Virtual Screening of Chemogenomic Libraries: Accelerating Drug Repurposing with AI and Computational Biology

This article explores the integration of virtual screening and chemogenomic libraries as a powerful strategy for drug repurposing.

Virtual Screening of Chemogenomic Libraries: Accelerating Drug Repurposing with AI and Computational Biology

Abstract

This article explores the integration of virtual screening and chemogenomic libraries as a powerful strategy for drug repurposing. Aimed at researchers and drug development professionals, it covers the foundational principles of using annotated small-molecule libraries to uncover new therapeutic uses for existing drugs. The scope extends to current methodological approaches, including AI-accelerated docking and deep learning pipelines, while also addressing critical challenges such as chemical library biases and the need for robust validation. By examining comparative case studies and future directions, this review provides a comprehensive framework for implementing these computational techniques to reduce development timelines and costs, thereby expediting the delivery of new treatments to patients.

Laying the Groundwork: Principles of Chemogenomics and Virtual Screening in Repurposing

Chemogenomic libraries represent a powerful cornerstone of modern phenotypic drug discovery and repurposing efforts. These collections of target-annotated small molecules enable researchers to probe biological systems systematically, bridging the gap between phenotypic screening and target-based drug discovery. This application note delineates the strategic design, implementation, and analytical protocols for utilizing chemogenomic libraries in virtual screening campaigns aimed at drug repurposing. We provide detailed methodologies for library construction, quantitative high-throughput screening (qHTS), and data analysis, supported by structured workflows and reagent specifications to facilitate robust experimental design and interpretation.

Chemogenomic libraries are strategically designed collections of small molecules annotated for their interactions with specific protein targets or target families [1]. Unlike traditional compound libraries focused on structural diversity, chemogenomic libraries emphasize biological relevance and target coverage, creating defined mappings between chemical space and biological space [2]. This intentional design makes them particularly powerful for drug repurposing research, where understanding a compound's polypharmacology—its ability to interact with multiple targets—can reveal new therapeutic applications beyond original indications [3].

The fundamental value proposition of these libraries lies in their information-rich composition. When a compound from a chemogenomic library produces a phenotypic response in a screening assay, the pre-existing target annotations immediately provide testable hypotheses about the biological pathways and mechanisms involved [3] [4]. This approach significantly accelerates the target deconvolution process that traditionally represents a major bottleneck in phenotypic screening [4]. For drug repurposing, this strategy efficiently identifies new therapeutic uses for existing clinical compounds by systematically probing their activities across diverse disease models and biological contexts.

Library Design Strategies and Composition

The construction of a high-quality chemogenomic library requires balancing multiple optimization parameters, including target coverage, cellular activity, chemical diversity, and compound availability [5] [6]. Two complementary design strategies have emerged: target-based and drug-based approaches.

Target-Based Design: Experimental Probe Compounds (EPCs)

The target-based approach begins with defining a comprehensive set of proteins implicated in disease pathogenesis, then identifying potent and selective small-molecule modulators for these targets [6]. This process typically generates nested compound subsets:

- Theoretical Set: A comprehensive in silico collection of established target-compound pairs covering the defined target space. One published example includes 336,758 unique compounds targeting 1,655 cancer-associated proteins [6].

- Large-Scale Set: A filtered subset (e.g., 2,288 compounds) refined through activity and similarity thresholds to reduce redundancy while maintaining target coverage [6].

- Screening Set: The final physical library (e.g., 1,211 compounds) comprising commercially available, cellularly active probes optimized for practical screening applications [5] [6].

Drug-Based Design: Approved and Investigational Compounds (AICs)

The drug-based strategy focuses on compounds with established clinical profiles, including approved drugs and investigational agents [6]. This collection is particularly valuable for repurposing applications, as these compounds have known safety profiles and often favorable pharmacokinetic properties. The AIC library is typically curated from public drug databases and clinical trials, with structural similarity analyses used to minimize redundancy [6].

Table 1: Comparative Analysis of Chemogenomic Library Design Strategies

| Design Parameter | Target-Based Approach (EPCs) | Drug-Based Approach (AICs) |

|---|---|---|

| Primary Objective | Maximize target coverage and mechanistic exploration | Leverage existing clinical compounds for repurposing |

| Compound Sources | Chemical probes, investigational compounds | Approved drugs, clinical candidates |

| Advantages | High target diversity, novel biology discovery | Favorable ADMET profiles, accelerated translation |

| Challenges | Variable clinical translatability | Limited novelty in target space |

| Target Coverage | ~84% of defined anticancer targets (1,211 compounds for 1,386 proteins) [5] | Varies by therapeutic area |

Implementation Example: The C3L Library

The Comprehensive anti-Cancer small-Compound Library (C3L) exemplifies the practical application of these design principles. Through iterative filtering—prioritizing cellular activity, potency, and commercial availability—researchers distilled a theoretical set of 336,758 compounds down to a screening-optimized library of 1,211 compounds while maintaining coverage of 84% of the original 1,386 anticancer targets [5] [6]. This library successfully identified patient-specific vulnerabilities in glioblastoma stem cells, demonstrating the utility of focused chemogenomic libraries in uncovering clinically relevant insights [5].

Experimental Protocols and Workflows

Protocol 1: Virtual Screening of Chemogenomic Libraries

Virtual screening computationally prioritizes compounds from chemogenomic libraries for experimental testing, leveraging target annotations and structural information [7].

Materials:

- Chemogenomic library database (e.g., C3L, MIPE)

- Protein structure or pharmacophore model

- Molecular docking software (e.g., AutoDock, Glide)

- Computing cluster or cloud resources

Procedure:

- Library Preparation: Convert compound structures from the chemogenomic library into appropriate 3D formats for docking. Apply chemical filters to ensure drug-likeness.

- Target Preparation: Generate the 3D structure of the target protein through experimental data or homology modeling. Define the binding site coordinates.

- Molecular Docking: Perform computational docking of library compounds against the target binding site. Use scoring functions to rank compounds by predicted binding affinity.

- Hit Selection: Analyze top-ranking compounds for binding mode consistency and interaction quality. Select diverse chemotypes for experimental validation.

- Experimental Validation: Test selected compounds in biochemical or cellular assays to confirm activity.

Protocol 2: Quantitative High-Throughput Screening (qHTS)

qHTS assays screen compounds across multiple concentrations, generating concentration-response curves for robust potency and efficacy assessment [8].

Materials:

- Compound library in source plates

- Automated liquid handling system

- 1536-well microtiter plates

- Cell-based assay reagents

- High-content imager or plate reader

Procedure:

- Assay Development: Optimize cell density, reagent concentrations, and incubation times using control compounds.

- Compound Transfer: Using acoustic dispensing or pin tools, transfer compounds from source plates to assay plates across a concentration range (typically 3-8 points in serial dilution).

- Cell Treatment: Add cell suspension to assay plates. Incubate for predetermined time under appropriate conditions.

- Signal Detection: Measure assay endpoint using appropriate detection method (e.g., fluorescence, luminescence, high-content imaging).

- Data Processing: Normalize data to positive and negative controls. Fit concentration-response curves using the Hill equation to determine AC~50~ and E~max~ values [8].

Table 2: Key Parameters in qHTS Data Analysis Using the Hill Equation

| Parameter | Symbol | Biological Interpretation | Estimation Considerations |

|---|---|---|---|

| Baseline Response | E~0~ | Untreated system response | Should be stable across plates |

| Maximal Response | E~∞~ | Maximum compound effect | May indicate efficacy or toxicity |

| Half-Maximal Activity | AC~50~ | Compound potency | Precise estimation requires defined asymptotes [8] |

| Hill Coefficient | h | Steepness of concentration-response | Suggests cooperativity in mechanism |

Data Analysis and Interpretation

Concentration-Response Curve Fitting

The Hill equation remains the standard model for analyzing qHTS data:

[ Ri = E0 + \frac{(E\infty - E0)}{1 + \exp{-h[\log Ci - \log AC{50}]}} ]

Where:

- ( Ri ) = measured response at concentration ( Ci )

- ( E_0 ) = baseline response

- ( E_\infty ) = maximal response

- ( AC_{50} ) = half-maximal activity concentration

- ( h ) = Hill slope parameter [8]

Critical Considerations:

- Parameter estimates show poor repeatability when concentration ranges fail to establish both upper and lower asymptotes [8].

- Increasing replicate measurements improves precision of AC~50~ and E~max~ estimates (Table 3).

- Flat response profiles or non-monotonic curves may indicate false negatives or complex biology not captured by the Hill equation [8].

Table 3: Impact of Replicate Number on Parameter Estimation Precision

| True AC~50~ (μM) | True E~max~ (%) | Number of Replicates (n) | 95% CI for AC~50~ Estimates | 95% CI for E~max~ Estimates |

|---|---|---|---|---|

| 0.001 | 50 | 1 | [4.69×10^-10^, 8.14] | [45.77, 54.74] |

| 0.001 | 50 | 3 | [5.59×10^-8^, 0.54] | [44.90, 55.17] |

| 0.001 | 50 | 5 | [5.84×10^-7^, 0.15] | [47.54, 52.57] |

| 0.1 | 50 | 1 | [0.04, 0.23] | [12.29, 88.99] |

| 0.1 | 50 | 5 | [0.06, 0.16] | [46.44, 53.71] |

Target-Phenotype Mapping

Following hit identification, systematic mapping of compound targets to observed phenotypes enables mechanistic deconvolution:

- Hit Clustering: Group active compounds by structural similarity and target annotation.

- Pathway Enrichment: Analyze annotated targets for overrepresentation in specific biological pathways using tools like KEGG or Gene Ontology [4].

- Network Analysis: Construct target-pathway-disease networks to identify key nodes connecting compound activity to potential therapeutic applications [4].

The Scientist's Toolkit: Essential Research Reagents

Table 4: Key Reagents for Chemogenomic Library Screening

| Reagent / Resource | Function | Application Notes |

|---|---|---|

| Annotated Chemical Libraries | Source of target-annotated compounds for screening | C3L, MIPE, or custom collections; ensure proper storage at -20°C |

| Cell Painting Assay Kits | Multiparametric morphological profiling | Uses 6 fluorescent dyes to mark cellular components [4] |

| High-Content Imaging Systems | Automated image acquisition and analysis | Essential for phenotypic screening; requires optimized protocols |

| T4 DNA Ligase | Adapter ligation in NGS library prep | For target identification via genomic methods [9] |

| T4 DNA Polymerase | End-repair of fragmented DNA | Creates blunt-ended DNA for NGS library construction [9] |

| Hill Equation Modeling Software | Curve fitting for qHTS data | Enables AC~50~ and E~max~ estimation; requires appropriate asymptotes [8] |

Applications in Drug Repurposing

Chemogenomic library screening has demonstrated particular utility in drug repurposing through several mechanisms:

- Target-Based Repurposing: Identification of novel targets for existing drugs reveals new therapeutic indications. For example, profiling of traditional medicine compounds identified sodium-glucose transport proteins and PTP1B as targets relevant to hypoglycemic activity [1].

- Phenotype-Based Repurposing: Screening clinical compound libraries in disease-relevant models directly identifies new indications without prior target knowledge.

- Polypharmacology Exploitation: Deliberate exploration of multi-target activities enables addressing complex diseases through systems pharmacology approaches [4].

The integration of chemogenomic screening with functional genomics technologies (e.g., CRISPR-Cas9) creates powerful convergent approaches for rapid target validation and mechanism elucidation [3].

Chemogenomic libraries provide a systematic framework for bridging chemical space and biological function, offering powerful capabilities for drug repurposing research. The strategic design of these libraries—balancing target coverage, compound diversity, and practical screening considerations—enables efficient translation from phenotypic observations to mechanistic insights. The protocols and analytical methods detailed in this application note provide researchers with a roadmap for implementing chemogenomic approaches in their repurposing campaigns. As these libraries continue to expand and evolve, incorporating increasingly sophisticated annotation and design principles, they will undoubtedly yield new therapeutic opportunities from existing chemical matter.

Drug repurposing (also known as drug repositioning) represents a paradigm shift in pharmaceutical development, focusing on identifying new therapeutic uses for existing drugs, including those already approved, discontinued, or still in clinical trials [10] [11]. This approach stands in stark contrast to traditional de novo drug discovery, offering a more efficient and cost-effective path to market by leveraging existing clinical, pharmacological, and safety data [11]. The strategic value of drug repurposing has gained significant recognition across the pharmaceutical industry and academic research institutions, particularly for addressing persistent therapeutic challenges in areas such as oncology, neurodegenerative disorders, and rare diseases [10] [12].

The fundamental rationale for drug repurposing rests on its ability to circumvent many of the most resource-intensive stages of traditional drug development. Since repurposed candidates have already undergone extensive safety testing in humans, they can bypass much of the preclinical toxicity testing and Phase I safety trials required for novel compounds [10]. This strategic advantage translates directly into reduced development timelines, lower costs, and higher success rates, ultimately accelerating patient access to new treatments [10].

Quantitative Advantages of Drug Repurposing

Comparative Analysis: Repurposing vs. Traditional Development

The economic and temporal benefits of drug repurposing are substantial and well-documented. The tables below provide a detailed comparison of key development metrics between traditional drug discovery and drug repurposing approaches.

Table 1: Cost and Time Comparison of Drug Development Approaches

| Metric | De Novo Drug Discovery | Drug Repurposing |

|---|---|---|

| Average cost to approval | $1.5 - $4.5 billion (commonly around $2-3 billion) [12] | Approximately $300 million [10] [12] |

| Average time to market | 10-17 years (commonly ~12 years median) [12] | 3-12 years [12] (at least 3 years, with lowest average at 6 years) [10] |

| Success probability | ~10-12% from Phase I to approval [12] | ~30% (approximately 3× higher than de novo) [12] |

Table 2: Market Segments and Growth Projections in Drug Repurposing

| Segment | Market Share/Dominance | Projected Growth/Figures |

|---|---|---|

| Global Market (Overall) | Valued at USD 35.14 billion in 2025 [12] to reach USD 46.87 billion by 2032 (4.2% CAGR) [12]. Alternate sources project USD 36.87 billion in 2025 to USD 59.30 billion by 2034 (5.42% CAGR) [11]. | |

| Leading Approach | Disease-centric (39.3% share in 2025) [12] | 43% revenue share in 2024 [11] |

| Dominant Therapeutic Area | Oncology (45.6% share in 2025) [12] | Driven by urgent need and high repurposing potential [12] |

| Leading Drug Type | Small molecules (55.4% share in 2025) [12] | Versatility and established profiles [12] |

| Dominant Region | North America (42.3%-47% share) [12] [11] | Well-established healthcare system and R&D infrastructure [12] |

| Fastest Growing Region | Asia Pacific (24.5% share in 2025) [12] | Expanding healthcare expenditure and investments [12] [11] |

Strategic Implications of Repurposing Advantages

The quantitative benefits outlined in Table 1 translate into several strategic advantages for drug development. The significantly reduced financial investment required for repurposing makes it an attractive strategy for addressing rare and orphan diseases, where the patient population may be too small to justify the enormous costs of traditional drug development [10]. Furthermore, the abbreviated development timeline proves particularly valuable during public health crises, as demonstrated during the COVID-19 pandemic when repurposed drugs like baricitinib provided rapidly available treatment options [10].

The higher probability of success for repurposed drugs (approximately 30% compared to 10-12% for novel drugs) substantially de-risks the development process [12]. This success rate advantage stems from the extensive existing knowledge about the drug's pharmacokinetics, pharmacodynamics, and safety profile in humans, which allows researchers to make more informed decisions about potential new indications [10].

Computational Frameworks for Drug Repurposing

Artificial Intelligence and Machine Learning Approaches

Artificial Intelligence (AI) and machine learning (ML) have revolutionized drug repurposing by enabling the analysis of complex, high-dimensional biological and medical data to identify non-obvious drug-disease associations [10]. These computational techniques can exploit diverse data sources, including genomics, proteomics, clinical records, and scientific literature, to predict novel therapeutic indications for existing drugs.

Machine learning algorithms commonly applied in drug repurposing include:

- Supervised ML (using labeled input-output data examples): Logistic Regression, Support Vector Machines, Random Forest [10]

- Unsupervised ML (using unlabeled datasets): Principal Component Analysis, clustering algorithms [10]

- Deep Learning (DL) : Multilayer Perceptron, Convolutional Neural Networks, Long Short-Term Memory Recurrent Neural Networks [10]

These AI-driven approaches excel at pattern recognition across diverse chemical and biological spaces, enabling researchers to identify potential repurposing candidates with a speed and scale unattainable through traditional experimental methods alone [11].

Network-Based Link Prediction Methods

Network-based approaches represent another powerful computational framework for drug repurposing. These methods analyze relationships between molecules—including protein-protein interactions, drug-disease associations, and drug-target interactions—to identify repurposing opportunities based on network proximity [10] [13]. The fundamental premise is that drugs located near a disease's molecular site in biological networks tend to be more suitable therapeutic candidates than those farther away [10].

A recent advancement in this field involves constructing bipartite networks of drugs and diseases, then applying sophisticated link prediction algorithms to identify missing connections that represent potential repurposing opportunities [13]. These network methods have demonstrated impressive performance, with some algorithms achieving area under the ROC curve above 0.95 and average precision almost a thousand times better than chance in cross-validation tests [13].

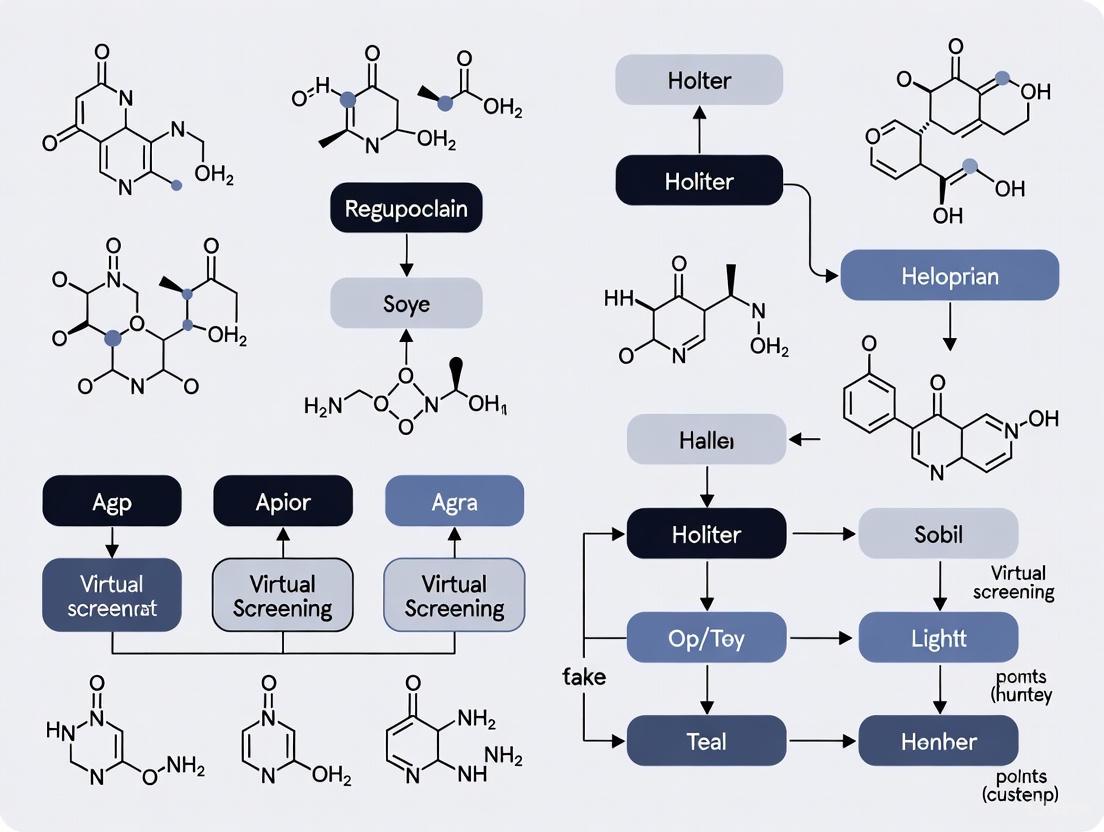

Diagram 1: Network-based drug repurposing workflow. This approach constructs bipartite networks from multiple data sources and applies link prediction algorithms to identify potential new drug-disease associations for experimental validation.

Experimental Protocols for Virtual Screening

Automated Virtual Screening Pipeline for Structure-Based Repurposing

Structure-based virtual screening uses target protein structural information to identify potential drug candidates. The following protocol outlines an automated virtual screening pipeline using free software tools, suitable for repurposing FDA-approved drug libraries.

Table 3: Key Research Reagents and Computational Tools

| Item/Tool | Function/Purpose | Implementation Notes |

|---|---|---|

| AutoDock Vina/QuickVina 2 | Molecular docking software for predicting small molecule binding to protein targets | Fast, accurate binding pose predictions; requires PDBQT format inputs [14] |

| FDA-Approved Drug Library | Collection of existing drugs for repurposing screening | Available from ZINC database; requires format conversion for docking [14] |

| MGLTools | Provides AutoDockTools for receptor and ligand preparation | Necessary for PDB to PDBQT file format conversion [14] |

| fpocket | Open-source software for binding pocket detection | Identifies potential binding cavities and provides druggability scores [14] |

| jamdock-suite scripts | Customizable Bash scripts for workflow automation | Modular tools (jamlib, jamreceptor, jamqvina, jamrank) streamline the screening pipeline [14] |

Protocol: Structure-Based Virtual Screening for Drug Repurposing

System Setup and Software Installation (Timing: ~35 minutes)

- Environment Setup: The protocol is designed for Linux/Unix systems. Windows 11 users can install Windows Subsystem for Linux (WSL) by opening PowerShell as administrator and running:

wsl --install[14]. - System Update: Update system packages using:

sudo apt update && sudo apt upgrade -y[14]. - Install Essential Packages: Install required dependencies including OpenBabel, PyMOL, and build tools:

sudo apt install -y build-essential cmake openbabel pymol libboost1.74-all-dev[14]. - Install AutoDockTools: Download and install MGLTools from https://ccsb.scripps.edu/mgltools/downloads/ to generate input files for Vina [14].

- Install fpocket: Clone, build, and install fpocket from https://github.com/Discngine/fpocket for binding pocket detection [14].

- Install QuickVina 2: Clone the repository from https://github.com/QVina/qvina, checkout qvina2 branch, and compile for accelerated docking [14].

- Get jamdock-suite Scripts: Clone the protocol scripts from https://github.com/jamanso/jamdock-suite and add to your PATH [14].

Library Preparation and Receptor Setup (Timing: Variable based on library size)

- Generate Compound Library: Use

jamlibto create a library of FDA-approved drugs in PDBQT format. The script automatically downloads and converts molecules from ZINC database [14]. - Prepare Receptor Structure: Use

jamreceptorto convert protein PDB files to PDBQT format and analyze binding sites with fpocket. Select target pockets interactively to define the docking grid box [14].

Molecular Docking and Results Analysis (Timing: Hours to days based on library size)

- Execute Docking: Run

jamqvinato perform automated docking across the entire compound library. For large libraries, utilize high-performance computing clusters [14]. - Resume Capability: Use

jamresumeto restart long-running jobs if interrupted, ensuring robustness [14]. - Rank Results: Apply

jamrankto evaluate and rank docking results using two scoring methods, identifying the most promising repurposing candidates [14].

AI-Accelerated Virtual Screening Platform

Recent advances have integrated artificial intelligence with virtual screening to enhance efficiency and accuracy. The RosettaVS platform represents a state-of-the-art approach that combines physics-based docking with active learning techniques for ultra-large library screening [15].

Protocol: AI-Accelerated Virtual Screening with RosettaVS

Platform Setup and Configuration

- Install RosettaVS: Access the open-source platform and install necessary components, including the improved RosettaGenFF-VS force field for enhanced virtual screening accuracy [15].

- Receptor Preparation: Process target protein structures, accounting for side-chain flexibility and limited backbone movement to model induced fit upon ligand binding [15].

Screening Protocol Implementation

- VSX Mode (Virtual Screening Express): Perform rapid initial screening of large compound libraries using a streamlined docking protocol [15].

- Active Learning Integration: Employ target-specific neural networks that are trained during docking computations to triage and select promising compounds for more expensive calculations [15].

- VSH Mode (Virtual Screening High-Precision): Apply high-precision docking with full receptor flexibility to the top candidates identified in the VSX phase for final ranking [15].

Validation and Hit Confirmation

- Experimental Validation: Progress top-ranking computational hits to experimental validation using binding affinity assays (e.g., SPR, ITC) [15].

- Structural Validation: When possible, validate predicted binding poses using high-resolution X-ray crystallography to confirm computational predictions [15].

Hybrid Ligand- and Structure-Based Methods

Combining ligand- and structure-based methods often yields more reliable results than either approach alone. The hybrid strategy leverages the pattern recognition capabilities of ligand-based methods with the atomic-level insights of structure-based approaches [16].

Diagram 2: Hybrid virtual screening workflow integrating ligand-based and structure-based methods. This approach can be implemented through parallel or sequential strategies, balancing confidence and coverage in hit identification.

Protocol: Hybrid Virtual Screening Implementation

Sequential Integration Approach

- Ligand-Based Filtering: First, employ rapid ligand-based screening (e.g., using tools like eSim, ROCS, or FieldAlign) of large compound libraries to identify novel scaffolds and chemically diverse starting points [16].

- Structure-Based Refinement: Subject the most promising ligand-based hits to structure-based docking experiments to confirm binding interactions and binding mode predictions [16].

- Advantage: This approach conserves computationally expensive structure-based calculations for compounds already pre-filtered by ligand similarity, increasing overall efficiency [16].

Parallel Screening with Consensus Scoring

- Independent Screening: Run both ligand-based and structure-based screening simultaneously on the same compound library, with each method generating its own ranking [16].

- Consensus Scoring Framework: Create a unified ranking through multiplicative or averaging strategies that favor compounds ranking highly across both methods [16].

- Advantage: This approach reduces false positives and increases confidence in selecting true positives by requiring agreement between complementary methods [16].

Case Study Implementation: LFA-1 Inhibitor Optimization

- Data Splitting: Chronologically split structure-activity data into training and test sets for both QuanSA (ligand-based) and FEP+ (structure-based) affinity predictions [16].

- Individual Prediction: Each method independently predicts binding affinities with similar accuracy levels [16].

- Hybrid Model: Average predictions from both approaches, resulting in significantly reduced mean unsigned error (MUE) through partial cancellation of individual method errors [16].

Drug repurposing represents a strategically vital approach to pharmaceutical development that offers substantial advantages in cost, time, and success probability compared to traditional de novo drug discovery. The integration of advanced computational methods—including AI-driven approaches, network-based link prediction, and hybrid virtual screening protocols—has dramatically accelerated the identification of new therapeutic indications for existing drugs.

The experimental protocols outlined in this article provide researchers with practical frameworks for implementing structure-based, ligand-based, and integrated screening approaches. These methodologies leverage publicly available resources and open-source tools, making them accessible to academic researchers, pharmaceutical companies, and biotechnology firms alike.

As the field continues to evolve, drug repurposing is poised to play an increasingly important role in addressing unmet medical needs, particularly in complex disease areas like oncology, neurodegenerative disorders, and rare diseases. The continued development and refinement of computational approaches will further enhance our ability to identify repurposing opportunities, ultimately accelerating the delivery of effective treatments to patients.

Virtual screening (VS) is a cornerstone of modern computer-aided drug design (CADD), enabling researchers to efficiently identify potential drug candidates from vast chemical libraries by computationally predicting their biological activity [17]. In the context of drug repurposing—the strategy of finding new therapeutic uses for existing drugs—VS provides a powerful and cost-effective approach to navigate chemogenomic libraries, significantly accelerating the discovery of novel treatments for diseases such as colorectal cancer [18]. The two primary methodologies, Ligand-Based Virtual Screening (LBVS) and Structure-Based Virtual Screening (SBVS), offer complementary paths to this goal. LBVS leverages known bioactive molecules to find new compounds with similar properties, while SBVS utilizes the three-dimensional structure of a biological target to predict ligand binding [19] [17]. The strategic integration of these methods, particularly with advances in artificial intelligence (AI), is increasingly vital for enhancing the efficiency and success of drug discovery and repurposing campaigns [20] [21].

Core Principles and Methodologies

Ligand-Based Virtual Screening (LBVS)

LBVS operates on the fundamental "similarity-property principle," which posits that structurally similar molecules are likely to exhibit similar biological activities [20] [17]. This approach is indispensable when the three-dimensional structure of the target protein is unknown, as it relies entirely on the information derived from known active ligands.

- Molecular Descriptors and Similarity Searching: LBVS compares molecules using molecular descriptors, which can be categorized by their dimensionality [19] [17].

- Quantitative Structure-Activity Relationship (QSAR): This is a more quantitative LBVS approach that constructs a mathematical model correlating molecular descriptors to a biological activity [18] [20]. Machine learning (ML) techniques, including support vector machines (SVM) and random forest algorithms, are now extensively used to build predictive QSAR models from high-throughput screening data [18] [22].

Structure-Based Virtual Screening (SBVS)

SBVS methodologies depend on the availability of the three-dimensional structure of the target, typically a protein, obtained through X-ray crystallography, NMR spectroscopy, or computational predictions (e.g., AlphaFold) [20] [23]. The core principle is to predict how a small molecule (ligand) interacts with the target's binding site.

- Molecular Docking: This is the most widely used SBVS technique [19] [17]. Docking involves two main steps:

- Pose Prediction: Sampling possible orientations (poses) of the ligand within the binding site.

- Scoring: Ranking these poses using a scoring function that estimates the binding affinity.

- Scoring Functions: These are mathematical functions used to predict the strength of protein-ligand interaction. They can be physics-based (estimating force field energies), empirical (based on fitted parameters), or knowledge-based (derived from statistical analyses of known protein-ligand complexes) [19] [22]. A key challenge is the accurate calculation of binding affinities in an aqueous environment [7].

Table 1: Comparison of LBVS and SBVS Core Characteristics

| Feature | Ligand-Based (LBVS) | Structure-Based (SBVS) |

|---|---|---|

| Primary Data | Known active ligands (1D, 2D, 3D descriptors) | 3D structure of the target protein |

| Key Principle | Molecular similarity | Structural and chemical complementarity |

| Main Techniques | Similarity search, QSAR modeling, Pharmacophore modeling | Molecular docking, Molecular dynamics simulations |

| Data Requirement | Set of active/inactive compounds | Protein structure (experimental or predicted) |

| Major Advantage | No protein structure needed; computationally fast | Can discover novel scaffolds; provides binding mode insights |

| Major Limitation | Bias towards known chemical space; limited novelty | High computational cost; sensitive to protein flexibility and scoring inaccuracies |

Integrated Workflow and Experimental Protocols

Given their complementary strengths and weaknesses, the most effective VS strategies often combine LBVS and SBVS approaches [19] [20]. The following workflow and protocol outline a synergistic hybrid strategy for a drug repurposing project.

Combined Virtual Screening Workflow

The diagram below illustrates a sequential hybrid workflow that leverages both LB and SB methods to efficiently prioritize compounds from a large chemogenomic library.

Detailed Protocol for a Hybrid VS Campaign

Objective: To identify potential repurposed drug candidates from a library of approved drugs for a specific protein target (e.g., PAK2 kinase [24]).

Materials & Software:

- Chemical Library: A database of approved drugs (e.g., 3,648 FDA-approved compounds [24]).

- Target Structure: A 3D structure of the target protein (e.g., PDB ID for PAK2).

- Software for LBVS: Tools for fingerprint calculation (e.g., RDKit) and QSAR modeling (e.g., Knime, Python scikit-learn).

- Software for SBVS: Molecular docking software (e.g., Glide [24] [22], AutoDock Vina).

- Software for Refinement: Molecular dynamics simulation packages (e.g., GROMACS, AMBER).

Procedure:

Library Preparation:

- Obtain the structures of approved drugs in a suitable format (e.g., SDF, SMILES).

- Perform chemical cleaning: standardize structures, remove duplicates, and generate probable tautomers and protonation states at physiological pH (e.g., using MOE, Open Babel).

- Output: A curated, ready-to-screen molecular database.

LBVS Pre-filtering:

- Similarity Search: If known active ligands for the target are available, calculate 2D molecular fingerprints (e.g., ECFP4) for all library compounds and the reference active(s). Rank the library by similarity to the reference (e.g., using the Tanimoto coefficient [17]).

- QSAR Model: If a larger set of actives and inactives is available, train a binary classification QSAR model (e.g., using a Random Forest or SVM algorithm [22]). Use the model to predict and rank the library compounds by their probability of activity.

- Output: A subset of the top 5-20% of compounds ranked by the LBVS method.

SBVS Screening (Molecular Docking):

- Target Preparation: Prepare the protein structure from the PDB file: add hydrogen atoms, assign partial charges, and define the binding site (often based on the co-crystallized ligand or known catalytic residues).

- Grid Generation: Define a 3D grid box that encompasses the binding site of interest for docking calculations.

- Ligand Preparation: Convert the LBVS-pre-filtered compounds into 3D structures and optimize their geometry.

- Docking Execution: Dock each prepared ligand into the defined grid. Use the docking software's scoring function to predict the binding pose and affinity for each ligand.

- Pose Analysis and Selection: Manually inspect the top-scoring docking poses for key interactions with the protein (e.g., hydrogen bonds, hydrophobic contacts, pi-stacking). Select the top 50-100 compounds with favorable interactions and high docking scores for further analysis [24].

- Output: A shortlist of high-ranking candidates with predicted binding modes.

Post-Docking Refinement (Optional but Recommended):

- Molecular Dynamics (MD) Simulation: To account for protein flexibility and solvation effects, run MD simulations (e.g., for 100-300 ns [24]) on the top-ranked protein-ligand complexes.

- Analysis: Calculate the root-mean-square deviation (RMSD) of the ligand and protein to assess stability. Use the simulation trajectories to compute more robust binding free energies (e.g., using MM/PBSA or MM/GBSA methods). This step helps validate the stability of the docked pose and provides a more reliable estimate of binding affinity [24].

Experimental Validation:

Table 2: Essential Materials and Software for Virtual Screening

| Item Name | Type/Category | Primary Function in VS | Example Tools / Databases |

|---|---|---|---|

| Chemical Libraries | Database | Source of compounds for screening; crucial for repurposing. | FDA-approved drugs [24], ZINC, ChEMBL [18] |

| Target Structures | Database | Provides 3D coordinates of the biological target for SBVS. | Protein Data Bank (PDB), AlphaFold Protein Structure Database [20] |

| Molecular Descriptors | Computational Algorithm | Numerical representation of molecular structure for LBVS. | ECFP fingerprints, MOE descriptors, RDKit |

| QSAR Modeling Software | Software | Builds predictive models linking structure to activity for LBVS. | Knime, Python scikit-learn, WEKA |

| Molecular Docking Suite | Software | Predicts ligand pose and scores binding affinity for SBVS. | Glide [24] [22], AutoDock Vina, GOLD |

| MD Simulation Package | Software | Refines docked poses and assesses complex stability. | GROMACS, AMBER, NAMD [24] |

| Binding Assay Kits | Wet Lab Reagent | Experimentally validates computational hits. | Kinase activity assays, Surface Plasmon Resonance (SPR) kits [18] [20] |

Ligand-based and structure-based virtual screening are powerful, complementary methodologies that form the backbone of modern computational drug discovery and repurposing. LBVS offers speed and efficiency by leveraging historical ligand data, while SBVS provides a mechanistic basis for binding and the potential to discover novel chemotypes. The integration of these approaches into a hybrid workflow, as detailed in this application note, mitigates their individual limitations and maximizes the probability of identifying high-quality repurposing candidates. The ongoing incorporation of artificial intelligence and machine learning is further enhancing the predictive power and scalability of both LBVS and SBVS [20] [21]. As chemogenomic libraries continue to expand and structural data becomes more accessible, these refined virtual screening protocols will play an increasingly critical role in accelerating the delivery of new therapies to patients.

Within modern drug development, repurposing existing compounds represents a paradigm shift towards more efficient and cost-effective therapeutic discovery. This approach identifies new medical applications for drugs already approved for other conditions, leveraging established safety profiles to significantly accelerate the development timeline [10]. The process typically requires only 6 years and approximately $300 million, a substantial reduction from the 10-15 years and $2.6 billion often needed for de novo drug development [10] [25]. This article examines the landmark repurposing cases of Sildenafil and Thalidomide, framing their stories within the context of modern virtual screening methodologies for chemogenomic libraries. These historical examples provide critical insights and protocols for contemporary researchers aiming to navigate the complex landscape of computational drug rediscovery.

The Repurposing Paradigm: Sildenafil and Thalidomide

Sildenafil: From Angina to Erectile Dysfunction

Originally developed by Pfizer for the treatment of angina pectoris, Sildenafil was investigated for its ability to inhibit phosphodiesterase (PDE) and promote coronary vasodilation. During Phase I clinical trials, the drug demonstrated an unexpected side effect: it induced penile erections. This serendipitous discovery pivoted its development path toward erectile dysfunction, a condition for which it received FDA approval in 1998. The drug's mechanism involves selective inhibition of phosphodiesterase type 5 (PDE5), enhancing the effect of nitric oxide (NO) by preventing the degradation of cyclic guanosine monophosphate (cGMP) in the corpus cavernosum. This success story underscores the value of clinical observation and the potential for unexpected off-target effects to reveal significant therapeutic applications.

Thalidomide: A Phoenix from the Ashes

The thalidomide narrative represents perhaps the most dramatic reversal of fortune in pharmaceutical history. Initially marketed in the late 1950s as a sedative and antiemetic for morning sickness, the drug was linked to severe congenital malformations in an estimated 10,000 infants worldwide [26]. This tragedy prompted massive regulatory reforms and seemingly consigned thalidomide to medical history.

However, decades later, thalidomide experienced a remarkable renaissance. Israeli physician Jacob Sheskin discovered its efficacy in treating erythema nodosum leprosum (ENL), an inflammatory complication of leprosy [26]. Subsequent research revealed that thalidomide possesses potent immunomodulatory and anti-angiogenic properties, notably inhibiting tumor necrosis factor-alpha (TNF-α) production and vascular endothelial growth factor (VEGF)-induced corneal neovascularization [26]. In 2006, thalidomide completed its extraordinary comeback by becoming the first new agent in over a decade approved for the treatment of plasma cell myeloma [26]. Recent research has further elucidated its molecular mechanism, showing that thalidomide promotes the degradation of transcription factors, including SALL4, which explains its teratogenic effects when administered during critical fetal development periods [27].

Table 1: Comparative Analysis of Drug Repurposing Cases

| Characteristic | Sildenafil | Thalidomide |

|---|---|---|

| Original Indication | Angina pectoris | Morning sickness (anti-emetic) |

| Repurposed Indication | Erectile Dysfunction | Multiple Myeloma, Erythema Nodosum Leprosum |

| Primary Mechanism | Phosphodiesterase 5 (PDE5) inhibition | Immunomodulation, Anti-angiogenesis, TNF-α inhibition |

| Key Molecular Target(s) | PDE5 enzyme | Cereblon (CRBN), leading to degradation of transcription factors like SALL4 [27] |

| Development Time Reduction | Significant (exact duration not specified) | Several decades between initial use and oncology approval |

| Regulatory Impact | Standard approval process | Spurred major FDA reforms after initial toxicity [28] |

Virtual Screening and Computational Protocols

The stories of Sildenafil and Thalidomide, while originating in serendipity, now provide a rationale for systematic, computational repurposing approaches. Modern virtual screening leverages chemogenomic libraries and sophisticated algorithms to predict drug-target interactions (DTIs) at scale, transforming historical success into reproducible protocol.

Data-Driven Repurposing Workflow

A robust virtual screening pipeline integrates diverse biological data to generate high-confidence repurposing hypotheses. The following diagram illustrates the key stages of this process, from data collection to experimental validation.

Protocol: Knowledge Graph-Based Repurposing

Purpose: To systematically identify novel drug-disease associations through structured integration of heterogeneous biomedical data.

Materials:

- Data Sources: ChEMBL, BindingDB, Guide to Pharmacology (GtoPdb), DrugBank, clinicaltrials.gov

- Software Tools: OREGANO knowledge graph framework [29], Python/R for data analysis, molecular docking software (AutoDock Vina, Schrödinger)

- Hardware: High-performance computing cluster with adequate RAM for large graph processing

Procedure:

- Data Extraction and Integration:

- Download latest releases of primary drug-target interaction databases (ChEMBL, BindingDB, GtoPdb) [30].

- Extract approved drugs, investigational compounds, protein targets, disease indications, and associated bioactivity measurements (Ki, Kd, IC50).

- Standardize chemical structures using SMILES notation and map to canonical identifiers (PubChem CID, InChIKey).

- Implement entity resolution to merge equivalent nodes across different data sources using cross-references and semantic reconciliation [29].

Graph Construction:

- Define node types: Compound, Protein, Disease, Pathway, Biological Process.

- Define relationship types: BINDSTO, TREATS, REGULATES, ASSOCIATEDWITH.

- Construct knowledge graph using RDF triples or property graph model, implementing the schema:

(Drug)-[BINDS_TO]->(Target)-[INVOLVED_IN]->(Disease)(Drug)-[HAS_SIDE_EFFECT]->(AdverseEvent)(Target)-[PARTICIPATES_IN]->(Pathway)

Hypothesis Generation via Link Prediction:

- Apply graph embedding algorithms (TransE, ComplEx, Node2Vec) to represent nodes in continuous vector space.

- Train machine learning models (Random Forest, Neural Networks) on known drug-target pairs to predict novel interactions.

- Use sampling techniques (negative sampling) to generate non-interacting pairs for model training.

- Calculate probability scores for all possible drug-target pairs, ranking by prediction confidence.

Validation and Prioritization:

- Perform computational validation through literature mining (PubMed), retrospective clinical analysis (EHR, insurance claims), and existing clinical trial data [25].

- Apply mechanistic filtering based on pathway enrichment and target-disease proximity in the graph.

- Prioritize candidates with supporting evidence from multiple independent data sources.

Protocol: Molecular Docking for Target Deconvolution

Purpose: To elucidate the structural basis of drug-target interactions and identify novel binding partners for known drugs.

Materials:

- Protein Structures: RCSB Protein Data Bank (PDB), AlphaFold Protein Structure Database

- Compound Libraries: FDA-approved drug collection (e.g., FDA-DRIVe), ZINC database purchasable compounds

- Software: AutoDock Vina [31], GROMACS (for MD simulations), PyMOL (visualization)

Procedure:

- Preparation of Protein Structures:

- Retrieve high-resolution 3D structures of target proteins from PDB or generate using AlphaFold2.

- Remove water molecules and heteroatoms, add polar hydrogens, assign partial charges using appropriate force fields (AMBER, CHARMM).

- Define the binding site using known ligand coordinates or predicted active sites.

Preparation of Ligand Library:

- Download 3D structures of FDA-approved drugs in SDF or MOL2 format.

- Generate low-energy conformers using molecular mechanics force fields.

- Convert to PDBQT format with assignment of flexible torsions.

Molecular Docking Screen:

- Configure docking grid to encompass the entire binding site with sufficient margin.

- Execute high-throughput docking using Vina with exhaustiveness setting ≥8 for adequate sampling.

- Record binding poses and affinity scores (ΔG in kcal/mol) for all compounds.

Molecular Dynamics Validation:

- Select top-ranking complexes (based on docking score) for molecular dynamics simulation.

- Solvate the protein-ligand complex in explicit water (TIP3P) with appropriate ion concentration.

- Energy minimization followed by equilibration (NVT and NPT ensembles).

- Run production MD for 50-100 ns, analyzing trajectory stability via RMSD, RMSF, and hydrogen bond persistence [31].

Analysis and Hit Confirmation:

- Calculate binding free energies using MM/GBSA or MM/PBSA methods.

- Inspect interaction fingerprints for key hydrogen bonds, hydrophobic contacts, and salt bridges.

- Validate predictions through comparison with known active compounds and experimental testing.

Successful computational drug repurposing requires access to curated data sources and specialized software tools. The following table details essential resources for implementing the protocols described in this article.

Table 2: Key Research Reagents and Computational Resources for Drug Repurposing

| Resource Name | Type | Primary Function | Application in Repurposing |

|---|---|---|---|

| ChEMBL [30] | Database | Manually curated database of bioactive molecules with drug-like properties | Provides bioactivity data, target annotations, and ADMET information for ~2.4 million compounds |

| BindingDB [30] | Database | Focuses on measured binding affinities (Ki, Kd, IC50) | Supplies quantitative interaction data for ~1.3 million ligands and nearly 9,000 targets |

| Guide to Pharmacology (GtoPdb) [30] | Database | Expert-curated focus on targets of approved drugs | Offers high-quality data on key target families (GPCRs, ion channels, nuclear receptors) |

| OREGANO Knowledge Graph [29] | Computational Resource | Integrates heterogeneous drug data including natural compounds | Enables link prediction for novel drug-target associations through graph machine learning |

| AutoDock Vina [31] | Software Tool | Molecular docking and virtual screening | Predicts binding modes and affinities of drugs against new target proteins |

| ClinicalTrials.gov [25] | Database | Registry of clinical studies worldwide | Provides validation source for repurposing hypotheses through existing trial data |

The historical journeys of Sildenafil and Thalidomide from their original indications to repurposed applications provide both inspiration and methodological guidance for contemporary drug discovery. While these successes initially emerged through serendipity, they now illuminate a path for systematic, computational approaches to therapeutic rediscovery. Modern virtual screening of chemogenomic libraries, powered by knowledge graphs, molecular docking, and machine learning, transforms these historical anecdotes into reproducible protocols. By integrating heterogeneous biological data and applying rigorous computational validation, researchers can accelerate the identification of novel therapeutic applications for existing drugs, ultimately reducing development timelines and costs while addressing unmet medical needs. The frameworks and protocols presented herein offer practical guidance for leveraging these powerful approaches in ongoing repurposing efforts.

Executing the Screen: AI, Docking, and Practical Workflows

Virtual screening of chemogenomic libraries has emerged as a powerful, cost-effective strategy for identifying new therapeutic uses for existing drugs, significantly accelerating the drug discovery pipeline [32]. This approach leverages existing compounds with established safety profiles, reducing development timelines from the typical 10-15 years required for de novo drug discovery to an average of 6 years, while cutting costs from approximately $2.6 billion to around $300 million [33]. The success of any virtual screening campaign for drug repurposing is fundamentally dependent on the quality and comprehensiveness of the underlying chemical and biological data. Meticulous preparation of compound libraries and rigorous curation of associated data form the essential foundation upon which reliable and biologically relevant predictions are built. This application note provides detailed protocols for constructing high-quality chemogenomic libraries and curating the necessary data to enable effective virtual screening for drug repurposing.

Compound Library Compilation and Preparation

The first critical step involves assembling and preparing comprehensive libraries of compounds suitable for drug repurposing. These libraries typically encompass approved drugs, experimental agents, and sometimes natural compounds, each offering different repurposing opportunities.

A well-structured screening library should integrate compounds from multiple sources to maximize coverage of chemical space and therapeutic potential. The table below summarizes recommended library types and their characteristics.

Table 1: Recommended Compound Libraries for Drug Repurposing Virtual Screening

| Library Type | Source | Number of Compounds | Key Characteristics | Primary Use Case |

|---|---|---|---|---|

| Approved Drug Library | DrugBank (v5.1.7) [34] | 2,315 | FDA/other regulatory agency-approved; known safety profiles | Highest probability of clinical translation |

| Experimental Drug Library | DrugBank (v5.1.7) [34] | 5,935 | Investigational compounds; various clinical stages | Novel mechanism discovery; expanded chemical space |

| Traditional Chinese Medicine Library | Topscience Company [34] | 2,390 | Natural product-derived; diverse structural types (flavonoids, alkaloids, etc.) | Complementary chemical space exploration |

Molecular Structure Preparation and Standardization

Consistent and accurate molecular representation is crucial for computational screening. The following protocol ensures library compounds are properly prepared.

Protocol 2.2: Compound Structure Standardization

- Format Conversion and Initial Processing: Convert all compound structures into a consistent format (e.g., SDF, MOL2) using tools like Open Babel [34].

- 3D Structure Generation: For compounds lacking 3D structural information, generate initial 3D conformations using RDKit or Open Babel [34].

- Protonation and Tautomerization: Assign appropriate protonation states at physiological pH (7.4) using tools like Open Babel. Consider generating dominant tautomers.

- Energy Minimization: Perform geometry optimization using a molecular mechanics force field (e.g., MMFF94) to relieve steric clashes and ensure reasonable bond geometries.

- Docking Preparation: Convert the final, optimized structures into the specific format required by the chosen docking software (e.g., PDBQT for AutoDock Vina) [34].

Data Curation and Integration

Robust data curation integrates compound information with biological context, enabling more insightful virtual screening and hit prioritization.

Annotation with Pharmacological and Clinical Data

Each compound in the library should be annotated with key data to facilitate analysis and decision-making.

Table 2: Essential Compound Annotations for Drug Repurposing

| Data Category | Specific Annotations | Source Examples | Importance for Repurposing |

|---|---|---|---|

| Pharmacological | Known molecular targets, pathways, mechanism of action | DrugBank [34] | Predict polypharmacology and off-target effects |

| Clinical | Original indication, dosing regimens, contraindications, adverse effects | FDA labels, DrugBank [32] | Assess translational feasibility and safety |

| Pharmacokinetic | ADMET properties (Absorption, Distribution, Metabolism, Excretion, Toxicity) | DrugBank, PubChem | Predict bioavailability and potential toxicity |

| Chemical | Canonical SMILES, InChIKey, molecular weight, lipophilicity (LogP) | PubChem, ChEMBL | Assess drug-likeness and chemical properties |

Construction of Drug-Disease Networks

Network-based approaches provide a powerful framework for identifying repurposing opportunities by analyzing the complex relationships between drugs, targets, and diseases.

Protocol 3.2: Building a Drug-Disease Association Network

- Data Compilation: Assemble known drug-disease treatment pairs from machine-readable databases (e.g., DrugBank) and textual sources using natural language processing (NLP) tools, followed by manual curation [13].

- Network Representation: Construct a bipartite network where nodes represent either drugs or diseases, and edges connect a drug to a disease it is known to treat [13].

- Link Prediction: Apply network-based link prediction algorithms (e.g., graph embedding, degree-corrected stochastic block models) to this network to identify potential missing edges, which represent novel, high-probability drug repurposing candidates [13].

- Validation: Use cross-validation tests (randomly removing a subset of known edges and testing the algorithm's ability to recover them) to quantify prediction performance. Area Under the Curve (AUC) values above 0.95 have been achieved with these methods [13].

Quality Control and Validation

Implementing rigorous quality control measures is essential to ensure the reliability of the screening library and associated data.

Library Quality Assessment

Protocol 4.1: Quality Control Checks

- Structural Integrity: Verify the absence of atomic valency errors, unusual bond lengths, or other structural anomalies using toolkits like RDKit.

- Chemical Descriptor Calculation: Compute key physicochemical properties (e.g., molecular weight, LogP, number of hydrogen bond donors/acceptors) to profile the library and filter out compounds that fall far outside the typical "drug-like" space.

- Duplicate Removal: Identify and merge duplicate compounds based on standardized representations (e.g., InChIKey).

- Benchmarking: Test the finalized library and curation pipeline using established benchmark datasets like the Directory of Useful Decoys (DUD-E) to ensure the platform can effectively enrich known actives over decoys [34] [15]. Successful benchmarks should show strong early enrichment (EF1% > 16) [15].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Data Resources for Library Preparation and Curation

| Tool/Resource Name | Type | Primary Function in Library Prep/Curation | Access |

|---|---|---|---|

| RDKit | Cheminformatics Library | 3D structure generation, molecular descriptor calculation, SMILES manipulation | Open Source |

| Open Babel | Chemical Toolbox | File format conversion, protonation state assignment, energy minimization | Open Source |

| DrugBank | Database | Source for approved & experimental drug structures and annotations | Commercial & Free |

| AutoDock Tools | Docking Utility | Preparation of receptor and ligand files in PDBQT format for docking with AutoDock Vina [34] | Open Source |

| Node2Vec | Network Algorithm | Graph embedding for link prediction in drug-disease networks [13] | Open Source |

The meticulous preparation of chemogenomic libraries and rigorous curation of associated biological and clinical data are foundational, non-negotiable steps in virtual screening for drug repurposing. By adhering to the detailed protocols outlined in this application note—from compound standardization and multi-source annotation to network-based data integration and stringent quality control—researchers can construct a robust and reliable foundation for their computational campaigns. A well-prepared library and curated dataset significantly enhance the probability of identifying genuine, therapeutically viable repurposing opportunities, thereby accelerating the delivery of new treatments to patients.

The process of traditional drug development is lengthy, costly, and carries a high risk of failure, often requiring over 10 years and an investment of approximately $2.6 billion to bring a new drug to market [10]. In contrast, drug repurposing—identifying new therapeutic uses for existing drugs—offers a promising alternative that can reduce development costs to around $300 million and shorten timelines to as little as 3-6 years by leveraging existing safety and pharmacokinetic data [10]. Within this context, virtual screening of chemogenomic libraries has emerged as a powerful computational approach to accelerate drug repurposing research.

Artificial intelligence (AI) now plays a crucial role in drug repurposing by exploiting various computational techniques to analyze large datasets of biological and medical information, predict similarities between biomolecules, and identify disease mechanisms [10]. This article provides a detailed overview of three primary AI methodologies—machine learning, deep learning, and network-based approaches—framed within the context of virtual screening for drug repurposing, complete with structured protocols and implementation guidelines for researchers and drug development professionals.

The three principal AI methodologies employed in virtual screening for drug repurposing each offer distinct advantages and applications, as summarized in Table 1.

Table 1: Comparative Analysis of AI Methodologies in Virtual Screening for Drug Repurposing

| Methodology | Key Algorithms & Techniques | Primary Applications in Drug Repurposing | Data Requirements | Performance Considerations |

|---|---|---|---|---|

| Machine Learning (ML) | Logistic Regression, Random Forest, SVM, Naive Bayesian, k-NN [10] | Initial compound prioritization, Activity prediction, Property classification [10] | Structured bioactivity data, Molecular descriptors [10] | Faster training on smaller datasets; Limited with complex molecular representations [10] |

| Deep Learning (DL) | Multilayer Perceptron, CNN, LSTM-RNN, GAN, Graph Neural Networks [10] [35] | Ultra-large library screening, 3D structure-based prediction, Novel compound generation [36] [35] | Large-scale molecular structures, Protein-ligand complexes [36] [35] | Handles complex data well; Requires substantial computational resources [35] |

| Network-Based Approaches | Random walks, Heterogeneous knowledge graph mining, Multi-view learning [10] [37] | Drug-target interaction prediction, Mechanism of action elucidation, Polypharmacology discovery [10] [37] | Drug-disease associations, Protein-protein interactions, Drug-target networks [10] [37] | Excels at identifying non-obvious relationships; Less dependent on 3D structure data [10] |

Machine Learning Approaches

Core Algorithms and Implementation

Machine learning represents a foundational approach in virtual screening, employing algorithms that enable computers to learn from data without explicit programming [10]. These algorithms are categorized based on their learning mechanisms:

- Supervised ML utilizes labeled datasets with predefined input-output pairs to train models for predicting outcomes [10]. Common applications include quantitative structure-activity relationship (QSAR) modeling and activity classification.

- Unsupervised ML operates on unlabeled datasets to identify inherent patterns or groupings within the data, useful for compound clustering and chemical space analysis [10].

- Semi-supervised ML combines both labeled and unlabeled data, addressing the common scenario in drug discovery where extensive unlabeled data exists [10].

- Reinforcement ML employs an award and punishment system to maximize performance within given environmental parameters [10].

Experimental Protocol: Machine Learning-Based Virtual Screening

Table 2: Key Research Reagents and Computational Tools for ML-Based Screening

| Resource/Tool | Specifications/Requirements | Primary Function | Access Information |

|---|---|---|---|

| Molecular Descriptors | alvaDesc, Dragon | Quantify physical/chemical properties of molecules | Commercial software |

| Molecular Fingerprints | ECFP, FCFP | Encode substructural information as binary strings | Open-source implementations |

| Compound Libraries | ZINC, ChEMBL | Provide chemical structures and bioactivity data | https://zinc.docking.org/ |

| ML Algorithms | Scikit-learn, Random Forest, SVM | Model training and prediction | Open-source Python libraries |

Protocol Steps:

Data Collection and Curation

- Source compound structures and associated bioactivity data from public databases such as ZINC or ChEMBL [14].

- Curate data to remove duplicates, correct errors, and ensure consistency in activity measurements.

Molecular Representation

Model Training and Validation

- Split data into training (80%) and test sets (20%) using stratified sampling to maintain activity class distributions.

- Train multiple ML algorithms (e.g., Random Forest, SVM) using cross-validation to optimize hyperparameters.

- Validate model performance on the test set using metrics including AUC-ROC, precision, recall, and F1-score.

Virtual Screening and Hit Identification

- Apply the trained model to screen large compound libraries for repurposing candidates.

- Select top-ranking compounds for experimental validation based on predicted activity and favorable drug-like properties.

Figure 1: Machine Learning Virtual Screening Workflow

Deep Learning Approaches

Advanced Architectures for Virtual Screening

Deep learning, a subset of machine learning based on artificial neural networks with multiple hidden layers, has demonstrated remarkable performance in handling large and complex datasets for virtual screening [10] [38]. Key architectures include:

- Graph Neural Networks (GNNs) directly operate on molecular graph structures, capturing both atomic properties and bond connectivity [39]. Equivariant GNNs can further extract 3D structure features of small molecules, enabling accurate prediction of docking scores [35].

- Convolutional Neural Networks (CNNs) process grid-like data, applicable to molecular representations transformed into 2D feature maps [38].

- Transformers and Language Models treat molecular representations (e.g., SMILES) as sequences, learning patterns through attention mechanisms [38].

- Generative Adversarial Networks (GANs) and Diffusion Models create novel molecular structures with desired properties [10] [39].

Experimental Protocol: Deep Learning-Based Virtual Screening

Case Study: AI-Enhanced Screening for NMDA Receptor Modulators [36]

Table 3: Research Reagents and Tools for DL-Based Screening

| Resource/Tool | Specifications/Requirements | Primary Function | Access Information |

|---|---|---|---|

| ROCS-BART | Shape similarity algorithm | 3D molecular shape screening | Commercial (OpenEye) |

| Graph Neural Network | PyTorch Geometric, DGL | Drug-target interaction prediction | Open-source Python libraries |

| Screening Library | 18 million compounds | Source of candidate molecules | Custom or commercial |

| Validation Assays | Calcium flux (FDSS/μCell), Patch-clamp | Functional activity confirmation | Laboratory equipment |

Protocol Steps:

Initial Shape-Based Screening

AI-Enhanced Docking Refinement

- Apply a graph neural network-based drug-target interaction model to enhance docking accuracy [36].

- Use the model to predict binding affinities for the shape-based hits.

- Select compounds with improved predicted affinity for functional validation.

Functional Validation

Figure 2: Deep Learning Virtual Screening Workflow

Network-Based Approaches

Fundamental Principles and Methodologies

Network-based approaches study relationships between molecules—including protein-protein interactions, drug-disease associations, and drug-target interactions—to reveal drug repurposing opportunities [10]. The foundational theory posits that drugs proximal to the molecular site of a disease in biological networks tend to be more suitable therapeutic candidates than distal agents [10]. These methods are particularly valuable when 3D structural information is limited, as they can leverage existing knowledge graphs of biological relationships.

Key methodological frameworks include:

- Multi-view learning frameworks integrate multiple data types and perspectives to enhance prediction accuracy [10].

- Heterogeneous knowledge graph mining extracts patterns from complex networks containing diverse node and relationship types [10].

- Weighted network inference, as implemented in the wSDTNBI algorithm, uses binding affinity data to weight network edges, improving prediction quality over unweighted approaches [37].

Experimental Protocol: Network-Based Virtual Screening

Case Study: Identification of RORγt Inverse Agonists [37]

Table 4: Research Reagents and Tools for Network-Based Screening

| Resource/Tool | Specifications/Requirements | Primary Function | Access Information |

|---|---|---|---|

| wSDTNBI Algorithm | Weighted network inference | Predicts novel drug-target interactions | Custom implementation [37] |

| Binding Affinity Data | IC50, Ki values | Weight edges in DTI network | Public databases (ChEMBL, BindingDB) |

| Drug-Substructure Network | Structural fragment associations | Captures structure-activity relationships | Custom constructed |

| Validation Compounds | 72 purchased compounds | Experimental confirmation | Commercial suppliers |

Protocol Steps:

Network Construction

- Compile a comprehensive drug-target interaction network from public databases and literature.

- Apply edge weighting based on binding affinity data (e.g., IC50, Ki values) to create a weighted DTI network [37].

- Construct a drug-substructure association network to capture structural relationships.

Network-Based Inference

- Implement the wSDTNBI algorithm to calculate prediction scores for potential drug-target pairs [37].

- Prioritize candidates based on their network proximity to established therapeutic targets.

Experimental Validation

- Procure top-ranking compounds (e.g., 72 compounds for initial screening) [37].

- Perform in vitro experiments to confirm activity (e.g., IC50 determination).

- Validate direct target engagement using structural methods (e.g., X-ray crystallography) where possible [37].

- Advance confirmed hits to in vivo disease models to demonstrate therapeutic efficacy [37].

Figure 3: Network-Based Virtual Screening Workflow

Integrated Approaches and Future Directions

The most effective virtual screening strategies for drug repurposing often combine multiple methodologies to leverage their complementary strengths. For instance, ML models can provide initial compound prioritization, DL approaches can refine predictions using structural information, and network-based methods can contextualize findings within biological systems.

Future advancements will likely focus on improved integration of multimodal data, development of more interpretable AI models, and creation of standardized benchmarking datasets. As these technologies continue to evolve, they promise to further accelerate the identification of repurposing opportunities, ultimately delivering safe and effective treatments to patients more rapidly and cost-efficiently.

Virtual screening is a cornerstone of modern drug discovery, enabling researchers to computationally evaluate vast chemical libraries to identify promising therapeutic candidates. The integration of artificial intelligence (AI) has revolutionized this field, dramatically accelerating screening processes and improving prediction accuracy. These AI-accelerated platforms are particularly valuable for drug repurposing research, where they can efficiently screen existing compound libraries against new disease targets, potentially bypassing years of preliminary safety testing. Platforms such as RosettaVS and VirtuDockDL represent the cutting edge of this transformation, each employing distinct computational strategies to tackle the challenges of predicting protein-ligand interactions at scale. Their application allows researchers to navigate the expansive chemical space of chemogenomic libraries with unprecedented speed and precision, identifying novel therapeutic applications for existing compounds through structure-based and ligand-based approaches [10].

The significance of these platforms becomes evident when considering the traditional drug discovery pipeline, which typically requires over 10 years and $2.6 billion to bring a single drug to market, with only one marketable compound emerging from approximately one million screened candidates [40] [41]. In contrast, AI-accelerated virtual screening can complete the initial identification of hit compounds in less than a week for some targets, substantially reducing both time and financial resources [15]. For drug repurposing specifically, this approach leverages existing compounds with known safety profiles, potentially reducing development costs to approximately $300 million and shortening the timeline to as little as 3-6 years [10]. This efficiency makes AI-driven platforms indispensable tools for addressing urgent medical needs, from rapidly evolving viral threats to rare diseases with limited treatment options.

Table 1: Overview of Featured AI-Accelerated Virtual Screening Platforms

| Platform | Computational Approach | Key Features | Optimal Use Cases |

|---|---|---|---|

| RosettaVS [15] | Physics-based docking with AI-acceleration | RosettaGenFF-VS force field; VSX & VSH docking modes; Receptor flexibility modeling | High-precision structure-based screening; Targets requiring flexible receptor models |

| VirtuDockDL [41] | Deep learning with graph neural networks | Automated molecular graph processing; Integration of structural and physicochemical features; Ligand- and structure-based screening | Large-scale ligand prioritization; Multi-target screening campaigns |

Platform Comparison and Performance Benchmarking

RosettaVS and VirtuDockDL employ fundamentally different computational philosophies to achieve their virtual screening capabilities. RosettaVS utilizes a physics-based approach grounded in the Rosetta molecular modeling suite, incorporating an enhanced force field (RosettaGenFF-VS) that combines enthalpy calculations (ΔH) with entropy estimates (ΔS) for improved binding affinity predictions [15]. This platform excels in modeling receptor flexibility—a critical advantage for targets that undergo conformational changes upon ligand binding. Its docking protocol implements two distinct modes: Virtual Screening Express (VSX) for rapid initial screening with fixed protein side chains, and Virtual Screening High-precision (VSH) for detailed analysis of top hits with flexible side chains [15] [42]. This tiered approach enables efficient triaging of billion-compound libraries while maintaining accuracy for the most promising candidates.

In contrast, VirtuDockDL employs a deep learning framework centered on graph neural networks (GNNs) that automatically extract relevant features from molecular structures without relying on manually crafted descriptors [41]. The platform transforms molecular structures into graph representations where atoms serve as nodes and bonds as edges, allowing the GNN to learn complex structure-activity relationships directly from the data. This approach integrates both structural information and physicochemical features—including molecular weight, topological polar surface area, hydrogen bond donors/acceptors, and lipophilicity—enabling comprehensive molecular characterization [41]. VirtuDockDL further distinguishes itself by combining both ligand-based and structure-based screening methodologies within a unified, automated workflow.

Benchmarking analyses demonstrate the distinctive strengths of each platform. RosettaVS has shown exceptional performance in binding pose prediction, achieving a top 1% enrichment factor of 16.72 on the CASF-2016 benchmark, significantly outperforming other physics-based scoring functions [15]. In practical applications, the platform identified hit compounds for challenging targets including the ubiquitin ligase KLHDC2 (14% hit rate) and the human voltage-gated sodium channel NaV1.7 (44% hit rate), with all hits demonstrating single-digit micromolar binding affinity [15]. The accuracy of RosettaVS's pose predictions was further validated through high-resolution X-ray crystallography, confirming close agreement between computational models and experimental structures [15].

VirtuDockDL has demonstrated remarkable accuracy in benchmark studies, achieving 99% accuracy, an F1 score of 0.992, and an area under the curve (AUC) of 0.99 when screening the HER2 cancer target dataset, surpassing both DeepChem (89% accuracy) and AutoDock Vina (82% accuracy) [41]. The platform has successfully identified potential inhibitors for diverse targets including the Marburg virus VP35 protein, TEM-1 beta-lactamase in bacterial infections, and the CYP51 enzyme in fungal infections [41]. Its integrated approach combining ligand-based pre-screening with structure-based validation has proven particularly effective for prioritizing compounds across multiple target classes.

Table 2: Quantitative Performance Metrics of Virtual Screening Platforms

| Performance Metric | RosettaVS | VirtuDockDL | Traditional Methods (e.g., AutoDock Vina) |

|---|---|---|---|

| Screening Accuracy | 14-44% hit rates for experimental validation [15] | 99% on HER2 dataset [41] | 82% on HER2 dataset [41] |

| Enrichment Factor (Top 1%) | 16.72 (CASF-2016) [15] | Not explicitly reported | 11.9 (second-best method on CASF-2016) [15] |

| Pose Prediction Accuracy | Validated by X-ray crystallography [15] | Dependent on AutoDock Vina integration [41] | Varies by target and methodology |