Validating Phenotypic Screening Hits: A Chemogenomic Framework for Target Identification and Deconvolution

This article provides a comprehensive guide for researchers and drug development professionals on integrating chemogenomics with phenotypic screening to validate hits and identify mechanisms of action.

Validating Phenotypic Screening Hits: A Chemogenomic Framework for Target Identification and Deconvolution

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on integrating chemogenomics with phenotypic screening to validate hits and identify mechanisms of action. It covers the foundational principles of phenotypic drug discovery (PDD), detailing how it expands druggable target space and enables the discovery of first-in-class therapies. The content explores practical methodologies, including the use of annotated chemical libraries, affinity-based pull-down techniques, and label-free target identification strategies. It further addresses common troubleshooting and optimization challenges, such as mitigating the limitations of genetic and small-molecule screens and leveraging AI for data integration. Finally, the article presents robust validation frameworks and comparative analyses of computational and experimental tools, offering a complete roadmap for translating phenotypic observations into validated, druggable targets.

The Resurgence of Phenotypic Screening and the Chemogenomics Advantage

Why Phenotypic Screening is a Powerhouse for First-in-Class Drugs

In the evolving landscape of pharmaceutical research, phenotypic drug discovery (PDD) has re-emerged as a profoundly effective strategy for identifying first-in-class therapeutics. Between 1999 and 2008, phenotypic screening was responsible for the discovery of over half of the first-in-class small-molecule drugs approved by the FDA [1]. This approach, which identifies bioactive compounds based on their observable effects on disease phenotypes without requiring prior knowledge of a specific molecular target, contrasts with target-based drug discovery (TDD) that focuses on modulating predefined molecular targets [2]. The renewed appreciation for PDD stems from its ability to capture complex biological interactions within realistic disease models, thereby uncovering novel mechanisms of action (MoA) that would likely remain undiscovered through hypothesis-driven target-based approaches [3] [1]. This guide objectively examines the performance of phenotypic screening against target-based approaches, supported by experimental data and methodological frameworks essential for modern drug development.

Phenotypic vs. Target-Based Screening: A Comparative Analysis

The distinction between phenotypic and target-based screening strategies represents a fundamental dichotomy in drug discovery philosophy. Phenotypic screening evaluates compounds based on their ability to elicit a desired therapeutic effect in complex biological systems, including cells, tissues, or whole organisms [2]. This target-agnostic approach embraces biological complexity and has consistently identified novel therapeutic mechanisms. In contrast, target-based screening employs reductionist principles, focusing on compounds that selectively interact with a predefined molecular target, typically a protein with established disease relevance [3] [2].

Table 1: Strategic Comparison Between Phenotypic and Target-Based Screening Approaches

| Parameter | Phenotypic Screening | Target-Based Screening |

|---|---|---|

| Discovery Bias | Unbiased, allows novel target identification [2] | Hypothesis-driven, limited to known pathways [2] |

| Mechanism of Action | Often unknown at discovery, requires deconvolution [2] | Defined from the outset [2] |

| Biological Context | Captures complex systems-level interactions [3] [2] | Reductionist, focused on single targets [2] |

| Success Profile | Higher rate of first-in-class drug discovery [1] | More effective for follower drugs with optimized properties [4] |

| Technical Requirements | High-content imaging, functional genomics, AI analysis [2] | Structural biology, computational modeling, enzyme assays [2] |

| Target Validation | Required after compound identification [2] | Completed before screening begins [2] |

The disproportionate success of phenotypic screening in generating first-in-class therapeutics is particularly evident in complex disease areas with polygenic origins, such as cancer, neurodegenerative disorders, and rare diseases [2] [1]. Phenotypic approaches have expanded the "druggable target space" to include unexpected cellular processes—including pre-mRNA splicing, target protein folding, trafficking, and degradation—and revealed entirely new classes of drug targets [1].

Experimental Evidence: Key Success Stories and Data

The efficacy of phenotypic screening is demonstrated through multiple first-in-class therapies discovered through this approach. Notable examples include ivacaftor and lumicaftor for cystic fibrosis, risdiplam and branaplam for spinal muscular atrophy (SMA), and the immunomodulatory drugs thalidomide, lenalidomide, and pomalidomide [3] [1].

Table 2: Clinically Successful Drugs Discovered Through Phenotypic Screening

| Drug | Disease Indication | Key Experimental Model | Mechanism of Action |

|---|---|---|---|

| Ivacaftor/Lumicaftor [1] | Cystic Fibrosis | Cell lines expressing disease-associated CFTR variants [1] | CFTR channel potentiators and correctors [1] |

| Risdiplam/Branaplam [1] | Spinal Muscular Atrophy | SMN2 splicing modulation assays [1] | SMN2 pre-mRNA splicing modification [1] |

| Lenalidomide/Pomalidomide [3] [1] | Multiple Myeloma | TNF-α production inhibition assays [3] | Cereblon-mediated degradation of transcription factors IKZF1/3 [3] |

| Daclatasvir [1] | Hepatitis C | HCV replicon phenotypic screen [1] | Modulation of HCV NS5A protein [1] |

| SEP-363856 [1] | Schizophrenia | Phenotypic screen in disease models | Novel mechanism targeting trace amine-associated receptor 1 [1] |

For glioblastoma multiforme (GBM), researchers developed a sophisticated phenotypic screening approach that combined tumor genomic profiling with molecular docking to create rationally enriched chemical libraries [5]. This methodology involved:

- Target Selection: Identification of 755 genes with somatic mutations overexpressed in GBM patient samples from The Cancer Genome Atlas [5]

- Network Analysis: Mapping these genes onto a protein-protein interaction network to construct a GBM-specific subnetwork [5]

- Virtual Screening: Docking approximately 9,000 in-house compounds to 316 druggable binding sites on proteins in the GBM subnetwork [5]

- Phenotypic Validation: Screening selected compounds against patient-derived GBM spheroids, leading to the identification of compound IPR-2025 [5]

This compound demonstrated potent anti-GBM activity with single-digit micromolar IC50 values, significantly outperforming standard-of-care temozolomide, while showing no toxicity to normal cell lines [5]. The success of this integrated approach highlights how modern PDD can overcome traditional limitations through strategic combination with target-informed library design.

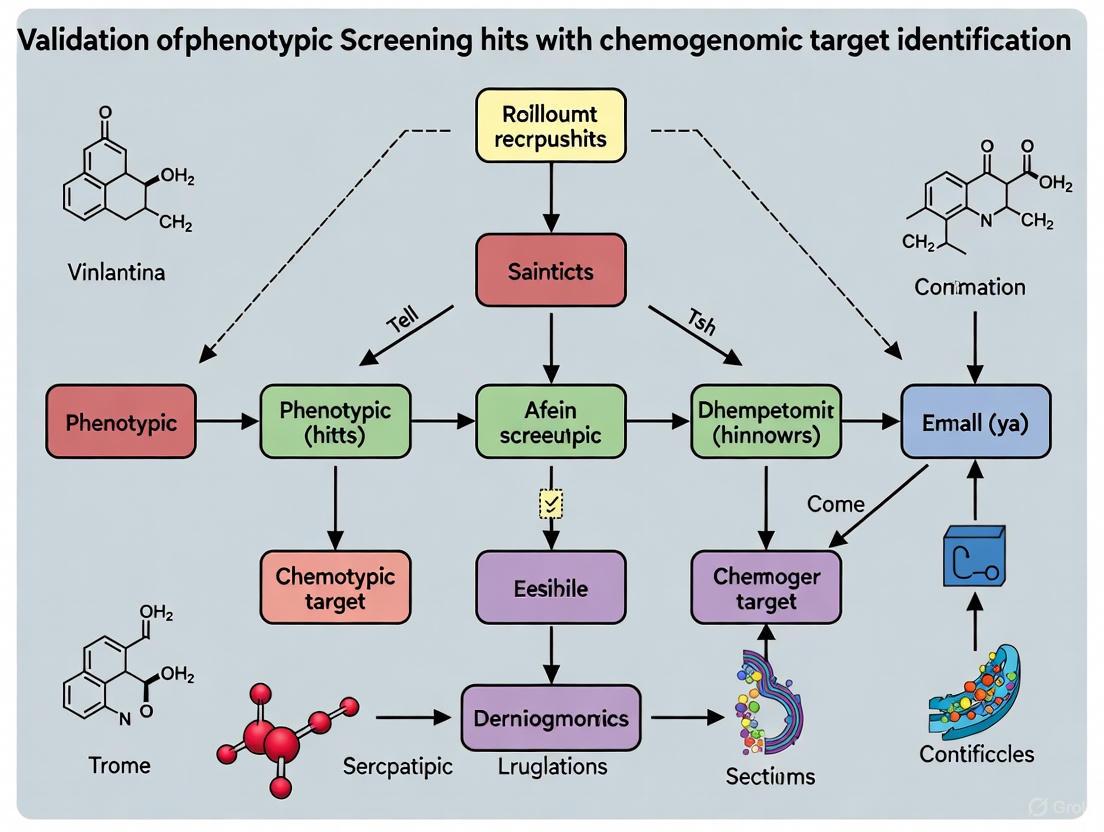

Diagram 1: Phenotypic Screening Workflow. This flowchart outlines the key steps in phenotypic drug discovery, from initial screening to target identification.

Methodological Framework: Validating Phenotypic Hits Through Chemogenomics

A critical challenge in phenotypic screening remains target deconvolution—identifying the molecular mechanism responsible for the observed therapeutic effect [2]. Modern chemogenomic approaches have revolutionized this process through computational and experimental methods that systematically link compound structures to biological targets.

Computational Target Prediction Methods

Recent advances in bioinformatics have produced sophisticated in silico target prediction platforms that accelerate mechanism of action elucidation. A comprehensive 2025 benchmark study systematically evaluated seven target prediction methods using an FDA-approved drug dataset [6]:

Table 3: Comparison of Computational Target Prediction Methods

| Method | Type | Algorithm | Database | Performance Notes |

|---|---|---|---|---|

| MolTarPred [6] | Ligand-centric | 2D similarity | ChEMBL 20 | Most effective in benchmark study [6] |

| RF-QSAR [6] | Target-centric | Random forest | ChEMBL 20&21 | Web server implementation [6] |

| TargetNet [6] | Target-centric | Naïve Bayes | BindingDB | Multiple fingerprint types [6] |

| ChEMBL [6] | Target-centric | Random forest | ChEMBL 24 | Morgan fingerprints [6] |

| CMTNN [6] | Target-centric | Neural network | ChEMBL 34 | Stand-alone code [6] |

| PPB2 [6] | Ligand-centric | Nearest neighbor/Neural network | ChEMBL 22 | Multiple algorithms [6] |

| SuperPred [6] | Ligand-centric | 2D/fragment/3D similarity | ChEMBL & BindingDB | ECFP4 fingerprints [6] |

These computational methods employ either target-centric approaches (building predictive models for specific targets) or ligand-centric strategies (identifying similar compounds with known targets) [6]. The benchmark analysis revealed that MolTarPred demonstrated particular effectiveness, especially when using Morgan fingerprints with Tanimoto scoring metrics [6].

Experimental Target Identification Protocols

Complementing computational approaches, experimental methods for target identification have seen significant advances:

Cellular Thermal Shift Assay (CETSA): This label-free method detects changes in protein thermal stability upon compound binding in live cells [4]. The technique measures the melting curve of proteins in compound-treated versus control cells, identifying stabilized targets that shift their denaturation profiles.

Thermal Proteome Profiling (TPP): A proteome-wide extension of CETSA, TPP uses multiplexed quantitative mass spectrometry to monitor thermal stability shifts across thousands of proteins simultaneously [5] [4]. This approach was successfully applied to identify multiple targets engaged by the anti-glioblastoma compound IPR-2025 [5].

Transcriptomics Analysis: RNA sequencing of compound-treated versus untreated cells can reveal pathway-level effects that inform mechanism of action [5]. This approach provides complementary data to direct binding assays by capturing downstream consequences of target engagement.

Diagram 2: Target Deconvolution Strategies. This diagram illustrates the integrated computational and experimental approaches for identifying the molecular targets of phenotypic screening hits.

Essential Research Tools for Phenotypic Screening

Successful implementation of phenotypic screening campaigns requires specialized research tools and reagents designed to capture relevant biology while enabling high-throughput operation.

Table 4: Essential Research Reagents and Platforms for Phenotypic Screening

| Research Tool | Function | Application Notes |

|---|---|---|

| High-Content Imaging Systems [2] | Automated microscopy and image analysis for multiparametric phenotypic assessment | Enables quantification of complex morphological changes in cells [2] |

| 3D Spheroid/Organoid Cultures [2] [5] | Physiologically relevant disease models that better mimic tissue architecture | Patient-derived GBM spheroids used in glioblastoma screening [5] |

| iPSC-Derived Cell Models [2] | Patient-specific cell types for disease modeling and compound screening | Particularly valuable for neurological disorders [2] |

| Transcreener HTS Assays [7] | Biochemical assays for enzyme activity detection (kinases, ATPases, etc.) | Flexible platform for multiple target classes using FP, FI, or TR-FRET detection [7] |

| Chemical Libraries with Diverse Annotation [2] [5] | Collections of compounds for screening; non-annotated libraries preferred for novel target discovery | Rationally designed libraries tailored to disease genomics enhance hit rates [5] |

| Zebrafish Embryo Models [8] | Whole-organism screening with high genetic similarity to humans | Used for neuroactive drug screening and toxicology studies [2] |

Advanced research platforms like Recursion OS and Insilico Medicine's Pharma.AI exemplify the integration of these tools with artificial intelligence for enhanced phenotypic discovery. The Recursion OS platform leverages approximately 65 petabytes of proprietary data and includes models like Phenom-2 (a 1.9 billion-parameter vision transformer) and MolPhenix for predicting molecule-phenotype relationships [9]. Similarly, Insilico's PandaOmics module leverages 1.9 trillion data points from over 10 million biological samples and 40 million documents for target identification and prioritization [9].

Phenotypic screening remains a powerhouse for first-in-class drug discovery because it embraces biological complexity, reveals unexpected mechanisms of action, and identifies novel therapeutic targets that would elude hypothesis-driven approaches. The historical success of this approach—from early observations of penicillin's effects to modern high-throughput campaigns—underscores its enduring value in the pharmaceutical development landscape [2] [1].

The future of phenotypic discovery lies in strategic integration with complementary technologies: advanced disease models (3D organoids, patient-derived cells), sophisticated target deconvolution methods (computational prediction, thermal proteome profiling), and artificial intelligence platforms that can extract meaningful patterns from high-dimensional phenotypic data [2] [9] [1]. By combining the unbiased nature of phenotypic screening with modern tools for mechanistic elucidation, drug discovery researchers can systematically address the challenges of complex diseases and deliver the transformative medicines that patients urgently need.

Chemogenomics represents a pivotal paradigm in modern drug discovery, systematically exploring the interaction between small molecules and biological targets. This approach establishes comprehensive ligand-target structure-activity relationship matrices to accelerate the identification and validation of therapeutic targets. Within the context of phenotypic screening, chemogenomics provides a powerful framework for deconvoluting the mechanisms of action of bioactive compounds. This guide examines the core principles, methodologies, and practical applications of chemogenomics, with a focused analysis of experimental platforms and reagent solutions that enable researchers to bridge chemical and biological spaces effectively.

Chemogenomics aims at the systematic identification of small molecules that interact with the products of the genome and modulate their biological function [10]. This field operates on the fundamental premise of establishing and expanding a comprehensive ligand-target Structure-Activity Relationship (SAR) matrix, representing a key scientific challenge for the 21st century following the elucidation of the human genome [10]. The chemogenomic approach utilizes small molecules as tools to establish relationships between targets and phenotypic outcomes, operating through two primary directional strategies: reverse chemogenomics (investigating biological activity starting from enzyme inhibitors) and forward chemogenomics (identifying relevant targets of pharmacologically active small molecules) [11].

The expansion of the physically available and bioactive chemical space represents a central objective of chemogenomics [10]. Effective systematic expansion appears possible when conserved molecular recognition principles serve as the founding hypothesis for compound design. These principles include approaches focusing on target families, privileged scaffolds, protein secondary structure mimetics, co-factor mimetics, and diversity-oriented synthesis (DOS) and biology-oriented synthesis (BIOS) libraries [10]. This systematic framework enables researchers to navigate the complex landscape of chemical-biological interactions with greater precision and efficiency.

Chemogenomics in Phenotypic Screening Hit Validation

Phenotypic drug discovery represents a powerful approach for identifying compounds that produce desired therapeutic effects without pre-supposing specific molecular targets, particularly valuable for infectious diseases where few well-validated targets exist [12]. A significant advantage of phenotypic screening is that active compounds modulate mechanisms or pathways essential for pathogen survival while possessing necessary properties for cellular permeation, metabolic stability, and target access without significant efflux [12]. However, a major limitation remains the lack of knowledge regarding the molecular target and binding mode of hits, which could enable structure-guided optimization approaches.

Target identification for phenotypic screening hits presents substantial challenges, as experimental determination can be complex, time-consuming, expensive, and not always successful [12]. Computational target prediction platforms have emerged as valuable tools to generate testable hypotheses, utilizing both ligand and protein-structure information to produce ranked sets of predicted molecular targets [12]. These platforms address the critical need for efficient mechanism deconvolution in phenotypic discovery programs.

Table 1: Challenges in Phenotypic Screening and Chemogenomic Solutions

| Challenge | Impact on Drug Discovery | Chemogenomic Approach |

|---|---|---|

| Unknown molecular target | Difficult to optimize compounds rationally | Computational target prediction and chemogenomic library screening |

| Unknown binding mode | Limited structure-guided optimization | 3D binding pose prediction and binding site analysis |

| Potential scaffold liabilities | Late-stage failure due to poor pharmacokinetics/toxicology | Early liability screening and scaffold hopping |

| Target-related unattractiveness | Wasted resources on less therapeutic targets | Early target identification for prioritization |

| Multi-target interactions | Unpredictable efficacy or toxicity | Selective compound profiling and polypharmacology assessment |

The premise of computational target identification rests on molecular recognition principles: structurally similar compounds interacting through similar pharmacophores will be recognized by similar protein binding sites [12]. If a phenotypic hit molecule shares similarity with a compound bound to a specific protein site in structural databases, this information can identify proteins with similar binding sites in the pathogen proteome, enabling target hypothesis generation [12].

Experimental Platforms and Methodologies

Computational Target Prediction Workflow

An advanced target prediction platform for phenotypic actives against Mycobacterium tuberculosis exemplifies the integrated computational approach [12]. The methodology employs a fragment-based strategy to address limited chemical space coverage in structural databases, drawing analogy to fragment-based drug discovery principles that increase efficiency in chemical space sampling [12].

Preparative Steps:

- PDB Fragment Space Creation: All small molecule ligands in the Protein Data Bank (PDB) are fragmented to generate molecular fragments capturing diverse pharmacophoric patterns. For each fragment, the binding cavity is defined and fragment-protein interactions analyzed [12].

- Target Space Generation: A comprehensive M. tuberculosis target space is assembled, including existing PDB structures (2,055 structures) and high-quality modeled protein structures (3,667 structures) generated using Rosetta homology modeling [12].

Platform Workflow:

- Fragmentation of Phenotypic Active: The active hit compound is fragmented in silico to generate molecular fragments [12].

- Fragment Similarity Search: Fragments from the phenotypic hit are compared against the PDB fragment database to identify identical or similar fragments with known binding environments [12].

- Cavity Comparison: Identified PDB fragments define cavities that are compared against the M. tuberculosis target space to find similar binding sites [12].

- Docking and Binding Mode Analysis: The complete phenotypic hit is docked into putative targets, and binding modes are analyzed for consistency with observed structure-activity relationships [12].

The following diagram illustrates the core logical workflow of this computational approach:

Chemogenomic Set Assembly and Validation

An alternative empirical approach utilizes curated chemogenomic compound sets - libraries of highly annotated biologically active compounds screened for phenotypic outcomes in disease-relevant models [13]. While chemical probes represent the highest quality tools for such purposes, molecules in a chemogenomic set may exhibit less stringent individual potency and selectivity properties but are assembled to provide broader selectivity profiles with non-overlapping off-target activity that enables mechanistic deconvolution [13].

The compilation of an NR1 nuclear receptor family chemogenomic set demonstrates rigorous assembly criteria [13]:

Selection Criteria:

- Compound-Bioactivity Annotations: Sourced from public repositories (PubChem, ChEMBL, IUPHAR/BPS, BindingDB, Probes&Drugs) compiled in curated datasets [13]

- Potency Requirements: Cellular potency ≤10 µM (preferably ≤1 µM) based on community-agreed criteria [13]

- Selectivity Standards: Up to five off-targets at final concentration [13]

- Chemical Diversity: Analyzed using Tanimoto similarity of Morgan fingerprints and Murcko molecular frameworks [13]

- Mode of Action Diversity: Inclusion of agonists, antagonists, and inverse agonists [13]

- Commercial Availability: Ensuring broad accessibility to the research community [13]

Validation Workflow:

- Identity and Purity Verification: NMR, LC-UV, LC-ELSD, and LC-MS confirmation (≥95% purity) [13]

- Viability Assessment: Primary cell viability assay in multiple cell lines (HEK293T, U-2 OS, MRC-9 fibroblasts) using confluence measurement to determine growth rate [13]

- Multiplex Toxicity Profiling: High-content microscopy-based multiplex assay evaluating apoptosis, cytoskeleton alterations, membrane permeabilization, and mitochondrial mass using orthogonal stains [13]

- Off-Target Liability Screening: Differential scanning fluorimetry (DSF) screening against representative kinases and bromodomains (BRD4, TRIM24, BRPF1, AURKA, CDK2, MAPK1, GSK3B, CSNK1D, ABL1, FGFR3) [13]

- In-Family Selectivity Profiling: Uniform hybrid reporter gene assays on main targets and all NRs in respective subfamilies [13]

Table 2: Experimental Validation Methods for Chemogenomic Sets

| Validation Method | Key Parameters Measured | Exclusion Criteria |

|---|---|---|

| Cell Viability Assay | Growth rate (GR), confluency over time | GR ≤ 0.5, atypical cellular phenotypes |

| Multiplex Toxicity Assay | Apoptosis, cytoskeleton, membrane integrity, mitochondrial mass | Phenotypic effects, precipitation, non-specific toxicity |

| Differential Scanning Fluorimetry | Protein melting temperature (ΔTm) | ΔTm > 1.8°C (≥ 2 × SD) on liability targets |

| Reporter Gene Assays | In-family selectivity, potency confirmation | Lack of intended activity, poor potency |

| Compound Solubility | Kinetic solubility in assay conditions | Insufficient solubility for testing |

Comparative Analysis of Research Platforms

Cheminformatics Platforms for Chemogenomics

The implementation of chemogenomic approaches requires robust cheminformatics platforms capable of handling diverse chemical data and supporting target prediction workflows. The following table compares key platforms used in chemogenomic research:

Table 3: Cheminformatics Platform Comparison for Chemogenomics Applications

| Platform | License Model | Key Strengths | Target Prediction Capabilities | Integration Options |

|---|---|---|---|---|

| RDKit | Open-source (BSD) | Comprehensive functionality, high performance, active community | Ligand-based similarity searching, molecular descriptor calculation, fingerprint generation | Python, KNIME, PostgreSQL cartridge, Java, C++ |

| ChemAxon Suite | Commercial | Enterprise-level chemical data management, user-friendly interfaces | Chemical database management, substructure and similarity search | Java-based APIs, Pipeline Pilot, KNIME |

| CDK (Chemistry Development Kit) | Open-source | Cross-platform compatibility, extensive descriptor calculation | Molecular descriptor calculation, fingerprint generation, SAR analysis | Java-based applications, various programming languages |

| Open Babel | Open-source | Format conversion, structure manipulation | Chemical file format conversion, basic molecular manipulation | Command-line utilities, programming interfaces |

RDKit deserves particular emphasis as it has become a de facto standard in the field due to its comprehensive functionality, high performance, and active community [14]. While RDKit itself is a library rather than a standalone application, it provides robust capabilities for molecular descriptor calculation, fingerprint generation for similarity searching, and substructure search - all critical for chemogenomic applications [14]. RDKit supports multiple fingerprint types (Morgan fingerprints similar to ECFP, RDKit Fingerprint, Topological Torsion, Atom Pair, and MACCS keys) and similarity metrics (Tanimoto, Dice, Cosine, etc.) essential for ligand-based virtual screening [14]. Its integration with the PostgreSQL database system via the RDKit cartridge enables efficient chemical database management and searching at scale [15].

Specialized Chemogenomic Sets

The development of specialized chemogenomic sets for specific protein families represents another strategic approach. The following table compares two recently developed chemogenomic sets for nuclear receptor families:

Table 4: Comparative Analysis of Nuclear Receptor Chemogenomic Sets

| Parameter | NR1 Family Set [13] | NR4A Family Set [16] |

|---|---|---|

| Family Coverage | 19 NRs across 7 subfamilies | 3 receptors (Nur77/NR4A1, Nurr1/NR4A2, NOR1/NR4A3) |

| Compound Count | 69 comprehensively annotated modulators | 8 validated direct modulators |

| Activity Types | Agonists, antagonists, inverse agonists | Agonists and inverse agonists |

| Selection Criteria | Potency (≤10 µM), selectivity (≤5 off-targets), commercial availability | Direct binding validation, orthogonal cellular activity, commercial availability |

| Validation Methods | Viability assays, multiplex toxicity, DSF liability screening, reporter gene assays | ITC, DSF, reporter gene assays, solubility, multiplex toxicity |

| Proven Applications | Autophagy, neuroinflammation, cancer cell death | Endoplasmic reticulum stress, adipocyte differentiation |

The NR1 family chemogenomic set demonstrates the comprehensive approach to set assembly, with 69 compounds rigorously selected and validated to cover all 19 members of the NR1 family [13]. This set was optimized for complementary activity/selectivity profiles and chemical diversity to ensure orthogonality in phenotypic screening applications [13]. Proof-of-concept applications revealed roles of NR1 members in autophagy, neuroinflammation, and cancer cell death, confirming the set's suitability for target identification and validation [13].

In contrast, the NR4A family set represents a more focused approach, with 8 validated direct modulators addressing a smaller receptor subgroup [16]. The comparative profiling of NR4A modulators revealed a lack of on-target binding and modulation for several putative ligands, highlighting the critical importance of experimental validation in tool compound selection [16]. This smaller set nonetheless enabled the linking of orphan targets with phenotypic effects in endoplasmic reticulum stress and adipocyte differentiation [16].

The Scientist's Toolkit: Essential Research Reagents and Platforms

Successful implementation of chemogenomics approaches requires access to specialized reagents, platforms, and databases. The following table details key solutions for researchers in this field:

Table 5: Essential Research Reagent Solutions for Chemogenomics

| Resource Category | Specific Solutions | Function in Chemogenomics |

|---|---|---|

| Cheminformatics Platforms | RDKit, ChemAxon Suite, CDK, Open Babel | Chemical structure handling, descriptor calculation, similarity searching, database management [14] [15] |

| Chemical Databases | ChEMBL, PubChem, BindingDB, IUPHAR/BPS | Source of compound-bioactivity annotations for target identification [13] |

| Structural Databases | Protein Data Bank (PDB) | Source of protein-ligand complex structures for binding site analysis [12] |

| Target Prediction Tools | Fragment-based platforms, reverse docking approaches | Generation of target hypotheses for phenotypic hits [12] |

| Validation Assays | Reporter gene assays, DSF, ITC, multiplex toxicity assays | Experimental confirmation of compound-target interactions and selectivity [13] |

| Curated Chemogenomic Sets | NR1 family set, NR4A set, kinase chemogenomic sets | Annotated compound libraries for phenotypic screening and target deconvolution [16] [13] |

Chemogenomics provides a systematic framework for bridging chemical and biological spaces, enabling efficient target identification and validation in phenotypic drug discovery. The integration of computational prediction platforms with empirically validated chemogenomic sets offers complementary strategies for deconvoluting the mechanisms of action of bioactive compounds. Computational approaches like the fragment-based target prediction platform leverage structural information to generate testable target hypotheses, while carefully curated chemogenomic sets enable empirical target validation through selective modulation. As these methodologies continue to mature and integrate, they promise to accelerate the transformation of phenotypic screening hits into validated therapeutic targets, ultimately enhancing the efficiency of drug discovery pipelines across diverse therapeutic areas.

The concept of the druggable genome, first defined twenty years ago as the subset of the human genome encoding proteins capable of binding drug-like molecules, has fundamentally transformed target selection in pharmaceutical research [17]. Early estimates suggested approximately 4,500 genes constituted this space, but technological advances have continuously expanded this frontier [18] [17]. Today, researchers are moving beyond simple ligandability assessments to multi-parameter evaluations that encompass disease modification, tissue expression, functional sites, and safety profiles [17]. This evolution reflects a critical transition from asking "can this protein bind a drug?" to the more complex question: "can this target yield a successful drug?" [17].

Within this expanded framework, phenotypic screening has emerged as a powerful strategy for identifying novel biological insights and first-in-class therapies without requiring prior knowledge of specific molecular pathways [19]. However, a significant challenge persists in bridging the gap between phenotypic hits and target identification. This guide examines how integrative approaches, particularly Mendelian randomization (MR) and chemogenomic libraries, are validating phenotypic screening hits and expanding the druggable genome, with direct comparisons of their performance against conventional methods.

Case Study 1: Mendelian Randomization in Oncology Target Discovery

A 2025 study systematically applied druggable genome-wide Mendelian randomization to identify novel therapeutic targets for lung squamous cell carcinoma (LUSC), a non-small cell lung cancer subtype with poor prognosis and limited treatment options [18]. The research employed a multi-tiered validation approach using expression quantitative trait loci (eQTL) and protein QTL (pQTL) data from two independent datasets (ieub4953 and finngen) [18].

Table 1: LUSC-Related Genes Identified via Mendelian Randomization

| Gene Symbol | Identification Method | Effect on LUSC Risk | Associated Risk Factors |

|---|---|---|---|

| DNMT1 | cis-eQTL | Protective | Smoking (p=0.035) |

| ACSS2 | cis-eQTL | Risk factor | Smoking, Pulmonary fibrosis |

| YBX1 | cis-eQTL | Risk factor | Smoking, Phthisis, Alcohol |

| SELENOS | cis-eQTL | Risk factor | Pulmonary fibrosis |

| PPARA | cis-eQTL | Protective | Smoking, Pulmonary fibrosis |

| MST1 | cis-pQTL | Protective | Alcohol abuse |

| CPA4 | cis-pQTL | Protective | Phthisis (p=0.031) |

| MPO | cis-pQTL | Risk factor | Not specified |

Experimental Protocol and Validation

The methodology followed a rigorous multi-step process to ensure causal inference:

- Druggable Gene Selection: Researchers compiled 5,859 unique druggable genes from DGIdb v4.2.0 and Finan et al. databases [18].

- Instrumental Variable Selection: Genetic variants significantly associated with gene expression (±1 Mb window) were extracted as instrumental variables, with a genome-wide significance threshold of P < 5 × 10⁻⁸ and minimum F-statistic of 10 to ensure strength [18].

- Mendelian Randomization Analysis: Causal effects were estimated using Wald ratio (single IV) or inverse-variance weighted (IVW) method (multiple IVs) [18].

- Sensitivity Analyses: Bayesian co-localization, summary-data-based MR (SMR) analysis, and HEIDI tests were conducted to verify pleiotropic associations between gene expression and LUSC risk [18].

- Clinical Correlation: Researchers assessed prognosis, immune infiltration, and single-cell expression patterns for validated targets [18].

This approach successfully identified eight LUSC-related genes with causal associations, demonstrating how MR can prioritize targets for further investigation. The DNMT1, ACSS2, YBX1, SELENOS, and PPARA genes were identified through blood cis-eQTL analysis, while MST1, CPA4, and MPO emerged from cis-pQTL analysis [18].

Performance Assessment

The MR approach demonstrated several advantages for target identification. By using genetic variants as instrumental variables, the method avoids confounding factors and reverse causality inherent in observational studies, providing stronger evidence for causal target-disease relationships [18]. The methodology also enabled systematic interrogation of thousands of druggable genes simultaneously, significantly expanding the potential target space beyond conventionally investigated candidates.

However, the study also revealed limitations. Bayesian co-localization analysis showed negative results (PPH3 + PPH4 < 0.8) for all identified genes, suggesting insufficient evidence for shared causal variants between gene expression and LUSC risk [18]. This highlights a key consideration for MR-based approaches—while they can identify statistically significant associations, complementary methods may be needed to fully establish biological mechanisms.

Case Study 2: Integrating Single-Cell MR in Ophthalmology

Study Design and Findings

A 2025 investigation into primary open-angle glaucoma (POAG) exemplified how integrating single-cell technologies with MR can reveal cell-type-specific therapeutic targets and repurposable drugs [20]. This research employed druggable genome-wide and single-cell MR using POAG genome-wide association study data, blood, and single-cell eQTL datasets [20].

Table 2: POAG Therapeutic Targets Identified via Integrated MR Approach

| Gene Symbol | Cell Type Specificity | Effect on POAG Risk | Odds Ratio (95% CI) | Potential Repurposed Drugs |

|---|---|---|---|---|

| YWHAG | Not specified | Risk factor | 1.207 (1.131-1.288) | Not identified |

| GFPT1 | CD4+KLRB1- T cells | Protective (paradoxical risk in specific T cells) | 0.874 (0.840-0.910) | Trimipramine, Desipramine, Cyclosporin |

Experimental Workflow

The study implemented a comprehensive roadmap for target identification and validation:

- Druggable Genome Annotation: 4,463 druggable genes were sourced from Finan et al. and intersected with 19,127 blood eQTL genes [20].

- Single-Cell cis-eQTL Analysis: Immune cell-specific eQTLs were derived from the OneK1K database, comprising scRNA-seq data from 1.27 million peripheral blood mononuclear cells across 982 donors [20].

- Causal Inference: MR analysis was performed with Steiger filtering to ensure correct causal direction [20].

- Drug Repurposing Prediction: Molecular docking using DSigDB/CB-Dock2 confirmed strong binding of existing drugs to identified targets (Vina score < -5) [20].

- Safety Assessment: Phenome-wide association studies (PheWAS) were conducted to assess potential off-target effects [20].

Performance Assessment

The integration of single-cell resolution provided a critical advancement over bulk tissue analyses. Researchers discovered a cell-type-specific paradoxical effect where high GFPT1 expression in CD4+KLRB1-T cells increased POAG risk (OR = 1.448), contrary to its protective role at the bulk tissue level [20]. This finding highlights how cellular context dramatically influences target validation and underscores the limitation of conventional approaches that overlook microenvironment heterogeneity.

The molecular docking component successfully identified three FDA-approved drugs with strong binding affinity to GFPT1, while PheWAS analysis indicated no significant off-target effects, accelerating the path to clinical translation [20]. This end-to-end pipeline—from genetic discovery to repurposing candidates—demonstrates how modern MR approaches can de-risk early drug development.

Comparative Analysis: MR Versus Conventional Phenotypic Screening

Methodological Comparison

Traditional phenotypic screening has contributed significantly to drug discovery, enabling identification of novel therapeutic mechanisms without molecular target preconceptions [19]. However, both small molecule and genetic screening approaches face inherent limitations in subsequent target identification and validation.

Table 3: Performance Comparison of Target Identification Methods

| Parameter | Mendelian Randomization | Small Molecule Screening | Genetic Screening |

|---|---|---|---|

| Target Identification Capability | Direct causal inference | Indirect, requires deconvolution | Direct for genetic targets |

| Throughput | High (genome-wide) | Moderate to high | High with CRISPR |

| Clinical Translation Success | Higher (genetically validated targets) | Variable | Lower (genetic-pharmacologic disconnect) |

| Cell Type Resolution | Achievable with sc-eQTL integration | Limited without specialized assays | Achievable with scRNA-seq |

| Limitations | Limited by GWAS sample size and diversity | Limited to ~1,000-2,000 of 20,000+ genes [19] | Fundamental differences between genetic and small molecule effects [19] |

Limitations and Mitigation Strategies

Conventional phenotypic screening faces several constraints. Small molecule libraries interrogate only a small fraction (approximately 1,000-2,000 targets) of the human genome's 20,000+ genes, creating significant coverage gaps in the druggable genome [19]. Furthermore, chemical tool compounds used for target validation often suffer from poor selectivity, creating uncertainty in associating phenotypes with specific molecular targets [19].

Genetic screening approaches, while enabling systematic perturbation of gene function, face a different set of challenges. There are fundamental differences between genetic and small molecule perturbations, including temporal resolution (permanent gene knockout versus transient pharmacological inhibition), compensation mechanisms, and the inability of genetic approaches to mimic allosteric modulation or protein degradation [19].

Mendelian randomization addresses several of these limitations by leveraging natural genetic variation as a surrogate for lifelong drug target modulation, providing human physiological context that is absent from in vitro models [18] [20]. The methodology also benefits from very large sample sizes available through biobanks, enabling robust statistical power that exceeds many conventional screening approaches.

The Scientist's Toolkit: Essential Research Reagents and Workflows

Key Research Reagent Solutions

Successful expansion of the druggable genome requires specialized reagents and datasets:

Table 4: Essential Research Reagents and Resources for Druggable Genome Studies

| Resource Type | Specific Examples | Function and Application |

|---|---|---|

| Druggable Genome Databases | Finan et al. (4,463 genes), DGIdb v4.2.0 | Define the initial target universe for screening [18] [20] |

| QTL Datasets | eQTLGen Consortium (blood cis-eQTL), OneK1K (sc-eQTL), pQTL datasets | Provide genetic instruments for MR studies [18] [20] |

| GWAS Resources | FinnGen, UK Biobank, ieuge | Supply outcome data for causal inference [18] [20] |

| Analytical Tools | TwoSampleMR R package, SMR software, COLOC for Bayesian colocalization | Enable statistical analysis and causal inference [20] |

| Validation Resources | PDBe-KB (protein structures), ChEMBL (bioactive molecules), canSAR | Facilitate structural and chemical validation of targets [17] |

Integrated Workflow Visualization

The following diagram illustrates the comprehensive workflow for expanding the druggable genome through integrated genetic and functional approaches:

Integrated Workflow for Expanding Druggable Genome

The integration of Mendelian randomization with phenotypic screening frameworks represents a powerful strategy for expanding the druggable genome and validating novel therapeutic targets. The case studies in LUSC and POAG demonstrate how genetically validated targets provide de-risked starting points for drug development, with higher likelihood of clinical translation success [18] [20]. The addition of single-cell resolution addresses critical limitations of conventional phenotypic screening by revealing cell-type-specific effects and paradoxical signaling that would otherwise remain obscured [20].

Future expansion of the druggable genome will increasingly rely on knowledge graphs that integrate data from gene-level to protein residue-level, enabling artificial intelligence approaches to navigate the complexity of biological systems and identify high-quality targets [17]. As these technologies mature, the scientific community can anticipate continued growth in the number of therapeutic targets, particularly for diseases with high unmet need where conventional target identification approaches have proven insufficient.

The combination of human genetic evidence from MR with functional validation from phenotypic screening creates a virtuous cycle for drug discovery—where genetic findings inspire phenotypic assays, and phenotypic observations motivate genetic investigations—ultimately accelerating the development of novel therapies for complex diseases.

The decline in pharmaceutical research and development productivity has spurred a resurgence of interest in phenotypic drug discovery (PDD). Unlike target-based approaches, PDD identifies compounds based on their ability to modulate disease-relevant phenotypes without prior knowledge of specific molecular targets, making it particularly valuable for complex diseases and first-in-class medicine development [21]. However, a significant challenge emerges during hit validation: understanding the mechanism of action (MOA) of phenotypically active compounds in the context of widespread polypharmacology—the phenomenon where single compounds interact with multiple biological targets [22] [23].

This guide examines the integration of phenotypic screening with chemogenomic target identification technologies, comparing experimental approaches and computational frameworks that enable researchers to navigate the complex polypharmacology of hit compounds while accelerating the development of novel therapeutics.

The Polypharmacology Landscape in Drug Discovery

Polypharmacology represents a paradigm shift from the traditional "one drug–one target" model toward understanding drugs' complex interactions with multiple biological targets. Research indicates that most drug molecules interact with six known molecular targets on average, even after optimization [23]. This multi-target activity presents both challenges and opportunities:

Therapeutic Advantages: Polypharmacology can enhance therapeutic efficacy for complex, multifactorial diseases, particularly in central nervous system (CNS) disorders and oncology, where modulating multiple pathways simultaneously may yield superior clinical outcomes [24] [22].

Validation Challenges: Promiscuous binding complicates target deconvolution and MOA determination, potentially introducing off-target effects that contribute to adverse drug reactions [25] [26].

The polypharmacology index (PPindex) has been developed as a quantitative metric to compare target specificity across compound libraries, with steeper slopes (larger absolute values) indicating more target-specific libraries [23].

Table 1: Polypharmacology Index Comparison Across Selected Compound Libraries

| Library Name | PPindex (All Targets) | PPindex (Without 0/1 Target Bins) | Relative Specificity |

|---|---|---|---|

| DrugBank | 0.9594 | 0.4721 | Most specific |

| LSP-MoA | 0.9751 | 0.3154 | Intermediate |

| MIPE 4.0 | 0.7102 | 0.3847 | Intermediate |

| Microsource Spectrum | 0.4325 | 0.2586 | Most polypharmacologic |

Phenotypic Screening: A Target-Agnostic Approach

Phenotypic screening assesses compound effects in physiologically relevant systems without requiring predefined molecular targets, potentially increasing translational success rates [23] [21]. This approach is particularly valuable for:

CNS Drug Discovery: The intricate interplay of neurotransmitter systems makes target-agnostic approaches particularly suitable for neuropsychiatric disorders [24].

Complex Disease Pathologies: Diseases involving multiple genetic factors and compensatory pathways may be better addressed through phenotypic approaches [21].

First-in-Class Therapeutics: Phenotypic screening has demonstrated a superior track record in discovering first-in-class medicines compared to target-based approaches [21].

However, the primary challenge remains target deconvolution—identifying the molecular mechanisms responsible for observed phenotypic effects [26] [21]. This process becomes increasingly complex when considering the polypharmacology of hit compounds, where multiple simultaneous interactions may contribute to the overall phenotypic response.

Chemogenomic Approaches for Target Identification

Chemogenomics systematically studies the interactions between chemical compounds and biological targets, providing powerful tools for target deconvolution in phenotypic screening.

Knowledge-Based Chemogenomic Platforms

Comprehensive knowledgebases enable researchers to leverage existing compound-target interaction data for polypharmacology prediction:

Drug Abuse Knowledgebase (DA-KB): This specialized resource centralizes chemogenomics data related to drug abuse and CNS disorders, incorporating genes, proteins, chemical compounds, and bioassays to facilitate polypharmacology analysis [25].

Computational Analysis of Novel Drug Opportunities (CANDO): This platform employs fragment-based multitarget docking with dynamics to construct compound-proteome interaction matrices, which are then analyzed to determine similarity of drug behavior based on proteomic interaction signatures [22].

TargetHunter Platform: Provides computational algorithms for polypharmacological target identification and tool compounds for validation, particularly for GPCRs implicated in complex disorders [25].

Experimental Target Deconvolution Methods

Advanced experimental techniques enable direct identification of compound-target interactions:

Limited Proteolysis (LiP): A novel, label-free proteomics approach that detects structural changes in proteins upon compound binding, allowing for comprehensive identification of drug targets and off-targets without requiring chemical modification of the compound [26].

Compressed Phenotypic Screening: An innovative pooling approach where multiple perturbations are combined into unique pools, significantly reducing sample requirements and costs while maintaining the ability to deconvolve individual compound effects through computational regression analysis [27].

High-Content Imaging with Morphological Profiling: Using multiplexed fluorescent dyes (e.g., Cell Painting assay) to capture complex morphological features, enabling classification of compounds based on phenotypic fingerprints that can be linked to mechanisms of action [27].

Comparative Analysis of Experimental Approaches

Table 2: Comparison of Target Identification and Validation Methodologies

| Method | Key Features | Throughput | Information Gained | Key Limitations |

|---|---|---|---|---|

| Limited Proteolysis (LiP) | Label-free, detects protein structural changes | Medium | Direct binding information, proteome-wide coverage | Requires specialized expertise in proteomics |

| Compressed Phenotypic Screening | Pooled compounds, computational deconvolution | High | Cost-efficient morphological profiling | Limited by pool size and deconvolution accuracy |

| Computational CANDO Platform | In silico docking, proteome-wide interaction prediction | Very High | Putative interaction signatures for repurposing | Dependent on quality of structural and chemical data |

| High-Content Morphological Profiling | Multiplexed imaging, phenotypic fingerprinting | Medium | Functional classification based on phenotype | Indirect target inference requires validation |

Integrated Workflow for Hit Validation

Successful validation of phenotypic screening hits requires an integrated approach that combines complementary technologies:

Diagram 1: Hit Validation Workflow

Critical Considerations for Hit Triage and Validation

Effective hit validation requires addressing several key challenges:

Biological Knowledge Integration: Successful hit triage leverages three types of biological knowledge: known mechanisms, disease biology, and safety considerations, while structure-based triage alone may be counterproductive [28].

Polypharmacology Assessment: Early evaluation of compound promiscuity using tools like PPindex helps prioritize compounds with desirable multi-target profiles while minimizing off-target liabilities [23] [29].

Chain of Translatability: Establishing a clear connection between the phenotypic assay, disease relevance, and clinical translation is essential for prioritizing hits with genuine therapeutic potential [21].

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 3: Key Research Reagent Solutions for Phenotypic Screening and Target Identification

| Tool/Platform | Primary Function | Application in Validation |

|---|---|---|

| Cell Painting Assay | Multiplexed morphological profiling | Phenotypic classification and mechanism of action prediction [27] |

| Chemogenomic Libraries | Collections of target-annotated compounds | Target deconvolution in phenotypic screens [23] |

| DA-KB Knowledgebase | Domain-specific chemogenomics database | Polypharmacology analysis for CNS targets [25] |

| CANDO Platform | Computational proteome docking | Predicting drug-target interactions and repurposing opportunities [22] |

| LiP-MS Platform | Limited proteolysis mass spectrometry | Direct identification of drug-target interactions [26] |

Case Studies and Applications

CNS Drug Discovery: Ulotaront

A compelling example of successful phenotypic polypharmacology drug discovery comes from CNS research, where the SmartCube platform was used to identify ulotaront, a first-in-class antipsychotic currently in Phase III clinical trials [24]. This approach:

- Used in vivo phenotypic profiling without target preconceptions

- Identified a compound with a novel mechanism of action not involving dopamine receptor antagonism

- Demonstrated placebo-like tolerability despite complex polypharmacology

- Highlighted the power of behavioral phenotypic drug discovery for CNS applications

Oncology: Imatinib Polypharmacology

Imatinib, initially developed as a selective BCR-ABL inhibitor for chronic myeloid leukemia, exemplifies the importance of understanding polypharmacology:

- Originally discovered through high-throughput screening [22]

- Later found to inhibit multiple kinase targets (PDGF-R, c-Kit, c-fms) [22]

- Demonstrates that therapeutic efficacy may derive from multi-target effects [22]

- Drug resistance often emerges through mutations affecting binding, prompting development of next-generation inhibitors [22]

The integration of phenotypic screening with chemogenomic target identification represents a powerful strategy for addressing the challenges of polypharmacology in drug discovery. Key advancements driving this field include:

Improved Computational Prediction: Machine learning and network-based approaches are enhancing our ability to predict polypharmacological profiles and identify promising multi-target therapeutics [29].

Advanced Proteomics Technologies: Innovations like LiP-MS are providing more comprehensive and direct methods for target deconvolution [26].

High-Content Compression Methods: Pooled screening approaches are increasing the throughput and efficiency of phenotypic discovery campaigns [27].

Specialized Knowledgebases: Domain-specific resources like DA-KB are enabling more focused investigation of complex disease mechanisms [25].

As the field advances, the most successful drug discovery pipelines will likely embrace a holistic approach that acknowledges the inherent polypharmacology of most effective drugs while developing sophisticated tools to understand, predict, and optimize these complex interaction profiles for improved therapeutic outcomes.

A Practical Toolkit: From Phenotypic Hit to Target Hypothesis

Designing and Curating a Chemogenomic Library for Phenotypic Screening

The drug discovery paradigm has significantly shifted from a reductionist 'one target—one drug' vision to a more complex systems pharmacology perspective that acknowledges a single drug often interacts with several targets [30]. This evolution, driven by the need to address complex diseases like cancers and neurological disorders, has catalyzed the revival of phenotypic drug discovery (PDD) strategies. Phenotypic screening does not rely on a priori knowledge of specific drug targets, presenting a major challenge: deconvoluting the mechanism of action and identifying the therapeutic targets responsible for the observed phenotype [30]. Chemogenomic libraries represent a powerful solution to this challenge. A chemogenomic library is a collection of well-defined pharmacological agents where a hit in a phenotypic screen suggests that the annotated target or targets of the probe molecules are involved in the phenotypic perturbation [31]. Effectively, these libraries integrate small-molecule chemogenomics with genetic approaches, expediting the conversion of phenotypic screening projects into target-based drug discovery approaches [31].

The core value of a chemogenomic library lies in its annotation—the rich information linking compounds to their known protein targets, biological pathways, and even disease associations. This annotation transforms a simple collection of compounds into a sophisticated hypothesis-testing tool. Furthermore, the emergence of advanced cell-based phenotypic screening technologies, including induced pluripotent stem (iPS) cell technologies, gene-editing tools like CRISPR-Cas, and high-content imaging assays such as "Cell Painting," has increased the resolution and throughput of phenotypic readouts, making the need for well-curated libraries even more critical [30]. This guide will objectively compare the key strategies, experimental protocols, and performance data involved in designing and applying chemogenomic libraries for phenotypic screening.

Core Design Strategies and Comparative Analysis

Designing a chemogenomic library is a balancing act between comprehensive coverage of biological targets and practical considerations of library size, cost, and screening efficiency. Different strategies prioritize these factors differently, leading to distinct library designs. The following table summarizes the quantitative aspects of several design strategies as evidenced by recent research.

Table 1: Comparison of Chemogenomic Library Design Strategies and Performance

| Design Strategy | Reported Library Size | Target / Pathway Coverage | Key Design Criteria | Reported Applications / Outcomes |

|---|---|---|---|---|

| Systems Pharmacology Network Integration [30] | ~5,000 compounds | A large and diverse panel of drug targets involved in diverse biological effects and diseases. | Integration of drug-target-pathway-disease relationships & morphological profiles; scaffold diversity for broad coverage. | Target identification and mechanism deconvolution for phenotypic assays; integration with Cell Painting morphological profiles. |

| Precision Oncology-Focused Design [32] | A minimal library of 1,211 compounds (virtual); a physical library of 789 compounds (pilot). | 1,386 anticancer proteins; 1,320 targets covered by the physical library. | Library size, cellular activity, chemical diversity & availability, and target selectivity; adjusted for cancer. | Pilot screening on glioblastoma patient cells identified highly heterogeneous, patient-specific phenotypic vulnerabilities. |

| Machine Learning-Driven Feature Extraction [33] | 1,862 drugs (in underlying dataset). | 1,554 human target proteins (enzymes, GPCRs, ion channels, nuclear receptors). | Use of L1-regularized classifiers to identify informative chemogenomic features (chemical substructure-protein domain pairs). | Extraction of biologically meaningful substructure-domain associations; maintained drug-target interaction prediction performance. |

Beyond the general strategies, specific analytical procedures have been developed for particular therapeutic areas. For precision oncology, this involves designing compound collections adjusted for library size, cellular activity, chemical diversity and availability, and target selectivity to cover a wide range of protein targets and biological pathways implicated in various cancers [32]. The resulting libraries can be characterized by their compound and target spaces, providing a quantitative assessment of their coverage before any physical screening takes place.

Experimental Protocols for Library Construction and Validation

Protocol 1: Building a Systems Pharmacology Network for Library Curation

This protocol outlines the methodology for constructing a comprehensive data network to inform the selection of compounds for a chemogenomic library, as described in the development of a 5,000-compound library [30].

1. Data Acquisition and Integration:

- Bioactivity Data: Source standardized bioactivity data (e.g., IC50, Ki, EC50) and target annotations from public databases like ChEMBL.

- Pathway and Disease Context: Integrate pathway information from the Kyoto Encyclopedia of Genes and Genomes (KEGG) and disease associations from the Human Disease Ontology (DO) to provide biological and clinical context to the drug-target relationships.

- Morphological Profiling Data: Incorporate high-content imaging data from public benchmarks like the Broad Bioimage Benchmark Collection (BBBC022 - Cell Painting assay). This links chemical structures to a rich layer of phenotypic information.

2. Data Processing and Network Construction:

- Molecule Standardization: Process chemical structures to ensure consistency. Software like

ScaffoldHuntercan be used to decompose molecules into hierarchical scaffolds and fragments, enabling analysis of chemical diversity and privilege structures [30]. - Graph Database Implementation: Integrate the heterogeneous data sources (molecules, proteins, pathways, diseases, morphological features) into a high-performance NoSQL graph database, such as Neo4j. In this model, nodes represent entities (e.g., a molecule, a target protein), and edges represent the relationships between them (e.g., "Molecule A inhibits Target B") [30].

3. Library Curation and Filtering:

- Compound Selection: Filter the universe of available compounds based on the richness of their bioactivity data and the quality of their target annotations.

- Scaffold-Based Diversity: Apply scaffold-based filtering to ensure the final library encompasses a diverse chemical space that represents a broad swath of the druggable genome, avoiding over-representation of similar chemotypes [30].

Network-Based Library Construction Workflow

Protocol 2: A Machine Learning Approach for Identifying Chemogenomic Features

This protocol details a classifier-based method for extracting the fundamental associations between drug chemical substructures and protein domains that govern drug-target interactions [33]. This approach can inform library design by highlighting the most informative features.

1. Data Preparation:

- Drug-Target Interactions: Obtain a gold-standard set of known drug-target interactions from a database like DrugBank. This serves as positive examples for model training.

- Compound Representation: Encode the chemical structures of all drugs into a binary fingerprint vector (e.g., 881-dimensional using PubChem substructures), where each element indicates the presence or absence of a specific chemical substructure.

- Protein Representation: Encode the target proteins into a binary vector representing the presence or absence of protein domains from a database like PFAM.

2. Feature Vector Construction for Drug-Target Pairs:

- Represent each drug-target pair by the tensor product (also known as the Kronecker product) of the drug fingerprint vector and the protein domain vector.

- This operation generates a very high-dimensional feature vector where each feature corresponds to a specific pair of a chemical substructure and a protein domain [33].

3. Model Training and Feature Extraction:

- Apply L1-Regularized Classifiers: Train a binary classifier, such as L1-regularized logistic regression (L1LOG) or L1-regularized support vector machine (L1SVM), to predict drug-target interactions from the tensor product feature vectors.

- Extract Informative Features: The L1-regularization has the property of driving the weights of many features to zero. The non-zero weights in the resulting model correspond to the specific substructure-domain pairs that are most informative and predictive of the interaction [33]. These features form a biologically meaningful, minimal set.

Machine Learning Feature Identification Process

Successful construction and application of a chemogenomic library rely on a suite of publicly available data resources, software tools, and physical reagents.

Table 2: Essential Toolkit for Chemogenomic Library Research and Screening

| Tool / Resource Name | Type | Primary Function in Chemogenomics |

|---|---|---|

| ChEMBL [30] | Public Database | Provides curated bioactivity data (e.g., IC50, Ki) and target annotations for small molecules, forming a foundational data source for library annotation. |

| Cell Painting [30] | Experimental Assay | A high-content, image-based morphological profiling assay that generates a rich phenotypic signature for compounds, used for mechanistic deconvolution. |

| Neo4j [30] | Software / Database | A graph database platform used to integrate heterogeneous data (drug, target, pathway, phenotype) into a unified systems pharmacology network. |

| ScaffoldHunter [30] | Software | Analyzes and visualizes the molecular scaffold hierarchy of compound libraries, enabling diversity analysis and chemoinformatic curation. |

| PubChem Substructure Fingerprints [33] | Chemical Descriptor | A standardized set of 881 chemical substructures used to numerically represent a molecule for machine learning and chemogenomic analysis. |

| PFAM Database [33] | Public Database | A comprehensive collection of protein families and domains, used to functionally annotate and numerically represent target proteins. |

| C3L Explorer [32] | Web Platform / Data | A publicly available data exploration and visualization platform for a specific precision oncology-focused chemogenomic library and its screening results. |

The strategic design and curation of a chemogenomic library are pivotal for bridging the gap between phenotypic observation and target identification. As demonstrated, approaches range from extensive, systems-level networks encompassing thousands of compounds to more focused, disease-specific libraries and in silico models that distill the fundamental principles of drug-target interactions. The choice of strategy depends on the specific research goals, whether for broad mechanistic deconvolution or identifying patient-specific vulnerabilities in precision oncology. The continued development and application of these libraries, supported by robust public data resources and advanced computational methods, firmly position chemogenomics as a cornerstone of modern phenotypic drug discovery.

Affinity-based pull-down methods represent a cornerstone biochemical approach for identifying the molecular targets of small molecules discovered through phenotypic screening [34] [35]. When unbiased phenotypic screening reveals compounds that produce desirable biological effects, the critical subsequent challenge lies in identifying their specific protein targets—a process essential for understanding mechanisms of action, optimizing lead compounds, and predicting potential off-target effects [34] [36]. Among the experimental strategies available, affinity-based pull-down methods stand out for their direct approach to capturing and identifying protein binding partners [35]. These techniques function by chemically modifying the small molecule of interest with an affinity tag, creating a bait molecule that can selectively isolate target proteins from complex biological mixtures such as cell lysates [34] [35]. The two predominant strategies—on-bead affinity matrices and biotin tagging—offer complementary advantages and limitations that researchers must carefully consider when validating phenotypic hits through chemogenomic target identification research [34].

Core Principles and Comparative Analysis

Fundamental Mechanisms

On-bead affinity matrix approach involves covalently attaching a small molecule to a solid support (e.g., agarose beads) through a linker, creating an immobilized affinity matrix [34] [35]. This matrix is then incubated with a cell lysate containing potential target proteins. After washing away non-specifically bound proteins, specifically bound targets are eluted and identified through mass spectrometry analysis [34]. The linker, often polyethylene glycol (PEG), is crucial as it positions the small molecule away from the bead surface, potentially improving accessibility to protein binding partners [34].

Biotin-tagged approach utilizes the strong non-covalent interaction between biotin and streptavidin (Kd ≈ 10-15 M) [34]. In this method, the small molecule is conjugated to a biotin tag through a chemical linkage, creating a mobile bait probe [34] [35]. This biotinylated molecule is incubated with a cell lysate or living cells to allow formation of compound-protein complexes, which are then captured using streptavidin-coated beads [34]. The bound proteins are typically eluted under denaturing conditions (e.g., SDS buffer at 95-100°C) and identified via SDS-PAGE and mass spectrometry [34].

Performance Comparison and Experimental Data

Table 1: Comparative Analysis of On-Bead Matrix vs. Biotin-Tagged Pull-Down Methods

| Parameter | On-Bead Affinity Matrix | Biotin-Tagged Approach |

|---|---|---|

| Tagging System | Covalent attachment to solid support (e.g., agarose beads) | Biotin tag conjugated to small molecule |

| Complexity of Probe Synthesis | Moderate to high | Moderate |

| Representative Successful Applications | KL001 (CRY), Aminopurvalanol (CDK1), BRD0476 (USP9X) [35] | Withaferin (vimentin), stauprimide (NME2), PNRI-299 (Ref-1/AP-1) [34] [35] |

| Cellular Permeability | Limited to cell lysate applications | Possible in live cells but permeability may be reduced by biotin tag [34] |

| Elution Conditions | Native conditions possible (e.g., excess free ligand) | Often requires denaturing conditions (SDS, heat) [34] |

| Key Advantages | Preserves protein function for downstream assays; reusable matrix | Strong binding affinity; versatile detection methods |

| Major Limitations | Potential steric hindrance from beads; requires optimization of attachment site | Harsh elution conditions may denature proteins; biotin tag may affect cellular permeability and bioactivity [34] |

| Compatibility with Intact Cellular Context | No (lysate-based only) | Yes (with potential limitations due to tag effects) [34] |

Table 2: Experimental Data from Selected Studies Using Each Method

| Compound | Method | Identified Target | Key Experimental Findings | Reference |

|---|---|---|---|---|

| KL001 | On-bead matrix | Cryptochrome (CRY) | Identified circadian clock protein; validated through competitive binding and functional assays | [35] |

| Aminopurvalanol | On-bead matrix | CDK1 | Confirmed known cyclin-dependent kinase target; demonstrated method specificity | [35] |

| PNRI-299 | Biotin-tagged | Activator Protein 1 (AP-1)/Ref-1 | Identified redox factor 1 as molecular target; explained compound's mechanism in transcription regulation | [34] [35] |

| Withaferin | Biotin-tagged | Vimentin | Discovered interaction with type III intermediate filament protein; validated through imaging and co-localization | [35] |

Experimental Protocols

Protocol for On-Bead Affinity Matrix Pull-Down

1. Probe Preparation:

- Covalently attach small molecule to agarose beads using a heterobifunctional crosslinker (e.g., PEG-based spacer) at a specific site that doesn't interfere with bioactivity [34] [37].

- Prepare control beads without conjugated small molecule or with an inactive analog.

2. Sample Preparation:

- Lyse cells in appropriate buffer (e.g., 50 mM Tris-HCl, pH 7.5, 150 mM NaCl, 1% IGEPAL CA-630, protease inhibitors) [38].

- Clarify lysate by centrifugation at 16,000 × g for 15 minutes at 4°C.

3. Binding Reaction:

- Incubate cell lysate (typically 0.5-1 mg total protein) with small molecule-conjugated beads (25-50 μL bead volume) for 2-4 hours at 4°C with gentle rotation [37].

- In parallel, incubate control lysate with control beads.

4. Wash Steps:

- Pellet beads by gentle centrifugation (500 × g for 1 minute).

- Wash 3-5 times with 10-20 bead volumes of wash buffer (e.g., 50 mM Tris-HCl, pH 7.5, 300 mM NaCl) to remove non-specifically bound proteins [39] [37].

- Optimize stringency by adjusting salt concentration or adding mild detergents.

5. Elution:

- Elute specifically bound proteins using either:

- Competitive elution with excess free small molecule (2-4 hours incubation)

- Low pH buffer (e.g., 100 mM glycine, pH 2.5-3.0)

- SDS-PAGE sample buffer (for direct analysis by electrophoresis) [37]

6. Analysis:

- Separate eluted proteins by SDS-PAGE and visualize with Coomassie or silver staining.

- Identify specifically bound proteins (present in experimental but absent in control eluates) by excising bands and analyzing via LC-MS/MS [34] [40].

- Validate putative targets through orthogonal methods (e.g., Western blotting, functional assays).

On-Bead Affinity Matrix Workflow: This diagram illustrates the sequential process of immobilizing a small molecule to beads, incubating with cell lysate, and identifying bound target proteins.

Protocol for Biotin-Tagged Pull-Down

1. Probe Preparation:

- Synthesize biotin-conjugated small molecule using a chemical linker at a position known not to affect biological activity [34].

- Confirm conjugation through analytical methods (HPLC, mass spectrometry).

2. Binding Reaction:

- Incubate biotinylated small molecule (typically 1-10 μM) with cell lysate or intact cells for 1-2 hours at 4°C [34].

- For live cell studies, optimize concentration and incubation time to maintain cell viability.

3. Capture:

- Add streptavidin-coated beads (25-50 μL) and incubate for 1 hour at 4°C with gentle rotation.

- Include controls: no compound, unconjugated biotin, or excess free compound competition.

4. Wash Steps:

- Pellet beads by gentle centrifugation (500 × g for 1 minute).

- Wash 3-5 times with wash buffer (e.g., 50 mM Tris-HCl, pH 7.5, 150 mM NaCl, 0.1% Triton X-100) [34].

5. Elution:

- Elute bound proteins by boiling beads in 1× SDS-PAGE sample buffer for 5-10 minutes [34].

- Alternative elution methods include competition with excess free ligand or biotin.

6. Analysis:

- Analyze eluted proteins by SDS-PAGE and mass spectrometry as described for on-bead method.

- For Western blot analysis of specific candidates, split eluate for parallel analysis.

Biotin-Tagged Pull-Down Workflow: This diagram shows the process of creating a biotinylated small molecule, forming complexes with target proteins, and capturing them with streptavidin beads for analysis.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagent Solutions for Affinity Pull-Down Experiments

| Reagent/Category | Specific Examples | Function and Application Notes |

|---|---|---|

| Solid Supports | Agarose beads, Magnetic beads | Provide solid matrix for immobilization; magnetic beads enable easier handling and high-throughput applications [37] |

| Affinity Tags | Biotin, GST, His-tag | Enable specific capture of bait molecule or bait-target complexes; biotin offers strongest non-covalent interaction [34] [37] |

| Binding Matrices | Streptavidin beads, Glutathione Sepharose, Ni-NTA resin | Capture tagged molecules; choice depends on tag used [34] [39] [37] |

| Linkers/Crosslinkers | PEG spacers, Photoactivatable linkers (diazirines, benzophenones) | Connect small molecule to tag or solid support; optimize length to minimize steric hindrance [34] |

| Lysis Buffers | IGEPAL CA-630, Triton X-100, CHAPS | Extract proteins while maintaining native interactions; detergent choice affects complex stability [38] [37] |

| Protease Inhibitors | Complete Mini tablets (Roche), PMSF | Prevent protein degradation during isolation process [38] |

| Elution Reagents | Reduced glutathione (for GST), Imidazole (for His-tag), SDS sample buffer | Release captured proteins; specific to affinity system or denaturing for general elution [39] [37] |

| Detection Methods | Coomassie/silver staining, Western blotting, LC-MS/MS | Identify and validate captured proteins; MS is essential for unknown target identification [34] [40] |

Strategic Implementation in Target Validation

Method Selection Guidelines

Choosing between on-bead matrix and biotin-tagged approaches requires careful consideration of several factors. The on-bead affinity matrix method is particularly advantageous when working with small molecules where conjugation can be strategically designed to minimize interference with binding activity, or when the resulting protein complexes need to be studied under native conditions for functional assays [34]. This method has proven successful for compounds like KL001 and BRD0476, where the targets (cryptochrome and USP9X, respectively) were successfully identified and validated [35].

The biotin-tagged approach offers greater flexibility for live-cell applications and is ideal when the small molecule can tolerate conjugation without significant loss of potency [34]. However, researchers must be cautious about potential reduced cellular permeability due to the biotin tag and the need for harsh elution conditions that may denature proteins and preclude subsequent functional analysis [34]. The successful identification of vimentin as the target for withaferin demonstrates the power of this approach when optimized appropriately [35].

Technical Considerations and Optimization

Minimizing Non-Specific Binding: Non-specific binding remains a significant challenge in both approaches. Effective strategies include:

- Using appropriate control beads (empty beads or beads with inactive analogs)

- Optimizing wash stringency by adjusting salt concentration (150-500 mM NaCl) and detergent type/concentration

- Including competitor molecules (e.g., unlabeled biotin for biotin-based systems) during washes [34] [37]

Validation of Specific Interactions: Putative targets identified through pull-down experiments require rigorous validation:

- Employ orthogonal techniques such as Cellular Thermal Shift Assay (CETSA), Drug Affinity Responsive Target Stability (DARTS), or Surface Plasmon Resonance (SPR)

- Demonstrate dose-dependent competition with free compound

- Confirm functional consequences of binding through enzymatic or cellular assays [35]

- Use genetic approaches (knockdown/knockout) to validate functional relevance

Troubleshooting Common Issues:

- Low target yield may require optimization of bait concentration, incubation time, or lysis conditions

- High background binding can be addressed by increasing wash stringency or including specific competitors

- Failure to detect known interactions may indicate improper probe orientation or steric hindrance, necessitating alternative conjugation strategies [34] [37]