Validating Mechanism of Action: A Comprehensive Guide to Chemogenomic Profiling in Drug Discovery

This article provides a comprehensive overview of chemogenomic profiling as a powerful system-based approach for validating the mechanism of action (MoA) of small molecules in drug discovery.

Validating Mechanism of Action: A Comprehensive Guide to Chemogenomic Profiling in Drug Discovery

Abstract

This article provides a comprehensive overview of chemogenomic profiling as a powerful system-based approach for validating the mechanism of action (MoA) of small molecules in drug discovery. Aimed at researchers, scientists, and drug development professionals, it explores the foundational principles of chemogenomics, contrasting forward and reverse strategies for target deconvolution and phenotypic screening. The scope extends to detailed methodological applications, including the design of targeted chemical libraries and the integration of affinity-based pull-down and label-free techniques for target identification. It further addresses common troubleshooting and optimization challenges, offering solutions for issues such as probe design and data integration. Finally, the article covers validation and comparative analysis, illustrating how chemogenomics informs decision-making in precision oncology and lead optimization, ultimately accelerating the development of safer and more effective therapeutics.

Chemogenomics 101: From Basic Concepts to System-Wide Target Exploration

Chemogenomics is a drug discovery paradigm that involves the systematic screening of targeted chemical libraries of small molecules against specific families of drug targets (e.g., GPCRs, kinases, proteases) with the ultimate goal of identifying novel drugs and drug targets [1]. In the modern context, it represents a shift from the traditional "one target—one drug" vision to a more complex systems pharmacology perspective, leveraging the wealth of genomic data to explore the intersection of all possible drugs on all potential therapeutic targets [2] [1].

This guide compares the central strategies in chemogenomics—forward and reverse approaches—and details how their integration, supported by advanced technological platforms, is pivotal for validating the mechanism of action (MOA) of new therapeutic compounds.

Strategic Approaches to Chemogenomics

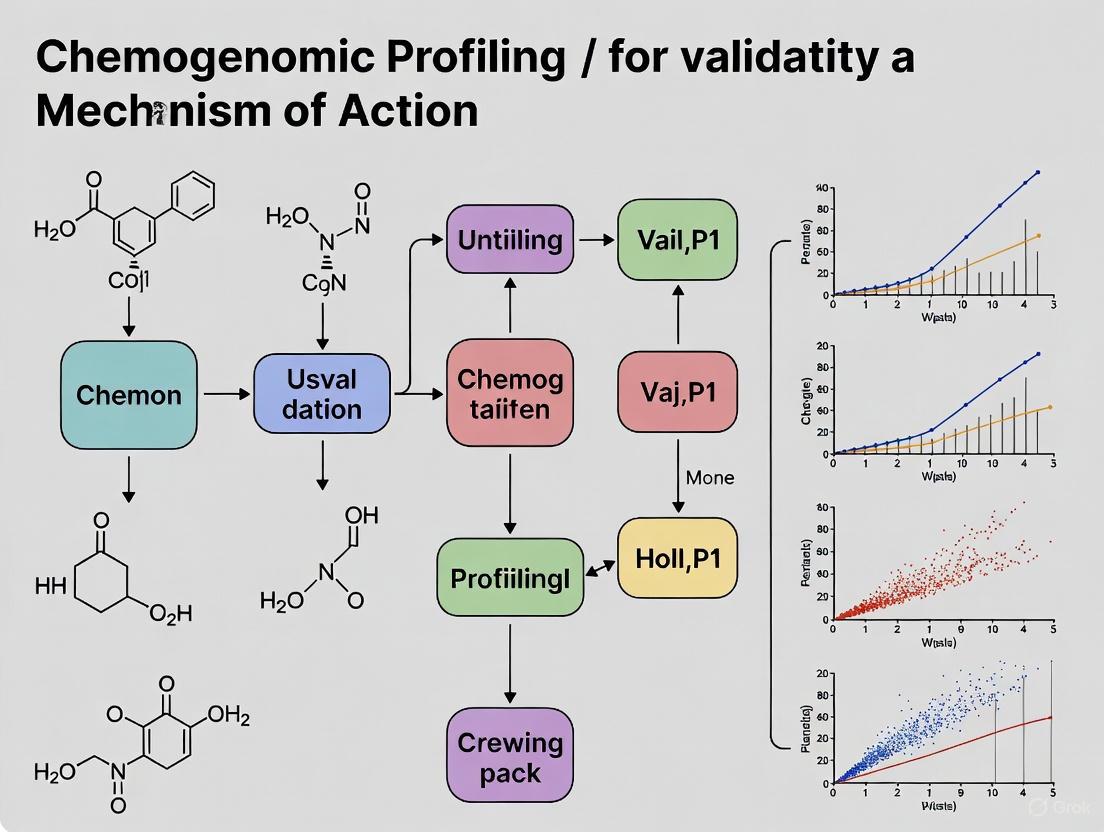

Two primary, complementary strategies define experimental chemogenomics. Their logical relationship and workflow are summarized in the diagram below.

Forward Chemogenomics

In this phenotype-first approach, small molecules are screened in cellular or animal models to identify compounds that produce a desired phenotype, such as the arrest of tumor growth [1]. The molecular basis for the phenotype is initially unknown. The core challenge lies in subsequently deconvoluting the target—identifying the specific protein(s) and biological pathways responsible for the observed effect [3] [1]. This approach pre-validates the biological effect of a compound in a disease-relevant context from the outset [3].

Reverse Chemogenomics

This target-first approach begins by identifying small molecules that perturb the function of a specific, known protein target in an in vitro assay [1]. Once a modulator is found, the phenotype it induces is analyzed in cells or whole organisms to confirm the biological role of the target and the therapeutic potential of the compound [1]. This strategy has been enhanced by the ability to perform parallel screening across entire protein families [1].

Comparison of Chemogenomic Strategies and Platforms

The following tables summarize the core characteristics of the two main strategies and examples of real-world chemogenomic libraries.

Table 1: Comparison of Forward and Reverse Chemogenomics Approaches

| Feature | Forward Chemogenomics | Reverse Chemogenomics |

|---|---|---|

| Starting Point | Phenotype in a complex biological system (e.g., cell-based assay) [1] | Known, purified protein target [1] |

| Primary Goal | Identify compounds inducing a phenotype; then find the target [3] [1] | Find compounds modulating a target; then characterize the phenotype [1] |

| Typical Assays | High-content imaging, phenotypic screening [2] | In vitro enzymatic assays, binding assays [1] |

| Target Validation | Late-stage; required after hit identification [3] | Early-stage; prerequisite for screening [3] |

| Advantage | Disease-relevant context, identifies novel biology [3] | High target specificity, straightforward for lead optimization [1] |

| Challenge | Target deconvolution can be complex and time-consuming [3] | May fail if target is not disease-relevant in a physiological context [3] |

Table 2: Exemplary Chemogenomic Libraries and Their Characteristics

| Library Name | Size (Compounds) | Key Characteristics | Application in Screening |

|---|---|---|---|

| C3L Minimal Screening Library [4] | 1,211 | Designed to target 1,386 anticancer proteins; emphasizes cellular activity and chemical diversity. | Phenotypic profiling of glioblastoma patient cells. |

| EUbOPEN Chemogenomic Library [5] | N/A | Aims to cover ~30% of the druggable genome; organized by target families (kinases, epigenetic modulators). | Functional annotation of proteins, including underexplored target areas. |

| Phenotypic Pharmacology Network Library [2] | 5,000 | Integrates drug-target-pathway-disease data with morphological profiles from Cell Painting assay. | Target identification and mechanism deconvolution for phenotypic screens. |

| Pfizer/GSK BDCS Libraries [2] | N/A | Industrial compound sets designed for broad biological diversity and target coverage. | Broad screening against diverse target families. |

Experimental Protocols for Target Identification and MOA Validation

Following a phenotypic screen, identifying the molecular target is crucial. The methodologies below, often used in tandem, form the cornerstone of MOA validation.

Direct Biochemical Methods: Affinity Purification

This method provides the most direct physical evidence of compound-target interaction [3].

- Procedure:

- Immobilization: The small molecule of interest is covalently linked to a solid support (e.g., beads) via a chemical tether, ensuring it remains accessible for binding [3].

- Incubation: The immobilized compound is incubated with a cell lysate containing the potential target proteins.

- Washing: Non-specifically bound proteins are removed through a series of stringent washes [3].

- Elution & Analysis: Specifically bound proteins are eluted, often by competition with free soluble compound, and identified using mass spectrometry [3].

- Key Considerations:

Genetic Interaction Methods: Fitness-Based Profiling

This approach uses genetic perturbations to identify a compound's target and pathway [6].

- Procedure (Yeast Model):

- Pooled Screening: A pooled library of barcoded yeast deletion strains is grown competitively in the presence and absence of the small molecule [6].

- Fitness Measurement: The relative abundance of each strain in the pool is determined by quantifying the barcodes via microarray or sequencing. Strains whose growth is specifically enhanced or inhibited by the drug are identified [6].

- Target Inference:

- Haploinsufficiency Profiling (HIP): Strains heterozygous for a drug's essential target show heightened sensitivity (fitness defect) because reduced gene dosage amplifies the drug's effect [6].

- Overexpression Profiling: Strains overexpressing the drug target may show increased resistance, as the higher protein level titrates out the compound [6].

- Key Considerations: This method is powerful in model organisms but requires adaptation for human cell studies using CRISPR-based gene knockout or activation screens [6].

Computational Inference: Morphological and Transcriptional Profiling

This method infers MOA by comparing the "fingerprint" of an unknown compound to a reference database of profiles for compounds with known targets [2] [6].

- Procedure:

- Profile Generation:

- Database Query: The resulting profile (morphological or gene expression) is used as a query against a reference database of profiles from compounds with known MOA.

- Guilt-by-Association: The MOA of the unknown compound is inferred from the known compound(s) with the most similar profile [6].

- Key Considerations: The accuracy of this method is entirely dependent on the breadth and quality of the reference database [6].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful chemogenomic profiling relies on a suite of specialized reagents and platforms.

Table 3: Key Reagent Solutions for Chemogenomic Research

| Item | Function in Chemogenomics |

|---|---|

| Targeted Chemical Library (e.g., C3L, EUbOPEN) [4] [5] | A curated collection of small molecules designed to cover a wide space of drug targets, particularly protein families; the core reagent for screening. |

| Cell Painting Assay Kits [2] | A high-content imaging assay that uses fluorescent dyes to label multiple cell components; used to generate morphological profiles for MOA inference. |

| Barcoded Mutant Libraries (e.g., Yeast KO, CRISPR sgRNA) [6] | Pooled libraries of genetically perturbed cells (e.g., gene knockouts) that allow for fitness-based profiling and genetic target identification. |

| Affinity Purification Resins [3] | Solid supports (e.g., beads) for immobilizing small molecules to create affinity matrices for direct biochemical target pulldown. |

| Photoaffinity Labeling Probes [3] | Small molecules equipped with a photoactivatable crosslinker; upon UV irradiation, they form a covalent bond with their protein target, aiding in the capture of low-affinity interactions. |

The strategic integration of forward and reverse chemogenomics creates a powerful feedback loop for robust MOA validation. A phenotypic "hit" from a forward screen can be advanced to target identification via biochemical, genetic, and computational methods. Conversely, a target-focused "hit" from a reverse screen must be validated in a physiologically relevant phenotypic model. This iterative process, supercharged by high-quality chemogenomic libraries and advanced technological platforms, systematically bridges the gap between observable biological effects and their underlying molecular mechanisms, ultimately accelerating the development of safer and more effective therapeutics.

In the field of modern drug discovery, validating the mechanism of action (MoA) of bioactive compounds is a critical step in translating phenotypic observations into targeted therapies. Chemogenomics, the systematic study of the interaction between chemical compounds and biological systems, provides two distinct yet complementary approaches for this validation: forward and reverse chemogenomics [7] [6]. These pathways mirror classical genetic approaches but employ small molecules as perturbing agents to establish causal relationships between molecular targets and phenotypic outcomes [3] [6]. The strategic selection between forward and reverse chemogenomics depends on the starting point of the investigation—whether one begins with an uncharacterized compound eliciting a phenotype or a predefined molecular target of interest. This guide provides an objective comparison of these two methodologies, their experimental protocols, and their respective applications in MoA validation for researchers and drug development professionals.

Conceptual Frameworks and Definitions

Forward Chemogenomics: From Phenotype to Target

Forward chemogenomics begins with a biologically active small molecule whose protein target is unknown. Researchers observe a phenotypic effect in a cellular or organismal system and work to identify the molecular target(s) responsible [3] [7]. This approach is analogous to forward genetics, where one starts with an observable trait and identifies the responsible gene [3]. The strength of this strategy lies in its unbiased nature—it allows for the discovery of novel therapeutic targets and biological pathways without preconceived hypotheses about which proteins might be relevant to a disease process [3] [8]. Historically, this approach has led to significant discoveries, including the identification of FKBP12, calcineurin, and mTOR as the targets of immunosuppressive compounds FK506 and cyclosporine A [3].

Reverse Chemogenomics: From Target to Phenotype

Reverse chemogenomics starts with a validated protein target of known or presumed therapeutic value and seeks compounds that modulate its activity [7] [6]. This approach is analogous to reverse genetics, where a specific gene is manipulated to observe the resulting phenotypic consequences [3]. The reverse approach requires substantial upfront investment in target validation to demonstrate the protein's relevance to a biological pathway or disease process before screening begins [3]. This strategy dominates target-based drug discovery campaigns and benefits from straightforward optimization pathways once lead compounds are identified.

Table 1: Core Conceptual Differences Between Forward and Reverse Chemogenomics

| Feature | Forward Chemogenomics | Reverse Chemogenomics |

|---|---|---|

| Starting Point | Biologically active small molecule with unknown target [3] | Validated protein target with known therapeutic relevance [3] [6] |

| Analogous Genetics Approach | Forward genetics (phenotype to gene) [3] | Reverse genetics (gene to phenotype) [3] |

| Screening Context | Cell-based or organism-based phenotypic assays [3] [8] | Target-based assays using purified proteins [3] |

| Target Discovery | Required as follow-up (target deconvolution) [3] | Known prior to compound discovery |

| Typical Applications | Discovering novel targets and biological pathways [3] [8] | Developing selective modulators of characterized targets [3] |

Experimental Workflows and Methodologies

Forward Chemogenomics Workflow

The forward chemogenomics pathway involves a multi-step process to deconvolute the molecular target(s) responsible for an observed phenotype. The workflow typically proceeds through the following stages:

Step 1: Phenotypic Screening Researchers first conduct cell-based or organism-based assays to identify compounds that induce a desired phenotypic change [3] [8]. These assays preserve cellular context and can reveal novel biology, but they require follow-up target identification [3].

Step 2: Target Deconvolution This critical phase employs various methods to identify the protein target(s):

- Direct Biochemical Methods: Affinity purification using compound-immobilized matrices followed by mass spectrometry identification of bound proteins [3]. Challenges include maintaining compound activity while immobilized and designing appropriate control experiments [3].

- Genetic Interaction Methods: Examining how genetic perturbations (e.g., gene knockouts or knockdowns) alter compound sensitivity [6]. In yeast, haploinsufficiency profiling (HIP) can directly identify drug targets by monitoring fitness defects in heterozygous strains [6].

- Computational Inference: Comparing compound-induced gene expression profiles or chemogenomic signatures to reference databases [3] [6]. Pattern matching can infer mechanism of action based on similarity to compounds with known targets [6].

Step 3: Mechanistic Validation Confirmed targets undergo functional studies to establish the causal relationship between target engagement and observed phenotype [3].

Figure 1: Forward Chemogenomics Workflow - From phenotypic observation to target identification.

Reverse Chemogenomics Workflow

The reverse chemogenomics pathway follows a more linear, target-centric approach:

Step 1: Target Selection and Validation A protein target is selected based on established relevance to a disease pathway or biological process [3]. Credentialing involves demonstrating that modulation of the target will produce the desired therapeutic effect [3].

Step 2: Biochemical Screening Purified target protein is exposed to compound libraries in high-throughput screening (HTS) formats [3]. Assays measure direct binding or functional modulation of the target.

Step 3: Cellular Validation Hit compounds from biochemical screens are tested in cellular models to confirm target engagement and functional effects in a more physiologically relevant context [3].

Step 4: Phenotypic Characterization Compounds with confirmed cellular activity undergo broader phenotypic assessment to evaluate potential off-target effects and comprehensive biological impact [3].

Figure 2: Reverse Chemogenomics Workflow - From target selection to compound validation.

Comparative Analysis: Strengths and Limitations

Performance Metrics and Applications

Table 2: Experimental Comparison of Forward and Reverse Chemogenomics Approaches

| Parameter | Forward Chemogenomics | Reverse Chemogenomics |

|---|---|---|

| Target Novelty Potential | High - enables discovery of novel biology [3] [8] | Limited to known biology and pre-validated targets [3] |

| Attrition Risk | Higher - phenotypic relevance established early but target deconvolution can fail [3] [8] | Lower for on-target activity but higher for clinical translation [3] |

| Technical Complexity | High - requires multiple orthogonal methods for target identification [3] | Moderate - streamlined workflow with clear optimization path [3] |

| Polypharmacology Detection | Excellent - can identify multiple relevant targets simultaneously [3] | Poor - focused on single target, though off-targets can cause issues [3] |

| Typical Timeline | Longer due to target deconvolution phase [3] | Shorter initial screening to hit identification [3] |

| Success Examples | FK506 → FKBP12/calcineurin [3]; Trapoxin A → HDACs [3] | Most kinase inhibitors; protease inhibitors [3] |

Practical Considerations for Implementation

The choice between forward and reverse chemogenomics depends on several practical considerations. Forward approaches are particularly valuable when biological understanding of a disease is incomplete, as they can reveal novel therapeutic targets and pathways without predefined hypotheses [3] [8]. However, they require sophisticated target deconvolution capabilities and may encounter challenges in differentiating primary targets from secondary binders.

Reverse approaches benefit from more straightforward structure-activity relationship development and optimization once hits are identified [3]. The main challenge lies in the initial target validation—selecting targets with genuine therapeutic potential and developing robust assays that predict physiological relevance [3].

Recent advances have blurred the boundaries between these approaches. Integrated strategies now combine initial phenotypic screening with computational target prediction and subsequent experimental validation, leveraging the strengths of both paradigms [9] [10].

Detailed Experimental Protocols

Protocol 1: Affinity Purification for Target Identification (Forward Chemogenomics)

This protocol details the biochemical approach for identifying direct protein targets of bioactive compounds, a cornerstone of forward chemogenomics [3].

Materials and Reagents:

- Compound of interest (≥95% purity)

- Inactive structural analog (for control experiments)

- Solid support matrix (e.g., agarose beads)

- Cross-linking reagent (for photoaffinity labeling variants)

- Cell lysate from relevant tissue or cell line

- Wash buffers: PBS, high-salt buffer (500 mM NaCl), and detergent-containing buffer

- Elution buffer (compound solution or denaturing conditions)

- Mass spectrometry-grade solvents for protein identification

Procedure:

- Immobilization: Covalently link the compound to a solid support matrix using appropriate chemistry that preserves its bioactivity [3]. A control matrix should be prepared with an inactive analog or blocked reactive groups.

- Incubation: Incubate the compound-conjugated matrix with cell lysate (typically 1-10 mg total protein) for 1-4 hours at 4°C with gentle agitation.

- Washing: Perform sequential washes with PBS, high-salt buffer, and detergent-containing buffer to remove nonspecifically bound proteins [3]. Stringency should be balanced to retain genuine interactions while minimizing background.

- Elution: Elute specifically bound proteins using either excess free compound (competitive elution) or denaturing conditions [3].

- Identification: Resolve eluted proteins by SDS-PAGE and identify specific bands by mass spectrometry, or digest proteins directly in solution for LC-MS/MS analysis.

Validation: Candidates should be validated through orthogonal approaches such as cellular thermal shift assays, siRNA-mediated knockdown with compound sensitivity assessment, or biophysical binding assays [3].

Protocol 2: Competitive Fitness Profiling in Yeast (Forward Chemogenomics)

This genetic approach leverages barcoded yeast deletion collections to identify drug targets and responsive pathways [6].

Materials and Reagents:

- Barcoded yeast deletion collections (e.g., YKO collection)

- Compound of interest dissolved in appropriate vehicle

- Rich and selective media for yeast growth

- PCR amplification reagents for barcode amplification

- Microarray or sequencing platform for barcode quantification

Procedure:

- Pooling: Combine all yeast deletion strains in equal proportions in appropriate media.

- Compound Exposure: Divide the pool and grow in presence of compound (test) or vehicle control (reference) for multiple generations.

- Harvesting: Collect samples at multiple time points during logarithmic growth.

- Barcode Amplification: Isolate genomic DNA and amplify unique barcodes by PCR.

- Quantification: Quantify barcode abundance by microarray or next-generation sequencing [6].

- Analysis: Calculate fitness defects as the ratio of barcode abundance in test versus control conditions. Strains showing significant fitness defects indicate genes important for compound response.

Data Interpretation: Homozygous deletion strains that are hypersensitive to the compound may identify the direct drug target or pathway components. Heterozygous strains showing haploinsufficiency can directly identify the drug target [6].

Protocol 3: Virtual Screening for Target Fishing (Computational Approach)

Computational target fishing serves as a complementary approach to experimental methods in both forward and reverse chemogenomics [9] [10].

Materials and Software:

- Compound structure in standardized format (SMILES or SDF)

- Target databases (ChEMBL, BindingDB, PDB)

- Software tools: Schrödinger, Discovery Studio, ChemMapper, PharmMapper, or idTarget

- Computing resources adequate for database screening

Procedure:

- Shape Screening: Compare the 3D geometry of the query compound to annotated ligand databases using molecular similarity algorithms [10]. Top matches suggest potential targets.

- Pharmacophore Screening: Identify essential functional features and their spatial relationships, then screen against pharmacophore model databases [10].

- Reverse Docking: Dock the query compound against a database of protein active sites to identify favorable interactions [10].

- Consensus Scoring: Integrate results from multiple approaches to generate high-confidence target hypotheses.

Validation: Computational predictions require experimental validation through the biochemical or genetic methods described above [9].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents for Chemogenomics Studies

| Reagent/Solution | Function | Application Context |

|---|---|---|

| Immobilized Compound Beads | Affinity matrix for pull-down experiments | Forward chemogenomics - direct target identification [3] |

| Barcoded Yeast Deletion Collections | Pooled screening of loss-of-function mutants | Forward chemogenomics - fitness profiling [6] |

| Photoaffinity Probes | Covalent capture of low-affinity targets | Forward chemogenomics - cross-linking applications [3] |

| Purified Protein Targets | High-throughput screening | Reverse chemogenomics - biochemical assays [3] |

| Annotated Compound Libraries | Reference databases for computational screening | Both approaches - target prediction [9] [10] |

| 3D Protein Structure Databases | Reverse docking targets | Computational target fishing [10] |

| Gene Expression Profiling Arrays | Signature-based mechanism identification | Forward chemogenomics - MoA classification [6] |

Integrated Applications and Future Directions

The distinction between forward and reverse chemogenomics is increasingly blurred in contemporary drug discovery, with integrated approaches becoming more prevalent. For example, in a recent study on NR4A nuclear receptor modulators, researchers employed a combined strategy starting with compound profiling (reverse approach) followed by application of validated tool compounds to elucidate novel biology in endoplasmic reticulum stress and adipocyte differentiation (forward approach) [11].

Advancements in computational methods are particularly transformative for both approaches. For forward chemogenomics, improved target prediction algorithms accelerate the tedious process of target deconvolution [9] [10]. For reverse chemogenomics, structure-based design facilitates more rational compound optimization. The growing availability of large-scale chemogenomic datasets enables pattern-based MoA prediction that transcends the traditional forward/reverse dichotomy [6] [9].

In cancer research, comprehensive molecular profiling studies exemplify how these approaches converge in precision medicine. The COMPASS trial in pancreatic cancer integrated whole genome and transcriptome sequencing to identify molecular subgroups with therapeutic implications, simultaneously informing both target discovery (forward) and patient stratification for targeted therapies (reverse) [12].

The future of MoA validation will likely involve even tighter integration of these approaches, leveraging the phenotypic relevance of forward chemogenomics with the mechanistic clarity of reverse chemogenomics through iterative cycles of computational prediction and experimental validation.

The Shift from 'One-Target-One-Drug' to Systems Pharmacology

For decades, drug discovery has been dominated by the 'one-target-one-drug' paradigm, a reductionist approach that focuses on identifying single molecular targets and developing highly specific compounds to modulate them [13]. This strategy has produced successful treatments for infectious and monogenic diseases but demonstrates significant limitations when applied to complex, multifactorial diseases such as cancer, neurodegenerative disorders, and metabolic syndromes [13] [14]. These conditions involve intricate networks of genes, proteins, and signaling pathways with redundant mechanisms that diminish the efficacy of single-target therapies, leading to high failure rates in clinical trials—approximately 60-70% for drugs developed through conventional approaches [13].

The recognition of these limitations has catalyzed a fundamental shift toward systems pharmacology, a holistic framework that views the body as an integrated network of molecular interactions [13] [15]. This emerging discipline integrates systems biology, bioinformatics, and pharmacology to understand sophisticated drug-target-disease relationships within biological networks [16]. Rather than targeting individual components, systems pharmacology aims to modulate multiple nodes in disease networks simultaneously, offering enhanced therapeutic efficacy with reduced side effects for complex disorders [17]. This paradigm shift represents a move from reductionist to systems-level thinking in pharmaceutical research, enabled by advances in omics technologies, bioinformatics, and computational modeling [18].

Comparative Analysis: Classical Pharmacology vs. Systems Pharmacology

The transition from classical to systems pharmacology represents more than just technological advancement—it constitutes a fundamental rethinking of therapeutic intervention. The table below summarizes the key distinctions between these two paradigms.

Table 1: Key Features of Traditional and Network Pharmacology

| Feature | Traditional Pharmacology | Systems Pharmacology |

|---|---|---|

| Targeting Approach | Single-target | Multi-target / network-level |

| Disease Suitability | Monogenic or infectious diseases | Complex, multifactorial disorders |

| Model of Action | Linear (receptor-ligand) | Systems/network-based |

| Risk of Side Effects | Higher (off-target effects) | Lower (network-aware prediction) |

| Failure in Clinical Trials | Higher (60-70%) | Lower due to pre-network analysis |

| Technological Tools Used | Molecular biology, pharmacokinetics | Omics data, bioinformatics, graph theory |

| Personalized Therapy | Limited | High potential (precision medicine) |

This comparative analysis reveals why systems pharmacology is better suited for addressing complex diseases. The single-target approach of classical pharmacology operates on a linear receptor-ligand model, which tends to experience more off-target effects and higher clinical trial failure rates [13]. In contrast, systems pharmacology employs network-aware prediction that minimizes adverse effects by considering drug actions within the broader context of biological systems [13]. Furthermore, while classical pharmacology offers limited potential for personalized medicine, systems pharmacology enables precision medicine through the integration of multi-omics data and computational predictions that account for individual variability [13] [18].

Chemogenomic Profiling: A Key Experimental Framework for Validation

Fundamental Principles and Applications

Chemogenomic profiling has emerged as a powerful experimental framework for validating drug mechanisms of action (MoA) within systems pharmacology. This approach systematically measures how chemical perturbations affect a comprehensive collection of genetic mutants, creating fitness profiles that reveal functional connections between compounds and their cellular targets [19] [20]. The core principle involves screening libraries of genetically distinct strains—such as haploid deletion mutants in model organisms—against diverse compound collections to generate quantitative drug scores (D-scores) that indicate sensitivity or resistance patterns [19].

This methodology has been successfully applied across multiple species, including Saccharomyces cerevisiae, Schizosaccharomyces pombe, and Plasmodium falciparum, demonstrating its broad utility for MoA investigation [19] [21]. In malaria research, chemogenomic profiling of P. falciparum piggyBac mutants has revealed novel insights into antimalarial drug mechanisms and resistance pathways, including the identification of an artemisinin sensitivity cluster containing the K13-propeller gene linked to artemisinin resistance [21]. Cross-species comparisons have further revealed that compound-functional module relationships are more conserved than individual compound-gene interactions, highlighting the modular organization of drug response systems [19].

Key Methodologies and Workflows

The experimental workflow for chemogenomic profiling involves several critical steps. For yeast models, the HaploInsufficiency Profiling and HOmozygous Profiling (HIP/HOP) platform utilizes barcoded heterozygous and homozygous knockout collections grown competitively in pooled formats [20]. Haploinsufficiency profiling (HIP) detects drug-induced sensitivity in heterozygous strains deleted for one copy of essential genes, directly identifying drug target candidates when the drug targets the product of these genes [20]. Homozygous profiling (HOP) interrogates nonessential homozygous deletion strains to identify genes involved in drug target pathways and those required for drug resistance [20].

Table 2: Core Methodologies in Chemogenomic Profiling

| Method | Organism | Key Features | Primary Applications |

|---|---|---|---|

| HIP/HOP Profiling | S. cerevisiae | Barcoded heterozygous/homozygous deletion pools; competitive growth; sequencing-based fitness quantification | Drug target identification; resistance mechanism mapping |

| Cross-Species Chemogenomics | S. cerevisiae and S. pombe | Comparative analysis of orthologous genes; evolutionary conservation of drug response | MoA prediction enhancement; conserved functional module identification |

| P. falciparum PiggyBac Mutant Profiling | Plasmodium falciparum | Single insertion mutants; dose-response IC50 determination; pathway association mapping | Antimalarial drug discovery; resistance gene identification |

| Mammalian CRISPR Screens | Human cell lines | Genome-wide knockout libraries; next-generation sequencing readouts | Human-specific target validation; translational drug development |

Fitness quantification is typically achieved through barcode sequencing that measures strain abundance changes following drug treatment. The resulting fitness defect (FD) scores represent relative strain sensitivity, with the greatest FD scores in HIP assays indicating the most likely drug targets [20]. Data processing involves normalization strategies such as robust z-score transformation of log2 ratios between control and treatment conditions, enabling cross-experiment comparisons [20]. These quantitative profiles allow for MoA prediction through similarity analysis—comparing unknown compound profiles to references with established mechanisms—and target identification through resistance patterns that emerge when drugs interact with their protein targets [19] [20].

Diagram 1: Chemogenomic Profiling Workflow. This workflow illustrates the key steps from mutant library construction to mechanism of action prediction.

Essential Research Tools and Databases for Systems Pharmacology

The implementation of systems pharmacology relies on diverse computational tools and biological databases that enable network construction, target prediction, and multi-omics integration. The table below summarizes key resources used in this field.

Table 3: Essential Research Reagent Solutions for Systems Pharmacology

| Category | Tool/Database | Functionality | Research Application |

|---|---|---|---|

| Drug Information | DrugBank, PubChem, ChEMBL | Drug structures, targets, pharmacokinetics | Compound characterization; ADME/T prediction |

| Gene-Disease Associations | DisGeNET, OMIM, GeneCards | Disease-linked genes, mutations, gene function | Target validation; disease module identification |

| Target Prediction | Swiss Target Prediction, Pharm Mapper, SEA | Predicts protein targets from compound structures | Polypharmacology assessment; mechanism elucidation |

| Protein-Protein Interactions | STRING, BioGRID, IntAct | Protein-protein interaction networks | Pathway analysis; network modeling |

| Pathway Analysis | KEGG, Reactome | Pathway mapping and visualization | Biological context interpretation; module identification |

| Network Analysis & Visualization | Cytoscape, NetworkX, Gephi | Network construction, topological analysis | Hub node identification; network modeling |

These resources facilitate the data-driven approach central to systems pharmacology. For instance, drug-target networks constructed using Cytoscape or NetworkX enable the identification of hub nodes and bottleneck proteins that represent key intervention points [13]. Similarly, integration of multi-omics data through tools like multi-omics factor analysis (MOFA) supports the development of comprehensive, patient-specific models for precision medicine applications [13] [18]. The strategic combination of these computational resources with experimental validation creates a powerful framework for network-based drug discovery.

Network Analysis and Mechanism of Action Prediction

Network Construction and Topological Analysis

Central to systems pharmacology is the construction and analysis of biological networks that represent complex drug-target-disease relationships. The standard workflow begins with data retrieval and curation from established databases such as DrugBank for drug information, DisGeNET for disease-associated genes, and STRING for protein-protein interactions [13]. Following data collection, target prediction employs both ligand-based (QSAR modeling, similarity ensemble approaches) and structure-based (molecular docking) strategies to identify potential drug targets [13].

Network construction typically involves creating bipartite graphs for drug-target interactions and protein-protein interaction (PPI) maps using tools like Cytoscape and NetworkX [13]. Topological analysis then applies graph-theoretical measures—including degree centrality, betweenness, closeness, and eigenvector centrality—to identify hub nodes and bottleneck proteins that represent critical control points in biological networks [13]. Community detection algorithms such as MCODE and Louvain further identify functional modules within these networks, which undergo enrichment analysis to determine overrepresented pathways and biological processes [13].

Mechanism of Action Prediction through Profile Similarity

Chemogenomic profiles serve as powerful phenotypic signatures for predicting mechanisms of action through similarity-based inference. The fundamental principle is that compounds sharing similar mechanisms will produce similar fitness profiles across a collection of mutants [19] [20]. This approach enables the classification of uncharacterized compounds by comparing their chemogenomic profiles to those of well-characterized references [21] [20].

Diagram 2: Mechanism of Action Prediction through Profile Similarity. This process compares unknown compound profiles against reference databases to infer mechanisms of action.

Studies have demonstrated that drugs targeting the same pathway show significantly higher profile correlations than those targeting different pathways [21]. For example, in P. falciparum, chemogenomic profiling correctly grouped inhibitors acting on related biosynthetic pathways and those targeting the same organelles, validating the approach's predictive capability [21]. Similarly, large-scale comparisons of yeast chemogenomic datasets revealed that the cellular response to small molecules is limited and can be described by a network of discrete chemogenomic signatures, with the majority (66.7%) conserved across independent studies [20].

Applications in Complex Disease Treatment and Drug Repurposing

Addressing Complex Disorders and Drug Resistance

Systems pharmacology offers particular promise for treating complex disorders with multifactorial etiology, including neurodegenerative diseases, cancer, and metabolic syndromes [17] [14]. Unlike single-target approaches, multi-target drugs can simultaneously modulate multiple pathways disrupted in these conditions, potentially yielding enhanced therapeutic efficacy [17]. For neurodegenerative diseases like Alzheimer's and Parkinson's, where traditional 'one-target-one-drug' approaches have largely failed, network therapeutics provide opportunities to address shared pathological mechanisms such as protein aggregation across multiple disorders [14].

Another critical application lies in overcoming drug resistance, a major challenge in antimicrobial and anticancer therapies [17]. Simultaneously impacting multiple targets reduces the probability of resistance development through single-point mutations, as demonstrated by the effectiveness of combination therapies in HIV treatment [22] [17]. In epilepsy, where approximately one-third of patients experience drug resistance, multi-target agents like valproic acid show broader efficacy spectrum compared to highly selective drugs, supporting the network approach to refractory conditions [17].

Drug Repurposing and Combination Therapy

Systems pharmacology enables systematic drug repurposing by revealing novel drug-disease relationships through network analysis [13] [17]. Computational approaches can screen existing drug libraries against new indications based on network proximity between drug targets and disease modules, as exemplified by the repositioning of metformin as an anticancer agent [13]. Multi-target agents are natural candidates for prospective drug repurposing to treat comorbid conditions, potentially addressing underlying pathologies plus disease symptoms with single therapeutic agents [17].

For drug combination prediction, systems pharmacology integrates network analysis with computational models to identify synergistic drug pairs that collectively modulate disease networks more effectively than individual agents [16]. This approach has been particularly valuable in traditional Chinese medicine research, where systems pharmacology helps dissect the mechanisms of multi-herb formulations and identify active compounds responsible for synergistic effects [16].

Future Perspectives and Challenges

The continued evolution of systems pharmacology faces both opportunities and challenges. Future developments will likely focus on multi-omics integration, combining genomics, transcriptomics, proteomics, and metabolomics data to create more comprehensive network models [13]. Additionally, advances in machine learning and artificial intelligence will enhance target prediction, drug combination optimization, and patient stratification for precision medicine applications [13] [18].

Significant challenges remain in data integration and standardization, particularly in managing the volume, variety, velocity, and veracity of biological big data [18]. Furthermore, distinguishing causation from correlation in network associations requires sophisticated computational approaches that integrate heterogeneous data types while avoiding overfitting [18]. Finally, translational validation of network-based hypotheses demands close integration between computational prediction and experimental confirmation in biologically relevant models, including advanced human in vitro systems such as iPSC-derived cultures and organ-on-a-chip technologies [14].

Despite these challenges, systems pharmacology represents a transformative approach to drug discovery that embraces biological complexity rather than reducing it. By shifting the therapeutic paradigm from single targets to integrated networks, this discipline holds exceptional promise for developing more effective treatments for complex diseases that have remained recalcitrant to traditional approaches.

In modern drug discovery, validating the mechanism of action (MoA) of therapeutic compounds is a critical step that bridges phenotypic screening and target-based development. Chemogenomic profiling has emerged as a powerful systems biology approach for MoA elucidation by analyzing the complex interactions between chemical perturbations and genetic backgrounds. This guide objectively compares four major classes of biological targets—G Protein-Coupled Receptors (GPCRs), Kinases, Proteases, and Nuclear Receptors—through the lens of chemogenomic validation, providing experimental methodologies and data-driven comparisons to inform research and development strategies. The PROSPECT (PRimary screening Of Strains to Prioritize Expanded Chemistry and Targets) platform exemplifies this approach, profiling chemical-genetic interactions (CGIs) between small molecules and pooled hypomorphic mutants to simultaneously identify bioactive compounds and provide early MoA insight [23].

Target Class Comparison and Characteristics

Table 1: Comparative Analysis of Key Biological Target Classes

| Parameter | GPCRs | Kinases | Proteases | Nuclear Receptors |

|---|---|---|---|---|

| Human Family Size | ~800 [24] | >500 [25] | ~2% of proteome [26] | 48 [27] |

| Therapeutic Significance | 34% of FDA-approved drugs [28] | Key cancer targets (e.g., EGFR, B-Raf) [29] | 12 FDA-approved replacement therapies [26] | 15-20% of pharmaceuticals [27] |

| Structural Features | 7 transmembrane domains [28] | Catalytic kinase domain | Active site with substrate recognition motifs [26] | DNA-binding, ligand-binding domains [27] |

| Primary Signaling Mechanisms | G protein coupling, arrestin recruitment [28] | Phosphorylation cascades (e.g., MAPK, PI3K/AKT/mTOR) [29] | Peptide bond hydrolysis [26] | Ligand-dependent transcription regulation [27] |

| Chemogenomic Profiling Applications | Bias signaling analysis, allosteric modulator characterization [28] | Polypharmacology assessment, resistance mechanism studies [29] | Substrate specificity engineering [26] | Selective modulator development, co-regulator interaction mapping [27] |

| Experimental Challenges | Signal transduction complexity, low native expression [28] | Pathway crosstalk, compensatory mechanisms [29] | Specificity engineering, activity control [26] | Tissue-specific effects, functional redundancy [27] |

Table 2: Therapeutic Targeting Approaches by Target Class

| Target Class | Representative Drugs | Primary Indications | Targeting Strategies |

|---|---|---|---|

| GPCRs | Propranolol, Ozanimod, Semaglutide [30] | Cardiovascular disease, multiple sclerosis, type 2 diabetes [30] | Orthosteric/allosteric modulation, biased ligands, bitopic designs [28] |

| Kinases | Gilteritinib, B-Raf inhibitors [30] [29] | Cancer, leukemia [30] [29] | ATP-competitive inhibitors, allosteric modulators, covalent inhibitors [29] |

| Proteases | Recombinant proteases, engineered variants [26] | Hematological malignancies, digestive disorders [26] | Activity engineering, substrate specificity switching, conditional activation [26] |

| Nuclear Receptors | Tamoxifen, Enzalutamide, Thiazolidinediones [27] | Breast cancer, prostate cancer, type 2 diabetes [27] | Agonists/antagonists, selective receptor modulators, coregulator disruptors [27] |

Chemogenomic Profiling Technologies and Workflows

Reference-Based MoA Prediction Using PROSPECT

The PROSPECT platform employs a reference-based approach termed Perturbagen CLass (PCL) analysis to elucidate small molecule MoA. This methodology involves screening compounds against a pool of hypomorphic Mycobacterium tuberculosis mutants, each depleted of a different essential protein. The platform measures chemical-genetic interactions through next-generation sequencing of strain-specific DNA barcodes, generating CGI profiles that serve as fingerprints for MoA prediction [23].

In practice, PCL analysis compares the CGI profile of an unknown compound against a curated reference set of compounds with annotated MOAs. In validation studies, this approach achieved 70% sensitivity and 75% precision in leave-one-out cross-validation, and comparable performance (69% sensitivity, 87% precision) with a test set of 75 antitubercular compounds with known MOA [23]. The methodology successfully identified 29 compounds targeting bacterial respiration from 98 previously unannotated compounds and enabled the discovery of a novel QcrB-targeting scaffold that initially lacked wild-type activity [23].

In Silico Target Prediction Methodologies

Computational target prediction serves as a complementary approach to experimental chemogenomics. A 2025 systematic comparison of seven target prediction methods (MolTarPred, PPB2, RF-QSAR, TargetNet, ChEMBL, CMTNN, and SuperPred) evaluated their performance using a shared benchmark dataset of FDA-approved drugs [31]. The study found that MolTarPred was the most effective method, with performance optimization achieved through high-confidence filtering and the use of Morgan fingerprints with Tanimoto scores [31]. These computational approaches are particularly valuable for early-stage drug repurposing and polypharmacology assessment, though they remain constrained by the quality and comprehensiveness of existing bioactivity data [31].

Table 3: Experimental Platforms for Chemogenomic Profiling

| Platform/Technology | Application Scope | Key Features | Performance Metrics |

|---|---|---|---|

| PROSPECT/PCL Analysis [23] | Antibacterial discovery, MOA elucidation | Reference-based CGI profiling, hypomorphic mutant screening | 70-75% sensitivity, 75-87% precision in MOA prediction |

| In Silico Target Fishing [31] | Drug repurposing, polypharmacology assessment | Ligand-centric similarity searching, structure-based docking | Variable performance across methods; MolTarPred identified as most effective |

| GPCRdb [32] | GPCR research and drug design | Integrated data, analysis tools, structure models | Covers 200 distinct receptors, 103 inactive and 209 active states |

| Protease Engineering Platforms [26] | Protease specificity reprogramming | High-throughput screening in E. coli, yeast, phage | Achieved >5,000-fold selectivity switches in engineered proteases |

Experimental Protocols for Target Validation

PROSPECT Platform Methodology

The PROSPECT platform utilizes a systematic workflow for simultaneous compound discovery and MoA determination [23]:

Strain Pool Preparation: Generate a pooled library of hypomorphic M. tuberculosis mutants, each engineered with proteolytic depletion of a different essential gene and tagged with unique DNA barcodes.

Compound Screening: Screen small molecule libraries against the mutant pool across multiple dose conditions, typically using 96- or 384-well format.

Barcode Sequencing and Quantification: After appropriate incubation periods, extract genomic DNA and amplify barcode regions for next-generation sequencing. Quantify relative abundance changes for each mutant strain under chemical treatment compared to DMSO controls.

CGI Profile Generation: Calculate fitness defects for each mutant under each compound condition, generating a quantitative CGI profile vector for each compound-dose combination.

Reference-Based MOA Prediction: Compare CGI profiles of unknown compounds to a curated reference set using PCL analysis, assigning MOA based on similarity to compounds with known targets.

Experimental Validation: Confirm predictions through secondary assays, such as resistance mutation mapping (e.g., qcrB allele sequencing for QcrB inhibitors) or sensitivity profiling in alternative genetic backgrounds (e.g., cytochrome bd knockout strains) [23].

Protease Specificity Engineering Workflow

Engineering proteases with altered substrate specificity involves distinct methodological approaches [26]:

Library Construction: Generate diverse protease variant libraries through site-directed mutagenesis, error-prone PCR, or gene synthesis focusing on active site residues and potential exosites.

Selection System Design: Implement appropriate high-throughput screening or selection systems in suitable hosts (E. coli, yeast, or cell-free systems) incorporating both positive selection (desired substrate cleavage) and counter-selection (against wild-type substrate recognition).

Variant Isolation: Screen library variants under selective pressure, isolating clones with desired specificity profiles using methods such as:

- Phage-Assisted Continuous Evolution (PACE)

- Yeast Endoplasmic Reticulum Sequestration Screen (YESS)

- β-lactamase survival screening

- FRET-based fluorescence assays

Characterization and Validation: Express and purify selected variants for biochemical characterization using kinetic assays, substrate profiling, and structural studies to confirm specificity switching and catalytic efficiency.

Signaling Pathway Mapping and Visualization

GPCR Signaling Cascades

Kinase Signaling Networks

Research Reagent Solutions and Essential Materials

Table 4: Key Research Reagents and Experimental Resources

| Reagent/Resource | Application | Key Features | Source/Reference |

|---|---|---|---|

| GPCRdb Database | GPCR research, structure analysis | Integrated data on receptors, ligands, structures, and tools | [32] |

| ChEMBL Database | Bioactivity data, target prediction | Curated bioactivity data, ligand-target interactions | [31] |

| PROSPECT Platform | Antibacterial MoA determination | Hypomorphic mutant pool, CGI profiling | [23] |

| Phage-Assisted Continuous Evolution (PACE) | Protease engineering | Continuous evolution under selection pressure | [26] |

| AlphaFold-Multistate Models | Structure-based drug design | Inactive/active state GPCR models | [32] |

| Yeast Endoplasmic Reticulum Sequestration Screen (YESS) | Protease specificity engineering | Substrate selectivity screening | [26] |

Chemogenomic profiling represents a paradigm shift in target validation and MoA elucidation, enabling researchers to move beyond traditional single-target approaches to embrace the complexity of biological systems. The comparative analysis presented here demonstrates that while GPCRs, kinases, proteases, and nuclear receptors differ significantly in their structural features and signaling mechanisms, all can be effectively studied using modern chemogenomic approaches.

The integration of reference-based profiling methods like PROSPECT with computational target prediction and specialized databases creates a powerful framework for accelerating drug discovery. As these technologies continue to evolve—with advances in structural modeling, directed evolution, and high-throughput screening—their application across target classes will further enhance our ability to validate mechanisms of action and develop more effective therapeutics with known biological targets.

Future directions in the field will likely include increased integration of artificial intelligence and machine learning approaches, expanded reference databases covering more target classes and chemical space, and the development of more sophisticated multi-omics profiling platforms that combine chemogenomic data with transcriptomic, proteomic, and metabolomic readouts for comprehensive MoA deconvolution.

Linking Small Molecule-Protein Interactions to Observable Phenotypes

Understanding the connection between small molecule-protein interactions and the resulting phenotypic changes in cells is a cornerstone of modern drug discovery and chemogenomic profiling. This process is critical for validating a compound's mechanism of action (MoA). A bioactive small molecule typically perturbs a cellular state by interacting with specific protein targets; however, the absence of a protein target does not inherently confirm the molecule's phenotypic impact. Establishing this causal link requires a suite of experimental strategies that span from initial phenotypic observations to the identification of molecular targets and, finally, functional validation. This guide objectively compares the key methodologies used to bridge this gap, supporting research aimed at confirming therapeutic MoA through comprehensive chemogenomic profiling.

Methodological Comparison for Target Identification and Validation

The following table summarizes the core experimental approaches for linking small molecules to their protein targets and associated phenotypes, detailing their fundamental principles and primary applications [33] [34].

Table 1: Comparison of Key Methods for Linking Small Molecules to Phenotypes

| Method Category | Specific Technique | Key Principle | Primary Application in MoA Validation |

|---|---|---|---|

| Affinity-Based Pull-Down | SILAC (Stable Isotope Labeling with Amino acids in Cell culture) [33] | Uses isotopically labeled amino acids for quantitative MS; compares protein enrichment between SM-loaded and control beads [33]. | Unbiased identification of direct protein binders and their complexes from cell lysates [33]. |

| Affinity-Based Pull-Down | On-Bead Affinity Matrix [34] | Small molecule is covalently attached to solid support (e.g., agarose beads) via a linker and used to purify targets from lysate [34]. | Identification of protein targets for small molecules where a covalent attachment point is available [34]. |

| Affinity-Based Pull-Down | Biotin-Tagged Approach [34] | Small molecule is conjugated to biotin; target proteins are purified using streptavidin/avidin beads [34]. | High-affinity purification of target proteins and complexes; widely used due to strong biotin-streptavidin interaction [34]. |

| Label-Free | DARTS (Drug Affinity Responsive Target Stability) [34] | Small molecule binding protects the target protein from proteolytic degradation, evident on a gel [34]. | Rapid, confirmation of binding without requiring chemical modification of the small molecule [34]. |

| Label-Free | CETSA (Cellular Thermal Shift Assay) [34] | Small molecule binding stabilizes the target protein against heat-induced denaturation [34]. | Assessment of target engagement in a cellular context, providing physiological relevance [34]. |

| Morphological & Interaction Profiling | Morphological Profiling [35] | Automated imaging and analysis to quantify small molecule-induced changes in cellular morphology [35]. | Predictive MoA analysis and detection of bioactivity in a broader biological context [35]. |

| Morphological & Interaction Profiling | PLIC (Proximity Ligation Imaging Cytometry) [36] | Combines proximity ligation assay with imaging flow cytometry to quantify PPIs/PTMs in rare cell populations at single-cell level [36]. | Validation of protein-protein interactions or oligomerization under physiological conditions in rare cells [36]. |

Detailed Experimental Protocols

To ensure reproducibility, this section outlines the core methodologies for several key techniques from the comparison table.

Quantitative Target Identification Using SILAC

This protocol enables the unbiased and quantitative identification of proteins that bind to small-molecule probes within a complex cellular proteome [33].

- Step 1: Cell Culture and Metabolic Labeling. Culture two populations of cells in media containing either "light" (natural isotopes) or "heavy" (13C, 15N) forms of arginine and lysine. Allow for at least 5 population doublings to ensure full incorporation into the proteome [33].

- Step 2: Preparation of Affinity Matrix. Conjugate the small molecule of interest to a solid support, such as agarose beads. A critical control is to prepare a separate batch of beads loaded with an inactive compound or just the solvent (e.g., ethanol) [33].

- Step 3: Affinity Pull-Down. Mix the "heavy"-labeled cell lysate with the small molecule-conjugated beads. Simultaneously, mix the "light"-labeled cell lysate with the control beads. Incubate to allow protein binding, then wash under mild stringency to preserve weakly bound complexes [33].

- Step 4: Sample Combination and MS Analysis. Combine the beads from both pull-downs. Elute the bound proteins, digest them with trypsin, and analyze the resulting peptides by liquid chromatography-tandem mass spectrometry (LC-MS/MS). The "heavy" and "light" versions of each peptide appear as distinct peaks, and their ratio indicates the level of specific enrichment by the small molecule bait [33].

- Step 5: Data Analysis. Proteins with high heavy-to-light ratios are considered specific binders. Candidates are prioritized based on these ratios and statistical significance [33].

Drug Affinity Responsive Target Stability (DARTS)

This label-free method leverages the protective effect of small molecule binding on its target protein [34].

- Step 1: Protein Lysate Preparation. Prepare a lysate from cells or tissues of interest.

- Step 2: Small Molecule Incubation. Incubate the lysate with the small molecule of interest. A control sample should be incubated with the vehicle (e.g., DMSO) alone.

- Step 3: Limited Proteolysis. Subject both the small molecule-treated and vehicle-treated lysates to digestion with a non-specific protease (e.g., pronase or thermolysin) for a limited time. The concentration of protease and digestion time must be optimized.

- Step 4: Gel Electrophoresis and Analysis. Run the proteolyzed samples on a gel (e.g., SDS-PAGE). A protein band that is more stable (i.e., less degraded) in the small molecule-treated sample compared to the vehicle control is a candidate target. This band can be excised and identified by mass spectrometry.

Proximity Ligation Imaging Cytometry (PLIC) for Protein Complexes

This protocol is designed for quantifying protein-protein interactions or oligomerization in rare cell populations defined by multiple surface markers [36].

- Step 1: Cell Preparation and Staining. Isolate the rare cell population of interest (e.g., via fluorescence-activated cell sorting). Fix and permeabilize the cells.

- Step 2: Primary Antibody Incubation. Incubate the cells with a pair of primary antibodies raised in different host species, each targeting one of the two putative interacting proteins (e.g., Aire and Sirt1).

- Step 3: Proximity Ligation Assay (PLA). Add two species-specific secondary antibodies (PLA probes), each conjugated to a unique short DNA strand. If the two primary antibodies are in close proximity (<40 nm), the DNA strands on the PLA probes can be ligated to form a circular DNA template.

- Step 4: Rolling Circle Amplification (RCA) and Detection. Amplify the circular DNA via RCA. Then, add fluorescently labeled oligonucleotides that are complementary to the repeated DNA sequence generated by RCA. This results in a strong, localized fluorescent signal at the site of the protein interaction.

- Step 5: Imaging Flow Cytometry. Analyze the cells using an imaging flow cytometer. This allows for the quantification of the fluorescent PLA signal across thousands of single cells while simultaneously collecting data on multiple surface markers and the subcellular localization of the signal (e.g., nuclear speckles vs. diffuse background). Advanced data processing algorithms can filter out false-positive signals based on this subcellular distribution [36].

Experimental Workflow and Signaling Pathway Visualization

The following diagrams, created using DOT language and the specified color palette, illustrate the logical flow of key experiments and a generalized signaling pathway.

Small Molecule Target Identification Workflow

Signaling Pathway Linking Target to Phenotype

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful experimentation relies on high-quality, specific reagents. The table below lists key materials and their critical functions in the described methodologies.

Table 2: Key Research Reagents for Small Molecule-Protein Interaction Studies

| Research Reagent / Material | Critical Function in Experimentation |

|---|---|

| SILAC Media Kits | Provide defined media formulations with stable isotope-labeled arginine and lysine, essential for quantitative proteomic comparisons [33]. |

| Affinity Matrices (e.g., Agarose/NHS-Activated Beads) | Solid supports for covalent immobilization of small molecule baits, forming the core of the affinity purification system [33] [34]. |

| Biotin-Streptavidin/Avidin Systems | Utilizes the high-affinity biotin-streptavidin interaction for highly efficient pull-down of targets using biotin-tagged small molecules [34]. |

| Cell Permeabilization Buffers | Enable antibodies and PLA probes to access intracellular targets for techniques like PLIC and immunofluorescence staining [36]. |

| PLA (Proximity Ligation Assay) Kits | Provide the specialized oligonucleotide-conjugated secondary antibodies, ligation, and amplification reagents required for detecting protein proximities [36]. |

| Pronase/Thermolysin Proteases | Non-specific proteases used in DARTS experiments to digest unbound proteins while small molecule-bound targets remain protected [34]. |

| High-Specificity Antibody Pairs | Crucial for PLIC and other immunoassays; must target different epitopes/proteins and be raised in different species to avoid cross-reactivity [36]. |

| LC-MS/MS Grade Solvents and Trypsin | Ensure high sensitivity and low background noise in mass spectrometric identification of proteins, a final common step in many protocols [33] [34]. |

Practical Applications: From Library Design to Target Deconvolution Techniques

Designing Targeted Chemogenomic Libraries for Precision Oncology

Chemogenomic libraries represent a strategically designed collection of small molecules used to systematically probe biological systems and identify novel therapeutic vulnerabilities. In precision oncology, these libraries enable researchers to connect chemical compounds with specific cellular targets and phenotypes, thereby accelerating the identification of patient-specific treatment strategies. The fundamental premise of chemogenomic library design involves creating compound sets that optimally cover the druggable genome while providing sufficient mechanistic information to deconvolute the biological basis of observed phenotypes [37]. As the field advances toward Target 2035—a global initiative to identify pharmacological modulators for most human proteins by 2035—the strategic design of these libraries becomes increasingly critical for unlocking novel cancer vulnerabilities [37].

The power of chemogenomic profiling lies in its ability to functionally link chemical compounds to biological pathways and processes. When compounds with overlapping target profiles are combined into carefully curated sets, researchers can identify the specific targets responsible for phenotypic outcomes through pattern recognition [37]. This approach has demonstrated particular value in identifying patient-specific vulnerabilities in challenging cancers like glioblastoma, where phenotypic screening of patient-derived cells against targeted compound libraries has revealed highly heterogeneous responses across patients and cancer subtypes [4]. The following sections compare alternative design strategies, present experimental validation data, and provide practical methodologies for implementing chemogenomic approaches in precision oncology research.

Comparison of Chemogenomic Library Design Strategies

Strategic Approaches and Their Applications

Table 1: Comparison of Chemogenomic Library Design Strategies

| Design Strategy | Library Size | Target Coverage | Key Advantages | Validated Applications | Primary Limitations |

|---|---|---|---|---|---|

| Minimal Screening Library [4] | 1,211 compounds | 1,386 anticancer proteins | Cost-effective; optimized for cellular activity and chemical diversity; widely applicable across cancers | Phenotypic profiling of glioblastoma patient cells; identification of patient-specific vulnerabilities | Limited to established anticancer targets; may miss novel mechanisms |

| Comprehensive Chemogenomic Sets [37] | Covers ~1/3 of druggable proteome | Thousands of proteins across major target families | Enables target deconvolution through overlapping selectivity patterns; covers emerging target families | EUbOPEN project; inflammatory bowel disease, cancer, and neurodegeneration research | Requires extensive characterization; more resource-intensive |

| Pathway-Targeted Libraries | Variable | Focused on specific pathways | High depth in targeted areas; ideal for hypothesis-driven research | Antifungal synergy prediction [38]; mitochondrial function studies [39] | Limited scope; potentially biased toward known biology |

| Selectivity-Focused Collections [37] | ~50-100 chemical probes | High-specificity targets | Gold-standard tool compounds; peer-reviewed with negative controls; minimal off-target effects | Donated Chemical Probes (DCP) project; target validation studies | Limited coverage; time-consuming development process |

Performance Metrics and Experimental Validation

Table 2: Experimental Performance Metrics of Different Library Types

| Library Characteristic | Minimal Screening Library [4] | Comprehensive Chemogenomic Sets [37] | Selectivity-Focused Collections [37] | AI-Enhanced Prediction [39] |

|---|---|---|---|---|

| Target Identification Accuracy | 73% (based on phenotypic correlation) | 70-80% (based on EUbOPEN criteria) | >90% (peer-reviewed probes) | AUC 0.73 (vs. 0.58 for structure-based methods) |

| Cellular Activity Confirmation | 789 compounds tested in patient cells | Comprehensive biochemical/cell-based profiling | Target engagement <1 μM demonstrated | Integrated drug/CRISPR viability screens |

| Patient-Derived Cell Validation | Yes (glioblastoma stem cells) | Yes (multiple cancer types) | Limited (dependent on probe availability) | Yes (mutation-specific predictions) |

| Data Availability | Public repository (Zenodo) | Project-specific data resource | Information sheets with recommendations | Open-source tool (GitHub) |

Experimental Protocols for Library Validation and Application

Phenotypic Screening in Patient-Derived Cells

Protocol 1: Patient-Specific Vulnerability Identification [4]

Library Preparation: Select a targeted compound library (e.g., 789 compounds covering 1,320 anticancer targets) with appropriate chemical diversity and cellular activity profiles.

Cell Culture: Establish patient-derived glioma stem cells from glioblastoma patients, maintaining subtype characteristics throughout culture.

Screening Setup: Plate cells in 384-well format and treat with compound library using appropriate concentration ranges (typically 1 nM-10 μM) with DMSO controls.

Viability Assessment: Measure cell survival after 72-96 hours using imaging-based phenotypic profiling or CellTiter-Glo luminescent cell viability assay.

Data Analysis: Normalize data to controls, calculate percentage viability, and identify patient-specific vulnerabilities based on differential compound sensitivity across GBM subtypes.

Target Deconvolution: Use compound target annotations to connect sensitivity patterns to specific pathways and mechanisms.

This protocol successfully identified highly heterogeneous phenotypic responses across glioblastoma patients and subtypes, demonstrating the value of targeted libraries in uncovering patient-specific treatment opportunities [4].

Chemogenomic Profiling for Mechanism of Action Studies

Protocol 2: Mechanism Deconvolution Using Chemogenomic Profiles [38] [40]

Strain Collection: Utilize comprehensive mutant collections (e.g., yeast gene deletion library, piggyBac mutant clones, or CRISPR-modified cell lines).

Profile Generation: Treat mutant collections with compounds of interest and measure fitness defects (IC50 values) compared to wild-type strains.

Data Processing: Normalize responses to untreated controls and calculate fold-change in sensitivity/resistance for each mutant.

Similarity Analysis: Compute pairwise correlations between compound profiles using Spearman correlation or specialized similarity metrics.

Cluster Identification: Apply hierarchical clustering to group compounds with similar profiles, indicating shared mechanisms of action.

Pathway Mapping: Connect profile similarities to biological pathways using enrichment analysis (KEGG, Gene Ontology).

This approach has successfully predicted antifungal synergies [38], revealed artemisinin functional activity in malaria [21], and identified novel mechanisms of action for aurone compounds [40], demonstrating its broad applicability across biological systems.

Visualization of Chemogenomic Workflows and Pathways

Conceptual Framework for Chemogenomic Library Design

Figure 1: Conceptual Framework for Chemogenomic Library Design and Application

Experimental Workflow for Phenotypic Screening

Figure 2: Phenotypic Screening Workflow for Target Identification

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagent Solutions for Chemogenomic Studies

| Reagent/Category | Specific Examples | Function/Application | Considerations for Selection |

|---|---|---|---|

| Compound Libraries | Minimal screening library (1,211 compounds) [4]; EUbOPEN chemogenomic collection [37] | Phenotypic screening; target identification | Prioritize cellular activity, chemical diversity, and target coverage based on research goals |

| Cell Models | Patient-derived glioma stem cells [4]; DepMap cancer cell lines [39] | Disease-relevant screening contexts; mechanism validation | Ensure molecular characterization; consider genetic diversity and clinical relevance |

| Genetic Tools | CRISPR-Cas9 knockout libraries [39]; piggyBac mutant collections [21] | Target validation; genetic interaction studies | Match genetic background to screening context; consider coverage and efficiency |

| Profiling Technologies | L1000 platform [41]; Cell Painting [41] | High-content phenotypic characterization | Balance content with throughput; consider data analysis capabilities |

| Data Resources | DepMap [39]; Zenodo datasets [4]; EUbOPEN data portal [37] | Benchmarking; bioinformatics analysis | Assess data quality, annotations, and compatibility with existing workflows |

| AI/Target Prediction Tools | DeepTarget [39]; Structure-based methods (RosettaFold, Chai-1) | In silico target identification; mechanism prediction | Consider cellular context incorporation and validation status |

The strategic design of targeted chemogenomic libraries represents a powerful approach for advancing precision oncology by connecting chemical compounds with biological mechanisms in patient-relevant contexts. As demonstrated through comparative analysis, different library design strategies offer distinct advantages—from the cost-effective minimal screening library ideal for initial phenotypic discovery to the comprehensive chemogenomic sets enabling sophisticated target deconvolution. The experimental protocols and visualization frameworks provided here offer practical guidance for implementation, while the research reagent toolkit equips scientists with essential resources for successful execution.

Looking forward, the integration of chemogenomic approaches with emerging technologies—particularly AI-driven target prediction tools like DeepTarget [39]—promises to accelerate our understanding of drug mechanisms of action and identify novel therapeutic opportunities in oncology. As the field progresses toward the Target 2035 goals [37], the continued refinement and strategic application of chemogenomic libraries will be essential for translating cancer genomics into effective personalized therapies that address the complex heterogeneity of human malignancies.

Affinity-based pull-down assays are cornerstone techniques in chemogenomic profiling for validating a drug's mechanism of action. These methods enable the direct isolation and identification of protein targets from complex biological systems, providing crucial evidence for target engagement and selectivity. Among these, three principal approaches—on-bead, biotin-tagged, and photoaffinity tagged—offer distinct strategies for capturing drug-protein interactions. This guide objectively compares their methodologies, performance, and applications in modern drug discovery research.

The core principle of affinity-based pull-down involves using a small molecule, modified to function as "bait," to isolate its binding partners from a protein mixture such as a cell lysate. The captured proteins are then identified, typically through mass spectrometry [34] [42]. The key differentiation between the three main approaches lies in the design of the bait molecule and how it is presented to the proteome.

The table below summarizes the fundamental characteristics, advantages, and limitations of each method.

Table 1: Core Characteristics of Affinity-Based Pull-Down Methods

| Feature | On-Bead Affinity Matrix | Biotin-Tagged Approach | Photoaffinity Tagged Approach |

|---|---|---|---|

| Core Principle | Small molecule covalently attached to solid beads via a linker [34]. | Small molecule conjugated to biotin; captured with streptavidin/avidin beads [34]. | Small molecule with a photoreactive group forms a covalent bond with target upon UV irradiation [42] [43]. |

| Probe Structure | Drug -> Linker -> Solid Bead | Drug -> Linker -> Biotin | Drug -> Linker -> Photoreactive Group -> Linker -> Affinity Tag (e.g., Biotin) |

| Key Advantage | Simple workflow; no free probe to remove before binding to beads. | High-affinity capture via biotin-streptavidin interaction (K~10⁻¹⁵ M). | Captures transient/weak interactions; "freezes" the binding event. |

| Primary Limitation | Bead surface can cause non-specific binding; potential steric hindrance. | Requires careful linker design; biotinylation can affect drug activity. | Requires synthesis of complex probe; potential for non-specific cross-linking. |

| Ideal Use Case | Initial target fishing for compounds with high affinity and known SAR. | Standardized pull-downs for soluble proteins and strong binders. | Identifying low-abundance targets, transient interactions, and membrane proteins. |

Experimental data underscores the real-world performance of these techniques. A recent (2025) study on the MDM2 inhibitor Navtemadlin utilized a diazirine-based photoaffinity probe to successfully and selectively identify MDM2 as its primary target in cells. The probe retained sub-micromolar binding affinity (IC₅₀ of 58 nM for one probe design) and induced the expected p53-pathway phenotype, confirming its functionality [44]. This demonstrates the capability of photoaffinity methods to validate mechanism of action in a cellular context.

Table 2: Experimental Performance Data from Select Studies

| Method | Compound Example | Identified Target(s) | Key Experimental Findings | Source |

|---|---|---|---|---|

| On-Bead | Aminopurvalanin, KL-001 | CDK1, Cryptochrome (CRY) | Successfully isolated specific protein targets from complex lysates using an agarose-based matrix [34]. | [34] |

| Biotin-Tagged | Withaferin, Epolactaene | Vimentin, Hsp60 | Biotin-streptavidin pull-down enabled specific isolation of target proteins, confirmed by competition [34]. | [34] |