Target Deconvolution in Phenotypic Screening: Overcoming Challenges to Unlock First-in-Class Therapies

Phenotypic drug discovery (PDD) has re-emerged as a powerful strategy for identifying first-in-class therapeutics, often acting through novel mechanisms.

Target Deconvolution in Phenotypic Screening: Overcoming Challenges to Unlock First-in-Class Therapies

Abstract

Phenotypic drug discovery (PDD) has re-emerged as a powerful strategy for identifying first-in-class therapeutics, often acting through novel mechanisms. However, a central challenge in PDD is target identification, or 'target deconvolution'—the process of pinpointing the specific molecular target(s) responsible for a compound's observed effect. This article provides a comprehensive overview for researchers and drug development professionals, exploring the foundational principles of PDD, detailing modern methodological approaches for target deconvolution, addressing key troubleshooting and optimization strategies, and validating success through comparative analysis with target-based discovery. By synthesizing current methodologies and future directions, this review aims to equip scientists with the knowledge to navigate the complexities of phenotypic screening and accelerate the development of innovative medicines.

The Renaissance of Phenotypic Drug Discovery: Why Target Identification Matters

FAQs: Core Concepts of Phenotypic Drug Discovery

What is phenotypic drug discovery (PDD)?

Phenotypic Drug Discovery (PDD) is an empirical strategy where compounds are identified based on their effects on disease phenotypes or biomarkers in realistic disease models, without relying on a pre-specified molecular target hypothesis. This biology-first approach contrasts with target-based drug discovery (TDD), which begins with a specific, known molecular target [1] [2]. Modern PDD leverages complex biological systems—such as cell-based assays or patient-derived materials—to capture the complexity of disease physiology, often leading to the discovery of first-in-class medicines with novel mechanisms of action [1] [3].

How does PDD differ from target-based drug discovery?

The fundamental difference lies in the starting point. PDD starts with a biological system or disease phenotype, while TDD starts with a defined molecular target. This key distinction influences the entire drug discovery workflow, from screening and hit validation to lead optimization [2]. The table below summarizes the core differences.

| Feature | Phenotypic Drug Discovery (PDD) | Target-Based Drug Discovery (TDD) |

|---|---|---|

| Starting Point | Disease phenotype in a biologically relevant system (e.g., cell-based model) [1] | Known molecular target with a hypothesized role in disease [2] |

| Knowledge Prerequisite | No prior knowledge of the specific drug target or mechanism is required [1] | Requires a well-characterized molecular target and understanding of its function [2] |

| Primary Screening Readout | Functional, therapeutic effect on a disease-relevant phenotype [1] | Biochemical interaction with the predefined target (e.g., binding, inhibition) [2] |

| Major Challenge | Target deconvolution (identifying the molecular mechanism of action) [1] [4] | Demonstrating that target modulation translates to a therapeutic effect in a complex disease system [2] |

| Key Strength | Potential to identify first-in-class drugs with novel mechanisms [1] | Enables rational, structure-based drug design for optimized specificity [2] |

What are the main advantages of a phenotypic approach?

PDD offers several key advantages that make it a valuable discovery modality [1] [3]:

- Expansion of Druggable Target Space: It can reveal therapies that act on unexpected cellular processes (e.g., pre-mRNA splicing, protein folding) and novel target classes that might not be identified through hypothesis-driven approaches [1].

- Delivery of First-in-Class Medicines: A significant number of first-in-class drugs, which work by unprecedented mechanisms, have originated from phenotypic screens [1] [2].

- Addressing Biological Complexity: It is well-suited for polygenic diseases or situations where the underlying biology is poorly understood, as it captures system-level effects and polypharmacology (simultaneous modulation of multiple targets) that can contribute to efficacy [1].

FAQs: Implementation & Troubleshooting

What are common limitations in phenotypic screening and how can they be mitigated?

Both small-molecule and genetic phenotypic screens have inherent limitations. Understanding these is crucial for robust experimental design [4].

| Screening Approach | Common Limitations | Proposed Mitigation Strategies [4] |

|---|---|---|

| Small Molecule Screening | - Covers a limited fraction of the human proteome.- False positives from assay interference or promiscuous compounds.- "Molecular obesity": lead compounds with high molecular weight. | - Use diverse compound libraries (e.g., including natural product-inspired collections).- Implement stringent hit triage (e.g., dose-response, counterscreens).- Focus on lead-like chemical space during library design. |

| Genetic Screening (e.g., CRISPR) | - Fundamental differences from pharmacological perturbation (e.g., complete knockout vs. partial inhibition).- Difficulty modeling multi-gene and polypharmacological effects.- Limited throughput of disease-relevant models (e.g., 3D cocultures). | - Use more physiologically relevant models (e.g., in vivo screens).- Employ multi-omics readouts for deeper mechanistic insight.- Develop improved methods for combinatorial gene perturbations. |

How can I improve the quality and success of a phenotypic screen?

Robust assay design and data quality are the foundations of a successful phenotypic screening campaign [5].

- Start with a Biologically Relevant Model: Choose a cell model that accurately reflects the disease biology, such as patient-derived cells or co-culture systems that include the tumor microenvironment [5] [3].

- Optimize and Control the Assay Rigorously:

- Adjust cell seeding density for accurate image analysis [5].

- Automate dispensing and imaging to minimize human error and variability [5].

- Include positive and negative controls on every plate to monitor assay performance [5].

- Use replicates and randomize sample positions to avoid positional bias [5].

- Ensure High-Quality Data for Analysis:

What is target deconvolution and what methods are used?

Target deconvolution is the process of identifying the specific molecular target(s) and mechanism of action (MoA) of a compound discovered in a phenotypic screen [1] [2]. It is a major challenge in PDD but is valuable for safety de-risking and guiding clinical development [1]. Common methods include:

- Chemical Proteomics: Using immobilized compound analogs to pull down and identify binding proteins from cell lysates [1].

- Functional Genomics: CRISPR or RNAi screens to identify genes that modulate the compound's activity [4].

- Resistance Mutations: Selecting for and characterizing cell clones that are resistant to the compound can point to the target pathway [1].

- Transcriptomics/Profiling: Comparing the gene expression signature induced by the compound to databases of signatures from compounds with known MoA [1].

Experimental Protocol: Key Methodology

Representative Protocol: High-Content Phenotypic Screening for Hit Identification

The following workflow outlines a generalized protocol for an image-based phenotypic screen, incorporating best practices for robustness [5].

Objective: To identify small molecules that induce a specific phenotypic change (e.g., altered cell morphology, protein translocation, or reduced proliferation) in a disease-relevant cell model.

Materials:

- Cell Model: Biologically relevant cell line (e.g., primary cells, iPSC-derived cells, or co-culture system).

- Compound Library: Diverse or focused small-molecule collection.

- Assay Reagents: Cell culture medium, dyes for staining cellular components (e.g., nuclei, cytoskeleton), fixation and permeabilization buffers.

- Equipment: Automated liquid handler, high-content imaging system, computational infrastructure for data storage and analysis.

Procedure:

Assay Development and Optimization:

- Seed cells in a multi-well plate, optimizing density for confluency and single-cell segmentation during image analysis [5].

- Define and validate the phenotypic readout using known positive and negative control compounds.

- Calculate the Z'-factor to statistically confirm the assay is robust for screening.

Compound Dispensing and Cell Treatment:

- Using an automated liquid handler, transfer compounds from the library to assay plates. Include DMSO vehicle controls on every plate.

- Treat cells with compounds at a predetermined concentration and time.

Cell Fixation, Staining, and Imaging:

- Fix cells and stain with fluorescent dyes to mark relevant cellular structures.

- Acquire images automatically using a high-content imager, ensuring consistent settings (exposure, offset) across all plates [5].

Image Analysis and Feature Extraction:

- Use image analysis software (e.g., CellProfiler or commercial AI-powered platforms) to segment individual cells and extract hundreds of morphological features (e.g., size, shape, texture, intensity) [5].

Hit Triage and Validation:

- Normalize data and apply statistical methods to identify compounds that significantly induce the desired phenotype.

- Prioritize hits by potency, efficacy, and chemical attractiveness.

- Confirm activity in dose-response experiments and orthogonal assays to rule out false positives and assay artifacts [4].

Research Reagent Solutions

Essential materials and tools for conducting phenotypic screening experiments.

| Reagent / Tool | Function in PDD | Key Considerations |

|---|---|---|

| Patient-Derived Cells | Provides a disease-relevant, physiologically accurate model for screening [3]. | Maintain genetic and phenotypic stability during culture; use low passage numbers. |

| Complex Co-culture Systems | Models cell-cell interactions and the tumor microenvironment (e.g., with immune cells) [3]. | Can be lower throughput; requires careful optimization of cell ratios. |

| High-Content Imaging Platform | Captures multiparametric data on cellular morphology and subcellular localization [5] [3]. | Generates large, complex datasets; requires robust computational analysis pipelines. |

| CRISPR Libraries | Functional genomics tool for target identification and validation via gene knockout [4]. | Knockout may not mimic pharmacological inhibition; can miss polypharmacology. |

| Diverse Compound Libraries | Maximizes chances of finding hits by covering a broad chemical space [4]. | Even the best libraries only cover a fraction of the human proteome. |

| AI/ML Analysis Platforms (e.g., phenAID) | Analyzes high-dimensional data to predict mechanism of action and identify hits [5]. | Requires high-quality, well-annotated input data to be effective. |

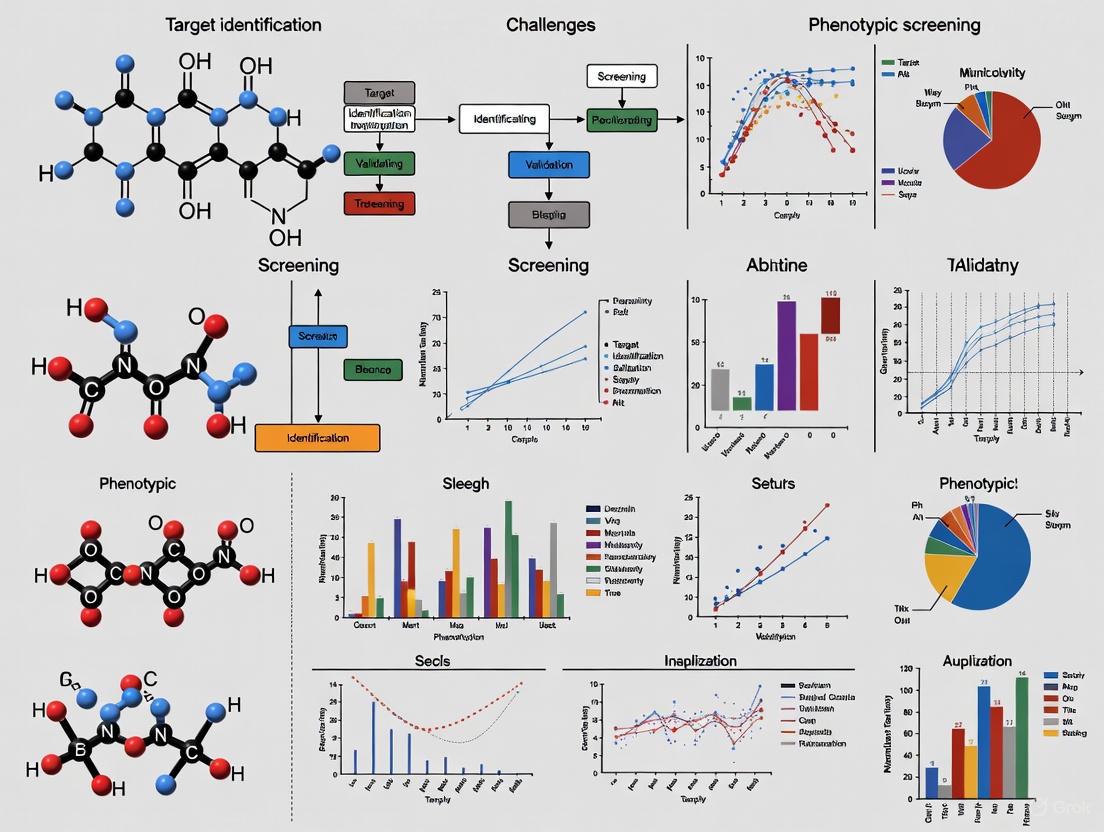

Visual Guide: Phenotypic Screening Workflow & Target Deconvolution

Phenotypic Screening Workflow

Target Deconvolution Methods

Phenotypic Drug Discovery (PDD) has experienced a major resurgence following a surprising observation: a majority of first-in-class drugs approved between 1999 and 2008 were discovered empirically without a predefined drug target hypothesis [1] [6]. This finding challenged the pharmaceutical industry's decades-long focus on target-based drug discovery (TDD) and sparked renewed interest in phenotypic approaches [7]. Modern PDD combines the original concept of observing therapeutic effects on disease physiology with advanced tools and strategies, systematically pursuing drug discovery based on therapeutic effects in realistic disease models [1]. This technical resource center provides practical guidance for researchers navigating the challenges and opportunities of phenotypic screening.

The Evidence Base: Quantitative Analysis of PDD Success

First-in-Class Drug Discovery Outcomes (1999-2008)

Table 1: Discovery Strategies for First-in-Class Small Molecule Drugs (1999-2008)

| Discovery Strategy | Number of First-in-Class Drugs | Percentage of Total |

|---|---|---|

| Phenotypic Drug Discovery (PDD) | 28 | 56% |

| Target-Based Drug Discovery (TDD) | 17 | 34% |

| Other/Modified Approaches | 5 | 10% |

| Total | 50 | 100% |

Source: Adapted from Swinney & Anthony analysis cited in [7] [6]

This foundational analysis revealed that PDD approaches yielded a disproportionate number of first-in-class medicines compared to target-based strategies, surprising an industry that had predominantly invested in target-based programs [7]. The continued value of PDD is demonstrated by recent groundbreaking medicines for cystic fibrosis, spinal muscular atrophy, and hepatitis C discovered through phenotypic approaches [1] [8].

Notable First-in-Class Drugs Discovered via PDD

Table 2: Recent Therapeutic Advances from Phenotypic Screening

| Drug/Therapeutic | Therapeutic Area | Key Mechanism/Target | PDD Model Used |

|---|---|---|---|

| Ivacaftor, Lumacaftor, Tezacaftor | Cystic Fibrosis | CFTR correctors/potentiators | Cell lines expressing disease-associated CFTR variants [1] |

| Risdiplam, Branaplam | Spinal Muscular Atrophy | SMN2 pre-mRNA splicing modifiers | SMN2 reporter gene assays [1] [8] |

| Daclatasvir & other NS5A inhibitors | Hepatitis C | HCV NS5A protein modulation | HCV replicon phenotypic screen [1] |

| SEP-363856 | Schizophrenia | Unknown (novel mechanism) | Disease-relevant phenotypic models [1] |

| Lenalidomide | Multiple Myeloma | Cereblon E3 ligase modulation (discovered post-approval) | Multiple anti-inflammatory and anti-angiogenesis phenotypes [1] |

Technical Support Center: PDD Challenges & Troubleshooting Guides

Frequently Asked Questions: Addressing Core PDD Challenges

FAQ 1: How do we justify PDD programs when management demands predefined targets? Justification Strategy: Emphasize the historical evidence that PDD yields more first-in-class therapies. Frame PDD as a approach to address the "unknown unknowns" in disease biology that can derail even well-defined target-based programs [7]. Develop a clear translatability chain demonstrating how your phenotypic assay connects to human disease biology [9].

FAQ 2: What are the most critical factors in designing a phenotypically relevant screening assay? Key Considerations: The "Rule of 3" for predictive phenotypic assays recommends that models should demonstrate: (1) disease relevance, (2) quantifiable biomarkers, and (3) clinical translatability [9]. Prioritize physiological relevance over throughput—better to have a medium-throughput assay with high biological relevance than a high-throughput assay with poor predictive value [10] [11].

FAQ 3: How can we overcome the major bottleneck of target identification? Deconvolution Strategies: Implement a multi-pronged approach: (1) Affinity capture using bead-based platforms to physically pull down molecular targets [12]; (2) Functional genomics using CRISPR or RNAi screens; (3) Transcriptional profiling and bioinformatics; (4) Resistance generation and whole-genome sequencing [1] [12]. Begin deconvolution early with at least two parallel methods to validate findings.

FAQ 4: What types of compound libraries work best for phenotypic screening? Library Design: Balance chemical diversity with biological relevance. Include compounds with: (1) Structural diversity to maximize novel target discovery; (2) Known bioactivity profiles for potential repurposing; (3) Favorable physicochemical properties for cellular penetration; (4) Some target-focused sets for mechanism-informed PDD [8]. Consider including annotated libraries with known mechanisms to help with target deconvolution.

FAQ 5: How do we transition from phenotypic hits to optimized leads without a clear target? Optimization Pathway: Use phenotypic outcomes as your primary guide for structure-activity relationship (SAR) studies. Develop secondary assays that provide more granular biological readouts without requiring full target identification. Implement early counterscreens to eliminate nuisance compounds and focus on genuinely interesting phenotypes [10] [11].

Experimental Protocols: Key Methodologies for PDD Success

Protocol 1: Bead/Lysate-Based Affinity Capture for Target Identification

Purpose: Identify molecular targets of phenotypic screening hits using affinity purification and mass spectrometry.

Materials Required:

- Solid support beads (e.g., agarose, magnetic beads)

- Compound derivatization chemistry (often via amino- or carboxyl-functionalized linkers)

- Cell lysates from disease-relevant models

- Mass spectrometry system with proteomics capabilities

- Control beads (without compound) for background subtraction

Procedure:

- Compound Derivatization: Chemically modify hit compound to incorporate a linker while maintaining biological activity. Verify activity retention in phenotypic assay post-modification.

- Bead Preparation: Immobilize derivatized compound on solid support beads. Prepare control beads without compound or with inactive analog.

- Lysate Preparation: Culture disease-relevant cells and prepare lysates using non-denaturing conditions to preserve native protein structures.

- Affinity Capture: Incubate compound-conjugated beads with cell lysates. Include appropriate controls to identify non-specific binding.

- Wash and Elute: Remove non-specifically bound proteins through sequential washing. Elute specifically bound proteins using competitive compound elution or denaturing conditions.

- Protein Identification: Digest eluted proteins and analyze by LC-MS/MS. Compare experimental samples with controls to identify specifically bound targets.

- Validation: Confirm target identity through orthogonal methods (cellular thermal shift assay, siRNA knockdown, or biochemical binding assays).

Troubleshooting Tips:

- If background binding is high: Optimize wash stringency, include additional control beads, or pre-clear lysates.

- If no specific targets identified: Verify compound activity post-derivatization, test different linker lengths/chemistries, or try different lysis conditions.

- If multiple potential targets identified: Use a "uniqueness index" to prioritize targets based on specificity across experiments [12].

Protocol 2: Development of Disease-Relevant Phenotypic Assays

Purpose: Create cell-based assays that faithfully recapitulate disease biology for phenotypic screening.

Materials Required:

- Disease-relevant cells (primary cells, iPSC-derived cells, or specialized cell lines)

- Appropriate culture materials for 2D or 3D formats

- Phenotypic readout systems (high-content imagers, plate-based cytometers, etc.)

- Compound libraries in appropriate storage formats

- Biomarker detection reagents (antibodies, dyes, probes)

Procedure:

- Model Selection: Choose the most disease-relevant cellular model available. Prioritize patient-derived cells or iPSC-differentiated cells over conventional cell lines when possible [7].

- Assay Design: Define the phenotypic endpoint that best represents the disease state or desired therapeutic effect. Ensure the endpoint is quantifiable and reproducible.

- Format Optimization: Test both 2D and 3D culture formats. For complex diseases, 3D organoids or co-culture systems often provide superior biological relevance [11] [13].

- Assay Validation: Establish robustness metrics (Z'-factor >0.5), reproducibility, and scalability. Verify that known modulators produce expected phenotypic changes.

- Automation and Miniaturization: Adapt assay to appropriate throughput format while maintaining phenotypic relevance. Consider balance between throughput and biological complexity [13].

- Counterscreen Development: Implement parallel assays to identify non-specific or nuisance compounds early in screening cascade.

Troubleshooting Tips:

- If phenotypic readout is weak: Revisit disease relevance of model, consider alternative differentiation protocols, or test additional biomarkers.

- If assay variability is high: Standardize culture conditions, implement more rigorous quality control for cells, or simplify readout parameters.

- If throughput is insufficient: Consider automation solutions or strategic assay multiplexing without compromising biological relevance [13].

Visualizing PDD Workflows: From Screening to Therapeutics

PDD Screening Cascade and Target Deconvolution

Diagram 1: PDD screening cascade highlighting the parallel paths of phenotypic optimization and target deconvolution.

PDD vs TDD: Comparative Workflow Analysis

Diagram 2: Comparison of PDD and TDD workflows showing fundamental differences in approach and timing of target validation.

The Scientist's Toolkit: Essential Research Reagents & Platforms

Table 3: Key Research Reagent Solutions for Phenotypic Screening

| Reagent/Platform Type | Specific Examples | Primary Function | Application Notes |

|---|---|---|---|

| Cellular Models | iPSC-derived cells, Primary cells, 3D organoids | Provide disease-relevant screening context | Patient-derived cells offer highest translational relevance; 3D cultures better mimic tissue architecture [10] [7] |

| Detection Systems | High-content imagers, Plate-based cytometers | Multiparametric phenotypic measurement | High-content imaging enables subcellular resolution; multiparametric readouts provide richer data [10] [11] |

| Automation Platforms | Freedom EVO, Fluent Automation systems | Enable screening throughput and reproducibility | Modular systems allow adaptation to different assay formats and throughput needs [13] |

| Affinity Capture Reagents | Functionalized beads, Crosslinkers | Target identification and validation | Magnetic bead systems facilitate separation; multiple linker chemistries needed for different compound classes [12] |

| Compound Libraries | Diverse small molecules, Known bioactives, CRISPR libraries | Source of phenotypic modulators | Diversity-oriented libraries maximize novel target discovery; annotated libraries aid target deconvolution [8] |

| Analysis Software | AI/ML image analysis, Multi-omics platforms | Data analysis and target prioritization | Machine learning essential for complex multiparametric data; integrated platforms streamline deconvolution [11] |

The disproportionate success of Phenotypic Drug Discovery in delivering first-in-class therapeutics stems from its ability to address the incompletely understood complexity of human disease [1] [9]. By starting with disease-relevant models rather than hypothetical targets, PDD bypasses the limitations of incomplete target validation and embraces the polypharmacology that often underlies therapeutic efficacy [1]. The continued resurgence of PDD will depend on developing increasingly sophisticated disease models, improving target deconvolution methodologies, and strategically integrating phenotypic approaches with target-based methods where appropriate [9] [8]. When implemented with careful attention to assay relevance and translational potential, PDD represents a powerful approach to address the ongoing challenge of delivering innovative medicines for unmet medical needs.

Frequently Asked Questions (FAQs)

Q1: What is target deconvolution and why is it a central challenge in phenotypic screening?

A: Target deconvolution is the process of identifying the specific molecular target(s) through which a compound exerts its biological effect after being identified in a phenotypic screen [14]. It serves as the essential bridge between observing a desired phenotypic outcome and understanding its underlying mechanism of action (MoA) [15] [1]. This process is critical because while phenotypic screening can identify compounds that produce therapeutic effects in complex biological systems, it cannot inherently reveal which proteins or pathways are responsible [9]. Without successful target deconvolution, researchers face significant challenges in optimizing hit compounds, predicting potential toxicity, understanding structure-activity relationships, and fulfilling regulatory requirements for drug development [1] [16].

Q2: What are the main methodological categories for target deconvolution?

A: Target deconvolution strategies generally fall into three main categories, each with distinct principles and applications:

- Affinity-Based Chemoproteomics: These methods involve immobilizing the compound of interest on a solid support to isolate binding proteins from complex biological samples [14] [16]. The captured proteins are then identified via mass spectrometry. A key variant is photoaffinity labeling (PAL), which uses a photoreactive group on the probe to covalently cross-link to its target upon light exposure, stabilizing transient interactions [14] [16].

- Activity-Based Protein Profiling (ABPP): This approach uses bifunctional probes containing a reactive group that covalently binds to active sites of specific enzyme classes (e.g., proteases, hydrolases) and a reporter tag for enrichment and identification [14] [16]. It is particularly powerful for profiling specific enzyme families in native systems.

- Label-Free Methods: These strategies, such as thermal proteome profiling or solvent-induced denaturation shifts, detect changes in protein stability or solubility upon compound binding without requiring chemical modification of the small molecule [14]. This allows for target identification under native conditions.

Q3: Our phenotypic hit is not very potent. Can we still proceed with target deconvolution?

A: Yes, but with caveats. Many affinity-based methods require high-affinity binders (typically in the nanomolar range) for successful pull-down and identification [16]. For weaker binders, label-free methods like thermal shift assays may be more suitable, as they can detect stabilization even with lower-affinity compounds [14]. Alternatively, you can first use the weak hit as a starting point for medicinal chemistry optimization to create more potent analogues with an affinity handle specifically designed for deconvolution experiments [16].

Q4: How can we distinguish the true therapeutic target from irrelevant off-target binders?

A: Distinguishing the pharmacologically relevant target from non-specific binders requires orthogonal validation strategies. A systematic approach is crucial:

- Dose-Response Correlation: Confirm that the binding affinity to the putative target correlates with the functional potency (EC50/IC50) observed in the phenotypic assay [16].

- Genetic Perturbation: Use techniques like CRISPR/Cas9 or RNAi to knock out or knock down the putative target. The phenotypic effect of the compound should be abolished or significantly diminished if the correct target has been identified [15].

- Competition Experiments: Show that excess unmodified ("free") compound competes with and reduces the signal from your affinity probe in pull-down experiments [16].

- Resistance Mutations: For some targets, especially in infectious disease, selecting for resistant mutants and sequencing can reveal the binding site or target [15].

Q5: What are the biggest bottlenecks in moving from a validated hit to a deconvoluted target?

A: The primary bottlenecks include:

- Probe Design: Creating a functional affinity probe without losing the compound's biological activity is often non-trivial and requires significant medicinal chemistry effort [16].

- Target Abundance: Identifying targets that are low in abundance within a complex proteomic background is technically challenging, even with modern mass spectrometry [14].

- Functional Validation: Establishing a definitive causal link between target engagement and the observed phenotype requires extensive follow-up work using genetic and cellular biology techniques [15] [1].

- Polypharmacology: Many compounds act on multiple targets, making it difficult to discern which interactions are responsible for the efficacy and which may lead to side effects [1].

Troubleshooting Guides

Problem 1: High Background or Non-Specific Binding in Affinity Purification

Symptoms: Multiple proteins identified in mass spectrometry with no clear front-runner; poor correlation between protein abundance and phenotypic potency.

Possible Causes and Solutions:

- Cause: The affinity matrix or linker is causing non-specific interactions.

- Solution: Include rigorous controls using "blank" beads (with linker but no compound) or beads with an inactive enantiomer of your compound. Subtract proteins identified in the control pull-down from the experimental sample [16].

- Cause: Insufficient washing stringency.

- Solution: Optimize wash buffers by increasing salt concentration (e.g., 300-500 mM NaCl), adding mild detergents (e.g., 0.1% Triton X-100), or including competitors like ATP (for kinases) to disrupt weak, non-specific interactions.

- Cause: The compound itself has promiscuous binding properties.

- Solution: Re-evaluate the hit chemistry for known pan-assay interference compounds (PAINS) and consider creating structurally distinct analogues to see if the phenotype and binding profile are maintained.

Problem 2: Failed Photo-Crosslinking

Symptoms: No or very low protein labeling observed after UV irradiation in a photoaffinity labeling (PAL) experiment.

Possible Causes and Solutions:

- Cause: The photoreactive group (e.g., diazirine, benzophenone) is not positioned correctly to interact with the target protein.

- Solution: Synthesize alternative probe versions where the photoreactive group is attached at different positions on the molecule, guided by structure-activity relationship (SAR) data [16].

- Cause: The photoreactive group is quenched or degraded.

- Solution: Ensure probes are stored properly (often in the dark, under inert atmosphere) and confirm the stability of the photoreactive group using analytical methods like NMR or MS before the experiment.

- Cause: Insufficient UV energy or irradiation time.

- Solution: Calibrate your UV lamp and optimize the irradiation time and distance from the sample. For benzophenones, longer irradiation times (minutes to hours) may be needed compared to diazirines (seconds to minutes).

Problem 3: Inconsistent Results in Label-Free Thermal Shift Assays

Symptoms: Poor reproducibility of protein melting curves; weak or unstable stabilization signals.

Possible Causes and Solutions:

- Cause: Protein aggregation or instability at assay temperatures.

- Solution: Optimize protein buffer conditions (pH, salts) and include stabilizing additives. Use a fast-reading dye and ensure homogeneous temperature control across the sample plate [14].

- Cause: The compound's effect on protein thermal stability is subtle.

- Solution: Run the assay in biological triplicates and use statistical methods to identify significant shifts. Consider using isothermal dose-response fingerprinting (ITDRF) which can be more robust for detecting small shifts [14].

- Cause: The target protein is of low abundance, making its signal hard to detect in a complex lysate.

- Solution: Pre-fractionate the cell lysate or use targeted proteomics methods (e.g., SRM, PRM) to specifically monitor the melting curve of the suspected low-abundance target.

Problem 4: The Deconvoluted Target Does Not Fully Explain the Phenotype

Symptoms: Engagement with the putative target is confirmed, but genetic knockout only partially recapitulates the compound's effect, suggesting additional mechanisms.

Possible Causes and Solutions:

- Cause: The compound has polypharmacology, engaging multiple targets that contribute to the overall phenotype.

- Solution: Use a multi-pronged approach. Integrate chemoproteomic data with functional genomic screens (CRISPR) and transcriptomic profiling to build a comprehensive network of the compound's interactions [1].

- Cause: The identified target is part of a protein complex, and the compound disrupts a specific protein-protein interaction (PPI).

- Solution: Investigate whether the compound affects known protein complexes of the target using co-immunoprecipitation (co-IP) or proximity-dependent biotinylation (BioID) assays [15].

- Cause: The primary phenotype is a downstream consequence of a cascade of events initiated by target engagement.

- Solution: Use time-course experiments to map the earliest molecular events following compound treatment (e.g., phosphoproteomics, early transcriptional changes) to better understand the causal pathway.

Experimental Protocols

Protocol 1: An Integrated Workflow for Target Deconvolution Using a Knowledge Graph and Molecular Docking

This protocol, adapted from a recent study on p53 pathway activators, combines computational biology and experimental validation to efficiently narrow down candidate targets [17].

Objective: To systematically identify the direct target of a phenotypic hit (e.g., UNBS5162, a p53 pathway activator) from a vast number of potential candidates.

Materials:

- Protein-Protein Interaction Knowledge Graph (PPIKG) database

- Molecular docking software (e.g., AutoDock Vina, Glide)

- Compound of interest (e.g., UNBS5162)

- Standard cell culture and molecular biology reagents for experimental validation (antibodies, etc.)

Procedure:

- Phenotypic Screening: Identify an active compound (the "hit") using a disease-relevant phenotypic assay (e.g., a high-throughput luciferase reporter assay for p53 transcriptional activity) [17].

- Knowledge Graph Analysis:

- Construct or access a PPIKG centered on the pathway of interest (e.g., p53_HUMAN). This graph should include proteins and their functional interactions.

- Input the phenotypic hit and use the knowledge graph's inference capabilities to narrow the list of candidate proteins. In the referenced study, this step reduced candidates from 1088 to 35, focusing on proteins related to p53 activity and stability [17].

- Molecular Docking:

- Prepare the 3D structures of the shortlisted candidate proteins and the compound.

- Perform molecular docking simulations to predict the binding affinity and pose of the compound against each candidate target.

- Prioritize targets that show strong predicted binding affinity and a plausible binding mode. In the p53 example, USP7 was prioritized through this step [17].

- Experimental Validation:

- Validate the top computational prediction(s) using direct binding assays (e.g., surface plasmon resonance - SPR) and cellular functional assays (e.g., Western blotting to assess downstream pathway modulation) to confirm the target.

Integrated Knowledge Graph and Docking Workflow

Protocol 2: Target Deconvolution Using Affinity Purification and Quantitative Mass Spectrometry

This is a standard "workhorse" protocol for identifying direct binding partners of a small molecule [14] [16].

Objective: To isolate and identify proteins that bind directly to an immobilized version of your phenotypic hit from a complex proteome (e.g., cell lysate).

Materials:

- Affinity resin (e.g., NHS-activated Sepharose, magnetic beads)

- Phenotypic hit compound with a known site for conjugation (e.g., a primary amine or hydroxyl group)

- Control compound (inactive analog or vehicle)

- Cell line of interest and lysis buffer

- Mass spectrometer (LC-MS/MS)

- Standard buffers: Coupling buffer, Quenching buffer, Lysis buffer, Wash buffers, Elution buffer.

Procedure:

- Probe Design and Synthesis:

- Synthesize a functionalized analogue of your hit compound containing a chemical handle (e.g., an alkyne or primary amine) for immobilization. Critically, validate that this analogue retains biological activity in your phenotypic assay.

- Immobilization:

- Covalently couple the functionalized compound to the affinity resin according to the manufacturer's protocol.

- Prepare a control resin in parallel, coupled with an inactive compound or just the linker.

- Affinity Purification:

- Prepare a clarified lysate from the relevant cell line or tissue.

- Incubate the lysate with the compound-conjugated resin and the control resin separately. Use excess unmodified compound in a competition experiment to specifically elute binders.

- Wash the resin extensively with lysis buffer followed by a high-stringency wash buffer to remove non-specific binders.

- Elute bound proteins using a low-pH buffer, SDS-PAGE loading buffer, or by boiling.

- Protein Identification and Quantification:

- Digest the eluted proteins with trypsin.

- Analyze the resulting peptides by liquid chromatography coupled to tandem mass spectrometry (LC-MS/MS).

- Use database searching to identify proteins and quantitative methods (e.g., label-free quantification, SILAC) to compare enrichment in the experimental sample versus the control and competition samples. Proteins significantly enriched in the experimental sample are high-confidence candidate targets.

Research Reagent Solutions

The following table details key reagents and tools mentioned in the search results that are essential for conducting target deconvolution studies.

Table: Essential Research Reagents for Target Deconvolution

| Reagent / Tool | Provider / Source | Primary Function in Target Deconvolution |

|---|---|---|

| TargetScout Service | Momentum Bio [14] | Provides a commercial service for affinity pull-down and profiling, handling probe immobilization, target isolation, and identification. |

| PhotoTargetScout | OmicScouts [14] | A specialized service for photoaffinity labeling (PAL), including assay optimization and target identification for challenging targets like membrane proteins. |

| SideScout | Momentum Bio [14] | A commercially available, proteome-wide protein stability assay for label-free target deconvolution based on solvent-induced denaturation shifts. |

| CysScout | Momentum Bio [14] | Enables proteome-wide profiling of reactive cysteine residues using activity-based protein profiling (ABPP), useful for identifying targets with accessible cysteines. |

| Protein-Protein Interaction Knowledge Graph (PPIKG) | Public/Commercial Databases & In-house Curation [17] | A computational tool that maps known biological interactions to infer potential targets for a compound based on its phenotypic context, drastically narrowing candidate lists. |

| ChEMBL Database | EMBL-EBI [18] | A large-scale bioactivity database containing over 20 million data points used for selecting highly selective tool compounds and for in silico target prediction. |

| High-Selectivity Compound Library | Custom selection from databases (e.g., ChEMBL) [18] | A collection of compounds known to be highly selective for single targets. When used in phenotypic screens, hits can immediately suggest a potential mechanism of action. |

| Activity-Based Probes (ABPs) | Commercial vendors & academic synthesis [16] | Bifunctional chemical probes containing a reactive group and a tag used to label and identify active enzymes within specific classes (e.g., hydrolases, proteases) in complex proteomes. |

Method Selection Guide

Choosing the right deconvolution method depends on the properties of your compound and your experimental goals. The following flowchart provides a logical framework for this decision-making process.

Target Deconvolution Method Selection Guide

Table: Comparison of Key Target Deconvolution Methods

| Method | Typical Timeframe | Required Compound Starting Point | Key Technical Challenges | Best Suited For |

|---|---|---|---|---|

| Affinity Purification + MS | 2 - 6 months | High-affinity binder; known conjugation site | Probe design; non-specific binding; low-abundance targets | Identifying direct binders from lysate; well-behaved compounds. |

| Photoaffinity Labeling (PAL) | 3 - 8 months | Compound with modifiable site for photoreactive group | Positioning of photoreactive group; low cross-linking efficiency | Transient interactions; membrane proteins; tissue samples. |

| Activity-Based Profiling (ABPP) | 1 - 4 months | Knowledge of target enzyme class | Limited to enzyme classes with nucleophilic residues | Profiling specific enzyme families (kinases, hydrolases). |

| Label-Free (Thermal Shift) | 1 - 3 months | Native compound (no modification needed) | Detecting subtle stability shifts; low-abundance targets | Native conditions; initial screening for binding. |

| Computational (KG/Docking) | 1 week - 2 months | Compound structure; knowledge of phenotype pathway | Accuracy of predictions; requires experimental validation | Rapidly generating testable hypotheses; prioritizing targets. |

Frequently Asked Questions (FAQs) on Phenotypic Drug Discovery

FAQ 1: What is the core difference between Phenotypic Drug Discovery (PDD) and Target-Based Drug Discovery (TBDD)?

- Answer: PDD is a "biology-first" approach that identifies compounds based on their ability to induce a therapeutic effect in a realistic disease model (e.g., a cell-based assay or whole organism) without prior knowledge of a specific molecular target [1] [19] [9]. In contrast, TBDD is a reductionist approach that begins with a known, hypothesized drug target (e.g., a specific enzyme or receptor) and uses biochemical assays to find compounds that modulate it [19]. PDD is particularly valuable for discovering first-in-class medicines with novel mechanisms of action (MoA) [1].

FAQ 2: What are the major challenges in a PDD campaign, and how can they be addressed?

- Answer: The primary challenges are Hit Validation (ensuring the phenotypic change is real and relevant) and Target Deconvolution (identifying the specific molecular target(s) responsible for the observed phenotype) [9]. These can be addressed by:

- Using physiologically relevant disease models (e.g., 3D organoids, patient-derived cells) [19].

- Implementing robust assay design with multiple, disease-relevant readouts [9].

- Applying modern tools for target identification, such as chemical proteomics, CRISPR-based genetic screens, and multi-omics integration [1] [20].

FAQ 3: How does PDD help in targeting "undruggable" proteins?

- Answer: The term "undruggable" often refers to proteins that are difficult to drug with conventional small molecules, such as those without defined binding pockets (e.g., transcription factors) or non-enzymatic scaffold proteins [1]. PDD can identify compounds that work through unconventional MoAs, such as:

FAQ 4: What role do modern technologies like AI and High-Content Imaging (HCI) play in PDD?

- Answer: They are revolutionizing PDD by dramatically improving scale and insight [21] [19].

- AI/ML: AI-driven models can predict compounds that induce desired phenotypic changes from transcriptomic or other data, improving hit rates and enabling smaller, more focused screens [21] [22]. They also aid in molecular design and understanding complex biological systems [21].

- High-Content Imaging (HCI): HCI allows for the simultaneous multi-parameter visualization and quantification of thousands of cells in high-throughput. It provides deep insights into a compound's MoA, toxicity, and off-target effects by analyzing hundreds of phenotypic features at once [19].

Troubleshooting Guide for PDD Experiments

Table: Common PDD Experimental Challenges and Solutions

| Challenge | Potential Root Cause | Recommended Solution | Key References |

|---|---|---|---|

| High false-positive/negative hit rate | Poor assay robustness; overly simplistic disease model. | Implement the "Phenotypic Screening Rule of 3": Use 3+ disease-relevant cellular contexts, assay readouts, and chemical compound types. | [9] |

| Difficulty with target deconvolution | Compound may have polypharmacology (multiple targets); lack of sensitive methods. | Employ affinity-based chemical proteomics and functional genomics (e.g., CRISPR knockout/activation screens) in tandem. | [1] [20] |

| Poor clinical translatability | The cellular or animal model does not adequately recapitulate human disease biology. | Shift to more physiologically relevant models, such as patient-derived organoids or organ-on-a-chip systems. | [19] [9] |

| Identifying degradation-driven phenotypes | Difficulty distinguishing between simple protein inhibition and actual protein degradation. | Integrate direct measures of target protein abundance (e.g., Western blot, immunofluorescence) into the primary screening workflow. | [20] |

Experimental Protocols for Key PDD Methodologies

Protocol 1: High-Content Imaging (HCI) for a Phenotypic Screen

This protocol is used to run an image-based screen to identify compounds that reverse a disease-associated phenotype, such as aberrant protein aggregation or altered cell morphology [19].

- Model System Selection: Seed cells in a 384-well plate. Use a patient-derived tumor organoid model to maximize clinical relevance [19].

- Compound Treatment: Treat with a library of small molecules using a robotic liquid handler. Include appropriate positive and negative controls on every plate.

- Staining and Fixation: At the desired endpoint, fix cells and stain with fluorescent dyes or antibodies for key phenotypic markers (e.g., nuclei, cytoskeleton, a specific pathogenic protein).

- Image Acquisition: Use a confocal high-content imager (e.g., ImageXpress Micro Confocal) to automatically capture high-resolution images from each well.

- Image and Data Analysis: Apply high-content analysis (HCA) algorithms to extract quantitative data on 300+ phenotypic features (e.g., cell count, organoid size, protein intensity, texture). Use machine learning to classify hits based on their multiparameter profiles.

Protocol 2: A Workflow for Phenotypic Protein Degrader Discovery (PPDD)

This specialized PDD protocol aims to find compounds that induce the degradation of a target protein [20].

- Assay Development: Establish a cell-based reporter assay where the phenotype is directly linked to the loss of the target protein (e.g., a luminescence-based survival signal or fluorescence from a tagged target).

- Primary Screening: Screen a focused library that includes compounds known to engage E3 ligases (e.g., molecular glues) or bifunctional degraders (PROTACs).

- Hit Triage:

- Confirmatory Assay: Use Western blotting or immunofluorescence to validate that active compounds reduce the target protein level, not just its activity.

- Counter-Screen: Test hits in cells lacking the candidate E3 ligase or the target protein to confirm mechanism-specific activity.

- Target and E3 Ligase Deconvolution: For novel degraders, use techniques like thermal protein profiling, ubiquitin proteomics, and CRISPRi to identify the degraded target and the recruited E3 ligase [20].

The Scientist's Toolkit: Key Research Reagent Solutions

Table: Essential Materials for Advanced PDD Campaigns

| Reagent / Material | Function in PDD | Specific Application Example |

|---|---|---|

| Patient-Derived Organoids | 3D in vitro models that closely mimic the morphology, genetics, and physiology of native human tissue. | Screening for cancer therapeutics with high predictive value for patient drug response [19]. |

| CRISPR Knockout/Knockin Libraries | Functional genomics tool for systematic gene perturbation to identify genes essential for a phenotype or for target deconvolution. | Identifying which gene loss rescues a disease phenotype or which E3 ligase is required for a degrader's activity [20]. |

| Connectivity Map (CMap) Database | A public resource of gene expression profiles from cells treated with bioactive small molecules. | Using AI to compare a disease signature or a hit compound's signature to CMap to predict MoA or find repurposing opportunities [21] [22]. |

| Bifunctional Degraders (PROTACs) | Molecules with one ligand that binds a target protein and another that binds an E3 ubiquitin ligase, linked together to induce target degradation. | Targeting previously "undruggable" proteins for degradation by the proteasome [21] [20]. |

PDD Experimental Workflow and Signaling Pathways

The following diagram illustrates a generalized, modern PDD workflow that integrates AI and multi-omics for target deconvolution and hit validation.

Diagram 1: Generalized PDD workflow.

The next diagram visualizes how a phenotypic hit can lead to the discovery of unprecedented mechanisms of action, expanding the druggable genome beyond traditional targets.

Diagram 2: PDD reveals novel MoAs and targets.

This technical support center resource is framed within a broader thesis on target identification challenges in phenotypic screening research. Phenotypic Drug Discovery (PDD) has re-emerged as a powerful strategy for generating first-in-class medicines, responsible for a disproportionate number of these therapies compared to target-based approaches [1]. This guide provides troubleshooting support and foundational knowledge for researchers navigating the complex journey from phenotypic screen to validated drug target, illustrated by landmark success stories including ivacaftor, lumacaftor, daclatasvir, and risdiplam.

The PDD Advantage and Its Core Challenge

Why use Phenotypic Screening? PDD allows for the discovery of novel molecular targets and mechanisms of action (MoA) without a pre-specified target hypothesis. It expands the "druggable target space" to include unexpected cellular processes like pre-mRNA splicing, protein folding, and trafficking, as well as novel target classes [1]. However, a central paradox exists: while an understanding of a drug's mechanism is not required for regulatory approval, it is crucial for derisking safety and mapping a clinical path [23]. The following FAQs address the specific challenges you may encounter in this process.

Frequently Asked Questions (FAQs) & Troubleshooting Guides

FAQ 1: How do we proceed when a phenotypic hit has no known molecular target?

Challenge: You have a confirmed hit from a phenotypic screen that robustly reverses the disease phenotype, but the molecular target is completely unknown. This is a common starting point in PDD.

Troubleshooting Guide:

- Step 1: Profile the Compound's Signature. Use multi-omics approaches (transcriptomics, proteomics, metabolomics) to generate a detailed signature of the hit compound. Compare this signature to databases of compound profiles (e.g., Connectivity Map) to identify similar compounds with known MoAs, which can provide testable hypotheses [22] [24].

- Step 2: Employ Functional Genomics. Conduct genome-wide CRISPR knockout or RNAi screens in your disease model. Identify genes whose loss either mimics the compound's effect (synthetic lethality) or reverses it, pinpointing potential pathways involved [1].

- Step 3: Use Chemical Biology for Target Identification. Employ affinity-based methods (e.g., affinity chromatography, photoaffinity labeling) using a functionalized version of your hit compound to pull down direct binding partners from a cellular lysate. Mass spectrometry can then identify the bound proteins [1].

- Step 4: Validate Candidate Targets. Use genetic tools (knockdown/knockout) in your disease model to see if modulating the candidate target recapitulates the phenotypic effect of your compound. Crucially, use resistant versions of the target or cell line to confirm the specific interaction is necessary for activity [1].

Case Study: Daclatasvir (HCV NS5A Inhibitor) Daclatasvir was identified in a phenotypic screen using an HCV replicon system. Its target, the non-structural protein NS5A, had no known enzymatic activity and was an elusive target for years. The MoA was only elucidated after the compound's efficacy was proven in phenotypic models [1] [25].

FAQ 2: Our hit compound appears to engage multiple targets. Is this a liability or an opportunity?

Challenge: Target deconvolution efforts suggest your lead compound interacts with several targets, and you are concerned about potential toxicity (polypharmacology).

Troubleshooting Guide:

- Assess the Disease Context. For complex, polygenic diseases (e.g., CNS disorders, cancer), modulating a single target may be insufficient. Multi-target engagement can be beneficial for efficacy and to combat resistance [1].

- Differentiate On-Target vs. Off-Target Effects. Determine which target interactions are required for the therapeutic effect ("on-target" polypharmacology) and which are unrelated and potentially detrimental. Use structural activity relationships (SAR) to try and separate efficacy from adverse effect profiles.

- Consider the Therapeutic Window. Evaluate if the multi-target activity occurs at therapeutically relevant concentrations. Many successful drugs, like imatinib, have a defined polypharmacology profile that contributes to their clinical efficacy [1].

- Leverage the Profile. If the multi-target profile is synergistic for efficacy, it can be a strategic advantage. Focus your development on demonstrating a superior efficacy and safety profile compared to selective agents.

Case Study: Imatinib (BCR-ABL Inhibitor) Initially designed as a selective inhibitor of the BCR-ABL fusion protein in CML, imatinib was later found to inhibit c-KIT and PDGFR. This polypharmacology was not a liability but proved critical for its activity in other cancers, such as gastrointestinal stromal tumors (GIST) [1].

FAQ 3: How can we improve the clinical translatability of our phenotypic assay results?

Challenge: Hits from a phenotypic screen performed in a simplified cell line model fail to show efficacy in more physiologically relevant models.

Troubleshooting Guide:

- Upgrade Your Disease Model. Move from 2D cell cultures to more complex and physiologically relevant models early in the screening cascade. Utilize 3D organoids, bioengineered tissue models, or organs-on-chips that better mimic human disease pathology and microenvironment [26].

- Incorporate Patient-Derived Materials. Use primary cells or patient-derived induced pluripotent stem cells (iPSCs) to ensure the genetic background of your model is relevant to the human condition [26].

- Implement High-Content Screening (HCS). Use high-content imaging and machine learning (ML) to extract rich, multi-parametric phenotypic data that goes beyond a single readout. This provides a more robust and information-rich dataset for selecting promising compounds [25].

- Use AI/ML for Virtual Screening. Leverage computational frameworks like

DrugReflectorto prioritize compounds with a higher likelihood of inducing the desired complex phenotype before running expensive and labor-intensive wet-lab screens. This can improve hit rates by an order of magnitude [22].

Case Study: Lumacaftor (CFTR Corrector) Lumacaftor was discovered using target-agnostic compound screens in cell lines expressing disease-associated CFTR variants (specifically F508del). The use of a disease-relevant cellular model was crucial for identifying a compound that corrected the defective protein folding and trafficking [1] [25].

Experimental Protocols for Key Phenotypic Screening Workflows

Protocol 1: High-Content Phenotypic Screening for a Complex Cellular Phenotype

This protocol is adapted from the approaches that led to the discovery of compounds like risdiplam, where the desired phenotype was a change in SMN2 pre-mRNA splicing [1] [25].

1. Objective: To identify small molecules that modulate a specific disease-relevant phenotypic endpoint (e.g., protein localization, cytoskeletal rearrangement, splicing correction) in a high-throughput manner.

2. Materials:

- Cell Line: A disease-relevant cell model (e.g., patient-derived iPSCs, engineered cell line with a disease mutation).

- Assay Plate: 384-well microplates, optically clear for imaging.

- Staining Reagents:

- Primary Antibody: Specific to your target protein.

- Secondary Antibody: Conjugated to a fluorophore (e.g., Alexa Fluor 488, 555, 647).

- Nuclear Stain: Hoechst 33342 or DAPI.

- Fixative: 4% Paraformaldehyde (PFA).

- Permeabilization Buffer: 0.1% Triton X-100 in PBS.

- Instrumentation: High-content imager (e.g., ImageXpress Micro Confocal, Opera Phenix).

3. Procedure: 1. Seed cells in 384-well plates at an optimized density and incubate for 24 hours. 2. Compound Treatment: Treat cells with library compounds (e.g., 1-10 µM final concentration) and controls (positive/negative) for a predetermined time (e.g., 24-72 hours). Include DMSO vehicle controls. 3. Fixation: Aspirate media and add 4% PFA for 15-20 minutes at room temperature. 4. Permeabilization and Blocking: Wash with PBS, then permeabilize and block with a solution containing 0.1% Triton X-100 and 1-5% BSA for 1 hour. 5. Immunostaining: * Incubate with primary antibody diluted in blocking buffer for 2 hours at RT or overnight at 4°C. * Wash 3x with PBS. * Incubate with fluorophore-conjugated secondary antibody and nuclear stain for 1 hour at RT in the dark. * Wash 3x with PBS, leaving a final volume of 100µL PBS. 6. Image Acquisition: Image plates using a 20x or 40x objective on the high-content imager. Acquire multiple fields per well to ensure statistical robustness. 7. Image Analysis: Use integrated software (e.g., CellProfiler, IN Carta) to extract features like intensity, texture, morphology, and object count. Train a machine learning classifier to identify the desired phenotype based on positive controls.

The workflow for this high-content screening is standardized as follows:

Protocol 2: Functional Validation of a Novel Target Post-Deconvolution

Once a candidate target is identified (e.g., via affinity purification), this protocol helps confirm its biological relevance.

1. Objective: To genetically validate that a putative target protein is responsible for the observed phenotypic effect of a small molecule.

2. Materials:

- Cell Line: Disease-relevant cell line used in the original screen.

- sgRNAs: Designed against your candidate target gene and a non-targeting control.

- Lentiviral Packaging System: psPAX2, pMD2.G.

- Puromycin or other appropriate selection antibiotic.

- qPCR Reagents: Primers for target gene and housekeeping genes.

- Western Blot Reagents: Antibodies against target protein and loading control.

3. Procedure: 1. Generate Knockout Cells: * Produce lentivirus containing CRISPR/Cas9 and sgRNAs targeting your gene of interest. * Transduce your cell line and select with puromycin for 72 hours. * Confirm knockout efficiency via qPCR and Western Blot. 2. Compound Treatment: Treat the knockout cell line and the wild-type control with your hit compound. 3. Phenotype Re-assessment: Run the original phenotypic assay on both cell lines. * Expected Result for True Target: The phenotypic effect of the compound should be significantly diminished or ablated in the knockout cell line compared to the wild-type control. 4. Rescue Experiment: Re-introduce a wild-type cDNA of the target gene (resistant to the sgRNA) into the knockout cell line. Demonstrate that this rescues sensitivity to the compound, providing definitive proof of target engagement.

Key Signaling Pathways and Mechanisms of Action

Diagram 1: Mechanism of Risdiplam in Spinal Muscular Atrophy

Risdiplam, discovered via phenotypic screening, modulates SMN2 pre-mRNA splicing to increase full-length SMN protein levels [1] [25] [27].

Diagram 2: Mechanism of CFTR Correctors (Lumacaftor) and Potentiators (Ivacaftor)

Phenotypic screens identified two classes of drugs that rescue different classes of CFTR mutations in cystic fibrosis [1].

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key reagents and their applications in phenotypic screening and target identification, as demonstrated in the cited success stories.

| Research Reagent | Function & Application in PDD |

|---|---|

| Patient-derived iPSCs | Creates physiologically relevant human disease models for screening (e.g., for neurological disorders like SMA) [26]. |

| 3D Organoid Cultures | Provides complex, in vivo-like tissue architecture for more predictive phenotypic assays in organs like liver, gut, and brain [26]. |

| Connectivity Map (CMap) | A public database of drug-induced gene expression profiles used to compare hit compound signatures and generate MoA hypotheses [22]. |

| High-Content Imaging Systems | Automated microscopes that capture multi-parametric data (morphology, intensity, texture) from cell-based assays for rich phenotypic analysis [25]. |

| CRISPR Knockout Libraries | Enables genome-wide functional genomics screens to identify genes critical for a disease phenotype or compound mechanism [1]. |

| Affinity Chromatography Resins | Used to immobilize small-molecule hits and pull down direct binding proteins from cell lysates for target identification (e.g., Streptavidin beads for biotinylated compounds) [1]. |

The success of PDD is evidenced by the number of first-in-class medicines it has produced. The table below summarizes key data on approved drugs discussed in this guide.

| Drug / Compound | Indication | Discovery Approach | Key Molecular Target / MoA |

|---|---|---|---|

| Risdiplam (Evrysdi) | Spinal Muscular Atrophy | Phenotypic screen for SMN2 splicing modification [1] [25] | SMN2 pre-mRNA splicing modifier [1] |

| Ivacaftor/Lumacaftor | Cystic Fibrosis | Target-agnostic screen in CFTR-expressing cells [1] [25] | CFTR potentiator & corrector [1] |

| Daclatasvir (Daklinza) | Hepatitis C | Phenotypic screen using HCV replicon system [1] [25] | NS5A protein inhibitor [1] [25] |

| Vamorolone (Agamree) | Duchenne Muscular Dystrophy | Phenotypic profiling [25] | Dissociative steroidal anti-inflammatory [25] |

| Perampanel (Fycompa) | Epilepsy | Whole-system, multi-parametric modeling [25] | AMPA glutamate receptor antagonist [25] |

Table Footnote: A review of new therapies approved between 1999 and 2017 showed that PDD contributed to 58 out of 171 total drugs, underscoring its significant impact on the development of first-in-class medicines [25].

A Toolkit for Target Deconvolution: From Affinity Capture to AI-Driven Profiling

Technical Foundations: Immobilization Techniques for Affinity-Based Methods

Immobilization is a critical first step in affinity chromatography, forming the foundation upon which the entire experiment rests. The choice of strategy directly impacts the binding capacity, specificity, and overall success of your target identification efforts. The table below summarizes the core techniques.

Table 1: Core Immobilization Techniques for Affinity Chromatography

| Immobilization Method | Interaction or Reaction | Key Advantages | Key Drawbacks & Challenges |

|---|---|---|---|

| Physical Adsorption | Hydrophobic or ionic interactions [28] | Simple and fast; no need for complex chemistry or modified biomolecules [28] | Weak attachment susceptible to desorption by pH/salt changes; random orientation leading to crowding [28] [29] |

| Covalent Binding | Formation of stable covalent bonds (e.g., via -NH₂, -COOH) [28] [30] | Excellent stability and strong binding; suitable for long-term use and harsh elution conditions [28] [30] | Requires specific functional groups on the biomolecule and surface; potential for loss of activity due to improper orientation or conformational changes [28] [30] |

| Affinity Immobilization | Highly specific bioaffinity (e.g., streptavidin-biotin, antibody-antigen) [28] | Improved orientation and functionality; uses biocompatible linkers [28] | Expensive reagents; can still suffer from crowding effects and poor reproducibility [28] |

| Entrapment | Caging within a porous polymer or fiber matrix [29] | Prevents enzyme aggregation and proteolysis [29] | Can cause diffusion limitations, reducing activity; potential for enzyme leakage from the matrix [29] |

Experimental Protocols & Workflows

Protocol 1: Covalent Immobilization of a Small-Molecule Bait via Amine Coupling

This is a common and robust method for immobilizing small molecules that contain primary amine groups (-NH₂) or for coupling to the primary amines of a protein bait.

1. Reagent and Material Preparation:

- Activated Support: Select a resin with appropriate reactive groups, such as NHS-activated Sepharose or an epoxy-activated support [28].

- Immobilization Buffer: 0.1 M sodium phosphate, 0.15 M NaCl, pH 7.4. Ensure no other primary amines (e.g., Tris buffer, azide) are present.

- Bait Solution: Prepare your small-molecule or protein bait in the immobilization buffer.

- Blocking Buffer: 0.1 M Tris-HCl, pH 8.0.

- Wash Solutions: High-salt (e.g., 0.1 M sodium phosphate, 1 M NaCl, pH 7.4) and low-pH (e.g., 0.1 M sodium acetate, 0.5 M NaCl, pH 4.0) buffers.

2. Immobilization Procedure: 1. Wash the Resin: Gently wash the activated support with 10-15 bed volumes of cold (4°C) immobilization buffer to remove storage solution. 2. Coupling Reaction: Incubate the bait solution with the prepared resin slurry for 2-4 hours at room temperature or overnight at 4°C with gentle end-over-end mixing. 3. Quenching and Blocking: After coupling, centrifuge the resin and collect the supernatant to determine coupling efficiency. Block any remaining active groups by incubating with blocking buffer for 1-2 hours. 4. Washing: Sequentially wash the resin with at least 10 bed volumes each of immobilization buffer, high-salt wash, and low-pH wash to remove non-covalently bound bait and other contaminants.

3. Confirmation and Storage:

- Store the prepared affinity resin as a 50% slurry in a storage buffer (e.g., PBS with 0.02% sodium azide) at 4°C.

Protocol 2: Standard Affinity Purification Workflow for Target Identification

This protocol describes the general process of using your immobilized bait to pull down interacting proteins from a complex mixture, such as a cell lysate.

1. Sample and Buffer Preparation:

- Binding/Wash Buffer: Use a physiologic buffer like Phosphate Buffered Saline (PBS) or Tris-Buffered Saline (TBS), pH 7.4. Optionally, add a mild non-ionic detergent (e.g., 0.1% Triton X-100) to minimize nonspecific binding [31].

- Elution Buffers: Prepare one or more of the following based on your bait-target system [31]:

- Low-pH Elution: 0.1 M glycine•HCl, pH 2.5-3.0 (immediately neutralize fractions with 1 M Tris, pH 8.5).

- High-Salt Elution: 3.5–4.0 M magnesium chloride in 10mM Tris, pH 7.0.

- Competitive Elution: A high concentration (e.g., >0.1 M) of a soluble ligand that competes for binding.

- Cell Lysate: Prepare a clarified lysate in binding buffer from relevant cells or tissues. Pre-clear with bare resin if high nonspecific binding is observed.

2. Affinity Purification Procedure: 1. Equilibration: Equilibrate your prepared affinity resin with 10-15 bed volumes of binding buffer. 2. Binding: Incubate the pre-cleared lysate with the resin for 1-2 hours at 4°C with gentle mixing. 3. Washing: Wash the resin with 15-20 bed volumes of binding/wash buffer to remove unbound and weakly associated proteins. 4. Elution: Apply 3-5 bed volumes of your chosen elution buffer and collect fractions. 5. Regeneration (Optional): Wash the resin with a stripping buffer (e.g., 6 M guanidine•HCl) and re-equilibrate with storage buffer if re-use is intended.

3. Downstream Analysis:

- Analyze eluted fractions by SDS-PAGE and silver staining or Coomassie staining.

- Identify specific binding partners using mass spectrometry (e.g., LC-MS/MS).

Troubleshooting Guide: Addressing Common Experimental Issues

FAQ 1: My affinity purification results in a high background of nonspecific proteins. How can I improve specificity?

- Problem: Numerous proteins bind to the resin regardless of the specific bait.

- Solutions:

- Optimize Wash Stringency: Increase the salt concentration (e.g., up to 500 mM NaCl) or add a mild non-ionic detergent (e.g., 0.1% Tween-20) to your wash buffer to disrupt weak, nonspecific interactions [31].

- Include a Control Resin: Always run a parallel experiment with a "dummy" resin (e.g., immobilized with an irrelevant molecule or just the blocked support). Proteins found in both the experimental and control eluates are nonspecific binders.

- Pre-clear the Lysate: Pass your lysate over the control resin before incubating with the bait resin to remove proteins that stick nonspecifically.

- Reduce Bait Density: Over-crowding of the bait on the resin can promote nonspecific binding. Reduce the coupling concentration to ensure proper orientation and accessibility [28].

FAQ 2: I suspect my target protein is binding but not eluting efficiently. What are my options?

- Problem: The target is retained on the column and cannot be recovered for analysis.

- Solutions:

- Try a Harsher Elution Condition: If gentle, competitive elution fails, use a stronger method. A step-wise elution with low pH (e.g., 0.1 M glycine, pH 2.5) or a chaotropic agent (e.g., 2 M MgCl₂) can be effective, but may denature the protein [31].

- Test Different Elution Strategies: If the interaction is very high-affinity, use a competitive ligand. Alternatively, elute with SDS-PAGE sample buffer to recover everything bound to the resin for western blot analysis.

- Verify Bait Integrity: Ensure your immobilized bait has not degraded or leached from the resin during the procedure, which would leave nothing to elute.

FAQ 3: After covalent immobilization, my bait seems to have lost its activity. What could have gone wrong?

- Problem: The bait is immobilized but is no longer functional.

- Solutions:

- Consider Orientation: Covalent coupling may have occurred through a functional group critical for target binding. If possible, use a different immobilization chemistry (e.g., couple via a carboxyl group if amine coupling fails) or introduce a spacer arm to provide more mobility and access [28] [30].

- Reduce Coupling Density: High density can lead to steric hindrance, where bait molecules are too close together for the large target protein to access [28]. Optimize the amount of bait used during coupling.

- Use a Milder Chemistry: Explore alternative, gentler covalent methods (e.g., Schiff base formation) or switch to a high-affinity, non-covalent method like streptavidin-biotin, which often preserves functionality [28].

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Reagent Solutions for Affinity-Based Target Identification

| Reagent / Material | Function & Role in the Experiment |

|---|---|

| Activated Chromatography Resins | The solid support (e.g., beaded agarose) pre-activated with chemical groups (e.g., NHS, epoxy) for covalent ligand immobilization [28] [31]. |

| Biotinylated Bait & Streptavidin Resin | A versatile affinity pair. The small molecule or protein bait is biotinylated and captured onto immobilized streptavidin, allowing for uniform orientation and gentle elution with excess biotin [28]. |

| Elution Buffers (Glycine, Competitors) | Solutions used to dissociate the target from the immobilized bait. They work by altering pH, ionic strength, or by competition, enabling recovery of the purified target [31]. |

| Chaotropic Agents (e.g., Guanidine•HCl) | Denaturing agents used in harsh elution conditions or for resin regeneration. They disrupt protein structure by breaking hydrogen bonds, effectively dissociating even very strong interactions [31]. |

| Protease Inhibitor Cocktails | Essential additives in cell lysis and binding buffers to prevent proteolytic degradation of your target protein and the immobilized bait during the purification process. |

Workflow Visualization

The following diagram illustrates the logical workflow and decision points for a direct target identification project using affinity chromatography, set within the context of phenotypic screening.

Phenotypic screening is a powerful method for identifying compounds that produce a desired therapeutic effect. However, a significant challenge, known as target deconvolution, follows: identifying the specific molecular targets responsible for the observed phenotype [32] [2]. Chemoproteomics has emerged as a crucial discipline to address this challenge, providing a suite of techniques to directly profile protein interactions of small molecules within complex biological systems [32] [33].

This technical support center focuses on two cornerstone chemoproteomic methods—Activity-Based Protein Profiling (ABPP) and Photoaffinity Labeling (PAL). These techniques enable researchers to move from a phenotypic observation to a mechanistic understanding, identifying drug targets and binding sites, which is essential for lead optimization and understanding mechanisms of action [32] [34].

Core Methodologies and Experimental Protocols

Activity-Based Protein Profiling (ABPP)

ABPP is a method for chemically interrogating the proteome of a cell using designed small-molecule probes that covalently bind to active enzymes based on their catalytic mechanism [35].

Detailed Protocol: ABPP Workflow

Probe Design: Design or select an activity-based probe (ABP). An ABP typically consists of three key elements:

- Reactive Group (Warhead): A covalent-binding group that selectively targets a specific enzyme class or nucleophilic amino acid (e.g., cysteine) [33].

- Linker Group: A spacer that provides flexibility and distance between the warhead and the tag.

- Report Tag: An handle for detection and/or enrichment, such as an alkyne for subsequent bioorthogonal "click chemistry" conjugation to a fluorophore (e.g., for gel-based analysis) or biotin (for affinity purification) [36].

Live-Cell Screening: Incubate the ABP with live cells or a complex proteome. This allows the probe to engage with its protein targets in a native physiological environment, preserving cellular context and protein complexes [33].

Conjugation via Click Chemistry (if a bioorthogonal handle is used): After the labeling reaction, perform a copper-catalyzed azide-alkyne cycloaddition (CuAAC) "click" reaction to conjugate the reporter tag (e.g., biotin-azide or fluorescent dye-azide) to the alkyne-bearing probe that is now covalently attached to its protein targets [37].

Detection and Analysis:

- For Fluorescent Tags: Separate proteins by SDS-PAGE and visualize labeled proteins using in-gel fluorescence scanning.

- For Affinity Tags (e.g., Biotin): Solubilize the labeled proteome and incubate with streptavidin-conjugated beads to enrich probe-labeled proteins. After thorough washing, the bound proteins are eluted and identified by liquid chromatography-tandem mass spectrometry (LC-MS/MS) [36].

The following diagram illustrates the ABPP workflow using a biotin-azide tag and streptavidin enrichment.

Photoaffinity Labeling (PAL)

PAL is a powerful strategy to study non-covalent and transient interactions, such as protein-protein interactions (PPIs) or protein-ligand interactions, by using photoreactive groups to capture these interactions covalently [37] [36].

Detailed Protocol: PAL Workflow

Photoprobe Design: Synthesize a probe containing:

- A Ligand: A small molecule or peptide with known or suspected bioactivity and binding affinity.

- A Photoreactive Group: A chemically inert moiety that, upon UV irradiation, generates a highly reactive species. The three most common groups are diazirines, benzophenones (BP), and aryl azides [37] [36].

- An Affinity or Report Tag: Similar to ABPP, this is often an alkyne handle for post-labeling conjugation via click chemistry.

Interaction and Crosslinking: Incubate the photoprobe with the biological system (e.g., purified protein, cell lysate, or live cells) to allow formation of non-covalent interactions. Subsequently, irradiate the sample with UV light at a specific wavelength to activate the photoreactive group, forming a covalent bond (the "photoadduct") with nearby interacting biomolecules [36].

Sample Processing and Enrichment: Lyse the cells (if working with live cells). Perform click chemistry with biotin-azide to tag the photoadducts. Use streptavidin beads to capture and enrich the biotinylated proteins, followed by rigorous washing to remove non-specifically bound proteins [37].

Protein Identification and Analysis: Digest the enriched proteins on-bead with trypsin. Analyze the resulting peptides by LC-MS/MS to identify the crosslinked protein partners. For binding site mapping, analyze the MS/MS spectra for peptides containing the crosslink [37].

The workflow for identifying protein partners using PAL-MS is summarized below.

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential reagents and their functions in ABPP and PAL experiments.

| Item | Function in Experiment | Key Considerations |

|---|---|---|

| Covalent Small-Molecule Library [33] | A collection of compounds designed to covalently bind to protein targets; used for screening. | Library quality is critical. Compounds should have a reactive "warhead" and be designed to minimize non-specific reactivity (e.g., avoid PAINS). |

| Photoaffinity Probes [37] [36] | Molecules containing a photoreactive group (e.g., diazirine, benzophenone) used in PAL to capture transient interactions. | The photoreactive group should be small to avoid disrupting native interactions and must have a well-characterized activation wavelength and reactivity. |