Systems Chemical Biology: Integrating Network Biology and Cheminformatics for Advanced Drug Discovery

This article explores the integration of systems biology and chemical biology, a field termed 'systems chemical biology.' Aimed at researchers, scientists, and drug development professionals, it examines how this interdisciplinary...

Systems Chemical Biology: Integrating Network Biology and Cheminformatics for Advanced Drug Discovery

Abstract

This article explores the integration of systems biology and chemical biology, a field termed 'systems chemical biology.' Aimed at researchers, scientists, and drug development professionals, it examines how this interdisciplinary fusion uses cheminformatics, multi-omics data, and network analysis to understand how small molecules perturb complex biological systems. The content covers foundational concepts, methodological applications in target identification and therapeutic optimization, strategies for overcoming data integration and technical challenges, and validation through case studies in precision medicine. The article concludes by synthesizing key takeaways and outlining future directions for transforming biomedical research and clinical translation.

From Reductionist to Comprehensive: Defining the Systems Chemical Biology Paradigm

The field of biomedical research has undergone a fundamental transformation, evolving from a traditional reductionist focus on single targets toward an integrated, holistic approach. This paradigm shift recognizes that complex diseases arise from perturbations in interconnected biological networks rather than isolated molecular defects. The integration of systems biology with chemical biology represents the vanguard of this transformation, creating a powerful framework for understanding disease complexity and accelerating therapeutic development [1] [2].

Where traditional pharmaceutical research often relied on developing highly potent compounds against specific biological mechanisms with frequent difficulties in demonstrating clinical benefit, the new paradigm leverages multidisciplinary teams and parallel processes to understand underlying biological processes holistically [1]. This approach has proven critical for addressing the fundamental challenge in drug development: the translation of laboratory success into genuine clinical efficacy for patients. By examining biological functions across multiple levels—from molecular interactions to population-wide effects—researchers can now develop more predictive models of human disease and more effective therapeutic strategies [1].

The Historical Evolution: From Single-Target to Network-Based Approaches

The Limitations of Traditional Approaches

The latter half of the 20th century saw pharmaceutical companies increasingly producing potent compounds targeting specific biological mechanisms, particularly focusing on discrete target classes including G-protein coupled receptors (45%), enzymes (25%), ion channels (15%), and nuclear receptors (approximately 2%) [1]. Despite this target-focused approach, the industry faced a significant obstacle: demonstrating clinical benefit in patients. This challenge of translating compound potency into therapeutic efficacy revealed the critical limitations of single-target thinking and paved the way for transformative changes in drug development [1].

The traditional linear approach to drug discovery often relied on trial-and-error methods, including high-throughput technologies that failed to account for system-level complexity. This methodological gap became particularly evident with the Kefauver-Harris Amendment in 1962, which demanded proof of efficacy from adequate and well-controlled clinical trials, thereby dividing Phase II clinical evaluation into two components: Phase IIa (finding a potential disease in which the potential drug would work) and Phase IIb/Phase III (demonstrating statistical proof of efficacy and safety) [1]. This regulatory environment highlighted the need for more predictive approaches that could bridge the chasm between laboratory observations and clinical outcomes.

The Rise of Integrative Frameworks

The emergence of clinical biology represented an early organized effort to bridge relationships and foster teamwork between preclinical physiologists, pharmacologists, and clinical pharmacologists [1]. This approach, pioneered by pharmaceutical companies like Ciba (now Novartis), established four key steps based on Koch's postulates to indicate potential clinical benefits of new agents:

- Identify a disease parameter (biomarker)

- Show that the drug modifies that parameter in an animal model

- Show that the drug modifies the parameter in a human disease model

- Demonstrate a dose-dependent clinical benefit that correlates with similar change in direction of the biomarker [1]

This framework marked a significant advancement toward translational physiology, focusing on identifying human disease models and biomarkers that could more easily demonstrate drug effects before progressing to costly Phase IIb and III trials. The subsequent development of the chemical biology platform around the year 2000 took advantage of genomics information, combinatorial chemistry, improvements in structural biology, high-throughput screening, and various cellular assays that could be genetically manipulated to find and validate targets and leads [1].

Technical Integration: How Systems Biology and Chemical Biology Converge

Multi-Omics Data Integration

The holistic approach leverages diverse omics technologies to capture molecular information across multiple biological layers, enabling researchers to construct comprehensive network models rather than focusing on linear pathways.

Table 1: Omics Technologies in Integrated Drug Discovery

| Data Type | Technologies | Data Output | Applications in Drug Discovery |

|---|---|---|---|

| Genomics | GWAS, NGS, Epigenomic arrays | Genetic variants, DNA methylation patterns | Target identification, patient stratification [2] |

| Transcriptomics | Microarrays, RNA-Seq | Gene expression profiles, miRNA signatures | Mechanism of action, biomarker discovery [2] |

| Proteomics | Mass spectrometry, Protein arrays | Protein expression, post-translational modifications | Target engagement, signaling networks [2] |

| Metabolomics | LC/MS, GC/MS | Metabolite concentrations, flux measurements | Pharmacodynamic biomarkers, toxicity prediction [2] |

The integration of these diverse data types enables the construction of causal network models—representations of biological systems as objects and the directed causal relations between them [2]. Unlike traditional correlation-based approaches, these models can predict how perturbations at one network node will propagate through the system, offering tremendous power for understanding drug effects.

Network Analysis and Computational Methods

The conversion of large omics datasets into biological knowledge requires sophisticated computational techniques that reduce data complexity while preserving biologically meaningful patterns. These methods connect results to external knowledge and integrate multiple data sources to generate testable hypotheses.

Table 2: Computational Methods for Systems-Chemical Biology Integration

| Method Category | Specific Techniques | Applications | Key Advantages |

|---|---|---|---|

| Data Reduction | PCA, clustering, filtering | Handling multivariate datasets | Identifies patterns in high-dimensional data [2] |

| Network Analysis | Co-expression, Bayesian, causal | Modeling biological interactions | Captures system-level properties [2] |

| Knowledge-Based | Pathway enrichment, GO analysis | Connecting data to prior knowledge | Leverages existing biological information [2] |

| Integration Methods | Classifiers, multi-omics fusion | Combining data types | Provides comprehensive biological view [2] |

These computational approaches enable researchers to move beyond the limitations of studying individual components in isolation, instead modeling the complex interactions and emergent properties that characterize living systems. The resulting network models provide a more accurate representation of biological reality than traditional reductionist approaches.

Experimental Methodologies for Holistic Drug Discovery

Target Identification and Validation Protocols

Protocol 1: Integrated Network-Based Target Identification

- Data Collection: Gather disease-relevant multi-omics data (transcriptomics, proteomics, epigenomics) from appropriate model systems or patient samples [2].

- Network Construction: Build gene co-expression or protein-protein interaction networks using algorithms such as Weighted Gene Co-expression Network Analysis (WGCNA) or ARACNE [2].

- Module Identification: Apply community detection algorithms to identify densely connected network modules associated with disease phenotypes.

- Prioritization: Rank candidate targets based on network topology metrics (degree, betweenness centrality) and functional annotation using enrichment analysis tools [2].

- Experimental Validation: Use CRISPR-based gene editing or RNA interference in relevant cellular models to perturb candidate targets and assess impact on disease-relevant phenotypes.

Protocol 2: BioMAP Phenotypic Profiling Platform

- Primary Cell Systems: Establish co-cultures of primary human cell types (endothelial cells, peripheral blood mononuclear cells, fibroblasts) to model disease-specific tissue environments [2].

- Compound Treatment: Expose BioMAP systems to reference compounds and experimental small molecules across multiple concentrations.

- Multiparameter Readouts: Quantify protein biomarkers using ELISA or multiplexed immunoassays, capturing diverse biological responses [2].

- Profile Database: Compare compound-induced profiles to an extensive database of reference profiles using pattern-matching algorithms.

- Mechanism Prediction: Identify potential mechanisms of action and secondary activities based on similarity to reference compound profiles [2].

Lead Optimization and Safety Assessment

Protocol 3: Systems Pharmacology Lead Optimization

- Pathway Modulation Assessment: Evaluate compound effects on key signaling pathways using phosphoproteomics and high-content imaging [2].

- Network Liability Identification: Apply network toxicity models to predict potential adverse effects based on target proximity to known toxicity pathways [2].

- Polypharmacology Profiling: Assess compound interactions with secondary targets using broad-based profiling assays and computational prediction tools.

- Therapeutic Index Prediction: Integrate efficacy and toxicity profiles to estimate the potential therapeutic window using quantitative systems pharmacology models.

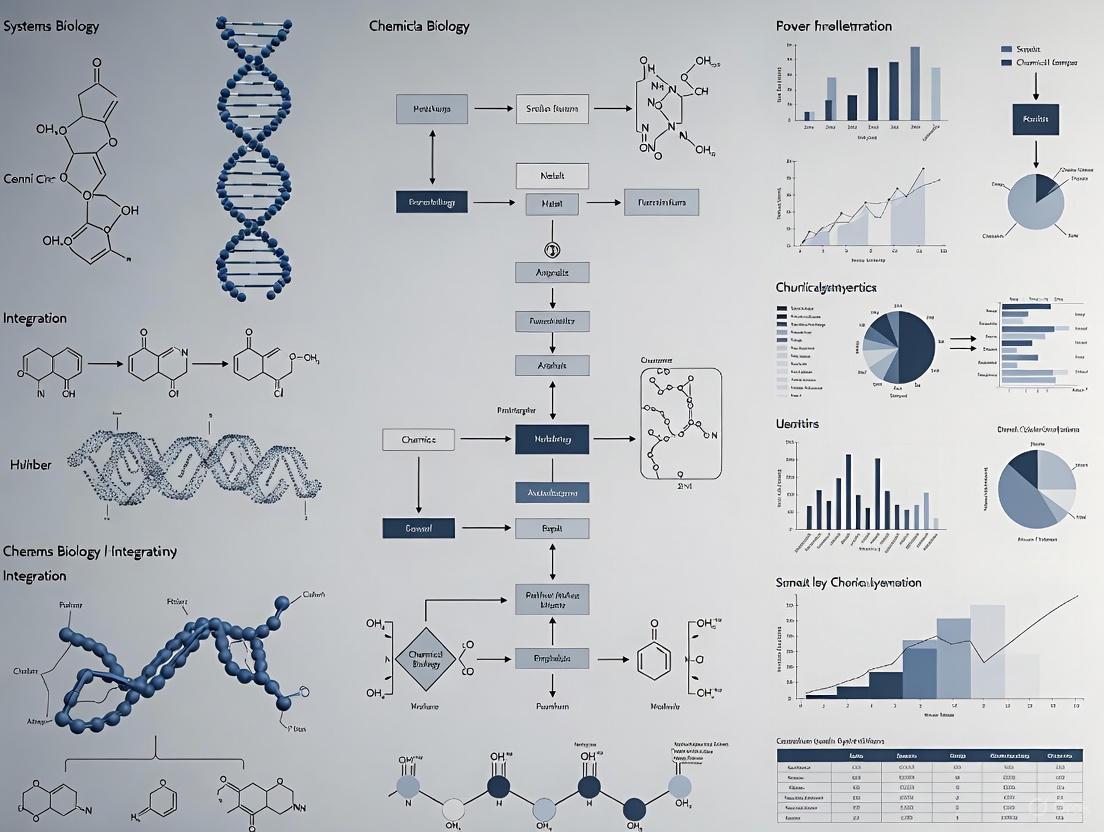

Diagram 1: Holistic drug discovery workflow integrating multi-omics data and phenotypic screening.

Key Research Tools and Reagent Solutions

The implementation of holistic approaches requires specialized research tools and reagents that enable comprehensive profiling of compound effects across multiple biological layers.

Table 3: Essential Research Reagent Solutions for Holistic Discovery

| Reagent Category | Specific Examples | Function | Application Context |

|---|---|---|---|

| Multi-Omics Profiling Kits | RNA-Seq library prep, phosphoproteomic kits, metabolomic standards | Comprehensive molecular profiling | Target identification, mechanism of action studies [2] |

| Primary Cell Co-culture Systems | BioMAP primary human cell panels, organotypic cultures | Physiologically relevant disease modeling | Phenotypic screening, toxicity prediction [2] |

| Pathway Reporter Assays | Luciferase-based pathway reporters, FRET biosensors | Monitoring specific pathway activation | Lead optimization, functional characterization [1] |

| High-Content Screening Reagents | Multiplexed fluorescent dyes, automated microscopy reagents | Multiparametric cellular analysis | Phenotypic profiling, network biology [1] |

| Chemical Proteomics Probes | Activity-based probes, photoaffinity labels | Target identification and engagement | Mechanism elucidation, polypharmacology [2] |

Quantitative Data Analysis and Visualization in Holistic Research

Effective implementation of holistic approaches requires sophisticated quantitative data analysis methods to extract meaningful patterns from complex, high-dimensional datasets. Quantitative data analysis serves as the mathematical foundation for interpreting multi-omics data, enabling researchers to discover trends, patterns, and relationships within large datasets [3].

The two primary categories of quantitative analysis—descriptive statistics (mean, median, mode, standard deviation) and inferential statistics (hypothesis testing, regression analysis, ANOVA)—provide complementary approaches for summarizing data characteristics and making generalizations about larger populations [3]. These methods are particularly important for comparing quantitative data between different experimental groups, such as treatment conditions or patient subtypes, where the difference between means and medians must be computed and visualized appropriately [4].

Diagram 2: Quantitative data analysis workflow for multi-omics research.

For visualizing comparative data in holistic studies, researchers should select appropriate graphical representations based on the nature of their data and research questions. Common effective visualization methods include:

- Back-to-back stemplots: Ideal for small datasets and two-group comparisons [4]

- 2-D dot charts: Effective for small to moderate amounts of data across multiple groups [4]

- Boxplots: Excellent for comparing distributions across multiple groups, displaying five-number summaries (minimum, Q1, median, Q3, maximum) [4]

- Bar charts: Simple and effective for categorical comparisons [5]

- Line charts: Ideal for displaying trends over time [5]

Accessibility considerations are paramount when creating visualizations for scientific communication. Guidelines include ensuring sufficient color contrast, not relying solely on color to convey information, providing text alternatives, and making interactive visualizations keyboard-accessible [6] [7].

Applications in Precision Medicine and Drug Development

The holistic integration of systems biology with chemical biology has produced tangible advances in precision medicine and pharmaceutical development. By accounting for the complex, multi-scale nature of human biology, these approaches have improved the prediction of drug effects in patients, enabled personalized medicine strategies, and begun to improve the success rate of new drugs in the clinic [2].

One significant application has been the finding of new uses for existing drugs through systematic analysis of their effects on biological networks. By comparing the network perturbation signatures of compounds across multiple disease contexts, researchers can identify novel therapeutic indications that would not be apparent from single-target perspectives [2]. This approach has been particularly valuable for drug repurposing, potentially reducing development timelines and costs while expanding treatment options for diseases with unmet medical needs.

The holistic framework has also proven invaluable for understanding and predicting variable patient responses to therapies. By integrating genomic, transcriptomic, and proteomic data from patient populations, researchers can identify biomarker signatures that predict therapeutic efficacy and adverse event susceptibility, enabling more targeted clinical trial designs and ultimately more personalized treatment approaches [1] [2].

Future Perspectives and Challenges

Despite significant progress, the holistic integration of systems biology and chemical biology faces several technical and conceptual challenges. Technical issues include the well-understood computational difficulties associated with large datasets with many features but few samples, missing data concerns, and systematic biases in published literature [2]. Understanding and managing variability in data—both biological and technical—remains particularly challenging when integrating across multiple omics platforms and experimental systems.

The field continues to grapple with the development of better models of human disease biology that can accommodate multiple omics data types while remaining interpretable and clinically actionable. More integrated network-based models that capture the dynamic nature of biological systems and can predict emergent properties following therapeutic intervention represent an important frontier [2]. As these models improve, they will increasingly enable translational physiology—the examination of biological functions across levels spanning from molecules to cells to organs to populations—creating a more direct pathway from basic research to clinical application [1].

The continued evolution of holistic approaches will require advances in both experimental technologies and computational methods, particularly in managing and extracting knowledge from the expanding universe of biological data. As these integrated frameworks mature, they hold the promise of fundamentally transforming drug discovery and development, moving beyond single-target studies to embrace the true complexity of human biology and disease.

The integration of cheminformatics with biological network simulations represents a paradigm shift in systems biology and drug discovery. This convergence enables researchers to bridge the critical gap between chemical structure data and complex biological system behaviors. Cheminformatics provides the computational framework for managing, analyzing, and predicting the properties of small molecules, while biological network simulations model the intricate interplay of biomolecules within cellular systems [8] [9]. Together, they form a powerful synergistic relationship that accelerates the identification of novel therapeutic targets and the design of effective chemical modulators.

The foundation of this integration rests on the ability to translate molecular structures into quantitative descriptors that can be incorporated into systems biology models. As of 2025, advancements in artificial intelligence (AI) and machine learning (ML) have significantly enhanced our capacity to analyze complex datasets and predict molecular properties with unprecedented accuracy [9]. Simultaneously, the expansion of multi-omics technologies has produced increasingly comprehensive biological networks, creating an urgent need for computational methods that can effectively integrate chemical and biological data types [10]. This whitepaper examines the core tenets, methodologies, and applications of this integration within the broader context of systems biology research.

Theoretical Framework: Bridging Cheminformatics and Network Biology

Cheminformatics Foundations for Systems Biology

Cheminformatics has evolved from its origins in pharmaceutical screening to become a cornerstone of modern chemical biology. The field encompasses computational methods to manage, analyze, and predict properties of chemical compounds, with a primary focus on data representation, storage, and analysis [8]. Core to this discipline is the translation of molecular structures into computer-readable formats, known as molecular representation, which serves as the foundation for training machine learning and deep learning models [11].

Molecular representation methods have advanced significantly from traditional approaches to modern AI-driven techniques. Traditional methods include:

- String-based representations: SMILES (Simplified Molecular Input Line Entry System), InChI, and SELFIES that encode molecular structures as text strings [11]

- Molecular descriptors: Quantitative features that capture physicochemical properties (e.g., molecular weight, logP, polar surface area) [8]

- Molecular fingerprints: Binary or numerical vectors that encode substructural information (e.g., ECFP, FCFP) [11]

Modern AI-driven approaches now leverage deep learning techniques to learn continuous, high-dimensional feature embeddings directly from large and complex datasets. These include graph neural networks (GNNs) that operate directly on molecular graphs, transformer models that process SMILES strings as a chemical language, and multimodal approaches that integrate multiple representation types [11].

Biological Network Principles

Biological networks provide the structural framework for understanding complex cellular processes in systems biology. These networks abstract biological systems as graphs where nodes represent biological entities (genes, proteins, metabolites) and edges represent interactions, regulations, or other functional relationships between them [10]. Key network types include:

- Protein-protein interaction (PPI) networks: Maps physical interactions between proteins

- Gene regulatory networks: Captures transcriptional regulation relationships

- Metabolic networks: Represents biochemical reaction pathways

- Drug-target interaction (DTI) networks: Connects chemical compounds to their biological targets

The integration of multi-omics data has revolutionized biological network analysis by enabling more comprehensive and context-specific models. Network-based integration methods for multi-omics data can be categorized into four primary types: network propagation/diffusion, similarity-based approaches, graph neural networks, and network inference models [10]. These approaches allow researchers to capture the complex interactions between drugs and their multiple targets, providing a systems-level perspective essential for modern drug discovery.

Conceptual Integration Framework

The theoretical integration of cheminformatics with biological network simulations occurs at multiple conceptual levels:

- Structural-to-Functional Mapping: Chemical structures are mapped to their functional effects on biological networks through target engagement and downstream pathway modulation

- Multi-Scale Modeling: Small molecule properties (cheminformatics domain) are connected to cellular and phenotypic responses (network biology domain) through multi-scale models

- Network Pharmacology: Compounds are evaluated based on their interactions with multiple network nodes rather than single targets, reflecting the polypharmacology inherent to most effective drugs

This conceptual framework enables researchers to move beyond single-target drug discovery toward network-based therapeutic strategies that account for system-wide effects and emergent behaviors [10].

Methodological Approaches: Data Integration and Workflow Pipelines

Cheminformatics Data Preprocessing for Network Integration

Effective integration begins with rigorous preprocessing of chemical data to ensure quality and consistency. The standard workflow encompasses:

Data Collection and Initial Preprocessing

Data collection involves gathering chemical data from diverse sources including public databases (PubChem, ChEMBL, ZINC15), literature sources, and experimental results. The initial preprocessing phase involves removing duplicates, correcting errors, and standardizing formats to ensure consistency, typically using tools like RDKit [8].

Molecular Representation and Feature Engineering

After preprocessing, appropriate molecular representations are selected based on the specific analytical goals. SMILES strings remain widely used for their compactness and human-readability, while molecular graphs more naturally represent structural topology for graph neural networks. Feature extraction derives relevant properties such as molecular descriptors, fingerprints, or other structural characteristics for use as model inputs [8] [11].

Feature engineering transforms or creates new features to enhance model performance through techniques like normalization, scaling, and generating interaction terms. For biological network integration, particularly important are features that capture potential bioactivity, such as pharmacophore patterns, toxicity risks, and physicochemical properties affecting cell permeability [8].

Data Structuring for AI Models

The processed chemical data is organized into structured formats suitable for AI models, including labeled datasets for supervised learning or appropriately structured data for unsupervised learning tasks. Data augmentation techniques may be applied to expand dataset size or enhance diversity, improving model robustness and generalization [8].

Network-Based Multi-Omics Integration Methods

Network-based approaches provide powerful frameworks for integrating cheminformatics data with biological networks. These methods can be systematically categorized as shown in the table below:

Table 1: Network-Based Multi-Omics Integration Methods

| Method Category | Key Algorithms | Strengths | Limitations |

|---|---|---|---|

| Network Propagation/Diffusion | Random walk with restart, Heat diffusion | Robust to noise, Intuitive biological interpretation | Limited capacity for heterogeneous data integration |

| Similarity-Based Approaches | Similarity network fusion, Kernel methods | Flexibility in data types, Strong mathematical foundation | Computational intensity with large datasets |

| Graph Neural Networks | Graph convolutional networks, Graph attention networks | High predictive accuracy, End-to-end learning | Black-box nature, High computational resources required |

| Network Inference Models | Bayesian networks, Mutual information-based | Causal relationship modeling, Strong statistical foundation | Requires large sample sizes for robust inference |

These methods enable the identification of novel drug targets, prediction of drug responses, and drug repurposing by leveraging the complementary information from multiple omics layers integrated within biological networks [10].

Integrated Workflow Architecture

The complete integrated workflow combines cheminformatics preprocessing with network biology analysis:

Diagram Title: Integrated Cheminformatics-Network Biology Workflow

This architecture demonstrates how chemical data undergoes preprocessing and feature engineering before integration with biological networks constructed from multi-omics data. The integrated model training phase leverages both chemical and biological features to generate predictions that are subsequently validated experimentally.

Experimental Protocols and Implementation

Protocol 1: Network-Based Target Identification for Novel Compounds

This protocol describes a method for identifying potential biological targets for novel chemical compounds by integrating cheminformatics with biological network analyses.

Materials and Reagents

Table 2: Essential Research Reagents and Computational Tools

| Item | Function/Application | Implementation Examples |

|---|---|---|

| Chemical Databases | Source of chemical structures and properties | PubChem, ChEMBL, ZINC15 [8] |

| Bioactivity Data | Experimental compound-target interaction data | ChEMBL, BindingDB [9] |

| Molecular Representation Tools | Convert structures to computable formats | RDKit, Open Babel [8] |

| Network Analysis Software | Biological network construction and analysis | Cytoscape, NetworkX [10] |

| Multi-Omics Datasets | Genomic, transcriptomic, proteomic data | TCGA, GTEx, CPTAC [10] |

| AI/ML Frameworks | Model training and prediction | PyTorch, TensorFlow, Scikit-learn [11] |

Methodology

Step 1: Compound Profiling and Representation

- Begin with the novel compound of interest and generate standardized molecular representations

- Calculate comprehensive molecular descriptors (e.g., topological, electronic, and physicochemical properties)

- Generate multiple fingerprint types (ECFP, FCFP) and graph representations

- Apply AI-based representation learning models (e.g., graph neural networks) to create embedded representations

Step 2: Similarity-Based Target Hypothesis Generation

- Perform similarity searching against compounds with known targets in databases like ChEMBL

- Use multiple similarity metrics (structural, shape-based, pharmacophore-based)

- Apply ensemble similarity approaches to increase robustness

- Compile initial target hypotheses based on similarity principles

Step 3: Biological Network Contextualization

- Construct or access relevant biological networks (PPI, signaling, metabolic)

- Annotate networks with multi-omics data relevant to the disease context

- Apply network propagation algorithms starting from initial target hypotheses

- Identify network neighborhoods enriched for potential targets

Step 4: Integrated Model Prediction

- Train machine learning models on known compound-target interactions

- Incorporate both chemical features and network-based features

- Use graph neural networks that operate directly on the integrated chemical-biological network

- Generate prioritized list of potential targets with confidence scores

Step 5: Experimental Validation Planning

- Design validation experiments based on predicted targets and confidence metrics

- Prioritize targets based on both prediction confidence and therapeutic relevance

- Plan appropriate experimental assays (binding assays, functional assays, phenotypic screens)

This protocol typically identifies 3-5 high-confidence targets for experimental validation, with successful implementation yielding confirmed targets for approximately 60-70% of novel compounds [10].

Protocol 2: Scaffold Hopping Using Network-Aware Cheminformatics

Scaffold hopping aims to discover new core structures while retaining similar biological activity, representing a crucial strategy in lead optimization [11]. This protocol enhances traditional scaffold hopping by incorporating biological network context.

Methodology

Step 1: Activity Landscape Characterization

- Start with a known active compound (reference compound)

- Define the relevant biological activity profile across multiple assays or phenotypes

- Map the activity to relevant biological pathways and networks

- Identify key molecular features responsible for activity (pharmacophore pattern)

Step 2: Network-Constrained Chemical Space Exploration

- Define the relevant chemical space based on reference compound properties

- Apply generative AI models (VAEs, GANs) to explore novel scaffolds

- Constrain generation using bioactivity predictors trained on network-relevant assays

- Use transformer architectures with SELFIES representations for valid chemical structures

Step 3: Multi-Parameter Optimization

- Evaluate generated compounds using predictive models for:

- Target binding affinity (primary target)

- Selectivity against off-targets (network neighbors)

- ADMET properties

- Synthetic accessibility

- Apply multi-objective optimization to balance competing parameters

- Use Pareto front analysis to identify optimal compromises

Step 4: Network Activity Signature Conservation

- Predict the network-wide activity signature of proposed scaffolds

- Compare to reference compound using network perturbation similarity

- Prioritize scaffolds that maintain similar network perturbation patterns

- Apply explainable AI techniques to interpret conserved bioactivity

Step 5: Experimental Validation

- Synthesize or acquire top-ranked scaffold-hop compounds

- Test in primary and counter-screens to confirm desired activity profile

- Validate network-level effects using transcriptomics or proteomics

- Iterate based on experimental results

Advanced scaffold hopping methods using these integrated approaches have demonstrated success rates of 40-50% in maintaining biological activity while achieving significant structural changes [11].

Protocol 3: Predictive Polypharmacology Profiling Using Heterogeneous Networks

This protocol addresses the challenge of predicting polypharmacology - the interaction of compounds with multiple targets - by integrating cheminformatics with heterogeneous biological networks.

Methodology

Step 1: Heterogeneous Network Construction

- Build an integrated network containing:

- Compound nodes (with chemical features)

- Protein targets (with sequence and structural features)

- Disease/phenotype nodes

- Various relationship types (compound-target, target-pathway, etc.)

- Use standardized identifiers to enable data integration

- Apply data normalization techniques to handle different data types and scales

Step 2: Graph Neural Network Model Development

- Implement heterogeneous graph neural network architecture

- Design appropriate message passing mechanisms for different relationship types

- Incorporate attention mechanisms to weight important relationships

- Use multi-task learning to predict multiple properties simultaneously

Step 3: Model Training and Validation

- Train model on known compound-target interactions

- Use appropriate regularization to prevent overfitting

- Validate using rigorous cross-validation strategies

- Test on external validation sets to assess generalizability

Step 4: Polypharmacology Prediction

- Apply trained model to new compounds

- Generate comprehensive target interaction profiles

- Identify potential therapeutic effects and toxicity risks

- Predict network-wide effects based on multi-target engagement

Step 5: Experimental Confirmation

- Design multiplexed assays to test predicted polypharmacology

- Use high-content screening for phenotypic validation

- Apply multi-omics approaches to capture system-wide effects

- Iterate based on experimental findings

Models using this approach have demonstrated significant improvements in predicting clinical effects and toxicity compared to single-target approaches, with some implementations achieving 70-80% accuracy in predicting clinical outcomes based on preclinical data [12] [10].

Data Analysis and Interpretation Framework

Multi-Scale Data Integration and Visualization

Effective analysis of integrated cheminformatics and network simulation data requires specialized visualization approaches that can represent both chemical and biological information. Key visualization strategies include:

Chemical Space Mapping: Projecting compounds into 2D or 3D space based on molecular similarity, annotated with bioactivity data and network properties. This allows researchers to visualize structure-activity relationships in the context of network perturbations [8].

Network Visualization with Chemical Annotation: Displaying biological networks with nodes colored or shaped based on chemical interactivity. This approach highlights network regions that are particularly rich in chemical perturbations or potential drug targets [10].

Multi-Parameter Optimization Landscapes: Creating visualization dashboards that enable simultaneous evaluation of multiple compound properties, including target potency, selectivity, ADMET properties, and network perturbation scores.

Statistical and AI-Driven Analysis Methods

Robust statistical analysis is essential for interpreting integrated cheminformatics-network biology data. Key analytical approaches include:

Dimensionality Reduction: Techniques such as t-SNE and UMAP are used to visualize high-dimensional chemical and biological data in lower-dimensional spaces while preserving important relationships [11].

Network Topology Analysis: Calculating network metrics (degree centrality, betweenness, closeness) for nodes affected by chemical perturbations to identify critical targets and pathways [10].

Machine Learning Model Interpretation: Using SHAP (SHapley Additive exPlanations) and other explainable AI techniques to interpret model predictions and identify key molecular features driving biological activity [11] [12].

The analysis workflow for integrated cheminformatics and network biology data can be visualized as:

Diagram Title: Multi-Modal Data Analysis Workflow

Applications in Drug Discovery and Development

AI-Driven Molecular Generation and Optimization

The integration of cheminformatics with biological network simulations has revolutionized molecular generation and optimization in drug discovery. Generative AI models can now design novel molecular structures optimized for desired network-level effects rather than single-target activity [8] [12].

Key applications include:

De Novo Drug Design: AI generates novel molecules through de novo design, which are then optimized using cheminformatics tools to enhance properties such as solubility, bioavailability, and target engagement profile. Techniques like PASITHEA employ gradient-based optimization to refine molecular structures, ensuring they meet predefined criteria [8].

Iterative Optimization: This involves repeatedly refining AI-generated molecules based on feedback from cheminformatics models and network simulations. This process leads to the development of more effective and safer drug candidates. Tools like CIME4R enhance human-AI collaboration in chemical reaction optimization by enabling comprehensive analysis of reaction parameter spaces and AI model predictions [8].

Chemical Space Exploration: Systematic navigation of vast molecular landscapes to identify novel therapeutic compounds and enhance molecular diversity. For example, transformer architectures using SMILES structures can exhaustively explore local chemical space [8].

Predictive Toxicology and Safety Assessment

Integrating cheminformatics with biological network modeling has significantly advanced predictive toxicology by enabling mechanism-based safety assessment:

Network-Based Toxicity Prediction: Modeling compound effects on toxicity-relevant pathways (e.g., hepatotoxicity, cardiotoxicity) by simulating their interactions with relevant biological networks. This approach moves beyond correlation-based predictions to mechanism-based assessments [8] [9].

Early Toxicity Prediction: Using QSAR modeling and read-across methods that leverage physicochemical properties to assess potential toxicity risks early in the discovery process, enabling informed decision-making before significant resources are invested [8].

Mechanistic Insights into Toxicity: Molecular docking and pharmacophore mapping provide mechanistic insights into toxicity, guiding experimental validation and improving drug safety evaluation [8].

Drug Repurposing and Combination Therapy

Network-based integration approaches have proven particularly valuable for drug repurposing and combination therapy design:

Computational Drug Repurposing: Using heterogeneous networks that connect drugs, targets, diseases, and pathways to identify new therapeutic applications for existing drugs. These approaches can rapidly identify candidate drugs for repurposing by analyzing their network proximity to disease modules [10].

Synergistic Combination Prediction: Predicting effective drug combinations by modeling their complementary effects on disease networks. This approach can identify combinations that target multiple pathways simultaneously or that counter adaptive resistance mechanisms [10].

Clinical Response Prediction: Integrating chemical features with patient-specific biological networks to predict individual drug responses. This personalized medicine approach accounts for individual genetic variations that affect both drug metabolism and target pathways [10].

Implementation Challenges and Future Directions

Current Limitations and Technical Challenges

Despite significant advances, several challenges remain in fully integrating cheminformatics with biological network simulations:

Data Quality and Standardization: Issues related to data quality and standardization remain critical, particularly in the consistent representation of molecular structures and annotation of biological networks. Limitations in current molecular encoding systems present challenges for accurately representing complex chemical information [9].

Computational Scalability: The integration of large-scale chemical data with increasingly complex biological networks creates significant computational demands. Methods that efficiently search ultra-large chemical spaces (e.g., libraries containing 10^14 or more molecules) while incorporating network constraints remain an active area of development [12].

Interpretability and Validation: As models increase in complexity, maintaining biological interpretability while achieving high predictive accuracy becomes challenging. Furthermore, experimental validation of network-level predictions requires sophisticated multi-omics approaches that can be resource-intensive [10].

Negative Data Reporting: The availability of high-quality negative data (inactive compounds) is essential for improving the reliability and generalizability of ML models. However, curating negative datasets remains challenging due to limited reporting of inactive compounds and potential biases in screening assays [9].

Emerging Technologies and Methodological Advances

Several emerging technologies show promise for addressing current challenges and advancing the field:

Quantum Computing: Quantum computing holds promise for revolutionizing chemical simulations by offering new capabilities for simulating and optimizing chemical processes that are computationally intractable with classical computers [9].

Advanced Molecular Representations: New molecular representation methods continue to emerge, including geometry-aware graph representations that incorporate 3D structural information, and multi-modal representations that combine structural, sequence, and property information [11].

Dynamic Network Modeling: Current biological networks typically represent static interactions, but emerging approaches incorporate temporal and spatial dynamics to model how networks change over time and in different cellular contexts [10].

Federated Learning: As data privacy concerns grow, federated learning approaches enable model training across multiple institutions without sharing raw data, facilitating collaboration while protecting proprietary information [12].

Future Outlook

The integration of cheminformatics with biological network simulations is poised to become increasingly central to drug discovery and chemical biology research. Key trends likely to shape future development include:

Increased Automation: The integration of automated synthesis and screening with computational predictions will create closed-loop systems that continuously refine models based on experimental feedback [8] [13].

Personalized Network Pharmacology: The development of patient-specific biological networks will enable truly personalized drug discovery, where compounds are selected or designed based on an individual's unique network perturbations [10].

Enhanced Explainability: New methods for explaining complex model predictions will improve trust and adoption, particularly in regulated environments like drug development [11] [12].

As these trends converge, the integration of cheminformatics with biological network simulations will increasingly enable a systems-level understanding of chemical-biological interactions, transforming drug discovery from a predominantly reductionist approach to a holistic, network-based paradigm.

In modern systems biology and chemical biology research, the integration of comprehensive data resources is paramount for advancing drug discovery and understanding complex biological systems. PubChem, KEGG, and BRENDA represent three cornerstone databases that collectively provide a framework for connecting chemical structures with biological activity, pathway context, and enzymatic function. This technical guide examines the core functionalities, data structures, and integrative applications of these resources, emphasizing their role in bridging molecular-level data with systems-level analyses. As the field moves toward increasingly mechanism-based approaches, the synergistic use of these databases enables researchers to validate targets, interpret high-throughput data, and accelerate the development of novel therapeutics within a translational physiology context.

Database Core Architectures and Data Models

PubChem: Chemical Information Repository

PubChem is a comprehensive public chemical database resource maintained by the National Institutes of Health (NIH). It operates as a large, highly-integrated system containing data from over 1,000 sources, making it one of the world's most extensive chemical information resources. The database employs a multi-collection architecture that organizes information into specialized domains:

- Substance: Archives chemical descriptions provided by contributors, which may include non-discrete structures or even structureless materials. This collection contains over 322 million substance records as of late 2024.

- Compound: Stores unique chemical structures extracted from Substance records through chemical structure standardization, containing approximately 119 million compounds.

- BioAssay: Houses descriptions and test results from biological assay experiments, with 1.67 million biological assays and 295 million bioactivity data points.

- Specialized Collections: Includes Protein, Gene, Pathway, Cell Line, Taxonomy, and Patent databases that provide target-centric views of PubChem data [14].

Recent updates to PubChem have enhanced its utility for systems biology applications. The introduction of consolidated literature panels combines all references about a compound into a single searchable interface, while patent knowledge panels display chemicals, genes, and diseases co-mentioned in patent documents. Furthermore, PubChem has improved accessibility for chemicals with non-discrete structures, including biologics, minerals, polymers, and complex mixtures [14].

KEGG: Pathway Integration Framework

The Kyoto Encyclopedia of Genes and Genomes (KEGG) is a database resource dedicated to understanding high-level functions and utilities of biological systems from molecular-level information. KEGG's core strength lies in its manually curated pathway maps that represent molecular interaction, reaction, and relation networks. The pathway identification system uses a structured coding scheme:

- map: Manually drawn reference pathway

- ko: Reference pathway highlighting KOs (KEGG Orthology groups)

- ec: Reference metabolic pathway highlighting EC numbers

- rn: Reference metabolic pathway highlighting reactions

- <org>: Organism-specific pathway generated by converting KOs to geneIDs [15]

KEGG PATHWAY is organized into seven major categories: Metabolism, Genetic Information Processing, Environmental Information Processing, Cellular Processes, Organismal Systems, Human Diseases, and Drug Development. This hierarchical structure allows researchers to navigate from broad biological processes to specific molecular interactions, facilitating the integration of chemical compounds within their functional contexts [15] [16].

BRENDA: Enzyme Function Database

BRENDA (BRaunschweig ENzyme DAtabase) serves as the comprehensive enzyme information system, providing detailed functional data on enzymes classified according to the Enzyme Commission (EC) nomenclature of IUBMB. The database contains meticulously curated information from primary literature, with recent releases encompassing:

- >5 million data points for approximately 90,000 enzymes from 13,000 organisms

- Manually extracted information from 157,000 primary literature references

- Disease-related data, protein sequences, 3D structures, genome annotations, ligand information, and kinetic data [17]

BRENDA implements advanced query systems, evaluation tools, and visualization options for detailed assessment of enzyme properties. Recent developments include completely revised pathway maps, enhanced enzyme summary pages, integrated 3D structure viewers, and predictions for intracellular localization of eukaryotic enzymes. The EnzymeDetector tool combines BRENDA enzyme annotations with protein and genome databases for comprehensive enzyme detection across species [17].

Table 1: Quantitative Overview of Database Contents

| Database | Primary Content | Data Volume | Key Metrics |

|---|---|---|---|

| PubChem | Chemical compounds & bioactivities | 119 million compounds, 322 million substances | 295 million bioactivities, >1,000 data sources [14] |

| KEGG | Biological pathways & networks | 500+ pathway maps | Manually drawn molecular interaction networks [15] [16] |

| BRENDA | Enzyme functional data | >5 million data for ~90,000 enzymes | 157,000 literature references, 13,000 organisms [17] |

Systems Biology Integration Methodologies

Experimental Protocols for Database Integration

Protocol 1: Target Identification and Validation Workflow

Purpose: To identify and validate novel drug targets by integrating chemical, pathway, and enzymatic data across PubChem, KEGG, and BRENDA.

Procedure:

- Initial Compound Screening: Identify bioactive compounds from PubChem BioAssay data, filtering for potency (IC50/EC50 < 10μM) and selectivity (≥10-fold selectivity over related targets) [14].

- Pathway Contextualization: Map compound targets to KEGG pathways using KEGG Mapper to identify involved pathways and potential network effects. Determine if targets represent nodes with high betweenness centrality in metabolic or signaling networks [15] [16].

- Enzyme Characterization: Query BRENDA for detailed enzymatic parameters of identified targets, including kinetic values (Km, kcat), pH/temperature optima, and inhibitor profiles [17].

- Systems Validation: Apply the four-step framework adapted from Koch's postulates:

- Identify disease-relevant biomarkers or phenotypic parameters

- Demonstrate compound modulation of parameters in animal models

- Verify modulation in human disease models

- Establish dose-dependent clinical benefit correlating with biomarker changes [1]

Protocol 2: Multi-Omics Data Integration for Mechanism of Action Studies

Purpose: To elucidate compound mechanisms of action by integrating transcriptomic, proteomic, and metabolomic data within the framework provided by KEGG and BRENDA.

Procedure:

- High-Content Screening: Treat model systems with compound and perform:

- RNA-seq for transcriptomic profiling

- LC-MS/MS for proteomic analysis

- NMR or LC-MS for metabolomic profiling [1]

- Pathway Enrichment Analysis: Use KEGG Mapper to identify significantly enriched pathways (p < 0.05, FDR corrected) across all omics layers.

- Enzyme-Ligand Interaction Mapping: Cross-reference significantly altered metabolites with BRENDA ligand database to identify potential enzyme targets and allosteric regulators.

- Bioactivity Correlation: Query PubChem for known bioactivities of the compound and structural analogs to confirm hypothesized targets [14] [17].

- Network Integration: Construct unified pathway models that incorporate transcript, protein, and metabolite changes, using KEGG pathways as scaffolding.

Visualization of Database Integration in Systems Biology

The following diagram illustrates how PubChem, KEGG, and BRENDA integrate to support systems biology research, particularly in drug discovery applications:

Systems Biology Database Integration

Chemical Biology Platform Implementation

The chemical biology platform represents an organizational approach that optimizes drug target identification and validation by emphasizing understanding of underlying biological processes and leveraging knowledge from similar molecules. This platform connects strategic steps to determine clinical translatability of new compounds [1].

The platform has evolved through three historical stages:

- Bridging Chemistry and Pharmacology (1950s-1960s): Integration of compound synthesis with physiological testing in animal models.

- Introduction of Clinical Biology (1980s): Development of biomarkers and human disease models to bridge preclinical and clinical research.

- Genomics-Informed Chemical Biology (2000-present): Integration of genomic information, combinatorial chemistry, structural biology, and high-throughput screening [1].

Table 2: Research Reagent Solutions for Database-Driven Research

| Reagent/Resource | Function in Experimental Workflow | Database Integration |

|---|---|---|

| Bioactive Compounds | Probe biological function and therapeutic potential | PubChem bioactivity data and compound structures [14] |

| Pathway Mapping Tools | Visualize molecular interactions within biological contexts | KEGG Mapper for pathway enrichment analysis [16] |

| Enzyme Assay Systems | Characterize kinetic parameters and inhibition profiles | BRENDA functional enzyme data [17] |

| Biomarker Panels | Assess target engagement and pharmacological effects | Clinical biology framework for translational validation [1] |

| Multi-omics Profiling | Generate systems-level views of compound effects | Integration across all databases for mechanism elucidation [1] |

Applications in Drug Discovery and Development

Translational Workflow from Target to Clinic

The integration of PubChem, KEGG, and BRENDA enables a systematic approach to drug discovery that aligns with modern translational physiology principles. This workflow examines biological functions across multiple levels, from molecular interactions to population-wide effects:

Drug Discovery Translational Workflow

Case Implementation: Natural Product Drug Discovery

The power of database integration is exemplified in natural product research, where PubChem provides structural and bioactivity data on natural compounds, KEGG maps these compounds to biosynthetic pathways, and BRENDA characterizes the enzymatic transformations involved [14] [17].

Recent Advances:

- PubChem has integrated natural product data from NPASS (Natural Product Activity and Species Source Database), enhancing coverage of biologically relevant chemical space [14].

- KEGG provides specialized pathway maps for secondary metabolite biosynthesis, including phenylpropanoids, flavonoids, alkaloids, and various antibiotic classes [15].

- BRENDA encompasses enzyme data from diverse organisms, including those producing pharmacologically important natural products [17].

This integrated approach aligns with the observed industry transition from traditional trial-and-error methods to targeted selection approaches that incorporate systems biology techniques like transcriptomics, proteomics, and metabolomics [1].

The continued evolution of PubChem, KEGG, and BRENDA reflects the growing importance of data integration in chemical and systems biology. Recent developments include:

- Expanded Data Coverage: PubChem's addition of data from over 130 new sources, including drug information from FDA and JPMDA, toxicology data from USEPA, and metabolomics resources [14].

- Enhanced Interoperability: PubChemRDF expansion to include literature co-occurrence data, enabling semantic web technologies for exploring entity relationships [14].

- Improved Accessibility: Specialized web pages for chemicals with non-discrete structures and enhanced visualization tools across all databases.

These resources collectively provide the infrastructure needed to implement chemical biology platforms that leverage accumulated knowledge and parallel processing to accelerate therapeutic development. As these databases continue to evolve, they will play an increasingly critical role in bridging chemical space with biological function, ultimately enabling more predictive and efficient approaches to understanding and manipulating biological systems.

The synergistic use of PubChem, KEGG, and BRENDA exemplifies how computational resources can drive the transition from descriptive biology to predictive, mechanism-based science – a fundamental goal of both systems biology and modern drug discovery.

The integration of chemical biology and systems biology represents a paradigm shift in biomedical research, enabling a more comprehensive understanding of complex biological systems. Chemical biology provides the tools—particularly small molecules—to precisely perturb biological systems, while systems biology offers the conceptual and computational frameworks to model and understand the emergent properties that arise from these perturbations [18]. At the intersection of these disciplines lies the need for large-scale, publicly accessible chemical and biological data.

The National Institutes of Health (NIH) Molecular Libraries Initiative (MLI) and its public database, PubChem, were established to address this critical need. This initiative has provided researchers with unprecedented access to chemical screening data and tools, creating an infrastructure that supports the integration of chemical and systems biology approaches. By generating and organizing vast amounts of data on small molecule bioactivities, these resources enable researchers to uncover complex relationships between chemical structure and biological function that would be impossible to discern through isolated investigations [19].

The Molecular Libraries Initiative: Programmatic Infrastructure for Chemical Biology

The Molecular Libraries Initiative was launched as a component of the NIH Roadmap for Medical Research, specifically under the Molecular Libraries and Imaging (MLI) program [19]. This ambitious initiative was designed to democratize access to high-throughput screening (HTS) technologies that were previously confined to pharmaceutical industry settings. The primary goal was to facilitate the use of HTS and chemical library screening within the academic community, accelerating the discovery of novel research tools and potential therapeutic candidates [20].

The initiative established several key components:

- The Molecular Libraries Screening Center Network (MLSCN): Grant-supported experimental laboratories performing HTS against biological targets.

- The Molecular Libraries Small Molecule Repository (MLSMR): A shared compound repository that eventually became part of PubChem's substance database.

- PubChem: An open repository for experimental data identifying the biological activities of small molecules [19].

MLSMR Libraries and Chemical Diversity

The Molecular Libraries Small Molecule Repository (MLSMR) served as the chemical foundation for the initiative, aggregating compounds from various sources including academic institutions. For example, the Center for Chemical Methodology and Library Development at Boston University (CMLD-BU) contributed stereochemically and structurally complex chemical libraries specifically designed not to overlap in chemical space with molecules already available in public databases [20]. This emphasis on novel chemical space exploration was critical for expanding the universe of biologically active compounds available to researchers.

PubChem: Technical Architecture and Data Integration

Database Structure and Organization

PubChem is organized as three distinct but interconnected databases that form a comprehensive chemical information ecosystem:

- PubChem Substance: Contains depositor-provided chemical descriptions, serving as a repository for original data submissions.

- PubChem Compound: Stores unique chemical structures derived from the Substance database through an automated process of structure standardization.

- PubChem BioAssay: Archives biological assay descriptions and test results, including high-throughput screening data [21] [19].

The fundamental relationships between these databases are maintained through standardized identifiers. Substance identifiers (SIDs) relate to Compound identifiers (CIDs) through chemical structure standardization, while BioAssay identifiers (AIDs) connect both compounds and substances to their biological activity profiles [19].

Data Growth and Content Expansion

Since its inception, PubChem has experienced substantial growth in both data content and user base. The following table summarizes the expansion of PubChem's core data content:

Table 1: Growth of PubChem Data Content (as of August 2020)

| Database | Record Count | Increase vs. 2018 | Key Content Description |

|---|---|---|---|

| Substance | 293 million | 19% | Depositor-provided chemical descriptions |

| Compound | 111 million | 14% | Unique chemical structures |

| BioAssay | 271 million data points | 14% | Bioactivity results from 1.2 million assays |

Source: [21]

In addition to this core data, PubChem has significantly expanded its integration with external resources. Recent additions include chemical-literature links from Thieme Chemistry (covering over 745,000 chemicals), material property data from SpringerMaterials (for approximately 32,000 compounds), and patent links from the World Intellectual Property Organization (WIPO) containing over 16 million chemical structures [21]. The platform has also created specialized data collections, such as the COVID-19 dataset, which integrates relevant chemical and biological data from authoritative sources including NCBI databases, UniProt, RCSB PDB, and DrugBank [21].

Chemical Structure Standardization

A critical technical challenge in maintaining a public chemical database is handling the diverse representations of chemical structures from hundreds of data sources. PubChem addresses this through an automated structure standardization process that converts submitted structures into consistent representations [22].

The standardization process addresses several complex issues in chemical informatics:

- Tautomerism: Different representations of the same compound that exist in equilibrium, affecting approximately 44% of structures processed by PubChem.

- Aromaticity models: Varying definitions and representations of aromatic systems across different cheminformatics toolkits.

- Stereochemistry: Consistent representation of chiral centers and geometric isomerism.

- Valence validation: Identification and correction or rejection of structures with invalid atom valences [22].

The standardization process has a rejection rate of only 0.36%, predominantly due to structures with invalid atom valences that cannot be readily corrected. Of the structures that pass standardization, 44% are modified in the process, demonstrating the critical importance of this normalization step for maintaining data quality [22].

Diagram: PubChem Data Integration and Standardization Workflow

Integration with Systems Biology: Methodologies and Applications

Data Integration Frameworks for Systems Biology

The vast data resources provided by PubChem and the Molecular Libraries Initiative serve as critical inputs for systems biology research. Biological data integration methodologies have evolved to handle the complex, multi-layered nature of this data, which spans genomic, transcriptomic, proteomic, and metabolomic domains [23]. These integration approaches can be broadly categorized as:

- Network-based methods: Using graph theory to analyze connectivity patterns across multiple biological networks.

- Machine learning approaches: Extending standard algorithms to incorporate disparate data types.

- Factorization methods: Decomposing complex data matrices into latent factors that capture underlying biological processes [23].

The integration of chemical data from PubChem with other omics data enables researchers to address fundamental biological problems including network inference, protein function prediction, disease gene prioritization, and drug repurposing [23]. These applications demonstrate how chemical biology data provides a critical perturbation dimension that enhances the explanatory power of systems biology models.

Multi-Omics Integration Platforms and Tools

The challenge of integrating chemical data with other omics layers has led to the development of sophisticated computational platforms. These tools employ various algorithmic strategies to extract meaningful patterns from heterogeneous datasets:

Table 2: Multi-Omics Data Integration Methods

| Method | Approach Type | Key Features | Applications |

|---|---|---|---|

| MOFA | Unsupervised factorization | Bayesian framework, identifies latent factors | Discovering hidden patterns, cohort stratification |

| DIABLO | Supervised integration | Uses phenotype labels, feature selection | Biomarker discovery, classification |

| SNF | Network-based | Fuses similarity networks, non-linear | Patient clustering, data fusion |

| MCIA | Multivariate statistics | Covariance optimization, multiple datasets | Cross-omics pattern recognition |

Source: [24]

These integration methods face significant computational challenges, including handling different data sizes and formats, managing noise and biases, effectively selecting informative datasets, and scaling with increasing data volume [23]. The non-negative matrix factorization (NMF) based approaches have emerged as particularly promising for heterogeneous biological data integration, as they are well-suited for dealing with diverse data types and offer opportunities for further methodological development [23].

Experimental Protocols: Leveraging PubChem for Systems Biology Research

Protocol 1: Target Identification and Validation Using PubChem Data

This protocol outlines a systematic approach to identifying and validating molecular targets using PubChem bioactivity data integrated with systems biology resources.

Materials and Reagents

Table 3: Key Research Reagent Solutions for Target Identification

| Reagent/Resource | Function | Source |

|---|---|---|

| PubChem BioAssay | Source of bioactivity data for small molecules | https://pubchem.ncbi.nlm.nih.gov/ |

| Gene Ontology (GO) | Functional annotation of potential targets | http://geneontology.org/ |

| STRING database | Protein-protein interaction network data | https://string-db.org/ |

| Pathway Commons | Integrated pathway information | https://www.pathwaycommons.org/ |

| Cytoscape | Network visualization and analysis | https://cytoscape.org/ |

Procedure

Compound Selection and Bioactivity Retrieval

- Identify small molecules of interest using PubChem search tools (name, structure, or similarity).

- Retrieve all bioactivity data for selected compounds using PubChem Programmatic services (PUG-REST or PUG-View).

- Filter results by activity type (e.g., IC50, Ki, EC50) and confidence level (e.g., screening set, confirmed active).

Target Identification and Prioritization

- Extract protein targets associated with bioactive compounds from PubChem protein pages.

- Annotate targets with functional information using Gene Ontology terms.

- Prioritize targets based on bioactivity strength, frequency across compounds, and relevance to disease pathways.

Network Analysis

- Construct protein-protein interaction networks using STRING database.

- Integrate expression data (from GEO) or mutation data (from TCGA) if available.

- Identify network modules and hubs using topological analysis (degree, betweenness centrality).

Experimental Validation

- Design experiments to test computational predictions (e.g., knockdown, overexpression).

- Measure phenotypic outcomes relevant to disease context.

- Iterate based on validation results to refine network models.

Protocol 2: Multi-Omics Data Integration for Drug Repurposing

This protocol describes a methodology for integrating chemical data from PubChem with multi-omics datasets to identify new therapeutic uses for existing drugs.

Procedure

Data Collection and Preprocessing

- Retrieve drug-target interaction data from PubChem for FDA-approved drugs.

- Obtain disease-specific omics data (transcriptomics, proteomics, genomics) from public repositories (TCGA, GEO).

- Preprocess each data type using appropriate normalization methods (e.g., RMA for microarray, TPM for RNA-seq).

Multi-Omics Data Integration

- Select integration method based on research question (see Table 2).

- For unsupervised pattern discovery (MOFA):

- Format all datasets into overlapping feature matrices.

- Train model to identify latent factors capturing variance across data types.

- Interpret factors using feature loadings and association with clinical variables.

- For supervised prediction (DIABLO):

- Define outcome variable (e.g., disease vs. control).

- Integrate datasets to find components that discriminate groups.

- Select features most relevant to discrimination.

Candidate Prioritization and Validation

- Identify drug candidates whose target profiles align with multi-omics signatures.

- Validate predictions in relevant disease models (cell lines, animal models).

- Analyze dose-response relationships using PubChem bioactivity data.

Diagram: Multi-Omics Data Integration Workflow for Drug Repurposing

Impact and Future Directions

The integration of public data resources like PubChem with systems biology approaches has fundamentally transformed biomedical research. The Molecular Libraries Initiative created an infrastructure that enables researchers to connect chemical structures to biological functions at an unprecedented scale, providing the experimental foundation for modeling complex cellular systems [19]. This has been particularly valuable for understanding signaling networks, metabolic pathways, and regulatory circuits that underlie both normal physiology and disease states.

The field continues to evolve with several promising future directions:

- Enhanced data integration algorithms that can more effectively handle the volume and heterogeneity of multi-omics data.

- Improved structure standardization methods that better handle tautomerism and stereochemical complexity.

- Expanded knowledge panels that integrate chemical data with biological pathways and disease mechanisms.

- Temporal and spatial resolution in chemical biology data to enable more dynamic systems models.

- Patient-specific data integration for precision medicine applications, combining chemical data with clinical and genomic information [23] [21] [24].

As chemical biology continues to develop more sophisticated tools for perturbing biological systems, and systems biology refines its computational frameworks for modeling complexity, the integration of these disciplines through public data resources will remain essential for advancing our understanding of biology and developing new therapeutic strategies. The NIH Molecular Libraries Initiative and PubChem have established a foundational infrastructure that continues to support this integrative approach, demonstrating the powerful synergies that emerge when chemical and systems biology perspectives are combined.

Tools and Workflows: Applying Omics and Cheminformatics in Drug Discovery

The integration of proteomics, metabolomics, and transcriptomics represents a paradigm shift in biomedical research, moving investigation beyond single-layer analysis to a holistic, systems-level understanding of biological processes. This multi-omics approach is fundamentally transforming how researchers study complex biological systems, particularly in the context of human diseases and drug development [25]. When framed within the broader thesis of systems biology integration with chemical biology research, multi-omics technologies provide the essential data layers necessary to construct comprehensive models of biological systems that can be strategically modulated by chemical tools and therapeutic interventions [1].

Systems biology provides the conceptual framework for understanding complex biological systems as integrated networks, while chemical biology offers the toolset for precisely probing and manipulating these systems [1]. The synergy between these disciplines is creating powerful new paradigms for drug discovery, moving beyond the traditional "one target, one drug" approach to a more holistic understanding of how chemical interventions perturb biological networks across multiple scales [26]. This convergence enables researchers to not only observe system-wide molecular changes but also to design targeted chemical probes that can test systemic hypotheses and potentially correct dysregulated networks in disease states [1].

The fundamental value of multi-omics integration lies in its ability to capture different layers of biological information that together provide a more complete picture of cellular states and dynamics. Genomics provides the blueprint, transcriptomics reveals gene expression dynamics, proteomics identifies functional effectors, and metabolomics captures the ultimate functional readout of cellular processes [27]. By integrating these layers, researchers can move beyond correlation to establish causal relationships within biological networks, identifying key regulatory nodes and therapeutic targets that would be invisible to single-omics approaches [25] [28].

Core Multi-Omics Technologies: Methodologies and Applications

Transcriptomics: Capturing the Dynamic RNA Landscape

Transcriptomics involves the comprehensive study of all RNA transcripts within a biological system, providing insights into the dynamic expression of genetic information. Modern transcriptomics has expanded beyond messenger RNA (mRNA) to include various non-coding RNAs such as long non-coding RNAs, microRNAs, and circular RNAs, all of which play crucial regulatory roles in cellular processes [25].

The predominant technology for transcriptome analysis is RNA sequencing (RNA-seq), which enables both qualitative and quantitative assessment of RNA populations. Key advancements include single-cell RNA sequencing (scRNA-seq), which resolves cellular heterogeneity by measuring gene expression in individual cells [25]. This technology has proven particularly valuable in complex tissues like tumors and brain regions, where distinct cell populations exhibit different functional states and disease susceptibilities [25] [29].

Table 1: Transcriptomics Technologies and Applications

| Technology | Key Features | Applications | Limitations |

|---|---|---|---|

| RNA-seq | High-throughput, quantitative, detects novel transcripts | Gene expression profiling, differential expression analysis | Requires RNA extraction, loses spatial context |

| Single-cell RNA-seq | Resolves cellular heterogeneity, identifies rare cell populations | Cell type classification, developmental biology, tumor heterogeneity | Technical noise, high cost, complex data analysis |

| Spatial Transcriptomics | Preserves spatial context, maps gene expression in tissue architecture | Tissue organization studies, host-pathogen interactions, developmental biology | Lower resolution than scRNA-seq, specialized equipment required |

Proteomics: From Gene Expression to Functional Effectors

Proteomics focuses on the large-scale study of proteins, including their expression levels, post-translational modifications, and interactions. While transcriptomics provides information about gene expression, proteomics directly characterizes the functional molecules that execute cellular processes, making it particularly valuable for understanding disease mechanisms and identifying therapeutic targets [25].

Mass spectrometry (MS) represents the cornerstone of modern proteomics, with both label-free and stable isotope labeling approaches enabling quantitative protein profiling. Affinity-based proteomics methods, including protein microarrays and co-immunoprecipitation coupled with MS, facilitate the study of protein-protein interactions and post-translational modifications [25]. These modifications—such as phosphorylation, glycosylation, and ubiquitination—crucially regulate protein function, localization, and stability, with specialized fields like phosphoproteomics providing insights into signaling network dynamics in diseases including type 2 diabetes, cancer, and neurodegenerative disorders [25].

Table 2: Proteomics Technologies and Applications

| Technology | Key Features | Applications | Limitations |

|---|---|---|---|

| Mass Spectrometry-based Proteomics | High sensitivity, identifies PTMs, quantitative capabilities | Biomarker discovery, signaling pathway analysis, drug target identification | Complex sample preparation, dynamic range limitations |

| Affinity-based Proteomics | Studies protein interactions, characterizes protein complexes | Protein-protein interaction networks, antibody development | Antibody specificity issues, limited throughput |

| Protein Microarrays | High-throughput, parallel protein analysis | Autoantibody profiling, drug-protein interactions, clinical diagnostics | Limited proteome coverage, protein stability challenges |

Metabolomics: The Functional Readout of Cellular Processes

Metabolomics involves the comprehensive analysis of small molecule metabolites, representing the most downstream product of gene expression and providing a direct snapshot of cellular physiology and biochemical activity [25]. The metabolome is highly dynamic and responsive to both genetic and environmental changes, making it particularly valuable for understanding functional changes in disease states and therapeutic interventions [27].