Structure-Based vs. Ligand-Based Virtual Screening: A Comprehensive Guide for Drug Discovery

This article provides a comparative analysis of structure-based (SBVS) and ligand-based virtual screening (LBVS) for researchers and drug development professionals.

Structure-Based vs. Ligand-Based Virtual Screening: A Comprehensive Guide for Drug Discovery

Abstract

This article provides a comparative analysis of structure-based (SBVS) and ligand-based virtual screening (LBVS) for researchers and drug development professionals. It explores the foundational principles of both approaches, detailing key methodologies like molecular docking and pharmacophore modeling. The content delves into practical applications, troubleshooting common pitfalls, and advanced optimization strategies, including the integration of machine learning. Finally, it offers a framework for the validation and comparative assessment of both techniques, highlighting their synergistic potential through real-world case studies to guide effective implementation in hit identification and lead optimization campaigns.

Virtual Screening Foundations: Core Principles of SBVS and LBVS

Structure-Based Virtual Screening (SBVS) is a computational approach used in the early stages of drug discovery to identify novel bioactive molecules from extensive chemical compound libraries by leveraging the three-dimensional (3D) structure of a biological target [1]. This method involves computationally "docking" millions of small molecules into the binding site of a target protein and using scoring functions to rank these compounds based on their predicted binding affinity [2] [3]. The primary goal is to select a subset of promising "hit" compounds for further experimental validation, thereby accelerating the hit-finding process and reducing the high costs and time associated with traditional drug development [3].

The indispensability of SBVS stems from its foundation on the physical structure of the target. Unlike ligand-based methods that rely on the similarity to known active compounds, SBVS utilizes the 3D structural information to predict how a ligand will interact with the protein's binding pocket [4]. This provides a powerful mechanism for identifying novel chemical scaffolds, even in the absence of known active compounds, making it a cornerstone of modern computer-aided drug design (CADD) [3].

SBVS in the Virtual Screening Landscape: A Comparative Framework

Virtual screening methods broadly fall into two categories: structure-based and ligand-based. Understanding how SBVS compares to its ligand-based counterpart is crucial for selecting the appropriate tool in a drug discovery campaign.

The table below outlines the core distinctions:

| Feature | Structure-Based Virtual Screening (SBVS) | Ligand-Based Virtual Screening (LBVS) |

|---|---|---|

| Fundamental Principle | Uses the 3D structure of the protein target to dock and score compounds [4]. | Uses known active ligands to identify new compounds with similar structural or pharmacophoric features [4]. |

| Primary Requirement | A reliable 3D structure of the target (from X-ray, Cryo-EM, NMR, or homology modeling) [1] [5]. | A set of known active compounds for the target of interest [4]. |

| Key Advantage | Can identify novel, diverse chemotypes without prior knowledge of active ligands; provides atomic-level interaction insights [4]. | Fast, computationally cheap; excellent at pattern recognition across diverse chemistries [4]. |

| Main Challenge | Dependence on the quality and accuracy of the protein structure; handling protein flexibility; accuracy of scoring functions [5] [3]. | Limited to finding compounds similar to known actives; cannot identify truly novel scaffolds [4]. |

| Ideal Use Case | Hit discovery when a protein structure is available and for scaffold hopping [1] [2]. | Prioritizing large chemical libraries, especially when no protein structure is available [4]. |

A powerful trend in the field is the move towards hybrid approaches, which combine the strengths of both methods. This can be done either sequentially (e.g., using fast ligand-based filtering to narrow a library before detailed structure-based docking) or in parallel (e.g., using consensus scoring from both methods to increase confidence in the final hit list) [4]. Evidence suggests that such hybrid strategies can outperform either method used alone by reducing prediction errors and increasing the confidence in identified hits [4].

The SBVS Workflow: From Protein Structure to Hit Identification

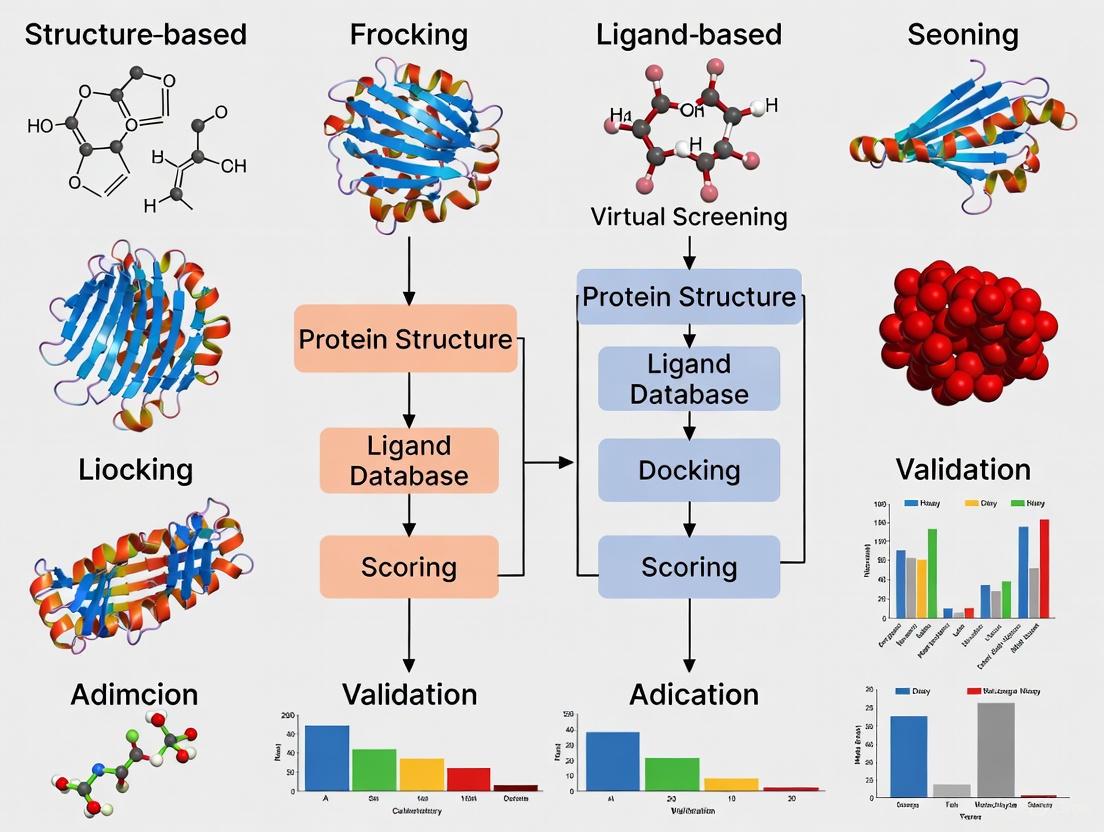

The process of conducting an SBVS campaign is a multi-stage pipeline where the quality of each step is critical to the overall success. The workflow can be broken down into four key stages, as visualized below.

SBVS Workflow Overview

Stage 1: Target Structure Preparation

This foundational step involves obtaining and preparing a high-quality 3D structure of the target protein.

- Experimental Structures: The gold standard is an experimental structure determined by X-ray crystallography, Cryo-Electron Microscopy (Cryo-EM), or NMR spectroscopy [5] [2].

- Computational Models: In the absence of an experimental structure, homology modeling (also known as comparative modeling) is a widely used and effective alternative. This method predicts the 3D structure of a target protein based on its amino acid sequence and the known structure of a homologous protein (the template) [5] [6]. The accuracy of a homology model is paramount for SBVS success [5].

- Addressing Flexibility: Proteins are dynamic. A significant challenge is accounting for protein and binding site flexibility, as a single static structure may not capture all relevant states for ligand binding. Advanced techniques like Ligand-Steered Modelling (LSM) incorporate information from known ligands during the modeling process to generate more accurate binding site conformations, which often outperforms docking into static models [5] [6].

Stage 2: Compound Library Preparation

This stage involves assembling and curating the virtual chemical library to be screened.

- Sources: Libraries can be sourced from public or commercial databases (e.g., ZINC) or custom-designed in-house collections [2].

- Preparation: Each compound in the library is processed to generate plausible 3D conformations and correct protonation states at a physiological pH, typically using tools like OpenBabel [1].

Stage 3: Molecular Docking & Scoring

This is the computational heart of SBVS.

- Docking: Automated algorithms (e.g., in AutoDock Vina) position each compound from the library into the defined binding site of the target, sampling millions of possible orientations and conformations (called "poses") [1].

- Scoring: Each pose is evaluated and ranked using a scoring function. These mathematical functions estimate the binding affinity based on factors like van der Waals forces, hydrogen bonding, and electrostatic interactions [3]. The choice and accuracy of the scoring function are major determinants of SBVS success, and different software can yield varying results [3].

Stage 4: Hit Analysis & Selection

The top-ranked compounds from the docking simulation are analyzed.

- Post-processing: This involves visual inspection of the predicted protein-ligand complexes using visualization tools like UCSF Chimera [1]. Researchers assess the rationality of the binding interactions (e.g., key hydrogen bonds, hydrophobic contacts).

- Consensus Techniques: To improve reliability, consensus virtual screening (CVS) can be employed, where results from multiple docking programs or scoring functions are combined to reduce false positives and increase the likelihood of selecting true active compounds [3].

Experimental Data & Performance Comparison

The practical value of SBVS is demonstrated through both retrospective validation and prospective applications that have led to clinical candidates.

Performance of Homology Models in SBVS

A critical question is how well SBVS performs when using computationally predicted protein models instead of experimental structures. A comprehensive survey of 322 prospective SBVS campaigns provided insightful data [5]:

| Structure Type | Number of Prospective SBVS Studies | Reported Performance Note |

|---|---|---|

| X-ray Crystal Structures | 249 | The established standard for SBVS. |

| Homology Models | 73 | The potency of the hits identified was on average higher than for hits identified by docking into X-ray structures [5]. |

This counter-intuitive result highlights that a well-built homology model, potentially optimized for ligand binding, can be highly effective in virtual screening.

Methodology for Validating SBVS Performance

To quantitatively evaluate the performance of an SBVS protocol (e.g., a specific docking program or a new homology model), researchers use a retrospective screening experiment. The standard methodology is as follows:

- Prepare a Test Library: Create a virtual library containing a set of known active compounds for the target and a large number of "decoy" molecules that are presumed inactives. Databases like the Directory of Useful Decoys (DUD) are often used for this purpose.

- Run the SBVS Protocol: Perform the virtual screening workflow (docking and scoring) on the entire test library.

- Analyze the Enrichment: Analyze the ranking of compounds to see if the known active compounds are preferentially ranked higher than the decoys. A successful protocol will "enrich" the top of the list with true actives.

- Calculate Metrics: Common metrics to report include:

- Enrichment Factor (EF): Measures the concentration of actives in the top fraction of the ranked list compared to a random selection.

- Area Under the Receiver Operating Characteristic Curve (AUC-ROC): Represents the overall ability of the method to correctly rank actives above inactives.

This validation process is crucial for establishing confidence in an SBVS setup before committing to expensive experimental testing [5].

The Scientist's Toolkit: Key Reagents & Software for SBVS

A successful SBVS project relies on a suite of specialized software tools and databases. The table below details essential "research reagents" for the field.

| Tool / Resource Name | Type | Primary Function in SBVS |

|---|---|---|

| Protein Data Bank (PDB) | Database | The single global archive for experimentally determined 3D structures of proteins and nucleic acids [2]. |

| AutoDock Vina | Software | A widely used, open-source program for molecular docking and scoring [1]. |

| UCSF Chimera | Software | A powerful tool for interactive visualization and analysis of molecular structures, used for inspecting docking results [1]. |

| OpenBabel | Software | A chemical toolbox used to convert file formats and prepare compound structures for docking [1]. |

| Homology Modeling Tools (e.g., MODELLER, SWISS-MODEL) | Software | Platforms used to generate 3D protein models from amino acid sequences when experimental structures are unavailable [5] [6]. |

| ZINC Database | Database | A free public database of commercially available compounds for virtual screening, containing over 230 million molecules [2]. |

Structure-Based Virtual Screening is a powerful and established method for mining chemical space to discover new lead compounds in drug discovery. Its unique reliance on the 3D structure of the biological target allows for the de novo identification of bioactive molecules. While challenges remain in scoring function accuracy and handling protein flexibility, the integration of SBVS with ligand-based methods and the successful use of high-quality homology models have significantly expanded its utility and impact. As computational power increases and algorithms become more sophisticated, SBVS will continue to be an indispensable tool for researchers and scientists aiming to bring new therapeutics to the market more efficiently.

Ligand-Based Virtual Screening (LBVS) is a foundational computational technique in drug discovery, employed to identify new potential drug candidates by leveraging the chemical information of known bioactive molecules. This approach is particularly valuable when the three-dimensional structure of the target protein is unavailable or difficult to obtain [7]. This guide provides a objective comparison of LBVS methodologies, supported by experimental data and detailed protocols.

Core Principles and Methodologies of LBVS

LBVS operates on the principle that molecules structurally similar to a known active compound are likely to share its biological activity [8]. It bypasses the need for a protein structure by using one or more known active ligands as templates to search large chemical databases for similar compounds.

The main computational strategies in LBVS include:

- 2D Similarity Methods: These methods use molecular fingerprints—binary vectors representing the presence or absence of specific chemical substructures—to compute similarity. Common fingerprints include ECFP (Extended Connectivity Fingerprint) and FCFP (Functional Class Fingerprint) [9]. The similarity between two molecules is typically quantified using the Tanimoto coefficient [7] [9].

- 3D Shape-Based Methods: These methods compare the three-dimensional molecular shapes and pharmacophoric features (e.g., hydrogen bond donors/acceptors, hydrophobic regions) of a query ligand against database compounds. The goal is to find molecules that can adopt a similar conformation and orientation in the binding site [7] [10]. Tools like ROCS (Rapid Overlay of Chemical Structures) are industry standards for this approach [4] [7].

- Pharmacophore Modeling: A pharmacophore model abstracts the essential steric and electronic features necessary for a molecule to interact with a biological target. LBVS uses this model to search for compounds that embody these features [4].

The following workflow illustrates how these methods are typically applied in sequence for an effective screening campaign:

Quantitative Performance Comparison

The performance of LBVS methods is rigorously evaluated using benchmark datasets like the Directory of Useful Decoys (DUD/DUD-E+) [7] [10]. Key metrics include the Area Under the ROC Curve (AUC), which measures overall screening performance, and the Enrichment Factor (EF), which indicates how much a method concentrates active compounds at the top of the ranked list compared to a random selection.

Table 1: Performance of LBVS Methods Across Diverse Protein Targets

| Target Protein | LBVS Method | Performance (AUC) | Key Findings / Comparative Advantage |

|---|---|---|---|

| Multiple Targets (DUD-E+) | HWZ Score (Shape-Based) | Average AUC: 0.84 ± 0.02 [7] | Showed improved overall performance and was less sensitive to the choice of target compared to other methods [7]. |

| Multiple Targets (DUD-E+) | PharmScreen & Phase Shape (3D-Based) | Varies by target and query conformation [10] | Performance is highly dependent on the query conformation, especially when 2D structural similarity between the template and actives is low [10]. |

| SARS-CoV-2 Mpro | LBVS with Boceprevir Template | N/A | Successfully identified potential inhibitors (C3, C5, C9) with higher computed binding affinity (-9.9 to -8.0 kcal mol⁻¹) than the reference compound (-7.5 kcal mol⁻¹) [11]. |

Table 2: LBVS vs. Structure-Based VS (SBVS) and Hybrid Methods

| Screening Approach | Description | Typical Use Case | Reported Performance |

|---|---|---|---|

| Ligand-Based (LBVS) | Uses known active ligands as templates for similarity search. [7] | No protein structure available; early library filtering. [4] | Fast; effective for finding structurally similar actives; performance can be query-dependent. [10] |

| Structure-Based (SBVS) | Docks compounds into the 3D structure of the target protein. [12] | High-quality protein structure is available. [4] | Can identify novel scaffolds; scoring function inaccuracies can lead to false positives. [7] |

| Hybrid / Sequential | Combines LBVS and SBVS, e.g., LBVS for fast filtering followed by SBVS for refinement. [12] [4] | Leveraging strengths of both; balancing speed and precision. [4] | Can outperform individual methods; provides more reliable results and increases confidence in hits. [4] |

| FIFI Fingerprint (Hybrid) | An Interaction Fingerprint combining ligand and structure information for machine learning. [12] | When limited active compounds and a protein structure are available. [12] | Showed higher prediction accuracy than other IFPs for 5 out of 6 targets in retrospective evaluation. [12] |

Detailed Experimental Protocols

Protocol 1: Shape-Based Screening with the HWZ Score

This protocol, which demonstrated high performance on the DUD benchmark, involves a sophisticated shape-overlapping procedure and a robust scoring function [7].

Query and Database Preparation:

- Identify a known high-affinity ligand to serve as the query structure.

- Prepare the compound database by converting structures into a standardized format and generating 3D conformations for each molecule.

Molecular Superposition:

- For each candidate molecule, identify and map common chemical functional groups (e.g., rings, chains) between the candidate and the query.

- Perform an initial superposition by aligning the centers of mass and the principal moments of inertia of the two molecules.

- Refine the alignment by treating the candidate as a rigid body and optimizing the shape-density overlap (V~AB~) with the query using a steepest descent algorithm. An efficient quaternion-based algorithm is used for rotations [7].

Scoring and Ranking:

- Calculate the HWZ score, a robust scoring function designed to overcome the limitations of the traditional Tanimoto score, particularly for molecules of different sizes [7].

- Rank all candidate molecules in the database based on their HWZ score against the query.

Protocol 2: 3D-LBVS with Multiple Query Conformations

This protocol addresses a critical factor in 3D-LBVS performance: the selection of the query conformation [10].

Template Selection and Query Generation:

- From a set of known active ligands, select a template molecule, ideally one with several rotatable bonds to maximize conformational diversity.

- Generate five distinct query conformations for the template [10]:

- QXR: The experimental crystallographic structure.

- QEMXR: The energy-minimized crystallographic structure (using MMFF94 force field).

- QLEG: The lowest-energy conformer sampled in the gas phase (generated with RDKit's ETKDG method).

- QLEW: The lowest-energy conformer sampled in water.

- Q_ENS: An ensemble of accessible conformers from clustering the conformational space.

Virtual Screening Execution:

- Perform parallel virtual screening campaigns against the target database (e.g., DUD-E+) using each of the five query definitions.

- Use a 3D-LBVS tool like PharmScreen (which relies on hydrophobic/philic pattern similarity) or Phase Shape (which refines volume overlap) [10].

Performance Analysis:

- For each screening run, calculate enrichment metrics (e.g., AUC, EF).

- Analyze the impact of the different query conformations on the recovery rate of active compounds, particularly in datasets where the 2D structural similarity between template and actives is low [10].

The relationship between query conformation and screening performance can be complex, as illustrated below:

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful implementation of LBVS relies on a suite of software tools and chemical databases.

| Resource Name | Type | Function in LBVS |

|---|---|---|

| RDKit | Cheminformatics Software | Open-source platform for molecular informatics; used for fingerprint generation, conformer generation, and molecular standardization [9] [10]. |

| VSFlow | Open-Source Software Tool | A command-line tool that integrates substructure, fingerprint, and shape-based screening into a single workflow [9]. |

| ROCS | Commercial Software | Industry-standard tool for 3D shape-based screening and molecular overlay [4] [7]. |

| DUD-E+ Database | Benchmarking Dataset | A public database of actives and decoys used to validate and benchmark virtual screening methods [10]. |

| ChEMBL / PubChem / ZINC | Chemical Databases | Public repositories containing vast amounts of chemical structures and bioactivity data used for screening and model building [11] [9]. |

| QuanSA | 3D-QSAR Method | Constructs physically interpretable binding-site models from ligand data to predict quantitative affinity, guiding compound design [4]. |

Virtual screening (VS) has become an integral part of the modern drug discovery process, serving as a computational approach to identify promising hit compounds from extensive chemical libraries [13] [14]. The two primary methodologies in this field are Structure-Based Virtual Screening (SBVS) and Ligand-Based Virtual Screening (LBVS), each with distinct knowledge requirements, operational frameworks, and application domains [15]. SBVS relies on the three-dimensional structure of the biological target, typically employing molecular docking to predict how small molecules interact with a protein binding site [16]. In contrast, LBVS operates without target structure information, instead utilizing known active ligands to search for structurally or physiochemically similar compounds under the similarity-property principle, which posits that similar molecules often exhibit similar biological activities [16] [15] [14]. This analysis systematically compares the fundamental knowledge prerequisites for implementing these complementary approaches, providing researchers with a framework for selecting appropriate methodologies based on available information and project requirements.

The successful implementation of SBVS and LBVS requires fundamentally different types of input data and technical knowledge. The table below summarizes the core prerequisites for each approach.

Table 1: Fundamental Knowledge Prerequisites for SBVS and LBVS

| Prerequisite Category | Structure-Based VS (SBVS) | Ligand-Based VS (LBVS) |

|---|---|---|

| Primary Data Input | 3D Structure of the target protein (from X-ray crystallography, NMR, or Cryo-EM) [16] [17] | Set of known active ligands for the target [16] [15] |

| Structural Knowledge | Detailed atomic-level architecture of the binding site [16] | Not required |

| Key Technical Methods | Molecular docking, scoring functions, binding site analysis [16] [15] | Molecular similarity searching, pharmacophore modeling, QSAR [16] [15] |

| Computational Demand | High (requires significant processing power and time) [18] [15] | Relatively Low (faster, can run on standard workstations) [18] [15] |

| Ideal Application Scenario | Target with a known or modelable structure; seeking novel scaffolds [13] [19] | Target structure unknown; sufficient known actives available [16] [17] |

Detailed Methodological Workflows

Understanding the procedural flow of each method is crucial for planning and resource allocation. The following diagrams outline the standard workflows for SBVS and LBVS, highlighting key decision points and technical steps.

SBVS Workflow

The SBVS process is a structure-driven pipeline that begins with target preparation and ends with the selection of potential hits. The workflow is primarily sequential, with feedback loops for validation and optimization.

Diagram 1: Structure-Based Virtual Screening (SBVS) Workflow. This protocol visualizes the sequential steps for screening compounds against a known protein structure, featuring critical validation checkpoints.

LBVS Workflow

The LBVS process is ligand-centric, building models from known actives to screen large chemical databases. The workflow emphasizes chemical data analysis and model building rather than structural bioinformatics.

Diagram 2: Ligand-Based Virtual Screening (LBVS) Workflow. This protocol outlines the process of screening compounds based on similarity to known active molecules, featuring a feedback loop for model optimization.

Experimental Protocols & Technical Implementation

Key Experimental Protocol: Structure-Based Virtual Screening using Molecular Docking

Molecular docking represents the most widely used SBVS technique [16]. The following protocol details its key steps, with an example based on a benchmark study of adenosine deaminase (ADA) [19].

Table 2: Key Steps in a Molecular Docking Protocol for SBVS

| Step | Action | Purpose & Technical Details | Common Tools & Resources |

|---|---|---|---|

| 1. Target Acquisition | Obtain 3D structure of the target protein. | Use experimental (X-ray, NMR) or predicted structures. If using homology modeling (e.g., with MODELLER), validate model quality [19]. | PDB, MODELLER, AlphaFold2 [20] [19] |

| 2. Binding Site Prep | Define the protein's binding site. | Identify key residues and features. Remove water molecules unless critical. Add hydrogen atoms and assign partial charges [19]. | SYBYL, DMS, SiteHound, fPocket [17] [19] |

| 3. Ligand Library Prep | Prepare the small molecule database. | Convert 2D structures to 3D, assign correct tautomers, protonation states, and generate conformers. | LigPrep, CORINA, OMEGA [21] |

| 4. Docking Execution | Perform the docking simulation. | Systematically search for optimal ligand poses within the binding site. Use a validated docking algorithm and parameters [19]. | DOCK, AutoDock VINA, GOLD, Glide [17] [21] [19] |

| 5. Scoring & Ranking | Evaluate and rank ligand poses. | Use a scoring function to predict binding affinity. Consensus scoring from multiple functions can improve reliability [16]. | Various scoring functions (e.g., ChemScore, GoldScore) [15] |

| 6. Hit Analysis | Visually inspect top-ranked complexes. | Verify sensible binding modes, key interactions (H-bonds, hydrophobic contacts), and chemical合理性. | Maestro, PyMOL, UCSF Chimera [21] |

Key Experimental Protocol: Ligand-Based Screening using 3D Similarity Methods

When a 3D protein structure is unavailable, LBVS using 3D molecular similarity offers a powerful alternative [18]. This protocol often employs shape-based or field-based comparisons.

Table 3: Key Steps in a 3D Similarity Protocol for LBVS

| Step | Action | Purpose & Technical Details | Common Tools & Resources |

|---|---|---|---|

| 1. Query Selection | Choose one or more known active ligands as the query. | Select a bioactive conformation if known. Using multiple diverse queries can increase scaffold diversity in results [13] [15]. | NCI Database, ZINC, In-house libraries [17] |

| 2. Conformational Analysis | Generate a representative set of 3D conformations for each molecule. | Account for ligand flexibility. Ensure the bioactive conformation is represented in the set [18]. | OMEGA, CONFGEN, CORINA [18] |

| 3. Molecular Description | Calculate 3D molecular descriptors. | Encode shape, electrostatic, or pharmacophoric properties. Methods include Gaussian functions (ROCS), atomic distances (USR), or surface descriptors [18]. | ROCS, USR, USRCAT, ESHAPE3D [18] |

| 4. Similarity Calculation | Compare database molecules to the query. | Align molecules and compute a similarity score (e.g., Volume Tanimoto Coefficient). Better superposition yields a higher score [18]. | ROCS, MolShaCS [18] |

| 5. Ranking & Prioritization | Rank the database compounds by similarity score. | Higher scores indicate greater 3D similarity to the query, suggesting a higher probability of activity. | In-house scripts, KNIME, Pipeline Pilot |

Successful virtual screening campaigns rely on a suite of computational tools and compound libraries. The following table catalogs key resources mentioned in the literature.

Table 4: Essential Virtual Screening Resources

| Resource Type | Name | Primary Function | Relevance to VS Type |

|---|---|---|---|

| Software Tools | MODELLER [19] | Comparative protein structure modeling | SBVS (when experimental structure is unavailable) |

| DOCK, AutoDock VINA, Glide [17] [21] [19] | Molecular docking and scoring | SBVS | |

| ROCS (Rapid Overlay of Chemical Structures) [18] [15] | 3D shape-based similarity screening | LBVS | |

| Machine Learning Algorithms (SVM, kNN, ANN) [13] [14] | Building predictive QSAR and activity classification models | LBVS | |

| Compound Libraries | ZINC Library [17] | >20 million purchasable compounds for screening | SBVS & LBVS |

| NCI Open Database [17] | ~265,000 compounds available for screening | SBVS & LBVS | |

| Directory of Universal Decoys (DUD) [19] | Benchmarking set with actives and property-matched decoys | SBVS & LBVS (for method validation) | |

| Computing Infrastructure | Minerva HPC [17] | High-performance computing cluster for large-scale screening | SBVS (essential), LBVS (beneficial) |

Integrated and Machine Learning-Enhanced Approaches

The distinction between SBVS and LBVS is increasingly blurred by hybrid strategies that leverage the strengths of both paradigms [16] [20]. These integrated approaches can be categorized as sequential, parallel, or hybrid. A sequential approach might use fast LBVS methods to pre-filter a massive library before applying more computationally intensive SBVS [16] [20]. A parallel approach runs LBVS and SBVS independently and then combines the results using data fusion algorithms to create a unified ranking [16] [20].

Furthermore, machine learning (ML) and deep learning (DL) are profoundly impacting both SBVS and LBVS [13] [20] [14]. In LBVS, ML models such as Support Vector Machines (SVM), Random Forest, and Neural Networks can build robust quantitative structure-activity relationship (QSAR) models from ligand data [13] [14]. In SBVS, ML is being used to develop more accurate scoring functions and to enable the direct prediction of binding affinity from protein and ligand structures, potentially bypassing traditional docking [20]. The rise of large, ultra-large libraries (e.g., Enamine REAL with 36 billion compounds) in competitions like CACHE makes these efficient ML-powered hybrid approaches essential for modern drug discovery [20].

SBVS and LBVS offer distinct yet complementary pathways for hit identification in drug discovery. The choice between them is fundamentally dictated by the available knowledge prerequisites: SBVS requires detailed 3D structural information of the target protein, while LBVS depends on a set of known active ligands. SBVS is often favored for its potential to discover novel chemical scaffolds, whereas LBVS is computationally more efficient and applicable when structural data is absent [13] [18] [16]. The emerging trend leans toward hybrid methods that synergistically combine both approaches, augmented by machine learning, to maximize the strengths and mitigate the limitations of each individual method [16] [20]. This integrated philosophy, leveraging all available chemical and structural information, represents the most powerful and robust strategy for navigating the vast chemical universe in the search for new therapeutic agents.

Historical Context and Evolution in Modern Drug Discovery

The escalating costs and high attrition rates associated with traditional drug discovery have propelled computational methods to the forefront of modern pharmaceutical research [22]. Virtual screening, a cornerstone of this digital transformation, provides a fast and cost-effective alternative to wet-lab high-throughput screening (HTS) by computationally narrowing vast chemical libraries to identify promising hits [4] [9]. These in silico approaches have evolved into two primary methodological streams: structure-based virtual screening (SBVS) and ligand-based virtual screening (LBVS).

SBVS relies on the 3D structure of a protein target, typically obtained through X-ray crystallography, NMR spectroscopy, or computational modeling, to dock and score small molecules [22] [19]. In contrast, LBVS operates without a target structure, leveraging known active ligands to identify new hits based on structural or pharmacophoric similarity [4] [9]. This guide provides a comparative analysis of these complementary approaches, examining their historical context, methodological underpinnings, performance metrics, and protocols to inform strategic decisions in contemporary drug discovery pipelines.

Historical Context and Evolution

The evolution of virtual screening is inextricably linked to advancements in structural biology and cheminformatics. The completion of the Human Genome Project unveiled a wealth of druggable targets, while parallel progress in X-ray crystallography and NMR spectroscopy provided the structural details necessary for SBVS to flourish [22]. Early docking programs like DOCK pioneered the field by using a negative image of the receptor site to match small molecule atoms [19].

Concurrently, LBVS methods matured from simple substructure searches to sophisticated similarity metrics using molecular fingerprints and 3D shape alignment [9]. The recent decade has witnessed a paradigm shift with the integration of artificial intelligence (AI) and machine learning (ML). AI now routinely informs target prediction, compound prioritization, and scoring functions, with some platforms reporting hit enrichment rates boosted by more than 50-fold compared to traditional methods [23]. The field is further transforming with the advent of ultra-large library screening, the application of models like AlphaFold to predict protein structures, and the creation of rigorous new benchmarks to address data leakage in ML model validation [24] [4].

Key Methodological Differences and Workflows

The fundamental distinction between SBVS and LBVS lies in their required inputs and operational logic. The workflows for each approach, and how they can be integrated, are visualized below.

Virtual Screening Workflow Comparison: This diagram illustrates the parallel pathways of structure-based and ligand-based virtual screening, and their convergence in a hybrid consensus approach.

Comparative Performance Analysis

The utility of SBVS and LBVS is ultimately gauged by their performance in retrospective benchmarks and prospective discovery campaigns. Key metrics include the enrichment factor (EF), which measures a model's ability to prioritize active compounds over inactives compared to random selection, and the hit rate, the proportion of tested compounds that show experimental activity [24] [25].

Performance Metrics from Benchmark Studies

Table 1: Comparative Performance of Virtual Screening Methods

| Method / Tool | Key Metric | Reported Performance | Context / Benchmark | Key Requirements |

|---|---|---|---|---|

| Docking (SBVS) | Median EF1% | 7.0 - 21 | Varies by program & scoring function [24] | Protein 3D Structure |

| LBVS (Fingerprint) | Processing Speed | ~Seconds per million cmpds. [9] | Efficient for large library pre-filtering | Known Active Ligands |

| AI-Enhanced Screening | Hit Enrichment | >50-fold increase [23] | Compared to traditional methods | Curated Training Data |

| Hybrid (LBVS + SBVS) | Mean Unsigned Error (MUE) | Significant reduction [4] | LFA-1 inhibitor affinity prediction | Both inputs available |

Recent work has proposed an improved metric, the Bayes enrichment factor (EFB), to address a fundamental limitation of the standard EF, which cannot estimate model performance on very large libraries due to its dependence on the ratio of actives to inactives in the benchmark set [24]. The EFB requires only random compounds instead of presumed inactives, avoids the ceiling effect of the traditional EF, and allows for enrichment estimation at much lower selection fractions, providing a better indicator of real-world screening utility [24].

Quantitative modeling of large-scale docking campaigns reveals that while current scoring functions are noisy predictors of binding affinity, they can still effectively enrich for hits. Performance is heavily influenced by the virtual library's intrinsic hit rate, highlighting the importance of pre-filtering for properties like charge and hydrophobicity, especially with tera-scale libraries [25].

Experimental Protocols and Workflows

Protocol for Structure-Based Virtual Screening (Docking-Based)

A typical SBVS pipeline involves sequential steps of target and compound library preparation, docking, and post-processing [22] [19].

- Target Preparation: The protein structure (experimental or homology model) is prepared by assigning proper protonation states, adding hydrogen atoms, and handling missing residues. For flexible targets, an ensemble of conformations may be generated via molecular dynamics (MD) [22] [19].

- Compound Library Preparation: A database of small molecules (e.g., ZINC, ChEMBL) is curated. Compounds are standardized, their tautomeric and protonation states are assigned, and 3D conformers are generated [22] [9]. Pre-filtering based on drug-likeness (e.g., Rule of Five) or structure-based pharmacophores is often applied to enrich the library [22].

- Molecular Docking: Each prepared compound is computationally posed into the target's binding site. Programs like AutoDock, DOCK, or Glide use algorithms to sample possible orientations and conformations (poses) [22] [19].

- Scoring and Ranking: A scoring function evaluates the complementarity of each pose. Compounds are ranked based on their predicted binding score, and the top-ranked molecules are selected for experimental validation [22] [24].

Protocol for Ligand-Based Virtual Screening

LBVS workflows are generally faster and rely on establishing a similarity hypothesis from known actives [9].

- Query Definition and Preparation: One or more known active compounds are defined as the query set. For 3D methods, their bioactive conformations are identified or generated [9].

- Database Screening:

- 2D Fingerprint Similarity: Molecular fingerprints (e.g., ECFP, FCFP) are computed for both query and database molecules. Similarity is calculated using the Tanimoto coefficient or related metrics, and compounds are ranked by similarity [9].

- 3D Shape-Based Screening: The 3D shape of the query molecule(s) is compared to conformers of database molecules using methods like ROCS or the open-source VSFlow. A combined score incorporating shape and pharmacophore similarity is often used for ranking [4] [9].

- Hit Selection and Validation: The top-ranking compounds from the similarity search are selected for further analysis and experimental testing.

Protocol for a Hybrid Screening Approach

A hybrid consensus strategy leverages the strengths of both SBVS and LBVS, often yielding more reliable results than either method alone [4].

- Sequential Integration: A large compound library is first rapidly filtered using a ligand-based method (e.g., fingerprint similarity) to identify a subset of compounds with desired features. This enriched library is then subjected to more computationally expensive structure-based docking for final refinement and selection [4].

- Parallel Screening with Consensus Scoring: The same compound library is screened independently using both SBVS and LBVS. The results are combined by either:

- Parallel Scoring: Selecting top candidates from both ranked lists to maximize the chance of recovering actives.

- Hybrid Scoring: Creating a single unified ranking by averaging or multiplying normalized scores from both methods, which increases confidence in selected hits by favoring compounds that rank highly by both criteria [4].

The Scientist's Toolkit: Essential Research Reagents and Software

Successful virtual screening relies on a suite of software tools and compound databases. The table below details key resources cited in experimental protocols.

Table 2: Key Research Reagents and Software Solutions

| Item Name | Type / Category | Primary Function in Virtual Screening | Example Tools / Databases |

|---|---|---|---|

| Protein Structure Database | Data Repository | Provides experimentally-solved 3D structures for SBVS targets or templates. | Protein Data Bank (PDB) [19] |

| Compound Library | Data Repository | Curated collections of small molecules for screening; can be public or commercial. | ZINC, ChEMBL, PubChem, ChemBridge [22] [9] |

| Homology Modeling Software | Software Tool | Generates 3D protein models for SBVS when no experimental structure is available. | MODELLER [19] |

| Molecular Docking Suite | Software Tool | Poses and scores compounds in a protein binding site (core SBVS engine). | DOCK, AutoDock, Glide, GOLD [22] [19] |

| Cheminformatics Toolkit | Software Tool | Provides foundational functions for molecule handling, fingerprinting, and substructure search. | RDKit [9] |

| Ligand-Based Screening Tool | Software Tool | Performs 2D/3D similarity searches and shape-based comparisons. | VSFlow, ROCS, SwissSimilarity [9] [4] |

Structure-based and ligand-based virtual screening are both powerful, yet imperfect, technologies that have become indispensable in modern drug discovery. The choice between them is often dictated by available data: LBVS is the go-to option when ligand information is abundant but protein structures are lacking, while SBVS shines when a reliable target structure is available, providing atomic-level insights into binding interactions.

The future of virtual screening lies not in choosing one over the other, but in their strategic integration. As evidenced by the performance data, hybrid approaches that combine the pattern-recognition strength of LBVS with the mechanistic insights of SBVS consistently outperform individual methods, reducing errors and increasing confidence in hit identification [4]. The field is rapidly evolving with trends such as the integration of AI and machine learning to develop target-biased scoring functions [22], the application of AlphaFold-predicted structures to expand the scope of SBVS [4], the development of more rigorous benchmarks to prevent data leakage in ML models [24], and the ability to screen ultra-large chemical libraries containing billions of molecules [25] [4]. For research teams, aligning with these trends by adopting integrated, data-driven workflows is no longer optional but a strategic necessity to mitigate risk, compress timelines, and improve the odds of translational success.

Methodologies in Action: Implementing SBVS and LBVS Workflows

Structure-Based Virtual Screening (SBVS) is a cornerstone of modern computer-aided drug design, enabling researchers to rapidly identify potential drug candidates by computationally screening large chemical libraries against three-dimensional protein structures [26]. At the heart of SBVS lie molecular docking programs and their scoring functions, which predict how small molecules bind to target proteins and estimate their binding affinity. Among the numerous docking tools available, AutoDock Vina, Glide, and DOCK have emerged as widely used solutions across academic and industrial settings. These tools employ different sampling algorithms and scoring functions, leading to variations in their performance across different protein targets and screening scenarios. This guide provides an objective comparison of these three docking programs, supported by experimental data from benchmarking studies, to inform researchers and drug development professionals in selecting appropriate tools for their virtual screening campaigns.

Fundamental Concepts in Molecular Docking

Molecular docking comprises two main components: a sampling algorithm that generates putative ligand orientations and conformations (poses) within the protein binding site, and a scoring function that evaluates and ranks these poses [26]. The performance of docking programs is typically assessed using two key metrics: the ability to reproduce experimental binding modes (measured by Root Mean Square Deviation, RMSD, between predicted and crystallographic poses), and the effectiveness in virtual screening (measured by enrichment factors and Area Under the Curve, AUC, from Receiver Operating Characteristic, ROC, analysis) [26]. An RMSD value of less than 2.0 Å is generally considered a successful pose prediction [26] [27].

Table 1: Key Characteristics of AutoDock Vina, Glide, and DOCK

| Characteristic | AutoDock Vina | Glide | DOCK |

|---|---|---|---|

| Developer | The Scripps Research Institute | Schrödinger | University of California, San Francisco |

| License | Open Source | Commercial | Open Source |

| Sampling Algorithm | Hybrid of genetic algorithm and Broyden-Fletcher-Goldfarb-Shanno (BFGS) method | Systematic torsionally-enhanced energy search | Shape-matching and anchor-and-grow |

| Scoring Function | Empirical scoring function with machine learning optimization | GlideScore (Empirical force field-based) | Chemical matching and grid-based scoring |

| Speed | Very Fast [27] | Moderate to Slow [27] | Moderate [27] |

| Key Strengths | Speed, ease of use, good performance | High pose prediction accuracy, comprehensive scoring | Flexibility in handling various molecular features |

Performance Comparison and Benchmarking Data

Pose Prediction Accuracy

The ability to correctly predict the binding mode of a ligand as found in crystallographic structures is a fundamental test for docking programs. Multiple studies have evaluated this capability across different protein families:

COX-1 and COX-2 Enzymes: In a benchmark study of 51 cyclooxygenase-inhibitor complexes, Glide demonstrated superior performance by correctly predicting binding poses (RMSD < 2.0 Å) for 100% of studied co-crystallized ligands. Other programs showed lower success rates: GOLD (82%), AutoDock (59%), and FlexX (82%) [26].

Macrolide and Macrocyclic Complexes: A study evaluating 20 protein-macrolide complexes found that AutoDock Vina, Glide, and DOCK performed comparably in self-docking tests, with mean RMSD values of 0.55 Å, 0.94 Å, and 0.57 Å, respectively. When docking conformational ensembles, the mean RMSD values were 1.31 Å for Glide, 1.34 Å for DOCK, and 1.29 Å for AutoDock Vina [27].

General Performance Assessment: A comprehensive evaluation using the PDBBind dataset demonstrated that conventional docking workflows like Glide and Surflex-Dock achieve success rates of 67-68% for top-ranked poses at the 2.0 Å RMSD threshold in cognate re-docking scenarios with defined binding sites [28].

Virtual Screening Enrichment

The effectiveness of docking programs in distinguishing active compounds from inactive ones in virtual screening is typically measured using enrichment factors and ROC analysis:

Cyclooxygenase Virtual Screening: ROC analysis of virtual screening performance against cyclooxygenase enzymes revealed AUC values ranging between 0.61-0.92 across different docking methods, with enrichment factors of 8-40 folds [26].

DUD Dataset Benchmarking: A study across 40 protein targets from the Directory of Useful Decoys (DUD) found that the mean screening performance of AutoDock Vina combined with the NNScore 1.0 rescoring function was not statistically different from Glide's performance [29].

Scoring Biases in Reverse Docking: Large-scale reverse docking studies have revealed that all three programs exhibit scoring biases toward proteins with certain pocket properties, such as large contact areas or high hydrophobicity, which can lead to false positives in target identification [30].

Table 2: Summary of Performance Metrics from Benchmarking Studies

| Docking Program | Pose Prediction Success Rate (RMSD < 2.0 Å) | Virtual Screening Performance (AUC Range) | Notable Strengths |

|---|---|---|---|

| AutoDock Vina | 48-81%* [28] [27] | 0.61-0.92* [26] | Excellent speed, good overall performance |

| Glide | 67-100%* [28] [26] | 0.61-0.92* [26] | High pose prediction accuracy, robust scoring |

| DOCK | ~57%* [27] | 0.61-0.92* [26] | Strong performance with macrocyclic compounds |

Note: Performance metrics vary significantly across different protein targets and test sets. The ranges represent values reported across multiple studies rather than direct comparisons within a single study.

Experimental Protocols and Methodologies

Standardized Benchmarking Workflow

To ensure fair and reproducible comparison of docking programs, researchers typically follow a standardized workflow for benchmarking studies:

Figure 1: Standard workflow for docking benchmarking studies.

Key Methodological Components

Data Set Collection and Preparation

Protein Structure Preparation: Crystal structures of protein-ligand complexes are downloaded from the Protein Data Bank. Proteins are typically prepared by removing redundant chains, water molecules, and cofactors, followed by adding hydrogen atoms and assigning proper protonation states at physiological pH [26] [29]. For instance, in the COX enzyme study, 51 complexes were selected and prepared using DeepView software [26].

Ligand Preparation: Small molecules are prepared using tools like Schrödinger's LigPrep to generate appropriate tautomeric, isomeric, and ionization states. Energy minimization is performed to ensure proper geometry [29]. In macrolide docking studies, conformational ensembles of ligands are often generated to account for flexibility, with conformers lying 0-10 kcal/mol above the global minimum included in docking calculations [27].

Docking Parameters and Execution

Binding Site Definition: The binding site is typically defined based on the location of the cognate ligand in the crystal structure, often using a grid box centered on the ligand. For AutoDock Vina, box dimensions are frequently taken from reference studies or defined to encompass the entire binding pocket [29].

Docking Protocols: Each program is run with its default parameters or with parameters optimized for specific systems. For example, in the Glide assessment, multiple precision modes (HTVS, SP, XP) are often employed in sequential screening to balance accuracy and computational cost [29].

Performance Evaluation Metrics

Pose Prediction Accuracy: The root mean square deviation (RMSD) between heavy atoms of the docked pose and the experimental crystal structure pose is calculated. Success rates are reported for thresholds of 2.0 Å and sometimes 1.0 Å for high-precision requirements [28].

Virtual Screening Performance: Enrichment factors, Area Under the ROC Curve (AUC), and Boltzmann-Enhanced Discrimination of ROC (BEDROC) metrics are used to evaluate the ability of docking programs to prioritize active compounds over decoys in screening scenarios [26] [31].

Table 3: Key Research Reagents and Computational Resources for Docking Studies

| Resource Category | Specific Tools/Solutions | Function in Docking Workflow |

|---|---|---|

| Protein Structure Resources | Protein Data Bank (PDB) [26], PDBBind [28] | Sources of experimentally determined protein-ligand complex structures for benchmarking and method development |

| Compound Libraries | NCI Diversity Set [29], DUD/E Decoys [30] [29] | Curated sets of active compounds and matched decoys for virtual screening validation |

| Structure Preparation | Schrödinger Protein Preparation Wizard [29], MGLTools [29] | Tools for adding hydrogens, assigning bond orders, optimizing hydrogen bonding, and correcting structural issues |

| Ligand Preparation | Schrödinger LigPrep [29], Open Babel | Generation of 3D structures, tautomers, stereoisomers, and ionization states at physiological pH |

| Performance Analysis | ROC Curve Analysis [26], Enrichment Factors [26], RMSD Calculations [26] | Quantitative metrics for evaluating pose prediction and virtual screening performance |

Context-Dependent Performance and Selection Guidelines

The performance of docking programs is highly system-dependent, with each tool exhibiting strengths in specific scenarios:

For High-Precision Pose Prediction: Glide consistently demonstrates superior performance in reproducing experimental binding modes across multiple benchmarking studies, making it suitable for projects requiring accurate binding mode analysis [26] [28].

For Large Virtual Screens: AutoDock Vina offers an excellent balance of speed and accuracy, particularly valuable when screening large compound libraries where computational efficiency is paramount [27] [29].

For Specialized Applications: DOCK shows particular strength with macrocyclic and macrolide compounds, and its shape-matching algorithm can be advantageous for certain target classes [27].

Addressing Scoring Biases and Limitations

All docking programs exhibit scoring biases that researchers should acknowledge and address:

Size and Polarizability Bias: Scoring functions tend to favor larger, more polarizable compounds regardless of the target, which can lead to artificial enrichment in virtual screening [29].

Pocket Property Bias: Programs may show preference for proteins with specific pocket characteristics, such as large contact areas or high hydrophobicity, potentially leading to false positives in target fishing applications [30].

Mitigation Strategies: Score normalization approaches and the use of composite scoring functions tailored to specific receptor classes can help mitigate these biases and improve virtual screening performance [30] [29].

Future Directions and Method Development

The field continues to evolve with several promising developments:

Machine Learning Scoring Functions: Neural network-based approaches like NNScore show comparable performance to established methods and offer potential for further improvement [29].

Hybrid Workflows: Combining multiple docking programs and rescoring strategies often yields better results than relying on a single method, taking advantage of the complementary strengths of different approaches [32].

Deep Learning Methods: New approaches like DiffDock have emerged but require careful validation, as their performance may be influenced by training set composition and may not yet surpass properly implemented conventional docking workflows [28].

In conclusion, AutoDock Vina, Glide, and DOCK each offer distinct advantages for structure-based virtual screening. Glide generally provides superior pose prediction accuracy, AutoDock Vina excels in speed and efficiency, while DOCK remains a robust open-source option with particular strengths for certain molecular classes. Researchers should select tools based on their specific requirements, considering factors such as target protein characteristics, desired balance between speed and accuracy, and available computational resources. Incorporating positive controls and using multiple complementary approaches can further enhance the reliability of virtual screening campaigns in drug discovery.

Ligand-Based Virtual Screening (LBVS) is a foundational computational strategy in drug discovery, employed when the three-dimensional structure of the target protein is unknown or unavailable. Its core principle is the "Similarity-Property Principle," which posits that structurally similar molecules are likely to exhibit similar biological activities and properties [33] [20]. By leveraging information from known active compounds, LBVS provides a powerful means to identify new hit molecules from vast chemical libraries, significantly accelerating the early stages of drug development. This approach stands in contrast to Structure-Based Virtual Screening (SBVS), which relies on the 3D structure of the biological target. LBVS is particularly valuable for targets like G Protein-Coupled Receptors (GPCRs), where obtaining high-resolution structural data can be challenging [34] [35]. The primary methodologies underpinning LBVS are Quantitative Structure-Activity Relationship (QSAR) modeling, pharmacophore mapping, and chemical similarity searches, each offering distinct mechanisms for comparing and prioritizing compounds.

The relevance of LBVS continues to grow in the modern computational landscape. While SBVS often demands substantial computational resources, limiting its application in screening ultra-large chemical libraries, LBVS offers a computationally efficient alternative or complement [20]. Furthermore, the integration of machine learning (ML) and artificial intelligence (AI) is revolutionizing LBVS, evolving it from traditional similarity measures towards sophisticated chemical language models and deep learning algorithms that can leverage vast amounts of experimental data to improve predictive accuracy [36] [20]. This review will objectively compare the core LBVS methods based on their operational protocols, performance metrics, and practical applications, providing a clear guide for researchers in selecting and implementing these tools.

The three principal LBVS techniques—QSAR modeling, pharmacophore mapping, and similarity searching—operate on related principles but differ significantly in their implementation and the type of molecular information they prioritize. The typical workflow for applying these methods, from data preparation to hit identification, is visualized below.

Core Methodologies and Data Requirements

- QSAR Modeling: This approach develops a mathematical model that correlates quantitative molecular descriptors (e.g., logP, polar surface area, topological indices) of a training set of compounds with their biological activity [33]. The resulting model predicts the activity of new, untested compounds. It requires a high-quality dataset with quantitative activity data (e.g., IC₅₀, Kᵢ) and is highly dependent on the choice of molecular descriptors and machine learning algorithms [35].

- Pharmacophore Mapping: A pharmacophore is defined as an abstract representation of the steric and electronic features necessary for molecular recognition at a target site [37] [38]. Pharmacophore models can be generated in a ligand-based manner by identifying common features shared by multiple known active molecules [37]. These models are then used as 3D queries to screen compound databases for molecules that can adopt a conformation matching the feature arrangement.

- Similarity Searching: This is the most rapid LBVS method. It calculates the structural or physicochemical similarity between a query molecule (known active) and each compound in a database [33] [34]. Similarity is typically measured using molecular fingerprints (e.g., ECFP6) or 2D/3D molecular descriptors. It requires only one or a few known active compounds and is less computationally intensive than other methods.

Performance Comparison and Experimental Data

Direct comparison of LBVS methods in real-world case studies provides the most objective performance data. The following table summarizes key metrics and outcomes from selected prospective and retrospective screening campaigns.

Table 1: Comparative Performance of LBVS Methods in Virtual Screening

| Method Category | Specific Method / Software | Target / Case Study | Key Performance Metric | Result & Hit Rate | Key Finding / Advantage |

|---|---|---|---|---|---|

| Similarity Search | ECFP6 Fingerprints | CRF1 Receptor [34] | Retrospective Enrichment | Lower enrichment than 3D methods | Fast and straightforward, but may find fewer novel scaffolds. |

| Similarity Search | ROCS (Shape Tanimoto) | CRF1 Receptor [34] | Retrospective Enrichment & Scaffold Recovery | High enrichment; retrieved more active scaffolds | 3D shape-based methods show superior performance in identifying actives. |

| Pharmacophore Modeling | Ligand-based Pharmacophore | Various Targets [38] | Prospective Hit Rate (General) | Typical hit rates range from 5% to 40% | Significantly higher hit rates than random screening (<1%). |

| QSAR Modeling | kNN-QSAR | Multiple GPCRs [35] | Prediction Accuracy (vs. Similarity Methods) | Highest predictive power compared to PASS and SEA | Superior when sufficient training data is available. |

| Similarity Search | SEA (Similarity Ensemble Approach) | Multiple GPCRs [35] | Prediction Accuracy (vs. QSAR) | Lowest predictive power in the study | Chemical similarity alone may be less accurate than QSAR models. |

Experimental Protocols and Validation

The performance data presented in Table 1 is derived from rigorous experimental protocols. For prospective studies, the standard workflow involves:

- Model/Query Generation: A model is built using known active compounds. For QSAR, this involves dividing data into training and test sets [35]. For pharmacophores, active molecules are aligned to derive common features [38].

- Virtual Screening: The model or query is used to screen a large database of commercially or synthetically accessible compounds (e.g., ZINC15, Enamine REAL) [39] [20].

- Experimental Validation: A selection of top-ranking virtual hits is procured and tested in vitro for biological activity (e.g., binding affinity or functional assays) [38] [34]. The hit rate is calculated as the percentage of tested compounds confirmed active.

In retrospective validations, a dataset with known active and inactive compounds is used [34] [35]. The virtual screening method is applied, and its ability to "enrich" actives at the top of the ranked list is measured using metrics like Enrichment Factor (EF) and Area Under the ROC Curve (AUC). This measures how much better the method is than random selection [38].

The Scientist's Toolkit: Essential Research Reagents and Software

Successful implementation of LBVS relies on a combination of computational tools, software, and chemical databases. The table below details key resources used in the featured studies and the broader field.

Table 2: Key Research Reagents and Software for LBVS

| Resource Name | Type | Primary Function in LBVS | Application Example |

|---|---|---|---|

| RDKit | Software Library | Open-source toolkit for cheminformatics; used for descriptor calculation, fingerprint generation, and molecular modeling [39]. | Converting SMILES strings to molecular graphs; generating molecular fingerprints for similarity searches [39]. |

| ROCS (Rapid Overlay of Chemical Structures) | Commercial Software | Performs 3D shape-based and "color" (feature-based) similarity comparisons between molecules [34]. | Scaffold hopping by finding molecules with similar shape/features but different chemical structures [34]. |

| PubChem / ChEMBL | Chemical Database | Public repositories of chemical structures and their associated bioactivity data [39] [38]. | Source of known active compounds for model building; source of decoy molecules for validation [38]. |

| ZINC / Enamine REAL | Purchasable Compound Database | Large, commercially available libraries of small molecules for virtual screening (e.g., >75 billion make-on-demand compounds) [39] [20]. | The target database for performing the virtual screen to find purchasable hits [39]. |

| Decoy Sets (e.g., DUD-E) | Validation Resource | Libraries of molecules with similar properties to actives but presumed inactive, used for retrospective validation [38]. | Benchmarking and validating the performance of a pharmacophore or QSAR model to ensure it can distinguish actives from inactives [38]. |

Integrated Approaches and Future Outlook

While each LBVS method has its strengths, the most powerful modern applications often involve their combination with each other or with structure-based methods. A sequential or parallel combination of LBVS and SBVS is a recognized strategy to leverage their complementary strengths and mitigate their individual limitations [20]. For instance, a fast LBVS method like similarity searching can first filter a multi-billion compound library down to a manageable size, which is then subjected to more computationally intensive SBVS (docking) or detailed pharmacophore screening [20].

The future of LBVS is inextricably linked to Artificial Intelligence (AI). Machine learning, particularly deep learning, is being applied to enhance all LBVS approaches [36] [20]. QSAR is evolving with more complex descriptors and neural networks. Similarity searching is being transformed by chemical language models that can learn complex molecular representations from SMILES strings or molecular graphs [20]. These AI-driven advancements promise to further improve the efficiency, accuracy, and scaffold-hopping potential of LBVS, solidifying its role as a critical tool in the era of big data and ultra-large library screening.

This guide objectively compares the performance of structure-based virtual screening (SBVS), ligand-based virtual screening (LBVS), and their integrated approaches across three critical target classes in drug discovery: enzymes, G protein-coupled receptors (GPCRs), and protein-protein interactions (PPIs). The content is framed within the broader thesis that a hybrid strategy, often enhanced by machine learning (ML), consistently outperforms either method alone by mitigating their inherent limitations.

Virtual screening is a computational cornerstone of modern drug discovery, designed to efficiently identify hit compounds from vast chemical libraries. The two primary strategies are:

- Structure-Based Virtual Screening (SBVS): Relies on the 3D structure of the target protein, typically using molecular docking to predict how small molecules bind to a specific binding pocket and estimate their binding affinity [4] [20].

- Ligand-Based Virtual Screening (LBVS): Applied when the protein structure is unknown but active ligands are available. It identifies novel hits based on their similarity to known active compounds, using methods like pharmacophore modeling or Quantitative Structure-Activity Relationship (QSAR) [4] [20].

These approaches are highly complementary. SBVS can identify novel scaffolds but is computationally expensive and depends on high-quality protein structures. LBVS is computationally efficient but may miss chemically novel hits. The integration of both methods, particularly with advances in artificial intelligence (AI), is revolutionizing the field [4] [20] [36].

Performance Comparison Across Target Classes

The following case studies and summarized data demonstrate the application and performance of these methods across different target types.

Targeting Enzymes: The Case of PfDHFR

Malaria, caused by Plasmodium falciparum, remains a major global health challenge. The enzyme Dihydrofolate Reductase (PfDHFR) is a vital drug target, and mutations in its binding site are a primary cause of drug resistance [40].

Experimental Protocol: A comprehensive benchmarking study evaluated three docking tools (AutoDock Vina, PLANTS, and FRED) against both wild-type (WT) and quadruple-mutant (Q) PfDHFR variants. The DEKOIS 2.0 benchmark set was used, which includes known active molecules and challenging decoys. The docking outputs were further re-scored by two pretrained machine learning scoring functions (MLSFs): CNN-Score and RF-Score-VS v2. Performance was measured using the Enrichment Factor at 1% (EF1%), which indicates how many more active compounds are found in the top 1% of the ranked list compared to a random selection [40].

Table 1: Benchmarking Results for PfDHFR Virtual Screening

| Target Variant | Docking Tool | Standard EF1% | ML Re-scoring Method | Enhanced EF1% |

|---|---|---|---|---|

| Wild-Type (WT) | AutoDock Vina | Worse-than-random | RF-Score-VS v2 / CNN-Score | Better-than-random |

| Wild-Type (WT) | PLANTS | Not Specified | CNN-Score | 28.0 |

| Quadruple-Mutant (Q) | FRED | Not Specified | CNN-Score | 31.0 |

Performance Summary: The study demonstrated that re-scoring docking results with MLSFs, particularly CNN-Score, consistently and significantly enhanced screening performance. This was evident in the high EF1% values achieved and the ability to retrieve diverse, high-affinity binders for both the wild-type and resistant mutant variants of PfDHFR [40].

Targeting GPCRs: The GPCRVS Platform

G protein-coupled receptors (GPCRs) are the largest family of membrane proteins and drug targets, but their structural flexibility and similarity pose challenges for selective drug design [41] [42].

Experimental Protocol: The GPCRVS platform is an AI-driven decision support system that overcomes the limitations of individual LBVS and SBVS methods. It integrates:

- Ligand-based predictions using deep neural networks and gradient boosting machines trained on data from ChEMBL and patents.

- Structure-based docking using AutoDock Vina for flexible ligand docking into defined orthosteric and allosteric sites.

- A unique six-residue peptide truncation method to handle peptide ligands, which are common for GPCRs, by focusing on the key N-terminal "message" fragment responsible for receptor activation [42].

Table 2: GPCRVS Performance on Class B GPCRs and Chemokine Receptors

| GPCR Subfamily | Ligand Type | Key Challenge | GPCRVS Solution | Validation Outcome |

|---|---|---|---|---|

| Class B (e.g., GLP-1R, GIPR) | Peptides & Small Molecules | Large peptide ligands | 6-residue truncation + unified model | Accurate activity prediction and selectivity assessment |

| Chemokine Receptors (e.g., CCR1, CXCR3) | Inhibitors (Small Molecules) | Subtype selectivity | Combined LB/SB screening and off-target prediction | Successful identification of selective patent compounds |

Performance Summary: By combining ligand- and structure-based methods, GPCRVS allows for the evaluation of compounds ranging from small molecules to peptides, predicting their activity range, pharmacological effect (e.g., agonist, antagonist), and potential binding mode. This integrated approach provides a more robust and selective screening tool for complex GPCR targets compared to using either method in isolation [42].

Targeting Protein-Protein Interactions: The HelixVS Platform

Protein-protein interactions (PPIs) are increasingly important therapeutic targets but often feature large, shallow interfaces that are difficult for small molecules to disrupt. The HelixVS platform was applied to these challenging targets [43].

Experimental Protocol: HelixVS employs a deep learning-enhanced, multi-stage SBVS workflow:

- Stage 1 - Rapid Docking: Uses QuickVina 2 to generate multiple binding poses for millions of molecules.

- Stage 2 - Deep Learning Refinement: A more accurate, RTMscore-based deep learning model re-scores the docking poses to improve affinity predictions.

- Stage 3 - Binding Mode Filtering: An optional step to filter results based on specific, pre-defined interaction patterns (e.g., key hydrogen bonds) [43].

The platform was tested on the standard DUD-E benchmark and in real-world drug development pipelines targeting PPIs, such as the TLR4/MD-2 and cGAS immune modulators [43].

Table 3: HelixVS Performance on DUD-E Benchmark and Real-World PPI Targets

| Application Context | Metric | AutoDock Vina | HelixVS | Improvement |

|---|---|---|---|---|

| DUD-E Benchmark (102 targets) | EF₁% (Enrichment Factor) | 10.022 | 26.968 | ~169% increase |

| DUD-E Benchmark | EF₀.₁% (Early Enrichment) | 17.065 | 44.205 | ~159% increase |

| Real-World PPI Projects | Experimental Hit Rate (μM/nM activity) | Not Specified | >10% of tested molecules | Successful hit identification |

Performance Summary: HelixVS demonstrated a substantial performance gain over classical docking tools like Vina in both benchmark settings and challenging real-world applications. Its ability to identify active molecules against difficult PPI targets underscores the power of integrating deep learning models into the SBVS pipeline to achieve superior enrichment and hit rates [43].

The Hybrid Approach: Sequential, Parallel, and Integrated Workflows

The case studies above highlight a common theme: the growing dominance of hybrid approaches. These can be implemented in several ways [4] [20]:

- Sequential Combination: A funnel-based workflow where LBVS (e.g., pharmacophore screening) rapidly filters an ultra-large library, and the resulting smaller subset is analyzed with more computationally expensive SBVS (e.g., molecular docking). This conserves resources while leveraging the strengths of both methods [4].

- Parallel Combination: LBVS and SBVS are run independently on the same library, and their results are combined. This can be done by:

- Parallel Scoring: Selecting top candidates from both lists to maximize the chance of finding hits.

- Consensus Scoring: Creating a single unified ranking, which increases confidence in the final selection [4].

- Fully Integrated AI Models: Newer platforms like GPCRVS and HelixVS represent a deeper integration, where machine learning models are trained on both ligand chemical data and protein-structural information, creating a unified and powerful prediction engine [20] [42] [43].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 4: Key Research Reagents and Computational Tools for Virtual Screening

| Item / Resource | Function / Application | Relevance to VS Workflow |

|---|---|---|

| DEKOIS 2.0 Benchmark Sets | Public datasets containing known active molecules and carefully selected decoys for specific protein targets. | Essential for objectively evaluating and benchmarking the performance of virtual screening pipelines [40]. |

| AlphaFold3 Predicted Structures | AI-predicted protein-ligand complex structures, useful when experimental structures are unavailable. | Provides structural models for SBVS; supplying an active ligand during prediction can improve model accuracy for screening [44]. |

| Machine Learning Scoring Functions (e.g., CNN-Score, RF-Score-VS v2) | Pretrained ML models that re-score docking poses to more accurately predict binding affinity. | Used after classical docking to significantly improve enrichment and distinguish true actives from decoys [40]. |

| CACHE Competition Data & Targets | An independent benchmark for evaluating computational hit-finding methods on unpublished targets with experimental validation. | Provides a rigorous, real-world standard for comparing and validating new virtual screening strategies [20]. |

The comparative analysis across enzymes, GPCRs, and PPIs leads to a clear and evidence-based conclusion: while classical LBVS and SBVS methods are powerful, their synergistic integration consistently delivers superior results. The sequential application of LBVS for rapid library enrichment followed by SBVS for detailed interaction analysis represents a robust and resource-efficient strategy. Furthermore, the emerging paradigm of using machine learning and deep learning models to augment or integrate these approaches—exemplified by platforms like GPCRVS and HelixVS—is setting a new standard for performance. These hybrid systems address fundamental limitations of traditional methods, enabling higher hit rates, better affinity prediction, and the successful targeting of challenging protein classes, thereby accelerating the early stages of drug discovery.

In modern drug discovery, virtual screening (VS) serves as a critical computational technique for identifying promising hit compounds from vast chemical libraries, significantly reducing the time and cost associated with experimental screening [45]. VS methodologies are broadly classified into two categories: structure-based virtual screening (SBVS), which relies on three-dimensional protein structures to predict ligand binding through docking, and ligand-based virtual screening (LBVS), which utilizes known active ligands to identify compounds with similar structural or pharmacophoric features [4]. While SBVS provides atomic-level insights into binding interactions, it is computationally demanding and requires high-quality protein structures. LBVS, though faster and less resource-intensive, is limited by the known ligand data and may lack structural novelty [20].

The emerging paradigm recognizes that these approaches are highly complementary rather than mutually exclusive. Hybrid strategies that integrate LBVS and SBVS mitigate their individual limitations and leverage their synergistic potential to enhance screening efficiency and hit rates [20] [4]. This guide objectively compares the three principal hybrid workflows—sequential, parallel, and integrated—by examining their underlying protocols, performance metrics, and practical applications in contemporary drug discovery research.

Experimental Protocols for Hybrid Workflows

To ensure valid comparisons, benchmarking studies follow standardized protocols. The DEKOIS 2.0 benchmark set is widely used to evaluate virtual screening performance. It provides bioactive molecules alongside carefully selected, property-matched decoy molecules for specific protein targets, enabling the assessment of a method's ability to prioritize true actives [40]. Common performance metrics include the Enrichment Factor at 1% (EF1%), which measures how enriched the top 1% of the ranked library is with true actives, and the Area Under the Receiver Operating Characteristic Curve (ROC-AUC), which evaluates the overall ranking quality of actives over decoys [40].

The following experimental protocol is typical in benchmarking studies, such as those evaluating performance against wild-type and mutant Plasmodium falciparum Dihydrofolate Reductase (PfDHFR) [40]:

- Protein Preparation: Experimentally determined protein structures (e.g., from PDB) are prepared by removing water molecules, adding hydrogen atoms, and optimizing side-chain conformations using tools like OpenEye's "Make Receptor" [40].

- Ligand Library Preparation: A library of known active compounds and decoys is prepared. Tools like Omega are used to generate multiple conformations for each ligand, and formats are standardized for different docking programs [40].

- Docking and Scoring: The prepared library is screened using docking tools such as AutoDock Vina, FRED, or PLANTS. The grid box is defined to encompass the binding site of interest [40].