Structure-Based Filtering Algorithms for Drug Discovery: A Comprehensive Guide to Dataset Curation, Implementation, and Validation

This article provides a comprehensive guide to structure-based filtering algorithms for dataset curation in drug discovery.

Structure-Based Filtering Algorithms for Drug Discovery: A Comprehensive Guide to Dataset Curation, Implementation, and Validation

Abstract

This article provides a comprehensive guide to structure-based filtering algorithms for dataset curation in drug discovery. Aimed at researchers and development professionals, it explores the foundational principles of leveraging 3D molecular structures to prioritize compounds. The content details practical methodologies for implementation, addresses common challenges and optimization strategies, and establishes rigorous validation frameworks for comparing algorithm performance. By synthesizing current computational approaches, this resource serves as a practical roadmap for integrating efficient and effective structure-based filtering into modern drug development pipelines to enhance the quality of candidate selection.

Understanding Structure-Based Filtering: Core Concepts and Its Role in Modern Drug Discovery

Structure-based filtering represents a class of algorithms and methodologies designed to select, refine, or process data based on its inherent structural properties, relationships, or models. In the context of dataset curation for scientific research, particularly in drug development, these techniques are paramount for isolating high-quality, relevant data from noisy, heterogeneous, and massive raw data pools. The core principle moves beyond simple keyword or property matching to an intelligent analysis of how data points are organized, interconnected, and modeled, whether the "structure" refers to the spatial arrangement of atoms in a protein, the syntactic structure of text, or the topological structure of a molecular graph. The integration of Artificial Intelligence (AI), especially deep learning, has dramatically advanced these capabilities, enabling the prediction of protein structures with near-experimental accuracy and the curation of datasets that train more efficient and powerful models [1] [2]. These advancements are crucial for accelerating therapeutic discovery, as a robust understanding of target structures like G protein-coupled receptors (GPCRs) forms the foundation of structure-based drug discovery (SBDD) [2]. This document outlines the fundamental principles, advanced AI integrations, and practical protocols for applying structure-based filtering in a modern research environment.

Basic Principles and Traditional Approaches

Structure-based filtering is founded on the principle of using a predefined or learned model of "structure" to make inclusion or exclusion decisions. This can be broken down into several classic approaches:

Rule-Based and Heuristic Filtering

This approach relies on expert-defined rules to filter data based on structural characteristics. In cheminformatics, this is exemplified by functional group filters and rules like Lipinski's Rule of Five, which use the 2D molecular structure to predict drug-likeness and remove compounds with undesirable or reactive moieties [3]. Similarly, in data curation for language models, heuristic rules filter documents based on structure-like features such as duplicate lines, abnormal text lengths, or excessive symbol counts [4].

Fuzzy Logic Filtering

For multidimensional data like color images, fuzzy logic provides a robust framework for structure-based filtering. Unlike binary logic, fuzzy systems handle the imprecision inherent in real-world data by defining membership functions. For instance, in biomedical image analysis, fuzzy filters can process a pixel's neighborhood to effectively remove noise while preserving critical structural details like edges. These filters use fuzzy rules and derivatives to adaptively smooth an image based on local structural patterns, which is vital for accurate diagnosis [5].

Structure-Informed Database Filtering

The principle of pre-filtering a large database to increase the positive predictive value of subsequent, more computationally intensive screens is a key application of structure-based filtering in virtual screening. By first removing compounds that are obvious negatives based on structural and property filters (e.g., molecular weight, polar surface area, presence of toxic groups), researchers can focus valuable resources on a much smaller, higher-quality subset of compounds, significantly improving the efficiency of the hit discovery process [3].

Table 1: Traditional Structure-Based Filtering Approaches

| Approach | Core Principle | Typical Application |

|---|---|---|

| Rule-Based/Heuristic | Applies expert-defined, often threshold-based, rules to structural features. | Drug-likeness prediction in cheminformatics; initial data cleaning in text curation [3] [4]. |

| Fuzzy Logic | Uses graded membership sets and rules to handle imprecision and uncertainty in structural data. | Noise reduction and edge preservation in biomedical image processing [5]. |

| Database Pre-Filtering | Uses structural and property filters to create a enriched, target-specific subset from a large compound library. | Improving the positive predictive value in high-throughput virtual screening [3]. |

Advanced AI Integration in Structure-Based Filtering

The advent of AI, particularly deep learning, has transformed structure-based filtering from a reliance on hand-crafted rules to a data-driven paradigm where complex structures are learned directly from data.

AI for Protein Structure Prediction and Filtering

AI-powered tools like AlphaFold2 (AF2) and RoseTTAFold have resolved the long-standing challenge of predicting protein 3D structures from amino acid sequences with atomic-level accuracy [1] [2]. These models are trained on the known structures in the Protein Data Bank (PDB) and have generated highly accurate models for entire proteomes, including those of major drug target classes like GPCRs [2]. For many Class A GPCRs, AF2 models show high confidence (pLDDT >90) in the transmembrane domain and the orthosteric ligand-binding pocket, with root mean square deviation (RMSD) of less than 2 Å from experimental structures [2]. These AI-predicted structures serve as the foundational "filter" in SBDD, enabling research on targets without experimental structures. However, a limitation is that standard AF2 models often represent a single conformational state, prompting developments like AlphaFold-MultiState to generate state-specific models (e.g., active or inactive GPCR conformations) for more relevant drug discovery [2].

AI-Powered Data Curation Pipelines

In dataset curation for training AI models, structure-based filtering uses machine learning models to assess and select data based on qualities like grammaticality, informational content, and reasoning structure. Modern pipelines, such as the one used to create the Aleph-Alpha-GermanWeb dataset, employ a multi-stage process:

- Heuristic Filtering: Applies initial rules for language identification and low-quality text removal [4].

- Model-Based Filtering: Uses classifiers (e.g., fastText, BERT) to predict document quality based on grammar, style, and informativeness, effectively filtering based on the linguistic and informational "structure" of the text [4].

- Deduplication: Removes exact and near-duplicate documents to ensure data diversity [4] [6].

- Synthetic Data Generation: Augments organic data by using LLMs to generate paraphrases and factual expansions conditioned on high-quality native documents, thereby creating new, structurally sound data [4].

This AI-driven curation has demonstrated dramatic improvements, enabling models trained on curated datasets to outperform those trained on much larger, unfiltered datasets, achieving the same performance with up to 86.9% less compute (a 7.7x training speedup) [6].

Table 2: Key AI Technologies for Advanced Structure-Based Filtering

| AI Technology | Role in Structure-Based Filtering | Impact |

|---|---|---|

| AlphaFold2 & RoseTTAFold | Predicts the 3D structure of proteins from sequence, providing the structural model for SBDD. | Revolutionized target identification and understanding for GPCRs and other proteins; expanded structural coverage of proteomes [1] [2]. |

| Model-Based Classifiers (e.g., BERT) | Filters text and other data by assessing quality dimensions like grammaticality, coherence, and reasoning structure. | Enables creation of high-quality training datasets for LLMs, leading to better performance with less data and compute [4] [6]. |

| Generative AI / LLMs | Creates synthetic data by expanding or paraphrasing high-quality source data, maintaining structural and topical accuracy. | Augments scarce data resources, particularly for non-English languages, enhancing dataset diversity and quality [4]. |

Application Notes and Protocols

Protocol: A Multi-Stage AI Curation Pipeline for a Text Dataset

This protocol details the methodology for curating a high-quality text dataset, as exemplified by modern pipelines [4] [6].

1. Objective: To create a high-quality, domain-specific dataset from a raw web-crawled corpus (e.g., RedPajama-V1) for pre-training large language models. 2. Materials: Raw text corpus (e.g., Common Crawl data); computing cluster; parsing tools (e.g., resiliparse); language identification model (e.g., fastText); MinHash libraries for deduplication; quality classification models (e.g., trained BERT/fastText); a capable LLM for generation (e.g., Mistral-Nemo-Instruct). 3. Experimental Workflow:

Diagram 1: AI Data Curation Pipeline

4. Procedure:

- Step 1: Heuristic Filtering. Extract plain text from HTML. Apply a blacklist to exclude documents from unwanted domains (e.g., adult content, fraud). Use a language identification model to retain only documents with a high probability of being in the target language [4].

- Step 2: Text Quality Cleaning. Apply repetition detection thresholds at the line, paragraph, and n-gram levels. Use document-level heuristics to filter out texts with abnormal lengths, unnatural mean word lengths, excessive symbols, or low fractions of alphabetic words [4].

- Step 3: Deduplication. Perform exact deduplication by comparing document hashes. Perform fuzzy deduplication using MinHash signatures over 5-gram character windows and locality-sensitive hashing (LSH) to cluster and remove near-duplicates [4] [6].

- Step 4: Model-Based Filtering. Train classifier models (e.g., fastText, BERT) to predict document quality using labels derived from grammar-checking tools (e.g., LanguageTool) or LLM judges. Assign quality points based on classifier outputs and sort documents into quality buckets. Only the highest-quality buckets are retained for the final dataset [4].

- Step 5: Synthetic Data Generation (Optional). For high-quality organic documents, use an instruction-tuned LLM to generate synthetic variants (e.g., Wikipedia-style rephrasing, summarization, Q&A pair creation). Limit the number of variants per organic sample (e.g., ≤5) to prevent quality degradation. Clean the outputs to remove LLM artifacts [4].

- Step 6: Blending. Combine the filtered organic data and the generated synthetic data at a controlled ratio to produce the final curated dataset [4].

5. Evaluation: Evaluate the curated dataset by pre-training LLMs on it and benchmarking their performance on a suite of tasks (e.g., MMLU, reasoning, truthfulness) against models trained on baseline datasets like FineWeb or RefinedWeb [4] [6].

Protocol: Structure-Based Hit Identification for a GPCR Target

This protocol leverages AI-predicted structures for the initial phases of drug discovery [2].

1. Objective: To identify hit compounds for a GPCR target using an AI-predicted protein structure. 2. Materials: AI-predicted GPCR structure (e.g., from AlphaFold Protein Structure Database or generated with AlphaFold-MultiState for a specific state); compound library for virtual screening; molecular docking software (e.g., AutoDock, DiffDock); computing cluster. 3. Experimental Workflow:

Diagram 2: Structure-Based GPCR Hit Discovery

4. Procedure:

- Step 1: Receptor Modeling. Obtain or generate a 3D model of the target GPCR. For targets with no experimental structure, download the pre-computed model from the AlphaFold Database or generate a state-specific model using tools like AlphaFold-MultiState. Validate the model's confidence using the provided pLDDT score, with a focus on the transmembrane and orthosteric pocket regions [2].

- Step 2: Preparation of Compound Library. Prepare a database of compounds for screening. Apply structure-based filters (e.g., using a tool like FILTER) to remove compounds with undesirable functional groups, poor drug-like properties, or potential reactivity, creating an enriched subset for docking [3].

- Step 3: Modeling of Ligand-Bound Complex (Docking). Perform molecular docking to generate poses of ligands within the binding pocket of the GPCR model. Use the docking software to sample possible ligand conformations and score the resulting poses. Note that the accuracy of docking is highly dependent on the accuracy of the predicted binding pocket [2].

- Step 4: Hit Identification. Analyze the docking results. Rank compounds based on their docking scores and inspect the top-ranked poses for key receptor-ligand interactions (e.g., hydrogen bonds, hydrophobic contacts). Select a diverse set of high-ranking compounds for experimental validation.

- Step 5: Experimental Validation. The selected computational "hit" compounds must be procured or synthesized and tested in biochemical or cellular assays to confirm biological activity.

5. Evaluation: The success of the protocol is evaluated by the number and potency of experimentally confirmed hits. The geometric "correctness" of the docking poses can be retrospectively assessed if an experimental structure of the complex becomes available, using metrics like ligand heavy-atom RMSD and the fraction of correctly predicted receptor-ligand contacts [2].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Structure-Based Filtering and Discovery

| Item / Resource | Function / Application | Explanation |

|---|---|---|

| AlphaFold Protein Structure Database | Provides pre-computed protein structure predictions for entire proteomes. | Offers immediate access to reliable 3D models for a vast array of targets, bypassing the need for experimental structure determination or de novo modeling [1]. |

| Protein Data Bank (PDB) | Repository for experimentally determined 3D structures of proteins, nucleic acids, and complex assemblies. | The primary source of ground-truth structural data for training AI predictors like AF2 and for validating computational models [1]. |

| FILTER Software (e.g., OpenEye) | Applies functional group and property-based filters to compound libraries. | Prepares databases for virtual screening by removing compounds with undesirable properties, thereby increasing the positive predictive value of downstream screens [3]. |

| FastText / BERT Classifiers | Model-based filtering for text and data quality assessment. | Used within curation pipelines to automatically score and filter documents based on grammaticality, style, and informativeness [4]. |

| Collinear AI Curators / DatologyAI Pipeline | Specialized reward models and pipelines for data curation. | Embodies the state-of-the-art in enterprise-grade data curation, using ensembles of small models to efficiently select high-quality data for training, yielding significant compute savings [7] [6]. |

The Critical Role of High-Quality Dataset Curation in Accelerating Drug Discovery Pipelines

The integration of artificial intelligence (AI) and machine learning (ML) into drug discovery has transformed the landscape of pharmaceutical research, shifting the core challenge from algorithmic innovation to data quality and integrity. The principle of "garbage in, garbage out" is particularly critical in this field, where the quality of the underlying training data fundamentally determines the predictive power, reliability, and clinical applicability of the resulting models [8]. High-quality, well-curated datasets are not merely a convenience but a prerequisite for developing robust AI models capable of accurately predicting complex biomolecular interactions, such as protein-ligand binding affinities [9] [10].

The process of data curation—involving the organization, description, quality control, preservation, and enhancement of data for reuse—is essential for creating a solid data foundation [11]. This is especially true for structure-based drug discovery (SBDD), where models learn from three-dimensional structural data of protein-ligand complexes. Inaccuracies in these structures, such as incorrect atom assignments, inconsistent geometries, or missing hydrogen atoms, are not uncommon in raw experimental data and can severely mislead AI models during training [9]. Consequently, a rigorous, structure-based filtering algorithm is indispensable for transforming raw, noisy experimental data into a refined, AI-ready knowledge base. This Application Note details the protocols and benchmarks for constructing such a high-quality dataset, providing a framework for researchers to build reliable predictive models that can accelerate the drug discovery pipeline.

Modern drug discovery is increasingly reliant on computational methods to navigate the vast combinatorial space of potential drug candidates. While AI holds the promise of drastically reducing the time and cost associated with bringing a new drug to market, its success is heavily contingent on the data from which it learns. The industry faces significant challenges related to data volume, heterogeneity, and inherent noise [10]. Data sourced from public repositories like the Protein Data Bank (PDB) or ChEMBL, while invaluable, often contain inconsistencies that must be addressed through meticulous curation before they can power reliable AI applications [9] [12].

A primary obstacle in structure-based AI model development is the limited number of publicly available protein-ligand structures (approximately 20,000) coupled with a lack of comprehensive thermodynamic data [9]. This scarcity is compounded by structural inaccuracies originating from the limited spatial resolution of experimental methods and biases in the software used for molecular geometry processing [9]. Common issues include:

- Incorrect protonation states and missing hydrogen atoms.

- Distorted bond lengths and angles, particularly for heteroatoms, which can deviate from reference values by up to 17% [9].

- Over-assignment of hydrogen atoms, leading to unrealistic local formal charges [9].

These issues prevent AI models from implicitly learning the correct physics of molecular interactions. Therefore, a structured curation pipeline that systematically refines and enriches raw structural data is critical to provide models with the highest possible correctness and consistency.

Protocols for Structure-Based Dataset Curation

This section outlines a standardized, multi-stage protocol for curating a high-quality dataset for structure-based drug discovery, with a focus on preparing data for affinity prediction tasks.

Protocol 1: Data Sourcing and Pre-Filtering

Objective: To gather a comprehensive set of raw protein-ligand complexes and apply initial filters based on experimental and chemical criteria.

Materials:

- Source Databases: PDBbind [9], BindingDB [13], ChEMBL [12].

- Computational Tools: SQLite database software, cheminformatics toolkits (e.g., RDKit, Open Babel).

Methodology:

- Data Acquisition: Download the latest version of the PDBbind database (e.g., the "refined set") or connect to the ChEMBL database via its SQLite format [12].

- Measurement Type Filtering: To ensure binding affinity data consistency, filter records to include only those with a specified measurement type (e.g., IC50, Ki, EC50). Avoid mixing different measurement types within a single curated dataset [12].

- Noise-Level Annotation: Implement a tiered filtering system to assign noise-level annotations. This creates subsets of varying quality [12]:

- Core: The highest quality subset, obtained by applying the most stringent filters (e.g., exact measurement type, unambiguous protein target, high-confidence ligand structure).

- Refined: An intermediate quality subset with moderately strict filters.

- General: A broader, more inclusive dataset with minimal filtering, representing the "raw" data landscape.

- Initial Structure Extraction: Extract the protein and ligand structures into separate files (e.g., PDB for protein, SDF or MOL2 for ligand).

Table 1: Key Source Databases for Protein-Ligand Complex Data

| Database Name | Primary Content | Key Features | Use Case in Curation |

|---|---|---|---|

| PDBbind [9] | Experimentally determined protein-ligand complexes with binding affinity data. | Curated from the PDB, includes ~20,000 structures. | Primary source for 3D structural data and experimental affinities. |

| ChEMBL [12] | Bioactivity data for drug-like molecules. | Large-scale, target-annotated bioactivities. | Sourcing ligand information and bioactivity data for affinity prediction. |

| BindingDB [13] | Measured binding affinities for protein-ligand interactions. | Focus on quantitative binding data. | Supplementary source for validating and enriching affinity data. |

Protocol 2: Structural Curation and Quantum Mechanical Refinement

Objective: To correct atomic-level inaccuracies in ligand structures and calculate quantum mechanical (QM) properties to enrich the dataset.

Materials:

- Software: Quantum chemical software packages (e.g., Gaussian, ORCA, DFTB+), molecular dynamics suites (e.g., GROMACS, AMBER).

- Hardware: High-performance computing (HPC) cluster.

Methodology:

- Ligand Structure Validation: Load the initial ligand structures and check for common errors, including:

- Abnormal bond lengths and angles.

- Violations of valence shell electron pair repulsion (VSEPR) theory.

- Incorrect atomic hybridizations.

- Protonation State Assignment: Programmatically assign physiologically relevant protonation states to ligand atoms using tools like

EpikorPROPKA. This step often involves the removal or addition of hydrogen atoms from the initial PDB geometry, which constitutes up to 75% of all structural modifications [9]. - Quantum Mechanical Refinement: Use semi-empirical QM methods (e.g., GFN2-xTB) to systematically refine and optimize the geometry of each ligand. This step regularizes the structure, correcting the distorted functional groups identified in the validation step [9].

- QM Property Calculation: For each refined ligand, calculate a suite of electronic and molecular properties. These properties serve as high-quality descriptors for ML models.

Table 2: Quantum Mechanical Properties for Dataset Enrichment

| Property Category | Specific Properties | Significance in Drug Discovery |

|---|---|---|

| Molecular Properties | Electron affinity, Chemical hardness, Ionization potential, Electronegativity, Polarizability [9] | Indicators of chemical reactivity and stability. |

| Atomic Properties | Partial charges (e.g., MK, ESP), Bond orders, Atomic hybridizations [9] | Describe the electronic environment and reactivity at specific atoms. |

| Reactivity Indices | Fukui indices, Atomic softness [9] | Predict sites for nucleophilic or electrophilic attack. |

Protocol 3: Domain Annotation and Splitting for Robust Validation

Objective: To annotate data points with domain information and split the dataset in a way that tests a model's ability to generalize to novel scenarios, a key aspect of out-of-distribution (OOD) evaluation.

Materials:

- Computational Tools: Scaffold analysis tools (e.g., in RDKit), sequence clustering tools (e.g., MMseqs2).

Methodology:

- Domain Annotation: Categorize each protein-ligand complex based on specific domain shifts [12]:

- Scaffold: Annotate the molecular scaffold of the ligand using the Bemis-Murcko method.

- Assay: Tag data with the assay ID from which the binding affinity was measured.

- Protein Family: Cluster protein targets by their gene family (e.g., kinases, GPCRs).

- Size: Categorize ligands based on molecular weight or heavy atom count.

- OOD Dataset Splitting: Partition the curated dataset into training, validation, and test sets using a domain-based split. For example:

- Training Set: Contains complexes from certain protein families (e.g., GPCRs) and ligand scaffolds.

- Test Set (OOD): Contains complexes from held-out protein families (e.g., kinases) and/or entirely novel ligand scaffolds not seen during training. This strategy rigorously evaluates the model's generalization capability [12].

The following workflow diagram summarizes the end-to-end curation pipeline.

Benchmarking and Validation

After curation, it is crucial to benchmark the dataset's quality and utility by establishing baseline ML performance metrics.

Validation Protocol:

- Baseline Model Training: Train standard ML models (e.g., Graph Neural Networks for ligands, Convolutional Networks on voxelized structures) on the curated dataset for a task such as binding affinity prediction.

- Performance Benchmarking: Evaluate the model's performance on the held-out test sets, particularly the OOD sets, using metrics like Root Mean Square Error (RMSE) and Pearson's R correlation coefficient.

- Comparative Analysis: Compare the model's performance against the same model trained on a non-curated version of the data. A significant improvement in accuracy, especially on the OOD sets, demonstrates the value of the curation pipeline [9].

Table 3: Example Baseline Performance Metrics on MISATO Curated Data

| Machine Learning Task | Model Architecture | Benchmark Metric | Performance on\nRaw Data (Example) | Performance on\nCurated Data (Example) |

|---|---|---|---|---|

| Binding Affinity Prediction | 3D Convolutional Neural Network | Pearson's R | 0.45 | 0.68 |

| Ligand Property Prediction (e.g., Electron Affinity) | Graph Neural Network | RMSE | 1.25 eV | 0.85 eV |

| Protein Flexibility Prediction | Recurrent Neural Network | Accuracy | 70% | 85% |

The following table details key resources required to implement the described curation protocols.

Table 4: Essential Research Reagent Solutions for Dataset Curation

| Resource Name | Type | Function in Curation Pipeline |

|---|---|---|

| PDBbind Database [9] | Data Repository | Provides the foundational set of experimental protein-ligand structures and binding data for curation. |

| ChEMBL Database [12] | Data Repository | Supplies large-scale, target-annotated bioactivity data for ligand-based tasks and data expansion. |

| RDKit | Cheminformatics Toolkit | Used for ligand standardization, scaffold analysis, molecular descriptor calculation, and file format manipulation. |

| Quantum Chemical Software (e.g., ORCA) [9] | Computational Chemistry Tool | Performs the essential quantum mechanical refinement of ligand geometries and calculation of electronic properties. |

| Molecular Dynamics Suites (e.g., GROMACS) [9] | Simulation Software | Generates dynamic trajectories of protein-ligand complexes to capture flexibility and solvation effects, supplementing static structures. |

| DrugOOD Curator [12] | Computational Tool | A specialized tool for generating and managing datasets with out-of-distribution splits and noise-level annotations for rigorous benchmarking. |

The curation of high-quality, AI-ready datasets is a critical, non-negotiable step in modern computational drug discovery. The protocols outlined in this Application Note provide a roadmap for transforming raw, noisy structural data into a refined resource that empowers robust and generalizable AI models. By implementing a rigorous structure-based filtering and enrichment pipeline—encompassing QM refinement, dynamic simulation, and thoughtful OOD splitting—researchers can build a solid data foundation. This foundation is the key to unlocking the full potential of AI, ultimately accelerating the discovery of safe and effective therapeutics. Adherence to these curation standards will help overcome the current data quality challenges and pave the way for the next generation of predictive models in structure-based drug discovery.

Accurately identifying protein binding sites and understanding molecular interaction landscapes is a cornerstone of modern drug discovery and design. Protein-ligand interactions are fundamental to numerous biological processes, including enzyme catalysis and signal transduction [14]. The rapid growth in the number of known protein structures and small molecules has intensified the need for computational methods that can accurately and efficiently predict these binding sites, supplementing or bypassing costly experimental techniques like X-ray crystallography [14]. However, the reliability of these computational models is critically dependent on the quality of the data on which they are trained. Recent research has revealed that widespread issues like train-test data leakage and dataset redundancies have severely inflated the perceived performance of many models, leading to a significant overestimation of their real-world generalization capabilities [15]. This application note explores these data challenges, presents a structure-based filtering solution, and details protocols for leveraging these advancements to achieve more robust predictions of binding sites and molecular interactions.

Quantitative Performance Assessment

The following tables summarize key quantitative findings from recent studies that address data quality and model generalization in binding site and affinity prediction.

Table 1: Impact of PDBbind CleanSplit on Model Generalization Performance (CASF Benchmark) [15]

| Model / Training Condition | Reported Performance (Original PDBbind) | Performance (PDBbind CleanSplit) | Key Metric |

|---|---|---|---|

| GenScore (Retrained) | Excellent | Substantially Dropped | Binding Affinity Prediction |

| Pafnucy (Retrained) | Excellent | Substantially Dropped | Binding Affinity Prediction |

| GEMS (Graph Neural Network) | Not Applicable | State-of-the-Art | Binding Affinity Prediction |

Table 2: Performance of LABind on Benchmark Datasets for Binding Site Prediction [14]

| Evaluation Metric | LABind Performance | Significance |

|---|---|---|

| AUC (Area Under the ROC Curve) | Superior to baseline methods | Overall model discriminative ability |

| AUPR (Area Under the Precision-Recall Curve) | Superior to baseline methods | Better performance on imbalanced classification |

| MCC (Matthews Correlation Coefficient) | Superior to baseline methods | Robust measure for binary classification |

| F1 Score | Superior to baseline methods | Balance between precision and recall |

Application Notes & Protocols

Protocol: Curation of a Non-Leaking Training Set Using PDBbind CleanSplit

Background: The standard practice of training on the PDBbind database and testing on the Comparative Assessment of Scoring Functions (CASF) benchmark has been compromised by data leakage, with nearly 49% of CASF complexes having highly similar counterparts in the training set [15]. This protocol outlines the steps to create a rigorously filtered dataset.

Methodology: Structure-Based Filtering Algorithm [15]

- Multi-Modal Similarity Calculation: For every protein-ligand complex in both the training (PDBbind) and test (CASF) sets, compute a combined similarity score using three metrics:

- Protein Similarity: Calculated using TM-scores.

- Ligand Similarity: Calculated using Tanimoto scores (based on molecular fingerprints).

- Binding Conformation Similarity: Calculated using pocket-aligned ligand root-mean-square deviation (RMSD).

- Train-Test Separation: Identify and remove all training complexes that are structurally similar to any test complex based on defined thresholds for the combined similarity metrics. This step also explicitly removes training complexes with ligands identical to those in the test set (Tanimoto > 0.9).

- Redundancy Reduction within Training Set: Apply adapted filtering thresholds to identify similarity clusters within the training data itself. Iteratively remove complexes from these clusters until the most striking redundancies are resolved, encouraging the model to learn generalizable patterns rather than memorizing.

Key Outcome: The resulting PDBbind CleanSplit dataset is strictly separated from the CASF benchmarks, enabling a genuine evaluation of a model's ability to generalize to unseen protein-ligand complexes [15].

Protocol: Ligand-Aware Binding Site Prediction with LABind

Background: Many existing methods for binding site prediction are either tailored to specific ligands or ignore ligand information altogether, limiting their practicality and generalizability to novel compounds [14]. LABind provides a unified, structure-based framework for predicting binding sites for small molecules and ions in a ligand-aware manner.

Methodology: Graph Transformer with Cross-Attention [14]

- Input Representation:

- Ligand: The ligand's SMILES sequence is input into the MolFormer pre-trained molecular language model to obtain a foundational ligand representation.

- Protein: The protein sequence is processed by the Ankh pre-trained protein language model to generate sequence embeddings. The protein's 3D structure is analyzed by DSSP to obtain structural features (e.g., secondary structure). These are concatenated to form a protein-DSSP embedding.

- Graph Construction and Encoding: The protein's 3D structure is converted into a graph where nodes represent residues. Node features include the protein-DSSP embedding and spatial features (angles, distances). A graph transformer captures complex binding patterns from the local spatial context of the protein.

- Cross-Attention for Interaction Learning: A cross-attention mechanism is employed between the ligand representation and the protein graph representation. This allows the model to learn distinct binding characteristics specific to the given protein-ligand pair.

- Binding Site Prediction: The output from the interaction learning module is fed into a multi-layer perceptron (MLP) classifier to predict the probability of each residue being part of a binding site.

Key Outcome: LABind can effectively integrate ligand information to predict binding sites not only for ligands seen during training but also for unseen ligands, demonstrating robust generalization [14].

Diagram 1: The LABind architecture integrates protein and ligand information through a cross-attention mechanism to predict binding sites.

Protocol: Meta-Learned Data Curation with DataRater

Background: The quality of foundation models is heavily dependent on their training data. Manually curating large datasets with hand-crafted heuristics is not scalable. The DataRater framework meta-learns the value of individual data points to automate dataset curation [16].

Methodology: Meta-Gradient-Based Valuation [16]

- Meta-Objective Definition: The core objective is to improve training efficiency on a held-out validation dataset. The DataRater model is trained to assign a preference weight to each training data point.

- Meta-Training Loop: The process involves two levels of learning:

- Inner Loop: A model (e.g., a binding affinity predictor) is trained on a batch of data where each example is weighted by the current DataRater.

- Outer Loop: The performance of this trained model is evaluated on a clean, held-out validation set. The gradients of this validation loss with respect to the DataRater's parameters (the weights it assigned) are computed—these are the meta-gradients.

- Parameter Update: The DataRater's parameters are updated using these meta-gradients, effectively learning to assign higher weights to data that leads to better generalization on the validation set.

Key Outcome: Using DataRater to filter training data can lead to significant improvements in compute efficiency (e.g., up to 46.6% net compute gain reported) and frequently improves final model performance [16].

Diagram 2: The DataRater meta-learning cycle uses validation performance to learn the value of training data points.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Computational Tools and Resources for Binding Site and Interaction Research

| Tool / Resource Name | Type | Primary Function & Application |

|---|---|---|

| PDBbind Database [15] | Database | A comprehensive database of protein-ligand complexes with experimentally measured binding affinity data, used for training scoring functions. |

| CASF Benchmark [15] | Benchmarking Suite | A benchmark set for the comparative assessment of scoring functions, used for evaluating the generalization power of affinity prediction models. |

| PDBbind CleanSplit [15] | Curated Dataset | A structure-filtered version of PDBbind designed to eliminate train-test data leakage, enabling realistic model evaluation. |

| LABind [14] | Software Tool | A graph transformer-based model for predicting protein binding sites for small molecules and ions in a ligand-aware manner. |

| HERGAI [17] | AI Model | A structure-based AI tool for predicting inhibitors of the hERG potassium channel, crucial for assessing cardiotoxicity in drug discovery. |

| DataRater [16] | Meta-Learning Framework | A system that meta-learns the value of individual data points to automate the curation of high-quality training datasets. |

| Smina [17] [14] | Software Tool | A fork of AutoDock Vina used for molecular docking, often employed to generate binding poses for input to machine learning models. |

| AlphaFold [18] | AI Model | A protein structure prediction tool that can generate highly accurate 3D protein models for targets with unknown structures. |

| MolFormer [14] | AI Model | A pre-trained molecular language model that generates molecular representations from SMILES strings, used in LABind for ligand encoding. |

| Ankh [14] | AI Model | A pre-trained protein language model that generates protein sequence representations, used in LABind for protein encoding. |

In modern computational drug discovery, the curation of high-quality datasets is a foundational step for developing robust filtering and machine learning algorithms. The process hinges on leveraging authoritative, well-annotated molecular databases to obtain reliable protein structures and small molecule compounds. The Protein Data Bank (PDB) and the ZINC database represent two cornerstone resources in this ecosystem, providing experimentally determined 3D structures of biological macromolecules and commercially available, ready-to-dock small molecules, respectively [19] [20]. Framed within the context of research on structure-based filtering algorithms for dataset curation, this document outlines detailed application notes and protocols for the acquisition, preparation, and integration of data from these critical resources. The methodologies described herein are designed to ensure that researchers can construct datasets that are both findable and biologically relevant, thereby enhancing the efficacy of downstream virtual screening and machine learning tasks.

A clear understanding of the scope and content of primary databases is crucial for effective experimental design. The following tables summarize key quantitative and qualitative information for the core databases discussed in this protocol.

Table 1: Core Molecular Databases for Structure-Based Research

| Database Name | Primary Content | Number of Entries/Compounds | Key Features and Formats |

|---|---|---|---|

| RCSB Protein Data Bank (PDB) [19] | Experimentally determined 3D structures of proteins, nucleic acids, and complexes. | Over 230,000 entries (as of 2025) [21]. | - Structures from X-ray crystallography, Cryo-EM, NMR.- Formats: PDBx/mmCIF, PDBML/XML, legacy PDB.- Includes computed structure models from AlphaFold DB. |

| ZINC [20] [22] | Commercially available compounds for virtual screening. | Over 230 million "ready-to-dock" compounds; over 750 million purchasable compounds for analog searching. | - Molecules annotated with purchasability, biogenic class (e.g., metabolites, drugs).- Pre-calculated physicochemical properties (e.g., MW, logP).- Formats: SDF, mol2, SMILES. |

| Collection of Open Natural Products (COCONUT) | Natural products. | ~695,000 molecules [23]. | - Diverse chemical structures.- Useful for identifying novel bioactive compounds. |

Table 2: Key Protein Data Bank (PDB) File Download Services [24]

| File Format | Description | Example Download URL (Compressed) |

|---|---|---|

| PDBx/mmCIF | Standard, rich format for structural data. | https://files.wwpdb.org/download/4hhb.cif.gz |

| PDBx/BinaryCIF | Binary, efficient-to-parse version of mmCIF. | https://models.rcsb.org/4hhb.bcif.gz |

| PDBML/XML | XML representation of PDB data. | https://files.wwpdb.org/download/4hhb.xml.gz |

| Legacy PDB | Original format; limited for large structures. | https://files.wwpdb.org/download/4hhb.pdb.gz |

| Biological Assembly | File representing the functional oligomeric state. | https://files.wwpdb.org/download/5a9z-assembly1.cif.gz |

Workflow for Structure-Based Virtual Screening

The following diagram illustrates the integrated protocol for leveraging PDB and ZINC in a structure-based virtual screening campaign, incorporating machine learning filtering as detailed in the subsequent case study.

Case Study: Identifying Natural Inhibitors of βIII-Tubulin

This protocol exemplifies a structure-based filtering pipeline to identify natural product inhibitors targeting the 'Taxol site' of the human αβIII tubulin isotype, a target associated with cancer drug resistance [25]. The workflow integrates homology modeling, virtual screening, and machine learning-based filtering to curate a high-value dataset for experimental follow-up.

Experimental Procedures

Homology Modeling of the Target Protein

Objective: To construct a 3D atomic model of the human αβIII tubulin isotype when an experimental structure is unavailable.

Template Identification and Retrieval:

- Retrieve the canonical sequence for human βIII-tubulin from UniProt (ID: Q13509).

- Search the PDB for a suitable template using the BLAST service on the RCSB PDB website. The crystal structure of αIBβIIB tubulin bound to Taxol (PDB ID: 1JFF) is an ideal template, sharing 100% sequence identity with human β-tubulin [25].

- Download the template structure in mmCIF format using the URL:

https://files.wwpdb.org/download/1JFF.cif.gz[24].

Model Building:

- Use homology modeling software such as Modeller 10.2 to build the 3D coordinates of the βIII-tubulin isotype.

- To preserve the ligand-binding pocket, keep the αIB-tubulin chain, GTP, Mg²⁺, GDP, and the Taxol molecule from the 1JFF template. Replace only the βIIB-tubulin chain with the newly modeled βIII-tubulin using molecular visualization software (e.g., PyMol).

Model Validation:

- Select the final homology model based on the Discrete Optimized Protein Energy (DOPE) score.

- Validate the stereo-chemical quality of the model using PROCHECK to analyze the Ramachandran plot, ensuring over 90% of residues are in the most favored regions [25].

Ligand Library Preparation from ZINC

Objective: To prepare a library of natural compounds for docking into the target site.

Library Acquisition:

Format Conversion:

- Use a tool like Open Babel to convert the downloaded SDF files into the PDBQT format required by docking software like AutoDock Vina [25].

- Command line example:

obabel -i sdf input.sdf -o pdbqt -O output.pdbqt

Structure-Based Virtual Screening (SBVS)

Objective: To rapidly screen millions of compounds and identify a manageable subset of top-ranking hits based on predicted binding energy.

Define the Binding Site:

- The binding site is defined by the coordinates of the Taxol molecule in the homology model (or template structure).

High-Throughput Docking:

- Use docking software such as AutoDock Vina or InstaDock to dock the entire prepared ZINC natural products library into the target Taxol site.

- Configure the software to output binding affinity (estimated ΔG in kcal/mol) for each compound.

Initial Hit Selection:

- Sort all docked compounds by their binding energy.

- Select the top 1,000 compounds with the most favorable (most negative) binding energies for further refinement via machine learning [25].

Machine Learning-Based Filtering

Objective: To further refine the docking hits by distinguishing compounds with "drug-like" and "target-specific" properties from those that merely dock well.

Training Data Curation:

- Active Compounds: Compile a set of known Taxol-site targeting drugs (e.g., from existing literature or databases like ChEMBL).

- Inactive Compounds: Compile a set of molecules known not to target the Taxol site (e.g., drugs acting on different pathways). Use the DUD-E server to generate decoy molecules with similar physicochemical properties but different topologies to the actives [25].

Feature Generation:

- Calculate molecular descriptors and fingerprints for both the training data and the 1,000 docking hits. Use software like PaDEL-Descriptor to generate ~800 1D, 2D, and 3D molecular descriptors from the compounds' SMILES strings [25].

Model Training and Validation:

- Train multiple machine learning classifiers (e.g., Decision Tree, Support Vector Machine, k-Nearest Neighbors) on the training data to distinguish actives from inactives.

- Use 5-fold cross-validation and evaluate performance using metrics like Accuracy, Precision, Recall, and Area Under the Curve (AUC). An AUC value above 0.7 indicates a model with good predictive power [25] [23].

Hit Prediction and Integration:

- Use the trained ML models to predict the activity of the 1,000 docking hits.

- Select only those compounds predicted to be active by all models (consensus prediction) to minimize false positives. This process refined the initial 1,000 hits down to 20 high-confidence active natural compounds in the referenced study [25].

ADME-T and Toxicity Prediction

Objective: To filter the ML-refined hits for compounds with favorable drug-like properties and low potential toxicity.

- Property Calculation: Use computational tools (e.g., SwissADME, ProTox-II) to predict Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADME-T) properties for the final 20 hits.

- Activity Prediction: Perform PASS (Prediction of Activity Spectra for Substances) analysis to identify other potential biological activities and flag compounds with undesirable off-target effects.

- Selection: Select the top 4 compounds (e.g., ZINC12889138, ZINC08952577, ZINC08952607, ZINC03847075) that exhibited exceptional ADME-T properties and notable predicted anti-tubulin activity [25].

Molecular Dynamics Validation

Objective: To confirm the stability of the ligand-protein complex and the reliability of the docking pose over time.

- System Setup: Solvate the protein-ligand complex in a water box and add ions to neutralize the system.

- Simulation Run: Run a molecular dynamics (MD) simulation (e.g., for 100 nanoseconds) using software like GROMACS or AMBER.

- Trajectory Analysis: Calculate key metrics including Root Mean Square Deviation (RMSD) for protein backbone stability, Root Mean Square Fluctuation (RMSF) for residue flexibility, Radius of Gyration (Rg) for compactness, and Solvent Accessible Surface Area (SASA). A stable complex will show low RMSD and minimal dramatic fluctuations in other parameters.

- Binding Affinity Calculation: Use MM/GBSA or MM/PBSA methods on the MD trajectories to calculate the binding free energy, providing a more robust affinity estimate than docking scores alone [25].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Database Curation and Analysis

| Resource Name | Type | Function in Workflow | Access Link |

|---|---|---|---|

| RCSB PDB API [24] | Web Service | Programmatic access to search, retrieve, and analyze PDB data. | https://www.rcsb.org/docs |

| wwPDB File Download | Data Repository | Bulk download of PDB structures in mmCIF, XML, and PDB formats. | https://files.wwpdb.org |

| ZINC15 Subset Browser [22] | Database Interface | Graphically browse and filter purchasable compounds by biogenic class, drug-likeness, etc. | https://zinc15.docking.org |

| PaDEL-Descriptor [25] | Software | Calculate 1D, 2D, and 3D molecular descriptors/fingerprints for ML from chemical structures. | http://www.yapcwsoft.com/dd/padeldescriptor/ |

| DUD-E Server [25] | Web Server | Generate decoy molecules for training machine learning models to reduce false positives. | http://dude.docking.org |

| Open Babel | Software Tool | Convert chemical file formats between hundreds of formats (e.g., SDF to PDBQT). | http://openbabel.org |

| DSSP 4 [21] | Software/Database | Annotate protein secondary structure elements following FAIR principles; crucial for characterizing targets. | https://pdb-redo.eu/dssp |

Structure-based drug design (SBDD) leverages computational methods to discover and optimize therapeutic candidates by predicting how small molecules interact with biological targets. Molecular docking, virtual screening, and binding affinity prediction form the foundational computational toolkit for this process, enabling researchers to rapidly identify and prioritize promising compounds from vast chemical libraries [26] [27]. These methods have become indispensable in pharmaceutical research, significantly reducing the time and cost associated with experimental screening alone [26].

The reliability of these computational techniques is critically dependent on the quality of the underlying data. Recent research highlights that dataset curation, particularly through structure-based filtering algorithms, is paramount for developing models that generalize well to novel targets and compounds. Issues such as data leakage and redundancy in public datasets have been shown to severely inflate performance metrics, leading to over-optimistic assessments of model capabilities [15]. This application note details established protocols and emerging best practices in molecular docking, virtual screening, and affinity prediction, framed within the essential context of rigorous data curation for robust model development.

Molecular Docking: Principles and Protocols

Physical Basis and Molecular Recognition

Molecular docking computationally simulates the atomic-level association between a protein (receptor) and a small molecule (ligand) to predict the stable conformation of the resulting complex [26]. This binding is driven by non-covalent interactions, and the formation of a stable complex is governed by a decrease in the system's Gibbs free energy, as described by the equation:

ΔGbind = ΔH - TΔS [26]

Where ΔGbind is the change in Gibbs free energy, ΔH is the change in enthalpy, T is the absolute temperature, and ΔS is the change in entropy. The key intermolecular forces facilitating binding include [26]:

- Hydrogen Bonds: Electrostatic attractions between a hydrogen atom bonded to an electronegative donor (e.g., O, N) and another electronegative acceptor atom.

- Ionic Interactions: Attractions between oppositely charged amino acid residues and ligand functional groups.

- Van der Waals Interactions: Weak, non-specific attractions between transient dipoles in electron clouds of adjacent atoms.

- Hydrophobic Interactions: Entropy-driven association of non-polar groups to minimize disruptive interactions with the aqueous solvent.

The process of molecular recognition is commonly described by three conceptual models [26]:

- Lock-and-Key: The protein and ligand are rigid and possess pre-complementary shapes.

- Induced-Fit: The binding site undergoes a conformational change to accommodate the ligand.

- Conformational Selection: The protein exists in an ensemble of conformations, and the ligand selectively binds to the most complementary state.

Search Algorithms and Scoring Functions

A docking algorithm must solve two core problems: exploring the vast conformational space of the ligand within the binding site (search algorithm), and identifying the correct pose by estimating the binding strength (scoring function) [27].

Table 1: Common Conformational Search Algorithms in Molecular Docking

| Algorithm Type | Description | Key Characteristics | Example Software |

|---|---|---|---|

| Systematic Search | Rotates all rotatable bonds by fixed intervals to exhaustively explore conformations [27]. | Computationally intensive; complexity grows exponentially with rotatable bonds. | Glide, FRED |

| Incremental Construction | Fragments the ligand, docks rigid core fragments, and rebuilds the molecule with flexible linkers [27]. | Reduces complexity by focusing on flexible linkers between rigid fragments. | FlexX, DOCK |

| Monte Carlo | Makes random changes to conformation; new states are accepted based on energy and Boltzmann probability [27]. | Stochastic; can escape local minima. | Glide |

| Genetic Algorithm | Encodes torsions as "genes"; populations of conformations evolve via mutation and crossover based on a fitness score [27]. | Inspired by natural selection; effective for complex flexibility. | AutoDock, GOLD |

Scoring functions are designed to approximate the binding free energy (ΔGbind) by evaluating the physicochemical complementarity of a given protein-ligand pose [27]. They can be broadly categorized as:

- Force-Field-Based: Calculate energies from molecular mechanics terms (e.g., van der Waals, electrostatics).

- Empirical: Use weighted sums of interaction features (e.g., hydrogen bonds, hydrophobic contacts) fitted to experimental data.

- Knowledge-Based: Derive potentials from statistical analyses of atom-pair frequencies in known structures.

Experimental Protocol: A Standard Molecular Docking Workflow

Objective: To predict the binding pose and estimate the binding affinity of a small molecule ligand within a defined protein binding pocket.

Materials and Reagents:

- Protein Structure: A high-resolution 3D structure from X-ray crystallography, cryo-EM, or a predicted model from tools like AlphaFold [28].

- Ligand Structure: A 3D chemical structure of the small molecule, typically in SDF or MOL2 format.

- Docking Software: Such as AutoDock Vina, Glide, GOLD, or Surfdock [29] [27].

- Structure Preparation Tools: For example, the Protein Preparation Wizard (Schrödinger) or UCSF Chimera.

Procedure:

- Protein Preparation [27]:

- Hydrogen Addition: Add hydrogen atoms appropriate for the physiological pH.

- Protonation States: Assign correct protonation states to histidine, aspartic acid, glutamic acid, and other ionizable residues.

- Structure Completion: Model any missing loops or side-chain atoms.

- Energy Minimization: Perform a constrained minimization to relieve steric clashes.

Ligand Preparation:

- Generate plausible 3D conformations and tautomers.

- Assign correct bond orders and formal charges.

- Energy-minimize the ligand structure using a molecular mechanics forcefield.

Binding Site Definition:

- If the native binding site is unknown, define the search space using a grid box centered on the suspected binding region or the entire protein surface for "blind docking."

Molecular Docking Execution:

- Configure the docking software with the prepared structures and defined search space.

- Select an appropriate search algorithm and scoring function.

- Run the docking simulation to generate multiple candidate binding poses.

Post-processing and Analysis:

- Pose Clustering: Group geometrically similar poses to identify consensus binding modes.

- Rescoring: Optionally, re-score the top poses using a more advanced or consensus scoring function.

- Interaction Analysis: Visually inspect the top-ranked poses to identify key hydrogen bonds, hydrophobic contacts, and pi-stacking interactions.

Virtual Screening: Ligand- and Structure-Based Methods

Virtual screening (VS) computationally evaluates large libraries of compounds to identify molecules with a high probability of binding to a target [28]. It serves two primary purposes: enriching a subset of a large library with active compounds and guiding the detailed optimization of smaller compound series [28].

Ligand-Based vs. Structure-Based Screening

Table 2: Comparison of Virtual Screening Approaches

| Feature | Ligand-Based Virtual Screening | Structure-Based Virtual Screening |

|---|---|---|

| Requirement | Known active ligand(s) [28]. | 3D structure of the target protein [28]. |

| Core Principle | Identifies compounds similar in shape or pharmacophore to known actives [28] [30]. | Docks compounds into the binding pocket to evaluate complementarity [28]. |

| Key Methods | Pharmacophore mapping, shape similarity (ROCS), field alignment (FieldAlign) [28]. | Molecular docking (Glide, AutoDock Vina) [29] [28]. |

| Advantages | Fast, cost-effective; useful when protein structure is unavailable [28]. | Provides atomic-level interaction insights; often better library enrichment [28]. |

| Limitations | Relies on existing ligand data; may miss novel scaffolds [28]. | Computationally expensive; sensitive to protein structure quality [28]. |

The Power of Hybrid Screening Strategies

Integrating ligand- and structure-based methods often yields more reliable results than either approach alone [28]. Two common hybrid strategies are:

- Sequential Integration: A rapid ligand-based screen filters a massive library down to a manageable number of promising candidates (e.g., thousands), which are then subjected to more computationally expensive structure-based docking for refinement [28].

- Parallel Screening with Consensus Scoring: Both ligand- and structure-based methods are run independently on the same library. Their results are combined using a consensus framework (e.g., multiplicative or averaging), favoring compounds that rank highly by both methods, which increases confidence in the selections [28].

The Impact of AI-Generated Protein Structures

The emergence of AlphaFold and other AI-based protein structure prediction tools has dramatically increased the availability of protein models [28]. However, important considerations for their use in VS include:

- Static Conformation: AI models typically predict a single static state, potentially missing ligand-induced fit mechanisms [28].

- Side-Chain Reliability: While backbone predictions are often accurate, side-chain positioning—critical for specific interactions—can be less reliable without refinement [28].

- Ligand-Bound Models: Newer co-folding methods (e.g., AlphaFold3, Boltz-2) show promise in generating ligand-bound conformations but may struggle with generalizability to novel scaffolds or allosteric sites [28] [15].

Binding Affinity Prediction: From Physical Simulation to Deep Learning

Accurately predicting the binding affinity (e.g., Ki, Kd, IC50) is crucial for prioritizing compounds. The binding constant Keq relates to the Gibbs free energy via:

ΔGbind = -RT ln Keq [26]

Where R is the gas constant and T is the temperature. Methods for affinity prediction span a spectrum from physics-based simulations to data-driven machine learning models.

Table 3: Key Methods for Binding Affinity Prediction

| Method Category | Description | Representative Tools | Key Considerations |

|---|---|---|---|

| Free Energy Perturbation (FEP) | A high-accuracy, physics-based simulation method that calculates the free energy difference between related ligands [28] [31]. | Schrödinger FEP+, OpenFE | High computational cost; requires high-quality structure; limited to congeneric series [31]. |

| Machine Learning (ML) Scoring Functions | Data-driven models trained on protein-ligand complexes to predict affinity directly from structural and chemical features [15] [32]. | GenScore, Pafnucy, GEMS, HPDAF | Performance depends heavily on training data quality; risk of poor generalization [15]. |

| Physics-Informed ML | Hybrid methods that incorporate physical principles (e.g., molecular fields, strain energy) into ML models, bridging the gap between simulation and pure correlation [31]. | QuanSA (Quantitative Surface Analysis) [28] | More generalizable than black-box ML; less expensive than FEP; can model novel scaffolds [31]. |

Critical Considerations for Machine Learning Models

The performance of deep-learning-based scoring functions is highly susceptible to biases in the training data. A major issue identified in recent literature is data leakage between standard training sets (e.g., PDBbind) and benchmark test sets (e.g., CASF) [15]. When models are trained and tested on highly similar complexes, they can achieve high benchmark performance through memorization rather than genuine learning of protein-ligand interactions, leading to a significant overestimation of their real-world generalization capability [15].

Protocol: Mitigating Data Bias with Structure-Based Filtering

Objective: To create a rigorously curated dataset for training and evaluating affinity prediction models, ensuring genuine generalization.

Procedure [15]:

- Source Data: Start with a standard dataset, such as PDBbind.

- Multimodal Similarity Assessment: For every complex in the training set, compute its similarity to every complex in the test set using a combined metric:

- Protein Similarity: Use the TM-score to assess global protein fold similarity.

- Ligand Similarity: Calculate the Tanimoto coefficient based on molecular fingerprints.

- Binding Conformation Similarity: Compute the pocket-aligned ligand root-mean-square deviation (RMSD).

- Apply Filtering Thresholds: Remove any training complex that exceeds similarity thresholds with any test complex (e.g., TM-score > 0.7, Tanimoto > 0.9, RMSD < 2.0 Å). This step eliminates direct train-test leakage.

- Reduce Internal Redundancy: Within the training set itself, identify and remove complexes that form high-similarity clusters to prevent models from settling for a local minimum via memorization.

- Output: The result is a filtered, non-redundant dataset (e.g., PDBbind CleanSplit) suitable for training models whose benchmark performance will reflect true generalization ability [15].

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Computational Tools for Structure-Based Drug Design

| Tool Name | Primary Function | Key Features / Use Case |

|---|---|---|

| AutoDock Vina | Molecular Docking [29] | Open-source, widely used for binding pose prediction and virtual screening. |

| Glide (Schrödinger) | High-Accuracy Docking [29] | Known for superior pose prediction accuracy and physical validity; uses systematic and Monte Carlo search methods [29] [27]. |

| AlphaFold2/3 | Protein Structure Prediction [28] [15] | Provides high-quality protein models when experimental structures are unavailable. |

| GEMS | Deep-Learning Affinity Prediction [15] | Graph neural network model demonstrating robust generalization on curated benchmarks like PDBbind CleanSplit. |

| HPDAF | Multimodal Affinity Prediction [32] | Integrates protein sequence, drug graph, and pocket structure using a hierarchical attention mechanism. |

| PDBbind Database | Benchmarking & Training [15] | Comprehensive database of protein-ligand complexes with experimental binding affinities. |

| PoseBusters | Pose Validation [29] | Toolkit to validate the chemical and geometric plausibility of predicted docking poses. |

The synergy between molecular docking, virtual screening, and binding affinity prediction creates a powerful engine for modern drug discovery. The following diagram synthesizes these techniques into a coherent, data-centric workflow that emphasizes the critical role of curated data.

As illustrated, structure-based filtering for dataset curation is not an isolated step but a foundational practice that enhances the reliability of every subsequent computational stage. By rigorously addressing data bias and redundancy, researchers can develop more predictive AI models and docking protocols, ultimately increasing the efficiency and success rate of drug discovery campaigns. The future of these foundational techniques lies in the continued integration of physical principles with data-driven AI, all built upon a bedrock of high-quality, meticulously curated data.

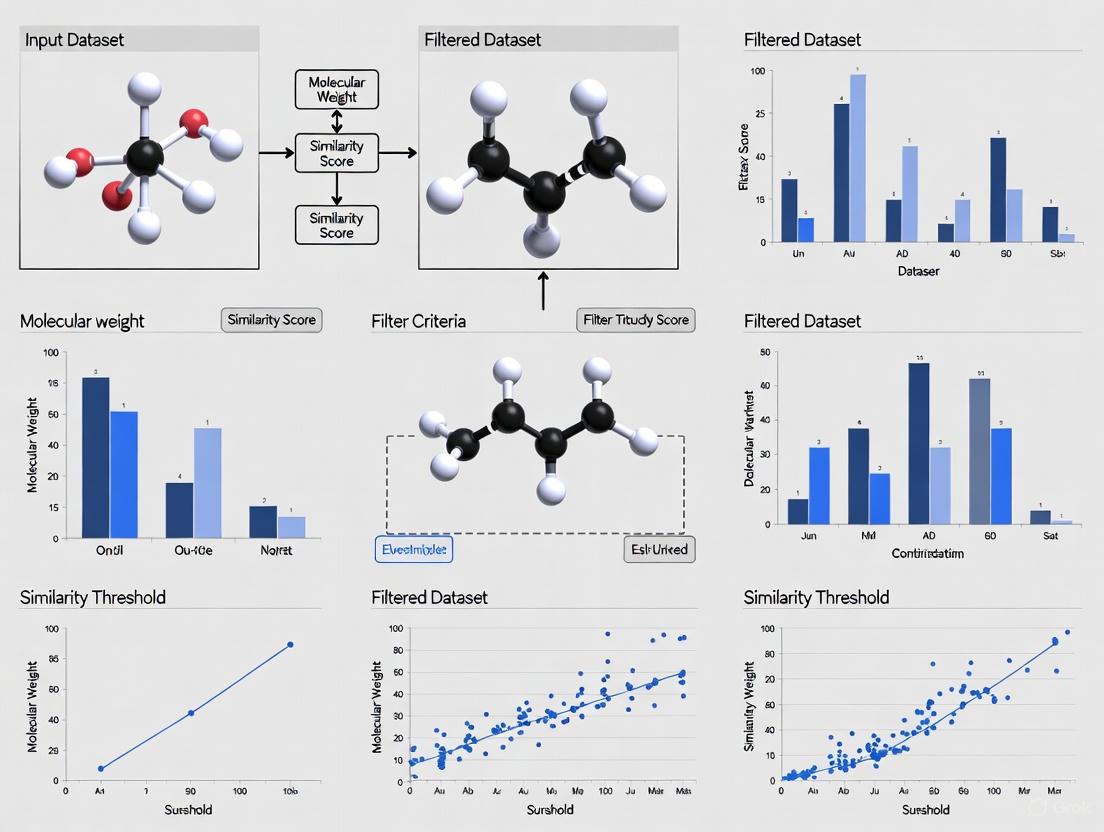

Implementing a Multi-Dimensional Filtering Pipeline: From Physicochemical Rules to AI-Powered Tools

In the context of structure-based filtering algorithm research for dataset curation, the multi-stage filtering workflow represents a sophisticated architectural paradigm. This approach is designed to process complex, high-dimensional, and often noisy datasets in a manner that is both computationally efficient and robust to nuisance factors or domain-specific artifacts [33]. The core motivation is to sequentially refine data quality, isolating relevant signals and enforcing task-specific constraints through a series of discrete, specialized stages [33]. For researchers and drug development professionals, this methodology offers a structured mechanism for enhancing the reliability and usability of curated datasets, which is paramount in high-stakes fields like pharmaceutical research.

Core Principles of Multi-Stage Filtering

The design of an effective multi-stage filtering workflow is governed by several key principles. The overarching goal is to achieve modular control over the critical trade-offs between precision, recall, and computational cost [33]. This is practically accomplished by deploying fast, coarse-filtering algorithms in the initial stages to reduce data volume, thereby reserving more computationally intensive, fine-grained, or semantic analysis for subsequent stages where the dataset has been significantly reduced [34] [33]. This strategy ensures overall efficiency.

Furthermore, a foundational architectural decision involves the sequencing of filters. In an optimal configuration for decimation, the shortest filter is placed first and the longest filter, which possesses the narrowest transition width, is placed last. This arrangement ensures that the most computationally expensive filter operates at the lowest sample rate, dramatically reducing implementation costs [34]. This principle of staging filters from simplest to most complex is a cornerstone of efficient pipeline design. Finally, the workflow must be designed for transparency and interpretability, allowing researchers to understand and validate filtering decisions at each stage, which is crucial for scientific reproducibility and debugging [33].

Architectural Components and Staging

A robust multi-stage filtering pipeline is composed of several logical stages, each with a distinct objective. The typical progression moves from high-speed, coarse exclusion to sophisticated, task-aware selection. Table 1 outlines the functions and key methodologies for each common stage.

Table 1: Stages of a Multi-Stage Data Filtering Pipeline

| Pipeline Stage | Primary Function | Representative Methodologies & Criteria |

|---|---|---|

| Initial Coarse Filtering | Rapidly reduce data volume using fast, domain-agnostic heuristics [33]. | Rule-based blocklists, language identification, duplicate removal, aspect ratio checks [33]. |

| Intermediate Feature-Based Selection | Apply more computationally intense operations to filter based on intrinsic data features [33]. | Metric learning, deep clustering, diffusion/intersection operators, affinity matrices [33]. |

| Task-Aware or Semantic Filtering | Execute fine-grained selection aligned with specific downstream domain uses [33]. | Fine-tuned models (e.g., BERT classifiers), contrastive losses, multi-model consensus [33]. |

| Integration and Reweighting | Prepare the final curated dataset for downstream tasks [33]. | Reintegration of retained samples, distributional alignment, rebalancing for task objectives [33]. |

The following diagram illustrates the logical flow and decision points within a generalized multi-stage filtering workflow.

Generalized Multi-Stage Filtering Workflow

Experimental Protocols and Methodologies

Protocol: Diffusion-Based Manifold Filtering

This protocol is designed to extract shared latent structures from multimodal data while removing sensor-specific or nuisance variations, as demonstrated in sensor fusion applications [33].

- Affinity Matrix Construction: For each data modality, construct an affinity matrix (e.g., using a Gaussian kernel) that encodes the pairwise similarities between data points within that modality.

- Alternating Diffusion: Apply the alternating diffusion process. This involves repeatedly applying kernel-based transitions between modalities, effectively filtering observations by emphasizing local neighborhood consistency that is common across all modalities [33].

- Union Graph Embedding: Create a unified representation by embedding the union of the diffused affinity graphs. This final embedding captures the common intrinsic structure, and data points not manifesting this consistent structure are filtered out [33].

Protocol: Weakly-Supervised Data Curation

This methodology is effective for curating high-quality training samples from weakly labeled or noisy data, commonly used in machine vision and audio processing [33].

- Initial Proposal Generation: Use a base model (e.g., an object localization network or an automatic speech recognition system) to generate initial pseudo-labels or data proposals.

- Metric Learning and Clustering: Employ metric learning (e.g., with triplet loss) to learn a feature space where semantically similar data points are close. Follow this with density-based clustering (e.g., DBSCAN) to identify and prune outliers and noisy proposals [33].

- Consensus Filtering and Training: Use multi-model agreement (e.g., low pairwise Character Error Rate across multiple ASR outputs) or graph-based label propagation to cross-validate and select the most robust data points for the final curated set [33].

Data Curation and FAIRness Evaluation Protocol

Adapted from curation frameworks like the CURATE(D) model, this protocol ensures data is Findable, Accessible, Interoperable, and Reusable (FAIR) [35].

- File and Metadata Check: Inventory all files. Review and verify metadata to ensure it conforms to repository and disciplinary standards. Check that the data falls within the intended repository's scope [35].

- Data Understanding and Quality Assurance: Examine the dataset to understand file interrelations. Check for quality assurance issues like missing data, ambiguous headings, and code extraction failures. Determine if documentation is sufficient for reuse [35].

- Request and Augment: Generate a list of questions to request missing information from the data submitter. Augment metadata to improve findability (e.g., assigning a permanent identifier) and interoperability [35].

- Transform and Evaluate: Transform file formats to non-proprietary, preservation-friendly types when possible. Conduct a final review of the dataset and metadata against FAIR principles, as well as ethical frameworks like CARE (for indigenous data) and FATE (for AI/ML research) [35]. Crucially, all curation actions must be performed on a copy, leaving the original raw data untouched [35].

The Scientist's Toolkit: Research Reagent Solutions

The successful implementation of a multi-stage filtering workflow relies on a combination of computational tools and theoretical frameworks. Table 2 details essential components for building and analyzing such pipelines.

Table 2: Essential Reagents for Multi-Stage Filtering Research

| Reagent / Tool | Type | Function in Pipeline Development |

|---|---|---|

| Similarity Metrics (Cosine, Euclidean) [36] | Algorithm | Quantify proximity between data points in a vector space to determine similarity for filtering. |

| Affinity Matrix [33] | Data Structure | Encodes pairwise similarities between data points, serving as the foundation for graph-based and diffusion filters. |

| BERT-like Classifier [33] | Model | Provides a pre-trained, adaptable model for semantic filtering and classification tasks in intermediate/late stages. |

| Clustering Algorithms (e.g., DBSCAN) [33] | Algorithm | Identify natural groupings and outliers in data based on density or metric learning for feature-based selection. |

| Rule-Based Blocklist [33] | Heuristic | A fast, transparent set of rules for initial coarse filtering to exclude structurally or semantically irrelevant data. |

| Krippendorff’s Alpha [33] | Metric | A reliability statistic used to evaluate the consistency and performance of filtering stages, particularly with multiple annotators or models. |

| CURATE(D) Checklist [35] | Framework | A structured model guiding the data curation process, from file checks to FAIRness evaluation. |

| ModernBERT [33] | Model | An example of an efficient language model used in safety-focused filtering stages to block unwanted content. |

Performance Assessment and Quantitative Outcomes

Rigorous performance assessment is critical and must extend beyond final task accuracy to include efficiency, robustness, and fairness. Empirical evaluations from the literature demonstrate the tangible benefits of the multi-stage approach. Table 3 summarizes key performance findings from various implementations.

Table 3: Quantitative Performance of Multi-Stage Filtering Pipelines

| Application Domain | Reported Performance Metrics | Key Outcome |

|---|---|---|

| Large Language Model (LLM) Data Curation [33] | Krippendorff’s α, Cost | Up to 18.4% gain in Krippendorff’s α over single-stage baselines, with computational costs reduced by ~97%. |

| Automatic Speech Recognition (ASR) [33] | Data Volume Reduction, Word Error Rate (WER) | Filtering curated 1–2% of pseudo-labeled audio data without degradation in WER, indicating high data efficiency. |

| Sensor Fusion [33] | Robustness to Artificial Noise | Demonstrated intrinsic removal of noise and spurious modalities, maintaining performance despite added noise sensors. |

| Safe LLM Pretraining [33] | Tamper Resistance, Capability Retention | Effectively blocked unwanted capabilities (e.g., biothreat knowledge) without degrading unrelated capacities, even after extensive adversarial fine-tuning. |

These results highlight the pipeline's ability to enhance robustness against noise and adversarial manipulation, significantly improve data efficiency by drastically reducing the volume of data required for training, and maintain or improve final task accuracy while simultaneously enforcing critical constraints like safety and fairness [33].

The initial triage of chemical compounds is a critical step in drug discovery, enabling researchers to focus computational and experimental resources on the most promising candidates. Drug-likeness rules, primarily Lipinski's Rule of Five (Ro5), provide a foundational framework for this initial filtering by predicting compounds with a higher probability of oral bioavailability. These rules are particularly valuable in structure-based filtering algorithms for dataset curation, where they serve as the first gatekeeper in a multi-tiered screening process. By applying these rules, researchers can efficiently reduce massive chemical libraries to a more manageable set of candidates worthy of more computationally intensive structure-based design approaches, thereby accelerating the early drug discovery pipeline.

Core Principles of Lipinski's Rule of Five

Definition and Criteria

Lipinski's Rule of Five is a widely adopted rule of thumb in drug discovery that helps predict the likelihood of a compound being orally bioavailable in humans. Formulated by Christopher A. Lipinski in 1997, the rule states that poor absorption or permeation is more probable when a compound violates more than one of the following four criteria, all values of which are multiples of five, hence the name "Rule of Five" [37] [38]:

- Hydrogen Bond Donors (HBD): No more than 5

- Hydrogen Bond Acceptors (HBA): No more than 10

- Molecular Weight (MWT): Less than 500 Daltons

- Partition Coefficient (log P): Not greater than 5 (often measured as calculated Log P, CLogP)

According to the rule, an orally active drug should have no more than one violation of these conditions [37] [38]. The underlying principle is that these physicochemical properties significantly influence a drug's pharmacokinetics, including its absorption, distribution, metabolism, and excretion (ADME) profile.

Scientific Rationale and Limitations

The Rule of Five emerged from the observation that most orally administered drugs are relatively small and moderately lipophilic molecules [38]. The specific criteria were chosen because they correlate with key ADME properties: excessive hydrogen bonding can reduce membrane permeability, high molecular weight may hinder absorption, and extreme lipophilicity can negatively impact solubility [37].

However, several important limitations must be recognized:

- The Ro5 specifically applies to compounds that are not substrates for active transporters [39].