Structure-Based Drug Design: Foundations, Methods, and Future Directions in Computational Drug Discovery

This article provides a comprehensive overview of the foundations of Structure-Based Drug Design (SBDD), a pivotal computational approach in modern drug discovery.

Structure-Based Drug Design: Foundations, Methods, and Future Directions in Computational Drug Discovery

Abstract

This article provides a comprehensive overview of the foundations of Structure-Based Drug Design (SBDD), a pivotal computational approach in modern drug discovery. Tailored for researchers, scientists, and drug development professionals, it explores the core principles and historical context of SBDD, detailing key methodological approaches like molecular docking and dynamics. The content addresses significant challenges such as target flexibility and drug-likeness optimization, presenting advanced solutions including accelerated molecular dynamics and AI-driven frameworks. Finally, it examines validation techniques and comparative analyses of SBDD performance, synthesizing key takeaways to outline future directions and implications for biomedical and clinical research.

The Rational Foundation: Core Principles and Evolution of SBDD

Structure-Based Drug Design (SBDD) represents a rational approach to drug discovery and development that utilizes the three-dimensional structure of a biological target, typically a protein, to design and optimize drug candidates [1]. This methodology stands in contrast to traditional ligand-based approaches, which rely on knowledge of existing active compounds. The fundamental difference between these approaches is analogous to designing a key by having the blueprint of the lock (SBDD) versus only studying a collection of existing keys that fit the same lock (ligand-based design) [2]. This direct approach allows researchers to engineer molecules by understanding the precise position and nature of the target's binding site, free from the chemical biases inherent in existing ligand collections [2]. Over recent decades, SBDD has evolved from a largely experimental technique to a sophisticated computational discipline, with data now recognized not as a mere research byproduct but as a critical strategic asset in its own right [3].

The value of SBDD is particularly evident in addressing the high costs and productivity challenges of traditional drug discovery. Bringing a new drug to market carries an average cost of $2.2 billion, with high failure rates in clinical trials primarily due to insufficient efficacy (over 50% in Phase II) or safety concerns (20-25% across phases) [2]. By generating molecules tailored from the outset to be high-affinity, specific binders for their targets, SBDD aims to increase the quality of candidates entering the clinical pipeline, thereby improving the odds of clinical success and reducing late-stage attrition [2].

Core Principles and Methodological Framework of SBDD

The Defining Characteristics of SBDD

At its core, SBDD is an iterative process that fits within the broader context of a drug discovery program [4]. The process begins with the identification of small-molecule ligands that are complementary to the structure of the target through computational methods [4]. The advantages of this approach are multifold: hundreds of thousands of ligands can be virtually screened as potential drug leads without initial purchase or synthesis, the process is rapid relative to in vitro screening, and the associated costs are relatively low [4].

The value of SBDD data products is determined by several key characteristics that transform raw structural data into a strategic asset. High-quality structural data products are characterized by rigorous validation to ensure accuracy and reliability, standardized formats for seamless integration across platforms, comprehensive metadata to enhance usability, and intuitive interfaces that democratize access across multidisciplinary teams [3]. These attributes are essential for making structure-based drug discovery more efficient and effective.

The SBDD Workflow: An Iterative Cycle

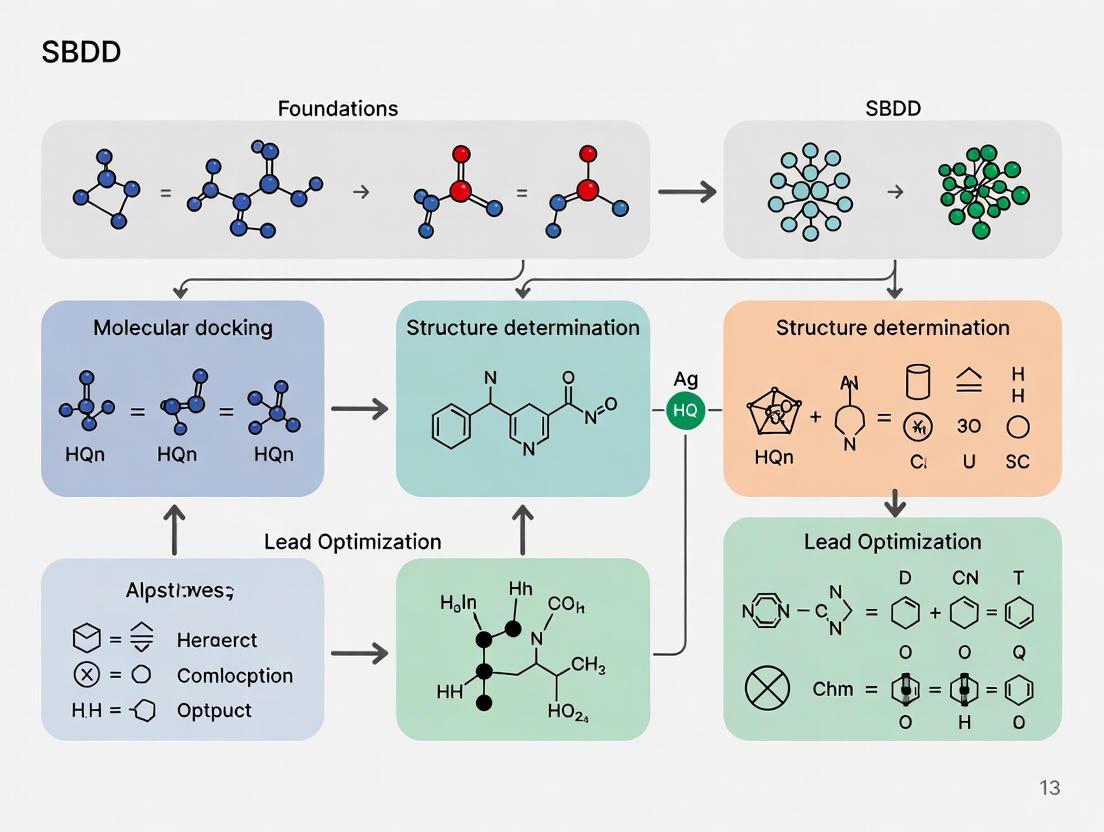

The SBDD process follows a systematic workflow that leverages three-dimensional structural information to discover and optimize drug candidates [1]. The workflow can be visualized as an iterative cycle of preparation, docking, scoring, and experimental validation, as illustrated below:

Figure 1: The Iterative SBDD Workflow. This diagram illustrates the cyclical nature of structure-based drug design, where experimental feedback informs subsequent computational cycles for continuous optimization.

As depicted in Figure 1, the SBDD workflow begins with target identification and preparation of both the target structure and ligand databases [4] [1]. After selecting and validating the target, the process requires an accurate 3D structure of the protein, which can be obtained from experimental methods (X-ray crystallography, cryo-EM, NMR) or through homology modeling when experimental structures are unavailable [4] [1]. The target model is then analyzed to identify active or allosteric binding sites using dedicated algorithms [1].

The molecular docking phase involves computational screening where software identifies optimal binding modes of small-molecule ligands in the target structure [4]. These binding modes are then scored for their noncovalent interactions, generating a ranked list of candidates [4]. Top-ranking compounds undergo visual evaluation to assess goodness of fit, formation of key interactions, and complementarity before selected molecules are purchased or synthesized for experimental testing [4]. Compounds demonstrating affinity and activity ("hits") then enter the hit-to-lead optimization phase, where they undergo iterative cycles of SBDD using focused analog libraries to improve binding affinity, selectivity, and drug-like properties [4] [1].

Key Methodologies and Experimental Protocols in SBDD

Molecular Docking and Virtual Screening

Molecular docking represents a cornerstone methodology in SBDD, used to model the interactions of small molecules with active or allosteric sites of target proteins [1]. Docking software employs various algorithms to identify optimal binding modes and orientations of small molecules within a defined binding site [4]. The field offers numerous docking programs, each with distinctive approaches and capabilities as detailed in Table 1.

Table 1: Representative Molecular Docking Software and Key Features

| Program | Key Features | Flexibility Handling | Accessibility |

|---|---|---|---|

| DOCK 6 | Docks small molecules, includes solvent effects, uses incremental construction | Ligand flexibility | Free for academic use [4] |

| AutoDock | Uses interaction grid for receptor conformations, simulated annealing for ligands | Ligand flexibility | Free of charge [4] |

| GOLD | Uses genetic algorithms | Partial protein and ligand flexibility | Commercial [4] |

| Glide | Performs complete conformational, orientational, and positional search | Ligand flexibility | Commercial [4] |

| FlexX | Uses incremental construction for ligands | Ligand flexibility | Commercial [4] |

Docking protocols support both high-throughput virtual screening (HTVS) for large-scale ligand evaluation and high-precision docking for detailed pose analysis of lead-like compounds [1]. To address the challenge of receptor flexibility, ensemble docking can be performed when multiple protein structures are available, increasing the robustness of predictions [1].

Target and Ligand Preparation Protocols

Target Structure Preparation

The preparation of the macromolecular target structure requires several critical steps to ensure accurate docking results [4]:

- Hydrogen Addition: Hydrogens, typically absent from crystal structures determined at resolutions lower than 1Å, must be added to the macromolecular structure.

- Charge Assignment: Charges are calculated and assigned for individual residues to properly model electrostatic interactions.

- Binding Site Definition: The docking site is defined, which can be the active site of an enzyme or an assembly site with another macromolecule. This can be done by defining individual residues within the general docking site or using a 3.5–6Å radius around a preexisting ligand.

- Cofactor and Water Decisions: A decision must be made regarding whether to retain metals, cofactors, and ordered water molecules that exist in the docking site, depending on whether they are critical to ligand binding or should be displaced.

- Flexibility Parameters: If the docking program allows target flexibility, the number and identity of flexible residues and their degree of flexibility must be defined.

Ligand Database Preparation

Ligand preparation involves converting two-dimensional representations into three-dimensional structures suitable for docking [4]:

- 3D Conversion: Ligands in the database, typically represented as "strings" describing two-dimensional connectivity, are automatically converted to three-dimensional, minimized representations using software such as CONCORD or CORINA.

- Drug-Likeness Filtering: The library can be initially filtered to select compounds with improved likelihood of bioavailability based on molecular weight, number of rotatable bonds, and hydrogen bond donor/acceptor groups.

- Geometry Optimization: Ligands are checked for proper geometry, including reasonable bond distances and angles, with conformations minimized if necessary.

- Stereochemistry Handling: Ligands with stereocenters are examined as independent enantiomers.

- Protonation State: Ligands are appropriately protonated for the pH of the target solution environment.

Advanced Simulation Techniques: Molecular Dynamics

Molecular dynamics (MD) simulations provide a dynamic, atomistic view of ligand-receptor complexes, capturing conformational changes and binding flexibility that influence drug behavior—aspects that static structures cannot reveal [1]. Unbiased MD simulations assess pose stability, quantify protein-ligand interactions, identify water sites, reveal transient binding pockets, and evaluate potential allosteric effects [1].

Advanced MD techniques include:

- Steered MD and Umbrella Sampling: These methods study the kinetics and thermodynamics of ligand binding and unbinding processes, providing insights into binding mechanisms and residence times [1].

- Ensemble Simulations: These capture the dynamic nature of protein flexibility, addressing a significant challenge in molecular docking that typically relies on static structures [2].

MD expertise now extends across diverse biologically relevant systems, including transmembrane proteins, lipid membranes, protein-protein interfaces, and emerging modalities such as PROTACs and molecular glues [1].

Scoring Functions and Binding Affinity Estimation

Following docking, scoring functions estimate the binding affinity of ligand-receptor complexes [4]. Docking scores are inherently approximations of the true binding constant, based primarily on noncovalent interactions between ligand and target [4]. Several approaches can improve scoring accuracy:

- Solvent Corrections: Since solvent plays a crucial role in ligand binding, solvation corrections can be applied through simple dielectric constant estimation or explicit solvation models [4].

- Consensus Scoring: This approach rescores top hits with multiple scoring algorithms, with hits appearing at the top of multiple lists selected for further investigation, leading to greater predictive accuracy [4].

- Free Energy Perturbation (FEP): FEP calculations provide a rigorous measure of changes in free energy between unbound and bound complexes in solvent, offering more accurate binding affinity predictions [4].

- Machine Learning-Based Scoring: Newer approaches like DrugCLIP use pretrained ligand and pocket encoders to generate binding scores, demonstrating strong performance in virtual screening tasks [5].

Essential Research Reagents and Computational Tools

Successful implementation of SBDD relies on a comprehensive toolkit of research reagents and computational resources. The "Scientist's Toolkit" encompasses both data resources and software solutions that enable the various stages of the SBDD workflow.

Table 2: Essential Research Reagents and Computational Tools for SBDD

| Category | Resource/Tool | Description and Function |

|---|---|---|

| Target Structures | Protein Data Bank (PDB) | Primary repository for experimental 3D structures of proteins and nucleic acids determined by X-ray crystallography, NMR, or cryo-EM [4]. |

| Ligand Databases | ZINC Database | Curated collection of commercially available compounds for virtual screening, providing 2D structures that can be converted to 3D for docking studies [4]. |

| In-house Registration Systems | Private Compound Collections | Internal databases of synthesized or acquired compounds, often including inventory systems and virtual libraries particularly important for fragment-based discovery [3]. |

| Docking Software | Programs in Table 1 | Computational tools that predict preferred binding orientation and conformation of small molecules in target binding sites [4]. |

| Specialized SBDD Platforms | Proasis (DesertSci) | Enterprise solution that translates 3D protein structural data into strategic assets, streamlining drug discovery through integrated data management [3]. |

| Molecular Dynamics Engines | GROMACS, Others | Software for performing MD simulations to study protein-ligand interactions, conformational changes, and binding thermodynamics [3] [1]. |

Current Challenges and Future Directions in SBDD

Evaluation Metrics and Practical Applicability

Despite technological advancements, practical application of SBDD models in real-world drug development remains challenging [5]. A significant limitation concerns evaluation metrics, particularly reliance on the Vina docking score as the standard for assessing binding abilities [5]. This metric shows susceptibility to overfitting, as scores can be artificially inflated by simply increasing molecular size, potentially leading to overly optimistic evaluations of model performance [5]. Furthermore, the synthetic feasibility of generated molecules often proves complex and unfeasible, impeding wet-lab validation [5] [6].

To address these limitations, researchers propose a comprehensive evaluation framework that extends beyond traditional metrics [5]:

- Similarity-Based Metrics: Evaluate resemblance of generated molecules to known active compounds and FDA-approved drugs, gauging potential for modification into viable candidates.

- Virtual Screening-Based Metrics: Measure practical deployment capabilities by assessing how well generated molecules can discriminate between active and inactive compounds.

- Refined Binding Affinity Estimation: Continue using binding affinity estimates but with more nuanced evaluation, including delta scores for specific binding ability and machine learning-based scoring functions [5].

AI Integration and Federated Data Ecosystems

The future of SBDD data products lies in their integration with AI systems [3]. As machine learning algorithms become more advanced in predicting ligand binding modes and protein-ligand interactions, the quality and organization of training data becomes paramount [3]. Organizations maintaining pristine structural data products will gain a competitive edge in developing next-generation AI tools for drug design [3].

Deep learning methods for structure-based drug discovery represent a particularly promising direction [2]. These generative models create novel molecules tailored to specific protein targets by learning principles of molecular structure and binding interactions from large datasets [2]. The central challenge involves effectively encoding protein structure—distilling critical structural and chemical features of the binding site from the noise of the surrounding protein [2].

Additionally, federated data ecosystems are emerging, enabling organizations to share structural information while safeguarding proprietary interests [3]. These collaborative platforms accelerate discovery across the industry while preserving competitive differentiation, potentially addressing the data scarcity issues that limit some AI approaches.

Structure-Based Drug Design has established itself as an indispensable rational approach in modern drug discovery. By leveraging the three-dimensional structural information of biological targets, SBDD enables direct, structure-guided design of therapeutic compounds, potentially reducing the high attrition rates that plague traditional discovery approaches. The methodology has evolved from relying on static experimental structures to incorporating dynamic simulations, sophisticated scoring functions, and increasingly, artificial intelligence.

The iterative cycle of target preparation, molecular docking, scoring, and experimental validation forms the core of the SBDD process, with each iteration informed by structural insights and experimental feedback. As the field advances, challenges remain in improving evaluation metrics, ensuring synthetic feasibility, and effectively integrating protein flexibility and dynamics. Nevertheless, with the growing integration of AI and the emergence of collaborative data ecosystems, SBDD is poised to become increasingly central to therapeutic development, ultimately enabling more efficient and effective drug discovery for a wide range of human diseases.

Structure-based drug design (SBDD) represents a foundational pillar in modern pharmaceutical research, enabling the rational development of therapeutic agents through detailed analysis of molecular interactions between drugs and their biological targets. This methodology stands in stark contrast to traditional ligand-based approaches, which infer target properties indirectly from known active compounds. The paradigm of SBDD has evolved from early successes grounded in hypothetical modeling to contemporary approaches leveraging advanced computational and structural biology techniques. As Anderson notes, SBDD has become "an integral part of most industrial drug discovery programs" [7], demonstrating its critical role in addressing the immense costs and high failure rates associated with drug development, where bringing a single drug to market is estimated to cost $2.2 billion [8]. This whitepaper traces the technical evolution of SBDD from its pioneering applications to its current status as a multidisciplinary field integrating structural biology, computational chemistry, and machine learning.

The Captopril Breakthrough: A Foundational Case Study

The development of captopril in the early 1980s stands as a landmark achievement in SBDD, representing one of the first deliberate applications of target structure analysis for drug design. Captopril was engineered as a specific inhibitor of angiotensin-converting enzyme (ACE), a zinc metallopeptidase central to blood pressure regulation through its roles in synthesizing hypertensive angiotensin II and degrading hypotensive bradykinin [9].

The design strategy employed by Cushman, Ondetti, and colleagues was remarkably innovative given the technological limitations of the era. Without a direct experimental structure of ACE available, the team constructed a hypothetical model of the ACE active center based on its presumed analogy to the well-characterized zinc metallopeptidase carboxypeptidase A [9] [10]. This model guided logical sequential improvements from a weakly active prototype inhibitor—derived from a snake venom peptide (teprotide or SQ 20881)—to the highly optimized structure of captopril [9].

The molecular architecture of captopril incorporates key pharmacophoric elements essential for its mechanism:

- A thiol moiety that directly coordinates with the catalytic zinc ion in the ACE active site

- An L-proline group that enhances oral bioavailability

- A methylpropanoyl chain that optimally occupies the substrate binding pocket [11]

This rational design process established foundational principles for SBDD, demonstrating how even hypothetical target models could guide successful drug development when informed by structural similarities to characterized enzymes.

Table 1: Key Structural Elements of Captopril and Their Functional Roles

| Structural Element | Chemical Feature | Functional Role in ACE Inhibition |

|---|---|---|

| Thiol group | -SH moiety | Directly coordinates with catalytic zinc ion |

| L-proline residue | Pyrrolidine-2-carboxylic acid | Enhances oral bioavailability and binding orientation |

| Methyl group | -CH₃ side chain | Optimizes hydrophobic interactions with S1' pocket |

| Carboxyl group | -COOH terminus | Interacts with positively charged residues in active site |

Evolution of Structural Determination Techniques

The progression of SBDD has been inextricably linked to advances in methods for determining high-resolution macromolecular structures. Early SBDD efforts like captopril relied on comparative modeling, but contemporary approaches benefit from an array of sophisticated experimental techniques.

X-ray Crystallography Methods

X-ray crystallography has historically been the workhorse of structural biology, constituting greater than 85% of structures in the Protein Data Bank (PDB) [12]. Traditional cryocooling methods, while enabling high-resolution structure determination, often trap proteins in single conformational states and remove natural flexibility. Recent advancements have addressed these limitations:

Serial room-temperature crystallography: Enabled by X-ray Free Electron Lasers (XFELs) and advanced synchrotron sources, this technique captures structural dynamics and reveals conformational changes obscured at cryogenic temperatures [12]. For glutaminase C (GAC) inhibitors, room-temperature crystallography identified disrupted hydrogen bonds and binding site flexibility that explained potency differences undetectable in cryo-cooled structures [12].

Fixed-target approaches: Microcrystals pipetted onto silicon or polymer chips enable high-throughput data collection with minimal sample consumption (~10μL), making this method ideal for initial drug binding screening [12].

Mix-and-inject serial crystallography (MISC): Utilizing microfluidic mixers, this time-resolved technique probes ligand-binding events on millisecond to second timescales, capturing intermediate conformational states during binding [12].

Emerging Structural Biology Techniques

Cryogenic electron microscopy (cryo-EM) has emerged as a powerful alternative for targets resistant to crystallization, particularly membrane proteins and large complexes [12] [10]. While approximately 55% of cryo-EM maps in the PDB achieved resolution better than 3.5Å in 2021 (compared to 98% of crystallography structures), continuous technical improvements are rapidly closing this gap [12].

NMR-driven SBDD addresses several limitations of crystallography by providing solution-state structural information and capturing dynamic protein-ligand interactions [13]. Key advantages include:

- Direct detection of hydrogen bonding through ¹H chemical shifts

- Ability to study flexible proteins and disordered regions

- Identification of ~20% of protein-bound waters typically invisible in X-ray structures

- No crystallization requirement, applicable to proteins recalcitrant to crystallization [13]

Table 2: Comparison of Major Structural Determination Techniques in SBDD

| Technique | Resolution Range | Key Advantages | Principal Limitations |

|---|---|---|---|

| X-ray Crystallography | ~1.0-3.0 Å | High throughput, high resolution, well-established | Requires crystallization, limited dynamics representation |

| Cryo-EM | ~2.5-4.5 Å | No crystallization needed, suitable for large complexes | Lower resolution for many targets, size limitations |

| NMR Spectroscopy | Atomic-level (solution) | Captures dynamics, no crystallization, detects H-bonds | Molecular weight limitations, signal overlap in large proteins |

| AlphaFold Prediction | Varies (in silico) | Rapid, covers entire proteome, no experimental work | Limited accuracy for ligand complexes, static structures |

The Computational Revolution in SBDD

Computational methods have dramatically transformed SBDD from a structure-guided manual process to an increasingly automated, predictive discipline. The integration of advanced algorithms and machine learning has addressed fundamental challenges in molecular docking, scoring, and chemical space exploration.

Molecular Docking and Virtual Screening

Molecular docking serves as the computational core of SBDD, predicting ligand binding modes and affinities to target structures [14]. Modern implementations have evolved to address key challenges:

Scoring functions: Special attention has been devoted to developing reliable scoring functions that minimize false positives while selecting true binders—particularly crucial when screening billion-compound libraries where even a one-in-a-million false positive rate yields thousands of incorrect hits [10].

GPU acceleration: The computational bottleneck of docking massive libraries has been mitigated through graphics processing unit (GPU) computing resources and cloud computing, enabling screening of ultra-large virtual libraries with billions of drug-like compounds [10].

Successful virtual screening campaigns typically achieve hit rates of 10-40% in experimental testing, with novel hits often exhibiting potencies in the 0.1-10 μM range across diverse targets [10].

Expanding Accessible Chemical Space

The effectiveness of structure-based screening depends critically on diverse ligand libraries encompassing broad chemical space. Recent developments have dramatically expanded accessible compounds:

Virtual on-demand libraries: Platforms like Enamine's REAL (Readily Accessible) database have grown from approximately 170 million compounds in 2017 to over 6.7 billion in 2024, using carefully selected building blocks and optimized parallel synthesis protocols [10].

Synthetically accessible virtual inventory (SAVI): Developed by the US National Institutes of Health, these libraries ensure compounds can be rapidly synthesized after virtual identification [10].

The strategic value of large, diverse libraries lies not only in increasing hit identification probability but also in improving candidate novelty and patentability while enabling meaningful structure-activity relationship analysis from hit analogs [10].

Accounting for Molecular Dynamics in Drug Design

A significant evolution in SBDD has been the recognition and incorporation of protein flexibility and dynamics, moving beyond static structural snapshots to embrace the intrinsically dynamic nature of biomolecules.

The Relaxed Complex Method

The Relaxed Complex Method (RCM) represents a sophisticated approach that integrates molecular dynamics (MD) simulations with docking studies. This methodology addresses the critical limitation of conventional docking, which typically maintains fixed protein conformations or allows only limited sidechain flexibility [10]. The RCM workflow involves:

- Running extensive MD simulations of the target protein to sample conformational diversity

- Identifying representative structures that capture distinct low-energy states, including cryptic pockets

- Utilizing these structures for docking studies to identify ligands capable of binding to various conformational states [10]

This approach proved particularly valuable in the development of the first FDA-approved HIV integrase inhibitor, where MD simulations revealed significant active site flexibility that informed inhibitor design [10].

Advanced Sampling Methods

Conventional MD simulations often struggle to cross substantial energy barriers within practical timeframes. Accelerated molecular dynamics (aMD) methods address this limitation by adding a boost potential to smooth the system's potential energy surface, decreasing energy barriers and accelerating transitions between low-energy states [10]. This enhanced sampling capability enables more efficient exploration of conformational landscapes, including cryptic pockets relevant to allosteric regulation.

Modern Integrative Approaches and Future Directions

Contemporary SBDD has evolved into a multidisciplinary endeavor integrating computational predictions, experimental structural data, and machine learning algorithms.

Machine Learning and Deep Learning

Deep learning methods have introduced transformative capabilities for structure-based drug discovery, particularly through:

Co-folding models: Newer architectures like AlphaFold3, HelixFold3, and Chai simultaneously predict protein structure and protein-ligand binding modes, offering rapid structural insights when experimental approaches prove intractable [7].

Generative models: These systems learn fundamental rules of molecular structure and binding interactions from training data, then create novel molecules tailored to specific protein targets while maintaining chemical validity [8].

A central challenge in modern SBDD involves effectively encoding complete protein structures to distill critical binding site features from structurally irrelevant information [8]. Machine learning approaches demonstrate increasing autonomy in directly incorporating structural information rather than relying on preprocessed features [8].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for SBDD

| Reagent/Material | Function in SBDD | Application Context |

|---|---|---|

| Crystallization Screening Kits | Empirical identification of crystallization conditions | X-ray crystallography |

| Cryoprotectant Solutions | Protect crystals during cryocooling | Cryogenic crystallography |

| ¹³C-labeled Amino Acid Precursors | Enable specific isotopic labeling for NMR studies | NMR-driven SBDD |

| Gas Dynamic Virtual Nozzles (GDVN) | Produce thin liquid jets for crystal delivery | Serial femtosecond crystallography at XFELs |

| Fixed Target Chips (Silicon/Polymer) | Support microcrystals for serial data collection | Synchrotron serial crystallography |

| Microfluidic Mixers | Enable rapid ligand mixing for time-resolved studies | Mix-and-inject serial crystallography (MISC) |

The evolution of structure-based drug design from its seminal application in captopril development to contemporary integrated approaches represents a remarkable scientific journey. The field has progressed from hypothetical models based on analogous structures to precise atomic-level understanding enabled by advanced structural biology techniques. Modern SBDD now embraces protein dynamics, leverages unprecedented computational resources, and utilizes machine learning to navigate vast chemical spaces. Despite these advances, challenges remain in accurately predicting binding affinities, modeling full flexibility, and accounting for solvation effects and entropy-enthalpy compensation. The continued convergence of experimental structural biology, computational modeling, and artificial intelligence promises to further transform SBDD, enhancing its critical role in developing novel therapeutics against increasingly challenging targets. As technical capabilities expand, the foundational principles established by early successes like captopril continue to inform rational drug design strategies, ensuring SBDD remains at the forefront of pharmaceutical innovation.

Visual Appendices

Experimental Workflow Diagram

Diagram 1: Integrated SBDD Workflow

Technique Evolution Timeline

Diagram 2: SBDD Technique Evolution

Structure-based drug design (SBDD) has established itself as a cornerstone of modern pharmaceutical research, utilizing the three-dimensional structure of biological targets to rationally design therapeutic molecules [15]. However, the traditional drug discovery paradigm remains protracted and costly, often consuming 10–15 years and over $2 billion per approved drug, with a 90% attrition rate in clinical trials [16]. The industry is at a pivotal transformation point, driven by the integration of advanced computational methodologies. This whitepaper examines the key technological and strategic drivers—spearheaded by artificial intelligence (AI) and enhanced molecular modeling—that are now actively reducing discovery timelines and associated costs within the framework of SBDD.

The AI Revolution in Structure-Based Drug Design

Artificial intelligence, particularly generative AI and deep learning, is fundamentally reshaping the SBDD landscape. By translating structural data into predictive insights, AI addresses core bottlenecks in the discovery pipeline.

AI-Driven Protein Structure Prediction

The accuracy of SBDD is contingent on high-quality structural models of the target protein. AI-based prediction tools have dramatically expanded the universe of addressable targets.

- Breakthrough Accuracy: Tools like AlphaFold2 (AF2) and RoseTTAFold deliver structural predictions for protein families, such as G-protein coupled receptors (GPCRs), with transmembrane domain accuracy approaching ~1 Å Cα RMSD, rivaling experimental methods in some cases [17].

- Expanding Coverage: While experimental structures are available for about a quarter of the GPCR superfamily, AF2 models now provide coverage for all members, including those with low homology to known structures, thus enabling SBDD for previously intractable targets [17].

- State-Specific Modeling: A significant limitation of early AI predictors was their tendency to produce a single, "average" conformation. Newer extensions, such as AlphaFold-MultiState, now leverage activation state-annotated templates to generate functionally relevant, state-specific structural ensembles, which are critical for designing agonists or antagonists [17].

Generative AI for Molecular Design and Optimization

Generative AI models are accelerating the hit identification and lead optimization phases, which traditionally consume 4–7 years [16].

- Capabilities and Models: These models, including Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), and Transformers, learn from vast chemical and structural datasets to generate novel, drug-like molecules with optimized properties [16]. They can predict key characteristics such as binding affinity, solubility, and metabolic stability before synthesis.

- Impact on Timelines and Cost: By performing in-silico simulation of millions of compounds, AI condenses months of manual design and screening into days or hours [16]. This capability has been demonstrated in real-world applications; for instance, Insilico Medicine delivered a preclinical candidate for an anti-fibrosis drug in just 13–18 months, a fraction of the traditional 2.5–4-year timeline, and at a cost of approximately $2.6 million [16]. Similarly, Exscientia has reported cutting early design efforts by 70% while slashing associated capital costs by 80% [16].

Table 1: Quantified Impact of Generative AI on Drug Discovery

| Metric | Traditional Timeline/Cost | AI-Accelerated Timeline/Cost | Reduction |

|---|---|---|---|

| Early Hit/Lead Discovery | 4-7 years [16] | 1-2 years [16] | Up to 70% [16] |

| Preclinical Candidate ID | 2.5-4 years [16] | 13-18 months [16] | ~50% [16] |

| Capital Cost (Early Design) | Industry Benchmark | AI-driven Benchmark | 80% [16] |

| Overall R&D Cost | ~$2.6 Billion per approved drug [16] | Projected annual industry savings of $60-110 Billion [18] | Significant |

Advanced Computational Methodologies

While AI generates novel candidates, physics-based computational methods are critical for validating and optimizing these designs, creating a powerful synergistic workflow.

Molecular Dynamics for Dynamic Insight

A significant challenge in SBDD is the static nature of crystal structures. Proteins are dynamic, and their movement is often essential for function.

- Capturing Flexibility: Molecular dynamics (MD) simulations, powered by high-performance software like GROMACS, model the physical movements of atoms and molecules over time [19]. This provides critical insights into protein flexibility, ligand binding modes, and molecular interactions that static models cannot capture.

- Advanced Sampling Techniques: Methods such as Steered MD and free energy perturbation (FEP) calculations allow researchers to investigate specific molecular processes, such as ligand unbinding, and to predict with high accuracy the relative binding free energies of a congeneric series of compounds [20] [19]. This enhances the ability to rationally optimize lead compounds for potency.

Addressing Persistent SBDD Challenges

Despite decades of advancement, the practical application of computer-aided drug design (CADD) remains fraught with challenges that require careful expert management [20].

- Data Quality and Preparation: The success of any computational workflow depends on the quality of the input. Challenges include ensuring correct ligand stereochemistry, protonation states, and tautomeric forms during ligand preparation [20] [21].

- Docking and Scoring Limitations: Molecular docking, a cornerstone of SBDD, still struggles with accurate pose prediction and binding affinity estimation (scoring). The output from these tools cannot be used uncritically, and human judgment is essential for interpreting results [20].

- Physical Plausibility Checks: With the rise of AI-based co-folding models (e.g., Boltz-2), new challenges have emerged, such as the generation of poses with incorrect stereochemistry or physically implausible geometries [21]. Best practices now mandate automated checks using tools like PoseBusters and post-prediction refinement to add hydrogens and "clean up" geometries [21].

Implementation and Best Practices

Translating these technological drivers into tangible reductions in timeline and cost requires robust, enterprise-grade strategies and workflows.

Integrated SBDD Workflow

The most significant efficiency gains are realized when individual technologies are integrated into a seamless, iterative workflow. The following diagram outlines a modern, AI-enhanced SBDD cycle that connects target identification to lead optimization through continuous computational validation.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful execution of the SBDD workflow relies on a suite of specialized computational tools and data resources.

Table 2: Key Research Reagent Solutions for Modern SBDD

| Tool/Resource Category | Example(s) | Primary Function in SBDD |

|---|---|---|

| Protein Structure Databases | Protein Data Bank (PDB), AlphaFold DB | Source of experimental and predicted 3D protein structures for target modeling and analysis [20] [17]. |

| Structure Prediction & Modeling | AlphaFold2, RoseTTAFold, OpenFold | Generate accurate 3D structural models of target proteins, enabling SBDD for targets without experimental structures [17]. |

| Molecular Docking & Pose Generation | MOE, GROMACS, Boltz-1/2 | Predict the binding orientation (pose) of a small molecule within a protein's binding site [21] [19]. |

| Molecular Dynamics & Simulation | GROMACS, AMBER, SCHRODINGER | Simulate the dynamic behavior of proteins and protein-ligand complexes to assess stability and binding mechanics [19]. |

| Free Energy Calculations | Free Energy Perturbation (FEP) | Accurately compute relative binding free energies to guide lead optimization [20]. |

| AI-Driven Molecular Design | VAEs, GANs, Transformers | Generate novel, synthetically accessible drug-like molecules and optimize their properties in silico [16]. |

| Structure Validation & Analysis | PoseBusters, AIMNet2 | Automatically check generated protein-ligand complexes for physical plausibility and calculate strain energy [21]. |

Strategic Enablers and Collaborative Ecosystems

Beyond specific tools, broader strategic initiatives are key drivers of efficiency.

- Unified Data Platforms: Centralized enterprise platforms that standardize, organize, and provide unified access to structural and chemical data are critical for overcoming data fragmentation, a major historical bottleneck [19].

- Cross-Functional Collaboration: Success hinges on seamless collaboration between medicinal chemists, biophysicists, and computational biologists. Streamlined data-sharing platforms that break down silos are essential [22] [19].

- Investment in M&A and Partnerships: Robust mergers and acquisitions (M&A) activity and strategic partnerships, as seen in 2024-2025, reinforce innovation pipelines, provide access to novel technologies, and accelerate time to market [22].

The confluence of artificial intelligence and advanced physics-based computational methods is ushering in a transformative era for structure-based drug design. The key drivers—AI-powered protein structure prediction, generative chemistry, dynamic molecular simulations, and integrated enterprise platforms—are no longer theoretical concepts but are actively demonstrating quantified impacts. By adopting these technologies within a strategic, collaborative framework, researchers and drug development professionals can realistically aim to slash discovery timelines by over half and reduce associated costs by billions of dollars. This progression is foundational to the evolution of SBDD, paving the way for a more efficient and productive future in pharmaceutical R&D, ultimately enabling the faster delivery of vital therapies to patients.

Structure-based drug design (SBDD) has historically relied on high-resolution three-dimensional protein structures to rationally design and optimize therapeutic compounds. For decades, X-ray crystallography served as the predominant technique, despite significant limitations for membrane proteins, large complexes, and dynamic targets. The past decade has witnessed a revolutionary transformation with the concurrent emergence of two transformative technologies: cryo-electron microscopy (cryo-EM) and artificial intelligence (AI)-based structure prediction as exemplified by AlphaFold. This paradigm shift has dramatically expanded the universe of available protein structures, moving SBDD from a target-limited endeavor to a discovery-driven science that can tackle previously intractable biological targets. These technologies are not merely incremental improvements but represent fundamental changes in how researchers obtain structural information, enabling the study of complex biological systems in near-native states and providing structural insights for virtually any protein encoded by the human genome. The integration of these data-rich structural resources is now reshaping the entire drug discovery pipeline, from target identification and validation to lead optimization, offering unprecedented opportunities for therapeutic innovation [23] [24] [10].

The Resolution Revolution: Cryo-Electron Microscopy

Technical Foundations and Workflow

Cryo-electron microscopy has undergone a "resolution revolution" since around 2013, transforming it from a low-resolution technique suitable for large complexes to a method capable of determining atomic-resolution structures. This breakthrough stems from major advancements in direct electron detectors, advanced image processing algorithms, and sample preparation techniques [25] [23]. The method involves flash-freezing protein samples in vitreous ice to preserve their native structure, followed by imaging thousands of individual particles and using computational methods to reconstruct three-dimensional densities [24].

The standard single-particle cryo-EM workflow encompasses several critical stages:

- Sample Preparation: A purified protein solution is applied to an EM grid and rapidly vitrified in liquid ethane, preserving hydration and native structure.

- Grid Screening and Data Collection: Grids are screened for optimal ice thickness and particle distribution. Thousands of micrographs are collected using advanced microscopes equipped with direct electron detectors.

- Data Processing and 3D Reconstruction: Individual particle images are picked, classified, and aligned to generate a three-dimensional electron density map through iterative refinement.

- Model Building and Validation: An atomic model is built into the density map, refined, and validated against the map and geometric constraints [25] [24].

The following diagram illustrates this integrated experimental and computational workflow:

Quantitative Impact on Structural Biology

The impact of cryo-EM on structural biology is quantitatively demonstrated by the exponential growth of structures deposited in public databases. As of August 2023, nearly 24,000 single-particle EM maps and 15,000 associated structural models had been deposited in the Electron Microscopy Data Bank (EMDB) and Protein Data Bank (PDB), respectively [25]. The technology has successfully resolved structures of 52 antibody-target and 9,212 ligand-target complexes, with approximately 80% of these complex maps achieving resolutions better than 4 Å—sufficient for informing drug design efforts [25]. The highest resolution achieved by cryo-EM currently stands at 1.15 Å for human apoferritin, demonstrating the method's capability to reach true atomic resolution [25] [24].

Table 1: Cryo-EM Performance Metrics and Applications in Drug Discovery

| Metric | Statistical Data | Significance for SBDD |

|---|---|---|

| Total EM Maps in EMDB | ~24,000 (as of Aug 2023) [25] | Enables study of large complexes and membrane proteins |

| Resolution Distribution | ~90% of maps at 2-5 Å resolution [25] | Sufficient for atomic modeling and drug design |

| Ligand Complex Structures | 9,212 ligand-target complexes [25] | Direct visualization of drug-binding sites and interactions |

| Highest Achieved Resolution | 1.15 Å (human apoferritin) [25] | Comparable to high-quality crystal structures |

| Sample Consumption | 3 μL of 0.5-2 mg/mL sample/grid (5-15 μg total) [25] | Enables work with difficult-to-express targets |

Advantages for Challenging Drug Targets

Cryo-EM offers distinct advantages for studying targets that have historically challenged crystallographic methods. Membrane proteins, particularly G-protein coupled receptors (GPCRs) and ion channels, represent one of the most significant areas of impact. These targets are notoriously difficult to crystallize but constitute over 30% of current drug targets [24]. Cryo-EM can capture these proteins in multiple conformational states under near-physiological conditions, providing insights into activation mechanisms and allosteric regulation that are crucial for drug design [25] [23]. The technique has also proven invaluable for studying large macromolecular complexes such as the ribosome, spliceosome, and viral machinery, opening new avenues for targeting complex biological processes with therapeutics [23] [24].

The Predictive Revolution: AlphaFold and AI-Based Structure Prediction

Methodology and Global Impact

AlphaFold2, developed by Google DeepMind and released in 2020, represents a breakthrough in protein structure prediction using deep learning algorithms. The system leverages evolutionary information from multiple sequence alignments, physical constraints of protein folding, and sophisticated attention-based neural networks to predict atomic-level protein structures from amino acid sequences with remarkable accuracy [26] [23]. The subsequent development of AlphaFold3 has extended these capabilities to include predictions of protein-ligand and protein-nucleic acid complexes [23].

The global impact of AlphaFold is demonstrated by its widespread adoption across the scientific community. The AlphaFold database, hosted by the European Molecular Biology Laboratory's European Bioinformatics Institute (EMBL-EBI), contains over 240 million predicted structures and has been accessed by 3.3 million users across more than 190 countries, including over one million users from low- and middle-income countries [26]. This unprecedented democratization of structural information has fundamentally changed the accessibility of protein models for researchers worldwide.

Quantitative Assessment of AlphaFold's Structural Coverage

AlphaFold's structural predictions have achieved unprecedented coverage of the protein universe. The database now provides models for over 214 million unique protein sequences, essentially covering the entire UniProt knowledgebase [10]. This represents a dramatic expansion beyond the approximately 200,000 experimental structures available in the PDB, which correspond to only about 60,000 unique protein sequences [10]. The scale of this resource has transformed bioinformatics and target selection, enabling researchers to work with structural models for virtually any protein of interest.

Table 2: AlphaFold Database Metrics and Applications in SBDD

| Metric | Data | Implication for Drug Discovery |

|---|---|---|

| Total Predictions | Over 214 million unique protein structures [10] | Near-complete coverage of known proteomes |

| Database Access | 3.3 million users from 190+ countries [26] | Democratizes structural information globally |

| Citation Impact | ~40,000 journal articles citing AlphaFold2 (2024) [26] | Rapid adoption across biological sciences |

| Structural Accuracy | Comparable to experimental structures for many targets [27] | Provides reliable starting points for drug design |

| Comparative Coverage | PDB: ~200,000 structures; AlphaFold: 214 million+ [10] | Expands target space by orders of magnitude |

Practical Integration into SBDD Workflows

Experimental Complementarity and Validation

While both technologies provide structural information, they exhibit complementary strengths in SBDD applications. Cryo-EM excels at determining experimental structures of complex macromolecular assemblies, membrane proteins, and multiple conformational states, often with bound ligands or drugs [25] [24]. AlphaFold provides computational predictions for proteins that may be difficult to express, purify, or crystallize, offering complete genomic coverage but typically without ligands or consideration of conformational dynamics [27] [23].

A powerful trend emerging in modern SBDD is the integration of both approaches. For instance, AlphaFold predictions can be used to resolve uncertain regions in cryo-EM maps, while cryo-EM experimental data can validate and refine AlphaFold models [23]. This synergistic approach is particularly valuable for studying conformational dynamics and allosteric mechanisms, where experimental data can guide the interpretation of computational models.

Practical Refinement for Drug Design Applications

Direct use of AlphaFold models for SBDD presents specific challenges that require computational refinement. A primary limitation is that standard AlphaFold predictions do not include ligand-bound conformations, which often differ significantly from apo-protein structures due to induced-fit binding [27]. As noted by Schrödinger's Edward Miller, "proteins change their shapes, sometimes quite substantially, when different drug molecules bind to them. As it exists now, AlphaFold2 is unable to model these very important effects" [27].

Successful applications in prospective drug discovery campaigns require physics-based refinement using molecular dynamics-based induced fit docking (IFD-MD) and free energy perturbation (FEP+) calculations to reorganize the binding site around specific ligands [27]. For example, in Schrödinger's MALT1 program, AlphaFold structures were used to resolve uncertainties in experimental structures, enabling more accurate FEP+ calculations to predict compound activity [27]. Similarly, for GPCR targets—highly dynamic membrane proteins of major pharmaceutical interest—AlphaFold models require significant refinement with known ligands to achieve accuracy comparable to experimental structures for prospective design [27].

Essential Research Toolkit

The effective implementation of these technologies requires specialized reagents, instrumentation, and computational resources. The following table summarizes key components of the modern structural biologist's toolkit for leveraging the cryo-EM and AlphaFold revolutions:

Table 3: Essential Research Toolkit for Modern Structural Biology in SBDD

| Tool Category | Specific Examples | Function in SBDD Workflow |

|---|---|---|

| Cryo-EM Hardware | Direct electron detectors (e.g., Gatan K3, Falcon 4) [23] | High-resolution image acquisition with minimal radiation damage |

| Grid Preparation | Functionalized grids (e.g., UltrAuFoil) [25] | Address preferred orientation problems and improve particle distribution |

| Processing Software | RELION, cryoSPARC, EMAN2 [25] [24] | Single-particle analysis, 2D/3D classification, and map refinement |

| AI Prediction | AlphaFold2, AlphaFold3, RoseTTAFold [26] [23] | De novo protein structure prediction from sequence |

| Refinement Tools | Molecular dynamics (GROMACS) [3], IFD-MD, FEP+ [27] | Refine protein-ligand complexes and predict binding affinities |

| Validation Resources | PDB, EMDB, MolProbity [25] | Structure validation and quality assessment |

Emerging Trends and Integration

The future of structural biology in SBDD lies in the deeper integration of cryo-EM, AI prediction, and complementary biophysical techniques. Several trends are shaping this evolution:

- Time-Resolved Cryo-EM: Technical advances are enabling the capture of transient intermediate states in biochemical reactions, providing dynamic structural information previously inaccessible [25].

- AI-Enhanced Cryo-EM Processing: Machine learning algorithms are increasingly being applied to automate particle picking, classification, and model building, addressing key bottlenecks in cryo-EM workflows [24].

- Integrated Structural Biology: Hybrid approaches that combine cryo-EM, NMR, X-ray crystallography, and computational predictions are providing comprehensive insights into protein dynamics and function [13] [10].

- Federated Data Ecosystems: Collaborative platforms are emerging that enable organizations to share structural information while protecting proprietary interests, accelerating discovery across the industry [3].

The combination of these technologies is particularly powerful for studying intrinsically disordered proteins, allosteric mechanisms, and complex molecular machines that have historically resisted structural characterization. As these methods mature, they will enable increasingly accurate predictions of drug binding affinities, specificity, and molecular mechanisms.

The concurrent revolutions in cryo-EM and AI-based structure prediction have fundamentally transformed the foundations of structure-based drug design. The dramatic expansion of available protein structures—from thousands in the PDB to hundreds of millions through AlphaFold—has democratized structural information and enabled SBDD approaches for previously inaccessible targets [26] [10]. Meanwhile, cryo-EM has provided experimental validation for many of these predictions while enabling the structural characterization of complex macromolecular assemblies and membrane proteins at unprecedented resolutions [25] [24].

The integration of these technologies into cohesive SBDD workflows represents the new frontier in drug discovery. Organizations that effectively leverage both experimental cry-EM structures and computational AlphaFold models, while investing in the necessary refinement and validation methodologies, are positioned to accelerate the discovery of novel therapeutics against challenging targets. As these technologies continue to evolve and integrate with other advanced methods such as molecular dynamics simulations and AI-driven virtual screening, they will further compress drug discovery timelines and increase success rates, ultimately delivering innovative medicines to patients more rapidly and efficiently. The data revolution in structural biology has indeed provided the foundation for a new era of structure-based drug design.

Structure-Based Drug Design (SBDD) has revolutionized modern therapeutics by enabling the rational development of molecules that precisely interact with biological targets, moving beyond traditional serendipitous discovery approaches [28]. At the heart of this paradigm shift are membrane proteins—particularly G protein-coupled receptors (GPCRs) and ion channels—which represent the largest and most therapeutically significant class of drug targets in the human proteome [29]. These proteins mediate crucial physiological processes including cellular communication, signal transduction, and ion homeostasis, making them indispensable targets for treating numerous diseases [30] [29].

The structural elucidation of membrane proteins has historically presented substantial challenges due to their conformational flexibility, low natural abundance, and the technical difficulties associated with crystallizing membrane-embedded proteins [29] [7]. Recent breakthroughs in structural biology, particularly in cryo-electron microscopy (cryo-EM) and computational prediction methods, have dramatically accelerated SBDD for these targets by providing high-resolution structural insights [31] [29]. This technical guide examines the current landscape of membrane protein-targeted SBDD, focusing on GPCRs and ion channels, with emphasis on structural advances, experimental methodologies, and emerging computational approaches that are expanding the frontiers of drug discovery.

Structural Biology Advances for Membrane Proteins

Experimental Structure Determination Methods

Membrane protein structural biology has been transformed by multiple complementary methodologies that enable researchers to overcome historical bottlenecks. X-ray crystallography pioneered the field with the first structures of rhodopsin and the β2 adrenergic receptor (β2AR), but requires protein engineering with fusion proteins, antibody fragments, or thermostabilizing mutations to facilitate crystallization [29]. Despite its challenges, crystallography remains valuable for obtaining high-resolution structures of protein-ligand complexes when suitable crystals can be grown [7].

Cryo-electron microscopy (cryo-EM) has emerged as a revolutionary alternative that does not rely on protein crystallization [29]. This method visualizes detergent- or nanodisc-solubilized proteins in near-native states and excels at determining structures of larger protein complexes, including GPCR-G protein complexes that were previously intractable [29]. The Protein Data Bank has experienced exponential growth in GPCR complex structures, with 523 of 554 complexes determined by cryo-EM as of November 2023 [29]. Nuclear Magnetic Resonance (NMR) spectroscopy provides complementary dynamic information in solution environments, detecting conformational changes through stable-isotope "probes" incorporated into receptors [29].

Computational Structure Prediction

Advances in machine learning now enable accurate protein structure prediction from sequence data alone [7]. Models like AlphaFold3, HelixFold3, and Chai can perform protein-ligand co-folding, simultaneously predicting protein structure and binding modes [7]. While accuracy may be lower than experimental methods, these computational approaches dramatically accelerate SBDD, particularly for targets resistant to experimental structure determination [7]. Recent research has successfully designed soluble analogues of complex membrane protein folds (including GPCRs) using computational pipelines that invert AlphaFold2 networks coupled with ProteinMPNN sequence optimization, effectively expanding the functional soluble fold space [31].

Table 1: Membrane Protein Structure Determination Methods

| Method | Resolution | Key Applications | Advantages | Limitations |

|---|---|---|---|---|

| X-ray Crystallography | Atomic (∼1-3 Å) | Protein-ligand complexes with small molecules | High resolution; Well-established | Requires crystallization; Challenging for complexes |

| Cryo-electron Microscopy | Near-atomic (∼2-4 Å) | Large protein complexes (e.g., GPCR-G protein) | No crystallization needed; Native-like environment | Expensive equipment; Sample preparation challenges |

| NMR Spectroscopy | Atomic to residue level | Protein dynamics, intermediate states | Studies dynamics in solution | Limited to smaller proteins; Technical complexity |

| Computational Prediction | Residue level (confidence scores) | Rapid structure generation, ligand co-folding | Fast; No experimental setup required | Accuracy varies; Validation required |

G Protein-Coupled Receptors (GPCRs)

GPCR Signaling Mechanisms

GPCRs characterized by their seven-transmembrane (7TM) helix architecture mediate cellular responses to diverse extracellular signals including photons, ions, lipids, neurotransmitters, and hormones [29]. Their signal transduction occurs through a sophisticated allosteric mechanism spanning approximately 40 Å between extracellular stimulus sites and intracellular signaling events [29]. GPCRs primarily signal through heterotrimeric G proteins and arrestins, creating complex signaling profiles fundamental to physiological processes [29].

The canonical G protein activation cycle begins with agonist binding, inducing conformational changes that facilitate G protein recruitment [29]. The activated GPCR catalyzes GDP/GTP exchange on the Gα subunit, triggering dissociation of Gα-GTP from the Gβγ dimer [29]. Both components modulate effector proteins: Gα-GTP regulates enzymes like adenylyl cyclase (AC) and phospholipase C (PLC), while Gβγ modulates various signaling pathways [29]. Signal termination occurs through GTP hydrolysis by Gα, followed by Gαβγ heterotrimer reformation [29]. For signal regulation, activated GPCRs undergo C-terminal phosphorylation by GRKs, promoting β-arrestin binding that causes receptor desensitization via clathrin-mediated endocytosis while simultaneously scaffolding G-protein-independent signaling through MAP kinases and other pathways [29].

Figure 1: GPCR Signaling Pathways and Regulation

Biased Signaling in GPCRs

Biased signaling represents a paradigm shift in GPCR pharmacology, occurring when ligands selectively activate specific downstream pathways (either G proteins or β-arrestins) while avoiding others [32]. This selectivity offers tremendous therapeutic potential for developing drugs with improved efficacy and reduced side effects [32]. Structural studies reveal that biased ligands induce distinct receptor conformations and microswitch transitions that favor engagement with specific transducers [32]. Key mechanisms include intracellular interface remodeling and allosteric modulation that shape pathway-selective signaling outcomes [32].

The structural basis of biased signaling in class A GPCRs has been elucidated through cryo-EM studies combined with functional assays like bioluminescence resonance energy transfer (BRET) and NanoLuc Binary Technology (NanoBiT) [32]. These approaches reveal how distinct ligand binding modes reshape receptor conformations to favor specific transducer engagement, enabling the rational design of biased therapeutics through structure-guided approaches [32].

GPCR-Targeted Drug Discovery

Approximately 34% of FDA-approved drugs target GPCRs, with modulators in clinical trials experiencing exponential growth [29]. GPCR drug discovery has evolved from targeting orthosteric sites (conserved binding pockets for endogenous ligands) to exploiting allosteric sites that offer superior subtype selectivity and reduced side effects [29]. More recently, bitopic ligands that simultaneously engage both orthosteric and allosteric sites have emerged with advantages including improved affinity, enhanced selectivity, and biased signaling capabilities [29].

Table 2: GPCR-Targeted Drug Discovery Approaches

| Approach | Binding Site | Key Features | Advantages | Challenges |

|---|---|---|---|---|

| Orthosteric Ligands | Endogenous ligand site | Competitive with native ligands; High efficacy | Well-established; Potent activity | Limited subtype selectivity; More side effects |

| Allosteric Modulators | Topographically distinct sites | Modulate orthosteric ligand effects; Saturable effect | High selectivity; Lower side effects | More complex screening; Subtler effects |

| Bitopic Ligands | Both orthosteric and allosteric | Single molecule with two pharmacophores | Improved affinity; Enhanced selectivity | Complex design; Optimization challenges |

Ion Channels and Direct G Protein Crosstalk

Ion Channel Regulation by G Proteins

Ion channels constitute another major class of membrane protein drug targets that regulate electrical signaling and ion homeostasis. Recent structural biology breakthroughs have illuminated unprecedented direct crosstalk between GPCRs and ion channels via G proteins [30]. Cryo-EM structures of complexes like TRPC5-Gαi3, GIRK-Gβγ, and TRPM3-Gβγ have elucidated molecular mechanisms whereby Gα or Gβγ subunits directly bind to and modulate ion channel activity [30]. This direct regulation represents a more efficient signaling mechanism compared to traditional second messenger systems.

Beyond heterotrimeric G proteins, the TRPV4-RhoA complex structure reveals that small G proteins can also directly modulate ion channels [30]. These structural insights create opportunities for developing novel therapeutics targeting specific ion channel-G protein complexes, although the physiological roles of these interactions require further characterization to fully exploit their pharmacological potential [30].

Figure 2: Ion Channel Regulation Pathways

Computational Methodologies for SBDD

Structure-Based Virtual Screening (SBVS)

Structure-Based Virtual Screening (SBVS) has become an essential component of modern drug discovery, offering a cost-effective and efficient alternative to high-throughput screening [28]. The typical SBVS workflow begins with protein preparation—processing 3D target structures from experimental data or predictions by assigning protonation states, optimizing hydrogen bonds, and treating water molecules [28]. This is followed by library preparation where compound collections are processed to assign proper stereochemistry, tautomeric, and protonation states [28].

The core SBVS process involves docking each compound into the target binding site to predict binding poses, followed by scoring to approximate binding affinity using empirical or knowledge-based functions [28]. Advanced approaches include ensemble docking (using multiple receptor conformations), induced fit docking (accommodating side-chain flexibility), and consensus docking (combining multiple scoring functions) to improve accuracy [28]. Successful SBVS campaigns have directly identified nM inhibitors, demonstrating the method's growing capability to deliver high-quality leads [28].

Emerging AI and LLM Applications

Artificial intelligence is pushing SBDD boundaries through innovative frameworks like Collaborative Intelligence Drug Design (CIDD), which combines structural precision of 3D-SBDD models with chemical reasoning capabilities of large language models (LLMs) [33]. This approach addresses critical limitations in current SBDD models, which often produce molecules with favorable docking scores but poor drug-like properties due to distorted substructures [33]. The CIDD framework begins with 3D-SBDD model generation of initial molecules, then refines them through LLM-powered modules for interaction analysis, design improvement, and reflection [33]. When evaluated on the CrossDocked2020 dataset, CIDD achieved a remarkable 37.94% success ratio, significantly outperforming the previous state-of-the-art benchmark of 15.72% while simultaneously improving both binding interactions and drug-likeness [33].

Experimental Protocols and Methodologies

Structure-Based Virtual Screening Protocol

A comprehensive SBVS protocol involves multiple stages of preparation and analysis [28]:

Protein Preparation

- Obtain 3D structure from PDB or computational prediction

- Assign protonation states using PROPKA [28] or H++ [28]

- Optimize hydrogen bond network with PDB2PQR [28]

- Add hydrogen atoms, partial charges, and fill missing loops/side chains

- Decide on water molecule treatment (remove, retain, or predict positions)

- Minimize structure to relieve steric clashes

Compound Library Preparation

- Select appropriate library (e.g., ZINC, Enamine, in-house collections)

- Generate stereoisomers, tautomers, and protonation states at physiological pH

- Filter based on drug-likeness (Lipinski's Rule of Five) and undesirable substructures

- Perform conformational sampling to generate 3D conformers

Docking and Scoring

- Select docking program (AutoDock Vina, Glide, GOLD, or DiffDock)

- Define binding site using native ligand or pocket detection algorithms

- Set appropriate search space and exhaustiveness parameters

- Execute docking runs with multiple poses per compound

- Score poses using empirical, force field, or knowledge-based functions

Post-Processing and Hit Selection

- Visual inspection of top-ranking poses for interaction validity

- Filter based on chemical moieties, metabolic liabilities, and physicochemical properties

- Assess lead-likeness and chemical diversity

- Select final compounds for experimental validation

Cryo-EM Workflow for Membrane Protein Complexes

The cryo-EM structure determination pipeline for membrane protein-ligand complexes involves [29]:

Sample Preparation

- Express and purify target membrane protein using detergent solubilization

- Incorporate into nanodiscs or amphipols for stabilization

- Form complex with binding partners (G proteins, arrestins) and ligands

- Optimize grid preparation with vitrification

Data Collection

- Screen grids for ice quality and particle distribution

- Collect multi-frame movies using direct electron detector

- Target 50,000-100,000 particles per dataset

Image Processing

- Motion correction and CTF estimation

- Automated particle picking

- 2D classification to remove junk particles

- Initial model generation

- 3D classification and refinement

- Bayesian polishing and CTF refinement

- Map sharpening and validation

Model Building and Refinement

- De novo model building or docking of existing structures

- Iterative real-space refinement

- Validation using MolProbity and EMRinger

- Deposition to PDB and EMDB

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagent Solutions for Membrane Protein SBDD

| Reagent/Category | Function/Application | Examples/Specifics |

|---|---|---|

| Stabilized Receptor Mutants | Enables crystallization and structural studies | Thermostabilized GPCR mutants (e.g., β1AR and A2A variants) |

| G Protein Mimetics | Stabilizes active GPCR conformations | NanoBiT, Mini-G proteins, camelid nanobodies |

| Cryo-EM Grids | Sample support for electron microscopy | UltraFoil, Quantifoil grids with various hole sizes |

| Detergents & Amphipols | Membrane protein solubilization | DDM, LMNG, amphipol A8-35, styrene-maleic acid copolymers |

| Functional Assay Systems | Measures signaling pathway activation | BRET, FRET, NanoLuc Binary Technology (NanoBiT) |

| Computational Tools | Protein structure prediction and docking | AlphaFold3, HelixFold3, AutoDock Vina, DiffDock |

The target landscape of membrane proteins, particularly GPCRs and ion channels, continues to evolve through structural biology breakthroughs and computational methodologies. The integration of cryo-EM, machine learning prediction, and advanced virtual screening has created unprecedented opportunities for rational drug design against these therapeutically vital targets. Emerging approaches including biased ligand design, allosteric modulation, and direct ion channel-G protein complex targeting represent the next frontier in membrane protein drug discovery. Furthermore, collaborative frameworks combining structural models with large language domain knowledge promise to bridge the critical gap between binding affinity optimization and drug-like properties, potentially accelerating the delivery of novel therapeutics to patients. As these technologies mature, SBDD for membrane proteins will continue to expand its impact across virtually all therapeutic areas, solidifying its foundation as a cornerstone of modern pharmaceutical research and development.

Methodologies in Action: Core Techniques and Practical Applications

Structure-based drug discovery (SBDD) has become an essential tool in assisting fast and cost-efficient lead discovery and optimization [28]. By utilizing the knowledge of the three-dimensional (3D) structure of biological targets, SBDD aims to understand the molecular basis of disease and employs computational methods to investigate ligand-protein interactions at an atomic level [28]. Within this framework, structure-based virtual screening (SBVS) serves as an efficient, alternative approach to experimental high-throughput screening (HTS), enabling researchers to computationally screen large libraries of drug-like compounds against targets of known structure and experimentally test only those predicted to bind well [28] [34].

The application of rational, structure-based drug design has proven more efficient than traditional discovery methods because it delivers new drug candidates more quickly and cost-effectively [28]. Virtual screening is broadly classified into two categories: ligand-based methods, used when the 3D structure of the receptor is unknown, and structure-based methods, employed when the receptor structure is available [34]. This technical guide focuses specifically on molecular docking as a cornerstone technique in SBVS, addressing its fundamental principles, methodological considerations, and recent advancements.

Fundamental Principles of Molecular Docking

Molecular docking is a computational method that predicts the optimal binding conformation and orientation of a small molecule (ligand) within the binding site of a biological target (receptor) [35]. This technique serves two primary objectives: predicting the binding affinity and conformation of small molecules within a receptor site, and identifying hits from large chemical databases to discover diverse chemical scaffolds [35]. The docking process involves two core computational challenges: sampling (exploring possible conformations of ligands in the receptor binding pocket) and scoring (identifying the correct binding mode and ranking different ligands by estimated binding affinity) [36].

Conformational Search Algorithms

Docking programs employ various conformational search methods to explore the flexibility and spatial arrangement of ligands within binding sites. These algorithms can be broadly categorized into systematic and stochastic approaches [35].

Table 1: Conformational Search Methods in Molecular Docking

| Method Type | Specific Approach | Principle of Operation | Representative Docking Programs |

|---|---|---|---|

| Systematic | Systematic Search | Rotates all possible rotatable bonds by fixed intervals to exhaustively explore conformational space | Glide [35], FRED [35] |

| Incremental Construction | Fragments molecules, docks rigid components, then systematically builds linkers | FlexX [35], DOCK [35] | |

| Stochastic | Monte Carlo | Uses random sampling and Boltzmann distribution probability for conformation acceptance | Glide [35] |

| Genetic Algorithm | Employs natural selection principles with cross-over and mutation operations | AutoDock [35], GOLD [35] |

Systematic methods thoroughly explore all potential conformations by systematically changing torsional degrees of freedom [35]. While comprehensive, these methods face exponential complexity growth as the number of rotatable bonds increases. Stochastic techniques utilize random sampling and probabilistic methods to explore conformational space, making them more efficient for complex flexible ligands [35].

Scoring Functions

Scoring functions are designed to reproduce binding thermodynamics by approximating the free energy of binding between the protein and ligand in each docking pose [28] [35]. The binding free energy (ΔGbinding) is governed by the equation: ΔGbinding = ΔH - TΔS, where ΔH represents the enthalpy component and ΔS the entropy component at temperature T [35].

Scoring functions estimate the enthalpy component by summing all interactions of different types at the atomistic level, though this approach has been criticized for treating binding as a purely additive phenomenon [35]. The accuracy of scoring functions remains a significant challenge in molecular docking, as they must balance computational efficiency with physical realism to enable the screening of large compound libraries [36].

Virtual Screening Workflow and Methodologies

The general scheme of a SBVS campaign follows a multi-stage process that begins with target and compound library preparation and proceeds through docking, scoring, and post-processing of top-ranking hits [28]. Successful implementation requires careful consideration at each stage to maximize the probability of identifying genuine binders.

Protein and Ligand Library Preparation