Structure and Ligand-Based Virtual Screening: Protocols, Advances, and Best Practices for Modern Drug Discovery

This article provides a comprehensive guide to structure-based (SBVS) and ligand-based (LBVS) virtual screening for researchers and drug development professionals.

Structure and Ligand-Based Virtual Screening: Protocols, Advances, and Best Practices for Modern Drug Discovery

Abstract

This article provides a comprehensive guide to structure-based (SBVS) and ligand-based (LBVS) virtual screening for researchers and drug development professionals. It covers foundational principles, from the knowledge-driven nature of SBVS, which relies on target protein structures, to the similarity-based approach of LBVS. The content details methodological workflows, including library preparation, docking, pharmacophore modeling, and the integration of AI and machine learning. It addresses critical challenges in scoring, pose prediction, and bias mitigation, while also presenting optimization strategies like consensus scoring and hybrid workflows. Finally, the article examines validation frameworks, performance metrics, and comparative case studies, including insights from the CACHE challenge, to equip scientists with the knowledge to design effective and reliable virtual screening campaigns.

Virtual Screening Fundamentals: Core Principles and Historical Context

Defining Structure-Based and Ligand-Based Virtual Screening

Virtual screening (VS) is a cornerstone computational technique in modern drug discovery, employed to efficiently identify promising hit compounds from extensive chemical libraries by predicting their biological activity [1]. It serves as a cost-effective and rapid alternative or complement to experimental high-throughput screening, enabling researchers to prioritize a manageable number of synthesizable or purchasable candidates for laboratory testing [1] [2]. The two primary and complementary methodologies in this field are Structure-Based Virtual Screening (SBVS) and Ligand-Based Virtual Screening (LBVS). The choice between them is principally dictated by the available structural and bioactivity information for the target of interest [2]. This article defines these two approaches, details their respective protocols, and presents a comparative analysis to guide their application.

Core Definitions and Fundamental Principles

Structure-Based Virtual Screening (SBVS)

SBVS relies on the three-dimensional (3D) structure of the macromolecular target, typically a protein, determined through experimental methods like X-ray crystallography or NMR, or generated via computational homology modeling [3] [2]. Its fundamental premise is the principle of complementarity, which posits that a potent ligand must exhibit strong steric and physicochemical compatibility with its target's binding site [3]. The most widely used SBVS technique is molecular docking, which computationally predicts the preferred orientation (pose) of a small molecule within a defined binding pocket and evaluates the stability of the resulting complex using a scoring function [4] [3].

Ligand-Based Virtual Screening (LBVS)

LBVS is applied when the 3D structure of the target is unknown but a set of compounds with known activity against the target is available. Its foundation is the similarity principle, which states that structurally similar molecules are likely to exhibit similar biological activities [4] [5]. LBVS methods use molecular descriptors of known active compounds as templates to search for and rank compounds in a database. These methods range from simpler 2D approaches, such as substructure searching and fingerprint-based similarity calculations, to more complex 3D techniques that compare molecular shapes and pharmacophoric features [4] [5].

Table 1: Core Characteristics of SBVS and LBVS

| Feature | Structure-Based (SBVS) | Ligand-Based (LBVS) |

|---|---|---|

| Primary Requirement | 3D structure of the target protein [2] | Known active ligand(s) [2] |

| Underlying Principle | Structural and physicochemical complementarity [3] | Molecular similarity [4] |

| Key Methodologies | Molecular Docking, Scoring Functions [3] | Pharmacophore Mapping, 2D/3D Similarity Search, QSAR [2] |

| Key Advantage | Identifies novel scaffolds; provides atomic-level interaction insights [6] | Fast, computationally inexpensive; does not require a protein structure [5] [6] |

| Main Challenge | Handling target flexibility; accurate affinity prediction [4] [3] | Bias towards the chemical template; limited novelty of identified hits [4] |

Quantitative Performance and Benchmarking

The effectiveness of virtual screening methods is quantitatively assessed using benchmark datasets and standardized metrics. Key benchmarks include the Directory of Useful Decoys (DUD) and the Comparative Assessment of Scoring Functions (CASF) [7]. Common performance metrics are the Enrichment Factor (EF), which measures the concentration of true active compounds early in the ranked list, and the Area Under the Curve (AUC) of the Receiver Operating Characteristic (ROC) curve, which evaluates the overall ability to distinguish actives from inactives [7].

Table 2: Representative Performance Metrics of Different VS Methods

| Method / Platform | Key Benchmark Dataset | Reported Performance Metric | Value |

|---|---|---|---|

| RosettaGenFF-VS (Physics-based SBVS) | CASF-2016 | Top 1% Enrichment Factor (EF1%) | 16.72 [7] |

| AutoDock Vina (SBVS) | General Performance | Virtual Screening Accuracy | Slightly lower than commercial tools like Glide [7] |

| VSFlow (LBVS, Fingerprint) | N/A | Processing Speed | Thousands to millions of compounds in hours on a single CPU [5] |

Experimental Protocols and Methodologies

A Protocol for Structure-Based Virtual Screening using Molecular Docking

The following protocol outlines a typical SBVS workflow using the open-source jamdock-suite and AutoDock Vina [8].

1. System Setup and Pre-screening

- Install Software Dependencies: On a Unix-like system or Windows Subsystem for Linux (WSL), install essential packages including

openbabel,pymol,fpocket,AutoDockTools(MGLTools), andAutoDock Vina(or its variant QuickVina 2) [8]. - Receptor Preparation: Obtain the target protein structure (e.g., from the PDB). Use a script like

jamreceptorto convert the PDB file to PDBQT format, which assigns atom types and charges. The script utilizesfpocketto detect and characterize potential binding pockets, allowing the user to select one for docking and automatically define a grid box around it [8]. - Compound Library Preparation: Select a database such as ZINC, which contains millions of commercially available compounds. Use a script like

jamlibto generate a library of molecules in PDBQT format. This process typically includes steps for energy minimization and format conversion to ensure compatibility with the docking software [8].

2. Docking Execution and Post-Screening

- Automated Docking: Use a script like

jamqvinato automate the docking of the entire prepared compound library into the selected receptor grid box. For robustness in long-running screens, a tool likejamresumecan be used to pause and restart the process [8]. - Ranking and Analysis: Once docking is complete, employ a script like

jamrankto evaluate and rank the results based on the docking scores. The top-ranked compounds represent the virtual hits predicted to have the strongest binding affinity [8].

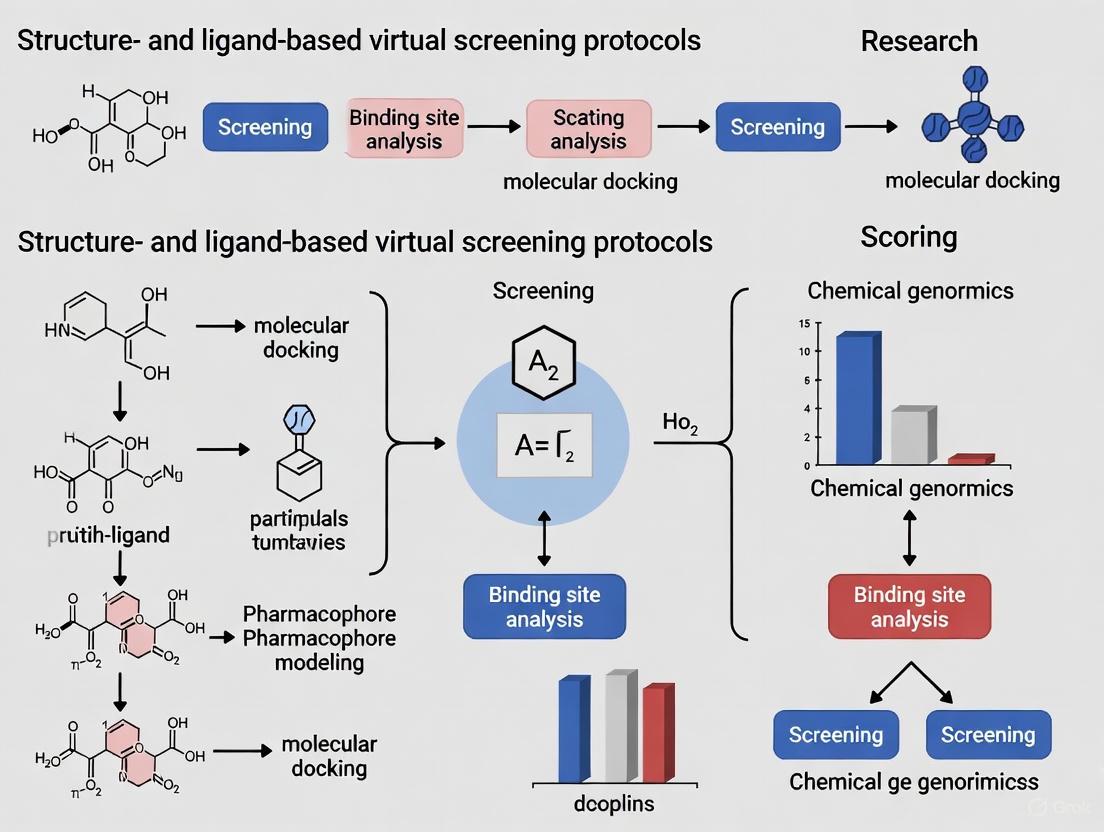

The following workflow diagram illustrates this multi-step protocol for a structure-based virtual screening pipeline.

A Protocol for Ligand-Based Virtual Screening using VSFlow

The following protocol details a LBVS workflow using the open-source command-line tool VSFlow, which integrates multiple ligand-based methods [5].

1. System and Database Setup

- Install VSFlow: Ensure a working installation of Python (v3.7+) and install VSFlow and its dependencies (RDKit, xlrd, pymol-open-source, etc.) using the provided Conda environment file [5].

- Database Preparation: Use VSFlow's

preparedbtool to standardize a compound database (e.g., from an SDF or SMILES file). This step includes neutralization of charges, removal of salts, optional tautomer canonicalization, and generation of molecular fingerprints and/or multiple 3D conformers. The output is a standardized.vsdbfile for rapid subsequent screening [5].

2. Screening Execution and Analysis

- Substructure Search: Use the

substructuretool with a SMARTS pattern or a query molecule to find all compounds in the database that contain the specified substructure [5]. - Fingerprint Similarity Search: Use the

fpsimtool with a query molecule (e.g., a known active). The tool calculates molecular fingerprints (e.g., ECFP4) for the query and all database molecules, then ranks the database by a similarity coefficient (e.g., Tanimoto) [5]. - Shape-Based Screening: Use the

shapetool with a query molecule in a bioactive conformation (e.g., from a PDB structure). The tool aligns conformers of database molecules to the query, calculates shape similarity (and optionally 3D pharmacophore fingerprint similarity), and ranks the database based on a combined score [5]. - Result Visualization: VSFlow supports the generation of result files in various formats (SDF, Excel, CSV) and can create PDF reports with 2D structures of the hits for visual inspection [5].

The logical flow of a comprehensive ligand-based screening campaign, integrating these three methods, is depicted below.

A successful virtual screening campaign relies on a suite of computational tools and compound libraries. The table below catalogs key resources for setting up a robust VS pipeline.

Table 3: Key Resources for Virtual Screening

| Resource Name | Type / Category | Brief Description of Function |

|---|---|---|

| AutoDock Vina/QuickVina 2 [8] | SBVS Software | A widely used, open-source molecular docking program for predicting ligand poses and binding affinities. |

| RosettaVS [7] | SBVS Software | A physics-based docking method that models receptor flexibility and shows state-of-the-art performance on benchmarks. |

| VSFlow [5] | LBVS Software | An open-source command-line tool integrating substructure, fingerprint, and shape-based screening methods. |

| RDKit [5] | Cheminformatics Library | An open-source toolkit for cheminformatics, used for molecule standardization, fingerprint generation, and conformer generation. |

| ZINC [8] [3] | Compound Database | A free public database containing the structural and purchasability information for millions of compounds. |

| ChEMBL [1] | Bioactivity Database | A manually curated database of bioactive molecules with drug-like properties, containing bioactivity data. |

| PDB (Protein Data Bank) [1] | Structure Database | A single worldwide repository for the processing and distribution of 3D structural data of biological macromolecules. |

| MCE Bioactive Compound Library [2] | Commercial Compound Library | A collection of over 29,000 bioactive and structurally diverse compounds, useful for validation and screening. |

Integrated and Hybrid Screening Strategies

Given the complementary strengths and weaknesses of SBVS and LBVS, integrating them into a hybrid workflow often yields superior results [4] [6]. There are two predominant integration strategies:

- Sequential Approach: This is a multi-tiered filtering process. A computationally inexpensive LBVS method (e.g., pharmacophore or 2D similarity search) is first used to rapidly reduce a massive chemical library to a more manageable size. This enriched library is then subjected to the more computationally demanding SBVS (docking) for precise pose prediction and final ranking [4] [6].

- Parallel Approach with Consensus Scoring: Both LBVS and SBVS are run independently on the same library. The results are then combined, either by selecting top candidates from both lists to maximize hit diversity or by creating a unified consensus ranking (e.g., by averaging ranks or scores) to increase confidence in the selected hits [4] [6]. Evidence suggests that a hybrid model averaging predictions from both approaches can perform better than either method alone by partially canceling out their individual errors [6].

The following diagram illustrates how these two fundamental approaches can be synergistically combined into a powerful hybrid screening workflow.

The relentless pursuit of efficiency in drug discovery has catalyzed a paradigm shift towards integrated, knowledge-driven approaches in virtual screening. This paradigm moves beyond the traditional dichotomy of structure-based (reliant on target 3D structures) and ligand-based (reliant on known active ligands) methods. Instead, it strategically fuses these two streams of information to create a more powerful and predictive discovery engine [6]. The core premise is that the complementary strengths of each method can compensate for the other's weaknesses, leading to higher confidence in hit identification and a greater probability of success. Ligand-based methods excel at rapid pattern recognition and leveraging historical bioactivity data, while structure-based methods provide atomic-level insights into binding interactions [6]. This Application Note details the protocols and practical implementation of this knowledge-driven paradigm, providing a framework for researchers to accelerate lead identification and optimization.

The Hybrid Screening Framework

A knowledge-driven virtual screening workflow is not merely the sequential use of different tools, but a synergistic integration designed to maximize efficiency and accuracy. The typical workflow involves two primary strategies: sequential integration and parallel consensus scoring [6].

- Sequential Integration: This cost-effective strategy employs rapid ligand-based filtering of large compound libraries (often millions of compounds), followed by structure-based refinement of a much smaller, prioritized subset (typically a few thousand). This conserves computational resources for the most expensive calculations.

- Parallel Consensus Scoring: Here, ligand- and structure-based screening are run independently on the same compound library. The results are then combined, either by selecting top candidates from both lists to maximize hit recovery or by creating a unified consensus ranking to increase confidence in selections [6].

The workflow, detailed in the diagram below, provides a visual roadmap for implementing this hybrid strategy.

Diagram 1: Knowledge-Driven Hybrid Virtual Screening Workflow. This diagram outlines the synergistic integration of ligand-based and structure-based methods, culminating in a consensus analysis for high-confidence hit identification.

Core Methodologies and Protocols

Ligand-Based Methods: Leveraging Known Actives

When the 3D structure of a target is unavailable or uncertain, ligand-based methods provide a powerful starting point. These methods operate on the principle that molecules with similar structural or physicochemical properties are likely to have similar biological activities.

3D Pharmacophore Modeling: A pharmacophore is an abstract model that defines the essential steric and electronic features necessary for molecular recognition. A protocol for creating and using a 3D pharmacophore model is as follows:

- Feature Identification: From a set of known active ligands, identify and align key pharmacophore features such as Hydrogen-bond Donors (HD), Hydrogen-bond Acceptors (HA), Aromatic Rings (AR), Positively/Negatively Charged Centers (PO/NE), and Hydrophobic regions (HY) [9] [10].

- Model Generation: Use software (e.g., AncPhore, PHASE) to generate a consensus pharmacophore model that captures the common interaction features from the aligned ligands [9] [10].

- Virtual Screening: Screen a large compound database (e.g., ZINC20) against the model. Compounds that match the spatial arrangement of the defined features are retained as hits.

Advanced AI-Driven Ligand-Based Screening: Modern deep learning frameworks, such as DiffPhore, have revolutionized this process. DiffPhore is a knowledge-guided diffusion model that performs "on-the-fly" 3D ligand-pharmacophore mapping [9] [10].

- Protocol: The model takes a pharmacophore hypothesis and a compound library as input. It then generates 3D ligand conformations that maximally map to the given pharmacophore model, using a diffusion-based process guided by type and directional alignment rules [9]. This approach has been shown to surpass traditional pharmacophore tools and several docking methods in predicting binding conformations and virtual screening power [9].

Structure-Based Methods: Utilizing the Target Architecture

Structure-based methods rely on the 3D structure of the biological target, typically obtained from the Protein Data Bank (PDB), to predict how a small molecule will bind.

Molecular Docking: This is the cornerstone technique of structure-based screening.

- Preparation: Prepare the protein structure (e.g., from PDB ID: 1ZGY for PPARG) by adding hydrogens, assigning partial charges, and removing water molecules. Prepare the ligand library by generating 3D conformations and optimizing geometries.

- Docking Execution: Dock each ligand from the library into the defined binding site of the target protein. The docking algorithm (e.g., AutoDock Vina, Glide) will generate multiple poses per ligand.

- Scoring and Ranking: A scoring function ranks the generated poses based on estimated binding affinity. The top-ranked compounds are selected for further analysis.

Machine Learning-Enhanced Scoring: Traditional scoring functions can be limited. Machine learning models trained on protein-ligand interaction fingerprints (PLIFs) offer improved "screening power" – the ability to distinguish true binders from non-binders [11].

- Protocol (PADIF Fingerprint): For a given target, generate interaction fingerprints (e.g., PADIF) for known active and decoy molecules. Train a classifier (e.g., Random Forest) on this data. This target-specific scoring function can then re-rank docking outputs to more accurately prioritize true actives [11]. Critical to this process is the selection of non-binding "decoy" molecules to train the model, with strategies including random selection from ZINC15 or leveraging "dark chemical matter" from historical High-Throughput Screening (HTS) data [11].

Table 1: Performance Metrics of Virtual Screening Methods on Diverse Targets

| Target Name | ChEMBL ID | Number of Actives | Method | Key Performance Metric |

|---|---|---|---|---|

| Aldehyde Dehydrogenase 1 | CHEMBL3577 | 245 | ML/PADIF Model | Enhanced screening power over classical scoring [11] |

| Peroxisome Proliferator-Activated Receptor γ (PPARG) | CHEMBL235 | 4,298 | Docking & Consensus | High enrichment in hybrid approach [11] [6] |

| Vitamin D Receptor (VDR) | CHEMBL1977 | 459 | DiffPhore | Superior pose prediction and hit identification [9] |

| Lymphocyte Function-Associated Antigen 1 (LFA-1) | N/A | Chronological Dataset | Hybrid (QuanSA + FEP+) | MUE* dropped significantly vs. individual methods [6] |

MUE: Mean Unsigned Error in affinity prediction.

Case Study: The DiffPhore Framework for Integrative Discovery

DiffPhore serves as a prime example of the modern knowledge-driven paradigm, seamlessly integrating ligand-based pharmacophore constraints with structure-based conformation generation.

Application Protocol:

- Input: A defined pharmacophore model for the target of interest (e.g., human glutaminyl cyclase) and a database of small molecules.

- Pose Generation: DiffPhore's diffusion-based generator, guided by a knowledge-encoder that understands pharmacophore type and direction matching rules, generates biologically relevant 3D ligand conformations [9] [10].

- Validation: The top-ranked compounds are selected for experimental testing. Their binding modes, as predicted by DiffPhore, can be validated using co-crystallographic analysis, which has confirmed high consistency with experimental structures [9].

The architecture and data flow of this advanced AI framework are illustrated below.

Diagram 2: Architectural Overview of the DiffPhore AI Framework. The model integrates pharmacophore knowledge directly into a diffusion process to generate accurate ligand binding conformations.

A successful virtual screening campaign relies on access to high-quality data and software tools. The following table catalogs essential resources for implementing the knowledge-driven paradigm.

Table 2: Essential Resources for Knowledge-Driven Virtual Screening

| Resource Name | Type | Function in Research | Key Feature / Note |

|---|---|---|---|

| RCSB Protein Data Bank (PDB) [12] | Database | Global repository for experimentally determined 3D structures of proteins, nucleic acids, and complexes. | Foundation for structure-based methods; provides atomic-level structural data. |

| ChEMBL [11] | Database | Manually curated database of bioactive molecules with drug-like properties. | Source of known active ligands and bioactivity data for ligand-based modeling. |

| ZINC15/ZINC20 [11] [9] | Database | Publicly available database of commercially available compounds for virtual screening. | Source of "decoy" molecules and a vast chemical library for screening. |

| Dark Chemical Matter (DCM) [11] | Dataset | Collections of compounds that have been tested multiple times in HTS but never shown activity. | High-quality source of confirmed non-binders for training machine learning models. |

| DiffPhore [9] [10] | Software | Deep learning framework for 3D ligand-pharmacophore mapping and binding pose prediction. | AI-driven integration of pharmacophore constraints; excels in pose prediction and screening. |

| PADIF [11] | Software/Descriptor | Protein per Atom Score Contributions Derived Interaction Fingerprint. | Machine-learning ready representation of protein-ligand interactions for target-specific scoring. |

| VTX [13] | Software | Open-source molecular visualization software for handling massive molecular systems. | Enables real-time visualization and manipulation of large complexes and screening results. |

The knowledge-driven paradigm, which strategically integrates 3D structural data with information from known ligands, represents a mature and powerful framework for modern virtual screening. By implementing the hybrid workflows, detailed protocols, and AI-enhanced tools outlined in this Application Note, researchers can significantly improve the efficiency and success rate of their drug discovery campaigns. This approach moves beyond isolated computational techniques, fostering a more holistic and intelligent process for identifying high-quality lead compounds with greater confidence.

Virtual screening (VS) is a cornerstone of modern computational drug discovery, enabling researchers to rapidly identify potential hit compounds from vast chemical libraries. The evolution of VS from its early roots in molecular docking to today's sophisticated hybrid methodologies represents a significant advancement in the field. This progression was driven by the need to overcome the inherent limitations of individual techniques, leading to integrated approaches that leverage the complementary strengths of both structure-based and ligand-based methods. Framed within a broader thesis on structure- and ligand-based virtual screening protocols, this article details the historical development, current consensus protocols, and practical workflows that define the state of the art. The journey from rigid docking algorithms to the integration of artificial intelligence and extensive receptor flexibility illustrates a continuous effort to improve the accuracy and efficiency of predicting bioactive molecules [14] [7].

For researchers and drug development professionals, understanding this evolution is critical for selecting and designing effective virtual screening campaigns. The transition from single-technique applications to holistic frameworks has consistently demonstrated enhanced performance in identifying novel active compounds, often with improved efficiency and success rates [4] [6]. This document provides a detailed account of this progression, supported by structured data, explicit experimental protocols, and visual guides to the key workflows.

The Early Era: Foundations in Molecular Docking

The development of virtual screening began with molecular docking in the 1980s. The earliest algorithms treated the ligand and protein receptor as rigid bodies, searching for complementary matches based primarily on geometric and steric criteria [14]. The pioneering work of Kuntz and colleagues, leading to the creation of the DOCK software, introduced a shape-matching strategy that explored possible configurations by calculating the geometric distance between molecules [14]. This rigid approach was a necessary simplification given the limited computational resources of the time, but it ignored critical aspects of molecular recognition, such as the inherent flexibility of both the ligand and the receptor.

The concept of the scoring function was central from the outset. These functions synthesize various energetic contributions—including electrostatic and van der Waals interactions—into a single score, expressed as a negative ΔG value (in kcal/mol), to predict binding affinity and rank candidate poses [14]. The goal was to create a "new microscope" capable of revealing molecular interactions that were inaccessible to experimental observation [14]. However, the simplistic nature of early rigid docking and scoring functions limited their predictive accuracy, failing to account for the induced fit and conformational changes that are fundamental to ligand binding.

Table 1: Key Developments in Early Molecular Docking

| Time Period | Key Paradigm | Representative Software | Major Advancement | Inherent Limitation |

|---|---|---|---|---|

| 1980s | Rigid Docking | DOCK (UCSF) | Shape complementarity and geometric matching | Neglected ligand and receptor flexibility |

| 1990s | Flexible Ligand Docking | AutoDock, GOLD | Sampling of ligand conformational degrees of freedom | Protein target largely held rigid |

| 2000s | Advanced Scoring & Sampling | Glide, Surflex | Improved force fields and search algorithms | Handling of protein flexibility and solvation remained challenging |

The Rise of Complementary VS Methodologies

As computational power increased, the VS landscape expanded to include two primary, complementary categories of techniques, each with distinct strengths and weaknesses.

Structure-Based Virtual Screening (SBVS)

SBVS requires the three-dimensional structure of the target, typically derived from X-ray crystallography, cryo-electron microscopy, or homology modeling. The most common SBVS technique is molecular docking, which predicts the preferred orientation (pose) of a small molecule within a target's binding site and estimates the binding affinity using a scoring function [4] [15]. Docking programs must solve two main problems: sampling (exploring possible conformations and orientations of the ligand in the binding site) and scoring (accurately ranking these poses based on their predicted binding affinity) [15]. Despite advancements, challenges persist, including the accurate treatment of protein flexibility, the role of bridging water molecules, and the entropic contributions to binding [4] [15].

Ligand-Based Virtual Screening (LBVS)

LBVS is employed when the 3D structure of the target is unknown but information about active ligands is available. It operates on the molecular similarity principle, which posits that structurally similar molecules are likely to have similar biological activities [4] [5]. LBVS methods can be broadly divided into:

- 2D Methods: These use descriptors based on the molecular graph, such as chemical fingerprints (e.g., ECFP, MACCS keys), to compute similarity, often using the Tanimoto coefficient [4] [16].

- 3D Methods: These compare molecules based on their three-dimensional shape, volume, and pharmacophoric features (e.g., hydrogen bond donors/acceptors, hydrophobic regions). Tools like ROCS (Rapid Overlay of Chemical Structures) perform 3D shape-based similarity screening, which excels at "scaffold-hopping"—identifying active compounds with novel chemical backbones [5] [16].

LBVS is typically much faster and less computationally demanding than SBVS, making it ideal for the rapid filtering of ultra-large chemical libraries [5] [6].

Table 2: Comparison of Core Virtual Screening Methodologies

| Aspect | Structure-Based (SBVS) | Ligand-Based (LBVS) |

|---|---|---|

| Requirement | 3D structure of the target protein | Known active ligand(s) |

| Primary Method | Molecular Docking | Molecular Similarity Search |

| Key Strengths | Provides atomic-level interaction insights; can identify novel scaffolds. | Fast and computationally cheap; excellent for analog searching and scaffold hopping. |

| Major Limitations | Computationally expensive; sensitive to protein flexibility and scoring inaccuracies. | Biased by the choice of reference ligand; cannot model novel interactions beyond the pharmacophore. |

| Common Tools | Glide, GOLD, AutoDock Vina, RosettaVS | ROCS, EON, FTrees, VSFlow, ECFP Fingerprints |

The Modern Paradigm: Hybrid and Consensus Approaches

Recognizing that LBVS and SBVS are highly complementary, the modern era of virtual screening has been defined by the development of hybrid and consensus strategies that integrate both approaches to mitigate their individual weaknesses and synergize their strengths [4] [6]. These integrated protocols have been shown to outperform single-method applications, leading to higher hit rates and the discovery of chemically diverse active compounds [4] [17].

The integration strategies can be classified into three main categories [4]:

- Sequential Approach: This is a multi-step filtering process where a fast, computationally inexpensive method (typically LBVS) is used to pre-filter a large chemical library. The resulting subset of promising candidates is then subjected to a more rigorous and expensive SBVS analysis, such as molecular docking. This strategy optimizes the trade-off between computational cost and predictive accuracy [4] [6].

- Parallel Approach: LBVS and SBVS are run independently on the same compound library. The final hit list is compiled by combining the top-ranking compounds from each method, either by taking the union of the lists or by creating a consensus ranking. This approach increases the likelihood of recovering true active compounds and helps mitigate the specific limitations of each method [4] [6].

- Hybrid (Integrated) Approach: This represents the most advanced strategy, where LB and SB information are combined into a single, holistic computational framework. For example, pharmacophoric constraints derived from known active ligands or from the analysis of the protein binding site can be directly incorporated into the docking process to guide pose generation and scoring [4].

A notable example of a successful consensus protocol was applied to identify natural product inhibitors of the tubulin-microtubule system. The protocol combined molecular similarity searches, molecular docking, pharmacophore modeling, and in silico ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) prediction to prioritize candidates, which were then further validated with molecular dynamics simulations [17]. This integrated workflow led to the identification of several compounds with confirmed activity against cancer cell lines [17].

The following diagram illustrates the logical workflow of a modern, consensus virtual screening protocol.

Modern Consensus Virtual Screening Workflow

Advanced Protocols and the AI-Accelerated Future

Contemporary virtual screening protocols have become increasingly refined, incorporating best practices for input preparation and leveraging advanced computational techniques.

Essential Pre-Screening Preparations

The accuracy of any VS campaign is heavily dependent on the quality of the input data. Adherence to best practices in ligand and target preparation is non-negotiable for success [15].

Ligand Preparation: This involves generating accurate 3D geometries with optimal bond lengths and angles, as most docking programs do not alter these during the calculation [15]. Critical steps include:

- Tautomer and Protonation States: Correctly assigning the dominant tautomeric and protonation states of ligands at physiological pH (or the pH of the target environment) is crucial, as it dramatically affects hydrogen bonding patterns and molecular charge. Ensemble docking of multiple stable protomers is an effective strategy [15].

- Charge Assignment: Partial atomic charges must be assigned consistently for both the ligand and the target, as mismatches can lead to false negatives [15].

- Conformer Generation: For LBVS 3D methods and flexible docking, generating multiple, low-energy conformers for each ligand is essential. Tools like RDKit's ETKDG method are widely used for this purpose [5].

Target Preparation: This involves processing the protein structure by removing extraneous water molecules, adding hydrogen atoms, and assigning appropriate charges. While the emergence of highly accurate protein structure prediction tools like AlphaFold has significantly expanded the library of available targets, caution is advised. These models often predict a single static conformation and may have unreliable side-chain positioning, which can impact docking performance without careful refinement [6].

The Role of Artificial Intelligence and Advanced Computing

The latest evolution in VS is marked by the integration of artificial intelligence (AI) and the screening of ultra-large chemical libraries containing billions to trillions of molecules.

- AI-Accelerated Platforms: New open-source platforms, such as OpenVS, combine physics-based docking with active learning. These platforms train target-specific neural networks on-the-fly to intelligently select the most promising compounds for expensive docking calculations, dramatically reducing the computational resources and time required to screen multi-billion compound libraries [7].

- Improved Physics-Based Methods: Continued development of physics-based force fields remains critical. For instance, the RosettaVS protocol incorporates enhanced force fields (RosettaGenFF-VS) that model both the enthalpy (ΔH) and entropy (ΔS) of binding, and allow for substantial receptor flexibility (side-chain and limited backbone movement). This has proven essential for achieving high accuracy in pose and affinity prediction, outperforming many other methods on standard benchmarks [7].

Table 3: The Scientist's Toolkit: Essential Software and Resources

| Tool Name | Type/Category | Primary Function | Key Features | Access |

|---|---|---|---|---|

| Glide | SBVS Software | Molecular Docking & Virtual Screening | High accuracy; hierarchical filters; handles explicit water energetics (Glide WS) [18]. | Commercial |

| AutoDock Vina | SBVS Software | Molecular Docking | Widely used, good balance of speed and accuracy [19] [7]. | Open-Source |

| ROCS | LBVS Software | 3D Shape & Pharmacophore Similarity | Excellent for scaffold-hopping; fast overlay of chemical structures [16]. | Commercial |

| RDKit | Cheminformatics Toolkit | Core Cheminformatics & Descriptor Calculation | Foundation for many custom VS tools; includes fingerprint generation, conformer generation (ETKDG), and substructure search [5]. | Open-Source |

| VSFlow | LBVS Tool | Ligand-Based Virtual Screening | Open-source command-line tool integrating substructure, fingerprint, and shape-based screening [5]. | Open-Source |

| OpenVS | AI-VS Platform | AI-Accelerated Virtual Screening | Open-source platform using active learning to screen billion+ compound libraries efficiently [7]. | Open-Source |

| ZINC Database | Compound Library | Repository of Commercially Available Compounds | Curated database of millions to billions of "drug-like" and "lead-like" molecules for screening [15] [5]. | Free Access |

The following diagram summarizes the historical evolution of virtual screening, highlighting the key transitions and current state-of-the-art.

Evolution of Virtual Screening Paradigms

The evolution of virtual screening from early rigid docking to modern, intelligent hybrid protocols represents a remarkable trajectory of innovation in computational drug discovery. The field has moved from simplistic, single-method applications to sophisticated frameworks that leverage the synergy between ligand-based and structure-based information, augmented by AI and powerful physics-based simulations. This progression has consistently aimed at—and achieved—higher predictive accuracy, greater efficiency, and the ability to navigate the immense complexity of molecular recognition. For today's researcher, a thorough understanding of this evolution and the available toolkit is fundamental to designing and executing successful virtual screening campaigns that can reliably identify novel, potent, and drug-like compounds.

In the modern drug discovery pipeline, computational methods like structure-based and ligand-based virtual screening have become indispensable for efficient lead identification and optimization. These approaches rely on foundational concepts that predict the likelihood of a biological target being modulated by a drug-like molecule and the ability to extrapolate known chemical information to novel compounds. This application note details the core principles of druggability, binding site characterization, and the Similarity-Property Principle, providing structured protocols for their practical application within virtual screening workflows. By integrating these concepts, researchers can better prioritize targets and compounds, thereby accelerating the drug discovery process.

Core Concept Definitions and Applications

Druggability

Druggability describes the inherent potential of a biological target (typically a protein) to bind a drug-like molecule with high affinity, resulting in a functional, therapeutic change [20] [21]. A "druggable" target must not only be disease-modifying but also possess structural and physicochemical features compatible with drug binding.

- Quantitative Definition: A commonly used operational definition is that a druggable protein can bind drug-like compounds with a binding affinity below 10 μM [21].

- The Druggable Genome: The concept of the "druggable genome" refers to proteins capable of binding Rule-of-Five-compliant small molecules, estimated to be a small fraction of the human proteome [20]. It is estimated that only 10-15% of human proteins are druggable, and a similar percentage are disease-modifying, meaning only 1-2.25% of disease-modifying proteins are likely to be druggable [20].

- Beyond Small Molecules: The principle has been extended to include biologic medical products, such as therapeutic monoclonal antibodies [20].

Table 1: Methods for Predicting Druggability

| Method | Fundamental Principle | Key Advantages | Key Limitations |

|---|---|---|---|

| Precedence-Based [20] | Guilt-by-association; target is druggable if it belongs to a protein family with known drug targets. | Simple and fast; leverages existing knowledge. | Conservative; misses novel, undrugged protein families. |

| Structure-Based [20] [22] | Analyzes 3D protein structures to identify cavities and calculate their physicochemical/geometric properties. | Can identify novel druggable sites; provides structural insights. | Requires high-quality 3D structures; training set dependent. |

| Feature-Based [20] [21] | Uses machine learning (e.g., Support Vector Machines) on amino-acid sequence features or biophysical properties. | Applicable when 3D structures are unavailable; high throughput. | Accuracy is dependent on the quality and breadth of training data. |

Binding Sites

A binding site is a localized region on a protein, typically a cavity or cleft, where a ligand binds to produce a functional effect. Successful drug discovery depends on the accurate identification and characterization of these sites [23] [24].

- Characteristics of a Druggable Binding Site: A binding site with suitable size (able to accommodate compounds with MW < 500 Da), appropriate lipophilicity, and sufficient hydrogen-bonding potential is considered druggable [21]. Sufficient volume, depth, and hydrophobicity are key contributors [22].

- Allosteric and Cryptic Sites: Beyond the traditional active site, allosteric sites (regulatory sites distinct from the active site) and cryptic sites (sites that become apparent upon protein dynamics) represent valuable targets for drug discovery, offering potential for greater selectivity [22] [24].

Table 2: Computational Approaches for Binding Site Identification

| Category | Examples | Application Context |

|---|---|---|

| Structure-Based Methods [23] [22] | Q-SiteFinder, DoGSiteScorer, MD simulations | Requires an experimental or homology-modeled 3D structure. Identifies pockets based on geometry and energy. |

| Machine Learning-Based Methods [22] | SVM-based classifiers, Deep learning models | Integrates multiple features (geometric, evolutionary, energetic) to predict binding sites. |

| Binding Site Feature Analysis [22] | Analysis of hydrophobicity, polarity, charge, volume | Used to assess the quality of an identified site and its potential to bind drug-like molecules. |

The Similarity-Property Principle

The Similarity-Property Principle (SPP), also known as the Similar-Structure, Similar-Property Principle, is the fundamental assertion in cheminformatics that structurally similar molecules tend to have similar properties [25] [26]. These properties can be physical (e.g., boiling point) or biological (e.g., biological activity, target binding) [26].

- Foundation for Ligand-Based Methods: The SPP is the theoretical cornerstone of Ligand-Based Virtual Screening (LBVS), Quantitative Structure-Activity Relationships (QSAR), and Quantitative Structure-Property Relationships (QSPR) [25] [26].

- A Foundational, Not Absolute, Principle: While a powerful guiding principle, it is a heuristic, not an absolute law. Activity cliffs, where small structural changes lead to large property changes, are notable exceptions.

Experimental Protocols

Protocol: Structure-Based Druggability Assessment

This protocol uses a structure-based approach to evaluate a novel protein target's potential to bind small-molecule drugs [20] [23] [24].

Objective: To computationally assess the druggability of a target protein using its 3D structure.

Workflow:

Step-by-Step Procedure:

- Input Protein Structure: Obtain a high-quality 3D structure of the target protein from the Protein Data Bank (PDB) or generate one via homology modeling [23].

- Structure Pre-processing:

- Binding Site Identification:

- Descriptor Calculation & Druggability Prediction:

- For the selected pocket, calculate key geometric (volume, surface area, depth) and physicochemical (hydrophobicity, polarity) descriptors [20] [22].

- Input the calculated descriptors into a trained machine learning model (e.g., a Support Vector Machine as in DoGSiteScorer) or a pre-configured druggability prediction server like ChEMBL's DrugEBIlity [20] [22].

- Analysis: The output is a qualitative classification (e.g., druggable, less druggable, undruggable) and/or a quantitative druggability score. A high score indicates a high probability of finding drug-like binders.

Protocol: Ligand-Based Virtual Screening using the Similarity-Principle

This protocol uses the SPP to identify novel hit compounds by searching for molecules structurally similar to a known active compound [25] [27].

Objective: To identify potential hit compounds from a large chemical database that are structurally similar to a known active reference molecule.

Workflow:

Step-by-Step Procedure:

- Query Compound Selection: Select a known active compound with confirmed biological activity against the target of interest. This will serve as the reference or "query."

- Descriptor and Similarity Metric Definition:

- Choose a molecular representation. Extended-connectivity fingerprints (ECFPs) are a common and effective choice.

- Select a similarity coefficient. The Tanimoto coefficient is the most widely used metric for fingerprint-based similarity.

- Database Screening:

- Select a chemical database for screening (e.g., PubChem, ZINC, ChEMBL) [27].

- Using a cheminformatics toolkit (e.g., RDKit, OpenBabel) or the database's built-in search function, compute the structural similarity between the query compound and every compound in the database.

- Post-processing and Hit Selection:

- Rank all database compounds based on their similarity score to the query, from highest to lowest.

- Apply additional filters to the top-ranking compounds (e.g., based on physicochemical properties, presence of undesirable functional groups, or synthetic accessibility) [27].

- Select the final hits for purchase or synthesis based on high similarity scores and favorable properties.

Table 3: Key Computational Tools and Databases for Virtual Screening

| Resource Name | Type | Function in Research | Access |

|---|---|---|---|

| Protein Data Bank (PDB) | Database | Repository for experimentally determined 3D structures of proteins and nucleic acids. | Public |

| PubChem [27] | Database | Public database of chemical molecules and their biological activities. Essential for LBVS. | Public |

| ChEMBL [20] [27] | Database | Manually curated database of bioactive, drug-like molecules with annotated target information. | Public |

| DoGSiteScorer [22] | Web Tool / Software | Automatically detects and analyzes binding pockets and predicts their druggability. | Public |

| RDKit | Cheminformatics | Open-source toolkit for cheminformatics and machine learning. Used for descriptor calculation and similarity searching. | Public |

| Schrödinger Suite | Software Suite | Comprehensive commercial software for protein preparation, molecular docking, and SBVS. | Commercial |

| ZINC [27] | Database | Public database of commercially available compounds for virtual screening. | Public |

The Complementary Roles of VS and High-Throughput Screening (HTS)

The drug discovery process is a complex, time-consuming, and costly endeavor, requiring over 12 years and more than $1 billion to bring a new therapeutic to market [28]. Within this pipeline, high-throughput screening (HTS) and virtual screening (VS) have emerged as powerful, complementary technologies for identifying bioactive small molecules. HTS involves the experimental screening of thousands to millions of chemical compounds against specific biological targets using automated systems [29] [30]. By contrast, VS employs computational methods to prioritize compounds from vast chemical libraries for synthesis and testing [31] [4]. While HTS has traditionally been the workhorse of early drug discovery, recent advances in computing power and algorithmic sophistication have positioned VS as a viable alternative or complementary approach that can substantially expand the accessible chemical space [32].

This article examines the complementary strengths and limitations of both techniques and provides detailed protocols for their implementation. By understanding how these methods synergize, researchers can design more efficient screening strategies that leverage the advantages of both experimental and computational approaches.

Comparative Analysis of HTS and VS

Fundamental Characteristics and Workflows

High-Throughput Screening (HTS) is an experimental method designed to rapidly evaluate the biological activity of large compound libraries. A screen is typically considered "high throughput" when it conducts over 10,000 assays per day [30]. Modern HTS utilizes miniaturized formats (96-, 384-, 1536-, or 3456-well plates), automation, robotics, and sophisticated detection systems to test thousands of compounds simultaneously [28] [33]. The primary objective is to identify "hits" – compounds showing desired therapeutic effects against specific biological targets [29].

Virtual Screening (VS) encompasses computational techniques that predict biological activity prior to synthesis or testing. VS methods are broadly categorized as structure-based (SBVS), which utilizes the three-dimensional structure of the target (e.g., molecular docking), or ligand-based (LBVS), which relies on the structural information and physicochemical properties of known active compounds [4]. VS can screen trillions of virtual molecules, far exceeding the capacity of physical HTS [32].

Key Performance Metrics and Comparative Advantages

Table 1: Comparative Analysis of HTS and VS Characteristics

| Parameter | High-Throughput Screening (HTS) | Virtual Screening (VS) |

|---|---|---|

| Throughput Potential | 10,000-100,000 physical assays per day [30] | Screening of trillion-compound libraries [32] |

| Chemical Space Access | Limited to existing physical compounds [32] | Access to synthesis-on-demand and virtual compounds [32] |

| Primary Costs | Equipment, reagents, and compound libraries [28] | Computational resources and software [32] |

| Key Limitations | False positives/negatives, assay artifacts, limited compound availability [34] [32] | Dependency on target structure/known ligands, scoring function inaccuracies [31] [4] |

| Hit Rates | Typically 0.001% - 0.1% [32] | Reported 6.7% - 7.6% in large-scale studies [32] |

Table 2: Analysis of HTS and VS Strengths and Limitations

| Aspect | Strengths | Limitations |

|---|---|---|

| HTS | • Direct experimental measurement of activity [33]• Amenable to complex phenotypic assays [29]• No requirement for target structural information | • High rates of false positives and false negatives [34] [32]• Limited to available compound collections [32]• Resource-intensive and costly [28] |

| VS | • Vastly greater coverage of chemical space [32]• Lower cost per compound screened [32]• Can predict compounds for novel targets via homology models [32] | • Requires high-quality target structures or known ligands [31] [4]• Challenges in accounting for full protein flexibility [4]• Scoring functions may inaccurately predict binding affinities [4] |

Integrated Screening Strategies and Protocols

Strategic Integration of HTS and VS

The complementary nature of HTS and VS has led to the development of integrated strategies that leverage the advantages of both approaches. Three primary integrated strategies have emerged:

- Sequential Approaches: These involve dividing the VS pipeline into consecutive steps, typically beginning with LBVS pre-filtering due to lower computational cost, followed by more computationally intensive SBVS methods [4]. This strategy optimizes the tradeoff between computational cost and methodological complexity.

- Parallel Approaches: Both LBVS and SBVS methods are run independently, and the best candidates from each method are selected for biological testing [4]. This approach can increase performance and robustness over single-modality approaches but may show sensitivity to target-specific structural details.

- Hybrid Approaches: These combine LB and SB techniques into a unified framework that simultaneously exploits all available information about both ligands and targets [4]. This represents the most integrated but also most computationally complex strategy.

Protocol 1: Implementation of a Quantitative HTS (qHTS) Campaign

Objective: To identify and validate hit compounds through experimental quantitative HTS.

Background: Quantitative HTS (qHTS) represents an advancement over traditional single-concentration HTS by testing compounds at multiple concentrations, generating concentration-response curves simultaneously for thousands of compounds [34] [35]. This approach reduces false-positive and false-negative rates compared to traditional HTS [34].

Table 3: Key Reagents and Materials for qHTS

| Reagent/Material | Function/Description |

|---|---|

| Compound Libraries | Collections of chemical compounds (e.g., combinatorial chemistry, natural products, biological libraries) [28] |

| Microtiter Plates | Miniaturized assay formats (96-, 384-, 1536-well plates) for high-density testing [28] [33] |

| Detection Reagents | Fluorescent or luminescent probes (e.g., for FRET, FP, TR-FRET) to measure biological activity [28] [33] |

| Automated Liquid Handling Systems | Robotics for precise dispensing of reagents and compounds in miniaturized formats [30] [28] |

| High-Sensitivity Detectors | Plate readers capable of detecting fluorescence, luminescence, or absorbance signals [34] [33] |

Procedure:

- Target Identification and Assay Development: Select a biologically relevant target (e.g., enzyme, receptor) and develop a robust assay measuring its activity. Common targets include G-protein coupled receptors (45%), enzymes (28%), and ion channels (5%) [28].

- Assay Validation: Validate the assay using control compounds. Calculate validation metrics including Z'-factor (aim for >0.5), signal-to-noise ratio, and coefficient of variation [33].

- Compound Preparation: Dispense compound libraries into assay plates using automated liquid handling systems. For qHTS, prepare multiple concentration points for each compound, typically via serial dilution [34].

- Assay Implementation: Incubate compounds with the biological target and detection reagents. For enzyme targets, this typically involves measuring product formation or substrate depletion over time.

- Data Acquisition: Read plates using appropriate detectors (e.g., fluorescence, luminescence). Modern HTS can test millions of compounds across 15 concentrations [34] [35].

- Data Analysis:

- Fit concentration-response data to the Hill equation [34] [35]: [ Ri = E0 + \frac{E{\infty} - E0}{1 + \exp{-h[\log Ci - \log AC{50}]}} ] where (Ri) is the response at concentration (Ci), (E0) is the baseline response, (E{\infty}) is the maximal response, (AC_{50}) is the half-maximal activity concentration, and (h) is the Hill slope [34].

- Estimate parameters ((AC{50}), (E{\max}) = (E{\infty} - E0)) and their uncertainties through nonlinear regression [34].

- Identify "hits" based on efficacy and potency thresholds.

Troubleshooting Tips:

- High false-positive rates may result from compound interference (e.g., aggregation, fluorescence). Include counter-screens and additives (e.g., Tween-20) to mitigate these effects [32].

- Poor curve fits often occur when the concentration range doesn't capture both asymptotes of the Hill equation. Optimize concentration ranges to properly define baseline and maximal responses [34] [35].

Protocol 2: Structure-Based Virtual Screening with Molecular Docking

Objective: To identify potential hit compounds through computational prediction of protein-ligand interactions.

Background: Molecular docking, a primary SBVS method, predicts the native binding mode of a small molecule within a protein's binding site and estimates the interaction affinity [31]. Docking programs combine search algorithms to explore possible ligand poses with scoring functions to rank these poses [31].

Table 4: Key Computational Resources for Structure-Based VS

| Resource | Function/Description |

|---|---|

| Protein Structures | X-ray crystallography, cryo-EM, or homology models of target proteins [32] |

| Chemical Libraries | Virtual compound collections (e.g., ZINC, Enamine) totaling billions of molecules [32] |

| Docking Software | Programs such as AutoDock, GOLD, DOCK, or Glide for pose prediction [31] |

| Computing Infrastructure | High-performance computing clusters with thousands of CPUs/GPUs [32] |

Procedure:

- Target Preparation:

- Obtain the three-dimensional structure of the target protein from databases (e.g., PDB) or generate a homology model if an experimental structure is unavailable.

- Prepare the protein structure by adding hydrogen atoms, assigning protonation states, and removing water molecules (except functionally important waters).

- Compound Library Preparation:

- Select a virtual compound library (commercially available or custom-designed).

- Generate 3D structures for each compound and minimize their energies.

- Apply drug-like filters (e.g., Lipinski's Rule of Five) and remove compounds with problematic structural motifs (PAINS).

- Docking Simulation:

- Define the binding site coordinates, typically based on known ligand binding sites or functional domains.

- Perform docking calculations using programs such as AutoDock [31] or AtomNet [32]. For deep learning approaches like AtomNet, this involves scoring protein-ligand complexes using convolutional neural networks [32].

- Execute the docking run, which may require extensive computational resources (e.g., 40,000 CPUs, 3,500 GPUs for large libraries) [32].

- Post-Docking Analysis:

- Cluster top-ranked poses to ensure structural diversity.

- Select the highest-ranking compounds from each cluster, avoiding manual cherry-picking to reduce bias [32].

- Visually inspect top-ranked complexes to assess binding mode plausibility.

- Hit Selection and Experimental Validation:

- Select 50-500 top-ranking compounds for synthesis or purchase from "on-demand" chemical libraries [32].

- Validate computational predictions through experimental testing following Protocol 1.

Troubleshooting Tips:

- Poor enrichment may result from inadequate treatment of protein flexibility. Consider using multiple protein conformations or flexible residue side chains during docking [31] [4].

- Inaccurate binding affinity predictions may arise from limitations in scoring functions. Consider using consensus scoring or more computationally intensive free energy calculations for final hit prioritization [4].

Protocol 3: Ligand-Based Virtual Screening

Objective: To identify novel active compounds based on similarity to known active molecules.

Background: LBVS methods operate under the similarity principle, which states that structurally similar molecules are likely to have similar biological activities [4]. These approaches are particularly valuable when 3D structural information for the target is unavailable but known active compounds exist.

Procedure:

- Reference Compound Selection: Curate a set of known active compounds with demonstrated activity against the target of interest.

- Molecular Descriptor Calculation: Compute molecular descriptors (1D, 2D, or 3D) that encode structural and physicochemical properties relevant to biological activity.

- Similarity Searching: Screen virtual compound libraries using similarity metrics (e.g., Tanimoto coefficient) to identify compounds structurally related to reference actives.

- Pharmacophore Modeling (Optional): Develop a pharmacophore model defining essential molecular features necessary for biological activity, using it to filter virtual libraries [4].

- Hit Prioritization: Rank compounds by similarity scores and apply additional filters (e.g., ADMET properties) [4].

- Experimental Validation: Select top-ranking compounds for experimental testing.

HTS and VS represent complementary rather than competing approaches in modern drug discovery. HTS provides direct experimental measurement of compound activity in biologically relevant systems but is constrained by practical limitations of physical screening. VS offers unparalleled access to chemical diversity and lower costs per compound screened but depends on the availability of structural information or known ligands. The most effective drug discovery strategies intelligently integrate both approaches, leveraging their complementary strengths to maximize the probability of identifying novel chemical starting points for therapeutic development. As both technologies continue to evolve—with advances in qHTS, 3D cell models, AI-based screening, and computational power—their synergy will undoubtedly become increasingly important in addressing the challenges of modern drug discovery.

Executing Virtual Screening: From Workflow Design to AI Integration

In the realm of modern drug discovery, virtual screening serves as a pivotal cornerstone for identifying potential drug candidates from vast chemical libraries [36]. The success of any virtual screening campaign is fundamentally dependent on rigorous initial target analysis and comprehensive bibliographic research. These critical first steps determine the selection of appropriate computational methodologies and establish the foundation upon which all subsequent experiments are built. Target analysis involves a thorough investigation of the biological target's properties, available structural data, and known ligand information, while bibliographic research ensures that previous experimental findings and established structure-activity relationships are effectively leveraged. This systematic approach enables researchers to design more effective virtual screening protocols, whether they employ structure-based, ligand-based, or integrated hybrid methods [6]. The following sections provide detailed methodologies and protocols for conducting thorough target analysis and bibliographic research, framed within the context of structure- and ligand-based virtual screening protocols.

Core Principles of Target Analysis

Target Characterization and Classification

Biological Target Assessment: Begin by comprehensively characterizing the biological target's role in disease pathology. Determine whether the target is an enzyme, receptor, ion channel, or nucleic acid, and investigate its biological function and therapeutic relevance. For proteins, identify key structural domains and functional regions, particularly the binding site characteristics. Classify the target according to standard protein classification systems (e.g., kinases, GPCRs, nuclear receptors) to leverage class-specific screening approaches [36]. For emerging target classes like RNA, recognize the unique biophysical phenomena that underpin their folding and interaction patterns, which require specialized screening methods [37].

Structural Biology Evaluation: Conduct an extensive search for existing structural data on the target protein. Preferred sources include the Protein Data Bank (PDB) for experimentally determined structures (X-ray crystallography, cryo-EM, NMR) and databases like AlphaFold for predicted structures [6]. Critically assess the quality of available structures using resolution values, R-factors, and electron density maps for experimental structures. For predicted structures, evaluate confidence scores and model reliability. Identify multiple structural conformations (apo, holo, intermediate states) that may reveal flexibility and conformational changes relevant to ligand binding [7].

Binding Site Analysis: Perform detailed analysis of the binding site geometry, physicochemical properties, and flexibility. Characterize the binding pocket dimensions, surface features, and key interaction points (hydrogen bond donors/acceptors, hydrophobic patches, charged regions). Use computational tools to assess side-chain flexibility and backbone mobility within the binding site. For targets with known resistance mutations (e.g., PfDHFR quadruple mutant N51I/C59R/S108N/I164L), analyze how mutations alter binding site properties and impact drug binding [38].

Table 1: Key Target Analysis Parameters and Assessment Methods

| Analysis Parameter | Assessment Method | Key Outputs | Interpretation Guidelines |

|---|---|---|---|

| Target Classification | Database mining (UniProt, Gene Ontology) | Protein class, biological function | Determines appropriate screening strategy (e.g., kinase-focused libraries) |

| Structural Quality | PDB metadata analysis, Model quality scores | Resolution, confidence metrics, validation reports | Structures with resolution ≤2.5Å preferred for structure-based methods |

| Binding Site Properties | Pocket detection algorithms, Surface property mapping | Volume, surface area, hydrophobicity, electrostatic potential | Larger, hydrophobic pockets may favor docking; polar sites need precise pharmacophores |

| Structural Flexibility | Comparative analysis of multiple structures, B-factor analysis | Root-mean-square deviation (RMSD) between conformations, flexibility hotspots | High flexibility may require ensemble docking or flexible receptor protocols |

| Known Mutations | Literature mining, mutation databases | Mutation frequency, clinical significance, structural impact | Mutations at binding site residues may necessitate specialized screening approaches |

Bibliographic Research and Data Curation

Literature Mining and Data Integration: Conduct systematic reviews of scientific literature to gather comprehensive information on known active compounds, established structure-activity relationships (SARs), and previous screening efforts. Utilize databases including PubMed, Scopus, and Web of Science with targeted search queries combining target names with keywords such as "inhibitors," "ligands," "crystal structure," and "mutations." Extract quantitative activity data (IC₅₀, Ki, EC₅₀) and convert to consistent units (pIC₅₀ = −log₁₀(IC₅₀) for uniform analysis [36]. Document SAR trends, key pharmacophoric features, and notable activity cliffs where small structural changes cause significant potency differences [39].

Chemical Data Collection and Curation: Collect known active compounds from public databases (ChEMBL, PubChem BioAssay, BindingDB) and literature sources. Implement rigorous data curation protocols including structure standardization, removal of duplicates, and elimination of compounds with undesirable properties (e.g., pan-assay interference compounds or PAINS) [40]. For ligand-based approaches, gather comprehensive sets of active and inactive compounds where available. Pay particular attention to data quality, as erroneous activity data and mislabeled compounds significantly impact model performance [40].

Dataset Validation and Bias Assessment: Implement systematic bias assessment protocols to identify and quantify potential biases in chemical datasets. Evaluate seventeen or more physicochemical properties to ensure balanced representation between active compounds and decoys [36]. Use fingerprint-based similarity methods and principal component analysis (PCA) to visualize the distribution of actives versus decoys in chemical space. Assess "analogue bias" where numerous active analogues from the same chemotype may artificially inflate model performance metrics [36]. Compare dataset characteristics with established benchmark sets like Maximum Unbiased Validation (MUV) to identify potential limitations [36].

Experimental Protocols for Target Analysis

Structural Data Preparation Protocol

Protein Structure Preparation:

- Source Selection: Retrieve protein structures from PDB or predicted models from AlphaFold. Prioritize high-resolution structures (≤2.5Å) with relevant ligands bound.

- Structure Processing: Remove water molecules, ions, and crystallization additives, except for functionally important water molecules. Add and optimize hydrogen atoms using tools like OpenEye's Make Receptor [38].

- Binding Site Definition: For crystal structures, define the binding site based on the coordinates of cocrystallized ligands. For apo structures, use computational pocket detection algorithms.

- Protonation States: Assign appropriate protonation states to acidic and basic residues based on physiological pH and local environment.

- Structural Alignment: Align multiple structures to identify conserved binding site features and conformational variations.

Ligand Data Preparation:

- Compound Collection: Gather known active compounds from PubChem, ChEMBL, and literature sources [36] [40].

- Structure Standardization: Neutralize structures, remove salts, and generate canonical tautomers using tools like RDKit [36].

- Conformational Sampling: Generate multiple low-energy conformers for each ligand using tools like Omega [38].

- Descriptor Calculation: Compute molecular descriptors (molecular weight, logP, hydrogen bond donors/acceptors) and fingerprints (ECFP4, ECFP6, MACCS) using RDKit or similar toolkits [36].

Dataset Validation Protocol

Bias Assessment Workflow:

- Property Analysis: Calculate 17+ physicochemical properties (molecular weight, logP, polar surface area, etc.) for both active compounds and decoys.

- Similarity Analysis: Perform similarity searching using 2D fingerprints to identify potential analogue bias.

- Diversity Assessment: Apply MinMaxPicker algorithm or similar methods to ensure chemical diversity within the dataset [37].

- Spatial Distribution Mapping: Use 2D principal component analysis (PCA) to visualize the positioning of active compounds relative to decoys in chemical space [36].

- Comparative Benchmarking: Compare dataset characteristics with standard benchmark sets like MUV to identify potential biases [36].

Decoy Selection and Validation:

- Source Selection: Generate or select decoys from databases like DUD-E or Directory of Useful Decoys [36] [7].

- Property Matching: Ensure decoys match the physicochemical properties of actives while being chemically distinct.

- Likeness Filtering: Apply drug-like filters (molecular weight ≤400, rotatable bonds ≤5, etc.) to maintain relevance [37].

- Validation: Assess the challenging nature of the decoy set by ensuring low similarity to active compounds.

Diagram 1: Target analysis and bibliographic research workflow

Quantitative Benchmarking Data

Performance Metrics for Method Selection

Virtual Screening Performance Benchmarks: Evaluation of virtual screening methods utilizes specific metrics to assess their ability to identify true active compounds. Key performance indicators include Enrichment Factor (EF), which measures the concentration of active compounds in the top fraction of ranked molecules, and Area Under the Curve (AUC) of Receiver Operating Characteristic (ROC) curves, which evaluates the overall ranking quality [38] [7]. The success rate indicates the percentage of targets for which the best binder is placed among the top 1%, 5%, or 10% of ranked compounds [7]. These metrics provide critical guidance for selecting appropriate virtual screening methods based on target characteristics.

Table 2: Virtual Screening Performance Benchmarks Across Methodologies

| Screening Method | Target Class | Performance Metric | Result | Reference Application |

|---|---|---|---|---|

| Consensus Holistic Screening | PPARG (Nuclear Receptor) | AUC | 0.90 | [36] |

| Consensus Holistic Screening | DPP4 (Protease) | AUC | 0.84 | [36] |

| PLANTS + CNN-Score | PfDHFR (Wild-Type) | EF 1% | 28 | [38] |

| FRED + CNN-Score | PfDHFR (Quadruple Mutant) | EF 1% | 31 | [38] |

| RosettaGenFF-VS | CASF-2016 Benchmark | EF 1% | 16.72 | [7] |

| RNAmigos2 | RNA Targets | Early Enrichment | Top 2.8% | [37] |

| SVM + ECFP6 | BRAF Kinase | Accuracy | 99% | [40] |

Data Quality Impact Assessment

Impact of Data Quality on Model Performance: The quality and composition of training data significantly influence virtual screening performance. Studies demonstrate that conventional machine learning algorithms like Support Vector Machines (SVM) can achieve 99% accuracy when using optimal molecular representations (Extended + ECFP6 fingerprints), surpassing more complex deep learning methods [40]. Common practices such as using decoys for training often lead to high false positive rates, while defining compounds above certain pharmacological thresholds as inactives reduces model sensitivity and recall [40]. The ratio of active to inactive compounds in training data substantially affects performance, with imbalanced datasets where inactives outnumber actives resulting in decreased recall but increased precision [40].

Implementation Toolkit

Research Reagent Solutions

Table 3: Essential Research Tools for Target Analysis and Bibliographic Research

| Tool/Category | Specific Examples | Primary Function | Application Context |

|---|---|---|---|

| Structural Databases | Protein Data Bank (PDB), AlphaFold Database | Source experimental and predicted protein structures | Structure-based screening, binding site analysis |

| Chemical Databases | PubChem, ChEMBL, BindingDB | Source bioactive compounds and activity data | Ligand-based screening, SAR analysis, model training |

| Bioactivity Databases | DUD-E, DEKOIS 2.0 | Source benchmark sets with active compounds and decoys | Method validation, bias assessment, performance benchmarking |

| Structure Preparation | OpenEye Toolkits, RDKit, Schrödinger Suite | Process protein and ligand structures for analysis | Structure cleanup, protonation state assignment, conformer generation |

| Descriptor Calculation | RDKit, PaDEL-Descriptor | Compute molecular descriptors and fingerprints | Chemical space analysis, machine learning feature generation |

| Cheminformatics | KNIME, Pipeline Pilot | Create reproducible data analysis workflows | Data curation, standardization, and preprocessing automation |

| Visualization Tools | PyMOL, Chimera, RDKit | Visualize structures and binding interactions | Binding site analysis, interaction mapping, result interpretation |

Integrated Screening Strategy Selection

Methodology Selection Framework: Based on comprehensive target analysis and bibliographic research, select appropriate virtual screening strategies:

Structure-Based Methods Priority: When high-quality target structures are available, employ docking-based approaches (AutoDock Vina, FRED, PLANTS, RosettaVS) [38] [7]. For targets with conformational flexibility, use methods that incorporate side-chain and limited backbone flexibility (RosettaVS) [7]. For challenging targets like RNA, consider specialized tools (RNAmigos2, rDock) that account for unique structural features [37].

Ligand-Based Methods Priority: When structural data is limited but known active compounds are available, implement similarity-based methods (ROCS, eSim, FieldAlign) or quantitative approaches (QuanSA) [6]. For targets with well-defined SAR, employ machine learning models (SVM, Random Forest) with optimized molecular representations [40].

Consensus and Hybrid Approaches: Implement consensus scoring strategies that combine multiple methods (QSAR, pharmacophore, docking, shape similarity) to improve hit rates [36]. Deploy sequential workflows that use rapid ligand-based filtering followed by structure-based refinement of promising subsets [6]. Apply parallel screening with both ligand- and structure-based methods, combining results through consensus frameworks to increase confidence in selections [6].

Diagram 2: Decision workflow for virtual screening strategy selection

Target analysis and bibliographic research constitute the critical foundation for successful virtual screening campaigns in drug discovery. Through systematic characterization of biological targets, rigorous assessment of structural data, comprehensive literature mining, and meticulous data curation, researchers can design optimized screening strategies that maximize the probability of identifying novel bioactive compounds. The integration of performance benchmarking data with method selection frameworks enables informed decisions about appropriate virtual screening methodologies based on target-specific characteristics and available data resources. By implementing these detailed protocols for target analysis and bibliographic research, researchers can establish a robust foundation for structure- and ligand-based virtual screening campaigns that effectively navigate the complex landscape of modern drug discovery.

Within structure- and ligand-based virtual screening protocols, the construction of a high-quality screening library is a critical preliminary step that profoundly influences the success of all subsequent stages. This library serves as the foundational chemical space from which potential drug candidates are identified. The process encompasses the strategic sourcing of compounds, their meticulous computational preparation, and the generation of biologically relevant 3D conformers. The fidelity of this process directly impacts the reliability of virtual screening outcomes, as errors introduced early in library preparation can propagate through the workflow, leading to false positives, missed hits, and ultimately, costly experimental failures. This document outlines detailed application notes and protocols for building a robust screening library, framed within a comprehensive thesis on virtual screening research.

Sourcing Compounds for Your Library

The first step involves aggregating compounds from diverse and reliable sources. The choice of source dictates the chemical space explored and influences the hit discovery rate.

Key Compound Databases

Table 1: Key Databases for Sourcing Screening Compounds

| Database Name | Source Type | Approximate Scale | Key Features & Use Cases |

|---|---|---|---|

| Commercial Vendor Libraries (e.g., Enamine, ChemDiv) | Physical/Virtual | 10^6 - 10^7 compounds | Readily available for purchase (physical), or for virtual screening (catalogues). Ideal for high-throughput screening (HTS) follow-up. |

| Publicly Available Repositories (e.g., ZINC, PubChem) | Virtual | 10^8 - 10^10 compounds | Free, large-scale libraries like ZINC are standard for initial virtual screening [41]. |

| Corporate/Institutional Collections | Physical/Virtual | Varies | Contains historical, proprietary compounds. Used for in-house lead optimization and repurposing. |