Strategies for Reducing False Positives in Chemogenomic Screening: A Comprehensive Guide for Drug Discovery

Chemogenomic screening has become a cornerstone of modern drug discovery, enabling the systematic exploration of chemical-genetic interactions on a genome-wide scale.

Strategies for Reducing False Positives in Chemogenomic Screening: A Comprehensive Guide for Drug Discovery

Abstract

Chemogenomic screening has become a cornerstone of modern drug discovery, enabling the systematic exploration of chemical-genetic interactions on a genome-wide scale. However, the high rate of false positives remains a significant challenge, leading to wasted resources and delayed projects. This article provides a comprehensive overview of strategies to mitigate false positives, covering foundational principles, advanced methodological applications, practical troubleshooting, and rigorous validation techniques. We explore computational target prediction, machine learning triage, experimental design optimization, and integrative multi-omics approaches. Designed for researchers, scientists, and drug development professionals, this guide synthesizes current best practices and emerging technologies to enhance the precision and efficiency of chemogenomic screening campaigns.

Understanding the Root Causes of False Positives in Chemogenomic Assays

In chemogenomic screening research, false positives represent activity in an assay that is not related to the targeted biology but arises from surreptitious compound interference with the assay detection system. These false results can be particularly problematic because they are often reproducible and concentration-dependent—characteristics typically attributed to genuine actives. With genuine active compounds being exceptionally rare (approximately 0.01–0.1% of screening libraries), they can be easily obscured by a high incidence of false positives [1]. This technical support center provides comprehensive troubleshooting guidance to help researchers identify, understand, and mitigate these deceptive signals across common screening platforms.

Section 1: Understanding Reporter Gene Assay False Positives

Reporter gene assays (RGAs) are particularly susceptible to specific interference mechanisms that can generate misleading results.

FAQ: What are the most common causes of false positives in Reporter Gene Assays?

Q: Why does my RGA screen show an unusually high hit rate? A: Elevated hit rates in RGAs often stem from compounds acting as "frequent hitters" that interfere with the assay system rather than modulating the intended biological target. Statistical models built from testing 650,000 compounds have identified that these frequent hitters often share specific chemical structures and can be prioritized computationally before costly follow-up studies [2].

Q: Can compounds directly inhibit the reporter enzyme itself? A: Yes, direct inhibition of firefly luciferase is a well-documented source of false positives. For example, substituted N-pyridin-2-ylbenzamides have been identified as competitive inhibitors with respect to the substrate luciferin, with IC₅₀ values reaching as low as 0.069 μM [3]. These inhibitors can accommodate themselves in the luciferin binding site, effectively competing with the natural substrate.

Q: How can I distinguish true actives from luciferase inhibitors? A: Implement a counter-screen using purified luciferase enzyme with KM levels of substrate. Compounds showing activity in both your primary RGA and this counter-screen are likely direct luciferase inhibitors rather than specific modulators of your biological target [1].

Experimental Protocol: Identifying Direct Luciferase Inhibitors

Purpose: To differentiate compounds that directly inhibit firefly luciferase from genuine modulators of your biological pathway.

Materials:

- Purified firefly luciferase enzyme

- Luciferin substrate at KM concentration

- Test compounds

- Luciferase assay buffer

- Luminescence plate reader

Procedure:

- Prepare a concentration series of each test compound in luciferase assay buffer

- Pre-incubate luciferase with compounds for 15-30 minutes at room temperature

- Initiate the reaction by adding luciferin at KM concentration

- Measure luminescence immediately

- Calculate inhibition curves and IC₅₀ values for each compound

- Compare activity patterns with primary RGA results

Interpretation: Compounds exhibiting potent inhibition in this purified enzyme assay (typically IC₅₀ < 10 μM) that also showed activity in your cellular RGA are likely false positives due to direct luciferase inhibition [3].

Section 2: High-Content Screening (HCS) False Positives

High-content screening generates multidimensional data where artifacts can manifest in various forms, from subtle morphological changes to complete assay interference.

FAQ: Addressing Common HCS Artifacts

Q: Why do I see inconsistent staining across my HCS plates? A: Inconsistent staining often results from technical variations in sample processing. Inadequate deparaffinization can cause spotty, uneven background staining, while slides losing signal over time during storage can create plate-edge effects. Always use freshly cut tissue sections and fresh xylene for deparaffinization to minimize these artifacts [4] [5].

Q: How does compound autofluorescence affect HCS results? A: Compound fluorescence can significantly impact assays using light-based detection, particularly those utilizing blue-shifted spectral ranges. In some cases, fluorescent compounds can constitute up to 50% of actives in certain assays. This interference is reproducible and concentration-dependent, making it initially challenging to identify [1].

Q: What causes high background in my HCS images? A: High background stems from multiple potential sources including insufficient blocking, endogenous enzyme activity, non-specific antibody binding, or inadequate washing. For HRP-based detection systems, endogenous peroxidase activity in tissue samples may produce excess background signal if not properly quenched [4].

Troubleshooting Guide: HCS Image Artifacts

Table: Common HCS Image Artifacts and Solutions

| Problem | Possible Causes | Recommended Solutions |

|---|---|---|

| Weak or No Staining | Epitope masking due to fixation; insufficient antibody concentration; inactive antibodies | Optimize antigen retrieval methods; increase primary antibody concentration; include positive controls to verify antibody activity [4] [5] |

| High Background | Inadequate blocking; endogenous enzyme activity; non-specific secondary antibody binding | Increase blocking time to 30+ minutes; quench endogenous peroxidases with 3% H₂O₂; use polymer-based detection systems [4] |

| Non-Specific Staining | Inadequate deparaffinization; insufficient washing; section drying out | Increase deparaffinization time; extend washing to 3×5 minutes with TBST; ensure sections remain covered in liquid [5] |

| Uneven Staining | Inconsistent antigen retrieval; uneven reagent distribution | Use microwave oven for consistent heat distribution during antigen retrieval; ensure complete coverage of tissue sections with all reagents [4] |

Section 3: Quantitative Analysis of False Positive Mechanisms

Understanding the prevalence and characteristics of different interference mechanisms enables more effective screening triage strategies.

Table: Prevalence and Characteristics of Common Assay Interferences

| Interference Type | Prevalence in Library | Enrichment in Actives | Key Characteristics | Prevention Strategies |

|---|---|---|---|---|

| Aggregation | 1.7–1.9% | Up to 90-95% in some biochemical assays | Non-specific inhibition; detergent-sensitive; steep Hill slopes | Add 0.01–0.1% Triton X-100 to assay buffer [1] |

| Compound Fluorescence | EX340nm/EM450nm: 2–5%EX480nm/EM540nm: 0.01–0.2% | Up to 50% in blue-shifted assays | Reproducible, concentration-dependent | Use orange/red-shifted fluorophores; include pre-read step [1] |

| Firefly Luciferase Inhibition | At least 3% | Up to 60% in some cell-based assays | Competitive inhibition with respect to luciferin | Counter-screen against purified luciferase; use orthogonal assays [1] [3] |

| Redox Cycling Compounds | ~0.03% generate H₂O₂ at appreciable levels | Up to 85% in some assays | DTT-dependent; time-dependent inactivation | Replace DTT/TCEP with weaker reducing agents; use [DTT] ≥10mM [1] |

Section 4: Computational and Chemogenomic Solutions

Advanced computational methods and chemogenomic approaches provide powerful tools for prioritizing and understanding false positives.

FAQ: Computational Mitigation Strategies

Q: How can in silico models help identify potential false positives before screening? A: In silico chemogenomics approaches can create "frequent hitter" models that prioritize potential false positives based on chemical structure. These models have successfully identified nonspecific actives in RGAs, achieving a 50% hit rate compared to normal hit rates as low as 2% [2]. The most frequently predicted cellular targets for these frequent hitters relate to apoptosis and cell differentiation, including kinases, topoisomerases, and protein phosphatases.

Q: What is the role of target prediction methods in false positive identification? A: Modern target prediction methods like MolTarPred, which uses 2D similarity searching with Morgan fingerprints, can help identify off-target effects that may be responsible for false positive signals. These ligand-centric methods leverage known ligand-target interactions to hypothesize mechanisms for observed off-target responses [6].

Q: How can chemogenomic libraries address transporter-mediated false positives? A: Double gene deletion libraries for membrane transporters can systematically identify import/export routes that contribute to compound susceptibility or resistance. For example, studies using Saccharomyces cerevisiae have identified specific transporters like Itr1 responsible for importing triazole and imidazole antifungal compounds, while ABC transporter Pdr5 may play roles in both import and export of different compounds [7].

Research Reagent Solutions

Table: Essential Reagents for False Positive Mitigation

| Reagent/Category | Function in False Positive Reduction | Example Applications |

|---|---|---|

| Polymer-based Detection Reagents | More sensitive than avidin/biotin systems; reduce background in IHC/HCS | SignalStain Boost IHC Detection Reagents for enhanced sensitivity with minimal background [4] |

| Antigen Retrieval Buffers | Unmask epitopes masked by fixation; improve specific signal | Optimized buffers for microwave or pressure cooker retrieval methods [4] |

| Non-ionic Detergents | Reduce aggregation-based inhibition by disrupting compound aggregates | Triton X-100 at 0.01–0.1% in assay buffers to prevent nonspecific inhibition [1] |

| Enzyme Inhibitors | Quench endogenous enzyme activity that causes background | 3% H₂O₂ for peroxidase; 2mM Levamisole for phosphatase [5] |

| Normal Sera & Blocking Reagents | Reduce non-specific antibody binding | 5% normal goat serum for 30 minutes before primary antibody incubation [4] |

Section 5: Integrated Experimental Workflows for False Positive Identification

Implementing systematic workflows that combine computational and experimental approaches provides the most robust false positive mitigation.

Integrated Workflow for False Positive Identification

Experimental Protocol: Orthogonal Assay Validation

Purpose: To confirm that compound activity is directed toward the biological target of interest rather than being assay format-dependent.

Principle: Orthogonal assays use different reporters or detection technologies to test compounds identified as actives in the primary screen. Compounds inactive in orthogonal assays are removed from consideration, as this indicates the original activity was likely assay format-dependent [1].

Implementation Strategy:

- For luminescence-based primary screens, implement fluorescence-based orthogonal assays

- For imaging-based primary screens, implement biochemical or transcriptional readouts

- Ensure orthogonal assays measure the same biological pathway but through different detection mechanisms

- Prioritize compounds that show consistent activity across multiple assay formats

Interpretation: Compounds showing activity only in the primary screen but not in orthogonal assays are likely false positives resulting from specific interference with the detection system of the primary assay.

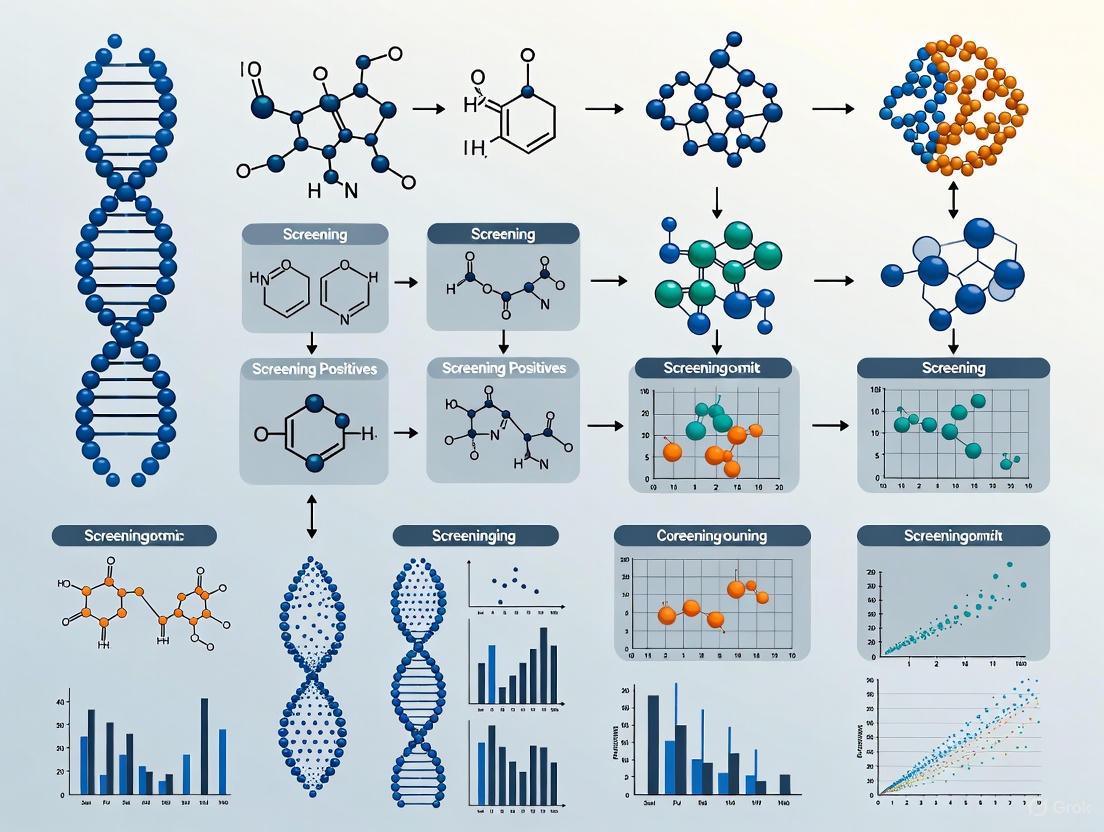

Section 6: Visualization of False Positive Mechanisms

Understanding the molecular mechanisms underlying common false positives enables more effective experimental design.

False Positive Mechanisms and Detection Strategies

Successfully navigating the challenge of false positives in chemogenomic screening requires integrated strategies that combine computational pre-screening, rigorous experimental design, and systematic follow-up validation. By implementing the troubleshooting guides, experimental protocols, and computational approaches outlined in this technical support center, researchers can significantly improve the efficiency of their screening campaigns, saving both time and resources while increasing confidence in screening results. The most effective false positive reduction strategies employ multiple orthogonal approaches, recognizing that no single method can identify all potential sources of interference in complex biological systems.

Frequently Asked Questions

What are the most common types of assay-interfering compounds? The most prevalent mechanisms of assay interference include chemical reactivity (e.g., thiol-reactive and redox-active compounds), inhibition of reporter enzymes (e.g., luciferase), and the formation of colloidal aggregates. These compounds generate false positive signals by interfering with the detection technology rather than through a specific biological interaction [8].

How can I proactively identify frequent hitters in my screening library? Computational tools can filter libraries before screening. Instead of relying solely on oversensitive substructure alerts (like traditional PAINS filters), use modern Quantitative Structure-Interference Relationship (QSIR) models. Tools like "Liability Predictor" are trained on large high-throughput screening (HTS) datasets to more reliably predict compounds with nuisance behaviors, allowing for better library design and prioritization [8].

What are the best practices for confirming that a screening hit is not a false positive? A multi-pronged approach is essential:

- Use orthogonal assays that employ a different detection technology (e.g., switch from a luminescence-based to a fluorescence-based readout) [8].

- Inspect dose-response curves for shapes characteristic of interference, such as a steep, "all-or-nothing" response [9].

- Perform experimental counter-screens specifically designed to detect reactivity, aggregation, or reporter enzyme inhibition [8].

- Apply computational triaging with QSIR models to flag potential interferers for closer scrutiny [8].

Beyond small molecules, what other screening technologies have off-target effects? CRISPR-Cas-based genetic screening is also susceptible to off-target effects. The CRISPR-Cas complex can bind and cleave DNA at sites with high sequence similarity to the intended target, leading to unintended mutations and potential genotoxicity. These effects are influenced by guide RNA design, the type of Cas protein used, and the delivery method [10] [9].

Troubleshooting Guides

Guide 1: Triage of Small Molecule Screening Hits

This guide outlines a step-by-step process to identify and eliminate false positives from small molecule screening campaigns.

1. Initial In-Silico Triage

- Action: Subject your hit list to computational analysis.

- Methodology: Use a tool like Liability Predictor to score compounds for potential thiol reactivity, redox activity, and luciferase inhibition. Additionally, screen for potential colloidal aggregators [8].

- Goal: Flag compounds with high probability of assay interference for deprioritization or require stronger confirmatory evidence.

2. Orthogonal Assay Confirmation

- Action: Test hits in a secondary assay that does not rely on the primary assay's detection technology.

- Methodology: If the primary screen was luminescence-based, develop a fluorescence-based or cell viability counter-assay to confirm the biological effect [8] [9].

- Goal: Verify that the observed activity is genuine and not an artifact of the detection system.

3. Analytical Characterization

- Action: Investigate the behavior of the compound in solution.

- Methodology: Use dynamic light scattering (DLS) to detect the formation of colloidal aggregates. Check for compound fluorescence that could overlap with the assay's detection window [8].

- Goal: Rule out physical chemical phenomena that cause nonspecific inhibition.

4. Dose-Response Analysis

- Action: Carefully analyze the hit's dose-response curve.

- Methodology: Look for non-standard curve shapes, such as a steep Hill slope, which can be indicative of assay interference mechanisms [9].

- Goal: Identify spurious activity patterns that differ from a typical target-binding event.

The workflow for this triage process is summarized below:

Guide 2: Managing Off-Target Effects in CRISPR Screening

This guide details strategies to minimize and identify off-target effects in CRISPR-Cas9 genome editing experiments.

1. Careful gRNA Design

- Action: Select guide RNAs (gRNAs) with maximal specificity.

- Methodology: Use in-silico tools to design gRNAs with a unique spacer sequence that has minimal homology to other genomic sites. Consider the chromatin state of the target locus and avoid sequences with multiple near-matches in the genome [10].

- Goal: Reduce the inherent potential for the CRISPR complex to bind off-target sites.

2. Employ High-Fidelity Cas Variants

- Action: Choose engineered Cas proteins with improved specificity.

- Methodology: Replace the standard SpCas9 with high-fidelity variants (e.g., SpCas9-HF1, eSpCas9) or alternative Cas proteins like Cas12a that have different recognition and cleavage properties [10].

- Goal: Utilize enzymes with stricter binding requirements to decrease off-target cleavage.

3. Detect and Quantify Off-Target Activity

- Action: Empirically measure off-target editing.

- Methodology: After editing, use methods like targeted deep sequencing of predicted off-target sites or unbiased genome-wide assays (e.g., GUIDE-seq, CIRCLE-seq) to identify and quantify unintended mutations [10].

- Goal: Gain a comprehensive understanding of the editome to assess potential genotoxic risk.

4. Consider Alternative Editing Modalities

- Action: Use editors that do not cause double-strand breaks.

- Methodology: For certain applications, employ base editing or prime editing systems. These technologies can introduce precise changes without inducing double-strand breaks, thereby significantly reducing the generation of indels at off-target sites [10].

- Goal: Achieve the desired genetic outcome with a safer editing profile.

The following diagram illustrates the logical decision points for managing CRISPR off-target effects:

Quantitative Data on Assay Interference

The table below summarizes key quantitative data on different types of assay interference, which can aid in risk assessment during hit triage [8].

| Interference Mechanism | Typical Assay Readouts Affected | Common Functional Groups or Behaviors | QSIR Model Performance (Balanced Accuracy) |

|---|---|---|---|

| Thiol Reactivity | Fluorescence-based assays, cell viability | Electrophilic centers (e.g., α,β-unsaturated carbonyls, alkyl halides) | 58-78% |

| Redox Activity | Assays with reducing agents, phenotypic assays | Quinones, polyphenols | 58-78% |

| Luciferase Inhibition | Luciferase reporter gene assays | Some heterocycles, non-specific enzyme inhibitors | 58-78% |

| Colloidal Aggregation | Biochemical inhibition assays | Compounds forming sub-micrometer aggregates in aqueous solution | (Most common cause of artifacts) |

Experimental Protocols

Protocol 1: Liability Triage Using a QSIR Web Tool

This protocol uses the publicly available "Liability Predictor" webtool to triage a list of screening hits [8].

- Input Preparation: Compile the chemical structures of your hit compounds in a supported digital format, such as a list of SMILES strings or a SDF file.

- Tool Access: Navigate to the "Liability Predictor" website at https://liability.mml.unc.edu/.

- Job Submission: Upload your chemical structure file or input the SMILES strings directly into the web interface. Select the desired prediction models (e.g., thiol reactivity, redox activity, luciferase inhibition).

- Result Interpretation: After processing, the tool will return a score or classification for each compound. Hits flagged as high-risk by multiple models should be considered low-priority unless their activity is confirmed in a highly orthogonal assay.

Protocol 2: Counter-Screen for Luciferase Reporter Interference

This protocol describes a direct counter-screen to identify compounds that inhibit common luciferase reporter enzymes [8].

- Reagent Preparation: Prepare a solution containing only the luciferase enzyme (firefly or nano) and its required substrate/buffer components. Do not include any biological target.

- Compound Treatment: Add the hit compounds directly to the reagent solution at the same concentration used in the primary screen. Include a DMSO-only vehicle control.

- Signal Measurement: Incubate according to the reagent's protocol and measure the luminescent signal.

- Data Analysis: Compare the luminescence in compound wells to the vehicle control. A significant decrease in signal indicates that the compound directly inhibits the luciferase reporter, marking it as a likely false positive.

Research Reagent Solutions

The table below lists key computational and experimental tools for addressing common pitfalls in chemogenomic screening.

| Tool or Reagent | Type | Primary Function | Application in False Positive Reduction |

|---|---|---|---|

| Liability Predictor [8] | Computational Webtool | Predicts compounds with thiol reactivity, redox activity, and luciferase inhibition. | Triage of HTS hit lists and design of screening libraries. |

| RDKit [11] | Open-Source Cheminformatics Library | Calculates molecular descriptors, fingerprints, and handles chemical data. | Managing chemical libraries, filtering compounds, and similarity analysis. |

| MolTarPred [6] | Computational Tool | Ligand-centric target prediction using 2D similarity searching. | Identifying potential off-targets and generating mechanisms of action hypotheses. |

| ChEMBL Database [6] | Public Bioactivity Database | Curated database of bioactive molecules with drug-like properties. | Provides annotated chemical and bioactivity data for model training and validation. |

| High-Fidelity Cas9 [10] | Engineered Protein | CRISPR-Cas nuclease variant with reduced off-target activity. | Increases specificity in genetic screens and therapeutic genome editing. |

| Base Editors / Prime Editors [10] | Genome Editing Tool | CRISPR-based systems that edit nucleotides without double-strand breaks. | Reduces indel-related off-target effects and genotoxicity in editing experiments. |

The Impact of Chemical Libraries and Compound Properties on False Positive Rates

FAQs and Troubleshooting Guides

Frequently Asked Questions (FAQs)

Q1: What are the most common chemical features that cause false positives in high-throughput screening?

Compounds with certain chemical moieties are prone to cause false positive results through various interference mechanisms. The following table summarizes key structural alerts and their reasoning for exclusion from screening libraries:

Table: Common Chemical Moieties Excluded to Reduce False Positives

| Chemical Moiety | Primary Reason for Exclusion | Examples |

|---|---|---|

| Acyl halides | Covalent bonding | Acid chlorides |

| Aldehydes | Covalent bonding | Aminoformyl moieties |

| Epoxides | Covalent bonding | Oxiranes |

| Thiols | Toxicity/Covalent bonding | Benzene thiol |

| Anhydrides | Covalent bonding | Maleic anhydride |

| Hydrazines | Covalent bonding | Phenylhydrazine |

| Michael acceptors | Covalent bonding | Vinyl ketones, chalcones |

| Activated halides | Covalent bonding | 2-Chloronitrobenzene |

| Azides | Toxicity | Organic azides |

| Nitrosos | Toxicity | C-nitroso compounds |

These compounds are proactively excluded from quality-controlled screening libraries like the Maybridge collection to minimize false leads [12].

Q2: How can I distinguish between true actives and false positives caused by inorganic impurities?

Zinc and other metal impurities can cause false positives that mimic genuine activity in biochemical assays. To identify these false positives:

Run a TPEN Counter-Screen: Include the zinc-selective chelator TPEN (N,N,N′,N′,-tetrakis(2-pyridylmethyl)ethylenediamine) in your assay. A potency shift greater than 7-fold in the presence of TPEN (typically at 10-50 µM) suggests zinc contamination [13].

Check Synthesis Routes: Review compound synthesis history for steps involving zinc, titanium, or other metal reagents, as these are common contamination sources [13].

Elemental Analysis: For critical hits, perform elemental analysis to detect metal contamination. Active batches of truly inactive compounds may contain up to 20% zinc by mass [13].

Table: Zinc Sensitivity Across Various Assay Types

| Target Class | Example Target | Zinc IC₅₀ (µM) |

|---|---|---|

| Enzyme | Pad4 | 1.0 |

| Kinase | Jak3 | 14.9 |

| Protein-Protein Interaction | Various | 2.7-4.9 |

| Signaling Protein | Ras/Raf | <47 |

Q3: What computational tools can help identify frequent hitters before experimental screening?

The ChemFH platform provides integrated virtual evaluation of potential false positives using:

Machine Learning Models: Multi-task directed message-passing neural networks (DMPNN) combining uncertainty estimation, achieving an average AUC of 0.91 across multiple interference mechanisms [14].

Structural Alert Libraries: 1,441 representative alert substructures derived from analysis of 823,391 compounds [14].

Multiple Screening Rules: Incorporates ten commonly used frequent hitter screening rules including PAINS, Aggregator Advisor, and Lilly Medchem rules [14].

ChemFH is freely available at https://chemfh.scbdd.com/ and has been validated across five virtual screening libraries with reliable performance on natural products and FDA-approved drugs [14].

Q4: How does the DNA linker in DNA-encoded libraries (DELs) affect hit identification?

The DNA-conjugation linker in DELs can significantly influence hit detection and create false negatives:

Linker-Induced Bias: The presence of the linker can prevent detection of otherwise active compounds, leading to widespread false negatives where active molecules are missed in selection data [15].

Apparent Selectivity: Linkers can create artificial target selectivity, where the same molecule without a linker shows broad activity across related targets, but the DNA-conjugated version appears selective [15].

Validation Imperative: Always synthesize and test DEL hits without the DNA linker to confirm true activity and selectivity [15].

Troubleshooting Common Experimental Issues

Problem: Inconsistent activity across different batches of the same compound

Solution: This often indicates inorganic contamination or decomposition.

- Resynthesis Analysis: Resynthesize compounds using alternative routes that avoid metal reagents [13].

- Purity Assessment: Employ multiple analytical techniques (NMR, LC-MS, elemental analysis) to identify inorganic contaminants not detected by standard purity methods [13].

- Biological Validation: Test multiple batches in concentration-response curves alongside positive controls.

Problem: High hit rate with unusual structure-activity relationships

Solution: This may indicate assay interference rather than true target engagement.

- Orthogonal Assays: Confirm hits using multiple detection technologies (e.g., switch from fluorescence to luminescence or ELISA) [13].

- Cytotoxicity Profiling: Implement multiplexed cell health assays to distinguish specific activity from general cytotoxicity [16].

- Aggregation Testing: Add non-ionic detergents (e.g., 0.01% Triton X-100) to identify colloidal aggregators [14].

Problem: DNA-encoded library selections yield surprisingly target-specific hits despite target homology

Solution: This may reflect linker bias rather than true selectivity.

- Cross-Target Testing: Synthesize hits without linker and test across related targets [15].

- Linker Variants: Explore different linker lengths and compositions if developing PROTACs or other bivalent molecules [15].

- Machine Learning Correction: Account for linker effects in ML models trained on DEL data [15].

Experimental Protocols

Protocol 1: TPEN Counter-Screen for Metal-Induced False Positives

Purpose: Identify false positives caused by zinc contamination in compound samples [13].

Materials:

- TPEN (N,N,N′,N′,-tetrakis(2-pyridylmethyl)ethylenediamine), stock solution in DMSO

- Test compounds in DMSO

- Standard assay reagents for your target

Procedure:

- Prepare two sets of identical assay plates with your test compounds at appropriate concentrations.

- To the experimental set, add TPEN at 10-50 µM final concentration.

- To the control set, add equivalent DMSO without TPEN.

- Run your standard assay protocol in parallel.

- Calculate fold-shift in IC₅₀ values: Fold shift = IC₅₀ (+TPEN) / IC₅₀ (-TPEN)

- Compounds with >7-fold potency shift are likely zinc-contaminated false positives [13].

Interpretation: True targets are unaffected by TPEN, while metal-sensitive assays show significant potency shifts.

Protocol 2: High-Content Cellular Health Assessment for Phenotypic Screening

Purpose: Comprehensively characterize compound effects on cellular health to distinguish specific activity from general toxicity [16].

Materials:

- HeLa, U2OS, or HEK293T cells

- Live-cell dyes: Hoechst33342 (50 nM), MitotrackerRed or MitotrackerDeepRed, BioTracker 488 Green Microtubule Cytoskeleton Dye

- Multi-parameter high-content imaging system

Procedure:

- Plate cells in appropriate multi-well imaging plates and incubate overnight.

- Treat with test compounds and controls for 6-72 hours with live-cell imaging capability.

- Image at multiple time points using standardized exposure settings.

- Analyze images for multiple parameters:

- Nuclear morphology (pyknosis, fragmentation)

- Mitochondrial mass and membrane potential

- Microtubule cytoskeleton integrity

- Cell count and viability

Interpretation: Classify compounds based on time-dependent effects on cellular health. Specific inhibitors show distinct profiles from general toxins [16].

The Scientist's Toolkit: Essential Research Reagents

Table: Key Reagents for False Positive Investigation

| Reagent/Tool | Primary Function | Application Notes |

|---|---|---|

| TPEN | Selective zinc chelator | Counter-screen for metal contamination; use at 10-50 µM [13] |

| Triton X-100 | Non-ionic detergent | Identify colloidal aggregators; use at 0.01% [14] |

| Hoechst 33342 | Nuclear stain | Live-cell imaging; use at 50 nM to avoid toxicity [16] |

| Mitotracker Red/Deep Red | Mitochondrial stain | Assess mitochondrial health in live cells [16] |

| ChemFH Platform | Computational prediction | Virtual screening for frequent hitters [14] |

| Multi-parameter Cytotoxicity Assay | Cellular health assessment | Distinguish specific from toxic compounds [16] |

Table: Comprehensive False Positive Mitigation Strategies

| False Positive Type | Detection Methods | Prevention Strategies |

|---|---|---|

| Metal Contamination | TPEN counter-screen, elemental analysis | Alternative synthesis routes, rigorous purification [13] |

| Colloidal Aggregation | Detergent addition, dynamic light scattering | Library pre-filtering for aggregators, use of chemoinformatic tools [14] |

| Assay Interference | Orthogonal assay formats, counter-screens | Fluorescence/luminescence profiling, assay design optimization [14] |

| Cytotoxic Compounds | Multiplexed cell health assays | Early cytotoxicity profiling, time-dependent analysis [16] |

| Reactive Compounds | Structural alert screening, covalent binding assays | Exclusion of reactive moieties from libraries [12] |

| DEL Linker Artifacts | Off-DNA synthesis and testing | Understanding linker bias in data interpretation [15] |

Troubleshooting Guide: Addressing False Positives in Chemogenomic Screens

This guide helps diagnose and resolve common issues in chemogenomic screening related to cellular context.

Symptom: High hit rate with non-reproducible or non-mechanistic activity in a cell-based assay.

| Potential Cause | Diagnostic Experiments | Solutions & Preventative Strategies |

|---|---|---|

| Compound Aggregation [1] | - Test for detergent sensitivity (e.g., add 0.01-0.1% Triton X-100).- Analyze inhibition curves for steep Hill slopes (>2-3).- Measure IC50 shift with increasing enzyme concentration. | - Include non-ionic detergent in assay buffer.- Use an orthogonal, non-biochemical assay (e.g., cellular phenotype) to confirm activity.- Assess compound behavior using dynamic light scattering. |

| Off-Target Assay Interference (e.g., Luciferase Inhibition) [1] | - Test compounds in a counter-screen against the isolated reporter (e.g., purified firefly luciferase).- Check if activity is consistent in an orthogonal assay with a different readout (e.g., β-lactamase, GFP). | - Use alternative, structurally unrelated reporters in assay design.- Consult published databases of known interferers (e.g., luciferase inhibitors) to flag problematic chemotypes.- Employ cell-free target-based assays to isolate direct target engagement. |

| Cellular Context-Specific Toxicity [17] | - Perform a cell viability counter-screen under identical assay conditions.- Use high-content imaging to confirm the desired phenotypic outcome, not just cell death. | - Shorten compound incubation times to reduce cytotoxic effects.- Tiered screening: only progress hits that show desired activity without concomitant cytotoxicity. |

| Presence of Redox-Active or Reactive Compounds [1] | - Test for activity loss upon addition of a reducing agent (e.g., DTT) or a nucleophile (e.g., glutathione).- Measure activity in the presence of catalase to scavenge hydrogen peroxide. | - Replace strong reducing agents (DTT, TCEP) with weaker ones (cysteine) in assay buffers.- Filter out compounds with known reactive functional groups (e.g., alkyl halides, Michael acceptors) from screening libraries. |

Symptom: A gene target identified as essential in one cell line is non-essential in another, confounding validation.

| Potential Cause | Diagnostic Experiments | Solutions & Preventative Strategies |

|---|---|---|

| Genetic Compensation & Pathway Redundancy [18] | - Use multi-parametric phenotypic assays (e.g., transcriptomics, proteomics) to assess if different pathways are activated upon gene loss in different contexts.- Perform double knockout of paralogous genes to test for synthetic lethality. | - Screen across a diverse panel of cell lines to map context-specific dependencies.- Utilize multi-omics data (proteomic, transcriptomic) from the cell line to understand baseline pathway activity and redundancy. |

| Differential Protein Complex Assembly [18] | - Use co-immunoprecipitation (Co-IP) to compare protein-protein interactions of the target in sensitive vs. resistant cell lines.- Employ proximity ligation assays (PLA) to visualize complex formation in situ. | - Characterize protein complex stoichiometry and composition in the relevant cellular context before initiating a screen. |

| Altered Metabolic State or Nutrient Availability [19] | - Measure metabolite levels or nutrient consumption in different cell media.- Test gene essentiality under different nutrient conditions (e.g., galactose vs. glucose media). | - Use physiologically relevant culture conditions that mimic the in vivo environment.- Consider the metabolic profile of the cell model during experimental design and data interpretation. |

Frequently Asked Questions (FAQs)

Q1: Our high-throughput screen yielded a high hit rate. What are the first steps to triage these results and identify true positives?

A1: The immediate priority is to identify and remove compounds causing assay interference. Follow this systematic triage workflow [1]:

- Remove Promiscuous Binders: Identify compounds that are active in multiple, unrelated assays.

- Confirm Dose-Response: Confirm that activity is concentration-dependent.

- Employ Orthogonal Assays: Test hits in an assay that uses a completely different detection technology (e.g., switch from a luminescence readout to fluorescence or cell imaging). Hits that fail in the orthogonal assay are likely false positives.

- Conduct Counter-Screens: Use targeted assays to rule out common interferers like firefly luciferase inhibition, cytotoxicity, or aggregation (e.g., by adding detergent).

Q2: Why does a genetic knockout (e.g., via CRISPR) produce a strong phenotype in one cell line and no phenotype in another, even if the target is expressed?

A2: This "context specificity" is a core feature of biological complexity. The phenotype arising from a gene's loss depends on the larger molecular network in that specific cell [17]. Key factors include:

- Genetic Background: The presence of mutations in complementary pathways can bypass the need for your target gene (synthetic lethality/rescue) [19].

- Molecular Redundancy: Another gene, often a paralog, may perform the same essential function in one cell line but not the other [18].

- Cellular State & Lineage: The baseline metabolic, signaling, and differentiation state of the cell determines which genes are critical for survival [20]. A one-size-fits-all approach does not work in functional genomics.

Q3: How can we better account for cellular compartmentalization in our analysis of signaling pathways?

A3: Traditional "well-stirred" biochemical models are often insufficient. To account for compartmentalization [18]:

- Spatial Techniques: Use high-resolution imaging (e.g., FRET, confocal microscopy) with specific organelle markers to confirm the localization of your target and its effectors.

- Subcellular Fractionation: Biochemically isolate organelles (e.g., plasma membrane, cytoplasm, nucleus) to measure pathway activity and protein modifications in each compartment.

- Mathematical Modeling: Develop computational models that explicitly include different cellular compartments and the rates of molecular translocation between them. This can reveal how the same molecule can carry different signals in different locations.

Q4: What are some emerging computational strategies to predict and account for cell-type specificity in screening data?

A4: Machine learning (ML) is a powerful new tool. One approach involves building models that use a small set of "sentinel" CRISPR knockouts to predict genome-wide loss-of-function effects across diverse cell lines [17]. This allows for:

- Data Compression: Predicting phenotypes for unmeasured genes, reducing the scale and cost of experiments.

- Network Analysis: Identifying core functional modules that drive cellular behavior, moving beyond single-gene analysis.

- Improved Prediction: ML models that integrate multiple data types (e.g., CRISPR phenotypes, transcriptomics, lineage) significantly outperform univariate models based on a single genomic feature.

Visual Guide to Experimental Workflows

Troubleshooting False Positives in HTS

Mapping Context Specificity with CRISPR

The Scientist's Toolkit: Key Reagents & Methods

| Tool / Reagent | Function / Description | Application in Reducing False Positives |

|---|---|---|

| Non-ionic Detergent (Triton X-100) [1] | Disrupts colloidal compound aggregates that cause non-specific inhibition. | Added to biochemical assay buffers (0.01-0.1%) to eliminate aggregation-based false positives. |

| Orthogonal Assay [1] | An assay with a different detection method or biological readout than the primary screen. | Confirms that compound activity is due to the targeted biology, not the assay format. |

| Counter-Screen Assay [1] | A targeted assay to identify compounds that interfere with the detection system. | Examples include a standalone luciferase enzyme assay or a general cytotoxicity assay. |

| Haploinsufficiency Profiling (HIP) [19] | A chemogenomic method in yeast that identifies a compound's protein target by measuring fitness defects in heterozygous deletion strains. | Provides direct, in vivo evidence of target engagement, moving beyond phenotypic artifacts. |

| TKOv3 Library [21] | A genome-scale CRISPR knockout library for human cells, containing ~71,000 sgRNAs targeting ~18,000 genes. | Enables systematic identification of context-specific genetic dependencies across diverse cell lines. |

| Cofitness Network Analysis [19] | A computational method that identifies genes whose knockout phenotypes are correlated across many conditions. | Reveals functional relationships and buffering mechanisms that explain context specificity. |

Advanced Methodologies for Enhanced Specificity in Screening

Core Concepts and FAQs

What is the primary goal of computational pre-screening in chemogenomics?

Computational pre-screening aims to prioritize compounds from vast libraries that are most likely to be true bioactives, thereby increasing phenotypic hit rates and reducing the costs and inefficiencies associated with experimental screening of random compound collections [22].

How does compound pre-selection directly address the problem of false positives?

Pre-selection enriches screening libraries for compounds with desirable properties (e.g., cellular permeability, lower assay interference potential), which reduces the proportion of false positives resulting from artifacts like colloidal aggregation, spectroscopic interference, or chemical reactivity. This directly improves the signal-to-noise ratio in subsequent experimental assays [14].

What are the main categories of false positives that these methods aim to eliminate?

- Colloidal Aggregators: Compounds that form colloids in assay buffers, non-specifically inhibiting many proteins [14].

- Spectroscopic Interference Compounds: These include autofluorescent compounds and firefly luciferase (FLuc) inhibitors, which interfere with common detection methods [14].

- Chemically Reactive Compounds: Molecules that react covalently with protein targets non-specifically [14].

- Promiscuous Compounds: Molecules that inhibit multiple unrelated targets due to undesirable structural features [14].

Troubleshooting Common Experimental Issues

Issue: High rate of non-specific cytotoxicity or inhibition across multiple assay types.

Potential Cause: The compound library is enriched with promiscuous or pan-assay interference compounds (PAINS) [14]. Solution:

- Apply a comprehensive false-positive filter like ChemFH before purchase or screening. This platform uses a multi-task deep learning model to identify various assay interferents with high accuracy (Average AUC of 0.91) [14].

- Implement a simple two-property filter based on physicochemical properties. For example, filtering for compounds with a calculated LogP ≥ 2 and hydrogen bond acceptors ≤ 6 can enrich for bioactives while removing many undesirable compounds, potentially achieving a 30% cost saving in library acquisition [22].

Issue: Confirmed bioactivity in initial screening, but the compound shows no specificity in follow-up target identification assays.

Potential Cause: The compound may be a frequent hitter or its physicochemical properties are not conducive for specific target engagement [14]. Solution:

- Perform chemo-genomic profiling, such as HaploInsufficiency Profiling (HIP) in yeast. This genome-wide assay can identify specific hypersensitive strains, pointing to a compound's potential target and mechanism of action, thus distinguishing specific from non-specific agents [22].

- Utilize in-silico target prediction to narrow down potential targets. Ensemble chemogenomic models that integrate multi-scale chemical and protein sequence information can rank potential targets for a query compound. High-ranking targets can then be prioritized for experimental validation [23].

Issue: Low hit rate in a phenotypic screen on a model organism (e.g.,C. elegans).

Potential Cause: The compound library lacks molecules with sufficient bioavailability or cellular permeability in the chosen organism. Solution:

- Pre-select compounds based on bioactivity in a surrogate system. For instance, screening a library for growth inhibitors in S. cerevisiae (yeast) first, and then using these "yactive" compounds in the target organism. This strategy has been shown to increase phenotypic hit-rates by 6.6-fold in C. elegans compared to random compound screening [22].

- Build a organism-specific predictive model. A Naïve Bayes model trained on the physicochemical properties of known growth-inhibitory compounds can be used to rank-order purchasable compounds for screening, dramatically improving hit rates [22] [24].

Experimental Protocols for Validation

Protocol 1: Two-Property Filter for Library Design

This protocol provides a cost-effective method to pre-filter compound libraries before purchase to enrich for bioactives [22].

Methodology:

- Data Source: Start with a commercially available compound library (e.g., ZINC20, containing over 20 million compounds).

- Property Calculation: For each compound, calculate two key physicochemical properties:

- LogP: The calculated octanol-water partition coefficient, a measure of lipophilicity.

- H-Acceptors: The number of hydrogen bond acceptors.

- Application of Filter: Select only compounds that satisfy both of the following criteria:

- LogP ≥ 2

- H-Acceptors ≤ 6

- Outcome: This filter is empirically derived and has been shown to retain ~91% of known growth-inhibitory compounds while reducing the library size by approximately 30%, leading to significant cost savings [22].

Table: Two-Property Filter Specifications

| Property | Description | Filter Criteria | Rationale |

|---|---|---|---|

| LogP | Calculated octanol-water partition coefficient | ≥ 2 | Enriches for compounds with sufficient lipophilicity for passive cell membrane permeability [22]. |

| H-Acceptors | Number of hydrogen bond acceptors | ≤ 6 | Limits compounds to those more likely to be passively transported across cell membranes [22]. |

Protocol 2: Machine Learning-Assisted Hit Prioritization from HTS Data

This protocol uses machine learning to distinguish true bioactives from assay artifacts directly from HTS data [25].

Methodology:

- HTS Campaign: Perform a high-throughput screen on your compound library.

- Model Training: Train a gradient boosting model on the noisy HTS data.

- Influence Analysis: Analyze the learning dynamics during model training using a novel formulation of sample influence. This step allows the model to distinguish compounds exhibiting desired biological responses from those producing assay artifacts without relying on pre-defined interference mechanisms.

- Prioritization: The model outputs a prioritized list of compounds, effectively excluding assay interferents with different mechanisms. This approach has been shown to be more efficient at prioritizing biologically relevant compounds than standard baselines [25].

Workflow Visualization

In-silico Chemogenomics Hit Prioritization

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Computational Tools and Resources for Hit Prioritization

| Tool / Resource | Type | Primary Function | Application in Hit Prioritization |

|---|---|---|---|

| ChemFH [14] | Integrated Online Platform | Predicts multiple types of assay interferents (aggregators, fluorescent compounds, etc.) using a DMPNN model. | Virtual screening of compound libraries to remove frequent hitters before experimental screening. |

| ZINC20 [26] | Free Ultralarge Chemical Database | Provides access to over 20 million commercially available compounds in ready-to-dock formats. | Source of purchasable compounds for virtual screening and library design. |

| Ensemble Chemogenomic Model [23] | Target Prediction Algorithm | Predicts protein targets for a query compound by integrating multi-scale chemical and protein sequence information. | Identifying potential mechanisms of action for hits and assessing polypharmacology or off-target effects. |

| Naïve Bayes Model / 2-Property Filter [22] [24] | Pre-screening Prioritization Model | Ranks compounds based on likelihood of bioactivity or applies a simple physicochemical filter. | Cost-effective pre-purchase prioritization to increase phenotypic hit rates in model organism screens. |

| "Yactive" Compound Set [22] [24] | Pre-plated Chemical Library | A collection of ~7,500 compounds known to inhibit S. cerevisiae growth. | A empirically validated resource for screening in diverse model organisms to achieve higher hit rates. |

In chemogenomic screening research, the high rate of false positives poses a significant challenge, often leading to wasted resources and delayed drug discovery pipelines. Random Forest classifiers have emerged as a powerful machine learning tool to address this issue, leveraging ensemble learning to improve the accuracy of target identification and validation. This technical support center provides practical guidance for researchers implementing these methods, featuring troubleshooting guides, FAQs, and detailed experimental protocols directly applicable to chemogenomic false positive reduction.

Research Reagent Solutions

The table below outlines essential computational tools and their functions for implementing Random Forest classifiers in chemogenomic screening.

Table 1: Essential Research Reagents and Computational Tools

| Item | Function in Research |

|---|---|

| Random Forest Algorithm | An ensemble machine learning method that constructs multiple decision trees for robust classification and regression tasks. |

| Molecular Descriptors | Quantitative representations of chemical structures used as input features for predictive modeling. |

| Molecular Fingerprints | Binary vectors representing the presence or absence of specific structural features in a molecule. |

| Hyperparameter Tuning Tools (e.g., GridSearchCV) | Software utilities for systematically optimizing the settings of the Random Forest model to maximize performance. |

| Feature Selection Methods (e.g., Boruta) | Algorithms that identify the most relevant molecular descriptors or features to improve model interpretability and reduce overfitting. |

Experimental Protocols & Data

Protocol: Building a Random Forest Classifier for False Positive Reduction

This protocol outlines the key steps for constructing a Random Forest model to distinguish true positive from false positive hits in chemogenomic screening data.

Data Preparation and Feature Engineering

- Compile Actives and Decoys: Gather a dataset of confirmed active drug-target pairs and a set of "compelling decoys" (inactive pairs that are structurally similar to actives) to create a challenging classification scenario [27].

- Generate Molecular Features: Calculate molecular descriptors (e.g., using MOE software) and/or molecular fingerprints (e.g., Extended-Connectivity Fingerprints, ECFP) for all compounds [28].

- Integrate Target Information: Incorporate relevant target protein features or descriptors to create a comprehensive feature set for each drug-target pair [29].

Model Training and Validation

- Implement Cross-Validation: Use a nested cross-validation strategy, where an outer loop (e.g., 75:25 train-test split repeated 100 times) assesses generalizability, and an inner loop (e.g., 10-fold CV) is used for hyperparameter optimization [30].

- Apply Feature Selection: Utilize stable feature selection methods like Boruta to identify the most predictive biomarkers or features, reducing noise and model complexity [30].

- Train Random Forest Model: Train the classifier on the training subset, using a "balanced" class weight setting to automatically adjust for imbalanced datasets [28].

Performance Evaluation and Prospective Validation

- Evaluate Model Performance: Assess the model on the held-out test set using metrics such as Area Under the Curve (AUC), Positive Predictive Value (PPV), and Negative Predictive Value (NPV) [28].

- Prospective Screening: Apply the trained model to a new, external chemical library to predict and prioritize high-confidence compounds for experimental validation [27] [28].

Experimental Workflow

The following diagram illustrates the logical workflow for the described experimental protocol.

Quantitative Performance Data

The table below summarizes the performance of a Random Forest classifier in reducing false positives for various metabolic disorders, demonstrating the potential of this approach in screening contexts [31].

Table 2: Example False Positive Reduction in Newborn Screening (NBS) using Random Forest

| Disorder | Confirmed Positives | First-Tier NBS False Positives | False Positives After RF Analysis | Reduction in False Positives |

|---|---|---|---|---|

| GA-1 | 48 | 1344 | 150 | 89% |

| MMA | 103 | 502 | 276 | 45% |

| OTCD | 24 | 496 | 11 | 98% |

| VLCADD | 60 | 200 | 196 | 2% |

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: Our Random Forest model is overfitting to the training data. What are the key hyperparameters we should tune to control this?

A: Overfitting is a common issue where the model performs well on training data but poorly on unseen test data. To mitigate this, focus on the following hyperparameters:

max_depth: Limits the maximum depth of each tree. A shallow tree may underfit, while a deep tree may overfit [32] [33].min_samples_split: Specifies the minimum number of samples required to split an internal node. Increasing this value prevents the tree from creating nodes that learn from very small, noisy data subsets [33].min_samples_leaf: Sets the minimum number of samples that must be present in a leaf node. A higher value creates a more generalized model [33].max_features: Limits the number of features considered for the best split at each node. Using fewer features (e.g.,"sqrt"or"log2") introduces more randomness and helps control overfitting [32] [33].

Q2: How can we effectively identify the most important molecular features driving our classifier's predictions?

A: Stable feature selection is critical for model interpretability. We recommend:

- Boruta Algorithm: This method compares the importance of your real features against the importance of "shadow" features (randomly permuted versions). Features that are significantly more important than the best shadow feature are deemed important [30].

- Permutation-Based Feature Selection: This involves randomly shuffling each feature and measuring the drop in the model's performance. A large drop indicates an important feature. This can be done with raw or multiple-testing-corrected p-values [30].

Q3: Our dataset is highly imbalanced, with very few confirmed active compounds compared to inactives. How can we adjust the Random Forest model for this?

A: Class imbalance can bias the model toward the majority class. To address this:

- Use the

class_weight="balanced"parameter insklearn.ensemble.RandomForestClassifier. This penalizes misclassifications of the minority class more heavily, encouraging the model to pay more attention to the active compounds [28]. - In one study, applying this setting increased the Positive Predictive Value (PPV) from 61.1% to 65.6%, improving the model's ability to correctly identify true actives [28].

Q4: What is the trade-off between using more trees (n_estimators) and computational cost?

A: While increasing the number of trees generally improves performance and stability, it comes with diminishing returns and a linear increase in computational time.

- Guidance: Start with a default of 100 trees. If performance is acceptable, this is often sufficient. You can try increasing this to 200 or 500, but performance typically stabilizes, and the significant increase in computation time may not be justified [34] [33]. Using parallel processing (

n_jobs=-1) can significantly reduce fitting and prediction times [34].

Common Error Guide

Problem: Poor performance on the test set despite good training accuracy.

- Possible Cause 1: Overfitting due to overly complex trees.

- Solution: Tune hyperparameters like

max_depth,min_samples_leaf, andmax_featuresto constrain the model [33]. - Possible Cause 2: Data leakage, where information from the test set is inadvertently used during training.

- Solution: Ensure a rigorous nested cross-validation setup and avoid performing feature selection before splitting the data into training and test sets [27] [30].

Problem: The model takes too long to train.

In chemogenomic screening, false positives remain a significant barrier to efficient drug discovery. They lead to wasted resources, misdirected research efforts, and delayed identification of truly promising compounds. Multi-parameter approaches, which integrate high-content data from morphological profiling and the Cell Painting assay, provide a powerful strategy to overcome this challenge. By capturing a broad spectrum of cellular responses in an untargeted manner, these methods create distinctive bioactivity signatures that help distinguish true mechanistic effects from nonspecific or artifactual signals [35] [36]. This technical support center provides the essential troubleshooting guidance and methodologies needed to successfully implement these approaches while minimizing false positives in your research.

Frequently Asked Questions (FAQs)

Q1: How does morphological profiling specifically help reduce false positives in screening? Morphological profiling captures hundreds to thousands of quantitative cellular features in an unbiased manner, creating a distinctive "fingerprint" for each compound's effect on cells. Unlike targeted assays that measure a single predefined outcome, this comprehensive profiling allows researchers to compare unknown compounds against reference profiles of known mechanisms of action (MOAs). Compounds that show similar morphological profiles to established references are more likely to share the same biological target, while false positives often demonstrate aberrant or inconsistent profiles that don't cluster with known bioactivities [36] [37]. This approach has proven particularly effective for identifying frequently encountered off-target activities, such as tubulin binding, which might otherwise be missed in conventional assays [37].

Q2: What is the difference between conventional Cell Painting and the newer Cell Painting PLUS (CPP) assay? The table below summarizes the key differences between these two approaches:

Table: Comparison of Cell Painting and Cell Painting PLUS Assays

| Feature | Conventional Cell Painting | Cell Painting PLUS (CPP) |

|---|---|---|

| Imaging Channels | Typically 4-5 channels | Up to 7 separate channels |

| Signal Merging | Dyes with overlapping spectra often merged (e.g., RNA+ER, Actin+Golgi) | Each dye imaged in separate channel |

| Cellular Compartments | 8 standard compartments | 9+ compartments, including additional lysosome staining |

| Staining Approach | Single staining procedure | Iterative staining-elution cycles |

| Organelle Specificity | Moderate due to channel merging | High due to spectral separation |

| Customization | Fixed dye set | Flexible dye selection based on research needs |

| Cost Considerations | Lower reagent costs | Higher due to additional dyes and processing [35] |

Q3: Can brightfield images alone provide sufficient data for bioactivity prediction? Yes, recent research demonstrates that in many cases, deep learning models trained solely on brightfield images can achieve high performance in predicting compound bioactivity across diverse targets and assays. One large-scale study found that brightfield-only predictions could achieve performance comparable to multi-channel fluorescence in many assays, with an average ROC-AUC of 0.744 across 140 diverse assays [38]. However, fluorescence imaging provides higher specificity for particular subcellular structures and is recommended when investigating specific organelle-level effects.

Q4: What are the most common technical challenges that affect data quality in morphological profiling? The most frequent issues include:

- Batch effects across different experimental runs or sites

- Poor segmentation accuracy due to suboptimal staining or cell density

- Spectral bleed-through between fluorescence channels

- Signal intensity variability caused by inconsistent staining or imaging parameters

- Cell health issues that create confounding morphological changes [36] [39] Implementing rigorous standardization protocols and quality control measures is essential to minimize these effects.

Troubleshooting Guides

Poor Image Segmentation and Feature Extraction

Table: Troubleshooting Image Segmentation Issues

| Problem | Possible Causes | Solutions |

|---|---|---|

| Weak fluorescence signal | Inadequate dye concentration, photobleaching, insufficient exposure time | Optimize dye concentrations and exposure times; include fresh controls; protect samples from light [35] |

| Inaccurate cell boundary detection | Overlapping cells, incorrect parameter settings, poor contrast | Adjust cell seeding density; optimize segmentation algorithm parameters; verify stain performance [39] |

| High background noise | Non-specific antibody binding, autofluorescence, insufficient washing | Include appropriate controls; use brighter fluorophores for low-abundance targets; increase wash steps [40] |

| Inconsistent staining across plates | Variation in fixation, permeabilization, or staining time | Standardize protocol timing; use fresh reagents; implement quality control checks [35] |

Addressing Batch Effects and Technical Variability

Batch effects represent a major source of false positives and false negatives in high-content screening. The following strategies can help mitigate these issues:

- Implement cross-site standardization: As demonstrated in large-scale morphological profiling resources, extensive assay optimization across multiple imaging sites significantly improves data reproducibility [41]

- Use reference compounds: Include compounds with known morphological profiles in every experiment to monitor technical variability

- Apply batch correction algorithms: Computational methods can help remove technical artifacts while preserving biological signals [36]

- Standardize preprocessing pipelines: Establish consistent data preprocessing workflows, being particularly cautious with transformations like randomization that can negatively affect downstream analysis [42]

Optimization of Multiplexed Fluorescence Detection

Effective multiparameter approaches require careful optimization of fluorescence detection to minimize spectral overlap and maximize signal quality:

- Perform voltage walks: For each detector, determine the minimum voltage requirement (MVR) that allows clear resolution of dim fluorescent signals from background noise [43]

- Titrate all antibodies: Use stain index calculations to identify separating concentrations that provide good distinction between labeled and unlabeled cells while minimizing spillover spreading [43]

- Implement appropriate controls: Always include fluorescence minus one (FMO) controls for gate setting and viability controls to exclude dead cells that nonspecifically bind antibodies [43]

- Validate dye stability: Characterize fluorescence properties over time, as some dyes (particularly lysosomal markers) may show significant signal decay within 24 hours [35]

Standard Operating Protocols

Core Cell Painting Protocol Workflow

Diagram: Cell Painting Experimental and Computational Workflow

Step-by-Step Methodology:

- Cell Culture Seeding: Plate adherent cells in multiwell plates at optimized density to ensure proper cell spreading without overcrowding [39]

- Compound Treatment/Perturbation: Treat wells with specific compounds or genetic perturbations, including appropriate controls (untreated, vehicle, and reference compounds) [39]

- Staining with Fluorescent Dyes: Apply the standard Cell Painting dye cocktail:

- Nuclear DNA: Hoechst or DAPI (blue channel)

- Endoplasmic Reticulum: Concanavalin A (green channel)

- Mitochondria: MitoTracker (red channel)

- Golgi Apparatus & Actin: Phalloidin and Wheat Germ Agglutinin (yellow channel)

- Nucleoli & Cytoplasmic RNA: SYTO 14 (far-red channel) [39]

- Multichannel Fluorescence Imaging: Acquire images using high-content imaging systems with appropriate filters for each fluorescence channel [36]

- Image Pre-processing: Correct for background fluorescence, flat-field illumination, and apply quality control filters [39]

- Cell Segmentation & Feature Extraction: Use automated algorithms to identify individual cells and subcellular compartments, then extract morphological features (size, shape, texture, intensity) [36] [39]

- Data Normalization: Apply appropriate normalization to remove technical variation while preserving biological signals [42]

- Morphological Profiling & Analysis: Generate multivariate profiles and perform similarity analysis to identify compounds with related mechanisms of action [37]

Cell Painting PLUS (CPP) Iterative Staining Protocol

The CPP assay expands multiplexing capacity through iterative staining-elution cycles:

Diagram: Cell Painting PLUS Iterative Staining Workflow

Key Steps for CPP Implementation:

- Perform initial staining cycle for a subset of cellular targets

- Complete imaging of all stained structures in separate channels

- Apply elution buffer (0.5 M L-Glycine, 1% SDS, pH 2.5) to remove dyes while preserving cellular morphology [35]

- Repeat staining and imaging cycles for additional cellular targets

- Ensure image registration to align images from different cycles for the same cells

- Process and analyze data as in conventional Cell Painting, with enhanced compartment specificity

Essential Research Reagent Solutions

Table: Key Reagents for Morphological Profiling and Cell Painting Assays

| Reagent Category | Specific Examples | Function & Application Notes |

|---|---|---|

| Fluorescent Dyes - Standard CP | Hoechst 33342, DAPI; Phalloidin; Concanavalin A; MitoTracker Deep Red; SYTO 14; Wheat Germ Agglutinin | Labels nuclear DNA; stains actin cytoskeleton; labels endoplasmic reticulum; stains mitochondria; stains nucleoli and RNA; labels Golgi and plasma membrane [39] |

| Fluorescent Dyes - CPP | LysoTracker; Additional compartment-specific dyes | Labels lysosomes (requires live staining in CPP); customizable based on research questions [35] |

| Fixation & Permeabilization | Formaldehyde (4%, methanol-free); Ice-cold methanol (90%); Triton X-100; Saponin | Preserves cellular structure; use for permeabilization (add drop-wise while vortexing); alternative permeabilization agents [40] |

| Elution Buffers (CPP) | Glycine-SDS buffer (0.5 M L-Glycine, 1% SDS, pH 2.5) | Efficiently removes dye signals between staining cycles while preserving morphology [35] |

| Viability Indicators | Propidium Iodide; 7-AAD; Fixable viability dyes (eFluor) | Identifies dead cells for exclusion from analysis; use with fixed cells for intracellular staining [43] |

| Image Analysis Tools | CellProfiler; IKOSA Platform; ResNet50 models | Open-source image analysis; commercial AI-based platform; deep learning for bioactivity prediction [38] [39] |

Data Analysis and Quality Control Pipeline

Morphological Profiling Data Analysis Workflow

Diagram: Morphological Profiling Data Analysis Pipeline

Critical Steps for Robust Analysis:

- Single-Cell Feature Extraction: Extract hundreds of morphological features from each cell using standardized feature sets [36]

- Data Quality Assessment: Implement quality control metrics including:

- Cell count thresholds per well

- Signal-to-noise ratios for each channel

- Segmentation accuracy validation

- Batch Effect Correction: Apply established methods (e.g., Combat, Z-score normalization) to remove technical variation while preserving biological signals [42]

- Profile Aggregation: Compute median feature values per well while preserving single-cell data for subpopulation analysis [39]

- Similarity Analysis: Calculate correlation distances between compound profiles using appropriate similarity metrics [37]

- MOA Prediction: Employ machine learning approaches (1-nearest-neighbor classification, deep learning) to predict mechanisms of action [36] [37]

- Hit Confirmation: Validate predictions through orthogonal assays and dose-response experiments

Performance Metrics for MOA Prediction

Table: Evaluation Metrics for Morphological Profiling Performance

| Metric | Definition | Interpretation | Benchmark Values |

|---|---|---|---|

| NSC (Not-Same-Compound) | Accuracy in predicting MOA when test compound profiles are excluded from training | Measures model generalizability to new compounds | Varies by dataset; ~0.7-0.9 AUC in benchmark studies [36] |

| NSCB (Not-Same-Compound-and-Batch) | Accuracy when excluding same compound AND same experimental batch | Evaluates robustness to batch effects | Lower than NSC; indicates batch sensitivity [36] |

| Drop | Difference between NSC and NSCB | Quantifies batch effect magnitude | Ideally minimal; <0.1 indicates good batch handling [36] |

| ROC-AUC | Area Under Receiver Operating Characteristic curve | Overall prediction performance | 0.744±0.108 average across 140 assays in one study [38] |

Validation Strategies for Hit Compounds

To minimize false positives in chemogenomic screening, implement a comprehensive validation strategy:

- Orthogonal assay confirmation: Test hit compounds in biologically relevant functional assays unrelated to morphological profiling

- Dose-response analysis: Verify that phenotypic effects are concentration-dependent

- Structure-activity relationship (SAR) examination: Confirm that structural analogs produce similar morphological profiles

- Target-based validation: Use genetic approaches (CRISPR, RNAi) or direct binding assays to verify suspected targets

- Chemical-genetic interaction profiling: Compare morphological profiles from chemical perturbations with genetic perturbation profiles targeting the same pathway [36]

The integration of these multi-parameter approaches provides a powerful framework for distinguishing true bioactivities from artifactual signals, ultimately accelerating the drug discovery process while reducing resource waste on false leads.

FAQs: Core Principles of Screening Design

1. What is a screening design and when should I use it? Screening designs are a type of designed experiment used as an initial step to identify the most influential factors among many potential variables. They are an efficient way to systematically separate "the vital few from the trivial many" factors, using a relatively small number of experimental runs. You should use them when you have many potential factors to study, the important factors are unknown, or the effects of the factors are unknown [44].

2. What statistical principles make screening designs effective? The effectiveness of screening designs relies on four key principles [44]:

- Sparsity of Effects: Out of many candidate factors, only a small portion will be important.

- Hierarchy: Lower-order effects (like main effects) are more likely to be important than higher-order effects (like interactions).

- Heredity: Important higher-order terms (e.g., interactions) are usually associated with important lower-order effects of the same factors.

- Projection: A good design will retain reliable statistical properties when unimportant factors are removed and the analysis focuses on the vital few.

3. How can I ensure my A/B test results are reliable? To ensure reliable causal inference from your experiments, two key statistical assumptions must be met [45]:

- Independence between Groups: The outcome for one experimental group should not influence the other. Violations can occur through network effects or confounding variables.

- Independence and Identical Distribution (i.i.d.) within Groups: The collection of one sample should not impact another, and the underlying distribution of the metric should not trend or change variance during the experiment (it should be stationary).

4. What are common pitfalls that lead to false positives in screening? Common pitfalls include [46]:

- Selection Bias: When your test group is not representative of the population.

- Confounding Variables: When an unaccounted factor changes simultaneously with your experimental variable.

- Insufficient Sample Size: When you don't have enough data to detect a genuine effect.

- Data Quality Issues: When outliers or errors skew your results.

Troubleshooting Guides

Problem: Inconclusive or Unreproducible Screening Results

| Potential Cause | Diagnostic Check | Solution |

|---|---|---|

| Confounding Factors | Check if data collection for test/control groups happened under different conditions (e.g., different days, operators). | Randomize the allocation of samples to test and control groups to balance even unmeasured confounding factors [45]. |

| Violated Independence | Check for possible "network effects" where the treatment in one group affects the control group's behavior [45]. | Isolate groups or assign treatments at a level (e.g., user vs. visit) that prevents cross-pollution [45]. |

| Unclear Hypothesis | Review your experiment document. Is your hypothesis vague and open to interpretation? | Craft a precise, measurable hypothesis. Instead of "improve engagement," specify "increase task completion rate by 10%." [46] |

| Insufficient Power | Perform a power analysis before the experiment. Was the sample size too small to detect a meaningful effect? | Determine the required sample size upfront using a power analysis to avoid inconclusive results [46]. |

Problem: High Signal-to-Noise Ratio Obscuring True Hits

| Potential Cause | Diagnostic Check | Solution |

|---|---|---|

| Noisy Metric | Analyze the variance of your primary metric. Is it inherently highly variable? | Formulate "fast-twitch" metrics that are sensitive to the change you are testing and have lower inherent variance [45]. |

| Incorrect Factor Ranges | Review prior knowledge. Are the chosen factor levels too narrow to produce a detectable change? | Based on subject matter expertise, choose factor ranges and levels large enough to produce a detectable signal over the background noise [44]. |

| Unaccounted Interactions | After identifying main effects, check for significant lack of fit, which can suggest missing interaction or quadratic terms [44]. | Include center points in your design to detect curvature. Use the projection property of your design to run follow-up experiments that estimate interactions among the vital few factors [44]. |

Experimental Protocol: Genome-Scale Chemogenomic CRISPR Screen

This detailed protocol is adapted for conducting high-confidence, dropout CRISPR screens to identify genetic interactions with small molecules [21].

1. Key Research Reagent Solutions

| Item | Function |

|---|---|

| TKOv3 Library | A CRISPR sgRNA library containing 70,948 guides targeting 18,053 human genes. It is used to systematically knock out genes across the genome [21]. |

| RPE1-hTERT p53-/- Cell Line | A human retinal pigment epithelial cell line with telomerase immortalization and p53 knockout. It provides a stable, genetically defined background for screening [21]. |

| Lentiviral Packaging System | Produces lentiviral particles to deliver the TKOv3 sgRNA library into the target cells, ensuring efficient and stable genomic integration [21]. |

| Selection Antibiotic (e.g., Puromycin) | Selects for cells that have been successfully transduced with the sgRNA-containing virus, creating a uniformly edited population for the screen [21]. |

| Genotoxic Agent (or other compound of interest) | The chemical perturbation whose genetic interactions are being probed. The concentration must be pre-determined to be sub-lethal [21]. |

| Illumina Sequencing Platform | Used for high-throughput sequencing of the sgRNA barcodes from the screened cell population to quantify guide abundance [21]. |

2. Detailed Workflow