Strategies for Managing Computational Demands in Molecular Dynamics Simulations: A 2025 Guide for Biomedical Researchers

This article provides a comprehensive guide for researchers and drug development professionals on managing the significant computational costs of Molecular Dynamics (MD) simulations.

Strategies for Managing Computational Demands in Molecular Dynamics Simulations: A 2025 Guide for Biomedical Researchers

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on managing the significant computational costs of Molecular Dynamics (MD) simulations. It covers foundational knowledge of hardware selection, including the latest CPUs and GPUs, and explores methodological advances such as machine learning interatomic potentials (MLIPs) trained on massive new datasets like Open Molecules 2025 (OMol25). The guide offers practical troubleshooting and optimization strategies for geometry convergence and simulation setup and concludes with robust protocols for validating and comparing simulation results to ensure scientific reliability. By synthesizing current hardware benchmarks, software capabilities, and data resources, this article serves as a vital resource for executing efficient and accurate MD simulations in biomedical research.

Laying the Groundwork: Understanding Core Hardware and Computational Principles

Balancing CPU Clock Speed and Core Count for MD Workloads

Frequently Asked Questions

FAQ 1: For molecular dynamics simulations, should I prioritize CPU clock speed or core count? For most molecular dynamics (MD) workloads, you should prioritize processor clock speeds over core count [1] [2]. While having a sufficient number of cores is important, the speed at which a CPU can deliver instructions to other components of the system is crucial for optimal performance [2]. MD software is often more GPU-dependent for performing complex math calculations [1]. A processor with too many cores may lead to some being underutilized; a mid-tier workstation CPU with 32 to 64 cores and higher base/boost clock speeds is often a well-suited choice [1] [2].

FAQ 2: What is a good starting point for CPU core count in an MD workstation? A great all-around target is to choose a CPU with 32 to 64 cores for most MD workloads [1]. This range is typically sufficient and optimal, balancing parallel processing capabilities with the higher single-threaded performance needed for various simulation tasks. Processors from the Intel Xeon W-3400 series or AMD Threadripper PRO families fall into this category [1].

FAQ 3: When would a dual CPU setup be beneficial for my research? Dual CPU configurations, available with data center CPUs like AMD EPYC and Intel Xeon Scalable, can be considered for workloads requiring even more cores, as seen in software like NAMD and GROMACS [2]. However, be aware that there can be a slight decrease in speeds due to communication latency in dual CPU servers [1]. These setups are primarily beneficial when you need the extensive PCIe lanes for multiple GPUs or require extremely high memory capacity [1].

FAQ 4: How do my choices in CPU affect GPU performance in MD simulations? The CPU plays a supporting role to the GPU in many MD simulations. You should choose a CPU with the highest clock speed that can also satisfy the number of GPUs you want to use [1]. The CPU's PCIe lane capacity is critical here; workstation and data center CPUs offer more PCIe lanes, allowing for multiple GPUs to be installed without bottlenecking, which is essential for software like NAMD that scales with multiple GPUs [1].

FAQ 5: Can I use a high-end consumer CPU (e.g., Intel Core or AMD Ryzen) for MD, or do I need a workstation/server CPU? Yes, a top-tier consumer CPU can be a viable and cost-effective option [1]. The main differences between these and mid-tier workstation or data center CPUs are the available RAM capacity and the number of PCIe lanes [1]. If your workload does not require vast amounts of memory (e.g., more than 128GB) and you only plan to use one or two GPUs, a high-end consumer CPU may be perfectly adequate.

Troubleshooting Guides

Problem: Simulation performance is lower than expected despite having a high core count CPU.

- Possible Cause: Underutilized cores due to software limitations or a CPU with a lower base clock speed.

- Solution:

- Verify that your MD software (e.g., GROMACS, NAMD, AMBER) is configured to use all available CPU cores. Consult the specific software's documentation for parallelization options.

- Check the CPU's clock speed. A CPU with a very high core count but a lower base frequency might be the bottleneck for parts of the simulation that are not perfectly parallelized [1] [2].

- Consider using performance profiling tools to identify if the simulation is being limited by single-threaded performance in certain routines.

Problem: System becomes unstable or unresponsive when running simulations with multiple GPUs.

- Possible Cause: Insufficient PCIe lanes on the CPU, leading to a bandwidth bottleneck for the GPUs.

- Solution:

- Confirm that your CPU provides enough PCIe lanes to support all installed GPUs at their required bandwidth (e.g., x16 per GPU). Consumer-grade CPUs often have limited PCIe lanes [1].

- If you are using multiple high-end GPUs, consider switching to a platform with a workstation or data center CPU (e.g., AMD Threadripper PRO or Intel Xeon W-3400) that offers higher PCIe lane counts [1].

Problem: Inefficient scaling when increasing the number of MPI processes or OpenMP threads in GROMACS.

- Possible Cause: Poor thread affinity (pinning) or a suboptimal choice in the number of MPI ranks versus OpenMP threads.

- Solution:

- By default,

gmx mdrunsets thread affinity to prevent threads from migrating between cores, which can dramatically degrade performance [3]. Do not disable this unless you are an expert. - Experiment with different combinations of MPI ranks and OpenMP threads. A good starting point is to set the number of OpenMP threads (

-ntomp) to match the number of cores per CPU socket, and then adjust the number of MPI ranks accordingly [3]. - For GPU-accelerated runs, it is often most efficient to use a single MPI rank per GPU [3].

- By default,

Hardware Performance Data

Table 1: CPU Recommendation Summary for MD Workloads

| CPU Type | Recommended Use Case | Pros | Cons |

|---|---|---|---|

| High-End Consumer (e.g., AMD Ryzen, Intel Core) | Single-GPU or dual-GPU setups; smaller systems; budget-conscious builds [1]. | High clock speeds; cost-effective [1]. | Limited PCIe lanes; lower max memory capacity [1]. |

| Workstation (e.g., AMD Threadripper PRO, Intel Xeon W-3400) | Multi-GPU setups (up to 4x); large memory needs; general-purpose MD workstation [1]. | High core count (32-64); more PCIe lanes; high memory capacity [1]. | Higher cost than consumer CPUs. |

| Dual Data Center (e.g., AMD EPYC, Intel Xeon Scalable) | Largest simulations requiring maximum core count or memory; setups with 8 or more GPUs [1] [2]. | Maximum core and memory resources; highest PCIe lane availability [1]. | Highest cost; potential communication latency [1]. |

Table 2: Representative Hardware Configurations for Different MD Scenarios

| Component | Standard Workstation | High-Throughput Server | Cost-Conscious Build |

|---|---|---|---|

| CPU | AMD Threadripper PRO (32-64 cores) or Intel Xeon W-3400 series [1]. | Dual AMD EPYC or Intel Xeon Scalable processors [2]. | High-clock-speed AMD Ryzen 9 or Intel Core i9 [1]. |

| GPU | 2-4x NVIDIA RTX 5000/4500 Ada or RTX 4090 [1] [2]. | 4-8x NVIDIA professional RTX GPUs (e.g., RTX 6000 Ada) [1]. | 1-2x NVIDIA GeForce RTX 4090 or 4080 [1] [2]. |

| RAM | 128GB - 256GB [1]. | 256GB+ [1]. | 64GB - 128GB [1]. |

Experimental Protocols

Protocol 1: Methodology for Benchmarking CPU Core Scaling in GROMACS

- System Preparation: Choose a standard benchmark system for your field (e.g., a solvated protein like DHFR) or use your own system of interest.

- Software Configuration: Compile GROMACS with the highest SIMD instruction set supported by your target CPU (e.g., AVX2 or AVX-512) and with support for OpenMP [3].

- Benchmark Execution:

- Run a series of short simulations (e.g., 1000 steps) using the same input file.

- Systematically vary the parallelization configuration. For a CPU with

Nphysical cores, test different combinations of MPI ranks and OpenMP threads (e.g.,-ntmpi 1 -ntomp N,-ntmpi 2 -ntomp N/2, ...,-ntmpi N -ntomp 1). - Use the

gmx mdrun -dlb yesoption to enable dynamic load balancing, which can improve performance [3].

- Data Collection: Record the performance in ns/day from the GROMACS log file for each run configuration.

- Analysis: Plot ns/day versus the number of cores/threads used. The point where the performance curve begins to plateau indicates the optimal core count for that specific system and hardware, beyond which adding more resources yields diminishing returns.

Protocol 2: Profiling Workflow for Identifying Hardware Bottlenecks

- Run a Representative Simulation: Execute a standard production-run simulation on your system.

- System Monitoring: Use system monitoring tools (e.g.,

htop,nvidia-smi dmon) to log the following metrics throughout the run:- CPU utilization (total and per-core).

- GPU utilization.

- System memory usage.

- CPU clock speed.

- Log File Analysis: Examine the GROMACS log file for detailed timings of different parts of the simulation (PME mesh, non-bonded force calculation, communication, etc.).

- Bottleneck Identification:

- CPU-bound: If one or a few CPU cores are consistently at 100% while GPU utilization is low, the simulation is likely limited by single-threaded CPU performance [1] [2].

- GPU-bound: If GPU utilization is consistently high (e.g., >90%), the simulation is primarily limited by GPU processing power.

- Communication-bound: If the "Wait GPU local" or MPI communication times in the log file are high, the bottleneck may be data transfer between the CPU and GPU or between MPI ranks [3].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational "Reagents" for MD Simulations

| Item | Function / Explanation |

|---|---|

| MD Software (GROMACS, NAMD, AMBER) | The primary application that performs the numerical integration of Newton's equations of motion for the molecular system [1]. |

| High-Clock-Speed CPU | Acts as the system controller, handles serial portions of the code, manages I/O, and feeds data to the GPU[s] [1] [2]. |

| High-Performance GPU (e.g., NVIDIA RTX Ada series) | The computational workhorse that accelerates the highly parallelizable force calculations, providing a massive performance boost [1] [2] [4]. |

| Ample System RAM | Holds the entire molecular system, coordinates, and trajectory data in memory during the simulation to prevent disk I/O bottlenecks [1]. |

| Fast NVMe Storage | Provides high-speed storage for reading initial coordinates and parameter files, and for writing high-frequency trajectory data [1]. |

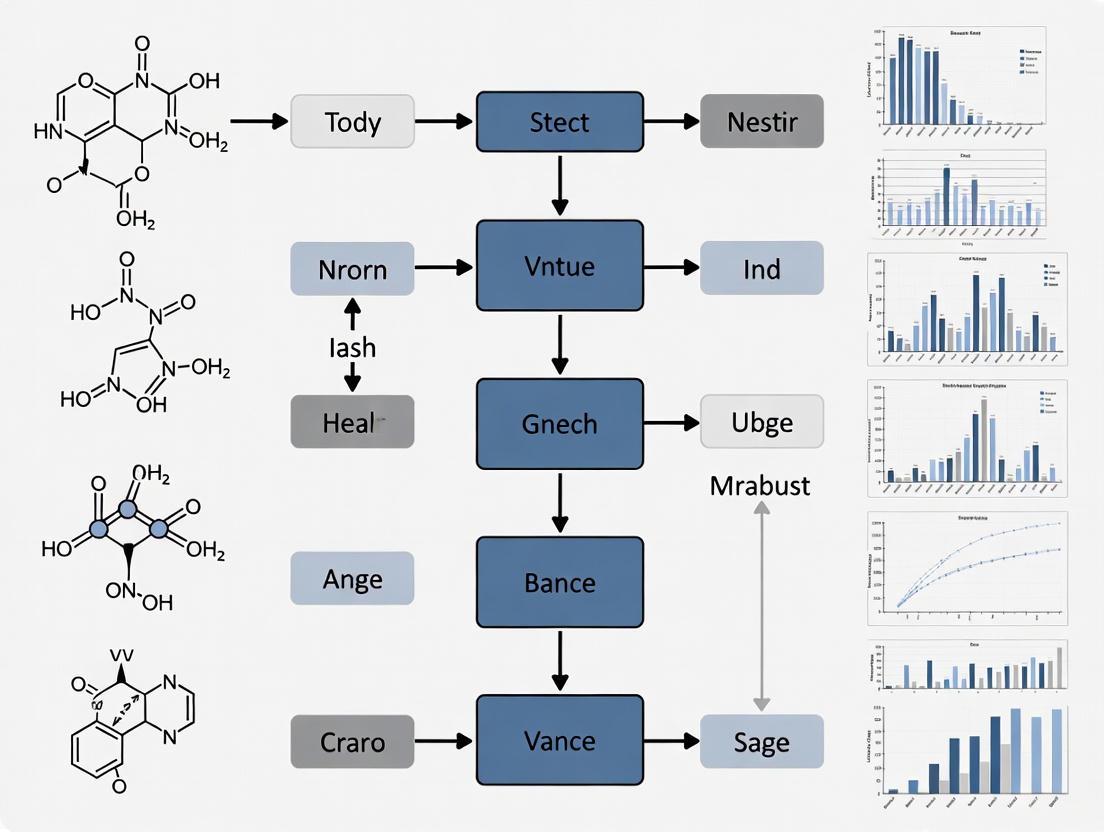

Workflow Visualization

Decision Workflow for CPU Selection in MD

Frequently Asked Questions

What makes the NVIDIA RTX 4090 suitable for Molecular Dynamics simulations? The NVIDIA RTX 4090 is built on the Ada Lovelace architecture and is highly suitable for many Molecular Dynamics (MD) simulations due to its exceptional parallel processing power. It features 16,384 CUDA cores and 24 GB of high-speed GDDR6X memory, which are crucial for handling the complex calculations in software like AMBER, GROMACS, and NAMD [5] [6] [7]. Its high FP32 (single-precision) performance offers the best cost-to-performance ratio for MD workloads that do not require full double-precision (FP64) calculations [8] [9].

When should I consider a professional Ada Lovelace GPU like the RTX 5000/6000 Ada over the RTX 4090? Consider a professional GPU like the RTX 5000 Ada or RTX 6000 Ada in these scenarios [6] [8]:

- Multi-GPU Setups: You need to build a system with 3 or more GPUs. The RTX 4090's large physical size and cooling demands make it difficult to deploy in dense multi-GPU workstations or servers. Professional Ada cards are designed with standardized form factors for such configurations [6] [8].

- Larger VRAM Requirements: Your simulations (e.g., very large systems in NAMD) require more than 24 GB of VRAM. The RTX 6000 Ada offers 48 GB of VRAM, providing headroom for more extensive models [10].

- Software and Support: For enterprise environments where certified drivers and long-term stability are prioritized, professional GPUs may be preferred.

What are the VRAM requirements for typical MD simulations? VRAM requirements vary by software and system size [6] [11]:

- AMBER & GROMACS: These are generally less memory-intensive. GPU core count and clock speed are often prioritized over massive VRAM. The 24 GB on an RTX 4090 is typically ample [6].

- NAMD: Can scale to use multiple GPUs and may benefit from larger aggregate memory when simulating very large molecular systems [6].

- General Rule: The memory must be sufficient to hold the entire simulation. Running out of VRAM can severely bottleneck performance or cause the job to fail. It's advisable to monitor memory usage during a test run of your specific workload [11].

Why is my simulation running slower than expected on the RTX 4090? Several factors can cause this [12] [9]:

- Driver Issues: Ensure you have the latest NVIDIA drivers compatible with your MD software.

- Incorrect Precision: Verify that your software is configured to use mixed or single precision (FP32). The RTX 4090 has limited FP64 (double-precision) performance. Forcing full FP64 will result in a significant performance drop [9].

- Overheating: Monitor GPU temperature during simulation. Thermal throttling can reduce performance. Ensure adequate cooling in your system [12].

- Software Configuration: Incorrect flags (e.g., not specifying

-nb gpu -pme gpuin GROMACS) can prevent the GPU from being fully utilized [9]. - CPU or RAM Bottleneck: The CPU must be fast enough to feed data to the GPU, and system RAM must be sufficient [6].

How do I set up a multi-GPU workstation for MD simulations? For multi-GPU setups [6] [8]:

- Platform Choice: Use a workstation or server motherboard with sufficient PCIe slots and lanes. AMD Threadripper PRO or Intel Xeon W-3400 series CPUs are recommended for their high PCIe lane counts.

- GPU Selection: For more than two GPUs, professional Ada cards (RTX 5000/6000 Ada) are easier to install due to their standard double-wide cooling solutions.

- Software Utilization: In software like AMBER and GROMACS, multiple GPUs are typically used to run separate jobs in parallel for higher throughput. NAMD can sometimes scale a single simulation across multiple GPUs for a faster runtime [6].

GPU Performance and Selection Data

Table 1: Key GPU Specifications for Molecular Dynamics

| GPU Model | CUDA Cores | VRAM | Memory Type | FP32 Performance | Key Consideration for MD |

|---|---|---|---|---|---|

| NVIDIA RTX 4090 | 16,384 [7] | 24 GB [7] | GDDR6X [7] | 82.58 TFLOPS [8] | Best cost-to-performance; limited multi-GPU scalability [8] |

| NVIDIA RTX 6000 Ada | 18,176 [10] | 48 GB [10] | GDDR6 [10] | 91.61 TFLOPS [8] | Top-tier performance & VRAM; ideal for large, complex systems [8] |

| NVIDIA RTX 5000 Ada | ~10,752 [10] | 32 GB [8] | GDDR6 | 65.28 TFLOPS [8] | Best balance for multi-GPU setups; great price-to-performance [8] |

Table 2: GPU Recommendation by MD Software

| Software | Priority | Recommended GPU(s) | Rationale |

|---|---|---|---|

| AMBER | Core Clock & Count [6] | RTX 4090, RTX 6000 Ada [6] | Highly GPU-dependent; CPU is mostly idle. Excellent for single or parallel jobs. [6] |

| GROMACS | Core Clock & Count [6] | RTX 4090, RTX 6000 Ada [6] [10] | Utilizes both CPU and GPU. Multi-GPU is often for separate concurrent jobs. [6] |

| NAMD | Core Clock & Count, Multi-GPU Scalability [6] | RTX 4090 (1-2 GPUs), RTX 5000/4500 Ada (3-4 GPUs) [6] | Can scale a single simulation across multiple GPUs. Benefits from a strong CPU and many PCIe lanes. [6] |

Troubleshooting Guides

Guide 1: Basic GPU Health and Performance Check

Use this flowchart to diagnose common performance issues with your GPU.

Methodology:

- Identify the Issue: Begin by pinpointing if the problem is low performance, system crashes, or failure to start [12].

- Check Connections: For physical workstations, ensure the GPU is securely seated in the PCIe slot and all power cables are firmly connected [12].

- Monitor Temperature: Use monitoring software (like

nvidia-smi) to check the GPU's temperature during simulation. Overheating can cause throttling and reduce performance [12]. - Update Drivers: Verify that the latest NVIDIA drivers compatible with your MD software are installed [12].

- Verify Software Configuration: Confirm that your MD software is configured to use the GPU and with the correct precision (FP32/mixed) [9].

Guide 2: Systematic Workflow for GPU Selection and Experiment Setup

Follow this workflow to select the right GPU and configure your experimental setup for MD simulations.

Methodology:

- Define Requirements: Identify the primary MD software (AMBER, GROMACS, NAMD), the typical size of your molecular systems, and your target simulation speed (e.g., nanoseconds per day) [9].

- Select GPU: Use Table 1 and Table 2 to shortlist GPUs based on your software's needs, VRAM requirements, and budget. Decide between a single powerful GPU (RTX 4090) or a multi-GPU setup (RTX 5000 Ada) [6] [8].

- Choose Supporting Hardware:

- CPU: Select a CPU with high clock speeds. A mid-tier workstation CPU like an AMD Threadripper PRO with 32-64 cores is often sufficient. The CPU should have enough PCIe lanes to support your chosen number of GPUs [6] [10].

- RAM: For a workstation, 64 GB to 128 GB of RAM is a good starting point, with 256 GB recommended for larger simulations [6].

- Storage: Use fast NVMe storage (2-4 TB) for quick read/write of trajectory data [6].

- Power Supply: Ensure an appropriately sized PSU (e.g., 850W minimum for a single RTX 4090) [7].

- Benchmark: Run a small, representative simulation and measure performance (wall-clock time, ns/day) and check for errors [9].

- Validate and Scale: Confirm that the results are accurate and the performance meets your needs before committing to long-term, large-scale simulations [9].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Essential Hardware and Software for MD Simulations

| Item | Function / Role in MD Workflow | Example / Specification |

|---|---|---|

| Primary GPU | Accelerates the bulk of computational calculations in the simulation. | NVIDIA GeForce RTX 4090, NVIDIA RTX 6000 Ada [6] [10] |

| MD Software | The application that performs the molecular dynamics calculations. | AMBER, GROMACS, NAMD [6] [9] |

| Workstation CPU | Manages simulation logistics, data I/O, and feeds tasks to the GPU. | AMD Threadripper PRO, Intel Xeon W-3400 series (high clock speed) [6] [10] |

| System Memory (RAM) | Holds the operating system, software, and simulation data before it is processed by the GPU. | 64 GB - 256 GB of DDR4/DDR5 memory [6] |

| High-Speed Storage | Stores initial structures, parameter files, and large trajectory output files. | 2 TB - 4 TB NVMe SSD [6] |

| Precision Configuration | Software setting that balances calculation speed (FP32) with numerical accuracy (FP64). | "Mixed Precision" or "FP32" for most MD on consumer GPUs [9] |

| Cooling Solution | Maintains GPU and CPU temperatures within operational limits to prevent performance loss. | Robust air cooling or liquid cooling system [12] |

| Benchmarking Suite | A standardized small simulation to validate hardware performance and stability. | A short .mdp file for GROMACS or input deck for NAMD [9] |

Evaluating RAM and Storage Needs for Large Biomolecular Systems

FAQs on RAM and Storage Fundamentals

How much RAM is typically needed for a molecular dynamics simulation?

The random access memory (RAM) required for a simulation depends primarily on the number of particles (atoms) in your system. While needs can vary, the following table provides general guidelines for system sizing based on expert recommendations and practical examples [13] [14].

| System Scale | Typical System Size (Atoms) | Recommended RAM | Use Case Context |

|---|---|---|---|

| Small | ~50,000 | 64 GB | A single RTX 4090 GPU workstation [13] [14]. |

| Medium | ~250,000 | 128 GB - 256 GB | A protein in explicit solvent; a single node on an HPC cluster [15] [14]. |

| Large | 1 - 3 Million | 256 GB+ | A large membrane protein dimer or tetramer [16]. |

| Extreme | 10 Million+ | 1 TB+ | Very large complexes; multi-node HPC simulations [15]. |

A real-world example illustrates how a ~250,000-atom system consumed over 5 TB of RAM when improperly configured across 512 MPI tasks, but ran smoothly with a different, more efficient task configuration [15]. This highlights that software settings are as critical as hardware.

What are the storage requirements for MD trajectories?

Molecular dynamics simulations generate large volumes of trajectory data. The storage needs are a function of the number of atoms, the simulation length, and the frequency at which frames are saved.

| Factor | Impact on Storage Needs | Consideration |

|---|---|---|

| System Size | Linear increase with atom count. | Larger systems produce larger per-frame file sizes. |

| Simulation Length | Linear increase with time. | Longer simulations capture more events but require more space. |

| Output Frequency | Higher frequency = more data. | Saving frames less frequently can drastically reduce storage needs. |

| File Format | Binary (e.g., XTC) is smaller than text. | Use compressed formats for long-term storage. |

A contemporary HPC node running molecular dynamics can produce around 10 GB of data per day [16]. A single, long-running simulation on a powerful cluster can easily generate multiple terabytes of data.

Why did my simulation fail with an "OUTOFMEMORY" error despite having enough total RAM?

This common error often stems from inefficient resource configuration, not a lack of physical memory. The problem is usually that the memory demand is poorly distributed across the available compute resources.

- Cause: Launching too many MPI tasks per node can cause each task to require a small, separate portion of memory, leading to massive total memory consumption and inefficiency [15]. In one documented case, this led to a 392.86% memory efficiency, meaning the job used almost four times more memory than was physically allocated [15].

- Solution: Reduce the number of MPI tasks per node and increase the number of OpenMP threads per MPI task (e.g.,

srun -n 128 -c 8 gmx_mpi mdrun -ntomp 8 -deffnm md). This consolidates memory usage and can resolve the error while improving performance [15].

Troubleshooting Common Performance Issues

Problem: Simulation is slow and does not scale with more CPU cores.

- Check Software Configuration: Molecular dynamics software like GROMACS, NAMD, and AMBER often benefit more from higher processor clock speeds than a very high core count [13] [14]. A 96-core processor may leave many cores underutilized. Prioritize a CPU with a balance of high clock speeds and a sufficient number of cores (e.g., 32 to 64 cores) [13] [14].

- Check GPU Offloading: For supported software, ensure that the computationally intensive non-bonded interactions are being offloaded to the GPU. The GPU is typically far more efficient at these calculations than the CPU.

Problem: Simulation fails to start or crashes immediately with a memory error.

- Check Memory per Core: If you are running on a cluster, calculate the memory available per core (Total Node RAM / Cores per Node). Your simulation's per-core memory requirement must be less than this value. For larger systems, you may need to request nodes with more memory or use fewer cores per node.

- Validate Input Parameters: Incorrect settings in your molecular dynamics parameter (MDP) file, such as an excessively large cut-off for non-bonded interactions, can lead to unexpectedly high memory demands [17].

The following diagram outlines the logical decision process for diagnosing and resolving high RAM consumption issues.

Experimental Protocols for Resource Estimation

Protocol: Benchmarking RAM Usage for a New System

Before launching a large production run, it is critical to profile the memory requirements of your specific system.

- Prepare Input: Create your system's topology and structure files as usual.

- Run a Short Test: Launch a very short simulation (e.g.,

-nsteps 1000) on a single node with high memory. - Monitor Memory: Use system monitoring tools (e.g.,

htop,ps) or the cluster's job scheduler metrics to track the peak memory usage of themdrunprocess. - Extrapolate: The memory usage from the short test is a good approximation for the needs of a much longer simulation of the same system. Use this value to request appropriate resources in your production job script.

Protocol: Efficiently Scaling on HPC Clusters

This protocol outlines the steps to achieve good performance and memory efficiency on a high-performance computing cluster, based on the successful resolution of a real-world problem [15].

- Start with a Balanced Configuration: Begin with a configuration that uses multiple OpenMP threads per MPI task. A good starting point is to have 4-8 CPU cores dedicated to each MPI task.

- Launch the Job: Use a command structure like:

srun -n [N_MPI] -c [C_PER_MPI] gmx_mpi mdrun -ntomp [OMP_THREADS] -deffnm md. Ensure thatC_PER_MPIequalsOMP_THREADS. - Profile and Adjust: Run the job for a short period and check the performance (ns/day) and memory efficiency from the log file and job metrics.

- Iterate: If performance is poor or memory efficiency is low, adjust the ratio of MPI tasks to OpenMP threads. The goal is to find the "sweet spot" for your specific system and hardware.

The Scientist's Toolkit: Key Research Reagent Solutions

This table details essential computational "reagents" and their functions in managing the computational demands of biomolecular simulations.

| Item | Function in Computational Experiment |

|---|---|

| HPC Cluster | A network of powerful computers that provides the aggregate computational power (CPU/GPU) and memory required for large-scale simulations [18] [19]. |

| GPU Accelerators (e.g., NVIDIA RTX 4090, A100, H100) | Specialized hardware that dramatically speeds up the calculation of non-bonded forces, the most computationally intensive part of MD simulations [13] [16]. |

| Job Scheduler (e.g., SLURM, PBS) | Software that manages and allocates compute resources (nodes, cores, memory) on an HPC cluster, allowing multiple users to share the system efficiently [15]. |

| Molecular Dynamics Software (e.g., GROMACS, AMBER, NAMD) | The primary software engine that performs the simulation by integrating Newton's equations of motion for all atoms in the system [16]. |

| Container Technology (e.g., Singularity/Apptainer, Docker) | Packages software and all its dependencies into a single image, ensuring reproducibility and simplifying software deployment on diverse HPC platforms [16]. |

What is NRF? The Node Replacement Factor (NRF) is a critical metric in high-performance computing (HPC) that quantifies the performance gain achieved by using GPU-accelerated servers compared to traditional CPU-only systems. It is defined as the number of CPU-only servers replaced by a single GPU-accelerated server to achieve equivalent application performance [20]. This metric fundamentally changes the economics of data centers by delivering breakthrough performance with dramatically fewer servers, reduced power consumption, and less networking overhead, resulting in total cost savings of 5X-10X [20].

NRF Measurement Methodology NRF is determined through a standardized benchmarking process. Application performance is first measured using up to 8 CPU-only servers. Linear scaling is then applied to extrapolate beyond this range, calculating how many CPU servers one GPU-accelerated node can replace [20]. The specific NRF value varies significantly across different applications and molecular dynamics software, reflecting their unique computational characteristics and optimization levels for GPU architecture.

Quantitative NRF Performance Data

The following tables summarize comprehensive NRF measurements across major molecular dynamics applications, demonstrating the substantial performance gains achievable with NVIDIA GB200 GPUs compared to AMD Dual Genoa 9684X CPU-only systems [20].

Table 1: NRF Performance for Molecular Dynamics Applications

| Application | Test Module | Performance Metric | AMD Dual Genoa (CPU-Only) | 2x GB200 NRF | 4x GB200 NRF |

|---|---|---|---|---|---|

| AMBER [PME-STMVNPT4fs] | DC-STMV_NPT | ns/day | 3.69 | 64x | 128x |

| AMBER [PME-JACNPT4fs] | DC-JAC_NPT | ns/day | 377.04 | 30x | 58x |

| AMBER [FEP-GTI_Complex 1fs] | FEP-GTI_Complex | ns/day | 25.07 | 15x | 31x |

| GROMACS [Cellulose] | Cellulose | ns/day | 79 | 4x | 7x |

| GROMACS [STMV] | STMV | ns/day | 20 | 6x | 10x |

| LAMMPS [Tersoff] | Tersoff | ATOM-Time Steps/s | 7.67E+07 | 37x | 63x |

| LAMMPS [SNAP] | SNAP | ATOM-Time Steps/s | 8.40E+05 | 21x | 41x |

| LAMMPS [ReaxFF/C] | ReaxFF/C | ATOM-Time Steps/s | 1.90E+06 | 19x | 27x |

| GTC | mpi#proc.in | Mpush/Sec | 146 | 13x | 26x |

Table 2: NRF Performance for Physics and Engineering Applications

| Application | Test Module | Performance Metric | AMD Dual Genoa (CPU-Only) | 2x GB200 NRF | 4x GB200 NRF |

|---|---|---|---|---|---|

| Chroma | HMC Medium | Final Timestep Time (Sec) | 10,037 | 142x | 211x |

| FUN3D [waverider-5M] | waverider-5M | Loop Time (Sec) | 170 | 19x | 27x |

| FUN3D [waverider-20M w/chemistry] | waverider-20M w/chemistry | Loop Time (Sec) | 1,927 | 18x | 36x |

Table 3: Consumer GPU Performance Comparison for AI/MD Tasks

| Hardware | Summarization Time (100 articles) | Fine-tuning Time (5 epochs) | Performance Boost vs CPU |

|---|---|---|---|

| CPU (Reference) | 24 seconds | ~12,000 seconds (estimated) | 1x (Baseline) |

| NVIDIA Tesla T4 | 1.6 seconds | 243 seconds | 10-50x |

| NVIDIA RTX 4090 | 69 seconds | 60 seconds | ~40-200x |

| NVIDIA RTX 5090 | 75 seconds | 125 seconds | Software Limited |

| NVIDIA H100 | 60 seconds | 46 seconds | Optimized for large models |

Experimental Protocols for NRF Benchmarking

Standard NRF Measurement Protocol

Step-by-Step Methodology:

- Baseline Establishment: Measure application performance using 1-8 CPU-only servers with identical system configurations except for GPU components [20]

- Linear Scaling Application: Use linear regression to extrapolate CPU performance to higher node counts, establishing the theoretical CPU server requirement for target performance levels [20]

- GPU Performance Measurement: Execute identical application workloads on GPU-accelerated nodes, ensuring all software versions and configuration parameters remain consistent

- NRF Calculation: Divide the scaled CPU server performance by the single GPU-accelerated node performance:

NRF = CPU_Node_Equivalent / GPU_Node [20] - Validation: Verify results across multiple runs to ensure statistical significance and account for performance variability

Application-Specific Benchmarking Configurations

AMBER Molecular Dynamics Protocol:

- Software Version: AMBER 24-AT_24 [20]

- Accelerated Features: PMEMD Explicit Solvent and GB Implicit Solvent [20]

- Measurement: Nanoseconds per day (ns/day) for production MD simulations [20]

- GPU Configuration: Multiple independent instances using MPS for parallel execution [20]

- Test Modules: DC-CelluloseNPT, DC-FactorIXNPT, DC-JACNPT, DC-STMVNPT [20]

GROMACS Molecular Dynamics Protocol:

- Software Version: GROMACS 2025.3 [20]

- Accelerated Features: Implicit (5x) and Explicit (2x) Solvent handling [20]

- Scalability: Multi-GPU, single node optimization [20]

- Test Systems: Cellulose and STMV (Satellite Tobacco Mosaic Virus) benchmarks [20]

- Performance Metric: Nanoseconds per day (ns/day) for standardized biomolecular systems [20]

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: Why does my GPU-accelerated simulation sometimes show worse performance than CPU-only execution?

A: This performance regression typically stems from suboptimal workload distribution and configuration issues [21]. Key factors include:

- Incorrect domain decomposition: The simulation box may be partitioned in a way that creates communication bottlenecks between CPU and GPU [21]

- Suboptimal GPU offloading settings: Not all computational tasks benefit from GPU execution—some operations like PME (Particle Mesh Ewald) may perform better on CPU depending on system size [21]

- Memory transfer overhead: Excessive data transfer between CPU and GPU memory can negate computational benefits, particularly for smaller systems [21] [22]

- Software compatibility: Driver versions, CUDA toolkit, and MD software must be compatible and optimally configured [21]

Q2: How does system size affect GPU acceleration efficiency in molecular dynamics?

A: GPU acceleration efficiency strongly correlates with system size due to parallelization overhead [21] [22]:

- Small systems (<50,000 atoms): Often show limited or negative GPU acceleration due to dominant communication overhead [21]

- Medium systems (50,000-500,000 atoms): Typically achieve 5-30x acceleration with proper configuration [20]

- Large systems (>500,000 atoms): Can achieve 30-150+x acceleration as computational workload dominates communication costs [20]

- Memory-bound systems: Very large systems may require high-memory GPUs (48GB VRAM) like RTX 6000 Ada to avoid memory swapping [23]

Q3: What are the key hardware considerations for maximizing NRF in MD simulations?

A: Optimal hardware selection involves balancing multiple factors [23] [24]:

- GPU Memory Capacity: Large simulations require substantial VRAM (24-48GB)—RTX 6000 Ada provides 48GB for memory-intensive workloads [23]

- CPU-GPU Balance: Powerful CPUs are needed to feed data to multiple GPUs—avoid underpowered CPUs creating bottlenecks [23]

- Memory Bandwidth: High-speed system RAM and GPU memory bandwidth critical for data-intensive simulations [24]

- Power and Cooling: GPU nodes generate substantial heat—adequate cooling essential for sustained performance [24]

- Multi-GPU Configuration: Not all applications scale linearly with additional GPUs—verify application-specific multi-GPU support [23]

Troubleshooting Common Performance Issues

Problem: Abysmal GPU Performance Despite Proper Hardware

Symptoms: GPU-enabled simulation runs slower than CPU-only equivalent [21]

Solution Protocol:

Step-by-Step Resolution:

- Verify GPU Task Assignment: Check that appropriate computational tasks are assigned to GPU. For GROMACS, use

-nb gpu -bonded gpu -pme gpufor full GPU offloading [21] - Optimize Domain Decomposition: Adjust the number of MPI processes and OpenMP threads. For 40-core systems, try 10 MPI processes with 4 threads each rather than 40 MPI processes [21]

- Update Software Stack: Ensure CUDA drivers (≥11.8), MD software version, and compilers are compatible and updated [21]

- Monitor Memory Transfers: Use profiling tools to identify excessive CPU-GPU data transfer and adjust workload partitioning accordingly [22]

- System Size Assessment: For systems under 50,000 atoms, consider CPU-only or hybrid execution as communication overhead may dominate [21]

Problem: Memory Limitations in Large-Scale Simulations

Symptoms: Simulation fails with "out of memory" errors or exhibits severe performance degradation [23]

Solution Strategies:

- GPU Memory Upgrade: Utilize high-memory GPUs (RTX 6000 Ada with 48GB) for billion-atom simulations [23]

- Multi-GPU Configuration: Distribute workload across multiple GPUs using application-specific multi-GPU support [23]

- Memory Optimization: Reduce output frequency, use checkpointing, and minimize unnecessary data collection [24]

- Mixed Precision: Employ mixed-precision calculations where acceptable for numerical accuracy [25]

The Scientist's Toolkit: Essential Research Reagents

Table 4: Hardware Solutions for Molecular Dynamics Research

| Component | Recommended Solutions | Function & Application |

|---|---|---|

| Primary GPU | NVIDIA RTX 6000 Ada, NVIDIA RTX 4090, NVIDIA H100 | Massively parallel computation for MD force calculations and integration [23] |

| Specialized MD GPU | NVIDIA GB200 (2x-4x configuration) | Extreme-scale simulation with 75-152x NRF for specific AMBER workloads [20] |

| CPU | AMD Threadripper PRO 5995WX, Intel Xeon Gold 6230, AMD EPYC | Coordinate integration, task distribution, and data management for GPU workflows [23] [21] |

| System Memory | 192-400 GB DDR4/DDR5 ECC RAM | Storage of coordinates, velocities, forces, and trajectory data for large systems [21] |

| MD Software | AMBER, GROMACS, NAMD, LAMMPS | Specialized molecular dynamics algorithms with GPU acceleration [20] [25] |

| Parallelization | MPI, OpenMP, CUDA | Multi-node and multi-core parallel execution frameworks [21] [25] |

| Tool Category | Specific Tools | Purpose & Implementation |

|---|---|---|

| Performance Profiling | NVIDIA Nsight Systems, GROMACS Internal Timing | Identify computational bottlenecks and communication overhead [21] |

| GPU Optimization | CUDA Graphs (GMXCUDAGRAPH=1), GPU-Aware MPI | Reduce GPU kernel launch overhead and enable direct GPU communication [21] |

| Visualization | VMD, ChimeraX | Trajectory analysis and structural visualization of simulation results [25] |

| Cluster Management | Slurm, PBS Pro | Job scheduling and resource management for HPC systems [21] |

| Benchmarking Suites | Standard MD benchmarks (CELLULOSE, STMV, JAC) | Performance validation and hardware comparison [20] |

Advanced Configuration and Optimization

Optimal GPU Acceleration Settings

GROMACS Specific Optimization:

NAMD GPU Configuration:

- Utilize CUDA and GPU-resident mode for maximum performance [25]

- Enable multiple GPUs per node for large-scale simulations [25]

- Leverage NAMD's specialized GPU algorithms for non-bonded forces [25]

Energy Efficiency Considerations

Beyond raw performance, GPU acceleration provides significant energy efficiency benefits [24]:

- Power Consumption: Well-configured GPU nodes can reduce energy consumption per simulation by 5-10X compared to CPU-only clusters [24]

- Total Cost of Ownership: Considering typical 3-5 year hardware lifespan, energy costs often exceed hardware costs—GPU efficiency directly reduces operational expenses [24]

- Cooling Requirements: GPU nodes generate concentrated heat—ensure adequate cooling capacity to maintain boost clocks during long simulations [24]

Future Directions and Emerging Technologies

The GPU acceleration landscape continues to evolve with several key trends:

- Architecture Advances: New GPU architectures like Blackwell promise further performance gains, though software optimization often lags hardware release [26]

- Multi-GPU Scaling: Improved scaling across multiple GPUs enables billion-atom simulations on single nodes [25]

- AI/ML Integration: Emerging applications of machine learning potentials and hybrid approaches further leverage GPU computational capabilities [22]

- Specialized Hardware: Domain-specific accelerators and tensor cores are being increasingly utilized for specific MD workloads [26]

This technical support resource provides researchers with comprehensive guidance for quantifying, implementing, and troubleshooting GPU acceleration in molecular dynamics simulations using the Node Replacement Factor framework. By following these protocols and recommendations, research teams can significantly enhance computational efficiency and accelerate scientific discovery.

The Role of Specialized Workstations and Cloud Computing Infrastructures

Technical Support Center: Managing Computational Demands for Molecular Dynamics

This technical support center provides troubleshooting guides and FAQs to help researchers, scientists, and drug development professionals effectively manage computational resources for molecular dynamics (MD) simulations. The content is framed within the broader thesis of optimizing computational strategies to accelerate scientific discovery.

Troubleshooting Guides

Common GROMACS Setup and Runtime Errors

This section addresses frequent issues encountered when preparing and running simulations with GROMACS, a widely used MD software package.

Error 1: Residue not found in topology database [27]

- Error Message:

Residue 'XXX' not found in residue topology database - Problem: The force field you selected does not contain a definition for the molecule or residue "XXX" in your input file.

- Solutions:

- Rename the residue: The residue name in your coordinate file may not match the name used in the force field's database; renaming it might solve the issue.

- Find a topology: Search the literature for a topology file (

.itp) for your molecule that is consistent with your chosen force field and include it in your system's topology. - Parameterize the molecule: As a last resort, you may need to parameterize the molecule yourself, which is complex and requires expert knowledge.

Error 2: Atom not found in residue [27]

- Error Message:

WARNING: atom X is missing in residue XXX Y in the pdb file - Problem: The input structure is missing atoms that the force field expects to be present.

- Solutions:

- Let GROMACS add hydrogens: Use the

-ignhflag to letpdb2gmxremove non-matching hydrogen atoms and add the correct ones. - Check terminal residues: For AMBER force fields, ensure N-terminal and C-terminal residues are prefixed with 'N' and 'C' (e.g., 'NALA' for an N-terminal alanine).

- Model missing atoms: Use external software to model in atoms listed under

REMARK 465orREMARK 470in your PDB file. Avoid using the-missingoption for generating protein topologies.

- Let GROMACS add hydrogens: Use the

Error 3: Invalid order for directive [27]

- Error Message:

Invalid order for directive xxx(commonlydefaultsoratomtypes) - Problem: The directives in your topology (

.top) or include (.itp) files are in the wrong sequence. - Solutions:

- Place

[defaults]first: The[defaults]section must appear first in your topology, typically via the#includestatement for your force field. - Define new types early: Any new

[ atomtypes ]or other parameter directives must appear before the first[ moleculetype ]directive.

- Place

Error 4: Stepsize too small or non-finite forces [28]

- Error Message:

Stepsize too small...or...force on at least one atom is not finite - Problem:

- The energy minimization has converged as much as possible with the current parameters.

- Two atoms in the input structure are excessively close, leading to impossibly high forces.

- Solutions:

- Check Fmax: If the potential energy is reasonable for your system (e.g., ~ -10⁵ to -10⁶ for hydrated systems), it may be sufficiently minimized to proceed.

- Inspect coordinates: Check your initial structure for atom clashes.

- Use soft-core potentials: Consider using soft-core potentials for minimizing systems with infinite forces.

Error 5: Pressure scaling and range checking errors [28]

- Error Message:

Pressure scaling more than 1%orRange Checking error - Problem: The simulation is unstable, often due to poor equilibration, incorrect topology parameters, or an overly responsive pressure coupling algorithm.

- Solutions:

- Re-equilibrate: Ensure the system is well-equilibrated in the NVT ensemble before applying pressure coupling (NPT).

- Adjust

tau-p: Increase the pressure coupling time constant (tau-p) to slow down the box-size adjustments. - Review topology: Double-check all parameters in your topology file for validity.

Performance and Optimization Issues

Issue: Simulation is running too slowly or with poor scaling [29]

- Problem: The GROMACS binary is not optimized for your hardware, or the compute resources are insufficient for the system size.

- Solutions:

- Use optimized compilers: On AWS Graviton3E processors, using the Arm Compiler for Linux (ACfL) with Scalable Vector Extension (SVE) support has been shown to yield performance gains of 6-28% over other compiler/SIMD combinations [29].

- Enable high-speed networking: In cloud environments, ensure Elastic Fabric Adapter (EFA) is enabled for near-linear scaling across multiple nodes [29].

- Use performance libraries: Link against optimized math libraries like the Arm Performance Libraries (ArmPL).

Frequently Asked Questions (FAQs)

FAQ 1: When should I use a specialized workstation versus a cloud cluster?

The choice depends on your project's stage and scale. The following table outlines the recommended approach based on common research scenarios.

| Research Scenario | Recommended Environment | Rationale |

|---|---|---|

| Method Development & Debugging | Specialized Workstation | Rapid prototyping, testing parameters, and verifying setup before committing cloud credits [30]. |

| Small System Screening (< 100,000 atoms) | Specialized Workstation | Avoids queue times and cloud management overhead; sufficient for many preliminary studies. |

| Large-Scale Production Runs | Cloud Cluster | Access to virtually unlimited, scalable compute power for systems with millions of atoms [29]. |

| Hybrid Workflow | Both | Test and debug locally on a workstation, then seamlessly scale out to the cloud for production runs [30]. |

FAQ 2: What is the single most important step to avoid wasting cloud computing credits?

Test your MD setup locally first [30]. Before submitting a job to the cloud, run a short simulation (a few hundred steps) on a local workstation or use the "Generate inputs" function in tools like the SAMSON GROMACS Wizard. This validates your configuration, input files, and topology, catching common errors that would cause a costly cloud job to fail immediately [30].

FAQ 3: My simulation failed on the cloud. What are the first things I should check?

- Inspect the Log File: Always start with the main GROMACS log file (

.log). Look for explicit error messages (like those listed in the troubleshooting guide above) and warnings that appear just before the crash. - Verify File Paths: Ensure all input files (topology, coordinates, parameters) were correctly transferred to the cloud instance and that all paths in your command scripts are accurate.

- Check Resource Limits: Confirm that the simulation did not run out of memory or wall-clock time allocated by the job scheduler.

FAQ 4: How do I achieve the best performance for GROMACS on modern cloud hardware?

For optimal performance on AWS Graviton3E (Hpc7g) instances [29]:

- Compiler: Use the Arm Compiler for Linux (ACfL).

- SIMD Support: Build GROMACS with SVE support (

-DGMX_SIMD=ARM_SVE). - Math Library: Link against Arm Performance Libraries (ArmPL).

- MPI: Use a recent Open MPI version configured with Libfabric for EFA support.

FAQ 5: What are the key considerations for storing and managing my simulation data in the cloud?

- Use High-Performance File Systems: For best I/O performance, use a service like Amazon FSx for Lustre, which is designed for HPC workloads and can be backed by an Amazon S3 bucket for durable storage [29].

- "Data Has Gravity": It is often more efficient and cost-effective to bring computation to the data. Store large datasets in cloud object storage (like S3) and launch compute resources in the same region to avoid expensive data transfer charges [31].

Experimental Protocols & Workflows

Objective: To catch configuration errors and ensure simulation stability before launching costly cloud jobs.

Methodology:

- System Preparation: Use

pdb2gmxto generate topology,gromppto process parameters, andmdrunfor a short minimization and equilibration run on a local workstation. - Short Test Run: Execute a brief MD simulation (100-1,000 steps) locally.

- Validation Check: Monitor the log file for warnings and errors. Confirm that key metrics (potential energy, temperature, pressure) stabilize as expected.

- Cloud Submission: Only if the local test completes successfully, transfer the validated input files to the cloud environment for the full production run.

Objective: To determine the optimal compute configuration (number of nodes, MPI processes) for a given molecular system.

Methodology:

- Select a Benchmark System: Use a standardized input file, such as the STMV virus system (~28 million atoms) from the Unified European Application Benchmark Suite (UEABS) [29].

- Define Test Matrix: Run the same simulation across a range of node counts (e.g., 1, 2, 4, 8 nodes).

- Measure Performance: Record the simulation performance in nanoseconds per day.

- Analyze Scaling: Plot performance versus node count to identify the point where parallel scaling efficiency begins to drop, indicating the cost-effective limit for your system.

The Scientist's Toolkit: Research Reagent Solutions

This table details essential computational "reagents" — the software, hardware, and services required to conduct modern molecular dynamics simulations.

| Item | Function / Description | Example / Specification |

|---|---|---|

| MD Simulation Engine | Software that performs the numerical integration of the equations of motion to simulate system evolution. | GROMACS [27] [29], LAMMPS [29], AMS [32] |

| Force Field | A set of mathematical functions and parameters that describe the potential energy of a system of atoms. | AMBER, CHARMM, OPLS (selected in pdb2gmx) [27] |

| Specialized Workstation | A local computer with sufficient CPU/GPU cores, RAM, and fast local storage for development and testing. | High-core-count CPU, >64 GB RAM, NVMe SSD [30] |

| Cloud Compute Instance | A virtualized server in the cloud, optimized for HPC workloads, used for scalable production runs. | AWS Hpc7g.16xlarge (Graviton3E) [29] |

| High-Performance File System | A parallel file system optimized for fast read/write operations required by large-scale MD simulations. | Amazon FSx for Lustre (PERSISTENT_2) [29] |

| Compiler Suite | Tools that translate human-readable source code into optimized machine code for a specific processor. | Arm Compiler for Linux (ACfL) with ArmPL [29] |

| Message Passing Interface (MPI) | A communication protocol that enables multiple processes to work in parallel across different compute nodes. | Open MPI with Libfabric/EFA support [29] |

Advanced Methods and Real-World Applications in Biomolecular Research

Leveraging Machine Learning Interatomic Potentials (MLIPs) for 10,000x Speedup

FAQs: Core Concepts and Setup

Q1: What is a Machine Learning Interatomic Potential (MLIP) and what key trade-off does it disrupt? MLIP is a novel in silico approach for molecular property prediction that creates an alternative to disrupt the traditional accuracy/speed trade-off. It achieves near-quantum-mechanical accuracy of Density Functional Theory (DFT) at a computational cost closer to classical empirical force fields, facilitating more efficient and precise simulations [33] [34].

Q2: I'm new to MLIPs. What user-friendly tools are available to get started? Libraries are available that provide user-friendly tools for industry experts without a deep machine learning background. These include pre-trained models for organics and seamless integration with molecular dynamics tools like ASE and JAX-MD, allowing you to run simulations without developing models from scratch [33].

Q3: What are the essential components of an MLIP library? A comprehensive MLIP library typically includes three core components: multiple modern model architectures (e.g., MACE, NequIP, ViSNet), data generation and training tools, and integrated Molecular Dynamics wrappers (e.g., ASE, JAX-MD) for running simulations [33].

FAQs: Implementation and Troubleshooting

Q4: Which model architecture should I choose for my system? The choice depends on your system's specifics and accuracy requirements. Available libraries offer several high-performance architectures. MACE, NequIP, and ViSNet are examples of models included in current releases, each with different strengths in balancing accuracy and computational efficiency [33].

Q5: How can I achieve the best computational performance when running MLIP-driven MD simulations? For peak performance, particularly on modern ARM-based HPC hardware:

- Use optimized compilers: The Arm Compiler for Linux (ACfL) with its integrated Arm Performance Libraries (ArmPL) has been shown to generate faster code than GNU compilers for molecular dynamics applications [29].

- Enable advanced instruction sets: Leverage Scalable Vector Extension (SVE) support. Benchmarks show SVE-enabled binaries can be 19-28% faster than those using NEON/ASIMD instructions [29].

- Employ efficient MPI: Use a high-performance MPI implementation like Open MPI configured with low-level libraries (e.g., Libfabric) to ensure near-linear scalability across multiple nodes [29].

Q6: My simulation results do not match my experimental data. What could be wrong? First, verify the quality of your training data. MLIPs are only as good as the data they are trained on. Ensure your reference quantum mechanics (QM) data is converged and accurate for the properties you want to predict. Second, check the transferability of your potential; a model trained on one molecular phase or configuration may not generalize well to others [34].

Performance Benchmarks and Data

The performance of MLIPs and their supporting infrastructure is key to achieving massive speedups. The following tables summarize critical performance data from recent benchmarks.

Table 1: GROMACS Performance on AWS Graviton3E (Hpc7g) Instances Using Different Compilers and SIMD Settings [29]

| Test Case | System Size (Atoms) | ACfL with SVE (ns/day) | ACfL with NEON (ns/day) | GNU with SVE (ns/day) | Performance Gain (SVE vs. NEON) |

|---|---|---|---|---|---|

| Case A: Ion Channel | 142,000 | 62.5 | 57.5 | 59.0 | ~9% |

| Case B: Cellulose | 3,300,000 | 15.8 | 12.3 | 14.9 | ~28% |

| Case C: STMV | 28,000,000 | 8.4 | 7.1 | 7.9 | ~19% |

Table 2: Key Software Tools for MLIP Development and Simulation

| Tool Name | Category | Function | Reference |

|---|---|---|---|

| MACE, NequIP, ViSNet | MLIP Model | Core machine learning architectures for representing the interatomic potential. | [33] |

| ASE | MD Wrapper | Atomic Simulation Environment; a Python library for setting up, controlling, and analyzing atomistic simulations. | [33] |

| JAX-MD | MD Wrapper | A library for accelerated Molecular Dynamics using JAX, enabling highly efficient simulations on GPUs/TPUs. | [33] |

| Arm Compiler for Linux (ACfL) | Compiler | A compiler suite optimized for Arm architecture, delivering higher performance for HPC applications. | [29] |

| Open MPI | Library | A high-performance Message Passing Interface (MPI) library for parallel computing across multiple nodes. | [29] |

Experimental Protocols for Benchmarking

Protocol 1: Benchmarking MLIP Performance on HPC Systems

This protocol outlines the steps to benchmark the performance of an MLIP-driven MD simulation, based on best practices for HPC systems [29].

- System Setup: Provision a compute cluster using a management tool like AWS ParallelCluster. Configure the cluster to use Hpc7g.16xlarge instances powered by Graviton3E processors.

- Software Configuration:

- Install the Arm Compiler for Linux (ACfL) version 23.04 or later.

- Install Open MPI 4.1.5 or later, configured to use Libfabric for network communication.

- Compile your MD software (e.g., GROMACS, LAMMPS) and the MLIP model using ACfL with the

-DGMX_SIMD=ARM_SVEflag for SVE support.

- Run Benchmark: Execute standardized test cases (e.g., the Unified European Application Benchmark Suite - UEABS) with a fixed number of simulation steps (e.g., 10,000).

- Performance Profiling: Measure the simulation performance in nanoseconds per day. Conduct weak and strong scaling tests to evaluate parallel efficiency across multiple nodes.

Protocol 2: Training a Robust MLIP Model

This protocol describes the general workflow for generating a reliable MLIP [34].

- Data Generation:

- Select a diverse set of atomic configurations representative of the chemical space you intend to simulate.

- Use Density Functional Theory (DFT) to calculate the energies and forces for these configurations, creating your reference dataset.

- Feature Representation: Choose a material structure descriptor (e.g., Atomic Cluster Expansion, SOAP) to convert atomic coordinates into a suitable input for the machine learning model.

- Model Training: Train a chosen MLIP architecture (e.g., NequIP) on the generated dataset, using a loss function that minimizes the error on energies and forces.

- Model Validation: Validate the trained model on a held-out test set of configurations not seen during training. Evaluate its accuracy on predicting energies, forces, and relevant material properties.

Workflow and System Diagrams

MLIP Development and Application Cycle

Optimized HPC Infrastructure for MLIP MD

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Libraries for MLIP Research

| Item | Function in Research | Key Benefit |

|---|---|---|

| MLIP Library | Provides core architectures (MACE, NequIP) and training tools for developing interatomic potentials. | User-friendly interface, integrates training and MD simulation [33]. |

| JAX-MD | A molecular dynamics wrapper that runs on GPUs/TPUs using the JAX framework. | Facilitates highly efficient, hardware-accelerated MD simulations [33]. |

| Arm Compiler for Linux (ACfL) | Compiles C, C++, and Fortran code for Arm-based processors. | Generates optimized binaries, delivering 6-28% higher performance vs. GNU compilers [29]. |

| Open MPI with EFA | A high-performance MPI implementation with the Elastic Fabric Adapter for AWS. | Enables near-linear scalability of MD simulations across multiple HPC nodes [29]. |

| GROMACS/LAMMPS | High-performance molecular dynamics simulation software packages. | Widely adopted, community-standard tools optimized for various HPC architectures [29]. |

The Open Molecules 2025 (OMol25) dataset represents a transformative resource for computational chemistry and molecular dynamics (MD) simulations. Developed through a collaboration co-led by Meta and the Department of Energy's Lawrence Berkeley National Laboratory, OMol25 addresses a fundamental challenge in creating accurate machine learning (ML) models for atomic simulations: the lack of comprehensive, high-quality training data [35] [36].

This dataset enables researchers to overcome significant computational demands by providing ML models that can deliver quantum chemical accuracy at a fraction of the computational cost of traditional methods [35]. With machine learning interatomic potentials (MLIPs) trained on OMol25's density functional theory (DFT) data, researchers can achieve predictions of the same caliber as DFT but 10,000 times faster, making scientifically relevant molecular systems and reactions of real-world complexity finally accessible [36].

Table: Key Specifications of the OMol25 Dataset

| Attribute | Specification |

|---|---|

| Total DFT Calculations | >100 million [35] |

| Computational Cost | ~6 billion CPU core-hours [36] |

| Unique Molecular Systems | ~83 million [35] |

| Maximum System Size | Up to 350 atoms [35] |

| Elemental Coverage | 83 elements across the periodic table [35] |

| Dataset Volume | ~500 TB (electronic structures) [37] |

OMol25 Technical Support Center

Frequently Asked Questions (FAQs)

Q1: What are the licensing terms for using the OMol25 dataset and models? The OMol25 dataset itself is provided under a CC-BY-4.0 license, which allows for broad sharing and adaptation. However, the baseline model checkpoints are distributed under the FAIR Chemistry License, which has specific restrictions on prohibited uses, including military applications, weapon development, and illegal drug development [38]. You must agree to this license and provide your full legal details to access the models on Hugging Face [38].

Q2: How can I quickly start running calculations with the OMol25 models? For researchers wanting immediate results without local setup, the Rowan cloud chemistry platform offers a free-tier option. After creating an account, you can select the "Basic Calculation" workflow, input your molecule (by name, SMILES, or file upload), and select the Meta model from the "Level of Theory" dropdown to run geometry optimizations and frequency calculations [39].

Q3: What are the common causes of model performance issues, and how can I troubleshoot them? Performance and accuracy problems often stem from:

- Incorrect spin/charge states: Ensure you properly set the

chargeandspinin theatoms.infodictionary [39]. - Device mismatches: The

deviceparameter inload_predict_unitmust match your available hardware ("cuda"for GPU,"cpu"for CPU) [39]. - Suboptimal inference settings: Using

inference_settings="turbo"can accelerate inference but locks the predictor to the first system size it encounters [39].

Q4: Where can I access the raw electronic structure data beyond basic properties? The comprehensive OMol25 Electronic Structures dataset, comprising ~500 TB of raw DFT outputs, electronic densities, wavefunctions, and molecular orbital information, is available through the Materials Data Facility via high-performance Globus transfer. Access requires a free Globus account and joining a permission group [37].

Q5: My research involves large biomolecular complexes. Is OMol25 suitable for this? Yes. A significant innovation of OMol25 is its inclusion of systems of up to 350 atoms, a tenfold increase over previous typical datasets. This includes explicit focus on biomolecules, electrolytes, and metal complexes, making it highly suitable for studying large, complex systems [35] [36].

Troubleshooting Guides

Issue 1: Hugging Face Access Denied

Problem: Your request to access the models on Hugging Face is denied or pending.

- Solution Steps:

- Provide Complete Information: Ensure you submit your full legal name, date of birth, and full organization name with all corporate identifiers, avoiding acronyms [38].

- Review License Terms: Carefully read the FAIR Chemistry License and Acceptable Use Policy to ensure your intended use is compliant [38].

- Check Geographic Restrictions: Note that access is restricted in comprehensively sanctioned jurisdictions, as well as in China, Russia, and Belarus [38].

Issue 2: Failed Installation or Import offairchem-core

Problem: Errors occur when trying to install or import the necessary Python packages.

- Solution Steps:

- Verify Environment: Use a clean Python 3.8+ environment to avoid dependency conflicts.

- Install via PyPI: Use the standard command

pip install fairchem-corefor the core library andpip install asefor the Atomic Simulation Environment [39]. - Check Dependencies: The

fairchem-corepackage should handle its own dependencies, but ensure you have a compatible PyTorch installation for your hardware (CPU vs. CUDA).

Issue 3: Model Produces Physically Unreasonable Results

Problem: The model returns energies or forces that are nonsensical.

- Solution Steps:

- Validate Input Structure: Check that your initial molecular geometry is reasonable. Use chemical drawing software or pre-optimization with a faster method if needed.

- Verify System Composition: Ensure all atoms in your system are among the 83 elements covered by OMol25 [35].

- Confirm Charge/Spin: Double-check that the

atoms.info = {"charge": 0, "spin": 1}values are physically appropriate for your system [39]. - Consult Evaluations: Review the comprehensive evaluations provided by the OMol25 team to understand the model's expected performance on different chemical tasks [36].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table: Essential Computational Tools for Leveraging OMol25

| Tool Name | Type | Primary Function | Access Link |

|---|---|---|---|

| OMol25 Dataset | Reference Data | Training ML models with 100M+ DFT calculations [35] | Hugging Face / Materials Data Facility [37] [38] |

| ORCA 6.0.1 | Quantum Chemistry Code | High-performance DFT code used to generate OMol25 data [40] | https://orcaforum.kofo.mpg.de/ |

| Atomic Simulation Environment (ASE) | Python Library | Interface for setting up and running atomistic simulations [39] | https://wiki.fysik.dtu.dk/ase/ |

| FAIRChemCalculator | Python Calculator | Bridges ASE with OMol25 models for energy/force calculations [39] | Included in fairchem-core |

| Rowan Cloud Platform | Web Application | GUI-based platform for running OMol25 models without local setup [39] | https://www.rowansci.com/ |

| Globus | Data Transfer Service | High-performance transfer of the 500TB electronic structures dataset [37] | https://www.globus.org/ |

Experimental Protocols: Methodologies for Key Use Cases

Protocol: Running a Basic Single-Point Energy Calculation

This protocol details the core methodology for obtaining the potential energy of a molecular system using the OMol25 models, which serves as the foundation for more complex simulations [39].

Step-by-Step Procedure:

- Environment Setup: Install the required packages using

pip install fairchem-core asein your Python environment [39]. - Model Acquisition: Request access to and download the desired model weights (e.g.,

esen_sm_conserving_all.pt) from the OMol25 page on Hugging Face [38]. - Initialization Script:

- Structure Preparation:

- Execution:

Protocol: Advanced Analysis of MD Trajectories using Distance Maps

For researchers analyzing complex MD trajectories, such as studying conformational changes in proteins, converting trajectories into interresidue distance maps provides a powerful analytical tool. This methodology, validated on SARS-CoV-2 spike protein simulations, enables the prediction of functional impacts of mutations [41].

Methodological Workflow:

Detailed Steps:

- Trajectory Preprocessing: Extract frames from your MD simulation at a consistent time interval. For protein systems, focus on the residues of interest (e.g., the receptor-binding domain) [41].

- Distance Matrix Calculation: For each frame, calculate the Euclidean distance between the alpha-carbons (Cα) of all residue pairs, generating a symmetric N x N matrix where N is the number of residues [41].

- Input Representation: Use these matrices as 2D input features (distance maps) for a deep learning model. This representation is inherently invariant to translational and rotational movements of the protein, reducing preprocessing needs [41].

- Model Training: Implement a Convolutional Neural Network (CNN) designed to classify conformational states or predict mutation effects based on the patterns in the distance maps [41].

- Validation: Correlate the model's predictions with independent biophysical measures, such as binding free energy calculations, to validate the biological significance of the predictions (achievable Pearson’s correlation coefficient can be >0.77) [41].

Molecular dynamics (MD) simulations are an essential tool for understanding the behavior of biomolecules and designing new drugs. Since nearly all physiological processes occur in an aqueous environment, accurately modeling solvent effects is crucial for realistic simulations. Researchers primarily use two approaches: explicit solvent models, which include individual solvent molecules, and implicit solvent models, which treat the solvent as a continuous medium. This guide helps you navigate the trade-offs between these approaches to optimize your research simulations.

Model Comparison: Key Differences at a Glance

The table below summarizes the core characteristics of implicit and explicit solvent models to help you understand their fundamental differences.

| Feature | Implicit Solvent Models | Explicit Solvent Models |

|---|---|---|

| Fundamental Representation | Solvent as a continuous dielectric medium [42] [43] | Individual, discrete solvent molecules [44] [43] |

| Computational Cost | Lower; fewer degrees of freedom [45] | Significantly higher; many added atoms [44] |

| Sampling Speed | Faster conformational sampling; reduced viscosity [46] [45] | Slower sampling; solvent viscosity and friction present [46] |

| Physical Accuracy | Approximate; misses specific molecular interactions [44] [43] | High-resolution; captures specific interactions like hydrogen bonding [43] [47] |

| Key Limitations | Poor for charged species, hydrogen bonding, and non-polar effects [44] [43] [47] | High computational cost can limit system size and simulation time [44] |

Troubleshooting Common Solvation Model Issues

Why is my implicit solvent simulation producing physically unrealistic results for my charged molecule?

Implicit solvent models often fail to accurately capture the behavior of charged species and systems with strong, specific solvent-solute interactions [44] [43] [47].

- Root Cause: Continuum models lack a molecular view of the solvent. They cannot represent specific, directional interactions like strong hydrogen bonds or account for the precise structure of the first solvation shell, which is critical for ions and radicals [43] [47].

- Evidence: A 2025 study on the carbonate radical anion found that implicit solvation (SMD model) predicted only one-third of the experimentally measured reduction potential. The error arose from an inability to model the extensive hydrogen-bonding network [47].

- Solution Strategy:

- Switch to Explicit Solvent: If computationally feasible, use explicit water molecules for accurate modeling of charged systems [47].

- Use a Cluster-Continuum Approach: Incorporate a few explicit solvent molecules to capture key first-shell interactions, and then embed this cluster in a continuum model to represent the bulk solvent [48]. The number of explicit molecules needed for convergence should be benchmarked [48].

Why are my conformational changes happening much faster in implicit solvent compared to explicit solvent?

This is a common and expected effect due to differences in solvent friction [46].

- Root Cause: Explicit solvent has viscosity and inertia, which create friction that slows down solute motion. Implicit solvent models often lack this viscous drag, leading to accelerated dynamics and faster conformational sampling [46] [45].

- Evidence: A 2015 study directly comparing explicit and implicit solvent simulations found the speedup in conformational sampling with implicit solvent was highly system-dependent, ranging from approximately 1-fold for small dihedral flips to about 100-fold for large changes like nucleosome tail collapse [46].

- Solution Strategy:

- Interpret Dynamics with Caution: The accelerated kinetics in implicit solvent are not directly comparable to real-world timescales. These simulations are excellent for probing conformational space and thermodynamics, but not for measuring exact transition rates [46] [45].

- Adjust Friction Coefficient: Many MD packages allow you to tune the friction coefficient (e.g.,

gamma_lnin AMBER) in implicit solvent to better mimic the viscosity of explicit water, though this is an approximation [46].

My implicit solvent simulation ignores my changed dielectric constant settings. What went wrong?

This suggests a potential issue with the input or model parametrization.

- Root Cause: The input parameters may not be correctly recognized by the software, or the chosen implicit solvent model may not be properly validated for the specific conditions you are using [49].

- Evidence: In a 2014 AMBER mailing list post, a user reported that changing the

extdielparameter to model a hydrophobic solvent had no effect on the simulation outcome. An expert response indicated that the specific Generalized Born model (igb=7) andbondiradii combination had not been successfully validated for use in low-dielectric solvents [49]. - Solution Strategy:

- Validate Input: First, check the program's output file to confirm it lists your non-default parameters (e.g.,

extdiel = 26.70). This verifies the input was read [49]. - Benchmark Your Model: Before production runs, ensure the specific implicit solvent model and parameters you have chosen are known to work for systems similar to yours. Consult the software documentation and literature. If no validated model exists for your conditions, you may need to switch to explicit solvent or perform your own validation against experimental data [49].

- Validate Input: First, check the program's output file to confirm it lists your non-default parameters (e.g.,

Advanced Protocols and Emerging Solutions

Detailed Protocol: Setting Up a QM/MM Semicontinuum Solvation Model

This protocol is ideal for quantum chemistry calculations (e.g., in Q-Chem) where full explicit solvation is too costly, but implicit solvent is inadequate.

- Generate Solvent Configurations: Use molecular dynamics (MD) with an explicit solvent box and a classical force field to generate an ensemble of solute-solvent configurations. Run the simulation long enough to ensure proper sampling [48].

- Select Configurations for QM Calculation: From the MD trajectory, select multiple snapshots (e.g., at regular time intervals). In each snapshot, identify a small cluster consisting of the solute molecule and the key solvent molecules from its first solvation shell [48].

- Define the QM and MM Regions: For each cluster, the solute and the critical explicit solvent molecules are treated with quantum mechanics (QM). The rest of the explicit solvent molecules from the snapshot can be treated with a molecular mechanics (MM) force field in a QM/MM calculation, or simply discarded [48].

- Embed in Continuum Solvent: The entire QM cluster (or QM/MM system) is then placed within an implicit solvent model (e.g., IEFPCM) to capture the bulk electrostatic effects of the solvent [48].

- Run and Average: Perform the QM or QM/MM calculation for each selected cluster configuration. The final energy or property is obtained by averaging the results across all configurations [48].

Emerging Solution: Machine Learning for Explicit Solvent Accuracy

Machine learning (ML) is a promising path to bypass the high cost of explicit solvent simulations.

- Concept: Train an ML potential on a diverse set of configurations derived from accurate but expensive quantum chemical calculations in explicit solvent. Once trained, the ML model can predict energies and forces with quantum-mechanical accuracy at a fraction of the computational cost, enabling long-timescale MD simulations [44].

- Protocol:

- Active Learning: Use an automated workflow that runs short MD simulations, identifies new and uncertain molecular configurations, and queries a high-level QM method to compute their accurate energy. These new data points are automatically added to the training set, improving the model iteratively [44].

- Similarity-Based Selection: To reduce the number of expensive QM calculations, use fast molecular descriptors to select diverse structures for the training set, rather than solely relying on energy-based criteria [44].

- Application: This approach has been successfully used to study reactions like the cycloaddition between cyclopentadiene and methyl vinyl ketone in explicit water, revealing mechanistic details (e.g., a more stepwise pathway) that were missed by implicit solvent models [44].

The Scientist's Toolkit: Essential Research Reagents

The table below lists key computational "reagents" and methods used in solvation modeling.

| Tool / Method | Function / Description | Example Use Cases |

|---|---|---|

| GB/SASA Models [42] | Combines Generalized Born (electrostatics) with Solvent-Accessible Surface Area (non-polar contributions). | Fast, implicit-solvent MD of proteins; initial conformational sampling [42] [45]. |

| Poisson-Boltzmann [42] | Numerically solves the PB equation for electrostatic solvation free energy. | Highly accurate, single-point energy calculations; not typically for MD due to cost [42]. |

| SMD [47] | A widely used universal implicit solvation model based on electron density. | Standard for benchmarking solvation energies of small molecules in quantum chemistry [47]. |

| Interaction-Reorganization Solvation (IRS) [43] | A newer explicit-solvent approach using MD trajectories to compute solvation free energy. | Accurate solvation energy calculations where continuum models fail [43]. |