Strategic Library Size Reduction in Biomedical Research: Maximizing Efficiency Without Compromising Coverage

This article provides a comprehensive guide for researchers and drug development professionals on strategically reducing library size while maintaining robust target coverage.

Strategic Library Size Reduction in Biomedical Research: Maximizing Efficiency Without Compromising Coverage

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on strategically reducing library size while maintaining robust target coverage. It explores the foundational principles of library optimization, presents practical methodological applications across diverse fields like genomics, proteomics, and radiotherapy planning, addresses common troubleshooting and optimization challenges, and offers a comparative analysis of validation strategies. By synthesizing evidence from recent studies, this resource delivers actionable insights for enhancing research efficiency, reducing computational and experimental burdens, and accelerating discovery in biomedical and clinical contexts.

The Core Principles: Why Smaller, Smarter Libraries Work

For researchers in drug development, optimizing a library—be it chemical, genetic, or thematic—involves a fundamental trade-off: reducing its physical or virtual size while maintaining sufficient coverage of the target space. This article provides a technical support framework to guide you through the experimental and computational challenges inherent in this process.

Troubleshooting Guide: Common Challenges in Library Optimization

Q1: How can I quickly identify and resolve issues causing a loss of diversity in my condensed screening library?

- Issue: Post-optimization, the library shows significantly reduced diversity, increasing the risk of missing key hits.

- Investigation: First, compare the physicochemical property distributions (e.g., molecular weight, logP, polar surface area) of the original and reduced libraries. A sharp narrowing of distributions indicates a problem.

- Solution: Ensure your optimization algorithm includes a diversity penalty term. Re-run the analysis, increasing the weight of this penalty to force the selection of a more structurally diverse subset of compounds.

- Prevention: Continuously monitor diversity metrics in real-time during the library selection process, not just at the end [1].

Q2: My library size has been successfully reduced, but the computational model's performance has dropped. What steps should I take?

- Issue: A smaller, more manageable library fails to recapitulate the predictive power of the full library in silico.

- Investigation: This often signals overfitting during the feature selection or model training phase. Validate your model on a completely independent test set that was not used in any part of the optimization.

- Solution: Implement a more robust cross-validation protocol. Simplify your model or increase the regularization strength to prevent it from learning noise from the training data. Re-evaluate the feature selection criteria to ensure they are aligned with the ultimate biological endpoint [2].

- Prevention: Use hold-out validation sets from the beginning and define clear, success criteria for model performance before starting the optimization.

Q3: What is the best way to validate that a smaller library maintains adequate coverage of the biological target space?

- Issue: Uncertainty about whether the optimized library samples the necessary biological target space to identify active compounds.

- Investigation: Perform retrospective validation. Use historical screening data to see if a library of the same size and design, selected by your new method, would have identified known active compounds or hits.

- Solution: Employ multiple metrics to assess coverage. These should include:

- Structural Clustering: Ensure compounds from all major chemical clusters in the original library are represented.

- Pharmacophore Coverage: Verify that key pharmacophore features relevant to your target class are present.

- Biological Assay: If resources allow, run a small-scale pilot screen against the target to confirm the hit rate has not unacceptably declined [2].

- Prevention: Define "coverage" with specific, measurable parameters at the start of the project.

Q4: How do I track the success of my library optimization efforts using data?

- Issue: The team cannot agree on whether the library optimization project is successful.

- Investigation & Solution: From day one, establish and track Key Performance Indicators (KPIs). The table below summarizes critical metrics for library optimization [1] [3].

Table 1: Key Performance Indicators for Library Optimization

| Metric | Description | Target |

|---|---|---|

| Size Reduction Factor | Percentage decrease in the number of compounds or data points. | Project-defined (e.g., 50-80%) |

| Diversity Index | A measure of the structural or thematic variety within the library (e.g., Gini-Simpson index). | Maintain >80% of original library value. |

| Hit Rate Retention | The ratio of the hit rate in the optimized library to the hit rate in the original library. | >90% |

| Model Performance Drop | The change in predictive accuracy (e.g., AUC, R²) on the independent test set. | <5% decrease |

Experimental Protocol: Method for Rational Library Reduction

This protocol outlines a methodology for systematically reducing library size while monitoring target space coverage.

1. Goal Definition and Baseline Establishment

- Objective: Define the specific goal of the optimization (e.g., reduce from 100,000 to 20,000 compounds for an HTS feasibility study).

- Data Collection: Assemble the full library dataset. Annotate compounds with all relevant features (structural descriptors, prior bioactivity data, calculated properties).

- Baseline Metrics: Calculate baseline metrics for the full library: size, diversity index, and performance of a benchmark model.

2. Feature Selection and Algorithm Choice

- Feature Selection: Apply feature selection techniques (e.g., variance threshold, correlation analysis, model-based selection) to remove redundant or non-informative descriptors. This simplifies the problem space.

- Algorithm Selection: Choose a subset selection algorithm suited to your goal.

- For Maximum Diversity: Use MaxMin or sphere exclusion algorithms.

- For Representative Coverage: Use clustering-based methods (e.g., k-means) followed by centroid selection.

- For Predictive Power: Use machine learning models with built-in feature importance (e.g., Random Forest) to guide the selection of the most informative compounds.

3. Iterative Optimization and Validation

- Subset Generation: Run the selected algorithm to generate candidate subsets of the target size.

- Validation: For each candidate subset, calculate the KPIs from Table 1. Compare them against the predefined success criteria.

- Iteration: If the criteria are not met, adjust the algorithm parameters (e.g., diversity penalty, cluster size) and iterate. This process should be data-driven [1].

4. Final Evaluation and Reporting

- Hold-out Set Testing: Evaluate the performance of the final, optimized library on a completely independent validation set that was not used during any optimization step.

- Documentation: Report all parameters, algorithms, and final KPI values to ensure the experiment is reproducible.

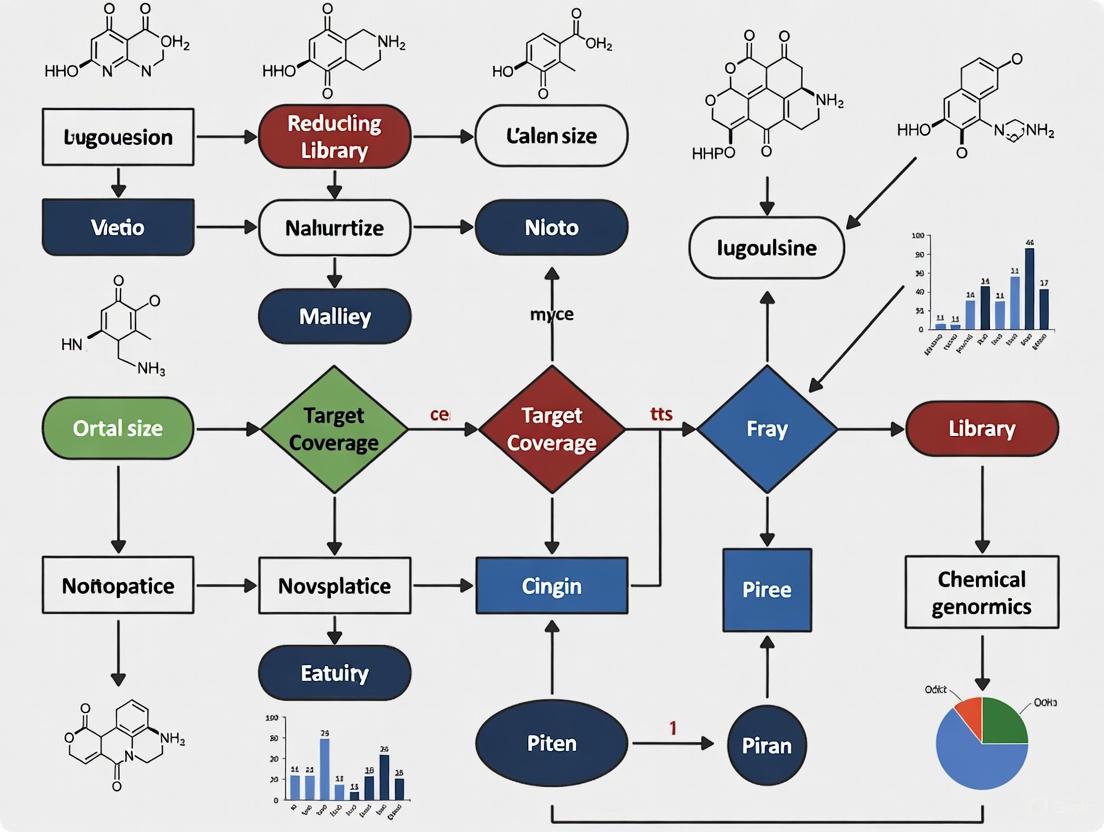

Workflow Visualization

The following diagram illustrates the logical workflow and iterative nature of the library optimization process.

Research Reagent Solutions for Library Analysis

Table 2: Essential Tools for Library Profiling and Validation

| Reagent / Tool | Function in Library Optimization |

|---|---|

| Chemical Descriptor Software (e.g., RDKit, Dragon) | Calculates quantitative features (e.g., molecular weight, polarity, charge) to numerically represent each library member for computational analysis. |

| Clustering Algorithm | Groups library members based on similarity in descriptor space, enabling the selection of representative subsets and assessment of diversity. |

| Cheminformatics Platform (e.g., Knime, Pipeline Pilot) | Provides a visual workflow environment to build, execute, and automate the complex multi-step processes of data preprocessing, analysis, and model building. |

| Statistical Analysis Software | Used to calculate diversity indices, perform hypothesis testing on hit rates, and generate visualizations to compare library properties before and after optimization. |

This technical support center provides troubleshooting guides and FAQs for researchers investigating how to reduce library size while maintaining target coverage, using evidence and methodologies from radiotherapy plan quality studies.

Frequently Asked Questions

Q1: What is the core evidence that a smaller set of radiotherapy plans can achieve output quality comparable to a larger set? A key intra-institutional study demonstrated that for a specific clinical case, 40 planners created treatment plans with a wide range of quality scores. Statistical analysis found that plan quality showed no significant correlation with a planner's years of experience, job title, or other measured factors. This indicates that consistent, high-quality output is achievable without requiring a vast number of individual planners or plans, as the variation stems from systemic rather than individual expert-dependent factors [4].

Q2: How can variability in the "planning library" impact final outcomes? In radiotherapy, variability in contouring—the delineation of tumors and organs—is a major source of inconsistency. Research on nasopharyngeal carcinoma shows that interobserver variability (IOV) in delineation has a direct dosimetric and clinical impact [5]. The relative volume difference (ΔV) in contoured targets showed a strong correlation (R=0.703) with changes in Tumor Control Probability (TCP) [5]. This means inconsistencies in the initial "library" of contours can negatively affect the potency of the final treatment plan.

Q3: Beyond dose metrics, what other factors define a high-quality plan? A high-quality plan balances three core concepts [6]:

- Dose Metrics: Adherence to clinical goals for target coverage and organ sparing.

- Robustness: The plan's sensitivity to uncertainties (e.g., day-to-day anatomical changes).

- Complexity: How intricate the delivery instructions are; highly complex plans can be less deliverable and more prone to errors. Evaluating a plan requires a holistic view of all these elements to ensure the delivered dose is clinically suitable [6].

Q4: What tools can help standardize output and reduce variability? The literature suggests moving beyond additional training and investigating advanced, systematic solutions [4]. Promising approaches include:

- Knowledge-Based Planning (KBP): Uses a model trained on prior high-quality plans to predict achievable dose-volume objectives for new cases.

- Advanced Optimization Techniques: Automates and standardizes the planning process within the treatment planning system. These tools help encode best practices, reducing reliance on individual planner habits and decreasing variation across a smaller set of plans [4].

Troubleshooting Guides

Problem: High Variation in Output Quality

Symptoms:

- Wide range of quality scores among different plans for the same case [4].

- Inconsistent contouring of targets and organs-at-risk (OARs) between different professionals [5].

Investigation and Solutions:

- Check for Clear Protocols: Ensure all users are working from the same detailed, prioritized list of planning goals and constraints. The esophagus study provided all planners with an identical "ordered list of target dose coverage and normal tissue evaluation criteria" [4].

- Implement Standardized Metrics: Use a Plan Quality Metric (PQM) to score plans objectively. The referenced study used a points system where higher-priority clinical goals had more points at stake, allowing for quantitative ranking [4].

- Analyze Correlation with Extraneous Factors: Perform a statistical analysis (e.g., runs test, Spearman's rank correlation) to see if quality correlates with experience or other variables. If no correlation is found (as in the core study), the solution is not more training but systemic change [4].

- Adopt Advanced Standardization Tools: Transition to methods like Knowledge-Based Planning (KBP) to mitigate human variability [4].

Problem: Reduced Target Coverage or Organ Sparing

Symptoms:

- Failure to meet dose-volume criteria for Planning Target Volume (PTV) coverage (e.g., D95%) [4].

- Excessive dose to Organs-at-Risk (OARs), increasing Normal Tissue Complication Probability (NTCP) [5].

Investigation and Solutions:

- Verify Contouring Consistency: Use geometric metrics like Dice Similarity Coefficient (DSC) and Hausdorff Distance (HD95) to quantify contouring variation, which is a primary source of dosimetric errors [5].

- Re-optimize with Higher Priority: In the planning system, increase the optimization weight or priority for the violated objective.

- Assess Plan Robustness: Check if the plan is overly sensitive to minor anatomical shifts. A plan that is perfect on the planning scan may degrade upon delivery. Use robust evaluation and optimization tools if available [6].

Experimental Data and Protocols

Key Experimental Findings

Table 1: Plan Quality Score Distribution from 40 Planners [4]

| Metric | Value |

|---|---|

| Score Range | 80.24 to 135.89 |

| Mean Score | 128.7 |

| Median Score | 131.5 |

| Distribution | Negatively Skewed |

Table 2: Correlation Between Delineation Variability and Clinical Outcomes [5]

| Relationship Analyzed | Correlation Coefficient (R) |

|---|---|

| Relative Volume Difference (ΔV) vs. Prescription Dose Coverage (ΔPDC) | 0.686 |

| Relative Volume Difference (ΔV) vs. Tumor Control Probability (ΔTCP) | 0.703 |

| Relative Volume Difference (ΔV) vs. ΔTCP (Validation Set) | 0.778 |

Detailed Experimental Protocol: Intra-Institutional Plan Quality Study

This protocol is adapted from the study providing the core evidence for this article [4].

1. Objective: To investigate the sources of variability in radiotherapy treatment plan output between planners within a single institution.

2. Materials and Setup:

- Patient Case: A single thoracic esophagus patient CT scan and structure set.

- Planners: 40 treatment planners (a mix of physicists and dosimetrists).

- Planning System: Varian Eclipse treatment planning system.

- Technique: Sliding window IMRT with a Simultaneous Integrated Boost (SIB) prescription.

- Constraints: All planners had access to the same institutional planning procedures and a detailed, prioritized list of planning goals.

3. Method:

- Each planner created a treatment plan independently on a copy of the same patient dataset.

- Planners had one week to complete their plan, submitting it over a 13-month period.

4. Data Analysis:

- Scoring: Plans were ranked based on a points-based Plan Quality Metric (PQM). Points were awarded or deducted based on adherence to the prioritized list of clinical goals.

- Statistical Testing:

- A runs test was used to determine if planner qualities (job title, campus, etc.) influenced the score ranking.

- Spearman’s rank correlation was used to investigate if plan score correlated with years of experience or plan complexity (monitor units).

Workflow Diagram

Relationship Diagram: Factors Affecting Plan Quality

The Scientist's Toolkit

Table 3: Research Reagent Solutions for Radiotherapy Plan Quality Analysis

| Item / Solution | Function in the Research Context |

|---|---|

| Treatment Planning System (TPS) | Software platform (e.g., Varian Eclipse) used to design, optimize, and calculate the 3D dose distribution of radiotherapy plans. [4] |

| Plan Quality Metric (PQM) | A points-based scoring system to objectively rank plans based on their adherence to a prioritized list of clinical goals for target coverage and organ sparing. [4] |

| Dose-Volume Histogram (DVH) | A graphical plot used to summarize the 3D dose distribution, essential for extracting quantitative data for plan evaluation and scoring. [4] |

| Dice Similarity Coefficient (DSC) | A spatial overlap index (0 to 1) used to quantify the geometric concordance of contours between different observers, critical for assessing contouring variability. [5] |

| Hausdorff Distance (HD95) | A metric measuring the largest contour boundary discrepancy between two structures, useful for identifying major outlier delineations. [5] |

| Knowledge-Based Planning (KBP) | A system that uses historical high-quality plans to model achievable dose objectives for new cases, reducing variability and standardizing quality. [4] |

| Virtual Unenhanced CT via Dual-Energy CT | An imaging technique that removes iodine contrast from CT scans, improving the accuracy of dose calculations by providing a more representative tissue density map. [7] |

What is a minimal sgRNA library? A minimal sgRNA library is a compact, highly optimized collection of single-guide RNAs designed for genome-wide CRISPR screening. Unlike conventional libraries that often use 4-10 sgRNAs per gene, minimal libraries typically employ only 2 sgRNAs per gene, resulting in a library size nearly identical to the number of protein-coding genes being targeted. This approach reduces library complexity by 42-80% compared to standard libraries while maintaining high screening performance through careful sgRNA selection [8] [9].

Why are minimal libraries important for genetic screening? Library size presents a significant barrier in CRISPR screening. Larger libraries require massive cell numbers (typically 50-100 × library size for proper representation), increased costs, and limit applications in complex models like primary cells, organoids, and in vivo systems. Minimal libraries overcome these limitations by drastically reducing library complexity while preserving screening sensitivity and specificity, enabling more feasible and cost-effective genetic screens [8] [9].

Library Comparison and Performance Metrics

How do minimal libraries compare to conventional designs? The table below summarizes key differences between minimal libraries and conventional designs:

Table 1: Comparison of Minimal vs. Conventional Genome-wide CRISPR Libraries

| Feature | Minimal Libraries | Conventional Libraries |

|---|---|---|

| sgRNAs per gene | 2 sgRNAs/gene | 4-10 sgRNAs/gene |

| Total library size | ~21,000 sgRNAs (H-mLib); ~37,700 sgRNAs (MinLibCas9) | 65,000-100,000+ sgRNAs |

| Size reduction | 42-80% smaller | Reference size |

| Target coverage | 18,761-21,157 protein-coding genes | Similar gene coverage |

| Cell number requirements | Significantly reduced | 50-100 × library size |

| Cost | Lower due to reduced reagents and sequencing | Proportionally higher |

| Applications | Ideal for complex models (primary cells, organoids, in vivo) | Best for standard cell lines with unlimited expansion capacity |

The H-mLib library exemplifies the minimal approach, containing 21,159 sgRNA pairs targeting human protein-coding genes with nearly 1:1 gene coverage. Benchmarking experiments demonstrated this library maintains high specificity and sensitivity in identifying essential genes despite its compact size [8]. Similarly, the MinLibCas9 library targets 18,761 genes using only 2 sgRNAs per gene, achieving a 42-80% size reduction while preserving the ability to identify known essential genes with >89.8% precision in most cancer cell lines tested [9].

Design Principles and Methodologies

What strategies enable effective minimal library design? Successful minimal library design incorporates multiple optimization strategies:

Empirical sgRNA Selection: Mining large-scale existing screening data (such as from Project Score or Avana libraries) to identify sgRNAs with strong, consistent biological effects across diverse contexts [9]

Computational Prediction Integration: Combining multiple on-target efficiency algorithms (Rule Set 2, DeepCas9, CFD score) to select highly active sgRNAs while minimizing off-target effects [8] [10]

Biological Context Consideration: Prioritizing sgRNAs targeting conserved protein domains and hydrophobic cores, which are more likely to generate loss-of-function mutations when edited [8] [11]

Genetic Variation Awareness: Filtering out sgRNAs containing single-nucleotide polymorphisms (SNPs), especially near the PAM sequence, which could reduce editing efficiency [8]

Dual-guRNA Systems: Using vectors expressing two sgRNAs per construct to further minimize library complexity while maintaining knockout efficiency [8]

The design workflow for minimal libraries typically follows this process:

How are sgRNAs selected for minimal libraries? The selection process employs rigorous bioinformatic and empirical approaches:

On-target Efficiency Prediction: Integration of multiple scoring algorithms (Rule Set 2, DeepCas9, AIdit_ONs) into a composite ON-score that better predicts cleavage efficiency than individual scores [8]

Off-target Assessment: Using Cutting Frequency Determination (CFD) scores to calculate potential off-target sites and exclude sgRNAs with high off-target potential [8]

Functional Domain Targeting: Selecting sgRNAs that target conserved protein domains annotated in the Conserved Domain Database (CDD), as these are more likely to disrupt protein function [8]

Empirical Performance Validation: Applying statistical tests (like Kolmogorov-Smirnov tests) to compare sgRNA fitness effects to non-targeting controls, identifying guides with strong biological activity [9]

Experimental Workflow and Protocols

What is the standard workflow for minimal library screening? The experimental workflow for minimal library screening follows these key steps:

Detailed protocol for minimal library screening:

Cell Line Preparation:

- Generate Cas9-expressing cell lines through lentiviral transduction and antibiotic selection (e.g., puromycin for stable integration)

- Validate Cas9 activity using control sgRNAs before proceeding with library screening [12]

Library Transduction:

- Produce high-titer lentivirus from the minimal sgRNA library

- Transduce Cas9-expressing cells at low multiplicity of infection (MOI = 0.3-0.4) to ensure most cells receive only one sgRNA

- Include selection markers (e.g., puromycin resistance or fluorescent markers) to enrich for successfully transduced cells [12] [13]

Application of Selection Pressure:

- For negative selection screens (identifying essential genes): Apply conditions where most cells survive but those with essential gene knockouts are depleted

- For positive selection screens (identifying resistance genes): Apply strong selection where most cells die except those with protective knockouts [14] [12]

Sample Collection and DNA Extraction:

Sequencing Library Preparation:

- Amplify sgRNA sequences from genomic DNA using PCR with primers containing Illumina adapter sequences

- Include sample barcodes for multiplexing and staggered bases to maintain library complexity during sequencing [12]

Sequencing and Data Analysis:

Troubleshooting Common Experimental Issues

What are common challenges in minimal library screening and their solutions?

Table 2: Troubleshooting Guide for Minimal Library Screens

| Problem | Potential Causes | Solutions |

|---|---|---|

| No significant gene enrichment | Insufficient selection pressure; weak phenotype | Increase selection pressure; extend screening duration; optimize screening conditions [14] |

| Large loss of sgRNAs in sample | Insufficient initial library representation; excessive selection pressure | Re-establish library cell pool with adequate coverage; ensure 200× coverage per sgRNA; reduce selection pressure [14] |

| High variability between sgRNAs targeting same gene | Differences in individual sgRNA efficiency | Design 3-4 sgRNAs per gene in initial library; use dual-guide systems; employ robust statistical methods that account for sgRNA variability [8] [14] |

| Low mapping rate in sequencing | Poor quality sequencing libraries; primer issues | Ensure sufficient absolute number of mapped reads (not just percentage); verify sequencing primer design; check library quality before sequencing [14] |

| Poor replicate correlation | Insufficient cell numbers; technical variability | Increase cell numbers for better representation; ensure consistent culture conditions; use combined analysis if correlation >0.8, otherwise perform pairwise analysis [14] |

| Unexpected log-fold-change values | Statistical artifacts; extreme values from individual sgRNAs | Use robust statistical methods (RRA); examine individual sgRNA performance; consider biological rather than just statistical significance [14] |

How can researchers determine if their minimal library screen was successful?

- Include well-validated positive control genes with corresponding sgRNAs in the library - these should show significant enrichment/depletion in expected directions [14]

- Assess cellular response to selection pressure - there should be clear phenotypic differences (e.g., cell killing or survival) under selective conditions [14] [12]

- Examine the distribution of sgRNA abundance and log-fold-changes across conditions - successful screens typically show clear separation between targeting and non-targeting controls [14]

- For essential gene identification, compare results to established core essential gene sets - minimal libraries should recover a high percentage of these known essentials [9]

Research Reagent Solutions

What key reagents are essential for successful minimal library screening?

Table 3: Essential Research Reagents for Minimal Library Screening

| Reagent/Category | Function | Implementation Examples |

|---|---|---|

| Minimal sgRNA Libraries | Compact library for efficient screening | H-mLib (21,159 sgRNA pairs); MinLibCas9 (37,522 sgRNAs); custom-designed minimal libraries [8] [9] |

| Lentiviral Vector Systems | Efficient delivery of sgRNA libraries | Third-generation lentiviral systems; dual-gRNA vectors with different promoters (hU6, macaque U6) to prevent recombination [8] [13] |

| Cas9-Expressing Cell Lines | Provide CRISPR nuclease activity | Stable Cas9-integrated lines; transgenic Cas9 models (e.g., Cas9 knock-in mice); inducible/conditional Cas9 systems [12] [13] |

| Selection Markers | Enrich for successfully transduced cells | Puromycin resistance; fluorescent markers (mCherry, GFP); dual-marker cassettes [12] [13] |

| NGS Library Prep Kits | Prepare sgRNA sequences for sequencing | Specialized CRISPR screening NGS kits with barcoding and Illumina adapter sequences [12] |

| Bioinformatics Tools | Analyze screening data and identify hits | MAGeCK (with RRA and MLE algorithms); STARS; RIGER; custom analysis pipelines [14] [10] |

Frequently Asked Questions

Q: How many cells are needed for a minimal library screen? A: Cell numbers depend on library size and desired coverage. For the H-mLib (21,159 sgRNAs), at 200× coverage, you would need approximately 4.2 million cells. For larger minimal libraries like MinLibCas9 (37,722 sgRNAs), approximately 7.5 million cells are needed. Always include extra cells to account for processing losses [8] [14] [12].

Q: Can minimal libraries really achieve comparable results to conventional libraries? A: Yes, when properly designed. The MinLibCas9 library recovered >89.8% of significant dependencies identified with full libraries across 245 cancer cell lines. Minimal libraries may even increase dynamic range by focusing on the most effective sgRNAs [9].

Q: What sequencing depth is required for minimal library screens? A: For positive selection screens: ~10 million reads per sample. For negative selection screens: up to 100 million reads due to more subtle changes in sgRNA representation. The formula for estimating required data volume is: Required Data Volume = Sequencing Depth × Library Coverage × Number of sgRNAs / Mapping Rate [14].

Q: How do I choose between single-guide and dual-guide minimal libraries? A: Single-guide libraries are simpler and sufficient for most applications. Dual-guide libraries (like H-mLib) can provide more robust knockout by targeting each gene with two sgRNAs simultaneously and are particularly beneficial for challenging targets or when complete gene disruption is critical [8].

Q: What are the key quality control metrics for minimal library screens? A: Essential QC metrics include: library coverage (>99% sgRNAs represented), coefficient of variation between replicates (<10%), strong correlation between biological replicates (Pearson R > 0.8), and appropriate enrichment of positive controls [14].

Q: Can minimal libraries be used for in vivo screening? A: Yes, the reduced size of minimal libraries makes them particularly suitable for in vivo applications where cell numbers are limited. Both direct (library delivered in vivo) and indirect (cells transduced then transplanted) approaches have been successful with minimal libraries [13].

Frequently Asked Questions

What is the difference between coverage and redundancy in a research context? Coverage refers to how completely the collected data or analyses address the entire subject or target area. In contrast, redundancy refers to the duplication of information or effort across different data points or analyses. High coverage with low redundancy is ideal, as it means a comprehensive assessment without wasted resources [15].

My assay has failed with no observable window. What are the first things I should check? A complete lack of an assay window is most commonly due to improper instrument setup. First, verify that the correct emission filters are installed, as this is critical for assays like TR-FRET. You can test your instrument's setup using reagents you already possess before running the full experiment [16].

Why might my positive control show lower-than-expected values? If your positive control (e.g., a 100% phosphopeptide control) is exposed to development reagents, it can become partially cleaved, leading to an elevated signal and a lower-than-expected value. Ensure that this control is not exposed to any development reagents to guarantee it remains uncleaved and provides the lowest possible ratio [16].

How can I improve the output of my adaptive sampling run? To maximize output, focus on maintaining high pore occupancy and optimizing your library. Load a higher amount of sample, calculated based on molarity rather than mass. Using a library with shorter fragment sizes can also increase flow cell longevity and data output by reducing pore blockages [17].

My results show high redundancy and low coverage. What experimental factors should I investigate? High redundancy often stems from a lack of diversity in the experimental inputs. To improve coverage and reduce redundancy, consider introducing diversity into your system. Studies have shown that diversity in expertise topics, seniority levels, and publication networks can lead to broader coverage and lower redundancy in outcomes [15].

Troubleshooting Guides

Problem: Inadequate Target Coverage

Issue: The experiment fails to provide sufficient data on the regions or targets of interest.

- Potential Cause 1: Low Pore Occupancy.

- Solution: Increase the amount of sample loaded, calculating the quantity based on molarity. For a library with an N50 of ~6.5 kb, aim for 50-65 fmol, which is approximately 200 ng. Using a biomath calculator is recommended for precise conversions [17].

- Potential Cause 2: Library Fragmentation is Too Long.

- Solution: Shear the library to produce shorter fragments. This increases molarity for the same mass of DNA, improves pore occupancy, reduces pore blocking, and allows the pore to process more reads in the same amount of time [17].

- Potential Cause 3: Poorly Defined or Buffered Regions of Interest (ROIs).

- Solution: When using adaptive sampling, ensure your .bed file is buffered appropriately. Add a buffer to the sides of your ROIs approximately equal to the N10 of your library's read length distribution. As a rule of thumb, for an ~8 kb N50 library, add a 20 kb buffer to account for reads that start outside but extend into the ROI [17].

Problem: High Redundancy and Low Diversity

Issue: Data or results are repetitive and do not provide new or unique perspectives, wasting resources.

- Potential Cause: Homogeneity in Experimental Inputs.

- Solution: Actively introduce diversity into your system. In experimental design, this can be analogous to selecting a diverse group of reviewers. The following table summarizes the effect of different diversity dimensions on redundancy and coverage, based on empirical studies [15]:

| Diversity Dimension | Impact on Coverage | Impact on Redundancy |

|---|---|---|

| Topical Diversity | Increases | Decreases |

| Seniority Diversity | Increases | Decreases |

| Publication Network Diversity | Increases | Decreases |

| Organizational Diversity | No observed evidence | Decreases |

| Geographical Diversity | No observed evidence | No observed evidence |

Problem: Poor or Inconsistent Z'-factor

Issue: The assay's robustness metric is low, making it unsuitable for screening.

- Potential Cause 1: High Signal Variability.

- Solution: Use ratiometric data analysis (e.g., acceptor signal/donor signal) to account for variances in pipetting and reagent variability. This provides an internal reference and improves data consistency [16].

- Potential Cause 2: Insufficient Assay Window.

- Solution: While a larger assay window is generally better, the Z'-factor also depends on the standard deviation of your data. A large window with high noise can have a worse Z'-factor than a small window with low noise. Focus on optimizing both the window size and data precision. Assays with a Z'-factor > 0.5 are considered suitable for screening [16].

The following table summarizes key quantitative benchmarks for assessing experimental quality and performance.

| Metric | Definition | Calculation Formula | Target Benchmark | ||

|---|---|---|---|---|---|

| Z'-factor | A measure of assay robustness and quality, accounting for both the assay window and data variation [16]. | `Z' = 1 - [3*(σp + σn) / | μp - μn | ]` where σ=std dev, μ=mean, p=positive, n=negative control. | > 0.5 [16] |

| Assay Window | The dynamic range between the positive and negative controls. | Window = (Mean of Max Signal) / (Mean of Min Signal) |

Varies; assess with Z'-factor [16] | ||

| Enrichment Factor | The fold-enrichment for targets in adaptive sampling [17]. | (% on-target reads with ADS) / (% on-target reads without ADS) |

~5-10 fold [17] | ||

| Diversity Index | A quantitative measure of representation across different categories in a group or dataset [18]. | (Varies by specific index, e.g., Gini-Simpson, Blau) | Organization-specific target. | ||

| Contrast Ratio | The legibility of text or visual elements, critical for figures and interfaces [19]. | (L1 + 0.05) / (L2 + 0.05) where L1 is the relative luminance of the lighter color and L2 is the darker. | ≥ 4.5:1 for large text; ≥ 7:1 for small text [19] |

Experimental Protocols

Protocol 1: Ratiometric Data Analysis for TR-FRET Assays

Purpose: To account for pipetting variances and reagent lot-to-lot variability, ensuring a robust assay window and reliable Z'-factor [16].

- Measure Signals: Collect the acceptor signal (e.g., 520 nm for Tb) and the donor signal (e.g., 495 nm for Tb) as Relative Fluorescence Units (RFUs).

- Calculate Emission Ratio: For each well, divide the acceptor signal by the donor signal (Acceptor RFU / Donor RFU).

- Plot Data: Graph the emission ratio against the logarithm of the compound concentration.

- Normalize (Optional): To express data as a Response Ratio, divide all emission ratio values by the average emission ratio from the bottom (minimum) of the curve. This normalizes the assay window to start at 1.0.

Protocol 2: Adaptive Sampling for Targeted Sequencing

Purpose: To enrich sequencing data for specific genomic regions of interest (ROIs) without physical sample manipulation, thereby efficiently utilizing sequencing capacity on targets [17].

- Library Preparation: Prepare a sequencing library as usual. For optimal results, shear DNA to a fragment size appropriate for your ROI. A smaller fragment size (e.g., N50 ~6.5 kb) can improve molarity, reduce pore blocking, and increase overall output.

- Calculate Molarity: Determine the molar concentration of your library. For a 6.5 kb N50 library, 50 fmol is approximately 200 ng. Use a biomath calculator for precise conversion based on your actual fragment distribution.

- Prepare Reference Files: Create a FASTA file of the reference genome and a .bed file defining your ROIs.

- Buffer the .bed File: Add buffer regions to your ROIs in the .bed file. For an ~8 kb N50 library, a 20 kb buffer is recommended.

- Configure and Run: Load the library onto the flow cell. In MinKNOW, select "adaptive sampling" (enrichment mode) and upload your FASTA and buffered .bed files to begin the run.

The Scientist's Toolkit

| Item | Function |

|---|---|

| Microplate Reader with TR-FRET Filters | Precisely measures time-resolved fluorescence resonance energy transfer, crucial for binding and enzymatic assays. |

| Nanopore Sequencer (e.g., MinION) | Enables real-time, long-read DNA/RNA sequencing and targeted enrichment via adaptive sampling. |

| .bed File | A text file that defines genomic regions of interest (ROIs) for targeted sequencing in adaptive sampling. |

| Buffered .bed File | A .bed file where ROIs have been extended by a buffer (e.g., 20 kb) to capture reads that start outside but extend into the ROI. |

| Molarity Calculator | Converts DNA mass (ng) to molarity (fmol) based on average fragment length, critical for optimizing adaptive sampling load. |

| Z'-factor | A statistical metric used to assess the quality and robustness of a high-throughput screening assay. |

Workflow and Pathway Diagrams

Targeted Sequencing with Adaptive Sampling Workflow

Assay Failure Troubleshooting Pathway

Understanding Pareto Optimality in Library Design

Frequently Asked Questions (FAQs)

What is Pareto Optimality in the context of library design? Pareto Optimality describes a state in library design where you cannot improve one desired property (e.g., fitness) without making another property (e.g., diversity) worse. A Pareto optimal library provides the best possible balance between multiple, often competing, objectives [20]. This is distinct from the Pareto Principle (the 80/20 rule) [21].

Why should I use a Pareto-optimized library instead of a traditional NNK library? Traditional libraries, like NNK libraries, often contain a high proportion of non-functional variants. Research has shown that machine learning-guided Pareto-optimized libraries can achieve a fivefold higher packaging fitness than standard NNK libraries with negligible sacrifice in diversity. This leads to less wasted screening effort and can yield approximately 10-fold more successful variants after experimental selection [22].

My primary goal is high fitness. Why should I care about diversity? While high fitness ensures the identification of excellent starting variants, rich diversity increases the likelihood of uncovering multiple fitness peaks and exploring a wider sequence space. This is crucial for downstream tasks like machine learning-guided directed evolution (MLDE), as a diverse training set allows models to map the fitness landscape more effectively [20]. A Pareto-optimized library balances both needs.

How do I know if my library is truly Pareto optimal? A set of libraries forms the "Pareto frontier." If your library's combination of fitness and diversity scores places it on this frontier, it is Pareto optimal. This means no other possible library design from your parameters would be better in both metrics simultaneously. Specialized software tools, such as those implementing Bayesian optimization, can identify this frontier for you [23].

Can Pareto optimization handle more than two objectives? Yes. The principle extends to multiple objectives. For instance, in drug discovery, you might simultaneously optimize for binding affinity to a primary target, selectivity against off-targets, and suitable pharmacokinetic properties [23]. Methods like Pareto Monte Carlo Tree Search (MCTS) have been developed to search for molecules on the complex Pareto front in such multi-objective spaces [24].

Troubleshooting Guides

Problem: Poor Yield of Functional Variants After Screening

Symptoms: A large percentage of your library variants are non-functional, unstable, or fail to package. Possible Causes and Solutions:

- Cause 1: The library design overly prioritizes sequence diversity at the expense of basic functionality.

- Cause 2: The scoring functions used in the design do not accurately predict real-world behavior.

- Solution: Incorporate more advanced or ensemble machine learning models for fitness prediction. For example, using a combination of protein language models and sequence density models can improve the accuracy of zero-shot fitness predictions [20].

Problem: Library Lacks Diversity and Converges on Similar Variants

Symptoms: Screening identifies hits, but they are all very similar, offering no novel scaffolds or solutions. Possible Causes and Solutions:

- Cause 1: The optimization over-emphasized a single fitness score, leading to a narrow library.

- Solution: Adjust the trade-off parameter (e.g., λ in algorithms like MODIFY) to increase the weight assigned to the diversity objective. This will push the library design toward a different, more diverse point on the Pareto frontier [20].

- Cause 2: The diversity metric is not well-suited to your discovery goal.

- Solution: Consider using different diversity metrics. Some methods optimize diversity at the residue-level composition, which can be more effective than simple sequence-level diversity for exploring functional landscapes [20].

Problem: Inefficient Screening of Very Large Virtual Libraries

Symptoms: The computational cost of performing multi-objective virtual screens (e.g., docking against multiple targets) on a giant virtual library is prohibitive. Possible Causes and Solutions:

- Cause: Performing exhaustive calculations on a library of billions of molecules is inherently resource-intensive.

- Solution: Implement a model-guided multi-objective optimization workflow, such as Bayesian optimization with a Pareto-based acquisition function. This can dramatically reduce the computational burden. One study successfully identified 100% of the Pareto-optimal molecules in a 4-million-compound library after evaluating only 8% of it [23].

Experimental Protocols for Pareto-Optimized Library Design

Protocol 1: Designing a Combinatorial Mutagenesis Library with POCoM

This protocol is based on the POCoM (Pareto Optimal Combinatorial Mutagenesis) method for designing protein variant libraries balanced for structural stability and evolutionary acceptance [25].

Input Preparation:

- Gather the target protein's amino acid sequence and 3D structure.

- Generate a multiple sequence alignment (MSA) of homologous proteins.

Scoring Function Calculation:

- Sequence-based Score (Φ): Derive a statistical potential from the MSA. This includes one-body terms (ϕi(si)) for positional conservation and two-body terms (ϕij(si, sj)) for correlated mutations [25].

- Structure-based Score (Ψ): Use a tool like Rosetta to predict energies for a training set of variant sequences. Then, use Cluster Expansion (CE) to build a fast, sequence-based potential (with terms ψi(si) and ψij(si, sj)) that approximates the structural energy [25].

Library Representation:

- Define the set of mutable residue positions and the allowed amino acid choices at each position.

Pareto Optimization:

- The POCoM algorithm efficiently scores entire libraries based on the average of the sequence-based and structure-based scores over all constituent variants without explicit enumeration.

- The algorithm maps the Pareto frontier, identifying all library designs where no other design has a better average score for one objective without being worse for the other.

Library Selection:

- Choose a library from the Pareto frontier that best matches your desired trade-off between evolutionary favorability and structural stability for your specific experiment.

Protocol 2: ML-Guided Library Design with MODIFY

This protocol uses the MODIFY framework to co-optimize fitness and diversity for enzyme engineering, even without prior experimental fitness data [20].

Residue Selection: Specify the set of amino acid residues in the parent enzyme to be mutated.

Zero-Shot Fitness Prediction:

- Employ an ensemble machine learning model (e.g., combining protein language models like ESM-1v and ESM-2 with sequence density models like EVmutation) to predict the fitness of combinatorial variants without experimental training data.

Pareto Frontier Calculation:

- The MODIFY algorithm solves the optimization problem:

max fitness + λ · diversity. - By varying the trade-off parameter (λ), the algorithm traces out the Pareto frontier, identifying optimal libraries for different balances of exploitation (fitness) and exploration (diversity).

- The MODIFY algorithm solves the optimization problem:

Library Filtering:

- Filter the sampled enzyme variants based on additional criteria like predicted foldability and stability to finalize the library for synthesis.

Table 1: Performance Comparison of Library Design Methods

| Method | Key Objective | Reported Improvement vs. Standard Library | Context |

|---|---|---|---|

| ML-guided AAV Design [22] | Packaging Fitness & Diversity | 5x higher packaging fitness; ~10x more infectious variants after selection | AAV5 7-mer peptide insertion library |

| MODIFY [20] | Fitness & Diversity (Zero-shot) | Outperformed baselines in 34/87 protein deep mutational scanning datasets | Enzyme engineering for C–B and C–Si bond formation |

| Multi-objective Bayesian Optimization [23] | Computational Screening Efficiency | Identified 100% of Pareto front after exploring only 8% of a >4M compound library | Virtual screening for selective dual inhibitors |

Research Reagent Solutions

Table 2: Key Reagents and Computational Tools for Pareto-Optimal Library Design

| Item / Reagent | Function / Purpose | Example Use in Context |

|---|---|---|

| NNK Library | A standard control library for benchmarking new designs. | Used as a baseline to demonstrate a 5x improvement in packaging fitness by an ML-guided Pareto-optimal library [22]. |

| Multiple Sequence Alignment (MSA) | Provides evolutionary data for sequence-based scoring. | Used to derive statistical potentials (e.g., in POCoM) that measure evolutionary acceptability of variants [25]. |

| Cluster Expansion (CE) | Converts structure-based energy evaluations into a fast, sequence-based potential. | Enables efficient average stability scoring of massive combinatorial libraries without enumerating all members [25]. |

| Protein Language Models (e.g., ESM-1v, ESM-2) | Provides unsupervised, zero-shot fitness predictions from sequence. | Part of the MODIFY ensemble model to predict variant fitness without experimental data [20]. |

| Pareto Optimization Software (e.g., MolPAL) | Computational tool for multi-objective Bayesian optimization. | Used to efficiently search vast virtual chemical spaces for molecules on the Pareto front [23]. |

Workflow Visualization

Pareto Optimal Library Design Workflow

Practical Strategies for Building Optimized Libraries

Frequently Asked Questions (FAQs)

Q1: What is the core function of the RedLibs algorithm? RedLibs (Reduced Libraries) is an algorithm designed for the rational design of smart combinatorial libraries for pathway optimization, thereby minimizing the use of experimental resources [26]. Its primary function is to identify a single, partially degenerate DNA sequence that, when synthesized, will produce a sub-library of a user-specified size. This sub-library is computationally optimized to sample a range of a target numerical parameter, such as Translation Initiation Rate (TIR), as uniformly as possible [26] [27].

Q2: Why is optimizing library size and coverage important in metabolic engineering? Full randomization of regulatory elements like Ribosome Binding Sites (RBS) leads to combinatorial explosion, creating libraries with billions of variants that are impossible to screen comprehensively [26]. Furthermore, these fully randomized libraries are highly biased, with the vast majority of sequences (>99.5% for an 8N RBS library for mCherry) leading to very low expression, making productive variants scarce [26]. RedLibs addresses this by creating small, "smart" libraries that maximize the coverage of the functional parameter space with minimal experimental effort [26].

Q3: What input data does RedLibs require? RedLibs requires a list of sequence-value pairs as input. For RBS engineering, this is typically generated by RBS prediction software (e.g., the RBS Calculator) and consists of a comprehensive list of DNA sequences (e.g., from a fully degenerate N-region) and their corresponding predicted TIR values [26] [27]. The standalone version of RedLibs can also accept any user-provided data set of sequences and associated numerical values [27].

Q4: How does RedLibs evaluate the quality of a designed library? The algorithm compares the cumulative distribution function (CDF) of the candidate library's TIRs to the CDF of the desired target distribution (e.g., a uniform distribution). The similarity is quantitatively measured using the Kolmogorov-Smirnov distance (dKS). A lower dKS value indicates a library that more closely matches the ideal uniform distribution [26].

Troubleshooting Guides

Poor Library Diversity in Mismatch Repair Proficient (MMR+) Strains

- Problem: When integrating an RBS library into the chromosome of an MMR+ strain (e.g., via CRMAGE), the resulting diversity of recovered sequences is severely reduced compared to the expected library, and the allelic replacement efficiency is low [28].

- Background: The bacterial MMR system, specifically MutS, efficiently recognizes and repairs small mismatches (below 5-6 bp) during genome editing, introducing a strong sequence-dependent bias [28].

- Solution: Apply the Genome-Library-Optimized-Sequences (GLOS) rule.

- Principle: MutS does not efficiently recognize insertions or mismatches greater than 5 bp [28].

- Protocol: Design degenerate oligonucleotides where the mutated region creates a mismatch of at least 6 base pairs relative to the genomic sequence. This requires using a restricted set of nucleotides (three instead of four) at each randomized position to maintain the mismatch length [28].

- Implementation: Use the GLOS rule as a pre-selection filter for the sequences generated before running the RedLibs optimization. This ensures the final library is compatible with MMR+ strains [28].

Non-Uniform Sequence Abundance in Final Library

- Problem: While most expected library sequences are recovered after integration and selection, their abundances are not uniform [28].

- Potential Causes and Mitigation Strategies:

- Oligonucleotide Folding Energy: Single-stranded DNA oligonucleotides used in methods like MAGE can form secondary structures. Oligos with lower folding energies (more stable structures) hybridize less efficiently to the target genomic site, leading to lower incorporation efficiency [28].

- Mitigation: Analyze the folding energies (ΔG) of the library oligonucleotides and consider this as an additional filter during the library design phase to avoid sequences with highly stable secondary structures [28].

- Biases in Chemical Synthesis: The chemical process of oligonucleotide synthesis can have sequence-dependent yields, leading to under- or over-representation of certain sequences in the initial pool [28].

- Mitigation: This is harder to control, but being aware of this potential bias can help interpret experimental results. Using synthesis providers known for high quality and consistency is recommended.

- Oligonucleotide Folding Energy: Single-stranded DNA oligonucleotides used in methods like MAGE can form secondary structures. Oligos with lower folding energies (more stable structures) hybridize less efficiently to the target genomic site, leading to lower incorporation efficiency [28].

Experimental Protocols

Core Protocol: Optimizing a Branched Pathway using RedLibs

This protocol outlines the key steps for applying RedLibs to optimize product selectivity in a branched metabolic pathway, as demonstrated for violacein biosynthesis [26].

1. Define the Optimization Goal

- Identify the pathway and the specific branching point to be optimized.

- Define the desired outcome (e.g., maximizing the yield of one product over another).

2. Generate RBS Sequence-TIR Pairs

- For each gene to be optimized, define the DNA sequence encompassing the region to be randomized (e.g., a Shine-Dalgarno sequence of 8 bases, "NNNNNNNN").

- Use an RBS prediction tool (e.g., the RBS Calculator) with the specific gene sequence as input to generate a comprehensive list of all possible sequences and their predicted TIRs [26].

3. Run the RedLibs Algorithm

- Input: Provide the list of sequence-TIR pairs for each gene into RedLibs.

- Set Constraints: Specify the target library size based on your screening capacity [27].

- Output: RedLibs will return a ranked list of optimal degenerate sequences (e.g., using IUPAC codes) that encode libraries with the most uniform TIR distributions.

4. Library Construction and Screening

- Synthesize the degenerate oligonucleotides output by RedLibs.

- Clone the library into your expression system using standard molecular biology techniques (e.g., PCR and assembly) [26].

- Screen or select the resulting variant library for the desired phenotype (e.g., product selectivity or yield). The small, smart library size allows for low-to-medium throughput screening methods [26].

5. Iterative Optimization (Optional)

- The high density of functional clones in RedLibs-derived libraries allows for iterative optimization. Hits from a first round can be sequenced, and their RBS sequences can be used as the input for a subsequent, finer-resolution RedLibs analysis around a more promising TIR range [26].

Workflow Visualization

The following diagram illustrates the logical workflow for the RedLibs optimization process.

Validation of RedLibs Library Distributions

The performance of RedLibs was validated in silico and in vivo by randomizing the RBSs of two fluorescent proteins (sfGFP and mCherry) [26]. The table below summarizes the key quantitative data from the validation.

Table 1: RedLibs Library Size and Computational Analysis for mCherry RBS Optimization [26]

| Target Library Size | Number of Possible Sub-Libraries Evaluated by RedLibs | Characteristics of Output Library |

|---|---|---|

| 4 | 4.3 million | Uniform sampling of the entire accessible TIR space, encoded by a single degenerate sequence. |

| 12 | 25.7 million | Uniform sampling of the entire accessible TIR space, encoded by a single degenerate sequence. |

| 24 | 70.2 million | Uniform sampling of the entire accessible TIR space, encoded by a single degenerate sequence. |

Impact of MMR on Library Diversity

The following data highlights the critical importance of using the GLOS rule when working with chromosomal libraries in MMR-proficient strains [28].

Table 2: Effect of MMR and GLOS on Chromosomal RBS Library Diversity [28]

| Experimental Condition | Allelic Replacement (AR) Efficiency | Library Members Recovered (out of 18 designed) | Observed Indel Frequency |

|---|---|---|---|

| MMR- Strain (N6-RedLibs Library) | ≥98% | 16 - 18 | 16.5% |

| MMR+ Strain (N6-RedLibs Library) | ~48% | 5 - 9 | 7.5% |

| MMR+ Strain (GLOS-RedLibs Library) | ≥98% | 16 - 18 | Not Specified |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Reagents for RedLibs-Driven Experiments

| Item | Function / Explanation |

|---|---|

| RBS Prediction Software | Computational tool (e.g., the RBS Calculator) required to generate the initial input for RedLibs: a list of DNA sequences and their predicted Translation Initiation Rates (TIRs) [26]. |

| RedLibs Algorithm | The core algorithm that reduces the full sequence space to a single, optimally designed degenerate sequence that encodes a uniform-coverage library of a specified size [26] [27]. |

| Degenerate Oligonucleotides | Chemically synthesized DNA primers or fragments containing the IUPAC-code degenerate sequence output by RedLibs. This is the physical implementation of the designed library [26]. |

| MMR-Proficient Strain (e.g., EcNR1) | For stable, industrial-scale metabolic engineering, it is preferable to use MMR-proficient strains to avoid off-target mutations. This requires the use of the GLOS rule during library design [28]. |

| CRMAGE System | A genome editing method combining multiplex automated genome engineering (MAGE) with CRISPR/Cas9 counter-selection. Used for high-efficiency integration of library oligonucleotides into the bacterial chromosome [28]. |

Effectiveness of Multi-Criteria Optimization-based Trade-Off Exploration in combination with RapidPlan for head & neck radiotherapy planning

Frequently Asked Questions (FAQs)

Q1: What is the primary benefit of combining Multi-Criteria Optimization (MCO) with knowledge-based planning like RapidPlan? The combination enhances plan quality by leveraging the strengths of both approaches. RapidPlan utilizes a database of previous high-quality plans to generate realistic dose-volume histogram (DVH) estimations and optimization objectives for a new patient. MCO then allows planners to interactively explore the trade-offs between these objectives, such as balancing target coverage against organ-at-risk (OAR) sparing, to select the most clinically desirable plan [29] [30]. Studies show this synergy can significantly improve OAR sparing while maintaining clinically acceptable target coverage.

Q2: During MCO trade-off exploration, what happens when I adjust a slider for one objective? When you manipulate a slider to improve a specific objective (e.g., lower the mean dose to a parotid gland), the system automatically adjusts other plan parameters. This demonstrates the inherent trade-offs, often causing other objectives to deteriorate (e.g., a slight reduction in dose to a nodal PTV) to maintain a balanced solution on the Pareto surface [29]. The algorithm aims to distribute the "cost" of the improvement evenly among other criteria unless you use restrictors to limit the range for specific objectives.

Q3: Does the combined RP+MCO approach increase plan complexity and affect deliverability? Yes, plans generated with RP and MCO combined often show increased complexity, typically measured by an increase in the number of monitor units (MUs) [29] [30]. However, research confirms that these plans remain deliverable, passing patient-specific quality assurance checks using tools like portal dosimetry with standard gamma criteria (e.g., 3%, 2mm) [30].

Q4: How does the starting plan influence the MCO process? The initial "balanced" plan is central to the subsequent approximation of the Pareto surface. Trade-off exploration generates alternative plans around this starting point. Therefore, beginning with a high-quality, promising plan—such as one generated by RapidPlan—is desirable as it provides a better foundation for exploring optimal trade-offs [29].

Troubleshooting Guides

Poor Organ-at-Risk (OAR) Sparing Despite MCO Exploration

Problem: After MCO trade-off exploration, the dose to critical OARs remains unacceptably high.

| Step | Action | Rationale & Reference |

|---|---|---|

| 1. Verify Starting Plan | Ensure the initial plan (e.g., from RapidPlan) has high-quality DVH estimations. A poor starting point can limit MCO potential [29]. | The initial plan heavily influences the Pareto surface exploration. |

| 2. Check Objective Selection | Confirm that the OARs you want to spare are included as active objectives in the MCO setup [29]. | Only selected objectives are available for trade-off exploration. |

| 3. Use Restrictors | Apply restrictors on sliders for high-priority targets to prevent their degradation when improving OAR doses [29]. | Restrictors lock an objective's value within a specified range, forcing cost to be distributed elsewhere. |

| 4. Re-evaluate Clinical Goals | Determine if slight, clinically acceptable deterioration in PTV coverage could enable significant OAR sparing [29]. | The largest OAR sparing is often achieved by accepting a slight, acceptable reduction in nodal PTV coverage. |

Inconsistent Plan Quality Among Planners

Problem: Plan quality varies significantly between junior and senior planners when using RP and MCO.

| Step | Action | Rationale & Reference |

|---|---|---|

| 1. Standardize MCO Protocol | Develop a standardized procedure for which objectives to select and a general strategy for slider manipulation [29]. | This reduces variability stemming from different planner strategies and experience levels. |

| 2. Leverage Knowledge-Based DVHs | Use the DVH predictions from the validated RapidPlan model as a baseline for achievable plan quality [30]. | The knowledge-based model encapsulates expertise from a database of high-quality plans, improving consistency. |

| 3. Implement Plan Quality Metrics | Define a set of quantifiable metrics (e.g., mean parotid dose, PTV D95%) for objective plan comparison before clinical approval [29] [30]. | Quantitative comparisons ensure all plans, regardless of the planner, meet minimum quality standards. |

Experimental Data & Protocols

The table below summarizes dose/volume parameters from a study comparing clinical VMAT plans with those optimized using RP and MCO for head and neck cancer [29].

Table 1: Dosimetric Comparison for HNC VMAT Plans (Mean ± SD)

| Structure | Parameter | Clinical Plan | RP_TO+ Plan | P-Value & Significance |

|---|---|---|---|---|

| Left Parotid | Mean Dose (Gy) | 22.9 ± 5.5 | 15.0 ± 4.6 | Significant improvement |

| Right Parotid | Mean Dose (Gy) | 24.8 ± 5.8 | 17.1 ± 5.0 | Significant improvement |

| Nodal PTV | D99% (Gy) | 77.4 ± 0.6 | 76.0 ± 1.2 | Slight, clinically acceptable reduction |

| Nodal PTV | D95% (Gy) | 79.7 ± 0.4 | 80.9 ± 0.9 | Slight increase |

Protocol: Combined RP and MCO Workflow for VMAT Planning

This protocol outlines the methodology for generating treatment plans using the combined approach, as described in the research [29] [30].

Model Creation and Validation:

- Database Curation: Populate a RapidPlan model with a library of 50+ clinically approved, high-quality VMAT plans for a specific disease site (e.g., head and neck) [29].

- Validation: Statistically validate the model's goodness-of-fit (e.g., R², chi-square) and estimation power (e.g., Mean Square Error) through internal validation using plans not included in the training set [30].

Plan Generation for New Patient:

- RapidPlan Setup: Contour the new patient's PTVs and OARs. Generate the initial plan using the validated RapidPlan model to automatically set optimization objectives based on the patient's anatomy.

- MCO Initialization: Create a copy of the RapidPlan-generated solution to use as the "balanced" starting plan for MCO trade-off exploration.

Trade-Off Exploration:

- Objective Selection: Choose key clinical objectives for exploration. For HNC, these typically include mean or line dose for parotid glands, maximum dose for PRV Spinal Cord and PRV Brainstem, and lower dose objectives for PTVs [29].

- Pareto Navigation: Use the sliders in the MCO interface to explore the trade-offs. For example, move the parotid gland slider towards a lower dose and observe the corresponding impact on PTV coverage and other OARs.

- Plan Selection: Select the plan that best balances all clinical goals. This may involve accepting a slight deterioration in a lower-priority objective to achieve a major gain in a higher-priority one [29].

Final Plan Analysis:

- Dosimetric Check: Verify that the final plan meets all clinical constraints for PTV coverage and OAR tolerances.

- Deliverability Check: Confirm the plan's deliverability by performing patient-specific quality assurance (e.g., portal dosimetry) [30].

Workflow Visualization

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Components for RP and MCO Research Implementation

| Item | Function in Workflow | Example / Note |

|---|---|---|

| Treatment Planning System (TPS) | Platform for performing inverse planning, hosting the knowledge-based model, and running the MCO algorithm. | Varian Eclipse TPS with RapidPlan and MCO-based Trade-Off exploration [29] [30]. |

| Plan Database | A curated set of historical, high-quality treatment plans used to train and validate the knowledge-based model. | 70+ clinically approved VMAT plans for a specific disease site (e.g., left-sided breast or head and neck) [29] [30]. |

| Validation Software Tools | Scripts or tools for statistical analysis of the model's performance (Goodness-of-fit, estimation power). | Calculation of R², chi-square (X²), and Mean Square Error (MSE) to validate model robustness [30]. |

| Quality Assurance (QA) Equipment | Hardware and software to verify the deliverability of the complex plans generated by the RP+MCO process. | Portal dosimetry system (e.g., Varian Portal Dosimetry) for patient-specific QA with gamma analysis [30]. |

Rationally Reduced Libraries for Combinatorial Pathway Optimization

Core Concepts: RedLibs Algorithm

What is the fundamental principle behind the RedLibs algorithm?

RedLibs is an algorithm designed to rationally design small, smart combinatorial libraries for pathway optimization, minimizing experimental effort. It addresses the challenge of combinatorial explosion that occurs when randomly generating ribosomal binding site (RBS) libraries. Instead of testing all possible sequences, RedLibs identifies a single, partially degenerate RBS sequence that encodes a sub-library. This sub-library is optimized to uniformly cover the entire range of possible Translation Initiation Rates (TIRs) at a user-defined, manageable size [31].

How does RedLibs select the optimal degenerate sequence?

The algorithm performs an exhaustive search. It starts with a fully degenerate input sequence (e.g., N8) and uses RBS prediction software to generate a list of all possible sequences and their predicted TIRs. It then computes the TIR distributions for all possible partially degenerate sequences that would produce a library of the user's target size. It compares each distribution to a target distribution (e.g., uniform) using the Kolmogorov-Smirnov distance (dKS) and ranks the sequences by how closely they match the ideal distribution [31].

What are the main advantages of using RedLibs over a fully randomized library?

- Drastically Reduced Experimental Effort: It can reduce a library of billions of sequences down to a few dozen that uniformly sample the TIR space [31].

- Uniform Coverage: It avoids the skew towards low TIRs found in fully randomized libraries, ensuring coverage of intermediate and strong RBSs [31].

- One-Pot Cloning: The entire optimized library is encoded by a single degenerate sequence, simplifying cloning procedures [31].

Implementation and Experimental Protocol

What is the step-by-step workflow for using RedLibs?

The table below outlines the key stages of a RedLibs experiment.

| Step | Description | Key Inputs/Outputs |

|---|---|---|

| 1. Input Generation | Generate a gene-specific data set of sequence-TIR pairs using RBS prediction software for a fully degenerate sequence [31]. | Input: Coding gene sequence. Output: List of all RBS sequences & predicted TIRs (e.g., 65,536 pairs for N8). |

| 2. Library Design with RedLibs | Run the RedLibs algorithm, specifying the desired target library size [27]. | Input: Sequence-TIR pairs, target size. Output: Ranked list of optimal degenerate sequences & their uniformity score. |

| 3. Library Construction | Synthesize the top degenerate RBS sequence and clone it upstream of your target gene(s) via one-pot PCR/assembly [31]. | Output: A plasmid library ready for transformation. |

| 4. Screening & Selection | Screen the library for clones with improved performance (e.g., higher metabolite production, fluorescence) [31]. | Output: Identified top-performing variant(s). |

How was RedLibs validated in a proof-of-concept experiment?

Researchers constructed a plasmid (pMJ1) with two fluorescent protein genes (sfGFP and mCherry), each preceded by a degenerate RBS. They then compared the performance of a RedLibs-designed library against a fully randomized (N6 or N8) RBS library. The RedLibs library showed superior and more uniform coverage of expression levels for both proteins in in silico and in vivo screens [31].

What is a specific methodology for optimizing a branched metabolic pathway?

In the violacein biosynthesis pathway optimization:

- Library Construction: RedLibs was used to design degenerate RBS libraries for the key enzymes at the branching point of the pathway.

- Primary Screening: A library of pathway variants was screened to identify clones with altered product selectivity (ratios of different violacein derivatives).

- Iterative Optimization: Top-performing variants from the first round were used as a new starting point. The RBS regions of other pathway genes were randomized with a new RedLibs library for a second round of screening, further optimizing the product output [31].

Troubleshooting Common Issues

What should I do if my experimental results do not match the predicted TIR distribution?

- Verify Input Data: Ensure the RBS prediction data used for RedLibs is specific to your gene's 5'-coding region, as this region significantly impacts RBS strength [31].

- Check Cloning Fidelity: Confirm that the synthesized degenerate sequence is correct and that the cloning process has not introduced biases.

- Consider Model Limitations: Remember that TIR prediction models are approximate and do not account for all cellular factors like gene dosage, promoter activity, or mRNA stability [31]. The library is designed to cover a range, not to provide exact TIR values.

How do I choose the right target library size?

The target size should be selected based on your experimental screening throughput. If you can only screen 96 clones, design a library of 96 or fewer variants. RedLibs allows you to define this size, ensuring the library is "amenable to screening" and matches your analytical capabilities [31] [27].

My pathway has more than two genes. Can RedLibs handle this?

Yes. RedLibs is particularly powerful for multi-gene pathways because it combats combinatorial explosion. While a 3-gene pathway with fully randomized N8 RBSs would have over 280 trillion combinations, RedLibs can create a single, manageably-sized library for each gene. These can then be combined, drastically reducing the total number of clones that need to be screened while still effectively exploring the expression space [31].

Research Reagent Solutions

The table below lists key reagents and computational tools essential for implementing the RedLibs method.

| Reagent / Tool | Function in the Experiment |

|---|---|

| RBS Calculator | Predictive software used to generate the initial input for RedLibs: a list of RBS sequences and their corresponding predicted Translation Initiation Rates (TIRs) [31]. |

| RedLibs Algorithm | The core algorithm that identifies the optimal degenerate RBS sequence to create a uniform-coverage library of a specified size. Available as a standalone web tool [27]. |

| Degenerate Oligonucleotide | The synthesized DNA primer or fragment containing the RedLibs-optimized degenerate RBS sequence. This is the physical implementation of the library [31]. |

| Fluorescent Protein Reporters | Proteins like sfGFP and mCherry, used for rapid in vivo validation of library performance and distribution of expression levels [31]. |

| Pathway-Specific Biosensors | Genetically encoded sensors that transcribe production of a target metabolite into a detectable signal (e.g., fluorescence), enabling high-throughput screening [32]. |

Library-Based vs. Library-Free Analysis Methods in Proteomics

In the field of proteomics, Data-Independent Acquisition (DIA) has become a powerful method for comprehensive and reproducible protein quantification. A central decision in designing a DIA experiment is whether to use a library-based or a library-free analysis method. Library-based DIA relies on pre-existing spectral libraries generated from Data-Dependent Acquisition (DDA) runs, while library-free DIA uses computational algorithms to identify peptides directly from the DIA data using sequence databases. This guide is designed to help you navigate this choice, troubleshoot common issues, and implement strategies to reduce dependency on large spectral libraries without compromising the coverage of your target proteins.

Core Concepts and Strategic Comparison

What is Library-Based DIA?

Library-based DIA is a method that identifies and quantifies peptides by matching acquired DIA data to a reference spectral library. This library is typically built from DDA experiments and contains empirical data on peptide fragmentation patterns, retention times, and, if applicable, ion mobility values [33]. The core principle is pattern recognition, where the complex fragment ion spectra from a DIA sample are queried against this pre-compiled library of known spectra [34] [33].

What is Library-Free DIA?

Library-free DIA, also known as directDIA, eliminates the need for empirical DDA libraries. Instead, it uses sophisticated software algorithms to generate in-silico predicted spectra from a protein sequence database (FASTA file). These predicted spectra are then used to identify peptides directly from the DIA data [33] [35]. Advances in deep learning have significantly improved the accuracy of spectral predictions, making library-free approaches increasingly robust [34].

Strategic Comparison

The table below summarizes the key characteristics of each approach to guide your initial selection.

Table 1: Strategic Comparison of Library-Based and Library-Free DIA Methods

| Feature | Library-Based DIA | Library-Free DIA |

|---|---|---|

| Prior DDA Requirement | Yes, for library generation | No |

| Spectral Library Source | Project-specific or public DDA libraries | In-silico generated from a FASTA file |

| Initial Setup Time | Longer (due to DDA runs and QC) | Shorter |

| Sample Demand | Higher (requires extra runs for library) | Lower |

| Ideal Project Type | Targeted validation, pathway-focused studies | Discovery-phase, large-scale profiling |

| Organism Compatibility | Well-characterized species | Broad, including novel or non-model organisms |

| Flexibility | Limited; changes may require new library | High |

| Identification Specificity | Very high (based on empirical match) | High (depends on prediction algorithm QC) |

Performance and Quantitative Data

Understanding the practical performance of each method is crucial. The following table summarizes key quantitative findings from comparative studies.

Table 2: Performance Comparison Based on Published Data

| Performance Metric | Library-Based DIA | Library-Free DIA | Context and Notes |

|---|---|---|---|

| Protein Identifications | High, especially with comprehensive libraries [36] | Can outperform library-based if library is limited; 2x more than DDA in one study [36] [35] | Performance is highly dependent on library comprehensiveness and software tool [36]. |

| Quantification Precision | Excellent, high reproducibility [34] [36] | High; ~90% of IDs quantifiable with <20% CV [35] | Both methods provide highly reproducible data when optimized. |

| Low-Abundance Protein Detection | Excellent, provided the targets are in the library [33] | Moderate, unless workflows are optimized [33] | Library-free may miss borderline signals in complex samples. |

Methodologies and Experimental Protocols

Implementing a Library-Based Workflow

- Spectral Library Generation:

- Sample Preparation: Run multiple DDA acquisitions (often with fractionation) on representative samples to maximize coverage.

- Data Acquisition: Acquire high-quality DDA data under LC-MS conditions matched as closely as possible to your planned DIA runs.

- Data Processing: Process the DDA files with a search engine (e.g., MaxQuant) against a sequence database to build the spectral library. Incorporate indexed retention time (iRT) peptides for retention time calibration [37].

- DIA Data Acquisition and Analysis: