Strategic Library Design: The Key to Unlocking Higher Hit Rates in Phenotypic Screening

This article provides a comprehensive guide for researchers and drug development professionals on leveraging strategically optimized compound libraries to significantly improve hit rates in phenotypic screening.

Strategic Library Design: The Key to Unlocking Higher Hit Rates in Phenotypic Screening

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on leveraging strategically optimized compound libraries to significantly improve hit rates in phenotypic screening. It explores the foundational challenges of traditional screening, details advanced methodological approaches including AI-integrated and high-content platforms, offers practical troubleshooting strategies to mitigate false positives and enhance data quality, and validates the impact of these optimizations through comparative analysis with target-based methods. The synthesis of these areas provides a actionable framework for designing more efficient and productive phenotypic discovery campaigns.

The Phenotypic Screening Imperative: Why Hit Rates Matter

In the landscape of modern drug discovery, high-throughput screening (HTS) serves as a cornerstone technology for rapidly evaluating millions of chemical or biological entities to identify potential therapeutic starting points, or "hits" [1]. However, a central tension exists between the drive for increased speed and volume (throughput) and the necessity that the results are meaningful and translatable to human biology (biological relevance). Successfully balancing these two factors is critical for improving hit rates and reducing the high attrition rates that plague the development pipeline, where fewer than 14% of candidates entering Phase 1 trials ultimately reach patients [1]. This technical support center is designed to help you navigate the specific challenges at this intersection, providing troubleshooting guides and detailed protocols to enhance the success of your phenotypic screening campaigns.

Frequently Asked Questions (FAQs)

FAQ 1: What are the most common causes of low hit rates in phenotypic screens, and how can I address them?

Low hit rates can stem from an undersized screening library, a poor disease model, or an assay that is not optimized for the biological question.

- Problem: The screening library lacks diversity or biological relevance.

- Solution: Utilize libraries designed for your specific target class (e.g., GPCR-focused, kinase-focused) and incorporate structural and functional diversity. For genetic screens, use CRISPR genome-wide sgRNA libraries with multiple guides per gene to ensure robust knockouts and mitigate off-target effects [2].

- Problem: The disease model is too reductionist.

- Solution: Move towards more physiologically relevant models where feasible. This could include using primary cells, co-culture systems, or 3D organoids that better recapitulate the disease state [3].

- Problem: The assay design favors artifacts over genuine biology.

- Solution: Implement rigorous counter-screens and orthogonal assays that use different detection principles (e.g., switching from a fluorescence-based readout to a mass spectrometry-based one) to filter out false positives caused by compound interference [1].

FAQ 2: How can I ensure my high-throughput assay is both robust and biologically meaningful?

A robust assay is reproducible and reliable, while a biologically meaningful one measures a parameter directly linked to the disease phenotype.

- Best Practices for Robustness:

- Quality Control (QC) Metrics: Consistently monitor statistical metrics like Z'-factor to quantitatively assess assay quality. A Z'-factor > 0.5 is generally indicative of a robust assay [1].

- Plate Controls: Strategically place positive and negative controls on each assay plate to monitor performance and enable data normalization [1].

- Miniaturization Management: Be aware that assay miniaturization (e.g., to 1536-well plates) can make the assay more susceptible to evaporation and edge effects. Implement procedural adjustments to mitigate these issues [1].

- Best Practices for Biological Relevance:

- Physiologically Relevant Reagents: Use protein reagents with adequate stability, solubility, and expression levels, which may require sophisticated protein engineering strategies [1].

- Phenotypic Endpoints: Focus on measuring complex, disease-relevant phenotypic changes (e.g., cell morphology, viability, or reporter gene expression in a relevant pathway) rather than isolated molecular interactions [3].

FAQ 3: My screen produced a long list of hits, but many are likely false positives. How can I triage them effectively?

A multi-stage triage strategy is essential to winnow down your hit list to the most promising candidates for further validation.

- Step 1: Filter for Assay Interference: Use computational filters to flag compounds known as Pan-Assay Interference Compounds (PAINS) and employ counter-screens to eliminate hits that act via non-specific mechanisms like compound aggregation or auto-fluorescence [1].

- Step 2: Confirm Activity: Retest the primary hits in a dose-response manner using the original assay to confirm the activity.

- Step 3: Orthogonal Validation: Test confirmed hits in a secondary, functionally distinct assay that measures the same biological pathway or phenotype. This is a critical step for verifying biological relevance [1].

- Step 4: Assess Reproducibility: Ensure the hit's activity is reproducible across multiple experimental replicates and, if possible, in different cellular backgrounds.

FAQ 4: In genetic screens, what is the difference between a positive and negative selection screen, and how does it impact my experimental design?

The choice between positive and negative screens dictates the selection pressure and the required analytical depth.

- Positive Selection Screen: Identifies genes whose knockout gives cells a survival or growth advantage under selective pressure (e.g., drug resistance). In these screens, most cells die, and the surviving population is enriched for specific sgRNAs. These screens are generally more robust and require a typical NGS read depth of ~1 x 10^7 reads [2].

- Negative Selection Screen: Identifies genes that are essential for survival under the screening conditions. Here, cells with knockouts in essential genes are lost from the population. Since most cells survive, detecting statistically significant depletion is more challenging and often requires a greater NGS read depth, potentially up to ~1 x 10^8 reads [2].

The table below summarizes the key differences:

| Feature | Positive Selection Screen | Negative Selection Screen |

|---|---|---|

| Objective | Identify gene knockouts that confer an advantage | Identify essential gene knockouts |

| Outcome | Enrichment of specific sgRNAs in the population | Depletion of specific sgRNAs from the population |

| Screen Robustness | Generally more robust | More challenging, requires tight controls |

| Recommended NGS Read Depth | ~1 x 10^7 reads | Up to ~1 x 10^8 reads |

FAQ 5: How can I assess the biological relevance of my selected hits beyond simple classification accuracy?

While classification accuracy is a common metric, it may not fully capture biological relevance. It is crucial to use biology-based criteria for evaluation [4].

- Gene Ontology (GO) Enrichment Analysis: Determine whether your set of hit genes is significantly enriched for GO terms related to the disease or phenotype under study. This connects your hits to known biological processes, molecular functions, and cellular components.

- Linkage to Quantitative Trait Loci (QTLs): If available, check if your hit genes co-locate with known QTLs associated with the trait. This provides genetic evidence linking the gene to the phenotype [4].

- Pathway Analysis: Map your hits to known signaling or metabolic pathways to see if they cluster in a biologically plausible manner, potentially revealing a common mechanism of action.

Troubleshooting Common Experimental Issues

Issue: High well-to-well variability in my HTS assay is compromising data quality.

- Potential Causes & Solutions:

- Cause: Inconsistent liquid handling.

- Solution: Calibrate liquid handlers regularly and consider using non-contact dispensing technologies, like acoustic droplet ejection, to improve precision and reduce cross-contamination [1].

- Cause: Edge effects in microplates due to evaporation.

- Solution: Pre-incubate assay plates at room temperature after seeding to allow for thermal equilibration. Alternatively, use plate seals or omit data from the outermost wells [1].

- Cause: Biological variability, such as using cells at different passage numbers or confluence.

- Solution: Standardize cell culture protocols and use cells within a strict passage range.

Issue: My CRISPR screen results are inconsistent or lack a clear signal.

- Potential Causes & Solutions:

- Cause: Low transduction efficiency of the sgRNA library.

- Solution: Titrate the sgRNA library lentivirus to achieve a low MOI that results in a transduction efficiency of 30-40%. This ensures most transduced cells receive only a single sgRNA, which is critical for linking genotype to phenotype [2].

- Cause: Inefficient Cas9 activity.

- Solution: Use a cell line that stably expresses Cas9 at an optimal level. Validate Cas9 activity prior to the screen using a control sgRNA.

- Cause: Insufficient coverage or scale.

- Solution: Screen with a large number of cells (~76 million cells for a 40% transduction efficiency is recommended for some systems) and maintain a high representation of each sgRNA (e.g., 400-1000 cells per sgRNA) to ensure library diversity [2].

- Cause: Inadequate genomic DNA (gDNA) extraction.

- Solution: Isolate gDNA from a sufficient number of cells (100-200 million) using maxi-prep methods. Do not use miniprep kits, as they cannot handle the scale and will reduce sample diversity [2].

Issue: I am struggling with a high rate of false positives in my primary screen.

- Potential Causes & Solutions:

- Cause: Compound-mediated assay interference (e.g., fluorescence quenching, chemical reactivity).

- Solution: As part of your triage strategy, run assays like mass spectrometry-based HTS, which directly detects and quantifies unlabeled analytes and is less prone to such artifacts [1].

- Cause: The primary assay is overly sensitive or has a low signal-to-background ratio.

- Solution: Re-optimize the assay conditions to improve the Z'-factor and implement more stringent hit-selection criteria (e.g., a higher threshold for activity).

Experimental Protocols

Protocol: A Workflow for a Pooled Genome-Wide CRISPR Knockout Screen

This protocol provides a general overview for conducting a loss-of-function phenotypic screen using a pooled lentiviral sgRNA library [2].

1. Select a Screenable Phenotype: Choose a phenotypic change that allows for the enrichment or depletion of edited cells. Examples include resistance to a drug, changes in cell proliferation, or expression of a fluorescent reporter that can be sorted by FACS.

2. Prepare Cas9-Expressing Cells: * Transduce your target cells with a lentivirus expressing Cas9. * Apply antibiotic selection (e.g., puromycin) to generate a stable Cas9-expressing cell line. * Confirm Cas9 expression and activity before proceeding.

3. Produce and Titrate sgRNA Library Lentivirus: * Produce a high-titer lentiviral stock from the genome-wide sgRNA library. * Titrate the virus on your Cas9+ cells to determine the volume needed to achieve 30-40% transduction efficiency. This low MOI is critical for ensuring single sgRNA integration per cell.

4. Perform the Library Screen: * Transduce the Cas9+ cells at the determined scale to maintain library representation. * Apply the selective pressure (e.g., add a drug, sort cells based on phenotype) for a sufficient duration (often 10-14 days) to allow phenotypes to manifest. * Include an untreated control population.

5. Harvest Genomic DNA and Prepare NGS Libraries: * Harvest genomic DNA from a large number of cells (e.g., 100-200 million) from both the treated and control populations using a maxi-prep method. * Amplify the integrated sgRNA sequences from the gDNA using PCR with primers containing Illumina adapter sequences and barcodes. * Purify the PCR product for next-generation sequencing.

6. Sequence and Analyze Data: * Sequence the sgRNA amplicons to a sufficient depth (see FAQ 4). * Align sequences to the reference sgRNA library. * Calculate the enrichment or depletion of each sgRNA in the treated population compared to the control using specialized bioinformatics tools (e.g., MAGeCK).

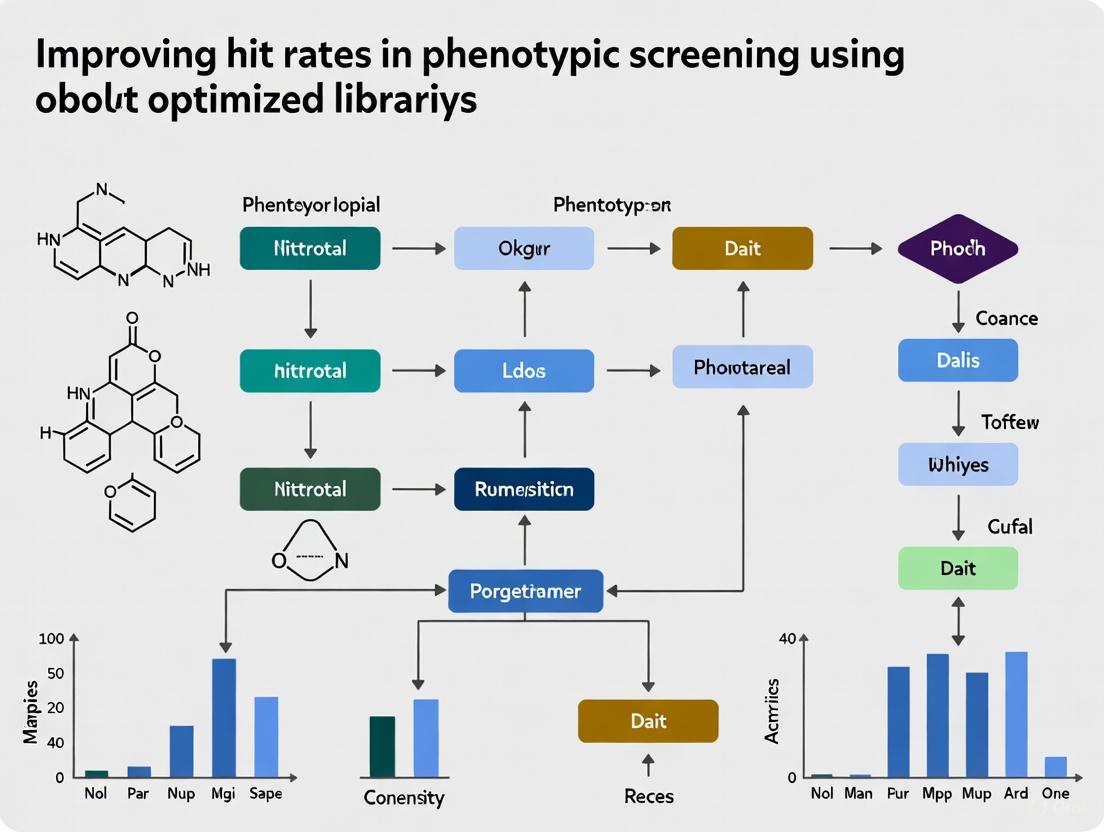

The following diagram illustrates the key steps in this workflow:

Protocol: Key Steps for a Phenotypic Small Molecule Screen

1. Assay Development and Validation: * Define the Phenotype: Clearly define the measurable phenotypic endpoint (e.g., change in cell morphology, reporter gene activation, or cytokine secretion). * Optimize Assay Conditions: Miniaturize the assay to the desired format (384- or 1536-well) and optimize cell density, reagent concentrations, and incubation times. * Validate the Assay: Perform a pilot screen with known controls (positive and negative) to establish key QC metrics like Z'-factor and signal-to-background ratio. Ensure the assay is pharmacologically relevant by testing known modulators [1].

2. Library Design and Preparation: * Select a compound library with chemical diversity and structures that are likely to be relevant to your target class or phenotypic outcome. * Reformulate compounds into assay-ready plates at a consistent concentration.

3. Screening Execution: * Use automated liquid handling to dispense cells and compounds. * Include control wells on every plate (e.g., positive control, negative control, vehicle control) to monitor assay performance and allow for inter-plate normalization.

4. Hit Triage and Validation: * Primary Hit Identification: Apply a statistical threshold (e.g., activity > 3 standard deviations from the mean) to identify initial hits from the primary screen. * Hit Confirmation: Retest primary hits in a dose-response curve in the original assay. * Counter-Screening: Test confirmed hits in orthogonal assays to rule out non-specific mechanisms [1]. * Secondary Assays: Progress the most promising hits into more complex, physiologically relevant models to confirm the phenotypic effect [3].

The Scientist's Toolkit: Key Research Reagent Solutions

The table below details essential materials and their functions for setting up robust phenotypic screens.

| Item | Function & Importance |

|---|---|

| Validated Antibodies | Critical for immunoassays (ELISA, flow cytometry, western blot) and cell sorting. High specificity and affinity are required for sensitive and reproducible target detection [5]. |

| Stable Cell Lines | Engineered cells that consistently express a target protein, reporter gene, or Cas9 nuclease. They reduce variability and are foundational for reproducible screening [2]. |

| CRISPR sgRNA Library | A pooled collection of lentiviruses, each encoding a guide RNA targeting a specific gene. Enables genome-wide, unbiased discovery of genes involved in a phenotype [2]. |

| Phenotypic Reporter Assays | Reagents and cell systems designed to measure complex cellular outputs (e.g., pathway activation, cell death, differentiation). They form the basis of the phenotypic readout [3]. |

| High-Quality Compound Libraries | Collections of small molecules with known chemical structures and properties. Diversity and drug-likeness of the library are key determinants of screening success [1]. |

| qPCR/NGS Kits | Kits for quantifying sgRNA abundance from genomic DNA in CRISPR screens. Accurate and sensitive kits are vital for determining which genes are hits [2]. |

Visualizing the Hit Identification and Validation Pathway

The journey from a full library to a validated, biologically relevant hit involves multiple stages of filtering and validation. The following diagram outlines this critical pathway, highlighting key decision points.

Understanding the High Cost of False Positives and Assay Artifacts

In the pursuit of new therapeutic agents, high-throughput phenotypic screening allows researchers to identify compounds that produce a desired biological effect without prior knowledge of the specific molecular target [6]. However, the success of these campaigns is often hampered by a critical challenge: assay artifacts and false positives. These nuisance compounds appear active in primary screens but do not genuinely modulate the biological pathway or target of interest, leading to wasted resources and delayed projects [7]. This technical support guide explores the origins of these deceptive signals and provides validated strategies to mitigate them, thereby improving the quality of your hit selection and enhancing the efficiency of your drug discovery pipeline.

Understanding Common Assay Artifacts

Assay interference mechanisms are diverse and can persist into hit-to-lead optimization stages, resulting in significant resource depletion [7]. The table below summarizes the most prevalent types of interference and their impact on screening campaigns.

Table 1: Common Mechanisms of Assay Interference in High-Throughput Screening

| Interference Mechanism | Description | Common Assays Affected |

|---|---|---|

| Chemical Reactivity | Compounds undergo unwanted chemical reactions with target biomolecules or assay reagents, including thiol reactivity and redox cycling [7]. | Fluorescence-based thiol-reactive assays, redox activity assays [7]. |

| Luciferase Interference | Compounds inhibit the reporter enzyme luciferase, leading to a false reduction in luminescent signal [7]. | Luciferase reporter assays (firefly, nano) [7]. |

| Compound Aggregation | Compounds with poor solubility form colloidal aggregates that non-specifically perturb biomolecules [7]. | Biochemical and cell-based assays, AmpC β-lactamase inhibition [7]. |

| Fluorescence/Absorbance Interference | Small molecules are themselves fluorescent or colored, interfering with the optical detection method [7]. | Fluorescence polarization (FP), TR-FRET, Differential Scanning Fluorimetry (DSF) [7]. |

| Technology-Specific Interference | Compounds quench the signal, emit auto-fluorescence, or disrupt affinity capture components like antibodies [7]. | Homogeneous proximity assays (ALPHA, FRET, TR-FRET, HTRF, BRET, SPA) [7]. |

Computational Tools for Artifact Identification

Computational methods have been developed to assist in the detection and removal of interference compounds from HTS hit lists and screening libraries. The "Liability Predictor" webtool represents a modern approach to this problem, using Quantitative Structure-Interference Relationship (QSIR) models to predict nuisance behaviors [7].

Table 2: Performance of Modern Computational Tools vs. Traditional PAINS Filters

| Tool Name | Targeted Interference | Reported Performance | Key Advantage |

|---|---|---|---|

| Liability Predictor | Thiol reactivity, Redox activity, Luciferase inhibition | 58–78% balanced external accuracy for 256 test compounds [7]. | QSIR models outperform traditional PAINS filters [7]. |

| Luciferase Advisor | Luciferase inhibition | Information not in search results. | Predicts luciferase inhibitors in luciferase-based assays [7]. |

| SCAM Detective | Colloidal aggregation | Information not in search results. | Predicts the most common source of false positives [7]. |

| PAINS Filters | Multiple mechanisms | Oversensitive; fails to identify a majority of truly interfering compounds [7]. | Broad alerts but poor precision and recall [7]. |

Troubleshooting FAQs and Guides

Q: My primary phenotypic screen yielded an unusually high hit rate. What are the first steps I should take to triage these hits?

A: A high hit rate often signals a high level of false positives. Your first step should be to employ orthogonal assay technologies that use a different detection method. For example, if your primary screen was a luciferase-based reporter assay, follow up with a non-luminescent method like a fluorescent or cell viability assay. Furthermore, utilize computational triage tools like "Liability Predictor" early in your workflow to flag compounds likely to exhibit thiol reactivity, redox activity, or luciferase interference. For fluorescence-based assays, simply re-running the assay with a far-red shifted fluorophore can dramatically reduce interference [7].

Q: How can I proactively design my screening library and assay to minimize the impact of assay artifacts?

A: Proactive design is key to improving hit quality.

- Library Curation: Before screening, virtually profile your chemical library using tools like "Liability Predictor" to remove compounds with known nuisance behaviors [7].

- Assay Technology Selection: Choose detection methods less prone to interference. For instance, using red-shifted fluorophores can minimize autofluorescence from compounds [7].

- Assay Development: Incorporate specific counterscreens during development. For example, include a redox-activity or thiol-reactivity assay to quantify the level of this interference in your library under your specific assay conditions [7].

Q: Are PAINS filters still recommended for flagging potential false positives?

A: While PAINS filters are widely known, they are oversensitive and can disproportionately flag compounds as interferers while missing a majority of truly problematic compounds. Modern QSIR models like those in "Liability Predictor" have been shown to identify nuisance compounds more reliably than PAINS filters. It is recommended to use these more advanced, validated models for hit triage [7].

Q: What are the limitations of small molecule phenotypic screening that contribute to false discoveries?

A: A significant limitation is that even the best chemogenomics libraries only interrogate a small fraction of the human genome—approximately 1,000–2,000 out of 20,000+ genes. This limited target coverage means many phenotypic changes are not easily linked to a specific molecular target, complicating the validation of a true positive. Furthermore, the complexity of phenotypic assays introduces more variables where interference can occur, making them particularly vulnerable to artifacts [8]. Mitigation strategies include using diverse compound libraries with varied chemotypes and employing advanced computational methods, such as the DrugReflector framework, which uses active learning to better predict compounds that induce desired phenotypic changes [6].

The Scientist's Toolkit: Key Research Reagent Solutions

The following table lists essential reagents and tools used in the development and validation of assays discussed in this guide.

Table 3: Key Research Reagent Solutions for Assay Development and Counterscreening

| Item Name | Function/Application | Key Features |

|---|---|---|

| pHrodo Dyes (Thermo Fisher) | Fluorescent labeling of antibodies or other ligands for tracking internalization into acidic compartments (early endosomes to lysosomes) [9]. | pH-sensitive; fluorescence dramatically increases in acidic environments; low background as they are non-fluorescent at neutral pH [9]. |

| LysoLight Deep Red Dye (Thermo Fisher) | A powerful tool for monitoring the lysosomal degradation of antibodies, proteins, or ADCs [9]. | Non-fluorescent until cleaved by proteases in the lysosome; provides excellent sensitivity and specificity for degradation [9]. |

| SiteClick Antibody Labeling System (Thermo Fisher) | Allows for site-specific, gentle conjugation of pHrodo or other dyes to antibodies for internalization studies [9]. | Maintains antibody function and minimizes background via a controlled, click chemistry-based conjugation [9]. |

| Zenon pHrodo IgG Labeling Kits (Thermo Fisher) | Provides a rapid, non-covalent method for labeling antibodies with pHrodo dyes for quick internalization screens [9]. | Labeling complexes form in just 5 minutes; ideal for rapid screening of multiple antibodies [9]. |

Experimental Workflow for Hit Validation

The following diagram illustrates a recommended workflow for triaging hits from a phenotypic screen, integrating multiple counterscreens and computational tools to efficiently identify true positives.

Hit Triage and Validation Workflow

Signaling Pathways and Mechanisms of Interference

To understand how assay artifacts produce false signals, it is crucial to visualize their mechanisms of action compared to a true positive. The diagram below contrasts a specific true positive mechanism with common interference pathways.

Mechanisms of True vs. False Positives

Library Quality as a Critical Limiting Factor in Discovery Efficiency

Frequently Asked Questions (FAQs)

What defines a 'high-quality' screening library for phenotypic screening? A high-quality screening library is the foundation of successful discovery programs. It should be representative of biologically relevant chemical space, composed of chemically attractive compounds with tractable synthetic accessibility, and free of undesirable chemical functionalities [10]. Key characteristics include a balanced distribution of drug-like physicochemical properties (adhering to principles like Lipinski's Rule of Five), the minimization of problematic structures (such as PAINS), and careful annotation of all compounds [10].

Why is library quality so crucial for phenotypic screening hit rates? In phenotypic screening, the biological target is initially unknown. A high-quality library increases the probability that any observed activity is due to a specific, meaningful biological interaction rather than compound toxicity, reactivity, or instability [11]. Poor library quality, contaminated with promiscuous or unstable compounds, can generate a high rate of false positives that waste significant resources during follow-up [10] [11].

How can I check the quality of my compound library after long-term storage? You can confirm the integrity of your library through quality control (QC) sampling. A standard protocol involves:

- Randomly selecting a representative subset of compounds from your storage formats (e.g., 96-way and 384-way tubes) [10].

- Analyzing by Liquid Chromatography–Mass Spectrometry (LCMS) to determine purity (via ultraviolet and evaporative light scattering detectors) and confirm identity (by mass spectrometry) [10].

- Setting a pass/fail criteria, for example, where >80% purity is considered acceptable. One study found 87.4% of compounds passed this criterion after years of storage [10].

My screening hit rate is low. Could my library be the problem? Yes. A low hit rate can stem from several library-related issues:

- Lack of Diversity: The library may not adequately sample the chemical space relevant to your specific phenotypic assay.

- Poor Compound Integrity: Degradation during storage can reduce the concentration of active compounds [10].

- Inappropriate Physicochemical Space: The library may be skewed towards properties that are not suitable for your cellular model (e.g., compounds unable to penetrate cells). Analyzing your library's properties (e.g., molecular weight, logP) and comparing it to known bioactive compounds can reveal gaps [10].

What are the biggest pitfalls in hit validation from phenotypic screens, and how can library quality help? A major pitfall is pursuing hits that act through non-specific or nuisance mechanisms [11]. High-quality libraries are pre-filtered to remove many of these problematic compounds, such as those with reactive functional groups or known promiscuity (PAINS) [10]. This pre-emptive filtering during library design streamlines the hit validation process by providing a cleaner starting point, allowing researchers to focus on more promising leads [11].

Troubleshooting Guides

Problem: High Rate of False Positives or Non-Specific Hits

Potential Causes:

- Presence of compounds with undesirable chemical functionalities (e.g., PAINS, toxicophores) in the library [10].

- Compound precipitation or aggregation in the assay buffer.

- Chemical degradation of compounds leading to reactive by-products [10].

Solutions:

- Implement Stringent Library Curation: Apply computational filters (PAINS filters, modified Pfizer filters) to flag or remove compounds with suspect structural moieties from your screening deck [10].

- Enhance Quality Control (QC): Institute a rigorous QC protocol for new compound acquisitions. One recommended approach is to randomly check 12.5% of the compounds from a vendor plate by LCMS to confirm identity and purity before they enter your screening workflow [10].

- Analyze Physicochemical Properties: Use tools like Pipeline Pilot or similar software to calculate key molecular descriptors (e.g., clogP, molecular weight, rotatable bonds) for your entire library. Compare the distribution to known bioactives to identify property outliers that might cause non-specific effects [10].

Problem: Low Hit Rate or Lack of Confirmed Actives

Potential Causes:

- Library does not cover the chemical space relevant to the biological system under investigation.

- High proportion of compounds unable to reach the intracellular target (e.g., due to poor permeability or efflux).

- Compound degradation during storage, leading to loss of activity [10].

Solutions:

- Diversify Your Library: Augment your existing collection with specialized subsets, such as:

- Bioactives: FDA-approved drugs, clinical candidates, and known chemical probes [10].

- Focused Libraries: Compounds designed for specific target classes or pathways relevant to your disease area [10].

- Fragments: Low molecular weight compounds following the Rule of 3, which can increase the efficiency of sampling chemical space [10].

- Profile Library for Cell-Based Assays: Prioritize compounds with properties conducive to cell penetration. Analyze parameters like topological polar surface area (TPSA) and fraction of sp3 carbon atoms (Fsp3), which can be indicators of better cellular bioavailability [10].

- Verify Compound Integrity: Conduct a stability study as described in the FAQ section. If degradation is widespread, consider replenishing the library or implementing stricter storage conditions (e.g., consistent -20°C storage in sealed, low-volume plates with minimal freeze-thaw cycles) [10].

Problem: Hits are Difficult to Optimize or Show Poor Structure-Activity Relationships (SAR)

Potential Causes:

- The initial hit compounds are overly complex or possess structural features that are intractable for medicinal chemistry.

- The library lacks suitable analog series for follow-up, making it hard to explore the structure-activity landscape around the initial hit.

Solutions:

- Assess Compound "Lead-Likeness": During library design and hit triage, prioritize compounds with properties that are amenable to optimization. This includes a focus on lower molecular weight and complexity to leave "room" for property optimization during medicinal chemistry campaigns.

- Build Libraries with Analog Coverage: When acquiring compounds, ensure the library is not just diverse at the scaffold level but also includes multiple analogs per scaffold. This provides immediate starting points for SAR studies once a hit is identified [10].

- Collaborate with Medicinal Chemists: Establish a strong collaboration to help design and synthesize follow-up compounds based on the initial hit. Access to custom synthesis is often key to successful hit-to-lead optimization [12].

Experimental Protocols & Data

Protocol 1: Quality Control Assessment of a Screening Library

Purpose: To experimentally determine the purity and identity of compounds in a stored screening library [10].

Methodology:

- Sample Selection: Randomly select a structurally diverse set of compounds from the library storage system. For example, pick ~500-800 compounds from both reservoir plates (96-way tubes) and assay-ready plates (384-way tubes) [10].

- LCMS Analysis:

- Instrumentation: Ultra-performance liquid chromatography (UPLC) system equipped with ultraviolet (UV) and evaporative light scattering (ELS) detectors, coupled to a mass spectrometer (MS) [10].

- Purity Calculation: Inject the samples and calculate the average purity from the two detection methods (UV and ELS) [10].

- Identity Confirmation: Use the mass spectrometer to confirm the identity of the compound by matching the observed mass to the expected mass [10].

- Data Interpretation: Compounds with >80% purity are generally considered acceptable for screening. A high-quality library should have >85% of tested compounds passing this threshold [10].

Protocol 2: Physicochemical Property Profiling of a Compound Library

Purpose: To understand the chemical space and drug-likeness of a screening collection.

Methodology:

- Descriptor Calculation: Use cheminformatics software (e.g., Pipeline Pilot, RDKit) to calculate key molecular descriptors for all compounds in the library. Essential descriptors include [10]:

- Molecular Weight (MW)

- Calculated LogP (clogP) and LogD at pH 7.4 (clogD)

- Topological Polar Surface Area (TPSA)

- Number of Hydrogen Bond Donors (HBD) and Acceptors (HBA)

- Number of Rotatable Bonds (RB)

- Number of Aromatic Rings (AR)

- Fraction of sp3 Carbons (Fsp3)

- Structural Alert Screening: Pass the library through a set of computational filters (e.g., PAINS, a modified version of the Pfizer rule) to identify compounds with potentially problematic functional groups [10].

- Data Analysis and Visualization:

Data Presentation

Table 1: Quality Control (QC) Results for a Representative Academic Screening Library

This table summarizes QC data from a test set of 779 compounds after long-term storage [10].

| Purity Range | Number of Compounds | Percentage of Library | Interpretation |

|---|---|---|---|

| >90% | 606 | 77.8% | Excellent |

| 80-90% | 75 | 9.6% | Acceptable |

| <80% | 98 | 12.6% | Failed QC |

Table 2: Mean Physicochemical Properties of Screening Library Subsets

This table compares the average properties of different sub-libraries, highlighting their distinct design goals [10].

| Molecular Descriptor | Full Library | Diversity Set | Bioactives | Focused Set | Fragments |

|---|---|---|---|---|---|

| Molecular Weight | 383.6 | 390.9 | 359.1 | 432.9 | 232.9 |

| clogP | 3.4 | 3.5 | 2.9 | 4.1 | 1.6 |

| clogD | 2.7 | 2.8 | 2.1 | 3.4 | 1.1 |

| TPSA | 75.8 | 71.5 | 89.6 | 78.6 | 61.4 |

| H-Bond Acceptors | 5.9 | 5.7 | 7.1 | 6.3 | 4.0 |

| H-Bond Donors | 1.6 | 1.5 | 2.1 | 1.6 | 1.5 |

| Rotatable Bonds | 5.8 | 5.9 | 5.6 | 6.9 | 3.2 |

| Aromatic Rings | 2.5 | 2.6 | 2.1 | 2.8 | 1.5 |

| Fraction sp3 (Fsp3) | 0.5 | 0.5 | 0.5 | 0.5 | 0.5 |

| Structural Alerts | 0.2 | 0.1 | 0.4 | 0.2 | 0.1 |

Table 3: Z-Factor Ranges for HTS Assay Validation

The Z-factor is a key statistical parameter for evaluating the quality and robustness of an HTS assay itself, which is critical before screening a valuable library [13].

| Z-Factor Value | Assay Quality | Recommendation |

|---|---|---|

| 1.0 | Ideal | Theoretical perfect assay. |

| 0.5 < Z < 1.0 | Excellent | A robust assay suitable for HTS. |

| 0 < Z < 0.5 | Marginal | The assay may be usable but requires optimization. |

| Z < 0 | Poor | The assay is not suitable for HTS screening. |

Workflow Visualization

Library Screening & Quality Control Workflow

In silico Target Prediction Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

| Tool / Reagent | Function | Key Considerations |

|---|---|---|

| Automated Compound Storage System (e.g., Brooks Life Sciences) | Manages large compound collections in DMSO solutions at -20°C, enabling efficient cherry-picking and replication [10]. | Systems should track tube formats (e.g., 384-way for single-use, 96-way for reservoirs) and minimize freeze-thaw cycles [10]. |

| Liquid Chromatography-Mass Spectrometry (LCMS) | The gold standard for Quality Control (QC), confirming compound identity (via mass) and purity (via UV/ELS detectors) [10]. | Essential for validating new compound acquisitions and periodically checking library integrity after storage [10]. |

| Cheminformatics Software (e.g., Pipeline Pilot, RDKit) | Calculates key molecular descriptors (MW, clogP, TPSA, etc.) and runs structural alert filters to profile library quality and diversity [10]. | Allows for comparison of your library's chemical space against known bioactives and commercial libraries to identify gaps [10]. |

| CRISPR-Cas9 Libraries | Enables high-throughput functional genomic screening for target identification and validation, especially in phenotypic rescue experiments [14] [15]. | Used to confirm that a phenotypic hit acts specifically through a suspected target by genetically reversing the phenotype [15]. |

| AI-Powered Morphological Profiling (e.g., Ardigen phenAID) | Uses AI and Cell Painting assays to analyze high-content cell images, identifying active compounds and predicting their mechanism of action based on morphological "fingerprints" [16]. | Bridges the gap between cell imaging and small molecule design, helping to triage hits and understand their bioactivity [16]. |

The Resurgence of Phenotypic Screening for First-in-Class Therapies

FAQs: Enhancing Phenotypic Screening Success

This section addresses common challenges researchers face in phenotypic screening campaigns and provides strategic solutions grounded in current practices and technologies.

FAQ 1: How can we improve the quality and disease-relevance of our initial hit compounds?

The key is to use more physiologically relevant disease models and well-designed compound libraries from the outset.

- Advanced Cellular Models: Move beyond simple 2D cell cultures. Implement 3D cell cultures (e.g., spheroids, organoids) to better model tissue architecture, cell-cell interactions, and the tumor microenvironment, leading to more predictive results [17] [18].

- Optimized Compound Libraries: Utilize specialized phenotypic screening libraries that are curated for chemical diversity, favorable physicochemical properties, and enriched with compounds having known bioactivity or mechanism of action. These libraries are designed to increase the probability of identifying high-quality, drug-like hits [19].

FAQ 2: What is the biggest operational challenge after a primary screen, and how can it be managed?

The most significant challenge is hit triage—efficiently prioritizing a manageable number of promising leads from thousands of initial hits for further study.

- Rigorous Hit Validation: Establish strict, multi-parameter criteria for progression. The table below outlines essential criteria and recommended thresholds for hit validation [12]:

| Criterion | Recommended Threshold | Purpose |

|---|---|---|

| Potency | >60% activity at assay concentration (e.g., 10 µM) | Filters weak actives for prioritized compounds with strong effects [12]. |

| Selectivity Index (SI) | SI >10 (ratio of toxic-to-therapeutic concentration); use MTT or similar assay | Eliminates promiscuous or overtly toxic compounds by comparing cytotoxicity to efficacy [12]. |

| Dose-Response | Confirm activity and determine IC50/EC50 | Confirms biological activity and provides a quantitative measure of compound potency. |

FAQ 3: Is identifying the exact molecular Mechanism of Action (MoA) always necessary before pre-clinical development?

Not always. While knowing the MoA is beneficial for optimization and safety profiling, it is not an absolute requirement for clinical progression.

- Focus on Phenotypic Effect: If a compound demonstrates strong efficacy, a high selectivity index, and favorable pharmacokinetic properties in the absence of a known target, it can still be advanced. Historical examples like cyclosporine and niclosamide were used effectively without a full understanding of their MoA [12].

- Staged Deconvolution: Initially, focus on identifying the phenotypic point of pathway intervention (e.g., which stage of a viral life cycle is inhibited). Full target deconvolution can be pursued in parallel or at a later stage using techniques like affinity purification, expression cloning, or genetic screens [20] [12].

Troubleshooting Guides for Common Experimental Issues

This guide provides step-by-step protocols for addressing specific technical problems in phenotypic screening workflows.

Issue 1: High False Positive Rate in Primary Screen

A high hit rate (>3%) often indicates interference from non-specific or cytotoxic compounds [12].

Investigation and Resolution Protocol:

- Confirm Activity: Retest all primary hits in a dose-response format using the original assay to confirm reproducible activity.

- Assess Cytotoxicity: Run a parallel viability assay (e.g., MTT, ATP-based) on the confirmed hits under the exact same conditions as the phenotypic assay.

- Calculate Selectivity Index (SI): For each hit, calculate SI = CC50 (cytotoxic concentration 50%) / EC50 (effective concentration 50%). Prioritize compounds with SI > 10 [12].

- Examine Chemical Structure: Filter out compounds with known undesirable chemical motifs (e.g., pan-assay interference compounds, or PAINS) and those with poor drug-like properties.

Issue 2: Difficulty in Elucidating Mechanism of Action (Target Deconvolution)

Successful target deconvolution requires a multi-pronged approach, as no single technique is universally successful.

Experimental Workflow for Target Deconvolution: The following diagram illustrates a sequential, integrated strategy for MoA elucidation.

Detailed Methodologies:

- Determine Phenotypic Point of Intervention: Design secondary assays to pinpoint the biological process being disrupted. For virology, this could involve time-of-addition assays, viral entry assays using reporter systems, or replication assays with subgenomic replicons [12].

- Affinity-Based Techniques: Immobilize the hit compound on a solid support to create "bait." Incubate this with cell lysates, then wash and elute bound proteins. Identify these proteins using mass spectrometry. This directly identifies physical binding partners [12].

- Genetic Screens: Use genome-wide CRISPR knockout or haploid cell libraries. Cells with a knocked-out gene that confers resistance to the compound's effect can identify the compound's target or pathway members essential for its activity [12].

- Knowledge-Based & Computational Approaches: Screen for chemical similarity to known bioactive compounds. Use in silico docking against protein structure databases to generate testable hypotheses about potential targets [12].

Issue 3: Translating Hits from Cell Models to In Vivo Efficacy

Failure in animal models often stems from poor pharmacokinetic (PK) properties not assessed in cellular assays.

Pre-clinical Profiling Protocol:

- In Vitro PK Screening:

- Metabolic Stability: Incubate compounds with liver microsomes (human and relevant animal species) and measure parent compound depletion over time.

- Permeability: Assess using Caco-2 cell monolayers to predict oral absorption.

- Plasma Protein Binding: Determine the fraction of compound bound to plasma proteins using equilibrium dialysis.

- In Vivo Pharmacokinetics:

- Route of Administration: Test multiple routes (e.g., oral, intravenous) [12].

- PK Analysis: Administer compound to animals and collect serial blood samples. Measure compound concentration over time to calculate key parameters: Area Under the Curve (AUC), maximum concentration (Cmax), half-life (t1/2), and bioavailability (F) [12].

- Tissue Distribution: Measure compound levels in target organs to ensure sufficient exposure at the disease site [12].

- In Vivo Efficacy: Only proceed with in vivo disease model challenges after confirming that the compound's PK profile achieves and sustains blood/tissue concentrations above the in vitro EC50 for the required duration [12].

The Scientist's Toolkit: Essential Research Reagents & Materials

The table below lists key solutions for setting up a robust phenotypic screening platform.

| Item | Function & Application in Phenotypic Screening |

|---|---|

| Specialized Phenotypic Screening Library | Pre-designed compound collections (e.g., 5,760 compounds) optimized for diversity, known bioactivity annotations, and drug-like properties to increase hit rates and provide starting points for MoA deconvolution [19]. |

| 3D Cell Culture Systems | Platforms (e.g., from partners like InSphero, Lonza) to generate spheroids or organoids. Used to create more physiologically relevant disease models for screening, improving the translation of hits [18]. |

| Automated Workstation | Integrated liquid handling and detection systems (e.g., Tecan Fluent or Freedom EVO). Enables high-throughput, miniaturized (384-/1536-well) assays, improves reproducibility, and manages complex workflows like 3D cell culture [18]. |

| High-Content Imaging System | Automated microscopes and analyzers for Cell Painting and other multiplexed assays. Quantifies complex morphological changes in cells upon compound treatment, providing rich, multi-parameter data for hit identification and MoA insight [21]. |

| Viability Assay Kits | Ready-to-use kits (e.g., MTT, ATP-based luminescence) for parallel assessment of cell health. Critical for calculating the Selectivity Index (SI) during hit triage to eliminate cytotoxic false positives [12]. |

Building Better Libraries: Strategic Design and Technological Integration

Foundational Principles & FAQs

Frequently Asked Questions

Q1: Why is chemical diversity critical in libraries for phenotypic screening? A: Chemical diversity is crucial because phenotypic screening aims to discover novel biology and first-in-class therapies without a pre-specified target hypothesis [3]. A comprehensive and diverse library maximizes the probability of identifying hits with a desired therapeutic effect across a wide biological space. Quantifying this diversity requires a multi-faceted approach, using molecular scaffolds, structural fingerprints, and physicochemical properties to get a complete picture of the "global diversity" of a collection [22].

Q2: How does library design for phenotypic screening (PDD) differ from target-based screening (TDD)? A: The key difference lies in the starting point. TDD begins with a known, validated molecular target, allowing for the design of focused libraries. In contrast, PDD is "molecular-target-agnostic," relying on chemical interrogation of a disease-relevant biological system to uncover novel targets and mechanisms of action (MoA) [3]. Therefore, PDD requires libraries that cover a broader, more diverse chemical space to probe complex physiology effectively.

Q3: What is the role of the Rule-of-Five and how should it be applied in modern library curation? A: The Rule-of-Five and similar lead-like rules provide valuable guidelines for ensuring favorable ADME (Absorption, Distribution, Metabolism, and Excretion) properties [22]. They are essential for filtering out compounds with poor drug-like characteristics. However, for phenotypic screening, an overly strict application might prematurely exclude chemical matter with novel scaffolds or mechanisms. A balanced approach is recommended, using these rules to guide selection while allowing for chemotypes that fall outside these norms, as they may reveal unprecedented MoAs [3].

Q4: What are the common sources of compound interference in primary assays, and how can they be mitigated? A: Homogeneous proximity assays, common in high-throughput screening (HTS), are susceptible to compound-mediated interference mechanisms such as assay signal interference [23]. A key mitigation strategy is the development and use of a readout counter assay. This orthogonal assay helps identify false positive hits caused by compound interference rather than genuine on-target activity [24].

Q5: How many hit series should ideally progress from validation to the hit-to-lead stage? A: While the exact number can vary by project, it is generally recommended to advance around two to three validated hit series into the hit-to-lead (H2L) phase [24]. This provides a manageable number of starting points for further optimization while maintaining a backup option should the leading series fail.

Troubleshooting Common Experimental Issues

Issue 1: High hit rate with many non-reproducible or promiscuous compounds.

- Potential Causes: The primary assay may not be sufficiently robust or pharmacologically sensitive. The compound library might contain a high frequency of pan-assay interference compounds (PAINS) or compounds with unfavorable properties (e.g., aggregation).

- Solutions:

- Assay Robustness: Ensure the assay's Z'-factor is >0.5 before full-scale screening [23].

- Hit Triage: Implement a stringent, multi-parameter triage funnel. This should include dose-response confirmation, a readout counter-assay to identify interferants, and biophysical validation (e.g., SPR, NMR) to confirm target engagement [24].

- Library Curation: Improve the quality of the screening collection by filtering for lead-like properties and chemical stability [24].

Issue 2: Low hit rate from a phenotypic screen.

- Potential Causes: The chemical diversity of the screening library may be insufficient to modulate the complex biology of the disease model. The assay conditions might not be physiologically relevant enough.

- Solutions:

- Diversity Analysis: Use tools like Consensus Diversity Plots (CDPs) to evaluate and compare the global diversity of your library against known diverse sets (e.g., natural product libraries) [22].

- Library Enhancement: Introduce compounds with novel chemotypes or specialized subsets (e.g., natural product-derived libraries) to access under-explored chemical space [22].

- Model Relevance: Consider adopting more physiologically relevant models, such as stem cell-derived systems or advanced 3D cell models, to better capture the disease phenotype [23].

Issue 3: Difficulty in identifying the Mechanism of Action (MoA) of a phenotypic hit.

- Potential Causes: The triage process may not have collected sufficient biological knowledge about the hit's behavior.

- Solutions:

- Leverage Biological Knowledge: Successful MoA deconvolution is enabled by integrating three types of knowledge: known mechanisms of action (from comparable hits), deep understanding of the disease biology, and known safety profiles of related compounds [11].

- Functional Genomics: Employ techniques like CRISPR or RNAi screens in parallel to small-molecule screening to identify genes critical to the phenotype, which can provide clues to the relevant pathways [23].

- Avoid Structure-Based Triage Alone: Prioritizing hits based solely on structural attractiveness during early triage can be counterproductive, as it may discard compounds with novel and valuable MoAs [11].

Experimental Protocols & Data Presentation

Protocol: Quantifying Library Diversity with Consensus Diversity Plots (CDPs)

Objective: To comprehensively evaluate the structural diversity of a compound library using multiple molecular representations simultaneously [22].

Methodology:

- Data Curation: Curate the compound library using a tool like the wash module of Molecular Operating Environment (MOE). This involves disconnecting metal salts, removing simple components, and rebalancing protonation states [22].

- Scaffold Diversity Assessment:

- Calculate all molecular scaffolds (cyclic and acyclic systems) using a consistent methodology (e.g., with the MEQI program).

- Generate a Cyclic System Recovery (CSR) curve by plotting the fraction of scaffolds (X-axis) against the fraction of compounds retrieved (Y-axis).

- Quantify scaffold diversity using the Area Under the CSR Curve (AUC) and the fraction of scaffolds needed to recover 50% of the compounds (F50). Low AUC and high F50 indicate high scaffold diversity [22].

- Fingerprint Diversity Assessment:

- Generate structural fingerprints for all compounds (e.g., MACCS keys or Extended Connectivity Fingerprints).

- Calculate the average pairwise Tanimoto similarity within the library. Lower average similarity indicates higher fingerprint diversity [22].

- Physicochemical Property Diversity Assessment:

- Calculate key physicochemical properties (e.g., molecular weight, logP, number of hydrogen bond donors/acceptors, polar surface area, number of rotatable bonds) for each compound.

- Assess the property space diversity by calculating the Euclidean distance in a multi-dimensional property space or by analyzing the distribution of properties [22].

- Construct the CDP:

- Create a 2D plot where the X-axis represents fingerprint diversity (e.g., 1 - average Tanimoto similarity) and the Y-axis represents scaffold diversity (e.g., AUC of CSR curve).

- Each compound library is represented as a single point on this plot.

- The diversity of physicochemical properties can be mapped using a continuous or categorical color scale [22].

Table 1: Key Metrics for Quantifying Chemical Library Diversity

| Diversity Dimension | Calculation Method | Interpretation | Target Value/Range |

|---|---|---|---|

| Scaffold Diversity | Area Under CSR Curve (AUC) | Lower AUC = Higher diversity | Context-dependent; compare vs. reference libraries [22] |

| Scaffold Diversity | F50 (Fraction of scaffolds to cover 50% of library) | Higher F50 = Higher diversity | Context-dependent; compare vs. reference libraries [22] |

| Fingerprint Diversity | Average Pairwise Tanimoto Similarity | Lower similarity = Higher diversity | < 0.15 - 0.30 is often considered diverse [22] |

| Property Diversity | Shannon Entropy (SSE) / Euclidean Distance | Higher SSE/distance = Higher diversity | SSE closer to 1.0 indicates maximum diversity [22] |

Protocol: A Standard Hit Identification and Triage Workflow

Objective: To identify and validate high-quality hits from a high-throughput phenotypic screen [24].

Methodology:

- Pilot Screen: Perform a pilot screen using a representative subset (e.g., 1-5%) of the full compound library to validate assay performance and screening conditions [24].

- Primary Screening: Screen the entire selected compound deck under the optimized conditions. Identify "primary hits" that exceed the predefined activity threshold (e.g., >3 standard deviations from the mean) [24].

- Hit Confirmation: Re-test primary hits in the same assay in replicates to confirm the activity.

- Hit Triage & Concentration-Response: Generate dose-response curves for confirmed hits in the primary assay. Test compounds against a readout counter-assay and relevant selectivity targets to filter out interferants and non-selective compounds [24].

- Hit Validation:

- Orthogonal Assays: Test prioritized hits in secondary assays with different readout technologies (e.g., biophysical, high-content imaging) and in more physiologically relevant systems to confirm the biological activity [24].

- SAR Analysis: Synthesize and test structurally related analogs to establish an initial Structure-Activity Relationship (SAR) and confirm that the activity is structure-dependent [25] [24].

- ADME-Tox Profiling: Conduct early in vitro ADME-Tox assays (e.g., metabolic stability, cytochrome P450 inhibition) to assess drug-like properties [24].

Table 2: Essential Research Reagent Solutions for Phenotypic Screening

| Reagent / Material | Function in the Workflow | Key Considerations |

|---|---|---|

| Diverse Compound Library | Source of chemical matter to probe biological systems and identify hits [24]. | Quality, diversity, and lead-like properties are paramount. Should be optimized for size (100,000s of compounds) and contain novel chemotypes [22] [24]. |

| Cell-Based Disease Models | Provides the physiologically relevant system for phenotypic screening [3]. | Moving towards more complex models like stem cells, co-cultures, and 3D organoids to better mimic disease biology [23]. |

| High-Content Screening (HCS) Platform | Automated imaging and analysis to extract multiparametric phenotypic data from cell-based assays [26]. | Enables multiplexing of several fluorescent markers, confocal imaging for clarity, and is reliable for high-throughput workflows [26] [23]. |

| Biophysical Assay Platforms | Hit validation by confirming direct target engagement and measuring binding kinetics (e.g., SPR, NMR, ITC) [23] [24]. | Provides a label-free, orthogonal method to confirm activity beyond the primary screen. |

| Functional Genomics Tools | For target identification and validation post-hit discovery (e.g., CRISPR libraries) [23]. | Helps deconvolute the Mechanism of Action of phenotypic hits by identifying genes critical to the phenotype. |

Visual Workflows & Pathways

Phenotypic Hit Identification Workflow

Library Curation & Diversity Analysis

FAQs on Library Design and Implementation

FAQ 1: How can I create a focused library tailored to a specific disease like glioblastoma? A rational design approach uses the disease's genomic profile to enrich a chemical library. For glioblastoma (GBM), this process involves:

- Target Identification: Analyze tumor RNA sequence and mutation data to identify overexpressed genes and somatic mutations.

- Network Analysis: Map these genes onto a human protein-protein interaction network to construct a disease-relevant subnetwork.

- Virtual Screening: Dock an in-house compound library against the druggable binding sites on proteins within this subnetwork. Select compounds predicted to bind multiple targets for phenotypic screening [27]. This method enabled the discovery of a compound (IPR-2025) with single-digit micromolar IC50 values in patient-derived GBM spheroids and submicromolar activity in tube-formation assays [27].

FAQ 2: What resources are available for GPCR-focused research and library design? The GPCRdb database provides comprehensive, open-access resources for GPCR research. Its 2025 release includes:

- Expanded Receptor Coverage: Incorporation of all ~400 human odorant receptors (ORs) and their orthologs in major model organisms [28].

- Data Mapping Tools: A new Data Mapper allows users to visualize their own data on receptor wheels, trees, clusters, or heatmaps [28].

- Advanced Structure Models: Updated state-specific structure models for all human GPCRs and new models of physiological ligand complexes, built using AlphaFold and RoseTTAFold [28].

- Ligand Search: New tools to query ligands by name, identifier, similarity, or substructure across major databases like ChEMBL and Guide to Pharmacology [28].

FAQ 3: How can computational methods improve hit optimization after an initial screen? Active learning (AL) workflows guided by free-energy calculations can efficiently explore chemical space. A successful application for LRRK2 WDR domain inhibitors involved:

- Virtual Screening: Screening billions of commercial compounds and filtering for analogs of initial hits [29].

- Free Energy Calculations: Using molecular dynamics thermodynamic integration (MD TI) to compute relative binding free energies (RBFE) [29].

- Machine Learning Guidance: An ML model was iteratively trained on computed RBFEs to predict the binding affinity of new analogs, prioritizing the most promising compounds for the next round of calculations [29]. This approach achieved a 23% experimental hit rate, identifying 8 novel inhibitors from 35 tested compounds [29].

FAQ 4: Are there pre-built libraries available for epigenetic targets? Yes, commercially available focused libraries exist. For example, the Epigenetics Screening Library has been expanded to include over 230 small molecule modulators. These compounds target key epigenetic players, including writers, erasers, and readers, and include several inhibitors that have been used in clinical trials [30].

Troubleshooting Guides

Problem: Low hit rate or lack of efficacy in a phenotypic screen using a focused library.

| Potential Cause | Diagnostic Steps | Corrective Action |

|---|---|---|

| Inadequate target coverage | Verify that your library design is based on a comprehensive disease network analysis. | Expand the target list by integrating multi-omics data (genomic, transcriptomic) and ensure coverage of key signaling pathways [27]. |

| Poor cellular model relevance | Compare results between 2D immortalized cell lines and 3D patient-derived spheroids/organoids. | Shift screening to more physiologically relevant 3D models that better mimic the tumor microenvironment [27]. |

| Limited chemical diversity | Analyze the chemical space and scaffolds represented in your focused set. | Enrich the library with compounds predicted for selective polypharmacology or use diversity-oriented synthesis libraries [27]. |

| Insufficient compound selectivity | Perform kinome-wide profiling to identify and quantify off-target effects. | Use biochemical profiling services to calculate a selectivity index and refine compounds to minimize off-target activity [31]. |

Problem: Confirming target engagement and mechanism of action for a hit from a phenotypic screen.

| Potential Cause | Diagnostic Steps | Corrective Action |

|---|---|---|

| Uncertain direct binding | Perform binding studies like Surface Plasmon Resonance (SPR) or Isothermal Titration Calorimetry (ITC) [31]. | Use techniques like X-ray crystallography or cryo-EM to visualize compound-target interactions at the molecular level [31]. |

| Complex polypharmacology | Conduct multi-omics analysis (e.g., RNA sequencing) on treated vs. untreated cells [27]. | Employ proteome-wide techniques like Thermal Proteome Profiling (TPP) to identify all engaged protein targets directly [27]. |

| Unclear binding kinetics | Perform kinetic analysis to determine if the inhibitor is competitive, non-competitive, or allosteric. | Vary ATP or substrate concentrations to assess impact on potency and elucidate the mode of action [31]. |

Experimental Data and Protocols

Table 1: Performance Metrics of Focused Library Approaches

| Target Class | Library Strategy | Key Experimental Model | Primary Outcome Metric | Result / Hit Rate | Reference |

|---|---|---|---|---|---|

| Glioblastoma (Multiple Kinases/PPIs) | Genomic profile-guided virtual screening of ~9,000 compounds [27]. | Patient-derived GBM spheroids | Cell Viability IC50 | Single-digit µM (superior to Temozolomide) [27]. | [27] |

| LRRK2 WDR (Parkinson's Disease) | Active learning-guided optimization of 5.5B compound library [29]. | Surface Plasmon Resonance (SPR), 19F-NMR | Binding Affinity (KD), Confirmed Inhibitors | 8 novel inhibitors confirmed from 35 tested (23% hit rate) [29]. | [29] |

| Endothelial Cell Angiogenesis | Hits from the GBM-focused library [27]. | Tube-formation assay on Matrigel | Anti-angiogenesis IC50 | Sub-micromolar IC50 values [27]. | [27] |

Detailed Protocol: Kinase Inhibitor Profiling and Validation

This protocol outlines key steps for characterizing hits from a kinase-focused library, from initial biochemical confirmation to cellular target engagement [31].

1. Evaluate Biochemical Kinase Activity

- Objective: Confirm the compound inhibits the intended kinase and determine potency.

- Materials:

- Purified kinase protein

- Test compound and known control inhibitor

- ATP, substrate peptide

- Assay reagents (e.g., for luminescent or ELISA-based detection)

- Procedure:

- Select Assay Format: Choose a robust biochemical assay (e.g., luminescent, radiometric).

- Dose-Response Curve: Incubate the kinase with a serial dilution of your compound across a range of concentrations (e.g., 0.1 nM to 100 µM).

- Initiate Reaction: Start the enzymatic reaction by adding ATP and substrate.

- Measure Activity: Quantify the remaining kinase activity using the chosen detection method.

- Calculate IC50: Plot % inhibition vs. compound concentration and fit a curve to determine the IC50 value.

- Troubleshooting: Always include a positive control inhibitor to validate the assay performance. An unusually high or low IC50 for the control indicates a potential problem with the assay conditions.

2. Decipher Mechanism of Action (MoA)

- Objective: Understand how the inhibitor binds to the kinase (e.g., competitive with ATP).

- Materials:

- SPR biosensor or ITC instrument

- Purified kinase and compound

- Procedure:

- Binding Study: Immobilize the kinase on an SPR chip or place it in the ITC sample cell.

- Inject Compound: Flow or inject the compound over the kinase.

- Analyze Binding Kinetics (SPR): Determine association (ka) and dissociation (kd) rates to calculate the binding affinity (KD).

- Analyze Thermodynamics (ITC): Measure heat changes to determine KD, enthalpy (ΔH), and entropy (ΔS).

- Kinetic Analysis: Repeat the IC50 determination from Step 1 at several different ATP concentrations. A rightward shift in the IC50 curve with increasing ATP concentration suggests competitive inhibition.

3. Conduct Kinase Profiling

- Objective: Assess selectivity across the kinome to minimize off-target effects.

- Materials:

- Panel of purified kinases (e.g., >100 kinases)

- High-throughput screening capability

- Procedure:

- Screen Panel: Test your compound at a single concentration (e.g., 1 µM) against a broad kinase panel.

- Dose-Response on Hits: Perform full IC50 determinations on any kinases that show >50% inhibition in the initial screen.

- Calculate Selectivity Index: Divide the IC50 for the most potent off-target kinase by the IC50 for your primary target kinase. A higher number indicates greater selectivity.

4. Assess Cellular Target Engagement

- Objective: Confirm the compound engages its target in a live-cell context.

- Materials:

- Relevant cell line

- Antibodies for phosphorylated substrates (biomarkers)

- Procedure:

- Treat Cells: Incubate cells with your compound and a vehicle control.

- Lyse and Analyze: Harvest cells and analyze lysates via Western blot to monitor reduction in phosphorylation of the target kinase's downstream substrates.

- Functional Assays: Implement cell-based assays relevant to the disease phenotype (e.g., proliferation, apoptosis) to link target engagement to functional outcomes.

Visualized Workflows and Pathways

Diagram 1: Phenotypic Screening with Focused Libraries

Diagram 2: Active Learning Hit Optimization

The Scientist's Toolkit: Research Reagent Solutions

| Resource / Tool | Function / Application | Key Features / Notes |

|---|---|---|

| GPCRdb [28] | Centralized database for GPCR research. | Access reference data, structure models (AlphaFold, RoseTTAFold), ligand information, and data visualization tools. |

| Enamine REAL Database [29] | Source of commercially available compounds for virtual screening. | Contains billions of make-on-demand compounds for expansive chemical space exploration. |

| Epigenetics Screening Library [30] | Pre-built focused set for epigenetic targets. | Includes 230+ modulators targeting writers, erasers, and readers; contains clinical trial inhibitors. |

| Surface Plasmon Resonance (SPR) [29] | Label-free technique for measuring binding kinetics (KD, ka, kd). | Critical for confirming direct target engagement of hits from phenotypic screens. |

| Thermal Proteome Profiling (TPP) [27] | Proteome-wide method to identify direct protein targets. | Unbiased approach to deconvolute mechanism of action for phenotypic hits. |

| 19F-NMR [29] | Nuclear magnetic resonance for detecting ligand binding. | Useful for confirming binding of fluorinated compounds; used in hit validation. |

| Kinase Profiling Services [31] | Biochemical assays to determine inhibitor selectivity. | Screen against large panels of kinases (e.g., >100) to calculate selectivity index and minimize off-target effects. |

| AlphaFold-Multistate & RoseTTAFold [28] | Protein structure prediction tools. | Generate accurate models of GPCR-ligand complexes and receptor states for structure-based design. |

The Role of AI and Machine Learning in Virtual Compound Prioritization and Library Denoising

Technical Support Center

Troubleshooting Guides

Guide 1: Addressing High False Positive Rates in Phenotypic Screening

Problem: Initial phenotypic screens are yielding a high number of false positives, leading to inefficient use of resources in downstream validation.

Diagnosis: This is frequently caused by screening libraries containing compounds with undesirable properties, such as pan-assay interference compounds (PAINS), fluorescent compounds, or those with general cytotoxicity, which create signals unrelated to the intended biological mechanism [8].

Solution: Implement AI-driven library denoising.

- Pre-Screen Filtering: Use AI models to profile your compound library in silico before biological screening.

- Model Application:

- PAINS Filtering: Employ a pretrained classifier to flag and remove compounds containing PAINS substructures.

- Cytotoxicity Prediction: Use a QSAR (Quantitative Structure-Activity Relationship) model to predict and filter compounds with a high likelihood of general cytotoxicity.

- Assay Interference Prediction: Train a model on historical screening data to identify compounds that have previously shown interference in your specific assay technologies (e.g., fluorescence, luminescence).

- Validation: Run a pilot screen with the denoised library and compare the hit rate and confirmation rate against a historical control.

Guide 2: Overcoming Target Deconvolution Bottlenecks for Phenotypic Hits

Problem: Active compounds from a phenotypic screen are identified, but determining their molecular mechanism of action (MoA) is slow and halts development.

Diagnosis: Traditional target deconvolution methods (e.g., chemical proteomics) are low-throughput and not always successful. The target hypothesis may be entirely missing [20] [32].

Solution: Leverage AI for MoA prediction and target identification.

- Data Collection: For confirmed hits, gather multi-dimensional data, including:

- Chemical Structure (SMILES)

- High-Content Imaging Profiles (morphological data)

- Gene Expression Profiles (from RNA-seq)

- AI Analysis:

- Input the chemical structures into a trained model like the L1000 Connectivity Map to find compounds with similar gene expression signatures, suggesting a shared MoA [8].

- Use a platform that integrates chemical and phenotypic features (e.g., from the Cell Painting assay) to cluster hits by potential MoA and predict novel targets [32].

- Experimental Triangulation: Use the AI-generated target hypotheses to design focused follow-up experiments (e.g., cellular thermal shift assays, CRISPRi) for validation [27].

Frequently Asked Questions (FAQs)

Q1: What is the practical impact of AI-based virtual compound prioritization on discovery timelines? A1: AI can significantly compress early-stage discovery. Real-world examples show that AI-designed molecules have entered Phase I trials within 12 to 18 months of program initiation, compared to the typical 4-6 years required by traditional methods [33] [34] [35]. This represents an acceleration of approximately 25% in discovery timelines [34].

Q2: Our phenotypic screening uses complex 3D patient-derived spheroids. Can AI handle such complex data? A2: Yes, modern AI is particularly suited for complex phenotypic data. For instance, one documented approach used patient-derived glioblastoma (GBM) spheroids for screening. The AI and virtual screening workflow was specifically designed to identify compounds that inhibit GBM spheroid viability and angiogenesis without affecting normal cell viability, demonstrating effectiveness in biologically relevant models [27].

Q3: We are concerned about the "black box" nature of AI. How can we trust its compound prioritizations? A3: This is a valid concern. Trust is built through explainability and validation. Leading AI platforms provide insight into their decisions by highlighting which chemical features or structural properties contributed to a compound's high ranking. Furthermore, any AI prioritization must be followed by rigorous experimental validation in relevant biological systems, which confirms the prediction and builds confidence for future use [36].

Q4: What are the key data requirements for building an effective AI model for library denoising in our lab? A4: The most critical factor is high-quality, relevant data. The model's performance depends on [37] [8]:

- Historical Screening Data: Data from your past assays, including both active and inactive compounds.

- Clean Annotation: Accurate labels for undesirable compounds (e.g., "interferes with assay," "cytotoxic").

- Chemical Diversity: A broad representation of chemical space in your training data to improve model generalizability.

- Domain-Specific Training: Models trained from scratch on biomedical data often outperform general-purpose models [37].

Performance Data for AI in Discovery

Table 1: Quantitative Impact of AI on Drug Discovery Processes. Data synthesized from industry reports and published studies [33] [34].

| Metric | Traditional Approach | AI-Accelerated Approach | Improvement |

|---|---|---|---|

| Discovery to Phase I Timeline | 4-6 years | 1.5-2 years | ~60-70% faster |

| Compounds Synthesized for Lead Optimization | Hundreds to thousands | 10x fewer | ~90% reduction |

| Clinical Trial Patient Recruitment | Often delayed | Up to 80% shorter timeline | Significant acceleration |

| Estimated Cost Savings | Baseline | Billions annually across industry | Substantial |

Featured Experimental Protocol

Protocol: Target-Informed Library Enrichment for Phenotypic Screening of Glioblastoma

This protocol details the method used to create a rationally enriched chemical library for phenotypic screening against patient-derived GBM spheroids, as published in ACS Chemical Biology [27].

Objective: To create a focused screening library with a higher probability of activity against GBM by leveraging the tumor's genomic profile and AI-based virtual screening.

Materials:

- Data: GBM tumor RNA-seq and somatic mutation data from sources like The Cancer Genome Atlas (TCGA). A large-scale human protein-protein interaction network.

- Software: Structure-based molecular docking software (e.g., using SVR-KB scoring).

- Compound Library: An in-house or commercially available library of small molecules (~9000 compounds in the original study).

- Biological Models: Low-passage patient-derived GBM spheroids, primary astrocytes, CD34+ progenitor cells, brain endothelial cells.

Workflow:

Step-by-Step Procedure:

Target Identification:

- Perform differential expression analysis on GBM patient RNA-seq data (vs. normal samples) to identify significantly overexpressed genes (p < 0.001, FDR < 0.01, log2FC > 1).

- Compile a set of somatically mutated genes from the same patient cohort.

- Combine these lists to identify 755 genes implicated in GBM.

Network and Druggability Filtering:

- Map the 755 genes onto a large-scale human protein-protein interaction (PPI) network. This narrows the list to 390 proteins that are well-connected within the GBM context.

- Identify proteins with known 3D structures in the Protein Data Bank (PDB). For each, classify druggable binding sites as catalytic (ENZ), protein-protein interaction (PPI), or allosteric (OTH). This step identified 117 proteins with 316 druggable sites.

Virtual Screening (AI/ML Component):

- Dock the entire in-house compound library (~9000 molecules) against all 316 druggable binding sites.

- Use a knowledge-based scoring function (e.g., SVR-KB) to predict binding affinities.

- Prioritize and select compounds that are predicted to bind strongly to multiple targets within the GBM network, aiming for selective polypharmacology. The original study selected 47 compounds for experimental testing.

Phenotypic Screening:

- Screen the enriched library against 3D patient-derived GBM spheroids to assess inhibition of cell viability.

- Counterscreen hits in normal cell models (e.g., primary astrocytes, CD34+ progenitor spheroids) to assess selectivity.

- Test the effect of hits on angiogenesis using a brain endothelial cell tube formation assay.

Mechanism of Action Studies:

- For lead compounds, use RNA sequencing of treated vs. untreated cells to uncover potential pathways affected.

- Confirm target engagement using mass spectrometry-based thermal proteome profiling (TPP) [27].

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for AI-Guided Phenotypic Screening.

| Reagent / Solution | Function / Application | Example in Context |

|---|---|---|

| Patient-Derived Spheroids/Organoids | Advanced 3D cell models that better recapitulate the tumor microenvironment and complexity for biologically relevant phenotypic screening. | Used to screen an AI-enriched library for selective anti-GBM activity [27]. |