Strategic Filtering of Compound Libraries: Enhancing Hit Discovery in Modern Drug Development

This article provides a comprehensive guide to activity and similarity filtering procedures for compound libraries, tailored for drug discovery researchers and scientists.

Strategic Filtering of Compound Libraries: Enhancing Hit Discovery in Modern Drug Development

Abstract

This article provides a comprehensive guide to activity and similarity filtering procedures for compound libraries, tailored for drug discovery researchers and scientists. It explores the foundational principles of chemical space and drug-likeness, details methodological applications of property-based and functional group filters, and offers strategies for troubleshooting common pitfalls. By comparing traditional scaffold-based libraries with modern make-on-demand approaches and validating methods through real-world case studies, this resource serves as a strategic framework for optimizing virtual screening campaigns and improving the efficiency of hit identification and lead optimization.

The Principles of Chemical Space and Drug-Likeness

Frequently Asked Questions

Q1: What are the main computational bottlenecks when screening ultra-large chemical libraries? The primary bottlenecks are the immense computational time and resources required for physics-based docking, which becomes prohibitive when evaluating billions of compounds. While rigid docking is faster, it may not sample favorable protein-ligand structures, leading to potential errors. Introducing full receptor and ligand flexibility improves success rates but drastically increases computational demands [1].

Q2: How can I efficiently screen multi-billion compound libraries without exhaustive docking? Active learning techniques and evolutionary algorithms can be used to triage and select the most promising compounds for expensive docking calculations. Instead of docking every compound, these methods use machine learning to iteratively select and evaluate a small subset of the library, significantly reducing the number of molecules that require full docking simulation [2] [1].

Q3: What is the difference between 'drug-like' and 'lead-like' compounds? Lead-like compounds are generally less complex than drug-like compounds in parameters like molecular weight (MWT) and Log P. This is because medicinal chemistry optimization to develop a drug from a lead compound almost invariably increases MWT and Log P. However, a strong structural resemblance is typically maintained between a starting lead and its resulting drug [3].

Q4: How is structural similarity calculated for small molecules in virtual screening? Structural similarity is typically quantified using molecular fingerprints and similarity metrics. Fingerprints are fixed-dimension vectors that represent structural features. The Tanimoto coefficient is the most commonly used similarity expression. It is calculated as c / (a + b - c), where 'a' and 'b' are the number of 'on' bits in molecules A and B, and 'c' is the number of bits common to both [4].

Q5: Why is my virtual screening yielding a high number of false positives? A high rate of false positives can occur if the scoring function used in docking is not accurately distinguishing true binders from non-binders. It can also stem from the presence of compounds with undesirable chemical functionality that may cause assay interference. Applying exclusionary filters to remove reactive chemical groups and using more sophisticated scoring functions that account for entropy changes can help mitigate this [3] [2].

Troubleshooting Guides

Issue 1: Poor Hit Enrichment in Virtual Screening

| Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Insufficient receptor flexibility | Compare docking results from rigid vs. flexible protocols. | Use a docking method like RosettaVS that allows for flexible side chains and limited backbone movement [2]. |

| Low-quality compound library | Analyze the property distributions (MWT, Log P, H-bond donors/acceptors) of your library against known drug-like databases. | Apply drug-likeness filters (e.g., Rule of 5) and exclude compounds with reactive functional groups [3]. |

| Inefficient chemical space sampling | Check if your screening method explores diverse scaffolds or gets stuck in a local minimum. | Implement an evolutionary algorithm (e.g., REvoLd) to efficiently explore combinatorial chemical spaces without full enumeration [1]. |

Issue 2: High Experimental Attrition of Virtual Hits

| Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Poor physicochemical properties | Calculate key properties like polar surface area (PSA), rotatable bonds, and Log P for your hits. | Prioritize lead-like compounds with lower molecular weight and complexity to allow for optimization headroom [3]. |

| Promiscuous compound binders | Screen for common substructures known to cause assay interference or aggregate formation. | Apply positive filters for "privileged structures" and negative filters for undesired chemical functionality [3]. |

| Inaccurate binding affinity prediction | Validate docking poses with experimental techniques like X-ray crystallography, if possible. | Use a scoring function that combines enthalpy (ΔH) and entropy (ΔS) calculations, such as RosettaGenFF-VS [2]. |

Experimental Protocols & Methodologies

Protocol for AI-Accelerated Virtual Screening with RosettaVS

This protocol is designed for screening multi-billion compound libraries against a protein target with a known binding site [2].

- Platform Setup: Utilize the open-source OpenVS platform, which integrates the RosettaVS docking protocol and is designed for high-performance computing (HPC) clusters.

- Ligand Preparation: Obtain the compound library in a standardized format (e.g., SDF). For ultra-large libraries (billions of compounds), use the library's reaction and substrate definitions directly if using an evolutionary algorithm [1].

- Initial Express Screening (VSX Mode): Run the initial screen using the VSX (virtual screening express) mode. This is a rapid docking mode that sacrifices some accuracy for speed.

- Active Learning Triage: The OpenVS platform uses active learning to train a target-specific neural network during docking. This network selects the most promising compounds for further evaluation, avoiding exhaustive docking.

- High-Precision Docking (VSH Mode): Take the top-ranking hits from the initial VSX screen and re-dock them using the VSH (virtual screening high-precision) mode. This mode includes full receptor flexibility for more accurate pose and affinity prediction.

- Hit Validation: Select the top-ranked compounds from the VSH screen for in-vitro binding affinity assays (e.g., to determine IC50 or Kd values).

Protocol for Structural Similarity Searching

This methodology is used to find structurally analogous compounds (hits) in existing libraries based on a reference molecule with established activity [4].

- Reference Compound Selection: Choose a known active compound as the query.

- Fingerprint Generation: Generate a molecular fingerprint for the query compound. For activity-based searching, a feature fingerprint like the Extended Connectivity Fingerprint (ECFP4) is recommended.

- Library Screening: Calculate the same type of fingerprint for every compound in the screening library.

- Similarity Calculation: Compute the similarity between the query fingerprint and every library compound fingerprint using the Tanimoto coefficient.

- Hit Selection: Rank all library compounds by their similarity score and select the top candidates (hits) for further experimental testing.

Research Reagent Solutions

The table below lists key resources used in computational and experimental screening campaigns as detailed in the search results.

| Item Name | Function / Application | Key Features |

|---|---|---|

| Enamine REAL Space | An ultra-large, make-on-demand combinatorial chemical library for virtual screening [1] [5]. | Contains billions of readily synthesizable compounds; constructed from lists of substrates and robust chemical reactions [1]. |

| RosettaVS Software | An open-source, physics-based virtual screening method for predicting docking poses and binding affinities [2]. | Models receptor flexibility; includes VSX (fast) and VSH (accurate) docking modes; integrated with the OpenVS platform [2]. |

| REvoLd Algorithm | An evolutionary algorithm for efficient exploration of ultra-large combinatorial libraries without full enumeration [1]. | Uses crossover and mutation on molecular fragments; achieves high hit rates with only thousands of docking calculations [1]. |

| Extended Connectivity Fingerprints (ECFP) | A type of molecular fingerprint used to represent molecular structure for similarity searches and machine learning [4]. | A circular (radial) fingerprint that captures atom environments; non-substructure preserving, ideal for activity-based screening [4]. |

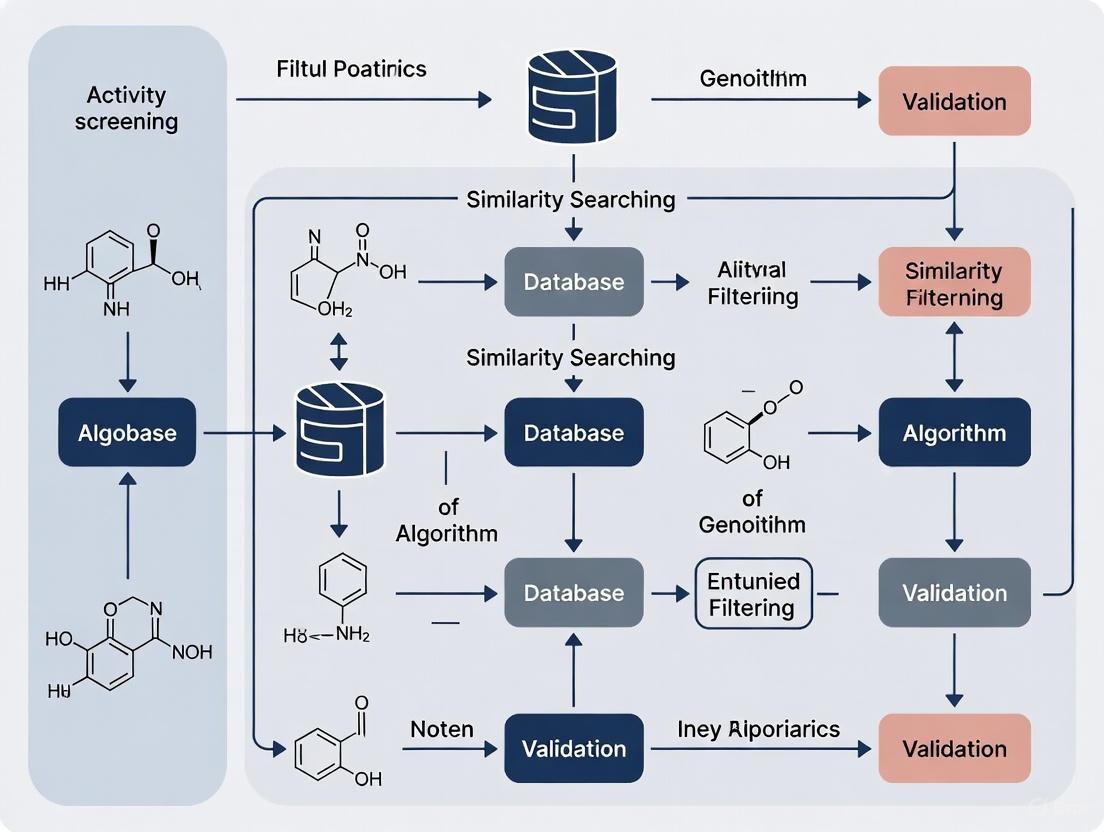

Workflow Diagrams

Ultra-Large Library Screening Workflow

Similarity Search Methodology

Core Concept Definitions

- Drug-likeness: A concept that evaluates whether a compound has physicochemical properties similar to those of known oral drugs. It is a strategic guide for selecting compounds that have a high probability of success in later-stage development and clinical trials [6] [7].

- Lead-likeness: A tactical guide for selecting initial starting points (leads) for chemical optimization. Lead-like compounds are typically smaller and less complex than drug-like compounds, providing the necessary "chemical space" to be optimized into a safe and effective drug candidate while maintaining favorable properties [6] [8].

The following table summarizes the typical physicochemical property ranges associated with each concept, based on analyses of known drugs and leads [8].

Table 1: Key Physicochemical Properties for Drug-like and Lead-like Compounds

| Property | Drug-like (Typical Profile) | Lead-like (Typical Profile) |

|---|---|---|

| Molecular Weight (MW) | Higher (e.g., ≤500) | Lower (e.g., ≤350-400) |

| Octanol-Water Partition Coefficient (LogP) | Higher (e.g., ≤5) | Lower (e.g., ≤3-4) |

| Hydrogen Bond Acceptors (HAC) | Higher (e.g., ≤10) | Lower |

| Hydrogen Bond Donors (HDO) | Higher (e.g., ≤5) | Lower |

| Molecular Complexity/Flexibility | More complex/flexible | Less complex/flexible |

| Intrinsic Water Solubility (LogSw) | Lower | Higher |

FAQs and Troubleshooting Guides

FAQ 1: Why should I apply different filters for lead-likeness and drug-likeness? Answer: Applying the correct filter at the wrong stage is a common error that can reduce the success of a discovery program.

- Problem: Applying strict drug-like filters too early, during initial library design or hit identification, can eliminate smaller, less complex lead-like compounds. These lead-like compounds are crucial as they provide the necessary chemical space for optimization. Adding functional groups to improve potency and selectivity will inevitably increase molecular weight and lipophilicity [6] [8].

- Solution: Adopt a staged filtering strategy. Use lead-like criteria for designing screening libraries and selecting initial hits. As compounds progress through the optimization cycle, their properties should be monitored against drug-like criteria to ensure they remain developable [6].

FAQ 2: My lead compound has high potency but poor solubility. How can I address this during library design? Answer: Poor solubility is a frequent issue that can be mitigated by designing an optimization library focused on improving this property.

- Root Cause: High lipophilicity (LogP) and molecular complexity are key contributors to low aqueous solubility. Studies show that lead compounds and chemical probes tend to be more soluble than final drugs, indicating that solubility should be a key parameter for lead selection [8].

- Troubleshooting Protocol:

- Property Analysis: Calculate the clogP and intrinsic water solubility (LogSw) of your lead compound [8].

- Library Design: Design a focused library around the lead scaffold by introducing:

- Polar functional groups (e.g., amines, alcohols, amides) to increase hydrophilicity.

- Ionizable groups that can form salts with improved dissolution.

- Small, polar substituents (e.g., -OH, -CN) to reduce logP without a large increase in molecular weight.

- Virtual Screening: Filter the proposed library members against lead-like property rules, ensuring that new compounds maintain a LogP on the lower end of the lead-like range (e.g., <3) and have improved predicted LogSw [8].

FAQ 3: How do I balance target potency with lead-like properties during optimization? Answer: The goal is to achieve potency while preserving room for optimization.

- Problem: A common pitfall is adding large, hydrophobic groups to gain potency, which can lead to compounds that are too large and lipophilic (violating drug-like rules) too early in the process [8].

- Experimental Workflow:

- Start: Begin with a confirmed hit that meets lead-like criteria (e.g., MW <350, ClogP <3) [8].

- Design & Synthesize: Create analog libraries using late-stage functionalization strategies that leverage common chemical transformations [9].

- Test & Analyze: Measure the biological activity and physicochemical properties (MW, LogP, solubility) of all new analogs.

- Iterate: Use Structure-Activity Relationship (SAR) and Structure-Property Relationship (SPR) data to guide the next cycle of design. Prioritize analogs that maintain a balance of improved potency and lead-like properties.

The following diagram illustrates this iterative process.

FAQ 4: What are the best practices for building a virtual library for a novel target? Answer: The key is to ensure the library is both synthetically feasible and rich in high-quality leads.

- Challenge: Generating virtual compounds that are novel yet can actually be synthesized and have a high probability of becoming successful drugs [10].

- Methodology:

- Define the Scope: Choose a set of validated chemical reactions and readily available building blocks (reagents) [10].

- Enumerate the Library: Use open-source tools like DataWarrior or KNIME to computationally combine the reagents according to the reaction rules, generating all possible products [10].

- Apply Lead-like Filtering: Use calculated properties (MW, HBD, HBA, LogP) to filter the enumerated virtual library, retaining only those compounds that fall within lead-like property space [8] [10].

- Assess Synthetic Accessibility: Use additional filters or scores to prioritize compounds that are easier to synthesize based on the chosen reactions [10] [9].

Table 2: Essential Research Reagents and Resources for Library Design and Analysis

| Item | Function/Brief Explanation |

|---|---|

| Building Block Reagents | Commercially available chemical starting materials (e.g., carboxylic acids, amines, boronic acids) used to construct a combinatorial library around a core scaffold [10]. |

| Pre-validated Reaction Schemes | A set of reliable and robust chemical transformations (e.g., amide coupling, Suzuki coupling) used to virtually or physically generate the library, ensuring synthetic feasibility [10]. |

| Virtual Library Enumeration Software (e.g., DataWarrior, KNIME) | Open-source chemoinformatics tools that allow researchers to computationally generate all possible compounds from a set of reactions and building blocks [10]. |

| Property Calculation Tools (e.g., ALOGPS) | Software or algorithms for predicting key physicochemical properties like LogP (lipophilicity) and LogSw (aqueous solubility) for virtual compounds [8]. |

| Target-Annotated Compound Databases (e.g., C3L, ChEMBL) | Curated collections of small molecules with known biological activities and protein targets, used for benchmarking and validating library design strategies [11] [12]. |

In the process of screening compound libraries, activity and similarity filters are used to prioritize compounds with a high probability of success. Among the most foundational are property filters, which assess a compound's physicochemical characteristics to predict its behavior in a biological system. The most well-known of these is Lipinski's Rule of 5 (Ro5), a set of guidelines used to identify compounds with a high likelihood of good oral bioavailability. This guide provides troubleshooting support for researchers applying these filters and related classification systems in their experiments.

Troubleshooting FAQs: Rule of 5 and BDDCS

1. My pharmacologically active lead compound has two Rule of 5 violations. Should I abandon it?

Not necessarily. The Rule of 5 is a guideline, not an absolute rule. Many effective oral drugs exist beyond the Rule of 5 (bRo5), including drugs derived from peptides and natural products [13] [14]. Before making a decision, investigate the reasons for the violations. Strategies to improve bioavailability for bRo5 compounds include:

- Utilizing formulations that enhance solubility.

- Employing higher doses where physiologically permissible.

- Structural modifications like macrocyclization or designing intramolecular hydrogen bonds to improve permeability [13]. The decision should be based on the project's target and the feasibility of these mitigation strategies.

2. My compound is a BDDCS Class 1 drug. How should I approach investigating drug-drug interactions (DDIs)?

For BDDCS Class 1 compounds (high solubility, high permeability), the focus for DDI investigations should be primarily on metabolic enzymes (particularly Cytochrome P450), not transporters. Evidence suggests that BDDCS Class 1 drugs do not show clinically relevant transporter-mediated DDIs that require dosage changes [15]. This can streamline your experimental plan, allowing you to prioritize resources on metabolic stability and enzyme inhibition assays.

3. My high-throughput screening (HTS) campaign identified potent hits, but they are all outside the Rule of 5. Why is this happening, and what are the risks?

This is a common occurrence, especially when targeting protein-protein interactions or other challenging biological targets with large, complex binding sites. The chemical space for bRo5 compounds is rich with opportunities [13] [16]. The primary risks associated with these hits are:

- Poor passive permeability and aqueous solubility.

- Complex synthesis and optimization pathways. To troubleshoot, move beyond simple potency assays and initiate early-stage ADME profiling. Use advanced predictive tools trained on bRo5 chemical space to assess properties and guide optimization toward oral drug-like properties [16].

4. How can I improve the reproducibility of my permeability and solubility assays during property screening?

Variability in assay results is a major hurdle in property-based filtering. Key steps to improve reproducibility include:

- Automation: Implement automated liquid handlers to reduce human error and intra-user variability [17].

- Sample Integrity: Track freeze-thaw cycles rigorously and ensure compounds are stored under optimal conditions to prevent degradation [18].

- Data Integrity: Use integrated laboratory information management systems (LIMS) to standardize data entry and maintain a complete, auditable trail of all procedures and results [18].

Experimental Protocols for Key Assays

Protocol 1: Determining Biopharmaceutics Classification System (BCS) / Biopharmaceutics Drug Disposition Classification System (BDDCS) Category

The BCS classifies drugs based on their aqueous solubility and intestinal permeability, while the BDDCS uses the same principles but uses extent of metabolism as a surrogate for permeability [15]. This classification is critical for predicting absorption and disposition.

1. Principle: A drug substance is considered highly soluble if the highest dose strength is soluble in 250 mL or less of aqueous media over the pH range of 1 to 7.5 at 37°C. A drug is considered highly permeable when the extent of absorption in humans is determined to be 90% or more of an administered dose [15].

2. Materials:

- Test and reference compound

- USP apparatus (paddle or basket)

- Buffers (pH 1.0, 4.5, 6.8)

- Caco-2 cell line or suitable model for permeability studies

- LC-MS/MS system for analytical quantification

3. Methodology:

- Solubility Determination: Shake-flask method is performed at 37°C in different pH buffers. The concentration of the drug in solution is quantified using a validated HPLC-UV or LC-MS/MS method.

- Permeability Determination: Using the Caco-2 cell model, the apparent permeability (Papp) of the drug is measured. A drug with a Papp greater than a predefined threshold (e.g., similar to metoprolol) is considered highly permeable.

4. Data Analysis and Classification:

- Class 1: High Solubility, High Permeability

- Class 2: Low Solubility, High Permeability

- Class 3: High Solubility, Low Permeability

- Class 4: Low Solubility, Low Permeability For BDDCS, the permeability assignment is replaced by evidence that the drug is extensively metabolized (Class 1/2) or primarily excreted unchanged (Class 3/4) [15].

Protocol 2: Computational Assessment of Rule of 5 and Related Filters

1. Principle: Calculate key physicochemical properties from the 2D molecular structure to predict drug-likeness and potential oral bioavailability.

2. Software & Tools:

- ACD/Percepta Platform: Provides accurate predictions for pKa, logP, and other properties, even for complex bRo5 molecules [16].

- ChemAxon's Marvin Suite: Offers free calculators for molecular weight, logP, and hydrogen bond donors/acceptors [14].

- Other in-house or commercial QSAR platforms.

3. Methodology:

- Structure Input: Draw or import the 2D structure of the compound in the software.

- Property Calculation: Run the algorithms to compute the following properties:

- Molecular Weight (MW)

- Octanol-Water Partition Coefficient (logP)

- Number of Hydrogen Bond Donors (HBD)

- Number of Hydrogen Bond Acceptors (HBA)

- Polar Surface Area (PSA)

- Number of Rotatable Bonds (NRB)

- Application of Filters: Compare the calculated values against the criteria in the table below.

4. Data Analysis:

- A compound is considered Rule of 5 compliant if it has no more than one violation [14].

- For a more nuanced view, apply Veber's rules (Rotatable Bonds ≤ 10 and PSA ≤ 140 Ų) or other lead-like filters [14].

Data Presentation: Property Filter Criteria

Table 1: Key Physicochemical Property Filters for Oral Bioavailability

| Filter Name | Property Criteria | Threshold Value | Primary Application |

|---|---|---|---|

| Lipinski's Rule of 5 [19] [14] | Molecular Weight (MW) | < 500 Da | Early-stage drug-likeness screening for oral absorption. |

| LogP (Partition Coefficient) | < 5 | ||

| Hydrogen Bond Donors (HBD) | ≤ 5 | ||

| Hydrogen Bond Acceptors (HBA) | ≤ 10 | ||

| Veber's Rules [14] | Polar Surface Area (PSA) | ≤ 140 Ų | Refining bioavailability prediction, focusing on molecular flexibility and polarity. |

| Rotatable Bonds (RB) | ≤ 10 | ||

| Ghose Filter [14] | Molecular Weight | 180 - 480 Da | A quantitative filter for drug-likeness. |

| LogP | -0.4 to +5.6 | ||

| Molar Refractivity | 40 - 130 | ||

| Total Atoms | 20 - 70 | ||

| Lead-like (Rule of 3) [14] | Molecular Weight | < 300 Da | Selecting smaller, less lipophilic starting points for optimization in screening libraries. |

| LogP | ≤ 3 | ||

| Hydrogen Bond Donors/Acceptors | ≤ 3 | ||

| Rotatable Bonds | ≤ 3 |

Table 2: BDDCS Predictions for Drug Disposition and Drug-Drug Interactions (DDIs) for Orally Administered Drugs [15]

| BDDCS Class | Solubility | Extent of Metabolism | Predicted Role of Transporters in Drug Disposition |

|---|---|---|---|

| Class 1 | High | Extensive | Clinically insignificant transporter effects. DDIs are primarily metabolic. |

| Class 2 | Low | Extensive | Efflux transporters may affect absorption and gut metabolism; uptake and efflux transporters can be significant in the liver. |

| Class 3 | High | Poor | Uptake transporters are critical for absorption and disposition. |

| Class 4 | Low | Poor | Uptake and efflux transporters can be critical, but the low permeability is a major limiting factor. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for Property Filtering Experiments

| Item / Reagent | Function / Explanation |

|---|---|

| Caco-2 Cell Line | A human colon adenocarcinoma cell line used as an in vitro model of the human intestinal mucosa to predict drug permeability. |

| MDCK Cell Line | Madin-Darby Canine Kidney cells, often transfected with specific human transporters (e.g., MDR1), used for rapid permeability and transporter interaction assays. |

| PAMPA Assay Kit | Parallel Artificial Membrane Permeability Assay; a non-cell-based, high-throughput tool for initial passive permeability screening. |

| ACD/Percepta Platform | Software for predicting pKa, logP, and other ADME properties, with models refined for both Rule of 5 and bRo5 chemical space [16]. |

| I.DOT Liquid Handler | A non-contact dispenser that automates low-volume liquid handling for HTS, enhancing data reproducibility and reducing compound/reagent consumption [17]. |

| Laboratory Information Management System (LIMS) | A software-based solution for tracking samples, managing experimental data, and ensuring data integrity and regulatory compliance (e.g., 21 CFR Part 11) [18]. |

Visualization: Classification and Troubleshooting Workflow

The following diagram illustrates the logical workflow for classifying compounds and troubleshooting common issues related to oral bioavailability.

FAQs and Troubleshooting Guides

What are functional group filters and why are they used in virtual screening?

Functional group filters are computational tools used to identify and remove small molecules containing substructures associated with undesirable properties from chemical libraries prior to screening. These filters help eliminate compounds that may produce false-positive results in high-throughput screening (HTS) assays, exhibit toxicity, or demonstrate promiscuous behavior (activity against multiple unrelated targets) [20] [21].

The primary purpose is to increase screening efficiency by focusing resources on compounds with a higher probability of being viable leads, thereby reducing experimental noise and follow-up efforts on artifacts [21]. They are a crucial first step in computer-aided drug design workflows to narrow down vast chemical spaces into focused, high-quality libraries [21].

My screening hit was flagged as a PAINS compound. What should I do next?

A PAINS (Pan-Assay Interference Compounds) flag indicates potential assay interference, but does not automatically invalidate your hit [22]. Follow these steps:

- Perform orthogonal assays: Confirm activity using a different assay technology (e.g., switch from a fluorescence-based to a radiometric assay) to rule out technology-specific interference [23].

- Conduct counter-screens: Implement specific assays to test for common interference mechanisms:

- Test for thiol reactivity using glutathione (GSH) probes and detection by fluorometry or mass spectrometry [23].

- Perform aggregation testing using detergent addition experiments; true inhibitors typically show reduced activity in the presence of detergents like Triton X-100, while aggregators do not [23] [21].

- Evaluate structure-activity relationships (SAR): Synthesize and test close structural analogs. Genuine inhibitors typically show interpretable SAR, whereas promiscuous compounds often do not [22].

- Assess selectivity: Test the compound against unrelated targets; specific inhibitors should not show broad activity across diverse targets [22].

What is the difference between PAINS, REOS, and other functional filters?

Different functional filters serve complementary purposes in compound triage, as summarized in the table below.

| Filter Name | Primary Purpose | Key Characteristics | Common Applications |

|---|---|---|---|

| PAINS [21] [22] | Identify pan-assay interference compounds | Flags 480 substructures known to cause false positives in biochemical assays [21]. | Target-based HTS triage; early hit list prioritization. |

| REOS [21] [24] | Rapid elimination of swill | Uses 117 SMARTS patterns to remove compounds with reactive, promiscuous, or undesirable functionalities [21]. | Initial library design; removal of reactive compounds and toxicophores. |

| Aggregators Filter [21] | Identify colloidal aggregators | Hybrid approach combining functional group similarity to known aggregators with property filters (e.g., SlogP <3) [21]. | Detecting nonspecific inhibition mechanisms in cell-free assays. |

| Reactivity Models [23] | Predict covalent reactivity | Deep learning models predict atoms involved in reactivity with biological macromolecules; provides mechanistic hypotheses [23]. | mechanistic understanding of promiscuous bioactivity; complementary to PAINS. |

Are there approved drugs that contain PAINS scaffolds, and why weren't they filtered out?

Yes, several approved drugs contain substructures that would be flagged by PAINS filters [22]. For example, the anticancer drug doxorubicin contains a scaffold that might be flagged [22]. This occurs because:

- Context matters: Some inherently reactive scaffolds can be optimized into safe, effective drugs when the reactivity is managed (e.g., through structural modification, targeted delivery, or when the therapeutic context, like oncology, tolerates a higher risk profile) [22].

- The "privileged structure" paradox: Some chemical scaffolds classified as PAINS are also considered "privileged structures" – molecular frameworks capable of providing ligands for multiple targets and can be tailored into specific therapeutics [22].

- Phenotypic vs. target-based screening: PAINS filters were developed for and perform best in biochemical (target-based) assays. Their application to phenotypic screening is highly debated and may inappropriately eliminate valuable chemical starting points [22].

What are the best practices for implementing a functional group filtering protocol?

- Use multiple complementary filters: Combine functional group filters (e.g., PAINS, REOS) with property-based filters (e.g., Lipinski's Rule of Five) for comprehensive library profiling [21].

- Filter before docking: Apply filters early in the computational workflow to reduce library size and save computational resources for more demanding simulations [21].

- Customize for your target and assay type: Adjust filtering stringency based on your specific assay technology (e.g., be more stringent with fluorescence-based assays) and biological target [23] [22].

- Manually review flagged compounds: Do not rely solely on automated filtering. Use interactive visualization tools to inspect flagged structures and their matched substructures before deciding to exclude them [25].

- Consider the therapeutic context: Be less restrictive with filters for life-threatening diseases (e.g., cancer) where a broader risk-benefit profile is acceptable [22].

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Resource | Function | Access Information |

|---|---|---|

| usefulrdkitutils [25] | Python package for applying functional group filters (including REOS and BMS rules) and visualizing matched substructures. | Install via pip: pip install useful_rdkit_utils |

| ZINC Database [20] | Public repository of commercially available compounds for virtual screening; includes millions of purchasable small molecules. | http://zinc.docking.org/ |

| ChEMBL [25] [24] | Manually curated database of bioactive molecules with drug-like properties; source of structural alert rules. | https://www.ebi.ac.uk/chembl/ |

| RDKit [24] | Open-source cheminformatics toolkit; fundamental for calculating molecular descriptors and handling chemical data. | https://www.rdkit.org/ |

| FILTER [26] | Commercial software for high-speed molecular filtering based on physicochemical properties and undesirable substructures. | https://www.eyesopen.com/filter |

| KNIME [21] | Visual platform for creating data workflows, including pipelines for medicinal chemistry filtering and analysis. | https://www.knime.com/ |

Experimental Protocols & Workflows

Protocol: Implementing a Functional Group Filtering Pipeline Using Python

This protocol uses the useful_rdkit_utils package to apply and visualize structural alerts [25].

Troubleshooting Tip: To visually understand why a compound was flagged, use the datamol package's lasso_highlight_image function to create images with the matched substructure highlighted, as demonstrated in the useful_rdkit_utils notebook [25].

Protocol: Counter-Screen for Thiol Reactivity

This experimental protocol helps confirm if a screening hit acts via nonspecific covalent modification [23].

- Principle: Reactive compounds may form covalent adducts with biological nucleophiles like glutathione (GSH), which can be detected by a shift in molecular weight.

- Procedure:

- Prepare a solution of your compound (e.g., 100 µM) in a suitable buffer (e.g., phosphate buffer, pH 7.4).

- Add a molar excess of glutathione (e.g., 1 mM) to the solution.

- Incubate the mixture at 37°C for 1-24 hours.

- Analyze the reaction mixture using LC-MS (Liquid Chromatography-Mass Spectrometry).

- Monitor for the formation of a new peak corresponding to the compound-GSH adduct (MWcompound + MWGSH - 2*MW_H + possible modifications).

- Interpretation: The appearance of a GSH adduct confirms thiol reactivity, suggesting a potential promiscuous mechanism. This does not automatically invalidate the compound but warrants caution and further investigation [23].

Workflow Visualization

Compound Library Filtering Workflow

Hit Triage Strategy for PAINS

The evolution of compound libraries from thousands to billions of molecules represents a paradigm shift in early drug discovery. This expansion, powered by make-on-demand combinatorial chemistry, moves screening beyond the physical constraints of traditional compound collections into vast virtual chemical spaces [27]. While this offers unprecedented opportunities for identifying novel chemical matter, it introduces significant computational and strategic challenges that require new approaches to library design, screening, and hit identification. This technical support guide addresses the specific experimental and methodological issues researchers encounter when working with these ultra-large libraries.

Quantitative Comparison: Library Evolution

The table below summarizes the key quantitative differences between traditional and modern screening paradigms.

| Parameter | Traditional HTS | Make-on-Demand & vHTS |

|---|---|---|

| Typical Library Size | 100,000 - 1,000,000 compounds [28] [29] | Billions to tens of billions [27] [29] |

| Throughput | 10,000+ compounds per day (Ultra HTS: 100,000/day) [28] | Virtual screening of billions via computational prescreening [27] |

| Screening Format | 384-well to 1586-well microplates (2.5-10 μL volume) [28] | In-silico docking and machine learning scoring [27] [29] |

| Typical Hit Rate | ~0.001% to 0.15% [29] | Computational hit rates of ~7-10% reported [29] |

| Primary Cost & Limitation | Physical compounds, reagents, and automation [28] | Massive computational resources and synthesis of predicted hits [27] |

Experimental Protocols & Methodologies

Protocol for Evolutionary Algorithm Screening (REvoLd)

This protocol is designed for efficient navigation of billion-member make-on-demand libraries like the Enamine REAL space [27].

Step 1: Library and Target Preparation

Step 2: Initialization

- Generate a random start population of ~200 ligands by combining library fragments [27].

Step 3: Evolutionary Optimization (30 Generations)

- Scoring: Use flexible molecular docking (e.g., RosettaLigand) to score each ligand in the population [27].

- Selection: Select the top 50 scoring individuals to advance to the next generation [27].

- Reproduction:

- Duplicate Removal: Filter out identical molecules to ensure exploration of diverse chemical space.

Step 4: Output and Validation

- Output: A diverse set of top-scoring, synthetically accessible compounds.

- Validation: Select a subset of hits (e.g., 50-100) for synthesis and experimental validation in a biochemical assay [29].

Protocol for AI-Convolutional Neural Network Screening (AtomNet)

This protocol leverages deep learning for structure-based screening across ultra-large libraries [29].

Step 1: Pre-Screening Filtering

- Remove molecules prone to assay interference or those that are overly similar to known binders of the target or its homologs [29].

Step 2: Virtual Screening

Step 3: Compound Selection

- Cluster the top-ranked molecules to ensure structural diversity.

- Algorithmically select the highest-scoring exemplars from each cluster without manual cherry-picking [29].

Step 4: Experimental Confirmation

- Synthesize selected compounds (100-500) and confirm purity (>90% by LC-MS) [29].

- Test in a primary single-dose assay, followed by dose-response (DR) studies for confirmed hits [29].

- Perform analog expansion around confirmed hit scaffolds to establish initial Structure-Activity Relationships (SAR) [29].

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Reagent | Function in Screening |

|---|---|

| Make-on-Demand Library (e.g., Enamine REAL) | Provides access to billions of synthetically accessible compounds for virtual screening [27] [29]. |

| Cellular Microarrays (2D monolayers) | Used in cell-based HTS assays for toxicity assessment and phenotypic screening in 96- or 384-well formats [28]. |

| Polymer-Supported Scavengers | Used in solution-phase library synthesis to remove excess reagents, though not a general purification method [30]. |

| Analytical/Preparative HPLC & SFC | Critical for high-throughput purification of synthesized compound libraries to ensure >90% purity for reliable assay data [30]. |

| Tool Compounds (e.g., Forskolin) | Well-characterized biological probes used as positive controls in assay development and validation [31]. |

Frequently Asked Questions (FAQs)

Library Design and Selection

Q1: How do I choose between a traditional focused library and a billion-member make-on-demand library for my project? The choice depends on your target and goal. Use a focused, annotated library built from "privileged structures" or known bio-active scaffolds if you are exploring a well-characterized target class and want to build a quick target hypothesis [31]. Opt for an ultra-large make-on-demand library when seeking truly novel scaffolds, especially for novel or less-drugged targets where few known actives exist [27] [29].

Q2: What are the critical steps to avoid being overwhelmed by the size of a billion-compound library? The key is not to screen all molecules exhaustively. Implement a tiered screening strategy:

- Pre-filtering: Use simple rules (e.g., PAINS filters, physicochemical properties) to remove undesirable compounds [29].

- Computational Prescreening: Apply efficient algorithms like evolutionary searches (REvoLd) or deep learning models (AtomNet) to explore the vast space intelligently and identify a tractable number of high-priority candidates for synthesis [27] [29].

Technical and Computational Challenges

Q3: My target lacks a high-resolution crystal structure. Can I still effectively use structure-based virtual screening on ultra-large libraries? Yes. Studies have successfully used homology models with sequence identities as low as ~42% to the template protein, as well as Cryo-EM structures, achieving hit rates comparable to those with crystal structures [29]. The robustness of modern machine-learning scoring functions can compensate for some structural uncertainty.

Q4: What computational resources are typically required for a virtual screen of a billion-plus compound library? Screening a 16-billion compound library is a massive undertaking, reported to require over 40,000 CPUs, 3,500 GPUs, 150 TB of main memory, and 55 TB of data transfers [29]. For most academic or smaller industrial labs, leveraging cloud computing or highly optimized algorithms (like REvoLd, which docks only thousands of molecules) is a more feasible approach [27].

Experimental Validation and Triage

Q5: The hit rate from my computational screen seems unusually high (~10%). How do I triage these results effectively? A high computational hit rate is a positive sign, but rigorous experimental triage is crucial.

- Confirm Purity: Ensure all compounds for testing are of high purity (>90% by LC-MS/NMR) [29].

- Dose-Response: Move from single-point hits to determining potency (IC50/EC50) [29].

- Counter-Screens: Rule out assay interference (e.g., aggregation, fluorescence) by using additives like Tween-20 and running orthogonal assays [29].

- Analog Testing: Confirm SAR by testing commercially available or quickly synthesized analogs of the initial hit [29].

Q6: Why is purification so critical for screening libraries, and what are the best methods? The purity of a screening library is paramount for obtaining high-quality, interpretable assay data. Crude mixtures can lead to false positives, missed actives present in low yields, and wasted time on resynthesis and deconvolution [30]. For libraries of a few thousand compounds, HPLC is a viable and widely used method. For larger libraries or where solvent removal is a bottleneck, Supercritical Fluid Chromatography (SFC) is a powerful alternative with faster run times and easier solvent evaporation [30].

Workflow and Decision Pathways

This workflow outlines the key decision points and processes for screening ultra-large compound libraries.

Screening Workflow for Ultra-Large Libraries

Troubleshooting Common Experimental Issues

Problem: High False Positive Rate in Virtual Screening

- Potential Cause 1: The computational model was trained on biased or non-diverse data, limiting its ability to generalize.

- Solution: Use models specifically validated on diverse targets and those that do not rely heavily on known binders for the target of interest [29].

- Potential Cause 2: Inadequate pre-filtering of promiscuous or reactive compounds (e.g., PAINS).

- Solution: Implement stringent in-silico filters to remove compounds with undesirable substructures before the main screen [29].

Problem: Failure to Identify Any Hits After Experimental Testing

- Potential Cause 1: The structural model (homology model or crystal structure) does not represent a biologically relevant conformation.

- Solution: If possible, use multiple structures or consider molecular dynamics simulations to sample flexible states. Explore different protonation states of key residues.

- Potential Cause 2: The assay conditions are not optimal for detecting the predicted activity (e.g., solubility issues, wrong co-factors).

- Solution: Include control tool compounds to verify assay functionality. Pre-check the solubility of computational hits in the assay buffer.

Problem: Success in Primary Screen but Failure in Analog Expansion

- Potential Cause: The initial hit is a false positive, or the binding mode prediction is inaccurate, leading to unproductive SAR.

- Solution: Re-confirm the purity and activity of the original hit. If feasible, attempt to obtain a co-crystal structure of the initial hit with the target to guide analog design rationally.

A Practical Guide to Filter Implementation and Workflow Integration

Frequently Asked Questions (FAQs)

Q1: Why are Molecular Weight, logP, TPSA, and Rotatable Bonds considered fundamental property-based filters?

These four properties are crucial because they are strongly correlated with key pharmacokinetic outcomes, particularly oral bioavailability and passive membrane permeability [21] [32]. They form the core of many established filtering rules, such as Lipinski's Rule of Five (MW, logP) and the Veber rules (TPSA, Rotatable Bonds) [21] [33]. Using them early in library design efficiently shifts the chemical space towards "drug-like" or "lead-like" regions, increasing the likelihood that identified hits will have favorable absorption, distribution, metabolism, and excretion (ADME) properties [21] [34].

Q2: My compound violates the standard MW filter (>500 Da) but is active. Should it be automatically discarded?

Not necessarily. While the Rule of Five provides an excellent guideline for typical oral drugs, certain target classes, such as Protein-Protein Interaction inhibitors (iPPIs), often require larger molecules (mean MW of ~521 Da) to effectively bind to their targets [34]. Automatic discard is not recommended. Instead, you should review the other properties—especially logP and TPSA—and consider the biological context. A high MW compound with acceptable logP and TPSA may still be viable. Filters should be used as a dynamic guideline rather than an inflexible rule [21] [32].

Q3: How does a high number of Rotatable Bonds negatively impact a compound?

An excessive number of rotatable bonds increases molecular flexibility, which is negatively correlated with oral bioavailability [21] [34]. Flexible molecules can adopt many conformations, entropically disfavoring the binding process to the target. Furthermore, this flexibility can hinder the compound's ability to pass through cell membranes efficiently. The Veber filter suggests a limit of 10 or fewer rotatable bonds to optimize bioavailability [21] [33].

Q4: What is a common pitfall when applying the logP filter, and how can it be addressed?

A common pitfall is relying on a single calculated logP value. Different software packages may use varying algorithms, leading to discrepancies [32]. Furthermore, logP describes the partition coefficient for the neutral form of a molecule. For ionizable compounds, the distribution coefficient (logD) at a physiologically relevant pH (e.g., 7.4) provides a more accurate picture of lipophilicity [34]. It is good practice to calculate both logP and logD and to be aware of the specific calculation method used in your cheminformatics pipeline.

Q5: Can you provide a real-world example of a consecutive filtering protocol?

A documented protocol from the READDI AViDD Center applies filters in a sequential manner for hit confirmation [33]:

- Physicochemical Filters: Apply strict cut-offs (e.g., MW ≤ 500 Da, clogP ≤ 5, TPSA < 140 Ų, Rotatable Bonds < 10).

- Assay Liability Filters: Flag or remove compounds with Pan-Assay Interference Compounds (PAINS) substructures, aggregators, fluorescent compounds, or metal chelators.

- Data Analysis Filters: Use statistical measures (Z'-score, hillslope) from experimental data to eliminate false positives. This sequential approach efficiently prioritizes compounds with the greatest potential for progression [33].

Troubleshooting Guides

Issue 1: High Attrition Rate Due to Poor Physicochemical Properties

Problem: A high percentage of virtual screening hits or synthesized compounds are failing early ADMET assays, showing poor solubility or permeability.

Diagnosis and Solutions:

| Symptom | Likely Cause | Corrective Action |

|---|---|---|

| Poor aqueous solubility | logP/logD is too high | Tighten the logP filter. Consider applying a more stringent cut-off (e.g., logP < 4) to reduce lipophilicity and improve solubility [34]. |

| Low passive permeability | TPSA is too high or excessive Rotatable Bonds | Apply the Veber filter criteria (TPSA ≤ 140 Ų and Rotatable Bonds ≤ 10) to focus on compounds with better membrane permeation potential [21] [33]. |

| General poor drug-likeness | Multiple violations of property rules | Implement a multi-parameter scoring system like the "STOPLIGHT" composite score used in the AViDD Center, which provides a holistic view of a compound's properties [33]. |

Issue 2: Inconsistencies in Filtering Results Across Different Software Platforms

Problem: The same compound library, when filtered using different cheminformatics software, yields different numbers of passed compounds.

Diagnosis and Solutions:

| Symptom | Likely Cause | Corrective Action |

|---|---|---|

| Different logP values | Use of different calculation algorithms | Standardize the computational tool used for descriptor calculation across the project (e.g., RDKit, OpenEye) [35]. Validate calculated values against a small set of experimental data if available. |

| Discrepancies in passed/failed counts | Varying implementations of SMARTS patterns or perception of aromaticity/bond orders | Ensure chemical structures are standardized (e.g., using canonical isomeric SMILES) before filtering to minimize perception differences [32]. Manually inspect a sample of borderline compounds. |

Issue 3: Over-Filtering and Loss of Chemically Diverse or Novel Scaffolds

Problem: The filtering process is too stringent, eliminating potentially interesting and novel chemotypes, leading to a chemically homogenous and potentially biased hit list.

Diagnosis and Solutions:

| Symptom | Likely Cause | Corrective Action |

|---|---|---|

| Loss of all compounds for a specific target class | Blind application of "drug-like" filters to non-standard targets | Adapt filter thresholds to the target biology. For example, use different rules for Protein-Protein Interaction inhibitors [32] [34]. |

| Low scaffold diversity in final list | Over-reliance on strict property cut-offs | Use filters to flag compounds for manual review rather than automatically excluding them. This allows a medicinal chemist to make an informed decision on interesting outliers [21] [32]. |

Experimental Protocols & Workflows

Protocol 1: Standard Operating Procedure for Pre-Virtual Screening Library Preparation

This protocol details the steps for preparing a large, diverse compound library for virtual screening by applying property-based filters to focus on a lead-like chemical space [21] [32] [33].

Research Reagent Solutions:

| Item | Function in the Protocol |

|---|---|

| Raw Compound Library (e.g., in SDF or SMILES format) | The starting collection of compounds to be filtered. |

| Cheminformatics Software (e.g., KNIME, RDKit, OpenEye FILTER) | The platform used to calculate molecular descriptors and apply filtering rules. |

| Standardization Tool (e.g., included in KNIME or RDKit) | Standardizes chemical structures (e.g., neutralization, tautomer normalization) to ensure consistent descriptor calculation. |

| Descriptor Calculation Node | Computes the key physicochemical properties: Molecular Weight, logP, TPSA, and number of Rotatable Bonds. |

| Data Viewing/Export Tool | Allows for inspection of results and export of the filtered library for downstream virtual screening. |

Methodology:

- Data Input and Standardization: Load the raw compound library. Standardize the molecular structures. This includes neutralizing structures, removing duplicates, and generating canonical tautomers to ensure consistency [32] [35].

- Descriptor Calculation: Using the cheminformatics software, calculate the following properties for every molecule in the library:

- Molecular Weight (MW)

- Octanol-water partition coefficient (logP)

- Topological Polar Surface Area (TPSA)

- Number of Rotatable Bonds

- Filter Application: Apply the following sequential filters to create a "Lead-like" subset [21] [33]:

- Filter 1 (Size): Remove molecules with MW > 500 Da.

- Filter 2 (Lipophilicity): Remove molecules with logP > 5.

- Filter 3 (Polarity/Flexibility): Remove molecules with TPSA > 140 Ų or Rotatable Bonds > 10.

- Output: Export the resulting subset of compounds that pass all filters. This curated library is now optimized for use in downstream, computationally intensive virtual screening workflows like molecular docking.

The workflow for this protocol is illustrated below:

Protocol 2: Tiered Filtering for Hit Triage and Confirmation

This protocol is used after initial screening (virtual or HTS) to triage hits for experimental validation. It employs a tiered, flagging system to prioritize compounds without immediately discarding potential leads [32] [33].

Methodology:

- Tier 1 - Property Flagging: Calculate MW, logP, TPSA, and Rotatable Bonds for all hits. Flag compounds that fall outside the desired ranges (e.g., MW > 500, logP > 5, TPSA > 140, Rotatable Bonds > 10). A composite score (e.g., STOPLIGHT) can be generated to rank compounds.

- Tier 2 - Functional Group Flagging: Apply functional group filters (e.g., PAINS, REOS, aggregators) to flag compounds with substructures known to cause assay interference or promiscuous activity [21] [33].

- Tier 3 - Manual Review: A medicinal chemist reviews all flagged compounds. Flags are not automatic rejections. The chemist assesses the context—e.g., a "PAINS" flag might be acceptable if the compound is a known covalent inhibitor for the target. The final decision to progress a compound is based on a holistic view of its properties, flags, and structural novelty.

The workflow for this triage process is as follows:

Table 1: Common Property-Based Filters and Their Rationale [21] [32] [33]

| Property | Common Filter Name | Typical Cut-off | Rationale & Impact |

|---|---|---|---|

| Molecular Weight (MW) | Lipinski's Rule of 5 | ≤ 500 Da | Higher MW correlates with poorer oral absorption and permeation due to larger molecular size. |

| logP | Lipinski's Rule of 5 | ≤ 5 | High lipophilicity (logP) leads to poor aqueous solubility, metabolic instability, and promiscuity. |

| Topological Polar Surface Area (TPSA) | Veber Filter | ≤ 140 Ų | A key descriptor for cell permeability. Low TPSA is generally favorable for passive diffusion across membranes. |

| Number of Rotatable Bonds | Veber Filter | ≤ 10 | Fewer rotatable bonds reduce molecular flexibility, which is linked to improved oral bioavailability. |

Table 2: Advanced Considerations for Property-Based Filtering

| Consideration | Description | Application Tip |

|---|---|---|

| Target Class Dependence | Optimal property ranges can vary significantly by target. | For Protein-Protein Interaction (PPI) inhibitors, be prepared to accept higher MW and logP values than the standard Ro5 [34]. |

| logP vs. logD | logP is for the neutral species; logD is the distribution coefficient at a specific pH. | For ionizable compounds, use logD at pH 7.4 as it more accurately represents lipophilicity under physiological conditions [34]. |

| Beyond Rule-of-5 (bRo5) | A growing class of compounds that violate Ro5 but are still orally bioavailable. | When exploring difficult targets, consider specialized filters or models designed for the bRo5 chemical space [36]. |

Troubleshooting Guides

Guide 1: Troubleshooting Suspect Activity in a Screening Hit

| Problem | Possible Cause | Recommended Action | Interpretation of Results |

|---|---|---|---|

| Apparent inhibitory activity in a biochemical assay | Spectroscopic interference (compound absorbs or fluoresces in the assay detection region) [37] | Run an interference assay: Measure the compound's effect on the signal detection reagents in the absence of the target [37]. | Linear change in signal with concentration (follows Beer's law) suggests interference. Log-linear change suggests specific binding [37]. |

| Irreversible or non-reversible inhibition | Covalent modification of the target protein [37] | Perform a dilution test: Incubate the target at 5x its assay concentration with the hit at 5x its IC50. Dilute the mixture 10-fold and re-measure activity [37]. | Inhibition drops to ~33% after dilution suggests reversible inhibition. Little change in inhibition suggests covalent activity [37]. |

| Promiscuous inhibition across multiple unrelated targets | Colloidal aggregation (compound forms nano-scale particles that non-specifically inhibit proteins) [37] | 1. Add detergent: Repeat assay with 0.01% Triton X-100 or 0.025% Tween-80 [37].2. Centrifuge: For cell-based assays, centrifuge compound medium; if activity decreases post-spin, it suggests aggregation [37]. | Attenuated activity with detergent or after centrifugation strongly indicates colloidal aggregation [37]. |

| In-cell activity with no clear target | Membrane disruption or general cellular toxicity [37] | Demonstrate that the compound is active at concentrations substantially lower than those causing cellular toxicity or death [37]. | Activity only at cytotoxic concentrations suggests the apparent effect is due to cell death, not specific target engagement [37]. |

Guide 2: Troubleshooting a Potential PAINS Compound

| Problem | Investigation Method | Next Steps & Validation |

|---|---|---|

| A screening hit is flagged as a PAINS chemotype by an in silico tool [21]. | Literature Review: Search for evidence of chemotype promiscuity using resources like BadApple [37].Counter-Screening: Test the molecule against unrelated targets [37]. | If the compound shows selective activity, proceed to SAR (Structure-Activity Relationship) analysis. A lack of logical SAR is a hallmark of a PAINS mechanism [37]. |

| A published compound, later identified as a PAINS, is reported as active against your target of interest. | Dose-Response Analysis: Determine if the concentration-response curve is well-behaved (e.g., has a Hill coefficient close to 1) [37].Competition Assay: Test whether the compound competes with a known ligand for the binding site [37]. | If curves are ill-behaved (e.g., high Hill slope) or the compound does not compete with known ligands, the original activity is likely an artifact. Discontinue the compound [37]. |

| A natural product-derived hit shows pan-assay activity. | Recognize it may be an IMP (Invalid Metabolic Panacea), the natural product equivalent of PAINS [37]. | Apply the same rigorous controls as for synthetic PAINS, focusing on membrane perturbation as a potential mechanism [37]. |

Frequently Asked Questions (FAQs)

Q1: What are the fundamental differences between PAINS, REOS, and aggregator filters?

These functional group filters operate at different stages and with slightly different intents, as summarized in the table below.

| Filter Type | Primary Goal | Typical Application Stage | Key Characteristics & Mechanisms |

|---|---|---|---|

| PAINS (Pan-Assay INterference compoundS) | Identify compounds that appear active through multiple artifactual mechanisms (e.g., covalent reactivity, redox activity, spectroscopic interference) [37] [21]. | Post-HTS (High-Throughput Screening) analysis of hits; prior to purchasing compounds for screening [37]. | Flags ~480 problematic substructures (e.g., quinones, rhodanines, curcuminoids). Acts via multiple interference mechanisms [37] [21]. |

| REOS (Rapid Elimination Of Swill) | Remove compounds with reactive, toxic, or otherwise undesirable functional groups early in library design [21] [38]. | Pre-screening, during the design of a compound library [38]. | Uses ~117 structural rules (SMARTS strings) to filter out promiscuous ligands and toxicophores [21]. |

| Aggregator | Identify compounds that form colloidal aggregates, a primary source of false positives in screening [37]. | Post-HTS hit triage; can also be used pre-screening [37]. | A hybrid filter: uses SlogP < 3 and similarity to a database of known aggregators (e.g., via Tanimoto coefficient) [21]. |

Q2: If my compound is flagged as a PAINS by an online tool, should I immediately discard it?

No. In silico flags are a critical alert, not a final verdict [37]. A compound flagged as a potential PAINS should be subjected to rigorous experimental follow-up. If it passes these control experiments—showing well-behaved dose-response curves, specificity in counter-screens, and a logical SAR—it may be a true, well-behaved ligand [37]. The key is to provide robust experimental evidence to overcome the in silico prediction.

Q3: What are the essential experimental controls for confirming a compound acts via colloidal aggregation?

The table below outlines the primary experimental methods for confirming and ruling out colloidal aggregation.

| Experimental Control | Protocol Details | Positive Result for Aggregation |

|---|---|---|

| Detergent Addition | Repeat the activity assay in the presence of a non-ionic detergent (e.g., 0.01% Triton X-100) [37]. | Significant attenuation or loss of inhibitory activity [37]. |

| Dynamic Light Scattering (DLS) | Directly observe the compound in solution for particles in the 50–1000 nm size range [37]. | Observation of particles confirms aggregation, though not necessarily that it causes the activity [37]. |

| Enzyme Counter-Screen | Counter-screen the compound against enzymes highly sensitive to aggregation (e.g., AmpC β-lactamase, malate dehydrogenase) [37]. | Promiscuous inhibition of these sensitive enzymes suggests an aggregation-based mechanism [37]. |

| Target Concentration | Increase the concentration of the soluble target protein in the assay [37]. | Reduced inhibitory activity at higher target concentrations [37]. |

Q4: How were these functional group filters applied in the development of a real-world screening library?

Stanford University's HTS facility provides a clear example. Their compound selection process involved several filtering steps [38]:

- Standardization: Molecular structures were standardized, charges were cleared, and salts were stripped [38].

- Drug-like Filter: Compounds were filtered using a modified Lipinski's Rule of Five (e.g., MW between 100-500, AlogP between -5 and 5) [38].

- REOS Filter: Molecules were passed through a REOS filter to eliminate compounds with reactive or undesirable functional groups, removing approximately 30,000 molecules from their candidate pool [38].

- Diversity Selection: A final, diverse set was selected based on chemical fingerprints and properties compared to an in-house library [38].

Experimental Workflows & Signaling Pathways

Compound Triage Workflow

Primary Mechanisms of Assay Interference

| Tool Name | Function / Description | Key Utility |

|---|---|---|

| Non-ionic Detergents (Triton X-100, Tween-80) | Experimental control for colloidal aggregation; attenuates activity of aggregators [37]. | Rapid, low-cost test to rule out a major source of false positives [37]. |

| Dynamic Light Scattering (DLS) Instrument | Directly detects and measures the size of colloidal particles (50-1000 nm) in compound solution [37]. | Provides physical evidence of compound aggregation [37]. |

| Counter-Screen Targets (e.g., AmpC β-lactamase, Trypsin) | Enzymes highly susceptible to inhibition by colloidal aggregates; used to test for promiscuous inhibition [37]. | Confirms a compound acts via a promiscuous aggregation mechanism [37]. |

| ZINC15 / PAINS Patterns (e.g., cbligand.org/PAINS/) | Free online databases and tools to screen compound structures for PAINS chemotypes [37]. | In silico pre-screening of compound libraries or HTS hits [37]. |

| BadApple | A database and tool for literature-based promiscuity analysis of chemical scaffolds [37]. | Investigates whether a chemotype has a history of promiscuous activity [37]. |

| RDKit | Open-source cheminformatics toolkit for calculating molecular descriptors, fingerprints, and applying structural filters [39]. | Building custom filtering and analysis pipelines for compound libraries [39]. |

Frequently Asked Questions (FAQs)

1. What are the primary goals of filtering a compound library for CNS drug discovery? The primary goals are to enrich your library for molecules that can cross the blood-brain barrier (BBB) to reach their target site in the central nervous system and to ensure they possess properties conducive to becoming an oral drug, such as good absorption and low metabolic instability [40] [21]. This early application of filters helps reduce late-stage attrition by eliminating compounds with undesirable ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) profiles or functional groups that cause assay interference [41] [21].

2. My initial library is too large for virtual screening. What is the first filtering step I should take? The most efficient first step is to apply functional group filters, such as REOS (Rapid Elimination of SWill) or PAINS (Pan-Assay Interference Compounds) filters [21]. These filters remove compounds with reactive, unstable, or promiscuous functional groups that are likely to produce false-positive results in high-throughput screens, saving significant computational time and resources [41] [21].

3. A compound passed my BBB permeability model but failed in vivo. What could be wrong? This discrepancy can arise from several factors. Your in silico model may not fully account for active efflux by transporters like P-glycoprotein [40]. Additionally, the compound might have poor metabolic stability in the bloodstream or be extensively bound to plasma proteins, reducing the free fraction available to cross the BBB [21]. Review the compound's susceptibility to metabolic soft spots and plasma protein binding predictions.

4. I am targeting a protein-protein interaction, which often requires larger molecules. Should I strictly adhere to the Rule of 5? No, strict adherence to the Rule of 5 is not recommended for such targets. Many approved oral drugs, particularly natural products and peptides, exist in the "Beyond Rule of 5" (bRo5) space [13]. For these compounds, properties like intramolecular hydrogen bonding (which reduces polar surface area), macrocyclization, and formulation strategies are more relevant for achieving oral bioavailability than molecular weight alone [13].

5. What are the key property filters for ensuring CNS activity and oral druggability? A combination of filters is used to prioritize compounds for CNS activity and oral administration. The following table summarizes key filters and their typical cut-offs:

Table 1: Key Property Filters for CNS and Oral Druggability

| Filter Name | Key Parameters | Typical Cut-off Values | Primary Goal |

|---|---|---|---|

| Lipinski's Rule of 5 [21] | Molecular Weight (MW), LogP, H-bond Donors (HBD), H-bond Acceptors (HBA) | MW ≤ 500, LogP ≤ 5, HBD ≤ 5, HBA ≤ 10 | Oral bioavailability |

| Veber Filter [21] | Polar Surface Area (TPSA), Rotatable Bonds | TPSA ≤ 140 Ų, Rotatable Bonds ≤ 10 | Oral bioavailability |

| BBB Permeability Predictors [40] | LogP, TPSA, Brain-to-blood ratio, Presence of specific substructures | LogP ~2-5, TPSA < 60-70 Ų | CNS penetration & activity |

| Egan Filter [21] | LogP, TPSA | LogP ≤ 5.88, TPSA ≤ 131.6 Ų | Intestinal absorption |

6. How can I visualize the overall filtering workflow for a CNS-targeted library? The workflow for filtering a compound library for CNS targets involves sequential application of functional and property filters. The diagram below illustrates this process.

Troubleshooting Guides

Problem: High Hit Rate in HTS but Low Confirmation in Secondary Assays

| Possible Cause | Recommended Action | Preventive Measure |

|---|---|---|

| Presence of PAINS [21] | Re-screen the hit list using a PAINS filter. Remove any compounds containing flagged substructures (e.g., rhodanines, curcuminoids). | Apply PAINS and REOS filters before conducting the primary HTS [21]. |

| Compound Aggregation [21] | Test hit compounds in the presence of a non-ionic detergent like Triton X-100 or CHAPS. If activity is abolished, colloidal aggregation is likely. | Use an aggregator filter during library design, which combines Tanimoto similarity to known aggregators with an SlogP cut-off (<3) [21]. |

| Chemical Instability | Check the integrity of the compounds after dissolution in the assay buffer (e.g., using LC-MS). | Incorporate stability filters (e.g., to exclude molecules with hydrolytically unstable esters) during library design. |

| Fluorescence or Signal Interference | Run the assay in the absence of the biological target to check for signal interference from the compound itself. | For fluorescence-based assays, pre-screen the library for intrinsic fluorescence at the relevant wavelengths. |

Problem: Good Cellular Activity but No Efficacy in Animal Models

| Possible Cause | Recommended Action | Preventive Measure |

|---|---|---|

| Poor BBB Penetration [40] | Determine the brain-to-plasma ratio (Kp) in animal models. A low ratio indicates poor penetration or active efflux. | Use validated in silico BBB models [40] and apply strict filters for TPSA (<60-70 Ų) and LogP (~2-5) early in screening. |

| Active Efflux | Co-administer a P-gp inhibitor (e.g., cyclosporine A). If efficacy is restored, the compound is likely a P-gp substrate. | Incorporate computational models to predict P-gp efflux during compound selection. |

| Rapid Systemic Clearance | Assess pharmacokinetic parameters (e.g., half-life, clearance) from plasma samples. | Prioritize compounds with favorable in vitro microsomal stability and lower rotatable bond count (e.g., ≤10) [21]. |

| Plasma Protein Binding | Measure the fraction of compound unbound in plasma. A very low unbound fraction limits bioavailability. | Consider plasma protein binding predictions during compound optimization. |

Problem: Promising In Silico CNS Profile but Poor Experimental Permeability

| Possible Cause | Recommended Action | Preventive Measure |

|---|---|---|

| Over-reliance on a Single Model | Use multiple complementary prediction models (e.g., based on different algorithms or training sets). | Employ a consensus approach from several in silico tools and cross-validate predictions with simpler in vitro assays (e.g., PAMPA-BBB) [40]. |

| Inaccurate Descriptor Calculation | Verify the calculated molecular descriptors (e.g., TPSA, LogP) using a different software package. | Manually inspect the structures of top candidates to ensure descriptor calculation is chemically sensible. |

| Ignoring Transporter Effects | Use cell-based BBB models (e.g., hCMEC/D3) that express relevant influx/efflux transporters to assess permeation. | Integrate predictions for transporter substrates (e.g., for P-gp) into the screening workflow. |

Experimental Protocols

Protocol 1: Ligand-Based Virtual Screening for CNS-Active Compounds

This protocol uses structural similarity to known CNS-active drugs to rapidly enrich a screening library [40].

- Select Query Molecules: Choose 3-5 FDA-approved drugs with known activity against your target or a related CNS pathway [40].

- Configure Screening Tool: Use a tool like Pharmit, ChemMine, or SwissSimilarity. Input the query molecules and set a Tanimoto similarity threshold (e.g., 0.7-0.8) to balance novelty and similarity [40].

- Execute Screening: Screen against large chemical databases (e.g., PubChem, ZINC15, ChEMBL). This will typically yield thousands of structurally similar molecules [40].

- Apply BBB Permeability Filter: Process the resulting molecules through a computational BBB permeability model. This classifies them into BBB-permeable and non-permeable subsets [40].

- Prioritize Candidates: Further filter the BBB-permeable molecules based on ADME properties, toxicophore absence, and drug-likeness rules to create a final, prioritized list for testing [40].

Protocol 2: Applying a Multi-Stage Filtering Pipeline

This protocol describes a sequential filtering strategy to refine a large virtual library into a focused set for CNS targets.

- Data Preparation: Convert the library (e.g., in SDF or SMILES format) for analysis. Standardize structures and remove duplicates [41] [21].

- Functional Group Filtering:

- Property-Based Filtering:

- BBB-Specific Prediction: Use a specialized machine learning or descriptor-based BBB prediction model to score and rank the remaining compounds based on their predicted brain-to-blood ratio [40].

- Final Manual Review: Manually inspect the top-ranking compounds to ensure chemical tractability, diversity, and the absence of any subtle undesirable features missed by the automated filters.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Library Filtering and CNS Discovery

| Item / Resource | Function / Description | Example Tools / Databases |

|---|---|---|

| Cheminformatics Software | Calculates molecular descriptors, applies filters, and performs clustering and diversity analysis. | Schrodinger Suite, MOE, RDKit, Knime [41] [21] |

| Virtual Screening Platforms | Web servers and software for pharmacophore-based screening and molecular docking. | Pharmit, SwissSimilarity, DOCK3.7, AutoDock Vina [40] [42] |

| Chemical Databases | Sources of commercially available and pubicly documented compounds for library building. | ZINC15, PubChem, DrugBank, ChEMBL [40] [43] |

| PAINS/REOS Filter Sets | Defined sets of SMARTS patterns to identify and remove promiscuous or reactive compounds. | Published SMARTS patterns from the scientific literature, often built into modern software [21] |

| BBB Prediction Models | In silico models that predict the likelihood of a compound crossing the blood-brain barrier. | Available as standalone tools or integrated within larger drug discovery platforms [40] |

| HTS Compound Libraries | Commercially available, pre-designed libraries of compounds with drug-like properties for screening. | BOC Sciences HTS Library, Pre-plated Diversity Libraries [43] |

Troubleshooting Guides and FAQs

FAQ: Core Concepts and Design

Q1: What is the primary goal of a sequential filtering pipeline in compound library research? The primary goal is to efficiently navigate vast chemical spaces to identify high-quality, developable hit compounds. A sequential pipeline applies increasingly sophisticated filters to rapidly eliminate unsuitable compounds in early stages, saving resources for more refined selection processes on a smaller, pre-enriched subset of compounds [44]. This hierarchical approach balances efficiency and accuracy [44].

Q2: What are the key differences between activity and similarity filtering?

- Activity Filtering: This is a ligand-based approach that uses known bioactive compounds to define queries for virtual screening. The goal is to identify new compounds that share biological activity with the query, often using molecular fingerprints, topological indices, or pharmacophore models [44].

- Similarity Filtering: This method assesses structural or property similarity between compounds. It is often used to ensure diversity in a primary screening library or, conversely, to create focused libraries around a specific scaffold for lead optimization. It employs metrics like Tanimoto coefficients to quantify similarity [45] [44].

Q3: How do I decide on the sequence of filters for my pipeline? A robust strategy applies efficient, coarse filters first, followed by more advanced, computationally expensive filters. A typical sequence is [44]:

- Fast Filters: Apply rules for unwanted chemical functionalities and lead-like properties.

- Intermediate Filters: Use ligand-based methods like similarity searching or pharmacophore models.

- Advanced Filters: Employ structure-based methods like molecular docking with accurate scoring functions or free-energy calculations.

Q4: What are "lead-like" properties, and why are they preferred over "drug-like" properties for screening libraries? Lead-like compounds are smaller and less hydrophobic than typical drug-like compounds. Selecting for lead-like properties leaves room for molecular weight, lipophilicity, and other characteristics to increase during the lead optimization process, helping to maintain favorable ADMET properties [45]. Common lead-like criteria are summarized in Table 1 below.

FAQ: Implementation and Troubleshooting

Q5: Our HTS results show a high rate of false positives. How can the filtering pipeline address this? High false-positive rates can stem from compound reactivity, assay interference, or promiscuous binding. Your filtering pipeline should explicitly address this by implementing a "cleanup" filter to remove compounds with unwanted functionalities. This includes reactive groups (e.g., acyl halides), moieties that can interfere with assays (e.g., certain chromophores), or groups with poor pharmacokinetic profiles (e.g., sulfates) [45]. Defining and applying a list of these unwanted groups (see Table 2) during the initial filtering stage can significantly improve data quality.

Q6: How can we effectively reduce the size of a large commercial compound library for a focused screening campaign? After applying basic lead-like and unwanted-group filters, use cluster-based methods to remove redundancy and ensure diversity. Cluster the remaining compounds based on molecular similarity (e.g., using Tanimoto coefficients). Then, select a representative compound from each cluster where the pairwise similarity within the cluster is above a certain threshold (e.g., >0.9). This ensures broad coverage of chemical space without over-representing similar structures [45].

Q7: Our virtual screening pipeline seems to miss known active compounds. What could be wrong? This could indicate an overly restrictive filtering strategy.