Strategic Filtering of Bioactive Compounds: Designing Efficient Screening Libraries for Cellular Activity

This article provides a comprehensive guide for researchers and drug development professionals on designing focused compound libraries by strategically filtering for cellular activity.

Strategic Filtering of Bioactive Compounds: Designing Efficient Screening Libraries for Cellular Activity

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on designing focused compound libraries by strategically filtering for cellular activity. It covers the foundational principles of defining cancer-associated target spaces and the critical challenge of ensuring cellular potency. The content explores advanced methodological approaches, including cheminformatics for library management, AI-driven generative models, and multi-target library design. It further addresses practical troubleshooting for common pitfalls and outlines rigorous validation strategies through phenotypic screening and computational profiling. By integrating these elements, the article serves as a roadmap for creating high-quality, target-annotated libraries that accelerate hit identification in complex phenotypic assays, ultimately enhancing the efficiency of drug discovery, particularly in precision oncology.

Laying the Groundwork: Principles of Target Space and Cellular Potency

FAQs: Troubleshooting Common Experimental Issues

Q1: Our high-content screening (HCS) assay for compound testing shows weak signal intensity. What could be the cause and how can we resolve it?

Weak staining or signal intensity in HCS can arise from multiple sources. To troubleshoot, consult the table below which outlines common causes and solutions.

Table 1: Troubleshooting Weak Staining in HCS Assays

| Possible Cause | Recommended Solution |

|---|---|

| Insufficient antibody concentration | Titrate the antibody to determine the optimal concentration; consider overnight incubation at 4°C. [1] |

| Masked epitope due to fixation | Use antigen retrieval methods (HIER or PIER) to unmask the epitope; reduce fixation time. [1] |

| Loss of antibody activity | Run positive controls; ensure proper antibody storage and avoid repeated freeze-thaw cycles. [1] |

| Inconsistent cellular models | Validate cell lines via genotyping; manage growth rates and passage numbers; use STR analysis for verification. [2] |

| Protein located in the nucleus | Add a permeabilizing agent (e.g., Triton X-100) to the blocking and antibody dilution buffers. [1] |

Q2: We are observing high background noise in our phenotypic screens. How can we improve the signal-to-noise ratio?

High background staining obscures critical features and is often due to non-specific binding. Key solutions are summarized in the table below.

Table 2: Troubleshooting High Background Staining

| Possible Cause | Recommended Solution |

|---|---|

| Insufficient blocking | Increase the blocking incubation period or change the blocking reagent (e.g., 10% normal serum for sections, 1-5% BSA for cell cultures). [1] |

| Primary antibody concentration too high | Titrate the antibody to find the optimal concentration and incubate at 4°C. [1] |

| Non-specific binding by secondary antibody | Run a negative control without the primary antibody. Use a pre-adsorbed secondary antibody and block with serum from the species in which the secondary was raised. [1] |

| Incomplete washing | Increase the number and duration of washes between steps. [1] [3] |

| Contaminated reagents | Use fresh, sterile buffers; avoid using equipment exposed to concentrated analytes; work in a clean environment. [3] |

Q3: How can we assess and ensure the quality of our HCS assay before running a full library screen?

Assay quality is paramount for generating reliable data. Key acceptance criteria and steps include:

- Z' Factor Calculation: The Z' factor is a statistical parameter that measures assay quality and robustness. An assay with a Z' factor greater than 0.4 is considered acceptable for screening, though a value above 0.6 is preferred. [2]

- Include Controls: Always set up positive and negative controls on every assay plate. Positive controls validate the assay's response, while negative controls establish the baseline. [2]

- Pilot Testing: Run initial pilot tests on a small scale to determine the assay's feasibility and reliability for HCS. Optimize the workflow to minimize waste and ensure reproducibility. [2]

Q4: Our compound library screening has identified a potential MYC inhibitor. What are the current clinically relevant standards in this field?

Targeting the oncoprotein MYC has historically been challenging but recent advances are promising. Two of the most extensively studied compounds are:

- Omomyc / OMO-103: A peptide-based inhibitor that disrupts the MYC-MAX interaction. Evidence of target inhibition was shown in a phase I trial, where decreased expression of MYC-regulated genes was observed. The treatment was reported to be well tolerated. [4]

- MYCi975: A small-molecule MYC-MAX antagonist. Preclinical studies show it exhibits anti-cancer activity in several animal models and can enhance the response to immunotherapy. [4]

Experimental Protocols for Key Methodologies

Protocol 1: Validating Compound Activity in a Phenotypic HCS Assay

This protocol is designed to filter compounds for cellular activity and assess their effect on cancer hallmarks.

- Cell Plating and Incubation: Plate validated cells (e.g., patient-derived glioblastoma stem cells) in solid black polystyrene microplates to reduce well-to-well crosstalk. Allow cells to adhere overnight. [5] [2]

- Compound Treatment: Treat cells with compounds from your library. Include a broad dose-response concentration range (e.g., 1 nM - 10 µM) to identify all associated phenotypes. Include DMSO vehicle controls. [2]

- Staining and Fixation: At the appropriate time point (determined by a time-course experiment), stain live cells with fluorescent probes or fix cells and perform immunocytochemistry for target proteins (e.g., using probes for autophagy, cell signaling, or cytotoxicity). [2]

- Image Acquisition: Use a confocal high-content screening platform (e.g., Thermo Scientific CellInsight CX7) to acquire multiplexed images at multiple focal planes. This allows for the examination of subcellular structures. [6]

- Image and Data Analysis: Extract quantitative data on features like cell count, viability, and nuclear morphology. Normalize data to controls to address systematic variations. Use a robust curve-fitting method (e.g., 4-parameter logistic) for dose-response analysis. [2] [3]

- Hit Confirmation: Retest initial hits in confirmation assays with higher replicate numbers to minimize false positives and false negatives. [2]

Protocol 2: Assessing Compound Selectivity and Target Engagement

- Target-Annotated Library Design: Start with a comprehensive list of cancer-associated targets, such as the 1,655 proteins implicated in various hallmarks of cancer. [5]

- Cellular Potency Filtering: Filter compounds based on cellular activity data to remove inactive probes. [5]

- Selectivity Filtering: Apply similarity filtering to select the most potent and selective compound for each target, reducing redundancy and off-target effects. [5]

- Phenotypic Screening in Relevant Models: Screen the final library (e.g., a focused set of ~1,200 compounds) in physiologically relevant patient-derived models to identify patient-specific vulnerabilities and confirm on-target activity. [5]

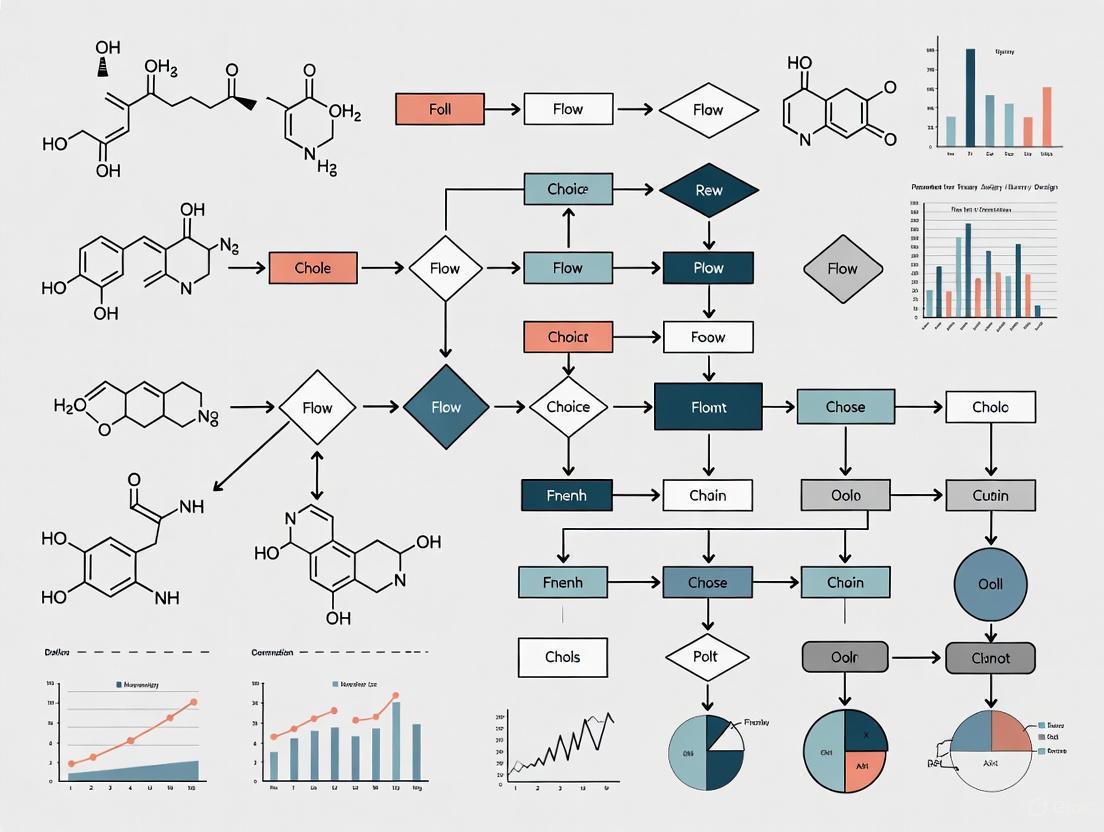

The diagram below illustrates the logical workflow for filtering a compound library to identify hits with high cellular activity and selectivity.

Signaling Pathways in Cancer Target Space

The following diagram illustrates the central role of the MYC oncoprotein, a frequently deregulated target in cancer, and its interplay with key hallmarks, providing a context for targeting this pathway.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Target Space and Compound Screening Research

| Reagent / Tool | Function / Application | Key Considerations |

|---|---|---|

| Validated Cell Lines & Patient-Derived Models | Physiologically relevant models for phenotypic screening; used to identify patient-specific vulnerabilities. [5] | Validate via genotyping (e.g., STR analysis); manage passage number; verify functional pathways with reference compounds. [2] |

| SCREEN-WELL & CELLESTIAL Libraries/Probes | Comprehensive compound libraries and fluorescent probes for monitoring autophagy, cell signaling, and cytotoxicity in HCS. [2] | Use in multiplexed assays; test tolerance to solvents like DMSO; perform dose-response curves. [2] |

| High-Content Screening (HCS) Platform | Automated, high-resolution microscopy for multiparameter analysis of compound effects at a subcellular level. [2] | Use confocal models (e.g., Thermo Scientific CX7) for improved resolution; ensure proper calibration of liquid handling systems. [6] [2] |

| Target-Annotated Compound Library (e.g., C3L) | A focused library of bioactive small molecules designed to interrogate a wide range of anticancer targets. [5] | Optimize for library size, cellular activity, chemical diversity, and target selectivity to cover a broad target space efficiently. [5] |

| Antibodies Validated for Immunofluorescence | Specific detection of target proteins and cellular phenotypes in fixed-cell assays. [1] | Check datasheet for application validation (IHC/IF); titrate for optimal concentration; use appropriate antigen retrieval if needed. [1] |

Troubleshooting Guides

Guide 1: Troubleshooting Discrepancies Between Biochemical Binding and Cellular Activity

Problem: Your compound shows excellent target binding in a biochemical assay but fails to elicit the expected cellular response.

Solution: Investigate factors that prevent the compound from engaging its target in the complex cellular environment.

| Problem | Possible Root Cause | Diagnostic Experiments | Potential Solutions |

|---|---|---|---|

| Lack of Cellular Permeability | Compound is too polar or is a substrate for efflux pumps [7]. | - Perform PAMPA or Caco-2 assays to measure passive permeability.- Use assays with efflux pump inhibitors (e.g., Elacridar) to check for transporter efflux [7]. | - Optimize Log P and Polar Surface Area (TPSA); aim for ClogP < 4 and TPSA > 75 to reduce toxicity risk while maintaining permeability [8].- Reduce the number of H-bond donors and rotatable bonds [7]. |

| Intracellular Metabolism/Instability | Compound is metabolized by cytosolic enzymes before reaching the target. | - Incubate compound with cell lysates (S9 fraction) and analyze by LC-MS for degradation products.- Use stable isotope labeling to track the parent compound. | - Identify and block metabolic soft spots (e.g., labile esters).- Consider prodrug strategies for improved delivery. |

| Off-target Binding & Promiscuity | High lipophilicity leading to non-specific binding to membranes or other proteins [8]. | - Profile compound in a broad panel of off-target assays (e.g., Cerep Bioprint).- Calculate the Lipophilic Ligand Efficiency (LLE = pIC50 - LogP); aim for LLE > 5 [8]. | - Reduce overall lipophilicity (ClogP).- Introduce polarity to improve selectivity. |

| Incorrect Cellular Context | The mechanism of action (MOA) requires a specific cellular state not present in your model. | - Validate that your cell model expresses the necessary co-factors, signaling proteins, or post-translational modifications for the intended MOA [9]. | - Switch to a more physiologically relevant cell type (e.g., primary cells, iPSC-derived cells) [10]. |

Guide 2: Troubleshooting Variable Results in Cell-Based Potency Assays

Problem: Your cell-based potency assay shows high variability, making it difficult to reliably rank compounds.

Solution: Systematically control for cell culture and assay execution factors to improve reproducibility.

| Problem | Possible Root Cause | Diagnostic Experiments | Potential Solutions |

|---|---|---|---|

| High Background Signal | Inadequate blocking or non-specific antibody binding [11]. | - Run a no-primary-antibody control.- Perform an antibody blocking validation: pre-incubate the antibody with a 10-fold excess of its immunogen; signal should be abolished [11]. | - Use a blocking buffer specifically formulated for cell-based assays.- Optimize antibody concentration and incubation time. |

| Inconsistent Cell Seeding | Cells are not adherent, unhealthy, or at the wrong confluence [11]. | - Check cell viability and morphology before seeding.- Validate the linear range of the assay by plating a dilution series of cells [11]. | - Never touch the bottom of the plate with the pipette tip. Gently tap the plate sides after seeding to ensure even distribution [11].- Use a consistent passage number range for experiments. |

| Poor Data Linearity | Assay is run outside its dynamic range; signals are saturated or too low. | - Perform a dilution series for both the cell number and the primary antibody to find the linear response window [11]. | - Establish standard curves for all key reagents and ensure measurements fall within the linear range. |

| Edge Effects | Wells on the edge of the plate dry out or experience temperature gradients. | - Compare the results from edge wells to interior wells. | - When incubating for more than one day, feed cells with fresh media to prevent drying [11].- Use plate seals or maintain a humidified environment. |

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between target binding and cellular potency?

A1: Target binding measures a compound's ability to interact with its purified protein target in a simplified biochemical system. Cellular potency, however, is a functional measure of the compound's ability to achieve its intended mechanism of action (MOA) and produce a desired biological effect within the complex environment of a living cell [9] [12]. A compound can be a potent binder but lack cellular potency due to factors like poor permeability, efflux, or metabolic instability [8].

Q2: Why should my library design focus on "lead-like" rather than just "drug-like" compounds?

A2: "Drug-like" properties are often modeled on marketed oral drugs, which tend to be more complex molecules. "Lead-like" compounds are smaller and less lipophilic, providing crucial "chemical space" for optimization. Medicinal chemistry optimization almost invariably increases molecular weight (MWT) and Log P [7]. Starting with a lead-like compound (lower MWT, lower Log P) allows you to add necessary bulk and functionality during optimization while staying within a safer and more developable chemical space [7].

Q3: My compound is highly potent but also highly cytotoxic. What could be the cause?

A3: This is often a sign of "molecular obesity" or promiscuity [8]. Compounds with high lipophilicity (ClogP > 3, especially > 4) have a greater tendency to engage in non-specific, hydrophobic interactions with various cellular targets, membranes, and proteins, leading to pleiotropic effects and toxicity [8]. To mitigate this, calculate the Lipophilic Ligand Efficiency (LLE = pIC50 - LogP). An LLE greater than 5 is associated with a significant reduction in the risk of toxicity [8].

Q4: For my cell therapy product, the potency test doesn't perfectly correlate with clinical efficacy. Is this a problem?

A4: Not necessarily. While it is desirable for a potency test to reflect clinical efficacy, a perfect correlation is not always required for regulatory approval [9]. The primary roles of the potency test are to ensure manufacturing consistency and product stability from lot to lot [9] [12]. For many approved cell therapies, the exact MOA is not fully known, making it difficult to design a test that perfectly predicts clinical outcome. The key is that the product must be clinically efficacious with an acceptable risk-benefit profile [9].

Essential Data for Compound Filtering

Table 1: Key Property Ranges for High-Quality Screening Compounds

This table synthesizes property filters used to design compound libraries with a higher probability of demonstrating cellular potency and developability [7] [13] [8].

| Property | Lead-like / General Oral | CNS-Active | Toxicity Risk Reduction | Rationale |

|---|---|---|---|---|

| Molecular Weight (MWT) | < 400 [8] | Lower (Oral drugs are lower in MWT) [7] | < 400 [8] | Lower MWT is correlated with better absorption and reduced ADMET issues [8]. |

| clogP/clogD | < 4 [8] | Lower | < 3 (and TPSA > 75) [8] | High lipophilicity is a major driver of promiscuity, toxicity, and poor solubility [8]. |

| Hydrogen Bond Donors (HBD) | < 5 [7] | Fewer [7] | - | Impacts permeability and absorption [7]. |

| Hydrogen Bond Acceptors (HBA) | < 10 [7] | Fewer [7] | - | Impacts permeability and absorption [7]. |

| Polar Surface Area (TPSA) | - | - | > 75 [8] | Higher TPSA coupled with low clogP significantly reduces risks of in vivo toxicity [8]. |

| Lipophilic Ligand Efficiency (LLE) | - | - | > 5 [8] | Ensures potency is achieved through specific binding rather than non-specific lipophilic interactions [8]. |

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Reagent | Function in Cellular Potency Assessment |

|---|---|

| Validated Primary Antibodies | For detecting specific protein targets, phosphorylation, or expression changes in cell-based assays like In-Cell Western [11]. Critical for MoA deconvolution. |

| AzureCyto In-Cell Western Kit | A reagent kit that provides all necessary components (blocking buffer, permeabilization solution, total cell stain) for performing robust and quantitative In-Cell Western assays, reducing optimization time [11]. |

| Near-Infrared (NIR) Fluorescent Secondaries | Secondary antibodies labeled with fluorophores (e.g., AzureSpectra 700, 800) allow for multiplexed detection with minimal crosstalk in cell-based assays [11]. |

| Physiologically Relevant Cell Lines | Cell models that closely mimic the disease state (e.g., primary cells, iPSC-derived cells, organoids) are essential for measuring biologically meaningful cellular potency [14] [10]. |

| Covalent Compound Library | A collection of compounds with covalent warheads. These serve as a fruitful reservoir for target-agnostic screening, as the warhead provides an "intrinsic chemical biology handle" to expedite MoA deconvolution [15]. |

| Stem Cell Differentiation Kits | Provide standardized protocols and reagents to generate specific, terminally differentiated cell types (e.g., neurons, cardiomyocytes) from pluripotent stem cells for potency assays in a relevant cellular context [10]. |

Visualizing Core Concepts and Workflows

Target vs Cellular Screening Workflow

Relationship Between MOA, Potency, and Efficacy

Library Design as a Multi-Objective Optimization Problem

Technical Support Center

Troubleshooting Guides

Issue 1: Generative Model Produces Chemically Invalid Structures Problem: The AI model generates molecular structures with incorrect valences or unstable rings. Solution:

- Implement Valence Checks: Add post-generation filters to remove structures that violate basic chemical bonding rules [16].

- Augment Training Data: Ensure training libraries are curated with only valid, synthesizable compounds [16].

- Use Rule-Based Sanitization: Incorporate chemical informatics toolkits to validate outputs before proceeding to property prediction stages.

Issue 2: Optimization Favors a Single Property at the Expense of Others Problem: The designed compound library is skewed toward high potency but poor metabolic stability. Solution:

- Adjust Objective Weights: Rebalance the weighting scheme in the multi-objective function to prevent any single property from dominating [16].

- Apply Pareto Optimization: Analyze the Pareto front to select compounds representing the best possible trade-offs between all critical parameters [16].

- Incorplexity Penalty Terms: Add penalty terms to the objective function that actively discourage extreme values in any one property.

Issue 3: Limited Public Data for Target of Interest Problem: Insufficient bioactivity data exists for a novel target to reliably train a generative model. Solution:

- Employ Transfer Learning: Pre-train the model on a larger, general chemical dataset, then fine-tune on the limited target-specific data available [16].

- Utilize Multi-Task Learning: Design the model to learn from related targets or general pharmaceutical properties, sharing representations across tasks [16].

Frequently Asked Questions (FAQs)

Q: What is the minimum dataset size required to start a multi-objective library design project? A: While data requirements vary, one cited approach successfully generated balanced compounds using limited public data by leveraging transfer learning and multi-task learning frameworks [16].

Q: How do I choose which properties to include in the multi-objective function? A: Base your selection on the specific therapeutic context. Core properties often include potency (against single or multiple targets), metabolic stability, and a calculated safety profile. The function can be tailored to prioritize the balance of these conflicting features [16].

Q: Can this approach design compounds for multi-target therapies? A: Yes. A key application is designing compounds with a well-balanced profile for engaging multiple targets, which involves optimizing for affinity across several biological targets simultaneously [16].

Experimental Protocols & Data

Table 1: Key Properties for Multi-Objective Compound Optimization

| Property Objective | Typical Target Range | Commonly Used Assay/Model | Optimization Goal |

|---|---|---|---|

| Potency (pIC₅₀) | >7.0 (nanomolar) | Biochemical inhibition assay | Maximize |

| Metabolic Stability (HLM t₁/₂) | >30 minutes | Human Liver Microsome assay | Maximize |

| Cytotoxicity (CC₅₀) | >30 µM | Cell viability assay (e.g., HepG2) | Maximize |

| Lipophilicity (LogP) | 1-3 | Chromatographic method (e.g., LogD) | Maintain in range |

| Multi-Target Affinity | pIC₅₀ >6.5 for all targets | Panel of biochemical assays | Balanced Maximization |

Detailed Methodology: Multi-Objective Optimization Workflow

Protocol 1: De Novo Compound Design with Conflicting Properties

Data Curation and Pre-processing

- Source: Gather public bioactivity data (e.g., from ChEMBL) and compound structures.

- Standardization: Curate SMILES strings, standardize descriptors, and remove duplicates and assay artifacts.

- Labeling: Annotate compounds with property labels relevant to the objectives (e.g., "high potency," "low clearance").

Model Architecture and Training

- Framework: Implement a conditional generative model (e.g., a Variational Autoencoder or Generative Adversarial Network).

- Conditioning: The model is conditioned on a multi-dimensional vector representing the desired profile for all target properties.

- Training: Train the model to reconstruct compounds and predict their properties from the latent space.

Multi-Objective Optimization and Sampling

- Objective Function Definition: Formulate a combined objective function, such as a weighted sum of predicted properties:

Score = w₁ * Potency + w₂ * Stability + w₃ * Safety. - Latent Space Search: Perform guided exploration (e.g., via Bayesian optimization) in the model's latent space to find points that maximize the objective function.

- Compound Generation: Decode the optimized latent points to generate novel molecular structures.

- Objective Function Definition: Formulate a combined objective function, such as a weighted sum of predicted properties:

In-silico Validation and Filtering

- Property Prediction: Run generated compounds through predictive models for ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) properties.

- Structural Filtering: Apply rules for chemical stability, synthesizability, and the presence of undesirable functional groups.

- Selection: Finally, select a diverse set of compounds from the Pareto-optimal front for further experimental validation [16].

Visualizations

Diagram 1: Multi-Objective Optimization Workflow

Diagram 2: Conflicting Property Balance

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions

| Reagent / Material | Function in Library Design Research |

|---|---|

| Human Liver Microsomes (HLMs) | Critical for conducting high-throughput in-vitro assays to assess the metabolic stability of candidate compounds. |

| Cell-Based Assay Kits (e.g., Cytotoxicity) | Used for experimentally profiling generated compounds against key secondary objectives like safety and cellular activity. |

| Target Protein Panels | Essential for validating the primary design goal of multi-target affinity by measuring binding or inhibition across multiple targets. |

| Chemical Informatics Software Toolkits | Enable the calculation of molecular descriptors, structural validation, and application of chemical rules to filter generated libraries. |

| Curated Public Bioactivity Databases (e.g., ChEMBL) | Serve as the primary source of training data for building generative models, especially for novel or less-studied targets. |

FAQs: Sourcing and Feasibility in Library Design

What are the primary goals when filtering a compound library for cellular activity? The primary goals are to identify compounds with genuine on-target biological activity while filtering out molecules that are cytotoxic, chemically unstable, or prone to assay interference. The aim is to venture into underexplored areas of chemical space to uncover novel mechanisms of action, moving beyond compounds similar to existing antibiotics to address antimicrobial resistance [17].

Why might the activity of a compound in a virtual screen not translate to a cellular assay? A major reason is the difference between the nominal concentration applied to the assay and the actual concentration experienced by the cell. Chemicals can adsorb to plastic well plates, bind to serum proteins in the media, evaporate, or be metabolized, reducing the bioavailable concentration. Using a Virtual Cell Based Assay (VCBA) model can predict the real intracellular concentration by accounting for these factors using the compound's physicochemical properties [18].

What are common sources of variability in cell-based assay results? Variability can arise from multiple factors in the cell culture workflow, including cell seeding density, passage number, the timing of analysis, and the selection of appropriate microtiter plates. Maintaining reproducibility at every step is crucial for data reliability [19].

How can AI-generated compound designs be feasibly sourced for physical testing? Generative AI can design millions of novel compounds. The key to feasibility is computationally screening these virtual molecules for synthesizability. For instance, after AI generated over 36 million candidates, researchers applied filters for antibacterial activity, cytotoxicity, and chemical liabilities, narrowing the pool to a manageable number of top candidates for synthesis and testing [17]. Aggregator platforms that consolidate commercially available compounds from multiple vendors can also help source building blocks or analogous compounds [20].

Troubleshooting Guides

Issue: High Hit Rate with Non-Reproducible or Cytotoxic Compounds

Problem: An initial screening returns an unusually high number of hits, many of which kill the cells or cannot be confirmed in follow-up experiments.

Solution:

- Filter for Pan-Assay Interference Compounds (PAINS): Apply computational filters to remove compounds known to cause false positives through non-specific mechanisms, such as aggregators or fluorescent quenchers [20].

- Implement Drug-Likeness Filters: Use guidelines like Lipinski's Rule of Five (molecular weight ≤ 500, logP ≤ 5, hydrogen bond donors ≤ 5, hydrogen bond acceptors ≤ 10) early in the virtual screening process to prioritize compounds with higher probability of cellular permeability and developability [20].

- Predict Cytotoxicity: Use trained machine-learning models to computationally screen and remove compounds predicted to be toxic to human cells before they are ever synthesized or purchased [17].

Issue: Discrepancy Between Nominal and Effective Cellular Concentration

Problem: A compound shows promising activity at a certain concentration in silico, but no effect is observed in the cellular assay, even at high nominal doses.

Solution:

- Employ a Virtual Cell Based Assay (VCBA) Model: Use this kinetic model to estimate the time-dependent actual concentration of the test chemical inside the cell, based on its physicochemical parameters and the specific cell line being used [18].

- Account for Key Parameters: The VCBA model calculates losses due to evaporation, binding to plastic and serum proteins, and metabolic degradation. This allows you to refine dosing strategies in silico before wet-lab experiments [18].

- Use the Model for IVIVE: Apply the VCBA coupled with a physiologically based kinetic (PBK) model to extrapolate the in vitro effective concentration to a predicted in vivo dose, strengthening the feasibility argument for a compound series [18].

Issue: Infeasible Sourcing or Synthesis of AI-Designed Compounds

Problem: Generative AI proposes structurally novel compounds, but they are impossible to synthesize with current methods or require unavailable starting materials.

Solution:

- Incorporate Synthesizability Checks: Integrate reaction-based virtual enumeration and retrosynthetic analysis into the AI design workflow to ensure proposed molecules are synthetically feasible [20].

- Leverage Compound Aggregators: Use commercial platforms that aggregate millions of available compounds from global suppliers to search for and source the desired molecule or a structurally similar analogue for initial testing [20].

- Partner with Specialized CROs: For complex molecules, collaborate with contract research organizations that specialize in custom chemical synthesis to navigate challenging synthetic routes.

Experimental Protocols & Data

Protocol: Tandem Mass Spectrometry for Hit Identification in Barcode-Free Libraries

This protocol is for identifying hits from a self-encoded library (SEL) after affinity selection, without relying on DNA barcodes [21].

- Sample Preparation: After affinity selection against the immobilized target and washing away non-binders, elute the bound compounds. Desalt and concentrate the eluate.

- nanoLC-MS/MS Analysis:

- Chromatography: Inject the sample onto a nanoflow liquid chromatography system using a C18 column for separation. Use a water/acetonitrile gradient with 0.1% formic acid.

- Mass Spectrometry: Operate the mass spectrometer in data-dependent acquisition (DDA) mode. Full MS1 scans are followed by fragmentation (MS2) of the most intense ions.

- Automated Structure Annotation:

- Data Processing: Use software like SIRIUS and CSI:FingerID for reference spectra-free structure annotation.

- Library Matching: Score the predicted molecular fingerprints of the unknown MS2 spectra against a computationally enumerated database of all possible library structures to unequivocally identify the hit compound.

Protocol: In Vitro to In Vivo Extrapolation (IVIVE) Using a Virtual Cell Based Assay

This methodology extrapolates effective in vitro concentrations to in vivo doses for risk assessment and compound prioritization [18].

- Define System Parameters: Input the physicochemical properties of the test compound (e.g., logP, pKa, solubility) and parameters specific to your cell line (e.g., cell volume, lipid and protein content).

- Run the VCBA Simulation: Use the set of ordinary differential equations in the VCBA to model the time-dependent concentration of the chemical in the medium and inside the cells, accounting for partitioning, binding, and degradation.

- Couple with a PBK Model: Use the output from the VCBA (the in vitro biochemically effective concentration) as the input for a human physiologically based kinetic (PBK) model.

- Extrapolate the Dose: The PBK model runs backwards to estimate the external in vivo dose that would result in the target organ concentration equivalent to the effective concentration seen in the cell assay.

Quantitative Data Tables

Table 1: Key Molecular Properties for Drug-Likeness Filtering

Table based on criteria used to curate modern screening libraries and score AI-generated virtual compounds [20].

| Property | Ideal Range for Filtering | Rationale |

|---|---|---|

| Molecular Weight (MW) | ≤ 500 Da | Impacts compound permeability and absorption. |

| LogP (lipophilicity) | ≤ 5 | Reduces risk of poor solubility and metabolic instability. |

| Hydrogen Bond Donors (HBD) | ≤ 5 | Influences membrane permeability and oral bioavailability. |

| Hydrogen Bond Acceptors (HBA) | ≤ 10 | Affects solubility and permeability. |

| Topological Polar Surface Area (TPSA) | ≤ 140 Ų | A good predictor of cell membrane penetration, especially for blood-brain barrier. |

Table 2: Common Assay Interferences and Mitigation Strategies

Table summarizing frequent sources of false positives in cellular screening and how to address them [20] [17].

| Interference Type | Cause | Mitigation Strategy |

|---|---|---|

| Cytotoxicity | Non-specific mechanism leading to cell death. | Use cell viability assays (e.g., ATP-based assays) in parallel; filter with predictive cytotoxicity models. |

| Chemical Reactivity | Compound reacts non-specifically with protein targets. | Filter out chemical groups known for promiscuous activity (e.g., certain Michael acceptors). |

| Aggregation | Compounds form colloidal aggregates that sequester proteins. | Use detergents like Triton X-100 in assays; check for dynamic light scattering. |

| Fluorescence/ Luminescence | Compound interferes with optical readout. | Use orthogonal, non-optical assay methods (e.g., HPLC, FACS) for hit confirmation. |

| Structural Liabilities | Molecules with unstable motifs (e.g., esters, aldehydes). | Apply computational filters to remove compounds with known unstable functional groups. |

Workflow Visualization

Virtual to Physical Screening Workflow

VCBA Compound Fate Model

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Library Screening and Feasibility Analysis

| Item | Function |

|---|---|

| Curated Compound Libraries | Pre-filtered collections of molecules designed for drug-like properties, diversity, and relevance to specific target classes (e.g., kinases), improving initial hit quality [20]. |

| Compound Aggregator Platforms | Online services that consolidate millions of commercially available chemicals from multiple suppliers, streamlining the sourcing of screening compounds and building blocks [20]. |

| Virtual Cell Based Assay (VCBA) Software | A kinetic model that uses physicochemical properties to predict the real concentration of a compound inside a cell, correcting for losses to plastic, protein, and evaporation [18]. |

| High-Content Screening Systems | Automated microscopy platforms that provide multiparametric readouts from cell-based assays, allowing simultaneous assessment of efficacy and cytotoxicity. |

| Self-Encoded Libraries (SELs) | Barcode-free solid-phase combinatorial libraries where hits are identified via tandem MS/MS, ideal for targets incompatible with DNA-encoded libraries (DELs), such as nucleic-acid binding proteins [21]. |

| AI/ML Drug Discovery Platforms | Software that uses generative models to design novel compounds and predictive models to virtually screen for activity, ADMET properties, and synthesizability [17] [20]. |

Advanced Tools and Workflows: From Cheminformatics to AI-Driven Design

Troubleshooting Guides

Issue 1: Chemical Search Returns Inaccurate or No Results

Problem: When searching a chemical library using an identifier (e.g., name, CASRN) or structure, the query returns no hits or incorrect structures.

Solutions:

- Verify Identifier Input: Ensure identifiers are correctly spelled and formatted. Mixed input (e.g., names, CASRNs, SMILES) is often supported, but a single incorrect entry can cause failures [22].

- Check SMILES/String Interpretation: If a SMILES string is used, the system may convert it into a structure rather than searching for it in the database. Confirm how your informatics tool handles different input types [22].

- Validate Structure Drawing: For substructure or similarity searches, redraw the query structure in the molecular editor to ensure the correct connectivity and functional groups are represented [23] [22].

- Confirm Database Contents: The chemical may not be present in the specific database or library you are searching. Check if your library is filtered or if the compound is part of a "make-on-demand" virtual collection that requires synthesis [24].

Issue 2: Hazard or Safety Profile Data is Missing or Inconclusive

Problem: After generating a hazard or safety comparison profile, some data cells are empty, greyed out, or marked "Inconclusive," hindering compound assessment.

Solutions:

- Interpret Data Availability: White cells typically mean no data is available, while grey "Inconclusive" (I) cells indicate the data could not yield a definitive classification [22].

- Consult Multiple Data Sources: Use integrated hyperlinks to external databases (e.g., ATSDR, EPA's CompTox Chemicals Dashboard, PubChem) to gather additional data points not shown in the initial heatmap [22].

- Leverage Predictive Models (QSAR): If experimental data is absent, use built-in QSAR (Quantitative Structure-Activity Relationship) prediction modules to estimate toxicity endpoints and physicochemical properties [24] [22].

Issue 3: Compound Integrity and Stability in Screening Libraries

Problem: Biological screening results are inconsistent, potentially due to compound degradation in stored library samples.

Solutions:

- Monitor Compound Age and Usage: Track the date of stock solution preparation and the number of times a sample has been used. Older, frequently used compounds are more likely to degrade [25] [26].

- Manage DMSO Storage Conditions: Small molecules dissolved in aqueous DMSO can become hydrated and break down over time. Ensure proper storage conditions to minimize hydration and maintain stock integrity [25].

- Implement Quality Control (QC) Data: Integrate QC data (e.g., purity checks) into the compound management informatics system. This allows researchers to filter out or flag compounds that have failed QC [26].

Frequently Asked Questions (FAQs)

General Cheminformatics

Q1: What is cheminformatics, and why is it critical for modern drug discovery? Cheminformatics combines chemistry, computer science, and information science to organize and process chemical data [27]. It is essential for managing the vast chemical spaces of ultra-large libraries, which can contain billions of "make-on-demand" compounds, enabling virtual screening and data-driven hit identification where empirical screening is not feasible [24].

Q2: What is the difference between a pharmacophore and an informacophore? A pharmacophore is a model based on human-defined heuristics and chemical intuition, representing the spatial arrangement of features essential for a molecule's biological activity [24]. An informacophore extends this concept by incorporating data-driven insights from computed molecular descriptors, fingerprints, and machine-learned representations of chemical structure, offering a more systematic and bias-resistant strategy for scaffold optimization [24].

Data Management & Analysis

Q3: How can I add a chemical property (like druglikeness) to a table of compounds? Most cheminformatics software allows you to insert a calculated property column. Typically, you right-click on the molecular structure column header, select "Insert Column," then choose the desired chemical property (e.g., logP, molecular weight, druglikeness) from a function menu. The software will calculate and display the values for all compounds in the table [23].

Q4: What are the FAIR data principles, and why are they important? FAIR stands for Findable, Accessible, Interoperable, and Reusable [27]. These principles are guidelines for scientific data management, ensuring that digital assets like chemical and biological data are well-organized and usable beyond their immediate initial application. This maximizes data utility and promotes its preservation, which is crucial for building robust machine learning models in drug discovery [27].

Experimental Design & Validation

Q5: How do biological functional assays integrate with cheminformatics predictions? Computational tools rapidly identify potential drug candidates, but these in silico predictions must be rigorously confirmed. Biological functional assays (e.g., enzyme inhibition, cell viability) provide quantitative, empirical data on compound activity, potency, and mechanism of action [24]. This validation creates an iterative feedback loop where assay results inform and refine the computational models and structure-activity relationship (SAR) studies, guiding the design of better analogues [24].

Q6: What is the "hit-to-lead" stage? Hit to lead (H2L) is a key stage in early drug discovery [27]. "Hits" are initial compounds with a desired therapeutic effect at a known target. The H2L process involves optimizing these hits to produce a "lead" compound—a refined candidate with improved efficacy, selectivity, and drug-like properties suitable for advanced stages of development [27].

Experimental Protocols & Data

Protocol 1: Virtual Screening for Hit Identification

Objective: To identify potential hit compounds from an ultra-large virtual library by filtering for desired properties and target binding.

- Library Curation: Select a virtual compound library (e.g., Enamine's 65-billion make-on-demand library) [24].

Property Filtering: Apply calculated filters to narrow the chemical space. The table below summarizes key properties and typical thresholds used for lead-like compounds [24].

Table: Key Physicochemical Properties for Initial Library Filtering

Property Target Range Rationale Molecular Weight 200 - 500 Da Balances solubility and permeability. LogP < 5 Controls lipophilicity, impacting ADMET. Hydrogen Bond Donors ≤ 5 Improves cell membrane permeability. Hydrogen Bond Acceptors ≤ 10 Influences solubility and permeability. - Structure-Based Virtual Screening: For a specific protein target, perform molecular docking of the filtered compound set to predict binding affinities and poses [24].

- Cheminformatic Analysis: Cluster the top-ranking virtual hits by structural similarity and profile them against hazard endpoints to identify promising, safe scaffolds for acquisition and testing [22].

Protocol 2: Generating a Hazard Comparison Profile

Objective: To compare and rank a set of compounds based on their potential toxicity across multiple endpoints.

- Input Chemicals: Retrieve compounds for profiling by searching with identifiers (CASRN, name, SMILES) or by drawing a structure to find analogues via similarity search [22].

- Generate Profile: Execute the hazard profiling module to create a heat map. Each cell's color and letter represent the toxicity grade: Red/VH (Very High), Orange/H (High), Yellow/M (Medium), Green/L (Low), Grey/I (Inconclusive) [22].

- Data Interpretation: Sort columns to identify compounds with the highest hazard rankings. Hover over or click on cells to reveal the underlying data sources (Authoritative, Screening, or QSAR Model) [22].

- Export and Report: Download the complete hazard profile as a multi-worksheet Excel file containing the heat-map display and the underlying data for further analysis and reporting [22].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table: Key Materials for Cheminformatics-Driven Library Screening

| Item / Solution | Function in Experiments |

|---|---|

| Compound Library Management Software (e.g., IDBS Polar) | Tracks compound age, usage, and integrity over time, ensuring proper documentation and lessening user error [25]. |

| Cheminformatics Suites (e.g., ICM, EPA Cheminformatics Modules) | Provides integrated tools for chemical search, structure editing, 2D-to-3D conversion, property calculation, and hazard profiling [23] [22]. |

| Make-on-Demand Virtual Libraries | Ultra-large collections of novel, easily synthesizable compounds that dramatically expand accessible chemical space for virtual screening [24]. |

| Toxicity Estimation Software Tool (TEST) | Enables batch prediction of physicochemical properties and toxicity endpoints for chemicals lacking experimental data [22]. |

| Structure Drawing / Molecular Editor | An integrated tool for drawing, editing, and visualizing chemical structures, often with real-time property calculation (e.g., druglikeness) [23]. |

Workflow Diagrams

Compound Filtering and Validation Workflow

Chemical Data Retrieval and Profiling Path

Ligand-Based and Structure-Based Virtual Screening for Cellular Activity

Troubleshooting Guides and FAQs

FAQ: Core Concepts and Applications

What is the fundamental difference between LBVS and SBVS, and when should I use each approach?

Ligand-Based Virtual Screening (LBVS) uses known active ligands as references to identify new compounds with similar structural or physicochemical features, operating on the principle that similar molecules often have similar biological activity [28] [29]. It is particularly valuable when the 3D structure of the target protein is unavailable, such as for many G-protein-coupled receptors (GPCRs) [29] [30]. Structure-Based Virtual Screening (SBVS), in contrast, requires a 3D protein structure and computationally docks small molecules into the target's binding site to predict binding poses and affinity [31] [32]. SBVS often provides better library enrichment by explicitly accounting for the binding pocket's shape and volume [30]. For a new target with several known actives but no protein structure, start with LBVS. If a high-quality protein structure is available, SBVS can provide atomic-level insights into interactions.

How can I effectively combine LBVS and SBVS methods?

A sequential hybrid approach is often most efficient [30]. First, use fast LBVS methods to filter a very large compound library (e.g., millions to billions of compounds) down to a more manageable subset (e.g., thousands). This leverages the speed and pattern-recognition strength of LBVS [33]. Then, apply more computationally expensive SBVS to this pre-filtered set to refine the selection based on predicted binding interactions [30]. This conserves computational resources while leveraging the strengths of both methods. Alternatively, you can run both methods in parallel and use consensus scoring, which prioritizes compounds that rank highly in both LBVS and SBVS, thereby increasing confidence in the selected hits [30].

My virtual screening campaign identified compounds with good predicted affinity, but they showed no cellular activity. What could be wrong?

This common issue often stems from compounds failing to reach the intracellular target. The problem likely lies in inadequate filtering for cell permeability and other ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) properties [28]. During library design and post-screening filtering, ensure you evaluate key physicochemical properties linked to cellular availability. The following table summarizes critical properties and typical thresholds for cellular activity:

Table: Key Physicochemical Properties for Cellular Activity Filtering

| Property | Description | Typical Thresholds for Cellular Activity |

|---|---|---|

| Lipid-Water Partition Coefficient (LogP) | Measures lipophilicity; impacts membrane permeability [28]. | Optimal range should be defined for the target (e.g., not too high) |

| Topological Polar Surface Area (TPSA) | Predicts cell permeability and absorption [28]. | Lower TPSA is generally better for cell membrane penetration |

| Hydrogen Bond Donors (HBD) | Counts the number of OH and NH groups [28]. | ≤5 (as per Lipinski's Rule of Five) |

| Hydrogen Bond Acceptors (HBA) | Counts the number of O and N atoms [28]. | ≤10 (as per Lipinski's Rule of Five) |

| Molecular Weight (MW) | Impacts permeability and solubility [28]. | ≤500 Da (as per Lipinski's Rule of Five) |

| hERG Toxicity | Predicts potential for cardiotoxicity [28]. | "Negative" (predicted toxicity probability <0.3) |

| Solubility | Critical for compound bioavailability [28]. | Higher values (in μmol/L) are generally better |

What are the best practices for preparing my target protein structure for SBVS?

The quality of the input structure is paramount [31] [32]. Start with a high-resolution experimental structure (from X-ray crystallography or cryo-EM) if available. If using a computationally modeled structure (e.g., from AlphaFold), be aware of potential limitations in side-chain positioning and conformational dynamics, which may require post-modeling refinement [30]. Account for flexibility: If multiple receptor conformations are available, consider ensemble docking to account for target flexibility, which can significantly improve results for proteins with mobile binding sites [31] [34]. Prepare the structure by adding polar hydrogens, assigning correct protonation states, and treating key water molecules and metal ions appropriately [31].

Troubleshooting Common Workflow Issues

Issue: Poor enrichment of active compounds in my SBVS results.

- Possible Cause 1: Rigid receptor model. Your docking setup may not account for necessary side-chain or backbone movements in the binding site.

- Solution: If possible, use a docking program that allows for side-chain flexibility. Alternatively, perform ensemble docking using multiple protein conformations derived from crystal structures or molecular dynamics simulations [31] [34].

- Possible Cause 2: Inadequate consideration of key interactions.

- Solution: Before docking, analyze known ligand-target complexes to identify critical interaction residues. After docking, manually inspect top-ranked poses to ensure they form these key interactions (e.g., hydrogen bonds with catalytic residues) rather than relying solely on the docking score [32] [33].

Issue: LBVS consistently returns compounds with high structural similarity (lacking scaffold diversity).

- Possible Cause: Over-reliance on a single query compound or a narrow similarity metric.

- Solution: Use multiple, diverse known active compounds as queries for parallel screening runs [29]. Instead of relying solely on topological fingerprints, employ 3D similarity methods that consider molecular shape and electrostatic fields, which can facilitate "scaffold hopping" [29] [30]. For shape-based methods, the HWZ scoring function has been shown to improve performance over traditional Tanimoto scoring [29].

Issue: High rate of false positives in the final hit list.

- Possible Cause: Relying on a single scoring metric from one virtual screening method.

- Solution: Implement a consensus approach. Filter the initial SBVS or LBVS hits using the other method. For example, take the top-ranked compounds from docking and check their similarity to known actives, or vice-versa [33] [30]. This helps eliminate compounds that are artifacts of a single method's scoring function.

Issue: Successful hits from screening show unexpected cytotoxicity or off-target effects.

- Possible Cause: Insufficient screening for safety and selectivity during the virtual screening process.

- Solution: Incorporate explicit off-target filters. Some platforms allow you to screen selected compounds against a panel of safety and kinase assays. You can set filters to discard compounds with predicted activity (pIC50) above a certain threshold (e.g., >7 for obvious toxicity) against these undesirable off-targets [28].

Experimental Protocols for Key Steps

Protocol: Ligand-Based Virtual Screening Using Molecular Similarity

- Query Selection: Gather a set of known active compounds for your target from databases like ChEMBL [28] or PubChem [31]. Select queries that are diverse in structure and potency.

- Compound Library Preparation: Obtain a database of small molecules (e.g., ZINC, PubChem) [31]. Prepare the library by generating realistic 3D conformations for each compound and standardizing tautomeric and protonation states.

- Similarity Calculation: For each compound in the library, calculate its similarity to the query molecule(s). Common methods include:

- 2D Fingerprints: Use substructure keys-based fingerprints (e.g., PubChem fingerprint) and calculate the Tanimoto coefficient, typically with a cut-off > 0.5 for similarity [33].

- 3D Shape Similarity: Use tools like ROCS to maximize the overlap of molecular shapes and chemistry between the query and database compounds [29].

- Ranking and Selection: Rank the library compounds based on their similarity score to the query. Select the top-ranked compounds for further experimental testing or as input for a subsequent SBVS workflow [30].

Protocol: Structure-Based Virtual Screening Using Molecular Docking

- Target Preparation: Obtain the 3D structure of your target protein (PDB format). Remove native ligands and water molecules, unless a specific water is crucial for binding. Add polar hydrogens and assign protonation states at physiological pH. Define the binding site coordinates, typically centered on a known co-crystallized ligand or key residues [31] [33].

- Ligand Library Preparation: Prepare the library of small molecules by generating 3D structures, assigning correct bond orders, and enumerating possible tautomers and protonation states at a physiological pH (e.g., using OpenBabel) [33]. Convert the library and prepared protein into the required format for your docking software (e.g., PDBQT for AutoDock Vina) [33].

- Molecular Docking: Perform the docking simulation. For each ligand, the software will generate multiple poses (orientations and conformations) within the binding site. Use a grid box that amply covers the binding site (e.g., 19x38x19 Å) [33].

- Pose Scoring and Ranking: The docking program scores each pose using a scoring function. For each compound, retain the pose with the best (most negative) docking score. Rank all compounds in the library based on this score [31] [34].

- Post-Processing and Analysis: Manually inspect the top-ranked poses (e.g., top 20-50 compounds) to check for sensible binding modes, key interactions (e.g., hydrogen bonds, hydrophobic contacts), and reasonable geometry. Use tools like PLIP to systematically analyze non-covalent interactions [33]. Select the most promising candidates for experimental validation.

Workflow and Pathway Visualizations

Virtual Screening Decision and Workflow Diagram

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Computational Tools and Databases for Virtual Screening

| Tool/Resource Name | Type | Function in Virtual Screening |

|---|---|---|

| ChEMBL [28] | Public Database | Provides curated bioactivity data and assay information for known active compounds, useful for selecting LBVS queries. |

| ZINC [31] [35] | Public Compound Library | A free database of commercially available compounds for building screening libraries. |

| PubChem [31] [33] | Public Database/ Library | Provides a massive repository of chemical structures and biological activities for compound sourcing and similarity searching. |

| AutoDock Vina [33] [34] | Docking Software | A widely used, open-source program for molecular docking and SBVS. |

| ROCS [29] [30] | LBVS Software | A standard for 3D shape-based virtual screening, comparing molecular shape and chemistry. |

| RosettaVS [34] | Docking Software / Platform | An advanced, open-source SBVS method that models receptor flexibility and screens ultra-large libraries. |

| VirtuDockDL [36] | AI-Based Platform | A deep learning pipeline that uses Graph Neural Networks to predict compound activity, combining ligand and structure-based insights. |

| QuanSA [30] | LBVS Software | A 3D quantitative structure-activity relationship method that predicts both ligand pose and quantitative affinity. |

| ICM-Pro [35] | Computational Chemistry Suite | Software used for library enumeration, molecular modeling, and docking. |

| OpenBabel [33] | Chemical Toolbox | An open-source tool for chemical file format conversion and molecular manipulation, crucial for library preparation. |

Designing Multi-Target Focused Libraries for Complex Diseases

Frequently Asked Questions (FAQs) & Troubleshooting

This section addresses common challenges researchers face when designing and implementing multi-target focused libraries, providing practical solutions grounded in recent research.

FAQ 1: Why should I consider a multi-target approach for complex diseases like diabetes or Alzheimer's?

- Answer: Diseases such as cancer, neurodegenerative disorders, and diabetes are characterized by multifactorial etiologies, meaning they involve complex networks of pathological conditions. Drugs that act on a single target have frequently proven impractical and insufficient for managing these conditions. Multi-target approaches, which simultaneously modulate multiple biological targets within a disease pathway, enhance therapeutic efficacy while reducing side effects and toxicity. This strategy can also reduce polypharmacy, improving patient outcomes and adherence [37] [38].

FAQ 2: My HTS campaign yielded hits that are PAINS (Pan-Assay Interference Compounds). How can I avoid this in library design?

- Answer: A critical step in crafting a high-quality screening library is upfront filtering to eliminate compounds with problematic functionalities. You should employ cheminformatics filters to remove agents known to promiscuously interfere with assay outputs. This includes functional groups such as aldehydes, Michael acceptors, rhodanines, and many others described in PAINS (Pan-Assay Interference Compounds) and REOS (rapid elimination of swill) filters. Using software from providers like ACD Labs, OpenEye, or Schrodinger to apply these filters is a standard best practice [39].

FAQ 3: What are the key physicochemical properties I should prioritize for a "lead-like" multi-target library?

- Answer: While rules like Lipinski's Rule of Five are a good starting point for ensuring drug-likeness, library design should focus on "lead-like" qualities. This often involves prioritizing compounds with smaller molecular weight and lower lipophilicity than typical drug molecules to allow for optimization potential during medicinal chemistry efforts. Your library should be optimized for structural diversity, drug-like properties, and should follow established rules to ensure favorable absorption, distribution, metabolism, and excretion (ADME) properties [39] [40].

FAQ 4: How can I expand my hit compound into a focused library for multi-target activity?

- Answer: An effective method is to use a comprehensive set of chemical transformation rules. This involves identifying a compendium of structural changes commonly used in medicinal chemistry (available in linear notation SMIRKS) and applying them to your hit compound(s) to generate a virtual library. This approach allows for a rigorous exploration of the chemical space around your initial hit, helping you find new bioactive compounds and generate therapeutic options for complex diseases. The resulting virtual library can then be filtered based on medicinal chemistry criteria and synthesized for testing [38].

FAQ 5: How can computational methods and AI aid in the de novo design of multi-target ligands?

- Answer: Advanced computational methods, including artificial intelligence (AI), are powerful tools for de novo design. For instance, generative models like Cycle-Consistent Adversarial Networks (CycleGAN) can be trained on unpaired sets of target-focused chemical libraries to generate novel multi-target directed ligands (MTDLs). These AI-generated molecules can be designed to inhibit two or more primary target enzymes simultaneously, such as in Alzheimer's disease, and can be evaluated for structural novelty, binding affinity, and favorable physicochemical properties in silico before synthesis [41].

Experimental Protocols & Data Presentation

This table summarizes critical filters to apply during the library design phase to avoid problematic compounds and ensure lead-like quality.

| Filter Category | Specific Examples / Criteria | Purpose / Rationale |

|---|---|---|

| Problematic Functionalities | Aldehydes, Michael acceptors, Rhodanines, 2-halopyridines, Sulfonyl halides, Redox-cycling compounds | Eliminate compounds that promiscuously interfere with assay outputs (PAINS) or are chemically reactive/unstable. |

| Physicochemical Properties | Lipinski's Rule of Five, lead-like molecular weight and complexity | Ensure compounds have favorable ADME properties and optimization potential. |

| Structural Diversity | High number of unique scaffolds, low clustering density | Maximize the chance of finding hits against diverse target classes and biological space. |

This table outlines successful multi-target strategies explored in T2DM research, which can inform target selection for other complex diseases.

| Target Combination | Reported Lead Compounds | Therapeutic Outcome / Implication |

|---|---|---|

| PPARα/γ agonists | Ragaglitazar, Aleglitazar, MHY908, LT175 | Improves insulin sensitivity and reduces triglyceride/blood glucose levels; several compounds have reached clinical trials. |

| PPARγ/SUR agonists | Compound 5 (from research) | Simultaneously improves insulin sensitivity and stimulates insulin secretion. |

| PPARγ/FFA1 (GPR40) agonists | Compounds 6 & 7 (from research) | Improves insulin sensitivity, stimulates insulin secretion, and lowers blood glucose levels. |

| PTP1B/AR/PPARα/PPARγ | Compounds 3 & 4 (from research) | Demonstrates robust in vivo antihyperglycemic activity; targets insulin signaling and complications. |

Objective: To generate a novel, focused chemical library for multi-target drug discovery against complex diseases, starting from known active compounds.

Materials:

- Reference Compounds: A set of known active compounds against your target(s) of interest (e.g., from ChEMBL or internal HTS).

- Transformation Rules: A curated set of SMIRKS strings representing common medicinal chemistry transformations.

- Cheminformatics Software: A software environment capable of handling chemical transformations and library enumeration (e.g., RDKit, KNIME, or commercial suites).

- Computational Filters: Pre-defined filters for PAINS, physicochemical properties, and other desired criteria.

Methodology:

- Compound Curation: Collect and curate your set of reference compounds with known multi-target or single-target activity.

- Library Enumeration: Systematically apply each transformation rule from your SMIRKS compendium to every reference compound. This generates a large virtual library of structural analogs.

- Filtering: Apply a series of computational filters to the enumerated library:

- Step 1: Remove compounds with problematic functionalities (see Table 1).

- Step 2: Filter based on lead-like physicochemical properties (e.g., molecular weight, logP).

- Step 3: Assess structural diversity and complexity.

- Virtual Screening: Use molecular docking or other virtual screening techniques to predict the binding affinity of the filtered library members against your multiple targets of interest (e.g., PTP1B and AR for diabetes).

- ADME-Tox Prediction: Calculate predicted ADME-Tox properties for the top-ranking virtual hits using tools like ADMElab 2.0 to prioritize compounds with a higher probability of success.

- Selection for Synthesis: The final output is a prioritized list of novel, synthetically feasible compounds for chemical synthesis and biological evaluation.

Objective: To generate novel multi-target directed ligands (MTDLs) de novo using a Cycle-Consistent Adversarial Network (CycleGAN) trained on unpaired inhibitor datasets.

Materials:

- Target-Specific Inhibitor Libraries: Curated, non-overlapping sets of known inhibitors for each target of interest (e.g., 69 AChE, 572 BACE1, and 246 GSK3β inhibitors for Alzheimer's disease).

- Computational Framework: A configured MTDL-GAN (CycleGAN) model for molecular generation.

- Validation Software: Tools for calculating Tanimoto similarity and performing molecular docking simulations.

Methodology:

- Data Preparation: Curate and characterize inhibitor libraries for each target from databases like ChEMBL.

- Model Training: Train the MTDL-GAN on these unpaired datasets. The model learns to translate molecules from one inhibitor domain (e.g., AChE inhibitors) into another (e.g., BACE1 inhibitors) while preserving activity-relevant features, effectively generating MTDL-like molecules.

- Library Generation & Sampling: Store all molecular structures generated during training to create an in silico MTDL library. Sample a subset (e.g., 300 molecules) for detailed analysis.

- Structural Novelty Assessment: Calculate Tanimoto similarity scores between the generated molecules and known bioactive molecules in databases to confirm novelty.

- In silico Validation:

- Perform molecular docking simulations to validate the binding affinities of the generated MTDLs to all target enzymes.

- Compare the binding affinities of the top MTDLs against those of known investigational drugs.

- Analyze the generated MTDLs for favorable physicochemical properties and synthetic tractability.

The Scientist's Toolkit: Research Reagent Solutions

| Item / Resource | Function / Application in Library Design |

|---|---|

| Commercial Diversity Libraries (e.g., ChemBridge DIVERSet, ChemDiv Diversity) [40] | Provides a foundation of structurally diverse, drug-like compounds for High-Throughput Screening (HTS) against novel targets. |

| Bioactive & FDA-Approved Compound Libraries (e.g., LOPAC, Prestwick) [40] | Used for drug repurposing and validation; these compounds have well-characterized bioactivity and safety profiles. |

| Natural Product Libraries (purified compounds from Analyticon, GreenPharma) [37] [40] | A rich source of multi-activity drugs that intrinsically modulate multiple targets; offers unique chemotypes not found in synthetic libraries. |

| Cheminformatics Software (e.g., ACD Labs, OpenEye, MOE, Schrodinger) [39] [38] | Used for applying cheminformatic filters, calculating molecular descriptors, performing library enumeration, and virtual screening. |

| Transformation Rules (SMIRKS) [38] | A set of coded chemical reactions used for the systematic in silico expansion of hit compounds into focused analog libraries. |

| Virtual Screening & Docking Software (e.g., Molecular Operating Environment - MOE) [38] | Used to predict the binding affinity and binding mode of library compounds against protein targets before synthesis or purchase. |

| ADME-Tox Prediction Tools (e.g., ADMElab 2.0) [38] | Predicts pharmacokinetic and toxicity properties of compounds in the library to prioritize those with a higher probability of in vivo success. |

Workflow Visualization

Multi-Target Library Design Workflow

Multi-Target Hit Identification & Validation

Integrating Generative AI and Active Learning for Novel Compound Design

Frequently Asked Questions (FAQs)

FAQ 1: Why might my generative AI-designed compounds show excellent binding affinity in simulations but fail in cellular activity assays? Compounds may fail in cellular assays due to poor cellular permeability, instability in physiological conditions, off-target effects, or promiscuous functional groups that cause assay interference. A primary cause is the lack of integrated cellular activity filters during the generative process. For instance, molecules containing certain structural alerts (e.g., PAINS - Pan-Assay Interference Compounds) can generate false positives in biochemical assays but show no real cellular activity [42] [43]. Furthermore, compounds might not possess the required properties (like appropriate logP or polar surface area) to traverse cell membranes [43]. It is crucial to include early-stage filters for drug-likeness, toxicity, and known promiscuous motifs, and to validate hits using orthogonal cellular assays [44] [42].

FAQ 2: How can I improve the synthetic accessibility of compounds generated by my generative AI model? Integrating synthetic accessibility (SA) predictors as "oracles" within the active learning cycle is an effective strategy [44]. This ensures the generative model is iteratively fine-tuned to prioritize synthetically feasible structures. Furthermore, employing fragment-based generative approaches, where the AI builds molecules from readily available chemical fragments, can significantly enhance synthetic tractability [17]. Tools and scripts are available that can apply predefined structural alert filters to eliminate compounds with problematic, unstable, or difficult-to-synthesize functional groups from your virtual library before proceeding to expensive synthesis [42].

FAQ 3: My model is generating compounds with high similarity to known actives. How can I encourage more novel scaffold exploration? This is a common issue known as mode collapse. To encourage novelty, explicitly incorporate a "novelty" or "diversity" metric into your active learning reward function. One approach is to fine-tune the model on a temporal-specific set of generated molecules that are evaluated for dissimilarity from the initial training data [44]. Promoting dissimilarity from the training set during the iterative cycles forces the model to explore uncharted chemical spaces. Another method is to use generative architectures known for high sample diversity, such as diffusion models or variational autoencoders (VAEs), which are less prone to mode collapse than other models [44] [45].

Troubleshooting Guides

Problem: High Attrition Rate Between Computational Hits and Cellular Active Compounds

A significant number of top-ranked computational hits fail to show activity in live-cell assays.

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| Promiscuous/Interfering Functional Groups | - Perform substructure searching against known alert libraries (e.g., PAINS, REOS) [42].- Check for redox-active or metal-chelating groups.- Run in orthogonal, non-biochemical assays (e.g., cell-based counterscreens). | - Integrate structural alert filtering before molecular docking or affinity prediction [42] [43].- Use robust, cell-based primary screening where feasible. |

| Poor Physicochemical Properties | - Calculate key properties: logP, Molecular Weight, Topological Polar Surface Area (TPSA), H-bond donors/acceptors.- Compare against lead-like or drug-like criteria (e.g., Lipinski's Rule of 5). | - Implement property-based filtering during the generative AI's active learning cycle to enforce optimal ranges [44] [43].- Aim for "lead-like" properties to allow room for optimization. |

| Lack of Cellular Permeability | - Assess passive permeability via calculated TPSA or PAMPA assays.- Determine if the compound is a substrate for efflux pumps. | - Include permeability predictors in the multi-parameter optimization workflow.- Consider prodrug strategies for highly polar, active compounds. |

Problem: Low Synthesis Success Rate for AI-Generated Compounds

Selected virtual hits cannot be synthesized or require prohibitively complex routes.

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| AI Model Lacks SA Awareness | - Retrospectively analyze the SA score of generated molecules using tools like RAscore or SAScore.- Check for overly complex ring systems or stereochemistry. | - Retrain or fine-tune the generative model with an SA score as a reward signal in the active learning loop [44].- Adopt a fragment-based generative approach that builds upon synthetically tractable scaffolds [17]. |

| Presence of Unstable or Reactive Motifs | - Screen generated structures for moieties known to be unstable (e.g., certain esters, aldehydes) or reactive (e.g., Michael acceptors, alkyl halides) in a cellular environment [42]. | - Apply functional group filters that remove compounds with known chemical liabilities before the synthesis list is finalized [42] [43].- Collaborate closely with medicinal chemists to review proposed structures. |

Problem: Lack of Scaffold Diversity in Generated Compound Library

The generative AI output is confined to a few chemical series, limiting exploration.

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| Overfitting to Training Data | - Calculate the pairwise structural similarity (e.g., Tanimoto coefficient) between generated molecules and the training set.- Assess the diversity of the latent space in VAEs. | - Incorporate an explicit "diversity" or "novelty" penalty in the active learning objective function [44].- Use generative models like VAEs or diffusion models that better explore the chemical space [44] [45]. |

| Overly Restrictive Property Filters | - Audit the property thresholds (e.g., molecular weight < 400, logP < 3) used in early filtering stages. | - Loosen initial property constraints in a stepwise manner to see if diversity increases.- Apply stricter filters later in the workflow after a diverse set of scaffolds has been identified. |

Experimental Protocols & Data

Key Experimental Results from Cited Literature

Table 1: Experimental Validation of AI-Designed Compounds from Select Studies

| Study (Target) | Generative AI Approach | # Compounds Synthesized | # with Cellular/Target Activity | Key Outcome |

|---|---|---|---|---|

| CDK2 Inhibitor Design [44] | VAE with Nested Active Learning | 9 | 8 | Successfully generated novel scaffolds; 1 compound with nanomolar potency. |

| KRAS Inhibitor Design [44] | VAE with Nested Active Learning | N/A (in silico) | 4 (predicted) | Identified molecules with potential activity in a sparsely populated chemical space. |

| Antibiotics for MRSA & N. gonorrhoeae [17] | Fragment-Based VAE (F-VAE) & CReM | 22 (for S. aureus) | 6 (for S. aureus) | One candidate (DN1) cleared MRSA skin infection in a mouse model. |

Detailed Methodologies

Protocol 1: Nested Active Learning with a Generative VAE for Target-Specific Compound Design [44]

This protocol describes a workflow for iteratively generating and optimizing novel compounds with high predicted affinity and synthesizability.

Data Representation & Initial Training:

- Represent training molecules as SMILES strings, which are tokenized and converted into one-hot encoding vectors.

- Train a Variational Autoencoder (VAE) initially on a general drug-like compound set to learn viable chemical structures.

- Fine-tune the VAE on a target-specific training set to bias the generation towards relevant chemotypes.

Inner Active Learning Cycle (Chemoinformatic Optimization):

- Generation: Sample the VAE to produce new molecules.

- Evaluation: Filter generated molecules using chemoinformatic oracles for drug-likeness, synthetic accessibility, and dissimilarity from the current training set.

- Fine-tuning: Add molecules that pass the filters to a "temporal-specific" set. Use this set to fine-tune the VAE, reinforcing the generation of compounds with desired properties. This cycle repeats for a predefined number of iterations.

Outer Active Learning Cycle (Affinity Optimization):

- Evaluation: After several inner cycles, subject the accumulated molecules in the temporal-specific set to molecular docking simulations as a physics-based affinity oracle.

- Fine-tuning: Transfer molecules with favorable docking scores to a "permanent-specific" set. Use this high-quality set to fine-tune the VAE.

- The process then returns to the inner cycle, but similarity is now assessed against the enriched permanent-specific set. These nested cycles iterate to refine the compound library.

Candidate Selection:

- Apply stringent filtration to the final permanent-specific set.

- Use advanced molecular modeling simulations (e.g., PELE, Absolute Binding Free Energy calculations) to evaluate binding interactions and stability.

- Select top candidates for synthesis and experimental validation.

Protocol 2: Fragment-Based and Unconstrained AI Generation for Novel Antibiotics [17]

This protocol outlines two complementary approaches for generating novel antimicrobial compounds.

Fragment-Based Generation (for N. gonorrhoeae):

- Fragment Library Curation: Assemble a large library of chemical fragments (e.g., 45 million combinations).

- Fragment Screening: Use pre-trained machine learning models to screen the library for fragments with predicted antibacterial activity and low cytotoxicity.

- Hit Expansion: Employ generative AI algorithms (CReM and F-VAE) to build complete molecules from the top fragment hit (F1). CReM generates analogs by making chemically reasonable mutations, while F-VAE learns to incorporate the fragment into a full molecular structure.