Strategic Design of Chemogenomic Libraries: Balancing Potency and Selectivity for Next-Generation Therapeutics

This article provides a comprehensive guide for researchers and drug development professionals on the strategic design and application of chemogenomic libraries to achieve an optimal balance between drug potency and...

Strategic Design of Chemogenomic Libraries: Balancing Potency and Selectivity for Next-Generation Therapeutics

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the strategic design and application of chemogenomic libraries to achieve an optimal balance between drug potency and selectivity. It explores the foundational principles of chemogenomics, details advanced methodological approaches for library assembly and screening, addresses common limitations and optimization strategies in phenotypic discovery, and reviews computational and experimental frameworks for validation and comparative analysis. By integrating insights from cutting-edge tools like COOKIE-Pro, network pharmacology, and machine learning, this resource aims to equip scientists with the knowledge to design more effective and safer targeted therapies, ultimately reducing attrition rates in clinical development.

The Principles of Chemogenomics: Building Libraries for Targeted Therapeutic Discovery

Defining Chemogenomic Libraries and Their Role in Modern Drug Discovery

A chemogenomic library is a strategically designed collection of small molecules used to systematically probe biological systems. Unlike general compound libraries, they are structured to target specific protein families (like GPCRs or kinases) or to cover a broad spectrum of mechanisms across the proteome [1] [2]. The primary goal of these libraries is to bridge the gap between chemical compounds and biological responses, enabling researchers to deconvolute complex phenotypes and identify novel therapeutic targets [2].

In modern drug discovery, these libraries are pivotal for phenotypic screening, where compounds are tested on cells or tissues to observe changes without pre-supposing a specific molecular target. The central challenge in utilizing these libraries lies in balancing compound potency (the strength of a compound's effect on its primary target) with selectivity (its specificity for the primary target over others). Achieving this balance is critical for developing effective therapies with minimal off-target effects [3].

Core Concepts & Troubleshooting Guide

FAQ: Library Design and Application

What is the main difference between a chemogenomic library and a standard compound library? A standard compound library is often designed for general screening or chemical diversity. In contrast, a chemogenomic library is a focused set of compounds curated based on existing knowledge of drug-target interactions. It is designed to interrogate specific biological pathways or a wide range of mechanistically defined targets within a cellular system, making it particularly powerful for understanding the mechanism of action in phenotypic screens [2].

Our phenotypic screen identified a hit. How can a chemogenomic library help us find the target? This process, called target deconvolution, is a key application. By profiling your hit compound alongside a chemogenomic library in a high-content assay (like Cell Painting), you can compare the morphological profile it induces to the profiles of compounds with known mechanisms. A significant similarity in profiles often suggests a shared target or pathway [2]. The underlying system pharmacology network that links compounds to targets and pathways can then be queried to generate testable hypotheses about your hit's mechanism of action.

How selective do the compounds in a chemogenomic library need to be? This touches directly on the potency-selectivity balance. While highly selective compounds are valuable for pinpointing a single target's function, compounds with known polypharmacology (multi-target activity) can also be highly informative. They can reveal synergistic effects or be repurposed for complex diseases. The ideal library contains a mix of both, with well-annotated activity profiles for each compound [3] [2].

What are the limitations of using chemogenomic libraries in screening? A major limitation is that even the best chemogenomic libraries cover only a fraction of the human proteome—approximately 1,000–2,000 out of 20,000+ genes [4]. This means many potential drug targets remain unaddressed. Furthermore, a compound's on-target effect in a simple system might not replicate its behavior in a more complex disease-relevant cellular model, potentially leading to misleading conclusions [4].

Troubleshooting Common Experimental Issues

Issue: Hit compounds from a phenotypic screen show high cytotoxicity at low concentrations, suggesting potential off-target effects.

- Root Cause: The compound may be potent but non-selective, interacting with multiple targets essential for cell survival.

- Solution: Perform a target-specific selectivity analysis [3]. This involves profiling the hit compound against a panel of related targets to calculate a selectivity score. Reformulate the problem as a multi-objective optimization: you need a compound that simultaneously shows high potency for the desired target and low potency for off-targets. This data can guide medicinal chemistry efforts to modify the compound and improve its selectivity profile.

Issue: A compound shows a clear phenotypic effect in a primary screen, but its known annotated target does not seem to align with the observed phenotype.

- Root Cause: The compound's mechanism of action in your specific cellular context may be different from its canonical annotation. It could be acting on an unknown or secondary target.

- Solution: Use the chemogenomic library as a reference set. Re-run your assay with the hit compound and a broad chemogenomic library. Use high-content imaging to create a detailed morphological profile for each. If your hit compound clusters with compounds targeting a specific pathway not previously considered, this can reveal a novel mechanism. Subsequently, you can use techniques like CRISPR knockouts or proteomics to validate the newly suggested target [2].

Issue: Our chemogenomic screen yielded a large number of hits, and we are struggling to prioritize them for follow-up.

- Root Cause: Lack of a systematic framework to rank compounds based on multiple criteria, including potency, selectivity, and novelty.

- Solution: Implement a multi-parameter scoring system. Integrate the following data for each hit into a prioritization table:

- Potency (e.g., IC50/EC50): The concentration at which the desired effect is observed.

- Selectivity Score (e.g., Gini score or target-specific score): A metric quantifying how specific the compound is to the phenotype or target of interest versus other effects [3].

- Chemical Tractability: Assess whether the compound's chemical structure is suitable for further optimization (e.g., favorable drug-likeness properties).

- Morphological Profile Similarity: How closely the compound's phenotype matches other compounds with desirable mechanisms [2].

Experimental Protocols & Data Analysis

Protocol 1: Assessing Target-Specific Selectivity

This protocol is adapted from methods used to analyze kinase inhibitor profiles [3].

1. Objective: To quantify how selective a given compound is for a specific primary target of interest compared to all other potential targets.

2. Materials:

- The compound of interest.

- A panel of purified target proteins (e.g., a kinase panel).

- A reliable binding affinity assay (e.g., a fluorescence polarization assay to measure dissociation constant, Kd).

3. Procedure:

- Measure the binding affinity (Kd) of the compound for the primary target (t~j~).

- Measure the binding affinity of the compound for a broad set of off-targets.

- Calculate the Global Relative Potency for the compound against the primary target using the formula:

G~ci,tj~ = K~ci,tj~ - mean(B~ci~\{K~ci,tj~})Where:K~ci,tj~is the binding affinity (pKd) for the compoundc~i~on targett~j~.B~ci~\{K~ci,tj~}is the set of all other measured binding affinities for this compound [3].

- A higher positive score indicates greater selectivity for the target of interest.

4. Data Interpretation: The resulting score allows you to rank multiple compounds for their selectivity against your target. This helps identify leads that are both potent and specific, a key step in optimizing a chemogenomic library for a given disease application.

Protocol 2: Integrating Morphological Profiling for Mechanism of Action Studies

This protocol outlines how to use a high-content assay like Cell Painting to deconvolute a compound's mechanism of action [2].

1. Objective: To generate a hypothesis for a hit compound's mechanism of action by comparing its morphological profile to a reference chemogenomic library.

2. Materials:

- A cell line relevant to your disease of interest (e.g., U2OS osteosarcoma cells are commonly used).

- The hit compound(s) and a reference chemogenomic library (e.g., a 5000-compound library targeting diverse mechanisms).

- Cell Painting assay reagents: fixation solution and fluorescent dyes for staining multiple cell components (nucleus, endoplasmic reticulum, etc.).

- High-content imaging system and image analysis software (e.g., CellProfiler).

3. Procedure:

- Plate cells in multiwell plates and treat them with the hit compound and the reference library compounds.

- Fix and stain the cells according to the Cell Painting protocol.

- Image the plates using a high-throughput microscope.

- Use CellProfiler to identify individual cells and measure hundreds of morphological features (size, shape, texture, intensity) for each cell object.

- For each compound, calculate an average profile from all the treated cells.

- Use dimensionality reduction and clustering algorithms to group compounds with similar morphological profiles.

4. Data Interpretation: A hit compound that clusters tightly with a set of compounds known to inhibit a specific target (e.g., BET bromodomains) provides strong circumstantial evidence that it shares a similar mechanism. This hypothesis can then be validated with direct binding assays.

Data Presentation

Table 1: Selectivity Metrics for Comparing Compounds in a Library

This table summarizes key metrics used to quantify the selectivity of compounds, which is crucial for balancing library design [3].

| Metric | Formula/Calculation | Interpretation | Best Use Case |

|---|---|---|---|

| Standard Selectivity Score | Number of targets bound above a potency threshold (e.g., Kd < 10 µM). | Lower number = more selective. Simple, intuitive. | Initial, broad filtering of promiscuous compounds. |

| Gini Selectivity Score | Derived from the Gini coefficient; measures inequality in a compound's binding affinity distribution across targets. | Closer to 1 = more selective (affinity concentrated on few targets). | Ranking compounds based on the "shape" of their entire target activity profile. |

| Target-Specific Selectivity Score | G~ci,tj~ = K~ci,tj~ - mean(B~ci~\{K~ci,tj~}) |

Higher positive score = more selective for target t~j~. | Identifying the best compound for a specific target of interest. |

Table 2: Key Materials for a Chemogenomic Screening Pipeline

This table lists essential reagents and tools for setting up a chemogenomic screening experiment [2].

| Research Reagent / Tool | Function in the Experiment | Example Sources / Software |

|---|---|---|

| Curated Chemogenomic Library | A collection of compounds with known or diverse mechanisms of action; the core reagent for profiling. | Prestwick Chemical Library, NCATS MIPE Library, GSK BDCS [2]. |

| Cell Painting Dyes | A set of fluorescent dyes that stain specific cellular compartments (nucleus, ER, etc.) for high-content imaging. | Commercially available kits (e.g., from Sigma-Aldrich). |

| Image Analysis Software | Extracts quantitative morphological features from cell images. | CellProfiler (open source) [2]. |

| Graph Database | Integrates heterogeneous data (compounds, targets, pathways, phenotypes) for network analysis. | Neo4j [2]. |

| Target Affinity Panel | A set of purified proteins to experimentally determine a compound's binding affinity and selectivity. | Commercial service providers (e.g., Eurofins, Reaction Biology). |

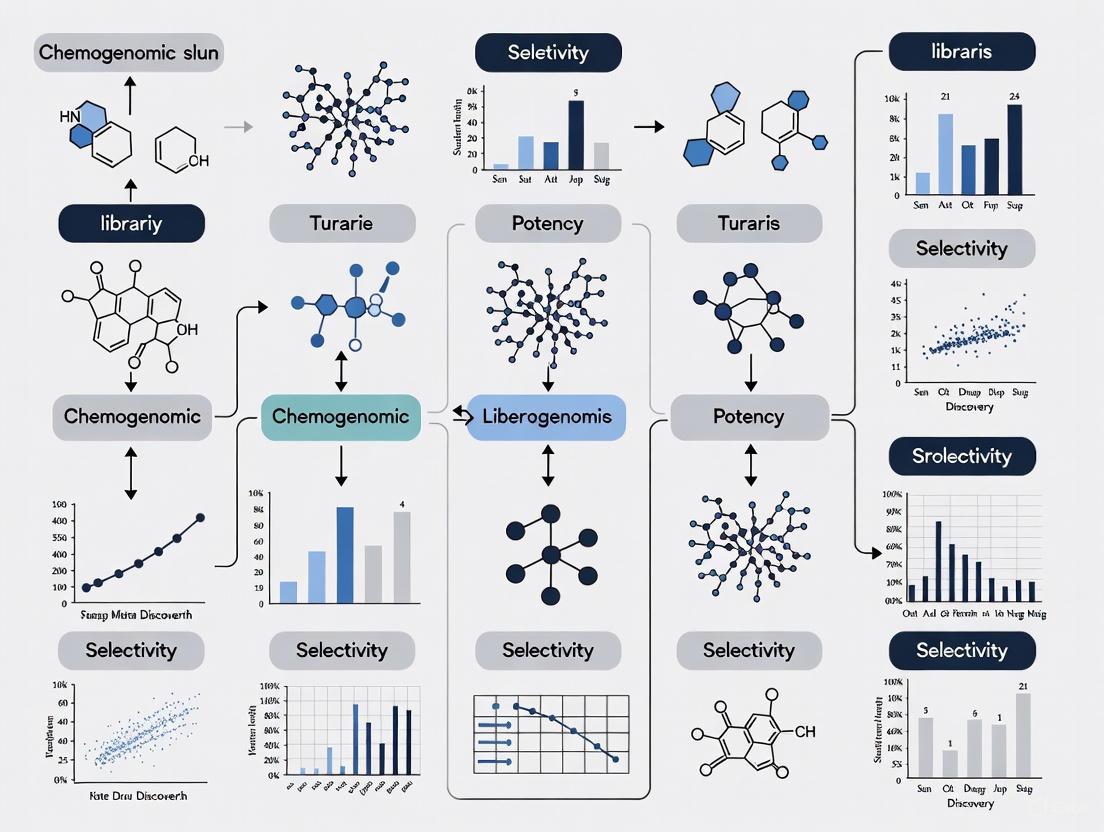

Workflow Visualization

Chemogenomic Screening Workflow

Selectivity Optimization Logic

Troubleshooting Guides

Guide 1: Diagnosing and Resolving Poor Compound Selectivity

Issue: Your compound shows potent activity against your primary target but also exhibits significant off-target effects, leading to potential toxicity or unwanted side effects in phenotypic screens.

Symptoms:

- High signal in multiple, diverse counter-screens and orthogonal assays.

- Bell-shaped or shallow dose-response curves in secondary assays [5].

- In phenotypic screening, the compound induces multiple, non-specific cellular changes, as detected in high-content analysis or cell painting assays [5].

Troubleshooting Steps:

| Step | Action | Objective & Rationale |

|---|---|---|

| 1. Confirm Specificity | Run a panel of counter screens designed to identify assay interference (e.g., autofluorescence, aggregation) [5]. | To rule out false positives caused by the compound's physicochemical properties rather than true biological activity. |

| 2. Profile Selectivity | Use broad target profiling services (e.g., kinase panels, GPCR screens) to quantify activity across a wide range of potential off-targets [3]. | To move from a qualitative (non-selective) to a quantitative (target-specific selectivity) understanding of the compound's profile [3]. |

| 3. Analyze Chemotype | Perform a Structure-Activity Relationship (SAR) analysis. Check for chemical features associated with pan-assay interference compounds (PAINS) [5]. | To determine if the non-selectivity is inherent to the chemotype and to guide further chemical optimization away from promiscuous scaffolds. |

| 4. Optimize Lead | Use the selectivity data to chemically modify the lead compound, aiming to weaken off-target binding while maintaining or improving on-target potency. | To improve the target-specific selectivity score by simultaneously optimizing absolute potency for the target of interest and relative potency against other targets [3]. |

Guide 2: Addressing Compounds with High Selectivity but Low Potency

Issue: A compound is highly selective for your target of interest but lacks sufficient biological activity (low efficacy) at therapeutically relevant concentrations.

Symptoms:

- The compound shows a weak physiological response despite high receptor binding affinity [6].

- High EC50 value, indicating low potency in functional assays [6].

Troubleshooting Steps:

| Step | Action | Objective & Rationale |

|---|---|---|

| 1. Verify Binding | Use biophysical methods (e.g., Surface Plasmon Resonance - SPR, Isothermal Titration Calorimetry - ITC) to confirm direct binding to the intended target [5]. | To distinguish between true low efficacy and a failure to engage the target at all. |

| 2. Check Assay Health | Review control compound data and assay metrics (Z'-factor). Ensure the assay has a sufficient signal window to detect a weak response [5]. | To confirm that the assay itself is capable of detecting the compound's activity and is not insensitive. |

| 3. Differentiate Agonism | Test the compound in a functional agonist/antagonist mode assay. A selective but low-efficacy compound may act as a partial agonist or antagonist [6]. | To fully characterize the compound's intrinsic activity (efficacy), which is separate from its affinity and selectivity [6]. |

| 4. Explore Analogs | If the chemotype is selective but weak, synthesize and test close structural analogs to find a molecule with better potency while maintaining selectivity. | To improve the "absolute potency" component of the target-specific selectivity score [3]. |

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between a drug's affinity, efficacy, potency, and selectivity?

- Affinity: The strength of binding between a drug and its receptor. A high-affinity drug binds tightly even at low concentrations [6].

- Efficacy (Intrinsic Activity): The ability of a drug, once bound, to produce a functional biological response. A drug can have high affinity but low efficacy [6].

- Potency: The concentration of a drug required to produce a defined effect (e.g., EC50). It is a function of both affinity and efficacy [6].

- Selectivity: A drug's ability to preferentially produce a particular effect by acting on a specific receptor or cell type. It is related to the structural specificity of binding [6].

- Specificity: The narrowness of a drug's action. A specific drug results in a limited set of cellular responses, often by interacting with very few targets [6].

FAQ 2: How can I quantitatively measure and compare the selectivity of different compounds in my library?

Traditional metrics like the Gini coefficient and selectivity entropy measure the overall narrowness of a compound's bioactivity profile. For a more targeted approach, a target-specific selectivity score is recommended. This score is a bi-objective optimization that considers [3]:

- Absolute Potency: The compound's potency against your specific target of interest.

- Relative Potency: The compound's potency against all other potential off-targets. A higher score indicates a compound that is both potent and selective for your target. The following table summarizes key selectivity metrics:

| Selectivity Metric | What It Measures | Interpretation |

|---|---|---|

| Target-Specific Score [3] | Potency for a specific target vs. all others. | High score = High potency and high specificity for your target. |

| Gini Coefficient [3] | Inequality of binding affinities across all targets. | High score (closer to 1) = Selective (activity concentrated on few targets). |

| Selectivity Entropy [3] | Distribution of binding affinities across targets. | Low entropy = Selective (strong binding to few targets). |

| Partition Index [3] | Fraction of total binding strength directed to a reference target. | High index = More selective for the reference target. |

FAQ 3: My primary HTS assay is biochemical. What experimental cascade should I use to triage hits and confirm specific, on-target activity?

A robust triage cascade is essential. After confirming dose-response in the primary assay, proceed with these experimental strategies [5]:

- Counter-Screens: Use assays designed to identify and eliminate false positives caused by assay technology interference (e.g., compound autofluorescence, aggregation, singlet oxygen quenching) [5].

- Orthogonal Assays: Confirm bioactivity using an assay with a different readout technology (e.g., switch from fluorescence to luminescence or absorbance) or a biophysical method (e.g., SPR, ITC, MST) to validate target engagement [5].

- Cellular Fitness Assays: Test for general toxicity or harm to cells using viability (e.g., CellTiter-Glo), cytotoxicity (e.g., LDH assay), or high-content imaging assays [5].

- Selectivity Profiling: Test confirmed hits against a panel of related and unrelated targets to understand the breadth of their activity [3].

FAQ 4: Why is it so challenging to develop highly selective kinase inhibitors, and how can polypharmacology be leveraged?

Kinases are a large family of enzymes with highly conserved ATP-binding sites. This structural similarity makes it difficult to design inhibitors that bind to one kinase without affecting others [3]. However, this polypharmacology (action on multiple targets) can be leveraged for drug repurposing if a compound's off-target activities align with the mechanisms of another disease. The key is to ensure sufficient selectivity against the off-target proteins driving that new disease progression [3].

FAQ 5: How does the concept of "intrinsic activity" explain why two drugs binding the same receptor can have different effects?

Intrinsic activity (efficacy) describes the maximum effectiveness of a drug molecule at producing a response once it is bound to the receptor [6]. Two drugs can bind to the same set of receptors with the same affinity, but one might produce a greater maximum effect. The drug producing the greater effect has higher intrinsic activity. A drug with high affinity but low intrinsic activity may bind well but produce only a weak physiological response [6].

Experimental Protocols & Workflows

Protocol 1: Determining Target-Specific Selectivity for a Kinase Inhibitor

This protocol outlines a method for assessing the selectivity of a kinase inhibitor against a panel of kinase targets, using the target-specific selectivity scoring method [3].

1. Materials and Reagents

- Compound of Interest: Your kinase inhibitor, dissolved in DMSO.

- Kinase Panel: A diverse set of purified kinase proteins (e.g., 100-500 kinases).

- Assay Substrate: A suitable peptide or protein substrate for the kinases.

- ATP Solution: Prepared at a concentration near the Km for most kinases.

- Detection Reagents: Depending on the assay format (e.g., ADP-Glo kit, fluorescent antibodies for phospho-substrates).

2. Procedure

- Step 1 - Broad Kinase Profiling: Test your compound at a single concentration (e.g., 1 µM or 10 µM) against the entire kinase panel. This initial screen identifies potential off-targets.

- Step 2 - Dose-Response Curves: For your primary target and all kinases showing >50% inhibition in the initial screen, run full dose-response curves (typically 10 concentrations in a 1:3 or 1:2 serial dilution). Perform experiments in triplicate.

- Step 3 - Data Analysis:

- Calculate the dissociation constant (Kd) or IC50/pIC50 for each compound-kinase pair.

- Organize the data into a matrix where rows are compounds and columns are kinases.

- For your compound (c~i~) and primary target kinase (t~j~), calculate the target-specific selectivity score using the formula that considers both its absolute potency against t~j~ and its relative potency against all other kinases [3].

3. Data Interpretation A high target-specific selectivity score indicates a compound that is both potent against your target of interest and has minimal off-target activity. This compound is a superior candidate for further development compared to one that is merely potent but non-selective.

Workflow Diagram: Hit Triage Cascade for Specific Lead Selection

Conceptual Diagram: The Relationship Between Drug Properties

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Assay Type | Function in Selectivity & Potency Assessment |

|---|---|

| Broad Target Profiling Panels (e.g., kinase, GPCR, epigenetic) | Systematically measures compound activity across hundreds of targets to quantitatively define selectivity and identify off-target effects [3]. |

| Biophysical Assays (SPR, ITC, MST) | Confirms direct binding to the primary target, measures binding affinity (Kd), and provides kinetic parameters (on/off rates), orthogonal to biochemical activity [5]. |

| Orthogonal Assay Reagents (Luminescence, Absorbance, HCS) | Uses a different detection technology to confirm primary assay hits, eliminating false positives caused by assay-specific interference (e.g., fluorescence quenching) [5]. |

| Cellular Fitness Assay Kits (Viability, Cytotoxicity, Apoptosis) | Determines if the compound's observed activity is due to specific target modulation or general cellular toxicity, a critical factor in lead selection [5]. |

| Counter-Screen Assay Kits (Aggregation, Redox, Chelation) | Specifically designed to identify and flag compounds that act through undesirable, non-specific mechanisms (e.g., pan-assay interference compounds) [5]. |

What are the primary strategies for designing a chemogenomic library that balances wide target coverage with compound selectivity?

Designing a chemogenomic library requires a strategic balance between covering a wide range of biological targets and ensuring the compounds have appropriate selectivity profiles. The primary strategies involve a systems-level approach and careful analysis of chemical space.

Adopt a Systems Pharmacology Perspective: Modern drug discovery has shifted from a "one target—one drug" model to a "one drug—several targets" systems pharmacology perspective. This is particularly important for complex diseases like cancers and neurological disorders, which are often caused by multiple molecular abnormalities [2]. The library should be designed to probe these complex systems.

Exploit Structural Similarities and Differences for Selectivity: Rational design can tune selectivity by exploiting differences between protein families. Key structural features to consider include:

- Shape Complementarity: Small differences in binding site shape can be leveraged for major gains in selectivity. For example, designing compounds that fit a larger binding site (e.g., COX-2) but clash with a smaller, similar site (e.g., COX-1) can achieve over 13,000-fold selectivity [7].

- Electrostatics and Flexibility: Differences in charge distribution and the flexibility of both the target and decoy proteins can be exploited to design selective compounds [7].

Integrate Heterogeneous Data Sources: A robust library is built by integrating diverse data into a network pharmacology database. This typically includes:

- Bioactivity Data: From resources like the ChEMBL database, which contains standardized bioactivity data for millions of molecules and thousands of targets [2].

- Pathway and Disease Information: From databases like KEGG and the Disease Ontology, which help link targets to biological processes and diseases [2].

- Phenotypic Profiling Data: Incorporating data from high-content imaging assays, such as Cell Painting, which provides morphological profiles linking compound-induced changes to phenotypic outcomes [2].

The following workflow outlines the key steps and data integrations in building a chemogenomic library:

How can I quantify the scaffold diversity of my compound library, and what are the benchmark values for high-quality libraries?

Quantifying scaffold diversity is crucial for ensuring a library probes a broad area of chemical space and is not biased towards a few common structures. Several computational methods and metrics are available.

Key Scaffold Representations:

- Murcko Frameworks: This method dissects a molecule into its ring systems, linkers, and side chains. The Murcko framework is the union of all ring systems and linkers, representing the core scaffold of the molecule [8].

- Scaffold Tree: A more systematic, hierarchical method that iteratively prunes peripheral rings from the Murcko framework based on a set of rules until only one ring remains. This creates different "levels" of scaffolds for each molecule, providing a multi-resolution view of chemical diversity [2] [8].

Key Metrics for Quantifying Diversity:

- Scaffold Count and Cumulative Scaffold Frequency Plots (CSFPs): The simplest metric is the number of unique scaffolds in a library. A more informative approach is the CSFP, which plots the cumulative percentage of molecules represented by the scaffolds, sorted from most to least frequent [8].

- PC50C (Percentage of Scaffolds to Cover 50% of Compounds): This metric quantifies the distribution of molecules over scaffolds. A low PC50C value indicates that a small number of scaffolds account for a large proportion of the library (low diversity). A high PC50C value indicates that a larger number of scaffolds are needed to cover half the library, indicating high diversity [8].

Benchmark Values and Comparisons: Comparative analyses of commercial libraries provide context for assessing your own library's diversity. One study analyzed standardized subsets of several libraries, each containing 41,071 compounds with matched molecular weight distributions. The table below summarizes the scaffold diversity based on Murcko frameworks and Level 1 Scaffold Trees:

Table 1: Benchmark Scaffold Diversity of Standardized Compound Libraries (n=41,071 compounds each)

| Library Name | Number of Unique Murcko Frameworks | PC50C for Murcko Frameworks | Number of Unique Level 1 Scaffolds | PC50C for Level 1 Scaffolds |

|---|---|---|---|---|

| Chembridge | 5,808 | 1.9% | 4,253 | 2.5% |

| ChemicalBlock | 5,746 | 1.9% | 4,238 | 2.5% |

| Mcule | 5,693 | 1.9% | 4,174 | 2.5% |

| VitasM | 5,581 | 1.9% | 4,106 | 2.6% |

| Enamine | 5,255 | 2.1% | 3,910 | 2.8% |

| LifeChemicals | 4,970 | 2.2% | 3,749 | 2.9% |

| Specs | 4,509 | 2.4% | 3,457 | 3.2% |

| Maybridge | 4,216 | 2.6% | 3,243 | 3.4% |

Data adapted from [8]

Interpretation: Libraries like Chembridge, ChemicalBlock, and Mcule are considered more structurally diverse, as they possess a higher number of unique scaffolds and require a smaller percentage of scaffolds (lower PC50C) to cover 50% of their compounds [8].

What experimental and computational protocols are used to characterize and validate target coverage of a chemogenomic library?

Validating that a library adequately covers the intended target space requires a combination of computational prediction and experimental confirmation.

Computational Protocol for Target Coverage Analysis:

Data Collection and Curation:

In Silico Target Profiling:

- Use computational methods to predict the activity of each library compound against the entire panel of targets.

- Methods: These can include structure-based approaches like molecular docking if 3D structures are available, or ligand-based approaches like similarity searching or quantitative structure-activity relationship (QSAR) models if bioactivity data is available [9].

- Tools: Various commercial and open-source software platforms can perform these predictions.

Coverage and Bias Estimation:

- The output is a matrix predicting the potential interaction between each compound and each target.

- Analyze this matrix to determine which targets are "hit" by at least one compound in the library. The goal is to maximize the number of targets covered [9].

- Assess bias by ensuring coverage is relatively uniform across the target family, rather than having many compounds for a few targets and sparse coverage for others [9].

Experimental Protocol for Validation via Phenotypic Screening:

Cell-Based Phenotypic Screening:

- Cell Model Selection: Use disease-relevant cell models. For cancer, this could include patient-derived cells, such as glioma stem cells from glioblastoma patients [10].

- Perturbation: Treat cells with each compound from the physical chemogenomic library.

- Staining and Imaging: Employ a high-content imaging assay like Cell Painting. This assay uses a set of fluorescent dyes to label major cellular components (nucleus, nucleolus, cytoplasm, Golgi apparatus, actin, and mitochondria). Cells are then imaged using a high-throughput microscope [2].

Image and Data Analysis:

- Feature Extraction: Use image analysis software (e.g., CellProfiler) to extract hundreds of morphological features from the images for each cell [2].

- Profile Generation: Generate a morphological profile for each compound treatment, representing its unique "phenotypic fingerprint".

- Clustering and Analysis: Cluster compounds based on their morphological profiles. Compounds with similar profiles are likely to share mechanisms of action, suggesting they affect similar biological targets or pathways [2].

Validation of Target Coverage:

- The library's target coverage is validated if the phenotypic screening reveals a wide range of distinct morphological profiles and identifies compounds that induce phenotypes of interest (e.g., patient-specific vulnerabilities in cancer cells) [10].

- Compounds with known mechanisms can serve as positive controls, and their profiles should cluster together, confirming the assay's ability to deconvolute mechanisms.

The relationship between computational and experimental validation is summarized below:

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Resources for Chemogenomic Library Design and Screening

| Item / Resource | Function / Description | Example Sources / Tools |

|---|---|---|

| Bioactivity Databases | Provides curated data on drug-like molecules, their targets, and bioactivities for building knowledge networks. | ChEMBL [2] |

| Pathway & Ontology Databases | Provides information on biological pathways, gene functions, and disease classifications for biological annotation. | KEGG, Gene Ontology (GO), Disease Ontology (DO) [2] |

| Phenotypic Profiling Assay | A high-content imaging assay that uses fluorescent dyes to label cellular components, enabling quantification of morphological changes. | Cell Painting [2] |

| Scaffold Analysis Software | Software used to systematically dissect molecules into scaffolds and fragments for diversity analysis. | Scaffold Hunter [2] |

| Graph Database | A database technology ideal for integrating and querying complex, interconnected network pharmacology data. | Neo4j [2] |

| Commercial Compound Libraries | Pre-designed libraries focusing on specific target families (e.g., kinases, GPCRs) or broad diversity for screening. | Pfizer chemogenomic library, GSK Biologically Diverse Compound Set (BDCS), NCATS MIPE library [2] |

| Public Screening Libraries | Large, purchasable collections of small molecules for virtual or high-throughput screening. | ZINC database vendors (e.g., Mcule, Enamine, ChemBridge) [8] |

Conceptual Foundations: From Single-Target to Network Pharmacology

What is the fundamental difference between the 'magic bullet' and polypharmacology approaches?

The traditional 'magic bullet' paradigm operates on a 'one drug-one target' model, where a single drug is designed to modulate a single biological target with high specificity. In contrast, polypharmacology recognizes that complex diseases often arise from perturbations in biological networks and intentionally designs therapeutic interventions to modulate multiple targets simultaneously. This can be achieved either through a single drug binding to multiple targets or through combinations of drugs that hit different targets within a disease network [11] [12].

Why has the field shifted toward polypharmacology for complex diseases?

The shift is driven by the recognition that many diseases are not caused by single genetic determinants but involve complex multiplicity of genetic factors and environmental influences. The 'one gene-one disease' theory has proven unsuccessful for many conditions because disease manifestations arise from the impact on protein function within regulatory networks. Systems biology has revealed that physiological functions are controlled by complicated networks of signals where each component represents a 'node' and each interaction is an 'edge'. Disease-associated genetic mutations often perturb these networks at multiple points, making multi-target approaches more effective [11].

Practical Implementation: Designing Chemogenomic Libraries

How do I balance potency and selectivity when building a targeted screening library?

Designing a targeted screening library requires multi-objective optimization to maximize cancer target coverage while ensuring cellular potency and selectivity, while minimizing the number of compounds. Systematic strategies involve:

- Target-based design: Identifying small molecules against druggable cancer targets among approved, investigational, and experimental probe compounds [13]

- Activity filtering: Removing non-active probes and selecting the most potent compounds for each target [13]

- Selectivity assessment: Evaluating both on- and off-target profiles to ensure appropriate specificity [13] [14]

The C3L (Comprehensive anti-Cancer small-Compound Library) development demonstrated that through careful curation, library size can be reduced 150-fold while still covering 84% of cancer-associated targets [13].

What are the key quality criteria for chemical probes in polypharmacology research?

High-quality chemical probes should meet stringent quality criteria including [14]:

- Cell permeability for relevant experimental systems

- Potency appropriate for the intended biological context

- Selectivity against the intended target family

- Comprehensive characterization including off-target profiling

Table 1: Key Research Reagent Solutions for Polypharmacology Studies

| Reagent Type | Function | Examples/Applications |

|---|---|---|

| Kinase Chemical Probes [14] | Study kinase biology including catalytic and scaffolding functions | Allosteric inhibitors, covalent inhibitors, macrocyclic inhibitors targeting active/inactive states |

| Bromodomain Probes [14] | Modulate chromatin and epigenetic mechanisms | Inhibitors against bromodomain-containing proteins for cancer research |

| Ubiquitin System Probes [14] | Study ubiquitination processes regulating protein degradation | E3 ubiquitin ligase and de-ubiquitinase (Dub) inhibitors |

| Chemogenomic Library Sets [14] | Target family-directed compound collections | Kinase chemogenomic set (KCGS) inhibiting catalytic function of multiple kinases |

| Open Science Chemical Probes [14] | Community-validated research tools | Broadly characterized modulators openly available to research community |

Experimental Design & Methodologies

What network analysis methods support polypharmacology target identification?

Systems biology employs several methodologies to identify potential polypharmacology targets:

- Drug-target network analysis: Examining relationships between drugs and targets to identify multiple targets and suitable combinations [11]

- Genomic and proteomic profiling: Large-scale analysis of signaling molecules under various conditions (e.g., drug treatment, stress) [11]

- Network representation of genetic mutations: Identifying how different mutations perturb networks at nodes or edges, with edgetic perturbations often having consequences at multiple network points [11]

Diagram 1: Systems Pharmacology Workflow

How do I design experiments to validate multi-target approaches?

Effective experimental validation requires:

- Phenotypic screening in disease-relevant models using target-annotated compound libraries [13]

- Multi-parameter phenotypic profiling to characterize cellular effects and understand mechanisms of action [15]

- Pathway activity assessment using relevant biomarkers and network readouts [11]

- Patient-derived models that recapitulate disease heterogeneity, such as glioma stem cells from glioblastoma patients [13]

Table 2: Key Quantitative Metrics for Polypharmacology Assessment

| Parameter | Assessment Method | Target Threshold |

|---|---|---|

| Target Coverage [13] | Number of disease-relevant targets modulated | >80% of defined target space |

| Cellular Potency [13] | In vitro activity in disease models | IC50 <1 μM for primary targets |

| Selectivity Index [14] | Off-target profiling across target families | >10-100 fold selectivity window |

| Therapeutic Index [15] | Ratio of toxic to efficacious exposure | >10 for acceptable safety margin |

| Network Modulation [11] | Pathway activity readouts | Significant perturbation of disease network |

Troubleshooting Common Experimental Challenges

How do I address insufficient network coverage in my polypharmacology approach?

Problem: Library or compound combination does not adequately cover the disease-relevant network.

Solutions:

- Expand target space by including nearest neighbors and influencer targets in the network [13]

- Implement target-based design strategies to identify compounds against under-represented targets [13]

- Use extended compound space analysis to identify additional bioactive compounds through database queries [13]

- Consider combination approaches where multiple drugs target different nodes in the network [11]

What strategies help manage selectivity concerns in multi-target compounds?

Problem: Compound shows undesirable off-target effects while attempting to hit multiple therapeutic targets.

Solutions:

- Employ allosteric inhibitors that target unique binding pockets rather than conserved active sites [14]

- Develop covalent inhibitors targeting unique cysteine residues in target proteins [14]

- Utilize macrocyclic compounds with improved shape compatibility for better selectivity [14]

- Implement counter-screening against known off-targets and orthogonal assays to eliminate false positives [16]

Diagram 2: Selectivity Optimization Strategies

How can I improve translation from cellular models to clinical relevance?

Problem: Promising polypharmacology effects in simple models don't translate to more complex systems.

Solutions:

- Use patient-derived cell models that better recapitulate disease heterogeneity [13]

- Implement disease-relevant assays including 3D co-culture and organ-on-a-chip systems [15]

- Incorporate pharmacokinetic considerations early, including absolute bioavailability assessment [17]

- Leverage human genetics data to validate therapeutic targets and prioritize those with human genetic evidence [15]

Emerging Frontiers & Advanced Applications

What innovative approaches are expanding polypharmacology capabilities?

Emerging strategies include:

- Systems pharmacology: Deliberate design of therapeutic drugs for multi-targeting that affords beneficial effects [12]

- Precision polypharmacology: Patient-specific vulnerability identification through phenotypic screening of annotated libraries [13]

- Network-based target prioritization: Identifying influential nodes and edges in disease networks for optimal intervention points [11]

- Chemical biology integration: Using chemical probes to understand network biology and identify new intervention strategies [14]

The future of polypharmacology lies in integrating systems biology understanding with precision medicine approaches to develop multi-target therapies that are both effective for specific patient populations and safe through their balanced activity profiles.

Advanced Methodologies for Library Assembly and Phenotypic Screening

Frequently Asked Questions (FAQs)

FAQ 1: How can I effectively integrate data from ChEMBL, KEGG, and GO to construct a unified network?

Constructing a unified network requires a systematic approach to map compounds to targets and then to biological functions. Begin by querying ChEMBL for your compounds of interest to retrieve known protein targets. Use the standardized target identifiers (e.g., UniProt IDs) from ChEMBL to cross-reference with KEGG PATHWAY and GO databases. KEGG provides pathway context, while GO offers detailed biological processes, molecular functions, and cellular components. This creates a compound-target-pathway network, which can be visualized and analyzed in tools like Cytoscape. The key is using common identifiers to ensure seamless integration across these heterogeneous data sources [18] [19].

FAQ 2: What are the common data formatting challenges when using these databases, and how can I resolve them?

The primary challenge is the use of different nomenclature and identifier systems across databases.

- Problem: ChEMBL uses its own target identifiers, KEGG uses its own gene and pathway IDs, and GO uses GO Term IDs.

- Solution: Always use a common, standardized identifier as a bridge. The most effective method is to map all entities (e.g., drug targets) to their official UniProt IDs or Gene Symbols. Online ID conversion tools or database-specific cross-reference tables can automate this process. For instance, when you retrieve a target from ChEMBL, note its UniProt ID, which can then be used to find corresponding entries in KEGG and GO [20] [18].

FAQ 3: My network is too large and complex. How can I filter it to identify the most biologically relevant interactions?

Overly complex networks can be simplified by applying filters based on confidence scores and topological analysis.

- Data Confidence: In ChEMBL, filter for interactions with high-quality evidence (e.g., high

pCHEMBLvalues, specific assay types). For protein-protein interactions from sources like STRING, use a high confidence score threshold (e.g., >0.7) [18]. - Network Topology: After constructing the network in an analysis tool like Cytoscape or NetworkX, calculate topological metrics. Focus on nodes with high degree centrality (number of connections) and high betweenness centrality (how often a node acts as a bridge). These "hub" and "bottleneck" nodes are often critical to the network's structure and function and make excellent candidates for further investigation [21] [18].

FAQ 4: Which tools are best for visualizing and analyzing the resulting pharmacology networks?

Cytoscape is the industry standard for biological network visualization and analysis. It allows you to import your network data, apply visual styles, and perform topological calculations using built-in or third-party apps (e.g., cytoHubba for identifying important nodes, ClueGO for functional enrichment analysis) [22] [19] [18]. For programmable and web-based visualizations, NetworkX in Python is excellent for graph analysis, and D3.js can be used for creating interactive web visualizations [18].

Troubleshooting Guides

Issue 1: Handling Inconsistent or Missing Identifiers Across Databases

Symptoms: Inability to link compounds to targets or pathways; broken edges in the network graph; a high number of unconnected nodes.

Resolution Protocol:

- Standardize Your Input: Start with a list of compounds and map them to canonical identifiers like SMILES or InChIKeys using a tool like PubChem.

- Map to Common Gene/Protein Identifiers:

- Use the

chembl_webresource_clientlibrary or ChEMBL API to fetch targets for your compounds. In the results, prioritize thetarget_componentsfield which often contains UniProt IDs. - For these UniProt IDs, use the KEGG API (

https://rest.kegg.jp/conv/target_db/uniprot_id) to retrieve associated KEGG Gene IDs. - Similarly, use the UniProt ID to retrieve associated GO terms from the GO consortium database or AmiGO.

- Use the

- Validate Mappings: Cross-check a subset of your mappings manually in the respective web interfaces of ChEMBL, KEGG, and GO to ensure the functional relationships are logical.

Prevention: Always design your data retrieval workflow to use UniProt IDs or official Gene Symbols as a central, stable identifier [20] [18].

Issue 2: Low Confidence in Predicted Compound-Target Interactions

Symptoms: Your network includes interactions with weak evidence, leading to unreliable hypotheses.

Resolution Protocol:

- Apply Stringent Filters: When pulling data from ChEMBL, filter bioactivity data based on:

- pCHEMBL: Use a threshold (e.g., pCHEMBL > 6.5, which is roughly equivalent to IC50/Ki < 50 nM).

- Assay Type: Prefer interactions confirmed in binding assays (

B) or functional assays (F).

- Use Complementary Prediction Tools: Augment experimental data with predictions from tools like:

- SwissTargetPrediction: Predicts targets based on compound structure similarity.

- SEA (Similarity Ensemble Approach): Links proteins to ligands based on the similarity of their known ligands.

- Experimental Validation: For high-value targets identified in your network, plan low-throughput validation experiments such as Surface Plasmon Resonance (SPR) or qPCR to confirm binding and functional effects [18].

Issue 3: Interpreting the Biological Significance of Network Modules

Symptoms: You have identified a cluster of highly interconnected nodes but are unsure of its biological meaning.

Resolution Protocol:

- Extract Network Modules: Use a community detection algorithm (e.g., the Louvain method or MCODE in Cytoscape) to identify densely connected clusters (modules) within your large network.

- Perform Functional Enrichment Analysis: For the set of genes/proteins within a module, perform an over-representation analysis using:

- GO Enrichment Analysis: Tools like

g:ProfilerorDAVIDcan identify which Biological Processes, Molecular Functions, or Cellular Components are statistically over-represented in your module. - KEGG Pathway Enrichment Analysis: The same tools can test for enrichment of KEGG pathways.

- GO Enrichment Analysis: Tools like

- Synthesize Findings: A module enriched for a specific KEGG pathway (e.g., "PI3K-Akt signaling pathway") and related GO terms (e.g., "cell proliferation") suggests that the module represents a functional unit governing that process. This directly informs your understanding of the polypharmacology of your compounds [22] [18].

Research Reagent Solutions

The table below lists essential databases, tools, and their functions for building systems pharmacology networks.

| Category | Tool/Database | Primary Function in Network Construction |

|---|---|---|

| Compound & Target Data | ChEMBL | A manually curated database of bioactive molecules with drug-like properties. It provides compound structures and curated bioactivity data (e.g., IC50, Ki) against protein targets [18]. |

| DrugBank | A comprehensive database containing information on drugs, drug mechanisms, interactions, and targets. Useful for cross-referencing and enriching drug-specific data [22] [18]. | |

| Pathway & Function | KEGG (Kyoto Encyclopedia of Genes and Genomes) | A resource for understanding high-level functions and utilities of biological systems. It is used to map drug targets to specific pathways (e.g., metabolic, signal transduction) [18]. |

| Gene Ontology (GO) | A major bioinformatics initiative to standardize the representation of gene and gene product attributes. It provides controlled vocabularies for Biological Process, Molecular Function, and Cellular Component to annotate targets [18]. | |

| Protein Interactions | STRING | A database of known and predicted protein-protein interactions, which is essential for building the protein-protein interaction (PPI) layer of your network [22] [18]. |

| Network Analysis & Visualization | Cytoscape | An open-source platform for complex network visualization and analysis. It is the primary tool for integrating data, visualizing networks, and performing topological analyses [22] [19] [18]. |

| NetworkX | A Python library for the creation, manipulation, and study of the structure, dynamics, and functions of complex networks. Ideal for programmable network analysis [18]. |

Experimental Protocol: Constructing a Compound-Target-Pathway Network

This protocol outlines the steps to build a systems pharmacology network from heterogeneous data sources.

Objective: To construct and analyze a multi-layered network linking compounds, their protein targets, and the associated biological pathways and processes.

Methodology:

Compound List Curation

- Compile a list of compounds of interest (e.g., a chemogenomic library, natural products).

- Obtain canonical SMILES structures for each compound from PubChem or ChEMBL.

Target Identification from ChEMBL

- For each compound, query the ChEMBL database via its web interface or API to retrieve known protein targets.

- Filtering: Retain only bioactivity data with:

pCHEMBLvalue > 6.5.- Assay type defined as 'B' (Binding) or 'F' (Functional).

- Extract the corresponding UniProt IDs for all confirmed targets.

Pathway and Process Annotation via KEGG and GO

- For the list of UniProt IDs, use the KEGG REST API (

https://rest.kegg.jp/link/pathway/uniprot_id) to find associated KEGG pathways. - For the same list, use a GO enrichment tool (e.g.,

g:Profiler) to identify significantly over-represented Gene Ontology terms (Biological Process, Molecular Function, Cellular Component). Use an adjusted p-value cutoff of < 0.05.

- For the list of UniProt IDs, use the KEGG REST API (

Network Construction and Analysis in Cytoscape

- Create three separate node tables: Compound, Target (UniProt ID), and Pathway/GO Term.

- Create edges based on the following confirmed relationships:

- Compound --(binds)--> Target

- Target --(participatesin)--> KEGG Pathway

- Target --(annotatedto)--> GO Term

- Import these tables into Cytoscape to generate the integrated network.

- Use Cytoscape's built-in tools or apps to calculate network properties (degree, betweenness centrality) and identify functional modules.

Visualization and Interpretation

- Apply a visual style: Color compound nodes blue (

#4285F4), target nodes red (#EA4335), and pathway/GO term nodes green (#34A853). - Analyze the network to identify hub targets and key pathways that are modulated by multiple compounds, providing insights into polypharmacology and potential mechanisms of action [22] [19] [18].

- Apply a visual style: Color compound nodes blue (

Workflow and Signaling Pathway Visualizations

SPN Workflow

Net Analysis

PI3K Pathway

FAQs: Balancing Screening Technologies with Selectivity and Potency Goals

1. What is the fundamental difference between HTS and HCS, and how does this impact my early drug discovery strategy?

High-Throughput Screening (HTS) is a method designed to rapidly evaluate the biological or biochemical activity of a large number of compounds, identifying initial "hits" against a specific target. It focuses on speed and throughput, typically using a single-parameter readout. In contrast, High-Content Screening (HCS) provides a detailed, multi-parameter analysis of cellular responses, capturing information on cell morphology, viability, proliferation, and protein localization. While HTS is highly efficient for initial target-based screening of vast libraries, HCS is more suitable for secondary and tertiary screening, offering a rounded view of cellular responses and helping to understand a compound's mechanism of action and off-target effects [23] [24]. Your strategy should leverage HTS for initial broad screening and HCS for deeper, phenotypic investigation later in the cascade.

2. My Cell Painting assay is producing variable results across large campaigns. What are the common scalability challenges and potential solutions?

Cell Painting assays face several scalability challenges [25]:

- Cost and Complexity: The need for large quantities of proprietary dyes and multiple staining, fixation, and wash steps elevates costs and can compromise reproducibility with small protocol deviations.

- Spectral Overlap: Using multiple fluorescent dyes (often 4-5) pushes the limits of microscopy, as emission spectra often overlap, complicating image analysis.

- Data Burden: A single assay can generate millions of images and thousands of features, imposing heavy demands on data storage and computation.

- Batch Effects: Small shifts in cell seeding or plate handling can introduce artifacts that mask genuine biological signals.

As a scalable alternative, consider fluorescent ligand-based HCS. These probes bind selectively to defined targets, offering streamlined multiplexing, lower reagent costs, improved data interpretability, live-cell compatibility, and easier scaling with cleaner signals [25].

3. How can I use phenotypic profiling from Cell Painting to predict compound bioactivity and reduce screening library size?

Deep learning models can be trained on Cell Painting data, combined with a small set of single-concentration activity readouts, to predict compound activity across diverse targets. This approach can reliably prioritize compounds most likely to modulate an intended target. Research has shown that using Cell Painting data in this way can achieve an average ROC-AUC of 0.744 ± 0.108 across 140 diverse assays, with 62% of assays achieving good performance (ROC-AUC ≥ 0.7). This strategy allows for the creation of focused, enriched compound sets, enabling the use of more complex and biologically relevant assays earlier in the discovery process [26].

4. Beyond traditional metrics, how can I quantify the selectivity of a compound for a specific target of interest to better balance potency and selectivity?

Traditional selectivity metrics characterize the overall narrowness of a compound's bioactivity spectrum but do not quantify selectivity for a specific target. For target-specific selectivity, a new approach decomposes the problem into two components [3]:

- Absolute Potency: The compound's potency against your target of interest.

- Relative Potency: The compound's potency against all other potential off-targets.

You can then formulate this as a multi-objective optimization problem to find compounds that simultaneously maximize absolute potency and minimize relative potency. This method provides a more nuanced view for discovering or repurposing multi-targeting drugs, such as kinase inhibitors [3].

5. What advanced analytical tools are available to comprehensively assess the selectivity of covalent inhibitors across the proteome?

For covalent inhibitors, a powerful new data analysis method called COOKIE-Pro (Covalent Occupancy Kinetic Enrichment via Proteomics) provides an unbiased view of how these drugs interact with thousands of proteins in a cell. This technique precisely measures both the binding strength (affinity) and reaction speed (reactivity) of a drug against its intended target and off-targets simultaneously. By helping to distinguish compounds that are potent due to specific binding from those that are broadly reactive, COOKIE-Pro accelerates the rational design of more effective and safer covalent therapeutics [27].

Troubleshooting Guides

Issue 1: Poor Reproducibility and High Costs in Scalable Cell Painting Assays

Problem: Inconsistent morphological fingerprints and escalating costs during large-scale Cell Painting campaigns [25].

Investigation Checklist:

- Review staining protocol for deviations in incubation times or reagent quality.

- Check fluorescence microscope filters and light sources for degradation or misalignment causing spectral overlap.

- Analyze data for plate-to-plate or batch-to-batch normalization artifacts.

- Quantify data storage and computational pipeline burdens.

Solutions:

- Transition to Fluorescent Ligand Probes: Consider replacing the multi-dye Cell Painting approach with targeted fluorescent ligands for a more streamlined and reproducible workflow with lower operational complexity [25].

- Protocol Automation and Standardization: Implement liquid handling robots for all staining and washing steps to minimize human error.

- Implement Advanced Batch Correction: Use computational tools designed for high-content imaging data to identify and correct for batch effects before analysis.

Issue 2: Integrating HTS Hit Finding with HCS for Mechanistic Insight

Problem: How to effectively transition from a large number of HTS "hits" to a manageable number for in-depth HCS analysis without losing critical information [23] [26].

Investigation Checklist:

- Determine the availability of single-concentration activity data for HTS hits.

- Assess the structural diversity of the HTS hit list.

- Define the required cellular parameters and phenotypes needed from HCS (e.g., cytotoxicity, specific organelle effects).

Solutions:

- Use Phenotypic Bioactivity Prediction: Employ a pre-trained model on your compound library's Cell Painting data (if available) to predict activity for your target using the HTS hit list. This can prioritize which hits to take forward into HCS [26].

- Apply Target-Specific Selectivity Scoring: Use a target-specific selectivity score on the HTS hit list to rank compounds not just by potency against the primary target, but also by their selectivity over off-targets [3].

- Employ a Tiered Screening Cascade: Use HTS for primary screening, followed by a lower-throughput HCS assay on the hits to filter out those with undesirable phenotypic profiles before committing to costly secondary assays.

Table 1: Performance of Cell Painting-Based Bioactivity Prediction Across Assay Types

This table summarizes the predictive performance of deep learning models trained on Cell Painting and single-point activity data, demonstrating its utility across diverse biological contexts [26].

| Assay Category | Number of Assays | Average ROC-AUC | Percentage of Assays with ROC-AUC ≥ 0.7 | Percentage of Assays with ROC-AUC ≥ 0.8 |

|---|---|---|---|---|

| All Assays | 140 | 0.744 ± 0.108 | 62% | 30% |

| Cell-Based Assays | Information not specified in source | Particularly well-suited for prediction | Information not specified in source | Information not specified in source |

| Kinase Targets | Information not specified in source | Particularly well-suited for prediction | Information not specified in source | Information not specified in source |

Table 2: Core Comparison of HTS and HCS Technologies

A fundamental comparison of HTS and HCS to guide strategic experimental planning [23] [24].

| Parameter | High-Throughput Screening (HTS) | High-Content Screening (HCS) |

|---|---|---|

| Primary Objective | Rapid identification of "hits" from large libraries | Multi-parameter analysis of cellular responses and mechanisms |

| Typical Readout | Single-parameter (e.g., enzymatic activity, binding) | Multiplexed, multi-parameter (morphology, localization, etc.) |

| Throughput | Very high (thousands to millions of compounds) | High, but generally lower than HTS |

| Data Output | Numerical, lower complexity | High-dimensional image-based data |

| Best Application Stage | Primary, initial screening | Secondary/Tertiary screening, lead optimization |

| Key Strength | Speed and efficiency for target-based screening | Profiling mechanism of action and off-target effects |

Experimental Protocols

Protocol 1: Cell Painting Assay for Morphological Profiling

Methodology: A multiplexed fluorescence assay using up to six dyes to label various cellular components for unsupervised morphological profiling [25] [26].

Detailed Workflow:

- Cell Culture: Plate cells (e.g., U2OS) in a multi-well microtiter plate and allow to adhere.

- Compound Treatment: Introduce chemical compounds or genetic perturbations to the cells for a defined period.

- Fixation and Staining: Fix cells and stain with a panel of fluorescent dyes. A typical panel includes:

- Mitotracker Red CMXRos: For mitochondria.

- Concanavalin A: For endoplasmic reticulum.

- Wheat Germ Agglutinin (WGA): For Golgi apparatus and plasma membrane.

- Phalloidin: For actin cytoskeleton (F-actin).

- Hoechst 33342: For nucleus.

- SYTO 14: For nucleoli and cytoplasmic RNA.

- Image Acquisition: Use an automated high-content fluorescence microscope to acquire high-resolution images in all relevant channels for each well of the plate.

- Image Analysis and Feature Extraction: Use image analysis software (e.g., CellProfiler) to identify individual cells and subcellular compartments. Extract hundreds to thousands of morphological features (size, shape, texture, intensity, granularity) for each cell.

- Data Analysis: The extracted features form a "phenotypic fingerprint" for each treatment, which can be used for clustering, classification, and bioactivity prediction using machine learning models.

Protocol 2: COOKIE-Pro for Covalent Inhibitor Profiling

Methodology: A proteomics-based method to measure the binding affinity and reactivity of covalent inhibitors across the proteome [27].

Detailed Workflow:

- Cell Lysis: Lyse cells to create a soluble proteome mixture.

- Drug Incubation: Add the covalent drug to the lysate, allowing it to bind to its protein targets.

- "Chaser" Probe Incubation: Introduce a broad-reactive, competitive covalent probe that carries a biotin tag. This probe will latch onto any unoccupied binding sites on proteins.

- Streptavidin Pulldown and Digestion: Use streptavidin beads to pull down all proteins bound by the chaser probe. Wash the beads and digest the proteins into peptides.

- Liquid Chromatography and Tandem Mass Spectrometry (LC-MS/MS): Analyze the peptides using LC-MS/MS to identify and quantify the proteins that were bound by the chaser probe.

- Data Analysis: The abundance of a protein's peptides bound by the chaser probe is inversely proportional to the occupancy of the original drug. This allows for the calculation of occupancy, binding affinity, and inactivation rate for thousands of proteins simultaneously, providing a comprehensive map of drug-target interactions.

Experimental Workflow and Relationship Visualizations

HTS to HCS Screening Cascade

Potency vs. Selectivity Optimization

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for HCS and Cell Painting Assays

| Reagent / Material | Function / Application | Example Use in Context |

|---|---|---|

| Cell Painting Dye Panel | A set of fluorescent dyes that label specific subcellular compartments for morphological profiling. | Staining nucleus (Hoechst), actin (Phalloidin), mitochondria (MitoTracker), Golgi/ER (Concanavalin A, WGA) to create a phenotypic fingerprint [25] [26]. |

| Fluorescent Ligands | Selective probes that bind to defined targets (e.g., GPCRs, kinases) for targeted HCS. | Used as a scalable alternative to Cell Painting for direct, reproducible readouts of target engagement with minimal spectral overlap [25]. |

| Covalent "Chaser" Probe | A broad-reactive, competitive covalent probe with a biotin tag for proteome-wide occupancy studies. | Key reagent in the COOKIE-Pro protocol to label unoccupied protein binding sites after treatment with a covalent drug [27]. |

| Live-Cell Compatible Dyes | Fluorescent dyes or probes compatible with live cells for kinetic and longitudinal analysis. | Enables HCS in true live-cell protocols, facilitating studies of dynamic processes, which is a key advantage of fluorescent ligands [25]. |

| Zebrafish Embryos | An alternative model organism for in vivo HCS due to genetic similarity, transparency, and rapid development. | Used in phenotypic screening and toxicity assessment (e.g., Acutetox Assay) for a more physiologically relevant context than cell cultures alone [23]. |

COOKIE-Pro (COvalent Occupancy KInetic Enrichment via Proteomics) is an unbiased, mass spectrometry-based method that quantifies the binding kinetics of irreversible covalent inhibitors across the entire proteome. It simultaneously determines the inactivation rate ((k{inact})) and the equilibrium constant ((KI)) for both intended and off-target proteins, providing a comprehensive map of compound engagement in a native cellular context [28] [29].

This methodology directly addresses a core challenge in chemogenomic library research and covalent drug discovery: balancing the inherent potency of irreversible binders with their necessary selectivity to minimize off-target effects [28] [13]. By decoupling intrinsic chemical reactivity from binding affinity, COOKIE-Pro provides the critical data needed to rationally optimize this balance.

Troubleshooting Guide & FAQs

What are the key parameters measured by COOKIE-Pro and what do they signify? COOKIE-Pro measures two fundamental kinetic parameters for covalent inhibitors [28]:

- (k_{inact}): The maximum rate of covalent bond formation.

- (KI): The equilibrium constant for the initial, reversible binding step. The overall efficiency of covalent inactivation is summarized by the second-order rate constant (k{eff} = k{inact}/KI). A potent and selective inhibitor should achieve its efficiency through a low (KI) (tight binding) rather than an excessively high (k{inact}) (high intrinsic reactivity), which can lead to promiscuous binding [28].

How does COOKIE-Pro overcome the limitation of traditional activity-based assays? Traditional methods require purified proteins and activity-based readouts (e.g., enzyme activity), which is impractical for profiling thousands of proteins across the proteome [28]. COOKIE-Pro eliminates this need by using a "chaser" probe and mass spectrometry to quantify the occupancy of a drug on a protein by measuring the remaining unoccupied binding sites. This allows for kinetic profiling in complex biological systems like permeabilized cells, preserving native protein environments [28] [29].

The measured covalent occupancy is lower than expected for my primary target. What could be the cause?

- Insufficient Incubation Time: The covalent binding reaction may not have reached completion. Ensure incubation times are long enough to observe the saturation curve for adduct formation [28].

- Rapid Compound Degradation: The covalent inhibitor might be unstable under the assay conditions. Include stability checks for the compound in the assay buffer.

- Competing Reactions: The warhead might be reacting with small-molecule nucleophiles (e.g., glutathione) in the system, reducing its effective concentration for protein binding [28].

The data shows high variability in off-target occupancy between technical replicates. How can this be improved?

- Use Permeabilized Cells Over Lysates: The original method emphasizes using permeabilized cells instead of cell lysates. This preserves native protein complexes and eliminates variability that arises from inconsistent compound permeation rates in intact cells or from the disrupted environment of a lysate [28].

- Standardize Sample Processing: Ensure consistent protein digestion, peptide enrichment, and mass spectrometry instrument time to minimize technical noise.

Can COOKIE-Pro be applied to high-throughput screening (HTS)? Yes. A streamlined, two-point kinetic strategy has been successfully applied to screen a library of 16 covalent fragments, generating thousands of kinetic profiles in a single experiment [28] [29]. This enables the prioritization of hits based on true binding affinity rather than intrinsic reactivity early in the discovery pipeline.

Experimental Protocol & Data

Summary of COOKIE-Pro Workflow [28] [29]:

- Sample Preparation: Use permeabilized cells to maintain the native proteome environment.

- Two-Step Incubation:

- Incubate the proteome sample with the covalent inhibitor at various concentrations and for different time points.

- Add a desthiobiotin-conjugated "chaser" probe that irreversibly binds to any remaining unoccupied cysteine residues.

- Sample Processing: Lyse cells, digest proteins, and enrich for probe-labeled peptides using streptavidin beads.

- Quantification: Analyze enriched peptides via liquid chromatography-mass spectrometry (LC-MS). The abundance of the chaser probe peptide is inversely proportional to the covalent occupancy by the drug.

- Data Analysis: Fit the time- and concentration-dependent occupancy data to a kinetic model to extract (k{inact}) and (KI) values for thousands of proteins.

Quantitative Data from Validation Studies [28] [29] The following table summarizes kinetic parameters measured for BTK inhibitors, demonstrating the method's accuracy and utility in identifying selective liabilities.

| Protein Target | Inhibitor | (k_{inact}) (min⁻¹) | (K_I) (μM) | (k_{eff}) (μM⁻¹·min⁻¹) |

|---|---|---|---|---|

| BTK | Ibrutinib | 0.27 | 0.47 | 0.57 |

| BTK | Spebrutinib | 0.15 | 0.081 | 1.85 |

| TEC | Spebrutinib | 0.16 | 0.0072 | 22.22 |

| ITK | Ibrutinib | 0.21 | 0.14 | 1.50 |

Key Insight from Data: COOKIE-Pro revealed that spebrutinib is over 10-fold more potent for the off-target TEC kinase than for its intended target, BTK, a finding critical for understanding its therapeutic profile [29].

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Material | Function in COOKIE-Pro |

|---|---|

| Permeabilized Cells | Preserves native protein complexes and cellular architecture while allowing uniform compound access [28]. |

| Covalent Inhibitor Library | The compounds being profiled; can range from clinical inhibitors to covalent fragments [28]. |

| Desthiobiotin "Chaser" Probe | A reactivity-based probe that covalently labels unoccupied cysteines after inhibitor incubation, enabling enrichment and MS quantification [28]. |

| Streptavidin Beads | Used to affinity-purify and enrich peptides that have been labeled by the desthiobiotin chaser probe [28]. |

| Mass Spectrometry | The core analytical platform for identifying and quantifying labeled peptides, providing proteome-wide coverage [28] [29]. |

| TMT Multiplexing Kits | (Optional) For tandem mass tag (TMT) labeling, allowing multiplexing of up to 18 samples to increase throughput and reduce quantitative variability [28]. |

Visualizing Workflows and Relationships

The following diagrams illustrate the core experimental workflow of COOKIE-Pro and the conceptual relationship it helps to decipher in covalent inhibitor design.

COOKIE-Pro Experimental Workflow

Inhibitor Properties and Outcomes

This technical support center addresses common experimental challenges in the phenotypic profiling of Glioblastoma (GBM) patient cells for precision oncology. The guidance is framed within the critical research objective of balancing the potency and selectivity of compounds in chemogenomic libraries to accurately identify patient-specific therapeutic vulnerabilities.

Troubleshooting Guides and FAQs

FAQ 1: Why does my single-cell RNA sequencing (scRNA-seq) data from patient-derived xenograft (PDX) models show unexpected cell state distributions compared to in vitro cultures?

Issue: Researchers often observe a narrower diversity of cell states in in vitro cultures than in the same cells after in vivo transplantation.

Explanation: This is a recognized phenomenon driven by the tumor microenvironment. In vitro stem cell conditions maintain a less differentiated state, while the mouse brain environment activates latent differentiation potential, leading to a wider variety of transcriptional cell states [30].

Solution:

- Experimental Design: Always include a paired analysis of pre-injection cells and post-treatment tumor cells isolated from the animal model.

- Data Interpretation: Account for this expansion in your analysis. The in vivo distribution (e.g., dominance of OPC-like/MES-like states in perivascular invasion or NPC-like/AC-like states in diffuse invasion) is more representative of the tumor's biology and should be the primary focus for identifying therapeutic targets [30].

FAQ 2: How can I improve the selectivity of covalent inhibitors in my chemogenomic library to minimize off-target effects?

Issue: Covalent inhibitors form permanent bonds with target proteins, but their high reactivity can lead to binding with unintended off-target proteins, causing toxicities and confounding phenotypic results.

Explanation: Optimizing covalent inhibitors requires balancing two parameters: binding affinity (strength of attraction to the target) and reactivity (speed of bond formation). A common pitfall is misinterpreting broad reactivity as true potency [27].

Solution & Protocol:

- Utilize COOKIE-Pro Analysis: Implement the COOKIE-Pro (Covalent Occupancy Kinetic Enrichment via Proteomics) method to comprehensively profile drug interactions across the proteome [27].

- Workflow: Break down cells in a liquid solution, add the covalent drug, and then introduce a "chaser" probe that latches onto unoccupied protein-binding sites.

- Measurement: Use mass spectrometry to measure chaser probe binding, which allows for the precise calculation of occupancy, binding affinity, and inactivation rate for thousands of proteins simultaneously.

- Application: This data helps chemists prioritize compounds that are potent due to specific target affinity, not just inherent high reactivity [27].

FAQ 3: My NGS library preparation for transcriptional profiling of GBM cells is yielding low amounts of usable data. What are the common root causes?

Issue: Low library yield, high duplication rates, or adapter contamination in Next-Generation Sequencing (NGS) preparation.

Explanation: This is frequently due to issues at the sample input, fragmentation, or amplification stages [31].

Solution: The table below outlines common problems and corrective actions.

Table: Troubleshooting NGS Library Preparation Failures

| Problem Category | Typical Failure Signals | Common Root Causes | Corrective Actions |

|---|---|---|---|

| Sample Input & Quality | Low starting yield; smear in electropherogram [31] | Degraded DNA/RNA; sample contaminants (phenol, salts); inaccurate quantification [31] | Re-purify input sample; use fluorometric quantification (Qubit) over UV; ensure high purity ratios (260/230 > 1.8) [31] |

| Fragmentation & Ligation | Unexpected fragment size; sharp ~70-90 bp peak (adapter dimers) [31] | Over- or under-shearing; improper adapter-to-insert molar ratio; poor ligase performance [31] | Optimize fragmentation parameters; titrate adapter:insert ratios; ensure fresh ligase and optimal reaction conditions [31] |

| Amplification & PCR | Overamplification artifacts; high duplicate rate; bias [31] | Too many PCR cycles; carryover enzyme inhibitors; mispriming [31] | Reduce the number of PCR cycles; re-purify sample to remove inhibitors; optimize annealing conditions [31] |