Strategic Design and Application of Chemogenomic Libraries in Precision Oncology

This article provides a comprehensive guide for researchers and drug development professionals on the strategic design and application of chemogenomic libraries.

Strategic Design and Application of Chemogenomic Libraries in Precision Oncology

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the strategic design and application of chemogenomic libraries. It covers foundational principles, from defining the druggable genome to practical library construction, and explores advanced methodologies for phenotypic screening and target deconvolution. The content also addresses common optimization challenges and outlines rigorous validation frameworks, using real-world case studies like glioblastoma research to illustrate the transformative potential of well-designed chemogenomic libraries in accelerating the discovery of patient-specific cancer vulnerabilities and novel therapeutics.

Laying the Groundwork: Core Principles and Target Selection for Chemogenomic Libraries

Chemogenomics, or chemical genomics, represents a systematic approach in modern drug discovery that involves the screening of targeted chemical libraries of small molecules against distinct families of drug targets, such as G-protein-coupled receptors (GPCRs), nuclear receptors, kinases, and proteases [1]. The primary goal is the parallel identification of novel drugs and therapeutic targets, leveraging the vast amount of data generated by the completion of the human genome project [1] [2]. This strategy moves beyond the traditional "one drug–one target" paradigm by studying the interaction of all possible drugs on all potential therapeutic targets, thereby integrating target discovery and drug discovery into a unified process [1] [3].

The foundational principle of chemogenomics is the use of small molecules as chemical probes to perturb and characterize the functions of the proteome. The interaction between a compound and a protein induces a phenotypic change, allowing researchers to associate specific proteins with molecular and cellular events [1]. A key concept enabling this approach is "structure-activity relationship (SAR) homology," which posits that ligands designed for one member of a protein family often exhibit activity against other members of the same family. This permits the construction of targeted chemical libraries with a high probability of collectively binding to a significant proportion of a given target family [1] [3].

Key Strategic Approaches: Forward and Reverse Chemogenomics

Two primary experimental frameworks guide chemogenomics investigations: forward (or classical) chemogenomics and reverse chemogenomics. These approaches differ in their starting point and methodology for linking chemical compounds to biological function [1] [2].

Table 1: Comparison of Forward and Reverse Chemogenomics Approaches

| Feature | Forward Chemogenomics | Reverse Chemogenomics |

|---|---|---|

| Starting Point | A desired phenotype in a cell or whole organism [1] | A known, validated protein target [1] |

| Primary Screening | Phenotypic assay (e.g., inhibition of tumor growth) [1] [2] | Target-based assay (e.g., in vitro enzymatic test) [1] [2] |

| Objective | Identify compounds that induce the phenotype, then find their protein target(s) [1] | Identify compounds that modulate the target, then analyze the induced phenotype [1] |

| Also Known As | Phenotypic screening [2] | Target-based screening [2] |

Forward Chemogenomics

In forward chemogenomics, the process begins with a phenotypic assay designed to mimic a specific disease state or biological function, such as the arrest of tumor growth [1]. Libraries of small molecules are screened to identify "modulators" that produce the desired phenotypic change. The subsequent, and often more challenging, step is the deconvolution of the mechanism of action (MOA)—the identification of the specific protein target(s) responsible for the observed phenotype [1] [2]. This approach is particularly powerful for discovering novel biology without preconceived notions about the proteins involved.

Reverse Chemogenomics

Reverse chemogenomics starts with a defined, purified protein target implicated in a disease pathway. Compound libraries are screened against this target using in vitro assays to identify active modulators (e.g., inhibitors or activators) [1]. The bioactive compounds are then progressed to cellular or organismal models to study the phenotypic consequences of target modulation, thereby validating the target's role in the biological response [1] [2]. This approach has been enhanced by the ability to perform parallel screening and lead optimization across entire target families [1].

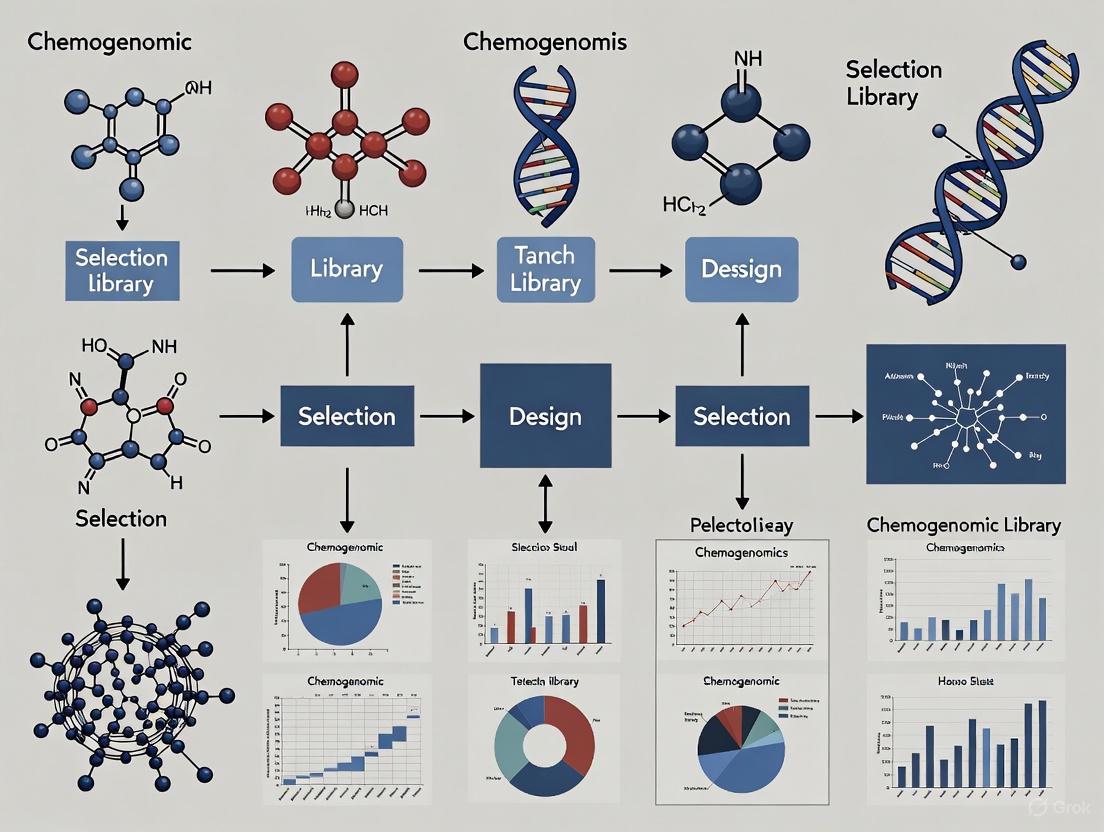

The logical relationship and workflow of these two complementary strategies are illustrated below.

Applications and Practical Protocols

Chemogenomics strategies have been successfully applied to diverse areas in biomedical research, from elucidating the mode of action of traditional medicines to identifying new drug targets and pathway components.

Determining Mode of Action (MOA) for Traditional Medicines

The complex mixtures of compounds found in traditional medicine systems like Traditional Chinese Medicine (TCM) and Ayurveda present a challenge for modern pharmacology. Chemogenomics provides a powerful tool to deconvolute their MOA [1].

Protocol 1: Elucidating MOA of Traditional Formulations

- Compound Identification: Curate a database of chemical structures present in the traditional medicine formulation [1].

- Phenotypic Annotation: Compile known therapeutic phenotypes associated with the formulation from literature (e.g., anti-inflammatory, hypoglycemic, anti-cancer) [1].

- In Silico Target Prediction: Use computational target prediction programs to identify potential protein targets for the constituent compounds. These programs leverage known chemogenomic data to predict interactions [1] [4].

- Enrichment Analysis: Statistically analyze the predicted targets to identify those that are significantly enriched and directly linked to the known therapeutic phenotypes [1]. For example, a formulation for diabetes might show enrichment for targets like sodium-glucose transport proteins or the insulin signaling regulator PTP1B [1].

- Experimental Validation: The top predicted target-phenotype links form testable hypotheses for subsequent in vitro and in vivo experimental validation.

Identifying New Antibacterial Drug Targets

Chemogenomics profiling can leverage existing ligand libraries to discover new therapeutic targets, as demonstrated in the search for novel antibacterial agents [1].

Protocol 2: Target Identification via Chemogenomics Similarity

- Library Selection: Start with a curated ligand library for a well-characterized member of a target family (e.g., the bacterial enzyme murD, involved in peptidoglycan synthesis) [1].

- Target Family Mapping: Apply the chemogenomics similarity principle. Using computational docking and structural studies, map the known ligand library to other, less-characterized members of the same protein family (e.g., murC, murE, murF) [1].

- Ligand-Target Pairing: Identify candidate ligands from the original library that are predicted to bind with high affinity to the new family members [1].

- Experimental Assay: Test the predicted ligands in experimental assays against the new targets. Successful inhibitors are expected to exhibit broad-spectrum antibacterial activity, especially if the target pathway is essential and unique to bacteria [1].

Key Research Reagent Solutions

The execution of chemogenomics protocols relies on specific reagents, databases, and software tools. The following table details essential components of the chemogenomics toolkit.

Table 2: Essential Research Reagents and Tools for Chemogenomics

| Category | Item | Function and Application Notes |

|---|---|---|

| Chemical Libraries | Targeted Chemogenomic Library [5] [6] | A collection of bioactive small molecules designed to cover a specific protein target family (e.g., kinases). Used for primary screening in both forward and reverse approaches. |

| Databases & Software | ExCAPE-DB [4] | An integrated, large-scale chemogenomics dataset. Used for building predictive models of polypharmacology and off-target effects. |

| PubChem / ChEMBL [4] [7] | Public repositories of chemical structures and their biological activity data. Source for building custom screening libraries and for data mining. | |

| Structure Standardization Tools (e.g., AMBIT, RDKit) [4] [7] | Software to ensure chemical structures are accurately and consistently represented, a critical step prior to QSAR modeling or virtual screening. | |

| Assay Systems | Phenotypic Assay Systems [1] [2] | Cell-based or organism-based assays designed to measure a complex phenotypic output (e.g., cell viability, morphology, reporter gene expression). |

| In Vitro Target Assay Systems [1] [6] | Biochemical assays using purified protein targets to measure compound binding or functional modulation (e.g., enzymatic activity). | |

| Data Curation | Data Curation Workflow [7] | A defined protocol for verifying the accuracy and consistency of both chemical structures and bioactivity data, which is crucial for reliable model development. |

Data Management and Curation in Chemogenomics

The power of chemogenomics is built upon the foundation of high-quality, large-scale data. The generation of these datasets presents significant challenges in data management, curation, and integration [2] [7].

Central to chemogenomics is the conceptual "compound-target matrix," where rows represent all possible compounds, columns represent all potential targets, and the matrix elements describe the biological interaction (e.g., IC₅₀, active/inactive) [3]. This matrix is inherently sparse, as experimentally testing every compound against every target is impossible [3]. Computational methods are therefore essential to fill the gaps and predict interactions [3] [4].

The quality of data in public repositories like PubChem and ChEMBL is heterogeneous, necessitating rigorous curation [4] [7]. Errors in chemical structures (e.g., incorrect stereochemistry, valence violations) and bioactivity data can severely compromise the accuracy of predictive models [7]. An integrated curation workflow is recommended, involving:

- Chemical Curation: Standardization of structures, removal of inorganics and mixtures, normalization of tautomers, and verification of stereochemistry [7].

- Bioactivity Curation: Processing of chemical duplicates (where the same compound has multiple activity records) and aggregation of data to ensure one record per compound-target pair [4] [7].

Initiatives like the ExCAPE-DB project have created integrated, standardized datasets by applying such curation protocols to millions of data points from PubChem and ChEMBL, facilitating robust Big Data analysis and machine learning in chemogenomics [4]. The workflow for building such a reliable resource is complex and involves multiple steps of filtering and standardization, as shown below.

Chemogenomics represents a powerful, integrated strategy that accelerates the discovery of new therapeutic targets and bioactive molecules by systematically exploring the interaction between chemical space and biological target families. The complementary approaches of forward and reverse chemogenomics provide flexible frameworks for addressing different research questions, from probing novel biology to validating specific targets. As the field advances, the emphasis on high-quality, well-curated data, robust computational models, and carefully designed chemical libraries will be paramount to realizing the full potential of chemogenomics in delivering new treatments for human disease.

Application Notes

The systematic construction of a comprehensive cancer target space is a cornerstone of modern precision oncology. It involves the integration of multi-omics data, functional genomic screens, and chemoinformatic principles to identify and prioritize therapeutically vulnerable nodes across diverse cancer types. This process transforms the conceptual "druggable genome" – the subset of genes encoding proteins that can be bound by small molecules or biologics – into a mapped and actionable landscape for therapeutic intervention [1] [8]. The following application notes detail the key steps and considerations for building this target space, using a recent integrative genomic study on colorectal cancer (CRC) as a primary case study [9].

Foundational Target Identification: An Integrative Genomic Framework

A multi-layered analytical framework was employed to move from the broad druggable genome to high-confidence, causal cancer targets. The process began with a curated set of 4,479 druggable genes from databases like the Drug–Gene Interaction Database (DGIdb) [9]. To establish causal relationships between gene expression and cancer risk, the study utilized Mendelian Randomization (MR). This method uses genetic variants, specifically cis-expression quantitative trait loci (cis-eQTLs), as instrumental variables to infer causality, reducing confounding biases common in observational studies [9]. The initial MR analysis identified 47 genes significantly associated with CRC risk out of the 2,525 druggable genes with available cis-eQTL data.

Subsequently, colocalization analysis was applied to ensure that the genetic signals influencing gene expression and cancer risk were shared, strengthening the evidence for a causal relationship. This rigorous filtering culminated in the prioritization of six high-confidence druggable targets: TFRC, TNFSF14, LAMC1, PLK1, TYMS, and TSSK6 [9]. A key step in this process was the assessment of potential off-target effects via phenome-wide association studies (PheWAS), which indicated minimal side-effect profiles for these genes, enhancing their appeal as therapeutic targets.

Clinical and Preclinical Validation of Prioritized Targets

The six prioritized genes were further scrutinized across multiple dimensions to validate their clinical relevance:

- Drug Repurposing Potential: Several identified genes, such as PLK1 and TYMS, are already targeted by existing or investigational drugs, suggesting immediate opportunities for drug repurposing in CRC [9].

- Expression in the Tumor Microenvironment: Single-cell and bulk RNA sequencing analyses revealed distinct expression patterns of these genes in tumor and stromal cell populations. Notably, the immune modulator TNFSF14 was found to be involved in regulating T cell activation, highlighting its role within the immune context of the tumor [9].

- Experimental Validation: The findings were confirmed in CRC patient samples using techniques like RT-qPCR and immunohistochemistry (IHC), providing tangible evidence of their dysregulation in human tumors [9].

Designing a Chemogenomic Library for Cancer

The output from such a genomic mapping exercise directly informs the design of targeted chemogenomic libraries. The goal is to create a collection of small molecules that broadly, yet selectively, cover the key targets and pathways identified. A strategy for such a library involves [5] [10]:

- Covering a Wide Range of Protein Targets: The library should encompass compounds targeting kinases, GPCRs, nuclear receptors, proteases, and other protein families implicated in oncogenesis.

- Incorporating Cellular and Clinical Activity Data: Selecting compounds with known cellular activity and leveraging clinical data ensures biological relevance and increases the probability of identifying effective treatments.

- Ensuring Chemical Diversity and Availability: The library must be chemically diverse to probe different biological pathways but also composed of physically available compounds for practical screening.

This strategy was successfully applied in a pilot study for glioblastoma, where a library of 789 compounds covering 1,320 anticancer targets was used to profile patient-derived glioma stem cells, revealing highly heterogeneous, patient-specific vulnerabilities [5].

Experimental Protocols

Protocol 1: Integrative Genomic Analysis for Causal Target Identification

This protocol details the computational workflow for identifying causal druggable targets from genome-scale data.

I. Materials and Reagents

- Computing Infrastructure: High-performance computing cluster with sufficient memory and storage for large-scale genomic data.

- Software and Tools: R or Python with specialized packages (e.g., TwoSampleMR, coloc in R).

- Data Sources:

- Druggable Gene List: A curated list from DGIdb or a similar repository [9].

- eQTL Data: Cis-eQTL summary statistics from consortia such as eQTLGen (blood tissue) or GTEx (multi-tissue) [9].

- Disease GWAS Data: Summary statistics from large-scale genome-wide association studies for the cancer of interest (e.g., from the GWAS catalog or biobanks like FinnGen) [9].

II. Procedure

- Data Curation and Harmonization:

- Download and preprocess GWAS and eQTL summary statistics.

- Restrict the analysis to genes present in the druggable genome list.

- For each druggable gene, extract significant cis-eQTLs (P < 5 × 10⁻⁸) that are independent (linkage disequilibrium r² < 0.1 within a 10,000 kb window) to serve as instrumental variables [9].

Mendelian Randomization Analysis:

- Perform two-sample MR to estimate the causal effect of gene expression on cancer risk.

- Use multiple MR methods (e.g., Inverse-Variance Weighted, MR-Egger) to ensure robustness.

- Apply multiple testing correction (e.g., Bonferroni) to identify genes with significant causal associations.

Colocalization Analysis:

- For significant genes from the MR analysis, conduct colocalization analysis to determine the probability that the same variant is responsible for both the eQTL and GWAS signals.

- A high posterior probability (e.g., PP.H4 > 0.8) indicates a shared causal variant and strengthens the evidence for the target [9].

Off-Target Effect Assessment:

- Perform a Phenome-wide Association Study (PheWAS) by querying the lead cis-eQTLs of the prioritized genes against a database of diverse phenotypes to identify potential pleiotropic effects [9].

III. Analysis and Interpretation

- Genes that pass the significance thresholds in both MR and colocalization analyses, and show minimal off-target effects in PheWAS, are considered high-confidence causal targets.

- These candidates should be taken forward for experimental validation.

Protocol 2: Phenotypic Profiling Using a Targeted Chemogenomic Library

This protocol describes a cell-based phenotypic screen to identify patient-specific vulnerabilities using a pre-designed chemogenomic library.

I. Materials and Reagents

- Cell Model: Patient-derived cells, such as glioma stem cells (GSCs) for glioblastoma or patient-derived organoids for CRC [5].

- Chemogenomic Library: A physically available library of 500-1500 bioactive small molecules targeting a wide range of anticancer proteins (e.g., kinases, epigenetic regulators) [5] [10].

- Staining Reagents:

- Hoechst 33342: For nuclear staining.

- CellMask Deep Red: For cytoplasmic staining.

- Antibodies for Cleaved Caspase-3: For apoptosis detection.

- Equipment: High-content imaging system and automated liquid handler.

II. Procedure

- Cell Preparation and Plating:

- Culture patient-derived cells under standard conditions.

- Seed cells into 384-well microplates at an optimized density using an automated liquid handler.

- Incubate for 24 hours to allow cell attachment.

Compound Treatment:

- Using a pintool transfer or acoustic dispenser, treat cells with compounds from the chemogenomic library at a single concentration (e.g., 1 µM) or a range of concentrations. Include DMSO-only wells as negative controls.

Phenotypic Staining and Fixation:

- After 72-96 hours of compound exposure, stain live cells with Hoechst 33342 and CellMask Deep Red.

- Fix cells with 4% paraformaldehyde and perform immunocytochemistry for cleaved caspase-3 to quantify apoptosis.

- Wash plates with PBS and seal for imaging.

High-Content Imaging and Analysis:

- Image each well using a high-content imager with a 20x objective.

- Extract quantitative features for each cell, including:

- Nuclear area and intensity

- Cell count (for viability)

- Cytoplasmic morphology

- Cleaved caspase-3 positivity

III. Data Analysis and Hit Calling

- Normalize cell counts in compound wells to DMSO control wells to calculate percent viability.

- Calculate a Z-score for each feature to identify phenotypic outliers.

- Compounds that significantly reduce viability (e.g., >50% reduction) or induce a strong apoptotic response are considered "hits."

- Analyze the heterogeneity of responses across different patient-derived models to identify patient-specific and subtype-specific vulnerabilities.

Data Presentation

Table 1: High-Confidence Druggable Targets Identified via Integrative Genomics in Colorectal Cancer

| Gene Symbol | Gene Name | Primary Known Function | MR P-value | Colocalization Confidence | Known Drug Candidates (from DrugBank/DGIdb) |

|---|---|---|---|---|---|

| TFRC | Transferrin Receptor | Iron transport | < 5 × 10⁻⁸ | High | (e.g., Anti-TFRC antibodies) |

| TNFSF14 | TNF Superfamily Member 14 | T cell activation, Immune modulation | < 5 × 10⁻⁸ | High | (e.g., Recombinant TNFSF14) |

| LAMC1 | Laminin Subunit Gamma 1 | Extracellular matrix organization, Cell adhesion | < 5 × 10⁻⁸ | High | - |

| PLK1 | Polo Like Kinase 1 | Cell cycle progression (Mitosis) | < 5 × 10⁻⁸ | High | Volasertib, BI 2536 |

| TYMS | Thymidylate Synthetase | DNA synthesis | < 5 × 10⁻⁸ | High | 5-Fluorouracil, Pemetrexed |

| TSSK6 | Testis Specific Serine Kinase 6 | Spermatogenesis | < 5 × 10⁻⁸ | High | - |

Data derived from [9]. MR P-value indicates significance in Mendelian Randomization analysis.

Table 2: Essential Research Reagent Solutions for Druggable Genome Mapping

| Reagent / Solution | Function / Application | Specific Example(s) |

|---|---|---|

| DGIdb / DrugBank Database | Curated sources for identifying and annotating druggable genes and their known drug interactions. | Used to compile the initial list of 4,479 druggable genes [9]. |

| eQTL Summary Statistics | Provides data on genetic variants that influence gene expression levels; used for selecting instrumental variables in MR. | eQTLGen Consortium dataset (blood tissue) [9]. |

| Cancer GWAS Summary Statistics | Provides data on genetic variants associated with cancer risk; used as the outcome in MR. | Data from FinnGen biobank and other large meta-analyses [9]. |

| Targeted Chemogenomic Library | A collection of bioactive small molecules designed to probe a wide range of predefined protein targets in phenotypic screens. | A library of 789 compounds targeting 1,320 proteins for profiling glioma stem cells [5]. |

| High-Content Imaging Assays | Multiparametric cell-based assays to quantify complex phenotypic responses (viability, apoptosis, morphology) to library compounds. | Hoechst 33342 (nuclei), CellMask (cytosol), antibodies for cleaved caspase-3 (apoptosis) [5]. |

Visualizations

Research Framework

Analytical Workflow

Strategic compound sourcing is a cornerstone of modern chemogenomics, which aims to systematically understand the interactions between small molecules and biological targets. A chemogenomic library is not merely a collection of compounds; it is a strategically curated set of bioactive molecules designed to probe diverse biological pathways and protein families efficiently. The fundamental challenge in library design lies in balancing several competing factors: library size, cellular activity, chemical diversity, and target selectivity [5]. By applying rigorous analytic procedures, researchers can design targeted screening libraries that cover a wide range of protein targets and biological pathways implicated in various diseases, making them widely applicable to precision oncology and other therapeutic areas [5].

The strategic sourcing approach leverages existing chemical assets—including approved drugs and late-stage investigational probes—as a foundation for library development. This methodology provides several distinct advantages over de novo compound discovery: established safety profiles, known bioavailability parameters, and reduced development timelines. In a practical demonstration of this approach, researchers successfully identified patient-specific vulnerabilities by imaging glioma stem cells from patients with glioblastoma using a physically assembled library of 789 compounds covering 1,320 anticancer targets [5]. The resulting phenotypic profiling revealed highly heterogeneous responses across patients and cancer subtypes, highlighting the critical importance of well-curated compound selections for precision medicine applications.

Approved Drugs as Chemical Starting Points

Approved drugs represent valuable starting points for chemogenomic libraries due to their well-characterized safety profiles and known target interactions. These compounds serve as excellent chemical probes for understanding fundamental biological processes and can be repurposed for new therapeutic indications. The structural diversity of approved drugs provides coverage across multiple target classes, including G-protein-coupled receptors, ion channels, enzymes, and nuclear receptors. When incorporating approved drugs into a chemogenomic library, researchers should prioritize compounds with known molecular mechanisms, favorable physicochemical properties, and potential for polypharmacology.

Investigational New Drugs

Late-stage investigational drugs represent a rich source of novel chemical matter with optimized pharmacological properties. These compounds often target emerging biological pathways and may exhibit novel mechanisms of action compared to approved drugs. The following table summarizes key investigational drugs advancing through regulatory review with potential utility for chemogenomic library inclusion:

Table 1: Selected Late-Stage Investigational Drugs for Library Sourcing

| Drug Name | Molecular Target | Therapeutic Area | Company | PDUFA Date | Key Characteristics |

|---|---|---|---|---|---|

| Paltusotine [11] | SST2 agonist [11] | Acromegaly [11] | Crinetics Pharmaceuticals [11] | Sep 25, 2025 [11] | Once-daily oral dosing; durable IGF-1 regulation [11] |

| Ziftomenib [11] | Menin inhibitor [11] | NPM1-mutant AML [11] | Kura Oncology & Kyowa Kirin [11] | Nov 30, 2025 [11] | Oral administration; achieves significant complete remission [11] |

| Aficamten [11] | Cardiac myosin inhibitor [11] | Obstructive hypertrophic cardiomyopathy [11] | Cytokinetics [11] | Dec 26, 2025 [11] | Improves peak oxygen uptake and cardiac performance [11] |

| RGX-121 [11] | IDS gene therapy [11] | Mucopolysaccharidosis II [11] | Regenxbio Inc. [11] | Nov 9, 2025 [11] | One-time gene therapy; adeno-associated viral vector [11] |

| Sibeprenlimab [11] | APRIL inhibitor [11] | IgA nephropathy [11] | Otsuka Pharmaceutical [11] | Nov 28, 2025 [11] | Subcutaneous administration; reduces proteinuria [11] |

| Reproxalap [11] | RASP modulator [11] | Dry eye disease [11] | Aldeyra Therapeutics [11] | Dec 16, 2025 [11] | First-in-class; targets elevated RASP levels [11] |

| Epioxa [11] | Corneal cross-linking [11] | Keratoconus [11] | Glaukos Corporation [11] | Oct 20, 2025 [11] | Non-invasive therapy; combines bio-activated formulation with UV-A light [11] |

These investigational compounds illustrate the breadth of contemporary drug discovery across diverse therapeutic areas including rare diseases, ophthalmology, hematology, autoimmune disorders, and cardiovascular conditions [11]. Their inclusion in chemogenomic libraries provides access to cutting-edge chemical matter targeting novel biological pathways.

Experimental Protocols for Library Assembly and Screening

Protocol 1: Design and Assembly of a Targeted Screening Library

Objective: To design and assemble a targeted screening library of 1,000-2,000 compounds from approved drugs and investigational probes for phenotypic screening in disease-relevant cellular models.

Materials:

- Compound management system (e.g., Echo acoustic dispenser)

- Approved drug collection (e.g., Prestwick Chemical Library, Selleckchem FDA-approved Drug Library)

- Investigational compounds sourced from commercial suppliers

- DMSO (cell culture grade)

- 384-well tissue culture-treated microplates

- Automated liquid handling system

Procedure:

- Compound Selection: Apply analytic procedures for designing anticancer compound libraries adjusted for library size, cellular activity, chemical diversity, and target selectivity [5]. Prioritize compounds that cover a wide range of protein targets and biological pathways implicated in the disease area of interest.

- Stock Solution Preparation: Prepare 10 mM stock solutions of all compounds in DMSO using an automated liquid handling system. Verify compound identity and purity through LC-MS analysis for a quality control subset (≥5% of library).

- Plate Formatting: Format compounds into 384-well master plates at a concentration of 10 mM using an acoustic dispenser. Include control wells containing DMSO only (0.1% final concentration).

- Intermediate Dilution: Create intermediate working plates by diluting master plates to 500 μM in DMSO for cell-based assays.

- Quality Control: Implement quality control measures including:

- HPLC-UV analysis to assess compound purity

- LC-MS to confirm compound identity

- Absorbance-based assay to detect precipitated compounds

- Storage: Store master and working plates at -20°C in sealed containers with desiccant to prevent moisture absorption.

Expected Outcomes: A formatted screening library suitable for high-throughput phenotypic profiling with comprehensive documentation of compound structures, concentrations, and storage locations.

Protocol 2: Phenotypic Profiling Using Patient-Derived Cells

Objective: To identify patient-specific vulnerabilities by screening the curated compound library against patient-derived cells, such as glioma stem cells from glioblastoma patients [5].

Materials:

- Patient-derived cell lines

- Curated compound library from Protocol 1

- Cell culture media and supplements

- 384-well black-walled, clear-bottom assay plates

- High-content imaging system

- Cell staining reagents (Hoechst 33342, Phalloidin, MitoTracker)

- Cell viability assay reagents (e.g., CellTiter-Glo)

Procedure:

- Cell Preparation: Culture patient-derived cells under appropriate conditions. For glioma stem cells, use neurobasal media supplemented with EGF, FGF, and B27.

- Cell Plating: Plate cells in 384-well assay plates at a density of 500-1,000 cells per well in 50 μL media using an automated liquid dispenser. Allow cells to adhere overnight.

- Compound Treatment: Transfer 50 nL of compound from working plates (500 μM) to assay plates using an acoustic dispenser, resulting in a final concentration of 5 μM and 0.1% DMSO. Include positive controls (e.g., staurosporine for cell death) and negative controls (DMSO only).

- Incubation: Incubate compound-treated cells for 72-120 hours at 37°C, 5% CO₂.

- Endpoint Assaying:

- Viability Assessment: Add CellTiter-Glo reagent and measure luminescence according to manufacturer's instructions.

- Morphological profiling: Fix cells with 4% formaldehyde, permeabilize with 0.1% Triton X-100, and stain with Hoechst 33342 (nuclei), Phalloidin (actin cytoskeleton), and MitoTracker (mitochondria).

- High-Content Imaging: Acquire images using a 20x objective on a high-content imaging system. Capture at least 9 fields per well to ensure adequate cell sampling.

- Image Analysis: Extract morphological features including cell count, nuclear size, cytoskeletal organization, and mitochondrial morphology using image analysis software.

Expected Outcomes: Dose-response data for viability and multivariate morphological profiles for each compound. Patient-specific sensitivity patterns revealing potential therapeutic vulnerabilities.

Workflow Visualization

Diagram 1: Chemogenomic Library Screening Workflow. This flowchart illustrates the complete process from compound selection to hit identification in phenotypic screening assays.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Reagents for Chemogenomic Library Screening

| Reagent / Tool | Function | Application Notes |

|---|---|---|

| Approved Drug Libraries [5] | Source of clinically relevant compounds with known safety profiles | Pre-formatted plates available from commercial suppliers; typically 1,000-2,000 compounds |

| Acoustic Liquid Handlers | Contact-free transfer of nanoliter volumes of compound solutions | Essential for minimizing DMSO concentration in assays; enables high-density plate formatting |

| High-Content Imaging Systems | Automated microscopy for multiparametric phenotypic assessment | Capable of capturing multiple fluorescence channels; requires specialized image analysis software |

| DNA-Encoded Libraries (DELs) [12] | Technology for high-throughput screening of vast chemical libraries | Utilizes DNA as a unique identifier for each compound; allows screening of millions of compounds [12] |

| Computer-Aided Drug Design (CADD) [12] | Computational methods to predict binding affinity of small molecules | Reduces time and resources required for experimental screening [12] |

| Click Chemistry Toolkits [12] | Modular reactions for efficient synthesis of diverse compounds | Enables rapid construction of compound libraries; useful for library expansion [12] |

| Targeted Protein Degradation Protcols [12] | Methods to tag proteins for degradation via cellular machinery | Provides access to previously "undruggable" targets; requires specialized compound designs [12] |

Data Analysis and Integration Framework

The analysis of screening data from strategically sourced compound libraries requires specialized computational approaches. For quantitative data analysis, researchers should employ dose-response modeling to calculate IC₅₀ values and efficacy parameters for each compound. The quantitative data generated consists of discrete and distinct objects with no overlap between data points, typically represented in structured tables with clear variables and values [13]. Each data point must be properly contextualized within its experimental variables to enable correct interpretation.

In contrast, qualitative data from morphological profiling captures complex, condensed information about cell state that cannot be fully reduced to individual variables without losing critical biological insights [13]. This qualitative data requires specialized analytical approaches such as machine learning-based pattern recognition to identify compound-specific phenotypes and patient-specific vulnerabilities. The integration of these quantitative and qualitative datasets enables a comprehensive understanding of compound activities and cellular responses.

Successful implementation of this strategic sourcing framework facilitates the identification of novel therapeutic vulnerabilities and accelerates the drug discovery process. By leveraging approved drugs and investigational probes as a foundation for chemogenomic libraries, researchers can efficiently explore chemical space while reducing the resource expenditures associated with de novo compound discovery [12].

Chemogenomics represents a systematic approach in modern drug discovery that integrates genomics and chemistry to accelerate the identification of both therapeutic targets and bioactive compounds [1]. This strategy involves the screening of targeted chemical libraries of small molecules against distinct drug target families—such as GPCRs, kinases, nuclear receptors, and proteases—with the dual objective of discovering novel drugs and their molecular targets [1]. The completion of the human genome project provided an unprecedented abundance of potential targets for therapeutic intervention, and chemogenomics aims to systematically study the intersection of all possible drugs on these potential targets [1] [2].

The fundamental strategy of chemogenomics involves using active compounds as chemical probes to characterize proteome functions [1]. The interaction between a small molecule and a protein induces a measurable phenotype, allowing researchers to associate specific proteins with molecular events [1]. A key advantage of chemogenomics over traditional genetic approaches is its ability to modify protein function reversibly and in real-time, observing phenotypic changes only after compound addition and their potential reversal upon compound withdrawal [1]. Currently, two primary experimental approaches dominate the field: forward (classical) chemogenomics and reverse chemogenomics [1].

Forward Chemogenomics: Phenotype-Based Screening

Core Principles and Workflow

Forward chemogenomics begins with the observation of a particular phenotype, followed by the identification of small molecules that induce or modify this phenotypic response [1]. The molecular basis of the desired phenotype is initially unknown in this approach. Once modulators are identified, they serve as tools to investigate the protein responsible for the observed phenotype [1]. For example, a loss-of-function phenotype might manifest as arrested tumor growth, and compounds inducing this effect become candidates for target identification [14].

The major challenge in forward chemogenomics lies in designing phenotypic assays that enable direct progression from screening to target identification [1]. This approach is particularly valuable for uncovering novel biological mechanisms and therapeutic strategies without preconceived notions about specific molecular targets.

Table: Key Characteristics of Forward Chemogenomics

| Aspect | Description |

|---|---|

| Starting Point | Observable phenotype in cells or whole organisms [1] |

| Screening Focus | Identification of compounds that modify the phenotype [1] |

| Target Knowledge | Molecular target unknown at screening initiation [1] |

| Primary Strength | Unbiased discovery of novel biological mechanisms [1] |

| Main Challenge | Subsequent target deconvolution [1] |

Experimental Protocol: Phenotypic Screening for Novel Drug Targets

Purpose: To identify compounds inducing a specific phenotype (e.g., inhibition of cancer cell growth) and subsequently determine their molecular targets.

Materials and Reagents:

- Cell culture materials (appropriate cell lines, culture media, supplements)

- Chemical library (diverse small molecule collections)

- Cell viability assay reagents (e.g., MTT, CellTiter-Glo)

- Staining and fixation solutions for image-based assays

- Lysis buffers for protein extraction

- Proteomics equipment (mass spectrometer, chromatography system)

Procedure:

- Model System Development: Establish a biologically relevant model system that recapitulates the disease phenotype of interest. For cancer research, this may involve patient-derived cell models, 3D organoids, or engineered tumor cells [14].

- Phenotypic Screening: Plate cells in multiwell plates and treat with compounds from the chemical library. Include appropriate controls (vehicle-only and positive controls) [15].

- Phenotype Assessment: Incubate for predetermined time periods, then quantify phenotypic responses using appropriate methods:

- Hit Identification: Select compounds that produce the desired phenotype based on statistical significance compared to controls.

- Target Deconvolution: Identify molecular targets of hit compounds using various approaches:

- Affinity Purification: Immobilize hit compounds on solid support for pull-down assays with cell lysates followed by mass spectrometry [1].

- Genetic Approaches: Utilize chemogenomic profiling in model organisms like yeast to identify gene products that functionally interact with small molecules [16].

- Transcriptomic Profiling: Compare gene expression patterns induced by compounds with unknown mechanism to those with known targets [16].

- Target Validation: Confirm target identity through complementary approaches such as CRISPR-based gene editing, RNA interference, or biochemical binding assays [17].

Applications and Case Studies

Forward chemogenomics has proven valuable in multiple domains:

- Target Identification: A key application involves identifying totally new therapeutic targets, such as novel antibacterial agents targeting the peptidoglycan synthesis pathway in bacteria [1].

- Pathway Elucidation: Researchers have used this approach to identify genes in biological pathways, such as discovering the enzyme responsible for the final step in diphthamide biosynthesis after thirty years of its characterization [1].

- Oncology Research: In glioblastoma research, phenotypic screening of patient-derived glioma stem cells using focused compound libraries revealed highly heterogeneous, patient-specific vulnerabilities across different cancer subtypes [14].

Reverse Chemogenomics: Target-Based Screening

Core Principles and Workflow

Reverse chemogenomics adopts the opposite strategy, beginning with a specific protein target of interest and screening for compounds that perturb its function [1]. This approach initially identifies small molecules that modulate the activity of a defined enzyme or receptor in the context of an in vitro biochemical assay [1]. Once modulators are identified, researchers then analyze the phenotype induced by these molecules in cellular systems or whole organisms [1].

This strategy essentially mirrors the target-based approaches that have dominated pharmaceutical discovery over recent decades but is enhanced by parallel screening capabilities and the ability to perform lead optimization across multiple targets belonging to the same protein family [1]. Reverse chemogenomics is particularly powerful for validating the therapeutic potential of specific targets and understanding their role in biological responses [1].

Table: Key Characteristics of Reverse Chemogenomics

| Aspect | Description |

|---|---|

| Starting Point | Known protein target with suspected therapeutic relevance [1] |

| Screening Focus | Identification of compounds that modulate target activity in vitro [1] |

| Target Knowledge | Molecular target well-defined at screening initiation [1] |

| Primary Strength | Straightforward validation of target therapeutic potential [1] |

| Main Challenge | Translating in vitro activity to physiologically relevant phenotypes [1] |

Experimental Protocol: Target-Focused Compound Screening

Purpose: To identify compounds that modulate the activity of a predefined molecular target and characterize their phenotypic effects.

Materials and Reagents:

- Purified target protein(s)

- Biochemical assay reagents (substrates, cofactors, detection reagents)

- Chemical library (often target-family focused)

- Cell culture materials for secondary assays

- Analytical instruments (plate readers, liquid handling systems)

Procedure:

- Target Selection and Production: Select a therapeutically relevant protein target and produce it in purified form (e.g., recombinant expression in E. coli or insect cells) [1].

- Biochemical Assay Development: Develop a robust in vitro assay capable of measuring target activity:

- For enzymes: Design activity assays measuring substrate conversion (e.g., fluorescence, absorbance, or luminescence-based readouts).

- For receptors: Develop binding assays (e.g., fluorescence polarization, surface plasmon resonance).

- Primary Screening: Screen compound libraries against the target using the biochemical assay. Typical screening includes:

- Testing compounds at single concentration (10 μM) in duplicate [14].

- Including appropriate controls (no compound, reference inhibitors/activators).

- Hit Confirmation: Retest confirmed hits in dose-response experiments to determine potency (IC50, EC50, Ki values).

- Selectivity Profiling: Counter-screen hits against related targets to assess selectivity and minimize off-target effects [14].

- Cellular Phenotype Analysis: Evaluate phenotypic effects of confirmed hits in relevant cellular models:

- Assess cellular target engagement (e.g., cellular thermal shift assays, downstream pathway modulation) [14].

- Determine functional consequences (viability, differentiation, migration, etc.).

- Mechanism of Action Studies: Investigate compound effects in more complex models (tissue explants, animal models) for therapeutic efficacy and potential toxicity [18].

Applications and Case Studies

Reverse chemogenomics has enabled significant advances in multiple areas:

- Mode of Action Determination: This approach has been used to determine the mechanism of action for traditional medicines, including Traditional Chinese Medicine and Ayurveda, by predicting ligand targets relevant to known phenotypes [1].

- Drug Repurposing: By screening approved drugs against defined molecular targets, researchers have identified new therapeutic applications for existing medications [14] [18].

- Selectivity Profiling: The strategy enables comprehensive assessment of compound selectivity across target families, helping to optimize drug candidates for reduced off-target effects [14].

Comparative Analysis: Forward vs. Reverse Approaches

Direct Comparison of Strategic Features

Table: Comprehensive Comparison of Forward and Reverse Chemogenomics

| Parameter | Forward Chemogenomics | Reverse Chemogenomics |

|---|---|---|

| Screening Strategy | Phenotype-first approach [1] | Target-first approach [1] |

| Target Identification | Post-screening, requires deconvolution [1] | Predefined before screening [1] |

| Primary Screening System | Cells or whole organisms [1] | Isolated molecular targets [1] |

| Typical Assay Format | High-content phenotypic assays [15] | Biochemical or binding assays [1] |

| Hit-to-Target Pathway | Complex, requires extensive validation [1] | Straightforward, target known from start [1] |

| Therapeutic Relevance | High physiological relevance [14] | May lack physiological context [1] |

| Risk of Translation Failure | Lower, due to physiological context [14] | Higher, due to potential lack of translation to whole systems [1] |

| Suitable For | Novel target discovery, pathway elucidation [1] | Target validation, lead optimization [1] |

Visualizing Screening Workflows

The following diagram illustrates the fundamental differences in workflow between forward and reverse chemogenomics approaches:

Chemogenomic Library Design for Screening

Essential Research Reagent Solutions

Successful implementation of both forward and reverse chemogenomics approaches requires carefully designed chemical libraries and associated research tools. The following table outlines key reagent solutions essential for chemogenomic studies:

Table: Essential Research Reagents for Chemogenomic Screening

| Reagent Type | Function/Purpose | Examples/Specifications |

|---|---|---|

| Focused Chemical Libraries | Targeted screening against specific protein families or pathways [15] | Kinase inhibitor collections, GPCR-focused libraries, epigenetic modulator sets [15] |

| Diverse Compound Collections | Broad phenotypic screening for novel biology [15] | 10,000-100,000 compounds with maximal structural diversity [15] |

| Annotated Bioactive Compounds | Mechanism of action studies and reference standards [15] | Prestwick Chemical Library, NCATS MIPE library [15] |

| Cell Painting Assay Kits | High-content morphological profiling [15] | Multiplexed fluorescent dyes for organelles (nucleus, ER, Golgi, etc.) [15] |

| Barcoded Knockout Collections | Chemogenomic fitness profiling in yeast [16] | Yeast heterozygous and homozygous deletion pools [16] |

| CRISPR Screening Libraries | Genetic screening in mammalian cells [14] | Genome-wide guide RNA libraries for gene knockout [14] |

Strategic Library Design Considerations

Designing effective chemogenomics libraries requires balancing multiple objectives:

Target Coverage: Ensure comprehensive coverage of the intended target space, whether focused on specific protein families or broad across the druggable genome [14]. For example, the C3L (Comprehensive anti-Cancer small-Compound Library) was designed to cover 1,386 anticancer proteins with just 1,211 compounds through careful selection [14].

Cellular Activity: Prioritize compounds with demonstrated cellular activity rather than just biochemical potency, as this increases the likelihood of observing physiologically relevant effects [14].

Chemical Diversity: Include structurally diverse compounds to maximize the chances of identifying novel chemotypes and avoid redundant structure-activity relationships [15].

Selectivity Considerations: Balance the need for selective tool compounds with the potential benefits of multi-target agents, particularly for complex diseases where polypharmacology may be advantageous [15].

Practical Constraints: Consider compound availability, solubility, stability, and compatibility with screening formats when assembling physical screening libraries [14].

Integrated Applications in Drug Discovery

Synergistic Use of Forward and Reverse Approaches

The most effective drug discovery programs often integrate both forward and reverse chemogenomics strategies in a complementary manner:

Target Discovery to Validation Pipeline: Use forward chemogenomics to identify novel therapeutic targets in phenotypic screens, then apply reverse chemogenomics to develop selective compounds against these newly validated targets [1].

Mechanism of Action Deconvolution: Employ reverse chemogenomics approaches to characterize the molecular targets of hits identified in phenotypic forward screens, accelerating the understanding of compound mechanism of action [18].

Predictive Chemogenomics: Develop computational models that leverage data from both approaches to holistically characterize gene-compound response associations, enabling prediction of novel therapeutic molecules and their mechanisms [2].

Emerging Trends and Future Directions

The field of chemogenomics continues to evolve with several emerging trends:

Increased Integration of Chemoinformatic and Bioinformatic Data: There is growing emphasis on refined integration of chemical and biological data to build more predictive models of drug-target interactions [2].

Focus on Data Quality Over Quantity: A shift from simply generating large screening datasets toward producing higher-quality, better-annotated data with improved physiological relevance [2].

Advanced Phenotypic Profiling: Development of more sophisticated phenotypic screening platforms, including high-content imaging with Cell Painting and complex 3D tissue models, that provide richer biological information [15].

Expansion to Novel Therapeutic Modalities: Application of chemogenomics principles beyond traditional small molecules to include targeted protein degraders, covalent inhibitors, and other emerging modalities [18].

Forward and reverse chemogenomics represent complementary strategies in modern drug discovery, each with distinct advantages and applications. Forward chemogenomics offers an unbiased approach to identifying novel biological mechanisms and therapeutic strategies by starting with phenotypic observations. In contrast, reverse chemogenomics provides a targeted approach for validating specific molecular targets and optimizing compounds with known mechanisms of action.

The strategic integration of both approaches, supported by carefully designed chemogenomic libraries and advanced screening technologies, creates a powerful framework for accelerating drug discovery. As the field continues to evolve, emphasizing data quality, physiological relevance, and computational integration will further enhance the impact of chemogenomics on identifying and validating new therapeutic strategies for human diseases.

From Theory to Practice: Library Construction and Phenotypic Screening Applications

Chemogenomic libraries represent strategically designed collections of small molecules used to systematically probe biological systems and identify therapeutic agents. These libraries have emerged as powerful tools in phenotypic drug discovery, where they enable the identification of novel biological targets and mechanisms of action when combined with high-content screening technologies [15] [18]. The fundamental challenge in developing these libraries lies in balancing multiple, often competing objectives: comprehensive target coverage, structural diversity, cellular activity, selectivity, and practical constraints such as compound availability and cost [14].

Multi-objective optimization (MOO) frameworks provide mathematical rigor to this design process, allowing researchers to navigate complex trade-offs without prematurely prioritizing one objective over others. Unlike single-objective optimization that relies on scalarization, Pareto optimization identifies a set of optimal solutions that reveal the inherent trade-offs between objectives [19]. This approach is particularly valuable in chemogenomic library design, where the relationship between chemical structure, target coverage, and biological activity is complex and multidimensional.

This protocol outlines detailed methodologies for applying multi-objective optimization to chemogenomic library design, with specific examples from published libraries and practical guidance for implementation.

Theoretical Framework: Multi-Objective Optimization in Library Design

Pareto Optimization Principles

In multi-objective molecular optimization, the goal is to identify molecules that simultaneously optimize multiple properties. The Pareto front defines the set of optimal solutions where improvement in one objective necessitates deterioration in at least one other objective [19]. For example, when designing selective drugs, strong affinity to the target and weak affinity to off-targets are both desired but often competing objectives.

Formally, for n objectives {f₁, f₂, ..., fₙ} to be maximized, solution A dominates solution B if:

- fᵢ(A) ≥ fᵢ(B) for all i ∈ {1, 2, ..., n}

- fᵢ(A) > fᵢ(B) for at least one i

The Pareto front consists of all non-dominated solutions, providing researchers with a set of optimal trade-offs from which to select based on their specific research priorities [19].

Application to Chemogenomic Libraries

In chemogenomic library design, the key objectives typically include:

- Target coverage: Maximizing the number of protein targets addressed by the library

- Structural diversity: Ensuring broad coverage of chemical space to increase chances of discovering novel bioactivities

- Cellular potency: Selecting compounds with demonstrated biological activity

- Selectivity: Preferring compounds with specific target interactions over promiscuous binders

- Practical constraints: Considering compound availability, cost, and compatibility with screening technologies [14] [15]

Table 1: Key Objectives in Chemogenomic Library Design

| Objective | Description | Measurement Approach |

|---|---|---|

| Target Coverage | Number of distinct biological targets modulated by library | Annotation from databases (ChEMBL, DrugBank) |

| Structural Diversity | Breadth of chemical space covered | Molecular fingerprints, scaffold analysis, Tanimoto similarity |

| Cellular Potency | Demonstrated biological activity in cellular assays | IC₅₀, EC₅₀, or Kᵢ values from literature |

| Selectivity | Specificity for intended targets | Selectivity scores, off-target profiling |

| Practicality | Availability and compatibility with screening | Commercial availability, solubility, stability |

Protocol: Designing a Focused Chemogenomic Library Using Multi-Objective Optimization

Compound Collection and Initial Curation

Materials:

- Chemical databases (ChEMBL, DrugBank, PubChem)

- Commercial compound suppliers (e.g., Selleckchem, Tocris, MedChemExpress)

- Bioinformatics tools (KNIME, Pipeline Pilot, or custom Python/R scripts)

Procedure:

- Define target space: Compile a comprehensive list of proteins implicated in disease pathogenesis from The Human Protein Atlas, PharmacoDB, and literature review [14].

- Identify compound-target interactions: Extract known bioactive compounds for each target from ChEMBL and other annotated databases.

- Apply initial filters: Remove compounds with undesirable properties (e.g., reactive groups, poor drug-likeness) using established filters such as PAINS.

- Compile initial collection: Create a theoretical compound set covering the defined target space.

Table 2: Performance Metrics for the C3L Library Design

| Library Version | Compound Count | Target Coverage | Reduction from Theoretical Set | Key Characteristics |

|---|---|---|---|---|

| Theoretical Set | 336,758 | 1,655 targets (100%) | - | Comprehensive target annotation |

| Large-Scale Set | 2,288 | 1,655 targets (100%) | 147-fold | Activity and similarity filtered |

| Screening Set (C3L) | 1,211 | 1,386 targets (84%) | 278-fold | Commercially available, potent probes |

Multi-Objective Filtering and Optimization

Materials:

- Molecular fingerprinting tools (RDKit, OpenBabel)

- Similarity calculation algorithms (Tanimoto, Dice)

- Multi-objective optimization algorithms (NSGA-II, SPEA2)

Procedure:

- Activity filtering: Remove compounds lacking demonstrated cellular activity (IC₅₀/EC₅₀/Kᵢ < 10 µM) [14].

- Potency-based selection: For each target, select the most potent compounds to reduce redundancy.

- Structural diversity optimization:

- Calculate molecular fingerprints (ECFP4/6, MACCS)

- Cluster compounds using Butina clustering or similar methods

- Select representative compounds from each cluster

- Availability filtering: Filter for commercially available compounds

- Multi-objective optimization:

- Define objectives: maximize target coverage, maximize structural diversity, minimize library size

- Apply NSGA-II or similar algorithm to identify Pareto-optimal solutions

- Select final library based on project requirements

Diagram 1: Chemogenomic Library Optimization Workflow

Library Validation and Profiling

Materials:

- Cell-based screening assays (Cell Painting, high-content imaging)

- Data analysis pipelines (CellProfiler, custom Python/R scripts)

- Target annotation databases (GO, KEGG, Disease Ontology)

Procedure:

- Experimental validation: Screen library against disease-relevant cell models (e.g., patient-derived glioblastoma stem cells) [14].

- Morphological profiling: Use Cell Painting or similar assay to capture multiparametric phenotypic responses [15].

- Target deconvolution: Integrate screening results with target annotations to identify mechanism of action.

- Performance assessment: Evaluate library performance based on hit rates and target identification success.

Advanced Applications and Case Studies

Phenotypic Screening for Patient-Specific Vulnerabilities

In a pilot application of the Comprehensive anti-Cancer small-Compound Library (C3L), researchers screened 789 compounds against glioma stem cells from glioblastoma patients. The approach revealed highly heterogeneous phenotypic responses across patients and molecular subtypes, demonstrating the value of targeted libraries in identifying patient-specific vulnerabilities [14].

Key findings:

- Library coverage: 1,320 anticancer targets with 789 compounds

- Identification of patient-specific vulnerabilities despite common diagnosis

- Successful deconvolution of mechanisms due to target-annotated library design

Chemogenomic Library for Morphological Profiling

Another approach integrated drug-target-pathway-disease relationships with morphological profiles from Cell Painting assays. This platform enables:

- Systematic exploration of chemical perturbations on cellular morphology

- Prediction of mechanism of action for novel compounds

- Identification of polypharmacology and off-target effects [15]

Diagram 2: Chemogenomic Platform for Phenotypic Screening

Research Reagent Solutions

Table 3: Essential Research Reagents and Tools for Chemogenomic Library Development

| Reagent/Tool | Function | Example Sources |

|---|---|---|

| ChEMBL Database | Bioactivity data for target annotation | European Molecular Biology Laboratory |

| Cell Painting Assay | Morphological profiling for phenotypic screening | Broad Institute |

| Neo4j Graph Database | Integration of heterogeneous biological data | Neo4j, Inc. |

| RDKit | Cheminformatics and molecular fingerprinting | Open-source toolkit |

| NSGA-II Algorithm | Multi-objective optimization | Various implementations (PyGMO, JMetal) |

| Commercial Compound Libraries | Source of biologically active compounds | Selleckchem, Tocris, MedChemExpress |

Multi-objective optimization provides a powerful framework for designing targeted chemogenomic libraries that balance the competing demands of target coverage, structural diversity, and practical screening considerations. The protocols outlined here enable researchers to create focused libraries that maximize biological insights while minimizing resource requirements. As phenotypic screening continues to regain prominence in drug discovery, rationally designed chemogenomic libraries will play an increasingly important role in bridging the gap between phenotypic observations and target identification.

Within the strategic framework of chemogenomics—the systematic screening of targeted chemical libraries against families of drug targets—the selection of optimal compounds is a critical challenge [1]. This process aims to identify novel drugs and drug targets by leveraging the fact that ligands designed for one family member often bind to additional, related targets [1]. However, the ultimate success of this approach depends on a rigorous triage of screening candidates. This application note details a refined protocol for the systematic filtering of compound libraries based on the three pivotal criteria of potency, selectivity, and availability. By providing detailed methodologies and data presentation standards, we empower researchers to construct high-quality, focused libraries that maximize the probability of success in both forward and reverse chemogenomics campaigns [1].

Theoretical Foundation: Quantifying Compound-Target Interactions

The Target-Specific Selectivity Paradigm

Traditional selectivity metrics, such as the Gini coefficient or selectivity entropy, characterize the narrowness of a compound's bioactivity profile across all tested targets [20]. While useful for identifying highly specific compounds, these metrics fall short when the goal is to find a compound that is selective for a particular target of interest, which is a common requirement in drug discovery and repurposing [20]. To address this, the concept of target-specific selectivity has been developed. It is defined as the potency of a compound to bind to a particular protein of interest relative to its potency against all other potential off-targets [20].

This target-specific selectivity can be decomposed into two core components:

- Absolute Potency: The intrinsic binding affinity (e.g., pKd or IC50) of the compound against the target of interest.

- Relative Potency: The compound's binding affinity against other potential (off-)targets, which can be quantified using global or local statistical comparisons [20].

The most desirable compounds are those that simultaneously maximize absolute potency and relative potency, a challenge that can be formulated as a bi-objective optimization problem [20].

Experimental Design and Data Considerations

Large-scale, consistent bioactivity datasets are a prerequisite for robust compound filtering. The protocol outlined below was developed and tested using a published dataset of fully-measured interactions between 72 kinase inhibitors and 442 kinases, which provides a wide spectrum of polypharmacological activities for method validation [20]. When working with such data, the careful design of tables is essential for efficient communication. Key principles include ordering data to match the table's purpose, rounding numbers for readability, performing computations for the user (e.g., providing summary statistics), and ensuring a clear visual hierarchy to guide the reader's eye [21] [22].

Experimental Protocol: A Tiered Filtering Workflow

This protocol describes a sequential, tiered approach to filter a chemogenomics compound library. An overview of the workflow is provided in the diagram below.

Tier 1: Primary Potency Screen

Objective: To identify all compounds with sufficient binding affinity for the primary target.

- Data Input: Load the bioactivity matrix (e.g., pKd values, where pKd = -log10(Kd)) for all compound-target pairs [20].

- Threshold Setting: Define a potency threshold based on the project's goals. For example, a pKd > 7 (Kd < 100 nM) is a common starting point for a high-affinity interaction.

- Filtering: For the target of interest (Tj), select all compounds (Ci) where pKd(Ci, Tj) exceeds the defined threshold.

- Output: A subset of compounds demonstrating meaningful potency against the primary target.

Tier 2: Target-Specific Selectivity Assessment

Objective: To rank the potent compounds from Tier 1 based on their selectivity for the primary target over all off-targets.

- Calculate Target-Specific Selectivity Score: For each compound (Ci) passing Tier 1, calculate its selectivity score for the primary target (Tj). The score incorporates both absolute and relative potency [20]. A simplified, robust implementation is the Global Relative Potency:

G_ci,tj = K_ci,tj - mean(B_ci \ {K_ci,tj})[20]- Where

K_ci,tjis the binding affinity for the target of interest, andmean(B_ci \ {K_ci,tj})is the average affinity of the compound against all other targets.

- Rank Compounds: Rank the compounds in descending order of their

G_ci,tjscore. Compounds with the highest scores are both potent and selective. - Statistical Validation (Optional): For large or noisy datasets, perform a permutation-based procedure to calculate empirical p-values and assess the statistical significance of the observed selectivity scores [20].

- Output: A ranked list of potent and selective compounds for the target of interest.

Tier 3: Availability and Drug-Likeness Filter

Objective: To ensure the top-ranking compounds are readily accessible and possess properties conducive to drug development.

- Commercial Availability Check: Cross-reference the list of compounds with internal and commercial compound vendor databases (e.g., WOMBAT, Beilstein) [23]. Prioritize compounds that are physically available for purchase.

- Drug-Likeness Evaluation: Filter compounds based on established rules, such as Lipinski's Rule of Five, to increase the likelihood of favorable pharmacokinetics [23].

- Output: A final, prioritized list of candidates suitable for experimental validation.

Data Presentation and Analysis

The following table provides a clear, consolidated view of the filtering outcomes, allowing researchers to quickly assess the progression and stringency of each tier. Numbers should be rounded, and a visual hierarchy used to guide the reader to the most important information [21].

Table 1: Example Compound Filtering Summary for Kinase Target MEK1

| Filtering Tier | Applied Criteria | Compounds Remaining | Attrition Rate |

|---|---|---|---|

| Starting Library | N/A | 72 | N/A |

| Tier 1: Potency | pKd (MEK1) > 7.0 | 18 | 75% |

| Tier 2: Selectivity | Global Relative Potency > 2.0 | 5 | 72% |

| Tier 3: Availability | Commercially Available | 4 | 20% |

Detailed Profile of Top Candidates

For the final candidates, a detailed table should be constructed to facilitate comparison and final selection. Alignment is critical here: numerical data should be right-aligned for easy comparison, while text should be left-aligned [22].

Table 2: Detailed Characteristics of Final Candidate Compounds

| Compound ID | Potency vs. MEK1 (pKd) | Mean Potency vs. Off-Targets (pKd) | Selectivity Score (G) | Lipinski Rule Compliance | Vendor ID |

|---|---|---|---|---|---|

| AZD-6244 | 9.2 | 5.8 | 3.4 | Yes | VendorA12345 |

| CEP-701 | 9.5 | 6.5 | 3.0 | Yes | VendorB67890 |

| Compound_X | 8.8 | 6.1 | 2.7 | Yes | VendorC54321 |

| Compound_Y | 8.5 | 5.9 | 2.6 | Yes | VendorA98765 |

The Scientist's Toolkit: Research Reagent Solutions

Successful implementation of this protocol relies on key reagents and databases. The following table lists essential resources and their functions in the filtering workflow.

Table 3: Essential Research Reagents and Databases for Compound Filtering

| Item | Function / Purpose | Example Sources / Notes |

|---|---|---|

| Bioactivity Database | Provides raw binding affinity or inhibition data for compound-target pairs on a large scale. | PubChem BioAssay, CHEMBL, Davis et al. kinase dataset [20]. |

| Compound Vendor Catalog | To determine physical availability and source of short-listed compounds. | Sigma-Aldrich, Vitas-M, MolPort, internal corporate libraries. |

| Chemoinformatic Software | To calculate drug-likeness descriptors (e.g., molecular weight, logP) and perform structural analysis. | Open-source tools (RDKit), commercial packages (Schrodinger Suite). |

| Statistical Computing Environment | To implement the target-specific selectivity scoring and statistical validation procedures. | R or Python with necessary data manipulation and statistical libraries. |

Computational Implementation

The core of the target-specific selectivity scoring can be implemented in a statistical programming language like R. The following code block provides a conceptual outline.

The systematic, tiered filtering protocol detailed in this application note provides a robust and practical framework for selecting high-value compounds from a chemogenomics library. By moving beyond simple potency thresholds to incorporate a rigorous, target-specific definition of selectivity and practical availability constraints, researchers can significantly de-risk the early stages of drug discovery. This approach ensures that resources are focused on compounds with the highest probability of success in subsequent experimental validation, thereby accelerating the identification of novel drugs and drug targets within a chemogenomics paradigm.

The discovery and development of new therapeutic agents face significant challenges due to the complexity of biological systems and the multifactorial nature of most diseases. Traditional single-target approaches often yield drugs with insufficient efficacy, rapid development of resistance, and significant side effects [24]. In this context, systems pharmacology has emerged as a powerful interdisciplinary framework that integrates computational and experimental methods to understand drug actions within complex biological networks [25]. This approach is particularly valuable for chemogenomic library selection, where the goal is to design compound libraries targeted to specific families of biological macromolecules [23].

Systems pharmacology enables researchers to move beyond the traditional "one drug, one target" paradigm by constructing comprehensive drug-target-pathway-disease networks that capture the complexity of therapeutic interventions. By mapping these multi-scale relationships, researchers can identify more effective therapeutic strategies, including multi-target drugs and optimized drug combinations [24] [25]. This network-based perspective is especially relevant for understanding the mechanisms of traditional medicine approaches, such as Traditional Chinese Medicine (TCM), where multi-herb therapies have demonstrated synergistic effects that cannot be explained by simple additive models [25].

The integration of systems pharmacology into chemogenomic library design represents a paradigm shift in drug discovery. Rather than screening compounds against isolated targets, researchers can now prioritize compounds based on their predicted behavior within complex biological networks, significantly increasing the efficiency of the drug discovery process and improving the quality of candidate compounds [23].

Core Methodologies and Technologies

The construction of drug-target-pathway-disease networks relies on the integration of multiple complementary technologies, each contributing unique insights into the network structure and dynamics.

Foundational Technological Pillars

Modern systems pharmacology integrates four core technological pillars that provide the data, analytical frameworks, and predictive capabilities required for network construction [24]:

Table 1: Core Technologies in Systems Pharmacology

| Technology | Primary Function | Key Applications | Inherent Limitations |

|---|---|---|---|

| Omics Technologies (Genomics, Proteomics, Metabolomics) | Generate high-throughput molecular data | Reveal disease-related molecular characteristics; provide foundational data for drug research | Data heterogeneity; lack of standardization; potential for biased predictions |

| Bioinformatics | Process and analyze biological data using computer science and statistical methods | Identify drug targets; elucidate mechanisms of action; analyze differentially expressed genes | Prediction accuracy depends on chosen algorithms; may not fully capture biological complexity |

| Network Pharmacology (NP) | Study drug-target-disease networks using systems biology approaches | Develop multi-target therapeutic strategies; understand polypharmacology | May overlook biological complexity aspects (e.g., protein expression variations); potential for false positives without experimental validation |

| Molecular Dynamics (MD) Simulation | Examine drug-target interactions at atomic level by tracking atomic movements | Enhance precision of drug design and optimization; calculate binding free energy | High computational costs; model accuracy sensitive to force field parameters; difficult to replicate under real-life conditions |

Quantitative Systems Pharmacology (QSP) Workflows

Quantitative Systems Pharmacology (QSP) represents a more formalized implementation of systems pharmacology principles, using computational models to describe dynamic interactions between drugs and pathophysiological systems [26] [27]. QSP models integrate features of the drug (dose, dosing regimen, exposure at target site) with target biology and downstream effectors at molecular, cellular, and pathophysiological levels [26].

A mature QSP modeling workflow typically includes several key components that enable efficient, reproducible model development [26]:

- Data Programming and Standardization: Converting raw data from various sources into a standardized format that constitutes the basis for all subsequent modeling tasks.

- Multi-Conditional Model Setup: Handling different values of the same model parameter across different experimental conditions during both estimation and simulation.

- Robust Parameter Estimation: Implementing multistart strategies for parameter estimation to identify multiple potential solutions and assess reliability.

- Parameter Identifiability Analysis: Using methods such as profile likelihood to investigate parameter identifiability and compute confidence intervals.

- Model Qualification and Validation: Progressive maturation through comparison with experimental data and refinement of model structures.

This workflow is particularly valuable for chemogenomic library design as it provides a quantitative framework for predicting how compounds from targeted libraries might behave in complex biological systems, enabling more informed selection of compounds for inclusion in screening libraries [23] [26].

Experimental Protocols and Applications

Protocol for Constructing Drug-Target-Pathway-Disease Networks

The following step-by-step protocol outlines the integrated process for building comprehensive drug-target-pathway-disease networks, with particular emphasis on applications for chemogenomic library design and validation.

Table 2: Key Research Reagent Solutions for Network Construction

| Reagent/Category | Specific Examples | Primary Function | Relevance to Chemogenomics |

|---|---|---|---|

| Compound Libraries | WOMBAT: World of Molecular Bioactivity [23] | Provides structured biological activity data for diverse compounds | Foundation for chemogenomic library design; enables analysis of structure-activity relationships across target families |

| Bioinformatics Databases | TCGA (The Cancer Genome Atlas) [24]; TCMSP (Traditional Chinese Medicine Systems Pharmacology) [25] | Provide disease-related molecular data and compound-target relationships | Supplies necessary annotation data for predicting compound-target interactions within gene families |