Strategic Compound Selection for Diverse Chemogenomics Libraries in Modern Drug Discovery

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to design and implement effective chemogenomics libraries.

Strategic Compound Selection for Diverse Chemogenomics Libraries in Modern Drug Discovery

Abstract

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to design and implement effective chemogenomics libraries. It covers foundational principles of chemogenomics, advanced cheminformatics methodologies for compound selection, strategies to overcome common screening limitations, and rigorous validation approaches. By integrating insights from recent initiatives like EUbOPEN and leveraging technologies such as morphological profiling and AI-driven screening, this guide aims to enhance the success of phenotypic screening campaigns and accelerate target deconvolution and drug discovery pipelines.

The Foundations of Chemogenomics: Building Libraries for Systems Pharmacology

Defining Chemogenomics Libraries and Their Role in Phenotypic Drug Discovery

Chemogenomics libraries are systematically designed collections of small molecules, typically comprising potent, selective, and well-annotated pharmacological agents. These libraries are constructed to target a broad spectrum of proteins across the human proteome, facilitating the study of gene function and biological pathways through chemical intervention [1]. In the context of phenotypic drug discovery (PDD), these libraries serve a critical function. When a compound from a chemogenomics library produces a hit in a phenotypic screen, it suggests that the compound's annotated molecular target or targets are involved in the observed phenotypic perturbation [2]. This approach has the potential to significantly expedite the conversion of phenotypic screening projects into target-based drug discovery campaigns [2].

The modern drug discovery paradigm has evolved from a reductionist "one target—one drug" vision toward a more holistic systems pharmacology perspective that acknowledges a "one drug—several targets" reality [1]. This shift is partly driven by the understanding that complex diseases often arise from multiple molecular abnormalities rather than a single defect, making phenotypic screening a valuable strategy for identifying novel therapeutics [1]. Chemogenomics libraries represent a powerful tool for bridging the gap between phenotypic observations and molecular target identification, thereby addressing one of the most significant challenges in phenotypic drug discovery.

Key Characteristics and Design Principles

Composition and Annotation

A high-quality chemogenomics library is characterized by several key features. Firstly, it consists of compounds with well-defined mechanisms of action (MoA) and high target selectivity [3]. These libraries are often composed of chemically diverse compounds selected for their drug-like properties and ability to represent a wide array of bioactive chemotypes [4]. For instance, commercial chemogenomics libraries can contain over 1,600 diverse, highly selective, and well-annotated pharmacological probe molecules designed to cover a broad panel of drug targets involved in diverse biological effects and diseases [4] [1].

The biological annotation of these libraries is paramount. Each compound should be comprehensively characterized not only for its primary target affinity but also for its effects on basic cellular functions. This includes assessments of cell viability, mitochondrial health, membrane integrity, cell cycle progression, and potential interference with cytoskeletal functions [3]. Such comprehensive profiling helps differentiate between target-specific effects and non-specific cytotoxicity, which is crucial for accurate data interpretation in phenotypic screens.

Polypharmacology Considerations

A critical aspect in the design and use of chemogenomics libraries is understanding and managing polypharmacology—the phenomenon where a single compound interacts with multiple molecular targets. While polypharmacology can sometimes be therapeutically beneficial, it complicates target deconvolution in phenotypic screening [5].

Quantitative measures such as the polypharmacology index (PPindex) have been developed to evaluate the target-specificity of chemogenomics libraries. This index is derived from the distribution of known targets across all compounds in a library, with larger PPindex values (slopes closer to a vertical line) indicating more target-specific libraries, and smaller values (slopes closer to a horizontal line) indicating more polypharmacologic libraries [5]. Studies comparing different libraries have revealed significant variations in their polypharmacology profiles, which must be considered when selecting a library for phenotypic screening [5].

Table 1: Polypharmacology Index (PPindex) of Exemplary Chemogenomics Libraries

| Library Name | PPindex (All Data) | PPindex (Excluding Compounds with 0 or 1 Target) | Interpretation |

|---|---|---|---|

| DrugBank | 0.9594 | 0.4721 | More target-specific |

| LSP-MoA | 0.9751 | 0.3154 | Moderate polypharmacology |

| MIPE 4.0 | 0.7102 | 0.3847 | Moderate polypharmacology |

| Microsource Spectrum | 0.4325 | 0.2586 | More polypharmacologic |

Library Design and Curation

The process of building a chemogenomics library involves careful compound selection and curation strategies. Computational approaches often integrate drug-target-pathway-disease relationships with morphological profiling data from assays like Cell Painting to select compounds that represent a large and diverse panel of drug targets [1]. These approaches may utilize system pharmacology networks that integrate heterogeneous data sources, including bioactivity data from databases like ChEMBL, pathway information from KEGG, gene ontologies, and disease ontologies [1].

Systematic methodologies like the Tool Score (TS) have been developed to prioritize tool compounds from large-scale, heterogeneous bioactivity data [6]. This evidence-based, quantitative metric ranks compounds based on confidence in their strength and selectivity, enabling researchers to create more reliable targeted screening sets. Validation studies have demonstrated that high-TS tools show more reliably selective phenotypic profiles in cell-based pathway assays compared to lower-TS compounds [6].

The Role of Chemogenomics in Phenotypic Drug Discovery

Target Deconvolution

The primary application of chemogenomics libraries in PDD is target identification and mechanism deconvolution. In traditional phenotypic screening, identifying the molecular targets responsible for an observed phenotype represents a major bottleneck. Chemogenomics libraries directly address this challenge by providing a collection of compounds with known targets, enabling researchers to make informed hypotheses about the mechanisms driving phenotypic changes [5] [2].

When a compound from a chemogenomics library produces a phenotype, the pre-existing knowledge about its molecular target(s) provides immediate starting points for understanding the biological mechanism. This approach is particularly powerful when multiple compounds with different chemical scaffolds but overlapping target profiles produce similar phenotypes, strengthening the association between a specific target and the observed effect [3].

Integration with Genetic Screening

Chemogenomics approaches can be powerfully integrated with genetic screening methodologies, such as RNA interference (RNAi) and CRISPR-Cas9, to strengthen target validation [2]. While genetic screens systematically perturb gene function, and small molecule screens perturb protein function, each approach has distinct limitations that can be mitigated through integration [7].

For example, genetic perturbations are permanent and affect the entire cell, while pharmacological inhibition is tunable and reversible. Additionally, genetic approaches can probe proteins that are currently considered "undruggable," while small molecules can target specific protein domains or functions [7]. The concordance between genetic and chemical perturbations of the same target provides compelling evidence for its therapeutic relevance, creating a more robust foundation for drug discovery programs.

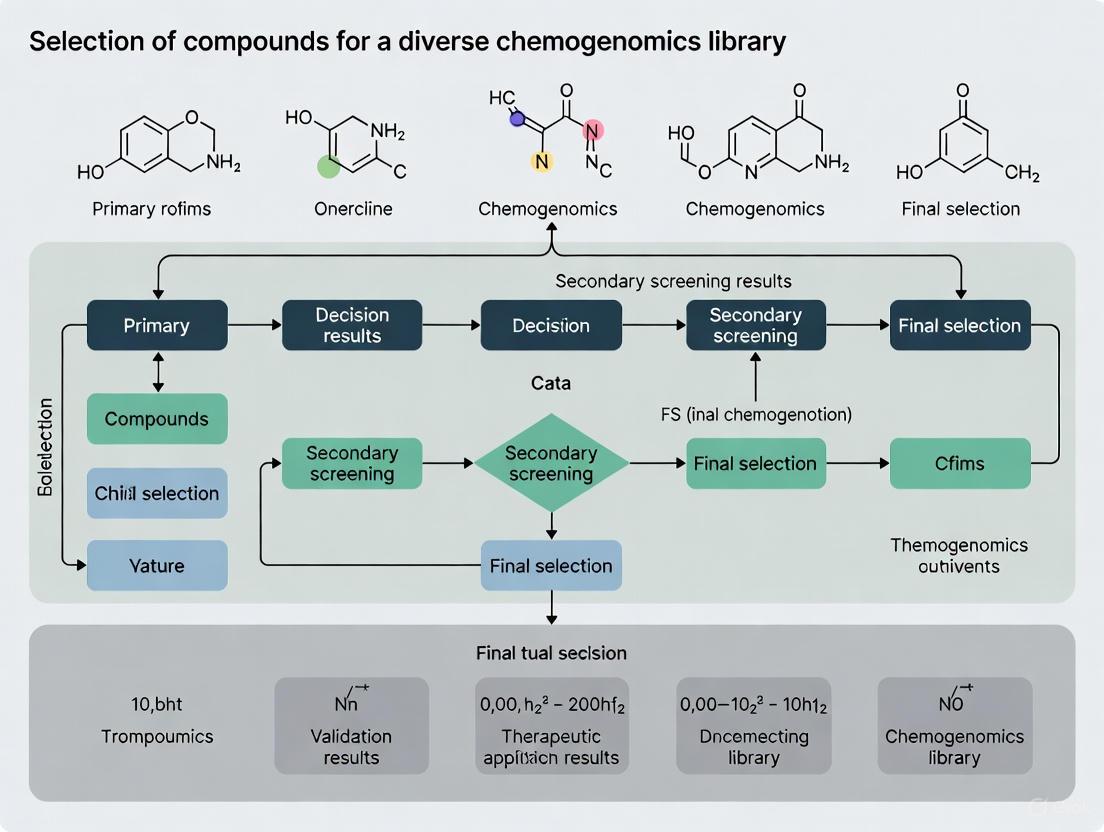

Practical Workflow

The typical workflow for using chemogenomics libraries in phenotypic screening involves several key steps, from library development to target hypothesis generation, as illustrated below.

Diagram 1: Chemogenomics Library Development and Screening Workflow

Experimental Protocols for Library Validation and Screening

High-Content Phenotypic Profiling

Advanced high-content screening (HCS) technologies play a crucial role in both validating chemogenomics libraries and conducting phenotypic screens. The Cell Painting assay is particularly valuable for this purpose, as it uses multiple fluorescent dyes to label various cellular components and extracts thousands of morphological features to create a detailed profile of compound effects [1]. This approach enables researchers to detect disease-relevant morphological signatures and group compounds with similar mechanisms of action based on their morphological profiles.

A optimized live-cell multiplexed assay has been developed specifically for annotating chemogenomic libraries, classifying cells based on nuclear morphology—an excellent indicator for cellular responses such as early apoptosis and necrosis [3]. This assay combines the detection of nuclear changes with other general cell-damaging activities of small molecules, including alterations in cytoskeletal morphology, cell cycle, and mitochondrial health, providing a comprehensive, time-dependent characterization of compound effects on cellular health in a single experiment [3].

Protocol: HighVia Extend Viability Assay

The HighVia Extend protocol represents a sophisticated approach for comprehensive compound annotation [3]:

- Cell Preparation: Plate appropriate cell lines (e.g., U2OS, HEK293T, MRC9) in multiwell plates compatible with high-content imaging.

- Compound Treatment: Apply chemogenomic library compounds at relevant concentrations, including appropriate controls.

- Staining Solution: Prepare a dye mixture containing:

- 50 nM Hoechst33342 for nuclear staining

- MitotrackerRed for mitochondrial visualization

- BioTracker 488 Green Microtubule Cytoskeleton Dye for cytoskeletal assessment

- MitotrackerDeepRed for additional mitochondrial content evaluation

- Live-Cell Imaging: Acquire images at multiple time points (e.g., 24, 48, 72 hours) using a high-throughput microscope.

- Image Analysis: Use automated image analysis software (e.g., CellProfiler) to identify individual cells and measure morphological features.

- Population Gating: Apply supervised machine-learning algorithms to categorize cells into distinct populations based on health status (healthy, early/late apoptotic, necrotic, lysed).

This continuous assay format captures the kinetics of diverse cell death mechanisms, differentiating between rapid cytotoxic responses (e.g., staurosporine) and slower, more specific phenotypic changes (e.g., JQ1) [3].

Nuclear Phenotype Classification

Research has demonstrated that nuclear phenotype alone can provide robust assessment of compound effects when comprehensive cellular profiling is not feasible [3]. The classification is based on:

- Healthy Nuclei: Normal size and shape, uniform chromatin distribution

- Pyknosis: Nuclear condensation and shrinkage

- Nuclear Fragmentation: Breakdown into discrete fragments

This simplified approach produces highly comparable IC50 values and population distribution profiles to more complex multi-parameter assays, though it may increase vulnerability to assay interference from fluorescent compounds [3].

Research Reagent Solutions

Successful implementation of chemogenomics approaches requires specific research reagents and tools. The following table outlines key solutions used in the field.

Table 2: Essential Research Reagents for Chemogenomics and Phenotypic Screening

| Reagent / Solution | Function | Application Example |

|---|---|---|

| Chemogenomic Library (e.g., BioAscent, ChemDiv) | Collection of well-annotated compounds with known targets | Phenotypic screening and target deconvolution [4] [2] |

| Cell Painting Assay Reagents | Multiplexed fluorescent labeling of cellular components | High-content morphological profiling [1] |

| HighVia Extend Assay Dyes | Live-cell multiplexed staining for viability assessment | Comprehensive compound annotation [3] |

| Tool Score (TS) Algorithm | Quantitative metric for compound selectivity prioritization | Evidence-based compound ranking [6] |

| System Pharmacology Network (Neo4j) | Integration of heterogeneous biological data | Network-based compound selection [1] |

| Polypharmacology Index (PPindex) | Quantitative measure of library target specificity | Library quality assessment [5] |

Current Limitations and Future Directions

Technical and Practical Challenges

Despite their utility, chemogenomics libraries face several limitations. The best chemogenomics libraries currently interrogate only a small fraction of the human genome—approximately 1,000–2,000 targets out of 20,000+ genes [7]. This limited coverage reflects the reality that many proteins remain poorly addressed by chemical tools. Additionally, issues with compound polypharmacology, despite mitigation efforts, continue to complicate target deconvolution [5].

There are also fundamental differences between genetic and small molecule perturbations that must be considered. Small molecules typically inhibit protein function rather than eliminating the protein entirely, can access different subcellular compartments based on physicochemical properties, and may exhibit off-target effects despite careful design [7]. Furthermore, many phenotypic assays lack the throughput required to screen comprehensive chemogenomics libraries in complex physiological systems, creating practical constraints on implementation.

Emerging Solutions and Innovations

Several strategies are emerging to address these limitations. The development of more sophisticated computational approaches, including artificial intelligence for target prediction, is enhancing our ability to design better libraries and interpret screening results [7] [1]. International initiatives such as the EUbOPEN project aim to create open-access chemogenomic libraries covering more than 1,000 proteins with well-annotated chemical tools, while Target 2035 represents a broader effort to expand this coverage to the entire druggable proteome [3].

The integration of high-content phenotypic profiling with multi-omics approaches and advanced data analysis methods is creating more robust frameworks for understanding compound mechanisms. Furthermore, the development of quantitative metrics like the Tool Score and Polypharmacology Index provides researchers with objective criteria for library selection and compound prioritization [5] [6]. The relationship between phenotypic screening and target deconvolution strategies illustrates the evolving methodology in the field.

Diagram 2: Integrated Target Deconvolution Strategy for Phenotypic Screening

Chemogenomics libraries represent a powerful strategic resource in modern phenotypic drug discovery, directly addressing the critical challenge of target deconvolution. Through their carefully curated composition of target-annotated compounds, integration with high-content screening technologies, and systematic approach to data interpretation, these libraries provide an essential bridge between phenotypic observations and molecular mechanisms. While limitations in target coverage and compound specificity persist, ongoing initiatives and technological advancements continue to enhance the utility and application of chemogenomics approaches. For researchers engaged in selecting compounds for diverse chemogenomics libraries, a thorough understanding of both the capabilities and constraints of these resources is essential for maximizing their potential in identifying novel therapeutic targets and mechanisms.

Chemogenomics integrates drug discovery and target identification through the detection and analysis of chemical-genetic interactions, providing a powerful approach for understanding the genome-wide cellular response to small molecules [8]. The fundamental principle involves using targeted chemical libraries to probe biological systems, enabling direct, unbiased identification of drug target candidates as well as genes required for drug resistance. Designing a targeted screening library of bioactive small molecules presents significant challenges since most compounds modulate their effects through multiple protein targets with varying degrees of potency and selectivity [9]. Successfully implemented chemogenomic assays and analytical frameworks help bridge the critical gap between bioactive compound discovery and drug target validation, addressing a persistent challenge in drug discovery—the validation of molecular targets and pathways modulated by bioactive small molecules [8].

Table 1: Key Definitions in Chemogenomic Library Development

| Term | Definition | Primary Function |

|---|---|---|

| Chemical Probe | A selective small molecule meeting specific potency and selectivity criteria [10] | Investigates target function, safety, and translation [10] |

| Annotated Bioactive Compound | A compound with known biological activity and target information | Provides starting points for drug discovery campaigns |

| Chemogenomic Profiling | Method analyzing genome-wide cellular response to compounds [8] | Identifies drug targets and resistance mechanisms [8] |

| Target Validation | Process of confirming a protein's role in a disease context | Establishes therapeutic relevance before costly development |

Core Component 1: Selective Chemical Probes

Defining Quality Criteria for Probes

Chemical probes are defined by four main criteria that ensure their utility for investigating target function: (1) minimal in vitro potency of less than 100 nM; (2) greater than 30-fold selectivity over sequence-related proteins; (3) profiled against an industry standard selection of pharmacologically relevant targets; and (4) demonstrated on-target cellular effects at greater than 1 μM concentration [10]. These stringent criteria distinguish high-quality chemical probes from less selective tool compounds, ensuring researchers can attribute observed phenotypic effects to modulation of the intended target with high confidence.

Exemplary Probe: (+)-JQ1 for BET Bromodomains

The development of (+)-JQ1 exemplifies the application of these criteria to a high-quality chemical probe. (+)-JQ1 is a potent inhibitor of both bromodomains of BRD4 (KD(BRD4(1)) = 50 nM, KD(BRD4(2)) = 90 nM by isothermal titration calorimetry) with similar potency against both bromodomains of BRD3, and approximately three-fold weaker binding against BRD2 and BRDT [10]. This triazolothienodiazepine-based probe was key to establishing the mechanistic significance of BET inhibition in multiple haematological and solid malignancies, including breast, colorectal, and brain cancers, as well as multiple myeloma, leukaemia, and lymphoma [10]. While (+)-JQ1 itself was unsuitable for clinical progression due to its short half-life, it provided an invaluable starting point for medicinal chemistry optimization campaigns that led to clinical candidates.

Table 2: Evolution from Chemical Probes to Clinical Candidates

| Compound | Probe/Target Profile | Key Optimizations | Clinical Status |

|---|---|---|---|

| (+)-JQ1 (Probe) | Pan-BET inhibitor; KD BRD4(1) = 50 nM [10] | Prototype probe; insufficient half-life [10] | Research tool only [10] |

| I-BET762/GSK525762 | Inspired by (+)-JQ1; IC50 (FP): BRD2=794 nM, BRD3=398 nM, BRD4=631 nM [10] | Improved PK properties, solubility, and half-life [10] | Clinical trials for AML, breast, and prostate cancer [10] |

| OTX015/MK-8628 | Potent BET inhibitor; IC50 = 92-112 nM (FRET) [10] | Structural alterations to improve drug-likeness [10] | Clinical development terminated due to lack of efficacy [10] |

| CPI-0610 | Inspired by (+)-JQ1 structure [10] | Amino-isoxazole fragment with constrained azepine ring [10] | Information not in search results |

Core Component 2: Annotated Bioactive Compound Sets

Publicly Available Compound Collections

Several organizations provide carefully curated compound sets that form the backbone of chemogenomic screening efforts. These include the SGC Chemical Probes (small, drug-like molecules meeting specific criteria: in vitro IC50 or Kd < 100 nM, > 30-fold selectivity over proteins in the same family, and significant on-target cellular activity at 1 μM) [11]. The Open Science Probes represent another valuable resource, providing a unique collection of probes with associated data, control compounds, usage recommendations, and ordering information [11]. Additional specialized collections include the Bromodomain Toolbox (25 chemical probes covering 29 human bromodomain targets) [11] and the Methyltransferases Toolbox for studying methylation-mediated signaling in epigenetics and inflammation [11].

Drug Collections for Comparative Screening

Beyond chemical probes, annotated drug collections enable researchers to benchmark new compounds against molecules with established clinical profiles. Key resources include DrugBank, which provides clinical information, side effects, drug interactions, chemical structures, and protein interaction data for approved and investigational drugs [11]. The NIH NCATS Inxight Drugs database serves as a comprehensive portal for drug development information, containing data on ingredients in medicinal products [11]. For cancer research, the FDA-Approved Anticancer Drugs set (AOD XI) contains 179 agents plated across 3 microtiter plates, enabling cancer research, drug discovery, and combination studies [11].

Experimental Protocols for Chemogenomic Profiling

Haploinsufficiency Profiling (HIP) and Homozygous Profiling (HOP)

The HIP/HOP chemogenomic platform employs barcoded heterozygous and homozygous yeast knockout collections to provide mechanistic insight into drug-gene interactions [8]. HIP exploits drug-induced haploinsufficiency, a phenomenon where strain-specific sensitivity occurs in heterozygous strains deleted for one copy of an essential gene when exposed to a drug targeting that gene's product. The complementary HOP assay interrogates approximately 4800 nonessential homozygous deletion strains to identify genes involved in the drug target's biological pathway and those required for drug resistance [8]. In practice, molecular identifiers unique to each strain enable competitive growth in a single pool, with fitness quantified by barcode sequencing to generate fitness defect scores that report drug sensitivity.

Protocol Workflow and Data Analysis

The experimental workflow involves several critical steps: (1) construction of pooled heterozygous and homozygous strains; (2) robotic collection of samples for both HIP and HOP assays; (3) barcode sequencing and quantification of strain abundance; and (4) data normalization and analysis [8]. Key methodological variations include collection parameters (fixed time points versus doubling-based collection) and normalization approaches (batch effect correction versus study-based normalization). Data processing typically involves calculating relative strain abundance as the log2 of the median control signal divided by the compound treatment signal, with final fitness defect scores expressed as robust z-scores [8]. This comprehensive genome-wide profile provides a complete view of the cellular response to a specific compound.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagent Solutions for Chemogenomics

| Resource/Solution | Provider/Type | Function & Application |

|---|---|---|

| SGC Chemical Probes | Structural Genomics Consortium [11] | High-quality, open-access probes for target validation and functional studies |

| ChemicalProbes.org | Community-driven wiki [11] | Recommends appropriate chemical probes, provides usage guidance, documents limitations |

| opnMe Portal | Boehringer Ingelheim [11] | Open innovation portal providing access to BI's molecule library for collaboration |

| Probe Miner | Computational resource [11] | Computational assessment and scoring of literature compounds for probe suitability |

| CLOUD Library | CeMM [11] | Library of Unique Drugs covering prodrugs and active forms at pharmacologically relevant concentrations |

| Kinase Chemogenomic Set | Various sources [11] | Focused collection for probing the kinome with selective inhibitors |

| Yeast Deletion Pools | Commercial/academic [8] | Barcoded knockout collections for HIP/HOP chemogenomic profiling |

| DepMAP Portal | Broad Institute [8] | Complementary data on cancer cell lines and chemical sensitivity |

Library Design Strategies and Implementation

Quantitative Design Framework

Effective chemogenomic library design requires analytic procedures adjusted for multiple factors: library size, cellular activity, chemical diversity and availability, and target selectivity [9]. Systematic approaches can result in minimal screening libraries of 1,211 compounds capable of targeting 1,386 anticancer proteins, as demonstrated in recent studies focused on precision oncology applications [9]. These designed compound collections cover a wide range of protein targets and biological pathways implicated in various cancers, making them widely applicable for identifying patient-specific vulnerabilities through phenotypic screening approaches.

Implementation in Precision Oncology

In practice, these design strategies have been successfully implemented in pilot screening studies. For instance, researchers have employed physical libraries of 789 compounds covering 1,320 anticancer targets to perform imaging-based screening of glioma stem cells from patients with glioblastoma (GBM) [9]. The resulting cell survival profiling revealed highly heterogeneous phenotypic responses across patients and GBM subtypes, demonstrating how strategically designed chemogenomic libraries can uncover patient-specific therapeutic vulnerabilities. The data and assay annotations from such studies are increasingly made freely available through repositories like Zenodo and GitHub, along with web platforms for data exploration and visualization [9].

The systematic construction of chemogenomic libraries relies on two fundamental components: high-quality selective chemical probes that enable precise target validation, and comprehensively annotated bioactive compounds that provide pharmacological context and starting points for drug discovery. By implementing rigorous design strategies that balance library size, chemical diversity, cellular activity, and target selectivity, researchers can create efficient screening collections capable of uncovering novel therapeutic vulnerabilities. The continued expansion of publicly available compound resources, standardized profiling protocols, and open-data initiatives will further enhance the power of chemogenomic approaches to bridge the critical gap between basic research and clinical translation in precision medicine.

The systematic selection of compounds for a diverse chemogenomics library is a foundational step in modern drug discovery. Such libraries are designed to interrogate a wide range of biological targets, enabling the identification of novel chemical starting points and the exploration of complex biological phenomena. The core challenge lies in ensuring that the chemical library provides adequate coverage of the druggable proteome—the subset of human proteins that can be binded by small molecules with high affinity—to maximize the probability of success in phenotypic screens or target-based campaigns. A library with limited coverage may miss critical interactions, leading to false negatives and wasted resources. This guide provides an in-depth technical framework for assessing library coverage, providing methodologies and metrics to map chemical diversity directly to the druggable proteome, thereby supporting the broader thesis that informed compound selection is crucial for successful chemogenomics research.

The druggable proteome is estimated to encompass approximately 4,000 proteins, yet current chemogenomics libraries typically probe only a fraction of this space. A comprehensive analysis reveals that the best chemogenomics libraries interrogate only about 1,000–2,000 targets out of the more than 20,000 protein-coding genes in the human genome [7]. This coverage gap underscores the critical need for robust assessment methods. Computational approaches, particularly chemoinformatics, have become indispensable for bridging this gap, allowing researchers to manage chemical data, predict molecular properties, and design novel compounds with unprecedented efficiency [12]. By applying the principles and protocols outlined in this guide, researchers can quantify the structural and functional coverage of their libraries, identify areas of under-representation, and make data-driven decisions to optimize library composition for specific research objectives within a chemogenomics context.

Core Concepts and Definitions

The Druggable Proteome

The druggable proteome refers to the subset of proteins within an organism that are capable of binding small molecules with high affinity, and whose activity can be modulated by such binding events. This concept is central to target-based drug discovery. The druggable proteome is not a fixed entity; it expands with advancements in structural biology, such as the resolution of new protein structures via cryo-EM, and the emergence of novel therapeutic modalities, such as molecular glues and PROTACs, that can engage targets previously considered "undruggable." The arrival of machine learning-powered structure prediction tools, like AlphaFold, which has generated over 214 million unique protein structure models, has dramatically increased access to putative target structures, further expanding the known druggable universe [13]. A critical task in library design is to ensure that the chemical space covered by a compound collection aligns with the structural and physicochemical space of binding sites across this proteome.

Chemical Library Coverage

Chemical library coverage is a measure of how well a given collection of compounds samples the relevant chemical space in relation to the biological targets of interest. It is a multi-faceted concept that requires assessment from several complementary angles:

- Structural Coverage: The diversity of molecular scaffolds and chemotypes present in the library. A library with poor structural coverage will have many similar compounds, leading to redundant biological information.

- Property Coverage: The distribution of key physicochemical properties (e.g., molecular weight, lipophilicity, polar surface area) across the library, which should align with drug-like or lead-like principles to ensure favorable pharmacokinetics.

- Target-Family Coverage: The library's implicit or explicit ability to interact with key protein families (e.g., kinases, GPCRs, ion channels) that constitute major portions of the druggable proteome.

Assessing coverage is not merely about maximizing diversity. It involves a balanced approach to ensure the library is both broad enough to probe novel biology and focused enough to contain compounds with a high probability of success against the intended target classes [14]. The following workflow diagram illustrates the core process for evaluating this coverage.

Figure 1: Workflow for Assessing Library Coverage. This process integrates chemical space analysis with biological space mapping to generate a comprehensive coverage report.

Quantitative Metrics for Coverage Assessment

A multi-faceted assessment using well-defined quantitative metrics is essential to avoid the biases inherent in any single method. The following tables summarize key metrics and property ranges critical for evaluating library coverage.

Table 1: Core Metrics for Assessing Chemical Diversity and Coverage

| Metric Category | Specific Metric | Description | Interpretation in Proteome Coverage |

|---|---|---|---|

| Scaffold Diversity | Scaffold Count (Unique) [14] | Number of distinct molecular frameworks (cyclic systems) after removing side chains. | A higher count suggests an ability to interact with a wider variety of protein binding site architectures. |

| Scaled Shannon Entropy (SSE) [14] | Measures the evenness of compound distribution across different scaffolds. Ranges from 0 (minimal diversity) to 1 (maximal diversity). | An SSE closer to 1 indicates a library is not dominated by a few common chemotypes, reducing redundancy in screening. | |

| F50 Value [14] | The fraction of unique scaffolds needed to cover 50% of the compounds in a library. | A lower F50 value indicates higher scaffold diversity, as fewer scaffolds account for half the library. | |

| Structural Diversity | Type-Token Ratio (TTR) / Moving Window TTR (MWTTR) [15] | The ratio of unique "chemical words" (MCS) to total words in the library's "vocabulary." | A higher TTR indicates greater linguistic richness and structural diversity, analogous to a broader biological target vocabulary. |

| Tanimoto Similarity [16] [14] | A measure of structural similarity based on chemical fingerprints (e.g., ECFP, MACCS). Average and distribution are key. | A lower average similarity suggests a more diverse library, potentially leading to more diverse biological outcomes. | |

| Property Diversity | Principal Component Analysis (PCA) [17] | Reduces the dimensionality of multiple physicochemical properties to visualize and quantify property space coverage. | A larger area covered in PCA space indicates coverage of a wider range of drug-like properties, relevant to a larger proteome fraction. |

Table 2: Key Physicochemical Properties for Drug-Like Coverage

| Property | Target Range for Lead-Like Libraries | Significance in Proteome Coverage |

|---|---|---|

| Molecular Weight (MW) | 200-500 Da [12] | Lower MW compounds often have better permeability and are more likely to target a wider range of binding sites. |

| Octanol-Water Partition Coefficient (cLogP) | 1-3 [12] | Optimal lipophilicity balances solubility and membrane permeability, crucial for engaging intracellular targets. |

| Hydrogen Bond Donors (HBD) | ≤ 5 | Impacts compound solubility and ability to form specific interactions with polar residues in binding pockets. |

| Hydrogen Bond Acceptors (HBA) | ≤ 10 | Influences desolvation penalties and the nature of interactions with diverse target families. |

| Polar Surface Area (PSA) | < 140 Ų | A key predictor of cell permeability; critical for ensuring compounds can reach intracellular targets. |

| Rotatable Bonds | ≤ 10 | Affects molecular flexibility and the entropy penalty upon binding to a protein target. |

The Consensus Diversity Plot (CDP) is a powerful visualization tool that represents the global diversity of a compound library by integrating multiple metrics into a single, two-dimensional plot [14]. Typically, scaffold diversity (e.g., using SSE or F50) is plotted on the Y-axis, and fingerprint diversity (e.g., average Tanimoto similarity) on the X-axis. A third dimension, such as property diversity, can be mapped using a color scale. This allows researchers to quickly classify and compare libraries. For instance, a library in the CDP's top-right quadrant would have high scaffold and high fingerprint diversity, indicating broad potential coverage of the druggable proteome, whereas a library in the bottom-left would be considered coverage-poor.

Experimental Protocols for Coverage Mapping

Protocol 1: Scaffold-Based Diversity Analysis

This protocol assesses the fundamental building blocks of a chemical library, providing insight into the core structural motifs available to interact with protein targets.

Objective: To quantify the diversity of molecular scaffolds within a compound library and assess the risk of structural redundancy.

Materials & Software:

- Compound library in SDF or SMILES format.

- Cheminformatics toolkit (e.g., RDKit [18] or Chemistry Development Kit (CDK) [18]).

- Software for calculating molecular scaffolds (e.g., MEQI for generating chemotypes [14]).

Procedure:

- Data Curation: Load the compound library. Remove duplicates, salts, and neutralize charges using a tool like the

washmodule in MOE [14] or equivalent functionality in RDKit/CDK. - Scaffold Extraction: Apply the Bemis-Murcko method or the Johnson and Xu methodology [14] to extract the central scaffold from each molecule, discarding all side chain atoms.

- Generate Cyclic System Retrieval (CSR) Curve:

- Rank all unique scaffolds from most frequent to least frequent.

- On the X-axis, plot the cumulative fraction of unique scaffolds (from 0 to 1).

- On the Y-axis, plot the cumulative fraction of total compounds accounted for by those scaffolds.

- Calculate the Area Under the Curve (AUC). A lower AUC indicates higher scaffold diversity, as a small number of scaffolds does not account for a large portion of the library [14].

- Calculate Scaled Shannon Entropy (SSE):

- For the

nmost populated scaffolds, calculate the Shannon Entropy (SE):SE = -∑(p_i * log2(p_i)), wherep_iis the proportion of compounds belonging to scaffoldi. - Normalize the SE to obtain the SSE:

SSE = SE / log2(n). An SSE value closer to 1.0 indicates a more even distribution of compounds across scaffolds [14].

- For the

Protocol 2: Linguistic Diversity Analysis Using Chemical Words

This innovative protocol adapts methods from computational linguistics to chemistry, using maximal common substructures (MCS) as "chemical words" to profile a library's structural vocabulary [15].

Objective: To characterize the structural diversity of a library using linguistic metrics and identify characteristic "keywords" that define the collection.

Materials & Software:

- Compound library in SMILES format.

- RDKit or equivalent software for MCS calculation.

- Custom scripts (e.g., in Python) for frequency and distribution analysis.

Procedure:

- Pairwise MCS Calculation: For all possible pairs of molecules in the library (or a large, statistically significant random subset), compute the Maximum Common Substructure (MCS) for each pair. This MCS is defined as a "chemical word" [15].

- Build Vocabulary and Rank Words: Compile all unique MCS words into a "vocabulary" for the library. Rank the words by their frequency of occurrence.

- Plot Frequency vs. Rank: Generate a log-log plot of frequency versus rank. A power-law (Zipfian) distribution is expected, similar to natural language [15].

- Calculate Moving Window Type-Token Ratio (MWTTR):

- Randomly sample a sequence of chemical words (e.g., 50,000) from the total vocabulary.

- Calculate the Type-Token Ratio (TTR = number of unique words / total words) within a moving window of fixed size (e.g., 1,000 words) slid across the sequence.

- Plot the distribution of these TTR values. A higher average MWTTR indicates greater "linguistic richness" and, by analogy, greater structural diversity, which has been shown to be higher in natural product libraries compared to random molecular collections [15].

Protocol 3: Machine Learning-Driven Target Prediction

This protocol uses machine learning models trained on known chemogenomic interactions to predict the potential target coverage of a novel library.

Objective: To impute the potential biological target space of a compound library based on its chemical features.

Materials & Software:

- Known target-compound interaction database (e.g., ChEMBL, BindingDB [17]).

- Cheminformatics toolkit (e.g., RDKit for descriptor calculation [17]).

- Machine learning libraries (e.g., Scikit-learn for Random Forest, SVM [17]).

Procedure:

- Prepare Training Data: Extract a dataset of small molecules with known protein targets (active) and a set of decoy molecules (inactive) from a source like DUD-E [17].

- Calculate Molecular Descriptors: Generate 2D molecular descriptors (e.g., molecular weight, logP, topological indices) and/or chemical fingerprints (e.g., ECFP4) for all molecules using RDKit.

- Train Predictive Models: Train multiple machine learning classifiers (e.g., Random Forest, Support Vector Machine) to distinguish active from inactive compounds for a specific target or target family. Evaluate model performance using cross-validation and metrics like AUC-ROC [17].

- Predict Library Activity: Apply the trained models to the compound library of interest. The output is a prediction of which compounds are likely active against the target family.

- Aggregate Coverage: By repeating this process for key druggable target families (e.g., kinases, GPCRs, ion channels, proteases), an aggregate profile of the library's predicted target coverage can be built, identifying strengths and gaps.

The relationship between the chemical features of a library and the biological space it probes is complex. The following diagram outlines the logical framework for connecting these two domains, which is the foundation of the above protocols.

Figure 2: Logic of Chemical-to-Biological Space Mapping. Predictive models, trained on known chemical-biological interactions, map a library's features to its potential target coverage.

The Scientist's Toolkit: Essential Research Reagents and Software

Table 3: Key Software Tools for Cheminformatics and Coverage Analysis

| Tool Name | Type / Category | Primary Function in Coverage Assessment | Reference |

|---|---|---|---|

| RDKit | Open-Source Cheminformatics Library | Calculating molecular descriptors, generating chemical fingerprints, scaffold decomposition, and molecular visualization. Essential for Protocol 1. | [18] [17] |

| Chemistry Development Kit (CDK) | Open-Source Java Library | Similar to RDKit, provides a wide range of cheminformatics functionalities including structure manipulation, descriptor calculation, and QSAR. | [18] |

| MayaChemTools | Collection of Command-Line Tools | Performing molecular descriptor calculation, property prediction, and substructure searching in a high-throughput, scriptable manner. | [18] |

| PaDEL-Descriptor | Software for Descriptor Calculation | Calculating a comprehensive set of molecular descriptors and fingerprints. Can be accessed via a Python wrapper for integration into workflows. | [18] |

| KNIME | Open-Source Data Analytics Platform | Visual programming for building and executing complex cheminformatics workflows, including library enumeration and diversity analysis. | [19] |

| DataWarrior | Open-Source Data Visualization/Analysis | An interactive program for data visualization, filtering, and analysis, with built-in chemistry functions for diversity plots. | [19] |

| Consensus Diversity Plot (CDP) | Online Visualization Tool | Specifically designed to represent the global diversity of compound libraries using multiple metrics (scaffolds, fingerprints, properties) on a single 2D plot. | [14] |

The process of assessing chemical library coverage against the druggable proteome is a critical, multi-dimensional exercise that moves library design from an art to a data-driven science. By employing a combination of scaffold analysis, linguistic profiling, and machine learning-based target prediction, researchers can obtain a comprehensive and quantitative understanding of their library's strengths and weaknesses. This guide has outlined the core concepts, provided definitive metrics in structured tables, and detailed practical protocols for executing this assessment.

The ultimate goal within a chemogenomics research thesis is to select a compound set that is not merely large, but intelligently configured to maximize the probability of meaningful biological discovery. A library optimized for broad proteome coverage increases the likelihood of identifying novel hit compounds for diverse targets, including those that are currently underrepresented or poorly understood. As the field advances with the integration of more sophisticated AI models, ultra-large virtual libraries, and dynamic structural data from molecular simulations, the frameworks for coverage assessment will become even more precise and predictive. By adopting these rigorous assessment strategies, researchers can ensure their chemogenomics libraries are powerful engines for innovation in drug discovery.

The systematic selection of compounds for a diverse chemogenomics library is a foundational step in modern drug discovery, directly influencing the success of high-throughput screening (HTS) campaigns against novel biological targets [20]. A well-designed library maximizes the coverage of biologically relevant chemical space while minimizing redundancy, thereby increasing the probability of identifying high-quality hits across diverse target classes [21]. The core challenge lies in moving beyond simple compound counting to a multi-faceted assessment of molecular diversity using complementary computational approaches.

This guide details the three primary and interdependent axes for evaluating compound library diversity: scaffold diversity, structural fingerprint diversity, and physicochemical property diversity [14]. By integrating quantitative metrics from these domains, researchers can make informed decisions to prioritize compounds that collectively explore broad regions of chemical space, ensuring that a chemogenomics library is poised for success against both known and unforeseen biological targets.

Molecular Scaffolds and Frameworks

Defining Molecular Scaffolds

A molecular scaffold, or chemotype, represents the core structure of a molecule, essential for classifying compounds and correlating structural classes with biological activity [22]. In chemoinformatics, objective and systematic scaffold definitions are crucial for consistent analysis. The most prevalent definitions include:

- Murcko Framework: Proposed by Bemis and Murcko, this method deconstructs a molecule into ring systems, linkers, and side chains. The framework is defined as the union of all ring systems and the linkers that connect them [23] [24]. This representation retains atom and bond type information, providing a detailed view of the core structure.

- Scaffold Tree: This hierarchical approach, introduced by Schuffenhauer et al., iteratively prunes rings from a molecule based on a set of prioritization rules until only a single ring remains [23] [24]. Each level of the tree represents a different abstraction of the molecule, with Level n-1 typically corresponding to the Murcko framework. This hierarchy allows for the analysis of scaffold relationships and diversity at multiple levels of complexity.

- Molecular Anatomy: A more recent, flexible approach that generates a multi-dimensional network of hierarchically interconnected molecular frameworks. It uses multiple representations and fragmentation rules to overcome the limitations of single-rule methods, effectively clustering active molecules from different structural classes and capturing critical structure-activity information [22].

Key Metrics for Scaffold Diversity Analysis

Quantifying scaffold distribution is vital for understanding the structural diversity and potential bias of a compound library. Key quantitative metrics include:

- Scaffold Frequency and Counts: The most fundamental metrics involve identifying the total number of unique scaffolds in a library and the frequency of compounds represented by each scaffold. A high number of unique scaffolds relative to the total number of compounds often indicates high diversity [23].

- Scaffold Hinges and Distribution Metrics: The NC₅₀C and PC₅₀C metrics are widely used. NC₅₀C is the number of scaffolds required to cover 50% of the compounds in a library, while PC₅₀C is the percentage of unique scaffolds needed to cover 50% of the compounds [23] [24]. A low PC₅₀C value indicates that a small subset of scaffolds accounts for a large portion of the library, suggesting potential redundancy.

- Cyclic System Recovery (CSR) Curves: These curves visualize the distribution of compounds over scaffolds. The fraction of unique scaffolds is plotted on the X-axis, and the cumulative fraction of compounds covered by those scaffolds is plotted on the Y-axis [14] [24]. The Area Under the CSR Curve (AUC) and F₅₀ (the fraction of scaffolds needed to recover 50% of the database) are derived metrics, where low AUC and high F₅₀ values point to high scaffold diversity [14].

- Shannon Entropy (SE) and Scaled Shannon Entropy (SSE): SE measures the distribution of compounds across scaffolds. It is calculated as SE = -∑pᵢ log₂pᵢ, where pᵢ is the probability of a compound belonging to scaffold i [14]. SSE normalizes SE by the maximum possible entropy (log₂n, where n is the total number of scaffolds), yielding a value between 0 (minimal diversity) and 1 (maximum diversity) [14]. This is particularly useful for comparing libraries of different sizes.

Table 1: Key Metrics for Quantifying Scaffold Diversity

| Metric | Description | Interpretation |

|---|---|---|

| NC₅₀C | Number of scaffolds covering 50% of compounds | Lower value suggests less diversity (a few common scaffolds) |

| PC₅₀C | Percentage of unique scaffolds covering 50% of compounds | Lower value suggests less diversity |

| AUC of CSR Curve | Area Under the Cyclic System Recovery Curve | Lower value indicates higher scaffold diversity |

| F₅₀ from CSR | Fraction of scaffolds to retrieve 50% of compounds | Higher value indicates higher scaffold diversity |

| Scaled Shannon Entropy (SSE) | Normalized measure of the "evenness" of scaffold distribution | Ranges from 0 (all same scaffold) to 1 (perfect, even distribution) |

Experimental Protocol: Scaffold Diversity Analysis

Objective: To determine the scaffold diversity of a candidate compound library using Murcko frameworks and the Scaffold Tree hierarchy.

Materials:

- A curated dataset of chemical structures in SDF or SMILES format.

- Cheminformatics software such as MOE (Molecular Operating Environment), RDKit, or KNIME with relevant plugins.

- A computational environment capable of running Pipeline Pilot protocols or custom Python/R scripts.

Procedure:

- Data Curation: Standardize the input structures. This typically includes neutralizing charges, removing salts, and generating canonical tautomers and stereochemistry representations [14] [24].

- Scaffold Generation:

- Murcko Frameworks: For every molecule in the library, remove all acyclic side chains and atom-specific hybridization, retaining only the ring systems and the linkers that connect them [23] [24].

- Scaffold Tree: For each molecule, generate the hierarchical tree by iteratively removing rings based on prioritization rules (e.g., complexity, ring size, heteroatom content) until a single ring remains. Export the scaffolds at Level 1 (one level of abstraction below the Murcko framework) for analysis [23] [24].

- Calculate Scaffold Metrics:

- Identify all unique scaffolds from the generated Murcko and Level 1 sets.

- For each scaffold representation, calculate the NC₅₀C and PC₅₀C values.

- Generate the CSR curve by sorting scaffolds by frequency (most to least frequent), then plotting the cumulative fraction of compounds recovered versus the cumulative fraction of scaffolds.

- Calculate the SSE for the library based on the distribution of compounds across the Level 1 scaffolds.

- Visualization:

- Tree Maps: Use software to generate Tree Maps where the size of each rectangle represents the number of compounds for a given scaffold, and the color can represent a property (e.g., average molecular weight). Scaffolds are clustered based on structural similarity (e.g., using Tanimoto similarity of their ECFP4 fingerprints) [23] [24].

- SAR Maps: Create SAR Maps to visualize the structure-activity relationship landscape of the scaffolds, linking structural similarity to potential biological activity [24].

Structural Fingerprints and Chemical Space

Chemical Space and Fingerprint Representations

Chemical space is a theoretical concept where different molecules occupy different regions of a mathematical space defined by their properties [25]. Since exhaustively evaluating the entire chemical universe is impossible, compound libraries are designed to sample biologically relevant regions of this space [20]. Structural fingerprints are a cornerstone of this navigation, providing a numerical representation of molecular structure that enables computational comparison.

Common fingerprint types include:

- Extended Connectivity Fingerprints (ECFP): These are circular fingerprints that capture molecular topology around each atom up to a specified bond diameter. They are excellent for assessing general structural similarity and are widely used in activity prediction [26].

- MACCS Keys: A set of 166 predefined structural fragments (bits). A molecule is represented by a binary vector indicating the presence or absence of each fragment. These keys are highly interpretable [14].

- Other Representations: Shape-based fingerprints and pharmacophore fingerprints capture three-dimensional molecular information, which can be critical for targets where steric and electronic complementarity are key.

Key Metrics for Fingerprint-Based Diversity

The diversity of a library based on fingerprints is typically assessed using similarity measures:

- Average Pairwise Tanimoto Similarity: The Tanimoto coefficient is a standard measure for comparing binary fingerprints. It is calculated as T(A,B) = c/(a+b-c), where

aandbare the number of bits set in molecules A and B, andcis the number of common bits. The average of all pairwise comparisons within a library indicates its internal diversity; a lower average similarity signifies higher diversity [14] [25]. - Intrinsic Similarity (iSIM) Framework: For very large libraries (N > 10⁵), calculating all pairwise similarities becomes computationally prohibitive (O(N²)). The iSIM framework overcomes this by providing a method to compute the average pairwise Tanimoto similarity (iT) with linear complexity (O(N)), making it feasible to analyze massive datasets [25].

- Complementary Similarity: This concept from the iSIM framework involves calculating a library's iT after removing a single molecule. A molecule with low complementary similarity is central to the library (medoid-like), while one with high complementary similarity is an outlier. Analyzing the diversity of these subgroups (e.g., lowest and highest 5th percentiles) over time provides a granular view of chemical space evolution [25].

- Clustering with BitBIRCH: The BitBIRCH algorithm, an adaptation of the BIRCH clustering method for binary fingerprints, allows for efficient clustering of ultra-large libraries. Tracking the formation of new clusters over time helps identify which new compounds are exploring truly novel regions of chemical space [25].

Table 2: Key Metrics for Fingerprint-Based Diversity

| Metric | Description | Interpretation |

|---|---|---|

| Average Pairwise Tanimoto | Mean of all pairwise Tanimoto coefficients between library molecules | Lower average indicates higher fingerprint diversity |

| iSIM Tanimoto (iT) | Efficient, O(N) calculation of the average pairwise Tanimoto for large libraries | Same interpretation as average pairwise, but feasible for massive libraries |

| Complementary Similarity | iT of a library after removing one molecule | Identifies central (low value) and outlier (high value) molecules |

Experimental Protocol: Fingerprint Diversity Analysis

Objective: To evaluate the structural diversity and intrinsic similarity of a compound library using molecular fingerprints and the iSIM framework.

Materials:

- A curated compound library.

- Cheminformatics toolkit with fingerprint capabilities (e.g., RDKit, CDK).

- Computational resources for handling the library size. Implementation of the iSIM algorithm (e.g., custom Python script based on published methods [25]).

Procedure:

- Fingerprint Generation: For every compound in the standardized library, compute structural fingerprints. ECFP4 (with a diameter of 4) and MACCS keys are recommended as standard representations for a comprehensive analysis [14].

- Calculate Global Diversity:

- For smaller libraries (<100,000 compounds), compute the full pairwise Tanimoto similarity matrix and report the mean of all off-diagonal elements.

- For larger libraries, implement the iSIM algorithm: arrange all fingerprints in a matrix, sum the "on" bits for each column to create a vector K = [k₁, k₂, ..., kₘ], then calculate iT using the formula:

iT = Σ[kᵢ(kᵢ-1)] / Σ[kᵢ(kᵢ-1) + 2kᵢ(N-kᵢ)]for i from 1 to M [25].

- Identify Chemical Space Regions:

- Calculate the complementary similarity for every molecule in the library.

- Define the "medoids" as molecules in the lowest 5th percentile of complementary similarity and the "outliers" as those in the highest 5th percentile.

- Calculate the internal diversity (iT) of the medoid and outlier sets separately to understand the diversity within the core and periphery of the library's chemical space [25].

- Cluster Analysis:

- Apply the BitBIRCH clustering algorithm to the entire library's fingerprints to group compounds into structurally similar clusters.

- Analyze the distribution of compounds across clusters and note the number of singleton clusters. A large number of small clusters and singletons indicates high diversity [25].

Physicochemical Properties and Multi-Dimensional Assessment

Property Ranges and Diversity

While scaffolds and fingerprints describe molecular structure, physicochemical properties directly influence a compound's behavior in biological systems, including its absorption, distribution, metabolism, excretion, and toxicity (ADMET) profile [20]. For a diverse chemogenomics library, ensuring a broad coverage of lead-like and drug-like property space is crucial.

Key properties for analysis include:

- Molecular Weight (MW)

- Calculated Log P (cLogP), measuring lipophilicity

- Number of Hydrogen Bond Donors (HBD)

- Number of Hydrogen Bond Acceptors (HBA)

- Polar Surface Area (PSA)

- Number of Rotatable Bonds (RB) [14] [20]

Diversity is assessed by examining the distribution of compounds within the multi-dimensional space defined by these properties, often using Principal Component Analysis (PCA) to reduce dimensionality for visualization [20].

The Consensus Diversity Plot (CDP): An Integrated View

The Consensus Diversity Plot (CDP) is a powerful method that integrates multiple diversity criteria into a single, two-dimensional visualization, providing a "global diversity" perspective essential for final compound selection [14].

Construction of a CDP:

- Axes: A CDP uses two primary diversity metrics as its axes. A common configuration is to plot scaffold diversity (e.g., SSE or F₅₀ from CSR analysis) on the Y-axis and fingerprint diversity (e.g., average pairwise Tanimoto similarity) on the X-axis [14].

- Data Points: Each data point on the plot represents an entire compound library or a subset thereof.

- Quadrants: The plot can be divided into four quadrants using dashed lines at meaningful threshold values for each axis. This allows for the immediate classification of libraries as high/low in scaffold and fingerprint diversity [14].

- Third Dimension - Physicochemical Properties: A third diversity criterion, such as the diversity of physicochemical properties (measured by the average Euclidean distance in a normalized property space), is incorporated using a continuous color scale for the data points [14].

Table 3: Core Physicochemical Properties for Diversity Analysis

| Property | Description | Role in Library Design |

|---|---|---|

| Molecular Weight (MW) | Mass of the molecule | Impacts permeability and solubility; kept in lead-like range. |

| cLogP | Calculated octanol-water partition coefficient | Measure of lipophilicity; critical for ADMET. |

| H-Bond Donors (HBD) | Number of O-H and N-H bonds | Affects membrane permeability and solubility. |

| H-Bond Acceptors (HBA) | Number of O and N atoms | Influences desolvation and target binding. |

| Polar Surface Area (PSA) | Surface area of polar atoms | Strong predictor of cell permeability. |

| Rotatable Bonds (RB) | Number of non-rigid bonds | Related to molecular flexibility and oral bioavailability. |

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key software tools and resources essential for conducting the diversity analyses described in this guide.

Table 4: Essential Computational Tools for Diversity Analysis

| Tool / Resource | Type | Primary Function in Diversity Analysis |

|---|---|---|

| MOE (Molecular Operating Environment) | Commercial Software Suite | Data curation, scaffold generation (Murcko, Scaffold Tree via sdfrag), fingerprint calculation, and molecular property calculation [14] [24]. |

| RDKit | Open-Source Cheminformatics Library | Programmatic data curation, generation of Murcko frameworks and fingerprints (ECFP, etc.), and calculation of molecular descriptors. The core engine for many custom scripts and workflows [24]. |

| Pipeline Pilot | Scientific Workflow Platform | Used to build automated, reproducible workflows for data curation, fragment generation, and diversity metric calculation [22] [24]. |

| Consensus Diversity Plot (CDP) Web Tool | Online Application | Freely available web service for generating Consensus Diversity Plots to integrate and visualize multiple diversity metrics [14]. |

| iSIM / BitBIRCH Algorithm | Computational Method | A specific algorithmic framework for efficiently calculating the intrinsic similarity (iT) and clustering ultra-large compound libraries (e.g., >10⁶ compounds) that are otherwise intractable with traditional methods [25]. |

| Tree Maps / SAR Maps Software | Visualization Tool | Software functionality (often within broader platforms) for creating Tree Maps to visualize scaffold distribution and SAR Maps to link structural similarity with activity data [23] [24]. |

The strategic selection of compounds for a diverse chemogenomics library demands a multi-faceted approach that moves beyond simple counts. By systematically applying the quantitative metrics and experimental protocols outlined for scaffold diversity, structural fingerprint diversity, and physicochemical property space, researchers can make data-driven decisions.

The ultimate power lies in integration. Tools like the Consensus Diversity Plot (CDP) [14] and advanced clustering algorithms like BitBIRCH [25] enable a holistic "global diversity" assessment, ensuring that a selected library is not merely large, but is genuinely diverse across multiple complementary representations of chemical space. This rigorous, metrics-driven foundation maximizes the probability of success in high-throughput screening and the subsequent identification of novel chemical probes and drug leads across the proteome.

The paradigm of drug discovery has progressively shifted from a reductionist, "one target–one drug" approach to a holistic, systems-level perspective that acknowledges complex diseases arise from multifactorial molecular abnormalities [1]. Systems biology provides the foundational framework for this transition, enabling the integration of heterogeneous biological data to elucidate complex target-pathway-disease relationships. For chemogenomics library research—which utilizes diverse chemical probes to interrogate biological systems—this integrative approach is transformative. It facilitates the strategic selection of compounds that collectively cover a wide swath of the druggable genome and are rationally linked to disease-relevant biological pathways [1] [9].

The core objective of integrating systems biology into chemogenomics is to move beyond single-target screening toward a network-based understanding of compound action. This involves constructing multi-scale models that connect a compound's protein targets to its effects on intracellular pathways, cellular phenotypes, and ultimately, disease outcomes [1] [27]. Such an approach is particularly vital for precision oncology, where patient-specific vulnerabilities often stem from complex, rewired regulatory networks rather than isolated genetic lesions [9]. This guide details the core methodologies, data integration strategies, and experimental protocols for implementing a systems biology-driven framework in chemogenomics library design and analysis.

Core Methodologies for Mapping Relationships

Network Pharmacology and Multi-Omics Integration

Network pharmacology is an interdisciplinary approach that integrates systems biology, omics technologies, and computational methods to identify and analyze multi-target drug interactions [27]. It serves as a primary tool for mapping target-pathway-disease relationships by constructing and analyzing heterogeneous biological networks.

- Data Integration: Successful network construction relies on synthesizing data from multiple sources. Key databases include:

- ChEMBL: A repository of bioactive molecules with their curated protein targets and bioactivities (e.g., IC50, Ki) [1].

- KEGG & GO: Provide structured knowledge on biological pathways, molecular functions, and cellular components [1].

- Disease Ontology (DO): Offers a standardized classification of human diseases, enabling consistent disease annotation [1].

- STRING: A database of known and predicted protein-protein interactions (PPIs), crucial for understanding functional relationships between targets [28] [27].

- Network Construction and Analysis: Data from these sources are integrated into a unified graph database, such as Neo4j, where nodes represent entities (e.g., compounds, targets, pathways, diseases) and edges represent their relationships (e.g., "binds," "participates-in," "treats") [1]. Analyzing these networks involves identifying highly connected regions (clusters) and central nodes (hubs) that often represent key functional modules or critical regulatory points in disease biology. Gene Ontology (GO) and KEGG pathway enrichment analyses are then performed on these clusters to infer biological meaning [1].

Knowledge Graphs and Automated Reasoning

Biological knowledge graphs represent an advanced evolution of network models, formalizing entities and their relationships into (head, relation, tail) triples [29]. This structured format enables the application of knowledge base completion (KBC) models, which can predict novel, unseen relationships—such as new drug-disease treatments—by reasoning across the graph.

- Rule-Based Reasoning: Symbolic KBC models, such as AnyBURL, learn logical rules that explain connections within the graph [29]. For example, a rule might state:

compound_treats_disease(X, Y) ⇐ compound_binds_gene(X, A), gene_involved_in_pathway(A, B), pathway_associated_with_disease(B, Y). This provides an interpretable, biological rationale for a predicted drug repositioning. - Automated Evidence Generation: A significant challenge is the generation of overwhelmingly large numbers of evidence paths, many of which are biologically irrelevant. Automated filtering pipelines address this by incorporating disease-specific "landscape" data (e.g., key genes and pathways known to be important for a disease like cystic fibrosis or Parkinson's) to prioritize the most mechanistically meaningful evidence chains for expert review [29]. This approach was experimentally validated, showing strong correlation between automatically extracted paths and preclinical experimental data [29].

Computational Target Discovery and Validation

Systems biology leverages a suite of computational methods for initial target discovery and hypothesis generation.

- Machine Learning (ML) for Drug-Target Interaction (DTI) Prediction: ML models are trained on known drug-target interactions to predict novel interactions for new drug or target candidates. These methods use various molecular descriptors of drugs and biological features of proteins to learn complex patterns [30].

- Molecular Docking: This computational technique predicts the binding affinity and orientation of a small molecule within a protein's binding site. In a study targeting the Oropouche virus, molecular docking using PyRx software was used to evaluate the binding of compounds like Acetohexamide and Deptropine to prioritized host targets (e.g., IL10, FASLG), helping to rationalize their potential efficacy before experimental validation [28].

- Drug Affinity Responsive Target Stability (DARTS): This experimental method identifies potential protein targets of a small molecule by exploiting the principle that a protein's stability against proteolysis often increases upon ligand binding. DARTS is label-free and can be applied to complex cell lysates, making it a valuable tool for deconvoluting the mechanisms of action of compounds identified in phenotypic screens [30].

Quantitative Data and Experimental Protocols

Structured Data for Chemogenomics

Table 1: Key Databases for Building Target-Pathway-Disease Networks

| Database Name | Primary Content | Application in Network Building |

|---|---|---|

| ChEMBL [1] | Bioactive molecules, protein targets, bioactivities (IC50, Ki) | Core source for compound-target relationships. |

| KEGG [1] | Manually drawn pathway maps for metabolism, disease, etc. | Annotates targets with pathway membership. |

| Gene Ontology (GO) [1] | Standardized terms for biological processes, molecular functions, cellular components | Provides functional annotation for protein targets. |

| Disease Ontology (DO) [1] | Structured classification of human disease terms | Enables consistent disease annotation. |

| STRING [27] | Known and predicted protein-protein interactions (PPIs) | Informs on functional protein complexes and networks. |

Table 2: Experimentally Validated Compounds from a Systems Biology Workflow (Case Study: Oropouche Virus) [28]

| Compound | Molecular Weight (g/mol) | Key Prioritized Host Targets | Reported Binding Affinity |

|---|---|---|---|

| Acetohexamide | 324.40 | IL10, FASLG, PTPRC, FCGR3A | Strong binding to multiple targets |

| Deptropine | 333.47 | IL10, FASLG, PTPRC, FCGR3A | Strong binding to multiple targets |

| Methotrexate | 454.44 | Dihydrofolate reductase (implicit) | Evaluated in docking studies |

| Retinoic Acid | 300.44 | Nuclear receptors (implicit) | Evaluated in docking studies |

Detailed Experimental Protocols

This protocol outlines the computational and experimental steps for identifying host-targeted therapeutics, as applied to the Oropouche virus.

Identification of Virus-Associated Host Targets:

- Step 1: Retrieve a list of human genes known to interact with the virus or play a role in its life cycle from databases like OMIM and GeneCards.

- Step 2: Remove duplicate entries and map the remaining genes to standardized identifiers in the UniProt database (restricting to Homo sapiens).

Drug Prediction and Compound Selection:

- Step 3: Use the DSigDB database with the list of mapped host targets to predict compounds that may modulate these targets.

- Step 4: Filter the resulting compounds using Lipinski's Rule of Five (and other drug-likeness criteria) to prioritize molecules with favorable pharmacokinetic properties. Discontinue compounds with known toxicity issues.

Protein-Protein Interaction (PPI) Network Analysis:

- Step 5: Analyze the host targets using the STRING database and Cytoscape software to construct a PPI network.

- Step 6: Identify densely connected clusters and central hubs within the network. Perform functional enrichment analysis (e.g., GO, KEGG) on these clusters to identify critical, dysregulated biological pathways (e.g., Fc-gamma receptor signaling, T-cell receptor signaling).

Molecular Docking Validation:

- Step 7: Select the 3D structures of the prioritized host targets from the Protein Data Bank (PDB).

- Step 8: Perform molecular docking simulations using software such as PyRx to evaluate the binding affinities and modes of the selected small molecules against the target proteins.

- Step 9: Prioritize compounds based on computed binding energies and the biological plausibility of the binding poses.

Experimental Validation:

- Step 10: Confirm the predicted antiviral efficacy and mechanism of action of the top-ranking compounds through in vitro and in vivo experiments (not covered in detail by the source).

This protocol describes the use of a systems-annotated chemogenomic library in a phenotypic screen.

Library Curation:

- Step 1: Assemble a diverse collection of small molecules representing a wide range of pharmacological targets. For example, a minimal screening library might contain ~1,200 compounds targeting over 1,300 anticancer proteins [9].

- Step 2: Annotate each compound with its known protein targets and associated pathways using databases like ChEMBL.

- Step 3: To ensure structural diversity, use software like ScaffoldHunter to classify compounds based on their core chemical scaffolds.

Cell-Based Phenotypic Screening:

- Step 4: Plate disease-relevant cells (e.g., patient-derived glioma stem cells) in multiwell plates.

- Step 5: Treat cells with the compounds from the chemogenomic library. Include appropriate controls (e.g., DMSO vehicle).

- Step 6: After a predetermined incubation period, stain the cells with a fluorescent dye kit (e.g., for Cell Painting assay) and image them using a high-throughput microscope [1].

Morphological Profiling and Data Analysis:

- Step 7: Use automated image analysis software (e.g., CellProfiler) to extract quantitative morphological features (e.g., cell size, shape, texture, granularity) for each cell and treatment condition.

- Step 8: Generate a morphological profile for each compound by aggregating feature data across cells.

- Step 9: Cluster compounds based on the similarity of their morphological profiles. Compounds with similar profiles are predicted to share mechanisms of action.

Target and Mechanism Deconvolution:

- Step 10: For hits of interest from the screen, use the pre-built system pharmacology network to connect the compound to its known targets and associated pathways.

- Step 11: Perform GO and KEGG pathway enrichment analysis on the collective set of targets for a given phenotypic cluster to infer the biological processes and pathways underlying the observed phenotype [1].

Signaling Pathways and Workflow Visualization

Systems Biology Workflow for Chemogenomics

The following diagram illustrates the integrated computational and experimental workflow for applying systems biology in chemogenomics library research, from initial data integration to experimental validation.

Knowledge Graph Reasoning for Drug Repurposing

This diagram details the process of using a biological knowledge graph and rule-based reasoning for generating explainable drug repurposing hypotheses, followed by automated filtering to isolate biologically meaningful evidence.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Database Resources

| Tool/Resource | Type | Primary Function in Research |

|---|---|---|

| Cytoscape [28] [27] | Software Platform | Network visualization and analysis; used for constructing and analyzing PPI and drug-target networks. |

| STRING [28] [27] | Database & Web Tool | Provides a database of known and predicted PPIs, essential for building functional association networks. |

| PyRx [28] | Software Tool | A platform for virtual screening and molecular docking, used to evaluate compound-target binding. |

| Neo4j [1] | Database | A graph database management system used to store and query complex network pharmacology data. |

| CellProfiler [1] | Software Tool | Automated image analysis software for extracting quantitative morphological features from cellular images. |

| ChEMBL [1] | Database | A manually curated database of bioactive molecules with drug-like properties, providing compound-target annotations. |

| AnyBURL [29] | Algorithm | A symbolic, rule-based knowledge graph completion model used for generating explainable drug-disease predictions. |

Application in Chemogenomics Library Research

Integrating systems biology into chemogenomics library design transforms it from a collection of chemicals into a targeted, hypothesis-generating system. The overarching goal is to create a library where compounds are not only structurally diverse but also strategically chosen to perturb a wide range of disease-relevant biological pathways [9].

A practical application involves designing a minimal screening library for precision oncology. This process involves:

- Target Space Definition: Compiling a comprehensive list of proteins implicated in various cancers from literature and databases.

- Compound Selection and Annotation: Selecting bioactive small molecules that collectively cover this target space. Analytics are applied to optimize for library size, cellular activity, chemical diversity, and target selectivity [9].