Strategic Compound Selection: A Modern Framework for Identifying Potent, Target-Specific Hits in Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on the strategic selection of potent compounds for specific biological targets.

Strategic Compound Selection: A Modern Framework for Identifying Potent, Target-Specific Hits in Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the strategic selection of potent compounds for specific biological targets. It covers the foundational principles of target identification and validation, explores modern methodological approaches including High-Throughput Screening (HTS) and in silico methods, addresses critical troubleshooting and optimization strategies to mitigate common pitfalls, and outlines robust validation frameworks for confirming compound efficacy and specificity. By integrating the latest advancements in AI, computational chemistry, and functional assays, this resource offers a holistic framework designed to improve the efficiency and success rates of early-stage drug discovery campaigns.

Laying the Groundwork: From Target Identification to Druggability Assessment

Target identification is a critical early step in the drug discovery pipeline, requiring collaboration between experts from various disciplines to define disease mechanisms, evaluate therapeutic targets based on efficacy, safety, and competitive landscape. Biomedical literature serves as a foundational resource for this process, where associations between biological entities reported across millions of scientific publications can reveal fundamental drivers of disease pathogenesis and untapped therapeutic opportunities. This technical support center provides troubleshooting guidance and methodological frameworks for researchers navigating the complexities of target identification through biomedical data mining and genetic association studies.

Troubleshooting Guides

Problem: Insufficient or Weak Gene-Disease Association Signals

Question: "My data mining efforts are returning weak or inconsistent gene-disease associations. What could be causing this issue?"

Answer: Weak association signals often stem from incomplete data extraction or suboptimal analytical approaches. Consider these solutions:

Expand Text Mining Scope: Implement comprehensive named entity recognition (NER) and normalization (NEN) systems to identify human genes, diseases, cell types, and drugs across the entire PubMed corpus of over 39 million abstracts, not just limited subsets [1].

Apply Statistical Significance Scoring: Utilize quantitative scoring schemas that calculate the statistical significance of entity co-occurrences rather than relying solely on frequency counts [1].

Leverage Specialized NLP Frameworks: Employ established biomedical natural language processing pipelines like SciLinker, which applies pre-trained models including Stanza's BiLSTM-CNN-Char architecture for entity recognition (F1 score: 88.08 for diseases/drugs) and PubMedBERT for relationship extraction [1].

Validate with Clinical Data: Confirm that your text mining results show enrichment of clinically validated targets, which serves as an important validation step for identified associations [1].

Problem: High Background Noise in Association Data

Question: "My association data contains excessive background noise, making specific signals difficult to distinguish. How can I improve signal-to-noise ratio?"

Answer: High background noise typically indicates issues with specificity in either data collection or analysis:

Optimize Entity Normalization: Ensure all recognized biological entities are properly normalized to standardized terminologies like the Unified Medical Language System (UMLS) to minimize false positives from synonym variations [1].

Implement Relationship Extraction: Move beyond simple co-occurrence statistics by applying fine-tuned BERT-based models (BioBERT, SciBERT, PubMedBERT) that can extract specific relationship types rather than just co-mention [1].

Utilize Multi-modal Data Integration: Integrate text-derived knowledge with multi-omics data streams to create corroborating evidence across different data types [1].

Apply Precision Filtering: Use modular NLP framework designs that allow for expansion to additional entities and text corpora, enabling more precise filtering of irrelevant associations [1].

Problem: Inconsistent Results Across Different Mining Approaches

Question: "I'm getting conflicting association results when using different text mining tools. How should I resolve these discrepancies?"

Answer: Inconsistent results often arise from methodological differences that can be addressed through:

Standardized Evaluation Framework: Apply consistent evaluation metrics across all mining approaches, focusing on precision and recall measures specific to biomedical entity recognition [1].

Benchmark Against Gold Standards: Compare results against established biomedical relationship databases and known pathway associations to calibrate different mining methods [1].

Hybrid Methodology Implementation: Combine co-occurrence-based models with rule-based and machine learning approaches to leverage the strengths of each method while mitigating their individual limitations [1].

Frequently Asked Questions (FAQs)

Q: What are the main computational approaches for extracting gene-disease associations from literature?

A: The primary approaches fall into three categories: (1) Co-occurrence-based models that quantify relationships based on statistical co-occurrence in texts; (2) Rule-based approaches using predefined patterns and linguistic structures; and (3) Machine learning methods, particularly deep neural networks (CNNs, RNNs) and pre-trained language models (BioBERT, SciBERT, PubMedBERT) that learn relationship patterns from annotated data [1].

Q: How can I assess the quality of compounds for screening in target validation?

A: High-quality screening compounds should meet these criteria: compliance with Lipinski's Rule of Five and Veber criteria for drug-likeness, exclusion of PAINS (pan-assay interference compounds), toxic, reactive, and unstable compounds, purity confirmation (≥90% by LCMS/NMR), and validation in relevant biological assays [2].

Q: What are the key considerations when building a screening compound library?

A: Essential considerations include: structural diversity to efficiently cover chemical space, adequate collection size (commercial libraries range from thousands to over 4.6 million compounds), proper storage conditions (DMSO solutions at specified concentrations), quality control protocols, and format flexibility (96- to 1536-well microplates) [3] [2].

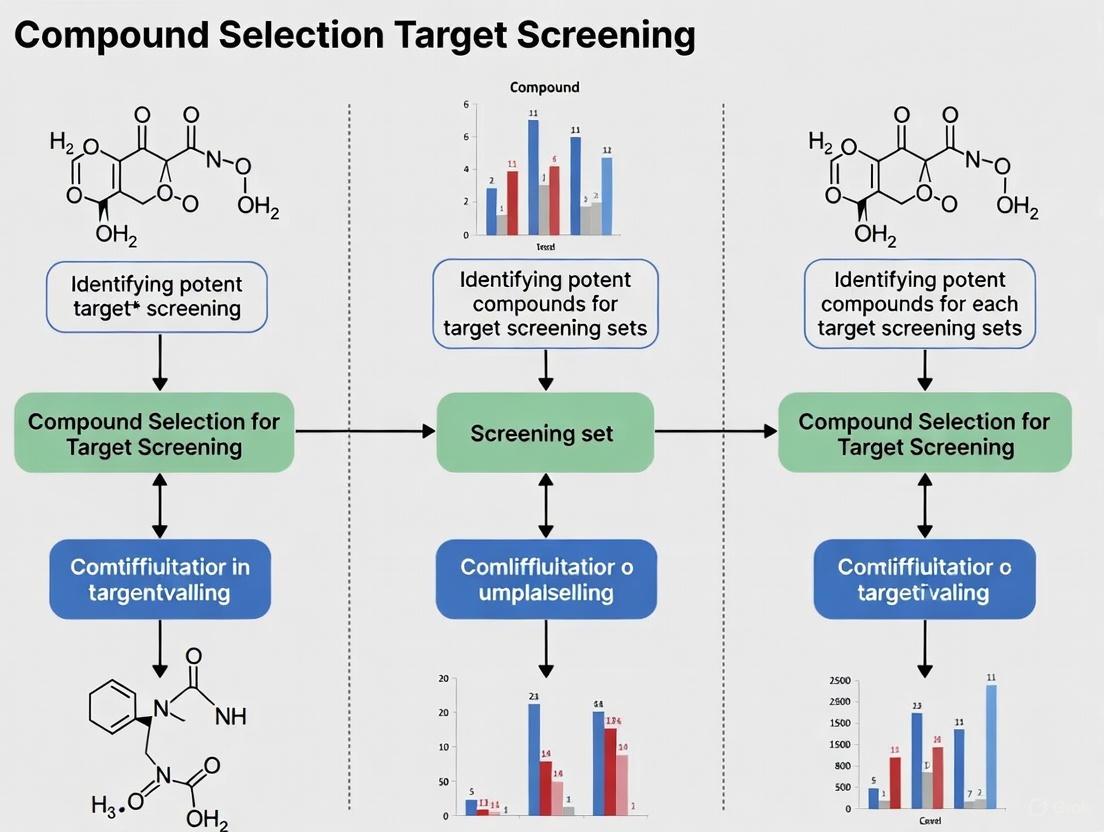

Workflow Visualization

Target Identification and Validation Pipeline

Biomedical Data Mining Architecture

Research Reagent Solutions

Table: Essential Resources for Target Identification and Screening

| Resource Type | Specific Examples | Key Features/Applications | Quality Metrics |

|---|---|---|---|

| Screening Compound Libraries | Enamine Screening Collection (4.67M compounds) [3] | HTS Collection (1.77M), Legacy Collection (1.73M), Advanced Collection (880K) | ≥90% purity (LCMS/NMR), drug-like filters, PAINS-free |

| Pre-plated Compound Sets | Life Chemicals Screening Sets [2] | 10mM DMSO solutions, 96-/384-well formats, custom concentrations | Rule of Five compliant, Veber criteria, structural diversity |

| Text Mining Tools | SciLinker NLP Framework [1] | Modular pipeline, UMLS normalization, relationship extraction | F1 score: 88.08 (disease/drug), 84.34 (gene/cell type) |

| Named Entity Recognition | Stanza NER Models [1] | BiLSTM-CNN-Char architecture, pre-trained on BC5CDR/BioNLP13CG | Multiple entity type recognition |

| Relationship Extraction | PubMedBERT [1] | Fine-tuned for biomedical relationships, co-occurrence scoring | Statistical significance quantification |

Experimental Protocols

Protocol 1: Large-Scale Literature Mining for Gene-Disease Associations

Methodology:

- Corpus Acquisition: Download PubMed Baseline XML files from NCBI's FTP server, including daily update files for current data [1].

- Text Preprocessing: Implement tokenization, part-of-speech tagging, and dependency parsing using spaCy, Stanza, and scispaCy libraries [1].

- Named Entity Recognition: Apply Stanza's pretrained biomedical NER models - the BC5CDR model (F1: 88.08) for disease/drug identification and BioNLP13CG model (F1: 84.34) for gene/cell type recognition [1].

- Entity Normalization: Map all recognized entities to Unified Medical Language System (UMLS) terminology to ensure consistent nomenclature [1].

- Relationship Extraction: Process co-occurrence sentences using fine-tuned PubMedBERT model specifically trained for gene-disease relationship extraction [1].

- Statistical Scoring: Apply significance scoring schema to quantify statistical relevance of identified associations, focusing on p-values and enrichment scores [1].

Quality Control:

- Validate against known gene-disease associations from curated databases

- Measure precision/recall using manually annotated gold standard datasets

- Verify enrichment of clinically validated targets in results

Protocol 2: Compound Library Preparation for Target Screening

Methodology:

- Library Selection: Choose appropriate screening collection based on target characteristics and assay requirements [3].

- Compound Acquisition: Source compounds as neat samples (50-150 mg) or pre-plated DMSO solutions (typically 10mM) [3].

- Quality Verification: Confirm compound purity (≥90%) through LCMS and/or 1H NMR analysis [3].

- Reformatting: Transfer compounds to appropriate assay format (96-, 384-, or 1536-well microplates) using liquid handling systems [2].

- Storage Management: Maintain compounds at proper temperature (-20°C for DMSO solutions) in barcoded containers to track inventory and prevent freeze-thaw cycles [3].

Quality Control:

- Confirm concentration accuracy through random sampling

- Verify compound structural integrity after storage

- Validate biological activity using reference standards

Frequently Asked Questions (FAQs)

What does "druggability" mean? Druggability refers to the likelihood that a protein target can be binded with high affinity (typically with a dissociation constant, Kd, below 10 μM) by a small, drug-like molecule that can subsequently modulate the target's function to produce a therapeutic effect [4]. It is an estimate of the probability of finding potent, selective, and bioavailable compounds for a given target [5] [4].

My target is considered "undruggable." Are there still viable strategies to target it? Yes. Many targets once considered undruggable are now being targeted with novel therapeutic modalities [6] [7]. These include Beyond Rule of Five (bRo5) compounds (e.g., macrocycles), covalent inhibitors, allosteric inhibitors, peptidomimetics, and advanced technologies like Targeted Protein Degradation (e.g., PROTACs) that can degrade proteins without the need for a traditional active binding site [6] [7].

What are the most common reasons a target is deemed undruggable? Common characteristics of undruggable sites include [5] [6]:

- Strongly hydrophilic surfaces with little hydrophobic character.

- Very small, shallow, or featureless binding pockets.

- Binding sites that are part of large, flat protein-protein interaction (PPI) interfaces.

- The absence of a defined pocket that can be occupied by a drug-like molecule.

How does binding site accessibility influence druggability? A binding site must be physically accessible for a ligand to reach it. Computational studies model proteins as physical environments and ligands as "robots" that must find a path to the binding site [8]. If no such path exists, or if the access tunnels are too narrow or energetically unfavorable, the site is effectively inaccessible, and the target may be considered very difficult or undruggable for that class of molecules [8].

Troubleshooting Guides

Problem: Inconclusive Results from Computational Druggability Assessment

Potential Cause 1: Over-reliance on a single computational method. Different algorithms have varying strengths and weaknesses.

- Solution: Employ a consensus approach by using multiple computational methods.

- Action: Run several druggability prediction tools. If using FTMap (which identifies binding hot spots by overlapping probe clusters), also use a method like MixMD or SILCS (which use mixed-solvent molecular dynamics to account for full protein flexibility and solvent competition) [6]. Cross-reference the results to find consensus hot spots.

Potential Cause 2: Using a single, rigid protein structure. Proteins are dynamic, and binding sites can open, close, or change shape.

- Solution: Assess druggability across multiple protein conformations.

- Action: If available, run mapping tools like FTMap or SILCS on all X-ray structures of the target protein to understand the impact of conformational changes. If structures are limited, consider using molecular dynamics simulations to generate alternative conformations for analysis [6].

Problem: Experimental Screening Fails to Identify Quality Hits for a Putatively Druggable Target

Potential Cause 1: The compound library used is not optimal for the target's binding site chemistry. A library biased towards hydrophobic targets may fail on a highly polar site.

- Solution: Curate or select a screening library matched to the target's predicted properties.

Potential Cause 2: The primary screen identified promiscuous or nuisance compounds. These compounds inhibit many targets through non-specific mechanisms, leading to false positives.

- Solution: Implement stringent counter-screens and computational filters early in the validation process.

Methodologies for Assessing Druggability and Accessibility

This section provides detailed protocols for key experiments and analyses cited in druggability research.

Experimental Protocol 1: NMR-Based Fragment Screening to Assess Druggability (Ligandability)

Purpose: To experimentally measure a protein's potential to bind small, drug-like molecules by determining the hit rate from a screen of a fragment library [5].

Principle: A protein is screened against a library of low molecular weight compounds ("fragments") using NMR spectroscopy. A high hit rate indicates the presence of a binding site with favorable physicochemical properties for ligand binding, correlating with high druggability [5].

Procedure:

- Protein Preparation: Produce and purify 15N-labeled target protein. Concentrate to 10-50 μM in a suitable NMR buffer.

- Library Preparation: Obtain a curated fragment library (e.g., 500-2000 compounds). Prepare fragments as 100-500 mM stock solutions in DMSO-d6.

- NMR Data Collection:

- Perform 2D 1H-15N HSQC NMR experiments on the protein alone (reference spectrum).

- Titrate fragments individually into the protein sample (typical final fragment ratio of 1:10 to 1:100 protein:fragment).

- Record a new 2D 1H-15N HSQC spectrum after each addition.

- Data Analysis:

- Monitor chemical shift perturbations, line broadening, or signal disappearance in the protein spectra.

- A compound is considered a "hit" if it causes significant changes, indicating binding.

- Calculate the hit rate: (Number of fragment hits / Total fragments screened) * 100%.

- Interpretation: A hit rate of >5% is generally indicative of a highly druggable site, while a hit rate of <1% suggests a challenging or undruggable target [5].

Experimental Protocol 2: Computational Mapping of Binding Hot Spots Using FTMap

Purpose: To identify and energetically rank regions on a protein surface that have the highest potential for binding small molecules (hot spots) [6].

Principle: FTMap is a computational analog of multiple solvent crystal structures (MSCS). It exhaustively docks a diverse set of small molecular probes onto the protein surface, finds favorable positions, and identifies "consensus sites" where multiple probes cluster. These consensus sites represent binding hot spots [6].

Procedure:

- Protein Structure Preparation:

- Obtain a 3D structure of the target protein (e.g., from PDB).

- Use a tool like Chimera or MOE to remove water molecules and co-crystallized ligands, add hydrogen atoms, and assign partial charges.

- Run FTMap:

- Access the FTMap server (https://ftmap.bu.edu/).

- Upload the prepared protein structure file in PDB format.

- Analysis of Results:

- FTMap returns a results file showing the top consensus sites (clusters), typically ranked by the number of probe clusters per site.

- Visually inspect the results in molecular visualization software. The strongest hot spot (highest ranked consensus site) often corresponds to the most druggable region.

- Interpretation:

- Easily Druggable: A target with one strong hot spot (e.g., >16 probe clusters) and 3-4 additional supporting hot spots [6].

- Challenging Target (e.g., PPI): A target with weak, shallow hot spots or a fragmented hot spot region across a flat surface [6].

- Need for bRo5 Compounds: A target with a complex hot spot structure of four or more strong hot spots may require larger compounds to achieve high affinity and selectivity [6].

Experimental Protocol 3: Evaluating Ligand Binding Site Accessibility with Motion Planning

Purpose: To computationally determine if a specific ligand can physically access a buried binding site through protein tunnels or channels [8].

Principle: This method transforms the accessibility problem into a robot motion planning problem. The ligand is modeled as a flexible agent that must navigate from outside the protein to the binding site. The algorithm explores the protein's void space to find valid, low-energy paths for the ligand [8].

Procedure:

- Input Preparation:

- Obtain the 3D atomic structure of the protein, including hydrogen atoms.

- Define the 3D structure of the ligand and its flexible bonds.

- Pre-identify the binding site coordinates (e.g., from a co-crystallized ligand or a hot spot mapping tool).

- Algorithm Execution:

- Implement a motion planning algorithm such as Rapidly-exploring Random Graphs (RRG).

- Bias the exploration using a workspace skeleton (e.g., a Mean Curvature Skeleton) of the protein's interior to guide the search towards the binding site.

- Assign weights to potential paths based on the influence of intermolecular forces (e.g., van der Waals, electrostatics).

- Path Analysis:

- The algorithm outputs a set of possible paths from the protein exterior to the binding site.

- Extract low-weight paths, which represent energetically favorable routes.

- Interpretation:

- The existence of a low-weight path indicates the binding site is accessible for that ligand.

- The absence of any valid path suggests the site is inaccessible, and the ligand or the target protein may need to be re-considered [8].

Quantitative Data on Druggability Assessment Methods

Table 1: Comparison of Key Druggability Assessment Methods

| Method | Type | Key Measurable(s) | Typical Output | Performance/Advantages | Limitations |

|---|---|---|---|---|---|

| NMR Fragment Screening [5] | Experimental | Hit Rate (%) | Continuous score (Hit Rate %) | High correlation with ability to bind drug-like molecules; gold standard. | Requires protein labeling/purification; lower throughput; resource-intensive. |

| DrugFEATURE [5] | Computational (Microenvironment) | Druggability Score | Categorical (Druggable/Undruggable) & Continuous score | Correlates with NMR hit rate (R²=0.47); accurately discriminated druggable targets. | Relies on knowledge of known drug-binding microenvironments. |

| FTMap [6] | Computational (Hot Spot) | Number of Probe Clusters per Consensus Site | Ranked list of binding hot spots | Fast; provides spatial information on bindable regions; no required prior knowledge of site. | Assumes a relatively rigid protein structure. |

| Mixed-Solvent MD (MixMD, SILCS) [6] | Computational (Hot Spot) | Probe Occupancy/Free Energy | 3D maps of favorable probe binding locations | Accounts for full protein flexibility and explicit solvent; more physically realistic. | Computationally expensive; lower throughput. |

Table 2: Key Characteristics of Druggable vs. Challenging Targets

| Characteristic | Druggable Target | Challenging/Undruggable Target |

|---|---|---|

| Binding Site Geometry | Sufficient volume, depth, and enclosure [5] [4] | Very small, shallow, or featureless [5] [6] |

| Surface Properties | Balanced hydrophobicity with some H-bonding potential [5] [4] | Strongly hydrophilic with little hydrophobic character, or highly lipophilic [5] [6] |

| Hot Spot Structure | One strong hot spot with several supporting ones [6] | Weak, fragmented, or no hot spots [6] |

| Location | Traditional orthosteric enzyme pocket or receptor cleft [5] | Flat protein-protein interaction interface [6] |

| Accessibility | Clear, solvent-exposed access tunnel [8] | Buried site with no clear or energetically favorable access path [8] |

Visualization of Experimental Workflows

Druggability Assessment Workflow

Binding Site Accessibility Analysis

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagents and Resources for Druggability Research

| Item | Function/Purpose | Example/Notes |

|---|---|---|

| Pre-plated Screening Libraries | Collections of compounds for experimental HTS or fragment screening. | Diverse Collection (e.g., 127.5K drug-like molecules) [9]. Fragment Libraries (e.g., 5,000 compounds, MW <300, compliant with Rule of 3) [2] [9] [10]. Targeted Libraries (e.g., Kinase, Covalent, CNS) [9]. |

| Known Bioactives & FDA-Approved Drugs | For assay validation and drug repurposing screens. | Libraries such as LOPAC1280, Selleckchem FDA-approved library, or the Broad Repurposing Hub (5,691 compounds) [9] [10]. |

| NMR-Ready Fragment Library | A curated set of low MW fragments for NMR-based screening to assess ligandability. | Typically 500-2000 compounds. Requires high solubility and structural diversity [5]. |

| FTMap Web Server | Computational tool for identifying binding hot spots on a protein structure. | Freely available at https://ftmap.bu.edu/ [6]. |

| Stable, Purified Target Protein | Essential for all experimental assessments (NMR, SPR, biochemical HTS). | Requires high purity (>95%) and stability at concentrations and conditions used in the assay. For NMR, 15N-labeling is needed [5]. |

| Motion Planning Software | For evaluating ligand access to buried binding sites. | Custom algorithms (e.g., based on RRG) as described in research [8]. |

| PAINS/Nuisance Compound Filters | Computational filters to remove promiscuous compounds from screening libraries or hit lists. | Tools like Badapple or the cAPP from the Hoffmann Lab [9]. |

Core Concepts in Target Validation

What is the primary goal of target validation in drug discovery?

Target validation is the critical process of experimentally confirming that a specific gene, protein, or biological pathway plays a key role in a disease and that modulating it will provide a therapeutic benefit. It provides the essential link between an initial hypothesis and the commitment to a costly drug discovery program. For researchers working with screening sets, a validated target ensures you are screening for compounds that act on a biologically relevant mechanism, maximizing the value of your resources.

How do Antisense Oligonucleotides (ASOs) function as target validation tools?

Antisense Oligonucleotides (ASOs) are single-stranded, synthetically prepared DNA sequences, typically 18-21 nucleotides in length, designed to be complementary to a specific target messenger RNA (mRNA) [11]. They modulate gene expression through several well-characterized mechanisms, making them powerful tools for validating gene function:

- RNase H-Mediated Degradation: This is a primary mechanism for many ASOs. The ASO binds to its complementary mRNA sequence, forming a DNA-RNA heteroduplex. This structure is recognized and cleaved by the ubiquitous cellular enzyme RNase H, which degrades the target mRNA, thereby preventing its translation into protein [11] [12]. The ASO is then free to bind another mRNA molecule, creating a catalytic effect.

- Steric Hindrance: Some ASO chemistries are incapable of recruiting RNase H. Instead, their binding to the mRNA physically blocks the cellular machinery (like the ribosome) from accessing the RNA, leading to translational arrest [11] [13].

- Splicing Modulation: ASOs can be designed to bind to specific sequences on pre-mRNA to alter its splicing pattern. This can lead to the exclusion (exon skipping) or inclusion of specific exons in the final mRNA transcript, which can restore protein function or eliminate a dysfunctional protein domain [13].

Table: Key Mechanisms of Action for Antisense Oligonucleotides

| Mechanism | ASO Type | Key Outcome | Therapeutic Example |

|---|---|---|---|

| RNase H Degradation | Gapmers (e.g., Phosphorothioates) | Cleavage and reduction of target mRNA | Reduction of disease-causing proteins |

| Steric Hindrance | Morpholinos (PMOs), PNAs | Blockage of ribosomal translation | Translational inhibition |

| Splicing Modulation | 2'-MOE, PMOs | Altered mRNA splicing to include or exclude exons | Production of functional protein variants (e.g., for Spinal Muscular Atrophy, Duchenne Muscular Dystrophy) |

Troubleshooting Guide: FAQs for Experimental Challenges

FAQ 1: Our initial ASO validation experiment shows high cytotoxicity. What could be the cause and how can we mitigate this?

Issue: Non-specific cytotoxic effects observed in cell culture models after ASO treatment.

Potential Causes & Solutions:

Cause: Off-Target Effects:

- Troubleshooting: The ASO sequence may have partial complementarity to other, non-target mRNAs, leading to unintended silencing. Use BLAST and other bioinformatics tools to perform a thorough sequence homology check against the entire transcriptome before ordering your ASOs [12].

- Solution: Redesign the ASO to target a more unique region of the mRNA or use a different ASO sequence altogether.

Cause: Immune Stimulation:

- Troubleshooting: Certain ASO backbone chemistries, particularly first-generation Phosphorothioates (PS-ODNs), can stimulate immune responses by interacting with toll-like receptors or other immune components [11] [13].

- Solution: Switch to a more advanced ASO chemistry. Second-generation (e.g., 2'-MOE) and third-generation (e.g., PMO, LNA) ASOs generally have reduced immunogenicity and improved safety profiles [11] [13].

Cause: Non-Specific Protein Binding:

- Troubleshooting: The PS backbone can bind to a wide range of cellular proteins, potentially disrupting their function and leading to cytotoxicity [11].

- Solution: Consider using charge-neutral backbones like Peptide Nucleic Acids (PNAs) or Phosphorodiamidate Morpholino Oligomers (PMOs), which significantly reduce non-specific protein interactions [11] [13].

FAQ 2: We have confirmed mRNA knockdown with our ASO, but see no corresponding change in the target protein or phenotype. What are the next steps?

Issue: A disconnect between molecular knockdown and functional outcome.

Potential Causes & Solutions:

Cause: Insufficient Knockdown:

- Troubleshooting: The level of mRNA reduction may not cross the critical threshold required to produce a measurable phenotypic change. Use quantitative PCR (qPCR) to accurately measure the percentage of mRNA knockdown.

- Solution: Optimize ASO delivery to increase cellular uptake or test higher concentrations (while monitoring for cytotoxicity). Consider testing multiple ASOs targeting different regions of the same mRNA.

Cause: Protein Half-Life:

- Troubleshooting: The target protein may have an exceptionally long half-life, meaning that even with effective mRNA knockdown, the pre-existing protein pool persists for a long time.

- Solution: Extend the duration of your experiment to allow for natural protein turnover. Alternatively, use a direct protein degradation method (e.g., PROTACs) in parallel to confirm the target's phenotypic role.

Cause: Redundancy or Compensation:

- Troubleshooting: Other genes or pathways may compensate for the loss of your target's function, masking the phenotypic effect.

- Solution: Use a combination of tools (e.g., ASO plus siRNA or CRISPRi) to achieve more complete inhibition, or analyze pathway activity through phospho-protein profiling or transcriptomics to identify compensatory mechanisms.

FAQ 3: Our ASO is effective in cell culture but shows no efficacy in a mouse model. How should we proceed?

Issue: Failure to translate in vitro findings to an in vivo context.

Potential Causes & Solutions:

Cause: Poor Pharmacokinetics (PK) and Delivery:

- Troubleshooting: Unconjugated ASOs have limited stability in circulation and poor tissue penetration. They are rapidly cleared from the blood and accumulate predominantly in the liver, kidney, and spleen [14].

- Solution:

- Chemical Modification: Ensure you are using nuclease-resistant chemistries (e.g., PS, 2'-MOE, PMO) [11].

- Conjugation for Targeting: Employ targeted delivery systems. For liver targets, GalNAc conjugation is highly effective for hepatocyte-specific delivery via the ASGPR receptor [14].

- Formulation: For other tissues, investigate lipid nanoparticles (LNPs) or other formulations to enhance bioavailability.

- Dosing Regimen: The standard bolus injection may not maintain effective tissue concentrations. Consider sustained-release formulations or more frequent dosing, as predicted by PBPK modeling [14].

Cause: Species-Specific Sequence Differences:

- Troubleshooting: The human ASO sequence may not be fully complementary to the rodent mRNA sequence due to evolutionary divergence.

- Solution: Perform cross-species conservation analysis of the target mRNA and design a species-specific ASO for your preclinical studies [12].

Table: Quantitative Considerations for In Vivo ASO Studies

| Parameter | Consideration | Typical Range/Example |

|---|---|---|

| Tissue Exposure | Governed by blood flow, endothelial permeability, and tissue binding [14]. | Liver & Kidney > Muscle & Lung > Brain (for unconjugated ASOs) |

| Uptake Pathways | Non-specific fluid-phase endocytosis vs. receptor-mediated endocytosis (RME) [14]. | GalNAc conjugation increases liver uptake by ~10-50x via ASGPR RME. |

| Clearance | Primarily via nuclease degradation and renal filtration. | Half-life can range from hours to days depending on chemistry. |

| PBPK Modeling | A predictive tool to simulate tissue uptake and optimize dosing [14]. | Can predict AUC ratios and identify key parameters like unbound plasma fraction and RME efficiency. |

Experimental Protocols for Key Validation Techniques

Protocol: In Vitro Target Validation Using ASOs in Cell Culture

Objective: To functionally validate a gene target by knocking down its mRNA and assessing downstream molecular and phenotypic consequences.

Materials:

- Validated ASO and scrambled control ASO (e.g., from Life Chemicals pre-plated sets) [15].

- Appropriate cell line expressing the target.

- Transfection reagent compatible with oligonucleotides.

- Lysis buffers for RNA and protein extraction.

- qPCR reagents for mRNA quantification.

- Western blot or ELISA reagents for protein quantification.

- Assay kits for phenotypic readouts (e.g., viability, apoptosis, migration).

Methodology:

- ASO Design & Bioinformatics: Design or select ASOs targeting accessible regions of the mRNA, as predicted by secondary structure modeling tools (e.g., mfold) [12]. Confirm specificity using BLAST.

- Cell Seeding & Transfection: Seed cells in appropriate plates. The next day, transfert with a range of ASO concentrations (e.g., 10-100 nM) using a suitable transfection reagent. Include a scrambled-sequence ASO as a negative control and a positive control ASO if available.

- Incubation: Incubate cells for 48-72 hours to allow for mRNA turnover and protein degradation.

- Molecular Validation (Step 1):

- mRNA Analysis: Harvest cells and extract total RNA. Perform reverse transcription followed by qPCR using primers for the target gene and housekeeping genes (e.g., GAPDH). Calculate fold-change in mRNA expression relative to the control ASO.

- Molecular Validation (Step 2):

- Protein Analysis: Harvest a separate set of cells and extract protein. Perform Western blotting or an ELISA to quantify the level of the target protein. This confirms the functional consequence of mRNA knockdown.

- Phenotypic Assessment:

- Functional Assays: Perform assays relevant to your disease model (e.g., MTT assay for cell viability, caspase assay for apoptosis, transwell assay for invasion) to link target knockdown to a biological effect.

- Data Interpretation: Correlate the degree of mRNA/protein knockdown with the magnitude of the phenotypic effect. A strong, dose-dependent correlation provides compelling evidence for target validation.

Protocol: In Vivo Validation Using Transgenic Models

Objective: To validate the therapeutic relevance of a target in a whole-organism context that recapitulates human disease.

Materials:

- Genetically modified mouse model (e.g., knockout, knock-in, or disease-specific transgenic model).

- Therapeutic ASO or control.

- Dosing equipment (e.g., osmotic minipumps, injection supplies).

- Equipment for in vivo imaging and/or behavioral tests.

- Tissue collection supplies for histology and molecular biology.

Methodology:

- Model Selection: Select a transgenic model that best mimics the human disease genetics and phenotype. For genetic disorders, this may be a knock-in of a human mutation. For complex diseases, a transgenic overexpressing a key protein might be appropriate.

- Study Design: Randomize age- and gender-matched animals into treatment groups (e.g., ASO-treated, control-ASO treated, untreated). Ensure adequate sample size for statistical power.

- ASO Administration:

- Dosing: Administer ASO via a clinically relevant route (e.g., subcutaneous, intravenous). Dosing regimens (frequency, duration) should be informed by PBPK modeling or prior PK studies to maintain target engagement [14].

- Formulation: Use appropriate buffers for ASO dissolution. For non-liver targets, consider formulation strategies to enhance delivery.

- Monitoring & Functional Endpoints:

- Behavioral/Phenotypic Tracking: Regularly monitor and score disease-relevant phenotypes (e.g., motor function, tumor size, cognitive tests).

- Terminal Analysis:

- Tissue Collection: At the end of the study, collect relevant tissues (e.g., target organ, plasma).

- Molecular Analysis: Quantify target mRNA and protein reduction in the tissues to confirm in vivo mechanism of action (MoA).

- Histopathological Analysis: Examine tissue sections for changes in pathology, biomarkers, or signs of toxicity.

- Data Integration: Integrate the molecular data (proof of knockdown), phenotypic data (disease modification), and histopathological data to build a comprehensive case for in vivo target validation.

Visualization of Workflows and Pathways

ASO Mechanism of Action Diagram

Integrated Target Validation Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Resources for Target Validation and Screening

| Reagent / Resource | Function in Validation | Examples & Sources |

|---|---|---|

| Pre-plated Screening Libraries | Provides structurally diverse, drug-like compounds for high-throughput screening (HTS) against a validated target. | Life Chemicals Diversity Sets, Focused Libraries (e.g., Kinase, GPCR, Covalent Inhibitor libraries) [15]. |

| Chemical Probes | High-quality, selective small-molecule inhibitors or modulators used for pharmacological validation of a target. | SGC Probes, ChemicalProbes.org recommendations, opnMe portal (Boehringer Ingelheim) [16]. |

| Bioactive Compound Libraries | Collections of compounds with known biological activity, useful for screening against related targets or pathways. | Pre-plated Bioactive Compound Library (Life Chemicals), NIH Molecular Libraries Program collection [15] [16]. |

| Approved Drug Libraries | Sets of clinically used drugs; useful for drug repurposing screens and for understanding polypharmacology. | CLOUD library, DrugBank, collections of FDA-approved drugs [16]. |

| Fragment Libraries | Low molecular weight compounds for Fragment-Based Drug Discovery (FBDD); used to identify starting points for lead optimization. | 3D-shaped Fragment Sets, High-solubility Fragment Sets (Life Chemicals) [15]. |

| ASO Design & Synthesis | Custom antisense oligonucleotides for gene knockdown experiments and validation. | Various commercial suppliers offering ASOs with diverse chemistries (PS, MOE, PMO, LNA). |

This technical support center provides troubleshooting guides and frequently asked questions for researchers building screening hypotheses in drug discovery. A robust screening hypothesis connects the modulation of a specific molecular target to a desired therapeutic effect, forming the foundation for identifying potent and selective compounds [17]. The content herein is framed within a broader thesis on selecting potent compounds for each target in screening set research, addressing common experimental challenges and providing practical solutions to streamline your workflow.

Frequently Asked Questions (FAQs)

Fundamental Concepts

Q1: What is the core purpose of building a screening hypothesis in early drug discovery?

A screening hypothesis proposes that modulating a specific biological target (e.g., a protein or gene) will produce a therapeutic effect in a disease context [17]. It is the foundational premise that justifies a drug discovery program. The core purpose is to establish a causal link between target modulation and a disease-relevant phenotypic outcome, thereby reducing the high risk of clinical failure due to a lack of efficacy [18] [17]. A well-validated hypothesis provides confidence that compounds discovered in a screen will have a mechanistic and therapeutic impact.

Q2: What are the key differences between target-based and phenotypic screening approaches?

The table below summarizes the core differences between these two primary screening strategies [19] [20].

| Feature | Target-Based Screening | Phenotypic Screening |

|---|---|---|

| Starting Point | A known, hypothesized molecular target [19] | A measurable biological or disease-relevant phenotype [19] |

| Assay Type | Biochemical (e.g., enzyme activity) [20] | Cell-based or whole-organism [20] |

| Primary Goal | Identify compounds that interact with and modulate the target [20] | Identify compounds that induce a desired functional change [19] |

| Advantage | Mechanism is known from the outset; rational design is facilitated [19] | Unbiased; can discover novel targets and mechanisms [19] |

| Major Challenge | Target may not be causally linked to the disease [17] | Target deconvolution can be difficult and time-consuming [19] |

Q3: What are the standard plate formats used in High-Throughput Screening (HTS), and how do they impact an assay?

HTS relies on miniaturization and automation to test thousands to millions of samples rapidly [21] [22]. Assays are typically run in microtiter plates, with the choice of format representing a balance between throughput, cost, and technical feasibility [21].

| Well Format | Typical Assay Volume | Primary Use Case & Impact |

|---|---|---|

| 96-well | Higher (e.g., 100-200 µL) | Lower complexity assays; easier liquid handling; lower throughput [21] |

| 384-well | Medium (e.g., 10-50 µL) | Standard for modern HTS; good balance of throughput and assay performance [21] [22] |

| 1536-well | Low (e.g., <10 µL) | Ultra-HTS; maximizes throughput and minimizes reagent cost; requires specialized instrumentation [21] [22] |

Troubleshooting Common Experimental Issues

Q4: How can I troubleshoot a high rate of false positives in my HTS campaign?

False positives, where compounds appear active but are not, are a major challenge in HTS. A multi-faceted troubleshooting approach is recommended:

- 1. Counterscreening and Hit Triaging: Implement secondary assays designed to identify compounds that act through undesired mechanisms. This includes:

- Assay Interference Screening: Test hits in the absence of the target to detect signal artifacts (e.g., auto-fluorescence, compound quenching) [20].

- Selectivity Screening: Test hits against unrelated targets to identify promiscuous inhibitors [20].

- Rule out PAINS: Be vigilant for Pan-Assay Interference Compounds (PAINS), which are chemical compounds that often give false-positive results across various assay formats [20].

- 2. Employ Quantitative HTS (qHTS): Screen compounds at multiple concentrations instead of a single dose. Generating concentration-response curves immediately helps distinguish true dose-dependent actives from false signals and provides preliminary potency data [22].

- 3. Validate Assay Robustness: Before the primary screen, ensure your assay has a high Z'-factor (a statistical parameter). A Z'-factor between 0.5 and 1.0 indicates an excellent and robust assay with a wide signal window, which minimizes false results [20].

Q5: Our screening hypothesis failed during validation—the compound hits modulate the target but do not produce the expected phenotypic effect. What are the potential causes?

This disconnect between target engagement and phenotypic outcome is a critical failure point. Key areas to investigate are:

- 1. Flawed Biological Hypothesis: The target may not be causally central to the disease pathway in the relevant cellular context. Re-interrogate the foundational literature using systematic, AI-assisted approaches. Newer models, like BERT-based classifiers, can help systematically analyse published literature to establish causal, not just correlative, relationships between a target and a health effect [18].

- 2. Inadequate Model System: The cellular or animal model used for phenotypic validation may not accurately recapitulate the human disease biology. Consider adopting more physiologically relevant models, such as 3D cell cultures, organoids, or primary cell screens, which can provide more predictive information [23] [20].

- 3. Compensatory Mechanisms: Biological systems often have redundant pathways or feedback loops that compensate for the targeted modulation, masking the expected phenotypic effect [19]. Using tools like siRNA or CRISPR in gain/loss-of-function studies can help validate the target's role and uncover such mechanisms [17] [23].

Q6: What strategies can be used for target deconvolution following a phenotypic screen?

Target deconvolution—identifying the molecular target of a compound discovered in a phenotypic screen—is a classic challenge. The following table outlines common methodologies.

| Strategy | Brief Description | Key Consideration |

|---|---|---|

| Affinity Purification | Immobilizing the compound to pull down interacting proteins from a cell lysate for identification by mass spectrometry. | Requires chemical modification of the compound, which must not affect its bioactivity. |

| Resistance Mutagenesis | Generating resistant cell clones and identifying genomic mutations that confer resistance, often pointing to the target or pathway. | Can identify the direct target or components in the same pathway. |

| Genetic Screens (CRISPR/siRNA) | Using genome-wide loss-of-function (CRISPR knockout) or gain-of-function (ORF) screens to identify genes that modify the compound's effect. | Provides functional evidence for target involvement within the cellular context [23]. |

| Bioinformatic Profiling | Comparing the compound's gene expression or proteomic signature to databases of signatures for compounds with known targets. | Relies on the availability and quality of reference databases. |

Experimental Protocols & Workflows

Protocol 1: Workflow for Developing and Validating a Screening Hypothesis

This workflow outlines the key stages from initial hypothesis generation to assay execution, integrating both computational and experimental validation.

Step-by-Step Guide:

Hypothesis Generation & Target Identification:

- Start with a clear unmet clinical need.

- Use data mining of biomedical literature, genetic association studies (e.g., linking polymorphisms to disease risk), gene expression data, and proteomics to identify a potential target [17] [24]. The hypothesis is that modulating this target will produce a therapeutic effect.

Target Validation:

- Goal: Increase confidence that the target is causally linked to the disease and is "druggable."

- Methods: Use a combination of tools to prosecute the target. This is a critical multi-validation step [17].

- Genetic Tools: siRNA or CRISPR for loss-of-function studies [17] [23]. Transgenic animals (knockout/knock-in) for in vivo validation [17].

- Biological Tools: Function-blocking monoclonal antibodies (for extracellular targets) [17].

- Chemical Tools: Use known small-molecule modulators (if available) to test the hypothesis [17].

Assay Development & Quality Control:

- Develop a robust, miniaturized assay suitable for HTS formats (e.g., 384-well plate) [21] [20].

- Critical Step: Rigorously validate the assay before the full screen. Calculate the Z'-factor; a value >0.5 is considered excellent [20]. Also assess signal-to-noise ratio and well-to-well reproducibility.

Primary Screening & Hit Validation:

- Execute the screen of your compound library.

- Troubleshooting: For potential false positives, use strategies like quantitative HTS (testing multiple concentrations) and counter-screens to rule out assay interference [22] [20].

- Confirm "hit" compounds through dose-response assays to determine IC50/EC50 values and confirm on-target activity [23] [20].

Protocol 2: Multi-level Classification for Literature-Based Hypothesis Support

Systematically analysing scientific literature is key to building a strong hypothesis. This protocol, based on a state-of-the-art BERT model, classifies sentences from PubMed abstracts to establish evidence for target-health effect relationships [18].

Application Guide:

This model allows for systematic, unbiased parsing of literature to support your hypothesis. For example:

- A sentence classified as a Direct relationship, with Target Downregulation leading to a Health Effect Decrease, provides strong mechanistic evidence that an inhibitor of your target could be therapeutic [18].

- This AI-assisted approach helps overcome the hurdles of manually reviewing vast amounts of unstructured literature and reduces expert bias [18]. The pipeline is available through platforms like TargetTri for assessing potential drug targets [18].

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential materials and reagents used in building and executing a screening hypothesis.

| Reagent / Solution | Function / Application |

|---|---|

| siRNA / shRNA Libraries | Used for loss-of-function genetic screens to validate the functional role of a target in a disease phenotype [21] [17]. |

| CRISPR-Cas9 Systems | Enables more precise gene knockout, activation (CRISPRa), or inhibition (CRISPRi) in genetic screens for target validation and deconvolution [23]. |

| Primary Cells | Provide a more physiologically relevant model for phenotypic screening compared to immortalized cell lines, leading to more predictive data [23]. |

| Monoclonal Antibodies | Used as highly specific target validation tools, particularly for cell-surface and secreted proteins. Also used as therapeutic modalities themselves [17] [19]. |

| Chemical Probe Libraries | Collections of well-characterized small molecules used to perturb specific protein families (e.g., kinases) to test target hypotheses [17]. |

| Transcreener HTS Assays | A universal, biochemical assay platform based on ADP/NADP detection, applicable for screening enzymes like kinases, GTPases, and PARPs, reducing assay development time [20]. |

| 384-well Nucleofector System | A system designed for high-throughput transfection of primary cells and cell lines in 384-well format, enabling genetic screens [23]. |

The Screening Toolkit: Integrating HTS, AI, and Computational Methods for Hit Identification

Troubleshooting Common HTS Experimental Issues

Q1: Our HTS assay is generating an unacceptably high number of false positives. What are the primary causes and solutions?

A: False positives can arise from several sources, including compound interference, assay design, and liquid handling. The table below summarizes common causes and corrective actions.

| Cause of False Positives | Description | Corrective Action |

|---|---|---|

| Compound Fluorescence/Opacity | Test compounds interfere with optical detection (e.g., fluorescence, luminescence) [25]. | Run counterscreens using detergent-based assays or test compounds in assay buffer without biological components [26]. |

| Non-selective Binding | Compounds non-specifically bind to proteins or other assay components [25]. | Include control assays to identify promiscuous inhibitors; use more stringent washing steps if applicable. |

| Assay Signal Quality | Poor distinction between positive and negative controls increases false results [27]. | Calculate the Z'-factor; a value >0.5 indicates a robust assay suitable for HTS. Re-optimize assay conditions if needed [26] [25]. |

| Liquid Handling Errors | Inconsistent pipetting, splashing, or cross-contamination between wells [28]. | Implement automated liquid handlers with dispensing verification technology and ensure regular equipment calibration [28]. |

Q2: We observe significant well-to-well variation (edge effects) across our microtiter plates. How can this be mitigated?

A: Well-position-based variation, or "edge effects," are often caused by uneven evaporation or temperature distribution during incubation.

- Plate Design: Incorporate effective controls across the entire plate, not just in a single column. Use a randomized or balanced plate layout for test compounds to avoid confounding positional effects with true biological activity [27] [25].

- Normalization: Apply statistical normalization methods, such as the B-score, which is particularly effective at removing systematic row and column biases from the data [27].

- Environmental Control: Use plate seals to minimize evaporation and ensure the incubator or plate hotel has a stable, uniform temperature. Automated systems should handle plates consistently to reduce environmental shocks [28].

Q3: Our HTS data is inconsistent and difficult to reproduce. What steps can we take to improve reliability?

A: Poor reproducibility often stems from manual process variability and human error.

- Automate and Standardize: Integrate robotics for liquid handling, plate washing, and dispensing. This reduces inter- and intra-user variability. For example, non-contact dispensers can verify dispensed volumes to ensure accuracy [28].

- Quality Control (QC) Metrics: Routinely employ QC metrics like the Z'-factor or Strictly Standardized Mean Difference (SSMD) to quantitatively measure the performance and reliability of each assay plate [27].

- Data Management: Use automated data processing pipelines to minimize manual data handling errors. Software platforms can capture output directly from instruments, run QC checks, and generate analysis-ready datasets [28] [29].

Frequently Asked Questions (FAQs)

Q4: What is the difference between a "Hit" and a "Lead" compound?

A: In HTS, a "Hit" is a compound that shows a desired level of activity in the primary screen. These are starting points that require confirmation and further characterization. A "Lead" compound is a validated hit that has undergone subsequent optimization and profiling for properties like potency, selectivity, and preliminary toxicity, making it a candidate for more advanced development [22].

Q5: How do I choose the right statistical method for hit selection in my primary screen?

A: The choice depends on whether your screen includes replicates.

- Screens without replicates: Use methods that assume a common variability across all compounds, such as the z-score, z*-score (robust to outliers), or SSMD based on the overall assay background [27].

- Screens with replicates: Use methods that can leverage the per-compound replicate data, such as the t-statistic or replicate-based SSMD. These provide a more accurate estimation of each compound's effect size and variability [27].

Q6: What is Quantitative HTS (qHTS) and how does it benefit the screening process?

A: Quantitative High-Throughput Screening (qHTS) is a paradigm where each compound in the library is tested at multiple concentrations simultaneously. Instead of a single activity data point, qHTS generates a full concentration-response curve for every compound immediately after the screen. This approach provides more information early on, including EC50, maximal response, and Hill coefficient, which helps to identify and triage false positives and yields nascent structure-activity relationships (SAR) from the primary screen [27] [22].

Essential Research Reagent Solutions

The following table details key materials and reagents essential for establishing a robust HTS workflow.

| Item | Function in HTS |

|---|---|

| Microtiter Plates | The core labware for HTS; typically disposable plastic plates with 96, 384, 1536, or more wells in a standardized grid pattern where assays are performed [27]. |

| Compound Libraries | Curated collections of small molecules, natural product extracts, or siRNAs that are screened for biological activity. These can include FDA-approved drugs for repurposing efforts [26] [25]. |

| Assay Reagents (Biological Targets) | The biological entities used to test the compound library, such as purified proteins (enzymes, receptors), cells (cell-based assays), or even animal embryos [27] [30]. |

| Detection Reagents | Reagents that produce a measurable signal (e.g., fluorescence, luminescence, absorbance) to indicate biological activity or binding events in the assay [31]. |

| Positive/Negative Controls | Reference compounds that produce a known strong response (positive) or no response (negative). They are critical for validating assay performance and normalizing data on every plate [27] [25]. |

Experimental Workflow and Data Analysis Protocols

Primary HTS Experimental Workflow

The following diagram illustrates the standard workflow for a primary High-Throughput Screening campaign.

HTS Data Analysis and Hit Selection Protocol

This protocol outlines the critical steps for processing HTS data to identify high-quality "hits."

1. Quality Control (QC) Review

- Calculate the Z'-factor for each assay plate using the positive and negative control data [27] [26]: ( Z' = 1 - \frac{3(\sigma{p} + \sigma{n})}{|\mu{p} - \mu{n}|} ) where ( \sigma{p} ) and ( \sigma{n} ) are the standard deviations of the positive and negative controls, and ( \mu{p} ) and ( \mu{n} ) are their means.

- Acceptance Criterion: Plates with a Z'-factor > 0.5 are considered to have excellent separation and are accepted for analysis. Plates below this threshold should be investigated and potentially repeated [26].

2. Data Normalization

- Normalize raw readouts (e.g., fluorescence) to account for inter-plate variation. A common method is Percent Inhibition [25]: ( \text{% Inhibition} = \frac{\text{(Negative Control Mean - Compound Well)}}{\text{(Negative Control Mean - Positive Control Mean)}} \times 100 )

- Alternative methods include z-score normalization or B-score for correcting spatial biases [27].

3. Hit Selection

- Apply a predetermined hit-threshold to the normalized data.

- For a Percent Inhibition metric, a common threshold might be >50% Inhibition [25].

- For a z-score-based method (typically used in no-replicate screens), a threshold of |z| > 3 is often used, flagging compounds that are more than 3 standard deviations from the plate mean [27].

4. Hit Confirmation

- "Cherry-pick" the putative hits from the original compound library into new assay plates.

- Re-test these compounds in a dose-response manner (e.g., qHTS) to confirm activity and generate preliminary potency data (EC50) [27]. This step is crucial for eliminating false positives.

Logical Decision Process for HTS Data Normalization

The diagram below outlines a logical workflow for selecting an appropriate data normalization method based on the characteristics of your HTS dataset.

In modern drug discovery, the selection of potent compounds for screening sets relies heavily on a suite of integrated in silico frontline tools. The paradigm has shifted from purely experimental, high-throughput screening to intelligent, computationally-driven prioritization. This approach leverages Virtual Screening (VS), Molecular Docking, and Quantitative Structure-Activity Relationship (QSAR) modeling to filter vast chemical libraries down to a manageable number of high-probability hits, significantly reducing time and resource expenditure [32] [33]. By framing these tools within a cohesive workflow, researchers can systematically address the central challenge of identifying promising candidates for a given biological target, which is the core thesis of efficient screening set research.

The integration of artificial intelligence (AI) and machine learning (ML) has transformed these tools from supportive utilities to foundational components of the R&D pipeline [32]. By 2025, AI-enhanced in silico methods are routinely used for target prediction, compound prioritization, and pharmacokinetic property estimation, driving a transformative shift in early-stage research [32]. This technical support center provides a foundational guide, troubleshooting common issues, and detailing protocols to empower researchers in effectively deploying these powerful computational tools.

Frequently Asked Questions (FAQs) and Troubleshooting Guides

General Workflow and Strategy

Q1: In what order should I apply VS, QSAR, and Docking in a new project? A robust, hierarchical strategy applies these tools sequentially to trade speed for accuracy, progressively narrowing the candidate list. The following workflow is recommended for efficient screening:

- Troubleshooting Tip: If your initial virtual library is exceptionally large (trillions of compounds), consider a fragment-based or "bottom-up" approach. This method first screens a smaller space of fragment-sized compounds to identify binding "hotspots" and essential scaffolds, which are then grown into larger drug-like molecules using ultra-large chemical spaces, vastly improving computational efficiency [34].

Q2: How do I validate my computational workflow before committing to expensive experimental work?

- Internal Validation: For QSAR models, always use a held-out test set (e.g., 20-30% of your data) and perform Y-randomization to ensure the model is not learning chance correlations. Check the model's applicability domain to ensure new predictions are reliable [35].

- Experimental Cross-Check: If available, include a set of known active and inactive compounds in your screening workflow. The ability of your pipeline to correctly rank and separate these groups builds confidence in its predictive power [36].

- Retrospective Screening (Docking): Perform a control docking with a co-crystallized ligand. A successful protocol should be able to reproduce the native binding pose (Root Mean Square Deviation, or RMSD, typically < 2.0 Å) and rank it favorably [37].

QSAR Modeling

Q3: My QSAR model has high accuracy on the training data but performs poorly on new compounds. What is the cause? This is a classic case of overfitting, where the model learns noise and specific features of the training set instead of the underlying structure-activity relationship.

- Solution:

- Simplify the Model: Reduce the number of molecular descriptors. Use feature selection techniques like Variance Threshold and Pearson Correlation analysis to remove constant and highly correlated descriptors [35].

- Increase Data: A larger and more diverse dataset of compounds improves model generalizability.

- Apply Regularization: Use machine learning algorithms that incorporate regularization (e.g., L1 or L2) to penalize model complexity.

- Check Applicability Domain: Ensure that the new compounds you are predicting are structurally similar to those in your training set. Predictions for compounds outside this domain are unreliable [35].

Q4: Which machine learning algorithm is best for QSAR modeling? There is no single "best" algorithm; the optimal choice depends on your dataset size, descriptor type, and the non-linearity of the structure-activity relationship. A comparative approach is recommended.

Table 1: Comparison of Common ML Algorithms for QSAR

| Algorithm | Best For | Key Advantages | Considerations |

|---|---|---|---|

| Random Forest (RF) [36] | Medium to large datasets, non-linear relationships. | Robust to outliers, provides feature importance. | Can be prone to overfitting on noisy data if not tuned. |

| Artificial Neural Network (ANN) [35] | Large, complex datasets with strong non-linearities. | High predictive accuracy, can model complex patterns. | "Black box" nature; requires large data and computational power. |

| Support Vector Machine (SVM) [35] | Small to medium-sized datasets. | Effective in high-dimensional spaces, memory efficient. | Performance heavily dependent on kernel and parameter selection. |

Molecular Docking and Virtual Screening

Q5: My top-docked compound has an excellent binding score but shows no activity in the lab assay. Why? This common discrepancy can arise from several factors:

- Inaccurate Solvation Model: The docking scoring function may not accurately represent the role of water molecules in the binding pocket. Some water molecules are essential for ligand binding and displacing them can be energetically unfavorable.

- Protein Flexibility: The X-ray crystal structure used for docking is a single, static snapshot. In reality, proteins are dynamic, and the binding site conformation might change (induced fit).

- Off-Target Promiscuity: The compound may be binding more strongly to a different, untested target.

- Troubleshooting Steps:

- Post-Docking Analysis: Always visually inspect the top poses. Look for sensible interactions like hydrogen bonds, salt bridges, and hydrophobic contacts with key binding site residues.

- Use More Advanced Methods: Follow up docking with Molecular Dynamics (MD) simulations (e.g., 100-300 ns). MD assesses the stability of the protein-ligand complex over time and provides a more rigorous estimate of binding free energy using methods like MM/GBSA [36] [37]. A stable RMSD and favorable free energy (e.g., -35.77 kcal/mol for a promising inhibitor vs. -18.90 kcal/mol for a control [37]) are strong positive indicators.

- Check for Assay Interference: Ensure the compound is not aggregating or reacting with assay components.

Q6: How do I handle water molecules and co-factors in my docking target protein?

- Strategic Approach: This is a target-specific decision. A common strategy is to dock with and without key crystallographic water molecules.

- Protocol: If a water molecule is involved in a conserved hydrogen-bonding network between the native ligand and the protein, it may be critical for binding. In such cases, include it as part of the receptor structure and treat it as a fixed part of the binding site during docking. For co-factors (e.g., metal ions, heme groups), they are almost always integral to the protein structure and must be included.

Target Engagement and Validation

Q7: My compound shows great in silico affinity, but how can I be confident it engages the actual target in a cellular context? This highlights the gap between computational prediction and cellular efficacy. In silico tools predict binding, but not necessarily cellular target engagement.

- Solution:

- Prioritize Tools that Use Cellular Context: When possible, use structural data from proteins in complex cellular environments (e.g., cryo-EM structures).

- Incorporate Cellular Target Engagement Assays: Plan for experimental validation using techniques like the Cellular Thermal Shift Assay (CETSA) or the Chemical Protein Stability Assay (CPSA). These methods measure drug-target engagement directly in intact cells or lysates, confirming that your compound stabilizes the target protein in a more complex, physiologically relevant system [32] [38]. CPSA, for example, is a plate-based, cost-effective assay that detects binding-induced protein stability against chemical denaturants and is scalable for high-throughput screening [38].

Detailed Experimental Protocols

Protocol 1: Integrated ML-QSAR and Docking Workflow for Novel Inhibitor Identification

This protocol, adapted from studies on NDM-1 and T. cruzi inhibitors, provides a robust framework for lead identification [36] [35].

1. Data Curation and Preparation

- Source: Retrieve a curated dataset of known inhibitors (with SMILES strings and IC50 values) from public databases like ChEMBL.

- Standardization: Convert IC50 values to pIC50 (-log10(IC50)) to normalize the activity scale [35].

- Descriptor Calculation: Use software like PaDEL-descriptor to calculate molecular descriptors or fingerprints (e.g., CDK fingerprints, MACCS keys) [36] [35].

2. Machine Learning QSAR Model Development

- Data Splitting: Split the data into a training set (70-80%) and a test set (20-30%).

- Model Training & Validation: Train multiple ML models (e.g., RF, ANN, SVM) on the training set. Use k-fold cross-validation (e.g., k=10) for hyperparameter tuning.

- Model Selection: Select the best model based on statistical metrics for the test set: Pearson Correlation Coefficient, Root Mean Squared Error (RMSE), and Mean Absolute Error (MAE). A high Pearson R for the test set indicates good predictive power [35].

3. Virtual Screening of Compound Libraries

- Screening: Use the validated QSAR model to predict the pIC50 of compounds in a large virtual library (e.g., natural product libraries, commercial collections).

- Filtering: Select compounds predicted to be more active than a predefined threshold or a control compound.

4. Molecular Docking of Top Hits

- Protein Preparation: Obtain the 3D structure from the PDB (e.g., PDB ID: 4EYL for NDM-1). Remove native ligands, add hydrogens, and assign charges.

- Grid Generation: Define the docking grid box centered on the native ligand's binding site with adequate size (e.g., 20x16x16 Å) to accommodate ligand flexibility [37].

- Docking Execution: Perform docking with an exhaustiveness level of 10-20 using AutoDock Vina. Generate multiple poses (e.g., 10) per ligand [37].

5. Post-Docking Analysis and Prioritization

- Pose Analysis: Visually inspect the binding modes of top-scoring compounds. Prioritize those forming key interactions with active site residues.

- Similarity Clustering: Use Tanimoto similarity and k-means clustering to group selected compounds and maximize chemical diversity for the final shortlist [37].

Protocol 2: Validation via Molecular Dynamics (MD) Simulations

This protocol is used to validate the stability of docking-predicted complexes [36] [37].

1. System Setup

- Complex Selection: Take the top-ranked docking pose of your hit compound and the native ligand (control) for simulation.

- Solvation and Ionization: Place the protein-ligand complex in a simulation box (e.g., TIP3P water model) and add ions to neutralize the system's charge.

2. Simulation Production Run

- Software: Use MD software like Desmond, GROMACS, or AMBER.

- Duration: Run an unrestrained simulation for a sufficient time (e.g., 100-300 ns) to observe stable binding.

3. Trajectory Analysis

- Root Mean Square Deviation (RMSD): Calculate the RMSD of the protein backbone and the ligand. A stable complex will reach a plateau in RMSD, indicating equilibrium.

- Root Mean Square Fluctuation (RMSF): Analyze RMSF to understand residual flexibility.

- Interaction Analysis: Monitor hydrogen bonds and other key interactions over the simulation time.

- Binding Free Energy Calculation: Use the MM/GBSA method to calculate the binding free energy. A significantly favorable energy (e.g., -35.77 kcal/mol for a hit vs. -18.90 kcal/mol for a control) strongly validates the interaction [37].

The following diagram illustrates this validation workflow:

Table 2: Key Software and Databases for In Silico Drug Discovery

| Category | Tool/Resource | Primary Function | Reference/Comment |

|---|---|---|---|

| Cheminformatics & Descriptors | PaDEL-Descriptor [35] | Calculates molecular descriptors and fingerprints. | Critical for featurization in QSAR modeling. |

| RDKit [36] [37] | Open-source cheminformatics toolkit. | Used for chemical informatics, descriptor calculation, and similarity analysis. | |

| Docking & VS | AutoDock Vina [37] | Molecular docking and virtual screening. | Widely used for its speed and accuracy; configurable exhaustiveness. |

| SwissADME [32] | Predicts ADME properties and drug-likeness. | Used for filtering compounds based on pharmacokinetic properties. | |

| MD Simulation | Desmond [37] | Molecular dynamics simulation system. | Used for 100-300 ns simulations to validate complex stability. |

| Commercial AI Platforms | PandaOmics [39] | AI-powered target discovery and multi-omics analysis. | Integrates multi-omics data and literature for target prioritization. |

| Chemistry42 [39] | AI-driven de novo molecular design and optimization. | An ensemble of generative AI and physics-based methods for lead optimization. | |

| Data Resources | ChEMBL [36] [35] | Manually curated database of bioactive molecules. | Primary source for bioactivity data to build QSAR models. |

| Protein Data Bank (PDB) [36] [37] | Repository for 3D structural data of proteins and nucleic acids. | Source of protein structures for docking and MD simulations. |

Technical Support Center

Troubleshooting Guide: Common Experimental Challenges in AI-Driven Hit-to-Lead

Problem 1: Poor Synthetic Accessibility of AI-Generated Molecules

- Symptoms: Generated molecules are chemically unrealistic, require too many synthetic steps, or use unavailable reagents.

- Diagnosis: The generative model lacks proper constraints or training on synthetic feasibility data.

- Solution: Integrate a retrosynthesis planning tool directly into the generation loop. Use a synthetic accessibility score (SAS) as a penalty term in the reinforcement learning reward function to guide the AI toward more readily synthesizable compounds [40].

Problem 2: Model Generizes Chemically Invalid Structures

- Symptoms: High rate of invalid SMILES strings or molecules with incorrect valences.

- Diagnosis: The model's underlying molecular representation or architecture does not enforce chemical rules.

- Solution: Switch from string-based representations (like SMILES) to graph-based models (Graph Neural Networks). Models like GraphAF, which use autoregressive graph generation, inherently preserve chemical validity during structure construction [41].

Problem 3: Limited Exploration of Chemical Space (Mode Collapse)

- Symptoms: The AI repeatedly generates minor variations of the same molecular scaffold, lacking diversity.

- Diagnosis: The generative algorithm is over-exploiting a local optimum in the chemical space.

- Solution: Implement a "novelty" or "diversity" reward in a multi-objective reinforcement learning setup. Techniques like Bayesian optimization can help balance the exploration of new regions with the exploitation of known promising areas [41].

Problem 4: Inaccurate ADMET Predictions

- Symptoms: Compounds perform well in silico but fail in vitro due to toxicity, poor permeability, or rapid metabolism.

- Diagnosis: The ADMET prediction models are trained on low-quality, sparse, or biased public data.

- Solution: Curate a high-quality, internal dataset of experimental ADMET properties. Use this to fine-tune pre-trained models. For critical decisions, rely on models that provide confidence scores for their predictions, and be cautious with low-confidence outputs [42] [40].

Problem 5: Inability to Balance Multiple, Competing Objectives

- Symptoms: Optimizing for one property (e.g., potency) causes significant deterioration in others (e.g., solubility).

- Diagnosis: Using a single-objective optimization strategy for a multi-faceted problem.

- Solution: Adopt a formal multi-objective optimization (MOO) framework. Use Pareto optimization to identify a set of candidate compounds that represent the best possible trade-offs between all desired properties, such as potency, selectivity, and metabolic stability [41] [40].

Frequently Asked Questions (FAQs)

FAQ 1: What is the most critical factor for the success of an AI-driven hit-to-lead project? The single most critical factor is high-quality, robust, and consistently assayed training data. Models are only as good as the data they learn from. Using noisy, inconsistent, or biased data will lead to the generation of molecules that fail experimentally. Investing in data curation is non-negotiable [40].

FAQ 2: How do we know when to trust an AI's molecule recommendation? Look for AI platforms that provide confidence scores for their predictions. These scores are generated by analyzing the correlation between the model's previous predictions and subsequent experimental results. A high-confidence prediction gives the medicinal chemist a quantifiable reason to prioritize a molecule for synthesis [40].

FAQ 3: Can AI help us design molecules for targets with very little known ligand data? Yes, but this is challenging. Strategies include using transfer learning from models pre-trained on large, general chemical databases, and then fine-tuning them with the limited target-specific data you have. Alternatively, you can use few-shot learning techniques specifically designed for low-data regimes [41].

FAQ 4: How is "success" quantitatively measured in AI-driven generative chemistry? Success is measured by a combination of key metrics tracked throughout the optimization cycles. The table below summarizes the primary quantitative indicators.

Table: Key Performance Indicators for AI-Driven Hit-to-Lead Optimization

| Metric Category | Specific Metric | Target Value / Benchmark |

|---|---|---|

| Molecular Quality | Chemical Validity | >95% of generated molecules [41] |

| Synthetic Accessibility Score (SAS) | Lower is better; aim for drug-like ranges | |

| Optimization Efficiency | Improvement in Primary Activity (e.g., IC50) | ≥10-fold over starting hit [40] |

| Success in Multi-Parameter Optimization | Positive movement in 3+ key properties simultaneously [40] | |

| Discovery Outcome | Novel Scaffold Identification (Scaffold Hop) | Successful generation of novel, potent chemotypes [40] |

| Experimental Validation Rate | High correlation between predicted and measured properties [40] |

FAQ 5: What is the role of the medicinal chemist in an AI-driven workflow? The medicinal chemist is more critical than ever. The AI acts as a powerful idea generator and pattern recognitor, but the chemist provides the essential creativity, intuition, and strategic oversight. Their role is to set the project goals, curate data, interpret the AI's suggestions in a chemical and biological context, and make the final decisions on which compounds to synthesize [40].

Experimental Protocols & Methodologies

Protocol 1: Property-Guided Molecular Generation with Graph Neural Networks

Purpose: To generate novel, valid molecules optimized for specific target properties from a starting hit compound.

Detailed Workflow:

- Data Preparation and Featurization:

- Curate a dataset of molecules with associated experimental data for the target properties (e.g., binding affinity, solubility).

- Represent each molecule as a graph where atoms are nodes and bonds are edges.

- Use a Graph Neural Network (GCN) to convert the molecular graph into a latent vector representation that encodes its structural features [41].

Model Setup and Training:

- Employ a Generative Adversarial Network (GAN) or a Variational Autoencoder (VAE) architecture designed for graph structures.

- Train the model to learn the distribution of the input chemical space. The generator creates new molecular graphs, while the discriminator evaluates how "real" they look compared to the training set [41].

Conditional Generation:

- Guide the generation process by conditioning the model on desired property values. This is achieved by feeding the target property as an additional input to the generator.