Strategic Chemogenomics Library Design for Advanced Phenotypic Screening in Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on designing effective chemogenomics libraries for phenotypic assays.

Strategic Chemogenomics Library Design for Advanced Phenotypic Screening in Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on designing effective chemogenomics libraries for phenotypic assays. It explores the foundational principles of chemogenomics as a bridge between phenotypic and target-based discovery, detailing methodological strategies for library assembly that integrate diverse bioactive compounds with genomic and proteomic data. The content addresses critical troubleshooting aspects, including mitigating promiscuous inhibitors and assay limitations, while covering validation frameworks and comparative analyses with genetic screening. By synthesizing these elements, the article offers a strategic roadmap to enhance the success of phenotypic screening campaigns in identifying novel therapeutic targets and mechanisms.

Laying the Groundwork: Core Principles and Strategic Value of Chemogenomics

The drug discovery landscape has progressively shifted from a reductionist 'one target–one drug' vision toward a more complex systems pharmacology perspective, recognizing that many complex diseases arise from multiple molecular abnormalities rather than a single defect [1]. Chemogenomics represents a strategic framework that bridges phenotypic and target-based discovery approaches by systematically investigating the interactions between chemical libraries and biological systems. This methodology leverages large-scale screening of diverse compound libraries against panels of biological targets to elucidate complex protein-ligand interaction networks [2] [3]. The resurgence of phenotypic screening in drug discovery has further emphasized the value of chemogenomics, as phenotypic assays do not rely on predefined molecular targets but require sophisticated chemical biology approaches to deconvolute mechanisms of action and identify therapeutic targets associated with observable phenotypes [1]. Within precision oncology, chemogenomics has emerged as a particularly powerful approach for addressing the challenges of tumor heterogeneity and adaptive resistance, enabling the identification of compounds with selective polypharmacology that can simultaneously modulate multiple targets across different signaling pathways [4] [3].

Core Principles and Definitions

Conceptual Framework

Chemogenomics operates on the fundamental principle that systematic analysis of chemical-biological interactions can reveal novel therapeutic opportunities that might be missed through conventional single-target approaches. This paradigm encompasses two complementary strategies: forward chemogenomics, which begins with phenotypic screening of compound libraries followed by target deconvolution, and reverse chemogenomics, which starts with specific protein targets of interest and identifies modulators through target-based screening [1]. The approach recognizes that most bioactive small molecules exert their effects through polypharmacology—modulating multiple protein targets with varying degrees of potency and selectivity—rather than through exclusive single-target engagement [4] [3]. This understanding has driven the development of specialized chemogenomic libraries designed to cover broad swaths of the druggable genome while providing sufficient structural diversity to enable the discovery of novel chemical matter with desired bioactivity profiles.

Key Methodological Components

The practice of chemogenomics integrates several critical components that distinguish it from traditional screening approaches. Chemical library design requires careful consideration of library size, cellular activity, chemical diversity, compound availability, and target selectivity to ensure comprehensive coverage of relevant biological space [3]. Data curation and standardization represent equally crucial elements, as the accuracy of both chemical structures and biological measurements directly impacts the reliability of resulting models and predictions [2]. This necessitates rigorous protocols for structural cleaning, stereochemistry verification, bioactivity processing, and detection of chemical duplicates to maintain data quality [2]. Furthermore, advanced assay systems including three-dimensional spheroids, organoids, and high-content imaging platforms have become essential for generating physiologically relevant phenotypic data in chemogenomic studies [4] [1]. These components collectively enable the construction of sophisticated pharmacology networks that integrate drug-target-pathway-disease relationships, forming the foundation for predictive chemogenomic models.

Chemogenomic Library Design Strategies

Rational Design Principles

Designing effective chemogenomic libraries requires balancing multiple competing constraints to maximize biological relevance while maintaining practical feasibility. Several systematic strategies have emerged for constructing targeted screening libraries of bioactive small molecules tailored for precision oncology applications [3]. These approaches prioritize compounds based on cellular activity to ensure physiological relevance, chemical diversity to broadly explore chemical space, commercial availability to enable practical implementation, and target selectivity profiles to facilitate mechanism deconvolution [3]. The design process must also account for the need to cover a wide range of protein targets and biological pathways implicated in various cancer types while maintaining a manageable library size suitable for phenotypic screening in disease-relevant model systems [3]. Advanced computational methods, including structure-based molecular docking to cancer-specific targets identified from tumor genomic profiles, have demonstrated particular utility in creating focused libraries enriched for compounds with desired polypharmacology profiles [4].

Implementation Examples

Recent implementations illustrate the practical application of these design principles. One research group created a rational library for glioblastoma (GBM) phenotypic screening by using structure-based molecular docking to map approximately 9,000 in-house compounds against 316 druggable binding sites on proteins within a GBM-specific subnetwork identified through tumor genomic profiling [4]. This approach enabled the selection of just 47 candidates for phenotypic screening, several of which showed promising activity against patient-derived GBM spheroids with substantially better efficacy than standard-of-care temozolomide [4]. In another example, researchers developed a minimal screening library of 1,211 compounds targeting 1,386 anticancer proteins, strategically condensed from larger virtual libraries through analytical procedures that optimized library size and target coverage [3]. A subsequent pilot screening study utilizing a physical library of 789 compounds covering 1,320 anticancer targets successfully identified patient-specific vulnerabilities in glioma stem cells from glioblastoma patients, revealing highly heterogeneous phenotypic responses across patients and GBM subtypes [3]. These examples demonstrate how targeted chemogenomic library design can yield practically screenable compound sets with comprehensive target coverage and high probability of identifying biologically active molecules.

Table 1: Comparative Analysis of Chemogenomic Library Design Strategies

| Design Strategy | Library Size | Target Coverage | Screening Approach | Key Outcomes |

|---|---|---|---|---|

| Genomic Profile-Based Enrichment [4] | 47 candidates | 316 druggable binding sites in GBM subnetwork | Phenotypic screening using 3D patient-derived GBM spheroids | Identification of IPR-2025 with single-digit μM IC50 in GBM spheroids, sub-μM activity in angiogenesis assay |

| Minimal Screening Library [3] | 1,211 compounds | 1,386 anticancer proteins | Phenotypic profiling of glioma stem cells from GBM patients | Identification of patient-specific vulnerabilities and heterogeneous responses across GBM subtypes |

| System Pharmacology Network [1] | 5,000 compounds | Broad panel of drug targets across biological pathways | Integration with Cell Painting morphological profiling | Platform for target identification and mechanism deconvolution in phenotypic assays |

Experimental Protocols and Methodologies

Target Selection and Library Enrichment Protocol

A critical protocol in chemogenomics involves the identification of disease-relevant targets and enrichment of chemical libraries for phenotypic screening. This process begins with the analysis of differential gene expression from disease-specific genomic databases such as The Cancer Genome Atlas (TCGA). For glioblastoma, this included 169 GBM tumors and 5 normal samples analyzed using RNA sequencing platforms to identify significantly overexpressed genes (p < 0.001, FDR < 0.01, and log2 fold change > 1) [4]. The resulting gene set is subsequently filtered to include only those encoding proteins involved in protein-protein interaction networks, leveraging large-scale human proteome interaction maps such as those described by Rolland and colleagues (approximately 8,000 proteins and 27,000 interactions) [4]. The final target selection step identifies druggable binding sites on protein structures from the Protein Data Bank, classified by site type: catalytic sites (ENZ), protein-protein interaction interfaces (PPI), or allosteric sites (OTH) [4]. For virtual screening, compound libraries are docked against these druggable binding sites using scoring methods such as support vector machine-knowledge-based (SVR-KB) to predict binding affinities, with subsequent selection of compounds predicted to simultaneously bind multiple disease-relevant targets [4].

Phenotypic Screening and Validation Workflow

Once a designed library is established, a comprehensive phenotypic screening protocol is implemented. For glioblastoma research, this involves three-dimensional spheroid models using low-passage patient-derived GBM cells rather than traditional monolayer cultures to better recapitulate the tumor microenvironment [4]. The screening process typically includes multiple phenotypic endpoints: cell viability inhibition measured through dose-response curves to determine IC50 values, anti-angiogenic activity assessed via endothelial cell tube formation assays in Matrigel, and differential toxicity evaluated in nontransformed control cells (e.g., primary hematopoietic CD34+ progenitor spheroids or astrocytes) to identify selective compounds [4]. For mechanism deconvolution, active compounds undergo RNA sequencing of treated versus untreated cells to identify differentially expressed pathways, followed by target engagement validation through mass spectrometry-based thermal proteome profiling and cellular thermal shift assays with target-specific antibodies [4]. This integrated approach enables the correlation of phenotypic effects with specific molecular targets and pathways, bridging the gap between phenotypic screening and target-based discovery.

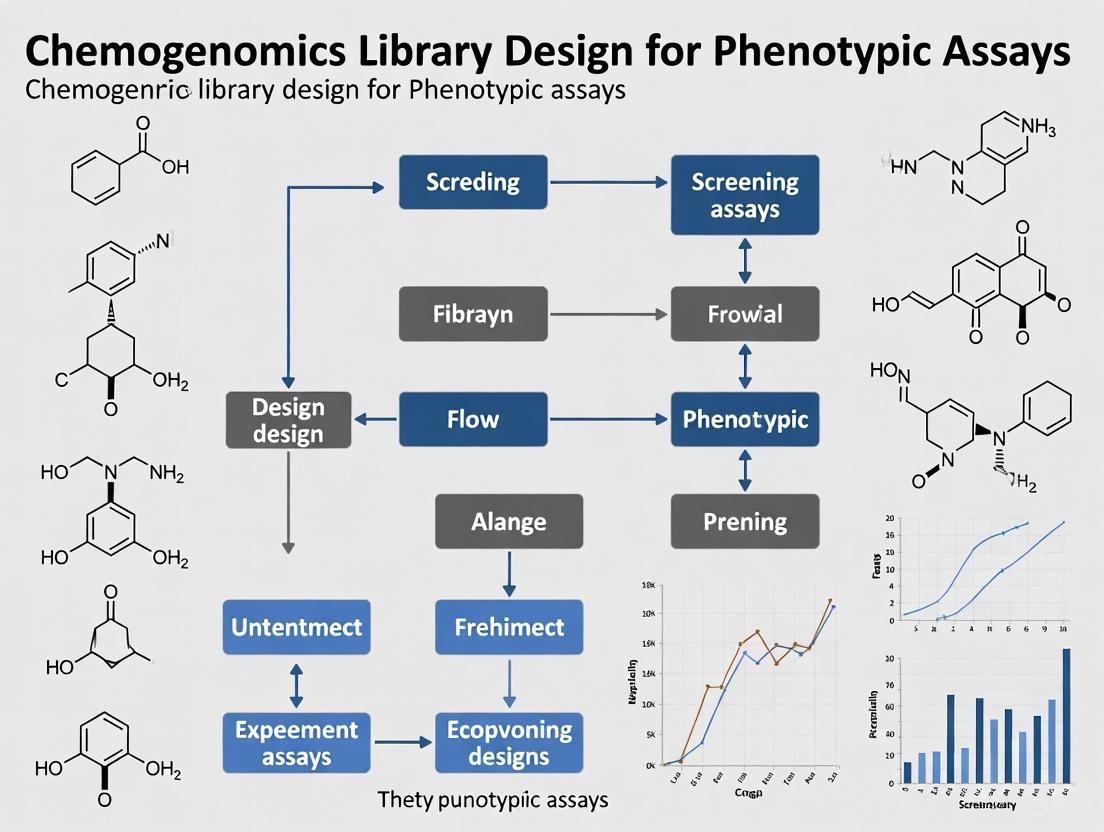

Workflow for Chemogenomic Library Design and Screening

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful implementation of chemogenomics approaches requires specialized reagents and tools that enable comprehensive compound screening and data integration. The following table summarizes key solutions utilized in contemporary chemogenomics research.

Table 2: Essential Research Reagent Solutions for Chemogenomics

| Reagent/Tool Category | Specific Examples | Function in Chemogenomics |

|---|---|---|

| Compound Libraries | Pfizer chemogenomic library, GSK Biologically Diverse Compound Set (BDCS), Prestwick Chemical Library, Sigma-Aldrich Library of Pharmacologically Active Compounds, NCATS MIPE library [1] | Provide diverse chemical matter with annotated bioactivities for screening across multiple target classes |

| Bioactivity Databases | ChEMBL, PubChem, PDSP Ki Database [2] [1] | Supply curated compound-target interaction data for library design and target prediction |

| Pathway Resources | KEGG Pathway Database, Gene Ontology (GO) Resource [1] | Enable biological context interpretation and pathway enrichment analysis of screening results |

| Disease Ontologies | Human Disease Ontology (DO) [1] | Standardize disease associations for targets and compounds |

| Phenotypic Profiling Assays | Cell Painting, High-content imaging-based morphological profiling [1] | Generate multidimensional phenotypic data for mechanism inference and compound functional classification |

| Structural Bioinformatics Tools | Molecular docking programs, Protein Data Bank (PDB) [4] | Enable structure-based target assessment and virtual screening |

| Data Integration Platforms | Neo4j graph database, ScaffoldHunter [1] | Integrate heterogeneous data sources and analyze chemical scaffolds |

Data Analysis and Integration Frameworks

Computational Integration Approaches

The complexity and scale of chemogenomics data necessitate robust computational frameworks for integration and analysis. Graph databases such as Neo4j have emerged as powerful tools for constructing pharmacology networks that integrate heterogeneous data sources, including chemical structures, bioactivities, protein targets, pathways, and disease associations [1]. These networks enable sophisticated queries across biological scales, from molecular interactions to phenotypic outcomes. Scaffold analysis using tools like ScaffoldHunter facilitates the organization of chemical libraries around hierarchical structural frameworks, identifying representative core structures that can inform structure-activity relationship studies and library design optimization [1]. Additionally, enrichment analysis methods implemented through packages like clusterProfiler in R enable the identification of statistically overrepresented biological pathways, Gene Ontology terms, or disease associations within sets of active compounds from screening campaigns [1]. These computational approaches collectively support the translation of complex chemogenomics data into actionable biological insights and therapeutic hypotheses.

Data Curation and Quality Control

The reliability of chemogenomics studies depends critically on rigorous data curation protocols that address both chemical and biological data quality. Chemical structure curation must include steps for structural cleaning, detection of valence violations, ring aromatization, normalization of specific chemotypes, standardization of tautomeric forms, and verification of stereochemistry [2]. Biological data curation requires processing of bioactivities for chemical duplicates, detection of outliers, and assessment of experimental variability, with mean errors in pKi measurements typically around 0.44 units with a standard deviation of 0.54 units based on analyses of ChEMBL data [2]. These curation processes are essential for minimizing the propagation of irreproducible data in public repositories and for ensuring the accuracy of computational models derived from chemogenomics datasets [2]. Implementation of automated curation workflows using tools such as RDKit, ChemAxon JChem, or KNIME pipelines can help standardize these processes and improve the reliability of chemogenomics data resources [2].

Data Integration and Analysis Framework for Chemogenomics

Case Study: Glioblastoma Chemogenomics

Implementation and Workflow

A comprehensive example of chemogenomics application comes from glioblastoma multiforme (GBM) research, where researchers developed an integrated approach to address the challenges of tumor heterogeneity and adaptive resistance [4]. The implementation began with target identification using RNA sequencing data from 169 GBM tumors and 5 normal samples from TCGA, identifying 755 overexpressed genes with somatic mutations in GBM patients [4]. These genes were mapped onto a combined protein-protein interaction network (approximately 8,000 proteins and 27,000 interactions), resulting in a GBM-specific subnetwork of 390 proteins, 117 of which contained druggable binding sites [4]. Virtual screening of approximately 9,000 compounds against 316 druggable binding sites using molecular docking with SVR-KB scoring identified candidates predicted to bind multiple targets within the network [4]. The phenotypic screening component utilized patient-derived GBM spheroids in three-dimensional culture, assessing cell viability, tube formation inhibition in endothelial cells (angiogenesis), and selectivity against normal cells (primary hematopoietic CD34+ progenitor spheroids and astrocytes) [4].

Outcomes and Validation

This chemogenomics approach identified several active compounds, including compound IPR-2025, which demonstrated particularly promising characteristics [4]. The compound exhibited potent anti-GBM activity with single-digit micromolar IC50 values in low-passage patient-derived GBM spheroids, substantially better than standard-of-care temozolomide [4]. It also showed strong anti-angiogenic effects with submicromolar IC50 values in endothelial cell tube formation assays, while displaying excellent selectivity with no significant effects on primary hematopoietic CD34+ progenitor spheroids or astrocyte cell viability [4]. Mechanism deconvolution through RNA sequencing revealed the compound's impact on multiple signaling pathways, and mass spectrometry-based thermal proteome profiling confirmed engagement with multiple protein targets, illustrating the successful implementation of selective polypharmacology [4]. This case study demonstrates how chemogenomics can bridge phenotypic screening and target-based approaches to identify compounds with complex polypharmacology profiles tailored to specific disease contexts.

The continued evolution of chemogenomics will likely focus on several key areas. Integration of multi-omics data at single-cell resolution should enhance understanding of cell-to-cell heterogeneity in drug response and resistance mechanisms. Advanced phenotypic profiling using high-content imaging and transcriptomic readouts will provide increasingly detailed characterization of compound effects across diverse cellular contexts [1]. Machine learning approaches are poised to dramatically improve target prediction and compound prioritization by leveraging the growing wealth of public chemogenomics data [2] [1]. Furthermore, the development of standardized data curation protocols and community-wide data quality initiatives will be essential for addressing reproducibility challenges and maximizing the value of shared chemogenomics resources [2].

In conclusion, chemogenomics represents a powerful integrative framework that effectively bridges phenotypic and target-based drug discovery paradigms. By systematically investigating chemical-biological interactions across multiple scales, from molecular targets to phenotypic outcomes, chemogenomics enables the identification of compounds with tailored polypharmacology profiles that can address the complexity of multifactorial diseases such as cancer. The strategic design of targeted screening libraries, coupled with sophisticated data integration and analysis platforms, positions chemogenomics as a cornerstone approach in precision oncology and the development of next-generation therapeutics. As chemical biology technologies continue to advance and chemogenomics datasets expand, this approach will play an increasingly central role in translating insights from genomic medicine into effective therapeutic strategies.

The Resurgence of Phenotypic Screening in Drug Discovery

Phenotypic screening has experienced a significant resurgence as a powerful strategy in modern drug discovery, marking a shift from traditional target-based approaches toward more physiologically relevant systems. This revival is largely driven by the recognition that complex diseases often arise from perturbations across multiple genes and pathways, and that cellular systems possess inherent redundancy and compensatory mechanisms [5]. Unlike target-based screening, which focuses on modulating a single predefined protein, phenotypic screening identifies compounds that produce a desired therapeutic effect in a cell-based or organism-based system, even when that effect requires the coordinated targeting of several biological pathways [5] [6]. This approach is particularly valuable for identifying novel mechanisms of action (MoA) and for tackling diseases where the underlying biology is not fully understood [6].

The modern application of phenotypic screening is framed within the sophisticated context of chemogenomics library design. These libraries are curated collections of small molecules designed to interrogate a wide range of protein targets and biological pathways in a systematic manner [7]. They enable researchers to not only identify hit compounds but also to begin deconvoluting their mechanisms of action by leveraging known drug-target-pathway-disease relationships [7]. This integration of phenotypic screening with chemogenomics represents a maturation of the field, moving beyond simple observation of effects to generating rich, data-dense profiles that can be mined for deeper biological insight.

The Modern Phenotypic Screening Workflow

Contemporary phenotypic screening is a multi-stage process that integrates advanced cell models, high-dimensional readouts, and sophisticated computational analysis. The core workflow is illustrated below, highlighting the critical steps from assay design to lead identification.

Workflow Diagram

This workflow begins with the development of a disease-relevant biological system, progresses through the screening of a carefully designed compound library, and culminates in the identification of hits and the deconvolution of their mechanisms of action using computational and experimental methods [7] [6]. A critical enabler of this process is the chemogenomic library, which is purpose-built to cover a wide swath of the druggable genome, thereby increasing the probability of identifying compounds that induce a relevant phenotype and facilitating subsequent target identification [7] [3].

Advanced Computational Methods

The complexity and high-dimensionality of data generated from modern phenotypic screens necessitate powerful computational approaches. Artificial intelligence (AI) and machine learning (ML) are now at the forefront of analyzing these rich datasets to identify hit compounds and predict their mechanisms of action.

The DrugReflector Framework

A recent breakthrough in the field is the development of a closed-loop active reinforcement learning framework incorporating a model called DrugReflector [5]. This approach was specifically designed to improve the prediction of compounds that induce desired phenotypic changes based on transcriptomic signatures.

The core innovation of DrugReflector lies in its iterative learning process. The model was initially trained on a subset of the Connectivity Map, a vast public database of compound-induced gene expression profiles [5]. A closed-loop feedback process then uses additional experimental transcriptomic data to iteratively refine the model's predictions. This active learning strategy allows the system to become increasingly efficient at selecting compounds that are likely to produce the target phenotype.

Performance benchmarks demonstrate that DrugReflector provides an order of magnitude improvement in hit-rate compared with screening of a random drug library, and outperforms alternative algorithms used for predicting phenotypic screening outcomes [5]. This represents a significant leap forward in screening efficiency, potentially enabling phenotypic campaigns to be smaller, more focused, and more cost-effective.

AI-Driven Platforms in Drug Discovery

The integration of AI into phenotypic screening is part of a broader movement in drug discovery. Leading AI-driven platforms have successfully advanced novel candidates into the clinic by leveraging diverse approaches, from generative chemistry to phenomics-first systems [8].

Table 1: Leading AI-Driven Drug Discovery Platforms with Phenotypic Screening Capabilities

| Company/Platform | Core AI Approach | Phenotypic Screening Integration | Clinical-Stage Candidates |

|---|---|---|---|

| Recursion [8] | Phenomics-first systems, computer vision | High-content cellular imaging paired with ML | Multiple candidates in clinical trials |

| Exscientia [8] | Generative chemistry, automated design | Patient-derived tissue models (ex vivo) | DSP-1181 (OCD), EXS-21546 (Immuno-oncology) |

| Insilico Medicine [8] | Generative AI, target discovery | Multi-omics data integration for phenotype simulation | ISM001-055 (Idiopathic Pulmonary Fibrosis) |

| BenevolentAI [8] | Knowledge-graph repurposing | Network pharmacology linking compounds to phenotypic outcomes | Several candidates in clinical stages |

The Recursion–Exscientia merger in 2024 exemplifies the strategic integration of complementary AI technologies, specifically combining Exscientia's strength in generative chemistry with Recursion's extensive phenomics and biological data resources [8]. This creates an end-to-end platform where AI-designed compounds can be rapidly validated in sophisticated phenotypic assays.

Experimental Protocols & Methodologies

The technical execution of a phenotypic screen requires careful consideration of cellular models, assay readouts, and target deconvolution strategies. The following protocols detail established methodologies in the field.

High-Content Morphological Profiling with Cell Painting

The Cell Painting assay is a high-content, image-based phenotypic profiling method that uses up to six fluorescent dyes to label eight cellular components, thereby generating a rich, morphological profile for each compound tested [7].

Protocol Steps:

- Cell Culture and Plating: Plate U2OS osteosarcoma cells (or other relevant cell lines) in multiwell plates.

- Compound Treatment: Perturb the cells with the small-molecule compounds from the chemogenomic library.

- Staining and Fixation: Stain the cells with a panel of fluorescent dyes to mark key cellular components, then fix them. A typical dye panel includes:

- Concanavalin A and wheat germ agglutinin to label the endoplasmic reticulum and plasma membrane.

- SYTO 14 to label nucleic acids.

- Phalloidin to label filamentous actin.

- MitoTracker to label mitochondria.

- Image Acquisition: Image the stained plates on a high-throughput microscope, capturing multiple fields per well.

- Image Analysis and Feature Extraction: Use automated image analysis software (e.g., CellProfiler) to identify individual cells and measure a vast array of morphological features (size, shape, texture, intensity, etc.) for each cell object (cell, cytoplasm, nucleus). The BBBC022 dataset, for example, contains 1,779 morphological features per cell [7].

- Data Processing: For each compound, calculate the average value of each feature across replicates. Filter features to retain those with a non-zero standard deviation and remove highly correlated features (e.g., >95% correlation) to reduce dimensionality [7].

Mechanism of Action Deconvolution

Once a phenotypic hit is identified, determining its Mechanism of Action (MoA) is the next critical challenge. Multiple experimental strategies can be employed, each with distinct strengths [6].

Table 2: Key Methods for Mechanism of Action Deconvolution

| Method | Process | Key Strength | Example Application |

|---|---|---|---|

| Affinity Chromatography [6] | Immobilized compound is used to pull down direct protein targets from cell lysates. | Identifies direct binding targets. | Kartogenin (KGN) was found to bind Filamin A (FLNA), disrupting its interaction with CBFβ and inducing chondrogenesis. |

| Gene Expression Profiling [6] | Global transcriptomic changes (via RNA-Seq or microarrays) are measured after compound treatment. | Uncovers modulated pathways and dependencies. | Gene profiling of KGN-treated cells revealed activation of RUNX transcription pathways. |

| Genetic Modifier Screening [6] | CRISPR-Cas9 or shRNA is used to knock down gene targets; synergy/antagonism with compound is assessed. | Identifies genes whose loss mimics or rescues the phenotype. | shRNA knockdown of FLNA was shown to recapitulate the chondrocyte-forming effect of KGN. |

| Computational Profiling [5] [7] | A compound's signature (e.g., morphological, transcriptomic) is compared to reference databases. | Enables rapid hypothesis generation based on similarity to compounds with known MoA. | The DrugReflector framework uses transcriptomic signatures from the Connectivity Map to predict compound activity. |

The Scientist's Toolkit: Essential Research Reagents

The successful implementation of phenotypic screening relies on a core set of research reagents and platforms.

Table 3: Essential Research Reagent Solutions for Phenotypic Screening

| Reagent / Platform | Function / Application | Key Features |

|---|---|---|

| Cell Painting Dye Set [7] | High-content morphological profiling of cells. | Labels 8+ cellular components; generates ~1,800 quantitative features per cell. |

| Curation-Mined Compound Libraries [7] [3] | Targeted screening against a defined subset of the druggable genome. | ~1,200 compounds can target >1,300 anticancer proteins; enables MoA hypothesis generation. |

| ChEMBL Database [7] | Public repository of bioactive molecules with drug-like properties. | Contains curated bioactivity data (IC₅₀, Kᵢ) for over 1.6 million molecules and 11,000 targets. |

| Connectivity Map (CMap) [5] | Public resource of transcriptomic profiles from compound-treated cells. | Serves as a training ground for AI models like DrugReflector to link gene signatures to phenotypes. |

| Neo4j Graph Database [7] | Integrates heterogeneous data types (drug-target-pathway-disease) into a unified network. | Enables system pharmacology queries and network-based target identification. |

The resurgence of phenotypic screening represents a paradigm shift in early drug discovery, driven by more disease-relevant models, high-content readouts, and the integration of sophisticated AI and computational tools. Framing these efforts within the context of rational chemogenomics library design is crucial for maximizing the biological insights gained from each screen. The future of the field lies in the continued refinement of closed-loop systems that tightly integrate predictive AI, automated experimental validation, and multi-omics data analysis. This powerful combination promises to unlock novel biology, identify first-in-class therapeutics for complex diseases, and ultimately improve the success rate of clinical translation.

Target deconvolution is a critical component of the modern phenotypic drug discovery (PDD) pipeline, serving as the essential link between the observation of a therapeutic phenotype and the understanding of its underlying molecular mechanism. In contrast to target-based approaches that begin with a known molecular target, PDD identifies chemical compounds based on their ability to induce a desired cellular phenotype, such as cell death or differentiation [9]. The subsequent process of target deconvolution involves identifying the specific molecular target(s) through which these bioactive small molecules function, thereby clarifying their mechanism of action (MoA) [9]. This approach is particularly valuable for complex diseases like cancer, neurological disorders, and metabolic diseases, which often involve multiple molecular abnormalities rather than a single defect [1]. Within the context of chemogenomics library design, strategic target deconvolution transforms phenotypic screening from a "black box" into a powerful, hypothesis-generating engine that systematically connects chemical structure to biological function through defined molecular targets.

The revival of phenotypic screening in recent years, accelerated by advances in cell-based technologies including induced pluripotent stem (iPS) cells, CRISPR-Cas gene-editing tools, and high-content imaging assays, has created an urgent need for robust target deconvolution methodologies [1]. Chemogenomics libraries specifically designed for phenotypic screening provide researchers with structured collections of chemical probes representing diverse targets across the human proteome, enabling systematic exploration of chemical space while maintaining annotated target relationships [1]. These libraries serve as essential resources for bridging the gap between observed phenotypic changes and their corresponding molecular targets, thereby accelerating the drug discovery process for researchers and scientists working across diverse therapeutic areas.

Core Methodologies for Target Deconvolution

Affinity-Based Purification Approaches

Affinity-based purification represents a foundational "workhorse" technique in target deconvolution that leverages immobilized small molecules to capture and identify interacting proteins from complex biological mixtures [9]. The methodology begins with chemical modification of the compound of interest to incorporate a solid support handle while preserving its biological activity. This immobilized "bait" compound is then exposed to cell lysates or other protein sources, allowing potential target proteins to bind. After extensive washing to remove non-specific interactions, specifically bound proteins are eluted and identified primarily through mass spectrometry analysis [9].

Key advantages of this approach include its applicability to a wide range of target classes and its ability to provide dose-response profiles and IC50 information that guides downstream drug development efforts [9]. The technique works effectively under native conditions, preserving physiological protein folding and interaction states. However, this method requires the synthesis of a high-affinity chemical probe that can be successfully immobilized without disrupting its target-binding capabilities, which can present significant medicinal chemistry challenges for some compound classes [9]. Commercially available services such as TargetScout offer robust and scalable implementations of this technology for researchers seeking to implement this approach without establishing the methodology in-house [9].

Activity-Based Protein Profiling (ABPP)

Activity-based protein profiling (ABPP) represents a powerful complementary approach that utilizes reactive chemical probes to covalently label and identify protein targets based on their enzymatic activity or chemical functionality [9]. This methodology employs bifunctional probes containing both a reactive group that covalently binds to specific amino acid residues (such as cysteine) and a reporter tag for enrichment and detection. ABPP strategies can be implemented in two primary configurations: direct labeling with a functionalized compound of interest, or competitive labeling where a promiscuous probe is applied with and without the test compound to identify targets whose probe occupancy is reduced through competitive binding [9].

This approach is particularly powerful for identifying specific enzyme families and characterizing functional states of proteins, providing information beyond mere physical interaction [9]. However, ABPP requires the presence of accessible reactive residues in the target protein(s) and may not be suitable for all target classes. Specialized implementations such as CysScout enable proteome-wide profiling of reactive cysteine residues, while customized assays can be developed for other nucleophilic amino acids [9]. The covalent nature of the labeling enables stringent washing procedures that reduce non-specific background interactions, potentially increasing confidence in identified targets.

Photoaffinity Labeling (PAL)

Photoaffinity labeling (PAL) represents a sophisticated target deconvolution strategy that combines the specificity of affinity-based approaches with the trapping capability of covalent labeling through photochemically induced crosslinking [9]. This methodology utilizes trifunctional probes comprising the small molecule of interest, a photoreactive moiety (such as aryl azides, diazirines, or benzophenones), and an enrichment handle. The experiment proceeds with the probe binding to its cellular targets under physiological conditions, followed by UV irradiation to activate the photoreactive group, forming covalent bonds with adjacent target proteins [9].

PAL offers distinct advantages for studying challenging protein classes, including integral membrane proteins, and for identifying compound-protein interactions that may be too transient for detection by other methods [9]. The technology is particularly valuable when working with low-affinity interactions or complex native environments where maintaining interaction stability during purification is challenging. However, PAL requires significant optimization of probe design and irradiation conditions, and may not be suitable for targets with shallow surface binding sites that prevent efficient crosslinking [9]. Commercially available services such as PhotoTargetScout provide specialized expertise in implementing this technology, including both assay optimization and target identification modules [9].

Label-Free Target Deconvolution Strategies

Label-free approaches represent an emerging category of target deconvolution methodologies that eliminate the need for chemical modification of the test compound, thereby avoiding potential perturbations to its structure, function, or cellular distribution [9]. One prominent implementation of this concept leverages solvent-induced denaturation shifts (SIDS) or thermal protein profiling to detect changes in protein stability induced by ligand binding. By comparing the kinetics of physical or chemical denaturation before and after compound treatment, researchers can identify target proteins based on their altered stability profiles using proteome-wide quantitative mass spectrometry [9].

The key advantage of label-free strategies is their ability to evaluate compound-protein interactions under completely native conditions without any structural modifications that might alter target engagement [9]. This approach can provide invaluable insights into chemical interactions in physiologically relevant contexts and advances both target deconvolution and off-target profiling. However, this technique can be challenging for very low-abundance proteins, very large proteins, and membrane proteins due to technical limitations in detection and analysis [9]. For feasible targets, commercially available implementations such as SideScout offer robust proteome-wide protein stability assays that can be applied to researchers' compounds of interest [9].

Table 1: Comparison of Major Target Deconvolution Methodologies

| Method | Key Principle | Advantages | Limitations | Ideal Use Cases |

|---|---|---|---|---|

| Affinity-Based Purification | Immobilized compound captures binding proteins from lysate [9] | Works for diverse target classes; provides binding affinity data [9] | Requires high-affinity, immobilizable probe; chemical modification may alter activity [9] | Broad target identification; established "workhorse" methodology [9] |

| Activity-Based Protein Profiling (ABPP) | Reactive probes covalently label functional protein sites [9] | Identifies functional states; reduces false positives through covalent capture [9] | Limited to proteins with accessible reactive residues [9] | Enzyme families; catalytic function studies; competitive binding assays [9] |

| Photoaffinity Labeling (PAL) | Photoreactive probes form covalent bonds with targets upon UV irradiation [9] | Captures transient interactions; suitable for membrane proteins [9] | Complex probe synthesis; optimization intensive; may miss shallow binding sites [9] | Low-affinity binders; membrane proteins; native environment studies [9] |

| Label-Free Stability Profiling | Detects ligand-induced changes in protein stability [9] | No compound modification needed; works under native conditions [9] | Challenging for low-abundance, large, and membrane proteins [9] | Native interaction mapping; off-target profiling; sensitive compounds [9] |

Experimental Design and Workflow Integration

Strategic Workflow for Target Deconvolution

Implementing a successful target deconvolution strategy requires careful planning and integration of complementary approaches to maximize the likelihood of identifying physiologically relevant molecular targets. The following workflow diagram illustrates a systematic approach that combines multiple methodologies to overcome individual limitations and provide orthogonal validation:

The Scientist's Toolkit: Essential Research Reagents

Successful implementation of target deconvolution methodologies requires access to specialized research reagents and tools. The following table details essential components of the target deconvolution toolkit:

Table 2: Essential Research Reagents for Target Deconvolution Studies

| Research Reagent | Function & Application | Key Considerations |

|---|---|---|

| Chemical Probes | Modified versions of hit compounds with affinity handles (biotin, alkyne/azide for click chemistry) or photoreactive groups [9] | Must preserve target binding affinity and specificity; position of modification critical for success [9] |

| Cell Lysates | Complex protein mixtures for in vitro binding studies; can be from diverse cell types or tissues [9] | Should reflect physiologically relevant context; consider protein concentration, integrity, and post-translational modifications [9] |

| Affinity Matrices | Solid supports (agarose, magnetic beads) for immobilizing bait compounds [9] | Low non-specific binding essential; compatibility with downstream analytical methods [9] |

| Activity-Based Probes | Bifunctional reagents with reactive groups (electrophiles) and detection tags [9] | Specificity for protein families; membrane permeability for live-cell applications [9] |

| Mass Spectrometry-Grade Reagents | High-purity solvents, proteases (trypsin), and labeling reagents for proteomic analysis [9] | Compatibility with LC-MS/MS systems; minimal chemical interference [9] |

| Validated Tool Compounds | Selective small-molecule modulators with known targets and mechanisms [10] | Serve as positive controls; must have established potency, selectivity, and cellular activity [10] |

Validation Criteria for High-Quality Tool Compounds

The use of high-quality tool compounds is essential for both target deconvolution and subsequent mechanism of action studies. Well-characterized tool compounds must meet specific criteria to ensure reliable experimental outcomes [10]:

Efficacy and Potency: A tool compound should demonstrate adequate efficacy to empirically test the experimental hypothesis, with potency confirmed through at least two orthogonal methodologies such as biochemical assays and surface plasmon resonance (SPR) [10].

Selectivity Profile: The compound should exhibit defined selectivity against related targets, typically demonstrated through profiling against panels of potential off-targets, ensuring that observed phenotypes can be confidently attributed to modulation of the intended target [10].

Cellular Activity: The tool compound must demonstrate cell permeability and appropriate exposure at the site of action, with proven utility as a probe through demonstration of phenotypic relevance via a proximal biomarker [10].

Availability and Reproducibility: The compound should be readily available to the research community with documented purity and stability, enabling reproduction of findings across different laboratories and experimental systems [10].

Applications and Impact on Drug Discovery

Advancing Phenotypic Drug Discovery

Target deconvolution strategies play an indispensable role in bridging the critical gap between initial phenotypic screening and downstream drug development activities [9]. By systematically identifying the on-target and off-target interactions of bioactive compounds, researchers can make informed decisions about a compound's feasibility as a drug candidate and elucidate its precise mechanism of action [9]. This is particularly crucial in phenotypic screening frameworks where hits are identified based on their ability to induce desired cellular phenotypes rather than through predefined target binding [9]. Following successful target deconvolution, researchers are empowered to optimize drug candidates through medicinal chemistry to enhance on-target activity, reduce off-target effects, improve deliverability, and tailor pharmacokinetic properties [9].

The integration of target deconvolution with chemogenomics library design creates a powerful virtuous cycle for drug discovery. Annotated chemogenomics libraries provide the foundational knowledge connecting chemical structures to biological targets, while phenotypic screening reveals novel biological connections that expand these annotations [1]. This iterative process continuously enriches the library's value while accelerating the identification of both novel molecular targets and conserved pharmacological pathways [1]. Furthermore, understanding a compound's mechanism of action enables better prediction of potential clinical efficacy and safety concerns, allowing for earlier mitigation of development risks and more efficient resource allocation in the drug discovery pipeline [9].

Integration with AI and Advanced Technologies

Artificial intelligence and machine learning are increasingly transforming target deconvolution practices, particularly through the analysis of complex multidimensional data generated by high-content screening technologies [11]. The application of AI foundation models for biology and protein design integrated into design-build-test-learn (DBTL) cycles enables more efficient candidate optimization and novel target identification [11]. These models leverage sequence, structure, and functional data to generate and optimize candidates in silico before experimental validation, dramatically streamlining the therapeutic development process [11].

Advanced image-based high-content screening (HCS) technologies, such as the Cell Painting assay, provide rich morphological profiles that can be connected to target modulation through specialized computational approaches [1]. This assay quantitatively captures cellular morphology through automated imaging of stained cells, measuring hundreds of morphological features that create distinctive profiles for different mechanism of action classes [1]. When integrated with chemogenomics libraries, these morphological profiles enable researchers to connect observed phenotypic changes to specific targets or pathways, significantly accelerating both target deconvolution and drug discovery [1]. Reinforcement learning, generative modeling, and active-learning feedback loops now enable iterative refinement of both compounds and their target hypotheses, representing a significant advancement over traditional single-step approaches [11].

Target deconvolution represents a cornerstone of modern phenotypic drug discovery, providing the critical connection between observed therapeutic phenotypes and their underlying molecular mechanisms. The integration of sophisticated methodological approaches—including affinity-based purification, activity-based protein profiling, photoaffinity labeling, and label-free strategies—within a structured chemogenomics framework enables researchers to systematically elucidate mechanism of action for novel bioactive compounds [9]. As drug discovery continues to address increasingly complex diseases involving polypharmacology and network pharmacology, the strategic importance of robust target deconvolution will only intensify [1].

The future of target deconvolution lies in the intelligent integration of complementary methodologies, leveraging the unique strengths of each approach while mitigating their individual limitations [9]. Furthermore, the accelerating integration of artificial intelligence and machine learning with experimental data promises to transform target deconvolution from a challenging bottleneck to a predictive, hypothesis-generating engine [11]. By adopting these advanced strategies and tools, researchers and drug development professionals can significantly enhance their ability to translate promising phenotypic hits into validated lead compounds with understood mechanisms of action, ultimately increasing the efficiency and success rate of the therapeutic development pipeline [9].

In the field of phenotypic drug discovery, the design of chemogenomic libraries represents a critical foundation for successful screening campaigns. These libraries, composed of small molecules designed to modulate a wide range of protein targets, aim to provide comprehensive coverage of the "druggable genome"—the subset of the human genome encoding proteins that can be targeted by pharmacological compounds [1] [12]. However, a significant gap persists between the theoretical expansiveness of the druggable genome and the practical coverage achieved by most screening libraries. This whitepaper provides a technical assessment of this coverage gap and outlines experimental methodologies for its systematic evaluation, framed within the context of advanced chemogenomics library design for phenotypic assays research.

The druggable genome encompasses approximately 4,479 genes categorized into three tiers based on evidence supporting their potential as drug targets [13]. Tier 1 includes proteins with direct evidence as targets of approved drugs or clinical candidates, Tier 2 contains proteins with structural or functional similarities to Tier 1 targets, and Tier 3 comprises genes with more distant similarities [13]. Despite this well-categorized universe, most screening libraries fail to achieve balanced representation across these categories, leading to systematic biases in phenotypic screening outcomes and potentially overlooking valuable therapeutic opportunities.

Defining Assessment Criteria for Library Coverage

Quantitative Metrics for Gap Analysis

A critical assessment of library coverage begins with establishing robust quantitative metrics that enable researchers to evaluate how well a chemical library represents the druggable genome. These metrics should extend beyond simple compound counts to encompass biological and chemical diversity parameters.

Table 1: Key Quantitative Metrics for Assessing Library Coverage of the Druggable Genome

| Metric Category | Specific Parameter | Optimal Range/Target | Assessment Method |

|---|---|---|---|

| Target Coverage | Tier 1 Genes Covered | >90% | Bioinformatics mapping of compounds to druggable genes [13] |

| Tier 2 Genes Covered | >70% | Similarity-based target prediction | |

| Novel Target Representation | 10-15% | Comparison with approved drug targets | |

| Compound Quality | Rule of 5 Compliance | >80% | Calculation of MW, LogP, HBD, HBA [12] |

| Chemical Probes Availability | >40% | Presence of selective, potent compounds per target [12] | |

| Diversity Metrics | Scaffold Diversity Index | >0.7 | Shannon entropy based on molecular frameworks [1] |

| Biological Pathway Coverage | >75% | Enrichment analysis against KEGG/GO databases [1] |

The application of these metrics reveals significant disparities in library composition. For instance, an analysis of curated libraries shows that while Tier 1 gene coverage often exceeds 80%, Tier 2 coverage typically falls below 60%, creating a substantial gap in probing emerging target classes [13] [3]. Furthermore, scaffold analysis frequently demonstrates that approximately 60% of compounds in typical libraries cluster around only 20% of available chemical frameworks, indicating substantial redundancy [1].

Qualitative Dimensions in Coverage Assessment

Beyond quantitative metrics, qualitative assessment dimensions must be considered:

- Target Quality: Evidence supporting the disease relevance of targeted genes, incorporating genetic validation from sources such as Mendelian randomization studies [13].

- Compound Annotation: Comprehensiveness of mechanistic information, including potency (IC50, Ki), selectivity (S-score), and cellular activity [1] [12].

- Pathway Context: Representation of targets within their functional biological pathways rather than as isolated entities [1] [3].

- Cellular Environment Considerations: Accounting for the differential behavior of compounds across varied cellular contexts and disease states [14].

Experimental Frameworks for Systematic Evaluation

Bioinformatics Pipeline for Target Coverage Assessment

A robust bioinformatics pipeline enables systematic evaluation of library coverage against the druggable genome. The following workflow provides a standardized approach:

Library Coverage Assessment Workflow

Protocol 1: Target-Based Coverage Analysis

Data Compilation: Assemble the druggable genome list from established sources such as Finan et al. (2017), encompassing 4,479 genes categorized into Tiers 1, 2, and 3 [13]. Exclude genes on sex chromosomes, mitochondrial DNA, and Tier 3 genes to focus on 2,030 high-priority targets.

Compound-Target Mapping: Annotate library compounds using bioactivity data from ChEMBL (version 22 or higher), including IC50, Ki, and EC50 values [1]. Employ similarity-based target prediction tools for compounds lacking direct target annotations.

Coverage Calculation: For each tier category, calculate coverage percentage as (Number of genes with ≥1 compound / Total genes in tier) × 100. Apply minimum potency thresholds (e.g., <1µM for direct targets, <10µM for predicted targets) to ensure pharmacological relevance.

Diversity Assessment: Process compounds through ScaffoldHunter software to generate molecular frameworks [1]. Calculate scaffold diversity using the Shannon entropy index: H = -Σ(pi × ln(pi)), where p_i represents the proportion of compounds belonging to scaffold i.

Functional Validation Using CRISPR-based Screening

CRISPR/Cas9-based genome-wide screening provides a functional validation method to assess whether library coverage aligns with biologically relevant targets [14] [15]. This approach is particularly valuable for identifying non-coding regulatory elements (NCREs) that may be overlooked in traditional library design.

Protocol 2: CRISPR Functional Validation of Library Targets

Library Design: Implement a dual-CRISPR system using paired single-guide RNAs (sgRNAs) under U6 and H1 promoters to delete non-coding regulatory elements (NCREs) ranging from 50-200 bp in length [14]. Design sgRNAs to target both ends of each regulatory element.

Screening Execution: Transduce cells stably expressing Cas9 with the dual-CRISPR library at low MOI (0.3-0.5) to ensure single-copy integration. Include a minimum of 500 cells per sgRNA pair to maintain library representation [14].

Phenotypic Assessment: Culture transfected cells for 15 days to identify NCREs affecting cell growth or specific phenotypic endpoints. Isolate genomic DNA and amplify integrated CRISPR sequences for sequencing.

Data Analysis: Apply robust ranking algorithms such as MAGeCK (Model-based Analysis of Genome-wide CRISPR/Cas9 Knockout) to identify significantly depleted or enriched sgRNAs following phenotypic selection [14]. Compare functional hits with existing library coverage to identify gaps in biologically relevant targets.

Table 2: Experimental Approaches for Coverage Validation

| Method | Key Application | Advantages | Limitations |

|---|---|---|---|

| CRISPR/Cas9 Screening [14] [15] | Functional validation of gene essentiality | Identifies biologically relevant targets in specific contexts | May miss redundant targets; technical challenges with NCREs |

| Cell Painting Phenotypic Profiling [1] | Morphological response assessment | Captures complex phenotypic signatures; target-agnostic | Difficult to deconvolute mechanisms of action |

| CETSA Target Engagement [16] | Direct binding confirmation in cells | Measures actual compound-target engagement; physiologically relevant | Requires compound treatment; lower throughput |

| Network Pharmacology Analysis [1] [3] | Pathway-level coverage assessment | Systems-level perspective; identifies network properties | Computationally intensive; depends on database quality |

Bridging the Gap: Strategies for Enhanced Library Design

Targeted Expansion Approaches

Based on coverage assessment results, specific strategies can address identified gaps:

Focus Library Enhancement: Develop targeted sub-libraries around underrepresented target classes. For example, after identifying underrepresentation in epigenetic regulators, a focused library might include chemical probes such as UNC0638 (lysine methyltransferase inhibitor) and trapoxin analogs (HDAC inhibitors) [12].

Scaffold Hopping Strategies: Apply computational scaffold hopping techniques to generate novel chemotypes for targets with limited representation. Deep graph networks have demonstrated success in generating 26,000+ virtual analogs with substantial potency improvements over initial hits [16].

Privileged Structure Integration: Incorporate "privileged structures" with demonstrated broad bioactivity across target classes, such as 1,4-benzodiazepin-2-ones and purines, while ensuring sufficient structural diversification to maintain selectivity [12].

Practical Implementation Framework

Implementing these strategies requires a systematic approach:

Iterative Design-Validate Cycle: Establish continuous cycles of library design, coverage assessment, and functional validation. This process should incorporate high-throughput technologies such as AI-guided retrosynthesis and scaffold enumeration to rapidly expand coverage [16].

Multi-Omic Data Integration: Integrate genetic validation data from sources such as Mendelian randomization studies, which can identify genetically-supported drug targets with higher clinical success probability [13]. For example, a recent study identified 12 new genetically-supported targets for osteomyelitis, including LTA4H, LAMC1, QDPR, and NEK6 [13].

Context-Specific Customization: Tailor library composition to specific phenotypic screening contexts. For glioblastoma research, this approach has yielded a minimal screening library of 1,211 compounds covering 1,386 anticancer proteins, successfully identifying patient-specific vulnerabilities [3].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagent Solutions for Library Assessment

| Reagent/Category | Primary Function | Application Notes | Quality Metrics |

|---|---|---|---|

| Druggable Genome Reference Set [13] | Benchmarking library coverage | 2,030 high-priority targets (Tiers 1-2); excludes Tier 3 and sex chromosomes | Comprehensive gene annotation; regular updates |

| CRISPR Dual-sgRNA Library [14] | Functional validation of target essentiality | Targets 4,047 ultra-conserved elements; enables deletion of 50-200bp regulatory regions | >90% target matching with guide RNAs; Spearman correlation >0.38 between replicates |

| Cell Painting Assay Kit [1] | Morphological profiling | 1,779 morphological features across cell, cytoplasm, and nucleus objects | Standardized staining protocol; feature correlation <95% |

| CETSA Platform [16] | Cellular target engagement confirmation | Measures thermal stabilization of targets in intact cells; compatible with high-resolution MS | Dose- and temperature-dependent stabilization; confirmation in complex tissues |

| ScaffoldHunter Software [1] | Chemical diversity analysis | Hierarchical scaffold decomposition; identifies representative core structures | Multiple visualization modes; batch processing capability |

| ChEMBL Database [1] | Bioactivity annotation | >1.6M molecules with standardized IC50, Ki, EC50 values | Regular updates; manual curation of literature data |

Visualization of Integrated Library Optimization Strategy

The complete workflow for addressing the druggable genome gap integrates assessment and optimization strategies into a cohesive framework:

Integrated Library Optimization Strategy

Critical assessment of library coverage against the druggable genome reveals systematic gaps that impact the effectiveness of phenotypic screening campaigns. By implementing standardized quantitative metrics, robust experimental validation protocols, and targeted expansion strategies, researchers can systematically address these limitations. The integration of genetic evidence, functional screening data, and chemical diversity analysis enables the design of chemogenomic libraries with enhanced biological relevance and coverage. As drug discovery continues to evolve toward systems-level approaches, comprehensive library assessment and optimization will play increasingly critical roles in identifying novel therapeutic opportunities and reducing attrition in later development stages.

Integrating Chemogenomics with Systems Pharmacology and Network Biology

The modern drug discovery paradigm is shifting from the traditional "one target–one drug" model to a more comprehensive "one drug–multiple targets" approach, driven by the understanding that complex diseases like cancer, neurological disorders, and metabolic conditions arise from multiple molecular abnormalities rather than single defects [1]. This shift has catalyzed the convergence of three powerful disciplines: chemogenomics, which systematically investigates the interactions between chemical compounds and biological targets; systems pharmacology, which examines drug actions within complex biological networks; and network biology, which maps the intricate relationships between biomolecules. This integration is particularly crucial for phenotypic screening, where the molecular mechanisms of observed effects are initially unknown, requiring sophisticated computational approaches to deconvolve complex biological responses [1] [17].

The primary challenge in phenotypic drug discovery lies in transitioning from observed phenotypic effects to understanding the underlying mechanisms of action. Chemogenomics libraries provide the essential bridge between chemical space and biological space by containing compounds with known or predicted target annotations. When these libraries are screened in phenotypic assays, the resulting data can be integrated with network biology and systems pharmacology to generate testable hypotheses about which targets and pathways are responsible for the observed phenotypes [1]. This integrated framework enables researchers to address the fundamental limitation of phenotypic screening—target identification—while simultaneously capturing the complex polypharmacology that often underlies efficacy against multifactorial diseases.

Theoretical Foundations and Computational Framework

Core Principles and Network Representations

The integrated framework rests on several foundational principles. First, similar chemical structures often interact with functionally related proteins, though this relationship is not absolute [18]. Second, therapeutic effects (phenotypic outcomes) emerge from perturbations to interconnected networks of biomolecules rather than isolated targets. Third, by mapping chemical and phenotypic similarities onto biological networks, we can infer novel drug-target relationships and mechanisms of action.

Biological networks can be represented at different levels of abstraction, each serving distinct purposes in the integrated framework:

- Interaction networks represent lists of physical or functional interactions without directionality or mechanistic information, useful for analyzing system structure [19].

- Activity flows (influence diagrams) capture directional influences between nodes without detailed biochemical mechanisms, commonly used for signaling pathways and gene regulatory networks [19].

- Logic models use qualitative rules to represent network relationships, suitable when quantitative parameters are unavailable [19].

- Quantitative biochemical networks employ detailed kinetic parameters to simulate system dynamics, providing the most mechanistic but data-intensive representation [19].

The drugCIPHER methodology exemplifies the power of integrating pharmacological and genomic spaces. It computes two key similarity metrics between drugs—Therapeutic Similarity (based on Anatomic Therapeutic Chemical classification) and Chemical Similarity (based on structural resemblance)—and relates these to the closeness of their protein targets within protein-protein interaction networks [18]. This approach demonstrates that drugs with high therapeutic and chemical similarity are more likely to share targets, and that modest but significant correlations exist between pharmacological similarities and genomic relatedness [18].

Key Computational Methods and Their Applications

Table 1: Computational Methods for Integrating Chemogenomics with Network Biology

| Method | Primary Function | Data Requirements | Key Applications |

|---|---|---|---|

| drugCIPHER [18] | Relates pharmacological and genomic spaces | Drug structures, therapeutic classifications, known drug-target interactions, PPI networks | Genome-wide drug target prediction, drug repurposing, side effect prediction |

| CSP Analysis [20] | Compares disease and drug-induced transcriptional profiles | Gene expression data from diseases and drug perturbations | Identifying drug targets that reverse disease-associated gene signatures |

| Network Pharmacology [21] | Maps drug-target-disease-pathway relationships | Compound-target interactions, pathway databases, disease ontologies | Validating multi-target mechanisms of traditional therapies, drug repurposing |

| Virtual Screening Enrichment [4] | Prioritizes compounds for phenotypic screening | Tumor genomic profiles, protein structures, PPI networks, compound libraries | Creating targeted libraries for selective polypharmacology in cancer |

The Chemogenomics Systems Pharmacology (CSP) approach provides another powerful method for identifying potential drug targets by comparing disease-induced transcriptional profiles with those induced by genetic or chemical perturbations [20]. This method operates on the principle that if a drug's effect on the transcriptional profile is contrary to the profile associated with a disease, it may reverse the disease phenotype. In traumatic brain injury (TBI), CSP analysis identified TRPV4, NEUROD1, and HPRT1 as top therapeutic target candidates and revealed strong molecular associations between TBI and Alzheimer's disease through shared gene expression patterns [20].

Chemogenomics Library Design for Phenotypic Screening

Strategic Design and Curation Principles

Designing effective chemogenomics libraries for phenotypic screening requires balancing multiple competing objectives: comprehensive target coverage, cellular activity, chemical diversity, target selectivity, and practical constraints like availability and cost [3]. The fundamental challenge is that even the best chemogenomics libraries interrogate only a fraction of the human genome—approximately 1,000–2,000 targets out of 20,000+ genes—due to the limited number of proteins with known chemical probes [17]. This limitation necessitates strategic prioritization of targets based on the biological context and screening objectives.

Several design strategies have emerged for creating targeted screening libraries:

- Disease-focused design utilizes genomic profiles from specific diseases to identify overexpressed genes and somatic mutations, which are then mapped onto protein-protein interaction networks to identify druggable binding sites [4]. For glioblastoma multiforme (GBM), this approach identified 755 genes with somatic mutations overexpressed in patient samples, which were filtered to 390 proteins with network interactions and further refined to 117 proteins with druggable binding sites [4].

- Target family-based design creates libraries focused on specific protein families (e.g., kinases, GPCRs) with known ligandability, enabling systematic exploration of chemotypes across related targets [1].

- Diversity-oriented design aims to cover broad chemical space without specific target annotations, useful for exploratory biology but requiring larger libraries [17].

- Selective polypharmacology design specifically seeks compounds that modulate multiple predefined targets across different signaling pathways, requiring advanced virtual screening approaches [4].

A key consideration in library design is the appropriate balance between target coverage and library size. Research has demonstrated that a minimal screening library of 1,211 compounds can effectively target 1,386 anticancer proteins, indicating that careful compound selection can maximize target coverage while minimizing screening costs [3].

Implementation and Practical Considerations

Successful implementation of chemogenomics libraries requires integration of multiple data sources and careful curation. The ExCAPE-DB dataset exemplifies the scale and complexity of modern chemogenomics resources, integrating over 70 million structure-activity relationship data points from PubChem and ChEMBL, standardized through rigorous processing pipelines [22]. Such resources enable Big Data analysis for building predictive models of polypharmacology and off-target effects.

Table 2: Essential Components of Chemogenomics Library Design

| Component | Description | Examples/Standards |

|---|---|---|

| Chemical Structures | Standardized representations of compounds | SMILES, InChI, InChIKey [22] |

| Target Annotations | Protein targets with standardized identifiers | Entrez ID, gene symbols, orthologue information [22] |

| Bioactivity Data | Quantitative measurements of compound-target interactions | IC50, Ki, EC50 values with standardized units [22] |

| Pathway Context | Biological pathways and processes involving targets | KEGG, Gene Ontology (GO) annotations [21] [1] |

| Disease Associations | Relationships between targets and disease phenotypes | Disease Ontology (DO), therapeutic classifications [1] |

Morphological profiling data, such as that generated by the Cell Painting assay, provides a valuable layer of functional information that can be integrated with chemogenomics libraries. This assay quantitatively measures 1,779 morphological features across multiple cellular compartments, creating distinctive profiles for compounds that can be linked to their target annotations [1]. Such integrative approaches enable researchers to connect chemical structure to target engagement to cellular phenotype in a unified framework.

Experimental Protocols and Methodologies

Chemogenomics Systems Pharmacology (CSP) Analysis

Objective: To identify potential drug targets by comparing disease-induced transcriptional profiles with those induced by genetic or chemical perturbations.

Materials:

- Disease gene expression datasets (e.g., from GEO database)

- Drug-induced gene expression profiles (e.g., from BaseSpace software)

- Protein-protein interaction data (e.g., from STRING database)

- Chemogenomic database (e.g., DrugBank, ChEMBL)

Procedure:

- Compile Disease Transcriptional Profiles: Collect differentially expressed genes (DEGs) from disease studies. For TBI analysis, 26 datasets from mice and rats with time points ranging from 3 hours to 7 days post-injury were used [20]. Select only genes with p-values <0.05 and absolute fold changes >1.2 as DEGs.

- Acquire Perturbation Signatures: Gather gene expression signatures induced by genetic perturbations (knockdown, knockout, mutation, or overexpression) and chemical perturbations (drug treatments) from databases like BaseSpace.

- Calculate Gene Signature Correlations: Use rank-based enrichment statistics to compute correlations between disease DEGs and perturbation-induced DEGs. The algorithm should handle cross-species comparisons and meta-analyses of multiple similar perturbations.

- Identify Inverse Correlations: Prioritize perturbations that induce transcriptional profiles opposite to the disease profile, as these may reverse the disease phenotype.

- Construct Protein Interaction Networks: Use tools like STRING to generate protein-protein interaction networks of predicted targets and identify closely connected clusters that may represent key regulatory modules.

- Validate Predictions: Compare computationally predicted targets with literature evidence and experimental data.

This CSP protocol successfully identified TRPV4, NEUROD1, and HPRT1 as top therapeutic target candidates for traumatic brain injury, consistent with independent literature reports [20].

Network-Based Drug Target Identification

Objective: To predict novel drug-target interactions by relating pharmacological and genomic spaces.

Materials:

- Drug therapeutic similarity matrix (based on ATC classification)

- Drug chemical similarity matrix (based on 2D structural similarity)

- Known drug-target interactions (e.g., from DrugBank)

- Protein-protein interaction network (e.g., from HPRD)

Procedure:

- Compute Drug Similarities: Calculate Therapeutic Similarity (TS) using a probabilistic model to characterize similarity between ATC codes. Calculate Chemical Similarity (CS) as 2D structural similarity.

- Define Target Closeness: For each drug-protein pair, define "closeness" based on their positions in the PPI network, considering both direct and indirect interactions.

- Construct Regression Models: Formulize three regression models:

- drugCIPHER-TS: Relates therapeutic similarity to target closeness

- drugCIPHER-CS: Relates chemical similarity to target closeness

- drugCIPHER-MS: Relates multiple similarity (combining TS and CS) to target closeness

- Calculate Concordance Scores: For a query drug, assign each protein in the PPI network three concordance scores based on the different regression models.

- Prioritize Targets: Rank proteins by their concordance scores, with higher scores indicating greater likelihood of being genuine drug targets.

In validation studies, drugCIPHER-MS outperformed both drugCIPHER-TS and drugCIPHER-CS as well as the Bipartite Local Model method in predicting known drug-target interactions [18].

Phenotypic Screening with Enriched Chemical Libraries

Objective: To identify compounds with selective polypharmacology against disease-relevant phenotypes.

Materials:

- Patient-derived cells (e.g., GBM stem cells for glioblastoma)

- 3D spheroid culture systems

- Enriched chemical library (designed using methods in Section 3)

- Phenotypic readouts (e.g., cell viability, morphology, functional assays)

Procedure:

- Library Enrichment: Design a focused chemical library using the disease-focused approach described in Section 3. For GBM, this involved docking approximately 9,000 in-house compounds to 316 druggable binding sites on proteins in the GBM subnetwork [4].

- Phenotypic Screening: Screen the enriched library against disease-relevant models, such as patient-derived GBM spheroids in 3D culture. Include appropriate controls and normalization procedures.

- Counter-Screening: Test active compounds against non-transformed primary cell lines (e.g., CD34+ progenitor cells, astrocytes) to assess selectivity.

- Secondary Assays: Evaluate promising compounds in additional phenotypic assays relevant to the disease (e.g., tube formation assays for angiogenesis inhibition).

- Mechanism Deconvolution: Use RNA sequencing and thermal proteome profiling to identify potential mechanisms of action and direct targets of active compounds.

- Target Validation: Confirm compound-target interactions using cellular thermal shift assays with specific antibodies.

This approach identified compound IPR-2025, which inhibited GBM spheroid viability with single-digit micromolar IC50 values and blocked endothelial tube formation with submicromolar potency, while sparing normal cells [4].

Applications and Case Studies

Target Discovery for Traumatic Brain Injury

The application of CSP to traumatic brain injury demonstrates how this integrated approach can identify novel therapeutic targets for conditions with high unmet medical need. Despite tremendous efforts, no treatment effectively limits the progression of secondary injury following TBI [20]. By comparing TBI-induced transcriptional profiles with those induced by various perturbations, researchers identified several potential drug targets that when modulated, could reverse the TBI-associated gene expression patterns.

Notably, this analysis revealed strong molecular connections between TBI and Alzheimer's disease, as perturbations on AD-related genes (APOE, APP, PSEN1, and MAPT) induced similar gene expression patterns to those observed in TBI [20]. This finding provides mechanistic insights into clinical observations linking TBI to increased AD risk and suggests potential therapeutic strategies that might address both conditions.

Selective Polypharmacology in Glioblastoma

The rational design of enriched chemical libraries for phenotypic screening has shown promising results in addressing challenging diseases like glioblastoma multiforme (GBM). Despite standard-of-care treatments including surgery, irradiation, and temozolomide, GBM remains largely incurable with median survival of only 14-16 months [4]. This treatment resistance stems from intra-tumoral genetic instability, which allows tumors to modulate multiple survival pathways simultaneously.

By creating a chemical library enriched for compounds predicted to simultaneously bind multiple GBM-specific targets identified from the tumor's RNA sequence and mutation data, researchers identified several active compounds [4]. The most promising compound, IPR-2025, demonstrated: