Strategic Chemogenomic Library Design: Principles, Applications, and Best Practices for Target Discovery

This article provides a comprehensive guide to the basic principles of chemogenomic library design for researchers, scientists, and drug development professionals.

Strategic Chemogenomic Library Design: Principles, Applications, and Best Practices for Target Discovery

Abstract

This article provides a comprehensive guide to the basic principles of chemogenomic library design for researchers, scientists, and drug development professionals. It explores the foundational concepts of systematically screening small molecules against target families to identify novel drugs and targets. The scope covers strategic methodologies for compound selection based on cellular activity, chemical diversity, and target selectivity, alongside practical applications in areas like precision oncology. It also addresses common challenges and optimization strategies, concluding with rigorous validation and comparative profiling approaches to ensure the generation of robust, high-quality chemical tools for effective target identification and validation in phenotypic drug discovery.

Foundations of Chemogenomics: From Basic Concepts to Strategic Target Family Screening

Chemogenomics represents a modern paradigm in chemical biology and drug discovery that investigates the systematic interplay between small molecules and biological target families on a genomic scale [1] [2]. This approach integrates combinatorial chemistry with genomic and proteomic sciences to comprehensively study the response of biological systems to chemical perturbations [2]. The primary goal is the parallel identification of novel drug targets and biologically active compounds that modulate phenotypic outcomes [2]. Unlike traditional single-target approaches, chemogenomics operates on the principle that focused chemical libraries can probe entire families of related proteins, such as G-protein-coupled receptors (GPCRs), kinases, nuclear receptors, and proteases [1]. This strategy leverages the structural and functional similarities within protein families, enabling the identification of ligands for less-characterized family members based on their similarity to well-established targets [1] [3].

The completion of the human genome project revealed a vast landscape of potential therapeutic targets, many with unknown functions and no known ligands—classified as orphan receptors [1]. Chemogenomics provides a powerful framework to elucidate the function of these novel targets by identifying small molecules that modulate their activity [1]. Furthermore, the hits discovered for these targets serve as valuable starting points for drug discovery campaigns [1]. The approach is characterized by its two-dimensional screening methodology, where the first dimension consists of a chemical library and the second dimension comprises a library of different cell types or protein targets [4]. This generates a rich data matrix that enables the correlation of chemical structures with biological responses across multiple dimensions, facilitating the deconvolution of complex mechanism-of-action relationships [4].

Fundamental Principles and Strategic Approaches

Core Concepts and Definitions

At its foundation, chemogenomics operates through several interconnected paradigms. Chemical genetics involves the modulation of protein function using small molecules, allowing researchers to probe biological systems with temporal and dose-dependent control [4]. This approach treats small molecules as conditional mutagens that can reversibly alter protein function, enabling real-time observation of phenotypic changes upon compound addition or withdrawal [1]. The chemical space—defined as the entirety of theoretically possible arrangements of atoms that result in small molecules—provides the universe from which screening libraries are derived [4]. Systematic exploration of this chemical space against biological target families forms the operational core of chemogenomics [1].

A key operational principle in chemogenomics is the use of targeted chemical libraries designed around known ligands of specific protein families [1]. Since ligands designed for one family member frequently show affinity for other related family members, such libraries collectively bind to a high percentage of the target family [1]. This approach significantly increases the probability of identifying modulators for orphan receptors within well-characterized protein families [1]. The underlying similarity principle enables knowledge transfer from characterized to uncharacterized family members, maximizing the information gained from screening efforts [3].

Forward versus Reverse Chemogenomics

Chemogenomics employs two complementary experimental strategies, each with distinct applications and workflows.

Table 1: Comparison of Forward and Reverse Chemogenomics Approaches

| Feature | Forward Chemogenomics | Reverse Chemogenomics |

|---|---|---|

| Starting Point | Phenotype of interest with unknown molecular mechanism | Known protein target with established in vitro assay |

| Screening Approach | Phenotypic screening for desired phenotype | Target-based screening for modulators of specific protein |

| Primary Challenge | Designing assays that enable target identification | Translating in vitro hits to cellular and organismal phenotypes |

| Typical Applications | Pathway discovery, phenotypic drug discovery | Target validation, mechanism confirmation |

| Target Identification | Required after hit identification—often complex | Known from the outset |

Forward chemogenomics (also called classical chemogenomics) begins with a phenotypic screen where the molecular basis of the observed phenotype is unknown [1]. Researchers identify small molecules that induce or suppress a specific phenotype in cells or whole organisms, then use these bioactive compounds as tools to identify the protein targets responsible for the observed effect [1]. For example, a loss-of-function phenotype might manifest as inhibition of tumor growth, with subsequent target identification necessary to understand the mechanism [1]. The main challenge lies in designing phenotypic assays that facilitate eventual target deconvolution [1].

Reverse chemogenomics follows a target-based approach where small molecules are first identified for their ability to perturb the function of a specific enzyme or receptor in an in vitro assay [1]. Once modulators are confirmed, the phenotypes induced by these compounds are analyzed in cellular or whole-organism contexts [1]. This strategy, enhanced by parallel screening capabilities across multiple targets within a family, helps confirm the biological role of the targeted protein and establishes therapeutic relevance [1]. Reverse chemogenomics closely resembles traditional target-based drug discovery but with the advantage of family-wide profiling [1].

Chemogenomic Library Design and Composition

Library Design Principles

The construction of targeted chemical libraries represents a critical success factor in chemogenomics. These libraries are strategically designed to maximize coverage of relevant chemical space while maintaining focus on specific protein families [3]. A proven design protocol involves chemogenomic classification of protein binding sites into subsites, followed by the collection of bioactive molecular fragments and virtual library generation [5]. This approach was successfully applied to the design of a focused library for 5-HT7 receptor ligands, where principal component analysis of molecular descriptors demonstrated effective focusing of the targeted library into regions of chemical space defined by known actives [5]. Computational validations indicated an enrichment factor of 5-HT7 ligand-like molecules in the range of 2-4 for the targeted library compared to a diverse reference library [5].

Effective library design incorporates several key considerations. Chemical diversity must be balanced with target family relevance to ensure sufficient variety while maintaining a higher probability of identifying hits against the protein family of interest [3] [6]. Additionally, drug-likeness and lead-likeness parameters such as those defined by Lipinski's Rule of Five ensure that library members possess physicochemical properties associated with successful drug development [4]. The inclusion of annotated chemical libraries—collections where some bioactivity data is already available—facilitates knowledge transfer and structure-activity relationship analysis across target families [4].

Composition of Representative Libraries

Several well-characterized chemogenomic libraries have been developed by academic and industrial groups, each with distinct characteristics and applications.

Table 2: Characteristics of Representative Chemogenomic Libraries

| Library Name | Size | Key Characteristics | Primary Applications |

|---|---|---|---|

| GSK Biologically Diverse Compound Set (BDCS) | Not specified | High chemical and biological diversity | Broad phenotypic screening |

| Pfizer Chemogenomic Library | Not specified | Focused on privileged structures | Target family screening |

| Prestwick Chemical Library | 1,280 compounds | High percentage of approved drugs | Repurposing, phenotypic screening |

| Sigma-Aldrich LOPAC | 3,200 compounds | Pharmacologically active compounds | Mechanism of action studies |

| NCATS MIPE | Not specified | Annotated with mechanism data | Translational research |

| Developed Library [6] | 5,000 compounds | Represents diverse drug targets | Phenotypic screening, target ID |

Contemporary chemogenomic libraries increasingly incorporate structural annotation and pathway mapping to facilitate mechanism deconvolution. For example, one recently developed library of 5,000 small molecules was designed to represent a large and diverse panel of drug targets involved in various biological effects and diseases [6]. This library was constructed through systematic analysis of drug-target-pathway-disease relationships integrated with morphological profiling data from high-content imaging assays [6]. The incorporation of morphological profiling from assays such as "Cell Painting" enables the characterization of cell states based on microscopic imaging, providing rich phenotypic fingerprints that can connect compound treatment to specific biological pathways [6].

Experimental Methodologies and Workflows

Screening Technologies and Platforms

Chemogenomic screening employs diverse experimental platforms tailored to specific research questions. Two-dimensional screening methodologies form the core approach, combining chemical libraries with genetic variant libraries [4]. For yeast models, three primary mutant library types are utilized: heterozygous deletions (sensitive to haploinsufficiency), homozygous deletions (identifying compensatory mechanisms), and overexpression libraries (detecting synthetic lethality) [4]. Each library type offers distinct advantages for probing chemical-genetic interactions and identifying mechanism of action.

Detection methods in chemogenomic screens primarily fall into two categories: non-competitive arrays and competitive mutant pools [4]. In non-competitive arrays, each mutant strain is cultured separately, providing robust quantitative data but requiring significant resources [4]. Competitive mutant pools culture all strains together, using molecular barcodes to quantify relative fitness through microarray hybridization or sequencing—a more efficient approach suitable for larger screens [4]. The choice between these methods depends on the specific research question, available resources, and required data resolution.

Workflow for Chemogenomic Screening and Target Identification

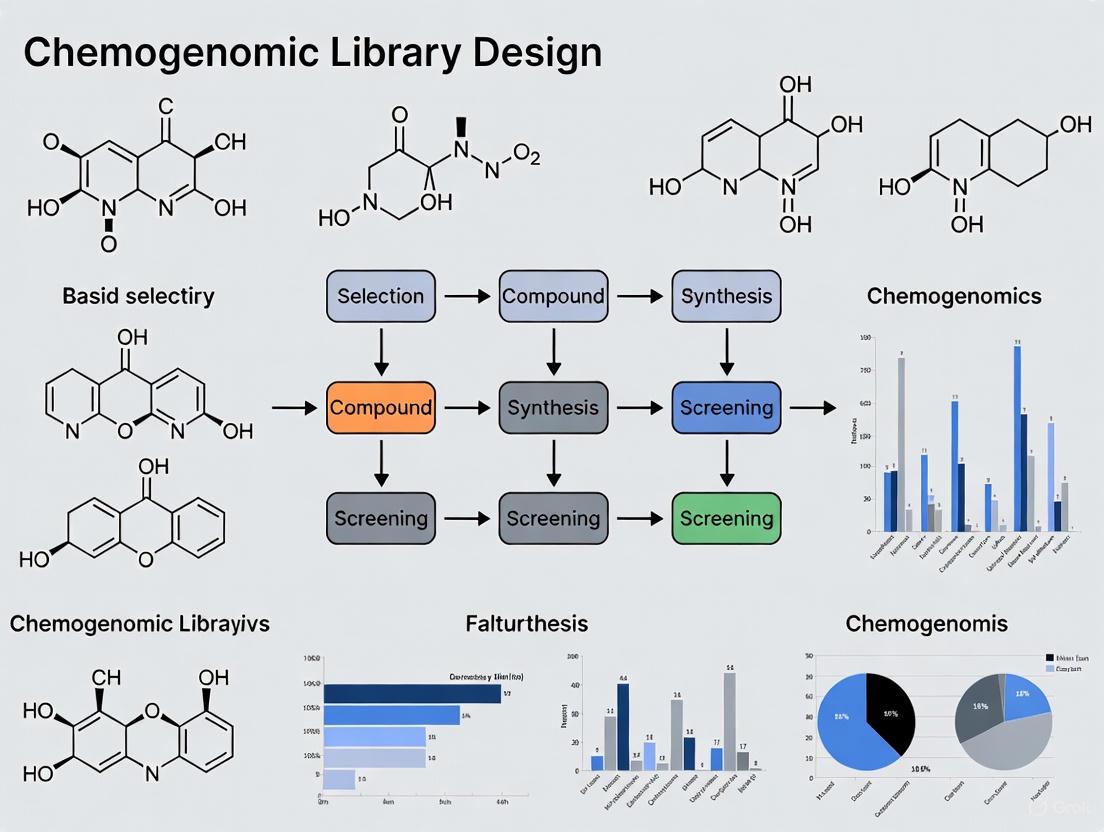

The following diagram illustrates the integrated workflow for chemogenomic screening and target identification, incorporating both experimental and computational components:

Data Analysis and Interpretation Methods

The interpretation of chemogenomic data requires specialized computational approaches to extract meaningful biological insights. Principal component analysis (PCA) of molecular descriptors helps visualize the distribution of compound libraries in chemical space, demonstrating the focusing of targeted libraries into regions populated by known active compounds [5]. Genetic interaction mapping creates epistatic profiles that reveal functional relationships between genes and compounds, while morphological clustering groups compounds with similar phenotypic effects based on high-content screening data [6] [4].

Network pharmacology approaches integrate chemogenomic data with biological pathway information, creating multi-scale networks that connect compound-target interactions to downstream phenotypic effects [6]. These networks typically incorporate diverse data types, including:

- Chemical structures and properties from databases such as ChEMBL [7] [6]

- Protein-target information including family classification and functional annotations [1] [6]

- Pathway data from resources like KEGG and Gene Ontology [6]

- Disease associations from Disease Ontology and other biomedical resources [6]

- Morphological profiles from high-content imaging assays [6]

The integration of these diverse data types enables the identification of complex relationships between chemical structures, biological targets, and phenotypic outcomes, facilitating the prediction of mechanism of action for novel compounds [6].

Applications in Biotechnology and Drug Discovery

Target Identification and Validation

Chemogenomics provides powerful approaches for identifying and validating novel drug targets. In one application, researchers capitalized on an existing ligand library for the bacterial enzyme murD involved in peptidoglycan synthesis [1]. Using chemogenomics similarity principles, they mapped the murD ligand library to other members of the mur ligase family (murC, murE, murF, murA, and murG) to identify new targets for known ligands [1]. Structural and molecular docking studies revealed candidate ligands for murC and murE ligases, demonstrating how chemogenomic approaches can expand the utility of existing compound collections against related targets [1].

Another innovative application involved the identification of genes in biological pathways through chemogenomic profiling [1]. Researchers utilized Saccharomyces cerevisiae cofitness data—representing similarity of growth fitness under various conditions between different deletion strains—to identify the enzyme responsible for the final step in diphthamide biosynthesis [1]. By identifying strains with high cofitness to known diphthamide biosynthesis genes, they discovered YLR143W as the missing diphthamide synthetase, subsequently confirmed through experimental validation [1]. This demonstrates how chemogenomic data can elucidate even long-standing biological mysteries.

Mechanism of Action Deconvolution

Determining the mechanism of action (MOA) for bioactive compounds represents a major application of chemogenomics. This approach has been successfully applied to traditional medicine systems, including Traditional Chinese Medicine (TCM) and Ayurveda, where the complex mixture of natural products presents significant challenges for target identification [1]. Compounds from traditional medicines often possess "privileged structures" with favorable solubility and safety profiles, making them attractive starting points for drug development [1]. Chemogenomic analysis of these compounds using target prediction programs has identified relevant protein targets linked to observed phenotypes, such as sodium-glucose transport proteins and PTP1B for hypoglycemic activity, or steroid-5-alpha-reductase and P-gp for anti-cancer formulations [1].

The integration of chemogenomics with phenotypic screening creates a powerful framework for MOA deconvolution. In one implementation, researchers developed a system pharmacology network integrating drug-target-pathway-disease relationships with morphological profiles from the Cell Painting assay [6]. This platform enabled the connection of compound-induced morphological changes to specific biological targets and pathways, facilitating rapid hypothesis generation about mechanism of action for hits from phenotypic screens [6]. Such integrated approaches are particularly valuable for complex disease models where the relevant molecular targets may not be known in advance.

Essential Research Reagents and Materials

Successful chemogenomic screening requires carefully selected reagents and materials designed to maximize information content and reproducibility.

Table 3: Essential Research Reagents for Chemogenomic Studies

| Reagent Category | Specific Examples | Function and Application |

|---|---|---|

| Chemical Libraries | Pfizer Chemogenomic Library, GSK BDCS, Prestwick Library, LOPAC, NCATS MIPE [6] | Source of chemical diversity for screening |

| Cell Line Libraries | Yeast deletion strains (heterozygous/homozygous), Cancer cell line panels, IPSC-derived cells [4] | Genetic diversity for chemical-genetic interaction studies |

| Assay Reagents | Cell Painting dyes, Viability indicators, Reporter constructs [6] | Phenotypic profiling and response measurement |

| Target Annotation Databases | ChEMBL, KEGG, Gene Ontology, Disease Ontology [6] | Biological context and target identification |

| Computational Tools | ScaffoldHunter, Neo4j, RDKit, Chemaxon JChem [7] [6] | Structural analysis and data integration |

The selection of appropriate chemical libraries represents a critical decision point in chemogenomic study design. Two fundamentally different approaches exist: diverse libraries that sample broad chemical space and focused libraries that target specific regions of chemical space [4]. Diverse libraries maximize the chance of discovering novel chemotypes but may require larger screening efforts, while focused libraries provide higher hit rates against specific target families but may limit serendipitous discoveries [4]. Many successful chemogenomic campaigns employ a hybrid approach, using diverse libraries for initial discovery followed by focused libraries for target family exploration.

Chemogenomics has established itself as a powerful integrative approach that systematically explores the interface between chemical compounds and biological systems. By combining targeted library design with high-throughput screening and computational analysis, this methodology enables the parallel identification of bioactive small molecules and their protein targets. The continued development of more sophisticated chemical libraries, screening technologies, and data integration platforms will further enhance the utility of chemogenomics in both basic research and drug discovery. As the field evolves, emphasis on data quality and reproducibility—through rigorous curation practices and standardized workflows—will ensure that chemogenomic approaches deliver robust, translatable insights into the complex relationship between chemical structure and biological function.

Chemogenomics represents a systematic approach in modern drug discovery that involves screening targeted libraries of small molecules against families of functionally related proteins, such as G-protein-coupled receptors (GPCRs), kinases, proteases, and nuclear receptors [8] [1]. The fundamental goal is the parallel identification of novel drugs and their biological targets by studying the intersection of all possible chemical compounds against all potential therapeutic targets derived from genomic information [9] [1]. This strategy marks a significant evolution from traditional one-drug-one-target approaches, instead considering the complex interactions between small molecules and biological systems on a broader scale.

The field operates on the principle that targeting entire gene families rather than individual proteins enables more efficient exploration of chemical and biological spaces [8]. Since a portion of ligands designed to bind one family member frequently bind to additional family members, collectively, compounds in a targeted chemical library should bind to a high percentage of the target family [1]. Chemogenomics integrates target and drug discovery by using active compounds as probes to characterize proteome functions, with the interaction between a small compound and a protein inducing a phenotype that can be systematically studied [1].

Core Conceptual Frameworks

Forward Chemogenomics

Forward chemogenomics, also termed classical chemogenomics, begins with the investigation of a specific phenotype of interest, such as inhibition of cancer cell growth or induction of cell death [9] [1]. Researchers identify small molecules that produce this desired phenotype through systematic screening of compound libraries against cellular or organismal model systems [1]. The molecular basis of the phenotype is initially unknown, and the identified active compounds serve as tools to pinpoint the protein targets responsible for the observed effect [1].

The primary challenge in forward chemogenomics lies in designing phenotypic assays that efficiently lead from screening to target identification [1]. A prominent example includes the Developmental Therapeutics Program of the National Cancer Institute (NCI), which screens compound libraries against a panel of representative cancer cell lines (NCI60) [9]. The anti-proliferative effects are recorded and analyzed to differentiate various classes of cytotoxic compounds, allowing researchers to generate hypotheses about mechanisms of action for novel agents [9]. This approach has shown particular utility in cancer biology, where patient-specific vulnerabilities can be identified through phenotypic profiling [10] [11].

Reverse Chemogenomics

Reverse chemogenomics adopts a target-first strategy, beginning with specific protein targets of interest [9] [1]. Gene sequences are cloned and expressed as target proteins, which are then screened against compound libraries using high-throughput, target-based assays [9]. These assays monitor compound effects on specific targets, cellular pathways, or whole-cell phenotypes [9]. Once modulators are identified, the phenotype induced by the molecule is analyzed in cellular or whole-organism contexts to validate biological function [1].

This approach resembles traditional target-based drug discovery but is enhanced by parallel screening capabilities and the ability to perform lead optimization across multiple targets belonging to the same gene family [1]. Reverse chemogenomics benefits from well-established methods including combinatorial chemistry, high-throughput screening, computational chemistry, structural biology, and bioinformatics [9]. The availability of protein structures from gene families, obtained through crystallography, NMR, or biological homology modeling, facilitates in silico chemogenomics approaches that predict molecules active against additional family members [8].

Table 1: Core Characteristics of Forward and Reverse Chemogenomics

| Feature | Forward Chemogenomics | Reverse Chemogenomics |

|---|---|---|

| Starting Point | Phenotype of interest | Known protein target |

| Screening Approach | Phenotypic screening | Target-based screening |

| Primary Goal | Identify drug targets | Validate biological function |

| Key Challenge | Target deconvolution | Phenotypic validation |

| Common Assays | Cell-based, whole organism | Cell-free, enzymatic, binding |

| Information Flow | Phenotype → Compound → Target | Target → Compound → Phenotype |

Experimental Methodologies and Workflows

Forward Chemogenomics Workflow

The experimental workflow for forward chemogenomics typically involves several standardized stages. First, a suitable model system is selected based on the phenotype of interest—this may include cancer cell lines, yeast strains, or other cellular/organismal models [9] [12]. A compound library is then screened against this model system under controlled conditions, with phenotypic responses quantitatively measured [10] [12]. Hit compounds that produce the desired phenotype are selected for target identification, which may involve various deconvolution strategies such as affinity chromatography, protein microarrays, or genetic approaches [9] [1]. Finally, confirmed target-compound pairs are validated through secondary assays and mechanistic studies [12].

Reverse Chemogenomics Workflow

The reverse chemogenomics workflow initiates with target selection and characterization, typically focusing on members of pharmaceutically relevant gene families [8] [1]. Target proteins are produced through cloning and expression systems, then used to develop specific screening assays [9]. Compound libraries are screened against these targets using high-throughput approaches, with hit compounds evaluated for selectivity across related targets [8] [1]. Promising compounds are then advanced to phenotypic characterization in cellular or organismal models to confirm biological relevance and therapeutic potential [1].

Implementation in Drug Discovery

Library Design Considerations

Designing appropriate compound libraries is crucial for successful chemogenomics approaches. Targeted screening libraries of bioactive small molecules must be carefully constructed considering library size, cellular activity, chemical diversity and availability, and target selectivity [10]. Most compounds modulate their effects through multiple protein targets with varying degrees of potency and selectivity, requiring analytic procedures to optimize library composition [10] [11]. The resulting compound collections should cover a wide range of protein targets and biological pathways implicated in the disease area of interest [10].

In practice, chemogenomic libraries can be constructed to include known ligands of at least one—and preferably several—members of the target family [1]. For example, specialized libraries include the GlaxoSmithKline Biologically Diverse Compound Set (targeting GPCRs and kinases), the LOPAC1280 (Library of Pharmacologically Active Compounds), Pfizer's Chemogenomic library (focusing on ion channels, GPCRs, and kinases), and the Prestwick Chemical Library (comprising approved drugs selected for target diversity, bioavailability, and safety) [8]. The design of a minimal screening library of 1,211 compounds targeting 1,386 anticancer proteins demonstrates how virtual libraries can be translated into physical screening collections for experimental validation [10] [11].

Research Reagent Solutions

Table 2: Essential Research Reagents in Chemogenomics

| Reagent/Category | Function & Application | Examples |

|---|---|---|

| Compound Libraries | Small molecule collections for screening | LOPAC1280, Prestwick Chemical Library, NCI Sets [8] [12] |

| Biological Models | Organismal/cellular systems for phenotypic screening | Yeast deletion strains, cancer cell lines, patient-derived cells [10] [12] |

| Target Proteins | Expressed and purified proteins for target-based screens | Recombinant kinases, GPCRs, nuclear receptors [8] [1] |

| Screening Assays | Test systems for compound evaluation | Cell-free binding, cell-based phenotypic, enzymatic assays [9] [12] |

Case Study: Glioblastoma Patient Cell Profiling

A recent application of chemogenomics in precision oncology demonstrated the power of phenotypic screening in identifying patient-specific vulnerabilities [10] [11]. Researchers implemented analytic procedures for designing anticancer compound libraries adjusted for library size, cellular activity, chemical diversity and availability, and target selectivity [10]. In a pilot screening study, they used a physical library of 789 compounds covering 1,320 anticancer targets to image glioma stem cells from patients with glioblastoma (GBM) [10] [11]. The cell survival profiling revealed highly heterogeneous phenotypic responses across patients and GBM subtypes, highlighting the potential of forward chemogenomics approaches to identify personalized therapeutic strategies [10].

The experimental protocol involved several key steps: (1) Design of virtual compound libraries covering a wide range of cancer-related targets; (2) Creation of a minimal screening library of 1,211 compounds targeting 1,386 anticancer proteins; (3) Culture of patient-derived glioma stem cells representing different GBM subtypes; (4) High-throughput phenotypic screening using automated imaging; (5) Quantification of cell survival and morphological parameters; and (6) Identification of patient-specific compound sensitivities based on differential responses [10].

Comparative Analysis and Strategic Applications

Advantages and Limitations

Both forward and reverse chemogenomics offer distinct advantages and face particular limitations. Forward chemogenomics enables discovery of novel biological mechanisms without predefined target hypotheses, potentially identifying unexpected therapeutic strategies [9] [1]. However, it faces significant challenges in target deconvolution and may involve complex mechanistic follow-up studies [1]. Reverse chemogenomics provides clear intellectual property positions and straightforward structure-activity relationship development but may produce compounds that lack cellular efficacy due to permeability or metabolic issues [9].

The main advantage of chemogenomics overall is its predictive power using enormous biological datasets, with information on drugs' and targets' nucleotide sequences and chemical structures available in public databases for predicting drug-target interactions [8]. Limitations include the need for meticulous integration of cheminformatics and bioinformatics data, developing rational methods for hit selection from vast virtual possibilities, and constructing information-specific catalogs [8].

Application Scenarios

Table 3: Application Scenarios for Forward and Reverse Chemogenomics

| Application | Forward Chemogenomics | Reverse Chemogenomics |

|---|---|---|

| Target Identification | Primary approach for novel target discovery | Limited to known target families |

| Mechanism of Action Studies | Identifying mechanisms of phenotypic effects | Confirming target engagement |

| Drug Repurposing | Discovering new indications through phenotypic screening | Predicting new targets for existing compounds |

| Pathway Elucidation | Mapping novel biological pathways | Validating hypothesized pathways |

| Personalized Medicine | Identifying patient-specific vulnerabilities | Developing targeted therapies |

Chemogenomics has been successfully applied to determine mechanisms of action for traditional medicines, identify new drug targets, and discover genes in biological pathways [1]. For example, chemogenomics approaches helped identify the enzyme responsible for the final step in the synthesis of diphthamide, a modified histidine residue found on translation elongation factor 2, thirty years after the compound was first characterized [1]. In antibacterial drug discovery, chemogenomics profiling has identified new therapeutic targets by capitalizing on existing ligand libraries for enzymes in the peptidoglycan synthesis pathway and mapping these to other members of the enzyme family [1].

Forward and reverse chemogenomics represent complementary strategies in modern drug discovery, each with distinct strengths and application domains. Forward chemogenomics begins with phenotypic observation and progresses to target identification, making it ideal for exploring novel biology and identifying unexpected therapeutic strategies. Reverse chemogenomics starts with defined molecular targets and progresses to phenotypic validation, providing a more structured approach for optimizing compounds against well-characterized target families.

The integration of both approaches within chemogenomics frameworks provides powerful capabilities for addressing the complexity of biological systems and drug interactions. As chemogenomics continues to evolve with advances in screening technologies, bioinformatics, and chemical biology, it offers increasingly sophisticated approaches for the systematic exploration of biological and chemical spaces, ultimately accelerating the discovery of novel therapeutic agents and their molecular targets. The strategic application of these core approaches will be essential for addressing unmet medical needs through more efficient and targeted drug discovery pipelines.

The Role of Chemogenomics in Bridging Target and Drug Discovery

Chemogenomics represents a paradigm shift in modern drug discovery, moving away from the traditional "one drug, one target" model toward a more holistic, systematic investigation of the interactions between chemical compounds and biological systems. Fundamentally, chemogenomics is defined as the investigation of classes of compounds (libraries) against families of functionally related proteins [13]. This strategy, in principle, searches for all molecules capable of interacting with any biological target, though in practice focuses on the systematic analysis of chemical-biological interactions using congeneric series of chemical analogs to investigate their action on specific target classes such as GPCRs, kinases, phosphodiesterases, and ion channels [13].

The discipline operates through two complementary approaches: reverse chemogenomics, where gene sequences of interest are expressed as target proteins and screened in a high-throughput manner against compound libraries, and forward chemogenomics, where active compounds are identified based on their phenotypic effects on whole biological systems, followed by mechanistic investigation of the phenotype [14]. This integrated framework enables the parallel identification of therapeutic targets and bioactive compounds, significantly accelerating the early drug discovery pipeline [14].

Theoretical Foundations and Strategic Approaches

Key Principles and Concepts

The theoretical framework of chemogenomics rests on several foundational concepts that differentiate it from traditional drug discovery approaches. The structure-activity relationship (SAR) homology concept enables the parallel exploration of gene and protein families by leveraging the premise that similar targets often bind similar ligands [14]. This principle allows researchers to extrapolate knowledge from well-characterized targets to less-studied members of the same protein family.

Another pivotal concept is that of "privileged structures" – molecular scaffolds that frequently produce biologically active analogs across a target family [13]. For example, benzodiazepines have demonstrated remarkable versatility in generating active compounds, particularly within the G-protein-coupled receptor class. The identification and utilization of such privileged structures enables more efficient library design and increases the probability of discovering novel bioactive compounds.

The SOSA (Selective Optimization of Side Activities) approach provides another strategic foundation, focusing on modifying the selectivity of biologically active compounds to generate new drug candidates from the side activities of therapeutically used drugs [13]. This approach leverages the extensive pharmacological characterization of existing drugs to identify new therapeutic applications, potentially reducing development risks and timelines.

Chemogenomics in Precision Oncology

The application of chemogenomics strategies has proven particularly transformative in oncology, where the approach helps identify treatment strategies that selectively target the multiple and complex molecular alterations observed in human tumors [14]. Recent advances have demonstrated the power of phenotypic screening in identifying patient-specific vulnerabilities, as evidenced by a pilot study on glioblastoma (GBM) patient cells that revealed highly heterogeneous responses across patients and GBM subtypes [10] [15].

Precision oncology applications require carefully designed chemogenomic libraries that cover a wide range of protein targets and biological pathways implicated in various cancers. Modern library design strategies emphasize cellular activity, chemical diversity, and target selectivity, with one proposed minimal screening library comprising 1,211 compounds targeting 1,386 anticancer proteins [10]. This targeted approach enables researchers to efficiently map the complex landscape of cancer vulnerabilities while managing screening costs and logistical constraints.

Chemogenomic Library Design: Methodologies and Protocols

Fundamental Design Strategies

Designing a targeted screening library of bioactive small molecules presents significant challenges, as most compounds modulate their effects through multiple protein targets with varying degrees of potency and selectivity [10]. Effective library design must balance several competing factors: library size, cellular activity, chemical diversity, compound availability, and target selectivity. Analytic procedures for designing anticancer compound libraries have been developed that systematically address these constraints, resulting in collections widely applicable to precision oncology initiatives [10] [15].

A critical consideration in library design is the concept of library focus. Broadly, libraries can be categorized as either target-family-focused or diversity-oriented. Target-family-focused libraries concentrate on compounds likely to interact with specific protein families (e.g., kinases, GPCRs), leveraging privileged structures and known pharmacophores. Diversity-oriented libraries aim to cover maximum chemical space to facilitate the discovery of novel chemotypes, often employing complex scaffold architectures with high stereochemical diversity.

Practical Implementation and Optimization

The practical implementation of chemogenomic library design involves a multi-step process beginning with target selection and compound identification. Researchers must first define the target space of interest, which in oncology might include proteins involved in apoptosis, cell cycle regulation, DNA repair, and signaling pathways commonly dysregulated in cancer [10]. Subsequently, compounds are selected based on their documented activity against these targets, with careful attention to potency, selectivity, and chemical tractability.

Compound filtering represents a crucial step in library optimization. This process typically employs physicochemical property filters (e.g., molecular weight <1000 Da, organic compounds without metal atoms) to remove non-drug-like molecules while retaining sufficient chemical diversity [16]. The resulting compound collections balance comprehensive target coverage with practical screening constraints, as demonstrated by a physical library of 789 compounds that covers 1,320 anticancer targets successfully applied to profile glioma stem cells from glioblastoma patients [10].

The continuous refinement of library design represents an ongoing trend in chemogenomics that increasingly emphasizes data quality rather than merely the number of data points generated [14]. This quality-focused approach recognizes that well-annotated, highly characterized compounds of moderate chemical diversity often yield more valuable insights than massive libraries with poor annotation and questionable data quality.

Figure 1: Chemogenomic Library Design Workflow. This flowchart outlines the systematic process for designing targeted compound libraries, from initial target definition through experimental validation.

Data Curation and Quality Control Protocols

Integrated Curation Workflow

The accuracy and reproducibility of chemogenomics data fundamentally determine the success of downstream modeling and discovery efforts. Numerous studies have alerted the scientific community to concerning rates of errors in both chemical structures and biological measurements within public databases [7]. To address these challenges, a comprehensive chemical and biological data curation workflow must be implemented prior to model development or database deposition [7].

The proposed integrated workflow encompasses both chemical structure verification and bioactivity data validation. For chemical structures, this includes identification and correction of structural errors, removal of problematic compound classes (inorganics, organometallics, mixtures), structural cleaning (detection of valence violations, abnormal bond parameters), ring aromatization, normalization of specific chemotypes, and standardization of tautomeric forms [7]. The treatment of stereochemistry demands particular attention, as compounds with multiple asymmetric centers show increased likelihood of erroneous assignments.

Biological Data Standardization

Bioactivity data curation presents unique challenges, as there are no absolute rules defining the "true" value of a biological measurement [7]. Nevertheless, systematic approaches can flag suspicious entries through processing of bioactivities for chemical duplicates – instances where the same compound is recorded multiple times within or across databases [7]. When duplicates are identified, bioactivity values must be carefully compared and potentially aggregated, with the best potency measurement typically selected as the representative value.

The standardization of bioactivity data requires careful attention to experimental details and measurement contexts. For example, the type of dispensing technology (tip-based versus acoustic) used in high-throughput screening can significantly influence experimental responses measured for the same compounds in the same assay [7]. These technical variations can dramatically affect both prediction performance and interpretation of computational models, highlighting the importance of comprehensive assay annotation.

Table 1: Key Steps in Chemogenomics Data Curation

| Curation Phase | Specific Procedure | Tools & Techniques |

|---|---|---|

| Chemical Structure Curation | Structure standardization; Tautomer normalization; Stereochemistry verification; Removal of inorganics/metallics | Chemaxon JChem; RDKit; Schrodinger LigPrep; KNIME workflows |

| Bioactivity Data Curation | Identification of chemical duplicates; Aggregation of multiple measurements; Unit conversion; Outlier detection | Custom scripts; AMBIT; Database-specific tools |

| Target Annotation | Standardization of target identifiers; Gene symbol verification; Orthologue mapping | Entrez ID; NCBI gene2accession; Orthologue tables |

| Assay Annotation | Mode of action classification; Technology type documentation; Experimental condition standardization | Controlled vocabularies; Ontologies (e.g., BioAssay Ontology) |

Experimental Protocols and Research Applications

Phenotypic Screening in Glioblastoma

A recent pilot study exemplifies the application of chemogenomics in precision oncology, specifically in profiling glioblastoma (GBM) patient cells [10] [15]. The experimental protocol began with the establishment of glioma stem cell cultures derived from patient tumors, preserving the cellular heterogeneity and phenotypic characteristics of the original malignancies. These cells were then screened against a physical library of 789 compounds carefully selected to cover 1,320 anticancer targets, with particular emphasis on pathways implicated in GBM pathogenesis.

The screening methodology employed high-content imaging to quantify multiple phenotypic endpoints, including cell viability, morphology, and more complex phenotypic signatures. This multi-parameter approach enabled the identification of subtle compound-induced phenotypes beyond simple cytotoxicity, providing richer information on mechanism of action and patient-specific vulnerabilities. The resulting data revealed highly heterogeneous responses across patients and established GBM molecular subtypes, underscoring the value of chemogenomic approaches in capturing the complexity of cancer biology and therapy resistance.

Chemogenomic Library Screening Protocol

A standardized protocol for chemogenomic library screening involves several critical stages. First, library preparation requires compound dissolution in appropriate solvents (typically DMSO) at standardized concentrations, followed by transfer to assay-ready plates using precision liquid handling systems. Next, cell seeding optimizes cell density and culture conditions to maintain physiological relevance while ensuring robust assay performance. The compound treatment phase typically employs a concentration-response format (e.g., 8-point serial dilution) to enable quantitative assessment of compound potency and efficacy.

Following an appropriate incubation period (typically 72-144 hours for viability assays), phenotypic readouts are captured using methods appropriate to the biological endpoints of interest. For cell viability assays, this might include ATP quantification (CellTiter-Glo), resazurin reduction, or high-content imaging of nuclear and cellular morphology. Finally, data analysis involves normalization to positive and negative controls, curve-fitting to calculate IC50/EC50 values, and identification of hits based on statistically defined thresholds. Throughout this process, quality control measures including Z'-factor calculation and plate uniformity assessment ensure data reliability.

Table 2: Essential Research Reagents and Tools in Chemogenomics

| Reagent/Tool Category | Specific Examples | Function in Research |

|---|---|---|

| Compound Libraries | Targeted anticancer library; Diversity-oriented synthesis collections; Annotated compound libraries with known bioactivity | Source of chemical probes for systematic target perturbation; enables connection of chemical structure to biological effect |

| Cell-Based Assay Systems | Glioblastoma stem cell cultures; Engineered isogenic cell lines; Patient-derived organoids | Biologically relevant screening platforms that preserve disease pathophysiology and cellular context |

| Detection Technologies | High-content imaging systems; Luminescence/fluorescence plate readers; Label-free biosensors | Multiparametric phenotypic assessment; quantitative measurement of compound effects |

| Bioinformatics Resources | ExCAPE-DB; ChEMBL; PubChem; Connectivity Map | Publicly accessible chemogenomics data repositories enabling data integration and meta-analysis |

Public Chemogenomics Databases

The growth of chemogenomics has been facilitated by the development of extensive public databases that compile chemical structures and associated bioactivity data. Key resources include ChEMBL, a manually curated database of bioactive molecules with drug-like properties extracted from the scientific literature; PubChem, a comprehensive repository of chemical substances and their bioactivities primarily from high-throughput screening efforts; and ExCAPE-DB, an integrated large-scale dataset specifically designed to facilitate big data analysis in chemogenomics [16].

The ExCAPE-DB represents a particularly significant resource, comprising over 70 million structure-activity relationship data points from PubChem and ChEMBL with standardized chemical structures, target information, and activity annotations [16]. This database employs rigorous curation protocols including chemical structure standardization (using ambitcli and the Chemistry Development Kit), bioactivity data standardization with controlled vocabularies, and aggregation of multiple activity records for the same compound-target pair. The resulting resource reflects industry-scale data quality and serves as a valuable foundation for building predictive models of polypharmacology and off-target effects.

Data Standardization and Integration Challenges

The integration of heterogeneous chemogenomics data presents substantial challenges, as publicly available data often lack standardized annotation for biological endpoints, mode of action, and target identifiers [16]. Addressing these inconsistencies requires the implementation of controlled vocabularies and standardization protocols for key data elements including target identifiers (preferably Entrez ID or UniProt ID), activity values (with uniform units), assay type classification, and experimental technology documentation.

Chemical structure representation presents particular standardization challenges, with error rates in public and commercial databases ranging from 0.1% to 3.4% depending on the database [7]. Common issues include incorrect stereochemistry, inaccurate tautomer representation, and presence of unwanted counterions or salts. The use of community-established standards such as InChI (International Chemical Identifier) and implementation of automated structure-checking pipelines can significantly improve data quality and interoperability across different platforms and research groups.

Figure 2: Chemogenomics Data Flow from Sources to Applications. This diagram illustrates the pathway from raw experimental data through standardization and integration into publicly accessible databases that enable various research applications.

Future Directions and Concluding Perspectives

The evolution of chemogenomics continues to transform early drug discovery by systematically investigating the complex interface between chemical space and biological systems. Current trends emphasize data quality over quantity, with refined integration of bioinformatics and chemoinformatics data enabling more rational selection of designed compounds from virtually infinite synthetic possibilities [14]. This quality-focused approach, combined with advances in library design and screening technologies, promises to enhance the efficiency of identifying both validated therapeutic targets and novel drug candidates.

The application of chemogenomics strategies in precision oncology particularly illustrates the power of this approach to address complex disease biology. By enabling the systematic mapping of patient-specific vulnerabilities against targeted compound libraries, chemogenomics provides a framework for developing more personalized treatment approaches that match the molecular heterogeneity of diseases like glioblastoma [10] [15]. As chemogenomics continues to evolve, its integration with emerging technologies including CRISPR-based functional genomics, proteomics, and artificial intelligence will further accelerate the identification and validation of novel therapeutic targets and corresponding chemical probes.

In conclusion, chemogenomics represents a powerful strategy that has matured from a niche concept to a central paradigm in modern drug discovery. By systematically exploring the intersection of chemical and biological space, it provides a robust framework for simultaneously addressing the key challenges of target identification and compound optimization. As data quality initiatives advance and integration with complementary technologies deepens, chemogenomics is poised to play an increasingly central role in bridging the critical gap between target discovery and therapeutic development across a broad spectrum of human diseases.

Chemogenomics represents a strategic paradigm in modern drug discovery, connecting the chemical and biological domains to establish ligand-target relationships on a systematic scale. This approach enables target classification, focused library design, and the interrogation of selectivity and polypharmacology profiles [17]. By organizing chemical libraries around specific protein families, researchers can leverage conserved structural and functional knowledge to accelerate the identification of novel therapeutic agents. This guide details the core principles and practical methodologies for designing chemogenomic libraries focused on three of the most therapeutically significant target families: G protein-coupled receptors (GPCRs), kinases, and nuclear receptors (NRs). The strategic selection of these families allows for the efficient exploration of chemical space by applying family-specific design rules, ultimately enabling more effective deconvolution of phenotypic screening outcomes and target validation in complex biological systems [10] [18] [19].

Core Design Principles for Chemogenomic Libraries

The development of a high-quality chemogenomic library requires adherence to several interconnected design principles that ensure both broad target coverage and interpretable screening results. These principles guide the selection and annotation of compounds to create resources that are maximally informative for biological discovery.

- Cellular Activity and Potency: Priority selection goes to compounds with demonstrated cellular activity (typically potency ≤ 10 µM, preferably ≤ 1 µM) to ensure relevance in biological systems [18] [19].

- Selectivity and Orthogonality: While absolute selectivity for a single target is rare and often unnecessary, libraries should be optimized for complementary selectivity profiles. This orthogonality allows for the deconvolution of mechanisms when observing phenotypic outcomes [20] [18].

- Target Coverage and Diversity: Libraries should aim for broad coverage across the target family, including understudied ("dark") members. Including multiple chemotypes per target increases confidence in linking biology to specific targets [20].

- Chemical Diversity and Drug-Likeness: Structural diversity in scaffolds and frameworks helps explore a wider swath of chemical space and minimizes redundancy. Compounds should generally exhibit drug-like properties (e.g., MW = 200-550, ClogP = -1.5-5.5) to enhance cellular permeability and reduce toxicity liabilities [21] [19].

- Data Annotation and Availability: Comprehensive bioactivity annotation, including primary potency, selectivity profiles, and known off-targets, is essential for interpreting screening data. Open access to data and compounds fosters broader scientific utility [10] [20].

The following workflow outlines the sequential stages of a target-family focused chemogenomic library design process, integrating these core principles from initial conceptualization to final deployment for screening.

Library Design Strategies by Target Family

Kinase-Focused Library Design

Kinases represent one of the most successfully targeted protein families for therapeutic intervention, with over 60 FDA-approved small molecule inhibitors. The design of kinase chemogenomic sets leverages the deep understanding of the conserved ATP-binding site while seeking to achieve selectivity through unique interactions with variable regions.

Key Design Considerations: The primary challenge is managing selectivity given the high conservation of the ATP-binding pocket across the 500+ human kinome. Successful libraries prioritize compounds with defined and narrow selectivity profiles rather than absolute specificity. For example, the Kinase Chemogenomic Set (KCGS) selects inhibitors based on a binding constant (K_D) < 100 nM for the primary target and a strict selectivity index (S10), meaning they inhibit fewer than 2.5% of the kinome panel tested at 1 µM [20]. This ensures tools are potent yet sufficiently selective for meaningful biological inference. Another critical strategy is maximizing kinome coverage, with special emphasis on understudied "dark" kinases nominated by initiatives like the Illuminating the Druggable Genome (IDG) program. The KCGS, for instance, covers 215 human kinases, providing starting points for understudied targets [20]. Furthermore, including multiple chemotypes per kinase is essential. Using two or more structurally distinct inhibitors for a single target builds confidence that observed phenotypic effects are due to the intended kinase inhibition and not to a shared off-target effect of a particular chemotype [20].

Case Study: The Kinase Chemogenomic Set (KCGS) The KCGS represents an open-science resource that exemplifies these principles. It was assembled through a collaborative community effort, with pharmaceutical companies and academic labs donating compounds. Each candidate inhibitor was profiled against a panel of 401 wild-type human kinases using a binding assay (DiscoverX scanMAX) at 1 µM [20]. Compounds meeting the initial selectivity threshold underwent full dose-response experiments to determine K_D values. The final selection of 187 inhibitors was manually triaged to maximize kinome coverage and chemical diversity, intentionally avoiding over-representation of well-studied kinases [20]. This rigorous process ensures KCGS is a highly annotated set of selective kinase inhibitors optimized for use in cell-based phenotypic screens to elucidate kinase biology.

GPCR-Focused Library Design

GPCRs are the largest and most successful family of druggable targets, with over one-third of all approved drugs acting on them. Designing GPCR-focused libraries involves unique strategies to target both orthosteric and allosteric binding sites.

Key Design Considerations: A major focus is leveraging privileged scaffolds—structural motifs that recur in known GPCR ligands. Library design often involves framework 2D-fingerprint similarity searches and the careful selection of these GPCR-privileged scaffolds, extended by 3D pharmacophore searches to identify novel chemotypes with potential activity [21]. Furthermore, explicitly targeting allosteric sites is a powerful strategy to achieve subtype selectivity and explore novel modes of modulation. Allosteric ligands can modulate receptor function more subtly than orthosteric ligands, offering new therapeutic opportunities. Libraries can contain dedicated sublibraries for allosteric modulators [17] [21]. Given the diversity of GPCRs and their ligands, achieving high chemical diversity is paramount. This involves designing novel, sp3-enriched scaffolds and ensuring drug-like molecular properties (e.g., MW 200-550, ClogP -1.5-5.5) to create high-quality starting points for optimization [21].

Implementation Example: Commercial GPCR Libraries Commercial providers have created large GPCR-focused libraries using integrated in silico approaches. For instance, one provider's library of 53,440 compounds was designed using a combination of 2D similarity, privileged scaffold selection, and 3D pharmacophore searches, followed by medicinal chemistry filtering [21]. This library also includes specialized sublibraries, such as an Allosteric GPCR Library (14,160 compounds) and a Lipid GPCR Library (6,400 compounds), enabling targeted research into specific GPCR classes and modes of action [21].

Nuclear Receptor-Focused Library Design

Nuclear receptors (NRs) are ligand-activated transcription factors that regulate gene expression. The NR1 family, which includes receptors for hormones, vitamins, and lipids, presents a unique opportunity for chemogenomic library design due to its partially explored therapeutic potential.

Key Design Considerations: A critical aspect is covering diverse modes of action. Unlike kinases and many GPCRs, NR ligands can function as agonists, antagonists, or inverse agonists. A high-quality NR library must include compounds representing these different functional outcomes to fully probe NR biology [18]. Comprehensive in-family selectivity profiling is also essential due to structural similarities within NR subfamilies. This involves profiling each candidate compound not just against its primary target, but against all NRs in its subfamily using uniform cellular assays (e.g., hybrid reporter gene assays) to map cross-reactivity and identify selective tools [18]. Additionally, stringent liability profiling is necessary. Candidates should be triaged in cell viability assays and screened against common off-targets like kinases and bromodomains, which are highly ligandable and can cause confounding phenotypic effects [18].

Case Study: An NR1 Chemogenomic Set A recent effort compiled a CG set for the 19 members of the NR1 family. The process began with curated public bioactivity data, identifying 30,862 annotated NR1 ligands [18]. Candidates were filtered for potency (≤ 10 µM), selectivity (up to five off-targets), commercial availability, and chemical diversity. The final selection of 69 compounds was rigorously profiled for identity/purity, cytotoxicity in multiple cell lines, and activity on liability targets like BRD4 and AURKA. In-family selectivity was confirmed using reporter gene assays, resulting in a set of highly annotated tools suitable for probing NR1 biology in phenotypic screens related to autophagy, neuroinflammation, and cancer cell death [18].

Table 1: Comparative Overview of Target Family Library Design Strategies

| Design Principle | Kinase Libraries (e.g., KCGS) | GPCR Libraries | Nuclear Receptor Libraries (e.g., NR1 Set) |

|---|---|---|---|

| Primary Selectivity Metric | Selectivity Index (S10 < 0.025-0.04 at 1 µM) [20] | Target-focused similarity & 3D pharmacophores [21] | Up to five known off-targets; in-family selectivity profiling [18] |

| Key Screening Assay | Binding assays (e.g., DiscoverX scanMAX) [20] | Functional and binding assays, often proprietary | Uniform cellular reporter gene assays [18] |

| Coverage Goal | 215+ kinases; 2+ chemotypes per kinase [20] | Broad coverage across GPCR families; specialized sublibraries [21] | Full coverage of a defined family (e.g., all 19 NR1 receptors) [18] |

| Typical Library Size | ~200-1200 compounds [10] [20] | ~40,000-50,000+ compounds [21] [22] | ~70-5000 compounds [18] [19] |

| Unique Challenge | Achieving selectivity in a conserved ATP-binding site | Identifying selective ligands for ~800 receptors | Distinguishing agonists from antagonists/inverse agonists |

Experimental Protocols for Library Validation and Screening

Standardized Selectivity and Liability Profiling

A defining feature of a high-quality chemogenomic library is the comprehensive experimental profiling of its constituents. This involves a cascade of assays to validate identity, purity, potency, selectivity, and absence of toxicity.

Key Experimental Protocols:

- Quality Control and Compound Integrity: All compounds must undergo rigorous quality control prior to inclusion. This is typically done via NMR, LC-UV, and LC-MS analyses to confirm chemical identity and ensure a purity of ≥95% [18].

- Primary Potency and Selectivity Profiling:

- Kinases: The standard is a broad panel binding assay, such as the DiscoverX scanMAX platform, which quantitatively measures compound binding against 400+ human kinases at a single concentration (e.g., 1 µM). Compounds passing an initial selectivity threshold then undergo full dose-response (K_D determination) for all kinases inhibited beyond a cut-off (e.g., >90%) [20].

- Nuclear Receptors: Uniform cellular reporter gene assays are used to profile compound activity (agonism/antagonism) across all members of a subfamily. This ensures data comparability and accurate in-family selectivity assessment [18].

- Liability and Toxicity Profiling:

- Cell Viability: Candidates are screened in cell viability assays across multiple cell lines (e.g., HEK293T, U-2 OS). Growth rates (GR) are measured over time to identify compounds with growth-inhibiting (GR < 1) or cytotoxic (GR < 0) effects [18].

- High-Content Phenotypic Screening: Compounds of concern can be further evaluated in multiplex high-content microscopy assays. These assays use orthogonal stains to capture phenotypic features related to cell health, such as apoptosis, cytoskeleton alterations, and mitochondrial mass, over extended time periods (12-48h) [18].

- Liability Target Screening: Differential scanning fluorimetry (DSF) is used to screen for binding to common, highly ligandable off-targets that cause strong phenotypes, such as representative kinases (AURKA, CDK2) and bromodomains (BRD4). A compound-induced increase in protein melting temperature (ΔTm > 1.8°C) is considered a significant interaction [18].

Application in Phenotypic Screening and Target Deconvolution

Validated chemogenomic libraries are powerful tools for phenotypic drug discovery. The key to their utility lies in the strategic deconvolution of screening results.

Workflow for Phenotypic Screening: Cells (e.g., patient-derived glioma stem cells, iPSCs) are treated with the chemogenomic library and analyzed using a phenotypic endpoint, such as cell survival, high-content imaging (e.g., Cell Painting), or a specific functional readout [10] [19]. The resulting phenotypic profiles are clustered to identify "hits" that induce a desired phenotypic change.

Target Deconvolution Strategies: Once hits are identified, the rich annotation of the library enables mechanistic hypotheses.

- Profile Matching: The phenotypic profile of a hit compound is compared to a database of reference profiles (e.g., from the Cell Painting assay) generated by known tool compounds. Similarity to the profile of a compound with a known mechanism can implicate a specific target or pathway [19].

- Connectivity Analysis: If multiple compounds sharing a common primary target (or off-target) all produce the same phenotypic outcome, this provides strong evidence for the involvement of that target in the observed phenotype. This is most powerful when different chemotypes for the same target are available, as in the KCGS [20].

- Cross-reactivity Analysis: For a single hit compound, its known selectivity profile is used to generate a list of candidate targets responsible for the phenotype. This list can be prioritized for further validation using genetic techniques like CRISPR [10] [23].

Table 2: The Scientist's Toolkit: Key Research Reagent Solutions

| Resource Name | Target Family | Key Features & Function | Source/Reference |

|---|---|---|---|

| Kinase Chemogenomic Set (KCGS) | Kinases | 187 inhibitors; pre-defined potency/selectivity; covers 215 kinases; ideal for phenotypic screening and probe discovery [20]. | www.randomactsofkinase.org [20] |

| NR1 Chemogenomic Set | Nuclear Receptors | 69 agonists/antagonists; comprehensively profiled for in-family selectivity and low toxicity; for target ID in autophagy, inflammation [18]. | Zenodo (10.5281/zenodo.10474037) [18] |

| GPCR-Focused Libraries | GPCRs | Large libraries (40,000-50,000+ cmpds) designed using privileged scaffolds & pharmacophore models; includes allosteric sub-libraries [21] [22]. | Commercial providers (e.g., Enamine, ChemDiv) [21] [22] |

| DiscoverX scanMAX | Kinases | Service platform for broad kinome profiling (400+ kinases); essential for validating inhibitor selectivity during library construction and hit follow-up [20]. | Discovery Life Sciences |

| Cell Painting Assay | Pan-target | High-content imaging assay that quantifies ~1,800 morphological features; generates rich phenotypic profiles for mode-of-action analysis [19]. | Broad Bioimage Benchmark Collection (BBBC022) [19] |

The strategic design of target-family-focused chemogenomic libraries provides a powerful framework for systematic biological and therapeutic exploration. By applying the core principles of cellular potency, orthogonal selectivity, broad coverage, and chemical diversity—tailored to the specific characteristics of GPCRs, kinases, and nuclear receptors—researchers can create unparalleled resources for both target-based and phenotypic drug discovery. The rigorous, multi-stage experimental validation of compound properties, as detailed in the protocols above, is what transforms a simple compound collection into a deeply annotated chemogenomic toolset.

The future of this field lies in the continued expansion of library coverage to understudied "dark" targets, the deepening of mechanistic annotations to include multi-omics data, and the development of even more sophisticated computational models to predict and deconvolve polypharmacology. As these libraries become more accessible to the global research community through open-science initiatives, they will undoubtedly accelerate the identification and validation of novel therapeutic targets for complex diseases.

Methodologies and Real-World Applications in Oncology and Beyond

Chemogenomic libraries are strategically designed collections of small molecules that enable the systematic exploration of biological targets and pathways. The primary objective of these libraries is to modulate protein function to understand disease mechanisms and identify therapeutic opportunities. The design and construction of these libraries are governed by three fundamental pharmacological criteria: potency, selectivity, and cellular activity [10] [24]. These parameters ensure that chemical tools produce reliable, interpretable data in biological systems, ultimately supporting robust target validation and drug discovery efforts.

These design principles have gained prominence through initiatives like Target 2035, a global effort aiming to develop pharmacological modulators for most human proteins by 2035 [24]. Within this framework, public-private partnerships such as the EUbOPEN consortium are creating openly available chemical tools annotated to high standards, emphasizing the critical importance of well-characterized compounds for biomedical research [24]. The rigorous application of potency, selectivity, and cellular activity criteria during library design ensures maximum biological relevance and utility across diverse research applications, particularly in precision oncology where understanding patient-specific vulnerabilities is paramount [10].

Defining the Core Design Criteria

Quantitative Standards for Chemical Probes and Tool Compounds

The minimal fundamental criteria for high-quality chemical tools, often referred to as "fitness factors," have been established through community consensus among chemical biologists and pharmacologists [25]. These criteria provide quantitative benchmarks for evaluating compounds suitable for mechanistic biological investigations.

Table 1: Quantitative Criteria for High-Quality Chemical Probes

| Criterion | Target Profile | Justification |

|---|---|---|

| Potency | In vitro activity < 100 nM | Ensures strong target engagement at physiologically relevant concentrations [25] |

| Selectivity | ≥30-fold against related targets | Minimizes confounding off-target effects in cellular assays [25] |

| Cellular Activity | Target engagement < 1 μM (10 μM for challenging targets) | Demonstrates compound functionality in biologically complex environments [24] [25] |

| Toxicity Window | Reasonable separation between efficacy and toxicity | Confirms phenotypic effects are target-mediated rather than general toxicity [24] |

While these standards represent the gold standard for chemical probes, chemogenomic libraries often employ compounds with slightly modified profiles. Chemogenomic (CG) compounds may exhibit narrower but not exclusive target selectivity while maintaining well-characterized polypharmacology [24]. These tools remain valuable for target deconvolution when used in sets with overlapping selectivity patterns, enabling researchers to identify the target responsible for a specific phenotype through pattern recognition [24].

Experimental Methodologies for Criteria Assessment

Potency Assessment Protocols

Potency evaluation requires a multi-assay approach to determine compound effectiveness across different biological contexts:

- In vitro biochemical assays: Measure direct binding affinity or functional inhibition of purified target proteins using techniques such as fluorescence polarization, surface plasmon resonance, or enzymatic activity assays. These establish fundamental compound-target interactions absent cellular complexity [26].

- Cellular target engagement assays: Employ techniques like cellular thermal shift assays (CETSA) or nanoBRET to confirm compound interaction with intended targets in live cells, providing critical context for biochemical potency [25].

- Phenotypic response measurements: Quantify functional consequences of target engagement through imaging-based viability assays, apoptosis markers, or pathway-specific reporters in disease-relevant cell models [10].

Selectivity Validation Methods

Comprehensive selectivity profiling employs complementary approaches to identify off-target interactions:

- Profiling panels against related targets: Screen compounds against panels of sequence-related proteins (e.g., kinase families, GPCR arrays) to identify selectivity within target families [24] [26].

- Broad-scale profiling platforms: Utilize high-throughput methodologies like chemical proteomics to assess interactions across thousands of proteins simultaneously, identifying unexpected off-target engagements [24].

- Family-specific selectivity criteria: Apply target class-specific guidelines that account for binding site conservation, ligandability, and available chemical matter, as implemented by EUbOPEN's expert committees [24].

Cellular Activity Confirmation

Demonstrating target modulation in biologically relevant systems requires:

- Pathway modulation assays: Measure downstream biomarkers of target engagement, such as phosphorylation status, gene expression changes, or metabolic alterations [10] [25].

- Primary cell testing: Evaluate compound activity in patient-derived cells or physiologically relevant models to confirm functionality in disease-relevant contexts [10] [24].

- Functional rescue experiments: Demonstrate reversal of phenotypic effects through genetic complementation or orthogonal targeting to establish causal relationships between target engagement and phenotypic outcomes [25].

Figure 1: Experimental Framework for Characterizing Chemical Tools. This workflow illustrates the multi-dimensional assessment required to establish compound quality across potency, cellular activity, and selectivity domains, culminating in phenotypic validation.

Implementation in Library Design and Screening

Practical Application in Library Design Strategies

The translation of potency, selectivity, and cellular activity criteria into practical library design involves balancing ideal characteristics with practical constraints:

- Target-family-focused design: Libraries for well-established target families (e.g., kinases, GPCRs) leverage abundant structural information to design compounds with conserved interaction motifs while incorporating selectivity elements through strategic substitution [26]. For example, kinase-focused libraries may include scaffolds capable of binding multiple kinase conformations (active, DFG-out) with substituents that access diverse pocket environments to enable both broad coverage and potential selectivity [26].

- Druggable genome coverage: Minimal screening libraries aim to maximize target coverage within practical screening constraints. Research demonstrates that approximately 1,200 carefully selected compounds can target over 1,300 anticancer proteins when selected based on rigorous potency, selectivity, and cellular activity criteria [10] [27].

- Cell-active compound prioritization: Library design prioritizes compounds with demonstrated cellular activity, as cellular target engagement represents a significant bottleneck in tool compound development. In the EUbOPEN consortium, all compounds undergo comprehensive characterization including primary patient cell profiling to confirm biological relevance [24].

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Research Reagents and Resources for Chemogenomic Studies

| Resource Type | Specific Examples | Function and Utility |

|---|---|---|

| Chemical Probes | Peer-reviewed compounds from EUbOPEN, SGC, Donated Chemical Probes [24] | High-quality, selective modulators for specific target validation; typically satisfy all key design criteria [25] |

| Matched Inactive Controls | Structurally similar but target-inactive analogs [25] | Critical negative controls to distinguish target-mediated from off-target effects; should be used alongside active probes [25] |

| Orthogonal Probes | Chemically distinct compounds targeting same protein [25] | Confirm phenotypic effects through different chemical matter; essential for validating biological findings [25] |

| Chemogenomic Compound Sets | EUbOPEN CG library (covers 1/3 of druggable proteome) [24] | Well-annotated compounds with overlapping selectivity patterns enable target deconvolution through phenotypic screening [24] |

| Online Assessment Portals | Chemical Probes Portal, Probe Miner, Probes & Drugs [25] | Community-vetted resources for identifying appropriate chemical tools and usage guidelines including recommended concentrations [25] |

Current Research Context and Implementation Challenges

The Reality of Chemical Probe Use in Biomedical Research

Despite established guidelines and available resources, implementation of optimal practices remains challenging. A systematic review of 662 publications revealed significant gaps in chemical probe implementation [25]:

- Only 25% of studies used chemical probes within the recommended concentration range

- Just 21% employed available inactive control compounds

- Merely 15% utilized orthogonal chemical probes targeting the same protein

- A mere 4% of publications adhered to all three best practices simultaneously

This implementation gap demonstrates the critical need for continued education and adherence to established design criteria when employing chemical tools in research settings.

Addressing Implementation Challenges Through Structured Frameworks

To improve experimental rigor, researchers have proposed "the rule of two" framework: employing at least two chemical probes (either orthogonal target-engaging probes and/or a pair of an active probe and matched target-inactive compound) at recommended concentrations in every study [25]. This approach directly addresses the validation gaps observed in current literature.

Figure 2: The "Rule of Two" Framework for Robust Chemical Biology. This systematic approach to experimental design emphasizes multiple lines of pharmacological evidence to increase confidence in research findings.