Solving the PDBbind Data Leakage Crisis: Strategies for Generalizable Binding Affinity Prediction

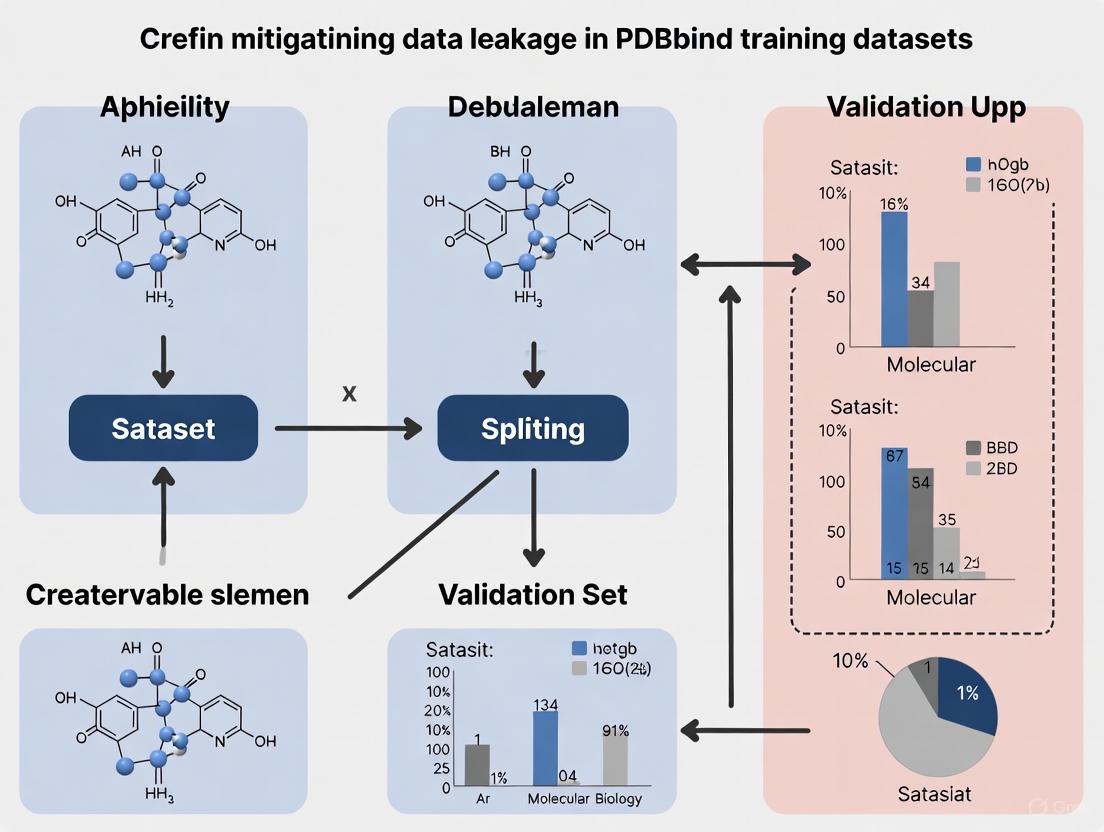

This article addresses the critical challenge of data leakage in PDBbind training datasets, which has been shown to severely inflate the performance metrics of machine learning models for protein-ligand binding...

Solving the PDBbind Data Leakage Crisis: Strategies for Generalizable Binding Affinity Prediction

Abstract

This article addresses the critical challenge of data leakage in PDBbind training datasets, which has been shown to severely inflate the performance metrics of machine learning models for protein-ligand binding affinity prediction. We explore the root causes of this leakage, including structural redundancies and similarities between standard training and test sets like CASF. The content provides a comprehensive overview of modern mitigation strategies, such as the PDBbind CleanSplit and LP-PDBBind protocols, which employ structure-based filtering to create truly independent training and test sets. Furthermore, we discuss the integration of these methods with broader data quality initiatives, such as HiQBind-WF, and evaluate the real-world performance of retrained models on independent benchmarks. This guide is essential for researchers and drug development professionals aiming to build predictive models with robust, generalizable capabilities for structure-based drug discovery.

The PDBbind Data Leakage Problem: Why Your Model's Performance Might Be an Illusion

Defining Data Leakage in the Context of PDBbind and CASF Benchmarks

Frequently Asked Questions

1. What is data leakage in the context of PDBbind and the CASF benchmark? Data leakage occurs when information from the test dataset (in this case, the CASF core sets) inadvertently influences the training process of a model. For PDBbind, this is not typically a literal duplication of data points, but rather the presence of highly similar protein-ligand complexes in both the training (general/refined sets) and test (core sets) data. This similarity allows models to "cheat" by making predictions based on memorization of structural patterns, rather than learning generalizable principles of binding, leading to an overestimation of the model's true performance on novel complexes [1] [2].

2. Why is data leakage between PDBbind and CASF a problem? Data leakage creates an over-optimistic assessment of a model's "scoring power," which is its ability to predict binding affinity. When a model is evaluated on test complexes that are very similar to those it was trained on, its high performance does not translate to real-world drug discovery scenarios, where it must score entirely new protein targets and novel chemical compounds. This inflates benchmark results and masks the model's true generalization capability [1] [2] [3].

3. How can I detect potential data leakage in my dataset? You can analyze your dataset for these key risk factors:

- Unrealistically High Performance: If your model achieves exceptionally high accuracy on the benchmark with minimal tuning, it is a major red flag [4] [5].

- High Structural Similarity: Use algorithms to check for complexes in your training set that have high protein structure similarity (TM-score), ligand similarity (Tanimoto score), and similar binding conformations (pocket-aligned ligand RMSD) to complexes in your test set [1].

- Identity Clusters: Check for the same protein or the same ligand appearing in both your training and test splits [2].

4. What are the main solutions for mitigating data leakage? The research community has developed curated datasets and splits to address this issue:

- PDBbind CleanSplit: A re-splitting of PDBbind that uses a structure-based filtering algorithm to remove training complexes that are structurally similar to any CASF test complex. It also reduces redundancies within the training set itself [1].

- LP-PDBBind (Leak-Proof PDBBind): A reorganized dataset that controls for data leakage by minimizing the sequence and chemical similarity of proteins and ligands between the training, validation, and test datasets [2] [6].

- HiQBind-WF: A workflow focused on creating high-quality protein-ligand binding datasets by correcting common structural artifacts in proteins and ligands, which can further improve model reliability [7].

Troubleshooting Guides

Guide 1: Diagnosing Over-optimistic Model Performance

Symptoms: Your model performs exceptionally well on the CASF benchmark (e.g., low RMSE, high Pearson R) but performs poorly when you test it on your own, truly independent data from other sources like BindingDB.

Diagnostic Steps:

- Benchmark with Clean Splits: Retrain your model on a leak-proof dataset like PDBbind CleanSplit or LP-PDBBind and re-evaluate its performance on the corresponding test set. A significant drop in performance (e.g., an increase in RMSE) is a strong indicator that your original model was benefiting from data leakage [1] [2].

- Perform an Ablation Study: Systematically remove different types of information from your model's input during training and testing. For example, try omitting protein node information from a graph neural network. If the model's performance does not drop significantly, it suggests the predictions are not based on genuine protein-ligand interactions but are likely relying on memorized ligand patterns or other leaked information [1].

- Run a Simple Baseline Algorithm: Implement a naive prediction method that finds the most structurally similar training complex for each test complex and uses its affinity as the prediction. If this simple, non-machine-learning method performs competitively with your complex model, it confirms that the test set can be "solved" through data lookup rather than learned principles [1].

Guide 2: Implementing a Leakage-Aware Data Splitting Strategy

Objective: To create a training and test split from PDBbind that ensures a rigorous evaluation of your model's generalization.

Methodology: The following workflow, based on the PDBbind CleanSplit protocol, outlines the key steps for creating a leakage-aware dataset [1].

Experimental Protocol:

- Calculate Complex Similarity: For every possible pair between a training complex (from PDBbind general/refined sets) and a test complex (from a CASF core set), compute three metrics [1]:

- Protein Structure Similarity: Use the TM-score algorithm. A score of 1.0 indicates perfect structural alignment.

- Ligand Chemical Similarity: Use the Tanimoto coefficient based on molecular fingerprints. A score > 0.9 typically indicates very similar or identical ligands.

- Binding Conformation Similarity: Calculate the root-mean-square deviation (RMSD) of the ligand atoms after aligning the protein binding pockets.

- Identify Leakage: Define similarity thresholds to flag problematic pairs. For example, a pair might be considered a "leak" if it has a high TM-score, a high Tanimoto score, and a low RMSD simultaneously [1].

- Filter the Training Set: Remove all training complexes identified in the previous step from your training dataset. This ensures no test complex has a close relative in the training data.

- Reduce Internal Redundancy (Optional but Recommended): Apply a similar clustering algorithm within the training set itself and remove some complexes to break up large clusters of highly similar structures. This encourages the model to learn general rules instead of memorizing specific structural motifs [1].

Quantitative Impact of Data Leakage

The table below summarizes the demonstrated effect of data leakage on model performance and the benefits of using leak-proof datasets.

Table 1: Performance Impact of Data Leakage and Mitigation Strategies

| Model / Scenario | Training Dataset | Test Dataset | Performance (Example) | Implication |

|---|---|---|---|---|

| State-of-the-Art Models (GenScore, Pafnucy) | Original PDBbind | CASF Benchmark | High Performance [1] | Performance is artificially inflated due to data leakage. |

| Same Models Retrained | PDBbind CleanSplit | CASF Benchmark | Substantial Performance Drop [1] | Confirms that original high scores were driven by leakage. |

| GEMS (Graph Neural Network) | PDBbind CleanSplit | CASF Benchmark | Maintains High Performance (RMSE ~1.22 pK) [1] | Demonstrates genuine generalization capability when trained on a clean dataset. |

| Various SFs (Vina, IGN, etc.) | LP-PDBBind | Independent BDB2020+ Set | Better Performance vs. models trained on standard PDBbind [2] | Leak-proof training leads to more reliable application on new data. |

The Scientist's Toolkit: Research Reagents & Solutions

Table 2: Key Resources for Leakage-Aware Binding Affinity Prediction

| Item | Type | Function & Relevance |

|---|---|---|

| PDBbind CleanSplit | Curated Dataset | A reorganized split of PDBbind designed to eliminate train-test leakage and reduce internal redundancy, enabling a true test of generalization [1]. |

| LP-PDBBind | Curated Dataset | A "Leak-Proof" version of PDBbind that controls for protein and ligand similarity across splits [2] [6]. |

| HiQBind & HiQBind-WF | Dataset & Workflow | A high-quality dataset and an open-source, semi-automated workflow for curating protein-ligand complexes by fixing structural errors, which improves data quality for training [7]. |

| BDB2020+ | Independent Benchmark | A rigorously compiled test set from BindingDB entries deposited after 2020, used for true external validation of model performance [2]. |

| Structure-Based Clustering Algorithm | Methodology | An algorithm that combines TM-score, Tanimoto score, and RMSD to identify overly similar complexes for filtering [1]. |

| Graph Neural Networks (e.g., GEMS, IGN) | Model Architecture | GNNs that use sparse graph modeling of protein-ligand interactions are showing promising generalization capabilities when trained on clean data [1] [2]. |

Troubleshooting Guides

Guide 1: Diagnosing and Resolving Data Leakage in PDBBind

Problem: Your machine learning model for binding affinity prediction performs excellently on standard benchmarks (like CASF) but fails dramatically when applied to genuinely new protein-ligand complexes.

Root Cause: Data leakage due to high structural, sequence, and chemical similarities between the training data (PDBbind general/refined sets) and test data (CASF core set) [1] [2]. Nearly half (49%) of CASF test complexes have exceptionally similar counterparts in the training data, allowing models to "cheat" by memorization rather than learning generalizable principles [1].

Diagnosis Steps:

- Similarity Analysis: Use a structure-based clustering algorithm to compare your training and test complexes across three metrics:

- Performance Drop Test: Retrain your model on a leakage-free split (like PDBbind CleanSplit or LP-PDBBind). A substantial performance drop indicates previous results were inflated by leakage [1] [2].

- Ablation Study: Run predictions while omitting protein node information. Accurate predictions without protein data suggest ligand memorization is a primary mechanism, indicating fundamental leakage [1].

Resolution Steps:

- Adopt a Cleaned Dataset: Replace the standard PDBbind split with a rigorously filtered dataset.

- PDBbind CleanSplit: Uses a structure-based filtering algorithm to remove training complexes that resemble any CASF test complex and reduces redundancies within the training set [1].

- LP-PDBBind (Leak-Proof PDBBind): A reorganized dataset minimizing sequence and chemical similarity of both proteins and ligands between splits, also filtering out covalent binders and structures with steric clashes [6] [2].

- HiQBind: Created via an open-source workflow (HiQBind-WF) that corrects common structural artifacts in PDB structures and ensures reliable binding data [8].

- Use Advanced Splitting Tools: For new data, employ tools like DataSAIL to perform similarity-aware data splits that minimize information leakage by formulating the split as a combinatorial optimization problem [9].

- Benchmark on Truly Independent Data: Use recently proposed independent test sets like BDB2020+ (built from post-2020 BindingDB data matched with PDB structures) for a genuine assessment of generalizability [2].

Guide 2: Improving Model Generalization for Novel Complexes

Problem: After fixing data leakage, model performance on independent tests is lower than desired.

Root Cause: The model architecture itself may lack the inductive biases necessary to generalize to novel protein-ligand pairs that are structurally dissimilar to training examples.

Diagnosis Steps:

- Analyze Performance by Similarity: Stratify your test results based on the similarity of the test complexes to the nearest neighbors in the training set. Poor performance on low-similarity complexes confirms a generalization failure.

- Check Input Representations: Determine if your model uses representations that overly rely on ligand features alone, which is a common shortcut [1].

Resolution Steps:

- Architecture Selection: Implement models designed for robust generalization.

- Sparse Graph Neural Networks (GNNs): Models like GEMS (Graph neural network for Efficient Molecular Scoring) represent protein-ligand interactions as sparse graphs and can maintain high performance even when trained on leakage-free data [1].

- Transfer Learning: Incorporate pre-trained language models (e.g., for protein sequences or ligand SMILES) to provide a richer initial representation that helps with generalization [1].

- Data Augmentation: Augment limited experimental data with high-quality modeled structures from datasets like BindingNet v2. Training on this larger and more diverse dataset has been shown to significantly improve model performance on novel ligands [10].

- Physics-Based Refinement: Combine deep learning models with physics-based refinement and rescoring methods (e.g., MM-GB/SA) to improve the quality of predicted poses and affinities [10].

Frequently Asked Questions (FAQs)

Q1: What exactly is "data leakage" in the context of PDBbind and the CASF benchmark?

Data leakage here is not merely having identical complexes in both training and test sets. It refers to the presence of highly similar proteins (high sequence/TM-score) and/or ligands (high Tanimoto coefficient) in both the PDBbind training data and the CASF test set [1] [2]. This similarity allows models to achieve high benchmark performance by exploiting structural memorization rather than learning the underlying principles of binding, leading to an overestimation of their true generalization capability [1].

Q2: What quantitative evidence exists for this data leakage crisis?

Studies have rigorously quantified the extent of the problem. One analysis revealed that nearly 600 high-similarity pairs exist between the standard PDBbind training set and the CASF-2016 benchmark, involving 49% of all CASF test complexes [1]. A simple algorithm that just found the 5 most similar training complexes for a test complex and averaged their affinities achieved a competitive Pearson R of 0.716 on CASF2016, demonstrating that similarity-based lookup can mimic "intelligent" prediction [1].

Q3: How much does data leakage inflate model performance?

The inflation is substantial. When top-performing models like GenScore and Pafnucy were retrained on a leakage-free split (PDBbind CleanSplit), their benchmark performance dropped markedly [1]. This confirms that the previously excellent performance was largely driven by data leakage and not model generalization.

Q4: Are certain model architectures more susceptible to data leakage?

All models trained on leaked data will show inflated performance. However, some architectures may be more prone to exploiting shortcuts. For instance, models that primarily rely on ligand information can accurately predict affinities for test ligands that are highly similar to those seen in training, even without protein context [1]. The solution is not just about architecture but about training data quality.

Q5: What is the practical impact of using a leak-proof dataset on real-world drug discovery?

Using leak-proof splits like LP-PDBBind for training leads to models that perform significantly better on truly independent test sets (e.g., BDB2020+) [2]. This translates to more reliable predictions for novel drug targets and compounds, which is the central goal of computational drug discovery. It prevents wasted resources based on over-optimistic in-silico results.

Q6: Beyond protein-ligand binding, is this a broader issue in biomedical machine learning?

Yes, data leakage due to similarity is a pervasive problem. It has been documented in other areas such as prediction of protein-protein interactions and missense variant deleteriousness, where standard random splits allow models to use protein-level shortcuts, leading to poor performance on out-of-distribution data [9].

Quantitative Evidence of the Crisis and Its Resolution

| Metric / Finding | Value / Description | Implication |

|---|---|---|

| CASF Complexes with Highly Similar Training Counterparts | 49% | Nearly half the benchmark does not test generalization to new complexes. |

| Performance of Similarity-Based Lookup Algorithm | Pearson R = 0.716 (CASF2016) | Simple memorization can achieve performance rivaling complex models. |

| Performance Drop of Top Models on CleanSplit | "Marked" and "Substantial" drop | Previous high performance was largely driven by data leakage. |

Table 2: Comparison of Datasets and Splits for Mitigating Leakage

| Dataset / Split | Key Curation Methodology | Key Advantage |

|---|---|---|

| PDBbind CleanSplit [1] | Structure-based filtering removing complexes with high protein (TM-score), ligand (Tanimoto), and binding pose (RMSD) similarity to test set. | Creates a strictly separated training set, turning CASF into a true external test. |

| LP-PDBBind [2] | Minimizes sequence/chemical similarity of both proteins and ligands between splits. Removes covalent binders and clashes. | Provides a standardized, cleaned data split for robust model comparison. |

| HiQBind & HiQBind-WF [8] | Open-source workflow to correct structural artifacts (bonds, protonation, clashes) in PDB structures. | Improves structural quality and reliability of binding affinity annotations. |

| DataSAIL [9] | Algorithmic tool for similarity-aware data splitting, formulated as an optimization problem. | Generic tool for creating leakage-reduced splits for various biomedical data types. |

Experimental Protocols for Creating a Leakage-Free Benchmark

Protocol 1: Creating a Cleaned Data Split (e.g., PDBbind CleanSplit)

Objective: To generate a training dataset free of complexes that are highly similar to a designated test benchmark.

Materials:

- Source dataset (e.g., PDBbind general/refined set for training, CASF core set for test)

- Protein structure alignment tool (e.g., for calculating TM-scores)

- Cheminformatics toolkit (e.g., for calculating Tanimoto coefficients from ligand SMILES)

- Structural analysis tool (e.g., for calculating pocket-aligned ligand RMSD)

Methodology:

- For each complex in the test set, compare it against every complex in the training set using a multi-modal similarity assessment [1]:

- Calculate protein structure similarity using the TM-score. A TM-score ≥ 0.7 suggests significant structural similarity.

- Calculate ligand similarity using the Tanimoto coefficient based on molecular fingerprints. A Tanimoto coefficient > 0.9 indicates high chemical similarity.

- Calculate binding pose similarity by aligning the protein pockets and computing the RMSD of the ligand heavy atoms. An RMSD < 2.0 Å suggests a very similar binding mode.

- Filter the training set by removing any complex that exceeds similarity thresholds (e.g., TM-score ≥ 0.7 AND Tanimoto > 0.9) with any test complex [1].

- Remove redundant training complexes by applying the same multi-modal comparison within the training set itself and iteratively removing complexes to dissolve the largest similarity clusters. This encourages the model to learn general rules instead of memorizing specific patterns [1].

- The resulting filtered dataset (e.g., PDBbind CleanSplit) is now strictly separated from the test benchmark and can be used for training models to assess true generalization.

Protocol 2: Retraining and Evaluating Models on a Cleaned Split

Objective: To realistically assess the generalization capability of a scoring function.

Materials:

- Cleaned dataset split (from Protocol 1 or a pre-made one like LP-PDBBind)

- Independent test set (e.g., BDB2020+ [2], or a cluster-based split with no similarity to training)

- Model implementations (e.g., Graph Neural Networks, RF-Score, etc.)

Methodology:

- Retrain the model using the training partition of the cleaned dataset.

- Evaluate the model on the test partition of the cleaned dataset. This gives a baseline performance without leakage.

- Evaluate the model on a truly independent test set like BDB2020+, which contains protein-ligand complexes released after 2020 and filtered for similarity to the training data [2]. This is the ultimate test of generalizability.

- Conduct an ablation study to probe the model's reasoning. For example, run predictions on the test set after omitting the protein nodes from the input. A model that still performs well is likely relying heavily on ligand memorization, whereas a model whose performance crashes is genuinely using protein-ligand interaction information [1].

Research Reagent Solutions

Table 3: Essential Tools and Datasets for Robust Binding Affinity Prediction

| Reagent / Resource | Type | Function / Purpose |

|---|---|---|

| PDBbind CleanSplit [1] | Curated Dataset | A training set filtered to remove structural similarities with CASF benchmarks, mitigating train-test leakage. |

| LP-PDBBind [2] | Curated Dataset | A leak-proof reorganization of PDBbind with minimized protein and ligand similarity between splits. |

| HiQBind & HiQBind-WF [8] | Data Curation Workflow | An open-source, semi-automated workflow to correct common structural artifacts in PDB complexes. |

| DataSAIL [9] | Software Tool | A Python package for performing similarity-aware data splits to minimize information leakage in biomedical ML. |

| BDB2020+ [2] | Independent Test Set | A high-quality benchmark compiled from post-2020 BindingDB and PDB data, useful for final model validation. |

| BindingNet v2 [10] | Augmented Dataset | A large set of modeled protein-ligand complexes to augment training data and improve model generalization. |

Workflow Diagrams

Diagram 1: Data Leakage Crisis in PDBbind

Diagram 2: Creating a Leakage-Free Benchmark

Frequently Asked Questions

1. What is data leakage in the context of PDBBind, and why is it a problem? Data leakage occurs when highly similar protein or ligand complexes are present in both the training and testing datasets. Unlike exact duplicates, this often involves proteins with high sequence similarity or ligands with high chemical similarity. This inflates performance metrics during benchmarking because the model is tested on data that is not truly novel, giving a false impression of its ability to generalize to new, unseen complexes. Consequently, a model may perform poorly in real-world drug discovery applications where it encounters truly novel targets [6] [1] [2].

2. How can I detect if my model's performance is compromised by data leakage? A key red flag is a significant performance drop when evaluating your model on a carefully curated, leakage-proof test set compared to a standard benchmark like the CASF core set. For instance, when state-of-the-art models were retrained on a leakage-proof dataset, their performance on the CASF benchmark dropped markedly [1]. Another method is to use a simple similarity-search algorithm that predicts affinity by averaging labels from the most similar training complexes; competitive performance from this naive approach suggests that your model might be leveraging memorization rather than learning generalizable principles [1].

3. What are the main types of errors found in PDBBind that affect model training? Beyond data leakage, the database contains curation and structural errors. A manual analysis of a protein-protein subset found an ~19% error rate in curated equilibrium dissociation constants (KD). These errors were categorized as shown in the table below [11]. Furthermore, common structural artifacts include covalent binders incorrectly labeled as non-covalent, ligands with rare elements, and severe steric clashes between protein and ligand atoms, all of which can mislead the training of scoring functions [8].

4. What solutions and resources are available to mitigate these issues? Researchers have developed new dataset splits and cleaning workflows to address these problems:

- Leak-Proof Splits: LP-PDBBind and PDBbind CleanSplit are reorganized versions of the dataset that control for protein and ligand similarity across training, validation, and test sets [6] [1] [2].

- Independent Test Sets: BDB2020+ and HiQBind are new independent datasets compiled from recent PDB entries and other sources like BindingDB, filtered with strict similarity controls to serve as reliable benchmarks [6] [8].

- Quality Control Workflows: HiQBind-WF is an open-source, semi-automated workflow that corrects common structural artifacts in PDB files, ensuring higher-quality input data [8].

Troubleshooting Guides

Guide 1: Diagnosing and Resolving Data Leakage

Symptoms: Your model shows excellent performance on standard benchmarks (e.g., CASF core set) but fails to make accurate predictions for your own novel protein-ligand complexes.

Methodology:

- Test on a Curated Benchmark: Evaluate your model on an independent benchmark like BDB2020+, which contains complexes deposited after 2020 and is filtered to be dissimilar to common training sets [6] [2]. A large performance gap between your results on this set and the CASF set indicates likely leakage.

- Perform Cluster-Based Cross-Validation: Instead of random splits, split your data using single-linkage clustering based on protein sequence similarity. This ensures that similar proteins are not scattered across training and test sets, providing a more realistic estimate of performance on new protein families [11].

- Analyze Similarity: Use a structure-based clustering algorithm that assesses protein similarity (TM-score), ligand similarity (Tanimoto score), and binding conformation similarity (pocket-aligned ligand RMSD). Identify and remove training complexes that are highly similar to any test complex [1].

Solution: Retrain your model on a leak-proof dataset. The table below summarizes the performance impact of retraining models on such datasets, demonstrating a more realistic assessment of generalization capability.

Table 1: Impact of Leak-Proof Training on Model Performance

| Model | Performance on CASF with Standard Training | Performance on CASF with Leak-Proof Training | Key Change |

|---|---|---|---|

| GenScore [1] | Excellent benchmark performance | Marked performance drop | Trained on PDBbind CleanSplit |

| Pafnucy [1] | Excellent benchmark performance | Marked performance drop | Trained on PDBbind CleanSplit |

| IGN [6] [2] | Good performance | Better generalizability on independent BDB2020+ set | Trained on LP-PDBBind |

Diagram: Troubleshooting workflow for data leakage.

Guide 2: Addressing Data Curation Errors

Symptoms: Your model's predictions are inconsistent or show poor correlation with experimental results, even after accounting for data leakage.

Methodology:

- Manual Verification: For a subset of your data, manually check the primary literature associated with the PDB entry to verify the reported KD value. Focus on categories known to have high error rates.

- Categorize Discrepancies: Classify any found errors using established categories to understand the root cause. The table below details common curation error types identified in research [11].

- Implement Automated Filters: Use a workflow like HiQBind-WF to automatically filter out problematic complexes, such as covalent binders, structures with steric clashes, or ligands with rare atomic elements [8].

Solution: Correct the errors in your dataset or use a pre-corrected dataset. Research shows that correcting curation errors can improve the Pearson correlation between predicted and measured log10(KD) values by approximately 8 percentage points [11].

Table 2: Common Categories of Curation Errors in PDBBind

| Error Category | Description | Example |

|---|---|---|

| No KD | The protein complex in the PDB structure does not have a KD value reported in the primary publication. | KD is reported for a different protein construct than the one crystallized [11]. |

| Different Heterodimer | The KD value belongs to a different protein heterodimer than the one in the PDB structure. | KD is for full-length protein, but PDB structure is of a truncated variant [11]. |

| Units | The units of the KD value are incorrect (e.g., nM vs. µM). | PDBBind reports 1.5 × 10⁻⁷ M, but the primary paper reports 1.5 × 10⁻¹⁰ M [11]. |

| Approximate | PDBBind reports an approximate value, while the primary citation reports a more precise one. | Paper reports 7.4 × 10⁻⁷ M; PDBBind reports 8 × 10⁻⁷ M [11]. |

| Multisite KD | PDBBind provides a single KD, but the primary publication reports multiple values for a multi-site binding model. | Publication reports two KDs; PDBBind reports only one [11]. |

Diagram: Workflow for addressing data curation errors.

The Scientist's Toolkit

Table 3: Essential Research Reagents and Resources

| Item Name | Type | Function and Explanation |

|---|---|---|

| LP-PDBBind [6] [2] | Dataset | A leak-proof reorganization of PDBBind with minimized protein/ligand similarity between splits to train more generalizable models. |

| PDBbind CleanSplit [1] | Dataset | A filtered training dataset created via structure-based clustering to eliminate data leakage and redundancy within the training set. |

| BDB2020+ [6] [2] | Benchmark Dataset | An independent evaluation set compiled from BindingDB and PDB entries post-2020, used for true external validation of model generalizability. |

| HiQBind-WF [8] | Software Workflow | An open-source, semi-automated workflow that corrects common structural artifacts in PDB files (e.g., bond orders, steric clashes, protonation states). |

| Cluster-Based Cross-Validation [11] | Methodology | A validation technique that groups similar proteins into clusters, ensuring all members of a cluster are in the same data split to prevent over-optimistic performance estimates. |

| Structure-Based Clustering Algorithm [1] | Algorithm | A method to identify similar complexes using combined protein structure (TM-score), ligand chemistry (Tanimoto), and binding pose (RMSD) metrics. |

Troubleshooting Guides

Guide 1: Diagnosing and Mitigating Data Leakage in PDBbind Training

Problem: Your machine learning model for binding affinity prediction performs well on benchmark tests (like CASF) but fails dramatically in real-world drug discovery applications on novel protein targets.

Explanation: This performance gap often stems from data leakage, where models memorize similarities between training and test data instead of learning generalizable principles of protein-ligand interactions. The standard PDBbind dataset and CASF benchmark share significant structural similarities, inflating performance metrics [1] [2].

Diagnosis and Solutions:

| Symptom | Root Cause | Investigation Method | Solution |

|---|---|---|---|

| High benchmark performance but poor performance on novel targets | Protein Similarity: Highly similar protein sequences or folds between training and test sets [1] [12]. | Calculate TM-scores or sequence identity between training and test proteins [1]. | Use similarity-aware data splits (e.g., PDBbind CleanSplit, LP-PDBBind) [1] [2]. |

| Model accurately predicts affinity for known ligand scaffolds but fails on new chemotypes | Ligand Memorization: Same or highly similar ligands (Tanimoto score >0.9) in both training and test sets [1] [2]. | Compute Tanimoto coefficients between training and test ligands [1]. | Filter training set to remove ligands highly similar to those in the test set [1]. |

| Model performs well on specific binding conformations but poorly on novel poses | Binding Conformation Leakage: Nearly identical protein-ligand binding geometries (low pocket-aligned RMSD) in both datasets [1]. | Calculate pocket-aligned ligand RMSD between complexes [1]. | Implement structure-based filtering using combined protein, ligand, and conformation metrics [1]. |

Quantitative Impact of Data Leakage:

The table below summarizes the extent of data leakage identified in the standard PDBbind dataset and the performance drop observed when models are retrained on leakage-free splits [1].

| Metric | Standard PDBbind | After CleanSplit Filtering | Notes |

|---|---|---|---|

| Test Complexes Affected | ~49% of CASF complexes | Strictly independent | 49% of test complexes had highly similar counterparts in training [1]. |

| Training Complexes Removed | N/A | ~12% total removed | 4% removed due to test similarity, ~8% for internal redundancy [1]. |

| Model Performance (RMSE) | Artificially low | Increases significantly | e.g., State-of-the-art model performance dropped on CASF2016 after retraining on CleanSplit [1]. |

Guide 2: Implementing a Leakage-Free Data Split for Your Dataset

Problem: You need to create a robust training/test split for your proprietary protein-ligand dataset to ensure your model will generalize.

Explanation: Random splitting is insufficient for biomolecular data due to inherent structural and chemical similarities. Specialized algorithms and tools are required to minimize data leakage.

Workflow for Creating a Leakage-Free Split:

Implementation Methods:

| Method | Description | Tools | Applicability |

|---|---|---|---|

| Multi-Metric Filtering | Uses combined protein, ligand, and conformation similarity to identify and remove overly similar complexes [1]. | Custom scripts (e.g., PDBbind CleanSplit algorithm) [1]. | Best for structure-based affinity prediction models. |

| Optimization-Based Splitting | Formulates splitting as a combinatorial optimization problem to minimize inter-split similarity [12] [9]. | DataSAIL [12] [9] | General purpose; handles 1D (proteins or ligands) and 2D (protein-ligand pairs) data. |

| Cluster-Based Splitting | Clusters data by similarity, then assigns entire clusters to splits to ensure independence [2]. | LP-PDBBind protocol [2] | Good for controlling both protein and ligand leakage simultaneously. |

Validation Protocol: After creating your splits, validate them by:

- Checking that no protein in the test set has a TM-score >0.5 with any training protein [1].

- Ensuring no test ligand has a Tanimoto coefficient >0.9 with any training ligand [1].

- Using an independent external test set (e.g., BDB2020+) [2] for final evaluation.

Frequently Asked Questions

Q1: What exactly is "data leakage" in the context of PDBbind and protein-ligand affinity prediction? Data leakage occurs when information from the test dataset inadvertently influences the training process, leading to overly optimistic performance estimates. In PDBbind, this is not usually exact duplicates but high structural and chemical similarities between complexes in the standard training set (e.g., PDBbind general/refined) and the test set (e.g., CASF core set). Models then exploit these similarities through "shortcut learning" rather than learning generalizable binding principles [1] [2].

Q2: My model uses a graph neural network (GNN). Why is it particularly vulnerable to ligand memorization? GNNs can exploit statistical shortcuts. Studies show that GNNs for binding affinity sometimes rely heavily on ligand features alone to make predictions, especially when the same or similar ligands appear in both training and test sets. When protein nodes are omitted from the graph, prediction accuracy often drops significantly, confirming that the model is memorizing ligands rather than learning protein-ligand interactions [1].

Q3: Are there any ready-to-use, leakage-free versions of PDBbind available? Yes, recent research has produced curated, leakage-reduced datasets:

- PDBbind CleanSplit: A re-split of PDBbind using a structure-based filtering algorithm to remove complexes with high similarity to the CASF benchmarks and reduce internal redundancies [1].

- LP-PDBBind (Leak-Proof PDBbind): A reorganized dataset with splits designed to minimize protein and ligand similarity between training, validation, and test sets [2] [6]. These datasets are designed to enable more realistic model evaluation and improve generalization [1] [2].

Q4: How does the DataSAIL tool help prevent data leakage, and when should I use it? DataSAIL is a Python package that formally treats data splitting as a combinatorial optimization problem. It is particularly valuable when:

- You are working with non-PDBbind data or a proprietary dataset and need to create robust splits.

- Your task involves two-dimensional data, such as protein-ligand pairs, where you need to control for similarity along both the protein and ligand dimensions simultaneously [12] [9]. DataSAIL helps ensure that the similarity between training and test data is minimized, providing a more realistic performance estimate for out-of-distribution applications [12].

The Scientist's Toolkit

Research Reagent Solutions

| Reagent / Resource | Type | Function in Mitigating Data Leakage |

|---|---|---|

| PDBbind CleanSplit | Curated Dataset | Provides a leakage-reduced version of PDBbind for training and evaluation, ensuring the test set (CASF) is structurally independent of the training data [1]. |

| LP-PDBBind | Curated Dataset | Offers a reorganized PDBbind with training/validation/test splits designed to minimize protein and ligand similarity, controlling for both dimensions of leakage [2]. |

| DataSAIL | Software Tool | A versatile Python package for performing similarity-aware data splits on biomolecular data, including complex protein-ligand pairs [12] [9]. |

| BDB2020+ | Independent Benchmark | An external test set compiled from BindingDB entries deposited after 2020, used for truly independent evaluation of model generalizability [2]. |

| TM-score Algorithm | Metric Algorithm | Quantifies protein structural similarity; used to identify and filter out proteins with high TM-score (>0.5) between splits [1]. |

| Tanimoto Coefficient | Metric Algorithm | Calculates ligand chemical similarity; used to filter out ligands with high Tanimoto score (>0.9) between splits [1]. |

Building Robust Datasets: Practical Protocols for Leakage-Free Splits

Troubleshooting Guides

Guide 1: Diagnosing and Mitigating Data Leakage in PDBbind

Problem: Models exhibit high benchmark performance on CASF datasets but fail dramatically in real-world applications or on truly independent tests.

Root Cause: Significant data leakage exists between the standard PDBbind training set and the common CASF benchmark test sets [1] [13]. Nearly 49% of CASF complexes have exceptionally similar counterparts (in protein structure, ligand chemistry, and binding conformation) in the training data, allowing models to "memorize" rather than generalize [1]. This inflates performance metrics and creates over-optimistic expectations of model capability.

Solution: Implement the PDBbind CleanSplit protocol, which applies a structure-based filtering algorithm to remove problematic similarities [1] [13].

| Step | Action | Rationale |

|---|---|---|

| 1. Identify Leakage | Compare all training and test complexes using combined protein similarity (TM-score), ligand similarity (Tanimoto), and binding conformation similarity (pocket-aligned ligand RMSD) [1]. | A multi-faceted approach catches leaks that single-metric (e.g., sequence-based) checks miss. |

| 2. Remove Test Similarities | Exclude any training complex with TM-score > 0.8, Tanimoto > 0.9, or a combined (Tanimoto + (1 - RMSD)) score > 0.8 versus any test complex [1]. | Severs the direct structural shortcut between training and test examples. |

| 3. Prevent Ligand Memorization | Remove training complexes with ligands identical (Tanimoto > 0.9) to those in the test set [1]. | Stops the model from predicting affinity based solely on recognizing a known ligand. |

| 4. Reduce Internal Redundancy | Apply adapted thresholds to identify and break up large similarity clusters within the training set itself [1]. | Forces the model to learn generalizable rules instead of relying on numerous near-duplicates. |

Verification: After applying CleanSplit, retrain your model. A significant performance drop on the CASF benchmark indicates that the original model's performance was likely inflated by data leakage. A model with genuine generalization capability will maintain robust performance [1].

Guide 2: Addressing Poor Generalization on Novel Complexes

Problem: A model, trained on a leakage-free dataset like CleanSplit, still performs poorly on novel protein families or ligand scaffolds.

Root Cause: The model architecture itself may be prone to learning shortcuts or lacks the necessary inductive biases to capture genuine protein-ligand interactions [1] [13].

Solution: Adopt an architecture designed for generalization, such as the GEMS (Graph neural network for Efficient Molecular Scoring) model, and leverage transfer learning [1].

| Component | Implementation | Benefit |

|---|---|---|

| Sparse Graph Representation | Model the protein-ligand complex as a graph, with atoms as nodes and interactions as edges [1]. | Focuses the model on relevant local chemical environments and interactions, improving efficiency and generalization. |

| Ablation Study | Systematically remove parts of the input (e.g., protein nodes) during evaluation [1]. | Verifies that predictions are based on genuine protein-ligand interactions and not just ligand-based memorization. |

| Transfer Learning | Initialize model components using pre-trained language models on large corpora of protein sequences or chemical compounds [1]. | Provides the model with a strong foundational understanding of biochemistry and chemistry before learning the specific task of affinity prediction. |

Frequently Asked Questions (FAQs)

Q1: What is the single most critical change I should make to my PDBbind training pipeline to improve model generalization?

A: The most critical change is to replace the standard PDBbind training split with a leakage-free version, such as PDBbind CleanSplit or LP-PDBBind [1] [2]. This ensures your model is evaluated on a test set that truly represents novel challenges, providing a realistic measure of its real-world applicability.

Q2: My model's performance dropped significantly after I switched to CleanSplit. Does this mean my model is bad?

A: Not necessarily. A performance drop is an expected and positive sign that you have successfully eliminated the data leakage that was artificially inflating your metrics [1]. It means you are now measuring your model's true generalization capability. This provides a more honest starting point for further model improvement.

Q3: Are there automated tools available to create my own leakage-free data splits for other biomolecular datasets?

A: Yes. Tools like DataSAIL are specifically designed for this purpose [12]. DataSAIL formulates leakage-reduced data splitting as a combinatorial optimization problem, handling complex scenarios involving one-dimensional (e.g., single molecules) and two-dimensional (e.g., drug-target pairs) data while controlling for similarity across splits.

Q4: Beyond data leakage, what other data quality issues should I be aware of in PDBbind?

A: Several other issues can compromise model training, which workflows like HiQBind-WF and PDBBind-Opt aim to fix [8] [14]. Key problems include:

- Covalent binders: Complexes where the ligand is covalently linked to the protein, which have a different binding mechanism [8] [14].

- Structural artifacts: Incorrect bond orders, severe steric clashes, and missing atoms in the original PDB structures [8] [14].

- Presence of rare chemical elements: Ligands containing elements like Tellurium (Te) or Selenium (Se) can be problematic due to their scarcity [8].

Experimental Protocols & Workflows

PDBbind CleanSplit Filtering Algorithm Workflow

The following diagram illustrates the logical workflow of the structure-based filtering algorithm used to create PDBbind CleanSplit.

Protocol: Executing the CleanSplit Filtering Algorithm

Objective: To create a training dataset (CleanSplit) free of data leakage against a designated test set (e.g., CASF core set) by removing structurally similar complexes.

Inputs:

- Full set of protein-ligand complexes from PDBbind.

- Test set complexes (e.g., from CASF 2016).

Methodology:

- Similarity Computation: For each training-test complex pair, compute three key metrics [1]:

- Protein Structure Similarity: Use TM-align to calculate the TM-score. A score > 0.8 indicates high structural similarity, even with low sequence identity [1].

- Ligand Chemical Similarity: Calculate the Tanimoto coefficient based on molecular fingerprints (e.g., using RDKit). A score > 0.9 indicates nearly identical ligands [1].

- Binding Conformation Similarity: After aligning protein pockets via TM-align, compute the root-mean-square deviation (RMSD) of the ligand heavy atoms. This measures how similarly the ligand is positioned in the binding site [1].

Application of Exclusion Criteria: A training complex is excluded if it meets ANY of the following conditions versus a test complex [1]:

- Its ligand is nearly identical (Tanimoto > 0.9) AND has a similar binding affinity (label difference |ΔpK| ≤ 1).

- It has high protein similarity (TM-score > 0.8).

- It has a high combined score for ligand similarity and positioning (Tanimoto + (1 - RMSD) > 0.8).

Redundancy Reduction (Optional but Recommended): Apply adapted versions of the above thresholds to identify and remove similar complexes within the training set, ensuring greater diversity and discouraging memorization [1].

Output: A filtered training dataset (PDBbind CleanSplit) rigorously separated from the test set.

Performance Validation Experiment

Objective: To quantify the impact of data leakage and validate the effectiveness of the CleanSplit dataset.

Method:

- Select two state-of-the-art binding affinity prediction models (e.g., GenScore and Pafnucy) [1].

- Train two instances of each model:

- Instance A: Trained on the standard PDBbind training set.

- Instance B: Trained on the PDBbind CleanSplit set.

- Evaluate the performance of all trained models on the same CASF benchmark, using standard metrics like Pearson's R and Root-Mean-Square Error (RMSE).

Expected Results:

| Model | Training Set | CASF Benchmark Performance (Pearson R / RMSE) | Interpretation |

|---|---|---|---|

| GenScore | Standard PDBbind | High (Inflated) | Performance likely driven by data leakage [1] |

| GenScore | PDBbind CleanSplit | Substantially Lower | Reveals the model's true generalization capability [1] |

| GEMS | PDBbind CleanSplit | Maintains High | Demonstrates genuine generalization, not reliant on leakage [1] |

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Resource | Type | Primary Function | Relevance to Mitigating Data Leakage |

|---|---|---|---|

| PDBbind CleanSplit | Curated Dataset | Provides a leakage-free training split for PDBbind. | The core solution; a benchmark-ready dataset for robust model training and evaluation [1] [13]. |

| LP-PDBBind | Curated Dataset | A reorganized PDBbind split controlling for protein and ligand similarity. | An alternative leakage-proof dataset, also used to retrain and re-evaluate scoring functions [2] [6]. |

| DataSAIL | Software Tool | Computes optimal data splits for biomedical ML to minimize information leakage. | Generalizes the splitting protocol; can be applied to create custom leakage-free splits for various datasets and problem types [12]. |

| HiQBind-WF / PDBBind-Opt | Workflow | An open-source, automated workflow for correcting structural artifacts in protein-ligand complexes. | Addresses data quality issues orthogonal to leakage, such as fixing incorrect bond orders, removing covalent binders, and resolving steric clashes [8] [14]. |

| GEMS Model | Machine Learning Model | A graph neural network for binding affinity prediction. | An example of a model architecture designed to achieve high performance without relying on data leakage, using sparse graphs and transfer learning [1]. |

Core Concept FAQs

What is the primary objective of the LP-PDBBind protocol? The primary objective of the LP-PDBBind (Leak-Proof PDBBind) protocol is to reorganize the popular PDBBind dataset into training, validation, and test sets that rigorously control for data leakage. Data leakage is defined as the presence of proteins and ligands with high sequence and structural similarity across different dataset splits, which can lead to artificially inflated performance metrics and poor generalizability of scoring functions to truly novel protein-ligand complexes [2].

How does "data leakage" specifically impact the development of scoring functions? When data leakage occurs, machine learning models or empirical scoring functions may achieve high performance on test sets by "memorizing" similarities to the training data, rather than by learning generalizable principles of binding. This creates an overoptimistic assessment of a model's capability. Consequently, a model that performs excellently on a contaminated test set may perform poorly in real-world drug discovery applications on novel targets or compounds [2] [3].

What are the key differences between LP-PDBBind and the standard PDBBind split? The standard PDBBind's "general," "refined," and "core" sets are known to be cross-contaminated with highly similar proteins and ligands. In contrast, LP-PDBBind introduces a new data splitting strategy that minimizes sequence and chemical similarity of both proteins and ligands between the training, validation, and test datasets. It also includes additional data cleaning steps to remove covalent binders and correct structural artifacts [2].

Implementation & Troubleshooting FAQs

What are the specific similarity thresholds used to define data leakage in LP-PDBBind? The LP-PDBBind protocol defines and controls for similarity using pairwise comparisons. The specific thresholds are designed to ensure that proteins and ligands in the test set are not highly similar to those in the training set. The following table summarizes the key criteria:

Table: Key Similarity Control Criteria in LP-PDBBind

| Entity | Similarity Measure | Objective |

|---|---|---|

| Protein | Pairwise sequence similarity | Ensure test proteins have low sequence similarity to training proteins [2]. |

| Ligand | Chemical fingerprint similarity (e.g., Tanimoto similarity) | Ensure test ligands are chemically dissimilar to training ligands [2]. |

| Protein-Ligand Pair | Structural interaction patterns | Minimize similarity in protein-ligand interaction patterns between splits [2]. |

The dataset size after applying LP-PDBBind is smaller. Is this a problem? A reduction in dataset size is an expected and acceptable consequence of rigorous data curation. The primary goal of LP-PDBBind is not to maximize quantity, but to ensure quality and reliability for model evaluation. A smaller, "leak-proof" dataset provides a more realistic and trustworthy benchmark for assessing the true generalizability of your scoring function [2] [3].

How do I access and use the LP-PDBBind dataset?

The LP-PDBBind dataset is available via a GitHub repository. The repository contains meta-information files (e.g., LP_PDBBind.csv) that specify the new data splits, clean levels, and other annotations. You will need to cross-reference this with structure files downloaded from the PDBBind website [15].

Table 1: LP-PDBBind Dataset Structure

| Component | Description | File/Location |

|---|---|---|

| Meta-information | PDB IDs, splits, SMILES, sequences, affinity data | dataset/LP_PDBBind.csv |

| Structure Files | Protein (.pdb) and ligand (.sdf/.mol2) structures | To be downloaded from the official PDBBind website. |

| Clean Levels | Boolean flags (CL1, CL2, CL3) indicating data quality tiers | Specified in the meta-information file. |

My model, trained on LP-PDBBind, shows lower performance on the test set. What does this mean? A drop in performance when moving from a standard split to LP-PDBBind is not a failure of your model, but rather an indication that the previous evaluation was likely biased. LP-PDBBind provides a more rigorous and realistic assessment of your model's scoring power. This result underscores the importance of using a leakage-free benchmark to guide the development of generalizable models [2].

Research Reagent Solutions

Table 2: Essential Materials for LP-PDBBind and Related Research

| Research Reagent / Tool | Type | Primary Function |

|---|---|---|

| LP-PDBBind Dataset | Curated Dataset | A leakage-proof benchmark for training and evaluating protein-ligand scoring functions [2] [15]. |

| BDB2020+ Dataset | Independent Test Set | An independent benchmark compiled from BindingDB entries deposited after 2020, used for final model validation [2] [15]. |

| DataSAIL | Software Tool | A Python package for performing similarity-aware data splitting to minimize information leakage in biomedical ML tasks [12]. |

| HiQBind-WF | Software Workflow | An open-source, semi-automated workflow for curating high-quality, non-covalent protein-ligand datasets and correcting structural artifacts [8]. |

Experimental Protocol: Creating the LP-PDBBind Split

The following diagram illustrates the workflow for generating the LP-PDBBind dataset, which involves data cleaning and similarity-based splitting.

LP-PDBBind Creation Workflow

Step-by-Step Methodology:

Data Cleaning and Curation:

- Input: Begin with the raw PDBBind dataset (e.g., version 2020) [2].

- Remove Covalent Binders: Filter out protein-ligand complexes where the ligand is covalently bound to the protein, as non-covalent binding is the primary focus [2] [8].

- Apply Rare Element Filter: Exclude ligands containing elements other than H, C, N, O, F, P, S, Cl, Br, and I to avoid data sparsity issues [8].

- Remove Steric Clashes: Exclude structures where any protein-ligand heavy atom pairs are closer than 2 Å, as these represent physically unrealistic interactions [8].

Similarity Analysis:

- Protein Similarity: For all protein sequences in the cleaned dataset, compute pairwise sequence similarity (e.g., using BLAST or an equivalent algorithm) [2] [15].

- Ligand Similarity: For all ligands, compute pairwise chemical similarity using molecular fingerprint representations (e.g., ECFP fingerprints) and a metric like Tanimoto similarity [2] [15].

Similarity-Aware Data Splitting:

- Algorithm: Use a splitting algorithm that formulates the assignment of complexes to training, validation, and test sets as an optimization problem. The objective is to minimize the maximum similarity of proteins and ligands between the different splits [2].

- Output: The result is the LP-PDBBind dataset, comprising three distinct splits where proteins and ligands in the test set are not highly similar to those in the training set. This dataset is now suitable for training and evaluating generalizable scoring functions [2].

What is the primary goal of this multimodal filtering approach?

This filtering methodology aims to mitigate data leakage in protein-ligand binding affinity prediction models, particularly for datasets like PDBbind. Data leakage occurs when models are trained and tested on non-independent data, leading to overoptimistic performance that doesn't generalize to real-world applications. By employing three complementary metrics, the approach ensures training and test sets contain structurally distinct complexes [13] [1].

Why are these three specific metrics used together?

Each metric captures a different dimension of protein-ligand complex similarity, providing a more robust assessment than any single metric could achieve [13]:

- TM-score assesses 3D protein structure similarity

- Tanimoto score assesses 2D ligand structural similarity

- Pocket-aligned RMSD assesses binding conformation similarity

This multimodal approach can identify complexes with similar interaction patterns even when proteins have low sequence identity, addressing limitations of traditional sequence-based filtering [13].

Technical Specifications & Thresholds

What are the quantitative thresholds for identifying problematic similarities?

The table below summarizes the key filtering thresholds used to identify and remove overly similar protein-ligand complexes:

Table 1: Multimodal Filtering Thresholds for Identifying Data Leakage

| Metric | Measurement Focus | Similarity Threshold | Interpretation Guidelines |

|---|---|---|---|

| TM-score | Protein structure similarity | >0.5 | Generally indicates the same protein fold [16] |

| Tanimoto Coefficient | Ligand chemical similarity | >0.9 | Indicates highly similar or identical ligands [13] |

| Pocket-aligned RMSD | Binding conformation similarity | <2.0 Å | Suggests nearly identical ligand positioning [13] |

What practical impact does this filtering have on datasets?

Application of these thresholds to the PDBbind-CASF benchmark relationship revealed:

- ~600 similarities identified between training and test complexes [13]

- 49% of CASF test complexes had highly similar counterparts in training data [13]

- ~12% of training complexes removed to create a "clean" dataset (4% for test separation + 7.8% for internal redundancy) [13]

Implementation Workflow

The following diagram illustrates the complete multimodal filtering process:

Research Reagent Solutions

Table 2: Essential Tools and Resources for Implementing Multimodal Filtering

| Tool/Resource | Type | Primary Function | Implementation Notes |

|---|---|---|---|

| TM-score | Software utility | Quantifies protein structural similarity | Available as C++ or Fortran source code; values >0.5 indicate same fold [16] |

| Tanimoto Coefficient | Mathematical metric | Calculates 2D molecular similarity based on chemical fingerprints | Typically implemented using RDKit or similar cheminformatics libraries [13] |

| Pocket-aligned RMSD | Geometric calculation | Measures binding mode similarity after structural alignment | Requires prior pocket alignment; values <2.0 Å indicate near-identical positioning [13] |

| PDBbind Database | Data resource | Source of protein-ligand complexes with binding affinities | General/refined sets for training; core set for testing [13] [2] |

| CASF Benchmark | Evaluation dataset | Standard benchmark for scoring functions | Must be separated from training data via filtering [13] |

Frequently Asked Questions

How does this approach improve model generalization?

By ensuring strict separation between training and test complexes, models cannot rely on memorizing similar structures and must learn genuine protein-ligand interaction principles. When state-of-the-art models were retrained on the filtered PDBbind CleanSplit, their performance dropped substantially, indicating previous benchmark results were inflated by data leakage [13].

What's the difference between this and time-based splitting?

Time-based splitting (training on pre-2020 data, testing on post-2020 data) doesn't adequately address the issue because new drugs often target established proteins, and existing drugs are tested on new proteins. Structural similarities can still occur across time partitions, making multimodal filtering more reliable for ensuring true independence [2].

How computationally intensive is this process?

The all-against-all comparison of protein-ligand complexes is computationally demanding but crucial. For large datasets like PDBbind, this requires efficient implementation and potentially high-performance computing resources. The TM-score calculation, in particular, involves complex structural alignments that can be computationally expensive [16].

Can these methods identify similarities despite low sequence identity?

Yes, this is a key advantage. Unlike sequence-based methods, the multimodal approach can detect complexes with similar interaction patterns even when protein sequences show low identity. This makes it particularly valuable for identifying subtle data leakage that would escape traditional filtering methods [13].

Troubleshooting Guide

Problem: Inconsistent TM-score values

Solution: Ensure you're normalizing by the same chain length when comparing scores. TM-score values depend on the normalization length, so consistent implementation is crucial for reproducible filtering [16].

Problem: High computational overhead

Solution: Consider implementing a tiered approach where rapid fingerprint-based screening (Tanimoto) is performed first, followed by more computationally intensive structural comparisons (TM-score, pocket-RMSD) only for promising candidates.

Problem: Residual data leakage after filtering

Solution: Re-examine your similarity thresholds. You may need to tighten them for specific applications. Additionally, check for similarities within the training set itself, as internal redundancies can also hamper model generalization [13].

Problem: Handling covalent binders

Solution: Exclude covalent protein-ligand complexes from your dataset before applying multimodal filtering, as they represent a different binding paradigm that requires specialized treatment in scoring functions [8].

In the field of computational drug design, accurately predicting protein-ligand binding affinity is crucial for structure-based drug discovery. While the issue of data leakage between training and test sets has gained significant attention, a more insidious problem often lurks within the training data itself: redundancy. This technical guide addresses strategies for identifying and mitigating redundancy within training sets, specifically focusing on PDBbind datasets, to build models that genuinely generalize to novel protein-ligand complexes rather than merely memorizing structural similarities.

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between train-test leakage and intra-training set redundancy?

- Train-Test Leakage occurs when information from the test set inadvertently influences the training process. This leads to inflated performance metrics during benchmarking that do not reflect the model's true ability to generalize to unseen data. A known issue in molecular benchmarking is the structural similarity between complexes in the general PDBbind set and those in the CASF benchmark [1].

- Intra-Training Set Redundancy refers to the presence of numerous highly similar data points within the training set itself. This encourages the model to settle for a local minimum in the loss landscape by simply memorizing these redundant patterns, rather than learning the underlying principles of protein-ligand interactions. It hampers generalization by promoting a reliance on similarity-based shortcuts [1].

FAQ 2: Why is simple random splitting insufficient for complex biomolecular data?

Random splitting assumes data points are independent and identically distributed. However, biomolecular data, such as protein-ligand complexes, exhibit complex dependency structures. For example, multiple complexes might share nearly identical protein structures, highly similar ligands, or comparable binding conformations. A random split can easily place these highly similar complexes in both the training and validation sets, leading to overoptimistic validation metrics and masking poor true generalization [1] [12].

FAQ 3: How can I quantify redundancy in my training set?

Redundancy can be quantified using a multimodal similarity approach that assesses several axes of similarity between data points. Key metrics include:

- Protein Similarity: Using metrics like the TM-score to compare protein structures [1].

- Ligand Similarity: Using metrics like the Tanimoto coefficient to compare small molecules [1].

- Binding Conformation Similarity: Using metrics like the pocket-aligned ligand root-mean-square deviation (RMSD) to compare how ligands sit in the binding pocket [1]. By applying thresholds across these combined metrics, you can identify clusters of highly similar complexes that constitute redundancy.

FAQ 4: What is the practical impact of removing redundant data? Won't it hurt performance?

Counterintuitively, removing redundant data can improve model generalization and final test performance on independent data. Training on a highly redundant set is like studying for an exam by reading the same paragraph repeatedly; you become an expert on that paragraph but fail to understand the chapter. Similarly, models trained on diverse, non-redundant sets are forced to learn broader, more generalizable patterns. Research on chest X-ray datasets showed that models trained on a de-redundanted, "informative subset" of data significantly outperformed models trained on the full, redundant dataset during both internal and external testing [17].

Troubleshooting Guides

Problem 1: My model performs excellently on the validation set but poorly on external tests.

Diagnosis: This classic sign suggests either train-test leakage or that your validation set is not truly independent due to underlying redundancy in the entire dataset.

Solution: Implement a similarity-clustered split.

- Calculate Similarity: Compute all-against-all protein, ligand, and binding site similarities for your entire dataset (including any planned validation/test sets) [1].

- Cluster Complexes: Use a clustering algorithm (e.g., agglomerative clustering) to group complexes that exceed your similarity thresholds (e.g., TM-score > 0.8, Tanimoto > 0.9, RMSD < 2.0Å) [1] [17].

- Assign Splits by Cluster: Ensure that all complexes within a single cluster are assigned to the same data split (training, validation, or test). This guarantees that the validation and test sets contain truly novel complexes not represented in the training data [12].

Problem 2: I have a limited dataset and am concerned that removing data will lead to underfitting.

Diagnosis: The concern is valid, but the goal is to remove redundant information, not unique information. The key is to prioritize quality and diversity over sheer quantity.

Solution: Use an entropy-based informative sample selection.

- Train a Baseline Model: First, train a model on your entire, potentially redundant, training set.

- Score Sample Informativeness: Use the trained model to evaluate each training sample. Calculate the entropy of the model's prediction for each sample. Samples with high entropy (high prediction uncertainty) are deemed more "informative" as the model has not yet learned them well, while low-entropy samples are considered learned or redundant [17].

- Select an Informative Subset: Use an optimization procedure (e.g., Bayesian optimization) to select a subset of training data that maximizes the average informativeness (entropy) score.

- Fine-Tune the Model: Fine-tune your model on this curated, informative subset. This approach has been shown to yield better performance on external test sets than using the full dataset [17].

Problem 3: I am working with paired data (like protein-ligand interactions) and need to avoid leakage on both axes.

Diagnosis: In two-dimensional data, leakage can occur if similar proteins or similar ligands appear across different splits.

Solution: Use a specialized tool for two-dimensional splitting.

- Define Similarity for Both Entities: Calculate separate similarity metrics for the proteins and the ligands in your dataset.

- Formulate a Constrained Optimization: The goal is to split the data such that no protein or ligand in the test set is highly similar to any in the training set. Tools like DataSAIL formalize this as a combinatorial optimization problem [12].

- Run the Splitting Algorithm: DataSAIL uses clustering and integer linear programming to heuristically solve this NP-hard problem, producing splits that minimize information leakage across both dimensions of the data [12].

Experimental Protocols & Data

Protocol 1: Creating a PDBbind CleanSplit

This protocol is based on the methodology established to address data leakage in the PDBbind database [1].

- Data Acquisition: Download the PDBbind database and the CASF benchmark datasets.

- Similarity Calculation:

- For every protein-ligand complex in CASF, compare it against every complex in the PDBbind general set.

- Compute three similarity metrics: TM-score (protein similarity), Tanimoto coefficient (ligand similarity), and pocket-aligned ligand RMSD (binding pose similarity).

- Filtering for Train-Test Separation:

- Identify and remove all complexes from the PDBbind training set that are above a threshold of similarity to any complex in the CASF test set. The original study used this to remove ~4% of training complexes, addressing 49% of CASF test complexes that had a highly similar counterpart in training [1].

- Filtering for Intra-Training Redundancy:

- Within the remaining PDBbind training set, identify clusters of highly similar complexes using the same multimodal similarity approach.

- Iteratively remove complexes from these clusters until the largest remaining cluster is below a defined size threshold. This was shown to remove an additional ~7.8% of training complexes [1].

- Result: The remaining dataset, termed PDBbind CleanSplit, is a refined training set with minimized train-test leakage and reduced internal redundancy.

Protocol 2: Entropy-Based Redundancy Reduction

This protocol is adapted from methods successfully applied to medical imaging datasets to remove semantic redundancy [17].

- Initial Training: Train a baseline model (e.g., a Graph Neural Network for binding affinity prediction) on the entire available training set. Let's call this model M_baseline.

- Inference and Entropy Calculation:

- Pass each training sample through Mbaseline to get a prediction.

- For a regression task, you can adapt the concept by measuring the model's uncertainty (e.g., using the variance from a probabilistic model or the error magnitude). For classification, calculate the prediction entropy directly.

Entropy = -Σ p_i * log(p_i), where pi is the predicted probability for class i. - High entropy/uncertainty indicates an informative sample that the model finds challenging.

- Subset Selection:

- Use a search algorithm like Bayesian Optimization to find the subset of training data that, when used for training, results in a model with the lowest possible validation loss.

- The optimization process is guided by the entropy scores, prioritizing the inclusion of high-entropy samples.

- Final Model Training: Train a new model from scratch on the optimized, informative subset identified in the previous step.

Quantitative Data on Data Redundancy and Filtering

Table 1: Impact of Data Filtering as Reported in PDBbind CleanSplit Study [1]

| Filtering Type | Complexes Removed | Key Consequence |

|---|---|---|

| Train-Test Leakage Reduction | ~4% of PDBbind training set | Addressed similarity for 49% of CASF-2016 test complexes, turning them into genuine external tests. |

| Intra-Training Redundancy Reduction | ~7.8% of PDBbind training set | Broke up large similarity clusters within the training set, discouraging memorization. |

| Cumulative Filtering | ~11.8% of PDBbind training set | Created the PDBbind CleanSplit, a refined dataset for robust model evaluation. |

Table 2: Performance Comparison on Redundant vs. Non-Redundant Data

| Dataset / Strategy | Reported Performance Insight |

|---|---|

| Standard PDBbind Split | Top models (e.g., GenScore, Pafnucy) showed high CASF performance, which dropped substantially when retrained on CleanSplit, indicating performance was previously driven by data leakage [1]. |

| PDBbind CleanSplit | A GNN model (GEMS) maintained high CASF performance when trained on CleanSplit, demonstrating genuine generalization capability [1]. |

| Entropy-Based Subset (Medical Imaging) | Model trained on an informative subset achieved significantly higher recall (0.7164 vs 0.6597) on internal test and dramatically better generalization on external test (0.3185 vs 0.2589) compared to a model trained on the full, redundant dataset [17]. |

Workflow Visualization

Diagram 1: Multimodal Filtering for Clean Training Set Creation

Multimodal Filtering Workflow - This diagram illustrates the two-stage process for creating a non-redundant training set, first by removing data points too similar to the test set, and then by reducing redundancy within the training data itself.

Diagram 2: Entropy-Based Informative Sample Selection

Entropy-Based Sample Selection - This diagram shows the process of using a baseline model to identify the most informative samples in a dataset based on prediction entropy, leading to a refined, non-redundant training subset.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Tools and Resources for Mitigating Data Redundancy

| Tool / Resource | Type | Function & Application |

|---|---|---|

| PDBbind CleanSplit [1] | Curated Dataset | A pre-filtered version of PDBbind with reduced train-test leakage and internal redundancy. Use as a benchmark training set for robust evaluation. |

| DataSAIL [12] | Python Package | Performs similarity-aware data splitting for 1D and 2D data. Ideal for creating splits that minimize leakage for protein, ligand, or protein-ligand pairs. |

| TM-score [1] | Algorithm/Metric | Measures protein structural similarity. A key metric for identifying redundant protein complexes in a dataset. |

| Tanimoto Coefficient [1] | Algorithm/Metric | Measures ligand similarity based on molecular fingerprints. Essential for identifying redundant ligands in a dataset. |

| Pocket-Aligned RMSD [1] | Algorithm/Metric | Measures the similarity of ligand binding conformations. Critical for assessing redundancy in the binding pose. |

| Entropy-Based Scoring [17] | Methodology | A strategy to score training samples by their informativeness, allowing for the creation of a potent, non-redundant subset without predefined similarity thresholds. |

Beyond Splitting: Integrating Data Quality and Mitigation Strategies

Addressing Broader Data Quality Issues with HiQBind-WF

Frequently Asked Questions (FAQs)

1. What is HiQBind-WF and why was it developed? HiQBind-WF is an open-source, semi-automated workflow designed to create high-quality, non-covalent protein-ligand binding datasets. It was developed to address common structural artifacts and data quality issues found in widely used datasets like PDBbind, which can compromise the accuracy and generalizability of scoring functions used in drug discovery [18] [19] [20].

2. What are the main types of structural errors corrected by this workflow? The workflow specifically identifies and corrects several key issues [18] [19] [14]:

- Structural Artifacts: Incorrect bond orders, protonation states, and aromaticity in ligands; missing atoms in proteins.

- Inappropriate Complexes: Covalently bound protein-ligand complexes.

- Non-Physical Structures: Severe steric clashes between protein and ligand heavy atoms.

- Problematic Ligands: Ligands containing rarely-occurring elements or that are too small for meaningful binding studies.

3. How does HiQBind-WF improve dataset reproducibility? HiQBind-WF is designed as a semi-automated, open-source workflow. This minimizes manual intervention and fosters transparency, ensuring that the data curation process is consistent and reproducible for the entire research community [18] [19].

4. What is the difference between the optimized PDBbind and the new HiQBind dataset? The workflow can be applied to optimize the existing PDBbind dataset (creating PDBbind-Opt). Furthermore, it was used to create a completely new dataset, HiQBind, by matching binding free energies from sources like BioLiP, Binding MOAD, and BindingDB with co-crystalized structures from the PDB. HiQBind serves as an independent benchmark for scoring functions [18] [21] [19].

5. Where can I access the HiQBind-WF tools and datasets? The code for the HiQBind workflow is available on GitHub under an MIT license [21]. The prepared HiQBind dataset is accessible via a Figshare repository [21].

Troubleshooting Guides

Guide 1: Resolving Protein-Ligand Complex Structural Errors

Problem: Your dataset contains protein-ligand complexes with structural errors that negatively impact scoring function training.

| Symptoms | Root Cause | Solution with HiQBind-WF |

|---|---|---|

| Poor scoring function performance/ generalizability [18] | Underlying training data contains structural artifacts [18] [14] | Apply the full HiQBind-WF curation pipeline to fix ligand and protein structures [19]. |