Scoring Functions in Virtual Screening: A Comprehensive Guide for Drug Discovery

Scoring functions are a critical, yet challenging, component of structure-based virtual screening (SBVS), directly impacting the success of modern drug discovery.

Scoring Functions in Virtual Screening: A Comprehensive Guide for Drug Discovery

Abstract

Scoring functions are a critical, yet challenging, component of structure-based virtual screening (SBVS), directly impacting the success of modern drug discovery. This article provides a comprehensive analysis for researchers and drug development professionals, covering the foundational principles, diverse methodological approaches, and persistent limitations of these functions. It explores cutting-edge optimization strategies, including machine learning and consensus scoring, and delivers a rigorous comparative assessment of their performance for pose prediction, binding affinity estimation, and active compound enrichment. By synthesizing the latest research and benchmarking studies, this review serves as a strategic guide for selecting, applying, and validating scoring functions to enhance the efficiency and success rates of virtual screening campaigns.

The Engine of Virtual Screening: Foundational Concepts and Classification of Scoring Functions

In the realm of structure-based drug discovery, computational methods have become indispensable for identifying and optimizing potential therapeutic compounds. At the heart of these methodologies lie three core tasks: pose prediction, virtual screening, and binding affinity prediction. These tasks are unified by their critical dependence on scoring functions—mathematical algorithms that approximate the binding affinity of a ligand to a protein target by calculating their interaction energy [1] [2]. Scoring functions serve as the primary decision-making tools in docking protocols, enabling researchers to prioritize compounds for further experimental investigation [3].

The evolution of scoring functions has progressed from classical approaches to modern artificial intelligence (AI)-driven methods. Traditional functions typically fall into three categories: force-field-based (using molecular mechanics), empirical (fitting parameters to experimental data), and knowledge-based (deriving potentials from structural databases) [4]. However, these classical approaches often suffer from limitations in accuracy and high false-positive rates [1]. The emergence of AI, particularly deep learning, has revolutionized the field by introducing models capable of learning complex patterns from vast datasets of protein-ligand complexes [5] [6]. These AI-driven methods significantly enhance predictive performance across all three core tasks, though challenges in generalization and physical plausibility remain active research areas [7] [6].

Table 1: Categories of Scoring Functions in Molecular Docking

| Category | Basis of Function | Strengths | Limitations |

|---|---|---|---|

| Force-Field-Based | Molecular mechanics force fields | Strong theoretical foundation | Computationally intensive, limited accuracy |

| Empirical | Weighted energy terms fitted to experimental data | Faster computation, simpler functions | Limited transferability across target classes |

| Knowledge-Based | Statistical potentials from structural databases | Good balance of speed and accuracy | Dependent on quality and size of database |

| AI-Driven | Deep learning models trained on complex structures | High accuracy, ability to learn complex patterns | Generalization challenges, data bias concerns |

Core Task 1: Pose Prediction

Definition and Significance

Pose prediction, also known as binding mode prediction, aims to determine the correct three-dimensional orientation and conformation of a small molecule (ligand) within a target protein's binding site [6]. The primary objective is to computationally generate a ligand pose that closely matches the native binding structure observed in experimental crystallographic complexes [8]. Accurate pose prediction is foundational to structure-based drug design as it provides critical insights into the molecular interactions governing binding, such as hydrogen bonding, hydrophobic contacts, and electrostatic interactions, which inform the rational optimization of lead compounds.

The accuracy of pose prediction is typically quantified using the root-mean-square deviation (RMSD) between the predicted ligand pose and the experimentally determined reference structure [3] [2]. A predicted pose is generally considered successful if its heavy-atom RMSD relative to the crystal structure is less than 2.0 Å [6]. This metric evaluates the sampling power of docking algorithms—their ability to generate poses close to the native structure—and the scoring power—their capacity to identify and rank these correct poses highest among generated decoys.

Methodological Approaches and Workflow

The pose prediction process typically involves two main components: a conformational search algorithm that explores possible ligand orientations and conformations within the binding site, and a scoring function that evaluates and ranks these generated poses [6]. Traditional docking tools like AutoDock Vina and Glide employ search algorithms such as Monte Carlo simulations or genetic algorithms combined with empirical or force-field-based scoring functions [8].

Recent AI-driven approaches have transformed pose prediction through several innovative paradigms:

- Generative diffusion models (e.g., SurfDock, DiffBindFR) progressively denoise random initial structures to generate accurate binding poses, achieving superior pose accuracy with RMSD ≤ 2 Å success rates exceeding 70% across diverse benchmarks [6].

- Regression-based models directly predict ligand coordinates and conformations from input protein and ligand structures but often struggle with producing physically plausible structures without steric clashes [6].

- Hybrid methods combine traditional conformational searches with AI-driven scoring functions, balancing accuracy and physical validity [6].

Table 2: Performance Comparison of Docking Methods in Pose Prediction

| Method Type | Representative Tools | RMSD ≤ 2 Å Success Rate | Physical Validity (PB-Valid Rate) | Combined Success Rate |

|---|---|---|---|---|

| Traditional | Glide SP | 75-85% | >94% | 70-80% |

| Generative Diffusion | SurfDock | 75-92% | 40-64% | 33-61% |

| Regression-Based | KarmaDock, QuickBind | 20-50% | 10-45% | 5-30% |

| Hybrid AI | Interformer | 60-80% | 70-90% | 50-75% |

Experimental Protocol for Pose Prediction Evaluation

To rigorously evaluate pose prediction performance, researchers can implement the following protocol based on community-standard benchmarks:

Dataset Preparation: Curate a diverse set of protein-ligand complexes from the PDBbind database [2] or specialized benchmarks like the Astex diverse set [6]. Ensure complexes cover various protein families and ligand chemotypes.

Complex Processing: Prepare protein structures by adding hydrogen atoms, assigning protonation states, and optimizing hydrogen bonding networks. Generate 3D ligand structures from SMILES strings and ensure proper charge assignment.

Docking Execution: Perform molecular docking using selected methods, saving multiple poses (typically 20-30) per ligand to ensure adequate sampling of the conformational space [2].

Pose Analysis: Calculate RMSD values between predicted poses and experimental reference structures after optimal structural alignment of protein binding sites.

Performance Metrics: Calculate success rates using the 2.0 Å RMSD threshold, and employ the PoseBusters toolkit to assess physical plausibility, including bond lengths, angles, stereochemistry, and protein-ligand clashes [6].

Core Task 2: Virtual Screening

Definition and Significance

Virtual screening (VS) represents the computational counterpart to high-throughput experimental screening, enabling researchers to rapidly prioritize potential hit compounds from vast chemical libraries for further experimental validation [1] [8]. The primary objective of structure-based virtual screening is to identify novel compounds with the potential to bind to a specific protein target of therapeutic interest, thereby accelerating the early stages of drug discovery [1]. VS is particularly valuable for addressing challenging target classes such as protein-protein interactions (PPIs), which often require novel chemotypes not well-represented in traditional compound libraries [8].

The performance of virtual screening campaigns is measured by the enrichment factor—the ability of the scoring function to prioritize active compounds over inactive ones in a ranked list [8]. Effective VS strategies must address several challenges, including the management of large datasets containing millions to billions of compounds, structural filtration to remove compounds with unfavorable properties, and accurate prediction of binding affinities while minimizing false positives [1].

Advanced Screening Strategies

Modern virtual screening employs sophisticated multi-step workflows that leverage both structure-based and ligand-based approaches:

- Pharmacophore-Based Screening: Utilizes the 3D arrangement of structural features essential for biological activity as queries to screen compound libraries. Elsaman et al. demonstrated this approach by screening 460,000 compounds from the National Cancer Institute library to identify KHK-C inhibitors for metabolic disorders [1].

- Multi-Step Docking Protocols: Combine fast initial screening with more sophisticated rescoring methods. Shahwan et al. implemented a virtual screening of 3,648 drug molecules against MAO-B followed by molecular dynamics simulations, identifying brexpiprazole and trifluperidol as promising candidates for Parkinson's disease and depression [1].

- Target-Specific Machine Learning Scoring Functions: Graph convolutional neural networks (GCNs) can be trained on target-specific data to significantly enhance screening accuracy compared to generic scoring functions, as demonstrated for targets like cGAS and kRAS [9].

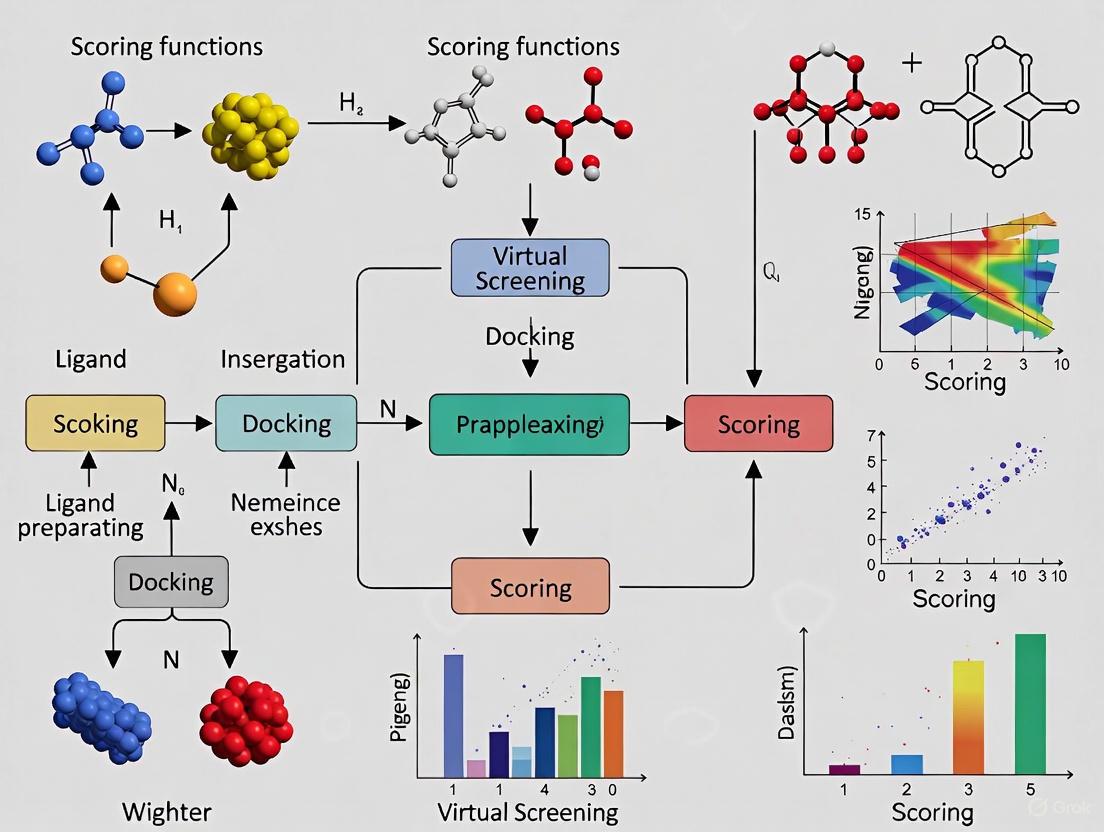

Figure 1: Virtual Screening Workflow. This diagram illustrates the multi-stage process of structure-based virtual screening, from initial compound library to final hit selection.

Experimental Protocol for Virtual Screening

A robust virtual screening protocol incorporates multiple filtering stages to balance computational efficiency with accuracy:

Library Preparation: Curate a screening library from commercial sources (e.g., ZINC, Enamine) or design focused libraries tailored to specific target classes. Apply chemical filters to remove compounds with undesirable properties or structural features [1].

Receptor Preparation: Select appropriate protein structures, considering flexibility through ensemble docking if multiple structures are available. The choice of receptor structure significantly impacts screening outcomes, with "close" methods using co-crystal structures with similar ligands often performing best [8].

Multi-Step Screening:

- Stage 1: Perform high-throughput pharmacophore screening or fast docking to rapidly reduce library size.

- Stage 2: Apply more computationally intensive molecular docking with multiple scoring functions to the top compounds.

- Stage 3: Implement post-docking analysis using molecular dynamics simulations (e.g., 300 ns MD) and free energy calculations (MM-PBSA) to further refine selections [1].

Hit Selection and Validation: Prioritize compounds based on consensus scoring, favorable predicted pharmacokinetic profiles, and synthetic accessibility. Proceed to experimental validation through biochemical or cellular assays.

Core Task 3: Binding Affinity Prediction

Definition and Significance

Binding affinity prediction aims to quantitatively estimate the strength of interaction between a protein and ligand, typically measured as binding free energy (ΔG) or inhibitory concentration (Ki/Kd) [10] [7]. Accurate affinity prediction represents the most challenging aspect of molecular docking, as it requires precise quantification of the subtle thermodynamic balance governing molecular recognition [7]. While classical scoring functions have demonstrated limited accuracy in this domain, recent AI-driven approaches have shown significant improvements in correlating predicted affinities with experimental measurements [5].

The ability to reliably predict binding affinities directly impacts lead optimization—the medicinal chemistry process of enhancing the potency and properties of initial hit compounds. Furthermore, accurate affinity prediction enables more effective virtual screening by improving the prioritization of true actives over non-binders [10]. However, significant challenges remain, including accounting for solvent effects, entropy contributions, and protein flexibility, which collectively complicate the relationship between structural features and binding strength.

Addressing Data Bias and Generalization Challenges

A critical advancement in binding affinity prediction has been the recognition and addressing of data bias in standard benchmarks. Recent research has revealed substantial train-test data leakage between the PDBbind database and the Comparative Assessment of Scoring Functions (CASF) benchmark, leading to inflated performance metrics for many deep-learning-based scoring functions [7]. When models are trained on PDBbind and tested on CASF, nearly half of the test complexes have highly similar counterparts in the training set, enabling prediction through memorization rather than genuine understanding of protein-ligand interactions [7].

To address this issue, the PDBbind CleanSplit dataset was developed using a structure-based filtering algorithm that eliminates data leakage by removing training complexes that closely resemble any CASF test complex [7]. This approach ensures more realistic evaluation of model generalization capabilities. When state-of-the-art models like GenScore and Pafnucy were retrained on CleanSplit, their performance dropped markedly, confirming that previous high scores were largely driven by data leakage rather than true generalization [7].

Advanced Methodologies and Protocols

Modern binding affinity prediction incorporates sophisticated physical modeling and machine learning:

Hybrid Physics-AI Approaches: Methods like DockBind integrate docking pose information with physical and chemical descriptors, including neural potential energy estimates, molecular fingerprints, and DFT-based energy calculations [10]. Ensembling predictions across multiple top-ranked docking poses improves robustness by mitigating the impact of misranked conformations [10].

Graph Neural Networks: GNNs like GEMS (Graph neural network for Efficient Molecular Scoring) leverage sparse graph modeling of protein-ligand interactions and transfer learning from protein language models to achieve state-of-the-art predictions on strictly independent test datasets [7].

Multi-Modal Feature Integration: Advanced models combine protein sequence embeddings from language models (ESM), detailed atomic environments captured by equivariant graph neural networks (MACE), and traditional molecular descriptors to enhance prediction accuracy [10] [7].

Table 3: Binding Affinity Prediction Performance on CASF Benchmark

| Method Category | Representative Methods | Original PDBbind (RMSE) | CleanSplit (RMSE) | Performance Drop |

|---|---|---|---|---|

| Classical SF | AutoDock Vina, GBVI/WSA dG | ~1.6-1.8 | ~1.6-1.8 | Minimal |

| Deep Learning SF | GenScore, Pafnucy | ~1.2-1.4 | ~1.5-1.7 | Significant |

| GNN with CleanSplit | GEMS | ~1.3 (on CleanSplit) | ~1.3 | Minimal |

Experimental Protocol for Binding Affinity Prediction

To rigorously evaluate binding affinity prediction methods while avoiding data bias, researchers should implement the following protocol:

Dataset Preparation: Utilize the PDBbind CleanSplit dataset or implement similar structure-based filtering to ensure no significant similarity exists between training and test complexes [7]. Filtering thresholds should consider protein similarity (TM-score > 0.8), ligand similarity (Tanimoto > 0.9), and binding conformation similarity (pocket-aligned ligand RMSD < 2.0 Å) [7].

Feature Engineering: Extract comprehensive features including atomic-level graph representations, molecular fingerprints, quantum chemical descriptors (DFT calculations), and protein sequence embeddings from language models like ESM [10] [7].

Model Training and Validation:

- Implement graph neural network architectures that explicitly model protein-ligand interactions through sparse graph representations.

- Utilize multi-task learning or transfer learning from related predictive tasks.

- Apply rigorous cross-validation with structure-based splits to prevent overoptimistic performance estimates.

Performance Assessment: Evaluate using multiple metrics including Root Mean Square Error (RMSE), Pearson correlation coefficient (R), and Spearman rank correlation (ρ) on strictly independent test sets. Compare against classical and other machine-learning-based scoring functions as baselines.

Table 4: Essential Computational Tools for Core Docking Tasks

| Tool Name | Type/Function | Application in Core Tasks | Key Features |

|---|---|---|---|

| MOE (Molecular Operating Environment) | Commercial drug discovery platform | Pose prediction, Virtual screening | Implements 5 scoring functions (London dG, ASE, Alpha HB, etc.) [3] [2] |

| AutoDock Vina | Open-source docking tool | Pose prediction, Virtual screening | Fast conformational search, widely used benchmark [8] [6] |

| Glide | Commercial docking software | Pose prediction, Virtual screening | High pose accuracy, strong physical validity [6] |

| PDBbind Database | Comprehensive protein-ligand database | Method development & benchmarking | >20,000 complexes with binding affinity data [2] [7] |

| CASF Benchmark | Curated benchmark sets | Method evaluation | Standardized assessment of scoring functions [2] [7] |

| PoseBusters | Validation toolkit | Pose quality assessment | Checks physical plausibility and chemical validity [6] |

| Graph Neural Networks | Deep learning architecture | All three tasks | Target-specific scoring, improved generalization [9] [7] |

| DiffDock | Diffusion-based docking | Pose prediction | Blind docking with state-of-the-art accuracy [10] |

The three core tasks of pose prediction, virtual screening, and binding affinity prediction represent interconnected components of a comprehensive structure-based drug discovery pipeline. While each task presents distinct challenges, they collectively depend on the continuous refinement of scoring functions through innovative methodologies, particularly AI and deep learning. The integration of physical modeling with data-driven approaches shows significant promise for developing more accurate and generalizable scoring functions.

Future advancements will likely focus on several key areas: improved handling of protein flexibility and solvent effects, development of standardized benchmarks without data leakage, and creation of more efficient algorithms capable of screening ultra-large chemical libraries. Additionally, the integration of generative AI for de novo ligand design coupled with accurate affinity prediction represents an emerging frontier that may further accelerate therapeutic development. As these computational methods continue to evolve, they will play an increasingly central role in bridging the gap between in silico predictions and experimental reality, ultimately enabling more efficient and successful drug discovery campaigns.

Scoring functions are fundamental components of structure-based virtual screening, enabling the prediction of ligand-receptor binding affinity and the identification of potential drug candidates. This whitepaper provides an in-depth technical examination of the three classical families of scoring functions—force field-based, empirical, and knowledge-based—that remain crucial in computational drug discovery. We detail their underlying theoretical principles, mathematical formulations, and implementation methodologies, contextualized within contemporary virtual screening research. The document includes structured comparisons of quantitative performance data, detailed experimental protocols for benchmark validation, and visualization of key workflows. Additionally, we present essential computational resources that constitute the researcher's toolkit for developing and applying these functions. Understanding the strengths and limitations of each scoring function family is paramount for optimizing virtual screening pipelines and advancing drug development efforts.

In the drug discovery pipeline, structure-based virtual screening (SBVS) has become an indispensable approach for identifying novel bioactive molecules from large compound libraries. Molecular docking, a core methodology of SBVS, predicts the binding mode and affinity of a small molecule within a target's binding site. The accuracy of these predictions hinges critically on the scoring function—a mathematical algorithm that approximates the binding affinity by calculating the interaction energy between the ligand and the biomacromolecule [11] [2]. Scoring functions are employed for three primary goals: pose prediction (identifying the correct binding geometry), virtual screening (distinguishing active from inactive compounds), and binding affinity prediction (ranking compounds by potency) [11]. While pose prediction is often performed with satisfactory accuracy, the precise prediction of binding affinity remains a significant challenge, driving continuous methodological refinements [11] [12].

The development of more accurate scoring functions is strategic in structure-based drug design (SBDD). Although no universal scoring function with reliable accuracy for all molecular systems exists, the classical approaches provide a robust foundation. Traditionally, scoring functions are classified into three main families: force field-based, empirical, and knowledge-based functions [11] [4]. Some recent classification schemes have proposed alternative categories, such as physics-based, regression-based, potential of mean force, and descriptor-based [11]. However, the traditional classification offers a general and adequate framework for understanding their fundamental development strategies [11]. This whitepaper delves into the technical specifics of these three classical families, providing researchers with a comprehensive guide to their mechanisms, applications, and assessment protocols.

Force Field-Based Scoring Functions

Theoretical Foundation and Algorithmic Workflow

Force field-based scoring functions root their methodology in classical molecular mechanics. They calculate the binding energy as a sum of multiple energy terms derived from a molecular force field. The core components typically include the interaction energies of the protein-ligand complex, encapsulated by non-bonded terms, and the internal ligand energy, which includes bonded and non-bonded terms [11]. The interaction energy is primarily calculated using Lennard-Jones potentials to describe van der Waals interactions and Coulomb potentials to describe electrostatic interactions [2] [13]. A critical advancement in this family is the incorporation of solvation effects, which can be computed using continuum solvation models like the Poisson-Boltzmann (PB) equation or the related Generalized Born (GB) model [11]. This consideration is vital for achieving a more physiologically relevant estimation of binding affinity.

The general form of a force field-based scoring function can be represented as: [ \Delta G{\text{bind}} = w{\text{vdW}} \cdot E{\text{vdW}} + w{\text{elec}} \cdot E{\text{elec}} + w{\text{sol}} \cdot E{\text{sol}} + E{\text{internal}} ] where ( E{\text{vdW}} ) and ( E{\text{elec}} ) are the van der Waals and electrostatic interaction energies, respectively, ( E{\text{sol}} ) is the solvation energy, ( E{\text{internal}} ) is the ligand's internal energy, and ( w ) represents the respective weights [11] [13]. The weights may be unity in purely physics-based functions or calibrated for specific applications. Prominent examples of force field-based scoring functions include those implemented in DOCK and DockThor [11]. The GBVI/WSA dG function in the Molecular Operating Environment (MOE) is another example, which is a force field-based function [2].

Key Characteristics and Experimental Considerations

Table 1: Key Characteristics of Force Field-Based Scoring Functions

| Aspect | Description | Representative Examples |

|---|---|---|

| Theoretical Basis | Classical molecular mechanics force fields. | DOCK, DockThor [11] |

| Core Energy Terms | Van der Waals (Lennard-Jones), Electrostatics (Coulomb), Solvation, Internal energy. | GBVI/WSA dG in MOE [2] |

| Solvation Treatment | Explicitly calculated, e.g., via continuum models (PB, GB). | Poisson-Boltzmann, Generalized Born [11] |

| Parameterization | Based on experimental physicochemical data and quantum mechanical calculations. | - |

| Computational Cost | Generally high, especially with explicit solvation models. [4] | - |

| Primary Strength | Strong physical basis, theoretically transferable. | - |

| Common Limitation | High computational cost; sensitivity to parameterization and charge assignments. | - |

When applying force field-based functions in virtual screening, particular attention must be paid to system preparation. This includes the accurate assignment of protonation states of ionizable residues at the target pH, typically done with tools like PROPKA [13], and the assignment of partial atomic charges. The use of a consistent force field for both the protein and the ligand is critical to avoid artifacts. The high computational cost of these functions can be a limiting factor in large-scale virtual screening campaigns; however, they are often used for final re-scoring of top-ranked compounds from faster, initial screens [11].

Empirical Scoring Functions

Theoretical Foundation and Algorithmic Workflow

Empirical scoring functions operate on the principle that the binding free energy can be correlated to a set of weighted, physically relevant descriptors. Unlike force field-based functions, they are not derived from first principles but are calibrated to reproduce experimental binding affinity data. The development of an empirical scoring function requires three key components: (i) descriptors that describe the binding event (e.g., hydrogen bonds, hydrophobic contacts), (ii) a dataset of 3D structures of protein-ligand complexes with associated experimental affinity data (e.g., from the PDBbind database), and (iii) a regression or classification algorithm to establish a relationship between the descriptors and the affinity [11]. Multiple linear regression (MLR) is frequently used, leading to linear scoring functions, but more sophisticated machine-learning techniques are increasingly employed [11].

The functional form of a linear empirical scoring function is: [ \Delta G{\text{bind}} = w0 + \sumi wi \cdot \Delta Xi ] where ( \Delta Xi ) are the interaction descriptors (e.g., number of hydrogen bonds, buried surface area), ( wi ) are the weights obtained through regression, and ( w0 ) is a constant [11]. LUDI, developed by Böhm, was the first empirical scoring function, and other prominent examples include ChemScore, GlideScore (used in Glide), and the various empirical functions in MOE such as London dG, ASE, Affinity dG, and Alpha HB [11] [2].

Key Characteristics and Experimental Considerations

Table 2: Key Characteristics of Empirical Scoring Functions

| Aspect | Description | Representative Examples |

|---|---|---|

| Theoretical Basis | Regression model trained on experimental complex and affinity data. | LUDI [11], ChemScore, GlideScore [11] |

| Core Descriptors | Hydrogen bonding, hydrophobic interactions, ionic interactions, entropy loss, etc. | London dG, Alpha HB (MOE) [2] |

| Training Algorithm | Multiple Linear Regression (MLR) or more complex Machine Learning (ML). | Linear: MLR; Nonlinear: RF, SVM [11] |

| Training Data | Curated datasets of protein-ligand complexes with binding affinities (e.g., PDBbind). | PDBbind, CASF benchmarks [2] [13] |

| Computational Cost | Generally fast, suitable for high-throughput screening. | - |

| Primary Strength | Fast and reasonably accurate for the chemical space covered by training data. | - |

| Common Limitation | Risk of overfitting; performance depends heavily on the quality and representativeness of the training set. | - |

A critical consideration when using empirical scoring functions is the domain of applicability. Since the model is derived from a specific training set, its predictive power may diminish when applied to targets or ligand chemotypes that are poorly represented in that set. Therefore, understanding the composition of the training data is essential. The quality of the input data—both the structural complexes and the affinity data—directly impacts model performance. Pre-processing steps to remove erroneous structures and normalize affinity measurements (e.g., pKd/pKi) are crucial. Empirical functions are often the default in many docking programs due to their good balance between speed and accuracy [11] [2].

Knowledge-Based Scoring Functions

Theoretical Foundation and Algorithmic Workflow

Knowledge-based scoring functions, also known as statistical-potential functions, derive their parameters from statistical analysis of structural databases. The fundamental assumption is that the frequency of occurrence of certain structural features (e.g., interatomic distances) in experimentally determined protein-ligand complexes reflects their energetic favorability. More frequently observed interactions are deemed more favorable. These observed frequencies are converted into pseudo-energy potentials through the inverse Boltzmann relationship, resulting in a Potential of Mean Force (PMF) [11] [4].

The general process involves analyzing a large database of known protein-ligand complexes (e.g., from the Protein Data Bank). For each pair of atom types, the radial distribution function, ( g(r) ), is computed. This function is then converted into an energy term: [ w(r) = -kB T \ln [g(r)] ] where ( kB ) is Boltzmann's constant, ( T ) is the absolute temperature, and ( w(r) ) is the pairwise potential [4]. The total score for a complex is the sum of the contributions from all interacting atom pairs. Examples of knowledge-based scoring functions include DrugScore and PMF [11].

Key Characteristics and Experimental Considerations

Table 3: Key Characteristics of Knowledge-Based Scoring Functions

| Aspect | Description | Representative Examples |

|---|---|---|

| Theoretical Basis | Inverse Boltzmann law applied to statistical frequencies from structural databases. | DrugScore [11], PMF [11] |

| Core Data | Pairwise distances between protein and ligand atom types from 3D structures. | - |

| Database Used | Large collections of high-resolution protein-ligand complexes (e.g., PDB). | Protein Data Bank (PDB) [4] |

| Functional Form | Sum of pairwise atom-type potentials. | - |

| Computational Cost | Fast, offering a good balance between accuracy and speed [4]. | - |

| Primary Strength | No need for experimental affinity data for parameterization; captures implicit effects. | - |

| Common Limitation | "Reference state" problem; performance depends on the size and quality of the database. | - |

A key challenge in developing knowledge-based scoring functions is the definition of the reference state, which represents the expected distribution of atom pairs in the absence of interactions. An inaccurate reference state can introduce biases into the potential. The quality and size of the structural database are also paramount; a larger, non-redundant, and high-resolution set of complexes will lead to more robust and generalizable statistical potentials. Knowledge-based functions are valued for their ability to implicitly capture complex effects, including solvation, without the need for explicit parameterization [4].

Comparative Performance and Benchmarking

Quantitative Performance Metrics and Datasets

Evaluating the performance of scoring functions requires standardized benchmarks and metrics. The Comparative Assessment of Scoring Functions (CASF) benchmark, built from the PDBbind database, is a widely used resource for this purpose [3] [2] [13]. The CASF-2013 dataset, for instance, contains 195 high-quality protein-ligand complexes [2]. Common performance metrics include the root-mean-square deviation (RMSD) for assessing pose prediction accuracy (a lower RMSD indicates a pose closer to the experimental structure) and the correlation coefficient between predicted scores and experimental binding affinities for assessing scoring power [3] [2]. In virtual screening, the screening power—the ability to classify active and inactive compounds—is often the most critical metric, measured by metrics like enrichment factors [14] [13].

Table 4: Classical Scoring Functions Performance Comparison (Based on CASF and related benchmarks)

| Scoring Function | Class | Primary Use Case | Pose Prediction (RMSD) | Scoring Power (Correlation) | Screening Power (Enrichment) |

|---|---|---|---|---|---|

| GBVI/WSA dG | Force Field | Affinity prediction, Re-scoring | Variable | Moderate [2] | Moderate |

| London dG | Empirical | Pose prediction, Virtual screening | Good [2] | Moderate | Good |

| Alpha HB | Empirical | Pose prediction (H-bond dependent targets) | Good [2] | Moderate | Good |

| Affinity dG | Empirical | General docking | Moderate | Moderate | Moderate |

| DrugScore | Knowledge-Based | Pose prediction, Binding site analysis | Good [11] | Moderate | Moderate |

Standardized Experimental Protocol for Benchmarking

A standardized protocol for benchmarking scoring functions ensures fair and reproducible comparisons. The following workflow, adapted from recent studies, outlines a robust methodology [3] [2] [13]:

- Dataset Selection: Obtain a standardized benchmark dataset, such as the CASF benchmark subset from the PDBbind database. This ensures results are comparable across different studies.

- System Preparation: Prepare all protein structures by adding hydrogen atoms, assigning protonation states (e.g., using PROPKA at pH 7.0), and optimizing the hydrogen-bonding network using a tool like the Protein Preparation Wizard in Schrödinger [13].

- Ligand Preparation: Prepare ligand structures using a tool like LigPrep, generating correct ionization states at physiological pH (e.g., 7.0 ± 2.0) and relevant tautomers and stereoisomers [13].

- Re-docking: For each protein-ligand complex, perform re-docking. The ligand is extracted from the complex and then docked back into the prepared protein structure. This tests the function's ability to reproduce the experimentally observed binding pose (pose prediction).

- Data Extraction: From the docking results, extract key outputs for analysis:

- Best Docking Score (BestDS): The most favorable score from all generated poses.

- Best RMSD (BestRMSD): The lowest RMSD value between any generated pose and the co-crystallized ligand.

- RMSD of BestDS (RMSDBestDS): The RMSD of the pose that had the best docking score.

- DS of BestRMSD (DSBestRMSD): The docking score of the pose that had the lowest RMSD [2].

- Performance Calculation:

- Pose Prediction: Calculate the success rate based on the RMSD_BestDS. A common threshold for a "correct" pose is an RMSD < 2.0 Å.

- Scoring Power: Calculate the correlation (e.g., Pearson's R) between the BestDS for each complex and its experimental binding affinity (pKd or pKi).

- Screening Power: For targets with known actives and decoys (e.g., from DUD-E or LIT-PCBA), calculate enrichment factors in the top 1% or 5% of the ranked library to evaluate the function's ability to prioritize active compounds [14] [13].

Table 5: Essential Computational Resources for Scoring Function Research

| Resource Name | Type | Primary Function in Research | Key Application Context |

|---|---|---|---|

| PDBbind Database | Curated Dataset | Provides a comprehensive collection of protein-ligand complexes with experimental binding affinity data for training and testing. | Empirical SF development; Benchmarking [2] [13] |

| CASF Benchmark | Benchmarking Tool | Offers a standardized diverse subset of PDBbind for comparative assessment of scoring functions. | Performance evaluation (Pose, Scoring, Screening power) [3] [2] |

| DUD-E / LIT-PCBA | Benchmarking Dataset | Provides datasets with known active compounds and property-matched decoys to test virtual screening performance. | Evaluation of screening power and model robustness [14] [13] |

| ZINC15 | Compound Library | A public database of commercially available compounds for virtual screening; used as a source for decoy molecules. | Decoy selection for ML-based SF training [14] |

| MOE (Molecular Operating Environment) | Software Platform | A commercial drug discovery suite implementing multiple classical scoring functions (London dG, Alpha HB, etc.) for docking. | Docking simulations; Comparative studies [3] [2] |

| Smina | Software Tool | A fork of AutoDock Vina designed for better scoring function development and customizability. | Docking and feature generation for ML-based SFs [13] |

| CCharPPI Server | Web Server | Allows for the assessment of scoring functions independent of the docking process. | Isolated evaluation of scoring performance [4] |

The drug discovery process has long relied on computational methods to identify and optimize potential therapeutic molecules. Structure-based virtual screening, particularly molecular docking, serves as a fundamental computational method in early-stage drug discovery by enabling scientists to quickly evaluate potential binding conformations of small molecules to protein targets [14]. Traditional scoring functions, which estimate how well a given ligand binds, have been based on either physical principles or empirical knowledge. However, these conventional approaches offer a trade-off between accuracy and speed, often relying on heuristics and physical approximations that limit their predictive accuracy [15]. This limitation has created a critical bottleneck in virtual screening campaigns, where the "screening power"—the ability to correctly select active ligands from mixtures of binders and non-binders—is paramount for success [14].

The emergence of machine learning (ML) and deep learning (DL) represents a paradigm shift in scoring function development. By learning complex patterns directly from growing repositories of protein-ligand structural and affinity data, these data-driven approaches promise to bridge the gap between accuracy and speed [16]. ML-based scoring functions have demonstrated potential not only to enhance affinity prediction ("scoring power") but also to significantly improve the identification of biologically active molecules ("screening power"), thereby accelerating virtual screening workflows [9] [14]. This whitepaper examines the transformative impact of ML/DL scoring functions, exploring their architectures, performance, and practical implementation in modern drug discovery research.

Fundamental Architectures and Methodological Approaches

Molecular Representations for Machine Learning

A critical differentiator among ML/DL scoring functions lies in how they represent molecular structures and interactions. The choice of representation fundamentally influences what patterns a model can learn and how well it generalizes to novel targets.

Table 1: Key Molecular Representations in ML/DL Scoring Functions

| Representation Type | Description | Key Examples | Advantages |

|---|---|---|---|

| Graph-Based Representations | Treats molecules as graphs with atoms as nodes and bonds as edges | Graph Convolutional Networks (GCNs) [9] | Naturally captures molecular topology and connectivity |

| Interaction Fingerprints | Encodes specific protein-ligand interactions as binary or numerical vectors | Protein per Atom Score Contributions Derived Interaction Fingerprint (PADIF) [14] | Provides human-interpretable features of binding interfaces |

| Atomic Environment Vectors | Describes local chemical environments using Gaussian functions | Atomic Environment Vectors (AEVs) [15] | Captures nuanced distance-dependent interactions |

| 3D Surface Representations | Models molecular surfaces and interaction potentials | MaSIF [17] | Captures shape complementarity and physicochemical properties |

Prominent Architectural Frameworks

Graph Convolutional Networks (GCNs)

GCNs have shown remarkable success in target-specific scoring function development. These networks operate directly on molecular graphs, learning hierarchical feature representations through message-passing between connected atoms. For challenging targets like cGAS and kRAS, GCN-based scoring functions demonstrated significant superiority over generic scoring functions, exhibiting remarkable robustness and accuracy in determining whether a molecule is active [9]. The graph structure enables GCNs to capture complex molecular patterns that translate to improved extrapolation performance when facing new compounds within a defined chemical space.

Protein-Ligand Interaction Graphs with Attention Mechanisms

The AEV-PLIG (Atomic Environment Vector-Protein Ligand Interaction Graph) framework represents a sophisticated evolution in interaction modeling [15]. This approach combines atomic environment vectors with protein-ligand interaction graphs, using an attentional graph neural network architecture to learn the relative importance of neighboring environments. Unlike earlier methods that simply count contacts, AEV-PLIG uses radial atomic environment vectors centered on ligand atoms as node features, capturing distance-dependent interaction information. The model leverages GATv2 layers, an enhanced version of graph attention networks that improves expressiveness, followed by global pooling and readout layers to generate binding affinity predictions.

Interaction-Focused Architectures for Improved Generalization

A significant challenge in ML scoring functions is generalizability to novel protein families or chemical series unseen during training. The CORDIAL (Convolutional Representation of Distance-dependent Interactions with Attention Learning) framework addresses this by incorporating an inductive bias toward learning distance-dependent physicochemical interaction signatures while explicitly avoiding direct parameterization of chemical structures [16]. This "interaction-only" approach has demonstrated maintained predictive performance in leave-superfamily-out validation that simulates encounters with novel protein families, outperforming contemporary ML models whose predictive ability degrades under these conditions.

Motif Prediction Networks

Beyond affinity prediction, deep learning approaches like MotifGen predict potential binding motifs directly from receptor structures without requiring known binders [17]. This network generates motif profiles at protein surface grid points for 14 types of functional groups or 6 chemical interaction classes. These human-interpretable profiles serve as pre-trained embedding inputs for versatile few-shot binder design applications, offering a strategy for novel binder discovery for challenging receptor targets with limited known binders.

Performance Benchmarking and Comparative Analysis

Quantitative Performance Metrics

Rigorous benchmarking is essential for evaluating ML/DL scoring functions against traditional methods and established benchmarks. The Critical Assessment of Scoring Functions (CASF) benchmark provides standardized evaluation, though recent work suggests need for more challenging out-of-distribution tests [15].

Table 2: Performance Comparison of Scoring Function Approaches

| Method Category | Representative Methods | CASF-2016 PCC | RMSE (kcal/mol) | Screening Power | Computational Speed |

|---|---|---|---|---|---|

| Traditional Scoring Functions | ChemPLP, other docking scores | 0.60-0.70 | 2.0-3.0 | Variable | Fastest |

| Machine Learning Scoring Functions | RF-Score, PADIF-based models [14] | 0.75-0.85 | 1.5-2.0 | Enhanced | Fast |

| Deep Learning Models | AEV-PLIG, CORDIAL, GCN models [16] [15] | 0.85-0.90 | 1.5-2.0 | Superior | Moderate |

| Free Energy Perturbation (FEP) | FEP+ [15] | 0.68 (FEP benchmark) | ~1.0 (when successful) | High (when applicable) | Slowest (~400,000x slower) |

Performance in Practical Applications

In virtual screening for targets like cGAS and kRAS, target-specific scoring functions developed using graph convolutional networks showed significant superiority over generic scoring functions [9]. These models demonstrated remarkable robustness and accuracy in determining whether a molecule is active, with GCNs showing particular ability to generalize to heterogeneous data based on learned complex patterns of molecular protein binding.

For binding affinity prediction, modern DL approaches like AEV-PLIG achieve competitive performance on standardized benchmarks while being orders of magnitude faster than FEP calculations [15]. When trained with augmented data (generated using template-based modeling or molecular docking), these models show significantly improved binding affinity prediction correlation and ranking on FEP benchmarks, with weighted mean PCC and Kendall's τ increasing from 0.41 and 0.26 to 0.59 and 0.42, narrowing the performance gap with FEP+ (which achieves 0.68 and 0.49 respectively) while being approximately 400,000 times faster.

Implementation Frameworks and Experimental Protocols

Integrated Software Platforms

The implementation and application of ML/DL scoring functions have been facilitated by developing software frameworks that unify functionality and benchmark generation.

Table 3: Key Research Reagent Solutions and Software Frameworks

| Tool/Framework | Primary Function | Key Features | Application Context |

|---|---|---|---|

| MolScore [18] | Scoring, evaluation and benchmarking framework for generative models | Unified scoring functions, performance metrics, benchmark implementation | De novo molecular design and evaluation |

| PADIF [14] | Protein-ligand interaction fingerprint | Granular atom typing and piecewise linear potential for interaction strength | Virtual screening and target prediction |

| MotifGen [17] | Binding motif prediction from receptor structures | Predicts 14 functional group types or 6 interaction classes at surface points | Peptide binder design and binding site prediction |

| AEV-PLIG [15] | Attention-based graph neural network for affinity prediction | Combines atomic environment vectors with protein-ligand interaction graphs | Binding affinity prediction and lead optimization |

Experimental Workflow for Target-Specific Scoring Function Development

Critical Experimental Considerations

Data Curation and Decoy Selection Strategies

The performance of ML scoring functions critically depends on appropriate decoy selection—choosing inactive compounds that resemble active compounds in physicochemical properties but lack biological activity [14]. Several strategic approaches have been analyzed:

- Random Selection: Choosing compounds randomly from extensive databases like ZINC15, which positively impacts model performance but may increase false negatives.

- Dark Chemical Matter (DCM): Leveraging recurrent non-binders from high-throughput screening assays stored as dark chemical matter.

- Data Augmentation: Utilizing diverse conformations from docking results to expand training data.

Studies reveal that models trained with random selections from ZINC15 and compounds from dark chemical matter closely mimic the performance of those trained with actual non-binders, presenting viable alternatives for creating accurate models lacking specific inactivity data [14].

Advanced Training Methodologies

To address limited training data, augmented data approaches have proven highly effective. By training on both experimentally determined 3D protein-ligand complexes and structures modeled using template-based ligand alignment or molecular docking, models show significantly improved prediction correlation and ranking for congeneric series typically encountered in drug discovery [15]. For protein-peptide interface predictions, fine-tuning pre-trained models on specialized datasets (e.g., protein-peptide complexes) has demonstrated improved recovery of known binding motifs, particularly for aliphatic and aromatic categories [17].

Future Directions and Implementation Challenges

Addressing Generalizability and Interpretability

Despite impressive performance on benchmarks, the application of ML scoring functions in real-world drug discovery pipelines has been limited by challenges with generalizability to novel targets and chemical series [16]. The development of more robust out-of-distribution benchmarks that penalize ligand and/or protein memorization represents an important step toward more reliable models [15]. Similarly, model interpretability remains a significant concern, with ongoing research focusing on making these "black box" systems more transparent and their predictions more interpretable for medicinal chemists.

Integration with Workflows and Prospective Validation

Future developments will likely focus on tighter integration of ML/DL scoring functions with end-to-end drug discovery workflows, including de novo molecular design platforms like MolScore [18]. As these models mature, prospective validation—where predictions are experimentally tested—will be essential for establishing confidence and refining approaches. The remarkable speed advantage of ML methods (orders of magnitude faster than FEP) positions them as valuable tools for initial screening and prioritization, potentially complementing more rigorous but slower physical methods for final candidate selection [15].

Machine learning and deep learning scoring functions represent a genuine fourth paradigm in structure-based virtual screening, moving beyond traditional physics-based and empirical approaches to data-driven predictive modeling. By leveraging sophisticated architectures like graph convolutional networks, attention mechanisms, and interaction-focused representations, these methods have demonstrated superior screening power and binding affinity prediction accuracy compared to conventional scoring functions. While challenges remain in generalizability, interpretability, and real-world validation, the rapid advancement of frameworks like AEV-PLIG, CORDIAL, and target-specific GCN models highlights the transformative potential of this approach. As data availability increases and methodologies mature, ML/DL scoring functions are poised to become indispensable tools in accelerating early-stage drug discovery and expanding the accessible chemical space for challenging therapeutic targets.

The acceleration of drug discovery hinges on the ability to rapidly and accurately identify promising therapeutic compounds. Within structure-based virtual screening, the scoring function is the central component that predicts the binding affinity of a small molecule to a biological target. This whitepaper details the core computational pipeline—encompassing molecular descriptors, curated datasets, and machine learning regression models—that underpins the development of modern, robust scoring functions. By framing this discussion within the critical context of virtual screening research, we provide a technical guide for scientists and developers aiming to build predictive models that enhance the efficiency and success of early-stage drug discovery.

In the drug discovery pipeline, virtual screening serves as a computational triage, evaluating vast chemical libraries to identify a manageable number of high-priority candidates for experimental validation [19]. The success of this process depends crucially on the "screening power"—the ability of the scoring function to correctly distinguish true binders from non-binders [14]. Traditional, generic scoring functions often struggle with this task due to their empirical nature and inability to fully capture the complex physics of molecular recognition.

The emergence of machine learning (ML) has transformed this landscape. ML offers a data-driven approach to develop scoring functions by learning the complex relationships between a molecule's features and its biological activity [20] [21]. These models require three foundational pillars: numerical representations of molecules (descriptors), high-quality and relevant datasets for training, and robust regression or classification algorithms. The interplay of these components dictates the real-world performance of the scoring function, impacting its accuracy, generalizability, and ultimately, its success in a drug discovery campaign.

Molecular Descriptors: The Language of Cheminformatics

Molecular descriptors are quantitative representations of a molecule's structural and physicochemical properties. They translate chemical structures into a numerical format that machine learning models can process. The choice of descriptors is critical, as it determines what information the model has access to for learning.

Key Descriptor Categories and Their Calculation

Feature engineering involves calculating these descriptors from a standardized molecular representation, typically a SMILES (Simplified Molecular Input Line Entry System) string, using toolkits like RDKit [20] [19]. The following table summarizes essential descriptors for virtual screening applications.

Table 1: Key Molecular Descriptors for Virtual Screening Models

| Descriptor | Description | Role in Virtual Screening |

|---|---|---|

| Molecular Weight (MW) | The mass of the molecule. | Indicates molecular size and drug-likeness; influences pharmacokinetics [20]. |

| LogP | The octanol-water partition coefficient. | Measures hydrophobicity, which critically affects membrane permeability [20]. |

| Hydrogen Bond Donors (HBD) | Number of donor atoms (e.g., OH, NH). | Defines key interactions with the protein target, influencing binding affinity and specificity [20]. |

| Hydrogen Bond Acceptors (HBA) | Number of acceptor atoms (e.g., O, N). | Defines key interactions with the protein target, influencing binding affinity and specificity [20]. |

| Topological Polar Surface Area (TPSA) | The surface area over polar atoms. | Represents molecular polarity; a crucial predictor for solubility and cellular bioavailability [20]. |

| Number of Rotatable Bonds | Number of non-ring bonds that can rotate. | Reflects molecular flexibility, which influences the entropy cost upon binding to a target [20]. |

Feature Selection for Model Performance

Not all descriptors contribute equally to a model's predictive power. Including irrelevant features can lead to overfitting and reduced generalizability. Techniques like Recursive Feature Elimination (RFE) are employed to identify and retain only the most predictive descriptors [20]. For instance, in a model for HIV integrase inhibitors, TPSA, Molecular Weight, and LogP were identified as the strongest predictors, while the number of rotatable bonds had a lower impact [20]. This process ensures a more robust and interpretable model.

Dataset Curation: The Foundation of Model Generalization

The performance of an ML-based scoring function is profoundly dependent on the quality, size, and composition of the dataset used for its training. A meticulously curated dataset is the foundation of a generalizable model.

Data Acquisition and Preparation

The process begins with acquiring bioactivity data from public databases such as ChEMBL, which contains experimentally measured data (e.g., IC50 values) for compounds against various biological targets [20] [14]. This raw data must undergo rigorous preprocessing:

- Data Cleaning: Removal of duplicates, erroneous entries, and salts from molecular structures using toolkits like RDKit to ensure a standardized dataset [20] [19].

- Activity Standardization: IC50 values (half-maximal inhibitory concentration) are often converted to pIC50 (-logIC50) to improve data linearity and model performance [20].

- Activity Thresholding: For classification models, compounds are labeled as active (e.g., pIC50 > 5) or inactive based on a predefined, biologically relevant threshold [20].

The Critical Role of Decoy Selection

A unique challenge in training virtual screening models is the selection of decoys—molecules that are presumed to be inactive but are physically similar to active compounds to make the discrimination task meaningful [14]. The strategy for decoy selection significantly influences model performance.

Table 2: Common Strategies for Decoy Selection in Virtual Screening

| Strategy | Methodology | Advantages & Considerations |

|---|---|---|

| Random Selection | Selecting compounds at random from large databases like ZINC15. | A viable and simple alternative, especially when experimental non-binders are unavailable [14]. |

| Dark Chemical Matter (DCM) | Using compounds from HTS assays that never showed activity across many screens. | Provides molecules with confirmed inactivity, closely mimicking true non-binders [14]. |

| Data Augmentation | Using diverse, non-native conformations generated by docking active molecules. | Generates target-specific decoys from known actives, enriching the negative dataset [14]. |

Research has shown that models trained with decoys from random selection or dark chemical matter can closely approximate the performance of models trained with confirmed non-binders, providing practical pathways for model development [14].

Regression Models: From Baseline to Advanced Architectures

With features and labels defined, the next step is selecting and training the machine learning model. The choice of algorithm ranges from interpretable baseline models to complex, high-capacity deep learning architectures.

Model Training and Evaluation Protocol

A standard experimental protocol ensures rigorous model development:

- Data Splitting: The curated dataset is split into a training set (e.g., 80%) for model learning and a held-out test set (e.g., 20%) for final evaluation [20].

- Model Selection & Training: Models are trained on the training set. Common choices include:

- Logistic Regression: Serves as an interpretable baseline for classification tasks [20].

- Random Forest: An ensemble method robust to overfitting and capable of capturing non-linear relationships [20].

- Graph Convolutional Networks (GCNs): A deep learning approach that operates directly on the molecular graph structure, learning relevant features automatically [9].

- Hyperparameter Tuning: Parameters not learned directly from the data (e.g., number of trees in a forest) are optimized using techniques like

GridSearchCVto find the best-performing configuration [20]. - Performance Evaluation: The trained model is evaluated on the test set using a suite of metrics [20] [22].

Quantitative Model Performance Comparison

The performance of different models can be quantitatively compared using standard metrics. The following table illustrates a typical comparison, demonstrating how more advanced models can outperform simpler ones.

Table 3: Performance Comparison of Machine Learning Models for Virtual Screening

| Metric | Random Forest | Logistic Regression | Graph Convolutional Network (GCN) |

|---|---|---|---|

| Accuracy | 0.816 [20] | 0.580 [20] | Significant superiority over generic scoring functions [9] |

| AUC-ROC | 0.886 [20] | 0.595 [20] | High accuracy & robustness [9] |

| Precision | 0.792 [20] | 0.571 [20] | - |

| Recall | 0.790 [20] | 0.187 [20] | - |

| Enrichment Factor (EF1%) | - | - | 16.72 (RosettaGenFF-VS) [22] |

As shown, the Random Forest model significantly outperforms Logistic Regression across all metrics, highlighting its ability to model the complex structure-activity relationships in chemical data [20]. Furthermore, advanced target-specific scoring functions, including those using GCNs and improved physics-based forcefields like RosettaGenFF-VS, demonstrate state-of-the-art performance, offering superior screening power and enrichment for challenging targets [9] [22].

Integrated Workflow and The Scientist's Toolkit

The development of a scoring function is a multi-stage process where each component—descriptors, data, and models—is deeply interconnected. The following diagram visualizes this integrated pipeline.

Diagram 1: The Scoring Function Development Pipeline

To implement this workflow, researchers rely on a suite of software tools and databases.

Table 4: Essential Research Reagents and Computational Tools

| Tool / Resource | Type | Primary Function in the Pipeline |

|---|---|---|

| ChEMBL | Database | A primary source for publicly available bioactivity data and known active compounds [20] [14]. |

| ZINC15 | Database | A curated repository of commercially available compounds, widely used for virtual screening and decoy selection [14]. |

| RDKit | Cheminformatics Toolkit | Calculates molecular descriptors, standardizes structures, and performs molecular operations [20] [19]. |

| SciKit-Learn | ML Library | Provides implementations for standard ML models (Random Forest, Logistic Regression) and evaluation metrics [20]. |

| PyTorch / TensorFlow | ML Framework | Enables the development and training of advanced deep learning models like Graph Convolutional Networks [9]. |

| RosettaVS | Docking & VS Platform | A state-of-the-art, physics-based virtual screening platform that incorporates machine learning and active learning [22]. |

The development of high-performance scoring functions is a sophisticated exercise in integrating cheminformatics and machine learning. This guide has detailed the core components of the pipeline: the critical role of well-chosen molecular descriptors, the non-negotiable need for rigorously curated datasets with thoughtful decoy selection, and the power of modern regression models from Random Forests to Graph Convolutional Networks. As the field advances, the integration of these target-specific, ML-driven scoring functions into scalable, open-source platforms is setting a new standard for rapid and effective hit identification in drug discovery [22]. By mastering the interplay of descriptors, datasets, and models, researchers can continue to push the boundaries of virtual screening, accelerating the delivery of novel therapeutics.

From Theory to Practice: Methodological Advances and Target-Specific Applications

Virtual screening has become an indispensable component of modern drug discovery, serving as a computational bridge between target identification and experimental validation. At the heart of virtual screening lie scoring functions—algorithms that predict the binding affinity and specificity of small molecules to biological targets. The accuracy of these scoring functions directly determines the success rate of identifying viable drug candidates, making their optimization a critical research focus [23]. Traditionally, these functions relied on physics-based principles or empirical scoring terms, but the field has witnessed a paradigm shift with the integration of machine learning (ML) techniques. This evolution has progressed from robust ensemble methods like Random Forests to sophisticated deep learning architectures, substantially improving the predictive power and applicability of virtual screening in early drug discovery [24] [25]. This technical guide examines the development and application of these ML-based scoring functions, providing a comprehensive overview of their methodologies, performance, and implementation for researchers and drug development professionals.

Machine Learning Fundamentals for Scoring Functions

Scoring functions are computational models that predict the binding affinity of a protein-ligand complex. In structure-based virtual screening, they are crucial for ranking compounds from large libraries by their predicted binding strength [23]. Traditional scoring functions are categorized as:

- Force field-based: Use molecular mechanics energy terms.

- Empirical: Weighted sum of interaction terms parameterized against experimental data.

- Knowledge-based: Derived from statistical analyses of atom pair frequencies in known structures [23].

Machine learning enhances these approaches by learning complex, non-linear relationships between molecular features and binding affinity from large datasets. The key advantage of ML-based scoring functions is their ability to capture intricate patterns in structural and interaction data that are difficult to model with predefined mathematical forms [25] [23]. The development of any ML-based scoring function requires three core components: (i) descriptors representing the protein-ligand complex, (ii) a dataset of complexes with experimental binding affinities, and (iii) a learning algorithm to establish the structure-activity relationship [23].

Random Forest Models in Virtual Screening

Random Forest (RF) algorithms have established themselves as highly effective and reliable tools for constructing scoring functions in virtual screening. Their popularity stems from robust performance across diverse target classes and relative ease of implementation.

Core Methodology and Applications

RF models operate by constructing multiple decision trees during training and outputting the mode of the classes (classification) or mean prediction (regression) of the individual trees. This ensemble approach confers excellent resistance to overfitting and handles high-dimensional feature spaces effectively [25]. In one application for anti-breast cancer drug discovery, researchers collected 1,974 compounds and used XGBoost (a gradient-boosting variant) for feature selection to identify the top 20 molecular descriptors most influential on biological activity. Subsequently, they compared multiple ML algorithms using pIC₅₀ values as feature data, finding that Random Forest, XGBoost, and Gradient Boosting algorithms all performed well with minimal difference between them, significantly outperforming Support Vector Machines [26].

After parameter optimization via semi-automatic tuning, the Random Forest algorithm demonstrated particularly strong performance with a prediction accuracy of 0.745, alongside excellent anti-overfitting properties and algorithm stability [26]. This robust performance makes RF particularly valuable for virtual screening campaigns where model generalizability is crucial.

Advanced Implementation: Kullback-Leibler Divergence Framework

An innovative application of Random Forests in Drug-Target Interaction (DTI) prediction incorporates Kullback-Leibler divergence (KLD) as a novel feature input. This approach utilizes E3FP three-dimensional molecular fingerprints to compute 3D similarities between ligands within each target (Q-Q matrix) and between a query and ligand (Q-L vector) [27].

The methodological workflow involves:

- 3D Conformer Generation: Generating multiple conformers for each ligand using tools like OpenEye Omega or RDKit.

- Fingerprint Calculation: Encoding each 3D conformer into E3FP fingerprints represented as 1024-bit vectors.

- Similarity Matrix Construction: Building Q-Q matrices (15,000×15,000 dimensions) for intra-target comparisons and Q-L vectors for query-target interactions.

- Probability Density Estimation: Transforming similarity matrices and vectors into probability density functions using kernel density estimation.

- KLD Feature Calculation: Using Kullback-Leibler divergence as a "quasi-distance" between density models to create feature vectors.

- Random Forest Classification: Employing the KLD feature vectors to predict DTIs across multiple targets [27].

This sophisticated approach achieved impressive performance metrics across 17 representative targets, with a mean accuracy of 0.882, out-of-bag score estimate of 0.876, and ROC AUC of 0.990, demonstrating the power of combining advanced feature engineering with Random Forest classification [27].

Performance Comparison of Random Forest Models

Table 1: Performance Metrics of Random Forest Models in Virtual Screening

| Application Context | Dataset/Targets | Key Performance Metrics | Reference |

|---|---|---|---|

| Anti-breast cancer QSAR modeling | 1,974 compounds | Prediction accuracy: 0.745; Excellent anti-overfitting properties | [26] |

| Drug-target interaction prediction | 17 targets from CHEMBL26 | Mean accuracy: 0.882; OOB score: 0.876; ROC AUC: 0.990 | [27] |

| Target-specific scoring functions | DUD-E benchmark (102 targets) | Average ROC-AUC: 0.98 when combined with deep learning | [28] |

Deep Learning Architectures for Enhanced Screening

Deep learning architectures have pushed the boundaries of virtual screening performance beyond what was achievable with traditional ML methods, particularly through their ability to automatically learn relevant features from raw molecular data.

Graph Neural Networks for Molecular Representation

Graph Neural Networks (GNNs) have emerged as particularly powerful architectures for molecular representation because they naturally model molecular structure—atoms as nodes and bonds as edges. The VirtuDockDL pipeline exemplifies this approach, employing a customized GNN to predict compound effectiveness as drug candidates [29].

The GNN architecture processes molecular graphs through:

- Graph Convolution Operations: Linear transformation of node features followed by batch normalization for stability: (\widehat{x }= \frac{x-{\mu }{\beta }}{\sqrt{{\sigma }{\beta }^{2}+ \in })

- Activation: ReLU non-linearity: ({h^{\prime \prime }}_{v}=\text{m}\text{a}\text{x}(0, \widehat{h}^{\prime }v))

- Residual Connections: Smooth gradient flow in deeper networks: ({h^{\prime \prime \prime }}{v}= {h}{v}+ {h^{\prime \prime }}_{v})

- Feature Fusion: Concatenation of graph-derived features with molecular descriptors and fingerprints: ({f}{combined}=ReLU\left({W}{combine}. \left[{h}{agg} ; {f}{eng}\right] {b}_{combine}\right)) [29]

This approach achieved 99% accuracy, an F1 score of 0.992, and an AUC of 0.99 on the HER2 dataset, significantly surpassing DeepChem (89% accuracy) and AutoDock Vina (82% accuracy) [29].

Complex-Based Deep Learning Models

Beyond ligand-based approaches, deep learning has been successfully applied to structure-based methods that explicitly model protein-ligand complexes. DeepScore represents an innovative framework that adopts the scoring form of Potential of Mean Force (PMF) scoring functions but calculates scores for protein-ligand atom pairs using fully connected neural networks rather than traditional statistical potentials [28].

The DeepScore architecture:

- Input Features: Atom type, hybridization state, valence, partial charge, and chemical properties (aromatic, hydrophobic, H-bond donor/acceptor) represented as feature vectors.

- Network Architecture: Feedforward neural networks that replace traditional PMF pair potentials.

- Consensus Scoring: DeepScoreCS combines DeepScore with traditional Glide Gscore for enhanced performance [28].

When validated on the DUD-E benchmark dataset containing 102 targets, DeepScore achieved an average ROC-AUC of 0.98, demonstrating exceptional performance across diverse target classes [28].

Performance Comparison of Deep Learning Approaches

Table 2: Performance Metrics of Deep Learning Models in Virtual Screening

| Model/Architecture | Screening Type | Key Performance Metrics | Advantages |

|---|---|---|---|

| VirtuDockDL (GNN) | Ligand-based | 99% accuracy, F1=0.992, AUC=0.99 on HER2 | Automated feature learning; superior to DeepChem, AutoDock Vina |

| DeepScore (Fully Connected NN) | Structure-based | Average ROC-AUC: 0.98 on DUD-E (102 targets) | Target-specific performance; combines with traditional scoring |

| CNN-Based Complex Scoring | Structure-based | State-of-the-art on multiple benchmarks | Direct processing of 3D complex structures |

Experimental Protocols and Implementation

Dataset Preparation and Curation

The quality and appropriateness of training data fundamentally determine the performance of any ML-based scoring function. Several benchmark datasets have become standards in the field:

- DUD-E (Directory of Useful Decoys-Enhanced): Contains 102 targets with an average of 224 active ligands and 13,835 decoys per target. Although some noncausal biases have been identified, it remains widely used for evaluating virtual screening performance [28].

- CHEMBL: A large-scale bioactivity database containing over 17 million activity entries, providing extensive training data across diverse target classes [25].

- ZINC: Contains over 230 million commercially available compounds, frequently used as a screening library [25].

Proper data preparation involves:

- Protonation State Assignment: Ensuring biologically relevant ionization states.

- Tautomer Generation: Considering relevant tautomeric forms.

- Conformer Sampling: Generating multiple 3D conformations to account for flexibility.

- Deduplication: Removing duplicate structures to prevent bias [28] [27].

Feature Engineering and Molecular Representation

The choice of molecular representation significantly impacts model performance:

- Extended-Connectivity Fingerprints (ECFPs): Circular topological fingerprints capturing molecular substructures.

- E3FP Fingerprints: 3D fingerprints encoding stereochemical and conformational information [27].

- Molecular Descriptors: Physicochemical properties like molecular weight, logP, topological polar surface area (TPSA).

- Graph Representations: Atoms as nodes (with features like element type, charge, hybridization) and bonds as edges (with bond type, conjugation) [29].

- Complex-Based Features: For structure-based methods, features include atom pairwise interactions, interaction fingerprints, and voxelized representations of binding sites [28] [25].

Model Training and Validation Protocols

Robust training methodologies are essential for developing generalizable models:

- Cross-Validation: K-fold cross-validation to optimize hyperparameters and assess model stability.

- Parameter Optimization: Semi-automatic tuning of key parameters (number of trees, learning rate, network architecture) [26].

- Evaluation Metrics: Comprehensive assessment using ROC-AUC, enrichment factors, precision-recall curves, and early enrichment metrics [28] [23].

- Benchmarking: Comparison against established baselines and state-of-the-art methods on standardized datasets.

Table 3: Key Computational Tools and Resources for ML-Based Virtual Screening

| Tool/Resource | Type | Function | Application Context |

|---|---|---|---|

| RDKit | Cheminformatics Library | Molecular descriptor calculation, fingerprint generation, SMILES processing | General-purpose cheminformatics; feature engineering [29] [27] |

| Glide | Docking Software | Generate docking poses and initial scoring | Structure-based virtual screening; pose generation for rescoring [28] |

| AutoDock Vina | Docking Software | Molecular docking with empirical scoring | Structure-based screening; benchmark comparison [29] |

| PyTorch Geometric | Deep Learning Library | Graph neural network implementation | Molecular graph processing; GNN models [29] |

| E3FP | 3D Fingerprint Algorithm | 3D molecular representation | Conformation-aware similarity calculations [27] |

| OpenEye Omega | Conformer Generation | 3D conformer ensemble generation | Structure-based screening preparation [27] |

| CHEMBL Database | Bioactivity Database | Source of training data for ML models | Model development and validation [27] |

| DUD-E Benchmark | Benchmark Dataset | Evaluation of virtual screening performance | Method comparison and validation [28] |

Workflow Visualization

ML Virtual Screening Workflow

Performance Comparison

The integration of machine learning techniques, from Random Forests to deep convolutional networks, has fundamentally transformed the capabilities of scoring functions in virtual screening. Random Forest models provide robust, interpretable, and high-performing solutions for various virtual screening tasks, achieving accuracies up to 88.2% in DTI prediction and demonstrating excellent anti-overfitting properties [26] [27]. Meanwhile, deep learning approaches like Graph Neural Networks and complex-based models have pushed performance boundaries further, with GNNs achieving 99% accuracy on specific targets and DeepScore reaching 0.98 ROC-AUC across diverse targets [29] [28]. As the field advances, the convergence of these approaches with increasingly large and diverse training datasets promises to further accelerate drug discovery by enabling more accurate, efficient, and cost-effective virtual screening pipelines. The ongoing challenge remains in developing models that balance high performance with interpretability and generalizability across novel target classes, ensuring that machine learning continues to play a pivotal role in addressing global health challenges through accelerated therapeutic development.

Building Target-Specific Scoring Functions (TSSFs) for Precision Drug Discovery