Scaling Up: Overcoming Graph Neural Network Challenges for Biomedical Breakthroughs

Graph Neural Networks (GNNs) hold immense potential for revolutionizing biomedicine, from drug discovery to clinical risk prediction.

Scaling Up: Overcoming Graph Neural Network Challenges for Biomedical Breakthroughs

Abstract

Graph Neural Networks (GNNs) hold immense potential for revolutionizing biomedicine, from drug discovery to clinical risk prediction. However, their scalability remains a critical bottleneck when applied to large, real-world biomedical datasets. This article provides a comprehensive guide for researchers and drug development professionals on the fundamental, methodological, and optimization challenges of scaling GNNs. We explore the root causes of scalability issues, such as neighborhood explosion and data heterogeneity, and detail cutting-edge solutions, including novel sampling algorithms, stable learning frameworks, and transferable architectures. Through a comparative analysis of performance and a forward-looking perspective, this article equips scientists with the knowledge to build robust, efficient, and generalizable GNN models that can unlock new frontiers in biomedical research and patient care.

The Scalability Bottleneck: Why GNNs Struggle with Large-Scale Biomedical Data

Frequently Asked Questions (FAQs)

FAQ 1: Why do I encounter "Out of Memory" (OOM) errors when training my GNN on large biomolecular graphs? This is primarily due to the neighborhood explosion problem and workload imbalance [1]. In message-passing GNNs, the number of neighboring nodes that must be processed grows exponentially with each additional layer. Furthermore, datasets containing graphs of irregular sizes (e.g., proteins of varying lengths) can create severely imbalanced mini-batches, where a single batch containing a very large graph can exceed GPU memory capacity [1] [2].

FAQ 2: What is "embedding staleness" in historical embedding methods, and how does it harm performance? Historical embedding methods (e.g., VR-GCN, GAS) use cached node embeddings from previous training iterations to reduce computational cost. Staleness occurs when these cached embeddings are not updated with the most recent model parameters, leading to a significant approximation error. This bias severely impacts training convergence and final model performance, particularly when using small batch sizes where model updates are frequent [3].

FAQ 3: My deep GNN model's performance degrades with too many layers. Is this a scalability issue? Yes, this is a classic scalability challenge known as over-smoothing. As the number of GNN layers increases, node embeddings can become indistinguishable, causing performance to plateau or degrade. This limits the ability of GNNs to capture long-range dependencies in large graphs, such as those found in extensive protein structures [4].

FAQ 4: What strategies can I use to scale GNN training on large biomedical graphs without partitioning the graph? Emerging strategies focus on memory-efficient preprocessing and distributed training. Index-batching constructs graph snapshots dynamically at runtime to avoid data duplication. When combined with Distributed Data Parallel (DDP) training, this allows for training on very large spatiotemporal graphs without partitioning, achieving significant memory reduction and speedups [5].

Troubleshooting Guides

Problem: GPU Memory Exhaustion during Training Symptoms: Training run fails with an Out-of-Memory (OOM) exception.

Solutions:

- Implement Balanced Batching: Replace the default random sampler with a balancing strategy that creates mini-batches with similar graph sizes. This prevents a single batch of large graphs from spiking memory usage and can reduce the maximum GPU memory footprint by over 30% [1].

- Utilize Historical Embeddings with Staleness Mitigation: Employ methods like GAS or GraphFM that use a historical embedding table. To counter staleness, integrate techniques like the REST algorithm, which decouples forward/backward passes and updates the memory table more frequently than the model parameters. This has been shown to improve performance on large-scale benchmarks like ogbn-papers100M by 2.7% [3].

- Adopt Multiscale Architectures: For large biomolecules, use specialized architectures like Schake. These models are designed to handle proteins with thousands of atoms efficiently by directly accounting for long-range interactions, thus improving transferability to larger structures [2].

Problem: Slow or Unstable Training Convergence Symptoms: Model performance plateaus or fluctuates wildly; training is slow even with a small dataset.

Solutions:

- Address Embedding Staleness: If using a historical embedding method, the instability is likely due to staleness. The REST algorithm is a direct solution to this, leading to notably accelerated convergence [3].

- Apply Normalization and Skip Connections: To enable deeper, more powerful GNNs without over-smoothing, use Differentiable Group Normalization (DGN) combined with residual/skip connections. This allows for training networks with over 30 layers without significant performance degradation, which is essential for capturing complex interactions in large graphs [4].

Key Experimental Protocols

Experiment 1: Protocol for Evaluating Historical Embeddings and Staleness Reduction

- Objective: Quantify the performance impact of embedding staleness and evaluate the effectiveness of the REST training algorithm.

- Methodology:

- Baseline Training: Train a standard GNN (e.g., GraphSAGE) and a historical embedding method (e.g., GAS) on a large-scale graph dataset (e.g., ogbn-papers100M).

- Introduce REST: Integrate the REST algorithm into the historical embedding method's training loop. This involves modifying the training cycle to perform multiple forward/backward passes to update the historical embedding table for each model parameter update.

- Evaluation: Measure and compare the prediction accuracy and training convergence speed (loss over time) across the three setups: Standard GNN, Standard Historical Embedding, and Historical Embedding with REST [3].

- Key Metrics: Test accuracy (%), training loss convergence rate.

Experiment 2: Protocol for Benchmarking GNN Scalability on Large Proteins

- Objective: Assess the scalability and transferability of various GNN architectures on large protein structures.

- Methodology:

- Dataset: Use the DISPEF dataset, which contains over 200,000 proteins with sizes up to 12,499 atoms, including implicit solvation free energies and forces [2].

- Model Selection: Benchmark a diverse set of GNNs (e.g., SchNet, EGNN, Equivariant Transformer) and a novel multiscale architecture (e.g., Schake).

- Training and Evaluation:

- Train models on smaller protein subsets (DISPEF-S, DISPEF-M).

- Evaluate transferability by testing on significantly larger proteins (DISPEF-L) to see if the model can generalize to structures beyond the training distribution.

- Measure computational cost (memory, time) on the DISPEF-c subset [2].

- Key Metrics: Mean Absolute Error (MAE) of energy/force predictions, GPU memory usage, inference time.

Experiment 3: Protocol for Distributed ST-GNN Training with PGT-I

- Objective: Achieve scalable training of Spatiotemporal GNNs on a large dataset (e.g., PeMS) without graph partitioning.

- Methodology:

- Baseline: Attempt to load and preprocess the entire dataset using a standard framework (e.g., PyTorch Geometric Temporal), noting the memory usage and potential OOM failure.

- Implement Index-Batching: Use the PGT-I framework to dynamically construct temporal snapshots at runtime using an index, rather than storing all preprocessed snapshots in memory.

- Scale with DDP: Combine index-batching with Distributed Data Parallel training across multiple GPUs (distributed-index-batching).

- Evaluation: Compare the peak memory usage, total training time, and final model accuracy against the baseline [5].

- Key Metrics: Peak memory footprint (GB), training speedup (x), model accuracy (MAE).

The Scientist's Toolkit

Table: Essential Reagents for Scalable GNN Research in Biomedicine

| Research Reagent | Function in Experiment |

|---|---|

| DISPEF Dataset [2] | Provides a benchmark of large, biologically-relevant protein structures with implicit solvation free energies for training and evaluating GNN scalability. |

| Historical Embeddings [3] | A memory table storing node embeddings from previous iterations, reducing the sampling variance and computational cost of mini-batch training. |

| REST Training Algorithm [3] | A simple method that reduces feature staleness in historical embedding approaches by decoupling forward and backward passes, improving convergence. |

| Differentiable Group Norm (DGN) [4] | A normalization technique that helps combat over-smoothing, enabling the training of much deeper GNNs (e.g., >30 layers) for complex tasks. |

| Balanced Mini-Batch Sampler [1] | A data loading strategy that groups graph samples of similar size together to prevent GPU memory imbalance and OOM errors. |

| PGT-I Framework [5] | An extension to PyTorch Geometric Temporal that enables memory-efficient and distributed training of spatiotemporal GNNs via index-batching. |

Table: Performance Improvements from Scalability Techniques

| Technique | Key Metric Improvement | Dataset / Context | Source |

|---|---|---|---|

| REST for Historical Embeddings | +2.7% & +3.6% Performance | ogbn-papers100M & ogbn-products | [3] |

| Balanced Mini-Batching | Up to 32.14% memory reduction | High-Energy Physics (HEP) GNNs | [1] |

| DeeperGATGNN (DGN + Skip Connections) | Up to 10% MAE reduction vs. SOTA | 5/6 Materials Property Datasets | [4] |

| PGT-I (Index-Batching + DDP) | 89% memory reduction; 13.1x speedup | PeMS Dataset with 128 GPUs | [5] |

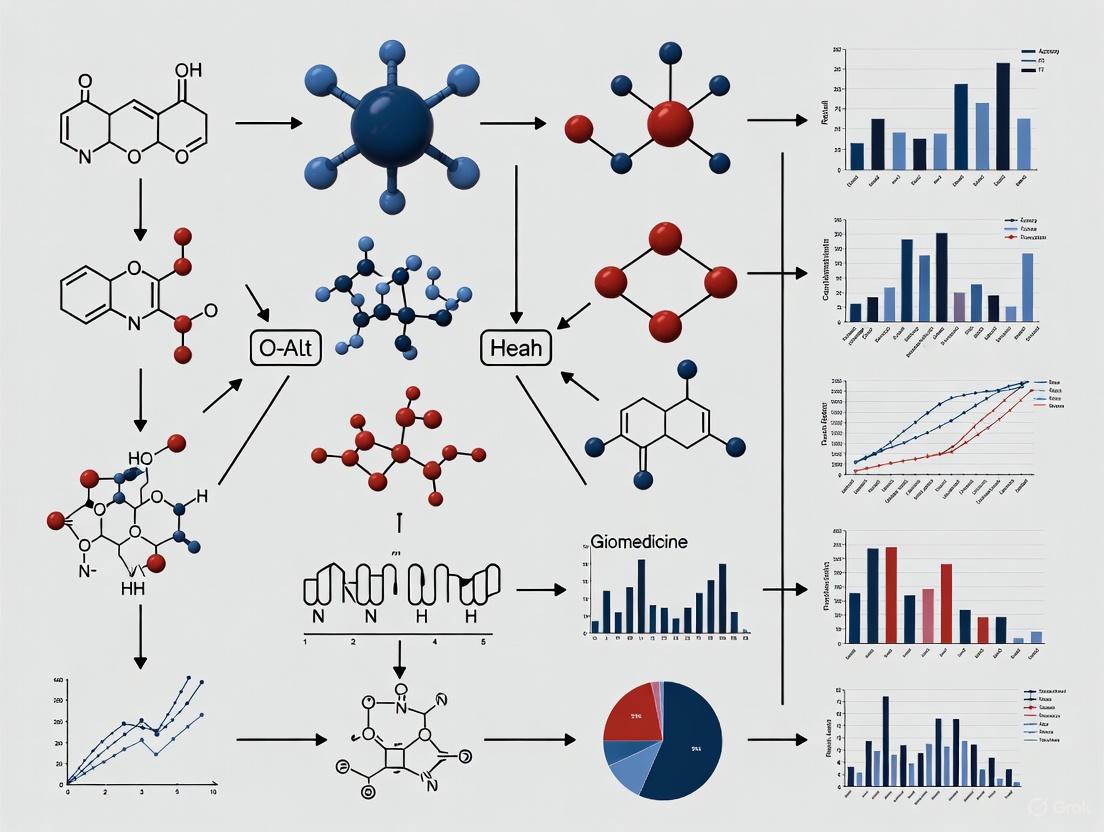

Workflow and Conceptual Diagrams

Diagram 1: Neighborhood explosion in a 2-layer GNN.

Diagram 2: REST algorithm decouples forward/backward passes.

Diagram 3: Distributed training with index batching (PGT-I).

Frequently Asked Questions (FAQs)

FAQ 1: What are the most common types of heterogeneity I will encounter in biomedical graph data? Biomedical graphs are inherently heterogeneous, which can be categorized along several dimensions. You will encounter node heterogeneity, where a single graph contains multiple types of entities (e.g., genes, diseases, drugs, proteins) [6] [7]. Edge heterogeneity is also common, with relationships having different types and semantics (e.g., "inhibits," "associated with," "expresses") [8] [9]. Furthermore, feature heterogeneity arises from the diverse attribute representations for different node and edge types, such as genomic sequences for genes and textual descriptions for diseases [6] [9].

FAQ 2: My GNN model isn't generalizing well to new, unseen graph data. What could be wrong? This is a classic challenge of transitioning from transductive to inductive learning [8]. Your model may be overfitting to the specific graph structure it was trained on. To address this:

- Utilize Inductive Frameworks: Employ models like GraphSAGE [8], which learn aggregation functions from node features rather than relying on a fixed, global graph structure.

- Incorporate External Knowledge: Integrate your graph with larger, more diverse biomedical knowledge graphs (e.g., PrimeKG [10]) to provide broader biological context and improve model robustness.

- Benchmark Generalization: Use dedicated datasets and benchmarks from resources like the Open Graph Benchmark (OGB) [10] that are designed to test model performance on unseen data.

FAQ 3: How can I handle missing modalities or incomplete graph data in my experiments? Missing data is a frequent issue in clinical and biomedical settings [9]. Advanced methods are being developed to address this, such as:

- Modality-Prompted Completion: This technique, used in models like GTP-4o, generates "hallucination" nodes or graph topologies to complete the representation of a missing modality, steering the model towards an embedding that resembles the complete data [9].

- Graph Autoencoders: These models can learn to reconstruct missing parts of a graph or node features from the available, observed data [11].

FAQ 4: What are the best practices for making my large-scale GNN experiments computationally feasible? Training GNNs on massive biomedical graphs (with millions of nodes and billions of edges [10] [7]) requires optimized hardware and software.

- Leverage Optimized Libraries: Use frameworks like WholeGraph and RAPIDS cuGraph that are specifically designed to optimize memory storage and retrieval for large-scale GNN training on NVIDIA GPUs [12].

- Efficient Sampling: Implement neighbor sampling algorithms (e.g., with counts like [15, 10, 5] instead of [30, 30, 30]) to significantly reduce computational load while maintaining model accuracy [12].

- Distributed Training: Distribute the graph data and computations across multiple GPUs to overcome the memory and processing limitations of a single device [12].

FAQ 5: How can I improve the interpretability of my GNN model for biomedical discovery? Moving beyond "black box" models is crucial for generating biologically meaningful insights.

- Use Explainability Tools: Leverage resources like GraphXAI [10], which provides a framework and benchmark datasets (e.g., via its ShapeGGen generator) to systematically evaluate and interpret the explanations provided by your GNN model.

- Attention Mechanisms: Implement models like Graph Attention Networks (GAT) [11] [8], which assign learned importance weights to a node's neighbors, providing insight into which connections the model deems most significant for a prediction.

Troubleshooting Guides

Issue 1: Poor Model Performance on Node Classification or Link Prediction

Symptoms: Low accuracy, precision, or recall on tasks like disease gene association prediction or drug-target interaction prediction.

Potential Causes and Solutions:

| Cause | Diagnostic Steps | Solution |

|---|---|---|

| Inadequate Graph Representation | Check if your graph captures all relevant biological scales (e.g., from molecular to phenotypic). | Integrate multiple data sources. Use a comprehensive knowledge graph like PrimeKG, which includes 17,080 diseases and over 5 million relationships across ten biological scales [10]. |

| Over-smoothing | Monitor performance degradation as the number of GNN layers increases. | Reduce model depth. Use techniques like skip connections or shallow architectures. Experiment with different GNN layers (e.g., GAT [11] or GCN [11]) that may be less prone to over-smoothing. |

| Low-Quality or Sparse Features | Evaluate node feature quality through basic classifiers. | Incorporate pre-trained feature embeddings. Use resources like ClinVec [10], which provides unified embeddings for clinical codes, or generate embeddings from large-scale biological networks [7]. |

Experimental Protocol for Benchmarking Model Performance:

- Dataset Selection: Choose a standard benchmark dataset relevant to your task, such as the ogbn-papers100M dataset for node classification [12] or a BioSNAP dataset like DG-Miner for disease-gene association [7].

- Feature Storage: Use WholeGraph for efficient storage of graph features to avoid I/O bottlenecks [12].

- Model Setup: Implement a baseline GNN model (e.g., GraphSAGE or GAT) using a framework like cuGraph-Ops [12].

- Training & Evaluation: Use a standard train/validation/test split. For the ogbn-papers100M dataset, a sample count of [15, 10, 5] and training for 24 epochs can be a starting point to achieve ~65% test accuracy [12]. Tune hyperparameters like batch size and learning rate for your specific task.

Diagnostic workflow for poor GNN performance, outlining checks for graph completeness, over-smoothing, and feature quality.

Issue 2: Scaling GNNs to Very Large Graphs (Billions of Edges)

Symptoms: Running out of GPU memory, extremely long training times, or inability to load the graph.

Potential Causes and Solutions:

| Cause | Diagnostic Steps | Solution |

|---|---|---|

| Hardware Bandwidth Bottleneck | Profile your code to see if data gathering is the slowest step. | Utilize WholeGraph's chunked device memory, which can achieve ~75% of NVLink bandwidth, drastically speeding up feature gathering [12]. |

| Inefficient Graph Storage | Check if the graph structure and features are stored in a format not optimized for GPU access. | Store the entire graph in GPU memory or distributed across multiple GPUs using a framework like WholeGraph [12]. For host memory storage, WholeGraph can achieve ~80% of PCIe bandwidth [12]. |

| Large Memory Footprint | Monitor GPU memory usage during training. | Implement neighbor sampling [12] and use distributed graph storage to shard the graph across multiple GPUs [12]. |

Experimental Protocol for Large-Scale GNN Training:

- System Configuration: Use a multi-GPU system like an NVIDIA DGX-A100 with high-speed interconnects (NVLink) [12].

- Data Loading: Leverage WholeGraph to store the graph's node features and the cuGraph library to manage the graph structure [12].

- Model Configuration: Choose a model architecture known for its scalability, such as GraphSAGE. Configure the sampling parameters appropriately (e.g., [15, 10, 5]) to balance accuracy and computational load [12].

- Distributed Training: Launch a distributed training job, ensuring the model and data are correctly partitioned across available GPUs.

A troubleshooting map for scaling GNNs to very large graphs, addressing hardware, software, and memory constraints.

Issue 3: Handling Multi-Modal and Heterogeneous Biomedical Data

Symptoms: Model fails to effectively integrate information from different data types (e.g., genomics, images, text), leading to suboptimal predictions.

Potential Causes and Solutions:

| Cause | Diagnostic Steps | Solution |

|---|---|---|

| Large Semantic Gaps | Check if the model is treating all modality relations identically. | Use a heterogeneous GNN framework. Explicitly model different node and edge types. Employ models like GTP-4o that use knowledge-guided meta-paths to capture the specific semantics of different cross-modal relations (e.g., "gene expresses protein" vs. "drug treats disease") [9]. |

| Missing Modalities | Check your dataset for incomplete samples. | Implement a modality-prompted completion module [9]. This technique generates placeholder representations for missing data, allowing the model to function even with an incomplete input. |

Experimental Protocol for Multi-Modal Learning with GTP-4o:

- Data Processing and Feature Extraction: For a patient subject, extract features from each available modality (e.g., Genomics

X_G, Pathological ImagesX_I, Cell GraphsX_C, Diagnostic TextsX_T) into a unified feature dimensiond[9]. - Heterogeneous Graph Construction: Establish a heterogeneous graph

Gwhere each modality is a node type, and edges represent cross-modal relations with specific semantic types [9]. - Modality-Prompted Completion: If a modality is missing, employ a graph prompting function

g_φ(·)to generate a "hallucination" node, completing the graph representation [9]. - Hierarchical Aggregation: Perform knowledge-guided aggregation using a global meta-path neighbouring module to capture long-range dependencies and a local multi-relation aggregation module for fine-grained cross-modal interaction [9].

- Task-Specific Head: Use the final integrated representation for downstream tasks like glioma grading or survival outcome prediction [9].

The Scientist's Toolkit: Key Research Reagent Solutions

| Resource Name | Type | Primary Function | Reference |

|---|---|---|---|

| PrimeKG | Knowledge Graph | A precision medicine-oriented KG integrating 20 resources to describe 17,080 diseases with over 5 million relationships. Useful for drug-disease prediction and hypothesis generation. | [10] |

| BioSNAP | Dataset Collection | A collection of diverse, ready-to-use biomedical networks (e.g., protein-protein, drug-target, disease-gene) with node features and metadata. | [10] [7] |

| Therapeutics Data Commons (TDC) | Framework & Datasets | A unifying framework providing AI/ML-ready datasets and learning tasks across the entire drug discovery and development pipeline. | [10] |

| WholeGraph | Software Library | A high-performance storage library for GNN training that optimizes memory storage and retrieval for large-scale graphs on NVIDIA GPUs. | [12] |

| GraphXAI | Evaluation Resource | A resource to systematically evaluate and benchmark the quality and faithfulness of explanations provided by GNN models. | [10] |

| OGB (Open Graph Benchmark) | Benchmark Suite | A collection of scalable, real-world benchmark datasets for graph machine learning with standardized data splits and evaluators. | [10] |

| ClinVec / ClinGraph | Clinical Embeddings | A set of unified clinical code embeddings (ClinVec) derived from a clinical knowledge graph (ClinGraph) that capture semantic relationships among medical concepts. | [10] |

Frequently Asked Questions (FAQs)

FAQ 1: Why does my Graph Neural Network model perform well during training but poorly on real-world, unseen biomedical data?

This is a classic symptom of poor Out-of-Distribution (OOD) generalization. GNNs, like other deep learning models, are often developed under the Independent and Identically Distributed (I.I.D.) hypothesis [13]. In practice, they can exploit subtle statistical correlations existing in the training set for predictions, even if it is a spurious correlation [13]. When the testing environment changes, these spurious correlations may break, leading to a significant performance drop. In biomedical contexts, this can be caused by differences in patient populations, medical practice patterns between institutions, or heterogeneity in data collection methods [8] [14].

FAQ 2: What are the common types of distribution shifts encountered when applying GNNs to biomedical graphs?

The common types of shifts can be categorized as follows:

- Feature Shift: The distribution of node features (e.g., lab test values, genetic markers) differs between training and testing graphs.

- Topological Shift: The structure of the graphs changes. For example, a model trained on molecular graphs of a certain size may fail on larger, more complex molecules [15].

- Label Shift: The relationship between the input graphs and their target labels changes. These shifts often occur when moving from data collected in a controlled research setting to real-world clinical data, or between different healthcare institutions with varying coding practices [14].

FAQ 3: Are GNNs fundamentally incapable of generalizing to unseen data with different distributions?

No, recent theoretical and empirical studies show that GNNs can generalize well to unseen data, even in the presence of some model mismatch [16]. For instance, GNNs trained on graphs generated from one manifold model have been proven to generalize robustly to graphs generated from a mismatched manifold [16]. The key is to use GNN architectures and training strategies specifically designed to focus on stable, causal relationships in the data rather than spurious correlations [13] [17].

FAQ 4: How can I make my GNN model more robust to distribution shifts for clinical event prediction?

A promising approach is an adaptable GCNN design [14]. This involves using data elements that are recorded consistently across institutions (e.g., key demographics) for explicit learning (node features), while data elements with wide variations across institutions (e.g., specific billing code patterns) are used for implicit learning through graph edge formation. The edge formation function can be systematically adapted for a new institution without retraining the entire model, thus improving generalizability [14].

Troubleshooting Guide: Diagnosis and Solutions

Step 1: Diagnose the Generalization Problem

Use this flowchart to identify the potential root cause of the performance drop.

Step 2: Implement Proven Solutions

The table below summarizes advanced methods designed to improve the OOD generalization of GNNs.

Table 1: Summary of GNN OOD Generalization Methods

| Method Name | Core Principle | Applicable Scenario | Key Theoretical/Experimental Result |

|---|---|---|---|

| StableGNN [13] | Uses causal inference to distinguish and prioritize stable correlations from spurious correlations in the graph data. | General OOD graphs, especially when spurious correlations are prevalent. | Outperforms baselines on synthetic and real-world OOD graph datasets; offers model interpretability. |

| OOD-GNN [17] | Employs a nonlinear graph representation decorrelation method to force the model to be independent of spurious features. | Scenarios with distribution shifts between training and testing graph data. | Significantly outperforms state-of-the-art baselines on 2 synthetic and 12 real-world datasets with shifts. |

| Adaptable GCNN Design [14] | Separates learning: consistent data elements as node features, variable elements for adaptable graph edge formation. | Clinical prediction across institutions with different practice patterns. | Achieved AUROCs of 0.70 (discharge) and 0.91 (mortality) externally, outperforming non-adaptive models. |

| MaxEnt Loss [18] | A loss function that improves model calibration, ensuring predicted probabilities reflect true correctness, both ID and OOD. | All GNN applications, critical for real-world deployment where confidence matters. | Improves calibration on a novel ID and OOD graph form of the Celeb-A dataset. |

Step 3: Experimental Protocols for Validation

Protocol for Testing OOD Generalization on Biomedical Graphs [13] [17]

- Data Splitting: Instead of a random split, split the graph data into training and testing sets in a way that intentionally creates a distribution shift. This can be done by:

- Splitting by time (training on older data, testing on newer data).

- Splitting by institution or data source (training on one hospital's data, testing on another's).

- Synthetically generating test graphs with different feature distributions or topological properties [15].

- Baseline Establishment: Train a standard GNN (e.g., GCN or GAT) on the training set and evaluate its performance on the OOD test set. This establishes the baseline performance drop.

- Intervention: Implement your chosen OOD generalization method (e.g., from Table 1).

- Evaluation Metrics: Report standard metrics (e.g., Accuracy, AUROC, F1-score) on both the training distribution (in-distribution) and the test distribution (out-of-distribution). Crucially, also monitor the generalization gap (the difference between training and test performance) [16].

- Ablation Studies: Conduct ablation studies to verify the contribution of key components of your method (e.g., the causal regularizer in StableGNN or the decorrelation module in OOD-GNN).

Protocol for Testing Generalization in Clinical Event Prediction [14]

- Graph Formation: Model patients as nodes in a graph. Connect nodes (patients) with edges based on clinical similarity. The similarity function can use features like lab results, vital signs, or demographics.

- Internal Validation: Train the GCNN model on the graph built from one institution's data. Validate it on a held-out test set from the same institution.

- External Validation: Apply the trained model to a completely separate dataset from a different institution. Key step: Before application, adapt the graph for the new institution by recomputing the patient similarity edges using the new institution's data patterns, while keeping the trained GCNN model weights frozen.

- Comparison: Compare the performance of the adaptable GCNN against static models that do not allow for this graph adaptation.

The Scientist's Toolkit

Table 2: Essential Research Reagents for GNN Generalization Experiments

| Item / Concept | Function in Experimentation |

|---|---|

| Synthetic Graph Datasets | Allows for controlled introduction of distribution shifts (e.g., feature or topological shifts) to precisely study model behavior [13] [15]. |

| Real-World OOD Benchmarks | Provides realistic testbeds (e.g., multi-institutional clinical datasets, molecular graphs with different scaffolds) to validate method effectiveness [13] [17] [14]. |

| Causal Regularizer | A software component that penalizes the model for relying on spurious statistical correlations, guiding it to learn more stable relationships [13]. |

| Representation Decorrelation Module | A software component that forces different dimensions of the learned graph representations to be independent, helping to eliminate spurious features [17]. |

| Adaptable Edge Formation Function | A function that defines how nodes (e.g., patients, molecules) are connected in a graph. It can be updated for new data environments without retraining the core model [14]. |

| Calibration Metrics (e.g., ECE) | Tools to measure if a model's predicted probabilities match the true likelihood of correctness, which is crucial for trustworthy deployment in biomedicine [18]. |

Visualization of Solution Architectures

The following diagram illustrates the core architecture of two key OOD generalization solutions, providing a blueprint for implementation.

Core GNN Architectures and Their Inherent Scalability Limits (GCN, GAT, GraphSAGE)

Frequently Asked Questions (FAQs)

FAQ 1: What are the most common GNN architectures used in biomedicine and what are their primary applications? In biomedicine, foundational GNN architectures including Graph Convolutional Networks (GCNs), Graph Attention Networks (GATs), and GraphSAGE are widely applied. Their primary applications include:

- Drug Discovery: Learning molecular fingerprints and predicting molecular properties or interactions. [8] [19]

- Clinical Event Prediction: Modeling patient data as graphs to predict outcomes like mortality, hospital discharge, or the need for procedures such as blood transfusion. [14]

- Protein-Protein Interaction (PPI) Prediction: Analyzing biological networks to understand complex cellular functions. [8]

FAQ 2: I keep encountering "Out-of-Memory" (OOM) errors when training on large biomedical graphs. What is the root cause? OOM errors are a primary symptom of scalability limits. The root causes are multifaceted: [1]

- GPU Memory Bottleneck: The size of GNN models and datasets often far exceeds the memory capacity of even modern GPUs. [1]

- Irregular Graph Samples: In applications like particle physics or patient records, your dataset may consist of many individual graphs (e.g., each patient or event is a graph). If these graphs vary significantly in size (number of nodes/edges), a standard random sampler can create mini-batches where one batch contains several very large graphs, spiking GPU memory consumption and causing OOM exceptions. [1]

- Full-Graph Training: Attempting to process the entire large graph (like a massive knowledge graph or social network) in one pass, which is computationally infeasible. [20]

FAQ 3: How can I improve my GNN model's generalizability across different healthcare institutions? A key strategy is an adaptable GCNN design that separates learning from data elements that are consistent across institutions from those that are not. [14]

- Node Features: Use stable, consistently recorded data (like specific lab results) as explicit node features for the model to learn from directly.

- Graph Edges (Connections): Define edges between nodes (e.g., patients) based on clinical similarity. The function that calculates this similarity can be adapted or re-defined for a new institution without retraining the entire core model. This allows the pre-trained GNN to leverage the pattern of similarity without being tied to the original institution's specific data coding practices. [14]

FAQ 4: What are "over-smoothing" and "over-squashing," and how do they limit GNN performance? These are fundamental architectural limitations that arise as GNNs get deeper: [21]

- Over-smoashing: This occurs when a node is connected to too many neighbors through a narrow "bottleneck" in the graph structure. As messages are passed from all these neighbors through just a few edges, information becomes compressed and distorted, limiting the model's ability to capture long-range dependencies. [21]

- Over-smoothing: After too many layers of message passing, the representations of nodes in different parts of the graph can become indistinguishable from one another, losing the unique information that was necessary for the task. [21]

Troubleshooting Guides

Issue 1: Resolving GPU Out-of-Memory (OOM) Errors

Symptoms: Training fails with a CUDA out-of-memory error. The error may occur inconsistently, not on every training epoch.

Diagnosis: The most likely cause is a workload imbalance due to irregularly sized input graphs in your mini-batches. [1]

Solution: Implement workload-balancing sampling strategies.

- Step 1: Analyze your dataset's graph size distribution (e.g., number of nodes per graph). You will likely observe a right-skewed distribution with a large standard deviation. [1]

- Step 2: Replace your standard random sampler with a balancing sampler. Research has shown strategies like balancing by graph size can reduce the maximum GPU memory footprint by over 30% compared to a naive sampler. [1]

- Step 3: For extremely large graphs that cannot fit in memory even with balanced sampling, leverage specialized frameworks like NVIDIA's WholeGraph. This library is designed as a storage solution for large-scale GNN training, optimizing memory storage and retrieval across multiple GPUs, achieving up to 75% of the theoretical NVLink bandwidth. [12]

Experimental Protocol: Evaluating Sampling Strategies

- Objective: Measure the impact of different mini-batch samplers on maximum GPU memory consumption and model accuracy.

- Materials: A dataset of graph samples with irregular sizes (e.g., HEP event graphs, molecular graphs). [1]

- Method:

- Baseline: Train the GNN model (e.g., a GAT or GraphSAGE) using a standard random sampler.

- Intervention: Train the same model using a balanced sampler that groups graphs of similar sizes together in batches.

- Metrics: For each run, record (a) the maximum GPU memory allocated and (b) the final task accuracy (e.g., classification accuracy).

- Expected Outcome: The balanced sampler should show a significant reduction in maximum memory usage while maintaining comparable model accuracy. [1]

Issue 2: Poor Generalization to Unseen Data (OOD Problem)

Symptoms: Your model performs well on the training data and internal test sets but suffers a significant performance drop (e.g., 5-20%) when applied to data from a different institution, a different time period, or a different molecular library. [22]

Diagnosis: The model has learned spurious correlations specific to the training data distribution rather than the true causal features for the task.

Solution: Integrate stable learning techniques with your GNN architecture to create a Stable-GNN (S-GNN).

- Step 1: Apply a feature sample weighting decorrelation technique. This method assigns a weight to each training sample to reduce the spurious correlations between all input features. [22]

- Step 2: Implement this using a Random Fourier Features (RFF) based nonlinear independence test. The RFF approximation makes this decorrelation computationally feasible (O(nD) complexity). [22]

- Step 3: Combine this sample weighting with your baseline GNN model (e.g., GCN) during training. This forces the model to rely on genuine causal features, improving its stability on Out-of-Distribution (OOD) data. [22]

Experimental Protocol: Testing Cross-Site Generalization

- Objective: Validate that the S-GNN model outperforms a standard GNN on data from an unseen source.

- Materials: Graph datasets from at least two different sources (e.g., TUDataset or OGB datasets). [22]

- Method:

- Train a standard GCN model on data from Source A and evaluate it on a held-out test set from Source A (i.i.d. test) and a full dataset from Source B (OOD test).

- Train an S-GNN model on the same data from Source A and evaluate it on the same Source A and Source B test sets.

- Compare the performance metrics (e.g., accuracy, AUROC) on the OOD test (Source B).

- Expected Outcome: The S-GNN model should demonstrate a smaller performance degradation on the Source B data compared to the standard GNN, indicating superior generalization. [22]

Table 1: Performance and Memory Footprint of Scalability Techniques

| Technique | Dataset/Context | Key Result | Citation |

|---|---|---|---|

| Workload-Balancing Samplers | High-Energy Physics (HEP) event graphs | Up to 32.14% reduction in max GPU memory footprint compared to a naive random sampler. | [1] |

| WholeGraph Storage | ogbn-papers100M dataset (111M nodes, 3.2B edges) | Achieved ~75% of NVLink bandwidth for chunked device memory, significantly accelerating data retrieval. | [12] |

| Stable-GNN (S-GNN) | OGB and TU Datasets | Addressed 5.66–20% performance degradation in OOD settings, achieving SOTA cross-site classification results. | [22] |

Table 2: Core GNN Architectures and Scalability Limits

| Architecture | Core Mechanism | Primary Scalability Limitation | Common Biomedical Use Case |

|---|---|---|---|

| GCN | Applies spectral convolution to aggregate features from a node's neighbors. [8] [21] | Limited scalability to very large graphs; fixed and equal weighting of neighbors may not be optimal. | Molecular property prediction, protein interface prediction. [8] [19] |

| GAT | Uses self-attention to assign different importance weights to each neighbor. [8] [21] | Computational and memory overhead of calculating attention scores for each edge, which can be prohibitive for graphs with billions of edges. | Drug repurposing, disease risk prediction where some relationships are more important than others. [8] |

| GraphSAGE | Efficiently generates node embeddings by sampling and aggregating features from a node's local neighborhood. [8] | Sampling depth and neighborhood size create a trade-off between performance and computational cost. Potential information loss from sampling. | Large-scale knowledge graph reasoning, patient similarity networks for clinical prediction. [8] [14] |

Workflow and System Diagrams

GNN Scalability Limits and Solutions

Stable GNN for OOD Generalization

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Hardware for Scalable GNN Research

| Tool/Resource | Type | Function in GNN Experimentation |

|---|---|---|

| NVIDIA DGX-A100 / H100 Systems | Hardware | Provides high-performance multi-GPU setup with NVLink technology, essential for distributing the computational load and memory footprint of large graphs. [1] [12] |

| WholeGraph (RAPIDS cuGraph) | Software Library | Acts as an optimized storage and retrieval solution for massive graph feature data, minimizing communication bottlenecks and enabling training on graphs with hundreds of millions of nodes. [12] |

| OGB (Open Graph Benchmark) & TUDataset | Data | Standardized benchmark datasets (e.g., ogbn-papers100M) for fairly evaluating and comparing the scalability and accuracy of new GNN models and techniques. [22] |

| Stable-GNN (S-GNN) Framework | Algorithmic Framework | A methodology combining sample reweighting decorrelation with standard GNNs to improve model generalizability and performance on out-of-distribution data, a critical need in biomedicine. [22] |

| Workload-Balancing Samplers | Algorithm | Data loaders that group similarly-sized graphs together in mini-batches to prevent GPU memory spikes and Out-of-Memory errors during training. [1] |

Architectural Solutions and Real-World Applications in Biomedicine

Graph Neural Networks (GNNs) have emerged as a powerful tool for biomedical research, enabling the analysis of complex biological systems represented as networks—from protein-protein interactions and molecular structures to patient-disease graphs and healthcare systems [23] [11]. However, as GNNs increase in depth, their receptive field grows exponentially, leading to the "neighbor explosion" problem where processing a single node requires aggregating information from a substantial portion of the graph [24] [25]. This creates significant memory and computational challenges, particularly when working with large-scale biomedical graphs that contain millions of nodes and edges [24].

Graph sampling techniques address this scalability issue by decoupling sampling from forward and backward propagation during minibatch training, enabling GNNs to scale to much larger graphs [25]. These methods primarily fall into three categories: node-wise, layer-wise, and subgraph sampling, each with distinct advantages and implementation considerations for biomedical applications.

Frequently Asked Questions: Sampling Strategy Selection

Q: What is the fundamental difference between node-wise, layer-wise, and subgraph sampling methods?

A: These methods differ in their sampling unit and approach:

- Node-wise sampling selects a fixed number of neighbors for each target node independently, which can lead to redundancy as nodes may be sampled multiple times [24].

- Layer-wise sampling samples nodes at each GNN layer with probabilities often proportional to their degree, minimizing variance across layers [24].

- Subgraph sampling extracts complete subgraphs for minibatch training, preserving local structure but potentially losing long-range dependencies [25] [26].

Q: How do I choose the right sampling strategy for my biomedical graph dataset?

A: Consider these factors:

- For homophilous graphs (where connected nodes often share labels), simple random sampling may perform adequately [24].

- For heterophilous graphs or multi-label graphs, adaptive methods like GRAPES that learn sampling probabilities typically outperform fixed heuristics [24].

- If your graph has a scale-free structure with core-periphery organization (common in biological networks), hierarchical methods like HISGCNs that preserve critical chains are preferable [25].

Q: Why does my sampled subgraph performance degrade despite using theoretically sound sampling methods?

A: Common issues and solutions include:

- Lost long-chain dependencies: Subgraph samplers may break critical information pathways; use chain-preserving methods like HISGCNs [25].

- Sample bias: Nodes frequently sampled may dominate training; implement loss normalization to correct for uneven sampling probabilities [25].

- Inappropriate heuristic: Fixed sampling policies may not adapt to your specific graph topology; consider learnable methods like GRAPES that optimize sampling for your task [24].

Q: How can I validate that my sampling method preserves important graph structural properties?

A: Monitor these metrics during experimentation:

- Discrete Ricci curvature of edges in sampled subgraphs [25]

- Node embedding variance across training iterations [25]

- Classification accuracy compared to full-graph baselines [24]

- Convergence speed during training [25]

Troubleshooting Guides

Problem: High Memory Consumption During Training

Symptoms

- GPU memory exhaustion errors

- Inability to increase batch size or model depth

- Training crashes with large neighbor samples

Solution Steps

- Switch to subgraph sampling methods like GraphSAINT [26] or HISGCNs [25] that decouple sampling from propagation

- Reduce sample size while using adaptive methods like GRAPES that maintain accuracy with smaller samples [24]

- Implement historical embeddings like in GAS to approximate neighbor embeddings [24]

Verification of Fix

- Monitor GPU memory usage during training

- Check that accuracy remains within 1-2% of full-batch performance

- Ensure training time per epoch decreases significantly

Problem: Poor Model Generalization on Heterophilous Graphs

Symptoms

- High training accuracy but low validation/test accuracy

- Performance degradation on graphs where connected nodes have different labels

- Inconsistent results across different biomedical domains

Solution Steps

- Implement adaptive sampling with GRAPES that learns task-specific sampling probabilities [24]

- Preserve structural properties using methods that maintain discrete Ricci curvature [25]

- Ensure core-periphery awareness with hierarchical sampling for scale-free biomedical networks [25]

Verification of Fix

- Test on multi-label heterophilous graph benchmarks [24]

- Compare performance against fixed heuristic baselines

- Validate that sampling probabilities correlate with task-relevant node importance

Problem: Lost Long-Range Dependencies in Sampled Subgraphs

Symptoms

- Performance degradation on global graph property prediction

- Reduced accuracy on node classification requiring multi-hop information

- Inability to capture distant node relationships

Solution Steps

- Use chain-preserving samplers like HISGCNs that maintain critical information pathways [25]

- Implement hierarchical sampling that preserves both core connectivity and peripheral chains [25]

- Adjust sampling depth to ensure sufficient receptive field for your specific task

Verification of Fix

- Validate preservation of important chains in sampled subgraphs [25]

- Test performance on tasks requiring long-range dependency capture

- Measure convergence speed improvement [25]

Sampling Method Comparison

Table 1: Characteristics of Major Graph Sampling Approaches

| Method Type | Key Examples | Sampling Approach | Best For | Limitations |

|---|---|---|---|---|

| Node-wise | GraphSAGE [24] | Randomly samples fixed number of neighbors per node | Homophilous graphs, Simple architectures | High redundancy, Neighbor explosion in deep GNNs |

| Layer-wise | FastGCN [24] | Samples nodes in each layer independently | Deep GNNs, Memory-constrained environments | May miss important low-degree nodes |

| Subgraph | GraphSAINT [25] [26] | Samples complete subgraphs for minibatches | Large graphs, Training stability | Potential loss of long-range dependencies |

| Adaptive | GRAPES [24] | Learns sampling probabilities optimized for task | Heterophilous graphs, Multi-label datasets | Higher computational overhead |

| Hierarchical | HISGCNs [25] | Preserves core-periphery structure and critical chains | Scale-free biomedical networks | Complex implementation |

Table 2: Performance Characteristics Across Biomedical Graph Types

| Graph Type | Optimal Sampling Method | Expected Accuracy Preservation | Memory Reduction | Implementation Complexity |

|---|---|---|---|---|

| Homophilous | Random Node/Layer Sampling | 95-100% of full-graph [24] | 5-10x [24] | Low |

| Heterophilous | GRAPES [24] | 98-100% of full-graph [24] | 3-8x [24] | High |

| Scale-free | HISGCNs [25] | Superior to alternatives [25] | 4-10x [25] | Medium-High |

| Multi-label | Adaptive Methods [24] | State-of-the-art [24] | 3-7x [24] | Medium-High |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools and Implementations for Graph Sampling Research

| Tool/Resource | Function | Application Context | Availability |

|---|---|---|---|

| GRAPES | Adaptive sampling method that learns node probabilities | Heterophilous and multi-label graphs [24] | Public GitHub |

| HISGCNs | Hierarchical importance sampling preserving core-periphery structure | Scale-free biomedical networks [25] | Public GitHub |

| GraphSAINT | Subgraph sampling for inductive learning | Large-scale graph training [25] [26] | Multiple implementations |

| GNN-BS | Bandit-based sampling with variance reduction | Dynamic sampling policy learning [24] | Research implementations |

| PyTorch Geometric | Framework for GNN implementations with sampling utilities | General GNN experimentation | Open-source |

Experimental Protocols and Workflows

Protocol 1: Implementing Adaptive Sampling with GRAPES

Graph Adaptive Sampling Workflow

Materials

- GRAPES implementation from official repository

- Biomedical graph dataset (e.g., protein-protein interactions, patient similarity networks)

- GNN framework (PyTorch Geometric or DGL)

Procedure

- Initialize two GNNs: one for sampling policy (predicts node inclusion probabilities) and one for classification [24]

- For each training iteration:

- Pass completed subgraph to classifier GNN for prediction [24]

- Compute classification loss and backpropagate through both GNNs [24]

- Update parameters for both sampling policy and classifier using gradient-based optimization [24]

Validation Metrics

- Node classification accuracy on test set

- Comparison against full-graph and fixed heuristic baselines

- Training time and memory usage reduction

Protocol 2: Hierarchical Sampling for Scale-Free Biomedical Networks

Hierarchical Sampling for Scale-free Graphs

Materials

- HISGCNs implementation

- Scale-free biomedical network (e.g., disease comorbidity, genetic association networks)

- Computing resources with sufficient RAM for graph partitioning

Procedure

- Partition graph into core and periphery using degree threshold [25]:

- Preserve core centrum in most minibatches to maintain connectivity [25]

- Sample periphery edges without core node interference to preserve long chains [25]

- Construct minibatches using sampled subgraphs focusing on both core and periphery importance [25]

- Train GCN on minibatches with loss normalization for frequently sampled nodes [25]

Validation Metrics

- Chain preservation rate in sampled subgraphs

- Node embedding variance across training

- Convergence speed and final accuracy [25]

Key Decision Framework for Sampling Strategy Selection

When designing sampling strategies for biomedical GNN applications, consider this structured approach:

- Characterize your graph: Determine if it exhibits homophily vs. heterophily, scale-free properties, or specific core-periphery structures [24] [25]

- Identify critical dependencies: Assess whether your prediction task requires long-range dependencies or primarily local information [25]

- Evaluate computational constraints: Consider available memory, graph size, and required training throughput [24]

- Select appropriate method class: Choose from fixed heuristics for simple graphs or adaptive methods for complex, heterophilous networks [24]

- Validate and iterate: Implement chosen method and verify it preserves task-relevant structural properties while reducing computational burden [25]

This structured approach ensures your sampling strategy aligns with both the topological characteristics of your biomedical graph and the specific requirements of your prediction task.

What are historical embedding methods and why are they important for biomedical GNNs?

Historical embedding methods are a class of Graph Neural Network training algorithms that use cached, historical node embeddings from previous training iterations to approximate the state of unsampled neighbors. This approach effectively mitigates the "neighbor explosion" problem, where the number of neighbors involved in GNN computations grows exponentially with network depth [27]. For biomedical researchers, these methods enable the training of deeper, more expressive models on large-scale graphs such as molecular structures, protein-protein interaction networks, and patient comorbidity graphs, while maintaining computational feasibility [8] [28].

How do historical embeddings differ from sampling methods?

Unlike sampling methods (node-wise, layer-wise, or subgraph sampling) that discard information from unsampled nodes and edges, historical embedding methods preserve all neighbor information by using cached embeddings as approximations [27]. This key difference reduces the estimation variance inherent in sampling approaches, potentially leading to more stable training and better preservation of graph structural information—critical factors when working with complex biomedical networks where no relationship is truly incidental [8].

Troubleshooting Common Experimental Issues

How can I diagnose staleness issues in my historical embeddings?

Staleness occurs when historical embeddings become significantly outdated compared to their true values as model parameters update. Diagnose this issue by monitoring these key indicators:

- Performance Degradation: Accuracy plateaus at suboptimal levels or decreases despite continued training

- Slowed Convergence: Model requires significantly more epochs to converge compared to baseline methods

- Embedding Divergence: Increasing discrepancy between historical embeddings and their recalculated values

The core issue is update frequency disparity: model parameters update N/B times per epoch (where N=nodes, B=batch size), while each node's cache refreshes only once per epoch when it serves as a target node [27].

What strategies can mitigate historical embedding staleness?

Several advanced approaches address staleness:

VISAGNN Framework: Incorporates staleness awareness through three mechanisms [27]:

- Dynamic Staleness Attention: Weighted message-passing using staleness scores

- Staleness-aware Loss: Regularization term to reduce staleness influence

- Staleness-Augmented Embeddings: Direct injection of staleness into node representations

GraphFM-OB: Compensates for staleness using feature momentum for both in-batch and out-of-batch nodes [27]

Refresh: Introduces staleness scores to avoid using highly stale embeddings, though this may sacrifice some neighbor information [27]

Why does my model converge slower with historical embeddings versus sampling?

Slower convergence typically indicates significant staleness bias dominating the variance reduction benefits. Address this by:

- Implementing Progressive Staleness Tolerance: Begin training with lower tolerance for stale embeddings, gradually increasing as model stabilizes

- Hybrid Sampling: Combine historical embeddings with selective neighbor sampling for critical nodes

- Strategic Cache Refresh: Implement periodic full-batch recalculations of historical embeddings after model parameters undergo substantial updates

How can I manage memory constraints when using historical embeddings?

While historical embeddings reduce GPU memory by storing embeddings on CPU or disk, large-scale biomedical graphs still present challenges:

- Embedding Compression: Apply dimensionality reduction techniques to cached embeddings

- Selective Caching: Implement caching policies that prioritize frequently accessed or high-degree nodes

- Cluster-Based Partitioning: Use graph clustering (as in GAS) to reduce inter-connectivity and cache synchronization needs [27]

Experimental Protocols & Methodologies

Benchmarking Historical Embedding Performance

When evaluating historical embedding methods for biomedical applications, follow this structured protocol:

Experimental Setup:

- Baselines: Compare against GraphSAGE (node-wise), ClusterGCN (subgraph), and Full-Batch GCN

- Datasets: Use biologically relevant graphs (PPI, molecular, patient networks)

- Metrics: Track accuracy, training time, memory usage, and convergence speed

Implementation Details:

- Employ consistent GNN architecture (e.g., 3-layer GAT) across comparisons

- Implement staleness tracking to correlate with performance metrics

- Conduct multiple runs with different random seeds for statistical significance

Staleness Impact Assessment Methodology

To quantitatively evaluate staleness:

Staleness Measurement:

- Compute L2 distance between historical and recalculated embeddings

- Track per-node update intervals (iterations since last refresh)

Correlation Analysis:

- Correlate staleness metrics with per-node prediction errors

- Analyze layer-wise staleness propagation through the GNN

Ablation Studies:

- Test individual components of VISAGNN (attention, loss, augmentation)

- Compare refresh strategies (periodic, adaptive, momentum-based)

Technical Reference

Quantitative Comparison of Historical Embedding Methods

Table 1: Performance Characteristics of Historical Embedding Approaches

| Method | Staleness Handling | Memory Efficiency | Convergence Rate | Best For Biomedical Use Cases |

|---|---|---|---|---|

| VR-GCN [27] | Basic historical embeddings | Medium | Medium | Medium-scale molecular graphs |

| GAS [27] | Graph clustering + regularization | High | Medium-Fast | Large-scale knowledge graphs |

| GraphFM-OB [27] | Feature momentum compensation | Medium | Medium | Dynamic patient networks |

| VISAGNN [27] | Dynamic staleness attention | Medium | Fast | Critical applications requiring high accuracy |

| Refresh [27] | Staleness evasion | High | Variable | Resource-constrained environments |

Table 2: Staleness Mitigation Techniques Comparison

| Technique | Implementation Complexity | Computational Overhead | Effectiveness | Compatibility |

|---|---|---|---|---|

| Dynamic Staleness Attention | High | Medium | High | GAT-based architectures |

| Staleness-aware Loss | Low | Low | Medium | All GNN variants |

| Embedding Augmentation | Medium | Low | Medium-High | All historical embedding methods |

| Feature Momentum | Medium | Low | Medium | Most sampling-based approaches |

| Strategic Cache Refresh | Low | Variable (periodic spikes) | High | All caching systems |

Research Reagent Solutions

Table 3: Essential Components for Historical Embedding Experiments

| Component | Function | Example Implementations |

|---|---|---|

| Embedding Cache | Stores historical node embeddings | CPU memory, SSD with efficient serialization |

| Staleness Tracker | Monodes embedding staleness metrics | Update counter, embedding divergence calculator |

| Graph Partitioning | Reduces inter-cluster connectivity | METIS, spectral clustering for biomedical graphs |

| Memory Manager | Balances CPU-GPU data transfer | Prefetching, cache-aware batching algorithms |

| Staleness-aware Sampler | Selects nodes minimizing staleness impact | Refresh-inspired algorithms, priority queues |

Architectural Diagrams

VISAGNN Staleness-Aware Architecture

Historical Embedding Update Pipeline

Frequently Asked Questions

Implementation Questions

Q: How often should I update historical embeddings in my biomedical graph experiment? A: The optimal update frequency depends on your specific graph characteristics:

- For rapidly evolving embeddings (early training, high learning rates): Update more frequently

- For stable training phases: Implement adaptive strategies that refresh embeddings based on staleness thresholds

- Consider a hybrid approach: Full updates every K epochs with selective updates for high-staleness nodes between epochs

Q: What is the optimal cache size for large-scale biomedical knowledge graphs? A: Cache sizing involves trade-offs:

- Minimum: Store embeddings for all nodes in your graph

- Optimal: Cache size = graph nodes + buffer for intermediate computations

- Constrained environments: Implement node importance scoring (by degree, centrality, or task relevance) to prioritize critical embeddings

Domain-Specific Questions

Q: How do historical embedding methods perform on heterogeneous biomedical graphs? A: Performance varies by heterogeneity type:

- Entity type heterogeneity: Methods like VISAGNN perform well as staleness attention can weight different entity types appropriately

- Relationship heterogeneity: Requires careful staleness threshold tuning as different relationship types may tolerate different staleness levels

- Temporal heterogeneity: Historical embeddings may struggle with rapidly evolving temporal graphs without frequent cache updates

Q: Which historical embedding method is most suitable for molecular property prediction? A: Based on current research:

- For small-molecule graphs: GAS or VISAGNN due to their clustering and staleness awareness

- For protein-protein interaction networks: GraphFM-OB handles the complex feature relationships effectively

- For large-scale drug-target networks: Refresh provides good performance with lower memory overhead

Performance & Optimization Questions

Q: Why does my historical embedding implementation show high GPU memory usage despite caching? A: Common causes and solutions:

- Inefficient batch construction: Include too many neighbors, triggering excessive fresh computations

- Solution: Implement neighbor sampling with historical embedding fallback

- Cache management overhead: Frequent CPU-GPU transfers due to poor prefetching

- Solution: Implement cache-aware batching that maximizes cache hits

- Gradient computation for cached embeddings: Unnecessary gradient tracking

- Solution: Use detach() operations on retrieved historical embeddings

Q: How can I adapt historical embedding methods for temporal biomedical graphs? A: Temporal adaptations require:

- Time-aware staleness metrics: Incorporate temporal decay in addition to update-based staleness

- Snapshot caching: Maintain historical embeddings for different temporal segments

- Temporal attention: Extend staleness attention to consider both update recency and temporal relevance

Frequently Asked Questions (FAQs)

1. What are spurious correlations in machine learning, and why are they a problem in biomedicine? Spurious correlations are associations between non-essential input features (like background, texture, or secondary objects) and target labels that a model learns to rely on. These correlations do not reflect a true causal relationship and often stem from biases in the dataset, such as selection bias or imbalanced group labels [29]. In biomedicine, this is particularly dangerous. For instance, a model for pneumonia detection might learn to rely on the presence of metal tokens from specific hospitals in chest X-rays instead of actual pathological features of the disease. This causes the model to fail catastrophically when deployed in new hospitals or with different equipment, potentially leading to misdiagnosis and harmful outcomes [29] [30].

2. Why are Graph Neural Networks (GNNs) especially susceptible to spurious correlations? GNNs are susceptible due to their inherent learning mechanisms. They can easily overfit to "spurious subgraphs" – parts of the graph structure that are correlated with the label but are not causally related to the task [31]. A prevalent yet often overlooked cause is Endogenous Task-oriented Spurious Correlations (ETSC). In node-level tasks, an ego-graph contains edges formed by diverse mechanisms, but only a subset is causally related to a specific task. The ego node acts as a confounder, creating spurious correlations between the task and non-causal edges [31]. Furthermore, from a signal processing perspective, a GNN's generalization error is tied to the alignment between node features and graph structure; misalignment can cause failures [32].

3. How can I detect if my model is relying on spurious correlations? A key indicator is a significant performance drop on Out-of-Distribution (OOD) data or on a "worst-group" test set curated to contain samples where the spurious correlation does not hold [29] [33]. You can also train a deliberately biased model (e.g., using high-weight decay or generalized cross-entropy loss) and analyze its predictions. A high disagreement between this biased model's predictions and the true labels can help identify "bias-conflicting" samples (those lacking the spurious correlation), which a robust model should handle correctly [34].

4. What is the difference between "bias-aligned" and "bias-conflicting" samples? These terms categorize data points based on their relationship with a spurious correlation.

- Bias-aligned samples are those where the spurious correlation holds (e.g., an image of a cow on grass). Models trained with Empirical Risk Minimization (ERM) find these samples easy and achieve high accuracy on them [34].

- Bias-conflicting samples are those that lack the spurious correlation (e.g., a cow in a desert). Models relying on spurious features will perform poorly on these. The core challenge in debiasing is to improve performance on this minority group [34].

5. My GNN generalizes poorly. Is this due to spurious correlations or architectural limitations like over-smoothing? While architectural issues like over-smoothing can cause poor performance, they do not fully explain why performance varies drastically across similar architectures or datasets [32]. If your model performs well on standard test sets (i.i.d. data) but fails on data from new domains, institutions, or under specific subgroup analysis, the root cause is likely its reliance on spurious correlations rather than genuine causal features [22] [30]. Deriving the exact generalization error can help disentangle these factors [32].

Troubleshooting Guide: Diagnosing and Mitigating Spurious Correlations

This guide addresses common failure scenarios related to spurious correlations in graph-structured biomedical data.

Problem 1: Poor Performance on Out-of-Distribution (OOD) Data

- Symptoms: High accuracy on the training and in-distribution test set, but a significant performance drop when the model is deployed on data from a new hospital, a different population, or a shifted data distribution [33] [30].

- Diagnosis: The model has likely learned dataset-specific nuisances (e.g., scanner type, hospital-specific protocols) instead of the underlying biological mechanism [33].

- Solutions:

- Employ Stable Learning with Feature Decorrelation: Methods like Stable-GNN (S-GNN) introduce a feature sample weighting decorrelation technique in the random Fourier transform space. This helps the model to eliminate spurious causal features and extract genuine ones, improving robustness to distribution shifts [22].

- Use Nuisance-Randomized Distillation (NURD): This algorithm trains a classifier under a distribution where the nuisance-label relationship is broken. It ensures the model's representations are independent of the nuisance variable, leading to better OOD detection and performance on "shared-nuisance" inputs [33].

Problem 2: Failure on Minority Subgroups in Training Data

- Symptoms: The model achieves high average accuracy, but performance is unacceptably low on a specific demographic subgroup or a rare biological class [29] [34].

- Diagnosis: The training data contains imbalanced group labels, and the model has overfitted to the spurious correlations that hold for the majority groups.

- Solutions:

- Implement Resampling based on Disagreement Probability (DPR): This method involves two key steps. First, train a deliberately biased model. Then, for the main model, upsample training examples based on the probability that the biased model disagrees with the true label. This automatically gives more weight to "bias-conflicting" samples without needing explicit bias labels [34].

- Apply Group Distributionally Robust Optimization (GroupDRO): If you have access to group labels (e.g., demographic information), GroupDRO directly optimizes for the worst-performing group by modifying the training objective to minimize the maximum loss across all groups [29].

Problem 3: GNNs Overfitting to Task-Irrelevant Graph Structures

- Symptoms: In node-level tasks, the model's predictions are overly influenced by parts of the graph structure that are not causally relevant to the scientific task [31].

- Diagnosis: The model is suffering from Endogenous Task-oriented Spurious Correlations (ETSC), where it uses non-causal edges within the ego-graph for prediction [31].

- Solutions:

- Adopt Counterfactual Contrastive Learning (CCL-Gn): This framework automatically learns to decompose the ego-graph into causally relevant and spuriously correlated subgraphs. It then uses an auxiliary contrastive learning objective to force the GNN to pull the representation of the raw ego-graph closer to its causal counterpart and push it away from the non-causal counterpart [31].

- Utilize Causal Graph Neural Networks (CIGNNs): These architectures explicitly incorporate causal inference principles. They are designed to learn invariant mechanisms and support interventional prediction and counterfactual reasoning, which helps in ignoring spurious structural correlations and focusing on biologically plausible pathways [30].

Experimental Protocols for Mitigating Spurious Correlations

Protocol 1: Disagreement Probability Resampling (DPR)

Objective: To debias a model without requiring explicit annotations for the spurious attributes [34].

Methodology:

- Train a Biased Model: First, train a model

f_busing a high-weight decay or generalized cross-entropy loss. This encourages the model to rely on simple, spurious features. - Calculate Disagreement Probability: For each training sample

(x_i, y_i), compute the probability that the biased modelf_bdisagrees with the true label:p_disagree = 1 - P(f_b(x_i) = y_i). - Upsample Based on Disagreement: Train the main debiased model using a loss function where each sample is weighted by its

p_disagree. This effectively upsamples the bias-conflicting samples. - Validation: Evaluate the final model on a separate test set containing bias-conflicting samples to measure worst-group performance.

Key Hyperparameters:

- Weight decay for the biased model.

- Loss function for the main model (e.g., weighted cross-entropy).

Protocol 2: Automated Counterfactual Contrastive Learning for Graphs (CCL-Gn)

Objective: To mitigate Endogenous Task-oriented Spurious Correlations (ETSC) in node-level tasks [31].

Methodology:

- Generate Counterfactual Views: For a given ego-graph, the framework learns to generate two counterfactual views:

- Causal View: The subgraph causally correlated to the task.

- Non-Causal View: The subgraph spuriously correlated to the task.

- Contrastive Learning: The GNN is optimized with an auxiliary contrastive learning objective. The representation of the raw ego-graph is pulled closer to the causal view and pushed apart from the non-causal view in the embedding space.

- Joint Training: The contrastive loss is combined with the standard supervised loss (e.g., node classification loss) to train the GNN end-to-end.

Key Hyperparameters:

- Temperature parameter in the contrastive loss.

- Weighting factor between the supervised and contrastive losses.

Protocol 3: Stable-GNN with Feature Decorrelation

Objective: To enhance the stability of GNN predictions across different data distributions by decorrelating features [22].

Methodology:

- Sample Reweighting: Learn instance-specific weights for the training data that, when applied, suppress spurious correlations between features and the target variable.

- Random Fourier Features (RFF): Use RFF, an efficient kernel approximation technique, to map nonlinear features into a low-dimensional space where decorrelation is performed. This step is computationally efficient (O(nD) complexity).

- Model Training: Train the GNN model using the reweighted samples. The sample weights are optimized to ensure the independence of input variables in the learned representation, promoting stability.

Key Hyperparameters:

- Dimensionality

Dof the Random Fourier Features. - Optimization parameters for the sample weight update algorithm.

Table 1: Comparison of Debiasing Methods Without Bias Labels

| Method Name | Core Principle | Bias Labels Required? | Reported Performance Gain |

|---|---|---|---|

| DPR [34] | Upsamples based on disagreement with a biased model | No | +20.87% vs ERM on Biased FFHQ [34] |

| CCL-Gn [31] | Counterfactual contrastive learning on graphs | No | Superior performance on 13 real-world datasets vs. GCL and OOD methods [31] |

| Stable-GNN (S-GNN) [22] | Sample reweighting for feature decorrelation | No | Surpasses SOTA GNNs on single-site and cross-site classification [22] |

| LfF [34] | Uses losses from two networks to identify bias-conflicting samples | No | Strong baseline, but outperformed by DPR [34] |

Table 2: Common Sources of Spurious Correlations in Biomedical Data

| Source | Description | Example in Biomedicine |

|---|---|---|

| Selection Bias [29] | Dataset does not represent the true population | Training data from a single hospital with specific patient demographics [30]. |

| Confounding Factors [29] | An unobserved variable influences both features and label | Patient age affecting both biological markers and disease prevalence [30]. |

| Imbalanced Group Labels [29] | Certain combinations of attributes are over-represented | A skin lesion dataset containing mostly light-skinned individuals with a specific disease [29]. |

| Simplicity Bias [29] | Model prefers to learn simple, highly available features | Using background (e.g., hospital scanner metadata) over complex pathological features in medical images [29] [33]. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Experimentation

| Resource / Algorithm | Type | Function / Application |

|---|---|---|

| CCL-Gn Framework [31] | Software Algorithm | Mitigates endogenous spurious correlations in node-level graph tasks. |

| DPR Resampling [34] | Software Algorithm | Debiasing without bias labels for image and graph classification. |

| Stable-GNN (S-GNN) [22] | Software Algorithm | Enhances GNN stability and cross-domain generalization via decorrelation. |

| NURD [33] | Software Algorithm | Improves OOD detection by breaking nuisance-label relationships. |

| TUDataset [22] | Benchmark Data | A collection of graph-based datasets for molecular and biological property prediction. |

| Open Graph Benchmark (OGB) [22] | Benchmark Data | Large-scale, diverse benchmark datasets for graph learning. |

Conceptual Workflow Diagrams

Strategies for Robust Predictions

Model Failure from Spurious Features

Technical Support Center

Troubleshooting Guides

Problem 1: Neighbor Explosion During Training

- Symptoms: Training runs out of memory (OOM) when processing large graphs or using multiple GNN layers. The receptive field grows exponentially with each layer [35].

- Diagnosis: This is a classic scalability bottleneck in message-passing GNNs, where each node aggregates information from its neighbors, and this process repeats across layers.

- Solutions:

- Graph Sampling: Implement mini-batch training with neighbor sampling (e.g., GraphSAGE) to create manageable subgraphs [36].

- Pre-Propagation GNNs (PP-GNNs): Decouple feature propagation from model training. Precompute the propagated features across the graph as a one-time preprocessing step. This eliminates the need for expensive message-passing during each training epoch and can improve training throughput by up to 15x [35].

- Simplified Architectures: Use decoupled GNNs like SAGN, which separate graph convolutions from feature transformations. This allows the use of scalable classifiers like MLPs on preprocessed graph features [36].

Problem 2: Handling Sparse, Irregular, and Heterogeneous EHR Data

- Symptoms: Model performance is poor; the graph constructed from EHR data has many node types (e.g., patients, medications) and connections are infrequent or irregular over time [37].

- Diagnosis: Standard GNNs designed for homogeneous, static graphs struggle with the complex, multi-relational, and temporal nature of clinical data.

- Solutions:

- Heterogeneous Graph Networks: Model different entity types (patients, diagnoses, drugs) as distinct node types and their relationships as distinct edge types. This preserves critical semantic information [38].

- Temporal Graph Networks: For dynamic data (e.g., patient journeys), use models like STM-GNN or Temporal Graph Networks (TGN). These incorporate memory modules (e.g., LSTMs) to update node embeddings based on historical sequences of graph events [37].

- Recurrent Augmentations: Enhance node features with their previous temporal embeddings before message passing and add spatial embeddings to node features before storing them in memory [37].

Problem 3: Model Performance Degrades on Large-Scale Graphs

- Symptoms: Despite scaling the graph size, model accuracy does not improve or training becomes computationally prohibitive [39].

- Diagnosis: The model architecture or training framework may not be designed to leverage the benefits of scale.

- Solutions:

- Leverage Scaling Laws: Systematically increase model width (embedding dimensions), depth (number of layers), and dataset size. Studies on molecular graphs show a 30.25% improvement when scaling to 1 billion parameters and a 28.98% improvement when increasing the dataset size eightfold [39].

- Self-Label-Enhanced (SLE) Training: Incorporate self-training techniques. Use the model to generate pseudo-labels on unlabeled data to augment the training set and improve label propagation (Knowledge-Label Propagation) [36].