Rational Drug Design: Foundational Concepts, AI-Driven Methods, and Translational Success

This article provides a comprehensive overview of the foundational concepts and modern practices of Rational Drug Design (RDD) for researchers and drug development professionals.

Rational Drug Design: Foundational Concepts, AI-Driven Methods, and Translational Success

Abstract

This article provides a comprehensive overview of the foundational concepts and modern practices of Rational Drug Design (RDD) for researchers and drug development professionals. It explores the paradigm shift from traditional trial-and-error methods to a hypothesis-driven approach grounded in structural biology and computational modeling. The scope spans from core principles and the latest AI-powered methodologies to strategies for troubleshooting optimization challenges and validating candidate efficacy. By synthesizing current trends and real-world case studies, this resource aims to equip scientists with the knowledge to design more effective and safer therapeutics efficiently.

From Intuition to informacophore: Core Principles of Rational Drug Design

Rational Drug Design (RDD) represents a fundamental shift in pharmaceutical development from traditional stochastic methods to a targeted, knowledge-driven approach. Unlike empirical discovery that relies on random screening of compounds, RDD involves the inventive process of finding new medications based on detailed knowledge of a biological target [1]. This methodological transition has transformed drug discovery from a high-cost, time-consuming endeavor into a more efficient, predictive science. The core premise of RDD is the design of molecules that are complementary in shape and charge to their biomolecular targets, enabling precise binding and modulation of target activity [1] [2]. This paradigm shift has been accelerated by advancements in structural biology, computational power, and artificial intelligence, allowing researchers to explore vast chemical spaces with unprecedented accuracy.

Historical Evolution: From Empirical Screening to Rational Design

The development of rational drug design emerged as a distinct methodology in the 1950s, with early examples demonstrating the power of targeting specific physiological mechanisms. Three landmark cardiovascular drugs—propranolol, captopril, and losartan—exemplify this historical progression and the increasing integration of epistemic and practical projects in pharmaceutical research [3].

Table 1: Historical Evolution of Rational Drug Design Through Case Studies

| Drug | Therapeutic Class | Development Period | Key Innovation | Target Knowledge Base |

|---|---|---|---|---|

| Propranolol | Beta-blocker | 1958-1964 | First β-adrenoreceptor antagonist | Receptor pharmacology without detailed structural data |

| Captopril | ACE inhibitor | 1970s | First structure-based design targeting ACE | Detailed enzyme mechanism and active site chemistry |

| Losartan | Angiotensin II receptor antagonist | 1980s-1990s | First AT1 receptor blocker | Receptor subtype characterization and binding requirements |

The development of propranolol by James Black and colleagues at Imperial Chemical Industries (1958-1964) marked a pivotal transition. The rationale was straightforward—design a molecule to inhibit adrenaline's action on β-adrenoreceptors to reduce cardiac oxygen demand—but represented a new approach to pharmaceutical development [3]. Captopril's design in the 1970s demonstrated even greater integration of target knowledge, leveraging understanding of angiotensin-converting enzyme (ACE) and its zinc-containing active site to design specific inhibitors [3]. By the time losartan was developed in the 1980s-1990s, the approach had evolved further to include receptor subtype characterization and detailed binding requirements [3].

This historical progression shows how rational drug design became possible when theoretical knowledge of drug-target interaction and experimental testing could interlock in cycles of mutual advancement [3]. The methodology has progressively shifted from targeting receptor systems without detailed structural knowledge to precise atomic-level intervention based on comprehensive understanding of target architecture.

Core Methodologies in Rational Drug Design

Structure-Based Drug Design (SBDD)

Structure-Based Drug Design relies on knowledge of the three-dimensional structure of biological targets obtained through experimental methods such as X-ray crystallography or NMR spectroscopy, or computational predictions [1] [2]. When an experimental structure is unavailable, homology modeling may create a target model based on related proteins with known structures [1]. The SBDD process involves four critical steps:

- Preparation of protein structure: Refining the target structure for computational analysis

- Identification of binding sites: Locating specific regions where ligand binding occurs

- Preparation of ligands: Optimizing candidate compounds for docking studies

- Docking and scoring: Computational prediction of binding poses and affinity estimation [2]

Key SBDD techniques include virtual screening of large molecular databases to identify potential ligands, de novo ligand design building molecules within constraints of the binding pocket, and optimization of known ligands by evaluating proposed analogs [1]. Modern implementations of SBDD increasingly incorporate artificial intelligence and machine learning to enhance prediction accuracy [4].

Ligand-Based Drug Design (LBDD)

When structural information about the biological target is limited or unavailable, Ligand-Based Drug Design provides an alternative approach. LBDD relies on knowledge of molecules known to interact with the target of interest [1]. The primary methods include:

- Pharmacophore modeling: Defining the essential structural features a molecule must possess to bind effectively to the target [1] [2]

- Quantitative Structure-Activity Relationship (QSAR): Establishing mathematical relationships between chemical structure descriptors and biological activity to predict new analogs [1]

These approaches enable indirect drug design by extrapolating from known active compounds to novel chemical entities with improved properties.

Ligand-Based Drug Design Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Essential Research Reagents and Materials for Rational Drug Design

| Category | Specific Examples | Function in RDD |

|---|---|---|

| Target Production | Cloning vectors, expression cells, purification resins | Generate and purify biological targets for structural studies and screening assays [1] |

| Structural Biology | Crystallization screens, cryo-protectants, NMR isotopes | Determine 3D structures of targets and target-ligand complexes [1] [2] |

| Compound Libraries | Diverse small molecules, fragment libraries, natural products | Provide starting points for lead identification and optimization [1] [5] |

| Computational Resources | Molecular docking software, QSAR packages, MD simulations | Predict binding, optimize compounds, and simulate molecular interactions [1] [4] |

| Binding Assays | Fluorescent dyes, radioisotopes, surface plasmon resonance chips | Quantitatively measure ligand-target interactions and binding affinity [1] [5] |

| ADME/Tox Screening | Metabolic enzymes, cell barriers, toxicity biomarkers | Assess pharmacokinetic properties and safety profiles of candidates [1] [2] |

Quantitative Framework: Key Parameters and Data Analysis

Successful implementation of rational drug design requires careful optimization of multiple physicochemical and biological parameters. The following quantitative framework guides decision-making throughout the drug discovery process.

Table 3: Key Quantitative Parameters in Rational Drug Design

| Parameter Category | Specific Metrics | Optimal Ranges/Targets | Computational Methods |

|---|---|---|---|

| Binding Affinity | Kd, Ki, IC50 | Lower values indicating stronger binding (nM-pM range) | Molecular docking, free energy calculations, QSAR [1] [6] |

| Drug-Likeness | Molecular weight, logP, H-bond donors/acceptors | Lipinski's Rule of Five and related guidelines [1] | Physicochemical property prediction, lipophilic efficiency [1] |

| Structural Optimization | Binding energy (ΔG), enthalpy (ΔH), entropy (ΔS) | Negative ΔG for spontaneous binding | Molecular mechanics, quantum mechanics, molecular dynamics [1] [2] |

| Selectivity | Selectivity indices, therapeutic window | Higher values indicating better safety profiles | Binding site comparison, off-target screening [1] [5] |

The binding affinity can be mathematically represented using the Gibbs free energy equation:

[ΔG = -RT \ln K_d]

where ΔG is the Gibbs free energy change, R is the universal gas constant, T is the temperature in Kelvin, and Kd is the dissociation constant [6]. A negative ΔG value indicates spontaneous binding, with more negative values corresponding to stronger interactions.

For multi-parameter optimization during lead compound development, scoring functions incorporate various terms:

[Score = w1ΔG{bind} + w2LipophilicEfficiency + w3SAS + w_4RotatableBonds + \cdots]

where wn represents weighting factors for different physicochemical and pharmacological properties [1].

Experimental Protocols: Methodological Details

Structure-Based Virtual Screening Protocol

Virtual screening represents a cornerstone methodology in modern rational drug design, enabling efficient exploration of vast chemical spaces. The following protocol outlines a standardized approach for structure-based virtual screening:

Target Preparation:

- Obtain 3D structure from Protein Data Bank or through homology modeling

- Add hydrogen atoms and optimize hydrogen bonding network

- Assign partial charges using appropriate force fields (e.g., AMBER, CHARMM)

- Remove crystallographic water molecules except those involved in key interactions

Binding Site Identification:

- Analyze known ligand binding locations from co-crystal structures

- Use computational detection methods (e.g., GRID, FPOCKET)

- Characterize physicochemical properties of binding pockets (hydrophobicity, electrostatic potential)

Compound Library Preparation:

- Curate database of purchasable compounds (e.g., ZINC, ChEMBL)

- Generate plausible 3D conformations for each compound

- Apply filters for drug-likeness and structural alerts

Molecular Docking:

- Perform high-throughput docking using rapid algorithms (e.g., AutoDock Vina, FRED)

- Select top-ranked compounds for more precise docking with flexible side chains

- Cluster results based on binding poses and chemical similarity

Post-Screening Analysis:

Chemogenomic Profiling Protocol

Chemogenomic approaches systematically explore interactions between chemical and target spaces, providing a framework for polypharmacology assessment and selectivity optimization:

Ligand and Target Space Description:

- Encode compounds using 1D, 2D, and 3D descriptors (molecular weight, topological fingerprints, pharmacophores)

- Represent targets by sequence motifs, binding site features, and structural domains

- Calculate similarity metrics (e.g., Tanimoto coefficient for compounds, sequence identity for targets)

Interaction Matrix Construction:

- Compile experimental data (Kd, Ki, IC50) for known target-ligand pairs

- Organize as 2D matrix with targets as columns and compounds as rows

- Identify data gaps for predictive modeling

Knowledge-Based Prediction:

- Apply ligand-based prediction (similar compounds → similar targets)

- Implement target-based prediction (similar targets → similar ligands)

- Use machine learning models to fill interaction matrix gaps

- Validate predictions with experimental testing [5]

Structure-Based Drug Design Workflow

Emerging Technologies and Future Directions

Artificial Intelligence and Deep Learning

The integration of artificial intelligence represents the cutting edge of rational drug design. AI models, particularly deep learning networks, are increasingly applied to predict key properties such as binding affinity, toxicity, and pharmacokinetic profiles [4]. These models complement traditional physics-based simulations by identifying complex patterns in large chemical and biological datasets. The emergence of AlphaFold 3 exemplifies this trend, providing an accurate atomic-level view of biomolecular systems that includes proteins, nucleic acids, small molecule ligands, and post-translational modifications [7]. This technology enables prediction of novel complexes without experimental structural data, dramatically accelerating target assessment and compound design.

Nanomedicine and Delivery Optimization

Rational design principles are expanding beyond small molecules to encompass nanomedicines and delivery systems. Computer-aided design strategies are being applied to optimize nanoparticles for drug delivery, particularly through high-throughput screening of lipid-like materials [8]. For example, computational chemistry and machine learning help identify ionizable lipids with optimal delivery efficiency for mRNA vaccines and therapeutics, moving beyond trial-and-error approaches that dominated early nanomedicine development [8].

Challenges and Limitations

Despite significant advances, rational drug design faces several persistent challenges. Accurate prediction of binding affinity remains imperfect, requiring iterative design-synthesis-test cycles [1]. Incorporating target flexibility, solvent effects, and accurate simulation of molecular dynamics demands substantial computational resources [2]. Furthermore, optimizing for multiple parameters simultaneously—including affinity, selectivity, pharmacokinetics, and safety—presents complex multi-objective optimization problems [1]. Future methodological developments must address these limitations while further integrating experimental and computational approaches to accelerate therapeutic discovery.

Rational Drug Design has fundamentally transformed pharmaceutical discovery from a stochastic process to a predictive science. By leveraging detailed knowledge of biological targets and their interactions with chemical entities, RDD enables more efficient, targeted therapeutic development. The continued integration of structural biology, computational modeling, and artificial intelligence promises to further enhance the precision and efficiency of drug discovery. As these methodologies mature and expand to encompass novel therapeutic modalities, rational design principles will remain foundational to advancing human health through targeted therapeutic interventions.

The field of computer-aided drug design is undergoing a profound transformation, driven by the integration of advanced machine learning with traditional biochemical principles. This evolution marks a shift from the static, expert-defined pharmacophore—an abstract model of steric and electronic features essential for molecular recognition—to a dynamic, data-driven informacophore. The informacophore leverages large-scale biological data and sophisticated algorithms to generate novel molecular structures with desired bioactivity, thereby expanding the foundational concepts of rational drug design (RDD) research. This whitepaper delineates this conceptual and technical progression, providing an in-depth examination of the underlying methodologies, experimental protocols, and computational tools that are redefining the landscape of pharmaceutical development.

Rational Drug Design (RDD) is a methodology for developing new pharmaceuticals through a scientific understanding of physiological mechanisms and drug-target interactions, integrating both epistemic (knowledge-seeking) and practical (technology-design) research aims [9]. Its emergence was made possible when theoretical knowledge of drug-target interaction and experimental testing began to interlock in cycles of mutual advancement.

A cornerstone concept in this field is the pharmacophore, officially defined by the International Union of Pure and Applied Chemistry (IUPAC) as "the ensemble of steric and electronic features that is necessary to ensure the optimal supra-molecular interactions with a specific biological target structure and to trigger (or to block) its biological response" [10] [11]. Historically, pharmacophores were used to denote common structural or functional elements essential for activity, but the modern definition emphasizes an abstract description of stereoelectronic molecular properties, not specific functional groups [10].

This abstract nature gives pharmacophores an inherent scaffold hopping ability, enabling the identification of structurally diverse molecules that share the same essential chemical functionalities required for biological activity [10]. The transition from this established concept to the nascent informacophore represents a paradigm shift. Whereas a pharmacophore is a static hypothesis derived from known actives or a single protein structure, an informacophore is a generative, data-driven model. It utilizes vast chemical and biological datasets—often derived from large-scale virtual screening or 'omics' technologies—within deep learning architectures to actively design and optimize novel bioactive compounds, thereby operationalizing RDD principles on an unprecedented scale.

The Pharmacophore: A Foundational Model

3D Representation and Feature Types

Pharmacophores represent the nature and location of chemical features involved in ligand-target interactions as geometric entities in three-dimensional space [10]. This representation captures the active conformation of a molecule and the essential interactions contributing to its activity. The core set of pharmacophoric features includes [10] [11]:

Table 1: Core Pharmacophore Features and Their Interactions

| Feature Type | Geometric Representation | Complementary Feature | Interaction Type | Structural Examples |

|---|---|---|---|---|

| Hydrogen-Bond Acceptor (HBA) | Vector / Sphere | HBD | Hydrogen-Bonding | Amines, Carboxylates, Ketones |

| Hydrogen-Bond Donor (HBD) | Vector / Sphere | HBA | Hydrogen-Bonding | Amines, Amides, Alcoholes |

| Aromatic (AR) | Plane / Sphere | AR, PI | π-Stacking, Cation-π | Any Aromatic Ring |

| Positive Ionizable (PI) | Sphere | AR, NI | Ionic, Cation-π | Ammonium Ions |

| Negative Ionizable (NI) | Sphere | PI | Ionic | Carboxylates |

| Hydrophobic (H) | Sphere | H | Hydrophobic Contact | Alkyl Groups, Alicycles |

To account for spatial constraints imposed by the binding site shape, pharmacophore models often incorporate exclusion volumes (XVOL). These represent forbidden areas where the ligand cannot occupy space due to steric clashes with the receptor, a feature that can be reliably extracted from X-ray structures of ligand-receptor complexes [10] [11].

Pharmacophore Model Generation Methodologies

The generation of pharmacophore models depends on available data, and can be broadly classified into two approaches.

Structure-Based Pharmacophore Modeling

This approach requires the three-dimensional structure of a macromolecular target, typically obtained from X-ray crystallography, NMR spectroscopy, or computational techniques like homology modelling and AlphaFold2 [11]. The workflow is as follows:

- Protein Preparation: The target structure is critically evaluated and prepared. This involves assessing residue protonation states, adding hydrogen atoms (absent in X-ray structures), and checking for missing residues or atoms to ensure the general quality and biological relevance of the structure [11].

- Ligand-Binding Site Detection: The binding site is identified manually from co-crystallized ligands or, more commonly, using bioinformatics tools like GRID or LUDI. GRID is a grid-based method that uses different functional groups to sample a protein region and identify energetically favorable interaction points, while LUDI predicts interaction sites using distributions of non-bonded contacts from experimental structures [11].

- Feature Generation and Selection: The binding site is analyzed to generate a map of potential interactions. Initially, many features are detected; only those essential for bioactivity are selected for the final model. This selection can be based on the conservation of interactions across multiple structures, the energetic contribution to binding, or key functional residues identified from sequence alignations [11]. When a protein-ligand complex is available, the ligand's bioactive conformation directly guides the placement of pharmacophore features.

Diagram 1: Structure-Based Pharmacophore Modeling Workflow

Ligand-Based Pharmacophore Modeling

This method is employed when the 3D structure of the target is unknown. It builds models from a collection of ligands known to be active against the same target, at the same binding site, and in the same orientation [10] [12]. The key steps are:

- Ligand Set Compilation and Conformational Analysis: A set of active and sometimes inactive ligands is compiled. Each ligand undergoes a conformational analysis to generate a representative set of its low-energy 3D conformations [12].

- Molecular Alignment and Feature Extraction: The conformational ensembles of the active ligands are superimposed to find their common pharmacophoric alignment. The algorithm then identifies the chemical features (e.g., HBA, HBD, Hydrophobic) that are spatially conserved across the aligned set [12].

- Model Hypothesis and Validation: One or more pharmacophore hypotheses are generated. These models are validated for their ability to discriminate known active compounds from inactive ones, and the best model is selected [12].

Diagram 2: Ligand-Based Pharmacophore Modeling Workflow

The Paradigm Shift: From Pharmacophore to Informacophore

The pharmacophore model, while powerful, faces limitations: it often requires explicit expert knowledge, depends on the quality and size of the initial input data (a few known actives or a single protein structure), and is primarily a static query for screening existing libraries. The informacophore paradigm overcomes these by leveraging deep learning to generate novel molecular structures directly from pharmacophoric constraints.

The PGMG Framework: A Prime Example

The Pharmacophore-Guided deep learning approach for bioactive Molecule Generation (PGMG) exemplifies the informacophore concept [13]. It uses pharmacophore hypotheses as a bridge to connect different types of activity data and directly generate bioactive molecules.

Experimental Protocol and Workflow of PGMG:

- Training Data Construction: Training samples are built from SMILES strings of molecules in large chemical databases (e.g., ChEMBL). Chemical features are identified, and a subset is randomly selected to build a pharmacophore network, ( G_p ). The shortest-path distances on the molecular graph are used as a proxy for Euclidean distances between pharmacophore features [13].

- Model Architecture: PGMG employs a graph neural network (GNN) to encode the spatially distributed chemical features of the pharmacophore hypothesis. A latent variable, ( z ), is introduced to model the many-to-many relationship between pharmacophores and molecules, boosting the diversity of generated molecules. A transformer decoder then learns to map this encoded information (( c ) for pharmacophore and ( z ) for chemical groups) into a valid SMILES string representing a novel molecule [13].

- Molecule Generation: To generate molecules, a pharmacophore hypothesis ( c ) is provided. Latent variables ( z ) are sampled from a prior distribution (e.g., standard Gaussian), and molecules are generated from the conditional distribution ( p(x|z,c) ) [13]. This process allows for flexible, on-demand generation in both ligand-based and structure-based design scenarios.

Diagram 3: PGMG's Informacophore Generation Process

Performance and Validation

In evaluations, PGMG demonstrated its capability to generate molecules with strong docking affinities while maintaining high scores of validity, uniqueness, and novelty [13]. It outperformed other methods in the ratio of available molecules (a metric for novel molecule generation) by 6.3% and successfully captured the distribution of physicochemical properties (Molecular Weight, LogP, QED, TPSA) of the training data, confirming its ability to learn underlying chemical space principles [13].

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

The implementation of pharmacophore and informacophore approaches relies on a suite of computational tools and data resources.

Table 2: Key Research Reagents and Computational Solutions

| Tool/Resource Name | Type/Function | Brief Description and Role in RDD |

|---|---|---|

| RDKit [13] | Cheminformatics Software | Open-source toolkit for cheminformatics used to identify chemical features from molecules and construct pharmacophore networks in workflows like PGMG. |

| RCSB Protein Data Bank (PDB) [11] | Structural Database | Primary source for 3D structures of proteins and protein-ligand complexes, serving as the essential starting point for structure-based pharmacophore modeling. |

| GRID [11] | Binding Site Analysis Software | A grid-based method that uses different molecular probes to sample a protein region and identify energetically favorable interaction points for feature generation. |

| LUDI [11] | Binding Site Analysis Software | A knowledge-based method that predicts potential interaction sites using geometric rules and distributions of non-bonded contacts from experimental structures. |

| AlphaFold2 [11] | Protein Structure Prediction | AI system that predicts protein 3D structures from amino acid sequences with high accuracy, providing reliable models for structure-based design when experimental structures are unavailable. |

| ChEMBL [13] | Bioactivity Database | A large-scale, open-access database of bioactive molecules with drug-like properties, used as a primary data source for training deep generative models like PGMG. |

| PGMG Framework [13] | Deep Generative Model | A pharmacophore-guided deep learning approach that represents the informacophore concept, generating novel bioactive molecules from pharmacophore hypotheses using GNNs and transformers. |

The conceptual evolution from the pharmacophore to the data-driven informacophore marks a significant maturation in Rational Drug Design. The pharmacophore remains a vital, interpretable model that abstracts the essence of molecular recognition. However, its integration into deep learning architectures has given rise to the informacophore—a generative, predictive, and dynamic tool that actively designs novel chemical entities. This synergy between foundational biochemical principles and cutting-edge artificial intelligence is overcoming traditional limitations of data scarcity and restricted chemical space exploration. As these data-driven methods continue to evolve, they promise to accelerate the drug discovery process, enabling more efficient and creative development of therapeutics for challenging diseases. The informacophore, therefore, is not a replacement for the pharmacophore, but rather its logical evolution, deeply embedding the wisdom of the past into the powerful computational frameworks of the future.

Rational Drug Design (RDD) represents a foundational pillar of modern pharmaceutical science, marking a revolutionary departure from traditional, serendipity-based drug discovery methods. Unlike the trial-and-error approach that dominated early pharmaceutical development, RDD employs a systematic, knowledge-driven process where compounds are deliberately designed to interact with specific molecular targets involved in disease pathways [14] [15]. This methodology is predicated on a deep understanding of the target's structure and function, enabling scientists to design molecules that precisely fit and modulate biological activity.

The significance of RDD lies in its ability to increase efficiency, reduce costs, and improve success rates in the drug discovery pipeline. By focusing on defined biological targets and using structural information to guide synthesis, RDD minimizes the reliance on random screening of thousands of compounds [15]. This review chronicles the pivotal historical successes of RDD, from its early conceptual origins to contemporary applications, highlighting the methodological breakthroughs and transformative therapies that have emerged from this paradigm.

The Foundational Shift: From Serendipity to Rational Design

The landscape of drug discovery was fundamentally transformed by the emergence of RDD principles in the mid-20th century. Historically, drug development was largely characterized by accidental discoveries and random screening of compound libraries. Landmark drugs like penicillin and chlorodiazepoxide were found through serendipity rather than design [15]. This process was inefficient, with estimates suggesting that only one compound out of ten thousand tested would eventually become an approved medicine [15].

George Hitchings and Gertrude Elion pioneered the systematic approach that would become known as rational drug design at Burroughs Wellcome Laboratories in the 1940s. They deliberately diverged from the traditional path by designing new molecules with specific molecular structures to interfere with cellular processes [14] [16]. Their foundational hypothesis centered on targeting nucleic acid synthesis, speculating that differences in nucleic acid metabolism between normal human cells, cancer cells, protozoa, bacteria, and viruses could be exploited to develop selective therapeutics [14]. This targeted approach represented a paradigm shift from random compound screening to a biology-first, hypothesis-driven methodology that would define RDD.

Table 1: Comparison of Traditional Drug Discovery vs. Rational Drug Design

| Aspect | Traditional Discovery (Trial-and-Error) | Rational Drug Design |

|---|---|---|

| Approach | Random screening, serendipity | Targeted, knowledge-based design |

| Efficiency | Low (~1 in 10,000 compounds succeed) | Higher, due to targeted approach |

| Key Players | Fleming (penicillin), Sternbach (chlordiazepoxide) | Hitchings & Elion, Cushman & Ondetti |

| Timeframe | Indefinite, unpredictable | Structured, iterative optimization |

| Theoretical Basis | Limited biological understanding | Deep target engagement knowledge |

The Hitchings and Elion Era: Purine Analogs and the First RDD Successes

The collaboration between George Hitchings and Gertrude Elion at Burroughs Wellcome produced the first definitive successes of rational drug design, establishing core principles that would guide future efforts. Their work focused on purines—building blocks of DNA and RNA—based on the hypothesis that interfering with nucleic acid synthesis could selectively inhibit the growth of pathogenic cells [14] [16].

Hitchings assigned Elion to investigate purines and their role in nucleic acid metabolism. They discovered that bacterial cells required specific purines to synthesize DNA, and reasoned that blocking these purines from being incorporated into DNA would halt cell growth [14]. This led to their development of "antimetabolites"—compounds structurally similar to natural purines that would trick metabolic enzymes into latching onto them instead of the natural substrates, thereby blocking DNA production [14].

By 1950, this approach yielded two significant compounds: diaminopurine and thioguanine, structural analogs of adenine and guanine respectively. These drugs proved effective against leukemia, a cancer characterized by uncontrolled white blood cell proliferation [14]. Elion later created 6-mercaptopurine (6-MP, Purinethol) by substituting an oxygen atom with a sulfur atom on a purine molecule [14]. Through six years of dedicated research, she discovered that combining 6-MP with other drugs could cure most childhood leukemia cases, representing a monumental achievement in cancer therapy [14] [16].

Table 2: Early RDD Successes from Hitchings and Elion's Laboratory

| Drug | Year | Target/Condition | Mechanism of Action | Impact |

|---|---|---|---|---|

| Diaminopurine | ~1950 | Leukemia | Purine analog, inhibits DNA synthesis | First successful RDD-based leukemia treatment |

| Thioguanine | ~1950 | Leukemia | Guanine analog, inhibits DNA synthesis | Effective against specific forms of leukemia |

| 6-Mercaptopurine (6-MP) | Post-1950 | Childhood leukemia | Purine antimetabolite | Cure for most patients when combined with other drugs |

| Azathioprine (Imuran) | 1960s | Organ transplantation | Suppresses immune system | Enabled successful organ transplants by preventing rejection |

| Allopurinol (Zyloprim) | 1960s | Gout | Reduces uric acid production | Treatment for painful gout symptoms |

| Acyclovir (Zovirax) | 1970s | Herpes | Selective antiviral; interferes with viral replication | Proof that drugs could target viruses selectively |

The legacy of Hitchings and Elion extended far beyond these individual drugs. Their work established several foundational principles of RDD:

- Target Identification: Focus on specific biological pathways essential to disease pathology

- Exploitation of Biochemical Differences: Design compounds that capitalize on metabolic differences between normal and pathogenic cells

- Structure-Based Design: Create molecules that mimic natural substrates to interfere with enzymatic processes

- Iterative Optimization: Continuously refine lead compounds based on biological results [14] [16]

Their approach also demonstrated the potential for unexpected therapeutic applications, as when drugs originally developed for leukemia were found to suppress the immune system, leading to the development of azathioprine (Imuran) for organ transplantation [14]. Similarly, their development of allopurinol for gout emerged from this systematic approach to drug design [14]. For their contributions, Hitchings and Elion shared the 1988 Nobel Prize in Physiology or Medicine with James Black [14] [16].

Captopril: A Case Study in Structure-Based Design

The development of Captopril, the first angiotensin-converting enzyme (ACE) inhibitor, represents another landmark achievement in RDD that demonstrates the power of target-based design. The Captopril story began with observations of drastically reduced blood pressure in individuals bitten by the Brazilian viper, Bothrops jararaca [17]. Researchers discovered that the venom contained peptides that potently inhibited ACE, an enzyme crucial for producing the vasoconstrictor angiotensin II [17].

Scientists at Squibb Pharmaceuticals isolated and purified the active peptide from the venom, naming it teprotide. While teprotide showed promising blood pressure-lowering effects in clinical trials, its peptide nature meant it had to be administered intravenously and was unsuitable as a chronic treatment for hypertension [17]. The project was nearly abandoned until researchers made a critical connection: ACE was identified as a zinc metalloprotease, similar to the previously studied carboxypeptidase A (CPA) [17].

This conceptual breakthrough enabled a structure-based design approach. Despite the absence of a direct crystal structure for ACE, researchers led by Cushman and Ondetti constructed a hypothetical model of its active site based on the known structure of CPA [17]. They hypothesized that a molecule combining elements of the snake venom peptides and the CPA inhibitor benzylsuccinic acid could effectively block ACE activity.

Their design strategy proceeded through several iterations:

- Initial lead compounds focused on succinyl proline, which provided specificity but limited potency

- Incorporation of the "Phe-Ala-Pro" pharmacophore from venom peptides led to 2-methyl succinyl proline with improved activity

- Optimization of the zinc-binding group, replacing a carboxylate with a thiol, dramatically increased potency

The resulting drug, Captopril, proved 1000 times more potent than the initial lead compound and became the first orally active ACE inhibitor, establishing an entirely new class of cardiovascular therapeutics [17].

Figure 1: The Rational Design Workflow for Captopril

Modern Advancements: From Structure-Based Design to AI-Driven RDD

The principles established by early RDD pioneers have evolved dramatically with technological advancements, particularly in structural biology and computational methods. The latter part of the 20th century saw the rise of structure-based drug design, enabled by X-ray crystallography and nuclear magnetic resonance (NMR) spectroscopy, which allowed researchers to visualize drug targets at atomic resolution [18].

Contemporary RDD increasingly leverages artificial intelligence (AI) and machine learning (ML) to accelerate and enhance the drug discovery process. AI models can now explore vast chemical spaces, predict binding affinities, and optimize drug candidates with unprecedented efficiency [19] [4]. These computational approaches complement experimental techniques by providing rapid insights that would traditionally require extensive laboratory work.

A transformative development in modern RDD is the emergence of AlphaFold, an AI system that predicts protein structures with remarkable accuracy. The latest iteration, AlphaFold 3, extends this capability to predict the structures of complexes containing proteins, nucleic acids, small molecules, and ions [7]. This breakthrough provides researchers with an atomic-level view of biomolecular interactions, enabling the design of therapeutics against targets previously considered intractable.

The impact of these technologies is exemplified in cases like the immune checkpoint protein TIM-3, a cancer immunotherapy target. AlphaFold 3 accurately predicted the structure of TIM-3 bound to small molecule ligands, including the characterization of a previously unknown binding pocket, demonstrating its utility in rational structure-based design [7].

Table 3: Evolution of Tools and Technologies in Rational Drug Design

| Era | Key Technologies | Capabilities | Limitations |

|---|---|---|---|

| 1950s-1970s (Hitchings & Elion) | Basic biochemistry, metabolite analysis, enzyme assays | Understanding metabolic pathways, designing substrate analogs | Limited structural information, reliance on biochemical inference |

| 1980s-2000s (Structure-Based Design) | X-ray crystallography, NMR, homology modeling, molecular docking | 3D visualization of targets, structure-based optimization | Experimental structure determination slow and not always feasible |

| 2010s-Present (AI-Enhanced RDD) | AI/ML models, molecular dynamics, virtual screening, AlphaFold | Rapid prediction of structures and interactions, exploration of vast chemical spaces | Model interpretability, computational resource requirements |

The Scientist's Toolkit: Essential Reagents and Methods in RDD

The practice of rational drug design relies on a sophisticated toolkit of research reagents and methodologies that enable the identification and optimization of therapeutic compounds.

Figure 2: Core Methodologies and Reagents in the RDD Workflow

Table 4: Essential Research Reagent Solutions in Rational Drug Design

| Reagent/Method | Function in RDD | Specific Examples from Case Studies |

|---|---|---|

| Enzyme Assay Systems | Quantitative measurement of target engagement and inhibition | Hitchings & Elion's purine incorporation assays; Cushman's first quantitative ACE assay [14] [17] |

| X-ray Crystallography | Determination of 3D atomic structures of targets and target-ligand complexes | BACE-1 inhibitor complex visualization; carboxypeptidase A structure guiding Captopril design [18] [17] |

| Homology Modeling | Prediction of unknown protein structures based on related proteins with known structures | ACE active site modeling based on carboxypeptidase A structure [17] |

| Virtual Screening Libraries | Computational screening of compound databases to identify potential hits | Modern AI/ML platforms for exploring chemical space [19] [4] |

| Structure-Activity Relationship (SAR) Analysis | Systematic evaluation of structural modifications on compound activity | Optimization of 6-MP combinations; Captopril lead optimization (>60 analogs) [14] [17] |

| AI/ML Prediction Platforms | Prediction of binding modes, affinities, and molecular properties | AlphaFold 3 for protein-ligand complex prediction; machine learning models for binding affinity [19] [7] [4] |

Rational Drug Design has fundamentally transformed pharmaceutical development from a serendipitous process to a deliberate, knowledge-driven science. The historical successes chronicled in this review—from the pioneering work of Hitchings and Elion on purine analogs to the structure-based development of Captopril and contemporary AI-powered discoveries—demonstrate the progressive refinement of this paradigm.

The foundational concepts established by early RDD practitioners remain highly relevant: identify critical biological targets, understand their structure and function, and design compounds that selectively modulate their activity. What has evolved dramatically are the tools available to implement this approach, with modern structural biology and artificial intelligence providing unprecedented insights into molecular interactions.

As RDD continues to evolve, the integration of increasingly sophisticated computational methods with experimental validation promises to accelerate the discovery of novel therapeutics for diseases that remain intractable. The historical successes of RDD not only represent monumental achievements in their own right but also provide a foundation for future innovation in pharmaceutical research and development.

Rational Drug Design (RDD) represents a paradigm shift from traditional trial-and-error approaches to a targeted strategy based on understanding molecular interactions between drugs and their biological targets. The core premise of RDD is exploiting the detailed recognition and discrimination features associated with the specific arrangement of chemical groups in the active site of a target macromolecule. This approach allows researchers to conceive new molecules that can optimally interact with proteins to block or trigger specific biological actions [20]. The modern RDD workflow has evolved into an integrated framework that synergistically combines computational predictions with experimental validation, significantly accelerating the timeline from target identification to viable drug candidates while reducing associated costs [2] [21].

The foundational concepts of RDD are built upon molecular recognition principles, notably the lock-and-key model proposed by Emil Fischer in 1890, which explains how substrates fit into the active sites of macromolecules similar to keys fitting into locks. This was later expanded by Daniel Koshland's induced-fit theory in 1958, which accounted for the conformational changes that occur in both ligand and target during the recognition process [20]. These fundamental principles continue to inform contemporary drug design strategies, now enhanced by sophisticated computational infrastructure and high-throughput experimental techniques.

Core Methodologies in Rational Drug Design

Structure-Based Drug Design (SBDD)

Structure-Based Drug Design (SBDD) relies directly on the three-dimensional structural information of biological targets, typically obtained through X-ray crystallography, NMR spectroscopy, or cryo-electron microscopy. When the experimental structure of the target protein is unavailable, computational techniques like homology modeling can generate reliable structural models based on homologous proteins with known structures [2]. The SBDD process involves several critical steps: First, preparation of the protein structure involves adding hydrogen atoms, assigning partial charges, and optimizing side-chain orientations. Second, identification of binding sites locates pockets on the protein surface suitable for ligand binding. Third, preparation of ligands involves generating 3D structures with proper geometry and charge distributions. Finally, docking and scoring predict how small molecules bind to the target and estimate binding affinity [2].

SBDD provides a visual framework for direct design of new molecular prototypes, allowing researchers to utilize detailed 3D features of the active site by introducing appropriate functionalities in designed ligands [20]. However, SBDD faces several challenges, including accounting for target flexibility throughout molecular docking and modeling, considering the role of water molecules in facilitating hydrogen bonding interactions, and incorporating solvation effects for drug molecules in aqueous environments [2].

Ligand-Based Drug Design (LBDD)

When the three-dimensional structure of the target protein is unavailable, Ligand-Based Drug Design (LBDD) offers an alternative approach that utilizes the information from known active molecules. This indirect method expedites drug development through analysis of the stereochemical and physicochemical features of reference compounds [2]. Key techniques in LBDD include pharmacophore modeling, which identifies the essential spatial arrangement of molecular features responsible for biological activity, and three-dimensional Quantitative Structure-Activity Relationship (3D QSAR) studies, which correlate biological activity with molecular properties [2].

LBDD employs molecular mimicry strategies, where new chemical entities are designed to position the 3D relative location of structural elements recognized as necessary in active molecules. This approach has successfully generated mimics of biologically important compounds including ATP, dopamine, histamine, and estradiol [20]. A specialized application of molecular mimicry focused on peptides has evolved into the field of peptidomimetics, which aims to transform peptide leads into drug-like molecules with improved stability and bioavailability [20].

Synergistic Integration of SBDD and LBDD

The most powerful modern RDD approaches leverage both SBDD and LBDD methodologies synergistically. When information is available for both the target protein and active molecules, the two approaches can be developed independently yet inform each other [20]. The synergy is realized when promising docked molecules designed through SBDD are compared to active structures from LBDD, and when interesting mimics from LBDD are docked into the protein structure to verify convergent conclusions [20].

Establishing this synergy requires correct binding models that position active molecules accurately into the active site of the target protein. The ideal situation involves having X-ray structures of complexes between active compounds and the target protein, though computational modeling can predict binding modes when structural data is unavailable [20]. This integrated global approach aims to identify structural models that rationalize the biological activities of known molecules based on their interactions with the 3D structure of the target protein [20].

Table 1: Key Computational Methods in Modern RDD

| Method Category | Specific Techniques | Primary Applications | Key Advantages |

|---|---|---|---|

| Structure-Based Methods | Molecular Docking, Molecular Dynamics Simulations, Binding Free Energy Calculations | Binding Pose Prediction, Virtual Screening, Lead Optimization | Direct visualization of binding interactions; Structure-based optimization |

| Ligand-Based Methods | Pharmacophore Modeling, 3D-QSAR, Similarity Searching | Lead Identification, Scaffold Hopping, Activity Prediction | Applicable when target structure is unknown; Leverages existing bioactivity data |

| Integrated Approaches | Structure-Based Pharmacophore, MD-Informed Docking | Binding Mode Validation, Scaffold Optimization | Combines strengths of both approaches; Increases confidence in predictions |

The Integrated RDD Workflow: From Computation to Experimentation

The modern RDD workflow follows an iterative cycle where computational predictions guide experimental work, which in turn refines computational models. This integrated approach creates a positive feedback loop that continuously improves both the understanding of the biological system and the quality of drug candidates.

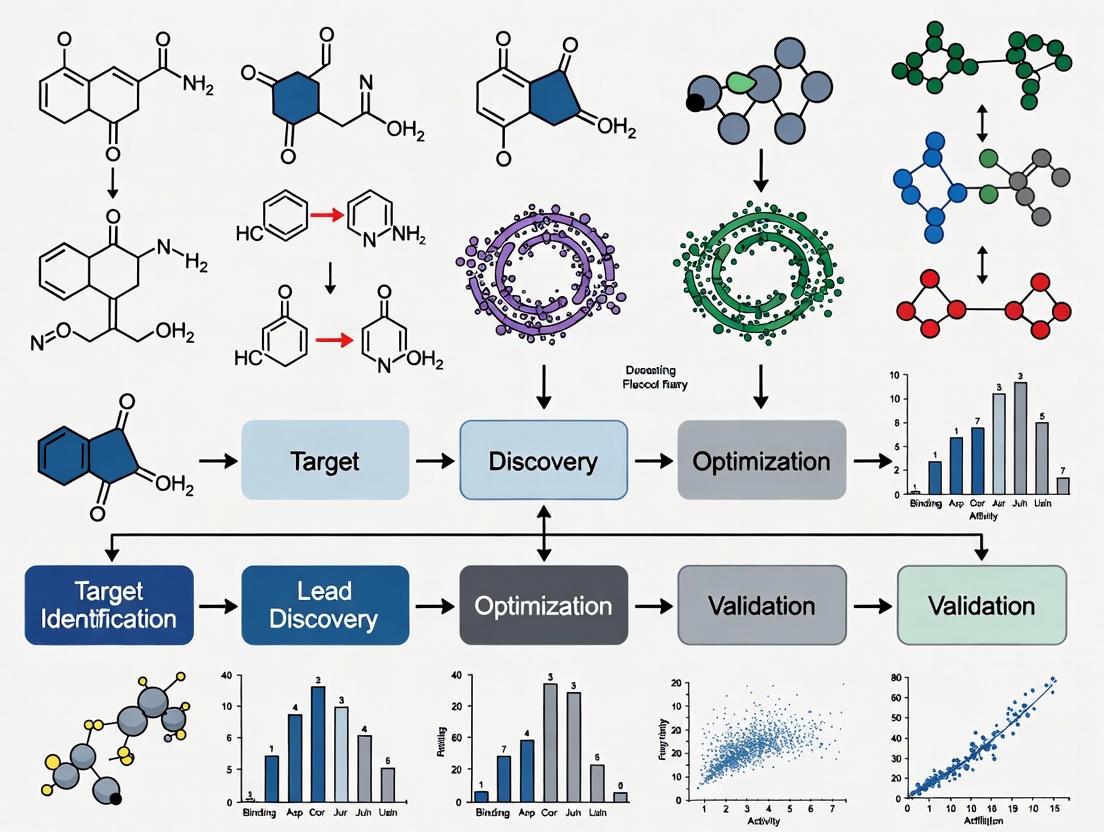

Workflow Visualization

Target Identification and Validation

The RDD process begins with identification and validation of a biological target—typically a protein, receptor, or enzyme—that plays a key role in a disease pathway. Modern target discovery increasingly leverages genomic and proteomic data to link specific genes to disease mechanisms at the molecular level [20]. Target validation establishes that modulation of the target (inhibition or activation) will produce a therapeutic effect with acceptable safety margins. Techniques for target validation include genetic approaches (knockout/knockdown studies), biochemical methods, and cellular models of disease [2].

Computational Screening and Compound Prioritization

Once a validated target is established, computational screening methods identify potential lead compounds. Virtual screening of compound libraries can encompass millions of structures, with molecular docking predicting how each compound might bind to the target. For example, Schrödinger's automated reaction workflow (AutoRW) enables high-throughput screening of catalysts, reagents, and substrates by automating the computation of reaction coordinates, transition states, and energetic barriers [21].

Advanced enterprises have scaled these efforts significantly; teams using platforms like LiveDesign can collaboratively screen over 2000 catalysts per year, compared to approximately 150 catalysts annually for a single modeling user [21]. This enterprise-scale approach demonstrates the dramatic efficiency gains possible with modern computational infrastructure.

Table 2: Key Research Reagents and Computational Tools in Modern RDD

| Category | Reagent/Tool | Function/Purpose | Application Example |

|---|---|---|---|

| Computational Tools | AutoRW (Schrödinger) | Automated reaction workflow for high-throughput screening | Large-scale catalyst screening for polymer design [21] |

| Computational Tools | VTK/ParaView | Scalable visualization and analysis | HPC-based simulation analysis for aerospace and energy R&D [22] |

| Computational Tools | Molecular Dynamics (GROMACS) | Simulate protein-ligand interactions over time | Stability analysis of peptide-protein complexes [23] |

| Experimental Assays | In Vitro Binding Assays | Measure direct compound-target interactions | Determination of inhibition constants (Ki) for lead compounds |

| Experimental Assays | Cellular Activity Assays | Assess functional effects in biological systems | Measurement of IC50 values in cancer cell lines [23] |

Experimental Validation and Iterative Optimization

Computational predictions must be experimentally validated to confirm biological activity. Initial validation typically involves in vitro assays to measure binding affinity and functional effects. For example, in the development of peptide inhibitors targeting survivin for cancer therapy, researchers synthesized the computationally designed P3 peptide and experimentally validated its efficacy [23].

The experimental results feed back into computational models to refine predictions and guide the next cycle of compound design. This iterative process continues until compounds with desired potency, selectivity, and drug-like properties are identified. The integration of computational and experimental data occurs most effectively on collaborative platforms that allow research teams to "share, analyze and communicate data seamlessly and make rapid decisions" across departments and geographical locations [21].

Case Study: Peptide Inhibitors Targeting Survivin for Cancer Therapy

A recent study exemplifies the modern integrated RDD workflow in the development of peptide inhibitors targeting the survivin protein for cancer therapy [23]. Survivin, a member of the Inhibitor of Apoptosis Protein (IAP) family, is overexpressed in various human cancers but largely absent in most normal tissues, making it an attractive therapeutic target [23].

Computational Design and Analysis

Researchers designed anti-cancer peptides derived from the Borealin protein, which naturally interacts with survivin as part of the Chromosomal Passenger Complex (CPC) essential for cell division [23]. Through single-point mutations, they developed several peptide variants and evaluated them using computational approaches:

- Molecular Docking identified peptides P2 and P3 as having the highest binding affinities, interacting with the Borealin-binding region and linker region of survivin [23].

- Molecular Dynamics (MD) Simulations using GROMACS analyzed the stability of protein-peptide complexes through:

- Root Mean Square Deviation (RMSD) calculations showed that after 18 ns, the RMSD curves for protein-ligand systems exhibited nearly identical conformation change patterns within an acceptable range [23].

- Radius of Gyration (Rg) analysis demonstrated consistent and steady conformational changes across all systems, indicating stable interaction behavior [23].

- Protein-Ligand Interaction Energy calculations revealed favorable binding energies, with short-range Coulombic interaction energies of -232.263 kJ mol⁻¹ for P2 and -229.382 kJ mol⁻¹ for P3 [23].

Experimental Validation

Based on computational analysis, the P3 peptide was synthesized for experimental validation. The peptide demonstrated significant potential as a novel anti-cancer agent by targeting key mechanisms in cancer cell survival and proliferation [23]. The study illustrates the dual approach of modern cancer therapeutics: disrupting cell division through inhibition of CPC formation while simultaneously inducing apoptosis in cancer cells.

Survivin Signaling Pathway

Advanced Applications and Future Directions

Automated Workflows in Catalysis and Polymer Design

Beyond traditional drug discovery, integrated RDD approaches are advancing fields like catalysis and materials science. Schrödinger's AutoRW workflow exemplifies this trend, automating the processes of enumeration, mapping, organization, and output needed for high-throughput screening [21]. Applications include:

- Polypropylene Tacticity Control: Scientists studied 13 isotactic catalysts using AutoRW to understand adjacent stereoselectivity in polypropylene production, with results showing good agreement with experimental selectivities (R² = 0.8) [21].

- Epoxy-Amine Reaction Screening: Researchers screened a library of 12 amines and 21 epoxides to build a relative reaction barrier heat map, enabling efficient design of high-performance polymers [21].

- Comonomer Selectivity Optimization: AutoRW screened 35 catalyst derivatives with different polymer substrates to understand effects on comonomer selectivity for block copolymerization [21].

High-Performance Computing and Visualization

The increasing complexity of RDD simulations demands advanced computing infrastructure. High-Performance Computing (HPC) environments now enable simulations that were previously impractical, while interactive visual workflows help bridge the gap between data generation and insight [22]. Modern visual workflow platforms combine high-performance back-end frameworks with flexible interfaces, allowing deployment of custom solutions on desktops, in Jupyter notebooks, or directly on the web [22]. These platforms transform how organizations explore, validate, and communicate results by making workflows "visual, collaborative, and accessible to both experts and non-specialists" [22].

Enterprise-Scale Collaboration Platforms

The future of RDD lies in platforms that support enterprise-scale collaboration, such as Schrödinger's LiveDesign, which enables teams to "collaborate, design, experiment, analyze, track, and report in a centralized platform" [21]. These platforms break down silos between research functions and geographical locations, creating environments where computational chemists, medicinal chemists, and biologists can work from the same live data rather than static reports [21]. This approach accelerates the iterative design-make-test-analyze cycles that are fundamental to successful drug discovery.

The modern RDD workflow represents a sophisticated integration of computational and experimental approaches that has transformed drug discovery from an empirical art to a rational science. By combining structure-based and ligand-based design methodologies within collaborative frameworks, researchers can accelerate the identification and optimization of therapeutic compounds while reducing the costs and timelines associated with traditional approaches. As computational power increases and algorithms become more refined, this integration will deepen further, potentially incorporating artificial intelligence and machine learning to extract even more insight from the growing body of chemical and biological data. The continued evolution of these integrated workflows promises to enhance our ability to address increasingly complex therapeutic challenges and deliver novel medicines to patients more efficiently.

AI and Structural Insights: The Modern RDD Toolkit for Accelerated Discovery

AI-Powered De Novo Molecular Design and Virtual Screening

Rational Drug Design (RDD) is a systematic process for creating new medications based on knowledge of a biological target, a paradigm that has evolved from intuition-led approaches to a data-driven discipline [24] [25]. The overarching goal of RDD is to design small molecules that are complementary in shape and charge to their biomolecular targets, thereby activating or inhibiting function to provide therapeutic benefit [25]. De novo molecular design represents a pivotal advancement within this framework, referring to computational methods that generate novel molecular structures from atomic building blocks with no a priori relationships, tailored to specific therapeutic objectives [26] [27]. This approach stands in contrast to traditional virtual screening, which is limited to exploring existing chemical libraries [28] [29].

The integration of Artificial Intelligence (AI), particularly deep learning, has catalyzed a paradigm shift in de novo design [26] [30]. AI enables the rapid exploration of the vast chemical space—estimated to contain 10^33 to 10^60 drug-like molecules—which is computationally intractable for traditional screening methods [26] [28]. This review explores how AI-powered de novo design and virtual screening are reshaping the foundational concepts of RDD, providing researchers with powerful tools to accelerate the discovery of novel therapeutic agents.

Foundations of Rational Drug Design

Core Principles and Evolution

Rational Drug Design was first formalized in the 1950s, becoming the methodological ideal in the 1980s following successful developments like lovastatin and captopril [24]. Traditional drug discovery follows a structured pipeline of complex, time-consuming steps: target identification, hit discovery, hit-to-lead progression, lead optimization, and preclinical and clinical testing [24]. This process is exceedingly costly, averaging USD 2.6 billion, and lengthy, taking over 12 years from inception to market approval [24] [30]. RDD aimed to counter these inefficiencies by using molecular modeling combined with structure-activity relationship (SAR) studies to strategically modify functional chemical groups to improve drug candidate effectiveness [24].

The core concept of RDD involves three general steps: (1) identifying a specific target that plays a key role in disease; (2) elucidating the structure and function of this target; and (3) using this information to design a drug molecule that interacts with the target in a therapeutically beneficial way [25]. This approach contrasts with traditional trial-and-error testing of chemical substances on cultured cells or animals, instead beginning with a hypothesis that modulation of a specific biological target may have therapeutic value [25].

Key Methodological Approaches

Two primary computational approaches dominate traditional RDD:

Structure-Based Drug Design (SBDD): Also known as direct drug design, this approach uses the three-dimensional structure of a biological target to develop new drug molecules [27] [25]. When the three-dimensional structure of a receptor is known through X-ray crystallography, NMR, or electron microscopy, researchers can analyze the molecular shape, physical properties, and chemical properties of the active site to design ligands that form optimal non-covalent interactions [27]. SBDD encompasses two main strategies: de novo drug design (building molecules from scratch) and virtual screening (computational screening of large databases of known molecules) [25].

Ligand-Based Drug Design (LBDD): Also termed indirect drug design, this approach relies on knowledge of other molecules that bind to the biological target of interest [27] [25]. When the target structure is unknown, researchers use known active binders to develop a pharmacophore model or quantitative structure-activity relationship (QSAR) models that define the essential chemical features required for biological activity [27]. Key LBDD methods include scaffold hopping, pseudoreceptor modeling, and QSAR studies [25].

Table 1: Key Methodological Approaches in Rational Drug Design

| Approach | Core Principle | Key Techniques | Application Context |

|---|---|---|---|

| Structure-Based Drug Design (SBDD) | Uses 3D structure of biological target | Molecular docking, de novo design, virtual screening | Known target structure from X-ray crystallography, NMR, cryo-EM |

| Ligand-Based Drug Design (LBDD) | Uses known active ligands as templates | Pharmacophore modeling, QSAR, scaffold hopping | Unknown target structure but known active compounds |

| AI-Powered De Novo Design | Generates novel molecules from scratch | Deep generative models, reinforcement learning | Exploration of vast chemical spaces beyond existing libraries |

AI-Powered De Novo Molecular Design

The Machine Learning Revolution in Molecular Generation

The emergence of generative AI has fundamentally transformed de novo molecular design, enabling the rapid, semi-automatic design and optimization of drug-like molecules [26]. While conventional de novo methods faced challenges with synthetic feasibility and required specialized computational skills, generative AI algorithms have revitalized the field by leveraging vast data on bioactivity, toxicity, and protein structures [26].

The development of ultra-large, "make-on-demand" or "tangible" virtual libraries has significantly expanded the range of accessible drug candidate molecules [24]. For example, chemical suppliers Enamine and OTAVA offer 65 and 55 billion novel make-on-demand molecules, respectively [24]. Screening such vast chemical spaces requires ultra-large-scale virtual screening for hit identification, as direct empirical screening of billions of molecules is not feasible [24].

Key AI Architectures and Applications

Several deep learning architectures have demonstrated remarkable success in de novo molecular design:

Generative Pretraining Transformer (GPT) Models: MolGPT, a transformer-decoder model, has shown excellent performance in generating drug-like molecules compared to earlier approaches like CharRNN, variational autoencoder (VAE), and generative adversarial networks (GANs) [28]. Recent modifications to GPT architectures include rotary position embedding (RoPE) to better handle relative position dependencies, DeepNorm for enhanced training stability, and GEGLU activation functions to improve expressiveness [28].

Encoder-Decoder Transformers: The T5-based T5MolGe model implements a complete encoder-decoder transformer architecture for conditional molecular generation tasks, learning the internal relationships between conditional properties and SMILES sequences to enable better control over specified molecular properties [28].

Selective State Space Models (Mamba): This emerging architecture addresses the quadratic computational complexity of transformers, showing promising results in language modeling and molecular generation tasks, particularly for handling long sequences [28].

Monte Carlo Tree Search (MCTS) with Neural Networks: Combined with multitask neural network surrogate models and recurrent neural networks for rollouts, MCTS has been successfully applied to explore chemical space and design novel therapeutic agents against SARS-CoV-2 [31].

Table 2: Performance Comparison of AI Models for Molecular Generation

| Model Architecture | Key Features | Strengths | Reported Limitations |

|---|---|---|---|

| Generative Pretraining Transformer (GPT) | Autoregressive, decoder-only architecture | Excellent performance in unconditional generation | Limited control for conditional generation tasks |

| T5-based Encoder-Decoder | Complete encoder-decoder, conditional generation | Better learning of property-SMILES relationships | Higher computational requirements |

| Selective State Space (Mamba) | Linear scaling with sequence length | Efficient for long sequences | Emerging technology, less extensively validated |

| Monte Carlo Tree Search (MCTS) | Combinatorial search with surrogate models | Effective exploration of chemical space | Dependent on quality of surrogate model |

AI-Driven Drug Discovery Workflow - This diagram illustrates the iterative cycle of AI-powered molecular generation, virtual screening, and experimental validation within the modern drug discovery paradigm.

Experimental Protocols and Methodologies

AI-Guided De Novo Design Protocol for Specific Targets

The following detailed methodology outlines an AI-powered workflow for designing inhibitors against specific drug targets, such as the L858R/T790M/C797S-mutant EGFR in non-small cell lung cancer [28]:

Step 1: Problem Formulation and Objective Definition

- Define precise molecular objectives: Specify target properties including binding affinity (Vina score < -9.0 kcal/mol), selectivity profile, ADMET properties, and synthetic accessibility [28] [31].

- Establish constraints: Molecular weight (<500 Da), logP range, rotatable bonds, and specific structural alerts to avoid.

Step 2: Data Curation and Preprocessing

- Collect known active compounds: Compile structural data and binding affinities for existing EGFR inhibitors from databases like ChEMBL and BindingDB [27] [31].

- Generate molecular representations: Convert structures to SMILES strings or molecular graphs, ensuring standardized representation and data cleaning [28] [29].

- Apply transfer learning: Use large-scale molecular databases (e.g., ZINC containing 250k molecules) for pretraining, followed by fine-tuning on target-specific data to overcome small dataset limitations [28] [31].

Step 3: Model Selection and Training

- Architecture selection: Choose appropriate generative model (e.g., GPT-based, T5MolGe, or Mamba) based on dataset size and conditional generation requirements [28].

- Implement conditional generation: Train models to explicitly learn relationships between molecular structures and target properties through embedding vector representation spaces [28].

- Optimize hyperparameters: Adjust learning rates, batch sizes, and network architectures through cross-validation.

Step 4: Molecular Generation and Optimization

- Generate candidate molecules: Use trained model to produce novel molecular structures meeting specified constraints.

- Apply Monte Carlo Tree Search: Implement MCTS with rollout using RNN to explore chemical space efficiently, guided by multi-task neural network predictions of binding affinity [31].

- Incorporate multi-objective optimization: Simultaneously optimize for binding affinity, drug-likeness, and synthetic accessibility using penalty terms for undesirable properties [31].

Step 5: Validation and Experimental Testing

- Conduct virtual screening: Filter generated molecules using molecular docking simulations against target structure [31].

- Synthesize promising candidates: Prioritize molecules with best predicted properties for chemical synthesis.

- Perform biological assays: Evaluate actual binding affinity, cellular activity, and selectivity through enzyme inhibition assays, cell viability assays, and mechanism of action studies [24].

Advanced Model Architectures and Implementation

For the T5MolGe implementation [28]:

- The model is built on a complete encoder-decoder transformer architecture based on the T5 (Transfer Text-to-Text Transformer) framework.

- The encoder processes conditional molecular properties and learns their embedding vector representation.

- This encoded representation guides the vector representation of SMILES sequences during generation.

- The final decoder block employs a softmax output with maximum likelihood objective to generate valid molecular structures.

- The model is trained to learn the mapping relationship between conditional properties and SMILES sequences, enabling precise control over generated molecular characteristics.

For GPT-based implementations with advanced modifications [28]:

- GPT-RoPE incorporates rotary position embedding to encode absolute position with a rotation matrix while incorporating explicit relative position dependency.

- GPT-Deep modifies layer normalization and residual connections using DeepNorm to combine the performance of Post-LN with the training stability of Pre-LN.

- GPT-GEGLU introduces a novel activation function combining properties of GELU and GLU to dynamically adjust neuron activation.

T5MolGe Encoder-Decoder Architecture - This diagram shows the complete encoder-decoder transformer architecture for conditional molecular generation, which learns embedding relationships between properties and structures.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools for AI-Powered De Novo Design

| Resource Category | Specific Tools/Resources | Function and Application |

|---|---|---|

| Chemical Databases | ZINC Database (250k+ molecules), BindingDB (800k+ molecules) [31] | Provide training data for AI models; sources of known active compounds for ligand-based design |

| Make-on-Demand Libraries | Enamine (65 billion compounds), OTAVA (55 billion compounds) [24] | Ultra-large virtual libraries of synthetically accessible compounds for virtual screening |

| Molecular Representations | SMILES, Deep SMILES, SELFIES, Molecular Graph Representations [28] [29] | Standardized formats for encoding chemical structures for AI processing and generation |

| Generative AI Frameworks | ChemTS Python Library [31], MolGPT [28], T5MolGe [28] | Software implementations of molecular generation algorithms for de novo design |

| Validation Assays | Enzyme Inhibition Assays, Cell Viability Assays, Pathway-Specific Readouts [24] | Experimental methods to validate AI-generated molecules and confirm biological activity |

| Docking Software | Molecular Docking Simulations (Vina) [31] | Computational tools for predicting binding affinity and orientation of generated molecules |

Case Studies and Clinical Applications

Successful Implementations of AI-Powered De Novo Design

Several notable achievements demonstrate the real-world impact of AI-powered de novo molecular design:

SARS-CoV-2 Therapeutics: Researchers employed a de novo design strategy combining Monte Carlo Tree Search with multitask neural networks to discover novel therapeutic agents against SARS-CoV-2 [31]. The approach generated hundreds of new candidates that outperformed existing FDA-approved molecules in binding Vina scores to the spike protein [31].

Fourth-Generation EGFR Inhibitors: AI-driven de novo design has been applied to target L858R/T790M/C797S-mutant EGFR in non-small cell lung cancer, addressing acquired resistance to third-generation inhibitors like osimertinib [28]. Transformer-based models generated novel molecular structures optimized for overcoming the C797S mutation.

Clinical-Stage AI-Designed Compounds: Drugs developed using AI-powered de novo design, including DSP-1181, EXS21546, and DSP-0038, have reached clinical trials, demonstrating the viability of AI-generated therapeutic agents [26]. While these compounds primarily target well-researched biological targets and do not necessarily innovate structural or binding properties, they validate the utility of generative algorithms in producing effective therapeutics [26].

Integration with Traditional Medicinal Chemistry

A critical insight from successful implementations is that AI-powered de novo design works most effectively when integrated with traditional medicinal chemistry expertise [24] [30]. For instance, the "informacophore" concept represents a fusion of structural chemistry with informatics, extending the traditional pharmacophore by incorporating data-driven insights derived from SARs, computed molecular descriptors, fingerprints, and machine-learned representations of chemical structure [24]. This hybrid approach enables more systematic and bias-resistant strategies for scaffold modification and optimization while maintaining connections to chemical intuition [24].

The iterative feedback loop spanning computational prediction, experimental validation, and optimization remains central to modern drug discovery [24]. Biological functional assays are not just confirmatory tools but strategic enablers that shape the direction of both computational exploration and chemical design [24]. As noted in recent reviews, AI represents a valuable complementary tool in small-molecule drug discovery, augmenting traditional methodologies rather than replacing them [30].

The field of AI-powered de novo molecular design continues to evolve rapidly, with several emerging trends shaping its future development. The convergence of generative models with Bayesian retrosynthesis planners, self-supervised pretraining on ultra-large chemical corpora, and multimodal integration of omics-derived features represents the next frontier in precision therapeutics [32]. The emergence of agentic AI systems that can autonomously navigate discovery pipelines points toward increasingly automated molecular design ecosystems [30].

Despite these advances, significant challenges remain. Model interpretability continues to present obstacles, as machine-learned informacophores can be challenging to link back to specific chemical properties [24]. The synthetic accessibility of AI-generated molecules requires careful consideration, and clinical success is not guaranteed, as demonstrated by the discontinuation of DSP-1181 after Phase I trials despite a favorable safety profile [30].

In conclusion, AI-powered de novo molecular design represents a transformative advancement within the framework of Rational Drug Design. By enabling the systematic exploration of vast chemical spaces and the generation of novel molecular entities with optimized properties, these approaches are reshaping drug discovery paradigms. When thoughtfully integrated with traditional medicinal chemistry expertise and experimental validation, AI-powered de novo design holds significant promise for accelerating the delivery of innovative therapeutics to address unmet medical needs.

High-Fidelity Structure Prediction with AlphaFold 3 and Beyond

Rational Drug Design (RDD) has traditionally relied on hypothesis-driven experimentation to modulate therapeutic targets, a process often constrained by incomplete structural knowledge of biomolecular systems. The emergence of AlphaFold 3 (AF3) represents a foundational shift in this paradigm, providing researchers with an unprecedented atomic-level view of nearly the entire biomolecular landscape [33] [7]. This AI model, developed by Google DeepMind and Isomorphic Labs, extends beyond the protein structure prediction capabilities of its predecessor to a unified framework capable of predicting the joint 3D structures of proteins, nucleic acids (DNA, RNA), small molecule ligands, ions, and modified residues [34] [35]. For the first time, AF3 achieves accuracy that surpasses specialized physics-based tools in predicting drug-like interactions, making it the first AI system to outperform traditional docking methods by at least 50% on standard benchmarks [34] [35]. This technological leap provides the structural foundation for a new era of RDD, enabling scientists to understand and target biological complexes in their full cellular context.

AlphaFold 3: Architectural Revolution

Core Model Architecture and Innovations

AlphaFold 3's architecture constitutes a substantial evolution from AlphaFold 2, engineered to handle the diverse chemistry of life's molecules within a single, unified deep-learning framework [34]. The model replaces AF2's complex Evoformer and structure module with a streamlined, diffusion-based approach that directly predicts raw atom coordinates.

Table: AlphaFold 3 Architectural Components and Functions

| Component | Function | Improvement over AlphaFold 2 |

|---|---|---|

| Pairformer | Processes pair and single representations only | Replaces evoformer; substantially reduces MSA processing [34] |

| Diffusion Module | Generates atomic coordinates via iterative denoising | Directly predicts raw coordinates; eliminates need for rotational frames/torsion angles [34] |

| Cross-Distillation | Enriches training with predicted structures | Reduces hallucination in unstructured regions [34] |