Phenotypic vs. Target-Based Drug Discovery: A Modern Guide to Strategies, Successes, and AI Integration

This article provides a comprehensive analysis of the two dominant paradigms in pharmaceutical research: phenotypic drug discovery (PDD) and target-based drug discovery (TDD).

Phenotypic vs. Target-Based Drug Discovery: A Modern Guide to Strategies, Successes, and AI Integration

Abstract

This article provides a comprehensive analysis of the two dominant paradigms in pharmaceutical research: phenotypic drug discovery (PDD) and target-based drug discovery (TDD). Aimed at researchers and drug development professionals, it explores the foundational principles of each approach, detailing their methodological workflows and key technological advancements, including the integration of AI and multi-omics data. The content addresses common challenges such as target deconvolution in PDD and efficacy attrition in TDD, offering practical troubleshooting and optimization strategies. Through a critical comparative analysis of success rates, particularly for first-in-class medicines, and an examination of emerging hybrid models, this article serves as a strategic resource for selecting and optimizing drug discovery pipelines for complex diseases.

Defining the Paradigms: The Core Principles of Phenotypic and Target-Based Drug Discovery

What is Target-Based Drug Discovery (TDD)? A History of Rational Design

Target-based drug discovery (TDD) represents a paradigm shift from traditional phenotypic approaches to a rational, hypothesis-driven framework centered on modulating specific molecular targets. This whitepaper delineates the historical emergence, core principles, and methodological workflows of TDD, contextualizing it within the modern drug discovery landscape alongside phenotypic drug discovery (PDD). The transition to TDD was catalyzed by advancements in genomics and molecular biology, enabling high-throughput screening of compounds against isolated proteins implicated in disease pathways. We provide a comprehensive examination of TDD's experimental protocols, key reagents, and strategic advantages while addressing its limitations in translating in vitro efficacy to clinical success. By synthesizing contemporary research and quantitative data, this guide offers drug development professionals a technical resource for navigating target-centric therapeutic development.

Target-based drug discovery (TDD) is a systematic approach to pharmaceutical development that begins with the identification and validation of a specific biological macromolecule—typically a protein or gene—hypothesized to play a critical role in disease pathogenesis. This molecular target serves as the foundational element for all subsequent discovery activities, establishing a causal linkage between target modulation and therapeutic outcome [1]. The TDD paradigm operates on the principle of rational design, wherein drug candidates are deliberately engineered or selected for their ability to interact with a predefined target with high specificity and affinity [2].

The strategic adoption of TDD must be contextualized within the broader dichotomy of drug discovery approaches, particularly in contrast to phenotypic drug discovery (PDD). While PDD identifies compounds based on their observable effects in complex biological systems without presupposition of mechanism, TDD employs a reductionist framework that prioritizes molecular specificity [2] [3]. This target-centric approach gained predominance following the molecular biology revolution and the sequencing of the human genome, which collectively provided an expansive repository of potential therapeutic targets [2]. The fundamental distinction between these approaches has profound implications for screening strategies, lead optimization, and ultimately, clinical translatability.

Historical Emergence of Target-Based Discovery

The evolution of drug discovery methodologies reveals a clear trajectory from serendipitous observation toward rational design. Historically, therapeutic agents were discovered through the empirical screening of natural products or crude extracts in whole organisms—a classical pharmacological approach now categorized as PDD [2] [1]. Seminal examples include morphine from opium poppy and digoxin from foxglove, where therapeutic utility was established long before their molecular mechanisms were understood [1].

The conceptual transition to TDD was catalyzed by several critical scientific advancements. The "one gene, one enzyme" hypothesis proposed by Beadle and Tatum, coupled with the elucidation of DNA's structure, established a mechanistic framework for understanding disease at the molecular level [1]. This foundation enabled pioneering work by researchers such as Gertrude Elion and George Hitchings, who systematically developed purine analogues to intercept specific metabolic pathways, yielding the first antiviral agents and immunosuppressants [1]. Similarly, James Black's rational design of beta-blockers and H₂ receptor antagonists demonstrated the power of targeting specific receptor subtypes to achieve therapeutic selectivity [1].

The completion of the Human Genome Project marked a watershed moment, providing researchers with an unprecedented catalog of potential drug targets and accelerating the pharmaceutical industry's commitment to TDD [2] [4]. Between 1999-2008, however, a surprising observation emerged: a majority of first-in-class drugs were discovered through phenotypic screening rather than target-based approaches [2]. This revelation prompted a re-evaluation of drug discovery strategies, fostering a contemporary perspective that recognizes the complementary strengths of both TDD and PDD within a diversified research portfolio.

Core Principles and Key Characteristics of TDD

The TDD framework is governed by several defining characteristics that distinguish it from phenotypic approaches and establish its rational foundation. Understanding these core principles is essential for effective implementation.

Target-First Hypothesis: TDD initiates with the selection of a specific molecular entity—most commonly proteins such as G-protein-coupled receptors (GPCRs), enzymes, ion channels, or nuclear receptors—that is hypothesized to be causally involved in a disease pathway [1] [4]. This "druggable" target must demonstrate therapeutic relevance, with ideal candidates possessing clear genetic or biochemical evidence linking their activity to disease pathology [4].

Molecular Specificity: A central tenet of TDD is the design of compounds with high selectivity for the intended target over related biological macromolecules [1]. This specificity aims to minimize off-target interactions that could lead to adverse effects, though the therapeutic value of polypharmacology (activity at multiple targets) is increasingly recognized for certain complex disorders [2].

Reductionist Assay Systems: TDD relies predominantly on biochemical or cell-based assays employing purified targets or engineered cell lines with simplified pathophysiology [3]. These systems enable precise measurement of compound-target interactions but may lack the physiological context of native tissue environments.

Define "Established Targets" and "New Targets": The distinction is crucial in TDD. Established targets are those with a well-understood function in normal physiology and disease pathology, supported by extensive scientific literature and often with clinically validated drugs available [1]. In contrast, new targets represent emerging biological understanding with less comprehensive validation, offering potential for first-in-class therapies but carrying greater development risk [1].

The successful prosecution of TDD programs requires rigorous validation of the proposed target's role in disease and its tractability to pharmacological intervention. This process leverages genetic, biochemical, and clinical evidence to establish confidence in the target-disease relationship before committing substantial resources to screening efforts [4].

The TDD Workflow: From Target to Candidate

The implementation of TDD follows a structured, sequential workflow designed to progressively refine compound properties and validate therapeutic hypotheses. The following diagram illustrates the core stages of this process.

Target Identification and Validation

The initial stage involves identifying a biologically relevant molecule with a hypothesized role in disease pathology. Modern approaches leverage genomic analyses (including genome-wide association studies), proteomic profiling, and bioinformatic mining of biological networks to nominate potential targets [4]. Following identification, targets undergo rigorous validation to establish their essential role in disease processes using techniques such as RNA interference, CRISPR-based gene editing, or pharmacological modulation with tool compounds [4] [3]. The emergence of multi-omics integration and machine learning approaches has enhanced the efficiency of this discovery stage [4].

Assay Development and High-Throughput Screening (HTS)

With a validated target, the next phase involves developing robust screening assays capable of interrogating large chemical libraries. TDD typically employs biochemical assays with purified protein targets or cell-based assays employing engineered reporter systems [1]. These assays are optimized for miniaturization and automation to enable high-throughput screening (HTS) of compound libraries ranging from hundreds of thousands to millions of molecules [1]. A critical aspect of this stage is counterscreening, which assesses compound specificity by testing against unrelated targets to eliminate non-selective hits early in the process [1].

Hit to Lead and Lead Optimization

Compounds demonstrating activity in primary screens ("hits") undergo confirmation and preliminary characterization to exclude artifacts or promiscuous inhibitors. Medicinal chemistry efforts then focus on improving the properties of confirmed hits through iterative structure-activity relationship (SAR) studies [1]. Key optimization parameters include:

- Increasing potency against the primary target

- Enhancing selectivity over related targets

- Improving drug-like properties (solubility, metabolic stability, permeability)

- Optimizing pharmacokinetic profiles (absorption, distribution, metabolism, excretion)

This optimization process leverages techniques such as computer-aided drug design, molecular modeling, and structural biology to inform compound design [1]. Contemporary approaches increasingly incorporate fragment-based drug discovery and protein-directed dynamic combinatorial chemistry to explore chemical space more efficiently [1].

Essential Research Reagents and Methodologies

The execution of TDD relies on a specialized toolkit of reagents and methodologies designed to enable precise interrogation of molecular targets. The following table catalogs essential resources for prosecuting target-based campaigns.

Table 1: Key Research Reagent Solutions for Target-Based Drug Discovery

| Reagent/Methodology | Function in TDD | Technical Considerations |

|---|---|---|

| Recombinant Proteins | Purified target proteins for biochemical assays and structural studies | Requires appropriate expression systems (e.g., E. coli, insect, mammalian cells) and functional characterization |

| Engineered Cell Lines | Cellular systems expressing target of interest; may include reporter constructs | Choice of host cell background (e.g., HEK293, CHO) and genetic modification method (transient vs. stable expression) critical |

| Chemical Libraries | Diverse collections of compounds for screening against molecular targets | Library design (diversity, drug-like properties), format (solution, DMSO stocks), and management systems essential |

| Pharmacological Tool Compounds | Reference molecules with established activity at target or related proteins | Used for assay validation, as positive controls, and for understanding structure-activity relationships |

| Target-Specific Assay Kits | Optimized reagents for measuring target activity (e.g., kinase, protease, receptor assays) | Commercial availability, compatibility with HTS formats, and robustness (Z'-factor) influence utility |

| Antibodies | Detection and quantification of target protein expression and modification | Specificity validation (e.g., knockout cell lines) and application compatibility (e.g., Western blot, immunofluorescence) required |

Advanced methodologies that have become integral to modern TDD include DNA-encoded libraries (DELs) for efficient exploration of chemical space, fragment-based screening to identify low molecular weight starting points, and cryo-electron microscopy for structural characterization of challenging targets [1] [5]. The increasing application of artificial intelligence and machine learning further augments these experimental approaches by enabling predictive modeling of compound-target interactions [4] [6].

Comparative Analysis: TDD vs. PDD

The strategic choice between target-based and phenotypic approaches represents a fundamental decision in drug discovery program planning. The following table synthesizes key comparative metrics derived from historical analysis and contemporary research.

Table 2: Quantitative Comparison of TDD and PDD Approaches

| Parameter | Target-Based Discovery (TDD) | Phenotypic Discovery (PDD) |

|---|---|---|

| First-in-Class Medicine Discovery | Lower proportion historically [2] | Higher proportion historically; source of ~50% of first-in-class drugs (1999-2008) [2] |

| Target Space | Limited to previously validated or understood targets [2] | Expands "druggable" space to include unexpected mechanisms and multi-target therapies [2] |

| Screening Throughput | Very high (millions of compounds) [1] | Variable; typically medium to high throughput [3] |

| Mechanism Deconvolution | Inherent to approach | Requires additional target deconvolution efforts; can be resource-intensive [3] |

| Physiological Context | Reductionist; may lack tissue complexity [7] [3] | Higher physiological relevance through use of primary cells, co-cultures, or whole organisms [7] [3] |

| Polypharmacology Assessment | Typically viewed as undesirable (off-target effects) [2] | Can intentionally identify multi-target compounds with potential synergistic effects [2] |

| Technical Success Rate in Primary Screening | Higher probability of technical success [7] | Lower probability due to complex assay systems [7] |

The integration of TDD and PDD represents an emerging paradigm that leverages the strengths of both approaches. This hybrid model may employ phenotypic screening for initial hit identification followed by target-based methods for lead optimization, or conversely, use target-focused assays to characterize compounds discovered in phenotypic screens [3]. The development of more physiologically relevant in vitro systems, including microphysiological systems ("organ-on-a-chip"), 3D organoids, and complex co-cultures, further blurs the distinction between these approaches by enabling target-focused questions to be addressed in more physiological contexts [8] [3].

Target-based drug discovery has established itself as a cornerstone of modern pharmaceutical research, providing a rational, systematic framework for interrogating biological pathways and developing therapeutic agents with defined mechanisms of action. The historical transition to TDD reflected advancements in molecular biology and genomics, enabling unprecedented precision in drug design. Despite challenges in clinical translation, TDD continues to evolve through incorporation of more physiologically relevant model systems, advanced computational methods, and integrative strategies that bridge the divide between target-centric and phenotypic approaches.

The future of TDD will likely be shaped by several convergent trends: the expanding repertoire of "druggable" targets including RNA and protein degradation machinery; the increasing application of artificial intelligence for target validation and compound design; and the growing recognition that polypharmacology may be therapeutically advantageous for complex diseases [2] [5] [4]. For drug development professionals, strategic target selection remains paramount, requiring thoughtful consideration of both biological rationale and practical druggability. As the field advances, the continued refinement of TDD principles—complemented by insights from phenotypic approaches—promises to enhance the efficiency and productivity of therapeutic development.

Phenotypic Drug Discovery (PDD) has re-emerged as a powerful, unbiased strategy for identifying first-in-class therapeutics, marking a significant shift from the reductionist approach of Target-Based Drug Discovery (TDD). This empirical, biology-first approach uses screening methods that do not require prior knowledge of specific molecular targets, instead identifying active molecules based on their effects on cells, tissues, or whole organisms relevant to human disease [9]. The renewed interest in PDD follows a systematic analysis revealing that between 1999 and 2008, phenotypic approaches were responsible for 28 first-in-class small molecule drugs compared to 17 from target-based methods [9] [10]. This surprising finding triggered a major resurgence in PDD adoption, with large pharmaceutical companies like AstraZeneca and Novartis increasing their use of phenotypic screens from less than 10% to an estimated 25-40% of their project portfolios between 2012 and 2022 [9].

Modern PDD should not be confused with historical approaches. Today's PDD leverages very modern tools including high-content imaging, RNA profiling, CRISPR, and advanced computational methods to recreate disease in microplates with higher physiological relevance [10]. This paradigm shift represents a fundamental change in how we conceptualize drug discovery, challenging assumptions about what is druggable and expanding the target space to include unexpected cellular processes and mechanisms of action [2].

PDD vs. TDD: Comparative Analysis and Strategic Implementation

Fundamental Philosophical Differences

The core distinction between PDD and TDD lies in their starting points and underlying philosophies. TDD begins with a hypothesis about a specific molecular target's role in disease, followed by screening for compounds that modulate this predefined target [11]. In contrast, PDD starts with a disease-relevant biological system and identifies compounds that produce a therapeutic phenotype without requiring target knowledge [9] [11]. This fundamental difference leads to distinct advantages and limitations for each approach (Table 1).

Table 1: Comparison of Phenotypic vs. Target-Based Drug Discovery Approaches

| Parameter | Phenotypic Screening (PDD) | Target-Based Screening (TDD) |

|---|---|---|

| Discovery Approach | Identifies compounds based on functional biological effects in complex systems [11] | Screens for compounds that modulate a predefined molecular target [11] |

| Discovery Bias | Unbiased, allows for novel target identification [11] | Hypothesis-driven, limited to known pathways and targets [11] |

| Mechanism of Action | Often unknown at discovery, requiring later deconvolution [11] | Defined from the outset based on target knowledge [11] |

| Target Space | Broad, includes novel and diverse target types [9] | Narrow, typically limited to enzymes and receptors with known function [9] |

| Success Rate for First-in-Class | Higher proportion of first-in-class medicines [9] [10] | Lower proportion of first-in-class medicines [9] [10] |

| Technological Requirements | Requires high-content imaging, functional genomics, and AI/ML [9] [11] | Relies on structural biology, computational modeling, and enzyme assays [11] |

| Typical Applications | Diseases with complex biology or unknown mechanisms; novel target discovery [12] | Well-validated targets with established biology [12] |

Quantitative Outcomes Assessment

The impact of PDD on drug discovery is demonstrated through quantitative analysis of approved therapies. A comprehensive review showed that from 1999 to 2017, PDD contributed to 58 out of 171 total approved drugs, compared to 44 approvals from TDD and 29 from monoclonal antibody-based therapies [9]. This track record of success, particularly for first-in-class medicines, has solidified PDD's position as a valuable discovery modality in both academia and the pharmaceutical industry [2].

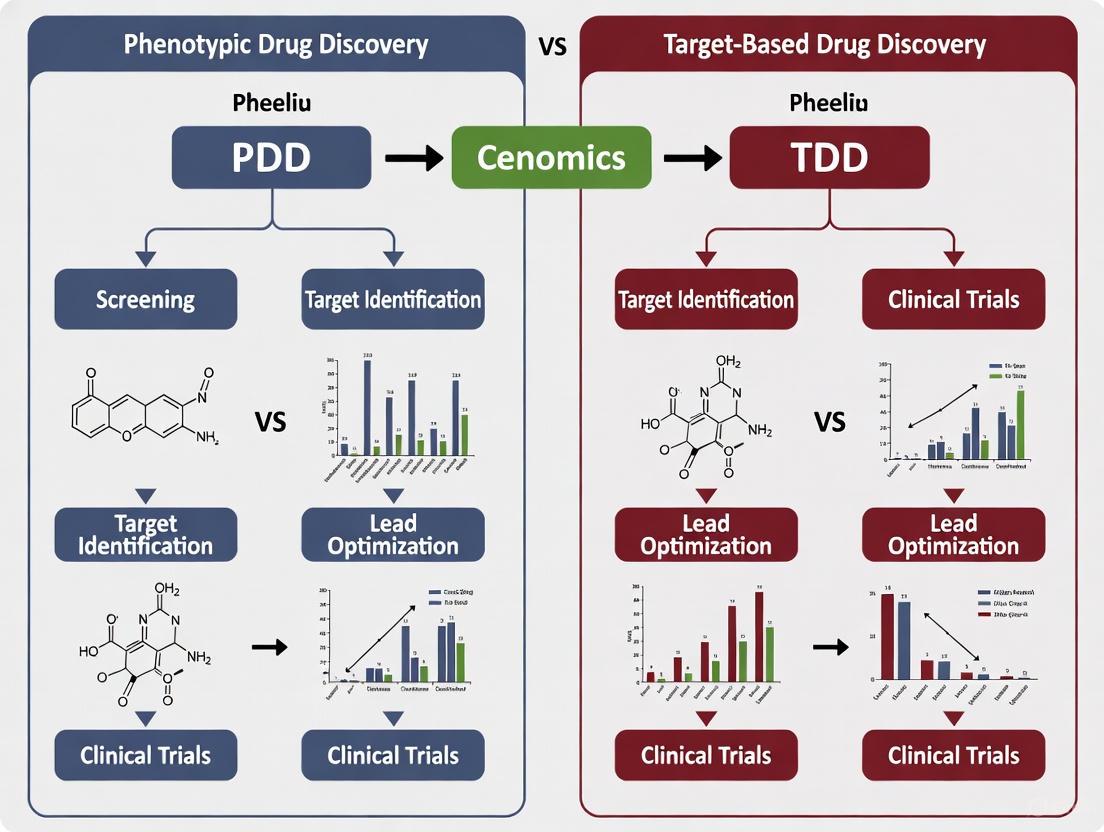

The following diagram illustrates the fundamental differences in workflow between PDD and TDD approaches:

Modern PDD Methodologies: Experimental Frameworks and Workflows

Core Screening Platforms and Model Systems

Modern PDD employs sophisticated biological systems that closely mimic human disease physiology. The selection of appropriate model systems is critical for generating clinically relevant results [10].

In Vitro PDD Platforms have evolved significantly from simple 2D cell cultures to complex, physiologically relevant systems:

- 2D Monolayer Cultures: Traditional cell culture models used for basic functional assays and cytotoxicity screening [11]

- 3D Organoids and Spheroids: More physiologically relevant models that better mimic tissue architecture and function, commonly used in cancer and neurological research [11]

- iPSC-Derived Models: Induced pluripotent stem cells differentiated into specific cell types, enabling patient-specific drug screening and disease modeling [11]

- Patient-Derived Primary Cells: Cells derived directly from patients, offering high clinical relevance for disease modeling [10] [11]

- Organ-on-Chip Models: Systems that recapitulate human physiological processes by merging cell culture with microengineering techniques in microfluidic devices [11]

In Vivo PDD Platforms provide whole-organism context for evaluating therapeutic effects:

- Zebrafish: Small vertebrate model with high genetic similarity to humans, used for neuroactive drug screening and toxicology studies [11]

- Caenorhabditis elegans: Simple, well-characterized organism used in neurodegenerative disease research and longevity studies [11]

- Rodent Models: Gold-standard mammalian models in preclinical research that provide robust data on pharmacodynamics and pharmacokinetics [11]

High-Content Screening and Phenotypic Profiling

High-content screening (HCS) represents arguably the most powerful enhancement to modern PDD, combining automated microscopy with computational image analysis to extract rich morphological data from cells [9]. The Cell Painting assay has emerged as a particularly valuable phenotypic profiling technique, using multiple fluorescent dyes to mark key cellular components and computational analysis to extract thousands of morphological features [13]. This approach enables clustering of cellular phenotypes to help identify potential drug candidates and elucidate mechanisms of action [9].

The experimental workflow for a typical phenotypic screening campaign involves multiple standardized steps:

Essential Research Reagents and Solutions

Successful implementation of PDD requires carefully selected biological tools and reagents. The following table details key research solutions essential for modern phenotypic screening:

Table 2: Essential Research Reagent Solutions for Phenotypic Drug Discovery

| Research Solution | Function in PDD | Application Examples |

|---|---|---|

| Cell Painting Kits | Multiplexed fluorescent dye sets that mark multiple organelles for high-content morphological profiling [13] [14] | Phenotypic profiling, mechanism of action studies, hit triage [13] |

| CRISPR Libraries | Enable genome-wide functional screening to identify genes essential for specific phenotypic responses [10] | Target identification, validation of compound mechanism [10] |

| iPSC Differentiation Kits | Standardized protocols and reagents for generating disease-relevant cell types from induced pluripotent stem cells [11] | Neurological disease modeling, patient-specific screening [11] |

| 3D Culture Matrices | Specialized extracellular matrix materials that support formation and maintenance of organoids and spheroids [11] | Complex disease modeling, tumor biology studies [11] |

| L1000 Assay Reagents | Gene expression profiling technology that measures 978 representative transcripts for low-cost transcriptional profiling [13] | Mechanism of action classification, connectivity mapping [13] |

| High-Content Imaging Reagents | Fluorescent dyes, antibodies, and probes for monitoring cellular processes and morphological changes [9] [11] | Multiparameter phenotypic assessment, live-cell imaging [9] |

Recent Success Stories: PDD-Generated Therapeutics

PDD has contributed to numerous recently approved therapies, particularly for diseases with complex biology or previously undruggable targets. These success stories demonstrate the power of phenotypic approaches to identify first-in-class medicines with novel mechanisms of action.

Table 3: Recently Approved Therapies Identified Through Phenotypic Drug Discovery

| Drug (Brand Name) | Therapeutic Area | Year Approved | Key Mechanism/Target | PDD Approach |

|---|---|---|---|---|

| Risdiplam (Evrysdi) | Spinal Muscular Atrophy | 2020 [9] | SMN2 pre-mRNA splicing modifier [9] [2] | Phenotypic screen for compounds increasing full-length SMN protein [9] |

| Vamorolone (AGAMREE) | Duchenne Muscular Dystrophy | 2023 [9] | Dissociative steroid that modifies downstream receptor activity [9] | Phenotypic profiling to elucidate sub-activities and dissociate efficacy from steroid side effects [9] |

| Lumacaftor/Ivacaftor (ORKAMBI) | Cystic Fibrosis | 2015 [9] | CFTR corrector/potentiator combination [9] [2] | Target-agnostic compound screens using cell lines expressing disease-associated CFTR variants [9] [2] |

| Daclatasvir (Daklinza) | Hepatitis C Virus | 2014/2015 [9] | NS5A replication complex inhibitor [9] [2] | Phenotypic screening using HCV replicon system [2] |

| Perampanel (Fycompa) | Epilepsy | 2012 [9] | AMPA receptor antagonist [9] | Whole-system, multi-parametric modeling in phenotypic assays [9] |

| Lenalidomide (Revlimid) | Multiple Myeloma | 2005 [12] | Cereblon E3 ligase modulator leading to IKZF1/3 degradation [2] [12] | Phenotypic screening of thalidomide analogs for enhanced TNF inhibition [12] |

Case Study: Risdiplam for Spinal Muscular Atrophy

Spinal Muscular Atrophy (SMA) is a rare neuromuscular disease caused by loss-of-function mutations in the SMN1 gene. Phenotypic screens identified small molecules that modulate SMN2 pre-mRNA splicing to increase levels of functional SMN protein [2]. The approved drug, risdiplam, works by engaging two sites at the SMN2 exon 7 and stabilizing the U1 snRNP complex - an unprecedented drug target and mechanism of action [2]. This target would have been unlikely identified through traditional target-based approaches since SMN2 lacked known functional activity relevant to the disease [9].

Case Study: Cystic Fibrosis Modulators

Cystic fibrosis is caused by mutations in the CFTR gene that decrease CFTR function or interrupt intracellular folding and membrane insertion. Target-agnostic compound screens using cell lines expressing wild-type or disease-associated CFTR variants identified both potentiators (such as ivacaftor) that improve CFTR channel gating, and correctors (such as lumacaftor, tezacaftor, and elexacaftor) that enhance CFTR folding and plasma membrane insertion [2]. The combination of elexacaftor, tezacaftor, and ivacaftor was approved in 2019 and addresses 90% of the CF patient population [2].

AI and Machine Learning in PDD

Computational Advances in Phenotypic Analysis

Artificial intelligence and machine learning have dramatically enhanced PDD by enabling automated analysis of complex phenotypic data. ML/AI tools provide significant advantages for PDD through automated analysis of cell image data, extraction of diverse morphological features, and clustering of cellular phenotypes to help identify potential drug candidates [9]. Advanced computational methods can leverage multimodal data, combining chemical structure features with extracted image features to significantly improve the prediction of mechanism of action and bioactivity properties [9].

Recent research demonstrates that combining multiple data modalities dramatically improves bioactivity prediction. One study found that while chemical structures (CS), morphological profiles (MO) from Cell Painting, and gene expression profiles (GE) could individually predict 6-10% of assays with high accuracy (AUROC >0.9), in combination they could predict 21% of assays - a 2 to 3 times improvement over single modalities [13]. At more practical accuracy thresholds (AUROC >0.7), combining modalities increased predictable assays from 37% with chemical structures alone to 64% when integrated with phenotypic data [13].

Emerging AI Platforms and Foundation Models

The field is rapidly evolving with new AI-driven platforms specifically designed for phenotypic discovery:

PhenoModel: A multimodal molecular foundation model using dual-space contrastive learning to connect molecular structures with phenotypic information from cellular morphological profiles [14]. This model outperforms baseline methods in molecular property prediction and active molecule screening based on targets, phenotypes, and ligands [14].

Recursion-Exscientia Integrated Platform: Following their 2024 merger, this integrated platform combines Recursion's extensive phenomics data with Exscientia's generative chemistry capabilities, creating a closed-loop design-make-test-learn cycle powered by automated robotics and AI [15].

Ardigen's phenAID Platform: Dedicated to reducing analysis time and enhancing prediction quality for high-content screening datasets through advanced machine learning algorithms [9].

The integration of AI into PDD workflows has created powerful new capabilities for analyzing complex biological systems and predicting compound activity, significantly accelerating the early stages of the drug discovery process [13].

Phenotypic Drug Discovery has firmly re-established itself as an essential approach for identifying first-in-class medicines with novel mechanisms of action. By focusing on therapeutic outcomes in physiologically relevant systems rather than predefined molecular targets, PDD has expanded the druggable target space to include previously inaccessible biological processes [2]. The continued evolution of PDD will be driven by advances in human-based phenotypic platforms, improved disease models, and sophisticated computational methods including AI and machine learning [8].

As these technologies mature and integrate, PDD is poised to address some of the most challenging limitations in drug discovery, particularly for complex diseases with polygenic origins or poorly understood biology. The future will likely see increased convergence of phenotypic and target-based approaches, creating hybrid workflows that leverage the strengths of both strategies [12]. This integration, powered by AI and multimodal data analysis, represents the next frontier in therapeutic discovery - enabling researchers to systematically navigate biological complexity while delivering innovative medicines for patients with unmet medical needs.

The history of modern drug discovery has been characterized by a pendulum swing between two fundamental strategies: Phenotypic Drug Discovery (PDD) and Target-Based Drug Discovery (TDD). PDD, the older of the two approaches, can be defined as a "compound-first" strategy that uses target-agnostic, system-based assays to identify pharmacologically active molecules based on their effects on disease phenotypes or translational biomarkers [16]. In contrast, TDD represents a "mechanism-first" approach focused on a specific molecular target—a gene product that provides a starting point for inventing a therapeutic which modulates its expression, function, or activity [16]. The evolution between these strategies represents more than a simple methodological preference; it reflects deeper philosophical differences in how researchers bridge the gap between understanding disease mechanisms and inventing effective medicines.

This analysis traces the historical trajectory of drug discovery from its phenotypic origins through the dominance of reductionist target-based approaches and the contemporary resurgence of phenotypic strategies, examining the technological and scientific forces driving these transitions and their implications for future therapeutic development.

The Historical Dominance of Phenotypic Drug Discovery

The empirical principles of PDD formed the foundation of early pharmaceutical development, with pioneers like Paul Ehrlich, who invented the first "magic bullet" (salvarsan) for syphilis from chemical dyes, and Sir James Black and Dr. Paul Janssen, who emphasized starting with a "pharmacologically active compound" [16]. George H. Hitchings Jr. highlighted the power of empirical, phenotypic screens when he stated in his 1988 Nobel lecture that "those early, untargeted studies led to the development of useful drugs for a wide variety of diseases and has justified our belief that this approach to drug discovery is more fruitful than narrow targeting" [16].

Before the genetic revolution, most medicines were identified primarily through this compound-first approach, relying on observable therapeutic effects in disease models or even serendipitous clinical observations rather than predefined molecular mechanisms. This empirical tradition produced many foundational therapeutics, but as molecular biology advanced, the limitations of this approach—including lengthy development cycles and uncertain mechanisms of action—became increasingly apparent, setting the stage for a paradigm shift.

The Ascendancy of Target-Based Drug Discovery

The genetic revolution of the 1980s-1990s, culminating in the sequencing of the human genome in 2001, fundamentally reshaped drug discovery philosophy [2]. The powerful new understanding of genes and their protein products created the vision that new medicines could be discovered rationally based on this molecular understanding of disease. This "mechanism-first" strategy promised greater efficiency, specificity, and a more scientific foundation for therapeutic development.

TDD dominated pharmaceutical research from approximately 1990-2010, driven by several perceived advantages:

- Clearer Development Pathways: Known molecular targets enabled rational drug design and optimization

- Improved Specificity: Drugs could be designed to interact specifically with validated targets, potentially reducing off-target effects

- High-Throughput Screening: Target-based assays were often more amenable to automation and miniaturization

- Biomarker Development: Known targets facilitated companion diagnostic development

The reductionist appeal of TDD aligned with the scientific zeitgeist of the period, leading to notable successes such as vemurafenib, a BRAF inhibitor for melanoma [16]. However, despite these advances, the cost of producing new medicines far outpaced the industry's ability to discover them, revealing a troubling gap between understanding disease mechanisms and actually inventing effective new medicines [16].

The Contemporary Resurgence of Phenotypic Approaches

A pivotal 2011 analysis by Swinney and Anthony of discovery strategies for new molecular entities approved by the FDA between 1999 and 2008 revealed a surprising pattern: a majority of first-in-class small-molecule drugs were discovered empirically through PDD approaches, while the majority of follower drugs were discovered using TDD [2] [16]. This analysis demonstrated that the mechanistic knowledge available when a program is initiated is often insufficient to provide a blueprint for discovering first-in-class medicines, creating a knowledge gap that PDD addresses empirically.

This revelation, combined with stagnating productivity in the pharmaceutical industry despite massive investments in target-based approaches, sparked a major resurgence in PDD beginning around 2011 [2]. Modern PDD has evolved significantly from its historical predecessors, now combining the original concept with sophisticated tools and strategies to systematically pursue drug discovery based on therapeutic effects in realistic disease models [2].

Table 1: Notable Drug Discoveries from Modern Phenotypic Approaches

| Drug | Disease Area | Key Target/Mechanism Identified | Screen Type |

|---|---|---|---|

| Ivacaftor, Tezacaftor, Elexacaftor [2] | Cystic Fibrosis | CFTR correctors/potentiators (channel folding/gating) | Cell lines expressing CFTR variants |

| Risdiplam, Branaplam [2] | Spinal Muscular Atrophy | SMN2 pre-mRNA splicing modulators | SMN2 reporter gene assays |

| Daclatasvir [2] | Hepatitis C | NS5A protein inhibitor | HCV replicon phenotypic screen |

| Lenalidomide [2] | Multiple Myeloma | Cereblon E3 ligase modulator (protein degradation) | Clinical observation (thalidomide derivatives) |

| SEP-363856 [2] | Schizophrenia | Novel mechanism (trace amine-associated receptor) | Phenotypic screen |

The return to phenotypic strategies has been facilitated by several technological advances:

- Improved Disease Models: Development of induced pluripotent stem cells (iPSCs), organoids, and more physiologically relevant cellular systems [16]

- Advanced Biomarkers: Sophisticated functional readouts with improved clinical translatability

- Chemical Biology Tools: Libraries designed for phenotypic screening with balanced diversity and tractability [16]

- Analytical Technologies: -omics approaches and bioinformatics for mechanism deconvolution

Quantitative Comparison: PDD vs. TDD Performance Metrics

Analyzing the relative performance of PDD and TDD approaches reveals distinct strengths and limitations for each strategy. The following table synthesizes data from industry analyses and clinical outcomes:

Table 2: Comparative Analysis of PDD vs. TDD Output and Characteristics

| Parameter | Phenotypic Drug Discovery (PDD) | Target-Based Drug Discovery (TDD) |

|---|---|---|

| First-in-class medicines (1999-2008) [16] | Majority (from empirical discovery) | Minority |

| Follower medicines (1999-2008) [16] | Minority | Majority |

| Target Space | Novel, unexpected targets and mechanisms | Established, validated target classes |

| Mechanism of Action | Often identified post-discovery | Defined before compound optimization |

| Disease Models | Complex, systems-based, disease-relevant | Reductionist, target-focused |

| Typical Development Timeline | Often longer due to mechanism deconvolution | Potentially shorter with validated targets |

| Probability of Phase 2 → 3 Transition [16] | 32.4-48.6% (across strategies) | 32.4-48.6% (across strategies) |

| Probability of Phase 3 → Approval [16] | 50-59% (across strategies) | 50-59% (across strategies) |

| Major Challenge | Target identification, clinical translation | Target validation, clinical efficacy |

The data demonstrates that while TDD has proven effective for developing follower drugs that improve upon existing mechanisms, PDD has disproportionately contributed breakthrough first-in-class medicines with novel mechanisms of action. However, both approaches face significant challenges in late-stage development, with lack of therapeutic efficacy accounting for >50% of Phase 3 failures across strategies [16].

Experimental Framework: Methodologies for Modern PDD

Implementing a successful phenotypic drug discovery program requires carefully designed experimental workflows that balance physiological relevance with practical screening considerations. The following diagram illustrates a generalized PDD workflow:

Detailed Experimental Protocols

Phenotypic Screening Protocol: Cystic Fibrosis CFTR Correctors

The discovery of CFTR correctors (elexacaftor, tezacaftor) and potentiators (ivacaftor) for cystic fibrosis exemplifies modern PDD success [2].

Primary Screening Protocol:

- Cell Model: Utilize Fischer Rat Thyroid (FRT) cells co-expressing human CFTR mutants (e.g., ΔF508-CFTR) and a halide-sensitive yellow fluorescent protein (YFP)

- Assay Principle: Functional CFTR at membrane enables iodide influx, quenching YFP fluorescence

- Screening Format: 384-well plates, 10,000-100,000 compound libraries

- Compound Incubation: 24 hours to allow CFTR maturation and trafficking

- Assay Execution:

- Aspirate compound media and add iodide-free PBS

- Add iodide solution and measure YFP fluorescence (500/525 nm) every second for 20 seconds

- Calculate initial fluorescence slope as indicator of CFTR function

- Hit Criteria: Compounds showing >3 standard deviations above DMSO control mean

- Counterscreens: Toxicity assays, verification in primary human bronchial epithelial cells

Target Deconvolution Protocol: Mechanism of Action Studies

Following primary phenotypic screening, identifying molecular targets represents a critical PDD challenge.

Integrated Target Identification Workflow:

- Chemical Genetics:

- CRISPR/Cas9 knockout or RNAi screening with hit compounds

- Resistance generation and whole-exome sequencing of resistant clones

- Chemical Proteomics:

- Immobilize compound on solid support for affinity purification

- Incubate with cell lysates, wash, and elute bound proteins

- Identify proteins by mass spectrometry

- Transcriptomics/Proteomics:

- RNA sequencing or proteomic profiling of compound-treated vs. untreated cells

- Pattern matching to compounds with known mechanisms

- Bioinformatics Integration:

- Cross-reference multiple approaches to identify consensus targets

- Validate through genetic manipulation (overexpression/knockdown)

The Scientist's Toolkit: Essential Research Reagents for PDD

Implementing effective phenotypic screening requires carefully selected reagents and tools designed to maximize physiological relevance while maintaining screening feasibility.

Table 3: Essential Research Reagents for Modern Phenotypic Drug Discovery

| Reagent Category | Specific Examples | Function in PDD |

|---|---|---|

| Cell Models | iPSC-derived cells, primary human cells, organoids, 3D culture systems | Provide physiologically relevant environments for compound screening |

| Compound Libraries | Diversity-oriented synthesis libraries, known bioactives, natural product extracts | Source of chemical matter with balanced diversity and tractability [16] |

| Functional Reporters | Fluorescent calcium indicators, membrane potential dyes, YFP halide sensors | Enable measurement of functional phenotypes beyond simple viability |

| Genetic Tools | CRISPR/Cas9 libraries, siRNA collections, cDNA overexpression libraries | Facilitate target identification and validation |

| Analytical Technologies | High-content imagers, automated patch clamp, mass cytometers | Multiparametric readout capabilities for complex phenotypes |

| Biomarker Assays | Phospho-specific antibodies, metabolic flux assays, secreted protein markers | Bridge phenotypic observations to molecular mechanisms |

Integrated Approaches: The Future of Drug Discovery

The historical oscillation between PDD and TDD is evolving toward a more integrated approach that leverages the strengths of both strategies. The concept of "Mechanism-Informed PDD" (MIPDD) has emerged, which uses empirical assays to identify molecular mechanisms of action within target-based strategies [16]. This hybrid approach acknowledges that knowledge of a target alone does not always provide the molecular details required to predict a specific therapeutic response.

The future of drug discovery lies in recognizing that PDD and TDD represent complementary rather than competing approaches. PDD excels at identifying first-in-class medicines with novel mechanisms when knowledge gaps exist between targets and disease phenotypes, while TDD provides efficient optimization paths for validated targets and follower drugs. Successful organizations will maintain flexibility in selecting the optimal strategy based on the specific biological context, available tools, and project goals rather than adhering to methodological dogma.

The continued evolution of both approaches will be shaped by emerging technologies including artificial intelligence, functional genomics, and increasingly sophisticated disease models that further blur the traditional boundaries between phenotypic and target-based discovery, ultimately creating more opportunities to address unmet medical needs through innovative therapeutic mechanisms.

In the pharmaceutical landscape, two principal paradigms guide the discovery of new therapeutics: Target-Based Drug Discovery (TDD) and Phenotypic Drug Discovery (PDD). Historically, PDD was the primary method for discovering new medicines through observation of their effects on disease physiology in whole organisms or cellular models. The molecular biology revolution of the 1980s shifted focus toward TDD, a reductionist approach that modulates specific molecular targets with known roles in disease. Since approximately 2011, PDD has experienced a major resurgence following the observation that a majority of first-in-class drugs approved between 1999 and 2008 were discovered through phenotypic approaches without a predefined target hypothesis [2].

The modern iteration of PDD is defined by its focus on modulating a disease phenotype or biomarker in a realistic disease model, rather than a pre-specified target, to provide therapeutic benefit [2]. Conversely, TDD relies on an established causal relationship between a molecular target and a disease state. This technical guide examines the key rationales for choosing between these strategies, providing a structured decision-making framework for researchers and drug development professionals, supported by comparative data, experimental protocols, and practical toolkits.

Strategic Decision Framework: TDD vs. PDD

The choice between phenotypic and target-centric strategies depends on multiple project-specific variables, including the understanding of disease biology, desired innovation level, and available tools. The following table summarizes the key strategic considerations for selecting each approach.

Table 1: Strategic Decision Framework for TDD vs. PDD

| Decision Factor | Favor Phenotypic Screening (PDD) | Favor Target-Based Screening (TDD) |

|---|---|---|

| Target/Mechanism Understanding | No attractive or known target; complex, polygenic diseases with poorly understood pathophysiology [2] [17] | Well-validated target with established causal link to disease; understood mechanism of action [2] |

| Innovation Goals | First-in-class medicine; novel mechanism of action (MoA); expansion of druggable target space [2] | Best-in-class agent; improvement over existing therapies; optimization of known MoA [2] |

| Biological Complexity | Diseases requiring multi-target modulation (polypharmacology); unexpected biological connections [2] | Diseases with linear, well-defined pathways; single target modulation is sufficient for efficacy [2] |

| Technical Capabilities | Physiologically relevant disease models (e.g., human cell-based, microphysiological systems) [8] [18] | Target-based assay systems (e.g., enzymatic, binding, simple cellular assays) [17] |

| Risk Tolerance | Higher tolerance for uncertain target identity; investment in target deconvolution [17] [18] | Lower tolerance for target uncertainty; need for clear regulatory path based on target validation [17] |

Quantitative Performance Comparison

Empirical data reveals distinct performance patterns for TDD and PDD approaches in delivering new therapeutic agents. The following table summarizes key quantitative comparisons based on industry analyses.

Table 2: Performance Comparison of PDD and TDD Approaches

| Metric | Phenotypic Drug Discovery (PDD) | Target-Based Drug Discovery (TDD) |

|---|---|---|

| First-in-Class Drugs | Disproportionate source of first-in-class medicines [2] | Less common origin for first-in-class drugs [2] |

| Approved Small-Molecule Drugs | Majority of approved small-molecule drugs originated from PDD approaches [19] | Only 123 of 1144 approved small-molecule drugs discovered by purely TDD methods [19] |

| Novel Mechanisms/Targets | Identifies unexpected cellular processes and novel mechanisms [2] | Primarily addresses known targets and established mechanisms |

| Target Identification | Requires subsequent target deconvolution - a key challenge [17] [18] | Target known from outset - no deconvolution needed |

| Integration Potential | Benefits from integration with TDD for mechanism elucidation [19] | Benefits from PDD data for understanding complex biology [19] |

Recent computational approaches demonstrate how integrating both strategies can enhance outcomes. The Knowledge-Guided Drug Relational Predictor (KGDRP) framework, which integrates multimodal biomedical data including biological networks, gene expression, and chemical structures within a heterogeneous graph structure, shows a 12% improvement in predictive performance in real-world screening scenarios and a 26% enhancement in target prioritization for drug target discovery [19].

Experimental Design and Methodologies

Phenotypic Screening Protocol Workflow

A robust phenotypic screening protocol requires careful model selection, assay development, and hit validation. The following diagram illustrates a generalized workflow for phenotypic screening campaigns:

The phenotypic screening workflow begins with careful definition of a disease-relevant phenotype, followed by selection of a physiological disease model that accurately recapitulates key aspects of human disease pathophysiology. Modern PDD increasingly utilizes human-based systems, including primary cells, induced pluripotent stem cells (iPSCs), and microphysiological systems (organ-on-a-chip) to enhance clinical translatability [8] [18]. After implementing a robust screening campaign, significant effort is dedicated to hit triage and prioritization, employing secondary assays and counter-screens to eliminate compounds with undesirable mechanisms [18]. A critical phase follows with target deconvolution to identify the molecular mechanism of action, employing methods such as affinity chromatography, expression cloning, protein microarrays, and biochemical suppression [20].

Integrated Graph-Based Learning Methodology

The KGDRP framework represents an advanced approach that integrates PDD and TDD data through biological heterogeneous graphs (BioHG). The following diagram illustrates this methodology:

The BioHG construction specifically incorporates several critical data types: drug response data (capturing drug-cell relationships), drug-target interaction data (describing drug-protein interactions), RNA expression profiles of cell lines (representing protein-cell line relationships), protein-protein interactions (from UniProt database), Gene Ontology data, and pathway data from Reactome [19]. Notably, drugs and cell lines are not directly connected in this graph structure, forcing the model to learn drug response through proteins, thereby enabling more comprehensive use of network information to enrich representations [19]. For the relationship between proteins and cell lines, proteins exhibiting expression values higher than the mean value of each cell line establish edges, with transcriptional expression values assigned as edge weights [19].

The framework incorporates several predictors: the RNA expression predictor, the drug-target interaction predictor, and the biological process predictor, which enable KGDRP to capture inherent correlations and dependencies across diverse biological networks through multi-task learning [19]. To address the drug cold-start problem (where drugs in PDD and TDD may not overlap), KGDRP introduces a transformation function that learns a mapping from chemical structure to knowledge-informed drug representations [19].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful implementation of either screening strategy requires specific research tools and reagents. The following table details essential components for establishing robust screening platforms.

Table 3: Essential Research Reagent Solutions for Drug Discovery Screening

| Reagent Category | Specific Examples | Function and Application |

|---|---|---|

| Cell Models | Primary human cells, iPSCs, engineered cell lines, co-culture systems | Provide physiologically relevant systems for phenotypic screening; engineered lines for target-based assays [8] [18] |

| Compound Libraries | Diverse small-molecule collections, targeted libraries, FDA-approved drug collections | Source of chemical matter for screening; diversity critical for PDD; focused libraries for TDD [2] |

| Detection Reagents | Fluorescent dyes, antibodies, luminescent probes, biosensors | Enable quantification of phenotypic changes or target engagement in screening assays [17] |

| Omics Tools | CRISPR libraries, transcriptomic profiling, proteomic arrays | Functional genomics for target identification/validation; mechanism of action studies [2] [17] |

| Specialized Assays | High-content imaging, reporter gene assays, pathway-specific assays | Phenotypic profiling and characterization; pathway modulation assessment in TDD [8] [17] |

Signaling Pathways and Biological Mechanisms

Phenotypic screening has uniquely expanded the "druggable target space" by identifying compounds that modulate previously unexplored biological pathways and mechanisms. The following diagram illustrates key pathways successfully targeted by PDD-derived therapeutics:

These successfully targeted pathways share a common characteristic: they would have been difficult to identify through purely target-based approaches. For example, the CFTR correctors that enhance protein folding and membrane insertion were discovered through phenotypic screens of cells expressing disease-associated CFTR variants, identifying compounds with an unexpected mechanism of action [2]. Similarly, the target and mechanism of lenalidomide were only elucidated several years post-approval, when it was found to bind the E3 ubiquitin ligase Cereblon and redirect its substrate selectivity [2].

The choice between target-centric and phenotype-centric strategies remains a fundamental consideration in therapeutic development. PDD offers distinct advantages for discovering first-in-class medicines with novel mechanisms, particularly for complex diseases with poorly understood pathophysiology. TDD provides a more direct path for optimizing known mechanisms and developing best-in-class agents against validated targets.

Future directions in the field point toward increased integration of both approaches through computational frameworks like KGDRP that leverage multimodal data [19], greater use of human-based physiological systems including microphysiological systems and organ-on-chip technologies [8] [18], and application of advanced artificial intelligence for target prediction and mechanism elucidation. The combination of robust phenotypic screening with modern target deconvolution technologies represents a powerful strategy for expanding the druggable genome and addressing unmet medical needs across diverse disease areas.

Ultimately, the decision between phenotypic and target-based approaches should be guided by specific project goals, biological understanding of the disease, and available technical resources rather than doctrinal adherence to either paradigm. Strategic integration of both approaches throughout the drug discovery pipeline offers the most promising path for delivering innovative therapeutics to patients.

Modern Workflows and Technologies: Implementing PDD and TDD in the AI Era

Target-based drug discovery (TDD) represents a cornerstone strategy in modern pharmaceutical research, operating in parallel and contrast to phenotypic drug discovery (PDD). While PDD identifies compounds based on their effects in complex biological systems without requiring prior knowledge of a specific molecular target, TDD follows a reductionist approach that begins with the selection and validation of a single molecular target believed to play a critical role in disease pathogenesis [21] [2]. This methodological dichotomy creates distinct advantages and challenges for each approach. TDD offers the significant benefit of a clear mechanism of action from the project's inception, facilitating rational drug design and optimization [22]. The TDD paradigm has been empowered by advances in genomics, structural biology, and screening technologies, enabling researchers to systematically pursue therapeutic interventions against a expanding array of biological targets [2] [22].

The disproportionate number of first-in-class medicines originating from PDD between 1999-2008 sparked renewed interest in phenotypic approaches [2]. However, TDD remains a dominant force in drug discovery, particularly for programs where the target biology is well-understood and the primary goal is to create best-in-class therapeutics against validated mechanisms rather than discover novel biology [2]. The TDD pipeline comprises a series of methodical stages, each with defined objectives and decision gates, designed to maximize the probability of clinical success while efficiently allocating resources. This technical guide provides an in-depth examination of the core TDD pipeline, from initial target identification through high-throughput screening, while contextualizing its strategic position within the broader drug discovery landscape that includes PDD approaches.

Phase 1: Target Identification and Validation

Principles of Target Identification

Target identification represents the foundational stage of the TDD pipeline, focusing on the selection of a biological entity whose modulation is expected to provide therapeutic benefit. A promising drug target typically exhibits several key properties [22]:

- A confirmed role in the pathophysiology of a disease and/or is disease-modifying

- Uneven expression distribution throughout the body to enable therapeutic targeting without widespread systemic effects

- An available 3D-structure to assess druggability through computational and experimental methods

- Being easily 'assayable' to enable high-throughput screening campaigns

- A promising toxicity profile where potential adverse effects can be predicted using phenotypic and bioinformatic data

The process of identifying a novel drug target can follow one of two principal strategic pathways (Figure 1) [22]. Target discovery operates on the paradigm that discovering a new drug requires first finding a new target, after which compound libraries are screened to identify molecules that interact with this target. In contrast, target deconvolution begins with a drug or compound that demonstrates efficacy, with the molecular target being identified retrospectively.

Figure 1: Strategic Pathways for Target Identification in TDD

Target Validation Techniques

Once a potential target is identified, rigorous validation is essential to demonstrate that modulating its activity will produce a therapeutic effect with an acceptable safety profile. Comprehensive target validation typically requires 2-6 months to complete and applies multiple complementary techniques to build compelling evidence for the biological target [23]. The three major components of target validation using human data include tissue expression profiling, genetic evidence, and clinical experience [24]. For each component, specific metrics can guide investment decisions and confidence levels (Table 1).

Table 1: Key Techniques for Target Validation

| Validation Category | Specific Techniques | Key Outputs/Metrics |

|---|---|---|

| Functional Analysis | In vitro assays using 'tool' compounds; Pharmacological modulation | Demonstration of desired biological effect; Dose-response relationships; Potency measurements (IC50, EC50) |

| Expression Profiling | mRNA and protein distribution analysis in healthy vs. disease states; qPCR; Immunohistochemistry | Correlation of target expression with disease progression; Tissue-specific expression patterns |

| Genetic Validation | Genome-wide association studies (GWAS); Genetic linkage analysis; siRNA/shRNA screening | Evidence of target-disease association from human genetics; Phenotypic effects of gene suppression |

| Biomarker Identification | Transcriptomics (qPCR); Protein analyte detection (Luminex); Flow cytometry | Quantifiable biomarkers for monitoring target engagement and therapeutic efficacy |

| Cell-Based Models | 3D cultures; Co-culture systems; Human induced pluripotent stem cells (iPSC) | Disease-relevant cellular models for evaluating target modulation in physiological context |

According to the National Academies, establishing pharmacologically relevant exposure levels and target engagement are two key steps in target validation [24]. Additionally, there is growing recognition of the importance of rapid target invalidation to avoid costly investment in targets that ultimately lack therapeutic potential. The ultimate validation occurs when a drug engaging the target demonstrates safety and efficacy in patients, but the goal of pre-clinical validation is to build sufficient confidence to justify proceeding to clinical development [24].

Phase 2: Assay Development and High-Throughput Screening

Fundamentals of High-Throughput Screening

High-throughput screening (HTS) serves as the primary engine for lead discovery in the TDD pipeline, enabling the rapid testing of hundreds of thousands to millions of compounds against a validated biological target [21] [25]. HTS leverages automation, miniaturization, and parallel processing to conduct biological or chemical tests on an unprecedented scale, dramatically accelerating the early drug discovery process [25] [26]. A typical HTS system consists of several integrated components: robotics for plate handling, liquid dispensing devices for reagent and compound transfer, environmental controllers for incubation, and sensitive detectors for signal readout [25].

The core labware for HTS is the microtiter plate, which features a grid of small wells arranged in standardized formats. Modern HTS primarily utilizes 384-well or 1536-well plates, with ongoing trends toward further miniaturization to 3456-well formats to reduce reagent costs and increase throughput [25] [26]. The working volumes in these systems have decreased substantially, with typical assays now running in 2.5-10 μL total volume, and ultra-high density systems operating with volumes as low as 1-2 μL per well [26]. This miniaturization enables the screening of vast compound libraries while conserving precious biological reagents and chemical compounds.

HTS assays are predominantly classified as either biochemical (cell-free) or cell-based formats. Biochemical assays measure direct interactions between compounds and purified targets (enzymes, receptors), while cell-based assays examine compound effects in a more physiological context, including pathway activation and phenotypic changes [21]. The choice between these formats depends on the target biology, assay feasibility, and the desired information about compound activity.

HTS Assay Development and Quality Control

Robust assay development is critical for successful HTS campaigns. A well-developed HTS assay must balance sensitivity, reproducibility, and scalability while maintaining biological relevance [21]. The development process involves optimizing reagent concentrations, incubation times, detection methods, and tolerance to dimethyl sulfoxide (DMSO)—the common solvent for compound libraries.

Several key performance metrics are employed to ensure assay quality and reliability (Table 2). The Z'-factor is particularly important, providing a normalized measure of assay robustness that accounts for both the signal dynamic range and data variation. A Z'-factor between 0.5 and 1.0 indicates an excellent assay suitable for HTS [21]. Other critical parameters include the signal-to-noise ratio, signal window, and coefficient of variation across wells and plates.

Table 2: Key Performance Metrics for HTS Assay Validation

| Performance Metric | Calculation/Definition | Acceptance Criteria | Application in HTS |

|---|---|---|---|

| Z'-factor | 1 - (3σpositive + 3σnegative)/|μpositive - μnegative| | 0.5-1.0: Excellent assay0-0.5: Marginal assay<0: Poor assay | Overall assay quality assessment; Day-to-day robustness |

| Signal-to-Background Ratio | Meansignal / Meanbackground | >3: Typically acceptableHigher values preferred | Measures assay window magnitude |

| Signal-to-Noise Ratio | (Meansignal - Meanbackground) / σ_background | >10: Typically acceptableDependent on assay type | Assesses detection sensitivity |

| Coefficient of Variation (CV) | (σ / μ) × 100% | <10-20% depending on assay type | Measures well-to-well reproducibility |

| Strictly Standardized Mean Difference (SSMD) | (μpositive - μnegative) / √(σ²positive + σ²negative) | >3: Strong hit selection | Hit selection in screens with replicates |

Recent advances in HTS include the development of quantitative HTS (qHTS) paradigms, where compounds are tested at multiple concentrations to generate concentration-response curves directly from the primary screen [25] [27]. This approach provides richer pharmacological data early in the discovery process, enables better assessment of structure-activity relationships, and reduces false positive and negative rates by more fully characterizing compound effects.

The HTS workflow follows a staged approach to efficiently identify high-quality hits (Figure 2). The process begins with primary screening of entire compound libraries, typically in single-point format, to identify initial "hits." These hits progress to confirmation screening, often with replicates and counter-screens to eliminate false positives, followed by concentration-response experiments to determine compound potency (IC50/EC50 values).

Figure 2: HTS Workflow from Assay Development to Hit Identification

The Scientist's Toolkit: Essential Research Reagents and Technologies

Successful implementation of the TDD pipeline requires a comprehensive suite of research tools and technologies. The following table details essential reagents and platforms used throughout target validation and HTS phases.

Table 3: Essential Research Reagents and Technologies for TDD

| Tool Category | Specific Examples | Key Applications in TDD |

|---|---|---|

| Gene Modulation Tools | siRNA, shRNA, CRISPR-Cas9 | Target validation through gene knockdown/knockout; Functional genomics |

| Detection Technologies | Fluorescence Polarization (FP), TR-FRET, Fluorescence Intensity, Luminescence | HTS assay detection; Quantifying biochemical interactions and cellular responses |

| Cell-Based Model Systems | Immortalized cell lines, Primary cells, iPSC-derived cells, 3D cultures, Co-culture systems | Disease-relevant models for target validation and phenotypic screening |

| Compound Libraries | Diverse small molecule collections, Focused libraries, Natural product extracts | Source of chemical starting points for HTS campaigns |

| Automation & Robotics | Liquid handlers, Plate readers, Automated incubators, Central robotics systems | Enabling HTS throughput and reproducibility; Reducing manual labor |

| Labeling & Detection Reagents | Fluorescent probes, Antibodies, Aptamers, Luminescent substrates | Signal generation in HTS assays; Target detection and quantification |

| Bioinformatic Tools | Chemical databases, Structural modeling software, Data analysis pipelines | Target assessment; Compound library design; HTS data analysis and hit selection |

Strategic Considerations: TDD vs. PDD in Modern Drug Discovery

The choice between TDD and PDD approaches represents a fundamental strategic decision in drug discovery programming. Each paradigm offers distinct advantages and faces particular challenges (Table 4). TDD provides a clear mechanism of action from the outset, enables rational drug design based on target structure, typically offers higher throughput in screening, and facilitates the development of pharmacodynamic biomarkers for clinical development [22]. Conversely, PDD has demonstrated a superior track record in producing first-in-class medicines with novel mechanisms, expands the "druggable target space" to include previously unexplored biological processes, and identifies compounds that act through polypharmacology (simultaneous modulation of multiple targets) [2].

Table 4: Comparative Analysis of TDD and PDD Approaches

| Parameter | Target-Based Drug Discovery (TDD) | Phenotypic Drug Discovery (PDD) |

|---|---|---|

| Starting Point | Known molecular target with hypothesized role in disease | Disease-relevant phenotype or biomarker without pre-specified target |

| Mechanism of Action | Known from project inception | Often unknown initially; requires deconvolution |

| Throughput Potential | Typically higher; streamlined assay systems | Often lower due to complex assay systems |

| Druggable Space | Limited to targets with established assay feasibility | Expands to novel targets and mechanisms |

| Historical Success | Majority of best-in-class drugs | Disproportionate number of first-in-class drugs |

| Target Identification | Required before screening | Required after hit identification |

| Chemical Optimization | Facilitated by structural knowledge of target | Often empirical without structural guidance |

| Clinical Translation | Biomarker strategies can be developed early | Physiological relevance may improve translation |

Rather than viewing TDD and PDD as competing strategies, modern drug discovery increasingly recognizes their complementary nature [22]. Many successful drug discovery programs employ elements of both approaches—using phenotypic assays to validate target biology and assess compound efficacy in physiologically relevant systems, while employing target-based assays for mechanistic studies and structure-based optimization. The strategic integration of both paradigms represents a powerful approach to addressing the ongoing challenges of drug discovery productivity.

Recent trends include the use of human-based phenotypic platforms throughout the discovery process for hit triage and prioritization, elimination of hits with unsuitable mechanisms, and supporting clinical strategies through pathway-based decision frameworks [8]. As these approaches mature, they offer the potential to generate better leads faster by leveraging the strengths of both TDD and PDD within integrated discovery workflows.

Phenotypic Drug Discovery (PDD) has re-emerged as a critical strategy in modern therapeutic development, driven by the observation that it disproportionately yields first-in-class medicines [2]. Unlike Target-Based Drug Discovery (TDD), which begins with a predefined molecular hypothesis, PDD identifies compounds based on their ability to modify disease-relevant phenotypes in biologically complex systems without prior knowledge of the specific drug target [2] [17]. This approach has successfully addressed complex diseases where the underlying pathophysiology is incompletely understood or where multi-target modulation provides therapeutic benefits [2]. Modern PDD combines this foundational concept with advanced tools and strategies, systematically pursuing drug discovery based on therapeutic effects in realistic disease models [2]. This technical guide details the core components of the PDD pipeline, from assay design principles to hit identification and validation, providing researchers with a framework for implementing this powerful approach.

The distinction between PDD and TDD represents more than a technical difference; it fundamentally shapes discovery strategy and outcomes. Between 1999 and 2008, an analysis revealed that a majority of first-in-class drugs were discovered through phenotypic approaches rather than target-based methods [2] [19]. PDD expands the "druggable target space" to include unexpected cellular processes and novel mechanisms of action (MoA), as demonstrated by breakthroughs in cystic fibrosis, spinal muscular atrophy, and hepatitis C treatment [2]. Furthermore, PDD naturally accommodates and even exploits polypharmacology – where a compound engages multiple targets – which can be advantageous for treating complex, polygenic diseases [2]. For broader adoption, key challenges need resolution, including the progression of poorly qualified leads and the advancement of compounds with undesirable mechanisms that fail at later stages [8].

Phenotypic Assay Design Fundamentals

Core Principles and System Selection

Effective phenotypic assays balance biological relevance with technical feasibility, requiring careful consideration of multiple factors:

- Disease Relevance: The assay system must capture key aspects of human disease pathophysiology. This includes relevant cell types, disease-associated stimuli, and endpoints that reflect clinical manifestations [17].

- Translational Bridge: Establish a "chain of translatability" connecting the assay phenotype to human disease biology through 'omics signatures and pathway engagement [17].

- Technical Robustness: Ensure the assay meets standard performance criteria (Z' factor >0.5, coefficient of variation <20%) to reliably detect compound effects amid system complexity [17].

- Scalability: Design with screening feasibility in mind, considering timeline, resource requirements, and compatibility with automation when moving to higher-throughput formats [8].

Modern phenotypic screening uses biological systems directly for new drug screening, ranging from cell-based setups to higher-order screening using small animal models [28]. The choice of experimental model represents a critical decision point that balances physiological relevance with practical screening constraints.

Table 1: Comparison of Phenotypic Screening Models

| Model System | Physiological Relevance | Throughput Capacity | Key Applications | Major Limitations |

|---|---|---|---|---|

| 2D Cell Cultures | Moderate | High | Initial hit identification, mechanism studies | Limited tissue context, simplified microenvironment |

| 3D Organoids/Spheroids | High | Medium | Complex cell-cell interactions, tissue morphogenesis | Higher variability, more complex image analysis |

| Microphysiological Systems (Organs-on-Chips) | High | Low-medium | Human pathophysiology, complex tissue interfaces | Specialized equipment, limited throughput |

| Small Animal Models | Highest | Low | Whole-organism physiology, integrated systems | Low throughput, high cost, translatability questions |

Key Technological Components

Advanced technologies enable the detailed interrogation of complex phenotypes in modern PDD:

- High-Content Imaging and Analysis: Multiparametric imaging captures morphological and spatial information at single-cell resolution, providing rich datasets on compound effects [28]. Automated image analysis pipelines extract quantitative features that define phenotypic states.

- Functional Genomic Integration: CRISPR-based screening identifies genes and pathways that modulate disease phenotypes, validating targets and generating mechanistic insights [17].

- Transcriptomic Profiling: Gene expression signatures contextualize compound effects within known disease pathways and enable comparison to reference compounds [17] [19].

- Microphysiological Systems: These human cell-based platforms recapitulate tissue-level and organ-level functions, providing more physiologically relevant contexts for compound testing [8].

Quantitative Phenotypic Endpoints and Assay Validation

The selection and validation of quantitative endpoints is fundamental to successful phenotypic screening. Modern approaches move beyond single-parameter measurements to capture multidimensional phenotypes that better reflect disease biology.

Table 2: Categories of Phenotypic Endpoints and Their Applications

| Endpoint Category | Measured Parameters | Detection Methods | Therapeutic Area Examples |

|---|---|---|---|

| Morphological | Cell size, shape, organelle distribution, spatial relationships | High-content imaging, automated microscopy | Oncology, neurodegenerative diseases |

| Proteomic | Protein expression, localization, post-translational modifications | Immunofluorescence, FRET, flow cytometry | Immunology, inflammation |

| Functional | Calcium flux, membrane potential, metabolic activity | FLIPR, electrophysiology, Seahorse analyzer | Cardiology, metabolic diseases |

| Secretory | Cytokine release, hormone secretion, extracellular matrix deposition | ELISA, luminescence, mass spectrometry | Immunology, fibrosis |

| Transcriptional | Gene expression changes, pathway activation | Reporter gene assays, RT-qPCR | Oncology, virology |

Assay validation establishes the reliability and predictive value of the phenotypic system. The "Phenotypic Screening Rule of 3" provides a framework for this process, emphasizing three critical elements: (1) clinical relevance of the assay system, (2) pharmacological credibility of known reference compounds, and (3) statistical robustness of the assay performance [17]. Technical validation should establish a Z' factor >0.5, signal-to-noise ratio >3, and coefficient of variation <20% for key parameters. Biological validation should demonstrate that the assay detects efficacy of known therapeutic agents with appropriate potencies and generates disease-relevant phenotypes that align with clinical manifestations.

Experimental Workflow for Phenotypic Screening