Phenotypic Screening: The Resurgent Engine for First-in-Class Drug Discovery

This article explores the powerful resurgence of phenotypic screening as a primary driver for discovering first-in-class therapeutics.

Phenotypic Screening: The Resurgent Engine for First-in-Class Drug Discovery

Abstract

This article explores the powerful resurgence of phenotypic screening as a primary driver for discovering first-in-class therapeutics. Aimed at researchers and drug development professionals, it covers the foundational principles that make phenotypic approaches uniquely suited for identifying novel mechanisms of action. The scope extends to modern methodologies integrating high-content imaging, functional genomics, and AI, while also addressing key challenges like target deconvolution and assay design. Through comparative analysis of recent successes and an examination of the evolving landscape, this article provides a comprehensive resource for leveraging phenotypic screening to innovate drug discovery pipelines.

Why Phenotypic Screening is a Powerhouse for First-in-Class Drug Discovery

Phenotypic Drug Discovery (PDD) represents a biology-first approach to identifying novel therapeutics by focusing on observable changes in disease-relevant models without requiring prior knowledge of specific molecular targets. This empirical strategy has re-emerged as a powerful platform for discovering first-in-class medicines, accounting for a disproportionate number of groundbreaking therapies approved over the past two decades. This technical review examines the core principles, methodological frameworks, and recent successes of PDD, highlighting its unique value in addressing complex disease mechanisms and expanding druggable target space. We detail experimental protocols, analytical workflows, and technological innovations that enable modern phenotypic screening, with particular emphasis on applications in drug discovery for poorly characterized diseases. The integrated data presentation and visualization provided herein offer drug development professionals a comprehensive reference for implementing PDD strategies within their research portfolios.

Phenotypic Drug Discovery (PDD) is defined by its focus on modulating disease phenotypes or biomarkers in realistic biological systems rather than targeting predefined molecular mechanisms [1]. This approach stands in contrast to Target-Based Drug Discovery (TDD), which relies on explicit hypotheses about specific proteins, enzymes, or receptors and their roles in disease pathology. After being largely supplanted by reductionist target-based strategies during the molecular biology revolution, PDD has experienced a major resurgence following a seminal observation that between 1999 and 2008, a majority of first-in-class drugs were discovered empirically without a target hypothesis [1] [2].

Modern PDD combines the original concept of observing therapeutic effects on disease physiology with contemporary tools and strategies, enabling systematic pursuit of drug candidates based on efficacy in physiologically relevant disease models [1]. This renaissance has been fueled by notable clinical successes and the recognition that phenotypic approaches can access novel biological mechanisms and target spaces that remain invisible to conventional target-based screening methods [3]. The field now serves as an accepted discovery modality in both academia and the pharmaceutical industry, with estimates suggesting that phenotypic screens account for 25-40% of the project portfolios in major pharmaceutical companies [3].

Core Principles and Comparative Framework

Fundamental Characteristics of Phenotypic Screening

PDD operates on several core principles that distinguish it from target-based approaches. First, it is target-agnostic, meaning it does not require predetermined knowledge of the specific molecular target or its role in disease [4]. Second, it emphasizes biological context by employing disease-relevant cellular or physiological systems that maintain native molecular interactions and signaling networks [2]. Third, it prioritizes functional outcomes over mechanistic understanding at the initial discovery phase, selecting compounds based on their ability to reverse or modify disease-associated phenotypes [1] [4].

The phenotypic approach is particularly valuable when: (1) no attractive molecular target is known to modulate the pathway or disease phenotype of interest; (2) the project goal is to obtain a first-in-class drug with a differentiated mechanism of action; or (3) the disease pathophysiology involves complex, polygenic mechanisms that cannot be adequately modeled by single-target modulation [1].

Comparative Analysis: PDD versus Target-Based Approaches

Table 1: Strategic Comparison Between Phenotypic and Target-Based Drug Discovery Approaches

| Parameter | Phenotypic Drug Discovery | Target-Based Drug Discovery |

|---|---|---|

| Starting Point | Disease phenotype in biologically relevant system | Predefined molecular target |

| Knowledge Requirement | Limited target knowledge required | Extensive target validation needed |

| Chemical Library | Diverse, often including compounds with unknown mechanisms | Focused libraries optimized for target class |

| Primary Screening Readout | Functional reversal of disease phenotype | Binding affinity or modulation of target activity |

| Target Identification | Required after compound identification (target deconvolution) | Defined before compound screening |

| Strength | Identifies novel mechanisms and targets; suitable for complex diseases | Rational design; easier optimization; clear mechanism |

| Challenge | Target deconvolution difficult; complex assay development | Limited to known biology; may miss synergistic effects |

The comparative advantage of PDD is evidenced by its track record in generating first-in-class medicines. A landmark analysis covering 1999-2008 found that PDD approaches yielded 28 first-in-class small molecule drugs compared to 17 from target-based strategies [2] [3]. This disproportionate productivity has driven increased investment in phenotypic screening across the pharmaceutical industry despite the significant challenges associated with target deconvolution and assay complexity [1] [5].

Notable Successes and Case Studies

PDD has contributed to the development of numerous groundbreaking therapies, particularly for diseases with complex or poorly understood etiology. The following case studies illustrate the transformative potential of phenotypic approaches.

Cystic Fibrosis Modulators

Cystic fibrosis (CF) is a progressive genetic disease caused by mutations in the CF transmembrane conductance regulator (CFTR) gene. Target-agnostic compound screens using cell lines expressing disease-associated CFTR variants identified multiple therapeutic classes [1]:

- Potentiators such as ivacaftor that improve CFTR channel gating properties

- Correctors including lumacaftor, tezacaftor, and elexacaftor that enhance CFTR folding and plasma membrane insertion

The combination therapy elexacaftor/tezacaftor/ivacaftor was approved in 2019 and addresses 90% of the CF patient population [1] [3]. This breakthrough would have been unlikely through target-based approaches alone, as the corrector mechanism involved unexpected effects on protein folding and trafficking not readily predicted from CFTR biology.

Spinal Muscular Atrophy Therapeutics

Spinal muscular atrophy (SMA) is caused by loss-of-function mutations in the SMN1 gene. Humans possess a related SMN2 gene, but a splicing mutation leads to exclusion of exon 7 and production of an unstable protein. Phenotypic screens identified small molecules that modulate SMN2 pre-mRNA splicing and increase full-length SMN protein levels [1].

Risdiplam, approved by the FDA in 2020, emerged from this approach and works by stabilizing the U1 snRNP complex - an unprecedented drug target and mechanism of action [1] [3]. As SMN2 lacked known therapeutic activity before these screens, it would have been an unlikely target for traditional discovery campaigns [3].

Additional Pioneering Therapies

Table 2: Recently Approved Therapies Discovered Through Phenotypic Screening

| Drug | Indication | Year Approved | Key Target/Mechanism | Discovery Approach |

|---|---|---|---|---|

| Vamorolone | Duchenne muscular dystrophy | 2023 | Dissociative steroid that modulates mineralocorticoid receptor signaling | Phenotypic profiling in disease models [3] |

| Risdiplam | Spinal muscular atrophy | 2020 | SMN2 splicing modifier | Phenotypic screen for SMN2 splicing modification [1] [3] |

| Daclatasvir | Hepatitis C | 2014-2015 | NS5A replication complex inhibitor | HCV replicon phenotypic screen [1] [3] |

| Lumacaftor | Cystic fibrosis | 2015 | CFTR corrector | Target-agnostic screen in CFTR cell lines [1] [3] |

| Perampanel | Epilepsy | 2012 | AMPA receptor antagonist | Whole-system, multi-parametric modeling [3] |

| Lenalidomide | Multiple myeloma | 2005+ | Cereblon modulator leading to IKZF1/3 degradation | Phenotypic optimization of thalidomide analogs [1] [4] |

These case studies demonstrate how PDD has expanded the "druggable target space" to include unexpected cellular processes such as pre-mRNA splicing, protein folding, trafficking, and degradation [1]. The approach has revealed novel mechanisms of action for traditional target classes and unveiled entirely new target classes that would have remained inaccessible through hypothesis-driven approaches.

Methodological Framework and Experimental Protocols

Core Workflow for Phenotypic Screening

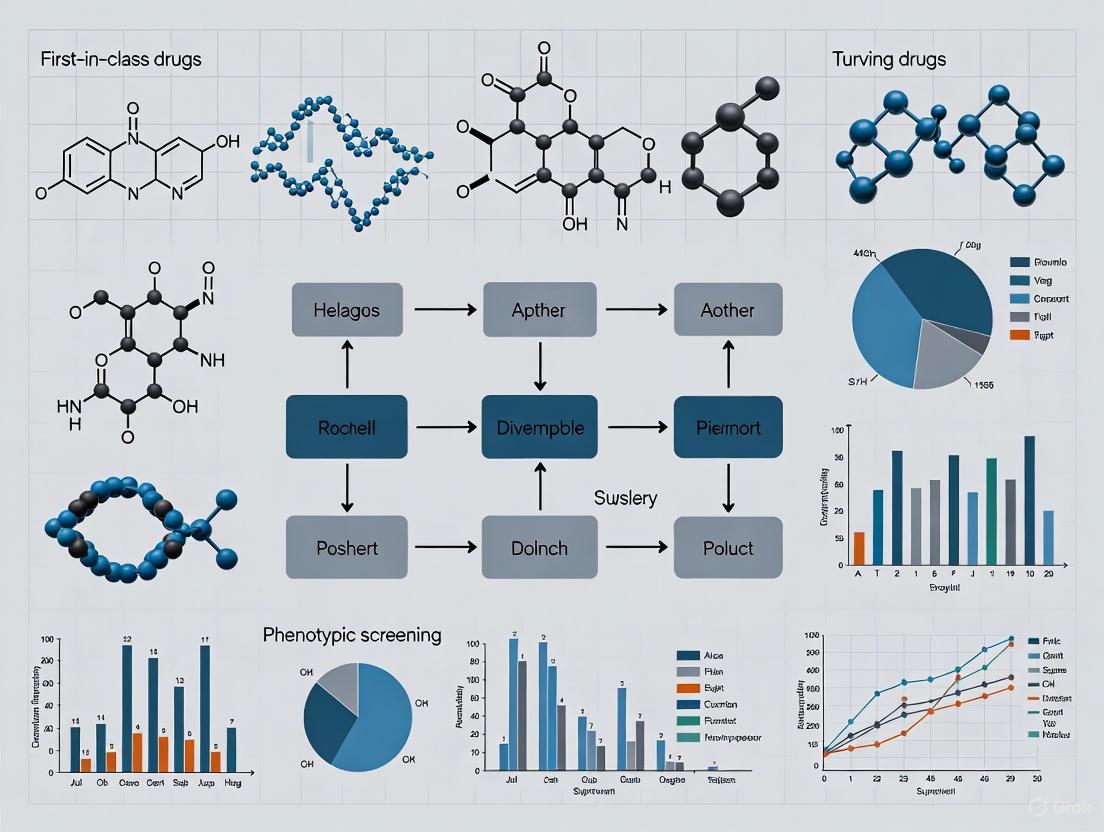

The standard workflow for image-based phenotypic profiling involves multiple interconnected stages, each requiring rigorous optimization and validation [6]. The following diagram illustrates this integrated process:

Critical Protocol Components

Disease-Relevant Model Systems

The foundation of successful PDD is a biologically relevant model system that faithfully recapitulates key aspects of human disease pathophysiology [2]. Preferred models include:

- Primary patient-derived cells: These offer the highest physiological relevance as they originate from individuals with the actual disease [2]. For example, cystic fibrosis screens utilized bronchial epithelial cells from CF patients with specific CFTR mutations [1] [2].

- Induced pluripotent stem cell (iPSC)-derived models: iPSCs can be differentiated into disease-relevant cell types while maintaining patient-specific genetic backgrounds [5].

- Complex co-culture systems: These models incorporate multiple cell types to better mimic tissue-level interactions and microenvironmental influences [1].

Model validation should include demonstration of disease-relevant phenotypes, genetic fidelity, and appropriate responses to known reference compounds where available [5].

High-Content Phenotypic Profiling

Image-based phenotypic profiling enables quantification of multidimensional morphological and functional features in response to chemical or genetic perturbations [6]. The Cell Painting protocol represents a particularly powerful implementation of this approach, utilizing multiplexed fluorescent dyes to simultaneously label eight broadly relevant cellular components:

- Nucleus (Hoechst or DAPI)

- Nucleoli (SYTO 14)

- Endoplasmic reticulum (Concanavalin A)

- Mitochondria (MitoTracker)

- Golgi apparatus and plasma membrane (wheat germ agglutinin)

- F-actin cytoskeleton (phalloidin)

This comprehensive staining strategy enables detection of subtle morphological perturbations across multiple organelles and cellular compartments in a single assay [6].

Image Acquisition and Analysis Pipeline

High-content screening requires automated image acquisition systems capable of rapidly capturing high-resolution images from multi-well plates (typically 384-well or 1536-well format) [6]. Following acquisition, images undergo a multi-step analytical pipeline:

- Illumination correction: Compensates for spatial heterogeneity in microscope optics that could bias intensity-based measurements

- Quality control: Identifies and excludes images with artifacts, improper focus, or other technical issues

- Segmentation: Delineates individual cells and subcellular compartments using intensity thresholds or machine learning-based detection

- Feature extraction: Quantifies morphological, intensity, and texture features for each segmented object

- Data normalization and standardization: Adjusts for plate-to-plate and batch variations using positive and negative controls

The output is a high-dimensional dataset capturing hundreds to thousands of features per cell, enabling comprehensive characterization of compound-induced phenotypic effects [6].

Technological Enablers and Advanced Methodologies

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagent Solutions for Phenotypic Screening

| Reagent Category | Specific Examples | Function in PDD |

|---|---|---|

| Cell Models | Primary patient-derived cells, iPSC-derived lineages, 3D organoids | Provide disease-relevant biological context for screening [1] [2] |

| Fluorescent Probes | Cell Painting cocktail, organelle-specific dyes, viability indicators | Enable multiparametric readout of cellular morphology and function [6] |

| Compound Libraries | Diverse small molecule collections, targeted probe sets, clinical candidates | Source of chemical perturbations for phenotypic modulation [7] |

| CRISPR Tools | Genome-wide knockout libraries, targeted guide RNA sets | Enable genetic validation and functional genomics follow-up [1] [5] |

| Bioinformatics Platforms | CellProfiler, ImageJ/Fiji, HighContentProfiler | Extract and analyze high-dimensional feature data [6] |

Machine Learning and Artificial Intelligence

Advanced computational methods have become indispensable for analyzing the complex, high-dimensional datasets generated in phenotypic screens [4] [6]. Several machine learning approaches are commonly employed:

- Supervised machine learning: Utilizes labeled training datasets to classify compounds based on known mechanisms of action or toxicity profiles [6]. Algorithms include support vector machines, random forests, and gradient boosting machines.

- Unsupervised machine learning: Identifies patterns and clusters in data without pre-existing labels, enabling discovery of novel mechanisms [6]. Principal component analysis, t-distributed stochastic neighbor embedding, and k-means clustering are frequently used.

- Deep learning: Employs multi-layered neural networks to extract features directly from raw images, reducing reliance on manual feature engineering [6]. Convolutional neural networks have demonstrated particular utility in image-based profiling.

These AI-driven approaches can significantly enhance pattern recognition in complex phenotypic data, improve prediction of mechanisms of action, and accelerate target identification [3] [6].

Target Deconvolution Strategies

Once phenotypic hits are identified, determining their molecular targets (deconvolution) represents a critical challenge. Common approaches include:

- Chemical proteomics: Uses immobilized compound analogs to capture and identify interacting proteins from cell lysates [1]

- Functional genomics: Empl CRISPR-based knockout or knockdown screens to identify genes whose modulation affects compound sensitivity [1] [5]

- Transcriptional profiling: Compares gene expression signatures induced by phenotypic hits to reference compounds with known mechanisms [5]

- Resistance generation: Selects for and characterizes cell populations with acquired compound resistance to identify potential targets [1]

The "tool score" concept provides a systematic framework for prioritizing chemical probes based on integrated bioactivity data, helping to distinguish true target engagement from off-target effects [7].

Quantitative Performance and Industry Impact

Analysis of drug approval data demonstrates the significant impact of PDD on the pharmaceutical landscape. Between 1999 and 2017, phenotypic screening contributed to the development of 58 out of 171 total new drugs, compared to 44 approvals from target-based discovery and 29 from monoclonal antibody-based therapies [3]. This productivity is particularly notable given the greater resources typically allocated to target-based approaches during this period.

The superior performance of PDD in generating first-in-class medicines is especially pronounced in certain therapeutic areas:

- Neurological disorders: PDD has successfully addressed complex polygenic conditions where single-target approaches have repeatedly failed [1]

- Rare genetic diseases: Phenotypic approaches have delivered transformative therapies for conditions with well-defined genetic causes but poorly understood pathophysiology [1] [3]

- Oncology: Several groundbreaking cancer therapeutics, including immunomodulatory drugs and molecular glues, originated from phenotypic screens [1] [4]

Future Directions and Concluding Perspectives

PDD continues to evolve with advancements in disease modeling, screening technologies, and analytical methods. Key emerging trends include:

- Integration of multi-omics data: Combining phenotypic readouts with genomic, proteomic, and metabolomic profiling to enhance mechanistic understanding [4]

- Microphysiological systems: Development of organ-on-a-chip and other 3D culture technologies that better mimic human tissue architecture and function [5]

- Automated profiling platforms: Implementation of end-to-end automated systems for high-throughput phenotypic characterization [6]

- Open data initiatives: Collaborative consortia such as JUMP-CP that generate large-scale public datasets for method development and discovery [3]

Despite ongoing challenges in target deconvolution and assay standardization, PDD has firmly reestablished itself as an essential component of modern drug discovery. By embracing biological complexity and remaining agnostic to predefined targets, this approach continues to deliver transformative medicines for diseases with high unmet need while expanding the boundaries of druggable target space. As technological innovations enhance our ability to model human disease and interpret complex phenotypic data, PDD is poised to make increasingly significant contributions to the pharmaceutical development landscape.

The strategic approach to drug discovery has historically oscillated between two paradigms: phenotypic screening, which observes compound effects in whole biological systems without presupposing molecular targets, and target-based screening, which employs rational drug design against specific molecular mechanisms. For decades, the pharmaceutical industry predominantly favored target-based strategies, driven by advances in molecular biology and genomics. However, a seminal analysis published in Nature Reviews Drug Discovery fundamentally challenged this preference by demonstrating that between 1999 and 2008, phenotypic screening was responsible for the discovery of 28 first-in-class small molecule drugs, compared to just 17 from target-based methods [3]. This empirical evidence of phenotypic screening's superior performance in generating innovative therapies catalyzed a dramatic resurgence in its application. From 2012 to 2022, the use of phenotypic drug discovery (PDD) in large pharmaceutical companies grew from less than 10% to an estimated 25-40% of project portfolios [3]. This whitepaper analyzes the historical track record of first-in-class drug origins, examining the quantitative evidence, detailing successful experimental protocols, and exploring how modern technological innovations are cementing PDD's role in generating transformative medicines.

Quantitative Analysis of First-in-Class Drug Discovery Strategies

Systematic analyses of drug approval patterns reveal a consistent and compelling narrative: phenotypic screening disproportionately contributes to the discovery of first-in-class medicines with novel mechanisms of action. The following data synthesizes findings from multiple comprehensive reviews to illustrate this trend.

Table 1: Comparison of Drug Discovery Strategies and Their Outcomes (1999-2017)

| Discovery Strategy | Time Period | Number of First-in-Class Drugs | Percentage of Total Approvals | Notable Advantages |

|---|---|---|---|---|

| Phenotypic Screening | 1999-2008 | 28 | 62% of first-in-class drugs | Identifies novel targets and mechanisms; more likely first-in-class |

| Target-Based Screening | 1999-2008 | 17 | 38% of first-in-class drugs | Enables rational drug design; higher precision for validated targets |

| Phenotypic Screening | 1999-2017 | 58 out of 171 total drugs | 34% of all new drugs | Expands "druggable" target space; reveals unexpected biology |

| Target-Based Screening | 1999-2017 | 44 out of 171 total drugs | 26% of all new drugs | More straightforward optimization; clearer regulatory path |

| Monoclonal Antibodies | 1999-2017 | 29 out of 171 total drugs | 17% of all new drugs | High specificity; favorable pharmacokinetics |

Table 2: Recent First-in-Class Drugs Discovered Through Phenotypic Screening (2015-2023)

| Drug Name | Year Approved | Indication | Novel Mechanism of Action | Molecular Target (if later identified) |

|---|---|---|---|---|

| Risdiplam (Evrysdi) | 2020 | Spinal Muscular Atrophy | SMN2 pre-mRNA splicing modifier | Stabilizes U1 snRNP complex binding to SMN2 pre-mRNA |

| Vamorolone (AGAMREE) | 2023 | Duchenne Muscular Dystrophy | Dissociative steroid | Mineralocorticoid receptor (modifies downstream signaling) |

| Lumacaftor/Ivacaftor (ORKAMBI) | 2015 | Cystic Fibrosis | CFTR corrector/potentiator | CFTR protein (enhances folding and membrane insertion) |

| Daclatasvir (Daklinza) | 2014 (EU), 2015 (USA) | Hepatitis C | NS5A replication complex inhibitor | HCV NS5A protein (non-enzymatic viral protein) |

| Perampanel (Fycompa) | 2012 | Epilepsy | AMPA receptor antagonist | AMPA glutamate receptor |

The data demonstrates that phenotypic screening consistently delivers a higher number of first-in-class medicines across different analysis periods. A particularly revealing statistic comes from Novartis, which reported a dramatic increase in the percentage of phenotypic screens conducted within its organization from 2011 to 2015, reflecting the industry's strategic pivot toward this approach [3]. The continued success of PDD is evident in the 2023 approval of vamorolone for Duchenne muscular dystrophy, which was identified through phenotypic profiling that elucidated its unique "dissociative" sub-activities, separating therapeutic efficacy from typical steroid safety concerns [3].

Detailed Experimental Protocols in Modern Phenotypic Screening

Successful phenotypic screening campaigns employ carefully designed experimental workflows that balance biological relevance with practical screening considerations. Below are detailed protocols for key methodologies that have yielded successful first-in-class therapies.

High-Content Screening (HCS) with Multiparametric Imaging

The discovery of perampanel, an AMPA receptor antagonist for epilepsy, required whole-system, multi-parametric modeling that exemplifies sophisticated phenotypic screening [3].

Protocol Workflow:

Model System Preparation: Utilize primary neuronal cultures or brain slice preparations that maintain native cellular architecture and network connectivity. For epilepsy research, hippocampal slices with intact tri-synaptic circuits are preferred.

Compound Library Handling: Prepare compound libraries in DMSO stocks (typically 10mM) and dilute in physiological buffer to final test concentrations (1-10µM), maintaining DMSO concentration below 0.1%.

Multielectrode Array (MEA) Recording: Plate neuronal networks on MEAs containing 64-256 electrodes. Record spontaneous and evoked electrical activity at 37°C with 5% CO₂. Include positive controls (known anticonvulsants) and negative controls (vehicle alone).

Multiparametric Assessment: Simultaneously measure multiple parameters including:

- Neuronal firing rate (extracellular action potentials)

- Bursting behavior (synchronized network activity)

- Synaptic potentiation (using evoked responses)

- Cytotoxicity (using propidium iodide or similar marker)

Data Acquisition and Analysis: Record baseline activity for 30 minutes, apply test compounds, and monitor for 2-4 hours. Analyze data using specialized software (e.g., NeuroExplorer, Axion Biosystems Integrated Studio) to detect compounds that normalize hyperexcitable networks without complete suppression of physiological activity.

Cell-Based Phenotypic Screening for Genetic Disorders

The discovery of risdiplam for spinal muscular atrophy (SMA) exemplifies target-agnostic screening in disease-relevant cellular models [1].

Protocol Workflow:

Development of Reporter Cell Line: Generate patient-derived fibroblasts or induced pluripotent stem cells (iPSCs) containing an SMN2 minigene reporter construct where exon 7 inclusion produces luciferase or fluorescence signal.

Assay Optimization and Validation: Optimize cell density (e.g., 5,000 cells/well in 384-well plates), incubation times, and reporter signal detection. Validate assay quality using Z'-factor >0.5 and signal-to-background ratio >3:1.

Primary Screening: Screen compound libraries (typically 100,000 - 1,000,000 compounds) at single concentration (e.g., 10µM) in duplicate. Incubate compounds with reporter cells for 48 hours.

Hit Confirmation: Retest active compounds in dose-response (typically 8-point, 1:3 serial dilution from 30µM to 1nM) to confirm activity and calculate EC₅₀ values.

Secondary Functional Assays: Validate hits in patient-derived motor neuron cultures measuring full-length SMN protein levels by immunofluorescence or Western blot, and SMN protein function through gem formation (subnuclear structures where SMN localizes).

Table 3: Research Reagent Solutions for Phenotypic Screening

| Reagent/Category | Specific Examples | Function in Experimental Protocol |

|---|---|---|

| Cell Models | Patient-derived iPSCs, Primary neuronal cultures, Reporter cell lines (SMN2 minigene) | Provide disease-relevant biological context with preserved pathophysiology for compound screening |

| Detection Systems | High-content imagers, Multielectrode arrays (MEAs), Luciferase/Fluorescence reporters | Enable multiparametric readouts of compound effects on cellular phenotypes and functions |

| Compound Libraries | Diverse small molecule collections (100,000 - 2,000,000 compounds), FDA-approved drug libraries | Provide chemical starting points for identifying active molecules against disease phenotypes |

| Analysis Software | Image analysis algorithms (CellProfiler), Network activity analyzers (NeuroExplorer), Machine learning platforms | Extract meaningful biological signals from complex datasets and identify subtle phenotypic changes |

Mechanism of Action: From Phenotype to Molecular Target

A critical phase in phenotypic drug discovery is target deconvolution—identifying the specific molecular mechanism responsible for the observed phenotypic effect. Successful elucidation of mechanisms of action (MoA) has repeatedly revealed novel biological pathways and expanded the "druggable" target space.

Case Study: Daclatasvir and HCV NS5A Protein

Daclatasvir, discovered through a HCV replicon phenotypic screen, targets the NS5A protein—a non-structural viral protein with no known enzymatic activity that plays a key role in the HCV replication process [3] [1]. At the time of discovery, NS5A was an elusive target that would have been unlikely to be pursued through traditional target-based approaches. The mechanism was elucidated through resistance mutation mapping and biophysical binding studies, revealing that daclatasvir disrupts the formation of HCV replication complexes by binding to NS5A dimer interfaces [3].

Case Study: Risdiplam and SMN2 Splicing Modulation

Risdiplam modulates SMN2 pre-mRNA splicing by engaging two specific sites at the SMN2 exon 7 and stabilizing the U1 snRNP complex, an unprecedented drug target and mechanism of action [1]. This mechanism was identified through detailed RNA-protein binding studies and structural biology approaches, revealing how the compound promotes inclusion of exon 7 to produce functional SMN protein.

Current Innovations and Future Directions

The next generation of phenotypic screening integrates advanced computational technologies that address historical limitations while amplifying strengths. Artificial intelligence and machine learning now enable automated analysis of complex phenotypic data, extracting subtle morphological features that might escape human detection [3]. Consortia such as JUMP-CP are fostering collaboration by sharing large public datasets and analysis methods, with supporting tools like the JUMP-CP Data Explorer enhancing accessibility [3].

Modern AI-driven drug discovery platforms exemplify this evolution. Companies like Recursion utilize massive-scale phenotypic profiling with their Recursion OS, which integrates approximately 65 petabytes of proprietary data and employs models like Phenom-2 (a 1.9 billion-parameter model trained on 8 billion microscopy images) to map biological relationships [8]. Similarly, Insilico Medicine's Pharma.AI platform leverages multimodal data fusion, combining textual information from published literature and patents with omics-level insights and chemical libraries to create comprehensive biological representations [8].

These technological advances directly address the primary challenges of phenotypic screening—particularly target identification and hit validation—while preserving its fundamental advantage: the ability to identify novel therapeutic mechanisms without predetermined target hypotheses. As these platforms mature, they are poised to systematically accelerate the discovery of first-in-class medicines for increasingly complex diseases.

The historical track record of first-in-class drug origins presents a compelling case for phenotypic screening as a primary engine of pharmaceutical innovation. Quantitative analyses spanning two decades consistently demonstrate that phenotypic approaches disproportionately yield first-in-class medicines with novel mechanisms of action, from the groundbreaking HCV therapy daclatasvir to the transformative SMA treatment risdiplam. The experimental methodologies that enabled these successes—ranging from high-content cellular screening to whole-system multiparametric modeling—provide robust templates for future campaigns. While phenotypic screening presents distinct challenges in target deconvolution and assay design, modern innovations in AI, machine learning, and data science are systematically addressing these limitations. The continued strategic integration of phenotypic screening within drug discovery pipelines, particularly when applied to areas of unmet medical need with complex biology, promises to sustain its legacy as a vital source of transformative medicines.

Phenotypic drug discovery (PDD) represents a powerful strategy for identifying first-in-class therapeutics by focusing on observable changes in complex biological systems rather than predefined molecular targets. This approach enables the unbiased discovery of novel biological targets and mechanisms of action (MoA) that would remain inaccessible through target-based methods. Historically, PDD has demonstrated remarkable success in identifying transformative medicines, with a 2011 review revealing that between 2000 and 2008, phenotypic screening strategies yielded 28 first-in-class small molecule drugs compared to only 17 from target-based approaches [2]. This significant advantage stems from the ability to identify compounds based on their functional effects in disease-relevant models without relying on potentially incomplete or incorrect assumptions about underlying disease biology.

The fundamental strength of phenotypic screening lies in its capacity to capture the complexity of cellular signaling networks and adaptive resistance mechanisms seen in clinical settings [4]. By observing compound effects in systems that more closely mimic human disease pathophysiology, researchers can uncover unexpected therapeutic opportunities and novel biology. This approach is particularly valuable for diseases with poorly characterized molecular pathways or those involving complex polygenic interactions, where single-target approaches often fail due to compensatory mechanisms and network robustness [4]. The unbiased nature of phenotypic discovery allows researchers to identify compounds that modify disease states through multiple potential mechanisms simultaneously, potentially leading to more effective therapeutic strategies with reduced susceptibility to resistance development.

Quantitative Evidence: Phenotypic Screening Outcomes

The superior performance of phenotypic screening in generating first-in-class medicines is well-documented in both historical and contemporary analyses. The following table summarizes key quantitative evidence demonstrating the advantages of this approach for novel target and mechanism identification:

Table 1: Comparative Performance of Phenotypic vs. Target-Based Drug Discovery

| Metric | Phenotypic Screening | Target-Based Approach | Data Source |

|---|---|---|---|

| First-in-class small molecule drugs (2000-2008) | 28 | 17 | 2011 Industry Review [2] |

| Novel target identification capability | High - Identifies previously unknown targets | Limited to previously validated targets | [4] |

| Translation to clinical success | Enhanced through disease-relevant models | Higher attrition due to flawed target hypotheses | [4] [2] |

| Biological complexity capture | High - Accounts for system-level interactions | Low - Focused on single targets | [4] |

| Resistance mitigation potential | Higher through multi-target effects | Lower due to single-target focus | [4] |

The evidence clearly demonstrates that phenotypic strategies significantly outperform target-based approaches in generating innovative therapeutics. This advantage becomes particularly pronounced when addressing diseases with complex, multifactorial pathophysiology or those lacking well-validated molecular targets. The higher clinical translation rate of candidates identified through phenotypic screening further underscores the value of using disease-relevant systems early in the discovery process [2]. By focusing on functional outcomes in biologically complex systems, researchers can bypass the limitations of reductionist target-based approaches and identify compounds with a higher probability of clinical success.

Experimental Framework for Unbiased Phenotypic Discovery

Implementing a robust phenotypic screening platform requires careful consideration of experimental design, model systems, and analytical approaches. The following protocols outline key methodological considerations for establishing an effective phenotypic discovery workflow:

Protocol 1: Development of Disease-Relevant Cellular Models

Purpose: To establish biologically relevant screening systems that faithfully recapitulate key aspects of human disease pathophysiology.

Methodology:

- Primary Cell Isolation: Source primary human cells from patients with the target disease when possible. For example, in cystic fibrosis research, use bronchial epithelial cells from CF patients to screen for compounds that restore airway surface liquid layer [2].

- Stem Cell Differentiation: Employ induced pluripotent stem cell (iPSC) technology to generate disease-relevant cell types through directed differentiation protocols.

- Complex Co-culture Systems: Establish multicellular systems incorporating stromal, immune, and parenchymal cells to better mimic tissue microenvironments.

- 3D Culture Implementation: Utilize organoid or spheroid models to capture spatial organization and cell-cell interactions absent in monolayer cultures.

- Disease-Relevant Stimuli: Apply pathophysiologically appropriate insults or stimuli to create disease-like states (e.g., inflammatory cytokines, metabolic stressors, mechanical stress).

Validation Parameters:

- Transcriptomic profiling against human disease tissue signatures

- Functional assessment of disease-relevant pathways

- Pharmacological response to known reference compounds

- Genetic validation using CRISPR-based approaches

Protocol 2: High-Content Phenotypic Screening

Purpose: To quantitatively capture multidimensional phenotypic responses to compound treatment using automated imaging and analysis.

Methodology:

- Multiparameter Assay Design: Develop assays measuring multiple cellular features simultaneously (morphology, proliferation, death, differentiation, organelle function).

- High-Content Imaging: Implement automated microscopy platforms to capture high-resolution cellular images across multiple channels (e.g., nuclei, cytoskeleton, specific organelles).

- Image Analysis Pipeline: Apply machine learning-based feature extraction to quantify hundreds of morphological and intensity-based parameters per cell.

- Phenotypic Profiling: Cluster compounds based on their multidimensional phenotypic signatures to identify novel mechanisms of action.

- Concentration Response: Screen compounds across multiple concentrations to assess potency and therapeutic index.

Key Reagents:

- Multiplexed fluorescent dyes for cellular compartments and functions

- Validated antibodies for key signaling nodes and differentiation markers

- Disease-relevant reporter constructs (GFP-tagged proteins, promoter-reporter systems)

- Reference compounds with known mechanisms of action

Protocol 3: Target Deconvolution Strategies

Purpose: To identify the molecular targets and mechanisms underlying observed phenotypic effects.

Methodology:

- Chemical Proteomics: Use compound-conjugated beads to pull down interacting proteins from cell lysates, followed by mass spectrometry identification.

- Genome-Wide CRISPR Screening: Perform positive and negative selection screens to identify genes that modify compound sensitivity or resistance.

- Expression Cloning: Transfect cells with cDNA libraries and screen for clones that confer compound resistance or hypersensitivity.

- * Cellular Thermal Shift Assay (CETSA)*: Monitor protein thermal stability changes upon compound binding to identify direct targets.

- Transcriptomic/Proteomic Profiling: Assess global gene expression or protein abundance changes following compound treatment to infer pathway engagement.

Validation Approaches:

- Genetic knockdown/knockout of putative targets

- Orthogonal binding assays (SPR, ITC)

- Resistance mutation generation and mapping

- Target engagement assays in cellular contexts

Research Reagent Solutions for Phenotypic Discovery

Successful implementation of phenotypic screening campaigns requires carefully selected reagents and tools. The following table outlines essential research solutions and their applications in unbiased discovery:

Table 2: Essential Research Reagent Solutions for Phenotypic Screening

| Reagent Category | Specific Examples | Function in Phenotypic Discovery |

|---|---|---|

| Primary Cell Models | Patient-derived primary cells, iPSC-derived lineages | Provide disease-relevant biological context with preserved pathophysiology [2] |

| Advanced Culture Systems | 3D organoids, spheroids, microfluidic chips | Recapitulate tissue-level complexity and microenvironmental cues |

| Biosensors | GFP-tagged proteins, FRET reporters, calcium indicators | Enable real-time monitoring of signaling pathway activity and cellular responses |

| High-Content Imaging Reagents | Multiplexed fluorescent dyes, validated antibodies | Facilitate multiparameter phenotypic characterization at single-cell resolution |

| CRISPR Screening Libraries | Genome-wide knockout, activation, inhibition libraries | Enable systematic genetic screening for target identification and validation |

| Proteomic Tools | Activity-based probes, biotinylated compound analogs | Support target deconvolution through chemical proteomics approaches |

| Multi-omics Platforms | Single-cell RNA sequencing, spatial transcriptomics, phosphoproteomics | Provide comprehensive molecular profiling for mechanism elucidation |

The selection of appropriate research reagents fundamentally influences the success and interpretability of phenotypic screening campaigns. Prioritizing physiological relevance, analytical robustness, and compatibility with downstream deconvolution approaches ensures maximum value from screening investments. Furthermore, establishing standardized reagent validation procedures minimizes technical variability and enhances reproducibility across experiments.

Visualization of Phenotypic Screening Workflows

The following diagrams illustrate key workflows and signaling pathways relevant to unbiased phenotypic discovery, created using Graphviz DOT language with adherence to specified color and contrast guidelines.

Phenotypic Drug Discovery Workflow

Target Deconvolution Pathways

Phenotypic Screening to Clinical Translation

Phenotypic drug discovery represents a powerful paradigm for identifying first-in-class therapeutics through its capacity for unbiased exploration of biological space. By focusing on functional outcomes in disease-relevant systems rather than predetermined molecular targets, this approach enables the discovery of novel mechanisms and targets that would remain inaccessible through reductionist strategies. The documented superiority of phenotypic screening in generating innovative medicines, combined with advances in disease modeling, high-content screening, and target deconvolution technologies, positions this approach as an essential component of modern drug discovery. As biological complexity increasingly challenges conventional target-based methods, the unbiased nature of phenotypic discovery offers a path toward addressing diseases with unmet medical need through novel therapeutic mechanisms.

{# Abstract}

Phenotypic Drug Discovery (PDD), an approach that identifies compounds based on their effects on disease-relevant models without prior knowledge of a specific molecular target, is experiencing a major resurgence. This renewed interest is fueled by its proven track record of delivering first-in-class medicines, particularly for complex diseases where the underlying biology is incompletely understood. This whitepaper examines the key factors driving the return to phenotypic screening, including the limitations of purely target-based approaches, and the convergence of technological advancements in high-content screening, functional genomics, and artificial intelligence. We detail the experimental protocols enabling this renaissance and present a toolkit of essential reagents, providing researchers and drug development professionals with a technical guide to modern PDD.

The history of drug discovery was originally built upon phenotypic observations, where compounds were selected for their effects on whole cells, tissues, or organisms. With the advent of molecular biology and genomics, the industry largely pivoted to a target-based paradigm, which focuses on modulating a predefined, hypothesized molecular target. This target-based approach promised precision and rational design. However, analysis of drug discovery outcomes revealed a critical insight: between 1999 and 2008, a majority of first-in-class new molecular entities were discovered through phenotypic screening, underscoring a significant advantage of this method for innovative therapy development [1].

This finding, among others, has catalyzed a renaissance in PDD. Modern PDD is not a return to old methods but an evolution, combining the original philosophy with sophisticated tools and strategies. It is now an accepted and integrated discovery modality in both academia and the pharmaceutical industry, valued for its ability to address the complexity of polygenic diseases and to reveal unprecedented biological mechanisms and targets [1] [5]. This whitepaper explores the specific factors and data behind this strategic shift.

Key Drivers of the Phenotypic Screening Resurgence

The renewed focus on phenotypic screening is not due to a single factor but is the result of a convergence of scientific, technological, and strategic drivers. These can be categorized into four primary areas, as illustrated below.

Demonstrated Success in Delivering First-in-Class Therapies

The most compelling driver for PDD's resurgence is its empirical success. Phenotypic screens have consistently identified pioneering drugs with novel mechanisms of action (MoAs) that would have been difficult to predict or design for using a target-based rationale.

- Expansion of Druggable Target Space: PDD has enabled the pharmacologic targeting of processes and proteins previously considered "undruggable." Notable examples include:

- CFTR Correctors (e.g., Tezacaftor): Discovered in a target-agnostic screen for compounds that improve the folding and membrane insertion of the mutant CFTR protein in cystic fibrosis [1].

- Splicing Modulators (e.g., Risdiplam): Identified through phenotypic screening for small molecules that correct SMN2 pre-mRNA splicing, leading to an oral therapy for spinal muscular atrophy [1].

- Molecular Glues (e.g., Lenalidomide): The teratogenic and therapeutic effects of thalidomide and its analogs were discovered phenotypically. Their MoA—rerouting the Cereblon E3 ubiquitin ligase to degrade specific transcription factors—was elucidated years post-approval, creating an entirely new modality in drug discovery [4] [1].

- Polypharmacology: Phenotypic screening naturally identifies compounds whose therapeutic effect may rely on modulating multiple targets simultaneously, an approach that is advantageous for complex diseases like cancer and central nervous system disorders [1].

Limitations of Reductionist Target-Based Approaches

While target-based discovery has been successful, its limitations have become increasingly apparent, creating a strategic need for complementary approaches.

- High Attrition from Flawed Hypotheses: Targeted approaches often experience remarkable attrition due to a lack of efficacy, which can stem from an incomplete or incorrect understanding of a target's role in human disease. This increases false positives and reduces drug approval rates [4].

- Inability to Capture Disease Complexity: Single-target strategies frequently fail to address the complexity of cellular signaling networks, redundancy, and adaptive resistance mechanisms seen in clinical settings. PDD, by contrast, tests compounds in systems that preserve some of this native complexity, potentially leading to more clinically relevant hits [4].

Technological Advancements in Screening Platforms

Modern tools have overcome many historical bottlenecks of PDD, making it a more scalable, informative, and reliable strategy.

- High-Content Screening (HCS): The HCS market, a cornerstone of modern PDD, is experiencing robust growth, projected to rise from USD 1.52 billion in 2024 to USD 3.12 billion by 2034 [9]. HCS combines automated microscopy, multi-parameter image analysis, and informatics to quantitatively analyze complex cellular events and phenotypic changes. The adoption of 3D cell cultures and organoids within HCS provides more physiologically relevant data compared to traditional 2D cultures [9] [10].

- Functional Genomics: Technologies like CRISPR-Cas9 enable genome-wide screens to identify genes essential for specific disease phenotypes, revealing new therapeutic targets and synthetic lethal interactions [11].

- Compressed Screening Methods: Innovative experimental designs now allow for the pooling of perturbations (e.g., multiple small molecules), followed by computational deconvolution. This dramatically reduces the sample size, cost, and labor required for high-content phenotypic screens, unlocking their use in complex, patient-derived models [12].

The Integrative Power of AI and Multi-Omics

The challenges of data analysis and target identification (deconvolution) in PDD are being met with powerful new computational and analytical approaches.

- Artificial Intelligence and Machine Learning: AI/ML models are now central to parsing the high-dimensional, complex datasets generated by HCS and other phenotypic assays. They streamline image analysis, enable automated phenotypic classification, and help identify predictive patterns that link chemical structure to biological effect and MoA [4] [9] [13].

- Multi-Omics Integration: The integration of transcriptomics, proteomics, and metabolomics data with phenotypic readouts provides a systems-level view of biological mechanisms. This multi-omics layer adds crucial biological context, helping researchers connect observed phenotypic outcomes to discrete molecular pathways and thereby facilitating target identification and hypothesis generation [4] [13].

Table 1: Quantitative Growth of the High-Content Screening Market, a Key Enabler of PDD [9] [10]

| Metric | 2024 Value | 2025 Value | 2034 Projection | CAGR (2025-2034) |

|---|---|---|---|---|

| Global Market Size | USD 1.52 B | USD 1.63 B | USD 3.12 B | 7.54% |

| Largest Regional Market (2024) | North America (39% share) | |||

| Fastest-Growing Application | Phenotypic Screening Segment |

Table 2: Notable First-in-Class Drugs Discovered Through Phenotypic Screening [4] [1]

| Drug | Indication | Key Phenotypic Readout | Novel Mechanism of Action (MoA) Elucidated Post-Discovery |

|---|---|---|---|

| Risdiplam | Spinal Muscular Atrophy | Increased full-length SMN protein | Modulates SMN2 pre-mRNA splicing |

| Ivacaftor, Tezacaftor | Cystic Fibrosis | Improved CFTR channel function | CFTR potentiator and corrector |

| Lenalidomide | Multiple Myeloma | Downregulation of TNF-α | Cereblon-dependent degradation of IKZF1/3 |

| Daclatasvir | Hepatitis C | Inhibition of HCV replication | Binds and inhibits the non-enzymatic NS5A protein |

Detailed Experimental Protocols in Modern Phenotypic Screening

To illustrate the practical application of these drivers, we detail two key protocols: a standard High-Content Screening workflow and an innovative compressed screening method.

Protocol 1: High-Content Phenotypic Screening Using Cell Painting

Objective: To identify small molecules that induce morphologic changes in a disease-relevant cell model, enabling unsupervised clustering of compounds by MoA.

Materials: See "The Scientist's Toolkit" in Section 4. Methodology:

- Cell Seeding and Treatment: Seed U2OS or other relevant cell lines into 384-well microplates. After cell adherence, treat with a library of small molecules (e.g., an FDA-approved drug repurposing library) at a predetermined concentration (e.g., 1 µM) and incubate for a set period (e.g., 24 hours) [12].

- Staining (Cell Painting Assay): Fix cells and stain with a multiplexed fluorescent dye panel:

- Nuclei: Hoechst 33342

- Endoplasmic Reticulum: Concanavalin A, AlexaFluor 488 conjugate

- Mitochondria: MitoTracker Deep Red

- F-Actin: Phalloidin, AlexaFluor 568 conjugate

- Golgi Apparatus & Plasma Membrane: Wheat Germ Agglutinin, AlexaFluor 594 conjugate

- Nucleoli & Cytoplasmic RNA: SYTO 14 [12]

- Automated Imaging: Image plates using a high-throughput automated microscope, capturing five channels corresponding to the fluorescent probes.

- Image Analysis and Feature Extraction:

- Perform illumination correction and quality control.

- Use segmentation algorithms to identify individual cells and subcellular compartments.

- Extract morphological features (e.g., area, intensity, texture, shape) for each compartment. A typical analysis can yield over 800 informative morphological features per cell [12].

- Data Analysis and Hit Identification:

- Normalize data per plate to correct for systematic bias.

- Compute the Mahalanobis Distance (MD), a multivariate measure of effect size, between the vector of median feature values for each compound and the control (DMSO) wells. A larger MD indicates a stronger phenotypic effect [12].

- Use unsupervised machine learning (e.g., clustering after dimensionality reduction) to group compounds inducing similar morphological profiles, which often correspond to shared MoAs.

Protocol 2: Compressed Phenotypic Screening for Scaling Complex Models

Objective: To map transcriptional responses to a library of protein ligands in a patient-derived pancreatic cancer organoid model with significantly reduced sample number and cost.

Materials: Patient-derived pancreatic ductal adenocarcinoma organoids, library of recombinant TME protein ligands, scRNA-seq reagents. Methodology:

- Pooling Design: Combine N perturbations (e.g., 80 different cytokines) into unique pools of size P (e.g., 10 ligands per pool). The experimental design ensures each individual ligand appears in R (e.g., 5) distinct pools. This creates a P-fold compression, drastically reducing the number of samples needed for scRNA-seq [12].

- Organoid Treatment and scRNA-seq: Treat organoids with each pool of ligands. After incubation, harvest cells and perform single-cell RNA sequencing (scRNA-seq) on the entire pool-treated sample.

- Computational Deconvolution: Use a regularized linear regression framework to deconvolve the individual effect of each ligand from the complex, pooled scRNA-seq data. The model infers the specific transcriptional signature of each ligand within the pooled sample [12].

- Hit Validation and Biological Insight:

- Prioritize top "hits" (ligands causing significant transcriptional shifts) for individual validation.

- Correlate deconvoluted ligand-induced signatures with clinical outcome data from separate patient cohorts to assess biological and translational relevance [12].

The workflow for this innovative compressed screening approach is summarized below.

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful implementation of modern phenotypic screens relies on a suite of essential reagents and tools. The following table details key components and their functions.

Table 3: Essential Reagents and Tools for a Phenotypic Screening Lab

| Category | Specific Item / Technology | Critical Function in PDD |

|---|---|---|

| Cell Models | Primary cells, Patient-derived organoids, 3D spheroids | Provides physiologically relevant and clinically predictive disease models. |

| Perturbation Libraries | Bioactive small-molecule collections, CRISPR libraries, siRNAs | Introduces genetic or chemical perturbations to probe biological systems. |

| Multiplexed Stains | Cell Painting dye panel (Hoechst, MitoTracker, etc.) [12] | Enables comprehensive, high-content profiling of cell morphology. |

| Imaging Systems | High-content imagers (e.g., from Thermo Fisher, Yokogawa) [9] [10] | Automated, high-throughput capture of fluorescent cellular images. |

| Analysis Software | AI/ML-based image analysis tools (e.g., PhenAID [13]) | Segments images, extracts features, and classifies complex phenotypes. |

| Omics Technologies | scRNA-seq, proteomics, metabolomics platforms | Provides deep molecular context for phenotypic observations and aids target deconvolution. |

The resurgence of phenotypic screening represents a strategic maturation of the drug discovery field. It is driven by the undeniable success of PDD in delivering pioneering therapies, a clear-eyed assessment of the limitations of a purely reductionist approach, and, most critically, the development of powerful technologies that overcome PDD's traditional challenges. The integration of high-content biology with multi-omics data and artificial intelligence has created a new, robust operating system for drug discovery. For researchers aiming to identify first-in-class drugs for complex and poorly understood diseases, the modern, integrated phenotypic approach offers a powerful and essential pathway from biological complexity to clinical breakthrough.

Modern Phenotypic Screening Workflows: From High-Content Imaging to AI Integration

The pursuit of first-in-class drugs represents one of the most challenging frontiers in pharmaceutical research. Traditional target-based approaches often struggle to deliver novel therapeutics with unprecedented mechanisms of action, as they are constrained by pre-existing knowledge of biological targets. In contrast, phenotypic screening offers a powerful alternative by assessing compound effects in complex biological systems without requiring prior understanding of specific molecular targets, thereby enabling serendipitous discovery of novel biology and therapeutic mechanisms [12]. The success of this approach, however, is critically dependent on the biological relevance of the experimental models used.

Recent regulatory shifts are further accelerating the adoption of human-relevant models. The United States Food and Drug Administration (FDA) Modernization Act 3.0 has formally positioned human-relevant alternative models—including organ-on-chip systems, computational modeling, and AI-driven in silico approaches—as viable substitutes for traditional animal testing [14]. This paradigm change, combined with initiatives like the Society for Immunotherapy of Cancer's strategic plan to integrate AI technologies, underscores the growing importance of physiologically representative models in therapeutic discovery [14].

This technical guide examines the development and implementation of disease-relevant models ranging from patient-derived cells to complex cocultures, with a specific focus on their application in phenotypic screening for first-in-class drug discovery. We provide detailed methodologies, analytical frameworks, and practical considerations for researchers aiming to implement these systems in their discovery pipelines.

Model Systems: Technical Foundations and Applications

Patient-Derived Xenografts (PDX) and Conditionally Reprogrammed Cells (CRC)

Patient-derived xenograft (PDX) models are established by transplanting patient tumor tissue directly into immunodeficient mice, creating an in vivo platform that retains key characteristics of the original malignancy. These models faithfully maintain gene expression profiles, histopathological features, drug responses, and molecular signatures of the source tumors, offering significant advantages over traditional cell line models [15]. PDX models have demonstrated remarkable potential in drug development, combination therapy optimization, and precision medicine applications [15].

The conditionally reprogrammed cell (CRC) technique provides an alternative approach for establishing patient-derived models without requiring murine hosts. This method utilizes a feeder layer of irradiated J2 murine fibroblasts and a Rho-associated kinase inhibitor (Y-27632) to create an in vitro environment that supports rapid expansion of primary epithelial cells from patient specimens while preserving their original genetic and phenotypic characteristics [16]. The CRC platform enables establishment of cell cultures from minimal patient material, including endoscopic ultrasound-guided fine-needle biopsies or surgical resection specimens [16].

Table 1: Comparison of Patient-Derived Model Platforms

| Model Type | Key Features | Establishment Timeline | Applications | Limitations |

|---|---|---|---|---|

| PDX Models | Retains tumor microenvironment, high clinical predictive value | 3-6 months | Drug efficacy testing, biomarker discovery, co-clinical trials | High cost, low throughput, murine stroma replacement |

| CRC Platform | Rapid expansion, preserves original tumor genetics, suitable for drug screening | 2-4 weeks | High-throughput compound screening, personalized medicine | Limited tumor microenvironment components |

| CRC Organoids | 3D architecture, drug penetration barriers, transcriptomic profiling | 2-4 weeks | Drug sensitivity testing, biomarker validation, tumor biology studies | Matrix-dependent, variable growth rates |

Three-Dimensional Organoid Models

Three-dimensional (3D) organoid cultures address fundamental limitations of two-dimensional (2D) systems by better replicating the structural complexity, cell-cell interactions, and metabolic heterogeneity of native tissues. Established from patient-derived CRC lines, 3D organoid cultures are typically developed using a Matrigel-based platform without organoid-specific medium components that might influence molecular subtypes [16].

The technical protocol for generating CRC organoids involves mixing conditionally reprogrammed cells with 90% growth factor-reduced Matrigel at densities of 5,000-10,000 cells per 20 μL of matrix, depending on growth characteristics [16]. The cell-Matrigel mixture is aliquoted into culture plates as dome structures, solidified at 37°C, and overlaid with appropriate culture medium. This approach preserves intrinsic molecular subtypes while enabling formation of 3D structures that mimic in vivo pathology.

Drug sensitivity profiling in 3D organoid models has demonstrated superior clinical predictability compared to 2D cultures. Studies in pancreatic cancer models revealed that IC₅₀ values for 3D organoids were generally higher than their 2D counterparts, reflecting the structural complexity and drug penetration barriers observed in vivo [16]. When tested against standard chemotherapy regimens (gemcitabine plus nab-paclitaxel and FOLFIRINOX), 3D organoids more accurately mirrored patient clinical responses than 2D cultures [16].

Complex Coculture Systems

Incorporating multiple cell types into coculture systems enables modeling of the tumor microenvironment (TME) and its role in therapeutic response. These systems typically combine patient-derived cancer cells with relevant stromal components, including cancer-associated fibroblasts, immune cell populations, and endothelial cells. The composition and spatial arrangement of these cocultures can be tailored to address specific biological questions related to immune evasion, drug resistance, and metastatic potential.

Advanced coculture platforms now leverage automated systems like the MO:BOT platform that standardizes 3D cell culture processes to improve reproducibility and reduce the need for animal models [17]. This fully automated system handles seeding, media exchange, and quality control, rejecting sub-standard organoids before screening and scaling from six-well to 96-well formats to provide up to twelve times more data on the same footprint [17].

Advanced Phenotypic Screening Methodologies

Compressed Screening for Enhanced Throughput

A significant innovation in phenotypic screening is the development of compressed experimental designs that pool multiple perturbations to reduce sample requirements, labor, and cost [12]. This approach combines N perturbations into unique pools of size P, with each perturbation appearing in R distinct pools overall. Relative to conventional screens where each perturbation is tested individually, compressed screening reduces sample number, cost, and labor by a factor of P (P-fold compression) [12].

The analytical framework for compressed screens employs regularized linear regression and permutation testing to deconvolve the effects of individual perturbations from pooled measurements [12]. This method has been successfully applied to map transcriptional responses to tumor microenvironment protein ligands in pancreatic cancer organoids, uncovering reproducible phenotypic shifts induced by specific ligands that were distinct from canonical reference signatures and correlated with clinical outcome [12].

Table 2: Compression Parameters and Performance in Phenotypic Screening

| Compression Level (P) | Replication (R) | Theoretical Cost Reduction | Hit Recovery Rate | Optimal Use Cases |

|---|---|---|---|---|

| 3x | 3 | 66% | >90% | Small libraries (<100 compounds), precious primary cells |

| 10x | 5 | 90% | 85-90% | Medium libraries (100-500 compounds), moderate biomass |

| 20x | 7 | 95% | 75-85% | Large libraries (>500 compounds), expandable models |

| 80x | 7 | 98.75% | 60-70% | Massive libraries, initial prioritization only |

High-Content Readouts and Analysis

Modern phenotypic screens increasingly employ high-content readouts such as single-cell RNA sequencing (scRNA-seq) and high-content imaging to capture complex cellular responses. The Cell Painting assay, for example, multiplexes six fluorescent dyes to examine multiple cellular components and organelles: nuclei (Hoechst 33342), endoplasmic reticulum (concanavalin A–AlexaFluor 488), mitochondria (MitoTracker Deep Red), F-actin (phalloidin–AlexaFluor 568), Golgi apparatus and plasma membranes (wheat germ agglutinin–AlexaFluor 594), and nucleoli and cytoplasmic RNA (SYTO14) [12].

Computational analysis of high-content data typically involves illumination correction, quality control, cell segmentation, morphological feature extraction, plate normalization, and highly variable feature selection. For morphological profiling, the Mahalanobis Distance (MD) serves as a multidimensional generalization of the z-score to quantify effect sizes across multiple features [12]. Dimensionality reduction techniques applied to morphological features can identify distinct phenotypic clusters enriched for specific drug classes or mechanisms of action.

Integration with Artificial Intelligence and Machine Learning

Artificial intelligence is transforming the design and interpretation of phenotypic screens. Machine learning (ML) and deep learning (DL) algorithms enable analysis of high-dimensional data from complex models, identification of subtle phenotypic patterns, and prediction of compound efficacy [14]. These approaches are particularly valuable for first-in-class drug discovery, where novel mechanisms of action may produce distinctive but previously uncharacterized phenotypic signatures.

Generative AI models like BoltzGen represent a significant advance by unifying protein design and structure prediction while maintaining state-of-the-art performance [18]. This model incorporates built-in constraints informed by wet-lab collaborators to ensure generated protein structures respect physical and chemical principles [18]. Such tools can design novel protein binders for challenging targets, expanding the scope of therapeutic intervention.

AI-powered platforms also enhance the analysis of complex coculture systems. For example, Sonrai Analytics' Discovery platform integrates advanced AI pipelines and visual analytics to generate interpretable biological insights from multi-modal datasets, including complex imaging, multi-omic, and clinical data [17]. By layering these datasets, researchers can uncover links between molecular features and disease mechanisms more quickly [17].

Experimental Protocols

Establishment of CRC Organoids from Patient-Derived Cells

Materials Required:

- Patient-derived conditionally reprogrammed cells (CRCs)

- Growth factor-reduced Matrigel (Corning)

- F medium: 70% Ham's F-12 nutrient mix + 25% complete Dulbecco's Modified Eagle Medium

- Supplement cocktail: 0.4 mg/mL hydrocortisone, 5 mg/mL insulin, 8.4 ng/mL cholera toxin, 10 ng/mL epidermal growth factor, 5% fetal bovine serum, 24 mg/mL adenine, 10 mg/mL gentamicin, 250 ng/mL Amphotericin B

- Rho-associated kinase inhibitor Y-27632 (5 μM final concentration)

- 6-well cell culture plates

- Dissociation reagent (e.g., Human Tumor Dissociation Kit)

Procedure:

- Harvest patient-derived CRCs from 2D culture using appropriate dissociation reagent.

- Centrifuge cell suspension at 300 × g for 5 minutes and resuspend in F medium.

- Mix CRCs with 90% growth factor-reduced Matrigel on ice. Use 5,000 cells/20 μL for rapidly growing cells or 10,000 cells/20 μL for slower-growing cells.

- Aliquot 20 μL of cell-Matrigel mixture into each well of a 6-well plate, forming dome structures.

- Incubate plate at 37°C for 20 minutes to solidify Matrigel.

- Carefully add 4 mL of F medium supplemented with Y-27632 to each well.

- Refresh medium every 3-4 days, monitoring organoid growth.

- Harvest organoids when >50% exceed 300 μm in size (typically 2-4 weeks).

- For passaging, dissociate organoids from Matrigel using ice-cold PBS, centrifuge at 1500 RPM for 3 minutes, and repeat procedure from step 3 [16].

Compressed Phenotypic Screening Workflow

Materials Required:

- Perturbation library (small molecules, cytokines, etc.)

- Target cells (e.g., pancreatic cancer organoids, PBMCs)

- Appropriate culture medium

- Multi-well plates (96-well or 384-well format)

- High-content readout reagents (e.g., Cell Painting dyes, scRNA-seq reagents)

Procedure:

- Library Pooling Design:

- Select pool size (P) based on desired compression level (typically 3-80 perturbations per pool).

- Ensure each perturbation appears in multiple pools (R = 3-7) for robust deconvolution.

- Use combinatorial pooling designs to maximize efficiency.

Screening Execution:

- Plate target cells in appropriate assay format.

- Apply perturbation pools to replicate wells.

- Incubate for predetermined duration (e.g., 24 hours for acute responses).

- Process for high-content readout (e.g., fix and stain for Cell Painting, or prepare for scRNA-seq).

Data Acquisition:

- For imaging: Acquire 5-channel images using high-content imaging system.

- For scRNA-seq: Perform library preparation and sequencing appropriate for pooled samples.

Computational Deconvolution:

- Extract features from high-content data (e.g., 886 morphological features from Cell Painting).

- Apply regularized linear regression to infer individual perturbation effects.

- Use permutation testing to establish significance thresholds.

- Validate top hits in conventional individual compound screens [12].

Research Reagent Solutions

Table 3: Essential Research Reagents for Disease-Relevant Models

| Reagent Category | Specific Products | Application | Key Features |

|---|---|---|---|

| Extracellular Matrices | Growth factor-reduced Matrigel (Corning) | 3D organoid culture | Basement membrane extract, promotes polarization |

| Cell Culture Media Supplements | Rho-associated kinase inhibitor Y-27632 | Conditional reprogramming | Enhances survival of primary epithelial cells |

| High-Content Imaging Reagents | Cell Painting kit (Sigma-Aldrich) | Morphological profiling | 6-plex fluorescent staining of cellular compartments |

| Dissociation Reagents | Human Tumor Dissociation Kit (Miltenyi Biotec) | Primary tissue processing | Gentle enzymatic cocktail for viable single cells |

| Automation Platforms | MO:BOT platform (mo:re) | High-throughput 3D culture | Automated seeding, feeding, quality control |

| Cell Line Engineering | Agilent SureSelect Max DNA Library Prep Kits | Genomic sequencing | Automated target enrichment on firefly+ platform |

Signaling Pathway Diagrams

The development and implementation of disease-relevant models ranging from patient-derived cells to complex cocultures represents a cornerstone of modern phenotypic screening for first-in-class drug discovery. These systems bridge the translational gap between traditional models and human pathophysiology, enabling identification of novel therapeutic mechanisms with higher clinical predictive value. As regulatory frameworks evolve toward human-relevant testing systems and AI-powered analysis becomes more sophisticated, these advanced models will play an increasingly central role in unlocking unprecedented therapeutic mechanisms and delivering transformative medicines for challenging diseases.

High-content screening (HCS) and Cell Painting represent a paradigm shift in phenotypic drug discovery, enabling the systematic identification of first-in-class medicines through unbiased morphological profiling. These technologies extract rich, quantitative data from cellular images to decipher complex biological responses to genetic or chemical perturbations, offering distinct advantages over traditional target-based approaches. When integrated with functional genomics, they create a powerful framework for linking genetic variation to cellular phenotype and function, accelerating the discovery of novel therapeutic mechanisms. This technical guide details the methodologies, applications, and integrative strategies that position these core technologies at the forefront of modern drug development, supported by standardized protocols and advanced computational analysis pipelines that have matured significantly over the past decade.

Phenotypic drug discovery (PDD) identifies compounds that alter disease phenotypes in biologically relevant systems without requiring prior knowledge of specific molecular targets. Mounting evidence suggests that PDD yields more first-in-class medicines than target-based drug discovery (TDD), making it particularly valuable for polygenic diseases or those with poorly understood pathophysiology [19]. This approach has produced notable clinical successes including ivacaftor for cystic fibrosis, risdiplam for spinal muscular atrophy, and lenalidomide for multiple myeloma, often revealing novel mechanisms of action years after initial discovery [1].

The resurgence of phenotypic strategies has been fueled by advances in high-content technologies that capture complex cellular responses with increasing resolution and scale. Modern PDD leverages sophisticated disease models, functional genomics tools, and computational methods to systematically bridge the gap between phenotypic observation and mechanistic understanding, expanding the "druggable target space" to include previously inaccessible biological processes [1].

Technical Foundations

High-Content Screening (HCS)

High-content screening is an advanced phenotypic screening strategy that uses automated microscopy and image analysis to capture and quantify complex cellular features at scale. Unlike traditional assays that measure limited pre-selected parameters, HCS generates multidimensional data from each sample, typically at single-cell resolution [19]. This approach enables detection of subtle phenotypes and heterogeneous responses within cell populations that would be obscured in bulk measurements.

Core Principles:

- Multiplexed Imaging: Simultaneous measurement of multiple cellular components using fluorescent labels

- Automated Analysis: Software-driven extraction of quantitative features from images

- High-Throughput Compatibility: Adaptation to multi-well plate formats for screening applications

- Single-Cell Resolution: Preservation of cellular heterogeneity in data analysis

Cell Painting Assay

Cell Painting is the most widely adopted morphological profiling assay, first described in 2013 and optimized through subsequent iterations [19] [20]. It employs a multiplexed fluorescent staining strategy to "paint" eight major cellular components, creating a comprehensive representation of cellular state that can detect subtle phenotypic changes induced by genetic or chemical perturbations [19] [20].

Key Advantages:

- Unbiased Profiling: Captures morphological changes without pre-specified hypotheses

- Standardization: Consistent protocol applicable across diverse cell types and perturbations

- Information Rich: Extracts ~1,500 morphological features per cell [20]

- Cost Effectiveness: Uses inexpensive fluorescent dyes rather than antibodies [20]

Table: Evolution of the Cell Painting Protocol

| Version | Publication Year | Key Improvements | Reference |

|---|---|---|---|

| Original Protocol | 2013 | Initial six-dye, five-channel implementation | [19] |

| Version 2 | 2016 | Minor adjustments based on implementation experience | [19] |

| Version 3 (JUMP-CP) | 2022 | Quantitative optimization using 90 reference compounds | [19] |

Experimental Workflow Visualization

Detailed Methodologies and Protocols

Standard Cell Painting Protocol

The established Cell Painting protocol involves sequential staining of cells with six fluorescent dyes imaged across five channels to visualize eight cellular components [20] [21]. The complete process from cell culture to data analysis typically spans 2-3 weeks, with 1-2 weeks dedicated to computational analysis [20].

Cell Culture and Preparation:

- Plate cells in multi-well plates (typically 384-well format)

- Culture flat, non-overlapping cell lines (U2OS osteosarcoma cells commonly used) [19]

- Incubate at 37°C for specified duration (typically 24 hours before perturbation)

- Apply experimental perturbations (small molecules, genetic manipulations, etc.)

Staining Procedure (Adapted from Bray et al. 2016) [20] [21]:

- Live-cell staining: Incubate with MitoTracker Deep Red (500 nM) for 30 minutes at 37°C to label mitochondria

- Fixation: Treat with paraformaldehyde (3.2-4% vol/vol) for 20 minutes at room temperature

- Permeabilization: Apply Triton X-100 (0.1%) for 20 minutes

- Multiplexed staining: Incubate with staining cocktail for 30 minutes containing:

- Hoechst 33342 (5 μg/mL) - nuclei

- Phalloidin (5 μL/mL) - filamentous actin

- Concanavalin A (100 μg/mL) - endoplasmic reticulum

- Wheat Germ Agglutinin (1.5 μg/mL) - Golgi apparatus and plasma membrane

- SYTO 14 (3 μM) - nucleoli and cytoplasmic RNA

- Washing: Perform multiple washes with HBSS before imaging

Image Acquisition:

- Acquire images using high-content imaging systems (e.g., ImageXpress Confocal HT.ai) [22]