Overcoming the Cold-Start Problem in Chemogenomic Target Prediction: AI Strategies for Novel Drug and Target Discovery

Accurately predicting interactions between novel drugs and targets—the 'cold-start problem'—is a major bottleneck in AI-driven drug discovery.

Overcoming the Cold-Start Problem in Chemogenomic Target Prediction: AI Strategies for Novel Drug and Target Discovery

Abstract

Accurately predicting interactions between novel drugs and targets—the 'cold-start problem'—is a major bottleneck in AI-driven drug discovery. This article provides a comprehensive overview for researchers and drug development professionals, exploring the foundational causes of this challenge and its impact on predictive models. We detail cutting-edge methodological solutions, from transfer learning and biological large language models to advanced data handling techniques. The content further offers practical troubleshooting and optimization strategies, and concludes with a critical evaluation of validation frameworks and performance benchmarks for real-world application, synthesizing key insights to guide future research and development efforts.

Understanding the Cold-Start Challenge: Why Novel Drugs and Targets Stump AI Models

Frequently Asked Questions (FAQs)

Q1: What exactly is the "cold-start problem" in chemogenomics? The cold-start problem refers to the significant drop in model performance when predicting interactions for novel drugs or novel targets that were not present in the training data [1] [2]. This is a major challenge in drug discovery and repurposing, as the primary goal is often to find targets for new drug compounds or to repurpose existing drugs for new proteins [3] [4].

Q2: What are the different types of cold-start scenarios? Research typically defines four main scenarios based on the novelty of the entities involved [5] [6]:

- Warm Start: Predicting interactions for drug-target pairs where both the drug and target have known interactions in the training data.

- Compound Cold Start (Novel Drug): Predicting interactions for a new drug compound against known targets.

- Protein Cold Start (Novel Target): Predicting interactions for known drugs against a new target protein.

- Blind Start (Two Novel Entities): Predicting interactions for a completely new drug against a completely new target.

Q3: Why do traditional similarity-based methods fail for cold-start problems? Traditional methods often rely on the "guilt-by-association" principle, which assumes that similar drugs bind similar targets. However, this principle can break down for novel entities with no prior interaction data, and it may not produce serendipitous discoveries [3]. Furthermore, some network-based inference methods are inherently biased and cannot predict for new drugs or targets [3].

Q4: Which cold-start scenario is the most challenging for predictive models? The "Blind Start" scenario, involving both a novel drug and a novel target, is generally the most challenging because the model has no prior interaction data for either entity to learn from [5]. However, studies have shown that the "Protein Cold Start" (novel target) can also be particularly difficult for many state-of-the-art methods [4].

Troubleshooting Guides

Issue 1: Poor Performance on Novel Drug Predictions

Problem: Your model performs well on known drugs but fails to generalize to novel drug compounds.

Solution: Integrate external chemical knowledge to build a robust representation for new compounds.

- Step 1: Employ unsupervised pre-training on large, unlabeled chemical databases (e.g., PubChem) using language models on SMILES sequences or graph neural networks on molecular graphs [1]. This helps the model learn the fundamental "grammar" of chemistry.

- Step 2: Utilize transfer learning from related tasks. For example, pre-train your model on Chemical-Chemical Interaction (CCI) data. The interaction patterns learned from CCI can be transferred to the drug-target interaction task, providing the model with crucial inter-molecule interaction information it wouldn't get from drug structures alone [1] [2].

- Step 3: Represent novel drugs using pre-trained features like Mol2Vec [5]. These embeddings capture semantic features of drug substructures and provide a meaningful input representation even for previously unseen compounds.

Issue 2: Poor Performance on Novel Target Predictions

Problem: Your model cannot accurately predict interactions for novel target proteins.

Solution: Enhance protein representation with structural and functional context.

- Step 1: Use protein language models (e.g., ProtTrans) trained on millions of protein sequences to generate feature representations for novel targets [5]. These models capture evolutionary and structural information directly from the amino acid sequence.

- Step 2: Apply transfer learning from Protein-Protein Interaction (PPI) networks [1] [2]. Since the protein interface in PPI can reveal effective drug-target binding modes, this knowledge helps the model understand how a protein might interact with a drug.

- Step 3: If available, incorporate predicted or experimental protein structures. A protein can be represented as a graph where nodes are residues and edges represent contacts or distances, providing a simplified yet informative 2D structural representation [1].

Issue 3: Model Failure in a Complete Cold-Start (Blind) Setting

Problem: Your model is ineffective when both the drug and target are novel.

Solution: Adopt a framework specifically designed for this hardest case, leveraging flexible molecular representations.

- Step 1: Move beyond the rigid "lock-and-key" theory. Implement models inspired by the induced-fit theory, where compounds and proteins are treated as flexible molecules [5]. This aligns better with biological reality and can improve generalization.

- Step 2: Use a framework like ColdstartCPI [5]. This involves:

- Generating features for the novel compound and protein using Mol2Vec and ProtTrans.

- Using a Transformer module to learn compound and protein features by extracting inter- and intra-molecular interaction characteristics, allowing the features of one molecule to adapt based on the other.

- Step 3: Combine knowledge graph embeddings with a recommendation system approach (e.g., KGE_NFM) [4]. The knowledge graph integrates multi-omics data, providing a rich context for both drugs and targets, while the recommendation system paradigm is naturally suited to predicting new links.

Experimental Protocols & Data

| Method Name | Core Approach | Best Suited For Cold-Start Scenario | Key Advantage |

|---|---|---|---|

| C2P2 [1] [2] | Transfer Learning from CCI & PPI | Novel Drugs & Novel Targets | Incorporates critical inter-molecule interaction information. |

| KGE_NFM [4] | Knowledge Graph & Recommendation System | Novel Proteins (Protein Cold Start) | Integrates heterogeneous data; does not rely on similarity matrices. |

| ColdstartCPI [5] | Pre-training & Induced-Fit Theory | Blind Start (Novel Drug & Target) | Models molecular flexibility; performs well in data-sparse conditions. |

| Ensemble Chemogenomic Model [7] | Multi-scale Descriptors & Ensemble Learning | Novel Drugs & Novel Targets | Combines multiple protein and compound descriptors for robustness. |

Protocol: Implementing a C2P2-Inspired Framework

This protocol outlines a transfer learning procedure to mitigate cold-start problems by leveraging interaction data [1].

1. Pre-training on Auxiliary Tasks

- Objective: Learn generalized representations for chemicals and proteins from related interaction tasks.

- Chemical-Chemical Interaction (CCI) Pre-training:

- Data Source: Gather CCI data from pathway databases or via text mining [1].

- Model Training: Train a model to predict CCI. The goal is to learn a representation function that encodes chemical structures in a way that reflects their interaction potential.

- Protein-Protein Interaction (PPI) Pre-training:

- Data Source: Obtain PPI data from curated biological databases.

- Model Training: Train a model to predict PPI. This helps the model learn representations that capture the properties of protein interfaces involved in binding.

2. Transfer Learning to Drug-Target Affinity (DTA)

- Objective: Transfer the learned knowledge to the main DTA prediction task.

- Model Architecture: Use the pre-trained models from Step 1 as the foundation (encoder) for your DTA model.

- Fine-tuning: Train the entire model on your DTA dataset. The pre-trained weights provide a head start, as they are already tuned to understand molecular interactions, making the model more robust to novel drugs and targets.

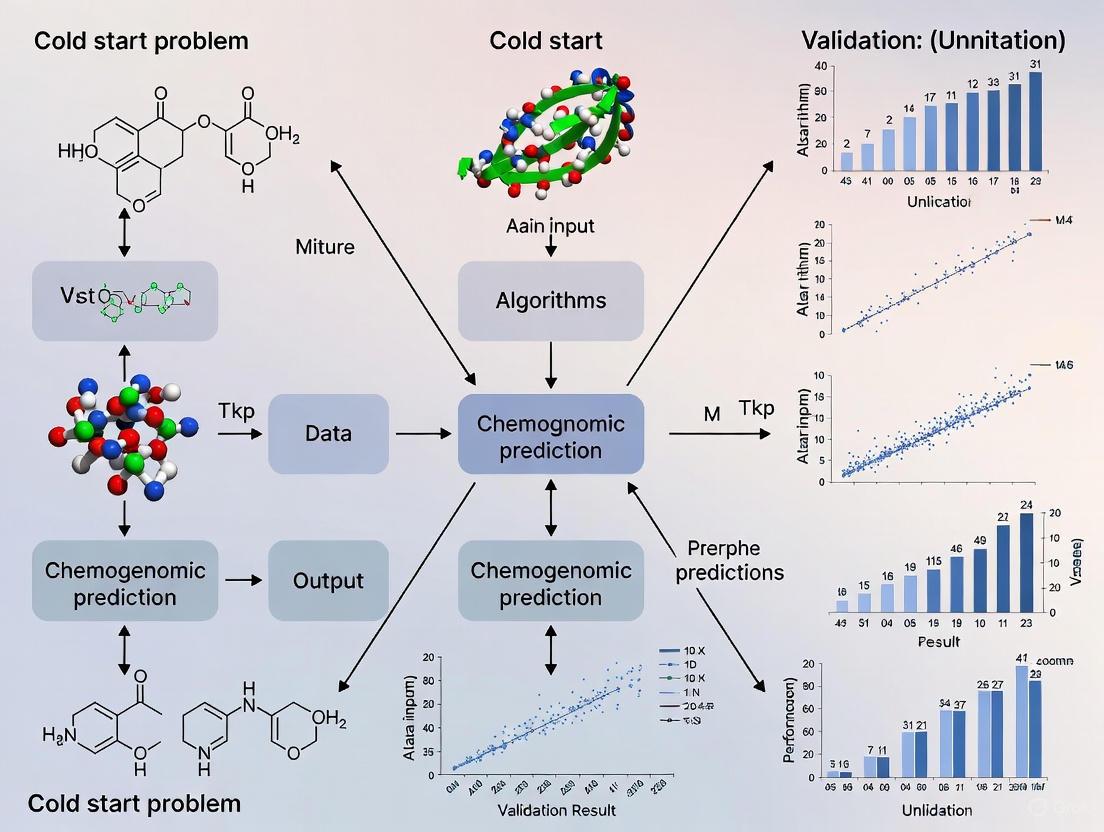

Visualization: Knowledge Graph Framework for Cold-Start Problems

The diagram below illustrates how a knowledge graph (KG) integrates diverse data to address cold-start issues.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools and Databases

| Item Name | Type | Function in Cold-Start Research |

|---|---|---|

| ChEMBL [7] | Database | Provides curated bioactivity data for known drug-target interactions, used for model training and benchmarking. |

| BindingDB [7] [5] | Database | A public database of measured binding affinities, essential for training and validating affinity prediction models. |

| UniProt [7] | Database | Provides comprehensive protein sequence and functional annotation data (e.g., Gene Ontology terms) for generating protein descriptors. |

| PubChem [1] | Database | A vast repository of chemical structures and properties, used for unsupervised pre-training of compound representation models. |

| Mol2Vec [5] | Pre-trained Model | Generates numerical representations (embeddings) for compounds based on their chemical substructures, useful for novel drugs. |

| ProtTrans [5] | Pre-trained Model | A suite of protein language models that generate state-of-the-art feature representations from amino acid sequences, crucial for novel targets. |

| Knowledge Graph (e.g., PharmKG) [4] | Data Framework | Integates diverse biological data (drugs, targets, diseases, pathways) into a unified graph, providing rich context for new entities. |

Frequently Asked Questions (FAQs)

FAQ 1: What is the "cold-start problem" in drug-target prediction? The cold-start problem refers to the significant drop in machine learning model performance when predicting interactions for novel drugs or target proteins that were not present in the training data. This is a critical challenge in drug discovery and repurposing, as it directly limits the ability to identify new therapeutic uses for existing drugs or predict targets for novel compounds. The problem manifests in three main scenarios: compound cold start (predicting for new drugs), protein cold start (predicting for new targets), and blind start (predicting for both new drugs and new targets simultaneously) [1] [5].

FAQ 2: Why do traditional computational methods fail with novel drugs or targets? Many traditional methods rely heavily on similarity principles or existing network data. When a new drug or target has no known interactions or close analogs in the training set, these methods have no basis for making predictions. Furthermore, models based solely on lock-and-key theory or rigid docking treat molecular features as fixed, failing to account for the flexible nature of actual binding interactions, which is especially problematic for novel entities [5].

FAQ 3: What advanced computational strategies can mitigate the cold-start problem? Several advanced strategies have shown promise:

- Transfer Learning: Knowledge gained from predicting chemical-chemical interactions (CCI) and protein-protein interactions (PPI) can be transferred to the drug-target affinity (DTA) task, as the underlying interaction principles are similar [1] [8].

- Knowledge Graph Embeddings (KGE): Representing diverse biological data (e.g., drug-disease associations, side-effects, pathways) as a knowledge graph allows models to learn robust representations of drugs and targets, improving generalization to new entities [4] [9].

- Unsupervised Pre-training: Using large, unlabeled datasets of chemical structures (e.g., SMILES) and protein sequences to pre-train models helps them learn fundamental biochemical "grammar," creating a better starting point for specific prediction tasks [1] [5].

- Induced-Fit Theory Models: Frameworks like ColdstartCPI treat proteins and compounds as flexible molecules, using Transformer modules to learn interaction characteristics. This aligns with biological reality and improves prediction for unseen compounds and proteins [5].

FAQ 4: How can I evaluate if my model is robust to cold-start scenarios? It is essential to evaluate models using realistic data splits that simulate real-world conditions. Instead of random cross-validation, set up experiments where all interactions for specific drugs or proteins are held out from the training set to create compound cold-start, protein cold-start, and blind start test sets. Performance on these dedicated test sets is the true indicator of a model's utility in drug repurposing and de novo discovery [4] [5].

Troubleshooting Guides

Problem: Poor Model Generalization on Novel Drugs or Targets Symptoms: High accuracy during training and random cross-validation, but a dramatic performance drop when predicting interactions for molecules or proteins not seen during training.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Insufficient Feature Generalization | Check if your model relies only on simplistic or fixed molecular descriptors. | Integrate pre-trained features from large-scale models (e.g., ProtTrans for proteins, Mol2Vec for compounds) to capture deeper semantic and functional information [5]. |

| Data Sparsity | Analyze the training data for new entities; if they have no similar neighbors in the training set, similarity-based methods will fail. | Employ knowledge graph frameworks (e.g., KGE_NFM) that leverage heterogeneous data (like drug-disease networks) to infer relationships beyond direct similarity [4]. |

| Lock-and-Key Assumption | Review the model architecture; if features for a protein are static regardless of the compound it is paired with, it may be too rigid. | Implement models inspired by induced-fit theory, like ColdstartCPI, which use attention mechanisms to allow molecular features to adapt contextually during binding prediction [5]. |

Problem: Instability in Cold-Start Prediction Training Symptoms: Large fluctuations in validation loss or failure to converge when training models designed for cold-start scenarios, such as those using adversarial learning.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Adversarial Training Instability | Monitor the loss of the feature extractor and domain classifier in adversarial networks like DrugBAN_CDAN. If one overwhelms the other, training fails. | Use gradient reversal layers with a careful scheduling strategy and consider using Wasserstein distance or other stabilization techniques for Generative Adversarial Networks (GANs) [5]. |

| Information Leakage | Perform rigorous data separation to ensure no information from the "cold" test entities leaks into the training process, which can inflate performance. | Ensure a strict separation where all interactions for cold-start drugs/targets are completely absent from training. Use dedicated knowledge graph splits that withhold entire entities [5]. |

Quantitative Performance Data

The following table summarizes the performance of various state-of-the-art models under different cold-start conditions, as measured by the Area Under the Curve (AUC). Higher values indicate better performance.

Table 1: Model Performance (AUC) in Cold-Start Scenarios on Benchmark Datasets [5]

| Model | Warm Start | Compound Cold Start | Protein Cold Start | Blind Start |

|---|---|---|---|---|

| ColdstartCPI | 0.989 | 0.912 | 0.917 | 0.879 |

| DeepDTA | 0.984 | 0.802 | 0.821 | 0.701 |

| GraphDTA | 0.985 | 0.811 | 0.823 | 0.712 |

| KGE_NFM | 0.978 | 0.842 | 0.855 | 0.768 |

| DrugBAN_CDAN | 0.986 | 0.861 | 0.869 | 0.785 |

Table 2: Impact of Transfer Learning on Cold-Start Performance (AUC) [1]

| Training Strategy | Cold-Drug AUC | Cold-Target AUC |

|---|---|---|

| C2P2 (with CCI/PPI Transfer) | 0.892 | 0.901 |

| Standard Pre-training | 0.843 | 0.855 |

| From Scratch (No Pre-training) | 0.791 | 0.802 |

Experimental Protocols

Protocol 1: Implementing a CCI/PPI Transfer Learning Framework (C2P2)

Principle: Enhance drug-target affinity (DTA) prediction by first pre-training models on related tasks with abundant data—Chemical-Chemical Interaction (CCI) and Protein-Protein Interaction (PPI)—to learn generalized interaction knowledge [1].

Workflow:

- CCI Pre-training:

- Data Collection: Gather a large-scale CCI dataset from databases like STITCH or PubChem.

- Model Training: Train a graph neural network (GNN) or sequence model (e.g., on SMILES strings) to predict chemical-chemical interactions. The objective is to learn a robust representation that captures how molecules interact with one another.

- PPI Pre-training:

- Data Collection: Obtain a comprehensive PPI dataset from a source like BioGRID or STRING.

- Model Training: Train a model (e.g., a Transformer on amino acid sequences) to predict protein-protein interactions. This teaches the model the grammar of protein interfaces and binding.

- Knowledge Transfer & DTA Model Fine-tuning:

- Feature Extraction: Use the pre-trained CCI and PPI models to generate initial feature representations for drugs and targets in your DTA dataset.

- Fine-tuning: Integrate these features into a DTA prediction model (e.g., a neural network) and fine-tune the entire model on the specific DTA task, allowing the transferred knowledge to be adapted and refined.

Protocol 2: Building a Knowledge Graph Embedding Framework (KGE_NFM)

Principle: Overcome data sparsity and cold-start by learning low-dimensional representations of drugs and targets from a rich knowledge graph (KG) that integrates multiple data types (e.g., drug-disease, target-pathway, drug-side-effect associations) [4].

Workflow:

- Knowledge Graph Construction:

- Integrate data from multiple biomedical databases (e.g., DrugBank, UniProt, DisGeNET) to build a heterogeneous graph where nodes represent entities (drugs, targets, diseases, etc.) and edges represent their relationships (interacts-with, treats, causes, etc.).

- Knowledge Graph Embedding (KGE):

- Use a KGE model (e.g., TransE, DistMult, or PairRE) to encode all entities and relations into a continuous vector space. The model learns to preserve the graph's structure, so similar entities have similar embeddings.

- Neural Factorization Machine (NFM) Integration:

- For a given drug-target pair, retrieve their pre-trained KG embeddings.

- Feed these embeddings into an NFM, which is a recommendation system algorithm. The NFM learns to model the complex, non-linear feature interactions between the drug and target embeddings to predict the likelihood of interaction.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Databases for Cold-Start Research

| Item Name | Type | Function & Explanation |

|---|---|---|

| ProtTrans | Pre-trained Model | Provides deep learning-based protein language model embeddings. Used to generate high-quality, functional representations of protein sequences, crucial for cold-start targets [5]. |

| Mol2Vec | Pre-trained Model | Generates vector representations for molecular substructures from SMILES strings. Captures chemical context and similarity, aiding in representing novel compounds [5]. |

| BindingDB | Database | A public, web-accessible database of measured binding affinities, focusing chiefly on the interactions of proteins considered to be drug targets. Essential for training and benchmarking DTA models [5]. |

| DrugBank | Database | A comprehensive knowledgebase for drug and drug-target information. Serves as a key data source for building knowledge graphs and validating predictions [4]. |

| BioKG | Knowledge Graph | A publicly available knowledge graph that integrates data from multiple biomedical sources. Provides a ready-made resource for KGE pre-training to mitigate cold-start problems [4]. |

| Transformer Module | Algorithm | A deep learning architecture using self-attention. In frameworks like ColdstartCPI, it is used to model flexible, context-dependent interactions between compounds and proteins, mimicking induced-fit binding [5]. |

Frequently Asked Questions (FAQs)

FAQ 1: What are the most common cold-start scenarios in chemogenomic prediction? Cold-start problems occur when a model must make predictions for drugs or targets that were not present in the training data. These scenarios are formally categorized as follows [1] [5]:

- Compound Cold Start: Predicting interactions for novel drugs against known protein targets.

- Protein Cold Start: Predicting interactions for known drugs against novel protein targets.

- Blind Start: The most challenging scenario, requiring prediction of interactions between completely novel drugs and novel proteins.

FAQ 2: Why do models fail with novel molecular structures, even when pre-trained? Model failure often stems from a representation gap. While unsupervised pre-training on large molecular datasets helps learn internal structural patterns (intra-molecule interaction), it may lack specific information about how molecules interact with each other (inter-molecule interaction), which is critical for binding affinity prediction [1]. Furthermore, models trained on biased data or simplified assumptions (like the rigid "key-lock" theory) struggle to generalize to the flexible nature of real-world binding events [5].

FAQ 3: How can I assess the generalizability of my DTI model beyond standard metrics? Beyond standard metrics like AUC, use data splitting strategies that simulate real-world challenges [10]. Instead of random splits, employ:

- Scaffold Splits: Test the model's performance on entirely new molecular scaffolds.

- Temporal Splits: Train on older data and test on newer data to simulate a real discovery pipeline.

- Stratified Cold Splits: Explicitly hold out specific drugs or proteins during training to test cold-start performance [5]. Reporting performance on these splits provides a more realistic view of generalizability.

FAQ 4: What practical strategies can mitigate data sparsity?

- Leverage Auxiliary Data: Use transfer learning from related tasks, such as Chemical-Chemical Interaction (CCI) and Protein-Protein Interaction (PPI), to incorporate valuable inter-molecule interaction knowledge into your DTI model [1].

- Data Augmentation: Techniques that generate valid variations of existing data can help, though their effectiveness varies [10].

- Multi-Task Learning: Training a model on several related prediction tasks simultaneously can help it learn more robust representations, although this does not always solve core generalization issues [10].

FAQ 5: Are deep learning models always superior to traditional methods for DTI prediction? No. The performance advantage is highly context-dependent. On small datasets, traditional machine learning methods (e.g., Random Forests, SVM) with expert-designed descriptors often outperform deep learning models [11] [12]. Deep learning methods typically require large amounts of high-quality data to excel and become competitive on larger datasets [11] [12].

Troubleshooting Guides

Issue 1: Poor Performance on Novel Drugs or Targets (Cold-Start Problem)

Problem: Your model performs well on known drug-target pairs but fails to generalize to new entities.

Solution Checklist:

Implement Transfer Learning:

- Action: Pre-train your drug and target encoders on large, auxiliary datasets before fine-tuning on your specific DTI task.

- Protocol: The C2P2 framework suggests this workflow [1]:

- Step 1: Learn a general protein representation by pre-training on a Protein-Protein Interaction (PPI) prediction task.

- Step 2: Learn a general chemical representation by pre-training on a Chemical-Chemical Interaction (CCI) prediction task.

- Step 3: Integrate these pre-trained encoders into your DTA model, allowing it to leverage prior knowledge of molecular interactions.

Adopt an Induced-Fit Theory Approach:

- Action: Move beyond the rigid "key-lock" model. Use architectures that allow the representations of compounds and proteins to adapt to each other.

- Protocol: The ColdstartCPI framework provides a methodology [5]:

- Step 1: Encode proteins and compounds using pre-trained models (e.g., ProtTrans for proteins, Mol2vec for compounds) to get initial feature matrices.

- Step 2: Construct a joint representation of the compound-protein pair.

- Step 3: Process this joint matrix through a Transformer module. The attention mechanism allows the model to learn flexible, context-dependent features for both molecules, mimicking the biological induced-fit effect.

Validate with Rigorous Splitting:

Issue 2: Model Fails to Learn Meaningful Molecular Representations

Problem: Your model does not capture the essential features required for accurate interaction prediction, leading to low performance.

Solution Checklist:

Fuse Multiple Representation Types:

- Action: Combine different molecular representations to capture both structural and functional characteristics.

- Protocol: [13] [11]

- Step 1 (Graph Representation): Use a Graph Neural Network (GNN) to process the molecular graph, capturing topological information.

- Step 2 (Sequence Representation): Use a language model (e.g., Transformer) to process the SMILES string of a drug or the amino acid sequence of a protein, capturing sequential context.

- Step 3 (Feature Combination): Combine the learned embeddings from both representations (e.g., via concatenation or a learned weighted sum) before the final prediction layer.

Incorporate Domain Knowledge via Features:

- Action: Augment learned features with expert-designed descriptors or fingerprints.

- Protocol: This multi-view learning approach can be implemented as follows [11]:

- Step 1: Generate traditional descriptors (e.g., ECFP fingerprints for drugs, composition/transition/distribution descriptors for proteins).

- Step 2: Learn data-driven descriptors using a deep learning encoder (e.g., GNN).

- Step 3: Concatenate both the traditional and learned descriptors to create a enriched feature vector for the prediction task.

Issue 3: High-Variance Results and Unreducible Error Metrics

Problem: Model performance is inconsistent across different data splits or random seeds, and error metrics seem to have hit a ceiling.

Solution Checklist:

Diagnose Data Sparsity and Quality:

- Action: Quantify the sparsity of your dataset and check for experimental noise.

- Protocol: Calculate the sparsity value as the ratio of known interactions to all possible drug-target pairs in your dataset [14]. Benchmark datasets often have sparsity values below 0.07, meaning over 93% of possible interactions are unknown [14]. Be aware that a '0' in the interaction matrix may indicate a lack of data rather than a true non-interaction [14].

Address Extreme Class Imbalance:

- Action: If framing DTI prediction as a classification task, use metrics that are robust to class imbalance.

- Protocol: [14] [12] Prioritize the Area Under the Precision-Recall Curve (AUPR) over the Area Under the ROC Curve (AUC) for a more realistic performance assessment on imbalanced datasets where positive interactions are the minority class.

Experimental Protocols & Data

Key Benchmark Dataset Statistics

The Yamanishi benchmark is a widely used gold-standard data set for comparing DTI prediction algorithms. Its statistics are summarized below [14].

Table 1: Benchmark Data Set for DTI Prediction

| Data Set | Number of Drugs | Number of Targets | Number of Known Interactions | Sparsity Value |

|---|---|---|---|---|

| Enzyme | 445 | 664 | 2,926 | 0.010 |

| Ion Channel (IC) | 210 | 204 | 1,476 | 0.034 |

| GPCR | 223 | 95 | 635 | 0.030 |

| Nuclear Receptor (NR) | 54 | 26 | 90 | 0.064 |

Protocol: Implementing a Transfer Learning Workflow for Cold-Start Mitigation

This protocol is based on the C2P2 framework described in [1].

Objective: Improve DTA prediction for novel drugs/targets by transferring knowledge from CCI and PPI tasks.

Materials:

- Software: Python, deep learning library (e.g., PyTorch, TensorFlow).

- Data:

- Source DTI Data: e.g., BindingDB, BIO-SNAP [5].

- Auxiliary PPI Data: e.g., from BioGRID or STRING databases.

- Auxiliary CCI Data: e.g., from STITCH or pathway databases.

Method:

- Pre-training Phase (Knowledge Transfer):

- PPI Task: Train a protein encoder model (e.g., a Transformer) to predict whether two protein sequences interact. Use a large dataset of known PPIs.

- CCI Task: Train a drug encoder model (e.g., a GNN) to predict the interaction between two chemical compounds. Use a large dataset of known CCIs.

- Fine-Tuning Phase (DTA Prediction):

- Initialization: Use the pre-trained protein and drug encoders from Step 1 to initialize the encoders in your DTA model.

- Training: Train the entire DTA model on your specific drug-target affinity data. The model starts with robust general-purpose representations and fine-tunes them for the specific task of affinity prediction.

Validation: Compare the performance of the model with pre-trained encoders against a model with randomly initialized encoders. Use a strict cold-start test set where all drugs or all targets are unseen [5].

Research Reagent Solutions

Table 2: Key Computational Tools for DTI Research

| Tool / Resource | Type | Primary Function | Reference/Source |

|---|---|---|---|

| Mol2vec | Molecular Representation | Generates unsupervised numerical representations for chemical compounds based on their substructures. | [5] |

| ProtTrans | Protein Representation | Learns protein language models from millions of protein sequences, providing powerful feature extraction. | [5] |

| SIMCOMP | Cheminformatics Tool | Computes structural similarity scores between drug molecules, used to build drug similarity matrices. | [14] |

| KEGG LIGAND & GENES | Database | Provides curated data on drugs, targets, and their interactions for building benchmark datasets. | [14] |

| ECFP (Extended-Connectivity Fingerprints) | Molecular Descriptor | Creates a fixed-length binary bit string representing the presence of molecular substructures. | [13] |

| SMILES | Molecular Representation | A string-based notation for representing the structure of chemical molecules. | [13] |

Workflow Diagrams

Diagram 1: C2P2 Transfer Learning Framework

Diagram 1: Knowledge transfer from PPI and CCI tasks enhances DTA model performance on cold-start problems.

Diagram 2: ColdstartCPI Induced-Fit Workflow

Diagram 2: The ColdstartCPI framework uses a Transformer to model flexible molecular interactions.

FAQ: Understanding the Cold-Start Problem

What is the "cold-start" problem in Drug-Target Affinity (DTA) prediction? The cold-start problem refers to the significant drop in machine learning model performance when predicting interactions for novel drugs or target proteins that were not present in the training data. This is a major challenge in real-world drug discovery and repurposing, where researchers often work with new molecular entities [1].

Why do traditional models fail in cold-start scenarios? Traditional computational methods often rely heavily on the chemogenomic properties of drugs and proteins. When a new drug or target with a novel structure is introduced, these models lack the specific interaction data needed to make accurate predictions, as they cannot effectively generalize from their training set to these unseen entities [15].

What strategies can mitigate the cold-start problem? Advanced strategies focus on learning more generalized representations. Key approaches include:

- Transfer Learning: Leveraging knowledge from related tasks, such as Chemical-Chemical Interaction (CCI) and Protein-Protein Interaction (PPI), to inform the DTA model [1].

- Topology-Preserving Embeddings: Creating molecular representations that maintain the structural and functional relationships from a heterogeneous network, adhering to the "guilt-by-association" principle [16].

- Heterogeneous Network Integration: Using diverse biological and pharmacological data (e.g., from side effects, diseases, gene expression) to build robust features for drugs and targets, reducing reliance on a single data type [15].

Troubleshooting Guide: Addressing Common Experimental Issues

Problem: Model performance is poor on new drugs (cold-drug scenario).

- Potential Cause 1: The drug encoder has not learned a generalized representation that captures meaningful chemical features transferable to novel structures.

Solution:

- Apply Pre-training: Use a language model (like Transformer) pre-trained on large-scale, unlabeled SMILES sequences (e.g., from PubChem) to learn the intrinsic "grammar" of chemical compounds [1].

- Incorporate CCI Knowledge: Fine-tune the pre-trained encoder using chemical-chemical interaction data. This teaches the model how molecules interact with each other, providing valuable information for predicting how they might interact with proteins [1].

- Use Graph Representations: Represent drugs as graphs (atoms as nodes, bonds as edges) and employ graph neural networks pre-trained on tasks like attribute masking. This captures both local atom environments and global molecular topology [1].

Potential Cause 2: The model is overfitting to the specific drugs in the training set and cannot generalize.

- Solution:

- Implement Topology-Aware Loss: As in the GLDPI model, use a prior loss function that forces the embeddings of similar drugs (based on network similarity) to be close in the latent space. This ensures the model respects the "guilt-by-association" principle, even for unseen drugs that are similar to known ones [16].

- Leverage Heterogeneous Networks: Integrate multiple drug-related networks (e.g., based on side-effects, therapy domains) using a graph attention network (GAT) to learn a comprehensive and robust drug representation [15].

Problem: Model performance is poor on new target proteins (cold-target scenario).

- Potential Cause 1: The protein encoder lacks a fundamental understanding of protein sequence and function.

Solution:

- Utilize Protein Language Models: Employ a pre-trained protein language model (e.g., ProtTrans, which uses BERT or T5 architectures) on massive protein sequence databases (like UniRef). This helps the model understand evolutionary and structural constraints in protein sequences [1].

- Incorporate PPI Knowledge: Transfer learning from protein-protein interaction tasks can be highly beneficial. PPI data teaches the model about residues and regions critical for binding at protein interfaces, which often overlap with drug-target binding sites [1].

Potential Cause 2: The protein representation is not informed by diverse functional data.

- Solution: Use an integration framework like BIONIC to learn protein representations from multiple heterogeneous networks (e.g., genetic interactions, pathway co-membership). A Graph Attention Network (GAT) can encode each network, and features are combined through a weighted, stochastically masked summation to create a comprehensive profile [15].

Problem: The overall model struggles with severe class imbalance in real-world DTI data.

- Potential Cause: Known interactions (positive samples) are vastly outnumbered by unknown pairs (negative samples), causing the model to be biased towards predicting "no interaction."

- Solution:

- Avoid 1:1 Negative Sampling in Evaluation: During testing, use imbalanced test sets (e.g., 1:10, 1:100, or 1:1000 positive-to-negative ratios) to simulate real-world conditions and properly evaluate model robustness [16].

- Use AUPR as the Primary Metric: The Area Under the Precision-Recall curve is more informative than AUROC for imbalanced datasets, as it focuses on the performance of the minority (positive) class [16].

- Adopt a Similarity-Based Architecture: Implement models like GLDPI that use cosine similarity between drug and protein embeddings to predict interactions. This design, combined with a topology-preserving loss, inherently leverages the "guilt-by-association" principle, which is robust to data imbalance [16].

Experimental Data & Performance Comparison

Table 1: Cold-Start Performance of Advanced DTA Models

This table summarizes the reported performance of models specifically designed to address cold-start challenges. AUPR (Area Under the Precision-Recall Curve) is highlighted as a key metric for imbalanced data.

| Model / Feature | Cold-Start Scenario Tested | Key Methodology | Reported Performance Gain |

|---|---|---|---|

| C2P2 [1] | Cold-Drug, Cold-Target | Transfer Learning from CCI & PPI tasks. | Shows advantage over other pre-training methods in cold-start DTA tasks. |

| GLDPI [16] | Cold-Drug, Cold-Target | Topology-preserving embeddings with prior loss; cosine similarity for prediction. | >100% improvement in AUPR on imbalanced benchmarks; >30% improvement in AUROC/AUPR in cold-start experiments. |

| DrugMAN [15] | Cold-Drug, Cold-Target, Both-Cold | Integration of heterogeneous networks with a Mutual Attention Network. | Smallest performance drop from warm-start to Both-cold scenario; best overall performance in real-world scenarios. |

Table 2: Essential Research Reagents & Computational Tools

This toolkit lists key resources mentioned in the cited research for building robust, cold-start-resistant DTA models.

| Research Reagent / Tool | Function in the Experiment | Key Implementation Details |

|---|---|---|

| Protein Language Model (e.g., ProtTrans) [1] | Learns generalized sequence representations for proteins. | Pre-trained on billions of sequences (e.g., UniRef); can be based on BERT or T5 architectures. |

| Chemical Language Model (e.g., SMILES Transformer) [1] | Learns generalized sequence representations for drugs from SMILES strings. | Pre-trained on millions of compounds (e.g., from PubChem) using Transformer architectures. |

| Graph Attention Network (GAT) [15] | Integrates multiple heterogeneous biological networks for drugs or proteins. | Uses multi-head attention to weight the importance of neighboring nodes; outputs low-dimensional node features. |

| Mutual Attention Network (MAN) [15] | Captures interaction information between drug and target representations. | Built with Transformer encoder layers; takes concatenated drug and target features to learn pairwise interactions. |

| Topology-Preserving Prior Loss [16] | Ensures molecular embeddings reflect the structure of the drug-protein network. | A loss function based on "guilt-by-association," aligning embedding distances with network similarity. |

Experimental Workflow Visualization

The following diagram illustrates the integrated workflow of the C2P2 and DrugMAN frameworks, combining transfer learning and heterogeneous data integration to tackle the cold-start problem.

Diagram 1: A unified workflow to overcome cold-start challenges in DTA prediction.

Advanced AI Methodologies to Solve Cold-Start Prediction

Frequently Asked Questions (FAQs)

Q1: What is the core principle behind using CCI and PPI for Drug-Target Affinity (DTA) prediction? The core principle is transfer learning. Instead of learning drug and protein representations from scratch on often limited DTA data, the model first learns the fundamental principles of molecular and protein interaction from large, related databases of Chemical-Chemical Interactions (CCI) and Protein-Protein Interactions (PPI). This learned "interaction knowledge" is then transferred and fine-tuned for the specific task of predicting drug-target binding affinity, making the model more robust, especially for novel drugs or targets [1] [2].

Q2: Why does the cold-start problem occur in DTA prediction, and how does C2P2 address it? The cold-start problem occurs because standard machine learning models perform poorly when predicting interactions for new drugs or targets that were not present in the training data. The C2P2 framework tackles this by pre-training on CCI and PPI tasks. This provides the model with a generalized understanding of biochemical interaction patterns before it even sees DTA data, leading to better generalization on novel entities [1].

Q3: What kind of data and features are needed to implement this approach? The implementation leverages multiple data types and feature representations for both drugs and targets [1] [17]:

| Entity | Data Source Examples | Feature Representation Methods |

|---|---|---|

| Drug/Chemical | PubChem [1], DrugBank [18] | SMILES Sequences (for language models) [1], Molecular Graphs (for GNNs) [1], MACCS Keys/Structural Fingerprints [17] |

| Protein/Target | UniProt [18], Pfam [1] | Amino Acid Sequences (for language models like Transformer, ESM-2) [1] [18], Amino Acid/Dipeptide Composition [17], Protein Graphs (from contact maps) [1] |

| Interaction Data | CCI databases, PPI databases [1] | Labeled interaction pairs for pre-training tasks. |

Q4: My model performs well in pre-training but poorly on the DTA task. What could be wrong? This is often a issue of negative transfer, where the pre-trained knowledge is not properly adapted to the new task. Ensure your fine-tuning dataset is relevant and of high quality. Also, experiment with different fine-tuning strategies; you may need to "unfreeze" and train more layers of the pre-trained model or adjust the learning rate to be lower than in pre-training to avoid overwriting the valuable pre-trained weights too quickly.

Troubleshooting Guides

Problem 1: Poor Performance on Novel Drugs/Targets (Cold-Start Scenario) Even with transfer learning, your model might struggle with true cold-start cases.

| Possible Cause | Solution | Related Experimental Protocol |

|---|---|---|

| Insufficient interaction diversity in pre-training data. | Curate more comprehensive CCI/PPI datasets that cover a wider range of interaction types and molecular scaffolds. | Use databases like BindingDB for DTA data, and dedicated CCI/PPI databases for pre-training. Always rigorously define cold-start splits (new drugs or new proteins not in training) for evaluation [1] [17]. |

| The transferred features are not effectively integrated for the DTA task. | Implement a cross-attention mechanism between the transferred drug and protein representations. This allows the model to focus on the most relevant parts of the molecule and protein for their specific interaction [17]. | In your model architecture, after obtaining pre-trained features for drug (D) and target (T), use a cross-attention layer to compute a context-aware representation of T conditioned on D, and vice-versa, before the final affinity prediction layer. |

| Simple fine-tuning is causing catastrophic forgetting of pre-trained knowledge. | Use a multi-task learning approach during fine-tuning. Jointly train the model on the main DTA prediction task and an auxiliary task like Masked Language Modeling (MLM) on the drug and protein sequences. This helps retain the generalized knowledge [17]. | During the DTA model training phase, add a loss term that also predicts masked tokens in the SMILES and protein sequences based on their context. |

Problem 2: Model Training is Unstable or Slow Issues related to the practical aspects of training complex models.

| Possible Cause | Solution | Related Experimental Protocol |

|---|---|---|

| Class or data imbalance in the DTA dataset. | Apply data balancing techniques. Use Generative Adversarial Networks (GANs) to generate synthetic data for the minority class (e.g., interacting pairs) to reduce false negatives [17]. | On a dataset like BindingDB, analyze the distribution of positive and negative interactions. Train a GAN (e.g., with a Generator and Discriminator network) to create plausible synthetic positive interaction pairs and add them to the training set. |

| High-dimensional feature space leading to noisy gradients. | Employ robust feature selection. Use algorithms like Genetic Algorithms (GA) with Roulette Wheel Selection to identify and use only the most predictive 85-90 features from a larger set of 180+, improving accuracy and stability [18]. | From your initial feature set (e.g., 183 features from UniProt/DrugBank), run a Genetic Algorithm to evolve a subset of features that maximizes the model's performance on a validation set. |

Experimental Protocols for Key Scenarios

Protocol 1: Pre-training a Graph Neural Network (GNN) on CCI Data

- Data Collection: Obtain a large dataset of chemical-chemical interactions, including the SMILES representation for each molecule.

- Graph Representation: Convert each molecule from its SMILES string into a molecular graph. Atoms become nodes (with features like atom type, charge), and bonds become edges (with features like bond type).

- Pre-training Task: Use a context prediction task. Mask a part of the molecular graph and train the GNN to predict the surrounding context of the missing subgraph. This teaches the model about the intra-molecular interactions and functional groups that dictate how chemicals interact [1].

- Model Output: The trained model provides a powerful molecular encoder that can convert any new molecule (represented as a graph) into a meaningful numerical vector (embedding).

Protocol 2: Fine-tuning a Pre-trained Model for DTA Prediction

- Model Architecture: Construct a DTA model that uses your pre-trained encoders. For example:

- A GNN (pre-trained on CCI) to process the drug molecule.

- A Transformer-based protein language model (pre-trained on PPI) to process the target protein sequence.

- The final embeddings from both encoders are then fused (e.g., concatenated) and passed through a few fully connected layers to predict the binding affinity value.

- Training Procedure:

- Initialize your drug and protein encoders with the pre-trained weights.

- Use a loss function like Mean Squared Error (MSE) for the affinity value.

- You can choose to freeze the encoder weights initially and only train the final layers, or unfreeze all parameters and use a very low learning rate for the pre-trained parts to gently adapt them to the DTA task.

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Resource | Function in the Experiment |

|---|---|

| ESM-2 (Evolutionary Scale Modeling) [18] | A state-of-the-art protein language model. Used to generate deep, context-aware numerical representations (embeddings) of protein sequences from primary structure alone, capturing evolutionary and structural information. |

| MACCS Keys [17] | A type of molecular fingerprint. Provides a fixed-length bit-vector representation of a molecule's structure based on the presence or absence of 166 predefined chemical substructures. Useful for fast similarity searching and as input features for ML models. |

| Random Forest / XGBoost Classifiers [17] [18] | Powerful ensemble machine learning algorithms. Often used for classification tasks (e.g., interaction yes/no) and for interpretability studies via feature importance analysis, especially on tabular data derived from features like fingerprints and protein descriptors. |

| SHAP (SHapley Additive exPlanations) [18] | A game-theoretic method for model interpretability. It quantifies the contribution of each input feature (e.g., a specific protein property or molecular descriptor) to the final prediction, helping to identify key predictors of druggability or binding. |

| Generative Adversarial Network (GAN) [17] | A deep learning framework consisting of two neural networks (Generator and Discriminator) trained adversarially. Used in DTI prediction to generate synthetic minority-class data to address dataset imbalance and improve model sensitivity. |

Workflow and Architecture Diagrams

Diagram 1: C2P2 Transfer Learning Workflow

Diagram 2: Cold-Start Problem Troubleshooting Guide

Core Concepts & FAQs

FAQ 1: What are the primary advantages of using ESM-2 and Mol2Vec for cold start target prediction?

ESM-2 and Mol2Vec provide powerful, sequence-based representations that bypass the need for historical interaction data, which is the core challenge of the cold start problem. The key advantages are summarized in the table below.

Table 1: Advantages of ESM-2 and Mol2Vec for Cold Start Scenarios

| Model | Input Data | Key Advantage for Cold Start | Underlying Principle |

|---|---|---|---|

| ESM-2 | Protein amino acid sequences | Generates structural and functional insights without Multiple Sequence Alignments (MSAs) or 3D structure data for new proteins [19]. | Learns evolutionary patterns and residue-residue contacts via masked language modeling on millions of sequences [19] [20]. |

| Mol2Vec | Compound SMILES strings | Creates meaningful molecular embeddings based on chemical intuition, without requiring known binding partners [21]. | An unsupervised machine learning approach that treats chemical substructures as "words" in a molecular "sentence" [21]. |

FAQ 2: My model fails to predict any interactions for a newly discovered protein. How can I improve its performance?

This is a classic cold start problem. Instead of relying on interaction-based models, leverage the intrinsic information captured by the biological language models.

- Strategy 1: Utilize Pre-trained Embeddings. Use a pre-trained ESM-2 model to generate a feature vector for your new protein sequence. Similarly, use Mol2Vec to create an embedding for your compound. These embeddings can be used as input to a simple classifier like Random Forest, as demonstrated in a recent study [21].

- Strategy 2: Leverage Transfer Learning. Fine-tune a pre-trained ESM-2 model on a related, larger dataset of protein-ligand interactions if available. This can help the model adapt its general protein knowledge to the specific task of binding prediction.

- Strategy 3: Analyze Attention Maps. For ESM-2, examine the model's self-attention maps. Specific attention patterns can correspond to residue-residue contact maps, potentially revealing binding pockets or functional sites even for novel proteins [19].

FAQ 3: How does a language model-based approach compare to traditional network-based methods for cold start problems?

Traditional network-based methods often suffer from the cold start problem, as they rely heavily on the connectivity and similarity within an interaction network [3]. The table below outlines the key differences.

Table 2: Language Models vs. Network-Based Methods for Cold Start

| Feature | Language Models (ESM-2 & Mol2Vec) | Traditional Network-Based Methods |

|---|---|---|

| Data Requirement | Primary sequence (protein or compound) | Existing network of interactions and similarities |

| Cold Start Capability | High; designed for zero-shot inference on new sequences [19] | Low; biased towards high-degree nodes and fail on new entities [3] |

| Information Source | Evolutionary patterns and chemical intuition from pre-training [19] [21] | Topology of the existing interaction network [3] |

| Interpretability | Moderate; can analyze attention weights [19] | High; predictions are often based on "wisdom of the crowd" [3] |

Experimental Protocols & Workflows

Protocol: Predicting Drug-Target Binding Using ESM-2 and Mol2Vec

This protocol is based on a study that combined ESM-2 and Mol2Vec embeddings with a Random Forest classifier for robust prediction of protein-ligand binding [21].

1. Data Preparation

- Proteins: Obtain the amino acid sequences of your target proteins in FASTA format.

- Compounds: Obtain the SMILES strings of your candidate drug compounds.

- Ground Truth: Compile a labeled dataset of known binding interactions (positive examples) and non-interactions (negative examples) for model training.

2. Feature Vector Generation

- Protein Embeddings:

- Use a pre-trained ESM-2 model (e.g.,

esm2_t30_150M_UR50Dfrom Hugging Face). - Pass each protein sequence through the model and extract the per-residue embeddings.

- Generate a single fixed-size representation for the entire protein by performing mean pooling over the sequence dimension.

- Use a pre-trained ESM-2 model (e.g.,

- Compound Embeddings:

- Use a pre-trained Mol2Vec model.

- Input the SMILES string of each compound to generate a 200-dimensional molecular embedding vector [21].

3. Model Training and Prediction

- Feature Concatenation: For each protein-compound pair, concatenate the ESM-2 protein vector and the Mol2Vec compound vector to create a unified feature representation.

- Classifier Training: Train a Random Forest classifier on the concatenated features using the labeled interaction data. The Random Forest model is noted for providing robust predictive performance and a conservative strategy that minimizes false positives [21].

- Binding Prediction: Use the trained model to predict the interaction probability for novel protein-compound pairs.

Workflow Visualization

Diagram 1: ESM2 & Mol2Vec Prediction Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools and Resources for ESM-2 and Mol2Vec Experiments

| Resource Name | Type | Function | Access Link / Reference |

|---|---|---|---|

| ESM-2 Pre-trained Models | Protein Language Model | Generates contextual embeddings from protein sequences; available in various sizes (8M to 15B parameters). | GitHub: facebookresearch/esm [20] |

| Mol2Vec | Molecular Embedding Model | Converts SMILES strings into numerical vectors capturing chemical substructures. | [21] |

| Hugging Face Transformers | Python Library | Provides easy access to ESM-2 and other transformer models for fine-tuning and inference. | https://huggingface.co/docs/transformers/index |

| OpenProtein.AI | Commercial Platform | Offers cloud-based access to ESM and other foundation models for protein engineering tasks with minimal coding. | [20] |

| Random Forest (scikit-learn) | Machine Learning Classifier | A robust model for integrating ESM-2 and Mol2Vec embeddings to predict interactions. | [21] |

| BioKG / PharmKG | Knowledge Graph | Curated biomedical databases that can be used for pre-training or as an additional data source to enrich predictions. | [4] |

Troubleshooting Advanced Scenarios

FAQ 4: The perplexity of ESM-2 for my protein of interest is high. What does this indicate and how should I proceed?

High perplexity indicates that the protein sequence is "surprising" or out-of-distribution for the ESM-2 model. This is common for proteins with few evolutionary relatives or novel, engineered sequences [19].

- Interpretation: The model's representation for this protein may be less reliable, which could lead to lower accuracy in downstream tasks like structure or interaction prediction. A strong negative correlation exists between perplexity and structure prediction accuracy (TM-Score) [19].

- Actionable Steps:

- Validate with Alternative Methods: Do not rely solely on ESM-2-based predictions. Use molecular docking or other homology-based methods if a remotely related structure exists.

- Seek Similar Sequences: Check if any similar sequences exist in metagenomic databases, as ESM-2 was trained on a vast dataset that includes metagenomic proteins [19].

- Proceed with Caution: Acknowledge the higher uncertainty in your results for this specific target.

FAQ 5: How can I visualize the model's reasoning to build trust in its predictions for a novel target?

Interpretability is a known challenge for deep learning models [3]. However, you can use the following techniques:

- For ESM-2: Extract Attention Maps. The self-attention weights in the transformer layers can be visualized. Specific attention heads often learn to capture residue-residue contacts, which can highlight potential binding sites or functional domains on your novel protein [19]. The following diagram illustrates this analytical process.

Diagram 2: Analysis of ESM2 Attention Maps

- For the Overall Pipeline: Analyze Feature Importance. After training your Random Forest (or other) classifier, you can perform permutation importance or SHAP analysis to determine which features—from either the ESM-2 embedding or the Mol2Vec embedding—were most critical for the prediction. This can reveal whether the model is "reasoning" based on protein characteristics, compound characteristics, or both.

Troubleshooting Guides and FAQs

Q1: My model's performance drops significantly when predicting interactions for novel drugs or targets not seen during training. What fusion strategies can mitigate this "cold start" problem?

A: The cold-start problem is common when your training set lacks examples of new molecular entities. Address this by using transfer learning from related interaction tasks to infuse crucial "inter-action" knowledge into your representations [1].

- Problem Detail: Models often rely solely on intra-molecule information (e.g., from language model pre-training on SMILES or protein sequences). This lacks the inter-molecule interaction information critical for binding affinity prediction [1].

- Recommended Solution: Implement a framework like C2P2 (Chemical-Chemical Protein-Protein Transferred DTA). This approach transfers knowledge learned from predicting Chemical-Chemical Interactions (CCI) and Protein-Protein Interactions (PPI) to the Drug-Target Affinity (DTA) task [1].

- Methodology:

- Pre-training for Inter-Molecule Knowledge: First, train separate models on large CCI and PPI datasets. This teaches the model the "grammar" of how molecules and proteins interact with each other.

- Feature Integration: Integrate these learned representations into your primary DTA model. This can be done by:

- Using the pre-trained models as feature extractors.

- Adding specific fusion layers that combine the CCI/PPI-derived features with sequence or graph representations.

- Expected Outcome: This transfer learning approach provides a more robust and generalized representation for drugs and proteins, leading to improved prediction accuracy for novel entities [1].

Q2: How can I effectively represent and fuse highly heterogeneous data types (like sequences, graphs, and knowledge graphs) for a unified prediction?

A: A unified framework that combines Knowledge Graph Embeddings (KGE) with a powerful fusion model like a Neural Factorization Machine (NFM) has proven effective [4].

- Problem Detail: Simple feature concatenation or early fusion can lead to suboptimal performance due to the complex, non-linear relationships between different data modalities [22].

- Recommended Solution: Adopt a two-stage framework such as KGE_NFM [4].

- Methodology:

- Knowledge Graph Embedding (KGE): Construct a knowledge graph containing various entities (drugs, targets, diseases, side-effects) and their relationships. Use a KGE model (e.g., TransE, DistMult) to learn low-dimensional vector representations for all entities. This step integrates heterogeneous information into a unified semantic space.

- Neural Factorization Machine (NFM) for Fusion: Feed the learned KGE representations (along with other features) into an NFM. The NFM excels at modeling second-order and higher-order feature interactions, allowing for deep and effective fusion of the multimodal inputs for the final DTA prediction [4].

- Expected Outcome: This framework captures complex, multi-relational data from various sources, leading to more accurate and robust predictions, especially in challenging scenarios like cold-start for new proteins [4].

Q3: The features from my different modalities (e.g., sequence and graph) are not semantically aligned, leading to poor fusion. How can I improve alignment?

A: This is a core challenge in multimodal learning. Instead of directly fusing features, project them into a common latent space where semantically similar concepts are close.

- Problem Detail: When features from images and text are derived from separate, modality-specific models, they may not be semantically aligned. Directly passing them to a fusion module yields suboptimal results [22].

- Recommended Solution: Utilize models or layers designed for implicit alignment.

- Methodology:

- Shared Encoders: Employ a shared encoder or an integrated encoding-decoding process to handle multimodal inputs simultaneously. This allows different data types to be transformed into a common representation space [22].

- Attention-Based Alignment: Implement cross-modal attention mechanisms. This allows features from one modality (e.g., a molecular graph) to directly attend to and influence the representation of another (e.g., a protein sequence), dynamically aligning relevant parts of the inputs.

- Expected Outcome: Improved semantic coherence between modalities, which allows the subsequent fusion module to more effectively leverage complementary information [22].

Experimental Protocols and Data

Table 1: Key Performance Metrics of Multimodal Fusion Frameworks on Cold-Start Scenarios

| Model / Framework | Core Fusion Strategy | Cold-Start Scenario Tested | Key Metric (e.g., AUPR) | Performance Highlight |

|---|---|---|---|---|

| C2P2 [1] | Transfer Learning from CCI & PPI | Cold-Drug, Cold-Target | AUPR | Shows advantage over other pre-training methods in DTA tasks. |

| KGE_NFM [4] | KGE + Neural Factorization Machine | Cold Start for Proteins | AUPR | Achieves accurate and robust predictions, outperforming baseline methods. |

| G2MF [23] | Graph-based feature-level fusion | Generalization to new cities (Geographic Isolation) | Overall Accuracy (88.5%) | Exhibits good generalization ability on data with geographic isolation. |

Detailed Methodology for C2P2 Transfer Learning Experiment [1]:

- Objective: Incorporate inter-molecule interaction information into drug and target representations to mitigate the cold-start problem in DTA prediction.

- Pre-training Tasks:

- Chemical-Chemical Interaction (CCI): Train a model to predict interactions between two chemical entities. The data can be derived from pathway databases, text mining, or structure/activity similarity.

- Protein-Protein Interaction (PPI): Train a model to predict physical interactions between two protein macromolecules.

- Representation Learning:

- For Proteins: Learn representations via language modeling on protein sequences (e.g., using Transformer models) or by constructing protein graphs based on contact maps.

- For Molecules (Drugs): Learn representations via language modeling on SMILES sequences or via Graph Neural Networks on molecular graphs.

- Knowledge Transfer & Fusion:

- The knowledge (model weights or features) learned from the CCI and PPI tasks is transferred to the main DTA model.

- The final DTA model fuses the intra-molecule information (from sequence/graphs) with the inter-molecule information (from CCI/PPI) to predict the binding affinity value.

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Multimodal Fusion Experiments

| Item / Resource | Function in Multimodal Fusion Experiments |

|---|---|

| Knowledge Graphs (e.g., PharmKG, BioKG) [4] | Provides structured, multi-relational biological data for learning robust entity representations via KGE. |

| Interaction Datasets (CCI, PPI) [1] | Serves as a source for transfer learning, providing critical inter-molecule interaction knowledge to combat the cold-start problem. |

| Pre-trained Language Models (e.g., ProtTrans for proteins) [1] | Provides high-quality initial sequence representations for proteins and drugs (SMILES), capturing intra-molecule contextual information. |

| Graph Neural Networks (GNNs) | The core architecture for processing naturally graph-structured data like molecules (atoms/bonds) and proteins (residue contact maps). |

| Neural Factorization Machine (NFM) [4] | A powerful fusion component that models second-order and higher-order feature interactions between combined multimodal embeddings. |

Architectural Visualizations

Diagram 1: Unified KGE and NFM Fusion Workflow

Diagram 2: C2P2 Transfer Learning for Cold-Start Problem

Diagram 3: Graph-Based Multimodal Fusion (G2MF) for Complex Data

Frequently Asked Questions (FAQs)

Q1: What are the most common failure modes when training GANs on imbalanced chemogenomic data, and how can I identify them?

GAN training is inherently unstable, and several common failure modes can be identified by monitoring the loss functions and generated outputs [24] [25].

- Vanishing Gradients: This occurs when the discriminator becomes too good and provides no useful gradient information for the generator to learn. The generator's loss decreases rapidly, but it fails to produce realistic data [24] [25].

- Mode Collapse: The generator produces the same or a very limited variety of plausible outputs (e.g., generating nearly identical molecular structures) instead of a diverse set. This is often visible in the generated samples and can be reflected in oscillating loss values [24] [25].

- Failure to Converge: The generator and discriminator loss values oscillate wildly without stabilizing, indicating that the two models have not found an equilibrium. The quality of the generated samples does not improve over time [24] [25].

Q2: My GAN for generating synthetic minority-class drug candidates suffers from mode collapse. What are the proven solutions?

Mode collapse, where the generator produces limited varieties, can be addressed with specific architectural and loss function modifications.

- Use Wasserstein Loss with Gradient Penalty (WGAN-GP): This loss function provides more stable training and smoother gradients, preventing the discriminator from becoming too strong and allowing the generator to learn more effectively. It has been successfully applied in network intrusion detection to generate diverse minority attack samples [26].

- Implement Unrolled GANs: This technique optimizes the generator against future states of the discriminator, preventing it from over-optimizing for a single, fixed discriminator and encouraging diversity in the output [24].

- Employ a Conditional GAN (CGAN): By providing class labels as input to both the generator and discriminator, you can guide the data generation process. The CE-GAN model uses conditional constraints to ensure both the balance and diversity of generated network intrusion samples [26].

Q3: How can I evaluate the quality and effectiveness of synthetic data generated for cold-start drug-target interaction (DTI) prediction?

Beyond standard machine learning metrics, specific evaluation methods are required for generative models.

- Fréchet Inception Distance (FID) and Inception Score (IS): These are standard metrics for evaluating the quality and diversity of generated images. Lower FID and higher IS scores indicate generated data that is closer to the real data distribution. They were used to validate the performance of the Damage GAN model on imbalanced image datasets [27].

- Downstream Model Performance: The most critical test is to use your generated synthetic data to augment the training set for your primary DTI prediction model. If the synthetic data is effective, you should see a significant improvement in the predictive accuracy for the minority class (e.g., novel drugs or targets) without degrading the performance on the majority class. Studies in DTI prediction have shown that GAN-augmented data can lead to high sensitivity and specificity on benchmark datasets like BindingDB [17].

- Analysis of Chemical Space: For chemogenomic data, you can analyze the distribution of the generated molecules in a chemical descriptor space (e.g., using t-SNE) to ensure they occupy a similar region to the real minority class molecules and do not just replicate existing samples.

Q4: Are there specific GAN architectures better suited for handling complex, structured data like molecular graphs or protein sequences?

Yes, standard GANs are often designed for images, but variants exist for structured data.

- Graph Neural Network-based GANs: Since molecules can be natively represented as graphs (atoms as nodes, bonds as edges), using a GAN where the generator and discriminator are built with Graph Neural Networks (GNNs) is a promising approach. Pre-training GNNs on related tasks can also provide a robust starting point [1].

- Conditional GANs (CGAN): As mentioned, CGANs are highly adaptable. For DTI, you can condition the generation on specific target protein features, guiding the generator to create drug-like molecules that are more likely to interact with that target, which is directly relevant to mitigating the cold-start problem [28].

- Knowledge Graph Embedding Models: While not a GAN, frameworks like KGE_NFM integrate knowledge graphs to learn low-dimensional representations of drugs, targets, and their interactions from heterogeneous data. This approach has shown advantages in cold-start scenarios for DTI prediction [4].

Troubleshooting Guide

This guide addresses specific error messages and performance issues.

| Problem/Symptom | Possible Cause | Solution |

|---|---|---|

| Generator loss drops to zero while discriminator loss remains high. | Vanishing gradients; the discriminator fails to learn. | Switch to a Wasserstein loss (WGAN-GP) to ensure the discriminator provides useful gradients [24] [26]. |

| Generated samples have low diversity (e.g., same molecular scaffold). | Mode collapse. | Implement unrolled GANs or use mini-batch discrimination to encourage diversity [24]. |

| Loss values for generator and discriminator oscillate wildly without convergence. | The models are not reaching an equilibrium (Nash equilibrium). | Apply regularization techniques, such as adding noise to the discriminator's input or penalizing the discriminator's weights [24]. |

| Synthetic data does not improve cold-start DTI model performance. | Poor quality or non-representative synthetic data. | Use a conditional GAN (CGAN) to tightly control the generation based on protein or drug features [26] [28]. Validate with FID/IS and t-SNE plots [27]. |

| Training is unstable and slow on high-dimensional data. | Model architecture is too simple or learning rate is poorly tuned. | Use a deep convolutional architecture (DCGAN) with best practices (e.g., strided convolutions, Adam optimizer with tuned LR) [27] [25]. |

Experimental Protocols & Data

Summary of GAN Performance in Imbalanced Learning

The table below summarizes quantitative results from recent studies that employed GANs to address data imbalance.

| Study/Model | Application Domain | Key Metric | Performance with GAN | Baseline Performance |

|---|---|---|---|---|

| GAN + Random Forest (RFC) [17] | Drug-Target Interaction (BindingDB-Kd) | Sensitivity (Recall) | 97.46% | Not Reported |

| Specificity | 98.82% | Not Reported | ||

| ROC-AUC | 99.42% | Not Reported | ||

| Damage GAN [27] | Image Generation (Imbalanced CIFAR-10) | FID (Lower is better) | Outperformed DCGAN & ContraD GAN | DCGAN (Higher FID) |

| CE-GAN [26] | Network Intrusion Detection (NSL-KDD) | Minority Class Detection | Significant improvement | Poor detection of rare attacks |

Detailed Methodology: GAN-based Oversampling for DTI Prediction

This protocol is adapted from studies that successfully used GANs for data augmentation in drug-target affinity prediction [17].

Data Preparation and Feature Engineering:

- Drug Features: Encode drug molecules using molecular fingerprints such as MACCS keys to create fixed-length bit-vectors that represent structural features [17].

- Target Features: Encode protein sequences using composition-based descriptors like amino acid composition (AAC) and dipeptide composition (DPC) to create a fixed-length numerical representation [17].

- Formulate Pairs: Create feature vectors for drug-target pairs by concatenating the drug and target feature vectors.

- Split Data: Separate the pairs into interacting (positive/minority class) and non-interacting (negative/majority class). Further split the data into training and testing sets, ensuring that novel drugs or targets are held out in the test set to simulate a cold-start scenario.

GAN Training for Synthetic Data Generation:

- Model Selection: Choose a GAN architecture suitable for your data type. For vector-based representations, a fully connected GAN or a Conditional GAN (CGAN) can be effective. For graph-based data, consider a Graph GAN.

- Train on Minority Class: Train the GAN only on the feature vectors of the minority class (the interacting pairs) from the training set.

- Generate Synthetic Samples: After training, use the generator to create a sufficient number of synthetic minority-class samples to balance the class distribution in the training set.

Model Training and Evaluation:

- Augment Training Set: Combine the original training data with the generated synthetic samples.

- Train Predictor: Train your DTI prediction model (e.g., a Random Forest classifier) on the augmented dataset.

- Evaluate on Cold-Start Test: Evaluate the model's performance on the held-out test set containing novel drugs or targets. Key metrics to report include Sensitivity (Recall) to measure the detection of true interactions, Specificity, and ROC-AUC [17].

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in the Experiment |

|---|---|

| MACCS Keys | A standardized set of 166 molecular substructures used to convert a drug's chemical structure into a fixed-length binary fingerprint for feature representation [17]. |

| Amino Acid Composition (AAC) | A simple protein sequence descriptor that calculates the fraction of each amino acid type in the sequence, providing a fundamental feature vector for target proteins [17]. |

| Conditional GAN (CGAN) | A GAN variant where both the generator and discriminator are conditioned on auxiliary information (e.g., class labels or protein features), allowing for targeted generation of specific data classes [26] [28]. |

| Wasserstein GAN with Gradient Penalty (WGAN-GP) | A stable GAN architecture that uses the Earth-Mover distance and a gradient penalty term to overcome issues like vanishing gradients and mode collapse, leading to more reliable training [26]. |

| Fréchet Inception Distance (FID) | A metric for assessing the quality of generated images by calculating the distance between feature distributions of real and generated data in a pre-trained neural network's feature space [27]. |

Workflow and Architecture Diagrams

Frequently Asked Questions

What is the cold-start problem in chemogenomics? The cold-start problem occurs when a machine learning model for Drug-Target Affinity (DTA) or Compound-Protein Interaction (CPI) prediction performs poorly on novel drugs or targets that were not present in the training data. This is a major challenge in drug discovery and repurposing, where predicting interactions for new entities is the primary goal [1] [5].

How can pre-trained feature extractors help with this issue? Pre-trained models learn robust and generalized representations of molecules and proteins from vast, unlabeled datasets. By leveraging this pre-existing knowledge, your DTA/CPI model does not start from scratch. This provides a foundational understanding of biochemical properties and internal structures (intra-molecule interactions), which improves the model's ability to generalize to unseen compounds and proteins [1] [5].

What are some common pre-trained models for drugs and proteins? For proteins, models like ProtTrans [5] are used. For drug-like compounds, common models include Mol2vec [5]. These models can convert raw input sequences (e.g., amino acid sequences for proteins, SMILES strings for compounds) into informative feature matrices that capture structural and functional characteristics [5].

My model performs well on training data but poorly on novel compounds. What could be wrong? This is a classic sign of overfitting and insufficient generalization. Ensure you are using features from a model pre-trained on a large and diverse chemical library. Also, consider incorporating interaction information during training, not just the static features of the compounds and proteins. Frameworks inspired by induced-fit theory, which treat molecules as flexible entities, can enhance performance on unseen data [5].

What is the difference between intra- and inter-molecule interaction information?

- Intra-molecule interactions refer to the internal structural relationships within a single molecule or protein, such as the bonds between atoms in a drug or the sequence of amino acids in a protein. This is what language model pre-training primarily learns [1].