Overcoming the Cold-Start Challenge in Drug-Target Interaction Prediction: Modern Computational Strategies

Accurate prediction of Drug-Target Interactions (DTIs) is fundamental to accelerating drug discovery and repurposing.

Overcoming the Cold-Start Challenge in Drug-Target Interaction Prediction: Modern Computational Strategies

Abstract

Accurate prediction of Drug-Target Interactions (DTIs) is fundamental to accelerating drug discovery and repurposing. However, the 'cold-start' problem—predicting interactions for novel drugs or targets with no prior interaction data—severely limits the applicability of traditional computational models. This article synthesizes the latest advances in overcoming this challenge, exploring foundational concepts, innovative methodologies like meta-learning and multi-level protein modeling, strategies for optimizing model generalization, and rigorous validation frameworks. Tailored for researchers, scientists, and drug development professionals, it provides a comprehensive overview of how next-generation in silico methods are enabling more reliable and efficient predictions in data-sparse scenarios, ultimately de-risking the early stages of drug development.

Understanding the Cold-Start Problem in DTI Prediction: Definitions, Scenarios, and Impact

Cold-Start Scenarios: Definitions and Performance Data

Cold-start problems occur when predicting interactions for new entities not seen during model training. The table below defines the core scenarios and summarizes the performance of various state-of-the-art methods.

Table 1: Cold-Start Scenarios and Method Performance

| Cold-Start Scenario | Definition | Key Challenges | Representative Methods & Reported Performance |

|---|---|---|---|

| Cold-Drug | Predicting interactions for novel drugs that are not in the training set [1]. | Lack of known interactions for the new drug, making it impossible to learn a direct representation from historical DTI data [2]. | C2P2 Framework [1]: Transfers knowledge from Chemical-Chemical Interaction (CCI) tasks.DTI-LM [2]: Uses drug SMILES sequences with language models; performance disparity noted between cold-drug and cold-target scenarios. |

| Cold-Target | Predicting interactions for novel target proteins that are not in the training set [1]. | Lack of known interactions for the new target protein [2]. The problem can be more challenging if the protein has no structural or sequential homologs in the training data [2]. | DTI-LM [2]: Leverages protein amino acid sequences; reported to excel in cold-target predictions.ColdDTI [3] [4]: Uses multi-level protein structures; demonstrates strong performance. |

| Full Cold-Start | Predicting interactions for pairs involving both a novel drug and a novel target [3]. | The most challenging scenario with no direct interaction data for either molecule, requiring high model generalization. | MGDTI [5]: Employs meta-learning and graph transformers, showing effectiveness in full cold-start scenarios.ColdDTI [3] [4]: Attends to multi-level protein structures to capture transferable biological priors. |

Frequently Asked Questions (FAQs) and Troubleshooting

Q1: My model performs well on known drugs and targets but fails on new ones. What is the root cause?

- A: This is the classic cold-start problem. Models that rely heavily on existing interaction graphs or deep learned representations from known DTIs lack the mechanism to generalize to unseen entities. The root cause is the absence of interaction information for new nodes in the network [3] [1].

- Troubleshooting Steps:

- Diagnose the Scenario: Determine if you are facing a cold-drug, cold-target, or full cold-start problem.

- Shift Strategy: Move from graph-based methods, which struggle without informative neighbors for new nodes [3], to structure-based approaches that exploit the intrinsic features of the drugs and proteins themselves [3].

- Incorporate Transferable Priors: Use methods that learn biologically meaningful patterns, such as interactions between drug substructures and multi-level protein motifs, which can transfer to novel entities [3] [1].

Q2: How can I represent a novel protein when its 3D structure is unavailable?

- A: While 3D structure is informative, it is often unavailable. You can use the following hierarchical representations to capture rich biological information:

- Primary Structure: The amino acid sequence, which can be encoded with pre-trained language models (e.g., ProtBert) [2].

- Secondary & Tertiary Structures: Predict or incorporate information about secondary motifs (e.g., α-helices, β-sheets) and tertiary substructures [3].

- Holistic Embeddings: Use the entire protein sequence to generate a quaternary-level global representation [3]. Frameworks like ColdDTI are explicitly designed to attend to and fuse these multi-level structures [3].

Q3: What is the benefit of using meta-learning for cold-start DTI prediction?

- A: Meta-learning, as used in MGDTI, trains a model to be adaptive to new tasks with limited data [5]. In the context of DTI:

- It simulates cold-start tasks during training by learning from a variety of "learning episodes."

- The model learns a general initialization that can be quickly fine-tuned with only a few examples of a new drug or target, significantly improving its generalization capability for true cold-start scenarios [5].

Experimental Protocol: Implementing a Cold-Start Evaluation

To rigorously benchmark your model against cold-start problems, follow this standardized protocol.

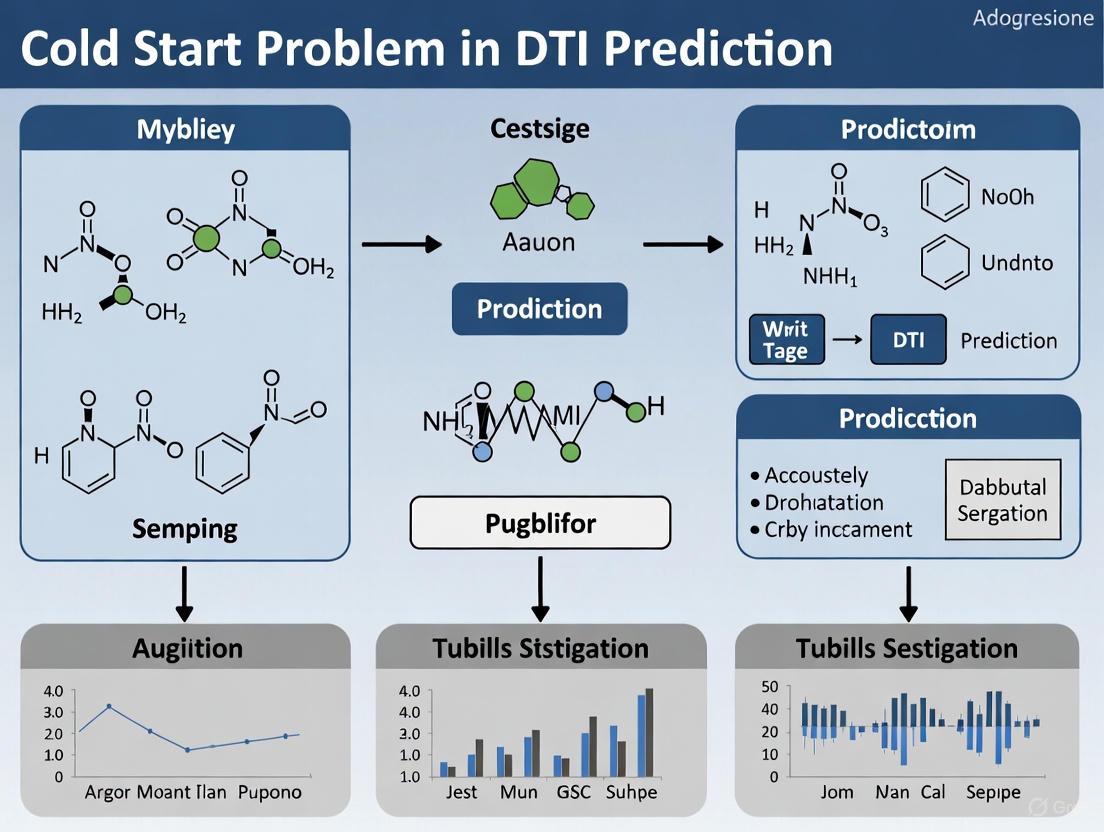

Workflow Description: The diagram outlines the core process for a cold-start evaluation. The first critical step is Data Partitioning, where you must create training and test sets that ensure drugs, targets, or both in the test set are completely absent from the training set to simulate the desired cold-start scenario [1].

Key Evaluation Metrics:

- AUC (Area Under the ROC Curve): A key metric used to measure overall model performance across cold-start scenarios [3] [2].

- Other Metrics: Consider including metrics like Precision-Recall AUC (AUPR) and F1-score for a comprehensive assessment.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Cold-Start DTI Research

| Item / Resource | Function / Description | Relevance to Cold-Start |

|---|---|---|

| SMILES Sequences | A string representation of a drug's molecular structure [3] [6]. | Provides the fundamental input feature for representing novel drugs in most structure-based models [2]. |

| Protein Amino Acid Sequences | The primary structure of a target protein [3]. | The most universally available input for representing novel targets, especially when 3D structure is unknown [2]. |

| Pre-trained Language Models (e.g., ProtBert, ESM, ChemBERTa) | Models trained on vast corpora of protein or chemical sequences to generate contextual embeddings [2]. | Provides robust, generalized feature representations for novel drugs and targets, mitigating the lack of task-specific data [2]. |

| Protein Structure Databases (e.g., AlphaFold DB) | Resources providing computationally predicted 3D structures for proteins [1]. | Enables the use of graph or point-cloud representations of novel targets, capturing structural information beyond the primary sequence [1]. |

| Interaction Knowledge (PPI, CCI) | Data on Protein-Protein Interactions and Chemical-Chemical Interactions [1]. | Can be transferred via transfer learning to imbue models with general "interaction knowledge" before they learn the specific DTI task, improving performance on cold-start entities [1]. |

Frequently Asked Questions

1. What exactly is the "cold-start" problem in Drug-Target Interaction (DTI) prediction? The cold-start problem refers to the significant drop in model performance when predicting interactions for novel drugs or target proteins that were not present in the training data. This is a major challenge because the primary goal of in silico drug discovery is to identify interactions for precisely these new entities. The problem can be divided into two scenarios: "cold-drug" (predicting for new drugs against known proteins) and "cold-target" (predicting for new proteins against known drugs). Traditional models that rely heavily on existing interaction networks or similarity to known entities struggle in these situations [3] [1].

2. Why do graph-based methods often fail in cold-start scenarios? Graph-based methods formulate DTI prediction as a link prediction task on a heterogeneous network. They work by propagating information through the network topology. However, their performance heavily relies on existing connections. In cold-start scenarios, new drugs or proteins are "orphan nodes" with no or very few connecting edges, leaving them without informative neighbors from which to learn. This makes these models vulnerable when the DTI data is sparse, which is often the case with novel compounds and targets [3].

3. How can we incorporate protein structure information to improve generalization? Proteins have a natural hierarchy of structural levels—primary (amino acid sequence), secondary (motifs like α-helices), tertiary (3D substructures), and quaternary (the whole protein complex). Traditional methods often use only the primary sequence. Explicitly modeling these multi-level structures allows the model to learn more transferable, biologically grounded priors about how interactions occur at different granularities, rather than overfitting to specific sequences seen during training. This can be achieved through hierarchical attention mechanisms that mine interactions between drug structures and these different protein levels [3].

4. My model performs well on validation splits but poorly on novel compounds. Is this a data or model issue? This is a classic sign of a cold-start problem and is likely a limitation of the model's architecture and training paradigm. Models that are overly reliant on learning from the specific patterns of seen drugs and targets may fail to generalize. The solution often involves shifting the model's learning objective. Instead of just learning to predict interactions for specific pairs, the model should be guided to learn fundamental, transferable interaction patterns. This can be achieved through techniques like meta-learning, transfer learning from related tasks, or incorporating stronger biological priors [1] [5].

Troubleshooting Guides

Problem: Poor Performance on New Drugs (Cold-Drug Scenario)

Diagnosis: The model's predictions are inaccurate when evaluating drugs that were not in the training set.

Solutions:

- Leverage Transfer Learning from Chemical Interactions: Transfer knowledge from a pre-training task focused on Chemical-Chemical Interactions (CCI). This teaches the model the "grammar" of how molecules interact with each other, providing a foundational understanding of intermolecular forces and reaction principles that are directly relevant to drug-target binding. This incorporates crucial inter-molecule interaction information that pure sequence-based language models lack [1].

- Implement a Meta-Learning Framework: Train your model using a meta-learning approach, such as Model-Agnostic Meta-Learning (MAML). This framework simulates cold-start tasks during training by repeatedly showing the model small "support" sets of known interactions for a specific task and then asking it to predict for a "query" set. This forces the model to learn a parameter initialization that can rapidly adapt to new drugs with very few examples, making it inherently more robust to novelty [5].

Problem: Poor Performance on New Target Proteins (Cold-Target Scenario)

Diagnosis: The model fails to generalize to target proteins unseen during training.

Solutions:

- Utilize Multi-Level Protein Representations: Move beyond flat amino acid sequences. Represent and encode proteins using their hierarchical biological structures [3].

- Experimental Protocol for ColdDTI-style Multi-Level Feature Extraction: [3]

- Primary Structure: Encode the amino acid sequence using a pre-trained protein language model (e.g., from ProtTrans).

- Secondary Structure: Annotate sequences with secondary structure types (e.g., α-helix, β-sheet) using tools like DSSP. Represent each motif by its start/end position and type.

- Tertiary & Quaternary Structure: Use predicted or experimental 3D structures (e.g., from AlphaFold2) to define tertiary substructures and global quaternary embeddings.

- Hierarchical Attention: Employ an attention mechanism to let the model learn the importance of interactions between drug substructures and each level of the protein hierarchy.

- Experimental Protocol for ColdDTI-style Multi-Level Feature Extraction: [3]

- Transfer Learning from Protein-Protein Interaction (PPI) Data: Pre-train your protein encoder on a large-scale PPI prediction task. The physical interactions at protein interfaces (e.g., electrostatics, hydrogen bonding, hydrophobic effects) reveal effective binding modes and the distribution of ligand-binding pockets. This provides the model with a strong prior on what a "bindable" protein region looks like [1].

Problem: Model is Overly Sensitive to Noisy Labels and Sparse Data

Diagnosis: The known interaction matrix is sparse (many unknown pairs are treated as non-interacting), and the model's performance is unstable.

Solutions:

- Employ a Robust Loss Function: Replace standard loss functions (like Mean Squared Error) with a more robust alternative designed to handle outliers. The (L2)-C loss combines the low error of (L2) loss with the robustness of C-loss, making the model less sensitive to potentially incorrect negative labels in the sparse interaction matrix [7].

- Adopt Multi-Kernel and Ensemble Learning: Use multiple kernels to represent drugs and targets from different views (e.g., based on structure, sequence, and interaction profiles). Then, use multi-kernel learning to dynamically weight these views. Complement this with ensemble learning to model multiple data structures simultaneously (e.g., drug structure, target structure, and the low-rank structure of the interaction matrix), which improves robustness [7].

Experimental Protocols & Data

Table 1: Summary of Key Cold-Start DTI Prediction Methods

| Method | Core Strategy | Technical Mechanism | Best For Scenario |

|---|---|---|---|

| ColdDTI [3] | Multi-Level Protein Modeling | Hierarchical attention across primary, secondary, tertiary, quaternary protein structures. | Cold-Drug, Cold-Target |

| C2P2 [1] | Transfer Learning | Pre-training on Chemical-Chemical (CCI) & Protein-Protein (PPI) interaction tasks. | Cold-Drug, Cold-Target |

| MGDTI [5] | Meta-Learning | Graph Transformer trained with meta-learning to adapt quickly to new tasks. | Cold-Drug, Cold-Target |

| DTI-RME [7] | Robust Ensemble | (L_2)-C loss, multi-kernel learning, and ensemble modeling of multiple data structures. | Noisy & Sparse Data |

| ColdstartCPI [8] | Induced-Fit Theory | Models flexibility of both compounds and proteins using pre-trained features and Transformer. | Cold-Drug, Cold-Target |

The Researcher's Toolkit: Essential Reagents for Cold-Start DTI Experiments

| Item / Resource | Function in the Experiment | Specification Notes |

|---|---|---|

| Protein Data Source (e.g., UniRef, Pfam) | Provides large-scale protein sequences for pre-training language models or extracting features. | Critical for learning robust, generalizable representations. [1] |

| Chemical Compound Database (e.g., PubChem) | Source of SMILES strings and molecular structures for pre-training chemical encoders. | The PubChem dataset contains over 77 million SMILES sequences. [1] |

| PPI Database (e.g., HPRD, STRING) | Provides data for the protein-protein interaction pre-training task. | Teaches the model the physics of protein interfaces. [1] |

| 3D Structure Predictor (e.g., AlphaFold2) | Generates tertiary and quaternary structure data from amino acid sequences. | Required for multi-level structure modeling; experimental data can be time-consuming to acquire. [3] [1] |

| Gold-Standard DTI Datasets (e.g., NR, IC, GPCR, E) | Benchmark datasets for evaluating model performance under different cold-start settings. | Nuclear Receptors (NR), Ion Channels (IC), GPCRs, and Enzymes (E) are common benchmarks. [7] |

Workflow: Meta-Learning for Cold-Start DTI Prediction

The following diagram illustrates the meta-learning process that enables models to handle new tasks efficiently.

Workflow: Multi-Level Protein Structure Feature Extraction

This diagram outlines the process of creating hierarchical representations of a protein target.

FAQs: Addressing Common Experimental Challenges

FAQ 1: Why does my model's performance degrade significantly when predicting interactions for novel drugs or proteins?

Answer: This is a classic symptom of the Cold-Start Problem. The degradation occurs because traditional models rely heavily on patterns learned from existing data, which are absent for new entities.

- In Graph-Based Models: Your model likely depends on network topology. New drugs or proteins appear as isolated nodes with no connecting edges, providing no topological information for inference. This is known as the "neighborlessness" issue [3]. Models like those that use Graph Neural Networks (GNNs) struggle because message-passing mechanisms fail when new nodes have no neighbors [2] [9].

- In Structure-Based Models: Performance drops when the novel entity has no structural or sequential homologs with known interactions in the training data [2]. Many methods use only primary structures (e.g., amino acid sequences), missing higher-level structural interactions critical for binding [3].

Troubleshooting Steps:

- Diagnose: Check if the poor-performing test cases involve drugs or proteins with low similarity to your training set.

- Mitigate: Shift towards methods that incorporate heterogeneous biological information (e.g., side effects, diseases) or use pre-trained language models on large corpora of sequences, which can provide better priors for unseen entities [2] [9].

FAQ 2: How can I validate if my model is overly dependent on network topology and lacks generalization power?

Answer: You can design a specific ablation experiment to test this dependency.

Experimental Protocol:

- Data Splitting: Create two test sets from your data.

- Test Set A (Warm Start): Contains drugs and proteins that have known interactions in the training graph.

- Test Set B (Cold Start): Contains drugs or proteins completely absent from the training graph.

- Model Evaluation: Train your graph-based model and evaluate its performance (e.g., AUC, AUPR) separately on Test Set A and Test Set B.

- Interpretation: A significant performance drop in Test Set B indicates excessive reliance on network topology and poor generalization. For example, network-based models like DTINet and NeoDTI are known to show such a decrease [9].

Table 1: Sample Experimental Results Demonstrating the Cold-Start Performance Drop

| Model Type | Example Model | Warm-Start AUC | Cold-Start AUC | Performance Drop |

|---|---|---|---|---|

| Graph-Based | DTINet [9] | 0.92 | 0.71 | -0.21 |

| Structure-Based (Primary) | TransformerCPI [3] | 0.89 | 0.75 | -0.14 |

| Advanced Multi-level | ColdDTI [3] | 0.91 | 0.83 | -0.08 |

FAQ 3: My structure-based model performs well on benchmarks but yields biologically implausible results. What could be wrong?

Answer: This often stems from a simplistic representation of biological structures. Many models treat proteins as flat amino acid sequences, ignoring the hierarchical nature of protein structure (primary, secondary, tertiary, quaternary) that dictates function and interaction [3]. Similarly, representing drugs only as SMILES strings may overlook 3D conformational and functional group information.

Troubleshooting Guide:

- Problem: Over-reliance on primary structure (sequence) only.

- Solution: Integrate multi-level structural information. For proteins, incorporate predictions or data on secondary structures (e.g., α-helices, β-sheets) and tertiary contacts [3]. For drugs, consider using molecular graph representations that capture 2D topology or 3D conformation where available.

- Problem: The model learns spurious correlations from data artifacts instead of true interaction patterns.

FAQ 4: How do I handle the issue of false negative samples in my training data?

Answer This is a critical data quality issue. Many datasets treat unverified interactions as negative samples, but many could be true, undiscovered interactions [11]. Using these "false negatives" for training misleads the model.

Methodology to Mitigate False Negatives:

- Strategy 1: Positive-Unlabeled Learning: Reformulate the problem to treat unknown interactions as unlabeled rather than negative.

- Strategy 2: Fuzzy Theory & Feature Projection: As in DTI-HAN, use only positive samples and high-confidence similarities to estimate a membership distribution function for classification, avoiding false negatives during the prediction stage [11].

- Strategy 3: Robust Loss Functions: Design loss functions that are less sensitive to label noise.

Experimental Protocols & Workflows

Protocol 1: Benchmarking Model Performance Under Cold-Start Scenarios

This protocol is essential for evaluating a model's real-world applicability.

- Data Preparation:

- Use benchmark datasets (e.g., from Yamanishi et al.) that include drug structures (SMILES), protein sequences, and known interactions [11].

- Strategically split the data to simulate different cold-start conditions:

- Cold-Drug: All interactions of specific drugs are held out for testing.

- Cold-Protein: All interactions of specific proteins are held out for testing.

- Both-Cold: Interactions of specific drug-protein pairs are held out.

- Model Training: Train your model only on the warm-start training set.

- Evaluation: Predict on the cold-start test sets and compare performance metrics (AUC, AUPR) against warm-start performance. A robust model will show the smallest decrease in performance [9].

The following workflow outlines the key steps for a comprehensive cold-start benchmark evaluation.

Protocol 2: Integrating Multi-Level Protein Structures

This methodology, inspired by ColdDTI, enhances the biological fidelity of structure-based models [3].

- Feature Extraction:

- Primary Structure: Use a pre-trained protein language model (e.g., ESM, ProtBert) to get embeddings from the amino acid sequence.

- Secondary Structure: Use tools like DSSP or deep learning predictors to assign secondary structure types (α-helix, β-sheet) to sequence regions.

- Tertiary Structure: If available, use residue contact maps or distance maps derived from 3D structures.

- Quaternary Structure: Often represented by the global protein embedding.

- Hierarchical Interaction Modeling:

- Use a hierarchical attention mechanism to model the interactions between drug representations (at both local functional group and global molecular levels) and each level of the protein structure.

- Adaptive Fusion:

- Dynamically combine the contributions from the different protein structural levels and drug granularities to make the final prediction.

The diagram below illustrates this multi-level representation and fusion process.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Advanced DTI Prediction Research

| Resource Name | Type | Function in Experiment | Key Application |

|---|---|---|---|

| ESM/ProtBert [2] | Pre-trained Language Model | Generates context-aware feature embeddings from protein amino acid sequences. | Captures semantic and structural information from primary sequences, improving cold-start performance. |

| ChemBERTa / MoLFormer [2] | Pre-trained Language Model | Generates feature embeddings from drug SMILES strings. | Understands chemical syntax and semantics for better drug representation. |

| Graph Attention Network (GAT) [9] [12] | Neural Network Architecture | Learns node representations in a graph by assigning different importance to neighbors. | Integrates heterogeneous network data (drug-drug, target-target similarities) for robust feature learning. |

| BIONIC [9] | Network Integration Framework | Learns comprehensive node features from multiple biological networks using GATs. | Creates accurate and holistic drug/target representations by combining different data sources. |

| Line Graph Transformation [10] | Graph Theory Technique | Converts drug-target interaction edges in a bipartite graph into nodes in a new graph. | Enables direct modeling of relationships between different drug-target pairs. |

| AutoDock Vina [11] | Molecular Docking Software | Simulates how a drug molecule binds to a 3D protein structure and calculates binding affinity. | Used for in silico validation of predicted DTIs, providing biological plausibility. |

Understanding the hierarchical structure of proteins is fundamental to elucidating drug-target interactions (DTIs), particularly when addressing the cold-start problem—predicting interactions for novel drugs or targets with no prior interaction data. Proteins exhibit a natural hierarchy of structural levels: primary (amino acid sequence), secondary (local folding patterns like α-helices and β-sheets), tertiary (the overall three-dimensional structure), and quaternary (assembly of multiple protein chains). Computational models traditionally limited to primary sequences face significant generalization challenges in cold-start scenarios. Emerging research demonstrates that explicitly modeling this structural hierarchy enables more accurate and generalizable predictions by capturing biologically meaningful interaction patterns transferable to new entities [3].

➤ Troubleshooting Guide: FAQs on Protein Structures in DTI Prediction

Q1: Why do my DTI predictions fail for novel targets despite high sequence similarity to known targets?

- Problem Analysis: This common cold-start scenario often occurs when models rely solely on primary sequence similarity. Proteins with similar sequences can fold into different tertiary structures or form distinct quaternary assemblies, leading to different interaction profiles.

- Solution: Incorporate higher-order structural information.

- Recommended Action: Utilize models that explicitly represent tertiary and quaternary structures. For example, the ColdDTI framework represents tertiary structures by their starting and ending positions on the residue sequence and uses hierarchical attention to model interactions across all structural levels [3].

- Validation: Cross-reference predictions with experimental data on protein folding and complex formation from databases such as the Protein Data Bank (PDB).

Q2: What experimental techniques can validate computational predictions for novel protein targets?

- Problem Analysis: Computational predictions for novel targets require experimental validation to confirm biological relevance, especially when higher-order structural data is unavailable.

- Solution: A multi-technique approach is recommended.

- For Binary Interaction Screening: Yeast Two-Hybrid (Y2H) is a genetic method useful for high-throughput screening of protein-protein interactions. However, it may produce false positives and requires proteins to localize to the nucleus [13] [14].

- For Complex Purification and Identification: Tandem Affinity Puritation (TAP) combined with Mass Spectrometry (MS) allows for the purification of protein complexes under near-physiological conditions and identification of interacting partners [13] [14].

- For Detailed Atomic-Level Information: Nuclear Magnetic Resonance (NMR) spectroscopy is particularly valuable for studying weak protein-protein interactions and structures in solution, providing atomic-level detail without the need for crystallization [13].

Q3: How can I represent protein multi-level structures for computational DTI models?

- Problem Analysis: Effectively encoding biological structural hierarchies into machine-learning models is a key challenge.

- Solution: Implement a structured representation schema.

- Primary Structure: Represent as a sequence of amino acid residues.

- Secondary Structure: Represent each element (e.g., α-helix, β-sheet) by its start/end positions on the sequence and its type.

- Tertiary Structure: Represent sub-structures or domains by their start/end positions on the sequence.

- Quaternary Structure: Often represented as the global protein embedding, as it encompasses the entire functional unit [3]. Frameworks like ColdDTI use this multi-level representation with pre-trained models to extract meaningful features for downstream prediction tasks [3].

➤ Experimental Protocols for Protein Interaction Analysis

Protocol 1: Tandem Affinity Purification (TAP) with Mass Spectrometry for Complex Identification

- Principle: A target protein is fused with a TAP tag (e.g., comprising protein A and a calmodulin-binding peptide). The tag facilitates two sequential purification steps under native conditions, yielding highly pure protein complexes for identification by MS [13] [14].

- Workflow:

- Fusion: Fuse the gene of the target protein with the DNA encoding the TAP tag.

- Expression: Express the fusion protein in the host organism (e.g., yeast) to allow native complex formation.

- First Purification: Pass the cell lysate over an IgG matrix. The protein A part of the tag binds tightly to IgG. Contaminants are washed away.

- Tag Cleavage: Release the complex from the beads using a specific protease (e.g., Tobacco Etch Virus protease).

- Second Purification: Incubate the eluate with calmodulin-coated beads in the presence of calcium. After washing, the target complex is released using a calcium-chelating agent (e.g., EGTA).

- Analysis: Separate complex components by gel electrophoresis, digest with proteases, and identify the fragments via Mass Spectrometry [14].

The following diagram illustrates the core experimental workflow:

TAP-MS Experimental Workflow

Protocol 2: Yeast Two-Hybrid (Y2H) Screening for Binary Interactions

- Principle: A transcription factor is split into a DNA-Binding Domain (BD) and an Activation Domain (AD). The "bait" protein is fused to BD, and the "prey" protein is fused to AD. Interaction between bait and prey reconstitutes the transcription factor, activating reporter gene expression [13] [14].

- Workflow:

- Construct Creation: Clone the bait protein gene into a vector fused with BD. Clone the prey protein (or library) into a vector fused with AD.

- Transformation: Co-transform both plasmids into a suitable yeast reporter strain.

- Selection: Plate transformed yeast on selective media that requires reporter gene activation for growth (e.g., lacking specific nutrients like histidine).

- Validation: Confirm interaction through secondary reporter assays (e.g., β-galactosidase activity). Sequence plasmid DNA from positive colonies to identify interacting prey proteins [14].

➤ Research Reagent Solutions for Protein Interaction Studies

Table: Essential Research Reagents and Resources

| Reagent/Resource | Function/Application | Key Characteristics |

|---|---|---|

| TAP Tag Systems | Affinity purification of protein complexes under native conditions. | Typically a dual-tag (e.g., Protein A & Calmodulin Binding Peptide) for high-specificity, two-step purification [14]. |

| Yeast Two-Hybrid Systems | High-throughput screening for binary protein-protein interactions. | Available as GAL4/LexA-based systems; can be matrix or library-based for screening [14] [14]. |

| Heterogeneous Interaction Networks | Data integration for computational DTI prediction models. | Networks combining drug-drug, target-target, and drug-target data from sources like DrugBank, HPRD, and SIDER [15]. |

| Knowledge Graphs (e.g., Gene Ontology) | Providing biological context for computational models. | Used in frameworks like Hetero-KGraphDTI for knowledge-based regularization, improving model interpretability and biological plausibility [16]. |

| Benchmark Datasets (e.g., DrugBank, KEGG) | Training and evaluation of computational DTI models. | Contain known drug-target pairs, chemical structures, and protein sequences; essential for performance comparison (AUC, AUPR) [16] [17] [15]. |

➤ Advanced Computational Frameworks for Cold-Start DTI

To directly address the cold-start problem, novel computational frameworks move beyond primary sequences. The following diagram illustrates the architecture of one such advanced model, ColdDTI, which leverages multi-level protein structures:

ColdDTI Multi-Level Prediction Framework

ColdDTI Framework: This framework explicitly represents and processes proteins at all four structural levels. It uses a hierarchical attention mechanism to model interactions between drug structures (local and global) and each level of protein structure. This allows the model to learn transferable biological priors, reducing over-reliance on historical interaction data and improving performance in cold-start scenarios [3].

DTIAM Framework: A unified, self-supervised approach that learns representations of drugs and targets from large amounts of unlabeled data. Its pre-training modules for drugs (using molecular graphs) and targets (using protein sequences) extract critical substructure and contextual information, which significantly enhances generalization for downstream DTI, binding affinity (DTA), and mechanism of action (MoA) prediction tasks, especially when labeled data is scarce [17].

Hetero-KGraphDTI: This framework combines graph neural networks with knowledge integration from biomedical ontologies (e.g., Gene Ontology) and databases. It uses a knowledge-based regularization strategy to infuse biological context into the learned representations of drugs and targets, improving the accuracy and biological plausibility of predictions [16].

Innovative Computational Architectures for Cold-Start DTI Prediction

Frequently Asked Questions & Troubleshooting Guides

Protein Structure Representation

Q1: What are the key challenges in representing multi-level protein structures for cold-start DTI prediction?

Traditional methods typically represent proteins only by their primary structure (amino acid sequences), which limits their ability to capture interactions involving higher-level structures [3]. This becomes particularly problematic in cold-start scenarios where you're predicting interactions for novel drugs or proteins with no prior interaction data. The main challenge is developing representations that capture primary, secondary, tertiary, and quaternary structural information while maintaining biological accuracy and computational efficiency.

Troubleshooting Guide: When your model shows poor generalization to novel proteins

- Problem: Performance degradation when testing on newly discovered proteins not in training data.

- Solution: Implement hierarchical attention mechanisms that explicitly model interactions between drug structures and multiple levels of protein organization [3].

- Verification: Check if your framework includes representations for secondary structure elements (α-helices, β-sheets) and their positions in the residue sequence.

Q2: How can we effectively extract and represent secondary and tertiary protein structures?

Secondary structures should be represented by their starting and ending positions on the residue sequence along with their type (e.g., α-helix or β-sheet) [3]. For tertiary structures, represent them by their spatial positioning and domain organization. Quaternary structures represent the complete functional protein assembly and can be captured through global embedding techniques.

Troubleshooting Guide: Handling incomplete structural data

- Problem: Missing tertiary or quaternary structure data for novel protein targets.

- Solution: Use self-supervised pre-training on large amounts of unlabeled protein sequence data to learn meaningful representations even when complete structural data is unavailable [17].

- Alternative Approach: Implement transfer learning from proteins with known structures to those with only sequence information.

Cold-Start Scenarios

Q3: What specific techniques address the cold-start problem for novel drugs and targets?

Meta-learning approaches train models to be adaptive to cold-start tasks by learning transferable interaction patterns [5]. Self-supervised learning on large unlabeled datasets of drug molecules and protein sequences helps learn meaningful representations without relying solely on labeled interaction data [17]. Hierarchical attention mechanisms specifically mine interactions between multi-level protein structures and drug structures at both local and global granularities [3].

Troubleshooting Guide: Addressing data scarcity in cold-start scenarios

- Problem: Insufficient interaction data for new drugs or targets.

- Solution: Incorporate drug-drug similarity and target-target similarity as additional information to mitigate interaction scarcity [5].

- Implementation: Use graph-based methods that leverage similarity networks while preventing over-smoothing through attention mechanisms or graph transformers.

Q4: How do we validate cold-start DTI predictions experimentally?

Experimental validation typically involves high-throughput screening followed by specific binding assays. For example, in DTIAM framework validation, researchers successfully identified effective inhibitors of TMEM16A from a high-throughput molecular library (10 million compounds) which were then verified by whole-cell patch clamp experiments [17]. Independent validation on specific targets like EGFR and CDK 4/6 provides additional confirmation of prediction reliability.

Computational Methods & Implementation

Q5: What computational architectures best handle multi-level protein structures?

The ColdDTI framework employs hierarchical attention mechanisms to capture interactions across primary, secondary, tertiary, and quaternary structures [3]. Transformer-based architectures with multi-task self-supervised learning have proven effective for learning representations from molecular graphs of drugs and primary sequences of proteins [17]. Graph transformers with meta-learning components (MGDTI) help prevent over-smoothing while capturing long-range dependencies in structural data [5].

Troubleshooting Guide: Managing computational complexity

- Problem: High computational demands when processing multiple structural levels.

- Solution: Implement adaptive fusion mechanisms that dynamically balance contributions from different protein structural levels and drug granularities [3].

- Optimization: Use layer-wise pre-training and transfer learning to reduce training time for specific prediction tasks.

Quantitative Data Comparison

Performance Metrics of DTI Prediction Frameworks

The following table summarizes key performance metrics across recent DTI prediction methods, particularly focusing on cold-start scenarios:

| Framework | Primary Approach | Cold-Start Performance | Structural Levels Utilized | Key Innovation |

|---|---|---|---|---|

| ColdDTI [3] | Hierarchical attention | Consistently outperforms previous methods in cold-start settings | Primary to quaternary structures | Explicit multi-level protein structure modeling |

| DTIAM [17] | Self-supervised pre-training | Substantial improvement in cold-start scenarios | Primary sequences with substructure focus | Unified prediction of interactions, affinities, and mechanisms |

| MGDTI [5] | Meta-learning graph transformer | Effective in cold-start scenarios | Molecular graphs and similarity networks | Meta-learning adaptation to cold-start tasks |

| Traditional Methods [3] | Sequence-based models | Limited generalization in cold-start scenarios | Primarily primary structure only | Baseline for comparison |

Protein Structure Representation Methods

| Structural Level | Representation Approach | Data Requirements | Biological Accuracy |

|---|---|---|---|

| Primary Structure [18] | Amino acid sequence | Sequence data only | Limited to linear information |

| Secondary Structure [3] | Position and type (α-helix, β-sheet) | Sequence with structural annotation | Medium - captures local folding |

| Tertiary Structure [3] | Spatial positioning and domains | 3D structural data or predictions | High - captures spatial organization |

| Quaternary Structure [3] | Global protein embeddings | Complete assembly data | Highest - functional protein form |

Experimental Protocols

Protocol 1: Implementing Multi-Level Protein Representation

Purpose: To extract and represent hierarchical protein structures for cold-start DTI prediction.

Materials:

- Protein sequence databases (UniProt)

- Structural databases (PDB, AlphaFold DB)

- Computational framework (ColdDTI implementation)

Procedure:

- Primary Structure Encoding:

- Input raw amino acid sequences: T = (a₁, a₂, ..., aₘ) where aⱼ is an amino acid residue [3]

- Convert to embedding vectors using pre-trained protein language models

Secondary Structure Annotation:

- Extract secondary structure elements (α-helices, β-sheets) from structural data or predictions

- Record starting positions, ending positions, and structure types for each element [3]

- Map secondary structure information to corresponding residue positions

Tertiary Structure Representation:

- Identify protein domains and substructures from 3D structural data

- Annotate starting and ending positions of tertiary substructures [3]

- Calculate spatial relationships between different domains

Quaternary Structure Modeling:

- For multi-chain proteins, identify subunit composition and interactions

- Generate global embeddings representing the complete functional assembly [3]

Hierarchical Integration:

- Employ attention mechanisms to align representations across structural levels

- Implement adaptive fusion to balance contributions from different levels

- Validate representation quality through downstream prediction tasks

Troubleshooting: If structural data is unavailable, use predicted structures from AlphaFold or similar tools. For novel proteins with no homologs, rely on primary sequence with self-supervised learning.

Protocol 2: Cold-Start Validation Framework

Purpose: To validate DTI predictions for novel drugs and targets with no prior interaction data.

Materials:

- Benchmark datasets with cold-start splits

- High-throughput screening capabilities

- Patch clamp apparatus for electrophysiological validation [17]

Procedure:

- Data Partitioning:

- Create warm-start, drug cold-start, and target cold-start splits

- Ensure no overlap between training and test compounds/targets in cold-start scenarios

Model Training:

Experimental Validation:

Performance Assessment:

- Compare against state-of-the-art baselines across multiple metrics

- Focus on AUC improvements in cold-start scenarios

- Evaluate model interpretability through attention weight analysis

Workflow Diagrams

ColdDTI Framework Architecture

Multi-Level Protein Representation Pipeline

Research Reagent Solutions

| Resource Type | Specific Examples | Primary Function | Application in Cold-Start DTI |

|---|---|---|---|

| Protein Databases [3] | UniProt, PDB, AlphaFold DB | Provide sequence and structural information | Source data for multi-level protein representation |

| Drug Compound Resources [3] | PubChem, ChEMBL | Offer molecular structures and properties | SMILES sequences and molecular graphs for drug representation |

| Interaction Databases [17] | DrugBank, BindingDB | Contain known drug-target interactions | Training data and benchmark evaluation |

| Computational Frameworks [3] [17] | ColdDTI, DTIAM | Implement hierarchical structure modeling | Primary tools for cold-start prediction |

| Validation Assays [17] | High-throughput screening, Patch clamp | Experimental verification of predictions | Confirm computational predictions for novel interactions |

This technical support center provides troubleshooting guides and FAQs for researchers employing meta-learning frameworks to address the cold-start problem in drug-target interaction (DTI) prediction.

Frequently Asked Questions (FAQs)

1. What is meta-learning and why is it relevant to the cold-start problem in DTI prediction? Meta-learning, or "learning to learn," is a machine learning technique that enables models to quickly adapt to new tasks with limited data by leveraging prior experience from a variety of training tasks [19]. In DTI prediction, the cold-start problem refers to the challenge of predicting interactions for new drugs or new targets that have little to no known interaction data [5] [20]. Traditional models rely heavily on sufficient existing interaction data and thus fail in these scenarios. Meta-learning directly addresses this by training models on a distribution of tasks (e.g., predicting interactions for different subsets of drugs and targets), which allows the model to develop a generalized initialization that can be rapidly fine-tuned with only a few examples of a new cold-start task [5] [21].

2. What are the main categories of meta-learning algorithms I should consider? Meta-learning algorithms are broadly categorized into three main approaches [19] [22]:

- Optimization-based (e.g., MAML, Reptile): These methods learn a set of optimal initial model parameters that can be quickly adapted to a new task with a few gradient steps [23].

- Metric-based (e.g., Prototypical Networks): These methods learn a metric space in which classification is performed by computing distances to prototype representations of each class, making them highly effective for few-shot classification [23] [24].

- Model-based: These models are designed with internal or architectural mechanisms to facilitate fast adaptation, often using recurrent networks or memory modules.

3. My meta-learning model for cold-start DTI is overfitting to the major tasks and ignoring minor user groups or rare targets. How can I address this? Task-overfitting, where a model performs well on common tasks (major users/drugs) but poorly on rare ones, is a known challenge. To mitigate this:

- Implement Personalized Adaptive Learning Rates: Instead of a universal learning rate for all tasks, design a personalized adaptive learning rate for each user or task group. This can be achieved by using a similarity-based method to find similar users/tasks as a reference for setting the learning rate [21].

- Incorporate a Memory Agnostic Regularizer: This technique helps reduce overfitting while maintaining performance and can control space complexity, making it efficient for large-scale datasets [21].

4. How can I effectively design tasks for meta-learning in a DTI context? Task design is critical for successful meta-learning. For DTI prediction, tasks should share an underlying structure but differ in specific parameters [23]. A common approach is N-way K-shot classification:

- N-way: The number of classes (e.g., types of drugs or targets) in a task.

- K-shot: The number of examples per class available for adaptation.

For example, you can structure your dataset so that each task involves predicting interactions for a unique, disjoint set of

Ndrugs or targets. The model then learns from a large number of such tasks, enabling it to generalize to novel drugs or targets (the cold-start scenario) [5] [24]. The Neurenix API provides utilities for generating such classification tasks [23].

5. My graph neural network for DTI suffers from over-smoothing when capturing long-range dependencies. What are some solutions? Over-smoothing is a common issue in deep GNNs where node representations become indistinguishable. The MGDTI (Meta-learning-based Graph Transformer) framework proposes a solution [5] [20]:

- Use a Graph Transformer: Replace or augment standard GNN layers with a graph transformer module. Transformers are adept at capturing long-range dependencies in data without the same over-smoothing constraints of message-passing GNNs.

- Node Neighbor Sampling: Generate contextual sequences for each node through sampling before processing them with the transformer, which helps in capturing local structural information effectively [20].

Troubleshooting Guides

Issue 1: Poor Performance on Cold-Start Tasks After Meta-Training

Problem: Your meta-learned model fails to adapt effectively to new drugs or targets (cold-start tasks), showing low predictive accuracy.

Solution: This often indicates that the model has not learned sufficiently generalizable prior knowledge. Follow this diagnostic workflow to identify and address the root cause.

Diagnostic Steps & Fixes:

- Check Task Diversity in Meta-Training: The model may have been trained on tasks that are not representative of the true variety of cold-start scenarios. Fix: Curate your meta-training tasks to cover a broad and realistic distribution of drugs and targets, ensuring the model encounters a wide range of structural and functional variations during training [23].

- Check Model Architecture for Bottlenecks: A model with limited capacity cannot capture the complex patterns needed for rapid adaptation. Fix: Consider using a more expressive architecture. For graph-based DTI data, the MGDTI framework employs a Graph Transformer to prevent over-smoothing and better capture long-range dependencies in the biological network [5] [20].

- Check Inner-Loop Learning Rate: A poorly chosen learning rate for the inner-loop adaptation can hinder fast learning. Fix: Systematically tune the inner-learning rate (

inner_lr). For scenarios with highly diverse tasks, consider implementing a personalized adaptive learning rate that varies per task or user group to prevent major groups from dominating the learning process [23] [21]. - Check Use of Auxiliary Similarity Information: Relying solely on interaction data is insufficient for cold-starts. Fix: Integrate auxiliary information to mitigate data scarcity. The MGDTI method, for instance, uses drug-drug similarity and target-target similarity as additional inputs to provide context for new entities with no known interactions [5] [20].

Issue 2: Unstable Meta-Training and Slow Convergence

Problem: The meta-training process is unstable, with a high-variance loss that converges slowly or diverges.

Solution: This is frequently related to the meta-optimization process and the batch construction.

Diagnostic Steps & Fixes:

- Reduce the Meta-Batch Size: Using too many tasks per meta-batch can lead to unstable updates. Fix: Start with a smaller meta-batch size (e.g., 4-8 tasks per batch) as recommended in the Neurenix best practices [23].

- Use First-Order Approximation: Computing second-order derivatives in MAML is computationally expensive and can sometimes introduce noise. Fix: If using MAML, set the

first_orderflag toTrue. This approximates the meta-gradient using only first-order derivatives, which often stabilizes training with minimal impact on performance [23]. - Adjust the Number of Inner-Loop Steps: Too many inner-loop steps can cause overfitting to the support set of each task, while too few may prevent sufficient adaptation. Fix: Experiment with the number of inner-loop steps (

inner_steps), typically starting between 5 and 8 [23]. - Gradient Clipping: Implement gradient clipping during the meta-update phase to prevent exploding gradients.

Experimental Protocols & Performance Data

Protocol: Implementing a Meta-Learning Framework for Cold-Start DTI

This protocol outlines the key steps for implementing and evaluating a meta-learning framework like MGDTI for cold-start DTI prediction [5] [20].

1. Data Preparation and Task Generation:

- Construct a Drug-Target Information Network: Build a heterogeneous graph

G=(V,E)where nodesVrepresent entities like drugs, targets, and diseases. EdgesErepresent interactions or similarities between them [20]. - Define Meta-Learning Tasks: For cold-start scenarios, create two types of tasks:

- Cold-Drug Task: Predict interactions between new drugs (absent from training) and known targets.

- Cold-Target Task: Predict interactions between new targets (absent from training) and known drugs.

- Format as N-way K-shot: For each task, sample N novel classes (drugs/targets) for the support set (K examples per class) and query set.

2. Model Setup (e.g., MGDTI):

- Graph Enhanced Module: Integrate drug-drug and target-target similarity matrices as additional information to enrich node features [20].

- Local Graph Structural Encoder: Use a node neighbor sampling method to generate contextual sequences for each node, preparing them for the next stage.

- Graph Transformer Module: Feed the contextual sequences into a graph transformer to capture long-range dependencies and generate final node embeddings without over-smoothing [5].

- Meta-Learning Wrapper: Employ an optimization-based algorithm like MAML or Reptile to train the entire model.

3. Meta-Training:

- The model is trained on a large number of tasks sampled from the training classes.

- For each task, the model uses the support set for inner-loop adaptation and the query set for the meta-update.

4. Meta-Testing (Evaluation on Cold-Start Scenarios):

- Evaluate the meta-trained model on a held-out set of test tasks involving truly novel drugs or targets.

- No fine-tuning on the test classes is allowed; only the few examples in the test task's support set can be used for adaptation.

Performance Benchmarking

The following table summarizes the performance of the MGDTI model compared to other baseline methods on benchmark datasets under cold-start scenarios, measured by Area Under the Precision-Recall Curve (AUPR) [5] [20].

Table 1: Performance Comparison (AUPR) of DTI Prediction Methods in Cold-Start Scenarios

| Method | Type | Cold-Drug AUPR | Cold-Target AUPR | Notes |

|---|---|---|---|---|

| MGDTI (Proposed) | Meta-learning + Graph Transformer | 0.961 | High Performance | Excels in cold-target scenarios [5] [25] |

| KGE_NFM | Knowledge Graph + Recommendation | 0.922 (Warm) | Robust Performance | A unified framework, robust in cold-start for proteins [25] |

| DTiGEMS+ | Heterogeneous Data Driven | 0.957 (Warm) | Not Specified | High performance in warm start [25] |

| TriModel | Knowledge Graph Embedding | 0.946 (Warm) | Not Specified | Good performance in warm start [25] |

| NFM (standalone) | Feature-based | 0.922 (Warm) | Reduced in Cold-start | Performance drops over 10% in imbalanced/cold-start [25] |

| MPNN_CNN | End-to-end Deep Learning | 0.788 (Warm) | Not Specified | Struggles with limited training data [25] |

Note: "Warm" indicates performance reported in warm-start settings, provided for context. Direct cold-start comparisons between all methods are not always available in the search results, but MGDTI is explicitly designed and evaluated for this challenge [5].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools and Data for Meta-Learning in DTI Prediction

| Item Name | Function / Application | Specifications / Examples |

|---|---|---|

| Meta-Learning API (e.g., Neurenix) | Provides high-level implementations of algorithms (MAML, Reptile, Prototypical Networks) for rapid prototyping. | Supports CPU, CUDA, ROCm; offers MAML(), Reptile(), and PrototypicalNetworks() classes [23]. |

| Knowledge Graph Embedding (KGE) Models | Learns low-dimensional vector representations of entities (drugs, targets) from a knowledge graph for feature extraction. | Models like DistMult, TriModel; used in frameworks like KGE_NFM [25]. |

| Graph Neural Network (GNN) Libraries | Builds and trains models on graph-structured data, fundamental for network-based DTI prediction. | PyTorch Geometric, DGL; MGDTI uses a custom Graph Transformer [5] [20]. |

| Benchmark DTI Datasets | Standardized datasets for training and fair evaluation of DTI prediction models. | Yamanishi_08's dataset, BioKG [25] [20]. |

| Similarity Matrices | Provides auxiliary information (drug-drug, target-target) to mitigate data scarcity in cold-start scenarios. | Can be derived from chemical structure fingerprints or protein sequence similarities [5] [20]. |

| Task Generator Utilities | Automates the creation of N-way K-shot tasks from a dataset for meta-learning training and evaluation. | Functions like generate_classification_tasks() in the Neurenix API [23]. |

Key Architectural Diagrams

Meta-Learning for Cold-Start DTI Workflow

This diagram illustrates the end-to-end workflow for applying meta-learning to cold-start Drug-Target Interaction prediction, from task construction to final prediction.

MGDTI Model Architecture

The MGDTI framework integrates graph learning with meta-learning to tackle cold-start DTI prediction.

Frequently Asked Questions (FAQs)

Q1: What is the "cold-start" problem in Drug-Target Interaction (DTI) prediction, and why is it a significant challenge? The cold-start problem refers to the major challenge of predicting interactions for novel drugs or target proteins that have little to no known interaction data. This is a critical bottleneck because most computational models rely on observed interaction patterns from existing data. In cold-start scenarios, this historical data is absent, making it difficult for models to generalize and provide reliable predictions for new entities [3] [5].

Q2: How can data from Protein-Protein Interactions (PPIs) and Cell-Cell Interactions (CCIs) help with cold-start DTI prediction? PPI and CCI data provides a rich source of prior biological knowledge about how proteins and cells communicate and function together. This information can be transferred to DTI tasks in several ways:

- Providing Structural Priors: PPI networks can reveal a protein's functional context and multi-level structural organization, which influences how it interacts with drugs [3].

- Enabling Homology-Based Inference: If a protein with unknown drug interactions is structurally similar to a protein in a well-characterized PPI network, some interaction patterns can be inferred, though this requires high sequence similarity [26].

- Offering Transferable Patterns: The fundamental principles of molecular recognition learned from analyzing PPI and CCI data can be formalized and transferred to model the interactions between drugs and their targets [27].

Q3: What are the key limitations of using homology transfer from PPI data? While promising, homology-based transfer has important limitations that require caution:

- It requires high sequence similarity: Accurate transfer of interactions typically only occurs at very high levels of sequence identity (e.g., BLAST E-values < 10⁻¹⁰) [26].

- Conservation is not guaranteed: Surprisingly, interactions are often more conserved between paralogs (within the same species) than between orthologs (across different species), challenging the assumption that model organism data can be directly applied to humans [26].

- Data incompleteness and noise: PPI datasets from high-throughput experiments are often incomplete and can contain a high rate of false positives, which can mislead models if not carefully accounted for [26].

Q4: My DTI model performs well overall but fails on specific drug pairs. What could be the issue? This is a classic symptom of the "activity cliff" (AC) problem. Your model may be overly reliant on the principle that similar drugs have similar effects. An activity cliff occurs when two structurally very similar drugs have dramatically different biological activities or binding affinities towards the same target. Traditional models struggle with these highly discontinuous structure-activity relationships [28]. A potential solution is to use transfer learning from a dedicated AC prediction task to make your DTI model "AC-aware" and more robust to these cases [28].

Troubleshooting Guides

Problem: Poor Generalization to Novel Drugs or Targets

This is the core cold-start problem. Your model fails when presented with a new drug or target protein not seen during training.

| Potential Cause | Recommended Solution | Related Concept |

|---|---|---|

| Over-reliance on drug-drug or protein-protein similarity graphs. | Shift to structure-based methods that use intrinsic features (e.g., SMILES for drugs, amino acid sequences for proteins) instead of relational data [3] [29]. | Graph-based vs. Structure-based Models [3] |

| Using only a protein's primary structure (sequence). | Explicitly model the multi-level structure of proteins (primary, secondary, tertiary) in your framework to capture more biologically transferable priors [3]. | Protein Multi-level Structure [3] |

| Simple model architecture with limited transfer learning. | Implement a hint-based knowledge adaptation strategy. Use a large, pre-trained protein language model (teacher) to provide "general knowledge" to a smaller, efficient student model tailored for DTI [29]. | Hint-based Learning [29] |

| Data scarcity for specific protein families. | Apply meta-learning. Train your model on a wide variety of DTI tasks so it can quickly adapt to new, unseen drugs or targets with limited data [5]. | Meta-learning [5] |

Experimental Protocol: Implementing Hint-Based Knowledge Adaptation for Proteins This methodology transfers general protein knowledge to a task-specific DTI model.

- Teacher Model Setup: Select a pre-trained protein language model (e.g., ProtBERT [29]).

- Feature Extraction (Caching): Pass all protein sequences in your dataset through the teacher model and extract the intermediate feature representations (hidden states) from one or more layers. Cache these features to avoid recomputation.

- Student Model Design: Construct a smaller, efficient neural network (e.g., a shallow Transformer or CNN) as your target protein encoder.

- Training with Hint Loss: Train the student model with a composite loss function:

- Prediction Loss: Standard loss (e.g., Binary Cross-Entropy) for the DTI prediction task.

- Hint Loss: A Mean Squared Error (MSE) loss that penalizes the difference between the student model's intermediate features and the cached features from the teacher model.

- Joint Learning: The student model learns to simultaneously mimic the teacher's general understanding of proteins and perform the specific DTI task accurately and efficiently [29].

Problem: Model Struggles with "Activity Cliffs"

Your model inaccurately predicts interactions for pairs of structurally similar drugs that have large differences in potency.

| Potential Cause | Recommended Solution | Related Concept |

|---|---|---|

| Model is biased towards smooth structure-activity relationships. | Integrate transfer learning from an explicit Activity Cliff (AC) prediction task. Pre-train part of your model to identify ACs, then fine-tune it on your primary DTI task [28]. | Activity Cliffs (ACs) [28] |

| Imbalanced data with few known AC examples. | Use specialized dataset splitting (compound-based split) to ensure AC pairs are properly represented in the test set and to avoid data leakage [28]. | Compound-based Splitting [28] |

Experimental Protocol: Transfer Learning from Activity Cliff Prediction This protocol enhances DTI prediction by first learning the challenging patterns of activity cliffs.

- AC Dataset Construction:

- From your DTI data, for each target, pair all drugs that interact with it.

- Calculate the structural similarity between paired drugs (e.g., using ECFP fingerprints or SMILES similarity).

- Classify a pair as an AC if their similarity is >90% and the difference in their binding affinities is greater than a threshold (e.g., 10-fold) [28].

- AC Model Pre-training: Train a model on the AC prediction task (binary classification: AC vs. non-AC pair).

- Knowledge Transfer: Use the pre-trained weights from the AC model's encoder to initialize the drug encoder (or relevant components) in your main DTI model.

- DTI Model Fine-tuning: Finally, fine-tune the entire DTI model on the primary drug-target interaction dataset. This equips the model with better capabilities to handle tricky structural nuances [28].

Problem: Inefficient Handling of Long Protein Sequences

Training your model on large datasets is slow, and memory requirements for processing full-length protein sequences are prohibitively high.

| Potential Cause | Recommended Solution | Related Concept |

|---|---|---|

| Using a standard Transformer encoder for proteins, which has quadratic complexity. | Adopt efficient Transformer architectures (e.g., Performer, Linformer) specifically designed for long sequences [29]. | Quadratic Complexity [29] |

| Large model size of standard protein encoders. | Employ knowledge distillation or the hint-based adaptation method to train a compact, efficient student model [29]. | Knowledge Distillation [29] |

Research Reagent Solutions

The following table lists key computational tools and data resources essential for experiments in knowledge transfer for DTI prediction.

| Resource Name | Type | Function in Research |

|---|---|---|

| Cytoscape [30] | Software Platform | Visualize and analyze biological networks, including PPI and CCI data. Useful for exploring the functional context of a target protein. |

| STRING App [30] | Cytoscape Plugin | Access and import the STRING database's PPI data directly into Cytoscape for analysis and visualization. |

| ProtBERT / ProtTrans [29] | Pre-trained Model | Provides general-purpose, powerful embeddings for protein sequences. Often used as a "teacher" model for knowledge transfer. |

| ChemBERTa [29] | Pre-trained Model | Provides embeddings for drug molecules represented as SMILES strings, capturing chemical semantics. |

| BindingDB [29] [28] | Dataset | A public database of measured binding affinities between drugs and target proteins, commonly used for training and evaluating DTI models. |

| BIOSNAP [29] | Dataset | A benchmark dataset collection for network-based problems, often used in DTI prediction research. |

Pathway and Workflow Visualizations

Experimental Workflow for ColdDTI

This diagram illustrates the workflow of the ColdDTI framework, which explicitly models protein multi-level structure to address cold-start prediction [3].

Knowledge Transfer via Hint-Based Learning

This diagram shows how knowledge is transferred from a large teacher model to a efficient student model for protein encoding [29].

Logic of Sufficient and Necessary Edges

This diagram visualizes the causal logic relationships in biological networks, a concept that can be transferred to understand drug-target interactions [27].

## FAQs and Troubleshooting Guides

This technical support center addresses common challenges researchers face when implementing advanced encoders for Drug-Target Interaction (DTI) prediction, with a special focus on overcoming the cold start problem for novel drug molecules.

### Encoder Selection and Performance

Q1: For a cold start scenario with a novel drug structure, should I prioritize a Graph Neural Network or a Transformer-based encoder?

A: The choice depends on the nature of the structural information you need to capture. Our benchmark studies, summarized in Table 1, indicate that explicit and implicit structure learning methods have complementary strengths.

Table 1: Benchmark Comparison of GNN vs. Transformer Encoders for DTI Prediction

| Encoder Type | Representative Models | Key Strength | Key Weakness | Recommended Scenario for Cold Start |

|---|---|---|---|---|

| Explicit (GNN) | GCN, GIN, GAT [31] | Excels at learning local graph topology and functional group relationships [31]. | Limited expressive power; can suffer from over-smoothing and over-squashing with deep layers [32]. | Novel drugs where local atom-bond arrangements are critical for binding. |

| Implicit (Transformer) | MolTrans, TransformerCPI [31] | Superior at capturing long-range, contextual dependencies within the molecular structure [31]. | May lose fine-grained local structural details without proper inductive biases [32]. | Novel, complex drugs where global molecular context determines activity. |

Troubleshooting Guide:

- Problem: Model fails to learn meaningful representations for novel molecular graphs.

- Solution: Consider a hybrid or ensemble approach. Architectures like EHDGT combine GNNs and Transformers in parallel, using a gate mechanism to dynamically balance local and global features, which can be highly effective for generalizing to unseen data [32].

Q2: How can I manage the high computational complexity of Graph Transformers when working with large molecular graphs?

A: The quadratic complexity of standard self-attention is a known bottleneck. Here are two proven strategies:

- Adopt Linear Attention Variants: Implement models that replace the standard softmax attention with a linear attention mechanism. The EHDGT model uses this to significantly reduce complexity [32]. For large graphs, the SGFormer model demonstrates that a single-layer, single-head attention with linear complexity can achieve highly competitive performance, making it suitable for graphs with billions of nodes [33].

- Leverage Simplified Architectures: SGFormer shows that a one-layer attention model can be a powerful learner, scaling to web-scale graphs like ogbn-papers100M (111 million nodes) while providing up to 141x inference speedup over deeper Transformers [33].

Troubleshooting Guide:

- Problem: Training runs out of memory on large graph datasets.

- Solution: Shift from a deep, multi-head Transformer design to a shallow, simplified architecture like SGFormer, which forgoes complex components like positional encodings and deep layers while maintaining performance [33].

### Feature Engineering and Data Representation

Q3: What is the most effective way to incorporate positional and structural information into a Graph Transformer to boost its performance on molecular data?

A: Standard Transformers lack an innate sense of graph structure. Injecting this via positional and structural encoding is critical. The EHDGT model employs a robust strategy of superimposing node-level random walk positional encoding with edge-level positional encoding to enhance the original graph input [32]. Furthermore, the SPEGT model proposes a continuous injection of ensembled structural and positional encodings via a gate mechanism, preventing the information from becoming blurred through the Transformer layers [34].

Troubleshooting Guide:

- Problem: Your Graph Transformer performs worse than a simple GNN on molecular tasks.

- Solution: Systematically enhance your input graph features. Implement combined positional encoding strategies (e.g., Laplacian eigenvectors combined with random walk) and ensure edge features are incorporated directly into the attention calculation [32] [34].

Q4: Our dataset has missing features for some nodes (atoms) in the molecular graph. How can we best reconstruct this data?

A: Graph-based feature propagation is a powerful technique for this issue. A spatio-temporal graph attention network proposed for wind data reconstruction successfully used a feature propagation method that incorporates edge features and 3D coordinates to reconstruct missing node feature sequences, forming a complete graph-structured dataset for downstream prediction tasks [35]. This approach can be adapted for molecular graphs by using the known molecular structure to define connectivity.

### Model Architecture and Training

Q5: How can I design an architecture that effectively balances the local and global learning capabilities for molecular graphs?

A: A parallelized architecture that dynamically fuses GNN and Transformer outputs is a state-of-the-art solution. The EHDGT model uses this design:

- A GNN branch processes local subgraphs to augment its proficiency in local information.

- A Transformer branch incorporates edges into the attention calculation for global, long-range dependency modeling.

- A gate-based fusion mechanism dynamically integrates the outputs of both branches, maintaining an optimal balance between local and global features [32].

Experimental Protocol for DTI Benchmarking (Based on GTB-DTI [31]):

- Objective: Fairly compare the effectiveness and efficiency of GNN and Transformer-based drug structure encoders.

- Datasets: Use six standardized datasets for DTI classification and regression tasks.

- Model Training: For each model, use its individually reported optimal hyperparameters and training settings to ensure a fair comparison.

- Evaluation Metrics:

- Effectiveness: Use task-specific metrics like AUC-ROC for classification and Mean Squared Error (MSE) for regression.

- Efficiency: Measure peak GPU memory usage, total running time, and time to convergence.

- Featurization: Use consistent drug featurization techniques that inform chemical and physical properties across all models.

Diagram: Parallel GNN-Transformer Fusion Architecture. This design, used in EHDGT, allows dynamic balancing of local and global features [32].

## The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Components for Building Advanced Graph Encoders in DTI Research

| Component / Algorithm | Function | Example Use-Case |

|---|---|---|

| Gate-based Fusion Mechanism [32] | Dynamically balances the contributions of local (GNN) and global (Transformer) feature streams. | Mitigates over-smoothing in GNNs and enhances local feature learning in Transformers for novel drugs. |

| Linear Attention [32] [33] | Replaces standard self-attention to reduce computational complexity from quadratic to linear. | Enables training on large molecular graphs or high-throughput virtual screening. |

| Multi-order Similarity Graph Construction [36] | Constructs graph topology by considering higher-order node relationships beyond direct (1st-order) connections. | Captures complex topological patterns in molecular structures for more robust representation learning. |

| Structural & Positional Ensembled Encoding [34] | Combines multiple graph encoding types (e.g., Laplacian, random walk) to provide a richer structural context. | Improves model's understanding of molecular geometry and relational context, crucial for cold start. |

| Feature Propagation for Data Imputation [35] | Reconstructs missing node features in a graph by leveraging information from connected nodes. | Handles incomplete molecular data or datasets with partial feature availability. |

Diagram: Technical Pathway for Addressing Cold Start DTI. This workflow helps select the right encoder strategy for novel drugs.

Frequently Asked Questions (FAQs)

Q1: What is the cold start problem in Drug-Target Interaction (DTI) prediction, and why is it a significant challenge? The cold start problem refers to the significant difficulty in predicting interactions for novel drugs or targets that have no known interactions in the training data. This is a major challenge in drug discovery because it limits the ability to identify new therapeutic uses for existing drugs or to predict targets for newly developed compounds. Traditional models often rely heavily on the network topology of known interactions or similarity to other drugs/targets, which fails when such prior information is absent [37].

Q2: How can multimodal data fusion help mitigate the cold start problem? Multimodal data fusion addresses the cold start problem by integrating diverse, intrinsic information about drugs and targets that does not depend on existing interaction networks. By combining features from 1D sequences (SMILES for drugs, amino acid sequences for targets), 2D topological graphs (molecular structures for drugs, contact maps for targets), and even 3D spatial structures, models can learn fundamental functional and structural properties. This provides a robust basis for making predictions about novel entities, as demonstrated by frameworks like MIF-DTI and EviDTI [37] [38].

Q3: My model produces overconfident and incorrect predictions for novel drug-target pairs. How can I improve prediction reliability? Overconfidence in false predictions is a common issue, particularly with out-of-distribution samples. Implementing Evidential Deep Learning (EDL), as in the EviDTI framework, allows the model to quantify its own uncertainty. This provides a confidence score for each prediction, enabling you to prioritize experimental validation on predictions with high probability and low uncertainty, thereby reducing resource waste on false positives [37].

Q4: What is the role of cross-attention and bilinear attention in interaction extraction? Cross-attention mechanisms are crucial for capturing the complex, pairwise correlations between a drug and a target. Instead of simply concatenating their features, cross-attention allows the model to focus on the most relevant parts of a target's sequence when analyzing a specific drug, and vice versa. This is a key component in models like MFCADTI and MIF-DTI for learning effective interaction features [39] [38].