Overcoming Molecular Docking Limitations for Novel Targets: A 2025 Guide to Robust Protocols

Molecular docking is a cornerstone of computational drug discovery but faces significant challenges when applied to novel biological targets, leading to unreliable predictions.

Overcoming Molecular Docking Limitations for Novel Targets: A 2025 Guide to Robust Protocols

Abstract

Molecular docking is a cornerstone of computational drug discovery but faces significant challenges when applied to novel biological targets, leading to unreliable predictions. This article provides a comprehensive, current analysis for researchers and drug development professionals, synthesizing the latest findings from traditional and deep learning docking paradigms. We explore the fundamental physical and algorithmic roots of these limitations, present advanced methodological strategies including hybrid AI-physics frameworks, detail practical troubleshooting and protocol optimization techniques, and establish rigorous validation standards. By integrating insights across these four core intents, this guide aims to equip scientists with a actionable framework to enhance the accuracy, reliability, and biological relevance of docking studies for unexplored therapeutic targets.

The Core Challenge: Understanding the Fundamental Limits of Docking for Novel Targets

Frequently Asked Questions (FAQs)

FAQ 1: What is the 'Novel Target' problem in molecular docking? The 'Novel Target' problem refers to the significant performance drop and lack of reliability that computational docking methods exhibit when applied to proteins, binding pockets, or ligands that are structurally or sequentially distinct from those present in their training data. This failure to generalize is a critical bottleneck in drug discovery for new disease targets and is primarily driven by gaps in three key areas: protein sequence similarity, 3D binding pocket structure, and ligand chemical topology [1].

FAQ 2: Why do some deep learning docking methods produce physically implausible results? Despite achieving favorable Root-Mean-Square Deviation (RMSD) scores, some deep learning models, particularly regression-based architectures, often generate poses with high steric tolerance. This means they may produce configurations with incorrect bond lengths/angles, stereochemistry, or severe protein-ligand clashes. These models prioritize learned data distributions over physical constraints, leading to poses that are geometrically impossible or chemically invalid, a flaw often revealed by validation toolkits like PoseBusters [1].

FAQ 3: Which docking paradigm currently offers the best balance for novel targets? Recent multidimensional evaluations indicate that hybrid methods, which integrate traditional conformational search algorithms with deep learning-enhanced scoring functions, offer the most robust balance for novel targets. They synergize the physical plausibility of physics-based approaches with the data-driven accuracy of AI. For instance, the hybrid method Interformer has been shown to maintain competitive pose accuracy while retaining robust physical validity across diverse benchmark datasets, including those containing novel protein binding pockets [1].

FAQ 4: What is the role of experimental validation in addressing generalization gaps? Experimental validation is non-negotiable. In-silico predictions, especially for novel targets, must be confirmed through experimental methods such as X-ray crystallography, NMR spectroscopy, or Cryo-Electron Microscopy to verify the binding mode and affinity. A docking prediction should be considered a hypothesis until it is empirically tested. This is crucial for mitigating the risks posed by inaccurate scoring functions and physically implausible poses generated by some methods [2].

Troubleshooting Guides

Problem 1: Poor Pose Prediction on Proteins with Low Sequence Similarity to Training Data

Symptoms: Consistently high RMSD values and failure to recapitulate known key protein-ligand interactions, even when the overall binding site fold appears similar.

Diagnosis and Solutions:

- Root Cause: The docking model has overfitted to specific amino acid sequences and lacks the fundamental understanding of physico-chemical interactions (e.g., hydrogen bonding, hydrophobic patches) that are conserved across evolutionarily distant proteins.

- Solution A: Employ Multi-Structure Docking

- Action: Do not rely on a single protein structure. Instead, perform docking against an ensemble of structures. This ensemble can include:

- Multiple experimental structures (e.g., from PDB) of the same protein.

- Homology models generated from diverse templates.

- Conformational snapshots from Molecular Dynamics (MD) simulations [3].

- Rationale: This accounts for inherent protein flexibility and reduces the risk of failure due to minor structural variations in a single static snapshot.

- Action: Do not rely on a single protein structure. Instead, perform docking against an ensemble of structures. This ensemble can include:

- Solution B: Utilize Hybrid or Traditional Docking Methods

- Action: For novel sequences, prioritize docking tools known for strong generalization. According to benchmarks, traditional methods like Glide SP and AutoDock Vina, or hybrid methods like Interformer, demonstrate more consistent performance and physical validity on datasets containing novel protein sequences compared to purely regression-based deep learning models [1].

Problem 2: Inaccurate Docking to Novel Binding Pocket Geometries

Symptoms: The ligand fails to dock correctly into a binding pocket that has a shape or architecture not represented in the method's training set, even if the overall protein is known.

Diagnosis and Solutions:

- Root Cause: The scoring function or pose generation algorithm is biased toward pocket geometries it has encountered before and cannot extrapolate to novel spatial arrangements.

- Solution A: Leverage Pocket-Specific Data Curation

- Action: Use rigorous benchmark sets like DockGen that are specifically curated to test performance on novel binding pockets. Validate your method's performance on such a set before applying it to your true target [1].

- Rationale: This provides a realistic assessment of your workflow's limitations and helps in selecting the most appropriate tool.

- Solution B: Integrate AI-Driven Pocket Flexibility

- Action: For critical targets, consider using advanced docking solutions that incorporate pocket flexibility either through explicit side-chain movement or by using generative models trained on a diverse set of pocket structures. Methods like DynamicBind, designed for blind docking, can be more adaptable to novel pocket geometries [1].

Problem 3: Failure to Dock Ligands with Unfamiliar Topologies or Scaffolds

Symptoms: The method performs well on ligand analogs but produces unrealistic poses for chemically distinct or structurally novel compounds.

Diagnosis and Solutions:

- Root Cause: The model's internal representation of chemical space is limited to the scaffolds and functional groups present in its training data.

- Solution A: Apply Pose Refinement with Molecular Dynamics

- Action: Use the top-ranked docking poses as starting points for short MD simulations. This allows the ligand and receptor to relax into a more physiologically realistic conformation, accounting for induced-fit effects that rigid docking cannot capture [3].

- Rationale: MD refinement can correct minor steric clashes and optimize interactions, improving the physical plausibility of the final pose.

- Solution B: Adopt a Multi-Method Consensus Approach

- Action: Dock the same ligand using multiple, algorithmically distinct methods (e.g., one stochastic, one systematic, one deep learning-based). Poses that are consistently predicted across different programs are more likely to be correct.

- Rationale: This strategy mitigates the individual weaknesses and biases of any single docking algorithm [2].

Quantitative Performance Data

The following tables summarize the performance of various docking paradigms across critical dimensions, highlighting their relative strengths and weaknesses when facing generalization challenges.

Table 1: Comparative Docking Performance Across Benchmark Datasets [1]

| Docking Paradigm | Specific Method | Astex (Known Complexes) | PoseBusters (Unseen Complexes) | DockGen (Novel Pockets) | |||

|---|---|---|---|---|---|---|---|

| RMSD ≤2Å (%) | PB-Valid (%) | RMSD ≤2Å (%) | PB-Valid (%) | RMSD ≤2Å (%) | PB-Valid (%) | ||

| Traditional | Glide SP | ~70.6 | 97.7 | ~58.0 | 97.9 | ~40.2 | 94.2 |

| AutoDock Vina | Information missing | 82.4 | Information missing | 79.0 | Information missing | 88.4 | |

| Generative Diffusion | SurfDock | 91.8 | 63.5 | 77.3 | 45.8 | 75.7 | 40.2 |

| DiffBindFR (MDN) | 75.3 | Information missing | 50.9 | 47.2 | 30.7 | 47.1 | |

| Hybrid (AI Scoring) | Interformer-Energy | 81.2 | 72.9 | 59.6 | 72.0 | 46.6 | 69.8 |

| Regression-Based DL | QuickBind / GAABind / KarmaDock | Performance significantly lower, often failing to produce physically valid poses. |

Table 2: Strengths and Weaknesses by Docking Paradigm [1]

| Paradigm | Pose Accuracy | Physical Validity | Generalization | Best Use Case |

|---|---|---|---|---|

| Traditional | Moderate | Excellent | Good | Benchmarking; when physical plausibility is paramount. |

| Generative Diffusion | Excellent | Moderate to Low | Variable | High-accuracy pose prediction on known target types. |

| Regression-Based DL | Low | Poor | Poor | Not recommended for novel targets in current state. |

| Hybrid | High | Good | Best Balance | Robust applications involving diverse or novel targets. |

Experimental Protocols & Workflows

Protocol 1: A Rigorous Workflow for Validating Docks on Novel Targets

This protocol provides a step-by-step guide for assessing the binding pose and affinity of a ligand against a novel protein target.

1. Target Preparation: * Obtain the 3D structure of the target protein from PDB, homology modeling, or AI-based prediction (e.g., AlphaFold2). * Clean the structure: remove water molecules, co-factors, and original ligands. Add hydrogen atoms and assign correct protonation states for key residues (e.g., His, Asp, Glu) using tools like PDB2PQR or the protein preparation wizard in Maestro/MOE. * Define the binding site coordinates based on biological data or predicted active sites.

2. Ligand Preparation: * Sketch or obtain the 3D structure of the ligand. * Generate likely tautomers and protonation states at physiological pH (e.g., using Epik or LigPrep). * Perform an energy minimization to ensure proper bond lengths and angles.

3. Docking Execution: * Select at least two docking programs from different paradigms (e.g., one traditional like Glide SP or AutoDock Vina, and one hybrid or diffusion-based). * Run the docking simulations, generating a large number of poses (e.g., 50-100 per ligand).

4. Pose Selection and Analysis: * Cluster the generated poses based on spatial similarity (RMSD). * Score and Rank poses using the native scoring functions of the docking programs. * Visually Inspect the top-ranked poses from each cluster to check for key interactions (H-bonds, pi-stacking, hydrophobic contacts) and physical plausibility (no severe clashes).

5. Validation: * Cross-validate with a different method: If available, compare results with a pose generated by a fundamentally different technique (e.g., a different docking algorithm or MD simulation). * Experimental Validation: The ultimate validation step. Proceed with experimental techniques like X-ray crystallography or mutagenesis to confirm the predicted binding mode [2].

Protocol 2: Structure-Based Virtual Screening Protocol

Objective: To computationally screen a large library of compounds to identify potential hits that bind to a novel target.

1. Library Curation: * Select a chemically diverse, synthesizable compound library (e.g., ZINC, ChEMBL). * Prepare all library ligands: generate 3D conformers, optimize geometry, and assign correct protonation states.

2. High-Throughput Docking: * Use a fast, reliable docking program (e.g., AutoDock Vina, DOCK) to screen the entire library against the prepared target structure. * The scoring function ranks compounds based on predicted binding affinity.

3. Post-Screening Analysis: * Re-docking: Take the top-ranked compounds (e.g., top 1%) and re-dock them using a more rigorous, computationally expensive method (e.g., Glide XP, hybrid methods) to improve pose prediction accuracy. * Interaction Analysis: Manually inspect the binding modes of the top re-docked hits to ensure they form sensible interactions with the target. * Consensus Scoring: Rank hits based on a combination of scores from multiple scoring functions to reduce false positives [4] [2].

Signaling Pathways and Workflows

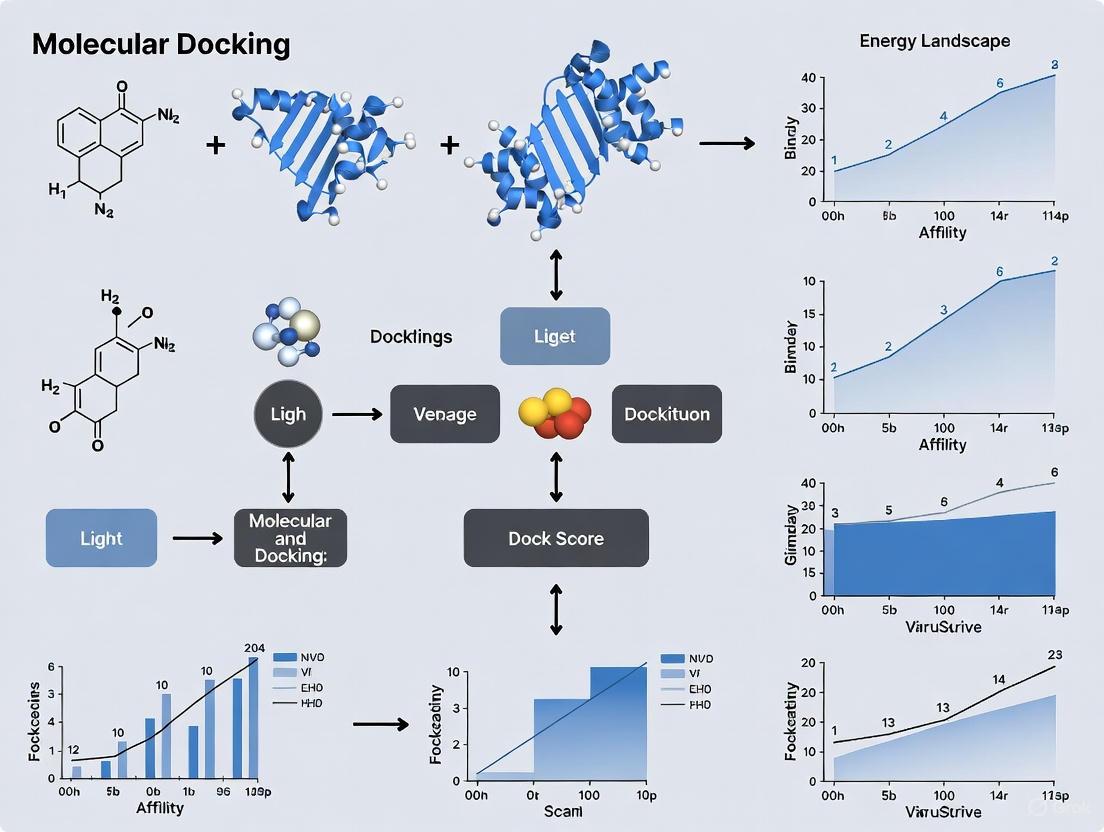

Diagram 1: Novel Target Docking Validation Workflow

Diagram 2: Generalization Gap Taxonomy

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Reagents for Docking Research

| Reagent / Resource | Type | Primary Function | Key Consideration |

|---|---|---|---|

| PDB (Protein Data Bank) | Database | Repository for experimentally-determined 3D structures of proteins and nucleic acids. | The gold standard for obtaining target structures; quality and resolution can vary [2]. |

| AlphaFold Protein Structure Database | Database | Repository of highly accurate predicted protein structures generated by AlphaFold2. | Invaluable for targets without experimental structures; but is a predictive model [3]. |

| ZINC Database | Database | Curated database of commercially-available compounds for virtual screening. | Provides readily accessible starting points for drug discovery [4]. |

| DockGen Dataset | Benchmark Set | A dataset specifically curated to test docking performance on novel protein binding pockets. | Critical for evaluating a method's generalization capability before applying it to a true novel target [1]. |

| PoseBusters | Validation Tool | A toolkit to systematically evaluate docking predictions against chemical and geometric consistency criteria. | Essential for detecting physically implausible poses that might have good RMSD [1]. |

| AutoDock Vina | Docking Software | A widely-used, open-source program for molecular docking and virtual screening. | A robust traditional method known for its speed and general reliability [1] [2]. |

| Glide (Schrödinger) | Docking Software | A comprehensive docking suite offering different levels of precision (SP, XP). | Noted for its high physical validity and strong performance in benchmarks [1]. |

| GROMACS / AMBER | MD Software | Software packages for performing Molecular Dynamics simulations. | Used for pre-docking conformational sampling or post-docking pose refinement to account for flexibility [3]. |

FAQs: Understanding Physical Plausibility in Docking

What is "physical plausibility" in molecular docking and why is it a critical metric? Physical plausibility refers to whether a predicted protein-ligand binding pose adheres to fundamental chemical and physical constraints, such as reasonable bond lengths and angles, proper stereochemistry, and the absence of severe atomic clashes [5]. It is critical because a pose can have an excellent (low) computational docking score yet be physically impossible or unstable in a real biological environment. Relying solely on score can lead to false positives and wasted research resources [6].

My docking pose has a high score (low binding energy) but looks unnatural. Should I trust it? No, you should not automatically trust it. A high score does not guarantee biological relevance. Computational scoring functions are simplifications and can be misled [6]. It is essential to visually inspect the pose for obvious issues like unrealistic atom overlaps or strained geometries and to use additional validation tools like PoseBusters [5] or molecular dynamics simulations to test the pose's stability over time [3].

Why do deep learning docking models sometimes generate physically invalid poses? Some deep learning models, particularly regression-based architectures, are trained to minimize the root-mean-square deviation (RMSD) from a known structure. In this process, they may prioritize this single metric over fundamental physical constraints, leading to poses with incorrect bond lengths, angles, or atomic clashes, despite a favorable RMSD [5].

How can a docking pose be correct based on RMSD but still be physically implausible? RMSD measures the average distance between atoms in a predicted pose and a reference pose. A low RMSD indicates general shape similarity but does not describe the quality of the internal ligand geometry or all its interactions. A pose could be slightly shifted in a way that creates severe atomic clashes or distorted bonds, yielding a good RMSD but a poor physical structure [5].

What is the relationship between docking scores (ΔG) and experimental results (IC50)? The relationship is often not straightforward. While a more negative ΔG theoretically suggests stronger binding and thus a lower (more potent) IC50, studies frequently find a poor correlation [7]. Discrepancies arise from factors ignored in simplified docking simulations, such as cellular permeability, compound metabolism, and the dynamic nature of the true biological environment [7].

Troubleshooting Guides

Guide 1: Identifying and Rectifying Physically Implausible Poses

Symptoms: The top-ranked docking pose exhibits unrealistic ligand geometry, severe atomic clashes with the protein, or unlikely interaction patterns that violate chemical principles.

Root Causes:

- Inadequate Ligand Preparation: The ligand's starting structure may have incorrect bond orders, missing hydrogens, or an unrealistic initial conformation [8].

- Overly Simplified Scoring Function: The scoring function may underestimate the energy penalty of atomic clashes or fail to properly model certain interaction types [5] [6].

- Insufficient Sampling: The docking algorithm may not have explored enough conformational space to find a physically realistic low-energy pose [3].

- Rigid Protein Receptor: The docking run may have kept the protein completely rigid, not allowing side-chains to move slightly to accommodate the ligand, which can lead to clashes [3].

Solutions:

- Validate Ligand Geometry: Before docking, ensure your ligand has proper chemistry. Use toolkits to add missing hydrogens, assign correct bond orders, and perform a quick energy minimization [8].

- Employ Pose Validation Tools: After docking, run your top poses through a validation tool like PoseBusters [5]. This checks for physical and chemical sanity.

- Inspect Poses Visually: Always visually examine the top-ranked poses in a molecular viewer. Look for obvious steric clashes and check if key interactions (e.g., hydrogen bonds) are geometrically sound.

- Use Molecular Dynamics (MD) for Refinement: Run a short MD simulation on the docked complex. An unstable or implausible pose will often quickly fall apart or rearrange significantly in simulation, while a physically sound pose will remain stable [6] [3].

Guide 2: Improving Pose Validation for Novel Targets

Challenge: When working with a new protein target with no known experimental ligand structures, validating the physical plausibility of docking results becomes more challenging.

Methodology:

- Use Ensemble Docking: If available, dock against an ensemble of multiple receptor conformations instead of a single static structure. These conformations can be taken from different crystal structures or generated through MD simulations. This accounts for protein flexibility and reduces the chance of clashes [3].

- Leverage Conservation: If the binding site of your novel target is similar to a well-characterized protein, use the known interaction patterns from the characterized protein as a guide for what constitutes a plausible pose in your target.

- Apply Consensus Scoring: Re-score your docking poses using multiple different scoring functions. A pose that is ranked highly by several independent scoring methods is more likely to be physically plausible and correct [6].

- Prioritize MD Validation: For a novel target with high stakes, MD simulation refinement is strongly recommended. It is one of the best ways to assess the stability and physical realism of a predicted complex in the absence of experimental ligand data [3].

Quantitative Data on Docking Performance and Plausibility

The table below summarizes key performance metrics for various docking methods, highlighting the critical gap between traditional accuracy metrics (RMSD) and physical plausibility.

Table 1: Comparative Performance of Docking Methods Across Different Benchmarks [5]

| Method Category | Method Name | Astex Diverse Set (RMSD ≤ 2 Å) | Astex Diverse Set (PB-Valid) | PoseBusters Set (RMSD ≤ 2 Å) | PoseBusters Set (PB-Valid) | DockGen (Novel Pockets, RMSD ≤ 2 Å) | DockGen (Novel Pockets, PB-Valid) |

|---|---|---|---|---|---|---|---|

| Traditional | Glide SP | 91.76% | 97.65% | 80.37% | 97.20% | 70.18% | 94.25% |

| Generative Diffusion | SurfDock | 91.76% | 63.53% | 77.34% | 45.79% | 75.66% | 40.21% |

| Regression-Based | KarmaDock | 52.94% | 11.76% | 28.97% | 9.35% | 18.52% | 11.11% |

Table 2: Correlation of Docking Performance with Experimental Results [7] [9]

| Compound Class | Protein Target Family | Correlation between ΔG and IC50/Kd | Key Findings |

|---|---|---|---|

| Drug-like compounds | Various | Stronger | Scoring functions are often parameterized for pharmaceutical compounds. |

| Neonicotinoids (Environmental chemicals) | nAChRs / AChBPs | No clear correlation | Highlights a bias in docking software and a significant limitation for non-pharmaceutical applications. |

| Anti-breast cancer compounds | Breast cancer-related proteins | No consistent linear correlation | Discrepancies attributed to cellular factors (permeability, metabolism) and docking simplifications. |

Experimental Protocols for Validation

Protocol 1: Post-Docking Validation with PoseBusters and Visual Inspection

Objective: To systematically filter out physically implausible docking poses that may have high scores.

Materials:

- Output file from your docking run (e.g., PDBQT file from AutoDock Vina).

- The original protein structure file.

- PoseBusters toolkit (https://github.com/posebusters/posebusters) [5].

- Molecular visualization software (e.g., PyMOL, Chimera).

Step-by-Step Procedure:

- Pose Extraction: Extract the top N poses (e.g., top 10) from your docking output results.

- Run PoseBusters: For each pose, run the PoseBusters validation suite. The tool will generate a report indicating whether the pose passes basic checks for bond lengths, angles, steric clashes, and other geometric criteria.

- Filter and Triage: Separate the poses that are "PB-valid" from those that are not. Prioritize the valid poses for further analysis.

- Visual Inspection: Manually inspect the top-scoring valid and invalid poses in your molecular viewer. Pay close attention to:

- The fit of the ligand within the binding pocket.

- The geometry of hydrogen bonds and other specific interactions.

- Any remaining minor clashes that might be tolerated.

Protocol 2: Molecular Dynamics Refinement of Docked Poses

Objective: To assess the stability and physical realism of a docked complex under dynamic, solvated conditions that more closely mimic a biological environment.

Materials:

- A docked protein-ligand complex (the "pose").

- MD simulation software (e.g., GROMACS, NAMD).

- Appropriate force field parameters for the protein and the ligand.

- Access to high-performance computing (HPC) resources.

Step-by-Step Procedure:

- System Setup:

- Place the docked complex in the center of a simulation box.

- Solvate the system with water molecules (e.g., TIP3P water model).

- Add ions (e.g., Na⁺, Cl⁻) to neutralize the system's charge and achieve a physiological salt concentration.

- Energy Minimization:

- Run a steepest descent or conjugate gradient minimization to remove any bad contacts (steric clashes) that remain from the docking process.

- Equilibration:

- Perform a short (100-200 ps) simulation in the NVT ensemble (constant Number of particles, Volume, and Temperature) to stabilize the temperature.

- Follow with a short simulation in the NPT ensemble (constant Number of particles, Pressure, and Temperature) to stabilize the pressure and density of the system.

- Production Run:

- Run an unrestrained MD simulation for a timescale relevant to your research question (typically tens to hundreds of nanoseconds).

- Analysis:

- Calculate the Root-Mean-Square Deviation (RMSD) of the protein backbone and the ligand. A stable complex will show a stable, converged RMSD.

- Analyze the protein-ligand interactions (hydrogen bonds, hydrophobic contacts) over time to see if the key interactions predicted by docking are maintained.

Visualization of Workflows and Concepts

Figure 1: A recommended workflow for validating the physical plausibility of a docking pose, integrating both fast checks (visual, PoseBusters) and rigorous simulation (MD).

Figure 2: Taxonomy of scoring function types used in molecular docking to predict binding affinity, each with different strengths and weaknesses in assessing physical plausibility [10] [3].

The Scientist's Toolkit: Essential Research Reagents & Software

Table 3: Key Software and Tools for Ensuring Physical Plausibility in Docking

| Tool Name | Type | Primary Function in Pose Validation | Access |

|---|---|---|---|

| PoseBusters [5] | Validation Toolkit | Automatically checks docking poses for physical and chemical errors (bonds, angles, clashes). | Open Source |

| AutoDock Vina [10] [11] | Docking Software | Widely-used docking program with a good balance of speed and accuracy. | Open Source |

| Glide [5] | Docking Software | A traditional docking program noted for high physical validity and pose accuracy. | Commercial |

| GROMACS | Molecular Dynamics | A high-performance MD package for refining docked poses and testing their stability. | Open Source |

| PyMOL [11] | Visualization | Industry-standard for 3D visualization and manual inspection of molecular complexes. | Freemium |

| SAMSON/ AutoDock Vina Extended [8] | Modeling Platform | Provides an interactive environment for ligand preparation and docking with visual feedback on rotatable bonds. | Freemium |

Performance Tiers of Molecular Docking Methods

A comprehensive, multi-dimensional evaluation of molecular docking methods reveals distinct performance tiers, highlighting the inherent strengths and systematic errors of each approach. The table below summarizes the key performance metrics across different method types.

Table 1: Performance Tiers and Characteristics of Docking Methods

| Method Type | Performance Tier | Pose Accuracy (RMSD ≤ 2 Å) | Physical Validity (PB-Valid Rate) | Combined Success Rate | Key Characteristics & Systematic Errors |

|---|---|---|---|---|---|

| Traditional Methods (Glide SP, AutoDock Vina) | 1 (Highest) | Moderate to High | Very High (>94% across datasets) [5] | High | Excellent physical plausibility; systematic errors from scoring function biases [12]; computationally intensive [5] |

| Hybrid Methods (Interformer) | 2 | High | High | Best Balance [5] | Integrates traditional searches with AI scoring; balanced performance across metrics [5] |

| Generative Diffusion Models (SurfDock, DiffBindFR) | 3 | Highest (e.g., SurfDock: >70% across datasets) [5] | Moderate to Low (e.g., SurfDock: 40-64%) [5] | Moderate | Superior pose accuracy; systematic errors in physical plausibility (steric clashes, H-bonding) [5] |

| Regression-Based Models (KarmaDock, QuickBind) | 4 (Lowest) | Low | Very Low [5] | Low | Computationally efficient; frequent production of physically invalid poses [5]; poor generalization [5] |

Troubleshooting Guide: FAQs on Docking Method Limitations

How do I diagnose poor pose prediction in real-world scenarios?

Problem: Docking performance significantly degrades under realistic conditions compared to idealized benchmarks.

Solution:

- Understand Real-World Accuracy Limits: Even the best ML-based docking methods achieve only ~18% success when both geometric and chemical validity are enforced under realistic benchmark conditions (PLINDER-MLSB) [13]. Classical tools perform significantly worse.

- Use Ensemble Approaches: Combine multiple docking methods into a single ensemble, which can improve accuracy to approximately 35% [13].

- Implement Statistical Thinking: View docking better as a statistical filter rather than a precision predictor, especially for novel targets [13].

Systematic Error Source: Over-reliance on idealized benchmark performance that doesn't translate to real-world applications with unbound and predicted protein structures.

What causes physically implausible docking results and how can I avoid them?

Problem: Docking results show steric clashes, incorrect bond lengths/angles, or chemically invalid structures despite favorable RMSD scores.

Solution:

- Validate with PoseBusters: Systematically evaluate docking predictions against chemical and geometric consistency criteria [5].

- Method Selection: Prefer traditional methods (Glide SP maintains >94% physical validity) or hybrid methods for critical applications requiring physical plausibility [5].

- Avoid Over-Reliance on Single Metrics: RMSD alone is insufficient; always assess physical validity alongside pose accuracy [5].

Systematic Error Source: Regression-based and generative models often prioritize pose accuracy over physical constraints, leading to chemically impossible structures.

How should I handle binding site uncertainty for new targets?

Problem: When the actual binding site is unknown, blind docking methods produce unreliable results with high false positive rates.

Solution:

- Avoid Blind Docking Abuse: Blind docking frequently places ligands in incorrect sites with artificially favorable energy scores [14].

- Implement Binding Site Detection: Use binding site detection software/algorithms before docking [14].

- Leverage Known Structural Information: When available, use the location of original crystal ligands in proteins as binding sites [14].

Systematic Error Source: Docking algorithms based on energy minimization principles will preferentially place ligands in any low-energy site, not necessarily the biologically relevant one.

Why do my docking results fail to generalize to novel protein structures?

Problem: Methods that perform well on known complexes fail dramatically when encountering novel protein binding pockets or sequences.

Solution:

- Assess Generalization Capability: Evaluate methods across diverse datasets including Astex diverse set (known complexes), PoseBusters benchmark (unseen complexes), and DockGen (novel binding pockets) [5].

- Method Awareness: Recognize that most DL methods exhibit significant performance drops on novel protein binding pockets [5].

- Hybrid Strategy: For novel targets, consider traditional methods or hybrid approaches that maintain more consistent performance across diverse protein landscapes [5].

Systematic Error Source: Overfitting to training data distributions; limited exposure to structural diversity during model development.

Experimental Protocols for Method Validation

Protocol: Comprehensive Docking Method Evaluation

Purpose: To systematically evaluate and compare docking methods across multiple performance dimensions.

Workflow:

Methodology:

- Dataset Curation: Utilize three benchmark datasets: Astex diverse set (known complexes), PoseBusters benchmark set (unseen complexes), and DockGen dataset (novel protein binding pockets) [5].

- Method Selection: Include representatives from each performance tier: traditional (Glide SP, AutoDock Vina), generative diffusion (SurfDock, DiffBindFR), regression-based (KarmaDock, QuickBind), and hybrid methods (Interformer) [5].

- Performance Metrics:

- Calculate RMSD ≤ 2 Å success rates for pose accuracy

- Assess PB-valid rates for physical plausibility

- Determine combined success rate (RMSD ≤ 2 Å & PB-valid)

- Evaluate generalization across protein sequence similarity, ligand topology, and binding pocket structural similarity [5]

Protocol: Virtual Screening Validation for New Targets

Purpose: To validate docking methods for virtual screening campaigns targeting novel protein structures.

Workflow:

Methodology:

- Binding Site Definition:

Method Implementation:

Validation Metrics:

Research Reagent Solutions

Table 2: Essential Computational Tools for Docking Research

| Tool Category | Specific Tools | Primary Function | Application Context |

|---|---|---|---|

| Traditional Docking | Glide SP, AutoDock Vina, UCSF DOCK 3.7 | Physics-based and empirical docking | High physical validity requirements; benchmark comparisons [5] [12] |

| Generative AI Docking | SurfDock, DiffBindFR, DynamicBind | DL-based pose generation | Maximum pose accuracy; known binding sites [5] |

| Validation & Analysis | PoseBusters, TorsionChecker | Geometric and chemical validation | Method evaluation; pose quality assessment [5] [12] |

| Benchmark Datasets | Astex Diverse Set, PoseBusters Set, DockGen | Performance benchmarking | Comprehensive method evaluation [5] |

| Scoring Functions | Traditional SFs, AI-enhanced SFs | Binding affinity prediction | Virtual screening; hit identification [5] [15] |

Frequently Asked Questions (FAQs)

FAQ 1: What is the primary cause of training set bias in protein-ligand prediction models? The primary cause is the uneven representation of protein families and ligand types in public databases. Models trained on these datasets learn to rely on patterns from frequently observed proteins or ligands, rather than general principles of molecular recognition. Analysis of major affinity databases (PDBbind, BindingDB, ChEMBL) confirms that binding affinity can often be predicted using protein features alone, not from specific compound-protein interactions, because most compounds show consistent affinities due to high sequence or functional similarity among their target proteins [16].

FAQ 2: How does this bias specifically affect predictions for novel protein targets? When a model encounters a protein from a family not well-represented in its training data, its performance significantly drops. For instance, deep learning docking methods exhibit high success rates on known complexes (e.g., >90% pose accuracy for some on the Astex set) but this can fall dramatically to around 30-50% on datasets containing novel protein binding pockets (e.g., DockGen set) [5]. The models struggle to generalize to unseen binding site geometries.

FAQ 3: What does "physically implausible" docking output mean? Despite achieving a good RMSD (Root-Mean-Square Deviation) score, a predicted ligand pose might violate fundamental physical laws. The PoseBusters toolkit reveals that many deep learning methods produce structures with incorrect bond lengths/angles, clashing atoms, or implausible stereochemistry [5]. A high-confidence prediction from a model like Boltz-1 can be completely incorrect due to steric clashes, even when the overall peptide orientation seems reasonable [17].

FAQ 4: Are newer, AI-based models like AlphaFold immune to these biases? No. AlphaFold2-Multimer (AF2-Multimer) and AlphaFold3 (AF3) show remarkable accuracy in predicting protein-peptide complexes, but they also demonstrate a strong bias for previously seen structures. Their performance is best when predicting interactions for proteins or interface geometries that are well-represented in their training data, and they struggle to generalize to novel binding sites [17]. Their accuracy is also linked to the quality and depth of the Multiple Sequence Alignments (MSAs) used as input.

FAQ 5: What practical steps can I take to diagnose bias in my own prediction results? You can perform a "sequence similarity check" by comparing your target protein against the training set of the model you are using. Additionally, use validation tools like PoseBusters to check the physical validity of docking poses beyond simple RMSD metrics [5]. Be highly skeptical of high-confidence scores from a model if your target is phylogenetically distant from common model organisms in structural databases.

Troubleshooting Guides

Troubleshooting Guide 1: Poor Pose Prediction Accuracy for a Novel Target

Symptoms:

- Consistently high RMSD values (>2 Å) for predicted ligand poses, even when the model outputs high confidence scores.

- Failure to recover key, known catalytic or binding interactions in the predicted pose.

- Physically impossible ligand conformations or severe steric clashes with the protein [5].

Diagnostic Steps:

- Check Target Similarity: Use BLAST to compare your target's sequence against the PDB. A low sequence identity (<30%) to any protein in the model's training set is a strong indicator of potential failure due to bias [18].

- Validate the Protocol: Run a control docking with a known ligand-protein complex from a well-represented family (e.g., a kinase) to verify your computational setup is correct.

- Assess MSA Depth (for AF-based models): Inspect the depth and quality of the Multiple Sequence Alignment generated for your target. A shallow or poor-quality MSA for either the protein or peptide partner is a known factor reducing AlphaFold's prediction accuracy [17].

- Cross-Validate with Multiple Methods: Perform docking with one traditional physics-based method (e.g., AutoDock Vina, Glide SP) and at least one deep learning method. A consistent failure across diverse methods points to a fundamental challenge with the target itself or the setup [5].

Solutions:

- Utilize Bias-Reduced Datasets: If training a model, use resources like the BASE web service to obtain datasets where protein similarity between training and test sets is controlled, forcing the model to learn more generalized features [16].

- Employ Hybrid or Traditional Docking: In benchmarks, traditional methods like Glide SP consistently maintain high physical validity (PB-valid rates >94% across diverse datasets) and can be more reliable for novel targets where DL models fail [5].

- Incorporate Ligand-Based Constraints: Use pharmacophore models or 3D shape similarity from known active compounds to guide the docking search and pose selection, adding information not reliant on the protein structure alone [19].

Troubleshooting Guide 2: High-Ranking Virtual Screening Hits Are Inactive in Assays

Symptoms:

- Virtual screening identifies compounds with excellent predicted binding affinity, but experimental validation shows no biological activity.

- The top hits are structurally very similar to each other and to compounds in the training data, but do not generalize.

Diagnostic Steps:

- Analyze the Chemical Space: Use fingerprint-based methods (e.g., ECFP) and visualization like UMAP to check if your active hits are clustered only in specific regions of chemical space, indicating model over-reliance on learning specific scaffolds [16].

- Test for "Dataset Bias": Train a simple model (e.g., a multilayer perceptron) using only compound fingerprints. If this model can "predict" affinity with reasonable accuracy, it indicates a strong bias in your dataset where affinity is correlated with ligand structure alone, not the specific interaction with the protein [16].

- Inspect Protein Features Importance: For deep learning-based affinity prediction (DTA) models, conduct a feature importance analysis. Previous models have been shown to rely disproportionately on protein features rather than a balanced use of compound and interaction features [16].

Solutions:

- Apply More Stringent Filters: Use drug-likeness (e.g., Lipinski's Rule of Five), PAINS filters, and synthetic accessibility scoring to remove unreasonable hits early.

- Use Blind Docking: If the binding site is unknown or suspected to be novel, use a docking method capable of blind docking (e.g., DynamicBind) to explore the entire protein surface [5].

- Rescore with Advanced Functions: Take the top poses from a fast docking run and rescore them using more sophisticated, potentially slower, scoring functions or free energy perturbation methods to improve the affinity ranking [19].

Experimental Protocols & Data

Protocol 1: Benchmarking Docking Method Generalization

Objective: To systematically evaluate the performance of a docking method when faced with novel protein binding pockets.

Methodology:

- Dataset Curation:

- Use the DockGen dataset, specifically designed to test generalization to novel binding pockets, or create a custom set by clustering proteins based on binding site structural similarity [5].

- Ensure the test set proteins have low sequence and structural similarity to proteins in the method's training set.

- Performance Metrics:

- Pose Accuracy: Calculate the ligand RMSD (Root-Mean-Square Deviation) of the top-ranked pose against the experimental structure. A threshold of ≤ 2 Å is commonly used for a "successful" prediction [5].

- Physical Validity: Use the PoseBusters toolkit to check for chemical and geometric inconsistencies, including bond lengths, angles, stereochemistry, and steric clashes. Report the "PB-valid" rate [5].

- Interaction Recovery: Quantify the recovery of key molecular interactions (e.g., hydrogen bonds, hydrophobic contacts, salt bridges) present in the experimental structure.

- Execution:

- Run the docking method(s) on the benchmark dataset.

- For each complex, record the RMSD, PB-valid status, and number of recovered native contacts.

- Aggregate results to calculate success rates for each metric.

This workflow is summarized in the following diagram:

Protocol 2: Creating a Bias-Reduced Dataset for Affinity Prediction

Objective: To generate a training/test split for a Drug-Target Affinity (DTA) model that minimizes the influence of protein similarity bias.

Methodology (as implemented in the BASE web service) [16]:

- Data Collection: Collect binding affinity data from public sources (PDBbind, BindingDB, ChEMBL, etc.), restricting to human proteins and standardizing affinity measurements (e.g., pKa).

- Identify Multi-Target Compounds: For compounds with affinity data for multiple proteins, calculate the Coefficient of Variation (CV) of their binding affinities. CV = standard deviation / mean.

- Set a Variation Threshold: Use the mean CV of approved drugs (from DrugBank) as a threshold to categorize multi-target compounds into "Low Variation" and "High Variation" groups.

- Create Bias-Reduced Splits: Systematically split the data to ensure that proteins in the test set have low sequence or functional similarity to proteins in the training set. This can be done by clustering proteins and ensuring no cluster is represented in both training and test sets.

- Validation: Train a simple model (e.g., MLP on compound fingerprints) on the new dataset. A significant drop in performance compared to a random split indicates the bias has been reduced, forcing the model to learn true interactions.

The logical flow of this analysis is as follows:

Quantitative Performance Data

Table 1: Comparative Performance of Docking Methods on Novel vs. Known Complexes [5]

| Method Category | Example Method | Known Complexes (Astex) RMSD ≤ 2Å / PB-Valid | Novel Pockets (DockGen) RMSD ≤ 2Å / PB-Valid | Key Limitation |

|---|---|---|---|---|

| Traditional | Glide SP | ~80% / >97% | ~45% / >94% | Computationally intensive search |

| Generative Diffusion | SurfDock | ~92% / ~64% | ~76% / ~40% | Poor physical plausibility |

| Regression-Based | KarmaDock | ~40% / ~10% | ~15% / ~5% | Often produces invalid poses |

| Hybrid (AI Scoring) | Interformer | ~85% / ~90% | ~50% / ~65% | Balance of accuracy and validity |

Table 2: Impact of Protein Similarity on AlphaFold2-Multimer Performance [17]

| Condition | Protein-Peptide Complexes with High-Quality Prediction (DockQ >0.8) | Key Observation |

|---|---|---|

| High similarity to training data | High success rate (≥60%) | Performance strongly depends on overlap with training set. |

| Low similarity to training data | Significant performance drop | Struggles to generalize to novel proteins/binding sites. |

| With shallow/poor peptide MSA | Reduced accuracy | Peptide MSA quality is critical for peptide conformation prediction. |

| Confidence Score (ipTM+pTM) >0.75 | 66-77% are high-quality | Low-confidence predictions are rarely accurate, but false positives exist. |

Research Reagent Solutions

Table 3: Essential Computational Tools for Bias Analysis and Mitigation

| Research Reagent | Function & Utility | Reference |

|---|---|---|

| BASE Web Service | Provides binding affinity prediction datasets with reduced protein similarity bias between training and test sets, promoting generalized model development. | [16] |

| PoseBusters Toolkit | Validates the physical plausibility and chemical correctness of docking poses, a critical check for deep learning model outputs that may have good RMSD but bad geometry. | [5] |

| DockGen Dataset | A benchmark set containing novel protein binding pockets, specifically designed to test the generalization capabilities of docking methods beyond their training data. | [5] |

| AlphaFold2/3 & AF2-Multimer | State-of-the-art protein structure and complex prediction tools. Performance is contingent on MSA depth and can show bias towards previously seen structures. | [17] |

| Boltz-1 & Chai-1 | Newer deep learning models for predicting protein-peptide binding geometry. Exhibit performance trends and biases similar to the AlphaFold family. | [17] |

Beyond Standard Protocols: Advanced Docking Strategies and Hybrid Frameworks

➤ Troubleshooting Guide: Common Experimental Issues

FAQ 1: My diffusion model predicts a ligand pose with a low RMSD, but the structure looks physically implausible. What is wrong? This is a known limitation where models prioritize RMSD over physical constraints [5]. The PoseBusters toolkit can systematically check for issues like invalid bond lengths, angles, or steric clashes [5].

- Solution: Implement a post-prediction refinement step using a physics-based force field or a traditional scoring function to correct physicochemical inaccuracies without significantly altering the overall pose [5].

FAQ 2: Why does my model perform well on standard benchmarks but fails on my novel protein target? This indicates a generalization failure. Most deep learning docking models are trained on datasets like PDBBind, which primarily contain holo (ligand-bound) structures, and struggle with apo (unbound) or novel protein conformations due to the induced fit effect [20].

- Solution:

- Use Flexible Docking Models: Employ next-generation models like FlexPose or DynamicBind that explicitly model protein sidechain or backbone flexibility to account for conformational changes upon ligand binding [20].

- Data Augmentation: If fine-tuning, incorporate cross-docking and apo-docking data into your training set to expose the model to a wider variety of protein conformations [20].

FAQ 3: During inference, my diffusion model is slow and computationally expensive. How can I optimize this? The iterative denoising process of diffusion models is inherently more computationally intensive than a single forward pass in regression-based models [21].

- Solution: Explore hybrid methods, such as using a diffusion model to generate initial poses and then a faster, traditional method for scoring and refinement. This balances accuracy and efficiency [5].

FAQ 4: The model fails to reproduce key molecular interactions (e.g., hydrogen bonds) even when the overall pose is correct. How can I fix this? The model's loss function may be overly focused on coordinate error (RMSD) and not sufficiently weighted to recover critical interactions [5].

- Solution: Integrate interaction-specific terms (e.g., hydrogen bonding, hydrophobic contact loss) into the training loss function to guide the model toward biophysically realistic predictions [5].

➤ Performance Data: Quantitative Comparisons

The table below summarizes the performance of different docking method classes across key benchmarks, illustrating the trade-off between pose accuracy and physical validity [5].

Table 1: Docking Method Performance Comparison (Success Rates %)

| Method Class | Representative Model | Astex Diverse Set (RMSD ≤ 2Å & PB-Valid) | PoseBusters Benchmark (RMSD ≤ 2Å & PB-Valid) | DockGen Novel Pockets (RMSD ≤ 2Å & PB-Valid) |

|---|---|---|---|---|

| Generative Diffusion | SurfDock | 61.18 | 39.25 | 33.33 |

| Generative Diffusion | DiffBindFR | ~34.73 (avg.) | ~34.23 (avg.) | ~20.90 (avg.) |

| Traditional | Glide SP | 78.82 | 63.55 | 52.63 |

| Regression-Based | KarmaDock, GAABind | < 20.00 | < 10.00 | < 5.00 |

➤ Featured Experimental Protocol: DiffDock Workflow

DiffDock is a seminal diffusion model for molecular docking that treats pose prediction as a generative problem [21].

Detailed Step-by-Step Protocol:

Input Representation:

- Protein Structure: Process the protein's 3D structure into a graph or geometric representation, often using residue-level or atomic-level features.

- Ligand Structure: Represent the ligand as a graph or with its initial 3D coordinates, including features like atom type and bonds.

Forward Noising Process:

Reverse Denoising Process (Inference):

- A neural network (an SE(3)-Equivariant Graph Neural Network) is trained to learn the reverse of this noising process [20].

- Starting from a random ligand pose (pure noise), the trained network iteratively refines the pose over multiple steps by predicting and removing noise.

- The model outputs multiple potential poses along with confidence estimates for each prediction [21].

Output and Validation:

- The top-ranked pose (based on the model's confidence) is selected.

- The predicted complex must be validated using a toolkit like PoseBusters to check for physical plausibility and the RMSD calculated against an experimental ground truth if available [5].

➤ The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Diffusion-Based Docking Experiments

| Item | Function in Research | Example / Note |

|---|---|---|

| Structured Datasets | Training and benchmarking models. | PDBBind (general), DockGen (novel pockets) [5]. |

| Evaluation Toolkits | Assessing physical plausibility of predictions. | PoseBusters toolkit checks steric clashes, bond lengths/angles [5]. |

| Specialized Software | Implementing core diffusion algorithms. | DiffDock [21], SurfDock [5], FlexPose (for flexibility) [20]. |

| Traditional Docking Suites | For hybrid workflow refinement and scoring. | Glide SP, AutoDock Vina; excel in physical validity [5]. |

| Computational Resources | Handling the iterative denoising process. | GPUs/TPUs with high VRAM; inference is more costly than regression models [21]. |

Frequently Asked Questions (FAQs)

FAQ 1: Why does my AI-powered virtual screening return good binders that are synthetically inaccessible? This is a common issue where the scoring function is disconnected from practical chemistry. AI scoring functions, including geometric deep learning models like DeepDock, are often trained solely on binding affinity data and may prioritize compounds that are difficult or impossible to synthesize [22]. To address this:

- Implement a pre-filtering step: Use rule-based filters (e.g., REOS) or a retrosynthetic analysis tool to remove compounds with problematic functional groups or complex synthetic routes before running the AI scoring.

- Incorporate synthetic accessibility scores: Integrate a synthetic accessibility score as a weighted term alongside the AI-based binding affinity score during the final ranking of compounds [23] [22].

FAQ 2: My AI scoring function performs well on test sets but fails during prospective screening on a new target. How can I improve its generalizability? This indicates overfitting to the training data. AI models, including graph neural networks and transformers, can struggle to generalize across diverse protein-ligand pairs, especially for new protein folds or chemotypes [24].

- Use hybrid scoring: Employ a consensus scoring strategy that combines the AI score with a physics-based or empirical score (e.g., MM/GBSA). This leverages the strengths of both approaches [22] [25].

- Augment training data: If possible, incorporate data from the new target family, even if it's sparse or from low-fidelity assays, to fine-tune the model. Techniques like transfer learning can be beneficial.

- Validate with controls: Before full-scale screening, run a control screen with known actives and decoys for the new target to benchmark the AI function's performance [23].

FAQ 3: How do I handle water molecules and protonation states when integrating AI scoring with a traditional conformational search? AI scoring functions can be sensitive to the precise chemical environment. Incorrect protonation states or misplaced key water molecules are a major source of false positives and pose prediction errors [25].

- Systematic preparation: Use tools like the Protein Preparation Wizard (Schrödinger) or PROPKA to calculate the protonation states of ionizable residues (especially Histidine) at physiological pH before generating conformational ensembles [22] [25].

- Conserve key waters: If crystallographic data shows conserved water molecules in the binding site that mediate interactions, include them in both the conformational search and the subsequent docking/scoring steps. The decision can be based on their B-factors and hydrogen-bonding networks [25].

FAQ 4: The conformational ensemble I generated is too large for efficient AI rescoring. What is the best way to reduce it? Traditional methods like Replica Exchange Molecular Dynamics (REMD) can generate millions of conformations, creating a computational bottleneck [26] [27].

- Cluster analysis: Use cluster analysis (e.g., using RMSD metrics) on the MD trajectory to identify representative conformations from the largest clusters. This significantly reduces the number of structures while capturing the essential conformational diversity [26].

- Geometric deep learning for pre-screening: Train an autoencoder to project conformations into a low-dimensional latent space. You can then sample efficiently from this space to select a diverse and representative subset of conformations for more expensive AI scoring [27].

Troubleshooting Guides

Problem: Poor enrichment of known active compounds during a hybrid virtual screening campaign. This suggests a failure in either the conformational search, the scoring function, or the integration between them.

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| Inadequate conformational sampling of the protein target. | Check if the known active ligands can be docked into the generated conformational ensemble in a pose that resembles their crystal structure. | Expand the conformational search. Use enhanced sampling methods like REMD instead of single, short MD simulations to better capture flexibility and rare states [26]. |

| A bias in the training data of the AI scoring function. | Check the chemical space and target classes the AI model was trained on. Test the scoring function on a held-out test set of known actives/decoys for your target. | Switch to a more generalizable scoring function or retrain/fine-tune the AI model with data relevant to your target. Employ a hybrid MM/GBSA + AI scoring approach to add physical realism [24] [22]. |

| The ligand conformational library is poor. | Check if low-energy ligand conformers can sterically fit and form key interactions in the binding site. | Improve the ligand conformational search. Use an explicit-solvent REMD workflow, as solvation can significantly impact low-energy conformations, which is missed by gas-phase searches [26]. |

Problem: High computational cost of the integrated workflow, making large-scale screening infeasible.

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| The traditional conformational search stage is too expensive. | Profile the computation time. REMD with explicit solvent is highly accurate but computationally intensive [26]. | Consider a multi-stage approach. Use a fast, implicit-solvent search to broadly sample space, followed by a focused explicit-solvent refinement on promising regions. Alternatively, use AI-based generative autoencoders to mine conformational space from short, and hence cheaper, MD simulations [27]. |

| AI rescoring is applied to too many conformer-ligand complexes. | Determine the number of poses being rescored. | Implement a stricter filtering funnel. Use a traditional scoring function to quickly screen down the compound library to a manageable number (e.g., top 1%) before applying the more expensive AI rescoring [23] [22]. |

Experimental Protocols & Data Presentation

Protocol 1: Explicit-Solvent Conformational Search Using REMD

This protocol generates a biologically relevant conformational ensemble for a flexible drug target, accounting for solvation effects [26].

- System Setup: Place the protein or molecule of interest in a solvation box filled with explicit water molecules. Add counterions to neutralize the system's charge.

- Energy Minimization: Perform energy minimization to remove any steric clashes introduced during the setup process.

- Equilibration: Run a short MD simulation with positional restraints on the solute to equilibrate the solvent and ions around it.

- REMD Production Run:

- Launch multiple replicas (e.g., 24-48) of the system, each at a different temperature (e.g., ranging from 300 K to 500 K).

- Run MD for a fixed number of steps (e.g., 100 ps - 1 ns) per replica.

- Attempt an exchange of coordinates between neighboring temperature replicas periodically based on the Metropolis criterion.

- Continue for a duration long enough to see multiple folding/unfolding events or conformational transitions.

- Trajectory Analysis: Extract the trajectory from the replica of interest (usually 300 K). Use cluster analysis (e.g., using RMSD) to group similar structures and identify the most probable conformational states.

Protocol 2: Hybrid AI/Energy-Based Virtual Screening Workflow

This protocol, adapted from a JAK3 inhibitor discovery campaign, integrates traditional docking with AI-based scoring to improve hit rates [22].

- Preparation: Prepare the protein structure (e.g., from PDB 5LWN) and a large compound library (e.g., ChemDiv, ZINC) using standard tools (e.g., Schrödinger's Protein Preparation Wizard and LigPrep).

- Standard Docking Screen:

- Perform high-throughput molecular docking (e.g., Glide SP) to screen the entire library, keeping the top 250,000 compounds.

- Re-dock the top hits with higher precision (e.g., Glide XP), keeping the top 20,000 compounds.

- Energy Refinement: Refine the top docking poses and estimate binding free energies using a more rigorous method like MM/GBSA.

- AI Rescoring: Score the MM/GBSA-refined poses using a geometric deep learning-based scoring function (e.g., DeepDock).

- Final Selection: Manually inspect the top-ranked compounds by the AI model, considering factors like chemical novelty, synthetic feasibility, and interaction patterns, to select a final set (e.g., 10-50 compounds) for experimental testing.

Table 1: Comparison of Conformational Search Methods

| Method | Key Features | Solvent Handling | Relative Computational Cost | Best Use Case |

|---|---|---|---|---|

| Systematic Torsional Scan [28] | Exhaustively scans dihedral angles | Gas phase or Implicit | Low to Medium | Small, rigid molecules |

| Molecular Dynamics (MD) [26] | Samples Boltzmann-weighted ensemble | Explicit | High | Studying dynamics and kinetics |

| Replica Exchange MD (REMD) [26] | Enhanced sampling across temperatures | Explicit | Very High | Complex biomolecules, overcoming energy barriers |

| Generative Autoencoder [27] | AI learns from short MD to generate vast ensembles | Can be trained on explicit-solvent MD | Low (after training) | Sampling vast spaces of IDPs |

Table 2: Performance of AI-Driven Methods in PLI Prediction

| AI Model Type | Application in PLI | Reported Advantage | Key Limitation |

|---|---|---|---|

| Geometric Deep Learning / GNNs [24] [22] | Scoring, Affinity Prediction | Incorporates 3D structural information; outperforms traditional docking in virtual screening [24]. | Requires high-quality 3D structures; generalizability [24]. |

| Generative Autoencoders [27] | Conformational Mining | Can generate full conformational ensembles of IDPs from short MD simulations, validated by SAXS/NMR [27]. | Reconstruction accuracy decreases for larger proteins (>40 residues) [27]. |

| Diffusion Models [24] | Pose Prediction | Improves accuracy of ligand pose generation. | Still emerging; sampling efficiency can be a challenge. |

| Transformers & Mixture Density Networks [24] | Binding Site Prediction | Refines binding site ID using hybrid sequence and structure embeddings. | Performance depends on training data breadth. |

Workflow Visualization

The Scientist's Toolkit: Essential Research Reagents & Software

Table 3: Key Resources for Hybrid Conformational Search and Screening

| Item / Resource | Type | Function / Application | Example Tools / Sources |

|---|---|---|---|

| Molecular Dynamics Engine | Software | Samples protein/ligand conformations using physics-based force fields; essential for generating initial training data and rigorous ensembles. | GROMACS, AMBER, NAMD, OpenMM [26] [27] |

| Conformational Search Tool | Software | Systematically or heuristically generates low-energy molecular conformers. | TINKER (scan), OMEGA, CONFGEN [26] [28] |

| Docking Software | Software | Predicts binding poses and scores for ligand-receptor complexes. | DOCK3.7, AutoDock Vina, Glide (SP/XP) [23] [22] [25] |

| AI Scoring Function | Algorithm / Software | Rescores docking poses using trained neural networks for improved affinity prediction. | DeepDock, other geometric deep learning models [24] [22] |

| Free Energy Calculator | Software | Calculates more rigorous binding free energies (MM/GBSA, MM/PBSA) for pose refinement or consensus scoring. | Schrödinger (Prime), AMBER, GROMACS [22] [25] |

| Structured Compound Library | Database | Provides chemically diverse, often commercially available, small molecules for virtual screening. | ZINC15, ChemDiv, MCEC [23] [22] |

Leveraging Molecular Dynamics for Pre- and Post-Docking Conformational Sampling

Frequently Asked Questions (FAQs)

FAQ 1: Why should I use Molecular Dynamics (MD) simulations before docking? MD simulations prior to docking generate multiple, physiologically relevant conformations of your target protein. This is crucial for capturing inherent protein flexibility and conformational changes induced by mutations, which rigid docking often misses. Using an ensemble of receptor structures from MD trajectories significantly improves the biological relevance of your docking results, especially for proteins with flexible binding sites or those affected by allosteric effects [3] [29].

FAQ 2: How does post-docking MD refinement improve my results? Post-docking MD simulations allow the docked ligand-receptor complex to relax and evolve into a more realistic, energetically stable conformation. This process refines the binding pose by accounting for induced-fit effects—subtle adjustments in the protein's structure upon ligand binding—which are largely ignored by standard docking programs. This leads to more accurate prediction of binding modes and interaction energies [3].

FAQ 3: My docking results are poor despite a correct binding site. What conformational sampling issue could be the cause? This is a common problem when the protein's active conformation is not adequately represented by a single, static crystal structure. Flexible loops or side-chain reorientations can drastically alter the binding site geometry. Implementing a pre-docking MD simulation can sample these alternative conformations. Clustering the resulting MD trajectories based on binding site residue RMSD allows you to dock against representative scaffold structures that reflect the true conformational diversity of the target [29].

FAQ 4: What is the recommended simulation time for generating meaningful pre-docking conformational ensembles? The necessary simulation length is highly protein-dependent. However, for the purpose of capturing variant-induced changes in a ligand-binding interface, simulations on the order of hundreds of nanoseconds are often sufficient to sample the relevant structural diversity. The goal is to achieve convergence in the conformational space of the binding site residues [29].

FAQ 5: Are there alternatives to full MD for conformational sampling in resource-limited scenarios? Yes, advanced conformational sampling tools like CREST (using iterated metadynamics) or Multiple-Minimum Monte Carlo (MMMC) methods can be highly effective. CREST uses metadynamics to bias simulations away from already-seen conformations, efficiently exploring the energy landscape. The MMMC method randomly modifies dihedral angles, followed by minimization, to find low-energy conformers and can be particularly effective for large, flexible molecules [30] [31].

Troubleshooting Guides

Issue 1: Inadequate Sampling of Receptor Conformations

Problem: Virtual screening fails to identify active compounds because the rigid receptor structure does not represent the conformational state that binds the ligand.

Solution:

- Perform pre-docking MD: Run an all-atom, explicit solvent MD simulation of the apo (ligand-free) receptor.

- Cluster the trajectory: Analyze the MD trajectory and cluster frames based on the Root Mean Square Deviation (RMSD) of the backbone atoms of the binding site residues.

- Select representative scaffolds: Choose the central structure from the most populated clusters for your docking studies.

Workflow Implementation (varScaffold Module from SNP2SIM):

- Input: PDB or DCD trajectory files from MD simulations [29].

- Alignment: Align all trajectory frames to a common reference (e.g., the protein backbone) [29].

- Clustering: Use the VMD "measure cluster" command on the binding site residue backbone RMSD with a user-defined threshold to identify the top 5 most populated conformational clusters [29].

- Output: Generate a set of variant-specific protein scaffolds from the representative structure of each major cluster for subsequent docking [29].

Issue 2: Physically Implausible or Clashed Docking Poses

Problem: Even top-ranked docking poses exhibit steric clashes, unrealistic bond angles, or poor interaction geometry, despite good RMSD to a crystal structure.

Solution:

- Employ post-docking MD refinement: Use the best docking poses as starting points for short MD simulations of the solvated ligand-receptor complex.

- Allow full flexibility: Simulate the complex with flexible ligand and flexible binding site residues to enable induced-fit adjustments.

- Re-score binding affinity: Extract the equilibrated structure and use MM/GBSA or a similar method to calculate the binding free energy from the simulation trajectory, which is often more reliable than docking scoring functions.

Issue 3: Handling Systematically Flexible or Large Molecules

Problem: Standard docking conformational search algorithms (systematic, genetic algorithm) struggle to find accurate low-energy structures for large, flexible molecules like macrocycles or dimeric catalysts.

Solution: Utilize the Multiple-Minimum Monte Carlo (MMMC) method for conformer generation [31].

- Random Dihedral Modification: Randomly modify an input molecule's dihedral angles [31].

- Steric Test & Minimization: Subject the new conformation to a quick steric check, then perform a minimization to find the nearest energy minimum [31].

- Energetic and RMSD Filtering: Accept the new minimum into the ensemble if it is within a specified energy window (e.g., 10 kcal/mol) of the global minimum and is structurally unique based on an RMSD threshold [31].

Experimental Protocols & Data Presentation

Table 1: Comparison of Conformational Sampling Methods

| Method | Key Principle | Best Use Case | Advantages | Limitations |

|---|---|---|---|---|

| Molecular Dynamics (MD) | Solves Newton's equations of motion to simulate atomic movements over time [3]. | Pre- and post-docking refinement; capturing full protein flexibility and dynamics [3]. | Physically realistic sampling; accounts for solvation and entropy. | Computationally intensive; time-scale limitations. |

| Metadynamics (e.g., in CREST) | Accelerates exploration by biasing the simulation away from already-seen conformations [30] [31]. | Efficiently finding global minima and conformational ensembles of single molecules [30]. | Efficient exploration of complex energy landscapes. | Requires careful selection of collective variables. |

| Multiple-Minimum Monte Carlo (MMMC) | Randomly samples dihedral angles, minimizes, and filters for unique, low-energy conformers [31]. | Flexible molecules and catalysts where MD struggles with rare events [31]. | Robust exploration; effective for large, flexible systems [31]. | May miss energy minima that require subtle concerted motions. |

| Genetic Algorithm (e.g., in AutoDock) | Uses principles of natural selection (mutation, crossover) to optimize poses based on a fitness score [3] [10]. | Standard ligand conformational search during docking. | Good balance of exploration and exploitation. | Can get trapped in local minima; population size and iteration dependent. |

Table 2: Essential Research Reagent Solutions

| Tool / Software | Function in Workflow | Key Application |

|---|---|---|

| NAMD | Performs all-atom, explicit solvent molecular dynamics simulations [29]. | Generating conformational trajectories of protein variants (varMDsim module in SNP2SIM) [29]. |

| VMD | Visualizes, analyzes, and trajectories; used for structural clustering [29]. | Clustering MD trajectories based on binding site RMSD to generate variant scaffolds (varScaffold module) [29]. |

| AutoDock Vina | Performs flexible-ligand docking into a rigid protein scaffold [29]. | High-throughput docking of small molecule libraries into MD-generated protein structures [29]. |

| CREST | Uses iterated metadynamics (iMTD-GC) for conformational ensemble generation [30]. | Exploring pressure-modified potential energy surfaces and finding conformational ensembles of single molecules [30]. |

| MMMC Package | Implements Multiple-Minimum Monte Carlo sampling for conformer generation [31]. | Locating low-energy conformers for large, flexible molecules where MD struggles [31]. |

| Libpvol Library | Extends molecular Hamiltonian with a PV term for modeling high-pressure effects [30]. | Conformational sampling of systems exposed to elevated pressures within CREST [30]. |

Workflow Visualization

Pre-docking MD Sampling Workflow

Post-docking MD Refinement Workflow

Molecular docking, the computational prediction of how ligands bind to target proteins, faces significant challenges when applied to novel targets. Traditional methods often struggle with accuracy and efficiency, particularly when dealing with undruggable targets that lack well-defined binding pockets or when experimental structural data is scarce. Artificial intelligence (AI) has emerged as a transformative technology to address these limitations, enabling more reliable predictions and accelerating drug discovery pipelines. By integrating geometric deep learning and unsupervised pre-training strategies, researchers can now overcome traditional bottlenecks, achieving superior performance in predicting binding affinities and identifying potential drug candidates even for poorly characterized targets. This technical support center provides essential guidance for researchers implementing these advanced AI methodologies in their molecular docking experiments.

Core AI Methodologies in Modern Docking

Geometric Deep Learning for Molecular Representation

Geometric deep learning (GDL) extends conventional neural networks to non-Euclidean data like molecular graphs and 3D structures, enabling more sophisticated molecular representations. Unlike traditional approaches that rely solely on covalent bonds, modern GDL frameworks incorporate both covalent and non-covalent interactions, capturing essential physical and chemical properties that govern molecular binding.

Molecular Geometric Deep Learning (Mol-GDL) represents a significant advancement by modeling molecular topology as a series of graphs reflecting different scales of atomic interactions [32]. In this framework, molecular graph representation (G(I) = (V, E(I))) is defined for a molecule with N atoms, where V represents nodes (atoms) and (E(I)) represents edges determined by an interaction region (I = [x{min}, x{max})). The adjacency matrix (A(I) = (a(I)_{ij})) is defined by:

[ a(I){ij} = \begin{cases} 1, & x{\text{min}} \leq \|ri - rj\| < x_{\text{max}} \text{ and } i \neq j \ 0, & \text{others} \end{cases} ]

This formulation allows the creation of multiple graph representations by varying the distance parameters, capturing different interaction types including short-range covalent bonds and longer-range non-covalent interactions critical for molecular recognition and binding [32].

Figure 1: Mol-GDL Multi-scale Graph Representation Workflow

Knowledge-Guided Pre-training Frameworks

Self-supervised pre-training on large molecular datasets has emerged as a powerful strategy for learning generalizable molecular representations that enhance downstream docking tasks. The Knowledge-guided Pre-training of Graph Transformer (KPGT) framework addresses key limitations in conventional pre-training by integrating additional molecular knowledge into the learning process [33].

KPGT combines a specialized graph transformer architecture called Line Graph Transformer (LiGhT) with a knowledge-guided pre-training strategy. The model incorporates a Knowledge Node (K Node) connected to original molecular graph nodes, with its feature embedding initialized using additional molecular knowledge such as descriptors or fingerprints. During pre-training, this K node interacts with other nodes in the multi-head attention module, providing semantic guidance for predicting masked components [33].

Experimental Protocol for KPGT Implementation:

Pre-training Data Curation: Assemble approximately two million molecules from sources like ChEMBL29 for initial pre-training [33]

Knowledge Node Initialization: Calculate molecular descriptors or fingerprints using established tools (e.g., RDKit) and encode them as initial K node features

Masked Graph Modeling: Randomly mask 15-20% of molecular graph nodes and train the model to reconstruct them using both structural context and knowledge node guidance

Transfer Learning Setup:

- Feature Extraction: Fixed backbone model with trained parameters

- Fine-tuning: Trainable backbone model with layer-wise learning rate decay and regularization strategies (L2-SP, FLAG) [33]

Downstream Task Adaptation: Integrate task-specific prediction heads and train on target docking datasets with reduced learning rates (10⁻³ to 10⁻⁵)

Troubleshooting Guides & FAQs

Common Computational Errors & Solutions

Problem: "Ligand Not Found" or "Cannot Find Ligand" Errors Table 1: Troubleshooting Ligand Recognition Issues

| Error Cause | Diagnostic Steps | Solution |

|---|---|---|

| Incorrect file format | Verify file structure using grep "ROOT" ligand.pdbqt |

Convert to appropriate format (PDBQT for single ligands, SDF for multiple ligands) [34] |

| Memory allocation failure | Check system logs for memory errors | Split large ligand sets into smaller batches (<100 ligands/file) [34] |

| Improper protonation states | Validate ligand charge states at physiological pH | Use tools like OpenBabel to adjust protonation states prior to docking [11] |

| Missing atomic coordinates | Confirm structural completeness with visualization tools | Add missing atoms or reconstruct incomplete regions using energy minimization |

Problem: Unrealistic Binding Poses or Poor Affinity Predictions

- Cause: Inadequate sampling of binding site or insufficient pose generation

- Solution: Increase the number of pose generation attempts (e.g., 50-100 poses) and adjust search exhaustiveness parameters (e.g., 24-48 for Vina) [11]

- Advanced Approach: Implement ensemble docking with multiple protein conformations to account for flexibility [11] [23]

Problem: Model Performance Degradation with Novel Targets

- Cause: Domain shift between pre-training data and target application

- Solution: Implement progressive fine-tuning strategies, starting with general molecular representations and gradually specializing to target class [33] [35]