Overcoming Key Hurdles in Phenotypic Screening Library Optimization for Better Drug Discovery

Phenotypic screening has regained prominence for discovering first-in-class medicines but faces significant challenges in library design and optimization that impact efficiency and success rates.

Overcoming Key Hurdles in Phenotypic Screening Library Optimization for Better Drug Discovery

Abstract

Phenotypic screening has regained prominence for discovering first-in-class medicines but faces significant challenges in library design and optimization that impact efficiency and success rates. This article explores the core hurdles and modern solutions, covering foundational principles, advanced methodological applications, practical troubleshooting strategies, and rigorous validation frameworks. It provides researchers and drug development professionals with a comprehensive guide to navigating the complexities of creating optimized screening libraries, from selecting chemically diverse and target-informed compounds to leveraging AI and novel pooling techniques for enhanced predictivity and translatability in complex disease models.

The Resurgence and Rationale of Phenotypic Screening in Modern Drug Discovery

What are the fundamental definitions of Phenotypic and Target-Based Screening in drug discovery?

In modern drug discovery, two principal strategies guide the identification of new therapeutic compounds: phenotypic screening and target-based screening. These approaches differ in their fundamental philosophy and starting point.

- Phenotypic Screening is an empirical strategy that identifies active compounds based on their ability to induce a measurable biological response in cells, tissues, or whole organisms, without prior knowledge of the specific molecular target involved. It is an unbiased method that captures the complexity of biological systems [1] [2] [3].

- Target-Based Screening is a hypothesis-driven approach that begins with a specific, well-characterized molecular target (e.g., a protein, enzyme, or receptor) believed to play a critical role in a disease process. It involves screening compounds for their ability to interact with and modulate that predefined target [1] [2].

The table below summarizes the core strategic differences between these two approaches.

| Feature | Phenotypic Screening | Target-Based Screening |

|---|---|---|

| Starting Point | Observable biological effect or phenotype [1] [3] | Predefined molecular target [2] |

| Knowledge Prerequisite | Does not require prior understanding of disease mechanism [2] | Relies on established knowledge of target and its role in disease [2] |

| Primary Screening Readout | Complex, integrated cellular response (e.g., cell death, differentiation, cytokine secretion) [1] [3] | Specific biochemical interaction (e.g., enzyme inhibition, receptor binding) [2] |

| Throughput | Often lower due to complex assays [3] | Typically high, amenable to HTS [2] [4] |

| Target Deconvolution | Required after hit identification; can be challenging and time-consuming [1] [3] | Not required; target is known from the outset [2] |

| Key Strength | Identifies first-in-class medicines; captures system complexity and polypharmacology [5] [4] | Efficient, rational design; easier to optimize leads; yields best-in-class drugs [2] [3] |

FAQs and Troubleshooting Guides

FAQ: Strategic Considerations

1. When should I choose a phenotypic screening approach over a target-based one? Choose phenotypic screening when investigating diseases with poorly understood molecular mechanisms, when you aim to discover first-in-class drugs with novel mechanisms of action, or when the therapeutic goal involves modulating complex, system-level biological responses, such as in immuno-oncology or neurodegenerative diseases [1] [2] [3]. It is also valuable for uncovering polypharmacology—when a compound acts on multiple targets [6].

2. What are the major limitations of phenotypic screening, and how can I mitigate them? The main limitations are the significant challenge of target deconvolution (identifying the molecular mechanism of action) and generally lower throughput [7] [3].

- Mitigation Strategy: Employ advanced technologies for target identification, such as proteomics (e.g., thermal proteome profiling), CRISPR-based genetic screens, and chemical genomics. Furthermore, using high-content imaging and AI-powered data analysis can extract more mechanistic information from the phenotypic readouts themselves, accelerating deconvolution [7] [1] [4].

3. Can these two strategies be integrated? Yes, and this is a growing trend in modern drug discovery. A hybrid approach is increasingly common, where a target-focused screen is conducted in a cellular context, making it both target-based and phenotypic [3]. For instance, you might screen for compounds that affect the phosphorylation of a specific target protein (target-based readout) using high-content imaging that also captures other cellular morphological changes (phenotypic readout) [3]. This combines the precision of a targeted approach with the contextual richness of a phenotypic one.

Troubleshooting Guide: Common Experimental Issues

Problem: High false-positive rate in a high-throughput phenotypic screen.

- Potential Cause: Assay artifacts or promiscuous, pan-assay interference compounds (PAINS) that generically disrupt assays.

- Solution: Implement rigorous cheminformatics filters to flag and remove PAINS. Use orthogonal, biophysical confirmation methods to validate hits. Ensure your compound library is designed with high chemical diversity and drug-likeness in mind to improve the quality of starting points [4].

Problem: A potent hit from a phenotypic screen has unsuccessful target deconvolution.

- Potential Cause: The compound may act through weak interactions with multiple targets (polypharmacology), making it difficult to pinpoint a single mechanism.

- Solution: Consider that polypharmacology might be integral to the compound's efficacy. Instead of searching for a single target, use system-wide approaches like RNA sequencing and thermal proteome profiling to identify the network of engaged targets and pathways [6].

Problem: A hit compound is effective in a 2D cell culture but loses efficacy in a more complex 3D model.

- Potential Cause: The simplified 2D assay does not recapitulate the tumor microenvironment, including cell-cell interactions, hypoxia, and drug penetration barriers.

- Solution: Move primary screening to more physiologically relevant models from the outset. Use patient-derived spheroids, organoids, or organ-on-chip platforms to better mimic the in vivo disease state and improve translational success [4] [6].

Experimental Protocol: A Phenotypic Screening Case Study

The following workflow and protocol outline a rational approach to phenotypic screening for glioblastoma (GBM), demonstrating how to address the key challenge of library optimization [6].

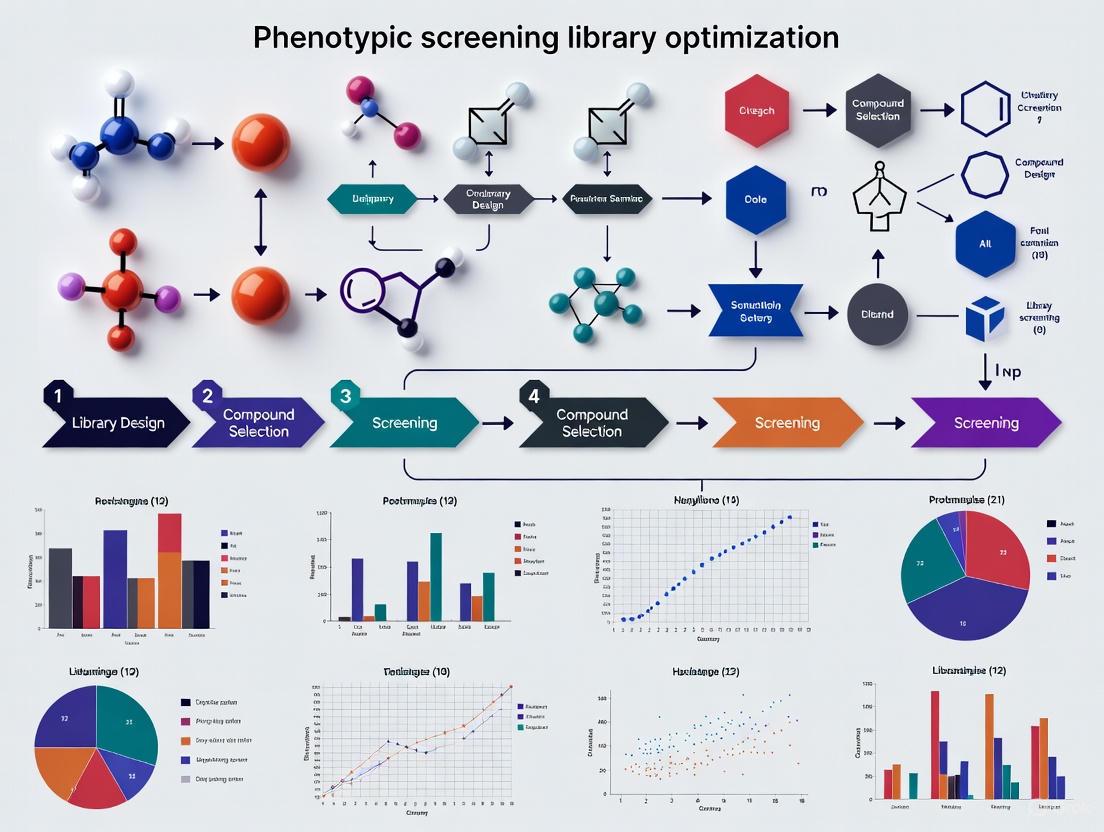

Diagram: Phenotypic Screening Workflow with Library Optimization.

Detailed Methodology:

Target Selection and Library Enrichment:

- Input Data: Begin with genomic data (e.g., RNA sequencing and mutation data) from patient tumors (e.g., from The Cancer Genome Atlas) [6].

- Differential Expression: Perform analysis to identify genes significantly overexpressed in the disease state compared to normal samples (p < 0.001, FDR < 0.01, log2FC > 1) [6].

- Network Construction: Map the products of these genes onto large-scale human protein-protein interaction networks to construct a disease-specific subnetworK [6].

- Virtual Screening: Identify proteins in this network with druggable binding pockets. Perform molecular docking of an in-house compound library (e.g., ~9000 compounds) against these druggable sites to rank-order compounds based on predicted binding affinity. Select a focused subset of compounds predicted to engage multiple disease-relevant targets for phenotypic screening [6].

Phenotypic Screening Assay:

- Cell Model: Use low-passage, patient-derived glioblastoma cells grown as three-dimensional (3D) spheroids. This model more accurately captures the tumor microenvironment than traditional 2D cell lines [6].

- Screening Protocol:

- Culture GBM cells in ultra-low attachment plates to promote spheroid formation.

- Treat mature spheroids with the enriched compound library at a single-digit micromolar concentration range.

- Incubate for a defined period (e.g., 72-96 hours).

- Measure the primary phenotypic endpoint: inhibition of cell viability using a standard assay like CellTiter-Glo.

- Include standard-of-care drugs (e.g., temozolomide for GBM) as a positive control.

Selectivity and Secondary Phenotyping:

- Counter-Screening: Test active hits against non-transformed primary cell lines (e.g., hematopoietic CD34+ progenitor spheroids or astrocytes) to identify compounds that selectively target diseased cells while sparing normal cells [6].

- Angiogenesis Assay: For oncology, perform a secondary phenotypic assay such as a tube formation assay with brain endothelial cells to assess the compound's ability to inhibit angiogenesis [6].

Target Deconvolution and Mechanism of Action:

- Transcriptomics: Perform RNA sequencing on compound-treated versus untreated cells to observe changes in gene expression and infer affected pathways [6].

- Proteomics: Use mass spectrometry-based thermal proteome profiling (TPP) to identify proteins that show a thermal stability shift upon compound binding, indicating direct target engagement [6].

- Validation: Confirm binding to key targets identified by TPP using cellular thermal shift assays (CETSA) with target-specific antibodies [6].

The Scientist's Toolkit: Key Research Reagent Solutions

The table below lists essential materials and their functions for setting up a phenotypic screening campaign, particularly one based on the protocol above.

| Research Reagent / Tool | Function in the Experiment |

|---|---|

| Patient-Derived Cells & 3D Spheroids | Provides a physiologically relevant disease model that recapitulates key features of the native tumor microenvironment, leading to more translatable results [4] [6]. |

| Diverse & Focused Compound Libraries | A high-quality library is crucial. Diversity libraries explore broad chemical space, while target-focused libraries (e.g., kinase, epigenetic) enrich for activity against specific target families [4]. |

| High-Content Imaging Systems | Enables multiparametric analysis of complex phenotypic outcomes in cell-based assays, such as morphological changes, protein localization, and cell viability [3]. |

| CRISPR Screening Tools | Allows for systematic perturbation of genes to infer gene function and validate potential targets in a phenotypic context [7] [3]. |

| AI/ML Data Analysis Platforms | Machine learning helps "denoise" screening data, prioritize hits, identify frequent hitters, and can even assist in predicting a compound's molecular target from its phenotypic signature [4]. |

| Multi-Omics Platforms (RNA-seq, Proteomics) | Used for target deconvolution. RNA-seq reveals altered pathways, while proteomic methods like thermal proteome profiling identify direct protein targets [1] [6]. |

Troubleshooting Guides & FAQs

This guide addresses common challenges in phenotypic screening library optimization to enable the unbiased discovery of first-in-class therapeutic mechanisms.

Frequently Asked Questions

FAQ 1: Our phenotypic screens generate hits, but we struggle to identify the mechanism of action (MoA). What strategies can improve target deconvolution?

- Challenge: Target deconvolution remains a significant bottleneck, often prolonging discovery timelines and complicating hit validation [1].

- Solution: Implement an integrated approach using modern tools:

- Functional Genomics: Combine your screening hits with CRISPR-based genetic screens to identify genes that modulate the compound's activity or resistance [7].

- Chemical Proteomics: Use techniques like thermal proteome profiling (TPPP) to directly identify protein targets that engage with your hit compound in a cellular context [6] [8].

- AI-Powered Pattern Matching: Leverage artificial intelligence (AI) to integrate phenotypic signatures (e.g., from high-content imaging) with chemical descriptors and omics data to predict potential targets [4] [9].

- Multi-omics Integration: Correlate compound-induced phenotypic changes with transcriptomic (RNA-seq) and proteomic profiles to narrow down the involved pathways [1] [6].

FAQ 2: How can we design a screening library that maximizes the chance of discovering first-in-class mechanisms?

- Challenge: Standard chemogenomic libraries only interrogate a small fraction of the human genome (~1,000-2,000 out of 20,000+ genes), limiting novelty [7].

- Solution: Employ strategic library design:

- Prioritize Diversity: Use libraries optimized for broad structural and chemical diversity to cover wide biological and chemical space [4] [10].

- Incorporate Bioactive Compounds: Enrich libraries with compounds known to be bioactive, including natural products and their analogs, to increase the likelihood of hitting relevant pathways [10].

- Rational Library Enrichment: For complex diseases, create focused libraries by virtually screening compounds against multiple disease-relevant targets identified from genomic and protein-interaction networks [6].

- Quality Control: Rigorously filter libraries to remove pan-assay interference compounds (PAINS), reactive molecules, and compounds with poor drug-like properties [4] [10].

FAQ 3: What are the key considerations for choosing between a pooled versus arrayed CRISPR library for a genetic screen?

- Challenge: Selecting the wrong library format can lead to inconclusive results or unmanageable experimental scale.

- Solution: Base the decision on your screening goals and resources.

- Use Pooled Libraries for Discovery: Ideal for unbiased, genome-wide screens where the phenotype results in enrichment or depletion of cells (e.g., drug resistance or essential gene identification). They are more scalable and do not require automated liquid handling [11].

- Use Arrayed Libraries for Targeted Screens: Best for screening a predefined subset of genes or when assaying complex phenotypes that don't confer a growth advantage (e.g., high-content imaging). Requires automation but allows you to know the exact perturbation in each well from the start [11].

FAQ 4: Our hit compounds are active in simple 2D cell models but fail in more complex, physiologically relevant assays. How can we improve translational relevance early on?

- Challenge: Traditional 2D monolayer assays often fail to capture the complexity of human disease, leading to high attrition rates later in development [6].

- Solution: Invest in more disease-relevant model systems from the outset.

- 3D Models: Utilize three-dimensional cultures, such as patient-derived spheroids and organoids, which better mimic the tumor microenvironment, cell-cell interactions, and drug penetration barriers [4] [6].

- Primary Cells: Whenever possible, use low-passage patient-derived cells instead of immortalized cell lines, as they maintain more authentic biological responses [6].

- Co-culture Systems: Implement assays that include multiple cell types (e.g., immune cells, fibroblasts) to capture complex biological interactions that drive disease [1].

FAQ 5: How can we effectively triage hits to focus on the most promising leads with novel mechanisms?

- Challenge: Phenotypic screens can generate false positives and hits with undesired polypharmacology, wasting resources on invalidated leads [4].

- Solution: Establish a robust, multi-parameter triage cascade.

- Orthogonal Assays: Confirm activity in a functionally independent secondary assay that measures the same phenotype.

- Counter-Screens: Rule out common assay artifacts and undesired mechanisms (e.g., cytotoxicity, interference with the detection system).

- Cheminformatic Filters: Use AI/ML tools to flag frequent hitters, compounds with structural alerts, and undesirable physicochemical properties [4] [9].

- Early ADME/Tox Profiling: Integrate predictive models for absorption, distribution, metabolism, excretion, and toxicity (ADME/Tox) early in the process to deprioritize compounds with poor pharmacokinetic or safety profiles [4] [9].

Experimental Protocols for Key Methodologies

Protocol 1: Phenotypic Screening of an Enriched Compound Library in a 3D Glioblastoma Model

This protocol, adapted from a published study, outlines a rational approach to screen for compounds with selective polypharmacology in a patient-derived glioblastoma (GBM) spheroid model [6].

Library Enrichment & Virtual Screening:

- Input: Use tumor genomic data (e.g., from TCGA) to identify a set of overexpressed and mutated genes in GBM.

- Network Analysis: Map these genes onto a human protein-protein interaction network to create a disease-relevant subnetwork.

- Target Selection: Identify proteins within this subnetwork that contain druggable binding pockets (catalytic sites, protein-protein interfaces).

- Virtual Screening: Dock an in-house compound library (e.g., ~9,000 compounds) against these druggable pockets. Select the top-ranking compounds predicted to bind multiple disease-relevant targets.

Phenotypic Screening in 3D Culture:

- Cell Culture: Plate low-passage patient-derived GBM cells in ultra-low attachment plates to form 3D spheroids.

- Compound Treatment: Treat spheroids with the enriched library compounds. Include standard-of-care (e.g., temozolomide) and DMSO vehicle controls.

- Viability Assay: After 5-7 days, measure cell viability using a 3D-optimized ATP-based assay (e.g., CellTiter-Glo 3D).

- Selectivity Counter-Screen: Test active compounds in parallel against non-transformed primary cell lines (e.g., hematopoietic CD34+ progenitor spheroids or astrocytes) to identify compounds with selective toxicity toward cancer cells.

Secondary Phenotypic Assay (Angiogenesis):

- Tube Formation Assay: Seed brain endothelial cells on a layer of Matrigel. Treat with the selective hit compound and quantify the inhibition of tube network formation after 6-18 hours.

Protocol 2: Executing a Pooled Genome-Wide CRISPR Knockout Screen

This protocol provides a general workflow for conducting a loss-of-function genetic screen to identify genes essential for a specific phenotype [11].

Cell Line Preparation:

- Generate a Cas9-expressing cell line by transducing your target cells with a lentiviral Cas9 construct. Select stable pools using an antibiotic like puromycin.

- Validate Cas9 activity and functionality in the cell line.

sgRNA Library Transduction:

- Produce a high-titer lentiviral stock of a genome-wide pooled sgRNA library (e.g., Brunello library).

- Transduce the Cas9+ cells at a low Multiplicity of Infection (MOI ~0.3-0.4) to ensure most cells receive only one sgRNA. Use ~76 million cells to maintain library representation.

- Apply selection (e.g., blasticidin) to eliminate non-transduced cells.

Phenotypic Selection:

- Split the transduced cell population into treatment and control groups (e.g., drug-treated vs. DMSO control).

- Culture the cells for a sufficient duration (typically 10-14 days) to allow for phenotypic manifestation and enrichment/depletion of specific knockouts.

Genomic DNA (gDNA) Extraction and Sequencing:

- Harvest at least 100-200 million cells from each population. Isolate high-quality gDNA using a maxi-prep method to maintain sgRNA representation.

- Amplify the integrated sgRNA sequences from the gDNA by PCR and prepare next-generation sequencing (NGS) libraries.

Data Analysis:

- Sequence the libraries to a depth of ~10-100 million reads, depending on the screen type (positive/negative).

- Align sequences to the reference sgRNA library and quantify the abundance of each guide in treatment vs. control groups.

- Use specialized algorithms (e.g., MAGeCK) to identify sgRNAs and genes that are significantly enriched or depleted.

Table 1: Key Quantitative Considerations for Screening Library Design

| Parameter | Typical Range or Value | Significance & Rationale |

|---|---|---|

| Chemogenomic Library Coverage | 1,000 - 2,000 / 20,000+ human genes [7] | Highlights the limited fraction of the genome probed by annotated compound sets, underscoring the need for diverse libraries for novel discovery. |

| Recommended Cell Number for Pooled CRISPR Screen | ~76 million cells [11] | Ensures adequate representation of the entire sgRNA library, typically aiming for 200-1000 cells per sgRNA to avoid stochastic dropout. |

| Target Transduction Efficiency (CRISPR) | 30 - 40% [11] | A low MOI is critical to ensure most cells receive a single sgRNA, allowing for clear genotype-to-phenotype linkage. |

| NGS Read Depth (Positive Screen) | ~10 million reads [11] | Sufficient for identifying enriched sgRNAs in a positive selection screen (e.g., for drug resistance). |

| NGS Read Depth (Negative Screen) | Up to ~100 million reads [11] | Deeper sequencing is required to detect subtle depletion signals in negative screens (e.g., for essential genes). |

Table 2: Comparison of Phenotypic Screening Libraries

| Library Type | Key Characteristics | Best Use Cases |

|---|---|---|

| ChemDiversity Library [10] | Emphasizes broad structural diversity; filtered for drug-like properties, PAINS-free. | Unbiased discovery when the goal is to explore entirely novel chemical and biological space. |

| BioDiversity Library [10] | Enriched with known bioactive compounds, drugs, and natural product-like scaffolds. | Increasing the probability of finding a hit by leveraging chemical matter with proven biological activity. |

| Disease-Enriched Library [6] | Virtually screened against a network of disease-specific targets derived from genomic data. | Complex polygenic diseases (e.g., glioblastoma) where selective polypharmacology is desired. |

| CRISPR Knockout Library [11] | Provides complete gene knockouts; genome-wide or focused formats; pooled or arrayed. | Identifying genes essential for a phenotype (synthetic lethality, drug resistance) in an unbiased manner. |

Key Signaling Pathways and Workflows

Integrated Phenotypic Screening Workflow

Multi-Modal Target Deconvolution Strategy

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Tools for Phenotypic Screening and Optimization

| Research Tool | Function & Application | Examples / Key Features |

|---|---|---|

| Diverse Compound Libraries [4] [10] | Provide a broad source of chemical matter for unbiased phenotypic screening. | ChemDiversity libraries (structurally diverse), BioDiversity libraries (bioactive-enriched). High drug-likeness, PAINS-free. |

| CRISPR sgRNA Libraries [7] [11] | Enable genome-wide or targeted loss-of-function genetic screens to identify genes involved in a phenotype. | Genome-wide pooled libraries (e.g., Brunello). Arrayed libraries for specific gene sets. Lentiviral delivery for stable integration. |

| 3D Culture Systems [4] [6] | Provide physiologically relevant disease models that better mimic the in vivo microenvironment. | Patient-derived spheroids, organoids. Used for screening and validating compound efficacy and selectivity. |

| High-Content Imaging Systems [1] [4] | Enable multiparametric analysis of complex phenotypic changes in cells (e.g., morphology, signaling). | Used in Cell Painting assays. Generates rich, high-dimensional data for AI/ML analysis. |

| AI/ML Software Platforms [4] [9] | Analyze complex screening data, predict compound properties, prioritize hits, and suggest targets. | Capabilities include virtual screening, ADME/Tox prediction, and image analysis for phenotypic profiling. |

FAQs: Navigating the Throughput-Relevance Trade-off

Q1: Our high-throughput primary screen identified promising hits, but these fail in secondary, more biologically complex assays. How can we improve the translational relevance of our primary screening data?

- Answer: This common challenge often stems from an over-optimization for throughput at the expense of biological context. To bridge this gap:

- Adopt Phased Screening: Implement a tiered screening strategy. Use a high-throughput but simplified assay for primary screening, but immediately follow up on hits with a secondary, lower-throughput assay that incorporates greater biological complexity (e.g., co-culture systems, 3D models, or high-content imaging) [12]. This balances initial speed with necessary validation.

- Leverage Computational Prediction: Use tools like InferLoop, which leverages accessible data (e.g., scATAC-seq) to predict biologically relevant signals, such as cell-type-specific chromatin interactions, that are difficult to capture in a high-throughput setup [13]. This adds a layer of biological insight without additional complex experiments.

- Utilize Advanced Pooling Designs: For genetic screens, employ sophisticated combinatorial pooling methods like DCP-CWGC (Distance- and Balance-aware Constant-Weight Gray Codes). This design allows for the detection of consecutive positives (e.g., overlapping peptides or regulatory elements) and includes error-detection capabilities, making large-scale screens more reliable and biologically informative [14].

Q2: We need to extract multiple phenotypic endpoints from a single screen to capture biological complexity, but this drastically reduces our throughput. What solutions are available?

- Answer: The key is to implement workflows that are multiplexed by design.

- Implement High-Content, Multi-Parameter Assays: Design experiments where multiple relevant parameters are measured simultaneously from the same sample. For example, a protocol using CM-H2DCFDA and TMRM in a high-content microscopy workflow can concurrently quantify intracellular ROS levels, mitochondrial membrane potential, and mitochondrial morphology in individual cells [15]. While image acquisition takes time, the rich, multi-dimensional data extracted per experiment provides a much deeper biological understanding than multiple separate, single-endpoint assays.

- Invest in Automated Data Integration: The bottleneck often shifts from data collection to data analysis. Utilize automated image analysis pipelines and multivariate data analysis tools to efficiently process the complex, multi-parametric data generated, turning it into actionable biological insights [15].

Q3: How can we design a screening library and strategy that is efficient for large-scale discovery while still being sensitive to subtle, cell-type-specific phenotypes?

- Answer: This requires a combination of smart experimental design and modern computational tools.

- Focus on Perturbation Efficiency: Ensure your library design and screening model are optimally matched. For genetic screens, this means using efficient delivery systems (e.g., lentiviral vectors) and well-designed guide RNA or siRNA libraries. For compound screens, this involves careful selection of compound concentration and vehicle controls to minimize false positives/negatives [12].

- Incorporate Cell-Type-Specific Computational Analysis: Use single-cell sequencing technologies (e.g., scATAC-seq) and computational tools like InferLoop to deconvolve cell-type-specific signals from a heterogeneous screening population. This allows you to run a pooled screen but still extract insights relevant to specific cell subtypes, preserving biological relevance [13].

- Employ AI-Driven Pathway Optimization: In fields like synthetic biology, AI models can predict optimal biological pathways (e.g., for metabolite production) before any wet-lab experiment begins. This "in-silico screening" drastically narrows down the experimental space, allowing you to focus high-throughput validation on the most promising, biologically sound targets [16].

Experimental Protocols for Balanced Screening

Protocol: High-Content Multiplexed Analysis of Oxidative Stress and Mitochondrial Function

This protocol allows for the simultaneous quantification of multiple interconnected cellular health parameters in a single, automated workflow, ideal for secondary screening or lower-throughput, high-information-content primary screens [15].

- Key Application: Simultaneously quantifying intracellular reactive oxygen species (ROS) levels, mitochondrial membrane potential (ΔΨm), and mitochondrial morphology in adherent cells.

- Principle: The assay uses two cell-permeable fluorescent reporters: CM-H2DCFDA for ROS and TMRM for ΔΨm and mitochondrial morphology. Automated widefield fluorescence microscopy and subsequent image analysis enable the extraction of intensity- and morphology-based features at a single-cell level.

Workflow:

The following diagram illustrates the key stages of this multiplexed high-content screening protocol.

Detailed Methodology:

- Cell Seeding: Seed adherent cells (e.g., Normal Human Dermal Fibroblasts - NHDF) in a 96-well plate and allow them to adhere overnight [15].

- Reagent Preparation:

- Prepare imaging buffer (e.g., HBSS supplemented with HEPES).

- Prepare stock and working solutions of fluorescent dyes:

- Prepare an inducer of oxidative stress, such as tert-Butyl hydroperoxide (TBHP).

- Microscope Setup: Configure an automated widefield microscope with a 20x air objective, an environmental chamber, and appropriate filter sets for GFP (CM-H2DCFDA) and TRITC (TMRM). Define an acquisition protocol that images multiple non-overlapping fields per well [15].

- Dye Loading and Staining:

- Aspirate the culture medium and wash cells with pre-warmed imaging buffer.

- Load cells with a working solution containing both CM-H2DCFDA (e.g., 1 µM) and TMRM (e.g., 100 nM) in imaging buffer. Incubate for 30-45 minutes at 37°C protected from light [15].

- Replace the dye solution with fresh imaging buffer before microscopy.

- Live-Cell Imaging:

- First Measurement (Basal Conditions): Acquire images for both fluorescent channels to establish baseline ROS levels and mitochondrial parameters [15].

- Second Measurement (Induced Conditions): Add the stress inducer (e.g., TBHP) directly to the wells. After a defined incubation period (e.g., 30 minutes), re-acquire images to measure the induced cellular response [15].

- Image Analysis: Use automated image analysis software to:

- Perform cell segmentation.

- Quantify mean fluorescence intensity for CM-H2DCFDA (ROS) and TMRM (ΔΨm) per cell.

- Extract morphological features (e.g., form factor, aspect ratio) from the TMRM channel to classify mitochondrial morphology [15].

- Data Analysis and QC: Perform multivariate statistical analysis on the extracted feature set to detect differences between cell types or treatments. Implement quality control steps to exclude out-of-focus images or debris [15].

Protocol: Error-Detecting Combinatorial Pooling for Complex Target Identification

This protocol outlines the use of a sophisticated combinatorial pooling strategy to enhance the efficiency and reliability of large-scale genetic or peptide screens, particularly when targeting consecutive or overlapping elements [14].

- Key Application: Efficiently deconvolve positive hits from a large library of samples (e.g., peptides, gRNAs) in a minimal number of pooled tests, with built-in error detection and a focus on detecting consecutive positives.

- Principle: The DCP-CWGC (Distance- and Balance-aware Constant-Weight Gray Codes) method assigns each sample a unique binary "address" that determines its placement in testing pools. The design ensures samples are evenly distributed (balanced), each is tested the same number of times (constant weight), and the pattern allows for the identification of consecutive positives and the detection of experimental errors [14].

Workflow:

The diagram below visualizes the core process of designing and executing a screen using the DCP-CWGC pooling method.

Detailed Methodology:

- Parameter Definition: Determine the screening parameters:

n: The number of samples (items) to be screened.r: The constant Hamming weight (number of '1's in the code), which defines how many pools each sample is placed in.m: The number of pools, which must satisfym ≥ 2r + 1for optimal performance [14].

- Code Generation: Use specialized algorithms to generate the DCP-CWGC.

- Branch-and-Bound Algorithm (BBA): Constructs near-perfectly balanced codes for small to medium

nby traversing an address-joint bipartite graph [14]. - Recursive Combination BBA (rcBBA): Efficiently constructs long codes by recursively combining shorter DCP-CWGCs, ideal for large-scale screens [14].

- Implementation Note: The open-source Python package

codePUBprovides implementations of these algorithms [14].

- Branch-and-Bound Algorithm (BBA): Constructs near-perfectly balanced codes for small to medium

- Pooling Plan and Experiment:

- Create a pooling plan based on the generated code. Each sample's binary address dictates in which of the

mpools it is included (a '1' indicates inclusion). - Perform the pooled assay. For example, combine peptide pools with reporter cells to test for immune activation.

- Create a pooling plan based on the generated code. Each sample's binary address dictates in which of the

- Result Analysis and Hit Deconvolution:

- Identify which pools test positive.

- Error Detection: The DCP-CWGC design expects exactly

r+1positive pools for a single consecutive positive hit. A deviation from this number indicates a potential experimental error (e.g., a false positive or negative) [14]. - Hit Identification: The unique OR-sum of the addresses of consecutive positive items allows for their precise identification, even in the presence of a limited number of errors, by cross-referencing the positive pools [14].

Data Presentation: Comparative Analysis of Screening Approaches

Table 1: Quantitative Comparison of Screening Methodologies

This table summarizes key performance metrics for different screening approaches, highlighting the trade-off between throughput and biological relevance.

| Screening Methodology | Typical Throughput | Key Biological Relevance Features | Key Limitations | Ideal Use Case |

|---|---|---|---|---|

| Biochemical (e.g., ELISA, Enzyme Activity) [12] | Very High (10,000s of data points/day) | Direct measurement of molecular interactions. | Lack of cellular context; may not reflect physiology. | Primary screening for target binding or enzymatic inhibition. |

| Cell-Based (Simple Monolayer) [12] | High (1,000s of data points/day) | Cellular permeability; basic cytotoxicity. | Limited tissue structure; absence of microenvironment. | Primary phenotypic screening (e.g., cell viability, reporter assays). |

| High-Content Analysis (e.g., Multiplexed Imaging) [15] | Medium (100s of wells/day, 10,000s of cells) | Multiplexed readouts (signaling, morphology, subcellular structures) at single-cell resolution. | Throughput limited by image acquisition and analysis time; cost. | Secondary validation & lower-throughput primary screens requiring deep phenotyping. |

| Advanced Pooling (e.g., DCP-CWGC) [14] | Theoretical efficiency gain of log(n) | Capable of detecting complex patterns (e.g., consecutive positives); includes error-detection. | Complex experimental design and deconvolution; not suitable for all assay types. | Large-scale genetic or peptide screens where library size is a major constraint. |

| 3D & Co-culture Models | Low (10s of wells/day) | Physiologically relevant tissue context; cell-cell interactions; improved predictive validity. | Very low throughput; high cost; challenging for automated handling and analysis. | Late-stage secondary validation and mechanistic studies for top hits. |

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Reagents for Balanced Phenotypic Screening

This table details essential reagents and their specific functions in setting up robust and informative screening assays.

| Research Reagent | Function & Role in Screening | Example Application in Protocol |

|---|---|---|

| CM-H2DCFDA [15] | Cell-permeable fluorescent dye that becomes highly fluorescent upon oxidation. Used as a reporter for intracellular Reactive Oxygen Species (ROS) levels. | Multiplexed high-content analysis of oxidative stress and mitochondrial function [15]. |

| TMRM (Tetramethylrhodamine, Methyl Ester) [15] | Cell-permeable, cationic fluorescent dye that accumulates in active mitochondria. Used to measure mitochondrial membrane potential (ΔΨm) and, via high-resolution imaging, mitochondrial morphology. | Multiplexed high-content analysis of oxidative stress and mitochondrial function [15]. |

| DCP-CWGC Code [14] | A specific binary code design used for combinatorial pooling. Its properties (balanced, constant weight, Gray code) enable efficient deconvolution, error detection, and identification of consecutive positives in large-scale screens. | Error-detecting combinatorial pooling for complex target identification (e.g., immunopeptide screening) [14]. |

| Single-Cell ATAC-seq (scATAC-seq) Data [13] | Sequencing data revealing regions of open chromatin in individual cells. Serves as input for computational prediction of regulatory elements. | Used as input for the InferLoop tool to predict cell-type-specific chromatin 3D structure (loops), adding a layer of biological insight without complex Hi-C experiments [13]. |

| HBSS-HEPES Imaging Buffer [15] | A physiological salt solution buffered with HEPES to maintain stable pH outside a CO₂ incubator. Essential for maintaining cell health during live-cell imaging experiments. | Used as the dye loading and imaging medium in the multiplexed oxidative stress protocol [15]. |

Troubleshooting Guides and FAQs

FAQ: Addressing Common Experimental Challenges

Q1: Our phenotypic screen yielded a high hit rate, but many compounds were false positives or promiscuous binders. How can we improve the quality of our initial library?

A1: High false-positive rates often indicate library quality issues. To address this:

- Apply Advanced Cheminformatic Filters: Implement filters beyond basic "drug-likeness" to remove pan-assay interference compounds (PAINS), compounds with poor solubility, and those with reactive functional groups [4].

- Utilize Orthogonal Confirmation: Use biophysical methods like surface plasmon resonance (SPR) early in the workflow to confirm binding and eliminate assay-specific artifacts [17] [4].

- Enhance Library Design: Curate libraries with emphasis on structural novelty, high purity, and validated solution stability to reduce noise and improve discovery efficiency [4].

Q2: Our fragment library screening failed to identify any novel chemotypes for our target. Are we limited by our library's coverage of chemical space?

A2: This is a common limitation. Even diverse experimental fragment libraries (e.g., 1,000-10,000 compounds) represent only a fraction of commercially available fragments (>500,000) and may miss critical chemotypes [17].

- Solution: Integrate virtual screening with your empirical screens. Docking large, commercially available fragment libraries can identify novel, potent chemotypes missing from your physical library, filling "chemotype holes" with little extra resource cost [17].

Q3: How can we balance the need for broad chemical space coverage with the practical constraints of screening capacity?

A3: A hybrid approach is most effective.

- Combine HTS and AI: Use high-throughput screening (HTS) for experimental validation while employing AI and machine learning to "denoise" data, recognize artifacts, and virtually screen vast in-silico chemical spaces to prioritize physical screening efforts [4].

- Use Focused Libraries: For well-validated target families (e.g., kinases, GPCRs), use targeted libraries to increase hit rates while maintaining a core diverse library for broader exploration [18] [4].

Q4: What are the key considerations when moving from a target-based screen to a more complex phenotypic screen?

A4: Phenotypic screening introduces new variables.

- Assay Relevance: Ensure cellular models (e.g., 3D cultures, organoids) are sufficiently physiologically relevant to capture disease complexity [4] [19].

- Target Deconvolution: A major challenge. Integrate high-content imaging, proteomics, and computational techniques early to link phenotypic outcomes to molecular targets [1].

- Compound Logistics: Phenotypic assays often have lower throughput. Employ robotics, assay miniaturization, and integrated workflows to maintain efficiency [4].

Quantitative Data on Library Composition and Performance

The design and composition of a chemical library directly influence screening outcomes. The tables below summarize key performance data and design criteria.

Table 1: Performance Comparison of Screening Methods for AmpC β-lactamase

| Screening Method | Library Size | Hit Rate | Most Potent KI (mM) | Key Findings |

|---|---|---|---|---|

| NMR (TINS) Screening | 1,281 fragments | 3.2% (41 hits) | 0.2 | Discovered novel chemotypes (Avg. Tc* 0.21) [17] |

| Virtual Screening | ~290,000 fragments | Not specified | 0.03 | Filled "chemotype holes" from the empirical library [17] |

| Integrated Approach | Empirical + Virtual | Combined benefits | 0.03 | Captured unexpected and target-tailored chemotypes [17] |

*Tc: Tanimoto coefficient, a measure of structural novelty.

Table 2: Key Design Criteria for Different Library Types

| Library Type | Typical Size | Primary Goal | Key Design/Filtration Criteria | Common Applications |

|---|---|---|---|---|

| Diverse Screening Library | 10,000 - 50,000+ | Maximize exploration of chemical space | Drug-likeness (e.g., Ro5), structural diversity, solubility, purity [18] [4] | Initial HTS, unbiased discovery |

| Focused/Targeted Library | 1,000 - 10,000 | Target specific protein families | Prior knowledge of target class, ligand-based or structure-based design [20] [18] | Kinase, GPCR, epigenetic target screening |

| Fragment Library | 1,000 - 2,000 | Identify weak-binding starting points | Rule of 3 (MW <300, HBD/HBA ≤3, cLogP ≤3), low rotatable bonds [17] [18] | Fragment-Based Drug Discovery (FBDD) |

Experimental Protocols

Protocol 1: Integrated Empirical and Virtual Fragment Screening

This protocol, adapted from a study on AmpC β-lactamase, combines unbiased empirical screening with structure-based virtual screening to maximize chemotype coverage [17].

1. Target Immobilized NMR Screening (TINS) - Primary Empirical Screen

- Objective: Identify fragments binding to the target protein.

- Methodology:

- Immobilize the target protein (e.g., AmpC β-lactamase) on a solid support.

- Screen the empirical fragment library (e.g., 1,281 compounds) using TINS.

- Use a reference protein to subtract non-specific binding.

- Confirm initial hits in a replication experiment.

- Output: A list of confirmed binding fragments.

2. Surface Plasmon Resonance (SPR) - Secondary Confirmatory Assay

- Objective: Validate binding and determine affinity (KD) for NMR hits.

- Methodology:

- Immobilize the target on an SPR chip.

- Inject confirmed NMR hits at varying concentrations.

- Measure binding kinetics (association/dissociation) to determine KD values.

- Output: Affinity data for binding fragments.

3. Enzymological Inhibition Assay (KI Determination)

- Objective: Determine the inhibitory potency of binding fragments.

- Methodology:

- Incubate the target enzyme with a substrate and varying concentrations of the fragment.

- Measure reaction rates (e.g., spectrophotometrically).

- Calculate the inhibition constant (KI) from the dose-response data.

- Output: Functional inhibition data and ligand efficiency (LE).

4. Parallel Virtual Screening of a Large Commercial Library

- Objective: Identify potent chemotypes not present in the empirical library.

- Methodology:

- Select a large library of purchasable fragments with chemotypes unrepresented in the empirical library (e.g., 290,000 compounds).

- Perform molecular docking against the target's active site using an appropriate scoring function.

- Prioritize top-ranking compounds for purchase and testing.

- Output: A list of computationally prioritized fragments.

5. Experimental Validation of Docking Hits

- Objective: Confirm the activity of virtual screening hits.

- Methodology:

- Subject the purchased, docking-prioritized fragments to the same enzymological inhibition assay (Step 3).

- Determine KI values and ligand efficiencies.

- Output: Potent inhibitors discovered via virtual screening.

6. X-ray Crystallography for Structural Insights

- Objective: Understand binding modes and validate docking predictions.

- Methodology:

- Co-crystallize the target protein with bound fragments (from both NMR and docking screens).

- Solve the crystal structure and analyze the protein-fragment interactions.

- Output: Atomic-resolution structures guiding further optimization.

Protocol 2: Phenotypic Screening in a Complex Cell Model

This protocol outlines a generalized workflow for a phenotypic screen using a disease-relevant cellular model [1] [4] [19].

1. Development of a Phenotypically Relevant Assay

- Objective: Establish a robust and translatable cellular model.

- Methodology:

- Select a physiologically relevant cell system (e.g., patient-derived cells, induced pluripotent stem cell (iPSC)-derived tissues, 3D organoids).

- Define a quantifiable, disease-relevant phenotypic endpoint (e.g., neurite outgrowth for neurodegeneration, tumor cell killing in co-culture, cytokine secretion profile).

- Implement a high-content imaging or multiparametric readout system to capture the complex phenotype.

2. Primary Screening and Hit Identification

- Objective: Identify compounds that modulate the desired phenotype.

- Methodology:

- Screen the compound library in the phenotypic assay. Use automation and miniaturization (e.g., 384- or 1536-well plates) for throughput.

- Apply statistical rigor for hit selection (e.g., Z'-factor for assay quality, setting hit thresholds based on standard deviations from the mean).

3. Hit Triage and Counter-Screening

- Objective: Eliminate false positives and prioritize promising leads.

- Methodology:

- Use cheminformatic filters to remove compounds with undesirable properties (PAINS, poor solubility).

- Perform counter-screens against general cytotoxicity to ensure phenotype-specific effects.

- Confirm activity in dose-response experiments.

4. Target Deconvolution

- Objective: Identify the molecular target(s) of the phenotypic hit.

- Methodology:

- Employ computational methods using chemical descriptors and omics data to infer potential targets.

- Use experimental techniques such as chemical proteomics (e.g., affinity purification pull-downs with the hit compound as bait), or genetic approaches (e.g., CRISPR-based screens).

Workflow and Pathway Diagrams

Diagram 1: Screening Strategy Selection Workflow

Diagram 2: Virtual Screening Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Libraries for Screening

| Reagent / Resource | Type | Primary Function in Screening |

|---|---|---|

| Diversity Screening Library [4] | Small Molecule Collection | Provides broad coverage of chemical space for initial unbiased screening in HTS or phenotypic campaigns. |

| Focused/Targeted Libraries (e.g., Kinase, GPCR) [18] [4] | Small Molecule Collection | Enriches for compounds active against specific target families, increasing hit rates for those targets. |

| Fragment Library [17] [18] | Small Molecule Collection | Provides low molecular weight starting points for FBDD, enabling efficient coverage of chemical space. |

| FDA-Approved Drug Library [4] | Small Molecule Collection | Used in repurposing screens, offering compounds with known safety profiles for new indications. |

| Target Protein (e.g., AmpC β-lactamase) [17] | Protein Reagent | The biological target for biochemical, biophysical, and structural studies in target-based screening. |

| SPR Instrumentation [17] | Biophysical Instrument | Confirms binding of hits and provides quantitative affinity (KD) and kinetic data. |

| NMR for TINS [17] | Biophysical Instrument | Detects weak binding of fragments in a primary screen using target-immobilized NMR. |

| X-ray Crystallography System [17] | Structural Biology Tool | Determines high-resolution structures of target-hit complexes to guide rational optimization. |

The Problem of False Positives and Assay Artifacts in Primary Screens

Frequently Asked Questions (FAQs)

1. What are the most common types of assay artifacts in primary screens? The most prevalent assay artifacts fall into several key categories [21]:

- Chemical Reactivity: Includes thiol-reactive compounds (TRCs) that covalently modify cysteine residues and redox-active compounds that produce hydrogen peroxide, indirectly modulating target activity.

- Interference with Reporter Enzymes: Compounds that directly inhibit common reporter proteins like firefly or nano luciferase, leading to false positive signals in reporter gene assays.

- Compound Aggregation: Molecules that form colloidal aggregates (SCAMs) at screening concentrations, non-specifically perturbing biomolecules.

- Interference with Optical Detection: Compounds that are intrinsically fluorescent or colored, interfering with fluorescence or absorbance-based readouts.

- Technology-Specific Interference: Signal quenching, inner-filter effects, or disruption of affinity capture components in assays like FRET, TR-FRET, ALPHA, or SPA.

2. How do PAINS filters work, and what are their limitations? Pan-Assay INterference compoundS (PAINS) filters are a set of substructural alerts designed to flag compounds associated with various assay interference mechanisms [21]. However, they have significant limitations: they are often oversensitive, disproportionately flagging compounds as potential false positives while failing to identify a majority of truly interfering compounds. This is because chemical fragments do not act independently from their structural surroundings, and many original PAINS alerts were derived from very few compounds, making them less reliable [21].

3. What computational tools are available to predict assay interference? Researchers can use several modern computational tools that are more reliable than PAINS filters [21]:

- Liability Predictor: A free webtool that uses Quantitative Structure-Interference Relationship (QSIR) models to predict compounds exhibiting thiol reactivity, redox activity, and luciferase inhibitory activity.

- Luciferase Advisor: Predicts luciferase inhibitors in luciferase-based assays.

- SCAM Detective: Predicts colloidal aggregators.

- InterPred: Predicts compounds that exhibit autofluorescence and luminescence interference.

4. Can assay technology itself help reduce false positives? Yes, the choice of detection technology can significantly impact false positive rates. For instance [22]:

- Fluorescence Lifetime Technology (FLT) can offer a superior readout compared to Time-Resolved Fluorescence Resonance Energy Transfer (TR-FRET). FLT measures the characteristic fluorescence decay of a fluorophore, which is less susceptible to common interference issues that affect fluorescence intensity measurements.

- Utilizing readouts in the far-red spectrum for fluorescence-based HTS assays dramatically reduces interference from compound autofluorescence [21].

5. How does a phenotypic screening approach influence false positive rates? Phenotypic screening, which measures functional outcomes in cellular systems, can overcome some limitations of target-based approaches. However, it is not immune to false positives arising from the interference mechanisms listed above [1]. A key challenge is that observed activity may not be due to the intended biological mechanism. Furthermore, target deconvolution for hits from phenotypic screens can be complex and time-consuming [1]. Integrating phenotypic data with multi-omics and AI can help address this by providing a systems-level view and uncovering true mechanisms of action [23].

Troubleshooting Guide: Identifying and Mitigating False Positives

This guide outlines a systematic approach to triage hits from a primary screen.

Step 1: In-silico Triage of Primary Hit List

Action: Filter your hit list using modern computational liability predictors. Methodology:

- Submit your list of hit compounds in SMILES or SDF format to a tool like Liability Predictor (https://liability.mml.unc.edu/) [21].

- The tool will use QSIR models to predict the likelihood of each compound being a thiol-reactive, redox-active, or luciferase-interfering artifact.

- Prioritize compounds with low interference scores for downstream confirmation. Why it works: This provides an initial, rapid assessment of potential chemical liabilities, flagging promiscuous compounds before investing in costly experimental follow-up [21].

Step 2: Experimental Confirmation of Activity

Action: Confirm that the observed activity is real and not an artifact of the primary screening conditions. Methodology:

- Dose-Response: Re-test hits in a concentration-dependent manner (e.g., a 10-point dose-response curve) in the original primary assay. True hits will typically show a saturable, stoichiometric dose-response relationship.

- Orthogonal Assay: Test confirmed hits in a secondary assay that uses a completely different detection technology. For example, if the primary screen was a luciferase-based reporter assay, the orthogonal assay could be a High-Content Imaging (HCI) readout of a relevant downstream protein marker or a mass spectrometry-based assay like RapidFire MS (RF-MS) [22]. Why it works: Orthogonal assays with different readout mechanisms are unlikely to be susceptible to the same interference compounds. A compound active across multiple assay formats is more likely to be a true positive.

Step 3: Investigate Mechanism-Based Activity

Action: Rule out nonspecific mechanisms of action. Methodology:

- Test for Aggregation: Perform assays in the presence and absence of non-ionic detergents (e.g., 0.01% Triton X-100). Inhibition that is reversed by detergent is a strong indicator of colloidal aggregation [21].

- Counter-Screens: Test compounds against unrelated targets or enzymes. A compound that inhibits many unrelated targets is likely a promiscuous, nonspecific inhibitor.

- Cellular Toxicity Assay: For cell-based phenotypic screens, perform a parallel viability assay (e.g., measuring ATP levels). This ensures that the observed phenotype is not simply a consequence of generalized cellular toxicity. Why it works: These experiments help distinguish specific, target-mediated activity from nonspecific effects like aggregation-based inhibition or cytotoxicity.

Quantitative Data on Assay Interference and Mitigation

| Assay Interference Type | External Balanced Accuracy | Number of External Compounds Tested |

|---|---|---|

| Thiol Reactivity | 58-78% | 256 |

| Redox Activity | 58-78% | 256 |

| Luciferase (Firefly) Interference | 58-78% | 256 |

| Luciferase (Nano) Interference | 58-78% | 256 |

| Detection Technology | Principle | Relative Reduction in False Positives (Model System: TYK2 Kinase) |

|---|---|---|

| Time-Resolved Fluorescence Resonance Energy Transfer (TR-FRET) | Measures fluorescence intensity after a time delay to reduce background. | Baseline |

| Fluorescence Lifetime Technology (FLT) | Measures the characteristic decay time of fluorescence, which is largely independent of concentration and fluorescence intensity. | Marked Decrease |

| RapidFire Mass Spectrometry (RF-MS) | Label-free method that directly detects substrate depletion or product formation. | Significant Decrease (considered a gold-standard confirmatory method) |

Experimental Protocols

Objective: To establish a robust, medium-throughput phenotypic assay for identifying inhibitors of CAF activation, a key process in cancer metastasis.

Materials (Research Reagent Solutions):

- Cell Lines: Human primary lung fibroblasts, highly invasive breast cancer cells (MDA-MB-231), human monocytes (THP-1 cells).

- Culture Media: DMEM-F12 and RPMI-1640, supplemented with 10% Fetal Calf Serum (FCS) and 1% penicillin-streptomycin.

- Assay Plates: 96-well plates suitable for In-Cell ELISA (ICE).

- Key Reagents: Primary antibody against α-Smooth Muscle Actin (α-SMA), fluorescently labeled secondary antibody, fixation reagent (e.g., ice-cold methanol), blocking buffer (e.g., 10% donkey serum in PBS).

Methodology:

- Co-culture Setup: Seed human lung fibroblasts alone or in co-culture with MDA-MB-231 breast cancer cells and THP-1 monocytes in a 96-well plate. Include appropriate controls (fibroblasts alone).

- Incubation: Incubate the co-culture for a predetermined period (e.g., 72 hours) at 37°C and 5% CO₂ to allow for CAF activation.

- Cell Fixation and Staining:

- Fix cells with ice-cold methanol.

- Permeabilize and block nonspecific binding sites using a blocking buffer.

- Incubate with anti-α-SMA primary antibody.

- Wash and incubate with a fluorescently conjugated secondary antibody.

- Signal Detection and Analysis: Measure the fluorescence signal using a plate reader. The expression level of α-SMA, a marker of myofibroblast/CAF activation, is the primary readout.

- Validation: A robust assay should show a significant increase (e.g., 2.3-fold) in α-SMA expression in co-culture conditions compared to fibroblasts alone, with a Z'-factor >0.5, indicating its suitability for screening [24].

Protocol 2: Experimental Workflow for Triage of HTS Hits

This workflow diagrams the multi-step process for validating primary screen hits.

Protocol 3: Key Phases of Hit Triage and Validation

This diagram breaks down the critical phases and decision points in the hit validation pipeline.

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item | Function in the Assay |

|---|---|

| Primary Human Lung Fibroblasts | The primary cell type whose activation into a CAF state is being measured. |

| MDA-MB-231 Cell Line | A highly invasive breast cancer cell line used to induce fibroblast activation in co-culture. |

| THP-1 Cell Line | A human monocyte cell line; monocytes/macrophages are key regulators in the CAF activation microenvironment. |

| Anti-α-SMA Antibody | The primary antibody used in the In-Cell ELISA to detect and quantify the levels of α-Smooth Muscle Actin, a key biomarker of CAF activation. |

| Fluorescent Secondary Antibody | Conjugated to a fluorophore; binds to the primary antibody to allow for detection and quantification of α-SMA levels. |

Advanced Strategies for Designing and Enriching Phenotypic Screening Libraries

This technical support center provides troubleshooting guides and FAQs to help researchers navigate common challenges in phenotypic screening library optimization.

Frequently Asked Questions

What is the primary limitation of a diverse screening library? Diverse "chemogenomics" libraries typically interrogate only a small fraction of the human genome—approximately 1,000–2,000 out of 20,000+ genes. This limited coverage can miss critical, novel, or undrugged targets, restricting the scope of your phenotypic discoveries [7].

When should I use a focused versus a diverse library? A focused library is more efficient when structural information on the target or target family is available or when ligands of the target are known. A diverse library is preferable when very little is known about the target and no or few ligands have been identified [25].

How does library design impact target identification (ID) in phenotypic screening? A major challenge is that compounds from phenotypic screens can be highly promiscuous, acting on multiple unexpected targets. This complicates and can even mislead target ID and validation efforts. Strategies like affinity purification and genetic approaches are needed for target deconvolution [7].

What are the key considerations for scaffold selection in library design? The choice of scaffold is critical as it predetermines many properties of the future lead. An ideal scaffold should have favorable ADME properties, present good vector orientation for substituents, enable robust binding interactions, be synthetically amenable, and offer patentability [26].

Troubleshooting Guides

Problem: High Hit Rate with Non-Specific or Promiscuous Compounds

Issue: Initial screen yields many hits, but most compounds show poor selectivity or engage multiple off-targets.

Diagnosis: This is common with libraries built around "privileged" scaffolds or those lacking sufficient chemical diversity, leading to frequent-hitter behavior [7].

Solution:

- Apply PAINS Filters: Use computational filters to identify and remove Pan-Assay Interference Compounds (PAINS) early in the triage process [7].

- Counter-Screening: Implement secondary assays against common off-targets (e.g., kinases, GPCRs) to identify and eliminate promiscuous binders [7].

- Library Refinement: For follow-up libraries, incorporate structural motifs known to avoid promiscuity and enhance selectivity [25].

Problem: Difficulty in Target Identification & Deconvolution

Issue: A compound shows a robust phenotypic response, but its molecular mechanism of action (MOA) remains unknown.

Diagnosis: This is a fundamental limitation of phenotypic screening. Without a known target, further medicinal chemistry optimization and safety profiling are challenging [7].

Solution:

- Affinity Purification Mass Spectrometry: Use biotinylated or photo-affinity probes derived from your hit compound to pull down and identify direct protein targets from cell lysates [7].

- Functional Genomics (CRISPR) Screens: Perform parallel genetic screens to identify genes whose loss-of-function phenocopies or rescues the compound's effect. This can pinpoint pathways and potential targets [7].

- Resistance Mutation Mapping: In microbial or cell-based systems, select for resistance to the compound and sequence the genome to identify mutated genes, which often encode the target or related proteins [7].

Problem: Poor Coverage of Relevant Chemical or Target Space

Issue: The screening library does not yield hits, potentially because it lacks compounds capable of modulating the biology in your specific phenotypic assay.

Diagnosis: The chemical space covered by the library is too narrow, biased towards certain target classes, or lacks the complexity needed for the phenotype [7] [26].

Solution:

- Analyze Library Composition: Computationally map your library's coverage of chemical space and target annotations to identify gaps [26].

- Incorporate Privileged Structures: For specific target families (e.g., kinases, GPCRs), design focused libraries around known privileged scaffolds to increase the likelihood of success [25].

- Explore New Modalities: Consider expanding your library to include compounds suitable for emerging target areas like protein-protein interactions (PPI) or the ubiquitin proteasome pathway, which may require specialized chemotypes [25].

Problem: Inefficient Translation from Hit to Lead

Issue: Confirmed hits have poor drug-like properties (e.g., solubility, metabolic stability), making them difficult to optimize into viable lead compounds.

Diagnosis: The initial library was designed without sufficient consideration of ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) properties during the hit identification phase [26].

Solution:

- Early ADMET Profiling: Integrate high-throughput solubility, microsomal stability, and cytotoxicity assays into the primary screening workflow [26].

- Structure-Based Design: If the target is identified, use structural information (e.g., from X-ray crystallography or cryo-EM) to guide optimization of potency and selectivity [1].

- Property-Based Design: Use guidelines like Lipinski's Rule of Five during the library design and hit-to-lead stages to prioritize compounds with better drug-like properties [26].

Experimental Protocols & Data

Quantitative Comparison of Screening Approaches

The table below summarizes key limitations and mitigation strategies for small molecule and genetic screening, two primary tools for phenotypic discovery [7].

| Screening Type | Key Limitation | Quantitative Impact | Mitigation Strategy |

|---|---|---|---|

| Small Molecule Screening | Limited target coverage of chemogenomics libraries | 1,000-2,000 of 20,000+ genes addressed [7] | Use multiple, diverse library types; include covalent and novel chemotypes [7]. |

| Promiscuity & assay interference | PAINS compounds can constitute a significant fraction of initial hits [7] | Early triage with counter-screens and computational filters [7]. | |

| Genetic Screening (e.g., CRISPR) | Fundamental difference from pharmacological perturbation | Genetic knockout is irreversible and complete, unlike transient, partial inhibition by drugs [7] | Use inducible or partial loss-of-function systems (e.g., CRISPRi) for better mimicry [7]. |

| Limited phenotypic robustness in high-throughput | Many validated hits fail in lower-throughput, more complex phenotypic assays [7] | Use high-content, multiparametric readouts; prioritize screens with high biological relevance [7]. |

Protocol: Designing a Focused Library for a Novel Target Family

This methodology outlines the creation of a target-focused library, such as for kinases or other well-characterized families [25].

Principle: When structural information on the target or target family is available, it is more efficient to design or select compounds that can be expected to modulate the target, rather than screening a vast, diverse library [25].

Procedure:

- Target Analysis: Collect all available structural data (X-ray, cryo-EM) of the target family, including apo structures and ligand-bound complexes. Identify conserved binding motifs and key interaction sites.

- Scaffold Identification: Select a core scaffold (e.g., a heterocycle) that can effectively present substituents to the key interaction sites identified in Step 1. The scaffold should be synthetically tractable and have known routes for diversification [26].

- Virtual Library Construction: Using combinatorial chemistry software, generate a virtual library by combining the chosen scaffold with a large set of available reagents at each variable position (R1, R2...). The size of this virtual library can be enormous (e.g., 1 million compounds for 200x50x100 reagents) [26].

- In-Silico Screening:

- Docking: If 3D structures are available, computationally dock virtual compounds into the binding site to score and rank them based on predicted binding affinity.

- Pharmacophore Modeling: Use known active ligands to build a pharmacophore model and filter virtual compounds that match this model.

- QSAR: Use existing bioactivity data to build a Quantitative Structure-Activity Relationship (QSAR) model to predict activity of new virtual compounds.

- ADMET Filtering: Apply computational filters to remove compounds with predicted poor solubility, high metabolic instability, or potential toxicity. Adhere to drug-like property guidelines [26].

- Final Selection and Synthesis: Select a manageable number of compounds (e.g., hundreds to a few thousand) from the top-ranked, filtered virtual list for actual synthesis or acquisition.

The Scientist's Toolkit: Key Research Reagents

| Reagent / Material | Function in Library Design & Screening |

|---|---|

| Chemogenomics Library | A collection of small molecules with known or predicted annotations against a set of biological targets. Used for initial phenotypic screens to provide mechanistic starting points [7]. |

| CRISPR Library | A pooled or arrayed collection of guide RNAs (gRNAs) targeting genes across the genome. Used in functional genomic screens to identify genes involved in a phenotype [7]. |

| Privileged Scaffold | A core molecular structure (e.g., benzimidazole, indole) known to produce ligands for multiple receptor types. Serves as a template for building focused libraries [26]. |

| Photo-affinity Probe | A chemical probe containing a photoreactive group (e.g., diazirine) and an affinity tag (e.g., biotin). Used for target deconvolution by covalently capturing protein targets upon UV irradiation [7]. |

Workflow Visualization

Strategic Library Design Workflow

Phenotypic Screening & Target Deconvolution

Frequently Asked Questions (FAQs)

FAQ 1: What is the core principle behind using multi-omics data for library enrichment? Multi-omics integration combines data from various molecular layers—such as genomics, transcriptomics, proteomics, and metabolomics—to build a comprehensive understanding of disease biology. This integrative approach helps identify key dysregulated pathways and networks in diseases like cancer. By using the tumor's specific genomic profile (e.g., RNA sequence and mutation data), researchers can pinpoint overexpressed proteins and map them onto protein-protein interaction networks to select a collection of biologically relevant targets. A chemical library is then computationally enriched by docking compounds against these selected targets to find molecules that potentially modulate multiple key proteins simultaneously, a strategy known as selective polypharmacology [6].

FAQ 2: What are the primary data sources for obtaining disease-specific genomic and multi-omics data? Large-scale public repositories are essential resources. These include:

- The Cancer Genome Atlas (TCGA): Provides comprehensive molecular profiles of various cancer types, including genomic, epigenomic, transcriptomic, and proteomic data [27].

- NCI Genomic Data Commons (GDC): A unified data repository that standardizes and distributes cancer genomic and clinical data from programs like TCGA. It provides harmonized data aligned to a reference genome, facilitating integrated analysis [28].

- Clinical Proteomic Tumour Analysis Consortium (CPTAC): Focuses on proteogenomic characterization, linking genomic alterations to protein-level changes in cancer [27].

FAQ 3: My multi-omics datasets have complex directional relationships. How can I account for this in analysis? Directional dependencies are a key challenge. Methods like Directional P-value Merging (DPM) have been developed to address this. DPM allows you to define a Constraints Vector (CV) that specifies the expected directional relationship between datasets (e.g., positive correlation between mRNA and protein levels, or negative correlation between promoter DNA methylation and gene expression). This method prioritizes genes with consistent, significant changes across omics layers that align with your biological hypothesis, while penalizing those with conflicting signals, leading to more accurate gene and pathway prioritization [27].

FAQ 4: How do I determine the optimal sampling frequency for different omics layers in a longitudinal study? Not all omics layers change at the same rate. A rational, hierarchical approach is recommended:

- Genome: Generally static; requires a single baseline assessment.

- Epigenome: Dynamic, but relatively stable.

- Transcriptome: Highly sensitive to environment and treatment; may require frequent assessments (e.g., daily or hourly in shift-work studies) [29].

- Proteome: Proteins have longer half-lives; testing frequency can be lower and aligned with specific clinical time points [29].

- Metabolome: Provides a near real-time snapshot of metabolic activity; may also require frequent sampling in certain contexts [29].

Table 1: Recommended Omics Sampling Frequency in Longitudinal Studies

| Omics Layer | Dynamic Nature | Recommended Sampling Frequency | Rationale |

|---|---|---|---|

| Genomics | Static | Once (baseline) | DNA sequence is largely unchanging. |

| Transcriptomics | Highly Dynamic | High Frequency | Gene expression rapidly responds to stimuli, environment, and treatment [29]. |

| Proteomics | Moderately Dynamic | Lower Frequency | Proteins are more stable, with longer half-lives than transcripts [29]. |

| Metabolomics | Highly Dynamic | High Frequency (in specific contexts) | Metabolites provide a real-time view of cellular activity and response [29]. |

FAQ 5: What are common data heterogeneity issues when integrating multi-omics data, and how can they be mitigated? Data heterogeneity arises from:

- Different Data Types: Combining sequence data, expression counts, and intensity values.

- Technical Bias: Variations in platforms, protocols, and batch effects.

- Dimensionality: The vast number of features measured. Mitigation strategies include:

- Data Harmonization: Using pipelines like those from the GDC to re-align genomic data to a common reference genome [28].

- Significance-Based Fusion: Integrating data at the level of P-values and directionality estimates (e.g., fold-change) to overcome scale differences, as done in methods like DPM and ActivePathways [27].

- Advanced Computational Methods: Employing deep learning, graph neural networks (GNNs), and other AI tools to synthesize and interpret complex datasets [30].

Troubleshooting Guides

Issue 1: High Attrition Rate in Phenotypic Screening

Problem: Compounds active in initial phenotypic screens fail to show efficacy in more disease-relevant models or exhibit high toxicity.

Possible Causes and Solutions:

Cause 1: Lack of Biological Relevance in Library Design

- Solution: Implement a target-informed library enrichment strategy.

- Protocol: Begin with the tumor's genomic profile (e.g., from TCGA). Perform differential expression and somatic mutation analysis to identify overexpressed and mutated genes. Map these genes onto a large-scale protein-protein interaction network (e.g., from literature-curated databases) to create a disease-specific subnetwork. Identify proteins within this network that have druggable binding pockets. Finally, computationally dock your compound library against these prioritized targets to select molecules for screening [6].

- Solution: Implement a target-informed library enrichment strategy.

Cause 2: Use of Oversimplified Biological Models

- Solution: Transition to more physiologically relevant screening assays.

- Protocol: Replace traditional 2D monolayer cultures of immortalized cell lines with 3D models. For glioblastoma (GBM) screening, use low-passage, patient-derived GBM spheroids. As a counter-screen for toxicity, employ non-transformed primary cell models, such as 3D spheroids of hematopoietic CD34+ progenitor cells or 2D cultures of astrocytes. This helps identify compounds that selectively inhibit tumor growth without affecting normal cell viability [6].

- Solution: Transition to more physiologically relevant screening assays.

Issue 2: Inconsistent Findings Across Omics Datasets

Problem: Significant genes or pathways identified in one omics dataset (e.g., transcriptomics) are not supported by another (e.g., proteomics).

Possible Causes and Solutions:

Cause 1: Ignoring Directional Biological Relationships

- Solution: Apply directional integration methods in your analysis workflow.

- Protocol: For each omics dataset, generate a matrix of gene-level P-values and a matrix of directional changes (e.g., +1 for up-regulation, -1 for down-regulation). Define a Constraints Vector (CV) that encapsulates the expected relationships (e.g.,

[+1, +1]for concordant mRNA-protein changes). Use the DPM algorithm to merge P-values, which will boost the ranking of genes with consistent changes and penalize those with inconsistent signals. Proceed with pathway enrichment analysis on the merged gene list [27].

- Protocol: For each omics dataset, generate a matrix of gene-level P-values and a matrix of directional changes (e.g., +1 for up-regulation, -1 for down-regulation). Define a Constraints Vector (CV) that encapsulates the expected relationships (e.g.,

- Solution: Apply directional integration methods in your analysis workflow.

Cause 2: Technical Variation and Lack of Standardization

- Solution: Ensure rigorous data preprocessing and harmonization.

- Protocol: When using public data, leverage pre-harmonized data from resources like the GDC, which realigns genomic data to a consistent reference genome (GRCh38) and applies uniform processing pipelines [28]. For in-house data, apply standard quality control checks (e.g., FASTQC for sequence data, normalization for batch effects) before integration.

- Solution: Ensure rigorous data preprocessing and harmonization.

Issue 3: Managing the Scale and Complexity of Multi-Omics Data

Problem: Computational challenges in storing, processing, and analyzing large multi-omics datasets.

Possible Causes and Solutions:

- Cause: Inadequate Computational Infrastructure

- Solution: Utilize cloud computing and specialized data transfer tools.