Overcoming Class Imbalance in Drug-Target Interaction Prediction: A Guide to Robust Machine Learning Models

Class imbalance, where experimentally validated drug-target interactions are vastly outnumbered by non-interacting pairs, is a critical and pervasive challenge that biases predictive models and hinders drug discovery.

Overcoming Class Imbalance in Drug-Target Interaction Prediction: A Guide to Robust Machine Learning Models

Abstract

Class imbalance, where experimentally validated drug-target interactions are vastly outnumbered by non-interacting pairs, is a critical and pervasive challenge that biases predictive models and hinders drug discovery. This article provides a comprehensive guide for researchers and drug development professionals on managing this imbalance. It explores the fundamental causes and impacts of skewed datasets, details a suite of data-level and algorithm-level mitigation techniques—from resampling methods like SMOTE and GANs to ensemble and cost-sensitive learning—and offers strategies for troubleshooting and hyperparameter optimization. Finally, it establishes a rigorous framework for model validation using imbalanced data-specific metrics and discusses the translational impact of robust, reliable DTI prediction on accelerating therapeutic development.

The Class Imbalance Problem: Why Your DTI Predictions Might Be Biased

Defining Class Imbalance in Drug-Target Interaction Datasets

Frequently Asked Questions (FAQs)

What is class imbalance, and why is it a critical issue in Drug-Target Interaction (DTI) prediction?

In DTI prediction, class imbalance refers to the situation where the number of known interacting drug-target pairs (positive class) is vastly outnumbered by the number of non-interacting pairs (negative class) [1]. This is a critical issue because most standard machine learning algorithms are designed with the assumption of an equal class distribution. When this assumption is violated, models become biased toward predicting the majority class, leading to poor sensitivity in identifying the minority class—which, in this case, are the novel drug-target interactions of primary interest [2] [1]. This data-driven bias can result in a high false negative rate, causing potentially valuable interactions to be overlooked during virtual screening.

What is the difference between between-class and within-class imbalance?

Class imbalance in DTI datasets is a two-fold problem:

- Between-class imbalance: This is the overall disparity between the number of interacting (positive) and non-interacting (negative) drug-target pairs. In a typical DTI dataset, non-interacting pairs far outnumber the interacting ones [1].

- Within-class imbalance: This occurs within the positive class itself. The known interactions can be categorized into multiple types (e.g., inhibition, activation), and some of these interaction types may have relatively fewer examples than others. These underrepresented types are known as "small disjuncts" and are a significant source of prediction errors, as models tend to be biased toward the better-represented interaction types [1].

This is a classic symptom of a model trained on a highly imbalanced dataset. A model can achieve high accuracy by simply always predicting the "non-interacting" class, as this class dominates the dataset. For example, in a dataset where 98% of pairs are non-interacting, a model that never predicts an interaction will still be 98% accurate but is practically useless. To properly diagnose this issue, you should rely on metrics that are more sensitive to class imbalance, such as Sensitivity (Recall), Specificity, the F1-score, and the Area Under the Precision-Recall Curve (AUPR) [3] [4].

Which metrics should I use to evaluate my model on an imbalanced DTI dataset?

Standard metrics like Accuracy can be misleading. The following metrics provide a more reliable assessment of model performance on imbalanced DTI data [3] [4]:

| Metric | Description | Why it's useful for Imbalanced Data |

|---|---|---|

| Sensitivity (Recall) | Proportion of actual positives correctly identified. | Directly measures the model's ability to find true interactions. |

| Specificity | Proportion of actual negatives correctly identified. | Measures how well the model rules out non-interactions. |

| Precision | Proportion of positive predictions that are correct. | Indicates the reliability of a predicted interaction. |

| F1-Score | Harmonic mean of Precision and Recall. | Single metric balancing the trade-off between Precision and Recall. |

| AUPR (Area Under the Precision-Recall Curve) | Area under the plot of Precision vs. Recall. | More informative than ROC-AUC when the positive class is rare. |

| MCC (Matthews Correlation Coefficient) | A balanced measure considering all confusion matrix categories. | Robust metric that works well even on imbalanced datasets. |

What are the most effective strategies to mitigate class imbalance in DTI prediction?

Multiple strategies have been successfully applied to DTI prediction, which can be broadly categorized as follows:

| Strategy Category | Core Principle | Example Methods |

|---|---|---|

| Data-Level Methods | Adjust the training dataset to create a more balanced class distribution. | Random Undersampling, Oversampling (e.g., SMOTE [2]), Generative Adversarial Networks (GANs) [3] |

| Algorithm-Level Methods | Modify the learning algorithm to reduce bias toward the majority class. | Cost-sensitive learning, Ensemble methods (e.g., Random Forest with balanced bags [5]), Evidential Deep Learning [4] |

| Hybrid Methods | Combine data-level and algorithm-level approaches. | Using GANs for data augmentation followed by a Random Forest classifier [3] |

Troubleshooting Guides

Issue: Model shows high performance on training data but poor generalization to new drug-target pairs.

Potential Causes and Solutions:

Cause 1: Overfitting on synthetic data.

- Solution: If you are using oversampling techniques like SMOTE, ensure that the synthetic data generation process does not introduce unrealistic examples that create artificial decision boundaries. Consider using advanced variants like Borderline-SMOTE or ADASYN, which are more careful about where synthetic samples are generated [2]. Alternatively, validate the realism of generated samples with a domain expert or use model-based approaches like GANs, which can learn more complex data distributions [3].

Cause 2: Data leakage or improper negative sample selection.

- Solution: Re-evaluate your negative dataset. A common practice is to treat unknown interactions as negative samples, but this can introduce false negatives. Employ rigorous negative sampling strategies. Some methods select negatives that are distant from all positive samples in the chemogenomical space to reduce the chance of missing true interactions [5]. Also, ensure that no information from the test set (e.g., highly similar drugs/targets) is leaked into the training process.

Issue: After applying undersampling, the model's performance on the majority class has degraded significantly.

Potential Causes and Solutions:

- Cause: Loss of informative majority-class examples.

- Solution: Random undersampling can discard potentially useful information about the non-interacting space. Instead of random sampling, use informed undersampling strategies. The NearMiss algorithm can be used to selectively remove majority-class samples that are redundant or lie far from the decision boundary, preserving those that are most informative [5]. Another robust approach is to use ensemble methods that train multiple learners on balanced subsets of the data, thereby leveraging the full dataset without bias [1] [5].

Issue: I have a complex, high-dimensional feature representation for drugs and targets. Which imbalance strategy should I prioritize?

Potential Causes and Solutions:

- Cause: High-dimensional data can make simple resampling less effective.

- Solution: In such scenarios, algorithm-level and hybrid methods often yield better results. Consider using a Generative Adversarial Network (GAN) for data augmentation, as it is designed to model complex, high-dimensional data distributions. For instance, one study used GANs to generate synthetic minority class data and combined it with a Random Forest classifier, achieving high accuracy (97.46%), sensitivity (97.46%), and ROC-AUC (99.42%) on the BindingDB-Kd dataset [3]. Alternatively, explore Evidential Deep Learning (EDL) frameworks like EviDTI, which provide reliable uncertainty estimates and have demonstrated robust performance on challenging, imbalanced benchmarks like the Davis and KIBA datasets [4].

The following table summarizes quantitative results from recent studies that explicitly addressed class imbalance in DTI prediction, providing a benchmark for expected outcomes.

Table: Performance of Different Imbalance Handling Strategies on DTI Prediction

| Strategy / Model | Dataset | Key Metric 1 | Key Metric 2 | Key Metric 3 |

|---|---|---|---|---|

| GAN + Random Forest [3] | BindingDB-Kd | Accuracy: 97.46% | Sensitivity: 97.46% | ROC-AUC: 99.42% |

| NearMiss + Random Forest [5] | Gold Standard (Enzymes) | AUROC: 99.33% | - | - |

| EviDTI (Evidential Deep Learning) [4] | Davis | Accuracy: ~0.82* | F1-Score: ~0.82* | AUPR: ~0.65* |

| Class Imbalance-Aware Ensemble [1] | DrugBank | Improved over 4 state-of-the-art baselines | - | - |

| Note: Exact values for EviDTI on the Davis dataset were not fully listed in the provided excerpt, but the model was reported to achieve competitive and robust performance across multiple metrics [4]. |

Experimental Protocols

Protocol 1: Implementing a GAN-based Data Augmentation Framework

This protocol outlines the steps for using Generative Adversarial Networks (GANs) to generate synthetic minority class samples, as demonstrated in a state-of-the-art study [3].

Feature Engineering:

- Drug Features: Extract molecular features using MACCS keys or other structural fingerprints to represent drugs as fixed-length vectors.

- Target Features: Represent target proteins using features derived from their amino acid sequences, such as amino acid composition, dipeptide composition, and pseudo-amino acid composition.

Data Preprocessing: Normalize all feature vectors and combine drug and target features for each pair to create a unified feature representation for the DTI pair.

GAN Training:

- Architecture: Set up a GAN comprising a generator and a discriminator. The generator learns to create synthetic feature vectors for the minority class (interacting pairs), while the discriminator learns to distinguish between real (from the training set) and synthetic samples.

- Training Loop: Train the GAN in an adversarial manner until the generator produces synthetic data that the discriminator can no longer reliably distinguish from real data.

Synthetic Data Generation: Use the trained generator to create a sufficient number of synthetic minority-class samples to balance the training dataset.

Classifier Training: Train a Random Forest classifier (or another suitable classifier) on the augmented, balanced training set that now includes both original and synthetic positive samples.

Validation: Evaluate the trained classifier on a held-out test set that contains only real, non-synthetic data, using metrics like Sensitivity, Specificity, and ROC-AUC.

Protocol 2: Applying Informed Undersampling with NearMiss

This protocol details the use of the NearMiss algorithm to balance the dataset by reducing majority class samples [5].

Feature Extraction and Representation: Calculate comprehensive feature descriptors for drugs and targets. For drugs, this can include various molecular fingerprints. For targets, use sequence-based features like amino acid composition.

Dimensionality Reduction (Optional): To handle the high dimensionality of the combined feature set and reduce computational complexity, apply a dimensionality reduction technique like Random Projection.

Apply NearMiss Undersampling:

- Implement the NearMiss algorithm (version 1, 2, or 3) to select the most informative majority-class samples to keep.

- NearMiss-1: Selects majority samples whose average distance to the k closest minority samples is the smallest.

- The goal is to reduce the number of non-interacting pairs to a level comparable with the number of interacting pairs.

Model Training: Train a Random Forest classifier on the newly balanced dataset produced by the NearMiss algorithm.

Evaluation: Rigorously test the model on an untouched test set, reporting metrics such as AUROC and AUPR.

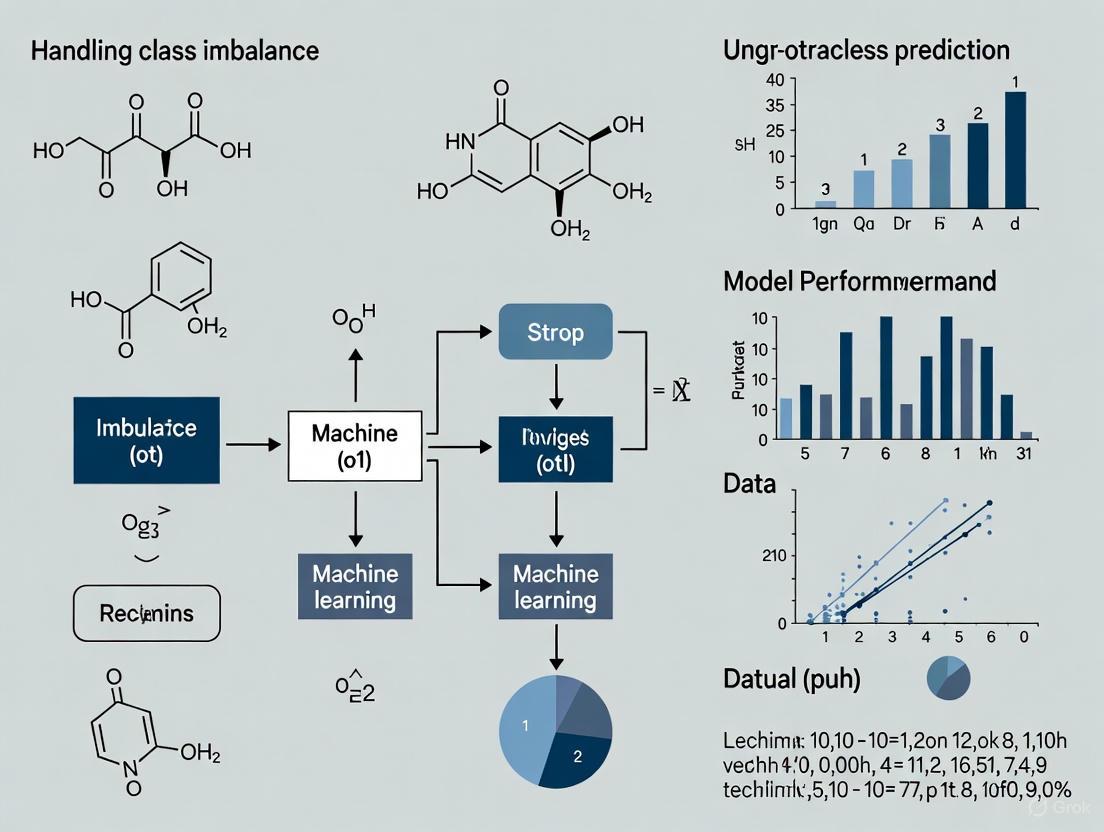

The following diagram illustrates the core logical relationship between the class imbalance problem and the two primary solution pathways described in the protocols above.

Research Reagent Solutions

This table lists key computational "reagents"—algorithms, tools, and techniques—essential for conducting experiments on imbalanced DTI datasets.

Table: Essential Research Reagents for Handling DTI Class Imbalance

| Reagent / Technique | Category | Primary Function | Example Application in DTI |

|---|---|---|---|

| Generative Adversarial Network (GAN) | Data Augmentation | Generates synthetic samples of the minority class to balance the training dataset. | Creating synthetic interacting drug-target pairs [3]. |

| SMOTE & Variants | Data Augmentation | Synthesizes new minority class instances by interpolating between existing ones. | Oversampling active compounds in inhibitor searches [2]. |

| NearMiss Algorithm | Data Sampling | Selectively removes majority class samples based on their distance to minority class instances. | Downsampling non-interacting pairs in gold standard datasets [5]. |

| Evidential Deep Learning (EDL) | Algorithmic | Provides predictive uncertainty quantification, helping to identify and down-weight unreliable predictions common in imbalanced settings. | Prioritizing high-confidence DTI predictions for experimental validation [4]. |

| Random Forest Classifier | Algorithmic | An ensemble learner that can be effective on imbalanced data, especially when used with balanced bagging. | Serving as a robust predictor after data balancing with GAN or NearMiss [3] [5]. |

| MACCS Keys / Molecular Fingerprints | Feature Engineering | Provides a standardized structural representation of drug molecules for machine learning. | Used as drug features in hybrid GAN-RF frameworks [3]. |

| Amino Acid Composition | Feature Engineering | Provides a fixed-length, sequence-based representation of target proteins. | Used as target features for input into classifiers and data augmentation models [3]. |

Technical Support Center: Troubleshooting Guides & FAQs

This support center is designed for researchers grappling with the practical and computational challenges of Drug-Target Interaction (DTI) prediction, with a specific focus on mitigating the effects of class imbalance to improve experimental outcomes.

Troubleshooting Guide: Common DTI Experimental Challenges

The following table outlines frequent issues, their underlying causes, and evidence-based solutions.

| Error / Problem | Root Cause | Proposed Solution |

|---|---|---|

| High False Positive Rate in Validation | Class imbalance biases computational models toward the majority (non-interacting) class, causing them to miss true interactions [1] [6]. | Implement ensemble learning methods that use random undersampling of the majority class and oversampling of minority interaction types to create balanced training sets [1] [6]. |

| Poor Model Performance on New Drugs/Targets | The "within-class imbalance" problem: models are biased toward well-represented interaction types in the training data and perform poorly on rare or new types [1]. | Use cluster-based oversampling. First, cluster the positive interactions to detect homogenous groups, then artificially enhance small clusters to help the model learn these "small concepts" [1]. |

| High Cost of Wet-Lab Validation | Traditional DTI validation (e.g., docking simulations) is expensive, time-consuming, and requires 3D protein structures that are not always available [1] [6]. | Employ a tiered validation strategy. Use high-throughput, cost-effective in silico screening to prioritize the most promising candidates before committing to expensive experimental validation [6]. |

| Inability to Afford Prescription Medicines | Patients, including those in clinical trials, may face financial stress and food insecurity, leading to cost-related non-adherence (CRN) that confounds experimental results [7]. | Implement screening for financial stress and food insecurity. Proactively discuss lower-cost medication options with participants, as this has been shown to be protective against CRN [7]. |

Frequently Asked Questions (FAQs)

Q1: My computational model achieves a high AUC, but most of its predictions fail in the lab. Why? This is a classic symptom of class imbalance. The Area Under the ROC Curve (AUC) can be misleading when the test set is highly unbalanced, as the model's bias toward the majority class is not sufficiently penalized [6]. Relying on metrics like the Area Under the Precision-Recall Curve (AUPRC) provides a more realistic performance assessment for imbalanced datasets where the minority class (interactions) is of primary interest [6].

Q2: Besides random sampling, what other techniques can address class imbalance in DTI data? Advanced methods go beyond simple random sampling. These include:

- Synthetic Oversampling (e.g., SMOTE): Generates synthetic examples of the minority class to balance the dataset [6].

- Cluster-Based Undersampling (CUS): Reduces the majority class by removing redundant examples from dense clusters [6].

- Ensemble Deep Learning: Combines multiple deep learning models, each trained on a balanced subset of data where the negative samples are randomly undersampled. This minimizes information loss from the majority class while reducing bias [6].

Q3: How can I make my DTI prediction model more robust for real-world applications? The key is to focus on the imbalance issue directly during model development. One effective approach is to use an ensemble of models and, crucially, to experimentally validate the computational predictions. Studies show that models trained with a balancing step not only perform better computationally but also yield significantly higher success rates in subsequent laboratory experiments, thereby saving time and resources [6].

Quantitative Data on Class Imbalance in DTI Research

The following tables summarize core quantitative data related to class imbalance and the associated costs of research.

Table 1: Impact of Class Imbalance Balancing on DTI Model Performance This table summarizes the performance gains achievable by addressing class imbalance, as demonstrated in foundational studies.

| Study & Method | Key Metric (Balanced Model) | Key Metric (Unbalanced Model) | Experimental Validation Outcome |

|---|---|---|---|

| Ensemble of Deep Learning Models [6] | Outperformed unbalanced models in AUPRC and other metrics. | Lower performance across all metrics. | The balanced model showed significantly better correlation with real-world experimental validation results. |

| Class Imbalance-Aware Ensemble [1] | Improved results over 4 state-of-the-art methods. | N/A (Compared to other methods) | Displayed satisfactory performance in simulating predictions for new drugs and targets with no prior known interactions. |

Table 2: Cost and Failure Statistics in Drug Development This data highlights the high-stakes environment that makes efficient DTI prediction critical.

| Metric | Statistic | Context / Source |

|---|---|---|

| Drug Development Cost | ~$1.8 Billion [1] | Average cost to develop a new drug. |

| Development Timeline | Over 12 years [1] | Average time from discovery to market. |

| Startup Failure Rate | 90% fail globally [8] | Analogous to the high-risk nature of drug discovery projects. |

| Product Failure Cause | 42% fail due to "no market need" [8] | Underscores the importance of validating the "problem" (i.e., the biological target and interaction) before major investment. |

Experimental Protocols for Robust DTI Prediction

Protocol: Ensemble Deep Learning with Random Undersampling

This protocol is designed to mitigate between-class imbalance bias [6].

- Data Preparation: Collect known DTIs from a public database like BindingDB. Use a threshold (e.g., PIC50 ≥ 7) to label pairs as positive (interacting) or negative (non-interacting). Split the data into training and test sets.

- Feature Representation:

- Drugs: Encode drugs using molecular fingerprints such as ErG (Extended reduced Graphs) or ESPF (Explainable Substructure Partition Fingerprint) derived from their SMILES strings.

- Targets: Encode target proteins using Protein Sequence Composition (PSC) descriptors or other features from their genomic sequences.

- Create Balanced Subsets: For each base learner in the ensemble, keep all positive samples constant. Then, perform random undersampling (without replacement) on the negative set to create a balanced training subset.

- Train Base Learners: Train multiple independent deep learning models (e.g., neural networks) on the different balanced subsets generated in the previous step.

- Aggregate Predictions: Combine the predictions of all base learners through an aggregation method (e.g., majority voting or averaging) to produce the final, robust prediction.

Protocol: Addressing Within-Class Imbalance via Clustering and Oversampling

This protocol addresses the problem of under-represented interaction types within the positive class [1].

- Cluster Positive Interactions: Apply a clustering algorithm (e.g., k-means) exclusively to the known positive interaction pairs in the training data. The goal is to group interactions into homogenous clusters, where each cluster ideally represents a specific type or concept of interaction.

- Identify Small Disjuncts: Analyze the resulting clusters. Clusters with a relatively low number of members are the "small disjuncts" or less-represented interaction types that are vulnerable to being ignored by the model.

- Oversample Small Clusters: Artificially increase the number of data points in these small clusters using oversampling techniques (e.g., duplication or synthetic generation). This enhances their representation and forces the classification model to focus on these difficult concepts.

- Proceed with Model Training: Use this within-class balanced dataset, potentially in conjunction with between-class balancing techniques, to train your final predictor.

Visualizing Workflows and Relationships

The Class Imbalance Problem in DTI Prediction

This diagram illustrates the two fundamental types of class imbalance that degrade DTI prediction performance.

Ensemble Learning Solution for Between-Class Imbalance

This workflow outlines the ensemble learning method that uses random undersampling to mitigate bias against the positive class.

The Scientist's Toolkit: Research Reagent Solutions

This table details key computational and data resources essential for conducting robust DTI prediction studies.

| Item / Resource | Function | Relevance to DTI / Class Imbalance |

|---|---|---|

| BindingDB [6] | A public database of experimentally measured binding affinities (Kd, Ki, IC50) for drugs and target proteins. | Serves as a primary source for building labeled datasets of interacting and non-interacting pairs. |

| DrugBank [1] [6] | A comprehensive database containing drug, chemical, and target information. | Provides critical data on known drugs and their targets for feature generation and model training. |

| PROFEAT Web Server [1] | Computes numerical descriptors for proteins from their amino acid sequences. | Generates fixed-length feature vectors (e.g., amino acid composition) to represent target proteins for machine learning models. |

| Rcpi Package [1] | An R package for calculating molecular descriptors and fingerprints for drug compounds. | Generates features for small-molecule drugs (e.g., constitutional, topological descriptors) for model input. |

| SMILES [6] | A string-based notation system for representing molecular structures. | The standard input for generating molecular fingerprints (like ErG and ESPF) used to represent drugs in deep learning models. |

Frequently Asked Questions

What is the class imbalance problem in Drug-Target Interaction (DTI) prediction? In DTI datasets, the number of known interacting pairs (positive class) is vastly outnumbered by the known non-interacting or unknown pairs (negative class). This skewed distribution is a fundamental characteristic of biological data, where confirmed interactions are rare and costly to obtain experimentally [9] [2].

Why is a model trained on imbalanced data considered biased? Most machine learning algorithms are designed to maximize overall accuracy, which, on imbalanced data, is most easily achieved by always predicting the majority class. This results in a model that is biased towards the majority class (non-interacting pairs) and fails to learn the discriminative patterns of the minority class (interacting pairs) [9] [6]. Consequently, while the model may show high accuracy, it performs poorly at its primary task: identifying true drug-target interactions.

What is the direct link between model bias and false negatives? A model biased towards the non-interacting class will systematically misclassify many true interacting pairs as non-interactions. These misclassifications are false negatives. In drug discovery, a false negative means a potential new drug or a new therapeutic use for an existing drug is mistakenly overlooked, potentially halting a promising research avenue and wasting resources spent on subsequent experiments [3] [6].

Can't we just trust a high Accuracy score? No, accuracy is a highly misleading metric for imbalanced problems. For example, in a dataset where 99% of pairs are non-interacting, a model that never predicts an interaction would still achieve 99% accuracy, while completely failing to identify any true drug-target interactions [10]. It is crucial to rely on a suite of metrics that are robust to imbalance.

Which metrics should I use to properly evaluate my DTI model? You should prioritize metrics that capture the model's performance on the minority class. Key metrics include [3] [11] [10]:

- Sensitivity (Recall): The ability to correctly identify true interactions.

- Precision: The proportion of predicted interactions that are correct.

- F1-Score: The harmonic mean of Precision and Sensitivity.

- AUPRC (Area Under the Precision-Recall Curve): More informative than ROC-AUC for imbalanced datasets, as it focuses directly on the performance of the positive class.

- MCC (Matthews Correlation Coefficient): A balanced measure that accounts for all four categories of the confusion matrix.

Troubleshooting Guide: Solving Imbalance-Induced Bias

This section provides actionable methodologies to diagnose and correct for model bias in your DTI pipelines.

Symptom: High Accuracy, Low Sensitivity

Your model's accuracy is high, but its ability to find true interactions (sensitivity/recall) is unacceptably low.

Solution 1: Apply Data-Level Resampling Techniques Resampling alters your training dataset to create a more balanced class distribution before training the model.

| Technique | Description | Best For / Considerations |

|---|---|---|

| Random Undersampling (RUS) | Randomly removes samples from the majority class. | Very large datasets where discarding some data is acceptable. Can lead to loss of informative patterns [9] [10]. |

| Synthetic Minority Oversampling (SMOTE) | Creates synthetic minority class samples by interpolating between existing ones. | Medium-sized datasets; avoids mere duplication. May introduce noisy samples if the minority class is not well-clustered [9] [11]. |

| Advanced Oversampling (GANs) | Uses Generative Adversarial Networks to generate highly realistic synthetic minority samples. | Complex, high-dimensional data where simpler methods fail. More computationally intensive but can yield superior results [3]. |

Experimental Protocol: Implementing K-Ratio Random Undersampling A systematic approach to finding the optimal imbalance ratio, as validated in recent research [10]:

- Prepare Data: Start with your imbalanced training set.

- Define Ratios: Instead of balancing to a 1:1 ratio, test a series of milder undersampling ratios (e.g., 1:50, 1:25, 1:10), where the second number is the size of the majority class relative to the minority class.

- Train Models: For each ratio, train your chosen model (e.g., Random Forest, XGBoost, GCN) on the resampled data.

- Validate & Select: Evaluate each model on a held-out validation set using robust metrics like F1-score and MCC. The results often show that a moderately balanced ratio (e.g., 1:10) outperforms both the highly imbalanced original data and a perfectly balanced 1:1 dataset.

Solution 2: Leverage Algorithm-Level Adjustments These methods adjust the learning algorithm itself to compensate for the imbalance.

- Cost-Sensitive Learning: Modify the model to assign a higher misclassification cost for errors on the minority class. This forces the model to pay more attention to learning the characteristics of drug-target interactions [10] [12].

- Ensemble Learning with Balanced Base Learners: Train an ensemble of models where each base learner is trained on a balanced subset of the data. For example, you can keep all positive samples and repeatedly draw random subsets of negative samples to create multiple balanced training sets. The final prediction is an aggregation of all base learners, which reduces variance and bias [6].

Experimental Protocol: Building a Deep Learning Ensemble A protocol to mitigate information loss from undersampling by using an ensemble of deep learning models [6]:

- Data Setup: From your training data, keep the set of positive interactions fixed.

- Create Multiple Subsets: Perform multiple rounds of Random Undersampling on the majority (negative) class to create several balanced training datasets. Each dataset will have a different, random sample of negative interactions.

- Train Base Learners: Train a separate, identical deep learning model (e.g., a multilayer perceptron with protein and drug features) on each of these balanced datasets.

- Aggregate Predictions: For a new drug-target pair, obtain predictions from all base learners and combine them (e.g., by averaging or majority voting) to produce the final prediction.

Solution 3: Utilize Advanced Feature Representations Instead of, or in conjunction with, resampling, you can use powerful feature representations that better capture the underlying biochemistry.

- Pre-trained Model Embeddings: Use embeddings (dense vector representations) generated from models pre-trained on vast biological and chemical corpora (e.g., BioGPT, SapBERT) or structures (e.g., Graph Neural Networks). These embeddings can provide a richer, more informative starting point for your classifier, making it easier to distinguish between classes even with imbalanced data [13].

- Unified Feature Engineering: Combine multiple robust feature extraction methods for drugs (e.g., Extended-Connectivity Fingerprints - ECFP) and targets (e.g., structural property sequences), then unite them via simple operations like element-wise addition to create a discriminative input vector [14].

Experimental Workflow Visualization

The following diagram illustrates a robust experimental workflow that integrates multiple solutions discussed above to effectively combat model bias.

Research Reagent Solutions

The table below catalogs key computational tools and methods used in state-of-the-art DTI research to address class imbalance.

| Research Reagent | Function & Application | Key Reference |

|---|---|---|

| GANs (Generative Adversarial Networks) | Generate high-quality synthetic samples of the minority class to create a balanced training set, overcoming limitations of simpler oversampling. | [3] |

| K-Ratio Random Undersampling (K-RUS) | A systematic undersampling method that finds the optimal imbalance ratio (e.g., 1:10) instead of full 1:1 balance, maximizing model performance. | [10] |

| Pre-trained Model Embeddings (e.g., BioGPT) | Provides rich, informative feature vectors for drugs and targets from models pre-trained on vast corpora, improving learning even from few examples. | [13] |

| Ensemble Deep Learning | Combines predictions from multiple deep learning models trained on different balanced data subsets, reducing variance and bias from any single sample. | [6] |

| SMOTE & Variants (e.g., Borderline-SMOTE) | Classic synthetic oversampling techniques that create new minority class instances in feature space, helping to define the decision boundary more clearly. | [9] [11] |

| Cost-Sensitive Learning | An algorithm-level approach that increases the penalty for misclassifying a minority class instance, directly countering the bias in the learning process. | [10] [12] |

Troubleshooting Guides

Guide 1: Diagnosing Model Bias in DTI Prediction

Problem: Your model achieves high overall accuracy but fails to predict true drug-target interactions (minority class).

Explanation: This is a classic symptom of between-class imbalance [15] [16]. In DTI datasets, the number of known interacting pairs is vastly outnumbered by non-interacting pairs. Standard classifiers are biased toward the majority class (non-interacting pairs) to minimize overall error, which harms the prediction of the critical minority class (interacting pairs) [15] [1].

Solution Steps:

- Confirm the Imbalance: Calculate the Imbalance Ratio (IR): IR = (Number of Non-interacting Pairs) / (Number of Interacting Pairs). A high IR (e.g., 10:1 or more) confirms a significant between-class imbalance [17].

- Apply Resampling Techniques: Use the following techniques to rebalance the class distribution before training:

- Oversampling: Generate synthetic samples for the minority (interacting) class using algorithms like SMOTE or its variants (Borderline-SMOTE, SVM-SMOTE) [9] [2].

- Undersampling: Randomly remove samples from the majority (non-interacting) class. Use with caution to avoid losing valuable information [9].

- Use Algorithm-Level Adjustments: Employ models that can intrinsically handle imbalance, such as:

Guide 2: Addressing Poor Performance on Specific Interaction Types

Problem: Your DTI model performs well on some drug-target interaction types but poorly on others, even though all are "interacting" pairs.

Explanation: This indicates within-class imbalance [15] [16]. The positive class (interacting pairs) itself contains multiple subtypes (e.g., different binding affinities, interaction mechanisms). Some of these subtypes are less represented, forming "small disjuncts" or rare cases that the model fails to learn effectively, biasing results toward the better-represented interaction types [15].

Solution Steps:

- Identify Small Disjuncts: Perform cluster analysis (e.g., K-means) on the feature vectors of the known interacting pairs. Small, distinct clusters represent the under-represented interaction types [15].

- Apply Cluster-Informed Oversampling: Once small clusters are identified, specifically apply oversampling techniques (like SMOTE) within these clusters to artificially enhance their representation in the training data [15].

- Tailored Model Strategies: For targets with a very small number of known interactions (TWSNI), leverage positive samples from similar targets (neighbors). For targets with abundant interactions (TWLNI), train models using only their owned positive samples [20].

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between between-class and within-class imbalance?

- Between-Class Imbalance: Refers to the overall disparity in the number of instances between the major classes—specifically, the number of non-interacting drug-target pairs (majority class) far exceeds the number of interacting pairs (minority class) [15] [1] [16]. This causes a model bias against predicting any interactions at all.

- Within-Class Imbalance: Occurs within a single class. In DTI, the minority class (interacting pairs) consists of multiple subtypes of interactions. Some of these subtypes have relatively few known examples compared to others, causing the model to be biased towards the more common interaction types and perform poorly on the rare ones [15] [16].

FAQ 2: Which evaluation metrics should I use instead of accuracy for imbalanced DTI data?

Accuracy is misleading for imbalanced datasets. Use metrics that focus on the performance of the minority class:

- Sensitivity (Recall): Measures the model's ability to correctly identify true interacting pairs.

- Specificity: Measures the model's ability to correctly identify true non-interacting pairs.

- Precision: Of all pairs predicted as interacting, what proportion are truly interacting?

- F1-Score: The harmonic mean of Precision and Sensitivity.

- AUC-ROC: Measures the overall ability to distinguish between interacting and non-interacting pairs [3] [17].

- MCC (Matthews Correlation Coefficient): A balanced metric that is particularly informative for imbalanced datasets [18].

FAQ 3: Can deep learning models like GNNs automatically handle class imbalance?

No, not automatically. While robust architectures like Graph Neural Networks (GNNs) can learn complex patterns, they are still susceptible to bias from imbalanced data. Explicit balancing techniques are required. Studies show that applying oversampling or a weighted loss function significantly improves the performance of GNNs on imbalanced DTI and drug discovery tasks, often leading to a higher Matthews Correlation Coefficient (MCC) [18].

FAQ 4: Are synthetic samples generated by oversampling techniques like SMOTE reliable for DTI data?

Yes, when used appropriately. SMOTE and its advanced variants (e.g., Borderline-SMOTE, Safe-level-SMOTE) generate synthetic samples in feature space by interpolating between existing, real minority class instances. This has been proven effective in various chemistry and drug discovery domains, including DTI prediction, for improving model sensitivity [9] [2]. More recently, Generative Adversarial Networks (GANs) have been used to create high-quality synthetic minority class data, further enhancing prediction performance on challenging datasets [3].

Experimental Protocols & Data

Table 1: Comparison of Imbalance Handling Techniques in DTI Research

| Technique Category | Specific Method | Key Principle | Best Suited For | Reported Performance (Example) |

|---|---|---|---|---|

| Data-Level (Resampling) | SMOTE [9] | Generates synthetic minority samples by interpolating between neighbors. | General between-class imbalance. | Improved prediction of HDAC8 inhibitors when combined with Random Forest (RF-SMOTE) [9] [2]. |

| Borderline-SMOTE [9] [2] | Focuses oversampling on minority samples near the class decision boundary. | Datasets with complex decision boundaries. | Enhanced prediction of protein-protein interaction sites when combined with CNN [9] [2]. | |

| Random Undersampling (RUS) [9] | Randomly removes majority class samples to balance the dataset. | Very large datasets where data loss is acceptable. | Used in DTI prediction to reduce negative sample bias [9]. | |

| Algorithm-Level | Weighted Loss Functions [18] | Increases the cost of misclassifying minority class instances during training. | Use with deep learning models (e.g., GNNs, CNNs). | Oversampling and weighted loss improved GNN MCC scores on molecular datasets [18]. |

| Ensemble Learning [15] | Combines multiple models, often with built-in sampling or weighting mechanisms. | Both between-class and within-class imbalance. | Outperformed 4 state-of-the-art methods by addressing both imbalance types [15]. | |

| Bayesian Optimization (CILBO) [19] | Automatically selects best hyperparameters and imbalance strategy (e.g., class weight). | Optimizing machine learning models like Random Forest. | Achieved ROC-AUC of 0.99 for antibacterial prediction, comparable to a complex deep learning model [19]. | |

| Hybrid/Advanced | GANs for Oversampling [3] | Uses a generative model to create synthetic minority class data. | Severe imbalance where SMOTE may be insufficient. | GAN + Random Forest achieved 97.46% sensitivity and 99.42% ROC-AUC on BindingDB-Kd dataset [3]. |

| Multiple Classification Strategies (MCSDTI) [20] | Applies different prediction strategies based on target interaction abundance. | Within-class imbalance and targets with few known interactions. | AUC increased by 1-3% on various datasets (NR, IC, GPCR, E) compared to next-best methods [20]. |

Table 2: Essential Research Reagent Solutions for DTI Imbalance Studies

| Reagent / Resource | Type | Function in Experiment | Key Features / Examples |

|---|---|---|---|

| DrugBank [15] [1] | Database | Provides known drug-target interactions for building positive class datasets. | Contains thousands of drug-target interactions; essential for ground truth data [15]. |

| PROFEAT [15] [1] | Feature Extraction | Computes fixed-length feature vectors from protein sequences for machine learning. | Calculates descriptors like amino acid composition, dipeptide composition, quasi-sequence-order [15] [1]. |

| Rcpi [15] [1] | Feature Extraction | Calculates molecular descriptors for drugs from their structure. | Generates constitutional, topological, and geometrical descriptors for small-molecule drugs [15] [1]. |

| SMOTE & Variants [9] | Software Algorithm | Addresses between-class imbalance by generating synthetic positive samples. | Available in libraries like imbalanced-learn (Python); includes Borderline-SMOTE, SVM-SMOTE [9]. |

| Bayesian Optimization Frameworks | Software Library | Automates hyperparameter tuning, including class weights and sampling strategy. | Libraries like scikit-optimize or Optuna can implement pipelines like CILBO [19]. |

| Graph Neural Network (GNN) Libraries | Software Library | Builds models that learn from molecular graph structures. | Architectures like GCN, GAT; can be combined with weighted loss functions for imbalance [18]. |

Workflow Visualization

Diagram 1: Ensemble Learning Workflow for Dual Imbalance

Diagram 2: MCSDTI Strategy for Target-Specific Imbalance

A Toolkit for Balance: Data-Level and Algorithm-Level Solutions

Frequently Asked Questions

Q1: What is the class imbalance problem in drug-target interaction (DTI) prediction? In DTI prediction, the number of known interacting drug-target pairs (positive samples) is vastly outnumbered by the non-interacting pairs (negative samples). This creates a significant class imbalance. For instance, bioassay datasets for infectious diseases can have imbalance ratios (IR) ranging from 1:82 to 1:104 (active to inactive compounds) [10]. This imbalance causes machine learning models to become biased toward the majority class (inactive), leading to poor detection of the pharmacologically important minority class (active) [9] [10].

Q2: When should I choose SMOTE over Random Undersampling for my DTI dataset? The choice depends on your dataset size and imbalance ratio. Random Undersampling (RUS) is often superior for extremely imbalanced datasets, as it significantly enhances recall, balanced accuracy, and F1-score [10]. For example, one study found RUS outperformed other techniques on highly imbalanced bioassay data (IR: 1:82–1:104) [10]. Conversely, SMOTE might be preferable when preserving the entire majority class is critical, as it generates new synthetic minority samples instead of discarding data [9]. However, SMOTE can sometimes introduce noisy samples and is not always the best-performing technique in DTI contexts [10].

Q3: How does Borderline-SMOTE improve upon standard SMOTE? Standard SMOTE generates synthetic examples for any instance in the minority class without considering how easily those instances are classified. Borderline-SMOTE is a more sophisticated variant that first identifies the "borderline" minority instances—those that are misclassified by a k-nearest neighbor classifier or are surrounded by many majority class instances. It then focuses synthetic data generation specifically on these more critical, hard-to-learn borderline instances. This leads to a more informative decision boundary and has been successfully used in protein-protein interaction site prediction [9] [21].

Q4: I've applied RUS, but my model's overall accuracy dropped. Is this normal? Yes, this is an expected and often misleading outcome. After applying RUS, a high overall accuracy typically reflects the model's ability to correctly predict the overrepresented inactive class. When the dataset is balanced, the model must now correctly classify both classes, which is a harder task. Therefore, a drop in overall accuracy is common, but it is accompanied by a crucial increase in sensitivity (recall) for the active class. For DTI prediction, metrics like Matthews Correlation Coefficient (MCC), F1-score, and balanced accuracy are more reliable indicators of model performance than overall accuracy [10].

Q5: What are the common pitfalls when using these resampling techniques?

- Random Undersampling (RUS): The primary risk is the loss of potentially useful information from the majority class, which could lead to a model that is less robust [9] [10].

- SMOTE: It can generate noisy synthetic samples if it interpolates between minority class instances that are outliers or from different sub-clusters. This can blur the class boundary and lead to overfitting [9].

- Borderline-SMOTE: While it improves upon SMOTE, its performance is dependent on correctly identifying the borderline region, which can be unstable in very high-dimensional feature spaces common in chemogenomics [9].

- General Pitfall: Applying resampling without proper validation. It is critical to ensure that no data leakage occurs between the training and test sets. Resampling should only be applied to the training data fold during cross-validation.

Troubleshooting Guides

Problem: Model shows high accuracy but fails to predict any active compounds. Diagnosis: This is a classic sign of a model biased by severe class imbalance. The algorithm is essentially learning to always predict "inactive" because that strategy yields high accuracy. Solution:

- Apply Resampling: Implement a resampling technique on your training data. For highly imbalanced datasets (IR > 1:50), start with Random Undersampling (RUS). Evidence from DTI research shows that a moderate imbalance ratio of 1:10 (active:inactive) achieved via RUS can provide an optimal balance between true positive and false positive rates [10].

- Change Performance Metrics: Immediately stop using overall accuracy. Instead, monitor Sensitivity (Recall), Specificity, Precision, F1-Score, and Matthews Correlation Coefficient (MCC) [10].

- Algorithm-Level Adjustment: As an alternative or complement to resampling, use cost-sensitive learning. Many algorithms (e.g., in Random Forest or XGBoost) allow you to assign a higher class weight or misclassification cost to the minority class, forcing the model to pay more attention to it [10].

Problem: After applying SMOTE, model performance did not improve or became worse. Diagnosis: Standard SMOTE might be creating unrealistic or noisy synthetic samples that do not correspond to chemically viable active compounds. Solution:

- Switch to an Advanced SMOTE Variant: Use Borderline-SMOTE or SVM-SMOTE. These methods are more strategic about where to generate new samples, focusing on the decision boundary, which can lead to more meaningful synthetic data [9] [22].

- Try a Hybrid Approach: Combine SMOTE with an undersampling method to clean the resulting data. For example, use SMOTEENN (SMOTE + Edited Nearest Neighbors) which uses SMOTE to oversample the minority class and then uses ENN to remove any samples from both classes that are misclassified by their k-nearest neighbors. This can help remove noisy samples from both classes [21] [23].

- Consider Undersampling: For your specific dataset, undersampling might simply be more effective. A comparative study on physiological signals found that RUS was the best option for improving sensitivity [23].

Problem: The computational cost of training on the resampled data is too high. Diagnosis: This can happen with SMOTE on large datasets or when the feature dimension is very high, as it involves extensive nearest-neighbor calculations. Solution:

- Use Random Undersampling (RUS): RUS significantly reduces the size of the training set, leading to faster model training times [9].

- Apply Feature Dimensionality Reduction: Before resampling, use techniques like Principal Component Analysis (PCA) or Random Projection to reduce the number of features. This simplifies the distance calculations for SMOTE and overall model complexity. One DTI study successfully used random projection for this purpose [5].

- Optimize the Imbalance Ratio: Instead of balancing to a perfect 1:1 ratio, find a more moderate ratio that still yields good performance. Research has shown that a 1:10 ratio can be highly effective, requiring much less data manipulation than a 1:1 ratio [10].

Performance Comparison of Resampling Techniques

The table below summarizes quantitative findings from various studies to guide technique selection.

Table 1: Comparative Performance of Resampling Techniques in Different Domains

| Technique | Domain / Application | Key Performance Findings | Citation |

|---|---|---|---|

| Random Undersampling (RUS) | Drug Discovery (Anti-HIV, Malaria, Trypanosomiasis) | Outperformed ROS, ADASYN, and SMOTE; achieved best MCC & F1-score on highly imbalanced data (IR 1:82-1:104). | [10] |

| NearMiss Undersampling | Drug-Target Interaction (DTI) Prediction | Combined with Random Forest, achieved state-of-the-art auROC (up to 99.33%) on gold-standard DTI datasets. | [5] |

| SMOTE | General Imbalanced Classification | Improved recall and balanced accuracy compared to no resampling, but sometimes led to a significant decrease in precision. | [10] [21] |

| Borderline-SMOTE | Protein-Protein Interaction Site Prediction | Superior to standard SMOTE for predicting interaction sites, aiding in protein design and mutation analysis. | [9] |

| SVM-SMOTE | Drug-Target Interaction (DTI) Prediction | Achieved superior performance in DTI prediction compared to other state-of-the-art methods on benchmark datasets. | [22] |

| Hybrid (SMOTE-NC + RUS) | Educational Data Mining (Extreme Imbalance) | Identified as the best-performing method for datasets with extreme class imbalance. | [21] |

Experimental Protocol: Implementing Resampling for DTI Prediction

The following workflow, based on established research methodologies, details the steps for integrating resampling into a DTI prediction pipeline [5] [22].

Data Collection & Feature Extraction:

- Drug Descriptors: Extract molecular features from drug compounds. Common descriptors include molecular fingerprints (e.g., FP2, PubChem fingerprints), counting vectors, and other physicochemical properties. Studies have used tools like PaDEL-Descriptors to generate these features [5] [22].

- Target Descriptors: Extract features from target protein sequences. Common methods include Amino Acid Composition (AAC), Dipeptide Composition (DPC), and features from databases like AAindex1 [5] [22].

- Formation of Pairs: Each data instance is a drug-target pair, represented by the concatenation of the drug and target feature vectors. The label is binary (1 for interaction, 0 for no interaction).

Data Preprocessing:

- Split Data: Partition the dataset into training and independent test sets. The test set must be kept separate and untouched during the resampling and model tuning phases to ensure an unbiased evaluation.

- Dimensionality Reduction (Optional): If the combined feature vector is very high-dimensional, apply a dimensionality reduction technique like Random Projection or PCA to the training data to reduce computational cost and mitigate the curse of dimensionality [5].

Resampling (Applied only to the Training Set):

- Identify the imbalance ratio in your training set.

- Apply your chosen resampling technique exclusively to the training data.

- Example: Implementing RUS with a 1:10 Ratio. From the majority class (non-interacting pairs), randomly remove samples until the number of majority class samples is only 10 times the number of minority class (interacting) samples [10].

- Example: Implementing Borderline-SMOTE. Use a library like

imbalanced-learnin Python. The algorithm will first identify the borderline minority instances and then generate synthetic samples along the line segments joining these borderline instances and their nearest neighbors.

Model Training and Validation:

- Train your chosen classifier (e.g., Random Forest, Support Vector Machine) on the resampled training data.

- Use cross-validation on the resampled training data to tune hyperparameters. Use appropriate metrics like MCC, F1-score, and AUC-ROC for evaluation.

Final Evaluation:

- Use the held-out, original (unresampled) test set to perform a final, unbiased assessment of your model's performance. Report the key metrics as described in the troubleshooting section.

Workflow Diagram: Resampling Strategy for DTI Prediction

The following diagram visualizes the logical decision process for selecting and applying a resampling technique in a DTI prediction project.

Table 2: Key Computational Tools and Data Resources for DTI Research

| Item / Resource | Type | Function / Description |

|---|---|---|

| Gold Standard DTI Datasets | Data | Publicly available benchmark datasets (e.g., Nuclear Receptors, Ion Channels, GPCRs, Enzymes) for developing and comparing DTI prediction models. [5] |

| PubChem Bioassay | Data | A key public database containing bioactivity data from high-throughput screening (HTS) experiments, which are often highly imbalanced. [10] |

| PaDEL-Descriptors | Software | A software tool used to calculate molecular fingerprints and descriptors for drug compounds from their structures. [5] |

| AAindex1 Database | Data | A database of numerical indices representing various physicochemical and biochemical properties of amino acids, used for creating target protein descriptors. [5] |

| imbalanced-learn (Python library) | Software | A comprehensive library providing numerous implementations of oversampling (SMOTE, Borderline-SMOTE, ADASYN) and undersampling (RUS, NearMiss, Tomek Links) techniques. |

| Random Forest / XGBoost | Algorithm | Ensemble learning algorithms that are frequently used as robust classifiers in DTI prediction tasks and can be combined with resampling techniques. [9] [10] [5] |

In computational drug discovery, a significant obstacle hampers the development of accurate predictive models: class imbalance. Drug-Target Interaction (DTI) datasets are typically characterized by a vast number of known non-interacting drug-target pairs (the majority class) and a relatively small number of known interacting pairs (the minority class of interest) [1]. This imbalance leads to models that are biased toward predicting non-interactions, resulting in poor sensitivity and a high rate of false negatives, meaning promising drug candidates are missed [24] [25]. Generative Adversarial Networks (GANs) have emerged as a powerful advanced data augmentation technique to address this critical issue. By generating high-quality, synthetic minority class samples, GANs can balance datasets, leading to more robust and sensitive DTI prediction models and ultimately accelerating the drug discovery pipeline [24] [18].

Frequently Asked Questions (FAQs) on GANs for Data Augmentation

Q1: What is data augmentation, and why are GANs considered superior to traditional methods in the context of DTI prediction?

Data augmentation encompasses techniques used to artificially expand the size and diversity of a training dataset. While traditional methods involve simple transformations like rotation or scaling for images, these are often inapplicable to molecular and interaction data [26]. GANs are superior because they can learn the complex, underlying distribution of real molecular structures and generate novel, synthetic data that is both diverse and representative of real-world biochemical space. This allows for a more principled and effective augmentation of the minority class in DTI datasets compared to simple oversampling [27] [24].

Q2: How do GANs specifically help with the class imbalance problem in DTI datasets?

GANs help mitigate class imbalance by focusing their generative power on the underrepresented class—the interacting drug-target pairs. Once trained on the known interactions, the GAN's generator can produce a large number of realistic, synthetic interacting pairs. These generated samples are then added to the training set, effectively balancing the class distribution. This process reduces the model's bias toward the majority class, improves its ability to recognize true interactions and significantly lowers the false negative rate [24] [18].

Q3: What are the most common failure modes when training GANs for this purpose, and are there established solutions?

Training GANs is notoriously challenging, and several common failure modes can impede success [28]. The table below summarizes these key issues and their potential remedies.

Table 1: Common GAN Failure Modes and Proposed Solutions

| Failure Mode | Description | Potential Solutions |

|---|---|---|

| Mode Collapse [28] | The generator produces a limited variety of outputs, often collapsing to a few similar samples. | Use Wasserstein loss (WGAN) [28] [29] or Unrolled GANs [28]. |

| Vanishing Gradients [28] | The discriminator becomes too good, providing no useful gradient for the generator to learn from. | Employ Wasserstein loss [28] [29] or a modified minimax loss [28]. |

| Failure to Converge [28] | The model training is unstable and never reaches a satisfactory equilibrium. | Apply regularization techniques, such as adding noise to discriminator inputs or penalizing discriminator weights [28]. |

Q4: Beyond standard GANs, what are some advanced architectures used in recent DTI prediction research?

Researchers have developed sophisticated hybrid frameworks that integrate GANs with other deep learning models to enhance performance. For instance, the VGAN-DTI framework combines GANs, Variational Autoencoders (VAEs), and Multilayer Perceptrons (MLPs) to improve prediction accuracy [27]. Another approach is GANsDTA, a semi-supervised method that uses GANs for unsupervised feature extraction from protein sequences and drug SMILES strings, which is particularly useful when labeled data is limited [30].

Troubleshooting Guide: Implementing GANs for DTI Augmentation

Problem 1: Lack of Output Diversity (Mode Collapse)

Symptoms: The generated molecular structures or interaction profiles lack diversity and are highly similar to each other.

Recommended Steps:

- Switch the Loss Function: Replace the standard minimax loss with a Wasserstein loss (WGAN). This change provides more stable training and gives better gradients even when the discriminator is very accurate, directly combating mode collapse [28] [29].

- Implement Mini-batch Discrimination: This technique allows the discriminator to look at multiple data samples in combination, helping it to detect a lack of diversity in the generator's output and providing a gradient that encourages variety.

- Use Architectural Constraints: Consider using an Unrolled GAN architecture. This method optimizes the generator against several future steps of the discriminator, preventing it from over-optimizing for a single, static discriminator and thus promoting diverse output generation [28].

Problem 2: Unstable Training and Non-Convergence

Symptoms: Training losses for the generator and discriminator oscillate wildly without settling, and the quality of generated samples does not improve over time.

Recommended Steps:

- Apply Gradient Penalty: When using a WGAN, use a gradient penalty (WGAN-GP) instead of weight clipping to enforce the Lipschitz constraint. This is a widely used regularization method that stabilizes training [29].

- Add Noise to Inputs: Introduce noise to the inputs of the discriminator. This acts as a regularizer, preventing the discriminator from becoming overconfident too quickly and overwhelming the generator [28].

- Optimize Optimizers: Use well-tuned optimization algorithms like Adam or RMSprop. Often, using a lower learning rate for the discriminator than for the generator can help maintain a training equilibrium.

Problem 3: Generating Chemically Invalid or Infeasible Molecules

Symptoms: The generator outputs molecular structures (e.g., in SMILES format) that are syntactically invalid or represent molecules that are not synthetically feasible.

Recommended Steps:

- Incorporate Domain Knowledge: Use a VAE-GAN hybrid model, as seen in the VGAN-DTI framework. The VAE component is particularly effective at encoding and generating syntactically valid molecular structures, ensuring the generated samples are chemically plausible [27].

- Post-Generation Validation: Implement a filter that checks all generated SMILES strings for chemical validity using established cheminformatics toolkits (e.g., RDKit). Only valid molecules are passed to the next stage.

- Rule-Based Constraints: During the GAN training process, incorporate rules or rewards that penalize the generation of chemically invalid structures, guiding the learning process toward feasible regions of the chemical space.

Experimental Protocols & Performance Benchmarks

Detailed Methodology: A GAN-Random Forest Framework for DTI

A robust experimental protocol for leveraging GANs in DTI prediction involves a hybrid machine learning and deep learning approach [24].

Workflow Description: The process begins with feature extraction from raw drug and target data. Drug features are typically represented using molecular fingerprints like MACCS keys, which encode chemical structures. Target protein features are derived from their amino acid sequences using compositions like Amino Acid Composition (AAC) and Dipeptide Composition (DPC). These drug and target features are then combined into a single feature vector for each pair.

The core of the augmentation is handled by the GAN. The Generator (G) takes a random noise vector and aims to produce synthetic feature vectors that represent synthetic minority class (interacting) samples. The Discriminator (D) is trained to distinguish between real interacting pairs from the training set and the fake ones produced by G. Through this adversarial game, G learns to produce highly realistic synthetic interacting pairs.

These generated samples are then used to augment the original, imbalanced training set. Finally, a Random Forest Classifier (RFC), known for its effectiveness with high-dimensional data, is trained on this balanced dataset to perform the final DTI prediction.

Quantitative Performance Metrics

The following table summarizes the performance of a GAN-based DTI prediction model, specifically a GAN + Random Forest (GAN+RFC) model, on different benchmark datasets, demonstrating its high efficacy [24].

Table 2: Performance of a GAN-RFC Model on BindingDB Datasets

| Dataset | Accuracy (%) | Precision (%) | Sensitivity/Recall (%) | F1-Score (%) | ROC-AUC (%) |

|---|---|---|---|---|---|

| BindingDB-Kd | 97.46 | 97.49 | 97.46 | 97.46 | 99.42 |

| BindingDB-Ki | 91.69 | 91.74 | 91.69 | 91.69 | 97.32 |

| BindingDB-IC50 | 95.40 | 95.41 | 95.40 | 95.39 | 98.97 |

For comparison, another advanced framework, VGAN-DTI, which integrates GANs with VAEs and MLPs, also reported state-of-the-art performance, achieving 96% accuracy, 95% precision, 94% recall, and a 94% F1 score [27].

The Scientist's Toolkit: Essential Research Reagents & Materials

Successful implementation of GAN-based data augmentation for DTI prediction relies on a set of key computational "reagents" and resources.

Table 3: Essential Tools and Datasets for GAN-based DTI Research

| Item Name | Type | Function & Application |

|---|---|---|

| BindingDB [27] [24] | Database | A public, curated database of measured binding affinities, providing the primary interaction data (both positive and negative pairs) for training and evaluation. |

| MACCS Keys [24] | Molecular Fingerprint | A set of 166 structural keys used to represent drug compounds as fixed-length binary vectors, enabling machine learning. |

| Amino Acid Composition (AAC) [24] | Protein Descriptor | Represents a protein sequence by its composition of the 20 standard amino acids, providing a fixed-length feature vector. |

| Dipeptide Composition (DPC) [24] | Protein Descriptor | Extends AAC by counting the frequency of dipeptides (two consecutive amino acids), capturing some sequence-order information. |

| SMILES [27] [30] | Molecular Representation | A string-based notation system for representing molecular structures, used as a direct input for some GAN models like GANsDTA. |

| Wasserstein GAN (WGAN) [28] [29] | Algorithm | A GAN variant with a more stable training process, used to overcome common issues like mode collapse and vanishing gradients. |

| Variational Autoencoder (VAE) [27] | Algorithm | Often used in hybrid models with GANs (e.g., VGAN-DTI) to ensure the generation of syntactically valid and diverse molecular structures. |

Troubleshooting Guides & FAQs

Frequently Asked Questions

FAQ 1: What is the fundamental difference between using an ensemble method and applying a cost-sensitive learning technique for handling class imbalance?

Ensemble methods and cost-sensitive learning tackle the class imbalance problem from different angles. Ensemble methods, like AdaBoost, combine multiple weak classifiers to create a strong learner, often improving overall predictive performance and robustness. When used for imbalanced data, they can be particularly effective at learning complex patterns from both the majority and minority classes [31]. Cost-sensitive learning is an algorithm-level approach that directly assigns a higher misclassification cost to the minority class. This forces the model to pay more attention to the minority class examples. A common implementation is using a weighted loss function, where the cost of misclassifying a minority class sample is weighted more heavily in the calculation of the model's error [18]. In practice, these approaches are not mutually exclusive and can be combined for superior results.

FAQ 2: My model has high accuracy but fails to predict any true positive interactions. What is the most likely cause and how can I resolve it?

This is a classic symptom of severe class imbalance. A model may achieve high accuracy by simply always predicting the majority class (non-interactions), which is unhelpful for drug discovery. The primary cause is that the training data is skewed, and the model learning process is not sufficiently penalized for ignoring the minority class.

Resolution Paths:

- Data-Level Solution: Use oversampling techniques to balance the dataset. For example, employ Generative Adversarial Networks (GANs) to create synthetic data for the minority class (positive interactions), which has been shown to significantly improve sensitivity and reduce false negatives [3]. Alternatively, the SMOTETomek algorithm can be used for this purpose [12].

- Algorithm-Level Solution: Implement cost-sensitive learning by applying class weights. In a Random Forest or Support Vector Machine, you can set the

class_weightparameter to "balanced" or manually assign higher weights to the minority class. This has been shown to lead to a significant percentage improvement in metrics like F1-score and MCC [12].

FAQ 3: Are there specific ensemble methods better suited for DTI prediction on imbalanced data?

Yes, certain ensemble methods have demonstrated excellent performance in this domain. The AdaBoost (Adaptive Boosting) classifier is a prominent example, which has been shown to enhance prediction accuracy, AUC, and F-score significantly over other methods in DTI prediction tasks [31]. Furthermore, using Random Forest as part of a hybrid framework, especially when combined with data-level balancing techniques like GANs, has achieved state-of-the-art performance metrics (e.g., accuracy >97%, sensitivity >97%) on benchmark datasets like BindingDB [3].

FAQ 4: How should I evaluate my model to ensure the performance on the minority class is adequate?

Relying solely on accuracy is misleading for imbalanced datasets. You should use a suite of metrics that are robust to class imbalance.

- Key Metrics:

- Sensitivity (Recall): Measures the model's ability to identify true positive interactions.

- Precision: Measures the correctness of the predicted positive interactions.

- F1-Score: The harmonic mean of precision and recall.

- Matthews Correlation Coefficient (MCC): A balanced metric that considers all four corners of the confusion matrix and is reliable for imbalanced classes.

- Area Under the Precision-Recall Curve (AUPR): More informative than ROC-AUC for imbalanced datasets, as it focuses directly on the performance of the positive (minority) class [32].

Troubleshooting Common Experimental Issues

Problem: Poor Generalization to Novel Drugs or Targets (Cold-Start Scenario)

- Symptoms: Model performs well on validation split but poorly on new drug-target pairs not seen during training.

- Potential Solutions:

- Leverage Pre-trained Models: Use feature encoders pre-trained on large, diverse biological and chemical datasets. For example, employ ProtTrans for protein sequences and molecular pre-trained models like MG-BERT for drug features to get better generalized representations [33].

- Incorporate Multi-dimensional Data: Go beyond 1D sequences. Use 2D molecular graphs and 3D spatial drug structures, along with target protein sequence features, to create a more robust model that can handle novelty [33].

- Uncertainty Quantification: Implement frameworks like Evidential Deep Learning (EviDTI). This allows the model to estimate its own uncertainty, helping researchers prioritize predictions with higher confidence for experimental validation and flagging unreliable predictions on novel pairs [33].

Problem: High Computational Cost of Complex Balancing Techniques

- Symptoms: Training times are prohibitively long, especially when using GANs or large ensembles.

- Potential Solutions:

- Start Simpler: Before deploying GANs, try more straightforward oversampling techniques like SMOTE or evaluate the performance gain from simply using a weighted loss function, which is computationally less expensive [18] [12].

- Benchmark and Compare: Systematically compare different balancing techniques. Evidence shows that while oversampling performs well, the optimal strategy can be dataset-dependent [18]. A focused benchmark can identify the most cost-effective method for your specific data.

- Feature Selection: Reduce the dimensionality of your input data. For instance, instead of using all gene expression features, it has been shown that as few as 10 carefully selected genes can be sufficient for high prediction power in some tasks, drastically reducing computational load [32].

Performance Comparison of Balancing Techniques

The table below summarizes quantitative results from various studies on handling class imbalance in drug discovery, providing a benchmark for expected outcomes.

Table 1: Performance Metrics of Different Balancing Approaches in Drug Discovery Models

| Model / Technique | Dataset | Key Metric | Performance | Note |

|---|---|---|---|---|

| GAN + Random Forest [3] | BindingDB-Kd | Sensitivity | 97.46% | Hybrid framework with synthetic data generation. |

| ROC-AUC | 99.42% | |||

| AdaBoost Classifier [31] | DrugBank | Accuracy | 2.74% improvement | Over existing methods; uses multiple feature sets. |

| F-Score | 3.53% improvement | |||

| Oversampling (GNNs) [18] | Molecular Datasets | MCC | Higher chance of high score | Outperformed other techniques in 8/9 experiments. |

| Weighted Loss Function (GNNs) [18] | Molecular Datasets | MCC | Can achieve high score | More variable than oversampling. |

| Cost-sensitive ML & AutoML [12] | Various Imbalanced Sets | F1 Score | Up to 375% improvement | With threshold optimization and class-weighting. |

Experimental Protocols

Protocol 1: Implementing a GAN-based Data Balancing Framework for DTI Prediction

This protocol is based on the hybrid framework that achieved state-of-the-art results [3].

- Feature Engineering:

- Drug Features: Extract molecular features from drug compounds represented in SMILES format. Use the MACCS keys or Morgan fingerprints to generate a structural fingerprint (e.g., a 1024-dimensional binary vector).

- Target Features: From the target protein's amino acid sequence (FASTA format), compute the Amino Acid Composition (AAC) and Dipeptide Composition (DC) to represent biomolecular properties.

- Data Balancing:

- Train a Generative Adversarial Network (GAN) on the feature vectors of the minority class (confirmed interactions).

- Use the trained generator to create a set of synthetic minority class samples.

- Combine these synthetic samples with the original training data to create a balanced dataset.

- Model Training and Prediction:

- Train a Random Forest Classifier on the balanced dataset. The ensemble nature of Random Forest is effective for high-dimensional data.

- Tune hyperparameters using a validation set.

- Evaluate the final model on a held-out test set using robust metrics like Sensitivity, F1-score, and AUC-PR.

Protocol 2: Cost-Sensitive Learning with Ensemble Methods

This protocol outlines integrating class weights directly into ensemble classifiers [31] [12].

- Feature Extraction:

- Utilize a library like PyBioMed or RDKit to compute diverse features. For drugs, use Morgan fingerprints and constitutional descriptors. For proteins, use Amino Acid Composition and Dipeptide Composition.

- Model Implementation:

- Select an ensemble algorithm such as AdaBoost or Random Forest.

- Set the

class_weightparameter to "balanced". This automatically adjusts weights inversely proportional to class frequencies. Alternatively, calculate weights manually (e.g.,weight_minority = total_samples / (2 * n_minority_samples)).

- Evaluation:

- Perform 10-fold Cross-Validation.

- Report metrics critical for imbalance: F1-score, MCC, and Balanced Accuracy.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools and Datasets for DTI Prediction on Imbalanced Data

| Item Name | Type | Function / Application | Key Feature |

|---|---|---|---|

| BindingDB [3] [34] | Database | A public database of measured binding affinities and interactions. | Provides Kd, Ki, and IC50 values for benchmarking. |

| DrugBank [33] [31] | Database | A comprehensive database containing drug and target information. | Source for known Drug-Target Interactions (DTIs). |

| PyBioMed [31] | Python Library | For feature extraction from drugs (SMILES) and proteins (sequences). | Computes molecular fingerprints, constitutional descriptors, and protein composition. |

| RDKit [31] | Cheminformatics Library | Open-source toolkit for cheminformatics. | Used to compute Morgan fingerprints and other molecular descriptors. |

| ProtTrans [33] | Pre-trained Model | Protein language model for generating protein sequence embeddings. | Provides powerful, context-aware representations of target proteins. |

| MG-BERT [33] | Pre-trained Model | Molecular graph pre-trained model for generating drug representations. | Encodes 2D topological information of drug molecules. |

| GAN (e.g., CTGAN) | Algorithm | Generates synthetic samples of the minority class. | Addresses data imbalance at the data level. |

| scikit-learn | Python Library | Provides machine learning algorithms (RF, SVM, AdaBoost) and tools. | Includes implementations for cost-sensitive learning (class_weight). |

Experimental Workflow and System Architecture

The following diagram illustrates a robust hybrid workflow for DTI prediction that integrates both data-level and algorithm-level solutions to class imbalance.

Hybrid DTI Prediction Workflow with Imbalance Handling

The diagram shows two parallel paths for handling imbalance: a data-level path using GANs to create a balanced dataset, and an algorithm-level path where a cost-sensitive loss function is applied directly within the ensemble model.

In the field of drug discovery, predicting drug-target interactions (DTIs) is a crucial but challenging task. A significant obstacle is class imbalance, where the number of known interactions (positive samples) is vastly outnumbered by unknown or non-interacting pairs (negative samples). This imbalance can lead to biased machine learning models that fail to accurately identify true interactions, ultimately limiting their utility in real-world drug development pipelines.