Optimizing RNA-seq Pipeline Parameters for Precision Variant Calling in Biomedical Research

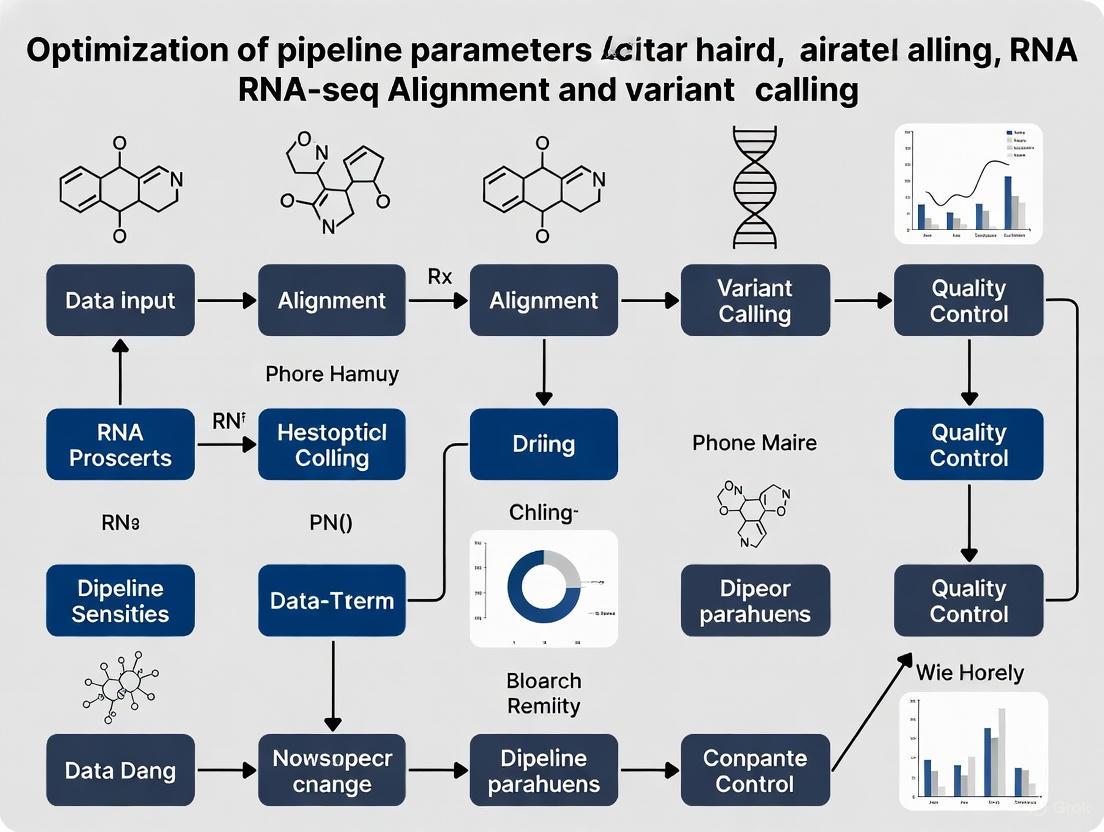

This article provides a comprehensive guide for researchers and drug development professionals on optimizing RNA-seq pipelines for accurate alignment and variant calling.

Optimizing RNA-seq Pipeline Parameters for Precision Variant Calling in Biomedical Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on optimizing RNA-seq pipelines for accurate alignment and variant calling. It covers foundational principles, from RNA extraction and quality control to the selection of splice-aware aligners. The guide details advanced methodological applications, including specialized parameters for tools like GATK HaplotypeCaller and DeepVariant, and explores machine learning classifiers such as VarRNA. It addresses common troubleshooting scenarios and optimization strategies for parameters affecting sensitivity and specificity. Finally, it discusses validation frameworks and comparative analyses to benchmark pipeline performance, highlighting how optimized RNA-seq workflows can uncover clinically actionable mutations and augment DNA-based precision medicine approaches.

Laying the Groundwork: Core Principles and Challenges of RNA-seq Alignment

The Critical Role of Sample Preparation and RNA Quality Control

Troubleshooting Guide: Common RNA Preparation Issues

The table below outlines frequent problems encountered during RNA extraction and purification, their potential causes, and recommended solutions.

| Problem | Cause | Solution |

|---|---|---|

| Low Yield | Incomplete cell lysis or homogenization [1]. | Increase sample digestion/homogenization time; ensure complete sample disruption [1] [2]. |

| Incomplete elution from the purification column [2]. | Incubate the column with nuclease-free water for 5-10 minutes at room temperature before centrifugation; perform a second elution [2]. | |

| RNA Degradation | RNase contamination during the procedure [1]. | Repeat preparation with fresh samples, exercising more care to prevent RNase contamination; use specific RNA protection reagents [1] [2]. |

| Improper handling or storage of starting material [2]. | Flash-freeze samples or use DNA/RNA Protection Reagent during storage; store input samples at -80°C prior to use [2]. | |

| Low A260/A280 Ratio | Residual protein contamination in the purified sample [2]. | Ensure proper Proteinase K digestion; remove all debris from the lysate before loading onto the purification column [2]. |

| Low A260/A230 Ratio | Carryover of guanidine salts or other contaminants from the purification process [2]. | Ensure all wash steps are performed thoroughly; after the final wash, centrifuge the column to remove residual buffer; avoid contact between the column tip and the flow-through [2]. |

| DNA Contamination | Genomic DNA not removed by the purification column [2]. | Perform an optional on-column DNase I treatment; for more thorough removal, perform an in-tube/off-column DNase I treatment [2]. |

| Clogged Column | Insufficient sample disruption or too much starting material [2]. | Increase homogenization time; centrifuge to pellet debris and use only the supernatant; reduce the amount of starting material to match the kit's specifications [2]. |

Frequently Asked Questions (FAQs)

What are the key metrics for assessing RNA quality and purity?

- RNA Integrity Number (RIN): This score, typically obtained from an Agilent Bioanalyzer, assesses the degradation level of the RNA. A RIN ≥ 8 is generally required for high-quality applications like full-length cDNA synthesis [3].

- 28S:18S Ribosomal Ratio: This ratio evaluates the integrity of ribosomal RNA, with a higher ratio (e.g., close to 2:1) indicating good quality.

- A260/A280 Ratio: This spectrophotometric measurement assesses protein contamination. A ratio of ~2.0 is generally accepted as pure for RNA [1].

- A260/A230 Ratio: This measures contamination by compounds like guanidine salts. A ratio of 2.0 or higher is desirable [2].

How should I assess RNA quantity and quality in my sample?

The recommended method is to use the Agilent RNA 6000 Pico Kit with a Bioanalyzer. This provides both the RIN value for integrity and an accurate quantification, which is especially important for low-concentration samples [3].

My RNA quality is good, but my downstream application (e.g., variant calling) is failing. What should I do?

If RNA quality and purity are verified, the issue likely lies with the downstream application itself [1]. For processes like RNA-seq variant calling, this could involve optimizing bioinformatics parameters, such as using tools like SplitNCigarReads and flagCorrection to properly handle spliced alignments in BAM files before variant calling [4].

My input RNA is degraded or of low quality (low RIN). What are my options?

For degraded RNA (e.g., from FFPE samples) or low-quality total RNA (RIN 2–3), use a random-primed cDNA synthesis kit, such as the SMARTer Universal Low Input RNA Kit for Sequencing. These kits are designed for such inputs but require prior ribosomal RNA (rRNA) depletion to prevent the majority of reads from mapping to rRNA [3].

The Scientist's Toolkit: Essential Reagent Solutions

| Item | Function |

|---|---|

| Monarch Total RNA Miniprep Kit | For total RNA extraction and purification from a variety of sample types, includes components for on-column DNase I treatment [2]. |

| DNA/RNA Protection Reagent | Maintains RNA integrity in biological samples during storage at -80°C, preventing degradation before processing [2]. |

| SMART-Seq v4 Ultra Low Input RNA Kit | Designed for full-length cDNA synthesis and sequencing from ultra-low input samples (10 pg–10 ng) or 1–1,000 intact cells. It is oligo(dT)-primed and requires high-quality input RNA (RIN ≥8) [3]. |

| SMARTer Stranded RNA-Seq Kit | Used for sensitive, strand-specific RNA-seq from 100 pg–100 ng of both full-length and degraded RNA. It utilizes random priming and requires prior rRNA depletion or poly(A) enrichment [3]. |

| RiboGone - Mammalian Kit | Depletes ribosomal RNA from mammalian total RNA samples (10–100 ng), dramatically increasing the useful sequencing reads for mRNA and other RNA species [3]. |

| Agilent RNA 6000 Pico Kit | Used with the Agilent Bioanalyzer to assess RNA integrity (RIN) and accurately quantify RNA, especially critical for low-concentration samples [3]. |

Experimental Workflow: From Sample to Quality RNA

The following diagram outlines the critical steps for obtaining high-quality RNA, integrated with key quality control checkpoints.

Impact of RNA Quality on Downstream Variant Calling

High-quality RNA is the foundation for accurate bioinformatics results. The diagram below illustrates how sample preparation choices directly impact the success of RNA-seq alignment and variant calling.

Selecting and Generating a Suitable Reference Genome

Frequently Asked Questions (FAQs)

What is a reference genome and why is it critical for my analysis? A reference genome is a high-quality genome assembly that represents the complete genetic sequence of an organism as a continuous string of nucleotides. It serves as a standardized baseline for comparison in genomic studies. For RNA-seq alignment and variant calling, the reference genome is essential for determining where sequencing reads originated from the genome, enabling the identification of gene expression levels, genetic variants, and structural variations. Using an unsuitable reference can introduce mapping errors and false positives, compromising all downstream analysis [5] [6].

How do I choose the best reference genome for my organism? The choice depends on your organism and the quality of available assemblies. For well-studied model organisms, use the assembly designated as "reference" by authoritative consortia like the Genome Reference Consortium (for human/mouse) or RefSeq. For other species, select the assembly with the highest contiguity (highest scaffold N50), completeness (lowest number of gaps), and most comprehensive gene annotation. Key selection criteria for prokaryotes and eukaryotes are systematically different and are detailed in the tables below [7] [8].

What are the limitations of using a single reference genome? A single reference genome does not represent the full genetic diversity of a species, which can lead to reference bias. In regions with high allelic diversity, your sample's sequence may differ significantly from the reference, causing reads to map poorly or not at all. This can result in an under-representation of variants, particularly in populations not represented in the reference construction. Initiatives like the Human Pangenome Reference Project aim to address this by building more diverse reference sets [5] [6].

My RNA-seq reads are not aligning well. Could the reference be the issue? Yes. Poor alignment rates can often be traced to a mismatch between your sample and the reference genome. This can occur if you are using a different subspecies or strain, or if the reference assembly is fragmented or of low quality. Ensure your reference includes comprehensive annotations (GTF/GFF file) for your specific organism and strain. For spliced aligners like STAR, a high-quality annotation file is crucial for accurately mapping reads across intron boundaries [9] [10].

Troubleshooting Guides

Problem: Low Mapping Rates in RNA-seq Alignment

Potential Cause 1: Incorrect or Poor-Quality Reference Genome or Annotation The reference genome or its associated gene annotation file (GTF/GFF) may be from a different genetic background than your samples, or the annotation may be incomplete.

- Solution Steps:

- Verify Source and Version: Confirm you are using the correct, most up-to-date reference and annotation from a authoritative source like Ensembl, NCBI, or UCSC. Ensure the versions of the genome FASTA file and annotation GTF file match.

- Check Species and Strain: Double-check that the reference corresponds to the exact species (and ideally, the strain) of your experimental samples.

- Evaluate Assembly Quality: Consult the assembly database for metrics like N50 and completeness. Prefer chromosome-level assemblies over those consisting of many unplaced scaffolds [5] [8].

- Re-generate Genome Indices: If you switch references or annotations, you must re-generate the aligner-specific index before running the alignment again [10].

Potential Cause 2: Suboptimal Alignment Parameters The aligner's parameters may be too stringent or not optimized for your specific data and reference.

- Solution Steps:

- Review Intron Sizes: Aligners like STAR have a maximum intron size parameter (

--alignIntronMax). The default is optimized for mammals. For organisms with smaller genes (e.g., fungi, bacteria), this value should be reduced to improve mapping [10] [11]. - Consult Best Practices: Refer to published best practices for your aligner and organism. For STAR, specific guidelines exist for achieving maximum mapping accuracy and speed [11].

- Review Intron Sizes: Aligners like STAR have a maximum intron size parameter (

Problem: High False Positive Variant Calls

Potential Cause: Systematic Errors from Reference Mismatch or Poor Assembly Variants that cluster in specific genomic regions, such as areas with low complexity or high repetitiveness, can be artifacts of mis-mapping reads to an incomplete or erroneous reference.

- Solution Steps:

- Use the Latest Reference: Older reference genomes (e.g., hg19) have known gaps and misassemblies. Upgrade to the most recent version (e.g., GRCh38) which has fewer gaps and corrected sequences [5] [12].

- Employ Best Practices for Pre-processing: Follow established germline or somatic variant calling best practices, which include steps like duplicate marking and base quality score recalibration (BQSR) to reduce technical artifacts [12] [13].

- Benchmark Your Pipeline: Use a sample with a known set of variants (a "ground truth" dataset like Genome in a Bottle's NA12878) to optimize and validate your variant calling pipeline's parameters, ensuring a balance between sensitivity and precision [14] [12] [13].

Reference Genome Selection Criteria

The National Center for Biotechnology Information (NCBI) maintains specific criteria for selecting a single "reference" genome for each species in the RefSeq database. The tables below summarize the primary criteria for prokaryotes and eukaryotes [7].

Table 1: Prokaryotic Reference Genome Selection Criteria (prioritized in order)

| Priority | Criterion | Description |

|---|---|---|

| 1 | Manual Selection | Selected based on community input, known biological features, or other a priori knowledge. |

| 2 | Assembly Length | Prefers assemblies with lengths closest to the species average (avoiding outliers). |

| 3 | CheckM Completeness | Selects assemblies with the highest estimated completeness. |

| 4 | Pseudo CDS Count | Prefers assemblies with the lowest number of pseudo genes (log-transformed). |

| 5 | Plasmid Presence | Assemblies containing plasmid sequences are preferred. |

Table 2: Eukaryotic Reference Genome Selection Criteria

| Criterion | Description |

|---|---|

| Quality & Status | Must not be contaminated or from an unverified species. RefSeq genomes are preferred. |

| Assembly Contiguity | Among eligible assemblies, the one with the highest contig N50 is selected. |

| Chromosome Completion | The hierarchy prefers, in order: 1) gapless chromosomes, 2) a full chromosome set, 3) at least one chromosome, 4) sequences assigned to chromosomes. |

Experimental Protocol: Generating a STAR Genome Index

A crucial first step for RNA-seq alignment is generating a custom genome index for your chosen aligner. This protocol details the process for the STAR aligner [10].

Methodology:

Prerequisite Software and Data:

- Install STAR (version 2.5.2b or higher).

- Obtain the reference genome sequence in FASTA format (

Homo_sapiens.GRCh38.dna.chromosome.1.fa). - Obtain the corresponding gene annotation file in GTF format (

Homo_sapiens.GRCh38.92.gtf).

Create a Directory for Indices: Use a space with sufficient storage capacity (e.g., scratch space).

Execute the Genome Generate Command: The following command generates the index. Adjust the thread count (

--runThreadN) and memory as needed.--runMode genomeGenerate: Instructs STAR to run in index generation mode.--genomeDir: Path to the directory where the indices will be stored.--genomeFastaFiles: Path to the reference genome FASTA file.--sjdbGTFfile: Path to the annotation file.--sjdbOverhang: Specifies the length of the genomic sequence around the annotated junctions. This should be set to (Read Length - 1). For 100bp paired-end reads, use 99 [10].

Workflow Diagram: Reference Genome Selection and Implementation

The diagram below outlines the logical workflow for selecting a reference genome and implementing it in an RNA-seq analysis pipeline.

Diagram 1: Reference genome selection and implementation workflow.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Materials for Reference-Based Genomic Analysis

| Item | Function in the Workflow |

|---|---|

| Reference Genome (FASTA) | The baseline DNA sequence for read alignment and variant comparison. Provides the coordinate system. [5] |

| Genome Annotation (GTF/GFF) | File specifying genomic coordinates of genes, exons, transcripts, and other features. Critical for transcriptomic analysis. [10] |

| Benchmark Variant Set (e.g., GIAB) | A set of validated, high-confidence variants for a reference sample (e.g., NA12878). Used for pipeline optimization and benchmarking. [12] [13] |

| Alignment Software (e.g., STAR, HISAT2) | "Splice-aware" tools that map RNA-seq reads to the reference genome, accounting for introns. [10] [11] |

| Variant Caller (e.g., GATK, DeepVariant) | Software that identifies single nucleotide polymorphisms (SNPs) and insertions/deletions (indels) by comparing sample data to the reference. [12] [13] |

| High-Performance Computing (HPC) Cluster | Essential computational resources for memory-intensive tasks like genome indexing and alignment. [10] |

Fundamentals of Splice-Aware Alignment with STAR and HISAT2

Troubleshooting Guide: Common Alignment Issues and Solutions

Low Alignment Rates

Problem: An unusually low percentage of reads are successfully aligned to the reference genome.

Solutions:

- Check Input File Quality: Use FastQC to assess quality issues like poor base quality or adapter contamination. Trim reads using Trimmomatic or Cutadapt if necessary [15].

- Verify Reference Genome Integrity: Ensure the HISAT2 or STAR index was built correctly and matches your experiment's reference genome version. Rebuild the index if integrity is in doubt [15] [16].

- Review Alignment Parameters: Adjust parameters such as

--max-intronlenfor your specific organism. Test both--end-to-endand--localalignment modes in HISAT2 to see which yields better results [15]. - Consult Log Files: Thoroughly review HISAT2 or STAR log files, which provide detailed statistics on unaligned reads and potential causes of alignment failure [15] [16].

Spurious Junction Alignment in Repetitive Regions

Problem: Splice-aware aligners can introduce erroneous spliced alignments between repeated sequences, creating falsely spliced transcripts [17].

Solutions:

- Apply EASTR Filtering: Use EASTR (Emending Alignments of Spliced Transcript Reads) to detect and remove falsely spliced alignments by examining sequence similarity between intron-flanking regions [17].

- Leverage Known Splice Sites: Use known splice site files with HISAT2's

--known-splicesite-infileoption to guide alignment [15]. - Validate with SpliceAI: Use SpliceAI, a machine learning-based splice site prediction tool, to score splice junctions overlapping repetitive elements like HERVs [17].

Indel Detection Challenges in RNA-seq

Problem: Difficulty detecting indels in RNA-seq alignments, particularly with splice-aware aligners [18].

Solutions:

- Tool Selection: For indel detection, consider using non-splice-aware tools like BWA or Bowtie2 for RNA-to-RNA mappings [18].

- Parameter Tuning: For STAR, specific parameter tuning may improve indel detection [18].

- Focus on SNVs: Be skeptical of indels called from RNA-seq data; SNVs are generally more reliable for variant calling from RNA-seq [19].

- Visual Validation: Use IGV to manually inspect putative indel regions and compare with exome data when available [18].

Alignment Rate Discrepancies Between Library Types

Problem: Significant differences in alignment rates between ribosomal RNA-depletion (ribo-minus) and poly(A) selection library preparation methods [17].

Solutions:

- Expect Lower ribo-minus Rates: ribo-minus libraries typically have higher rates of spuriously spliced alignments. EASTR removed 8.0% of HISAT2 and 6.4% of STAR alignments in ribo-minus samples compared to only 1.0-1.2% in poly(A) samples [17].

- Consider Developmental Stage: Prenatal samples may have higher rates of removed spliced alignments compared to adult samples [17].

- Apply Method-Specific Filtering: Implement more stringent filtering for ribo-minus libraries using tools like EASTR [17].

Quantitative Performance Comparison

Table 1: EASTR Filtering Impact on Human Brain RNA-seq Data

| Metric | HISAT2 | HISAT2 + EASTR | STAR | STAR + EASTR |

|---|---|---|---|---|

| Total Spliced Alignments | 153,192,435 | 148,983,542 (-3.4%) | 134,202,142 | 130,602,771 (-2.7%) |

| Non-reference Junctions | - | 138,111 removed | - | 75,273 removed |

| Reference-matching Junctions Removed | - | 114 | - | 119 |

Table 2: Typical HISAT2 Alignment Statistics for Paired-End Reads

| Category | Percentage | Read Counts |

|---|---|---|

| Reads Aligned Concordantly 0 Times | 47.42% | 56,230 pairs |

| Reads Aligned Concordantly Exactly 1 Time | 52.09% | 61,769 pairs |

| Reads Aligned Concordantly >1 Times | 0.48% | 572 pairs |

| Overall Alignment Rate | 52.75% | 125,077 reads |

Experimental Protocols

Protocol 1: STAR Alignment Workflow

Genome Index Generation:

Read Alignment:

Protocol 2: HISAT2 Alignment Workflow

Genome Index Generation:

Read Alignment:

Workflow Diagrams

STAR Two-Step Alignment Process

HISAT2 Hierarchical Indexing Alignment

The Scientist's Toolkit

Table 3: Essential Research Reagents and Software Solutions

| Tool/Reagent | Function | Application Context |

|---|---|---|

| EASTR | Detects and removes falsely spliced alignments between repetitive sequences | Post-alignment filtering for STAR and HISAT2 outputs [17] |

| SpliceAI | Machine learning-based splice site prediction | Validating splice junctions in problematic genomic regions [17] |

| FastQC | Quality control assessment of raw sequencing data | Initial data quality evaluation pre-alignment [20] |

| Trimmomatic | Adapter trimming and quality control | Preprocessing raw reads to improve alignment rates [15] |

| SAMtools | Processing and analysis of SAM/BAM files | Downstream manipulation of alignment files [21] |

| StringTie2 | Transcript assembly and quantification | Downstream analysis of aligned reads [17] |

Frequently Asked Questions

Q: Which aligner should I choose for my RNA-seq experiment? A: The choice depends on your specific needs. STAR demonstrates high accuracy but is memory-intensive [10]. HISAT2 is faster with lower memory requirements [22] [20]. For most users, HISAT2 provides a good balance of speed and accuracy, while STAR may be preferable for publication-quality results where accuracy is paramount [22].

Q: How can I improve indel detection in RNA-seq data? A: RNA-seq indel detection remains challenging. Consider using non-splice-aware aligners like BWA for RNA-to-RNA mappings specifically for indel detection [18]. For variant calling, focus on SNVs rather than indels, as they are more reliable in RNA-seq data [19]. Always visually validate putative indels in IGV [18].

Q: What percentage of alignment removal by EASTR is normal? A: Typically, EASTR removes 2.7-3.4% of spliced alignments, with the vast majority (99.7-99.8%) being non-reference junctions. Higher removal rates (6-8%) are expected in ribo-minus libraries compared to poly(A) selected libraries (1-1.2%) [17].

Q: How do I handle low alignment rates in HISAT2?

A: First verify FASTQ quality and reference genome compatibility. Ensure proper pairing of paired-end reads. Consider adjusting parameters like --max-intronlen and experiment with --end-to-end versus --local alignment modes. Use the --known-splicesite-infile option if you have known splice site information [15].

Q: Can I use GATK's SplitNCigar with HISAT2 alignments? A: Caution is advised when using SplitNCigar with HISAT2 alignments, as it may incorrectly interpret spliced reads as indels, potentially generating thousands of false positive indels. For variant calling from HISAT2 alignments, consider focusing on SNVs rather than indels [19].

Key Differences Between DNA and RNA Variant Calling

Technical Comparison: DNA vs. RNA Variant Calling

The table below summarizes the core technical and practical differences between DNA and RNA variant calling methodologies, highlighting key considerations for researchers when selecting the appropriate approach for their studies.

| Feature | DNA Variant Calling | RNA Variant Calling |

|---|---|---|

| Genetic Material Analyzed | Comprehensive view of the entire genome (all coding and non-coding regions) [23] | Limited to regions that produce RNA, mainly protein-coding regions [23] |

| Typical Read/Coverage | Hundreds of millions of reads for thorough coverage (e.g., ~13-fold coverage used in studies) [23] [24] | Fewer reads; ~30 million for expression, ~100 million for splicing/advanced analysis [23] [24] |

| Variant Detection Scope | Broad detection of variants across the genome [23] | Targets ~40% of variants called from DNA-seq; enriched for expressed regions [24] |

| Primary Applications | Comprehensive variant discovery, association studies, population genetics [25] | Studying gene expression, alternative splicing, eQTL/sQTL mapping [23] [9] |

| Key Challenges | Handling large data volumes, complex structural variants [25] | Allele-specific expression, RNA editing, reliance on gene expression levels [23] [24] |

| Precision/Recall | High precision and recall genome-wide [24] | ~80% overall precision, >97% in highly expressed coding regions [24] |

Troubleshooting FAQs

1. Why does RNA-seq identify fewer genetic variants than DNA-seq? RNA-seq is fundamentally limited by its focus on transcribed regions of the genome. It primarily captures messenger RNA (mRNA), which represents only a small portion of an organism's total genetic material. Consequently, many genetic variations in non-coding or non-expressed regions are missed when using RNA-seq alone [23]. Studies show RNA-seq typically identifies only about 40% of the variants called by DNA sequencing [24].

2. What are the main sources of false positives in RNA variant calling? The primary sources of false positives and inaccuracies in RNA variant calling include:

- Allele-Specific Expression (ASE): Where one allele is preferentially expressed over the other, which can skew variant calling and complicate the determination of genetic differences [23] [24].

- RNA Editing Events: Post-transcriptional modifications (e.g., A-to-I, C-to-U editing) alter the RNA sequence relative to the DNA template. These can be misinterpreted as genetic variants if not properly accounted for [23] [26].

- Technical Artifacts: Sequencing errors, particularly in complex regions like homopolymer runs, can be mistaken for true variants [26].

3. Can I use RNA-seq data for expression Quantitative Trait Loci (eQTL) mapping? Yes, RNA-seq is a powerful tool for eQTL mapping. Variants called from RNA-seq are highly enriched for eQTLs and can detect roughly 75% of the eGenes identified using DNA-seq variants [24]. However, caution is required. While there is a strong correlation in association p-values between methods, a low percentage (around 9%) of eGenes may share the same top associated variant. Using RNA-seq variant genotypes alone can sometimes result in false-positive eQTLs that are not found with DNA sequencing [24].

4. How does sequencing depth impact RNA variant calling accuracy? The accuracy of variant calling from RNA-seq is highly dependent on sequencing coverage. Research has shown that the quality of variant calling decreases significantly when sequencing coverage drops [23]. For highly expressed genes, deep RNA-seq (e.g., ~250 million reads) can achieve high accuracy (up to 98% precision in coding regions). Performance remains strong even when subsampled to 100 million and 30 million reads, but accuracy is substantially lower for genes with low expression levels [23] [24].

5. What are RNA-DNA differences (RDDs) and how should I handle them? RNA-DNA differences (RDDs) are instances where a variant is called from the RNA-seq data but is not present in the DNA-seq data from the same individual [24]. These differences can arise from:

- Genuine biological processes like RNA editing [23] [26].

- Technical artifacts or limitations in the sequencing technology [23]. It is crucial to investigate these RDDs thoroughly rather than automatically dismissing them, as they can provide valuable insights into post-transcriptional regulation. Specialized pipelines like CADRES have been developed to precisely identify true RNA editing sites by combining DNA and RNA variant calling with statistical analysis across different biological conditions [26].

Experimental Workflows and Protocols

Workflow for Comparative DNA-RNA Variant Analysis

This diagram outlines a robust experimental and computational workflow for a study designed to directly compare variants called from DNA and RNA sequencing, which is essential for identifying RDDs.

Protocol for RNA Variant Calling with NMD Inhibition

This protocol is particularly relevant for clinical diagnostics and studies of genetic disorders where nonsense-mediated decay (NMD) might degrade mutant transcripts [27].

Sample Preparation:

- Collect peripheral blood mononuclear cells (PBMCs) or other clinically accessible tissues.

- Split the cell culture. Treat one portion with an NMD inhibitor (e.g., Cycloheximide/CHX), and leave the other untreated. CHX treatment has been shown to more effectively inhibit NMD compared to other agents like puromycin (PUR) [27].

- Proceed with total RNA extraction from both treated and untreated samples.

Library Preparation and Sequencing:

- Use ribosomal depletion during library preparation to retain a broader spectrum of RNA transcripts compared to poly-A selection alone [24].

- Sequence the libraries to an appropriate depth. For variant calling, deeper sequencing (>100 million reads) is recommended over standard gene expression panels (~30 million reads) [23] [24].

Bioinformatic Processing:

- Trim adapter sequences and remove low-quality bases from RNA reads using tools like

fastp[24]. - Align reads using a splice-aware aligner (e.g., STAR) with settings like

--waspOutputModeto account for allelic imbalance [24]. - Call variants using a tool with a model specifically trained for RNA-seq data, such as the DeepVariant RNA-seq model, which improves accuracy and reduces false positives from common RNA editing sites [24] [25].

- Trim adapter sequences and remove low-quality bases from RNA reads using tools like

The Scientist's Toolkit: Research Reagent Solutions

The table below lists key reagents, tools, and datasets used in advanced RNA variant calling studies.

| Item Name | Function / Application | Key Features / Notes |

|---|---|---|

| DeepVariant (RNA-seq model) [24] [25] | AI-based variant caller from RNA-seq data. | Uses deep learning on pileup images; significantly improves RNA variant accuracy over previous methods. |

| STAR Aligner [24] | Splice-aware alignment of RNA-seq reads. | Essential for accurately mapping reads across exon junctions. |

| CADRES Pipeline [26] | Precise detection of differential RNA editing sites (DVRs). | Combines DNA/RNA variant calling & statistical analysis to filter artefacts. |

| Cycloheximide (CHX) [27] | Nonsense-Mediated Decay (NMD) inhibitor. | Used in sample prep to prevent degradation of transcripts with PTCs, aiding variant detection. |

| SDR-seq Tool [28] | Single-cell method capturing both DNA & RNA from same cell. | Links genetic variants to gene activity patterns in individual cells, even in non-coding regions. |

| FLAIR [29] | Tool for isoform discovery and analysis using long-read RNA-seq. | Reconstructs and quantifies full-length transcript isoforms, important for understanding splicing variants. |

| GIAB & SEQC2 Truth Sets [30] | Reference benchmark datasets for pipeline validation. | Used to validate accuracy and reproducibility of germline and somatic variant calling pipelines. |

Frequently Asked Questions (FAQs)

FAQ 1: What are the most common sources of false positive variant calls in RNA-seq data? False positives frequently arise from technical artifacts rather than true biological variation. Key sources include:

- RNA Editing Events: Post-transcriptional modifications, such as Adenosine-to-Inosine (A-to-I) editing, appear as A-to-G mismatches in sequencing data and can be mistaken for single nucleotide variants (SNVs). [31] [32]

- Mapping Errors: Reads that span splice junctions or originate from repetitive genomic regions are often misaligned, leading to incorrect variant identification. [33] [31]

- Reverse Transcription Artifacts: Enzymes like reverse transcriptase can introduce errors during cDNA synthesis, especially when encountering RNA secondary structures. [31]

- Strand-Specific Biases: Asymmetric coverage between forward and reverse strands during library preparation can create systematic artifacts. [31]

FAQ 2: How can I distinguish a true genomic variant from an RNA editing event? Reliably distinguishing between the two requires an integrated approach, as they are indistinguishable from RNA-seq data alone. Recommended strategies include:

- Utilize Matched DNA Sequencing: The most definitive method is to compare the RNA-seq variant calls with data from whole-genome or exome sequencing of the same sample. Variants present only in RNA are candidates for RNA editing. [33] [31]

- Leverage Databases and Sequence Context: Use known RNA editing databases (e.g., RADAR) and analyze sequence motifs. A-to-G changes, especially in specific sequence contexts, are hallmarks of A-to-I editing by ADAR enzymes. [31] [32]

- Apply Specialized Computational Tools: Tools like VarRNA employ machine learning models that consider multiple features (e.g., variant allele frequency, sequence context, mapping quality) to classify variants and filter out likely editing events. [33] [34]

FAQ 3: Why does allelic expression bias occur, and how is it detected? Allelic expression bias (or Allele-Specific Expression, ASE) occurs when one allele of a gene is expressed at a higher level than the other, often due to cis-regulatory variations like promoters or enhancers. [35]

- Detection Method: ASE is identified from RNA-seq data by quantifying the number of reads originating from each allele at heterozygous single nucleotide polymorphism (SNP) sites. A significant deviation from the expected 1:1 ratio indicates ASE. [35]

- Analysis Tools: Common tools for ASE analysis include MBASED, GeneiASE, and ASEP, the latter of which can analyze ASE across multiple individuals. [35]

FAQ 4: What steps can I take to minimize mapping errors in my RNA-seq analysis?

- Use Splice-Aware Aligners: Always use aligners designed for RNA-seq data, such as STAR, which can handle reads that span introns. [33] [32] [36]

- Perform Quality Control and Trimming: Use tools like fastp or Trim Galore to remove low-quality bases and adapter sequences, which improves mapping accuracy. [9] [35] [36]

- Consider Graph-Based Genomes: Emerging graph-based aligners that incorporate population variation can reduce reference bias and improve alignment in complex genomic regions. [31]

FAQ 5: My pipeline has low variant calling sensitivity in lowly expressed genes. What can I do? This is a common challenge, as RNA-seq coverage is directly proportional to gene expression. [31]

- Aggregate Information: For single-cell RNA-seq, computational methods can integrate information across cells with similar transcriptional profiles to boost detection power. [31]

- Leverage Advanced Callers: Machine learning-based variant callers like DeepVariant are being adapted for RNA-seq and can offer improved sensitivity for low-frequency variants. [31]

- Acknowledge the Limitation: Be transparent that variant calling from RNA-seq is inherently limited to expressed regions and highly biased towards genes with sufficient coverage. [31]

Troubleshooting Guides

Issue 1: Suspected RNA Editing Artifacts

Problem: You have identified variants, particularly A-to-G or C-to-T changes, that you suspect are RNA editing events rather than true genomic mutations.

Solution: Table: Strategies to Resolve RNA Editing Artifacts

| Action | Protocol/Method | Expected Outcome |

|---|---|---|

| Database Cross-Referencing | Check candidate variants against public RNA editing databases (e.g., RADAR, REDIportal). | Filter out known, common editing sites from your variant list. [31] |

| Sequence Motif Analysis | Use tools like HOMER or custom scripts to analyze the sequence context around the variant. |

Identification of characteristic motifs (e.g., for ADAR enzymes) supporting an editing event. [31] [32] |

| Apply a Classifier Tool | Run your VCF file through a specialized tool like VarRNA. Its XGBoost model uses features like variant type, read support, and genomic context to classify variants as germline, somatic, or artifact. [33] [34] | A filtered and annotated list of variants with reduced false positives from RNA editing. |

Preventive Measures:

- Whenever possible, design experiments with matched RNA and DNA sequencing from the same sample. This provides a ground truth for variant classification. [33] [32]

Issue 2: High Rate of Apparent Heterozygous Variants

Problem: An unusually high number of heterozygous variants are called, which may be due to mapping errors or allelic dropout.

Solution: Table: Troubleshooting High Heterozygous Variant Calls

| Investigation Area | Diagnostic Check | Resolution |

|---|---|---|

| Mapping Quality | Check the BAM file for regions with low mapping quality (MAPQ score) or many reads with multiple alignments. Use tools like SAMtools and QualiMap. |

Re-align reads with optimized parameters or a different splice-aware aligner (e.g., STAR). [33] [36] |

| Allelic Expression Balance | For known heterozygous SNPs, check for allelic imbalance using an ASE tool like MBASED or GeneiASE. Extreme skews may indicate mapping issues. [35] | Re-evaluate alignment in regions with systematic allelic bias. |

| Strand Bias | Check for strand bias in variant callers (e.g., using GATK's StrandOddsRatio filter). A strong bias can indicate technical artifacts. [31] |

Apply appropriate filters to remove variants with significant strand bias. |

Experimental Workflow Diagram:

Issue 3: Detecting and Validating Allelic Expression Bias

Problem: You want to identify genes that show significant allele-specific expression (ASE) in your RNA-seq data.

Solution:

- Prerequisite: Identify Heterozygous SNPs. You first need a set of high-confidence heterozygous SNPs, which can be derived from matched DNA-seq data or called directly from the RNA-seq data if no DNA is available. [35]

- Count Allelic Reads. For each heterozygous SNP, count the number of reads supporting the reference and alternative alleles. Tools like ASEP are designed for this and can analyze data across multiple individuals. [35]

- Statistical Testing. Test for significant deviation from the expected 1:1 expression ratio using a binomial test. ASEP uses a generalized linear mixed-effects model for this purpose. [35]

- Validation. Colocalize your ASE findings with expression quantitative trait loci (eQTL) data from resources like the GTEx Consortium or the Farm Animal GTEx (FarmGTEx) for agricultural species. [35]

Allelic Expression Analysis Diagram:

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Computational Tools and Resources

| Tool/Resource Name | Function/Purpose | Reference/Link |

|---|---|---|

| fastp / Trim Galore | Performs quality control and adapter trimming of raw RNA-seq reads. fastp is noted for its speed and simplicity. [9] | [9] [35] |

| STAR | A splice-aware aligner for mapping RNA-seq reads to a reference genome. Essential for accurate junction mapping. [33] [32] | [33] [32] |

| GATK HaplotypeCaller | A widely used tool for calling SNPs and indels from sequencing data. It is part of the best-practices pipeline for RNA-seq variant discovery. [33] | [33] |

| VarRNA | A specialized tool that uses machine learning (XGBoost) to classify variants called from tumor RNA-seq data as germline, somatic, or artifact. [33] [34] | https://github.com/nch-igm/VarRNA [34] |

| ASEP | A tool for detecting Allele-Specific Expression across a population of samples, using a generalized linear mixed-effects model. [35] | [35] |

| rMATS | A tool for detecting differential alternative splicing from RNA-seq data. Simulation studies indicate it remains an optimal choice. [9] | [9] |

| FarmGTEx / GTEx | Databases of regulatory variants and gene expression across tissues. Used to validate and colocalize ASE findings with known eQTLs. [35] | [35] |

Advanced Tools and Parameters for Robust RNA-seq Variant Detection

Frequently Asked Questions

1. Why is pre-processing like trimming and duplicate marking crucial for RNA-seq variant calling? Pre-processing raw RNA-seq data is a foundational step that directly impacts the accuracy of all downstream analyses, including variant calling. Trimming removes technical sequences (adapters) and low-quality bases that can cause misalignment. Duplicate marking helps distinguish between PCR artifacts and true biological signals. Inaccurate pre-processing can lead to false positive variant calls, reduced alignment rates, and biased gene expression estimates, ultimately compromising the biological validity of your results [37] [38].

2. Should I always remove duplicate reads from my RNA-seq data? The decision depends on your experimental protocol and analysis goals. For standard RNA-seq analysis focused on gene expression, marking duplicates is recommended to mitigate PCR amplification bias [39] [40]. However, for variant calling, best practices suggest marking but not automatically removing duplicates, as highly expressed genes will naturally generate many duplicate reads. If your library was prepared with Unique Molecular Identifiers (UMIs), then UMI-based deduplication is highly recommended as it can accurately distinguish technical duplicates from biological duplicates [38] [40].

3. What is a good quality score threshold for trimming RNA-seq reads? A Phred quality score of 20 (Q20) is a common starting point, representing a 1% error rate. However, many modern pipelines recommend more stringent thresholds. For example, the Geneious Prime documentation suggests a minimum quality of 30 (Q30) for Illumina data [41]. It is best practice to use quality control reports from tools like FastQC both before and after trimming to visually confirm the improvement in base quality scores and determine the optimal threshold for your specific dataset [42].

4. How does the choice of trimming tool affect downstream alignment? The trimming tool can influence data quality and alignment efficiency. A 2024 benchmarking study noted that while several tools improve base quality, they can behave differently. For instance, fastp was shown to significantly enhance the proportion of high-quality Q20 and Q30 bases, whereas Trim Galore sometimes led to unbalanced base distributions in the tail ends of reads [9]. Testing different tools on a subset of your data and checking the post-trimming alignment rate is a reliable way to select the best performer for your dataset.

5. My RNA-seq data is for variant calling. Are there any special pre-processing considerations? Yes, variant calling from RNA-seq requires extra care to handle spliced alignments. After the initial splice-aware alignment (e.g., with STAR), a critical step is processing the BAM file with a tool like GATK's SplitNCigarReads. This function splits reads that span exon-exon junctions (containing 'N' in the CIGAR string) into contiguous exon segments, making the data compatible with DNA-focused variant callers. This step is essential for reducing false positives and improving accuracy [37] [43] [33].

Troubleshooting Guides

Poor Alignment Rates After Trimming

- Symptoms: A low percentage of reads successfully align to the reference genome/transcriptome after the trimming step.

- Possible Causes and Solutions:

- Cause: Overly aggressive trimming, resulting in a large number of reads that are too short to map uniquely.

- Solution: Adjust the

MINLENparameter (or equivalent) in your trimmer to retain shorter reads. For example, instead of discarding reads below 36 bases, try a threshold of 20-25 bases and re-run the alignment [42]. - Cause: Incorrect or incomplete adapter sequences specified for trimming.

- Solution: Confirm the exact adapter sequences used in your library preparation kit with your sequencing provider. Many tools like Trim Galore can auto-detect common adapters, but manual specification may be necessary [40].

Unexpectedly High Duplicate Rates

- Symptoms: Tools like Picard MarkDuplicates or UMI-tools report a very high rate of duplicate reads (e.g., >50%).

- Possible Causes and Solutions:

- Cause: High expression of a small number of genes (biological duplication) or insufficient sequencing depth.

- Solution: This may not be a technical problem. Use tools like

dupRadarto analyze the duplication rate as a function of sequence depth, which can help distinguish technical duplicates from biological duplicates [40]. - Cause: The library preparation involved excessive PCR amplification.

- Solution: If UMIs are available, use a UMI-aware deduplication tool (e.g., UMI-tools

dedup). This is the most accurate method as it corrects for PCR bias before amplification [40]. If UMIs are not available, consider using paired-end sequencing if possible, as it has been shown to reduce the false-negative rate in differential expression analysis caused by deduplication [38].

Performance Comparison of Pre-processing Tools and Parameters

| Tool | Key Characteristics | Performance Notes |

|---|---|---|

| fastp | Fast; all-in-one operation; generates QC reports | Significantly enhanced Q20 and Q30 base proportions in benchmark studies. |

| Trim Galore | Wrapper for Cutadapt and FastQC; automated adapter detection | Can sometimes lead to an unbalanced base distribution in read tails. |

| Trimmomatic | Highly customizable parameters; widely cited | Parameter setup can be complex and does not offer a speed advantage. |

| BBDuk (in Geneious) | User-friendly interface with preset options for Illumina data | Recommended quality score threshold for Illumina data is Q30 [41]. |

| Method | Principle | Impact on Differential Expression Analysis |

|---|---|---|

| No Deduplication | All reads are retained for quantification. | Biased towards an increased number of false positive results. |

| Coordinate-Based (e.g., SAMtools, Picard) | Removes reads mapped to identical genomic positions. | Can produce a high proportion of false negative results, especially for highly expressed genes. |

| UMI-Based (1 or 2 indices) | Uses unique molecular barcodes to identify PCR duplicates. | Greatly improves accuracy; minimizes both false positives and false negatives. |

Experimental Protocols

Protocol 1: Standard Quality Control and Trimming with FastQC and Trimmomatic

This protocol is adapted from the H3ABionet SOPs and other best practices [37] [42].

- Quality Check (Raw Data):

- Run FastQC on the raw FASTQ files.

fastqc -o output_directory input_sample_R1.fastq.gz input_sample_R2.fastq.gz- Use MultiQC to aggregate results across all samples if working with a large project.

- Adapter and Quality Trimming:

- Execute Trimmomatic in PE (Paired-End) mode. The following command is a standard example [37]:

- Parameters:

ILLUMINACLIP: Removes adapter sequences.LEADING/TRAILING: Trims low-quality bases from the start/end of reads.SLIDINGWINDOW: Scans the read with a 4-base window, trimming when the average quality drops below 15.MINLEN: Discards reads shorter than 36 bases after trimming.

- Quality Recheck (Trimmed Data):

- Run FastQC again on the trimmed

*_paired.fastq.gzfiles. - Compare the reports, specifically the "Per base sequence quality" plot, to verify improvement [42].

- Run FastQC again on the trimmed

Protocol 2: RNA-seq Specific BAM Preparation for Variant Calling

This protocol is based on the GATK best practices for RNA-seq and recent research on optimizing long-read data for variant callers like DeepVariant [37] [43] [33].

- Splice-Aware Alignment:

- Align trimmed reads using a splice-aware aligner like STAR.

- Mark Duplicates:

- Identify, but do not remove, PCR duplicates. This preserves information for highly expressed genes while marking technical artifacts.

- Split Reads at N CIGAR Operations (Critical for RNA-seq):

- Use GATK's SplitNCigarReads to process reads that cross splice junctions.

- A 2023 study highlights that performing an additional "flag correction" after this step can further improve performance with DeepVariant by ensuring all split reads are correctly flagged [43].

Workflow Diagram

Research Reagent Solutions

Table 3: Essential Tools for the RNA-seq Pre-processing Workflow

| Item | Function in Pre-processing | Example Tools / Kits |

|---|---|---|

| Quality Control Tool | Provides initial assessment of raw read quality, base distribution, and adapter contamination. | FastQC [37] [42], MultiQC [42] |

| Trimming Tool | Removes adapter sequences, trims low-quality bases, and filters short reads. | Trimmomatic [37] [42], fastp [9], Trim Galore [9] [40], BBDuk [41] |

| Splice-aware Aligner | Aligns RNA-seq reads to a reference genome, correctly handling reads that span exon-exon junctions. | STAR [37] [42] [33], HISAT2 [42] |

| Duplicate Marking Tool | Identifies and marks PCR duplicates to mitigate amplification bias. | Picard MarkDuplicates [37] [40], UMI-tools dedup [40] |

| RNA-seq Variant Caller | Calls genetic variants from processed RNA-seq alignments, accounting for RNA-specific challenges. | GATK HaplotypeCaller [37] [33], DeepVariant [37] [43] |

| UMI Adapter Kit | Provides unique molecular identifiers during library prep for accurate deduplication. | ThruPLEX Tag-seq, NEXTFlex qRNA-seq [38] |

Implementing the GATK RNA-seq Short Variant Discovery Workflow

The GATK RNA-seq short variant discovery pipeline is designed to identify SNPs and Indels from RNA sequencing data. This process involves specific steps to handle the unique challenges of transcriptomic data, such as splicing and lower coverage in lowly expressed genes [44] [31].

Essential Research Reagents and Tools

Table 1: Key research reagents, tools, and their functions in the GATK RNA-seq variant discovery workflow

| Item | Function/Description | Notes |

|---|---|---|

| STAR Aligner | Maps RNA reads to a reference genome [44] | Two-pass mode recommended for better alignment around novel splice junctions [44] |

| SplitNCigarReads | Reformats alignments spanning introns for HaplotypeCaller [44] | Splits reads with N in CIGAR; crucial for RNA-seq data [44] |

| HaplotypeCaller | Calls SNPs and Indels simultaneously via local de novo assembly [44] | Default minimum confidence threshold is adjusted to 20 for RNA-seq [44] |

| VariantFiltration | Applies hard filters to raw variant calls [44] | Used instead of VQSR for RNA-seq, which requires truth data not yet available [44] |

| Known Sites VCF | Provides known variant locations for Base Quality Recalibration [44] | Must have compatible contigs with reference genome and GTF file [44] |

Frequently Asked Questions (FAQs)

Pipeline Configuration

Q: The workflow documentation mentions "germline" variant discovery, not somatic. Is there a dedicated pipeline for somatic short variant discovery using RNA-seq?

While the title of the provided GitHub repository and primary workflow is "germline" snps-indels [45], researchers in the forum comments have successfully adapted this pipeline for somatic calling. The core steps, especially the critical SplitNCigarReads transformation, are equally relevant for preparing RNA-seq data for somatic variant callers [44].

Q: How should I prepare for the BaseRecalibrator step if I don't have a known sites VCF file for my organism?

The BaseRecalibrator tool requires known variant sites for optimal operation [44]. If you are working with an organism without this resource, you have two options:

- Omit the BQSR step: Some researchers, especially those working with non-model organisms, proceed without base quality recalibration [44].

- Use a carefully selected resource: If using a different source for known variants (e.g., from the GATK resource bundle), ensure the contigs in your reference genome FASTA, GTF annotation, and known sites VCF files are perfectly compatible to avoid "incompatible contigs" errors [44].

Troubleshooting Common Errors

Q: When running BaseRecalibrator, I get the error: "Input files reference and features have incompatible contigs: No overlapping contigs found." How can I resolve this?

This error indicates a mismatch between the chromosome/contig names in your reference genome FASTA file, GTF annotation file, and known sites VCF file [44].

- Solution: Ensure all input files use the same naming conventions for contigs. You may need to obtain files from a single source or modify your files to make them consistent. Using the same reference genome build (e.g., all from Ensembl or all from the GATK resource bundle) for all inputs is crucial [44].

Q: The BaseRecalibrator tool requires a --known-sites argument, but I don't have this data for my plant species. What should I do?

This is a common issue for researchers working with non-model organisms. You can bypass the BaseRecalibrator step and proceed directly to variant calling with HaplotypeCaller. While BQSR can improve accuracy, the pipeline can still be run without it, though you may need to adjust variant filtering parameters accordingly [44].

Q: After SplitNCigarReads, downstream tools report issues with read flags. How can I fix this?

The SplitNCigarReads tool applies the "supplementary alignment" flag to all but one split read segment, which can impact the performance of some downstream variant callers [4]. Recent research suggests implementing a "flagCorrection" step after SplitNCigarReads to ensure all fragments retain the original alignment flag, which has been shown to improve variant calling performance, particularly for DeepVariant and Clair3 on long-read RNA-seq data [4].

Workflow Adaptation and Performance

Q: Should I run BaseRecalibrator if I am searching for novel RNA-editing sites?

Be cautious. The BaseRecalibrator step operates under the assumption that all reference mismatches are errors, which could lead it to incorrectly mark genuine RNA-editing variants as errors to be corrected [44]. For experiments focused on discovering RNA-editing events, omitting the BQSR step may be necessary to preserve these true biological signals.

Q: How can I improve the performance of my variant calling on long-read RNA-seq data like PacBio Iso-Seq or Nanopore?

For long-read data, applying the SplitNCigarReads step is still critical. Furthermore, a 2023 study in Genome Biology recommends an additional "flagCorrection" step after SplitNCigarReads to reset alignment flags. This manipulation of the BAM file makes it more suitable for DNA-based variant callers, significantly improving the performance of tools like DeepVariant and Clair3 on long-read RNA-seq data [4].

Table 2: Common error messages, their causes, and solutions

| Error Message | Likely Cause | Solution |

|---|---|---|

| "Argument known-sites was missing" | Missing required --known-sites VCF for BaseRecalibrator [44] |

Provide a known sites VCF or omit the BQSR step [44] |

| "Incompatible contigs" | Mismatched chromosome names between reference, GTF, and VCF [44] | Ensure all files use the same contig naming convention from a single source [44] |

| Low recall of variants near splice junctions | Intron-containing reads (with N in CIGAR) can spoil calling [4] | Ensure SplitNCigarReads is run; for long-read data, consider adding flag correction [4] |

| High false positives in final VCF | VQSR is not yet available for RNA-seq; hard filtering may be suboptimal [44] | Use the recommended hard filters; consider validating with orthogonal methods [44] |

Key Methodological Insights

The SplitNCigarReads step is the most critical transformation specific to RNA-seq data. It splits reads into exon segments at splice junctions (represented by 'N' in the CIGAR string) and hard-clips overhanging bases, making the alignment structure compatible with HaplotypeCaller's engine [44]. For long-read RNA-seq data, follow this with a flagCorrection step to ensure optimal performance with downstream callers [4].

Variant filtering for RNA-seq currently relies on hard filtering using the VariantFiltration tool, as the more advanced Variant Quality Score Recalibration (VQSR) requires truth data that is not yet available for RNA-seq [44]. Researchers should be aware of inherent challenges such as allelic dropout in lowly expressed genes and the difficulty of distinguishing true mutations from RNA-editing events, which may require matched DNA-seq data for confirmation [31].

Frequently Asked Questions (FAQs)

Q1: What are the main advantages of using RNA-seq data for variant calling compared to DNA-seq?

RNA-seq variant calling focuses on the actively transcribed regions of the genome, offering several unique benefits. It can identify mutations in expressed genes that are more likely to have functional consequences, reveal isoform-specific mutations, detect allele-specific expression, and uncover post-transcriptional modifications. If RNA-seq data is already available for expression analysis, variant calling can be performed on the same dataset, requiring no additional sequencing costs. This approach is particularly valuable for confirming the pathogenicity of variants, identifying deep intronic variants that affect splicing, and detecting mutations in genes with tissue-specific expression [31].

Q2: What are the most significant challenges in RNA-seq variant calling that DeepVariant must overcome?

Several key challenges exist when calling variants from RNA-seq data:

- Uneven Coverage: Coverage is directly proportional to gene expression levels. Lowly expressed genes have sparse coverage, leading to a high risk of false negatives and allelic dropout, where one allele is not represented in the data [31].

- RNA Editing: Processes like adenosine-to-inosine (A-to-G) editing can mimic genuine genomic mutations. Distinguishing these true variants from post-transcriptional modifications is difficult without matched DNA sequencing data [31].

- Mapping Ambiguity: Reads that span splice junctions are challenging to align accurately, and high sequence similarity among gene families can lead to multi-mapped reads, complicating variant identification [31] [46].

- Technical Artifacts: Reverse transcription during library preparation can introduce errors, and strand-specific protocols can create coverage biases that may be mistaken for variants [31].

Q3: Can DeepVariant be used to detect somatic mutations from tumor RNA-seq data without a matched normal sample?

While DeepVariant itself is primarily a germline variant caller, a novel method called VarRNA has been developed to address this specific challenge. VarRNA uses a combination of two machine learning models (XGBoost) to classify variants from tumor RNA-seq data as artifact, germline, or somatic. This approach has been validated to outperform existing RNA-seq variant calling methods and can identify about 50% of the variants detected by exome sequencing, while also finding unique RNA variants [33]. For traditional somatic callers, one study notes that Mutect2's statistical model, which depends on detected allele frequency, can be strongly biased in RNA-seq data, and suggests that Strelka2 (with its --rna flag) may offer higher sensitivity, though it requires subsequent filtering to remove germline variants [47].

Q4: I encountered the error "Data already present in output" when running DeepVariant in Parabricks. How can I resolve it?

This error can occur when processing specific genomic intervals. User reports indicate that it might be related to certain chromosomes or regions, such as chr20, 21, 22, X, and Y in hg19 assemblies. A potential workaround is to run the analysis on individual chromosomes or a subset of intervals to isolate the problematic region. Ensuring that all input files (BAM, FASTA, and interval files) are consistent with the same reference genome (e.g., all based on hg19 or all on GRCh38) is also a critical troubleshooting step [48].

Q5: How can I improve the performance and reduce the cost of running DeepVariant?

For users of NVIDIA Parabricks, significant performance gains can be achieved by:

- Using Fast Local SSDs: Storing both input/output and temporary files on a fast local SSD is crucial. Use the

--tmp-diroption to specify a temporary directory on the SSD [49]. - Leveraging GPU Acceleration: Utilize flags like

--gpusortand--gpuwriteto accelerate BAM sorting, duplicate marking, and compression. For multi-GPU systems, the--run-partitionflag efficiently splits work across GPUs [49]. - Retraining for Specific Data: The DeepVariant retraining tool in Parabricks allows fine-tuning the model for specific lab protocols or sequencing platforms (like Singular Genomics' G4), which can improve accuracy, especially for indel calling [50].

Troubleshooting Guides

Guide for Resolving "Data already present in output" Error

This guide addresses a specific runtime error encountered in a high-performance computing (HPC) environment.

Error Message:

[src/PBBgzfFile.cpp:893] Data already present in output., expected absent == 1, exiting.[48]Scenario: The error occurred when running DeepVariant in Parabricks via Apptainer/Singularity on a cluster. The job failed at a specific genomic region (e.g., chr20) after processing some data successfully [48].

Diagnosis Steps:

- Identify the Failing Interval: Check the job log to determine the exact chromosome or genomic interval where the failure occurred.

- Check File Consistency: Verify that your input BAM file, reference genome (FASTA), and any provided interval file (BED) are all aligned to the same reference build (e.g., all hg19 or all GRCh38). Inconsistencies can cause critical errors.

Solution Steps:

- Isolate the Problematic Region: Create a new, smaller interval file that contains only the chromosome or region where the job failed (e.g., just chr20).

- Re-run on the Subset: Execute your DeepVariant command again, using the new, smaller interval file via the

--interval-fileparameter. - Iterate if Necessary: If the job completes on the previously failing region, the issue is localized. You can then process the genome in chunks, excluding the problematic region until a root cause is found. If the error persists on a specific region even in isolation, it may indicate corruption in the input data for that locus.

Guide for Optimizing DeepVariant Performance in Parabricks

This guide provides key recommendations for achieving the best runtime performance when using NVIDIA Parabricks.

Objective: Drastically reduce the time and cost of analysis.

Recommended Commands and Flags: The following table summarizes the key flags for optimizing a DeepVariant germline pipeline in Parabricks.

Table: Key Performance Flags for Parabricks DeepVariant

| Flag | Function | Recommendation |

|---|---|---|

--tmp-dir |

Specifies directory for temporary files. | Point to a fast local SSD (e.g., /raid on DGX systems) [49]. |

--gpusort |

Uses GPUs to accelerate BAM sorting. | Always use for best performance [49]. |

--gpuwrite |

Uses a GPU to accelerate BAM/CRAM compression. | Always use for best performance [49]. |

--run-partition |

Efficiently splits work across multiple GPUs. | Use when running with 2 or more GPUs [49]. |

--num-streams-per-gpu |

Controls parallelism per GPU. | The default is auto. For further tuning, can be increased up to 6 [49]. |

--bwa-cpu-thread-pool |

Specifies CPU threads for BWA alignment. | Set based on your system (e.g., 16) [49]. |

- Example Optimized Command: Source: Adapted from NVIDIA Parabricks documentation [49].

Performance Data and Benchmarks

Table: DeepVariant Performance and Accuracy Metrics

| Metric | Value / Finding | Context |

|---|---|---|

| Runtime on NVIDIA H100 DGX | Under 10 minutes | For a full germline pipeline (FASTQ to VCF) [49]. |

| Cost on Google Cloud Platform | \$2-\$3 per genome | For a typical coverage whole genome in v0.7 [51]. |

| Accuracy Improvement via Retraining | Improved Indel F1-score | Retraining a default model on Singular G4 platform data led to significant indel calling improvement [50]. |

| Variant Detection in RNA-seq (VarRNA) | ~50% of exome sequencing variants | Validation against exome sequencing ground truth [33]. |

Experimental Protocols

Standardized RNA-seq Preprocessing Workflow for Variant Calling

A robust preprocessing pipeline is critical for generating high-quality input for DeepVariant. The following workflow is adapted from best practices and the VarRNA methodology [33].

- Alignment: Use STAR (v2.7.10) for two-pass alignment of FASTQ files to the reference genome (e.g., GRCh38.p13). This improves the detection of novel splice junctions, which is crucial for accurate mapping around exon boundaries [33].

- Post-processing with GATK:

- Add Read Groups: Use GATK's

AddOrReplaceReadGroupsto assign metadata to the aligned BAM file. - SplitNCigarReads: This critical step uses GATK's

SplitNCigarReadsto split reads that contain "N" in their CIGAR string (indicating they span introns) into separate alignments. This prevents artificial insertions or deletions at splice junctions from being misinterpreted as variants. - Base Quality Score Recalibration (BQSR): Perform BQSR using known variant sites (e.g., from dbSNP) to correct for systematic errors in base quality scores.

- Add Read Groups: Use GATK's

- Variant Calling with DeepVariant: Run DeepVariant on the processed BAM file. Key parameters for RNA-seq include using the

--rnamode if available, and considering disabling the use of soft-clipped bases to reduce false positives [33].

The workflow for this protocol can be visualized as follows:

Protocol for Retraining DeepVariant on Custom Data

Fine-tuning DeepVariant on data from specific sequencing platforms or protocols can enhance variant calling accuracy. This process is accelerated within NVIDIA Parabricks [50].

- Data Preparation: Obtain a set of aligned BAM files and a high-confidence set of known variants (e.g., from a NIST reference material like NA12878) for training and validation.

- Example Generation (

make_examples): Run themake_examplesstep from the DeepVariant retraining workflow. This converts candidate variants from the BAM files into a tensor format (image pileups) used for training. In Parabricks v4.1+, this step is GPU-accelerated. - Model Training: Execute the model training step. You can choose:

- Warm Start: Training from a previous model (e.g., the default Illumina WGS model). This is faster and suitable for incremental improvements.

- De Novo: Training from scratch, which is more computationally intensive but allows for exploring non-standard parameters.

- Model Validation: Validate the newly trained model by performing variant calling on a held-out validation dataset (e.g., NIST HG002) that was not used in training. Use the

hap.pytool to benchmark the variant calling accuracy (F1 score for SNPs and Indels) against the ground truth.

The retraining workflow is outlined below:

The Scientist's Toolkit

Table: Essential Research Reagents and Computational Tools

| Item Name | Type | Function in RNA-seq Variant Calling |

|---|---|---|

| STAR | Software (AlignER) | Performs sensitive, accurate splice junction mapping via two-pass alignment, which is crucial for RNA-seq [33]. |

| GATK | Software (Toolkit) | Provides essential utilities for BAM post-processing, including SplitNCigarReads and base quality recalibration [33]. |

| dbSNP | Database | A public repository of known genetic variants used as known sites for base quality score recalibration (BQSR) and filtering [33]. |

| NIST Reference Materials | Biological Standard | Well-characterized genomic samples (e.g., HG001, HG002) used as ground truth for training and validating variant callers [50]. |

| NVIDIA Parabricks | Software (Pipeline) | A suite providing GPU-accelerated versions of tools like DeepVariant and BWA, dramatically reducing compute time [49]. |

| VarRNA | Software (Classifier) | A specialized tool that classifies variants from tumor RNA-seq data as germline, somatic, or artifact using machine learning [33]. |

VarRNA is a computational method that classifies single nucleotide variants (SNVs) and insertions/deletions (indels) from tumor RNA-Seq data as germline, somatic, or artifact using a machine learning approach [33]. This tool addresses a significant challenge in cancer research: discerning somatic variants in cancer samples without a matched normal comparator, which is typically required for DNA-based somatic variant calling [33].

The system utilizes two XGBoost machine learning models in sequence [33] [34]:

- First Model: Classifies variant calls as either true variants or artifacts (false positives).

- Second Model: Classifies the true variants as either germline or somatic.

This approach was trained and validated on pediatric cancer samples with paired tumor and normal DNA exome sequencing data serving as the ground truth [33]. VarRNA has been shown to identify approximately 50% of the variants detected by exome sequencing while also detecting unique RNA variants absent in the corresponding DNA data [33] [34].

Troubleshooting Guides & FAQs

Common Installation and Dependency Issues

Problem: Dependency errors when setting up the VarRNA pipeline.

Solution:

- Ensure all required software is installed and accessible in your PATH [52].

- The VarRNA pipeline is primarily developed in Python and uses Snakemake for workflow scalability [33]. Confirm you have the correct versions of these dependencies.

- Required software includes GATK (v4.2.6.1 or higher) and STAR aligner (v2.7.10 or higher) [33] [52].

- If issues persist, specify full paths to software dependencies in your configuration file [52].

Problem: Software version compatibility issues.

Solution:

- VarRNA was developed and tested with specific tool versions [33]. Using different versions may cause unexpected behavior.

- Refer to the VarRNA GitHub repository for the most current list of compatible versions [33] [34].

Pipeline Execution Errors

Problem: Premature EOF (End of File) errors when processing BAM files.

Solution:

- This error often indicates file corruption or incomplete processing in previous steps [53].

- Validate input BAM files using tools like

ValidateSamFilefrom Picard orsamtools[53]. - Ensure all previous pipeline steps (read alignment, duplicate marking, splitting) complete successfully before proceeding to variant calling.

- Check that BAM files are properly indexed [53].

Problem: Memory-related errors during execution.

Solution:

- Decrease the number of threads allocated in the configuration file [52].

- Increase available memory if system resources permit [52].

- For large datasets, consider splitting the analysis into smaller batches [52].

Problem: BaseRecalibrator fails due to missing known sites VCF file.

Solution:

- The BaseRecalibrator step in GATK requires known variant sites for calibration [44].

- Obtain appropriate known sites VCF files (e.g., dbSNP build 151 for human data) that match your reference genome [33] [44].

- Ensure the reference genome version used for alignment matches the version associated with your known sites file [44].

Data Quality and Interpretation Issues

Problem: High false positive variant calls.

Solution:

- VarRNA's two-stage XGBoost model is specifically designed to reduce false positives by classifying variants as artifacts [33].

- Ensure proper quality control steps are performed, including base quality score recalibration and variant filtering according to GATK best practices for RNA-Seq [33] [44].

- Verify that RNA-Seq data has sufficient depth (recommended >70 million reads for reliable variant calling) [33].

Problem: Inconsistent contig names between reference files.

Solution:

- This error occurs when reference genome FASTA files, GTF annotation files, and known sites VCF files use different naming conventions for chromosomes [44].

- Ensure all reference files use consistent contig naming (e.g., "chr1" vs. "1") [44].

- Use the same source for all reference files when possible [44].

Performance Benchmarking and Experimental Protocols

VarRNA Performance Metrics

VarRNA was extensively validated against multiple cancer datasets. The table below summarizes key performance metrics compared to other methods:

Table 1: VarRNA Performance Benchmarking

| Metric | VarRNA Performance | Comparison to Other Methods |

|---|---|---|

| DNA Variant Detection | Identifies ~50% of variants detected by exome sequencing [33] | Outperforms existing RNA variant calling methods [33] |

| Unique RNA Variant Detection | Detects additional variants not found in paired DNA exome data [33] | Provides insights into RNA editing and allele-specific expression [33] |

| Cancer Driver Detection | Reveals variant allele frequencies distinct from DNA data in cancer-driving genes [33] | Can identify overexpression of mutant alleles in oncogenes [33] [34] |

| Computational Framework | Two XGBoost models with Snakemake workflow [33] | Designed for efficient deployment on high-performance computing systems [33] |

Detailed VarRNA Workflow Protocol

The complete VarRNA workflow consists of the following steps, which researchers can implement for their own RNA-Seq variant calling:

Step 1: RNA-Seq Alignment

- Use STAR (v2.7.10+) with two-pass alignment to GRCh38 reference genome [33].

- Recommended read depth: 70-400 million reads per sample [33].

- Check alignment rates (target: 70-96% reads aligned to reference) [33].

Step 2: Post-Alignment Processing

- Process BAM files using GATK (v4.2.6.1+) Best Practices for RNA-Seq [33] [44]:

- Add read groups

- Split reads with N in CIGAR string (spliced alignments)

- Perform base quality score recalibration using known sites from dbSNP (build 151) [33]

Step 3: Variant Calling

- Use GATK HaplotypeCaller with RNA-specific parameters [33]:

- Set

--do-not-use-soft-clipped-bases - Set

--standard-min-confidence-threshold-for-callingto 20 - Disable read down-sampling with

--max-reads-per-alignment-start 0

- Set

Step 4: Variant Classification with VarRNA Models

- Apply the two XGBoost models sequentially [33]:

- First model filters artifacts from true variants

- Second model classifies true variants as germline or somatic

- Input: Annotated variant table from previous steps

- Output: Classified variants with prediction scores

Step 5: Validation and Interpretation

- Compare results with available DNA sequencing data when possible

- Focus on variants in cancer-driving genes showing allele-specific expression

- Investigate biological significance of RNA-specific variants

Essential Research Reagent Solutions

Table 2: Key Research Reagents and Computational Tools for VarRNA Implementation

| Resource Type | Specific Tool/Resource | Function in Workflow | Compatibility Notes |

|---|---|---|---|

| Reference Genome | GRCh38.p13 | Read alignment and variant calling | Use consistent version across all steps [33] |

| Alignment Tool | STAR (v2.7.10+) | RNA-Seq read alignment | Two-pass mode recommended for sensitivity [33] [44] |

| Variant Caller | GATK HaplotypeCaller (v4.2.6.1+) | Initial variant calling | Use RNA-seq specific parameters [33] |

| Known Variants | dbSNP build 151 | Base quality recalibration | Required for BQSR step [33] |

| Machine Learning Framework | XGBoost | Variant classification | Core of VarRNA classification system [33] |

| Workflow Management | Snakemake | Pipeline scalability | Enables efficient HPC deployment [33] |

| Validation Resource | Paired DNA exome sequencing (when available) | Ground truth validation | Used in VarRNA development but not required for application [33] |

Workflow Visualization

Frequently Asked Questions

Q1: Can I use HaplotypeCaller for somatic variant discovery?

- A: No. HaplotypeCaller is designed for germline variant calling and uses a model that assumes a fixed ploidy. Somatic variants, with their varying allele fractions due to tumor purity and heterogeneity, require a specialized model like that in Mutect2. Using the wrong tool will result in poor sensitivity and specificity for somatic mutations [54] [55].

Q2: In tumor-only mode, can Mutect2 reliably distinguish between germline and somatic variants?

- A: This is challenging. Without a matched normal, the tool lacks direct evidence from the patient's germline. Mutect2 tries to distinguish them using population germline resources and the allele fraction in the tumor, but the model is inherently less accurate. The exception is when tumor purity is low, as most somatic variants will have low allele fractions, making them statistically distinguishable from germline heterozygous SNPs [55].

Q3: How can I increase the sensitivity of my somatic variant calls?

- A: In the

FilterMutectCallsstep, you can increase the-f-score-betaparameter from its default value of 1. This increases sensitivity at the expense of precision (i.e., it may allow more false positives) [55].

- A: In the

Q4: What is the purpose of a Panel of Normals (PoN) and how does it help?