Optimizing RNA-Seq for Compound Mode of Action Studies: A Complete Guide from Experimental Design to Data Analysis

This article provides a comprehensive guide for researchers and drug development professionals on applying RNA sequencing to elucidate compound mode of action.

Optimizing RNA-Seq for Compound Mode of Action Studies: A Complete Guide from Experimental Design to Data Analysis

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on applying RNA sequencing to elucidate compound mode of action. It covers foundational principles of transcriptome analysis in drug discovery, detailed methodological protocols optimized for high-throughput screening, critical troubleshooting and optimization strategies for reliable results, and validation approaches for robust data interpretation. By integrating the latest research on experimental design, sample size determination, and bioinformatics pipelines, this resource enables scientists to design and execute RNA-Seq studies that effectively distinguish primary drug effects from secondary responses and generate biologically meaningful insights for therapeutic development.

Laying the Groundwork: How RNA-Seq Revolutionizes MoA Studies in Drug Discovery

The Critical Role of Transcriptomics in Deconvoluting Compound Mechanisms

Transcriptomics, the global analysis of RNA expression, has become a cornerstone in modern drug discovery and development. By enabling comprehensive profiling of gene expression, it provides critical insights into the complex molecular mechanisms of action (MoA) of therapeutic compounds [1] [2]. Unlike genomic data which provides a static view, transcriptomics reveals the dynamic landscape of gene expression, capturing how cells respond to perturbations such as drug treatments [1]. This capability is fundamental for understanding both disease mechanisms and compound-induced changes at the molecular level.

The transition from microarray technology to RNA sequencing (RNA-Seq) represents a significant technological evolution. RNA-Seq offers several advantages, including the ability to measure expression levels of thousands of genes simultaneously, discover novel transcripts, and provide insight into functional pathways and regulations without prior knowledge of the genome [2]. This high-throughput capability has revolutionized the way biologists examine transcriptomes, making RNA-Seq an indispensable tool for identifying drug-related genes, microRNAs, and fusion proteins [2]. As a result, transcriptomics now plays a pivotal role across the drug discovery pipeline, from initial target identification to understanding drug resistance and toxicity [1].

Key Applications in Deconvoluting Compound Mechanisms

Target Discovery and Validation

Transcriptomics serves as a powerful tool for identifying potential drug target genes, a critical yet challenging step in drug development. By comparing transcriptomic profiles between diseased and normal states, researchers can uncover genes and pathways that play important roles in disease pathogenesis [1]. For example, RNA-Seq has helped identify distinct oncogene-driven transcriptome profiles, enabling the identification of potential targets for cancer therapy [1]. Once a compound is selected for further study, RNA-Seq can detect drug-induced genome-wide changes in gene expression, helping to confirm engagement with the intended target and understand downstream effects [1].

Differentiating Primary from Secondary Effects

A significant challenge in MoA studies is distinguishing direct (primary) from indirect (secondary) drug effects. Conventional RNA-Seq, which captures a single snapshot of the transcriptome, cannot properly differentiate between these effects [1]. This limitation is addressed by time-resolved RNA-Seq, which observes RNA abundances over time in biological samples [1]. This temporal dimension allows researchers to resolve complex regulatory networks and predict combinatorial effects, significantly enhancing MoA deconvolution. Techniques like SLAMseq enable high-throughput kinetic RNA sequencing, providing the resolution needed to separate primary transcriptional responses from secondary adaptive changes [1].

Understanding Drug Resistance and Sensitivity

Transcriptomic approaches are invaluable for identifying genes and mechanisms involved in both innate and acquired drug resistance. By comparing gene expression profiles between drug-resistant and sensitive cell lines or patient samples, researchers can pinpoint resistance-associated pathways and develop strategies to overcome them [1]. For instance, in triple-negative breast cancer (TNBC), RNA-Seq analysis of drug-resistant cell lines revealed significant differences in cytokine-cytokine receptor interaction pathways, providing new ideas for drug development [1]. Similarly, small RNA-Seq has been used to identify microRNAs that drive resistance to chemotherapeutic agents like doxorubicin in hepatocellular carcinoma [1].

Biomarker Discovery

Transcriptomics facilitates the discovery of biomarkers that can indicate disease presence, progression, or severity, serving as both diagnostic tools and potential therapeutic targets [1]. RNA-Seq has proven particularly valuable in cancer biomarker discovery, identifying fusion genes that drive malignancy in acute myeloid leukemia, breast cancer, and colorectal cancer [1]. Additionally, various non-coding RNAs, including miRNAs and lncRNAs, have been identified as promising biomarkers through transcriptomic analysis [1].

Experimental Protocols and Workflows

RNA Sequencing Wet-Lab Protocol

Sample Preparation and RNA Extraction

- Cell Culture & Compound Treatment: Culture appropriate cell lines and treat with the compound of interest at various concentrations and time points. Include vehicle controls. For time-resolved studies, plan multiple time points to capture kinetic responses [1].

- RNA Extraction: Isolve total RNA using phenol-chloroform extraction or commercial kits. Assess RNA quality using Bioanalyzer or TapeStation; ensure RNA Integrity Number (RIN) > 8.0 for reliable sequencing.

- Library Preparation: Deplete ribosomal RNA or enrich polyadenylated RNA. Fragment RNA and synthesize cDNA. Ligate sequencing adapters, typically using kits compatible with your sequencing platform (e.g., Illumina). Amplify library via PCR and validate quality using Bioanalyzer.

- Sequencing: Pool libraries and sequence on an appropriate NGS platform (e.g., Illumina NovaSeq X) with sufficient depth (typically 25-50 million reads per sample for differential expression analysis) [3].

Computational Analysis Workflow

The computational analysis of RNA-Seq data follows a structured bioinformatics pipeline to transform raw sequencing data into biologically meaningful insights [4].

Step-by-Step Computational Protocol [4]:

Quality Control of Raw Reads

- Tool: FastQC

- Process: Assess read quality, GC content, adapter contamination, and sequence duplication levels.

- Output: Quality reports for each sample.

Read Trimming and Filtering

- Tool: Trimmomatic

- Process: Remove adapter sequences, trim low-quality bases from read ends, and exclude very short reads.

- Command Example:

Alignment to Reference Genome

- Tool: HISAT2 or STAR

- Process: Map quality-filtered reads to a reference genome (e.g., GRCh38).

- Command Example (HISAT2):

- Post-processing: Convert SAM to BAM, sort, and index using SAMtools.

Gene Quantification

- Tool: featureCounts or HTSeq

- Process: Count reads that align to each gene feature.

- Command Example (featureCounts):

Differential Gene Expression Analysis

- Tool: DESeq2 or edgeR in R

- Process: Normalize count data and perform statistical testing to identify significantly differentially expressed genes between conditions (e.g., treated vs. control).

- Key Outputs: List of DEGs with log2 fold changes and adjusted p-values.

Visualization and Functional Analysis

- Visualization: Generate heatmaps, volcano plots, and PCA plots to visualize results.

- Pathway Analysis: Use tools like GSEA or Enrichr to identify enriched biological pathways among DEGs.

Data Analysis and Interpretation

Key Analytical Approaches for MoA Deconvolution

Pathway and Enrichment Analysis Identification of dysregulated biological pathways is fundamental to understanding compound MoA. Tools like Gene Set Enrichment Analysis (GSEA) identify pathways that are coordinately up- or down-regulated in response to treatment, even when individual gene changes are modest. This systems-level view helps connect transcriptional changes to biological processes and functions, revealing whether a compound affects processes like cell cycle progression, DNA damage repair, or specific metabolic pathways [2].

Time-Resolved Analysis As previously mentioned, analyzing transcriptomic data across multiple time points is crucial for distinguishing primary drug targets from secondary effects. Statistical methods for analyzing time-course data, including clustering of genes with similar temporal expression patterns, can reveal sequential events in drug response and help reconstruct regulatory networks [1].

Integration with Spatial Transcriptomics Advanced spatial transcriptomics technologies, when integrated with single-cell RNA-Seq data through deconvolution methods like Weight-Induced Sparse Regression (WISpR), allow researchers to map cell-type distributions and drug effects within the tissue context [5]. This is particularly valuable for understanding how compounds affect cellular interactions in complex tissues like tumors, providing insights into both efficacy and potential microenvironment-mediated resistance mechanisms.

Quantitative Data Presentation

Table 1: RNA-Seq Quality Control Metrics and Thresholds

| Quality Metric | Optimal Threshold | Importance for Analysis |

|---|---|---|

| RNA Integrity Number (RIN) | > 8.0 | Ensures RNA is not degraded; critical for library preparation |

| Total Reads per Sample | 25-50 million | Provides sufficient depth for accurate gene quantification |

| Q30 Score | > 80% | Indicates high base-calling accuracy |

| Alignment Rate | > 85% | Measures efficiency of mapping to reference genome |

| rRNA Contamination | < 2% | Confirms effective ribosomal RNA removal |

Table 2: Transcriptomic Signatures in Compound Mechanism Analysis

| Analysis Type | Key Parameters | Interpretation in MoA Context |

|---|---|---|

| Differential Expression | Adjusted p-value < 0.05, |log2FC| > 1 | Identifies significantly altered genes; magnitude indicates strength of response |

| Pathway Enrichment | FDR < 0.25 (GSEA) | Reveals biological processes affected by compound |

| Time-Resolved Analysis | Early (0-6h) vs Late (24h+) responses | Distinguishes primary drug targets from secondary adaptive changes |

| Cell-Type Deconvolution | Cell-type proportions & localization | Maps drug effects to specific cell populations in tissue context [5] |

Essential Research Reagent Solutions

Table 3: Key Research Reagents for Transcriptomic Studies in Compound MoA

| Reagent / Kit | Primary Function | Application in MoA Studies |

|---|---|---|

| Total RNA Extraction Kits | Isolation of high-quality RNA from cells/tissues | Preserves transcriptomic profile; critical for accurate downstream analysis |

| rRNA Depletion Kits | Removal of ribosomal RNA | Enriches for mRNA and non-coding RNAs; essential for total RNA-Seq |

| Stranded cDNA Library Prep Kits | Construction of sequencing libraries | Maintains strand information; improves transcript annotation |

| Single-Cell RNA-Seq Kits | Barcoding and capture of single cells | Resolves cellular heterogeneity in compound response |

| Spatial Transcriptomics Slides | Spatial capture of mRNA on tissue sections | Maps compound effects within tissue architecture [5] |

| SLAMseq/Kinetic RNA-Seq Kits | Metabolic labeling of newly synthesized RNA | Enables time-resolved analysis of transcription [1] |

Transcriptomics, particularly through advanced RNA-Seq technologies, provides an unparalleled window into the molecular mechanisms of action of bioactive compounds. The integration of comprehensive experimental protocols with sophisticated computational analyses enables researchers to move beyond simple gene lists to meaningful biological insights. As technologies evolve—especially in the realms of single-cell resolution, spatial context, and temporal dynamics—transcriptomic approaches will continue to enhance our ability to deconvolute complex compound mechanisms, ultimately accelerating the development of safer and more effective therapeutics.

In the field of drug discovery, particularly in compound mode of action (MoA) studies, RNA sequencing (RNA-Seq) has emerged as a transformative technology. It provides two fundamentally different philosophical approaches to scientific inquiry: the focused, hypothesis-driven research and the broad, unbiased discovery-based research [6]. The choice between these pathways significantly influences experimental design, resource allocation, and interpretation of results within compound MoA studies [7].

This application note details the strategic implementation of both approaches within the context of RNA-Seq protocol for compound MoA research, providing researchers with structured methodologies, comparative analyses, and practical tools to guide their study design.

Conceptual Frameworks and Their Applications

Hypothesis-Driven Research

Hypothesis-driven research begins with a specific, pre-formed hypothesis that seeks to explain a biological phenomenon [6]. In the context of compound MoA, this involves proposing a specific mechanism—such as "compound X induces cell death by inhibiting protein Y"—and then designing experiments to test this hypothesis [6]. The approach is grounded in prior knowledge from published research or previous work within a laboratory, and follows the scientific method of repeatedly attempting to disprove the hypothesis [6]. When applied to RNA-Seq, this results in a highly focused experimental design.

Unbiased Discovery Research

In contrast, unbiased discovery research (also termed hypothesis-generating) does not begin with a predefined hypothesis [6]. Instead, it uses non-biased approaches, often involving large-scale screens or 'omics' technologies like RNA-Seq, to generate novel hypotheses from the data [6]. This approach is particularly valuable when investigating poorly understood biological systems or when seeking breakthrough insights that might be constrained by current scientific paradigms [6]. For compound MoA studies, this could involve identifying novel pathways or biomarkers affected by a treatment without preconceived notions of the outcome.

Comparative Analysis

The table below summarizes the core characteristics of each approach.

Table 1: Strategic Comparison of Research Approaches

| Characteristic | Hypothesis-Driven Approach | Unbiased Discovery Approach |

|---|---|---|

| Starting Point | A specific, pre-defined hypothesis [6] | A general research question without a pre-formed hypothesis [6] |

| Primary Goal | To test and provide evidence for or against the stated hypothesis [6] | To generate new hypotheses from comprehensive data [6] |

| Typical RNA-Seq Design | Targeted; may focus on specific pathways or gene sets | Global transcriptome analysis; often wider sequencing coverage |

| Best Applications in MoA Studies | Validating a suspected molecular target or pathway | De novo target identification, biomarker discovery, and exploring novel mechanisms [8] |

| Key Advantage | Clear experimental path and interpretation criteria | Potential for ground-breaking, novel discoveries not limited by current knowledge [6] |

| Key Challenge | Risk of the hypothesis being wrong, potentially leading to inconclusive results [6] | Longer research process (hypothesis generation must be followed by testing); requires careful multiple testing correction [6] |

RNA-Seq Experimental Design for MoA Studies

Foundational Considerations

A successful RNA-Seq experiment for compound MoA studies, regardless of the overarching approach, begins with a clear definition of aims and objectives [7]. Key questions to consider include:

- Is a global, unbiased readout needed, or is a targeted approach more suitable? [7]

- What is the expected magnitude of differential expression?

- Is the chosen model system (e.g., cell line, organoid) suitable for screening the desired drug effects? [7]

- What are the potential sources of biological and technical variation, and how can they be separated from genuine drug-induced effects? [7]

Sample Size, Power, and Replication

The sample size has a significant impact on the quality and reliability of RNA-Seq results [7]. Statistical power—the ability to detect genuine differential expression—is influenced by biological variation, study complexity, cost, and sample availability [7].

Replication is non-negotiable for robust conclusions.

- Biological Replicates are independent samples (e.g., from different animals, cell culture passages) within the same experimental group. They account for natural biological variation and are critical for generalizability. At least 3 biological replicates per condition are typically recommended, with 4-8 being ideal for most experiments [7].

- Technical Replicates involve processing the same biological sample multiple times to assess technical variation introduced by the workflow itself [7].

Table 2: Replication Strategy for RNA-Seq Experiments

| Replicate Type | Definition | Purpose | Example in MoA Study |

|---|---|---|---|

| Biological Replicate | Different biological samples or entities [7] | To assess biological variability and ensure findings are reliable and generalizable [7] | 3 different cell culture plates treated with the same compound and control. |

| Technical Replicate | The same biological sample, measured multiple times [7] | To assess and minimize technical variation from sequencing runs and lab workflows [7] | Splitting the RNA extract from one plate into 3 separate library prep reactions. |

Critical Wet-Lab Workflow Decisions

The aims of the study directly dictate the wet-lab workflow [7].

Library Preparation Method: The choice is critical and depends on the sample type, RNA quality, and the biological question.

- Poly(A) Enrichment: Selects for mRNA with polyadenylated tails, providing a detailed view of coding transcripts. It is susceptible to biases with low-quality RNA [9] [10].

- Ribosomal RNA Depletion: Removes abundant ribosomal RNA, allowing for the sequencing of both coding and non-coding RNA. It performs better than poly(A) selection on degraded samples [9] [10].

- RNA Capture: Uses probes to target specific exons and is particularly robust for highly degraded samples, such as those from FFPE tissues [10].

- 3' mRNA-Seq (e.g., Discovery-seq): A cost-efficient, high-throughput method ideal for large-scale compound screens where the goal is gene expression and pathway analysis rather than full isoform characterization [8] [7].

Sample Quality and Quantity: For low-quality (degraded) or low-quantity samples, ribosomal depletion or exon capture methods are generally superior to poly(A) enrichment [10]. A comparative study found that ribosomal depletion protocols can generate accurate data even with inputs as low as 1-2 ng for degraded RNA, while exon capture performs best on highly degraded samples down to 5 ng input [10].

Controls: Include appropriate controls such as untreated vehicle controls and, for large-scale experiments, artificial spike-in controls (e.g., SIRVs). Spike-ins are invaluable for measuring assay performance, normalizing data, and assessing technical variability [7].

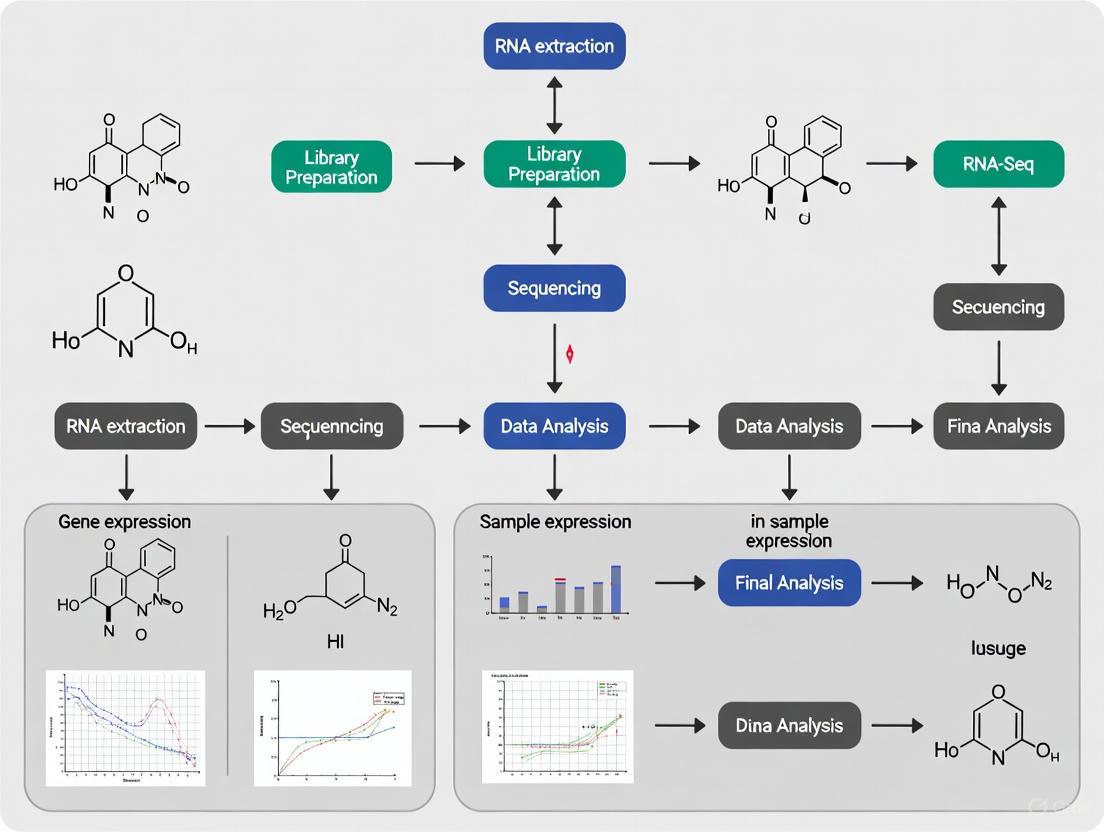

The following workflow diagram illustrates the key decision points in designing an RNA-Seq study for compound MoA research.

Detailed Methodological Protocols

Protocol A: Hypothesis-Driven MoA Validation Study

This protocol is designed to validate whether a compound acts through a specific, pre-defined pathway.

Objective: To test the hypothesis that "Compound X induces G1 cell cycle arrest in the A549 cell line via transcriptional suppression of Cyclin D1."

Step-by-Step Workflow:

Cell Culture and Treatment:

- Culture A549 cells in standard conditions.

- Seed cells in multiple technical replicates for biological replication.

- Treat with Compound X at the predetermined IC50 concentration. Include a vehicle control (e.g., DMSO).

- Harvest cells at multiple time points (e.g., 6h, 12h, 24h) post-treatment to capture kinetic effects.

RNA Isolation:

- Lyse cells and isolate total RNA using a silica-membrane column kit.

- Treat samples with DNase to remove genomic DNA contamination [9].

- Assess RNA quality using an automated electrophoresis system (e.g., Agilent TapeStation) to assign an RNA Integrity Number (RIN). Only proceed with samples having RIN > 8.0 [11] [10].

Library Preparation and Sequencing:

- Use a poly(A) enrichment-based library prep kit (e.g., TruSeq Stranded mRNA) to focus on coding mRNA [10].

- Incorporate RNA spike-in controls (e.g., ERCC) during the library prep to monitor technical performance [7].

- Perform single-end sequencing (e.g., 75 bp) on an Illumina platform to a depth of 20-30 million reads per sample.

Data Analysis:

- Quality Control: Assess raw read quality using FastQC.

- Alignment: Map reads to the human reference genome (e.g., GRCh38) using a splice-aware aligner like STAR.

- Quantification: Generate a count matrix for genes using featureCounts or HTSeq, focusing on a pre-selected gene set related to cell cycle regulation [11].

- Differential Expression: Perform statistical testing (e.g., using edgeR or DESeq2) to compare treated vs. control groups at each time point, with a primary focus on Cyclin D1 and other G1/S phase genes [11].

- Validation: Confirm key findings (e.g., Cyclin D1 downregulation) using an orthogonal method like qRT-PCR.

Protocol B: Unbiased MoA Deconvolution Study

This protocol is designed for situations where the MoA of a compound is completely unknown.

Objective: To identify the global transcriptomic changes and potential MoA of a novel Compound Y on primary human hepatocytes.

Step-by-Step Workflow:

Sample Preparation:

- Treat primary human hepatocytes from 5 different donors (biological replicates) with Compound Y at its IC50.

- Include vehicle controls for each donor to account for donor-to-donor variability.

- Harvest cells at a single, early time point (e.g., 8h) to capture primary transcriptional responses.

RNA Isolation and QC:

- Isolate total RNA. Given the potential for moderate degradation in primary cells, prioritize methods that maintain RNA integrity.

- Assess RNA quality. Proceed with samples with RIN > 7.0.

Library Preparation and Sequencing:

- Use a ribosomal RNA depletion kit (e.g., Ribo-Zero) to capture both coding and non-coding RNA species, allowing for a truly global analysis [9] [10].

- Include UMIs (Unique Molecular Identifiers) in the library prep protocol to correct for PCR amplification biases [8].

- Perform paired-end sequencing (e.g., 2x100 bp) on an Illumina platform to a depth of 40-50 million reads per sample to facilitate isoform-level analysis.

Data Analysis:

- Quality Control and Processing: Process raw data as in Protocol A, using UMI-aware pipelines for accurate quantification.

- Unsupervised Analysis: Begin with exploratory analyses like Principal Component Analysis (PCA) to visualize global sample relationships and identify potential outliers [11].

- Differential Expression: Perform genome-wide differential expression testing without pre-filtering for specific genes. Apply strict multiple testing correction (e.g., FDR < 0.05).

- Pathway and Network Analysis: Input the full list of differentially expressed genes into pathway enrichment tools (e.g., GSEA, Enrichr) to identify significantly perturbed biological processes, pathways, and functions, thereby generating new hypotheses about the compound's MoA [11].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for RNA-Seq in MoA Studies

| Item | Function | Considerations for MoA Studies |

|---|---|---|

| DNase I Enzyme | Degrades genomic DNA during RNA isolation to prevent DNA contamination in RNA-seq libraries [9]. | Critical for ensuring that observed expression changes are RNA-derived, not from genomic DNA. |

| Poly(dT) Magnetic Beads | Enriches for eukaryotic mRNA by binding to the polyadenylated (poly(A)) tail [9]. | Ideal for high-quality RNA from cell lines; not suitable for non-polyadenylated RNAs or degraded samples [10]. |

| Ribo-Zero / rRNA Depletion Kit | Selectively removes abundant ribosomal RNA (rRNA) from total RNA, allowing sequencing of other RNA species [9] [10]. | Preferred for degraded samples or when studying non-coding RNAs [10]. |

| RNA Spike-In Controls (e.g., SIRVs, ERCC) | Exogenous RNA added in known quantities to the sample before library prep [7]. | Essential for monitoring technical performance, normalization, and quantitative accuracy in large-scale screens [7]. |

| UMI Adapters | Oligonucleotide tags that provide a unique identifier to each mRNA molecule before PCR amplification [8]. | Reduces quantitative bias from PCR amplification, improving accuracy for differential expression analysis. |

| TruSeq RNA Access | Library prep kit that uses exome capture probes to enrich for coding RNA from degraded samples [10]. | The best-performing method for highly degraded samples, such as FFPE tissues [10]. |

The strategic selection between a hypothesis-driven and an unbiased discovery approach is a cornerstone of effective research into compound mode of action. The hypothesis-driven path offers focus and efficiency for validation studies, while the unbiased discovery approach opens the door to novel, breakthrough insights. By aligning the research question with the appropriate experimental framework—meticulously planning the design, replication, library preparation, and analysis—researchers can leverage the full power of RNA-Seq to deconvolve the mechanisms of therapeutic compounds and accelerate the drug discovery pipeline.

A critical challenge in modern drug discovery is elucidating a compound's precise Mode of Action (MoA), which describes the biological interactions through which a molecule produces its pharmacological effect [12]. Transcriptional dynamics, or the changes in gene expression over time, serve as a central gateway to understanding these mechanisms [13]. However, a fundamental distinction must be made between primary and secondary transcriptional effects. Primary effects are the direct, immediate consequences of a compound interacting with its cellular target(s). Secondary effects are the subsequent, downstream consequences resulting from the primary transcriptional changes and other cellular feedback mechanisms [14]. Accurately distinguishing between these is paramount, as primary effects reveal the initial therapeutic intervention point, while secondary effects can illuminate efficacy, resistance mechanisms, and potential side-effects [12].

Traditional mRNA-sequencing (RNA-Seq) measures cellular mRNA concentrations, but it faces a inherent limitation in temporal resolution due to the substantial lag between changes in transcriptional activity and detectable changes in mRNA levels. This lag, resulting from the time required for transcription, post-transcriptional processing, and the buffering capacity of pre-existing mRNAs, makes it difficult to separate primary from secondary regulatory events, as significant changes may require hours to detect [14]. This application note details how advanced RNA-Seq protocols and analytical frameworks can overcome this challenge, providing researchers with robust methodologies to deconvolve complex transcriptional responses and accelerate MoA studies.

Experimental Design for Temporal Resolution

A carefully considered experimental design is the most crucial aspect of any RNA-Seq study aimed at dissecting transcriptional dynamics [15]. The objective is to capture the transcriptional response at a resolution fine enough to identify the earliest initiating events.

Key Considerations for Robust Design

- Hypothesis and Objectives: Begin with a clear hypothesis about the expected drug effects. This will guide critical decisions on the model system, time points, and controls. Determine if you need quantitative gene expression data or qualitative data, such as isoform or splice variation information [15].

- Time Course Selection: The choice of time points is critical. Drug effects on gene expression can vary dramatically over time. To capture primary effects, very early time points (e.g., minutes) are essential. A kinetic RNA-Seq approach allows for the distinction of primary from secondary drug effects and is particularly useful during MoA studies [15]. The use of multiple, tightly spaced time points is a defining feature of successful studies, enabling the observation of response waves [14].

- Biological Replicates: Biological replicates—independent samples for the same experimental condition—are required to account for natural biological variation. At least 3 biological replicates per condition are typically recommended, but between 4–8 replicates per sample group are ideal for most experimental requirements, especially when dealing with the high variability that can dampen a genuine drug-induced signal [15].

- Pilot Studies: Conducting a pilot study with a representative sample subset is an excellent way to validate experimental parameters, including time course spacing and variability, before committing to a full-scale, resource-intensive experiment [15].

Table 1: Key Experimental Design Considerations for Temporal Transcriptomics

| Design Factor | Recommendation | Rationale |

|---|---|---|

| Initial Time Points | Within 10-30 minutes of treatment [14] | Captures immediate primary transcriptional responses before secondary effects manifest. |

| Time Course Density | Multiple, tightly spaced points (e.g., 10, 20, 40, 60 min) [14] | Enables observation of the dynamic progression of the transcriptional response. |

| Biological Replicates | Minimum of 3, ideally 4-8 per time point [15] | Ensures statistical power and reliability to account for biological variability. |

| Controls | Untreated and vehicle (mock) controls | Provides a baseline for identifying genuine drug-induced changes. |

Figure 1: Experimental time course design for separating primary and secondary drug effects. Early, dense time points are critical for capturing initial responses.

Advanced RNA-Seq Methodologies

Choosing the appropriate RNA-Seq methodology is a decisive factor in successfully capturing transcriptional dynamics. While standard RNA-Seq is valuable, specific protocols offer superior temporal resolution.

Nascent RNA Sequencing

Nascent RNA sequencing techniques, such as PRO-seq (Precision Run-On sequencing), directly measure the production of new RNAs by capturing RNA polymerase activity. This approach has a significant advantage: it can detect changes in transcription in minutes rather than hours [14]. By assaying transcription itself rather than the steady-state mRNA pool, these methods eliminate the lag from RNA processing and turnover, allowing for the direct detection of primary responses before secondary effects cascade through the cellular system. As demonstrated in a study on the compound celastrol, PRO-seq can reveal dramatic transcriptional effects within 10 minutes of treatment, including a two-wave response pattern that delineates early and later regulatory events [14].

High-Throughput 3'-End Sequencing

For large-scale drug screens involving many compounds, doses, or time points, 3'-end mRNA-Seq methods (e.g., QuantSeq) are a cost-effective and efficient alternative. These methods are ideal for gene expression and pathway analysis and facilitate the processing of larger sample numbers, often by enabling library preparation directly from cell lysates, thus omitting the need for RNA extraction [15]. While they do not offer the same direct view of transcription as nascent RNA-Seq, their efficiency makes them well-suited for generating the large, dense time-course datasets needed for kinetic analysis of drug effects.

Table 2: Comparison of RNA-Seq Methodologies for MoA Studies

| Methodology | Key Feature | Best Suited For | Temporal Resolution |

|---|---|---|---|

| Standard RNA-Seq | Measures steady-state mRNA levels | Profiling overall expression changes; identifying long-term outcomes. | Hours to days |

| Nascent RNA-Seq (e.g., PRO-seq) | Captures actively transcribing RNA polymerases | Identifying primary effects; studying rapid transcriptional regulation and enhancer activity. | Minutes |

| 3'-End mRNA-Seq (e.g., QuantSeq) | Focused on the 3'-end of transcripts; high-throughput | Large-scale screens; dose-response and time-course studies with many samples; pathway analysis. | Hours (improved via design) |

Computational Analysis and Data Integration

The complex, high-dimensional data generated from temporal RNA-Seq studies require robust bioinformatic analyses. Consulting with a bioinformatician during the experimental design phase is essential for success [15].

Differential Expression Analysis

Time-course data necessitates specialized statistical methods to identify differentially expressed genes across multiple time points. Tools like DESeq2 can be applied to read counts from nascent or standard RNA-Seq to pinpoint genes with significant expression changes at each time point relative to the untreated control [14]. In a PRO-seq study on celastrol, this approach identified that ~80% of differentially expressed genes were down-regulated, with a subset showing rapid and dramatic repression within the first 10 minutes, highlighting the immediate primary impact of the compound [14].

Pathway and Network Analysis

Once differentially expressed genes are identified, pathway enrichment analysis is used to place them in a biological context. This helps determine if the drug-induced genes are involved in specific pathways, such as heat shock response or inflammation. Furthermore, regression approaches can be applied to time-course data to identify key transcription factors that drive the observed transcriptional responses [14].

Deep Learning for Prediction

Emerging computational tools like PRnet represent a significant advancement. PRnet is a deep generative model that predicts transcriptional responses to novel chemical perturbations. It uses the compound's molecular structure (as a SMILES string) and the unperturbed cellular transcriptome to forecast the perturbed transcriptional profile. This model can be used for in-silico drug screening by identifying compounds whose predicted expression signature opposes a disease signature, thereby nominating new therapeutic candidates for experimental validation [16].

Figure 2: Workflow of the PRnet deep learning model for predicting transcriptional responses to novel compounds, enabling in-silico screening.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key reagents and materials essential for implementing the protocols described in this application note.

Table 3: Essential Research Reagents and Materials

| Reagent/Material | Function/Application | Example Use Case |

|---|---|---|

| SIRV Spike-in Controls | Artificial RNA controls to measure assay performance, dynamic range, and normalization accuracy [15]. | Quality control and data normalization in large-scale RNA-Seq experiments to ensure consistency. |

| PRO-seq / GRO-seq Reagents | Specific reagents for nascent RNA sequencing protocols to capture newly synthesized RNA [14]. | Identifying primary transcriptional effects within minutes of drug treatment. |

| QuantSeq Library Prep Kit | A 3'-end mRNA-Seq library preparation kit for focused gene expression profiling [17]. | High-throughput, cost-effective drug screening across multiple compounds and time points. |

| gDNA Removal Kit | Critical pre-treatment to remove genomic DNA contamination from RNA samples. | Ensuring clean RNA-Seq libraries free of gDNA-derived reads. |

| rRNA Depletion Kit | Removal of abundant ribosomal RNAs to enrich for mRNA and non-coding RNAs. | Whole transcriptome analysis where non-polyadenylated RNAs are of interest. |

| Cell Line or Organoid Models | Biologically relevant model systems for drug treatment. | Using patient-derived organoids to study intra-tumor response heterogeneity [17]. |

The selection of an appropriate biological model system is a critical first step in the design of RNA-Seq protocols for compound mode of action (MoA) studies. The model must accurately recapitulate key aspects of human biology while remaining experimentally tractable for high-throughput screening. Traditional two-dimensional (2D) cell lines, patient-derived xenografts (PDX), and more recently developed three-dimensional (3D) organoids each present distinct advantages and limitations for probing drug effects transcriptomically [18]. This Application Note provides a structured comparison of these systems and details optimized RNA-Seq protocols tailored for each model, with a specific focus on leveraging organoid technology for high-content MoA deconvolution in drug discovery pipelines.

Comparative Analysis of Model Systems

Characteristics and Applications

Table 1: Comparative analysis of model systems for RNA-Seq in drug discovery.

| Feature | Traditional Cell Lines | Patient-Derived Xenografts (PDX) | Organoids |

|---|---|---|---|

| Complexity | 2D monoculture; low complexity [18] | In vivo; maintains 3D structure [18] | 3D in vitro culture; self-organizing [19] [20] |

| Physiological Relevance | Low; lacks tissue context and cellular crosstalk [18] | High; interacts with host stroma and immune cells [18] | High; recapitulates tissue microarchitecture and function [19] [20] |

| Genetic Stability | Prone to genetic drift and instability over time [18] | Mouse stromal cells eventually replace human stroma [18] | Retains genetic and phenotypic heterogeneity of original tissue over long-term culture [18] |

| Throughput & Scalability | High; suitable for high-throughput screening [18] | Low; time-consuming and expensive [18] | Moderate to high; scalable for drug screening [19] [21] |

| Personalized Medicine Potential | Low; limited patient specificity | Moderate; patient-derived but requires immunodeficient mice [18] | High; can be biobanked from individual patients [18] |

| Typical RNA-Seq Applications | Initial target identification, high-throughput compound screening [15] | Preclinical validation of drug response [18] | High-content MoA studies, personalized drug screening, disease modeling [19] [21] |

Model System Selection Guidelines

- Choose Traditional Cell Lines for large-scale, high-throughput initial compound screens where cost, speed, and scalability are prioritized over physiological complexity [15] [18].

- Utilize Patient-Derived Organoids for high-content MoA studies, patient-specific drug response profiling, and disease modeling where retaining human tissue context and genetic heterogeneity is critical [19] [21] [20].

- Reserve PDX Models for late-stage preclinical validation of efficacy and toxicity, where in vivo context is necessary, acknowledging the higher costs and ethical considerations [18].

RNA-Seq Experimental Design for Compound MoA Studies

Foundational Design Considerations

A robust RNA-Seq experimental design is paramount for generating meaningful MoA data. Begin with a clear hypothesis regarding the expected transcriptional changes induced by the compound [15]. Key considerations include:

- Biological vs. Technical Replicates: Biological replicates (different biological samples per condition) are essential to account for natural variation and ensure findings are generalizable. A minimum of 3 biological replicates per condition is typically recommended, with 4-8 being ideal for increased reliability [15].

- Sample Size and Power: The sample size significantly impacts the ability to detect genuine differential expression. For precious samples (e.g., patient organoids), maximize the use of available material. For more accessible systems (e.g., cell lines), include larger sample numbers to enhance statistical power [15].

- Pilot Studies: Conduct pilot experiments with a representative sample subset to validate experimental parameters, wet lab workflows, and data analysis pipelines before committing to a full-scale study [15].

Critical Experimental Parameters

- Time Points: Compound effects on gene expression are dynamic. Multiple time points may be necessary to capture primary drug effects (direct target engagement) and distinguish them from secondary downstream consequences [15].

- Batch Effects: Design experiments to minimize and enable correction for batch effects—systematic, non-biological variations from processing samples across different times or locations. A balanced plate layout and randomized processing order are recommended [15].

- Controls: Include appropriate "no treatment" and vehicle ("mock") controls. Artificial spike-in RNA controls are valuable for monitoring technical performance, normalization, and data quality throughout the assay [15].

Specialized Protocols for Model Systems

Protocol 1: Targeted RNA-Seq in Organoids for High-Content MoA Screening

Application: TORNADO-seq (Targeted ORganoid NA-seq for Drug Discovery) is a cost-effective ($5 per sample) method for high-content screening that quantifies cell types and differentiation states in intestinal organoids, including responses to differentiation-inducing drugs [21].

Table 2: Key research reagents for organoid culture and TORNADO-seq.

| Reagent Category | Specific Examples | Function |

|---|---|---|

| Extracellular Matrix | Matrigel, ECM hydrogels, synthetic gels [19] | Provides 3D scaffold mimicking the tissue microenvironment. |

| Essential Medium Supplements | R-Spondin 1, Noggin, Wnt-3a (or CHIR99021), EGF [19] | Maintains stem cell niche and supports proliferation. |

| Tissue-Specific Factors | FGF7, FGF10 (lung), Neuregulin-1 (airway), Gastrin (GI) [19] | Promoves tissue-specific morphogenesis and self-renewal. |

| Library Prep Kit | Targeted RNA-Seq Kit (e.g., Lexogen) | Enables highly multiplexed, targeted gene expression profiling. |

Workflow:

- Organoid Culture & Compound Treatment: Culture normal and cancer organoids (e.g., colorectal cancer) in Matrigel with appropriate, defined medium [19] [21]. Treat organoids with compounds of interest across multiple doses.

- Organoid Harvesting & Lysis: Mechanically or enzymatically dissociate organoids. Lyse cells directly for RNA extraction.

- Targeted Library Preparation: Use a targeted RNA-Seq approach (e.g., 3'-end sequencing like QuantSeq) focusing on a predefined gene signature panel relevant to the biology and MoA hypotheses. This method is efficient for large sample numbers [15] [21].

- Sequencing & Analysis: Perform shallow sequencing on a high-throughput sequencer. Analyze data against the custom gene signature to evaluate compound-induced phenotypic changes, such as differentiation state shifts [21].

Diagram 1: TORNADO-seq workflow for organoid MoA screening.

Protocol 2: RNA Extraction and Sequencing from Primary Tissues

Application: This protocol is optimized for extracting high-quality RNA from primary tissues (e.g., surgical specimens, biopsies) for whole transcriptome sequencing, which is essential for benchmarking organoids or creating patient-specific models [19] [22].

Key Considerations and Troubleshooting:

- RNA Degradation: The primary risk is RNase contamination and improper sample handling. Solutions: Use RNase-free reagents and consumables; operate in a clean, dedicated area; wear gloves; flash-freeze fresh tissues in liquid nitrogen and store at -80°C; avoid repeated freeze-thaw cycles [22].

- Genomic DNA Contamination: Causes inhibition in downstream applications. Solutions: Reduce sample input volume if needed; use RNA extraction kits with DNase I digestion steps; employ reverse transcription reagents with genomic DNA removal modules [22].

- Low RNA Yield/Purity: Can result from incomplete homogenization, excessive sample input, or contaminants (protein, polysaccharides, fat). Solutions: Optimize homogenization conditions; adjust sample-to-reagent ratios (e.g., TRIzol volume); increase ethanol wash steps during purification [22].

Workflow:

- Sample Preservation: Immediately post-collection, snap-freeze tissue samples in liquid nitrogen and store at -80°C.

- Homogenization: Grind frozen tissue to a powder under liquid nitrogen. Transfer powder to a lysis buffer containing a strong denaturant (e.g., Guanidine thiocyanate in TRIzol) and β-mercaptoethanol to inactivate RNases.

- RNA Extraction: Perform acid-phenol:chloroform extraction (e.g., with TRIzol) to separate RNA into the aqueous phase. Precipitate RNA with isopropanol.

- RNA Purification: Wash the pellet with 75% ethanol. Dissolve the RNA in RNase-free water. Use a DNase I treatment kit to remove residual genomic DNA.

- Quality Control: Assess RNA integrity (RIN > 8.0) using an Agilent Bioanalyzer or TapeStation. Quantify RNA accurately by fluorometry (e.g., Qubit).

- Library Preparation: For ribodepletion, use Ribo-zero Gold kit to remove ribosomal RNA. For poly-A selection, use oligo(dT) beads. Proceed with standard whole transcriptome library prep (e.g., Illumina TruSeq Stranded mRNA).

Diagram 2: RNA-seq workflow for primary tissues.

Advanced Applications and Integrative Analysis

Single-Cell RNA-Seq for Organoid Characterization

Single-cell RNA sequencing (scRNA-seq) resolves cellular heterogeneity within organoids, providing unprecedented resolution for MoA studies. For example, scRNA-seq of human pancreatic organoids (hPOs) revealed distinct ductal subpopulations, from progenitor to mature states, which would be masked in bulk analyses [23]. This technique is crucial for understanding how compounds affect specific cell types within a complex 3D model.

Critical Tips for scRNA-seq:

- Pilot Experiment: Always conduct a pilot study to optimize dissociation protocols and confirm cell viability, as different organoid types vary in robustness [24].

- Cell Handling: Resuspend cells in an appropriate, EDTA-/Mg2+-/Ca2+-free buffer (e.g., PBS) to prevent interference with reverse transcription. Work quickly from cell collection to lysis or snap-freezing to minimize RNA degradation and transcriptome changes [24].

Quantitative Organoid Validation

Tools like the Web-based Similarity Analytics System (W-SAS) quantitatively assess the fidelity of organoids to native human organs by calculating a similarity percentage based on organ-specific gene expression panels (Organ-GEPs) derived from databases like GTEx [25]. This provides a standardized quality control metric, ensuring organoid models used in MoA studies are physiologically relevant.

Computational Clustering for Subtype Discovery

Feature Selection and Clustering of RNA-seq (FSCseq) is a model-based clustering algorithm designed specifically for RNA-seq count data. It can uncover novel molecular subtypes within cell lines or patient-derived samples, adjust for confounders like batch effects, and select cluster-discriminatory genes, thereby aiding in the interpretation of compound responses across different cellular subtypes [26].

The strategic selection of a model system, coupled with a rigorously designed RNA-Seq protocol, is fundamental to the successful deconvolution of a compound's mode of action. While traditional cell lines offer unmatched throughput for primary screens, and PDX models provide an in vivo context for validation, patient-derived organoids represent a powerful intermediate model that combines high physiological relevance with scalability for intermediate-to-high content MoA studies. By applying the specialized protocols and analytical frameworks outlined in this document—such as TORNADO-seq for high-content organoid screening, rigorous RNA extraction methods for primary tissues, and advanced integrative analyses like scRNA-seq—researchers can generate rich, mechanistically insightful transcriptomic data to accelerate the drug discovery process.

From Bench to Bioinformatics: Implementing RNA-Seq Protocols for MoA Analysis

High-Throughput Screening (HTS) is a critical tool in modern drug discovery, enabling researchers to rapidly test large libraries of chemical or biological compounds to identify promising "hit" compounds that interact with a specific biological target in a desired way [27]. The integration of transcriptomic analyses into this pipeline, particularly through advanced RNA sequencing (RNA-seq) methods, provides a powerful means to understand not just if a compound is active, but how it works. RNA-seq has become an indispensable tool in the drug development pipeline, allowing researchers to explore gene expression profiles, uncover mechanisms of action (MoA), and identify biomarkers of drug sensitivity or resistance [28].

However, traditional RNA-seq methods are often impractical for large-scale screens due to their cost, time requirements, and sensitivity to sample quality. This application note details two tailored solutions—3'-Seq and Discovery-seq—designed to overcome these limitations. These high-throughput workflows enable the transcriptomic phenotyping of thousands of samples, making comprehensive compound screening both feasible and cost-effective [8] [29]. A well-designed RNA-seq experiment begins with a clear hypothesis, which directly influences decisions on the best model system, sample size, sequencing depth, and the specific RNA-seq method to employ [28] [15].

3'-Seq (e.g., DRUG-seq, BRB-seq)

3'-Seq technologies represent a fundamental shift from traditional, full-length RNA-seq. They focus sequencing on the 3' end of mRNA transcripts, which is sufficient for robust gene expression quantification [28]. A key advantage of extraction-free 3' mRNA-seq methods like MERCURIUS DRUG-seq is the ability to process hundreds of cell or organoid samples simultaneously directly from cell lysates, eliminating tedious, time-consuming, and costly RNA isolation and cleanup steps [28]. These methods are typically massively multiplexed, allowing dozens to hundreds of samples to be processed in a single library preparation tube, drastically reducing per-sample costs and handling time [28]. They also demonstrate high sensitivity and robust performance even with degraded RNA samples (RIN as low as 2), which is often a concern for patient-derived samples or RNA from FFPE tissues [28].

Discovery-seq

Discovery-seq is another high-throughput method for performing 3' bulk RNA sequencing on thousands of samples within one experiment [8] [29]. While it exploits similar molecular biology as other 3'-Seq methods, it was developed to improve upon existing protocols like DRUG-seq by enhancing sensitivity and eliminating PCR bias, resulting in higher accuracy and lower cost [8] [29]. The workflow is highly automated and standardized, utilizing robotics and automated steps to ensure both high-throughput and high-quality results [8]. Clients typically submit washed and frozen cells or organoids in plates, and the protocol uses a direct in-well plate lysis method, removing the need for RNA extraction [29]. Discovery-seq offers a significant price reduction—up to a 10-fold decrease compared to traditional RNA-seq methods—making transcriptomic readouts accessible for high-throughput screens [29].

Comparative Analysis of High-Throughput RNA-seq Methods

The table below summarizes the key characteristics of these high-throughput methods against traditional RNA-seq.

Table 1: Comparison of High-Throughput RNA-seq Technologies for Drug Screening

| Feature | Traditional RNA-Seq | 3'-Seq (e.g., DRUG-seq) | Discovery-seq |

|---|---|---|---|

| Throughput | Low to moderate (tens of samples) | High (hundreds to thousands of samples) [28] | Very High (thousands of samples) [8] |

| Multiplexing Capacity | Low or none per tube | High (96-384 samples per tube) [28] | High (96-384 well plates) [8] |

| Typical Cost | High | Cost-effective [28] | Highly cost-effective (10x reduction vs. traditional) [29] |

| RNA Input Quality | Requires high-quality RNA (RIN >8) | Robust for low-quality RNA (RIN as low as 2) [28] | Compatible with cell lysates; no RNA extraction needed [29] |

| Key Innovation | Full-transcript coverage | 3' focusing; direct lysis; early multiplexing [28] | Automated workflow; reduced PCR bias [8] |

| Ideal For | Isoform, splicing, fusion analysis | Large-scale gene expression screens [28] | Massive-scale compound & CRISPR screens [8] |

Application in Compound Mode of Action Studies

A primary application of these high-throughput transcriptomic methods is the elucidation of a compound's Mode of Action (MoA). Performing RNA sequencing on thousands of treated samples allows for a comprehensive understanding of a drug's effects across different cell types, conditions, or doses [8] [29]. This approach enables the identification of both common and unique gene expression patterns, enhancing the precision and reliability of MoA predictions.

- Unbiased Pathway Identification: Unlike targeted assays, Discovery-seq and 3'-Seq provide unbiased whole transcriptome data, uncovering complex drug responses and mechanisms of action that might be missed by hypothesis-driven methods [29]. This can reveal novel therapeutic targets or unexpected off-target effects.

- Dose-Response Profiling: The cost-effectiveness of these methods allows for the generation of detailed concentration-response curves at the transcriptomic level. This quantitative HTS (qHTS) approach helps distinguish specific, potent effects from non-specific toxicity [30].

- Multimodal Data Integration: These transcriptomic workflows are designed to be integrated into existing screening pipelines. Screening plates can first be evaluated using viability assays, cell painting, or high-content screening. Subsequently, the same samples can be snap-frozen and submitted for high-throughput RNA-seq, allowing for the correlation of phenotypic changes with global gene expression alterations [8] [29].

The following diagram illustrates the strategic role of high-throughput transcriptomics in an integrated drug MoA screening workflow.

Experimental Protocol and Workflow

A successful high-throughput RNA-seq screen requires careful planning and execution. The following protocol outlines the key steps from experimental design to data delivery.

Table 2: Key Experimental Parameters for High-Throughput RNA-seq Screens

| Parameter | Recommendation | Notes |

|---|---|---|

| Cell Seeding Density | 3,000 - 10,000 cells/well [29] | As few as 2,500 cells may be used [8]. |

| Biological Replicates | Minimum 3, ideally 4-8 per condition [28] [15] | Critical for capturing biological variability and statistical power. |

| Controls | Untreated/vehicle controls; spike-in RNAs (SIRVs, ERCC) [28] [15] | Controls differentiate drug effects from background and assess technical performance. |

| Sequencing Depth | 1-4 million reads/sample [29] | 1-2M reads recovers ~12,000 genes; deeper sequencing for increased sensitivity [29]. |

| Read Configuration | Single-end (SR) 75-100 bp [28] | Sufficient for 3' gene counting; paired-end needed for inline barcodes/UMIs [28]. |

Detailed Workflow Steps

The step-by-step workflow for a high-throughput screen using technologies like Discovery-seq is visualized below.

Experimental Design and Plate Seeding: Begin with a clear hypothesis and aim [15]. Seed cells or organoids in 96- or 384-well plates at an optimized density (e.g., 3,000-10,000 cells/well) [29]. Treat with compound libraries, ensuring inclusion of appropriate controls (e.g., untreated, vehicle) and a sufficient number of biological replicates (minimum 3) to account for biological variation [28] [15]. Plan the plate layout to minimize and enable correction for batch effects [28].

Sample Preparation and Submission: After treatment and any preliminary phenotypic assays, wash cells with PBS to remove media contaminants. Snap-freeze the cell pellets or organoids in the plate and submit for sequencing [8] [29]. For DRUG-seq and similar methods, this is the point of transition to a direct lysis protocol.

Library Preparation (3'-Seq/Discovery-seq): The core of the protocol involves in-well lysis, which bypasses the need for total RNA extraction [28] [29]. This is followed by reverse transcription. A key step is the early introduction of sample-specific barcodes during cDNA synthesis, allowing for massive multiplexing by pooling hundreds of samples before subsequent amplification and library construction steps [28] [8]. This early pooling significantly reduces hands-on time and costs.

Sequencing: Sequence the pooled libraries on an appropriate high-throughput platform (e.g., Illumina). For 3'-Seq methods, a sequencing depth of 1-4 million reads per sample is typically sufficient for robust gene expression quantification, which is significantly lower than the 20-30 million reads per sample often recommended for standard bulk RNA-seq, contributing to the cost savings [28] [29].

Data Analysis and Delivery: The standard data analysis pipeline includes demultiplexing (assigning reads to samples based on barcodes), read alignment to a reference genome, and gene-level quantification. A typical deliverable is an exploratory report containing quality control metrics (e.g., number of genes detected per sample) and initial analyses, such as differential expression between treatment and control groups [8] [29].

The Scientist's Toolkit: Essential Research Reagents and Materials

The successful implementation of high-throughput transcriptomic screens relies on a set of key reagents and materials. The following table details these essential components.

Table 3: Essential Research Reagent Solutions for High-Throughput RNA-seq

| Reagent / Material | Function | Application Notes |

|---|---|---|

| Cell/Organoid Models | Biologically relevant system for compound testing. | Compatible with various animal species; organoids provide more physiological relevance [8] [15]. |

| Compound Libraries | Source of chemical perturbations for screening. | Can include small molecules, siRNAs, CRISPR guides, or antibodies [8] [27]. |

| Lysis Buffer | Cell membrane disruption and RNA stabilization. | Enables direct in-well lysis, eliminating need for RNA extraction [28] [29]. |

| Barcoded Reverse Transcription Primers | cDNA synthesis and sample multiplexing. | Primers contain sample barcodes and Unique Molecular Identifiers (UMIs) for pooling and accurate quantification [28]. |

| Automated Liquid Handling Systems | Precision and reproducibility in plate processing. | Robotics are essential for standardization and throughput in 384-well formats [8] [30]. |

| Spike-in RNA Controls (e.g., ERCC, SIRV) | Internal standards for technical performance. | Used for normalization, assessing sensitivity, reproducibility, and dynamic range [28] [15]. |

High-throughput RNA-seq workflows, specifically 3'-Seq and Discovery-seq, have revolutionized the scale and efficiency at which researchers can integrate transcriptomic phenotyping into drug discovery pipelines. By offering a cost-effective, scalable, and robust solution for processing thousands of samples, these methods move beyond simple hit identification to enable deep mechanistic insights into compound MoA. Strategic experimental design—incorporating appropriate controls, replicates, and batch effect management—is paramount to generating high-quality, biologically meaningful data. The adoption of these technologies empowers scientists to deconvolute complex drug responses more comprehensively, accelerating the journey from compound screening to target identification and validation.

In the field of transcriptomics, next-generation sequencing (RNA-Seq) has become an indispensable tool for elucidating the mode of action (MoA) of chemical compounds in drug discovery research. The strategic selection of a library preparation method directly influences the depth of biological insight, experimental cost, and scalability of MoA studies. While standard full-length RNA-Seq provides comprehensive transcriptome coverage, emerging 3'-end mRNA-Seq methods now enable high-throughput screening at a fraction of the cost, making them particularly suitable for large-scale compound testing. This application note examines the key considerations for selecting appropriate library preparation strategies that balance information content with practical constraints in pharmaceutical research settings. We present quantitative comparisons, detailed protocols, and strategic frameworks to guide researchers in optimizing their experimental designs for robust and economically viable MoA studies.

RNA-Seq Library Preparation Technologies: A Comparative Analysis

The choice between full-length and 3'-end RNA-Seq methods represents the primary strategic decision in designing MoA studies. Full-length RNA-Seq, exemplified by protocols such as Illumina's TruSeq Stranded mRNA, sequences fragments distributed across the entire transcript, enabling comprehensive analysis of splicing variants, fusion genes, and nucleotide polymorphisms [15]. In contrast, 3'-end methods such as BOLT-seq, BRB-seq, and 3'Pool-seq focus sequencing on the 3'-terminal region of mRNA transcripts, providing accurate gene expression quantification with significantly reduced sequencing depth requirements and costs [31] [32].

For MoA studies, this distinction carries significant implications. While full-length protocols are essential for investigating compounds that potentially alter splicing patterns (e.g., certain chemotherapeutic agents), 3'-end methods provide sufficient information for the majority of cases where differential gene expression analysis is the primary goal. The substantially lower cost of 3'-end methods enables researchers to include more biological replicates, test more compound concentrations, and analyze more time points within the same budget, thereby increasing the statistical power and temporal resolution of MoA studies [28].

Quantitative Comparison of Library Preparation Methods

The following table summarizes key performance and cost metrics for prominent RNA-Seq methods applicable to compound MoA studies:

Table 1: Comparative Analysis of RNA-Seq Library Preparation Methods

| Method | Approximate Cost per Sample (excl. sequencing) | Hands-on Time | Optimal Sequencing Depth | Key Applications in MoA Studies |

|---|---|---|---|---|

| Traditional Full-Length (e.g., TruSeq) | $64-$69 [33] | 2-3 days | 20-30 million reads/sample [28] | Splicing analysis, isoform characterization, fusion detection |

| BOLT-seq | <$1.40 [31] | ~2 hours [31] | 3-5 million reads/sample | High-throughput compound screening, time-course experiments |

| BRB-seq | ~$24 [33] | ~4 hours | 3-5 million reads/sample [33] | Mid-to-high-throughput screening, dose-response studies |

| 3'Pool-seq | ~90% reduction vs. TruSeq [32] | <12 hours [32] | 3-5 million reads/sample | Large-scale compound profiling, mechanism-based clustering |

Additional economic considerations extend beyond per-sample preparation costs. The reduced sequencing requirements of 3'-end methods (typically 3-5 million reads per sample compared to 20-30 million for full-length protocols) create a compounding cost-saving effect [33]. When implemented at full capacity on high-throughput sequencing platforms such as the Illumina NovaSeq S4 flow cell, the total cost per sample for 3'-end methods can approach $4.60, comparable to profiling four genes by qRT-PCR [33].

Experimental Workflow Comparison

The following diagram illustrates the procedural differences between traditional full-length and streamlined 3'-end RNA-Seq workflows, highlighting steps where 3'-end methods achieve significant efficiency gains:

Diagram 1: Workflow comparison between traditional full-length and modern 3'-end RNA-Seq methods. 3'-end protocols eliminate multiple purification and processing steps, significantly reducing hands-on time and cost [31] [28].

Recommended Protocols for Compound Mode of Action Studies

BOLT-seq: A Cost-Effective Approach for High-Throughput Screening

The Bulk transcriptOme profiling of cell Lysate in a single poT (BOLT-seq) method enables library construction directly from crude cell lysates, eliminating the need for RNA purification and significantly streamlining the workflow for processing large compound libraries [31]. This protocol is particularly suitable for dose-response studies and time-course experiments where hundreds of samples need to be processed economically.

Protocol Steps

Cell Lysis and RNA Denaturation

- Seed cells in 96-well or 384-well plates and treat with test compounds

- Wash cells twice with DPBS and lyse in 60 µL of lysis buffer containing 0.3% IGEPAL CA-630

- Incubate plates for 30 minutes with shaking at 800 RPM

- Transfer 6 µL of cell lysate to a PCR tube

- Add 1 µL RT-Mix-A containing 10 µM anchored oligo(dT)30-P7 RT primer and 1 µL 1 mM dNTP mix

- Denature RNA at 65°C for 5 minutes, then quickly cool on ice for 3 minutes [31]

Reverse Transcription

- Add 7 µL RT-Mix-B containing 117 mM Tris-HCl (pH 8.3), 175 mM KCl, 7 mM MgCl2, 23 mM DTT, 23% PEG8000 (w/v), 5 U RNase OUT Ribonuclease inhibitor, and 0.5 µL in-house purified M-MuLV reverse transcriptase

- Incubate at 50°C for 60 minutes

- Inactivate reaction at 80°C for 10 minutes

- No purification step is required at this stage [31]

Tagmentation

- Add 5 µL of TD-Mix containing 40 mM Tris-HCl (pH 7.5), 20 mM MgCl2, 30% PEG8000 (w/v), 20% tetraethylene glycol, and 0.5 µL of in-house purified Tn5 transposase

- Incubate at 55°C for 30 minutes

- Stop tagmentation by adding 5 µL of 0.2% SDS

- No purification step is required at this stage [31]

Gap-Filling and PCR Amplification

- Add 25 µL PCR-Mix containing 5x HiFi Fidelity Buffer, KAPA dNTP Mix, KAPA HiFi HotStart DNA Polymerase, 0.5 µL in-house purified reverse transcriptase, and 2 µL NGS indexed primers

- Perform gap-filling and PCR with the following program:

- 50°C for 10 minutes (gap-filling)

- 95°C for 3 minutes (initial denaturation and RT inactivation)

- 18 cycles of: 95°C for 30 seconds, 60°C for 30 seconds, 72°C for 30 seconds

- 72°C for 3 minutes (final extension) [31]

- Purify the indexed products at 0.6X with SpeedBead Magnetic Carboxylate Modified Particles

- Quantify library concentration and quality before sequencing

Experimental Design Considerations for MoA Studies

Robust experimental design is critical for generating meaningful MoA data from RNA-Seq experiments. The following strategic considerations ensure statistical reliability and biological relevance:

Biological Replicates

- Include a minimum of 3 biological replicates per condition to account for natural biological variation

- Ideally increase to 4-8 replicates for cell line studies where sample availability is not limiting [15] [28]

- Biological replicates should originate from independent cell cultures treated separately with the compound of interest

Controls and Benchmark Compounds

- Include vehicle controls (e.g., DMSO) treated in parallel with experimental compounds

- Incorporate benchmark compounds with known mechanisms of action to facilitate pattern recognition and MoA classification

- Consider using spike-in controls (e.g., ERCC RNA) for normalization and quality control, particularly in large-scale studies [15]

Time Points and Concentrations

- Include multiple time points (e.g., 6, 24, 48 hours) to capture both primary and secondary transcriptional responses

- Test multiple compound concentrations to establish dose-response relationships and distinguish specific from nonspecific effects [28]

Plate Layout and Batch Effects

- Distribute replicates across different plates to avoid confounding technical batch effects with biological conditions

- Randomize compound placement to prevent systematic bias

- Include control samples on every plate to monitor technical variability [15]

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of RNA-Seq library preparation for MoA studies requires careful selection of reagents and materials. The following table details essential components and their functions:

Table 2: Essential Research Reagents and Materials for RNA-Seq Library Preparation

| Reagent/Material | Function | Example Products |

|---|---|---|

| Cell Lysis Reagent | Releases RNA while maintaining stability for direct library preparation | IGEPAL CA-630 [31] |

| Anchored Oligo(dT) Primers | Binds to poly-A tail of mRNA and initiates reverse transcription; contains platform-specific adapter sequences | Integrated DNA Technologies custom primers [31] |

| Reverse Transcriptase | Synthesizes cDNA from mRNA templates | M-MuLV RT [31] |

| Tn5 Transposase | Fragments and tags cDNA in a single step (tagmentation) | In-house purified Tn5 [31] |

| RNAse Inhibitor | Prevents RNA degradation during reverse transcription | RNase OUT [31] |

| Indexed PCR Primers | Adds sample-specific barcodes and platform-compatible adapters for multiplexing | Nextera-style indexes, TruSeq indexes [31] [32] |

| High-Fidelity DNA Polymerase | Amplifies library fragments with minimal bias and errors | KAPA HiFi HotStart [31] |

| Magnetic Beads | Purifies and size-selects final libraries | SpeedBead Magnetic Carboxylate Modified Particles [31] |

| Spike-in RNA Controls | Monitors technical performance and enables cross-sample normalization | ERCC RNA Spike-In Mix, SIRVs [15] |

Strategic Implementation Framework

Decision Pathway for Method Selection

The following diagram outlines a systematic approach for selecting the appropriate library preparation method based on specific research objectives and constraints in MoA studies:

Diagram 2: Decision pathway for selecting RNA-Seq library preparation methods in compound MoA studies. This framework prioritizes research questions and practical constraints to guide method selection [31] [15] [28].

Data Analysis Considerations for MoA Studies

Following library preparation and sequencing, appropriate bioinformatic analysis is essential for extracting meaningful insights about compound mechanisms:

Quality Control and Preprocessing

- Assess raw read quality using FastQC or similar tools

- Remove adapter sequences and low-quality bases using Trimmomatic or Cutadapt

- Align reads to reference genome using splice-aware aligners such as STAR [31]

Quantification and Differential Expression

- Generate count matrices using featureCounts or HTSeq

- Perform differential expression analysis with DESeq2 or edgeR

- Apply multiple testing correction to control false discovery rate

MoA-Specific Analysis

- Conduct gene set enrichment analysis (GSEA) to identify affected pathways

- Compare expression profiles to reference databases (e.g., LINCS L1000) to identify similar compounds

- Perform clustering analysis to group compounds with similar mechanisms

- Construct interaction networks to elucidate signaling pathways

Strategic selection of RNA-Seq library preparation methods represents a critical decision point in designing compound MoA studies. While full-length RNA-Seq methods remain necessary for investigating specific mechanisms involving splicing alterations or novel transcript discovery, 3'-end methods such as BOLT-seq and BRB-seq offer compelling advantages for high-throughput screening applications where cost and scalability are primary concerns. The protocols and frameworks presented herein provide researchers with practical guidance for implementing these technologies in drug discovery pipelines. By aligning method selection with specific research objectives and applying rigorous experimental design principles, scientists can maximize the informational return on investment while advancing the understanding of compound mechanisms through transcriptomic profiling.

Within drug discovery, RNA sequencing (RNA-Seq) has become an indispensable tool for elucidating the mode of action (MoA) of novel compounds. The power of this transcriptomic analysis, however, is wholly dependent on the rigor of its experimental design [15] [28]. A carefully constructed plan that meticulously defines time points, dosing regimens, and control groups is paramount for distinguishing genuine, drug-induced transcriptional changes from background biological variation and technical artifacts. This document provides detailed application notes and protocols to guide researchers in designing robust RNA-Seq experiments specifically for compound MoA studies, ensuring that the resulting data is biologically meaningful and statistically sound.

Core Design Considerations for MoA Studies

Time Point Selection

The temporal dimension of gene expression response is critical for MoA studies, as drug effects can be transient, sustained, or delayed. Capturing the dynamic transcriptional landscape is essential for distinguishing primary drug targets from secondary downstream effects [15].

Table 1: Time Point Selection Strategy for MoA Studies

| Time Point Category | Typical Range | Rationale and Application | Key Considerations |

|---|---|---|---|

| Early Phase | 30 minutes - 4 hours | Captures immediate-early response genes and primary drug effects on direct targets. | Useful for distinguishing primary from secondary effects; may miss later phenotypic changes. |

| Intermediate Phase | 8 - 24 hours | Assesses established transcriptional reprogramming and secondary response waves. | A common and often essential range for capturing a broad spectrum of MoA-related changes. |

| Late Phase | 48 - 72 hours | Reveals downstream consequences, adaptive responses, and potential compensatory mechanisms. | May be confounded by secondary effects like cell toxicity or differentiation. |

Protocol: Designing a Time-Course Experiment

- Define Objectives: Determine if the goal is to capture the peak response, understand kinetics, or distinguish primary from secondary effects. Kinetic RNA-Seq approaches, such as SLAMseq, can be specifically employed to monitor RNA synthesis and decay rates globally [15].

- Conduct a Pilot Study: Use a subset of conditions and a wide range of time points (e.g., 1h, 4h, 12h, 24h, 48h) to identify periods of maximal transcriptional change for your specific compound and model system.

- Balance Sample Number: As multiple time points and replicates significantly increase the total number of samples, this approach is often applied to select candidate compounds for in-depth MoA investigation to keep the study manageable [15].

- Standardize Processing: Harvest and process all samples for RNA extraction in an identical manner to minimize technical batch effects introduced across different time points [11].

Dosing and Concentration

Selecting appropriate compound concentrations is vital for interpreting the pharmacological relevance of observed transcriptional changes.

Table 2: Dosing Strategy for Transcriptomic Profiling

| Dosing Approach | Concentration Range | Rationale and Data Output | Advantages |

|---|---|---|---|

| Single High Dose | IC50 or EC50 (e.g., 1-10 µM) | Generates a strong signal for initial MoA hypothesis generation. | Simpler, more cost-effective; good for initial screens. |

| Dose-Response | Multiple concentrations (e.g., 0.1x, 1x, 10x IC50) | Provides data on concentration-dependent effects, enhancing MoA interpretation and specificity. | Identifies pathways that are dose-responsive; helps separate on-target from off-target effects. |

Protocol: Establishing a Dose-Response RNA-Seq Workflow

- Determine Potency: Prior to RNA-Seq, establish the IC50 (for inhibitory compounds) or EC50 (for activators) using relevant phenotypic assays (e.g., viability, pathway reporter assays).

- Select Concentrations: Choose a minimum of three concentrations spanning a range around the IC50/EC50 (e.g., a low sub-therapeutic dose, the IC50/EC50, and a high supra-therapeutic dose).

- Include a Vehicle Control: A DMSO control (or other relevant vehicle) is mandatory for each dose and time point to account for solvent effects [34].

- Integrate with Screening: For large-scale compound screens, dosing can be performed in 384-well or 1536-well plate formats, with subsequent RNA-Seq analysis using high-throughput methods like Discovery-seq or DRUG-seq [8] [28].

Control Selection

Proper controls are the foundation for attributing observed gene expression changes to the compound's specific MoA and not to experimental variables.

Table 3: Essential Control Groups for RNA-Seq MoA Studies

| Control Type | Description | Purpose in Experimental Design |

|---|---|---|

| Untreated / Vehicle Control | Cells or model system treated with the compound's solvent (e.g., DMSO) at the same concentration as experimental groups. | Serves as the baseline for identifying differential expression; accounts for effects of the solvent itself [28] [34]. |