Optimizing NGS Library Prep for Chemogenomic cDNA: A 2025 Guide for Robust Transcriptomic Profiling in Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on optimizing Next-Generation Sequencing (NGS) library preparation specifically for chemogenomic cDNA studies.

Optimizing NGS Library Prep for Chemogenomic cDNA: A 2025 Guide for Robust Transcriptomic Profiling in Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on optimizing Next-Generation Sequencing (NGS) library preparation specifically for chemogenomic cDNA studies. It covers foundational principles, from nucleic acid extraction to adapter ligation, and details tailored methodological approaches for handling limited, drug-perturbed samples. The content explores critical troubleshooting strategies to mitigate bias and contamination, and offers a framework for the rigorous validation and comparative analysis of library quality. By synthesizing current methodologies and emerging trends, this guide aims to empower scientists to generate high-quality, reproducible transcriptomic data that can reliably inform mechanism-of-action studies and therapeutic development.

The Building Blocks of Chemogenomic NGS: From cDNA to Sequencing-Ready Libraries

Within the context of chemogenomic cDNA research, the quality and success of next-generation sequencing (NGS) experiments are fundamentally dependent on the initial construction of the sequencing library. Proper library preparation minimizes biases, ensures even coverage, and reduces errors, leading to high-quality data essential for discovering novel drug targets and understanding cellular responses to chemical compounds [1]. This application note details the core principles of three critical steps in NGS library preparation—fragmentation, end-repair, and adapter ligation—providing optimized protocols and quantitative data to guide researchers and drug development professionals in generating robust sequencing libraries from cDNA.

Core Step 1: Fragmentation

Principle and Purpose

Fragmentation generates DNA fragments of a uniform, desired length, which is a prerequisite for most short-read sequencing technologies [2]. The optimal insert size is determined by both the sequencing platform's limitations and the specific application [3]. For instance, in cDNA research, fragment size can be tailored for basic gene expression analysis or for more complex investigations into alternative splicing and transcript isoforms [4].

Quantitative Comparison of Fragmentation Methods

The two primary methods for fragmenting DNA are physical and enzymatic. The choice of method impacts sequence bias, required equipment, and hands-on time.

Table 1: Comparison of DNA Fragmentation Methods

| Method | Principle | Optimal Insert Size | Advantages | Disadvantages/Limitations |

|---|---|---|---|---|

| Physical (e.g., Acoustic Shearing) | Uses acoustic energy or sonication to shear DNA [2]. | 100–5000 bp [3]. | Accurate, unbiased results with uniform coverage [2] [1]. | Requires specialized equipment (e.g., Covaris) [2]. |

| Enzymatic | Digests DNA using non-specific endonucleases (e.g., Fragmentase) [3]. | Adjustable via digestion time. | Quick, easy, no special equipment required [2]. | Can introduce sequence bias and a greater number of artifactual indels [3] [5]. |

| Tagmentation | Uses a transposase enzyme to simultaneously fragment and tag DNA with adapters [3] [6]. | Fixed by kit design (e.g., ~450 bp) [5]. | Rapid, reduced sample handling and preparation time [3]. | May exhibit higher sequence bias and offers less flexibility in size modulation [5] [1]. |

Detailed Protocol: Enzymatic Fragmentation of cDNA

Application Note: This protocol is optimized for generating cDNA libraries for transcriptome analysis in chemogenomic studies, where sample input can be limited.

- Reaction Setup: In a nuclease-free PCR tube, combine the following components on ice:

- cDNA (from reverse transcription): 10–100 ng

- 10X Fragmentation Buffer: 5 µL

- Enzymatic Fragmentation Mix: 2 µL

- Nuclease-free water to a final volume of 50 µL.

- Incubation: Place the tube in a thermal cycler and incubate at 20–37°C for 10–20 minutes. The fragmentation time must be optimized empirically to achieve the desired insert size (see Table 1).

- Reaction Termination: Add 5 µL of 0.5 M EDTA to the tube to chelate divalent cations and stop the enzymatic reaction. Mix thoroughly.

- Purification: Purify the fragmented cDNA using magnetic beads or a spin column according to the manufacturer's instructions. Elute in 20 µL of nuclease-free water or an appropriate elution buffer.

- Quality Control: Analyze 1 µL of the purified product using a Bioanalyzer or Tapestation to verify the fragment size distribution.

Core Step 2: End-Repair

Principle and Purpose

Fragmentation produces DNA ends that are often uneven and lack the necessary 5'-phosphate groups for ligation. The end-repair (or "end-polishing") step converts these mixed overhangs into blunt-ended, 5'-phosphorylated fragments, making them compatible with sequencing adapters [3] [1].

Detailed Protocol: End-Repair and A-Tailing

This one-tube protocol combines the end-repair and A-tailing reactions for efficiency.

- Reaction Setup: Combine the following with the purified, fragmented cDNA from the previous step:

- Fragmented cDNA: 20 µL

- 10X End-Repair Buffer: 5 µL

- End-Repair Enzyme Mix (containing T4 DNA Polymerase, T4 Polynucleotide Kinase, and Klenow Fragment): 5 µL

- Nuclease-free water to a final volume of 50 µL.

- Incubation: Incubate the reaction at 20–25°C for 30 minutes.

- A-Tailing Reaction: Without purifying the end-repaired products, add the following directly to the same tube:

- 10X A-Tailing Buffer: 5 µL

- Klenow Fragment (exo–) or Taq Polymerase: 2 µL

- dATP (1 mM): 3 µL.

- Incubation: Incubate at 37°C (for Klenow) or 72°C (for Taq) for 30 minutes. This adds a single 'A' base to the 3' ends of the blunt-ended fragments.

- Purification: Purify the A-tailed DNA using magnetic beads. Elute the final product in 20 µL of nuclease-free water or elution buffer.

Core Step 3: Adapter Ligation

Principle and Purpose

Adapter ligation covalently attaches platform-specific oligonucleotide adapters to the prepared cDNA fragments using a ligase enzyme [2]. These adapters are critical as they:

- Provide complementary overhangs for ligation to the A-tailed fragments.

- Contain sequences for binding to the flow cell during cluster generation.

- Can include barcodes (indexes) for multiplexing samples and unique molecular identifiers (UMIs) for error correction and accurate variant detection [2] [6].

Detailed Protocol: Adapter Ligation

Application Note: The adapter-to-insert ratio is critical for maximizing ligation efficiency and minimizing adapter-dimer formation.

- Reaction Setup: In a clean tube, combine the following:

- A-tailed cDNA: 20 µL

- Universal or Indexed Adapter (15 µM): 2.5 µL

- 10X DNA Ligase Buffer: 5 µL

- T4 DNA Ligase: 2.5 µL

- Nuclease-free water to a final volume of 50 µL.

- A ~10:1 molar ratio of adapter to insert fragment is typically optimal [3].

- Incubation: Incubate at 20–25°C for 30–60 minutes.

- Purification and Size Selection: Purify the ligated product using magnetic beads with a double-sided size selection to remove excess adapters and adapter dimers. This step is crucial as adapter dimers can cluster efficiently and consume sequencing capacity [3] [1].

- Library QC and Quantification: Quantify the final library using a fluorometric method (e.g., Qubit) and qualify it using a Bioanalyzer to confirm the absence of adapter dimers and the correct size profile. For precise clustering on the sequencer, use quantitative PCR (qPCR) or digital PCR (ddPCR) for absolute quantification, as these methods provide superior accuracy compared to spectrophotometry [7].

Performance Data and Troubleshooting

Impact of Library Preparation on Sequencing Performance

Recent comparative studies of commercial library prep kits highlight key performance parameters.

Table 2: Performance of Selected Library Prep Kits in Whole Genome Sequencing [5]

| Kit Name | Technology | Input DNA (PCR-free) | Average Insert Size (by seq. reads) | Key Performance Notes |

|---|---|---|---|---|

| Nextera DNA Flex (Illumina) | Tagmentation | 100 ng | 366 bp | Requires PCR for indexing. Fixed insert size. |

| KAPA HyperPlus (Roche) | Enzymatic | 100 ng | 227 bp | Libraries with longer inserts avoid read overlap, improving genome coverage and SNV/indel detection. |

| NEBNext Ultra II FS (NEB) | Enzymatic | 100 ng | 188 bp | Minimal PCR cycles required. Performance is improved with optimized fragmentation. |

Common Challenges and Solutions

- Challenge: Adapter Dimer Formation. Excess adapters can ligate to each other, creating small fragments that dominate sequencing output [3] [1].

- Solution: Precisely control the adapter-to-insert ratio and perform a rigorous bead-based or gel-based size selection after ligation [3].

- Challenge: PCR Amplification Bias. Excessive PCR cycles during library amplification can distort sequence heterogeneity and reduce library complexity [7] [4].

- Challenge: Insert Size Deviation. Enzymatic fragmentation times that are not optimized can yield insert sizes that deviate from the target, affecting coverage [5].

- Solution: Optimize fragmentation conditions (time, temperature) for each sample type and input amount.

The Scientist's Toolkit: Essential Reagents

Table 3: Key Research Reagent Solutions for NGS Library Prep

| Item | Function | Example Kits/Products |

|---|---|---|

| Enzymatic Fragmentation Mix | Digests double-stranded cDNA/DNA into fragments of desired length. | xGen DNA Library Prep EZ Kit (IDT) [2], KAPA HyperPlus Kit (Roche) [5] |

| Methylated Adapters | Oligonucleotides containing sequencing compatibility sites, indexes for multiplexing, and UMIs. Methylation prevents digestion by certain restriction enzymes. | Illumina TruSeq UDI Adapters [6] |

| T4 DNA Ligase | Covalently links the adapter to the A-tailed DNA fragment. | Found in most commercial ligation-based kits (e.g., IDT, Illumina) [2] [1] |

| Size Selection Beads | Magnetic beads used to purify nucleic acids and select for a specific fragment size range, crucial for removing adapter dimers. | SPRIselect Beads (Beckman Coulter) |

| High-Fidelity DNA Polymerase | Amplifies the adapter-ligated library with minimal bias during optional PCR enrichment. | KAPA HiFi HotStart ReadyMix (Roche) |

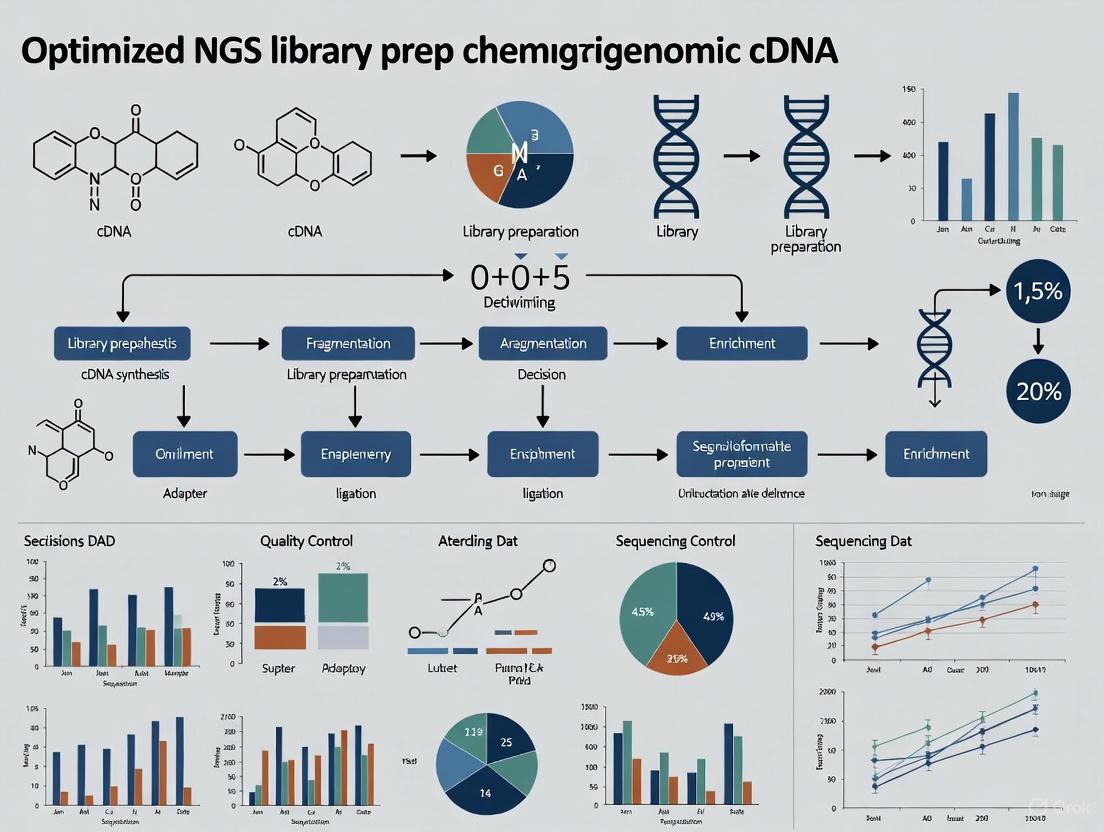

Workflow Visualization

The following diagram illustrates the complete workflow for the core steps of NGS library preparation, from fragmented cDNA to a sequencer-ready library.

Mastering the core principles of fragmentation, end-repair, and adapter ligation is non-negotiable for generating high-quality NGS libraries, especially in the demanding field of chemogenomic cDNA research. The protocols and data presented here provide a robust foundation for constructing libraries that ensure high data quality, minimize biases, and yield accurate, reproducible sequencing results. By carefully selecting fragmentation methods, optimizing reaction conditions, and implementing rigorous quality control, researchers can significantly enhance the reliability of their downstream analyses, thereby accelerating drug discovery and the understanding of chemical-genetic interactions.

Chemogenomics research, which explores the complex interactions between chemical compounds and biological systems, places unique and demanding requirements on next-generation sequencing (NGS) library preparation. The field inherently grapples with two major technical challenges: sample scarcity and complex transcriptomic responses. Researchers often work with limited material, such as rare cell populations treated with compound libraries or patient-derived samples exposed to drug candidates, where starting RNA can be exceptionally scarce [8]. Furthermore, the biological responses to chemical perturbations are multifaceted, involving subtle shifts in diverse RNA species that require highly sensitive and accurate detection methods [9]. This application note details optimized protocols and solutions specifically designed to overcome these challenges, enabling robust and reproducible cDNA library construction for chemogenomic studies.

Quantitative Challenges in Chemogenomic Library Preparation

The success of NGS in chemogenomics is highly dependent on the quantity and quality of input material. The table below summarizes key performance metrics for library preparation methods under conditions of sample scarcity, highlighting the critical thresholds for maintaining data quality.

Table 1: Performance Metrics of Library Prep Methods with Limited Input RNA

| Input RNA Amount | Number of Genes Detected | Detection of Low-Abundance Genes (FPKM 0-5) | Recommended Reverse Transcriptase | Key Limitations |

|---|---|---|---|---|

| 1 ng (bulk sample) | ~18,743 genes | Standard detection | Multiple options | Baseline for comparison |

| 5 pg | ~11,754 genes | Good detection with optimized protocols | Maxima H Minus | ~37% reduction in gene detection |

| 2 pg | Significant reduction | Moderate detection | Maxima H Minus | Mapping rate to marker genes drops to ~50% |

| 0.5 pg | >2,000 genes | Compromised without specialized methods | Maxima H Minus | Requires ultralow input optimization |

Even minor technical variations can significantly impact results. For instance, a pipetting inaccuracy of just 5% can result in a 2 ng variation in template DNA, which becomes critically important when working with scarce samples [10]. Additionally, inefficient library construction is reflected by a low percentage of fragments with correct adapters, leading to decreased sequencing data and increased chimeric fragments [4]. Batch effects arising from variations in reagents, equipment, or operator-related factors can substantially affect gene expression analysis outcomes, with particularly severe impacts on miRNA-seq data [10].

Optimized Protocols for Challenging Chemogenomic Samples

Ultralow Input RNA Sequencing Protocol

Based on systematic optimization studies, the following protocol significantly enhances sensitivity and low-abundance gene detection for scarce chemogenomic samples [8]:

Day 1: Reverse Transcription with Enhanced Efficiency

- Sample Preparation: Dilute extracted RNA in RNase-free water. For inputs below 10 pg, use siliconized (low retention) microcentrifuge tubes to maximize recovery.

- Reverse Transcription Master Mix:

- 2.0 μL 5X RT Buffer

- 1.0 μL dNTPs (10 mM each)

- 0.5 μL RNase Inhibitor (40 U/μL)

- 0.5 μL Maxima H Minus Reverse Transcriptase (200 U/μL)

- 1.0 μL Template-Switching Oligo (rN-modified TSO, 20 μM)

- 1.0 μL Gene-Specific Primer (2 μM)

- Add RNA sample (0.5-10 pg total RNA)

- Adjust to 10.0 μL with nuclease-free water

- Incubation:

- 42°C for 90 minutes

- 70°C for 5 minutes (enzyme inactivation)

- Hold at 4°C

Day 2: cDNA Amplification and Library Construction

- PCR Amplification:

- 10.0 μL RT product

- 15.0 μL 2X PCR Master Mix

- 1.0 μL PCR Primer (25 μM)

- 4.0 μL Nuclease-free water

- Thermocycling Conditions:

- 98°C for 3 minutes

- 18-22 cycles of:

- 98°C for 15 seconds

- 60°C for 30 seconds

- 72°C for 3 minutes

- 72°C for 5 minutes

- Hold at 4°C

- Purification and QC:

- Purify PCR products using magnetic beads (size selection if necessary)

- Quantify using fluorometric methods

- Assess quality via Bioanalyzer/TapeStation

This optimized protocol incorporates rN-modified template-switching oligos (TSO) and m7G-capped RNA templates to significantly improve sequencing sensitivity and low-abundance gene detection capability [8].

Automated Library Preparation for Enhanced Reproducibility

Automation addresses several challenges in chemogenomic library prep, particularly for screening applications involving multiple compounds or time points:

System Setup:

- Utilize liquid handling systems with non-contact dispensing technology

- Implement integrated thermocyclers to minimize sample transfer

- Configure for 96-well or 384-well plates based on throughput needs

Workflow Advantages:

- Reduced hands-on time: Approximately 30 minutes hands-on time for 96 samples with systems like ExpressPlex [10]

- Improved consistency: Inter-user variation coefficient reduced from >15% to <5%

- Miniaturized reactions: Capacity to work with sub-microliter volumes conserves precious samples

- Contamination control: Closed systems minimize environmental exposure [11]

Implementation Considerations:

- Select automation-compatible reagents (e.g., bead-based purification chemistries)

- Validate performance with standard reference materials

- Establish QC checkpoints for critical steps

Ultrasensitive Library Prep Workflow for Scarce Samples

Essential Research Reagent Solutions for Chemogenomics

Successful library preparation for chemogenomics requires carefully selected reagents specifically designed to address the challenges of sample scarcity and complex transcriptomic responses.

Table 2: Essential Research Reagents for Chemogenomic Library Preparation

| Reagent Category | Specific Product Examples | Function in Protocol | Considerations for Chemogenomics |

|---|---|---|---|

| Reverse Transcriptase | Maxima H Minus, SuperScript III | Converts RNA to cDNA; critical for sensitivity | Maxima H Minus shows superior sensitivity for low-abundance genes and minimal end bias [8] |

| Template-Switching Oligos | rN-modified TSO | Facilitates cDNA amplification from minimal input | rN modification significantly improves sequencing sensitivity and low-abundance gene detection [8] |

| Magnetic Beads | Sera-Mag Speedbeads, AMPure XP | Size selection and purification | Core-shell design provides tight size distributions; essential for FFPE and degraded samples [12] |

| Library Prep Kits | NEBNext UltraExpress, Illumina Stranded Total RNA Prep | Streamlined workflow integration | UltraExpress reduces tips by 32% and tubes by 50%; crucial for high-throughput compound screens [12] |

| Automation Systems | ExpressPlex, Callisto Sample Prep System | Standardization and throughput | ExpressPlex enables 96-sample prep in 30 minutes hands-on time; critical for multi-condition studies [10] |

Addressing Technical Complexities in Transcriptomic Responses

Chemogenomic experiments capture complex biological responses to chemical perturbations, requiring special consideration during library preparation:

Minimizing Amplification Bias

- Employ unique molecular identifiers (UMIs) to correct for PCR duplicates and enable accurate quantification [13]

- Utilize high-fidelity polymerases with minimal sequence preference

- Limit PCR cycles (typically 18-22) while maintaining sufficient library complexity

- Implement duplex-specific nucleases to normalize representation in highly abundant transcripts

Handling Diverse RNA Species Chemogenomic responses involve multiple RNA classes beyond mRNA, each requiring specific handling:

Small RNAs (miRNAs, siRNAs):

- Specialized ligation-based methods with pre-annealed adapter constructs [14]

- Biotinylated nucleotide incorporation in RT reaction for efficient cDNA purification

- Reduced gel purification steps to minimize sample loss

Long Non-coding RNAs:

- Ribosomal RNA depletion rather than poly-A selection to capture non-polyadenylated transcripts

- Enhanced fragmentation optimization to address secondary structures

Low-Abundance Transcripts:

- CRISPR-based depletion of abundant transcripts to increase coverage of rare targets [12]

- Hybridization-based capture approaches for focused analysis of specific pathway genes

Transcriptomic Complexity in Chemogenomic Studies

Quality Control and Validation Strategies

Rigorous QC protocols are essential for generating reliable chemogenomics data:

Pre-library Preparation QC:

- RNA Integrity Number (RIN) >8.5 for intact samples, >7.0 for FFPE

- Fluorometric quantification with sensitivity to 1 pg/μL

- Spike-in controls for absolute quantification

Post-library Preparation QC:

- Fragment size distribution analysis (Bioanalyzer/TapeStation)

- Library quantification via qPCR (more accurate than fluorometry for sequencing prediction)

- Adapter dimer detection (<5% threshold)

Post-sequencing QC:

- Sequencing saturation analysis

- Unique mapping rates (>70% for human transcripts)

- Gene detection counts compared to expected values

- Spike-in recovery calculations

For specialized applications like single-cell chemogenomics, additional validation through comparison to bulk RNA-seq or orthogonal methods (qPCR, nanostring) is recommended for a subset of targets.

Chemogenomics presents distinctive challenges for NGS library preparation that demand specialized approaches. The protocols and solutions detailed here address the dual challenges of sample scarcity through ultrasensitive methods and complex transcriptomic responses through optimized reagent systems and specialized handling of diverse RNA species. By implementing these tailored methods—including the use of Maxima H Minus reverse transcriptase, rN-modified template-switching oligos, automated workflows, and rigorous QC protocols—researchers can significantly enhance the quality and reproducibility of their chemogenomic studies. These advanced library preparation techniques enable more accurate characterization of compound-mode-of-action, identification of novel therapeutic targets, and ultimately, more efficient drug discovery pipelines.

The reverse transcription of RNA into complementary DNA (cDNA) is the foundational step in transcriptomic studies, determining the success and quality of all subsequent next-generation sequencing (NGS) data. For researchers in chemogenomics and drug development, where experiments often rely on limited or precious samples derived from compound treatments, optimizing this initial step is paramount for achieving accurate gene expression profiles. Inefficient reverse transcription can introduce significant bias, compromise detection sensitivity, and ultimately lead to misleading biological conclusions. This application note details the critical parameters and optimized protocols for the RNA-to-cDNA conversion, providing a robust framework for constructing high-quality transcriptomic libraries.

The Critical Role of Reverse Transcription

In transcriptomic workflows, RNA is first converted into a more stable DNA copy before sequencing. This cDNA synthesis process directly influences key outcomes:

- Library Complexity: The number of unique RNA molecules successfully captured and converted.

- Gene Detection Sensitivity: The ability to detect low-abundance transcripts, including key drug targets and biomarkers.

- Coverage Uniformity: The evenness of representation across different regions of a transcript.

The fidelity of this process is especially critical in chemogenomic research, where accurately quantifying subtle, compound-induced changes in the transcriptome is essential for understanding mechanisms of action and identifying novel therapeutic targets.

Optimizing Key Parameters for cDNA Synthesis

Primer Design and Selection

The choice of priming strategy is one of the most influential factors in reverse transcription. The table below summarizes the primary options and their optimal use cases.

Table 1: Primer Selection for Reverse Transcription

| Primer Type | Common Uses | Advantages | Limitations |

|---|---|---|---|

| Oligo(dT) | mRNA sequencing, poly-A tailed RNA enrichment [15] | Selects for mature, polyadenylated mRNA; reduces rRNA background. | Inefficient for degraded RNA; biased towards 3' end; unsuitable for non-polyA RNAs. |

| Random Hexamers | Whole transcriptome, degraded RNA [16] | Binds throughout transcript length; can detect non-polyA RNAs. | May not fully reverse transcribe long RNAs due to low binding stability. |

| Random 18mers | Whole transcriptome, long RNA transcripts [16] | Superior detection of long genes and low-abundance transcripts; more stable binding. | Less efficient for very short RNA biotypes (e.g., snRNAs, snoRNAs). |

| Gene-Specific | Targeted expression analysis (qPCR) | Highly specific and sensitive for targeted genes. | Not suitable for global transcriptome profiling. |

A pivotal study investigating primer length found that the commonly used random 6mer does not yield optimal performance. Instead, random 18mer primers demonstrated superior efficiency in overall transcript detection, particularly for long RNA transcripts like protein-coding genes and long non-coding RNAs in complex human tissue samples [16]. The 18mer detected approximately 10% more unique genes than the 6mer, with a significant advantage in detecting lowly expressed genes (FPKM 1-20) [16].

Input RNA Quantity and PCR Amplification

The amount of starting RNA and the subsequent amplification are tightly linked and must be carefully balanced to preserve library diversity and minimize artifacts.

Table 2: Impact of Input RNA and PCR Cycles on Data Quality

| Input RNA | Recommended PCR Cycles | Impact on PCR Duplicates | Effect on Gene Detection |

|---|---|---|---|

| High Input (≥ 125 ng) | Minimal cycles (e.g., 10-12) | Low rate (e.g., < 5%) [17] | High sensitivity; robust detection of low-expression genes. |

| Low Input (15 - 125 ng) | Increased but minimized cycles | High and variable rate (e.g., 34-96%) [17] | Reduced read diversity; fewer genes detected; increased noise. |

| Very Low Input (< 15 ng) | Maximum cycles per protocol | Very high rate; further increased by library conversion [17] | Severe loss of complexity; strong bias towards highly amplified fragments. |

For input amounts above 10 ng but below 125 ng, there is a strong negative correlation between input amount and the proportion of PCR duplicates. A positive correlation exists between the number of PCR cycles and duplicates. Therefore, the highest quality data is obtained using the lowest number of PCR cycles possible for a given input amount [17]. The use of Unique Molecular Identifiers (UMIs) is highly recommended for low-input samples to accurately distinguish biological duplicates from PCR-amplified artifacts during computational analysis [17].

Detailed Experimental Protocol: cDNA Library Construction

The following diagram illustrates the core workflow for constructing a cDNA library, from RNA isolation to ready-to-sequence libraries.

Materials: The Researcher's Toolkit

Table 3: Essential Reagents for cDNA Library Construction

| Reagent / Kit | Function | Considerations for Optimization |

|---|---|---|

| Oligo(dT) Magnetic Beads | Enriches for polyadenylated mRNA from total RNA [15]. | Reduces ribosomal RNA background; critical for mRNA-seq. |

| Reverse Transcriptase | Synthesizes first-strand cDNA using mRNA as a template [15] [18]. | Use high-fidelity, thermostable enzymes for long/structured RNAs. |

| Random Primers (6mer, 18mer) | Initiates reverse transcription at multiple sites along RNA fragments [16]. | 18mers recommended for superior detection of long transcripts [16]. |

| RNase H | Degrades the RNA strand in cDNA:RNA hybrids [15]. | Essential for second-strand synthesis. |

| DNA Polymerase I | Synthesizes the second strand of cDNA [15]. | Creates stable double-stranded cDNA. |

| dNTPs | Building blocks for cDNA synthesis. | Use balanced, high-quality stocks to prevent incorporation errors. |

| Platform-Specific Adapters | Allows cDNA fragments to bind to the sequencing flow cell [19]. | Contains barcodes for sample multiplexing. |

| Library Amplification Mix | PCR master mix containing a high-fidelity polymerase. | Minimize cycles to reduce duplication rates and bias [17]. |

Step-by-Step Procedure

Step 1: Isolation and Quality Control of mRNA

Begin with high-quality total RNA. Isolate mRNA via chromatographic purification using an oligo(dT) matrix to retain poly(A)+ RNA molecules, effectively depleting abundant tRNAs and rRNAs [15]. Assess RNA integrity using an instrument like an Agilent Bioanalyzer to ensure an RNA Integrity Number (RIN) > 8.0 for optimal results.

Step 2: First-Strand cDNA Synthesis

- Combine 1-1000 ng of mRNA with random 18mer primers (or oligo(dT) primers for 3'-end focused assays) and dNTPs [16].

- Denature at 65°C for 5 minutes to remove secondary structures, then immediately place on ice.

- Add reaction buffer, DTT, RNase inhibitor, and a robust reverse transcriptase (e.g., SuperScript II or equivalent) [18].

- Incubate at 42-50°C for 50-60 minutes, followed by enzyme inactivation at 70°C for 15 minutes. This produces an mRNA:cDNA hybrid.

Step 3: Second-Strand cDNA Synthesis

- To the first-strand reaction, add RNase H to nick the mRNA strand, and DNA Polymerase I to synthesize the second DNA strand using the dNTPs provided [15].

- Incubate at 16°C for 1 hour. The low temperature minimizes exonuclease activity and favors polymerase activity.

- Purify the double-stranded cDNA using magnetic beads or column-based purification. The cDNA can now be stored at -20°C.

Step 4: Adapter Ligation and Library Amplification

- Prepare the blunt-ended, double-stranded cDNA for adapter ligation. This may involve end-repair and A-tailing to create compatible ends for ligation.

- Ligate platform-specific adapters to the cDNA fragments. For blunt-end ligations, use high enzyme concentrations and incubate at room temperature for 15-30 minutes. For cohesive-end ligations, incubate at 12-16°C for longer durations [19].

- Perform a limited-cycle PCR (e.g., 10-15 cycles depending on input) to amplify the adapter-ligated library. Minimize PCR cycles to preserve library complexity and reduce duplicate rates [17].

- Purify the final library using magnetic bead-based cleanup to remove primer dimers and fragments that are too short or too long [19].

Step 5: Library Quality Control and Normalization

- Quantify the library accurately using fluorometric methods (e.g., Qubit) and qPCR.

- Assess size distribution with a Bioanalyzer or TapeStation.

- Normalize libraries to an equimolar concentration before pooling to ensure balanced representation in sequencing [19]. Automated systems can significantly improve the consistency of this step.

Troubleshooting Common Issues

- High PCR Duplication Rate: This indicates low library complexity, often due to insufficient input RNA or excessive PCR cycles. Solution: Increase RNA input where possible, and use the minimum number of PCR cycles required. Incorporate UMIs to bioinformatically identify and remove duplicates [17] [4].

- Low Library Yield: Can result from degraded RNA, inefficient enzymatic reactions, or losses during purification. Solution: Check RNA quality, ensure fresh reagents and proper enzyme handling to maintain activity, and use purification methods with high recovery rates [19].

- Adapter Dimer Contamination: Caused by self-ligation of adapters. Solution: Optimize adapter molar ratios during ligation and use bead-based size selection to remove short fragments [19].

The conversion of RNA to cDNA is a critical gateway in the transcriptomic library construction pipeline, whose quality dictates the validity of downstream data and analysis. For drug development professionals, consistent application of optimized protocols—embracing strategic primer selection, careful input RNA quantification, and minimized PCR amplification—is non-negotiable. By adhering to the detailed methodologies and best practices outlined in this application note, researchers can ensure the generation of robust, high-complexity cDNA libraries. This, in turn, provides a reliable foundation for uncovering meaningful biological insights in chemogenomic research and advancing therapeutic discovery.

Next-generation sequencing (NGS) library preparation is a critical first step in any sequencing workflow, profoundly impacting the quality, reliability, and interpretation of generated data. For researchers in chemogenomics and drug development, selecting the appropriate library construction method is paramount for obtaining meaningful biological insights from cDNA experiments. Among the available techniques, ligation-based and tagmentation-based workflows have emerged as two principal approaches, each with distinct advantages, limitations, and optimal application scenarios. This application note provides a detailed comparison of these methodologies, supported by quantitative performance data and step-by-step experimental protocols, to guide researchers in selecting and implementing the optimal strategy for their specific research objectives.

Core Technology Comparison

Fundamental Mechanisms

Ligation-based library preparation involves the physical or enzymatic fragmentation of DNA or cDNA, followed by a series of enzymatic steps to repair ends and ligate specialized adapters to both ends of the fragments using DNA ligase [13]. This traditional approach provides consistent performance across diverse genomic contexts.

Tagmentation-based library preparation utilizes a bead-linked transposome (BLT) system where a transposase enzyme simultaneously fragments DNA and ligates adapters in a single enzymatic step [20] [13]. This innovative approach dramatically reduces hands-on time and workflow complexity by combining multiple steps into one.

Performance Characteristics and Bias Profiles

Each method exhibits distinct performance characteristics and potential biases that researchers must consider:

- Sequence Bias: Tagmentation methods may display sequence pattern preference in the initial 10-15 bases of sequencing reads [20]. However, multiple studies indicate this does not adversely affect library complexity or genome coverage across diverse species and sequence contexts [20].

- Fragment Size Distribution: Ligation-based methods typically produce more variable fragment sizes, while BLT methods generate more homogeneous fragment distributions, independent of DNA input quantity and quality [20].

- Coverage Uniformity: Comparative studies demonstrate that the most crucial factor for even genome coverage is library fragment size, with BLT methods producing superior reproducibility in fragment size homogeneity compared to ligation-based approaches [20].

Quantitative Comparison of Performance Metrics

Table 1: Direct performance comparison of ligation, tagmentation, and PCR-based library prep methods for bacterial genomics [21]

| Performance Metric | Ligation-Based (LIG) | Tagmentation-Based (TAG) | PCR-Based (PCR) |

|---|---|---|---|

| Average Read Length | >5,000 bp | >5,000 bp | <1,100 bp |

| Total Output (Gbp) | 33.62 | 11.72 | 4.79 |

| Mappable Reads | 92.9% | 87.3% | 22.7% |

| Artifactual Tandem Content | 0.9% | 2.2% | 22.5% |

| Output Homogeneity | Most homogeneous | Intermediate | Most variable |

Table 2: Workflow and efficiency comparison between library preparation methods [21] [22] [13]

| Characteristic | Ligation-Based | Tagmentation-Based |

|---|---|---|

| Hands-on Time | ~3-6 hours [22] | ~1-1.5 hours [13] |

| Total Workflow Time | ~6.5 hours [22] | ~3-4 hours [13] |

| Input DNA Requirement | 100-1000 ng [22] | 1-500 ng [13] |

| PCR Requirement | Often required | Optional |

| Multiplexing Capacity | Standard | Standard |

| Cost Considerations | Higher reagent and labor costs | Lower overall cost due to reduced hands-on time |

Detailed Experimental Protocols

Ligation-Based Library Preparation Protocol

Principle: This method utilizes sequential enzymatic reactions to fragment DNA, repair ends, and ligate adapters in a multi-step process [13].

Table 3: Key reagents for ligation-based library prep [13]*

| Reagent | Function |

|---|---|

| Fragmentation Enzyme | Fragments DNA to desired size distribution |

| End Repair Mix | Repairs fragmented ends to create blunt ends |

| A-Tailing Enzyme | Adds single 'A' nucleotide to 3' ends |

| DNA Ligase | Ligates adapters to A-tailed fragments |

| SPRI Beads | Size selection and purification |

| Unique Dual Index Adapters | Enable sample multiplexing |

Step-by-Step Workflow:

DNA Fragmentation:

- Use either mechanical (acoustic shearing) or enzymatic fragmentation methods

- Target fragment size: 200-500bp for standard applications

- Recommended input: 100-1000ng high-quality DNA [22]

End Repair and A-Tailing:

- Incubate fragmented DNA with end repair enzyme mix (30 minutes, 20°C)

- Add A-tailing buffer and enzyme (30 minutes, 37°C)

- Purify using SPRI beads (1.8X ratio)

Adapter Ligation:

- Add unique dual index adapters and DNA ligase

- Incubate (15 minutes, 20°C)

- Purify with SPRI beads (1.8X ratio)

Library Amplification (Optional):

- Add PCR master mix with appropriate cycle number

- Purify final library with SPRI beads (1.0X ratio)

Quality Control:

- Quantify using fluorometric methods (Qubit)

- Assess size distribution (Bioanalyzer/TapeStation)

- Validate library concentration for sequencing

Tagmentation-Based Library Preparation Protocol

Principle: This approach uses bead-linked transposomes to simultaneously fragment DNA and incorporate sequencing adapters in a single reaction [20] [13].

Table 4: Key reagents for tagmentation-based library prep [20] [13]*

| Reagent | Function |

|---|---|

| Bead-Linked Transposomes (BLT) | Simultaneously fragments and tags DNA with adapters |

| Tagmentation Buffer | Optimizes transposase enzyme activity |

| Neutralization Buffer | Stops tagmentation reaction |

| PCR Master Mix | Amplifies library (if required) |

| SPRI Beads | Size selection and purification |

| Unique Dual Index Primers | Enable sample multiplexing |

Step-by-Step Workflow:

Tagmentation Reaction:

- Combine DNA with bead-linked transposomes and tagmentation buffer

- Incubate (5-15 minutes, 55°C)

- Add neutralization buffer to stop reaction

- Recommended input: 1-500ng DNA [13]

Library Amplification (Optional):

- Add PCR master mix with unique dual index primers

- Amplify with limited cycles (typically 12-15 cycles)

- Note: PCR-free workflows are possible with sufficient input

Purification and Size Selection:

- Clean up with SPRI beads (0.6X-0.8X ratio for size selection)

- Elute in appropriate buffer

Quality Control:

- Quantify using fluorometric methods

- Assess fragment size distribution

- Validate library for sequencing

Application-Specific Recommendations

Chemogenomic cDNA Research Considerations

For chemogenomic studies investigating gene expression responses to chemical compounds, several factors warrant special consideration:

- Input Material: Tagmentation methods offer superior performance with limited cDNA samples common in drug screening assays [13].

- Sequence-Specific Bias: Ligation-based approaches may be preferable for detecting transcripts with extreme GC content, as tagmentation can exhibit sequence-specific bias [20].

- Multiplexing Requirements: Both methods support extensive multiplexing, but tagmentation workflows enable more rapid processing of large compound libraries [23].

- Data Reproducibility: For longitudinal studies assessing compound effects over time, ligation-based methods may provide more consistent coverage of transcript ends [21].

Specialized Applications

FFPE and Degraded Samples:

- Modified tagmentation protocols demonstrate excellent performance with degraded RNA from FFPE samples [24]

- Ligation-based methods with specialized repair enzymes can rescue data from heavily damaged samples

Low-Input and Single-Cell Applications:

- Tagmentation methods are preferred for single-cell cDNA libraries due to higher efficiency [25]

- Modified tagmentation protocols enable library preparation from as little as 40ng DNA [25]

Multimodal Sequencing:

- Advanced tagmentation approaches enable concurrent readout of genetic sequence, CpG methylation, and chromatin accessibility from a single library [25]

Method Selection Guide

Table 5: Application-based recommendations for library preparation methods [21] [20] [13]*

| Research Scenario | Recommended Method | Rationale |

|---|---|---|

| Maximum Data Quality | Ligation-based | Superior mappable reads (92.9%) and lowest artifactual content [21] |

| High-Throughput Screening | Tagmentation-based | 65% faster workflow and higher throughput capabilities [23] |

| Limited Input Samples | Tagmentation-based | Effective with 1ng input vs. 100ng for ligation-based [13] |

| Complex Genome Regions | Ligation-based | Reduced sequence-specific bias for challenging regions [20] |

| Cost-Sensitive Projects | Tagmentation-based | Lower reagent costs and reduced hands-on time [23] |

| Multimodal Analysis | Tagmentation-based | Enables concurrent genetic and epigenetic profiling [25] |

The choice between ligation-based and tagmentation-based library preparation methods represents a critical decision point in designing chemogenomic cDNA research studies. Ligation-based methods remain the gold standard for applications demanding the highest data quality and minimal technical artifacts, as evidenced by their superior mappable read rates (92.9%) and low artifactual content [21]. Conversely, tagmentation-based approaches offer compelling advantages in workflow efficiency, requiring significantly less hands-on time (65% reduction) and lower input requirements while maintaining robust performance across most applications [13] [23].

For drug development professionals, the selection framework should prioritize project-specific requirements including input material limitations, throughput needs, data quality thresholds, and budget constraints. As both technologies continue to evolve, tagmentation methods show particular promise for emerging applications in multimodal sequencing and complex sample types, while ligation methods maintain their position for standardized applications requiring maximal data fidelity. By implementing the detailed protocols and considerations outlined in this application note, researchers can make informed decisions that optimize their library preparation strategies for successful chemogenomic investigations.

The Role of Adapters and Barcodes in Multiplexing Drug Treatment Samples

Within chemogenomic cDNA research, where the systematic screening of chemical compounds on biological systems is paramount, Next-Generation Sequencing (NGS) has become an indispensable tool for profiling transcriptomic changes. The efficiency of such studies is often gated by the throughput and cost-effectiveness of the sequencing workflow. Sample multiplexing, the simultaneous sequencing of multiple libraries in a single run, addresses this bottleneck directly [26] [27]. This technique relies on the strategic use of adapters and barcodes (also known as indexes) to enable the precise pooling and subsequent deconvolution of data from dozens of drug treatment samples [27]. By assigning a unique molecular identifier to each sample, researchers can dramatically reduce per-sample costs and minimize technical variability, thereby accelerating the pace of discovery in drug development [26]. These Application Notes detail the principles and provide a robust protocol for implementing adapter and barcode-based multiplexing in chemogenomic studies.

Conceptual Foundations of Multiplexing

The Core Components: Adapters and Barcodes

Multiplexing is fundamentally enabled by attaching short, unique DNA sequences to the cDNA fragments derived from each sample. This process involves two key components:

- Adapters: Short, known oligonucleotide sequences that are ligated to both ends of every cDNA fragment in a library [4]. These adapters are essential for the sequencing process itself, containing complementary sequences that allow the library fragments to bind to the flow cell and be amplified via bridge PCR. They also contain the primer-binding sites necessary for the sequencing reactions [4] [27].

- Barcodes (Indexes): Short, unique DNA sequences embedded within the adapter oligonucleotides [27]. Each sample in a multiplexed pool receives a unique barcode. After a pooled sequencing run is complete, the barcode sequence on each read is used to computationally assign the read back to its sample of origin, a process known as demultiplexing [27].

Advantages of a Multiplexed Workflow

The primary advantage of sample multiplexing is a significant increase in throughput and a reduction in sequencing costs. By pooling multiple samples, the time and reagent expenses for a sequencing run are distributed across all samples in the pool [26] [27]. Furthermore, processing samples in a single multiplexed run, rather than across multiple individual runs, reduces batch effects and technical variability, leading to more robust and reproducible comparative analyses—a critical consideration when assessing the subtle transcriptional impacts of drug treatments [26].

Indexing Strategies: Single vs. Dual Indexing

The configuration of barcodes within the adapters is a critical design choice. The two main strategies are single and dual indexing, with unique dual indexes being the recommended best practice for modern applications [27].

Table 1: Comparison of Single and Dual Indexing Strategies

| Feature | Single Indexing | Dual Indexing (Recommended) |

|---|---|---|

| Barcode Location | A single barcode sequence on one adapter. | Two unique barcode sequences, one on each adapter. |

| Multiplexing Capacity | Lower | Higher |

| Error Detection | Poor; cannot reliably detect index hopping. | Excellent; can identify and filter reads affected by index hopping. |

| Data Fidelity | Lower confidence in sample assignment. | High confidence in sample assignment. |

Index hopping is a phenomenon where barcode sequences are incorrectly assigned during sequencing, potentially leading to cross-contamination of data between samples [27]. Dual indexing provides a robust solution to this problem, as a read must match both expected barcode sequences to be assigned to a sample, thereby preventing misassignment if one index is corrupted [27].

Technical Implementation and Workflow

The integration of adapters and barcodes occurs during the library preparation stage, which transforms cDNA into a sequence-ready library.

Library Preparation Workflow

The following workflow outlines the key steps from fragmented cDNA to a pooled, multiplexed library ready for sequencing:

Key Steps Explained

- End Repair & A-Tailing: The cDNA fragments are blunted and a single 'A' nucleotide is added to the 3' ends. This creates a compatible overhang for ligation with adapters that have a complementary 'T' overhang [4].

- Adapter Ligation: Adapters containing the platform-specific sequences and binding sites for sequencing primers are ligated to the 'A-tailed' cDNA fragments [4]. In many modern kits, these adapters are already partially or fully double-stranded.

- Library Amplification & Indexing: This is the critical step where barcodes are incorporated. A limited-cycle PCR is performed using primers that contain:

- The

P5andP7flow cell binding sequences. - The unique barcode sequences that will identify the sample.

- The sequencing primer binding sites. This step simultaneously amplifies the library to generate sufficient mass for sequencing and adds the complete adapter structure, including the barcodes, to each molecule [27].

- The

- Library QC & Purification: The final library is purified to remove excess primers, adapter dimers, and PCR reagents. Quality control, typically using fluorometry and fragment analysis, is performed to confirm library concentration and size distribution [4].

- Normalization & Pooling: Libraries are quantified and normalized to ensure equimolar representation. They are then combined into a single pool for the sequencing run [27].

Essential Protocol: Multiplexing Drug Treatment Samples

This protocol provides a detailed methodology for generating multiplexed cDNA libraries from drug-treated samples.

Research Reagent Solutions

Table 2: Essential Reagents and Materials for Library Preparation

| Item | Function | Example/Note |

|---|---|---|

| DNA Library Prep Kit | Provides enzymes and buffers for end repair, A-tailing, ligation, and PCR. | Select a kit compatible with your sequencing platform and read length. |

| Unique Dual Indexed Adapters | Pre-synthesized adapter mixes containing unique barcode pairs for each sample. | Commercial sets (e.g., Illumina) are available in various plexities. |

| SPRIselect Beads | Magnetic beads for size selection and purification of the library between steps. | Enables removal of unwanted reagents and selection of optimal fragment sizes. |

| Qubit dsDNA HS Assay | Fluorometric quantification of library concentration. | More accurate for library quantitation than spectrophotometry. |

| Bioanalyzer/TapeStation | Capillary electrophoresis system for assessing library size distribution and quality. | Critical for detecting adapter dimers and verifying insert size. |

Step-by-Step Procedure

- Input Material: Begin with 100 ng of high-quality, double-stranded cDNA derived from drug-treated and control samples. Ensure cDNA is purified and in nuclease-free water.

- End Repair & A-Tailing:

- Combine cDNA with the provided end-prep enzyme mix.

- Incubate at 20°C for 30 minutes, followed by 65°C for 30 minutes.

- Purify the reaction using SPRIselect beads (1.0X ratio) to retain all fragments and elute in nuclease-free water.

- Adapter Ligation:

- To the purified end-repaired cDNA, add ligation buffer, DNA ligase, and a unique dual index adapter for each sample.

- Incubate at 20°C for 15 minutes.

- Purify with SPRIselect beads (0.9X ratio) to remove excess adapters and elute.

- Library Amplification:

- Prepare a PCR mix with the universal P5 and P7 primers and a high-fidelity DNA polymerase.

- Run the following PCR program:

- 98°C for 30 seconds (initial denaturation)

- 8-12 cycles of:

- 98°C for 10 seconds (denaturation)

- 60°C for 30 seconds (annealing)

- 72°C for 30 seconds (extension)

- 72°C for 5 minutes (final extension)

- Final Library Clean-up:

- Purify the amplified library with SPRIselect beads (0.9X ratio).

- Elute in nuclease-free water or TE buffer.

- Quality Control:

- Quantify the final library concentration using the Qubit dsDNA HS Assay.

- Analyze 1 µL of the library on a Bioanalyzer or TapeStation using a High Sensitivity DNA chip to confirm a peak in the expected size range (e.g., 300-500 bp) and the absence of a primer-dimer peak at ~100 bp.

- Pooling for Sequencing:

- Normalize all libraries to the same concentration (e.g., 10 nM) based on Qubit and fragment analyzer data.

- Combine an equal volume of each normalized library into a single tube. This is your multiplexed sequencing pool.

- The final pool can be diluted to the loading concentration required by your specific sequencer.

Data Analysis and Demultiplexing

Upon completion of the sequencing run, the primary data output is a pool of sequence reads from all samples. The process of demultiplexing is the first bioinformatic step, which uses the barcode information to sort the reads back into their respective sample-specific files. This process is typically performed automatically by the sequencer's onboard software or dedicated demultiplexing tools [27]. The output is a set of FASTQ files (or similar), one for each sample, which are then ready for standard downstream processing such as alignment, quantification, and differential expression analysis. The use of unique dual indexes ensures that any reads which have undergone index hopping are identified and either corrected or filtered out, preserving the integrity of the data for critical chemogenomic analyses [27].

Streamlined Protocols for Chemogenomic cDNA: From Low-Input Samples to Target Enrichment

Strategies for Low-Input and Degraded RNA from Treated Cell Cultures

Next-generation sequencing (NGS) has revolutionized biological research by enabling in-depth analysis of transcriptomes, yet analyzing samples with limited material or compromised quality remains a significant challenge [28]. In chemogenomic research, where cell cultures are treated with chemical compounds or drugs, researchers frequently encounter low-input and degraded RNA resulting from treatment-induced cytotoxicity or the necessity of using rare cell populations. These samples are particularly vulnerable to degradation and yield limitations, making conventional RNA sequencing approaches unsuitable [28] [4].

The success of transcriptomic studies in this context heavily depends on selecting appropriate library preparation strategies that can effectively handle minimal inputs while preserving biological complexity [3]. This application note provides a comprehensive framework for generating high-quality sequencing libraries from low-input and degraded RNA derived from treated cell cultures, with specific methodologies optimized for chemogenomic cDNA research.

Key Considerations for Experimental Design

Input Requirements and Sample Quality

Library preparation kits vary significantly in their input requirements, which is a primary consideration when working with limited samples from treated cultures. Input amounts generally fall into three categories: standard input (100-1000 ng), low-input (1-100 ng), and ultra-low-input (below 1 ng) [28] [29]. For degraded samples, which are common in chemogenomic studies involving fixed cells or stressful chemical treatments, higher input amounts may be necessary to compensate for fragmentation [28].

Sample quality assessment is crucial before library preparation. For RNA samples, the RNA Integrity Number (RIN) provides a valuable metric, though specialized kits can handle severely degraded samples with RIN values as low as 2 [30]. In treated cell cultures where extraction yields may be low, verification of sample quantity using sensitive methods such as fluorometry is recommended [31].

Strategic Selection of Library Preparation Technology

The choice of library preparation method significantly impacts data quality, coverage uniformity, and detection sensitivity. Three primary technological approaches have emerged for handling challenging RNA samples:

Template-switching technology: Utilizes the template-switching activity of reverse transcriptase to add universal adapter sequences during cDNA synthesis, enabling efficient library construction from minimal input [32]. This approach is particularly valuable for maintaining sequence representation in ultra-low-input scenarios.

Stranded protocols with specialized chemistry: Employ molecular techniques such as dUTP marking or ligation-based methods to preserve strand orientation information without requiring toxic reagents like actinomycin D [30]. These protocols are essential for accurate transcript annotation and identification of antisense transcription events in chemogenomic studies.

Unique molecular identifiers (UMIs): Incorporate molecular barcodes during reverse transcription to tag individual RNA molecules, enabling bioinformatic correction of amplification biases and PCR duplicates [33]. This technology provides more accurate quantitation, especially important when assessing expression changes in drug-treated samples.

Commercial Kit Comparisons and Selection Guide

Comprehensive Kit Comparison

Table 1: Comparison of Low-Input and Degraded RNA Library Preparation Kits

| Manufacturer | Kit Name | Input Range | Protocol Duration | Automation Compatibility | Key Features |

|---|---|---|---|---|---|

| Takara Bio | SMARTer Universal Low Input RNA Kit | 10-100 ng total RNA or 200 pg-10 ng rRNA-depleted RNA | 2 hours | No | SMART technology with random priming; useful for degraded RNA without polyA-tails [28] |

| Roche | KAPA RNA HyperPrep Kit | 1-100 ng RNA | 4 hours | Yes | Single-tube chemistry; optimized for degraded and low-input samples [28] |

| Watchmaker | Watchmaker RNA Library Prep Kit | 0.25-100 ng total RNA | 3.5 hours | Yes | Novel engineered reverse transcriptase for degraded FFPE samples [28] |

| Illumina | Stranded Total RNA Prep | 1-1000 ng standard quality RNA; 10 ng for FFPE | ~7 hours | Yes | Integrated enzymatic rRNA depletion; works with degraded samples [33] |

| Lexogen | Proprietary Ultra-low Input Technology | 10 pg to 1 ng total RNA | Varies | Yes | Extraction-free capability; works with cell lysates [29] |

| IDT | xGen Broad-Range RNA Library Preparation Kit | 10 ng-1 µg RNA or 100 pg-100 ng mRNA | 4.5 hours | Yes | Adaptase technology eliminates second-strand synthesis [28] |

Table 2: Performance Characteristics Across Input Ranges

| Input Range | Recommended Technology | Expected Gene Detection | Best For |

|---|---|---|---|

| >100 ng | Standard stranded protocols | >80% of transcriptome | High-quality samples from abundant cell cultures |

| 1-100 ng | Modified low-input protocols | 60-80% of transcriptome | Treated cultures with moderate yield |

| 100 pg-1 ng | Template-switching methods | 40-60% of transcriptome | Rare cell populations or limited material |

| 10-100 pg | Ultra-low input specialized kits | 20-40% of transcriptome | Single-cell or subcellular analyses |

Selection Guidelines for Chemogenomic Applications

For chemogenomic studies involving drug-treated cultures, kit selection should be guided by specific experimental parameters:

High-throughput compound screening: Automated-compatible kits such as the KAPA RNA HyperPrep or Watchmaker RNA Library Prep Kit enable processing of multiple samples with minimal hands-on time [28].

Time-course experiments with sequential sampling: Rapid protocol kits like the Takara SMARTer Universal Low Input (2 hours) provide quick turnaround for dynamic transcriptome assessment [28].

Pathway-focused analysis: Targeted RNA sequencing approaches using enrichment panels concentrate sequencing power on genes of interest, providing cost-effective solutions for focused questions [33].

Detailed Experimental Protocols

Protocol A: Ultra-Low Input RNA (10 pg-1 ng) Using Template-Switching Technology

This protocol is adapted from the SMARTer and Lexogen approaches for minute RNA quantities [28] [29].

Workflow Overview:

Step-by-Step Methodology:

RNA Fragmentation and Priming

- Combine 1-10 pg to 1 ng total RNA with 1 µL of 3' SMART CDS Primer II A in nuclease-free water to a total volume of 4.5 µL

- Incubate at 72°C for 3 minutes, then hold at 42°C for 2 minutes

- Brief centrifuge to collect contents

Reverse Transcription with Template Switching

- Prepare RT mix: 2.0 µL 5× First-Strand Buffer, 0.25 µL DTT (100 mM), 1.0 µL SMARTer II A Oligonucleotide, 1.0 µL RNase Inhibitor, 0.25 µL SMARTScribe Reverse Transcriptase

- Add 4.5 µL RT mix to each RNA-primer sample, mix gently by pipetting

- Incubate at 42°C for 90 minutes, then heat-inactivate at 70°C for 10 minutes

cDNA Amplification

- Prepare PCR mix: 25 µL SeqAmp PCR Buffer, 1 µL PCR Primer II A, 1 µL SeqAmp DNA Polymerase, 16.5 µL nuclease-free water

- Add 6.5 µL cDNA from previous step to 43.5 µL PCR mix

- Amplify using cycling conditions: 95°C for 1 min; 15-20 cycles of 95°C for 10 sec, 65°C for 30 sec, 68°C for 3 min; final extension at 72°C for 5 minutes

- Purify using AMPure XP beads at 0.8× ratio

Library Construction and Indexing

- Fragment 100 ng purified cDNA using Covaris shearing (200 bp target)

- Perform end repair and A-tailing using commercial enzyme mix (20°C for 30 min, 65°C for 30 min)

- Ligate Illumina adapters with UMI barcodes (T4 DNA ligase, 20°C for 15 min)

- Clean up using AMPure XP beads (0.8× ratio)

Library Amplification and Final Cleanup

- Amplify with index primers using reduced cycles (8-12 cycles) with high-fidelity polymerase

- Perform double-sided size selection with AMPure XP beads (0.5×/0.8× ratios)

- Quantify using Qubit dsDNA HS Assay and profile with Bioanalyzer High Sensitivity DNA chip

Critical Steps and Troubleshooting:

- For highly degraded samples, increase input amount by 1.5-2× while maintaining the same reaction volumes

- If yields are low after cDNA amplification, increase PCR cycles by 2-3 while monitoring for over-amplification artifacts

- To minimize batch effects, prepare master mixes for all enzymatic steps and process all samples in parallel

Protocol B: Degraded RNA from Treated Cultures (1-100 ng) Using Stranded Chemistry

This protocol utilizes the principles behind KAPA and Illumina stranded kits optimized for compromised samples [28] [33].

Workflow Overview:

Step-by-Step Methodology:

rRNA Depletion

- Dilute 1-100 ng total RNA to 11 µL with nuclease-free water

- Add 1 µL rRNA Removal Solution, mix thoroughly by pipetting

- Incubate at 68°C for 10 minutes, then hold at room temperature for 5 minutes

- Add 1 µL rRNA Removal Beads, incubate at room temperature for 5 minutes

- Place on magnet, transfer supernatant to new tube

RNA Fragmentation and Priming

- Add 2 µL Fragmentation Solution to depleted RNA

- Incubate at 94°C for 4 minutes (adjust time based on desired fragment size)

- Immediately place on ice, add 2 µL Fragmentation Stop Solution

First Strand cDNA Synthesis

- Add 4 µL First Strand Synthesis Act D Mix, 1 µL Random Primers, 2 µL SuperScript II Reverse Transcriptase

- Incubate at 25°C for 10 min, 42°C for 50 min, 70°C for 15 min

- Proceed immediately to second strand synthesis

Second Strand Synthesis with dUTP Incorporation

- Prepare second strand mix: 10 µL Second Strand Marking Master Mix, 4 µL dUTP Solution, 26 µL nuclease-free water

- Add to first strand reaction, incubate at 16°C for 60 minutes

- Purify using AMPure XP beads (0.8× ratio), elute in 25 µL Resuspension Buffer

Adapter Ligation and Library Completion

- Add 2.5 µL End Repair & A-Tailing Control, 12.5 µL Ligation Mix, 2.5 µL RNA Adapter

- Incubate at 20°C for 15 minutes

- Add 5 µL UDG, incubate at 37°C for 30 minutes to digest second strand

- Amplify with index primers: 98°C for 45 sec; 10-12 cycles of 98°C for 15 sec, 60°C for 30 sec, 72°C for 30 sec; 72°C for 1 min

- Perform double-sided size selection with AMPure XP beads

Quality Control Parameters:

- Assess library concentration using Qubit dsDNA HS Assay (target > 5 nM)

- Verify size distribution using Bioanalyzer High Sensitivity DNA chip (peak ~280-320 bp)

- Confirm absence of adapter dimers (< 3% of total signal)

- Check molarity by qPCR using library quantification kit for accurate pooling

The Scientist's Toolkit: Essential Research Reagents

Table 3: Critical Reagents for Low-Input and Degraded RNA Studies

| Reagent Category | Specific Products | Function & Importance | Application Notes |

|---|---|---|---|

| Reverse Transcriptases | SMARTScribe, SuperScript II | cDNA synthesis with high processivity and template-switching capability | Critical for full-length cDNA from degraded templates; engineered enzymes show better performance with inhibitors [28] |

| Library Amplification Kits | KAPA HiFi HotStart ReadyMix, CleanStart HiFi PCR Mastermix | High-fidelity amplification with uniform coverage | Minimize GC bias and maintain sequence representation; essential for accurate variant calling [28] [30] |

| RNA Depletion Kits | Illumina Ribo-Zero Gold, QIAseq FastSelect rRNA | Remove abundant ribosomal RNA | Significantly increases mapping rates; particularly important for bacterial or non-polyA samples [30] [33] |

| Nucleic Acid Purification | AMPure XP Beads, QIAseq Beads | Size selection and cleanup between steps | Bead-based methods preferred for low-input work due to higher recovery rates [28] [30] |

| Quality Control Tools | Agilent Bioanalyzer, TapeStation, Qubit fluorometer | Assess RNA integrity and library quality | Essential for troubleshooting and optimizing input requirements; Bioanalyzer provides critical size distribution data [30] [3] |

| Unique Dual Indexes | Illumina UDI, IDT xGen UDI | Sample multiplexing and cross-contamination reduction | Enable complex experimental designs with multiple treatment conditions and time points [28] [33] |

Data Analysis and Bioinformatics Considerations

Specialized Processing for Challenging Libraries

Sequencing data from low-input and degraded RNA requires specialized bioinformatic processing to extract meaningful biological insights:

Unique Molecular Identifier (UMI) processing: Deduplication based on UMIs provides accurate molecular counting, correcting for amplification biases inherent in low-input protocols [33]. Tools such as UMI-tools or zUMIs should be implemented before alignment to distinguish technical duplicates from biological replicates.

Adapter trimming and quality control: Aggressive adapter trimming is essential for degraded samples with short fragment sizes. Trimming tools should be configured with parameters specific to your library preparation kit, particularly for technologies like Adaptase that add specific sequences [34].

Strand-specific alignment: Ensure alignment software (STAR, HISAT2) is configured for the specific strandedness of your protocol to improve transcript assignment accuracy, particularly important for identifying overlapping transcripts in chemogenomic studies [30].

Quality Metrics for Degraded Samples

Traditional RNA-Seq QC metrics require adaptation for degraded samples:

- Mapping rates >70% may be acceptable for severely degraded samples (RIN < 4)

- 3' bias is expected in degraded samples; normalize using TPM or similar methods that account for transcript length

- Monitor ribosomal RNA content (<5% ideal, though higher percentages may be acceptable with certain depletion strategies)

- Assess duplication rates in context of input amount - higher duplication is expected with lower inputs but should be corrected with UMIs

Successful transcriptomic analysis of low-input and degraded RNA from treated cell cultures requires integrated optimization across sample preparation, library construction, and bioinformatic analysis. Based on the methodologies presented in this application note, the following recommendations emerge for chemogenomic research:

For ultra-low input scenarios (single-cell or limited cell populations), template-switching technologies such as SMARTer protocols provide the most robust performance, enabling library construction from as little as 10 pg total RNA while maintaining strand specificity [28] [29]. For moderately degraded samples from compound-treated cultures, streamlined stranded protocols like the KAPA RNA HyperPrep or Illumina Stranded Total RNA Prep offer the optimal balance of sensitivity, throughput, and data quality [28] [33].

The integration of UMIs is strongly recommended for all low-input applications to control for amplification biases and provide accurate quantitation of expression changes in response to chemical treatments [33]. Additionally, automated library preparation should be considered for studies involving multiple treatment conditions or time points to enhance reproducibility and throughput [28].

By implementing these optimized strategies and protocols, researchers can overcome the technical challenges associated with low-input and degraded RNA, thereby expanding the scope of chemogenomic investigations to include precious samples from complex treatment regimens and rare cell populations.

In the field of chemogenomic cDNA research, the choice between whole transcriptome and targeted RNA-Seq represents a critical strategic decision that directly influences data quality, experimental cost, and biological interpretation. Next-generation sequencing (NGS) library preparation serves as the foundational step that determines the scope, depth, and reliability of transcriptomic data. As the US EPA's ecological high-throughput transcriptomics challenge demonstrated, multiple technical approaches can yield viable results, but their relative strengths must be aligned with specific research objectives [35] [36]. This alignment becomes particularly crucial in drug development pipelines, where decisions progress from initial discovery to targeted validation, requiring different transcriptomic approaches at each phase [37].

The fundamental distinction between these approaches lies in their scope: whole transcriptome sequencing (WTS) aims to capture all RNA species in an unbiased manner, while targeted RNA-Seq focuses sequencing resources on a predefined set of genes of interest. Understanding the technical specifications, performance characteristics, and practical implications of each method enables researchers to optimize their NGS library prep strategy for chemogenomic applications, ultimately enhancing the reliability and actionability of research outcomes in both pharmaceutical development and environmental toxicology.

Technical Comparison: Scope, Applications, and Performance Metrics

Fundamental Methodological Differences

The core distinction between whole transcriptome and targeted RNA-Seq approaches lies in library preparation strategy. Whole transcriptome methods employ random primers during cDNA synthesis, distributing sequencing reads across entire transcripts [38]. This requires effective ribosomal RNA (rRNA) removal prior to library preparation—either through poly(A) selection for mRNA enrichment or rRNA depletion—to prevent sequencing resources from being dominated by abundant ribosomal RNAs [38] [33]. The resulting data provides comprehensive coverage across the transcriptional landscape, enabling detection of novel features and global pattern recognition.

In contrast, targeted RNA-Seq employs either enrichment-based or amplicon-based approaches to focus sequencing on specific transcripts of interest [39]. Enrichment methods use probes to capture targeted regions, while amplicon approaches employ PCR to amplify specific sequences. Both channel sequencing resources toward predefined genes, dramatically increasing coverage depth for those targets while ignoring off-target transcripts. Targeted approaches can be further refined through sentinel gene sets, which represent key portions of the transcriptome for specific applications, as demonstrated by the TempO-Seq platform that won the US EPA challenge by covering 5-11% of the whole transcriptome [35] [36].

Comparative Performance and Applications

Table 1: Technical Comparison of Whole Transcriptome and Targeted RNA-Seq Approaches

| Parameter | Whole Transcriptome Sequencing | Targeted RNA Sequencing | 3' mRNA-Seq |

|---|---|---|---|

| Transcriptome Coverage | Comprehensive; all RNA types (coding, non-coding) [38] | Focused; predefined gene sets [39] | 3' ends of polyadenylated transcripts [38] |

| Primary Applications | Novel isoform discovery, alternative splicing, gene fusions, non-coding RNA analysis [38] | Gene expression validation, pathway-focused studies, clinical biomarker assays [39] [37] | High-throughput gene expression quantification, degraded/FFPE samples [38] |

| Detection Sensitivity | Lower for low-abundance transcripts due to distributed reads [37] | Higher for targeted genes due to concentrated reads [37] [40] | Moderate; limited by 3' UTR annotation quality [38] |

| Differentially Expressed Genes Detected | More comprehensive detection [38] | Limited to predefined panel | Fewer detected, but sufficient for pathway analysis [38] |

| Hands-on Time | ~7 hours turnaround [33] | <9 hours turnaround [33] | Rapid protocol (<3 hours) [38] |

| Compatible Input | 1-1000 ng standard RNA; 10 ng for FFPE [33] | 10 ng standard RNA; 20 ng for FFPE/degraded [39] [33] | Compatible with degraded RNA and FFPE [38] |

| Cost per Sample | Higher [37] | Lower for large studies [37] | Most cost-effective for large-scale studies [38] |

The performance differences between these approaches have direct implications for experimental outcomes. In comparative studies, whole transcriptome sequencing consistently detects more differentially expressed genes due to its comprehensive coverage [38]. However, targeted approaches provide superior sensitivity for low-abundance transcripts within their panel, effectively minimizing the "gene dropout" problem that plagues single-cell whole transcriptome studies [37]. Notably, despite detecting fewer differentially expressed genes, 3' mRNA-Seq and other targeted methods yield highly similar biological conclusions at the pathway and gene set enrichment level [38].

For chemogenomic applications, this sensitivity advantage of targeted approaches proves particularly valuable when analyzing expressed mutations. A 2025 study demonstrated that targeted RNA-Seq uniquely identified clinically relevant variants missed by DNA sequencing alone, while simultaneously verifying that DNA-detected variants were actually expressed [40]. This capability to bridge the "DNA to protein divide" makes targeted RNA-Seq especially valuable for precision oncology and mechanism-of-action studies in drug development.

Decision Framework for Method Selection

Table 2: Strategic Selection Guide for RNA-Seq Approaches

| Research Goal | Recommended Approach | Rationale | Implementation Considerations |

|---|---|---|---|

| Discovery-phase Research | Whole Transcriptome Sequencing | Unbiased detection of novel transcripts, isoforms, and splicing events [38] | Requires higher sequencing depth; more complex bioinformatics analysis |

| Large-scale Screening | 3' mRNA-Seq or Targeted Panels | Cost-effective profiling of many samples; streamlined data analysis [38] [37] | Dependent on well-annotated 3' UTRs; limited transcriptome coverage |

| Low-abundance Transcript Detection | Targeted RNA-Seq | Superior sensitivity for focused gene sets; minimizes dropout rate [37] [40] | Blind to genes outside panel; requires prior knowledge for panel design |

| Challenging Samples (FFPE, degraded) | Targeted RNA-Seq or 3' mRNA-Seq | Robust performance with suboptimal RNA quality [38] [39] | May require specialized protocols; lower RNA input requirements |

| Pathway-focused Validation | Targeted RNA-Seq | Confirms discovery findings; provides quantitative accuracy for specific genes [37] | Custom panel design needed; limited exploratory capability |

| Expression Quantification Only | 3' mRNA-Seq | Simplified analysis; one fragment per transcript enables direct counting [38] | Less information per sample; may miss regulatory events in coding regions |

The strategic selection between these approaches often follows a logical progression throughout the research pipeline. Whole transcriptome sequencing typically serves for initial discovery and atlas-building, as exemplified by initiatives like the Human Cell Atlas [37]. As research questions become more focused, targeted approaches provide the validation and precision required for translational applications. In the drug development continuum, this often means using whole transcriptome methods for target identification and mechanism of action studies, then transitioning to targeted panels for biomarker validation, patient stratification, and clinical trial applications [37].

Experimental Protocols and Methodologies

Whole Transcriptome Library Preparation Protocol

The Illumina Stranded Total RNA Prep provides a representative protocol for whole transcriptome analysis [33]. This workflow begins with RNA quantification and quality assessment, crucial steps that determine subsequent input adjustments. For the library preparation process:

rRNA Depletion: The protocol uses integrated enzymatic RNA depletion to remove both rRNA and globin mRNA in a single, rapid step, compatible with human, mouse, rat, bacterial, and epidemiological samples [33]. This enzymatic depletion offers advantages over bead-based methods for certain sample types.

RNA Fragmentation and cDNA Synthesis: RNA is fragmented, then reverse transcribed into cDNA using random primers. The strand specificity is preserved through incorporation of dUTP during second-strand synthesis [33].

Adapter Ligation: Illumina adapters are ligated to the cDNA fragments, with index sequences incorporated for sample multiplexing. The protocol accommodates up to 384 unique dual indexes, enabling high-throughput sequencing [33].

Library Amplification and QC: The final library is amplified via PCR, followed by quality control using fragment analysis, qPCR, or fluorometry [19]. Libraries are normalized before pooling to ensure equimolar representation.