Optimizing Compound Selectivity in Chemogenomic Libraries: Strategies for Precision Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on optimizing compound selectivity in chemogenomic libraries.

Optimizing Compound Selectivity in Chemogenomic Libraries: Strategies for Precision Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on optimizing compound selectivity in chemogenomic libraries. It explores the foundational role of these libraries in expanding the druggable proteome, details advanced design and screening methodologies, addresses common challenges and optimization strategies, and presents frameworks for validation and comparative analysis. By synthesizing the latest advances from initiatives like EUbOPEN and Target 2035, this resource aims to enhance the efficiency and precision of early drug discovery, facilitating the development of high-quality chemical tools and therapeutics.

The Foundation of Chemogenomics: Expanding the Druggable Proteome

Defining Chemogenomic Libraries and Their Role in Modern Drug Discovery

Frequently Asked Questions

What is a chemogenomic library? A chemogenomic library is a collection of small molecules specifically designed to systematically probe families of related biological targets, such as kinases, GPCRs, or ion channels [1]. Unlike general compound libraries, they are structured around the understanding that related proteins often bind similar ligands, which helps in exploring chemical and target spaces in parallel [2].

Why is compound selectivity a major challenge in chemogenomics? Achieving selectivity is difficult because proteins within the same family often have highly similar binding sites. A compound designed for one target might unintentionally bind to off-targets, leading to unexpected side effects or toxicities. This makes the optimization of selectivity a central theme in designing high-quality chemogenomic libraries [3] [1].

My screening hit shows promising on-target activity but poor selectivity. What is the first step I should take? The first step is to conduct a thorough selectivity profiling against a panel of closely related targets from the same protein family [1]. This quantitative data will help you identify the most problematic off-target interactions and establish a baseline for your optimization campaign. The table below summarizes the properties of BET bromodomain inhibitors, illustrating the journey from a probe to a clinical candidate with improved properties [3].

A colleague suggested I use a "privileged structure" approach. What does this mean? A "privileged structure" is a specific molecular scaffold that is known to produce biologically active compounds for a given target family [2]. For example, benzodiazepine-based scaffolds have been used to develop ligands for G-protein-coupled receptors. Starting from such structures can increase the probability of discovering potent and selective compounds for members of that family.

Which computational techniques are most effective for predicting selectivity early in the design process? Structure-Based Drug Design (SBDD) and chemogenomics-based models are highly effective. SBDD uses the 3D structures of the target and off-target proteins to model how a compound fits into their binding pockets [4]. Chemogenomic models, a generalization of QSAR methods, can simultaneously predict a compound's interaction with multiple proteins, helping to flag potential selectivity issues before synthesis [1].

My project involves a target with no known crystal structure. How can I design selective inhibitors? In the absence of a crystal structure, you can employ ligand-based approaches. If active ligands for your target or related proteins are known, you can use pharmacophore modeling or molecular similarity analysis to design new compounds [5]. Furthermore, you can use computational tools like AlphaFold2 to generate high-quality predicted protein structures, which can then be used for structure-based design [4].

Troubleshooting Guides

Problem 1: Poor Selectivity Profile in a Lead Compound

Issue: Your lead compound demonstrates strong potency against the intended target but shows significant activity against several off-targets from the same protein family, potentially leading to adverse effects.

Diagnostic Steps:

- Confirm the Binding Mode: Use molecular docking studies against the high-resolution structures of your primary target and the key off-targets. Analyze the binding interactions to understand which molecular features of your compound are responsible for the lack of selectivity [4].

- Profile Against a Wider Panel: Expand your in vitro screening panel to include a broader range of members from the same protein family, as well as other pharmacologically relevant targets, to fully understand the compound's polypharmacology [3] [1].

- Analyze Structure-Activity Relationships (SAR): Correlate the chemical modifications in your compound series with the activity data across the target panel. Identify substituents that increase off-target activity and those that improve selectivity [3].

Solutions & Optimization Strategies:

- Exploit Structural Differences: Identify amino acid residues that differ between the binding site of your target and the off-targets. Redesign your compound to form favorable interactions (e.g., hydrogen bonds, steric clashes) exclusively with your target [4].

- Leverage "Reverse" Chemogenomics: Use the chemogenomic library screening data itself. If your compound is active against an undesired off-target, consult historical screening data to identify chemical features that are notorious for binding to that off-target and eliminate them from your design [1].

- Iterative Design and Screening: Use a focused library around your lead scaffold, systematically varying the substituents suspected to influence selectivity. The table below outlines a hypothetical optimization path for a kinase inhibitor [3].

Table: Example Selectivity Optimization for a Kinase Inhibitor Lead

| Compound | Target IC₅₀ (nM) | Off-Target 1 IC₅₀ (nM) | Off-Target 2 IC₅₀ (nM) | Selectivity Ratio (vs Off-Target 1) | Key Structural Change |

|---|---|---|---|---|---|

| Lead | 10 | 15 | 200 | 1.5 | - |

| Analog A | 12 | 450 | 180 | 37.5 | Introduced bulky group |

| Analog B | 8 | >1000 | 150 | >125 | Optimized hydrogen bond donor |

Problem 2: Low Success Rate in Virtual Screening

Issue: Virtual screening of a chemogenomic library against your target yields a high number of false positives, or no viable hits are found.

Diagnostic Steps:

- Verify Library Quality: Check the drug-likeness and chemical diversity of your virtual library. Ensure it has been pre-filtered for undesirable functional groups and reactive compounds that can produce assay artifacts [5].

- Interrogate the Screening Protocol: Review the parameters of your molecular docking simulation. Inaccurate scoring functions, improper handling of protein flexibility, or an incorrectly defined binding site are common culprits [4].

- Validate the Model: Test your virtual screening workflow on a target with known active compounds to see if it can successfully "re-discover" them (a process called enrichment testing) [5].

Solutions & Optimization Strategies:

- Refine the Scoring Function: Use a consensus of different scoring functions or integrate machine learning-based scoring to improve the prediction of binding affinity [5].

- Incorporate Pharmacophore Constraints: Before docking, use a pharmacophore model to pre-filter the library. This ensures that only compounds capable of forming key interactions with the target are considered, increasing the hit rate [4].

- Utilize a Multi-Conformer Library: Ensure your virtual library contains multiple reasonable 3D conformations for each compound, as the initial conformation can significantly impact docking results [5].

Problem 3: Inconsistent Biological Readouts in Phenotypic Screens

Issue: When screening a chemogenomic library in a phenotypic assay (e.g., cell viability), the results are inconsistent between replicates or do not align with the known biology of the target family.

Diagnostic Steps:

- Interrogate Assay Conditions: Ensure cell passage number, culture conditions, and assay reagent stability are consistent. Small variations can significantly impact phenotypic outputs.

- Check Compound Integrity: Verify the stability of compounds in the library under assay conditions (e.g., in DMSO or aqueous buffer). Compound degradation is a major source of inconsistency [3].

- Identify Assay Interferers: Test if "hit" compounds are acting through the intended mechanism. Use counter-screens to rule out fluorescence interference, aggregation-based inhibition, or general cytotoxicity [5].

Solutions & Optimization Strategies:

- Implement Rigorous QC: Use liquid chromatography (e.g., UPLC) to confirm the purity and stability of library compounds before and during screening campaigns.

- Use Orthogonal Assays: Confirm hits from a phenotypic screen with a secondary, target-based biochemical assay. This helps triage compounds that are acting through the desired mechanism [1].

- Employ "Forward" Chemogenomics: If a compound induces a interesting but unexpected phenotype, use it as a tool to identify its protein target(s) through methods like affinity purification or resistance mutation sequencing, thereby discovering new biology [1].

Experimental Protocols for Key Experiments

Protocol 1: Selectivity Profiling Using a Kinase Inhibitor Library

Objective: To quantitatively evaluate the selectivity of a lead compound against a panel of 50 human kinases.

Materials:

- Research Reagent Solutions:

- Kinase Enzyme Library: A collection of purified, active kinases from the same family (e.g., CMGC, AGC).

- ADP-Glo Max Assay Kit: A luminescent kinase assay kit for detecting ADP production.

- Test Compound: Prepared as a 10 mM stock in DMSO.

- ATP Solution: Prepared at the Km ATP concentration for each kinase.

- Specific Peptide Substrate: For each kinase in the panel.

Methodology:

- Reaction Setup: In a white 384-well plate, add kinase, buffer, and the peptide substrate.

- Compound Addition: Transfer the test compound via acoustic dispensing to create a 10-point, half-log dilution series. Include a DMSO-only control for 100% activity.

- Reaction Initiation: Start the reaction by adding ATP. Incubate the plate at 30°C for 60 minutes.

- Detection: Add an equal volume of ADP-Glo Reagent to stop the reaction and deplete remaining ATP. After 40 minutes, add the Kinase Detection Reagent to convert ADP to ATP and detect it via luciferase-driven luminescence.

- Data Analysis: Plot the dose-response curve for each kinase. Calculate the IC₅₀ value for your compound against each kinase. Generate a selectivity score (e.g., the Gini coefficient or the number of kinases inhibited by >90% at 1 µM).

Protocol 2: In Silico Selectivity Screening Workflow

Objective: To computationally predict and prioritize compounds from a virtual library with a high likelihood of being selective for Target A over homologous Off-Target B.

Materials:

- Software: Molecular docking software (e.g., AutoDock Vina, Glide), a cheminformatics toolkit (e.g., RDKit), and a protein visualization program.

- Structures: High-resolution crystal structures or high-quality AlphaFold2 models of Target A and Off-Target B [4].

- Compound Library: A virtual library in SDF or SMILES format, pre-filtered for drug-likeness [5].

Methodology:

- Binding Site Preparation: Prepare the protein structures by adding hydrogen atoms, assigning protonation states, and defining the grid box for docking around the binding site of both targets.

- Parallel Docking: Dock the entire virtual library against both Target A and Off-Target B using the same parameters.

- Post-Docking Analysis: For each compound, calculate the differential docking score (ScoreTargetA - ScoreOff-TargetB). A higher positive value suggests better selectivity for Target A.

- Interaction Analysis: Visually inspect the predicted binding poses of top-ranked compounds. Prioritize compounds that form unique, favorable interactions with Target A that are not possible with Off-Target B due to differing residues [4].

- Priority List: Generate a final ranked list of compounds for synthesis or purchase based on a combined score of predicted affinity for Target A and selectivity over Off-Target B.

The Scientist's Toolkit

Table: Essential Research Reagent Solutions for Chemogenomics

| Item | Function in Research | Example Application |

|---|---|---|

| Focused Target Family Library | A collection of compounds biased towards a specific protein family (e.g., kinases, GPCRs). | Used for initial screening to rapidly identify hits against a new target from a known family [1]. |

| DNA-Encoded Library (DEL) | Vast libraries of small molecules (billions) each tagged with a DNA barcode for identity. | Enables ultra-high-throughput screening against purified protein targets to find novel chemical starting points [6]. |

| Pharmacologically Active Compound Library (e.g., LOPAC) | A collection of well-annotated, known bioactive compounds. | Used as a control and validation set in assay development and for identifying promiscuous inhibitors [1]. |

| PROTAC Molecule Set | A library of Proteolysis-Targeting Chimeras, which recruit proteins to degradation machinery. | Used to investigate phenotypes resulting from protein degradation rather than inhibition, and to target previously "undruggable" proteins [6]. |

| Crystal Structures & AlphaFold2 Models | 3D structural data of target proteins. | Essential for structure-based drug design and understanding the structural basis for selectivity [4]. |

| Cheminformatics Software (e.g., RDKit) | Open-source software for cheminformatic analysis. | Used for calculating molecular descriptors, analyzing chemical space, and managing compound libraries [5]. |

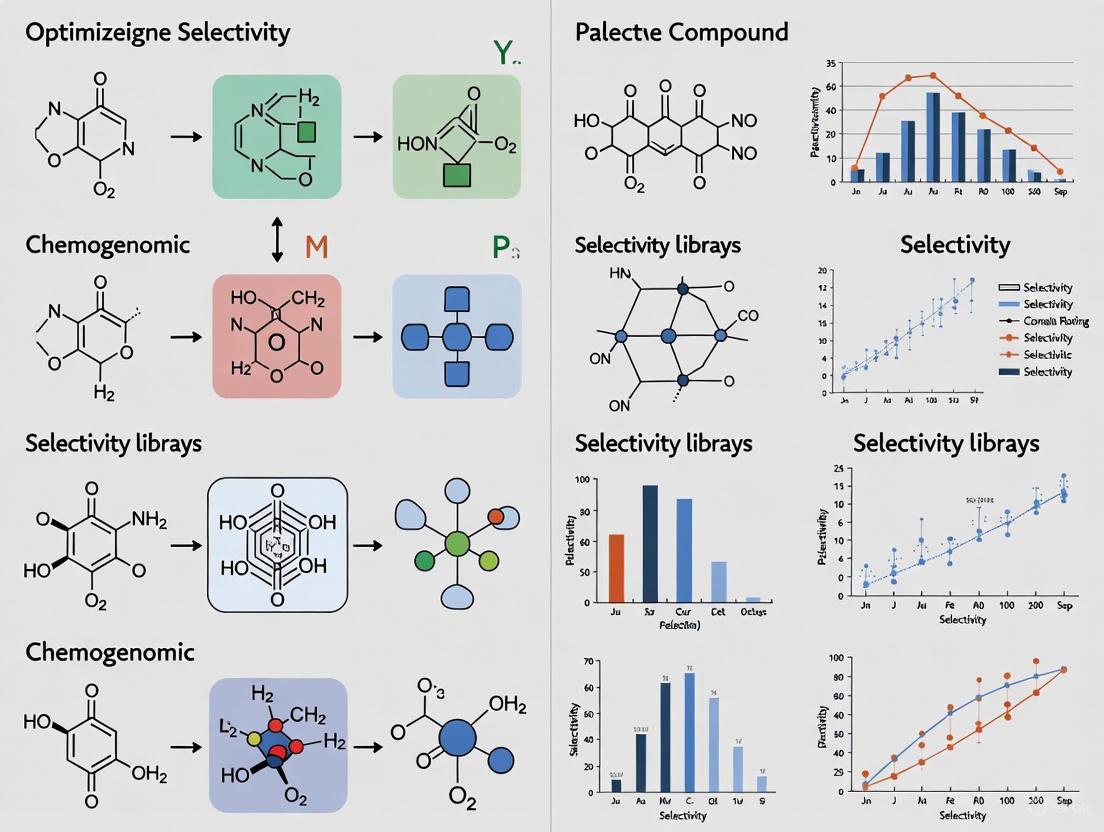

Workflow and Relationship Visualizations

The following diagrams illustrate the core workflows and logical relationships in chemogenomics research.

Diagram 1: The core workflow for a chemogenomics screening campaign, from target selection to lead optimization.

Diagram 2: The logical relationship between the core goal of selectivity optimization and the strategies and tools used to achieve it.

In the pursuit of target validation and drug discovery, two distinct but complementary classes of small molecules are essential: chemical probes and chemogenomic (CG) compounds. Understanding their precise definitions, appropriate applications, and limitations is fundamental to designing robust biological experiments and optimizing compound selectivity in chemogenomic library research.

A chemical probe is a highly characterized, potent, and selective small molecule used to investigate the function of a specific protein in biochemical, cellular, or in vivo settings. According to consensus criteria, a high-quality chemical probe must meet stringent standards [7] [8]:

- Potency: In vitro activity (IC50 or Kd) < 100 nM.

- Selectivity: >30-fold selectivity over related proteins within the same family.

- Cellular Activity: Evidence of on-target engagement at concentrations ≤ 1 μM.

- Characterization: Profiled against a broad panel of pharmacologically relevant off-targets.

In contrast, a chemogenomic (CG) compound is a modulator that may bind to multiple targets but possesses a well-characterized selectivity profile [9]. While not achieving the narrow selectivity of a chemical probe, CG compounds are invaluable for systematic, network-level exploration of target families and for target deconvolution when used in sets with overlapping profiles [10] [9].

The mission of global initiatives like Target 2035 is to provide chemical tools for the entire human proteome. Current data shows that available chemical tools target only about 3% of the human proteome, yet they already cover 53% of human biological pathways, highlighting their extensive utility [10].

Comparative Analysis: Key Differences at a Glance

Table 1: Characteristic Comparison of Chemical Probes and Chemogenomic Compounds

| Characteristic | Chemical Probe | Chemogenomic Compound |

|---|---|---|

| Primary Goal | Selective modulation of a single target | Multi-target modulation for systematic biology |

| Selectivity | >30-fold over related targets; extensively profiled [7] [8] | Well-characterized but multi-target profile [9] |

| Potency | < 100 nM (in vitro) [11] [8] | Varies; typically bioactive at μM or nM range |

| Ideal Use Case | Definitive functional studies of a single protein | Pathway analysis, phenotypic screening, target identification |

| Data Requirement | Comprehensive selectivity profiling, cellular target engagement [7] | Bioactivity data against a defined target set [9] |

| Availability | Often paired with a matched target-inactive control compound [7] | Often available in library sets covering target families |

Table 2: Current Proteome and Pathway Coverage of Chemical Tools

| Metric | Coverage | Source/Initiative |

|---|---|---|

| Proteins targeted by Chemical Probes | ~2.2% of human proteome [10] | Multiple (e.g., SGC, EUbOPEN) |

| Proteins targeted by Chemogenomic Compounds | ~1.8% of human proteome [10] | Multiple (e.g., EUbOPEN library) |

| Proteins targeted by Drugs | ~11% of human proteome [10] | DrugBank |

| Pathways covered by available chemical tools | ~53% of human biological pathways [10] | Target 2035 Analysis |

| EUbOPEN CG Library Coverage | ~1/3 of the druggable proteome [9] | EUbOPEN Consortium |

Troubleshooting Guides & FAQs for Experimental Design

FAQ 1: How do I choose between a chemical probe and a chemogenomic compound for my experiment?

Answer: The choice depends entirely on your experimental question and hypothesis. Adhere to the following decision workflow to make an informed choice.

FAQ 2: My experiment with a chemical probe produced an unexpected phenotype. What should I do?

Answer: An unexpected phenotype can be exciting but requires careful validation to ensure it is on-target. Follow this troubleshooting protocol to confirm your results.

Experimental Protocol for Phenotype Validation:

- Confirm Probe Concentration: Immediately verify that the probe was used within its recommended concentration range. Even selective probes become promiscuous at high concentrations [8]. The maximal specific concentration is often provided by resources like the Chemical Probes Portal [12].

- Employ a Matched Inactive Control: Use the structurally similar but target-inactive negative control compound that should be paired with a high-quality probe. If the phenotype disappears, it is likely on-target. If the phenotype persists, it is likely an off-target effect [7] [11].

- Use an Orthogonal Probe: Utilize a second, structurally distinct chemical probe targeting the same protein. If the same phenotype is observed with both probes, confidence that it is on-target is greatly increased. This is the core of "the rule of two" [8].

- Rescue Experiments: If possible, express a probe-resistant mutant of the target protein (e.g., a kinase with a "gatekeeper" mutation) to see if the phenotype is reversed.

- Check Probe Resources: Consult the Chemical Probes Portal or Probe Miner to ensure you are using the recommended probe for your target and to be aware of any known off-targets or limitations [12].

FAQ 3: How can I use a chemogenomic library to identify the target of a phenotypic hit?

Answer: Chemogenomic libraries are specifically designed for target deconvolution. The strategy relies on using a collection of compounds with overlapping but non-identical selectivity profiles.

Experimental Protocol for Target Deconvolution:

- Library Selection: Select a well-annotated CG library that covers the target family of interest (e.g., kinases, GPCRs). An example is the EUbOPEN library, which covers one-third of the druggable proteome [9].

- Phenotypic Screening: Screen the CG library in your phenotypic assay (e.g., cell viability, migration, reporter gene assay).

- Profile Correlation: Identify all compounds in the library that produce your phenotype of interest.

- Pattern Analysis: Compare the bioactivity profiles (their known targets) of the active compounds. The true molecular target is likely the one that is common to all or most of the active compounds. Statistical methods are used to rank potential targets based on the strength of this correlation [9].

- Validation: Confirm the identified target using a highly selective chemical probe or genetic methods (e.g., CRISPR knockout) as described in FAQ 2.

FAQ 4: I found a compound in a vendor catalog listed as a "probe." How can I verify its quality?

Answer: Vendor catalogs are not always reliable sources for quality assessment. A systematic approach using dedicated resources is crucial to avoid using poor-quality tools [12].

Verification Protocol:

- Consult the Chemical Probes Portal (Expert Curation): This portal provides expert-reviewed assessments and star ratings for compounds. It specifically flags "Historical Compounds" that are outdated and should not be used [12].

- Check Probe Miner (Data-Driven Assessment): This resource quantitatively ranks compounds based on statistical analysis of public bioactivity data. It provides objective metrics on selectivity and potency [12].

- Cross-Reference and Decide: Use these resources in tandem. A high-quality probe will typically have a good rating on both the Portal (e.g., 3-4 stars) and a high ranking in Probe Miner.

- Verify Availability of Controls: Ensure that a matched target-inactive control compound is available. If it isn't, the tool's utility is significantly limited [7] [8].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Resources for Selecting and Using Chemical Tools

| Resource Name | Type | Key Function | URL |

|---|---|---|---|

| Chemical Probes Portal | Expert-Curated Portal | Provides peer-reviewed recommendations and star ratings for chemical probes; flags outdated compounds [8] [12]. | www.chemicalprobes.org |

| Probe Miner | Data-Driven Platform | Offers objective, quantitative ranking of >1.8M molecules based on bioactivity data; comprehensive and frequently updated [12]. | https://probeminer.icr.ac.uk |

| SGC Chemical Probes | Source of Unencumbered Probes | Provides access to high-quality, open-access chemical probes developed by the Structural Genomics Consortium and partners [7] [3]. | https://www.thesgc.org/chemical-probes |

| EUbOPEN Consortium | Source of CG Libraries & Probes | Generates and distributes openly available chemogenomic compound sets and new chemical probes, focusing on understudied targets [9]. | https://www.eubopen.org |

| Donated Chemical Probes (DCP) | Source of Probes | Provides access to high-quality chemical probes donated by pharmaceutical companies and academics after peer review [9]. | https://www.sgc-ffm.uni-frankfurt.de |

| OPnMe | Source of Probes | Boehringer Ingelheim's platform to provide free access to some of their in-house developed tool compounds [7]. | https://opnme.com |

FAQs: Navigating the Expanding Druggable Proteome

What is the druggable proteome? The druggable proteome is defined as the fraction of human proteins that can bind to a small molecule or antibody with the required affinity and chemical properties to become a potential drug target linked to a disease [13]. It consists of proteins suitable for drug interactions, where a drug can induce a favorable clinical response [14].

Which protein families are currently most targeted by FDA-approved drugs? FDA-approved drugs are directed against 754 human proteins. These are predominantly concentrated within a few major protein families [13]. The table below provides a detailed breakdown.

Table 1: Classification of Targets for FDA-Approved Drugs [13]

| Protein Class | Number of Genes Targeted |

|---|---|

| Enzymes | 304 |

| Transporters | 182 |

| G-protein Coupled Receptors (GPCRs) | 103 |

| CD Markers | 79 |

| Voltage-gated Ion Channels | 55 |

| Nuclear Receptors | 21 |

Why is the field expanding beyond established targets like kinases and GPCRs? While targets like kinases and GPCRs are well-established, sequencing efforts have identified many disease-associated mutations in other protein families, providing a compelling rationale for exploring them [9]. Expanding into understudied families like E3 ligases and Solute Carriers (SLCs) unlocks new therapeutic opportunities for diseases with limited treatment options.

What are the key characteristics of an ideal drug target? An ideal target should have a critical role in the disease process, less significant involvement in other important processes (to limit side-effects), a favorable expression pattern (e.g., tissue-specific), and structural properties that allow for drug specificity [13]. It should also be amenable to high-throughput screening and have a biomarker for monitoring efficacy [14].

How do chemical probes differ from chemogenomic compounds? Chemical Probes are the gold standard: highly characterized, potent (typically <100 nM), and selective (at least 30-fold over related proteins) small molecules that modulate a protein's function in cells [9]. Chemogenomic (CG) Compounds may bind to multiple targets but have well-characterized off-target profiles. They are valuable tools for initial target discovery and deconvolution, especially when highly selective probes are not yet available [9].

Troubleshooting Guides

Issue: Achieving Sufficient Selectivity for a Novel Target

Problem: Your lead compound shows promising on-target activity but also inhibits several closely related proteins from the same family, raising concerns about potential side effects.

Solution:

- Apply Structure-Based Design: Analyze the structural differences between your primary target and the off-targets. Key principles to exploit include [15]:

- Shape Complementarity: Design compounds that fit into unique sub-pockets or that create steric clashes with off-targets. For example, a single V523I difference between COX-1 and COX-2 was exploited to achieve over 13,000-fold selectivity for COX-2 [15].

- Electrostatic Interactions: Leverage differences in charge distribution or unique hydrogen bonding opportunities.

- Flexibility and Hydration: Consider the flexibility of the binding site and the role of water molecules, which can differ between homologs.

- Utilize Broad Selectivity Panels: Screen your compounds against a broad panel of related proteins. Resources like the EUbOPEN consortium offer extensively profiled chemogenomic sets and data for various target families [16] [9].

- Iterative Design and Profiling: Use the selectivity data from each compound iteration to inform the design of the next, systematically refining the structure to diminish off-target binding while maintaining on-target potency [15].

Issue: Identifying a Viable Starting Point for an "Undruggable" Target

Problem: Your target (e.g., a protein phosphatase, a transcription factor, or a shallow protein-protein interaction interface) lacks a well-defined, druggable pocket and has no known small-molecule binders.

Solution:

- Leverage Machine Learning Predictions: Use in-silico classifiers to assess the inherent "ligandability" of the target based on its amino acid sequence and structural features. Advanced models using amino acid composition descriptors can achieve high accuracy in predicting druggable proteins [14].

- Explore New Modalities: For targets where traditional small molecules fail, consider alternative approaches.

- PROTACs and Molecular Glues: These molecules induce targeted protein degradation by recruiting the target to E3 ubiquitin ligases. This is particularly powerful because it requires only a transient binding event to the target protein, not necessarily functional inhibition [9].

- Covalent Inhibitors: Target unique cysteine or other nucleophilic residues. The EUbOPEN project, for instance, developed covalent inhibitors for the hard-to-drug SH2 domain of the SOCS2 protein [9].

- Focus on Chemogenomic Sets with Overlapping Profiles: Screen libraries of compounds with known, multi-target profiles. The phenotypic effect of your compound might be due to its action on a known, druggable target within its profile, providing a new therapeutic hypothesis for that disease [9].

Issue: Interpreting Complex Phenotypic Screening Results

Problem: A phenotypic screen with a chemogenomic library identifies a compound that produces a desired phenotype, but the specific protein target responsible for the effect is unknown.

Solution:

- Employ a Target Deconvolution Strategy: Use a set of well-characterized chemogenomic compounds with overlapping but distinct target profiles. By correlating the phenotypic readout with the target profiles of active and inactive compounds, you can identify the common target responsible for the effect [9].

- Validate with a High-Quality Chemical Probe: Once a candidate target is identified, confirm the phenotype using a highly selective and potent chemical probe for that target, if available. Resources like the EUbOPEN Donated Chemical Probes project provide peer-reviewed probes for this purpose [9].

- Integrate Multi-Omics Data: Enhance confidence by integrating your findings with other data. For example, check if the candidate target shows unfavorable prognostic significance in cancer patient data or if it is central to relevant disease pathways [14].

Experimental Protocols

Protocol: Machine Learning Prediction of Druggable Cancer-Driving Proteins

This protocol is adapted from a study that developed a classifier to identify druggable cancer-driving proteins using amino acid composition [14].

1. Objective: To build a predictive machine learning model for identifying druggable proteins from a set of cancer-driving proteins.

2. Materials and Reagents:

- Positive Set: FASTA sequences of 666 druggable proteins with FDA-approved drugs (from DrugBank and Broad Institute's Drug Repurposing Hub).

- Negative Set: FASTA sequences of 219 'hard-to-drug' proteins (e.g., protein phosphatases).

- Software: R package

RCPIfor calculating protein descriptors; Python scikit-learn and Jupyter notebooks for machine learning.

3. Methodology:

- Step 1: Calculate Protein Descriptors. Use the

RCPIpackage to compute three families of composition descriptors for each protein sequence:- Amino Acid Composition (AC): 20 descriptors.

- Di-amino Acid Composition (DC): 400 descriptors.

- Tri-amino Acid Composition (TC): 8000 descriptors.

- Step 2: Prepare Dataset. Label druggable proteins as '1' and hard-to-drug proteins as '0'. Address class imbalance using the Synthetic Minority Over-sampling Technique (SMOTE).

- Step 3: Train ML Classifiers. Use a threefold cross-validation (CV) pipeline. For each fold:

- Scale the training set and transform the test set accordingly.

- Apply Feature Selection/Dimension Reduction (e.g., PCA, LinearSVC) to the training set.

- Evaluate multiple ML classifiers (e.g., SVM, Random Forest, XGBoost) by calculating the Area Under the Receiver Operating Characteristic (AUROC) score.

- Step 4: Select and Apply the Best Model. The optimal model reported was a Support Vector Machine (SVM) using 200 tri-amino acid composition descriptors, achieving an AUROC of 0.975 [14]. This model can then be used to scan new cancer-driving protein sets.

The workflow below visualizes this machine learning process for predicting druggable proteins.

Protocol: Chemogenomic Library Screening for Target Identification

This protocol outlines a general approach for using chemogenomic libraries to identify novel therapeutic vulnerabilities, as applied in precision oncology studies [17] [9].

1. Objective: To identify patient-specific cancer vulnerabilities by screening a targeted chemogenomic compound library against patient-derived cells.

2. Materials and Reagents:

- Chemogenomic Library: A physically available library of well-annotated small molecules. For example, a minimal library of 1,211 compounds targeting 1,386 anticancer proteins [17].

- Cell Model: Patient-derived disease-relevant cells (e.g., glioma stem cells from glioblastoma patients).

- Assay Reagents: Reagents for cell viability/phenotypic readouts (e.g., imaging-based assays).

3. Methodology:

- Step 1: Library Design and Curation. Select compounds based on:

- Coverage of a wide range of protein targets and pathways implicated in cancer.

- Cellular activity and bioavailability.

- Chemical diversity and availability.

- Known target selectivity profiles.

- Step 2: Phenotypic Screening. Plate patient-derived cells and treat them with the chemogenomic library compounds. Use an imaging-based assay to measure a phenotypic endpoint such as cell survival or death.

- Step 3: Data Analysis. Analyze the phenotypic responses to identify "hits" – compounds that selectively inhibit the growth or survival of a specific patient's cancer cells.

- Step 4: Target Deconvolution. For the hit compounds, use their known and profiled target annotations to hypothesize which specific protein target(s) are responsible for the observed phenotype. This can be validated with additional experiments using selective chemical probes.

The following diagram illustrates the key stages of a chemogenomic screening workflow.

The Scientist's Toolkit: Research Reagent Solutions

This table details key resources and reagents that are essential for research in the expanding druggable proteome.

Table 2: Essential Research Reagents and Resources

| Item | Function / Description | Example / Source |

|---|---|---|

| DrugBank Database | A comprehensive database containing detailed information about drugs, their mechanisms, interactions, and targets. | www.drugbank.ca [13] |

| Chemical Probes | High-quality, selective, and potent small molecules used to validate the function of a specific protein target in cells. | EUbOPEN Donated Chemical Probes [9] |

| Chemogenomic (CG) Libraries | Collections of well-annotated compounds with known, often overlapping, target profiles. Used for phenotypic screening and target deconvolution. | EUbOPEN CG Library; Kinase Chemogenomic Set (KCGS) [16] [9] |

| Machine Learning Classifiers | Computational models that predict the druggability of proteins based on sequence or structural features, helping to prioritize new targets. | SVM classifier with tri-amino acid composition descriptors [14] |

| Patient-Derived Cell Assays | Disease-relevant cellular models derived directly from patient tissues, used for screening compounds in a physiologically relevant context. | Glioma stem cells from glioblastoma patients [17] |

The challenge of translating human genomic information into new medicines has revealed a significant bottleneck in biomedical research: the vast majority of the human proteome remains uncharacterized and unexploited for therapeutic purposes. While approximately 65% of the human proteome has been partially characterized, a substantial proportion (∼35%) remains uncharacterized, and less than 5% has been successfully targeted for drug discovery [18]. This knowledge gap highlights the profound disconnect between our ability to obtain genetic information and our subsequent development of effective medicines.

In response to this challenge, Target 2035 has emerged as an international federation of biomedical scientists from public and private sectors with an ambitious goal: to develop and apply new technologies to create chemogenomic libraries, chemical probes, and/or biological probes for the entire human proteome by the year 2035 [18]. This open science initiative represents a collaborative effort to address the "dark proteome" - those proteins with suspected or potential roles in disease states but which lack research tools to study their function.

As a key contributor to this global effort, the EUbOPEN (Enable and Unlock Biology in the OPEN) consortium operates as a public-private partnership with specific objectives to create, distribute, and annotate the largest openly available set of high-quality chemical modulators for human proteins [19] [9]. Together, these initiatives are establishing new paradigms for open collaboration in early-stage drug discovery.

The following table summarizes the core objectives, structures, and outputs of these complementary initiatives:

| Feature | Target 2035 | EUbOPEN |

|---|---|---|

| Primary Objective | Generate pharmacological modulators for most human proteins by 2035 [18] | Create the largest openly available set of high-quality chemical modulators [9] |

| Governance | International federation coordinated by Structural Genomics Consortium (SGC) [18] | Public-private partnership with 22 academic/industry partners [19] |

| Core Strategy | Open science, collaboration, and data sharing between public and private sectors [18] | Four pillars: chemogenomic libraries, probe discovery, patient-derived assays, data/reagent dissemination [20] |

| Key Outputs | - Chemical/biological probes [18]- Chemogenomic libraries [18]- Open datasets [21] | - Chemogenomic library (∼5,000 compounds) [19]- 100+ peer-reviewed chemical probes [9]- Patient-derived assay protocols [9] |

| Target Coverage | Entire human proteome [18] | ∼1,000 proteins (1/3 of druggable genome) [19] [22] |

| Timeline | 2035 [18] | 5-year project (2020-2025) [19] |

Technical Support Center: Troubleshooting Compound Selectivity

Frequently Asked Questions

Q1: What criteria distinguish a chemical probe from a chemogenomic compound?

A1: The EUbOPEN consortium has established strict, peer-reviewed criteria for chemical probes [9]:

- Potency: In vitro activity < 100 nM

- Selectivity: ≥30-fold selectivity over related proteins

- Cellular Activity: Target engagement in cells at <1 μM (or <10 μM for protein-protein interaction targets)

- Toxicity Window: Reasonable cellular toxicity window (unless cell death is target-mediated)

- Negative Controls: Availability of structurally similar inactive control compound

In contrast, chemogenomic compounds have less stringent selectivity requirements but provide well-characterized target profiles across multiple targets, enabling target deconvolution through overlapping selectivity patterns when used in sets [22].

Q2: How can I access and utilize the chemogenomic libraries for my target validation studies?

A2: The EUbOPEN chemogenomic library comprises approximately 5,000 compounds covering about 1,000 proteins across major target families including kinases, membrane proteins, and epigenetic modulators [9] [22]. To effectively utilize these resources:

- Access Point: Compounds can be requested through https://www.eubopen.org/chemical-probes [9]

- Annotation: All compounds are comprehensively characterized for potency, selectivity, and cellular activity across biochemical and cell-based assays [9]

- Application: Use compound sets with overlapping target profiles to identify the target responsible for specific phenotypes through pattern recognition [22]

- Data Access: Accompanying assay data and protocols are deposited in public repositories and project-specific data resources [9]

Q3: What experimental strategies are recommended for progressing difficult targets from gene to chemical modulator?

A3: For challenging target classes, Target 2035 recommends a multi-pronged approach [18]:

- Technology Test-Beds: Pilot projects focused on specific protein families (e.g., WD40 repeat domains) to compare experimental strategies [18]

- AI-Guided Methods: Implementation of design-make-test-analyze (DMTA) cycles that integrate experimental data generation and modeling workflows in real-time [21]

- Collaborative Networks: Participation in open chemistry networks where chemical resources are contributed on a patent-free, open access basis [18]

- Primary Cell Assays: Development of protocols using patient-derived cells for biologically relevant screening [9]

Troubleshooting Guides

Problem: Inconsistent Cellular Activity Despite Strong In Vitro Binding

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| Compound Permeability | - Measure logP/logD- Perform PAMPA assay- Test in efflux pump assays | - Modify physicochemical properties- Utilize prodrug strategies (e.g., phosphate masking) [9] |

| Protein Abundance | - Quantify target protein levels (Western blot)- Measure baseline phosphorylation | - Use chemogenomic compound sets to establish correlation [22]- Employ complementary targeting modalities (PROTACs) [9] |

| Cellular Compartmentalization | - Perform cellular fractionation- Use target engagement assays (CETSA) | - Optimize compound properties for specific compartments- Validate with orthogonal cellular assays [9] |

Problem: Interpreting Phenotypic Screening Results with Chemogenomic Libraries

When using chemogenomic compound sets for phenotypic screening, target deconvolution presents specific challenges. The following workflow outlines a systematic approach to address this problem:

Problem: Inadequate Data Quality for AI-Guided Compound Optimization

Robust artificial intelligence (AI) and machine learning (ML) models require high-quality, well-annotated datasets. Target 2035 has established best practices for data management to enable AI-guided drug discovery [21]:

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential research reagents and platforms available through Target 2035 and EUbOPEN initiatives:

| Resource | Description | Key Features | Access Information |

|---|---|---|---|

| EUbOPEN Chemical Probes | High-quality, peer-reviewed chemical modulators with negative controls [9] | - Potency <100 nM- Selectivity ≥30-fold- Cell-active- Open access | https://www.eubopen.org/chemical-probes [9] |

| Chemogenomic Library | ∼5,000 compounds covering ∼1,000 druggable targets [19] | - Well-annotated selectivity profiles- Covers kinases, GPCRs, SLCs, E3 ligases- Patient-cell assay data | Available through EUbOPEN portal [22] |

| MAINFRAME Network | International network of ML researchers and data scientists [23] | - Curated datasets- Experimental feedback- Collaborative benchmarking | Participation through Target 2035 [23] |

| Open Benchmarking Challenges | Computational challenges for hit-finding algorithms [23] | - Real-world experimental testing- CACHE, CASP, DREAM Challenges- Community validation | Through Target 2035 partnerships [23] |

| Patient-Derived Assay Protocols | Standardized protocols for primary cell assays [9] | - Focus on inflammatory bowel disease, cancer, neurodegeneration- Clinically relevant models | Disseminated through EUbOPEN outputs [9] |

Experimental Protocols for Key Methodologies

Comprehensive Compound Profiling for Selectivity Assessment

Purpose: To generate comprehensive selectivity profiles for compounds in chemogenomic libraries, enabling accurate target deconvolution in phenotypic screening [9] [22].

Materials:

- EUbOPEN chemogenomic library compounds [22]

- Selectivity panels for relevant target families (kinases, GPCRs, etc.) [9]

- Biochemical and cell-based assay reagents [9]

- Primary patient-derived cells (inflammatory bowel disease, cancer, neurodegeneration) [9]

Procedure:

- Panel Configuration: Establish target family-specific selectivity panels covering key representatives

- Concentration Range Testing: Profile each compound across relevant concentration ranges (typically 0.1 nM - 10 μM)

- Primary Assays: Conduct biochemical assays to determine initial potency and selectivity

- Cellular Target Engagement: Validate cellular activity using appropriate assays (CETSA, nanoBRET, etc.)

- Patient-Derived Cell Profiling: Test compounds in disease-relevant primary cell assays

- Data Integration: Compile all activity data into unified selectivity profiles

- Quality Control: Apply target family-specific criteria to validate compound utility [22]

Troubleshooting:

- If cellular activity doesn't correlate with biochemical data, investigate membrane permeability and efflux potential

- For inconsistent selectivity patterns, verify assay quality controls and compound integrity

- When target deconvolution remains challenging, utilize larger chemogenomic compound sets with overlapping profiles [22]

Implementing AI-Enabled Design-Make-Test-Analyze (DMTA) Cycles

Purpose: To accelerate hit identification and optimization through integrated experimental and computational workflows [21].

Materials:

- Standardized ELN and LIMS systems with controlled vocabulary [21]

- Automated laboratory equipment for compound synthesis and screening [21]

- Cloud computing infrastructure for data analysis and model training [21]

- Curated legacy datasets for model initialization [21]

Procedure:

- Data Collection Setup: Implement FAIR data principles across all experimental workflows [21]

- Model Training: Curate legacy data to build initial AI/ML models for compound design [21]

- Compound Design: Use models to generate novel compound designs with improved properties

- Automated Synthesis: Deploy automated synthesis platforms to generate designed compounds

- High-Throughput Screening: Test synthesized compounds in relevant biological assays

- Data Integration: Feed experimental results back into models to refine predictions

- Cycle Iteration: Continuously iterate the DMTA cycle to optimize compound properties [21]

Quality Control Considerations:

- Ensure precise ontologies and standardized vocabulary for all data entries [21]

- Capture both positive and negative data with appropriate metadata [21]

- Implement automated data validation checks at each workflow stage

- Establish criteria for model performance monitoring and refinement

Target 2035 and EUbOPEN represent transformative approaches to early-stage drug discovery through their commitment to open science, collaborative research models, and systematic coverage of the druggable proteome. By providing well-characterized chemical tools, robust experimental protocols, and comprehensive data resources, these initiatives are establishing the foundation for accelerated target validation and drug discovery. The technical support resources outlined in this article provide practical guidance for researchers navigating the challenges of compound selectivity and target deconvolution, while the standardized experimental protocols enable consistent implementation across the scientific community. As these initiatives progress toward their 2035 goals, they continue to demonstrate the power of open collaboration in addressing complex challenges in biomedical research.

Frequently Asked Questions

What are the primary goals of a well-designed chemogenomic library? A high-quality chemogenomic library aims for two main objectives: broad Target Coverage, meaning it contains compounds able to probe as many members of a protein family as possible, and high Chemical Diversity, ensuring the compounds represent a wide range of distinct scaffolds and structures to maximize the chance of identifying novel hits [24].

Our HTS campaign yielded many non-specific hits. How can library design prevent this? A high rate of non-specific binders, or "frequent hitters," often results from compounds with reactive or undesirable functional groups. Applying substructure filters during library design to remove molecules with these problematic motifs can significantly reduce false positives and improve the quality of your hit list [25].

How can we effectively measure the structural diversity of a screening library? Structural diversity can be measured using several computational methods [26]:

- Scaffold-based analysis: Quantifying the number and frequency of unique molecular cores or frameworks.

- Fingerprint-based methods: Using molecular fingerprints to calculate similarity and cluster compounds.

- Principal Component Analysis (PCA): Visualizing the chemical space in 2D or 3D plots to assess the spread of compounds.

What is the key trade-off in designing a targeted library? The main trade-off is between Coverage and Bias [24]. An ideal library provides uniform coverage across the entire target family. However, designs often introduce bias, where certain targets are over-represented by many similar compounds while others are neglected. The goal is to maximize coverage while minimizing bias.

Can I design a highly diverse library without synthesizing billions of compounds? Yes. Advanced strategies like factorizable libraries are designed for this. This approach involves creating smaller, optimized segment libraries (e.g., prefix and suffix segments) that are combined combinatorially. The result is an ultra-high-diversity library with efficient coverage of sequence space without the prohibitive cost of synthesizing every single variant [27].

Troubleshooting Guides

Issue: Inadequate Coverage of the Biological Target Space

Problem Description The screening library fails to interact with a broad range of proteins within the target family, leading to low hit rates and an inability to identify chemical starting points for important targets.

Diagnostic Steps

- Perform In silico Target Profiling: Use computational methods to predict the interaction profile of your compound library across the entire target family of interest (e.g., all kinases or GPCRs). This generates a ligand-target interaction matrix [24].

- Analyze the Interaction Matrix: Assess the predicted matrix for:

- Coverage: The percentage of targets that have at least one predicted binder in the library.

- Bias: The distribution of compounds across targets. Identify targets with a high density of predicted binders and those with none [24].

- Compare to Known Bioactive Space: Map your library's chemical space against a reference database of known bioactive compounds, such as ChEMBL, to identify gaps in coverage [28].

Solution To achieve uniform and broad target coverage, follow this iterative design and assessment workflow:

Preventative Best Practices

- Integrate Multiple Data Sources: Design libraries using both ligand-based information (known bioactive compounds) and structure-based data (protein structures) when available [24].

- Focus on Target Family-Directed Masterkeys: Prioritize compound scaffolds known to have inherent binding promiscuity across a protein family, which can then be optimized for selectivity [24].

Issue: Poor Chemical and Structural Diversity

Problem Description The compound library is clustered in a narrow region of chemical space, leading to redundant hits with similar scaffolds and a lack of novelty.

Diagnostic Steps

- Calculate Diversity Metrics:

- Scaffold Analysis: Generate all molecular frameworks in the library. A high number of unique scaffolds relative to the library size indicates good scaffold diversity [26].

- Molecular Similarity: Calculate pairwise Tanimoto coefficients using molecular fingerprints (e.g., ECFP). A low average similarity indicates a diverse set [25] [26].

- Visualize Chemical Space: Use dimensionality reduction techniques like PCA, t-SNE, or Generative Topographic Maps (GTM) to project compounds into a 2D map. Visually check for clusters and large empty areas [29] [26].

Solution To increase the chemical diversity of a screening library, employ a combination of selection and design strategies.

Table 1: Methods for Enhancing Library Diversity

| Method | Description | Application |

|---|---|---|

| Fingerprint-Based Clustering | Groups compounds by structural similarity using molecular fingerprints. | Select a representative subset of compounds from each cluster to ensure broad coverage [26]. |

| Scaffold Tree Analysis | Hierarchically breaks down molecules to classify scaffolds and sub-scaffolds. | Quantify scaffold diversity using Shannon entropy and prioritize libraries with many unique, non-similar scaffolds [26]. |

| Factorizable Library Design | Uses combinatorially assembled segment libraries (e.g., via Golden Gate assembly) to maximize theoretical diversity from a limited number of physically synthesized compounds [27]. | Achieve ultra-high-diversity libraries for antibody fragments or other combinatorial constructs while managing synthesis costs [27]. |

| Diversity-Oriented Synthesis (DOS) | A synthetic chemistry strategy designed to produce structurally diverse compounds from simple starting materials. | Build screening libraries with high skeletal and functional group diversity to explore underexplored chemical space [30]. |

Preventative Best Practices

- Balance Synthetic and Natural Compounds: Incorporate natural products or natural product-like compounds to access chemotypes often absent from purely synthetic libraries [25].

- Employ "Smart" Design: Use computational models and structural knowledge to focus diversification on regions of molecules most likely to yield functional improvements, maximizing functional diversity within a limited library size [31].

Issue: High Attrition Rate from Undesirable Compound Properties

Problem Description A high percentage of screening hits are false positives, exhibit toxicity, or have poor drug-like properties, making them unsuitable for lead optimization.

Diagnostic Steps

- Analyze Hit Compounds: Profile the structures of frequent hitters and toxic compounds for common substructures or functional groups.

- Audit Library Composition: Screen the entire library computationally for violations of established rules (e.g., PAINS filters) and undesirable physicochemical properties.

Solution Implement a robust compound filtering protocol to curate a high-quality, hit-like library.

Table 2: Key Compound Filters for Library Curation

| Filter Type | Objective | Typical Criteria & Notes |

|---|---|---|

| Drug-like/Lead-like | Ensure compounds have properties conducive to oral bioavailability and are suitable starting points for optimization. | Apply Lipinski's Rule of 5 and related rules. Enforce more stringent criteria for lead-like compounds (e.g., lower molecular weight, logP) to allow for optimization into drug-like molecules [25]. |

| Substructure Alerts | Remove compounds with functional groups prone to reactivity, toxicity, or assay interference. | Use filters like REOS (Rapid Elimination of Swill) and PAINS (Pan-Assay Interference Compounds) to identify and exclude frequent hitters and reactive molecules [25]. |

| Physicochemical Properties | Maintain a desirable balance of properties across the library. | Filter based on calculated properties like molecular weight, logP, number of hydrogen bond donors/acceptors, and polar surface area to keep compounds within a "hit-like" space [25]. |

The Scientist's Toolkit

Table 3: Essential Research Reagents and Resources for Library Design and Screening

| Item | Function in Research |

|---|---|

| Commercial Diverse Libraries (e.g., 50K Diversity Library) | Pre-selected collections of drug-like compounds, ideal as a starting point for phenotypic or target-based High-Throughput Screening (HTS) to maximize the chance of initial hit identification [30]. |

| Scaffold-Focused Libraries | Libraries where each compound represents a unique molecular framework. Essential for exploring novel chemical space and identifying new lead series during hit explosion [30]. |

| Natural Product Collections | Provides access to complex, evolutionarily optimized chemical structures often with unique bioactivity. Particularly valuable for phenotypic screening campaigns [25]. |

| ChEMBL Database | A manually curated database of bioactive molecules with drug-like properties. Serves as a critical reference for known target-compound interactions, bioactivity data, and benchmarking library coverage [28]. |

| ZINC Database | A freely available public repository of commercially available compounds for virtual screening. Used to select and purchase compounds for building a custom screening library [25]. |

| Cell Painting Assay | A high-content, image-based morphological profiling assay. Used to characterize the phenotypic response of cells to compound treatment, providing a rich dataset for mechanism of action deconvolution [28]. |

| DNA-Encoded Libraries (DELs) | Ultra-large libraries of compounds covalently tagged with DNA barcodes, allowing for simultaneous screening of billions of compounds against a purified target. Used for initial hit identification against isolated targets [29]. |

Design and Deployment: Methodologies for Building and Screening Selective Libraries

Leveraging Cheminformatics for Library Design and Virtual Screening

Troubleshooting Guides and FAQs

FAQ: Library Design and Enumeration

What strategies can be used to design a targeted chemogenomic library? Designing a targeted library is a multi-objective optimization problem. The goal is to maximize cancer target coverage while ensuring cellular potency, selectivity, and a minimal final library size. Two primary strategies exist:

- Target-Based Approach: Start with a defined protein target space (e.g., 1,655 cancer-associated proteins) and identify established compound-target interactions from public databases. This yields Experimental Probe Compounds (EPCs) [32].

- Drug-Based Approach: Curate Approved and Investigational Compounds (AICs) with known safety profiles, which is advantageous for drug repurposing. Both approaches require rigorous filtering based on activity, chemical diversity, and commercial availability to create a focused screening set [32].

How can I generate an ultra-large, synthetically accessible virtual library? Ultra-large libraries of REAL (REadily AvailabLe) compounds can be created using combinatorial chemistry. By employing reliable reactions like Sulfur Fluoride Exchange (SuFEx) and accessing large, diverse sets of building blocks from vendors (e.g., Enamine, ChemDiv), you can enumerate libraries of hundreds of millions of compounds. Software like ICM-Pro can be used for this library enumeration [33].

My virtual library is too large to screen efficiently. What filtering methods should I use? After enumeration, apply sequential filters to reduce library size while maintaining quality and diversity:

- Activity Filtering: Remove compounds predicted to be inactive or lacking desired properties.

- Potency Filtering: For each target, select the most potent compounds to reduce redundancy.

- Availability Filtering: Filter based on the commercial availability of building blocks or final compounds for physical screening. This process can reduce a theoretical set of over 300,000 compounds to an optimized screening set of about 1,200 compounds while retaining coverage of over 80% of the intended target space [32].

FAQ: Virtual Screening and Docking

How do I account for binding site flexibility during virtual screening? Relying solely on a single crystal structure may not capture the full range of binding poses. A recommended method is to use a 4D structural model. This involves:

- Generating an ensemble of receptor conformations using algorithms like ligand-guided receptor optimization.

- Using diverse sets of known high-affinity agonists and antagonists to generate distinct models of the binding site.

- Combining the best-performing models (e.g., antagonist-bound, agonist-bound, and crystal structure) into a single 4D model for docking. This accounts for flexibility and can improve the discrimination between binders and non-binders [33].

What criteria should I use to select compounds for synthesis after virtual screening? After docking a large library, prioritize compounds based on a combination of computational and practical factors:

- Docking Score: Compounds with the most favorable (lowest) predicted binding scores.

- Binding Pose Analysis: Prefer poses that form key hydrogen bonds with residues critical for binding (e.g., T114, S285, S90 for CB2) [33].

- Chemical Novelty and Diversity: Cluster top hits and select representatives from diverse chemical scaffolds to avoid bias.

- Synthetic Tractability: Prioritize compounds built from readily accessible building blocks (e.g., primary amines over secondary amines, azides from halide precursors) and consider the cost and stability of the final product [33].

My virtual screening hits are not validating experimentally. How can I improve my hit rate? A high experimental hit rate (e.g., 55% was achieved for CB2 antagonists) relies on multiple factors [33]:

- Library Quality: Use libraries built from reliable chemistry (e.g., SuFEx) to ensure synthesizability.

- Receptor Model Quality: Validate your docking model's ability to discriminate known binders from decoys using metrics like ROC AUC before screening.

- Iterative Screening: Perform an initial fast docking (low effort) to narrow the field, then re-dock the top hundreds of thousands of compounds with higher conformational sampling (high effort) for more accurate ranking [33].

FAQ: Data Analysis and Visualization

How can I visualize and analyze the chemical space of my screening library? With libraries containing millions of compounds, efficient visualization is crucial. Recent advances use dimensionality reduction algorithms to project high-dimensional chemical descriptor data into 2D or 3D maps. These chemical space maps facilitate:

- Assessment of library diversity and clustering.

- Identification of activity cliffs and structure-property relationships.

- Visual validation of QSAR models and the analysis of activity/property landscapes [34].

What tools can I use to profile and compare the hazard of identified hits? Tools like the EPA's Cheminformatics Modules (CIM) include a Hazard Module. This tool generates a heatmap profile comparing multiple chemicals across various toxicity endpoints. The data is color-coded (e.g., Red-Very High, Green-Low) and sources information from authoritative, screening, and QSAR model data, helping in the early safety assessment of candidates [35].

Key Experimental Protocols

Protocol 1: Structure-Based Virtual Screening of an Ultra-Large Library

Methodology Overview This protocol details the process of screening a 140-million compound library against the Cannabinoid Type II receptor (CB2) to identify antagonists, achieving a 55% experimental validation rate [33].

Step-by-Step Workflow

Library Enumeration:

- Use combinatorial chemistry tools within ICM-Pro software.

- Retrieve building blocks from vendor servers (Enamine, ChemDiv, Life Chemicals, ZINC15).

- Enumerate two separate libraries based on SuFEx reactions for sulfonamide-functionalized triazoles and isoxazoles.

- Combine the libraries for a unified virtual screening campaign.

Receptor Model Preparation (4D Docking):

- Start with a high-resolution crystal structure of the target receptor (e.g., CB2 with an antagonist).

- Use a ligand-guided receptor optimization algorithm to refine the side chains within an 8Å radius of the co-crystallized ligand.

- Generate an ensemble of receptor conformations using diverse sets of known agonists and antagonists.

- Select the best structural models based on their Receiver Operating Characteristic (ROC) Area Under Curve (AUC) values in benchmark docking.

- Combine the top models (e.g., antagonist-bound, agonist-bound, crystal structure) into a single 4D structural model.

Virtual Ligand Screening:

- Perform the first docking pass of the entire library into the 4D receptor maps using a standard docking effort.

- Save all compounds with a binding score better than a set threshold (e.g., -30).

- From these, select the top 340,000 compounds (170,000 from each sub-library) and re-dock them using a higher effort setting for more comprehensive conformational sampling.

- For each model in the 4D ensemble, select the top 10,000 compounds with the lowest docking scores.

Compound Selection and Prioritization:

- Cluster the top-scoring compounds based on their chemical scaffold to ensure diversity.

- Apply filters to ensure novelty compared to known ligands for the target.

- Visually inspect predicted binding poses, prioritizing compounds that form key hydrogen bonds with binding site residues.

- Finally, nominate 500 compounds for synthesis based on a combined assessment of docking score, binding pose, chemical novelty, diversity, and synthetic tractability.

Protocol 2: Designing a Focused Chemogenomic Library

Methodology Overview This protocol describes a systematic procedure for constructing a targeted anticancer compound library (C3L), optimized for size, cellular activity, and target coverage [32].

Step-by-Step Workflow

Define the Target Space:

- Compile a comprehensive list of proteins and gene products associated with cancer development using resources like The Human Protein Atlas and PharmacoDB.

- Expand this list by including proteins from pan-cancer studies, aiming to cover all "hallmarks of cancer." The final target space may include ~1,655 proteins.

Identify and Curate Compound-Target Interactions:

- For the EPC (Experimental Probe Compound) Collection: Manually extract compound-target interactions from public databases to create a theoretical in-silico set.

- For the AIC (Approved and Investigational Compound) Collection: Curate compounds from public sources and clinical trials to include drugs with known safety profiles.

Apply Multi-Stage Filtering:

- Global Activity Filtering: Remove compounds lacking demonstrated biological activity.

- Potency-based Redundancy Reduction: For each target, select the most potent compounds to minimize library size.

- Structural Diversity Filtering: Use chemical fingerprints (e.g., ECFP4, MACCS) and similarity metrics (e.g., Tanimoto, Dice) to remove highly redundant structures. A typical cutoff is a similarity <0.99.

- Availability Filtering: Filter based on the commercial availability of compounds for physical screening.

Library Validation:

- The final screening set is a compact library (e.g., 1,211 compounds) that retains high coverage (e.g., 84%) of the original target space. This library is then ready for phenotypic screening in relevant disease models.

Quantitative Data and Reagent Solutions

Table 1: Virtual Screening Performance Metrics

The following table summarizes key quantitative data from a virtual screening campaign that successfully identified CB2 receptor antagonists, demonstrating a high experimental hit rate [33].

| Metric | Value | Description/Context |

|---|---|---|

| Initial Library Size | 140 million compounds | Combinatorial library created using SuFEx chemistry [33]. |

| Top Compounds Selected | 500 compounds | Nominated for synthesis based on docking and diversity [33]. |

| Compounds Synthesized | 11 compounds | Successfully synthesized with >95% purity from the top 14 selected [33]. |

| Functional Antagonists Identified | 6 compounds | Showed CB2 antagonist potency better than 10 μM in functional assays [33]. |

| Validated Hit Rate | 55% | Proportion of synthesized compounds that were functionally active (6/11) [33]. |

| Best Binding Affinity (Ki) | 0.13 μM | The highest affinity measured for the most potent hit (BRI-13901) [33]. |

| Docking Score Threshold | -30 | Energy score cutoff used to save compounds from the first docking pass [33]. |

Table 2: Essential Research Reagent Solutions

This table lists key tools, software, and databases essential for conducting cheminformatics-driven library design and virtual screening.

| Item / Reagent | Function / Application | Specific Example / Vendor |

|---|---|---|

| Combinatorial Library Software | Enumerates virtual chemical libraries from building blocks. | ICM-Pro [33] |

| Building Block Vendors | Source of chemical reagents for virtual or physical library synthesis. | Enamine, ChemDiv, Life Chemicals, ZINC15 Database [33] |

| Molecular Docking Software | Predicts binding poses and scores of small molecules against a protein target. | ICM-Pro [33] |

| Chemical Database | Public repositories for obtaining chemical structures and bioactivity data. | PubChem, ChEMBL [36] [37] |

| Focused DEL Provider | For experimental hit discovery and optimization using DNA-Encoded Libraries. | HitGen [38] |

| Hazard Profiling Tool | Creates toxicity hazard comparison profiles across multiple endpoints. | EPA Cheminformatics Modules (CIM) - Hazard Module [35] |

Workflow and Pathway Visualizations

Virtual Screening Workflow

This diagram illustrates the multi-step computational and experimental workflow for virtual screening of an ultra-large library, from library creation to experimental validation [33].

Chemogenomic Library Design

This diagram outlines the target-based strategy for designing a focused, target-annotated chemogenomic library, highlighting the key filtering steps [32].

Core Concepts of Hit-to-Lead Profiling

What is the primary objective of the hit-to-lead phase?

The key objective is to rapidly assess several hit clusters to identify the most promising series for development into drug-like leads. This involves confirming a true structure-activity relationship (SAR), evaluating potency and selectivity, and conducting early assessment of in-vitro ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) properties. This phase typically runs for 6–9 months. [39]

What parameters are profiled during hit-to-lead?

Hit-to-lead assays provide deeper investigation compared to initial high-throughput screening (HTS), focusing on: [40]

- Potency: Quantifying how strongly a compound modulates its target (e.g., IC50 values).

- Selectivity: Determining if the compound interacts specifically with the intended target versus unrelated proteins.

- Mechanism of Action: Uncovering how the compound binds or interferes with target function.

- ADME Properties: Assessing characteristics that influence "drug-likeness."

Essential Assay Types for Hit Profiling

The following table summarizes the common categories of assays used in hit-to-lead profiling.

Table 1: Key Assay Types in Hit-to-Lead Profiling

| Assay Category | Description | Common Examples |

|---|---|---|

| Biochemical Assays | Cell-free systems measuring direct interaction with a purified molecular target. [40] | Enzyme activity assays (kinases, GTPases), binding assays (Fluorescence Polarization, TR-FRET), mechanistic studies. [40] |

| Cell-Based Assays | Evaluate compound effects in a cellular environment, adding physiological relevance. [40] | Reporter gene activity, signal transduction pathway modulation, cell proliferation, cytotoxicity. [40] |

| Profiling & Counter-Screening Assays | Confirm selectivity and rule out off-target activity. [40] [41] | Screening against a panel of related enzymes or proteins, testing for interactions with cytochrome P450 enzymes. [40] |

| Orthogonal Assays | Confirm bioactivity using a different readout technology or assay condition to guarantee specificity and eliminate technology-dependent artifacts. [41] | Using luminescence or absorbance to follow up a fluorescence-based primary screen; employing biophysical methods like SPR or MST. [41] |

| Cellular Fitness Assays | Classify compounds that maintain global non-toxicity and exclude those causing general cellular harm. [41] | Cell viability (CellTiter-Glo), cytotoxicity (LDH assay), apoptosis (caspase assay), high-content analysis of cell health. [41] |

Troubleshooting Guide: FAQs for Experimental Challenges

FAQ 1: How can I distinguish true bioactive hits from assay artifacts?

A multi-pronged experimental strategy is crucial for triaging primary hits toward high-quality, specific compounds. [41]

- Implement Counter Screens: Design assays that bypass the biological reaction to measure the compound's effect on the detection technology itself. This identifies artifacts like autofluorescence, signal quenching, or reporter enzyme modulation. [41]

- Employ Orthogonal Assays: Confirm activity using a completely different readout technology (e.g., follow a fluorescence screen with a luminescence-based assay). Biophysical methods like Surface Plasmon Resonance (SPR) or Thermal Shift Assays (TSA) can directly validate binding. [41]

- Analyze Dose-Response Curves: Scrutinize the shape of the curves. Steep, shallow, or bell-shaped curves may indicate toxicity, poor solubility, or compound aggregation. [41]

- Leverage Computational Filters: Use chemoinformatic filters (e.g., for pan-assay interference compounds or PAINS) to flag promiscuous compounds and undesirable chemotypes based on historical screening data. [41]

FAQ 2: My hits are potent but lack selectivity. What strategies can improve my selectivity profile?

A lack of selectivity indicates potential off-target effects. The following strategies can help de-risk your lead series.

- Panel Screening: Test compounds against a panel of related targets (e.g., a kinase panel if your target is a kinase) to understand the selectivity landscape and identify problematic off-target interactions. [40]

- Chemogenomic Library Design: Utilize or design a targeted library based on chemogenomic principles. This involves building a system pharmacology network that integrates drug-target-pathway-disease relationships, helping to select compounds that cover a diverse target space with known selectivity profiles. [42]

- Explore Structure-Activity Relationships (SAR): A genuine and steep SAR, where small structural changes lead to significant potency differences, provides confidence for selectivity optimization through medicinal chemistry. [41]

- Core-Hopping: Use in-silico profiling to enable scaffold core-hopping techniques, which can generate new hit series with improved properties and potentially different selectivity profiles. [39]

FAQ 3: How can I ensure my in vitro assay results will translate to more physiologically relevant models?

Translational relevance is a common challenge. Bridging this gap requires careful assay design and follow-up.

- Incorporate Cell-Based Assays Early: Early use of cell-based systems that better recapitulate the disease biology can reduce late-stage failures. This includes using disease-relevant cell lines, primary cells, or more complex models like 3D cultures. [40] [41]

- Stage Assays Appropriately: Ensure that in vitro assays are staged within conditions relevant to the disease, including the correct cell type, lifecycle stage of a pathogen, and use of relevant host cells. [43]

- Use Phenotypic Profiling: Technologies like high-content imaging and "Cell Painting" can provide a comprehensive morphological profile of a compound's effect on cells, offering a more physiologically relevant readout than bulk assays. [42]

- Advance to Complex Models: Use follow-up validation in more biologically relevant settings, such as 3D cultures or organoids, to confirm activity observed in simpler 2D cell models. [41]

FAQ 4: What are the best practices for managing variability and reproducibility in HTS and hit confirmation?

Variability can obscure true signals and lead to irreproducible results.

- Automate Workflows: Automated liquid handling and robotic systems standardize processes, reducing inter- and intra-user variability and human error. Tools with integrated verification features (e.g., droplet detection) further enhance data reliability. [44]

- Use Homogeneous Assay Formats: Implement "mix-and-read" assays that minimize wash steps to reduce complexity and variability. [40]

- Ensure Robust Signal Windows: During assay development, optimize the assay to ensure a clear differentiation between active and inactive compounds. [40]

- Include Proper Controls: Always run positive and negative controls to continuously monitor the quality of the assay and the generated data. [41]

Experimental Workflow for Hit Validation

The diagram below outlines a logical workflow for experimentally validating and triaging primary hits, integrating counter, orthogonal, and fitness screens to prioritize high-quality leads. [41]

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials and Reagents for Hit-to-Lead Profiling

| Item | Function / Application |

|---|---|

| Transcreener Assays | Homogeneous, high-throughput biochemical assays for measuring enzyme activity (e.g., kinases, GTPases), ideal for both primary screens and hit-to-lead follow-up. [40] |

| Cell Painting Kits | Multiplexed fluorescent dye sets for high-content morphological profiling. They stain multiple organelles to provide a comprehensive picture of the cellular state upon compound treatment, useful for assessing mechanism and toxicity. [41] [42] |

| Cell Viability/Cytotoxicity Assays | Reagents like CellTiter-Glo (ATP quantitation for viability), MTT, and LDH assays to evaluate cellular fitness and rule out general toxicity. [41] |