Next-Generation Sequencing in Chemical Sensitivity Profiling: A Comprehensive Guide for Predictive Cancer Model Development

This article explores the integration of next-generation sequencing (NGS) technologies for chemical sensitivity profiling in cancer models, addressing both foundational principles and advanced applications.

Next-Generation Sequencing in Chemical Sensitivity Profiling: A Comprehensive Guide for Predictive Cancer Model Development

Abstract

This article explores the integration of next-generation sequencing (NGS) technologies for chemical sensitivity profiling in cancer models, addressing both foundational principles and advanced applications. It covers the role of comprehensive genomic profiling in identifying actionable mutations and resistance mechanisms that inform drug response predictions. The content details methodological approaches for implementing NGS in both tissue and liquid biopsy contexts, while addressing key challenges in assay optimization, sensitivity thresholds, and data interpretation. Through validation frameworks and comparative analyses across sequencing platforms, we demonstrate how NGS-driven profiling enables more accurate prediction of therapeutic responses, ultimately advancing personalized cancer treatment strategies and drug development pipelines.

The Genomic Foundation of Drug Response: How NGS Reveals Actionable Targets and Resistance Mechanisms

Next-generation sequencing (NGS) represents a fundamental paradigm shift in genomic analysis, enabling the massively parallel sequencing of millions to billions of DNA fragments simultaneously. This transformative technology has revolutionized our approach to biological research and clinical diagnostics, particularly in oncology, by providing unprecedented insights into the molecular underpinnings of disease [1] [2]. Unlike first-generation Sanger sequencing, which processes a single DNA fragment at a time, NGS employs parallel processing to dramatically increase throughput while reducing costs and time requirements [3] [4]. The core principle of NGS lies in its ability to fractionate DNA samples into vast libraries of fragments that are sequenced concurrently, generating enormous datasets that computational algorithms then reassemble into a complete genomic sequence [4].

The evolution of NGS technology has progressed through distinct generations. The foundational years (1977-2005) were dominated by Fred Sanger's chain-termination method, which first made reading DNA possible but was limited in throughput and scalability [5]. The period from 2005 to 2010 witnessed the NGS revolution with the introduction of massively parallel short-read technologies from companies like 454 Life Sciences and Illumina, which reduced sequencing costs from approximately $10,000 per megabase to mere cents [5]. The 2010s saw the emergence of third-generation sequencing with platforms from Pacific Biosciences and Oxford Nanopore Technologies that enabled single-molecule, long-read sequencing [5]. Today, we are in an era defined by multi-omic compatibility, spatially-resolved sequencing, and ultra-high-throughput machines that continue to push the boundaries of genomic discovery [5].

Basic Principles and Chemistry of NGS

Fundamental Workflow

The NGS workflow comprises four essential steps that convert biological samples into interpretable genetic information. While platform-specific variations exist, these core principles remain consistent across most modern systems [3] [4]:

- Nucleic Acid Extraction: DNA or RNA is isolated from source material (e.g., tissue, cells) through cell lysis and purification to remove contaminants, ensuring high-quality input material for subsequent steps [3].

- Library Preparation: This critical step fragments the nucleic acids into appropriately sized pieces and ligates platform-specific adapters to both ends of each fragment. These adapters facilitate amplification, provide primer binding sites, and often include unique molecular barcodes that enable sample multiplexing [3]. Fragmentation methods include enzymatic digestion, sonication, or nebulization, with size selection tailored to specific applications [3].

- Sequencing: The prepared library is loaded onto the sequencing platform, where the actual base-by-base determination occurs through various biochemical processes collectively termed "sequencing by synthesis" or alternative methodologies [3].

- Data Analysis: Raw sequencing data undergoes computational processing including base calling, quality control, alignment to reference sequences, variant identification, and biological interpretation—a process requiring sophisticated bioinformatics pipelines and substantial computational resources [3].

Core Sequencing Chemistries

NGS platforms employ distinct biochemical approaches to determine nucleotide sequences:

Sequencing by Synthesis (SBS) represents the most widely implemented chemistry, utilized predominantly by Illumina platforms [3] [2]. This method uses fluorescently labeled reversible terminator nucleotides that are added iteratively to growing DNA strands. Each nucleotide incorporation event is detected through fluorescence imaging before the terminator is cleaved to enable subsequent additions [3]. This cyclic process continues for predetermined numbers of cycles, generating short reads typically ranging from 50-300 bases with exceptional accuracy (error rates of 0.1-0.6%) [1].

Semiconductor Sequencing, implemented by Ion Torrent platforms, employs a unique detection mechanism based on pH changes [3]. When DNA polymerase incorporates a nucleotide into a growing strand, a hydrogen ion is released. Ion-sensitive field-effect transistors detect these pH fluctuations, converting biochemical events directly to digital information without requiring optical imaging [3]. This approach simplifies instrumentation but can present challenges with homopolymer regions where multiple identical nucleotides occur sequentially [2].

Single Molecule Real-Time (SMRT) Sequencing, developed by Pacific Biosciences, observes DNA synthesis in real time at the single molecule level [5] [2]. DNA polymerase molecules are immobilized within microscopic wells called zero-mode waveguides (ZMWs), where fluorescently tagged nucleotides diffuse freely. As nucleotides are incorporated, their fluorescent signatures are detected before the tags diffuse away. This technology generates exceptionally long reads (typically 10,000-25,000 bases) but historically had higher error rates—a limitation addressed through circular consensus sequencing (CCS) that produces High-Fidelity (HiFi) reads with >99.9% accuracy [5].

Nanopore Sequencing, pioneered by Oxford Nanopore Technologies, measures changes in electrical current as DNA molecules pass through protein nanopores embedded in a membrane [5] [2]. Each nucleotide constellation produces a characteristic current disruption that machine learning algorithms decode into sequence information. This technology enables extremely long reads (often tens of kilobases) and real-time data analysis, with recent duplex sequencing chemistry achieving accuracies exceeding Q30 (>99.9%) [5].

Table 1: Comparison of Major NGS Sequencing Chemistries

| Chemistry | Representative Platforms | Read Length | Accuracy | Key Advantages | Key Limitations |

|---|---|---|---|---|---|

| Sequencing by Synthesis (SBS) | Illumina NovaSeq X, NextSeq | 50-300 bp | High (Q30-Q40) | High throughput, low cost per base | Short reads, GC bias |

| Semiconductor Sequencing | Ion Torrent | 200-400 bp | Moderate | Rapid, simple workflow | Homopolymer errors |

| Single Molecule Real-Time (SMRT) | PacBio Revio, Sequel | 10,000-25,000 bp | High with HiFi (Q30-Q40) | Long reads, epigenetic detection | Higher cost, complex instrumentation |

| Nanopore Sequencing | Oxford Nanopore MinION, PromethION | Up to 2+ Mb | Moderate to High (Q20-Q30 with duplex) | Ultra-long reads, real-time analysis | Higher error rates for simplex reads |

Advanced NGS Platforms and Specifications

The contemporary NGS landscape features diverse platforms optimized for specific applications and throughput requirements. As of 2025, the market includes approximately 37 sequencing instruments across 10 key companies, each with distinct technical specifications and performance characteristics [5].

Illumina dominates the short-read sequencing market with platforms ranging from benchtop systems like the MiSeq and NextSeq to production-scale instruments such as the NovaSeq X series [5] [6]. The NovaSeq X represents the current pinnacle of Illumina's technology, capable of outputting up to 16 terabases of data in a single run (approximately 26 billion reads per flow cell) while reducing the cost of sequencing a human genome below $200 [5] [6]. Illumina's sequencing-by-synthesis chemistry with reversible dye terminators provides exceptional accuracy and throughput for a wide range of applications from targeted sequencing to whole genomes [5].

Pacific Biosciences (PacBio) specializes in long-read sequencing through its SMRT technology [5]. The Revio system, launched in 2023, dramatically increased throughput and reduced costs for HiFi sequencing, making long-read applications more accessible [5]. PacBio's HiFi reads combine the length advantages of long-read sequencing (typically 10-25 kb) with accuracies exceeding 99.9% (Q30) through circular consensus sequencing that repeatedly reads the same molecule [5]. More recently, PacBio introduced the SPRQ ("spark") chemistry, their first multi-omics approach that extracts both DNA sequence and regulatory information from the same molecule by labeling accessible chromatin regions [5].

Oxford Nanopore Technologies (ONT) offers a unique sequencing approach based on protein nanopores that detect electrical signal changes as DNA or RNA molecules pass through [5]. Their platforms range from the portable, USB-sized MinION to the high-throughput PromethION series [5]. A significant advancement came with their Q20+ and subsequent Q30 Duplex kits, which sequence both strands of DNA molecules to achieve accuracies rivaling short-read platforms while maintaining the advantages of ultra-long reads (sometimes exceeding 2 megabases) and real-time analysis [5]. This technology enables direct detection of epigenetic modifications and has applications from field sequencing to comprehensive genome assembly [5].

Table 2: Comparison of Representative Advanced NGS Platforms (2025)

| Platform | Technology Type | Maximum Output Per Run | Read Length | Accuracy | Best Applications |

|---|---|---|---|---|---|

| Illumina NovaSeq X | Short-read (SBS) | 16 Tb | 2x150 bp | >80% bases ≥ Q30 | Population sequencing, large-scale genomics |

| PacBio Revio | Long-read (SMRT) | 360 Gb | 10-25 kb HiFi | >99.9% (Q30) | De novo assembly, variant phasing, isoform sequencing |

| Oxford Nanopore PromethION | Long-read (Nanopore) | 100+ Gb | 10-30 kb (ultra-long >2 Mb) | >99.9% duplex (Q30) | Real-time surveillance, structural variant detection |

| Illumina NextSeq 1000/2000 | Short-read (SBS) | 120-360 Gb | 2x150 bp | >75% bases ≥ Q30 | Targeted sequencing, single-cell analysis, transcriptomics |

NGS Workflow for Cancer Chemical Sensitivity Profiling

The application of NGS to chemical sensitivity profiling in cancer models requires specialized experimental designs and analytical approaches that link genomic features with therapeutic response. The following protocol outlines a comprehensive framework for such investigations.

Sample Preparation and Library Construction

Materials:

- Cancer cell lines or patient-derived models

- QIAamp DNA FFPE Tissue Kit (Qiagen) or equivalent

- Agilent SureSelectXT Target Enrichment System

- Illumina-compatible library preparation reagents

- Bioanalyzer system (Agilent 2100) or equivalent

Procedure:

- Model Treatment and DNA Extraction: Treat cancer models with chemical compounds of interest across a concentration range. After treatment period, extract high-quality genomic DNA using validated kits, ensuring minimum concentration of 20 ng/μL and A260/A280 ratio between 1.7-2.2 [7].

- Quality Control: Assess DNA integrity and quantity using fluorometric methods and fragment analyzers. For formalin-fixed paraffin-embedded (FFPE) samples, consider repair enzymes to address cross-linking artifacts [7].

- Library Preparation: Fragment DNA to appropriate size (typically 250-400 bp) via acoustic shearing or enzymatic fragmentation. Perform end-repair, A-tailing, and adapter ligation using platform-specific reagents. Include unique dual indexes to enable sample multiplexing [7].

- Target Enrichment: For focused analyses, employ hybrid capture-based target enrichment using panels covering cancer-associated genes, resistance markers, and pharmacogenomic loci. The SNUBH Pan-Cancer v2.0 panel (544 genes) represents an example of a comprehensive oncology-focused target capture system [7].

- Library Quantification and Validation: Precisely quantify final libraries using qPCR-based methods and assess size distribution via Bioanalyzer or TapeStation. Pool libraries at equimolar ratios for multiplexed sequencing [7].

Sequencing and Data Analysis

Sequencing Parameters:

- Platform: Illumina NextSeq 550Dx or equivalent

- Configuration: 2×150 bp paired-end reads

- Target Coverage: Minimum 200× with ≥80% of targets at 100× coverage

- Quality Metrics: ≥Q30 score for >75% of bases

Bioinformatic Analysis:

- Primary Analysis: Perform base calling and demultiplexing using platform-specific software (e.g., Illumina's bcl2fastq).

- Sequence Alignment: Map reads to reference genome (GRCh38/hg38) using optimized aligners such as BWA-MEM or Bowtie2.

- Variant Calling: Identify single nucleotide variants (SNVs) and small insertions/deletions (indels) using Mutect2 or similar variant callers, applying minimum variant allele frequency threshold of 2% [7].

- Copy Number Analysis: Detect amplifications and deletions using CNVkit, considering average copy number ≥5 as amplification [7].

- Fusion Detection: Identify gene fusions and structural variants using tools like LUMPY, with minimum supporting read count of 3 [7].

- Tumor Mutational Burden (TMB) Calculation: Compute TMB as the number of eligible variants per megabase, excluding polymorphisms with population frequency >1% and known benign variants [7].

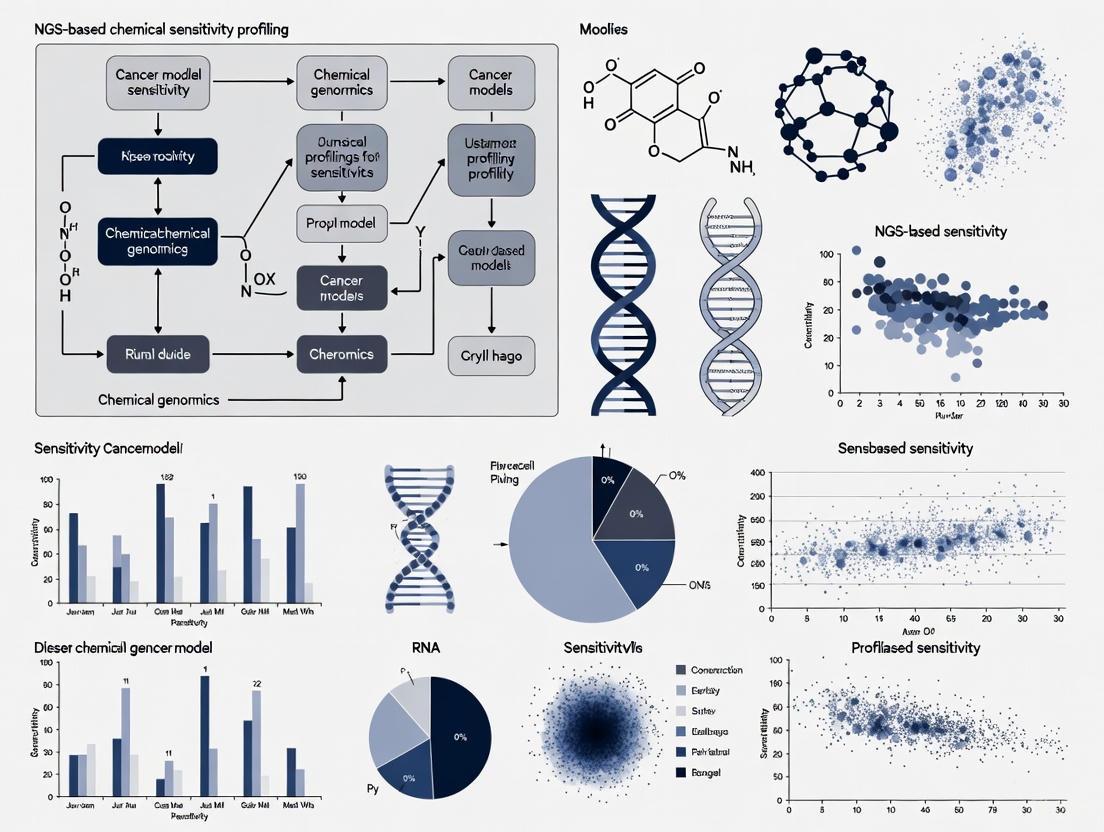

The following workflow diagram illustrates the complete experimental process for NGS-based chemical sensitivity profiling:

Diagram 1: NGS Chemical Sensitivity Profiling Workflow

Essential Research Reagent Solutions

Successful implementation of NGS-based chemical sensitivity profiling requires carefully selected reagents and materials. The following table outlines critical components for establishing robust experimental workflows.

Table 3: Essential Research Reagents for NGS-Based Chemical Sensitivity Profiling

| Reagent Category | Specific Products | Function | Application Notes |

|---|---|---|---|

| Nucleic Acid Extraction | QIAamp DNA FFPE Tissue Kit, DNeasy Blood & Tissue Kit | Isolation of high-quality genomic DNA from various sample types | FFPE-specific kits include cross-link reversal; minimum 20 ng DNA required [7] |

| Library Preparation | Illumina DNA Prep, KAPA HyperPrep Kit | Fragmentation, end-repair, A-tailing, adapter ligation | Incorporates unique dual indexes for sample multiplexing [3] |

| Target Enrichment | Agilent SureSelectXT, Illumina Nextera Flex | Hybrid capture-based selection of genomic regions of interest | Pan-cancer panels (e.g., 544-gene SNUBH panel) provide comprehensive coverage [7] |

| Quality Control | Agilent High Sensitivity DNA Kit, Qubit dsDNA HS Assay | Quantification and size distribution analysis of libraries | Average library size: 250-400 bp; concentration >2 nM [7] |

| Sequencing Reagents | Illumina SBS Chemistry, PacBio SMRTbell | Platform-specific nucleotides and buffers for sequencing reactions | Configuration: 2×150 bp paired-end for Illumina; >10 kb for PacBio HiFi [5] [7] |

Data Analysis and Integration with Sensitivity Metrics

The integration of genomic data with chemical response profiles represents the critical analytical phase that generates biologically actionable insights.

Variant Annotation and Interpretation

- Functional Annotation: Annotate identified variants using SnpEff or similar tools to predict functional consequences (missense, nonsense, splice-site, etc.) [7].

- Variant Classification: Categorize variants according to established guidelines such as the Association for Molecular Pathology (AMP) standards:

- Tier I: Variants of strong clinical significance (FDA-approved therapies, professional guidelines)

- Tier II: Variants of potential clinical significance (investigational therapies, different tumor type indications)

- Tier III: Variants of unknown significance

- Tier IV: Benign or likely benign variants [7]

- Actionability Assessment: Link genomic alterations to therapeutic options using knowledge bases such as OncoKB, CIViC, or Drug-Gene Interaction Database.

Correlation with Chemical Response

- Dose-Response Modeling: Calculate IC50 values and other pharmacodynamic parameters from viability assays following compound treatment.

- Association Analysis: Identify significant relationships between genomic features and sensitivity/resistance using statistical methods including:

- Fisher's exact tests for categorical genomic features

- Linear regression for continuous variables (e.g., TMB, variant allele frequency)

- Multivariate models incorporating clinical covariates

- Pathway Enrichment Analysis: Determine whether specific molecular pathways are enriched in sensitive or resistant models using gene set enrichment analysis (GSEA) or similar approaches.

The following diagram illustrates the analytical workflow for integrating genomic data with chemical sensitivity profiles:

Diagram 2: Genomic and Sensitivity Data Integration

Future Directions and Emerging Technologies

The NGS landscape continues to evolve rapidly, with several emerging technologies and methodologies poised to enhance chemical sensitivity profiling in cancer models.

Multi-omic Integration: The simultaneous analysis of genomic, transcriptomic, epigenomic, and proteomic data from the same samples provides comprehensive molecular portraits that better predict therapeutic response [8] [6]. PacBio's SPRQ chemistry exemplifies this trend, enabling concurrent assessment of DNA sequence and chromatin accessibility [5]. The integration of genetic alterations with gene expression signatures and epigenetic modifications will enable more accurate prediction of chemical vulnerabilities.

Spatial Transcriptomics and In Situ Sequencing: Emerging technologies now enable sequencing of cells within their native tissue context, preserving spatial information that is critical for understanding tumor microenvironment interactions [6]. These approaches will be particularly valuable for profiling heterogeneous tumor models and understanding how spatial relationships influence drug response.

Artificial Intelligence and Machine Learning: AI/ML algorithms are increasingly applied to NGS data to identify complex patterns predictive of chemical sensitivity [8] [6]. Deep learning models like Google's DeepVariant already improve variant calling accuracy, while more sophisticated neural networks can integrate multi-omic features to predict drug response with unprecedented precision [8].

Long-Read Applications: The improving accuracy and decreasing cost of long-read sequencing technologies open new possibilities for characterizing complex genomic regions that influence drug response, including highly repetitive regions, structural variations, and phased haplotypes [5]. These technologies are particularly valuable for resolving complex rearrangement patterns that emerge following chemical treatment.

As these technologies mature, they will increasingly enable researchers to build comprehensive predictive models of chemical sensitivity based on multidimensional molecular data, accelerating both basic cancer research and therapeutic development.

The advent of precision oncology has fundamentally shifted cancer treatment from a one-size-fits-all approach to a targeted strategy based on the unique molecular characteristics of an individual's tumor. This paradigm shift has been enabled by advances in genomic testing technologies, primarily through two distinct approaches: traditional single-gene assays and comprehensive genomic profiling (CGP). Single-gene testing methodologies, such as polymerase chain reaction (PCR), fluorescence in-situ hybridization (FISH), and immunohistochemistry (IHC), focus on identifying alterations in individual genes or limited protein expressions [9] [10]. While these tests have historically formed the foundation of molecular diagnostics, they possess inherent limitations in scope and efficiency when faced with the complex genomic landscape of cancer.

In contrast, comprehensive genomic profiling utilizes next-generation sequencing (NGS) technologies to simultaneously analyze hundreds of cancer-related genes from a single tissue or blood sample [11]. Unlike single-gene tests that are confined to hotspot regions within genes, CGP detects the four main classes of genomic alterations—base substitutions, insertions and deletions, copy number alterations, and rearrangements or fusions—across a broad panel of genes [11]. This comprehensive approach has emerged as a transformative tool in oncology, enabling the identification of clinically actionable biomarkers that might otherwise be missed by sequential single-gene testing approaches. As the number of targeted therapies continues to grow, the limitations of single-gene assays become increasingly pronounced, necessitating a critical examination of the comparative advantages of CGP in both scope and efficiency for modern cancer research and treatment.

Comparative Analytical Scope

Target Range and Alteration Detection

The fundamental distinction between single-gene assays and CGP lies in the breadth of genomic interrogation. Single-gene tests are methodologically constrained to identifying alterations confined to specific genes or hotspot regions, potentially missing clinically relevant mutations in additional genes [11]. For instance, a SNaPshot multiplex PCR panel might target variants in BRAF, EGFR, and KRAS, while FISH testing would be separately required to detect rearrangements in ALK, RET, or ROS1 [10]. This targeted approach becomes increasingly problematic as new biomarkers with clinical utility are discovered.

Comprehensive genomic profiling dramatically expands the detection capability by simultaneously assessing hundreds of cancer-associated genes. The technical advantage of CGP is its ability to identify diverse alteration types across a extensive genomic territory without prior knowledge of which specific gene might be驱动 the cancer. A prime example of this advantage is evident in rare but actionable biomarkers like NTRK fusions, which have been identified in less than 1% of all cancers but have targeted therapies available [11]. These fusions would unlikely be tested for using a single-gene approach due to their low frequency, yet CGP can detect them as part of its comprehensive assessment. Additionally, CGP can identify complex genomic signatures such as microsatellite instability (MSI), tumor mutational burden (TMB), and genomic loss of heterozygosity (gLOH), which have significant implications for immunotherapy response but cannot be adequately assessed through single-gene testing methods [11].

Table 1: Comparative Analysis of Detection Capabilities Between Single-Gene Testing and Comprehensive Genomic Profiling

| Parameter | Single-Gene Testing | Comprehensive Genomic Profiling |

|---|---|---|

| Genes Interrogated | 1 to several genes | 300+ genes simultaneously [10] |

| Variant Types Detected | Limited to methodology (e.g., SNVs by PCR, fusions by FISH) | All four major classes: SNVs, indels, CNAs, fusions [11] |

| Novel Biomarker Discovery | Not possible | Built-in capability for discovery |

| Genomic Signatures | Limited or not available | MSI, TMB, gLOH [11] |

| Actionable Findings in NSCLC | ~25-35% of patients [12] | 46-53% of patients [13] |

Diagnostic and Reclassification Capabilities

Beyond identifying therapeutic targets, comprehensive genomic profiling possesses a unique capability to contribute to diagnostic accuracy and tumor reclassification. In rare cases, CGP has revealed inconsistencies between primary diagnoses and molecular findings, triggering secondary pathological reviews that resulted in diagnostic reclassification [14]. For example, initial diagnoses of non-small cell lung cancer (NSCLC), sarcoma, and neuroendocrine carcinoma have been reclassified to renal cell carcinoma, medullary thyroid carcinoma, and melanoma, respectively, based on molecular findings from CGP [14]. Similarly, CGP has demonstrated significant utility in refining cancers of unknown primary (CUP) origin into distinct diagnostic categories, thereby enabling more precise treatment strategies [14].

These reclassification events have profound therapeutic implications. In one documented case, NGS testing helped correct an inaccurate primary diagnosis of leiomyosarcoma to liposarcoma, leading to indication-matched treatment with improved progression-free survival and quality of life [14]. The biomarkers driving these diagnostic changes include point mutations, gene fusions, and high tumor mutational burden, which provide molecular evidence supporting tumor origin or type. This diagnostic capability remains largely inaccessible through single-gene testing approaches, as they lack the comprehensive genomic context necessary to challenge or refine initial pathological assessments.

Operational Efficiency and Tissue Stewardship

Tissue Conservation and Testing Success

The efficiency of genomic testing is critically dependent on the optimal utilization of often limited tumor tissue. Single-gene testing approaches typically require sequential sectioning of formalin-fixed, paraffin-embedded (FFPE) tissue blocks, with each test consuming valuable material. A comparative analysis revealed that using single-gene testing prior to CGP requires more than 50 slides if all recommended tests are ordered individually, compared with only 20 slides for CGP alone [13]. This substantial difference in tissue requirements directly impacts testing success rates.

The clinical consequences of tissue exhaustion are significant. Research has demonstrated that patients with non-small cell lung cancer who underwent single-gene testing prior to CGP had significantly higher rates of test cancellation due to tissue insufficiency (17% vs. 7%) compared to those who only had CGP [13]. Furthermore, DNA sequencing failures were more common in the single-gene testing first group (13% vs. 8%), highlighting how tissue depletion negatively impacts the quality and success of subsequent comprehensive testing [13]. This is particularly problematic in cancers where biopsy samples are inherently small, such as lung cancer, where one study found that 29% of patients didn't get results from molecular testing because tissue was insufficient [11].

Table 2: Impact of Testing Approach on Tissue Utilization and Success Rates in NSCLC

| Performance Metric | Single-Gene Testing First | CGP Only |

|---|---|---|

| Slide Requirement | >50 slides [13] | ~20 slides [13] |

| Test Cancellation (Tissue Exhaustion) | 17% [13] | 7% [13] |

| DNA Sequencing Failure Rate | 13% [13] | 8% [13] |

| Turnaround Time >14 Days | 62% [13] | 29% [13] |

| Identification of Guideline-Recommended Biomarkers | 46% (after failed SGT) [13] | 53% [13] |

Workflow Efficiency and Turnaround Time

The operational workflow for genomic testing directly impacts clinical decision-making and patient care. Single-gene testing typically involves a sequential process where providers order individual tests based on initial hypotheses, awaiting results before determining subsequent testing needs. This sequential approach inevitably prolongs the time to comprehensive molecular characterization. Data from a prospective study demonstrated that 62% of patients who underwent single-gene testing prior to CGP experienced turnaround times exceeding 14 days, compared to only 29% in the CGP-only group [13].

Comprehensive genomic profiling streamlines this process by consolidating multiple analyses into a single integrated workflow. Advances in NGS technology and bioinformatics have further improved the efficiency of CGP, with some targeted panels now achieving turnaround times as short as 4 days from sample processing to results [15]. This accelerated timeline is crucial in advanced cancer, where timely initiation of appropriate therapy can significantly impact outcomes. The unified reporting structure of CGP also enhances clinical utility by presenting all molecular findings in a single interpretable format, facilitating treatment decision-making based on a complete genomic profile rather than fragmented results from multiple testing modalities.

Clinical Impact and Therapeutic Implications

Identification of Actionable Alterations

The ultimate measure of genomic testing utility lies in its ability to identify clinically actionable alterations that can inform treatment decisions. Comparative studies have consistently demonstrated the superior performance of CGP in this critical dimension. Research across multiple cancer types—including non-small cell lung cancer (NSCLC), cholangiocarcinoma (CCA), pancreatic carcinoma (PC), and gastro-oesophageal carcinoma (GEC)—has shown that tumor profiling with comprehensive NGS panels improved patient eligibility for personalized therapies compared with small panels [12]. The magnitude of this advantage varies by cancer type, with particularly dramatic differences observed in malignancies with diverse genomic drivers.

In gastro-oesophageal carcinoma, comprehensive panels identified actionable targets in 40% of patients, while small panels (≤60 genes) identified none [12]. Similarly, in pancreatic carcinoma, comprehensive profiling increased eligibility for personalized therapies from 3% with small panels to 35% [12]. Even in NSCLC, where testing practices are more established, comprehensive panels identified actionable alterations in 39% of patients compared to 37% with small panels [12]. These findings underscore how CGP expands therapeutic opportunities by casting a wider genomic net, particularly important for cancers with lower mutation frequencies or those lacking dominant driver mutations.

Case series further illustrate this advantage, documenting multiple instances where comprehensive genomic profiling identified highly actionable alterations missed by prior single-gene testing [10]. These included ALK fusions, EGFR exon 20 insertions, and MET exon 14 skipping alterations—all of which have approved targeted therapies [10]. Importantly, 46% of NSCLC patients with negative prior single-gene test results had positive findings for recommended biomarkers when subsequently evaluated by CGP [13], indicating that single-gene testing frequently provides false-negative results rather than truly negative genomic profiles.

Cost-Effectiveness and Resource Utilization

The economic implications of testing strategies represent a significant consideration in healthcare resource planning. While single-gene tests may appear less expensive individually, the cumulative cost of multiple single-gene tests must be weighed against the more comprehensive information obtained from a single CGP test. Research evaluating the overall diagnostic journey cost—from hospital admission through Molecular Tumour Board evaluation—found that the cost per patient to identify someone eligible for personalized treatments varied significantly according to panel size and tumor type [12].

In pancreatic carcinoma, the cost to find a patient eligible for personalized treatments was approximately $27,000 with small panels versus $5,500 with comprehensive panels [12]. The remarkable cost efficiency of comprehensive profiling in this context stems from its higher detection rate of actionable alterations. Similarly, for gastro-oesophageal carcinoma, the cost was immeasurable with small panels (as none of the patients were identified as eligible) versus $5,200 with comprehensive panels [12]. These findings challenge the perception of single-gene testing as a more economical approach and instead position CGP as a superior value proposition in many clinical scenarios.

It is noteworthy that the Molecular Tumour Board discussion component accounted for only 2-3% of the total diagnostic journey cost per patient (approximately €113/patient) [12], suggesting that the interpretive expertise required to implement CGP findings represents a relatively small incremental investment compared to the substantial clinical benefits derived from more comprehensive genomic information.

Experimental Protocols and Applications

Comprehensive Genomic Profiling Wet-Lab Protocol

The following protocol outlines the standard procedure for comprehensive genomic profiling using hybrid capture-based NGS methodology, suitable for implementation in a CLIA-certified laboratory setting:

Sample Requirements and Quality Control:

- Input Material: 50-100ng of DNA extracted from FFPE tissue sections with minimum 20% tumor content [15]

- Quality Assessment: DNA quantification using fluorometric methods (e.g., Qubit), with degradation assessment via fragment analysis

- Sample Tracking: Barcoding of samples to maintain chain of custody throughout the process

Library Preparation:

- DNA Fragmentation: Fragment genomic DNA to ~300bp using acoustic shearing or enzymatic fragmentation

- End Repair and A-Tailing: Repair fragment ends and add adenine overhangs using commercial library preparation kits

- Adapter Ligation: Ligate platform-specific adapters containing unique dual indices to enable sample multiplexing

- Library Amplification: Amplify adapter-ligated fragments using limited-cycle PCR (typically 4-8 cycles)

- Library Quantification: Assess library concentration using qPCR for accurate quantification of amplifiable fragments

Target Enrichment:

- Hybridization: Incubate library pools with biotinylated oligonucleotide probes targeting cancer-related genes (e.g., 324-gene panel [11] or 523-gene panel [10])

- Capture: Bind probe-hybridized fragments to streptavidin-coated magnetic beads

- Wash: Stringent washing to remove non-specifically bound fragments

- Amplification: Limited-cycle PCR to amplify captured libraries

Sequencing:

- Pooling: Normalize and pool enriched libraries based on qPCR quantification

- Cluster Generation: Load pooled libraries onto flow cell for cluster generation (bridge amplification)

- Sequencing: Perform sequencing using Illumina sequencing-by-synthesis technology with paired-end reads (2×100bp or 2×150bp)

- Output: Target sequencing depth of 500-1000x for tissue; minimum 100x unique molecular coverage [15]

Bioinformatic Analysis Pipeline

The computational analysis of NGS data follows a standardized workflow for variant detection and interpretation:

Primary Analysis:

- Base Calling: Convert raw signal data to nucleotide sequences (FASTQ format)

- Quality Control: Assess read quality using tools such as FastQC

- Adapter Trimming: Remove adapter sequences using Trimmomatic or similar tools

Secondary Analysis:

- Alignment: Map reads to reference genome (GRCh38) using optimized aligners such as BWA-MEM or STAR

- Duplicate Marking: Identify and mark PCR duplicates using Picard Tools

- Local Realignment: Perform realignment around indels using GATK

- Base Quality Recalibration: Adjust base quality scores using GATK

Tertiary Analysis:

- Variant Calling: Identify somatic mutations using mutect2, varscan2, or similar variant callers

- Copy Number Analysis: Detect amplifications and deletions using CONTRA, ADTEx, or similar tools

- Structural Variant Detection: Identify gene fusions and rearrangements using DELLY, LUMPY, or Manta

- Annotation: Annotate variants using databases such as dbSNP, COSMIC, ClinVar, and OncoKB

- Filtering: Prioritize variants based on population frequency, functional impact, and clinical relevance

Interpretation and Reporting:

- Pathogenicity Assessment: Classify variants according to AMP/ASCO/CAP guidelines

- Actionability Evaluation: Match genomic alterations to targeted therapies and clinical trials

- Report Generation: Create comprehensive patient reports with evidence-based therapeutic recommendations

Research Reagent Solutions

Table 3: Essential Research Reagents for Comprehensive Genomic Profiling

| Reagent Category | Specific Examples | Function in CGP Workflow |

|---|---|---|

| Nucleic Acid Extraction Kits | QIAamp DNA FFPE Tissue Kit, Maxwell RSC DNA FFPE Kit | Isolation of high-quality DNA from FFPE tissue specimens [15] |

| Library Preparation Kits | Illumina TruSight Oncology 500 HT, Sophia Genetics DDM library kit | Fragmentation, end repair, adapter ligation, and amplification for NGS library construction [15] [10] |

| Target Enrichment Panels | FoundationOne CDx (324 genes), OmniSeq INSIGHT (523 genes) | Hybridization capture of cancer-relevant genomic regions [11] [10] |

| Sequencing Reagents | Illumina NovaSeq 6000 S-Plex, MGI DNBSEQ-G50RS sequencing kit | Sequence generation using sequencing-by-synthesis technology [15] |

| Bioinformatic Tools | Sophia DDM, GATK, OncoKB, Cravat | Variant calling, annotation, and clinical interpretation of genomic data [15] |

| Quality Control Assays | Agilent TapeStation, Qubit dsDNA HS Assay, qPCR libraries quantification | Assessment of nucleic acid quality, quantity, and library preparation success [15] |

Workflow and Signaling Pathway Visualizations

Diagram 1: Comparative workflow analysis between single-gene testing and comprehensive genomic profiling approaches, highlighting differences in tissue utilization, process complexity, and outcomes.

Diagram 2: Biomarker detection capabilities across genomic testing methodologies, illustrating the comprehensive coverage of CGP compared to limited scope of single-gene assays.

The comparative analysis between comprehensive genomic profiling and traditional single-gene assays reveals a consistent pattern of advantages favoring CGP across multiple dimensions—analytical scope, operational efficiency, clinical utility, and economic value. The demonstrated ability of CGP to identify more actionable alterations, conserve precious tissue specimens, provide more rapid and comprehensive results, and ultimately guide more effective treatment decisions positions it as the superior approach for genomic profiling in contemporary oncology practice and research. As the field continues to evolve with an expanding repertoire of targeted therapies and biomarkers, the comprehensive nature of CGP becomes increasingly essential for realizing the full potential of precision oncology.

For research applications, particularly in chemical sensitivity profiling and cancer model development, CGP offers the additional advantage of generating rich genomic datasets that can be mined for discovery purposes beyond immediate clinical applications. The ability to detect novel alterations, identify complex genomic signatures, and contribute to diagnostic refinement makes CGP an invaluable tool for advancing our understanding of cancer biology and therapeutic response mechanisms. While single-gene assays may retain utility in specific, limited contexts where rapid assessment of a single biomarker is sufficient, the weight of evidence supports CGP as the foundational approach for comprehensive cancer genomic characterization in both clinical and research settings.

The management of cancer is increasingly guided by the principle of precision medicine, where treatment strategies are tailored to the specific genetic alterations found in an individual's tumor. Central to this approach is the identification of actionable mutations—somatic genetic alterations that directly influence clinical decision-making by predicting response or resistance to targeted therapies. Next-generation sequencing (NGS) has become the cornerstone technology for comprehensively profiling these alterations across hundreds of cancer-related genes simultaneously, moving beyond single-gene assays to capture the complex genomic landscape of malignancies [9]. The clinical utility of this approach is firmly established; for instance, patients with metastatic castration-resistant prostate cancer (mCRPC) harboring homologous recombination repair gene mutations can be treated with PARP inhibitors, while those with mismatch repair deficiency benefit from immune checkpoint blockade therapies [16].

The biological rationale connecting genetic alterations to therapeutic vulnerabilities stems from the concept of oncogene addiction and synthetic lethality. Oncogene addiction describes the phenomenon where cancer cells become dependent on a single activated oncogene for survival and proliferation, making them uniquely vulnerable to its inhibition. Synthetic lethality occurs when inactivation of either of two genes individually is viable, but simultaneous inactivation results in cell death—a principle exploited by PARP inhibitors in BRCA-deficient tumors. The National Cancer Institute's Molecular Analysis for Therapy Choice (NCI-MATCH) trial exemplifies how this paradigm is operationalized at scale, using NGS to match patients with relapsed or refractory cancers to therapies targeting specific molecular alterations [17]. This framework transforms cancer treatment from a histology-based approach to a genetically-guided strategy.

Methodologies for Mutation Detection and Interpretation

Sample Acquisition and Processing Considerations

Robust identification of actionable mutations begins with appropriate sample acquisition. While formalin-fixed paraffin-embedded (FFPE) tumor tissue remains the gold standard, liquid biopsy approaches using plasma, urine, or other bodily fluids offer non-invasive alternatives when tissue is unavailable [16]. Each sample type presents distinct advantages and limitations. Tumor tissues provide comprehensive genomic information but require invasive procedures. Plasma circulating tumor DNA (ctDNA) detection sensitivity depends heavily on tumor burden and shedding, with studies reporting over 70% of mCRPC patients having ctDNA variant allele frequencies (VAFs) >2%, achieving 90% concordance with tissue-based testing [16]. Urine samples have demonstrated 65.6% detection sensitivity for prostate cancer mutations, while seminal fluid shows potential despite current sampling challenges [16].

The NGS workflow consists of four critical stages: (1) template preparation, (2) sequencing, (3) imaging, and (4) data analysis [18]. For template preparation, three main approaches exist: clonally amplified templates (using emulsion PCR or bridge PCR), single-molecule templates (requiring less material and avoiding amplification bias), and circle templates (reducing error rates for cancer profiling) [18]. The choice of method depends on the application—single-molecule templates are preferred for quantitative analyses like gene expression profiling, while amplified templates are suitable for qualitative mutational analysis despite potential bias in AT-rich and GC-rich regions [18].

Sequencing Technologies and Analytical Validation

Multiple sequencing technologies are available, each with distinct performance characteristics. The Illumina platform uses complementary metal-oxide semiconductor (CMOS) technology with fluorescently labeled reversible terminators, while Ion Torrent employs non-optical sequencing based on detection of hydrogen ions released during DNA polymerase activity [18]. The Oncomine Cancer Panel assay with AmpliSeq chemistry and Personal Genome Machine sequencer has been validated for clinical use in the NCI-MATCH trial, demonstrating 96.98% overall sensitivity for 265 known mutations and 99.99% specificity across four Clinical Laboratory Improvement Amendments (CLIA)-certified laboratories [17].

Analytical validation must establish performance characteristics for each variant type. The NCI-MATCH assay validation established the following limits of detection: 2.8% for single-nucleotide variants (SNVs), 10.5% for small insertions/deletions (indels), 6.8% for large indels (gap ≥4 bp), and four copies for gene amplification [17]. This rigorous validation ensures that reported variants meet quality standards for clinical decision-making. Bioinformatics pipelines for variant calling typically involve quality control of FASTQ files, alignment to reference genomes, and annotation using tools like VarScan2 and ANNOVAR, with filtering thresholds adjusted for different sample types (e.g., VAF ≥1% for tissue, ≥0.3% for plasma) [16].

Table 1: Comparison of NGS Performance Across Different Sample Types

| Sample Type | Detection Sensitivity | Advantages | Limitations |

|---|---|---|---|

| Tumor Tissue (FFPE) | 100% (gold standard) | Comprehensive genomic information; established protocols | Invasive procurement; not always feasible |

| Plasma ctDNA | 67.6% | Non-invasive; enables monitoring | Lower sensitivity for low tumor burden |

| Urine | 65.6% | Completely non-invasive; patient-friendly | Variable DNA concentration |

| Seminal Fluid | 33.3% | High cfDNA concentration in prostate cancer | Sampling challenges post-treatment |

Connecting Mutational Profiles to Therapeutic Opportunities

Interpretation Frameworks for Actionability

The interpretation of genomic alterations follows structured frameworks that classify mutations based on clinical evidence levels. The NCI-MATCH trial established a tiered evidence system: Level 1 includes variants credentialed for FDA-approved drugs in any tissue (e.g., BRAF V600E and vemurafenib); Level 2a comprises variants serving as eligibility criteria for ongoing clinical trials; Level 2b includes variants with evidence from N-of-1 responses; and Level 3 relies on preclinical inferential data supporting treatment selection [17]. This structured approach ensures that treatment assignments are based on rigorously validated biomarkers.

Implementation requires assessment of specific mutation types and their functional consequences. Gain-of-function mutations in oncogenes (e.g., activating mutations in kinases) typically create direct drug targets, while loss-of-function mutations in tumor suppressor genes may indicate sensitivity to targeted therapies through synthetic lethal interactions [17]. For example, nonsense or frameshift variants in 26 tumor suppressor genes are specifically reported in the NCI-MATCH assay, as these truncating alterations may predict response to specific therapeutic classes [17]. Additionally, the mutational landscape provides biological insights—in prostate cancer, mutations in FOXA1, SPOP, and TP53 are commonly detected across sample types, while AR mutations show distinct patterns of prevalence in liquid biopsy samples compared to tissue [16].

Chemical Sensitivity Profiling through Computational Approaches

Emerging computational approaches now enable in silico chemical sensitivity profiling by integrating genomic features with chemical structure information. The ChemProbe model exemplifies this approach, using deep learning to predict cellular sensitivity to hundreds of compounds by combining transcriptomic data with chemical structures [19]. This model employs feature-wise linear modulation (FiLM) layers where chemical features scale and shift gene expression representations, effectively mimicking how chemical substructures modulate biological pathways [19]. This methodology accurately predicted breast cancer patient response in the I-SPY2 trial, achieving a macro-average area under the receiver operating characteristic curve of 0.65 for five therapeutics, demonstrating how computational models can extrapolate from cell line data to clinical predictions [19].

The interpretation of these models provides biological insights into mechanisms of chemical sensitivity. Analysis of learned parameters in ChemProbe revealed that scaling parameters grouped compounds by structural identity, while shifting parameters correlated with compound concentration [19]. Furthermore, gradient-based attribution methods identified transcriptome features reflecting compound targets and protein network modules, successfully identifying genes that drive ferroptosis [19]. This demonstrates how advanced computational approaches not only predict chemical sensitivity but also illuminate underlying biological mechanisms connecting genetic alterations to therapeutic vulnerabilities.

Experimental Protocols and Research Applications

Protocol for NGS-Based Mutation Detection from Tissue and Liquid Biopsies

Sample Collection and DNA Extraction:

- For FFPE tissue samples, use the QIAamp DNA FFPE Tissue Kit (Qiagen) following manufacturer's protocols. Have sections reviewed by a pathologist to assess tumor content [16] [17].

- For liquid biopsy samples (plasma, urine, seminal fluid), isolate circulating tumor DNA using the QIAamp Circulating Nucleic Acid Kit (Qiagen). Collect blood in EDTA tubes and process within 2 hours to prevent genomic DNA contamination [16].

- Extract germline DNA from white blood cells using the DNeasy Blood and Tissue Kit (Qiagen) to serve as a control for identifying somatic mutations and filtering out clonal hematopoiesis variants [16].

Library Preparation and Sequencing:

- Construct sequencing libraries using the KAPA Hyper DNA Library Prep Kit (Roche). For targeted sequencing, enrich 437 cancer-related genes using probe-based hybridization capture panels [16].

- For the NCI-MATCH validated protocol, use the Oncomine Cancer Panel assay with AmpliSeq chemistry on the Personal Genome Machine sequencer. This panel covers 4066 predefined genomic variations across 143 genes [17].

- Sequence on an Illumina HiSeq4000 platform with minimum coverage of 1000× for liquid biopsies and 500× for tissue samples to ensure detection of low-frequency variants [16].

Variant Calling and Annotation:

- Process FASTQ files through quality control using Trimmomatic to remove low-quality bases (quality score below 20) and Picard to remove PCR duplicates [16].

- Align filtered paired-end reads to the human reference genome (GRCh37/hg19) using the Burrows-Wheeler Aligner, followed by realignment around indels with GATK3 [16].

- Identify single nucleotide variants and indels using VarScan2 with minimum thresholds: for tissue samples, unique variant-supporting reads ≥5 and VAF ≥1%; for plasma samples, unique variant-supporting reads ≥3 and VAF ≥0.3% [16].

- Annotate variants using ANNOVAR and filter against population databases (1000 Genomes, ExAC) to exclude common polymorphisms. Compare with matched germline DNA to distinguish somatic mutations [16].

Protocol for Chemical Sensitivity Prediction Using Transcriptomic Data

Data Preprocessing and Model Training:

- Obtain basal transcriptomic profiles from cancer cell lines (e.g., Cancer Cell Line Encyclopedia) and match with drug sensitivity data (e.g., Cancer Therapeutics Response Portal) [19].

- Standardize RNA abundance values using robust z-score normalization across samples. Encode chemical structures using extended-connectivity fingerprints or other molecular representations [19].

- Implement a conditional deep learning model architecture where chemical features modulate gene expression representations through scaling and shifting operations (FiLM layers) [19].

- Train the model using five-fold cross-validation stratified by cell line to ensure generalizability. Use mean squared error loss between predicted and measured viability values optimized with Adam optimizer [19].

Sensitivity Prediction and Interpretation:

- Apply the trained ChemProbe model to new transcriptomic profiles by computing viability values across a range of compound concentrations [19].

- Generate dose-response curves and calculate area under the curve (AUC) values to quantify sensitivity. Establish a decision threshold for responder classification based on distribution of predicted values [19].

- Interpret model predictions using integrated gradients to identify genes driving chemical sensitivity predictions. Perform gene set enrichment analysis on top-weighted genes to identify biological pathways associated with response [19].

- Validate predictions in independent datasets such as clinical trial data (e.g., I-SPY2 trial) to assess translational utility [19].

Table 2: Essential Research Reagents and Solutions for NGS-Based Chemical Sensitivity Profiling

| Reagent/Solution | Function | Example Products/Protocols |

|---|---|---|

| Nucleic Acid Extraction Kits | Isolation of high-quality DNA from various sample types | QIAamp DNA FFPE Tissue Kit, QIAamp Circulating Nucleic Acid Kit, DNeasy Blood and Tissue Kit |

| Target Enrichment Panels | Capture of cancer-relevant genomic regions | Oncomine Cancer Panel (143 genes), Custom panels (437 cancer-related genes) |

| Library Preparation Kits | Construction of sequencing-ready libraries | KAPA Hyper DNA Library Prep Kit, Illumina DNA Prep |

| NGS Platforms | Massive parallel sequencing of captured libraries | Illumina HiSeq4000, Personal Genome Machine, NovaSeq |

| Variant Calling Software | Identification of somatic mutations from sequence data | VarScan2, GATK, Ion Reporter |

| Chemical Sensitivity Databases | Training data for predictive models | CTRP (545 compounds), CCLE (842 cell lines) |

| Deep Learning Frameworks | Implementation of chemical sensitivity models | PyTorch, TensorFlow with FiLM layers |

Implementation in Research and Clinical Settings

The implementation of NGS-based mutation detection and chemical sensitivity profiling requires careful consideration of analytical validation and regulatory compliance. The NCI-MATCH trial established a network of four CLIA-certified laboratories that demonstrated 99.99% mean inter-operator pairwise concordance across laboratories, proving that high reproducibility of complex NGS assays is achievable with standardized protocols [17]. For clinical application, assays must undergo rigorous validation of analytical sensitivity, specificity, reproducibility, and limit of detection for each variant type [17]. This ensures that reported variants meet quality standards for therapeutic decision-making.

Current National Comprehensive Cancer Network guidelines endorse liquid biopsy methodologies when tissue testing fails or is unattainable [16]. The convergence of comprehensive genomic profiling through NGS and computational chemical sensitivity prediction represents the future of precision oncology. These approaches enable the identification of patient-specific therapeutic vulnerabilities based on the unique genetic makeup of their tumors, moving beyond histology-based classification to genetically-guided treatment strategies. As these technologies evolve, they promise to further refine our ability to match the right patient with the right therapy at the right time, ultimately improving outcomes in cancer treatment.

Visualizing Workflows and Biological Relationships

NGS Workflow for Actionable Mutation Detection

Chemical Sensitivity Prediction Framework

Actionable Mutation Clinical Translation Pathway

Next-generation sequencing (NGS) has revolutionized the detection and characterization of drug resistance in cancer by enabling comprehensive genomic analysis of tumors with unprecedented sensitivity and throughput. Unlike traditional Sanger sequencing, which processes DNA fragments individually, NGS allows for massive parallel sequencing, processing millions of fragments simultaneously to identify genetic alterations that drive both primary (innate) and acquired (treatment-emergent) resistance [9]. This capability is transforming precision oncology by moving beyond single-gene assays to capture the complex genomic landscape of resistance mechanisms.

The application of NGS in chemical sensitivity profiling research provides critical insights into the dynamic evolution of tumors under therapeutic pressure. By detecting low-abundance variants and complex resistance patterns, NGS enables researchers to decipher the molecular pathways that allow cancer cells to evade treatment, thereby informing the development of more effective therapeutic strategies and combination regimens to overcome resistance [20] [21].

NGS Methodologies for Resistance Detection

Key Sequencing Technologies and Platforms

Different NGS platforms offer complementary strengths for resistance mechanism studies. Illumina sequencing utilizes sequencing-by-synthesis with fluorescently labeled nucleotides and is widely used for its high accuracy and throughput [2] [9]. Ion Torrent semiconductor sequencing detects hydrogen ions released during DNA polymerization, providing rapid turnaround times [2]. Third-generation technologies like Pacific Biosciences SMRT and Oxford Nanopore enable long-read sequencing, which is particularly valuable for resolving complex structural variations and epigenetic modifications that contribute to drug resistance [2] [22].

The selection of an appropriate NGS approach depends on the specific research objectives. Targeted panels focus on known resistance genes with deep coverage, making them ideal for detecting low-frequency variants. Whole-exome sequencing provides a broader view of coding regions, while whole-genome sequencing captures the complete genomic landscape, including non-coding regions and structural variants [9]. Single-cell sequencing represents a cutting-edge approach that resolves cellular heterogeneity in resistant populations, revealing subclonal dynamics that bulk sequencing might miss [21].

Analytical Considerations for Resistance Studies

The sensitivity of NGS in detecting resistant subclones is critically dependent on sequencing depth and variant calling thresholds. Studies demonstrate that lowering the detection threshold from the conventional 20% to 2% can increase the identification of pretreatment drug resistance by approximately 2.5-fold, revealing clinically relevant low-abundance variants that would otherwise remain undetected [20]. Effective bioinformatics pipelines must integrate variant calling, annotation, and clinical interpretation to distinguish driver resistance mutations from passenger alterations.

Visualization tools like Trackster enable interactive exploration of NGS data, allowing researchers to dynamically adjust parameters and visualize the effects on variant calling in real-time [23]. This integrated visual analysis approach facilitates the identification of optimal analysis settings for resistance mutation detection without the computational burden of repeatedly processing entire datasets.

NGS Applications in Resistance Mechanism Elucidation

Characterizing Primary Resistance Mechanisms

Primary (innate) resistance refers to pre-existing genetic factors that render tumors insensitive to initial treatment. NGS profiling of treatment-naïve tumors has revealed that low-abundance drug-resistant variants present below the detection limit of conventional methods can significantly impact therapeutic outcomes [20]. In HIV research, which provides a model for understanding resistance mechanisms, NGS at a 2% detection threshold revealed a 22.43% prevalence of pretreatment drug resistance compared to 11.08% at the standard 20% threshold [20].

In cancer, primary resistance mechanisms identified through NGS include:

- On-target mutations that alter drug binding sites

- Off-target alterations in parallel signaling pathways

- Activation of compensatory survival pathways

- Pharmacogenomic variants affecting drug metabolism

Mapping Acquired Resistance Evolution

Acquired resistance emerges under selective therapeutic pressure through Darwinian evolution of tumor cell populations. Longitudinal NGS monitoring of patients during treatment captures the dynamic clonal evolution that underlies resistance development. The SPACEWALK study in ALK-positive NSCLC exemplifies this approach, using NGS to identify three distinct resistance mechanisms: on-target (ALK secondary mutations), off-target (bypass pathway activation), and combined mechanisms [24].

In acute myeloid leukemia (AML), deep single-cell multi-omic profiling integrating NGS with ex vivo drug response testing has revealed conserved patterns of venetoclax resistance associated with specific molecular signatures. This integrated approach identified both known and novel mechanisms of innate and treatment-related resistance, including associations with increased proliferation and CD36 expression in resistant blasts [21].

Table 1: NGS Detection of Pretreatment Drug Resistance at Different Sensitivity Thresholds

| Detection Threshold | Overall PDR Prevalence | NNRTI Resistance | INSTI Resistance |

|---|---|---|---|

| 1% | 29.74% | 15.29% | 1.22% |

| 2% | 22.43% | 11.63% | 1.22% |

| 5% | 15.47% | 8.27% | 0.17% |

| 10% | 12.95% | 6.90% | 0.17% |

| 20% | 11.08% | 4.90% | 0.17% |

Data adapted from HIV resistance study demonstrating threshold-dependent mutation detection [20]

Experimental Protocols for NGS-Based Resistance Profiling

Targeted NGS Panel for Resistance Mutation Detection

The following protocol describes the development and validation of a targeted NGS panel specifically designed for comprehensive resistance profiling in solid tumors, based on established methodologies [15]:

Sample Preparation and Quality Control

- Extract DNA from tumor samples (minimum 50 ng input) using validated extraction kits

- Assess DNA quality and quantity via fluorometry and fragment analysis

- For FFPE samples, evaluate DNA degradation and adjust library preparation accordingly

Library Preparation and Target Enrichment

- Fragment DNA to ~300 bp using acoustic shearing or enzymatic fragmentation

- Repair ends and ligate with indexing adapters containing unique molecular identifiers

- Perform hybridization-based capture using biotinylated oligonucleotides targeting 61 cancer-associated genes including known resistance genes (KRAS, EGFR, ERBB2, PIK3CA, TP53, BRCA1)

- Amplify captured libraries with limited-cycle PCR (8-10 cycles)

Sequencing and Data Analysis

- Sequence on DNBSEQ-G50RS platform using cyclic array sequencing

- Generate minimum 2.2 million reads per sample with 469×-2320× median coverage

- Process data through bioinformatics pipeline: base calling > demultiplexing > alignment > variant calling

- Annotate variants using curated resistance databases and interpret clinical significance

Quality Assurance Metrics

- Ensure >98% target coverage at ≥100× molecular coverage

- Maintain sensitivity threshold of 2.9% variant allele frequency

- Achieve >99.99% reproducibility and repeatability in variant detection

- Validate against orthogonal methods and reference standards

Single-Cell Multi-Omic Profiling for Resistance Mechanisms

For comprehensive dissection of heterogeneous resistance mechanisms, the following single-cell protocol integrates genomic, transcriptomic, and functional profiling [21]:

Sample Processing and Single-Cell Isolation

- Collect primary patient samples (blood or bone marrow for hematologic malignancies, tissue for solid tumors)

- Dissociate into single-cell suspensions using gentle enzymatic digestion

- Isoselect viable mononuclear cells using density gradient centrifugation

- Sort or capture individual cells using microfluidic or droplet-based platforms

Multi-Omic Library Preparation

- For genomic analysis: Perform single-cell DNA sequencing to identify copy number variations and mutations

- For transcriptomic analysis: Prepare single-cell RNA sequencing libraries using template-switching chemistry

- For protein analysis: Conduct mass cytometry (CyTOF) with metal-tagged antibodies

- Index cells for multi-modal data integration

Functional Drug Profiling

- Perform single-cell ex vivo drug testing (pharmacoscopy) with resistance-relevant inhibitors

- Incubate cells with drug panels for 24-72 hours across concentration gradients

- Measure cell viability, apoptosis, and signaling responses via automated imaging

- Integrate functional responses with molecular profiles

Data Integration and Analysis

- Process sequencing data using single-cell bioinformatics pipelines (CellRanger, Seurat)

- Map transcriptional phenotypes and identify resistance-associated expression programs

- Correlate genomic alterations with drug response patterns

- Construct phylogenetic trees to model resistance evolution

Technical Specifications and Performance Metrics

Analytical Validation of NGS Resistance Panels

Rigorous validation is essential for reliable resistance mutation detection. The following performance characteristics were demonstrated for a validated 61-gene oncology panel [15]:

Table 2: Performance Metrics of Validated NGS Resistance Panel

| Parameter | Performance Metric | Acceptance Criterion |

|---|---|---|

| Sensitivity | 98.23% (at 95% CI) | >95% |

| Specificity | 99.99% (at 95% CI) | >99.5% |

| Accuracy | 99.99% (at 95% CI) | >99% |

| Precision | 97.14% (at 95% CI) | >95% |

| Reproducibility | 99.99% | >99% |

| Repeatability | 99.99% | >99% |

| Limit of Detection | 2.9% VAF | <5% VAF |

| Minimum DNA Input | 50 ng | ≤50 ng |

Research Reagent Solutions for NGS Resistance Studies

Table 3: Essential Research Reagents for NGS-Based Resistance Profiling

| Reagent Category | Specific Products | Application in Resistance Studies |

|---|---|---|

| Library Preparation | Sophia Genetics Library Kit, Illumina Nextera Flex | Fragment DNA and add adapters for sequencing |

| Target Enrichment | Custom hybridization baits (61-gene panel) | Isolate genomic regions harboring resistance mutations |

| Sequencing | MGI DNBSEQ-G50RS, Illumina MiSeq | Generate high-quality sequencing reads |

| Data Analysis | Sophia DDM, Trackster | Identify and visualize resistance mutations |

| Single-Cell Platforms | 10X Genomics Chromium, BD Rhapsody | Resolve cellular heterogeneity in resistant populations |

| Functional Assays | Pharmacoscopy platform | Correlate genomic findings with drug response |

Data Analysis and Interpretation Framework

Bioinformatics Pipeline for Resistance Mutation Detection

The computational analysis of NGS data for resistance mechanism studies requires a specialized bioinformatics workflow:

Primary Data Analysis

- Base calling and demultiplexing using platform-specific software

- Quality control assessment (FastQC) to evaluate read quality, GC content, and adapter contamination

- Trim low-quality bases and adapter sequences using tools like Trimmomatic or Cutadapt

Secondary Analysis

- Alignment to reference genome (hg19/GRCh38) using optimized aligners (BWA, Bowtie2)

- Post-alignment processing including duplicate marking, local realignment, and base quality recalibration

- Variant calling using mutational-specific algorithms (MuTect2, VarScan2) with sensitivity for low-frequency variants

- Structural variant detection using dedicated tools (Manta, Delly)

Tertiary Analysis and Interpretation

- Annotation of variants using curated databases (OncoKB, CIViC, COSMIC)

- Identification of known resistance mutations and novel putative resistance mechanisms

- Clonal decomposition and evolutionary analysis using phylogenetic methods

- Integration with functional drug response data to validate resistance associations

- Clinical interpretation and reporting using established guidelines

Visualization and Exploration of Resistance Data

Effective visualization is critical for interpreting complex resistance patterns. The Trackster environment enables interactive exploration of NGS data, allowing researchers to dynamically adjust parameters and visualize the effects on resistance variant calling in real-time [23]. This integrated visual analysis approach facilitates the identification of optimal analysis settings without the computational burden of repeatedly processing entire datasets.

Advanced visualization strategies include:

- Circos plots to display genomic alterations across multiple samples

- Fishplot representations of clonal evolution during treatment

- Heatmaps of gene expression patterns in resistant versus sensitive cells

- Pathway diagrams illustrating altered signaling networks in resistance

Visualizing NGS Workflows for Resistance Studies

The following diagrams illustrate key experimental and analytical workflows for NGS-based resistance mechanism studies.

NGS Resistance Profiling Workflow

NGS Resistance Profiling Workflow - This diagram outlines the comprehensive workflow from sample collection through to resistance mechanism interpretation, highlighting key quality control checkpoints.

Resistance Mechanism Classification

Resistance Mechanism Classification - This diagram categorizes the primary resistance mechanisms identifiable through NGS profiling, based on findings from the SPACEWALK study in ALK-positive NSCLC [24].

NGS technologies have fundamentally transformed our ability to decipher the complex molecular mechanisms underlying drug resistance in cancer. The approaches detailed in this application note provide researchers with powerful methodologies to detect both primary and acquired resistance mutations, track clonal evolution under therapeutic pressure, and identify novel resistance pathways. The integration of NGS with functional drug sensitivity profiling creates a particularly powerful paradigm for validating resistance mechanisms and identifying therapeutic vulnerabilities.

Future developments in single-cell multi-omics, long-read sequencing, and artificial intelligence-assisted analysis will further enhance the resolution and predictive power of NGS-based resistance studies. As these technologies continue to mature, they will accelerate the development of more effective therapeutic strategies that anticipate and circumvent resistance mechanisms, ultimately improving outcomes for cancer patients.

Tumor heterogeneity, which fosters tumor evolution, is a fundamental challenge in cancer medicine, as it drives adaptation, metastasis, and therapeutic resistance [25]. Intratumor heterogeneity (ITH) refers to the presence of diverse cellular subpopulations within a single tumor, arising from cumulative genomic alterations and shaped by evolutionary pressures [26]. Tracking this dynamic clonal architecture requires methodologies capable of capturing spatial and temporal complexity. Next-generation sequencing (NGS) has emerged as a pivotal technology for comprehensive genomic profiling, enabling detailed dissection of this heterogeneity across cancer types [9] [27].

Sequential profiling of tumors via NGS provides a powerful strategy for monitoring clonal dynamics during disease progression and in response to therapeutic pressures. This approach moves beyond static molecular snapshots, revealing the patterns and forces that govern tumor evolution, from early clonal expansions to late, complex branching phylogenies [26]. The application of this methodology is particularly relevant in the context of NGS-based chemical sensitivity profiling, as it allows researchers to correlate dynamic genomic landscapes with drug response and resistance mechanisms, thereby informing the development of more effective and enduring treatment strategies.

Key Concepts and Biological Background

Models of Tumor Evolution

Tumor progression is not linear but follows evolutionary patterns that can be inferred from genomic data. Two predominant models explain the genomic landscape of advanced tumors:

- Branching Evolution and Neutral Evolution: In advanced cancers, such as some colorectal cancers, a "Big Bang" model may occur where subclones expand early without subsequent dominant selection, leading to high ITH where numerous subclones coexist without one outcompeting the others [26]. This is characterized by a predominance of passenger mutations in the late phase.

- Darwinian Selection: In contrast, other cancers, like some renal cell carcinomas, exhibit strong natural selection where driver gene alterations (e.g., in MTOR, TSC1) are found in subclones, indicating ongoing selective pressures that shape the tumor architecture [26].

Components of Intratumor Heterogeneity

ITH is fueled by multiple types of genomic alterations, each with distinct clinical implications:

- Ubiquitous (Clonal) Aberrations: Also known as trunk or founder mutations, these are present in all tumor cells and are typically early carcinogenic events. Targeting these clonal events is considered essential for achieving a profound antitumor effect [26].

- Scattered (Subclonal) Aberrations: These are progressor or branch/leaf mutations that are present only in subsets of tumor cells. They contribute significantly to ITH and can be the source of resistant populations following therapy [26].

The degree of ITH varies significantly across cancer types. For instance, lung squamous carcinoma (LUSC) often exhibits higher inter- and intratumor heterogeneity at both the genomic and transcriptomic levels compared to lung adenocarcinoma (LUAD) [28].

Application Note: A Protocol for Sequential Tracking of Clonal Dynamics

This application note provides a detailed protocol for using targeted NGS to track clonal dynamics in solid tumors over time, specifically framed within research using cancer models for drug sensitivity profiling.

The following diagram illustrates the complete workflow for sequential profiling, from sample collection to data interpretation.

Sample Preparation and Study Design

Objective: To collect and process longitudinal tumor samples from cancer models to capture temporal genomic evolution.

Materials:

- Biological Material: Cancer model (e.g., patient-derived xenograft, organoid, or cell line) treated with a compound of interest.

- Sample Types: Fresh-frozen tissue biopsy, formalin-fixed paraffin-embedded (FFPE) tissue, or liquid biopsy (for ctDNA) [29] [30].

- Input Requirements: ≥50 ng of high-quality DNA for targeted sequencing panels. For FFPE samples, input may need to be increased due to DNA fragmentation [15].

Procedure:

- Treatment Arm Design: Establish cancer models and expose them to the chemical compound(s) under investigation. Include appropriate control arms (e.g., vehicle-treated).

- Sequential Sampling: Harvest tumor material at multiple time points:

- T₀: Baseline (pre-treatment)

- T₁: Early during treatment (e.g., 1-2 weeks)

- T₂: At maximal response

- T₃: Upon disease progression/relapse

- Sample Processing:

- For solid tissues, a pathologist should review hematoxylin and eosin-stained slides to mark areas for macrodissection or microdissection, ensuring enrichment of tumor cells and accurate estimation of tumor cell fraction [31].

- Extract genomic DNA using commercially available kits. Assess DNA quantity and quality (e.g., via spectrophotometry and fluorometry).

- Sample Quality Control (QC): Confirm that DNA samples meet pre-established QC metrics (e.g., A260/A280 ratio of 1.8-2.0, DNA integrity number >7 for FFPE samples) before proceeding to library preparation [31].

Library Preparation and Targeted Sequencing

Objective: To prepare sequencing libraries enriched for a defined panel of cancer-associated genes.

Materials:

- Targeted Gene Panel: A validated panel, such as a custom 61-gene oncopanel covering key genes (e.g., KRAS, EGFR, TP53, PIK3CA, BRCA1) [15].

- Library Prep Kit: Hybrid-capture based (e.g., Sophia Genetics) or amplicon-based library preparation kits.

- Instrumentation: Automated library preparation system (e.g., MGI SP-100RS) and benchtop sequencer (e.g., Illumina MiSeq, MGI DNBSEQ-G50RS) [15].

Procedure:

- Library Construction:

- Fragment genomic DNA to a size of ~300 bp and ligate platform-specific adapters. For hybridization-capture methods, use biotinylated oligonucleotide probes designed against the target regions [31].

- Amplify the library via PCR.

- Target Enrichment: Perform hybrid capture or amplicon PCR to isolate the genomic regions of interest. Wash away non-specific fragments.

- Library QC: Quantify the final library using quantitative PCR and assess its size distribution (e.g., via Bioanalyzer).

- Sequencing: Pool barcoded libraries and sequence on the chosen NGS platform. Aim for a minimum mean coverage of >500x to reliably detect low-frequency variants, with a uniformity of >99% across targeted bases [15].

Bioinformatic Analysis and Clonal Deconvolution

Objective: To identify somatic variants and reconstruct clonal architecture from sequential samples.

Computational Tools:

- Sequence Alignment: BWA-MEM for aligning reads to a reference genome (e.g., hg38).

- Variant Calling: MuTect2 for single nucleotide variants (SNVs) and Indels; Control-FREEC or CNVkit for copy number alterations (CNAs) [28].

- Clonal Deconvolution: PyClone or SciClone for inferring clonal populations based on variant allele frequencies (VAFs) and cancer cell fractions.

Procedure:

- Data Preprocessing: Convert raw sequencing data (FASTQ) to aligned reads (BAM format), including duplicate marking and base quality recalibration.