Network-Based Inference for Target Prediction: A Comparative Analysis of Methods, Applications, and Performance

This article provides a comprehensive comparative analysis of network-based inference (NBI) methods for predicting drug-target interactions (DTIs) and drug repositioning.

Network-Based Inference for Target Prediction: A Comparative Analysis of Methods, Applications, and Performance

Abstract

This article provides a comprehensive comparative analysis of network-based inference (NBI) methods for predicting drug-target interactions (DTIs) and drug repositioning. Aimed at researchers, scientists, and drug development professionals, it explores the foundational principles of NBI, which leverage bipartite network topology to infer new associations without requiring 3D protein structures or experimentally confirmed negative samples. The review delves into key methodologies like ProbS and HeatS, examines their practical applications in pharmaceutical research, addresses common challenges and optimization strategies, and provides a rigorous validation and performance comparison with other computational approaches, such as machine learning. By synthesizing evidence from recent studies, this analysis highlights the significant potential of network-based methods to accelerate drug discovery and development.

Foundations of Network-Based Inference: Principles and Core Concepts for Target Prediction

Traditional drug discovery has long relied on a reductionist "one-drug, one-gene" paradigm, which has shown limited success for complex diseases due to poor correlation between single protein modulation and organism-level responses [1]. Network-based inference represents a fundamental shift in this approach, recognizing that both drugs and pathological processes alter interconnected biochemical networks rather than isolated targets [2]. By modeling these complex interactions, network methods provide a systems-level framework for understanding drug action, leading to more predictive identification of viable drug target combinations within the efficacy-toxicity spectrum [2].

The adoption of network approaches addresses critical failures in drug development, where approximately 90% of candidates fail during clinical trials, largely due to unexpected toxicity or lack of efficacy stemming from an inability to predict interactions with off-targets and downstream processes [2]. Network-based inference moves beyond this limitation by contextualizing target identification within systemic pharmacodynamic properties, offering a more comprehensive understanding of how pharmacological interventions alter biological systems [1].

Network-Based Inference Methodologies: A Comparative Framework

Core Methodological Approaches

Network-based inference in drug discovery encompasses several distinct methodological approaches, each with unique strengths and applications for target prediction research.

Heterogeneous Network Models: These methods integrate multisource biological data—including drugs, targets, diseases, and side effects—into unified graph structures where nodes represent biological entities and edges represent their relationships [3]. Advanced implementations like MVPA-DTI employ meta-path aggregation mechanisms to dynamically integrate information from both feature views and biological network relationship views, effectively learning potential interaction patterns between entities [3]. These models have demonstrated substantial performance improvements, with one recent implementation achieving an AUROC of 0.966 in drug-target interaction prediction tasks [3].

Causal Network Inference: This approach aims to distinguish correlation from causation in biological networks, which is fundamental for identifying bona fide therapeutic targets [4]. CausalBench, a benchmark suite for evaluating network inference methods on real-world interventional data, has revealed that method scalability remains a significant limitation in the field [4]. Contrary to theoretical expectations, methods using interventional information do not consistently outperform those using only observational data on real-world biological datasets [4].

Graph Neural Networks (GNNs): GNNs have emerged as transformative tools by accurately modeling molecular structures and interactions with binding targets [5]. These networks learn representations of drugs and targets from large-scale unlabeled data through self-supervised pre-training, then apply these representations to downstream prediction tasks like drug-target interaction, binding affinity, and mechanism of action [6]. Frameworks like DTIAM demonstrate how this approach achieves substantial performance improvements, particularly in challenging cold-start scenarios where new drugs or targets have limited experimental data [6].

Gene Regulatory Network (GRN) Inference: These methods reconstruct functional gene-gene interactomes from transcriptomic data, with single-cell RNA sequencing providing unprecedented resolution [7]. However, methodological surveys indicate that GRN inference methods using scRNA-Seq technology frequently demonstrate performance similar to random predictors, highlighting significant challenges in data processing, biological variation, and performance evaluation [7].

Comparative Performance Analysis

Table 1: Comparative Performance of Network-Based Inference Methodologies

| Method Category | Key Strengths | Limitations | Representative Performance |

|---|---|---|---|

| Heterogeneous Network Models | Integrates multimodal biological data; captures high-order semantic information | Limited efficacy on sparse networks; computationally intensive | AUROC: 0.966; AUPR: 0.901 [3] |

| Causal Network Inference | Distinguishes causal from correlative relationships; utilizes interventional data | Poor scalability; limited performance gains with interventional data | Varies by method; scalability limits performance [4] |

| Graph Neural Networks (GNNs) | Excellent for cold-start scenarios; self-supervised learning reduces labeled data needs | Dependent on quality of molecular representations | Substantial improvement in cold-start scenarios [6] |

| Gene Regulatory Networks | Single-cell resolution; models transcriptional regulation | Performance often similar to random predictors; sensitive to data preprocessing | Challenging to assess without ground truth [7] |

Experimental Benchmarking and Evaluation Frameworks

Benchmarking Platforms and Metrics

Robust evaluation of network inference methods requires specialized benchmarking platforms that provide standardized datasets and biologically-motivated metrics. CausalBench has emerged as a leading benchmark suite, revolutionizing network inference evaluation with real-world, large-scale single-cell perturbation data [4]. Unlike traditional benchmarks with simulated graphs, CausalBench employs biologically-driven evaluation metrics including:

- Mean Wasserstein Distance: Measures the extent to which predicted interactions correspond to strong causal effects [4]

- False Omission Rate (FOR): Quantifies the rate at which existing causal interactions are omitted by model output [4]

- Precision-Recall Trade-off: Evaluates the balance between prediction accuracy and completeness [4]

These metrics address the fundamental challenge in biological network inference: the absence of definitive ground-truth knowledge in real-world systems [4]. Traditional evaluations conducted on synthetic datasets often fail to reflect actual performance in biological applications, making these biologically-validated metrics essential for meaningful method comparison [4].

Performance Trends in Method Evaluation

Recent large-scale benchmarking studies have revealed several critical trends in network inference performance. First, there exists an inherent trade-off between precision and recall across most methods, with researchers typically needing to optimize for one at the expense of the other [4]. Second, contrary to theoretical expectations, methods incorporating interventional data (GIES, DCDI variants) frequently fail to outperform observational methods (PC, GES, NOTEARS) on real biological datasets [4]. Third, scalability limitations significantly impact performance, with many methods struggling with the dimensionality of genome-scale networks [4].

Table 2: Experimental Data Sources for Network Inference

| Data Type | Source | Application in Network Inference | Key Considerations |

|---|---|---|---|

| Single-cell perturbation data | CausalBench suite [4] | Causal network inference; evaluation of method performance | Includes over 200,000 interventional datapoints; addresses dropout phenomenon [4] [7] |

| Drug-Target Interaction Data | LINCS, DrugBank, Yamanishi_08, Hetionet [6] [1] | Training and validation of DTI prediction models | Data sparsity and quality challenges; limited labeled data [6] |

| Transcriptomic Data | GEO repository (e.g., GSE150910) [1] | Gene co-expression network construction; causal gene identification | Requires normalization; confounder adjustment needed [1] |

| Molecular Structures | SMILES, molecular graphs [3] [6] | Structural feature extraction for drugs | 3D conformation features provide critical information [3] |

| Protein Sequences | Primary amino acid sequences [3] [6] | Sequence feature extraction for targets | Protein language models (e.g., Prot-T5) enhance feature quality [3] |

Experimental Protocols for Network-Based Target Identification

Integrated Causal Inference and Deep Learning Protocol

A novel computational framework integrating network analysis, statistical mediation, and deep learning demonstrates a robust protocol for identifying causal target genes and repurposable small molecules [1]. This methodology was successfully applied to Idiopathic Pulmonary Fibrosis (IPF) as a case study:

- Step 1: Network Construction - Weighted Gene Co-expression Network Analysis (WGCNA) was applied to RNA-seq data from 103 IPF patients and 103 controls to identify significantly correlated gene modules [1].

- Step 2: Causal Mediation Analysis - Bidirectional mediation analysis identified genes causally linked to disease phenotype, adjusting for clinical confounders (age, smoking status) using type-III ANOVA models [1].

- Step 3: Target Validation - Candidate causal genes were tested for association with lung function traits (FVC, DLCO) and predictive performance for disease severity using independent validation cohorts [1].

- Step 4: Compound Screening - Deep learning-based screening (DeepCE model) used the causal gene signature to identify small-molecule candidates with significant inverse correlation to the IPF-specific signature [1].

This protocol identified 145 unique mediator genes in IPF, with five genes (ITM2C, PRTFDC1, CRABP2, CPNE7, and NMNAT2) predictive of disease severity and several promising drug candidates including Telaglenastat and Merestinib [1].

Heterogeneous Network-Based DTI Prediction Protocol

The MVPA-DTI framework demonstrates a comprehensive protocol for drug-target interaction prediction using heterogeneous networks [3]:

- Step 1: Multiview Feature Extraction - A molecular attention Transformer extracts 3D conformation features from drug chemical structures, while Prot-T5 (a protein-specific language model) extracts biophysically relevant features from protein sequences [3].

- Step 2: Heterogeneous Network Construction - Integration of drugs, proteins, diseases, and side effects from multisource heterogeneous data constructs a comprehensive biological network [3].

- Step 3: Meta-Path Aggregation - A meta-path aggregation mechanism dynamically integrates information from both feature views and biological network relationship views, learning higher-order interaction patterns [3].

- Step 4: Interaction Prediction - The model predicts novel drug-target interactions by leveraging the integrated representations, achieving an AUPR of 0.901 and AUROC of 0.966 in benchmark tests [3].

This protocol successfully identified 38 out of 53 candidate drugs as having interactions with the KCNH2 target in a case study, validating its practical utility in drug discovery pipelines [3].

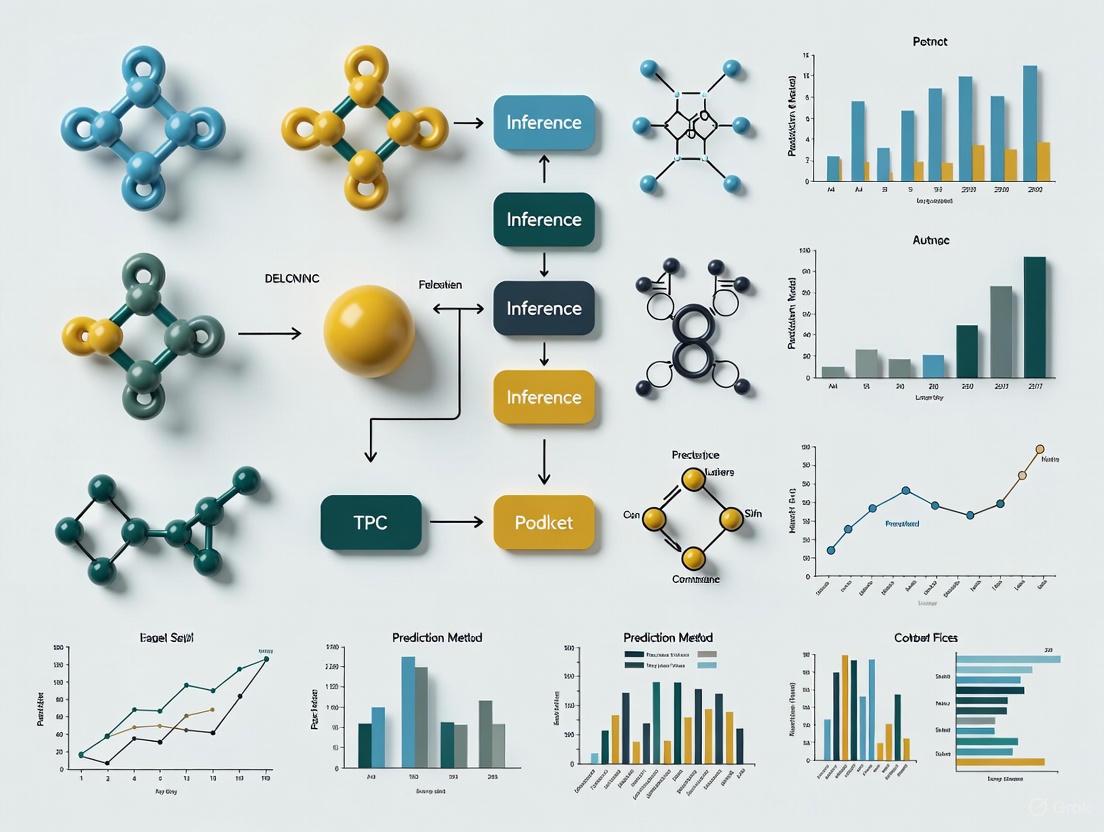

Visualization of Network Inference Workflows

Network-Based Inference Workflow: This diagram illustrates the generalized workflow for network-based inference in drug discovery, from data integration through target prediction.

Table 3: Essential Research Reagents and Computational Tools for Network-Based Inference

| Resource Category | Specific Tools/Databases | Function in Research | Key Features |

|---|---|---|---|

| Benchmarking Platforms | CausalBench [4] | Evaluation of network inference methods on real-world perturbation data | Biologically-motivated metrics; large-scale single-cell data |

| Bioinformatics Tools | WGCNA [1], GENIE3, PIDC [7] | Gene co-expression network analysis; GRN inference | Identification of correlated modules; network topology analysis |

| Deep Learning Frameworks | DTIAM [6], MVPA-DTI [3], MONN [6] | Drug-target interaction prediction; binding affinity estimation | Self-supervised learning; multi-task training; cold-start capability |

| Data Resources | LINCS [1], DrugBank [1], GEO [1] | Drug perturbation profiles; drug-target data; transcriptomic data | Large-scale reference data; standardized formats |

| Language Models | Prot-T5 [3], MolBERT [3] | Protein and molecular sequence representation | Biophysically relevant feature extraction; transfer learning |

Network-based inference represents a paradigm shift in drug discovery, moving beyond single-target approaches to model the complex interconnectedness of biological systems [2]. The integration of heterogeneous data sources, coupled with advanced computational methods like graph neural networks and causal inference, has significantly improved our ability to identify high-value therapeutic targets and repurposable drug candidates [3] [6] [1]. However, challenges remain in method scalability, performance validation, and translation to clinical success [4] [7].

Future methodological development must address several critical frontiers. First, improving the utilization of interventional data in causal network inference remains a significant opportunity, as current methods fail to consistently leverage this information effectively [4]. Second, standardization of evaluation metrics and benchmarking approaches is essential for meaningful comparison across methods [7]. Finally, the integration of multiscale models—from molecular interactions to physiological responses—will be crucial for capturing the full complexity of drug action in biological systems [2]. As these methodologies mature, network-based inference promises to deliver more effective, safer therapeutics with reduced development costs and higher clinical success rates [5].

In the landscape of computational target prediction, network-based inference (NBI) methods occupy a unique position by overcoming two fundamental limitations that constrain other approaches: dependency on three-dimensional (3D) protein structures and requirement for experimentally confirmed negative samples. Traditional structure-based methods like molecular docking require high-quality 3D structures of target proteins, which are unavailable for many biologically important targets [8]. Similarly, supervised machine learning methods typically need both confirmed interactions (positive samples) and confirmed non-interactions (negative samples) to build accurate prediction models [8]. The scarcity of reliable negative samples—experimentally validated non-interactions—poses a significant challenge for these methods [3] [6].

Network-based methods bypass these limitations by leveraging the known network of drug-target interactions (DTIs) and employing algorithms derived from recommendation systems and link prediction [8]. By treating drugs and targets as nodes in a bipartite network, these methods infer new potential interactions based solely on the topology of existing connections, without requiring structural information or negative examples [8] [9]. This independence grants NBI methods distinct advantages in coverage, scalability, and practical applicability in drug discovery pipelines.

Comparative Analysis of Methodological Limitations

Table 1: Fundamental Limitations of Different Computational Approaches for Target Prediction

| Method Category | Dependency on 3D Structures | Dependency on Negative Samples | Key Limitations |

|---|---|---|---|

| Structure-Based (Docking) | Required [8] | Not Required | Limited to proteins with solved 3D structures; computationally intensive [8] [6] |

| Ligand Similarity-Based | Not Required | Not Required | Limited to chemically similar drugs; cannot find novel scaffolds [8] [3] |

| Supervised Machine Learning | Not Required | Required [8] | Limited by quality and availability of negative samples; biased performance [8] [6] |

| Network-Based Inference (NBI) | Not Required [8] | Not Required [8] | Prediction scores not initially correlated with binding affinity [9] |

The independence from 3D structures enables network-based methods to cover much larger target space, including proteins with unknown structures such as many G protein-coupled receptors (GPCRs) [8]. This advantage is particularly significant given that among more than 800 GPCR family members, only approximately 30 have resolved crystal structures [8]. Similarly, by not requiring negative samples, NBI methods avoid the problem of limited availability of experimentally validated non-interactions from public databases and literature [8].

Experimental Validation and Performance Metrics

Benchmarking Studies and Quantitative Performance

Table 2: Experimental Performance Validation of Network-Based Methods

| Method Name | Key Innovation | Performance Metrics | Experimental Validation |

|---|---|---|---|

| NBI (Basic Algorithm) | Uses only known DTI network [8] | AUC: 0.9192 (ProbS) [10] | Relies on bipartite network topology [10] |

| SDTNBI/bSDTNBI | Incorporates drug-substructure associations [9] | Can predict for compounds outside original DTI network [9] | Discovered new compounds for ERα, EP4, NQO1 [9] |

| wSDTNBI | Incorporates binding affinity data [9] | Success rate: 9.7% (7/72 compounds) for RORγt [9] | Identified ursonic acid (IC50: 10 nM) and oleanonic acid (IC50: 0.28 μM) [9] |

| DTIAM | Self-supervised pre-training [6] | AUPR: 0.901, AUROC: 0.966 [6] | Superior performance in cold-start scenarios [6] |

Recent advances in network-based methods have addressed initial limitations while maintaining these core advantages. The wSDTNBI method, for instance, incorporates binding affinity data to create weighted DTI networks, enabling prediction scores correlated with biological activity while still not requiring 3D structures [9]. This approach demonstrated remarkable practical success in identifying novel RORγt inverse agonists, with a success rate (9.7%) that surpassed contemporary structure-based and deep learning-based virtual screening methods on the same target [9].

Methodological Framework and Workflows

Core Experimental Protocol for Network-Based Inference

The fundamental workflow of network-based inference methods involves several standardized steps, though specific implementations may vary:

Step 1: Network Construction

- Compile known drug-target interactions from databases to form a bipartite network

- For enhanced methods (SDTNBI, wSDTNBI), additionally construct a drug-substructure association network

- For weighted methods (wSDTNBI), assign edge weights correlated with binding affinities (Ki, Kd, IC50, EC50) [9]

Step 2: Algorithm Application

- Apply network inference algorithms such as probabilistic spreading (ProbS) [8] [10]

- Implement resource diffusion processes across the network

- Perform matrix operations to calculate prediction scores [8]

Step 3: Prioritization & Validation

- Rank predicted interactions by their scores

- Select top candidates for experimental validation

- Confirm interactions through in vitro assays (e.g., binding affinity measurements) [9]

Diagram 1: Workflow of network-based inference methods for target prediction.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents and Resources for Network-Based Inference

| Resource Category | Specific Examples | Function in Research |

|---|---|---|

| Interaction Databases | BindingDB, ChEMBL, DrugBank [8] | Sources of known DTIs for network construction |

| Chemical Information | PubChem, DrugBank [9] | Drug structures and substructure decomposition |

| Target Information | UniProt, PDB [8] | Protein sequences and (if available) structures |

| Experimental Assays | Ki, Kd, IC50, EC50 measurements [8] [9] | Validation of predicted interactions |

| Software Tools | NBI, SDTNBI, wSDTNBI implementations [8] [9] | Execution of network inference algorithms |

Case Study: Practical Application and Validation

The wSDTNBI method exemplifies how modern network-based approaches maintain independence from 3D structures while achieving quantitative predictions. In this approach:

- A weighted DTI network is constructed with edge weights correlated with binding affinities [9]

- A drug-substructure association network enables prediction for novel compounds [9]

- A two-pronged approach calculates scores using both network inference and similarity-based methods [9]

- Parameters (α, β, γ, δ) are tuned to address network imbalances [9]

This method was experimentally validated through a virtual screening campaign for retinoid-related orphan receptor γt (RORγt) inverse agonists. From 72 purchased compounds predicted by wSDTNBI, seven were confirmed as novel inverse agonists, including ursonic acid (IC50 = 10 nM) and oleanonic acid (IC50 = 0.28 μM) [9]. The direct binding of ursonic acid to RORγt was further confirmed by X-ray crystallography, and both compounds demonstrated therapeutic effects in multiple sclerosis models [9]. This case study illustrates how network-based methods achieve high success rates without initial dependency on 3D structure information.

Network-based inference methods provide a unique and valuable approach for drug-target interaction prediction by overcoming two critical dependencies that limit other computational methods: requirement for 3D protein structures and need for experimentally validated negative samples. This independence enables comprehensive coverage of the target space, including proteins with unknown structures, and avoids biases associated with negative sample selection. As evidenced by successful applications like wSDTNBI, these methods have evolved to incorporate additional data types while maintaining their foundational advantages, establishing them as powerful tools in modern drug discovery pipelines.

Understanding the Drug-Target Bipartite Network Model

Drug-target interaction (DTI) prediction is a critical area of research in genomic drug discovery and repurposing. The drug-target bipartite network model provides a powerful computational framework for this task, representing drugs and target proteins as two distinct sets of nodes, with edges indicating known interactions between them. This model allows researchers to systematically integrate heterogeneous biological data and apply various network-based and machine-learning algorithms to infer new, potential interactions. This guide offers a comparative analysis of the prominent computational methods that leverage this model for target prediction research.

Model Foundation and Core Concepts

A drug-target bipartite network is formally defined as a graph ( G = (D, T, E) ), where ( D ) is a set of drug nodes, ( T ) is a set of target protein nodes, and ( E ) is the set of edges between them such that every edge connects a node in ( D ) to a node in ( T ) [11] [12]. This structure inherently reflects the real-world interactions where a drug (a chemical compound) binds to a protein target (often the product of a gene). The primary goal within this framework is link prediction: to assign a likelihood score to unknown drug-target pairs, identifying which are most probable to interact [13].

The prediction is fundamentally guided by the "guilt-by-association" principle, which posits that similar drugs are likely to interact with similar targets and vice versa [11] [13]. To operationalize this, methods incorporate side information, primarily:

- Chemical Space: Represented by a drug similarity matrix ( S_d ), often computed from chemical structures (e.g., using SIMCOMP which assesses common substructures) [11].

- Genomic Space: Represented by a target similarity matrix ( S_t ), typically derived from protein sequence similarities (e.g., using normalized Smith-Waterman scores) [11].

The core challenge is to integrate these similarity measures with the known interaction network to make accurate predictions for unknown pairs, a task that becomes particularly difficult due to the extreme rarity of known interactions compared to the vast number of possible pairings [11].

Comparative Analysis of Methodologies

Computational approaches for DTI prediction have evolved from simple similarity-based methods to sophisticated algorithms that leverage deep learning on heterogeneous networks. The table below summarizes the core operational principles of several key methods.

Table 1: Comparison of Drug-Target Interaction Prediction Methodologies

| Method Name | Core Principle | Input Data Utilized | Key Algorithmic Approach |

|---|---|---|---|

| Bipartite Local Model (BLM) [11] | Supervised inference using local models for each drug and target. | Known DTIs, drug chemical structures, target protein sequences. | Two-step SVM classification; predictions combined for final score. |

| Semi-Bipartite Graph Model [13] | Learns topological features from an integrated network. | Known DTIs, drug-drug similarities, protein-protein similarities. | Sub-graph extraction and embedding, followed by Deep Neural Network classification. |

| AOPEDF [14] | Integrates diverse data via a heterogeneous network. | 15 different networks (chemical, genomic, phenotypic, etc.). | Arbitrary-order proximity embedding with a cascade deep forest classifier. |

| Network Proximity (sAB) [15] | Measures network distance between drug targets and diseases. | Protein-protein interactome, drug targets, disease-associated proteins. | Separation metric (( s_{AB} )) calculated from shortest paths in the interactome. |

| DHGT-DTI [16] | Captures both local and global network structures. | Heterogeneous network of drugs, targets, diseases. | Dual-view model using GraphSAGE (neighborhood) and Graph Transformer (meta-paths). |

Workflow of a Bipartite Network Inference Model

The following diagram illustrates a generalized, high-level workflow for network-based DTI prediction, which encompasses the core steps of many modern methods.

Experimental Protocols and Performance Benchmarking

To objectively compare the performance of different DTI prediction methods, researchers employ standardized experimental protocols, primarily involving benchmark datasets and cross-validation.

Standard Benchmarking Protocol

A widely used protocol involves benchmarking on four key drug-target interaction networks in humans: enzymes, ion channels, GPCRs (G-protein coupled receptors), and nuclear receptors [11]. The standard procedure is as follows:

- Data Compilation: Known interactions are collected from public databases like KEGG BRITE, BRENDA, SuperTarget, and DrugBank [11].

- Similarity Calculation:

- Cross-Validation: Performance is typically evaluated using k-fold cross-validation (e.g., 10-fold CV). Known interactions are randomly split into k folds; the model is trained on k-1 folds and tested on the held-out fold. This process is repeated k times [11] [13].

- Performance Metrics:

- AUC (Area Under the ROC Curve): Measures the overall ability to rank positive interactions higher than negatives. An AUC of 1.0 represents a perfect model, while 0.5 is random guessing.

- AUPR (Area Under the Precision-Recall Curve): Often more informative than AUC for highly imbalanced datasets where non-interactions vastly outnumber known interactions, which is typical for DTI prediction [11].

Quantitative Performance Comparison

The table below summarizes the reported performance of various methods on established benchmarks, illustrating the evolution of predictive power.

Table 2: Performance Benchmarking of DTI Prediction Methods

| Method | Dataset | Reported Performance (AUC) | Reported Performance (AUPR) | Key Experimental Finding |

|---|---|---|---|---|

| BLM [11] | Ion Channels | >97% | Up to 84% (nearly 90% precision at 60% recall) | Superior to precursor algorithms at the time. |

| AOPEDF [14] | External Validation (DrugCentral) | 86.8% | - | Outperformed several state-of-the-art methods on external validation. |

| AOPEDF [14] | External Validation (ChEMBL) | 76.8% | - | Demonstrated robust generalizability to independent data. |

| Semi-Bipartite Graph + DL [13] | Multiple Benchmarks | Outperformed others | Outperformed others | Showed ability to learn sophisticated topological features beyond handcrafted heuristics. |

Advanced Protocol: Network Proximity for Drug Combinations

Beyond predicting binary interactions, network models can guide combination therapy. A key protocol involves calculating the network separation (( s_{AB} )) of two drugs relative to a disease module [15]:

- Construct the Interactome: Assemble a comprehensive human protein-protein interaction (PPI) network from multiple data sources.

- Define Modules: Identify the target sets of Drug A and Drug B, and the protein set associated with a specific disease.

- Calculate Distances: Compute the mean shortest path length:

- ( d_{AB} ): between the targets of drug A and drug B.

- ( d{AA} ) and ( d{BB} ): within the targets of drug A and drug B, respectively.

- Compute Separation: ( s{AB} \equiv \langle d{AB} \rangle - \frac{\langle d{AA} \rangle + \langle d{BB} \rangle}{2} ) A negative ( s_{AB} ) indicates overlapping drug targets, while a positive value indicates separated targets [15].

- Relate to Efficacy: Studies have shown that the "Complementary Exposure" configuration—where two drugs with separated targets (( s_{AB} \geq 0 )) both hit the same disease module—is correlated with therapeutic efficacy in approved drug combinations for diseases like hypertension and cancer [15].

Successful implementation of DTI prediction models relies on a suite of computational and data resources. The table below details key "research reagents" for this field.

Table 3: Essential Resources for Drug-Target Bipartite Network Research

| Resource Name | Type | Primary Function | Relevance to DTI Models |

|---|---|---|---|

| KEGG [11] [17] | Database | Provides curated data on pathways, drugs, and targets. | Source for known DTIs, drug chemical structures, and target sequences for benchmarking. |

| DrugBank [11] [18] [14] | Database | Comprehensive drug and target information. | A primary source for experimentally validated DTIs to build and test models. |

| BioSNAP Dataset [18] | Benchmark Data | A pre-compiled drug-target interaction network. | Provides a ready-to-use dataset (7,341 nodes, 15,138 edges) for model training and validation. |

| DINIES [17] | Web Tool / Algorithm | A supervised prediction server for DTIs. | Allows users to run predictions with custom data using PKR, SDR, or DML algorithms. |

| LIANA [19] | Software Framework | A unified interface for cell-cell communication analysis. | Exemplifies the trend of frameworks that integrate multiple resources and methods, a useful paradigm for DTI tool development. |

| Human Protein Interactome [15] | Network Data | A large-scale map of protein-protein interactions. | Essential for calculating network-based proximity measures for drug and disease modules. |

The drug-target bipartite network model has established itself as a foundational framework for in silico drug discovery. The field has matured from methods like BLM, which effectively leveraged local network information and chemical/genomic similarities, to advanced deep learning models like AOPEDF and DHGT-DTI that integrate vast, heterogeneous biological networks to capture both local and global topological features. Performance benchmarks consistently show that these modern, integrative approaches achieve high accuracy and, crucially, generalize well to external validation sets. Furthermore, the extension of these network principles to predict efficacious drug combinations using proximity measures like ( s_{AB} ) demonstrates the model's expanding utility in tackling complex challenges in pharmaceutical research. As public databases grow and algorithms become more sophisticated, network-based inference will continue to be an indispensable tool for accelerating drug development and repurposing.

In the field of network biology, topological measures provide powerful mathematical frameworks for analyzing complex biological systems, from protein-protein interactions to gene regulatory networks. These quantitative descriptors distill intricate network structures into interpretable numerical values that can correlate with and predict biological significance. Within the context of target prediction research, identifying influential nodes in biological networks is paramount for understanding disease mechanisms, identifying drug targets, and predicting intervention effects. The core premise is that the structural position of a node—whether it represents a protein, gene, or metabolite—within a network profoundly influences its functional importance and potential as a therapeutic target [20].

Among the diverse array of topological indices, degree distribution and centrality measures have emerged as fundamental tools for network-based inference. Degree distribution provides a macroscopic view of network connectivity patterns, revealing whether a network is random, scale-free, or hierarchical—each with distinct implications for robustness and vulnerability. Centrality measures, including degree, betweenness, and closeness centrality, offer complementary perspectives on node importance by quantifying different aspects of network position and influence [21]. When strategically applied to biological networks, these measures can identify critical nodes whose perturbation (e.g., through pharmacological inhibition) may yield significant therapeutic effects while minimizing unintended consequences.

The comparative analysis of these measures reveals that their performance is highly context-dependent, varying with network structure, biological system, and the specific research objective. No single measure universally outperforms others across all scenarios, necessitating a nuanced understanding of their theoretical foundations, computational requirements, and predictive capabilities for informed application in target prediction research.

Theoretical Foundations of Centrality Measures

Degree Centrality

Degree centrality represents the most intuitive and computationally straightforward measure of node influence, defined simply as the number of direct connections a node possesses. In mathematical terms, for a node (vi) in graph (G), degree centrality is calculated as (DC(v{i}) = |\Gamma(v{i})|), where (\Gamma(v{i})) denotes the set of immediate neighbors [22]. In biological networks, nodes with high degree centrality (hubs) often correspond to proteins with multiple interaction partners or genes regulating numerous downstream targets.

The principal strength of degree centrality lies in its computational efficiency, making it applicable to very large-scale biological networks where more complex global measures become prohibitive. However, this local perspective also constitutes its main limitation: degree centrality fails to capture a node's position within the broader network context, potentially overlooking nodes that, despite moderate connectivity, occupy critically important positions as bridges between network modules [22] [23].

Betweenness Centrality

Betweenness centrality quantifies the extent to which a node acts as a bridge along the shortest paths between other nodes in the network. Formally, the betweenness centrality of a node (vi) is defined as (BC(v{i}) = \sum{j\neq i\neq k\in V(G)}\frac{SP{v{j}v{k}}(v{i})}{SP{v{j}v{k}}}), where (SP{v{j}v{k}}) is the total number of shortest paths from node (vj) to node (vk), and (SP{v{j}v{k}}(v{i})) is the number of those paths that pass through (vi) [22].

This measure identifies bottleneck nodes that control information flow or molecular signaling between different network regions. In drug target applications, proteins with high betweenness centrality often represent attractive intervention points because their perturbation can disrupt communication between multiple functional modules. The primary drawback of betweenness centrality is its computational intensity, as calculating shortest paths between all node pairs becomes challenging in very large networks [23].

Closeness Centrality

Closeness centrality measures how quickly a node can reach all other nodes in the network via shortest paths. It is defined as the inverse of the average shortest path distance from a node to all other nodes in the network. Nodes with high closeness centrality can rapidly disseminate signals or influences throughout the network [21].

In biological contexts, closeness centrality helps identify nodes capable of broadly affecting network states, making them potentially valuable for interventions aiming to systemically modulate cellular processes. Like betweenness centrality, closeness requires global network information and can be computationally demanding for large networks [22].

Comparative Analysis of Centrality Measures

Performance Across Network Types

The effectiveness of centrality measures varies significantly depending on network structure and the specific biological question under investigation. Table 1 summarizes the characteristic performance profiles of major centrality measures across different network types and applications.

Table 1: Comparative Performance of Centrality Measures in Biological Networks

| Centrality Measure | Computational Complexity | Key Strength | Key Limitation | Ideal Application Context |

|---|---|---|---|---|

| Degree Centrality | Low (O(n)) | Identifies highly connected hubs; Computational efficiency | Ignores global network position; Overlooks bottlenecks | Initial screening; Local influence assessment |

| Betweenness Centrality | High (O(n³)) | Identifies critical bridges and bottlenecks | Computationally intensive for large networks | Target identification for disrupting network communication |

| Closeness Centrality | High (O(n³)) | Identifies rapidly spreading nodes | Sensitive to disconnected components; Computationally intensive | Identifying broad-scale influencers in connected networks |

| K-shell Centrality | Moderate (O(n)) | Identifies network core positions | Coarse-grained ranking; Many nodes receive same value | Hierarchical analysis; Core-periphery structure identification |

| Complex Centrality | High | Specifically designed for complex contagions | Recently developed; Less validation in biological contexts | Social contagions; Behaviors requiring reinforcement |

Experimental Validation in Target Prediction

Empirical studies have directly compared centrality measures for identifying biologically significant nodes. In one notable investigation of drug target prediction algorithms, betweenness centrality emerged as a particularly informative topological measure. The study found that network topology predominantly determined prediction accuracy, leading to the development of TREAP (Target Inference by Ranking Betweenness Values and Adjusted P-values), which combines betweenness centrality with gene expression data for improved target identification [24].

The EDDC (Entropy Degree Distance Combination) approach represents another advancement, integrating local and global measures to overcome limitations of individual centrality metrics. By combining degree, entropy, and path information, EDDC addresses the monotonicity ranking issue where traditional methods like K-shell decomposition often assign identical scores to multiple nodes [22].

For complex contagions—phenomena requiring reinforcement from multiple sources—recent research demonstrates that traditional centrality measures based on simple path length frequently misidentify influential nodes. Complex centrality, which incorporates bridge width and reinforcement requirements, significantly outperforms traditional measures in identifying optimal seeding locations for such diffusion processes [25].

Methodological Protocols for Centrality Analysis

Standard Workflow for Network-Based Target Prediction

Implementing centrality analysis in target prediction requires a systematic approach to ensure biologically meaningful results. The following workflow outlines key methodological steps:

Network Construction: Assemble the biological network from high-quality, context-specific data. For protein-protein interaction networks, databases like STRING provide confidence-scored interactions. For gene regulatory networks, resources like Regulatory Circuits offer cell-type-specific interactions [24].

Network Pruning: Apply biologically relevant thresholds to remove low-confidence interactions. Studies suggest that moderate thresholds (e.g., 0.6-0.7 for STRING interactions) often optimize the trade-off between network quality and completeness [24].

Centrality Calculation: Compute multiple centrality measures using network analysis tools such as igraph R package or Python's NetworkX library. Parallel processing can accelerate computation for betweenness and closeness centrality in large networks [23].

Statistical Integration: Combine centrality scores with complementary biological data. The TREAP algorithm exemplifies this approach by integrating betweenness centrality with differential expression analysis (adjusted p-values) [24].

Experimental Validation: Prioritize candidate targets based on integrated scores and validate through perturbation experiments. Single-cell RNA sequencing technologies now enable high-resolution validation of network predictions [4].

The following diagram illustrates this methodological workflow:

Benchmarking Frameworks

Rigorous evaluation of centrality measures requires standardized benchmarking frameworks. CausalBench represents one such comprehensive benchmark specifically designed for network inference methods using large-scale single-cell perturbation data. This suite employs both biology-driven evaluations (comparing predictions to established biological knowledge) and statistical evaluations (assessing causal effect strength) to objectively compare method performance [4].

When benchmarking centrality measures for target prediction, key metrics include:

- Precision-Recall tradeoff: The ability to identify true targets while minimizing false positives

- Scalability: Computational efficiency with increasing network size

- Biological relevance: Correlation with known essential genes or validated targets

Research Reagent Solutions for Network Analysis

Implementing network centrality analysis requires both computational tools and data resources. Table 2 catalogues essential research reagents for conducting comprehensive topological analyses in biological networks.

Table 2: Essential Research Reagents and Resources for Network Centrality Analysis

| Resource Category | Specific Tools/Databases | Primary Function | Application Context |

|---|---|---|---|

| Network Data Resources | STRING, Regulatory Circuits, TRRUST | Provide experimentally validated and predicted molecular interactions | Network construction for proteins, genes, and transcription factors |

| Computational Tools | igraph (R/Python), NetworkX, Cytoscape | Calculate centrality measures and visualize biological networks | Implementation of algorithms for degree, betweenness, closeness centrality |

| Benchmarking Suites | CausalBench | Evaluate network inference method performance on perturbation data | Method validation and comparison in realistic biological contexts |

| Specialized Algorithms | TREAP, EDDC, inferCSN | Integrate centrality with other data types for improved prediction | Advanced target identification incorporating multiple data modalities |

Degree distribution and network centrality measures provide indispensable mathematical frameworks for identifying influential nodes in biological networks, with significant implications for target prediction in drug discovery. The comparative analysis presented herein demonstrates that each centrality measure offers distinct advantages and limitations, with optimal selection dependent on network structure, biological context, and specific research objectives.

Betweenness centrality has proven particularly valuable for identifying bottleneck proteins whose perturbation can disrupt disease-relevant pathways, while degree centrality remains useful for initial screening due to its computational efficiency. Recent methodological advances, including hybrid approaches like EDDC that integrate multiple measures and algorithms like TREAP that combine topological with molecular data, demonstrate the evolving sophistication of network-based target prediction.

As network biology continues to mature, the integration of topological measures with multi-omics data and single-cell resolution will likely further enhance our ability to identify therapeutically valuable targets within complex biological systems.

The Rationale Behind Link Prediction in Biological Networks

Biological systems are fundamentally composed of complex networks of interacting molecules, weaving the elaborate tapestry of life. These networks, encompassing proteins, genes, and metabolites, regulate critical processes from cellular signaling to organism-wide functions [26]. In this intricate molecular terrain, link prediction has emerged as an indispensable computational methodology for inferring missing or potential interactions between biological entities. The primary rationale for its application is to overcome the inherent incompleteness of experimentally derived biological networks and to generate actionable hypotheses for subsequent research and therapeutic development [27]. By leveraging the existing structure of known networks, link prediction provides a powerful, data-driven approach to map the vast uncharted territories of biological interactions, thereby accelerating our understanding of disease mechanisms and the identification of novel drug targets [28] [29].

This guide presents a comparative analysis of network-based inference methods, focusing on their application in target prediction research. We objectively evaluate the performance of diverse algorithmic families—from traditional topological approaches to modern graph embedding and deep learning techniques—by synthesizing findings from controlled experimental benchmarks. The following sections provide a detailed examination of their underlying methodologies, quantitative performance data, and practical protocols for implementation, offering drug development professionals a clear framework for selecting appropriate tools for their specific research contexts.

Methodological Approaches to Link Prediction

Link prediction algorithms can be broadly categorized into several classes based on their underlying computational principles. The following table summarizes the core methodologies, their key features, and representative algorithms.

Table 1: Methodological Families for Link Prediction in Biological Networks

| Method Family | Core Principle | Key Features | Representative Algorithms |

|---|---|---|---|

| Topological & Similarity-Based | Predicts links based on network structure metrics and node proximity. | Computationally lightweight; interpretable results; relies solely on network topology. | Common Neighbors, Betweenness Centrality, TREAP [29] |

| Graph Embedding | Maps network nodes into a low-dimensional vector space while preserving structural features. | Reduces high-dimensionality; facilitates use in machine learning classifiers. | Chopper, node2vec, DeepWalk, struc2vec [28] [27] |

| Machine Learning (Traditional) | Uses hand-engineered features (e.g., topological indices) with classifiers. | Requires feature engineering; can integrate diverse data types beyond topology. | Random Forests (GENIE3), Support Vector Machines [30] [31] |

| Deep Learning | Employs complex neural networks to automatically learn hierarchical features from raw data. | High predictive accuracy; capable of modeling non-linear patterns; requires large datasets. | Graph Neural Networks (GCN, GAT), GraphSAGE, Convolutional Neural Networks [31] |

The Graph Embedding Workflow

A prominent approach for modern link prediction involves graph embedding followed by a classification step. The general workflow can be visualized as follows:

Diagram 1: Graph embedding and classification workflow for link prediction.

Comparative Performance Analysis

To objectively compare the practical performance of different link prediction methods, we synthesized data from benchmark studies that evaluated algorithms on real-world biological network datasets.

Performance on Protein-Protein Interaction (PPI) Networks

Extensive experiments were conducted on tissue-specific human PPI networks from the Stanford Network Analysis Project (SNAP) [28]. The following table summarizes the embedding time and classification accuracy (Area Under the Curve, AUC) of the Chopper algorithm against other state-of-the-art graph embedding methods.

Table 2: Performance Comparison of Embedding Methods on PPI Link Prediction [28]

| Method | Embedding Time (Seconds) | AUC on Nervous System PPI | AUC on Blood PPI | AUC on Heart PPI |

|---|---|---|---|---|

| Chopper | ~50 | ~0.98 | ~0.97 | ~0.97 |

| node2vec | ~450 | ~0.96 | ~0.95 | ~0.95 |

| DeepWalk | ~400 | ~0.95 | ~0.94 | ~0.94 |

| struc2vec | ~650 | ~0.93 | ~0.92 | ~0.92 |

Experimental Protocol: The evaluation used three undirected PPI networks (Nervous System: 3,533 nodes, 54,555 edges; Blood: 3,316 nodes, 53,101 edges; Heart: 3,201 nodes, 48,719 edges). After applying the graph embedding algorithm to generate node features, feature regularization techniques were applied to reduce dimensionality. A classifier was then trained to distinguish positive interactions from randomly generated negative pairs, with performance evaluated using the AUC metric [28].

Performance in Drug Target Inference

Another critical application is drug target inference, where the goal is to predict the binding targets of pharmaceutical compounds. The TREAP algorithm, which leverages the topological feature of betweenness centrality, was benchmarked against other established methods.

Table 3: Performance in Drug Target Inference (Based on [29])

| Algorithm | Core Methodology | Key Performance Insight | Computational Demand |

|---|---|---|---|

| TREAP | Topological (Betweenness Centrality) & Statistical (p-values) | Often more accurate than state-of-the-art approaches; easy-to-interpret results. | Low |

| ProTINA | Network-based inference from gene expression | Accuracy is predominantly determined by network topology. | High |

| DeMAND | Network-based inference from gene expression | Overly complex for some applications; performance varies. | High |

Experimental Protocol: Studies typically involve treating a cell line with a drug and measuring the resulting gene expression changes. The algorithm uses a background network (e.g., a protein-protein or gene regulatory network) and the differential expression data to rank potential protein targets. Predictions are validated against known drug-target pairs from databases or follow-up experimental assays [29].

Experimental Protocols for Key Methodologies

Protocol A: Link Prediction with Graph Embedding

This protocol is based on the workflow used to evaluate the Chopper algorithm [28].

- Network Preparation: Obtain a biological network (e.g., a PPI network) formatted as a list of edges. Ensure the network is unweighted and undirected for compatibility with many standard algorithms.

- Graph Embedding: Apply the chosen graph embedding algorithm (e.g., Chopper, node2vec) to the network. This step maps each node to a low-dimensional vector, generating an embedding matrix

H ϵ R^(n x d), wherenis the number of nodes anddis the embedding dimension. - Feature Generation for Links: For each candidate pair of nodes (u, v), create a feature vector that represents the potential link. This is often done by applying a binary operator (e.g., concatenation, element-wise product) to the embeddings of nodes

uandv(h_uandh_v). - Dimensionality Reduction: To improve classifier efficiency and performance, apply feature regularization or dimensionality reduction techniques (e.g., Principal Component Analysis) to the generated link feature vectors.

- Classifier Training and Evaluation:

- Construct a labeled dataset with positive examples (known edges) and negative examples (randomly sampled non-edges).

- Split the data into training and test sets.

- Train a machine learning classifier (e.g., Support Vector Machine, Random Forest) on the training set.

- Use the trained classifier to predict links on the test set and evaluate performance using metrics like AUC.

Protocol B: Target Inference with Topological Features

This protocol outlines the steps for methods like TREAP, which rely on network topology [29].

- Network and Data Integration: Compile a relevant interaction network (e.g., a gene regulatory network). Integrate this with experimental data, such as gene expression profiles from drug-treated versus control samples.

- Topological Analysis: Calculate centrality measures (e.g., betweenness centrality) for all nodes in the network. Betweenness centrality identifies nodes that frequently lie on the shortest paths between other nodes, acting as critical bridges.

- Statistical Scoring: For each node, compute a statistical score (e.g., an adjusted p-value) that quantifies the significance of the observed experimental data (e.g., differential expression).

- Target Ranking and Inference: Combine the topological and statistical scores to generate a final ranked list of potential drug targets. Nodes with high betweenness centrality and statistically significant changes are prioritized as high-confidence predictions.

- Experimental Validation: Select top-ranked candidate targets for validation using wet-lab experiments such as co-immunoprecipitation or functional knockdown/knockout assays.

The logical flow of this topological approach is illustrated below:

Diagram 2: Workflow for topological target inference.

Successful implementation of link prediction requires a suite of computational tools and data resources. The table below details key components of the research toolkit.

Table 4: Essential Research Reagents and Resources for Link Prediction

| Resource Type | Name & Examples | Primary Function | Relevance to Link Prediction |

|---|---|---|---|

| Public Network Databases | BioGRID [32], STRING [32], MIPS [32], KEGG [26] | Provide repositories of known biological interactions (PPIs, regulatory links). | Source of gold-standard data for training algorithms and benchmarking predictions. |

| Specialized Datasets | Stanford SNAP PPI Networks [28], GeneNetWeaver (GNW) [30] | Offer curated, tissue-specific networks or in silico benchmark networks. | Enable controlled performance evaluation on realistic biological topologies. |

| Programming Libraries & Tools | Cytoscape [26], NetworkX [26], "WGCNA" R package [32] | Provide environments for network visualization, analysis, and construction (e.g., correlation networks). | Facilitate network manipulation, preliminary topological analysis, and visualization of results. |

| Algorithm Implementations | node2vec, GENIE3 [30], Graph Neural Network libraries (e.g., for GCN, GAT) [31] | Offer ready-to-use implementations of state-of-the-art inference and embedding algorithms. | Accelerate development and deployment of link prediction pipelines without building from scratch. |

The comparative analysis presented in this guide elucidates a clear trade-off between methodological complexity, computational cost, and predictive performance in biological link prediction. Topological methods like TREAP offer high interpretability and low computational demand, making them excellent for exploratory analysis and contexts where mechanistic insight is paramount [29]. In contrast, graph embedding approaches like Chopper demonstrate superior speed and classification accuracy on large, complex networks such as tissue-specific PPIs, providing a robust solution for comprehensive network completion tasks [28]. The emerging field of deep learning, particularly using Graph Neural Networks, shows immense promise for capturing non-linear patterns and integrating multimodal data, though it often requires greater computational resources and expertise [31].

For researchers in drug discovery and target prediction, the selection of an appropriate link prediction method should be guided by the specific biological question, the nature and quality of the available network data, and the resources available for computational and experimental validation. As biological datasets continue to grow in scale and complexity, the synergy between these methodological families—leveraging the interpretability of topology with the power of embedding and deep learning—will undoubtedly form the cornerstone of next-generation network inference tools.

The paradigm of drug discovery has undergone a fundamental transformation over the past several decades, shifting from the reductionist "one drug-one target" model toward a network-oriented approach embracing polypharmacology. This shift reflects the growing understanding that therapeutic efficacy, particularly for complex diseases, often requires modulation of multiple biological targets simultaneously [33] [34]. The traditional model, conceived in the early 1960s, was predicated on a simplistic perspective of human physiology where administering a single drug to modulate a specific target would revert a pathobiological state to health [34]. However, this approach has demonstrated significant limitations, with drugs frequently exhibiting promiscuous behavior by interacting with an estimated 6-28 off-target moieties on average [34].

The emerging paradigm of systems pharmacology deliberately designs therapeutic drugs for multi-targeting to afford beneficial effects to patients [34]. This approach aligns with the staggering complexity of human biology, where an individual human consists of approximately 37.2 trillion cells made up of 210 different cell types and 78 organs/organ systems, hosting an estimated 100-300 trillion microbes that play an intimate role in human health and pathobiology [34]. Within this complex system, we estimate that approximately 3.2 × 10^25 chemical reactions and interactions occur daily in a single individual—a number exceeding the estimated grains of sand on Earth [34]. This biological complexity fundamentally challenges the one drug-one target model and necessitates more sophisticated approaches to therapeutic intervention.

Fundamental Differences Between the Two Paradigms

Core Principles and Philosophical Foundations

The traditional "one drug-one target" paradigm operates on a linear model of "one drug → one target → one disease," assuming that selectively modulating a single target will produce the desired therapeutic effect without significant off-target consequences [8]. This reductionist perspective emerged from early successes like receptor-specific antagonists but fails to account for the network nature of biological systems, where targets operate within interconnected pathways and networks [34]. The approach relies heavily on the concept of high selectivity, where drug optimization focuses primarily on maximizing affinity for a single target while minimizing interactions with others.

In contrast, the polypharmacology paradigm embraces a network model of "multi-drugs → multi-targets → multi-diseases" that acknowledges most drugs inherently interact with multiple targets in vivo [8]. This approach recognizes that therapeutic effects often emerge from coordinated modulation of multiple targets within disease-relevant networks [33]. Rather than considering off-target effects as undesirable, polypharmacology seeks to rationally design multi-target profiles that maximize efficacy while managing safety concerns [34]. The philosophical foundation rests on systems biology principles, viewing biological systems as complex, dynamic networks where interventions must account for interconnectivity and redundancy [34].

Technological and Methodological Requirements

The implementation of these paradigms requires distinctly different methodological approaches and technological infrastructures:

Table 1: Methodological Requirements of Different Drug Discovery Paradigms

| Aspect | One Drug-One Target Paradigm | Polypharmacology Paradigm |

|---|---|---|

| Primary Screening Methods | High-throughput screening against single targets | Parallel or sequential multi-target screening |

| Computational Approaches | Molecular docking, QSAR, ligand-based similarity | Network biology, systems pharmacology, chemoproteomics |

| Data Requirements | Target-specific activity data | Multi-scale omics data (genomics, proteomics, phenomics) |

| Validation Strategies | Target-specific in vitro and in vivo models | Complex disease models, systems-level validation |

| Key Limitations | Poor efficacy in complex diseases, high attrition rates | Design complexity, potential for unanticipated interactions |

The one drug-one target approach predominantly utilizes reductionist experimental models that isolate specific targets from their biological context [34]. Computational methods include molecular docking-based approaches that rely on three-dimensional structures of targets, pharmacophore-based methods, and similarity searching based on the hypothesis that similar drugs share similar targets [8]. These methods face significant limitations when target structures are unavailable, as with many G protein-coupled receptors where only approximately 30 of more than 800 members have resolved crystal structures [8].

Polypharmacology employs network-based methods that do not rely on three-dimensional structures of targets or negative samples [8]. These include network-based inference (NBI) algorithms derived from recommendation systems, which predict potential drug-target interactions (DTIs) by performing resource diffusion processes on known DTI networks [8]. Additional approaches include chemo-proteomics strategies that allow unsupervised dissection of drug polypharmacology [35], and heterogeneous network models that integrate multiview path aggregation to systematically characterize multidimensional associations between biological entities [3].

Performance Comparison: Quantitative Analysis

Effectiveness in Different Therapeutic Areas

The performance of these paradigms varies significantly across therapeutic areas, with polypharmacology demonstrating particular advantage for complex diseases involving biological networks:

Table 2: Paradigm Performance Across Therapeutic Areas Based on FDA-Approved NMEs (2000-2015)

| Therapeutic Area (ATC Class) | Average Target Number per Drug | Exemplary Multi-Target Drugs | Key Findings |

|---|---|---|---|

| Nerve System | Highest (5+ targets for many drugs) | Zonisamide (31 targets), Ziprasidone (25 targets), Asenapine (20 targets) | 12 of 20 NMEs with ≥11 targets belong to this class |

| Cardiovascular System | Moderate | Dronedarone (18 targets) | Targets often cluster with metabolic disease targets |

| Antineoplastic & Immunomodulating Agents | Moderate to High | Pazopanib (10 targets) | Form distinct target clusters in network analysis |

| General Anti-infectives | Lowest (1.38) | Mostly single-target drugs | Most antimicrobials demonstrate single-target activity |

| Overall Average (All NMEs) | 2.1-5.1 (annual fluctuation) | Varies by year | 2009 showed peak multi-target activity (5.12 targets/drug) |

Data from FDA-approved New Molecular Entities (NMEs) between 2000-2015 reveals that nervous system drugs consistently exhibit the highest degree of polypharmacology, with many drugs targeting numerous proteins simultaneously [36]. This multi-target nature appears biologically necessary for therapeutic efficacy in complex neurological disorders. In contrast, general anti-infectives show the lowest average target number, as most drugs targeting infectious microorganisms are single-target [36]. This differential performance across therapeutic areas highlights the context-dependent value of polypharmacology.

Network analysis of drug-target interactions reveals that targets of nervous system NMEs form distinct clusters and have the highest degree in drug-target networks, indicating these targets are commonly engaged by different drugs [36]. Similarly, targets for antineoplastic and immunomodulating agents form their own clusters, while targets for alimentary tract and metabolic diseases tend to mix with cardiovascular targets, reflecting their interconnected physiological roles [36].

Computational Prediction Performance

Computational methods for predicting drug-target interactions show measurable performance differences between approaches aligned with each paradigm:

Table 3: Performance Comparison of Computational DTI Prediction Methods

| Method Category | Representative Methods | Key Advantages | Limitations | Reported Performance (AUROC) |

|---|---|---|---|---|

| Structure-Based | Molecular docking, inverse docking | Provides structural insights, mechanism of action | Limited by available protein structures | Varies widely by target |

| Ligand Similarity-Based | SEA, ChemMapper, DTiGEMS | Computationally efficient, simple implementation | Limited to similar chemical space | Moderate (method-dependent) |

| Network-Based | NBI, heterogeneous network models | No need for 3D structures or negative samples | Limited by network completeness | 0.966 (MVPA-DTI) [3] |

| Machine Learning-Based | DeepDTA, MONN, TransformerCPI | Handles complex patterns, integrates diverse features | Requires large training datasets | 0.901-0.966 (varies by method) |

| Integrated Methods | MVPA-DTI, DTIAM | Combines multiple data types, higher accuracy | Computational complexity | Highest (AUPR: 0.901) [3] |

Recent advanced methods like DTIAM, a unified framework for predicting interactions, binding affinities, and activation/inhibition mechanisms between drugs and targets, demonstrate substantial performance improvement over other state-of-the-art methods across all tasks, particularly in cold start scenarios [6]. DTIAM learns drug and target representations from large amounts of label-free data through self-supervised pre-training, accurately extracting substructure and contextual information that benefits downstream prediction [6].

Similarly, the MVPA-DTI model achieves an AUROC of 0.966 and AUPR of 0.901, representing improvements of 1.7% and 0.8% respectively over baseline methods [3]. This heterogeneous network model based on multiview path aggregation integrates drugs, proteins, diseases, and side effects from multisource heterogeneous data to systematically characterize multidimensional associations between biological entities [3].

Experimental Protocols and Methodologies

Network-Based Inference Methods

Network-based inference (NBI) methods represent one of the most significant methodological advances supporting the polypharmacology paradigm. These methods are derived from recommendation algorithms used in recommender systems and link prediction algorithms in complex networks [8]. The basic NBI algorithm can predict potential drug-target interactions using only the known DTI network without any additional information about chemical structures or protein sequences [8].

The experimental workflow for network-based DTI prediction typically involves:

Heterogeneous Network Construction: Integrating diverse biological entities (drugs, targets, diseases, side effects) and their relationships into a unified graph structure [3]

Feature Extraction: Utilizing advanced representation learning methods such as molecular attention transformers for drug 3D structure information and protein-specific large language models (e.g., Prot-T5) for protein sequence features [3]

Meta-Path Aggregation: Dynamically integrating information from both feature views and biological network relationship views to learn potential interaction patterns [3]

Interaction Prediction: Applying resource diffusion algorithms, collaborative filtering, or random walk with restart to infer novel interactions [8]

These methods are simple and fast, predicting potential DTIs by performing straightforward physical processes such as resource diffusion on networks, which can be described by simple matrix operations mathematically [8].

Self-Supervised Learning Frameworks

Modern approaches like DTIAM employ multi-task self-supervised pre-training to learn drug and target representations from large amounts of unlabeled data [6]. The experimental protocol involves:

Drug Molecular Pre-training Module:

- Input: Molecular graph segmented into substructures

- Representation: n × d embedding matrix with each substructure embedded into a d-dimensional vector

- Learning: Transformer encoder with three self-supervised tasks:

- Masked Language Modeling

- Molecular Descriptor Prediction

- Molecular Functional Group Prediction

Target Protein Pre-training Module:

- Utilizes Transformer attention maps to learn representations and contacts of proteins

- Based on unsupervised language modeling from large protein sequence databases

Drug-Target Prediction Module:

- Integrates compound and protein representations

- Employs automated machine learning framework with multi-layer stacking and bagging techniques

- Capable of predicting DTI, drug-target binding affinity (DTA), and mechanism of action (MoA) [6]

This approach accurately extracts substructure and contextual information during pre-training, improving generalization performance and providing benefits for downstream tasks, particularly in cold start scenarios where new drugs or targets lack historical interaction data [6].

Successful implementation of polypharmacology approaches requires access to comprehensive biological and chemical databases:

Table 4: Essential Databases for Polypharmacology Research

| Database | Scope and Content | Primary Application | Access |

|---|---|---|---|

| DrugBank | 6,711 drug entries including 1,447 FDA-approved small molecule drugs, 1,318 FDA-approved biotech drugs | Drug-target identification, drug repurposing | http://www.drugbank.ca/ |

| STITCH | Interactions between 300,000 small molecules and 2.6 million proteins from 1,133 organisms | Chemical-protein interaction network analysis | http://stitch.embl.de/ |

| BindingDB | 832,773 binding data for 5,765 protein targets and 362,123 small molecules | Binding affinity prediction, model validation | http://www.bindingdb.org/ |

| ChEMBL | 2D structures, calculated properties and abstracted bioactivities | QSAR modeling, chemical biology | https://www.ebi.ac.uk/chembl/ |

| PubChem BioAssay | 500,000 descriptions of assay protocols, providing 130 million bioactivity outcomes | High-throughput screening data mining | http://pubchem.ncbi.nlm.nih.gov/ |

| KEGG | Pathway information, disease networks, drug categories | Systems biology analysis, pathway mapping | http://www.genome.jp/kegg/ |

Computational Tools and Algorithms

The computational implementation of polypharmacology requires specialized tools and algorithms:

Network Analysis Tools:

- Cytoscape: Network visualization and analysis, particularly useful for integrating and analyzing heterogeneous biological networks

- Network-based Inference (NBI) Algorithms: Simple, fast algorithms derived from recommendation systems that predict DTIs through resource diffusion processes [8]

Deep Learning Frameworks:

- DTIAM: Unified framework for predicting interactions, binding affinities, and activation/inhibition mechanisms based on self-supervised learning [6]

- MVPA-DTI: Heterogeneous network model with multiview path aggregation that integrates structural and sequence information [3]

- DeepDTA: Uses convolutional neural networks to learn representations from SMILES strings of compounds and amino acid sequences of proteins [6]

Cheminformatics Resources:

- Molecular Attention Transformer: Extracts 3D conformation features from chemical structures of drugs [3]

- Prot-T5: Protein-specific large language model that explores biophysically and functionally relevant features from protein sequences [3]

The transition from "one drug-one target" to polypharmacology represents more than just a technical shift in drug discovery approaches—it constitutes a fundamental philosophical transformation in how we understand therapeutic intervention in complex biological systems. The traditional model, while successful for certain target classes, has demonstrated limitations in efficacy and safety for complex diseases, with most drugs being only 30-75% effective across patient populations and oncology drugs showing particularly low response rates at approximately 25% [34].

Polypharmacology approaches, particularly those leveraging network-based inference and heterogeneous data integration, have demonstrated superior performance in predicting drug-target interactions, especially for target classes where three-dimensional structural information is limited [8]. The ability of these methods to function without negative samples or complete structural data enables broader target coverage and better performance in cold-start scenarios [6] [3].

Future advancements will likely focus on integrating multi-omics data at unprecedented scales, leveraging the exponential increase of multidisciplinary Big Data and artificial intelligence approaches [37]. Key challenges remain, including data incompleteness that currently limits most approaches from comprehensively predicting selectivity, and limited agreement on model assessment that challenges identification of optimal algorithms [37]. However, the continued development of methods like DTIAM that unify prediction of interactions, binding affinities, and mechanisms of action signals a promising direction toward more comprehensive and clinically predictive polypharmacology profiling [6].

As drug discovery continues to evolve, the successful integration of polypharmacology principles with precision medicine approaches will be essential for developing next-generation therapeutics that maximize efficacy while minimizing adverse effects in specific patient populations [34]. This integration represents the most promising path forward for addressing the staggering complexity of human biology and improving the dismal success rates that have long plagued drug development.

Key Methodologies and Real-World Applications in Drug Repositioning

Network-Based Inference (NBI) has emerged as a powerful computational paradigm in the field of drug discovery and target prediction. As pharmaceutical companies face increasing pressure to reduce the time and cost associated with traditional drug development, which can exceed 15 years and $800 million per new drug, efficient computational methods have gained significant importance [38]. NBI methods represent a class of algorithms that leverage the topological properties of biological networks to predict novel interactions, offering distinct advantages over structure-based and machine learning approaches that require three-dimensional protein structures or experimentally validated negative samples, which are often unavailable [8]. This review provides a comprehensive comparative analysis of three fundamental NBI algorithms: Probabilistic Spreading (ProbS), Heat Spreading (HeatS), and the foundational Network-Based Inference (NBI) algorithm. We examine their methodological frameworks, experimental performance, and applications in biomedical research, with a particular focus on drug repositioning and target prediction.

Algorithmic Foundations and Methodologies

Core Mathematical Frameworks

The ProbS and HeatS algorithms operate on bipartite network structures, where connections exist only between nodes of two different types, such as drugs and diseases or drugs and targets [38]. These methods utilize distinct resource allocation mechanisms to generate predictions:

Probabilistic Spreading (ProbS) employs a two-step resource diffusion process reminiscent of random walks. Given a bipartite network with drugs (D) and diseases (P), the algorithm allocates initial resources to diseases and propagates them through the network. The mathematical formulation is expressed as:

f'ᵢ = Σₗ (aᵢₗ / kₗ) × Σⱼ (aⱼₗ × fⱼ / kⱼ)

where aᵢₗ represents the adjacency matrix element, kₗ and kⱼ denote node degrees, and fⱼ is the initial resource vector [38]. This approach effectively implements a collaborative filtering mechanism based on network connectivity patterns.

Heat Spreading (HeatS) utilizes a heat diffusion analogy where "heat" propagates through the network based on differential equations. The resource allocation follows:

f'ᵢ = Σₗ (aᵢₗ / kᵢ) × Σⱼ (aⱼₗ × fⱼ / kₗ)