Network-Based Inference for Drug-Target Prediction: A Comprehensive Guide from Foundations to Clinical Applications

This article provides a comprehensive overview of network-based inference (NBI) methods for predicting drug-target interactions (DTIs), a crucial task in modern drug discovery and repurposing.

Network-Based Inference for Drug-Target Prediction: A Comprehensive Guide from Foundations to Clinical Applications

Abstract

This article provides a comprehensive overview of network-based inference (NBI) methods for predicting drug-target interactions (DTIs), a crucial task in modern drug discovery and repurposing. Aimed at researchers, scientists, and drug development professionals, it explores the foundational principles of NBI, which leverages the topology of bipartite drug-target networks to infer new interactions without relying on 3D protein structures or experimentally confirmed negative samples. The scope covers core methodologies, including resource-spreading algorithms and heterogeneous network integration, their practical applications in polypharmacology and side-effect prediction, strategies for optimizing performance and overcoming data sparsity, and finally, a rigorous comparison with other computational approaches, supported by experimental validation case studies. By synthesizing the latest advancements, this review serves as a valuable resource for leveraging these powerful, efficient computational tools to accelerate drug development.

The Paradigm Shift: From Single-Target to Network-Based Pharmacology

Drug-target interaction (DTI) prediction is a cornerstone of computational drug discovery, enabling the rational design of new therapeutics, the repurposing of existing drugs, and the elucidation of their mechanisms of action [1]. The process of developing a new drug—from initial research to market availability—typically requires approximately $2.3 billion and spans 10–15 years, with a success rate that fell to 6.3% by 2022 [2]. DTI prediction is a pivotal component of the discovery phase, aiming to mitigate the high costs, low success rates, and extensive timelines of traditional drug development by efficiently using the growing amount of available bioactivity data [2]. Accurate target prediction helps minimize the validation of ineffective drug-target pairs, allows for more focused experimentation, and aids in identifying potential off-target effects and multi-target drugs promising for complex disease treatment [2]. This document frames the DTI prediction problem within the context of network-based inference, a class of methods that demonstrates significant advantages for this task.

Methodological Approaches to DTI Prediction

The evolution of in silico DTI prediction methods has progressed from early structure-based techniques to modern machine learning and network-based approaches. The following table summarizes the key methodologies.

Table 1: Overview of DTI Prediction Methodologies

| Method Category | Key Principles | Representative Algorithms/Models | Advantages | Limitations |

|---|---|---|---|---|

| Early In Silico | Utilizes 3D protein structures or known bioactive compounds to simulate binding. | Molecular Docking [2], QSAR, Pharmacophore Models [2] | Provides structural insights into binding interactions. | Highly dependent on available 3D protein structures; assumes linear structure-activity relationships [2]. |

| Machine Learning (ML) | Enables models to autonomously learn complex patterns from chemical and genomic data. | KronRLS [2], SimBoost [2], DeepDTA [1] | Capable of capturing non-linear relationships; high predictive accuracy with sufficient data. | Performance can be influenced by data sparsity and quality of negative samples [3]. |

| Network-Based Inference | Treats DTIs as a bipartite network and uses algorithms to infer new links. | Network-Based Inference (NBI) [3], Probabilistic Spreading (ProbS) [3] | Does not rely on 3D structures or negative samples; simple, fast, and covers a large target space [3]. | Relies heavily on the completeness of the known interaction network. |

| Multimodal & Pre-training | Integrates diverse data types (e.g., SMILES, text, 3D structures) into a unified model. | GRAM-DTI [1], EviDTI [4] | Improves robustness and generalizability; leverages large-scale unlabeled data. | Computationally intensive; requires complex architecture design. |

| Uncertainty-Aware DL | Quantifies the confidence or uncertainty of model predictions. | EviDTI [4] | Helps prioritize candidates for experimental validation; reduces risk from overconfident false positives. | Adds model complexity; requires specialized statistical methods. |

Experimental Protocols and Workflows

Protocol for Network-Based Inference (NBI)

Network-based methods, such as NBI, leverage the topology of known DTI networks for prediction without requiring 3D protein structures or experimentally confirmed negative samples [3].

Materials:

- Known DTI Data: A bipartite network of confirmed drug-target interactions (e.g., from databases like DrugBank [4]).

- Computing Environment: Standard computational hardware capable of performing matrix operations.

Procedure:

- Network Construction: Represent the known DTIs as a bipartite graph, where one set of nodes represents drugs and the other represents targets. An edge exists between a drug and a target if their interaction is known.

- Matrix Representation: Convert this bipartite graph into a binary adjacency matrix A, where the rows represent drugs and the columns represent targets. An element aᵢⱼ = 1 if drug i interacts with target j, and 0 otherwise.

- Resource Diffusion: Execute a two-step resource diffusion process, akin to a recommendation algorithm [3]:

- Step 1: Resources from target nodes are distributed to drug nodes.

- Step 2: Resources on drug nodes are then redistributed back to target nodes.

- Prediction Scoring: Mathematically, this process is captured by the operation W = A * Aᵀ, where the resulting matrix W contains the prediction scores for all possible drug-target pairs. Higher scores indicate a higher likelihood of interaction.

- Validation: The model's performance is evaluated under a cold-start scenario (e.g., predicting targets for new drugs not in the training network) using metrics like area under the ROC curve (AUC) [3].

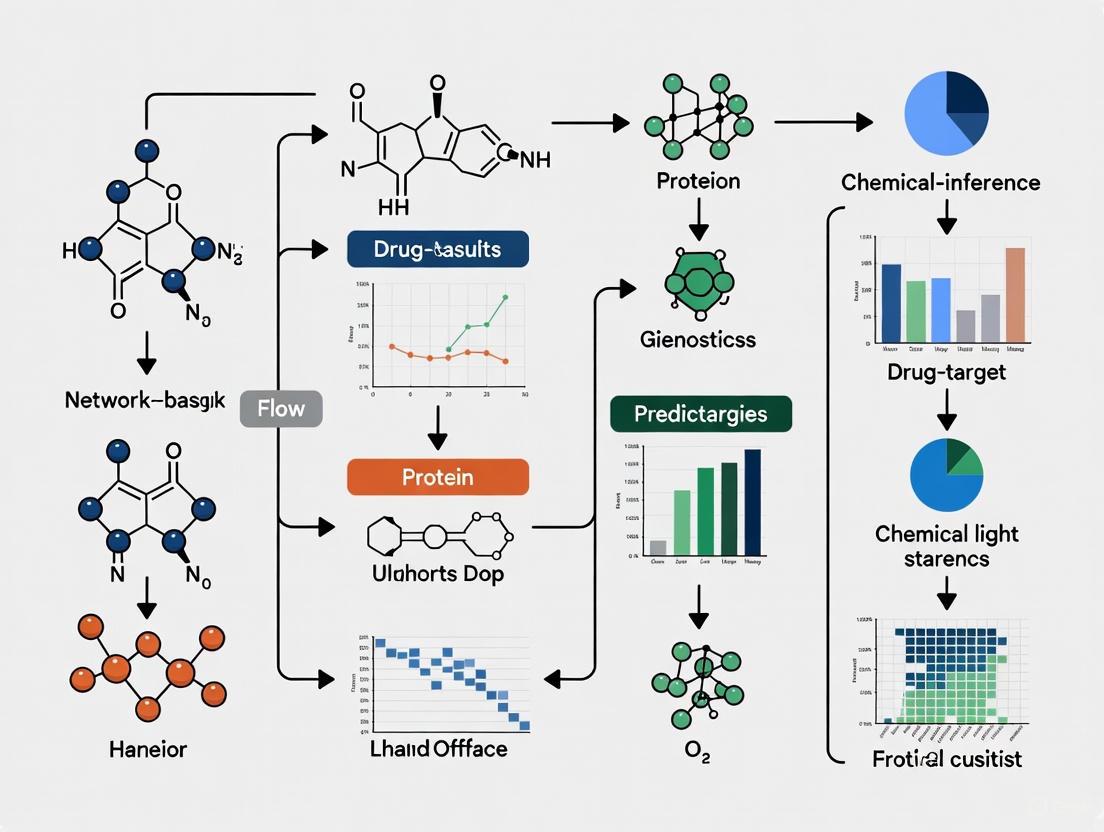

NBI Workflow: From a known DTI network to a prediction matrix via resource diffusion.

Protocol for a Modern Multimodal Deep Learning Framework (GRAM-DTI)

GRAM-DTI represents the state-of-the-art in integrating diverse data modalities for robust DTI prediction [1].

Materials:

- Multimodal Data:

- Drugs: SMILES sequences, textual descriptions, hierarchical taxonomic annotations (HTA).

- Proteins: Amino acid sequences.

- (Optional) IC50 activity measurements for weak supervision.

- Software & Models:

- Pre-trained encoders: MolFormer (for SMILES), MolT5 (for text/HTA), ESM-2 (for proteins).

- Computational framework for volume-based contrastive learning and adaptive modality dropout.

Procedure:

- Data Preprocessing and Embedding:

- For each drug and target, generate the respective multimodal inputs.

- Use the pre-trained, frozen encoders (e.g., ESM-2 for proteins) to obtain initial, high-dimensional feature vectors for each modality [1].

- Modality Projection:

- Train lightweight neural projectors to map each modality-specific embedding into a shared, lower-dimensional representation space.

- Multimodal Alignment with Volume Loss:

- Employ Gramian volume-based contrastive learning to align the four modalities (SMILES, text, HTA, protein) in the shared space simultaneously, capturing higher-order semantic relationships beyond pairwise alignment [1].

- Adaptive Modality Dropout:

- During pre-training, dynamically regulate the contribution of each modality to prevent dominant but less informative modalities from overwhelming complementary signals. This enhances model robustness [1].

- Model Training and Evaluation:

- Train the model on large-scale DTI datasets. If available, use IC50 values as an auxiliary supervision signal to ground the representations in biologically meaningful interaction strengths [1].

- Evaluate the model on benchmark datasets (e.g., Davis, KIBA) using metrics such as AUC and AUPR, and under cold-start settings to assess generalizability [1] [4].

GRAM-DTI Multimodal Fusion: Integrating multiple drug and target representations.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational tools and data resources essential for conducting DTI prediction research.

Table 2: Essential Research Reagents and Tools for DTI Prediction

| Item Name | Type | Function/Description | Example Use Case |

|---|---|---|---|

| SMILES String | Data Representation | A line notation for encoding the structure of chemical compounds. | Serves as the primary input for many drug encoders (e.g., MolFormer) [1]. |

| Amino Acid Sequence | Data Representation | The linear sequence of amino acids for a protein. | Serves as the primary input for protein language models like ESM-2 [1]. |

| Molecular Graph | Data Representation | Represents a drug as a 2D graph with atoms as nodes and bonds as edges. | Used by graph-based models (e.g., GraphDTA, EviDTI) to capture topological structure [4]. |

| IC50/Kd/Ki Value | Bioactivity Data | Quantitative measurements of binding affinity or inhibitory concentration. | Used as labels for regression tasks or for weak supervision during pre-training [1] [3]. |

| ESM-2 | Pre-trained Model | A large-scale protein language model that learns meaningful representations from sequences. | Used to generate powerful initial feature embeddings for target proteins [1]. |

| MolFormer | Pre-trained Model | A transformer-based model pre-trained on a large corpus of molecular SMILES strings. | Used to generate initial feature embeddings for drugs from their SMILES notation [1]. |

| Known DTI Network | Dataset/Resource | A curated collection of experimentally validated drug-target pairs. | Serves as the foundational data for network-based inference methods and for model training/validation [3]. |

| AlphaFold | Structural Model | A system that predicts a protein's 3D structure from its amino acid sequence. | Can be integrated to provide structural features for models that go beyond sequence information [2]. |

Performance Benchmarking

Quantitative evaluation on standardized benchmarks is critical for assessing the performance of DTI prediction models. The table below summarizes the performance of selected models on common datasets.

Table 3: Performance Comparison of DTI Prediction Models on Benchmark Datasets

| Model | Dataset | Accuracy (%) | AUC (%) | AUPR (%) | MCC (%) | F1 Score (%) |

|---|---|---|---|---|---|---|

| EviDTI [4] | DrugBank | 82.02 | - | - | 64.29 | 82.09 |

| EviDTI [4] | Davis | ~90.8* | ~90.1* | ~90.3* | ~90.9* | ~92.0* |

| EviDTI [4] | KIBA | ~90.6* | ~90.1* | - | ~90.3* | ~90.4* |

| GRAM-DTI [1] | Multiple | State-of-the-art | State-of-the-art | State-of-the-art | - | - |

| NBI Methods [3] | Various | Competitive | Competitive | - | - | - |

Note: Values marked with () are approximate, derived from the reported performance improvements over other baseline models as detailed in the source [4]. AUC: Area Under the ROC Curve; AUPR: Area Under the Precision-Recall Curve; MCC: Matthews Correlation Coefficient.*

In the pipeline of computer-aided drug discovery, traditional structure- and ligand-based methods have served as cornerstone technologies for predicting drug-target interactions (DTIs) and identifying lead compounds [5] [6]. These approaches, including molecular docking, pharmacophore modeling, and ligand-based similarity searching, operate on distinct principles but share common limitations that restrict their universal application [3]. With the paradigm shift toward network pharmacology and polypharmacology, the "one drug → one target → one disease" model is progressively being replaced by "multi-drugs → multi-targets → multi-diseases" frameworks [3]. This evolution underscores the necessity to critically evaluate traditional computational methods, whose constraints become increasingly pronounced when addressing complex biological systems. This application note systematically delineates the fundamental limitations of these established approaches while contextualizing their role within modern network-based inference research for drug-target prediction.

Comparative Limitations of Traditional DTI Prediction Methods

The table below summarizes the core methodologies and inherent constraints of three primary traditional approaches for drug-target interaction prediction.

Table 1: Core Methodologies and Limitations of Traditional DTI Prediction Approaches

| Method Category | Fundamental Principle | Data Requirements | Key Technical Limitations |

|---|---|---|---|

| Structure-Based (Docking) [6] [3] | Predicts binding pose and affinity of a small molecule within a target's 3D structure. | High-resolution 3D protein structure (e.g., from X-ray, NMR). | Performance is highly dependent on the scoring function's accuracy [6] [7]. Computationally expensive for large libraries [8]. |

| Structure-Based (Pharmacophore) [5] [3] | Defines essential steric/electronic features for bioactivity; used as a query for screening. | Protein-ligand complex structure or set of active ligands. | Model quality is sensitive to input data quality [5]. May oversimplify interactions by ignoring subtle energetics [7]. |

| Ligand-Based [9] [3] | Infers activity based on similarity to known active compounds (2D/3D similarity, QSAR). | A set of known active and (for QSAR) inactive compounds. | Cannot identify novel scaffolds (the "similarity limitation") [3]. Requires sufficient ligand data for model building [10]. |

Unified Workflow and Failure Points

The following diagram illustrates the generalized workflow for these traditional virtual screening methods and highlights critical points where their limitations manifest.

Detailed Limitations and Underlying Causes

Data Dependency and Coverage Constraints

A primary constraint across traditional methods is their stringent data dependency, which inherently limits the scope of targets and compounds they can effectively address.

Structural Data Limitation for Docking: Molecular docking and structure-based pharmacophore modeling fundamentally require high-quality three-dimensional structures of the target protein [3] [10]. This presents a major bottleneck, as structural information is unavailable for many biologically relevant targets, such as a significant portion of G protein-coupled receptors (GPCRs) and membrane proteins [3]. Even when structures are available, the presence of co-crystallized ligands, water molecules, and loop conformations can significantly impact the accuracy of the predicted interactions [5].

Ligand Data Limitation for Ligand-Based Methods: The predictive power of ligand-based approaches, including pharmacophore modeling and QSAR, is directly proportional to the quantity, quality, and chemical diversity of known active compounds used for model training [9] [10]. For understudied targets with few known modulators, building reliable models is challenging or impossible. Furthermore, these models are inherently biased toward existing chemical scaffolds, rendering them incapable of identifying active compounds with novel, structurally distinct motifs—a phenomenon known as the "similarity limitation" [3].

Performance and Accuracy Challenges

Quantitative benchmarks reveal significant performance variations and methodological weaknesses.

Scoring Function Inaccuracy in Docking: A critical weakness of docking-based virtual screening (DBVS) lies in the imperfect correlation between computationally predicted docking scores and experimentally measured binding affinities [6] [7]. Scoring functions often struggle to accurately model solvation effects, entropy, and specific interaction energies, leading to false positives and false negatives [6]. Performance is also highly dependent on the specific docking program and target protein, with no single method consistently outperforming others across diverse targets [6] [11].

Systematic Performance Comparison: A benchmark study comparing pharmacophore-based virtual screening (PBVS) and DBVS against eight diverse protein targets demonstrated the context-dependent nature of these methods. The table below summarizes key quantitative findings from this study.

Table 2: Benchmark Performance of PBVS vs. DBVS Across Eight Targets [6] [11]

| Virtual Screening Method | Average Enrichment Factor (Higher is Better) | Superior Performance in Cases (out of 16) | Key Performance Insight |

|---|---|---|---|

| Pharmacophore-Based (PBVS) | Higher | 14 | More efficient at retrieving actives from chemical databases in this benchmark. |

| Docking-Based (DBVS) | Lower | 2 | Performance varied significantly with the choice of docking program and target. |

| Key Takeaway | PBVS demonstrated a general advantage in this specific study, but DBVS remains a powerful and complementary tool, especially when 3D structural insights are crucial. |

Inefficiency and Resource Demands

Computational Throughput: Traditional molecular docking is computationally intensive, making the screening of ultra-large chemical libraries containing billions of molecules practically infeasible on standard computing resources [8]. While pharmacophore-based screening is generally faster, it still requires significant computational effort for large-scale databases [5].

The Negative Sample Problem for Machine Learning: Supervised machine learning models for DTI prediction typically require both positive (known interacting) and negative (known non-interacting) drug-target pairs for training [12] [3]. However, publicly available databases are rich in confirmed positive interactions but lack experimentally validated negative samples. Using automatically generated negative sets (e.g., "one versus the rest") can introduce low-quality labels and significantly degrade model performance [3].

Experimental Protocols for Method Benchmarking

Protocol: Benchmarking PBVS vs. DBVS Performance

This protocol outlines the steps for a comparative performance assessment of pharmacophore-based and docking-based virtual screening, based on established benchmarking practices [6] [11].

1. Reagent and Software Solutions

- Protein Targets: Select 3-5 structurally diverse targets with known 3D structures (from PDB) and sets of experimentally confirmed active ligands.

- Compound Database: Prepare a benchmarking database for each target by combining its known active ligands with a large set of pharmaceutically relevant decoy molecules (e.g., from ZINC database).

- Software: Select PBVS software (e.g., Catalyst, LigandScout) and multiple DBVS programs (e.g., DOCK, GOLD, Glide) to account for program-specific variations.

2. Procedure 1. Model Preparation: - For PBVS: Generate a structure-based pharmacophore model for each target using a co-crystallized ligand-protein complex. - For DBVS: Prepare the protein structure for docking (add hydrogens, assign charges) using the same complex. 2. Virtual Screening Execution: - Screen the entire benchmarking database against each target using both the PBVS and DBVS workflows. - Record the rank of each active compound in the screened list. 3. Performance Evaluation: - Calculate Enrichment Factors (EF) at early stages of the ranked list (e.g., top 1% and 5%). EF measures how much better the method is at retrieving actives compared to a random selection. - Generate Receiver Operating Characteristic (ROC) curves and calculate the Area Under the Curve (AUC) to assess overall performance.

3. Data Analysis

- Compare the average EF and AUC values across all targets for PBVS versus the different DBVS methods.

- The method that consistently retrieves more active compounds higher in the ranked list, resulting in higher EF and AUC values, is considered to have better performance for the tested scenario.

Protocol: Assessing the "Similarity Limitation" in Ligand-Based Screening

This protocol is designed to evaluate the inability of ligand-based methods to identify actives with novel scaffolds [3].

1. Reagent and Software Solutions

- Active Ligand Sets: For a well-characterized target, compile a set of known active compounds and cluster them by molecular scaffold.

- Software: Use software capable of calculating molecular similarity (e.g., based on 2D fingerprints) and performing similarity searches.

2. Procedure 1. Training Set Creation: Select one major scaffold cluster from the active set to serve as the "known" chemotype for training. 2. Blind Test Set Creation: The remaining active compounds, belonging to different scaffold clusters, form the "novel scaffold" test set. Combine this test set with a large pool of decoys. 3. Similarity Search: Use the compounds from the training set as queries to perform a similarity search against the blind test set. 4. Result Analysis: Examine the ranks of the "novel scaffold" actives. If they are not enriched near the top of the list, it demonstrates the method's limitation in scaffold hopping.

The Scientist's Toolkit: Key Research Reagents and Software

Table 3: Essential Resources for Traditional and Network-Based DTI Prediction

| Resource Name | Type/Category | Primary Function in Research |

|---|---|---|

| Protein Data Bank (PDB) [5] | Database | Primary repository for 3D structural data of proteins and nucleic acids, essential for structure-based methods. |

| ChEMBL [12] [8] | Database | Manually curated database of bioactive molecules with drug-like properties, containing binding affinities and ADMET data. |

| ZINC [9] [8] | Database | Publicly available database of commercially available compounds for virtual screening. |

| LigandScout [6] [11] | Software | Tool for creating structure- and ligand-based pharmacophore models and performing virtual screening. |

| Smina [8] | Software | A variant of AutoDock Vina for molecular docking, highly customizable for scoring function development. |

| AOPEDF [12] | Algorithm/Software | A network-based method that integrates heterogeneous biological data to predict DTIs, overcoming target-structure dependency. |

| DTIAM [10] | Algorithm/Software | A unified deep learning framework for predicting interactions, binding affinities, and mechanisms of action. |

Traditional docking, pharmacophore, and ligand-based approaches have undeniably contributed to drug discovery successes but are constrained by their specific data requirements, computational costs, and limited ability to characterize polypharmacology [3]. The emergence of network-based inference methods addresses several of these shortcomings by forgoing the need for 3D structural data and negative samples, enabling the prediction of interactions on a proteome-wide scale [12] [3]. In the modern research context, traditional methods are not obsolete but are increasingly being repositioned. They serve as powerful, targeted tools for lead optimization within a specific target family or as complementary filters integrated with network-based approaches to add mechanistic depth and structural insights to system-level predictions [7]. This synergistic combination of detailed traditional and holistic network-based approaches represents the future of computational drug discovery.

Network-Based Inference (NBI) is a computational method derived from recommendation algorithms and link prediction in complex network theory, repurposed for predicting drug-target interactions (DTIs) [13] [3]. Its core principle is leveraging the topology of a known bipartite drug-target network—where connections exist only between drug and target nodes—to infer new interactions [13]. A fundamental assumption is that similar drugs tend to interact with similar targets, and this similarity is captured not by direct chemical or genomic descriptors, but purely by the network's connectivity structure [3].

A significant advantage of NBI over other computational methods is that it operates without requiring the three-dimensional structures of target proteins or experimentally confirmed negative samples (i.e., non-interacting drug-target pairs) [14] [3]. This allows NBI to explore a much larger target space, including proteins with unknown structures, such as many G protein-coupled receptors (GPCRs) [3]. The method is computationally efficient, relying primarily on matrix operations to simulate a process of resource diffusion across the network [3].

Core Methodology and Protocols

The Fundamental NBI Protocol

The basic NBI protocol uses a known DTI network to predict unknown interactions through a resource allocation process [13].

Protocol Steps:

- Network Construction: Construct a bipartite network represented by an adjacency matrix ( A ) of dimensions ( nd \times nt ), where ( nd ) is the number of drugs and ( nt ) is the number of targets. Matrix element ( A(i, j) = 1 ) if drug ( i ) interacts with target ( j ); otherwise, ( A(i, j) = 0 ) [14].

- Resource Diffusion: The prediction is formulated as a two-step resource diffusion process [13]:

- Step 1 - Resource from Drugs to Targets: Resources from all drug nodes are allocated to the target nodes they connect to. The initial resource vector at the targets, ( f{t0} ), can be a uniform distribution or based on specific prior knowledge.

- Step 2 - Resource Back-Propagation to Drugs: Resources from the target nodes are propagated back to the drug nodes.

- Prediction Score Calculation: The final prediction matrix ( W ) is computed using the matrix formula ( W = A \cdot A^T \cdot A ), where ( A^T ) is the transpose of the adjacency matrix [13]. This process effectively spreads the interaction information through the entire network. A higher score in ( W(i, j) ) indicates a higher probability of interaction between drug ( i ) and target ( j ).

Visualization of the Fundamental NBI Resource Diffusion Process:

Advanced NBI Method: The wSDTNBI Protocol

Subsequent developments have enhanced the original NBI. The weighted Substructure-Drug-Target NBI (wSDTNBI) method incorporates binding affinity data and drug-substructure associations to make more quantitative predictions [14] [15].

Protocol Steps:

- Input Network Preparation:

- Weighted DTI Network: Construct a weighted drug-target adjacency matrix ( W{DTI} ). Instead of binary values (0/1), the edge weights are set to be positively correlated with experimental binding affinities (e.g., ( Kd ), ( IC{50} )) [14].

- Drug-Substructure Association (DSA) Network: Construct a binary adjacency matrix ( A{DSA} ) where an edge connects a drug to a substructure if the drug's chemical structure contains that substructure. This network includes both drugs from the DTI network and novel compounds, enabling predictions for new molecules [14].

- Two-Pronged Prediction Score Calculation:

- Prong 1 (Network-Based - red arrows in diagram): Convert the weighted ( W{DTI} ) to an unweighted matrix ( A{DTI} ). Use the balanced SDTNBI (bSDTNBI) method on the integrated substructure-drug-target network to calculate normalized scores stored in matrix ( S{norm} ) [14].

- Prong 2 (Similarity-Based - blue arrows in diagram): Calculate a drug similarity matrix using the Tanimoto coefficient on substructure fingerprints from ( A{DSA} ). For a given drug-target pair ( (Di, Tj) ), the similarity-based score ( S{sim}(i, j) ) is the average edge weight of the DTIs between ( Tj ) and its ( \epsilon ) most similar known ligands [14].

- Score Integration: The final prediction score is a combination of the normalized bSDTNBI score and the similarity-based score, resulting in an output where higher scores correlate with stronger predicted binding affinity [14].

Visualization of the wSDTNBI Two-Pronged Approach:

The Scientist's Toolkit: Research Reagent Solutions

Table 1: Essential resources for implementing NBI-based DTI prediction.

| Resource Name | Type | Function in NBI Research | Key Features |

|---|---|---|---|

| NetInfer Web Server [15] | Web Tool | User-friendly interface for predicting targets, pathways, and adverse effects using NBI methods. | Implements SDTNBI, bSDTNBI, and wSDTNBI; no local installation required. |

| Global DTI Network (v2020) [15] | Dataset | A comprehensive, curated bipartite network of known drug-target interactions. | Serves as the primary input network for resource diffusion in NBI. |

| BindingDB [16] | Database | Source of experimental binding affinity data (Kd, Ki, IC50). | Provides data to create a weighted DTI network for methods like wSDTNBI. |

| MetaADEDB [15] | Database | Comprehensive database on Adverse Drug Events (ADEs). | Used to extend NBI applications to ADE prediction. |

| Drug-Substructure Association Network [14] | Computational Construct | Network linking drugs to their constituent chemical substructures. | Enables target prediction for novel compounds outside the original DTI network. |

| Morgan Fingerprints [15] | Molecular Descriptor | A type of circular fingerprint representing molecular structure. | Used in NetInfer to calculate drug similarity for new compound input. |

Application Notes: Experimental Validation & Case Studies

Case Study 1: Drug Repurposing via Basic NBI

Objective: To rediscover new therapeutic targets (i.e., drug repurposing) for existing drugs using the basic NBI method [13].

Experimental Protocol for Validation:

- Prediction: Apply the NBI algorithm to a network of 12,483 FDA-approved and experimental drug-target links [13].

- Compound Selection: Prioritize and acquire top-ranking predicted drugs for specific targets (e.g., estrogen receptors, dipeptidyl peptidase-IV).

- In Vitro Binding Assays:

- Materials: Purified target proteins (e.g., human estrogen receptor alpha), candidate drugs, reference ligands, assay kits (e.g., fluorescence polarization or radiometric assays).

- Procedure: Incubate the target protein with a range of concentrations of the candidate drug. Measure the displacement of a known fluorescent or radioactive ligand. Calculate the half-maximal inhibitory concentration (IC50) or effective concentration (EC50) to quantify potency [13].

- Functional Cellular Assays:

- Materials: Human cancer cell lines (e.g., MDA-MB-231 breast cancer cells), cell culture reagents, MTT assay kit.

- Procedure: Treat cells with vehicle control or varying concentrations of the validated drug. After incubation, add MTT reagent and measure absorbance to determine cell viability. Calculate the half-maximal inhibitory concentration for anti-proliferative effects [13].

Results: This protocol validated five drugs, including montelukast and simvastatin, as hits against new targets with IC50/EC50 values ranging from 0.2 to 10 µM, and confirmed potent antiproliferative activity in cells [13].

Case Study 2: Virtual Screening with wSDTNBI

Objective: To discover novel, potent inverse agonists for retinoid-related orphan receptor γt (RORγt) using the advanced wSDTNBI method [14].

Experimental Protocol for Validation:

- Virtual Screening: Run the wSDTNBI algorithm on a weighted DTI network to prioritize compounds with predicted high binding affinity for RORγt.

- Compound Procurement: Purchase 72 top-ranking natural compounds for experimental testing [14].

- In Vitro Inverse Agonist Assay:

- Materials: RORγt ligand binding domain, candidate compounds, cofactor peptides, assay reagents for measuring constitutive receptor activity (e.g., luminescence-based).

- Procedure: Incubate RORγt with candidate compounds. Measure the reduction in constitutive receptor activity relative to a vehicle control. Generate dose-response curves to determine IC50 values [14].

- X-ray Crystallography:

- Materials: Crystals of the RORγt ligand-binding domain, the lead compound (e.g., ursonic acid).

- Procedure: Co-crystallize the protein with the lead compound. Collect diffraction data and solve the crystal structure to confirm direct atomic-level contact between the compound and the target protein [14].

- In Vivo Efficacy Study:

- Materials: Mouse model of multiple sclerosis (e.g., experimental autoimmune encephalomyelitis), validated lead compounds, vehicle control.

- Procedure: Administer the lead compound (e.g., ursonic acid or oleanonic acid) to the disease model. Monitor and score disease symptoms (e.g., paralysis) over time to demonstrate therapeutic efficacy [14].

Results: This integrated protocol identified seven novel RORγt inverse agonists. Ursonic acid and oleanonic acid showed high potency with IC50 values of 10 nM and 0.28 µM, respectively. The direct binding of ursonic acid was confirmed by X-ray structure, and in vivo studies demonstrated its therapeutic effects, achieving a high success rate of 9.7% (7/72) [14].

Quantitative Performance Data

Table 2: Performance comparison of NBI and other DTI prediction methods on benchmark datasets. AUC values from 30 simulations of 10-fold cross-validation are presented as mean ± standard deviation [13].

| Method | Enzymes (AUC) | Ion Channels (AUC) | GPCRs (AUC) | Nuclear Receptors (AUC) |

|---|---|---|---|---|

| NBI [13] | 0.975 ± 0.006 | 0.976 ± 0.007 | 0.946 ± 0.019 | 0.837 ± 0.040 |

| DBSI [13] | 0.959 ± 0.008 | 0.959 ± 0.010 | 0.927 ± 0.022 | 0.779 ± 0.047 |

| TBSI [13] | 0.947 ± 0.011 | 0.947 ± 0.013 | 0.901 ± 0.027 | 0.777 ± 0.050 |

Table 3: Experimental validation results of NBI methods in case studies.

| Case Study | NBI Method | Key Finding | Experimental Result |

|---|---|---|---|

| Drug Repurposing [13] | Basic NBI | 5 old drugs with new polypharmacological targets | IC50/EC50: 0.2 - 10 µM |

| RORγt Inverse Agonist Discovery [14] | wSDTNBI | 7 novel inverse agonists identified | Best IC50: 10 nM (Ursonic Acid) |

| RORγt Discovery Success Rate [14] | wSDTNBI | Experimental hit rate | 9.7% (7 out of 72 compounds) |

In the landscape of computational drug discovery, the prediction of drug-target interactions (DTIs) is a fundamental task. Traditional computational methods, such as molecular docking and structure-based pharmacophore mapping, often rely heavily on the availability of high-resolution three-dimensional (3D) protein structures [3]. Similarly, many machine learning approaches require large sets of both confirmed interacting (positive) and non-interacting (negative) drug-target pairs for model training [17]. Network-based inference (NBI) methods have emerged as a powerful alternative, demonstrating significant advantages by overcoming both of these constraints [3]. This application note details the methodologies and experimental protocols that leverage these key advantages, providing researchers with practical guidance for implementing these techniques in drug repurposing and novel drug discovery projects.

Core Advantages and Methodological Foundations

Independence from 3D Protein Structures

A significant bottleneck in structure-based methods is their limited applicability to proteins without solved 3D structures, such as many G-protein-coupled receptors (GPCRs) [3] [17]. Network-based methods circumvent this limitation by using network topology and similarity measures instead of structural data.

- Underlying Principle: These methods operate on the "guilt-by-association" principle, inferring potential interactions from the existing network of known DTIs and similarity relationships between drugs and between targets [3] [17].

- Data Utilization: They integrate diverse data types—such as chemical structures of drugs, amino acid sequences of proteins, known DTIs, and phenotypic data—to construct comprehensive relational networks without requiring 3D structural information [3] [18].

Independence from Experimentally Validated Negative Samples

Supervised machine learning models typically require both positive and negative examples. However, publicly available databases contain predominantly positive DTI data, and experimentally validated negative samples (confirmed non-interactions) are scarce [17]. Network-based methods address this challenge through their design.

- Positive-Unlabeled (PU) Learning: The problem is inherently one of PU learning, where only positive and unlabeled examples are available [18] [19]. Many network-based algorithms are designed to function without relying on gold-standard negative samples.

- Leveraging Network Structure: Algorithms like Network-Based Inference (NBI) use resource diffusion on the known DTI network (composed only of positive interactions) to predict new links, thus bypassing the need for negative examples altogether [3].

The following table summarizes the key challenges and how network-based methods address them.

Table 1: Key Challenges Addressed by Network-Based Methods

| Challenge | Impact on Traditional Methods | Network-Based Solution |

|---|---|---|

| Lack of 3D Structures | Limits application to proteins with unknown or hard-to-resolve structures (e.g., many membrane proteins) [3] [17]. | Uses network topology, sequence similarities, and chemical similarities to infer interactions without structural data [3] [18]. |

| Absence of Negative Samples | Introduces bias and artifacts in supervised learning models; leads to the "positive-unlabeled" problem [17] [19]. | Employs algorithms that function on known positive networks or uses sophisticated sampling strategies to generate realistic negatives [3] [19]. |

Experimental Protocols and Workflows

This section provides a detailed, step-by-step protocol for implementing a network-based DTI prediction pipeline that capitalizes on the described advantages.

Protocol 1: Basic Network-Based Inference (NBI) for DTI Prediction

This protocol is adapted from the foundational NBI (or Probabilistic Spreading) method, which requires only a known DTI network [3].

1. Objective To predict novel drug-target interactions using only a bipartite network of known DTIs, without 3D structures or negative samples.

2. Materials and Reagents

- Computational Environment: A standard computer with a Python or R environment.

- Data Source: A matrix of known DTIs (e.g., from databases like ChEMBL or DrugBank).

3. Procedure

- Step 1: Data Preparation and Network Construction

- Compile a list of drugs ( D = {d1, d2, ..., dm} ) and targets ( T = {t1, t2, ..., tn} ).

- Construct a bipartite adjacency matrix ( A ) of size ( m \times n ), where ( A{ij} = 1 ) if drug ( di ) is known to interact with target ( t_j ), and 0 otherwise (indicating an unknown interaction).

Step 2: Resource Diffusion and Weight Calculation

- The algorithm involves a two-step resource diffusion process across the bipartite network:

- Resource from targets to drugs: The resource located on each target node is equally distributed to the drugs it connects to.

- Resource from drugs to targets: The resource received by each drug node is then propagated back to the targets it links to.

- Mathematically, this can be compactly represented as a single matrix operation. The final prediction score matrix ( W ) is calculated using the formula: [ W = A \cdot A^T \cdot A ] where ( A^T ) is the transpose of the adjacency matrix ( A ). This matrix multiplication effectively performs the two-step diffusion process. The resulting matrix ( W ) contains the prediction scores for all unknown drug-target pairs.

- The algorithm involves a two-step resource diffusion process across the bipartite network:

Step 3: Prediction and Prioritization

- The scores ( W{ij} ) for all pairs where ( A{ij} = 0 ) (unknown interactions) represent the likelihood of a potential interaction.

- Rank these candidate DTIs in descending order of their scores for experimental validation.

Diagram 1: NBI Prediction Workflow

Protocol 2: Heterogeneous Network Construction and Feature Learning

For more advanced and accurate predictions, integrating multiple data sources into a heterogeneous network is highly beneficial. This protocol outlines the process using graph representation learning [18] [19].

1. Objective To build a comprehensive heterogeneous network integrating multiple biological entities and learn low-dimensional feature representations (embeddings) for drugs and targets to predict DTIs.

2. Materials and Reagents

- Software: Python with libraries such as

stellargraph,node2vec, orPyTorch Geometric. - Data Sources:

- Drug-drug similarity (e.g., from chemical fingerprints).

- Target-target similarity (e.g., from protein sequence alignment).

- Known DTI network.

- (Optional) Additional networks like drug-disease or protein-protein interactions (PPI).

3. Procedure

- Step 1: Data Collection and Similarity Calculation

- Drug Similarity: Calculate the pairwise chemical similarity between all drugs using Tanimoto coefficients on molecular fingerprints (e.g., MACCS or ECFP) [17].

- Target Similarity: Calculate the pairwise sequence similarity between all targets using normalized Smith-Waterman scores or BLAST E-values [17].

- Other Data: Gather data for other node types (e.g., diseases, side effects) and relationships from public databases.

Step 2: Heterogeneous Network Construction

- Create a graph ( G = (V, E) ) where ( V ) is the set of nodes (drugs, targets, diseases, etc.).

- Define edges ( E ) to include:

- Drug-drug edges (weighted by chemical similarity).

- Target-target edges (weighted by sequence similarity).

- Drug-target edges (known DTIs).

- Other relevant edges (e.g., drug-disease associations).

Step 3: Network Embedding Generation

Step 4: DTI Prediction Model Training

- For each known drug-target pair, create a feature vector by concatenating the drug embedding and the target embedding.

- Use the known DTIs as positive training examples.

- For negative examples, employ a robust negative sampling strategy: select pairs of drugs and targets that are not known to interact and are distant from each other in the network (e.g., with low topological overlap) to minimize false negatives [19].

- Train a classifier (e.g., Gradient Boosted Trees or a Neural Network) on these feature vectors to predict interaction likelihood [17].

Diagram 2: Heterogeneous Network Pipeline

Successful implementation of the protocols above relies on key data and software resources. The following table lists essential "research reagents" for network-based DTI prediction.

Table 2: Key Research Reagents and Resources for Network-Based DTI Prediction

| Resource Name | Type | Primary Function in Research | Key Utility / Relevance to Advantages |

|---|---|---|---|

| ChEMBL [17] | Database | Provides curated bioactivity data (IC50, Ki, Kd) for drugs and targets. | Source of experimentally validated positive interactions; enables creation of realistic benchmark datasets that may include negative samples. |

| DrugBank [20] | Database | Contains comprehensive drug, target, and DTI information, including drug structures (SMILES). | Provides drug chemical structures for similarity calculation and known DTIs for network construction, bypassing need for 3D structures. |

| HIPPIE PPI Network [21] | Database (Network) | A high-confidence protein-protein interaction network. | Used to build context-specific biological networks (e.g., for cancer) to inform target selection and understand polypharmacology, independent of 3D data. |

| STRING [20] | Database (Network) | A comprehensive database of known and predicted PPIs. | Integrates functional linkages between proteins, enriching the target-target similarity and network context beyond sequence alone. |

| RDKit | Software Library | Open-source cheminformatics toolkit. | Calculates molecular fingerprints and drug-drug similarity from SMILES strings, a core step for network construction without 3D data. |

| node2vec [17] | Software Algorithm | A graph embedding method that learns continuous feature representations for nodes in a network. | Generates drug and target embeddings from a heterogeneous network topology, serving as powerful features for DTI prediction models. |

| PathLinker [21] | Software Algorithm | Reconstructs signaling pathways within PPI networks by identifying shortest paths. | Used in network-informed target discovery to find critical connector nodes between proteins with co-existing mutations, suggesting combination drug targets. |

Performance and Validation

Network-based methods have demonstrated robust performance in predicting DTIs. The following table synthesizes quantitative results from recent studies, highlighting their effectiveness even without 3D structures or gold-standard negatives.

Table 3: Performance Benchmarks of Network-Based and Related Methods

| Model/Method | Key Principle | Reported Performance (AUROC / AUPR) | Notes on Advantages |

|---|---|---|---|

| NBI (ProbS) [3] | Resource diffusion on a DTI network. | Competitive performance on benchmark datasets (exact metrics not provided in source). | Directly operates on the known DTI network only, demonstrating core independence from 3D structures and negative samples. |

| DTIAM [10] | Self-supervised pre-training on molecular graphs and protein sequences. | Outperformed baseline methods in warm-start and cold-start scenarios. | Pre-training on large unlabeled data (sequences/graphs) reduces dependency on labeled DTI data and protein structures. |

| DT2Vec [17] | Graph embedding (node2vec) on similarity networks + classifier. | Achieved competitive results on a golden standard dataset. | Integrates chemical and genomic spaces into low-dimensional vectors without 3D data; uses a dataset with validated negatives. |

| MVPA-DTI [18] | Heterogeneous network with multiview path aggregation. | AUROC: 0.966, AUPR: 0.901. | Integrates drug 3D conformation features (from a transformer) and protein sequence features (from Prot-T5), but the network framework provides the primary predictive power. |

| Hetero-KGraphDTI [19] | GNN with knowledge integration. | Average AUC: 0.98, Average AUPR: 0.89. | Leverages prior biological knowledge from ontologies to regularize the model, enhancing performance without relying on negative samples or 3D structures. |

Concluding Remarks

The independence from 3D structures and experimentally validated negative samples positions network-based inference as a uniquely versatile and scalable strategy for DTI prediction. The protocols and resources detailed in this application note provide a clear roadmap for researchers to apply these powerful methods. They enable the systematic exploration of drug repurposing opportunities and the discovery of novel therapeutic targets, particularly for proteins that are intractable to structural studies, thereby accelerating the drug discovery pipeline [3] [21].

The prediction of drug-target interactions (DTIs) is a critical step in genomic drug discovery and drug repurposing, enabling researchers to understand the mechanisms of action of drugs at the target level and significantly reducing the time and cost associated with traditional drug development [22] [23] [24]. While experimental methods for identifying DTIs are expensive and laborious, computational in silico approaches provide an effective means to overcome this challenge [22]. Among these, methods leveraging the underlying principles of similarity property and network topology have demonstrated remarkable success. These approaches are fundamentally based on the "guilt-by-association" assumption, which posits that similar drugs are likely to interact with similar targets and vice versa [16] [24]. This application note details the theoretical foundations, experimental protocols, and practical implementations of these principles within the context of network-based inference for DTI prediction, providing researchers with a comprehensive toolkit for computational drug discovery.

Theoretical Foundation

The Similarity Principle in DTI Prediction

The similarity property principle asserts that the chemical space of drugs and the genomic space of targets can be systematically quantified and related. Chemical similarity between drugs is commonly computed from their structural properties, often represented by Simplified Molecular-Input Line-Entry System (SMILES) strings or molecular graphs, using measures such as SIMCOMP, which provides a global similarity score based on the size of common substructures between two compounds [23] [25]. For targets, genomic sequence similarity is typically calculated from amino acid sequences using normalized Smith-Waterman scores or other alignment metrics [23]. Furthermore, the integration of heterogeneous data sources—including drug-disease associations, side-effects, and phenotypic information—enriches the similarity measures, providing a multi-view perspective that enhances prediction accuracy beyond what is possible with chemical and genomic data alone [22] [24]. Crucially, similarity is not limited to intrinsic properties; it can also be derived from the interaction network itself, for instance, by calculating the Jaccard similarity between drugs based on their shared targets within known DTI networks [22].

Network Topology in Heterogeneous Biological Networks

Network topology refers to the structural arrangement and connectivity patterns between nodes (e.g., drugs, targets, diseases) in a network. In a DTI context, known interactions form a bipartite graph between drug and target nodes [23] [16]. The topology of this network exhibits significant correlation with drug structure similarity and target sequence similarity [23]. Topological features, such as node degree (number of connections) and cluster coefficients (measure of how nodes cluster together), are informative for prediction models, as seen in the statistics of gold-standard datasets [23]. Modern methods construct heterogeneous networks that integrate multiple node types (drugs, targets, diseases, side-effects) and relationship types, providing a more comprehensive view of the biological context [22] [24]. The key insight is that drugs or targets with similar topological properties within this heterogeneous network are more likely to be functionally correlated. Topological information is captured through low-dimensional feature representations that preserve proximities between nodes, including high-order relationships that go beyond immediate neighbors to capture more complex network structures [22] [24].

Table 1: Statistics of Gold-Standard Drug-Target Interaction Datasets [23]

| Dataset | No. of Drugs | No. of Target Proteins | No. of Known Interactions | Average Degree of Drugs | Average Degree of Targets | Cluster Coefficient of Drugs | Cluster Coefficient of Targets |

|---|---|---|---|---|---|---|---|

| Enzyme | 445 | 664 | 2926 | 6.57 | 4.40 | 0.850 | 0.902 |

| Ion Channel | 210 | 204 | 1476 | 7.02 | 7.23 | 0.871 | 0.897 |

| GPCR | 223 | 95 | 635 | 2.84 | 6.68 | 0.867 | 0.776 |

| Nuclear Receptor | 54 | 26 | 90 | 1.66 | 3.46 | 0.832 | 0.933 |

Table 2: Performance Comparison of State-of-the-Art DTI Prediction Methods

| Method | Core Principle | Key Algorithmic Approach | Reported Performance (AUROC) | Reported Performance (AUPR) |

|---|---|---|---|---|

| NTFRDF [22] | Multi-similarity fusion & network topology | Deep forest with low-dimensional topological features | Substantial improvement over benchmarks | Substantial improvement over benchmarks |

| DTINet [24] | Heterogeneous network integration | Random Walk with Restart (RWR) + Diffusion Component Analysis (DCA) | 5.9% higher than second-best | 5.7% higher than second-best |

| DTIAM [10] | Self-supervised pre-training | Transformer-based feature learning from molecular graphs & protein sequences | Superior performance in warm/cold start | Superior performance in warm/cold start |

| SaeGraphDTI [25] | Sequence attribute extraction & graph neural networks | Graph encoder/decoder on similarity-augmented network | Best in class on most key metrics | Best in class on most key metrics |

| BLMNII [24] | Bipartite local model + neighbor inference | Support Vector Machine (SVM) with interaction-profile inference | Benchmark | Benchmark |

Experimental Protocols

Protocol 1: Construction of a Heterogeneous Network and Feature Representation

Objective: To build a heterogeneous network integrating multiple data sources and generate low-dimensional vector representations for drugs and targets that encapsulate their topological properties [22] [24].

Materials: Known DTIs, drug chemical structures, target protein sequences, and optionally, drug-disease associations and side-effect data [23] [24].

Methodology:

- Data Collection and Similarity Calculation:

- Collect drug chemical structures (e.g., from KEGG LIGAND) and compute the drug-drug chemical similarity matrix (Sc) using a graph-based algorithm like SIMCOMP [23].

- Collect target protein sequences (e.g., from KEGG GENES) and compute the target-target sequence similarity matrix (Sg) using normalized Smith-Waterman scores [23].

- (Optional) Integrate other similarities, such as Jaccard similarity based on shared interaction profiles, and use a multi-similarity fusion strategy to create comprehensive similarity measures [22].

- Network Construction: Formally construct a heterogeneous network where nodes represent drugs, targets, and other entities (e.g., diseases). Edges represent known interactions, similarities, and other associations [22] [24].

- Network Diffusion and Feature Learning:

- Apply a network diffusion algorithm, such as Random Walk with Restart (RWR), to capture high-order proximities and the global topology of the network for each node [24].

- Use a dimensionality reduction technique, such as Diffusion Component Analysis (DCA), to obtain informative, low-dimensional vector representations from the diffusion states. This step is crucial for de-noising and capturing the underlying structural properties [24].

Expected Outcome: A set of low-dimensional feature vectors for each drug and target node, which encode their topological context within the heterogeneous network.

Protocol 2: DTI Prediction using a Graph Neural Network Framework

Objective: To predict novel DTIs by updating drug and target features based on the topological relationships in a graph and decoding potential interactions [25].

Materials: Drug SMILES strings, target amino acid sequences, and known DTIs.

Methodology:

- Sequence Attribute Extraction:

- Encode drug SMILES strings and target amino acid sequences into fixed-length integer sequences via padding or trimming.

- Pass the encoded sequences through an embedding layer to generate initial embedding matrices.

- Use a sequence attribute extractor with one-dimensional convolutional layers of varying kernel sizes to capture key substructures and local residue patterns, producing aligned attribute sequences [25].

- Graph Encoder for Topological Feature Update:

- Construct a relational network using similarity relationships (e.g., drug-drug, target-target) and known DTIs.

- Input the initial node features (from Step 1) and the relational network into a graph encoder (e.g., a Graph Neural Network). The GNN updates each node's representation by aggregating information from its neighbors, effectively incorporating network topology [25].

- Graph Decoder for Interaction Prediction:

- The updated drug and target node features are passed to a graph decoder.

- The decoder calculates the probability of an edge (interaction) existing between a given drug-target pair, typically through a function of their respective feature vectors, to produce the final DTI prediction [25].

Expected Outcome: A predictive model capable of scoring unknown drug-target pairs, identifying potential interactions with high probability.

Visualizations

Heterogeneous Network Architecture for DTI Prediction

Diagram 1: Data integration and modeling workflow for DTI prediction.

Computational Workflow of a Network-Based Prediction Model

Diagram 2: Core computational steps in a network-based DTI prediction model.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Data Resources and Computational Tools for DTI Research

| Resource / Tool Name | Type | Primary Function in Research | Example Use Case |

|---|---|---|---|

| KEGG BRITE [23] | Database | Source of known drug-target interaction data. | Building a gold-standard dataset for model training and evaluation. |

| KEGG LIGAND [23] | Database | Provides chemical structures of drugs/compounds. | Calculating drug-drug chemical similarity using SIMCOMP. |

| DrugBank [23] | Database | Repository for drug and target information. | Curating comprehensive lists of drugs and their protein targets. |

| SIMCOMP [23] | Algorithm / Tool | Computes global chemical similarity based on common substructures. | Generating the drug chemical similarity matrix (Sc) from chemical graphs. |

| Smith-Waterman Algorithm [23] | Algorithm / Tool | Performs local sequence alignment to compute similarity. | Generating the target sequence similarity matrix (Sg) from amino acid sequences. |

| Random Walk with Restart (RWR) [24] | Algorithm | Models network diffusion to capture high-order node proximity. | Exploring the topological context of a node in a heterogeneous network. |

| Diffusion Component Analysis (DCA) [24] | Algorithm | Performs dimensionality reduction on network diffusion states. | Learning low-dimensional, informative feature vectors from complex networks. |

| Graph Neural Network (GNN) [25] | Algorithm / Model | Learns node representations by aggregating information from a graph. | Updating drug and target features based on the topological relationships in a DTI network. |

The drug discovery landscape is undergoing a profound transformation, shifting from the traditional 'one drug-one target' philosophy toward a more holistic polypharmacology approach. This paradigm recognizes that complex diseases often involve dysregulation of multiple interconnected pathways and that single-target therapies may prove insufficient for durable therapeutic outcomes [26]. Polypharmacology represents the science of multi-targeting molecules, where a single drug is rationally designed to interact with multiple biological targets simultaneously [27]. This shift has been largely driven by the recognition that many successful drugs, initially developed as single-target agents, subsequently revealed multi-targeting properties that contributed significantly to their therapeutic efficacy [28].

The limitations of the single-target approach have become particularly evident in the treatment of complex, multifactorial diseases such as cancer, central nervous system disorders, autoimmune conditions, and metabolic diseases [26] [27]. Network biology reveals that biological systems operate through intricate interaction networks rather than isolated linear pathways. Consequently, modulating a single node in these complex networks often triggers adaptive responses and compensatory mechanisms that limit therapeutic efficacy [28]. Polypharmacology addresses this biological complexity by designing drugs that can modulate multiple targets within disease-relevant networks, potentially leading to enhanced efficacy and reduced susceptibility to resistance mechanisms [27].

This evolution has been facilitated by advances in multiple disciplines. The exponential growth of molecular data in the post-genomic era, coupled with advancements in computational modeling, cheminformatics, and systems biology, has enabled researchers to systematically study and design polypharmacological agents [28]. Furthermore, network-based inference approaches have emerged as powerful tools for predicting drug-target interactions (DTIs) and identifying new therapeutic applications for existing drugs, accelerating the development of multi-target therapies [18].

Polypharmacology: Conceptual Framework and Definitions

Fundamental Principles

Polypharmacology encompasses several distinct but interrelated concepts. At its core, it involves "one drug-multiple targets", where a single pharmaceutical agent is designed to interact with multiple targets either within a single disease pathway or across multiple disease pathways [28] [26]. This approach can be further categorized into several mechanistic strategies:

Single drug acting on multiple targets of a unique disease pathway: This strategy focuses on parallel or sequential targets within a defined pathological process to achieve enhanced therapeutic effect through simultaneous modulation [28].

Single drug acting on multiple targets across different disease pathways: This approach is particularly relevant for complex diseases with multiple etiological factors or for treating co-morbid conditions with a single agent [28].

Multi-target-directed ligands (MTDLs): These are specifically designed compounds that incorporate structural features enabling interaction with multiple predefined biological targets [27]. MTDLs represent the rational implementation of polypharmacology principles in drug design.

The Spectrum of Drug Polypharmacology

The continuum of polypharmacology ranges from unintentional to rational design:

Serendipitous Polypharmacology: Historically, multi-targeting properties of many drugs were discovered retrospectively after clinical use. Examples include aspirin (which acts on COX-1, COX-2, and NF-κB) and sildenafil (developed for angina but found effective for erectile dysfunction) [28].

Rational Polypharmacology: Modern drug discovery increasingly employs deliberate design of MTDLs through computational prediction and structural modeling [27]. This approach leverages advanced understanding of disease networks and target structures to create optimized multi-target agents.

The spatial arrangement of pharmacophores in MTDLs falls into three primary categories [27]:

- Linked pharmacophores: Distinct molecular domains connected via a spacer (linker)

- Fused pharmacophores: Structural elements directly connected through covalent bonds without linkers

- Merged pharmacophores: Integrated structures where multiple pharmacophores share a common structural core

Table 1: Classification of Multi-Target Drugs Based on Pharmacophore Arrangement

| Arrangement Type | Structural Features | Design Considerations | Example Drugs |

|---|---|---|---|

| Linked | Distinct domains connected via cleavable or non-cleavable linkers | Linker stability, spacer length, release mechanisms | Antibody-drug conjugates (e.g., Loncastuximab tesirine) |

| Fused | Direct covalent attachment without spacers | Structural compatibility, conformational flexibility | Peptide hybrids (e.g., Tirzepatide) |

| Merged | Shared structural core with overlapping pharmacophores | Balanced affinity across targets, molecular properties optimization | Small molecule kinase inhibitors (e.g., Sparsentan) |

Computational Framework: Network-Based Inference for Drug-Target Prediction

Theoretical Foundation

Network-based inference represents a cornerstone of modern polypharmacology research, addressing the fundamental challenge of predicting interactions between drugs and their biological targets [18]. This approach conceptualizes biological systems as complex networks where drugs, targets, diseases, and side effects form interconnected nodes [19]. The topological relationships within these heterogeneous networks provide critical insights into potential drug-target interactions that would be difficult to identify through reductionist approaches.

The mathematical foundation of network-based inference lies in graph theory, where biological entities and their relationships are represented as nodes and edges in a heterogeneous graph ( G = (V, E) ), with ( V ) representing the set of nodes (drugs and targets) and ( E ) representing the set of edges of different types (drug-drug similarities, target-target similarities, or known interactions) [19]. By analyzing the structural properties of these networks and applying algorithms that propagate information across nodes, researchers can infer novel interactions and identify potential multi-targeting opportunities.

Advanced Methodologies in Network-Based DTI Prediction

Recent advances in computational methods have significantly enhanced our ability to predict drug-target interactions. Heterogeneous network models that integrate multiview path aggregation have demonstrated remarkable performance in DTI prediction, achieving an AUPR (area under the precision-recall curve) of 0.901 and an AUROC (area under the receiver operating characteristic curve) of 0.966 in benchmark tests [18]. These models employ sophisticated feature extraction techniques, including molecular attention transformers for drug 3D structure analysis and protein-specific large language models (such as Prot-T5) for sequence feature extraction [18].

The GRAM-DTI framework introduces adaptive multimodal representation learning, integrating four modalities of molecular and protein information through volume-based contrastive learning [29]. This approach dynamically regulates each modality's contribution during pre-training and incorporates IC50 activity measurements as weak supervision to ground representations in biologically meaningful interaction strengths [29].

Another innovative approach, DTIAM, provides a unified framework for predicting drug-target interactions, binding affinities, and mechanisms of action [10]. This model employs self-supervised pre-training on large amounts of unlabeled data to learn meaningful representations of drugs and targets, then applies these representations to downstream prediction tasks with demonstrated superiority in cold-start scenarios [10].

Table 2: Performance Comparison of Advanced DTI Prediction Models

| Model Name | Core Methodology | Key Features | Reported Performance |

|---|---|---|---|

| MVPA-DTI [18] | Heterogeneous network with multiview path aggregation | Molecular attention transformer, Prot-T5 protein sequences, meta-path information aggregation | AUPR: 0.901, AUROC: 0.966 |

| Hetero-KGraphDTI [19] | Graph neural networks with knowledge integration | Knowledge-based regularization, multi-layer message passing, biological ontology integration | Average AUC: 0.98, Average AUPR: 0.89 |

| GRAM-DTI [29] | Multimodal pre-training with adaptive modality dropout | Volume-based contrastive learning, IC50 activity supervision, four modality integration | State-of-the-art across four public datasets |

| DTIAM [10] | Self-supervised pre-training with unified prediction | Mechanism of action prediction, cold start scenario handling, binding affinity prediction | Substantial improvement over baselines in all tasks |

Core Algorithms and Real-World Implementation in Drug Discovery

Network-Based Inference (NBI) is a computational method derived from complex network theory and recommendation algorithms to predict potential links in bipartite networks [3] [13]. In the context of drug discovery, identifying novel Drug-Target Interactions (DTIs) is a costly and time-consuming experimental process [30] [3]. Computational methods like NBI address this challenge by leveraging the known topology of drug-target bipartite networks to infer unknown interactions, thereby accelerating drug repositioning and the understanding of drug polypharmacology [3] [13].

The NBI method is conceptually founded on a resource diffusion process, analogous to mass or heat diffusion in physics [13]. It operates on the principle that potential interactions can be predicted by simulating the flow of "resource" through the bipartite network structure. Its simplicity, robustness, and independence from the three-dimensional structures of targets or negative samples make it a powerful and widely applicable tool [3].

Core Methodology and Mathematical Formulation

The original NBI framework, as introduced by Zhou et al. (2007) and applied to DTI prediction by Cheng et al. (2012), models the problem using a bipartite graph [30] [13].

Bipartite Network Construction

A drug-target bipartite network is formally defined by two disjoint sets:

- A set of drugs, ( D = {d1, d2, ..., d_m} )

- A set of targets, ( T = {t1, t2, ..., t_n} )

The interactions between these sets are represented by a binary ( m \times n ) adjacency matrix, A. An element ( A{ij} = 1 ) if drug ( di ) is known to interact with target ( tj ); otherwise, ( A{ij} = 0 ) [30] [31] [13]. The degree of a drug node ( di ) is its number of known targets, ( ki = \sum{j=1}^{n} A{ij} ). Similarly, the degree of a target node ( tj ) is ( \kappaj = \sum{i=1}^{m} A{ij} ) [32].

The Two-Step Resource Diffusion Algorithm

The core of the NBI protocol is a two-step resource diffusion process across the bipartite network. The following workflow and table detail this algorithmic procedure.

Table 1: The Two-Step Resource Diffusion Process in NBI

| Step | Process Description | Mathematical Formulation |

|---|---|---|

| 1 | Resource Transfer (Targets → Drugs): Initial resource located on target nodes is distributed to the drugs connected to them. The resource a drug receives is proportional to the initial resource of its linked targets and the strength of the connection. | ( f(di) = \sum{\alpha=1}^{n} \frac{A{i\alpha} f0(t\alpha)}{\kappa\alpha} ) |

| 2 | Resource Back-Transfer (Drugs → Targets): The resource now located on drug nodes is transferred back to target nodes. The final resource a target receives is proportional to the resource held by its linked drugs and the strength of those connections. | ( f'(tj) = \sum{l=1}^{m} \frac{A{lj} f(dl)}{kl} = \sum{l=1}^{m} \frac{A{lj}}{kl} \sum{\alpha=1}^{n} \frac{A{l\alpha} f0(t\alpha)}{\kappa_\alpha} ) |

In these equations, ( f0(t\alpha) ) denotes the initial resource located on target ( t\alpha ). Typically, the initial resource vector is set uniformly (e.g., ( f0(t\alpha) = 1 ) for all ( \alpha )) [30] [13]. The final resource allocation ( f'(tj) ) represents the recommendation score for target ( t_j ) given the initial setup. This process can be consolidated into a single matrix operation. The weight matrix ( W ) for the projection is given by the equivalent formulation:

[ W{ij} = \frac{1}{kj} \sum{l=1}^{m} \frac{A{il} A{jl}}{kl} ]

Subsequently, the final recommendation matrix ( R ) is computed as ( R = WA ), where ( R{ji} ) is the score recommending target ( tj ) to drug ( d_i ) [30]. The resulting list of potential DTIs for each drug is then sorted in descending order of this score for prioritization [30].

Performance Analysis and Benchmarking

The performance of the original NBI framework has been rigorously evaluated against other methods on benchmark datasets.

Table 2: Performance Comparison of NBI on Benchmark Datasets (10-fold Cross-Validation) [13]

| Method | Enzymes (AUC) | Ion Channels (AUC) | GPCRs (AUC) | Nuclear Receptors (AUC) |

|---|---|---|---|---|

| NBI | 0.975 ± 0.006 | 0.976 ± 0.007 | 0.946 ± 0.019 | 0.932 ± 0.039 |

| DBSI | 0.959 ± 0.008 | 0.957 ± 0.009 | 0.909 ± 0.023 | 0.887 ± 0.048 |

| TBSI | 0.943 ± 0.011 | 0.944 ± 0.012 | 0.895 ± 0.027 | 0.861 ± 0.055 |

As shown in Table 2, NBI consistently achieved the highest Area Under the Curve (AUC) values across all four major target families—Enzymes, Ion Channels, GPCRs, and Nuclear Receptors—demonstrating its superior predictive ability compared to Drug-Based and Target-Based Similarity Inference methods (DBSI and TBSI) [13].

Experimental Validation and Application Protocol

A key strength of the NBI framework is its successful application in predicting novel DTIs for drug repositioning, followed by experimental validation.

Protocol: Experimental Validation of NBI-Predicted Drug-Target Interactions

Prediction and Prioritization:

- Input: A comprehensive drug-target bipartite network constructed from databases like DrugBank [12] [13].

- Process: Run the NBI algorithm to obtain recommendation scores for all unknown drug-target pairs.

- Output: Generate a ranked list of potential new DTIs. Select top-ranked predictions for further validation, focusing on drugs with potential for repositioning (e.g., approved drugs with known safety profiles).

In Vitro Binding Assays:

- Objective: Determine the half-maximal inhibitory concentration (IC₅₀) or dissociation constant (Kd) to confirm binding affinity between the predicted drug and target [13].

- Procedure: a. Target Preparation: Express and purify the recombinant human target protein (e.g., estrogen receptor, dipeptidyl peptidase-IV) [13]. b. Compound Preparation: Prepare serial dilutions of the candidate drug (e.g., montelukast, simvastatin). c. Binding Measurement: Use a fluorescence-based or radioligand binding assay to measure the displacement of a known, labeled ligand by the candidate drug. The assay should include positive controls (known binder) and negative controls (vehicle only) [13]. d. Data Analysis: Plot dose-response curves and calculate IC₅₀ values using non-linear regression. A successful prediction is typically confirmed with IC₅₀ or Kd values in the sub-micromolar to micromolar range (e.g., 0.2 to 10 µM) [13].

Functional Cellular Assays:

- Objective: Verify that the predicted and confirmed interaction leads to a functional biological outcome in a relevant cell line.

- Procedure: a. Cell Culture: Maintain an appropriate cell line (e.g., human MDA-MB-231 breast cancer cells for anti-cancer drug validation) [13]. b. Viability/Proliferation Assay: Treat cells with varying concentrations of the candidate drug. After an incubation period (e.g., 48-72 hours), measure cell viability using assays like MTT or CellTiter-Glo [13]. c. Data Analysis: Calculate the half-maximal effective concentration (EC₅₀) for anti-proliferative effects. A significant reduction in cell viability at physiologically relevant concentrations provides strong support for the NBI prediction.

This protocol successfully validated the polypharmacology of several drugs, including montelukast, diclofenac, and simvastatin on estrogen receptors or dipeptidyl peptidase-IV, and demonstrated the anti-proliferative activity of simvastatin and ketoconazole in breast cancer cells [13].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Resources for NBI and Experimental Validation

| Item | Function/Description | Example Sources/Details |

|---|---|---|

| DTI Databases | Provide the foundational binary links to construct the bipartite network for NBI. | DrugBank [12] [13], BindingDB [12], ChEMBL [12], Therapeutic Target Database (TTD) [12] |

| Similarity Matrices | Optional inputs for enhanced NBI variants (e.g., DT-Hybrid). Quantify drug-drug and target-target relationships. | Drug: 2D fingerprint-based similarity (e.g., SIMCOMP) [30]. Target: Genomic sequence similarity (e.g., BLAST bits scores) [30]. |

| Computational Environment | Software for implementing the NBI algorithm and performing data analysis. | R, Python with scientific libraries (NumPy, SciPy, Pandas) [30] |

| Recombinant Proteins | Purified human target proteins for in vitro binding assays to validate predictions. | Commercially available or expressed in-house (e.g., E. coli, insect cells) [13] |

| Validated Assay Kits | Standardized biochemical kits for measuring binding affinity or enzymatic activity. | Fluorescence-based or radioligand binding assay kits specific to the target (e.g., kinase, protease, receptor) [13] |

| Cell Lines | Biologically relevant models for functional validation of predicted DTIs. | Human cancer cell lines (e.g., MDA-MB-231), primary cells, or engineered cell lines [13] |

| Cell Viability Assay Reagents | Compounds for assessing the functional cellular outcome of a confirmed DTI. | MTT, MTS, or CellTiter-Glo reagents [13] |

The paradigm in drug discovery has progressively shifted from the traditional "one drug, one target" model toward polypharmacology, which acknowledges that a single drug often interacts with multiple biological targets simultaneously [33] [3] [13]. This shift underscores the critical importance of comprehensively identifying drug-target interactions (DTIs), as these relationships determine both therapeutic efficacy and potential adverse effects. Experimental determination of DTIs remains costly and time-consuming, creating an urgent need for robust computational prediction methods [30] [34].

Among various computational approaches, network-based inference (NBI) methods have demonstrated significant advantages as they do not require three-dimensional protein structures or experimentally confirmed negative samples, which are often limited [3]. These methods leverage the topological properties of bipartite drug-target networks, treating DTI prediction as a resource allocation and diffusion process across the network [13]. This article provides a detailed examination of three advanced NBI methodologies: SDTNBI, SimSpread, and DT-Hybrid, including their underlying mechanisms, implementation protocols, and comparative performance.

Methodological Foundations

SDTNBI (Substructure-Drug-Target Network-Based Inference)

SDTNBI extends the basic NBI framework by incorporating chemical substructure information, enabling the prediction of targets for novel chemical compounds not present in the original network [33]. The method constructs a three-layer network comprising substructures, drugs, and targets.

Key Algorithmic Steps:

- Substructure Identification: Decompose known drug molecules into chemical substructures using molecular fingerprints.