Network Inference and Dynamic Modeling in Systems Biology: A Comprehensive Protocol for Biomedical Research

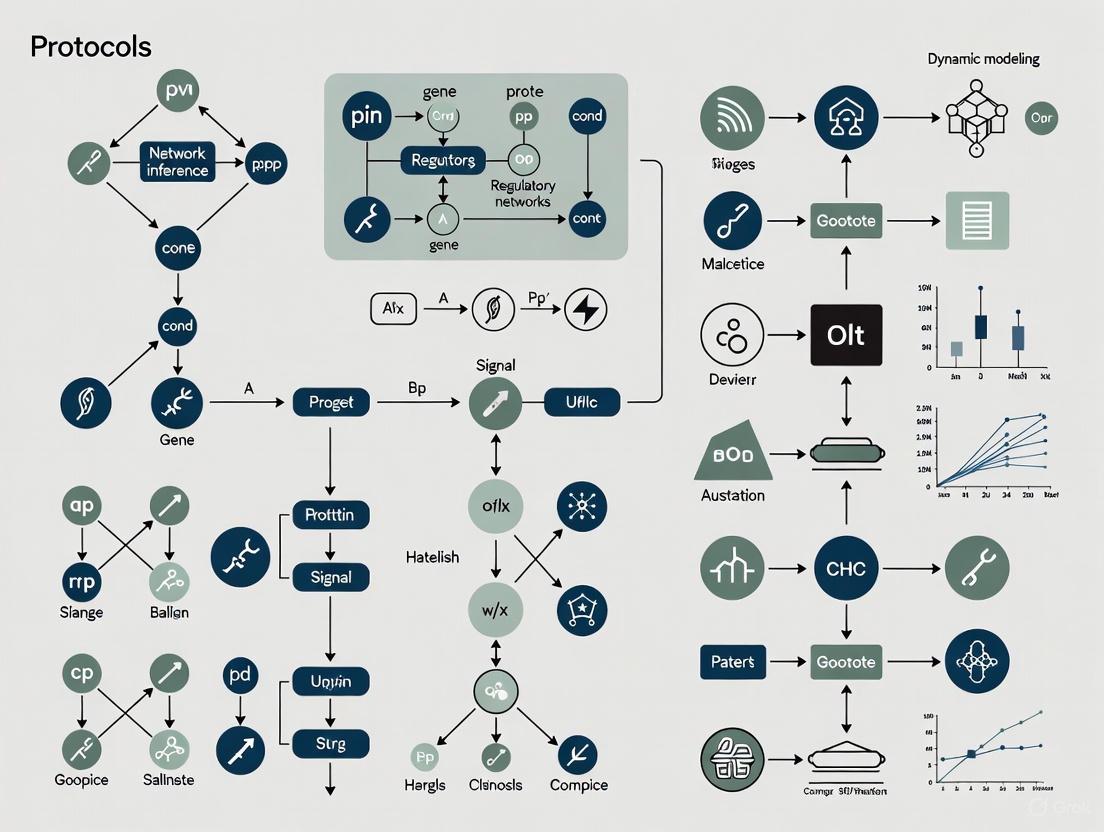

This article provides a comprehensive guide to network inference and dynamic modeling, essential for understanding complex biological systems in biomedical research and drug development.

Network Inference and Dynamic Modeling in Systems Biology: A Comprehensive Protocol for Biomedical Research

Abstract

This article provides a comprehensive guide to network inference and dynamic modeling, essential for understanding complex biological systems in biomedical research and drug development. It covers foundational concepts, core methodologies for reconstructing gene regulatory and signaling networks, and protocols for dynamic model calibration using ordinary differential equations. The guide addresses critical challenges including parameter identifiability, model distinguishability, and optimization strategies. Furthermore, it details rigorous validation frameworks, credibility standards, and comparative analysis of model performance. By integrating these elements, this resource empowers researchers to build predictive, reliable computational models for advancing therapeutic discovery and personalized medicine.

Core Concepts and Problem Formulation in Biological Network Analysis

In systems biology, cellular processes are not governed by isolated entities but by complex, interconnected networks of molecular interactions. The network inference problem refers to the computational challenge of reconstructing these hidden regulatory architectures—where nodes represent biological molecules (e.g., genes, proteins, metabolites) and directed edges signify causal influences or regulatory relationships—from experimental observation data [1]. Solving this problem is foundational for understanding the underlying mechanisms of health and disease, enabling the identification of novel drug targets and the development of personalized therapeutic strategies.

The core challenge lies in the fact that biological networks are not directly observable; they must be deduced from often noisy, high-dimensional data like transcriptomics and metabolomics. This process is further complicated by the timescale separation across different molecular layers; for instance, metabolic changes can occur in minutes, while transcriptional responses unfold over hours [1]. This document outlines the formal definition of the network inference problem, presents core quantitative concepts, details standard and emerging experimental and computational protocols, and provides guidelines for the effective visualization of inferred networks.

Quantitative Foundations: Data Types and Distribution Summaries

The first step in network inference is to summarize and understand the raw, quantitative data, which typically consists of measurements of molecular abundance (e.g., gene expression, metabolite concentration) across different conditions or time points [2]. Except for very small amounts of data, understanding is difficult without a summary, which involves characterizing the distribution of the data—what values are present and how often they appear [2].

Table 1: Common Data Summarization Techniques for Quantitative Biological Data

| Data Type | Summary Table Type | Key Statistics | Visualization Methods | Example in Biology |

|---|---|---|---|---|

| Continuous (e.g., metabolite concentration) | Frequency Table with Bins [2] | Counts, Percentages [2] | Histogram [2] | Distribution of birth weights in a population [2] |

| Discrete Quantitative (e.g., gene copy number) | Frequency Table [2] | Counts, Percentages [2] | Histogram, Bar Chart [2] | Number of severe cyclones per year [2] |

| Categorical / Survey (e.g., patient stratification) | Frequency Table, Grid [3] | Percentages, Row Percentages [3] | Bar Chart, Pie Chart | Likelihood of product purchase survey data [3] |

| Numeric Grid (e.g., brand consumption) | Summary Table of Variable Sets [3] | Averages, Medians, Percentages [3] | Heat Map, Table | Weekly consumption of different cola brands [3] |

For continuous data, creating a frequency table requires grouping data into exhaustive and mutually exclusive intervals, or 'bins'. Care must be taken to define bin boundaries with more decimal places than the recorded data to avoid ambiguity regarding which bin an observation belongs to [2]. A histogram is a graphical representation of this frequency table, where the width of a bar represents a bin and the height represents the frequency (count or percentage) of observations within that range [2]. The choice of bin size and boundaries can significantly impact the histogram's appearance and interpretation [2].

Experimental Protocols for Data Generation

High-quality data is a prerequisite for reliable network inference. The following protocols describe standard methodologies for generating key data types.

Protocol: Bulk Metabolomic Profiling via Mass Spectrometry

This protocol describes the acquisition of bulk metabolomic data, which captures the relative or absolute abundances of small molecules in a biological sample.

- 1. Sample Collection and Preparation: Snap-freeze tissue or quench cell culture in liquid nitrogen to instantly halt metabolic activity. Homogenize the sample in a pre-chilled methanol:water solvent mixture to extract metabolites. Remove debris by centrifugation.

- 2. Data Acquisition via Liquid Chromatography-Mass Spectrometry (LC-MS): Separate metabolites in the extract using a reverse-phase liquid chromatography (LC) column. Ionize the separated metabolites using electrospray ionization (ESI) and analyze them with a high-resolution mass spectrometer (MS). Acquire data in both positive and negative ionization modes for comprehensive coverage.

- 3. Data Pre-processing: Convert raw instrument data files to an open format (e.g., .mzML). Perform peak picking, alignment, and integration using software (e.g., XCMS, Progenesis QI) to generate a feature table. This table contains metabolite identities (or features) as rows, samples as columns, and peak intensities as values.

- 4. Data Normalization: Normalize the feature table to correct for technical variation. Common methods include normalization by total ion intensity, internal standards (e.g., stable isotope-labeled compounds), or probabilistic quotient normalization.

Protocol: Single-Cell RNA Sequencing (scRNA-seq) using 10X Genomics

This protocol details the generation of transcriptomic data at single-cell resolution, capturing cellular heterogeneity.

- 1. Single-Cell Suspension Preparation: Dissociate solid tissue or harvest cells to create a single-cell suspension. It is critical to maintain high cell viability (>90%) and prevent clumping. Filter the suspension through a flow cytometry-compatible strainer.

- 2. Cell Barcoding and Library Preparation using 10X Genomics Chromium: Load the cell suspension, barcoded gel beads, and partitioning oil onto a 10X Genomics Chromium chip. The system partitions thousands of individual cells into nanoliter-scale droplets, each containing a uniquely barcoded bead. Within each droplet, cell lysis and reverse transcription occur, labeling the mRNA from each cell with its unique barcode.

- 3. Library Construction and Sequencing: Break the emulsion, purify the barcoded cDNA, and prepare sequencing libraries following the manufacturer's instructions. This typically involves cDNA amplification, fragmentation, and the addition of adapters and sample indices. Pool libraries and sequence on an Illumina platform (e.g., NovaSeq) to a sufficient depth (e.g., 50,000 reads per cell).

- 4. scRNA-seq Data Pre-processing: Demultiplex the raw sequencing data using the cell barcodes and unique molecular identifiers (UMIs). Align reads to a reference genome (e.g., with STARsolo or Cell Ranger) and generate a gene expression matrix where rows are genes, columns are cell barcodes, and values are UMI counts. Perform quality control to remove empty droplets, doublets, and low-quality cells based on metrics like UMIs per cell and mitochondrial gene percentage.

Computational Protocols for Network Inference

Once quantitative data is generated and summarized, computational methods are applied to infer the network. Below are two protocols representing state-of-the-art approaches.

Protocol: Multi-omic Network Inference with MINIE

MINIE is a computational method that integrates bulk metabolomic and single-cell transcriptomic time-series data through a Bayesian regression framework, explicitly modeling the timescale separation between molecular layers [1].

- 1. Input Data Preparation: Format your data into two matrices:

- Transcriptomic Data (Slow Layer): A matrix

Gof size (number of time points × number of cells × number of genes) from scRNA-seq. - Metabolomic Data (Fast Layer): A matrix

Mof size (number of time points × number of metabolites) from bulk metabolomics.

- Transcriptomic Data (Slow Layer): A matrix

- 2. Model Formalization using Differential-Algebraic Equations (DAEs): Formalize the system dynamics using DAEs to handle timescale separation [1]:

d(g)/dt = f(g, m, b_g; θ) + ρ(g, m)w(Differential eq. for slow transcriptomic dynamics)d(m)/dt = h(g, m, b_m; θ) ≈ 0(Algebraic eq. for fast metabolic dynamics, assuming quasi-steady-state) Here,gis gene expression,mis metabolite concentration,bare baseline effects,θare model parameters, andwis noise. - 3. Transcriptome-Metabolome Mapping Inference: Use the algebraic constraint to linearize the fast metabolic dynamics [1]:

0 ≈ A_mg * g + A_mm * m + b_mm ≈ -A_mm⁻¹ * A_mg * g - A_mm⁻¹ * b_mInfer the interaction matricesA_mg(gene-metabolite) andA_mm(metabolite-metabolite) by solving a sparse regression problem, constrained by prior knowledge of human metabolic reactions [1]. - 4. Regulatory Network Inference via Bayesian Regression: Using the mapped data and the DAE framework, infer the final intra- and inter-layer regulatory network topology. The Bayesian approach provides robustness to noise and yields posterior probabilities for inferred edges, allowing researchers to rank interactions by confidence [1].

Protocol: GRN Inference from scRNA-seq with DAZZLE

DAZZLE addresses the prevalent "dropout" problem (false zero counts) in scRNA-seq data to improve Gene Regulatory Network (GRN) inference. It uses a novel Dropout Augmentation (DA) technique for model regularization [4].

- 1. Input Data Transformation: Start with a scRNA-seq gene expression count matrix

X(cells × genes). Transform the data to reduce variance and avoid log(0) usinglog(X + 1). - 2. Dropout Augmentation (Model Regularization): During each training iteration, randomly select a small proportion of the non-zero expression values and set them to zero. This artificially simulates additional dropout events, exposing the model to multiple noisy versions of the data and preventing it from overfitting to the specific dropout pattern in the original dataset [4].

- 3. Model Training with a Structured Autoencoder: Employ a variational autoencoder (VAE) architecture where the adjacency matrix

Aof the GRN is a parameter. The model is trained to reconstruct its input. A noise classifier is trained simultaneously to identify and down-weight values likely to be dropout noise during reconstruction [4]. - 4. Network Extraction and Sparsity Control: After training, the weights of the learned adjacency matrix

Aare retrieved as the inferred GRN. DAZZLE improves stability by delaying the application of sparsity-inducing loss terms and uses a closed-form prior, leading to faster computation and reduced model size compared to predecessors like DeepSEM [4].

Table 2: Key Research Reagent Solutions for Network Inference Studies

| Reagent / Resource | Function | Example Use Case |

|---|---|---|

| 10X Genomics Chromium | A microfluidic platform for partitioning single cells into droplets for barcoding and scRNA-seq library preparation [4]. | Generating high-throughput single-cell transcriptomic data for input into GRN inference tools like DAZZLE or MINIE. |

| LC-MS/MS System | An analytical chemistry instrument that separates complex mixtures (LC) and identifies/quantifies components with high sensitivity and specificity (MS/MS). | Profiling metabolite abundances for bulk metabolomic data, a key input for multi-omic inference with MINIE [1]. |

| Stable Isotope-Labeled Internal Standards | Chemically identical analogs to target metabolites with replaced atoms (e.g., ¹³C, ¹⁵N), used for absolute quantification in mass spectrometry. | Added to biological samples during metabolomic extraction to correct for losses during preparation and matrix effects during ionization. |

| Curated Metabolic Network (e.g., Recon3D) | A knowledgebase of manually curated human metabolic reactions, pathways, and metabolites [1]. | Used as a prior to constrain the solution space for metabolite-metabolite and gene-metabolite interactions during inference with MINIE [1]. |

| BEELINE Benchmarking Framework | A computational toolkit and standardized set of benchmarks for evaluating the performance of GRN inference algorithms [4]. | Used to validate and compare the accuracy of new inference methods like DAZZLE against existing state-of-the-art tools. |

Biological systems are governed by complex, interconnected networks that coordinate cellular functions, enabling organisms to grow, adapt, and respond to their environment. These networks—comprising transcriptional, signaling, metabolic, and protein-protein interactions—form the core framework of systems biology. The study of these networks through inference and dynamic modeling has become paramount for deciphering biological complexity, from fundamental cellular processes to disease mechanisms such as cancer. This article details the experimental and computational protocols essential for investigating the structure and dynamics of these biological networks, providing a practical guide for researchers and drug development professionals engaged in systems biology research.

Transcriptional Regulatory Networks (TRNs)

Transcriptional Regulatory Networks (TRNs) map the interactions between transcription factors and their target genes, revealing the program that controls gene expression. Inferring these networks from high-throughput data is crucial for understanding cell identity and fate decisions.

Key Methods and Protocols

Network Inference with Single-Cell Data: Accurately inferring GRNs from high-dimensional and noisy single-cell data remains a significant challenge. A robust hybrid framework, GGANO, integrates Gaussian Graphical Models for learning conditional independence between genes with Neural Ordinary Differential Equations for dynamic modeling and inference. This method demonstrates superior accuracy and stability compared to conventional approaches, particularly under high-noise conditions, and enables the inference of stochastic dynamics from single-cell data [5].

Independent Component Analysis (ICA) for TRN Expansion: A powerful approach for dissecting regulatory structures involves applying Independent Component Analysis (ICA) to large transcriptome compendia. This signal decomposition algorithm processes hundreds of RNA-seq datasets from diverse conditions and genetic backgrounds to identify "iModulons"—independently modulated gene sets. This method has been successfully used to map the TRN of Bacteroides thetaiotaomicron, a gut symbiont, leading to a significant expansion of its known regulatory network by establishing novel regulator-regulon relationships and functionally characterizing previously unknown transcription factors like specific ECF-σ factors [6].

Table 1: Core Methods for Transcriptional Network Inference

| Method | Principle | Application Example | Key Advantage |

|---|---|---|---|

| GGANO | Hybrid of Gaussian Graphical Models & Neural ODEs | Inferring gene dynamics from single-cell RNA-seq data [5] | High accuracy and robustness to noise; models stochastic dynamics |

| Independent Component Analysis (ICA) | Signal decomposition to identify co-regulated gene sets | Mapping regulons and characterizing ECF-σ factors in B. thetaiotaomicron [6] | Systematically expands known TRNs from large transcriptome compendia |

| CRISPRi-mediated Repression | Targeted knockdown of regulators to observe network perturbation | Functional validation of regulator-regulon relationships [6] | Enables causal validation of inferred regulatory links |

Experimental Workflow: TRN Reconstruction

The following diagram outlines a general workflow for reconstructing and validating a Transcriptional Regulatory Network, integrating computational inference and experimental validation.

Research Reagent Solutions for TRN Studies

Table 2: Essential Reagents for Transcriptional Network Analysis

| Reagent / Tool | Function in Protocol |

|---|---|

| Anhydrotetracycline | Inducer for CRISPRi/dCas9 systems to control sgRNA expression [6]. |

| Erythromycin & Gentamicin | Selection antibiotics for maintaining plasmids in bacterial systems [6]. |

| BHIS Broth & Anaerobic Minimal Medium | Specialized culture media for maintaining and experimenting on fastidious microorganisms like B. thetaiotaomicron [6]. |

| dCas9 Expression Vector | Engineered plasmid for targeted gene repression without cleavage in CRISPRi validation [6]. |

| Single Guide RNA (sgRNA) Libraries | Designed RNAs to target dCas9 to specific genomic loci for repression [6]. |

Signaling Networks

Signaling networks transmit information from a cell's exterior to its interior, triggering appropriate cellular responses through complex cascades of molecular interactions. Detailed protocols are essential for dissecting this complexity.

Key Methods and Protocols

Comprehensive Protocol Resources: "Signal Transduction Protocols, 3rd Edition" provides a collection of experimental methods focused on receptor-ligand interactions and the intricacies of integrated signaling pathways. These protocols are designed to enhance critical thinking and knowledge retention in signal transduction research [7].

Specialized Laboratory Techniques: Research groups, such as the Cell Signal Transduction Networks Laboratory, develop and publish specific, peer-reviewed methodologies for studying signaling networks. These protocols offer detailed, reproducible steps for particular experimental contexts [8].

Experimental Workflow: Signaling Pathway Analysis

A generalized workflow for analyzing a signaling pathway is shown below, from stimulus to response measurement.

Metabolic Networks

Metabolic networks represent the complete set of biochemical reactions within a cell, determining its metabolic capabilities and resource utilization. Their modeling is critical for understanding diseases like cancer and for metabolic engineering in plants and microbes.

Key Methods and Protocols

Flux Balance Analysis (FBA): FBA is a constraint-based modeling approach used to predict the flow of metabolites (flux) through a genome-scale metabolic network at steady state. It is widely applied to model primary and specialized metabolism and to predict phenotypes resulting from genetic or environmental perturbations [9].

13C-Metabolic Flux Analysis (13C-MFA): This technique relies on feeding cells isotope-labeled substrates (e.g., 13C-glucose) and using mass spectrometry to track the incorporation of the label into intermediate metabolites. The resulting isotopic distribution data allows for the quantitative estimation of in vivo metabolic flux rates in central carbon metabolism [10] [9].

Seahorse Metabolic Flux Analysis: This platform provides a real-time, live-cell assay for measuring key parameters of mitochondrial function, specifically the oxygen consumption rate (OCR) and extracellular acidification rate (ECAR), which serve as proxies for oxidative phosphorylation and glycolysis, respectively. It is extensively used in cancer research to profile metabolic phenotypes [10].

Dynamic Metabolic Modeling: Also known as kinetic modeling, this approach uses differential equations to describe the temporal adjustments of metabolic systems. It is particularly valuable for modeling processes that are not at steady state, such as metabolic responses to environmental stimuli or during developmental transitions [9].

Experimental Workflow: Metabolic Flux Analysis

A standard workflow for 13C-Metabolic Flux Analysis is depicted below, showing the integration of wet-lab and computational steps.

Research Reagent Solutions for Metabolic Studies

Table 3: Essential Reagents for Metabolic Network Analysis

| Reagent / Tool | Function in Protocol |

|---|---|

| 13C-Labeled Substrates (e.g., 13C-Glucose) | Tracers for MFA to follow carbon fate through metabolic pathways [10] [9]. |

| Seahorse XF Analyzer Kits | Pre-formulated assay kits to measure OCR and ECAR in live cells [10]. |

| Genetically Encoded Biosensors | Fluorescent tools for real-time monitoring of metabolite levels (e.g., NADH, ATP) in live cells [10]. |

| Anaerobic Chamber | Equipment for maintaining strict anaerobic conditions for culturing obligate anaerobes [6]. |

Protein-Protein Interaction (PPI) Networks

PPI networks map the physical associations between proteins, forming the backbone of most cellular machineries and signaling complexes.

Key Methods and Protocols

Mass Photometry: This technique measures the optical contrast generated by single protein complexes as they bind to a glass-water interface. It enables mass-resolved quantification of biomolecular mixtures, making it ideal for characterizing protein complexes, their stoichiometry, and stability without the need for labels [11].

Proximity-Dependent Labeling Techniques: Protocols such as BioID (proximity-dependent biotin identification) use engineered enzymes to biotinylate proteins in close proximity to a protein of interest. This allows for the mapping of protein interactomes in living cells, capturing weak or transient interactions that are difficult to detect with traditional methods.

Integrated Network Modeling in Systems Biology

Biological networks do not operate in isolation. A central goal of systems biology is to integrate these different network layers to build comprehensive models of cellular function.

Network Inference and Modeling Principles

Theoretical methods are indispensable for interpreting high-throughput biological data. A systems biology perspective emphasizes that methods for graph inference (reconstructing networks), graph analysis (mining networks for information), and dynamic network modeling (linking structure to behavior) are most powerful when they lead to testable biological predictions [12]. This integrated approach is applicable across model organisms, from human cells to plants [12].

Machine Learning and Future Perspectives

The field is rapidly advancing with the integration of machine learning (ML) technologies. In metabolic modeling, ML is being explored to predict enzyme kinetics, optimize metabolic models, and interpret complex multi-omics datasets [9]. Similarly, frameworks like GGANO that combine traditional models with neural networks represent the future of dynamic network inference from single-cell data [5]. These tools are poised to unravel the profound complexity and heterogeneity of biological systems.

Modern systems biology relies on high-throughput omics technologies to decipher the complex network architecture and dynamic behaviors of cellular systems. Single-cell RNA-sequencing (scRNA-seq) has revolutionized transcriptomic analysis by enabling researchers to investigate gene expression profiles at individual cell resolution, revealing cellular heterogeneity in complex biological systems that was previously obscured by bulk sequencing approaches [13]. The emergence of spatial biology and multi-omic integrations now provides unprecedented insights into how cells, molecules, and biological processes are organized and interact within their native tissue environments [14]. These technological advancements are fueling breakthroughs in oncology, neuroscience, immunology, and precision medicine by allowing researchers to study biological systems as integrated networks rather than collections of isolated components [12] [14].

The integration of scRNA-seq with other omics modalities creates a powerful framework for network inference and dynamic modeling in systems biology. Cells utilize intricate signaling and regulatory pathways connecting numerous constituents—including DNA, RNA, proteins, and small molecules—to coordinate multiple functions and adapt to changing environments [12]. High-throughput experimental methods now enable the simultaneous measurement of thousands of molecular interactions, generating complex datasets that require sophisticated theoretical approaches for meaningful interpretation [12]. This application note details standardized protocols and methodologies for leveraging these technologies to construct predictive models of cellular behavior, with particular emphasis on network inference, analysis, and modeling in systems biology research.

Single-Cell RNA-Sequencing: Core Principles and Workflows

Fundamental Technological Concepts

Single-cell RNA-sequencing represents a paradigm shift from bulk RNA-seq, with the key distinction being whether each sequencing library reflects an individual cell or a grouped cell population [13]. This technology has brought about a revolutionary change in the transcriptomic world by enabling comprehensive analysis of cellular heterogeneity, allowing researchers to observe how different cells behave at single-cell levels and providing new insights into biological processes [13]. The technique is particularly valuable for addressing crucial biological inquiries related to cell heterogeneity, early embryo development, and tumor diversity, especially in cases involving limited cell numbers [13].

The scRNA-seq workflow presents unique technical challenges distinct from bulk sequencing approaches, including scarce transcripts in single cells, inefficient mRNA capture, losses in reverse transcription, and bias in cDNA amplification due to the minute amounts of starting material [13]. scRNA-seq data are characterized by high dimensionality, noise, and sparsity, necessitating specialized computational tools for proper interpretation [15] [13]. During quality control, researchers must identify and remove low-quality individual cells and any data that might represent multiple cells (doublets), while being cautious about applying normalization techniques designed for bulk RNA-sequencing, as these can introduce significant errors into scRNA-seq data [13].

The following diagram illustrates the core steps in a standard scRNA-seq workflow, from sample preparation through data analysis:

Figure 1: scRNA-seq Workflow. The process begins with sample preparation and progresses through library preparation, sequencing, and computational analysis.

Comparative Analysis of scRNA-seq Methodologies

The table below provides a comprehensive comparison of major scRNA-seq protocols, highlighting their key technical specifications and applications:

Table 1: Comparison of Single-Cell RNA-Sequencing Protocols

| Protocol | Isolation Strategy | Transcript Coverage | UMI | Amplification Method | Unique Features and Applications |

|---|---|---|---|---|---|

| Smart-Seq2 | FACS | Full-length | No | PCR | Enhanced sensitivity for detecting low-abundance transcripts; generates full-length cDNA ideal for isoform analysis [13]. |

| Drop-Seq | Droplet-based | 3'-end | Yes | PCR | High-throughput and low cost per cell; scalable to thousands of cells simultaneously [16] [13]. |

| inDrop | Droplet-based | 3'-end | Yes | IVT | Uses hydrogel beads; low cost per cell; efficient barcode capture [13]. |

| CEL-Seq2 | FACS | 3'-only | Yes | IVT | Linear amplification reduces bias compared to PCR methods [13]. |

| Seq-Well | Droplet-based | 3'-only | Yes | PCR | Portable, low-cost, easily implemented without complex equipment [13]. |

| DroNC-Seq | Droplet-based | 3'-only | Yes | PCR | Specialized for single-nucleus sequencing, minimal dissociation bias [16] [13]. |

| SPLiT-Seq | Not required | 3'-only | Yes | PCR | Combinatorial indexing without physical separation; highly scalable and low cost [13]. |

| MATQ-Seq | Droplet-based | Full-length | Yes | PCR | Increased accuracy in quantifying transcripts; efficient detection of transcript variants [13]. |

| Fluidigm C1 | Droplet-based | Full-length | No | PCR | Microfluidics-based single-cell capture; precise cell handling [16] [13]. |

Cell Isolation Strategies

The initial stage of performing scRNA-seq involves extracting viable individual cells from the tissue of interest. The table below summarizes the primary methods for cell preparation and isolation:

Table 2: Cell Isolation Methods for scRNA-seq

| Method | Principle | Advantages | Limitations | Applications |

|---|---|---|---|---|

| FACS | Fluorescent-activated cell sorting | High purity and specificity based on surface markers; can select rare cell populations | Requires specialized equipment; potential for cellular stress during sorting | Ideal for targeted isolation of specific cell types using antibody-based selection [13]. |

| Droplet-based | Microfluidic encapsulation of cells | High-throughput processing of thousands of cells; cost-effective per cell | Limited control over individual cell selection; potential for multiple cells per droplet (doublets) | Excellent for unbiased profiling of complex tissues and tumor microenvironments [16] [13]. |

| Combinatorial Indexing | Split-pooling with barcodes | Does not require physical separation; can process millions of cells; no specialized equipment needed | Complex barcode design and analysis; potential index hopping | Suitable for very large-scale studies with limited sample material [13]. |

Novel methodologies have emerged to address specific research challenges. For instance, single-nucleus RNA-seq (snRNA-seq) is employed when tissue dissociation is problematic or when working with frozen samples or fragile cells [13]. Split-pooling techniques using combinatorial indexing (cell barcodes) offer distinct advantages over isolation of intact single cells, including the ability to handle large sample sizes (up to millions of cells) and greater efficiency in parallel processing of multiple samples while eliminating the need for expensive microfluidic devices [13].

Advanced Multi-Omics Integration and Spatial Biology

Emerging Multi-Omic Technologies

The field of single-cell analysis has evolved beyond transcriptomics to include multi-omic approaches that simultaneously measure multiple molecular layers from the same cells. SUM-seq (single-cell ultra-high-throughput multiplexed sequencing) represents a recent advancement that enables cost-effective, scalable co-assaying of chromatin accessibility and gene expression in single nuclei [17]. This technology allows profiling of hundreds of samples at the million-cell scale, outperforming current high-throughput single-cell methods in both throughput and data quality [17].

SUM-seq builds on a two-step combinatorial indexing approach, extending it to multi-omic applications. The method involves several key steps: (1) nuclei isolation and fixation, (2) introduction of unique sample indices for both ATAC and RNA modalities, (3) pooling of samples, (4) microfluidic barcoding, and (5) modality-specific library preparation and sequencing [17]. Performance metrics of SUM-seq consistently demonstrate high quality for both snRNA (UMIs and genes per cell) and snATAC (fragments in peaks per cell, TSS enrichment score), with library complexity metrics outperforming other ultra-high-throughput assays [17].

Spatial Biology and Tissue Context

Spatial biology has emerged as a transformative discipline that enables researchers to study how cells, molecules, and biological processes are organized and interact within their native tissue environments [14]. By combining spatial transcriptomics, proteomics, metabolomics, and high-plex multi-omics integration with advanced imaging, spatial biology provides unprecedented insights into disease mechanisms, cellular interactions, and tissue architecture [14].

The global spatial biology market is experiencing rapid growth, projected to reach $6.39 billion by 2035, with a compound annual growth rate of 13.1% [14]. This expansion is driven by rising investments in spatial transcriptomics for precision medicine, the growing importance of functional protein profiling in drug development, and the expanding use of retrospective tissue analysis for biomarker research [14]. Leading players are shaping the competitive landscape through partnerships, acquisitions, and product innovations, with companies like Bruker Corporation, Vizgen, Akoya Biosciences, and 10x Genomics advancing integrated spatial solutions [14].

Research Reagent Solutions for Multi-Omic Studies

Table 3: Essential Research Reagents and Platforms for scRNA-seq and Multi-Omics

| Reagent/Platform | Function | Application Notes |

|---|---|---|

| 10x Chromium | Microfluidic platform for single-cell partitioning | Enables high-throughput cell barcoding and library preparation; widely adopted for droplet-based scRNA-seq and multi-ome applications [17]. |

| Tn5 Transposase | Tagmentase for chromatin accessibility profiling | Loaded with barcoded oligos in SUM-seq for indexing accessible genomic regions in ATAC sequencing [17]. |

| Barcoded Oligo-dT Primers | Cell barcoding during reverse transcription | Critical for introducing unique cell identifiers during cDNA synthesis; enables sample multiplexing [17]. |

| Polyethylene Glycol (PEG) | Reverse transcription enhancer | Increases number of UMIs and genes detected per cell (~2.5- and ~2-fold respectively) with minor impact on ATAC quality [17]. |

| Glyoxal Fixative | Nuclear fixation and preservation | Maintains sample integrity for asynchronous sampling; compatible with cryopreservation for prolonged storage [17]. |

| Blocking Oligonucleotides | Reduces barcode hopping | Minimizes index cross-contamination in overloaded droplets, particularly important for ATAC modality [17]. |

| Unique Molecular Identifiers (UMIs) | Correction for PCR amplification bias | Molecular tags that distinguish original RNA molecules from PCR duplicates; essential for accurate transcript quantification [13]. |

Network Inference and Dynamic Modeling from Single-Cell Data

Computational Framework for Network Inference

The complexity and high-dimensional nature of single-cell data necessitate advanced computational approaches for network inference and dynamic modeling. GGANO represents a recent hybrid framework that integrates Gaussian Graphical Models for conditional independence learning with Neural Ordinary Differential Equations for dynamic modeling and inference [5]. Benchmark analyses demonstrate that GGANO achieves superior accuracy and stability compared to existing methods, particularly under high-noise conditions commonly encountered in scRNA-seq data [5].

Gene regulatory networks (GRNs) form the foundation of cellular decision-making processes, and accurately inferring GRN architecture from high-dimensional and noisy single-cell data remains a major challenge in systems biology [5]. Conventional approaches often struggle with robustness and interpretability, particularly when applied to complex biological processes such as cell fate decisions and complex diseases [5]. The application of GGANO to epithelial-mesenchymal transition (EMT) datasets has enabled researchers to uncover intermediate cellular states and identify key regulatory genes driving EMT progression [5].

Bayesian Multimodel Inference for Enhanced Certainty

Mathematical models are indispensable for studying the architecture and behavior of intracellular signaling networks, but it is common to develop multiple models to represent the same pathway due to phenomenological approximations necessitated by incomplete observation of intermediate steps in intracellular signaling pathways [18]. Bayesian multimodel inference (MMI) has emerged as a powerful approach to increase certainty in systems biology predictions when leveraging a set of potentially incomplete models [18].

The Bayesian MMI workflow involves: (1) calibrating available models to training data by estimating unknown parameters with Bayesian inference, (2) combining the resulting predictive probability densities using MMI, and (3) generating improved multimodel predictions of important quantities in systems biology studies [18]. This approach constructs predictors by taking a linear combination of predictive densities from each model: p(q|dtrain, 𝔐K) = Σ{k=1}^K wk p(qk|Mk, dtrain), with weights wk ≥ 0 that sum to 1 [18]. Application of MMI to ERK signaling pathway models has demonstrated increased certainty in predictions and robustness to changes in model composition and data uncertainty [18].

Network Inference Workflow

The following diagram illustrates the integrated computational pipeline for inferring gene regulatory networks from single-cell multi-omics data:

Figure 2: Network Inference Pipeline. Computational workflow for reconstructing gene network structure and dynamics from single-cell data.

Applications in Drug Discovery and Development

Model-Informed Drug Development (MIDD)

The integration of high-throughput single-cell technologies with Model-Informed Drug Development (MIDD) represents a paradigm shift in pharmaceutical research and development. MIDD provides an essential framework for advancing drug development and supporting regulatory decision-making by offering quantitative predictions and data-driven insights that accelerate hypothesis testing, improve candidate assessment efficiency, and reduce costly late-stage failures [19].

The "fit-for-purpose" strategic roadmap for MIDD aligns modeling tools with key questions of interest (QOI), context of use (COU), and model impact across all stages of drug development—from early discovery to post-market lifecycle management [19]. This approach demonstrates how MIDD tools can enhance target identification, assist with lead compound optimization, improve preclinical prediction accuracy, facilitate First-in-Human (FIH) studies, optimize clinical trial design including dosage optimization, describe clinical population pharmacokinetics/exposure-response (PPK/ER) characteristics, and support label updates during post-approval stages [19].

Omics-Enhanced Large Language Models in Drug Discovery

Large language models (LLMs), originally developed for natural language processing, are emerging as powerful tools to address challenges in omics data analysis, including high dimensionality, noise, and heterogeneity [15]. These models capture complex patterns and infer missing information from large, noisy datasets, enabling new applications in drug discovery [15].

A three-part framework for leveraging omics-based LLMs includes: (1) analyzing how LLM architectures and learning paradigms handle challenges specific to genomics, transcriptomics, and proteomics data; (2) detailing LLM applications in key areas such as uncovering disease mechanisms, identifying drug targets, predicting drug response, and simulating cellular behavior; and (3) discussing how insights from omics-integrated LLMs can inform the development of drugs targeting specific pathways, moving beyond single targets toward strategies grounded in underlying disease biology [15]. This framework provides both conceptual insights and practical guidance for leveraging LLMs in omics-driven drug discovery and development [15].

Applications in Personalized Medicine and Therapeutic Innovation

Single-cell and spatial omics technologies are driving innovations in personalized medicine by enabling detailed characterization of disease mechanisms at cellular resolution. These approaches are particularly valuable in oncology, where they facilitate the identification of novel therapeutic targets through comprehensive analysis of tumor microenvironments, cellular heterogeneity, and drug resistance mechanisms [13] [20].

The application of these technologies extends to neuroscience, immunology, and rare diseases, where they contribute to biomarker discovery, patient stratification, and understanding of disease pathogenesis [14] [20]. The continued advancement and integration of single-cell multi-omics technologies with computational modeling approaches promise to transform therapeutic development and precision medicine over the coming decade [14] [19].

In systems biology, complex molecular interactions are abstracted into networks to facilitate computational analysis and hypothesis generation. A network or graph provides a mathematical framework comprising nodes (vertices representing biological entities like genes or proteins) and edges (connections representing interactions or relationships) [21] [22]. The choice of network representation—directed or undirected, signed or unsigned—is a fundamental methodological decision that directly influences the biological insights that can be derived from network inference and dynamic modeling protocols [22] [23]. Directed networks incorporate asymmetric relationships where edges originate from one entity and terminate at another, whereas undirected networks represent symmetric, reciprocal relationships [24] [22]. Similarly, signed networks label edges with positive (activation) or negative (inhibition) signs, while unsigned networks only indicate the presence or absence of an interaction without specifying its nature [25] [23]. This application note provides a structured comparison of these representations and detailed protocols for their application in systems biology research, with a specific focus on gene regulatory network (GRN) inference.

Theoretical Foundations and Comparative Analysis

Directed vs. Undirected Networks

Table 1: Fundamental Differences Between Directed and Undirected Networks

| Feature | Directed Networks | Undirected Networks |

|---|---|---|

| Relationship Symmetry | Asymmetric (A→B does not imply B→A) | Symmetric (A-B implies B-A) [22] |

| Edge Representation | Arrows indicating direction [22] | Simple lines without direction [22] |

| Mathematical Formalization | Set of ordered pairs (E ⊆ V×V) [24] | Set of unordered pairs [22] |

| Connectivity | Path from A to B does not guarantee path from B to A [22] | Connectivity is always mutual |

| Centrality Measures | Separate in-degree and out-degree centrality [24] | Single degree centrality [22] |

| Typical Applications | Signal transduction, regulatory networks, web graphs, citation networks [24] [22] | Protein-protein interaction, co-expression networks, friendship networks [22] [23] |

Directed networks, or digraphs, are characterized by edges with a specific orientation, where a connection from node A to node B (an arc) does not imply a reciprocal connection from B to A [24]. This directionality introduces asymmetry, meaning the adjacency matrix representing the network is typically not symmetric [24]. Key concepts in directed networks include in-degree (number of incoming edges) and out-degree (number of outgoing edges), which quantify a node's prominence as a receiver or source of influence, respectively [24]. Connectivity is assessed through strongly connected components (where every node is reachable from every other via directed paths) and weakly connected components (where connectivity holds only when ignoring edge directions) [24]. In contrast, undirected networks represent bidirectional relationships where a connection between node A and node B is inherently mutual [22]. The adjacency matrix for an undirected network is symmetric, and a single degree centrality measure suffices for each node [22]. The choice between these models should be guided by the nature of the biological relationships under investigation, the specific research questions, and the available data [22].

Signed vs. Unsigned Networks

Table 2: Comparison of Signed and Unsigned Network Representations

| Feature | Signed Networks | Unsigned Networks |

|---|---|---|

| Edge Information | Includes sign (+ for activation, - for inhibition) [23] | Presence or absence of interaction only [23] |

| Biological Specificity | High; indicates nature of regulatory effect [23] | Low; indicates interaction without mechanistic detail |

| Correlation Treatment | Strong negative correlations may be treated as no connection [25] | Strong correlations (positive or negative) indicate connection [25] |

| Inference Complexity | Higher; requires determining sign of interaction | Lower; focus is on interaction existence |

| Analytical Utility | Essential for drawing biological insights on regulation [23] | Sufficient for theoretical work on network structure [23] |

| Recommended Use | Default for most biological applications [25] | When interaction type is unknown or irrelevant |

Signed networks encode the nature of interactions, crucially distinguishing between activation (positive edges) and inhibition/repression (negative edges) [23]. This representation is vital for understanding the dynamic behavior of biological systems, as it allows modelers to distinguish between reinforcing and antagonistic relationships. The Causal Attitude Network (CAN) model is an example that conceptualizes attitudes as networks of causally interacting evaluative reactions, a framework that can be adapted for biological signaling networks [21]. In a signed network, the sign of a correlation matters; some methodologies suggest that strongly negatively correlated nodes should be considered unconnected, leading to a "signed" network where the adjacency matrix contains only non-negative values despite the sign information [25]. Unsigned networks, conversely, only represent whether an interaction exists, treating strong correlations—whether positive or negative—as connections [25] [23]. While unsigned networks are simpler to construct and are often used in theoretical work, signed networks are generally preferable for biological applications because they preserve critical information about the direction of regulatory effects, which is essential for functional insights and predictive modeling [25] [23].

Experimental Protocols for Network Inference

Protocol 1: Inferring a Signed, Directed Gene Regulatory Network from Single-Cell RNA-Seq Data

This protocol details the steps for reconstructing a gene regulatory network (GRN) from single-cell RNA-sequencing data, resulting in a directed and signed network that captures causal regulatory relationships and their activating/inhibitory nature.

1. Experimental Design and Data Generation

- Single-Cell RNA-Sequencing: Perform single-cell RNA-seq on a population of cells under the biological condition of interest (e.g., a disease state, developmental time point, or after a perturbation). Each cell provides a snapshot of the expression levels of all genes, resulting in a matrix where rows represent cells and columns represent genes [23] [26].

- Pseudo-Temporal Ordering: If the data is from a static, heterogeneous population (e.g., cells at different stages of a process), use computational tools (e.g., Monocle, PAGA) to infer a pseudo-temporal ordering of the cells. This creates a trajectory that mimics the dynamic process being studied [23].

- Gene Selection: Identify a subset of genes with high biological variance for network analysis. This is typically done by plotting the Coefficient of Variation (CV; standard deviation/mean) against the mean expression for all genes and selecting genes with significantly higher CVs than expected for their expression level [23].

2. Data Preprocessing and Normalization

- Quality Control: Filter out low-quality cells and genes with low expression.

- Normalization: Normalize the expression counts to account for technical variations (e.g., sequencing depth). Common methods include log-transformation or more sophisticated approaches designed for single-cell data [26].

- Input Data Structure: The final preprocessed data for N selected genes is represented as a set of random variables {X₁, X₂, ..., Xₙ}, where each variable corresponds to the expression level of a gene (a node in the future GRN) [23].

3. Network Inference Using a Regression-Based Algorithm

- Algorithm Selection: Choose a regression-based inference algorithm capable of producing directed and signed networks, such as GENIE3 or TIGRESS [23]. The core principle is to solve, for each gene j, a regression problem where the expression of j is predicted from the expression of all other genes i (where i ≠ j).

- Model Execution: For each target gene Xⱼ, the algorithm solves an equation of the form: Xⱼ = f(X₁, X₂, ..., Xₙ), excluding Xⱼ itself. The function f can be a tree-based model (as in GENIE3) or a linear regression model with feature selection. The weight of the edge Xᵢ → Xⱼ is derived from the importance of Xᵢ in predicting Xⱼ [23].

- Sign Assignment: Once the set of significant interactions (edges) is identified, determine the sign of each edge. This is typically achieved by calculating the correlation (e.g., Pearson or Spearman) between the expression levels of the regulator gene Xᵢ and the target gene Xⱼ across the single-cell data. A positive correlation yields a "+" sign (activation), and a negative correlation yields a "-" sign (inhibition) [23].

4. Output and Validation

- Network Output: The final output is a set of weighted, directed, and signed edge predictions. The weight represents the confidence that a real regulatory interaction exists, the direction indicates the inferred causal relationship (Xᵢ → Xⱼ), and the sign indicates the type of regulation [23].

- Validation: Evaluate the accuracy of the inferred network against a "gold standard" dataset if available (e.g., from DREAM Challenges) using precision-recall (PR) curves or receiver operating characteristic (ROC) curves. PR curves are often considered superior for this task [23]. Experimental validation, such as siRNA knockdown or CRISPR inhibition of predicted regulator genes followed by qPCR of target genes, is the ultimate confirmation.

Protocol 2: Constructing an Unsigned, Undirected Co-Expression Network

This protocol outlines the creation of a gene co-expression network, which is typically undirected and unsigned, used to identify groups of functionally related genes without inferring causality.

1. Data Input and Preprocessing

- Use a gene expression matrix from either bulk RNA-seq or single-cell RNA-seq data. Preprocess and normalize the data as described in Protocol 1, Step 2 [23].

2. Correlation Matrix Calculation

- Compute the pairwise correlation matrix for all selected genes. The Pearson correlation coefficient is commonly used, but other measures like Spearman rank correlation can be more robust to outliers [23].

- For each pair of genes (i, j), the correlation coefficient rᵢⱼ is calculated, resulting in a symmetric matrix.

3. Network Construction and Thresholding

- The correlation matrix itself represents a fully connected, weighted, undirected network. To obtain a biologically meaningful and sparse network, apply a threshold.

- Threshold Selection: Choose a correlation threshold (e.g., |r| > 0.7) or use a method like Weighted Gene Co-expression Network Analysis (WGCNA) to transform the correlation matrix into an adjacency matrix [23]. In WGCNA, a signed or unsigned adjacency matrix can be created using a power function (soft thresholding) to emphasize strong correlations.

- Adjacency Matrix: The final adjacency matrix A is defined such that Aᵢⱼ = |rᵢⱼ|^β for an unsigned network (or other transformations for signed), where β is the soft-thresholding power. This step reduces the impact of weak correlations [23].

4. Module Detection and Analysis

- Use the resulting adjacency matrix to detect modules (clusters) of highly interconnected genes. This is typically done with hierarchical clustering and dynamic tree cutting.

- The resulting network is undirected (edges represent mutual co-expression) and unsigned (edges do not distinguish between positive and negative co-regulation, though the underlying correlations do) [23]. The primary output is a set of gene modules for functional enrichment analysis.

The Scientist's Toolkit: Essential Reagents and Computational Tools

Table 3: Key Research Reagent Solutions for Network Inference and Validation

| Item Name | Type | Function in Protocol |

|---|---|---|

| Single-Cell RNA-Seq Kit (e.g., 10x Genomics) | Wet-lab Reagent | Generates the primary gene expression matrix from individual cells, the foundational data for network inference [23] [26]. |

| DREAM Challenge Datasets | Computational Resource | Provide gold standard benchmarks with known network structures for objective algorithm evaluation and validation [23]. |

| GENIE3 / TIGRESS | Software Algorithm | Regression-based methods for inferring directed GRNs from expression data [23]. |

| WGCNA R Package | Software Algorithm | Constructs undirected co-expression networks and identifies functional gene modules from correlation matrices [23]. |

| siRNA or CRISPR-Cas9 Kit | Wet-lab Reagent | Enables experimental perturbation (knockdown/knockout) of predicted regulator genes to validate inferred edges in the network [23]. |

Advanced Topics: Dynamic and Multi-Scale Network Modeling

Biological systems are inherently dynamic. Moving beyond static network snapshots to model temporal dynamics is a key frontier. The ProbRules approach combines probabilities and logical rules to represent system dynamics across multiple scales, which is particularly useful for signal transduction networks where reactions occur in different spatial and temporal frames [27]. In this framework, the state of an interaction is represented by a probability, and a set of rules drives the evolution of these probabilities over time, allowing the model to capture the logic of succession in interaction activities [27].

For inferring dynamic networks from single-cell data, methods like GRIT (Gene Regulation Inference by Transport) have been introduced. GRIT uses optimal transport theory to fit a differential equation model to data by tracking the evolution of the cell distribution over time, effectively inferring the underlying GRN [26]. Furthermore, statistical formulations that connect single-cell stochastic dynamics to network inference on population-averaged data are crucial for proper experimental design and interpretation [28]. These models start from a description of stochastic dynamics in single cells and, through a series of assumptions, arrive at a general statistical framework for network inference from discretely observed data [28].

Network motifs are statistically over-represented, recurring patterns of interconnections found in complex biological networks [29] [30]. These small subgraphs function as fundamental computational circuits that define the dynamic behavior and functional capabilities of cellular regulatory systems [23] [31]. As building blocks of complex networks, motifs perform specific information-processing functions including signal amplification, noise filtering, temporal regulation, and response acceleration [32] [29]. The identification and characterization of these motifs provide crucial insights into the design principles of biological systems and enable the development of predictive dynamic models.

In transcriptional regulatory networks, three-node motifs are particularly significant for their well-characterized dynamical properties. Among the thirteen possible three-node directed subgraphs, only a select few are found to be statistically overrepresented in natural networks, suggesting they have been evolutionarily selected for their functional advantages [30] [33]. The most extensively studied of these include feed-forward loops, cascades, and fan-ins/outs, each conferring distinct information-processing capabilities to the network [23] [29]. This application note provides a comprehensive overview of these common network motifs, detailing their structures, dynamical functions, and experimental protocols for their inference and analysis within systems biology research.

Feed-Forward Loops: Structure, Dynamics, and Experimental Analysis

Architectural Configurations and Functional Classification

The feed-forward loop (FFL) is one of the most prevalent and well-characterized network motifs in biological systems, consistently identified as statistically overrepresented in transcriptional networks from E. coli to humans [32] [33]. This three-node pattern consists of a top regulator (X) that controls a target gene (Z) both directly and indirectly through a second regulator (Y), forming two parallel regulatory paths [32]. The FFL motif exists in eight possible configurations depending on the activation/repression nature of each interaction, which are broadly categorized as coherent or incoherent [32] [33].

In coherent FFLs (C-FFLs), the direct regulatory path from X to Z and the indirect path through Y have the same overall sign, resulting in reinforcing regulation. Conversely, in incoherent FFLs (I-FFLs), the direct and indirect paths have opposing signs, creating potentially opposing regulatory effects [32]. Among these configurations, the coherent type 1 (C1-FFL, with all three interactions being activations) and incoherent type 1 (I1-FFL, where X activates both Y and Z, but Y represses Z) occur with the highest frequency in natural networks [32]. The abundance of these specific configurations reflects their particular functional utility in cellular information processing.

Figure 1: Basic structure of a coherent type-1 feed-forward loop (C1-FFL) with all activating interactions. Node X regulates Z both directly and through regulator Y.

Dynamical Properties and Biological Functions

The specific temporal dynamics and functional capabilities of FFLs depend on both their configuration (coherent/incoherent) and the logical rules (AND/OR) governing integration of signals at the Z promoter [32] [33]. The table below summarizes the key dynamical functions of major FFL types:

Table 1: Functional properties of common FFL configurations

| FFL Type | Logic | Key Dynamical Function | Biological Examples |

|---|---|---|---|

| C1-FFL | AND | Sign-sensitive delay; Pulse filtering | Arabinose catabolism system in E. coli [33] |

| C1-FFL | OR | Off-delay; Response persistence | Not specified in sources |

| I1-FFL | AND | Pulse generation; Response acceleration | Galactose utilization system [32] |

| I1-FFL | OR | Accelerated shutdown | Not specified in sources |

The C1-FFL with AND logic functions as a sign-sensitive delay element that filters out transient input signals [32] [33]. When the input signal appears, the motif introduces a delay in Z activation because both X and Y must be present to activate Z (AND logic). However, when the input signal disappears, Z expression shuts off immediately without delay. This asymmetric temporal response allows the system to distinguish between persistent and transient input signals, potentially filtering out stochastic noise in the cellular environment [32].

The I1-FFL with AND logic operates as a pulse generator and response accelerator [32]. When the input signal appears, X rapidly activates Z directly, leading to immediate expression. As Y accumulates, it begins to repress Z, resulting in a pulse-like expression pattern. This configuration can accelerate response times compared to simple regulation by generating a rapid initial burst of expression before settling into steady-state levels [32]. The functional versatility of FFLs explains their evolutionary conservation across diverse biological systems and their implementation in synthetic biology applications [32].

Experimental Protocol: Inference and Validation of FFLs

Objective: Identify and validate functional feed-forward loop motifs from gene expression data.

Materials and Reagents:

- Single-cell RNA sequencing data (scRNA-seq)

- Gene expression matrix (cells × genes)

- Computational resources for network inference

- Validation reagents (qPCR, CRISPRi, reporter constructs)

Procedure:

Data Preprocessing

Network Inference

- Apply multiple inference algorithms (e.g., PIDC, GENIE3, PHIXER) to generate candidate networks [23]

- Extract all three-node subgraphs from the inferred network

- Calculate statistical significance using Z-score comparison to randomized networks [33]: [ Z = \frac{N{\text{obs}} - \langle N{\text{rand}} \rangle}{\sigma{\text{rand}}} ] where (N{\text{obs}}) is the count in the observed network, (\langle N{\text{rand}} \rangle) is the mean count in randomized networks, and (\sigma{\text{rand}}) is the standard deviation.

Motif Validation

- Select top candidate FFLs for experimental validation

- For each FFL (X→Y, X→Z, Y→Z), verify regulatory relationships using:

- Chromatin Immunoprecipitation (ChIP) for transcription factor binding

- CRISPRi-based repression of X and Y with measurement of Z expression

- Reporter gene constructs with mutated binding sites

- Test dynamic response to X induction/repression using time-course qPCR

Functional Characterization

- Measure temporal expression patterns of Z in response to X activation

- Compare response dynamics to theoretical predictions

- Assess noise-filtering capabilities under stochastic stimulation

Troubleshooting:

- High false-positive rates in network inference may require integration of prior knowledge [34]

- Biological variability may necessitate increased replicate numbers

- Confirming direct versus indirect regulation requires additional experimental approaches

Cascades and Fan-In/Fan-Out Motifs

Structural Properties and Network Context

Beyond FFLs, cascades and fan-in/fan-out motifs represent fundamental architectural patterns in regulatory networks. While these motifs may not always be statistically overrepresented in isolation, they form essential connective structures that contribute significantly to network dynamics [23].

Cascades (also referred to as regulatory paths) represent linear sequences of regulatory interactions where X regulates Y, which in turn regulates Z, creating a directional flow of information through the network [23]. Correlation-based network inference algorithms have been noted to demonstrate increased false positive rates for cascade structures, often misinterpreting them as direct regulations [23].

Fan-in motifs occur when multiple regulators converge on a single target gene, enabling integrative signal processing and combinatorial control [23] [29]. In contrast, fan-out motifs feature a single regulator controlling multiple target genes, facilitating coordinated expression of functionally related genes [23] [29].

Figure 2: Structural representations of cascade, fan-in, and fan-out network motifs.

Functional Significance in Regulatory Networks

The table below summarizes the key functional roles of these motifs and their detection by different inference algorithms:

Table 2: Functional properties of cascade, fan-in, and fan-out motifs

| Motif Type | Functional Role | Algorithm Detection Performance |

|---|---|---|

| Cascade | Information transmission; Temporal delay | Increased false positives with correlation methods [23] |

| Fan-in | Combinatorial control; Signal integration | Regression methods perform poorly [23] |

| Fan-out | Coordinated expression; Single-input modules | Regression methods perform poorly [23] |

Fan-in architectures allow for complex logical integration of multiple signals at promoter regions, enabling precise control of gene expression based on specific combinations of input signals [29]. This combinatorial control is essential for context-specific gene regulation in response to multiple environmental or developmental cues.

Fan-out structures, often organized as single-input modules (SIMs), enable synchronized expression of genes encoding functionally related proteins, such as enzymes in a metabolic pathway or components of a macromolecular complex [29]. This coordinated control ensures proper stoichiometry of interacting components and efficient resource allocation.

Regression-based network inference methods generally perform poorly in detecting both fan-in and fan-out motifs [23], highlighting the importance of employing multiple algorithmic approaches for comprehensive network mapping. Correlation-based methods, while generally simplistic, tend to perform relatively well on detecting feed-forward loops, fan-ins, and fan-outs, though with increased false positive rates for cascades [23].

Integrated Analysis of Network Motifs

Motif Aggregation and Higher-Order Organization

Network motifs do not function in isolation but rather aggregate to form higher-order structures that define the overall system behavior [35]. The specific ways in which motifs connect and cluster within networks reveal architectural principles that are characteristic of different biological systems [35]. Methods to quantify motif clustering (M~c~) have been developed to capture the propensity of motifs to share nodes, with different network types exhibiting distinctive clustering patterns [35].

In metabolic networks, type 6 FFL clusters (characterized by a single node that serves as input to all other nodes in the cluster) dominate, representing over 70% of FFL clustering in both E. coli and A. fulgidus metabolism [35]. This architecture reflects the broad use of common metabolites (such as ATP and ADP) by multiple biosynthetic pathways, creating highly interconnected functional modules [35].

The analysis of motif aggregation patterns provides insights into evolutionary constraints and functional requirements that shape network architecture. Different types of FFL clusters predominate in different network types, suggesting that specific clustering patterns are better suited to particular biological functions [35].

Advanced Protocol: Multi-Omic Network Inference with MINIE

Objective: Infer regulatory networks integrating transcriptomic and metabolomic data to capture cross-layer motif organization.

Materials and Reagents:

- Time-series scRNA-seq data

- Time-series bulk metabolomics data

- MINIE software package [1]

- Prior knowledge database of molecular interactions

Procedure:

Data Preparation

- Collect synchronized time-series transcriptomic and metabolomic data

- Align temporal measurements from both omic layers

- Normalize datasets using platform-specific methods

Model Configuration

- Implement Differential-Algebraic Equation (DAE) framework: [ \begin{array}{ll} \dot{g} = f(g,m,bg;\theta) + \rho(g,m)w \ \dot{m} = h(g,m,bm;\theta) \approx 0 \end{array} ] where (g) represents gene expression levels, (m) represents metabolite concentrations, and (\dot{m} \approx 0) reflects the timescale separation assumption [1].

- Incorporate prior knowledge of metabolic reactions to constrain possible interactions

Network Inference

- Perform transcriptome-metabolome mapping using sparse regression

- Infer intra-layer and cross-layer regulatory interactions via Bayesian regression

- Generate consensus network integrating multiple inference runs

Motif Analysis

- Extract all three-node subgraphs from the inferred multi-omic network

- Calculate statistical significance against appropriate null models

- Identify statistically overrepresented motifs using z-score analysis

- Characterize motif clustering patterns and inter-motif connections

Experimental Validation

- Select key cross-layer motifs for targeted validation

- Use genetic perturbations (knockdown/overexpression) of transcriptomic nodes

- Measure effects on metabolite levels via targeted mass spectrometry

- Verify regulatory relationships using reporter assays

Applications:

- Identification of functional motifs that span molecular layers

- Discovery of metabolite-mediated regulatory pathways

- Characterization of multi-layer network architecture in disease states

Research Reagents and Computational Tools

Table 3: Essential research reagents and computational tools for network motif analysis

| Resource Type | Specific Examples | Function/Application |

|---|---|---|

| Network Inference Algorithms | PIDC [23], GENIE3 [23] [34], PHIXER [23] | Inference of regulatory networks from expression data |

| Motif Discovery Tools | FANMOD [30], Kavosh [30], G-tries [30] | Identification of overrepresented network motifs |

| Statistical Frameworks | Exponential Random Graph Models (ERGMs) [31] | Assessment of motif significance controlling for network topology |

| Experimental Validation | CRISPRi, ChIP-seq, Reporter constructs | Verification of predicted regulatory interactions |

| Data Types | scRNA-seq [34], Bulk metabolomics [1] | Multi-omic measurements for network inference |

Network motifs represent fundamental building blocks of biological systems, encoding specific information-processing functions that define cellular regulatory dynamics. The feed-forward loop, with its diverse configurations and functional capabilities, serves as a paradigm for understanding how simple regulatory architectures can generate complex temporal dynamics. Cascades and fan-in/fan-out motifs provide essential connective structures that enable information flow and signal integration throughout regulatory networks.

Advanced network inference methods, particularly those integrating multi-omic data and accounting for timescale separation between molecular layers, provide powerful approaches for identifying these motifs in biological systems [1]. The experimental protocols outlined here offer comprehensive frameworks for inferring, validating, and characterizing network motifs, enabling researchers to move from computational predictions to biological insights.

As systems biology continues to evolve, the analysis of motif organization and their higher-order aggregation will remain essential for understanding the design principles of biological systems and for engineering synthetic circuits with predictable functions. The integration of sophisticated computational approaches with rigorous experimental validation provides a pathway toward deciphering the regulatory logic that underpins cellular behavior.

Core Algorithms and Dynamic Model Construction for Network Inference

In the broader context of protocols for network inference and dynamic modeling in systems biology, the reconstruction of interaction graphs from experimental data is a fundamental task [36]. Gene co-expression networks are a powerful representation for this purpose, where nodes correspond to genes and edges represent significant co-expression relationships inferred from transcriptomic data across multiple samples [37] [36]. The underlying principle is that genes exhibiting highly correlated expression patterns are likely to be functionally related or part of the same biological pathway, enabling "guilt-by-association" approaches for predicting gene function [37] [36]. Correlation-based methods provide a critical foundation for generating biological hypotheses about regulatory mechanisms and functional associations, ultimately supporting drug discovery and therapeutic development [38] [39].

Theoretical Foundations of Correlation Metrics

The choice of correlation metric fundamentally influences the structure and biological relevance of the constructed network. Different metrics capture distinct types of statistical dependencies between gene expression profiles.

Table 1: Comparison of Correlation Metrics for Gene Co-Expression Network Construction

| Correlation Metric | Relationship Type Captured | Key Advantages | Key Limitations |

|---|---|---|---|

| Pearson Correlation | Linear relationships [40] | Simple, fast to compute [40] | Sensitive to outliers; assumes normality; misses non-linear dependencies [40] |

| Spearman Correlation | Monotonic relationships [40] | Robust to outliers; no distributional assumptions [40] | Less statistical power for continuous data; loses information via ranking [40] |

| Distance Correlation | Linear and non-linear dependencies [37] [40] | Captures complex relationships; distribution-free; zero only if independent [37] [40] | Computationally intensive [40] |

| Maximal Information Coefficient (MIC) | Complex, non-linear relationships [40] | Captures a wide range of associations [40] | Can create false correlations; lacks mathematical justification [40] |

Signed Distance Correlation has been introduced to combine the strengths of distance correlation with information on the direction of association. After calculating the non-negative distance correlation, the sign of the Pearson correlation is imposed to distinguish between positive and negative co-expression relationships, which may be biologically relevant [37].

Protocol: Constructing a Co-Expression Network Using WGCNA

Weighted Gene Co-expression Network Analysis (WGCNA) is a widely used framework for constructing co-expression networks and identifying modules of highly correlated genes [41]. The following protocol outlines the key steps.

Data Pre-processing and Normalization

- Input Data Preparation: Begin with a gene expression matrix (e.g., from RNA-seq or microarrays) where rows represent genes and columns represent samples [37].

- Data Cleaning: Remove genes with excessive missing data or low expression. For microarray data, genes not present across all arrays or identified as pseudogenes should be excluded [37].

- Normalization: Apply quantile normalization to make the distribution of expression values identical across different samples. This enables comparison between experiments [37].

- Handling Low Expression: To reduce noise, ignore the 20% least expressed genes from each sample before normalization. After normalization, set these ignored values to the lowest expression value in the matrix [37].

Network Construction and Module Detection

- Correlation Matrix Calculation: Compute the pairwise correlation between all genes using a chosen metric (e.g., Pearson, Spearman, or Distance Correlation). The resulting matrix is symmetric, with each cell representing the correlation coefficient between two genes [41] [42].

- Adjacency Matrix Formation: Transform the correlation matrix into an adjacency matrix. In a "weighted" network, this is done by raising the absolute value of the correlation coefficient to a power β (soft-thresholding) to emphasize strong correlations and penalize weak ones [41]. The choice of β is based on the scale-free topology criterion.

- Module Detection: Use hierarchical clustering on the adjacency matrix to group genes with highly correlated expression profiles into modules. A dendrogram is generated, and branches are cut to define modules, each assigned a unique color [41].

Relating Modules to Traits and Identifying Hub Genes

- Module Eigengene Calculation: For each module, compute the module eigengene, defined as the first principal component of the module's expression matrix. It represents the overall expression pattern of the entire module [41].

- Module-Trait Correlation: Correlate module eigengenes with external sample traits (e.g., disease state, patient survival, tumor stage). This identifies modules significantly associated with phenotypes of interest [41].

- Hub Gene Identification: Within significant modules, identify hub genes—genes with the highest connectivity (i.e., strongest correlations with other genes in the module). These genes are often considered key regulatory players and candidate biomarkers [41].

The following workflow diagram illustrates the complete WGCNA process, from data input to biological insight.

Advanced Application: Causal Inference for Drug Discovery

Network analysis can be extended beyond correlation to infer causality, which is crucial for identifying therapeutic targets. The following protocol, termed Causal WGCNA, integrates mediation analysis [38].

- Identify Trait-Associated Modules: Perform standard WGCNA to identify gene modules significantly correlated with a disease phenotype of interest [38].

- Bidirectional Mediation Analysis: For genes within significant modules, conduct statistical mediation analysis to test whether a gene's expression mediates the relationship between the genetic variant (e.g., eQTL) and the trait, or vice versa. This helps establish a causal direction [38].

- Prioritize Causal Genes: Select genes that are significantly identified as causal drivers of the phenotype, not just correlated with it [38].

- Deep Learning-Based Compound Screening: Use the expression signature of the prioritized causal genes to screen for compounds using a deep learning model (e.g., DeepCE). The goal is to find drugs that can reverse the disease-associated signature back to a healthy state [38].

This integrated approach has successfully identified novel gene targets and repurposable drug candidates for complex diseases like idiopathic pulmonary fibrosis [38].

Table 2: Key Research Reagent Solutions for Gene Co-Expression Network Analysis

| Resource Name | Type | Primary Function | Reference |

|---|---|---|---|

| WGCNA R Package | Software Package | Primary tool for constructing weighted gene co-expression networks, detecting modules, and relating them to traits [43] [41]. | [43] [41] |

| COGENT R Package | Software Package | Validates the internal consistency and stability of co-expression networks without requiring prior biological knowledge [37]. | [37] |

| Omics Playground | Web Platform | A point-and-click interface that provides access to WGCNA and other analytical tools for users without coding expertise [41]. | [41] |