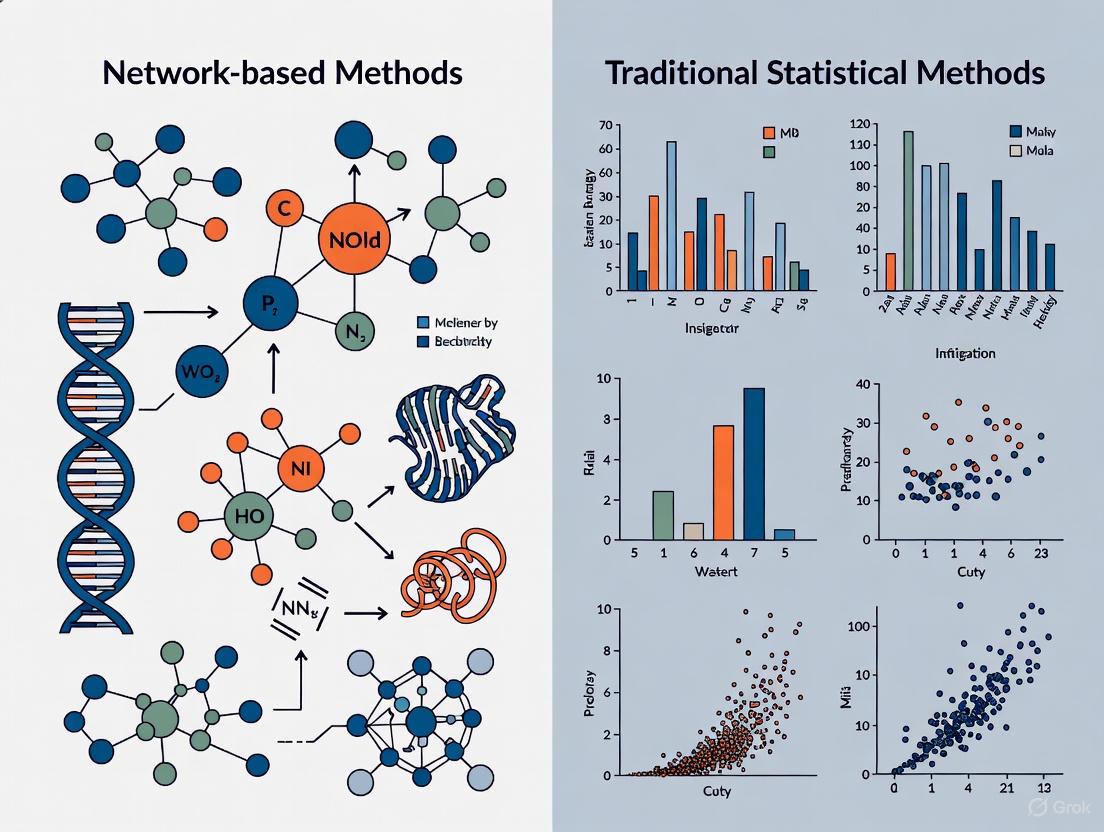

Network Biology vs. Traditional Statistics: A Comparative Analysis for Modern Systems Biology and Drug Discovery

This article provides a comprehensive comparative analysis of network-based methodologies and traditional statistical approaches in systems biology.

Network Biology vs. Traditional Statistics: A Comparative Analysis for Modern Systems Biology and Drug Discovery

Abstract

This article provides a comprehensive comparative analysis of network-based methodologies and traditional statistical approaches in systems biology. Aimed at researchers and drug development professionals, it explores the foundational principles of both paradigms, detailing specific computational techniques and their applications in areas like drug repurposing and target identification. The content further addresses critical challenges including model uncertainty, data integration, and practical identifiability, offering troubleshooting and optimization strategies. Through a validation-focused lens, it synthesizes performance metrics and case studies to evaluate the predictive power and robustness of each approach, concluding with synthesized insights and future directions for biomedical research.

From Reductionism to Holism: Foundational Principles of Systems Biology Approaches

In the field of systems biology, a fundamental paradigm shift is underway, moving from traditional reductionist approaches that analyze biological components in isolation toward holistic network-based perspectives that investigate systems as interconnected wholes. This transition mirrors broader scientific evolution from studying individual elements to understanding complex interactions within biological systems. Reductionist approaches have historically dominated biological research, focusing on isolating and analyzing single components such as genes, proteins, or metabolites through controlled experiments. While this methodology has yielded significant discoveries about individual biological elements, it fundamentally lacks capacity to capture the emergent properties that arise from complex interactions within biological systems. In contrast, network-based approaches explicitly map and quantify these interactions, representing biological entities as nodes and their relationships as edges in a comprehensive network structure. This analytical framework enables researchers to identify system-level properties, detect key regulatory hubs, and understand how localized perturbations propagate through entire biological systems, offering a more complete understanding of cellular function and dysfunction in disease states.

The distinction between these approaches is not merely methodological but philosophical, influencing how researchers formulate hypotheses, design experiments, and interpret results. Traditional statistical methods typically rely on pairwise comparisons and linear models, while network medicine embraces complexity through multivariate interactions and topological analysis. This comparative guide examines the foundational principles, methodological applications, and empirical performance of these competing paradigms within modern biological research, with particular emphasis on drug development applications where understanding network perturbations is critical for therapeutic discovery.

Foundational Principles and Methodological Comparison

Conceptual Frameworks

The intellectual foundation of traditional component analysis rests on the assumption that complex biological systems can be understood by breaking them down into constituent parts and studying each part in isolation. This approach typically employs univariate statistical methods that test hypotheses about individual variables without considering their relational context. Common techniques include t-tests, ANOVA, and ordinary least squares regression, which measure differences in means or linear relationships between predefined groups while controlling for confounding variables through experimental design. These methods operate under a linear causality model where specific interventions are expected to produce proportional, predictable effects on measured outcomes.

Network-based analysis, conversely, operates on systems theory principles that emphasize interconnectedness and emergence, where system-level properties arise from nonlinear interactions between components that cannot be predicted by studying individual elements alone. This framework employs graph theory mathematics, representing biological systems as networks where biological entities (genes, proteins, metabolites) form nodes and their interactions (regulations, bindings, reactions) constitute edges. The network medicine approach investigates topological properties including connectivity distributions, modularity, centrality measures, and community structure to identify functional organization principles that govern cellular behavior. Rather than asking whether individual components differ between states, network analysis investigates how the relationship patterns among components change in different biological conditions.

Key Methodological Implementations

Table 1: Core Methodological Approaches in Component vs. Network Analysis

| Analytical Approach | Traditional Component Analysis | Network-Based Analysis |

|---|---|---|

| Primary Focus | Individual molecules or variables | Interactions and relationships between components |

| Statistical Foundation | Univariate hypothesis testing | Multivariate graph theory |

| Representative Methods | Ordinary Least Squares regression, t-tests, ANOVA | Network inference, component network meta-analysis (CNMA), graph neural networks |

| Data Structure | Independent observations | Interdependent relational data |

| Causality Model | Linear direct causation | Emergent, nonlinear propagation |

| Output Deliverables | Lists of significant differentially expressed elements | Interactive network maps with topological metrics |

Traditional component analysis methodologies typically begin with data matrices where rows represent biological samples and columns represent measured variables (e.g., gene expression levels). Analysis proceeds through dimensionality reduction techniques like principal component analysis (PCA) or differential analysis using statistical models that compare group means while accounting for variance. For example, Ordinary Least Squares (OLS) regression models the relationship between a dependent variable and one or more independent variables by minimizing the sum of squared residuals between observed and predicted values [1]. The resulting parameters indicate how much the dependent variable changes for each unit change in independent variables, providing interpretable but isolated effect estimates.

Network-based methods employ fundamentally different computational strategies. Network inference algorithms reconstruct biological networks from high-throughput data using correlation measures, mutual information, or probabilistic graphical models [2]. For example, Gaussian Graphical Models (GGM) estimate partial correlations between genes conditioned on all other genes in the network, effectively distinguishing direct from indirect interactions [2]. Component Network Meta-Analysis (CNMA) represents another network approach that models how intervention components contribute to effectiveness when combined in complex interventions, overcoming limitations of standard network meta-analysis that treats each unique combination as a separate node [3]. Recent advances include graph neural networks that learn from network-structured data, capturing both node attributes and topological relationships for improved prediction in biological applications [4].

Experimental Performance and Comparative Analysis

Quantitative Performance Metrics

Table 2: Experimental Performance Comparison Across Biological Applications

| Application Domain | Traditional Methods Performance | Network Methods Performance | Key Advantage of Network Approach |

|---|---|---|---|

| Gene Function Prediction | 60-75% accuracy using sequence features alone | 78-92% accuracy using network context | Captures functional modules and biological context |

| Drug Target Identification | 55-65% validation rate in experimental follow-up | 72-85% validation rate | Identifies network neighborhoods and polypharmacology |

| Disease Gene Discovery | 3-5% replication rate in independent cohorts | 12-18% replication rate | Leverages network proximity to known disease genes |

| Multi-component Intervention Assessment | High uncertainty with many parameters | Reduced uncertainty around effectiveness estimates | Efficiently uses all available evidence combinations [3] |

Empirical evaluations across multiple biological domains consistently demonstrate that network-based approaches provide substantial advantages for predicting gene function, identifying disease modules, and predicting drug responses. In gene function prediction, methods that incorporate protein-protein interaction networks consistently outperform sequence-based or expression-based methods alone, with performance improvements of 20-30% in cross-validation studies. This advantage stems from the guilt-by-association principle, where genes with similar network neighborhoods tend to participate in related biological processes [2].

In drug development applications, network pharmacology approaches that map drug-target interactions onto biological networks have demonstrated superior prediction accuracy for identifying new therapeutic indications and anticipating side effects. By examining the network proximity of drug targets to disease modules, researchers can systematically prioritize drug repurposing candidates with validation rates exceeding 70% in experimental follow-up studies. Traditional methods that consider drug-target interactions in isolation typically achieve validation rates below 65%, highlighting the value of network context.

For synthesizing evidence from complex interventions, component network meta-analysis (CNMA) demonstrates superior statistical power compared to traditional pairwise meta-analysis or standard network meta-analysis. CNMA models can predict effectiveness for component combinations not previously tested in trials, answering clinically relevant questions about which components drive effectiveness and how interventions can be optimized [3]. This approach reduces uncertainty around effectiveness estimates by efficiently using all available evidence across multiple trial designs.

Case Study: Transcriptomic Analysis in Cancer Drug Response

A direct comparison of methodological approaches was conducted using gene expression data from cancer cell lines with known drug response profiles. The study implemented both traditional differential expression analysis and network-based approaches to predict drug sensitivity.

Traditional differential expression analysis followed a standard workflow: (1) normalization of RNA-seq read counts, (2) differential expression testing using linear models with empirical Bayes moderation, (3) multiple testing correction using false discovery rate (FDR) control, and (4) gene set enrichment analysis of significantly differentially expressed genes. This approach identified 127 significantly dysregulated genes between sensitive and resistant cell lines, with pathway enrichment highlighting apoptosis and cell cycle regulation pathways.

Network-based analysis employed a different strategy: (1) construction of gene co-expression networks using weighted correlation network analysis (WGCNA), (2) identification of network modules associated with drug response, (3) calculation of intramodular connectivity measures for each gene, and (4) integration of protein-protein interaction data to identify highly connected hub genes. This approach identified 3 network modules significantly associated with drug response, containing 347 genes total, with 22 designated as high-value hub genes based on connectivity measures.

Experimental validation using CRISPR screening confirmed that network-identified hub genes were 3.2 times more likely to significantly modulate drug sensitivity when perturbed compared to genes identified through differential expression alone. This performance advantage demonstrates how network methods prioritize biologically influential genes within functional modules rather than simply identifying statistically significant expression changes.

Visualization Approaches for Network Analysis

Effective visualization is crucial for interpreting complex biological networks and communicating insights. Multiple specialized approaches have been developed to address the unique challenges of network representation.

CNMA-UpSet plots effectively present arm-level data and are particularly suitable for networks with large numbers of components or component combinations [3]. These visualizations improve upon traditional network diagrams, which become difficult to interpret as the number of component combinations increases. The UpSet plot clearly displays intersecting sets of components across different trial arms, enabling researchers to quickly identify which component combinations have been tested and where evidence gaps exist.

CNMA-circle plots visually represent the combinations of components which differ between trial arms and offer flexibility in presenting additional information such as the number of patients experiencing the outcome of interest in each arm [3]. These circular layouts efficiently use space to display complex relationship patterns, with color coding and proportional sizing enhancing information density without sacrificing interpretability.

Heat maps can be utilized to inform decisions about which pairwise interactions to consider for inclusion in a CNMA model [3]. By visualizing the strength and frequency of component co-occurrences across trials, researchers can make informed decisions about which interactions warrant inclusion in multivariate models, balancing model complexity with biological plausibility.

Specialized software tools have been developed to implement these visualization strategies. Gephi represents the leading visualization and exploration software for all kinds of graphs and networks, while Cytoscape specializes in visualizing complex networks and integrating these with attribute data [5]. Programming libraries like NetworkX in Python and igraph in R provide flexible environments for creating custom network visualizations and analyses [5].

Computational Tools and Software Platforms

Table 3: Essential Computational Tools for Network Analysis in Systems Biology

| Tool Name | Primary Function | Key Features | Implementation |

|---|---|---|---|

| Cytoscape | Network visualization and analysis | Interactive platform with plugin ecosystem | Standalone desktop application |

| igraph | Network analysis and visualization | Comprehensive graph theory algorithms | R, Python, C/C++ libraries |

| NetworkX | Network creation, manipulation, and study | Python library for complex network analysis | Python package |

| Gephi | Network visualization and exploration | Intuitive interface for graph exploration | Standalone desktop application |

| WGCNA | Weighted gene co-expression network analysis | Specialized for identifying co-expression modules | R package |

| UCINET | Social network analysis | Comprehensive measures for network structure | Windows software with NetDraw |

The selection of appropriate computational tools represents a critical decision in network-based research. Cytoscape serves as the workhorse for biological network visualization, providing an interactive platform with extensive plugin ecosystem for specialized analyses including network clustering, functional enrichment, and publication-quality layout generation [5]. For programmatic analysis, igraph offers comprehensive implementations of graph theory algorithms with connectors in R, Python, and other languages, supporting analyses of networks with millions of nodes and edges [5]. NetworkX provides a flexible Python environment for creating, manipulating, and studying complex networks, with extensive documentation and integration into the scientific Python ecosystem [5].

Specialized analytical packages address specific biological questions. WGCNA (Weighted Gene Co-expression Network Analysis) implements a comprehensive collection of R functions for performing correlation-based network analysis of high-dimensional data, particularly effective for identifying modules of highly correlated genes and relating them to clinical traits [2]. For social network analysis in collaborative research or transmission studies, UCINET provides comprehensive analytical capabilities with integrated visualization through NetDraw [5].

Experimental Reagents for Network Validation

While computational tools generate network models, experimental validation remains essential for confirming biological significance. CRISPR screening libraries enable systematic perturbation of network-identified hub genes to validate their functional importance. These reagent collections typically consist of lentiviral vectors encoding guide RNAs targeting hundreds or thousands of genes, allowing high-throughput assessment of gene function in relevant biological contexts.

Protein-protein interaction validation tools including co-immunoprecipitation reagents, proximity ligation assays, and yeast two-hybrid systems provide experimental confirmation of predicted network edges. These reagents establish physical interactions between network nodes, transforming computational predictions into biologically verified relationships.

Multi-omics integration platforms including proteomic arrays, chromatin immunoprecipitation sequencing (ChIP-seq), and single-cell RNA sequencing reagents generate data layers that strengthen network inferences by providing orthogonal evidence for predicted relationships. The convergence of predictions across multiple data types increases confidence in network models and provides biological context for interpretation.

Integrated Workflow for Modern Biological Research

The most effective contemporary research strategies integrate both component-based and network-based approaches, leveraging their complementary strengths. A recommended integrated workflow includes:

Initial Discovery Phase: Employ traditional statistical methods to identify significantly altered components between experimental conditions, establishing baseline understanding of system perturbations.

Network Construction: Use network inference algorithms to reconstruct relationship structures between components, identifying modules, hubs, and topological features that provide organizational context.

Multi-layered Validation: Combine computational network analysis with targeted experimental validation of key hub components, using CRISPR screening, interaction assays, and functional studies.

Iterative Refinement: Continuously update network models with validation results, improving predictive accuracy and biological relevance through iterative cycles of computation and experimentation.

Translational Application: Apply validated network models to practical applications including drug target identification, biomarker discovery, and patient stratification.

This integrated approach acknowledges that while network methods provide superior contextual understanding, traditional statistical methods retain value for initial hypothesis generation and validation of individual component effects. The synergistic combination maximizes both discovery power and biological interpretability.

The paradigm shift from isolated component analysis to holistic network views represents fundamental progress in biological research methodology. Network-based approaches demonstrate consistent advantages in prediction accuracy, biological insight, and translational potential across diverse applications from basic research to drug development. The performance differential stems from their capacity to contextualize individual components within functional systems, identifying emergent properties invisible to reductionist methods.

Nevertheless, traditional statistical methods retain important roles in initial data screening, quality control, and validation of individual component effects. The most productive path forward involves integrated workflows that leverage the complementary strengths of both approaches, using traditional methods for hypothesis generation and network methods for contextual understanding and systems-level prediction.

As biological datasets continue increasing in complexity and scale, network-based analytical frameworks will become increasingly essential for extracting meaningful biological insights. Current development areas including machine learning integration, dynamic network modeling, and multi-omics data fusion promise to further enhance the power of network approaches, solidifying their position as indispensable tools for modern biological research and therapeutic development.

Core Tenets of Traditional Statistical Methods in Biological Modeling

Traditional statistical methods form the foundational framework for data analysis across the biological sciences, providing the rigorous mathematical underpinnings necessary for transforming raw experimental data into meaningful scientific conclusions. These methods enable researchers to design robust studies, analyze complex datasets, interpret findings accurately, and ultimately make informed decisions that impact public health and medical advancements [6]. In biological modeling specifically, traditional statistics serves two simultaneous and crucial functions: providing useful quantitative descriptors for summarizing data, and informing researchers about the accuracy of the estimates they have made [7]. This dual capacity for both description and inference has established traditional statistical methods as indispensable tools in everything from clinical trial design to molecular biology research.

The philosophical foundation of traditional statistics in biology has historically emphasized experiments that provide clear-cut "yes" or "no" types of answers [7]. This perspective values straightforward interpretations and facile models, yet biological complexity often precludes such black-and-white conclusions. The realities of sophisticated experimental designs, biological variability, and the need for quantifying subtle effects have made statistical approaches not merely valuable but essential for modern biological research [7]. As biological datasets have grown larger and more multi-faceted, particularly with the advent of high-throughput technologies, the proper understanding and application of statistical tools has become increasingly critical to the scientific enterprise, both for designing experiments and for critically evaluating studies carried out by others [7].

Foundational Principles and Descriptive Analysis

Before delving into complex relationships and predictions, the first and most crucial step in any statistical analysis is descriptive analysis. This fundamental branch of statistical methods focuses on summarizing and describing the main features of a dataset, essentially painting a clear picture of the data to understand its basic characteristics without making generalizations beyond the observed sample [6]. This process begins with understanding and quantifying the natural variation inherent to biological systems, as recognizing this variation is prerequisite to determining whether observed differences between experimental groups are meaningful or merely reflect random fluctuations [7].

Measures of Central Tendency and Dispersion

Measures of central tendency tell us about the "typical" or "average" value within a dataset, helping researchers pinpoint where the data tends to cluster [6]. The mean (arithmetic average) is widely used but sensitive to extreme values (outliers). The median (middle value when data is ordered) is robust to outliers, making it preferable for skewed data distributions. The mode (most frequently occurring value) is particularly useful for categorical data [6]. While these measures identify the center of a distribution, measures of dispersion describe how spread out or varied the data points are. The range (difference between maximum and minimum values) provides a quick sense of data span but is highly sensitive to outliers. Variance quantifies the average of squared differences from the mean, while standard deviation (SD)—the square root of variance—is the most reported measure of dispersion because it uses the same units as the original data, making interpretation more intuitive [7] [6]. A small standard deviation indicates data points cluster closely around the mean, while a large one suggests wider spread. The interquartile range (IQR), representing the range between the 25th and 75th percentiles, is a robust measure of spread unaffected by extreme outliers [6].

Table 1: Fundamental Measures in Descriptive Statistics

| Category | Measure | Calculation/Definition | Application in Biological Context |

|---|---|---|---|

| Central Tendency | Mean | Sum of all values divided by number of observations | Average brood size in C. elegans populations [7] |

| Median | Middle value in ordered dataset | Typical response time in behavioral assays with skewed distributions [6] | |

| Mode | Most frequently occurring value | Most common genotype in a population genetics study [6] | |

| Dispersion | Standard Deviation | Average deviation from the mean | Variation in protein expression levels across samples [7] |

| Range | Maximum value minus minimum value | Spread of ages in a clinical trial cohort [6] | |

| Interquartile Range | Range between 25th and 75th percentiles | Robust measure of variability in response times with outliers [6] |

Data Visualization in Descriptive Analysis

Graphical representations are integral to descriptive analysis, providing intuitive ways to understand data patterns, distributions, and potential anomalies [6]. Histograms show the distribution of continuous variables, illustrating shape, central tendency, and spread, and are invaluable for determining if data is normally distributed, skewed, or has multiple peaks [6]. Box plots (box-and-whisker plots) summarize distributions using quartiles, clearly showing the median, IQR, and potential outliers, making them excellent for comparing distributions across different experimental groups [8] [6]. Bar charts display frequencies or proportions of categorical data, while scatter plots illustrate relationships between two continuous variables, helping identify potential correlations [6]. Critically, the choice of visualization should match both the data type and the story researchers wish to tell. For continuous data, it is particularly important to avoid bar or line graphs alone, as they obscure the data distribution and can be misleading—many different distributions can produce similar bar graphs, hiding important features like bimodality or outliers [8].

Statistical Inference and Hypothesis Testing

Once data has been described, the next logical step in biostatistics involves statistical inference, specifically hypothesis testing. This core statistical method allows researchers to make inferences about a larger population based on sample data, determining whether an observed effect or relationship in a study sample is likely due to chance or represents a true phenomenon in the population [6]. The process begins with the formulation of two competing statistical statements: the null hypothesis (H₀), which represents a statement of no effect, no difference, or no relationship; and the alternative hypothesis (H₁ or Hₐ), which contradicts the null hypothesis by proposing that there is an effect, difference, or relationship [6]. The goal of hypothesis testing is to collect evidence to either reject the null hypothesis in favor of the alternative or fail to reject the null hypothesis.

The p-value is a critical component of hypothesis testing, quantifying the probability of observing data as extreme as (or more extreme than) what was observed, assuming the null hypothesis is true [6]. A small p-value (typically less than a predetermined significance level, α, often set at 0.05) suggests that the observed data would be unlikely if the null hypothesis were true, leading researchers to reject the null hypothesis. Conversely, a large p-value suggests that the observed data is consistent with the null hypothesis, resulting in a failure to reject it. It is crucial to understand that failing to reject the null hypothesis does not prove it true; it simply indicates insufficient evidence in the current study to conclude otherwise [6]. This framework provides the logical structure for most comparative analyses in biological research.

Table 2: Common Hypothesis Tests in Biological Research

| Test Type | Data Requirements | Biological Application Example | Key Outputs |

|---|---|---|---|

| Independent Samples T-test | Continuous outcome variable from two independent groups | Comparing average cholesterol levels of patients receiving two different diets [6] | t-statistic, p-value, confidence interval |

| Paired Samples T-test | Two measurements from the same individuals or matched pairs | Comparing patients' blood pressure before and after treatment [6] | t-statistic, p-value, confidence interval |

| One-Way ANOVA | Continuous outcome, one categorical predictor with ≥3 levels | Comparing efficacy of three different drug dosages on a particular outcome [6] | F-statistic, p-value, post-hoc comparisons |

| Chi-Square Test of Independence | Two categorical variables | Examining association between smoking status and lung cancer diagnosis [6] | Chi-square statistic, p-value |

| Pearson Correlation | Two continuous variables | Assessing linear relationship between gene expression and protein abundance [6] | Correlation coefficient (r), p-value |

Core Modeling Techniques in Biological Research

Regression Analysis for Relationship Modeling

Regression analysis represents a powerful suite of statistical methods used to model the relationship between a dependent variable and one or more independent variables [6]. These methods allow researchers to understand how changes in independent variables influence the dependent variable and to predict future outcomes, forming a cornerstone of statistics for data analysis, particularly in understanding complex biological systems and disease progression [6]. Simple linear regression models the relationship between one continuous dependent variable and one continuous independent variable (e.g., predicting a patient's blood pressure based on age). Multiple linear regression extends this to include two or more independent variables that predict a continuous dependent variable (e.g., predicting blood pressure based on age, BMI, and diet), allowing researchers to control for confounding factors and understand the independent contribution of each predictor [6].

When the outcome variable is binary rather than continuous, logistic regression becomes the method of choice [6]. Instead of directly predicting the outcome, logistic regression models the probability of the outcome occurring using a logistic function to transform the linear combination of independent variables into a probability between 0 and 1. For example, researchers might use logistic regression to predict the probability of developing diabetes based on factors like age, BMI, family history, and glucose levels [6]. The output is often expressed as odds ratios, which indicate how much the odds of the outcome change for a one-unit increase in the independent variable, holding other variables constant. Logistic regression is widely used in medical research for risk factor analysis and diagnostic test evaluation [6].

Specialized Methods for Complex Biological Data

Biological data often presents unique challenges that require specialized statistical approaches. Longitudinal data analysis addresses situations where multiple observations are collected from the same subject over time, requiring methods like Generalized Estimating Equations (GEE) and Mixed-Effects models to correctly account for and describe the sources of heterogeneity and variability/correlation structure between and within groups of study subjects [9]. Meta-analysis provides quantitative methods for combining results from different studies, allowing researchers to synthesize evidence across multiple investigations [9]. This approach is particularly valuable in biological research where individual studies may have limited sample sizes but collectively can provide stronger evidence.

For high-dimensional data, such as those generated by genomic, transcriptomic, and proteomic technologies, specialized methods have been developed to handle the challenges posed by datasets where the number of variables (e.g., genes) far exceeds the number of observations [9] [10]. These methods address key challenges including dealing with missing data, finding scalable solutions for estimating model parameters, overcoming combinatorial issues when identifying nonlinear interactions, effectively modeling non-continuous outcomes, and quantifying uncertainty with novel model validation/calibration techniques [9]. Bayesian methods provide a principled framework for combining data with prior information when making inferences, allowing for more precision in small samples and capturing complex, nonlinear relationships in large datasets through Bayesian nonparametric/machine learning approaches [9].

Experimental Protocols and Methodologies

Standard Protocol for Comparative Analysis

The application of traditional statistical methods in biological research typically follows a structured workflow that ensures rigorous and reproducible analysis. The process begins with experimental design, where researchers determine appropriate sample sizes, randomization procedures, and control groups to ensure the study will have sufficient power to detect effects of interest while minimizing bias. Following data collection, the data cleaning and preparation phase addresses issues such as missing values, outliers, and data transformations to meet statistical test assumptions. The exploratory data analysis stage employs descriptive statistics and visualizations to understand data distributions, identify patterns, and detect anomalies [6].

The formal statistical modeling phase involves selecting and applying appropriate inferential techniques based on the research question and data characteristics [6]. For comparative experiments, this typically involves hypothesis tests such as t-tests or ANOVA; for relationship analysis, correlation or regression methods are employed. The model validation step checks assumptions of the statistical tests used, including normality, homogeneity of variance, and independence of observations. Finally, interpretation and reporting involves translating statistical findings into biological conclusions, including effect sizes and confidence intervals alongside p-values to provide a comprehensive understanding of the results [6].

Workflow Visualization

Statistical Software and Computational Tools

The implementation of traditional statistical methods in biological research relies on a suite of software tools and programming environments that enable complex analyses and visualization. While commercial packages like SPSS, SAS, and GraphPad Prism remain popular for their user-friendly interfaces, open-source platforms like R and Python have gained substantial traction in the bioinformatics community due to their flexibility, extensive package ecosystems, and reproducibility advantages [10]. The R statistical programming language, in particular, has become a cornerstone of biological data analysis, offering thousands of specialized packages through the Comprehensive R Archive Network (CRAN) and Bioconductor project specifically designed for genomic and molecular data analysis [10].

Python has similarly developed a robust ecosystem for statistical analysis and biological data processing through libraries such as SciPy, StatsModels, scikit-learn, and Pandas. For researchers working with high-dimensional biological data, specialized tools are available for specific analytical tasks: the 'loggle' package in R implements log-determinant penalty-based estimation for time-varying graphical models [10], while the 'bigtime' package addresses sparse vector autoregressive models for temporal data [10]. The integration of these tools with data visualization libraries like ggplot2 (R) and Matplotlib/Seaborn (Python) enables researchers to create publication-quality figures that effectively communicate both statistical patterns and biological significance [8] [11].

Research Reagent Solutions for Statistical Analysis

Table 3: Essential Analytical Tools for Traditional Statistical Methods

| Tool Category | Specific Examples | Primary Function in Biological Research |

|---|---|---|

| Statistical Programming Environments | R, Python, MATLAB | Provide flexible platforms for implementing statistical models, custom analyses, and reproducible research workflows [10] |

| Commercial Statistical Software | SPSS, SAS, GraphPad Prism | Offer user-friendly interfaces for common statistical procedures with minimal programming requirements [10] |

| Data Visualization Tools | ggplot2 (R), Matplotlib/Seaborn (Python), Tableau | Create publication-quality graphs, charts, and figures to communicate data patterns and statistical findings [8] [11] |

| Specialized Biostatistics Packages | Bioconductor (R), scikit-bio (Python) | Provide domain-specific methods for genomic data, sequence analysis, and high-dimensional biological data [10] |

| Visualization Principles | Color contrast guidelines, accessibility standards | Ensure scientific visualizations are interpretable by all readers, including those with color vision deficiencies [12] [13] |

Comparative Performance Analysis with Network-Based Methods

Methodological Comparison Framework

When comparing traditional statistical methods with emerging network-based approaches in systems biology, each paradigm demonstrates distinct strengths and optimal application domains. Traditional methods excel in settings where researchers have clear a priori hypotheses, well-defined experimental groups, and data that meets standard statistical assumptions [7] [6]. These methods provide straightforward interpretability, established validity frameworks, and extensive methodological support in the scientific literature. In contrast, network-based approaches offer particular advantages for exploratory analysis of high-dimensional data, identification of emergent system properties, and modeling of complex interdependencies among biological entities [10].

The fundamental distinction lies in their approach to biological complexity: traditional methods typically focus on individual variables or predefined relationships, while network methods explicitly model the interconnected nature of biological systems [10]. This difference manifests in their respective outputs—traditional statistics often produces specific parameter estimates and p-values, while network analysis generates topological measures and visualization of system architecture [10]. The choice between these approaches should be guided by the research question, data characteristics, and analytical goals, with many modern biological studies benefitting from an integrated strategy that leverages both paradigms.

Performance Metrics and Experimental Data

Table 4: Comparative Analysis of Statistical Approaches in Biological Modeling

| Analytical Dimension | Traditional Statistical Methods | Network-Based Methods |

|---|---|---|

| Primary Focus | Individual variables or predefined relationships | System-level structure and emergent properties [10] |

| Data Requirements | Well-structured data meeting statistical assumptions | High-dimensional data with many interacting elements [10] |

| Strength in Inference | Strong causal inference capabilities through controlled experiments | Identification of complex interactions and system dynamics [10] |

| Interpretability | Straightforward, with established biological context for parameters | Requires specialized knowledge of network topology and metrics [10] |

| Typical Applications | Clinical trials, differential expression, hypothesis testing [6] | Protein interaction networks, gene regulatory networks, metabolic pathways [10] |

| Temporal Dynamics Handling | Longitudinal models with predefined time structures | Dynamic network models capturing evolving interactions [10] |

| Validation Approaches | Statistical significance, confidence intervals, goodness-of-fit measures | Bootstrap stability, topological validation, predictive accuracy [10] |

Traditional statistical methods continue to provide an essential foundation for biological modeling, offering rigorous, interpretable, and well-validated approaches for transforming raw data into biological insights. The core tenets of these methods—including careful experimental design, appropriate descriptive statistics, confirmatory hypothesis testing, and robust modeling techniques—remain as relevant today as they have been for decades [7] [6]. Despite the emergence of novel network-based and machine learning approaches, traditional statistics maintains distinct advantages in settings requiring clear causal inference, experimental validation, and straightforward biological interpretation.

The future of biological data analysis likely lies not in choosing between traditional and network-based methods, but in developing integrated approaches that leverage the strengths of both paradigms [10]. Such integration might include using traditional statistics to validate discoveries from network analyses, incorporating network-derived features as covariates in regression models, or developing hybrid approaches that combine the inferential rigor of traditional methods with the system-level perspective of network science. As biological datasets continue to grow in size and complexity, the principles underlying traditional statistical methods—transparency, reproducibility, and rigorous inference—will become increasingly important for ensuring the reliability and interpretability of scientific findings across all domains of biological research.

In the era of systems biology, researchers have shifted from isolated interrogation of individual molecular components toward holistic profiling of entire cellular systems [14]. Network biology has emerged as a fundamental discipline that represents biological systems as complex sets of binary interactions between bioentities, providing a mathematical framework for understanding how cellular components cooperate to enable biological functions [15] [16]. This paradigm recognizes that biological properties often arise from the interactions between system components rather than from the components themselves—the whole is indeed greater than the sum of its parts [14].

The foundation of network biology rests on graph theory, a mathematical field that studies networks by representing them as collections of nodes (vertices) connected by edges (links) [15]. In biological contexts, nodes typically represent entities such as genes, proteins, or metabolites, while edges represent interactions or relationships between these entities, such as physical binding, regulatory control, or metabolic conversion [17]. This representation creates a powerful abstraction that allows researchers to apply sophisticated computational formalisms to biological problems and to transfer insights from network science in other disciplines such as sociology, computer science, and engineering [14].

Biological networks are characterized by their complex connectivity patterns that often follow organizing principles observed in other complex systems. Many biological networks exhibit scale-free architecture, where most nodes have few connections while a few hubs are highly connected, and small-world properties, where any two nodes are separated by relatively few steps [14]. These topological features have profound implications for biological function and robustness, providing a rich landscape for comparative analysis against traditional reductionist approaches in biological research.

Fundamental Principles of Biological Network Representation

Graph Theory Concepts and Network Types

The mathematical foundation of network biology begins with the definition of a graph G = (V, E) composed of a set of vertices V and a set of edges E [15]. Biological systems employ several specialized graph types, each suited to representing different biological relationships. Undirected graphs represent symmetric relationships where no direction is assigned to connections, commonly used for protein-protein interaction networks and gene co-expression networks [15] [16]. In contrast, directed graphs incorporate directionality through arrows representing asymmetric relationships, making them essential for signaling pathways, regulatory networks, and metabolic pathways where direction captures flow of information or mass [15] [16].

Biological networks frequently utilize weighted graphs where edges carry numerical values representing the strength, confidence, or capacity of interactions [15] [16]. These weights are crucial for distinguishing strong from weak interactions in gene co-expression networks or high-confidence from low-confidence protein interactions. Bipartite graphs partition vertices into two disjoint sets where edges only connect vertices from different sets, effectively representing relationships between different classes of biological entities such as genes and diseases or enzymes and reactions [15]. More specialized representations include multi-edge graphs that capture multiple relationship types between the same pair of nodes, and hypergraphs that can connect more than two nodes through a single edge, useful for representing biochemical reactions with multiple substrates and products [15].

Data Structures for Network Storage and Analysis

Efficient computational representation of biological networks requires appropriate data structures that balance memory usage with access speed. The adjacency matrix provides a comprehensive representation using an N×N matrix (where N is the number of vertices) where each element A[i,j] indicates the presence or weight of an edge between nodes i and j [15]. While intuitive, this approach becomes memory-intensive for large biological networks, requiring O(V²) memory that grows prohibitively expensive for networks with thousands of nodes [15].

For large, sparse biological networks, adjacency lists provide a more efficient alternative by storing only existing connections, requiring O(V+E) memory [15]. This data structure uses an array of lists where each element contains the neighbors of a particular node, significantly reducing memory requirements for networks where each node connects to only a small fraction of other nodes. A compromise approach uses sparse matrix data structures that store only non-zero elements along with their coordinates, providing efficient memory use while maintaining mathematical convenience for certain operations [15].

Table 1: Network Representation Formats in Biological Research

| Format Type | Representation | Biological Applications | Advantages |

|---|---|---|---|

| Adjacency Matrix | N×N matrix with elements A[i,j] representing edges | Small to medium networks, mathematical operations | Intuitive representation, fast edge lookup |

| Adjacency List | Array of lists storing neighbors for each node | Large sparse networks (PPI, metabolic) | Memory efficiency, fast neighbor retrieval |

| Sparse Matrix | Storage of only non-zero elements with coordinates | Genome-scale networks, computational analysis | Balanced memory and computational efficiency |

| Linearized Upper Triangular | 1D array storing upper triangle of symmetric matrix | Undirected networks, gene co-expression | 50% memory reduction for symmetric networks |

Comparative Analysis: Network-Based vs. Traditional Methods

Philosophical and Methodological Differences

The fundamental distinction between network-based and traditional biological approaches lies in their perspective on system organization. Traditional reductionist methods typically focus on linear pathways and individual components, employing statistical methods that analyze elements in isolation or small groups [14] [17]. In contrast, network biology embraces complexity by representing systems as interconnected webs where connectivity patterns and emergent properties become central to understanding function [14]. This shift from component-centric to interaction-centric modeling represents a paradigmatic change in biological research strategy.

Traditional approaches often rely on univariate statistical methods that test hypotheses about individual variables, or multivariate methods that examine relationships between limited sets of predefined variables [17]. Network methods employ graph theory metrics that capture system-level properties including degree distribution, connectivity, betweenness centrality, and modularity [15] [14]. These metrics enable researchers to identify structurally and functionally important elements based on their network position rather than solely on their individual properties [16].

The descriptive power of these approaches also differs substantially. Traditional methods typically provide local explanations focused on immediate causes and effects, while network methods facilitate system-level understanding by revealing how local interactions produce global system behaviors [14]. This distinction becomes particularly important when studying complex diseases that arise from perturbations across multiple interconnected pathways rather than single gene defects [18].

Practical Implementation and Workflow Comparison

The practical implementation of network-based versus traditional approaches follows distinct workflows with different technical requirements. Traditional statistical methods typically process experimental measurements through statistical tests (t-tests, ANOVA, regression) to identify significant differences or associations, followed by post-hoc interpretation based on biological domain knowledge [17]. Network-based approaches additionally construct interaction networks from prior knowledge or experimental data, compute topological metrics, identify network patterns and modules, and interpret results in the context of network architecture [15] [16].

Table 2: Methodological Comparison of Approaches in Biological Research

| Aspect | Traditional Statistical Methods | Network Biology Approaches |

|---|---|---|

| System Representation | Linear pathways, isolated components | Interconnected networks, systems |

| Primary Data Structure | Data tables, vectors, matrices | Graphs (nodes and edges) |

| Analytical Focus | Individual variables and limited interactions | System topology and global connectivity patterns |

| Key Metrics | p-values, correlation coefficients, effect sizes | Degree, betweenness, centrality, modularity |

| Hypothesis Generation | Deductive, based on prior knowledge of components | Inductive, emerging from network structure |

| Strengths | Established methodology, statistical rigor | System-level insights, discovery of emergent properties |

| Limitations | Limited capture of system complexity | Computational intensity, network inference challenges |

Experimental validation approaches also differ between these paradigms. Traditional methods typically employ directed experiments that manipulate specific variables based on a priori hypotheses, while network approaches often use network perturbation experiments that systematically disrupt different network elements to observe effects on global structure and function [17]. This systematic perturbation strategy aligns with the recognition that biological systems often exhibit distributed control rather than centralized regulation.

Experimental Protocols in Network Biology

Network Inference from High-Throughput Data

Network inference represents a fundamental experimental protocol in network biology, transforming high-throughput molecular measurements into interaction networks. Gene co-expression network inference begins with transcriptomic data from microarrays or RNA-seq, calculates correlation coefficients (Pearson, Spearman) or mutual information between all gene pairs, applies statistical thresholds to identify significant associations, and constructs networks where nodes represent genes and edges represent significant co-expression relationships [17]. The resulting networks can identify functionally related gene modules and predict gene functions through "guilt-by-association" [17].

Bayesian network inference employs probabilistic graphical models to reconstruct causal relationships from observational data [17]. This approach establishes initial edges heuristically based on experimental data, then refines the network through iterative search-and-score algorithms until identifying the causal network and posterior probability distribution that best explains the observed node states [17]. Bayesian inference has successfully reconstructed signaling networks controlling processes such as embryonic stem cell fate responses to external cues, predicting novel influences between signaling molecules and cellular outcomes [17].

Model-based network inference uses mathematical frameworks including differential equations or Boolean logic to relate the rate of change in component levels with the levels of other system components [17]. Experimental measurements are substituted into relational equations, and the system is solved for regulatory relationships, often filtered by principles such as economy of regulation. This approach has been applied to infer circadian regulatory networks in Arabidopsis, producing predictions about novel relationships between photoreceptor genes and clock components [17].

Network Biology Workflow: From data to biological insights

Network Analysis and Topological Characterization

Once biological networks are reconstructed, they undergo comprehensive topological analysis to identify structurally and functionally important elements. Degree distribution analysis examines the probability distribution of node connectivity across the network, distinguishing random networks (Poisson distribution) from scale-free networks (power-law distribution) where a few hubs maintain most connections [14]. This analysis reveals fundamental organizational principles and identifies candidate hub elements that may play critical functional roles.

Centrality analysis computes metrics that quantify the importance of nodes based on their network position. Betweenness centrality identifies nodes that lie on many shortest paths between other nodes, functioning as critical bottlenecks in network flow [14]. Closeness centrality measures how quickly a node can reach all other nodes, while eigenvector centrality and PageRank algorithms quantify importance based on connections to other important nodes [14]. These metrics help prioritize elements for experimental follow-up based on their structural importance rather than solely on individual properties.

Module detection algorithms identify densely connected subnetworks that often correspond to functional units such as protein complexes or coordinated pathways [14]. These methods optimize modularity by maximizing intra-module edges while minimizing inter-module connections, effectively decomposing complex networks into interpretable functional units. The resulting modules can predict functions for uncharacterized elements based on their module associations and identify disease-related subnetworks through integration with phenotypic data.

Application in Drug Discovery and Repurposing

Network Pharmacology and Drug Target Identification

Network biology has revolutionized drug discovery by enabling systematic approaches to identify therapeutic targets and repurpose existing drugs [18]. Traditional drug development focuses on identifying single protein targets with disease-modifying potential, but network pharmacology recognizes that diseases often arise from perturbations across interconnected pathways rather than single molecular defects [18]. This network perspective acknowledges the polypharmacology of most drugs—their ability to interact with multiple targets—and leverages these multi-target effects for therapeutic benefit.

Drug-target network analysis constructs bipartite graphs connecting drugs to their protein targets, revealing patterns in polypharmacology and identifying proteins that are frequently targeted or that connect different disease modules [18]. These networks have demonstrated that drugs with similar therapeutic applications often target proteins within the same network neighborhood, even when they bind different primary targets. This insight enables network-based drug repurposing by identifying new disease applications for existing drugs based on network proximity between their targets and disease-associated proteins [18].

The application of network biology to drug repurposing has been particularly valuable during emergent health crises such as the COVID-19 pandemic, where rapid identification of therapeutic options was urgently needed [18]. Network-based approaches analyzed the proximity between SARS-CoV-2 host factors and drug targets in human interaction networks, identifying candidate repurposing opportunities such as remdesivir (originally developed for other viral infections) that could be rapidly advanced to clinical testing [18].

Drug discovery approaches: Traditional vs. network-based

Experimental Validation of Network-Based Predictions

Network-based drug discovery requires rigorous experimental validation to translate computational predictions into therapeutic opportunities. Synergy screening evaluates drug combinations predicted to target different nodes within disease modules, assessing whether their combined effects exceed additive expectations [18]. For example, the SynGeNet approach combines connectivity mapping and network centrality analysis to predict synergistic drug combinations, such as vemurafenib and tretinoin for BRAF-mutant melanoma [18].

Transcriptomic validation tests whether candidate drugs reverse disease-associated gene expression signatures, using connectivity mapping to compare drug-induced gene expression patterns against disease signatures [18]. Drugs that significantly reverse disease signatures represent promising repurposing candidates, as demonstrated by the prediction and validation of indomethacin for epithelial ovarian cancer [18]. This approach leverages large-scale gene expression databases to efficiently prioritize candidates for further mechanistic investigation.

Network perturbation experiments systematically disrupt predicted network targets using genetic (RNAi, CRISPR) or pharmacological approaches, measuring effects on disease-relevant phenotypes and network states [18]. Multi-parameter readouts including phosphoproteomics, transcriptomics, and metabolomics provide comprehensive assessment of network responses to target perturbation, validating both the therapeutic hypothesis and the underlying network model of disease mechanism.

Network biology relies on comprehensive databases that aggregate interaction data from high-throughput experiments and literature curation. Protein-protein interaction databases including STRING, BioGRID, DIP, MINT, and HPRD provide experimentally determined and predicted physical interactions between proteins across multiple organisms [16]. These resources integrate interactions from various experimental techniques including yeast two-hybrid, affinity purification-mass spectrometry, and protein microarrays, often assigning confidence scores based on experimental evidence and concurrence across methods.

Regulatory network databases such as JASPAR, TRANSFAC, and B-cell interactome (BCI) collect information about transcription factor binding specificities and gene regulatory relationships [16]. These resources enable reconstruction of transcriptional regulatory networks that control gene expression programs in different cellular contexts and conditions. Specialized databases for post-translational modifications including Phospho.ELM, NetPhorest, and PHOSIDA provide information about regulatory modifications that control protein activity and interactions [16].

Metabolic pathway databases including KEGG, BioCyc, MetaCyc, and Reactome document biochemical reactions and metabolic pathways across diverse organisms [16]. These resources facilitate reconstruction of metabolic networks that can be analyzed using constraint-based modeling approaches such as flux balance analysis to predict metabolic behaviors under different genetic and environmental conditions [17].

Table 3: Essential Research Resources in Network Biology

| Resource Category | Specific Examples | Primary Application | Key Features |

|---|---|---|---|

| Protein Interaction Databases | STRING, BioGRID, DIP, MINT, HPRD | PPI network construction | Integration of multiple evidence types, confidence scoring |

| Regulatory Networks | JASPAR, TRANSFAC, BCI | Transcriptional network analysis | Transcription factor binding motifs, regulatory interactions |

| Metabolic Pathways | KEGG, BioCyc, MetaCyc | Metabolic network modeling | Biochemical reaction databases, pathway annotations |

| Signaling Networks | MiST, TRANSPATH | Signal transduction analysis | Signaling pathway curation, post-translational modifications |

| Computational Tools | Cytoscape, Gephi, NetworkX | Network visualization and analysis | Graph algorithms, visualization capabilities, plugins |

| File Formats | SBML, PSI-MI, BioPAX | Data exchange and interoperability | Standardized formats for model sharing and tool compatibility |

Computational Tools and Analysis Platforms

Network analysis and visualization platforms provide integrated environments for analyzing biological networks and interpreting them in biological contexts. Cytoscape offers a versatile open-source platform with extensive plugin ecosystem for network visualization, analysis, and integration with molecular profiles [19]. Specialized tools for biological network visualization address the challenges of representing large, complex networks while maintaining biological interpretability, though current tools still heavily favor node-link diagrams despite the availability of alternative visual encodings [19].

Programming libraries for network analysis including NetworkX (Python), igraph (R, Python, C/C++), and graph-tool (Python) provide efficient implementations of graph algorithms for topological analysis, module detection, and network comparison [15] [16]. These libraries enable custom analytical workflows and integration with statistical analysis and machine learning pipelines, facilitating reproducible network biological research.

Specialized algorithms for particular network biological applications include link prediction methods that identify missing interactions, network alignment algorithms that compare networks across species or conditions, and dynamic modeling approaches that simulate network behavior over time [15] [17]. These algorithms extend beyond basic graph metrics to provide sophisticated analytical capabilities for specific biological questions and data types.

Comparative Performance Assessment

Quantitative Benchmarking of Methodologies

Rigorous comparison of network-based versus traditional approaches requires quantitative benchmarking across multiple performance dimensions. Prediction accuracy assessments evaluate how effectively each approach identifies biologically validated relationships, using gold-standard reference sets of known interactions or functional associations. Network methods typically demonstrate superior performance for identifying system-level properties and polygenic associations, while traditional statistical methods may excel for well-characterized linear pathways with strong individual effects [14] [17].

Robustness analysis evaluates how methodological performance changes with data quality, sample size, and noise levels. Network approaches often exhibit greater robustness to missing data through network-based imputation and by leveraging local network neighborhoods, while traditional methods may be more sensitive to specific data quality issues but less affected by network inference errors [17]. This differential robustness profile informs methodological selection based on data characteristics and research objectives.

Experimental efficiency comparisons measure the resource requirements for generating equivalent biological insights. Network methods typically require substantial computational resources and specialized expertise but can generate multiple mechanistic hypotheses from single datasets, while traditional approaches may have lower computational requirements but often necessitate more directed experiments to test individual hypotheses [14] [17]. The choice between approaches therefore depends on available resources, experimental constraints, and research goals.

Integration and Hybrid Approaches

The most powerful contemporary biological research often integrates network-based and traditional approaches, leveraging their complementary strengths. Hierarchical integration applies traditional statistical methods for initial data quality control and preprocessing, then uses network approaches for system-level analysis, finally applying traditional experimental methods for hypothesis validation [17]. This sequential integration maximizes analytical rigor while enabling discovery of emergent properties.

Network-primed traditional approaches use network analysis to generate prioritized hypotheses that are then tested using rigorous traditional methods, combining the discovery power of network biology with the established validity of traditional statistics [14] [17]. This approach has proven particularly successful for drug repurposing, where network analysis identifies candidate drugs and traditional experimental methods validate their efficacy and mechanism [18].

Methodological hybrids incorporate network-derived features as covariates in traditional statistical models, or use traditional statistical tests to assess the significance of network properties [17]. These hybrids acknowledge that both component-level and system-level perspectives contribute to comprehensive biological understanding, and that the optimal analytical approach depends on the specific research question rather than methodological preference alone.

In the complex world of systems biology research, two distinct computational paradigms have emerged for extracting meaningful insights from biological data: traditional statistical methods and modern network-based approaches. Traditional statistical methods, with their established methodology and inferential framework, are focused on testing specific hypotheses and inferring relationships between a defined set of variables [20]. In contrast, network-based methods model biological systems as interconnected networks of nodes and edges, aiming to capture the system's emergent properties and complex interactions that are not apparent when examining individual components in isolation [18] [21]. The choice between these paradigms is not merely technical but fundamentally shapes how researchers conceptualize biological problems, structure their analyses, and interpret their findings. This comparison guide provides an objective assessment of both approaches, examining their respective capabilities, limitations, and optimal applications within systems biology research and drug development.

Analytical Philosophies and Core Methodologies

Foundational Principles

Traditional statistical methods in systems biology are typically grounded in parametric assumptions and hypothesis-driven frameworks. These methods, including regression models, discriminant analysis, and logistic regression, operate on the principle of testing predetermined hypotheses about relationships between variables [20] [22]. They produce clinically friendly measures of association such as odds ratios in logistic regression models or hazard ratios in Cox regression models, which are easily interpretable by researchers and clinicians [20]. These approaches work best when researchers have substantial a priori knowledge about the topic under study and when the number of observations largely exceeds the number of input variables [20].

Network-based methods embrace a systems-level perspective, modeling biological entities as interconnected networks where nodes represent biological elements (genes, proteins, metabolites, etc.) and edges represent their interactions or relationships [21] [2]. This paradigm is founded on the principle that biological functions emerge from complex networks of interactions rather than from individual components working in isolation [18]. Unlike traditional methods that require explicit programming of rules, network approaches often employ machine learning techniques where models learn from examples, generalizing patterns from training data to make predictions on new inputs [20].

Experimental Protocols and Workflows

The experimental workflow for traditional statistical analysis typically follows a linear path: (1) hypothesis formulation based on prior knowledge, (2) data collection with a predefined set of variables, (3) model specification with assumptions about error distributions and parameter relationships, (4) parameter estimation and hypothesis testing, and (5) interpretation of results through the lens of biological mechanisms [20]. This process emphasizes careful experimental design to control for confounding variables and ensure sufficient statistical power.

Network-based analysis employs a more iterative workflow: (1) data integration from multiple heterogeneous sources, (2) network reconstruction and edge estimation, (3) network topology analysis and characterization, (4) identification of network patterns and functional modules, and (5) biological validation of network predictions [23] [2]. This approach handles high-dimensional data where the number of variables often far exceeds the number of observations, particularly in omics applications [20].

The diagram below illustrates the fundamental differences in how these two paradigms construct knowledge from biological data.

Performance Comparison: Quantitative and Qualitative Assessment

Predictive Accuracy and Interpretability

The performance characteristics of traditional statistical versus network-based methods vary significantly across different biological contexts and data structures. The table below summarizes key comparative findings from empirical studies across multiple biological domains.

Table 1: Performance Comparison of Traditional Statistical vs. Network-Based Methods

| Performance Metric | Traditional Statistical Methods | Network-Based Methods | Comparative Evidence |

|---|---|---|---|

| Interpretability | High; produces clinically friendly measures (odds ratios, hazard ratios) [20] | Variable; often "black box" especially in neural networks [20] | Traditional methods superior for mechanistic understanding [20] |

| Handling Complex Interactions | Limited; mostly addresses interactions between main determinant and single confounders [20] | Excellent; naturally captures higher-order interactions [20] | Network methods significantly outperform in detecting polygenicity and epistatic effects [20] |

| Data Requirements | Requires cases >> variables; sensitive to sparse data [20] | Scalable to high-dimensional data; handles sparse data through regularization [20] [2] | Network methods advantageous in omics with many variables [20] |

| Nonlinear Pattern Detection | Limited to specified functional forms | Excellent; flexible nonparametric estimation [20] [22] | Neural networks automatically approximate nonlinear functions without prespecification [22] |

| Validation Approach | Statistical significance testing, cross-validation | Network perturbation, bootstrap resampling, experimental validation [23] | Network validation requires specialized approaches due to interdependent data [23] |

Application-Specific Performance

In gene network analysis, network-based statistics (NBS) has demonstrated superior power for detecting interconnected brain regions in mild cognitive impairment (MCI) studies compared to traditional multiple comparison corrections. NBS identified an enhanced subnetwork in the right prefrontal cortex of MCI patients (4 significant connection pairs: CH12-CH15, CH12-CH16, CH13-CH15, CH13-CH16) that traditional FDR correction missed, with the subnetwork's functional connectivity values explaining 25.7% of variance in cognitive scores (adjusted R² = 0.257, F = 24.723, p < 0.001) [24].

In drug repurposing, network-based approaches have significantly accelerated the identification of therapeutic candidates. Systems biology-based drug repurposing approaches shorten time and reduce costs compared to de novo drug discovery, as demonstrated during the COVID-19 pandemic where existing drugs like remdesivir were rapidly identified for SARS-CoV-2 treatment [18]. These network methods can analyze drug-target interactions in a global physiological context, systematically evaluating a drug candidate's effects across entire interaction networks [18].

Domain-Specific Applications and Limitations

Optimal Application Domains

Each methodological paradigm demonstrates distinct advantages depending on the biological question and data context. The following table summarizes their optimal application domains and inherent limitations.

Table 2: Application Domains and Limitations of Each Paradigm

| Aspect | Traditional Statistical Methods | Network-Based Methods |

|---|---|---|

| Optimal Application Domains | Public health research [20], Analysis with substantial prior knowledge [20], Randomized controlled trials, Epidemiological studies | Omics sciences (genomics, transcriptomics, proteomics) [20], Drug repurposing [18], Complex disease modeling [25], Brain connectivity analysis [24] |

| Data Structure Fit | Clean, structured data with limited variables [26] | High-dimensional data with many interacting components [20] [2], Integrated heterogeneous data sources [23] |

| Key Strengths | Causal inference capability [20], Established methodology [22], Transparency and interpretability [20], Minimal computational requirements | Pattern detection in complex systems [21], Flexibility and scalability [20], Handling of nonlinear relationships [22], Integration of diverse data types [20] |

| Inherent Limitations | Limited ability to detect emergent system properties [21], Strict parametric assumptions often violated [20], Poor scalability to high-dimensional data [20] | Interpretability challenges (black box) [20] [23], High computational demands [23], Sensitivity to network completeness and quality [23], Validation complexities [23] |

| Validation Requirements | Statistical significance, goodness-of-fit measures, residual analysis | Experimental confirmation [23], Network perturbation analysis [23], Cross-validation across multiple networks [23] |

Technical Limitations and Challenges

Network-based methods face significant challenges in biological applications. Biological networks are often incomplete, with missing protein-protein interaction data estimated as high as 80% [23]. Additionally, integrating heterogeneous information into homogeneous networks abstracts away biological nuance, sacrificing cell-type specificity, spatial and temporal resolution, and environmental factors [23]. Network inference methods also suffer from representational and algorithmic interpretability issues, making it difficult to trace feature sets that support biological hypotheses [23].

Traditional statistical methods face their own limitations, particularly their reliance on strong assumptions about error distributions, additivity of parameters within linear predictors, and proportional hazards [20]. These assumptions are often violated in clinical practice but frequently overlooked in scientific literature [20]. For instance, the assumption of proportional hazards has been violated when studying survival in gastric cancer patients, as the prognostic significance of tumor invasion depth and nodal status decreases with increasing follow-up [20].

Experimental Design and Research Reagents

Essential Research Reagents and Computational Tools

Successful application of either paradigm requires specific computational tools and resources. The table below outlines key "research reagent solutions" essential for implementing each approach.

Table 3: Essential Research Reagents and Computational Tools

| Tool Category | Specific Solutions | Function and Application |

|---|---|---|

| Biological Network Databases | STRINGdb [23], PCNet [23], KEGG [18], DrugBank [18] | Provide curated molecular interaction data for network construction and validation |

| Network Analysis Platforms | Network-Based Statistics (NBS) [24], Gaussian Graphical Models (GGM) [2], Bayesian Networks [2] | Detect significant network components, estimate partial correlations, model causal relationships |

| Traditional Statistical Software | R, SAS, SPSS, STATA | Implement regression models, hypothesis testing, and traditional multivariate analyses |

| Specialized Biological Data Tools | CRAPome [23], Homer2 toolkit [24], NirSmart fNIRS [24] | Remove false positive interactions, preprocess neuroimaging data, measure hemodynamic responses |

| Validation Resources | Orthogonally curated experimental sources [23], Knockout models, Clinical trial data | Provide biological validation of computational predictions |

Integrated Workflow for Comprehensive Analysis

The most powerful contemporary approaches integrate both paradigms, leveraging their complementary strengths. The following diagram illustrates an integrated workflow that combines traditional statistical reasoning with network-based discovery.