Navigating the Data Maze: A Comprehensive Guide to Multi-Omics Imputation and Normalization

This article provides a systematic guide for researchers and bioinformaticians tackling the critical preprocessing steps in multi-omics analysis: imputation and normalization.

Navigating the Data Maze: A Comprehensive Guide to Multi-Omics Imputation and Normalization

Abstract

This article provides a systematic guide for researchers and bioinformaticians tackling the critical preprocessing steps in multi-omics analysis: imputation and normalization. It establishes the foundational importance of these steps for robust data integration, details current methodological strategies from classical to AI-driven approaches, offers practical solutions for common pitfalls, and outlines frameworks for rigorous validation. By synthesizing the latest computational advancements and best practices, this guide aims to equip professionals with the knowledge to enhance data quality, ensure biological validity, and accelerate discoveries in precision medicine and drug development.

Why Preprocessing is the Keystone of Reliable Multi-Omics Analysis

In multi-omics research, the integration of diverse molecular datasets—genomics, transcriptomics, proteomics, and metabolomics—is fundamentally complicated by two pervasive technical challenges: missing values and technical noise. These data imperfections represent a significant bottleneck that can obscure true biological signals, introduce biases in statistical analysis, and ultimately compromise the validity of scientific conclusions and biomarker discovery. The inherent heterogeneity of multi-omics data types, each with distinct biochemical properties and measurement technologies, creates multiple avenues for data loss and systematic technical variation. Understanding the origins, characteristics, and methodological approaches to mitigate these issues is a critical prerequisite for any robust multi-omics study. This document delineates the nature of these challenges and provides structured experimental protocols to address them, ensuring data quality and reliability in downstream integrative analyses.

The Multi-Faceted Nature of Missing Values

Origins and Classification of Missing Data

Missing values occur systematically across all omics layers due to a combination of technical and biological factors. The mechanism of data loss is critical for selecting the appropriate imputation strategy and can be categorized as follows:

- Missing Completely at Random (MCAR): The absence of data is unrelated to any observed or unobserved variables. Example: a sample is lost during preparation due to a random pipetting error.

- Missing at Random (MAR): The probability of missingness depends on observed data but not on the missing value itself. Example: a specific metabolite is undetectable in a particular batch of samples due to a known instrument calibration issue that affects all measurements in that batch equally.

- Missing Not at Random (MNAR): The missingness is related to the unobserved missing value itself. This is the most problematic mechanism. Example: a protein's abundance falls below the detection limit of the mass spectrometer [1] [2].

The following table summarizes the quantitative impact and common causes of missing data across different omics modalities, as observed in large-scale studies.

Table 1: Characteristics and Prevalence of Missing Values Across Omics Layers

| Omics Layer | Typical Missing Value Rate | Primary Causes | Data Type |

|---|---|---|---|

| Metabolomics | 10-30% | Abundance below instrument detection limit [1] | Continuous intensity |

| Proteomics | 15-40% | Low-abundance proteins, inefficient peptide detection [1] | Continuous intensity / counts |

| Lipidomics | 10-25% | Low abundance, extraction inefficiencies [1] | Continuous intensity |

| Transcriptomics | 5-20% | Lowly expressed genes, library preparation biases [3] | Counts (RNA-seq) |

| Genomics | <1-5% | Low sequencing coverage, variant calling filters | Discrete genotypes |

Consequences of Unaddressed Missing Data

Ignoring missing values through complete-case analysis (i.e., removing any sample or variable with missing data) is a statistically flawed approach that can severely compromise a study. In multi-omics data, where missingness is widespread, this leads to a drastic reduction in statistical power and the introduction of significant bias, as the remaining "complete" dataset may no longer be representative of the true biological population [4] [5]. Furthermore, many advanced machine learning and network inference algorithms require a complete data matrix to function. Without careful imputation, these models may fail entirely or produce spurious and non-reproducible findings, wasting valuable resources and potentially misleading the scientific community.

The Pervasive Challenge of Technical Noise

Technical noise, or unwanted non-biological variation, is introduced at every stage of the multi-omics workflow, from sample collection to data acquisition. Unlike missing data, noise affects every single measurement to some degree, inflating variance and masking true biological effects. The major sources of noise include:

- Batch Effects: Systematic technical biases introduced when samples are processed in different groups (batches). These can be caused by different reagents, different personnel, instrument recalibration, or day-to-day environmental fluctuations [3] [2]. Batch effects can be strong enough to completely obscure the biological signal of interest.

- Sample Preparation Variability: Inconsistencies during nucleic acid or protein extraction, purification, and quantification can lead to significant technical variation.

- Instrument Noise: In mass spectrometry-based platforms (proteomics, metabolomics, lipidomics), this includes electronic noise and ion suppression effects. In sequencing-based platforms (genomics, transcriptomics), it includes base-calling errors and optical noise [6].

- Background and Interference: Non-specific binding in immunoassays or cross-hybridization in microarray technologies contributes to background signal.

Quantitative Impact of Normalization

The choice of normalization strategy is critical for mitigating technical noise. Different methods are optimized for specific data types and noise structures. The effectiveness of a normalization technique is typically evaluated based on its ability to improve Quality Control (QC) sample consistency and preserve biological variance.

Table 2: Evaluation of Normalization Methods for Mass Spectrometry-Based Omics

| Normalization Method | Optimal Omics Application | Key Performance Metric | Impact on Biological Variance |

|---|---|---|---|

| Probabilistic Quotient (PQN) | Metabolomics, Lipidomics, Proteomics [1] | High improvement in QC feature consistency [1] | Preserves treatment-related variance |

| LOESS (on QC samples) | Metabolomics, Lipidomics [1] | High improvement in QC feature consistency [1] | Preserves time-related variance |

| Median Normalization | Proteomics [1] | Good improvement in QC feature consistency | Preserves treatment-related variance |

| TMM | Transcriptomics (RNA-seq) [3] | Corrects for sequencing depth and composition | Maintains differential expression accuracy |

| Quantile Normalization | Microarray Transcriptomics [3] | Forces identical distributions across samples | Can be aggressive; may remove weak biological signals |

| SERRF (Machine Learning) | Metabolomics [1] | Can outperform in some datasets | Risk of masking treatment-related variance [1] |

Experimental Protocols for Data Cleansing

Protocol 1: A Standard Preprocessing Pipeline for Omics Data

This protocol outlines a generalized workflow for data cleaning, normalization, and missing value imputation, adaptable for various omics data types.

I. Materials and Reagents

- Raw data files (e.g., .txt, .csv, .mzML, .bam)

- Computing environment (e.g., R, Python, MATLAB)

- Reference databases (e.g., HMDB for metabolomics, UniProt for proteomics)

II. Procedure

Data Loading and Quality Control (QC)

- Load the raw data matrix (samples × features).

- Perform initial QC visualization: Generate box plots of raw intensities/log-counts and sample correlation heatmaps to identify severe outliers.

- Criteria: Remove samples with consistently low intensity or correlation coefficients below a threshold (e.g., < 0.5) with other samples in their group [3].

Low-Abundance Filtering

- Remove features (genes, proteins, metabolites) with a high proportion of missing values or low signal.

- Criteria for RNA-seq: Filter out genes where fewer than 10% of samples have a count ≥ 5 [3].

- Criteria for Metabolomics: Filter out metabolites present in less than 80% of samples in any experimental group.

Normalization

- Select and apply a normalization method from Table 2 suited to your data type.

- Example for RNA-seq (in R): Use the

edgeRpackage to perform TMM normalization and transform counts to log2-CPM (Counts Per Million) [3]. - Example for Metabolomics (in R): Use the

pqnfunction from theNOREpackage to perform Probabilistic Quotient Normalization.

Missing Value Imputation

- Choose an imputation method based on the suspected missingness mechanism (see Section 2.1).

- For MCAR/MAR data, use K-Nearest Neighbors (KNN) imputation. It imputes a missing value by averaging the values from the

kmost similar samples (default k=5 is often effective) [3] [7]. - For MNAR data (e.g., left-censored data below detection limit), use methods like Minimum Imputation, Bayesian Principal Component Analysis (BPCA), or model-based methods that account for the detection limit.

Batch Effect Correction

- If a batch structure is known, use a correction method like ComBat (from the

svaR package) [3]. - Provide the normalized data matrix and batch covariate to the ComBat algorithm to remove systematic batch-related variation while preserving biological signal.

- If a batch structure is known, use a correction method like ComBat (from the

III. Data Analysis

- The resulting cleaned, normalized, and imputed data matrix is now suitable for downstream statistical analysis, including differential analysis, clustering, and machine learning.

Protocol 2: Handling Missing Data in Multi-Omics Integration Studies

This protocol specifically addresses the challenge of integrating multiple omics datasets where different samples may have missing layers, a common scenario in consortium studies [4] [5].

I. Materials

- Multiple cleaned and normalized omics datasets (e.g., Genotype, Methylation, Transcriptomics, Proteomics).

- Phenotypic and clinical data.

- Software: BayesNetty or a similar tool capable of handling mixed discrete/continuous data with missing values [4] [5].

II. Procedure

Data Filtering and Variable Selection

Model-Based Imputation within a Bayesian Framework

- This method does not impute values in a separate step. Instead, it fits a Bayesian network model directly to the incomplete data.

- The algorithm uses available data to infer the joint probability distribution of all variables.

- It calculates a posterior distribution for the parameters, which accounts for the uncertainty introduced by the missing values.

- An average network is computed over the posterior distribution, which robustly represents the causal relationships between variables despite the incomplete data [4] [5].

Network Interrogation

- The final average Bayesian network can be queried to infer possible associations and causal relationships between variables of interest (e.g., genotype -> protein -> clinical outcome) [5].

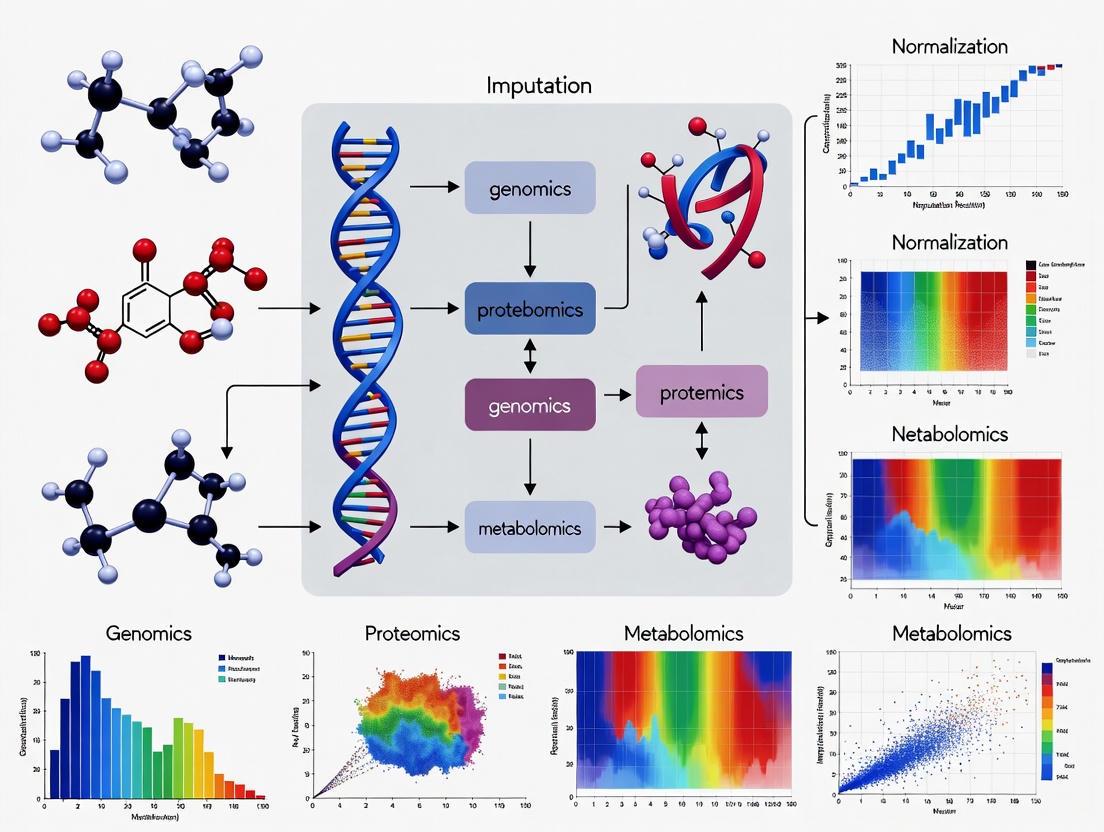

Visualizing the Experimental Workflow

The following diagram illustrates the logical relationships and sequential steps in the standard multi-omics data preprocessing workflow.

Diagram 1: Standard Multi-Omics Preprocessing Workflow.

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

Table 3: Key Research Reagents and Computational Tools for Data Cleansing

| Item / Tool Name | Function / Description | Application Context |

|---|---|---|

| QC Reference Samples | Pooled samples from all groups, run repeatedly to monitor instrument stability and for LOESS normalization [1]. | All mass spectrometry and sequencing experiments. |

| Internal Standards (IS) | Chemically similar, stable isotope-labeled analogs of target analytes added to correct for sample prep variability and ion suppression. | Targeted metabolomics, lipidomics, proteomics. |

| edgeR / DESeq2 (R packages) | Statistical packages for normalizing and analyzing RNA-seq count data (e.g., TMM method in edgeR) [3]. | Transcriptomics data analysis. |

| BayesNetty | Software package for fitting Bayesian networks to mixed discrete/continuous data with missing values, enabling causal inference [4] [5]. | Multi-omics integration with incomplete data. |

| ComBat / sva (R package) | Algorithm for adjusting for batch effects in high-dimensional data, preserving biological variance [3]. | Multi-omics data from multiple batches or centers. |

| KNN Imputation | k-Nearest Neighbors algorithm; fills a missing value using the average from the k most similar samples. A versatile, general-purpose method [3] [2]. | General imputation for MCAR/MAR data across omics layers. |

| WDL (Workflow Description Language) | A language for describing complex data processing workflows in a portable and scalable manner, ensuring reproducibility [8]. | Deploying standardized preprocessing pipelines on HPC systems. |

| Singularity / Docker | Containerization technologies that package software and dependencies into a portable, reproducible unit [8]. | Ensuring consistent software environments for analysis. |

The High-Dimension Low Sample Size (HDLSS) Problem and its Impact on Downstream Analysis

The High-Dimension, Low Sample Size (HDLSS) regime, where the number of features (p) far exceeds the number of observations (n, presents significant statistical and computational challenges for multi-omics research. This Application Note examines the theoretical foundations of the HDLSS problem and its profound impact on downstream analyses, including classification, clustering, and data integration. We detail robust experimental and computational protocols for data normalization, imputation, and dimensionality reduction specifically designed for HDLSS settings. Within the broader context of multi-omics data imputation and normalization research, these protocols are essential for ensuring the reliability of biological interpretations and the success of subsequent drug development efforts.

In modern bioinformatics, technological advances in high-throughput biology have enabled the simultaneous measurement of tens of thousands to millions of features (e.g., genes, proteins, metabolites) across a relatively small number of biological samples [9] [10]. This scenario is aptly termed the High-Dimension, Low Sample Size (HDLSS) paradigm. A pivotal characteristic of HDLSS data is that the dimensionality p is significantly larger than the sample size n, often denoted as p ≫ n [11].

This paradigm presents unique challenges that run counter to classical statistical intuition. For instance, in the limit as the dimension d → ∞ with a fixed sample size n, a standard Gaussian sample exhibits geometric properties where data vectors tend to lie on the surface of a growing sphere, and the angles between pairs of vectors approach 90 degrees, leading to a phenomenon of random rotation [9]. This inherent geometry can severely degrade the performance of traditional statistical methods, leading to overfitting, model instability, and spurious correlations [12] [13] [11]. In multi-omics studies, these challenges are compounded by the need to integrate heterogeneous data types (genomics, transcriptomics, proteomics, etc.), each with its own HDLSS characteristics, missing value patterns, and technical noise [10] [14] [15]. Addressing the HDLSS problem through principled normalization, imputation, and dimensionality reduction is therefore a critical prerequisite for any meaningful multi-omics integrative analysis.

Impact on Downstream Analysis

The HDLSS problem fundamentally compromises the validity and reliability of downstream analytical tasks. Understanding these impacts is crucial for developing appropriate corrective methodologies.

Table 1: Impact of HDLSS on Key Downstream Analyses

| Analytical Task | Impact of HDLSS | Consequence |

|---|---|---|

| Classification | High misclassification rate due to overfitting and increased variance of discriminant functions [13]. | Reduced accuracy in disease subtyping, sample diagnosis, and biomarker identification. |

| Clustering | Apparent formation of clusters in high-dimensional space that may not represent true biological groups [9]. | Misleading interpretation of cell types or disease subtypes, invalidating biological conclusions. |

| Principal Component Analysis (PCA) | Sample eigenvectors fail to converge to their population counterparts; they instead converge to a cone, creating a systematic angle bias [9]. | Inaccurate data visualization and incorrect identification of primary sources of variation. |

| Feature Selection | Standard methods assume feature independence; HDLSS exacerbates the difficulty in identifying truly relevant features from a sea of irrelevant ones [11]. | Selection of redundant or irrelevant features, hindering biomarker discovery and biological insight. |

| Data Fusion & Integration | The "curse of dimensionality" affects each omics view uniquely, complicating the creation of a unified, low-dimensional representation [12]. | Failure to capture true inter-omics relationships, leading to an incomplete or distorted biological picture. |

Methodological Framework for HDLSS Data

A robust analytical framework for HDLSS multi-omics data must incorporate specialized procedures for normalization, imputation, and dimensionality reduction to mitigate the adverse effects previously described.

Normalization Strategies for Multi-Omics Data

Normalization is a critical pre-processing step to control systematic biases and minimize technical variation, making different samples and omics layers comparable [16] [14]. The choice of normalization method is particularly sensitive in HDLSS settings, where technical artifacts can easily overwhelm subtle biological signals.

Table 2: Evaluation of Normalization Methods for MS-Based Multi-Omics Data

| Normalization Method | Underlying Assumption | Performance in Multi-Omics Context |

|---|---|---|

| Total Ion Current (TIC) | Total feature intensity is consistent across all samples [14]. | Can be biased by highly abundant features; performance varies across omics types. |

| Probabilistic Quotient Normalization (PQN) | The overall distribution of feature intensities is similar across samples [14]. | Identified as optimal for metabolomics and lipidomics; also excels in proteomics. Robust for temporal studies. |

| Median Normalization | The median feature intensity is constant across samples [14]. | Excels for proteomics data; a simple and stable method. |

| LOESS (QC-based) | Assumes balanced proportions of up/down-regulated features; uses quality control (QC) samples to model systematic error [14]. | Top performer for metabolomics and lipidomics; effective at preserving time-related variance in proteomics. |

| Variance Stabilizing Normalization (VSN) | Feature variance depends on its mean and can be transformed to be constant [14]. | Applied to proteomics; transforms data distribution. |

| SERRF (Machine Learning) | Uses Random Forest on QC samples to learn and correct for systematic errors like batch effects [14]. | Can outperform others but risks overfitting and masking true biological variance. |

Protocol 1: Two-Step Pre-Acquisition Normalization for Tissue-Based Multi-Omics Application: This protocol is designed for MS-based analysis of proteins, lipids, and metabolites extracted from the same tissue sample, minimizing technical variation prior to instrumental analysis [16].

- Tissue Homogenization: Weigh frozen tissue samples and homogenize in a methanol-water mixture (e.g., 5:2, v:v) at a consistent ratio (e.g., 0.06 mg tissue per μL solvent) [16].

- Multi-Omics Extraction: Perform a simultaneous extraction of biomolecules using a method like the Folch extraction (using methanol, water, and chloroform at a ratio of 5:2:10, v:v:v) [16].

- Post-Extraction Protein Quantification: Measure the protein concentration from the extracted protein pellet using a colorimetric assay (e.g., DCA assay) [16].

- Volume Adjustment: Normalize the volumes of the lipid and metabolite fractions based on the measured protein concentration before drying and LC-MS/MS analysis [16]. Rationale: Normalizing first by tissue weight and then by post-extraction protein concentration has been shown to generate the lowest sample variation, thereby best revealing true biological differences in subsequent analyses [16].

Multi-Omics Normalization Workflow

Advanced Imputation for Missing Data

Missing values are inevitable in omics datasets and are particularly problematic in HDLSS contexts, as they can constitute a significant portion of the already limited sample information. Integrative imputation techniques that leverage correlations across multi-omics datasets outperform methods relying on single-omics information alone [10] [17].

Table 3: Deep Learning Models for Omics Data Imputation

| Deep Learning Model | Key Principle | Strengths | Weaknesses | Suitable Omics Data |

|---|---|---|---|---|

| Autoencoder (AE) | Learns a compressed data representation (encoder) to reconstruct original data (decoder) [17]. | Excels at learning complex, non-linear relationships; relatively straightforward to train. | Prone to overfitting; latent space can be less interpretable. | scRNA-seq, bulk transcriptomics [17]. |

| Variational Autoencoder (VAE) | A probabilistic generative model that learns a latent variable distribution [17]. | More interpretable latent space; mitigates overfitting; good for modeling uncertainty. | More complex training due to KL divergence loss and sampling. | Transcriptomics, multi-omics integration [17]. |

| Generative Adversarial Networks (GANs) | Uses a generator and a discriminator in an adversarial game to produce realistic data [17]. | Highly flexible; can generate diverse, high-quality samples. | Training is unstable (mode collapse, hyperparameter sensitivity). | Image-based omics data (e.g., histology) [17]. |

| Transformer | Utilizes self-attention mechanisms to weigh the importance of all elements in a sequence [17]. | Captures long-range dependencies in data; highly parallelizable. | Computationally intensive (quadratic complexity with sequence length). | Genomics, proteomics (sequence data) [17]. |

Protocol 2: Autoencoder-Based Imputation for scRNA-seq Data Application: This protocol uses an overcomplete autoencoder to impute missing values in a sparse gene expression matrix, minimizing alterations to biologically uninformative values [17].

- Input: A sparse gene expression matrix

Rwith missing values represented as zeros orNA. - Model Architecture:

- Encoder (

E): A neural network that maps the input dataRto a lower-dimensional bottleneck layer. - Decoder (

D): A neural network that reconstructs the full expression matrix from the bottleneck layer.

- Encoder (

- Loss Function: Minimize the following objective:

min E,D ||R - Dσ(E(R))||₀² + λ/2 (||E||F² + ||D||F²)where||・||₀implies the loss is calculated only for non-zero counts inR,σis the sigmoid activation function, andλis a regularization coefficient to prevent overfitting [17]. - Training: Train the model using backpropagation until the reconstruction error converges.

- Imputation: The output of the trained autoencoder,

Dσ(E(R)), is the imputed gene expression matrix.

Autoencoder Imputation Process

Dimensionality Reduction and Feature Selection

Direct analysis in the original high-dimensional space is often infeasible. Therefore, reducing dimensionality while preserving biological signal is paramount.

Protocol 3: Hybrid Feature Selection for HDLSS Datasets Application: This metaheuristic method combines filtering and wrapper techniques to select a minimal set of informative features from HDLSS data, enhancing prediction model performance [11].

- Phase 1: Gradual Permutation Filtering (GPF)

- Input: All

pfeatures from the HDLSS dataset. - Ranking: Evaluate features based on permutation importance within a model (e.g., a classifier). This involves randomly shuffling a single feature and measuring the decrease in model performance.

- Iterative Filtering: Repeatedly (e.g., 50 times) measure permutation importance and eliminate features with importance near zero. Recalculate importance after each elimination step to minimize bias.

- Output: A ranked list of features that have survived the filtering process.

- Input: All

- Phase 2: Heuristic Tribrid Search (HTS)

- Forward Search: Start with a "first-choice feature" from the GPF-ranked list. Incrementally add the next feature from the list that most improves a performance metric (e.g., LCM).

- Consolation Match: If performance plateaus, attempt to swap a single feature between the selected and unselected pools to escape local optima.

- Backward Elimination: Remove the least important feature from the selected set if it does not degrade performance.

- Stopping Criterion: The process stops when no further improvement is found via swapping or elimination.

Data Fusion in HDLSS Settings

A universal approach for learning in an HDLSS setting involves multi-view mid-fusion [18]. When inherent data views (e.g., separate omics) are not available, this technique artificially constructs them by splitting high-dimensional feature vectors into smaller subsets. Each subset is then treated as an independent "view," and a mid-fusion integration model is applied to learn from these views simultaneously, effectively improving performance in the HDLSS context [18].

The Scientist's Toolkit: Essential Reagents & Materials

Table 4: Key Research Reagent Solutions for Multi-Omics Experiments

| Item | Function/Application | Example/Details |

|---|---|---|

| Folch Extraction Solvents | Simultaneous extraction of proteins, lipids, and metabolites from the same biological sample [16]. | Methanol, Water, Chloroform at ratio 5:2:10 (v:v:v). |

| Internal Standards (I.S.) | Spiked into samples before LC-MS/MS analysis to correct for technical variation during sample preparation and instrument run. | Metabolomics: 13C515N Folic Acid. Lipidomics: EquiSplash mixture [16]. |

| Colorimetric Protein Assay | Quantification of total protein concentration for sample normalization prior to proteomic analysis. | DCA (Dichloroacetic Acid) Assay or similar (e.g., BCA, Bradford) [16]. |

| LC-MS/MS Grade Solvents | Used as mobile phases for liquid chromatography to ensure minimal background noise and high sensitivity in mass spectrometry. | MS-grade Water with 0.1% Formic Acid (FA); Acetonitrile (ACN) with 0.1% FA [16]. |

| Quality Control (QC) Pool | A pooled sample created by combining small aliquots of all study samples, used to monitor and correct for instrumental drift. | Injected at regular intervals throughout the LC-MS/MS sequence for post-acquisition normalization (e.g., LOESS QC) [14]. |

The HDLSS problem is a central challenge in contemporary multi-omics research, directly impacting the veracity of downstream analytical results. Success in this context hinges on the rigorous application of specialized protocols for data pre-processing. As detailed in this note, a combination of robust two-step normalization, advanced deep learning-based imputation, and careful dimensionality reduction or feature selection forms a defensible strategy to mitigate the perils of high-dimensionality and low sample size. Adherence to these protocols ensures that subsequent data integration and modeling efforts are built upon a reliable foundation, thereby accelerating the discovery of robust biomarkers and therapeutic targets in precision medicine.

In multi-omics research, data heterogeneity presents a fundamental challenge for integrative analysis. This heterogeneity manifests primarily through different scales (e.g., read counts for transcriptomics versus intensity values for proteomics), varying distributions (binomial for transcript expression, bimodal for methylation data), and disparate modalities (continuous, categorical, and right-censored data) originating from platforms including genomics, transcriptomics, proteomics, metabolomics, and epigenomics [19]. The core objective of multi-omics integration is to synthesize these heterogeneous datasets, measured on the same biological samples, to achieve a holistic understanding of biological systems and complex diseases such as cancer and neurodevelopmental disorders [20] [21]. Successfully reconciling these differences is critical for uncovering hidden patterns and complex phenomena that are not apparent from single-omics analyses alone [21].

The sources of heterogeneity are both technical and biological. Technical variance arises from differences in sample handling, reagents, instrumentation, and operator, leading to batch effects that can obscure true biological signals [22]. Biologically, different omics layers may produce complementary or occasionally conflicting signals, as seen in colorectal carcinomas where methylation profiles linked to genetic lineages showed inconsistent connections to transcriptional programs [19]. Furthermore, cohort differences in sex, age, ancestry, disease severity, and comorbidities introduce additional variance that is not disease-related, complicating the distinction between technical noise and biological signal [22]. Addressing these challenges requires robust normalization, batch correction, and specialized statistical frameworks designed to handle high-dimensional, sparse data with complex covariance structures [22].

Understanding the Dimensions of Heterogeneity

The heterogeneity in multi-omics data stems from multiple, interconnected sources. Understanding these dimensions is the first step toward developing effective integration strategies.

- Platform-Induced Heterogeneity: Different omics technologies inherently produce data with distinct characteristics. For instance, RNA-sequencing (RNA-seq) data for transcriptomics is typically count-based and follows a negative binomial distribution, while mass spectrometry-based proteomics generates continuous intensity measurements that often require variance-stabilizing normalization [22]. Methylation data, particularly for CpG islands, displays a characteristic bimodal distribution [19]. These inherent differences in measurement scales and distributions must be reconciled before integration.

- Batch Effects and Confounders: Technical batch effects are a major source of unwanted variation, introduced by differences in sample processing dates, reagent lots, sequencing lanes, or mass spectrometry runs [22]. Biological confounders, such as age, sex, post-mortem interval (for brain tissue), and cell type heterogeneity, can also introduce systematic biases that are unrelated to the biological question of interest. In neurodevelopmental disorder studies, for example, case-control imbalances or developmental stage effects are common and must be carefully adjusted for [22].

- Dimensionality and Sparsity: Omics datasets are typically "wide," with thousands to hundreds of thousands of features (e.g., genes, proteins) measured across a relatively small number of samples. This "large p, small n" scenario increases the risk of overfitting and spurious associations [22]. Additionally, data sparsity is a concern, particularly in proteomics and metabolomics, where many features may be missing not at random but due to being below the detection limit.

Impact of Heterogeneity on Downstream Analysis

Failure to adequately address data heterogeneity has profound consequences on the reliability and interpretability of multi-omics studies.

- Reduced Statistical Power and False Discoveries: Unaccounted for technical variation and confounders can severely compromise downstream inference, inflating false positive rates or obscuring true biological signals [22]. Poor quality control, such as the inclusion of outlier samples due to RNA degradation, can distort differential expression analyses and bias integrative modeling.

- Impaired Integration Performance: Data heterogeneity directly challenges integration algorithms. Without proper harmonization, methods may fail to identify concordant signals across omics layers or may group samples based on technical artifacts rather than biological similarity. This can lead to inaccurate patient stratifications, unreliable biomarker discovery, and flawed molecular subtyping [19].

- Limited Reproducibility and Generalizability: Findings from multi-omics analyses that do not properly account for cohort heterogeneity (e.g., differences in ancestry, medication status) often fail to generalize to independent populations, undermining their clinical and translational potential [22].

Table 1: Key Dimensions of Data Heterogeneity in Multi-Omics Studies

| Dimension of Heterogeneity | Description | Exemplary Data Types | Primary Challenge |

|---|---|---|---|

| Scale and Distribution | Differences in data range (e.g., counts, intensities) and underlying statistical distribution. | RNA-seq (count, negative binomial), Methylation (beta-values, bimodal) | Incomparable feature variances that can dominate integration. |

| Modality | Differences in the fundamental type of data generated. | Genomic (categorical), Proteomic (continuous), Clinical (mixed) | Requires flexible algorithms that can handle diverse data structures. |

| Dimensionality | Differences in the number of features measured per omics layer. | Mutation data (highly sparse), Gene expression (dense) | "Large p, small n" problem, risk of overfitting. |

| Technical Noise | Non-biological variation introduced by experimental procedures. | Batch effects, Library preparation, Platform differences | Can confound biological signal if not corrected. |

Quantitative Benchmarks and Guidelines for Multi-Omics Study Design

Recent large-scale benchmarking studies on datasets from The Cancer Genome Atlas (TCGA) have provided evidence-based recommendations for designing robust multi-omics studies that can effectively manage data heterogeneity. These guidelines address key computational and biological factors to enhance the reliability of integration results [19].

A central finding is the critical importance of feature selection. Selecting a smaller subset of biologically relevant features (e.g., less than 10% of omics features) has been shown to improve clustering performance by up to 34% by reducing noise and mitigating the curse of dimensionality [19]. Furthermore, sample size and balance are crucial. Benchmarks recommend a minimum of 26 samples per class to achieve robust cancer subtype discrimination. Maintaining a class balance under a 3:1 ratio of sample sizes is also advised, as high imbalance can skew integration results [19].

The resilience of integration methods to noise is another key consideration. Studies suggest that analytical workflows should be designed to handle noise levels of up to 30%, beyond which performance can degrade significantly [19]. Adherence to these benchmarks provides a structured framework for researchers to optimize their analytical approaches.

Table 2: Evidence-Based Guidelines for Multi-Omics Study Design (MOSD)

| Factor | Category | Recommended Guideline | Impact on Analysis |

|---|---|---|---|

| Sample Size | Computational | ≥ 26 samples per class | Ensures sufficient statistical power for robust clustering. |

| Feature Selection | Computational | < 10% of omics features | Can improve clustering performance by 34%; reduces noise. |

| Class Balance | Computational | Balance ratio < 3:1 | Prevents skewed results and biased model training. |

| Noise Characterization | Computational | Noise level < 30% | Maintains model performance and reliability. |

| Omics Combinations | Biological | Gene Expression + Methylation often perform well | Provides complementary signals for patient stratification. |

| Clinical Correlation | Biological | Integrate molecular & clinical features (e.g., stage, age) | Validates biological relevance and clinical significance. |

Experimental Protocols for Data Reconciliation

This section provides detailed, step-by-step protocols for normalizing and harmonizing heterogeneous multi-omics data, a critical prerequisite for successful integration.

Protocol 4.1: Multi-Omics Data Preprocessing and Normalization

Objective: To transform raw data from each omics layer into a clean, normalized, and batch-corrected dataset ready for integration.

Materials:

- Computing Environment: R (v4.0+) or Python (v3.8+).

- Software Packages: R:

DESeq2,edgeR,sva,limma. Python:scikit-learn,pandas,numpy,scanpy(for single-cell data). - Input Data: Raw feature matrices (e.g., count matrix for RNA-seq, intensity matrix for proteomics).

Procedure:

- Quality Control (QC) and Filtering:

- Perform per-sample QC: Assess metrics such as sequencing depth (for RNA-seq), mapping rates, and number of detected features. Exclude outlier samples with signs of degradation or low quality [22].

- Perform per-feature Filtering: Remove features with excessive missingness (e.g., genes not expressed in a sufficient number of samples) or low variance, as they contribute little information.

Platform-Specific Normalization:

- For RNA-seq Data: Apply methods that account for library size and composition bias. Use the median-of-ratios method in

DESeq2or the trimmed mean of M-values (TMM) method inedgeR[22]. - For Proteomics Data: Apply variance-stabilizing normalization, quantile normalization, or use internal reference standards to correct for technical variation in mass spectrometry data [22].

- For Methylation Data: Perform background correction and normalization using methods from the

minfiorChAMPpackages.

- For RNA-seq Data: Apply methods that account for library size and composition bias. Use the median-of-ratios method in

Batch Effect Correction:

- Identify known batch variables (e.g., processing date, sequencing run).

- Apply a batch correction algorithm such as

ComBat(from thesvapackage) orremoveBatchEffect(from thelimmapackage) to remove systematic technical variation while preserving biological heterogeneity [22]. - For more complex batch structures, consider advanced methods like Mutual Nearest Neighbors (MNN) or deep learning-based approaches, especially in single-cell omics [22].

Handling Missing Data:

- For proteomics and metabolomics data, impute missing values using methods like k-nearest neighbors (KNN) imputation, minimum value imputation, or model-based approaches (e.g.,

MissForest), depending on the assumed mechanism of missingness.

- For proteomics and metabolomics data, impute missing values using methods like k-nearest neighbors (KNN) imputation, minimum value imputation, or model-based approaches (e.g.,

Protocol 4.2: Concatenation-Based Integration with DIABLO

Objective: To integrate multiple omics datasets using a multi-block supervised framework to identify correlated components that discriminate between pre-defined sample classes and predict clinical outcomes.

Materials:

- Software: R package

mixOmics[21]. - Input Data: Normalized and batch-corrected matrices from at least two omics layers (e.g., gene expression, methylation) and a sample phenotype/class vector.

Procedure:

- Data Preparation: Ensure all normalized matrices are transformed and scaled appropriately. The

mixOmicspipeline often includes internal log-transformation for count data and standardization. - Model Design:

- Specify the omics blocks (X) and the outcome vector (Y) that represents the phenotype or class to be discriminated.

- Define the model design matrix, which controls the level of integration between different omics layers. A common starting point is a full design where all blocks are connected.

- Parameter Tuning:

- Use the

tune.block.splsdafunction to perform a cross-validation grid search for the optimal number of components and the number of features to select per component and per omics block. This step is crucial for building a robust model.

- Use the

- Model Fitting:

- Run the final

block.splsda(DIABLO) model using the tuned parameters. - The model will identify a set of components—latent variables—that maximize the covariance between the omics blocks and the correlation with the outcome.

- Run the final

- Result Interpretation:

- Sample Plot: Visualize sample clustering in the latent space to assess class discrimination.

- Circus Plot: Generate a circos plot to visualize the correlations between selected features from different omics layers, revealing multi-omics biomarker networks.

- Loadings: Examine the variable loadings to identify the top features from each omics platform that drive the integration and class separation.

Protocol 4.3: Deep Learning-Based Integration with Flexynesis

Objective: To leverage a flexible deep learning toolkit for integrating bulk multi-omics data for various prediction tasks, including classification, regression, and survival analysis, in a modular and accessible framework.

Materials:

- Software: Flexynesis, available via PyPi, Bioconda, or Galaxy Server [23].

- Input Data: Normalized and batch-corrected matrices from multiple omics layers. The framework supports single-task and multi-task learning.

Procedure:

- Data Preprocessing with Flexynesis:

- Use the accessory pipeline provided with Flexynesis to streamline data processing, including feature selection and hyperparameter tuning.

- Model Configuration:

- Choose from available deep learning architectures (e.g., fully connected or graph-convolutional encoders).

- Select the supervision task(s): attach Multi-Layer Perceptron (MLP) "supervisor" heads for regression (e.g., drug response), classification (e.g., cancer subtype), or survival modeling (Cox Proportional Hazards) [23].

- Training and Validation:

- Implement standard training/validation/test splits. Flexynesis automates hyperparameter optimization.

- Train the model. The framework allows joint training on multiple outcome variables, shaping the sample embedding space (latent variables) with complementary information, even when some labels are missing [23].

- Model Evaluation and Biomarker Discovery:

- Evaluate performance on the held-out test set using task-specific metrics (e.g., AUC for classification, C-index for survival).

- Use the model's interpretability features to identify key input features (biomarkers) that contribute most to the predictions.

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

Table 3: Key Research Reagent Solutions and Computational Tools for Multi-Omics Integration

| Item / Tool Name | Type | Function in Multi-Omics Integration |

|---|---|---|

| DESeq2 / edgeR | R Software Package | Performs normalization and differential expression analysis for RNA-seq data; addresses library size and composition bias [22]. |

| ComBat (sva package) | R Software Package | Empirical Bayes method for correcting batch effects in high-dimensional data, preserving biological signal while removing technical artifacts [22]. |

| Flexynesis | Python Deep Learning Toolkit | Provides modular, reusable deep learning architectures for bulk multi-omics integration tasks like classification, regression, and survival analysis [23]. |

| DIABLO (mixOmics) | R Software Package | A supervised multi-block framework to identify highly correlated features across multiple omics datasets that discriminate between sample classes [21]. |

| Mutual Nearest Neighbors (MNN) | Computational Algorithm | A batch correction method that identifies pairs of cells (or samples) that are nearest neighbors across batches, used to align datasets and remove technical variation [22]. |

| Internal Reference Standards | Wet-Lab Reagent | Used in proteomics and metabolomics experiments; a set of known, stable isotopically labeled compounds spiked into samples to correct for technical variation during mass spectrometry [22]. |

| Single-Cell Multi-Omics Assays | Wet-Lab Protocol | Enables simultaneous measurement of genomic, transcriptomic, and epigenomic information from the same cell, resolving cellular heterogeneity without inference [24]. |

| Long-Read Sequencing | Technology Platform | Enables full-length transcript sequencing and access to complex genomic regions, improving the resolution of structural variants and isoform diversity [24]. |

In multi-omics research, the raw data generated from high-throughput technologies are never analysis-ready. They contain inherent technical artifacts that, if unaddressed, would obscure true biological signals and lead to spurious findings. Two of the most critical preprocessing steps are imputation and normalization, each serving distinct but complementary purposes. Imputation focuses on handling missing data values that arise from technical limitations, while normalization addresses systematic technical variations that prevent fair comparisons across samples or datasets [2] [25]. The confusion between these processes often stems from their shared position in data preprocessing workflows, yet their methodological approaches and ultimate goals differ fundamentally. Within the context of multi-omics data integration for precision medicine and drug development, applying these techniques appropriately is paramount for generating biologically valid, reproducible results that can inform clinical decision-making and therapeutic discovery [2] [24].

Defining the Core Concepts

The Goal of Imputation: Handling Data Gaps

Imputation is the process of estimating and filling in missing values in a dataset. In multi-omics studies, missing data is a pervasive issue arising from various sources. Technical limitations can prevent the detection of low-abundance proteins in proteomics or metabolites in metabolomics [25]. Analytical platform sensitivities vary, with some technologies failing to detect molecules present at concentrations below their detection thresholds. Biological constraints also contribute, as certain molecules may be expressed in a tissue-specific manner and thus absent in other sample types [25]. The primary goal of imputation is to create a complete data matrix suitable for downstream statistical analyses and machine learning algorithms, most of which require complete datasets. By addressing these gaps, imputation helps prevent biased parameter estimates, loss of statistical power, and reduced generalizability of findings [4].

The Goal of Normalization: Enabling Fair Comparison

Normalization is the process of removing unwanted technical variation to enable fair comparisons across samples and datasets. Multi-omics data are contaminated by numerous non-biological variances including differences in sample preparation, extraction efficiency, instrumental noise, sequencing depth, and reagent batches [26] [25]. These technical artifacts can create systematic differences between samples that obscure genuine biological signals. The core objective of normalization is to eliminate these technical biases so that biological differences can be accurately discerned. This process is particularly crucial when integrating datasets from different studies, laboratories, or platforms, as it ensures that observed differences reflect true biological variation rather than technical inconsistencies [26] [27].

Table 1: Fundamental Distinctions Between Imputation and Normalization

| Aspect | Imputation | Normalization |

|---|---|---|

| Primary Goal | Handle missing data points | Remove technical variation |

| Problem Addressed | Incomplete data matrices | Systematic technical biases |

| Trigger Condition | Missing values detected | Sample-to-sample technical variability |

| Key Challenge | Preserving biological relationships in estimated values | Removing technical noise without removing biological signal |

| Common Methods | Bayesian networks, matrix factorization, k-NN | Probabilistic Quotient Normalization (PQN), LOESS, Median normalization |

Technical Protocols and Methodologies

Experimental Protocol for Data Normalization

Title: Protocol for Normalizing Mass Spectrometry-Based Multi-Omics Data in Temporal Studies

Background: This protocol outlines a robust strategy for normalizing metabolomics, lipidomics, and proteomics datasets derived from mass spectrometry, particularly suited for time-course experiments where preserving temporal biological variance is critical [26].

Reagents and Materials:

- Raw mass spectrometry data files (.raw, .mzML)

- Quality Control (QC) samples (pooled from all experimental samples)

- R statistical environment (v4.3.0 or higher)

- Normalization packages:

limma(for LOESS, Median, Quantile),vsn(for VSN)

Procedure:

- Data Preparation: Process raw files using appropriate software (Compound Discoverer for metabolomics, MS-DIAL for lipidomics, Proteome Discoverer for proteomics) to generate feature intensity tables [26].

- Quality Control Assessment: Calculate the coefficient of variation (CV) for features across QC samples to assess initial data quality.

- Method Selection: Apply multiple normalization methods in parallel:

- For metabolomics and lipidomics: Implement Probabilistic Quotient Normalization (PQN) and LOESS using QC samples (LOESS QC)

- For proteomics: Implement PQN, Median, and LOESS normalization

- Effectiveness Evaluation: Assess each method's performance based on:

- Improvement in QC feature consistency (target: CV reduction >20%)

- Preservation of treatment and time-related variance (variance explained should not decrease >15% post-normalization)

- Method Implementation: Apply the optimal method(s) identified in step 4 to the entire dataset.

Troubleshooting:

- If biological variance decreases substantially post-normalization, consider adjusting parameter settings or trying an alternative method

- If QC consistency does not improve, check for outlier samples that may need exclusion prior to normalization

Experimental Protocol for Data Imputation

Title: Protocol for Bayesian Network Imputation of Multi-Omics Data with Missing Values

Background: This protocol describes a Bayesian network approach to handle missing data in multi-omics datasets, particularly effective for exploring causal relationships in incomplete datasets such as those generated from type 2 diabetes studies [4].

Reagents and Materials:

- Multi-omics dataset with missing values (genomics, proteomics, metabolomics, clinical variables)

- BayesNetty software package

- High-performance computing cluster (for datasets >1,000 samples)

Procedure:

- Data Preprocessing: Filter the full variable set (e.g., from ~16,000 to 260 variables) based on biological relevance and data quality.

- Network Setup: Initialize a Bayesian network structure incorporating prior biological knowledge where available.

- Model Fitting: Use the novel imputation method implemented in BayesNetty to fit the network to the incomplete data.

- Imputation Execution: Estimate missing values based on the conditional probability distributions within the fitted network.

- Validation: Assess imputation quality through:

- Comparison of distribution patterns pre- and post-imputation

- Sensitivity analysis using different network starting points

Troubleshooting:

- If convergence issues occur, consider reducing the number of variables or increasing iterations

- If imputed values show extreme deviations from expected ranges, check for violations of distributional assumptions

Comparative Analysis of Methods

Normalization Method Performance

Recent systematic evaluation of normalization strategies for mass spectrometry-based multi-omics datasets revealed distinct performance patterns across different omics types. The study analyzed metabolomics, lipidomics, and proteomics data from primary human cardiomyocytes and motor neurons exposed to acetylcholine-active compounds over time [26] [1].

Table 2: Performance of Normalization Methods Across Omics Types

| Omics Type | Recommended Methods | Performance Metrics | Methods to Avoid |

|---|---|---|---|

| Metabolomics | PQN, LOESS QC | Enhanced QC consistency, preserved time-related variance | SERRF (masks treatment variance) |

| Lipidomics | PQN, LOESS QC | Improved feature consistency, maintained treatment effects | SERRF (inconsistent performance) |

| Proteomics | PQN, Median, LOESS | Preserved treatment and time-related variance | VSN (over-correction) |

The study found that machine learning-based approaches like Systematical Error Removal using Random Forest (SERRF) showed inconsistent performance—while it outperformed other methods in some metabolomics datasets, it inadvertently masked treatment-related variance in others [26]. This highlights the importance of validating normalization method performance for specific experimental contexts rather than relying on generalized assumptions.

Imputation Method Performance

Bayesian network imputation methods have demonstrated particular utility for multi-omics datasets with complex missingness patterns. Applied to a type 2 diabetes dataset comprising genotypes, proteins, metabolites, gene expression measurements, and clinical variables from 3,029 individuals, the method enabled the construction of a large average Bayesian network from which putative causal relationships could be identified [4]. This approach effectively handled the reality that no individual had complete data for all variables, making standard complete-case analysis impossible. The success of this method stems from its ability to leverage conditional relationships between variables to estimate missing values, preserving the underlying biological structure within the data.

Integrated Workflow and Visualization

Logical Workflow for Multi-Omics Data Preprocessing

The sequential relationship between imputation and normalization, along with their position in the overall data preprocessing pipeline, can be visualized through the following workflow:

Diagram 1: Multi-omics data preprocessing workflow showing the relationship between quality control, normalization, and imputation.

Decision Framework for Method Selection

The selection of appropriate imputation and normalization strategies depends on specific data characteristics and experimental designs. The following decision framework guides researchers in selecting optimal methods:

Diagram 2: Decision framework for selecting appropriate imputation and normalization methods based on data characteristics.

Research Reagent Solutions

Table 3: Essential Research Reagents and Tools for Multi-Omics Preprocessing

| Reagent/Tool | Function | Application Context |

|---|---|---|

| Compound Discoverer 3.3 | Processes raw metabolomics data | Metabolomics feature detection and alignment [26] |

| MS-DIAL 5.1 | Processes lipidomics data | Lipid identification and quantification [26] |

| Proteome Discoverer 3.0 | Processes proteomics data | Protein identification and quantification [26] |

| R limma package | Implements normalization methods | LOESS, Median, and Quantile normalization [26] |

| R vsn package | Variance stabilization | Normalization for proteomics data [26] |

| BayesNetty software | Bayesian network analysis | Handling missing data in multi-omics datasets [4] |

| Quality Control (QC) samples | Monitoring technical variance | Assessment of normalization effectiveness [26] |

Imputation and normalization serve fundamentally distinct yet complementary roles in multi-omics data preprocessing. While imputation addresses data incompleteness by estimating missing values, normalization enables fair comparison by removing technical biases. The confusion between these processes can lead to inappropriate method selection and compromised research outcomes. For normalization, method performance varies significantly across omics types, with PQN and LOESS QC showing particular promise for metabolomics and lipidomics, while PQN, Median, and LOESS excel for proteomics [26]. For imputation, Bayesian network approaches offer powerful solutions for handling missing data while preserving biological relationships [4]. Researchers must carefully consider their specific data types, experimental designs, and analytical goals when selecting and implementing these preprocessing techniques. By applying these methods appropriately and sequentially—typically normalization followed by imputation—researchers can ensure that their multi-omics analyses yield biologically valid, reproducible insights that advance precision medicine and therapeutic development.

In the era of high-throughput biology, multi-omics data integration has become a cornerstone for advancing our understanding of complex biological systems, from disease mechanisms to therapeutic discovery [28]. The promise of integrating genomics, transcriptomics, proteomics, and epigenomics data lies in obtaining a comprehensive picture of biological processes that single-omics approaches cannot capture [29]. However, this promise remains contingent on a critical yet often underestimated prerequisite: rigorous data preprocessing. Poor preprocessing practices introduce systematic distortions that propagate through the entire analytical pipeline, ultimately compromising biological interpretation and undermining scientific reproducibility. This article examines the specific consequences of inadequate preprocessing across multi-omics workflows and provides structured guidelines to mitigate these pervasive challenges.

The Critical Role of Preprocessing in Multi-Omics Research

Data preprocessing transforms raw, complex biological data into clean, analysis-ready datasets. This foundational step is not merely technical "janitor work" but constitutes an essential scientific procedure that determines the validity of all subsequent findings. In multi-omics studies, preprocessing must address the unique characteristics of each data layer while ensuring their eventual compatibility for integration [30].

Traditional manual curation of multi-omics data consumes 60-80% of a computational biologist's time, creating a significant bottleneck in research velocity [30]. This intensive process is necessary because each omics modality presents distinct preprocessing requirements—from genotype imputation and quality control for GWAS data to adapter trimming and read mapping for RNA-seq [31] [32]. Without standardized, automated preprocessing pipelines, studies risk generating irreproducible results that cannot be translated into reliable biological insights.

Table 1: Multi-Omics Data Types and Their Preprocessing Particularities

| Omics Data Type | Key Preprocessing Steps | Primary Challenges |

|---|---|---|

| Genomics (GWAS) | Genotype imputation, quality control (call rate, HWE, MAF), additive encoding [31] | High dimensionality, polygenic architecture, population stratification |

| Transcriptomics (RNA-seq) | Quality control (FastQC), adapter trimming, read mapping, normalization (TPM) [33] [32] | Library size differences, multi-mapping reads, alternative splicing |

| Epigenomics (EWAS) | Background correction, normalization, probe filtering [31] | Cell type heterogeneity, technical variation, confounding |

| Proteomics | Peptide spectral match quantification, normalization, imputation [33] | Missing data, dynamic range compression, batch effects |

Consequences of Inadequate Preprocessing

Technical Artifacts Masquerading as Biological Signals

One of the most pernicious consequences of poor preprocessing is the failure to distinguish technical artifacts from genuine biological signals. Batch effects—systematic technical variation introduced by different processing dates, technicians, or instruments—can completely overwhelm true biological signals if not properly addressed [30]. In one documented case, the first principal component in an integrated multi-omics analysis of leukemia separated samples by sequencing vendor rather than disease subtype, misleading researchers about the fundamental structure of their data [29].

AI models excel at pattern recognition but cannot inherently distinguish real biological differences from technical artifacts. Without specialized correction methods like ComBat or advanced deep learning models, batch effects masquerade as discoveries, invalidating conclusions and leading research down unproductive paths [30].

Spurious Correlations and Functional Misinterpretation

When preprocessing fails to account for the distinct statistical properties of each omics layer, integrated analyses produce spurious correlations that misinterpret functional relationships. Studies often expect high correlation between mRNA and protein expression, but frequently find only weak associations due to legitimate post-transcriptional regulation [29]. Analysts unaware of this biological reality may misinterpret low correlations as meaningful or selectively report stronger pairs, creating distorted networks of molecular interaction.

In one real-world example, an integrated plot showed a correlation of 0.3 between ATAC-seq peaks and RNA for a set of genes, but half the peaks were located >50kb away from the gene body with no supporting regulatory logic [29]. Such oversights lead to incorrect assignment of regulatory elements and misinterpretation of gene regulatory networks.

Compromised Machine Learning Performance

The high-dimensionality of omics data, where the number of features (e.g., genes, variants) vastly exceeds sample size, makes machine learning models particularly vulnerable to poor preprocessing [31]. Feature selection methods like ridge regression, lasso, and elastic-net—while powerful—are not recommended for low sample sizes without appropriate preprocessing and can cause severe overfitting [31].

The curse of dimensionality combined with technical noise leads to models that memorize artifacts rather than learning biology. This fundamentally limits the translational potential of predictive models for clinical applications like treatment response prediction or disease diagnosis [31] [30].

Table 2: Quantitative Impact of Poor Preprocessing on Research Efficiency

| Metric | Traditional Manual Curation | Optimized Preprocessing | Business Impact |

|---|---|---|---|

| Time-to-Harmonization | 6-8 Weeks [30] | <48 Hours [30] | Accelerates insight generation by two months |

| R&D Productivity | Constrained (60-80% time on cleaning) [30] | Increased by 15-30% [30] | Quadruples researcher focus on high-value discovery |

| Data Fidelity | Dependent on human error [30] | Auditable, ontology-bound [30] | Essential for regulatory compliance and model trust |

Resolution Mismatch and Cellular Heterogeneity Oversights

Integrating data of different resolutions without appropriate preprocessing creates fundamental misinterpretations of cellular biology. Comparing bulk RNA-seq with single-cell ATAC-seq, for example, fails when analysts don't account for missing cellular anchors or compositional differences [29]. In one case study, integration of bulk proteomics and scRNA-seq from brain tissue led to misleading correlations because proteins expressed in glial cells were not properly captured in the scRNA-seq clustering [29].

These resolution mismasks are particularly problematic in complex tissues, where cellular heterogeneity drives biological function but requires specialized preprocessing approaches to resolve across omics layers.

Figure 1: Consequences of poor preprocessing practices cascade through the analytical pipeline, ultimately compromising biological interpretation and experimental reproducibility.

Experimental Protocols for Robust Multi-Omics Preprocessing

Protocol 1: Preprocessing of GWAS Data for Predictive Modeling

This protocol outlines the standardized preprocessing of genome-wide association study (GWAS) data, based on established methodologies for constructing predictive models of disease outcomes [31].

Materials:

- Raw genotype data (e.g., Illumina Infinium Global Screening Array)

- High-performance computing environment

- PLINK 1.9 software [31]

- Michigan Imputation Server access [31]

- Haplotype Reference Consortium (HRC) reference panel [31]

Procedure:

- Quality Control (Pre-Imputation): Submit genotypes to Michigan Imputation Server for automated QC. Exclusion criteria: variants with low allelic frequency (<0.2), low call rate (<0.95), repeated variants, or variants without information [31].

- Genotype Imputation: Perform imputation using Minimac 4 method from HRC reference panel (GRCh37/hg19 genomic annotation) [31].

- Quality Control (Post-Imputation): Execute second QC analysis with PLINK 1.9. Exclusion criteria: low imputation quality (R²<0.9); variants violating Hardy-Weinberg equilibrium (HWE P>10⁻⁶); low minor allele frequency (MAF<0.01) [31].

- Data Encoding: Encode GWAS data according to additive model using dosage format to indicate presence/absence of risk or reference allele in each SNP [31].

Validation:

- Assess final variant count and sample retention

- Verify MAF distribution meets study requirements

- Confirm HWE equilibrium in control populations

Protocol 2: Integrated RNA-seq and Proteomics Preprocessing

This protocol provides a streamlined workflow for simultaneous preprocessing of paired transcriptome and proteome data to enable comparative molecular subgroup identification [33].

Materials:

- RNA-seq count data (e.g., from STAR aligner and featureCounts)

- Proteomic peptide spectral match (PSM) data from TMT mass spectrometry

- R statistical environment (v3.6.3 or later) [33]

- Required R packages: tidyverse, edgeR, limma, vsn, biomaRt [33]

Procedure:

- Data Input and Structure:

- Read transcriptomic data (COUNT and TPM formats) ensuring rectangular structure with features in rows and samples in columns [33].

- Read proteomic PSM data, maintaining compatible sample organization.

Normalization and Scaling:

Batch Effect Correction:

Biology-Aware Feature Selection:

- Remove non-informative features: mitochondrial/ribosomal genes, unannotated peaks, proteins with >30% missing data [29].

- Focus on features with known biological relevance to system studied.

- Validate integration with pathway-level coherence assessment.

Validation:

- Compare molecular subgroups identified from each modality

- Examine RNA-protein correlation patterns in known marker genes

- Assess cluster stability and biological interpretability

Figure 2: Strategic preprocessing workflow showing three-phase approach transforming raw multi-omics data into analysis-ready datasets.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Computational Tools for Multi-Omics Preprocessing

| Tool/Resource | Function | Application Context |

|---|---|---|

| PLINK 1.9 [31] | Whole-genome association analysis | Quality control and analysis of GWAS data |

| Michigan Imputation Server [31] | Genotype imputation | HRC reference-based genotype completion |

| FastQC/MultiQC [32] | Quality control check | QC of raw sequencing data across multiple samples |

| edgeR/limma [33] | Differential expression | RNA-seq data normalization and analysis |

| ComBat/Harmony [30] [29] | Batch effect correction | Removing technical variation across datasets |

| MOFA+ [29] | Multi-omics integration | Factor analysis for integrated omics datasets |

| ColorBrewer [34] | Color palette selection | Accessible data visualization design |

| Chroma.js Palette Helper [34] | Color palette testing | Color blindness simulation and palette optimization |

The consequences of neglecting proper preprocessing in multi-omics research extend far beyond technical inconveniences—they fundamentally compromise biological interpretation and scientific reproducibility. Poor preprocessing practices introduce systematic biases that distort analytical outcomes, leading to spurious findings, wasted resources, and lost opportunities for genuine discovery. The protocols and guidelines presented here provide a framework for implementing rigorous, standardized preprocessing approaches that transform multi-omics data chaos into reliable, interpretable biological insight. As multi-omics technologies continue to evolve and integrate into clinical and pharmaceutical applications, establishing robust preprocessing foundations becomes not merely a methodological preference but an ethical imperative for reproducible science.

A Practical Toolkit: Modern Imputation and Normalization Strategies

Missing data represents a pervasive challenge in multi-omics research, significantly impeding analytical capabilities and decision-making processes across various domains including healthcare, bioinformatics, and precision oncology [35]. The inherent complexity of multi-omics data, characterized by high dimensionality, heterogeneity, and technical variability, creates formidable obstacles for integration and analysis [25]. The "four Vs" of big data—volume, velocity, variety, and veracity—pose particular challenges for conventional biostatistical methods, which often lack the flexibility to model non-linear interactions across different biological scales [25].

Imputation methodologies have evolved substantially from classical statistical approaches to contemporary machine learning and deep learning techniques, each with distinct advantages for handling different missingness patterns and data structures [35]. This progression reflects an ongoing effort to address the unique characteristics of omics data, including massive dimensionality disparities (from millions of genetic variants to thousands of metabolites), temporal heterogeneity, platform-specific technical variability, and pervasive missingness arising from both biological and technical constraints [25]. The selection of appropriate imputation strategies is crucial for maximizing the discovery of meaningful biological differences while minimizing the introduction of artifacts that could compromise downstream analyses.

Table 1: Fundamental Categories of Missing Data in Multi-Omics Studies

| Category | Description | Common Causes | Typical Impact |

|---|---|---|---|

| Missing Completely at Random (MCAR) | Missingness unrelated to any variables | Sample processing failures, random technical errors | Reduces statistical power but introduces minimal bias |

| Missing at Random (MAR) | Missingness related to observed variables but not unobserved data | Batch effects, platform-specific detection limits | Can introduce bias if missingness mechanisms are ignored |

| Missing Not at Random (MNAR) | Missingness related to the unobserved values themselves | Low-abundance molecules falling below detection thresholds | Potentially severe bias requiring specialized handling |

| Structured Missingness | Systematic patterns across samples or features | Incomplete modality acquisition, sample quality issues | Complicates integration and requires strategic imputation |

Classical and Statistical Imputation Methods

Classical imputation approaches form the foundational methodology for handling missing data, with development dating back to the 1930s [35]. These methods typically rely on statistical principles and assumptions about data distribution, offering interpretability and computational efficiency, particularly for datasets with limited missingness.

k-Nearest Neighbors (k-NN) and Regression-Based Approaches

k-NN imputation operates on the principle that samples with similar expression patterns across observed features will likely have similar values for missing features [36]. The method identifies the k most similar samples based on distance metrics (typically Euclidean or cosine distance) and imputes missing values as weighted averages of the neighbors' values. The key advantage of k-NN is its intuitive implementation and minimal assumptions about data distribution. However, performance deteriorates with high-dimensional data where distance metrics become less meaningful, and computational costs increase substantially with dataset size [36].

Regression-based methods model each feature with missing values as a function of other observed features, using techniques ranging from simple linear regression to more sophisticated regularized variants (ridge, lasso) [36]. These methods can capture linear relationships effectively but struggle with the complex non-linear interactions prevalent in biological systems. The emergence of multi-omics research has highlighted the limitations of these approaches for handling the completely missing modalities common in multi-platform studies [36].

Matrix Factorization and Completion

Matrix factorization approaches, particularly Non-negative Matrix Factorization (NMF), have gained significant traction for omics data analysis due to their ability to uncover latent structures and handle high-dimensional datasets [37] [36]. NMF decomposes a non-negative data matrix V (n×m) into two lower-dimensional non-negative matrices W (n×k) and H (k×m), such that V ≈ WH, where k represents the number of latent components [37].

The fundamental assumption underlying matrix completion is that the original data matrix has a low-rank structure, meaning that most features can be represented as linear combinations of a smaller number of latent factors [35]. This assumption frequently holds true for omics data, where coordinated biological processes generate strong dependencies among molecular features. For single-cell multi-omics data clustering, approaches like PLNMFG (Pseudo-label guided Non-negative Matrix Factorization with Graph constraint) integrate unified latent representation learning with cluster structure learning in a joint framework [37]. These methods perform adaptive imputation to handle dropout events while using prior pseudo-labels as constraints during collective factorization, resulting in more robust latent representations that preserve similarity information [37].

Table 2: Classical Imputation Methods and Their Applications

| Method Category | Key Algorithms | Strengths | Limitations | Optimal Use Cases |

|---|---|---|---|---|

| k-NN Based | k-NN, Ensemble k-NN | Simple implementation, preserves local structure | Computationally intensive for large datasets, sensitive to distance metrics | Small to medium datasets with limited missingness (MCAR) |

| Regression Based | Linear Regression, MICE | Models feature relationships, provides uncertainty estimates | Assumes linear relationships, may not capture complex biology | Datasets with strong linear correlations between features |

| Matrix Factorization | NMF, PMF, MNAR | Discovers latent structure, handles high-dimensional data | Requires rank selection, may struggle with complex patterns | Multi-omics integration, feature extraction, co-clustering |

| Statistical Models | EM Algorithm, Bayesian Networks | Handles uncertainty, provides probabilistic framework | Computationally intensive, convergence issues | Complex missingness patterns (MAR, MNAR), causal inference |

Modern Machine Learning Approaches

Modern machine learning methods for imputation leverage more sophisticated algorithms to capture complex patterns in multi-omics data, often outperforming classical approaches, particularly for large-scale datasets with complex missingness patterns.

Random Forests and Ensemble Methods

Tree-based ensemble methods like Random Forests handle missing data through sophisticated internal imputation mechanisms that leverage feature relationships. The missForest algorithm, for instance, imputes missing values by training a random forest model on observed values and predicting missing ones, iterating until convergence [35]. These methods are particularly effective for mixed data types (continuous and categorical) and can capture non-linear relationships without strong distributional assumptions. For mass spectrometry-based multi-omics datasets, the SERRF (Systematical Error Removal using Random Forest) method uses correlated compounds in quality control samples to correct systematic errors, including batch effects and injection order variation [14].

Graph-Based Imputation

Graph neural networks (GNNs) have emerged as powerful tools for imputation in biological networks, leveraging the inherent relational structure of omics data [35]. By representing samples and features as nodes in a graph, GNNs can propagate information from observed to unobserved nodes through message-passing mechanisms, effectively imputing missing values based on topological similarities. This approach is particularly valuable for single-cell multi-omics data, where graph Laplacian constraints can preserve local neighborhood structures during imputation [37]. Methods like PLNMFG incorporate graph constraints to maintain the intrinsic structure of multi-omics data during the clustering process, demonstrating how topological information can guide accurate imputation [37].

Deep Generative Models