Multimodal Data Integration: Unraveling Disease Mechanisms for Precision Medicine and Drug Discovery

This article explores the transformative role of multimodal data integration in deciphering complex disease mechanisms.

Multimodal Data Integration: Unraveling Disease Mechanisms for Precision Medicine and Drug Discovery

Abstract

This article explores the transformative role of multimodal data integration in deciphering complex disease mechanisms. Aimed at researchers, scientists, and drug development professionals, it provides a comprehensive analysis of how the fusion of diverse data types—including genomics, medical imaging, electronic health records, and wearable device outputs—is revolutionizing our understanding of pathology. The content covers foundational concepts, cutting-edge methodological frameworks like transformers and graph neural networks, practical solutions for overcoming data integration challenges, and a critical validation of clinical applications and performance metrics. By synthesizing insights across these domains, this article serves as a strategic guide for leveraging multimodal approaches to accelerate biomarker discovery, enhance therapeutic development, and advance personalized medicine.

The Foundation of Multimodal Integration: From Data Silos to a Holistic View of Disease

Multimodal data refers to the integrated collection and analysis of diverse, complementary biological and clinical data sources to construct a holistic representation of health and disease. In biomedicine, this encompasses data types ranging from molecular profiles and medical imaging to clinical records and real-time physiological monitoring [1] [2]. The convergence of these disparate modalities through advanced artificial intelligence (AI) is driving a paradigm shift in biomedical research, enabling unprecedented insights into disease mechanisms and accelerating the development of personalized therapeutic strategies [1] [3]. This technical guide delineates the core concepts, data types, and methodologies underpinning multimodal data integration, with a specific focus on its transformative role in elucidating complex disease pathologies.

Core Concepts and Definitions

At its foundation, multimodal data integration in biomedicine is driven by the recognition that complex diseases cannot be fully understood through a single data lens. The core principle is complementarity—each data modality provides a unique and non-redundant perspective on biological systems, and their integration yields insights that are greater than the sum of their parts [2] [4].

Multimodal Data: In the context of computer science and healthcare, this concept refers to the integration and analysis of information from multiple sources or modalities. These can include text, images, audio, video, and sensor data, among others [2] [4]. The primary objective is to leverage the complementary strengths of different data types to gain a more comprehensive understanding of a given problem or phenomenon [1].

Multimodal Artificial Intelligence (MMAI): This is an emerging and transformative domain that combines multiple data modalities to enhance decision-making. Unlike traditional AI systems that analyze a single data stream, multimodal AI integrates diverse sources such as clinical imaging, genetic profiles, biosensor outputs, and electronic health records. This integrative approach enables a deeper and more unified interpretation of human biology and disease [1] [3].

The value proposition of multimodal data is its ability to uncover complex relationships between physiological, genetic, and environmental factors, leading to more accurate diagnoses, personalized treatments, and improved outcomes [1]. For instance, in oncology, combining imaging, genomics, and clinical data allows for a more precise characterization of tumors and the development of tailored treatment plans, a process that is difficult or impossible with any single modality alone [2].

Key Data Modalities in Biomedical Research

Biomedical research leverages a wide array of data modalities. The table below summarizes the primary types, their specific examples, and their core functions in disease research.

Table 1: Key Data Modalities in Biomedical Research

| Modality Category | Specific Examples | Core Function in Disease Research |

|---|---|---|

| Genomics & Molecular Profiling | Genomic sequencing, Transcriptomics (RNA-seq), Epigenomics (methylation), Proteomics, Metabolomics [5] [1] [6] | Reveals genetic predispositions, dysregulated molecular pathways, and molecular subtypes of disease [2] [6]. |

| Medical Imaging & Histopathology | MRI, CT, X-ray, Histopathological slides, Spatial transcriptomics [1] [2] [7] | Provides anatomical, functional, and microstructural characterization of tissues and tumors [2] [4]. |

| Clinical & Patient Data | Electronic Health Records (EHRs), Clinical notes, Laboratory test results, Family history [1] [2] [3] | Offers longitudinal perspective on patient health, treatments, outcomes, and comorbidities [2]. |

| Real-Time Monitoring & Wearables | Wearable devices (e.g., fitness trackers), Continuous physiological monitors (e.g., ECG) [1] [2] | Captures dynamic, real-time data on patient health status and activity for continuous monitoring [1]. |

Methodologies for Multimodal Data Integration

The integration of heterogeneous data types requires sophisticated computational methodologies. The field is rapidly evolving beyond simple data concatenation toward complex AI-driven models capable of learning the deep relationships between modalities.

Data Fusion Techniques

Fusion techniques refer to the methods for concatenating signals or information from different modalities and can be broadly categorized [7]:

- Early Fusion: Data from different modalities are combined at the input stage, before being fed into a single model. This requires data to be transformed into a congruent format but allows the model to learn interactions from the rawest level.

- Intermediate/Joint Fusion: This is the most common approach in deep learning. Data from each modality are processed separately in the initial layers, and their learned representations (embeddings) are combined in intermediate layers of the model. This allows the model to learn complex, non-linear interactions between modalities.

- Late Fusion: Models are trained independently on each modality, and their predictions are combined at the final stage (e.g., through weighted averaging). This is flexible but cannot capture fine-grained inter-modal relationships.

Advanced AI Frameworks for Integration

Transformer Models: Initially conceived for natural language processing, transformers use self-attention mechanisms to assign weighted importance to different parts of sequential input data. This makes them highly effective for integrating clinical notes, genomic sequences, and imaging data by focusing on the most relevant features across modalities [7]. They have been used to set new benchmarks in tasks like diagnosing Alzheimer's disease by unifying imaging, clinical, and genetic information [7].

Graph Neural Networks (GNNs): GNNs are designed to model non-Euclidean, graph-structured data. In biomedicine, different data types (e.g., a patient, a gene, an image feature) can be represented as nodes in a graph, with edges representing their relationships. GNNs then aggregate feature information from a node's neighbors, making them exceptionally powerful for capturing the complex, relational structure of multimodal biomedical data [7]. They have been applied to predict outcomes like lymph node metastasis in cancer by learning the connections between image features and clinical parameters [7].

Deep Latent Variable Path Modelling (DLVPM): This novel method combines the representational power of deep learning with the capacity of path modelling (structural equation modelling) to identify relationships between interacting elements in a complex system [6]. DLVPM trains a collection of submodels (measurement models), one for each data type, to create deep latent variables (DLVs) that are optimized to be maximally associated with DLVs from other connected data types. This provides a holistic, interpretable model of the interactions between, for example, genetic, epigenetic, and histological data in cancer [6].

Experimental Protocol: Implementing a DLVPM Analysis

The following protocol outlines the key steps for applying DLVPM to integrate multimodal cancer data, as described in [6].

- Path Model Specification: The analysis begins by defining a hypothesis-driven path model. This model is visually represented as a network graph and mathematically as an adjacency matrix (C), where elements c_{ij} indicate the presence (1) or absence (0) of a postulated direct influence from data type i to data type j.

- Data Collection and Curation: Gather the multimodal datasets as defined by the path model. For a cancer study, this typically includes:

- Molecular Data: Single-nucleotide variants (SNVs), DNA methylation profiles, microRNA sequencing, and RNA sequencing data from sources like The Cancer Genome Atlas (TCGA).

- Imaging Data: Digitized histopathological whole-slide images (WSIs) of tumor tissue.

- Measurement Model Training: A dedicated neural network (e.g., a convolutional neural network for images, a feed-forward network for molecular data) is defined for each data type. These "measurement models" are trained to generate a set of Deep Latent Variables (DLVs) for their respective modality.

- DLVPM Model Optimization: The core algorithm is trained to optimize the DLVs from each measurement model such that they are maximally associated with DLVs from other data types as specified by the path model adjacency matrix. The optimization criteria can be represented as: max∑{i, j, i≠j} K _c{ij} tr(Ȳi(Xi, Ui, Wi)^T Ȳj(Xj, Uj, Wj)) where tr denotes the matrix trace, and the DLVs are constrained to be orthogonal within each modality.

- Model Application and Interpretation: The trained DLVPM model, which represents a joint embedding of all modalities, can then be applied to various downstream tasks. This includes patient stratification, identification of key genetic loci associated with histological features, or exploration of synthetic lethal interactions using independent CRISPR-Cas9 screen data.

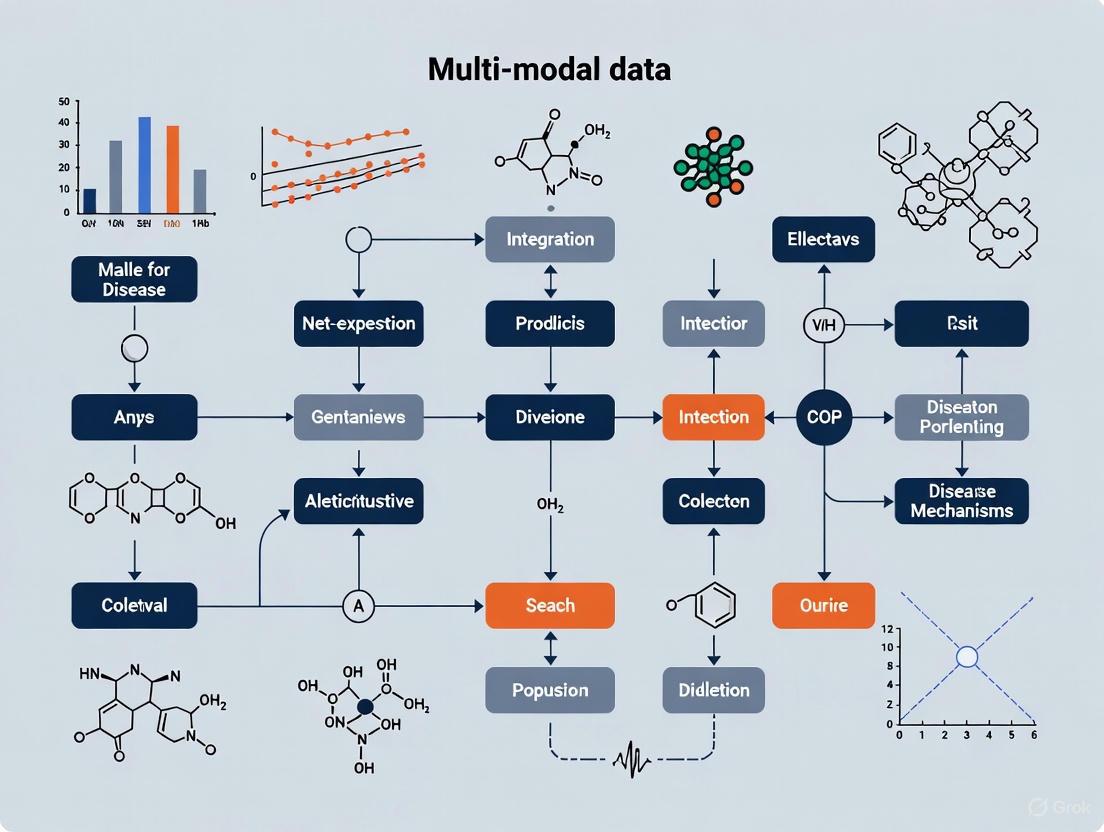

Diagram 1: DLVPM analysis workflow for multimodal data

Successfully conducting multimodal research requires access to high-quality data, computational tools, and AI models. The following table details key resources cited in recent literature.

Table 2: Essential Research Reagents and Resources for Multimodal Studies

| Resource Name | Type | Primary Function in Research | Key Application / Citation |

|---|---|---|---|

| The Cancer Genome Atlas (TCGA) | Comprehensive multimodal database | Provides co-linked data on genomics, transcriptomics, epigenomics, and histopathology for thousands of tumor samples. | Serves as a primary dataset for training and validating multimodal integration methods like DLVPM [6]. |

| The Cancer Imaging Archive (TCIA) | Medical imaging database | A large repository of medical images (MRI, CT, etc.), often linked with clinical and genomic data. | Used in AI studies for diagnostic imaging and for linking imaging phenotypes to genomic data [1]. |

| Protein Data Bank (PDB) | Structural biology database | A critical resource of experimentally validated protein and macromolecular structures. | Used for training deep learning models like AlphaFold for accurate protein structure prediction, aiding biomaterial design [1]. |

| Deep Latent Variable Path Modelling (DLVPM) | Computational Algorithm | A deep-learning-based method for mapping complex dependencies between multiple data types (e.g., omics and imaging). | Used to integrate single-nucleotide variant, methylation, RNA-seq, and histological data to obtain a holistic model of cancer [6]. |

| Graph Neural Networks (GNNs) | AI Model Framework | A class of neural networks designed to learn from graph-structured data, ideal for modeling relationships between multimodal data points. | Used to predict lymph node metastasis by constructing a graph linking image features and clinical parameters [7]. |

| Transformer Models | AI Model Architecture | Models using self-attention mechanisms to weigh the importance of different inputs, effective for sequential and multimodal data. | Applied to integrate imaging, clinical, and genetic information for superior performance in disease diagnosis [7]. |

Diagram 2: AI frameworks integrating multimodal data for disease insights

Multimodal data, encompassing genomics, imaging, clinical records, and beyond, is fundamentally redefining biomedical research. The core concepts of data complementarity and integration, powered by advanced AI frameworks like GNNs, Transformers, and DLVPM, are providing researchers with a powerful lens to investigate disease mechanisms in their full complexity. As the technologies for data generation and computational integration continue to mature, multimodal approaches are poised to unlock a new era of predictive, personalized, and preventive medicine, transforming our understanding and treatment of human disease.

Single-modality analysis has long been the standard approach in biomedical research, yet it provides inherently fragmented insights into complex disease mechanisms. This technical guide examines the transformative potential of multimodal data integration, which systematically combines complementary biological and clinical data sources—including genomics, medical imaging, electronic health records, and wearable device outputs—to construct a multidimensional perspective of patient health. Supported by quantitative evidence and detailed experimental protocols, this whitepaper demonstrates how multimodal integration enhances tumor characterization, enables personalized treatment planning, and facilitates early disease diagnosis, thereby addressing critical limitations of traditional single-modality approaches.

The Fundamental Limitations of Single-Modality Analysis

Single-modality approaches in disease research provide valuable but incomplete insights into complex pathological processes. The inherent constraints of analyzing isolated data types create significant barriers to comprehensive understanding.

Incomplete Biological Context: Individual modalities capture only specific aspects of disease biology. Genomic data reveals molecular alterations but lacks spatial and temporal context, while medical imaging provides anatomical information without underlying molecular drivers.

Limited Predictive Power: Studies demonstrate that single-modality biomarkers often yield suboptimal predictive performance. In immuno-oncology, for instance, single biomarkers fail to capture the complex cellular interactions required for effective antitumor immune responses [4].

Inconsistent Findings Across Modalities: Research on psychotic disorders reveals substantial variability when different neuroimaging techniques are used independently. Structural (T1-weighted imaging), white matter integrity (DTI), and functional connectivity (rs-FC) approaches each identify different abnormalities without providing a unified pathological model [8].

Table 1: Comparative Performance of Single vs. Multimodal Classification in Psychosis Research

| Modality | Number of Studies | Internal Classification Performance | External Classification Performance |

|---|---|---|---|

| T1-weighted | 30 | Moderate | Lower relative to rs-FC |

| DTI | 9 | Moderate | Similar across modalities |

| rs-FC | 40 | Moderate | Higher relative to T1 |

| Multimodal | 14 | Moderate | No significant advantage over unimodal |

| Overall | 93 | Reliable differentiation (OR = 2.64) | High heterogeneity across studies |

Source: Meta-analysis of machine learning classification studies for schizophrenia spectrum disorders [8]

The quantitative evidence from a comprehensive meta-analysis of 93 studies reveals a critical finding: while neuroimaging modalities can reliably differentiate individuals with schizophrenia spectrum disorders from controls (OR = 2.64, 95% CI = 2.33 to 2.95), no single modality demonstrates consistent superiority, and multimodal approaches currently show no significant advantage over unimodal methods in external validation [8]. This underscores both the value and limitations of each modality while highlighting the need for more sophisticated integration methodologies.

The Multimodal Integration Paradigm: Principles and Advantages

Multimodal AI systems process and integrate information from multiple data types or sensory inputs, generating insights that are richer and more nuanced than those produced by single-modality systems [9]. In healthcare, this approach combines diverse data sources—including medical imaging (MRI, CT), laboratory results, electronic health records, wearable device outputs, and genomic profiles—to enable a more comprehensive understanding of patient health [4].

The fundamental advantage of multimodal integration lies in its ability to leverage complementary information across data types. Where one modality may be insensitive to certain pathological changes, another can provide critical missing insights. This synergistic approach enables:

Holistic Disease Characterization: Multimodal integration provides a unified view of disease pathology across multiple biological scales, from molecular alterations to systemic manifestations.

Enhanced Predictive Accuracy: By capturing complex, nonlinear relationships between different data types, multimodal models can achieve superior predictive performance compared to single-modality approaches.

Personalized Intervention Strategies: The comprehensive profiling enabled by multimodal data allows for treatment planning tailored to individual patient characteristics and disease manifestations.

Quantitative Applications in Disease Research

Oncology: Enhanced Tumor Characterization and Personalized Treatment

Multimodal integration represents a paradigm shift in oncology, enabling more precise tumor characterization and personalized therapeutic interventions.

Enhanced Tumor Subtyping: Traditional molecular subtyping methods like PAM50 based solely on gene expression profiles show limitations, as patients within the same subgroup experience different outcomes [4]. Multimodal approaches overcome this by combining pathological images with genomic and other omics data. Dedicated feature extractors—convolutional neural networks for pathological images and deep neural networks for genomic data—generate integrated feature sets that enable more accurate prediction of breast cancer molecular subtypes [4]. This approach has been extended to pan-cancer studies, with one large-scale investigation integrating transcriptome, exome, and pathology data from over 200,000 tumors to develop a multilineage cancer subtype classifier [4].

Tumor Microenvironment (TME) Analysis: Advanced technologies including single-cell and spatial transcriptomics provide fine-grained resolution of the TME, revealing cellular interactions at single-cell and spatial dimensions [4]. Multimodal features extracted from these technologies have uncovered immunotherapy-relevant heterogeneity in non-small cell lung cancer (NSCLC) and identified distinct tumor subgroups in squamous cell carcinoma [4]. Cross-modal applications demonstrate that gene expression can be predicted from histopathological images of breast cancer tissue at 100μm resolution, while spatial transcriptomic features can reveal hidden histological characteristics in breast cancer tissue sections [4].

Personalized Treatment Planning: Multimodal integration enables tailored therapeutic approaches across multiple treatment modalities:

Radiation Therapy: Integration of high-resolution MRI scans and metabolic profiles enables accurate inference of tumor cell density in glioblastoma patients, optimizing radiotherapy regimens while minimizing damage to healthy tissue [4].

Immunotherapy: Multimodal biomarkers significantly improve prediction of responses to immune checkpoint blockade. Combining annotated CT scans, digitized immunohistochemistry slides, and common genomic alterations in NSCLC enhances prediction of responses to anti-PD-1/PD-L1 therapies [4]. One study demonstrated that multimodal fusion could accurately predict anti-HER2 therapy response with an AUC of 0.91 [4].

Table 2: Multimodal Integration Applications in Oncology

| Application Domain | Data Modalities Integrated | Performance/Outcome |

|---|---|---|

| Breast Cancer Subtyping | Pathological images, genomic data, other omics | Accurate molecular subtype prediction |

| Therapy Response Prediction | Clinical, imaging, genomic data | AUC = 0.91 for anti-HER2 therapy |

| Tumor Microenvironment | Single-cell data, spatial transcriptomics, histology | Identification of distinct tumor subgroups |

| Radiotherapy Planning | MRI, metabolic profiles | Optimized dose distribution for glioblastoma |

| Immunotherapy Response | CT scans, IHC slides, genomic alterations | Improved prediction for NSCLC |

Source: Journal of Medical Internet Research (2025) [4]

Neurodegenerative Disease: Uncovering Shared Path Mechanisms

Multimodal integration has proven particularly valuable in deciphering complex neurodegenerative disorders like Parkinson's disease (PD), where heterogeneity has complicated therapeutic development.

Knowledge Graph Integration: Researchers have developed a comprehensive knowledge graph by integrating high-content imaging and RNA sequencing data from PD patient-specific midbrain organoids harboring LRRK2-G2019S, SNCA triplication, GBA-N370S, or MIRO1-R272Q mutations with publicly available biological data [10]. This approach enabled identification of common transcriptomic dysregulation across monogenic PD forms reflected in glial cells of idiopathic PD (IPD) patient midbrain organoids.

Stratification of Idiopathic Patients: Through generation of single-cell RNA sequencing data from midbrain organoids derived from IPD patients, researchers successfully stratified IPD patients within the spectrum of monogenic PD forms [10]. This multimodal network-based analysis revealed that dysregulation in ROBO signaling might be involved in shared pathophysiology between monogenic PD and IPD cases, despite high degrees of heterogeneity [10].

Experimental Protocols and Methodologies

Protocol: Knowledge Graph Construction for Parkinson's Disease Mechanisms

Objective: Identify shared molecular dysregulation across Parkinson's disease variants using multimodal network-based data integration.

Sample Preparation:

- Generate patient-specific midbrain organoids from multiple PD variants (LRRK2-G2019S, SNCA triplication, GBA-N370S, MIRO1-R272Q) and idiopathic PD patients [10].

- Prepare samples for high-content imaging and RNA sequencing according to established organoid protocols.

Data Generation:

- High-Content Imaging: Perform multiplexed imaging of organoid sections using standardized antibody panels for key PD-relevant markers.

- RNA Sequencing: Conduct bulk and single-cell RNA sequencing on organoid samples to capture transcriptomic profiles.

- Public Data Collection: Curate relevant biological data from public repositories including protein-protein interactions, pathway databases, and genetic association data.

Data Integration and Analysis:

- Knowledge Graph Construction:

- Represent biological entities (genes, proteins, cells, pathways) as nodes

- Establish relationships (interactions, regulations, co-expression) as edges

- Integrate experimental data with prior knowledge from public databases

- Network Analysis:

- Apply graph algorithms to identify densely connected modules

- Perform pathway enrichment analysis on identified modules

- Calculate network centrality measures to prioritize key regulators

Validation:

- Confirm key findings using orthogonal methods (e.g., immunohistochemistry, functional assays)

- Validate predictions in independent patient cohorts where available

Protocol: Multimodal Classification of Psychosis Spectrum Disorders

Objective: Compare machine learning classification performance across multiple neuroimaging modalities for distinguishing schizophrenia spectrum disorders from healthy controls.

Participant Recruitment:

- Include participants meeting criteria for schizophrenia spectrum disorders and matched healthy controls

- Ensure appropriate sample size based on power calculations

- Collect relevant demographic and clinical characteristics

Data Acquisition:

- T1-weighted Imaging: Acquire high-resolution structural images using standardized MRI protocols

- Diffusion Tensor Imaging (DTI): Collect diffusion-weighted images for white matter integrity assessment

- Resting-State Functional Connectivity (rs-FC): Obtain blood-oxygen-level-dependent (BOLD) signals during rest

Preprocessing and Feature Extraction:

- Apply modality-specific preprocessing pipelines (e.g., normalization, motion correction)

- Extract whole-brain features for each modality:

- Regional gray matter volume or cortical thickness from T1

- Fractional anisotropy or mean diffusivity from DTI

- Functional connectivity matrices from rs-FC

Machine Learning Classification:

- Single-Modality Models:

- Train separate classifiers for each modality using cross-validation

- Optimize hyperparameters via nested cross-validation

- Multimodal Integration:

- Apply early fusion (feature concatenation) or late fusion (classifier ensemble) strategies

- Compare integration approaches against single-modality baselines

- Evaluation:

- Assess performance using sensitivity, specificity, and area under ROC curve

- Employ external validation when possible to minimize overoptimistic results

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Research Reagents for Multimodal Integration Studies

| Reagent/Category | Function in Multimodal Research | Specific Application Examples |

|---|---|---|

| Midbrain Organoid Kits | Patient-specific disease modeling | Parkinson's disease variant studies [10] |

| Single-Cell RNA Sequencing Kits | Transcriptomic profiling at cellular resolution | Tumor microenvironment characterization [4] |

| Spatial Transcriptomics Platforms | Gene expression with spatial context | Tumor margin analysis in oral squamous cell carcinoma [4] |

| Multiplexed Imaging Panels | Simultaneous detection of multiple protein targets | Cellular interaction mapping in tumor microenvironment [4] |

| Multimodal Nanosensors | Real-time monitoring within biological systems | Tumor microenvironment dynamics [4] |

| Knowledge Graph Databases | Integration of heterogeneous biological data | Network-based analysis of shared disease mechanisms [10] |

Technical Challenges and Implementation Considerations

Despite its transformative potential, multimodal integration faces significant technical challenges that must be addressed for successful implementation.

Data Standardization and Harmonization: The heterogeneity of multimodal data requires sophisticated methodologies capable of handling large, complex datasets [4]. Variations in data formats, resolutions, and measurement scales necessitate robust normalization and harmonization pipelines before meaningful integration can occur.

Computational Infrastructure: Multimodal AI systems often require more computational resources and sophisticated integration techniques compared to single-modality approaches [9]. Processing large-scale multimodal datasets demands substantial storage, memory, and processing capabilities, creating bottlenecks in model training and deployment [4].

Interpretability and Clinical Translation: Enhancing model interpretability is essential for providing clinically meaningful explanations that gain physician trust [4]. The "black box" nature of complex multimodal models presents barriers to clinical adoption, necessitating the development of explainable AI techniques that illuminate the basis for model predictions.

Future Directions and Concluding Remarks

Multimodal integration represents a paradigm shift in biomedical research, moving beyond the limitations of single-modality analysis to provide comprehensive insights into disease mechanisms. The field is evolving toward large-scale multimodal models that enhance accuracy across diverse applications [4]. Emerging areas include expanded applications in neurological and otolaryngological diseases, integration of real-time data from wearable devices, and development of more sophisticated data fusion techniques.

The imperative for integration is clear: as biomedical research confronts increasingly complex disease mechanisms, multidimensional perspectives become essential. By overcoming the limitations of single-modality analysis, multimodal integration enables more precise disease characterization, personalized treatment strategies, and ultimately, improved patient outcomes across a broad spectrum of conditions.

The investigation of complex human diseases requires a holistic view of biological systems that single-data-type approaches cannot provide. Multi-modal data integration has emerged as a transformative paradigm in biomedical research, systematically combining complementary biological and clinical data sources to provide a multidimensional perspective on health and disease mechanisms [2]. This approach leverages diverse data modalities—including genomics, medical imaging, electronic health records (EHRs), wearable device outputs, and clinical notes—to construct a more comprehensive understanding of disease pathophysiology than any single source can offer independently [2].

The fundamental premise of multi-modal integration is that each data type provides unique and valuable insights into patient health, but when considered in isolation, may offer an incomplete or fragmented view [2]. Genomic data reveals predispositions and molecular subtypes, medical imaging captures structural and functional manifestations, EHRs provide longitudinal clinical context, wearables provide real-time physiological monitoring, and clinical notes offer nuanced phenotypic details. The integration of these diverse data sources enables researchers to connect molecular-level alterations with clinical manifestations, thereby facilitating the elucidation of complex disease mechanisms [11].

This technical guide explores the core data sources essential for multi-modal disease research, detailing methodologies for their integration, and presenting experimental frameworks that leverage these integrated approaches to advance our understanding of disease pathogenesis.

Genomic Data

Genomic data forms the foundational layer of multi-modal integration, providing insights into DNA sequences, genetic variations, and their functional consequences. Next-Generation Sequencing (NGS) technologies have revolutionized genomic analysis by enabling large-scale DNA and RNA sequencing that is faster and more cost-effective than traditional methods [12].

Technical Specifications and Applications:

- Whole Genome Sequencing (WGS): Provides complete genomic information; crucial for identifying rare genetic variants and structural variations. Key applications include rare genetic disorder diagnosis and cancer genomics [12].

- Whole Exome Sequencing (WES): Targets protein-coding regions; more cost-effective for variant discovery in clinical settings.

- RNA Sequencing: Reveals gene expression patterns and alternative splicing events; essential for understanding transcriptional regulation in disease states.

- Single-Cell Genomics: Resolves cellular heterogeneity within tissues; critical for identifying rare cell populations in tumor microenvironments [12].

- Epigenomic Profiling: Includes DNA methylation and chromatin accessibility assays; reveals regulatory mechanisms beyond DNA sequence.

The integration of genomic data with other modalities enables researchers to connect genetic predispositions with phenotypic manifestations, a crucial step for unraveling complex disease mechanisms [11].

Medical Imaging Data

Medical imaging provides structural, functional, and metabolic information about disease manifestations across spatial scales. Different imaging modalities offer complementary insights into disease characteristics.

Table 1: Medical Imaging Modalities and Their Research Applications

| Modality | Technical Specifications | Research Applications | Key Features |

|---|---|---|---|

| Magnetic Resonance Imaging (MRI) | High soft-tissue contrast; multiplanar capability | Tumor characterization, brain connectivity studies, tissue metabolism | Quantitative functional measurements (fMRI, DTI, MR spectroscopy) |

| Computed Tomography (CT) | High spatial resolution; rapid acquisition | Anatomical localization, tumor volumetry, vascular imaging | Excellent bone and contrast agent visualization |

| Positron Emission Tomography (PET) | Molecular imaging capability; high sensitivity | Metabolic activity, receptor density, treatment response | Quantification of metabolic parameters (SUV, MTV, TLG) |

| Digital Pathology | Whole slide imaging; high-resolution tissue analysis | Tumor microenvironment, cellular interactions, spatial biology | Computational pathology algorithms for feature extraction |

Quantitative multimodal imaging technologies combine multiple functional measurements, providing comprehensive characterization of disease phenotypes [2]. For instance, in oncology, integrating MRI and PET enables both anatomical localization and metabolic profiling of tumors.

Electronic Health Records (EHRs) and Clinical Notes

EHRs contain structured and unstructured data generated during clinical care, providing real-world evidence and longitudinal perspectives on disease progression and treatment outcomes.

Structured EHR Components:

- Demographics, laboratory results, vital signs, medications, diagnoses, procedures

- Coded data using standardized terminologies (ICD, CPT, LOINC, SNOMED CT)

- Temporal sequences of clinical events enabling trajectory analysis

Unstructured Clinical Notes:

- Physician notes, progress notes, discharge summaries, pathology reports

- Require natural language processing (NLP) techniques for information extraction

- Contain rich phenotypic details, social determinants, and clinical reasoning

EHR data provides essential clinical context for molecular findings, enabling researchers to connect biomarker discoveries with patient outcomes, comorbidities, and treatment responses [2].

Wearable Device Data

Wearable devices enable continuous, real-time monitoring of physiological parameters in free-living environments, capturing dynamic disease manifestations and treatment responses.

Data Types from Wearables:

- Activity Metrics: Step count, activity type, intensity, sedentary behavior

- Cardiovascular Parameters: Heart rate, heart rate variability, blood pressure, ECG

- Sleep Patterns: Sleep stages, duration, quality, disturbances

- Physiological Stress: Galvanic skin response, skin temperature

Wearable data provides high-temporal-resolution insights into disease progression and treatment effects, complementing the episodic snapshots provided by clinical visits and diagnostic tests [2].

Multi-Modal Integration Methodologies

Computational Frameworks for Data Integration

Integrating diverse data modalities requires sophisticated computational approaches that can handle heterogeneity in data structure, scale, and meaning. Several methodological frameworks have been developed for this purpose.

Data Fusion Techniques:

- Early Fusion: Integration of raw data or features from multiple modalities before model training

- Intermediate Fusion: Combining representations from different modalities within the model architecture

- Late Fusion: Training separate models for each modality and combining their predictions

- Cross-Modal Learning: Transferring knowledge between modalities (e.g., predicting gene expression from histopathology images) [2]

Machine learning methods, particularly deep learning approaches, have shown significant promise in multimodal healthcare applications [13]. These approaches can effectively incorporate diverse data sources including imaging, text, time series, and tabular data, resulting in applications that better represent clinical reasoning processes [13].

Network-Based Integration Approaches

Network-based methods provide a powerful framework for multi-omics integration by representing biological components as nodes and their interactions as edges, offering a holistic view of relationships in health and disease [11].

Table 2: Network-Based Multi-Omics Integration Methods

| Method Type | Key Features | Representative Algorithms | Applications |

|---|---|---|---|

| Similarity-Based Networks | Constructs networks based on pairwise similarities | SNF, MWSNF | Patient stratification, disease subtyping |

| Knowledge-Based Networks | Incorporates prior biological knowledge | PARADIGM, KiMo | Pathway analysis, functional interpretation |

| Tensor Decomposition | Handles multi-way data interactions | Tucker decomposition, CP decomposition | Time-series multi-omics, spatial omics |

| Multi-Layer Networks | Represents different omics layers separately | MAGNA, MINE | Cross-omics interactions, network alignment |

Network-based approaches may reveal key molecular interactions and biomarkers by integrating multi-omics data, providing a systems-level understanding of disease mechanisms [11].

Experimental Protocols for Multi-Modal Studies

Protocol: Multi-Modal Tumor Subtyping in Oncology

This protocol details a methodology for integrating pathological images with genomic data to achieve accurate molecular subtyping of tumors, particularly in breast cancer [2].

Research Reagent Solutions:

- Formalin-Fixed Paraffin-Embedded (FFPE) Tissue Sections: Standard preservation method for histopathological analysis

- H&E Staining Reagents: Enable morphological assessment of tissue architecture

- RNA Extraction Kit: Isolate high-quality RNA from mirror tissue sections

- RNA Sequencing Library Prep Kit: Prepare libraries for transcriptomic profiling

- Immunohistochemistry Assays: Validate protein-level expression of identified subtypes

Methodology:

- Data Acquisition:

- Collect FFPE tissue blocks from patient cohorts

- Prepare H&E-stained sections for digital pathology scanning

- Extract RNA from adjacent tissue sections for RNA sequencing

- Perform quality control on both imaging and genomic data

Feature Extraction:

- Process whole slide images using a trained convolutional neural network (CNN) model to capture deep morphological features

- Process transcriptomic data using a trained deep neural network to extract molecular features

- Normalize features across samples and modalities

Multi-Modal Integration:

- Apply intermediate fusion techniques to combine image and genomic features

- Train a classification model on the integrated feature space

- Validate subtype predictions using orthogonal methods (IHC, survival analysis)

Validation:

- Assess prognostic significance of identified subtypes using survival analysis

- Validate biological relevance through pathway enrichment analysis

- Compare classification accuracy against single-modality approaches

This integrative approach can predict breast cancer molecular subtypes with high accuracy and has been extended to other tumor types and pan-cancer studies [2].

Protocol: Predicting Immunotherapy Response

This protocol outlines a method for predicting response to anti-human epidermal growth factor receptor 2 (HER2) therapy using multimodal radiology, pathology, and clinical information [2].

Research Reagent Solutions:

- Contrast Agents: For pre-treatment CT or MRI scans

- Immunohistochemistry Staining Kits: For HER2 status confirmation

- DNA Extraction Kits: For genomic analysis of relevant biomarkers

- Liquid Biopsy Collection Tubes: For circulating tumor DNA analysis

- Multiplex Immunofluorescence Assays: For tumor microenvironment characterization

Methodology:

- Multi-Modal Data Collection:

- Acquire pre-treatment contrast-enhanced CT scans

- Collect digitized immunohistochemistry slides for HER2 status

- Obtain genomic data for common alterations in NSCLC

- Extract clinical variables including performance status and treatment history

Feature Engineering:

- Extract radiomic features from tumor regions on CT scans

- Calculate spatial features from histopathology slides

- Encode genomic alterations as binary features

- Normalize clinical variables

Model Development:

- Implement a multi-modal deep learning framework

- Apply cross-modal attention mechanisms to weight informative features

- Train the model using response status as the outcome (responder vs. non-responder)

- Optimize hyperparameters using cross-validation

Performance Evaluation:

- Assess model performance using area under the curve (AUC) metrics

- Evaluate clinical utility using decision curve analysis

- Validate on external cohorts when available

The multi-modal model by Chen et al. achieved an area under the curve of 0.91 for predicting response to anti-HER2 combined immunotherapy, demonstrating superior performance compared to single-modality approaches [2].

Technical Implementation and Visualization

Workflow Diagram for Multi-Modal Integration

The following Graphviz diagram illustrates a generalized workflow for multi-modal data integration in disease mechanisms research:

Multi-Modal Data Integration Workflow

Tumor Microenvironment Characterization

The following diagram illustrates the multi-modal approach to characterizing the tumor microenvironment, which plays a crucial role in tumor initiation, progression, metastasis, and therapy resistance [2]:

Tumor Microenvironment Multi-Modal Analysis

Implementation Considerations for Data Visualization

Effective visualization of multi-modal data requires adherence to established design principles to ensure clarity and accessibility.

Color Palette and Accessibility: The specified color palette (#4285F4, #EA4335, #FBBC05, #34A853, #FFFFFF, #F1F3F4, #202124, #5F6368) should be applied with careful attention to contrast ratios. WCAG guidelines require a minimum contrast ratio of 4.5:1 for normal text (Level AA) and 7:1 for enhanced contrast (Level AAA) [14] [15]. All text elements in visualizations must maintain sufficient contrast against their backgrounds to ensure readability for users with visual impairments.

Data Visualization Best Practices:

- Maintain high data-ink ratio by eliminating non-essential chart elements [16]

- Establish clear context through comprehensive titles, axis labels, and legends [16]

- Use color strategically to encode information and direct attention [16]

- Select appropriate chart types for different data relationships [16]

The integration of multi-modal data sources represents a paradigm shift in disease mechanisms research, enabling a more comprehensive understanding of pathological processes than previously possible. By combining genomic, imaging, EHR, wearable, and clinical note data, researchers can connect molecular-level alterations with clinical manifestations across multiple scales of biological organization.

The methodologies and experimental protocols outlined in this technical guide provide a framework for designing and implementing multi-modal studies that can advance our understanding of disease mechanisms. As computational methods continue to evolve and datasets grow in scale and complexity, multi-modal integration will play an increasingly central role in translating biomedical discoveries into improved patient outcomes.

The future of multi-modal disease research lies in the development of more sophisticated integration algorithms, standardized data protocols, and collaborative frameworks that enable researchers to leverage diverse data types effectively. By embracing these approaches, the research community can accelerate the pace of discovery and ultimately deliver on the promise of precision medicine.

The establishment of Multidisciplinary Tumor Boards (MTBs) represents a cornerstone of modern oncology, facilitating collaborative diagnosis and treatment planning by integrating diverse clinical expertise. These formal meetings, typically involving medical oncologists, surgeons, radiologists, pathologists, and radiation oncologists, review and discuss cancer diagnoses to develop personalized care strategies [17]. This collaborative model has demonstrated significant benefits in patient outcomes but faces increasing strain from rising cancer incidence, growing case complexity, and financial pressures [17]. Simultaneously, the field of oncology has entered an era of multimodal data proliferation, encompassing diverse biological and clinical data sources such as genomics, medical imaging, electronic health records, and wearable device outputs [2].

Artificial Intelligence (AI) has emerged as a transformative technology capable of synthesizing these complex multimodal datasets to enhance clinical decision-making. The integration of AI into MTBs represents a natural evolution toward precision medicine, leveraging machine learning algorithms to process vast amounts of clinical and biological information that surpass human cognitive capacity for comprehensive synthesis [18]. This technical guide explores the mechanisms by which AI systems can mimic and augment the multidisciplinary decision-making processes of traditional tumor boards, with particular emphasis on multimodal data integration frameworks and their applications in disease mechanisms research.

The Multimodal Data Landscape in Oncology

Data Modalities and Characteristics

Oncology generates vast amounts of heterogeneous data from multiple sources, each providing unique insights into cancer biology. The table below summarizes the primary data modalities relevant to AI-enhanced tumor boards:

Table: Multimodal Data Sources in Oncology

| Data Modality | Data Types | Clinical/Research Utility |

|---|---|---|

| Genomic Data | DNA sequencing (Whole genome, exome), RNA sequencing, epigenetic profiles | Identification of driver mutations, molecular subtypes, therapeutic targets [2] [18] |

| Pathology Data | Histopathological whole slide images, immunohistochemistry, spatial transcriptomics | Tumor grading, cellular morphology, tumor microenvironment characterization [2] [6] |

| Radiology Data | MRI, CT, PET-CT scans | Tumor staging, treatment response assessment, anatomical localization [2] |

| Clinical Data | Electronic health records, laboratory values, performance status, treatment history | Prognostic stratification, comorbidity assessment, toxicity monitoring [2] [19] |

Technical Challenges in Multimodal Data Integration

The integration of multimodal oncology data presents significant computational and methodological challenges. Data heterogeneity across modalities creates obstacles in direct comparison and joint analysis [2]. The sheer volume of data, particularly from imaging and sequencing technologies, requires sophisticated computational infrastructure and specialized algorithms [6]. Additionally, clinical data often exhibits irregular sampling frequencies and missing values, complicating temporal analysis [2]. Model interpretability remains crucial for clinical adoption, as physicians require transparent reasoning processes rather than black-box recommendations [2] [17].

AI Architectures for Multimodal Data Fusion

Technical Approaches to Data Integration

Multiple AI architectural patterns have been developed to address the challenges of multimodal data fusion in oncology:

Early Fusion involves combining raw data from multiple modalities at the input level, allowing the model to learn correlations across modalities from the beginning of processing. This approach requires extensive data preprocessing and alignment but can capture subtle cross-modal interactions [6].

Intermediate Fusion utilizes separate feature extractors for each modality before combining representations in intermediate network layers. This flexible architecture accommodates modality-specific processing while enabling cross-modal learning [2].

Late Fusion processes each modality independently through separate models and combines the outputs at the decision level. This approach leverages specialized models for each data type but may miss important cross-modal correlations [2].

Deep Latent Variable Path Modelling (DLVPM) represents a cutting-edge approach that combines the representational power of deep learning with the structural mapping capabilities of path modeling. DLVPM defines measurement models for each data type and optimizes deep latent variables to be maximally associated across connected modalities while maintaining orthogonality within each data type [6].

Workflow Visualization: AI-Augmented MTB Decision Process

The following diagram illustrates the integrated workflow of an AI-augmented multidisciplinary tumor board, highlighting the fusion of multimodal data and collaborative decision-making between AI systems and clinical experts:

AI-Augmented MTB Decision Workflow

Experimental Protocols and Validation Studies

Quantitative Performance Assessment

Recent studies have systematically evaluated the concordance between AI-generated recommendations and multidisciplinary tumor board decisions. The table below summarizes key performance metrics from validation studies:

Table: AI-MTB Decision Concordance in Validation Studies

| Study Characteristics | Chen et al. [2] | Prospective Clinical Trial [19] |

|---|---|---|

| AI Model | Multi-modal model combining radiology, pathology, and clinical data | ChatGPT-4.0 based on clinical summaries |

| Primary Task | Prediction of anti-HER2 therapy response | General treatment recommendation alignment |

| Concordance Rate | AUC=0.91 | 76.4% (κ = 0.764) |

| Sample Size | Not specified | 100 patients |

| Key Finding | Superior prediction through multimodal integration | High agreement in standardized cases, limitations in complex individualized decisions |

Detailed Methodology: Prospective AI-MTB Concordance Study

A recent prospective study conducted between November 2024 and January 2025 provides a robust methodological framework for validating AI decision-support in MTB settings [19]:

Patient Cohort and Data Collection:

- 100 consecutive patients presented to the tumor board at a tertiary care institution

- Inclusion criteria: adults (>18 years) with pathologically confirmed cancer, first presentation to MTB

- Comprehensive clinical data compilation including demographics, performance status (ECOG), comorbidities, radiology and pathology reports, laboratory values, and tumor markers

- Distribution of cancer types: breast (28%), gastric (23%), esophageal (17%), colorectal (15%), other (17%)

AI Processing Protocol:

- Clinical data anonymized and structured in standardized document format

- ChatGPT-4.0 API integration with consistent prompt structure

- Model provided with complete clinical summaries without additional guidance or iterative questioning

- AI recommendations generated prior to MTB discussion to prevent bias

Outcome Measures and Statistical Analysis:

- Primary endpoint: concordance rate between AI and MTB final decisions

- Decision categories: neoadjuvant therapy, surgery, radiotherapy, additional diagnostic procedures, follow-up, adjuvant therapy, interventional sampling, endoscopic intervention, palliative care

- Statistical analysis using Cohen's Kappa for agreement and Spearman correlation

- Subgroup analysis to identify patterns in discordant cases

This protocol demonstrated that AI achieved highest concordance in cases adhering to established guidelines (86.4%), while discordance primarily occurred in complex cases requiring nuanced clinical judgment or consideration of patient-specific contextual factors [19].

Table: Essential Research Resources for Multimodal Oncology AI

| Resource Category | Specific Examples | Research Application |

|---|---|---|

| Genomic Profiling Platforms | MSK-IMPACT, FoundationOne CDx, OncoGuide NCC Oncopanel | Comprehensive tumor mutation profiling for treatment selection [18] |

| Public Cancer Databases | The Cancer Genome Atlas (TCGA), Genomic Data Commons | Training and validation datasets for model development [6] |

| AI Frameworks for Healthcare | Deep Latent Variable Path Modelling (DLVPM), MONAI (Medical Open Network for AI) | Specialized architectures for multimodal biomedical data integration [6] |

| Clinical NLP Tools | Clinical BERT, BioMed-RoBERTa | Extraction of structured information from clinical notes and literature [18] |

| Digital Pathology Infrastructure | Whole slide imaging systems, computational pathology platforms | High-resolution tissue analysis and spatial feature extraction [2] |

Implementation Framework and Pathway Modeling

The integration of AI into clinical workflows requires careful architectural planning. The following diagram models the pathway for implementing AI systems within multidisciplinary tumor boards:

AI-MTB Implementation Pathway

Future Directions and Research Opportunities

The field of AI-enhanced multidisciplinary tumor boards continues to evolve rapidly, with several promising research directions emerging. Large-scale multimodal models represent a significant frontier, analogous to foundation models in other domains, but specifically trained on diverse clinical data types [2]. Prospective validation in multi-center trials remains essential to establish generalizability across diverse healthcare settings and patient populations [19]. Advanced interpretation techniques are needed to enhance model transparency and provide clinically meaningful explanations that build physician trust [2] [17]. Finally, regulatory science must evolve to establish robust frameworks for evaluating AI systems as medical devices, particularly for adaptive learning systems that evolve with clinical experience [18].

The integration of AI into multidisciplinary tumor boards represents a paradigm shift in oncology, enabling more precise and personalized cancer care through systematic multimodal data integration. As these technologies mature, they hold the potential to augment clinical expertise, expand access to specialized knowledge, and ultimately improve outcomes for cancer patients worldwide.

Multimodal data integration has emerged as a transformative approach in biomedical research, systematically combining complementary biological and clinical data sources to provide a multidimensional perspective of patient health [4] [2]. This paradigm enables a more comprehensive understanding of disease mechanisms across oncology, ophthalmology, neurology, and other specialties by leveraging diverse data types including genomics, medical imaging, electronic health records, and wearable device outputs [4] [2]. The integration of these heterogeneous datasets through advanced artificial intelligence (AI) and machine learning methodologies allows researchers to capture complex biological interactions that remain obscured when analyzing single modalities in isolation [20] [21]. This technical guide explores the major disease applications of multimodal integration, detailing specific methodologies, quantitative performance, and experimental protocols that demonstrate its transformative potential for disease mechanisms research and therapeutic development.

Multimodal Integration in Oncology

Oncology represents one of the most advanced domains for multimodal AI applications, leveraging diverse data types to unravel tumor biology and improve clinical outcomes across the cancer care continuum [4] [20].

Applications and Methodologies

Enhanced Tumor Characterization: Multimodal integration enables precise tumor subtyping and characterization of the tumor microenvironment (TME). Pathological images and omics data are combined using dedicated feature extractors for each modality, with a convolutional neural network for images and deep neural network for genomic data, followed by fusion models for subtype prediction [4] [2]. Single-cell and spatial transcriptomics technologies provide fine-grained resolution of the TME, revealing cellular interactions at both single-cell and spatial dimensions [4] [21]. Cross-modal applications can predict gene expression from histopathological images of breast cancer tissue (100 µm resolution) and vice versa [4].

Personalized Treatment Planning: Multimodal scanning techniques and mathematical models integrate high-resolution MRI with metabolic profiles to design personalized radiotherapy plans for glioblastoma, enabling accurate inference of tumor cell density [4] [2]. For immunotherapy, multimodal factors are translated into clinically usable predictive markers by combining annotated CT scans, digitized immunohistochemistry slides, and genomic alterations to improve prediction of immune checkpoint blockade responses [4] [20].

Early Detection and Risk Stratification: Machine learning models utilizing clinical metadata, mammography, and trimodal ultrasound demonstrate superior breast cancer risk prediction compared to pathologist-level assessments [20]. The MONAI framework provides open-source AI tools for precise delineation of breast areas in mammograms and integration of radiomics with demographic data for improved risk assessment [20].

Drug Development and Clinical Trials: AI-driven platforms analyze large-scale molecular datasets to identify drug candidates, with AI-designed molecules progressing to clinical trials at twice the rate of traditionally developed drugs [20]. Multimodal integration optimizes clinical trial recruitment through eligibility-matching engines and enables real-time adaptive randomization informed by MMAI analytics [20].

Quantitative Performance in Oncology

Table 1: Performance Metrics of Multimodal AI in Oncology Applications

| Application Area | Specific Task | Performance Metric | Result | Data Modalities Integrated |

|---|---|---|---|---|

| Immunotherapy Response | Anti-HER2 therapy response prediction | Area Under the Curve | 0.91 [4] | Radiology, pathology, clinical information |

| Lung Cancer Risk Prediction | Lung cancer risk stratification | ROC-AUC | 0.92 [20] | Low-dose CT scans |

| Digital Pathology | Genomic alteration inference | ROC-AUC | 0.89 [20] | Histology slides |

| Melanoma Prognosis | 5-year relapse prediction | ROC-AUC | 0.833 [20] | Imaging, histology, genomics, clinical data |

| Metastatic NSCLC Treatment | Benefit from combination therapy | Hazard Ratio Reduction | 0.88-0.56 [20] | Radiomics, digital pathology, genomics |

| Prostate Cancer Outcomes | Long-term outcome prediction | Relative Improvement | 9.2-14.6% [20] | Phase 3 trial data multimodal integration |

Experimental Workflow for Tumor Subtype Classification

Protocol Title: Multimodal Integration for Breast Cancer Subtype Classification

Objective: To accurately classify breast cancer molecular subtypes using paired histopathology images and genomic data.

Materials and Reagents:

- Formalin-fixed, paraffin-embedded (FFPE) tumor tissue sections

- DNA/RNA extraction kits (e.g., Qiagen AllPrep)

- Microarray or RNA-seq reagents for gene expression profiling

- Hematoxylin and eosin (H&E) staining reagents

- Whole slide scanning system

Procedure:

- Sample Preparation: Section FFPE blocks at 4-5μm thickness for H&E staining and adjacent sections for nucleic acid extraction.

- Image Acquisition: Scan H&E slides at 40x magnification using a whole slide scanner; ensure minimum resolution of 0.25μm/pixel.

- Genomic Data Generation: Extract RNA and perform gene expression profiling using microarray or RNA-seq following manufacturer protocols.

- Feature Extraction:

- Process whole slide images through a pre-trained convolutional neural network (CNN) such as ResNet-50 to extract deep morphological features.

- Process gene expression data through a deep neural network to extract genomic features.

- Data Fusion: Integrate image and genomic features using a fusion model (feature-level or decision-level fusion).

- Subtype Classification: Train a classifier (e.g., random forest, support vector machine) on the fused features to predict PAM50 molecular subtypes.

- Validation: Perform cross-validation and external validation using independent datasets.

Quality Control:

- Ensure RNA integrity number (RIN) >7.0 for genomic analyses

- Verify image quality with focus quality metrics

- Implement batch correction for technical variations

Figure 1: Experimental workflow for multimodal tumor subtype classification in oncology

Research Reagent Solutions for Oncology

Table 2: Essential Research Reagents for Multimodal Oncology Studies

| Reagent/Technology | Primary Function | Application Context |

|---|---|---|

| 10x Genomics Visium | Spatial transcriptomics | Tumor microenvironment characterization [21] |

| Multiplexed Ion Beam Imaging | Multiplexed protein detection | Simultaneous measurement of 40+ markers in tissue [4] |

| Cell-free DNA extraction kits | Liquid biopsy sample preparation | Non-invasive cancer detection and monitoring [20] |

| Single-cell RNA sequencing kits | Cellular heterogeneity analysis | Tumor cell plasticity and immune infiltration [21] |

| Multiplex immunohistochemistry kits | Multiplexed protein detection | Spatial protein expression in tumor tissues [4] |

| GATK (Genome Analysis Toolkit) | Genomic variant discovery | Mutation detection in multimodal studies [21] |

Multimodal Integration in Ophthalmology

Ophthalmology has emerged as a frontier for multimodal AI applications, leveraging diverse imaging modalities and clinical data to enhance diagnosis and management of vision-threatening conditions [22] [23].

Applications and Methodologies

Glaucoma Management: Multimodal networks combining optical coherence tomography (OCT), fundus photography, demographics, and clinical features achieve exceptional performance (AUC=0.97) for glaucoma detection [22]. Fusion models like FusionNet integrate visual field reports and peripapillary circular OCT scans to detect glaucomatous optic neuropathy (AUC=0.95) [22]. The Glaucoma Automated Multi-Modality Platform (GAMMA) dataset enables development of algorithms for glaucoma grading using 2D fundus images and 3D OCT data [22].

Advanced Architectures: Transformer-based multimodal architectures like MM-RAF use self-attention mechanisms with three key modules: bilateral contrastive alignment to bridge semantic gaps between modalities, multiple instance learning representation to integrate multiple OCT scans, and hierarchical attention fusion to enhance cross-modal interaction [22]. These architectures effectively handle cross-modal information interaction even with significant modality differences.

Foundation Models: EyeCLIP represents a multimodal visual-language foundation model trained on 2.77 million ophthalmology images across 11 modalities with clinical text [24]. Its novel pretraining combines self-supervised reconstruction, multimodal image contrastive learning, and image-text contrastive learning to capture shared representations across modalities, demonstrating robust performance across 14 benchmark datasets [24].

Systemic Disease Prediction: Ophthalmic imaging serves as a non-invasive predictive tool for circulatory system diseases, with models trained on retinal fundus images predicting cardiovascular risk factors [22] [24]. The eye's unique accessibility as a window to the circulatory system enables assessment of systemic conditions including stroke and myocardial infarction risk [24].

Quantitative Performance in Ophthalmology

Table 3: Performance Metrics of Multimodal AI in Ophthalmology Applications

| Application Area | Specific Task | Performance Metric | Result | Data Modalities Integrated |

|---|---|---|---|---|

| Glaucoma Detection | Glaucoma classification | AUC | 0.97 [22] | OCT, fundus photos, demographics, clinical features |

| Glaucomatous Optic Neuropathy | Detection from multiple tests | AUC | 0.95 [22] | Visual field reports, peripapillary OCT |

| Rare Disease Classification | 17 rare diseases classification | AUC | Superior performance [24] | 14 imaging modalities |

| Diabetic Retinopathy | DR classification with few-shot learning | AUC | 0.681-0.757 [24] | Color fundus photography |

| Multi-disease Diagnosis | Foundation model performance | AUC Improvement | 4-5% [23] | Multiple ophthalmic imaging modalities |

| Accuracy Improvement | General multimodal vs unimodal | Accuracy Improvement | 2-7% [23] | Various ophthalmic data combinations |

Experimental Workflow for Multimodal Ophthalmic AI

Protocol Title: Multimodal Integration for Glaucoma Diagnosis and Progression Assessment

Objective: To develop a multimodal AI system for comprehensive glaucoma diagnosis and progression prediction using diverse ophthalmic data.

Materials and Reagents:

- Spectral-domain optical coherence tomography (SD-OCT) system

- Color fundus camera

- Visual field analyzer

- Tonometer for intraocular pressure measurement

- Data preprocessing pipelines for each modality

Procedure:

- Data Acquisition:

- Acquire SD-OCT volumes of the optic nerve head and macula

- Capture color fundus photographs centered on the optic disc

- Perform standard automated perimetry (visual field testing)

- Record intraocular pressure measurements and patient demographics

Image Preprocessing:

- Apply quality assessment to exclude poor-quality images

- Perform illumination correction on fundus photographs

- Register OCT volumes to a common coordinate system

- Extract retinal nerve fiber layer (RNFL) thickness maps from OCT

Feature Extraction:

- Process fundus images through CNN to extract optic disc and RNFL features

- Extract thickness measurements from OCT volumes using segmentation algorithms

- Process visual field data to extract pattern deviation and total deviation values

- Create feature vectors from clinical parameters (IOP, age, family history)

Multimodal Fusion:

- Implement feature-level fusion using concatenation or cross-modal attention

- Alternatively, use decision-level fusion to combine predictions from single-modality models

- Apply bilateral contrastive alignment to bridge semantic gaps between fundus and OCT features [22]

Model Training:

- Train a classifier for glaucoma diagnosis (normal vs glaucoma)

- For progression assessment, train a regression model to predict future visual field loss

- Use multi-task learning to simultaneously optimize diagnosis and progression tasks

Validation:

- Perform cross-validation on the training dataset

- Test on held-out validation set with expert annotations as ground truth

- Compare performance against clinical experts and single-modality baselines

Quality Control:

- Exclude images with quality scores below established thresholds

- Ensure consistent imaging protocols across different devices

- Implement data augmentation to address class imbalance

Figure 2: Multimodal workflow for ophthalmic AI applications

Multimodal Integration in Neurology

Neurology benefits from multimodal integration by combining neuroimaging, genetic risk scores, wearable sensor data, and clinical information to improve detection and prognostication of neurodegenerative diseases [25].

Applications and Methodologies

Neurodegenerative Disease Prediction: Machine learning models combining structural MRI parameters, accelerometry data from wearable devices, polygenic risk scores, and lifestyle information achieve high performance (AUC=0.819) for predicting neurodegenerative disease incidence [25]. This significantly outperforms models using only accelerometry data (AUC=0.688), demonstrating the value of multimodal integration [25].

Structural MRI Biomarkers: Multiple MRI parameters serve as reliable biomarkers, including hippocampal volume (AD correlation), cortical thickness (entorhinal cortex for mild cognitive impairment), and white matter hyperintensities (cerebral small vessel disease) [25]. These parameters capture distinct aspects of neurodegenerative pathology and provide complementary information when combined.

Wearable Device Monitoring: Accelerometers in wearable devices capture motor impairments characteristic of neurodegenerative diseases, including gait abnormalities in Alzheimer's (slower gait, shorter stride length) and Parkinson's (rigidity, tremors, freezing) [25]. Machine learning analysis of 24-hour activity patterns enables detection of prodromal stages before clinical diagnosis.

Multimodal Risk Stratification: Integration of multimodal factors identifies individuals at highest risk for conversion from mild cognitive impairment to dementia. Feature importance analyses reveal that structural MRI parameters constitute 18 of the 20 most important features for neurodegenerative disease prediction, with accelerometry data providing the remaining key predictors [25].

Quantitative Performance in Neurology

Table 4: Performance Metrics of Multimodal AI in Neurology Applications

| Application Area | Specific Task | Performance Metric | Result | Data Modalities Integrated |

|---|---|---|---|---|

| Neurodegenerative Disease | Incidence prediction | AUC | 0.819 [25] | MRI, accelerometry, PRS, lifestyle |

| Parkinson's Detection | Diagnosis from wrist accelerometer | Accuracy | >85% [25] | Accelerometry data |

| Parkinson's Diagnosis | Gaussian mixed model classifier | AUC | 0.69-0.85 [25] | Gait and low-movement data |

| Neurodegenerative Prediction | Model without MRI parameters | AUC | 0.688 [25] | Accelerometry, PRS, lifestyle |

Experimental Workflow for Neurodegenerative Disease Prediction

Protocol Title: Multimodal Integration for Neurodegenerative Disease Risk Prediction

Objective: To develop a predictive model for neurodegenerative disease incidence using multimodal data from the UK Biobank.

Materials and Reagents:

- 3T MRI scanner with standardized structural sequences

- Wrist-worn accelerometers (Axivity AX3 recommended)

- DNA extraction and genotyping kits

- Clinical assessment protocols for lifestyle factors

Procedure:

- Data Collection:

- Acquire T1-weighted structural MRI scans with 1mm isotropic resolution

- Distribute wrist accelerometers for 7-day continuous wear

- Collect blood samples for genotyping and polygenic risk score calculation

- Administer lifestyle questionnaires (diet, exercise, cognitive activity)

MRI Processing:

- Perform volumetric segmentation of hippocampal, amygdala, and cortical regions

- Measure cortical thickness using surface-based analysis (FreeSurfer)

- Quantify white matter hyperintensity volume from FLAIR sequences

- Extract regional volumetric measurements for subcortical structures

Accelerometry Analysis:

- Process raw accelerometer data to extract gait parameters during walking bouts

- Calculate activity metrics including sedentary time, light activity, and moderate-vigorous activity

- Derive circadian rhythm metrics from 24-hour activity patterns

- Extract features related to movement smoothness and coordination

Genetic Risk Assessment:

- Calculate polygenic risk scores for Alzheimer's and Parkinson's diseases

- Incorporate APOE ε4 status for Alzheimer's-specific risk

- Include known genetic variants associated with neurodegenerative conditions

Multimodal Integration:

- Use XGBoost machine learning algorithm to integrate all modalities

- Train separate models for all neurodegenerative diseases, Alzheimer's-specific, and Parkinson's-specific prediction

- Perform feature importance analysis to identify key predictors across modalities

Validation:

- Validate models using longitudinal follow-up data with clinical diagnoses

- Assess performance using time-dependent ROC analysis

- Evaluate calibration and clinical utility with decision curve analysis

Quality Control:

- Exclude participants with neurological diagnoses at baseline

- Ensure MRI data passes quality control for motion artifacts

- Verify accelerometer wear time compliance (>16 hours/day for ≥4 days)

Figure 3: Multimodal integration workflow for neurodegenerative disease prediction

Cross-Disease Methodological Framework

The implementation of multimodal integration across disease domains shares common methodological frameworks and technical challenges that require specialized approaches.

Multimodal Fusion Strategies

Feature-Level Fusion: Early fusion combines raw or extracted features from multiple modalities into a joint representation before model training [22] [21]. This approach enables the model to learn complex interactions between modalities but requires careful handling of heterogeneous data structures and scales.

Decision-Level Fusion: Late fusion trains separate models on each modality and combines their predictions through weighted averaging, majority voting, or meta-learners [22]. This approach preserves modality-specific dynamics but may miss low-level cross-modal interactions.

Hybrid Fusion: Combined approaches leverage both feature-level and decision-level fusion to balance their respective advantages [22]. This provides flexibility in algorithm design but increases computational complexity and requires careful optimization.

Cross-Modal Attention: Advanced interaction strategies use attention mechanisms to dynamically weight the importance of different modalities and their features [22] [24]. Transformer-based architectures have shown particular success in learning complex cross-modal relationships through self-attention and cross-attention mechanisms.

Technical Challenges and Solutions

Data Heterogeneity: Variations in data format, structure, and coding standards across modalities complicate integration [4] [21]. Solutions include development of unified data frameworks, normalization pipelines, and cross-modal alignment techniques.

Missing Modalities: Real-world clinical data often has incomplete modalities across patients [24]. Approaches include generative methods to impute missing modalities, flexible architectures that can handle variable input combinations, and transfer learning from complete to incomplete datasets.

Computational Complexity: Large-scale multimodal datasets demand significant computational resources [21]. Distributed computing, efficient model architectures, and dimensionality reduction techniques help address these challenges.

Model Interpretability: Complex multimodal models can function as "black boxes" [4] [2]. Visualization techniques, attention maps, feature importance analysis, and model distillation methods enhance interpretability for clinical adoption.

Multimodal data integration represents a paradigm shift in disease mechanisms research, enabling a more comprehensive understanding of complex biological systems across oncology, ophthalmology, neurology, and beyond. The technical methodologies and performance metrics detailed in this guide demonstrate the significant advantages of combining complementary data modalities through advanced AI and machine learning approaches. As multimodal integration continues to evolve, future directions will focus on large-scale foundation models, standardized integration frameworks, improved interpretability, and clinical translation to realize the full potential of this approach for precision medicine and therapeutic development. The continued advancement of multimodal integration methodologies promises to further revolutionize our understanding of disease mechanisms and enhance patient care across diverse medical specialties.

Frameworks and Applications: Technical Strategies for Integrating Data Modalities

In the realm of artificial intelligence (AI) and healthcare, multimodal data integration has emerged as a transformative approach for researching disease mechanisms and advancing therapeutic development. This paradigm involves systematically combining complementary biological and clinical data sources—including genomics, medical imaging, electronic health records (EHRs), and wearable device outputs—to construct a multidimensional perspective of patient health and disease pathology [4] [2]. The primary objective of multimodal data integration is to leverage the complementary strengths of different data types to gain a more comprehensive understanding of complex biological systems and disease processes than any single data modality can provide independently [2].