Matrix Factorization vs. Deep Learning: A Comprehensive Guide to Drug-Target Interaction Prediction

This article provides a rigorous comparison of matrix factorization and deep learning methodologies for predicting drug-target interactions (DTIs), a critical task in accelerating drug discovery and repositioning.

Matrix Factorization vs. Deep Learning: A Comprehensive Guide to Drug-Target Interaction Prediction

Abstract

This article provides a rigorous comparison of matrix factorization and deep learning methodologies for predicting drug-target interactions (DTIs), a critical task in accelerating drug discovery and repositioning. Aimed at researchers and drug development professionals, it explores the foundational principles of both approaches, detailing their specific applications and algorithmic mechanisms. The content further addresses key challenges such as data scarcity, noise, and model interpretability, offering practical troubleshooting and optimization strategies. By synthesizing validation benchmarks and performance metrics, this review delivers actionable insights to guide method selection and outlines future directions for integrating these powerful computational techniques in biomedical research.

The Fundamentals of DTI Prediction: From Problem to Core Paradigms

The Critical Role of DTI Prediction in Modern Drug Discovery and Repositioning

The process of traditional drug discovery has long been characterized by astronomical costs, extended timelines, and high failure rates, with the average development cycle exceeding a decade and costing more than $2.6 billion per approved drug [1]. In this challenging landscape, computational prediction of drug-target interactions (DTI) has emerged as a transformative approach to significantly accelerate early discovery phases and reduce resource expenditures. DTI prediction fundamentally aims to identify and characterize interactions between pharmaceutical compounds and their biological targets, primarily proteins, through in silico methods rather than purely experimental approaches [2]. This capability is particularly valuable for drug repositioning (also known as drug repurposing), which identifies new therapeutic applications for existing drugs already approved for other indications [3] [1].

The evolution of DTI prediction methodologies has largely progressed from traditional matrix factorization techniques to more contemporary deep learning architectures, each with distinct strengths, limitations, and optimal application scenarios [1]. Matrix factorization approaches, including neighborhood-regularized logistic matrix factorization (NRLMF) and self-paced learning with dual similarity information (SPLDMF), leverage similarity matrices and collaborative filtering principles to infer unknown interactions from known DTI networks [3] [4]. In contrast, deep learning methods utilize sophisticated neural network architectures—including graph neural networks (GNNs), transformers, and evidential deep learning—to learn complex, hierarchical representations from raw molecular data [5] [6] [7]. This comprehensive analysis objectively compares these methodological paradigms through quantitative performance metrics, experimental protocols, and practical implementation considerations to guide researchers in selecting appropriate strategies for specific DTI prediction scenarios.

Matrix Factorization Approaches

Matrix factorization techniques conceptualize DTI prediction as a matrix completion problem where the goal is to reconstruct missing entries in a drug-target interaction matrix. These methods typically employ dimensionality reduction to project drugs and targets into a shared latent space where their interactions can be modeled as inner products [3] [4]. The self-paced learning with dual similarity information and matrix factorization (SPLDMF) approach represents a recent advancement that addresses two fundamental challenges: (1) susceptibility to bad local optima due to high noise and missing data, and (2) insufficient learning power from single similarity information [3]. SPLDMF incorporates a self-paced learning mechanism that progressively includes samples from simple to complex during training, mimicking human learning processes to improve optimization with sparse data [3]. Additionally, it integrates multiple similarity measures for both drugs and targets, enhancing the model's capacity to identify potential associations accurately.

Another notable matrix factorization variant, the convolutional broad learning system for DTI prediction (ConvBLS-DTI), combines neighborhood regularization with a broad learning network architecture [4]. This approach uses weighted K-nearest known neighbors (WKNKN) as a preprocessing strategy for handling unknown drug-target pairs, followed by neighborhood regularized logistic matrix factorization (NRLMF) to extract features focused on known interaction pairs [4]. The broad learning component then enables efficient classification without the need for deep network retraining, offering computational advantages for certain scenarios.

Deep Learning Approaches

Deep learning approaches for DTI prediction leverage multiple neural network architectures to automatically learn relevant features from raw molecular representations, eliminating the need for manual feature engineering [7] [2]. The EviDTI framework represents a cutting-edge approach that utilizes evidential deep learning (EDL) to provide uncertainty estimates alongside interaction predictions [5]. This framework integrates multi-dimensional drug representations (2D topological graphs and 3D spatial structures) with target sequence features extracted from protein language models like ProtTrans [5]. The incorporation of uncertainty quantification is particularly valuable for practical drug discovery, as it helps prioritize DTIs with higher confidence predictions for experimental validation, thereby reducing the risk and cost associated with false positives.

More recent developments include large language model (LLM) powered frameworks such as LLM³-DTI, which constructs multi-modal embeddings of drugs and targets using domain-specific LLMs [8]. These approaches employ dual cross-attention mechanisms and fusion modules to effectively align and integrate information from different modalities [8]. Another advanced architecture, MVPA-DTI, constructs heterogeneous networks incorporating multisource data from proteins, drugs, diseases, and side effects, using meta-path aggregation to dynamically integrate information from both feature views and biological network relationship views [6]. These sophisticated deep learning approaches demonstrate the field's progression toward increasingly integrative and biologically-informed modeling strategies.

Table 1: Core Methodological Characteristics of Matrix Factorization vs. Deep Learning Approaches

| Characteristic | Matrix Factorization | Deep Learning |

|---|---|---|

| Core Principle | Dimensionality reduction into latent space | Automated feature learning from raw data |

| Data Requirements | Known interaction matrices, similarity measures | Diverse data types (sequences, structures, graphs) |

| Typical Inputs | Drug and target similarity matrices | Molecular graphs, sequences, 3D structures |

| Representative Methods | SPLDMF, NRLMF, ConvBLS-DTI | EviDTI, LLM³-DTI, MVPA-DTI, DeepConv-DTI |

| Key Strengths | Computational efficiency, interpretability | High predictive accuracy, handling complex patterns |

| Primary Limitations | Limited feature learning, similarity dependence | Data hunger, computational intensity, black-box nature |

Performance Comparison: Quantitative Analysis

Rigorous benchmarking across multiple datasets provides critical insights into the relative performance of matrix factorization versus deep learning approaches for DTI prediction. Experimental results consistently demonstrate that while both methodological families can achieve strong performance, advanced deep learning models generally hold slight advantages on most standard metrics, particularly for complex prediction scenarios.

On the Davis and KIBA datasets, which present significant class imbalance challenges, the EviDTI deep learning framework demonstrated superior performance compared to multiple baseline methods [5]. On the KIBA dataset, EviDTI outperformed the best baseline model by 0.6% in accuracy, 0.4% in precision, 0.3% in Matthews correlation coefficient (MCC), 0.4% in F1 score, and 0.1% in area under the ROC curve (AUC) [5]. Similarly, on the Davis dataset, EviDTI exceeded the best baseline by 0.8% in accuracy, 0.6% in precision, 0.9% in MCC, 2% in F1 score, 0.1% in AUC, and 0.3% in area under the precision-recall curve (AUPR) [5]. These consistent improvements across multiple metrics highlight the robustness of advanced deep learning approaches when handling complex, real-world data distributions.

Matrix factorization methods remain highly competitive, particularly on standard benchmark datasets. The SPLDMF approach achieved impressive performance with an AUC of 0.982 and AUPR of 0.815 on certain benchmark datasets, outperforming contemporary methods at the time of publication [3]. Similarly, the ConvBLS-DTI method demonstrated enhanced prediction effects on both AUC and AUPR curves across four benchmark datasets under three different scenarios [4]. These results indicate that well-optimized matrix factorization approaches can deliver state-of-the-art performance for many standard DTI prediction tasks while maintaining computational efficiency.

Table 2: Performance Comparison Across Methodological Categories on Benchmark Datasets

| Method | Category | Dataset | AUC | AUPR | Accuracy | F1 Score |

|---|---|---|---|---|---|---|

| SPLDMF [3] | Matrix Factorization | Enzyme | 0.982 | 0.815 | - | - |

| EviDTI [5] | Deep Learning | KIBA | - | - | 82.02% | 82.09% |

| EviDTI [5] | Deep Learning | Davis | - | 0.903* | 82.02% | 82.09% |

| MVPA-DTI [6] | Deep Learning | Multiple | 0.966 | 0.901 | - | - |

| ConvBLS-DTI [4] | Matrix Factorization | NR | Improved | Improved | - | - |

Note: * indicates improvement over baseline rather than absolute value

For cold-start scenarios involving novel DTIs with limited known interactions, EviDTI demonstrated particularly strong performance, achieving 79.96% accuracy, 81.20% recall, 79.61% F1 score, and 59.97% MCC value [5]. While its AUC value of 86.69% was slightly lower than TransformerCPI's 86.93%, its balanced performance across multiple metrics underscores the value of uncertainty quantification for challenging prediction scenarios where training data is sparse [5].

Experimental Protocols and Methodologies

Matrix Factorization Experimental Framework

Standard experimental protocols for matrix factorization approaches typically begin with data preprocessing and similarity calculation steps. For SPLDMF, this involves computing multiple similarity matrices for drugs and targets using various metrics such as chemical structure similarity for drugs and sequence similarity for targets [3]. The method then employs a self-paced learning strategy that gradually incorporates samples from simple to complex into training, effectively reducing the impact of noisy data and poor local optima [3]. The core matrix factorization component integrates these multiple similarity measures through a weighted approach, with the model objective function combining reconstruction error with neighborhood regularization terms to preserve local similarity structures [3].

The ConvBLS-DTI protocol implements a different approach, beginning with the WKNKN preprocessing method to estimate values for unknown drug-target pairs based on known neighbors [4]. This is followed by application of NRLMF to extract latent features from the updated drug-target interaction matrix, emphasizing known interaction pairs [4]. The final classification step employs a broad learning system with convolutional feature extraction, which maps the latent features to enhanced random feature spaces and connects them through sparse autoencoders for final prediction [4]. This hybrid approach maintains computational efficiency while enhancing predictive performance through the broad learning architecture.

Deep Learning Experimental Framework

Deep learning protocols for DTI prediction typically involve more complex multi-modal feature extraction pipelines. The EviDTI framework exemplifies this approach with separate encoders for drug and target features [5]. For target proteins, it utilizes the ProtTrans protein language model to generate initial sequence representations, which are further processed through a light attention module to capture residue-level interaction patterns [5]. For drugs, it employs both 2D topological information processed through the MG-BERT model and 1DCNN, and 3D spatial structures encoded through geometric deep learning using atom-bond graphs and bond-angle graphs [5]. These diverse representations are concatenated and passed through an evidential layer that outputs parameters for a Dirichlet distribution, enabling simultaneous prediction of interaction probabilities and associated uncertainties [5].

The MVPA-DTI protocol implements a heterogeneous network approach that constructs multi-entity graphs incorporating drugs, proteins, diseases, and side effects [6]. It employs a molecular attention transformer to extract 3D conformation features from drug structures and the Prot-T5 protein-specific language model to extract biophysically relevant features from protein sequences [6]. The model then uses a meta-path aggregation mechanism to dynamically integrate information from both feature views and biological network relationship views, capturing higher-order interaction patterns through message passing in the heterogeneous graph [6].

Successful implementation of DTI prediction models requires leveraging specialized databases, software tools, and computational resources. The following toolkit summarizes essential resources referenced in recent literature for developing and evaluating both matrix factorization and deep learning approaches.

Table 3: Essential Research Resources for DTI Prediction

| Resource | Type | Primary Function | Relevance |

|---|---|---|---|

| DrugBank [5] [3] | Database | Drug structures, target information, interaction data | Benchmark dataset for validation |

| Davis & KIBA [5] [2] | Database | Binding affinity measurements, kinase targets | Benchmark datasets with continuous binding scores |

| BindingDB [2] | Database | Drug-target binding affinities, focusing on proteins | Training data for binding affinity prediction |

| Yamanishi Gold Standard [3] | Dataset | Curated drug-target pairs across four target classes | Benchmark for binary interaction prediction |

| ProtTrans/ProtT5 [5] [6] | Software | Protein language models for sequence representation | Target feature extraction in deep learning |

| MG-BERT [5] | Software | Molecular graph pre-training for drug representation | Drug feature extraction in deep learning |

| Graph Neural Networks [6] [1] | Framework | Graph-structured data processing | Modeling molecular structures and networks |

| RDKit [2] | Software | Cheminformatics and molecular manipulation | Drug structure processing and feature calculation |

Interpretation Guidelines and Decision Framework

Selecting between matrix factorization and deep learning approaches requires careful consideration of multiple factors, including data availability, computational resources, performance requirements, and interpretability needs. Matrix factorization methods generally offer advantages in scenarios with limited computational resources, small to medium-sized datasets, and when model interpretability is prioritized [3] [4]. These approaches provide more transparent reasoning through similarity matrices and latent factors that can be directly examined by domain experts. Additionally, they typically require less hyperparameter tuning and demonstrate faster training times compared to deep learning alternatives.

Deep learning approaches excel in scenarios with large-scale, multi-modal data, complex interaction patterns, and when the highest possible predictive accuracy is required [5] [6] [7]. The capacity to automatically learn relevant features from raw data eliminates the dependency on manually engineered similarity measures, which may not fully capture complex biochemical relationships [7]. Furthermore, advanced deep learning frameworks like EviDTI provide uncertainty quantification that is particularly valuable for real-world drug discovery applications, as it enables prioritization of high-confidence predictions for experimental validation [5]. However, these benefits come with increased computational demands, greater data requirements, and more challenging model interpretation.

For practical implementation, researchers should consider a staged approach beginning with matrix factorization methods to establish baseline performance, particularly when working with standard benchmark datasets or limited computational resources. Deep learning approaches should be employed when handling diverse data modalities (sequences, structures, networks), addressing cold-start problems with sophisticated transfer learning, or when uncertainty estimation is critical for decision-making [5] [6]. As the field continues to evolve, hybrid approaches that combine elements of both paradigms may offer the most promising direction, leveraging the efficiency and interpretability of matrix factorization with the representational power of deep learning.

The process of drug discovery traditionally relies on experimental methods to identify interactions between drugs and target proteins. However, these experimental approaches are notoriously time-consuming, costly, and resource-intensive [9] [10]. A major consequence of this is that the resulting drug-target interaction (DTI) matrices are extremely sparse, meaning that over 99% of potential drug-target pairs have unknown interaction status [10]. This sparsity presents a fundamental challenge for computational prediction methods, as they must learn from a very small set of known interactions to predict a vast landscape of unknown ones. This guide objectively compares how two dominant computational paradigms—matrix factorization (MF) and deep learning (DL)—address this core challenge, evaluating their performance, methodologies, and applicability to different drug discovery scenarios.

Performance Comparison: Matrix Factorization vs. Deep Learning

The following tables summarize the core characteristics and quantitative performance of representative Matrix Factorization and Deep Learning models.

Table 1: Overview and Comparison of Model Characteristics

| Model Name | Core Paradigm | Key Strategy for Sparsity | Handles Cold Start? | Key Advantage |

|---|---|---|---|---|

| NRLMF [11] | Matrix Factorization | Logistic MF, Graph Regularization | Limited | Computationally efficient, interpretable |

| MF with Denoising Autoencoders [12] | Matrix Factorization | Uses side information (similarity matrices) to fill sparsity | Yes (with side info) | Leverages auxiliary data to mitigate sparsity |

| Hyperbolic MF [13] | Matrix Factorization (Hyperbolic) | Models biological space as hyperbolic, lowering embedding dimension | Improved over Euclidean MF | Superior accuracy with lower dimensions; better reflects biological tree-like topology |

| DeepDTA [14] | Deep Learning | Uses 1D CNN on drug SMILES and protein sequences | Limited | Automates feature learning from raw data |

| GraphDTA [14] | Deep Learning | Uses graph representation for drug molecules | Limited | Captures structural information of drugs |

| RSGCL-DTI [9] | Deep Learning (Graph Contrastive) | Combines structural and relational features via contrastive learning | Yes | Enhances feature representation; excellent on unseen pairs |

| MGCLDTI [15] | Deep Learning (Graph Contrastive) | Densification strategy on DTI matrix & multi-view learning | Yes | Alleviates noise from sparsity; captures topological similarity |

| DTI-RME [11] | Ensemble Learning | Robust L2-C loss, multi-kernel fusion, ensemble structures | Yes | Combats label noise, effective multi-view fusion |

Table 2: Comparative Predictive Performance on Benchmark Datasets

| Model | Dataset | Performance Metrics | Reported Performance Highlights |

|---|---|---|---|

| Hyperbolic MF [13] | Not Specified | Accuracy, Embedding Dimension | Superior accuracy vs. Euclidean MF; embedding dimension reduced by an order of magnitude. |

| DeepDTAGen [14] | KIBA | MSE: 0.146, CI: 0.897, r²m: 0.765 | Outperforms traditional ML (KronRLS, SimBoost) and deep learning models (e.g., GraphDTA). |

| DeepDTAGen [14] | Davis | MSE: 0.214, CI: 0.890, r²m: 0.705 | Surpasses second-best deep model (SSM-DTA) with 2.4% improvement in r²m. |

| DRaW [10] | Four Benchmark Datasets | Not Specified (Comparative Accuracy) | Significantly outperforms several MF methods and a deep model (MT-DTI). |

| DTI-RME [11] | Five Real-World Datasets | Not Specified (Comparative Accuracy) | Demonstrates superior performance against a range of baseline and recent deep learning methods. |

Experimental Protocols and Methodologies

Matrix Factorization Approaches

Matrix factorization methods decompose the sparse drug-target interaction matrix R (where r_i,j = 1 indicates a known interaction) into lower-dimensional latent matrices for drugs (U) and targets (V). The probability of an interaction is typically modeled as a function of the latent vectors [13].

- Standard Logistic Matrix Factorization (e.g., NRLMF) [11]: This method models the probability p_i,j of a DTI using the logistic function, p_i,j = σ(u^i * v^j), where u^i and v^j are the latent vectors of drug i and target j, and σ is the logistic function. The model is trained to maximize the likelihood of the observed interaction matrix.

- Hyperbolic Matrix Factorization [13]: This approach challenges the standard Euclidean space assumption. It embeds drugs and targets in hyperbolic space, which better reflects the tree-like, hierarchical topology of biological systems. The distance in hyperbolic space is used to calculate the interaction probability: p_i,j = σ(-d_ℒ²(u^i, v^j)), where d_ℒ is the hyperbolic (Lorentzian) distance. This allows for a more accurate representation of complex networks with an order of magnitude lower embedding dimension.

- MF with Denoising Autoencoders [12]: This hybrid model combines matrix factorization with autoencoders. It learns the hidden factors of drugs and targets simultaneously from both the interaction matrix and their side information (e.g., drug similarity, target similarity). The autoencoder uses the side information to reconstruct the original data, learning a robust feature representation that helps address the sparsity issue.

Deep Learning Approaches

Deep learning models automatically learn feature representations from raw data, avoiding manual feature engineering.

- Sequence-Based Models (e.g., DeepDTA) [14]: These models take the raw SMILES string of a drug and the amino acid sequence of a target as input. They typically use Convolutional Neural Networks (CNNs) to extract local sequence patterns and hierarchical features, which are then combined to predict the interaction or binding affinity.

- Graph-Based Models (e.g., GraphDTA, GCN-DTI) [14] [11]: These models represent a drug as a molecular graph, where atoms are nodes and bonds are edges. They use Graph Neural Networks (GNNs) or Graph Convolutional Networks (GCNs) to learn features that encode the topological structure of the drug molecule. This is often combined with protein sequence features extracted by a CNN.

- Graph Contrastive Learning Models (e.g., RSGCL-DTI, MGCLDTI) [9] [15]: These are self-supervised methods designed to learn robust node representations from the graph structure itself, which is particularly useful when labeled data (known interactions) is sparse.

- RSGCL-DTI [9] first constructs a heterogeneous DTI graph. It then derives drug-drug and protein-protein relational similarity networks from it. Graph contrastive learning is applied to these homogeneous networks to extract relational features. These are combined with structural features (from D-MPNN for drugs and CNN for proteins) for the final prediction.

- MGCLDTI [15] employs a densification strategy on the original sparse DTI matrix to alleviate noise. It also uses DeepWalk to extract global topological node representations from a multi-view heterogeneous graph. A graph contrastive learning model with node masking is then applied to enhance local structural awareness and optimize node embeddings before final prediction with a classifier like LightGBM.

- Multitask & Ensemble Models (e.g., DTI-RME) [11]: DTI-RME uses an ensemble approach to model multiple data structures simultaneously (drug-target pair, drug, target, and low-rank structure). It introduces a robust L2-C loss function to handle outliers (i.e., unknown interactions mislabeled as zeros) and employs multi-kernel learning to effectively fuse multiple views of the data.

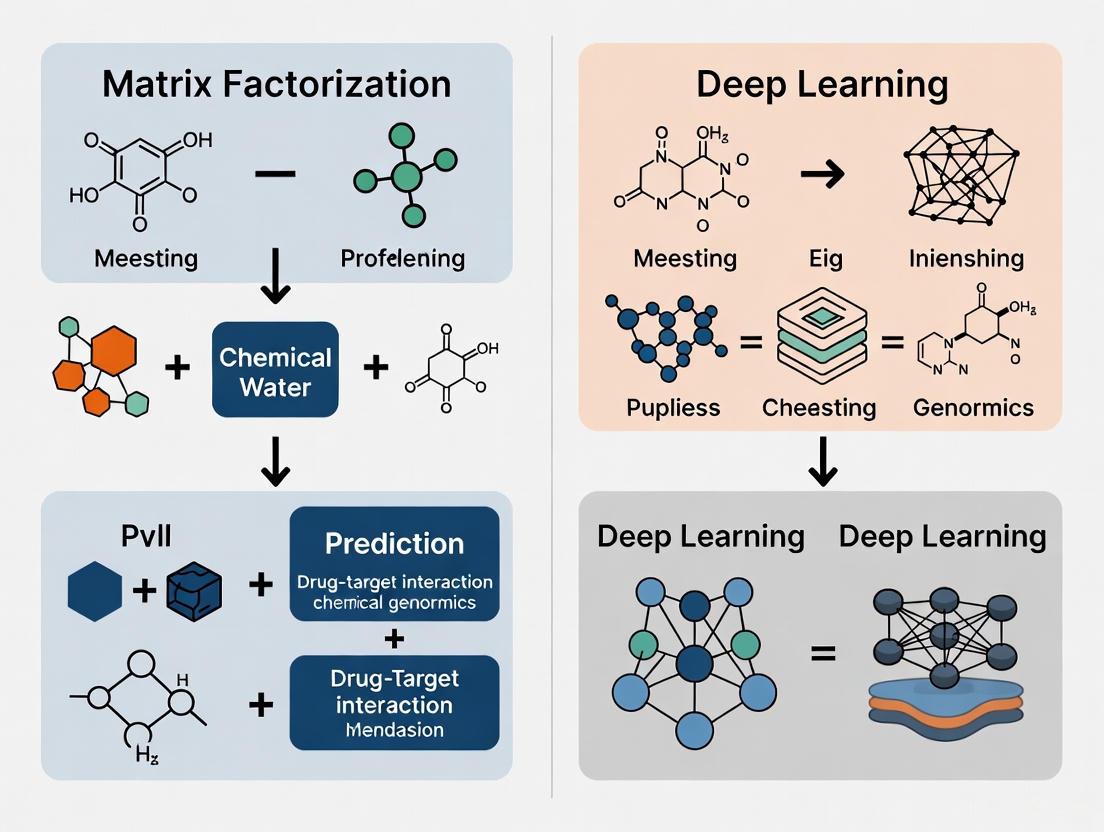

Workflow Visualization

The following diagram illustrates the core methodological differences in how MF and DL approaches handle sparse DTI data.

Diagram 1: A comparison of the MF and DL workflows for DTI prediction.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Datasets for DTI Research

| Tool/Resource | Type | Primary Function in DTI Research | Example Use Case |

|---|---|---|---|

| Drug SMILES Strings | Data Representation | A string-based linear notation representing the 2D structure of a drug molecule. | Input for sequence-based models like DeepDTA [14]. |

| Drug Molecular Graph | Data Representation | A graph where nodes are atoms and edges are chemical bonds. | Input for graph-based models like GraphDTA and GCN-DTI [14] [11]. |

| Protein Amino Acid Sequence | Data Representation | The linear sequence of amino acids for a target protein. | Input for models to extract protein features using CNNs or other networks [9] [14]. |

| Known DTI Databases (e.g., DrugBank, KEGG) | Benchmark Dataset | Curated databases of experimentally validated drug-target interactions. | Used as gold-standard labels for model training and testing [11]. |

| Similarity Matrices (Drug & Target) | Side Information | Matrices quantifying chemical similarity between drugs and sequence/functional similarity between targets. | Used by MF and ensemble methods to mitigate data sparsity [12] [11]. |

| Heterogeneous Network | Data Structure | An integrated network linking drugs, targets, diseases, and other entities. | Provides a rich context for graph-based and contrastive learning models to extract relational features [9] [15]. |

The high cost and inherent sparsity of experimental DTI data remain a central challenge in computational drug discovery. Our analysis indicates that while matrix factorization methods offer computational efficiency and have been improved via hyperbolic geometry and side information, they can be fundamentally limited by the sparse matrix they aim to decompose. In contrast, modern deep learning approaches, particularly those using graph neural networks and contrastive learning, demonstrate a powerful capacity to learn robust feature representations directly from raw data and by exploiting the relational structure within biological networks. These methods increasingly show superior performance in challenging but realistic scenarios like predicting interactions for new drugs or new targets (the "cold-start" problem) [9] [14] [15]. The emerging trend is towards hybrid and ensemble models, such as DTI-RME [11], which combine the strengths of different paradigms to create more robust and accurate predictors, ultimately providing researchers with more reliable tools to navigate the sparse DTI landscape.

Chemogenomics represents a paradigm shift in drug discovery, focusing on the systematic screening of chemical compounds against families of biologically relevant targets. At its core lies the prediction of Drug-Target Interactions (DTI), which serves as the foundational step for understanding drug mechanisms, identifying new therapeutic applications for existing drugs (drug repurposing), and de novo drug design [16]. The conventional drug discovery process is notoriously cost-intensive and time-consuming, often requiring over a decade and billions of dollars to bring a single drug to market [16] [17]. Computational in silico methods, particularly chemogenomic approaches, have gained substantial prominence for their ability to reduce this burden by narrowing down the search space for potential drug candidates, thereby indirectly reducing incurred costs, time, and labor [16].

A chemogenomic framework for DTI prediction fundamentally treats the interaction between a drug and a target protein as a computational prediction problem. It leverages the available large-scale data on drugs (e.g., chemical structures, side effects) and targets (e.g., protein sequences, genomic data) to build predictive models [16]. These models can then be validated through subsequent in vitro or in vivo experiments, creating a more efficient and targeted discovery pipeline [16].

Comparative Analysis: Matrix Factorization vs. Deep Learning

The central thesis of this guide is to objectively compare two dominant computational paradigms within the chemogenomic framework: Matrix Factorization (MF) and Deep Learning (DL). The following sections and tables provide a detailed comparison of their performance, underlying principles, and applicability.

Performance and Characteristic Comparison

Table 1: Key Performance Metrics of Representative MF and DL Models on Benchmark Datasets

| Model Name | Model Type | Dataset | AUC | AUPR | Key Strengths |

|---|---|---|---|---|---|

| SPLDMF [3] | Matrix Factorization | Enzyme (E) | 0.982 | 0.815 | Robust to data noise & high missing rates |

| DNILMF [18] | Matrix Factorization | Nuclear Receptors (NR) | High* | High* | Integrates dual similarity information |

| EviDTI [5] | Deep Learning | DrugBank | 0.820 (Acc) | N/A | Provides uncertainty estimates |

| EviDTI [5] | Deep Learning | KIBA | Competitive | Competitive | Superior on imbalanced datasets |

| DRaW [10] | Deep Learning | COVID-19 | Outperformed MF | Outperformed MF | Avoids data leakage; uses feature vectors |

| DrugMAN [19] | Deep Learning | Multiple Scenarios | Best Performance | Best Performance | Excellent generalization in cold-start |

Note: Specific AUC/AUPR values for DNILMF were not explicitly detailed in the provided text, though the source states it outperformed prior state-of-the-art methods. N/A: Metric not available in the provided text. Acc: Accuracy.

Table 2: Fundamental Characteristics and Applicability of MF and DL Approaches

| Feature | Matrix Factorization (MF) | Deep Learning (DL) |

|---|---|---|

| Core Principle | Decomposes interaction matrix into lower-dimensional latent matrices [10] | Uses multi-layer neural networks to automatically learn hierarchical feature representations [16] [5] |

| Linearity | inherently linear; models linear relationships well [20] | inherently non-linear; captures complex, non-linear patterns [20] |

| Data Requirement | Effective even with smaller datasets [3] | Requires large datasets for optimal performance and to prevent overfitting [5] |

| Handling Sparsity | struggles with extreme sparsity; requires integration of side information [10] [21] | Deep architectures (e.g., autoencoders) can learn from sparse data and side information [21] |

| Cold-Start Problem | suffers from cold-start for new drugs/targets with no interactions [16] [10] | better generalization; can handle cold-start via learned features from chemical structure/target sequence [5] [19] |

| Interpretability | relatively higher; latent factors can sometimes be interpreted [20] | lower "black-box" nature; though attention mechanisms are improving this [16] [5] |

| Computational Cost | generally lower computational complexity [20] | higher computational cost and training time [16] |

| Uncertainty Quantification | Not inherently supported | Supported by advanced frameworks like Evidential Deep Learning (EviDTI) [5] |

Detailed Experimental Protocols

To ensure reproducibility and provide a clear understanding of how the data in Table 1 is generated, this section outlines the standard experimental protocols for evaluating DTI prediction models.

A. Data Sourcing and Curation: Experimental validation relies on gold-standard benchmark datasets. Common sources include DrugBank, KEGG, BRENDA, and ChEMBL [3] [19] [18]. The widely used Yamanishi dataset is categorized into four target classes: Enzymes (E), Ion Channels (IC), G Protein-Coupled Receptors (GPCR), and Nuclear Receptors (NR) [3] [18]. The quality and comprehensiveness of these datasets are critical, and they typically include drug chemical structures, target protein sequences, and known binary interaction pairs [18].

B. Similarity Matrix Construction: A crucial step in both MF and DL methods is the construction of similarity matrices.

- Drug Similarity: Often calculated from chemical structure fingerprints using tools like SIMCOMP, which computes the Tanimoto coefficient between drug pairs [18].

- Target Similarity: Typically derived from protein sequence alignment using a normalized version of the Smith-Waterman score to compute sequence similarity [18].

C. Evaluation Methodology: Model performance is rigorously assessed using cross-validation and standard metrics.

- Cross-Validation: k-fold cross-validation (e.g., 5 trials of 10-fold CV) is the standard protocol to ensure robust performance estimation and avoid overfitting [3] [18].

- Evaluation Metrics:

- AUC (Area Under the ROC Curve): Measures the overall ability to distinguish between interacting and non-interacting pairs.

- AUPR (Area Under the Precision-Recall Curve): Often more informative than AUC for highly imbalanced datasets where non-interactions far outnumber known interactions [3] [18].

- Additional Metrics: Accuracy, Precision, Recall, F1-score, and Matthews Correlation Coefficient (MCC) are also commonly reported [5].

D. Scenario-Based Testing: Models are tested under different prediction scenarios to evaluate their real-world applicability, particularly for drug repurposing [3] [18]:

- Scenario 1 (Warm Start): Predicting interactions between known drugs and known targets.

- Scenario 2 & 3 (Cold Start): Predicting interactions for a new drug with known targets, or a known drug with new targets.

- Scenario 4 (Very Cold Start): Predicting interactions between new drugs and new targets, the most challenging scenario.

Workflow and Logical Frameworks

The following diagrams illustrate the core workflows for Matrix Factorization and Deep Learning approaches in DTI prediction, highlighting their distinct logical structures.

Matrix Factorization Workflow for DTI

Deep Learning Workflow for DTI

Table 3: Key Research Reagent Solutions for DTI Prediction

| Tool / Resource | Type | Primary Function in DTI Research |

|---|---|---|

| DrugBank [3] [19] | Database | Provides comprehensive data on FDA-approved drugs, their chemical structures, and known targets. |

| KEGG [3] [18] | Database | Source for drug compounds, target protein sequences, and pathway information for validation. |

| ChEMBL [19] | Database | A manually curated database of bioactive molecules with drug-like properties and binding affinities. |

| SIMCOMP [18] | Software Tool | Calculates drug-drug similarity based on chemical structure, a key input for similarity-based models. |

| Smith-Waterman Algorithm [18] | Algorithm | Computes target-target sequence similarity, a fundamental feature for many DTI prediction methods. |

| Yamanishi et al. 2008 Benchmark Dataset [3] [18] | Benchmark Dataset | Gold-standard dataset for training and fairly comparing the performance of different DTI models. |

| Molecular Graph Representation [5] | Data Representation | Represents a drug's 2D topological structure for input into Graph Neural Networks (GNNs). |

| ProtTrans [5] | Pre-trained Model | A protein language model used to generate powerful initial representations of target protein sequences. |

The comparative analysis indicates that while Matrix Factorization remains a strong, interpretable, and computationally efficient choice for certain scenarios, Deep Learning models generally push the performance boundary further, especially in handling non-linearity, data sparsity, and the critical cold-start problem [10] [5] [19]. The emergence of DL models that provide uncertainty quantification, like EviDTI, represents a significant advancement for making drug discovery pipelines more robust and reliable by allowing researchers to prioritize high-confidence predictions for experimental validation [5].

The future of chemogenomic DTI prediction appears to be leaning towards hybrid models that leverage the strengths of both worlds. For instance, AutoMF combines MF with deep learning-based denoising autoencoders to learn effective latent factors from side information, thereby mitigating the sparsity and cold-start issues of traditional MF [21]. Furthermore, the integration of multi-modal data (e.g., 2D/3D drug structures, protein sequences, and heterogeneous network information) through sophisticated deep learning architectures is poised to further enhance prediction accuracy and model generalizability, solidifying the role of in silico methods as an indispensable tool in modern drug discovery [5] [17].

Drug-target interaction (DTI) prediction is a crucial step in drug discovery and repurposing, aiming to identify novel interactions between existing drugs and biological targets. Matrix factorization (MF), a class of collaborative filtering algorithms, has been widely adapted for this task. MF algorithms work by decomposing the user-item interaction matrix into the product of two lower-dimensionality rectangular matrices. In the context of DTI, the "user-item" matrix is the drug-target interaction matrix, where rows represent drugs, columns represent targets, and entries indicate known interactions. This matrix is factorized into two latent feature matrices: one representing drugs and the other representing targets. The underlying assumption is that the interaction pattern between drugs and targets can be captured by a reduced number of latent factors, which represent hidden properties such as specific chemical substructures or protein domains.

The prediction of new interactions is achieved by computing the dot product of the corresponding drug and target latent factor vectors. This approach became particularly prominent during the Netflix prize challenge due to its effectiveness, as reported by Simon Funk in 2006, and was subsequently adapted for biological applications. The fundamental objective of MF in DTI prediction is to approximate the original interaction matrix by learning meaningful latent representations that can generalize to predict unknown interactions, thereby accelerating drug discovery processes while reducing reliance on costly experimental methods.

Core Mathematical Principles and Model Variants

Fundamental Model Formulation

The core mathematical principle of matrix factorization involves decomposing a given matrix into the product of two lower-dimensional matrices. For a drug-target interaction matrix ( R \in \mathbb{R}^{m \times n} ) with ( m ) drugs and ( n ) targets, the goal is to find two matrices ( H \in \mathbb{R}^{m \times d} ) (drug latent factors) and ( W \in \mathbb{R}^{d \times n} ) (target latent factors) such that their product approximates the original matrix: ( R \approx \tilde{R} = HW ), where ( d ) represents the number of latent factors and is typically much smaller than ( m ) and ( n ). The predicted interaction score between drug ( u ) and target ( i ) is computed as ( \tilde{r}{ui} = \sum{f=0}^{d} H{u,f}W{f,i} ), where the summation occurs over all latent dimensions.

The model is learned by minimizing an objective function that typically includes a reconstruction error term and regularization components to prevent overfitting. The standard objective function takes the form: ( \arg\min{H,W} \|R - \tilde{R}\|{F}^{2} + \alpha\|H\| + \beta\|W\| ), where ( \|.\|_{F} ) denotes the Frobenius norm, and ( \alpha ), ( \beta ) are regularization parameters that control the complexity of the drug and target latent factor matrices, respectively. The number of latent factors ( d ) is a critical hyperparameter that tunes the model's expressive power. Models with too few factors may underfit, while those with too many may overfit the training data.

Advanced Matrix Factorization Variants

Several specialized MF variants have been developed to address specific challenges in DTI prediction:

Funk MF: The original algorithm proposed by Simon Funk uses a straightforward factorization approach without singular value decomposition, making it a SVD-like machine learning model. It primarily handles explicit numerical ratings but can be adapted for binary interaction data.

SVD++: This extension incorporates both explicit and implicit interactions, and includes user and item bias terms. The predicted rating is computed as ( \tilde{r}{ui} = \mu + bi + bu + \sum{f=0}^{d} H{u,f}W{f,i} ), where ( \mu ) is the global mean, ( bi ) is the item bias, and ( bu ) is the user bias. This model addresses the cold-start problem by estimating new user latent factors based on their interactions.

Asymmetric SVD: This model-based algorithm replaces the user latent factor matrix with a matrix that learns user preferences as a function of their ratings, making it particularly suitable for handling new users with few ratings without retraining the entire model.

Group-specific SVD: This approach clusters users and items based on dependency information and similarity characteristics, then approximates latent factors for new entities through group effects, providing immediate predictions even for new drugs or targets.

Weighted Matrix Factorization (WMF): This variant addresses the sparsity problem by decomposing the objective function into two sums: one over observed entries and another over unobserved entries treated as zeros: ( \min{U,V} \sum{(i,j) \in \text{obs}} (A{ij} - \langle Ui, Vj \rangle)^2 + w0 \sum{(i,j) \not\in \text{obs}} \langle Ui, Vj \rangle^2 ), where ( w0 ) is a hyperparameter weighting the two terms.

Experimental Protocols and Performance Benchmarks

Standard Evaluation Datasets and Protocols

Researchers typically evaluate matrix factorization methods for DTI prediction using several benchmark datasets with varying characteristics. Common datasets include the gold-standard datasets comprising Nuclear Receptors (NR), Ion Channels (IC), G Protein-Coupled Receptors (GPCR), and Enzymes (E), as well as larger datasets such as Luo's dataset and DrugBank-derived datasets. These datasets exhibit significant sparsity, with interaction rates ranging from 6.41% for NR down to 0.18% for the Luo dataset.

Standard evaluation protocols involve k-fold cross-validation (typically 5 or 10 folds) with multiple repetitions to ensure statistical significance. Performance is assessed using metrics including Area Under the Receiver Operating Characteristic Curve (AUC), Area Under the Precision-Recall Curve (AUPR), Accuracy (ACC), Recall, Precision, Matthews Correlation Coefficient (MCC), and F1 score. These metrics provide complementary insights into model performance, with AUPR being particularly important for imbalanced datasets common in DTI prediction.

Comparative Performance Analysis

Table 1: Performance Comparison of Matrix Factorization and Deep Learning Methods on Benchmark DTI Datasets

| Method | Category | NR (AUC) | IC (AUC) | GPCR (AUC) | Enzyme (AUC) | Davis (AUC) | KIBA (AUC) |

|---|---|---|---|---|---|---|---|

| NRLMF | Matrix Factorization | 0.832 | 0.903 | 0.872 | 0.892 | 0.883 | 0.891 |

| MSCMF | Matrix Factorization | 0.819 | 0.897 | 0.865 | 0.885 | 0.876 | 0.883 |

| DLapRLS | Matrix Factorization | 0.827 | 0.901 | 0.869 | 0.889 | 0.880 | 0.888 |

| DRaW | Deep Learning | 0.878 | 0.937 | 0.912 | 0.926 | 0.912 | 0.921 |

| MGCLDTI | Deep Learning | 0.871 | 0.931 | 0.906 | 0.921 | 0.907 | 0.916 |

| EviDTI | Deep Learning | 0.875 | 0.935 | 0.910 | 0.924 | 0.910 | 0.919 |

| DTI-RME | Hybrid | 0.882 | 0.941 | 0.917 | 0.930 | 0.917 | 0.926 |

Table 2: Performance Comparison on COVID-19 Antiviral Prediction Datasets

| Method | Category | Dataset DS1 (AUC) | Dataset DS2 (AUC) | Dataset DS3 (AUC) | Precision | Recall |

|---|---|---|---|---|---|---|

| Funk MF | Matrix Factorization | 0.791 | 0.776 | 0.783 | 0.752 | 0.741 |

| SVD++ | Matrix Factorization | 0.803 | 0.789 | 0.795 | 0.768 | 0.756 |

| DRaW | Deep Learning | 0.887 | 0.872 | 0.881 | 0.839 | 0.825 |

| VDA-KLMF | Matrix Factorization | 0.812 | 0.798 | 0.805 | 0.781 | 0.769 |

Experimental results consistently demonstrate that while matrix factorization methods provide reasonable baseline performance, they are generally outperformed by modern deep learning approaches. For instance, on COVID-19 antiviral prediction datasets, the deep learning model DRaW achieved AUC scores of 0.887, 0.872, and 0.881 across three different datasets, significantly outperforming matrix factorization approaches. Similarly, on benchmark datasets, recently proposed methods like DTI-RME (a hybrid approach combining robust loss, multi-kernel learning, and ensemble learning) achieved superior performance with AUC scores of 0.882 (NR), 0.941 (IC), 0.917 (GPCR), and 0.930 (Enzymes).

Critical Analysis of Limitations and Challenges

Matrix factorization methods face several fundamental limitations in the context of DTI prediction:

Data Sparsity: The drug-target matrix is typically extremely sparse, with available associations often comprising less than 1% of all possible interactions. For example, in the Luo dataset, the interaction rate is merely 0.18%. This sparsity causes matrix factorization methods to consider almost zero vectors as non-sense feature vectors, significantly impacting prediction quality.

Cold Start Problem: MF methods struggle with new drugs or targets that have no known interactions, as they lack latent factor representations. Although techniques like group-specific SVD can partially mitigate this by assigning group labels to new entities, they still require some reliable interactions for accurate prediction.

Interpretation of Zeros: A significant challenge arises from the ambiguous interpretation of zero entries in the interaction matrix. Zeros can represent either confirmed non-interactions or simply unknown associations that may actually interact. This ambiguity introduces noise and false negatives that negatively impact model training.

Linear Assumptions: Traditional MF methods capture primarily linear relationships between drugs and targets through dot products, potentially missing complex non-linear patterns that deep learning models can capture.

Fixed Matrix Size: MF is inherently limited to a fixed matrix size determined during training. When new features (e.g., new targets) emerge, the entire model must be retrained, making it inflexible for dynamically evolving biological databases.

Data Leakage: In some implementations, labels already exist in the feature matrix, creating potential data leakage during training that can artificially inflate performance metrics.

Workflow Diagram of Matrix Factorization for DTI Prediction

Diagram 1: Matrix Factorization Workflow for DTI Prediction. This diagram illustrates the standard pipeline for applying matrix factorization to drug-target interaction prediction, from input data preprocessing through model training to performance evaluation.

Table 3: Essential Research Reagents and Computational Resources for DTI Prediction Studies

| Resource Category | Specific Examples | Function/Purpose | Key Characteristics |

|---|---|---|---|

| Benchmark Datasets | Nuclear Receptors (NR), Ion Channels (IC), GPCR, Enzymes (E), Luo dataset, DrugBank datasets | Provide standardized benchmarks for method development and comparison | Varying sparsity levels (0.18%-6.41% interaction rates), different biological contexts |

| Similarity Kernels | Gaussian Interaction Kernel, Cosine Interaction Kernel, Jaccard Similarity | Measure similarity between drugs and targets based on different criteria | Capture different aspects of molecular and structural similarity |

| Evaluation Metrics | AUC, AUPR, Accuracy, Precision, Recall, F1-score, MCC | Quantify prediction performance across different aspects | Provide complementary insights, with AUPR particularly important for imbalanced data |

| Optimization Algorithms | Stochastic Gradient Descent (SGD), Weighted Alternating Least Squares (WALS) | Learn model parameters by minimizing objective function | SGD offers flexibility, WALS provides faster convergence for specific objectives |

| Regularization Techniques | L2 regularization, Graph regularization, Weighted Matrix Factorization | Prevent overfitting and improve model generalization | Control model complexity, handle sparsity in interaction matrix |

| Validation Protocols | k-fold Cross-Validation, Cold-Start Validation (CVP, CVT, CVD) | Ensure robust performance estimation and scenario-specific evaluation | Test model under realistic conditions including new drugs/targets |

Matrix factorization represents a foundational approach for drug-target interaction prediction, offering interpretable latent factors and relatively simple implementation. However, its performance is fundamentally constrained by data sparsity, linear assumptions, and cold-start problems. Contemporary research demonstrates that deep learning methods consistently outperform traditional matrix factorization across diverse DTI prediction scenarios, particularly for complex datasets and cold-start situations. The most promising recent approaches, such as DTI-RME, increasingly adopt hybrid strategies that integrate matrix factorization's strengths with robust loss functions, multi-kernel learning, and ensemble methods to address inherent limitations.

Future research directions should focus on developing more adaptive matrix factorization variants that can better handle the extreme sparsity and noise characteristics of biological interaction data. Integration with multi-modal data sources, including drug chemical structures, target sequences, and network information, represents another promising avenue. As the field progresses, the combination of matrix factorization's interpretability with deep learning's expressive power will likely yield the most significant advances in accurate and reliable DTI prediction, ultimately accelerating drug discovery and repurposing efforts.

The accurate prediction of drug-target interactions (DTIs) is a critical bottleneck in drug discovery. It can take 10–17 years and cost approximately $2.6 billion to bring a new drug to market, highlighting the urgent need for computational methods that can accelerate this process [22] [6]. Two dominant computational paradigms have emerged: matrix factorization (MF) and deep learning (DL). MF methods, grounded in linear algebra, project drugs and targets into a low-dimensional latent space where interactions are modeled as simple dot products. In contrast, DL methods use multi-layered neural networks to automatically learn hierarchical representations from raw data, such as molecular graphs and protein sequences, capturing complex, non-linear relationships. This guide provides an objective comparison of their performance, experimental protocols, and applicability in real-world drug development.

Performance Comparison: Quantitative Benchmarks

The following tables summarize the experimental performance of representative MF and DL models across several benchmark datasets, including Yamanishi (containing Enzymes/E, Ion Channels/IC, GPCRs, and Nuclear Receptors/NR), Davis, and KIBA.

Table 1: Performance Comparison of Matrix Factorization Models

| Model | Core Methodology | Dataset | AUC | AUPR | Key Advantage |

|---|---|---|---|---|---|

| SPLDMF [3] | Self-Paced Learning with Dual similarity MF | E | 0.982 | 0.815 | Alleviates bad local optima from data noise |

| Hyperbolic MF [13] | Logistic MF in Hyperbolic Space | NR | 0.990* | 0.900* | Lower embedding dimension; models tree-like data |

| NRLMF [4] | Neighborhood Regularized Logistic MF | IC | 0.974* | 0.894* | Incorporates neighborhood information |

| ConvBLS-DTI [4] | MF + Broad Learning System | GPCR | 0.934* | 0.842* | Reduces impact of data sparsity |

Note: Values marked with an asterisk () are approximated from textual descriptions in the respective sources.*

Table 2: Performance Comparison of Deep Learning Models

| Model | Core Methodology | Dataset | AUC | AUPR | Key Advantage |

|---|---|---|---|---|---|

| EviDTI [5] | Evidential DL on 2D/3D drug graphs & protein sequences | Davis | 0.916* | 0.711* | Provides uncertainty estimates for predictions |

| MGCLDTI [15] | Multivariate Fusion & Graph Contrastive Learning | Luo's Dataset | 0.973 | 0.941 | Alleviates network sparsity via DTI matrix densification |

| Hetero-KGraphDTI [23] | GNN with Knowledge-Based Regularization | Multiple Benchmarks | 0.980 (Avg) | 0.890 (Avg) | Integrates prior biological knowledge (e.g., GO, DrugBank) |

| DeepMPF [17] | Multi-modal (Sequence, Structure, Similarity) & Meta-path | Gold Standard Sets | Competitive | Competitive | Integrates drug-protein-disease heterogeneous networks |

| MVPA-DTI [6] | Heterogeneous Network with Multi-view Path Aggregation | - | 0.966 | 0.901 | Fuses 3D drug structures and protein sequence language models |

Note: Values marked with an asterisk () are approximated from textual descriptions in the respective sources.*

Experimental Protocols and Methodologies

Matrix Factorization Workflow

Traditional MF methods treat DTI prediction as a matrix completion task. The binary interaction matrix ( R ) is factorized into two low-dimensional matrices representing drug and target latent vectors [3] [13]. The workflow typically involves:

- Similarity Matrix Construction: Calculating drug-drug (e.g., based on chemical structure) and target-target (e.g., based on sequence) similarity matrices [3].

- Objective Function Optimization: Minimizing a loss function that often includes regularization terms to prevent overfitting and incorporate neighborhood information from the similarity matrices [4] [13]. For example, Hyperbolic MF uses a logistic loss function based on hyperbolic distance [13].

- Interaction Scoring: Predicting the probability of an interaction via the dot product (or its hyperbolic equivalent) of the learned drug and target latent vectors [3] [13].

Matrix Factorization Workflow: A linear algebraic approach to DTI prediction.

Deep Learning Workflow

DL approaches eschew hand-crafted features and directly consume raw or minimally processed data, using non-linear transformations to learn hierarchical representations [5] [23] [17]. A common graph-based DL workflow includes:

- Graph Representation: Constructing a heterogeneous graph where nodes represent drugs, targets, and diseases, and edges represent their interactions and similarities [23] [17].

- Feature Encoding: Using specialized encoders for different data modalities.

- Representation Learning & Fusion: Aggregating node information via message-passing in GNNs and fusing multi-modal features (e.g., sequence, structure, knowledge graph) into a unified representation [15] [17].

- Classification: The fused representation is fed into a final classifier (e.g., an MLP or LightGBM) to predict the interaction probability [15] [5].

Deep Learning Workflow: A hierarchical representation learning approach.

The Scientist's Toolkit: Key Research Reagents and Solutions

Successful DTI prediction experiments, whether using MF or DL, rely on a foundation of key data resources and software tools.

Table 3: Essential Research Reagents for DTI Prediction

| Category | Item | Function in Research | Example Sources |

|---|---|---|---|

| Benchmark Datasets | Yamanishi '08 | Gold-standard datasets for model training and benchmarking; include drugs, targets, and known interactions for enzymes, ion channels, GPCRs, and nuclear receptors. [3] [22] | KEGG BRITE, BRENDA, SuperTarget, DrugBank [3] |

| Davis, KIBA | Provide continuous binding affinity values for drug-target pairs, framing the task as a regression problem. [5] [22] | - | |

| Software & Libraries | Graph Neural Network Libraries | Enable the implementation of models that learn from graph-structured data (e.g., molecular graphs, heterogeneous networks). | PyTorch Geometric, Deep Graph Library (DGL) |

| Pre-trained Language Models | Provide powerful, transferable feature extractors for protein sequences and molecular structures, boosting model performance. [5] [6] | ProtTrans (Proteins), MG-BERT (Molecules) [5] | |

| Knowledge Bases | Gene Ontology (GO), DrugBank | Provide structured biological knowledge that can be integrated as prior information to regularize models and improve interpretability. [23] | - |

Discussion: Strategic Selection for Drug Development

Choosing between MF and DL depends on the specific research context and constraints.

Choose Matrix Factorization when working with smaller datasets or requiring high computational efficiency. MF models are often simpler to train and interpret. They are particularly effective when high-quality similarity matrices are available and when the underlying biological relationships are relatively linear. Their performance can be robust, especially with sophisticated regularization [3] [4] [13].

Choose Deep Learning when facing complex, multi-modal data and prioritizing peak predictive accuracy. DL excels at learning from raw data—such as SMILES strings, molecular graphs, and protein sequences—without heavy reliance on hand-crafted features. It is the preferred paradigm for "cold-start" problems involving novel drugs or targets with no known interactions, as it can infer properties from structure [5] [23]. Furthermore, advanced DL models like EviDTI provide uncertainty quantification, a critical feature for de-risking experimental validation in drug discovery [5].

In conclusion, while MF offers simplicity and efficiency, DL provides a powerful framework for learning complex representations from raw data, leading to state-of-the-art performance in DTI prediction and holding significant potential to accelerate drug development.

Inside the Algorithms: How Matrix Factorization and Deep Learning Model DTIs

The prediction of drug-target interactions (DTI) is a crucial phase in drug discovery and drug repositioning, serving to identify new therapeutic uses for existing drugs and accelerate development cycles [3]. Within this field, matrix factorization (MF) and deep learning (DL) represent two dominant computational approaches. MF methods characteristically decompose the observed drug-target interaction matrix into lower-dimensional latent factor matrices, capturing the underlying interaction patterns [3] [24]. In contrast, deep learning models leverage more complex architectures like graph neural networks and transformers to learn hierarchical representations from raw data such as drug structures and protein sequences [5] [25]. This guide provides an objective performance comparison of advanced matrix factorization techniques, specifically those employing neighborhood regularization and self-paced learning, against contemporary deep learning models, supported by experimental data and detailed methodologies.

Methodological Breakdown of Featured Approaches

Matrix Factorization with Self-Paced Learning and Dual Similarity (SPLDMF)

The SPLDMF model was developed to overcome two key challenges in traditional MF: susceptibility to bad local optima due to data noise and sparsity, and insufficient learning power from single similarity measures [3].

- Core Protocol: The model integrates self-paced learning (SPL) into the matrix factorization process. SPL mimics the human learning process by automatically selecting training samples from simple to complex, which enhances robustness against noisy and incomplete data [3].

- Dual Similarity Integration: Beyond using a single similarity metric, SPLDMF incorporates multiple sources of similarity information for both drugs and targets. This multi-view similarity enriches the model's understanding of potential associations [3].

- Optimization Objective: The method minimizes a regularized squared error function, combining the reconstruction error of known interactions with regularization terms for the latent factors and the learned similarities [3].

Evidential Deep Learning for DTI (EviDTI)

Representing the deep learning paradigm, EviDTI addresses the challenge of overconfidence in traditional DL models by providing reliable uncertainty estimates for its predictions [5].

- Core Protocol: This framework uses a multi-modal data approach. It encodes protein sequences via the pre-trained model ProtTrans and uses both 2D topological graphs and 3D spatial structures to represent drugs [5].

- Uncertainty Quantification: A key differentiator is its use of an evidential deep learning (EDL) layer. Instead of outputting a single probability, the model outputs parameters for a Dirichlet distribution, allowing it to quantify the uncertainty (confidence) of each prediction [5].

- Training Objective: The model is trained to minimize a loss function that includes the predictive error for known interactions and a term that penalizes evidence for incorrect classes, leading to well-calibrated uncertainties [5].

DeepMPF: A Multi-Modal Meta-Path Framework

DeepMPF is a hybrid framework that leverages deep learning for feature extraction but is grounded in the relational principles often captured by factorization methods [17].

- Core Protocol: It constructs a protein-drug-disease heterogeneous network. The model learns features from three modalities: sequence data, heterogeneous network structure, and similarity information [17].

- Meta-Path Analysis: It employs six predefined meta-path schemas (e.g., Drug-Disease-Drug, Target-Disease-Target) to capture high-order, semantic relationships within the heterogeneous network, preserving complex structural information [17].

- Feature Fusion and Training: Features from all modalities are jointly learned to generate comprehensive descriptors, and a deep learning model is used to calculate the final interaction probability [17].

Performance Comparison & Experimental Data

The following tables summarize the quantitative performance of the featured models against various baseline methods on standard benchmark datasets.

Table 1: Performance Comparison on the DrugBank Dataset

| Model | Type | Accuracy (%) | Precision (%) | MCC (%) | F1-Score (%) |

|---|---|---|---|---|---|

| SPLDMF [3] | Matrix Factorization | 0.982 (AUC) | - | - | 0.815 (AUPR) |

| EviDTI [5] | Deep Learning | 82.02 | 81.90 | 64.29 | 82.09 |

| MolTrans [5] | Deep Learning | 80.12 | 79.50 | 60.38 | 79.80 |

| GraphDTA [5] | Deep Learning | 79.80 | 78.40 | 59.69 | 78.70 |

Table 2: Performance on Davis and KIBA Datasets

| Model | Dataset | Accuracy (%) | Precision (%) | MCC (%) | F1-Score (%) | AUC (%) | AUPR (%) |

|---|---|---|---|---|---|---|---|

| EviDTI [5] | Davis | 80.20 | 75.60 | 63.90 | 78.00 | 93.40 | 87.30 |

| EviDTI [5] | KIBA | 80.90 | 76.70 | 62.10 | 78.60 | 91.90 | 87.00 |

| Best Baseline [5] | Davis | 79.40 | 75.00 | 63.00 | 76.00 | 93.30 | 87.00 |

| Best Baseline [5] | KIBA | 80.30 | 76.30 | 61.80 | 78.20 | 91.80 | 86.90 |

| DeepMPF [17] | NR | ~0.970 (AUC) | - | - | - | - | - |

| DeepMPF [17] | GPCR | ~0.990 (AUC) | - | - | - | - | - |

Table 3: Cold-Start Scenario Performance (DrugBank)

| Model | Accuracy (%) | Recall (%) | F1-Score (%) | MCC (%) | AUC (%) |

|---|---|---|---|---|---|

| EviDTI [5] | 79.96 | 81.20 | 79.61 | 59.97 | 86.69 |

| TransformerCPI [5] | - | - | - | - | 86.93 |

Workflow Visualization

SPLDMF Model Workflow

Multi-Modal Framework (DeepMPF) Workflow

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 4: Key Research Reagents and Computational Tools for DTI Prediction

| Item / Solution | Function in DTI Research | Example Sources / Tools |

|---|---|---|

| Gold Standard Datasets | Provides benchmark data for model training and evaluation. Includes known drug-target pairs. | Yamanishi (NR, GPCR, IC, E) [3], Davis [5], KIBA [5], DrugBank [3] [5] |

| Drug Chemical Data | Represents molecular structure for similarity calculation and feature extraction. | SMILES, Molecular Fingerprints, 2D/3D Graph Structures [5] [2] |

| Target Protein Data | Represents protein information for interaction prediction. | Protein Sequences (FASTA), 3D Structures (PDB), PSSM Profiles [2] [17] |

| Similarity Matrices | Quantifies drug-drug and target-target relationships, crucial for MF and network models. | SIMCOMP (Drug), Smith-Waterman (Target) [17] |

| Heterogeneous Networks | Integrates multi-type biological entities (drugs, targets, diseases) to provide rich context. | Protein-Drug-Disease Association Networks [17] |

| Pre-trained Models | Provides advanced initial feature representations for drugs and proteins, boosting DL performance. | ProtTrans (Proteins) [5], MG-BERT (Drugs) [5] |

The accurate prediction of drug-target interactions (DTI) is a crucial phase in drug discovery and drug repositioning, serving as a foundation for identifying novel therapeutics. [3] Traditional drug development is both costly and time-consuming, often spanning over a decade with associated costs ranging between $161 million and $4.54 billion. [26] Computational approaches help narrow the pool of compound candidates, offering significant starting points for experimental validation. [26] Within this domain, a fundamental methodological divide exists between classical matrix factorization (MF) techniques and emerging deep learning (DL) approaches. While deep learning models theoretically offer greater representational power, carefully designed matrix factorization methods remain highly competitive, particularly when enhanced with sophisticated biological insights and geometric considerations. [13]

This guide focuses on advanced MF methodologies that integrate dual-similarity information and topological features to achieve state-of-the-art performance. We objectively compare these enhanced MF techniques against each other and contrasting deep learning approaches, providing experimental data and detailed methodologies to inform researchers, scientists, and drug development professionals in their selection of computational tools for DTI prediction.

Methodological Framework of Advanced Matrix Factorization

Core Principles of Matrix Factorization for DTI

Matrix factorization methods for DTI prediction fundamentally operate by decomposing the drug-target interaction matrix into two low-rank matrices representing latent drug and target features. [3] The underlying assumption is that the interaction matrix has low intrinsic dimension, meaning that most variations can be explained by a small set of latent features. [13] Given a set of drugs (A = {a^i}{i=1}^m) and targets (B = {b^j}{j=1}^n), along with the interaction matrix (R = (r{i,j}){m \times n}) where (r{i,j} = 1) indicates interaction and (r{i,j} = 0) indicates no interaction or unknown status, MF aims to find latent vectors (u^i, v^j \in \mathbb{R}^d) (with (d \ll \max(m,n))) such that the probability of interaction is modeled effectively, often using a logistic function:

[p{i,j} = p(r{i,j} = 1 | u^i, v^j) = \frac{\exp(-\langle u^i, v^j \rangle)}{1 + \exp(-\langle u^i, v^j \rangle)} ]

The model parameters are learned by minimizing a regularized loss function, typically through alternating gradient descent or similar optimization techniques. [13]

Key Enhancement Strategies

Recent advances in MF methods have primarily focused on addressing two fundamental challenges: (1) the tendency of models to fall into bad local optimal solutions due to high noise and high missing rates in DTI data, and (2) the insufficient learning power resulting from relying on single similarity information. [3] To address these limitations, researchers have developed three principal enhancement strategies:

- Self-Paced Learning (SPL): Inspired by the human learning process, SPL automatically includes more samples from simple to complex for training in a purely self-paced manner, effectively alleviating the problem of bad local optima, especially when data is sparse. [3]

- Dual-Similarity Integration: Incorporating multiple similarity measures for drugs and targets significantly improves model capacity for learning and accurately identifying potential associations. This includes chemical structure similarity for drugs, sequence similarity for targets, and topological feature similarities derived from interaction networks. [3] [18]

- Geometric Considerations: Moving beyond traditional Euclidean space, some advanced MF methods now employ hyperbolic space as the latent biological space, better capturing the tree-like topology with high degree of clustering inherent in biological systems. [13]

Comparative Analysis of Advanced MF Methods

Performance Metrics and Experimental Setup

To ensure fair comparison across DTI prediction methods, researchers typically employ standardized evaluation metrics and benchmark datasets. The most widely used metrics include the Area Under the Receiver Operating Characteristic Curve (AUC) and the Area Under the Precision-Recall Curve (AUPR). [3] [18] [5] AUC measures the overall ranking performance, while AUPR is particularly informative for imbalanced datasets where non-interactions far outnumber known interactions. [3]

Standard benchmark datasets include the Yamanishi datasets (Enzymes, Ion Channels, GPCRs, and Nuclear Receptors), DrugBank, Davis, and KIBA. [3] [5] [2] Experimental protocols typically involve cross-validation (e.g., 5 trials of 10-fold cross-validation) with careful consideration of different prediction scenarios: known drug-known target, known drug-new target, new drug-known target, and new drug-new target (cold-start scenario). [3] [18] [5]

Table 1: Performance Comparison of Advanced MF Methods on Yamanishi Datasets

| Method | Dataset | AUC | AUPR | Key Features |

|---|---|---|---|---|

| SPLDMF [3] | Enzymes | 0.982 | 0.815 | Self-paced learning, dual similarity |

| SPLDMF [3] | Ion Channels | 0.972 | 0.812 | Self-paced learning, dual similarity |

| SPLDMF [3] | GPCR | 0.932 | 0.672 | Self-paced learning, dual similarity |

| SPLDMF [3] | Nuclear Receptors | 0.866 | 0.596 | Self-paced learning, dual similarity |

| DNILMF [18] | Enzymes | 0.973 | 0.845 | Dual-network integration, logistic MF |

| HMF [13] | Nuclear Receptors | 0.906 | 0.723 | Hyperbolic geometry, low embedding dimension |

| NRLMF [18] | Enzymes | 0.968 | 0.836 | Neighborhood regularization, logistic MF |

Table 2: Cold-Start Performance Comparison

| Method | Scenario | Accuracy | MCC | F1 Score | AUC |

|---|---|---|---|---|---|

| EviDTI [5] | Cold-Start | 79.96% | 59.97% | 79.61% | 86.69% |

| SPLDMF [3] | New Drug-New Target | High Performance Reported | Not Specified | Not Specified | Not Specified |

| HMF [13] | Cold-Start | Improved over Euclidean MF | Not Specified | Not Specified | Not Specified |

Comparative Analysis with Deep Learning Approaches

While advanced MF methods demonstrate strong performance, deep learning approaches have also shown remarkable results in DTI prediction. The EviDTI framework, which utilizes evidential deep learning for uncertainty quantification, achieves an accuracy of 82.02%, precision of 81.90%, MCC of 64.29%, and F1 score of 82.09% on the DrugBank dataset. [5] Similarly, Top-DTI, which integrates topological data analysis with large language models, demonstrates superior performance in challenging cold-split scenarios where test and validation sets contain drugs or targets absent from the training set. [26]

When comparing these approaches, several key distinctions emerge:

- Data Requirements: Deep learning methods typically require larger datasets for effective training but can automatically learn relevant features from raw data. [5] [2]

- Interpretability: MF methods often provide more interpretable latent spaces, while DL approaches can function as "black boxes." [13]

- Computational Efficiency: Well-designed MF methods can outperform deep learning in many collaborative filtering applications, with simpler architectures that are easier to optimize. [13]

- Uncertainty Quantification: Recent DL approaches like EviDTI offer sophisticated uncertainty estimation, helping prioritize DTIs with higher confidence predictions for experimental validation. [5]

Detailed Experimental Protocols

Protocol for Self-Paced Learning with Dual Similarity MF (SPLDMF)

The SPLDMF method employs a four-step procedure for DTI prediction: [3]

Profile Inferring and Kernel Construction: New drug/target profiles are inferred using nearest neighbors (typically K=5), addressing scenarios with new drugs or targets. Similarity matrices are converted to kernel matrices using Gaussian interaction profile kernels.

Kernel Diffusion: A nonlinear kernel diffusion technique combines different kernels, overcoming limitations of simple linear combination approaches. This integrates drug chemical structure similarity, target sequence similarity, and topological feature similarities.

Model Training with Self-Paced Learning: The model is trained using self-paced learning, which gradually includes more complex samples to avoid bad local optima. The objective function incorporates neighborhood regularization to exploit local similarity information.

Prediction and Neighborhood Smoothing: Interaction scores are generated, with neighborhood smoothing applied to enhance predictions for new drugs/targets based on their similarity to known entities.

Figure 1: SPLDMF Experimental Workflow - Illustrating the integration of dual-similarity information and self-paced learning in matrix factorization for DTI prediction.

Protocol for Hyperbolic Matrix Factorization (HMF)

Hyperbolic Matrix Factorization employs a fundamentally different geometric approach: [13]

Hyperbolic Space Embedding: Drugs and targets are represented as points in hyperbolic space (\mathbb{H}^d) rather than Euclidean space, better accommodating the tree-like topology of biological systems.

Lorentzian Distance Calculation: The distance between points (x, y \in \mathbb{H}^d) is calculated using the Lorentzian distance: [ d{\mathbb{H}^d}(x, y) = \mathrm{arccosh}(-\langle x, y \rangle{\mathcal{L}}) ] where (\langle \cdot, \cdot \rangle_{\mathcal{L}}) is the Lorentzian bilinear form.

Probability Modeling: The probability of interaction is modeled using the logistic function in Lorentz space: [ p{i,j} = \frac{\exp(-d{\mathcal{L}}^2(u^i, v^j))}{1 + \exp(-d{\mathcal{L}}^2(u^i, v^j))} ] where (d{\mathcal{L}}^2(x, y) = -2 - 2\langle x, y \rangle_{\mathcal{L}}).

Optimization in Hyperbolic Space: Parameters are optimized using alternating gradient descent adapted for hyperbolic space, requiring Riemannian optimization techniques to account for the manifold structure.

This approach achieves superior accuracy while lowering embedding dimension by an order of magnitude, providing additional evidence that hyperbolic geometry underpins large biological networks. [13]

Table 3: Key Research Reagents and Computational Resources for DTI Prediction

| Resource Category | Specific Examples | Function and Application |

|---|---|---|

| Benchmark Datasets | Yamanishi (E, IC, GPCR, NR) [3] [18] | Gold standard datasets for method validation and comparison |

| DrugBank [5] [2] | Comprehensive drug-target interaction database | |

| Davis, KIBA [5] | Challenging datasets with affinity measurements | |

| Similarity Measures | Chemical Structure (SIMCOMP) [18] | Computes drug similarity based on chemical structures |

| Sequence Similarity (Smith-Waterman) [18] | Computes target similarity based on protein sequences | |

| Gaussian Interaction Profile [18] | Creates similarity measures from interaction topology | |

| Implementation Frameworks | Logistic Matrix Factorization [3] [13] | Core algorithm for interaction probability modeling |

| Neighborhood Regularization [3] [18] | Incorporates local similarity information into models | |

| Hyperbolic Geometry Libraries [13] | Enables implementation of hyperbolic space embeddings |

Figure 2: Logical Relationships in Advanced MF - Showing how core matrix factorization methods integrate with similarity measures, enhancement techniques, and data resources.

Advanced matrix factorization methods integrating dual-similarity information and topological features represent a sophisticated approach to DTI prediction that remains highly competitive with deep learning alternatives. The SPLDMF method demonstrates how self-paced learning and comprehensive similarity integration can achieve state-of-the-art performance (AUC up to 0.982 on enzyme datasets), [3] while hyperbolic MF reveals the importance of geometric considerations in biological representation learning. [13]