Machine Learning in Chemogenomics: Advanced Frameworks for Predicting Drug-Target Interactions

This article provides a comprehensive overview of the transformative role of machine learning (ML) in chemogenomics for predicting drug-target interactions (DTIs).

Machine Learning in Chemogenomics: Advanced Frameworks for Predicting Drug-Target Interactions

Abstract

This article provides a comprehensive overview of the transformative role of machine learning (ML) in chemogenomics for predicting drug-target interactions (DTIs). It explores the foundational principles of chemogenomic methods, which integrate chemical and biological data to overcome the limitations of traditional ligand-based and docking approaches. The scope covers a wide array of ML methodologies, from ensemble learning and deep neural networks to novel hybrid frameworks that leverage feature engineering and data balancing techniques like Generative Adversarial Networks (GANs). It further addresses critical challenges such as data sparsity, class imbalance, and model generalizability, while detailing rigorous validation protocols and performance metrics essential for real-world application. Designed for researchers, scientists, and drug development professionals, this review synthesizes current advances and practical strategies to accelerate drug discovery and repositioning.

Chemogenomics and DTI Prediction: Foundations and Core Principles

The process of drug discovery is fundamentally reliant on the accurate identification of interactions between drug molecules and their protein targets. Drug-target interaction (DTI) prediction serves as a critical component in the early stages of the drug discovery pipeline, enabling researchers to identify potential drug candidates more efficiently [1]. Traditional experimental methods for determining DTIs, while reliable, are characterized by high costs, lengthy development cycles (often 10-15 years), and low success rates (with recent overall success rates falling to approximately 6.3%) [1]. These challenges have catalyzed the adoption of in silico computational methods, particularly those leveraging machine learning (ML) and deep learning (DL), which offer the potential to significantly reduce drug development costs and timelines while efficiently utilizing the growing amount of available chemical and biological data [1] [2].

In the context of chemogenomics research, DTI prediction represents a paradigm shift from traditional single-target approaches to a more comprehensive framework that simultaneously explores interactions across multiple proteins and chemical compounds [3]. This approach operates on the principle that the prediction of a drug-target interaction may benefit from known interactions between other targets and other molecules, thereby enabling the prediction of unexpected "off-targets" that often lead to undesirable side effects and failure in drug development processes [3]. The integration of artificial intelligence, specifically ML and DL, has pushed the boundaries of predictive performance in DTI prediction, creating new opportunities for accelerating therapeutic development [4] [5].

Current Methodologies in DTI Prediction

Evolution of Computational Approaches

The landscape of in silico DTI prediction has evolved substantially from early structure-based methods to modern data-driven approaches. Early methodologies primarily focused on molecular docking and ligand-based virtual screening techniques [1]. Molecular docking, introduced by Kuntz et al. in 1982, utilizes the three-dimensional structure of target proteins to position candidate drug molecules within active sites, simulating potential binding interactions and estimating binding free energies [1]. Ligand-based methods, such as quantitative structure-activity relationship (QSAR) and pharmacophore models, predict new drug candidates by leveraging known bioactivity data and establishing mathematical correlations between molecular structure and biological activity [1].

However, these early approaches faced significant limitations, including dependency on protein 3D structures (which were often scarce), difficulties in capturing complex nonlinear structure-activity relationships, and limited ability to explore novel chemical spaces [1]. These challenges catalyzed the adoption of machine learning techniques, beginning with pioneering work by Yamanishi et al. who constructed a dual-layer model integrating chemical and genomic information [1].

Modern Machine Learning Frameworks

Contemporary DTI prediction leverages diverse machine learning frameworks, each with distinct advantages and applications:

Table 1: Overview of Modern DTI Prediction Approaches

| Method Category | Key Examples | Core Principles | Advantages | Limitations |

|---|---|---|---|---|

| Similarity-Based | KronRLS, SimBoost | Integrates drug chemical structure similarity with target sequence similarity | High interpretability, foundation for quantitative prediction | Limited serendipity, may not capture complex nonlinear relationships |

| Network-Based | DTINet, BridgeDPI, MVGCN | Integrates multiple interaction networks (drug-target, drug-drug, protein-protein) | Does not require 3D structures, can incorporate diverse data sources | Cold-start problem for new drugs/targets, computationally intensive |

| Feature-Based ML | Random Forest, SVM | Uses expert-engineered chemical and protein descriptors | Handles new drugs/targets via features, interpretable | Feature selection is crucial, class imbalance issues |

| Deep Learning | DeepConv-DTI, GraphDTA, MolTrans | Learns abstract representations from raw data (SMILES, sequences, graphs) | Automatic feature extraction, handles complex patterns | Low interpretability, requires large datasets |

| Hybrid & Advanced DL | EviDTI, DrugMAN, GAN+RFC | Combines multiple data types with advanced architectures | State-of-the-art performance, uncertainty quantification | Computational complexity, implementation challenging |

Similarity-based methods represent some of the earliest machine learning approaches for DTI prediction. KronRLS integrates drug chemical structure similarity with Smith-Waterman similarity scores of target sequences within a Kronecker regularized least-squares framework, formally defining DTI prediction as a regression task [1]. SimBoost introduced the first nonlinear approach for continuous DTI prediction, incorporating prediction intervals as confidence measures and interpretable features derived from similarity matrices [1].

Network-based methods leverage the "guilt-by-association" principle, operating on the premise that similar drugs tend to interact with similar targets. DTINet integrates data from diverse sources including drugs, proteins, diseases, and side effects, learning low-dimensional representations to manage noise and high-dimensional characteristics of biological data [1]. BridgeDPI effectively combines network- and learning-based approaches to enhance DTI prediction by introducing network-level information [1]. MVGCN (Multi-View Graph Convolutional Network) integrates similarity networks with bipartite networks, using self-supervised learning for initial node embeddings [1].

Feature-based machine learning approaches utilize expert-engineered descriptors for drugs and targets. The benefit of such methods is their ability to handle new drugs and targets without requiring similar information of chemical drugs and target sequences, as features can always be extracted for both drugs and proteins [6]. However, these methods face challenges in feature selection and class imbalance [6].

Deep learning methods have revolutionized DTI prediction by automating feature extraction. DeepConv-DTI applies convolutional neural networks to protein sequences and drug fingerprints [5]. GraphDTA utilizes graph neural networks to represent drug molecules as graphs rather than traditional strings [5]. MolTrans employs transformer architectures to model complex molecular interactions [5]. These methods demonstrate superior performance but face challenges in interpretability and reliability of automatically learned feature representations [6].

Hybrid and advanced deep learning frameworks represent the cutting edge in DTI prediction. EviDTI utilizes evidential deep learning for uncertainty quantification, integrating multiple data dimensions including drug 2D topological graphs, 3D spatial structures, and target sequence features [5]. DrugMAN integrates multiplex heterogeneous functional networks with a mutual attention network, using graph attention network-based integration to learn network-specific low-dimensional features for drugs and target proteins [7]. GAN-based hybrid frameworks address critical challenges like data imbalance through generative adversarial networks to create synthetic data for the minority class [4].

Application Notes & Protocols

Protocol 1: Implementing a GAN-Based Hybrid Framework for DTI Prediction

Background & Principles: Data imbalance represents a significant challenge in DTI prediction, where the minority class of positive drug-target interactions is substantially underrepresented, leading to biased models with reduced sensitivity and higher false negative rates [4]. This protocol outlines the implementation of a novel hybrid framework that combines generative adversarial networks (GANs) with traditional machine learning to address this limitation, leveraging comprehensive feature engineering and advanced data balancing techniques [4].

Experimental Procedure:

Step 1: Data Curation and Preprocessing

- Collect drug-target interaction data from BindingDB databases (Kd, Ki, and IC50 datasets)

- Curate the datasets to ensure data quality, removing duplicates and standardizing identifiers

- Split the data into training, validation, and test sets using an 80:10:10 ratio

Step 2: Feature Engineering

- For drug compounds: Extract structural features using MACCS (Molecular ACCess System) keys, which encode molecular structures as binary fingerprints representing the presence or absence of specific substructures [4]

- For target proteins: Compute amino acid composition (AAC) and dipeptide composition (DPC) to represent biomolecular properties, capturing the fractional content of amino acids and their pairs in the protein sequence [4]

- Combine drug and target features into a unified feature representation for each drug-target pair

Step 3: Data Balancing with GANs

- Train a Generative Adversarial Network on the minority class (positive interactions) to generate synthetic samples

- The generator network creates synthetic feature vectors, while the discriminator network distinguishes between real and synthetic samples

- After training, use the generator to produce synthetic minority class samples until approximate class balance is achieved

- Combine synthetic samples with original training data to create a balanced dataset

Step 4: Model Training and Optimization

- Implement a Random Forest Classifier with optimized hyperparameters

- Train the model on the balanced training dataset

- Validate model performance on the separate validation set, tuning hyperparameters as needed

- Employ cross-validation to ensure robustness and prevent overfitting

Step 5: Model Evaluation

- Evaluate the final model on the held-out test set using multiple metrics: accuracy, precision, sensitivity, specificity, F1-score, and ROC-AUC [4]

- Compare performance against baseline models without GAN-based balancing

Troubleshooting Tips:

- If GAN training is unstable, consider modifying the network architecture or adjusting learning rates

- If model performance plateaus, experiment with alternative feature extraction methods or hyperparameter configurations

- For overfitting, implement stronger regularization or increase the diversity of synthetic samples

Protocol 2: Evidential Deep Learning for Uncertainty-Aware DTI Prediction

Background & Principles: Traditional deep learning models for DTI prediction often produce overconfident predictions for out-of-distribution samples, lacking the ability to quantify uncertainty in their predictions [5]. This protocol describes the implementation of EviDTI, an evidential deep learning framework that provides uncertainty estimates alongside predictions, enabling more reliable decision-making in drug discovery pipelines [5].

Experimental Procedure:

Step 1: Data Preparation

- Curate benchmark datasets (DrugBank, Davis, KIBA) following established preprocessing protocols

- Split data into training, validation, and test sets (80:10:10 ratio)

- For cold-start evaluation, ensure strict separation where drugs and targets in the test set do not appear in training

Step 2: Protein Feature Encoding

- Utilize ProtTrans, a protein language pre-trained model, to extract initial protein sequence features [5]

- Process the initial representations through a light attention (LA) module to provide insights into local interactions at the residue level

- The LA module highlights functionally important residues while suppressing noise in the sequence representations

Step 3: Drug Feature Encoding

- For 2D topological information: Use MG-BERT, a molecular graph pre-training model, to obtain initial drug representations, then process through a 1DCNN [5]

- For 3D spatial structure: Convert drug molecules into atom-bond graphs and bond-angle graphs, with representations obtained through the GeoGNN module

- Concatenate 2D and 3D drug representations to form comprehensive drug embeddings

Step 4: Evidential Layer Implementation

- Concatenate the target and drug representations into a unified feature vector

- Feed the concatenated representation into the evidential layer, which outputs the parameters α of a Dirichlet distribution

- Calculate prediction probabilities and corresponding uncertainty values from the Dirichlet parameters

- Higher uncertainty values indicate less reliable predictions, enabling better prioritization for experimental validation

Step 5: Model Training and Evaluation

- Implement a specialized loss function that minimizes prediction error while maximizing evidence for correct classes

- Train the model using standard backpropagation with early stopping based on validation performance

- Evaluate on test sets using standard metrics (accuracy, precision, recall, MCC, F1, AUC, AUPR) and uncertainty calibration metrics

- Compare against baseline models (RF, SVM, NB, DeepConv-DTI, GraphDTA, MolTrans, etc.)

Implementation Considerations:

- The framework can be adapted to different molecular representations based on data availability

- Uncertainty thresholds should be determined empirically based on the desired trade-off between recall and precision

- For deployment, establish confidence intervals that determine which predictions proceed to experimental validation

Performance Benchmarking

Table 2: Performance Comparison of Advanced DTI Prediction Models

| Model | Dataset | Accuracy | Precision | Sensitivity | Specificity | F1-Score | ROC-AUC |

|---|---|---|---|---|---|---|---|

| GAN+RFC [4] | BindingDB-Kd | 97.46% | 97.49% | 97.46% | 98.82% | 97.46% | 99.42% |

| GAN+RFC [4] | BindingDB-Ki | 91.69% | 91.74% | 91.69% | 93.40% | 91.69% | 97.32% |

| GAN+RFC [4] | BindingDB-IC50 | 95.40% | 95.41% | 95.40% | 96.42% | 95.39% | 98.97% |

| EviDTI [5] | DrugBank | 82.02% | 81.90% | - | - | 82.09% | - |

| EviDTI [5] | Davis | +0.8% vs baselines | +0.6% vs baselines | - | - | +2.0% vs baselines | +0.1% vs baselines |

| EviDTI [5] | KIBA | +0.6% vs baselines | +0.4% vs baselines | - | - | +0.4% vs baselines | +0.1% vs baselines |

| DeepLPI [4] | BindingDB | - | - | 0.831 | 0.792 | - | 0.893 |

| kNN-DTA [4] | BindingDB-IC50 | - | - | - | - | - | RMSE: 0.684 |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for DTI Prediction Research

| Resource Category | Specific Tools/Databases | Key Functionality | Application in DTI Research |

|---|---|---|---|

| Bioactivity Databases | BindingDB, ChEMBL, Davis, KIBA | Provide curated drug-target interaction data with binding affinities | Training and benchmarking datasets for model development |

| Chemical Representation | MACCS Keys, Extended-Connectivity Fingerprints (ECFPs), SMILES | Encode molecular structures as machine-readable features | Feature extraction for drug compounds |

| Protein Representation | ProtTrans, ESM, Amino Acid Composition, Dipeptide Composition | Generate protein features from sequence and structural information | Feature extraction for target proteins |

| Deep Learning Frameworks | PyTorch, TensorFlow, DeepGraph | Implement and train neural network architectures | Building GANs, graph neural networks, transformers |

| Specialized DTI Tools | EviDTI, DrugMAN, Komet, KronRLS | Pre-built models for specific DTI prediction scenarios | Benchmarking, transfer learning, production deployment |

| Uncertainty Quantification | Evidential Deep Learning, Monte Carlo Dropout, Ensemble Methods | Estimate prediction reliability and model confidence | Prioritizing candidates for experimental validation |

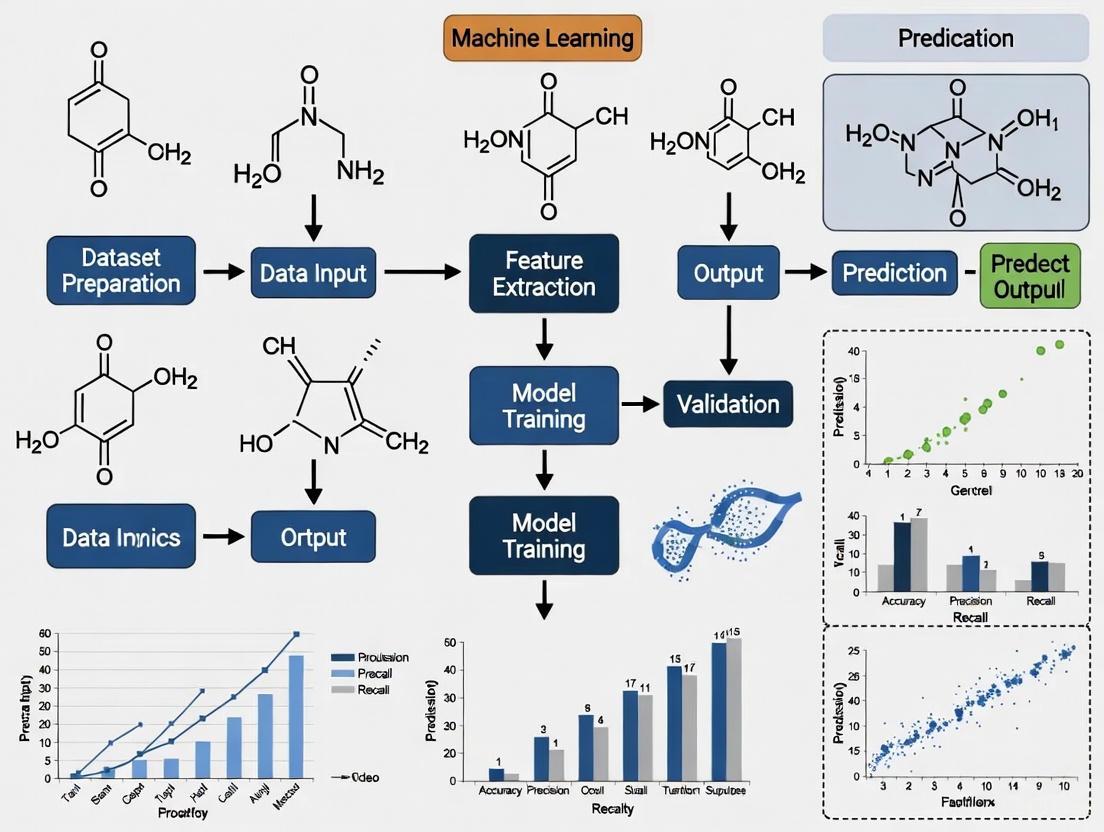

Workflow Diagrams

GAN-Based Hybrid Framework Workflow

EviDTI Framework Architecture

The integration of advanced machine learning methodologies into DTI prediction has fundamentally transformed the early drug discovery pipeline. The protocols and frameworks outlined in this document—from GAN-based hybrid approaches that effectively address data imbalance to evidential deep learning models that provide crucial uncertainty quantification—represent the cutting edge of computational drug discovery [4] [5]. These approaches demonstrate robust performance across diverse datasets and scenarios, achieving accuracy metrics exceeding 97% in some implementations while providing the reliability estimates necessary for informed decision-making in pharmaceutical research [4].

As the field continues to evolve, several emerging trends promise to further enhance DTI prediction capabilities. The integration of large language models and protein structure prediction tools like AlphaFold offers new opportunities for improved feature representation [1]. Similarly, the development of frameworks capable of integrating heterogeneous information sources through mutual attention networks provides pathways to more comprehensive interaction modeling [7]. For researchers and drug development professionals, the adoption of these advanced computational protocols enables more efficient prioritization of candidate compounds for experimental validation, ultimately accelerating the therapeutic development process and reducing the substantial costs associated with traditional drug discovery approaches.

Chemogenomics represents a transformative paradigm in modern drug discovery, systematically investigating the interactions between chemical compounds and biological target families on a genomic scale. By integrating complementary data from internal and external sources into unified chemogenomics databases, this approach enables the extraction of actionable information from vast biological datasets [8]. The establishment of structured, model-ready databases is crucial for applications ranging from focused library design and tool compound selection to target deconvolution in phenotypic screening and predictive model building [8]. This protocol outlines comprehensive methodologies for constructing chemogenomic frameworks, implementing machine learning models for drug-target interaction prediction, and applying these resources to accelerate therapeutic development. Through standardized data capture, harmonization, and integration practices, researchers can harness the full potential of chemogenomic data to navigate the complex landscape of drug discovery, ultimately reducing attrition rates and enhancing development efficiency.

Chemogenomics databases serve as foundational resources that systematically organize compound-target interaction data, enabling researchers to navigate the complex relationship between chemical space and biological space. These databases harmonize data from diverse sources, including historical in-house data and public repositories, into a unified framework that supports various chemical biology applications [8]. The evolution of high-throughput screening technologies has generated an explosion of experimentally discovered associations between compounds and targets, necessitating robust database infrastructures to maximize their utility [8].

Key Public Chemogenomics Databases

Table 1: Major Public Chemogenomics Databases and Their Characteristics

| Database Name | Primary Focus | Key Features | Data Sources |

|---|---|---|---|

| ChEMBL [9] | Bioactivity data | Manually curated database of bioactive molecules with drug-like properties | Published literature, patent documents |

| DrugBank [9] | Drug and target data | Comprehensive drug and drug target information with detailed mechanisms | Experimental, clinical, and molecular data |

| TTD (Therapeutic Target Database) [9] | Therapeutic targets | Focuses on known therapeutic protein and nucleic acid targets | Clinical, pre-clinical, and experimental data |

| STITCH (Search Tool for Interacting Chemicals) [8] | Chemical-protein interactions | Includes compound-protein and protein-protein interactions, filterable by tissue | Multiple public databases with confidence scoring |

| Drug2Gene [8] | Small-molecule activity | Complex query building with results viewable by compound, target, or relation | 19 different public databases (version 3.2) |

| BindingDB [10] [4] | Binding affinity data | Focuses on drug-target binding affinities (Kd, Ki, IC50) | Experimental measurements from scientific literature |

Data Harmonization and Integration Protocols

Successful chemogenomics implementation requires meticulous data harmonization and integration protocols to ensure data quality and interoperability:

- Compound Standardization: Implement standardized chemical representation using identifiers such as InChI (International Chemical Identifier) to enable accurate compound matching across different databases [8].

- Target Normalization: Map protein targets to standardized gene identifiers and sequences using resources like UniProt to ensure consistent biological annotation [9].

- Bioactivity Data Curation: Establish consistent thresholds for binding affinity values and implement quality control measures to handle conflicting data points, such as excluding compound-target pairs with bioactivity differences exceeding one magnitude [9].

- Metadata Annotation: Capture comprehensive experimental metadata, including assay conditions, measurement types (IC50, Ki, Kd), and experimental contexts to enable proper data interpretation [8].

Computational Frameworks for Chemogenomic Analysis

Machine learning approaches have revolutionized chemogenomic analysis by enabling the prediction of complex relationships between chemical structures and biological targets. These methods leverage both chemical descriptor spaces and biological descriptor spaces to build predictive models with applications across the drug discovery pipeline.

Molecular and Target Representation Methods

Effective representation of compounds and targets is fundamental to chemogenomic analysis. The following protocols outline standard approaches for feature extraction:

Molecular Descriptor Calculation:

- 2D Molecular Descriptors: Calculate 188 Mol2D descriptors including constitutional, topological, connectivity indices, shape descriptors, and charge descriptors using standardized cheminformatics toolkits [9].

- Structural Fingerprints: Generate MACCS keys (Molecular ACCess System) to represent structural features of compounds as binary vectors for similarity assessment [4].

- Graph Representations: Represent molecules as graphs with atoms as nodes and bonds as edges to preserve structural information for graph neural networks [11].

Protein Target Representation:

- Sequence-Based Features: Calculate amino acid composition, dipeptide composition, and autocorrelation features from protein sequences to capture biochemical properties [4].

- Gene Ontology Terms: Incorporate Gene Ontology (GO) terms across biological process (BP), molecular function (MF), and cellular component (CC) categories to represent functional characteristics [9].

- Evolutionary Information: Use position-specific scoring matrices (PSSMs) or protein language model embeddings to capture evolutionary conservation patterns [10].

Table 2: Machine Learning Approaches in Chemogenomics

| Algorithm Category | Representative Methods | Key Applications in Chemogenomics | Advantages |

|---|---|---|---|

| Traditional Machine Learning | Random Forests, Support Vector Machines [12] [13] | Target prediction, compound classification | Interpretability, effectiveness with structured features |

| Deep Learning | Convolutional Neural Networks (CNNs), Recurrent Neural Networks (RNNs) [12] [4] | Drug-target affinity prediction, sequence analysis | Automatic feature learning from raw data |

| Graph Machine Learning | Graph Neural Networks (GNNs) [11] | Molecular property prediction, structure-based interaction modeling | Natural representation of molecular structure |

| Multitask Learning | DeepDTAGen framework [10] | Simultaneous affinity prediction and target-aware drug generation | Knowledge transfer across related tasks |

Advanced Architectures for Drug-Target Interaction Prediction

Recent advances in deep learning have produced sophisticated architectures specifically designed for chemogenomic applications:

Multitask Learning Framework (DeepDTAGen): This approach simultaneously predicts drug-target binding affinities and generates novel target-aware drug candidates using a shared feature space. The framework employs the FetterGrad algorithm to mitigate gradient conflicts between tasks, ensuring aligned learning across prediction and generation objectives [10].

Ensemble Chemogenomic Models: Construct multiple chemogenomic models using different descriptor sets for compounds and proteins, then combine them to improve prediction performance. Validation studies demonstrate that such ensemble models can identify 57.96% of known targets in the top-10 predictions, representing approximately 50-fold enrichment over random guessing [9].

Hybrid ML-DL Frameworks with GANs: Address data imbalance issues in DTI prediction by employing Generative Adversarial Networks (GANs) to create synthetic data for the minority class, significantly reducing false negatives. This approach achieved remarkable performance metrics including accuracy of 97.46%, precision of 97.49%, and ROC-AUC of 99.42% on BindingDB-Kd datasets [4].

Diagram 1: Chemogenomics Data Integration Workflow (76 characters)

Experimental Protocols for Chemogenomic Applications

Protocol: Target Deconvolution in Phenotypic Screening

Target deconvraction identifies the molecular targets responsible for observed phenotypic effects of bioactive compounds.

Materials and Reagents:

- Phenotypic screening hit list with chemical structures

- Curated chemogenomics database (e.g., CHEMGENIE)

- Statistical analysis software (R, Python)

- Pathway analysis tools (KEGG, GO)

Procedure:

- Input Compound Preparation:

- Standardize chemical structures of screening hits

- Calculate molecular descriptors or fingerprints

- Annotate compounds with known bioactivity profiles

Database Query and Enrichment Analysis:

- Query chemogenomics database for known targets of screening hits

- Perform statistical enrichment analysis to identify overrepresented targets

- Calculate p-values using Fisher's exact test with multiple testing correction

Pathway and Network Analysis:

- Map enriched targets to biological pathways using KEGG or Reactome

- Construct protein-protein interaction networks around prioritized targets

- Identify key network nodes with high centrality measures

Experimental Validation Prioritization:

- Rank candidate targets based on enrichment scores and network properties

- Consider tissue expression patterns and biological context

- Design follow-up experiments for top candidate targets

Protocol: Building Predictive Polypharmacology Models

This protocol details the construction of models that predict multiple targets for chemical compounds.

Materials:

- Curated compound-target interaction dataset

- Molecular descriptor calculation software (RDKit, CDK)

- Machine learning framework (scikit-learn, PyTorch, TensorFlow)

- High-performance computing resources

Procedure:

- Dataset Preparation:

- Collect known compound-target interactions from ChEMBL, BindingDB

- Standardize activity measurements (e.g., Ki ≤ 100 nM for positive interactions)

- Split data into training (70%), validation (15%), and test (15%) sets

Feature Engineering:

- Calculate comprehensive molecular descriptors (188 Mol2D descriptors)

- Generate protein sequence features (amino acid composition, dipeptide composition)

- Create combined compound-target pair representations

Model Training:

- Implement ensemble methods (Random Forest, XGBoost) as baseline

- Train deep learning architectures (Graph Neural Networks, Transformers)

- Optimize hyperparameters using cross-validation on training set

Model Evaluation:

- Assess performance using concordance index (CI), mean squared error (MSE)

- Evaluate ranking metrics (top-k accuracy) for target prediction

- Perform external validation on held-out test set

Model Interpretation:

- Apply feature importance analysis (SHAP, permutation importance)

- Identify key molecular features driving target predictions

- Visualize chemical subspaces associated with polypharmacology

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents and Computational Tools for Chemogenomics

| Tool/Reagent | Type | Function/Application | Implementation Example |

|---|---|---|---|

| CHEMGENIE Database [8] | Data Resource | Integrated chemogenomics database for compound-target associations | Centralized repository combining internal and external bioactivity data |

| MACCS Keys [4] | Molecular Fingerprint | Structural representation of compounds for similarity searching | 166-bit structural keys for molecular similarity calculations |

| Mol2D Descriptors [9] | Molecular Descriptors | 2D molecular features for QSAR and machine learning | 188 descriptors including constitutional, topological, and charge descriptors |

| Amino Acid Composition [4] | Protein Descriptor | Representation of protein sequence features | Frequency of amino acids in protein sequences for target characterization |

| GANs for Data Balancing [4] | Computational Method | Address class imbalance in DTI datasets | Generate synthetic minority class samples to improve model sensitivity |

| Graph Neural Networks [11] | Machine Learning Architecture | Model molecular structures as graphs for property prediction | Message passing neural networks operating on atom-bond representations |

| FetterGrad Algorithm [10] | Optimization Method | Mitigate gradient conflicts in multitask learning | Minimize Euclidean distance between task gradients during training |

Diagram 2: Target Deconvolution Workflow (65 characters)

Data Visualization and Interpretation Guidelines

Effective visualization of chemogenomics data requires careful consideration of color spaces and perceptual uniformity to accurately communicate complex relationships.

Colorization Rules for Biological Data Visualization

- Identify Data Nature: Categorize variables as nominal (e.g., target classes), ordinal (e.g., affinity levels), interval, or ratio (e.g., binding affinity values) to determine appropriate color schemes [14].

- Select Perceptually Uniform Color Spaces: Utilize CIE Luv or CIE Lab color spaces instead of standard RGB to ensure perceptual uniformity, where color changes correspond to consistent perceptual differences [14].

- Assess Color Deficiencies: Check visualizations for accessibility using color deficiency simulators to ensure interpretability for users with color vision deficiencies [14].

- Contextualize Color Meaning: Apply domain-specific color conventions (e.g., red for inhibitory effects, blue for stimulatory effects) while providing clear legends [14].

Visualization of Multi-scale Biomolecular Data

Molecular visualization employs multiple representation models to highlight different structural aspects:

- Skeletal Models: Use ball-and-stick or stick representations to emphasize atomic connectivity and bonding patterns in small molecules [15].

- Cartoon Models: Implement ribbon diagrams to visualize protein secondary structures and folding patterns [15].

- Surface Models: Apply solvent-accessible surface (SAS) or solvent-excluded surface (SES) representations to analyze molecular interactions and binding pockets [15].

These visualization approaches, when combined with appropriate color schemes, enable researchers to intuitively understand complex structural relationships and interaction patterns between compounds and their biological targets.

In modern drug discovery, chemogenomics aims to relate the vast chemical space of potential compounds to the genomic space of biological targets, facilitating the identification of novel drug-target interactions (DTIs) [16]. The accurate prediction of these interactions is a critical and rate-limiting step, with machine learning (ML) emerging as a powerful tool to accelerate this process by leveraging large-scale chemical and biological data [16] [17]. The performance and generalizability of ML models are profoundly influenced by the quality, scope, and characteristics of the underlying databases used for training [17]. Among the most critical resources for DTI prediction are BindingDB, DrugBank, and ChEMBL. These databases provide manually curated, high-quality data on bioactive molecules, approved drugs, and quantitative protein-ligand binding measurements, forming the foundational data upon which chemogenomic models are built. This application note provides a detailed overview of these three key databases, summarizes their data into comparable tables, and outlines experimental protocols for their use in ML-driven chemogenomics research, specifically framed to address common challenges such as model generalizability and annotation bias.

Core Characteristics and Data Content

ChEMBL is a manually curated database of bioactive molecules with drug-like properties, primarily extracted from the scientific literature. It focuses on quantitative bioactivity data (e.g., IC₅₀, Kᵢ) essential for structure-activity relationship (SAR) analysis and rational drug design [18]. As of recent updates, it contains over 2.4 million compounds and 20.3 million bioactivity measurements [18].

DrugBank is a comprehensive resource combining detailed drug data with comprehensive target information. It is uniquely positioned as a knowledgebase for FDA-approved and experimental drugs, providing rich information on mechanisms of action, pharmacokinetics, drug-drug interactions, and clinical data [19] [18]. It contains over 17,000 drug entries and links to 5,000 protein targets [18].

BindingDB is a public database focused on measured binding affinities between proteins and small, drug-like molecules. It provides quantitative interaction data, such as Kd, Ki, and IC50 values, which are critical for validating binding predictions and modeling structure-activity relationships [18]. It boasts over 3 million binding data entries for more than 1.3 million compounds and 9,500 targets [18].

Table 1: Core Characteristics of BindingDB, DrugBank, and ChEMBL

| Feature | BindingDB | DrugBank | ChEMBL |

|---|---|---|---|

| Primary Focus | Protein-ligand binding affinities | Approved & experimental drugs; pharmacology | Bioactive molecules & SAR data |

| Total Compounds | >1.3 million [18] | >17,000 [18] | >2.4 million [18] |

| Total Targets | ~9,500 [18] | ~5,000 [18] | >9,500 (as of earlier data) [19] |

| Key Data Types | Kd, Ki, IC50 [18] | Drug targets, mechanisms, pharmacokinetics, pathways [19] [20] [18] | IC50, Ki, SAR, bioactivity data [19] [18] |

| Curation Style | Hybrid (manual + automated) [18] | Hybrid (manually validated + automated updates) [18] | Manual (expert-curated from literature/patents) [18] |

| Access | Free and publicly available [18] | Free for non-commercial use [18] | Free and publicly available [18] |

Quantitative Data and Molecular Coverage

The databases differ significantly in their size and scope, which directly influences their application in drug discovery pipelines. ChEMBL is the largest in terms of unique bioactivity records, making it invaluable for training ML models on a diverse chemical and biological space. BindingDB provides the deepest and most focused collection of quantitative binding measurements. DrugBank, while smaller in compound count, offers the richest contextual and pharmacological information for its entities, which is crucial for understanding drug mechanism and repurposing potential.

Table 2: Statistical Overview and Molecular Coverage

| Aspect | BindingDB | DrugBank | ChEMBL |

|---|---|---|---|

| Bioactivity Records | 3 million+ [18] | N/A (focus on drug entities) | 20.3 million+ [18] |

| Therapeutic Coverage | Broad (any protein with binding data) | Focused (approved, experimental, nutraceutical drugs) [19] | Broad (from medicinal chemistry literature) [19] |

| Data Source | Scientific literature [18] | Scientific literature, regulatory documents [18] | Scientific literature, patents [18] |

| Unique Value for ML | Quantitative affinity data for model validation [17] | Rich pharmacological context and known drug-target pairs [16] | Massive-scale, quantitative bioactivity data for SAR [19] |

Critical Considerations for Machine Learning Applications

A paramount challenge in using these databases for ML is the problem of annotation imbalance and topological shortcuts [17]. The known drug-target interaction (DTI) network is a bipartite graph with a fat-tailed degree distribution, meaning a few proteins and ligands (hubs) have a disproportionately large number of known interactions, while the majority have very few [17]. Furthermore, an anti-correlation exists between a node's degree and its average dissociation constant (Kd), meaning high-degree nodes tend to have stronger binding affinities [17]. ML models can exploit these topological features as shortcuts, learning to predict binding based on a molecule's popularity in the network rather than its structural or sequence-based features. This leads to models that fail to generalize to novel proteins or ligands not present in the training data [17].

Strategies to Mitigate Bias:

- Network-based Negative Sampling: Instead of assuming all unobserved pairs are negative, use network distance (e.g., shortest path) to select likely non-binding pairs as robust negative samples [17].

- Unsupervised Pre-training: Pre-train model embeddings for proteins and ligands on larger, unrelated chemical and sequence libraries to learn meaningful representations before fine-tuning on binding data, reducing dependency on limited annotations [17].

- Cross-Validation Strategy: Implement strict cross-validation splits where proteins/ligands in the test set are entirely absent from the training set (leave-one-out cross-validation) to properly assess generalizability to novel entities [17].

Diagram 1: ML Pitfall from Data Bias

Experimental Protocols for Data Extraction and Curation

Protocol 1: Building a Robust Dataset for DTI Prediction

This protocol describes the steps to create a high-quality, machine-learning-ready dataset from ChEMBL, BindingDB, and DrugBank, designed to mitigate annotation bias.

Research Reagent Solutions:

- Computational Environment: A Python environment (v3.8+) with key libraries including

pandasfor data manipulation,rdkitfor cheminformatics, andrequestsfor API access. - Data Sources: Direct download links or RESTful API endpoints for ChEMBL, BindingBank, and DrugBank.

- Identifier Mapping Tools: UniProt ID mapping service to harmonize protein identifiers across databases.

- Cheminformatics Toolkit: CACTVS or OpenBabel for structure normalization and canonicalization, crucial for accurate compound comparison [19].

Procedure:

- Data Acquisition: Download the latest public releases of ChEMBL (SQLite or flat file), BindingDB (CSV), and DrugBank (requires registration) [18].

- Structure Normalization: Process all chemical structures using a toolkit like CACTVS to normalize stereochemistry, charges, and remove duplicates. Apply rules to generate unique structure identifiers (e.g., InChIKey) at different normalization levels (e.g., ignoring stereochemistry or salts) to ensure consistent compound representation [19].

- Protein Identifier Harmonization: Map all protein identifiers to a standard namespace (e.g., UniProt IDs) using the UniProt mapping service. This is critical for integrating target data across all three sources [19].

- Activity Thresholding and Labeling: For each protein-ligand pair, define a binding label based on a consistent activity threshold (e.g., Kd or IC50 < 100 nM for "positive") [17]. This creates the foundational positive set.

- Negative Set Sampling (Network-Based): Implement a robust negative sampling strategy. Instead of random sampling, select protein-ligand pairs that are separated by a minimum shortest path distance (e.g., >=3) in the known DTI network. This helps select pairs that are topologically distant and more likely to be true negatives [17].

- Data Integration and Splitting: Merge the positive and robust negative sets. Split the final dataset into training, validation, and test sets using a stratified leave-one-out approach, ensuring that all interactions for specific proteins or ligands are entirely contained within one split to test model generalizability to novel entities [17].

Diagram 2: Data Curation Workflow

Protocol 2: A Workflow for In Silico Drug Repurposing

This protocol leverages the rich pharmacological data in DrugBank combined with the extensive bioactivity data in ChEMBL and BindingDB to identify new therapeutic uses for existing drugs.

Research Reagent Solutions:

- DrugBank Database: Provides the core list of approved drugs, their known targets, and associated diseases.

- ChEMBL/BindingDB APIs: Used to query for additional bioactivity data for the drug compounds against off-targets.

- Pathway/Network Analysis Tools: Software like the ReactomeFIViz Cytoscape app enables visualization of drug targets in the context of biological pathways and networks [20].

- Docking Software (Optional): Tools like AutoDock Vina can be used for structural validation of predicted novel interactions [17].

Procedure:

- Candidate Drug Selection: From DrugBank, extract a list of approved drugs. Filter based on safety profile or other relevant criteria.

- Off-Target Profiling: For each candidate drug, query ChEMBL and BindingDB using its canonical SMILES or InChIKey to retrieve all known bioactivity data against any human protein, not just its primary targets.

- Pathway and Network Mapping: Using an application like ReactomeFIViz, import the list of all known and potential targets for the drug. Map these targets to Reactome pathways and the Functional Interaction (FI) network [20].

- Pathway Enrichment Analysis: Perform over-representation analysis to identify pathways that are significantly enriched with the drug's targets. Pathways enriched with off-targets may suggest new disease mechanisms or reveal potential side effects [20].

- Hypothesis Generation: If the enriched pathway analysis reveals strong associations with a disease unrelated to the drug's original indication, this forms a repurposing hypothesis. For example, a drug whose off-targets are significantly enriched in a cancer-associated signaling pathway (e.g., RAF/MAPK cascade) could be a candidate for oncology repurposing [20].

- Experimental Validation: The top predicted repurposing candidates should be validated through in vitro binding assays or phenotypic screens, or further investigated with in silico docking simulations if 3D structures are available [17].

BindingDB, DrugBank, and ChEMBL are indispensable, complementary resources for chemogenomics and ML-based drug discovery. BindingDB offers precise binding measurements, DrugBank provides deep pharmacological context, and ChEMBL delivers unparalleled scale of bioactivity data. The effective application of these databases requires careful data curation and an awareness of inherent biases, such as annotation imbalance, which can limit the generalizability of ML models. By adhering to the protocols outlined herein—particularly those for robust dataset construction and bias mitigation—researchers can more reliably leverage these foundational data sources to predict novel drug-target interactions and accelerate the development of new therapeutics.

Advantages of Chemogenomic Methods over Ligand-Based and Docking Approaches

The accurate prediction of drug-target interactions (DTIs) is a critical bottleneck in pharmaceutical research, with traditional experimental methods being time-consuming, expensive, and low-throughput [21]. In silico approaches have emerged as powerful alternatives, primarily falling into three categories: ligand-based, docking-based (structure-based), and chemogenomic methods. Ligand-based approaches, including quantitative structure-activity relationship (QSAR) and pharmacophore models, predict new drug candidates by leveraging known bioactivity data and chemical similarity [1]. Structure-based methods, such as molecular docking, predict the binding mode and affinity of a ligand within a target protein's active site using three-dimensional structural information [22]. In contrast, modern chemogenomic methods integrate diverse chemical and biological information using machine learning (ML) and deep learning (DL) to model interactions across entire drug-target networks [1] [23].

This application note delineates the distinct advantages of chemogenomic approaches over traditional ligand-based and docking methods. We provide a structured comparison of their capabilities, detailed experimental protocols for implementing chemogenomic frameworks, and visualizations of key workflows. The content is framed within the broader thesis that machine learning-driven chemogenomics represents a paradigm shift in drug discovery by enabling more comprehensive, accurate, and scalable prediction of drug-target interactions.

Comparative Analysis of DTI Prediction Approaches

Table 1: Fundamental Characteristics of DTI Prediction Approaches

| Feature | Ligand-Based Methods | Docking-Based Methods | Chemogenomic Methods |

|---|---|---|---|

| Primary Data | Known active compounds, chemical structures [1] | 3D protein structures, ligand conformations [22] | Diverse data: chemical structures, protein sequences, interaction networks, omics data [1] [23] [21] |

| Core Principle | Chemical similarity principle [24] | Complementary fit and binding energy calculation [22] | Machine learning from heterogeneous, large-scale datasets [4] [23] |

| Handling Novelty | Limited to chemical space near known actives [1] | Dependent on availability of high-quality protein structures [24] | Capable of exploring novel chemical and target spaces [23] |

| Key Limitation | Cannot identify targets for structurally novel compounds [1] | Computationally expensive; limited by structural data availability and scoring function accuracy [1] [24] | Requires large, high-quality datasets for training; "black box" interpretability issues [23] |

Table 2: Performance and Applicability Comparison

| Aspect | Ligand-Based Methods | Docking-Based Methods | Chemogenomic Methods |

|---|---|---|---|

| Typical Application | Virtual screening for analogs of known drugs [1] | Lead optimization, binding mode analysis [22] | Large-scale DTI prediction, drug repurposing, polypharmacology studies [23] [25] |

| Throughput | High | Low to Medium | Very High [21] |

| Reported Performance (AUC) | Varies widely by method and dataset | Varies by protein and docking program | Up to 0.98-0.99 on benchmark datasets [4] [21] |

| Cold-Start Problem | Severe for novel scaffolds | Severe for proteins without structures | Mitigated by using sequence and network information [21] |

The fundamental advantage of chemogenomic methods lies in their data integration capacity. While traditional approaches rely on a single data type, chemogenomics can unify drug fingerprints (e.g., MACCS keys, ECFP), target representations (e.g., amino acid composition, protein language model embeddings), and known interaction networks into a unified predictive model [4] [23]. This enables the capture of complex, non-linear relationships that are inaccessible to simpler similarity-based or physics-based scoring functions.

Furthermore, chemogenomic approaches directly address key challenges in drug discovery, such as data imbalance through techniques like Generative Adversarial Networks (GANs) for synthetic data generation [4], and polypharmacology by naturally modeling a drug's interaction profile across multiple targets [23] [25]. The scalability of ML models allows for the screening of billions of potential drug-target pairs, which is computationally prohibitive for docking simulations [21].

Experimental Protocols for Chemogenomic DTI Prediction

Protocol 1: Hybrid Machine Learning Framework with Data Balancing

This protocol outlines the implementation of a high-performance chemogenomic framework that combines feature engineering with data balancing, as demonstrated in a recent study achieving >97% accuracy on BindingDB datasets [4].

1. Feature Engineering

- Drug Representation: Encode drug molecules using MACCS structural keys or Morgan fingerprints (radius 2, 2048 bits) to create fixed-length feature vectors representing molecular structure [4] [24].

- Target Representation: Represent target proteins using amino acid composition (AAC) and dipeptide composition (DPC) to capture sequence-based biochemical properties [4].

2. Data Balancing with GANs

- Problem: DTI datasets typically exhibit extreme imbalance, with negative interactions vastly outnumbering positive ones.

- Solution: Train a Generative Adversarial Network (GAN) on the minority class (positive interactions) to generate synthetic positive samples.

- Procedure: a. Pre-train the GAN on known positive drug-target pairs. b. Generate synthetic positive samples until class balance is achieved. c. Combine synthetic data with original training set.

3. Model Training and Prediction

- Algorithm: Employ a Random Forest Classifier, optimized for high-dimensional data.

- Training: Use 5-fold cross-validation on the balanced dataset.

- Validation: Evaluate on held-out test sets using AUC-ROC, precision, recall, and F1-score.

4. Experimental Validation

- Select top-ranked novel DTIs for in vitro validation using binding affinity assays (e.g., IC50, Ki determination).

Protocol 2: Graph Neural Network with Knowledge Integration

This protocol describes a cutting-edge graph-based chemogenomic approach that integrates biological knowledge, achieving state-of-the-art performance with AUC up to 0.98 [21].

1. Heterogeneous Graph Construction

- Nodes: Define two node types: drugs and targets.

- Edges: Establish multiple edge types:

- Drug-drug: Based on chemical similarity (Tanimoto coefficient on fingerprints).

- Target-target: Based on sequence similarity (Smith-Waterman score) or protein-protein interactions.

- Drug-target: Known interactions from databases like ChEMBL or BindingDB.

- Features: Initialize node features using molecular fingerprints for drugs and sequence-derived embeddings for targets.

2. Graph Representation Learning

- Model Architecture: Implement a Graph Convolutional Network (GCN) or Graph Attention Network (GAT) with multi-layer message passing.

- Knowledge Integration: Incorporate biological knowledge graphs (e.g., Gene Ontology, DrugBank) as regularization constraints during training to ensure embeddings are biologically plausible.

3. Model Optimization

- Training Objective: Use binary cross-entropy loss for interaction prediction.

- Negative Sampling: Employ an enhanced negative sampling strategy to select non-interacting pairs that are structurally similar to known interactions, creating a more challenging and realistic training set [21].

- Regularization: Apply knowledge-based regularization to align learned representations with known ontological relationships.

4. Interpretation and Validation

- Salience Mapping: Visualize attention weights to identify salient molecular substructures and protein motifs driving predictions.

- Experimental Validation: Prioritize novel predictions with high confidence scores and clear biological interpretability for wet-lab validation.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Chemogenomic DTI Prediction

| Resource Name | Type | Function in Research | Access Information |

|---|---|---|---|

| ChEMBL | Database | Manually curated database of bioactive molecules with drug-target interactions, ideal for model training and benchmarking [24]. | https://www.ebi.ac.uk/chembl/ |

| BindingDB | Database | Public database of measured binding affinities for drug-target pairs, useful for training affinity prediction models [4]. | https://www.bindingdb.org/ |

| DrugBank | Database | Comprehensive resource combining detailed drug data with drug target information, valuable for validation [23]. | https://go.drugbank.com/ |

| AutoDock Vina | Software | Molecular docking tool used for generating comparative baseline data or structural insights [22]. | http://vina.scripps.edu/ |

| MolTarPred | Software | Ligand-centric target prediction method based on 2D chemical similarity, effective for benchmarking [24]. | Stand-alone code |

| Hetero-KGraphDTI | Software/Algorithm | Graph neural network framework integrating multiple data types and knowledge graphs for state-of-the-art prediction [21]. | Custom implementation |

Workflow and Pathway Visualizations

Graph 1: High-Level Chemogenomic Workflow. This diagram illustrates the comprehensive workflow for chemogenomic-based DTI prediction, highlighting the integrated data sources and the critical steps of feature engineering and experimental validation.

Graph 2: GAN Data Balancing Protocol. This diagram details the procedure for addressing data imbalance using Generative Adversarial Networks (GANs), a key advantage of advanced chemogenomic methods.

Chemogenomic methods represent a significant advancement over traditional ligand-based and docking approaches by leveraging machine learning to integrate heterogeneous data types, address dataset imbalances, and model the complex landscape of drug-target interactions at scale. The protocols and resources provided herein offer researchers a practical roadmap for implementing these powerful methods in their drug discovery pipelines.

Future developments in this field will likely focus on improving model interpretability, integrating higher-quality structural data from AlphaFold, and leveraging large language models for enhanced biological representation learning [1] [26]. As these technologies mature, chemogenomic approaches will become increasingly indispensable for the efficient discovery of novel therapeutics and the repurposing of existing drugs, ultimately accelerating the delivery of new treatments to patients.

Machine Learning Architectures and Feature Engineering for DTI Prediction

In the field of chemogenomics and drug discovery, accurately predicting drug-target interactions (DTIs) is a critical yet challenging task. The foundation of modern computational approaches for DTI prediction lies in effective feature representation of molecular and proteomic data. Feature extraction methods have evolved significantly from traditional predefined descriptors to advanced learned representations, enabling machines to interpret chemical and biological entities for predicting binding affinities and interactions. This transformation is crucial for reducing the high costs and long timelines associated with traditional drug development processes, where approximately 60-70% of drug candidates fail due to poor efficacy or adverse effects [4].

The evolution of molecular representation has progressed from human-readable formats like IUPAC names to computer-oriented representations like SMILES (Simplified Molecular-Input Line-Entry System), molecular fingerprints, and graph-based representations [27]. Similarly, protein sequence representation has advanced from basic amino acid sequence encoding to sophisticated embeddings that capture physicochemical properties and evolutionary information. These representations form the foundational feature sets for machine learning (ML) and deep learning (DL) models in DTI prediction, enabling more accurate and efficient identification of potential drug-target pairs [28] [29].

Molecular Representation Methods

Traditional Small Molecule Representations

Traditional molecular representation methods rely on explicit, rule-based feature extraction to convert chemical structures into machine-readable formats. These methods have laid a strong foundation for many computational approaches in drug discovery.

SMILES (Simplified Molecular-Input Line-Entry System) represents molecules as strings of ASCII characters that specify molecular structure through atomic symbols and connectivity indicators. For example, the popular drug acetaminophen can be represented in SMILES format as "CC(=O)Nc1ccc(O)cc1" [27]. While SMILES offers compact encoding and human-readability (with practice), it has limitations including non-uniqueness (multiple valid SMILES for the same molecule) and sensitivity to syntax variations.

Molecular Fingerprints encode molecular structures as bit strings or numerical vectors representing the presence or absence of specific substructures or physicochemical properties. The most prominent types include:

- MACCS (Molecular ACCess System) keys: A set of 166 predefined structural fragments used for binary molecular representation [4] [27].

- Extended-Connectivity Fingerprints (ECFPs): Circular fingerprints that capture molecular features within increasing radial diameters, particularly valuable for structure-activity relationship studies [29].

- Graph-based fingerprints: Encode molecular graph properties including paths, branches, and ring systems.

Table 1: Comparison of Traditional Molecular Representation Methods

| Representation Type | Format | Key Features | Common Applications | Limitations |

|---|---|---|---|---|

| SMILES | String | Atomic symbols, bonds, branching | Sequence-based models, chemical databases | Non-unique representation, syntax sensitivity |

| MACCS Keys | Binary vector (166 bits) | Structural fragments | Similarity searching, virtual screening | Limited to predefined substructures |

| ECFP | Integer array | Circular atom environments | QSAR, machine learning | Computationally intensive for large molecules |

| Molecular Descriptors | Numerical vector | Physicochemical properties | QSAR, property prediction | May require feature selection |

Modern AI-Driven Molecular Representations

Recent advancements in artificial intelligence have introduced data-driven representation learning approaches that automatically extract relevant features from molecular data [29].

Language Model-Based Representations treat molecular representations (SMILES/SELFIES) as a specialized chemical language. Models such as Transformers tokenize molecular strings at atomic or substructure levels and process them through architectures adapted from natural language processing [29]. These approaches learn contextual molecular representations without relying on predefined rules or expert knowledge.

Graph-Based Representations model molecules directly as graphs where atoms represent nodes and bonds represent edges. Graph Neural Networks (GNNs), particularly Graph Attention Networks (GATs), process these molecular graphs to learn representations that capture both local atomic environments and global molecular topology [28] [29]. These methods naturally represent molecular structure without information loss that can occur in string-based representations.

Multimodal and Contrastive Learning approaches integrate multiple representation types (e.g., combining graph-based and sequence-based views) to create more comprehensive molecular embeddings. Contrastive learning frameworks enhance representation quality by maximizing agreement between differently augmented views of the same molecule while distinguishing between different molecules [29].

Protein Sequence Representation Methods

Traditional Protein Feature Extraction

Protein sequence representation methods transform amino acid sequences into numerical feature vectors that capture relevant biochemical properties for predictive modeling.

Amino Acid Composition (AAC) represents proteins as a 20-dimensional vector containing the occurrence frequencies of each standard amino acid. Dipeptide Composition (DC) extends AAC by considering the frequencies of consecutive amino acid pairs, capturing local sequence order information [4] [28].

Evolutionary Scale Modeling (ESM-1b) leverages unsupervised learning on millions of protein sequences to generate contextual embeddings that capture evolutionary information and structural constraints [28]. These embeddings often outperform handcrafted features for predicting protein function and interactions.

FEGS (Feature Extraction based on Graphical and Statistical features) is a novel approach that integrates graphical representation of protein sequences based on physicochemical properties with statistical features [30]. This method transforms a protein sequence into a 578-dimensional numerical vector that has demonstrated superior performance in phylogenetic analysis compared to other feature extraction methods.

Advanced Protein Representation Learning

Modern protein representation methods employ deep learning architectures to automatically learn relevant features from sequence data and structural information.

Sequence-Based Deep Learning approaches use convolutional neural networks (CNNs) and recurrent neural networks (RNNs) to extract local motifs and long-range dependencies from raw amino acid sequences [4] [31]. For example, DeepConv-DTI uses 1D-CNN on protein sequences to obtain feature representations for DTI prediction [31].

Graph-Based Protein Modeling represents proteins as graphs where amino acids form nodes and their interactions form edges. Graph attention networks then process these representations to capture complex structural relationships [28].

Multimodal Protein Representations integrate multiple information sources including sequence, evolutionary information, structural features, and protein-protein interaction networks to create comprehensive protein embeddings [31].

Table 2: Protein Sequence Representation Methods for DTI Prediction

| Method | Type | Features | Dimensions | Application in DTI |

|---|---|---|---|---|

| Amino Acid Composition (AAC) | Traditional | Amino acid frequencies | 20 | Basic sequence characterization |

| Dipeptide Composition (DC) | Traditional | Adjacent amino acid pairs | 400 | Local sequence pattern capture |

| PseAAC (Pseudo AAC) | Traditional | AAC + sequence order effects | 20+λ | Incorporating sequence order |

| ESM-1b | Deep Learning | Evolutionary context embeddings | 1280 | State-of-the-art protein modeling |

| FEGS | Hybrid | Graphical + statistical features | 578 | Phylogenetic analysis, similarity |

| CNN-Based Features | Deep Learning | Motif and pattern detection | Variable | DeepConv-DTI, MIFAM-DTI |

Experimental Protocols and Application Notes

Integrated DTI Prediction Framework

This protocol outlines the implementation of a hybrid DTI prediction framework combining advanced feature engineering with machine learning, as demonstrated in recent state-of-the-art approaches [4] [28] [31].

Feature Extraction Workflow

Drug Feature Extraction:

- Input: Drug compounds in SMILES format

- Generate MACCS structural fingerprints (166 bits) using RDKit or similar cheminformatics toolkit

- Calculate physicochemical property descriptors (molecular weight, logP, hydrogen bond donors/acceptors, etc.)

- Apply Principal Component Analysis (PCA) to reduce dimensionality to 128 dimensions

- Output: 128-dimensional drug feature vector

Target Protein Feature Extraction:

- Input: Protein sequences in amino acid format

- Compute dipeptide composition (400-dimensional vector)

- Generate ESM-1b embeddings using pretrained models

- Apply PCA to reduce ESM-1b embeddings to 128 dimensions

- Output: 128-dimensional target feature vector

Feature Integration:

- Concatenate drug and target feature vectors

- Alternatively, use cross-attention mechanisms to model interactions between drug and target features [31]

Figure 1: Integrated Drug-Target Interaction Prediction Workflow

Data Balancing and Model Training

A critical challenge in DTI prediction is addressing class imbalance, where confirmed interactions are significantly outnumbered by unknown or non-interacting pairs.

Generative Adversarial Networks for Data Balancing [4]:

- Preprocessing: Partition the dataset into interacting (positive) and non-interacting (negative) classes

- GAN Architecture: Implement a generator network that creates synthetic minority class samples and a discriminator network that distinguishes between real and synthetic samples

- Training: Train the GAN on the minority class until the generator produces realistic synthetic samples

- Data Augmentation: Add generated samples to the training set to balance class distribution

- Model Training: Train Random Forest or Deep Learning classifiers on the balanced dataset

Performance Metrics:

- Evaluate models using accuracy, precision, sensitivity, specificity, F1-score, and ROC-AUC

- Implement nested cross-validation to avoid hyperparameter selection bias

- Use cluster-cross-validation to assess performance on novel molecular scaffolds

Table 3: Performance Benchmarks of GAN-Based DTI Prediction on BindingDB Datasets

| Dataset | Accuracy | Precision | Sensitivity | Specificity | F1-Score | ROC-AUC |

|---|---|---|---|---|---|---|

| BindingDB-Kd | 97.46% | 97.49% | 97.46% | 98.82% | 97.46% | 99.42% |

| BindingDB-Ki | 91.69% | 91.74% | 91.69% | 93.40% | 91.69% | 97.32% |

| BindingDB-IC50 | 95.40% | 95.41% | 95.40% | 96.42% | 95.39% | 98.97% |

Multi-Source Information Fusion with Attention Mechanisms

Advanced DTI prediction models integrate multiple feature sources using attention mechanisms to improve prediction accuracy [28] [31].

MIFAM-DTI Protocol [28]:

- Multi-Source Feature Extraction:

- Drug features: physicochemical properties and MACCS fingerprints from SMILES

- Target features: dipeptide composition and ESM-1b embeddings from amino acid sequences

- Apply PCA to reduce each feature vector to 128 dimensions

Graph Attention Network Processing:

- Construct adjacency matrices using cosine similarity between feature vectors

- Apply logical OR operation on adjacency matrices

- Process through graph attention networks to learn attention weights

- Generate final drug and target representation vectors

Multi-Head Self-Attention:

- Apply multi-head self-attention to capture dependencies within feature sequences

- Concatenate outputs from multiple attention heads

Prediction:

- Concatenate final drug and target representation vectors

- Feed through fully connected layers with dropout regularization

- Output interaction probability using sigmoid activation

MFCADTI Cross-Attention Protocol [31]:

- Heterogeneous Network Feature Extraction:

- Construct biological network with drug, target, disease, and side effect nodes

- Use LINE algorithm to extract network topological features

- Extract attribute features from SMILES and amino acid sequences using Frequent Continuous Subsequence method

Cross-Attention Feature Fusion:

- Apply cross-attention mechanisms to integrate network and attribute features

- Use cross-attention to learn interaction features between drug-target pairs

Interaction Prediction:

- Pass final interaction feature representations to fully connected layers

- Predict DTIs using balanced class weights

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Tools and Resources for DTI Feature Representation Research

| Tool/Resource | Type | Function | Application Note |

|---|---|---|---|

| RDKit | Cheminformatics Library | Molecular descriptor calculation, fingerprint generation, SMILES parsing | Open-source platform for cheminformatics; supports 196 descriptors and 8 fingerprint types [27] |

| Open Babel | Chemical Toolbox | Molecular format conversion | Supports 146 molecular formats; essential for data preprocessing [27] |

| ESM-1b | Protein Language Model | Evolutionary-scale protein sequence representations | Pretrained on UniRef50; generates contextual embeddings capturing structural and functional constraints [28] |

| FEGS | Protein Feature Extraction | Graphical and statistical feature extraction from sequences | Generates 578-dimensional feature vectors; effective for similarity analysis [30] |

| BindingDB | Bioactivity Database | Experimental binding data for drug-target pairs | Primary source for positive/negative DTI samples; includes Kd, Ki, IC50 values [4] |

| DrugBank | Pharmaceutical Knowledge Base | Comprehensive drug, target, and interaction information | Source for validated DTIs; useful for benchmark dataset construction [28] |

| LINE Algorithm | Network Embedding | Network feature extraction from heterogeneous graphs | Captures first-order and second-order proximities in biological networks [31] |

| GANs | Data Generation | Synthetic sample generation for class imbalance | Creates synthetic minority class samples; improves model sensitivity [4] |

Implementation Considerations and Best Practices

Data Preprocessing and Quality Control

Effective feature representation begins with rigorous data preprocessing and quality control measures. For drug compounds, ensure SMILES strings are canonicalized and validated using toolkits like RDKit to avoid representation ambiguities [27]. For protein sequences, verify sequence integrity and remove fragments shorter than 50 amino acids that may not contain sufficient structural information. When working with public databases like BindingDB and DrugBank, implement careful curation procedures to handle conflicting annotations and eliminate duplicate entries [4] [28].

Addressing class imbalance is particularly crucial in DTI prediction, as confirmed interactions typically represent a small minority of all possible drug-target pairs. The application of Generative Adversarial Networks (GANs) has demonstrated significant improvements in model sensitivity by generating synthetic minority class samples [4]. Alternative approaches include stratified sampling techniques, cost-sensitive learning, and ensemble methods that explicitly account for imbalanced distributions.

Model Selection and Validation Strategies

Model selection should be guided by dataset characteristics and prediction requirements. Random Forest classifiers consistently demonstrate strong performance with feature-based representations, particularly when combined with GAN-based data balancing [4]. For complex nonlinear relationships, deep learning architectures including Graph Neural Networks and Transformers often achieve state-of-the-art performance but require larger training datasets and computational resources [28] [29].

Validation strategies must account for specific challenges in chemogenomic data. Cluster-cross-validation, where entire molecular scaffolds are assigned to validation folds, provides more realistic performance estimates than random cross-validation by testing generalization to novel chemical structures [32]. Nested cross-validation prevents hyperparameter selection bias and provides unbiased performance estimation [32]. Additionally, temporal validation using chronologically split data simulates real-world prediction scenarios where models predict interactions for newly discovered compounds or targets.

Figure 2: Decision Workflow for DTI Prediction Implementation

Computational Resource Requirements

Feature representation and DTI prediction workflows have varying computational requirements based on approach complexity. Traditional fingerprint-based methods with Random Forest classifiers can be implemented on standard workstations with 16GB RAM and multi-core processors. Deep learning approaches using GNNs or Transformers typically require GPU acceleration, with recommendations of NVIDIA RTX 3080 or equivalent with 10GB+ VRAM for moderate-sized datasets [28] [29]. Large-scale protein language models like ESM-1b benefit from high-memory environments (32GB+ RAM) during inference.

For organizations implementing these methods, cloud computing platforms provide flexible scaling options, with containerization (Docker) and workflow management (Nextflow, Snakemake) facilitating reproducible research across computing environments.

Feature representation forms the foundation of modern chemogenomic research and drug-target interaction prediction. The evolution from traditional fingerprints and descriptors to learned representations has significantly enhanced our ability to capture complex chemical and biological patterns relevant to drug discovery. Integrated frameworks that combine multiple representation types while addressing fundamental challenges like data imbalance and generalization to novel scaffolds demonstrate the increasing sophistication of computational approaches in this domain.

As molecular representation continues to advance, the integration of larger-scale biological knowledge, three-dimensional structural information, and advanced learning paradigms like contrastive and self-supervised learning will further enhance prediction capabilities. These computational advances, coupled with rigorous experimental validation, create a powerful framework for accelerating drug discovery and repositioning efforts, ultimately contributing to more efficient development of safe and effective therapeutics.

In the field of chemogenomics, the prediction of drug-target interactions (DTIs) is a fundamental task for understanding polypharmacology, de-orphaning drug molecules, and accelerating drug repurposing [33]. Among the computational approaches, classical machine learning (ML) models, particularly Random Forest (RF) and Support Vector Machine (SVM), remain widely used due to their interpretability, robustness with curated datasets, and strong performance on complex biological data [23]. These models typically operate within a proteochemometric (PCM) modeling framework, which integrates the chemical features of compounds with the genomic or sequence-based features of target proteins into a single supervised learning model [34] [33]. This application note details the implementation of RF and SVM for DTI prediction, providing structured protocols, performance benchmarks, and resource guidance for researchers and scientists.

Theoretical Foundation and Key Concepts

The application of RF and SVM in DTI prediction is largely grounded in the "guilt-by-association" (GBA) principle. This principle posits that similar drugs are likely to interact with similar targets, and vice versa [33]. PCM modeling extends this concept by considering both drug and target spaces simultaneously, allowing for extrapolation to novel compounds and novel targets [33].

- Random Forest is an ensemble learning method that constructs multiple decision trees during training. Its robustness in handling high-dimensional data and providing feature importance metrics makes it particularly valuable for DTI prediction, where datasets can contain thousands of molecular descriptors [35] [23].

- Support Vector Machine is a powerful classifier that finds an optimal hyperplane to separate interacting from non-interacting drug-target pairs in a high-dimensional feature space. Its effectiveness, especially with nonlinear kernels, has been demonstrated in numerous chemogenomic studies [36] [33].

The following workflow outlines the standard PCM-based DTI prediction process that leverages these algorithms.

Performance Benchmarking

Classical ML models have demonstrated strong and reliable performance in DTI prediction tasks, often serving as robust baselines against which more complex deep learning models are evaluated.

Table 1: Performance Metrics of Random Forest and SVM in DTI Studies

| Model | Dataset | Key Input Features | Performance Metrics | Reference / Context |

|---|---|---|---|---|

| Random Forest | 17 Targets from ChEMBL | 3D molecular fingerprints (E3FP), Kullback-Leibler divergence | Mean Accuracy: 0.882ROC AUC: 0.990 | [35] |