Machine Learning for Molecular Property Prediction: Advances, Applications, and Future Directions in Drug Discovery

This article provides a comprehensive overview of machine learning (ML) applications in molecular property prediction, a critical technology accelerating drug discovery and materials science.

Machine Learning for Molecular Property Prediction: Advances, Applications, and Future Directions in Drug Discovery

Abstract

This article provides a comprehensive overview of machine learning (ML) applications in molecular property prediction, a critical technology accelerating drug discovery and materials science. It explores foundational concepts, from overcoming traditional experimental bottlenecks to understanding dataset limitations and uncertainty quantification. The content delves into advanced methodological frameworks, including graph neural networks, multi-task learning, and emerging architectures like Kolmogorov-Arnold Networks, highlighting user-friendly tools that democratize access for researchers. It further addresses practical challenges such as data scarcity and model optimization, while presenting rigorous validation paradigms and comparative analyses across drug modalities. Through real-world case studies on targeted protein degraders and COVID-19 drug repurposing, this resource equips researchers and drug development professionals with the knowledge to effectively implement ML strategies, enhance predictive reliability, and drive innovation in biomedical research.

The Foundation of AI in Chemistry: From Data Challenges to Core Concepts

The Critical Need for ML in Molecular Property Prediction

The discovery of new molecules for applications in pharmaceuticals, materials, and energy storage is fundamentally constrained by the slow and resource-intensive process of experimentally determining molecular properties. Machine learning (ML) has emerged as a transformative tool to overcome this bottleneck, using data-driven models to predict properties directly from molecular structures, thereby accelerating the pace of scientific discovery [1] [2]. These models learn from existing data to make rapid predictions for new molecules, significantly reducing the time, cost, and wear-and-tear on laboratory equipment associated with traditional methods [1]. However, the efficacy of these models is often hampered by challenges such as data scarcity, the need for specialized programming skills, and poor performance on out-of-distribution data [1] [2] [3]. This document outlines the critical need for ML in this domain and provides detailed application notes and protocols to enable researchers to implement these advanced techniques effectively.

Current Landscape and Key Challenges

The application of ML in molecular sciences is rapidly evolving, with research focusing on overcoming significant barriers to practical implementation.

Table 1: Key Challenges in Molecular Property Prediction

| Challenge | Impact on Research | Emerging ML Solutions |

|---|---|---|

| Data Scarcity [2] | Limits model robustness, particularly for novel molecular classes. | Multi-task learning (MTL), Adaptive Checkpointing with Specialization (ACS) [2]. |

| Programming Skill Barrier [1] | Creates accessibility barrier for trained chemists without computational backgrounds. | User-friendly software tools (e.g., ChemXploreML) [1]. |

| Out-of-Distribution (OOD) Generalization [3] | Inflated performance estimates; models fail on chemically distinct molecules. | Robust evaluation protocols using scaffold and cluster-based data splits [3]. |

| Lack of Interpretability [4] | "Black box" predictions hinder scientific insight and hypothesis generation. | Functional group-level reasoning datasets (e.g., FGBench) [4]. |

| Ultra-Low Data Regimes [2] | Prevents ML application in new research areas with little historical data. | Specialized training schemes like ACS, enabling learning from <30 samples [2]. |

A significant frontier is the move from molecule-level to functional group-level prediction. Functional groups are specific atom groupings that dictate molecular properties [4]. Incorporating this fine-grained information can provide valuable prior knowledge, building more interpretable and structure-aware models [4]. The novel dataset FGBench, comprising 625,000 molecular property reasoning problems with precise functional group annotations, is designed to enhance the reasoning capabilities of large language models (LLMs) in chemistry by uncovering hidden relationships between specific functional groups and molecular properties [4].

Application Notes: Instrumental ML Models and Datasets

This section details key resources that form the modern scientist's toolkit for molecular property prediction.

Table 2: Essential Research Reagent Solutions for ML-Driven Discovery

| Item Name | Type | Function & Application | Key Specifications |

|---|---|---|---|

| ChemXploreML [1] | Desktop Software | User-friendly application for predicting key molecular properties (e.g., boiling point) without deep programming skills. | Offline-capable; includes automated molecular embedders; accuracy up to 93% for critical temperature [1]. |

| ACS Training Scheme [2] | ML Algorithm | Mitigates negative transfer in multi-task graph neural networks, enabling accurate prediction in ultra-low data regimes. | Combines shared backbones with task-specific heads; adaptive checkpointing; validated with as few as 29 labeled samples [2]. |

| FGBench Dataset [4] | Benchmark Dataset | Enables training and evaluation of models on functional group-level property reasoning. Contains 625K QA pairs. | Covers 245 functional groups; includes regression and classification tasks; supports single and multiple FG interactions [4]. |

| Open Molecules 2025 (OMol25) [5] | Quantum Chemistry Dataset | Large-scale DFT dataset for training foundational models on biomolecules, metal complexes, and electrolytes. | Configurations up to 10x larger than previous datasets; requires high-performance ORCA package (v6.0.1) [5]. |

| Universal Model for Atoms (UMA) [5] | Foundational Model | Machine learning interatomic potential providing accurate predictions across a wide range of materials and molecules. | Trained on over 30 billion atoms; serves as a versatile base for downstream fine-tuning applications [5]. |

Experimental Protocols

Protocol: Implementing ACS for Multi-Task Graph Neural Networks

Purpose: To train a robust multi-task GNN that mitigates negative transfer, especially under severe task imbalance and in ultra-low data regimes [2].

Materials:

- Hardware: Computer with CUDA-enabled GPU.

- Software: Python (>=3.8), PyTorch (>=1.9), PyTorch Geometric (>=2.0).

- Data: Molecular dataset with multiple property labels (e.g., ClinTox, SIDER, Tox21).

Procedure:

- Data Preprocessing:

Model Architecture Setup:

- Backbone: Implement a shared message-passing graph neural network (MP-GNN) to generate latent molecular representations [2].

- Heads: For each property prediction task, attach a separate, task-specific multi-layer perceptron (MLP) head.

ACS Training Scheme:

- Initialize the shared GNN backbone and all task-specific MLP heads.

- For each training epoch, perform a forward pass for all tasks. Calculate the loss for each task independently, masking losses where labels are absent.

- Update all model parameters via backpropagation using a combined (e.g., summed) loss.

- Adaptive Checkpointing: After each epoch, evaluate the model on the validation set for every task. For any task where the validation loss achieves a new minimum, checkpoint (save) the state of the shared backbone and that task's specific head [2].

- Continue training until convergence criteria are met for all tasks.

Model Specialization:

- Upon completion of training, the final model for each task is the checkpointed backbone-head pair that achieved the lowest validation loss for that specific task [2].

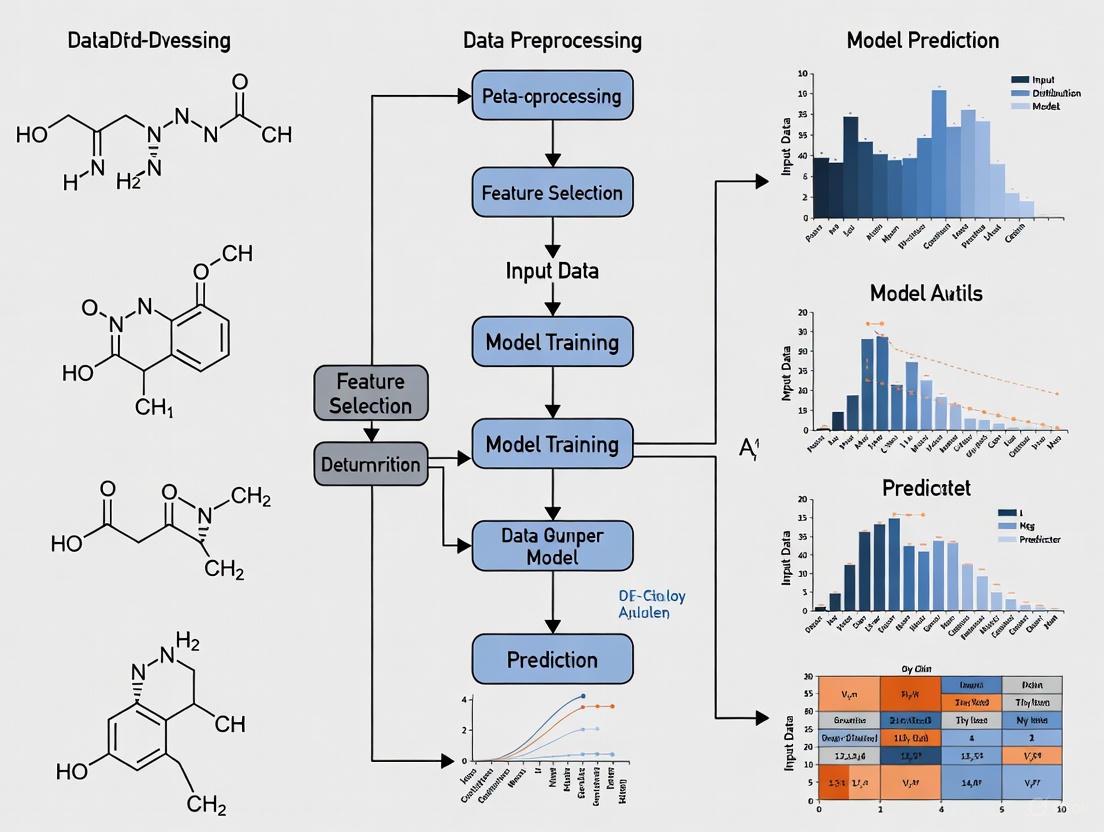

Workflow Diagram: ACS Mitigates Negative Transfer in Multi-Task Learning

Protocol: Robust OOD Evaluation of ML Models

Purpose: To assess the real-world applicability and generalization capability of molecular property prediction models by testing them on out-of-distribution data [3].

Materials:

- A trained ML model (e.g., Random Forest, GNN).

- A molecular dataset with property labels (e.g., from MoleculeNet).

- Computing environment for generating molecular fingerprints (e.g., RDKit for ECFP4).

Procedure:

- Data Splitting Strategy:

- Scaffold Split: Partition the dataset based on the Bemis-Murcko scaffold, ensuring that molecules with the same core scaffold are contained within a single split. This separates the data based on central molecular structures [3].

- Cluster Split (More Challenging): a. Generate ECFP4 fingerprints for all molecules in the dataset. b. Perform K-means clustering on the fingerprint vectors. c. Assign entire clusters to training, validation, and test sets. This ensures that chemically similar molecules are not leaked across splits, creating a tougher OOD test [3].

- Model Training and Evaluation:

- Train the model on the training set derived from one of the splitting strategies above.

- Evaluate the model's performance on the corresponding test set.

- Critical Analysis: Compare the model's performance on the scaffold-split test set versus the cluster-split test set. Note that a strong positive correlation between in-distribution (random split) and OOD performance is typical for scaffold splits (Pearson r ~0.9) but significantly weaker for cluster splits (r ~0.4) [3]. Therefore, model selection based on ID performance alone is unreliable for real-world OOD applications.

Workflow Diagram: Evaluating Model Robustness on OOD Data

The integration of machine learning into molecular property prediction is no longer a niche advantage but a critical necessity for accelerating scientific discovery. The field is rapidly addressing its core challenges through innovative software that lowers accessibility barriers [1], advanced training schemes that conquer data scarcity [2], and rigorous benchmarking that ensures real-world robustness [3]. By adopting the detailed application notes and experimental protocols outlined in this document—from implementing ACS for multi-task learning to conducting rigorous OOD evaluations—researchers and drug development professionals can reliably leverage state-of-the-art ML tools. This will enable them to push the boundaries of molecular design, leading to faster development of new medicines, materials, and sustainable technologies.

The translation of molecular structures into a machine-readable format, known as molecular representation, serves as the foundational step in artificial intelligence (AI)-assisted drug discovery [6]. An effective representation bridges the gap between chemical structures and their biological activity or physicochemical properties, enabling machine learning models to predict molecular behavior, design novel compounds, and navigate the vast chemical space [6] [7]. The choice of representation fundamentally determines the chemical information retained, directly influencing model performance, interpretability, and applicability in real-world drug discovery pipelines [8] [9].

Over years of research, three primary categories of molecular representations have emerged as central to computational chemistry and cheminformatics: string-based representations (notably SMILES), molecular fingerprints, and graph-based models [6] [9]. Each paradigm offers distinct advantages and limitations, making them suitable for different tasks and stages of the drug discovery process. More recently, fragment-based and set-based representations have emerged as innovative approaches that challenge conventional methodologies [8] [10]. This article provides a detailed examination of these core molecular representations, offering structured comparisons, experimental protocols, and visualization to equip researchers with practical knowledge for implementing these techniques in molecular property prediction research.

SMILES and String-Based Representations

Core Principles and Syntax

The Simplified Molecular Input Line Entry System (SMILES) provides a compact and efficient ASCII string representation of a molecule's structure [6] [11]. A SMILES string encodes atoms, bonds, branching, and ring closures through a specific, rule-based syntax. Atoms are represented by their atomic symbols (e.g., C, N, O), though atoms with charges or isotopes are enclosed in square brackets (e.g., [Na+], [13C]) [11]. Bonds are implied between adjacent atoms (denoting single bonds) or explicitly represented with symbols for double (=), triple (#), or aromatic bonds (the latter also indicated by using lowercase atomic symbols, as in aromatic carbon c) [11]. Branches are enclosed in parentheses, and ring closures are indicated by matching numerical labels placed after the two atoms that form the ring [11].

For example, the SMILES string for aspirin is CC(=O)OC1=CC=CC=C1C(=O)O. This string can be broken down into the acetyl group CC(=O)O, the aromatic ring C1=CC=CC=C1, and the carboxylic acid group C(=O)O [11]. While canonical SMILES exists for each molecule, the same structure can have multiple valid SMILES representations depending on the atom ordering, a characteristic known as non-uniqueness [11] [12].

Processing SMILES for Machine Learning

Before SMILES strings can be processed by machine learning models, they must be tokenized and converted into numerical format. Naive character-level tokenization is insufficient as it fails to handle multi-character atoms (e.g., "Cl", "Br") or complex bracketed species correctly [11]. A standard approach uses regular expressions (regex) to split the string into chemically meaningful tokens.

These tokens are subsequently mapped to integer indices or dense vector embeddings (e.g., via an nn.Embedding layer in PyTorch) to be fed into sequence models such as Recurrent Neural Networks (RNNs) or Transformers [11].

Limitations and Advanced String-Based Representations

Classical SMILES presents several challenges for machine learning. Its non-uniqueness can lead to models failing to recognize different strings as the same molecule [12]. Furthermore, SMILES strings are highly sensitive to small syntax errors, and models can generate invalid strings with unmatched parentheses or incorrect atom valences [8] [11]. They also lack explicit spatial information, which can be critical for understanding molecular behavior [11].

To address these issues, several advanced string-based representations have been developed:

- DeepSMILES: A modification that resolves most syntactical mistakes caused by long-term dependencies, though it can still produce semantically invalid strings [8].

- SELFIES (Self-Referencing Embedded Strings): A representation designed where every string is guaranteed to correspond to a valid molecular graph, significantly improving robustness in generative tasks [8].

- t-SMILES (tree-based SMILES): A recently introduced, flexible, fragment-based framework that describes molecules using SMILES-type strings obtained by performing a breadth-first search on a full binary tree formed from a fragmented molecular graph [8]. This approach demonstrates higher novelty scores and outperforms classical SMILES, DeepSMILES, and SELFIES in various goal-directed tasks [8].

Molecular Fingerprints

Definition, Categories, and Common Types

Molecular fingerprints are fixed-length vectors that encode the presence or frequency of specific structural patterns or substructures within a molecule [6] [13]. They are widely used in tasks such as similarity searching, clustering, and Quantitative Structure-Activity Relationship (QSAR) modeling due to their computational efficiency [6] [13]. Fingerprints can be broadly categorized as follows:

- Path-Based Fingerprints: Generate features by analyzing paths through the molecular graph. Examples include Depth First Search (DFS) and Atom Pair (AP) fingerprints [13].

- Circular Fingerprints: Also known as topological fingerprints, these dynamically generate fragments from the molecular graph by iteratively considering each atom and its neighbors within a specified radius. The most widely used algorithm is the Extended-Connectivity Fingerprint (ECFP), often considered a de facto standard for drug-like molecules [13] [9]. Related versions include Functional Class Fingerprints (FCFP), which use pharmacophore-based atom types [13].

- Substructure-Based Fingerprints: Use a predefined dictionary of structural fragments (e.g., MACCS keys or PubChem fingerprints), where each bit indicates the presence or absence of a specific substructure [13].

- Pharmacophore Fingerprints: Encode the presence of pharmacophoric features (e.g., hydrogen bond donors/acceptors) and the spatial relationships between them, focusing on molecular interaction capabilities [13].

- String-Based Fingerprints: Operate directly on SMILES strings by fragmenting them into fixed-size substrings, such as LINGO and MinHashed fingerprints (MHFP) [13].

Table 1: Categories and Characteristics of Common Molecular Fingerprints

| Fingerprint Category | Representative Examples | Information Encoded | Typical Vector Length |

|---|---|---|---|

| Circular/Topological | ECFP, FCFP, Morgan | Local atom environments & connectivity | 1024, 2048 |

| Substructure/Structural Keys | MACCS, PubChem | Presence of predefined substructures | 166 (MACCS), 881 (PubChem) |

| Path-Based | Atom Pair, Topological | Linear paths through molecular graph | 1024+ |

| Pharmacophore | PH2, PH3 | 3D pharmacophoric features & distances | Varies |

| String-Based | MHFP, LINGO | Substrings from SMILES representation | 1024+ |

Performance and Selection Guidelines

The effectiveness of a fingerprint is highly context-dependent and can vary significantly based on the chemical space and the specific prediction task [13] [14]. For instance, while ECFP is a default choice for drug-like compounds, other fingerprints may match or outperform it when working with natural products, which have distinct structural motifs like a higher fraction of sp³-hybridized carbons and multiple stereocenters [13].

A comprehensive benchmark study evaluating 20 different fingerprint types on over 100,000 unique natural products revealed that no single fingerprint consistently outperformed all others across 12 different bioactivity prediction tasks [13]. This finding underscores the importance of evaluating multiple fingerprinting algorithms for optimal performance on a given dataset.

Table 2: Fingerprint Performance on Natural Product Bioactivity Prediction (Adapted from [13]) This table summarizes the performance ranking (1=best) of selected fingerprints across multiple classification tasks. Lower average rank indicates better overall performance.

| Fingerprint | Average Rank (Across 12 Tasks) | Notable Strengths |

|---|---|---|

| ECFP4 | ~3.5 | Good balance of performance and interpretability |

| Patterned MACCS | ~4.0 | Effective for scaffold hopping |

| PH2 (Pharmacophore Pairs) | ~4.5 | Captures interaction features |

| Avalon | ~5.0 | Robust on diverse structures |

| MAP4 (MinHashed Atom Pair) | ~5.5 | Captures larger substructures |

For general-purpose applications with drug-like molecules, ECFP (radius 2 or 3, vector size 1024 or 2048) is a robust starting point [9]. When dealing with specialized chemical spaces (e.g., natural products, polymers) or specific objectives (e.g., scaffold hopping), exploring pharmacophore-based, path-based, or data-driven fingerprints is highly recommended [6] [13].

Graph-Based and Set-Based Representations

Molecular Graphs as Intuitive Representations

Intuitively, small molecules can be represented as graphs, where atoms constitute the nodes and bonds constitute the edges [7] [9]. Formally, a molecular graph is defined as ( G = (V, E) ), where ( V ) represents the set of nodes (atoms) and ( E ) represents the set of edges (bonds) [9]. This representation can be enriched with node feature matrices (encoding atom type, charge, hybridization, etc.) and edge feature matrices (encoding bond type, conjugation, stereochemistry, etc.) [7] [9]. An adjacency matrix ( A ) is commonly used to represent the connections between nodes [9].

Graph Neural Networks (GNNs) are the dominant architecture for learning from this representation. They operate through a message-passing mechanism, where nodes iteratively aggregate information from their neighbors to build meaningful representations that capture both local atomic environments and the global molecular topology [7]. This makes GNNs particularly powerful for capturing complex structure-property relationships that may be challenging for other representations.

Molecular Set Representation Learning

A recent innovation challenges the necessity of explicit bonds in molecular representations. Molecular Set Representation Learning (MSR) posits that representing a molecule as a set (formally, a multiset) of atoms may better capture the true nature of molecules, especially given the fuzzy definition of bonds in conjugated systems and the importance of dynamic intermolecular interactions [10].

In this framework, a molecule is represented as a set of k-dimensional vectors, where each vector encodes the invariants of a single atom (e.g., atomic number, degree, formal charge), similar to the initial atom identifiers used in ECFP generation (radius zero) [10]. This representation contains no explicit connectivity information. Specialized neural network architectures like DeepSets or Set-Transformer are required to handle this unordered, variable-sized input while maintaining permutation invariance [10].

Remarkably, the simplest set-based model (MSR1) that uses only atom invariants without any bond information has been shown to achieve performance competitive with state-of-the-art GNNs on several benchmark datasets [10]. This suggests that for certain tasks, explicit graph topology might be less critical than previously assumed, or that topological information is implicitly encoded within the atom invariants.

Comparative Analysis and Application Protocols

Systematic Comparison of Representation Performance

A large-scale evaluation of molecular property prediction models provides critical insights into the practical performance of different representations. One systematic study trained over 62,000 models on various datasets, including MoleculeNet benchmarks and opioids-related datasets, to investigate the predictive power of fixed representations, SMILES sequences, and molecular graphs [9].

The findings indicate that representation learning models (e.g., GNNs, SMILES-based Transformers) do not consistently outperform models using fixed fingerprints, especially on smaller datasets [9]. The performance of advanced models is highly dependent on dataset size, and they often exhibit limited gains on traditional benchmarks, suggesting that these benchmarks may not fully leverage the strengths of complex representation learning architectures [9] [10]. Furthermore, the presence of activity cliffs—where small structural changes lead to large property changes—can significantly challenge all model types [9].

Table 3: Strengths, Weaknesses, and Ideal Use Cases of Core Representations

| Representation | Key Advantages | Key Limitations | Ideal Application Context |

|---|---|---|---|

| SMILES/Strings | Compact; direct for sequence models; fast processing [11]. | Non-unique; syntax validity; limited spatial info [8] [11]. | Ligand-based screening; data augmentation with randomized SMILES [12]. |

| Molecular Fingerprints | Fast similarity search; interpretable (sometimes); computationally efficient [6] [13]. | Predefined features may miss relevant chemistry [13]. | High-throughput virtual screening; QSAR with limited data [6] [9]. |

| Molecular Graphs | Natural structure encoding; captures topology [7]. | Memory intensive; bounded expressive power by WL-test [8] [7]. | Property prediction with sufficient data; structure-aware tasks [7] [9]. |

| Molecular Sets | No bond definition needed; simple input; competitive performance [10]. | Newer, less established; requires specialized architectures [10]. | Complex systems (e.g., conjugated bonds); promising alternative to GNNs [10]. |

Protocol: Benchmarking Molecular Representations for Property Prediction

Objective: To systematically evaluate and compare the performance of different molecular representations (SMILES, Fingerprints, Graphs) on a specific molecular property prediction task.

Materials and Reagents (The Software Toolkit):

- Data Source: A curated dataset (e.g., from ChEMBL, MoleculeNet) with molecular structures (as SMILES) and associated property/activity labels.

- Cheminformatics Library: RDKit (for structure standardization, fingerprint calculation, and graph generation) [13] [9].

- Machine Learning Frameworks: PyTorch or TensorFlow.

- Specialized Libraries:

Experimental Workflow:

Data Preparation and Curation:

- Standardize all molecular structures using RDKit (e.g., neutralize charges, remove salts).

- Generate canonical SMILES to ensure a consistent representation.

- Split the dataset into training, validation, and test sets using a scaffold split to evaluate the model's ability to generalize to novel chemotypes, which is more challenging and clinically relevant than a random split [9] [10].

Feature Generation:

- SMILES Representation: Tokenize the canonical SMILES strings using a regex-based tokenizer. Build a vocabulary and create an embedding layer.

- Fingerprint Representation: Calculate selected fingerprints (e.g., ECFP4, MACCS, Morgan) for all molecules using RDKit. Convert them into fixed-length bit or count vectors.

- Graph Representation: Use RDKit to convert each SMILES string into a graph object. Define node features (e.g., atom type, degree, hybridization) and edge features (e.g., bond type). Represent the graph as adjacency matrices and feature matrices compatible with GNN libraries.

- Set Representation: For each molecule, create a set of vectors where each vector represents the invariants of a single non-hydrogen atom (e.g., atomic number, degree, formal charge), without any connectivity information [10].

Model Training and Evaluation:

- For each representation, train a corresponding model architecture:

- SMILES: A Transformer encoder (e.g., a BERT-style model) or an RNN.

- Fingerprints: A simple Multilayer Perceptron (MLP) or a CNN.

- Graphs: A GNN such as a Graph Isomorphism Network (GIN) or a Message-Passing Neural Network (MPNN).

- Sets: A Set-Transformer or DeepSets model.

- Train all models on the same data splits.

- Evaluate models on the test set using task-appropriate metrics (e.g., ROC-AUC for classification, RMSE for regression). Perform multiple runs with different random seeds to ensure statistical significance of the results.

- For each representation, train a corresponding model architecture:

Expected Output: A comparative performance table and analysis highlighting which representation(s) are most effective for the specific dataset and task, providing actionable insights for future modeling efforts.

Visual Guide to Molecular Representations and Applications

The following diagrams illustrate the logical relationships between different molecular representations and their typical applications in a drug discovery pipeline.

Table 4: Key Software and Computational "Reagents" for Molecular Representation Research

| Tool/Resource Name | Type/Category | Primary Function in Research |

|---|---|---|

| RDKit | Cheminformatics Library | Core structure manipulation, SMILES parsing, fingerprint calculation (ECFP, Morgan), and molecular graph generation [13] [9]. |

| PyTorch Geometric | Machine Learning Library | Provides implementations of numerous Graph Neural Networks (GNNs) and utilities for handling graph-structured data [7]. |

| Hugging Face Transformers | Machine Learning Library | Offers pre-trained Transformer models and easy-to-use frameworks for fine-tuning on SMILES data for classification or generation [6] [11]. |

| Deep Graph Library (DGL) | Machine Learning Library | An alternative library for building and training GNN models [7]. |

| t-SMILES Framework | Specialized Representation | Provides code algorithms (TSSA, TSDY, TSID) for generating fragment-based molecular representations to enhance model performance and novelty [8]. |

| Molecular Set Representation Architectures | Specialized Model Code | Implements set-based learning models (e.g., MSR1, MSR2, SR-GINE) as an alternative to graph-based approaches [10]. |

| ChemBERTa, MolBERT | Pre-trained Language Model | Provides transfer learning for SMILES-based tasks, having been pre-trained on large chemical corpora [11] [12]. |

Navigating Dataset Bias, Size, and Chemical Space Coverage

Molecular property prediction is a critical task in drug discovery, where the goal is to build machine learning models that can accurately map a chemical structure to a target property. The real-world utility of these models is heavily influenced by three interconnected factors: the size of the training dataset, the biases inherent within the data, and the coverage of the chemical space. A model trained on a small, biased dataset that poorly represents the vastness of chemical space will inevitably fail to generalize to novel compounds, potentially misguiding research directions and wasting valuable resources. This Application Note provides a structured overview of these challenges, supported by quantitative data from recent literature, and offers detailed protocols to help researchers navigate these complexities effectively.

Key Challenges in Molecular Property Datasets

The Critical Role of Dataset Size

The performance of molecular property prediction models is profoundly dependent on the volume of data available for training. A comprehensive systematic study revealed that representation learning models, including sophisticated graph neural networks, often exhibit limited performance compared to models using fixed molecular representations when dataset size is insufficient. The study, which trained over 62,000 models, concluded that dataset size is essential for representation learning models to excel [9]. The relationship between model complexity and data requirement is inverse; simpler models can converge with limited data, while complex deep learning models demand exponentially more data to learn robust representations due to their high parameter count [15].

Table 1: Heuristics for Estimating Data Requirements in Machine Learning

| Method | Description | Use Case & Limitations |

|---|---|---|

| 10 Times Rule [16] [17] [15] | Requires at least 10 data examples for each feature or parameter in the model. | Useful as a starting heuristic for simpler models; less applicable to large deep learning models with millions of parameters. |

| Factor of Model Parameters [15] | Budgets dataset size as a function of the number of trainable model parameters (e.g., 10-20 samples per parameter). | More directly encodes model complexity into data needs; a suggested formulation for neural networks. |

| Statistical Power Analysis [15] | A principled statistical method to estimate sample size based on effect size, error tolerance, and population variance. | Provides a quantitative formalism to translate performance criteria into data volume requirements. |

Pervasive Dataset Biases and Inconsistencies

Data heterogeneity and distributional misalignments pose critical challenges, often compromising predictive accuracy. In preclinical safety modeling, significant misalignments and inconsistent property annotations have been identified between gold-standard data sources and popular benchmarks like the Therapeutic Data Commons (TDC) [18]. These discrepancies arise from differences in experimental conditions, measurement protocols, and chemical space coverage. Naive integration of such heterogeneous data without proper assessment can introduce noise and degrade model performance, highlighting that data standardization alone does not guarantee improvement [18]. Furthermore, molecular datasets often suffer from severe class imbalance, where certain property values or structural classes are over-represented. This can lead to models that are biased toward predicting frequent classes, failing to generalize to the long tail of rare but potentially valuable compounds [19].

The Problem of Incomplete Chemical Space Coverage

The ultimate goal of a predictive model is to make accurate predictions for novel, potentially synthetically accessible compounds. A model's ability to do this is tied to the diversity of its training data. If the training set covers only a narrow region of chemical space, the model's applicability domain will be correspondingly limited. Techniques for molecular generation and optimization, such as the CSearch method, rely on broad coverage to effectively explore and identify promising candidates. CSearch uses a global optimization algorithm with fragment-based virtual synthesis to efficiently explore synthesizable, drug-like chemical space, generating novel compounds optimized for a given objective function with high computational efficiency [20]. Ensuring that training data supports this kind of exploration is paramount.

Table 2: Summary of Key Studies on Data Challenges in Molecular Property Prediction

| Study Focus | Key Findings | Impact on Model Performance |

|---|---|---|

| Systematic Model Evaluation [9] | Trained 62,820 models; representation learning models show limited performance without sufficient data. | Highlights that dataset size is a foundational element; large-scale data is crucial for advanced models to outperform simple baselines. |

| Data Consistency Assessment [18] | Found significant misalignments between benchmark and gold-standard ADME datasets. | Naive data integration can degrade performance; rigorous pre-modeling consistency checks are vital for reliable predictions. |

| Chemical Space Search (CSearch) [20] | Achieved 300-400x computational efficiency over virtual library screening for generating optimized compounds. | Demonstrates the power of informed exploration of chemical space; generated molecules were highly optimized, synthesizable, and novel. |

| Few-Shot Learning [21] | A meta-learning approach improves predictive accuracy with limited training samples. | Provides a methodological solution for low-data regimes by effectively leveraging shared and property-specific molecular knowledge. |

Experimental Protocols for Data Handling and Evaluation

Protocol: Data Consistency Assessment (DCA) Prior to Modeling

Purpose: To identify and address dataset misalignments, outliers, and batch effects before model training to ensure robust and generalizable predictive models [18]. Materials: AssayInspector software package, Python environment (with Scipy, Plotly, Matplotlib, Seaborn), molecular datasets in SMILES format. Procedure:

- Data Input: Compile and load molecular datasets from different sources (e.g., public benchmarks, in-house data, literature-curated gold standards) into AssayInspector.

- Descriptive Statistics Generation: Execute AssayInspector to generate a summary report containing:

- Number of molecules and endpoint statistics (mean, standard deviation, quartiles for regression; class counts for classification).

- Statistical comparison of endpoint distributions using the two-sample Kolmogorov–Smirnov test (regression) or Chi-square test (classification).

- Visualization and Inspection: Generate and analyze key plots:

- Property Distribution Plots: Visually compare the distribution of the target property (e.g., half-life, clearance) across all datasets.

- Chemical Space Plots: Use the built-in UMAP projection to visualize the coverage and overlap of different datasets in the molecular descriptor space.

- Dataset Discrepancy Plots: Identify molecules that appear in multiple datasets and compare their property annotations for inconsistencies.

- Insight Report Analysis: Review the automated insight report from AssayInspector, which flags:

- Datasets with significantly different endpoint distributions.

- Conflicting annotations for shared molecules.

- Outliers and out-of-range data points.

- Data Curation Decision: Based on the DCA report, decide to either exclude highly inconsistent data sources, apply corrective transformations, or proceed with integration while acknowledging potential uncertainty.

Diagram 1: Data Consistency Assessment Workflow

Protocol: Few-Shot Molecular Property Prediction via Heterogeneous Meta-Learning

Purpose: To accurately predict molecular properties in challenging low-data regimes by effectively extracting and integrating both property-shared and property-specific molecular features [21]. Materials: Molecular datasets (e.g., from MoleculeNet), Python, deep learning framework (e.g., PyTorch, TensorFlow), graph neural network libraries. Procedure:

- Feature Extraction:

- Property-Specific Knowledge: Use a Graph Isomorphism Network (GIN) or similar pre-trained GNN to process the molecular graph. This encoder captures contextual information and specific substructures relevant to individual properties.

- Property-Shared Knowledge: Use a self-attention encoder on the molecular features to extract fundamental structures and commonalities shared across different properties.

- Relational Learning: Based on the property-shared features, infer molecular relations using an adaptive relational learning module to understand the latent structure of the chemical space in the low-data regime.

- Meta-Training (Heterogeneous Strategy):

- Inner Loop: For each individual few-shot learning task, update the parameters of the property-specific feature encoder. This allows the model to quickly adapt to new tasks with limited data.

- Outer Loop: Jointly update all model parameters (including the property-shared encoder) across all tasks. This consolidates general, transferable knowledge.

- Alignment and Prediction: The final molecular embedding is improved by aligning it with the property label in the property-specific classifier for the final prediction.

Diagram 2: Heterogeneous Meta-Learning Architecture

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Software Tools and Datasets for Molecular Property Prediction

| Tool / Resource | Type | Function & Application |

|---|---|---|

| RDKit [9] [18] [20] | Cheminformatics Library | Open-source toolkit for calculating molecular descriptors (e.g., 2D, 3D), generating fingerprints (ECFP, Morgan), and handling SMILES strings. |

| AssayInspector [18] | Data Consistency Tool | Python package for identifying dataset misalignments, outliers, and batch effects through statistical tests and visualizations before model training. |

| Therapeutic Data Commons (TDC) [9] [18] | Data Benchmark Platform | Provides standardized benchmarks and curated datasets for molecular property prediction, including ADME parameters. |

| CSearch [20] | Chemical Space Search Tool | Global optimization algorithm that uses virtual synthesis and a pre-trained objective function to efficiently generate synthesizable, optimized compounds. |

| ECFP/Morgan Fingerprints [9] [20] | Molecular Representation | Circular fingerprints that encode molecular substructures, serving as a robust fixed representation for traditional ML models. |

| Graph Neural Networks (GNNs) [9] [20] [21] | Model Architecture | Deep learning models that operate directly on molecular graphs to learn task-specific representations, powerful with sufficient data. |

| Meta-Learning Algorithms [21] | Learning Framework | Enables models to learn from few examples by leveraging knowledge from related tasks, ideal for low-data property prediction. |

Understanding the Applicability Domain for Reliable Predictions

In the field of machine learning for molecular property prediction, the Applicability Domain (AD) of a model defines the specific region of chemical space—characterized by model descriptors and modeled response—within which the model's predictions are considered reliable [22] [23]. The fundamental principle is that a Quantitative Structure-Activity Relationship (QSAR) or other predictive model is not universally applicable; its reliability depends on how similar a new query compound is to the chemicals used in the model's training set [24]. Knowledge of the domain of applicability is therefore essential for ensuring accurate and reliable model predictions and is a cornerstone of trustworthy artificial intelligence (AI) in drug discovery [25] [26].

The need for a defined applicability domain is formally recognized in international regulatory guidelines. It constitutes the third principle of the OECD (Organization for Economic Co-operation and Development) validation principles for QSAR models, which states that a model must have "a defined domain of applicability" [23]. This provides a crucial framework for deciding when a model's output can be trusted for decision-making, particularly in a regulatory context or when prioritizing compounds for synthesis in a drug discovery project [27] [28].

The Critical Importance of AD in Molecular Property Prediction

The core challenge that the applicability domain addresses is the performance degradation machine learning models experience when predicting on data that falls outside their domain of applicability [25]. This degradation can manifest as high prediction errors (large residual magnitudes) and/or unreliable uncertainty estimates [25]. Without a method to estimate the model's domain, a researcher has no a priori knowledge of whether a prediction for a new test molecule is reliable.

In practical terms, the error of QSAR models has been shown to increase robustly as the distance (e.g., Tanimoto distance on Morgan fingerprints) to the nearest training set molecule increases [29]. This observation aligns with the molecular similarity principle, which posits that molecules similar to known active ligands are likely active themselves [29]. Consequently, defining an applicability domain acts as a quality control filter, restricting predictions to those molecules for which the model is sufficiently accurate [29].

Furthermore, the concept is becoming increasingly important for generative artificial intelligence in drug design. For generative models, the AD helps constrain the algorithm to produce structures in drug-like portions of the chemical space, preventing the generation of unrealistic, unstable, or uninteresting molecules [27].

Methodological Approaches for Defining AD

Several methodological approaches have been developed to define the applicability domain of a predictive model. These methods can be broadly classified into categories based on their underlying principles and can be applied as universal methods or as approaches dependent on a specific machine learning algorithm [24].

Table 1: Overview of Key Applicability Domain Definition Methods

| Method Category | Description | Key Examples |

|---|---|---|

| Distance-Based Methods | Measures the distance of a query compound from the training set distribution in the descriptor space. | - Leverage: Based on Mahalanobis distance to the training set center [24] [28].- k-Nearest Neighbors (k-NN): Uses distance to the k-nearest training set compounds [24]. |

| Range-Based Methods | Defines the AD as the multidimensional space enclosed by the minimum and maximum values of the descriptors in the training set. | - Bounding Box: A hyper-rectangle defined by the extreme descriptor values [24]. |

| Geometrical Methods | Defines a boundary that encompasses the training data in the feature space. | - Convex Hull: A geometric boundary that contains all training points [25]. |

| Density-Based Methods | Estimates the probability density of the training data in the feature space. | - Kernel Density Estimation (KDE): Provides a continuous measure of likelihood for a query point [25]. |

| Model-Specific Methods | Leverages the internal mechanics of the ML algorithm to estimate prediction reliability. | - One-Class SVM: Identifies a boundary around the training data [24].- Conformal Prediction: A framework that provides prediction intervals/sets with guaranteed validity [30]. |

A recent, general approach for determining the AD employs Kernel Density Estimation (KDE), which assesses the distance between data in feature space using density estimates [25]. This method offers advantages including natural accounting for data sparsity and the ability to handle arbitrarily complex geometries of ID regions without being limited to a single, pre-defined shape like a convex hull [25].

For kernel-based models (e.g., using Support Vector Machines), specialized AD methods have been developed that rely solely on the kernel similarity between structures, as traditional vectorial-descriptor approaches are not directly applicable [31].

Experimental Protocols for AD Determination

This section provides a detailed, step-by-step protocol for implementing two common AD methods: the Standardization Approach (a distance-based method) and the Conformal Prediction framework.

Protocol 1: Applicability Domain using the Standardization Approach

This is a simple, computationally efficient universal method for identifying outliers and compounds outside the AD [23].

Materials and Software:

- A dataset with calculated molecular descriptors for both training and test sets.

- Statistical software capable of basic calculations (e.g., MS Excel, Python, R).

Procedure:

- Descriptor Standardization: For each descriptor ( i ) used in the model, standardize the values for all compounds (training and test) using the mean (( \bar{Xi} )) and standard deviation (( \sigma{Xi} )) of the training set only: ( Ski = (Xki - \bar{Xi}) / \sigma{Xi} ) where ( Ski ) is the standardized descriptor ( i ) for compound ( k ), and ( Xki ) is the original descriptor value [23].

Calculate Overall Standardization Value: For each compound ( k ), compute the overall standardization value (( Sk )) which is the maximum of the absolute values of its standardized descriptors: ( Sk = \max( |S{k1}|, |S{k2}|, ..., |S_{kn}| ) ) [23].

Determine Threshold: A commonly used threshold for the maximum absolute value of the standardized descriptors is 2.5. This means a descriptor value that is more than 2.5 standard deviations from the training set mean is considered an outlier [23].

Define AD and Identify Outliers:

- Training Set: Compounds with ( S_k > 2.5 ) are considered X-outliers and may be removed to refine the model.

- Test Set: Compounds with ( S_k > 2.5 ) are considered outside the Applicability Domain, and their predictions should be treated as unreliable [23].

Protocol 2: Applicability Domain using Conformal Prediction

Conformal Prediction (CP) is a powerful framework that provides prediction intervals for regression or prediction sets for classification, along with a statistical guarantee of reliability [30].

Materials and Software:

- A trained machine learning model (e.g., Random Forest, Support Vector Machine).

- A proper training set, a calibration set, and a test set.

- Programming environment with CP libraries (e.g., in Python).

Procedure:

- Data Splitting: Split the initial data into three parts:

- A proper training set to train the underlying ML model.

- A calibration set to calculate nonconformity scores.

- An external test set for final evaluation [30].

Train the Model: Train the chosen ML predictor on the proper training set.

Calculate Nonconformity Scores: Use the trained model to predict the calibration set. For each calibration compound, compute a nonconformity score, which measures how different the prediction is from the actual value. For regression, a common nonconformity measure is the absolute prediction error [30].

Generate Prediction Intervals: For a new test compound with a specified significance level (( \alpha ), e.g., 0.05 for 95% confidence):

- Obtain the point prediction from the model.

- The prediction interval is constructed as:

[point_prediction - s, point_prediction + s], wheresis a percentile of the nonconformity scores from the calibration set [30].

Addressing Non-Exchangeability (Advanced): If the test data is known to be from a different chemical space (non-exchangeable with the original calibration set), the model's validity may drop. To restore reliability, a recalibration strategy can be employed without retraining the model. This involves replacing the original calibration set with a small subset of data from the new target domain, which has been experimentally characterized, thereby making the calibration and test data more exchangeable [30].

Workflow and Logical Relationships

The following diagram illustrates the general workflow for developing a QSAR model with an Applicability Domain, integrating the key concepts and protocols described in this document.

Figure 1: Workflow for QSAR Model Development with Applicability Domain Assessment. The diagram outlines the key steps, from data preparation to making reliable predictions on new compounds, highlighting the two primary AD protocols.

This section details key computational tools and resources essential for implementing AD in molecular property prediction research.

Table 2: Essential Computational Tools for Applicability Domain Research

| Tool/Resource Name | Type/Function | Brief Description of Role in AD Determination |

|---|---|---|

| RDKit | Open-Source Cheminformatics | Used to calculate molecular descriptors (e.g., ECFP fingerprints) and physicochemical properties, which form the basis for many AD methods [27]. |

| Standardization App | Standalone Software | A dedicated tool for implementing the standardization approach for AD, available at http://dtclab.webs.com/software-tools [23]. |

| KNIME | Workflow Management System | Provides nodes (e.g., Enalos Domain nodes) to compute AD based on Euclidean distances or Leverages within a visual, no-code/low-code environment [23]. |

| Conformal Prediction Libraries | Programming Library | Libraries in Python or R (e.g., nonconformist) that implement the conformal prediction framework for uncertainty quantification and reliable AD definition [30]. |

| Applicability Domain using Standardization | Web Application | An open-access application that allows users to identify outliers and test set compounds outside the AD using the descriptor pool of training and test sets [23]. |

Integrating a well-defined applicability domain is not an optional step but a fundamental requirement for the reliable application of machine learning models in molecular property prediction. It directly addresses the critical need for estimating prediction uncertainty, thereby enabling researchers and drug developers to distinguish between interpolative predictions, which are generally trustworthy, and extrapolative predictions, which require caution. As the field progresses with more complex models and generative AI, robust AD methodologies, such as kernel density estimation and conformal prediction, will be indispensable for building trust, ensuring reproducibility, and making informed decisions in drug discovery pipelines.

In the field of machine learning (ML) for molecular property prediction, understanding and accurately modeling the key categories of molecular properties is foundational to accelerating drug discovery and materials science. Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties, alongside fundamental physicochemical profiles, are critical determinants of a compound's viability as a therapeutic agent [32] [33]. undesirable ADMET properties account for approximately 40% of drug candidate failures, and toxicity alone contributes to another 30% of failures, highlighting the necessity for early and accurate assessment [34]. This document details the key property categories, provides structured data for comparison, outlines experimental and computational protocols for their determination, and visualizes the core workflows integrating these elements into ML-driven research.

Key Property Categories and Quantitative Data

Molecular properties can be broadly categorized into ADMET properties and physicochemical properties. The tables below summarize the specific endpoints and typical values of interest for researchers.

Table 1: Core ADMET Property Endpoints and Descriptions

| Property Category | Specific Endpoint | Description & Research Significance |

|---|---|---|

| Absorption | Bioavailability | Fraction of administered drug reaching systemic circulation; crucial for dosing [33]. |

| Distribution | Volume of Distribution (Vd) | Predicts drug concentration in plasma versus tissues; determines loading dose [33]. |

| Distribution | Blood-Brain Barrier (BBB) Penetration | Classifies if a compound can cross the BBB, vital for CNS-targeting drugs [34] [35]. |

| Metabolism | Cytochrome P450 (CYP) Inhibition (e.g., 2C9, 2C19, 2D6, 3A4) | Predicts drug-drug interactions by assessing inhibition of key metabolic enzymes [36]. |

| Excretion | Renal Clearance | Primary route of elimination for many drugs; critical for patients with renal impairment [33]. |

| Toxicity | hERG Inhibition | Predicts potential for cardiotoxicity (long QT syndrome) [34]. |

| Toxicity | Hepatotoxicity | Predicts drug-induced liver injury [34]. |

| Toxicity | Ames Test | Predicts mutagenic potential (genotoxicity) [34]. |

Table 2: Fundamental Physicochemical and Medicinal Chemistry Properties

| Property Category | Specific Property | Typical Target Range/Value & Influence |

|---|---|---|

| Lipophilicity | Log P (Partition coefficient) | Optimal range ~1-3; impacts membrane permeability and solubility [34]. |

| Solubility | Aqueous Solubility (Log S) | High aqueous solubility is generally desirable for good absorption [36]. |

| Polar Surface Area | Topological Polar Surface Area (TPSA) | < 140 Ų is often associated with good cell membrane permeability [34]. |

| Drug-likeness | Lipinski's Rule of Five | A predictive model for assessing the likelihood of a compound being an orally active drug [34]. |

| Structural Alerts | Toxicophore Presence | Identifies substructures associated with toxicity (e.g., mutagenic aromatic amines) [34] [37]. |

| Electrical Property | Dielectric Constant (ε) | For energy materials like immersion coolants, a low ε is often targeted (e.g., ~3-7) [38]. |

Modern computational platforms like ADMETlab 3.0 cover a wide array of these properties, offering predictions for 119 endpoints, including 21 physicochemical properties, 19 medicinal chemistry properties, 34 ADME endpoints, and 36 toxicity endpoints [34] [37].

Experimental and Computational Protocols

Protocol: Predicting ADMET Properties using a Graph Neural Network (GNN)

This protocol details the use of an attention-based GNN for molecular property prediction, using only molecular structure as input [36].

1. Molecular Graph Representation

- Input: Obtain the Simplified Molecular Input Line Entry System (SMILES) string for the compound of interest [36].

- Graph Construction: Convert the SMILES string into a molecular graph ( G = (V, E) ), where:

- ( V ) represents the set of nodes (atoms).

- ( E ) represents the set of edges (bonds).

- Adjacency Matrices: Generate multiple adjacency matrices to represent the entire molecule and specific substructures:

- ( A1 ): All bonds.

- ( A2 ): Single bonds only.

- ( A3 ): Double bonds only.

- ( A4 ): Triple bonds only.

- ( A_5 ): Aromatic bonds only. All matrices are zero-padded to a consistent dimension ( N \times N ), where ( N ) is the maximum number of atoms considered [36].

- Node Feature Matrix (( H )): For each atom (node), create a feature vector using one-hot encoding for the following atomic properties, then concatenate them into a full matrix [36]:

- Atom type (atomic number)

- Formal charge

- Hybridization type

- Whether the atom is in a ring

- Whether the atom is in an aromatic ring

- Chirality

2. Model Architecture and Training

- Architecture: Employ a Graph Neural Network with an attention mechanism. The model processes the multiple adjacency matrices and the node feature matrix [36].

- Learning Task: The GNN is trained to perform either:

- Regression (e.g., for predicting lipophilicity (Log P) or aqueous solubility).

- Classification (e.g., for predicting CYP450 enzyme inhibition).

- Training & Validation: Implement a five-fold cross-validation (CV) strategy on large, publicly available datasets (e.g., >4,200 compounds) to ensure model robustness and prevent overfitting [36].

3. Prediction and Output

- The trained model takes the molecular graph input and outputs a predicted value (for regression) or a classification probability (e.g., "CYP3A4 inhibitor" or "non-inhibitor") [36].

Protocol: High-Accuracy Physical Property Prediction using Pre-Trained Models

This protocol describes using a pre-trained molecular representation learning model, fine-tuned for specific bulk physical properties [38].

1. Pre-Trained Model Utilization

- Model Selection: Utilize a pre-trained model like Org-Mol, which is based on the Uni-Mol 3D transformer architecture and has been pre-trained on 60 million semi-empirically optimized small organic molecule structures [38].

- Input: Use the 3D molecular coordinates of the compound as the sole input.

2. Fine-Tuning for Specific Properties

- Data Collection: For the target property (e.g., dielectric constant, glass transition temperature (T_g)), collect a high-quality experimental dataset from public sources or in-house measurements [38].

- Transfer Learning: Fine-tune the pre-trained Org-Mol model on the collected property-specific dataset. This process adapts the general-purpose molecular representations to the specific structure-property relationship of interest [38].

3. High-Throughput Screening

- Deployment: Apply the fine-tuned model to a large virtual library of molecules (e.g., millions of ester compounds) to predict the target property for all candidates [38].

- Validation: Select top-performing candidates from the in silico screening for experimental synthesis and validation to confirm model predictions [38].

Workflow and Relationship Visualizations

The following diagrams illustrate the logical relationships and experimental workflows described in the protocols.

Molecular Graph ML Workflow

Pre-trained Model Fine-tuning

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

Table 3: Key Resources for Molecular Property Prediction Research

| Tool / Resource Name | Type | Primary Function in Research |

|---|---|---|

| ADMETlab 3.0 | Web Server / Computational Platform | Provides a comprehensive platform for predicting over 119 ADMET, physicochemical, and medicinal chemistry endpoints from molecular structure [34] [37]. |

| Directed Message Passing Neural Network (DMPNN) | Algorithm / Model Architecture | A graph neural network that learns molecular encodings via bond-centered convolutions, often combined with molecular descriptors for enhanced performance in property prediction [34]. |

| Chemprop | Software Package | An implementation of DMPNN specifically designed for molecular property prediction, supporting multi-task learning [34]. |

| Org-Mol | Pre-trained Model | A 3D transformer-based model pre-trained on millions of organic molecules, which can be fine-tuned to accurately predict bulk physical properties from single-molecule inputs [38]. |

| RDKit | Open-Source Cheminformatics Library | Used to compute 2D molecular descriptors, generate molecular graphs from SMILES, and perform other essential cheminformatics tasks [34] [36]. |

| Therapeutics Data Commons (TDC) | Data Platform / Benchmark | Provides curated datasets and benchmarking tools for fair comparison of models on drug discovery tasks, including ADMET property prediction [36]. |

| Low-Rank Adaptation (LoRA) | Model Fine-tuning Technique | A parameter-efficient method to adapt large chemical language models (e.g., ChemBERTa) for specific property prediction tasks, drastically reducing computational cost [35]. |

| Adaptive Checkpointing with Specialization (ACS) | Training Scheme | A multi-task GNN training method that mitigates "negative transfer" in imbalanced datasets, enabling reliable prediction in ultra-low data regimes [2]. |

Advanced ML Architectures and Real-World Applications in Biomedicine

Molecular property prediction is a critical task in drug discovery and materials science, where accurately forecasting properties like toxicity, solubility, or bioactivity can significantly accelerate research and reduce costs. Within machine learning for molecular property prediction, Graph Neural Networks (GNNs) have emerged as powerful tools that directly learn from the natural graph representation of molecules, where atoms constitute nodes and chemical bonds form edges. Among GNN architectures, Message Passing Neural Networks (MPNNs), Graph Convolutional Networks (GCNs), and Graph Attention Networks (GATs) represent foundational frameworks that have driven substantial progress in the field. These models learn rich molecular representations by aggregating and transforming information from atomic neighborhoods, capturing complex structure-property relationships that often elude traditional descriptor-based approaches. This application note provides a structured comparison of these architectures and detailed experimental protocols for their implementation in molecular property prediction tasks.

Core Architectural Frameworks

Message Passing Neural Networks (MPNNs) provide a generalized framework that unifies various graph neural network approaches. In MPNNs, learning occurs through iterative message passing phases where nodes receive and aggregate information from their direct neighbors, updating their internal representations based on these aggregated messages. This framework is particularly well-suited to molecular graphs as it mirrors the locality of chemical interactions. A 2025 study demonstrated MPNNs achieved superior performance (R² = 0.75) in predicting yields for cross-coupling reactions compared to other GNN architectures [39].

Graph Convolutional Networks (GCNs) operate by performing spectral graph convolutions approximated using layer-wise propagation rules. GCNs apply a first-order approximation of spectral graph convolutions to aggregate feature information from adjacent nodes, with each node's representation updated based on a normalized average of its neighbors' features plus its own. This architecture effectively captures local neighborhood dependencies but may struggle with capturing long-range interactions in molecular graphs without sufficient depth.

Graph Attention Networks (GATs) incorporate self-attention mechanisms into the propagation steps, enabling nodes to assign varying importance to features of their neighbors during aggregation. Unlike GCNs which use fixed weighting schemes, GATs compute attention coefficients that determine how strongly neighboring nodes influence each other's updates. This allows for more expressive modeling of molecular interactions where certain atomic neighbors or functional groups may be more relevant to property prediction than others.

Performance Comparison Across Molecular Tasks

Table 1: Performance comparison of GNN architectures across molecular property prediction tasks

| Architecture | Dataset/Property | Performance Metric | Result | Key Advantage |

|---|---|---|---|---|

| MPNN | Cross-coupling reaction yields [39] | R² | 0.75 | Superior predictive accuracy |

| GCN | Molecular property benchmarks [40] | Varies by dataset | Competitive | Computational efficiency |

| GAT | OGB-MolHIV (bioactivity) [41] | ROC-AUC | 0.807 | Global attention mechanism |

| EGNN | Geometry-sensitive properties [41] | MAE | 0.22-0.25 | 3D coordinate integration |

| KA-GNN [42] | Multiple benchmarks | Varies by dataset | State-of-the-art | Enhanced expressivity & interpretability |

| Descriptor-based (SVM) | ADME/T prediction [40] | Varies by dataset | Often superior | Computational efficiency |

Recent advancements include Kolmogorov-Arnold GNNs (KA-GNNs) which integrate Kolmogorov-Arnold networks into GNN components, demonstrating superior accuracy and computational efficiency across seven molecular benchmarks [42]. Equivariant GNNs (EGNNs) incorporate 3D molecular geometry, achieving the lowest mean absolute error for geometry-sensitive properties like air-water partition coefficients (MAE = 0.25) [41].

Experimental Protocols

Standardized Model Implementation Workflow

Data Preparation and Preprocessing

- Begin with molecular datasets in SMILES format or graph representations

- For molecular graphs, represent atoms as nodes (with features: atom type, hybridization, valence) and bonds as edges (with features: bond type, conjugation)

- Implement dataset splitting using scaffold splitting to ensure structurally distinct molecules are in different splits, preventing data leakage and overoptimism

- For 3D-aware models (EGNN), include molecular geometry coordinates either from computational optimization (DFT) or experimental crystallography data

- Normalize node features and target variables for regression tasks

Model Configuration

- Implement MPNN with 4-6 message passing layers for capturing molecular substructures of relevant size

- Configure GCN with 2-4 convolutional layers with residual connections to mitigate oversmoothing

- Implement GAT with 4-8 attention heads to capture diverse interaction patterns

- Set hidden dimensions between 64-256 based on dataset size and complexity

- Use learning rates of 0.0001-0.001 with Adam or AdamW optimizers

- Apply regularization techniques: dropout (0.1-0.5), weight decay (1e-5 to 1e-4), and batch normalization

Training and Validation

- Train models with early stopping based on validation loss with patience of 30-50 epochs

- Use appropriate loss functions: Mean Squared Error for regression, Cross-Entropy for classification

- Implement gradient clipping (max norm: 1.0-5.0) for training stability

- For imbalanced datasets, apply techniques from GATE-GNN including ensemble methods and transfer learning [43]

- Validate using k-fold cross-validation with scaffold splitting where feasible

Specialized Implementation Considerations

For MPNNs in Reaction Yield Prediction [39]

- Implement edge feature updates in addition to node updates

- Include reaction condition features (catalyst, solvent, temperature) as global context

- Use integrated gradients method for model interpretability

- Train on diverse cross-coupling reactions (Suzuki, Sonogashira, Buchwald-Hartwig)

For 3D-Aware Models (EGNN) [41]

- Incorporate E(n)-equivariant layers preserving translational and rotational symmetry

- Initialize with 3D molecular coordinates from quantum mechanics calculations

- Use distance-based attention in message functions

- Apply data augmentation through rotational invariance

For Attention-Based Models (GAT, Graphormer) [41]

- Implement global attention mechanisms for capturing long-range dependencies

- Use Laplacian positional encodings for structural context

- Apply edge encoding biases in attention score computation

- Consider linearized attention for improved computational efficiency with large molecules

Diagram 1: MPNN framework with architectural variants for molecular graphs

Table 2: Essential research reagents and computational resources for GNN implementation

| Resource | Type | Function/Purpose | Implementation Example |

|---|---|---|---|

| RDKit | Software Library | Molecular graph generation from SMILES, feature calculation | Convert chemical structures to graph representations with atom/bond features |

| PyTor Geometric | Deep Learning Library | GNN model implementation, graph data processing | Pre-built GCN, GAT, MPNN layers; mini-batch handling for graphs |

| Deep Graph Library | Deep Learning Library | Flexible GNN implementations, multi-framework support | Experimental architectures, custom message passing functions |

| OGB (Open Graph Benchmark) | Benchmark Datasets | Standardized evaluation, dataset preprocessing | MoleculeNet datasets, performance evaluation pipelines |

| ColabFold/AlphaFold | Structural Prediction | 3D molecular coordinates for geometric GNNs | Generate 3D structures for EGNN and other equivariant models |

| SHAP/Integrated Gradients | Interpretability Tools | Model explanation, feature importance | Identify influential molecular substructures for predictions |

Advanced Methodologies and Emerging Approaches

Innovative Framework Integrations

Kolmogorov-Arnold GNNs represent a significant architectural advancement that replaces standard multilayer perceptrons in GNNs with Kolmogorov-Arnold network modules. These KA-GNNs integrate Fourier-based univariate functions in node embedding, message passing, and readout components, demonstrating consistent outperformance over conventional GNNs in both prediction accuracy and computational efficiency [42]. Implementation requires:

- Replacing MLP transformations with KAN layers using Fourier-series-based univariate functions

- Implementing KA-GCN variant for improved node embedding with local chemical context

- Developing KA-GAT variant with expressive edge embedding initialization

- Leveraging enhanced interpretability to identify chemically meaningful substructures

Molecular Set Representation Learning offers an alternative to graph-based representations by treating molecules as sets of atoms rather than explicitly connected graphs. This approach addresses limitations in bond definition, particularly for conjugated systems and non-covalent interactions [10]. Key implementations include:

- MSR1: Single-set atom-based representation without explicit topology

- MSR2: Dual-set representation with separate atom and bond invariants

- SR-GINE: Integration of set representation layers with graph isomorphism networks

- Performance competitive with state-of-the-art GNNs on benchmark datasets

Integration with Large Language Models

Recent approaches combine GNN structural learning with knowledge extracted from Large Language Models (LLMs), leveraging both molecular structure and human prior knowledge [44]. The protocol involves:

- Generating knowledge-based features using LLMs (GPT-4o, GPT-4.1, DeepSeek-R1)

- Extracting structural features from pre-trained molecular models

- Feature fusion through concatenation or attention-based merging

- Joint training with both knowledge and structural representations

This hybrid approach addresses the long-tail distribution of molecular knowledge in LLMs while maintaining structural awareness, outperforming single-modality models across multiple property prediction tasks [44].

Diagram 2: Hybrid architecture combining LLM knowledge with GNN structural features

Performance Optimization and Troubleshooting

Addressing Common Implementation Challenges

Class Imbalance in Molecular Datasets Molecular datasets often exhibit significant class imbalance, particularly for rare properties or activities. The GATE-GNN architecture provides specialized mechanisms to address this through ensemble methods with graph ensemble weight attention and transfer learning [43]. Implementation strategies include:

- Dynamic node interaction modules with learnable attention weights

- Ensemble approaches that leverage embeddings from earlier layers

- Transfer learning from related molecular tasks with more balanced data

- Cost-sensitive learning with adjusted loss functions

Oversmoothing and Oversquashing Deep GNNs frequently suffer from oversmoothing (node representations becoming indistinguishable) and oversquashing (information bottleneck in tightly connected graphs). Mitigation approaches include:

- Residual connections between GNN layers

- Graph rewiring techniques like Gumbel-MPNN which uses Gumbel-Softmax to modify edges and reduce neighborhood distribution deviations [45]

- Attention-based neighborhood sampling

- Depth-wise regularization and intermediate supervision

Computational Efficiency For large-scale virtual screening applications, computational efficiency becomes critical. Recent benchmarks indicate that despite the popularity of GNNs, traditional descriptor-based models like SVM and XGBoost can outperform graph-based models in both prediction accuracy and computational efficiency for certain molecular properties [40]. Practical recommendations include:

- Evaluating problem complexity before model selection

- Considering hybrid approaches with molecular fingerprints for less complex properties

- Using MPNNs for reaction yield prediction where they demonstrate superior performance [39]

- Implementing EGNNs for geometry-sensitive properties where 3D information is crucial [41]

MPNNs, GCNs, and GATs provide powerful foundational frameworks for molecular property prediction, each with distinct strengths and optimal application domains. MPNNs offer strong performance for reaction prediction tasks, GCNs provide computational efficiency for standard property prediction, and GATs excel at capturing complex molecular interactions through attention mechanisms. Emerging approaches like KA-GNNs, molecular set representation learning, and LLM-GNN hybrids represent promising research directions that address current limitations in molecular representation. Successful implementation requires careful architectural selection based on specific molecular tasks, appropriate handling of dataset imbalances and structural constraints, and thoughtful integration of complementary approaches from both traditional machine learning and modern deep learning paradigms.

The accurate prediction of molecular properties is a cornerstone of modern drug discovery and development. Traditional computational models often face limitations in expressiveness, interpretability, and their ability to integrate diverse molecular representations. Recently, two innovative architectural paradigms have emerged to address these challenges: Kolmogorov-Arnold Graph Neural Networks (KA-GNNs) and Multi-Type Feature Fusion frameworks. KA-GNNs integrate the novel mathematical foundation of Kolmogorov-Arnold Networks (KANs) into graph neural networks, enhancing their approximation capabilities and transparency [42] [46]. Simultaneously, Multi-Type Feature Fusion architectures systematically combine heterogeneous molecular data sources—such as molecular graphs, sequences, and fingerprints—to create more comprehensive molecular representations [47] [48]. Framed within the broader context of machine learning for molecular property prediction, this article details the application of these architectures, providing structured experimental data, standardized protocols, and essential implementation tools for researchers and drug development professionals.

Theoretical Foundations

Kolmogorov-Arnold Networks (KANs) in a Nutshell

KANs are inspired by the Kolmogorov-Arnold representation theorem, which states that any multivariate continuous function can be represented as a finite composition of continuous univariate functions and additions [49]. Unlike traditional Multi-Layer Perceptrons (MLPs) that apply fixed, non-linear activation functions at nodes, KANs place learnable univariate functions on the edges of the network [42]. These univariate functions are typically parameterized using B-spline curves or Fourier series, allowing the network to adaptively learn optimal activation patterns from data [42] [49]. This fundamental difference grants KANs superior parameter efficiency, interpretability, and approximation accuracy compared to MLPs with comparable parameters [46].

The Principle of Multi-Type Feature Fusion

Multi-Type Feature Fusion is predicated on the understanding that no single molecular representation can fully encapsulate the complexity of a compound's structure and properties. This paradigm proposes that integrating complementary information from multiple sources—such as molecular graphs (capturing topological structure), SMILES sequences (capturing local chemical context), molecular fingerprints (encoding substructure presence), and even molecular images—leads to more robust and accurate predictive models [47] [50] [48]. The central challenge lies in developing effective fusion mechanisms—such as gating mechanisms, attention-based fusion, or specialized neural modules—that can seamlessly integrate these disparate data types without succumbing to issues like feature redundancy or information loss [47] [51].

KA-GNNs: Architecture and Applications

Core Architectural Framework

KA-GNNs systematically replace standard MLP components within classical Graph Neural Networks (GNNs) with KAN-based modules. This integration occurs across three fundamental stages of graph processing, as illustrated below.

- Node Embedding: The initial representation of each atom (node) is generated by passing atomic features (e.g., atom type, formal charge) and local bond context through a KAN layer instead of a linear layer followed by a fixed activation [42] [49].