Machine Learning for Drug-Target Interaction Prediction: Performance Evaluation, Current Challenges, and Future Directions

Accurate prediction of Drug-Target Interactions (DTIs) is a critical, yet challenging, step in accelerating drug discovery and repurposing.

Machine Learning for Drug-Target Interaction Prediction: Performance Evaluation, Current Challenges, and Future Directions

Abstract

Accurate prediction of Drug-Target Interactions (DTIs) is a critical, yet challenging, step in accelerating drug discovery and repurposing. This article provides a comprehensive performance evaluation of machine learning (ML) and deep learning (DL) methods for DTI prediction, tailored for researchers, scientists, and drug development professionals. We explore the foundational concepts and the evolution of computational approaches, from classical similarity-based methods to advanced graph neural networks and evidential deep learning. The review delves into methodological innovations, including feature engineering and multimodal data integration, while critically addressing persistent challenges such as data imbalance, model generalization, and uncertainty quantification. A comparative analysis of state-of-the-art models on benchmark datasets highlights performance metrics, robustness, and scalability. By synthesizing current capabilities and limitations, this article aims to serve as a roadmap for developing more reliable, efficient, and trustworthy computational tools for therapeutic development.

From Docking to Deep Learning: The Evolution of DTI Prediction Foundations

In the landscape of modern drug discovery, accurately predicting Drug-Target Interactions (DTI) stands as a critical bottleneck with multi-billion dollar implications. Traditional experimental methods for identifying DTIs, while reliable, are hampered by significant drawbacks including high costs and lengthy development cycles that substantially limit the pace of drug development [1] [2]. The pharmaceutical industry faces a persistent challenge: approximately 60-70% of drug candidates fail due to poor efficacy or adverse effects, highlighting the crucial importance of accurate DTI prediction early in the discovery pipeline [3].

Computational approaches, particularly deep learning (DL) techniques, have emerged as promising solutions to accelerate DTI identification and reduce development costs [1] [2]. These methods can be broadly classified into network-based approaches and proteochemometrics (PCM), with recent PCM methods receiving increased attention for their ability to learn complex patterns from drug and target representations [1]. However, despite significant advances, practical application of these models faces a major challenge: high probability predictions do not necessarily correspond to high confidence, leading to overconfidence in predictions for out-of-distribution and noisy samples [1] [2]. This overconfidence can introduce unreliable predictions into downstream processes, pushing false positives into experimental validation and potentially delaying the entire drug discovery process.

This guide provides an objective performance evaluation of contemporary machine learning methods for DTI prediction, focusing on experimental data, methodologies, and practical implementation considerations for researchers and drug development professionals.

Performance Comparison: Evaluating State-of-the-Art DTI Prediction Models

Comprehensive Benchmarking Across Multiple Datasets

To objectively evaluate model performance, researchers typically employ multiple benchmark datasets with different characteristics. The table below summarizes the performance of leading DTI prediction models across three standard datasets: DrugBank, Davis, and KIBA.

Table 1: Performance Comparison of DTI Models on Benchmark Datasets

| Model | Dataset | Accuracy (%) | Precision (%) | MCC (%) | F1 Score (%) | AUC (%) | AUPR (%) |

|---|---|---|---|---|---|---|---|

| EviDTI | DrugBank | 82.02 | 81.90 | 64.29 | 82.09 | - | - |

| EviDTI | Davis | +0.8* | +0.6* | +0.9* | +2.0* | +0.1* | +0.3* |

| EviDTI | KIBA | +0.6* | +0.4* | +0.3* | +0.4* | +0.1* | - |

| GAN+RFC | BindingDB-Kd | 97.46 | 97.49 | - | 97.46 | 99.42 | - |

| GAN+RFC | BindingDB-Ki | 91.69 | 91.74 | - | 91.69 | 97.32 | - |

| GAN+RFC | BindingDB-IC50 | 95.40 | 95.41 | - | 95.39 | 98.97 | - |

| CAMF-DTI | BindingDB | - | - | - | - | - | - |

| BarlowDTI | BindingDB-kd | - | - | - | - | 93.64 | - |

Note: Values with asterisk () indicate percentage point improvement over the previous best baseline model. MCC stands for Matthews Correlation Coefficient, AUC for Area Under the ROC Curve, and AUPR for Area Under the Precision-Recall Curve.*

EviDTI demonstrates robust overall performance across all metrics, particularly excelling in precision (81.90% on DrugBank) and maintaining competitive values for Accuracy (82.02%), MCC (64.29%), and F1 score (82.09%) [1]. On the challenging Davis and KIBA datasets, which are characterized by significant class imbalance, EviDTI shows particularly strong performance, exceeding the best baseline model by 0.8% in accuracy, 0.6% in precision, 0.9% in MCC, 2% in F1 score, 0.1% in AUC, and 0.3% in AUPR on the Davis dataset [1].

The GAN+RFC model achieves remarkable performance metrics on BindingDB subsets, reaching accuracy of 97.46%, precision of 97.49%, and ROC-AUC of 99.42% on the BindingDB-Kd dataset [3]. Similarly, BarlowDTI achieves state-of-the-art performance on the BindingDB-kd benchmark with a ROC-AUC score of 0.9364 [3].

Cold-Start Scenario Performance

Evaluating model performance under cold-start scenarios is crucial for assessing real-world applicability where predictions are needed for novel drugs or targets with limited interaction data.

Table 2: Cold-Start Scenario Performance Comparison

| Model | Accuracy (%) | Recall (%) | F1 Score (%) | MCC (%) | AUC (%) |

|---|---|---|---|---|---|

| EviDTI | 79.96 | 81.20 | 79.61 | 59.97 | 86.69 |

| TransformerCPI | - | - | - | - | 86.93 |

In cold-start scenarios following the practice established by Wang et al., EviDTI outperforms other models in several evaluation metrics, especially in accuracy (79.96%), recall (81.20%), F1 score (79.61%) and MCC value (59.97%), though its AUC value (86.69%) is slightly lower than TransformerCPI's 86.93% [2].

Experimental Protocols and Methodologies

EviDTI Framework Architecture

The EviDTI framework employs a multi-modal approach to DTI prediction, integrating various data dimensions and utilizing evidential deep learning (EDL) for uncertainty quantification [1] [2]. The experimental protocol involves three main components:

Protein Feature Encoder: Utilizes the protein sequence pre-training model ProtTrans as the initial encoder to generate target representations. This representation undergoes further feature extraction through a light attention (LA) module to provide insights into local interactions at the residue level [1].

Drug Feature Encoder: Encodes both 2D topological information and 3D structural information of drugs. For 2D topological graphs, initial representations are derived using the MG-BERT pre-trained model, subsequently processed by a 1DCNN. The 3D spatial structure is converted into an atom-bond graph and a bond-angle graph, with representations obtained through the GeoGNN module [1].

Evidential Layer: The target and drug representations are concatenated and fed into the evidential layer. The output is the parameter α, used to calculate prediction probability and corresponding uncertainty value [1] [2].

The framework was validated on three different experimental datasets: DrugBank, Davis, and KIBA, randomly divided into training, validation, and test sets in a ratio of 8:1:1 [1]. The implementation uses seven evaluation metrics: accuracy (ACC), recall, precision, Matthews correlation coefficient (MCC), F1 score, area under the ROC curve (AUC), and area under the precision-recall curve (AUPR) [1].

EviDTI Framework Architecture

CAMF-DTI Methodology

CAMF-DTI incorporates coordinate attention, multi-scale feature fusion, and cross-attention mechanisms to enhance both representation and interaction learning of drug and protein features [4]. The experimental protocol includes:

Drug Encoder: Drug molecules represented by SMILES strings are converted into molecular graphs G = (V, E), where V denotes atom nodes and E denotes chemical bonds. Using the DGL-LifeSci toolkit, each atom is encoded as a 74-dimensional feature vector including atom type, degree, hydrogen count, charge, hybridization, and aromaticity [4]. A three-layer Graph Convolutional Network (GCN) learns molecular representations through node feature updates at each layer.

Protein Encoder: Protein sequences are processed with coordinate attention to preserve directional and spatial information. The coordinate attention mechanism jointly encodes spatial position and sequence directionality, improving localization of key interaction regions [4].

Multi-Scale Feature Fusion: Applied to both drug and protein encoders to capture local binding patterns and global conformational information at multiple receptive fields [4].

Cross-Attention Module: Models dynamic interactions between drugs and proteins, generating a joint representation that passes to multilayer perceptrons (MLPs) for final DTI prediction [4].

CAMF-DTI was evaluated on four benchmark datasets: BindingDB, BioSNAP, C.elegans, and Human, demonstrating consistent outperformance against seven state-of-the-art baselines in terms of AUROC, AUPRC, Accuracy, F1-score, and MCC [4].

GAN-Based Hybrid Framework

The GAN-based hybrid framework addresses critical challenges in DTI prediction, particularly data imbalance and feature engineering [3]. The methodology involves:

Feature Engineering: Leverages MACCS keys to extract structural drug features and amino acid/dipeptide compositions to represent target biomolecular properties, enabling deeper understanding of chemical and biological interactions [3].

Data Balancing: Employs Generative Adversarial Networks (GANs) to create synthetic data for the minority class, effectively reducing false negatives and improving predictive model sensitivity [3].

Random Forest Classification: Utilizes Random Forest Classifier (RFC) optimized for handling high-dimensional data to make precise DTI predictions [3].

The framework was validated across diverse datasets, including BindingDB-Kd, BindingDB-Ki, and BindingDB-IC50, demonstrating scalability and robustness [3].

Successful implementation of DTI prediction models requires specific computational reagents and resources. The following table details key components essential for reproducing state-of-the-art results.

Table 3: Essential Research Reagents for DTI Prediction Implementation

| Resource Category | Specific Tool/Dataset | Function/Purpose | Key Specifications |

|---|---|---|---|

| Protein Feature Extraction | ProtTrans [1] | Protein sequence pre-training model for initial target representation | Generates initial protein sequence features |

| Drug Feature Extraction | MG-BERT [1] | Molecular graph pre-trained model for 2D drug representations | Processes 2D topological graph information |

| 3D Structure Processing | GeoGNN [1] | Geometric deep learning for 3D drug spatial structure | Encodes atom-bond and bond-angle graphs |

| Dataset | DrugBank [1] | Benchmark dataset for model training and validation | Used with 8:1:1 train/validation/test split |

| Dataset | Davis [1] | Benchmark dataset with kinase inhibition measurements | Challenging due to class imbalance |

| Dataset | KIBA [1] | Benchmark dataset with kinase inhibitor bioactivities | Known for complex imbalance patterns |

| Dataset | BindingDB [4] [3] | Collection of protein-ligand binding affinities | Multiple subsets (Kd, Ki, IC50) available |

| Implementation Framework | DGL-LifeSci [4] | Toolkit for graph neural networks in life sciences | Version 1.0; encodes atom-level features |

| Evaluation Metrics | Multiple [1] | Comprehensive model performance assessment | ACC, Recall, Precision, MCC, F1, AUC, AUPR |

Uncertainty Quantification: Addressing the Overconfidence Challenge

A significant advancement in recent DTI prediction research is the incorporation of uncertainty quantification to address the overconfidence problem prevalent in traditional deep learning models [1] [2].

Evidential Deep Learning Implementation

EviDTI utilizes evidential deep learning (EDL) to provide uncertainty estimates alongside predictions, enabling researchers to distinguish between reliable and high-risk predictions [1] [2]. This approach addresses a fundamental limitation of traditional DL models, which lack probability calibration ability and may produce high prediction probabilities even in low confidence situations [1].

The evidence layer in EviDTI outputs the parameter α, which is used to calculate both prediction probability and corresponding uncertainty value, allowing the model to dynamically adjust confidence levels according to knowledge boundaries [1]. This capability mirrors human cognitive processes, where familiar questions receive certain answers while unknown domains trigger explicit uncertainty expression [1].

Practical Applications of Uncertainty Estimates

Uncertainty quantification enhances drug discovery efficiency by prioritizing DTIs with higher confidence predictions for experimental validation [1]. In a case study focused on tyrosine kinase modulators, uncertainty-guided predictions successfully identified novel potential modulators targeting tyrosine kinase FAK and FLT3 [1].

Well-calibrated uncertainty information helps mitigate resource inefficiency by reducing the introduction of unreliable predictions into downstream processes, including the pushing of false positives into experimental validation and the omission of potentially active compounds in virtual screening [1] [2].

Uncertainty-Guided Decision Pipeline

Based on comprehensive experimental evaluations across multiple benchmark datasets, EviDTI demonstrates robust overall performance, particularly in precision (81.90% on DrugBank) and handling of class-imbalanced datasets like Davis and KIBA [1]. The incorporation of evidential deep learning for uncertainty quantification addresses a critical challenge in practical DTI prediction implementation, providing researchers with confidence estimates crucial for prioritization decisions in drug discovery pipelines [1] [2].

The GAN-based hybrid framework achieves remarkable performance on BindingDB subsets, with accuracy reaching 97.46% on BindingDB-Kd and ROC-AUC of 99.42%, demonstrating the effectiveness of addressing data imbalance through synthetic data generation [3]. Meanwhile, CAMF-DTI's integration of coordinate attention and multi-scale feature fusion demonstrates consistent outperformance across multiple benchmarks, highlighting the importance of preserving directional information in protein sequences and capturing features at multiple receptive fields [4].

Future directions in DTI prediction research will likely focus on enhanced uncertainty quantification, improved handling of cold-start scenarios, more sophisticated multi-modal data integration, and increased model interpretability for domain experts. As these computational methods continue maturing, their integration into standardized drug discovery workflows promises to significantly reduce development costs and timelines while increasing the success rate of novel therapeutic candidates.

The field of drug-target interaction (DTI) prediction stands as a crucial component in the drug discovery pipeline, where accurate predictions can significantly reduce the time and cost associated with bringing new therapeutics to market [5]. For decades, traditional computational methods, primarily molecular docking simulations and manual feature curation, have served as the cornerstone of in silico drug discovery efforts. However, the landscape is rapidly shifting with the emergence of sophisticated machine learning (ML) and deep learning (DL) approaches [6] [7].

Molecular docking, a structure-based method introduced in the 1980s, aims to predict the binding conformation and affinity of a small molecule (ligand) to a target protein [8]. Concurrently, manual feature curation involves researchers hand-crafting descriptive features from biological and chemical data—such as molecular descriptors and protein sequences—to feed into machine learning models [7]. While these methods have contributed valuable insights, they face profound limitations in scalability, accuracy, and their ability to capture the complex, dynamic nature of biomolecular interactions.

This guide objectively compares the performance of these traditional methodologies against modern ML-based alternatives, framing the analysis within a broader thesis on performance evaluation for DTI prediction research. By synthesizing recent experimental data and detailing foundational methodologies, we provide researchers and drug development professionals with a clear, evidence-based perspective on this pivotal technological shift.

Limitations of Traditional Docking Simulations

Molecular docking operates on a search-and-score framework, exploring possible ligand poses and evaluating them with a scoring function [8]. A fundamental and persistent challenge is the treatment of protein flexibility.

The Critical Challenge of Protein Flexibility

Traditional docking methods often treat proteins as rigid bodies, an oversimplification that ignores the dynamic induced fit effect—the conformational changes a protein undergoes upon ligand binding [8]. This limits their performance in realistic scenarios like apo-docking (using unbound protein structures) and cross-docking (docking ligands to alternative receptor conformations) [8]. As summarized in Table 1, performance drops significantly in these tasks compared to idealized re-docking because the method cannot accurately model the structural adaptations required for binding.

Table 1: Performance of Docking Methods Across Different Tasks

| Docking Task | Description | Key Challenge | Reported Accuracy Range |

|---|---|---|---|

| Re-docking | Docking a ligand back into its bound (holo) receptor conformation. | Overfitting to ideal geometries; poor generalization. | Varies, but generally high |

| Flexible Re-docking | Uses holo structures with randomized binding-site sidechains. | Robustness to minor conformational changes. | Not Specified |

| Cross-docking | Ligands docked to alternative receptor conformations (e.g., from different complexes). | Accounting for different induced fits without a priori knowledge. | Lower than re-docking |

| Apo-docking | Uses unbound (apo) receptor structures. | Inferring large-scale conformational changes from apo to holo state. | 0% to >90% (highly fragile) |

| Blind Docking | Predicting both ligand pose and binding site location. | High dimensionality; least constrained task. | Not Specified |

Performance and Accuracy Gaps

The performance of traditional docking is inconsistent. As noted in breast cancer research, the accuracy of docking protocols can range from a complete failure (0%) to over 90%, highlighting its fragility when not meticulously validated [9]. A key issue is that docking scores often fail to correlate with real-world binding affinity, leading to false positives and complicating virtual screening efforts [8] [9]. Furthermore, the computational demand of exhaustively sampling conformational space makes high-accuracy flexible docking prohibitively expensive for large-scale virtual screening [8].

Limitations of Manual Feature Curation

Before the rise of end-to-end deep learning, a significant research effort focused on manual feature curation for machine learning models. This process requires domain experts to hand-select and engineer informative descriptors from raw data, such as calculating molecular fingerprints from chemical structures or extracting specific physicochemical properties from protein sequences [7].

This approach is inherently limited. The manual selection process is time-consuming, labor-intensive, and can introduce human bias, as it relies on pre-existing knowledge of what features are considered important [7]. Consequently, these models may miss subtle or complex patterns in the raw data that are not captured by the pre-defined features. This limits the model's ability to discover novel and predictive relationships, ultimately constraining its predictive power and generalizability [7].

The Machine Learning Paradigm: Modern Alternatives

Modern deep learning approaches directly address the core limitations of traditional methods by learning complex patterns directly from data, thereby automating feature extraction and, in some cases, integrating flexibility.

Deep Learning for Flexible Molecular Docking

New deep learning models are transforming docking by moving beyond the rigid-body assumption. DiffDock, a diffusion-based model, achieves state-of-the-art accuracy at a fraction of the computational cost of traditional methods by iteratively refining a ligand's pose [8]. Emerging models like FlexPose enable end-to-end flexible modeling of protein-ligand complexes, directly addressing the challenge of induced fit by accommodating input structures regardless of their conformational state (apo or holo) [8]. These methods demonstrate the potential of DL to not only match but surpass traditional docking, particularly in more realistic and challenging docking scenarios.

Automated Representation Learning for DTI

Deep learning models automatically learn hierarchical feature representations from raw input data, such as Simplified Molecular-Input Line-Entry System (SMILES) strings for drugs and amino acid sequences for proteins [6] [7]. This eliminates the need for manual feature engineering. Graph neural networks (GNNs), for example, natively represent molecules as topological graphs, preserving crucial structural information about atoms and bonds [2] [7]. Furthermore, Evidential Deep Learning (EDL) frameworks like EviDTI address the critical issue of uncertainty quantification, allowing models to express confidence in their predictions and mitigate the risk of overconfident, incorrect results [2].

Performance Benchmark: Manual Review vs. AI Curation

The efficiency gains of automated data processing are not limited to molecular modeling. A comparative study in clinical data extraction for breast cancer research provides a compelling benchmark, as detailed in Table 2. The LLM-based approach demonstrated comparable accuracy to manual physician review while drastically reducing processing time and resource requirements [10].

Table 2: Performance Comparison: Manual Review vs. LLM-Based Processing

| Metric | Manual Physician Review | LLM-Based Processing (Claude 3.5 Sonnet) |

|---|---|---|

| Sample Size | 1,366 cases | 1,734 cases |

| Extraction Accuracy | Baseline | 90.8% |

| Processing Time | 7 months (5 physicians) | 12 days (2 physicians) |

| Physician Hours | 1,025 hours | 96 hours (91% reduction) |

| Cost | Not specified | $260 total ($0.15 per case) |

| Key Strength | Not specified | Significantly better capture of survival events (41 vs 11, P=.002) |

Essential Research Reagent Solutions

The advancement of DTI prediction research relies on a suite of key computational tools and datasets. The following table details essential "research reagents" for this field.

Table 3: Key Research Reagents for DTI Prediction

| Reagent Name | Type | Primary Function | Relevance to DTI Research |

|---|---|---|---|

| PDBBind [6] | Dataset | Curated database of protein-ligand complexes with 3D structures and binding affinities. | Primary benchmark for training and evaluating structure-based and affinity prediction models. |

| BindingDB [6] | Dataset | Public database of measured binding affinities for drug-like molecules and proteins. | Provides binding data for training and validating DTA models. |

| Davis [2] [6] | Dataset | Contains kinase inhibition data for a set of compounds. | A standard benchmark dataset, particularly for DTA prediction tasks. |

| KIBA [2] [6] | Dataset | Provides kinase inhibitor bioactivity scores integrating multiple sources. | Used for benchmarking DTI and DTA models on a large, integrated dataset. |

| DiffDock [8] | Software/Tool | A deep learning model using diffusion for molecular docking. | State-of-the-art tool for predicting ligand poses; represents the modern ML approach to docking. |

| EviDTI [2] | Software/Tool | An evidential deep learning framework for DTI prediction. | Predicts interactions and provides uncertainty estimates, enhancing reliability for decision-making. |

| ProtTrans [2] | Software/Tool | A pre-trained protein language model. | Used to generate powerful, contextual feature representations from amino acid sequences. |

Experimental Protocols and Workflows

To ensure reproducible and comparable results, rigorous experimental protocols are essential in DTI research.

Standard Model Evaluation Protocol

A typical workflow for evaluating a new DTI/DTA model involves several key steps, as used in the evaluation of EviDTI and other models [2] [6]:

- Dataset Selection: Use one or more benchmark datasets (e.g., Davis, KIBA, DrugBank).

- Data Splitting: Randomly split the data into training, validation, and test sets, typically in an 80:10:10 ratio [2]. To assess performance on novel interactions, a "cold-start" scenario is also used, where drugs or targets in the test set are not present in the training data [2].

- Model Training & Validation: Train the model on the training set and use the validation set for hyperparameter tuning.

- Performance Assessment: Evaluate the model on the held-out test set using a standard set of metrics, including:

- Area Under the ROC Curve (AUC): Measures the overall ranking performance.

- Area Under the Precision-Recall Curve (AUPR): More informative than AUC for imbalanced datasets.

- Precision, Recall, and F1 Score: Provide insights into classification performance.

- Matthews Correlation Coefficient (MCC): A balanced measure for binary classification.

LLM-Based Clinical Data Curation Protocol

The study comparing LLM-based processing to manual review followed a specific, replicable methodology [10]:

- Data Preparation: Deidentified clinical data was automatically extracted from a clinical data warehouse (CDW) and organized into prestructured sheets.

- Prompt Development: The LLM prompt was developed over a 3-phase iterative process (2 days total) using sample data to refine extraction rules for diagnoses, procedures, and biomarkers.

- LLM Processing: The preprocessed data was fed to Claude 3.5 Sonnet via its web interface to structure clinical variables into a CSV format.

- Validation: A stratified random sample of 50 records per group (900 data points total) was independently assessed by four breast surgical oncologists to determine accuracy.

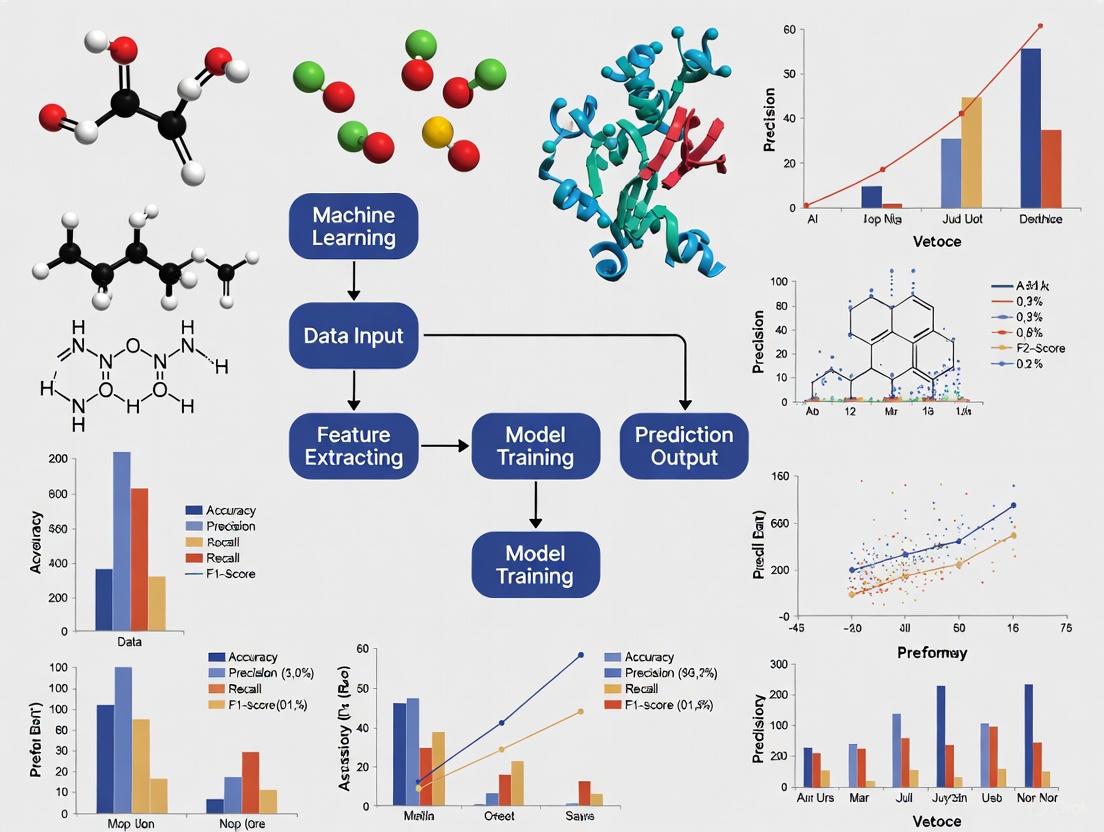

The following diagram visualizes the core methodological shift from a traditional, sequential workflow to an integrated, AI-driven paradigm in drug discovery.

Diagram 1: Contrasting methodological paradigms in DTI research, highlighting the transition from human-dependent, sequential steps to an automated, integrated AI approach.

The evidence demonstrates a clear and compelling shift in the paradigm of DTI prediction research. Traditional methods, namely rigid docking simulations and manual feature curation, are increasingly constrained by their inherent limitations: an inability to model dynamic protein flexibility, inconsistent and computationally expensive performance, and a reliance on biased, human-engineered features.

Modern machine learning approaches, including flexible deep learning docking models, automated representation learning, and evidential frameworks for uncertainty, directly address these shortcomings. They offer a path toward more accurate, efficient, and reliable predictions. The quantitative data, from the 91% reduction in physician hours for data curation to the superior performance of models like EviDTI on benchmark datasets, underscores that the future of computational drug discovery lies in the intelligent application of these advanced AI methodologies. For researchers and drug development professionals, embracing and contributing to this shift is essential for accelerating the delivery of life-saving therapeutics.

In the field of computational drug discovery, accurately predicting the relationships between drugs and their biological targets is a fundamental task. Two primary concepts form the cornerstone of this research: Drug-Target Interaction (DTI) and Drug-Target Affinity (DTA). While often discussed together, they represent distinct scientific questions and computational challenges. DTI prediction is essentially a binary classification problem that aims to determine whether a drug and target interact at all. In contrast, DTA prediction is a regression problem that quantifies the strength of this binding, typically measured by values such as dissociation constant (Kd), inhibition constant (Ki), or half-maximal inhibitory concentration (IC50) [11] [12].

Understanding this distinction is crucial for developing and evaluating machine learning methods, as each task requires different model architectures, performance metrics, and experimental validation approaches. This guide provides a comprehensive comparison of these core concepts, supported by experimental data and methodological insights from state-of-the-art research.

Defining the Core Concepts and Their Predictive Tasks

Drug-Target Interaction (DTI)

DTI prediction is formulated as a binary classification task where the goal is to predict whether a binding event occurs between a drug molecule and a target protein [11]. The output is typically a yes/no decision, which helps in preliminary screening of potential drug candidates. However, this approach has limitations—it doesn't differentiate between strong and weak binders and often struggles with the lack of reliable negative samples (pairs known not to interact) [12].

Drug-Target Affinity (DTA)

DTA prediction goes a step further by quantifying the binding strength as a continuous value [11] [13]. This reflects the real-world biochemical reality where interactions are not merely present or absent but exist on a spectrum of binding strengths. Predicting affinity is more informative for lead optimization in drug discovery, as it helps prioritize compounds with the strongest potential therapeutic effects [12].

Table 1: Fundamental Differences Between DTI and DTA Tasks

| Feature | Drug-Target Interaction (DTI) | Drug-Target Affinity (DTA) |

|---|---|---|

| Problem Type | Binary Classification | Regression |

| Primary Output | Interaction (Yes/No) | Binding Affinity (Continuous Value) |

| Typical Metrics | Accuracy, AUC, F1-Score, MCC [2] [14] | MSE, CI, RMSE, ( r_m^2 ) [13] |

| Biochemical Meaning | Presence/Absence of Binding | Strength of Binding (Kd, Ki, IC50) [12] |

| Main Challenge | Lack of verified negative samples [12] | Precisely quantifying interaction strength |

Performance Evaluation of Machine Learning Methods

Deep learning models have become prominent in both DTI and DTA prediction. Their performance is evaluated on public benchmark datasets using task-specific metrics, as summarized below.

Performance on DTI Prediction (Binary Classification)

The table below showcases the performance of various state-of-the-art models on a typical DTI classification task, evaluated using metrics like AUC and F1-score.

Table 2: Performance Comparison of State-of-the-Art DTI Prediction Models

| Model | AUROC | AUPRC | Accuracy | F1-Score | MCC |

|---|---|---|---|---|---|

| EviDTI [2] | 0.8669 | - | 0.7996 | 0.7961 | 0.5997 |

| BiMA-DTI [14] | >0.936 (Best) | High | - | - | - |

| GAN+RFC [15] | 0.9942 | - | 0.9746 | 0.9746 | - |

| CAMF-DTI [4] | High | High | High | High | High |

| M³ST-DTI [16] | Consistently Outperforms SOTA | - | - | - | - |

Key Insights:

- EviDTI incorporates evidential deep learning to provide uncertainty estimates for its predictions, which is valuable for prioritizing experimental validation and mitigating overconfidence [2].

- BiMA-DTI leverages a hybrid Mamba-Attention network, demonstrating strong performance, particularly in capturing long-range dependencies in sequences [14].

- The GAN+RFC model addresses the critical issue of data imbalance by using Generative Adversarial Networks (GANs) to generate synthetic data for the minority class, resulting in exceptionally high performance metrics [15].

Performance on DTA Prediction (Regression)

For DTA prediction, the following table compares the performance of regression models on benchmark datasets like Davis and KIBA, using metrics such as Mean Squared Error (MSE) and Concordance Index (CI).

Table 3: Performance Comparison of State-of-the-Art DTA Prediction Models

| Model | Davis (MSE/CI) | KIBA (MSE/CI) | BindingDB (MSE) | Key Feature |

|---|---|---|---|---|

| GRA-DTA [13] | 0.225 / 0.890 | 0.142 / 0.897 | - | Combines GraphSAGE & BiGRU |

| DeepDTA [13] | ~0.260 / ~0.880 | ~0.179 / ~0.880 | - | Baseline CNN model |

| MvGraphDTA [17] | - | - | - | Multi-view (Graph & Line Graph) |

| kNN-DTA [15] | - | - | 0.684 (IC50, RMSE) | Non-parametric, retrieval-based |

| MDCT-DTA [15] | - | - | 0.475 (MSE) | Multi-scale diffusion & interaction |

Key Insights:

- GRA-DTA utilizes GraphSAGE for drug graph representation and an attention-based BiGRU for protein sequences, effectively capturing both structural and sequential information [13].

- MvGraphDTA introduces a novel approach by using both original molecular graphs and their line graphs to extract richer structural and relational features, leading to superior performance [17].

- kNN-DTA is a notable non-parametric method that boosts performance during inference by aggregating information from nearest neighbors in the training set, requiring no additional training [15].

Experimental Protocols and Methodologies

To ensure reproducible and fair comparisons, researchers follow standardized experimental protocols. The workflow below illustrates the general process for developing and evaluating a DTI/DTA model, from data preparation to performance assessment.

Diagram 1: General Workflow for DTI/DTA Model Development

Data Sourcing and Curation

The first step involves gathering data from public databases. Key benchmark datasets include [6]:

- Davis: Contains binding affinities for kinases, measured mainly by Kd values.

- KIBA: A larger and more balanced dataset that integrates Ki, Kd, and IC50 information.

- BindingDB: A comprehensive database of drug-target binding data, including Kd, Ki, and IC50.

- BioSNAP, Yamanishi_08, Hetionet: Commonly used for DTI binary prediction tasks [11] [4].

Data Splitting Strategies

A critical aspect of protocol design is how the data is split into training, validation, and test sets. Different splitting strategies test the model's ability to generalize under various real-world scenarios [14]:

- Random Split (E1): A standard random partition of all drug-target pairs.

- Drug Cold Start (E2): Tests generalization to new drugs not seen in the training set.

- Target Cold Start (E3): Tests generalization to new targets not seen in the training set.

- Strict Cold Start (E4): Tests generalization to pairs where both the drug and the target are new.

The diagram below visualizes these different data splitting strategies, which are crucial for evaluating model generalizability.

Diagram 2: Data Splitting Strategies for Evaluation

Input Representations and Feature Extraction

A model's performance is heavily influenced by how drugs and targets are represented. The search results reveal a trend towards multi-modal and multi-scale feature extraction [16].

Drug Representations:

Target Representations:

Advanced models like M³ST-DTI and BiMA-DTI fuse features from textual (sequence), structural (graph), and functional (biological role) modalities to create a more comprehensive representation [16] [14].

The Scientist's Toolkit: Research Reagent Solutions

The following table lists key computational tools, datasets, and model architectures that are essential for contemporary DTI/DTA research.

Table 4: Essential Research Reagents for DTI/DTA Research

| Reagent / Resource | Type | Primary Function / Utility |

|---|---|---|

| BindingDB [6] [15] | Database | Primary source for binding affinity data (Kd, Ki, IC50). |

| Davis & KIBA [13] | Benchmark Dataset | Standard benchmarks for DTA model regression tasks. |

| RDKit [13] | Software Library | Converts drug SMILES strings into molecular graphs for GNN-based models. |

| ProtTrans [2] | Pre-trained Model | Provides powerful initial feature embeddings for protein sequences. |

| Graph Neural Network (GNN) [4] [17] | Model Architecture | Learns representations from the topological structure of drug molecules. |

| Attention Mechanism [13] [14] | Model Component | Identifies and weights important substructures in sequences and graphs. |

| Evidential Deep Learning (EDL) [2] | Training Framework | Provides uncertainty quantification for more reliable predictions. |

| Generative Adversarial Network (GAN) [15] | Model Architecture | Addresses data imbalance by generating synthetic minority-class samples. |

DTI and DTA prediction, while interconnected, represent distinct challenges in computational drug discovery. DTI is a classification task focused on identifying potential binding events, whereas DTA is a regression task aimed at quantifying the strength of these interactions. The evaluation of machine learning models for these tasks must therefore use different metrics and rigorous data splitting protocols.

Current research trends are moving towards frameworks that are multi-modal (integrating sequence, graph, and functional data), robust to cold-start problems, and capable of providing uncertainty estimates. Models like DTIAM [11], which unify the prediction of interaction, affinity, and mechanism of action, and EviDTI [2], which quantifies predictive uncertainty, represent the cutting edge. For researchers, the choice between a DTI or DTA approach—and the selection of an appropriate model—should be guided by the specific stage of the drug discovery pipeline and the biological question at hand.

Chemogenomics represents a paradigm shift in drug discovery, moving from a single-target focus to a systematic approach that aims to identify all possible ligands for all potential drug targets within a biological system [18] [19]. This field operates on the core principle that similar compounds tend to interact with similar targets, and conversely, similar targets tend to bind similar compounds [18]. By systematically exploring these chemical-biological interactions, researchers can simultaneously identify novel therapeutic compounds and their corresponding molecular targets, significantly accelerating the early drug discovery pipeline [20] [19].

The completion of the human genome project revealed approximately 3000 "druggable" targets, yet only about 800 have been investigated to any significant extent by the pharmaceutical industry [18]. This untapped pharmacological space presents both a challenge and an opportunity that chemogenomics seeks to address through high-throughput experimental and computational approaches. The ultimate goal is to construct a comprehensive two-dimensional matrix mapping the relationships between chemical compounds (rows) and biological targets (columns), where each cell represents a binding constant or functional effect [18].

Within this framework, drug-target interaction (DTI) prediction has emerged as a crucial computational component, enabling researchers to prioritize candidate interactions for experimental validation. Recent advances in machine learning, particularly deep learning, have dramatically improved our ability to accurately predict these interactions, thereby bridging the chemical space of compounds with the genomic space of potential drug targets [1] [6] [7].

Computational Methodologies in Chemogenomics

Fundamental Descriptors for Navigating Chemical and Target Spaces

The effectiveness of any chemogenomics approach depends critically on how both ligands (chemical compounds) and targets (proteins) are represented and compared. For ligands, descriptors range from one-dimensional (1-D) global properties to complex three-dimensional (3-D) structural representations [18]. 1-D descriptors include molecular weight, atom counts, and predicted properties like log P (lipophilicity), which are fast to compute and useful for preliminary filtering [18]. 2-D topological descriptors capture structural connectivity through molecular graphs or fingerprints that encode predefined structural patterns, with the Tanimoto coefficient serving as a popular similarity metric [18]. 3-D conformational descriptors incorporate spatial information about pharmacophores, molecular shapes, and fields, providing the most physiologically relevant representation but requiring careful handling of molecular alignment and conformational sampling [18].

For target proteins, classification similarly spans multiple dimensions. 1-D sequence information enables clustering of targets by family (e.g., GPCRs, kinases) through sequence alignment methods [18]. 2-D structural classifications map protein folds and secondary structure elements, while 3-D atomic coordinates from X-ray crystallography or NMR provide the most detailed structural information [18]. In chemogenomic approaches, the ligand-binding site often receives particular attention, as structural similarities among related targets are typically most pronounced in these regions [18].

Experimental Protocols for DTI Model Evaluation

Standardized evaluation protocols are essential for objectively comparing different DTI prediction approaches. The following methodology is representative of current best practices in the field [1] [3]:

Dataset Preparation: Publicly available benchmark datasets such as DrugBank, Davis, KIBA, and BindingDB are partitioned into training, validation, and test sets, typically in an 8:1:1 ratio. These datasets contain known drug-target pairs with associated binding affinities or binary interaction labels.

Data Balancing: To address the common issue of class imbalance (where non-interacting pairs far outnumber interacting ones), techniques like Generative Adversarial Networks (GANs) are employed to create synthetic data for the minority class, effectively reducing false negatives [3].

Feature Engineering: Comprehensive feature extraction includes:

Model Training and Optimization: Models are trained using appropriate loss functions and optimized via techniques like cross-validation. For deep learning models, pre-trained representations from large chemical or biological corpora are often utilized to enhance generalization [1].

Performance Assessment: Models are evaluated using multiple metrics including Accuracy (ACC), Recall, Precision, Matthews Correlation Coefficient (MCC), F1 score, Area Under the ROC Curve (AUC), and Area Under the Precision-Recall Curve (AUPR) [1] [3].

The following diagram illustrates the conceptual framework of chemogenomics and the corresponding computational prediction workflow:

Comparative Performance Evaluation of Machine Learning Approaches

Quantitative Comparison of DTI Prediction Models

Table 1: Performance comparison of recent DTI prediction models on benchmark datasets (2023-2025)

| Model | Year | Dataset | AUC | AUPR | Accuracy | Precision | Recall | MCC |

|---|---|---|---|---|---|---|---|---|

| GAN+RFC [3] | 2025 | BindingDB-Kd | 0.994 | - | 0.975 | 0.975 | 0.975 | - |

| EviDTI [1] | 2025 | DrugBank | - | - | 0.820 | 0.819 | - | 0.643 |

| Hetero-KGraphDTI [21] | 2025 | Multiple | 0.980 | 0.890 | - | - | - | - |

| SaeGraphDTI [22] | 2025 | Davis | - | - | - | - | - | - |

| GAN+RFC [3] | 2025 | BindingDB-Ki | 0.973 | - | 0.917 | 0.917 | 0.917 | - |

| EviDTI [1] | 2025 | KIBA | - | - | Competitive | +0.4% vs baselines | - | +0.3% vs baselines |

| GAN+RFC [3] | 2025 | BindingDB-IC50 | 0.990 | - | 0.954 | 0.954 | 0.954 | - |

Table 2: Methodological characteristics of featured DTI prediction approaches

| Model | Architecture Type | Drug Representation | Target Representation | Key Innovation |

|---|---|---|---|---|

| GAN+RFC [3] | Hybrid ML/DL | MACCS keys | Amino acid/dipeptide composition | GAN-based data balancing |

| EviDTI [1] | Evidential Deep Learning | 2D graph + 3D structure | Protein sequence (ProtTrans) | Uncertainty quantification |

| Hetero-KGraphDTI [21] | Graph Neural Network | Molecular structure | Protein sequence | Knowledge graph integration |

| SaeGraphDTI [22] | Graph Neural Network | SMILES attributes | Sequence attributes | Adaptive graph connectivity |

Analysis of Model Performance and Applicability

The quantitative comparisons reveal several important trends in DTI prediction. The GAN+RFC model demonstrates exceptional performance on BindingDB datasets, particularly for the BindingDB-Kd dataset where it achieves an remarkable AUC of 0.994 and accuracy of 97.5% [3]. This hybrid approach leverages generative adversarial networks to address data imbalance, creating synthetic minority class samples that significantly improve model sensitivity and reduce false negatives.

The EviDTI framework introduces a crucial innovation for practical drug discovery: uncertainty quantification [1]. By employing evidential deep learning, EviDTI provides confidence estimates alongside its predictions, allowing researchers to prioritize drug-target pairs with higher certainty for experimental validation. This addresses a critical limitation of traditional deep learning models, which often produce overconfident predictions for novel compounds or targets outside their training distribution.

Graph-based approaches like Hetero-KGraphDTI and SaeGraphDTI demonstrate the growing importance of relational information in DTI prediction [21] [22]. These models leverage not only the intrinsic features of drugs and targets but also the complex network relationships between them, including drug-drug similarities, target-target interactions, and known DTI networks. By incorporating this topological information, graph-based models can better generalize to novel compounds and targets through guilt-by-association reasoning.

The following workflow diagram illustrates the architecture of a modern, multimodal DTI prediction system:

Essential Research Reagents and Computational Tools

Table 3: Key research reagents and computational resources for chemogenomics studies

| Resource Type | Specific Examples | Primary Function | Relevance to DTI Prediction |

|---|---|---|---|

| Compound Libraries | Chemogenomic libraries [23] [19] | Systematic screening against target families | Provides training data and validation sets |

| Target Families | Kinases, GPCRs, Proteases [19] | Representative protein classes | Enables family-specific model development |

| Benchmark Datasets | DrugBank, Davis, KIBA, BindingDB [1] [3] [22] | Standardized performance evaluation | Enables fair comparison between methods |

| Feature Extraction Tools | ProtTrans, MG-BERT [1] | Generating molecular and protein representations | Provides input features for machine learning models |

| Deep Learning Frameworks | Graph Neural Networks, Transformers [6] [21] | Model implementation | Enables development of novel architectures |

The integration of chemogenomics principles with advanced machine learning has fundamentally transformed the landscape of drug-target interaction prediction. The comparative analysis presented in this guide demonstrates that while traditional machine learning approaches like Random Forests can achieve impressive performance when enhanced with techniques like GAN-based data balancing [3], newer paradigms incorporating evidential deep learning [1], graph neural networks [21] [22], and multi-modal learning [6] offer distinct advantages for practical drug discovery.

The most significant advances in recent years have addressed critical challenges in the field: data imbalance through synthetic sample generation [3], prediction reliability through uncertainty quantification [1], and model interpretability through attention mechanisms and knowledge integration [21]. These developments have gradually bridged the gap between computational predictions and experimental validation, increasing the trustworthiness of DTI models in decision-making processes.

Future progress in this field will likely focus on several key areas: (1) improved handling of out-of-distribution compounds and targets through better generalization techniques; (2) integration of multi-omics data and biological context beyond simple binary interactions; and (3) development of more sophisticated uncertainty quantification methods that can guide experimental prioritization with greater confidence. As these computational approaches continue to mature, they will play an increasingly central role in realizing the original promise of chemogenomics: to systematically map the interactions between chemical and genomic spaces for accelerated therapeutic development.

The accurate prediction of drug-target interactions (DTIs) is a critical step in the drug discovery process, offering the potential to significantly reduce development costs, shorten research timelines, and facilitate drug repositioning [24] [5]. Traditional experimental methods for determining DTIs are notoriously time-consuming, expensive, and labor-intensive, creating a pressing need for efficient computational alternatives [25] [3]. In silico methods, particularly those based on machine learning (ML), have emerged as powerful tools for this task, capable of systematically screening thousands of compounds to identify promising candidates for further experimental validation [5]. These computational approaches leverage the growing amount of available bioactivity data, compound libraries, and protein sequences to predict interactions with high efficiency [5].

Over the years, a diverse set of ML methodologies for DTI prediction has been developed. These can be broadly categorized into several paradigms, each with its own underlying principles, strengths, and limitations. This guide focuses on three foundational categories: similarity-based methods, which operate on the principle that chemically similar drugs tend to interact with similar targets; feature-based methods, which use learned or engineered representations of drugs and targets for prediction; and network-based methods, which model the complex web of interactions as a graph to infer new links [26] [25] [27]. Recent integrated and hybrid methods have also been developed, combining elements from these categories to overcome their individual limitations [27] [28].

This article provides a comparative guide to these ML approaches, framing the discussion within the broader context of performance evaluation for DTI prediction research. It is designed to equip researchers, scientists, and drug development professionals with a clear understanding of the current methodological landscape, supported by experimental data and structured comparisons.

Methodological Foundations and Comparative Analysis

The following sections detail the core principles, representative models, advantages, and disadvantages of each major category of DTI prediction methods.

Similarity-Based Methods

Similarity-based methods form one of the earliest and most intuitive classes of techniques for DTI prediction. They are grounded in the "guilt-by-association" principle, which posits that similar drugs are likely to interact with similar target proteins and vice versa [26] [25]. These methods typically rely on constructing comprehensive similarity matrices for both drugs and targets, based on information such as chemical structure, side effects, or protein sequence. Predictions are then made by propagating interaction information across these similarity networks [26] [27].

- Core Principle: The fundamental assumption is that if a drug ( Di ) interacts with a target ( Tj ), then:

- Drugs similar to ( Di ) are likely to interact with target ( Tj ).

- Targets similar to ( Tj ) are likely to interact with drug ( Di ) [26].

- Representative Models:

- KronRLS: A kernel-based method that integrates drug and target similarity matrices within a Kronecker regularized least-squares framework, formally defining DTI prediction as a regression task [5].

- SimBoost: A nonlinear approach that introduces prediction intervals and uses features derived from similarity matrices and neighboring relationships for continuous DTI prediction [5].

- DTiGEMS: Integrates multiple drug-drug similarities and employs a similarity selection and fusion algorithm to enhance prediction accuracy [24].

- Advantages and Disadvantages:

- Advantages: These methods are conceptually simple, do not require explicit feature extraction, and can effectively connect the chemical space of drugs with the genomic space of targets [26] [27].

- Disadvantages: Their performance is heavily dependent on the quality and completeness of the similarity measures. They often struggle to identify interactions for novel drugs or targets that lack similar neighbors in the known interaction network (the "cold start" problem) and may overlook complex biochemical properties [24] [27].

Feature-Based Methods

Feature-based methods, also referred to as feature-based chemogenomic approaches, treat DTI prediction as a supervised learning problem. These methods rely on representing drugs and targets using informative features, which are then used to train a classification or regression model [26] [29]. The representations can be manually engineered (e.g., molecular fingerprints for drugs, amino acid composition for proteins) or learned directly from raw data (e.g., SMILES strings, protein sequences) using deep learning [5] [3].

- Core Principle: Knowledge about drugs, targets, and confirmed interactions is translated into feature vectors. A predictive model is trained on these features to learn the complex patterns that govern interactions, which can then be applied to new drug-target pairs [26].

- Representative Models:

- DeepDTA: A deep learning model that uses convolutional neural networks (CNNs) on drug SMILES strings and protein sequences to predict binding affinities [24] [5].

- GraphDTA: Represents drug molecules as graphs and employs graph neural networks (GNNs) to learn features for affinity prediction, better capturing the topological structure of molecules [1].

- EviDTI: An evidential deep learning framework that integrates 2D and 3D drug structures with target sequence features, providing not only predictions but also uncertainty estimates, which is crucial for prioritizing experimental validation [1].

- Transformer-based Models (e.g., TransformerCPI, MolTrans): Utilize attention mechanisms to capture long-range dependencies and complex interactions within and between drug and protein sequences [1] [5].

- Advantages and Disadvantages:

- Advantages: Capable of learning complex, non-linear relationships from data. With deep learning, they can automatically learn relevant features from raw data, reducing the need for manual feature engineering. They can achieve high prediction accuracy, especially when large datasets are available [1] [29].

- Disadvantages: Performance can be constrained by the quality and size of the labeled dataset. They often require significant computational resources for training and can be less interpretable than simpler models [5] [29].

Network-Based Methods

Network-based methods model the DTI problem within a graph or network framework. Drugs, targets, and sometimes other entities like diseases or side effects are represented as nodes, while known interactions and relationships form the edges [25] [28]. These methods then use graph algorithms, such as random walks, matrix factorization, or graph neural networks, to infer new interactions by analyzing the topology of the network [25] [27].

- Core Principle: New interactions can be predicted by analyzing the proximity and connectivity patterns within a heterogeneous biological network. The structure of the network itself contains implicit information about potential associations [25] [28].

- Representative Models:

- NBI (Network-Based Inference): A classic method derived from recommendation algorithms that performs resource diffusion on the known DTI network to predict new interactions, without requiring any additional information beyond the network itself [25].

- DTINet: Learns low-dimensional representations of drugs and proteins by integrating diverse data sources and applying methods like random walk with restart (RWR) and diffusion component analysis (DCA) [5] [30].

- GCN-DTI: Uses graph convolutional networks (GCNs) to learn features from a graph representation of drugs and targets, which are then fed into a deep neural network for interaction prediction [30] [28].

- MGCLDTI: A more recent approach that integrates multi-source information and uses graph contrastive learning (GCL) to learn robust node representations, addressing challenges like data sparsity and noise [28].

- Advantages and Disadvantages:

- Advantages: Do not rely on the 3D structures of targets or predefined negative samples. They can provide a systematic view of interaction patterns and are particularly well-suited for integrating diverse types of biological data into a unified model [25] [27].

- Disadvantages: Predictive performance can be sensitive to the sparsity and noise inherent in biological networks. Some methods may have limited ability to capture the intricate biochemical details of the interactions [24] [28].

Integrated and Hybrid Methods

Recognizing that no single category is universally superior, recent research has focused on integrated or hybrid methods that combine the strengths of multiple paradigms [27]. For instance, MVPA-DTI constructs a heterogeneous network and employs a meta-path aggregation mechanism to dynamically integrate feature views (from drug structures and protein sequences) with biological network relationship views [24]. Another example, DTI-RME, combines robust loss functions, multi-kernel learning, and ensemble learning to address label noise, ineffective multi-view fusion, and incomplete structural modeling simultaneously [30]. Experimental assessments have demonstrated that these integrated methods often outperform approaches from a single category [27].

Performance Evaluation and Experimental Data

A rigorous evaluation is essential for comparing the performance of different DTI prediction methods. This section outlines standard evaluation protocols, datasets, and metrics, followed by a comparative analysis of results from recent studies.

Experimental Protocols and Benchmarking Standards

To ensure fair and reproducible comparisons, researchers typically adhere to common experimental setups:

- Datasets: Models are trained and tested on publicly available benchmark datasets. Commonly used datasets include:

- BindingDB: A large database containing binding affinities (Kd, Ki, IC50) for drugs and target proteins [3] [29].

- Davis: Provides kinase inhibition data (Kd values) for a set of drugs and kinases [1] [29].

- KIBA: A large-scale dataset that combines KI, Kd, and IC50 values into a unified bioactivity score, helping to mitigate experimental bias [1] [29].

- Gold Standard Datasets (NR, GPCR, IC, E): Curated by Yamanishi et al., these are smaller datasets categorized by target protein family (Nuclear Receptors, G-Protein Coupled Receptors, Ion Channels, Enzymes) and are widely used for binary interaction prediction [30] [29].

- Evaluation Metrics: Performance is measured using a range of metrics to provide a comprehensive view.

- For classification tasks (predicting whether an interaction exists or not):

- AUC-ROC (Area Under the Receiver Operating Characteristic Curve): Measures the model's ability to distinguish between interacting and non-interacting pairs across all classification thresholds.

- AUPR (Area Under the Precision-Recall Curve): Often more informative than AUC-ROC for imbalanced datasets, where non-interacting pairs far outnumber interacting ones.

- F1-Score: The harmonic mean of precision and recall.

- For regression tasks (predicting binding affinity values):

- MSE (Mean Squared Error): The average of the squares of the errors between predicted and actual values.

- RMSE (Root Mean Squared Error): The square root of the MSE, more interpretable as it is in the same units as the target variable.

- For classification tasks (predicting whether an interaction exists or not):

- Validation Scenarios: Methods are often evaluated under different scenarios to test their generalization capability:

- Cross-Validation on Paired (CVP): Standard random splitting of known drug-target pairs.

- Cross-Validation on Targets (CVT): Tests the model's performance on new targets that were not seen during training.

- Cross-Validation on Drugs (CVD): Tests the model's performance on new drugs that were not seen during training [30].

Comparative Performance Data

The following tables summarize the performance of various methods as reported in recent literature, providing a quantitative basis for comparison.

Table 1: Performance on Binding Affinity Prediction (Regression Tasks)

This table shows results on the BindingDB dataset, where the goal is to predict continuous binding affinity values (lower RMSE is better).

| Model | Approach Category | BindingDB (IC50) RMSE | BindingDB (Ki) RMSE |

|---|---|---|---|

| kNN-DTA [3] | Similarity-based / Neighborhood | 0.684 | 0.750 |

| Ada-kNN-DTA [3] | Similarity-based / Neighborhood | 0.675 | 0.735 |

| MDCT-DTA [3] | Feature-based (Deep Learning) | 0.475 | - |

| DeepLPI [3] | Feature-based (Deep Learning) | - | Test AUC: 0.790 |

| BarlowDTI [3] | Feature-based (Deep Learning) | - | Test AUC: 0.936 |

Table 2: Performance on Binary Interaction Prediction (Classification Tasks)

This table presents results for classifying whether a drug-target pair interacts, with performance measured by AUC and AUPR (higher is better). Results for EviDTI and baseline models are on the DrugBank, Davis, and KIBA datasets [1].

| Model | Approach Category | DrugBank (AUPR) | Davis (AUPR) | KIBA (AUPR) |

|---|---|---|---|---|

| Random Forest (RF) [1] | Feature-based (Traditional ML) | - | 0.668 | 0.762 |

| SVM [1] | Feature-based (Traditional ML) | - | 0.653 | 0.753 |

| MolTrans [1] | Feature-based (Deep Learning) | - | 0.699 | 0.787 |

| GraphormerDTI [1] | Feature-based (Deep Learning) | - | 0.715 | 0.795 |

| EviDTI [1] | Feature-based (Deep Learning) | Reported "competitive" | 0.724 | 0.799 |

Table 3: Performance of Hybrid and Network-Based Models

This table includes results for network-based and hybrid models on various datasets, highlighting their performance in different scenarios.

| Model | Approach Category | Dataset | Metric | Performance |

|---|---|---|---|---|

| MVPA-DTI [24] | Hybrid (Network + Feature) | Not Specified | AUROC / AUPR | 0.966 / 0.901 |

| DTI-RME [30] | Hybrid (Ensemble, Multi-kernel) | Luo Dataset | AUROC | 0.951 |

| MGCLDTI [28] | Network-based (Graph Learning) | Yamanishi_GPCR | AUROC | 0.934 |

Critical Analysis of Performance Trends

The experimental data reveals several key trends in the performance of DTI prediction methods:

- Advantage of Integrated Methods: As noted in a 2022 comparative analysis, integrated methods that combine network-based and machine learning techniques generally outperform methods from a single category [27]. This is corroborated by the strong performance of models like MVPA-DTI and DTI-RME, which systematically combine multiple views and data types.

- Handling Data Imperfections: Methods that explicitly address common data challenges, such as label noise and sparsity, show improved robustness. For example, DTI-RME's robust loss function is designed to handle outliers in the interaction matrix, which often correspond to undiscovered interactions rather than true negatives [30]. Similarly, the use of GANs for data balancing, as reported in one study, led to high accuracy (97.46%), precision (97.49%), and sensitivity (97.46%) on the BindingDB-Kd dataset [3].

- The Role of Pretraining and Language Models: The integration of large language models (LLMs) for proteins (e.g., ProtT5) and drugs has driven significant performance gains. These models, pretrained on massive unlabeled datasets, provide high-quality, generalized feature representations that enhance prediction accuracy [24] [5].

- Importance of Uncertainty Quantification: Beyond pure predictive accuracy, the ability to quantify prediction uncertainty is increasingly recognized as vital for practical application. Models like EviDTI, which provide uncertainty estimates, help prioritize the most reliable predictions for experimental validation, thereby improving the efficiency of the drug discovery pipeline [1].

Essential Research Reagents and Computational Tools

Successful DTI prediction research relies on a suite of computational "reagents" – datasets, software libraries, and feature extraction tools. The table below catalogs key resources frequently used in the field.

Table 4: Key Research Reagents and Resources for DTI Prediction

| Resource Name | Type | Function and Application in DTI Research |

|---|---|---|

| DrugBank [30] [29] | Database | A comprehensive resource containing detailed drug, target, and interaction data, used for building and testing predictive models. |

| BindingDB [3] [29] | Database | A public database of measured binding affinities, primarily focusing on drug-target interactions, used for regression-based DTA tasks. |

| KEGG, BRENDA, SuperTarget [30] | Database | Provide complementary information on pathways, enzyme functions, and drug-target relations, used for dataset curation and validation. |

| Gold Standard Datasets (NR, GPCR, IC, E) [30] [29] | Benchmark Dataset | Curated datasets for binary DTI prediction, allowing for direct comparison of methods across different target protein families. |

| SMILES [24] [29] | Data Representation | A string-based notation for representing molecular structures of drugs, used as input for many feature-based deep learning models. |

| Molecular Fingerprints (e.g., MACCS) [3] | Feature Extraction | Binary vectors representing the presence or absence of specific chemical substructures, used for calculating drug similarity and as input features. |

| ProtTrans / ProtT5 [24] [1] | Feature Extraction | A protein-specific large language model that converts protein sequences into biophysically and functionally relevant feature representations. |

| AlphaFold [5] [29] | Feature Extraction | A system that predicts protein 3D structures from amino acid sequences, providing structural features for structure-aware DTI models. |

| RDKit [29] | Software Library | An open-source toolkit for cheminformatics, used for processing SMILES strings, generating molecular fingerprints, and calculating descriptors. |

Workflow and Conceptual Diagrams

The following diagram illustrates the high-level logical workflow and the relationships between the main methodological categories discussed in this guide.

DTI Prediction Methodology Workflow

This diagram outlines the general pipeline for DTI prediction. Input data, comprising drug and target information along with known interactions, is processed by one of the core methodological categories. Each category contains specific representative models (e.g., KronRLS, DeepDTA, DTINet). The trend towards integrated methods is shown, as they synthesize concepts from multiple categories. The final output is a prediction of either a binary interaction or a quantitative binding affinity.

The field of computational drug-target interaction prediction has matured significantly, offering a diverse taxonomy of machine learning approaches. Similarity-based methods provide a strong, interpretable baseline. Feature-based methods, particularly deep learning models, excel at learning complex patterns from raw data and often achieve state-of-the-art accuracy. Network-based methods offer a powerful framework for integrating heterogeneous biological data and leveraging topological information.

Current evidence, both from the literature and the experimental data summarized herein, indicates that no single category is universally superior. The most significant performance gains are increasingly coming from integrated and hybrid methods that successfully combine the strengths of multiple paradigms—for instance, by fusing features from protein language models with the relational context of heterogeneous networks [24] [27] [28]. Furthermore, addressing endemic challenges like data sparsity, label noise, and the need for reliable uncertainty quantification, as seen in models like DTI-RME and EviDTI, is becoming a key differentiator for practical utility [1] [30].

For researchers and drug development professionals, the choice of method should be guided by the specific problem context, the available data, and the desired outcome. For novel target or drug scenarios, methods robust to "cold starts" are essential. When interpretability and reliability are paramount, models providing confidence estimates are invaluable. As the field continues to evolve, the integration of ever-more powerful foundational models like AlphaFold and large language models, coupled with sophisticated multi-view learning frameworks, promises to further narrow the gap between computational prediction and experimental reality, accelerating the pace of drug discovery.

Architectural Innovations: A Deep Dive into State-of-the-Art DTI Models

In the field of drug discovery, accurately predicting drug-target interactions (DTIs) is a critical yet challenging task. Feature engineering—the process of transforming raw data into informative features that better represent the underlying problem—plays a fundamental role in developing effective computational models [31]. For DTI prediction, this involves creating meaningful numerical representations from the complex structural and biological data of drugs and target proteins. Among the various techniques, the combination of MACCS keys for drug representation and amino acid compositions for target characterization has established a robust, interpretable foundation for machine learning models [3] [32].

This approach addresses a core challenge in computational drug discovery: effectively integrating chemical and biological information to capture the complex biochemical relationships that govern molecular interactions [3]. While newer deep learning methods have emerged, feature-based methods using engineered descriptors remain competitively performant, often offering greater interpretability and lower computational requirements [33] [32]. This guide provides a comprehensive performance comparison of this feature engineering paradigm against contemporary alternatives, examining its experimental validation, practical implementation, and position within the current DTI prediction landscape.

Core Methodologies: Feature Representation and Experimental Design

Drug Representation: MACCS Structural Keys

The MACCS (Molecular ACCess System) keys are a widely used structural fingerprint system that encodes the presence or absence of specific chemical substructures within a drug molecule [3] [32]. This representation transforms a drug's complex molecular structure into a fixed-length binary vector (typically 166 or 960 bits), where each bit indicates whether a particular structural pattern exists in the molecule. These patterns include specific functional groups, ring systems, atom types, and connectivity patterns that are chemically significant for molecular recognition and binding.

Target Representation: Amino Acid and Dipeptide Compositions

For target proteins, amino acid composition (AAC) and dipeptide composition (DC) provide fundamental sequence-derived features. AAC calculates the normalized frequency of each of the 20 standard amino acids within a protein sequence, while DC calculates the frequency of all 400 possible pairs of adjacent amino acids, thereby capturing local sequence order information [3] [33]. These compositions reflect important physicochemical properties of proteins—such as hydrophobicity, charge, and structural propensity—that influence their interaction with drug molecules.

Experimental Workflow and Protocol

The standard experimental protocol for evaluating MACCS and AAC/DC-based DTI prediction models follows a systematic workflow that integrates these feature representations with machine learning classification.

Figure 1: Experimental workflow for MACCS and AAC/DC-based DTI prediction

The standard implementation involves several key stages [3] [32]:

- Dataset Curation: Public DTI databases (BindingDB, DrugBank) provide confirmed interacting and non-interacting pairs.

- Feature Extraction: MACCS keys (166-bit) for drugs; AAC (20-dimensional) and DC (400-dimensional) for proteins.

- Data Balancing: Techniques like Generative Adversarial Networks (GANs) address class imbalance in experimental datasets.

- Classifier Training: Random Forest or SVM models are trained on concatenated drug-target features.

- Performance Evaluation: Models are evaluated using cross-validation and standard metrics (Accuracy, Precision, Recall, AUC-ROC).

Table 1: Essential research reagents and computational tools for feature-based DTI prediction

| Resource Name | Type | Primary Function | Application in MACCS/AAC-DC Workflow |

|---|---|---|---|

| RDKit [34] | Software Library | Cheminformatics and ML | Processes SMILES, generates MACCS keys, and calculates molecular properties |

| DGL-LifeSci [4] | Toolkit | Graph Neural Networks | Constructs molecular graphs from SMILES strings for advanced feature extraction |

| BindingDB [3] | Database | Bioactivity Data | Provides experimentally validated DTIs for model training and benchmarking |

| DrugBank [33] [2] | Database | Drug & Target Information | Sources for drug structures, target sequences, and known interactions |

| PubChem [33] [34] | Database | Chemical Information | Source for drug compounds and their structural identifiers (CIDs) |

| UniProt [33] | Database | Protein Sequence & Feature | Provides target protein sequences for feature extraction (AAC/DC) |

| scikit-learn | Library | Machine Learning | Implements RF, SVM classifiers and evaluation metrics for model development |

Performance Comparison and Experimental Data

Benchmark Performance of MACCS and AAC/DC Approaches

The performance of feature engineering approaches using MACCS keys and amino acid/dipeptide compositions has been rigorously evaluated against multiple benchmarking datasets. The following table summarizes key experimental results from recent studies:

Table 2: Performance comparison of MACCS and AAC/DC-based models on benchmark datasets

| Dataset | Model Architecture | Accuracy (%) | Precision (%) | Recall/Sensitivity (%) | Specificity (%) | F1-Score (%) | ROC-AUC (%) |

|---|---|---|---|---|---|---|---|

| BindingDB-Kd [3] | GAN + Random Forest | 97.46 | 97.49 | 97.46 | 98.82 | 97.46 | 99.42 |

| BindingDB-Ki [3] | GAN + Random Forest | 91.69 | 91.74 | 91.69 | 93.40 | 91.69 | 97.32 |

| BindingDB-IC50 [3] | GAN + Random Forest | 95.40 | 95.41 | 95.40 | 96.42 | 95.39 | 98.97 |

| Enzyme [32] | SVM + Feature Selection | - | - | - | - | - | 89.90* |

| Ion Channel [32] | SVM + Feature Selection | - | - | - | - | - | 92.90* |

| GPCR [32] | SVM + Feature Selection | - | - | - | - | - | 82.10* |

| Nuclear Receptor [32] | SVM + Feature Selection | - | - | - | - | - | 65.50* |

| Human [33] | MIFAM-DTI (Multi-source) | - | - | - | - | - | 98.20 |

Area Under Precision-Recall Curve (AUPR) values*Area Under ROC Curve (AUC) value

Comparative Analysis Against Alternative Approaches

When compared with other modern DTI prediction paradigms, the MACCS and AAC/DC feature engineering approach demonstrates distinct advantages and limitations:

Table 3: Performance comparison against alternative DTI prediction methodologies

| Model Type | Key Features | Representative Models | Performance (AUC-ROC) | Relative Advantages | Relative Limitations |

|---|---|---|---|---|---|

| Feature Engineering (MACCS+AAC/DC) | Structural keys, amino acid compositions | RF/SVM with MACCS+AAC/DC [3] [32] | 91-99% | High interpretability, computational efficiency, robust on small datasets | Limited to predefined features, may miss complex patterns |

| Graph Neural Networks | Molecular graphs, spatial structures | GraphDTA [2], MGraphDTA [4] | 85-92% | Captures topological structure, no feature engineering required | Computationally intensive, requires large datasets |

| Transformer & Attention Models | Self-attention, sequence context | MolTrans [2], TransformerCPI [2] | 87-94% | Captures long-range dependencies, state-of-art on some benchmarks | High parameter count, limited interpretability |

| Hybrid/Multi-Source Models | Integrates multiple representations | MIFAM-DTI [33], CAMF-DTI [4] | 95-98% | Leverages complementary information, often highest performance | Complex implementation, potential redundancy |