Ligand-Based vs. Target-Based Chemogenomics: A Strategic Guide for Modern Drug Discovery

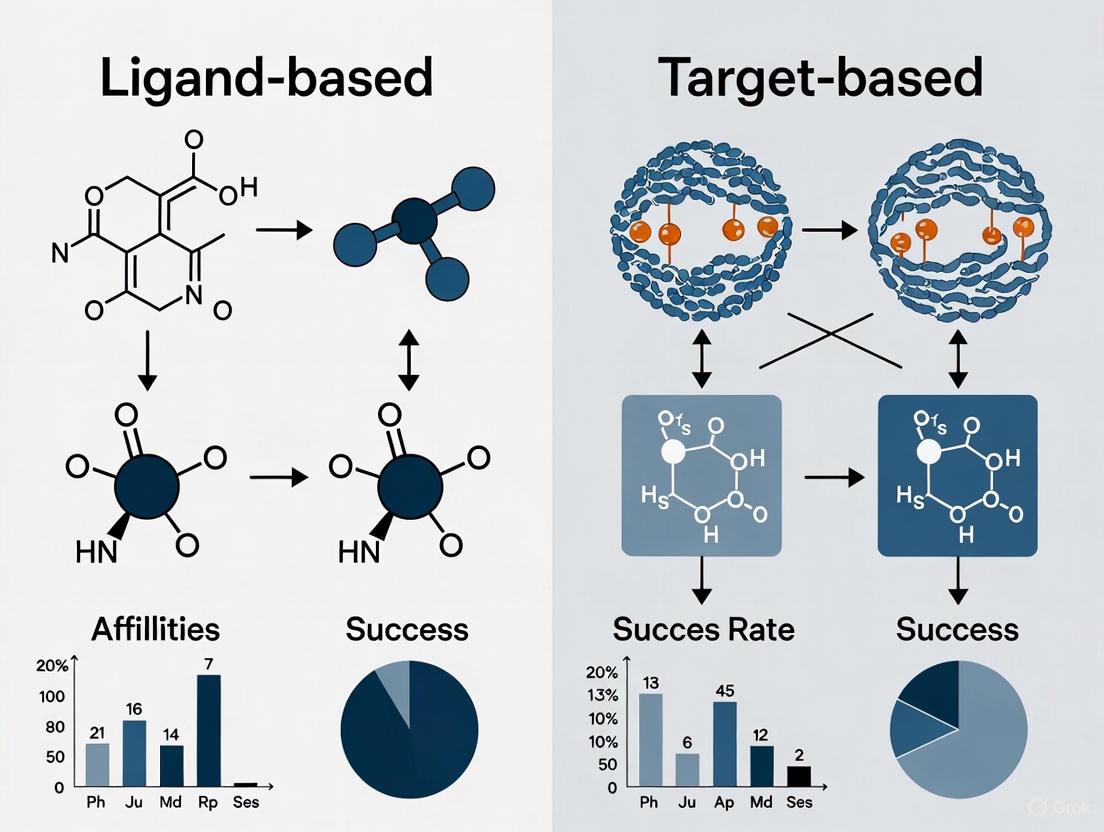

This article provides a comprehensive comparison of ligand-based and target-based chemogenomic approaches, two foundational strategies in contemporary drug discovery.

Ligand-Based vs. Target-Based Chemogenomics: A Strategic Guide for Modern Drug Discovery

Abstract

This article provides a comprehensive comparison of ligand-based and target-based chemogenomic approaches, two foundational strategies in contemporary drug discovery. Aimed at researchers, scientists, and drug development professionals, it explores the core principles, methodologies, and practical applications of each paradigm. The content delves into the distinct advantages and limitations of both approaches, offers strategies for troubleshooting and optimization, and presents a framework for their rigorous validation and comparative analysis. By synthesizing insights from current literature and case studies, this guide aims to empower scientists to select and integrate the most effective computational strategies for target identification, lead optimization, and drug repurposing, ultimately enhancing the efficiency and success rate of drug development pipelines.

Core Principles: Unveiling the Foundations of Chemogenomic Strategies

In the field of chemogenomics, which systematically explores the interaction between chemical space and biological target space, two primary computational strategies have emerged for drug discovery and target prediction: ligand-based and target-based approaches [1] [2] [3]. The core premise linking these paradigms is that similar ligands tend to bind to similar targets, and conversely, similar targets tend to bind to similar ligands [1] [3]. This guide provides a comparative analysis of these two methodologies, supported by recent performance data and detailed experimental protocols.

Core Principles and Methodologies

The fundamental difference between these approaches lies in their starting point and the primary information they utilize for drug discovery and target prediction.

Ligand-Based Approaches

Ligand-based methods rely on the principle that compounds with similar chemical structures are likely to have similar biological activities [1] [4]. These approaches do not require explicit structural information about the target protein.

- Key Techniques: The most common techniques include chemical similarity searching using molecular fingerprints, pharmacophore modeling, and Quantitative Structure-Activity Relationship (QSAR) analysis [1] [5]. These methods describe molecules using 1D, 2D, or 3D molecular descriptors [1].

- Similarity Measurement: Structural similarity is typically quantified using the Tanimoto coefficient, which compares molecular fingerprints such as Morgan fingerprints (ECFP-like) or FP2 fingerprints [1] [4]. For example, the MOST (MOst-Similar ligand-based Target inference) approach uses fingerprint similarity and explicit bioactivity data of the most-similar ligands to predict targets with high accuracy [4].

Target-Based Approaches

Target-based methods, in contrast, begin with the protein target of interest. These approaches leverage the three-dimensional structure of the target to identify or design potential ligands.

- Key Techniques: The predominant technique is molecular docking, which predicts how a small molecule binds to a protein's active site and scores the interaction strength [6] [7]. Structure-based virtual screening can systematically dock chemical libraries against arrays of protein targets [8].

- Requirements: These methods depend on the availability of high-quality 3D protein structures, which can come from experimental methods like X-ray crystallography or from computational models generated by tools like AlphaFold [6].

Table 1: Fundamental Characteristics of Ligand-Based and Target-Based Approaches

| Characteristic | Ligand-Based Approaches | Target-Based Approaches |

|---|---|---|

| Starting Point | Known active ligands | Protein target structure |

| Core Principle | "Similar ligands have similar activities" | "Structure-based molecular recognition" |

| Target Info Required | No 3D structure needed | High-quality 3D structure essential |

| Primary Techniques | Chemical similarity searching, QSAR, pharmacophore modeling | Molecular docking, structure-based virtual screening |

| Data Sources | ChEMBL, DrugBank, BindingDB [6] | PDB, AlphaFold models [6] |

Performance Comparison and Experimental Data

Recent systematic comparisons provide quantitative insights into the performance of these approaches. A 2025 benchmark study evaluated seven target prediction methods using a shared dataset of FDA-approved drugs, offering direct performance comparisons [6].

Table 2: Performance Comparison of Representative Target Prediction Methods

| Method Name | Approach Type | Algorithm/Fingerprint | Key Performance Findings |

|---|---|---|---|

| MolTarPred | Ligand-based | 2D similarity, MACCS/Morgan fingerprints | Most effective method in 2025 benchmark; Morgan fingerprints with Tanimoto outperformed MACCS with Dice scores [6] |

| MOST | Ligand-based | Morgan/FP2 fingerprints with machine learning | Achieved 87-95% accuracy in cross-validation; Morgan fingerprint slightly better than FP2 [4] |

| RF-QSAR | Target-centric | Random forest, ECFP4 | Included in systematic comparison [6] |

| TargetNet | Target-centric | Naïve Bayes, multiple fingerprints | Included in systematic comparison [6] |

| CMTNN | Target-centric | Neural network, Morgan fingerprints | Included in systematic comparison [6] |

| D3Similarity | Ligand-based | 2D and 3D similarity combination | Complementary to structure-based docking; uses 2D × 3D value as integrated score [9] |

Performance Trade-offs and Optimization

The 2025 comparison revealed that model optimization strategies involve important trade-offs. For instance, applying high-confidence filtering to interaction data, while improving precision, reduces recall, making it less ideal for drug repurposing applications where maximizing potential leads is prioritized [6].

Experimental Protocols

To illustrate how these approaches are implemented in practice, here are detailed methodologies from key studies.

Protocol 1: Ligand-Based Target Prediction (MOST Approach)

The MOST approach provides a robust protocol for ligand-based target inference [4]:

- Data Preparation: Extract bioactivity data (e.g., Ki values) from curated databases like ChEMBL. Filter for direct binding data with high confidence scores and resolve multiple measurements for the same target-ligand pair.

- Fingerprint Calculation: Generate molecular fingerprints for all compounds. The Morgan fingerprint (radius = 2) is recommended based on its superior performance.

- Similarity Search: For each query compound, calculate Tanimoto coefficients against all ligands in the training database. Identify the most-similar ligand(s) for each target.

- Model Training: Use machine learning methods (Logistic Regression or Random Forest performed best) to learn the relationship between fingerprint similarity, bioactivity of the most-similar ligand, and the probability of the query compound being active.

- Target Prediction: Apply the trained model to predict potential targets for new query compounds, using explicit bioactivity data (pKi values) of the most-similar ligands to calculate statistical significance (p-values) and control false discovery rates in multiple-target predictions.

Protocol 2: Structure-Based Virtual Screening

A standard protocol for target-based screening involves [6] [7]:

- Target Preparation: Obtain the 3D structure of the target protein from PDB or generate it using computational tools like AlphaFold. Prepare the structure by adding hydrogen atoms, assigning partial charges, and optimizing side-chain conformations.

- Binding Site Identification: Define the binding pocket, either from known co-crystallized ligands or through binding site prediction algorithms.

- Ligand Library Preparation: Curate a library of small molecules for screening, ensuring correct protonation states, tautomers, and 3D conformations.

- Molecular Docking: Systematically dock each compound from the library into the binding site using programs like AutoDock Vina or Glide. Generate multiple binding poses per compound.

- Scoring and Ranking: Use scoring functions to evaluate and rank the binding poses. Apply consensus scoring or post-processing with machine-learning-based scoring functions to improve accuracy.

- Experimental Validation: Select top-ranked compounds for in vitro testing to validate the predictions.

Successful implementation of these approaches requires access to specialized databases, software tools, and computational resources.

Table 3: Essential Resources for Chemogenomics Research

| Resource Category | Specific Tools/Databases | Key Function |

|---|---|---|

| Bioactivity Databases | ChEMBL, BindingDB, DrugBank | Provide curated ligand-target interaction data for ligand-based approaches [6] [8] |

| Protein Structure Resources | Protein Data Bank (PDB), AlphaFold Database | Source of 3D protein structures for target-based approaches [6] [8] |

| Cheminformatics Toolkits | RDKit, Open Babel | Calculate molecular fingerprints, perform structural manipulations, and similarity searching [9] [4] |

| Docking Software | AutoDock Vina, Glide, GOLD | Perform molecular docking and structure-based virtual screening [7] |

| Specialized Target Prediction Tools | MolTarPred, MOST, SEA, D3Similarity | Integrated platforms for predicting drug targets using various algorithms [6] [9] [4] |

Application Contexts and Decision Framework

The choice between ligand-based and target-based approaches depends on the specific research question, data availability, and project goals.

When to Prefer Ligand-Based Approaches

- Novel Target Identification: When discovering new protein targets for existing drugs or natural products with known phenotypic effects [2] [5].

- Limited Structural Data: When high-quality 3D structures of the target protein are unavailable [3].

- Drug Repurposing: To identify new therapeutic indications for existing drugs by predicting off-target effects [6] [4].

- Natural Products Research: For elucidating mechanisms of action of traditional medicines or natural products with complex structures [2] [5].

When to Prefer Target-Based Approaches

- Novel Lead Identification: When searching for new chemical starting points against a well-characterized target [7].

- Rational Drug Design: To optimize lead compounds through structure-based design when protein-ligand complex structures are available [7].

- Selectivity Profiling: To design selective compounds for specific protein family members by exploiting differences in binding sites [5].

- Target-Hopping: To identify ligands for orphan receptors with known structures but limited ligand information [3].

Emerging Trends and Integrated Approaches

The distinction between ligand-based and target-based paradigms is increasingly blurring with the emergence of integrated strategies [8] [10]. Modern chemogenomics leverages both chemical and biological information to create more accurate predictive models.

Hybrid Target-Ligand Approaches

These methods simultaneously consider descriptors of both ligands and proteins, creating unified models that can predict interactions across broader sections of the protein-ligand space [8]. For instance, some approaches merge protein sequence descriptors with ligand fingerprints to create interaction models that can generalize to proteins with limited ligand information [8].

Artificial Intelligence and Machine Learning

Recent advances incorporate deep learning and knowledge graphs to significantly improve target prediction accuracy [5]. These systems offer multi-dimensional drug-target interaction analysis, integrate multi-omics data, and provide interpretable decision support with clinical translatability [5].

The field continues to evolve with improvements in both approaches: ligand-based methods are incorporating more sophisticated similarity metrics and explicit bioactivity data [4], while target-based methods benefit from more accurate protein structure prediction and machine-learning scoring functions [6].

Decision Framework for Selecting Between Ligand-Based and Target-Based Approaches

Both ligand-based and target-based approaches offer distinct advantages for drug discovery within the chemogenomics paradigm. Ligand-based methods excel when sufficient ligand bioactivity data exists, providing high prediction accuracy and efficiency for applications like drug repurposing. Target-based approaches offer rational structure-based design capabilities when reliable protein structures are available. The most effective drug discovery strategies often integrate both approaches, leveraging their complementary strengths to navigate the complex landscape of protein-ligand interactions. As both methodologies continue to advance—with improvements in similarity metrics, machine learning scoring functions, and hybrid models—their impact on accelerating drug discovery and understanding polypharmacology will continue to grow.

The accurate prediction of interactions between a drug and its target protein is a cornerstone of modern drug discovery, serving as an efficient analog to costly and time-consuming wet-lab experiments [11]. The foundational principles guiding this field have evolved into two primary computational philosophies: the ligand-based paradigm, which operates on the "guilt-by-association" principle that similar ligands tend to bind similar targets, and the target-based paradigm, which prioritizes structural complementarity between a drug and the three-dimensional (3D) structure of its protein target [12] [10]. Within these paradigms, chemogenomic approaches that systematically study the interaction of small molecules with macromolecular target families have gained prominence [11]. This guide provides an objective comparison of these methodologies, evaluating their performance, detailing experimental protocols, and situating them within the broader context of chemogenomic research for an audience of researchers, scientists, and drug development professionals.

Methodological Foundations and Comparative Workflow

The two approaches, ligand-based and target-based, offer distinct yet complementary paths for predicting drug-target interactions (DTIs). The ligand-based approach relies on the similarity principle, where a query molecule is compared to a database of known active ligands; if the query is highly similar to a known ligand, it is predicted to bind the same target [6] [11]. In contrast, the target-based approach uses the 3D structure of a protein target to perform molecular docking, simulating how a query compound might fit and bind within a specific binding site, thereby prioritizing structural complementarity [13] [10]. A third, hybrid category known as proteochemometric modeling (PCM) has emerged, which integrates information from both multiple ligands and related protein targets into a single machine-learning model, allowing for both inter- and extrapolation to novel compounds and targets [12].

The diagram below illustrates the logical relationships and workflows connecting these core principles to their corresponding methodologies and data requirements.

Performance Comparison of Representative Methods

A precise comparative study published in 2025 systematically evaluated seven target prediction methods using a shared benchmark dataset of FDA-approved drugs [6]. The table below summarizes the quantitative performance data and key characteristics of these representative methods, providing an objective basis for comparison.

Table 1: Performance and characteristics of representative DTI prediction methods

| Method | Type | Algorithm | Key Data Source | Performance Notes |

|---|---|---|---|---|

| MolTarPred [6] | Ligand-centric | 2D similarity | ChEMBL 20 | Most effective method in 2025 comparison; Morgan fingerprints with Tanimoto score performed best. |

| PPB2 [6] | Ligand-centric | Nearest Neighbor/Naïve Bayes/Deep Neural Network | ChEMBL 22 | Uses top 2000 similar ligands; integrates multiple algorithms. |

| RF-QSAR [6] | Target-centric | Random Forest | ChEMBL 20 & 21 | ECFP4 fingerprints; considers top 4 to 110 similar ligands. |

| TargetNet [6] | Target-centric | Naïve Bayes | BindingDB | Utilizes multiple fingerprints (FP2, MACCS, ECFP). |

| ChEMBL [6] | Target-centric | Random Forest | ChEMBL 24 | Uses Morgan fingerprints. |

| CMTNN [6] | Target-centric | Multitask Neural Network | ChEMBL 34 | Run locally via ONNX runtime. |

| SuperPred [6] | Ligand-centric | 2D/Fragment/3D Similarity | ChEMBL & BindingDB | Employs ECFP4 fingerprints. |

Beyond this direct comparison, other machine learning approaches demonstrate the potential of handling highly imbalanced datasets, a common challenge in DTI prediction where known interactions are vastly outnumbered by unknown pairs. A 2025 study using a random forest classifier combined with an undersampling strategy (NearMiss) achieved state-of-the-art performance on gold standard datasets, with auROC values of up to 99.33% on enzymes and 92.26% on nuclear receptors [14]. Furthermore, novel deep learning models like DrugMAN, which integrate heterogeneous network information using mutual attention networks, have shown robust performance, particularly in challenging "cold-start" scenarios where information about new drugs or targets is limited [15].

Experimental Protocols for Method Evaluation

To ensure the reliability and reproducibility of DTI prediction methods, rigorous experimental protocols are essential. The following sections detail the key methodologies for benchmark dataset preparation and model evaluation as employed in recent comparative studies.

Benchmark Dataset Preparation

A critical first step involves constructing a high-quality, non-overlapping benchmark dataset. The following protocol is adapted from the 2025 comparative study [6]:

- Database Selection and Hosting: Select a comprehensive, experimentally validated database such as ChEMBL (version 34). Host the PostgreSQL version of the database locally for efficient data retrieval.

- Data Retrieval and Filtering: Query relevant tables (

molecule_dictionary,target_dictionary,activities) to retrieve bioactivity records. Filter for records with standard values (IC50, Ki, or EC50) below a specific threshold (e.g., 10,000 nM) to ensure high activity. - Data Cleaning:

- Exclude entries associated with non-specific or multi-protein targets by filtering out target names containing keywords like "multiple" or "complex."

- Remove duplicate compound-target pairs, retaining only unique interactions.

- High-Confidence Filtering (Optional): To enhance data quality for specific analyses, create a filtered subset that only includes interactions with a high confidence score (e.g., a minimum score of 7 in ChEMBL, indicating direct protein complex subunits are assigned).

- Benchmark Set Creation for Evaluation:

- Collect molecules with FDA approval years from the database.

- To prevent bias and overestimation of performance, ensure these molecules are excluded from the main database used for prediction.

- Randomly select a sample (e.g., 100 FDA-approved drugs) to serve as the query set for validation.

This workflow is visualized below, outlining the sequence of steps from raw data to a finalized benchmark dataset.

Performance Evaluation Strategy

The evaluation of different DTI prediction methods should be designed to simulate real-world scenarios and test generalization ability. The protocol used by the DrugMAN study exemplifies this approach [15]:

- Scenario-Based Data Splitting: Partition the known drug-target pairs into four different settings to assess model robustness:

- Warm Start: Both drugs and targets in the test set have known interactions in the training data.

- Drug-Cold: Drugs in the test set are new (absent from the training data).

- Target-Cold: Targets in the test set are new.

- Both-Cold: Both drugs and targets in the test set are new.

- Model Training and Prediction: Train each model on the training set and generate interaction predictions for the test set.

- Performance Metrics Calculation: Calculate standard metrics including the Area Under the Receiver Operating Characteristic Curve (AUROC), the Area Under the Precision-Recall Curve (AUPRC), and the F1-score. A model with good generalization will show the smallest decrease in these metrics from warm-start to cold-start scenarios.

Successful DTI prediction relies on a suite of computational tools and databases. The table below catalogs key resources, their primary functions, and their relevance to the discussed methodologies.

Table 2: Key research reagents and resources for DTI prediction

| Resource Name | Type | Function | Relevance to Methodologies |

|---|---|---|---|

| ChEMBL [6] [16] | Database | Provides experimentally validated bioactivity data (IC50, Ki, etc.) for compounds and targets. | Primary data source for ligand-based and PCM methods. |

| BindingDB [6] [16] | Database | Focuses on measured binding affinities between drugs and targets. | Key resource for ligand-based and target-centric models. |

| PDBbind [16] | Database | A curated subset of the PDB with binding affinity data for protein-ligand complexes. | Essential for training and testing structure-based, target-centric methods. |

| DrugBank [12] [15] | Database | Contains comprehensive drug and drug-target information. | Used for benchmarking and validating predictions, especially for approved drugs. |

| AlphaFold [13] | Tool | Predicts high-accuracy 3D protein structures from amino acid sequences. | Expands target coverage for structure-based docking by providing reliable models for proteins without experimental structures. |

| Molecular Fingerprints (e.g., Morgan, ECFP) [6] | Molecular Descriptor | Encodes molecular structure into a fixed-length bit string for similarity search and machine learning. | Core component of ligand-based methods and feature input for many machine learning models. |

| BindingNet v2 [16] | Dataset | A large collection of 689,796 modeled protein-ligand complexes. | Augments training data for deep learning models, improving generalization for novel ligand prediction. |

| NearMiss [14] | Algorithm | An undersampling strategy to balance imbalanced datasets by controlling the number of majority class samples. | Used in data preprocessing to improve model performance on datasets with few known interactions. |

| Mutual Attention Network [15] | Algorithm | A deep learning component that captures interaction information between drug and target representations. | Enhances hybrid and network-based models by learning complex, non-linear interaction patterns. |

The comparative analysis presented in this guide demonstrates that both ligand-based and target-based chemogenomic approaches offer distinct advantages. Ligand-centric methods like MolTarPred excel in efficiency and are highly effective for target fishing and drug repurposing when similar ligands are known [6]. In contrast, target-centric methods provide a mechanistic basis for interaction based on structural complementarity, which is crucial for novel target screening [13] [10]. The emerging trend, however, points toward hybridization and data integration. Methods that combine ligand and target information, such as proteochemometric modeling [12], or that leverage heterogeneous network data with advanced deep learning architectures, such as DrugMAN [15], show superior generalization ability, especially in challenging cold-start scenarios. Furthermore, the availability of larger and more diverse datasets, like BindingNet v2, and the integration of high-quality predicted structures from AlphaFold, are set to further enhance the accuracy and scope of all computational DTI prediction methods, solidifying their role as indispensable tools in modern drug discovery [13] [16].

The Shift from Single-Target to Polypharmacology and Systems-Level Analysis

For much of the past century, drug discovery was dominated by a "one target–one drug" approach, focused on developing highly selective ligands for individual disease proteins based on the belief that this would maximize therapeutic benefit and minimize off-target effects [17]. While this strategy achieved some successes, it has major limitations: approximately 90% of single-target drug candidates fail in late-stage trials due to lack of efficacy or unexpected toxicity [17]. These failures often stem from the reductionist oversight of the complex, redundant, and networked nature of human biology, where targeting a lone node in a complex network can easily be circumvented by the system [17].

In contrast, polypharmacology represents a fundamental paradigm shift—the rational design of small molecules that act on multiple therapeutic targets simultaneously [17]. This approach offers a transformative strategy to overcome biological redundancy, network compensation, and drug resistance, particularly for complex diseases with multifactorial etiologies [17] [18]. The clinical success of many "promiscuous" drugs revealed that multi-target activity could be advantageous rather than detrimental, leading to the intentional development of multi-target-directed ligands (MTDLs) [17] [18]. Polypharmacology has demonstrated particular success across oncology, neurodegeneration, metabolic disorders, and infectious diseases, where synergistic therapeutic effects can be achieved while potentially reducing adverse events and improving patient compliance compared to combination therapies [17].

Underpinning this shift are advanced computational methods that enable systematic analysis at a systems level. Chemogenomics—the systematic screening of targeted chemical libraries against families of drug targets—has emerged as a key strategy that integrates target and drug discovery [19] [2]. This approach leverages both ligand-based and target-based methods to efficiently explore chemical and target space, accelerating the identification of novel therapeutic targets and bioactive compounds [2] [11].

Computational Methodologies: A Comparative Framework

Computational approaches for target identification and drug discovery have evolved into two primary categories: ligand-based and target-based methods, both of which can be applied within a chemogenomics framework.

Ligand-Based Approaches

Ligand-based methods rely on the similarity principle: molecules with similar structural features are likely to exhibit similar biological activities [20]. These approaches are particularly valuable when the 3D structure of the target protein is unknown.

Similarity-Based Virtual Screening: This technique identifies new hits from large compound libraries by comparing candidate molecules against known active compounds using 2D molecular fingerprints or 3D molecular descriptors [20]. The underlying assumption is that structurally similar molecules will interact with similar biological targets.

Quantitative Structure-Activity Relationship (QSAR) Modeling: QSAR uses statistical and machine learning methods to relate molecular descriptors to biological activity [20]. Both 2D and 3D QSAR models are used for virtual screening and compound prioritization, with recent advances in 3D QSAR methods improving predictive accuracy even with limited data.

Target-Based Approaches

Target-based methods utilize the 3D structural information of target proteins to predict interactions with potential drug candidates.

Molecular Docking: This core technique predicts the bound orientation and conformation of ligand molecules within a target's binding pocket, scoring them based on interaction energies [20]. Docking performs flexible ligand docking while often treating proteins as rigid, a simplification that enables high-throughput screening.

Free-Energy Perturbation (FEP): A more computationally intensive method that estimates binding free energies using thermodynamic cycles [20]. FEP is primarily used during lead optimization to quantitatively evaluate the impact of small structural modifications on binding affinity.

Hybrid and Integrated Approaches

Increasingly, researchers are combining ligand-based and target-based methods to leverage their complementary strengths:

Sequential Integration: Large compound libraries are first filtered using fast ligand-based screening, after which the most promising candidates undergo more computationally intensive structure-based methods like docking [20].

Parallel Screening: Both approaches are run independently on the same compound library, with results compared or combined in a consensus scoring framework to increase confidence in selecting true positives [20].

Complementary Information Capture: Ensembles of protein pocket conformations can capture binding site flexibility, while ligand-based methods infer critical binding features from known active molecules [20].

The following diagram illustrates the workflow for integrating these complementary approaches:

Performance Comparison of Target Prediction Methods

Systematic Evaluation of Prediction Tools

A precise comparison study evaluated seven target prediction methods using a shared benchmark dataset of FDA-approved drugs to ensure reliability and consistency across different approaches [6]. The study assessed both stand-alone codes and web servers, including MolTarPred, PPB2, RF-QSAR, TargetNet, ChEMBL, CMTNN, and SuperPred [6]. The evaluation used ChEMBL version 34, containing 15,598 targets, 2.4 million compounds, and 20.7 million interactions, with data quality enhanced by filtering for high-confidence interactions [6].

Table 1: Comparative Performance of Target Prediction Methods

| Method | Type | Algorithm | Fingerprints/Descriptors | Key Findings |

|---|---|---|---|---|

| MolTarPred | Ligand-centric | 2D similarity | MACCS, Morgan | Most effective method overall; Morgan fingerprints with Tanimoto scores outperformed MACCS [6] |

| PPB2 | Ligand-centric | Nearest neighbor/Naïve Bayes/deep neural network | MQN, Xfp, ECFP4 | Uses top 2000 similar ligands for prediction [6] |

| RF-QSAR | Target-centric | Random forest | ECFP4 | Considers top 4, 7, 11, 33, 66, 88 and 110 similar ligands [6] |

| TargetNet | Target-centric | Naïve Bayes | FP2, Daylight-like, MACCS, E-state, ECFP2/4/6 | Algorithm uses multiple fingerprint types [6] |

| ChEMBL | Target-centric | Random forest | Morgan | Web server implementation [6] |

| CMTNN | Target-centric | ONNX runtime | Morgan | Stand-alone code implementation [6] |

| SuperPred | Ligand-centric | 2D/fragment/3D similarity | ECFP4 | Integrates similarity approaches [6] |

Performance Optimization Strategies

The comparative analysis revealed several key optimization strategies for improving prediction accuracy:

Fingerprint and Metric Selection: For MolTarPred, Morgan fingerprints with Tanimoto similarity scores demonstrated superior performance compared to MACCS fingerprints with Dice scores [6]. This highlights the importance of fingerprint and metric selection in optimizing ligand-based prediction methods.

High-Confidence Filtering: Employing high-confidence filtering (minimum confidence score of 7 in ChEMBL database) improved data quality but reduced recall, making this approach less ideal for drug repurposing applications where maximizing potential hit identification is prioritized [6].

Database Selection: ChEMBL was identified as particularly suitable for novel protein target prediction due to its extensive chemogenomic data, while DrugBank proved more appropriate for predicting new drug indications against known targets [6].

Experimental Protocols and Methodologies

Benchmark Dataset Preparation

The experimental protocol for comparative evaluation of target prediction methods involves carefully constructed benchmark datasets and validation frameworks:

Data Source and Curation: Researchers utilized ChEMBL version 34, containing over 2.4 million compounds and 20.7 million interactions [6]. The database was queried locally via PostgreSQL, retrieving data from moleculedictionary, targetdictionary, and activities tables.

Bioactivity Criteria: Records were selected with standard values for IC50, Ki, or EC50 below 10000 nM to ensure relevant binding affinities [6]. To prevent redundancy, duplicate compound-target pairs were removed, retaining only unique pairs and resulting in 1,150,487 unique ligand-target interactions.

Quality Control Measures: Entries associated with non-specific or multi-protein targets were excluded by filtering out targets containing keywords like "multiple" or "complex" [6]. A filtered database containing only high-confidence interactions (minimum confidence score of 7) was employed to enhance data quality.

Benchmark Construction: Molecules with FDA approval years were collected to prepare a benchmark dataset of FDA-approved drugs [6]. To prevent bias, these molecules were excluded from the main database to avoid overlap with known drugs during prediction. A random selection of 100 samples from the FDA-approved drugs dataset was used for method validation.

Method Implementation and Evaluation

Tool Execution: The seven target prediction methods included both stand-alone codes (MolTarPred and CMTNN) run locally and web servers (PPB2, RF-QSAR, TargetNet, ChEMBL, and SuperPred) that required manual query [6].

Performance Metrics: Methods were evaluated based on recall and prediction accuracy, with specific attention to how optimization strategies like high-confidence filtering affected performance characteristics [6].

Research Reagent Solutions for Chemogenomic Studies

Table 2: Essential Research Reagents and Databases for Chemogenomics

| Resource | Type | Primary Application | Key Features |

|---|---|---|---|

| ChEMBL | Database | Bioactivity data repository | 2.4M+ compounds, 15.5K+ targets, 20.7M+ interactions; experimentally validated data [6] |

| MolTarPred | Software Tool | Target prediction | Ligand-centric; uses Morgan fingerprints with Tanimoto similarity; top performer in comparative study [6] |

| PPB2 | Web Server | Polypharmacology prediction | Combines nearest neighbor, Naïve Bayes, and deep neural networks; uses MQN, Xfp, ECFP4 fingerprints [6] |

| RF-QSAR | Web Server | Target prediction | Random forest algorithm with ECFP4 fingerprints; target-centric approach [6] |

| AutoDock | Software Tool | Molecular docking | Models flexibility in targeted macromolecules; improved free-energy scoring system [11] |

| AlphaFold | AI Tool | Protein structure prediction | Generates high-quality structural models from amino acid sequences [17] |

Case Studies and Practical Applications

Successful Drug Repurposing through Target Prediction

Fenofibric Acid: A case study on fenofibric acid demonstrated its potential for drug repurposing as a THRB modulator for thyroid cancer treatment using computational target prediction approaches [6]. This illustrates the practical application of these methods in identifying new therapeutic uses for existing drugs.

Gleevec (imatinib mesylate): Originally developed for leukemia targeting Bcr-Abl fusion gene, Gleevec was later found to interact with PDGF and KIT receptors, leading to its repurposing for gastrointestinal stromal tumors [11]. This successful repositioning story highlights how single drugs can interact with multiple targets, enriching their polypharmacology.

Mebendazole and Actarit: MolTarPred discovered hMAPK14 as a potent target of mebendazole, confirmed by in vitro validation [6]. Similarly, the method predicted Carbonic Anhydrase II (CAII) as a new target of Actarit, suggesting potential repurposing of this rheumatoid arthritis drug for hypertension, epilepsy, and certain cancers [6].

Emerging Trends and Future Directions

The field of polypharmacology and chemogenomics continues to evolve with several emerging trends:

AI-Driven Polypharmacology: Recent advances in deep learning, reinforcement learning, and generative models have accelerated the discovery and optimization of multi-target agents [17]. These AI-driven platforms enable de novo design of dual and multi-target compounds, some demonstrating biological efficacy in vitro.

Integration of Omics Data: Combining chemogenomic approaches with CRISPR functional screens, pathway simulations, and systems biology provides a more comprehensive framework for guiding multi-target design [17].

Clinical Translation: Recent drug approvals continue to reflect the polypharmacology trend. Among 73 new drugs introduced in 2023-2024 in Germany, 18 (approximately 25%) aligned with polypharmacology concepts, including 10 antitumor agents, 5 drugs for autoimmune disorders, and 1 antidiabetic/anti-obesity drug [18].

The following diagram illustrates how polypharmacology addresses the limitations of single-target approaches in complex disease networks:

The shift from single-target to polypharmacology represents a fundamental evolution in drug discovery, moving from reductionist approaches to systems-level analysis that acknowledges the complex, networked nature of biological systems and disease pathologies. Computational methods have been instrumental in enabling this transition, with ligand-based and target-based chemogenomic approaches providing complementary tools for identifying and optimizing multi-target-directed ligands.

Performance comparisons reveal that integrated approaches leveraging both ligand and target information typically outperform single-method strategies, with tools like MolTarPred demonstrating particular efficacy when properly optimized. As artificial intelligence and machine learning continue to advance, alongside growing chemical and biological databases, the systematic discovery and optimization of polypharmacological agents is poised to become increasingly sophisticated and effective.

For researchers and drug development professionals, the practical implication is clear: embracing polypharmacology through integrated computational methods can address the limitations of single-target strategies, potentially leading to more effective therapeutics for complex diseases that have proven resistant to traditional approaches. The continued development and refinement of these computational tools will be essential for realizing the full potential of polypharmacology in delivering next-generation medicines.

Chemogenomics represents a systematic approach to understanding the interactions between chemical compounds and biological targets on a genomic scale. This field relies heavily on robust, well-curated public databases to accelerate drug discovery by enabling the prediction and analysis of Drug-Target Interactions (DTIs). The high costs, extended timelines (typically 10-15 years), and low success rates (approximately 6.3% as of 2022) of traditional drug development have made in silico approaches using these databases indispensable for preliminary screening and target identification [13]. These computational methods efficiently leverage the growing amount of available bioactivity and structural data to mitigate the risks and resource demands of experimental validation alone. Within this landscape, three databases have emerged as fundamental resources: ChEMBL for bioactivity data, the Protein Data Bank (PDB) for structural biology, and DrugBank for pharmaceutical knowledge. This guide provides an objective comparison of these resources, framing their capabilities within the context of ligand-based versus target-based chemogenomic approaches, and delivers practical experimental protocols for their application in predictive research.

Table 1: Core Characteristics of Major Chemogenomic Databases

| Feature | ChEMBL | DrugBank | RCSB PDB |

|---|---|---|---|

| Primary Focus | Bioactive molecules with drug-like properties & quantitative bioactivity [21] | Comprehensive drug & drug target information, including mechanistic & pharmacological data [22] [23] | Experimentally-determined 3D structures of biological macromolecules [24] |

| Key Data Types | Bioactivity measurements (e.g., IC50, Ki), targets, assays, molecules, documents [25] | Drug structures, mechanisms of action, interactions (drug-drug, drug-food), pharmacokinetics, targets [26] [22] | Atomic coordinates, experimental data, computed structure models, ligands, nucleic acids, and complexes [24] [27] |

| Data Source | Manually curated from literature, direct depositions, other public databases [25] | Manually curated from textbooks, journal articles, and external databases [23] | Experimental methods (X-ray, NMR, Cryo-EM) deposited by researchers; CSMs from AlphaFold DB [24] [27] |

| Unique Identifier | ChEMBL ID (e.g., 'CHEMBL123456') [25] | DrugBank ID (e.g., 'DB00001') | PDB ID (e.g., '1ABC') [24] |

| Quantitative Strength | pChEMBL value for standardized potency/affinity comparison [25] | FDA approval status, drug interactions, dosing information [22] | Resolution, R-factor, clustering data for structure quality [24] |

Database-Specific Capabilities and Research Applications

ChEMBL: A Repository for Bioactivity Data and Ligand-Based Discovery

ChEMBL is a manually curated database of bioactive molecules with drug-like properties, originally launched in 2009 [21] [25]. Its core function is to store quantitative bioactivity data (e.g., IC50, Ki) extracted from scientific literature, which is then standardized into searchable and comparable formats like the pChEMBL value—a negative logarithmic scale that allows for the comparison of roughly comparable measures of half-maximal response concentration, potency, or affinity [25]. The database has significantly expanded from its initial focus on medicinal chemistry literature to include data from patents, direct depositions from academic and industrial groups, and integrated datasets from other public resources like PubChem BioAssay [25]. This makes ChEMBL an essential tool for ligand-based virtual screening methods, such as Quantitative Structure-Activity Relationship (QSAR) modeling and pharmacophore modeling, which predict new drug candidates by leveraging known bioactivity data from structurally similar compounds [13].

DrugBank: Integrating Drug and Target Knowledge for Pharmaceutical Research

DrugBank is a unique bioinformatics and cheminformatics resource that combines detailed chemical, pharmacological, and pharmaceutical drug data with comprehensive drug target information [22] [23]. First released in 2006, it serves as a "gold standard" knowledgebase that bridges the gap between discrete chemical data and biological target contexts [22] [23]. Its DrugCard entries provide extensive information, including drug indications, mechanisms of action, biotransformation pathways, drug-drug interactions, and sequences and structures of protein targets [23]. This integrated view is particularly valuable for understanding polypharmacology, identifying drug repurposing opportunities, and predicting off-target effects. The database is crucial for research that requires a holistic understanding of a drug's pharmacological profile, from its chemical structure to its clinical applications and metabolizing enzymes [22].

Protein Data Bank (PDB): The Structural Foundation for Target-Based Approaches

The RCSB Protein Data Bank is the global archive for experimentally-determined 3D structures of biological macromolecules, including proteins, nucleic acids, and complex assemblies [24] [27]. Established in 1971, the PDB provides the foundational structural data required for target-based drug discovery approaches [27]. Its primary value lies in enabling structure-based drug design, most notably through molecular docking, a technique that uses the 3D structure of target proteins to position candidate drug molecules within active sites and simulate potential binding interactions [13]. A key recent development is the integration of over one million Computed Structure Models (CSMs) from AlphaFold DB and ModelArchive, which provide predictive models for proteins without experimentally-solved structures, thereby greatly expanding the structural coverage of the proteome [24] [27]. The PDB is also committed to open access and education, with resources like the "Molecule of the Month" series making structural biology accessible to a broad audience [27].

Experimental Protocols for Chemogenomic Workflows

The following protocols outline standard methodologies for leveraging these databases in ligand-based and target-based prediction tasks, which represent the two major computational paradigms in chemogenomics.

Protocol 1: Ligand-Based Virtual Screening Using ChEMBL

This protocol uses known active compounds from ChEMBL to identify new candidates without requiring a 3D protein structure.

Methodology:

- Define Query and Extract Actives: Identify a target of interest (e.g., a specific kinase). Query the ChEMBL database to extract all compounds reported with bioactivity (e.g., IC50 < 1 µM) against that target, along with their associated canonical SMILES strings and pChEMBL values [25].

- Calculate Molecular Descriptors: For each active compound, compute a set of molecular descriptors (e.g., molecular weight, LogP, topological polar surface area) and molecular fingerprints (e.g., ECFP4 fingerprints) using a cheminformatics toolkit like RDKit.

- Build a Predictive Model:

- QSAR Model: Use the computed descriptors and associated bioactivity values (pChEMBL) to train a regression model (e.g., Random Forest or Support Vector Machine) to predict the activity of new compounds [13].

- Similarity Search: Use the molecular fingerprints of the known actives to screen a large virtual compound library (e.g., the ZINC database). Compounds with a Tanimoto coefficient above a defined threshold (e.g., 0.7) are considered potential hits.

- Validate and Prioritize Hits: Use internal cross-validation to assess model performance. Apply the model or similarity search to an external test set from a later ChEMBL release to validate its predictive power. Prioritize compounds with high predicted activity or similarity for further experimental testing.

Protocol 2: Target-Based Screening Using Molecular Docking with PDB Structures

This protocol predicts binding modes and affinities by leveraging the 3D structural information from the PDB.

Methodology:

- Target Selection and Preparation: Search the RCSB PDB for a high-resolution structure of your target protein, ideally in complex with a relevant small molecule [24]. Prioritize structures with high resolution (e.g., < 2.0 Å) and completeness. Prepare the protein structure by removing water molecules (except those involved in key interactions), adding hydrogen atoms, and assigning correct protonation states.

- Ligand Library Preparation: Obtain a library of candidate compounds, for example, investigational drugs from DrugBank [26]. Generate 3D conformers for each compound and optimize their geometries using energy minimization.

- Molecular Docking: Define the binding site on the protein target, typically based on the location of a co-crystallized ligand. Dock each compound from your library into the binding site using software like AutoDock Vina or Glide. The docking algorithm will score and rank poses based on estimated binding free energy [13].

- Analysis and Hit Selection: Analyze the top-ranking poses for key interactions with the protein target, such as hydrogen bonds, hydrophobic contacts, and salt bridges. Visually inspect the most promising complexes. Select compounds with favorable docking scores and plausible interaction geometries for experimental validation.

The logical flow of these two primary approaches within a research project can be visualized as parallel pathways that inform each other.

Comparative Analysis: Ligand-Based vs. Target-Based Data Utilization

The choice between ligand-based and target-based approaches often depends on the available data, which in turn dictates the primary database used. The following table contrasts how each database supports these distinct yet complementary strategies.

Table 2: Database Support for Ligand-Based vs. Target-Based Approaches

| Aspect | Ligand-Based (ChEMBL-Centric) | Target-Based (PDB-Centric) |

|---|---|---|

| Primary Database | ChEMBL | RCSB PDB |

| Core Data Used | Bioactivity values (IC50, Ki), chemical structures, SMILES strings [13] [25] | 3D atomic coordinates of protein-ligand complexes [13] |

| Key Predictive Method | QSAR, Pharmacophore Modeling, Similarity Search [13] | Molecular Docking, Structure-Based Virtual Screening [13] |

| Primary Advantage | Does not require a 3D protein structure; can leverage vast historical bioactivity data [13] | Provides atomic-level insight into binding modes and interactions; can design novel scaffolds [13] |

| Key Limitation | Limited to chemical space similar to known actives; struggles with novel targets (cold-start) [13] | Highly dependent on the availability and quality of a 3D structure; scoring functions can be inaccurate [13] |

| Role of DrugBank | Provides clinical context, mechanisms of action, and known drug compounds for library building [23] | Offers links between approved/experimental drugs and their known protein targets for validation [22] |

Emerging Trends and Integrated Approaches

The distinction between ligand-based and target-based methods is increasingly blurred by modern integrated and machine learning approaches. For instance, the DGraphDTA model constructs protein graphs based on protein contact maps derived from 3D structures in the PDB, thereby incorporating spatial information into affinity prediction [13]. Furthermore, the application of Large Language Models (LLMs) and the integration of AlphaFold predicted models are advancing feature engineering for targets without experimental structures [13]. These hybrid methods, which often pull data from all three databases, aim to overcome the inherent limitations of any single approach, such as data sparsity and the "cold-start" problem for new targets with no known binders [13].

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful in silico chemogenomic research relies on a digital toolkit of software and resources that facilitate the extraction, processing, and analysis of data from these primary databases.

Table 3: Essential Digital Tools for Chemogenomic Research

| Tool/Resource | Function | Application Context |

|---|---|---|

| RDKit | An open-source cheminformatics toolkit for descriptor calculation, fingerprint generation, and molecular operations. | Critical for preparing ligand libraries from ChEMBL or DrugBank for QSAR and docking studies [13]. |

| AutoDock Vina | A widely used molecular docking program for predicting protein-ligand binding poses and scoring affinities. | The standard software for target-based virtual screening using structures from the PDB [13]. |

| RCSB PDB API | A programmatic interface to search, filter, and retrieve data and structures from the PDB archive. | Enables automated workflows for fetching structural data and metadata for large-scale analysis [24]. |

| pChEMBL Value | A standardized negative logarithmic scale for bioactivity data. | Allows for direct comparison of potency/affinity measurements from different assay types within ChEMBL [25]. |

| AlphaFold DB | A database of highly accurate predicted protein structures generated by DeepMind's AlphaFold2. | Provides computed structure models for targets lacking experimental structures, expanding the scope of structure-based design [24] [27]. |

In Silico Toolkits: Methods, Algorithms, and Real-World Applications

In the field of chemogenomics, drug-target interaction prediction is primarily pursued through two complementary paradigms: target-based and ligand-based approaches. Target-based methods rely on knowledge of the 3D structure of the protein target, using techniques such as molecular docking to identify potential binders [6] [28]. In contrast, ligand-based methods leverage the principle that chemically similar molecules are likely to exhibit similar biological activities [29]. This guide focuses on the latter, providing a comparative analysis of the three core ligand-based techniques: molecular similarity, Quantitative Structure-Activity Relationship (QSAR) modeling, and pharmacophore modeling. These methods are indispensable when the target protein structure is unknown, poorly characterized, or difficult to model, as is common with many membrane protein targets [28]. The fundamental advantage of ligand-based design is its ability to bypass the need for structural target information, instead using known bioactive molecules as templates to discover new hits or optimize lead compounds [6].

Comparative Performance of Ligand-Based Methods

The following table summarizes the core principles, strengths, and limitations of the three primary ligand-based methods, providing a framework for selection based on research objectives.

| Method | Core Principle | Typical Applications | Key Strengths | Primary Limitations |

|---|---|---|---|---|

| Molecular Similarity | Measures structural or property resemblance between molecules using fingerprints and similarity metrics [6] [30]. | Virtual screening, target fishing, drug repurposing [6]. | Fast, intuitive, no required activity data; effective for identifying novel chemotypes via scaffold hopping [6] [30]. | Limited by known ligand data; may miss novel chemotypes if similarity is narrowly defined [6]. |

| QSAR Modeling | Correlates numerical molecular descriptors with biological activity using statistical or ML models [31] [32]. | Lead optimization, activity and ADMET property prediction [33] [31]. | Predictive and quantitative; provides mechanistic insights; can model complex, non-linear relationships with modern ML [31]. | Requires a dataset of compounds with known activity; model performance depends on data quality and applicability domain [33]. |

| Pharmacophore Modeling | Identifies and maps the essential steric and electronic features for biological activity [34] [32]. | Virtual screening, de novo molecular generation, understanding key binding interactions [34] [32]. | Provides an intuitive 3D visualization of interaction requirements; can integrate both ligand and target structure information [34]. | Conformational analysis can be complex; quality depends on the input molecules' quality and diversity [32]. |

Performance Benchmarks and Data

Recent comparative studies provide quantitative performance data for these methods. A 2025 systematic comparison of target prediction methods evaluated several ligand-centric approaches using a benchmark of FDA-approved drugs [6]. The study found that MolTarPred, a 2D similarity-based method, was the most effective for drug repurposing, with optimizations showing that Morgan fingerprints with a Tanimoto similarity score outperformed other fingerprint and metric combinations [6].

For QSAR models, best practices are evolving. A 2025 analysis demonstrated that for virtual screening of ultra-large chemical libraries, models trained on imbalanced datasets (reflecting the real-world abundance of inactives) and optimized for high Positive Predictive Value (PPV) achieved hit rates at least 30% higher than models trained on balanced datasets and optimized for Balanced Accuracy [35]. This highlights the critical importance of selecting the right performance metric for the specific application.

Experimental Protocols and Methodologies

Molecular Similarity Search Protocol

Objective: To identify potential inhibitors of a target protein using a known active compound as a query. Workflow:

- Query Selection: Select a known high-affinity ligand (e.g., from ChEMBL or BindingDB) and obtain its canonical SMILES string [6].

- Fingerprint Generation: Encode the query molecule and all molecules in the screening database (e.g., ZINC, ChEMBL) into a molecular fingerprint. The Morgan fingerprint (radius 2, 2048 bits) is a current recommended choice [6].

- Similarity Calculation: Compute the similarity between the query fingerprint and every database fingerprint using the Tanimoto coefficient [6].

- Hit Identification: Rank all database compounds by their similarity score and select the top candidates for further experimental validation [6].

QSAR Model Development Protocol

Objective: To build a predictive model that relates molecular structures to a specific biological activity (e.g., pIC50). Workflow:

- Data Curation: Collect a set of compounds with reliably measured biological activity. A minimum of 20-30 compounds is recommended [32].

- Descriptor Calculation: Use software like PaDEL or RDKit to compute numerical molecular descriptors (e.g., molecular weight, logP, topological indices) for all compounds [31] [32].

- Dataset Splitting: Divide the data into a training set (~80%) for model building and a test set (~20%) for validation [32].

- Model Training: Use the training set and machine learning algorithms (e.g., Random Forest, Support Vector Machines) to build a model that correlates descriptors with activity [31].

- Model Validation: Critically assess the model's predictive power on the held-out test set using metrics like R² (for continuous models) and PPV (for classification models used in virtual screening) [35].

Ligand-Based Pharmacophore Modeling Protocol

Objective: To create a 3D model of the essential features a molecule must possess to bind to a target, using a set of known active ligands. Workflow (as applied to dengue protease inhibitors [32]):

- Ligand Selection: Select a set of 3-5 known active compounds with diverse structures but high potency. Energy-minimize their structures using a tool like Avogadro with the MMFF94 force field [32].

- Model Generation: Input the energy-minimized structures (in

.mol2format) into pharmacophore modeling software like PharmaGist. The software will identify common chemical features (e.g., hydrogen bond donors/acceptors, hydrophobic regions, aromatic rings) and their spatial arrangement [32]. - Model Screening: Use the resulting pharmacophore hypothesis to screen a large database (e.g., ZINC) via a tool like ZINCPharmer to find molecules that match the feature arrangement [32].

- Hit Refinement: Subject the virtual hits to further analysis, such as molecular docking and ADMET prediction, to prioritize the most promising candidates for experimental testing [32].

Figure 1: A workflow diagram illustrating the three primary ligand-based methodologies for computational drug discovery. All paths begin with known active ligands and converge on the identification of new candidate compounds for experimental testing.

The following table details key computational tools, databases, and descriptors essential for implementing the ligand-based methods discussed.

| Tool/Resource Name | Type | Primary Function | Relevance to Methods |

|---|---|---|---|

| ChEMBL [6] | Database | Curated database of bioactive molecules with drug-like properties. | Source of known active ligands for all three methods. |

| ZINC [32] | Database | Publicly available database of commercially-available compounds for virtual screening. | Primary screening library for similarity and pharmacophore searches. |

| RDKit [34] | Cheminformatics Toolkit | Open-source toolkit for cheminformatics and machine learning. | Fingerprint and descriptor calculation; general molecular manipulation. |

| PaDEL [32] | Software | Calculates molecular descriptors and fingerprints. | Descriptor calculation for QSAR modeling. |

| Morgan Fingerprints [6] | Molecular Representation | A type of circular fingerprint encoding the atomic environment. | State-of-the-art fingerprint for molecular similarity calculations. |

| PharmaGist [32] | Software | Online server for generating ligand-based pharmacophore models. | Creates pharmacophore hypotheses from a set of active ligands. |

| ZINCPharmer [32] | Web Server | Online tool for screening the ZINC database using a pharmacophore query. | Identifies molecules that match a given pharmacophore model. |

| Random Forest [31] | Algorithm | Robust machine learning algorithm for classification and regression. | A top choice for building modern, non-linear QSAR models. |

Molecular similarity, QSAR, and pharmacophore modeling represent a powerful arsenal of ligand-based methods that remain crucial in modern drug discovery. The choice between them depends on the available data and the specific research goal: molecular similarity for rapid screening and repurposing, QSAR for quantitative activity prediction and lead optimization, and pharmacophore modeling for a more structural understanding of interaction requirements. The ongoing integration of artificial intelligence, through graph neural networks and transformers, is enhancing the predictive power and scope of these classical approaches [31] [30]. By understanding the comparative strengths, performance benchmarks, and experimental protocols outlined in this guide, researchers can make informed decisions to efficiently navigate the vast chemical space and accelerate the discovery of novel therapeutic agents.

Target-based computational methods are pillars of modern structure-based drug design, enabling the prediction of how small molecules interact with biological macromolecules at an atomic level. These methods, which include molecular docking, Structure-Based Virtual Screening (SBVS), and Free-Energy Perturbation (FEP), leverage 3D structural information to prioritize compounds for synthesis and experimental testing, significantly accelerating early-stage drug discovery campaigns [36] [37]. Their application is crucial for hit identification, lead optimization, and understanding polypharmacology.

This guide provides a comparative analysis of these three key methodologies, framing them within the broader context of chemogenomic approaches. While ligand-based methods rely on the principle that similar molecules have similar activities, target-based methods utilize the physical structure of the target protein, offering a powerful, mechanism-driven strategy for discovering novel scaffolds, even in the absence of known active compounds.

The three methods differ fundamentally in their computational intensity, primary application, and the qualitative versus quantitative nature of their predictions. Table 1 summarizes their core characteristics and typical performance metrics.

Table 1: Comparative Overview of Target-Based Methods

| Method | Primary Application | Computational Cost | Key Performance Metrics | Typical Performance |

|---|---|---|---|---|

| Molecular Docking | Binding pose prediction; Initial hit identification from large libraries. | Low to Moderate (GPU can accelerate) | Pose Accuracy (RMSD ≤ 2 Å); Physical Validity (PB-valid); Virtual Screening Enrichment (EF1%, logAUC) | Pose Accuracy (Traditional: >90% on known complexes; DL: >70%); EF1%: Can reach 28-31 with ML re-scoring [38] [39] |

| Structure-Based Virtual Screening (SBVS) | Prioritizing compounds from ultra-large libraries (billions of molecules). | High (scales with library size) | Hit Rate; Enrichment Factor (EF); logAUC | Hit rates improve dramatically with billion-molecule libraries; Performance is modelable and improvable [40] [41] |

| Free-Energy Perturbation (FEP) | Lead optimization; predicting binding affinity changes for congeneric series. | Very High (requires extensive GPU resources) | Mean Unsigned Error (MUE) vs. experiment; Accuracy within 1.0 kcal/mol | MUE of ~1.0 kcal/mol (6-8-fold in affinity); Successfully guides discovery of selective inhibitors [42] [37] |

Molecular docking serves as the foundational tool for predicting how a ligand binds to a protein's binding site. A comprehensive 2025 benchmarking study evaluated traditional, deep learning (DL), and hybrid docking methods across multiple dimensions [39]. The results revealed a clear performance tier for pose prediction on benchmark sets like Astex Diverse Set and PoseBusters: Traditional methods (e.g., Glide SP) and Hybrid methods (AI scoring with traditional search) lead in combined success rate (RMSD ≤ 2 Å & physically valid), followed by Generative Diffusion models (e.g., SurfDock), with Regression-based DL models trailing behind [39]. While DL methods like SurfDock can achieve high pose accuracy (>70%), they often produce physically implausible structures with steric clashes, highlighting a key limitation [39].

SBVS uses docking to search massive molecular libraries. Performance is quantified by the hit rate—the fraction of tested compounds that show activity. Studies of large-scale docking campaigns, where billions of molecules are docked and hundreds are tested, show that hit rates are a predictable function of docking score and the library's intrinsic "hit-proneness" [41]. Performance can be significantly enhanced by re-scoring docking outputs with machine learning-based scoring functions (ML SFs). For instance, re-scoring docking results for the malaria target PfDHFR with CNN-Score improved early enrichment (EF1%) from worse-than-random to 28 for the wild-type and 31 for a resistant mutant [38].

FEP provides a higher-accuracy, physics-based method for predicting relative binding free energies. It is the most computationally intensive of the three, but its predictions are highly accurate, with average errors near 1.0 kcal/mol, which is within experimental uncertainty [42] [37]. Recent advances include Absolute Binding Free Energy (ABFE) calculations, which remove the need for a closely related reference ligand, and Active Learning FEP, which combines FEP with faster QSAR methods to explore chemical space more efficiently [42]. A 2025 case study on Wee1 kinase inhibitors demonstrated FEP's power, where it was used to profile 6.7 billion design ideas, leading to the discovery of novel, potent, and selective clinical candidates [37].

Experimental Protocols and Workflows

Benchmarking Molecular Docking and SBVS Performance

A rigorous protocol for evaluating docking and SBVS performance involves using a high-quality benchmark set like DEKOIS 2.0, which contains known active molecules and structurally similar but inactive decoys [38].

Key Steps:

- Protein Preparation: Obtain crystal structures (e.g., from PDB). Remove water molecules, ions, and redundant chains. Add and optimize hydrogen atoms using tools like OpenEye's "Make Receptor" [38].

- Ligand/Decoy Preparation: Prepare the active and decoy molecules from the benchmark set. Generate multiple conformations for each ligand using software like Omega [38].

- Docking Grid Definition: Define the docking grid box centered on the binding site. The size should encompass all relevant residues (e.g., 21.33Å × 25.00Å × 19.00Å for a typical target) [38].

- Docking Execution: Perform docking with the tools being evaluated (e.g., AutoDock Vina, FRED, PLANTS) using their default search parameters [38].

- Re-scoring with ML SFs (Optional): Extract the top poses from docking and re-score them using pretrained machine learning scoring functions like CNN-Score or RF-Score-VS v2 [38].

- Performance Analysis: Calculate key metrics:

- Enrichment Factor (EF): Measures the concentration of true actives in the top-ranked fraction of the screened library. EF1% is the enrichment in the top 1% [38].

- pROC-AUC: The area under the semi-log ROC curve, assessing the method's ability to rank actives above decoys [38].

- pROC-Chemotype Plots: Analyze the diversity and affinity of actives retrieved at early enrichment stages [38].

The workflow for a comparative docking and SBVS study is visualized below.

Free-Energy Perturbation for Binding Affinity Prediction

The FEP workflow, particularly for Relative Binding Free Energy (RBFE) calculations, is based on a thermodynamic cycle that allows for the calculation of the relative binding free energy between two similar ligands without simulating the physical binding process.

Key Steps:

- System Setup: Construct the thermodynamic cycle for the ligand pair in both their protein-bound and solvated (unbound) states [42] [37].

- Lambda Schedule Selection: Define a series of intermediate, non-physical "λ states" to alchemically morph one ligand into the other. Modern tools use automated scheduling to optimize the number of windows, balancing accuracy and computational cost [42].

- Force Field Parameterization: Assign accurate parameters to describe the energetics of the system. For challenging ligand torsions, quantum mechanics (QM) calculations can be used to refine parameters [42].

- Solvation and Sampling: Ensure the system is properly hydrated. Techniques like Grand Canonical Monte Carlo (GCMC) can be used to sample water positions effectively, which is critical for reducing hysteresis [42].

- Running Simulations: Perform molecular dynamics simulations at each λ window. For perturbations involving formal charge changes, longer simulation times are typically required for reliable results [42].

- Analysis: Analyze the simulation data to compute the relative binding free energy (ΔΔG) between the two ligands. The accuracy is typically validated against experimental data, with a goal of achieving a mean unsigned error (MUE) of ~1.0 kcal/mol [37].

The following diagram illustrates the core thermodynamic cycle and key steps of an FEP workflow.

The Scientist's Toolkit: Essential Research Reagents and Databases

Successful implementation of these computational methods relies on access to robust software tools, high-quality databases, and powerful computing infrastructure. Table 2 lists key resources.

Table 2: Essential Research Reagents and Resources for Target-Based Methods

| Category | Item / Resource | Function / Description | Relevant Use Case |

|---|---|---|---|

| Software & Tools | AutoDock Vina, FRED, PLANTS | Traditional molecular docking programs. | SBVS and pose prediction benchmarking [38]. |

| Glide SP | High-performance traditional docking tool. | Known for high physical validity of poses [39]. | |

| SurfDock, DiffBindFR | Deep learning-based generative docking models. | Achieving high pose prediction accuracy [39]. | |

| CNN-Score, RF-Score-VS v2 | Machine Learning Scoring Functions. | Re-scoring docking outputs to improve virtual screening enrichment [38]. | |

| FEP Software (e.g., Flare FEP, FEP+) | Suite for running free energy calculations. | Predicting relative binding affinities during lead optimization [42] [37]. | |

| Chemprop | Deep learning framework for molecular property prediction. | Training models to predict docking scores and guide screening [40]. | |

| Databases & Benchmarks | Protein Data Bank (PDB) | Repository for experimentally determined 3D protein structures. | Source of protein structures for docking and FEP setup [38]. |

| DEKOIS 2.0 | Benchmarking sets with actives and decoys. | Evaluating docking and SBVS performance [38]. | |

| ChEMBL, BindingDB | Databases of bioactive molecules with drug-like properties, affinities, and ADMET data. | Source of known active compounds for validation and training ML models [6] [12]. | |

| Large-Scale Docking (LSD) Database | Website providing docking scores/poses for 6.3B molecules across 11 targets. | Benchmarking machine learning and chemical space exploration methods [40]. | |

| Computing Infrastructure | GPU Clusters | High-performance computing. | Essential for running FEP calculations and deep learning docking models in a practical timeframe [42] [39]. |

Molecular docking, SBVS, and FEP represent a spectrum of target-based methods with complementary strengths in drug discovery. Docking provides a fast, accessible tool for initial pose prediction and screening billions of compounds, especially when enhanced by ML re-scoring. SBVS leverages docking to efficiently explore ultra-large chemical spaces, with predictable and improvable hit rates. FEP sits at the high-fidelity end, providing quantitative, experimentally accurate affinity predictions critical for lead optimization, albeit at a higher computational cost.

The choice of method depends on the project stage and available resources. For rapid screening of vast libraries, SBVS is indispensable. For optimizing a lead series with a known binding mode, FEP offers unparalleled precision in affinity prediction. The ongoing integration of machine learning, as seen in ML scoring functions and active learning workflows, is creating powerful hybrid approaches that leverage the speed of data-driven models and the rigor of physics-based simulations. This synergy, combined with the increasing availability of high-quality protein structures from experimental methods and AI prediction tools like AlphaFold2, promises to further solidify the role of target-based methods in accelerating the discovery of new therapeutics.

The prediction of drug-target interactions (DTIs) is a cornerstone of modern drug discovery, crucial for identifying new therapeutic candidates and repurposing existing drugs [43]. Traditionally, this field has been dominated by two distinct computational philosophies: ligand-based approaches and target-based approaches. Ligand-based methods operate on the principle that similar molecules tend to interact with similar biological targets, relying heavily on the chemical fingerprint similarity of compounds [6] [11]. In contrast, target-based methods, such as molecular docking, use the three-dimensional structure of a protein target to predict how and where a small molecule might bind [11]. With the advent of robust biological databases and increased computational power, a third, more integrative paradigm has emerged: chemogenomic approaches. These methods systematically leverage both chemical and biological information, often using machine learning to learn the complex relationships between drugs and their targets from large-scale datasets [43] [11]. The evolution of machine learning (ML), from classic algorithms like Random Forests to modern deep learning and Graph Neural Networks (GNNs), has been the primary engine driving this chemogenomic revolution, leading to unprecedented accuracy in predicting novel drug-target interactions [44] [45].

Comparative Performance of Machine Learning Methods

As ML techniques have advanced, so too has their performance in DTI prediction. The following table summarizes key quantitative results from recent studies and benchmark analyses, providing a clear comparison across different algorithmic families.

Table 1: Performance Comparison of Different DTI Prediction Methods on Various Benchmark Datasets

| Method Category | Specific Model/Approach | Dataset(s) Used | Key Performance Metrics | Year/Reference |

|---|---|---|---|---|

| Similarity-Based (Ligand-Centric) | MolTarPred (Morgan fingerprints) | ChEMBL 34, FDA-approved drugs | Most effective in systematic comparison [6] | 2025 |

| Classical Machine Learning | GAN + Random Forest Classifier | BindingDB-Kd | Acc: 97.46%, AUC: 99.42% [44] | 2025 |

| Classical Machine Learning | Feature Selection + Rotation Forest | Enzyme, Ion Channels, GPCRs, Nuclear Receptors | Acc: 98.12%, 98.07%, 96.82%, 95.64% [46] | 2023 |

| Kernel & Matrix Factorization | Kernelized Bayesian Matrix Factorization | Cancer cell line screening data | Effective for integrated QSAR [46] | 2016 |

| Deep Learning (General) | DeepLPI (CNN + biLSTM) | BindingDB | AUC: 0.790 (Test Set) [44] | 2025 |

| Graph Neural Networks | Hetero-KGraphDTI (GNN + Knowledge) | Multiple benchmarks | Avg. AUC: 0.98, Avg. AUPR: 0.89 [45] | 2025 |

| Graph Neural Networks | Multi-modal GCN Framework | DrugBank | AUC: 0.96 [45] | 2023 |

| Graph Neural Networks | Graph-based Multi-network | KEGG | AUC: 0.98 [45] | 2024 |

The data reveals a clear performance trend. While classical ML models like Random Forest, especially when enhanced with feature selection [46] or data balancing techniques like Generative Adversarial Networks (GANs) [44], achieve remarkably high accuracy, modern deep learning approaches are pushing the boundaries even further. GNN-based models consistently achieve top-tier performance, with the recently proposed Hetero-KGraphDTI framework setting a new benchmark with an average AUC of 0.98 [45]. This model's integration of biological knowledge graphs appears to mitigate over-smoothing and enhance the biological interpretability of its predictions.

Experimental Protocols and Methodologies

The superior performance of modern models is a direct result of sophisticated experimental protocols and feature engineering strategies. Below is a workflow diagram illustrating a typical advanced DTI prediction pipeline, integrating elements from several state-of-the-art approaches.

Data Preparation and Feature Engineering

The foundation of any robust DTI model is high-quality, well-curated data. Commonly used databases include ChEMBL [6], BindingDB [44], and DrugBank [11], which provide experimentally validated interactions, compound structures, and target information.

- Drug Feature Extraction: Small molecules are typically represented as molecular fingerprints, such as MACCS keys or Morgan fingerprints (ECFP), which encode molecular structures as bit vectors denoting the presence or absence of specific substructures [6] [46]. Newer methods represent drugs as molecular graphs, where atoms are nodes and bonds are edges, allowing GNNs to learn informative representations directly from the graph structure [45].