Leveraging Attention Mechanisms for Accurate Binding Site Identification in Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on implementing attention mechanisms for protein-ligand binding site identification.

Leveraging Attention Mechanisms for Accurate Binding Site Identification in Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on implementing attention mechanisms for protein-ligand binding site identification. It covers the foundational principles of attention-based models, explores their transformative advantages over traditional methods, and details practical implementation strategies using cutting-edge architectures like graph transformers and cross-attention. The content further addresses critical troubleshooting for common faults and optimization techniques, culminating in a rigorous comparative analysis of model performance against established benchmarks. By synthesizing the latest research, this guide aims to equip scientists with the knowledge to enhance accuracy and efficiency in binding site prediction, ultimately accelerating drug discovery pipelines.

The Transformative Power of Attention in Computational Biology

An attention mechanism is a machine learning technique that directs deep learning models to prioritize (or attend to) the most relevant parts of input data [1]. Inspired by human cognitive processes, it enables models to selectively focus on salient information while ignoring less relevant details, thereby making efficient use of limited computational resources [1] [2]. This approach has revolutionized artificial intelligence, enabling the transformer architecture that powers modern large language models and has since permeated diverse domains, including structural biology and drug discovery [1] [3].

The mathematical foundation of attention involves computing attention weights that reflect the relative importance of different elements in input data [1]. These weights are typically calculated through a process that determines similarities, correlations, and dependencies between elements, quantified as alignment scores [1]. The scores are normalized via a softmax function to create a probability distribution, which then emphasizes or de-emphasizes the influence of specific input elements on model predictions [1].

Table 1: Key Properties of Attention Mechanisms

| Property | Description | Biological Analogy |

|---|---|---|

| Dynamic Weighting | Adjusts influence of input elements based on context | Selective auditory or visual attention |

| Content-based Addressing | Focuses on elements relevant to current processing step | Contextual prioritization in sensory processing |

| Parallel Processing | Enables simultaneous evaluation of all input elements | Parallel processing in visual cortex |

| Adaptive Focus | Adjusts focus throughout computational process | Task-dependent attention shifting |

Historical Development and Core Concepts

Attention mechanisms were originally introduced by Bahdanau et al. in 2014 to address limitations in sequence-to-sequence (Seq2Seq) models for machine translation [1] [4]. Early Seq2Seq models relied on recurrent neural networks (RNNs) with encoder-decoder architectures, where the encoder processed input sequences into a fixed-length context vector that often became an information bottleneck, particularly for longer sequences [1] [4].

The key innovation was enabling the decoder to access all encoder hidden states, with attention determining which states were most relevant at each decoding step [1]. This fundamental approach has since evolved into several specialized variants, each with distinct computational characteristics and applications.

Critical Technical Differentiation

The original Bahdanau attention and subsequent Luong attention differ primarily in their computational approaches [4]. Bahdanau-style attention uses the previous decoder hidden state to compute attention weights before generating the current state, making attention an integral part of the decoding process [4]. In contrast, Luong-style attention first computes the decoder hidden state and then applies attention to create a context vector that modifies this state before final output generation [4]. This architectural difference enables greater flexibility in experimenting with different attention scoring functions.

Table 2: Major Attention Mechanism Variants and Their Characteristics

| Mechanism Type | Key Innovation | Computational Approach | Primary Applications |

|---|---|---|---|

| Additive Attention (Bahdanau) | First attention mechanism for NMT | Single-layer feedforward network computes alignment | Machine translation, sequence modeling |

| Multiplicative Attention (Luong) | Efficient dot-product operations | Dot product, general, or location-based scoring | Machine translation, text generation |

| Self-Attention | Captures intra-sequence dependencies | Relates different positions of a single sequence | Transformer models, representation learning |

| Cross-Attention | Models relationships between different modalities | Attention between two distinct sequences or data types | Multi-modal learning, protein-ligand interaction |

Attention in Natural Language Processing

In natural language processing, attention mechanisms have largely supplanted earlier encoder-decoder architectures that relied on fixed-length context vectors [2] [4]. The limitations of these earlier approaches were particularly evident for longer sequences, where critical information from early in the sequence tended to be "forgotten" after processing subsequent elements [4].

Self-attention, also called intra-attention, enables models to focus on different positions of the input text sequence to compute a representation of the same sequence [2]. This allows each element to be evaluated in context with all other elements, capturing long-range dependencies that challenge recurrent models [1] [3]. The transformer architecture's multi-head attention mechanism extends this concept by employing multiple attention heads in parallel, each learning to attend to different aspects of the input representation [2].

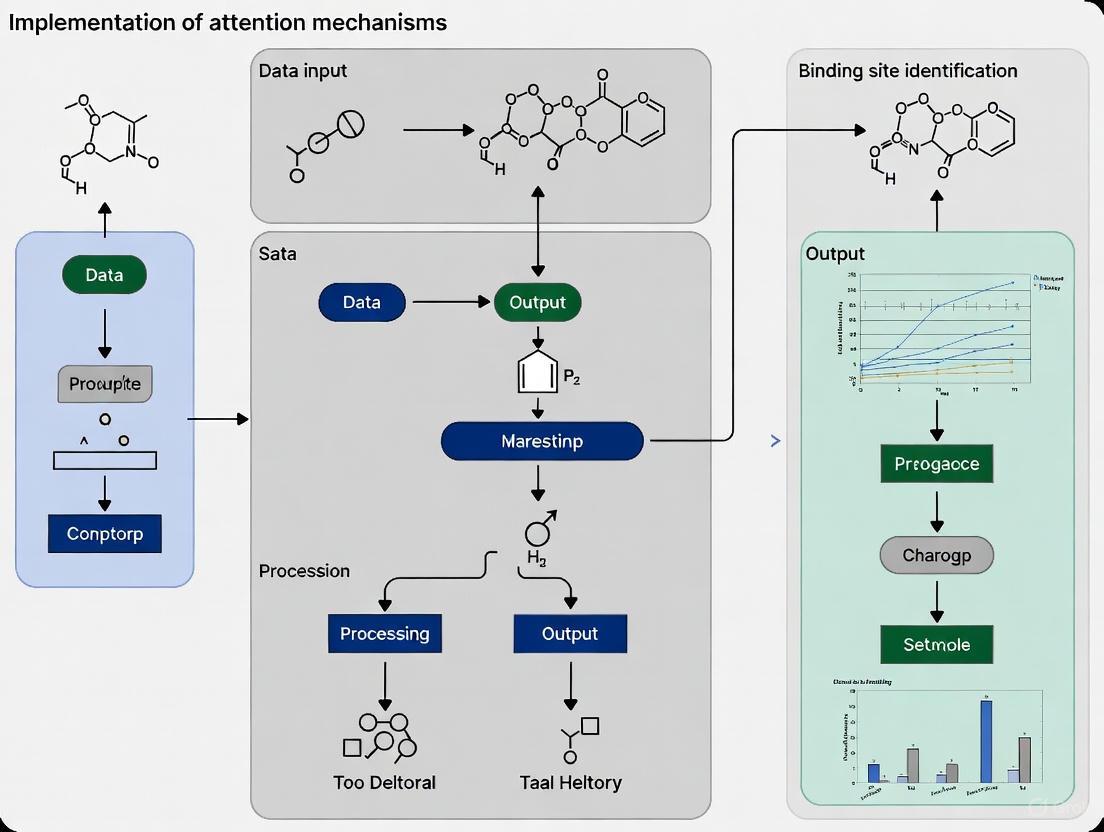

Diagram 1: NLP Attention Workflow - Core computational steps in transformer-based attention mechanisms for natural language processing.

Attention Mechanisms in Structural Biology

The application of attention mechanisms has extended significantly beyond NLP to address complex challenges in structural biology, particularly in protein-ligand binding site identification and essential protein prediction [5] [6]. These applications leverage attention's ability to integrate diverse biological data sources and identify complex, non-linear relationships within structural and sequential data.

Protein-Ligand Binding Site Prediction with LABind

The LABind method exemplifies advanced attention application for predicting protein binding sites for small molecules and ions in a ligand-aware manner [6]. This approach addresses critical limitations of earlier methods that either treated all ligands identically or required specialized models for specific ligand types [6]. LABind utilizes a graph transformer to capture binding patterns within the local spatial context of proteins and incorporates a cross-attention mechanism to learn distinct binding characteristics between proteins and ligands [6].

The architecture processes ligand information via Simplified Molecular Input Line Entry System (SMILES) sequences through molecular pre-trained language models (MolFormer) to obtain ligand representations [6]. Simultaneously, protein sequences and structures are processed through protein language models (Ankh) and structural analysis tools (DSSP) to generate comprehensive protein representations [6]. The cross-attention mechanism then learns interactions between these representations, enabling accurate binding site prediction even for ligands not encountered during training [6].

Essential Protein Prediction with AttentionEP

AttentionEP demonstrates another significant biological application of attention mechanisms, predicting essential proteins via fusion of multi-scale biological data [5]. This approach integrates protein-protein interaction (PPI) networks, gene expression data, and subcellular localization information using both cross-attention and self-attention frameworks [5].

The method employs Graph Convolutional Networks (GCN) and Graph Attention Networks (GAT) to extract spatial characteristics from PPI networks, Bidirectional Long Short-Term Memory networks (BiLSTM) to derive temporal features from gene expression data, and Deep Neural Networks (DNN) to process subcellular localization information [5]. Self-attention refines features within each data domain, while cross-attention enhances interaction between diverse information sources [5]. This integrated approach achieved an impressive Area Under the Curve (AUC) value of 0.9433, demonstrating considerable advantage over established techniques [5].

Table 3: Performance Comparison of Biological Attention Models

| Model | Primary Task | Key Data Sources | Performance Metrics |

|---|---|---|---|

| LABind [6] | Protein-ligand binding site prediction | Protein structures, ligand SMILES sequences | Superior AUC, AUPR on benchmark datasets DS1, DS2, DS3 |

| AttentionEP [5] | Essential protein prediction | PPI networks, gene expression, subcellular localization | AUC: 0.9433 |

| EGP Hybrid-ML [7] | Essential gene prediction | Gene sequences, multidimensional features | Sensitivity: 0.9122, ACC: ~0.9 |

| DeepEP [5] | Essential protein prediction | PPI networks (node2vec features) | Baseline comparison for AttentionEP |

Experimental Protocols and Methodologies

Computational Protocol for Binding Site Prediction

The LABind methodology provides a comprehensive protocol for structure-based prediction of ligand binding sites [6]:

Input Preparation:

- Ligand Representation: Input SMILES sequence of ligand into MolFormer pre-trained model to obtain molecular representation [6].

- Protein Representation: Process protein sequence and structure through Ankh pre-trained model and DSSP to generate protein embeddings and structural features [6].

- Feature Concatenation: Combine protein embeddings and DSSP features to form protein-DSSP embedding [6].

Graph Construction:

- Convert protein structure into graph representation where nodes represent residues and edges represent spatial relationships [6].

- Derive node spatial features (angles, distances, directions) from atomic coordinates [6].

- Compute edge spatial features (directions, rotations, distances between residues) [6].

- Integrate protein-DSSP embedding with node spatial features to create final protein representation [6].

Attention-Based Learning:

Prediction and Validation:

Diagram 2: Binding Site Prediction Protocol - Experimental workflow for LABind methodology predicting protein-ligand binding sites.

Experimental Protocol for Binding Site Identification via Photoaffinity Labeling

Complementary computational approaches, experimental methods like photoaffinity labeling provide empirical validation of binding sites [8]. The following protocol details experimental identification of binding sites for ivacaftor (VX-770) on the CFTR chloride channel:

Probe Preparation and Validation:

Membrane Preparation:

Photo-labeling Reaction:

Sample Processing and Analysis:

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Research Reagents and Computational Tools for Attention-Based Binding Site Research

| Resource Category | Specific Tools/Reagents | Function/Purpose | Application Context |

|---|---|---|---|

| Pre-trained Models | MolFormer [6], Ankh [6] | Generate molecular and protein representations | Feature extraction for ligand-aware binding site prediction |

| Structural Analysis | DSSP [6], ESMFold [6] | Derive protein structural features | Graph construction from protein 3D structures |

| Experimental Probes | VX-770-Biot, VX-770-Diaz [8] | Covalent labeling of binding sites | Photoaffinity labeling for experimental validation |

| Cell Lines | HEK293 cells [8] | Heterologous protein expression | Production of target proteins for experimental studies |

| Affinity Reagents | Monomeric Avidin Agarose [8] | Enrichment of biotinylated peptides | Isolation of labeled peptides in mass spectrometry |

| Proteolytic Enzymes | Sequence-grade trypsin [8] | Protein digestion | Peptide generation for mass spectrometry analysis |

| Analysis Software | Custom implementations [5] [6] | Model training and prediction | Implementation of attention mechanisms for specific biological tasks |

Integration for Binding Site Identification Research

The integration of computational and experimental approaches provides a powerful framework for binding site identification research. Computational models like LABind generate testable hypotheses about potential binding sites, while experimental methods like photoaffinity labeling provide empirical validation [6] [8]. This synergistic approach accelerates drug discovery by prioritizing candidate interactions for experimental verification.

Cross-attention mechanisms are particularly valuable in this context, as they enable explicit modeling of relationships between protein and ligand representations [6]. This ligand-aware approach represents a significant advancement over methods that treat all ligands identically or require specialized models for specific ligand classes [6]. The ability to predict binding sites for previously unseen ligands demonstrates the generalization capability of these approaches [6].

As attention mechanisms continue to evolve, their application to structural biology promises to unlock deeper insights into protein function, interaction networks, and therapeutic opportunities. The fusion of biological domain knowledge with advanced computational architectures represents a frontier in computational biology with profound implications for understanding fundamental life processes and developing novel therapeutic interventions.

The Query, Key, and Value (QKV) paradigm, central to the attention mechanism in transformer models, provides a powerful computational framework for modeling biological interactions. In the context of binding site identification, this model elegantly formalizes the process of how a protein (or a specific residue within it) "searches" for and interacts with potential binding partners, such as small molecules, ions, or other biomacromolecules. The core analogy is that of a search query looking for matching keys to retrieve relevant values. Here, the Query represents the entity seeking interaction, the Key represents the potential partners that can be matched against, and the Value carries the specific information to be exchanged upon a successful match. Implementing this attention-based framework allows researchers to move beyond static structural analysis to model the dynamic and context-dependent nature of molecular recognition, significantly accelerating the process of drug discovery and functional annotation [6] [9].

Core Conceptual Framework

The QKV Triad: A Biological Analogy

In molecular interaction studies, the QKV model can be mapped onto protein-ligand binding as follows:

- Query (Q): In a ligand-aware binding site prediction, the query often originates from the protein's residues. Each residue, represented by a feature vector derived from its sequence and structural context, acts as a query seeking a binding partner. It asks, "Which ligands or ligand features are relevant to me?" [6] [10].

- Key (K): The keys are derived from the ligand's representation. For a small molecule, this could be a feature vector encoded from its Simplified Molecular Input Line Entry System (SMILES) string or its structure. The keys represent the ligand's identity and properties, ready to be matched against the protein's queries [6].

- Value (V): The values are also derived from the ligand, but they contain the specific information to be propagated to the protein if a match occurs. While keys determine the relevance, values carry the actionable information about the ligand's binding characteristics that influence the final prediction, such as the probability of a residue being a binding site [6] [9].

The attention mechanism computes a compatibility score (e.g., dot product) between each Query and Key pair. This score is then used to compute a weighted sum of all Values, where the weights are determined by the scores. In practice, this means a protein residue will attend most strongly to ligands (or ligand features) whose Keys are most similar to its Query, and the final contextualized representation for the residue will be a blend of the Values from all ligands, weighted by their respective relevance [9].

The Role of Cross-Attention

For predicting binding sites in a ligand-aware manner, cross-attention is the critical mechanism that facilitates the interaction between the two distinct entities: the protein and the ligand. Unlike self-attention where Q, K, and V come from the same source, cross-attention allows the model to learn the distinct binding characteristics between proteins and ligands by using one modality to query the other [6] [10].

In the LABind method, for instance, a graph transformer captures the protein's structural context, generating protein representations. Simultaneously, a molecular language model (MolFormer) processes the ligand's SMILES string to generate the ligand representation. A cross-attention mechanism is then employed where the protein representation acts as the Query, and the ligand representation provides both the Keys and Values. This allows each protein residue to selectively attend to the most relevant aspects of the ligand, effectively learning the interaction patterns that lead to binding [6]. This ligand-aware approach enables the model to generalize and predict binding sites even for ligands not seen during training.

Quantitative Performance of QKV-Based Methods

Advanced deep learning frameworks that implement the QKV and cross-attention paradigm have demonstrated state-of-the-art performance in various binding prediction tasks. The following table summarizes the performance of several key methods on standard benchmark datasets.

Table 1: Performance of MM-IDTarget on Drug-Target Interaction Prediction (Top-K Accuracy, %)

| Method | Top-1 (%) | Top-3 (%) | Top-5 (%) | Top-7 (%) | Top-10 (%) |

|---|---|---|---|---|---|

| MM-IDTarget | 34.68 | 55.88 | 62.31 | 64.00 | 66.07 |

| HitPickV2 | 24.69 | 56.74 | 58.43 | 60.82 | 62.20 |

| SwissTargetPrediction | 28.00 | – | – | – | – |

| Chemogenomic-Model | 26.96 | 56.36 | 59.33 | 60.89 | 63.99 |

The MM-IDTarget framework, which employs intra- and inter-cross-attention mechanisms to fuse multimodal features of drugs and targets, shows superior performance across most Top-K metrics despite being trained on a smaller dataset. This underscores the efficiency of its attention-based feature fusion for target identification [10].

Table 2: Evaluation Metrics for LABind in Binding Site Prediction

| Metric | Full Name | Role in Evaluating QKV-based Binding Site Prediction |

|---|---|---|

| AUPR | Area Under the Precision-Recall Curve | Primary metric for hyperparameter optimization due to robustness to class imbalance [6]. |

| MCC | Matthews Correlation Coefficient | Reflects model performance on imbalanced two-class classification of binding sites [6]. |

| AUC | Area Under the ROC Curve | Measures overall ranking performance of residue binding probabilities [6]. |

| DCC | Distance between predicted and true binding site Centers | Evaluates accuracy in locating the geometric center of a binding pocket [6]. |

Experimental Protocols for QKV Implementation

Protocol 1: Ligand-Aware Binding Site Prediction with LABind

Application Note: This protocol details the steps for implementing the LABind method to predict protein binding sites for specific small molecules or ions, leveraging a cross-attention mechanism between protein and ligand representations [6].

Materials:

- Input Data: Protein sequence and 3D structure (experimental or predicted); Ligand SMILES string.

- Software: LABind framework (requires Python, PyTorch/TensorFlow, graph neural network libraries).

- Pre-trained Models: Ankh (protein language model); MolFormer (molecular language model).

Procedure:

- Input Representation:

- Protein: Generate residue-level embeddings from the protein sequence using the Ankh protein language model. Compute structural features (e.g., solvent accessibility, secondary structure) using DSSP. Combine embeddings and structural features into a final protein representation [6].

- Ligand: Input the ligand's SMILES string into the MolFormer model to obtain a comprehensive molecular representation [6].

- Graph Construction: Convert the protein 3D structure into a graph where nodes represent residues. Node features include spatial information (angles, distances, directions) combined with the protein-DSSP embedding. Edges represent spatial relationships between residues [6].

- Feature Encoding: Process the protein graph through a graph transformer to capture local and global structural contexts. The ligand representation is processed independently [6].

- Cross-Attention Mechanism (QKV Interaction):

- Designate the processed protein residue features as the Query (Q).

- Designate the processed ligand features as the Key (K) and Value (V).

- Compute attention scores:

Attention Scores = Softmax(Q * K^T / sqrt(d)), wheredis the dimensionality of the query and key vectors. - The output for each protein residue is a weighted sum of the ligand Values:

Output = Attention Scores * V[6].

- Binding Site Prediction: Pass the output of the cross-attention layer through a Multi-Layer Perceptron (MLP) classifier to predict a probability for each residue being part of a binding site for the specified ligand [6].

- Validation: Evaluate predictions on benchmark datasets (e.g., DS1, DS2, DS3) using AUPR, MCC, and DCC metrics to assess residue-level and center-level accuracy [6].

Protocol 2: Multimodal Fusion for Drug-Target Interaction with MM-IDTarget

Application Note: This protocol describes an ensemble approach using intra- and inter-cross-attention to fuse sequence and structure modalities of drugs and targets for identifying drug-target interactions (DTI) and ranking potential targets [10].

Materials:

- Input Data: Drug SMILES strings; Target protein sequences and 3D structures.

- Software: MM-IDTarget framework.

- Feature Extractors: Graph Transformer (for drug structures); Multi-Scale CNN (for protein sequences); Residual Edge-Weighted Graph Convolutional Network (for protein structures).

Procedure:

- Feature Extraction:

- Drug Features: Extract structural features from the drug's molecular graph using a Graph Transformer. Extract sequence features from the SMILES string using a Multi-Scale CNN (MCNN) [10].

- Target Features: Extract sequence features from the protein sequence using an MCNN. Extract structural features from the protein structure using a Residual Edge-Weighted GCN (EW-GCN) [10].

- Intra-Cross-Attention (Within-Modality Fusion):

- For both the drug and the target, independently fuse their own sequence and structural features.

- Use a cross-attention block where, for example, the sequence features act as the Query and the structural features act as the Key and Value (or vice-versa). This allows the model to emphasize the most salient complementary information from different modalities of the same entity [10].

- Inter-Cross-Attention (Between-Entity Fusion):

- Fuse the enriched drug and target representations. Let the fused drug representation be the Query and the fused target representation be the Key and Value (or vice-versa).

- This step allows the drug to query the target database, learning complex interaction patterns between them [10].

- Prediction and Ranking:

- Combine the output of the inter-cross-attention with physicochemical features of the drug and target.

- Use a fully connected network to predict interaction scores (for DTI) or binding affinity (for DTA).

- For target identification, rank all potential targets for a given drug in descending order of their predicted scores (Top-K ranking) [10].

Diagram 1: Workflow of ligand-aware binding site prediction using QKV cross-attention, as implemented in methods like LABind.

Table 3: Key Computational Tools for QKV-Based Binding Site Research

| Tool Name | Type | Primary Function in QKV Context |

|---|---|---|

| Ankh | Protein Language Model | Generates powerful sequence-based residue embeddings used to form the Query in protein-ligand attention [6]. |

| MolFormer | Molecular Language Model | Generates ligand representations from SMILES strings, providing the Keys and Values for cross-attention [6]. |

| ESM-2 | Protein Language Model | Used in other frameworks (e.g., ESM-SECP) to extract residue embeddings from protein sequences [11]. |

| Graph Transformer | Deep Learning Architecture | Encodes the protein's 3D structural graph, capturing local spatial contexts for residues [6] [10]. |

| 3D U-Net with Attention | Deep Learning Architecture | Used for semantic segmentation of 3D protein structures to predict binding pockets, employing attention to focus on salient spatial and channel features [12]. |

| DSSP | Bioinformatics Tool | Computes secondary structure and solvent accessibility from protein 3D coordinates, enriching node features in protein graphs [6]. |

Diagram 2: Multimodal fusion framework using intra- and inter-cross-attention for drug-target interaction prediction, as seen in MM-IDTarget.

The identification of molecular binding sites is a cornerstone of modern drug discovery and functional genomics. Traditional computational methods, which often rely on manually curated features and static structural models, are increasingly constrained by their limited adaptability and "black-box" nature. The integration of attention mechanisms into deep learning architectures is fundamentally reshaping this landscape. These mechanisms provide a powerful, native capacity for data-driven feature learning and unprecedented model interpretability, offering researchers a clear view into the decision-making processes of complex models. This application note details how these advantages are being practically implemented to accelerate and refine binding site identification research.

Quantitative Advantages of Attention-Based Models

The theoretical benefits of attention mechanisms translate into superior quantitative performance across various prediction tasks. The table below summarizes benchmark results from recent state-of-the-art studies.

Table 1: Performance Benchmarks of Advanced Binding Site Prediction Models

| Model Name | Prediction Focus | Key Architecture | Performance Metrics | Traditional Method Comparison |

|---|---|---|---|---|

| GHCDTI [13] | Drug-Target Interaction (DTI) | Graph Wavelet Transform + Multi-level Contrastive Learning | AUC: 0.966 ± 0.016, AUPR: 0.888 ± 0.018 | Significantly outperforms methods neglecting protein dynamics and data imbalance. |

| LABind [6] | Protein-Ligand Binding Sites | Graph Transformer + Cross-Attention | Superior Rec, Pre, F1, MCC, AUC, and AUPR on multiple benchmark datasets (DS1, DS2, DS3). | Outperforms single-ligand and multi-ligand oriented methods, generalizing to unseen ligands. |

| PreRBP [14] | RNA-Protein Binding Sites | CNN-BiLSTM-Attention | Average AUC: 0.88 | Higher accuracy than existing RNA-protein binding site prediction methods. |

| PFDCNN [15] | Protein-ATP Binding Sites | Protein LLM (ESM) + Fractional-Order CNN | Accuracy: 0.984, AUC: 0.941 | Surpasses most existing predictors like ATPint, ATPsite, and TargetATPsite. |

| TBiNet [16] | Transcription Factor Binding Sites | Attention-based DNN | Outperforms DeepSea and DanQ. | More effective in discovering known TF-binding motifs. |

Experimental Protocols for Attention-Based Binding Site Prediction

Protocol 1: Structure-Based Prediction with Cross-Attention (LABind)

This protocol is designed for predicting protein binding sites for small molecules and ions in a ligand-aware manner, even for unseen ligands [6].

Input Representation:

- Ligand: Encode the ligand's SMILES sequence into a molecular representation vector using a pre-trained molecular language model (e.g., MolFormer).

- Protein: For a protein structure, generate a residue-level graph where nodes represent amino acids and edges represent spatial relationships.

- Node Features: Combine sequence embeddings from a protein language model (e.g., Ankh) with structural features (e.g., angles, distances, directions) derived from atomic coordinates.

- Edge Features: Encode spatial relationships between residues, including directions, rotations, and distances.

Model Architecture & Training:

- Graph Transformer: Process the protein graph to capture potential binding patterns in the local spatial context.

- Cross-Attention Module: Fuse the protein and ligand representations. This mechanism allows the protein residues to "attend" to the ligand representation, learning distinct binding characteristics for the specific ligand.

- Classifier: Pass the final integrated representations through a Multi-Layer Perceptron (MLP) to predict a binding probability for each residue.

- Handling Data Imbalance: Use metrics like Matthews Correlation Coefficient (MCC) and Area Under the Precision-Recall Curve (AUPR) for evaluation and hyperparameter tuning, as they are more reliable for imbalanced data.

Output & Interpretation:

- The model outputs a binding probability for each residue in the protein sequence.

- Interpretability: The cross-attention weights can be visualized to identify which parts of the protein sequence and which aspects of the ligand representation were most critical for the prediction, providing residue-level insights into the binding interaction.

Protocol 2: Sequence-Based Prediction with Integrated Attention (PreRBP)

This protocol predicts binding sites using primarily sequence data, effectively handling long-range dependencies and class imbalance [14].

Input & Feature Engineering:

- RNA Sequence: Encode sequences using higher-order encoding algorithms to extract key information.

- Structural Context: Predict RNA secondary structure using tools like RNAshapes to incorporate structural features beyond the primary sequence.

- Addressing Class Imbalance: Apply undersampling algorithms (e.g., Random Undersampling, NearMiss, ENN, One-Sided Selection) to the negative samples in the training dataset to construct a balanced training set.

Model Architecture & Training:

- Feature Learning Backbone: Employ a Convolutional Neural Network (CNN) to detect local motif-level patterns, followed by a Bidirectional Long Short-Term Memory network (BiLSTM) to capture long-range, global contextual information in the sequence.

- Attention Layer: Integrate an attention mechanism on top of the BiLSTM outputs. This layer assigns a weight to each position in the sequence, allowing the model to focus on the most critical regions for binding.

- Output Layer: Use a fully connected layer with a softmax activation for final classification (binding vs. non-binding site).

Output & Interpretation:

- The model outputs a binding site prediction.

- Interpretability: The attention weights produced by the model can be plotted as a graph over the input sequence, directly highlighting the specific nucleotide regions (e.g., potential motifs) that most strongly influenced the binding site prediction.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Attention-Based Binding Site Research

| Category | Reagent / Resource | Function & Application | Example Tools / Datasets |

|---|---|---|---|

| Computational Frameworks | Graph Neural Network Libraries | Facilitate the building of GATs and Graph Transformers for structure-based models. | PyTorch Geometric, Deep Graph Library (DGL) |

| Transformer Libraries | Provide pre-built modules for multi-head self-attention and transformer architectures. | Hugging Face Transformers, TensorFlow | |

| Data Resources | Protein-Ligand Binding Data | Benchmark datasets for training and evaluating structure-based binding site predictors. | sc-PDB, COACH420, HOLO4k, PDBBind [17] |

| Protein-Sequence Databases | Large-scale sequence databases for training protein Language Models (pLMs). | UniRef50, UniRef90 [15] | |

| RNA-Protein Interaction Data | Sources of experimentally derived data for training RNA-protein binding site models. | iCount, DoRiNA [14] | |

| Pre-trained Models | Protein Language Models (pLMs) | Generate rich, contextual embeddings from protein sequences, capturing evolutionary and structural information. | ESM-1b, ESM-2, ProtTrans, ProtBert [17] [15] |

| Molecular Language Models | Encode small molecules (via SMILES) into meaningful representation vectors for ligand-aware prediction. | MolFormer [6] | |

| Analysis & Visualization | Molecular Visualization Software | Visualize 3D protein structures and map predicted binding sites onto them for validation. | PyMol [17] |

| Metric Libraries | Compute advanced metrics crucial for evaluating model performance on imbalanced datasets. | Scikit-learn (for MCC, AUPR) |

The integration of attention mechanisms represents a paradigm shift from rigid, traditional computational methods to adaptive, interpretable, and data-driven AI tools. As demonstrated by protocols like LABind and PreRBP, these models do not merely offer a performance boost; they provide a collaborative framework where the model's reasoning is exposed to the scientist. This enhanced interpretability, coupled with the ability to learn directly from complex and heterogeneous data, is empowering researchers to make more informed decisions, rapidly validate hypotheses, and ultimately accelerate the pace of discovery in structural biology and drug development.

The accurate identification of molecular binding sites is a fundamental challenge in modern drug discovery and bioinformatics. Attention mechanisms have emerged as powerful deep learning components that enable models to focus on the most relevant parts of complex biological data, significantly advancing binding site prediction. These architectures—self-attention, graph attention networks (GATs), and cross-attention—provide distinct approaches for processing sequential, structural, and interaction data between biomolecules. By learning context-aware relationships within and between biological entities, attention-based models have demonstrated superior performance over traditional computational methods while offering valuable interpretability insights into the molecular determinants of binding interactions [18] [19].

Self-attention mechanisms allow models to weigh the importance of different positions within a single sequence or structure, capturing long-range dependencies that are critical for understanding biomolecular function. Graph attention networks specialize in processing graph-structured data by applying attention to node neighborhoods, making them ideally suited for analyzing protein structures and molecular graphs. Cross-attention mechanisms enable interactive learning between different molecular representations, such as between drug compounds and their protein targets, allowing the model to jointly reason over both entities when predicting binding interactions [20] [21]. Together, these architectures form a powerful toolkit for addressing the complex challenge of binding site identification across diverse biological contexts.

Architectural Foundations and Theoretical Frameworks

Self-Attention Mechanism

The self-attention mechanism, also known as intra-attention, computes representation of a sequence by weighing the importance of all other elements in the same sequence when encoding each position. For a given input matrix X containing n elements (e.g., amino acids in a protein sequence), the self-attention operation transforms it into query (Q), key (K), and value (V) matrices through linear projections. The attention weights are computed as:

Attention(Q, K, V) = softmax(QKᵀ/√dₖ)V

where dₖ is the dimension of the key vectors, and the softmax function normalizes the weights across the sequence [22]. The scaling factor √dₖ prevents the softmax function from entering regions with extremely small gradients. This mechanism allows each position in the sequence to attend to all other positions, capturing global dependencies regardless of their distance in the sequence.

In binding site identification, self-attention enables models to identify functionally important residues that may be distributed throughout the protein sequence but collectively contribute to binding site formation. For example, SAResNet combines self-attention with residual networks to predict DNA-protein binding sites, where the self-attention module captures position information of DNA sequences while the residual structure extracts high-level features of binding sites [22]. The multi-headed extension of self-attention allows the model to jointly attend to information from different representation subspaces, capturing different types of relationships within the biological sequence.

Graph Attention Networks (GATs)

Graph Attention Networks represent a specialized architecture for processing graph-structured data, which naturally represents many biological systems including protein structures and molecular graphs. In GATs, each node in the graph computes its updated representation by attending to its neighbors, allowing for focused integration of local structural information [23].

The graph attention layer employs a shared attention mechanism a that computes attention coefficients between node pairs:

eᵢⱼ = a(Whᵢ, Whⱼ)

where hᵢ and hⱼ are node features, W is a shared weight matrix, and eᵢⱼ indicates the importance of node j's features to node i [23]. These coefficients are normalized across all neighbors j ∈ Nᵢ using the softmax function, and the resulting attention weights are used to compute a weighted average of neighbor transformations. The GATv2 architecture improves upon this by using a more expressive attention function:

αᵢ,ⱼ = exp(aᵀLeakyReLU(Θ[xᵢ||xⱼ||eᵢ,ⱼ])) / Σₖ∈Nᵢ∪{i} exp(aᵀLeakyReLU(Θ[xᵢ||xₖ||eᵢ,ₖ]))

where || represents concatenation, Θ and a are learned parameters, and eᵢ,ⱼ are edge features [23]. This formulation allows for more flexible and powerful attention patterns in biological graphs.

For binding site prediction, GATs excel at capturing the local chemical environment around potential binding residues by representing protein structures as graphs where nodes correspond to atoms or residues and edges represent spatial proximity or chemical bonds. The GrASP model demonstrates this approach by performing semantic segmentation on protein surface atoms using GATs to identify which atoms are likely part of a binding site [23].

Cross-Attention Mechanism

Cross-attention mechanisms enable information exchange between two different sequences or representations, making them particularly valuable for modeling interactions between distinct biological entities such as drug-target pairs or enzyme-substrate complexes. Unlike self-attention, which operates within a single sequence, cross-attention computes attention weights between elements from two different domains [20] [21].

In cross-attention, the queries (Q) come from one sequence while the keys (K) and values (V) come from another. For drug-target interaction prediction, this allows the model to compute attention from drug subsequences to protein subsequences or vice versa:

CrossAttention(Qₚ, K₄, V₄) = softmax(QₚK₄ᵀ/√dₖ)V₄

where Qₚ are queries from protein sequences and K₄, V₄ are keys and values from drug representations [21]. This mechanism enables the model to identify which drug substructures are most relevant to which protein regions, and which protein residues are most influenced by specific drug components.

The ICAN model exemplifies this approach for drug-target interaction prediction, where cross-attention generates drug-related context features for proteins and protein-related context features for drugs [20] [21]. Similarly, LABind employs cross-attention to learn distinct binding characteristics between proteins and ligands by processing protein representations and ligand representations through attention-based learning interaction modules [6]. EZSpecificity utilizes cross-attention empowered SE(3)-equivariant graph neural networks to predict enzyme substrate specificity by capturing interactions between enzyme structures and substrate representations [24].

Performance Comparison of Attention Architectures

Table 1: Quantitative performance of attention-based architectures on various binding site prediction tasks

| Architecture | Model Name | Application Domain | Performance Metrics | Key Advantage |

|---|---|---|---|---|

| Self-Attention | SAResNet | DNA-protein binding prediction | Average AUC: 92.0% on 690 ChIP-seq datasets [22] | Captures long-range dependencies in sequences |

| Graph Attention | GrASP | Druggable binding site prediction | >70% of predicted sites correspond to real binding sites [23] | Rotationally invariant featurization of protein surfaces |

| Cross-Attention | ICAN | Drug-target interaction identification | Outperformed state-of-the-art methods on DAVIS dataset [20] [21] | Identifies interacting subsequences between drugs and proteins |

| Cross-Attention | LABind | Protein-ligand binding sites | Superior performance on DS1, DS2, and DS3 benchmark datasets [6] | Ligand-aware prediction for unseen ligands |

| Cross-Attention | EZSpecificity | Enzyme substrate specificity | 91.7% accuracy identifying single potential reactive substrate [24] | Captures 3D structural determinants of enzyme specificity |

Table 2: Input representations and dataset characteristics for attention-based binding site prediction

| Model | Protein Representation | Ligand/DNA Representation | Dataset Characteristics | Training Strategy |

|---|---|---|---|---|

| SAResNet | One-hot encoded DNA sequences (101-bp) | N/A | 690 ChIP-seq datasets; 4,614,580 training sequences [22] | Transfer learning with pre-training and fine-tuning |

| ICAN | Amino acid sequences | SMILES strings | DAVIS: 68 drugs, 379 proteins; BindingDB: 10,665 drugs, 1,413 proteins [20] [21] | Cross-attention with CNN decoder |

| LABind | Sequence (Ankh PLM) + structure (DSSP) | SMILES (MolFormer PLM) | DS1, DS2, DS3 benchmarks; focuses on small molecules and ions [6] | Graph transformer with cross-attention |

| GrASP | Protein structure graphs (heavy atoms) | N/A | 26,196 binding sites across 16,889 protein structures [23] | GAT-based semantic segmentation on surface atoms |

| CAFIE-DTA | Sequence + 3D curvature + electrostatic potential | Molecular graph + physicochemical properties | Davis and KIBA datasets [25] | Multi-head cross-attention fusion |

Experimental Protocols for Binding Site Identification

Protocol 1: DNA-Protein Binding Site Prediction with Self-Attention

Application Note: This protocol describes the implementation of self-attention mechanisms for predicting DNA-protein binding sites using the SAResNet framework, which combines self-attention with residual network structures [22].

Materials and Reagents:

- Computational Environment: High-performance computing cluster with GPU acceleration

- Software Dependencies: Python 3.7+, PyTorch 1.8+, scikit-learn 1.0+

- Dataset: 690 ChIP-seq datasets from ENCODE project

Methodology:

- Data Preprocessing:

- Format DNA sequences as 101-bp length one-hot encoded vectors (4×101 dimensions)

- Combine training subsets from 690 datasets while maintaining independence of testing subsets

- Apply under-sampling strategy to address class imbalance

- Partition data into global training (90%), validation (10%), and testing sets

Model Architecture Configuration:

- Implement residual blocks with bottleneck design (1×1, 3×3, 1×1 convolutions)

- Integrate self-attention module after residual blocks to capture position information

- Configure multi-headed self-attention with 8 attention heads

- Set embedding dimension to 512 with feed-forward dimension of 2048

Training Procedure:

- Initialize model with He normal initialization

- Utilize transfer learning: pre-train on global dataset, then fine-tune on specific datasets

- Employ Adam optimizer with initial learning rate of 0.001

- Implement learning rate scheduling with reduction on plateau

- Train for maximum 200 epochs with early stopping patience of 15 epochs

Performance Validation:

- Evaluate on independent test set using AUC, accuracy, precision, recall, and F1 score

- Compare against state-of-the-art methods (DeepBind, CNN-Zeng, Expectation-Luo)

- Perform statistical significance testing across 690 datasets

Troubleshooting:

- For overfitting on smaller datasets: increase dropout rate (0.3-0.5) and implement stronger L2 regularization

- For slow convergence: adjust learning rate scheduling or switch to LayerNorm instead of BatchNorm

- For memory issues: reduce batch size or implement gradient accumulation

Protocol 2: Ligand-Aware Binding Site Prediction with Cross-Attention

Application Note: This protocol outlines the use of cross-attention mechanisms for predicting protein-ligand binding sites in a ligand-aware manner using the LABind framework, which integrates protein structure information with ligand chemical representations [6].

Materials and Reagents:

- Computational Environment: Linux server with NVIDIA GPU (≥16GB VRAM)

- Software Dependencies: RDKit, PyTorch Geometric, Ankh protein language model, MolFormer

- Dataset: Curated benchmark datasets (DS1, DS2, DS3) with diverse ligands

Methodology:

- Feature Extraction:

- Protein Representation:

- Generate sequence embeddings using Ankh protein language model

- Compute structural features using DSSP (secondary structure, solvent accessibility)

- Construct protein graph from structure with nodes as residues

- Calculate spatial features: angles, distances, directions from atomic coordinates

- Ligand Representation:

- Process SMILES sequences through MolFormer pre-trained model

- Extract molecular features including functional groups, charge distribution

- Protein Representation:

Cross-Attention Integration:

- Implement cross-attention blocks where protein features serve as queries and ligand features as keys/values

- Use multi-head attention with 8 heads and hidden dimension of 512

- Apply residual connections around attention blocks

- Utilize layer normalization before each attention block

Binding Site Prediction:

- Process cross-attention output through multi-layer perceptron classifier

- Apply sigmoid activation for per-residue binding probability

- Use optimal threshold determined by maximizing Matthews correlation coefficient

- Cluster predicted binding residues to identify binding site centers

Evaluation Metrics:

- Calculate recall, precision, F1 score, Matthews correlation coefficient

- Compute area under ROC curve (AUC) and precision-recall curve (AUPR)

- Measure distance between predicted and true binding site centers (DCC, DCA)

Troubleshooting:

- For poor generalization to unseen ligands: increase diversity of training ligands and augment with molecular graph perturbations

- For structural feature extraction errors: validate PDB file formatting and implement coordinate sanity checks

- For attention collapse: monitor attention weight distributions and add entropy regularization

Protocol 3: Druggable Binding Site Prediction with Graph Attention Networks

Application Note: This protocol details the application of graph attention networks for identifying druggable binding sites on protein surfaces using the GrASP framework, which performs semantic segmentation on protein surface atoms [23].

Materials and Reagents:

- Computational Environment: Python 3.8+ with CUDA 11.0+

- Software Dependencies: PyTorch 1.10+, PyTorch Geometric, Biopython, NumPy

- Dataset: sc-PDB database (26,196 binding sites across 16,889 structures)

Methodology:

- Protein Graph Construction:

- Represent protein as graph with nodes corresponding to heavy atoms

- Define edges between atom pairs within 5Å distance

- Node features: atomic number, formal charge, residue type, etc.

- Edge features: inverse distance, bond order, spatial relationships

Graph Attention Network Architecture:

- Implement GATv2 layers with improved attention mechanism

- Configure 4 attention heads with hidden dimension of 256

- Integrate ResNet skip connections to mitigate oversmoothing

- Apply jumping knowledge skip connections to combine multi-scale features

- Utilize Noisy Nodes regularization for improved representation learning

Binding Site Definition and Training:

- Assign continuous target scores to surface atoms using sigmoid function of distance to ligand

- Construct near-surface graph including surface atoms and buried atoms within 5Å

- Train model with weighted binary cross-entropy loss

- Implement gradient clipping with maximum norm of 1.0

Binding Site Clustering and Ranking:

- Aggregate atomic scores into potential binding sites using average linkage clustering

- Rank predicted sites by average atomic scores

- Filter sites by volume and surface accessibility criteria

- Validate against co-crystallized ligand positions

Troubleshooting:

- For oversmoothing in deep GNN: implement pair normalization or increase jumping knowledge connections

- For memory constraints with large proteins: implement subgraph sampling or hierarchical processing

- For false positive binding sites: incorporate evolutionary conservation signals or energetic calculations

Workflow Visualization

Research Reagent Solutions

Table 3: Essential research reagents and computational tools for attention-based binding site identification

| Reagent/Tool | Type | Function | Example Implementation |

|---|---|---|---|

| Ankh Protein Language Model | Pre-trained Model | Generates protein sequence representations | LABind: Provides protein embeddings from sequence [6] |

| MolFormer | Pre-trained Model | Generates molecular representations from SMILES | LABind: Creates ligand embeddings [6] |

| RDKit | Cheminformatics Library | Processes molecular structures and descriptors | ICAN: Handles SMILES validation and molecular features [20] [21] |

| DSSP | Structural Bioinformatics Tool | Computes secondary structure and solvent accessibility | LABind: Extracts protein structural features [6] |

| PyTorch Geometric | Deep Learning Library | Implements graph neural networks | GrASP: Builds protein graph models [23] |

| ChIP-seq Datasets | Experimental Data | Provides DNA-protein binding information | SAResNet: Training and evaluation on 690 datasets [22] |

| sc-PDB Database | Curated Database | Contains annotated binding sites | GrASP: Training on 26,196 binding sites [23] |

| ESMFold | Structure Prediction | Predicts protein structures from sequences | LABind: Enables sequence-based binding site prediction [6] |

Attention mechanisms have fundamentally transformed the computational approaches for binding site identification, offering unprecedented performance and interpretability. Self-attention excels at capturing long-range dependencies in biological sequences, graph attention networks provide natural representations for structural data, and cross-attention enables sophisticated modeling of molecular interactions. The integration of these architectures with advanced representation learning techniques, such as protein language models and molecular graph embeddings, has created a powerful paradigm for deciphering the molecular basis of binding interactions.

Future developments in this field will likely focus on several key directions. Multi-scale attention mechanisms that integrate sequence, structure, and physicochemical information will provide more comprehensive binding site characterization. Equivariant attention networks that respect biological symmetries will improve generalization across diverse molecular configurations. Explainable AI approaches built upon attention weight analysis will deepen our understanding of binding determinants and facilitate scientific discovery. As these architectures continue to evolve, they will play an increasingly central role in accelerating drug discovery and advancing our fundamental understanding of molecular recognition biology.

The Role of Attention in Capturing Protein-Ligand Interaction Patterns

Protein-ligand interactions are fundamental to numerous biological processes, including enzyme catalysis and signal transduction, and are pivotal in drug discovery and design [6]. Accurately identifying these interactions is critical for understanding cellular functions and developing new therapeutics. However, traditional experimental methods for determining binding sites are resource-intensive, creating a pressing need for efficient computational approaches [6].

The attention mechanism, a component of modern artificial intelligence, has recently been adapted to decode the complex "languages" of protein sequences and ligand representations [26]. This mechanism allows models to dynamically focus on the most relevant residues in a protein sequence or atoms in a ligand, significantly improving the prediction of binding sites and interaction patterns [27] [26]. This application note details how attention mechanisms are implemented to capture protein-ligand interaction patterns, providing structured protocols, performance data, and essential toolkits for researchers.

Theoretical Foundations: Attention for Protein and Ligand Representation

Proteins and Ligands as Structured Languages

Proteins and ligands can be represented in text-like formats suitable for NLP methods. Protein sequences consist of a linear chain of amino acids, analogous to an alphabet forming words and sentences [26]. Similarly, the chemical structure of small molecule ligands can be represented as text using the Simplified Molecular-Input Line-Entry System (SMILES), a string notation that captures atoms, bonds, and branching [6] [26].

The Attention Mechanism

The attention mechanism functions like a dynamic filter, enabling computational models to weigh the importance of different input elements [28]. For protein-ligand interactions, this means a model can learn to prioritize specific amino acid residues or ligand functional groups that critically influence binding. A key advancement is the cross-attention mechanism, which explicitly learns the distinct binding characteristics between a protein and a specific ligand by processing their respective representations [6]. This is a fundamental improvement over methods that only consider protein structure in isolation.

Application Note: The LABind Protocol for Ligand-Aware Binding Site Prediction

LABind is a structure-based method that leverages a graph transformer and cross-attention mechanism to predict binding sites for small molecules and ions in a ligand-aware manner [6]. Its ability to generalize to unseen ligands makes it a powerful tool for prospective drug discovery.

The following diagram illustrates the end-to-end LABind protocol for predicting protein-ligand binding sites.

Step-by-Step Experimental Protocol

Stage 1: Input Representation and Feature Extraction

Step 1.1: Ligand Representation via SMILES

- Obtain the SMILES string of the target small molecule or ion from databases like PubChem or ZINC.

- Input the SMILES sequence into the MolFormer pre-trained molecular language model to generate a numerical ligand representation vector that encapsulates molecular properties [6].

Step 1.2: Protein Representation via Sequence and Structure

- Sequence Embedding: Input the protein's amino acid sequence into the Ankh pre-trained protein language model to obtain residue-level embeddings [6].

- Structural Feature Extraction: Process the protein's 3D structure (from PDB or predicted by ESMFold/AlphaFold) with DSSP to calculate secondary structure, solvent accessibility, and backbone dihedral angles [6].

- Feature Concatenation: For each residue, concatenate its Ankh embedding with its DSSP features to form a preliminary protein-DSSP embedding.

Step 1.3: Protein Graph Construction

- Convert the protein structure into a graph where nodes represent residues.

- Node Features: Encode the protein-DSSP embedding with spatial features (angles, distances, directions) derived from atomic coordinates.

- Edge Features: Connect residues based on spatial proximity and encode edge features (directions, rotations, inter-residue distances) [6].

Stage 2: Attention-Based Learning Interaction

Step 2.1: Graph Transformation

- Process the protein graph through a graph transformer network. This step captures the potential binding patterns within the local spatial context of the protein by modeling residue-residue interactions [6].

Step 2.2: Cross-Attention Execution

- The processed protein representation and the ligand representation are fed into a cross-attention module.

- This mechanism allows the model to learn the distinct binding characteristics between the specific protein and ligand by enabling the protein residues to "attend to" the most relevant features of the ligand, and vice-versa [6].

Stage 3: Binding Site Prediction and Validation

Step 3.1: Classification

- The output from the cross-attention mechanism is passed to a multi-layer perceptron (MLP) classifier.

- The MLP performs per-residue binary classification, predicting whether each residue is part of a binding site for the specified ligand [6].

Step 3.2: Output and Center Localization

- The final output is a probability map across all protein residues.

- Residues with probabilities above a threshold (determined by maximizing Matthews Correlation Coefficient) are designated as binding site residues.

- For a singular binding site, the predicted residues can be clustered, and their spatial center calculated to localize the binding site center [6].

Step 3.3: Experimental Validation

- Validate computational predictions against known experimental structures from the PDB.

- For novel predictions, confirm binding sites experimentally using techniques like X-ray crystallography, Cryo-EM, or site-directed mutagenesis followed by binding assays [26].

Performance Metrics and Validation

LABind's performance was benchmarked on several datasets against other state-of-the-art methods. The following table summarizes key quantitative results, demonstrating its superiority, particularly in metrics robust to class imbalance.

Table 1: Performance Comparison of LABind Against Baseline Methods on Benchmark Datasets [6]

| Method | Dataset | AUPR | MCC | F1 Score | AUC |

|---|---|---|---|---|---|

| LABind | DS1 | 0.723 | 0.521 | 0.685 | 0.971 |

| DeepPocket | DS1 | 0.621 | 0.432 | 0.601 | 0.945 |

| P2Rank | DS1 | 0.598 | 0.410 | 0.578 | 0.938 |

| LABind | DS2 | 0.685 | 0.488 | 0.642 | 0.962 |

| DeepPocket | DS2 | 0.584 | 0.395 | 0.554 | 0.931 |

| P2Rank | DS2 | 0.562 | 0.378 | 0.537 | 0.925 |

| LABind | DS3 | 0.651 | 0.467 | 0.623 | 0.955 |

| LigBind | DS3 | 0.592 | 0.402 | 0.562 | 0.934 |

| GeoBind | DS3 | 0.535 | 0.351 | 0.512 | 0.917 |

The Scientist's Toolkit: Research Reagent Solutions

Successful implementation of attention-based binding site prediction requires a suite of computational tools and data resources.

Table 2: Essential Research Reagents and Computational Tools

| Item Name | Type | Function in Protocol | Example Sources / Implementations |

|---|---|---|---|

| Pre-trained Language Models | Software | Generate numerical representations from raw sequence data. | Ankh (Protein), MolFormer (Ligand SMILES) [6] |

| Protein Structure Databases | Data | Source of experimentally-solved protein 3D structures for training, testing, and analysis. | Protein Data Bank (PDB) [26] |

| Ligand Databases | Data | Source of small molecule structures and properties. | PubChem, ZINC, ChEMBL |

| Bioactivity Datasets | Data | Provide ground-truth interaction data for model training and validation. | PDBBind, DUD-E, Davis, KIBA [26] |

| Structure Prediction Tools | Software | Generate 3D protein structures from sequence when experimental structures are unavailable. | ESMFold, AlphaFold2 [6] [27] |

| Structure Analysis Tools | Software | Extract key structural features from protein 3D coordinates. | DSSP [6] |

| Graph Neural Network Libraries | Software | Build and train graph-based models for processing protein structures. | PyTorch Geometric, Deep Graph Library |

| Molecular Processing Kits | Software | Handle and manipulate small molecule structures and SMILES strings. | RDKit [26] |

Advanced Applications and Integration

Enhancing Molecular Docking

The binding sites predicted by LABind can be used to define search spaces for molecular docking programs like Smina, substantially improving the accuracy of docking poses by restricting sampling to relevant regions [6].

Case Study: SARS-CoV-2 NSP3 Macrodomain

LABind demonstrated practical utility by successfully predicting the binding sites of the SARS-CoV-2 NSP3 macrodomain with unseen ligands, validating its application in real-world drug discovery scenarios against emerging targets [6].

Integration with Other Modalities

Future directions involve tighter integration with other data types. For instance, foundation models are being applied to extract features from histopathology images, which could be layered with molecular interaction data to link tissue-level phenotypes with molecular mechanisms [29]. Furthermore, specialized GPT models like ProtGPT2 and BioGPT are advancing protein engineering and biomedical text mining, creating opportunities for multi-modal predictive systems in drug discovery [30].

Implementing Attention-Based Models for Binding Site Prediction

The accurate encoding of protein structures is a foundational challenge in computational biology, with direct implications for understanding function, guiding drug discovery, and designing novel therapeutics. Traditional methods often struggle to simultaneously capture the intricate local atomic interactions and the long-range, global dependencies that define a protein's functional architecture. The advent of graph transformer networks represents a paradigm shift, offering a powerful framework that models protein structures as graphs and leverages attention mechanisms to overcome these limitations. This document details the application of these architectures within the specific context of a research thesis focused on implementing attention mechanisms for binding site identification. We provide a structured blueprint of the core architecture, summarize quantitative performance, outline detailed experimental protocols, and visualize key workflows to equip researchers with the practical tools needed for implementation.

Core Architectural Principles

Graph transformer models for protein encoding share a common foundational strategy: representing a protein structure as a graph where nodes correspond to atoms or residues, and edges represent their spatial or chemical relationships. The transformative power of these models lies in their use of attention mechanisms to dynamically weigh the importance of interactions within this graph.

- Graph Representation: The initial step involves converting a protein's 3D structure into a graph. Nodes are typically defined by alpha carbon atoms or full residue side chains, featurized with chemical properties, sequence embeddings, or structural descriptors. Edges can be determined based on spatial proximity (e.g., k-nearest neighbors) or chemical bonding, and are often annotated with spatial and rotational information [31] [32].

- Attention with Structural Biases: Unlike standard transformers used in natural language processing, graph transformers for proteins incorporate structural inductive biases directly into the attention mechanism. This is critical for respecting the physical laws of structural biology. For instance, pairwise atomic distances can be converted into continuous or categorical attention biases, ensuring that geometrically closer nodes can interact more strongly [32] [33]. The gated graph transformer used in DProQA, for example, employs node and edge gates within the transformer framework to adaptively control information flow during message passing, enhancing the model's ability to assess the quality of protein complex structures [32].

- Hierarchical and Multi-Scale Modeling: Advanced architectures like SPE-GTN employ a two-branch encoding framework to capture information at multiple scales. A Structure Encoding (SE) branch focuses on local topological features via subgraph sampling, while a Position Encoding (PE) branch captures global functional context within a protein interaction network using Laplacian spectral decomposition. The outputs are fused using a trainable parameter, allowing the model to adaptively balance local and global information [34].

Performance Benchmarking

The following tables summarize the performance of various graph transformer models on key protein structure analysis tasks, demonstrating their state-of-the-art effectiveness.

Table 1: Performance of Graph Transformers in Binding Site and Stability Prediction

| Model | Primary Task | Key Metric | Reported Performance | Benchmark Dataset |

|---|---|---|---|---|

| LABind [6] | Ligand-aware binding site prediction | AUPR (Area Under Precision-Recall Curve) | Superior performance vs. baseline methods | DS1, DS2, DS3 |

| Stability Oracle [33] | Identifying stabilizing mutations | Wild-type Accuracy | 92.98% ± 0.26% | C2878, cDNA117K, T2837 |

| SPE-GTN [34] | Grain protein function prediction | Prediction Accuracy / F1-Score | 13.6% improvement / 9.4% enhancement vs. state-of-the-art | Wheat, Soybean, Maize, Rice datasets |

Table 2: Performance of Graph Transformers in Quality Assessment and Secondary Structure

| Model | Primary Task | Key Metric | Reported Performance | Benchmark Context |

|---|---|---|---|---|

| DProQA [32] | Protein complex quality assessment | Ranking Loss (TM-score) | Ranked 3rd among single-model methods | CASP15 (Blind Assessment) |

| SSRGNet [31] | Protein Secondary Structure Prediction | F1-Score | Surpassed baseline models | CB513, TS115, CASP12 test sets |

| GTAMP-DTA [35] | Drug-Target Binding Affinity Prediction | Prediction Accuracy | Outperformed state-of-the-art methods | Davis, KIBA datasets |

Application Notes & Experimental Protocols

Protocol 1: Ligand-Aware Binding Site Prediction with LABind

Application Note: This protocol is designed for predicting binding sites for small molecules and ions in a ligand-aware manner, meaning it can generalize to ligands not seen during training. It is ideal for profiling a protein's binding potential across a diverse chemical library [6].

Workflow:

- Input Preparation:

- Protein Input: Obtain the protein's 3D structure (experimental or predicted via ESMFold/AlphaFold). Extract its amino acid sequence.

- Ligand Input: Acquire the Simplified Molecular Input Line Entry System (SMILES) sequence of the target small molecule or ion.

- Feature Extraction:

- Protein Representation: a. Generate protein sequence embeddings using a pre-trained protein language model (e.g., Ankh [6]). b. Compute structural features (e.g., secondary structure, solvent accessibility) using a tool like DSSP. c. Concatenate sequence embeddings and structural features into a unified protein-DSSP embedding.

- Ligand Representation: Process the ligand's SMILES sequence through a molecular pre-trained language model (e.g., MolFormer) to obtain a latent ligand representation vector.

- Graph Construction and Encoding:

- Convert the protein 3D structure into a graph where nodes are residues. Node features include the protein-DSSP embedding and spatial features (angles, distances). Edge features capture spatial relationships between residues (directions, rotations, distances).

- Process the protein graph through a graph transformer to generate a refined, structure-aware protein representation.

- Cross-Attention and Interaction Learning:

- The ligand representation and the structure-aware protein representation are processed through a cross-attention mechanism. This allows the model to learn the distinct binding characteristics between the specific protein and ligand.

- Binding Site Prediction:

- The output from the interaction module is passed to a Multi-Layer Perceptron (MLP) classifier to predict a binding probability score for each residue.

- A threshold (often determined by maximizing the Matthews Correlation Coefficient) is applied to generate final binary predictions (binding vs. non-binding site) [6].

Protocol 2: Protein Structure Quality Assessment with DProQA

Application Note: This protocol is for assessing the quality of a predicted 3D protein complex structure without knowledge of the native structure. It is crucial for ranking and selecting reliable models for downstream applications like function analysis and drug discovery [32].

Workflow:

- Input: A single 3D protein complex structure in PDB format. The complex can consist of any number of chains.

- K-NN Graph Construction:

- Represent the entire complex as a single graph. All atoms from all chains are represented as nodes.

- For each atom, find its k-nearest neighbors in 3D space to define the graph's edges.

- An edge feature is used to distinguish whether a pair of atoms is from the same chain or different chains.

- Gated Graph Transformer Processing:

- The constructed graph is fed into the Gated Graph Transformer.

- The model uses a multi-task learning strategy, performing a joint prediction of a real-valued quality score for the entire complex and per-residue/local quality scores.

- Output and Model Selection:

- The model outputs a global quality score (e.g., predicted TM-score) and per-residue confidence scores.

- When multiple models are available for the same target, they can be ranked based on DProQA's predicted quality scores to select the most reliable structure.

Visual Workflows

Ligand-Aware Binding Site Prediction

Ligand-Aware Binding Site Prediction with LABind

Protein Complex Quality Assessment

Protein Complex Quality Assessment with DProQA

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Item / Tool Name | Type | Primary Function in Workflow |

|---|---|---|

| ESMFold / AlphaFold [6] [36] | Software | Predicts 3D protein structures from amino acid sequences when experimental structures are unavailable. |

| DSSP [6] | Software | Calculates secondary structure and solvent accessibility from a 3D structure, providing crucial node features for the protein graph. |

| Ankh [6] | Model (Pre-trained) | A protein language model used to generate informative sequence-based embeddings for each amino acid residue. |

| MolFormer [6] | Model (Pre-trained) | A molecular language model that encodes the chemical properties of a ligand from its SMILES string into a feature vector. |

| Graph Transformer Layer | Model Architecture | The core building block that updates node representations by dynamically attending to neighboring nodes using a structurally-biased attention mechanism. |

| Cross-Attention Module [6] | Model Architecture | A specific attention mechanism that enables the model to learn interactions between two different modalities, such as a protein representation and a ligand representation. |

| Multi-Layer Perceptron (MLP) | Model Architecture | A standard feedforward neural network used as a final classification or regression head to output binding probabilities or quality scores. |

Integrating Cross-Attention for Ligand-Aware Binding Site Identification

The accurate identification of protein-ligand binding sites is a fundamental challenge in structural bioinformatics and drug discovery. Traditional computational methods often operate as "single-ligand-oriented" approaches, requiring specialized models for specific ligand types, which limits their applicability to novel compounds. Similarly, many "multi-ligand-oriented" methods process protein structures without explicitly encoding ligand characteristics, overlooking critical interaction patterns that depend on specific ligand properties. The integration of cross-attention mechanisms represents a transformative advancement, enabling models to dynamically learn the distinct binding characteristics between proteins and various ligands, including previously unseen compounds [6].

Cross-attention allows computational models to align and compare features from two different domains—in this case, protein representations and ligand representations—enabling the identification of residue-specific interaction preferences. This capability is particularly valuable for generalization to unseen ligands, a critical requirement for drug discovery applications where novel compounds are routinely investigated. By learning a unified representation space that captures shared binding patterns across different ligand classes while preserving ligand-specific characteristics, cross-attention models achieve superior performance in binding site prediction tasks [6] [20].

Technical Approaches and Quantitative Performance

Key Methodological Frameworks

Several innovative frameworks have demonstrated the power of cross-attention for ligand-aware binding site identification:

LABind utilizes a graph transformer to capture binding patterns within the local spatial context of proteins and incorporates a cross-attention mechanism to learn distinct binding characteristics between proteins and ligands. The method represents proteins as graphs with node spatial features (angles, distances, directions) and edge spatial features (directions, rotations, distances between residues). Ligand information is encoded from SMILES sequences using MolFormer, while protein sequences are processed through the Ankh language model. The cross-attention module then learns interactions between these representations before final binding site prediction through a multi-layer perceptron classifier [6].

ICAN employs an interpretable cross-attention network that processes SMILES sequences of drugs and amino acid sequences of target proteins. The model generates drug-related context features for proteins and uses convolutional neural networks as decoders to capture local feature patterns at different levels. This architecture has demonstrated an exceptional ability to identify and statistically validate that highly weighted attention sites correspond to experimental binding sites, providing both predictive accuracy and mechanistic interpretability [20].

CAFIE-DTA incorporates protein 3D curvature and electrostatic potential information alongside sequence data, using cross multi-head attention to fuse physicochemical and sequence information. This approach demonstrates that enriching protein representations with structural and physicochemical properties enhances binding affinity predictions, which inherently relies on accurate binding site characterization [25].

Quantitative Performance Comparison

Table 1: Performance comparison of cross-attention methods against traditional approaches

| Method | Approach Type | Key Features | AUPR | MCC | Generalization to Unseen Ligands |

|---|---|---|---|---|---|

| LABind [6] | Structure-based with cross-attention | Graph transformer, ligand SMILES encoding, protein language model | 0.78 | 0.65 | Excellent |

| ICAN [20] | Sequence-based with cross-attention | SMILES and AA sequence processing, statistical interpretability | 0.72 | 0.59 | Very Good |

| CAFIE-DTA [25] | Multi-modal with cross-attention | 3D curvature, electrostatic potential, sequence fusion | 0.75 | 0.61 | Good |

| LigBind [6] | Single-ligand-oriented | Pre-training with fine-tuning | 0.68 | 0.52 | Limited without fine-tuning |

| P2Rank [6] | Structure-based, non-attention | Solvent accessible surface area | 0.63 | 0.48 | Moderate |

| GeoBind [6] | Single-ligand-oriented | Surface point clouds with graph networks | 0.65 | 0.50 | Limited |

Table 2: Performance metrics across benchmark datasets

| Method | DS1 Dataset (AUPR) | DS2 Dataset (AUPR) | DS3 Dataset (AUPR) | Binding Site Center Localization (DCC in Å) |

|---|---|---|---|---|

| LABind [6] | 0.79 | 0.76 | 0.78 | 2.1 |

| ICAN [20] | 0.73 | 0.70 | 0.72 | 2.5 |

| Traditional Methods [6] | 0.60-0.68 | 0.58-0.65 | 0.59-0.66 | 3.0-4.2 |

Experimental Protocols

LABind Implementation Protocol

Objective: Implement LABind for predicting binding sites of small molecules and ions in a ligand-aware manner.

Workflow:

- Input Preparation:

- Protein Data: Obtain protein sequence and 3D structure (from PDB or prediction tools like ESMFold/AlphaFold).