Integrating QSAR and Molecular Docking in Modern Drug Discovery: From AI-Driven Models to Clinical Applications

This article provides a comprehensive overview of the integrated application of Quantitative Structure-Activity Relationship (QSAR) modeling and molecular docking in contemporary drug discovery.

Integrating QSAR and Molecular Docking in Modern Drug Discovery: From AI-Driven Models to Clinical Applications

Abstract

This article provides a comprehensive overview of the integrated application of Quantitative Structure-Activity Relationship (QSAR) modeling and molecular docking in contemporary drug discovery. It explores the foundational principles of these computational methods, detailing their evolution from classical statistical approaches to modern AI-enhanced techniques. The content covers practical methodologies, addresses common challenges in model development and optimization, and outlines rigorous validation frameworks. Aimed at researchers, scientists, and drug development professionals, this review highlights how the synergy between ligand-based QSAR and structure-based docking creates powerful, efficient pipelines for lead compound identification and optimization, significantly accelerating preclinical drug development while reducing costs and experimental attrition.

The Essential Partnership: Understanding QSAR and Molecular Docking Fundamentals

The evolution of Quantitative Structure-Activity Relationship (QSAR) modeling represents a cornerstone of modern computational drug discovery, transitioning from classical statistical approaches to sophisticated artificial intelligence (AI)-driven methodologies. This journey began with foundational work by Crum-Brown and Fraser in 1868, who published the first general QSAR equation, and progressed through seminal contributions including Hammett's electronic parameters, Hansch analysis incorporating lipophilicity, and Free-Wilson deconstruction of substituent contributions [1]. The field has since expanded through machine learning (ML) and deep learning (DL) algorithms that now empower researchers to predict biological activity, optimize lead compounds, and navigate chemical spaces containing billions of molecules with unprecedented accuracy and efficiency [2] [3].

This progression has fundamentally transformed drug discovery from a trial-and-error process to a data-driven science, significantly reducing development timelines and costs while improving success rates [4]. The integration of AI with QSAR has been particularly transformative, enabling virtual screening of extensive chemical databases, de novo drug design, and multi-parameter optimization for specific biological targets [2]. This document details the historical context, methodological advances, and practical protocols that define classical and contemporary QSAR approaches, providing researchers with actionable frameworks for implementation within modern drug discovery pipelines.

Historical Foundations of QSAR

The conceptual foundations of QSAR emerged in the late 19th century with observations that biological activity could be correlated with molecular properties. In 1868, Crum-Brown and Fraser proposed the first general equation relating chemical structure to biological effect: Φ = f(C), where Φ represents physiological activity and C denotes chemical constitution [1]. Subsequent work by Richet demonstrated an inverse relationship between toxicity and water solubility for various organic compounds, while Meyer and Overton independently established correlations between lipophilicity (measured as oil-water partition coefficients) and narcotic activity [1].

The modern QSAR era began in the 1960s with the pioneering work of Corwin Hansch, who introduced a quantitative framework correlating biological activity with physicochemical parameters through linear free-energy relationships. The general form of the Hansch equation is expressed as:

Log BA = a log P + b σ + c E_s + constant (linear form)

Log BA = a log P + b (log P)² + c σ + d E_s + constant (nonlinear form) [1]

where Log BA is the logarithm of biological activity, log P represents lipophilicity, σ denotes the Hammett electronic parameter, and E_s represents Taft's steric parameter. This approach assumed that substituent contributions were additive and independent, enabling the prediction of biological activity for novel analogs [1].

Concurrently, Free and Wilson developed a complementary approach based on the presence or absence of specific substituents at defined molecular positions. The Free-Wilson model is mathematically expressed as:

Log BA = μ + Σa_i a_j

where μ represents the average activity of the parent scaffold, and a_i a_j denotes the contribution of specific substituents at particular positions [1]. This de novo approach allowed for bioactivity prediction without explicit physicochemical parameters but required numerous analogs with systematic substitution patterns.

Subsequently, Kubinyi proposed a mixed approach combining elements of both methodologies:

Log BA = Σa_i a_j + Σk_i φ_j + k

where Σa_i a_j represents the Free-Wilson substituent contributions and Σk_i φ_j denotes the Hansch-type physicochemical parameters [1]. This hybrid framework enhanced predictive capability by incorporating both structural and physicochemical descriptors.

Table 1: Historical Evolution of Key QSAR Methodologies

| Time Period | Key Methodologies | Core Principles | Representative Equation |

|---|---|---|---|

| 1868 | Crum-Brown & Fraser | First general structure-activity equation | Φ = f(C) |

| Early 1900s | Meyer-Overton, Richet | Lipophilicity-activity relationships | Toxicity ∝ 1/(water solubility) |

| 1960s | Hansch Analysis | Linear free-energy relationships | Log BA = a log P + b σ + c E_s + constant |

| 1960s | Free-Wilson | Substituent contribution additivity | Log BA = μ + Σa_i a_j |

| 1970s | Mixed Approach | Combined Hansch & Free-Wilson | Log BA = Σa_i a_j + Σk_i φ_j + k |

| 1980s-1990s | 3D-QSAR (CoMFA, CoMSIA) | 3D molecular fields & steric/electrostatic interactions | BA = f(steric, electrostatic, hydrophobic fields) |

| 2000s-Present | AI-Integrated QSAR | Machine learning, deep learning, generative models | BA = f(GNNs, transformers, neural networks) |

Classical QSAR: Methodologies and Protocols

Hansch Analysis Protocol

Objective: To develop a quantitative model correlating biological activity with physicochemical properties using multiple linear regression (MLR).

Materials and Reagents:

- Chemical Dataset: 20-50 structurally related compounds with measured biological activity (e.g., IC₅₀, EC₅₀, KI)

- Software Tools: DRAGON [2], PaDEL-Descriptor [5], or RDKit [2] for descriptor calculation

- Statistical Software: QSARINS [2], Build QSAR [2], or scikit-learn for model development and validation

Experimental Procedure:

Data Collection and Preparation

- Assemble a congeneric series of compounds with experimentally determined biological activities measured under consistent conditions

- Convert biological activity values to logarithmic form (e.g., Log(1/IC₅₀)) to linearize dose-response relationships

- Apply chemical curation to standardize structures, remove duplicates, and identify errors using tools like the KNIME platform [2]

Molecular Descriptor Calculation

- Calculate lipophilicity parameters (log P) using fragmental or atom-based methods

- Compute electronic parameters (σ) based on Hammett substituent constants

- Determine steric parameters (E_s) using Taft's method or molar refractivity

- Consider additional relevant descriptors including molar refractivity, hydrogen bonding capabilities, and topological indices

Model Development using Multiple Linear Regression

- Perform feature selection to identify the most relevant descriptors using stepwise regression, genetic algorithms, or LASSO regularization [2]

- Construct the Hansch equation using MLR: Log BA = a log P + b σ + c E_s + constant

- Evaluate nonlinear relationships by incorporating squared terms (e.g., (log P)²) to account for parabolic lipophilicity-activity relationships

Model Validation

- Assess goodness-of-fit using coefficient of determination (R²) and adjusted R²

- Perform internal validation via leave-one-out (LOO) or leave-many-out cross-validation, reporting Q² values

- Conduct external validation using a test set of compounds not included in model development

- Apply the OECD QSAR validation principles to ensure regulatory acceptance [5]

Model Interpretation and Application

- Interpret regression coefficients to determine the relative contribution of each physicochemical property to biological activity

- Use the validated model to predict activities of virtual compounds before synthesis

- Design new analogs with optimized physicochemical properties guided by model insights

Case Study Application: Talukder et al. integrated classical QSAR with docking and simulations to prioritize EGFR-targeting phytochemicals for non-small cell lung cancer, demonstrating the enduring relevance of Hansch principles in modern drug discovery [2].

Free-Wilson Analysis Protocol

Objective: To develop a QSAR model based on substituent contributions at specific molecular positions without explicit physicochemical parameters.

Materials and Reagents:

- Chemical Dataset: 30-100 compounds with systematic variation of substituents at defined molecular positions

- Software Tools: Molecular spreadsheet software with MLR capabilities or specialized Free-Wilson analysis tools

Experimental Procedure:

Data Matrix Preparation

- Identify the molecular scaffold and define substitution positions (R₁, R₂, ..., Rₙ)

- Create a binary matrix indicating the presence (1) or absence (0) of each possible substituent at each position

- Ensure the dataset contains sufficient structural variation to avoid co-linearity in the design matrix

Model Development

- Apply MLR to the binary design matrix with biological activity as the dependent variable

- Solve the equation: Log BA = μ + Σa_i a_j, where μ is the average activity of the parent scaffold, and a_i a_j represents the contribution of substituent j at position i

- Apply constraints to avoid overparameterization, typically requiring at least 5-10 compounds per substituent parameter

Model Validation and Application

- Validate using cross-validation techniques and external test sets

- Interpret substituent contributions to identify favorable chemical groups at each position

- Predict activity of unsynthesized combinations of substituents

- Prioritize synthetic targets based on predicted potency enhancements

Limitations: The Free-Wilson approach requires numerous analogs with systematic substitution patterns and cannot extrapolate beyond the chemical space defined by the training set substituents [1].

The Transition to AI-Integrated QSAR

The integration of artificial intelligence, particularly machine learning and deep learning, has transformed QSAR from statistically driven linear models to complex nonlinear algorithms capable of navigating high-dimensional chemical spaces [2]. This transition addresses key limitations of classical approaches, including their inability to model complex structure-activity relationships and handle large, diverse chemical datasets.

Machine learning algorithms including Support Vector Machines (SVM), Random Forests (RF), and k-Nearest Neighbors (kNN) have become standard tools in cheminformatics, offering robust performance for virtual screening and toxicity prediction [2]. These methods capture nonlinear relationships without prior assumptions about data distribution, significantly expanding the applicability domain of QSAR models.

More recently, deep learning architectures including Graph Neural Networks (GNNs), Transformers, and Generative Adversarial Networks (GANs) have further advanced the field by learning molecular representations directly from structural data without manual descriptor engineering [2] [6]. These approaches generate "deep descriptors" that capture hierarchical molecular features, enabling more flexible and data-driven QSAR pipelines applicable across diverse chemical spaces [2].

Table 2: Comparison of Classical Statistical and AI-Integrated QSAR Approaches

| Aspect | Classical QSAR | AI-Integrated QSAR |

|---|---|---|

| Core Algorithms | Multiple Linear Regression, Partial Least Squares | Random Forests, SVM, GNNs, Transformers |

| Molecular Representation | Predefined physicochemical descriptors & substituent indices | Learned representations (fingerprints, graph embeddings, SMILES encodings) |

| Handling of Nonlinear Relationships | Limited (requires explicit specification) | Excellent (automatically captures complex patterns) |

| Data Efficiency | Requires careful feature selection with limited variables | Effective with high-dimensional descriptor spaces |

| Interpretability | High (explicit coefficients for each parameter) | Variable (requires SHAP, LIME for interpretation) [2] |

| Applicability Domain | Restricted to congeneric series | Broad coverage of diverse chemical spaces |

| Implementation Tools | QSARINS, Build QSAR [2] | scikit-learn, DeepChem, PyTorch, TensorFlow |

Modern AI-Integrated QSAR Protocols

Machine Learning-Guided Virtual Screening Protocol

Objective: To rapidly identify bioactive compounds from ultralarge chemical libraries by combining machine learning classification with molecular docking.

Materials and Reagents:

- Chemical Libraries: Enamine REAL Space, ZINC15, or other make-on-demand libraries (up to billions of compounds) [3]

- Software Tools: RDKit for descriptor calculation, CatBoost or Deep Neural Networks for classification, molecular docking software (AutoDock, Glide, etc.)

- Computing Resources: High-performance computing cluster for large-scale docking and machine learning

Experimental Procedure:

Initial Docking and Training Set Generation

- Randomly select 1 million compounds from the target chemical library

- Perform molecular docking against the target protein using standard protocols

- Identify the top-scoring 1% of compounds (10,000 molecules) as the "active" class

- Label the remaining compounds as "inactive" for binary classification

Descriptor Calculation and Feature Engineering

- Compute molecular descriptors for all compounds:

- Split the dataset into training (80%) and calibration (20%) sets

Classifier Training and Conformal Prediction

- Train multiple CatBoost classifiers (5 independent models) on the training set using Morgan fingerprints [3]

- Apply the Mondrian conformal prediction framework to calibrate confidence levels

- Generate normalized p-values for each compound in the validation set

- Aggregate predictions across all classifiers by taking median p-values

Virtual Screening of Ultralarge Library

- Compute molecular descriptors for the entire chemical library (billions of compounds)

- Apply the trained conformal predictor with an optimized significance level (ε)

- Select compounds predicted as "virtual actives" with controlled error rates

- Perform molecular docking on the reduced compound set (typically 1-10% of original library)

Experimental Validation

- Select top-ranked compounds from the docking screen for synthesis or acquisition

- Test selected compounds in biological assays to validate predicted activity

- Iterate the model using newly acquired experimental data to improve performance

Case Study Application: Researchers applied this protocol to screen 3.5 billion compounds against G protein-coupled receptors, reducing computational costs by more than 1,000-fold while successfully identifying ligands with multi-target activity tailored for therapeutic effect [3].

Deep Learning-Based QSAR Protocol

Objective: To develop predictive QSAR models using deep neural networks that automatically learn relevant features from molecular structures.

Materials and Reagents:

- Chemical Dataset: Large-scale bioactivity data (10,000+ compounds) from public databases (ChEMBL, PubChem) or proprietary sources

- Software Tools: DeepChem, PyTorch, or TensorFlow for deep learning implementation

- Computing Resources: GPU-accelerated workstations or cloud computing instances

Experimental Procedure:

Data Preparation and Curation

- Collect bioactivity data from reliable sources with uniform measurement conditions

- Apply rigorous chemical curation: standardize structures, remove duplicates, and correct errors [5]

- Split data into training (70%), validation (15%), and test (15%) sets using time-split or scaffold-based splitting

Molecular Representation Selection

- Choose appropriate molecular input representations based on data size and complexity:

- Extended-Connectivity Fingerprints (ECFPs): For standard machine learning models

- Graph Representations: Atoms as nodes, bonds as edges for GNNs

- SMILES Sequences: For transformer-based models

- 3D Molecular Structures: For spatial-convolutional networks

- Choose appropriate molecular input representations based on data size and complexity:

Model Architecture Design

- Implement appropriate neural network architecture:

- Configure network depth, width, and regularization (dropout, batch normalization)

- Define appropriate loss function (mean squared error for regression, cross-entropy for classification)

Model Training and Optimization

- Train models using mini-batch gradient descent with early stopping

- Optimize hyperparameters (learning rate, hidden layers, dropout rate) using Bayesian optimization or grid search

- Monitor training and validation performance to detect overfitting

- Apply regularization techniques to improve generalization

Model Interpretation and Explanation

- Apply explainable AI techniques to interpret model predictions:

- Visualize important molecular features contributing to predictions

- Validate model interpretations against known medicinal chemistry principles

Model Deployment and Integration

- Deploy trained models as web services or integrated into drug discovery platforms

- Establish continuous learning pipelines to update models with new data

- Integrate with other computational tools (molecular docking, ADMET prediction) for comprehensive drug discovery workflows

Case Study Application: AI-integrated QSAR models have been successfully applied to design α-glucosidase inhibitors for diabetes treatment [7] and to discover precision cancer immunomodulation therapies targeting immune checkpoints [6].

The Scientist's Toolkit: Essential Research Reagents and Software

Table 3: Essential Computational Tools for Classical and AI-Integrated QSAR

| Tool Category | Specific Tools | Key Functionality | Applicability |

|---|---|---|---|

| Descriptor Calculation | DRAGON [2], PaDEL-Descriptor [5], RDKit [2] | Compute molecular descriptors & fingerprints | Classical & ML QSAR |

| Classical QSAR Modeling | QSARINS [2], Build QSAR [2] | Multiple regression, model validation | Classical QSAR |

| Machine Learning Libraries | scikit-learn, KNIME [2], CatBoost [3] | SVM, Random Forests, Gradient Boosting | ML-QSAR |

| Deep Learning Frameworks | DeepChem, PyTorch, TensorFlow | GNNs, Transformers, Neural Networks | DL-QSAR |

| Molecular Docking | AutoDock, Glide, GOLD | Structure-based virtual screening | Complementary to QSAR |

| Cheminformatics Platforms | RDKit, OpenBabel, ChemAxon | Chemical representation, manipulation | All QSAR approaches |

| Model Interpretation | SHAP [2], LIME [2] | Explainable AI, feature importance | ML & DL QSAR |

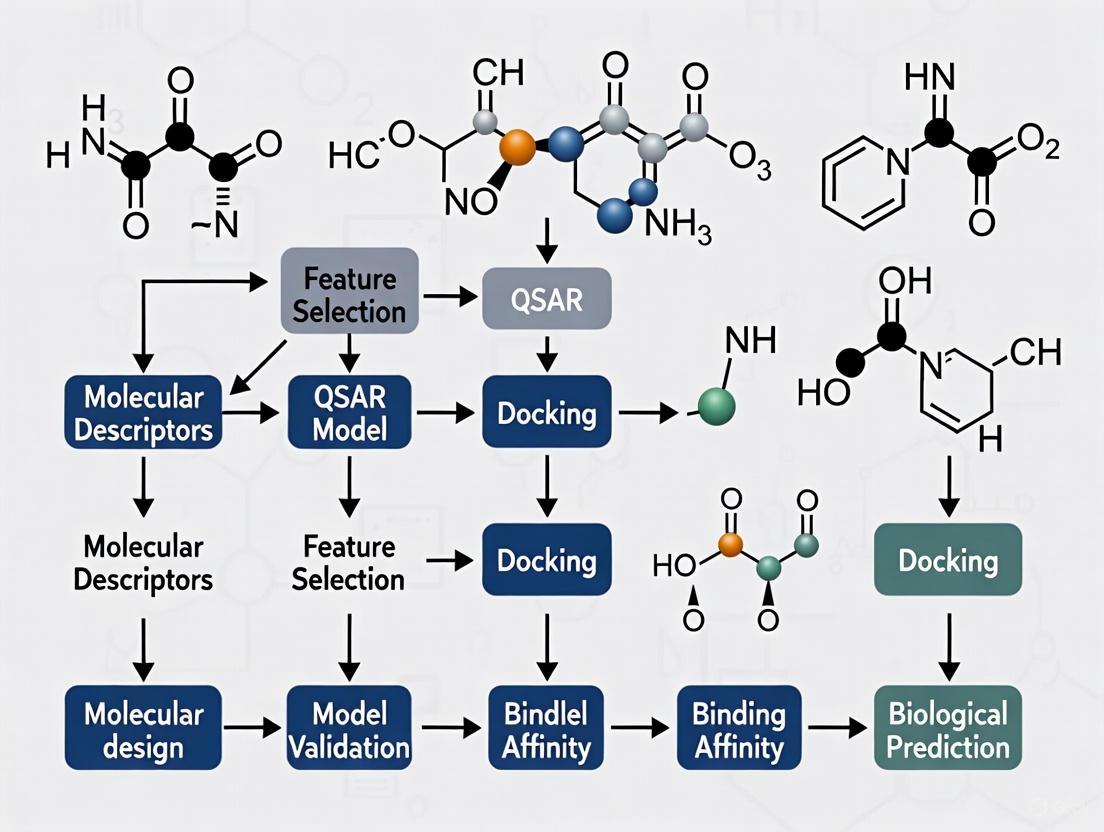

Workflow Visualization

Diagram 1: Historical evolution of QSAR methodologies from early observations to contemporary AI-integrated approaches, highlighting key methods and their primary applications.

Diagram 2: Modern AI-integrated QSAR workflow illustrating the key steps from data collection to experimental validation, highlighting the integration of machine learning with conformal prediction for efficient virtual screening.

Quantitative Structure-Activity Relationship (QSAR) models are regression or classification models used in the chemical and biological sciences and engineering to relate a set of "predictor" variables (X) to the potency of a response variable (Y) [8]. In essence, QSAR is a methodology that correlates the chemical structure of a molecule with its biochemical, physical, pharmaceutical, or biological effect using mathematical and statistical techniques [9]. These models first summarize a supposed relationship between chemical structures and biological activity in a dataset of chemicals, and then predict the activities of new chemicals [8]. The fundamental assumption underlying QSAR is that similar molecules have similar activities, a principle also known as the Structure-Activity Relationship (SAR) [8]. The basic mathematical expression of a QSAR model is:

Activity = f(physicochemical properties and/or structural properties) + error [8]

QSAR has evolved significantly since its inception in the 1960s with Corwin Hansch's pioneering work on Hansch analysis [10]. From the early use of a few easily interpretable physicochemical descriptors and simple linear models, QSAR has transformed into a sophisticated field that utilizes thousands of chemical descriptors and complex machine learning methods due to advancements in cheminformatics [10]. The related term QSPR (Quantitative Structure-Property Relationships) refers to models where a chemical property is modeled as the response variable instead of biological activity [8].

Fundamental Principles of QSAR

The SAR Principle and Paradox

The basic assumption for all molecule-based hypotheses in QSAR is that similar molecules have similar activities, known as the Structure-Activity Relationship (SAR) principle [8]. This principle suggests that compounds with similar structures often exhibit similar activities, which is supported by extensive chemical practice [10]. However, the SAR paradox refers to the fact that it is not universally true that all similar molecules have similar activities [8]. This paradox highlights the complexity of molecular interactions and the challenges in predicting biological activity based solely on structural similarity.

Essential Steps in QSAR Studies

The principal steps of QSAR/QSPR studies include [8] [9]:

- Selection of data set and extraction of structural/empirical descriptors: Assembling a collection of chemically related compounds with known biological activities or properties.

- Variable selection: Identifying the most relevant molecular descriptors that correlate with the biological activity.

- Model construction: Developing mathematical relationships between the selected descriptors and the biological activity.

- Validation and evaluation: Assessing the robustness, predictive power, and applicability domain of the developed model.

Dimensions of QSAR

QSAR methodologies have evolved through different dimensions of complexity [9]:

- 1D-QSAR: Correlates pKa (dissociation constant) and log P (partition coefficient) with biological activity.

- 2D-QSAR: Correlates biological activity with the overall structure pattern of drug molecules in two-dimensional space, considering parameters like hydrogen bonds, molecular refractivity, topological indices, and dipole moment.

- 3D-QSAR: Correlates biological activity with the three-dimensional structure of the molecule and its properties, considering steric hindrance, hydrogen bond acceptors/donors, and hydrophobic interactions.

- 4D-QSAR: Extends 3D-QSAR by incorporating multiple representations of ligand conformations.

Molecular Descriptors in QSAR

Molecular descriptors are mathematical representations of molecular structures that quantify characteristics of molecules [10]. They serve as critical tools for converting chemical structural features into numerical or symbolic representations that can be correlated with biological activity [8] [10].

Categories of Molecular Descriptors

Molecular descriptors can be categorized based on the type of molecular information they encode:

Table 1: Categories of Molecular Descriptors in QSAR

| Descriptor Category | Description | Examples | Calculation Methods |

|---|---|---|---|

| Constitutional Descriptors | Describe molecular composition without connectivity or geometry | Molecular weight, atom counts, bond counts | Simple counting algorithms [11] |

| Topological Descriptors | Encode connectivity patterns within molecules | Topological indices, connectivity indices | Graph theory-based algorithms [12] |

| Geometric Descriptors | Describe molecular size and shape in 3D space | Principal moments of inertia, molecular volume | Computational geometry approaches [10] |

| Electronic Descriptors | Characterize electronic distribution and properties | HOMO/LUMO energies, dipole moment, polarizability | Quantum chemical calculations (semi-empirical, ab initio) [11] |

| Physicochemical Descriptors | Represent bulk physical and chemical properties | Partition coefficient (log P), solubility, molar refractivity | Empirical formulas, group contribution methods [11] |

Key Electronic Descriptors

Electronic descriptors are particularly important in QSAR as they often directly relate to a molecule's reactivity and interaction capabilities:

HOMO and LUMO Energies: HOMO (Highest Occupied Molecular Orbital) and LUMO (Lowest Unoccupied Molecular Orbital) energies are quantum-mechanical descriptors related to molecular reactivity [11]. According to Frontier Orbital Theory, nucleophilic attack occurs by electron flow from the HOMO of a nucleophile into the LUMO of an electrophile. Molecules with electrons at accessible (near-zero) HOMO levels tend to be good nucleophiles, while molecules with low LUMO energies tend to be good electrophiles [11].

Polarizability: Polarizability characterizes how readily the atomic or molecular charge distribution is distorted by external static or oscillating electromagnetic fields [11]. Static polarizability can be rigorously calculated as the first derivative of the dipole moment with respect to the electric field, or the second derivative of molecular energy with respect to the electric field. Polarizability depends on the electronic structure of atoms and molecules, with larger atoms generally being more polarizable than small atoms [11].

The following diagram illustrates the workflow for calculating key molecular descriptors, highlighting the computational methods involved:

QSAR Modeling Approaches

Types of QSAR Methods

Various QSAR approaches have been developed to handle different aspects of molecular representation and activity prediction:

Fragment-Based (Group Contribution) QSAR This approach, also known as GQSAR, determines properties based on the sum of fragment contributions [8]. For example, the partition coefficient (logP) can be predicted by atomic methods (XLogP or ALogP) or by chemical fragment methods (CLogP) [8]. Fragment-based methods are generally accepted as better predictors than atomic-based methods [8]. GQSAR allows flexibility to study various molecular fragments of interest in relation to the variation in biological response and considers cross-terms fragment descriptors to identify key fragment interactions [8].

3D-QSAR 3D-QSAR applies force field calculations requiring three-dimensional structures of a set of small molecules with known activities (training set) [8]. The training set needs to be superimposed by either experimental data or molecule superimposition software. 3D-QSAR uses computed potentials (e.g., Lennard-Jones potential) rather than experimental constants and is concerned with the overall molecule rather than a single substituent [8]. The first 3D-QSAR was Comparative Molecular Field Analysis (CoMFA), which examines steric and electrostatic fields correlated by partial least squares regression (PLS) [8].

Chemical Descriptor-Based QSAR This approach computes descriptors quantifying various electronic, geometric, or steric properties of a molecule as a whole, rather than from individual fragments [8]. This differs from 3D-QSAR in that descriptors are computed from scalar quantities rather than from 3D fields [8].

String and Graph-Based QSAR These methods use direct molecular representations without explicit descriptor calculation. String-based QSAR uses SMILES strings directly for activity prediction [8], while graph-based methods use the molecular graph directly as input for QSAR models [8], though these often yield inferior performance compared to descriptor-based QSAR models [8].

Mathematical Modeling Techniques

QSAR model development utilizes various statistical and machine learning methods:

- Traditional Methods: Early QSAR models were based on linear regression, including multiple linear regression (MLR) and principal component analysis (PCA) [10].

- Partial Least Squares (PLS): Chemists often prefer PLS methods as it applies feature extraction and induction in one step [8].

- Machine Learning Methods: With advancements in cheminformatics, both linear and nonlinear machine learning methods have emerged, including support vector machines (SVM), decision trees, artificial neural networks (ANN), and deep learning models [8] [10].

The following workflow outlines the key stages in developing and validating a robust QSAR model:

Experimental Protocols and Applications

Protocol: Calculating Electronic Descriptors Using Semi-Empirical Methods

This protocol describes the calculation of HOMO/LUMO energies and polarizability for barbiturate analogs using MOPAC, which can be applied to QSAR studies of central nervous system depressants [11].

Materials and Software

- Chemical structures of compounds (in MOL format)

- MOLDEN software (or equivalent molecular visualization package)

- MOPAC software with PM6 parameter set

- Computer system with appropriate computational resources

Procedure

- Structure Preparation: Obtain the structure of the ethyl analog of barbituric acid using an online SMILES Translator or molecular builder. Save the structure as a 3D MOL file.

- Software Setup: Read the structure into MOLDEN by typing

molden barbiturate_1.molin the command line. - Job Configuration: Open the Z-matrix editor without changing the structure. Select MOPAC from the Format menu and submit the job. In the Submit Mopac Job window:

- Keep Task as "Geometry Optimization"

- Keep Method as "PM6"

- Set Charge to 0 and Spin to "Singlet" for neutral molecules with paired electrons

- Modify keywords: Remove NOXYZ, PRNT=2, COMPFG and add XYZ, STATIC, POLAR for polarizability calculation

- Provide a unique job name and descriptive title

- Calculation Execution: Click Submit to start the calculation. For barbiturate-sized molecules, the calculation typically completes in approximately 20 seconds.

- Result Extraction: Examine the output file (

barbiturate_1.out) using the commandtail barbiturate_1.outin Unix Shell. Locate the polarizability volumes (in ų units) near the end of the file for analysis.

Notes

- Verify that all formal valences are satisfied before calculation

- For HOMO energy calculations, use Gaussian with 6-31G* basis set instead of MOPAC

- Record all calculated descriptor values systematically for subsequent QSAR analysis

Protocol: Developing and Validating a QSAR Model

Materials

- Dataset of compounds with known biological activities (IC₅₀, EC₅₀, etc.)

- Cheminformatics software (e.g., various commercial or open-source QSAR packages)

- Molecular descriptor calculation tools

- Statistical analysis software or programming environment (R, Python, etc.)

Procedure

- Data Set Preparation: Curate a set of structurally similar molecules with known biological activity values. Ensure the dataset encompasses a wide variety of chemical structures within the same class to improve model generalization [10].

- Descriptor Calculation: Compute molecular descriptors for all compounds in the dataset. These may include constitutional, topological, geometric, electronic, and physicochemical descriptors [9].

- Variable Selection: Apply feature selection techniques to identify the most relevant descriptors that correlate with biological activity while reducing dimensionality and minimizing overfitting [8].

- Model Construction: Apply mathematical techniques such as partial least squares (PLS) regression, principal component analysis (PCA), or machine learning methods to develop a relationship between selected descriptors and biological activity [8] [9].

- Model Validation:

- Internal Validation: Perform cross-validation to assess model robustness [8].

- External Validation: Split the dataset into training and test sets, or use blind external validation by applying the model to new external data [8].

- Data Randomization: Verify the absence of chance correlation through Y-scrambling [8].

- Applicability Domain Assessment: Define the chemical space where the model can make reliable predictions based on the training set structures and properties [8].

Application in Drug Discovery

QSAR has found extensive applications in drug discovery and development:

- Lead Optimization: QSAR guides the process of lead optimization by predicting how structural changes affect biological activity [9].

- Toxicity Prediction: QSAR models predict the toxicological profiles of compounds, reducing the need for extensive animal testing [9].

- Virtual Screening: QSAR-based virtual screening identifies molecules likely to be effective against specific protein targets, as demonstrated in COVID-19 drug discovery efforts targeting SARS-CoV-2 proteins [9].

- Green Chemistry: QSAR supports green chemistry initiatives by identifying compounds unlikely to be successful early in the development process, reducing waste and increasing efficiency [9].

Table 2: Essential Research Reagents and Computational Tools for QSAR Studies

| Category | Item | Function/Application | Examples |

|---|---|---|---|

| Computational Software | Quantum Chemistry Packages | Calculate electronic descriptors (HOMO/LUMO energies, polarizability) | Gaussian, Gamess, Firefly (PC GAMESS), MOPAC [11] |

| Molecular Modeling & Visualization | Structure preparation, visualization, and analysis | MOLDEN, ChemSketch, Avogadro [13] [11] | |

| QSAR Modeling Platforms | Develop, validate, and apply QSAR models | Various commercial and open-source QSAR packages [10] | |

| Databases | Chemical Databases | Source compound structures for QSAR datasets | ZINC, PubChem, ChEMBL [13] [14] |

| Protein Data Bank | Provide 3D structures of biological macromolecules for 3D-QSAR and target identification | RCSB PDB [13] [14] | |

| Molecular Descriptors | Constitutional Descriptors | Describe basic molecular composition | Molecular weight, atom counts, bond counts [11] |

| Electronic Descriptors | Characterize electronic properties relevant to reactivity | HOMO/LUMO energies, dipole moment, polarizability [11] | |

| Topological Descriptors | Encode molecular connectivity patterns | Topological indices, connectivity indices [12] | |

| Statistical & Modeling Tools | Statistical Analysis Software | Perform regression, classification, and machine learning | R, Python with scikit-learn, various specialized QSAR tools [10] |

QSAR represents a powerful approach for establishing quantitative relationships between molecular structures and their biological activities or physicochemical properties. The core principles of QSAR revolve around the calculation and selection of appropriate molecular descriptors, the application of robust statistical and machine learning methods to develop predictive models, and the rigorous validation of these models to ensure their reliability and applicability. Molecular descriptors serve as the fundamental language that translates chemical structures into numerical values that can be correlated with biological endpoints.

The integration of QSAR with molecular docking and other computational approaches has created a powerful paradigm in modern drug discovery research. As the field continues to evolve with emerging technologies such as deep learning, larger and higher-quality datasets, and more accurate molecular descriptors, the predictive ability, interpretability, and application domain of QSAR models will continue to improve, solidifying their role as indispensable tools in drug design and molecular engineering.

Molecular docking is a cornerstone computational technique in structure-based drug discovery, enabling researchers to predict the preferred orientation and binding affinity of a small molecule (ligand) when bound to a target macromolecule (receptor) [15] [16]. By simulating this molecular recognition process, docking provides critical insights into fundamental biochemical processes and supports the identification and optimization of potential therapeutic candidates, such as nutraceuticals for disease management [16]. The technique is grounded in the long-standing "lock-and-key" and "induced-fit" theories of ligand-receptor binding, which postulate that the ligand must sterically and electrostatically complement the protein's binding site [15]. This application note details the fundamental principles, standard protocols, and key applications of molecular docking, framing it within the broader context of Quantitative Structure-Activity Relationship (QSAR) and modern drug discovery research.

Fundamental Principles and Terminology

The Docking Process: Sampling and Scoring

The molecular docking process fundamentally consists of two interrelated steps [15] [16] [17]:

- Sampling (Pose Prediction): The exploration of possible conformations, orientations, and positions of the ligand within the receptor's binding site. The goal is to generate a set of plausible binding modes, or "poses."

- Scoring (Affinity Prediction): The evaluation and ranking of the generated poses using a scoring function. This function estimates the binding affinity, typically correlating the computed score with the predicted binding free energy (ΔG) [17].

Search Algorithms

Search algorithms are designed to efficiently navigate the vast conformational and orientational space of the ligand within the binding site. They can be broadly classified as shown in Table 1 [15] [16] [17].

Table 1: Classification of Common Sampling Algorithms in Molecular Docking

| Algorithm Class | Specific Methods | Key Characteristics | Representative Software |

|---|---|---|---|

| Systematic | Systematic Search | Exhaustively rotates rotatable bonds by fixed intervals; thorough but computationally complex. | Glide, FRED [17] |

| Incremental Construction | Fragments the ligand, docks a base fragment, and builds the molecule incrementally. | FlexX, DOCK [15] [17] | |

| Stochastic | Monte Carlo (MC) | Makes random changes to the ligand; new conformations are accepted or rejected based on a probabilistic criterion. | ICM, QXP, early AutoDock [15] [17] |

| Genetic Algorithm (GA) | Encodes ligand degrees of freedom as "genes"; evolves poses over generations via crossover and mutation. | GOLD, AutoDock [15] [18] [17] | |

| Molecular Dynamics | Simulates physical atomic movements; often used for post-docking refinement. | Various MD packages [15] |

Scoring Functions

Scoring functions are mathematical approximations used to predict the binding affinity of a ligand pose. They fall into several categories, each with distinct advantages and limitations [16] [17].

Table 2: Major Classes of Scoring Functions

| Scoring Function Type | Fundamental Principle | Examples |

|---|---|---|

| Force Field-Based | Calculates binding energy by summing non-bonded interaction terms (van der Waals, electrostatic). | AutoDock, DOCK, GoldScore [16] |

| Empirical | Fits weighted energy terms (e.g., H-bonds, hydrophobic contacts) to experimental binding affinity data. | ChemScore, LUDI [15] [16] |

| Knowledge-Based | Derives potentials of mean force from statistical analyses of atom-pair frequencies in known protein-ligand structures. | PMF, DrugScore [16] |

| Consensus Scoring | Combines multiple scoring functions to improve reliability and reduce method-specific biases. | - |

The following diagram illustrates the logical workflow and the core components of a standard molecular docking process.

Standard Docking Protocol

A robust docking protocol is essential for obtaining biologically meaningful and reproducible results [17]. The steps below outline a generalized workflow applicable to most docking software.

Pre-docking Preparation

- Target Protein Preparation:

- Obtain the 3D structure of the target protein from a reliable source (e.g., Protein Data Bank, PDB).

- Remove native ligands, co-crystallized water molecules, and other irrelevant heteroatoms, unless they are known to be critical for binding (e.g., catalytic water, metal ions) [18] [19].

- Add missing hydrogen atoms and assign correct protonation states to amino acid residues (e.g., His, Asp, Glu) at the physiological pH of interest.

- Assign partial charges and optimize the structure using energy minimization to relieve steric clashes.

- Ligand Preparation:

- Obtain or draw the 2D structure of the ligand.

- Generate plausible 3D conformations and determine the most stable tautomeric and isomeric state.

- Assign accurate bond orders and Gasteiger or other appropriate partial charges.

- Ensure the ligand structure is energetically minimized.

- Binding Site Definition:

- If the binding site is known from experimental data, define it using the coordinates of the native ligand or key residues.

- For blind docking, use cavity detection programs (e.g., GRID, POCKET) to identify potential binding pockets on the entire protein surface [15].

Docking Execution and Analysis

- Parameter Selection: Choose an appropriate search algorithm and scoring function based on the system's requirements (e.g., speed vs. accuracy, ligand flexibility).

- Pose Generation and Scoring: Run the docking simulation to generate multiple ligand poses, which are then ranked by the scoring function.

- Post-docking Analysis:

- Pose Clustering: Cluster the top-ranked poses based on structural similarity (e.g., Root-Mean-Square Deviation, RMSD) to identify the most consistent binding modes.

- Interaction Analysis: Visually inspect the top poses to identify key molecular interactions (hydrogen bonds, hydrophobic contacts, pi-stacking, salt bridges) with the protein target. This step is critical for validating the biological plausibility of the prediction [20] [19].

- Validation: If available, compare the top-ranked pose with a known experimental structure (e.g., a co-crystallized ligand) by calculating the RMSD of the heavy atoms. A lower RMSD indicates better predictive accuracy.

Advanced Considerations and Controls

Incorporating Flexibility and Solvent Effects

- Receptor Flexibility: Traditional docking often treats the receptor as rigid, which is a major limitation. Advanced approaches incorporate protein flexibility through methods like ensemble docking (using multiple receptor conformations), soft docking (allowing minor van der Waals overlaps), or explicit side-chain flexibility [15] [18].

- Solvent and Cofactors: The role of structural water molecules can be critical. Some docking programs, like GOLD and Flare, allow for the explicit treatment of "toggle" or " displaceable" water molecules during the docking process [18] [19]. Similarly, the presence of metal ions and cofactors can be integrated into the docking simulation.

The Rise of Deep Learning in Docking

Deep learning (DL) is reshaping the molecular docking landscape [20] [17]. Modern DL-based docking paradigms include:

- Generative Diffusion Models: These models, such as SurfDock, show superior pose prediction accuracy by generating poses through a denoising process [20].

- Regression-based Models: These predict binding affinity and conformation directly from input data but can sometimes produce physically implausible structures [20].

- Hybrid Methods: Frameworks like Interformer integrate traditional conformational searches with AI-driven scoring functions, offering a balanced performance [20].

It is crucial to note that while DL methods can achieve high pose accuracy, they may exhibit high steric tolerance and fail to recover critical molecular interactions, underscoring the continued need for expert analysis and experimental validation [20].

Controls for Large-Scale Docking

For large-scale virtual screens of ultra-large libraries (containing billions of molecules), establishing controls is paramount [21]. Key controls include:

- Enrichment Studies: Before a full-scale screen, dock a known active ligand and a set of decoy molecules to ensure the docking protocol can correctly prioritize the active compound.

- Redocking and Cross-docking: Validate the method by redocking a native ligand into its original protein structure and by cross-docking it into related but distinct protein structures.

- Consensus Scoring: Use multiple scoring functions to rank compounds, as consensus hits are more likely to be true positives.

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential tools and resources used in a typical molecular docking study.

Table 3: Essential Research Reagents and Tools for Molecular Docking

| Item Name | Function / Application | Examples / Notes |

|---|---|---|

| Protein Structure | Provides the 3D atomic coordinates of the target receptor. | RCSB Protein Data Bank (PDB), AlphaFold DB [17] [22] |

| Small Molecule Database | Source of ligands for virtual screening. | ZINC, ChEMBL, PubChem [21] [22] |

| Docking Software | Performs the core docking calculation (sampling and scoring). | AutoDock Vina, GOLD, Glide, DOCK, Surflex [15] [18] [16] |

| Structure Visualization | Critical for analyzing and interpreting docking results. | PyMOL, UCSF Chimera, Flare [19] |

| Force Field | Provides parameters for energy calculations and minimization. | CHARMM, AMBER, OPLS [16] |

| Molecular Dynamics Software | Used for pre- or post-docking refinement to model flexibility and dynamics. | GROMACS, NAMD, AMBER [15] [17] |

Application Notes

A Practical Guide to Virtual Screening

Virtual screening (VS) is a primary application of docking used to identify novel hit compounds from large chemical libraries [17] [21]. The workflow for a standard VS campaign is illustrated below.

Protocol:

- Library Curation: Select a diverse, drug-like compound library (e.g., ZINC15) [21]. Pre-process the library to generate 3D structures and apply filters for undesirable functional groups or poor physicochemical properties.

- High-Throughput Docking: Execute the docking protocol established in Section 3 across the entire prepared library. High-performance computing (HPC) clusters are typically employed for this task [21].

- Hit Prioritization: Analyze the top-ranking compounds. Do not rely solely on the docking score. Critically assess the following:

- Pose Consistency: Are the poses from similar compounds binding in a consistent manner?

- Interaction Patterns: Do the hits form key interactions with residues known to be critical for function (e.g., catalytic residues)?

- Chemical Appeal: Are the hits synthetically accessible and have desirable properties for further optimization?

- Experimental Validation: The final, essential step is to procure or synthesize the top-ranked virtual hits and test their activity and binding in biochemical and/or cellular assays [17] [21].

B Integrating Docking with QSAR in a Drug Discovery Thesis

Molecular docking and QSAR are highly synergistic computational techniques. In the context of a drug discovery thesis, they can be integrated to form a powerful workflow for lead optimization [23] [9]:

- Hit Identification: Use molecular docking for the virtual screening of large libraries to identify initial hit compounds.

- Lead Generation: Synthesize or acquire a series of analogs based on the initial hit.

- QSAR Model Development: Test the analog series for biological activity. Use the resulting activity data (e.g., IC₅₀) and calculated molecular descriptors to build a QSAR model [9]. This model establishes a mathematical relationship between chemical structure and biological activity for the series.

- Mechanistic Insight with Docking: Dock representative compounds from the series into the protein target. Analyze the binding modes to understand the structural basis for the activity trends observed in the QSAR model. The interactions observed can guide the choice of descriptors for the QSAR model.

- Rational Design: The combined insights from QSAR (predictive power) and docking (structural context) are used to rationally design new compounds with predicted higher potency and improved properties. This creates an iterative cycle of design, prediction, synthesis, and testing, accelerating the lead optimization process [23].

In modern drug discovery, the integration of Quantitative Structure-Activity Relationship (QSAR) modeling and molecular docking has created a synergistic framework that significantly enhances the efficiency and success rate of identifying therapeutic candidates [2]. While QSAR models correlate molecular descriptors or structural features with biological activity, molecular docking simulations predict how small molecules interact with target proteins at the atomic level [24]. Together, these methods form a complementary pipeline that bridges ligand-based and structure-based drug design approaches, providing both predictive power and mechanistic insight [25].

This integrated approach is particularly valuable for addressing complex challenges in drug development, including the prediction of ADMET properties (Absorption, Distribution, Metabolism, Excretion, and Toxicity), prioritizing compounds for synthesis, and understanding the structural basis of activity against therapeutic targets such as kinases, tubulin, and viral polymerases [2] [26] [24]. The convergence of these computational methodologies enables researchers to navigate vast chemical spaces more effectively while reducing reliance on expensive high-throughput screening [2].

Complementary Strengths: How QSAR and Docking Interact

Theoretical Framework and Workflow Integration

QSAR and docking approach the drug discovery problem from different but complementary angles. QSAR models, particularly those enhanced by machine learning, excel at identifying quantitative relationships between molecular features and biological activity across compound series [2] [27]. These models can rapidly predict activity for virtual compounds before synthesis, enabling efficient prioritization. Molecular docking provides structural context for these relationships by revealing atomic-level interactions between ligands and their protein targets, helping medicinal chemists understand why certain structural features enhance potency [26] [24].

The synergy between these approaches is maximized when they are deployed in a coordinated workflow. QSAR models can prioritize compounds for docking studies, while docking results can inform QSAR model development by identifying key interaction features. This creates a virtuous cycle of prediction and validation that accelerates lead optimization [25].

Table 1: Complementary Strengths of QSAR and Molecular Docking

| Aspect | QSAR Approach | Molecular Docking | Integrated Benefit |

|---|---|---|---|

| Primary Focus | Statistical relationship between structure and activity [2] | Physical interaction between ligand and protein [24] | Comprehensive understanding from statistical trends to structural mechanisms |

| Chemical Space Exploration | Rapid screening of thousands to billions of compounds [2] | Detailed analysis of hundreds to thousands of candidates | Efficient tiered screening strategy |

| Output Deliverables | Predictive activity models and quantitative potency estimates [26] [27] | Binding poses, affinity scores, and interaction maps [24] | Both quantitative predictions and structural hypotheses for optimization |

| Target Information Requirements | Can operate with only compound structures and activities (ligand-based) [2] | Requires 3D protein structure (structure-based) [28] | Enables drug design for targets with varying structural characterization |

| Optimization Guidance | Identifies favorable physicochemical properties and substituents [27] | Reveals specific interactions to enhance (H-bonds, hydrophobic contacts) [26] | Multi-dimensional optimization strategy |

Workflow Visualization

The following diagram illustrates the integrated workflow between QSAR and molecular docking, showing how they complement each other in a drug discovery pipeline:

Integrated QSAR and Docking Workflow in Drug Discovery

Case Studies in Integrated Application

Aurora Kinase Inhibitor Development

A comprehensive study on imidazo[4,5-b]pyridine derivatives as Aurora kinase A inhibitors demonstrated the power of combining multiple QSAR techniques with docking simulations [26]. Researchers developed four different QSAR models (HQSAR, CoMFA, CoMSIA, and TopomerCoMFA) with excellent predictive statistics (cross-validation coefficients q² of 0.892-0.905), then used these models to identify key structural features influencing anticancer activity. The TopomerCoMFA model, which exhibited the highest external predictive ability (r²pred = 0.855), was particularly valuable for virtual screening of the ZINC database to identify novel R groups with potential enhanced activity [26].

Following QSAR-based design, molecular docking studies of the newly designed compounds with the Aurora A kinase structure (PDB ID: 1MQ4) helped validate binding modes and identify specific molecular interactions responsible for high affinity. This integration allowed researchers to design ten novel compounds with predicted improved activity profiles, which were further validated through molecular dynamics simulations and ADMET prediction [26].

Table 2: Key Research Reagent Solutions for Integrated QSAR-Docking Studies

| Reagent/Category | Specific Examples | Function in Research |

|---|---|---|

| Molecular Modeling Software | SYBYL2.0, Gaussian 09W, SCIGRESS, RDKit [26] [24] [29] | Compound structure building, optimization, and descriptor calculation |

| Descriptor Calculation Tools | DRAGON, PaDEL, ChemOffice [2] [24] | Computation of molecular descriptors for QSAR model development |

| Protein Structure Databases | Protein Data Bank (PDB) [26] [29] | Source of 3D protein structures for molecular docking targets |

| Chemical Databases | ZINC Database [26] | Source of commercially available compounds for virtual screening |

| Docking Platforms | AutoDock, Molecular Operating Environment (MOE) [24] [29] | Prediction of ligand-protein interactions and binding affinities |

| Dynamics Simulation Packages | GROMACS, AMBER, Desmond [26] [24] | Assessment of complex stability and interaction persistence over time |

Tubulin Inhibitors for Breast Cancer Therapy

In the development of 1,2,4-triazine-3(2H)-one derivatives as tubulin inhibitors for breast cancer treatment, researchers employed an integrated computational approach that highlighted the complementary nature of QSAR and docking [24]. The QSAR model, developed with DFT-calculated descriptors, achieved a predictive accuracy (R²) of 0.849 and identified absolute electronegativity and water solubility as key determinants of inhibitory activity. This provided quantitative guidelines for molecular design, which were then contextualized through docking studies that revealed how the most promising compound (Pred28) achieved a high binding affinity (-9.6 kcal/mol) through specific interactions with the tubulin colchicine binding site [24].

The docking results complemented the QSAR predictions by providing structural insights into why certain electronic properties enhanced activity - specifically how electronegativity features enabled optimal hydrogen bonding and hydrophobic interactions with key residues. Molecular dynamics simulations further strengthened this integration by demonstrating the stability of these interactions over time, with Pred28 showing the lowest RMSD (0.29 nm) during 100 ns simulations [24].

Coronavirus Polymerase Inhibitor Screening

A study on human coronavirus polymerase inhibitors showcased how QSAR and docking can be combined for repurposing existing nucleoside analogs [29]. Researchers calculated QSAR parameters including frontier orbital energies (HOMO-LUMO gap), electron affinity, and solvation properties for four anti-HCV drugs (Sofosbuvir, IDX-184, R7128, and MK-0608) and compared them to native nucleotides and Ribavirin. The QSAR analysis revealed that IDX-184 possessed electronic properties favorable for polymerase inhibition, which was subsequently confirmed through docking studies against 19 coronavirus polymerase models [29].

This combined approach demonstrated that IDX-184 would likely show superior binding compared to Ribavirin against MERS-CoV polymerase, while MK-0608 showed comparable performance. The synergy here allowed researchers to rapidly prioritize candidates for experimental testing without synthesizing new compounds, highlighting the efficiency gains possible through integrated computational approaches [29].

Experimental Protocols for Integrated Workflows

Protocol 1: Combined QSAR and Docking for Lead Optimization

This protocol outlines the steps for implementing an integrated QSAR-docking approach to optimize lead compounds, based on methodologies successfully applied in recent studies [26] [24] [27]:

Dataset Curation and Preparation

- Collect a structurally related compound series with measured biological activities (IC50 or Ki values)

- Convert activity values to pIC50 (-logIC50) for model development

- Divide compounds into training and test sets (typically 80:20 ratio) using rational selection methods to ensure representative chemical space coverage [24]

Molecular Descriptor Calculation and Selection

- Generate optimized 3D structures using molecular mechanics (MMFF94 or Tripos force field) followed by quantum chemical refinement (DFT with B3LYP/6-31G) [24]

- Calculate diverse molecular descriptors including:

- Apply feature selection techniques (genetic algorithm, stepwise regression) to identify most relevant descriptors [30]

QSAR Model Development and Validation

- Develop multiple QSAR models using various algorithms (MLR, PLS, RF, SVM) [2] [27]

- Validate models using both internal (cross-validation, q²) and external validation (predictions on test set, r²pred) [26]

- Apply strict validation criteria: q² > 0.5 and r²pred > 0.6 for predictive models [26]

- Interpret model coefficients to identify structural features favoring activity

Structure-Based Validation through Docking

- Prepare protein structure (from PDB or homology modeling) by adding hydrogens, assigning charges, and optimizing hydrogen bonds [26] [29]

- Define binding site based on known ligand or catalytic residues

- Dock training set compounds to verify that predicted active compounds show favorable binding interactions

- Use consensus scoring from multiple scoring functions to improve binding affinity predictions

Virtual Screening and Compound Design

- Apply validated QSAR model to screen virtual compound libraries

- Select top-ranked compounds for docking studies to verify binding mode feasibility

- Analyze docking poses to identify key protein-ligand interactions (hydrogen bonds, hydrophobic contacts, π-π stacking)

- Design new compounds by incorporating favorable structural features identified from both QSAR and docking

Experimental Validation and Iterative Refinement

- Synthesize and test top-predicted compounds

- Incorporate new experimental data to refine QSAR models

- Use iterative cycles of prediction and validation to optimize lead compounds

Protocol 2: 3D-QSAR Guided by Docking Pose Alignment

This specialized protocol is particularly useful when developing 3D-QSAR models that require spatial alignment of molecules, with docking providing the alignment rule [28]:

Binding Conformation Generation

- Dock each compound in the dataset to the target protein using flexible docking protocols

- Select the predominant binding pose for each compound based on clustering analysis and interaction consistency

- Extract the bound conformation for use in molecular alignment

Molecular Alignment for 3D-QSAR

- Align compounds using three different methods:

- Receptor-based alignment: Use docking poses directly

- Ligand-based alignment: Align to a common scaffold or pharmacophore

- Common substructure alignment: Identify maximum common substructure for alignment

- Evaluate which alignment method produces the most predictive 3D-QSAR model [28]

- Align compounds using three different methods:

3D-QSAR Model Development

- Calculate CoMFA (steric and electrostatic) and CoMSIA (additional hydrophobic, H-bond donor/acceptor) fields [26] [28]

- Use Partial Least Squares (PLS) regression to correlate field values with biological activity

- Generate 3D contour maps to visualize regions where specific molecular properties enhance or diminish activity

Model Application and Design

- Use contour maps to guide molecular modifications

- Design new compounds that incorporate favorable steric, electrostatic, and hydrophobic features indicated by the 3D-QSAR model

- Verify that designed compounds maintain complementary binding interactions identified through docking

The synergistic integration of QSAR and molecular docking represents a powerful paradigm in modern drug discovery, effectively bridging ligand-based and structure-based approaches [2] [25]. This complementary relationship enables researchers to leverage the predictive power of QSAR models with the mechanistic insights provided by docking simulations, creating a more comprehensive framework for compound optimization [26] [24]. As both methodologies continue to advance through incorporation of machine learning and improved force fields [2] [31], their integration will become increasingly seamless and impactful. The case studies and protocols presented here provide a roadmap for researchers seeking to implement this synergistic approach in their drug discovery efforts, potentially accelerating the identification and optimization of novel therapeutic agents across multiple disease areas.

Virtual screening and lead optimization represent two pivotal phases in modern computer-aided drug discovery, significantly reducing time and costs associated with bringing new therapeutics to market [32]. Virtual screening serves as a preliminary filtering technology to identify bioactive compounds from extensive chemical libraries, functioning as a complementary approach to high-throughput screening [33]. Once potential hits are identified, lead optimization focuses on improving their characteristics, including target selectivity, biological activity, potency, and toxicity profiles [34]. Within this framework, quantitative structure-activity relationship (QSAR) studies and molecular docking have emerged as indispensable computational tools that provide rational guidance for structural modification and efficacy enhancement [33] [35]. This application note details standardized protocols and practical considerations for implementing these methodologies within drug discovery pipelines.

Virtual Screening: Approaches and Applications

Virtual screening (VS) involves the in silico screening of compound libraries to identify molecules most likely to bind to a specific drug target [32]. It has become a cornerstone of modern drug discovery due to its ability to efficiently explore vast chemical spaces that would be prohibitively expensive and time-consuming to assay experimentally [36].

Key Virtual Screening Approaches

There are two primary VS approaches, which can be used independently or in combination:

Table 1: Comparison of Virtual Screening Approaches

| Approach | Description | Data Requirements | Key Advantages |

|---|---|---|---|

| Structure-Based Virtual Screening | Uses 3D structural information of the target protein to identify compounds that complement the binding site [32] | High-resolution protein structure (X-ray, NMR, or cryo-EM); homology models [32] [37] | Can identify novel scaffolds; provides structural insights for optimization |

| Ligand-Based Virtual Screening | Utilizes known active ligands to search for structurally or pharmacologically similar compounds [32] [33] | Bioactivity data of known ligands; molecular descriptors/fingerprints [38] | Effective when protein structure is unavailable; leverages existing structure-activity data |

| Pharmacophore-Based Screening | Identifies compounds containing essential steric and electronic features for optimal target interactions [32] [39] | Either protein structure or known active ligands | Abstract representation allows scaffold hopping to novel chemotypes |

Quantitative Insights and Performance Metrics

Analysis of published virtual screening results between 2007-2011 revealed that hit identification criteria vary significantly across studies [40]. Only approximately 30% of studies reported a clear, predefined hit cutoff, with no clear consensus on selection criteria. The distribution of activity cutoffs used in these studies demonstrates practical considerations for hit selection:

- 1-25 μM: 136 studies (most common range)

- 25-50 μM: 54 studies

- 50-100 μM: 51 studies

- 100-500 μM: 56 studies

- >500 μM: 25 studies (typically fragment-based screens) [40]

Modern implementations combining machine learning with traditional methods show remarkable efficiency improvements. One recent study demonstrated a 1000-fold acceleration in binding energy predictions compared to classical docking-based screening when using machine learning approaches [36].

Experimental Protocols

Protocol 1: Structure-Based Pharmacophore Modeling and Virtual Screening

This protocol generates pharmacophore models from protein-ligand structural data for virtual screening [32].

Software Requirements: Molecular Operating Environment (MOE), Discovery Studio, or similar package with pharmacophore modeling capabilities.

Procedure:

Protein Structure Preparation

- Obtain 3D structure from Protein Data Bank (PDB) or via homology modeling [32] [37]. ALPHAFOLD2 can generate reliable protein structures if experimental ones are unavailable [32].

- Add hydrogen atoms, assign protonation states, and correct any missing residues or atoms [32].

- Conduct energy minimization to relieve steric clashes.

Binding Site Characterization

- If the structure contains a bound ligand, define the binding site around this ligand.

- For apo structures, use binding site detection tools (e.g., GRID, LUDI) to identify potential binding pockets based on geometric and energetic properties [32].

Pharmacophore Feature Generation

- Analyze interactions between the protein and bound ligand (or binding site residues).

- Map key interaction features: hydrogen bond donors (HBD), hydrogen bond acceptors (HBA), hydrophobic areas (H), positively/negatively ionizable groups (PI/NI), and aromatic rings (AR) [32].

- Add exclusion volumes (XVOL) to represent the physical boundaries of the binding pocket [32].

Feature Selection and Model Validation

- Select features most critical for binding affinity, removing redundant or less important features [32].

- Validate the model using known active and inactive compounds to ensure it discriminates effectively.

Virtual Screening Implementation

- Apply the pharmacophore model as a query to screen compound databases using a search algorithm (e.g., MOE's pharmacophore search) [39].

- Generate multiple conformations for each database compound to account for flexibility.

- Retain compounds that match the pharmacophore features within defined spatial constraints.

The following workflow diagram illustrates this structure-based pharmacophore screening process:

Protocol 2: Molecular Docking for Lead Optimization

This protocol employs molecular docking to guide lead optimization through structure-activity relationship (SAR) analysis [37].

Software Requirements: Docking software (GOLD, AutoDock, Smina), molecular visualization tool (PyMOL, Chimera).

Procedure:

Structural Data Preparation and Validation

- Select high-resolution protein structure (<2.5 Å recommended) from PDB [37].

- Examine electron density maps to identify flexible or poorly resolved regions.

- Prepare protein by adding hydrogens, assigning charges, and removing water molecules (unless functionally important).

Ligand Preparation

- Generate 3D structures of lead compounds and analogs.

- Assign proper protonation states at physiological pH.

- Perform energy minimization using appropriate force fields.

Docking Workflow Establishment

- Define binding site coordinates based on known ligand position or active site residues.

- Select docking algorithm (genetic algorithm, Monte Carlo) and scoring function appropriate for your target [37].

- Validate docking protocol by re-docking known ligands and reproducing experimental binding poses (RMSD < 2.0 Å).

SAR Analysis and Compound Prioritization

- Dock series of analog structures to explore structure-activity relationships.

- Analyze binding modes to identify key interactions contributing to affinity.

- Correlate docking scores with experimental activities to validate predictive capability.

Interaction Mapping for Design

- Identify suboptimal interactions in current leads that could be improved.

- Propose structural modifications to enhance complementary interactions.

- Design new analogs with improved potency or selectivity.

The lead optimization process informed by docking and SAR analysis follows an iterative cycle:

Protocol 3: Machine Learning-QSAR Model Development

This protocol develops robust 2D QSAR models using machine learning to predict compound activity [38] [35].

Software Requirements: Python with scikit-learn, PaDEL descriptor software, KNIME, or other cheminformatics platforms.

Procedure:

Dataset Curation

Molecular Descriptor Calculation and Feature Selection

Model Training and Validation

- Split data into training (80%) and test (20%) sets, ensuring representative chemical space coverage [38].

- Train multiple ML algorithms: Support Vector Machine (SVM), Artificial Neural Network (ANN), and Random Forest (RF) [38].

- Optimize hyperparameters for each algorithm using grid search and cross-validation.

Model Evaluation and Selection

Model Application for Prediction

- Use the validated model to predict activities of virtual compound libraries.

- Apply ADMET filters to prioritize compounds with favorable drug-like properties [38].

- Select top-ranked compounds for further experimental validation.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Research Reagents and Computational Tools for Virtual Screening and Lead Optimization

| Category | Specific Tools/Resources | Function and Application |

|---|---|---|

| Structural Databases | Protein Data Bank (PDB) [37] | Repository of 3D protein structures for structure-based design |

| Compound Libraries | ZINC Database [36] | Commercially available compounds for virtual screening |

| Bioactivity Data | ChEMBL Database [36] [38] | Curated bioactivity data for ligand-based design and QSAR modeling |

| Docking Software | GOLD, AutoDock, Smina [37] [36] | Predict binding poses and scores for protein-ligand complexes |

| Pharmacophore Modeling | MOE, Discovery Studio [39] | Create and screen pharmacophore models for virtual screening |

| Descriptor Calculation | PaDEL [38] | Compute molecular descriptors and fingerprints for QSAR |

| Machine Learning Libraries | scikit-learn [38] | Implement ML algorithms for QSAR and activity prediction |

Virtual screening and lead optimization represent interconnected pillars of modern computational drug discovery. Structure-based approaches leveraging pharmacophore modeling and molecular docking provide mechanistic insights for compound design [32] [37], while ligand-based QSAR strategies efficiently leverage existing structure-activity data to guide optimization [38] [35]. The integration of machine learning methodologies across these domains offers unprecedented acceleration, enabling more effective navigation of chemical space and enhanced prediction of compound properties [41] [36]. By implementing the standardized protocols outlined in this application note, researchers can establish robust computational workflows that significantly enhance efficiency in identifying and optimizing novel therapeutic candidates.

From Theory to Practice: Implementing QSAR and Docking Methodologies

Molecular descriptors are mathematical representations of a molecule's structural, physicochemical, and electronic properties that form the foundational variables in Quantitative Structure-Activity Relationship (QSAR) modeling [42] [43]. The selection of appropriate descriptors is a critical step in building robust QSAR models, as they quantitatively encode chemical information that can be correlated with biological activity [10]. Descriptors are typically classified by dimensionality—1D, 2D, 3D, and 4D—based on the level of structural information they encode, with each category offering distinct advantages for specific applications in drug discovery [2] [43]. The evolution of QSAR from classical linear models to sophisticated machine learning and deep learning frameworks has further emphasized the importance of strategic descriptor selection to capture complex, nonlinear patterns across large chemical spaces [2] [10]. This protocol provides a comprehensive guide to the classification, calculation, and application of molecular descriptors within modern QSAR workflows, with particular emphasis on integration with molecular docking studies.

Molecular Descriptor Classification and Characteristics

Molecular descriptors can be broadly categorized by dimensionality, with each level incorporating increasingly complex structural information. The table below summarizes the key descriptor types, their characteristics, and primary applications in drug discovery research.

Table 1: Classification of Molecular Descriptors by Dimensionality

| Descriptor Type | Structural Information Encoded | Example Descriptors | Computational Cost | Primary Applications |

|---|---|---|---|---|

| 1D Descriptors | Bulk properties & whole-molecule characteristics | Molecular weight, log P, atom counts, polar surface area [43] [44] | Low | Preliminary screening, ADMET prediction [44] |

| 2D Descriptors | Structural fragments & molecular connectivity | Topological indices, connectivity fingerprints, graph-based descriptors [2] [43] | Low to Moderate | High-throughput virtual screening, similarity searching [45] [43] |

| 3D Descriptors | Molecular shape, surface, & volume properties | Molecular surface area, volume, 3D-MoRSE descriptors, WHIM descriptors [2] [45] | Moderate to High | Ligand-protein docking, binding affinity prediction [45] [46] |

| 4D Descriptors | Conformational flexibility & ensemble properties | Ensemble-averaged spatial features, grid-based occupancy [2] | High | Incorporating flexibility in binding site interactions [2] |

| Quantum Chemical Descriptors | Electronic structure & reactivity properties | HOMO-LUMO energies, dipole moment, electrostatic potential surfaces [2] | Very High | Modeling reaction pathways & electronic interactions [2] |

Experimental Protocols for Descriptor Calculation and Application

Protocol 1: Comprehensive Descriptor Generation Workflow

Objective: To generate a multi-dimensional descriptor set for QSAR model development.

Materials and Software:

- Chemical Structures: Standardized molecular structures in SDF, MOL2, or SMILES format [42]

- Descriptor Calculation Software: RDKit, PaDEL-Descriptor, Dragon, Mordred, or Schrödinger's DeepAutoQSAR [2] [42] [47]

- Computational Environment: Workstation with multi-core processor (≥16 GB RAM recommended for 3D/4D descriptors)

Procedure:

- Data Preprocessing:

Descriptor Calculation:

- 1D/2D Descriptors: Process structures through RDKit or PaDEL-Descriptor to calculate constitutional, topological, and electronic descriptors [42] [44].

- 3D Descriptors: Use Dragon or Schrödinger Maestro to compute steric, surface, and shape descriptors from energy-minimized 3D structures [2] [45].

- Quantum Chemical Descriptors: Perform geometry optimization and orbital calculation using Gaussian, GAMESS, or Schrödinger Jaguar at appropriate theory levels (e.g., B3LYP/6-31G*) [2].

- 4D Descriptors: Generate conformational ensembles using molecular dynamics simulations (e.g., 1-10ns in explicit solvent) and calculate ensemble-averaged spatial descriptors [2].

Descriptor Preprocessing: